Wavelet Transform Denoising of EEG Signals: A Comprehensive Guide for Biomedical Research and Clinical Applications

Electroencephalogram (EEG) signals are fundamental for diagnosing neurological disorders, monitoring brain function, and developing brain-computer interfaces.

Wavelet Transform Denoising of EEG Signals: A Comprehensive Guide for Biomedical Research and Clinical Applications

Abstract

Electroencephalogram (EEG) signals are fundamental for diagnosing neurological disorders, monitoring brain function, and developing brain-computer interfaces. However, their low amplitude makes them highly susceptible to contamination from various artifacts, which can compromise analysis and lead to inaccurate conclusions. This article provides a comprehensive exploration of wavelet transform techniques for EEG denoising, a method particularly suited to the non-stationary nature of neural data. We cover the foundational principles of why wavelets are effective, detail core methodological approaches including Discrete Wavelet Transform (DWT) and Stationary Wavelet Transform (SWT), and address key troubleshooting and optimization challenges such as optimal wavelet selection. Furthermore, we validate these techniques through performance metrics and comparative analysis with hybrid and deep learning methods, offering researchers and drug development professionals a robust framework for enhancing EEG signal fidelity in both research and clinical applications.

The Why and What: Fundamentals of EEG Artifacts and Wavelet Theory

Understanding the Critical Need for EEG Denoising in Clinical and Research Settings

Electroencephalography (EEG) is a non-invasive technique that records the brain's electrical activity through electrodes placed on the scalp, offering millisecond-level temporal resolution that is invaluable for monitoring fast-changing cognitive and neuronal processes [1] [2]. This technology plays a vital role in neuroscience research, clinical diagnosis, and emerging brain-computer interface (BCI) applications [3]. However, the utility of EEG is significantly compromised by its vulnerability to various artifacts and noise sources that contaminate the neural signals of interest [4].

The critical need for effective EEG denoising stems from the microvolt-range amplitudes of genuine neural signals, which are easily obscured by both physiological and non-physiological artifacts [3]. Physiological artifacts include ocular movements (eye blinks), muscle activity (EMG), cardiac signals (ECG), and motion-related disturbances, while non-physiological sources encompass power line interference, electrode pop, and environmental noise [1] [4]. These contaminants often overlap spectrally and temporally with actual brain activity, making their separation and removal particularly challenging [3].

The consequences of inadequate denoising are severe across applications. In clinical settings, artifacts can lead to misinterpretation of brain activity, potentially resulting in false diagnoses of neurological conditions such as epilepsy, Alzheimer's disease, or depression [3]. For brain-computer interfaces, noise corruption diminishes classification accuracy and system reliability, hindering effective communication and control for users with severe neurological disabilities [1]. In pharmaceutical development and cognitive research, artifact contamination can obscure subtle neural responses to interventions, reducing statistical power and potentially leading to erroneous conclusions about treatment efficacy [3] [1].

The Impact of Artifacts on EEG Signal Integrity

Types and Characteristics of EEG Artifacts

EEG artifacts manifest with distinct temporal and spectral properties that determine the appropriate denoising strategy. Ocular artifacts from eye blinks and movements appear as slow, high-amplitude waves predominantly in frontal electrodes, with amplitudes up to ten times greater than the underlying EEG signal [3]. Muscle artifacts from jaw clenching, head movement, or talking introduce high-frequency noise (0-200 Hz) that particularly distorts beta and gamma frequency bands critical for studying active cognitive processes [3] [4]. Cardiac artifacts from heartbeats manifest with similar frequencies and amplitudes to genuine EEG, making them particularly challenging to separate without distorting neural signals [3]. Motion artifacts resulting from physical movement produce sudden high-amplitude spikes across multiple channels, while electrode artifacts from poor contact create irregular signal patterns with abnormal impedance [4].

Quantitative Impact on Signal Quality

The degradation of EEG signal quality due to artifacts can be quantified through several key metrics that underscore the critical need for robust denoising approaches. The following table summarizes the most common quantitative measures used to evaluate denoising performance across methodologies:

Table 1: Key Metrics for Evaluating EEG Denoising Performance

| Metric | Formula/Description | Interpretation | Typical Range for Clean EEG |

|---|---|---|---|

| Signal-to-Noise Ratio (SNR) | $SNR = 10 \log{10}\left(\frac{P{signal}}{P_{noise}}\right)$ | Higher values indicate better noise suppression | >15 dB for clinical applications |

| Mean Square Error (MSE) | $MSE = \frac{1}{n}\sum{i=1}^{n}(f{\theta}(yi)-xi)^2$ | Lower values indicate better reconstruction accuracy | <0.1 for effective denoising |

| Peak SNR (PSNR) | $PSNR = 10 \log_{10}\left(\frac{MAX^2}{MSE}\right)$ | Higher values indicate better peak preservation | >30 dB for quality reconstruction |

| Correlation Coefficient | $\rho = \frac{\text{cov}(X,Y)}{\sigmaX\sigmaY}$ | Measures waveform similarity with ground truth | >0.9 for minimal distortion |

| Artifact-to-Signal Ratio (ASR) | $ASR = \frac{P{artifact}}{P{signal}}$ | Analogous to SNR; lower values preferred | <0.1 for effective artifact removal |

Without effective denoising, artifact contamination can reduce SNR to unacceptably low levels (often <5 dB), severely limiting the detectability of event-related potentials and other neural features of interest [5]. Studies have demonstrated that proper denoising can improve classification accuracy in BCI systems by up to 20-30%, moving from approximately 70% accuracy with contaminated signals to over 98% with properly denoised data [6].

Denoising Performance of Various Methodologies

Comparative Analysis of Denoising Techniques

Multiple algorithmic approaches have been developed to address the challenge of EEG denoising, each with distinct strengths, limitations, and performance characteristics. The following table provides a quantitative comparison of major denoising methodologies:

Table 2: Performance Comparison of EEG Denoising Techniques

| Method | Best Reported SNR (dB) | Best Reported MSE | Classification Accuracy | Computational Efficiency | Key Limitations |

|---|---|---|---|---|---|

| WT-Based (Symlet2-SWT) | 27.32 [7] | 5.09 [7] | ~90-95% [4] | Medium | Manual parameter selection, basis function dependency |

| EMD-DFA-WPD (Hybrid) | Not reported | Lower values reported [6] | 98.51% (RF), 98.10% (SVM) [6] | Low | Mode mixing problems, computationally intensive |

| WPTEMD (Hybrid) | Not reported | Lowest RMSE [8] | Not reported | Low | Complex implementation, parameter tuning |

| GAN (Standard) | 12.37 [5] | Not reported | Not reported | Very Low | Training instability, detail preservation issues |

| WGAN-GP | 14.47 [5] | Not reported | Not reported | Very Low | Computational demands, over-suppression risk |

| ICA | Not reported | Not reported | ~85-90% [2] | Medium | Requires manual component inspection, statistical assumptions |

| Adaptive Filtering | 10-15 [1] | Not reported | ~80-85% [1] | High | Requires reference signal, limited to specific artifacts |

Wavelet Transform Denoising Protocols

Discrete Wavelet Transform (DWT) for Ocular Artifact Removal

Principle: DWT decomposes EEG signals into approximation and detail coefficients using a mother wavelet, effectively separating neural activity from artifacts in the time-frequency domain [4] [7].

Protocol:

- Signal Preparation: Segment multichannel EEG into epochs of 2-5 seconds. Apply necessary pre-filtering (0.5-40 Hz bandpass) to remove extreme frequency components [4].

- Mother Wavelet Selection: Choose an appropriate mother wavelet (e.g., Symlet, Coiflet, or Daubechies families) that matches the morphological characteristics of both the EEG signal and the target artifacts [7].

- Decomposition Level: Determine the optimal decomposition level based on the target artifact frequency range:

- For ocular artifacts (0.5-4 Hz): 4-6 decomposition levels

- For muscle artifacts (20-60 Hz): 3-5 decomposition levels

- Thresholding Application: Apply thresholding to detail coefficients using established methods:

- Universal threshold: $TH = \sigma \sqrt{2 \log N}$ where $\sigma$ is noise standard deviation and $N$ is signal length

- Adaptive thresholds: Level-dependent thresholding using SURE or minimax principles

- Signal Reconstruction: Reconstruct the denoised signal from thresholded coefficients using inverse DWT.

- Validation: Quantify performance using SNR, MSE, and correlation coefficients with ground truth where available [7].

Optimization Considerations: The choice of mother wavelet significantly impacts performance. Studies indicate Symlet2 and Coiflet2 wavelets generally provide superior results for EEG signals compared to Haar wavelets [7]. The decomposition level should be optimized to match the spectral characteristics of both the neural signals of interest and the target artifacts.

Stationary Wavelet Transform (SWT) with Symlet2 for Motion Artifacts

Principle: SWT addresses DWT's translation variance by omitting the downsampling step, providing more stable artifact removal particularly suitable for motion artifacts [7].

Protocol:

- Parameter Initialization: Select Symlet2 as mother wavelet with 4 decomposition levels based on empirical optimization [7].

- Redundant Decomposition: Perform SWT without downsampling at each level, maintaining constant signal length throughout decomposition.

- Level-Dependent Thresholding: Apply adaptive thresholds to detail coefficients at each level, with stricter thresholds for higher decomposition levels containing more artifact-dominated components.

- Boundary Effect Management: Implement symmetric signal extension to minimize edge artifacts during reconstruction.

- Quality Verification: Validate using both quantitative metrics (SNR, PSNR) and qualitative assessment of time-domain waveform preservation.

Performance Benchmark: This approach has demonstrated superior performance with SNR values up to 27.32 dB and PSNR of 40.02 dB when applied to EEG contaminated with motion artifacts [7].

Advanced Hybrid Denoising Protocol: EMD-DFA-WPD for Depression Detection

Principle: This sophisticated hybrid approach combines the adaptive decomposition capability of Empirical Mode Decomposition (EMD) with the scaling property analysis of Detrended Fluctuation Analysis (DFA) and the frequency localization of Wavelet Packet Decomposition (WPD) [6].

Protocol:

- EMD Decomposition:

- Apply EMD to raw EEG signals to decompose into Intrinsic Mode Functions (IMFs)

- Implement sifting process until stopping criteria are met (standard deviation between consecutive sifts < 0.2-0.3)

- Typically obtain 7-10 IMFs plus residue from standard EEG recordings

DFA-Based Mode Selection:

- Compute scaling exponents ($\alpha$) for each IMF using DFA

- Identify artifact-dominated IMFs using thresholding ($\alpha$ < 0.5 for noisy components, $\alpha$ ≈ 0.5 for random noise, $\alpha$ > 0.5 for persistent neural signals)

- Select relevant neural signal components for further processing

Wavelet Packet Denoising:

- Apply WPD to selected IMFs using Symlet2 mother wavelet at level 4 decomposition

- Implement adaptive thresholding to wavelet packet coefficients using Birgé-Massart strategy

- Reconstruct denoised components from thresholded coefficients

Signal Reconstruction:

- Combine denoised components with residue from EMD

- Apply amplitude normalization to maintain physiological signal range

Validation:

- Quantitative assessment using SNR and MAE metrics

- Clinical validation through depression classification using SVM and Random Forest classifiers

Performance Outcomes: This hybrid methodology has demonstrated exceptional performance for depression detection, achieving classification accuracy of 98.51% with Random Forest and 98.10% with SVM classifiers, significantly outperforming individual techniques [6].

Contemporary Deep Learning Protocol: GAN and WGAN-GP for Adaptive Denoising

Principle: Generative Adversarial Networks (GANs) and their Wasserstein variant with Gradient Penalty (WGAN-GP) learn complex, non-linear mappings between noisy and clean EEG signals through adversarial training, offering exceptional adaptability to diverse artifact types [5].

Protocol:

- Data Preparation:

- Acquire paired clean and artifact-contaminated EEG datasets

- Apply band-pass filtering (8-30 Hz) and channel standardization

- Segment into fixed-length epochs (e.g., 2-second windows)

- Normalize amplitude to zero mean and unit variance

Generator Network Architecture:

- Design encoder with temporal convolutional layers (kernel size: 8, stride: 2)

- Implement bottleneck with dense latent representation

- Construct decoder with transposed convolutional layers

- Include skip connections to preserve temporal details

Discriminator/Critic Network:

- For standard GAN: Binary classifier distinguishing real vs. generated signals

- For WGAN-GP: Critic network with gradient penalty (λ=10)

- Use spectral normalization for training stability

Adversarial Training:

- Alternate between generator and discriminator/critic updates

- For WGAN-GP: Use RMSProp optimizer, learning rate=5e-5

- For standard GAN: Use Adam optimizer, learning rate=1e-4

- Implement early stopping based on validation loss

Multi-Component Loss Function:

- Adversarial loss: Generator tries to fool discriminator

- Content loss: Mean squared error between generated and clean signals

- Spectral loss: Maintain frequency distribution fidelity

- Temporal consistency loss: Preserve signal smoothness

Performance Evaluation:

- Quantitative metrics: SNR, PSNR, correlation coefficient, mutual information

- Qualitative assessment: Visual inspection of time-series and spectrograms

- Downstream validation: BCI classification accuracy improvement

Performance Outcomes: WGAN-GP achieves superior SNR (14.47 dB vs. 12.37 dB for standard GAN) with greater training stability, while standard GANs better preserve finer signal details (correlation coefficient >0.90) [5].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Toolkit for EEG Denoising Studies

| Category | Item | Specification/Example | Research Function |

|---|---|---|---|

| EEG Hardware | Acquisition System | 64-channel wireless systems (e.g., Enobio) | High-quality signal recording with minimal setup artifact [8] |

| Software Libraries | Signal Processing | MATLAB Wavelet Toolbox, Python MNE, PyWavelets | Implementation of DWT, SWT, WPD algorithms [4] [7] |

| Deep Learning Frameworks | Neural Network | TensorFlow (v2.15.1), Keras, PyTorch | Implementation of GAN, WGAN-GP, autoencoder models [5] [9] |

| Mother Wavelets | Basis Functions | Symlet2, Coiflet2, Daubechies (db4) | Time-frequency decomposition optimized for EEG characteristics [7] |

| Validation Datasets | Benchmark Data | BCI Competition IV, EEGdenoiseNet | Performance benchmarking and comparative analysis [5] [9] |

| Computational Resources | GPU Acceleration | NVIDIA Tesla V100, RTX 3090 | Training deep learning models (GANs, VAEs) with large EEG datasets [5] [3] |

EEG denoising represents a critical preprocessing step that significantly impacts the validity and reliability of neural data interpretation across clinical, research, and commercial applications. The progression from traditional wavelet-based methods to sophisticated hybrid approaches and deep learning architectures has steadily improved our capacity to separate neural signals from contaminating artifacts while preserving biologically relevant information.

The future of EEG denoising lies in the development of adaptive, real-time capable algorithms that can maintain performance across diverse recording conditions and subject populations. Emerging approaches including lightweight generative AI frameworks, cross-subject transfer learning, and self-supervised methods offer promising directions for overcoming current limitations in generalizability and computational efficiency [3] [9]. As these technologies mature, they will undoubtedly enhance the translational potential of EEG across clinical diagnostics, therapeutic monitoring, and basic neuroscience research.

Electroencephalography (EEG) provides a non-invasive method for recording the brain's spontaneous electrical activity, playing a critical role in neuroscience research, clinical diagnosis, and brain-computer interface (BCI) applications [10] [3]. However, the accuracy and reliability of EEG-based expert systems are significantly compromised by contamination from various artifacts, which can originate from both physiological sources and environmental interference [11] [10]. These artifacts often exhibit spectral and temporal overlap with genuine neural signals, making their separation particularly challenging [3]. Effective artifact management is thus a crucial preprocessing step for ensuring signal quality, especially within research focused on wavelet transform denoising techniques, which leverage distinct time-frequency properties to separate neural activity from contaminants [11]. This document characterizes the most common EEG artifacts—ocular, muscle, cardiac, and power line interference—within the context of wavelet-based denoising research, providing structured protocols for their identification and removal.

Artifact Characterization and Impact on EEG Signals

Artifacts in EEG recordings are broadly classified as physiological (originating from the body) or non-physiological (environmental or instrumental) [10]. The table below summarizes the key characteristics of common artifacts, knowledge of which is fundamental for developing effective wavelet-based denoising strategies that target specific frequency bands and morphological features.

Table 1: Characteristics of Common EEG Artifacts

| Artifact Type | Origin | Frequency Range | Amplitude | Primary Affected Channels | Key Morphological Features |

|---|---|---|---|---|---|

| Ocular (EOG) | Eye movements & blinks [10] | Mainly <4 Hz [10] [12] | Can be 10x greater than EEG [3] | Frontal [3] | Slow, large deflections [10] |

| Muscle (EMG) | Muscle activity (head, jaw) [10] | 0 - >200 Hz [10] [3] | Varies with contraction [10] | Temporal, widespread [3] | High-frequency, random spikes [10] |

| Cardiac (ECG) | Heart electrical activity [10] | ~1.2 Hz (pulse) [10] | Similar to EEG [10] | Near blood vessels, posterior [10] | Periodic, sharp QRS complex [10] |

| Power Line | Mains electricity [10] | 50/60 Hz & harmonics [10] | Varies with environment | All channels | Sinusoidal, continuous oscillation [10] |

The overlapping frequency characteristics of these artifacts with cerebral rhythms complicates their removal. For instance, ocular artifacts predominantly affect the delta band, which is also critical for studying deep sleep [10]. Similarly, muscle artifacts distort higher frequency beta and gamma bands associated with active thinking and cognitive processing [3]. Wavelet transform denising frameworks are designed to overcome these challenges by leveraging distinct energy distribution patterns in the time-frequency domain to separate neural signals from noise [11].

Experimental Protocols for Artifact Analysis and Denoising

Protocol for a Semi-Automated Preprocessing Pipeline

A robust preprocessing protocol is essential prior to advanced wavelet denoising. This protocol ensures the removal of large-amplitude artifacts and bad channels that could impede subsequent analysis [13].

1. Data Acquisition and Import:

- Acquire EEG data according to experimental requirements, noting the sampling rate and channel locations.

- Import the raw data into a preprocessing environment (e.g., EEGLAB, Python MNE).

2. Bandpass Filtering:

- Apply a bandpass filter (e.g., 1-40 Hz) to remove slow drifts and very high-frequency noise outside the range of primary neural signals [5].

3. Bad Channel Identification and Interpolation:

- Identify channels with excessive noise, flat signals, or unusually high impedance using automated algorithms and visual inspection.

- Interpolate the identified bad channels using signals from surrounding good channels (e.g., spherical spline interpolation).

4. Ocular Artifact Correction with ICA:

- Perform Independent Component Analysis (ICA) on the filtered data to separate statistically independent sources.

- Visually inspect the ICA components to identify those corresponding to ocular artifacts based on their topography (frontal distribution), time course, and power spectrum [13].

- Remove the artifact-laden components and reconstruct the signal.

5. Large-Amplitude Transient Removal with PCA:

- For remaining large-amplitude, short-duration artifacts (e.g., muscle pops), apply Principal Component Analysis (PCA) [13].

- Identify and remove principal components representing these transients, then reconstruct the data.

6. Quality Checking and Data Export:

- Visually compare the raw and cleaned data across all channels to verify artifact removal and signal preservation.

- Export the preprocessed data for subsequent wavelet denoising analysis.

Protocol for Wavelet-Based Denoising Evaluation

This protocol outlines the steps for evaluating the performance of a wavelet-based denoising method, such as the Fractional Wavelet Transform (FrWT) with adaptive thresholding [11].

1. Data Preparation:

- Use a preprocessed, artifact-corrected EEG dataset as a "clean" reference. This can be derived from the previous protocol or using validated clean data.

- Introduce synthetic artifacts (e.g., Gaussian noise, simulated EMG) at controlled signal-to-noise ratio (SNR) levels to create a noisy dataset with a known ground truth [11] [5].

2. Wavelet Decomposition:

- Select a mother wavelet (e.g., Daubechies, Morlet) and decomposition level appropriate for the EEG frequency bands of interest.

- Apply the discrete wavelet transform (DWT) or an advanced FrWT to the noisy EEG signals to decompose them into approximation and detail coefficients [11] [14].

3. Thresholding and Denoising:

- Apply a thresholding rule (e.g., adaptive thresholding) to the detail coefficients associated with noise. The FrWT method optimizes this threshold in the fractional domain to better separate non-stationary neural components from noise [11].

- Invert the wavelet transform using the thresholded coefficients to reconstruct the denoised EEG signal.

4. Performance Quantification:

- Calculate quantitative metrics to compare the denoised signal ((\hat{x})) against the clean ground truth ((x)) and the original noisy signal ((y)) [12] [5]:

- Signal-to-Noise Ratio (SNR): Measures the level of desired signal relative to noise. An increase indicates better denoising.

- Root Mean Square Error (RMSE): Quantifies the difference between the denoised and clean signal. Lower values are better.

- Correlation Coefficient (CC): Assesses the linear relationship between the denoised and clean signal. Closer to 1 is better.

- Perform a visual waveform analysis to ensure critical EEG features (e.g., epileptic spikes, event-related potentials) are preserved [11].

Table 2: Key Reagents and Computational Tools for EEG Denoising Research

| Name | Type/Function | Application in Research |

|---|---|---|

| Public EEG Datasets | Benchmark data (e.g., EEGdenoiseNet, PhysioNet) [5] | Model training, validation, and comparative performance benchmarking. |

| Independent Component Analysis (ICA) | Blind source separation algorithm [13] | Isolation and removal of ocular and other physiological artifacts in preprocessing. |

| Discrete Wavelet Transform (DWT) | Time-frequency decomposition tool [11] [14] | Core function in denoising pipelines for decomposing signals and applying thresholds. |

| Fractional Wavelet Transform (FrWT) | Advanced wavelet transform with optimal energy concentration [11] | Enhances noise separation by optimizing the transform order for non-stationary EEG components. |

| Deep Learning Models (e.g., GANs, CNNs) | Data-driven models for learning complex signal mappings [3] [12] [5] | End-to-end denoising, often used to learn the transformation from noisy to clean EEG. |

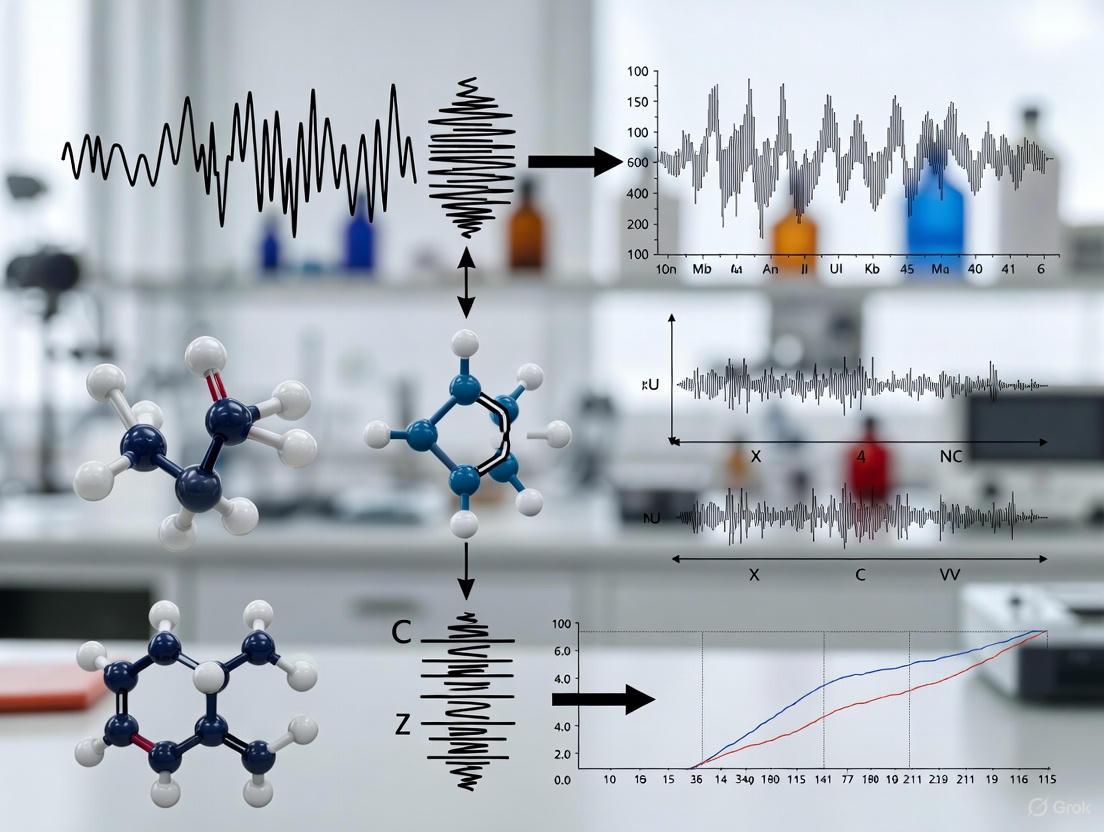

Workflow Visualization

The following diagram illustrates the integrated experimental workflow for EEG artifact management, from raw data acquisition to final denoised output, highlighting the role of wavelet transforms.

Theoretical Foundation: From Fourier to Wavelet Analysis

The journey of signal processing has evolved significantly from the traditional Fourier Transform to the more adaptive Wavelet Transform. The Fourier Transform (FT) is a fundamental mathematical tool that decomposes a function into its constituent sinusoidal frequencies of different amplitudes and phases. While powerful for analyzing the spectral composition of signals, it captures only global frequency information that persists over an entire sequence, lacking any temporal localization. This makes it unsuitable for analyzing non-stationary signals where frequency components change over time [15].

The Wavelet Transform (WT) was developed to overcome this critical limitation. Unlike Fourier's rigid sine and cosine basis functions, wavelet transform decomposes a signal into a set of wave-like oscillations called wavelets that are localized in both time and space. A wavelet is characterized by two fundamental properties: scale (which defines how stretched or squished the wavelet is and relates to frequency) and location (which defines where the wavelet is positioned in time). This allows the wavelet transform to perform a time-frequency analysis, revealing not only which frequencies are present in a signal but also when they occur [15].

The mathematical foundation of wavelet analysis involves convolving the signal with a set of wavelets at different scales and locations. For a particular scale, the wavelet is slid across the entire signal, and at each time step, the wavelet and signal are multiplied. The product of this multiplication gives a coefficient for that wavelet scale at that specific time step. This process is repeated across increasing wavelet scales [15]. The two main types of wavelet transformation are the Continuous Wavelet Transform (CWT) and the Discrete Wavelet Transform (DWT), with the latter being particularly valuable for digital signal processing and denoising applications [15].

Table: Fundamental Comparison Between Fourier and Wavelet Transforms

| Feature | Fourier Transform | Wavelet Transform |

|---|---|---|

| Basis Functions | Sine and cosine waves | Localized wavelets (e.g., Daubechies, Haar, Morlet) |

| Time Localization | No | Yes |

| Frequency Localization | Yes (global) | Yes (localized) |

| Ideal Signal Type | Stationary signals | Non-stationary, transient signals |

| Core Parameters | Frequency | Scale and location |

Wavelet Transform in EEG Denoising: Principles and Applications

Electroencephalography (EEG) signals, which record the brain's electrical activity, are inherently non-stationary and are highly susceptible to contamination from various physiological and non-physiological artifacts. These artifacts can have amplitudes up to ten times greater than the neural signal of interest, severely compromising the accuracy and reliability of EEG-based expert systems for clinical diagnosis, brain-computer interfaces, and cognitive monitoring [11] [3].

Wavelet transform is exceptionally well-suited for EEG denoising due to its ability to separate signal components based on their distinct time-frequency characteristics. The core principle involves decomposing the noisy EEG signal into different frequency sub-bands at multiple resolutions. This multi-resolution analysis allows for the separation of neural activity from artifacts like muscle noise, eye blinks, and power line interference, which often overlap in frequency but differ in their temporal and morphological properties [16] [17].

A key advantage in denoising is the process of wavelet thresholding. After decomposition, small coefficients in the detail sub-bands are typically associated with noise, while larger coefficients are associated with the underlying neural signal. By applying a threshold to these coefficients—setting small values to zero or reducing their magnitude—noise can be effectively suppressed. The clean signal is then reconstructed from the modified coefficients using the inverse wavelet transform [11]. Advanced methods, such as the Fractional Wavelet Transform (FrWT), further optimize energy concentration in the fractional domain, leading to more precise noise suppression while preserving critical non-stationary and quasi-stationary components of the EEG signal [11].

Table: Performance Comparison of EEG Denoising Techniques

| Denoising Method | Key Principle | Reported Advantages | Reported Limitations |

|---|---|---|---|

| Wavelet Thresholding [11] [16] | Time-frequency decomposition and coefficient thresholding | Effective for non-stationary signals, preserves transient features | Requires careful selection of wavelet and threshold |

| Empirical Mode Decomposition (EMD) [11] | Adaptive decomposition into intrinsic mode functions | Data-driven, does not require a basis function | Prone to mode mixing, can distort non-stationary patterns |

| Independent Component Analysis (ICA) [3] | Statistical separation of sources based on independence | Effective for separating artifacts from distinct sources | Sensitive to initial conditions, relies on statistical assumptions |

| Deep Learning (DL) [3] | Learning nonlinear mapping from noisy to clean signals | High performance, can model complex artifacts | Demands large training datasets, high computational cost |

| Proposed ARICB + FrWT [11] | Chirp-based decomposition & fractional wavelet thresholding | Outperforms others in noise reduction and detail preservation | Complex implementation, computationally intensive |

Detailed Experimental Protocol: EEG Denoising via Discrete Wavelet Transform

This protocol provides a step-by-step methodology for denoising a single-channel EEG signal using Discrete Wavelet Transform (DWT) thresholding, a common and effective approach.

Research Reagent Solutions and Materials

Table: Essential Materials and Software for Wavelet-Based EEG Denoising

| Item | Function/Description |

|---|---|

| Raw EEG Data | The contaminated signal to be denoised. Can be single-channel or multi-channel. |

| Computing Environment | Software such as MATLAB, Python (with PyWavelets, SciPy, NumPy libraries), or other scientific computing platforms. |

| Wavelet Family | A set of basis functions (e.g., Daubechies, Symlet, Coiflet) from which a specific wavelet is chosen. |

| Thresholding Function | A mathematical rule (e.g., Universal, SURE) to determine which coefficients to shrink or remove. |

| Thresholding Method | The approach for applying the threshold, either hard (keep or kill) or soft (shrink toward zero). |

| Performance Metrics | Quantitative measures (e.g., SNR, RMSE, PRD) to evaluate denoising effectiveness. |

Step-by-Step Procedure

Data Preprocessing:

- Input: Begin with a raw, single-channel EEG signal

y_raw(t), which is a composite of the true neural signalx(t)and noisez(t)[3]. - Preprocessing: Apply necessary preliminary steps such as band-pass filtering to remove DC offset and very high-frequency noise outside the EEG range of interest (e.g., 0.5-70 Hz). Downsample the data if needed. This yields the preprocessed signal

y(t)for denoising.

- Input: Begin with a raw, single-channel EEG signal

Wavelet Decomposition:

- Wavelet Selection: Choose an appropriate mother wavelet (e.g.,

'sym4'- Symlet with 4 vanishing moments) that matches the morphological characteristics of the desired EEG components [15]. - Decomposition Level: Select the number of decomposition levels

L. A common practice is to decompose until the lowest frequency sub-band (approximation) is predominantly below 1 Hz. - Perform DWT: Decompose the signal

y(t)using the DWT. This will produce one set of approximation coefficientsA_L(representing the signal's broad trends) andLsets of detail coefficientsD_1, D_2, ..., D_L(representing finer details and noise at progressively lower frequencies).

- Wavelet Selection: Choose an appropriate mother wavelet (e.g.,

Thresholding of Detail Coefficients:

- Threshold Selection: Calculate a threshold

λfor each level of detail coefficients or a universal threshold for all levels. A common method is the universal threshold:λ = σ * sqrt(2 * log(N)), whereNis the signal length andσis an estimate of the noise level (often the median absolute deviation of the level 1 coefficients divided by 0.6745) [11]. - Apply Threshold: Apply a soft or hard thresholding function to the detail coefficients

D_1toD_L. Soft thresholding is generally preferred as it provides a smoother reconstruction [16].- Soft Thresholding:

D_thresh = sign(D) * max(0, |D| - λ)

- Soft Thresholding:

- Threshold Selection: Calculate a threshold

Signal Reconstruction:

- Using the original approximation coefficients

A_Land the thresholded detail coefficientsD_thresh_1 ... D_thresh_L, perform the Inverse Discrete Wavelet Transform (IDWT) to reconstruct the denoised EEG signalx_hat(t).

- Using the original approximation coefficients

Validation and Analysis:

- Visual Inspection: Plot the original and denoised signals together to qualitatively assess the removal of obvious artifacts and the preservation of neural patterns.

- Quantitative Metrics: If a ground-truth clean signal is available, calculate performance metrics to quantify denoising efficacy [11] [3]:

- Signal-to-Noise Ratio (SNR):

SNR = 10 * log10(Psignal / Pnoise) - Root Mean Square Error (RMSE):

RMSE = sqrt(mean((x_clean - x_hat)^2)) - Percentage Root-mean-square Difference (PRD):

PRD = sqrt( sum((x_clean - x_hat)^2) / sum(x_clean^2) ) * 100%

- Signal-to-Noise Ratio (SNR):

The following workflow diagram illustrates the key stages of this protocol.

Advanced Protocol: R-Peak Detection in ECG via MODWT

This protocol details a specific application of wavelet transform for feature extraction, demonstrating its utility beyond simple denoising. The goal is to detect R-peaks in an Electrocardiogram (ECG) signal, which are critical for computing heart rate and heart rate variability [15].

Research Reagent Solutions and Materials

Table: Materials for MODWT-Based R-Peak Detection

| Item | Function/Description |

|---|---|

| Raw ECG Data | Noisy ECG signal, typically from a public database or clinical recording. |

| MATLAB or Python | Computing environment with signal processing toolkits (e.g., MATLAB's Wavelet Toolbox). |

| Maximal Overlap DWT (MODWT) | A non-decimated version of the DWT that is shift-invariant and better for time-series analysis. |

| 'sym4' Wavelet | The specific wavelet used for decomposition, chosen for its similarity to the QRS complex morphology. |

| Peak Finding Algorithm | A function or routine to identify local maxima in the reconstructed signal. |

Step-by-Step Procedure

Data Acquisition and Preprocessing: Obtain a raw ECG signal. The signal is often noisy, with baseline wander and muscle artifact.

Multi-Scale Decomposition: Perform MODWT on the ECG signal using the

'sym4'wavelet across multiple scales (e.g., 7 levels, corresponding to scales 2⁰ to 2⁶) [15].Coefficient Analysis: Analyze the wavelet coefficients at different scales:

- Small scales (2⁰, 2¹): Correspond to high frequencies and are predominantly noise.

- Intermediate scales (2², 2³, 2⁴): The R-peak signal emerges from the noise. The QRS complex produces significant coefficients at these scales.

- Large scales (2⁵, 2⁶): Correspond to low-frequency information like baseline wander and the T-wave.

Selective Reconstruction: Reconstruct the signal using information primarily from a single scale where the R-peaks are most prominent (e.g., scale 2³). This effectively acts as a custom filter that highlights the QRS complex while suppressing noise and other waveform components [15].

Peak Detection: Apply a peak-finding algorithm to the reconstructed signal. The peaks in this cleaned-up signal correspond to the R-peaks. Set an appropriate amplitude threshold and minimum distance between peaks to avoid false positives.

Validation: Plot the detected R-peak timestamps on top of the original ECG signal to visually validate the accuracy of the detection.

The following diagram illustrates the multi-scale analysis and feature extraction process.

Emerging Frontiers and Hybrid Approaches

The field of wavelet-based signal processing continues to evolve, particularly through integration with advanced machine learning and neuromorphic computing paradigms.

One significant advancement is the development of the Adaptive Residual-incorporating Chirp-based (ARICB) model used with a Fractional Wavelet Transform (FrWT). This method decomposes the EEG signal into non-stationary, quasi-stationary, and noise components using a coarse-to-fine fitting strategy with chirp atoms. The optimal-order FrWT then applies adaptive thresholding to preserve the neural components while removing noise based on their distinct energy distributions in the fractional domain. This tri-component model overcomes the limitations of conventional binary models that often cause irreversible feature damage [11].

Another frontier is the fusion of wavelet transforms with Spiking Neural Networks (SNNs). For instance, the SpikeWavformer framework integrates a discrete wavelet transform with a spiking self-attention mechanism. This hybrid approach leverages the wavelet's strength in automatic time-frequency decomposition and the SNN's biologically plausible, event-driven computation for superior energy efficiency. This is particularly promising for portable, resource-constrained BCI devices, achieving high performance in tasks like emotion recognition and auditory attention decoding while maintaining low computational overhead [17] [18].

Furthermore, deep learning models are being combined with wavelet analysis to create powerful denoising architectures. For example, some deep networks use Haar wavelet transforms in specially designed "Up and Down blocks" to better extract texture and structural information from data [19]. These hybrid models can learn complex, nonlinear mappings from noisy to clean signals, moving beyond the limitations of manually tuned thresholding parameters [3].

Advantages of Wavelets for Non-Stationary and Non-Linear EEG Signals

Electroencephalography (EEG) provides a non-invasive window into brain dynamics, capturing neural oscillations that are inherently non-stationary and non-linear. Traditional signal processing techniques, such as Fourier analysis, often fall short as they assume signal stationarity and struggle to resolve transient events. Wavelet Transform (WT) has emerged as a fundamental tool in EEG analysis, overcoming these limitations through its innate ability to provide multi-resolution analysis and adaptive time-frequency localization [20] [17].

The value of wavelet analysis extends across clinical and research domains, including the identification of epileptic seizures [21], monitoring anesthesia depth [22], and decoding auditory attention [17]. Its capacity to preserve crucial signal features while effectively removing noise makes it particularly suitable for applications requiring high precision, such as in drug development and neurotherapy monitoring, where accurate biomarker identification is essential.

Core Advantages of Wavelet Analysis for EEG

Wavelet transforms offer a suite of advantages specifically suited to the complex nature of neural signals.

Multi-Resolution Analysis (MRA)

MRA allows for the simultaneous examination of a signal at different resolutions or scales. This is crucial for EEG, where disparate frequency bands (e.g., delta, theta, alpha, beta, gamma) carry distinct physiological information. Wavelet decomposition enables the adaptive hierarchical representation of non-stationary neural activities, effectively characterizing both transient features and long-range rhythmic patterns [17] [18]. Unlike Fourier methods, MRA can isolate short-duration, high-frequency oscillations (like those in event-related potentials) while also capturing sustained, low-frequency oscillations (such as alpha waves) within the same analysis framework [20].

Joint Time-Frequency Localization

The wavelet transform achieves superior time-frequency resolution by dynamically adjusting the scale and translation parameters of its basis functions. This allows it to precisely pin-point when and at what frequency specific brain events occur. A comparative overview of its capabilities against other common techniques is provided in Table 1.

Table 1: Comparative Analysis of EEG Signal Processing Techniques

| Method | Time-Frequency Resolution | Handling of Non-Stationary Signals | Key Limitations |

|---|---|---|---|

| Fourier Transform (FT) | Provides only global frequency-domain information [17]. | Poor; assumes signal stationarity. | Loses all temporal information. |

| Short-Time FT (STFT) | Fixed window resolution; trades off time and frequency resolution [20]. | Moderate; limited by fixed window size. | Cannot resolve very brief transients and sustained oscillations equally well. |

| Empirical Mode Decomposition (EMD) | Data-driven and adaptive. | Effective but lacks a solid mathematical foundation [22]. | Prone to mode mixing and pattern distortion [11]. |

| Wavelet Transform (WT) | Adaptive resolution: High temporal resolution for high frequencies, high spectral resolution for low frequencies [20] [17]. | Excellent; designed for non-stationary, transient-rich signals. | Choice of mother wavelet and decomposition level is critical. |

Sparsity and Efficient Denoising

EEG signals are often contaminated by noise and artifacts (e.g., from eye blinks or muscle movement). Wavelet-based denoising leverages the fact that the true neural signal can be represented by a sparse set of significant wavelet coefficients, whereas noise and artifacts are spread across most coefficients. Techniques like thresholding allow for the preservation of signal integrity while effectively removing noise, which is a cornerstone of modern, automated denoising frameworks like onEEGwaveLAD [23]. Advanced methods, such as the Adaptive Residual-Incorporating Chirp-Based (ARICB) model, further refine this by decomposing EEG into non-stationary, quasi-stationary, and noise components before applying fractional wavelet transform with adaptive thresholding for superior noise suppression [11].

Synergy with Advanced Computational Models

Wavelet transforms integrate seamlessly with modern AI architectures. They are used for automatic feature extraction, eliminating the need for manual feature crafting and its inherent biases [17] [18]. Furthermore, the time-frequency maps generated by wavelets (e.g., scalograms) can be fed directly into Convolutional Neural Networks (CNNs) for classification tasks [24]. A particularly promising development is the fusion of wavelet transforms with Spiking Neural Networks (SNNs) in frameworks like SpikeWavformer, which combines the superior time-frequency analysis of wavelets with the energy-efficient, event-driven processing of SNNs, making it ideal for portable brain-computer interface applications [17] [18].

Application Notes and Protocols

This section provides a detailed methodological framework for applying wavelet transforms in EEG research, complete with a standard denoising protocol and a specific classification workflow.

Standard Wavelet-Based EEG Denoising Protocol

The following protocol, illustrated in Figure 1, is adapted from recent research on automated denoising pipelines [23] and optimization frameworks [25].

Diagram Title: Wavelet Denoising Protocol

Figure 1: Workflow for a standard wavelet-based denoising protocol.

- Step 1: Pre-processing and Segmentation. Begin with a raw, single- or multi-channel EEG signal. For online processing, segment the signal into windows. A 2017 study used 8-second epochs for seizure detection [21], while modern online denoisers like onEEGwaveLAD can operate on much smaller segments (e.g., 10-50 ms) for near real-time performance [23].

- Step 2: Wavelet Decomposition. Select an appropriate mother wavelet (e.g., Daubechies – 'db4' is common for EEG [21]) and the number of decomposition levels. The levels should be chosen so that the lowest frequency band adequately captures the slowest oscillation of interest (e.g., delta waves).

- Step 3: Thresholding. Apply a thresholding function (e.g., soft thresholding) to the detail coefficients. The threshold can be selected using rules like the universal threshold or a minimax threshold. This step is critical for separating signal from noise [25] [23].

- Step 4: Wavelet Reconstruction. Perform an inverse wavelet transform using the original approximation coefficients and the modified (thresholded) detail coefficients to reconstruct the denoised EEG signal in the time domain.

Protocol for EEG Feature Extraction and Classification

This protocol, depicted in Figure 2, outlines a methodology for using wavelets to extract features for machine learning models, as applied in schizophrenia classification and epileptic seizure detection [20] [21].

Diagram Title: Feature Extraction & Classification

Figure 2: Workflow for EEG feature extraction and classification using wavelets.

- Step 1: Discrete Wavelet Transform (DWT). Decompose the denoised EEG signal into multiple frequency sub-bands corresponding to standard neural oscillations (Delta, Theta, Alpha, Beta, Gamma) using DWT. The number of decomposition levels is typically set to 5 for a sampling frequency of 173.61 Hz to isolate these bands effectively [21].

- Step 2: Feature Vector Calculation. From each derived sub-band, calculate statistical features that characterize the signal. Commonly used features include:

- Energy: Sum of squares of the coefficient values.

- Entropy: Measure of randomness or complexity in the signal.

- Standard Deviation: Dispersion of the coefficients around the mean. These features are concatenated to form a comprehensive feature vector representing the original EEG epoch [21].

- Step 3: Classifier Model Training and Testing. The feature vectors are used to train a classifier. As shown in Table 2, Support Vector Machines (SVM) and Artificial Neural Networks (ANN) are widely used. For instance, SVM has achieved up to 100% accuracy in classifying ictal EEG signals [21]. Scalogram images from wavelets can also be input into Convolutional Neural Networks (CNNs) for end-to-end learning [24].

Table 2: Performance of Wavelet-Based Classifiers for EEG

| Application | Wavelet Type / Features | Classifier | Reported Performance |

|---|---|---|---|

| Epileptic Seizure Detection [21] | Daubechies (db4) / Energy, Entropy, Standard Deviation | SVM | Accuracy: 100% (Ictal), Sensitivity: 94.11%, Specificity: 100% |

| Epileptic Seizure Detection [21] | Daubechies (db4) / Energy, Entropy, Standard Deviation | ANN (MLP) | Accuracy: 97%, Sensitivity: 96.42%, Specificity: 100% |

| Schizophrenia Classification [20] | Continuous WT (CWT) & Discrete WT (DWT) / Statistical Features | Decision Trees | Accuracy: 97.98%, Sensitivity: 98.2%, Specificity: 97.72% |

| Cross-Task BCI Analysis [17] [18] | DWT with Spiking Self-Attention / Automatic Feature Extraction | SpikeWavformer (SNN) | High performance in Emotion Recognition and Auditory Attention Decoding |

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of wavelet-based EEG analysis requires a combination of software, data, and methodological components.

Table 3: Essential Research Reagents and Tools

| Item / Resource | Function / Description | Relevance to Wavelet EEG Research |

|---|---|---|

| MATLAB (Wavelet Toolbox) / Python (PyWT) | Software environments with built-in wavelet analysis functions. | Provides standardized, validated algorithms for DWT, CWT, and inverse transforms, ensuring reproducibility. |

| Daubechies (dbN) Wavelets [21] | A family of orthogonal wavelets characterized by a maximal number of vanishing moments. | The 'db4' wavelet is frequently selected for EEG due to its similarity in shape to neural waveforms, enabling efficient decomposition. |

| Public EEG Datasets (e.g., Bonn EEG dataset [21]) | Curated, often annotated, repositories of EEG data. | Serves as a critical benchmark for developing and validating new wavelet-based denoising and classification algorithms. |

| onEEGwaveLAD Framework [23] | A specific, fully automated online EEG wavelet-based learning adaptive denoiser. | Provides a modern, open framework for developing and testing online denoising pipelines without needing reference signals. |

| Adaptive Thresholding Functions [25] [11] | Algorithms (e.g., soft, hard) that determine how wavelet coefficients are modified to suppress noise. | Core to the denoising process; advanced adaptive strategies optimize the trade-off between noise removal and signal preservation. |

Wavelet transforms provide an mathematically robust and highly adaptable framework for tackling the core challenges of EEG signal analysis. Their strengths in multi-resolution analysis, adaptive time-frequency localization, and effective noise suppression make them indispensable for both basic neuroscience research and applied clinical diagnostics. The ongoing integration of wavelet methods with advanced deep learning and energy-efficient neuromorphic computing architectures, such as spiking neural networks, signals a future where wavelet-based analysis will be at the heart of real-time, portable, and highly accurate brain-computer interfaces and neurotherapeutic applications [17] [18]. For researchers, mastering the protocols and tools outlined in this document is fundamental to advancing the field of quantitative EEG analysis.

Wavelet transform has emerged as a powerful mathematical framework for processing non-stationary signals like electroencephalogram (EEG), effectively addressing limitations inherent in traditional Fourier analysis. Unlike sine and cosine waves that extend infinitely, wavelets are "little waves" that begin at zero, swell to a maximum, and quickly decay to zero again, providing localized time-frequency analysis capabilities [26]. This fundamental property makes them particularly suitable for analyzing EEG signals characterized by transient neural events and non-stationary characteristics. The wavelet transform decomposes a time-domain signal into its constituent wavelet coefficients through shifting and dilation of a mother wavelet function, enabling multi-resolution analysis that simultaneously captures both macroscopic patterns and microscopic fluctuations in neural signals [17].

The efficacy of wavelet-based denoising in EEG processing depends critically on three interdependent concepts: mother wavelet selection, which determines how well the basis function matches signal characteristics; time-frequency localization, which enables precise identification of transient artifacts; and multi-resolution analysis, which decomposes signals into different frequency bands at respective temporal resolutions [27]. These core principles form the theoretical foundation for advanced denoising frameworks that outperform traditional filtering methods, especially for physiological signals where preserving diagnostically relevant neurological information while removing artifacts is paramount [3] [27].

Table 1: Core Wavelet Types and Their Characteristics in EEG Denoising

| Wavelet Family | Representative Members | Key Characteristics | EEG Applications |

|---|---|---|---|

| Daubechies | db2-db11 [28] | Orthogonal, asymmetric; good for transient detection | General-purpose EEG denoising [29] |

| Symlets | sym2-sym8 [28] | Nearly symmetric; improved phase properties | Muscle artifact removal [29] |

| Coiflets | coif1-coif5 [28] | Nearly symmetric with higher vanishing moments | Ocular artifact correction [27] |

| Biorthogonal | bior1.1-bior2.6 [28] | Symmetric, perfect reconstruction | Signal decomposition [27] |

| Reverse Biorthogonal | rbio1.3-rbio2.8 [28] | Symmetric reconstruction properties | Multi-component analysis [27] |

Mother Wavelet Selection Framework

Mathematical Foundations and Selection Criteria

The mother wavelet function, denoted as Ψ(t), serves as the prototype for generating all wavelet basis functions through translation and scaling operations: Ψ(a,b)(t) = Ψ((t-b)/a), where 'a' represents the scaling parameter and 'b' the translation parameter [27]. This flexible generation allows wavelet transforms to adapt to signal features across different temporal and frequency scales. Optimal mother wavelet selection is critical for maximizing separation between neural signals and artifact components in the wavelet domain, which subsequently enhances thresholding efficacy during denoising procedures [28].

Recent research has demonstrated that suboptimal wavelet selection can lead to either inadequate noise reduction or undesirable signal distortion, particularly for low Signal-to-Noise Ratio (SNR) EEG recordings [28]. The mean of sparsity change (μsc) parameter has emerged as an effective empirical metric for quantifying this separation by capturing mean variation of noisy Detail components across decomposition levels [28]. This approach represents a significant advancement over traditional heuristic selection methods that often rely on trial-and-error processes susceptible to human bias.

Quantitative Selection Protocols

Experimental evidence indicates that signals with low SNR (typically below 10dB) can only be efficiently denoised with a limited subset of wavelets, while high-SNR signals (above 20dB) exhibit greater flexibility in wavelet choice [28]. For low-SNR EEG data, the change in μsc between the highest and second-highest performing wavelets is approximately 8-10%, whereas for high-SNR data this difference reduces to around 5%, indicating more competitive performance among candidate wavelets [28].

Table 2: Optimal Wavelet Selection Based on Application Scenarios

| EEG Application Context | Recommended Mother Wavelet | Performance Evidence | Decomposition Level |

|---|---|---|---|

| Ocular Artifact Removal | Coiflet with vanishing moment 3 [27] | Effective OA zone identification via SWT | Level 5 decomposition [27] |

| Muscle Artifact Removal | Symlets (sym29 recommended) [29] | Superior compatibility for EMG artifacts | Level-dependent optimization [28] |

| General Purpose Denoising | Daubechies (db4-db8) [28] | Balanced time-frequency localization | Adaptive level selection [28] |

| Epileptic Spike Detection | Sym8 [26] | Optimal for transient detection | Medium-high levels (5-7) [26] |

| Real-time Implementation | Biorthogonal (bior1.1-bior1.5) [30] | Computational efficiency | Lower levels (3-5) [30] |

The implementation protocol for optimal wavelet selection follows a systematic methodology. First, create a comprehensive wavelet sample space encompassing major families (Biorthogonal, Coiflet, Daubechies, Reverse biorthogonal, Symlet) with varying filter lengths [28]. For each candidate wavelet, compute the maximum decomposition level using the ratio Rj = LDj / Lf, where LDj is the length of Detail component at level j and Lf is the wavelet filter length, with the threshold Rj > 1.5 determining the maximum useful level [28]. Calculate the sparsity parameter for Detail components at each decomposition level, then compute the mean of sparsity change (μsc) across all valid levels [28]. Finally, select the optimal wavelet(s) based on the highest μsc values, choosing either a single best-performing wavelet or a group of top performers (e.g., top 3-5) for ensemble approaches [28].

Advanced Denoising Protocols

Thresholding Strategies and Parameter Optimization

Wavelet thresholding represents the crucial step where noise separation occurs, with two primary approaches dominating contemporary research: non-linear time-scale adaptive denoising using Stein's unbiased risk estimate (SURE) with soft-like thresholding functions [27], and non-negative garrote shrinkage functions that provide an optimal tradeoff between soft and hard thresholding characteristics [27]. The threshold value (tj,l) for wavelet coefficients at level l is typically calculated using a modified universal threshold: t'j,l = K·αj,l·√(2lnN), where αj,l represents the estimated noise variance (αj,l = median(|wj,l|)/0.6745), N is the signal length, and K is an empirically determined parameter (0

For real-time implementations, an adaptive thresholding approach utilizing a feedback control loop has demonstrated significant promise, particularly for portable brain-computer interface applications [30]. This method employs a noise level estimator module based on first detail coefficients level (d1) to calculate the unknown standard deviation of background noise, with performance optimized through integral gain (G) adjustment and window size (M) selection [30]. Experimental results indicate this approach can achieve approximately 8 dB improvement in SNR with acceptable settling time for real-time processing constraints [30].

Multi-Resolution Analysis and Decomposition Strategies

Multi-resolution analysis (MRA) provides the mathematical foundation for decomposing EEG signals into constituent frequency bands while maintaining temporal information. Through MRA, EEG signals are decomposed into Approximation coefficients (representing low-frequency components) and Detail coefficients (representing high-frequency components) at multiple resolution levels [27]. This hierarchical decomposition enables targeted artifact removal at specific frequency bands while preserving neural information in other bands.

The Discrete Wavelet Transform (DWT) implementation involves passing signals through half-band high-pass and low-pass filters, producing Detail and Approximation coefficients respectively, with the process iterating on the Approximation coefficients until the desired frequency resolution is achieved [27]. A significant advancement addresses DWT's time-variance limitation through Stationary Wavelet Transform (SWT), which maintains translation invariance—particularly critical for statistical EEG processing—though at the cost of increased computational complexity and redundancy [27].

Experimental Protocols and Validation Frameworks

Benchmarking Protocol for Denoising Performance

Rigorous evaluation of wavelet denoising efficacy requires standardized protocols employing multiple quantitative metrics. The established methodology involves calculating Signal-to-Noise Ratio (SNR) improvement, Root Mean Square Error (RMSE), and Pearson correlation coefficient between denoised and ground-truth clean signals [31]. For clinical applications, additional qualitative assessment by domain experts is recommended to ensure preserved diagnostic information [3].

The benchmarking workflow begins with preparing datasets containing both synthetic and real-world EEG recordings with varying artifact types (ocular, muscle, cardiac, power line interference) [27]. For each candidate denoising method, apply the identical preprocessing pipeline including band-pass filtering (typically 0.5-70Hz) and notch filtering (50/60Hz) [31]. Implement wavelet denoising using the selected parameters (mother wavelet, decomposition level, thresholding method) across all test signals [28]. Compute performance metrics (SNR improvement, RMSE, Pearson correlation) for quantitative comparison [31]. Finally, perform statistical testing (e.g., repeated measures ANOVA) to determine significant performance differences between methods [3].

Experimental data demonstrates that optimized wavelet methods can achieve SNR improvements above 27 dB even at high noise levels, with average Pearson correlation coefficients of 0.91 compared to ground truth signals [31]. Furthermore, recent studies implementing adaptive real-time wavelet denoising architectures report consistent SNR improvements of approximately 8 dB with computational performance suitable for embedded systems (average denoising time of 4.86 ms per signal window) [30].

Integrated Denoising Protocol for EEG Artifact Removal

Building upon the core concepts and experimental validation, the following integrated protocol provides a comprehensive methodology for wavelet-based EEG denoising:

Signal Preprocessing: Apply band-pass filter (0.5-70Hz) and notch filter (50/60Hz) to remove out-of-band noise [31].

Wavelet Selection: Implement the μsc-based selection protocol to identify optimal mother wavelet from a comprehensive sample space [28].

Decomposition Level Determination: Calculate maximum effective decomposition level using Rj = LDj / Lf > 1.5 criterion [28].

Signal Decomposition: Perform DWT/SWT using selected parameters to obtain Approximation and Detail coefficients [27].

Coefficient Thresholding: Apply modified universal thresholding with non-negative garrote shrinkage function to Detail coefficients [27].

Signal Reconstruction: Perform inverse DWT/SWT using thresholded coefficients to reconstruct denoised EEG [27].

Quality Validation: Compute performance metrics (SNR improvement, RMSE, Pearson correlation) and clinical validation [31].

Research Reagent Solutions

Table 3: Essential Research Materials and Computational Tools for Wavelet-Based EEG Denoising

| Category | Specific Tool/Reagent | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Wavelet Families | Daubechies (db2-db11) [28] | Signal decomposition basis functions | db4-db8 recommended for general EEG [28] |

| Symlets (sym2-sym8) [28] | Artifact-specific denoising | sym29 optimal for EMG artifacts [29] | |

| Coiflets (coif1-coif5) [28] | Ocular artifact correction | coif3 with vanishing moment 3 [27] | |

| Decomposition Algorithms | Discrete Wavelet Transform (DWT) [27] | Multi-resolution analysis | Computationally efficient [27] |

| Stationary Wavelet Transform (SWT) [27] | Translation-invariant analysis | Prevents artifact introduction [27] | |

| Fractional Wavelet Transform (FrWT) [11] | Advanced time-frequency analysis | Superior for non-stationary components [11] | |

| Thresholding Functions | Non-negative Garrote Shrinkage [27] | Coefficient thresholding | Optimal soft-hard compromise [27] |

| SURE-based Adaptive [27] | Automated threshold selection | Minimizes estimation risk [27] | |

| Feedback Control Loop [30] | Real-time adaptation | Adjusts to changing noise [30] | |

| Validation Metrics | SNR Improvement [31] | Denoising efficacy quantification | Target >8dB for real-time [30] |

| Pearson Correlation [31] | Signal preservation assessment | Target >0.9 for clinical use [31] | |

| Sparsity Change (μsc) [28] | Wavelet selection optimization | Automated parameter selection [28] |

Core Techniques and Workflows: Implementing Wavelet Denoising

Within the field of electroencephalogram (EEG) signal processing, the imperative for effective denoising is paramount. Artifacts, particularly ocular artifacts (OA) from eye blinks and movement, can significantly corrupt the neuronal signals of interest, complicating both clinical diagnosis and brain-computer interface (BCI) applications [32]. The quest for minimalistic, few-channel, and even single-channel EEG systems for use in natural environments has further intensified the need for robust, unsupervised denoising techniques [32]. Wavelet Transform (WT) has emerged as a powerful tool for this purpose, capable of handling the non-stationary nature of EEG signals [27]. Among the various wavelet methods, the Discrete Wavelet Transform (DWT) and the Stationary Wavelet Transform (SWT) are two of the most widely used approaches. This application note provides a practical comparison of DWT and SWT, framing them within the broader context of wavelet-based denoising research for EEG signals. It is designed to equip researchers, scientists, and drug development professionals with the quantitative data and detailed protocols necessary to make an informed choice between these two techniques for their specific applications.

Theoretical Foundations and Key Differences

The fundamental operation of both DWT and SWT involves decomposing a signal into a set of basis functions known as wavelets, which are obtained through the dilation and shifting of a mother wavelet [32]. This process yields approximation coefficients (representing the low-frequency content) and detail coefficients (representing the high-frequency content) at multiple levels, providing a time-frequency representation of the signal [32].

The primary distinction between DWT and SWT lies in their structural approach to this decomposition, which leads to critical practical differences [27]:

- Discrete Wavelet Transform (DWT): DWT is a non-redundant and computationally efficient transform. At each level of decomposition, the signal is passed through high-pass and low-pass filters, and the output is subsequently downsampled by a factor of two. This downsampling makes DWT time-variant, meaning that a simple shift in the input signal can lead to a different set of wavelet coefficients [32] [27].

- Stationary Wavelet Transform (SWT): SWT was developed precisely to overcome the translation-invariance drawback of DWT. It achieves this by omitting the downsampling step. At each level, the filters are upsampled instead, resulting in an output where the approximation and detail sequences are of the same length as the original signal. This creates a redundant representation but ensures translation invariance, at the cost of being computationally slower and requiring more memory [32] [27].

Table 1: Core Algorithmic Differences Between DWT and SWT.

| Feature | Discrete Wavelet Transform (DWT) | Stationary Wavelet Transform (SWT) |

|---|---|---|

| Downsampling | Applied at each level | Not applied |

| Coefficient Length | Reduces by half at each level | Remains equal to original signal length at all levels |

| Translation Invariance | Not translation-invariant | Translation-invariant |

| Computational Efficiency | Higher (non-redundant) | Lower (redundant) |

| Primary Strength | Computational efficiency, non-redundancy | Preservation of signal features, artifact removal accuracy |

Quantitative Performance Comparison in EEG Denoising

A systematic evaluation of DWT and SWT for ocular artifact (OA) removal from single-channel EEG data provides clear, quantitative insights into their performance. Key performance metrics include correlation coefficients, mutual information, signal-to-artifact ratio (SAR), and normalized mean square error (NMSE) [32].

The choice of the mother wavelet and the thresholding method significantly influences the performance of both DWT and SWT. Commonly used wavelet basis functions that resemble the characteristics of eye blinks include Haar, Coiflets (e.g., Coif3), Symlets (e.g., Sym3), and Biorthogonal wavelets (e.g., Bior4.4) [32]. For thresholding, universal threshold (UT) and statistical threshold (ST) are two common approaches, with ST often producing superior denoised results as it is based on the statistics of the signal [32].

Table 2: Performance Comparison of DWT and SWT with Different Configurations for Single-Channel EEG OA Removal. Adapted from [32].

| Wavelet Transform | Mother Wavelet | Thresholding Method | Correlation Coefficient (↑) | Signal-to-Artifact Ratio, SAR (↑) | Normalized MSE (↓) |

|---|---|---|---|---|---|

| DWT | Coif3 | Statistical Threshold (ST) | Optimal | Optimal | Optimal |

| DWT | Bior4.4 | Statistical Threshold (ST) | High | High | High |

| DWT | Haar | Universal Threshold (UT) | Moderate | Moderate | Moderate |

| SWT | Coif3 | Universal Threshold (UT) | High | High | High |

| SWT | Sym3 | Statistical Threshold (ST) | Moderate | Moderate | Moderate |

The data indicates that the optimal combination for OA removal is often DWT with a Statistical Threshold and the Coif3 or Bior4.4 mother wavelets [32]. This combination achieved superior performance across multiple metrics in a single-channel context, which is critical for minimalist EEG systems. However, SWT remains a robust alternative, particularly when translation invariance is a priority. Another independent study confirmed SWT's effectiveness, reporting that SWT with Symlet2 at level 4 achieved a high Signal-to-Noise Ratio (SNR) of 27.32 and a low Mean Square Error (MSE) of 5.09 [7].

Detailed Experimental Protocols

This section provides step-by-step protocols for implementing DWT and SWT denoising, allowing for the reproduction of results and practical application.

Generalized Wavelet Denoising Workflow

The following diagram illustrates the common workflow for wavelet-based denoising, which forms the basis for both DWT and SWT methods.

Protocol 1: Ocular Artifact Removal using DWT

This protocol is optimized for single-channel EEG data based on the findings of [32].

- Objective: To remove ocular artifacts from a single-channel EEG recording using DWT with a Statistical Threshold.

- Materials: Single-channel EEG data contaminated with ocular artifacts, software with DWT implementation (e.g., MATLAB, Python with PyWavelets).

- Procedure:

- Parameter Selection:

- Mother Wavelet: Select

coif3orbior4.4. - Decomposition Level (J): Choose an appropriate level (e.g., 5-8) based on the sampling frequency and the frequency band of the artifacts. Ocular artifacts are typically below 5 Hz.

- Thresholding Method: Statistical Threshold (ST).

- Mother Wavelet: Select

- Wavelet Decomposition: Decompose the raw EEG signal

x[n]using DWT to obtain one set of approximation coefficientsa_Jand multiple sets of detail coefficientsd_1tod_J. - Threshold Calculation & Application:

- For each level of detail coefficients

d_j, calculate the noise thresholdλ_jusing the Statistical Threshold formula:λ_j = σ_j * √(2 * log(N))whereσ_jis the standard deviation of the wavelet coefficients at levelj, andNis the length of the data. - Apply hard thresholding to the detail coefficients: set to zero all coefficients with an absolute value less than

λ_j, and leave others unchanged. This preserves edge sharpness.

- For each level of detail coefficients

- Signal Reconstruction: Perform the inverse DWT using the thresholded detail coefficients and the original approximation coefficients to reconstruct the denoised EEG signal.

- Parameter Selection:

- Validation: Quantify performance using Correlation Coefficient, Signal-to-Artifact Ratio (SAR), and Normalized Mean Square Error (NMSE) against a clean reference signal if available [32].

Protocol 2: Translation-Invariant Denoising using SWT

This protocol is suited for applications where preserving the exact temporal features of the EEG signal is critical.

- Objective: To denoise a multi-channel or single-channel EEG signal using the translation-invariant properties of SWT.

- Materials: EEG data, software with SWT implementation.

- Procedure:

- Parameter Selection:

- Mother Wavelet: Select

coif3orsym3. - Decomposition Level (J): Similar to DWT protocol.

- Thresholding Method: Universal Threshold (UT) or Level-Dependent Threshold (LDT).

- Mother Wavelet: Select

- Wavelet Decomposition: Decompose the raw EEG signal

x[n]using SWT. This will generateJsets of detail coefficientsw_j,k, each of lengthN(the original signal length), and one set of approximation coefficientsa_J,kof lengthN. - Threshold Calculation & Application:

- Estimate the noise level

σ_jat each level using the Median Absolute Deviation (MAD):σ_j = median(|w_j,k - mean(w_j,k)|) / 0.6745. - Calculate the Level-Dependent Threshold:

λ_j = σ_j * √(2 * log(N_j)), whereN_jis the number of coefficients at levelj(for SWT,N_j = N). - Apply a compromise thresholding function like the non-negative garrote:

T(x, λ) = { 0 if |x| ≤ λ; x - λ²/x if |x| > λ }This function offers a good balance between the smoothness of soft thresholding and the edge preservation of hard thresholding [33].

- Estimate the noise level

- Signal Reconstruction: Perform the inverse SWT using the thresholded detail coefficients and the approximation coefficients to obtain the denoised signal.

- Parameter Selection:

- Validation: Use the same performance metrics as Protocol 1. Additionally, inspect the denoised signal for the absence of artifact-induced distortions that are temporally aligned with eye blink events.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key components required for implementing the wavelet denoising protocols described in this note.

Table 3: Essential Research Reagents and Computational Solutions for Wavelet-Based EEG Denoising.

| Item | Function / Description | Example / Note |

|---|---|---|

| EEG Data Source | Raw signal for processing. Can be from public databases or newly acquired. | Karunya University database [34]; Department of Epileptology, University of Bonn [7]. |

| Computational Software | Platform for implementing DWT/SWT algorithms and signal analysis. | MATLAB (with Wavelet Toolbox) [32], Python (with PyWavelets, SciPy). |

| Mother Wavelets | Basis functions for decomposing the EEG signal. | Coif3, Bior4.4 (optimal for DWT-OA removal) [32]; Haar, Sym3 (commonly used) [32] [7]. |

| Thresholding Functions | Algorithms to modify wavelet coefficients for noise removal. | Hard Thresholding (preserves edges) [32], Soft Thresholding (smoother) [32], Non-negative Garrote (compromise) [33]. |

| Performance Metrics | Quantitative measures to evaluate denoising efficacy. | Correlation Coefficient, Signal-to-Artifact Ratio (SAR), Normalized MSE [32], Signal-to-Noise Ratio (SNR) [7]. |

The choice between DWT and SWT for EEG denoising is not a matter of one being universally superior to the other. Rather, it is a decision that must be aligned with the specific research goals and constraints. DWT, particularly when configured with a Statistical Threshold and an appropriate mother wavelet like coif3, offers a compelling combination of high performance and computational efficiency, making it exceptionally well-suited for minimalist, single-channel systems and potential real-time applications [32]. In contrast, SWT provides the critical benefit of translation invariance, which can be indispensable for analyses where the precise temporal localization of signal features is paramount, albeit at a higher computational cost.

Future research in wavelet-based EEG denoising is rapidly evolving. Promising directions include the development of hybrid models that leverage the strengths of multiple signal processing techniques, such as the integration of wavelet transforms with blind source separation (BSS) methods like Independent Component Analysis (ICA) [27]. Furthermore, advanced frameworks like the Adaptive Residual-Incorporating Chirp-Based (ARICB) model that decompose EEG into non-stationary, quasi-stationary, and noise components in the fractional wavelet domain represent a significant step beyond conventional binary models [11]. As the demand for ambulatory EEG monitoring and high-fidelity BCIs grows, the refinement of these unsupervised, computationally intelligent denoising techniques will continue to be a critical area of investigation for researchers and drug development professionals alike.

Electroencephalogram (EEG) signals are pivotal in clinical diagnosis, brain-computer interface (BCI) systems, and neurological disorder studies, yet their low amplitude makes them highly susceptible to contamination from physiological and environmental artefacts. Effective denoising is therefore a critical preprocessing step to preserve the integrity of neural information. The wavelet transform has emerged as a powerful tool for this purpose, offering multi-resolution analysis and excellent time-frequency localization that is particularly suited to the non-stationary nature of EEG signals. This application note details a standardized pipeline for wavelet-based denoising of EEG signals, providing researchers and drug development professionals with experimentally-validated protocols to enhance signal quality for downstream analysis and interpretation.

The Core Denoising Workflow