Validating EEG Artifact Removal: A Comprehensive Guide to Simulated Data and Ground-Truth Methodologies

This article provides a comprehensive framework for researchers and drug development professionals on validating electroencephalography (EEG) artifact removal techniques using simulated data.

Validating EEG Artifact Removal: A Comprehensive Guide to Simulated Data and Ground-Truth Methodologies

Abstract

This article provides a comprehensive framework for researchers and drug development professionals on validating electroencephalography (EEG) artifact removal techniques using simulated data. It covers the fundamental necessity of ground-truth data for reliable validation, explores established and emerging methodologies for generating and utilizing simulated EEG, addresses common troubleshooting and optimization challenges, and establishes rigorous protocols for the comparative evaluation of artifact removal performance. By synthesizing current best practices and validation strategies, this guide aims to enhance the rigor and reproducibility of EEG preprocessing in both clinical and research settings, ultimately leading to more robust neural biomarkers and neurophysiological insights.

The Critical Role of Ground-Truth Data in EEG Artifact Removal

Why Simulated Data is Indispensable for Validation

In electroencephalography (EEG) research, the ability to accurately remove artifacts—unwanted noise from muscle movement, eye blinks, or cardiac activity—is fundamental to interpreting brain signals. However, validating the algorithms designed to remove these artifacts presents a unique challenge: in real-world recordings, the underlying true brain signal is never known. Simulated data provides an indispensable solution to this problem by creating scenarios where the "ground truth" is explicitly defined, enabling rigorous and quantitative validation of artifact removal techniques.

The Fundamental Challenge of Unknown Truth

Electroencephalography is a non-invasive technique used to detect and record human brain electrical activity by placing electrodes on the scalp. Due to its high temporal resolution, portability, and non-invasiveness, EEG is widely utilized across various fields from monitoring sleep quality and recognizing emotions to detecting Alzheimer's disease and epilepsy [1].

However, EEG signals are notoriously susceptible to contamination from multiple sources:

- Physiological artifacts: Including Electromyography (EMG) from muscle activity, Electrooculography (EOG) from eye movements, and Electrocardiography (ECG) from heart activity [1]

- Motion artifacts: Particularly problematic in mobile EEG (mo-EEG) systems used in real-world settings [2]

- Technical artifacts: Stemming from environmental noise and recording system limitations [2]

The central problem for validation is straightforward yet profound: when analyzing real EEG data, researchers can never be certain what constitutes genuine brain activity versus artifact because both are mixed in the recorded signal. This makes it impossible to quantitatively assess how well artifact removal algorithms work, as there's no benchmark for comparison [1] [2].

Simulated Data as a Validation Solution

Simulated data addresses this fundamental limitation by creating scenarios where the ground truth brain signal is known. The core approach involves:

- Starting with clean EEG recordings obtained under ideal, restricted-movement conditions

- Artificially introducing controlled artifacts into these clean signals

- Applying artifact removal algorithms to the contaminated signals

- Comparing the results against the original clean recordings [1]

This methodology enables researchers to calculate precise performance metrics because both the input (clean EEG) and output (processed EEG) can be compared against a known standard.

Performance Metrics Enabled by Simulated Data

The use of simulated data allows quantification of artifact removal performance through standardized metrics:

Table 1: Key Performance Metrics for EEG Artifact Removal Validation

| Metric | Description | Interpretation |

|---|---|---|

| Signal-to-Noise Ratio (SNR) | Ratio of signal power to noise power | Higher values indicate better artifact removal |

| Correlation Coefficient (CC) | Linear relationship between processed and clean EEG | Values closer to 1.0 indicate better preservation of original signal |

| Relative Root Mean Square Error (RRMSE) | Magnitude of differences between signals | Lower values indicate more accurate reconstruction |

| Artifact Reduction Percentage (η) | Percentage reduction in artifact components | Higher values indicate more effective artifact removal |

Experimental Evidence from Recent Advances

Recent research demonstrates how simulated data has driven innovations in EEG artifact removal by enabling quantitative validation:

CLEnet: Dual-Scale CNN and LSTM Architecture

The CLEnet model integrates dual-scale convolutional neural networks (CNN) with Long Short-Term Memory (LSTM) networks and an improved EMA-1D attention mechanism. When validated on simulated datasets, CLEnet demonstrated significant improvements:

- In removing mixed artifacts (EMG + EOG), CLEnet achieved an SNR of 11.498 dB and CC of 0.925 [1]

- For ECG artifact removal, CLEnet showed a 5.13% increase in SNR and 8.08% decrease in temporal RRMSE compared to previous models [1]

- The model exhibited 2.45% and 2.65% improvements in SNR and CC respectively for multi-channel EEG containing unknown artifacts [1]

These quantitative results, made possible by simulated data validation, demonstrated CLEnet's capability to handle various artifact types more effectively than previous approaches.

Motion-Net: Subject-Specific Motion Artifact Removal

Motion-Net represents a specialized approach for removing motion artifacts in mobile EEG applications. Using simulated data with known ground truth references, researchers demonstrated:

- Motion artifact reduction percentage (η) of 86% ± 4.13 [2]

- SNR improvement of 20 ± 4.47 dB [2]

- Mean Absolute Error (MAE) of 0.20 ± 0.16 [2]

The study incorporated visibility graph features to enhance model accuracy with smaller datasets, with performance rigorously quantified through simulated data validation [2].

A²DM: Artifact-Aware Denoising Model

The A²DM framework introduced an innovative approach by fusing artifact representation into the time-frequency domain. When evaluated on the benchmark EEGdenoiseNet dataset:

- A²DM demonstrated a 12% improvement in correlation coefficient metrics compared to previous state-of-the-art methods [3]

- The model effectively removed both ocular and muscle artifacts in a unified framework [3]

This performance validation relied critically on simulated data where ground truth signals were available for comparison.

Experimental Protocols for Simulation-Based Validation

The validation of EEG artifact removal methods follows structured experimental protocols when using simulated data:

Protocol 1: Semi-Synthetic Data Generation

This approach combines clean EEG segments with recorded artifact signals:

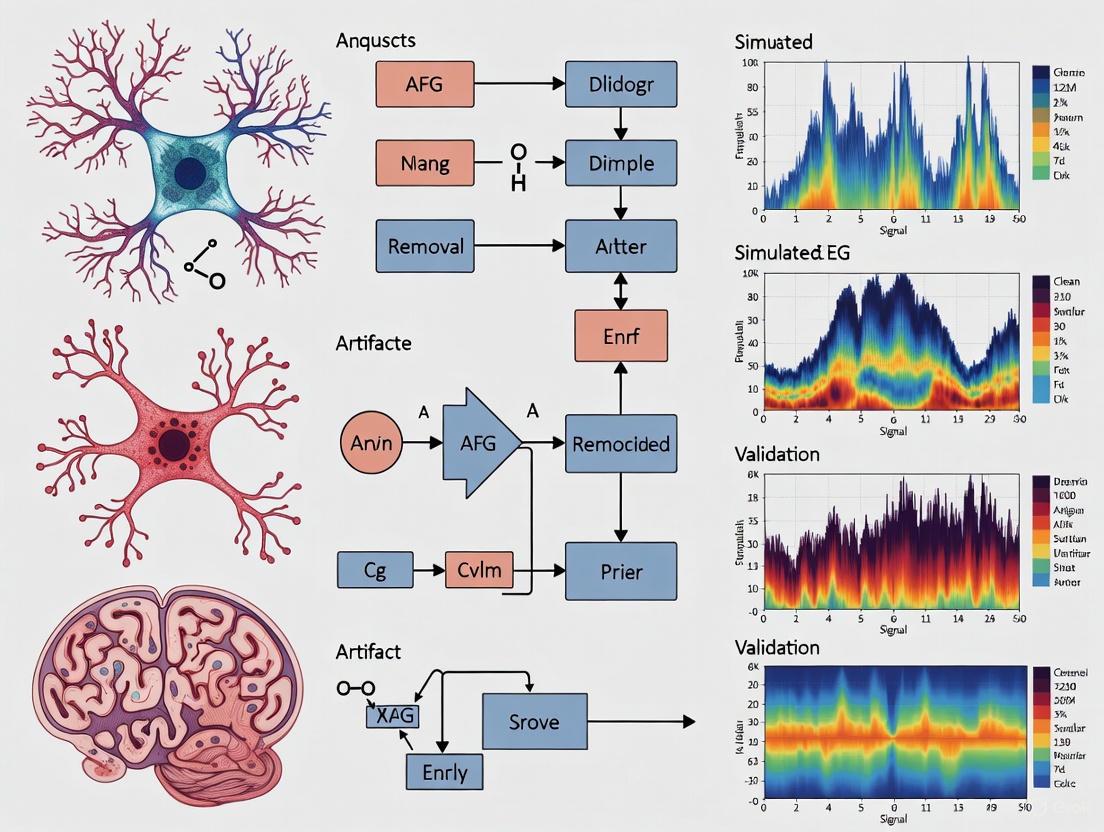

Diagram 1: Semi-Synthetic Data Validation Workflow

This protocol involves:

- Source Data Collection: Clean EEG is typically recorded under restricted movement conditions, while artifacts (EOG, EMG, ECG) are separately recorded [1]

- Mixing Process: Artifacts are artificially introduced into clean EEG at controlled signal-to-noise ratios [1]

- Algorithm Testing: The contaminated signals are processed through artifact removal algorithms

- Performance Quantification: Processed signals are compared to original clean EEG using standardized metrics [1]

Protocol 2: Real Data with Ground Truth Reference

This approach uses specialized experimental setups to capture both contaminated and reference signals simultaneously:

Diagram 2: Real Data with Reference Validation Workflow

This method includes:

- Specialized Recording: Using systems specifically designed to capture both clean and contaminated signals [2]

- Motion Tasks: Subjects perform specific movements known to generate artifacts [2]

- Reference Signals: Obtained through electrode placements minimally affected by motion [2]

- Cross-Validation: Comparing algorithm output against reference signals [2]

Table 2: Key Research Resources for EEG Artifact Removal Validation

| Resource | Function | Application in Validation |

|---|---|---|

| EEGdenoiseNet [1] [3] | Benchmark dataset with semi-synthetic EEG | Provides standardized data for algorithm comparison |

| Mockaroo [4] | Data generation tool | Creates synthetic datasets with controlled parameters |

| Independent Component Analysis (ICA) [5] | Blind source separation technique | Baseline method for performance comparison |

| Visibility Graph Features [2] | Signal transformation method | Enhances artifact detection in deep learning models |

| Monte Carlo Simulations [6] [7] | Statistical sampling method | Assesses algorithm robustness across variations |

Advancing the Field Through Simulation

The indispensable role of simulated data in EEG artifact removal validation extends beyond initial algorithm development. It enables:

- Objective benchmarking of different approaches [1] [3]

- Identification of algorithm strengths and weaknesses across different artifact types [3]

- Robustness testing under varying signal conditions [7]

- Reproducible research through standardized evaluation protocols [1]

As the field progresses toward more sophisticated applications—including mobile EEG, brain-computer interfaces, and clinical monitoring systems—the role of simulated data in validating artifact removal techniques becomes increasingly critical. Only through rigorous simulation-based validation can researchers ensure that the brain signals they analyze truly represent neurological activity rather than methodological artifacts.

Common EEG Artifacts and Their Impact on Data Integrity

Electroencephalography (EEG) is a vital tool for investigating brain function, but the signals it records are notoriously susceptible to contamination from unwanted sources, known as artifacts. These artifacts, which can originate from the patient's own body or the external environment, pose a significant threat to data integrity, potentially leading to the misinterpretation of neural signals and incorrect conclusions in both research and clinical settings [8] [9]. This guide provides an objective comparison of common EEG artifacts and the methodologies used to mitigate them, with a specific focus on experimental data and protocols relevant to validating artifact removal techniques using simulated data.

EEG artifacts are undesired signals that introduce changes in measurements and can obscure the neural signal of interest. Given that the amplitude of cerebral EEG is typically in the microvolt range, it is highly sensitive to contamination from sources with much larger amplitudes [8] [10]. Artifacts are broadly categorized as physiological (originating from the subject's body) or non-physiological (technical, from external sources) [9] [11].

The table below summarizes the characteristics and impacts of the most prevalent physiological artifacts.

Table 1: Characteristics and Impact of Common Physiological EEG Artifacts

| Artifact Type | Origin | Typical Waveform/Morphology | Spectral Characteristics | Primary Electrodes Affected | Potential Impact on Data Integrity |

|---|---|---|---|---|---|

| Ocular (Eye Blinks/Movements) | Corneo-retinal dipole (eye as dipole: cornea +, retina -) [8] [11] | Slow, high-amplitude deflections; blinks cause positive waveform in frontal electrodes [8] | Dominant in low frequencies (Delta, Theta bands) [9] | (Pre)frontal (Fp1, Fp2); lateral movements affect F7, F8 [8] [9] | Mimics slow-wave activity; can be misinterpreted as interictal discharges in epilepsy [8] |

| Muscle (EMG) | Contraction of head, face, or neck muscles [8] | High-frequency, sharp, irregular activity [9] | Broadband, but primarily >30 Hz (Beta/Gamma bands) [8] [9] | Widespread, but often localized to temporal regions [8] | Obscures genuine brain rhythms; reduces SNR in high-frequency bands critical for cognitive/motor studies [9] |

| Cardiac (ECG/Pulse) | Electrical activity of the heart (ECG) or pulsation of blood vessels under electrodes (pulse) [8] [11] | Rhythmic, stereotyped waveforms synchronized with heartbeat [11] | Overlaps multiple EEG bands; pulse artifact ~1.2 Hz [10] | Left-side brain electrodes (due to heart's position); electrodes over pulsating vessels [8] [10] | Rhythmic sharp waves may be confused with cerebral abnormal activity like sharp waves [11] |

Non-physiological artifacts include electrode pops (sudden, high-amplitude transients from impedance changes), cable movement, 60 Hz AC line noise, and incorrect reference placement [9] [11]. These are often addressed through proper experimental setup and hardware solutions, but can require specific post-processing if introduced [10].

# Methodologies for Artifact Detection and Removal

A wide array of techniques has been developed to manage EEG artifacts. The choice of method often involves a trade-off between the amount of data preserved and the risk of introducing new distortions or failing to remove the artifact.

Table 2: Comparison of Common EEG Artifact Removal Methodologies

| Method | Underlying Principle | Common Applications | Key Experimental Findings | Advantages | Limitations |

|---|---|---|---|---|---|

| Regression (Time/Frequency Domain) | Estimates artifact contribution via reference channels (e.g., EOG) and subtracts it from EEG signal [10] | Primarily for ocular artifacts [10] | Traditional method; can be affected by bidirectional interference (EEG contaminating EOG reference) [10] | Conceptually simple; requires separate reference channel [10] | Requires exogenous reference channels; may remove neural signals along with artifact [10] |

| Independent Component Analysis (ICA) | Blind source separation (BSS); decomposes EEG into statistically independent components, which are classified and removed [8] [10] | Ocular, muscle, and cardiac artifacts; BCG artifacts in EEG-fMRI [8] [12] | A 2025 study found ICA did not significantly improve decoding performance in most cases but was essential to avoid artifact-related confounds [13]. For BCG removal, ICA showed sensitivity to frequency-specific patterns in dynamic connectivity graphs [12]. | Does not require reference channels; can isolate and remove multiple artifact types [8] | Requires manual component inspection (expertise-dependent); computationally intensive; performance degrades with low-channel counts [8] [14] |

| Artifact Subspace Reconstruction (ASR) | Identifies and removes high-variance components in multi-channel EEG using a sliding window and statistical thresholding [15] | Non-stereotyped artifacts, movement artifacts; adaptable for mobile EEG and infant data [15] | The NEAR pipeline, combining ASR with bad channel detection, successfully reconstructed established EEG responses from noisy newborn datasets [15]. | Effective for non-stereotypical artifacts; can be used in real-time; suitable for low-channel setups [15] | Performance depends on calibration data and parameter tuning; may remove neural data if too aggressive [15] |

| Wavelet Transform | Decomposes signal into time-frequency space, allowing for targeted removal of artifact coefficients [10] [14] | Ocular and muscular artifacts, particularly in wearable EEG [14] | Emerging as a frequently used technique for managing ocular and muscular artifacts in wearable EEG pipelines, often using thresholding [14]. | Good for non-stationary signals; does not require multi-channel data [10] | Choice of mother wavelet and threshold rules can impact performance [10] |

→ Experimental Protocol: Impact of Artifact Correction on Decoding Performance

A 2025 study systematically evaluated how artifact correction and rejection affect the performance of Support Vector Machine (SVM) and Linear Discriminant Analysis (LDA)-based decoding of EEG signals [13].

- Objective: To determine whether eliminating artifacts during preprocessing enhances the performance of multivariate pattern analysis (MVPA), given that artifact rejection reduces the number of trials available for training the decoder [13].

- Methodology: The researchers used Independent Component Analysis (ICA) to correct for ocular artifacts. Artifact rejection was employed to discard trials with large voltage deflections from other sources, such as muscle artifacts. They assessed decoding performance across seven common event-related potential (ERP) paradigms and more challenging multi-way decoding tasks [13].

- Key Result: The combination of artifact correction and rejection did not significantly improve decoding performance in the vast majority of cases. However, the study strongly recommends using artifact correction prior to decoding analyses to reduce artifact-related confounds that might artificially inflate decoding accuracy [13].

# Validation and Emerging Frontiers in Artifact Management

Validation of artifact removal techniques is increasingly relying on sophisticated computational approaches and multi-modal data integration. A key development is the use of data assimilation (DA) to estimate neurophysiological parameters like cortical excitation-inhibition (E/I) balance from EEG data. A 2025 study validated a DA-based computational approach by comparing its E/I estimates with concurrent Transcranial Magnetic Stimulation and EEG (TMS-EEG) measures, finding significant correlations [16]. This demonstrates that computational methods can provide neurophysiologically valid estimations, offering a framework for benchmarking artifact removal pipelines.

Simultaneous EEG-fMRI presents a unique artifact removal challenge in the form of the ballistocardiogram (BCG) artifact. A 2025 systematic evaluation of BCG removal methods—Average Artifact Subtraction (AAS), Optimal Basis Set (OBS), and ICA—found that the choice of method significantly impacts subsequent functional connectivity analysis [12]. AAS achieved the best signal fidelity (MSE = 0.0038, PSNR = 26.34 dB), while OBS best preserved structural similarity (SSIM = 0.72). ICA, though weaker on signal metrics, was sensitive to frequency-specific patterns in dynamic network graphs [12]. This highlights that the optimal method depends on the downstream analysis goal.

The workflow for validating artifact removal pipelines often involves a combination of simulated and real data, as illustrated below.

For researchers designing experiments involving EEG artifact validation, the following tools and data are essential.

Table 3: Essential Research Reagents and Resources for EEG Artifact Research

| Tool/Resource | Function/Role in Research | Application Notes |

|---|---|---|

| Independent Component Analysis (ICA) | A blind source separation algorithm to decompose multi-channel EEG into independent components for artifact identification and removal [8] [10]. | Implemented in toolboxes like EEGLAB; requires expertise for manual component selection. Performance is best with high-density EEG systems [8] [14]. |

| Simulated Data Generation | Creates EEG signals with known ground truth and controlled artifact properties to quantitatively evaluate removal algorithms [16]. | Critical for initial validation. Can be generated using neural mass models or by adding real artifacts to clean baseline data [16]. |

| Artifact Subspace Reconstruction (ASR) | An adaptive, automated method for cleaning continuous EEG data by identifying and removing high-variance segments [15]. | Particularly useful for non-stereotypical artifacts in mobile EEG and motion-heavy recordings (e.g., infant studies) [15]. |

| Auxiliary Reference Sensors | Sensors (EOG, ECG, IMU) that provide direct measurements of physiological artifacts (eye, heart, movement) [10] [14]. | Used for regression-based removal and for validating the performance of other methods like ICA. Still underutilized in wearable EEG [10] [14]. |

| Public EEG Datasets with Artifacts | Benchmarks for comparing the performance of different artifact removal pipelines across laboratories [14]. | Should include data from varied populations (adults, infants) and recording setups (lab, mobile) to ensure generalizability [14] [15]. |

| Multi-Modal Neuroimaging (TMS-EEG) | Provides neurophysiological benchmarks (e.g., E/I balance indices) for validating the neurophysiological integrity of data after artifact removal [16]. | Serves as a "gold standard" for validating that artifact cleaning preserves genuine brain signals, not just removes noise [16]. |

The integrity of EEG data is fundamentally linked to the effective management of artifacts. While techniques like ICA, ASR, and wavelet transforms offer powerful solutions, the choice of method is not one-size-fits-all. Experimental evidence indicates that the optimal pipeline depends on the artifact type, the recording context (lab-based vs. wearable), and the ultimate goal of the analysis, whether it is ERP decoding, functional connectivity, or estimating neurophysiological parameters. A robust validation strategy, combining simulated data with known ground truth and multi-modal benchmarks like TMS-EEG, is essential for developing and selecting artifact removal methods that ensure the reliability of neuroscientific and clinical findings.

Spectral and Temporal Characteristics of Key Artifacts

Electroencephalography (EEG) is a vital tool in clinical and cognitive neuroscience, prized for its high temporal resolution and non-invasive nature [1]. However, its diagnostic accuracy is consistently challenged by the presence of artifacts—extraneous signals that obscure underlying brain activity. These artifacts originate from diverse sources, including physiological processes like eye movements and muscle activity, as well as non-physiological sources such as environmental interference and electrode motion [2] [17]. In wearable EEG systems, which employ dry electrodes and are used in mobile, real-world settings, these challenges are exacerbated due to reduced electrode-skin stability and the uncontrolled nature of the recording environment [18] [17]. The reliable removal of these artifacts is therefore a critical step in EEG analysis, forming an essential foundation for downstream applications in brain-computer interfaces, neurological diagnosis, and cognitive monitoring. This guide provides a comparative analysis of the spectral and temporal characteristics of key EEG artifacts and the performance of modern methods designed for their removal, with a specific focus on validation using simulated data.

Spectral and Temporal Profiles of Major Artifact Types

EEG artifacts exhibit distinct signatures in both the spectral (frequency) and temporal (time) domains. Understanding these characteristics is the first step in developing effective artifact removal strategies. The table below summarizes the defining features of the most common artifact types.

Table 1: Spectral and Temporal Characteristics of Key EEG Artifacts

| Artifact Type | Spectral Characteristics | Temporal Characteristics | Primary Sources |

|---|---|---|---|

| Ocular (EOG) | Low-frequency content (< 4 Hz); overlaps with delta rhythm [17]. | Slow, large-amplitude deflections; correlated with eye blinks and movements [17]. | Eye movements, blinks [2]. |

| Muscular (EMG) | Broad-spectrum, high-frequency activity (20-200 Hz); often overlaps with beta and gamma rhythms [17]. | Rapid, irregular, high-frequency spikes [17]. | Muscle contractions in head, neck, jaw [2]. |

| Motion | Can contaminate a broad frequency range [2]. | Sharp transients, baseline shifts, and periodic oscillations; patterns can be arrhythmic [2]. | Head movements, gait cycles, electrode displacement [2]. |

| Cardiac (ECG) | Periodic component around 1-1.7 Hz [1]. | Stereotypical, periodic spike patterns synchronized with heartbeat [18]. | Heartbeat [2]. |

| Non-Physiological/Technical | Specific to noise source (e.g., 50/60 Hz line noise) [1]. | Sudden, large-amplitude shifts (e.g., electrode pops); continuous interference (e.g., static) [19]. | Electrode pops, static, line noise, instrumental interference [2] [17]. |

Comparative Performance of Artifact Removal Algorithms

A variety of algorithms, from classical signal processing to modern deep learning, have been developed to tackle artifact removal. Their performance varies significantly based on the artifact type and the experimental setup. The following table synthesizes quantitative results from recent studies, providing a basis for comparison.

Table 2: Performance Comparison of Representative Artifact Removal Algorithms

| Algorithm | Artifact Type | Key Performance Metrics | Reported Experimental Setup |

|---|---|---|---|

| CLEnet [1] | Mixed (EMG + EOG) | SNR: 11.50 dB; CC: 0.925; RRMSEt: 0.300 [1]. | End-to-end removal from multi-channel EEG; tested on semi-synthetic and real 32-channel data [1]. |

| Motion-Net [2] | Motion | Artifact Reduction (η): 86% ±4.13; SNR Improvement: 20 ±4.47 dB; MAE: 0.20 ±0.16 [2]. | Subject-specific CNN; trained/tested on real EEG with motion artifacts and ground truth [2]. |

| Fingerprint + ARCI + Improved SPHARA [18] | Multiple (Dry EEG) | SD: 6.15 μV; SNR: 5.56 dB [18]. | Combination of ICA-based methods and spatial filtering; tested on 64-channel dry EEG during motor tasks [18]. |

| ICA-based Framework [20] | TMS-Evoked Muscle | High reproducibility of TEPs with 35+ training trials [20]. | Real-time, two-step ICA; validated on pre-published TMS-EEG datasets [20]. |

| Deep Lightweight CNN [19] | Eye, Muscle, Non-Physiological | Eye (ROC AUC): 0.975; Muscle (Accuracy): 93.2%; Non-Physio (F1-score): 77.4% [19]. | Artifact-specific CNN models; trained/tested on Temple University Hospital EEG Corpus [19]. |

Experimental Protocols for Key Studies

The performance data presented above is derived from rigorous experimental protocols. The following workflow generalizes the common steps involved in validating artifact removal methods using simulated or semi-synthetic data, a cornerstone of research in this field.

Diagram 1: Workflow for Validating Artifact Removal Methods.

The methodology for validating artifact removal algorithms often follows a structured pipeline [1] [2]:

- Data Acquisition: The process begins with the collection of clean EEG data. Simultaneously, artifacts are either recorded from real subjects (e.g., during specific tasks to induce eye movements or muscle activity) or are mathematically simulated [1].

- Semi-Synthetic Data Generation: To establish a reliable ground truth for quantitative evaluation, clean EEG signals are artificially contaminated with the recorded or simulated artifacts. This creates a semi-synthetic dataset where the true, artifact-free signal is known [1].

- Algorithm Training: The removal algorithm is trained. For deep learning models, this is typically done in a supervised manner, where the model learns to map the contaminated input to the clean output. Other methods, like Independent Component Analysis (ICA), operate in an unsupervised fashion, learning to separate sources without labeled examples [1] [20].

- Execution and Evaluation: The trained or configured algorithm is applied to the contaminated data. The output is then quantitatively compared against the known ground truth using metrics like Signal-to-Noise Ratio (SNR), Correlation Coefficient (CC), and Root Mean Square Error (RMSE) [1] [2].

To facilitate replication and further research, the table below details key computational tools and data resources used in the featured studies.

Table 3: Key Research Reagents and Computational Resources

| Tool/Resource | Type | Function in Research | Example Use Case |

|---|---|---|---|

| EEGdenoiseNet [1] | Benchmark Dataset | Provides semi-synthetic single-channel EEG data with clean and artifact components for standardized algorithm testing [1]. | Training and benchmarking of artifact removal models like CLEnet [1]. |

| Temple University Hospital (TUH) EEG Corpus [19] | Clinical EEG Dataset | Offers a large corpus of real, clinical EEG recordings with expert-annotated artifact labels, enabling validation in real-world conditions [19]. | Training and testing artifact-specific deep learning models [19]. |

| Independent Component Analysis (ICA) [18] [20] | Algorithm | A blind source separation method that decomposes multi-channel EEG into statistically independent components, allowing for manual or automatic identification and removal of artifact components [18] [20]. | Removal of ocular and muscular artifacts; suppression of TMS-evoked artifacts in real-time [20]. |

| Convolutional Neural Network (CNN) [1] [2] [19] | Deep Learning Architecture | Excels at extracting spatial and morphological features from data; can be designed for 1D signals or 2D topographic maps. Used for end-to-end artifact removal or detection [1] [19]. | Motion-Net for motion artifact removal; lightweight CNNs for detecting specific artifact classes [2] [19]. |

| Long Short-Term Memory (LSTM) [1] | Deep Learning Architecture | A type of recurrent neural network designed to learn long-range dependencies and temporal features in sequential data like EEG signals [1]. | Integrated in CLEnet to capture the temporal dynamics of EEG for better separation from artifacts [1]. |

The effective removal of artifacts from EEG signals hinges on a deep understanding of their unique spectral and temporal fingerprints. As demonstrated, ocular artifacts dominate the low-frequency range, while muscular and motion artifacts present complex, broad-spectrum challenges. The contemporary landscape of artifact removal is increasingly dominated by deep learning approaches, such as CLEnet and Motion-Net, which show superior performance in handling complex and mixed artifacts in multi-channel and mobile settings. However, traditional methods like ICA remain highly relevant, especially in scenarios with sufficient channels and for specific artifact types like those evoked by TMS. Validation through semi-synthetic data with known ground truth remains a critical and standard practice for the objective quantification of algorithm performance. As the field progresses, the fusion of spatial, spectral, and temporal processing techniques, coupled with the availability of robust public datasets, will continue to enhance the reliability of EEG analysis across clinical and research applications.

The Critical Role of Ground-Truth in Validating EEG Artifact Removal

Electroencephalography (EEG) functional connectivity (FC) research provides invaluable insights into brain network dynamics in both healthy and clinical populations. However, the accurate interpretation of EEG FC patterns is critically dependent on successfully removing artifacts from the signal. Artifacts from physiological sources (e.g., eye blinks, muscle activity, cardiac rhythms) and non-physiological sources (e.g., environmental noise, motion) can significantly distort connectivity metrics, leading to false conclusions about brain network organization [1] [21]. Consequently, establishing reliable ground-truth connectivity patterns is fundamental for validating the performance of artifact removal algorithms.

Research demonstrates that methodological choices in EEG processing pipelines significantly impact the estimation of functional connectivity, creating considerable variability across studies [22] [23]. Simulated data has emerged as an essential validation tool because researchers know the precise "ground truth" of the underlying neural connections, enabling objective evaluation of how different artifact removal techniques affect connectivity estimates [23]. Without this ground-truth benchmark, it is impossible to determine whether an artifact removal method preserves genuine neural signals while effectively eliminating non-neural contaminants.

Comparative Performance of Artifact Removal Methodologies

Traditional Signal Processing Approaches

Traditional approaches to EEG artifact removal include regression-based methods, blind source separation (BSS) techniques like Independent Component Analysis (ICA), wavelet transforms, and hybrid methods [1]. Among these, ICA remains one of the most widely used methods in both research and clinical applications [24] [25]. ICA operates on the principle of separating statistically independent components from multidimensional data, effectively isolating neural signals from artifactual sources [25]. However, ICA's performance is contingent on meeting specific statistical assumptions and is influenced by measurement uncertainty [24].

Table 1: Performance Characteristics of Traditional Artifact Removal Methods

| Method | Key Mechanism | Advantages | Limitations | Impact on FC Metrics |

|---|---|---|---|---|

| ICA (FastICA, Infomax) | Blind source separation of statistically independent components | Effective for ocular, muscle, and line noise artifacts; Widely available in toolboxes | Requires manual component inspection; Performance degrades with measurement uncertainty (SNR <15dB) [24] | Can preserve connectivity with proper component rejection [22] |

| Wavelet-Enhanced ICA (wICA) | Combines wavelet decomposition with ICA | Improved artifact separation; Better preservation of neural signal morphology | Increased computational complexity; Parameter sensitivity | Provides high test-retest reliability for alpha band FC [22] |

| Artifact Subspace Reconstruction (ASR) | Statistical rejection of high-variance components | Suitable for online processing; Effective for large-amplitude motion artifacts | May remove neural signals with high amplitude; Threshold selection critical | Limited evidence on specific FC impact |

Modern Deep Learning Architectures

Recent advances in deep learning have transformed EEG artifact removal by enabling automated, end-to-end processing without manual intervention. These approaches leverage convolutional neural networks (CNNs), long short-term memory (LSTM) networks, transformers, and generative adversarial networks (GANs) to learn complex patterns in artifact-contaminated EEG data [1] [21].

Table 2: Comparative Performance of Deep Learning Artifact Removal Models

| Model | Architecture | Artifact Types Addressed | Performance Metrics | Experimental Validation |

|---|---|---|---|---|

| CLEnet [1] | Dual-scale CNN + LSTM with EMA-1D attention | EMG, EOG, ECG, and unknown artifacts | SNR: 11.498dB; CC: 0.925; RRMSEt: 0.300; RRMSEf: 0.319 (mixed artifacts) | Semi-synthetic datasets with known ground truth |

| AnEEG [21] | LSTM-based GAN | Ocular, muscle, powerline interference | Lower NMSE and RMSE; Higher CC vs. wavelet methods | Multiple public EEG datasets |

| IMU-Enhanced LaBraM [26] | Fine-tuned transformer with IMU fusion | Motion artifacts during physical activities | Improved robustness under diverse motion scenarios vs. ASR-ICA | Mobile BCI dataset with standing/walking/running conditions |

| Unsupervised Encoder-Decoder [27] | Deep encoder-decoder with outlier detection | Task-specific artifacts without pre-labeling | 10% relative improvement in downstream classification | Clinical EEG data for coma prognostication |

The comparative data reveals that deep learning approaches generally outperform traditional methods, particularly for complex artifact types and in real-world conditions with motion [1] [26]. CLEnet demonstrates particular strength in handling mixed artifacts and unknown noise sources, while IMU-enhanced approaches show promise for mobile brain-computer interface applications where motion artifacts are prevalent.

Experimental Protocols for Ground-Truth Validation

Simulated EEG Functional Connectivity Data

Rigorous validation of artifact removal methods requires experimental protocols that incorporate known ground-truth connectivity patterns. One established approach involves simulating EEG data with predefined functional connectivity networks, enabling precise quantification of how artifact removal affects connectivity estimation [23].

Simulation Methodology:

- Generate synthetic EEG signals with predetermined connectivity patterns between brain regions

- Introduce controlled artifacts with varying properties (amplitude, frequency, spatial distribution)

- Apply artifact removal techniques to the contaminated signals

- Compare estimated connectivity with the original ground-truth patterns

Key Design Considerations:

- Incorporate realistic artifact properties based on empirical measurements [24]

- Systematically vary signal-to-noise ratios to test robustness [24]

- Include both physiological (EOG, EMG, ECG) and non-physiological artifacts

- Test across multiple connectivity metrics (phase-based, amplitude-based, directed)

This simulated approach enables researchers to determine optimal processing parameters for accurate FC estimation. Studies using this methodology have revealed that combining specific preprocessing steps significantly enhances connectivity measurement accuracy [23].

Diagram Title: Simulated Data Validation Workflow

Semi-Synthetic Benchmark Datasets

An alternative validation approach employs semi-synthetic datasets created by adding real artifacts to clean EEG recordings or combining artifact-free EEG segments with recorded artifactual signals [1]. This method preserves the statistical properties of genuine artifacts while maintaining ground-truth knowledge of the underlying neural signals.

Protocol Implementation:

- Data Collection: Obtain clean EEG segments during resting state or specific tasks

- Artifact Acquisition: Record pure artifact signals (EOG, EMG, ECG) separately

- Mixing Procedure: Linearly combine clean EEG and artifacts with controlled weighting

- Algorithm Validation: Apply artifact removal methods and quantify signal preservation

This approach has been utilized in benchmark studies such as those employing the EEGdenoiseNet dataset [1], enabling standardized comparison across multiple artifact removal algorithms. The semi-synthetic paradigm offers a compelling balance between experimental control and physiological realism.

Optimal Processing Pipelines for Connectivity Research

Evidence from ground-truth validation studies provides specific guidance for constructing optimal EEG processing pipelines for functional connectivity research. The most reliable approaches combine multiple techniques in a sequential manner to address different artifact types.

Integrated Processing Pipeline

The most effective pipelines incorporate artifact reduction techniques, appropriate re-referencing methods, and carefully selected functional connectivity metrics [22]. Research indicates that the combination of wavelet-enhanced ICA (wICA) artifact cleaning, current source density (CSD) re-referencing, and real magnitude squared coherence (rMSC) as a FC metric provides particularly high accuracy and test-retest reliability for detecting age-related differences in alpha band functional connectivity [22].

Optimal Parameters for FC Estimation:

- Epoch Length: 6 seconds or longer [23]

- Number of Epochs: 40 or more [23]

- Re-referencing: Reference Electrode Standardization Technique (REST) or common average reference (CAR) for phase-based metrics; CSD for magnitude-based metrics [23]

- FC Metrics: Imaginary coherence (iCOH) and weighted phase lag index (wPLI) for phase-based connectivity; rMSC for amplitude-based connectivity [22] [23]

Diagram Title: Optimal EEG-FC Processing Pipeline

Method Selection Guidelines

Choosing the appropriate artifact removal method depends on multiple factors, including the research question, artifact types, available computational resources, and the specific functional connectivity metrics of interest. The following guidelines emerge from ground-truth validation studies:

- For clinical applications with limited technical expertise: Deep learning approaches (e.g., CLEnet, AnEEG) offer automated processing with minimal manual intervention [1] [21]

- For motion-rich environments: IMU-enhanced methods provide superior artifact removal during physical activities [26]

- For phase-based connectivity metrics: ICA with wavelet enhancement combined with REST re-referencing delivers optimal performance [22] [23]

- For amplitude-based connectivity metrics: Current source density re-referencing with rMSC metrics is preferable [22]

- When measurement uncertainty is high (SNR <15dB): ICA performance degrades significantly, necessitating alternative approaches or quality checks [24]

Essential Research Reagents and Computational Tools

Table 3: Essential Research Resources for EEG Artifact Removal Validation

| Resource Category | Specific Tools & Datasets | Primary Function | Access Information |

|---|---|---|---|

| Public EEG Datasets | BrainClinics Repository [22]; EEGdenoiseNet [1]; Mobile BCI Dataset [26] | Benchmarking artifact removal algorithms; Ground-truth validation | Publicly available through respective repositories |

| Processing Toolboxes | EEGLAB [24]; MNE-Python [24] | Implementation of ICA and other artifact removal methods | Open-source platforms with extensive documentation |

| Deep Learning Frameworks | TensorFlow; PyTorch | Developing and training custom artifact removal models | Open-source with active community support |

| Simulation Platforms | MATLAB; Python (MNE, NumPy, SciPy) | Generating ground-truth connectivity patterns; Method validation | Commercial and open-source options available |

| Performance Metrics | SNR; CC; RRMSEt; RRMSEf [1] | Quantitative evaluation of artifact removal efficacy | Standardized calculation methods |

Future Directions and Emerging Approaches

The field of EEG artifact removal continues to evolve with several promising research directions. Multi-modal approaches that combine EEG with complementary physiological recordings (e.g., IMU, EOG, EMG) show significant potential for improved artifact identification and removal [26]. Additionally, self-supervised and unsupervised learning methods address the challenge of obtaining labeled training data by leveraging the inherent statistical properties of clean versus artifact-contaminated EEG segments [27].

Future validation efforts should focus on developing more sophisticated simulation frameworks that better capture the complex spatial, temporal, and spectral properties of both neural signals and artifacts. Furthermore, standardized benchmarking protocols using shared ground-truth datasets will enable more direct comparison between existing and emerging artifact removal methodologies, ultimately advancing the reliability of EEG functional connectivity research.

Limitations of Real Data for Methodological Benchmarking

The validation of electroencephalogram (EEG) artifact removal methods represents a critical challenge in computational neuroscience and biomedical engineering. While real EEG data provides the ultimate test environment, significant methodological limitations complicate its use for standardized benchmarking of new algorithms. This analysis examines these constraints within the broader context of validation research, where simulated data offers complementary advantages for controlled, reproducible evaluation of algorithmic performance.

Limitations of Real EEG Data for Benchmarking

Using real EEG data for benchmarking artifact removal methods introduces several fundamental challenges that can compromise validation integrity. The table below summarizes the primary limitations identified in current research.

Table 1: Key Limitations of Real EEG Data for Methodological Benchmarking

| Limitation Category | Specific Challenge | Impact on Benchmarking |

|---|---|---|

| Unknown Ground Truth | Inability to precisely separate true neural activity from artifacts [28] | Prevents accurate calculation of performance metrics and recovery fidelity |

| Artifact Variability | Uncontrolled, subject-specific artifact composition and intensity [28] | Introduces uncontrolled variables that complicate performance comparisons |

| Channel Correlations | Poor performance on multi-channel data due to overlooked inter-channel relationships [28] | Limits generalizability of single-channel focused algorithms |

| Data Scarcity | Difficulty obtaining sufficient real data representing all artifact types [21] | Restricts training data for deep learning models and comprehensive testing |

| Subjective Annotation | Manual component rejection requiring expert intervention and prior knowledge [28] | Introduces human bias and limits reproducibility across studies |

| Resource Intensity | Requirement for reference signals and manual inspection in traditional methods [28] | Increases operational complexity and cost of data collection |

The absence of known ground truth presents the most fundamental constraint. Without precise knowledge of the underlying neural signal, researchers cannot accurately quantify how effectively an algorithm removes artifacts while preserving genuine brain activity [28]. This problem is compounded by the presence of unknown artifacts in real recordings, whose proportion relative to the original signal remains unquantified [28].

Artifact variability further complicates benchmarking. Real biological artifacts (EOG, EMG, ECG) exhibit substantial inter-subject variability in characteristics and intensity, creating uncontrolled variables that hinder fair algorithm comparison [28]. This variability is particularly problematic for deep learning approaches that require extensive, diverse datasets for training [21].

Simulated Data as a Complementary Validation Tool

To address these limitations, researchers have developed sophisticated simulation approaches that enable controlled benchmarking. The following workflow illustrates a standard methodology for creating semi-synthetic EEG data, which combines clean EEG segments with recorded artifacts.

Semi-synthetic datasets created through this process provide researchers with contaminated signals alongside pristine ground truth, enabling precise quantification of artifact removal performance using standardized metrics [28] [21].

Quantitative Performance Metrics for Algorithm Benchmarking

The validation of EEG artifact removal methods relies on specific quantitative metrics that enable objective comparison between different algorithms. The following table outlines key performance indicators derived from experimental protocols in recent literature.

Table 2: Quantitative Metrics for EEG Artifact Removal Benchmarking

| Metric | Description | Interpretation | Experimental Results from Recent Studies |

|---|---|---|---|

| Signal-to-Noise Ratio (SNR) | Ratio of signal power to noise power [28] | Higher values indicate better artifact removal | CLEnet: 11.498 dB for mixed artifacts [28] |

| Correlation Coefficient (CC) | Linear correlation between processed and clean EEG [28] | Values closer to 1.0 indicate better signal preservation | CLEnet: 0.925 for mixed artifacts [28] |

| Relative Root Mean Square Error (RRMSE) | Temporal (t) and frequency (f) domain reconstruction error [28] | Lower values indicate higher fidelity | CLEnet: RRMSEt 0.300, RRMSEf 0.319 [28] |

| Normalized Mean Square Error (NMSE) | Normalized reconstruction error [21] | Lower values indicate better agreement with original signal | AnEEG demonstrated lower NMSE vs. wavelet techniques [21] |

| Signal-to-Artifact Ratio (SAR) | Ratio of signal power to residual artifact power [21] | Higher values indicate more complete artifact removal | AnEEG showed improvements in SAR values [21] |

These metrics provide complementary insights into algorithm performance. For instance, CLEnet demonstrated significant improvements across multiple metrics when evaluated on semi-synthetic datasets, achieving a 2.45% increase in SNR and 2.65% increase in CC compared to other models, while reducing temporal and frequency domain errors by 6.94% and 3.30% respectively [28].

Experimental Protocols for Benchmarking Studies

Standardized experimental protocols enable fair comparison between different artifact removal approaches. The following diagram illustrates a comprehensive benchmarking workflow that incorporates both simulated and real validation stages.

This protocol emphasizes initial validation on semi-synthetic datasets with known ground truth, followed by confirmation on real EEG recordings. For example, recent studies have utilized EEGdenoiseNet as a standardized semi-synthetic dataset, combining clean EEG with recorded EOG and EMG artifacts at controlled signal-to-noise ratios [28]. Additional datasets incorporate ECG artifacts from the MIT-BIH Arrhythmia Database to evaluate algorithm performance on cardiac artifacts [28] [21].

Table 3: Research Reagent Solutions for EEG Artifact Removal Studies

| Resource | Type | Function | Example Implementation |

|---|---|---|---|

| EEGdenoiseNet | Benchmark Dataset | Provides standardized semi-synthetic data with ground truth [28] | Mixed EEG, EOG, and EMG artifacts with known mixing ratios [28] |

| MIT-BIH Database | Artifact Source | Supplies clean ECG signals for cardiac artifact simulation [28] [21] | Combined with EEGdenoiseNet for ECG artifact evaluation [28] |

| CLEnet | Deep Learning Architecture | Dual-scale CNN with LSTM for multi-channel artifact removal [28] | Incorporates EMA-1D attention mechanism for temporal feature enhancement [28] |

| AnEEG | Deep Learning Framework | LSTM-based GAN for artifact removal [21] | Generator produces clean EEG, discriminator evaluates quality [21] |

| GCTNet | Hybrid Architecture | GAN-guided parallel CNN with transformer network [21] | Captures both global and temporal dependencies in EEG [21] |

| 1D-ResCNN | Baseline Algorithm | One-dimensional residual convolutional neural network [28] | Uses multiple convolutional kernels for multi-scale feature extraction [28] |

These resources enable standardized implementation and comparison of artifact removal methods. For instance, CLEnet's architecture specifically addresses the limitation of previous models that performed poorly on multi-channel data by effectively capturing inter-channel correlations [28]. Similarly, AnEEG's adversarial training approach enables the generation of artifact-free EEG signals while maintaining original neural activity patterns [21].

The limitations of real EEG data for methodological benchmarking underscore the critical importance of simulated and semi-synthetic datasets in validation research. While real data remains essential for final performance confirmation, controlled simulations enable rigorous, reproducible evaluation of artifact removal algorithms using quantitative metrics with known ground truth. Future benchmarking efforts should leverage both approaches, utilizing standardized datasets and evaluation protocols to enable meaningful comparison across the rapidly evolving landscape of EEG artifact removal methodologies.

Methodologies for Generating and Utilizing Simulated EEG Data

Electroencephalography (EEG) is a fundamental tool for investigating brain function in clinical, neuroscience, and cognitive research. A significant challenge in developing EEG analysis techniques, particularly for artifact removal, is the absence of a known ground truth in real neural data. Without this reference, validating the accuracy and efficacy of new algorithms becomes problematic. Simulated EEG data with precisely known properties provides an essential solution, creating a controlled test bench for method validation [29] [30].

This guide compares three prominent toolboxes for simulated EEG generation: SEED-G, EEGSourceSim, and SEREEGA. We focus on their application within a research thesis dedicated to validating EEG artifact removal methods, providing objective performance data and experimental protocols to inform researchers' selections.

Comparative Analysis of Simulated EEG Toolboxes

The table below summarizes the core characteristics and capabilities of the three featured toolboxes.

| Toolbox Name | Primary Simulation Approach | Key Features & Strengths | Best Suited for Validating | Accessibility |

|---|---|---|---|---|

| SEED-G [29] [31] [32] | Multivariate Autoregressive (MVAR) Models | Designed for testing connectivity estimators Imposes known ground-truth connectivity patterns Controls network parameters (density, nodes) Models non-stationary and inter-trial variable connectivity [29] | Connectivity-based artifact removal, Dynamic network analysis | MATLAB; Publicly available on GitHub [32] |

| EEGSourceSim [33] | MRI-based Forward Models & Realistic Noise Embedding | High anatomical realism with individual head models Embeds signal in realistic biological noise Suitable for source estimation & connectivity [33] | Source localization methods, Spatially-focused artifact removal | MATLAB; Open-source toolbox and dataset [33] |

| SEREEGA [30] | Lead Field Projection & Configurable Signal Mixing | Modular and general-purpose design Simulates event-related potentials (ERPs) and oscillations Configurable head models and signal types [30] | ERP analysis methods, Temporal artifact filtering | MATLAB; Free and open-source [30] |

Quantitative Performance and Experimental Data

SEED-G Performance Metrics

SEED-G is optimized for computational efficiency and realistic spectral properties. Performance testing demonstrates that datasets with up to 60 time series can be generated in less than 5 seconds [29] [31]. The toolbox successfully produces signals with spectral features similar to real EEG data, a critical factor for meaningful validation [29].

To illustrate the impact of data length on connectivity estimation accuracy—a key consideration for artifact removal validation—SEED-G documentation provides the following experimental results [32]:

| Number of Samples | False Positive Rate (FPR) | False Negative Rate (FNR) |

|---|---|---|

| 500 samples | 2% | 50% |

| 1500 samples | 1% | 11% |

| 2500 samples | 0% | 6% |

This data underscores that longer simulated epochs significantly improve the reliability of the ground truth, which is crucial for robustly testing artifact removal algorithms.

EEGSourceSim emphasizes realism through its use of a large set of 23 individual MRI-based head models and surface-based regions of interest brought into registration for each subject [33]. This approach allows for simulation studies that account for individual-subject variability in structure and function, providing a more rigorous test for artifact removal methods that may be sensitive to anatomical differences.

Experimental Protocols for Artifact Removal Validation

Here, we outline a general experimental workflow for validating an EEG artifact removal method using simulated data, adaptable to any of the toolboxes above.

Core Experimental Workflow

The following diagram visualizes the multi-stage process of creating a benchmark and validating a method against it.

Protocol 1: Validating a Deep Learning Denoiser for tES Artifacts

This protocol is inspired by a study that benchmarked deep learning models, including Complex CNN and State Space Models (SSMs), for removing transcranial Electrical Stimulation (tES) artifacts [34].

- Data Generation: Use a toolbox like SEREEGA to generate clean, synthetic EEG signals. Alternatively, use real, artifact-free EEG recordings as your baseline.

- Artifact Introduction: Create a semi-synthetic dataset by adding mathematically generated tES artifacts (e.g., for tDCS, tACS, tRNS) to the clean EEG. This provides a known ground truth for the underlying brain signal [34].

- Method Application: Apply the deep learning-based denoising method (e.g., Complex CNN for tDCS, multi-modular SSM for tACS/tRNS) to the contaminated dataset [34].

- Performance Quantification: Compare the denoised output to the original clean signal using metrics like:

- Root Relative Mean Squared Error (RRMSE) in temporal and spectral domains.

- Correlation Coefficient (CC) between the cleaned and ground-truth signals [34].

Protocol 2: Testing a Connectivity-Based Method with Non-Stationary Data

This protocol leverages SEED-G's unique capabilities to test methods in dynamic scenarios.

- Ground-Truth Design: Use SEED-G to generate a pseudo-EEG dataset with a known, time-varying connectivity pattern. For example, impose a connectivity link that changes in intensity at a specific time point [29] [32].

- Artifact Simulation: Introduce a controlled, non-brain artifact (e.g., an ocular blink simulated by a spike or slow wave) that overlaps with the period of connectivity change.

- Validation:

- Apply the artifact removal algorithm to the contaminated dataset.

- Run a dynamic connectivity estimator (e.g., Partial Directed Coherence) on both the clean ground-truth data and the artifact-corrected data.

- Assess whether the artifact removal process preserved or distorted the underlying, known dynamic change in connectivity.

The Scientist's Toolkit: Key Research Reagents

The table below lists essential "reagents" or components for designing realistic EEG simulation experiments.

| Research Reagent | Function in the Simulation Experiment |

|---|---|

| Head Model (Forward Model) | Prescribes how electrical activity from brain sources is projected to scalp electrodes. Realistic boundary element method (BEM) models are crucial for simulating volume conduction effects [33] [30]. |

| Multivariate Autoregressive (MVAR) Model | Acts as a generator filter to produce synthetic time series with specific, user-imposed statistical connectivity patterns between signals, creating a ground-truth network [29]. |

| Synthetic Artifact Model | A mathematical or data-driven model of a specific artifact (e.g., ocular blink, muscle activity, tES stimulation) that can be added to clean EEG with controlled amplitude and timing [34]. |

| Realistic Biological Noise | A model of ongoing, background brain activity, often derived from fitting components to measured resting-state EEG, which provides a plausible noise floor for the simulated signal [33]. |

Selecting the ideal simulated EEG toolbox depends directly on the validation goals of the artifact removal research. For studies focusing on the integrity of functional connectivity networks before and after cleaning, SEED-G is the superior choice due to its dedicated feature set for imposing and testing ground-truth connectivity. When the research question involves the spatial accuracy of source reconstruction following artifact removal, EEGSourceSim offers unparalleled anatomical realism. For more general-purpose validation, particularly of methods targeting event-related potentials or oscillatory activity, SEREEGA provides the necessary flexibility and modularity. By leveraging the experimental protocols and performance data outlined in this guide, researchers can make an informed decision and build a robust validation framework for their specific thesis on EEG artifact removal.

Leveraging Multivariate Autoregressive (MVAR) Models

Multivariate Autoregressive (MVAR) modeling is a powerful parametric approach for estimating dynamic, directed interactions from physiological signals like electroencephalography (EEG). In neuroscience, it is particularly valued for its ability to quantify directed functional connectivity with high temporal resolution, helping researchers understand how different brain areas interact over time scales as brief as tens of milliseconds [35]. The core principle of an MVAR model is that the current value of a multivariate time series can be predicted by a linear combination of its own past values. For a d-dimensional time series, the general form of a time-varying MVAR process of order p at each time step n is represented as:

Y(n)=∑r=1pAr(n)Y(n−r)+E(n)

Where Ar(n) is the matrix of time-varying MVAR coefficients at time n, and E(n) is a zero-mean, uncorrelated white noise vector process [35]. The model order p determines the number of past observations included in the model. A key advantage of MVAR models in the context of artifact removal validation is that, when fitted to clean neural data, they can generate synthetic EEG signals with known ground-truth properties, free from artifacts. This makes them an indispensable tool for creating realistic benchmark datasets where the true underlying brain activity is known, thereby allowing for objective evaluation of artifact removal algorithms [36] [37].

Comparative Analysis of MVAR Algorithm Performance

Several recursive algorithms exist for estimating time-varying MVAR (tvMVAR) models from non-stationary neural data. The choice of algorithm and its parameter settings significantly impacts the accuracy and reliability of the resulting connectivity estimates and synthetic data generation. The following table provides a structured comparison of four prominent tvMVAR algorithms.

Table 1: Comparison of Time-Varying MVAR (tvMVAR) Estimation Algorithms

| Algorithm Name | Core Methodology | Key Adaptation Parameters | Strengths | Weaknesses & Sensitivity |

|---|---|---|---|---|

| Recursive Least Squares (RLS) [35] | Extends Yule-Walker equations using a forgetting factor (λ) to weight errors over time. | Forgetting factor (λ); Model order (p) | Lower computational complexity; Suitable for single-trial modeling followed by averaging. | Performance degrades with signal downsampling; Sensitive to choice of λ. |

| General Linear Kalman Filter (GLKF) [35] | Models state process as a random walk using observation and state equations. | Two adaptation constants (c1, c2); Model order (p) | Allows for both single-trial and multi-trial modeling; Effective with well-tuned constants. | High c1/c2 values increase estimate variance; Low values slow adaptation. |

| Multivariate Adaptive Autoregressive (MVAAR) [35] | Kalman filter variant updating measurement noise from prior prediction error. | Adaptation coefficient (c); Model order (p) | Effective for single-trial analysis. | Limited to single-trial modeling; Performance varies with model order and sampling. |

| Dual Extended Kalman Filter (DEKF) [35] | Simultaneously estimates states and parameters of the dynamical system. | Adaptation coefficient; Model order (p) | Efficient for nonlinear dynamical systems. | Limited to single-trial modeling; Sensitive to parameter initialization. |

Performance and Sensitivity Insights

Experimental comparisons using both simulated data and benchmark EEG recordings have revealed critical performance insights. Across a broad range of model orders, all algorithms can correctly reproduce interaction patterns, demonstrating a degree of robustness to this parameter [35]. However, signal downsampling often degrades connectivity estimation accuracy for most algorithms, though in some cases it can reduce estimate variability by lowering the number of model parameters [35]. Furthermore, the strategy for handling multiple trials significantly impacts outcomes. Single-trial modeling followed by averaging can achieve optimal performance with larger adaptation coefficients than previously suggested, but it exhibits slower adaptation speeds compared to multi-trial modeling, where one tvMVAR model is fitted simultaneously across all trials [35].

Experimental Protocols for Artifact Removal Validation

A rigorous protocol for validating EEG artifact removal methods using MVAR models involves two main stages: 1) generating a simulated, ground-truth EEG dataset, and 2) applying and evaluating the artifact removal techniques on this controlled data.

Protocol 1: Generating Synthetic EEG with Ground Truth

This protocol creates realistic, artifact-free EEG signals with known connectivity properties.

- Step 1: Define Head Model and Source Locations: Begin by using a realistic head model, such as the New-York head model, to define the locations of neural sources and their projections to scalp electrodes [36].

- Step 2: Fit MVAR Model to Clean Data: Fit a high-dimensional MVAR model to long segments of clean, high-quality intracranial or scalp EEG data. This step captures realistic neural interaction dynamics. Techniques like the group Least Absolute Shrinkage and Selection Operator (gLASSO) can be effectively used to fit these models, even with a high number of recording sites (~100-200) [37].

- Step 3: Generate Synthetic EEG Signals: Use the fitted MVAR model to generate synthetic multichannel EEG time series. The dynamic directed connectivity in this synthetic data is known by design, providing a perfect ground truth [36] [38].

- Step 4: Simulate Artifacts: Introduce controlled artifacts into the clean, synthetic EEG. For example, to simulate motion artifacts, one can model the effects at the skin-electrode interface, connecting cables, and the electrode-amplifier system [39]. This results in a final dataset with known brain signals and known artifacts.

The following diagram illustrates this workflow for creating validated synthetic EEG data.

Protocol 2: Benchmarking Artifact Removal Methods

This protocol tests the efficacy of different artifact removal algorithms on the simulated data.

- Step 1: Apply Artifact Removal: Process the artifact-laden synthetic dataset from Protocol 1 with various artifact removal techniques. Prominent methods include:

- Independent Component Analysis (ICA): A blind source separation method that separates mixed signals into statistically independent components [40] [41].

- ERASE: A modified ICA approach that uses additional EMG reference channels to force more artifact power into identifiable components [41].

- RMD-SVD: A method combining Regenerative Multi-Dimensional Singular Value Decomposition with ICA, effective for single-channel artifact removal [40].

- Step 2: Compare to Ground Truth: Compare the "cleaned" output from each method against the original, known ground-truth EEG from Protocol 1.

- Step 3: Quantify Performance: Calculate performance metrics to objectively compare methods. Key metrics include:

- Signal-to-Noise Ratio (SNR): Measures the level of desired signal relative to noise/artifacts [40].

- Mean Squared Error (MSE): Quantifies the average squared difference between the cleaned and ground-truth signals [40].

- Sensitivity & False Positive Rates: Assess the ability to correctly identify and remove artifacts without discarding neural signals [41].

Table 2: Key Performance Metrics for Artifact Removal Validation

| Metric | Definition | Interpretation in Validation |

|---|---|---|

| Signal-to-Noise Ratio (SNR) | Ratio of signal power to noise power. | A higher SNR indicates more effective artifact suppression and better preservation of the neural signal. |

| Mean Squared Error (MSE) | Average of the squares of the errors between cleaned and true signal. | A lower MSE indicates the cleaned signal is closer to the true, artifact-free neural signal. |

| Sensitivity | Proportion of true artifacts correctly identified and removed. | Measures the method's ability to detect artifacts. High sensitivity means fewer artifacts remain. |

| False Positive Rate | Proportion of neural signal incorrectly identified as artifact. | A low false positive rate indicates the method preserves brain activity well, minimizing data loss. |

The following diagram outlines this benchmarking process.

The Scientist's Toolkit: Essential Research Reagents

Successfully implementing the aforementioned experimental protocols requires a combination of specific computational tools, software, and methodological components.

Table 3: Essential Reagents for MVAR-based Validation Research

| Research Reagent | Function & Role in Validation | Exemplars & Notes |

|---|---|---|

| Computational Head Model | Provides a biophysically realistic volume conductor to simulate scalp potentials from neural sources. | New-York Head Model [36]; Allows for accurate forward modeling of EEG signals. |

| MVAR Model Fitting Toolbox | Software package for estimating MVAR parameters from time series data. | Custom MATLAB scripts [36]; gLASSO for high-dimensional data [37]; SEED-G toolbox for inter-brain simulation [38]. |

| Artifact Simulation Module | Introduces controlled, realistic artifacts into clean EEG signals for validation. | Models for motion artifacts at skin-electrode interface and cables [39]; Models for EMG artifacts [41]. |

| Blind Source Separation (BSS) Algorithm | Core computational engine for separating neural signals from artifacts in mixed recordings. | Independent Component Analysis (ICA) [40] [41]; Canonical Correlation Analysis (CCA) [41]. |

| Performance Metric Calculator | Quantitatively assesses the fidelity of artifact-cleaned signals against ground truth. | Scripts to calculate SNR, MSE, PSNR [40]; Sensitivity and False Positive rate calculators [41]. |

MVAR models provide a rigorous mathematical framework for generating synthetic EEG with known ground-truth properties, making them a cornerstone for the objective validation of artifact removal algorithms. Systematic comparison of tvMVAR algorithms reveals that while all can recover basic interaction patterns, their performance is sensitive to parameters like adaptation coefficients and sampling rate. The experimental protocols outlined—involving synthetic data generation followed by systematic benchmarking—offer a robust pathway for evaluating the next generation of EEG artifact removal techniques. This approach is critical for advancing the reliability of EEG analysis in both basic neuroscience and applied settings such as clinical drug development.

Incorporating Realistic Non-Idealities and Non-Stationarities

Validating electroencephalography (EEG) artifact removal algorithms requires frameworks that incorporate realistic non-idealities and non-stationarities inherent in real-world data acquisition. As research into neural dynamics during natural movement and real-world tasks accelerates, the limitations of traditional artifact removal methods become increasingly apparent. These approaches often struggle with the complex, time-varying artifacts encountered in mobile EEG scenarios, where motion-induced signals and physiological interferences exhibit non-stationary characteristics that overlap with neural signals of interest both temporally and spectrally. This comparison guide objectively evaluates contemporary artifact removal methodologies, focusing on their performance validation using simulated data and controlled experimental setups that incorporate these challenging real-world conditions.

The transition from laboratory-based EEG systems to mobile brain imaging approaches has created an urgent need for validation frameworks that can accurately replicate the non-ideal conditions encountered during movement. These frameworks employ sophisticated simulation techniques, including head phantoms with electrical dipoles, semi-synthetic datasets combining clean EEG with recorded artifacts, and experimental protocols that systematically introduce non-stationarities. By testing algorithms against known ground truth signals in controlled yet realistic environments, researchers can establish meaningful performance benchmarks and identify the most suitable approaches for specific application contexts, from clinical monitoring to athletic performance optimization.

Performance Comparison of Contemporary Artifact Removal Methods

Table 1: Quantitative Performance Metrics of Deep Learning-Based Methods

| Method | Architecture | Artifact Types Addressed | SNR Improvement (dB) | Correlation Coefficient | Artifact Reduction (%) | RMSE |

|---|---|---|---|---|---|---|

| Motion-Net | 1D CNN with Visibility Graph features | Motion artifacts | 20 ±4.47 [2] | N/R | 86 ±4.13 [2] | 0.20 ±0.16 [2] |

| CLEnet | Dual-scale CNN + LSTM with EMA-1D | EMG, EOG, ECG, Mixed artifacts | 11.50 [1] | 0.925 [1] | N/R | 0.300 (temporal) [1] |

| A²DM | Artifact-aware CNN with frequency enhancement | Ocular (EOG), Muscle (EMG) | N/R | 12% improvement over NovelCNN [3] | N/R | N/R |

| LSTEEG | LSTM-based Autoencoder | Multiple artifact types | N/R | Superior to convolutional autoencoders [42] | N/R | N/R |

| Complex CNN | Convolutional Neural Network | tDCS artifacts | Best performance for tDCS [34] | N/R | N/R | N/R |

| M4 Network | State Space Models (SSMs) | tACS, tRNS artifacts | Best performance for tACS/tRNS [34] | N/R | N/R | N/R |

SNR: Signal-to-Noise Ratio; RMSE: Root Mean Square Error; N/R: Not Reported

Table 2: Performance Comparison of Non-Deep Learning Methods for Motion Artifacts

| Method | Core Approach | Applicable Scenarios | Key Performance Advantages | Computational Considerations |

|---|---|---|---|---|

| iCanClean | Canonical Correlation Analysis with reference signals | Walking, running | Better P300 congruency effect recovery [43], Improved dipolarity [43] | Effective with pseudo-reference signals [43] |

| Artifact Subspace Reconstruction (ASR) | Sliding-window PCA with thresholding | Walking, running | Reduced power at gait frequency [43], Similar ERP latency to standing task [43] | Less effective than iCanClean for P300 [43] |

| onEEGwaveLAD | Wavelet Transform + Isolation Forest | Real-time single-channel applications | Fully automated, no reference signals required [44] | Configurable window length tradeoffs [44] |

| Tripolar Concentric Ring Electrodes | Hardware-based Laplacian filtering | High-density mobile EEG | Improved spatial selectivity [45], Better localization accuracy at high artifact amplitudes [45] | Hardware solution requiring specialized equipment [45] |

The quantitative comparison reveals distinctive performance profiles across methodological categories. Deep learning approaches generally excel at handling complex, non-stationary artifacts when sufficient training data is available, with architectures like CLEnet demonstrating versatility across multiple artifact types [1]. Motion-Net shows exceptional performance specifically for motion artifacts, achieving approximately 86% artifact reduction while maintaining signal integrity through its subject-specific training approach [2]. The incorporation of visibility graph features provides structural information that enhances performance with smaller datasets, addressing a critical limitation of many deep learning methods [2].

Non-deep learning methods offer compelling advantages in scenarios requiring real-time processing or where training data is limited. iCanClean demonstrates particular effectiveness for motion artifact removal during dynamic tasks like running, successfully recovering expected P300 congruency effects that other methods miss [43]. Hardware-based solutions like tripolar concentric ring electrodes provide unique value in high-artifact environments, maintaining performance even when software approaches struggle with extreme amplitude artifacts [45]. The choice between these methodological approaches ultimately depends on specific application requirements, including computational constraints, available channels, and the nature of expected artifacts.

Experimental Protocols and Validation Methodologies

Simulation-Based Validation with Head Phantoms

Advanced head phantom systems provide the most physiologically realistic validation environments for evaluating artifact removal performance under controlled conditions. These systems incorporate electrical dipoles at anatomically relevant positions to simulate neural sources with precise spatial and temporal characteristics. In a comprehensive validation study, researchers constructed a head phantom using ballistics gelatin with fourteen dipolar sources: ten simulating neural generators in regions including occipital lobes, sensorimotor cortices, cerebellum, frontal and parietal lobes, premotor cortex, and anterior cingulate gyrus; and four simulating myoelectric sources in neck muscles (sternocleidomastoids and semispinalis capitis) [45]. This configuration enabled direct comparison of conventional disk electrodes versus tripolar concentric ring electrodes in recovering known neural signals amid contaminating muscle artifacts.

The experimental protocol broadcast simulated neural signals as random, time-varying, single-frequency sinusoidal bursts within standard EEG spectral bands (5-37 Hz), using prime number frequencies to avoid harmonic resonance in recorded signals [45]. Simultaneously, actual recorded human neck muscle activity during walking was broadcast at scaled amplitudes ranging from 0× to 2× typical surface recording levels. This approach systematically tested the robustness of each method across varying artifact intensities while maintaining ground truth knowledge of neural signals. Performance was evaluated through spectral power peak detection, scalp map spatial entropy, and localization accuracy metrics, providing comprehensive assessment of both signal preservation and spatial fidelity [45].

Semi-Synthetic Dataset Construction

Semi-synthetic approaches provide a flexible framework for evaluating artifact removal performance when specific artifact types are of interest. The CLEnet validation employed three distinct datasets: Dataset I combined single-channel EEG with EMG and EOG artifacts; Dataset II incorporated ECG artifacts from the MIT-BIH Arrhythmia Database; while Dataset III utilized real 32-channel EEG collected during a 2-back task containing unknown physiological artifacts [1]. This progressive validation approach tested generalizability from controlled semi-synthetic conditions to realistic unknown artifacts.