Untangling the Signal: Overcoming Frequency Overlap in Neural Data for Advanced Biomedical Research

This article addresses the critical challenge of frequency overlap between neural signals and biological artifacts, a fundamental problem that confounds data interpretation in neuroscience and drug development.

Untangling the Signal: Overcoming Frequency Overlap in Neural Data for Advanced Biomedical Research

Abstract

This article addresses the critical challenge of frequency overlap between neural signals and biological artifacts, a fundamental problem that confounds data interpretation in neuroscience and drug development. We explore the spectral characteristics of common artifacts—such as ocular, muscular, and cardiac interference—that inhabit the same frequency bands as key neural oscillations. The scope spans from foundational concepts explaining why traditional filtering fails, to advanced methodological solutions including deep learning and targeted artifact reduction. We also provide a comparative analysis of validation metrics and troubleshooting frameworks to optimize signal processing pipelines, ultimately empowering researchers to enhance the reliability of their neural data for clinical and research applications.

The Core Conflict: Why Frequency Overlap Challenges Neural Signal Interpretation

A fundamental challenge in neurophysiological signal analysis is the spectral overlap between neural signals of interest and non-neural physiological artifacts. This overlap occurs when artifacts generated by eye movements, muscle activity, and other biological processes share nearly identical frequency bands with crucial brain rhythms, making their separation exceptionally difficult [1] [2]. The intrinsic low amplitude of neural signals, typically measured in microvolts for EEG, further exacerbates this problem, as artifacts often exhibit amplitudes an order of magnitude larger, drastically reducing the signal-to-noise ratio (SNR) and obscuring genuine brain activity [3] [2]. This contamination compromises data integrity and can lead to clinical misdiagnosis, particularly when artifacts mimic epileptiform abnormalities or sleep rhythms [2]. Understanding and addressing this spectral convergence is therefore paramount for accurate interpretation of neural data in both research and clinical settings.

Quantitative Analysis of Spectral Overlap

The following table systematically categorizes common physiological artifacts and their spectral characteristics relative to standard neural oscillations, highlighting the specific frequency bands where overlap occurs.

Table 1: Spectral Overlap Between Neural Signals and Physiological Artifacts

| Signal/Artifact Type | Frequency Range | Primary Overlapping Neural Rhythms | Key Characteristics & Impact |

|---|---|---|---|

| Ocular Artifacts (EOG) [1] [2] | 3–15 Hz | Delta (0.5–4 Hz), Theta (4–8 Hz) | High-amplitude (100–200 µV) deflections; obscures low-frequency cognitive processes and evoked potentials. |

| Muscle Artifacts (EMG) [2] | 20–300 Hz | Beta (13–30 Hz), Gamma (>30 Hz) | Broadband high-frequency noise; masks cognitive and motor activity signals. |

| Sleep-Related Waveforms [3] | Varies (e.g., Delta) | Delta, Theta | Vertex waves and K-complexes can be confused with or obscured by other low-frequency artifacts. |

| Respiration Artifact [2] | ~0.1–0.3 Hz | Delta (0.5–4 Hz) | Slow baseline drifts; impairs sleep studies and low-frequency cognitive assessments. |

| Perspiration Artifact [2] | <1 Hz | Delta (0.5–4 Hz) | Slow potential shifts due to changing electrode impedance; contaminates delta and theta bands. |

Quantitative studies confirm the significant impact of this overlap. Mutual information and SNR analyses reveal that while MEG generally offers a higher total information content, both EEG and MEG are susceptible to these confounding signals. Notably, artifacts like vertex waves and K-complexes can carry significantly higher total information in MEG, whereas EEG is more sensitive to high-amplitude artifacts such as swallowing and muscle activity [3].

Methodological Approaches for Artifact Identification and Removal

Traditional and Classical Methods

Early approaches to artifact removal relied on well-established signal processing techniques, which are often used as benchmarks for newer methods.

- Regression-Based Methods: These are among the simplest techniques, operating under the linear assumption that the recorded signal is a sum of the true EEG and the artifact [1]. The process involves using a reference signal, such as an electrooculography (EOG) channel, to estimate the artifact's contribution to each EEG channel and subtract it. The time-domain regression method, for instance, involves filtering both the EEG and EOG signals, estimating a subject-specific weighting coefficient for each channel, and subtracting the scaled EOG artifact [1].

- Blind Source Separation (BSS) & Independent Component Analysis (ICA): ICA is a powerful BSS technique that decomposes multi-channel EEG data into statistically independent components [1] [2]. The underlying principle is that neural and artifactual sources are anatomically and physiologically distinct and thus get mixed differently across electrodes. Experts can then identify and remove components corresponding to blinks, eye movements, or muscle activity before reconstructing the clean EEG signal. This method is particularly effective for high-density EEG systems (e.g., >40 channels) [1].

- Artifact Subspace Reconstruction (ASR): ASR is an advanced, automated method that operates by statistically identifying and reconstructing portions of the EEG data contaminated by artifacts. It is especially useful for real-time applications and wearable EEG systems, as it can handle non-stationary noise bursts caused by gross motor movements [1].

Advanced Deep Learning and Generative Models

Modern research has pivoted towards using deep learning models to tackle the complex, non-linear nature of artifact contamination.

- Generative Adversarial Networks (GANs): GANs have shown remarkable success in generating artifact-free EEG signals. The architecture consists of a generator, which attempts to produce clean EEG from noisy input, and a discriminator, which learns to distinguish the generated signal from ground-truth clean data [4]. This adversarial training process guides the generator to create physiologically accurate, denoised outputs. Variants like Wasserstein GAN with Gradient Penalty (WGAN-GP) have demonstrated superior training stability and signal quality [5].

- Hybrid Deep Learning Models: Researchers are developing sophisticated hybrid models that combine the strengths of different architectures. For example, the AnEEG model integrates Long Short-Term Memory (LSTM) networks with a GAN to capture the temporal dependencies inherent in EEG data [4]. Other models, like GCTNet, use a GAN-guided parallel CNN alongside a transformer network to capture both global and local temporal features, reportedly reducing error and improving SNR compared to older methods [4].

- State Space Models (SSMs): For specific challenges like artifacts induced by Transcranial Electrical Stimulation (tES), multi-modular networks based on SSMs have been shown to excel, particularly for removing complex artifacts from tACS and tRNS protocols [6].

Table 2: Research Reagent Solutions for Artifact Removal

| Solution / Algorithm | Primary Function | Key Advantage |

|---|---|---|

| Independent Component Analysis (ICA) [1] [2] | Blind source separation of EEG signals into independent components. | Effective for isolating and removing stereotypical artifacts (e.g., blinks) from high-channel data without signal loss. |

| Artifact Subspace Reconstruction (ASR) [1] | Statistical detection and reconstruction of artifact-contaminated data segments. | Suitable for real-time application and mobile EEG; robust against large, non-stationary artifacts. |

| Generative Adversarial Network (GAN) [4] [5] | Generative model that learns to produce artifact-free EEG signals. | Capable of modeling complex, non-linear artifact properties; can synthesize clean data. |

| Long Short-Term Memory (LSTM) Network [4] | Type of recurrent neural network for processing sequential data. | Models temporal dynamics and long-range dependencies in EEG time series. |

| State Space Models (SSMs) [6] | Models dynamical systems for time-series denoising. | Particularly effective for removing structured, periodic noise like tACS artifacts. |

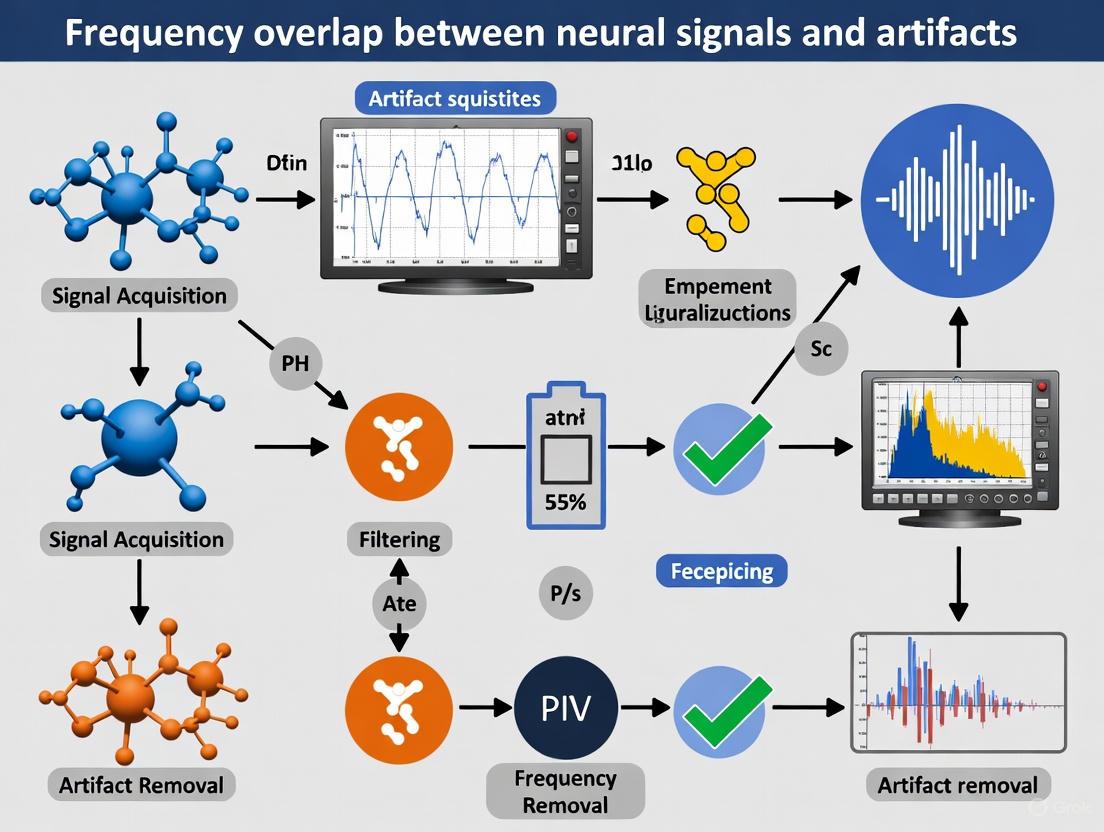

Visualizing the Problem and Solutions

The following diagrams illustrate the core problem of spectral overlap and the workflow of a modern deep-learning solution.

Spectral Overlap Visualization

Artifact Removal Workflow

The spectral overlap between neural signals and physiological artifacts remains a critical obstacle in electrophysiology. While classical methods like regression and ICA provide foundational solutions, the future lies in advanced, data-driven approaches. Deep learning models, particularly GANs and hybrid architectures, demonstrate a superior capacity to model the complex, non-linear relationships within the signal mixture, enabling more precise and effective artifact removal. As these technologies evolve, they will unlock more robust analysis of neural dynamics, directly enhancing the accuracy of clinical diagnostics and the efficacy of therapeutic interventions in neurology and drug development.

Neural oscillations, the rhythmic electrical activity generated by the brain, form a fundamental mechanism for coordinating large-scale neural communication and are critical for cognitive functions ranging from sensory processing to memory. A primary challenge in their accurate identification and quantification is the significant spectral overlap between authentic brain rhythms and non-cerebral artifacts, particularly those originating from muscular activity. This overlap can confound analysis, leading to misinterpretations in both basic neuroscience and clinical drug development. This technical guide details the spectral and spatial profiles of key oscillations, provides methodologies for their robust characterization amid contaminating signals, and frames these concepts within the essential context of artifact discrimination.

Foundational Spectral Properties of Neural Oscillations

The brain's oscillatory activity is not random; it is organized into distinct frequency bands that exhibit characteristic spatial distributions and functional correlates. Understanding these profiles is the first step in differentiating them from artifacts. The following table summarizes the core spectral and functional attributes of the key neural rhythms.

Table 1: Spectral Profiles and Functional Correlates of Key Neural Oscillations

| Rhythm | Frequency Range (Hz) | Prominent Cortical Distribution | Primary Functional Correlates |

|---|---|---|---|

| Delta | 0.5 – 4 [7] [8] | Medial fronto-temporal regions [9] | Deep sleep, unconscious states (e.g., UWS), pathological conditions [10] [9] |

| Theta | 4 – 8 [7] [8] | Medial fronto-temporal regions; Hippocampus [7] [9] | Spatial navigation, memory consolidation, focused attention [7] |

| Alpha | 8 – 13 [10] [8] | Posterior regions (e.g., occipital, precuneus) [9] | Idle state, relaxed wakefulness with eyes closed, sensory gating [10] [8] |

| Beta | 13 – 32 [8] | Lateral prefrontal cortex; Somatomotor cortex [9] | Active motor processing, sustained attention, cognitive control [9] |

The brain's spatial organization of these natural frequencies follows a structured gradient. Research using magnetoencephalography (MEG) has confirmed a medial-to-lateral and a posterior-to-anterior gradient of increasing frequency [9]. Slow rhythms like delta and theta are characteristic of medial fronto-temporal areas, while posterior regions are dominated by the alpha rhythm. In contrast, the lateral prefrontal cortex is distinguished by high-beta oscillations [9].

The Critical Challenge of Frequency Overlap with Artifacts

A principal technical hurdle in analyzing these rhythms is that their spectral bandwidths entirely overlap with common biological artifacts, most notably electromyographic (EMG) activity from muscles.

The Muscle Artifact Problem

The spectral bandwidth of muscle activity is broad, typically ranging from ~20–300 Hz [11]. This creates direct interference with neural signals:

- High-Frequency Contamination: Muscle activity from head and neck muscles (e.g., frontalis, masseter) has peak frequencies in the 30-100 Hz range, directly encroaching on the beta and gamma bands [11].

- Low-Frequency Contamination: The lower band-limit of muscle activity can extend down to approximately 15 Hz, leading to potential overlap with the upper alpha and lower beta bands [11].

- Amplitude Disparity: The amplitude of muscle artifacts can be several orders of magnitude larger than genuine high-frequency neural activity, making tiny neural oscillations particularly vulnerable to being obscured [11].

Spectral vs. Rhythmicity-Based Dissociation

Traditional analysis relies heavily on power spectral density (PSD), which quantifies signal amplitude but not the stability or "oscillatoriness" of the rhythm. A peak in the PSD above the aperiodic (1/f) component is often taken as evidence of an oscillation [8]. However, power and rhythmicity are dissociable; a signal can have high power in a frequency band without being strongly rhythmic, and vice-versa [8]. This is a critical distinction when discriminating brain activity from artifacts, as artifacts may produce high power but lack stable phase dynamics.

The phase-autocorrelation function (pACF) has been introduced as a method to directly quantify rhythmicity in an amplitude-independent manner [8]. The pACF measures the predictability of a future phase as a function of time lag, yielding a direct measurement of temporal stability. Studies using pACF have shown that significant rhythmicity is often present in narrow spectral peaks (e.g., 2-3 Hz wide), whereas the PSD can show elevated power across a much wider and potentially artifactual frequency range [8].

Table 2: Key Methodologies for Discriminating Neural Oscillations from Artifacts

| Method | Underlying Principle | Advantages | Limitations |

|---|---|---|---|

| Power Spectral Density (PSD) & FOOOF | Separates periodic spectral peaks from aperiodic 1/f background [10] [8] | Gold standard for initial identification; FOOOF parameterization enables quantitative comparison [10] | Confounds amplitude with rhythmicity; susceptible to contamination from broadband artifacts [8] |

| Phase-Autocorrelation Function (pACF) | Quantifies phase stability over time, independent of signal amplitude [8] | Directly measures "oscillatoriness"; reveals narrowband rhythmicity invisible to PSD [8] | Newer method with less established normative benchmarks; requires sufficient data length |

| Stationary Wavelet Transform (SWT) | Decomposes signal into frequency bands; artifacts detected and removed via thresholding [12] | Effective for removing localized, transient artifacts without relying on other channels [12] | Performance depends on selected frequency bands and threshold parameters [12] |

| Machine Learning (LSTM Networks) | Learns normal signal dynamics to predict and replace artifactual segments [13] | Channel-independent; recreates realistic signal to maintain data continuity [13] | Requires high-quality training data; computationally intensive |

Detailed Experimental Protocols for Oscillation Profiling

Protocol 1: Resting-State EEG Acquisition and Spectral Parameterization

This protocol is adapted from studies on disorders of consciousness, where spectral profiling is crucial for diagnosis [10].

- 1. Participant Preparation & Recording: Acquire at least 10 minutes of resting-state EEG during wakefulness. Standardize conditions (eyes open/closed). Use a high-density EEG system (e.g., 64+ channels) with a sampling rate ≥ 500 Hz to adequately capture higher frequencies. Impedance should be kept below 10 kΩ [10].

- 2. Preprocessing: Apply band-pass filtering (e.g., 0.5-70 Hz) and notch filtering (e.g., 50/60 Hz). Identify and reject segments with large muscle artifacts, eye blinks, or gross head movements. This can be done manually or via automated algorithms. In patient studies, high rejection rates (~37%) are common due to neurological symptoms [10].

- 3. Power Spectrum Calculation: For each artifact-free epoch and channel, compute the power spectral density (PSD) using a multitaper or Welch's method.

- 4. Spectral Parameterization with FOOOF: Fit the FOOOF algorithm to the PSD to separate the aperiodic ("1/f") component from periodic oscillatory peaks [10]. Key parameters to extract include:

- Aperiodic Exponent & Offset: Quantifies the background 1/f slope.

- Oscillatory Peaks: Center frequency, bandwidth (Hz), and power of each identified peak.

- 5. Spatial & Clinical Correlation: Calculate the antero-posterior (AP) gradient of peak frequencies [10]. Correlate spectral features (e.g., dominant peak frequency in 1-14 Hz range) with behavioral scores like the Coma Recovery Scale-Revised (CRS-R) [10].

Protocol 2: Quantifying Rhythmicity with the Phase-Autocorrelation Function (pACF)

This protocol measures the temporal stability of oscillations, complementing power-based analyses [8].

- 1. Signal Acquisition & Preprocessing: Follow Steps 1 and 2 from Protocol 1.

- 2. Complex Wavelet Filtering: To obtain the phase time series, filter the data using a complex wavelet filter (e.g., Morlet wavelet) across a log-spaced set of frequencies (e.g., 2 to 100 Hz) [8].

- 3. Compute Phase Time Series: Extract the instantaneous phase angle from the complex analytic signal at each frequency and time point.

- 4. Calculate pACF: For a given frequency, compute the autocorrelation of the complex phase time series across a range of time lags. The pACF value at lag k is given by the circular-linear correlation between the phase time series and its time-lagged copy [8].

- 5. Determine pACF Lifetime: Transform the pACF into a cumulative function. The pACF lifetime is the first lag at which this cumulative function exceeds a predefined threshold (e.g., 0.9), indicating the lag that accounts for 90% of the total above-chance phase autocorrelation [8].

- 6. Statistical Validation: Generate surrogate data (e.g., phase-randomized) to create a null distribution of pACF lifetimes. The observed pACF lifetime is significant if it exceeds the 99th percentile of the surrogate distribution (p ≤ 0.01) [8].

The following diagram illustrates the core workflow and logical relationships of the pACF method for quantifying rhythmicity.

The Scientist's Toolkit: Research Reagent Solutions

This section details essential tools and computational methods for conducting rigorous research on neural oscillations.

Table 3: Essential Tools for Neural Oscillation and Artifact Research

| Tool / Solution | Type | Primary Function | Application Note |

|---|---|---|---|

| FOOOF | Algorithm / Software | Parameterizes neural power spectra into periodic & aperiodic components [10] | Critical for moving beyond visual inspection of spectra; enables quantification of peak characteristics and 1/f background [10]. |

| SANTIA Toolbox | Software Toolbox | Detects and removes artifacts in LFP/EEG using machine learning [13] | Employs LSTM networks to recreate artifactual segments, maintaining signal continuity without discarding data [13]. |

| Phase-Autocorrelation Function (pACF) | Analytical Metric | Quantifies rhythmicity (temporal stability) independent of amplitude [8] | Reveals narrowband oscillations that are obscured in power spectra; dissociates "oscillatoriness" from signal strength [8]. |

| Long-Short Term Memory (LSTM) Network | Machine Learning Model | Predicts and replaces artifact-corrupted signal segments [13] | Provides a channel-independent solution for artifact removal, effective even when global artifacts corrupt most channels [13]. |

| High-Density MEG/EEG Systems | Recording Equipment | Maps the cortical distribution of natural frequencies with high spatial resolution [9] | Essential for revealing medial-to-lateral and posterior-to-anterior frequency gradients in the human brain [9]. |

Accurate characterization of delta, theta, alpha, and beta rhythms is foundational to neuroscience research and its clinical applications, including drug development for neurological and psychiatric disorders. This endeavor is complicated by the inherent spectral overlap between these oscillations and non-neural artifacts. A modern approach requires moving beyond simple power spectral analysis to embrace methods that directly quantify rhythmicity, such as the pACF, and robust artifact removal techniques, such as those based on wavelet transforms and machine learning. By integrating these advanced protocols and tools, researchers can achieve a more precise and reliable dissection of brain dynamics, ultimately leading to clearer biomarkers and more effective therapeutic interventions.

The accurate interpretation of neurophysiological signals, such as electroencephalography (EEG), is fundamental to neuroscience research and clinical neurology. However, these signals are persistently contaminated by physiological artifacts originating from ocular movement (EOG), muscle activity (EMG), and cardiac rhythms (ECG). A central challenge in this domain is the significant spectral overlap between genuine neural signals and these artifacts, which can obscure true brain activity and lead to erroneous conclusions in both academic research and drug development studies. Characterizing the spectral and temporal signatures of these artifacts is therefore not merely a signal processing exercise but a critical prerequisite for valid scientific and clinical outcomes. This guide provides an in-depth technical analysis of these major artifacts, detailing their spectral characteristics, methodologies for their identification and removal, and the implications of their frequency overlap with neural signals, providing researchers with the tools to enhance data fidelity.

Ocular Artifacts (EOG)

Spectral and Temporal Characteristics

Ocular artifacts, generated by eye blinks and movements, are among the most prevalent contaminants in EEG data. The electrooculographic (EOG) signal arises from the cornio-retinal potential, a steady potential difference across the eyeball. During blinks and saccades, this dipole rotates, creating a large electrical field that propagates across the scalp, most prominently over the frontal and prefrontal regions due to their proximity to the eyes [14]. The table below summarizes the core characteristics of EOG artifacts.

Table 1: Characteristics of Ocular (EOG) Artifacts

| Feature | Characteristics | Impact on Neural Signals |

|---|---|---|

| Spectral Content | Dominantly low-frequency, typically below 4 Hz [15]. | Overlaps with and can obscure delta brain rhythms (0.5-4 Hz). |

| Amplitude | High-amplitude peaks, often an order of magnitude larger than EEG [14]. | Can saturate amplifiers and mask concurrent neural activity. |

| Topography | Most pronounced in frontal EEG channels; volume conducts to central sites [16]. | Limits the reliability of frontal lobe signal interpretation. |

| Morphology | Slow, biphasic for blinks; sharper for saccades [14]. | Can be mistaken for slow cortical potentials or epileptiform spikes. |

Advanced Removal Methodologies

Given the spectral overlap with neural signals, simple high-pass filtering is ineffective as it would distort genuine brain activity. Advanced blind source separation techniques are required.

- Independent Component Analysis (ICA): ICA is a dominant method for EOG artifact removal. It decomposes multi-channel EEG recordings into statistically independent components (ICs), a subset of which often represent ocular artifacts. These can be manually or automatically identified and removed before signal reconstruction [14] [16].

- Wavelet-Enhanced ICA (wICA): An advanced hybrid method that improves upon standard ICA. After ICA decomposition, wavelet transform is applied to the artifactual ICs. EOG peaks are identified and corrected selectively within the component using wavelet thresholding, rather than removing the entire component. This approach preserves more neural information present in the component, reducing signal distortion in both time and spectral domains [14].

- Regression with EEMD-Cleaned References: Conventional regression uses a reference EOG channel to subtract artifacts from EEG but suffers from bidirectional contamination (the EOG channel itself contains cerebral activity). An improved method involves first applying Ensemble Empirical Mode Decomposition (EEMD) to the raw reference EOG signal to obtain Intrinsic Mode Functions (IMFs). An unsupervised technique like PCA is then used to reconstruct a "clean" EOG reference, which is subsequently used in the regression, leading to lower distortion of the underlying EEG [16].

The following diagram illustrates the workflow of the wICA and EEMD-Regression methods, which are designed to minimize neural data loss.

Figure 1: Workflows for advanced EOG artifact removal.

Muscular Artifacts (EMG)

Spectral and Temporal Characteristics

Electromyographic (EMG) artifacts originate from the electrical activity of cranial, facial, neck, and head muscles during contraction. Even minor contractions, such as jaw clenching or forehead tensing, can generate significant EMG. Unlike EOG, EMG has a broad spectral profile that extensively overlaps with neural signals, making it particularly challenging to remove.

Table 2: Characteristics of Muscular (EMG) Artifacts

| Feature | Characteristics | Impact on Neural Signals |

|---|---|---|

| Spectral Content | Broadband, high-frequency content, typically 5-2000 Hz, with significant overlap in beta (13-30 Hz) and gamma (>30 Hz) ranges [16] [17]. | Can mask high-frequency neural oscillations crucial for cognitive process studies. |

| Amplitude | Highly variable; can be large and transient. | Increases overall signal power, obscuring lower-amplitude EEG. |

| Topography | Localized to muscle group proximity (e.g., temporal for jaw); can be widespread. | Contaminates signals from temporal and frontal electrodes. |

| Morphology | Stochastic, spike-like, non-stationary. | Can be misinterpreted as epileptic spikes or pathological high-frequency activity. |

Quantitative Analysis for Fatigue and Artifact Identification

sEMG is not only an artifact source but also a primary tool for monitoring muscle fatigue, which is characterized by specific spectral and temporal shifts.

- Power Spectral Analysis: During sustained or fatiguing muscle contractions, the median frequency (MDF) of the sEMG power spectrum demonstrates a consistent decrease. This shift to lower frequencies is attributed to a reduction in muscle fiber conduction velocity and metabolic changes [18].

- Turns-Amplitude Analysis (TAA): This time-domain analysis quantifies the number of turns per second (T/s)—signal peak points where polarity changes with a minimum amplitude difference—and the mean turn amplitude (MTA). During dynamic fatiguing tasks, T/s shows a significant decrease and is strongly correlated with grip strength fatigue. During recovery, T/s increases, making it a robust indicator of both fatigue and recovery states [18].

Table 3: sEMG Parameters for Muscle Fatigue Assessment

| sEMG Parameter | Definition | Change During Fatigue | Correlation with Fatigue Level |

|---|---|---|---|

| Median Frequency (MDF) | Frequency that divides the power spectrum into two equal halves. | Significant decrease [18]. | Negative, moderate correlation [18]. |

| Turns per Second (T/s) | Number of signal peaks with polarity change per second. | Significant decrease [18]. | Strongest correlation; effective for fatigue and recovery [18]. |

| Mean Turn Amplitude (MTA) | Average amplitude of the identified turns. | Gradual increase [18]. | Lowest correlation [18]. |

Cardiac Artifacts (ECG)

Spectral and Temporal Characteristics

Cardiac artifacts manifest in EEG recordings primarily through the electrical activity of the heart, specifically the QRS complex. This artifact arises due to the volume conduction of the heart's electrical field to the scalp, a phenomenon more likely in specific electrode montages and with certain physiological conditions.

Table 4: Characteristics of Cardiac (ECG) Artifacts

| Feature | Characteristics | Impact on Neural Signals |

|---|---|---|

| Spectral Content | The QRS complex has a broad spectral range, but its fundamental frequency is around 10-20 Hz, overlapping with alpha and beta bands. | Can introduce rhythmic, spike-like contamination in central and parietal channels. |

| Amplitude | Can be highly variable; often large enough to be clearly distinguishable. | May obscure genuine epileptiform spikes that occur synchronously with the QRS complex. |

| Topography | Often observed in EEG channels with a common reference, particularly in central and posterior regions [19]. | Affects regions typically associated with somatosensory and visual processing. |

| Morphology | Stereotypical, periodic waveform reflecting the QRS complex (approx. 100 ms duration) [19]. | Its regularity helps in discrimination from aperiodic neural spikes. |

Advanced Detection and Removal Techniques

The periodic nature and stereotypical shape of the ECG artifact facilitate its detection and removal.

- QRS Complex Detection and Selective Filtering: A highly effective method involves first detecting the R-peaks in the concurrent ECG recording (e.g., using open-source algorithms like

R_peak_detect.min MATLAB). Once the QRS complexes are located, a filter (e.g., a zero-phase filter) is applied only to the EEG segments coinciding with these complexes. This selective approach prevents the loss of critical neural information, such as epileptiform spikes, in non-QRS segments [19]. - Spectral Feature Extraction for Cardiac-Related Conditions: ECG spectral properties can also be used diagnostically. For sleep apnea detection, a method combining EEMD and ICA (EEMD-ICA) can denoise single-lead ECG signals. The Hilbert-Huang Transform (HHT) is then applied to specific IMFs to extract spectral features like maximum instantaneous frequency (

femax). These features show statistically significant differences (p < 0.001) between normal and sleep apnea subjects and can be classified with high accuracy (92.9%) using a Random Forest model [20] [21].

The workflow below details the process of selective QRS filtering and ECG-based condition diagnosis.

Figure 2: Workflows for ECG artifact handling and analysis.

The Scientist's Toolkit: Research Reagent Solutions

This section catalogs key computational tools and data processing techniques essential for artifact research.

Table 5: Essential Research Tools and Methods

| Tool/Method | Function | Example Use Case |

|---|---|---|

| Independent Component Analysis (ICA) | Blind source separation to isolate neural and artifactual components. | Core to multiple EOG and EMG artifact removal pipelines [14] [16]. |

| Ensemble Empirical Mode Decomposition (EEMD) | Adaptive, data-driven signal decomposition into Intrinsic Mode Functions (IMFs). | Used for denoising ECG/EOG references and feature extraction [20] [16]. |

| Discrete Wavelet Transform (DWT) | Multi-resolution time-frequency analysis using wavelet basis functions. | Selective correction of EOG peaks in ICA components (wICA) [14]. |

| Turns-Amplitude Analysis (TAA) | Quantifies motor unit action potential firing patterns in time domain. | Assessing muscle fatigue and recovery from sEMG signals [18]. |

| Graph Signal Processing (GSP) | Analyzes signals defined on graph structures, such as brain connectivity networks. | Modeling spectral features of brain connectivity for disorder classification [22]. |

| Random Forest Classifier | Ensemble machine learning model for classification and regression. | Classifying spectral features for sleep apnea detection from ECG [20]. |

| R-peak Detection Algorithms | Algorithms to identify the R-wave in ECG signals. | Critical first step for QRS-complex-based EEG artifact removal [19]. |

The pervasive challenge of spectral overlap between neural signals and physiological artifacts necessitates a sophisticated, methodical approach to data preprocessing. Ocular (EOG), muscular (EMG), and cardiac (ECG) artifacts each possess distinct yet overlapping spectral signatures that, if unaddressed, critically compromise data integrity. As demonstrated, modern mitigation strategies move beyond simple filtering towards adaptive, data-driven methodologies such as wavelet-enhanced ICA, EEMD-based regression, and selective QRS-filtering, which prioritize the preservation of underlying neural information. For researchers, particularly in drug development where biomarker accuracy is paramount, the rigorous application of these advanced characterization and removal protocols is not optional but fundamental. The continued development and validation of these tools, especially those leveraging machine learning for automatic classification, will further empower scientists to isolate true brain activity, thereby enhancing the reliability and translational impact of neurophysiological research.

The analysis of neural signals, particularly electroencephalography (EEG), is fundamentally complicated by the pervasive issue of frequency overlap between neurophysiologically relevant brain activity and various biological artifacts. This spectral entanglement presents a critical challenge for traditional filtering techniques, which operate on the assumption that neural information and artifacts occupy distinct, separable frequency bands. In reality, ocular artifacts from eye blinks exhibit low-frequency components that substantially overlap with the clinically crucial delta rhythm (<4 Hz), while muscle artifacts from jaw clenching or forehead tension produce high-frequency content that intrudes upon the beta and gamma bands (>13 Hz) essential for understanding cognitive processing and motor commands [4]. This overlap renders simple frequency-based filtering inadequate, as such methods cannot discriminate between artifact and brain signal within shared frequency ranges, inevitably leading to the irreversible loss of neural information.

Traditional approaches, including regression-based methods, blind source separation (BSS), and wavelet transforms, have provided foundational tools for artifact management [4]. However, their effectiveness remains limited by their reliance on simplistic assumptions about the statistical properties and generative mechanisms of both neural signals and artifacts. The emergence of wearable EEG technology with its dry electrodes, reduced channel counts, and operation in uncontrolled environments has further exacerbated these limitations, intensifying the need for more sophisticated processing pipelines that can preserve neural information integrity under challenging recording conditions [23]. This whitepaper examines the fundamental limitations of traditional filtering methods, explores advanced deep learning approaches that offer promising alternatives, and provides practical guidance for researchers seeking to minimize neural information loss in their experimental workflows.

Fundamental Limitations of Traditional Filtering Approaches

Traditional artifact removal techniques share a common vulnerability: their operation inevitably discards valuable neural information while targeting artifacts, due to their inability to resolve the fundamental frequency overlap problem. These methods can be broadly categorized into several classes, each with distinct mechanisms of information loss.

Spectral Filtering and Blind Source Separation

Basic spectral filtering (e.g., high-pass, low-pass, band-stop) represents the most straightforward approach to artifact removal but suffers from the crudest form of information loss. By eliminating entire frequency bands suspected of containing artifacts, these filters simultaneously remove neural signals sharing those frequency ranges. For instance, applying a high-pass filter at 2 Hz to remove eye-blink artifacts would also eliminate portions of the clinically relevant delta rhythm, while a low-pass filter at 30 Hz to reduce muscle artifacts would truncate important gamma-band activity related to cognitive processing [4].

Blind Source Separation (BSS) techniques, particularly Independent Component Analysis (ICA), represent a more sophisticated approach that separates multichannel EEG signals into statistically independent components. While theoretically capable of distinguishing neural from artifactual sources based on their statistical properties, ICA faces practical limitations including:

- Dependency on high-channel counts for effective separation, making it less suitable for wearable EEG systems with limited electrodes [23]

- Subjectivity in component classification requiring manual inspection and labeling of components as neural or artifactual

- Inability to fully separate sources with similar statistical properties or temporal dynamics

The core limitation of both approaches stems from their fundamental assumption that artifacts and neural signals occupy separable manifolds in either the frequency or statistical domain. In reality, the complex nonlinear interactions between neurophysiological processes and artifact generators create overlapping distributions that cannot be fully disentangled through linear decomposition or simple frequency exclusion [6] [4].

Impact on Wearable EEG and Real-World Applications

The limitations of traditional filtering become particularly pronounced in the context of wearable EEG systems, which increasingly support applications in real-world environments beyond controlled laboratory settings. A systematic review of artifact management techniques for wearable EEG identified that traditional methods like ICA and wavelet transforms face significant challenges when confronted with the specific artifact properties of wearable systems, including reduced spatial resolution, motion artifacts from natural movement, and electromagnetic interference in uncontrolled environments [23].

Table 1: Traditional Filtering Methods and Their Limitations

| Method | Mechanism | Primary Limitations | Information Loss Risk |

|---|---|---|---|

| Spectral Filtering | Attenuates predefined frequency bands | Cannot resolve frequency overlap; removes valid neural signals | High - non-selective removal of frequency content |

| Regression-Based | Estimates and subtracts artifact using reference signals | Requires reference channels; imperfect artifact modeling | Medium - may over/under subtract neural content |

| ICA/BSS | Separates sources by statistical independence | Requires high channel count; manual component selection | Variable - dependent on correct component rejection |

| Wavelet Transform | Time-frequency decomposition and thresholding | Threshold selection critical; mixed efficacy across artifact types | Medium - co-elimination of neural features with similar time-frequency signatures |

Advanced Deep Learning Approaches for Artifact Removal

The limitations of traditional methods have spurred the development of sophisticated deep learning approaches that leverage neural networks to learn complex, nonlinear mappings between artifact-contaminated and clean EEG signals. These methods fundamentally differ from traditional techniques by operating on pattern recognition principles rather than explicit statistical or frequency-based assumptions.

Architectures for Neural Signal Processing

Convolutional Neural Networks (CNNs) have demonstrated particular effectiveness for certain artifact types, with Complex CNN architectures showing superior performance for removing transcranial direct current stimulation (tDCS) artifacts according to comparative benchmarks [6]. CNNs excel at capturing both spatial and temporal patterns in multichannel EEG data through their hierarchical feature learning capabilities.

State Space Models (SSMs) represent another advanced architecture, with multi-modular SSM networks (M4) demonstrating state-of-the-art performance for removing complex artifacts from transcranial alternating current stimulation (tACS) and transcranial random noise stimulation (tRNS) [6]. These models effectively capture the dynamic nature of neural signals and artifacts through their internal state representations.

Generative Adversarial Networks (GANs) have emerged as particularly powerful tools for artifact removal, employing a dual-network architecture where a generator network produces cleaned EEG signals while a discriminator network evaluates their similarity to genuine artifact-free data [4]. The adversarial training process enables the model to learn sophisticated representations of both artifacts and neural signals without explicit manual specification of their characteristics.

Specialized Deep Learning Models

Recent research has produced several specialized deep learning architectures optimized for specific artifact removal challenges:

The AnEEG model incorporates Long Short-Term Memory (LSTM) layers within a GAN architecture, enabling the network to capture temporal dependencies and contextual information critical for distinguishing artifacts from neural activity patterns [4]. By integrating LSTM's memory capabilities with GAN's generative power, this approach can effectively separate overlapping temporal patterns of neural signals and artifacts.

GCTNet combines transformer networks with GAN-guided parallel CNNs to capture both global and temporal dependencies in EEG signals [4]. The transformer components enable attention mechanisms that focus on clinically relevant neural patterns while suppressing artifacts, demonstrating performance improvements of 11.15% in relative root mean square error (RRMSE) and 9.81 in signal-to-noise ratio (SNR) compared to conventional methods.

Table 2: Performance Comparison of Deep Learning vs. Traditional Methods

| Model/Technique | Stimulation Type | Performance Metric | Result | Reference |

|---|---|---|---|---|

| Complex CNN | tDCS | RRMSE (temporal) | Best performance for tDCS | [6] |

| Multi-modular SSM (M4) | tACS, tRNS | RRMSE (spectral) | Best performance for tACS/tRNS | [6] |

| AnEEG (LSTM-GAN) | Multiple artifacts | Correlation Coefficient | Strong linear agreement with ground truth | [4] |

| GCTNet | Ocular, muscle | SNR improvement | +9.81 SNR improvement | [4] |

| Wavelet Transform | General artifacts | RRMSE | Lower performance than deep learning | [6] [4] |

Experimental Protocols and Methodological Considerations

Rigorous experimental design is essential for developing and validating artifact removal techniques that minimize neural information loss. The following protocols represent current best practices in the field.

Benchmarking and Evaluation Framework

Comprehensive evaluation of artifact removal techniques requires standardized benchmarking approaches:

Semi-Synthetic Dataset Creation: Combining clean EEG data with carefully calibrated synthetic artifacts enables controlled evaluation with known ground truth [6]. This approach allows precise quantification of performance metrics by providing a reference clean signal that is unavailable in real contaminated recordings. The process involves:

- Acquisition of high-quality EEG during resting state with minimal artifacts

- Recording of actual artifacts (ocular, muscle, movement) in separate sessions

- Linear mixing of artifacts with clean EEG at controlled signal-to-noise ratios

- Validation of the mixing process to ensure physiological plausibility

Performance Metrics: Multiple quantitative measures should be employed to comprehensively evaluate preservation of neural information:

- Root Relative Mean Squared Error (RRMSE) in temporal and spectral domains

- Correlation Coefficient (CC) between cleaned and ground truth signals

- Signal-to-Noise Ratio (SNR) and Signal-to-Artifact Ratio (SAR) improvements

- Normalized Mean Squared Error (NMSE) for overall reconstruction accuracy [4]

Cross-Stimulation Validation: Techniques should be evaluated across different stimulation types (tDCS, tACS, tRNS) as performance varies significantly depending on artifact characteristics [6].

Implementation Workflow for Deep Learning Approaches

The implementation of deep learning models for artifact removal follows a structured pipeline:

Deep Learning Artifact Removal Workflow

Implementing effective artifact removal requires both computational resources and carefully curated data materials. The following table summarizes key resources mentioned in recent literature.

Table 3: Research Reagent Solutions for Artifact Removal Research

| Resource | Type | Function/Application | Example Sources |

|---|---|---|---|

| Semi-Synthetic Datasets | Data | Controlled evaluation with known ground truth | EEG + synthetic tES artifacts [6] |

| Public EEG Repositories | Data | Model training and benchmarking | PhysioNet, EEG Eye Artefact Dataset, BCI Competition IV2b [4] |

| Deep Learning Frameworks | Software | Implementation of neural networks | TensorFlow, PyTorch, Keras [24] |

| Visualization Tools | Software | Model interpretation and debugging | TensorBoard, PyTorchViz, NN-SVG [24] |

| Wearable EEG Systems | Hardware | Real-world data acquisition | Systems with dry electrodes, ≤16 channels [23] |

| Auxiliary Sensors | Hardware | Enhanced artifact detection | IMU, EOG, EMG reference sensors [23] |

Visualization and Interpretation of Results

Effective visualization is critical for interpreting artifact removal performance and understanding model behavior. The following diagram illustrates the relationship between different neural signal components and the overlapping challenge this creates for traditional filtering.

Neural Signal and Artifact Frequency Overlap

The irreversible loss of neural information through traditional filtering methods represents a fundamental challenge in neural signal processing, particularly given the spectral overlap between artifacts and brain activity of interest. While traditional approaches like spectral filtering and blind source separation have provided valuable tools, their inherent limitations in resolving frequency overlap necessitate more sophisticated solutions. Deep learning methods, including GANs, state space models, and hybrid architectures, offer promising alternatives by learning complex, nonlinear mappings that can separate neural signals from artifacts while preserving clinically and scientifically relevant information.

Future advancements in this field will likely focus on several key areas: the development of brain foundation models (BFMs) pretrained on large-scale neural datasets to enable robust generalization across tasks and modalities [25]; improved interpretability techniques to build trust in deep learning models and provide insights into neural mechanisms; and hardware-efficient implementations suitable for real-time processing in implantable and wearable devices with strict power and computational constraints [26]. As these technologies mature, they will increasingly enable researchers to extract meaningful neural information from noisy recordings while minimizing the irreversible information loss that has historically plagued traditional filtering approaches.

The proliferation of neurotechnologies across clinical diagnostics, basic neuroscience, and drug development is fundamentally contingent on the integrity of neural signals. A primary threat to this integrity stems from physiological and environmental artifacts that share spectral bandwidth with neural signals of interest, a phenomenon known as frequency overlap. This technical guide examines how unresolved artifacts systematically distort research findings and clinical interpretations. We detail the biophysical origins of these artifacts, quantify their impact on analytical outcomes, and present a contemporary evaluation of advanced mitigation strategies, including deep learning and state-space models. Furthermore, we situate these technical challenges within a growing regulatory landscape focused on neurorights and data protection, underscoring the ethical imperative of robust artifact management for trustworthy neural data science.

Neural data, obtained from technologies such as electroencephalography (EEG), magnetoencephalography (MEG), and brain-computer interfaces (BCIs), provides a window into brain function. However, this data is invariably contaminated by artifacts—unwanted signals from non-neural sources. The central problem is that these artifacts often occupy the same frequency domains as vital neural oscillations, making separation exceptionally difficult [4].

For instance, eye blinks generate low-frequency signals (typically below 4 Hz) that obscure delta brain waves, while muscle activity (EMG) produces high-frequency noise (above 13 Hz) that overlaps with and can mask beta and gamma neural activity [4]. This spectral aliasing means that simple frequency-based filtering is often ineffective, as it removes valuable neural information along with the artifact. The inability to cleanly separate these signals directly compromises the validity of subsequent analyses, from functional connectivity maps to biomarker identification for neurological drugs. The issue is particularly acute in wearable neurotechnology, which operates in ecologically valid but noisy environments, promising richer data at the cost of increased contamination [27]. Consequently, unresolved artifacts are not merely a technical nuisance; they represent a fundamental challenge to data integrity with cascading effects on research conclusions, clinical diagnostics, and therapeutic development.

Quantifying the Impact: A Data-Driven Perspective

The consequences of inadequate artifact management can be quantified across multiple dimensions, from signal fidelity to clinical predictive power. The table below summarizes key performance metrics from recent artifact removal studies, illustrating the measurable benefits of advanced denoising techniques.

Table 1: Performance Metrics of Advanced Artifact Removal Methods

| Study & Technology | Artifact Type | Key Metric | Result | Implication for Data Integrity |

|---|---|---|---|---|

| OPM-MEG with Channel Attention Mechanism [28] | Ocular & Cardiac | Artifact Recognition Accuracy | 98.52% | Prevents misinterpretation of artifacts as neural events, crucial for ERF studies. |

| Macro-average Score | 98.15% | |||

| Deep Learning (AnEEG) on EEG [4] | Mixed Biological | Correlation Coefficient (CC) | Higher CC vs. baselines | Ensures cleaned signal maintains a strong linear relationship with ground-truth neural data. |

| Signal-to-Noise Ratio (SNR) | Improved SNR & SAR | Enhances the detectability of true neural signals against background noise. | ||

| ADC Histogram in Spinal MRI [29] | MRI Artifacts | Predictive AUC (Clinical Model) | 0.704 | Shows baseline performance without advanced artifact correction. |

| Predictive AUC (ADC Histogram Model) | 0.871 | Demonstrates significantly improved prognostic accuracy for tumor recurrence when using corrected data. |

Beyond these metrics, a systematic review of wearable EEG revealed that most processing pipelines integrate detection and removal but rarely separate their individual impact on performance, making it difficult to benchmark progress in the field [27]. This highlights a critical methodological gap. Furthermore, the selection of an optimal artifact removal strategy is highly dependent on the stimulation type or artifact source. For example, in Transcranial Electrical Stimulation (tES), a convolutional network (Complex CNN) performed best for tDCS artifacts, while a multi-modular network based on State Space Models (SSMs) was superior for the more complex tACS and tRNS artifacts [6]. A one-size-fits-all approach is insufficient for preserving data integrity across diverse experimental and clinical paradigms.

Experimental Protocols for Artifact Management

To ensure data integrity, researchers must employ rigorous and validated experimental protocols. The following sections detail methodologies from recent, high-impact studies.

Protocol: Deep Learning for tES Artifact Removal

This protocol is adapted from a benchmark study comparing machine learning methods for removing transcranial electrical stimulation artifacts from EEG data [6].

- 1. Data Preparation and Synthesis: Create a semi-synthetic dataset by combining clean, artifact-free EEG recordings with synthetically generated tES artifacts (tDCS, tACS, tRNS). This provides a known ground truth for controlled model evaluation.

- 2. Model Selection and Training: Train a suite of eleven artifact removal models. This should include:

- Shallow Methods: Standard filters and blind source separation (e.g., ICA).

- Deep Neural Networks: Including Complex CNN.

- State Space Models (SSMs): Such as the multi-modular M4 network.

- 3. Model Evaluation:

- Quantitative Analysis: Calculate the Root Relative Mean Squared Error (RRMSE) in both temporal and spectral domains and the Correlation Coefficient (CC) between the cleaned signal and the ground-truth clean EEG.

- Model Selection: Determine the best-performing model for each specific tES modality (e.g., Complex CNN for tDCS, M4 SSM for tACS/tRNS).

- 4. Application and Validation: Apply the selected model to real, contaminated EEG data and validate the physiological plausibility of the resulting "cleaned" neural signals.

Protocol: Automatic OPM-MEG Artifact Removal with Magnetic Reference

This protocol uses a magnetic reference signal and a channel attention mechanism to automatically identify and remove physiological artifacts in OPM-MEG data [28].

- 1. Reference Signal Acquisition: Utilize magnetic reference sensors, placed near the head but away from primary neural sources, to capture clean templates of artifact signals (e.g., eye blinks, cardiac activity).

- 2. Component Correlation Analysis: Perform blind source separation (e.g., ICA) on the main OPM-MEG data to obtain independent components (ICs). Calculate the Randomized Dependence Coefficient (RDC) between each IC and the magnetic reference signals to reliably identify artifact-laden components.

- 3. Attention Model Training:

- Dataset Construction: Use the RDC results to create a labeled dataset of artifact and neural components.

- Network Training: Train a channel attention network that fuses features from global average pooling (GAP) and global max pooling (GMP) layers. This model learns to automatically recognize artifact components based on their correlation with the reference signal.

- 4. Artifact Removal and Reconstruction: Remove the components classified as artifacts by the model and reconstruct the OPM-MEG signal. Quantify improvements in the Event-Related Field (ERF) response and Signal-to-Noise Ratio (SNR).

Diagram 1: OPM-MEG artifact removal workflow.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful artifact management relies on a combination of computational tools, curated data, and hardware. The following table catalogues key resources for developing and validating artifact removal pipelines.

Table 2: Key Reagents and Resources for Neural Artifact Research

| Item Name | Type | Function/Benefit | Example Use Case |

|---|---|---|---|

| Semi-Synthetic Datasets | Data | Provides a ground truth for controlled algorithm evaluation by mixing clean EEG with known artifacts. | Benchmarking denoising models [6] [4]. |

| Public EEG Datasets (e.g., EEG Eye Artefact, PhysioNet) | Data | Enables method validation and reproducibility on standardized, annotated data. | Training deep learning models like GANs [4]. |

| Magnetic Reference Sensors | Hardware | Provides a clean recording of artifact sources (eye, heart) independent of neural signals. | OPM-MEG artifact removal [28]. |

| Independent Component Analysis (ICA) | Algorithm | Separates mixed signals into statistically independent components for artifact identification. | Isulating ocular and muscular artifacts in EEG [27]. |

| Generative Adversarial Network (GAN) | Computational Model | Generates artifact-free signals through an adversarial process between generator and discriminator. | EEG denoising with temporal fidelity [4]. |

| State Space Models (SSMs) | Computational Model | Excels at modeling complex, time-dependent dynamics like tACS and tRNS artifacts. | Removing transcranial stimulation artifacts [6]. |

| Channel Attention Mechanism | Computational Model | Allows a model to focus on the most relevant sensor channels for detecting specific artifacts. | Automatic artifact recognition in MEG [28]. |

Regulatory and Ethical Dimensions

The technical challenge of ensuring data integrity is increasingly intertwined with a rapidly evolving regulatory and ethical landscape. Neural data is uniquely sensitive, potentially revealing thoughts, emotions, and decision-making patterns, and is thus being classified as a special category of data requiring heightened protection [30] [31].

Neurorights and Cognitive Liberty: There is a growing global movement to establish "neurorights," focusing on mental privacy, identity, and cognitive liberty. Chile has already amended its constitution to protect mental integrity, and Spain's Charter of Digital Rights explicitly addresses neurotechnology [32]. The core ethical argument is that if a person's thoughts are to be considered inviolable, then the data that can reveal those thoughts deserves exceptional protection.

Emerging Legislation and Guidelines: In the United States, the proposed MIND Act would direct the FTC to study neural data processing and identify regulatory gaps [30]. Simultaneously, states like California, Colorado, and Montana have amended their privacy laws to include neural data within the definition of "sensitive data," triggering stricter consent and processing requirements [30] [32]. Internationally, the Council of Europe is developing detailed guidelines for data protection in neuroscience, interpreting principles like purpose limitation, data minimization, and fairness in the context of neural data [31].

The ethical imperative is clear: the failure to adequately resolve artifacts does not merely produce scientifically invalid results; it risks generating misleading neural profiles that could lead to misdiagnosis, discriminatory profiling, or unwarranted neuromarketing. Implementing state-of-the-art artifact removal is thus not just a scientific best practice but a fundamental component of ethical and compliant research in the age of neurotechnology.

Diagram 2: From technical problem to regulatory response.

The integrity of neural data is the bedrock upon which valid neuroscience research and safe clinical neurotechnology are built. The pervasive issue of frequency overlap between artifacts and neural signals poses a continuous threat to this integrity, capable of skewing everything from basic brain dynamics research to the development of neurology drugs. While modern approaches, particularly deep learning and model-based frameworks, show significant promise in mitigating these artifacts, their success is contingent upon a rigorous, context-aware application. Furthermore, as regulatory bodies worldwide begin to recognize the profound sensitivity of neural data, the technical capability to robustly clean this data will become inseparable from the ethical and legal obligations of researchers and clinicians. Therefore, a continued focus on advancing and standardizing artifact management protocols is not merely a technical pursuit but a prerequisite for trustworthy and responsible progress in the neural sciences.

From Blind Source Separation to AI: Modern Methodologies for Artifact Management

The analysis of neural signals, such as those obtained via electroencephalography (EEG), is fundamentally complicated by the presence of artifacts—unwanted signals originating from both internal (e.g., eye blinks, muscle activity) and external (e.g., electrical interference) sources. A significant challenge in this domain is the frequency overlap between genuine neural signals and these artifacts, making their separation a non-trivial task. Techniques for Blind Source Separation (BSS), which aim to recover underlying source signals from observed mixtures without prior knowledge of the mixing process, are therefore indispensable. This technical guide delves into the theory and practice of the two most prominent BSS techniques—Principal Component Analysis (PCA) and Independent Component Analysis (ICA)—framed within the critical context of neuroscientific research where distinguishing neural activity from artifacts is paramount.

Theoretical Foundations

The Problem of Blind Source Separation

The core objective of BSS is to estimate a set of source signals ( S ) from observed mixed signals ( X ), based on the linear model: [ X = A S ] where ( A ) is an unknown mixing matrix. The goal is to find a separation matrix ( W ) such that: [ Y = W X ] provides a close estimate of the original source signals ( S ) [33] [34]. The "blind" aspect signifies that this is done with little to no knowledge of the sources ( S ) or the mixing process ( A ).

Principal Component Analysis (PCA)

PCA is a statistical technique primarily used for dimensionality reduction and decorrelation. It transforms the original correlated variables into a new set of uncorrelated variables called principal components (PCs). These components are ordered such that the first few retain most of the variation present in the original dataset [35].

- Objective: To find orthogonal directions of maximum variance in the data.

- Assumption: The underlying sources are uncorrelated.

- Limitation for BSS: While PCA can decorrelate signals, statistical independence is a stronger condition than uncorrelation. PCA relies on second-order statistics (variance) and may fail to separate sources with identical frequency content or non-Gaussian distributions, which are common in biological signals [35] [34].

Independent Component Analysis (ICA)

ICA is a more powerful BSS technique designed to recover statistically independent, non-Gaussian source signals from their linear mixtures.

- Objective: To find components that are as statistically independent as possible by maximizing non-Gaussianity or minimizing mutual information [34].

- Assumptions:

- Measures of Independence: ICA employs higher-order statistics, using measures such as:

- Kurtosis: A measure of the "peakedness" or "tailedness" of a distribution.

- Negentropy: A measure of non-Gaussianity based on differential entropy.

- Mutual Information: A measure of the mutual dependence between variables, which ICA seeks to minimize [34].

Table 1: Core Conceptual Differences between PCA and ICA.

| Feature | Principal Component Analysis (PCA) | Independent Component Analysis (ICA) |

|---|---|---|

| Primary Goal | Dimensionality reduction, variance maximization | Source separation, independence maximization |

| Statistical Basis | Second-order statistics (uncorrelation) | Higher-order statistics (independence) |

| Component Orthogonality | Yes, components are orthogonal | No, components are statistically independent but not necessarily orthogonal |

| Suitability for BSS | Limited, as it only decorrelates signals | High, specifically designed for separating independent sources |

| Typical Input | Correlated variables | Mixed signals from independent sources |

A Comparative Analysis: ICA vs. PCA

The "cocktail party problem" serves as a classic illustration of the difference in performance between PCA and ICA. In this scenario, the goal is to separate individual voices (independent sources) from a mixture of conversations recorded by multiple microphones.

- PCA Performance: PCA would identify the principal components that account for the most variance in the recorded mixtures. However, these components often remain mixtures of the original voices and fail to isolate individual speakers satisfactorily because it only ensures the outputs are uncorrelated, not independent [35].

- ICA Performance: ICA, by leveraging the statistical independence and non-Gaussianity of the speech signals, is typically able to separate the individual voices from the mixed recordings with high fidelity. This makes it the preferred methodology for BSS tasks where the sources are independent [35].

Table 2: Comparative Summary of PCA and ICA in Practical Applications.

| Aspect | PCA | ICA |

|---|---|---|

| Separation Capability | Poor for mixed independent sources | Effective for independent sources |

| Processing Speed | Generally faster | Can be computationally intensive |

| Output Interpretation | Components ordered by variance; may not correspond to physiologically distinct sources | Components are fundamental sources; can correspond to neural or artifactual signals |

| Key Advantage | Efficient dimensionality reduction and noise reduction if noise contributes little variance | Accurate separation of independent sources, even with overlapping frequencies |

| Main Disadvantage for BSS | Inability to separate sources based on independence | Relies on the independence and non-Gaussianity of sources; sensitive to algorithm choice |

Critical Application in Neural Signal Processing

The Challenge of Frequency Overlap

A central issue in EEG and other neurophysiological signal analysis is that artifacts (e.g., from eye movements, muscle activity, or cardiac signals) often share spectral properties with neural signals of interest. For instance, the frequency band of a fetal ECG (fECG) can overlap with that of the maternal ECG (mECG) and other bioelectrical potentials [36]. Similarly, TMS-induced artifacts can mask early TMS-evoked potentials (TEPs) [37]. Traditional filtering based on frequency is ineffective in these scenarios, necessitating the use of BSS techniques that leverage other statistical properties.

ICA for Artifact Removal in EEG and TMS-Evoked Potentials

ICA has become a cornerstone in EEG preprocessing pipelines for artifact removal. The process typically involves:

- Applying ICA to the multi-channel EEG data to decompose it into independent components (ICs).

- Identifying which ICs correspond to artifacts (e.g., via visual inspection of topographical maps and time courses, or using automated classifiers).

- Removing the artifact-related ICs.

- Reconstructing the "cleaned" EEG signal from the remaining neural components [37] [34].

However, a critical limitation of ICA has been identified in the context of TMS-evoked potentials (TEPs). Research shows that when an artifact repeats with very low trial-to-trial variability, it can create dependencies between underlying components. In such cases, ICA may become unreliable and incorrectly remove not only the artifact but also non-artifactual, brain-derived EEG data, leading to biased results. The study suggests measuring artifact variability from the ICA components themselves to predict cleaning accuracy, providing a crucial practical check for researchers [37].

The standard ICA model assumes source independence. In practical applications, however, source signals may exhibit some degree of dependence. To address this, Bounded Component Analysis (BCA) has been developed as an alternative BSS method. BCA replaces the strict independence assumption of ICA with the more relaxed assumptions that the source signals are bounded and have a Cartesian separable support. This allows BCA to separate both independent and dependent source signals, broadening its applicability. Recent research has focused on optimizing BCA algorithms, such as using an improved Beetle Antennae Search (BAS) algorithm, to enhance convergence speed and separation accuracy [33].

Experimental Protocols and Methodologies

Protocol 1: ICA for Fetal ECG (fECG) Extraction

Objective: To isolate the fetal ECG (fECG) from abdominal recordings of a pregnant woman, which contain a mixture of maternal ECG (mECG), fECG, and other noise [36].

- Data Acquisition: Place multiple electrodes on the maternal abdomen and thorax. The thorax signal is primarily mECG, while the abdominal signal is a mixture of mECG, fECG, and other bioelectrical potentials.

- Preprocessing: Center the data (subtract the mean) and whiten it (remove correlations and scale variances to unity). This simplifies the subsequent ICA problem [36] [34].

- ICA Application: Apply an ICA algorithm (e.g., FastICA, Infomax) to the preprocessed multi-channel abdominal signals. The algorithm will estimate a separation matrix ( W ) and output independent components.

- Component Identification: Identify the components corresponding to the fECG and mECG. This can be done by comparing the components to the thoracic mECG reference or based on their temporal and spectral characteristics.

- Signal Reconstruction: Select the component(s) identified as the fECG. These can then be used to calculate the fetal heart rate (fBPM) and for further clinical analysis.

- Implementation: This method has been successfully implemented in real-time on embedded systems like the Arduino DUE for continuous monitoring [36].

Protocol 2: Assessing ICA Accuracy for TMS-EEG Artifact Removal

Objective: To systematically evaluate the accuracy of ICA in removing TMS-induced artifacts from TMS-evoked potentials (TEPs) and to determine conditions under which ICA becomes unreliable [37].

- Simulation Setup: Use measured, artifact-free TEPs as a clean "ground truth" neural signal. Simulate TMS artifacts with controlled properties and superimpose them on the clean TEPs.

- Variability Manipulation: Systematically vary the trial-to-trial variability of the simulated artifact waveform, creating a range from deterministic (low variability) to stochastic (high variability) artifacts.

- ICA Processing: For each level of artifact variability, perform ICA-based cleaning on the simulated dataset.

- Accuracy Measurement: Compare the ICA-cleaned output to the original artifact-free TEPs. Measure the cleaning accuracy (e.g., using mean squared error or correlation) to determine how artifact variability affects performance.

- Variability Estimation: Develop a method to estimate the artifact variability directly from the ICA-derived components, without knowing the ground truth. Correlate this estimated variability with the measured cleaning accuracy.

- Outcome: The experiment demonstrates that low artifact variability leads to unreliable ICA cleaning and biased TEPs. It also shows that the accuracy of ICA can be predicted by measuring variability from the components, providing a practical guideline for researchers [37].

Table 3: Key Software and Analytical Tools for BSS Research.

| Tool / Resource | Function / Description | Relevance to BSS |

|---|---|---|

| EEGLAB | An open-source MATLAB toolbox for EEG analysis. | Implements ICA algorithms (e.g., Infomax) and provides visualization tools (e.g., topoplots) for component inspection and rejection [34]. |

| FastICA Algorithm | A computationally efficient algorithm for ICA. | Widely used for estimating independent components by maximizing non-Gaussianity via negentropy [34]. |

| JADE Algorithm | Joint Approximate Diagonalization of Eigenmatrices. | An ICA algorithm that uses fourth-order cumulants to separate sources, known for its speed and deterministic output [34]. |

| Embedded Systems (e.g., Arduino DUE) | Microcontroller boards for real-time processing. | Enable real-time implementation of BSS algorithms for point-of-care applications, such as continuous fetal monitoring [36]. |

| Simulated Data | Computer-generated signals with known ground truth. | Crucial for validating and benchmarking the performance of BSS algorithms under controlled conditions, as seen in TMS-artifact studies [37]. |

Visualizing Workflows

Generalized BSS Model for Neural Data

ICA-Based EEG Artifact Removal Process

The separation of neural signals from artifacts, particularly in the presence of frequency overlap, relies heavily on sophisticated BSS techniques. While PCA serves as an excellent tool for initial dimensionality reduction and noise suppression, its inability to enforce statistical independence limits its utility for core BSS problems. ICA has emerged as a powerful and widely adopted method for this purpose, successfully isolating artifacts and neural sources in applications ranging from fetal ECG extraction to EEG cleaning. However, practitioners must be aware of its limitations, such as its reliance on source independence and its potential inaccuracy when dealing with highly stable, repetitive artifacts, as evidenced in TMS-EEG research. The field continues to evolve with methods like Bounded Component Analysis (BCA) offering promising avenues for separating even dependent sources. Ultimately, the informed application of these techniques, coupled with robust experimental protocols and validation, is essential for advancing the accuracy and reliability of neural signal analysis in both research and clinical diagnostics.

A central challenge in neural signal processing, particularly within electroencephalography (EEG) research, is the frequency overlap between neurophysiologically relevant brain activity and various biological artifacts. This overlap significantly complicates the isolation and analysis of neural correlates for scientific and clinical applications, including drug development and therapeutic monitoring. Ocular artifacts, such as blinks, typically manifest in the low-frequency range (below 4 Hz), while muscle artifacts exhibit high-frequency characteristics (above 13 Hz), both of which critically overlap with the standard EEG frequency band of 1–100 Hz [4]. Traditional filtering and signal processing techniques struggle to separate these overlapping sources without discarding valuable neural information. This technical guide explores how hybrid CNN-LSTM architectures provide a sophisticated deep-learning framework for targeted artifact cleaning, thereby enhancing the fidelity of neural data analysis.

Core Architecture: Principles of CNN-LSTM Hybrid Models

The CNN-LSTM hybrid model is a powerful synergy of two deep learning paradigms, designed to exploit the complementary strengths of each for processing complex, time-series data like neural signals.

Architectural Components and Data Flow

Convolutional Neural Network (CNN) Component: The CNN layers act as the front-end of the hybrid model. They are responsible for performing spatial feature extraction by applying learnable filters that convolve across the input data. In the context of multi-channel EEG, these layers can identify local patterns and correlations across electrode spaces. Their inherent properties of parameter sharing and translational invariance make them highly efficient for detecting salient features irrespective of their specific temporal location [38] [39].

Long Short-Term Memory (LSTM) Component: The features extracted by the CNN are then fed into LSTM layers. LSTMs are a type of recurrent neural network (RNN) specifically designed to overcome the vanishing gradient problem. They incorporate a gating mechanism (input, forget, and output gates) that regulates the flow of information, allowing the network to learn and remember long-range temporal dependencies in sequential data [38]. This is crucial for understanding the dynamic evolution of neural states and distinguishing between sustained brain activity and transient artifacts.

The integrated data flow allows the hybrid model to simultaneously learn from both the spatial hierarchy of features and their temporal evolution, creating a rich representation of the input signals that is far more capable of disentangling overlapping sources than models using either component alone [38].

Quantitative Performance Advantages

Empirical studies across multiple domains demonstrate the superior performance of hybrid CNN-LSTM models compared to standalone architectures.

Table 1: Performance Comparison of Deep Learning Models in Signal Processing Tasks

| Model Architecture | Application Domain | Key Performance Metric | Reported Result |

|---|---|---|---|

| Hybrid CNN-LSTM | IoT Intrusion Detection [38] | Accuracy | 99.87% |

| False Positive Rate | 0.13% | ||

| Standard LSTM | IoT Intrusion Detection [38] | Accuracy | Lower than Hybrid Model |

| GRU | Random Sea Wave Forecasting [40] | R² Score (short-term) | 0.74 |

| LSTM | Random Sea Wave Forecasting [40] | R² Score (short-term) | 0.70 |

| CNN-Only | Road Surface Classification [39] | Macro F1-Score | Lower than CNN-LSTM |

Experimental Protocols for Neural Signal Cleaning

Implementing a CNN-LSTM model for targeted artifact cleaning requires a methodical pipeline from data preparation to model evaluation. The following protocol is synthesized from successful applications in EEG denoising [4] and related time-series processing tasks.

Data Preparation and Preprocessing

Data Sourcing and Simulation: For controlled experimentation, a common approach is to create a semi-synthetic dataset by linearly mixing clean EEG segments with recorded artifact signals (e.g., EOG for ocular artifacts, EMG for muscle artifacts) [4]. This provides a known ground truth for validation. For real data, use publicly available datasets like the EEG Eye Artefact Dataset or the Grasp and Lift (GAL) dataset, which are annotated with artifact types [4].

Signal Preprocessing: