Setting the Standard: A Comprehensive Guide to Benchmarking Computational Neuroscience Models

This article provides a rigorous framework for benchmarking computational neuroscience models, addressing a critical need for standardization in the field.

Setting the Standard: A Comprehensive Guide to Benchmarking Computational Neuroscience Models

Abstract

This article provides a rigorous framework for benchmarking computational neuroscience models, addressing a critical need for standardization in the field. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of model validation, from canonical neuron models to large-scale network simulations. It details methodological approaches for creating and applying benchmarks, including the use of synthetic and experimental data. The guide further offers practical strategies for troubleshooting and optimizing model performance, and finally, establishes robust protocols for the comparative validation of models against empirical data and other models. By synthesizing insights from recent literature and community initiatives, this article serves as a vital resource for advancing reproducible and clinically relevant computational neuroscience.

The Why and What: Core Principles and Canonical Models in Computational Neuroscience

In computational neuroscience, the journey from simulating a single ion channel to modeling an entire brain represents a spectrum of immense methodological complexity. Benchmarking serves as the essential practice that grounds this endeavor, enabling researchers to validate model correctness, assess computational efficiency, and facilitate meaningful comparisons across diverse simulation technologies. As the field progresses toward more integrated multi-scale models, establishing robust and standardized benchmarking practices becomes paramount for accelerating scientific discovery and ensuring the reliability of computational findings. This guide provides a comprehensive framework for defining benchmarking scope, offering practical methodologies and tools tailored for researchers and drug development professionals operating across biological scales.

Foundational Concepts and Definitions

Benchmarking in computational neuroscience systematically evaluates models and simulation technologies against standardized criteria, metrics, and datasets. This process transcends simple performance measurement; it provides the fundamental infrastructure for validating biological plausibility, quantifying computational efficiency, and ensuring reproducible results across different research environments. For drug development applications, rigorous benchmarking directly impacts risk assessment by providing data-driven estimates of a model's predictive power for clinical outcomes [1].

The scope of a benchmarking initiative must explicitly define the biological scale, specific research questions, and evaluation criteria. Clear scoping prevents mission creep—the tendency for a project's objectives to expand uncontrollably—by maintaining focus on distinguishing features of the phenomena and intuition about which factors require inclusion in an explanation [2]. Properly delineated scope acts as a natural Occam's razor, ensuring models address core knowledge gaps while minimizing unnecessary complexity.

Benchmarking Across Biological Scales

Computational neuroscience encompasses multiple biological scales, each requiring specialized benchmarking approaches. The following table summarizes key characteristics, challenges, and primary benchmarking metrics relevant to each scale.

Table 1: Benchmarking Considerations Across Biological Scales in Computational Neuroscience

| Biological Scale | Core Modeling Focus | Primary Benchmarking Metrics | Unique Challenges |

|---|---|---|---|

| Ion Channels [3] | Permeation, selectivity, and gating mechanisms | Conductance rates, selectivity ratios, gating kinetics, energy profiles | Reconciling high selectivity with high permeability; simulating rare events |

| Single Neurons | Electrical activity and signal integration | Spike timing accuracy, input resistance, firing patterns, computational cost | Balancing biophysical detail with simulation speed |

| Microcircuits & Networks [4] | Emergent dynamics from neuronal populations | Firing rate distributions, oscillation patterns, synchronization measures, scaling efficiency | Managing combinatorial complexity; interpreting population-level dynamics |

| Whole-Brain Systems [5] [6] | Large-scale functional connectivity and dynamics | Structure-function coupling, individual fingerprinting, brain-behavior prediction | Data integration across modalities; massive computational resources required |

Cross-Scale Integration and Validation

A critical challenge in multi-scale benchmarking involves validating how phenomena emerging at one level (e.g., network oscillations) relate to mechanisms at lower levels (e.g., channel kinetics). Effective benchmarking protocols must establish quantitative bridges between scales, ensuring that simplifications at lower levels do not invalidate emergent properties at higher levels. This often requires designing specific experiments that probe the sensitivity of macro-scale outputs to micro-scale parameters, creating a cohesive framework for integrated model validation across biological hierarchies.

Quantitative Benchmarking Data and Metrics

Establishing comprehensive quantitative profiles is fundamental to effective benchmarking. The table below synthesizes key benchmarking data from recent studies, providing a reference for expected performance across different metrics and methodologies.

Table 2: Quantitative Benchmarking Data for Neuroscience Models

| Benchmark Category | Specific Metric | Reported Values / Range | Context & Implications |

|---|---|---|---|

| Functional Connectivity (FC) Mapping [5] | Structure-Function Coupling (R²) | 0 to 0.25 | Precision-based statistics showed strongest correspondence with structural connectivity |

| FC Mapping [5] | Weight-Distance Correlation (∣r∣) | < 0.1 to > 0.3 | Fundamental network property varies significantly with FC estimation method |

| Ion Channel Properties [3] | K⁺ Conductance (KcsA) | 10⁷ to 10⁸ ions/second | Approaches theoretical diffusion limit; creates selectivity-permeability paradox |

| Ion Channel Selectivity [3] | K⁺/Na⁺ Selectivity (KcsA) | ~150:1 | Challenges simple size-exclusion models; highlights role of dehydration energy |

| Drug Development [1] | Probability of Success (POS) | Varies by phase/therapeutic area | Traditional benchmarking often overestimates POS; dynamic benchmarks improve accuracy |

Interpreting Quantitative Benchmarks

When applying these quantitative benchmarks, researchers must consider contextual factors that significantly influence results. For example, functional connectivity benchmarks depend heavily on the specific pairwise statistic used (e.g., covariance versus precision), with different methods revealing distinct aspects of network organization [5]. Similarly, ion channel conductance measurements are sensitive to simulation parameters such as membrane potential, ion concentrations, and specific force fields used in molecular dynamics simulations. Effective benchmarking requires transparent reporting of these contextual parameters to enable meaningful cross-study comparisons and scientific replication.

Experimental Protocols and Methodologies

Protocol for Benchmarking Functional Connectivity Methods

Based on large-scale comparisons of 239 pairwise statistics, the following protocol provides a standardized approach for evaluating functional connectivity methods [5]:

- Data Preparation: Utilize resting-state fMRI data from established public repositories (e.g., Human Connectome Project). Preprocess data using standardized pipelines for motion correction, normalization, and denoising. Parcellate brains using consistent atlases (e.g., Schaefer 100 × 7).

- FC Matrix Calculation: Apply multiple pairwise interaction statistics across different families (covariance, precision, information-theoretic, spectral, distance, linear model fits). The

pyspiPython package provides a standardized implementation of these measures. - Topological Analysis: Calculate probability density of edge weights and weighted degree (strength) for each brain region across all FC matrices. Identify network hubs and compare spatial distributions across methods.

- Geometric and Structural Validation: Quantify the relationship between functional connectivity and Euclidean distance between regions. Evaluate structure-function coupling by correlating FC matrices with diffusion MRI-based structural connectivity.

- Biological Alignment Assessment: Compute correlation between FC matrices and multimodal neurophysiological data, including gene expression, laminar similarity, neurotransmitter receptor similarity, electrophysiological connectivity, and metabolic connectivity.

- Individual Differences Quantification: Assess fingerprinting capability by measuring how well FC matrices can identify individuals across scanning sessions. Evaluate brain-behavior prediction performance using cross-validated models.

Protocol for Benchmarking Spiking Neural Network Simulators

For large-scale network simulations, the following modular workflow ensures comprehensive benchmarking [4]:

- Benchmark Specification: Define the benchmarking goal (e.g., weak scaling, strong scaling, energy efficiency). Select appropriate network models with different complexity levels (e.g., balanced random networks, multi-area models with natural density).

- Hardware Configuration: Document complete hardware specifications including compute nodes, processors, memory, interconnect, and GPU accelerators if applicable. For energy measurements, specify whether consumption includes only compute nodes or entire support infrastructure.

- Software Configuration: Record simulator versions, compiler versions, math libraries, and all relevant configuration options. Maintain consistent software environments across benchmarking runs.

- Model Implementation: Implement standardized network models (e.g., Brunel-type balanced networks with leaky integrate-and-fire neurons). For weak scaling, increase network size proportionally with computational resources. For strong scaling, maintain fixed network size while increasing resources.

- Execution and Monitoring: Execute simulations with detailed timing measurements separated into setup phase and simulation phase. Monitor memory usage, power consumption, and network activity dynamics throughout execution.

- Data Collection and Analysis: Record benchmarking data and metadata in standardized formats. Calculate key metrics including time-to-solution, energy-to-solution, memory consumption, and scaling efficiency. Compare activity statistics (firing rates, distributions) across simulators.

A Generalized 10-Step Modeling Process

For developing new models across biological scales, the following systematic process ensures rigorous benchmarking [2]:

- Frame the Question: Identify a specific phenomenon and formulate a precise question. Define evaluation criteria and stopping conditions.

- Define the Core assumptions: Make simplifying assumptions explicit and determine the model's intended scope.

- Formalize the Model: Translate concepts into mathematical frameworks and computational structures.

- Implement the Model: Develop code using appropriate programming languages and simulation technologies.

- Check the Implementation: Verify code correctness through unit tests and sanity checks.

- Test the Model: Assess basic operation and qualitative behavior against expected outcomes.

- Perform Preliminary Fitting: Compare initial model outputs with target data using simple parameter adjustments.

- Formally Fit the Model: Employ rigorous estimation procedures to optimize model parameters.

- Validate the Model: Evaluate performance on held-out data not used during fitting.

- Interpret the Model: Draw scientific conclusions based on model behavior and performance.

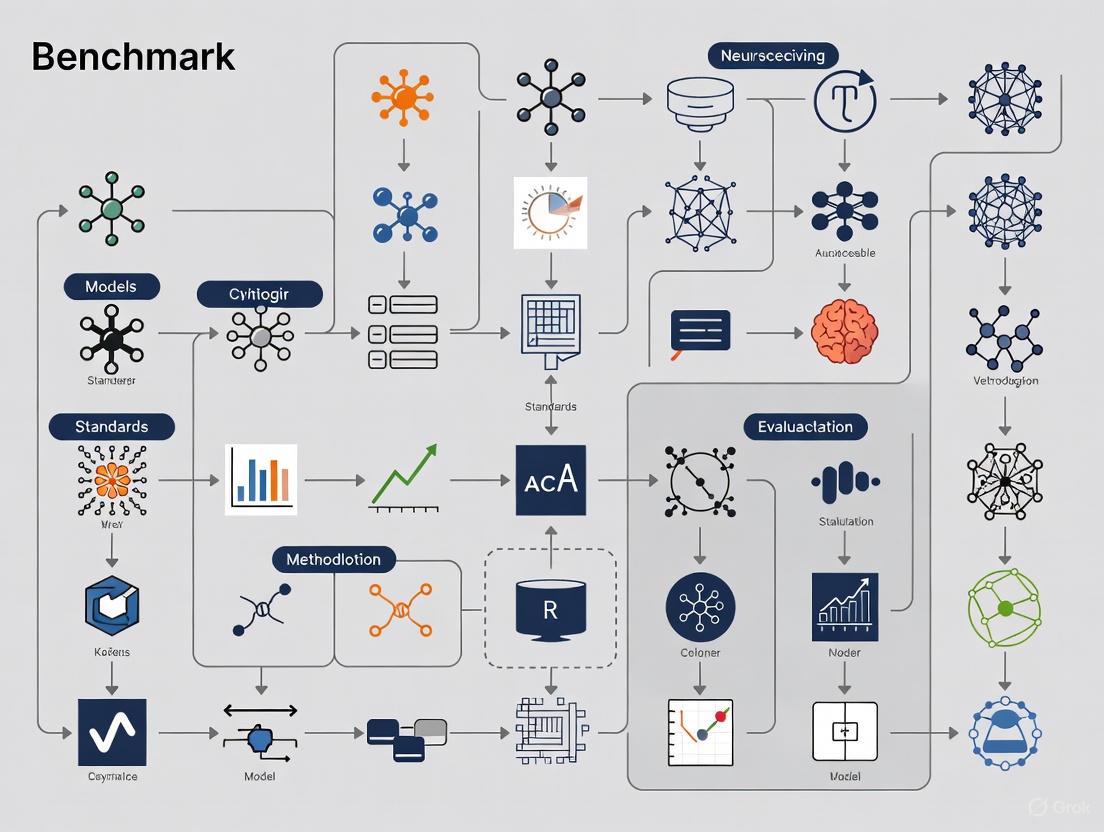

The diagram below illustrates this iterative modeling and benchmarking workflow:

Visualization and Workflow Diagrams

Functional Connectivity Benchmarking Workflow

The following diagram outlines the key stages in benchmarking functional connectivity methods, from data preparation to quantitative evaluation:

Ion Channel Simulation and Analysis Pipeline

For ion channel research, computational studies typically follow this pathway to characterize key biophysical properties:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful benchmarking requires both computational tools and conceptual frameworks. The following table details essential components for designing and executing rigorous benchmarking studies.

Table 3: Essential Research Reagents and Tools for Computational Neuroscience Benchmarking

| Tool Category | Specific Tool/Resource | Function/Purpose | Example Applications |

|---|---|---|---|

| Simulation Engines [4] | NEST, Brian, NEURON, Arbor, GeNN | Simulate spiking neuronal networks at different scales | Large-scale network models, detailed cellular simulations |

| FC Analysis Packages [5] | pyspi (Python) |

Implements 239 pairwise interaction statistics | Benchmarking functional connectivity methods |

| Benchmarking Frameworks [4] | beNNch |

Configures, executes, and analyzes simulation benchmarks | Standardized performance comparisons across HPC systems |

| Data Resources [5] [6] | Human Connectome Project, ZAPBench Dataset | Provides standardized neuroimaging data for benchmarking | Testing FC methods, whole-brain activity prediction |

| Modeling Guidance [2] | 10-Step Modeling Process | Provides systematic framework for model development | Structuring modeling projects across biological scales |

| Visualization Tools [7] [8] | Sigma, ColorBrewer, specialized neuro tools | Creates clear, honest data visualizations | Presenting benchmarking results, uncertainty visualization |

Defining the scope for benchmarking computational neuroscience models requires careful consideration of biological scale, research questions, and appropriate evaluation metrics. By adopting the standardized protocols, quantitative benchmarks, and systematic workflows outlined in this guide, researchers can establish rigorous benchmarking practices that enhance model reliability, facilitate cross-study comparisons, and accelerate scientific discovery. The ongoing development of specialized benchmarking tools and shared datasets will further strengthen these efforts, ultimately contributing to more robust, reproducible, and biologically-grounded computational models across all scales of neuroscience inquiry.

Computational neuroscience builds quantitative models of neural systems across scales, from single ion channels to entire networks and behavior [9]. Canonical models provide a shared vocabulary for researchers, enabling effective communication, collaboration, and benchmarking across the discipline [9]. These families of models capture fundamental neural phenomena including excitability, rhythms, and circuit-level dynamics, forming the foundation for both theoretical exploration and experimental validation.

This technical guide examines three foundational canonical models that operate at different biological scales: the Hodgkin-Huxley model (single neuron biophysics), the Izhikevich model (single neuron phenomenology), and the Wilson-Cowan model (population dynamics). For each model, we provide the mathematical formalisms, experimental protocols for validation, and their specific roles in establishing standards for computational neuroscience research.

The Hodgkin-Huxley Model: Cellular-Level Biophysical Foundation

Model Definition and Mathematical Formalisms

The Hodgkin-Huxley (HH) model, developed in 1952, represents the biophysical foundation of neuroscience and describes how action potentials in neurons are initiated and propagated [10] [11]. It approximates the electrical characteristics of excitable cells through nonlinear differential equations that model the neuron as an electrical circuit [12] [13].

The lipid bilayer is represented as a capacitance ((Cm)), voltage-gated ion channels as electrical conductances ((gn)), leak channels as linear conductances ((gL)), and electrochemical gradients as voltage sources ((En)) [10]. The model describes three types of ion currents: sodium (Na⁺), potassium (K⁺), and a leak current that consists mainly of Cl⁻ ions [12].

The core equation describes the current balance across the membrane:

[I(t) = Cm\frac{dVm}{dt} + \bar{g}\text{K}n^4(Vm - VK) + \bar{g}\text{Na}m^3h(Vm - V{Na}) + \bar{g}l(Vm - V_l)]

where (I(t)) is the total membrane current per unit area, (Vm) is the membrane potential, (Cm) is the membrane capacitance per unit area, (\bar{g}i) are the maximum conductances, and (Vi) are the reversal potentials for each ion species [10].

The gating variables (m), (n), and (h) (representing sodium activation, potassium activation, and sodium inactivation respectively) evolve according to:

[\frac{dx}{dt} = \alphax(Vm)(1 - x) - \betax(Vm)x]

where (x) represents (m), (n), or (h), and (\alphax) and (\betax) are voltage-dependent rate functions that describe the transition rates between open and closed states of ion channels [12] [10].

Figure 1: Hodgkin-Huxley Model Conceptual Workflow. The diagram shows the mathematical and experimental relationships between core components in the Hodgkin-Huxley framework, highlighting how voltage clamp data informs gating variables that ultimately generate action potentials.

Experimental Protocol and Parameterization

The original HH model was parameterized using voltage-clamp experiments on the giant axon of the squid [12] [10]. This experimental approach holds the membrane potential at a constant value while measuring ionic currents, allowing researchers to characterize the nonlinear conductance properties of voltage-gated ion channels.

Voltage-Clamp Experimental Protocol:

- Setup Preparation: Insert microelectrodes into squid giant axon (maintained at appropriate physiological temperature)

- Voltage Control: Clamp membrane potential at predetermined values (-100 mV to +100 mV range)

- Current Measurement: Record ionic currents flowing through membrane at each voltage level

- Ionic Separation: Use specific channel blockers (TTX for Na⁺ channels, TEA for K⁺ channels) to isolate individual current components

- Kinetic Analysis: Fit α and β rate functions to current traces at different voltage levels

The standard parameters derived from these experiments are summarized in Table 1 [12].

Table 1: Standard Parameters for the Hodgkin-Huxley Model

| Parameter | Symbol | Value | Units |

|---|---|---|---|

| Sodium Reversal Potential | (E_{Na}) | 55 | mV |

| Potassium Reversal Potential | (E_K) | -77 | mV |

| Leak Reversal Potential | (E_L) | -65 | mV |

| Maximum Sodium Conductance | (\bar{g}_{Na}) | 40 | mS/cm² |

| Maximum Potassium Conductance | (\bar{g}_K) | 35 | mS/cm² |

| Leak Conductance | (\bar{g}_L) | 0.3 | mS/cm² |

| Membrane Capacitance | (C_m) | 1 | μF/cm² |

The voltage-dependent rate functions are typically parameterized as [10]:

[ \alphan(Vm) = \frac{0.01(10 - V)}{\exp((10 - V)/10) - 1} \quad \betan(Vm) = 0.125\exp(-V/80) ] [ \alpham(Vm) = \frac{0.1(25 - V)}{\exp((25 - V)/10) - 1} \quad \betam(Vm) = 4\exp(-V/18) ] [ \alphah(Vm) = 0.07\exp(-V/20) \quad \betah(Vm) = \frac{1}{\exp((30 - V)/10) + 1} ]

where (V = V{rest} - Vm) [10].

Benchmarking Applications

The HH model serves as a gold standard for biophysically detailed single-neuron simulations. It accurately reproduces the shape and propagation velocity of action potentials, refractory periods, and anode-break excitation [12] [11]. Modern implementations can incorporate additional channel types, making it suitable for studying channelopathies and pharmacological interventions relevant to drug development [11].

The Izhikevich Model: Efficient Single-Neuron Phenomenology

Model Definition and Mathematical Formalisms

The Izhikevich model represents a compromise between biophysical realism and computational efficiency, capable of reproducing the firing patterns of various cortical neuron types with minimal computational overhead [9] [14]. The model combines continuous spike-generation mechanisms with a discrete reset condition, capturing the essential dynamics of neural excitability with just two variables.

The model is described by the following equations:

[ \frac{dv}{dt} = 0.04v^2 + 5v + 140 - u + I ] [ \frac{du}{dt} = a(bv - u) ]

with the reset condition:

If (v \geq 30) mV, then (v \leftarrow c) and (u \leftarrow u + d)

where (v) represents the membrane potential, (u) represents a membrane recovery variable, (I) is the input current, and (a), (b), (c), (d) are dimensionless parameters that determine the firing pattern of the neuron [14].

Parameterization for Different Neuron Types

The Izhikevich model can reproduce various firing patterns by adjusting just four parameters ((a), (b), (c), (d)), as summarized in Table 2.

Table 2: Izhikevich Model Parameters for Different Neural Firing Patterns

| Neuron Type | Parameter a | Parameter b | Parameter c | Parameter d |

|---|---|---|---|---|

| Regular Spiking (RS) | 0.02 | 0.2 | -65 | 8 |

| Intrinsically Bursting (IB) | 0.02 | 0.2 | -55 | 4 |

| Chattering (CH) | 0.02 | 0.2 | -50 | 2 |

| Fast Spiking (FS) | 0.1 | 0.2 | -65 | 2 |

| Thalamo-Cortical (TC) | 0.02 | 0.25 | -65 | 0.05 |

| Resonator (RZ) | 0.1 | 0.26 | -65 | 2 |

Benchmarking Applications

The computational efficiency of the Izhikevich model (approximately 13 FLOPs per integration step) makes it particularly suitable for large-scale network simulations involving thousands to millions of neurons [14] [15]. This enables researchers to investigate emergent phenomena in neural networks while maintaining biological plausibility of individual unit dynamics. The model has been extensively used in studies of network synchronization, pattern generation, and memory formation.

The Wilson-Cowan Model: Population-Level Dynamics

Model Definition and Mathematical Formalisms

The Wilson-Cowan model describes the population dynamics of synaptically coupled excitatory and inhibitory neurons in the neocortex [16] [17]. Introduced in 1972-1973, it tracks the mean numbers of activated and quiescent excitatory and inhibitory neurons, providing a mean-field description of large-scale neuronal network activity [16].

The model consists of two coupled differential equations representing excitatory ((E)) and inhibitory ((I)) neuronal populations:

[ \tauE\frac{dE}{dt} = -E + (1 - E)SE(c{EE}E - c{IE}I + P) ] [ \tauI\frac{dI}{dt} = -I + (1 - I)SI(c{EI}E - c{II}I + Q) ]

where (E(t)) and (I(t)) represent the proportion of excitatory and inhibitory cells firing per unit time, (\tauE) and (\tauI) are time constants, (c{ij}) are connection weights between populations, (P) and (Q) represent external inputs, and (SE) and (S_I) are sigmoidal response functions [16] [17].

The sigmoidal functions are typically of the form:

[ S(x) = \frac{1}{1 + \exp(-a(x - \theta))} - \frac{1}{1 + \exp(a\theta)} ]

where (a) determines the steepness and (\theta) the threshold of the sigmoid.

Figure 2: Wilson-Cowan Population Model Structure. The diagram illustrates the connectivity between excitatory and inhibitory populations in the Wilson-Cowan model, showing both recurrent connections and external inputs that drive the system dynamics.

Experimental Validation and Neural Mass Effects

The Wilson-Cowan equations successfully explain several phenomena observed in large-scale neural recordings [16]:

Stimulus Response Patterns:

- Weak stimuli trigger waves propagating at ~0.3 m/s with exponential amplitude decay

- Strong stimuli produce larger responses that remain localized

Pair Correlation Measurements:

- Resting cortex shows slowly decaying pair correlations between neighboring populations

- Stimulated cortex exhibits rapidly decaying pair correlations

Spontaneous Activity:

- Isolated cortical slabs generate spontaneous bursts of propagating activity

- Activity follows power-law avalanche size distributions with exponent ≈ -1.5

Recent extensions incorporate synaptic resources through combined effects of synaptic depression and recovery, following the Tsodyks-Markram scheme [17]:

[ \dot{R}E = \frac{(\xiE - RE)}{\tau{RE}} - \frac{ERE}{\tau{DE}} ]

where (RE) represents the fraction of available excitatory synaptic resources, (\tau{RE}) and (\tau{DE}) are recovery and depletion time constants, and (\xiE) is the baseline resource level [17].

Benchmarking Applications

The Wilson-Cowan model provides a framework for studying population-level phenomena including oscillations, multistability, traveling waves, and self-organized patterns [16] [17]. Its simplicity enables analytical treatment while capturing essential features of large-scale brain activity, making it invaluable for interpreting EEG, fMRI, and LFP recordings.

Integrated Benchmarking Framework

Multi-Scale Model Integration

A unified benchmarking framework for computational neuroscience requires integration across biological scales, from subcellular mechanisms to population dynamics [13]. Recent work has focused on developing models that unify temporal and spatial excitability mechanisms, bridging the gap between HH-type cellular models and Wilson-Cowan-type population models [13].

The memristive modeling approach provides one promising framework, representing each neuron as the parallel connection of a capacitive element with voltage-gated current sources, where the conductances have memory (memductances) [13]. This principle operates effectively at both cellular and population scales.

Standardized Research Toolkit

Table 3: Essential Research Reagents and Computational Tools for Neuroscience Benchmarking

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Voltage-Clamp Apparatus | Measures ionic currents across membrane | Hodgkin-Huxley parameterization |

| Tetrodotoxin (TTX) | Blocks voltage-gated sodium channels | Isolating potassium currents |

| Tetraethylammonium (TEA) | Blocks potassium channels | Isolating sodium currents |

| Microelectrode Arrays | Records extracellular potentials | Wilson-Cowan model validation |

| NEURON Simulation Environment | Simulates biophysically detailed neurons | Hodgkin-Huxley implementation |

| NEST (Neural Simulation Tool) | Simulates large neural networks | Izhikevich model deployment |

| Local Field Potential (LFP) Recording | Measures population activity | Wilson-Cowan model validation |

Validation Metrics and Standards

Rigorous benchmarking requires standardized metrics across spatial and temporal scales:

Single-Neuron Level:

- Action potential shape characteristics (width, amplitude, after-hyperpolarization)

- Firing frequency versus input current (F-I) curves

- Phase response curves

Network Level:

- Power spectral density of population activity

- Pair correlation functions between neural units

- Avalanche size and duration distributions

Computational Performance:

- Simulation time per unit biological time

- Memory requirements for network simulations

- Scalability with network size

Canonical models provide an essential shared vocabulary that enables productive collaboration and cumulative progress in computational neuroscience. The Hodgkin-Huxley model offers biophysical precision at the cellular level, the Izhikevich model balances efficiency and biological plausibility for network simulations, and the Wilson-Cowan model captures population-level dynamics essential for understanding large-scale brain activity.

As computational power continues to grow exponentially (from ~10 TeraFLOPS in the early 2000s to above 1 ExaFLOPS in 2022) [15], the integration of these canonical models across scales becomes increasingly feasible. Future benchmarking standards should focus on cross-validation between models operating at different biological scales, ensuring that insights gained at one level of analysis can inform and constrain models at adjacent levels.

The development of unified frameworks that incorporate both temporal and spatial excitability mechanisms [13], along with standardized validation metrics across biological scales, will accelerate progress toward more accurate, efficient, and biologically grounded models of neural function with significant implications for basic research and therapeutic development.

The field of computational neuroscience is experiencing a data deluge, fueled by rapid developments in experimental methods for acquiring neural data. However, this abundance has revealed a critical bottleneck: the lack of standardized datasets and benchmarks for comparing the proliferation of models that aim to preprocess this data or explain brain function. Without these standards, researchers struggle to answer fundamental questions about model accuracy, performance dependencies on specific datasets, and which model is appropriate for a given neuroscientific question [18]. This benchmarking crisis stands in stark contrast to the field of computer vision, where the establishment of the ImageNet Large Scale Visual Recognition Challenge in 2010 catalyzed explosive progress, catapulting model accuracy from just over 50% to more than 90% within a decade [18]. Many experts trace this rapid growth to two key elements: widespread adoption of a standardized dataset for tracking progress, and a structured framework for conducting this tracking [18]. This whitepaper examines how neuroscience can adapt the lessons from ImageNet's success to address its own benchmarking challenges, with a focus on establishing rigorous standards for evaluating computational neuroscience models.

Deconstructing the ImageNet Success Story

The ImageNet Challenge demonstrated how carefully designed benchmarks can accelerate progress across an entire field. Its success was not accidental but resulted from specific, replicable elements that neuroscience can adapt.

Core Success Factors

- Standardized Datasets: ImageNet provided a massive, curated dataset with clear ground truth labels, enabling direct comparison between different approaches [18].

- Unified Evaluation Framework: The challenge established consistent evaluation metrics and procedures, ensuring results were comparable across research groups [18].

- Competitive yet Collaborative Structure: The annual challenge fostered healthy competition while building a community around shared goals [18].

- Progressive Difficulty: As performance improved, the challenge evolved to address more difficult aspects of visual recognition, including robustness to real-world variations [19].

The ImageNet Legacy in AI Robustness

The ImageNet legacy continues through next-generation benchmarks like ImageNet-D, which uses diffusion models to create challenging test images with diversified backgrounds, textures, and materials. This benchmark has revealed significant accuracy drops (up to 60%) in state-of-the-art vision models, including foundation models like CLIP and MiniGPT-4 [19]. The methodology of creating "hard image mining with shared perception failures"—selectively retaining images that cause failures in multiple models—demonstrates how benchmarks can evolve to probe specific weaknesses in computational models [19].

Table 1: Evolution of ImageNet-style Benchmarks

| Benchmark | Focus | Key Innovation | Impact |

|---|---|---|---|

| Original ImageNet Challenge | Object recognition | Large-scale standardized dataset | Increased accuracy from ~50% to >90% |

| ImageNet-C | Robustness to corruptions | Synthetic corruptions (noise, blur) | Tested resilience to low-level distortions |

| ImageNet-9 | Background independence | Foreground-background separation | Evaluated reliance on contextual information |

| ImageNet-D | Real-world variations | Diffusion-generated hard examples | Revealed vulnerabilities in foundation models |

Current Benchmarking Initiatives in Neuroscience

A patchwork collective of investigators is spearheading benchmarking initiatives across different areas of neuroscience research, employing community challenges, standardized datasets, publicly available code, and accessible websites [18].

Standardizing Foundational Analysis

The critical first steps in neural data analysis have become major foci for benchmarking efforts:

Spike Sorting: SpikeForest, an initiative from the Flatiron Institute, standardizes and benchmarks spike-sorting algorithms through curated benchmark datasets (including gold-standard, synthetic, and hybrid-synthetic data), maintains up-to-date performance results, and lowers technical barriers to using spike-sorting software [18]. The platform runs algorithms on benchmark datasets and publishes accuracy metrics on an interactive website, giving users current information on algorithm performance [18]. Worryingly, these efforts have revealed low concordance between different sorters for challenging cases where spike size is small compared to background noise [18].

Functional Microscopy: For optical microscopy data, tools like the NAOMi simulator generate detailed, end-to-end simulations of brain activity with natural adulterations that imaging methods introduce [18]. Because the ground truth is known, researchers can precisely determine how effective their preprocessing models are and where they fall short [18].

Modeling Brain Function

Beyond preprocessing, benchmarks are emerging for models of brain function:

Brain-Score: This initiative compares models of the ventral visual stream by ranking them based on how well they account for benchmark datasets, with composite "brain scores" based on multiple criteria: how well models capture real neural responses in multiple brain regions during object identification tasks, and how well they predict behavioral choices [18]. This multi-faceted approach allows researchers to examine trade-offs—whether improvements in explaining one aspect enhance or diminish performance on others [18].

Functional Connectivity Mapping: A 2025 comprehensive benchmarking study evaluated 239 pairwise interaction statistics for mapping functional connectivity from fMRI data, examining how network features varied with the choice of statistical method [5]. The study found substantial quantitative and qualitative variation across methods, with measures like covariance, precision, and distance displaying multiple desirable properties including correspondence with structural connectivity and capacity to differentiate individuals [5].

Table 2: Key Neuroscience Benchmarking Initiatives

| Initiative | Neuroscience Domain | Benchmarking Approach | Key Finding |

|---|---|---|---|

| SpikeForest | Electrophysiology | Standardized datasets + consistent evaluation | Low concordance between sorters for challenging cases |

| NAOMi | Optical microscopy | Synthetic ground-truth data | Enables precise quantification of preprocessing effectiveness |

| Brain-Score | Visual processing | Multi-region neural + behavioral prediction | Reveals trade-offs between explaining different neural responses |

| Functional Connectivity Benchmark | Network neuroscience | Comparison of 239 interaction statistics | Precision-based statistics optimize structure-function coupling |

Essential Methodologies for Rigorous Benchmarking

Based on lessons from successful benchmarking efforts across computational fields, several essential methodologies emerge for rigorous benchmark design and implementation.

Benchmark Design Principles

Well-designed benchmarks must balance comprehensiveness with practical constraints while avoiding inherent biases:

- Clear Purpose and Scope: The benchmark's purpose should be clearly defined at the study's outset, whether it's demonstrating a new method's merits, neutrally comparing existing methods, or organized as a community challenge [20].

- Comprehensive Method Selection: Neutral benchmarks should include all available methods for a specific analysis type, or define explicit, unbiased inclusion criteria [20].

- Diverse Dataset Selection: Including a variety of datasets ensures methods are evaluated under a wide range of conditions, using either simulated data (with known ground truth) or real experimental data [20].

- Balanced Parameter Tuning: To avoid bias, all methods should receive equivalent attention to parameter tuning rather than extensively tuning some while using defaults for others [20].

Evaluation Criteria and Metrics

Choosing appropriate evaluation criteria is fundamental to meaningful benchmarking:

- Multiple Performance Metrics: Benchmarks should employ multiple quantitative metrics to capture different aspects of performance, as single metrics can provide over-optimistic estimates [20].

- Scientifically Relevant Dimensions: Beyond pure performance metrics, evaluation should consider explainability, robustness, uncertainty, computational efficiency, and code quality [21].

- Interpretable Rankings and Guidelines: Results should be summarized to provide clear guidelines for method users while highlighting weaknesses for developers to address [20].

Diagram 1: Benchmarking Workflow

Implementation Framework for Neuroscience Benchmarks

Translating benchmarking principles into practical neuroscience applications requires specialized frameworks addressing the field's unique challenges.

Addressing Neuroscience-Specific Challenges

Neuroscience presents distinct benchmarking challenges that require tailored solutions:

Ground Truth Data Acquisition: Unlike computer vision with its clear labels, obtaining ground truth in neuroscience is particularly challenging. For spike sorting, creating "gold standard" datasets requires simultaneously recording from neurons using extracellular methods and from within the cell itself—a time-consuming approach feasible only for small neuron numbers [18]. Synthetic data generation through tools like MEArec provides a viable alternative by simulating physical processes underlying data generation [18].

System-Level Brain Modeling: System-level brain models occupy an intermediate position between detailed neuronal circuit models and abstract cognitive models, distinguished by their structural and functional resemblance to the brain while allowing thorough testing and evaluation [22]. Effective benchmarking requires clear specification of model components, their modeling level, connection structures, component functions, information flow between components, and coding schemes [22].

Integrating Behavioral and Neural Data: The Visual Accumulator Model (VAM) exemplifies how convolutional neural network models of visual processing can be integrated with traditional evidence accumulation models of decision-making in a unified Bayesian framework [23]. This approach jointly fits CNN and EAM parameters to trial-level response times and raw visual stimuli from individual subjects, constraining both visual representations and decision parameters with behavioral data [23].

Computational Infrastructure and Sustainability

Advanced benchmarking requires sophisticated computational infrastructure and long-term sustainability planning:

- High-Performance Computing: Supercomputer performance has increased from ~10 TeraFLOPS in the early 2000s to above 1 ExaFLOPS in 2022—a 100,000-fold increase enabling complex simulations previously impossible [24].

- Sustainable Software Development: Neuroscience must acknowledge that scientific software can have lifespans of 40+ years, making sustainability and portability crucial for community-serving tools [24].

- Emerging Computing Architectures: Future benchmarking platforms may leverage specialized components like neuromorphic hardware (SpiNNaker, BrainScales, Loihi) that are proving suitable for conventional computing applications [24].

Table 3: The Scientist's Toolkit: Essential Benchmarking Resources

| Resource Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Synthetic Data Generators | NAOMi, MEArec | Generate ground-truth data with known properties | Testing analysis methods for optical microscopy and electrophysiology |

| Standardized Evaluation Platforms | SpikeForest, Brain-Score | Provide consistent evaluation metrics and datasets | Comparing spike sorting algorithms and visual processing models |

| Simulation Environments | NEURON, NEST | Simulate neuronal networks at various scales | Testing hypotheses about neural computation and dynamics |

| Data Analysis Packages | PySPI | Calculate multiple pairwise interaction statistics | Functional connectivity mapping and method comparison |

| High-Performance Computing | GPU clusters, Neuromorphic hardware | Enable large-scale simulations and analyses | Processing complex models and massive datasets |

Future Directions and Implementation Roadmap

As neuroscience continues its benchmarking journey, several critical pathways emerge for advancing the field.

Developing a Collaborative Neuroimaging Benchmarking Platform

Future progress may hinge on establishing a collaborative neuroimaging benchmarking platform that combines multiple evaluation aspects in an agile framework, allowing researchers across disciplines to work together on key predictive problems in neuroimaging and psychiatry [21]. Such platforms should incorporate extended evaluation procedures that focus on scientifically relevant aspects including explainability, robustness, uncertainty, computational efficiency, and code quality [21].

Integrated Cognitive Computational Neuroscience

The future lies in integrative approaches that build bridges between theory (instantiated in task-performing computational models) and experiment (providing brain and behavioral data) [25]. This cognitive computational neuroscience would combine the strengths of cognitive science (task-performing models that explain behavior), computational neuroscience (neurobiologically plausible mechanisms explaining brain activity), and artificial intelligence (scalable algorithms for complex tasks) [25].

Diagram 2: VAM Architecture

Implementation Roadmap

Successfully implementing comprehensive benchmarking in neuroscience requires coordinated effort across multiple fronts:

- Community Consensus Building: Establish working groups to define priority areas and develop standards for data formats, evaluation metrics, and reporting [18] [20].

- Infrastructure Development: Create shared resources for dataset hosting, code distribution, and results dissemination following models like SpikeForest's interactive website [18].

- Incentive Structures: Develop recognition systems that reward creation of high-quality benchmarks and participation in community challenges [20].

- Iterative Refinement: Implement processes for regular benchmark updates to address new challenges and methodological advances [20].

The critical need for benchmarks in neuroscience represents both a challenge and opportunity. By learning from the ImageNet success story while adapting to neuroscience's unique complexities, the field can accelerate progress toward understanding brain function and disorders. The standardized datasets, evaluation frameworks, and community engagement that powered computer vision's revolution now stand ready to catalyze similar advances in computational neuroscience, potentially transforming our understanding of the brain and accelerating the development of treatments for neurological and psychiatric disorders.

The fundamental challenge in modern computational neuroscience is one of integration and scale. For years, research has produced models that successfully capture experimental results in individual behavioral tasks or account for neural activity in specific brain regions. However, this piecemeal approach has limited our ability to develop comprehensive theories of intelligence. Integrative benchmarking represents a paradigm shift that addresses this fragmentation by assembling suites of benchmarks from many laboratories, creating evaluation frameworks that push mechanistic models toward explaining entire domains of intelligence, such as vision, language, and motor control [26] [27]. This approach moves beyond isolated experimental validation to assess how well models generalize across diverse cognitive challenges, ultimately driving the field toward more unified, neurally mechanistic explanations of intelligence.

The timing for this approach is increasingly favorable. Recent years have witnessed both the rising success of neurally mechanistic models and an unprecedented surge in the availability of neural, anatomical, and behavioral data [26]. These developments create an ideal environment for implementing integrative benchmarking platforms that can incentivize the development of more ambitious, unified models. By establishing clear standards for model evaluation across multiple dimensions of intelligence, the field can accelerate progress toward its organizing goal: accurately explaining domains of human intelligence as executable, neurally mechanistic models [26].

Core Principles and Methodological Framework

Defining Integrative Benchmarking

Integrative benchmarking constitutes a systematic approach to model evaluation that differs fundamentally from traditional single-measure validation. At its core, it involves:

- Aggregated Benchmark Suites: Curating diverse benchmarks from multiple laboratories and experimental paradigms into unified evaluation platforms [26]

- Multi-Dimensional Assessment: Evaluating models against neural, behavioral, and anatomical data simultaneously rather than optimizing for single metrics

- Domain-Wide Explanatory Scope: Pushing models beyond task-specific performance toward explaining entire domains of intelligence [26]

- Mechanistic Grounding: Ensuring models implement biologically plausible computations rather than merely fitting input-output relationships

This approach stands in stark contrast to traditional modeling practices in computational neuroscience, which have often focused on reproducing results from individual publications or explaining activity in isolated brain regions.

The Brain-Score Case Study

The development of Brain-Score provides a concrete example of how integrative benchmarking operates in practice. This platform for visual intelligence implements several key principles:

- Hierarchical Neural Alignment: Evaluating how well model activity patterns match neural recordings along the ventral visual stream across multiple brain regions

- Behavioral Correspondence: Assessing whether model predictions align with behavioral measures such as psychophysical thresholds and categorization performance

- Unified Metric Generation: Combining neural and behavioral benchmarks into composite scores that reflect overall biological fidelity [26]

The power of this approach lies in its ability to objectively compare diverse models against the same set of benchmarks, creating a competitive environment that drives rapid improvement in biological plausibility.

Experimental Protocols and Implementation

Benchmark Assembly and Curation

Implementing integrative benchmarking begins with systematic benchmark assembly. The process requires:

- Data Aggregation: Collecting neural recordings, behavioral measurements, and anatomical constraints from published literature and shared datasets

- Standardization: Establishing common formats, pre-processing pipelines, and evaluation metrics for disparate data types

- Metric Definition: Creating quantitative measures that capture essential aspects of neural mechanisms and cognitive functions

- Platform Development: Building technical infrastructure for transparent, reproducible model evaluation

This process demands careful attention to experimental consistency while respecting the natural variability present in biological data. Effective benchmarks must be comprehensive enough to constrain theories yet flexible enough to accommodate legitimate biological variation.

Model Evaluation Procedures

The evaluation phase follows rigorous protocols to ensure fair comparison across modeling approaches:

Table: Core Components of Integrative Model Evaluation

| Evaluation Dimension | Data Sources | Key Metrics | Validation Approach |

|---|---|---|---|

| Neural Predictivity | fMRI, electrophysiology, ECoG, MEG | Pearson correlation, noise-normalized R² | Cross-validation across stimuli, subjects, and recording sites |

| Behavioral Alignment | Psychophysics, task performance, reaction times | Accuracy matching, choice probability, behavioral transfer | Out-of-distribution generalization testing |

| Architectural Constraints | Anatomical tracing, connectivity maps | Graph similarity, connection specificity | Ablation studies and lesion comparisons |

| Computational Principles | Theoretical neuroscience, circuit mechanisms | Dynamical regime analysis, robustness testing | Perturbation responses and stability assessment |

For each benchmark, models are evaluated using cross-validation procedures that test generalization to novel stimuli, conditions, and subjects. The evaluation must carefully separate training data from testing data to prevent overfitting and ensure genuine predictive power [26].

Current Applications and Implementation

The Algonauts Project: A Contemporary Example

The Algonauts Challenge represents a cutting-edge implementation of integrative benchmarking principles. The 2025 edition specifically focused on predicting human brain activity in response to long, multimodal movies, requiring models to account for temporal dynamics and cross-modal integration [28]. Key aspects included:

- Stimulus Complexity: Using nearly 80 hours of naturalistic movie stimuli from the CNeuroMod project, including both television series and feature films

- Comprehensive Neural Measurement: Predicting fMRI responses across 1,000 whole-brain parcels in four participants

- Generalization Testing: Evaluating models on both in-distribution (season 7 of Friends) and out-of-distribution (six held-out films) stimuli [28]

This approach moves significantly beyond static image presentation to capture how brains process complex, dynamic, real-world stimuli.

Methodological Insights from Leading Approaches

Analysis of top-performing models in the Algonauts 2025 Challenge reveals several critical success factors for modern integrative benchmarking:

Table: Comparative Analysis of Top Algonauts 2025 Approaches

| Team/Model | Architecture | Feature Sources | Ensembling Strategy | Key Innovation |

|---|---|---|---|---|

| TRIBE (1st) | Transformer | Unimodal vision, audio, text models | Parcel-specific softmax weighting | Modality dropout during training |

| VIBE (2nd) | Dual transformers | Multimodal (Qwen2.5, BEATs, SlowFast) | 20-model ensemble | Separate fusion and prediction transformers |

| Multimodal Recurrent (3rd) | Hierarchical RNNs | Mixed unimodal and multimodal extractors | 100-model diverse ensemble | Brain-inspired curriculum learning |

| MedARC (4th) | Convolution + linear | Multimodal (InternVL3, Qwen2.5-Omni) | Parcel-specific ensembles | Architectural simplicity (no nonlinearities) |

A striking observation across these approaches is that while multimodality was essential—all top teams used pre-trained models spanning vision, audio, and language—architectural choices mattered less than expected. First and second place used transformers, third place used RNNs, and fourth place used simple convolutions and linear layers without nonlinearities, yet all achieved remarkably similar performance [28]. This suggests that current benchmarking approaches effectively reward general computational principles rather than specific implementation details.

Essential Research Reagents and Computational Tools

The Scientist's Toolkit

Implementing integrative benchmarking requires leveraging a sophisticated ecosystem of research reagents and computational tools. The following table details essential components used in modern approaches:

Table: Essential Research Reagents for Integrative Benchmarking

| Tool Category | Specific Examples | Function in Research |

|---|---|---|

| Neural Recording Technologies | fMRI, ECoG, MEG, high-density electrophysiology | Provides ground truth neural data for benchmark development and model validation |

| Pre-trained Feature Extractors | V-JEPA2 (vision), Whisper (speech), Llama 3.2 (language), BEATs (audio) | Converts complex stimuli into feature representations for brain activity prediction [28] |

| Multimodal Fusion Models | Qwen2.5-Omni, InternVL3, CLIP | Integrates information across sensory modalities to predict activity in higher-order associative cortices [28] |

| Benchmarking Platforms | Brain-Score, Algonauts Challenge infrastructure | Provides standardized evaluation frameworks and comparative leaderboards [26] [28] |

| Simulation Technologies | NEST, PyNN, neuromorphic hardware | Enables large-scale simulation of neural circuit models for mechanistic testing [29] |

These tools collectively enable researchers to move from isolated model development to integrated evaluation against biological benchmarks. The predominance of pre-trained feature extractors in top Algonauts approaches is particularly notable—no leading team trained their own feature extractors from scratch, instead leveraging foundation models to convert stimuli into high-quality representations [28].

Visualizing Integrative Benchmarking Frameworks

Conceptual Workflow Diagram

The following diagram illustrates the comprehensive workflow for integrative benchmarking, from data aggregation through model evaluation and refinement:

Multimodal Integration Architecture

Modern approaches to integrative benchmarking increasingly require handling multiple data modalities, as demonstrated by leading Algonauts Challenge entries:

Future Directions and Implementation Challenges

Scaling and Methodological Refinements

As integrative benchmarking matures, several critical challenges must be addressed to advance its effectiveness:

- Neural Data Resolution: Current benchmarks rely heavily on fMRI data with limited temporal resolution. Future platforms must incorporate higher-resolution neural recording techniques, including ECoG, MEG, and large-scale electrophysiology, to constrain models with finer temporal dynamics and spatial specificity.

- Behavioral Complexity: Moving beyond simple perceptual tasks to incorporate complex behaviors, including naturalistic movement, social interactions, and multi-step decision making, will be essential for capturing the full scope of intelligence.

- Lifelong Learning and Adaptation: Developing benchmarks that assess how models adapt to experience over time, rather than just static performance, will better reflect the dynamic nature of biological intelligence.

- Cross-Species Validation: Creating parallel benchmarks for non-human species will enable testing of evolutionary theories and facilitate integration with rich neurophysiological datasets from animal models.

The Algonauts 2025 Challenge already points toward one important scaling relationship: encoding performance appears to increase with more training sessions (up to 80 hours per subject), though the relationship appears sub-linear and plateauing rather than following the clean power laws observed in large language models [28].

Institutional and Collaborative Frameworks

Successfully implementing integrative benchmarking requires more than technical solutions—it demands new collaborative structures and incentive systems:

- Data Sharing Standards: Establishing common formats, metadata requirements, and quality controls for neural and behavioral data

- Model Reproducibility: Developing containerization and workflow management tools to ensure model evaluations are exactly reproducible across research groups

- Academic Credit Systems: Creating citation and contribution frameworks that properly reward researchers for benchmark development and model contributions

- Industry-Academic Partnerships: Facilitating knowledge transfer between academic neuroscience and industry AI research while protecting scientific values

The experience with the Potjans-Diesmann model highlights both the challenges and opportunities of model sharing in computational neuroscience. Despite urgent calls for more systematic model sharing, research practice still shows limited re-use of circuit models, with the PD14 model being a rare exception [29]. Integrative benchmarking platforms can help address this by creating structured incentives for model reuse and extension.

Integrative benchmarking represents more than just a methodological refinement—it offers a pathway to transform how we build and evaluate theories of intelligence. By creating comprehensive evaluation frameworks that push models to explain entire domains of intelligence, the field can move beyond isolated findings toward cumulative, integrated knowledge. The initial successes of platforms like Brain-Score and the Algonauts Project demonstrate the feasibility and power of this approach, while current challenges highlight areas for continued development and refinement.

As the volume and quality of neural data continue to grow, and as computational models become increasingly sophisticated, integrative benchmarking provides the essential glue to connect these advancements. Through continued development of these approaches, the field can work toward its most ambitious goal: executable, neurally mechanistic models that genuinely explain, rather than merely describe, the foundations of intelligence.

The expansion of computational neuroscience necessitates robust frameworks for model validation and collaboration. This whitepaper examines how infrastructure initiatives like the International Neuroinformatics Coordinating Facility (INCF) and benchmarking platforms such as Brain-Score establish critical standards for the field. We detail how INCF's community-driven programs foster open, FAIR (Findable, Accessible, Interoperable, and Reusable) neuroscience, while Brain-Score provides quantitative, empirical benchmarks for evaluating model performance against neural data. Within the context of a broader thesis on benchmarking standards, this analysis explores their integrated role in advancing neurally mechanistic models of intelligence, supported by detailed methodologies, quantitative data summaries, and visual workflows.

Computational neuroscience builds quantitative models of neural systems across scales, from single ion channels to entire networks governing behavior [9]. The field employs canonical models like Hodgkin-Huxley for biophysical detail, Izhikevich for efficient spiking units, and Wilson-Cowan for population dynamics [9]. However, the proliferation of models and extensive datasets creates a critical challenge: the lack of standardized benchmarks to evaluate model accuracy and biological plausibility across different datasets and research questions. Without standardized datasets and benchmarks, researchers struggle to determine model accuracy and comparative performance [18].

This challenge mirrors one previously faced in machine learning, where the establishment of the ImageNet benchmark catalyzed rapid progress by allowing direct model comparisons [18]. Neuroscience initiatives are now adopting this successful paradigm. This whitepaper examines how the International Neuroinformatics Coordinating Facility (INCF) and the Brain-Score platform collaboratively establish and maintain the standards and infrastructure necessary for rigorous, reproducible, and cumulative progress in computational neuroscience.

INCF: Building the Community and Infrastructure for Standards

The International Neuroinformatics Coordinating Facility (INCF) is a pivotal organization that builds collaborative infrastructure and establishes standards for neuroscience. Its mission is to create an open, FAIR, and collaborative global neuroscience ecosystem.

Strategic Activities and Governance

INCF's work is executed through a network of specialized councils and committees, each focusing on a key area of neuroscience infrastructure [30]. Their 2025 calendar is populated with coordinated activities, including town halls, committee meetings, and major events, demonstrating a strategic, year-round approach to community building.

Table: INCF Committees and Core Functions

| Committee | Full Name | Core Function |

|---|---|---|

| GB | Governing Board | Strategic oversight and governance |

| CTSI | Council for Training, Science, & Infrastructure | Aligning training, scientific research, and infrastructure development |

| SBP | Standards & Best Practices committee | Developing and promoting community standards |

| TEC | Training & Education Committee | Fostering training and educational resources |

| IC | Infrastructure Committee | Overseeing technical infrastructure development |

| IAC | Industry Advisory Committee | Facilitating industry-academia collaboration |

A central theme of INCF's 2025 agenda is strengthening collaboration between large-scale initiatives. A dedicated session at the joint EBRAINS Summit 2025 – INCF Assembly 2025 will bring together leaders from major international research infrastructures to identify common gaps and explore opportunities for partnership and interoperability [31]. This reflects a mature understanding that overcoming fragmentation is essential for advancing the field.

Brain-Score: A Platform for Empirical Model Benchmarking

Brain-Score is an open-source, community-driven platform that quantitatively evaluates computational models of brain function by testing them against a wide array of biological measurements [32] [33]. Its core mission is to "democratize the search for scientific models of natural intelligence" by providing a standardized suite of benchmarks.

Platform Architecture and Core Principles

The platform operates on several key principles. It is integrative, demanding that a model predict multiple aspects of neural data and behavior, not just a single dataset [18]. Its collaborative and open-source nature ensures all code is freely available, and many community members make their data and model weights public [33]. The platform is also domain-agnostic, beginning with primate vision and expanding to include human language processing, enabling the evaluation of large language models (LLMs) [32].

At a technical level, Brain-Score operationalizes experimental data into quantitative, machine-executable benchmarks. Models adhering to the defined BrainModel interface can be automatically scored on dozens of neural and behavioral benchmarks [34]. A modular plugin system simplifies the integration of new data, metrics, and models, encouraging community contribution [32].

Benchmarking Metrics and Quantitative Outcomes

Brain-Score evaluates models against two primary classes of benchmarks: neural benchmarks, which measure the match between model activations and neural activity recorded from the brain, and behavioral benchmarks, which assess the alignment of model outputs with human behavioral choices [33] [18]. The platform's leaderboard ranks models based on their average score across all benchmarks, promoting the development of models that provide a comprehensive explanation of brain function [33].

Table: Core Benchmark Categories in Brain-Score

| Benchmark Category | Measured Aspect | Example Data Type | Scoring Metric |

|---|---|---|---|

| Neural Alignment | Match to neural activity | fMRI, electrophysiology | Predictivity (e.g., linear regression) |

| Behavioral Alignment | Match to human decisions | Psychophysical task data | Accuracy correlation |

| Composite Brain-Score | Overall explanatory power | Aggregate of all benchmarks | Weighted average score |

The platform has been used to benchmark numerous models, revealing that no single model currently excels across all benchmarks. This highlights specific gaps in our understanding and provides a clear, empirical direction for future model development.

Integrated Methodologies: From Data to Benchmarking

The synergy between infrastructure organizations like INCF and benchmarking platforms like Brain-Score is critical for establishing end-to-end workflows in computational neuroscience. This section details the methodologies that underpin this integrated approach.

Workflow for Model Benchmarking

The following diagram illustrates the standardized workflow for submitting and evaluating a model on the Brain-Score platform, from community contribution to the final leaderboard ranking.

Protocol for Functional Connectivity Benchmarking

A recent landmark study published in Nature Methods (2025) provides a robust example of a large-scale benchmarking methodology relevant to the broader field [5]. The study benchmarked 239 pairwise interaction statistics for mapping functional connectivity (FC) in the brain.

- Objective: To determine how the organization of the FC matrix varies with the choice of pairwise statistic, and to identify which statistics are optimal for specific neuroscience questions [5].

- Data Source: Functional time series from N = 326 unrelated healthy young adults from the Human Connectome Project (HCP) S1200 release [5].

- Benchmarked Statistics: 239 pairwise statistics from 49 pairwise interaction measures across 6 families (e.g., covariance, precision, information theoretic, spectral) were calculated using the

pyspipackage [5]. - Evaluation Metrics: Each resulting FC matrix was evaluated on multiple canonical features:

- Topological & Geometric Organization: Hub mapping, weight-distance trade-offs.

- Structure-Function Coupling: Goodness of fit with diffusion MRI-estimated structural connectivity.

- Multimodal Alignment: Correspondence with gene expression, neurotransmitter receptor similarity, and electrophysiological connectivity.

- Individual Differences: Capacity for individual fingerprinting and brain-behavior prediction [5].

- Key Findings: The study found substantial quantitative and qualitative variation across FC methods. Precision-based and covariance-based statistics generally showed strong structure-function coupling, while no single statistic was optimal for all use cases, underscoring the need for tailored benchmarking [5].

For researchers engaging with these community initiatives, a suite of key platforms and tools is essential. The following table details these critical "research reagents" and their primary functions.

Table: Essential Resources for Standards-Based Computational Neuroscience

| Resource Name | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| Brain-Score Platform | Benchmarking Platform | Quantifies alignment of AI/ML models with neural & behavioral data | Provides the core empirical benchmark for model quality [32] [33]. |

| INCF Standards | Knowledge Repository | Curates and disseminates community standards and best practices | Defines the protocols for data and model sharing [30]. |

| EBRAINS Research Infrastructure | Digital Platform | Provides tools, data, and compute for brain research | Offers a scalable environment for running large-scale benchmarks [35]. |

| HCP Datasets | Data Resource | Provides large-scale, multimodal neuroimaging data | Serves as a foundational benchmark dataset for human brain function [5]. |

| SpikeForest | Software Suite | Benchmarks spike-sorting algorithms against ground-truth data | Standardizes a critical preprocessing step for electrophysiology [18]. |

| NAOMi Simulator | Software Tool | Generates synthetic ground-truth data for optical microscopy | Creates benchmarks for validating functional imaging analysis [18]. |

The Future of Neuroscience Benchmarking

The future of benchmarking in computational neuroscience lies in deeper integration and expanding scope. The partnership between INCF and EBRAINS exemplifies this, creating a unified forum for discussing neuro-AI, neuromorphic computing, and the ethics of data [35]. A key upcoming session on "Building bridges between large-scale brain initiatives" will directly address gaps in interoperability and data governance, which are fundamental for the next generation of benchmarks [31].

Technically, platforms like Brain-Score are expanding from vision to language, paving the way for evaluating large language models (LLMs) [32]. Furthermore, benchmarking efforts are becoming more nuanced, moving beyond a single "best" metric to a suite of benchmarks tailored to specific scientific questions, as demonstrated by the functional connectivity study [5]. This evolution, supported by community infrastructure, will enable a more refined and effective search for accurate models of the brain.

The establishment of rigorous standards for benchmarking is a cornerstone of a mature computational neuroscience. The synergistic efforts of the INCF, which builds the community and infrastructural backbone, and platforms like Brain-Score, which provide the empirical evaluation framework, are indispensable to this endeavor. By providing standardized datasets, clear benchmarks, and open-source tools, these initiatives enable reproducible research, direct model development, and ultimately accelerate the creation of neurally accurate models of cognition and behavior. As the field progresses, this integrated approach will be critical for translating data into a deeper, mechanistic understanding of the brain.

Building and Applying Effective Benchmarking Frameworks and Datasets

In computational neuroscience, the development of robust benchmarks is paramount for quantifying progress, ensuring reproducibility, and fostering the reuse of models. Benchmarks serve as standardized tests that allow researchers to compare the performance of different computational models against a common set of criteria and datasets. A well-designed benchmark provides a neutral ground for evaluating model correctness, performance, and biological plausibility, thereby accelerating scientific discovery and technological development. The success of computational neuroscience hinges on the community's ability to create benchmarks that are not only technically sound but also widely accepted and adopted. This guide outlines the core principles and practical methodologies for designing such benchmarks, framed within the broader context of establishing standards for computational neuroscience model research.

Foundational Principles of Benchmarking

Defining Purpose and Scope

The first step in designing a benchmark is to articulate its primary purpose with clarity. A benchmark's purpose defines the specific problem it aims to solve and the scientific questions it seeks to enable. For instance, a benchmark might be designed to evaluate how well different functional connectivity methods map the brain's network architecture [5], or to assess the capacity of deep learning models to predict brain activity from multimodal, naturalistic stimuli, as seen in the Algonauts Challenge [36]. Without a precisely defined purpose, a benchmark risks becoming a vague exercise that yields inconclusive or non-comparable results.

Closely linked to purpose is the benchmark's scope, which establishes its boundaries. The scope explicitly defines what the benchmark will and will not measure. Key considerations include:

- Biological Scale: Does the benchmark target subcellular processes, single neurons, microcircuits, or entire brain systems?

- Functional Domain: Is the benchmark focused on sensory processing, cognitive function, motor control, or a specific clinical application?

- Data Modalities: What types of data are included (e.g., fMRI, MEG, EEG, genetic, transcriptomic)?

- Model Class: Is the benchmark intended for spiking neuron models, deep learning architectures, or mean-field models?

A clearly articulated scope prevents "benchmark creep," where the tool becomes overloaded with disparate objectives, diluting its utility and focus.

Ensuring Neutrality and Avoiding Bias

A foundational principle of effective benchmarking is neutrality. A benchmark must be designed to evaluate models fairly, without inherent bias toward a particular methodological approach or implementation technology. Neutrality ensures that the results are credible and drive the field forward on scientific merit rather than engineering optimizations for a specific benchmark.

Strategies to ensure neutrality include:

- Diverse Dataset Curation: Utilizing multiple, independent datasets that reflect a variety of experimental conditions and, if applicable, subject populations. The Algonauts Challenge, for example, rigorously separates in-distribution and out-of-distribution test sets to foreground generalization as a key criterion for success [36].

- Task-Agnostic Metrics: Selecting performance metrics that are relevant to the benchmark's purpose but do not inherently favor a specific model architecture. For brain activity prediction, a metric like mean Pearson's correlation is a common, relatively neutral choice [36].

- Community Engagement: Involving a broad group of stakeholders during the benchmark's design phase to identify and mitigate potential sources of bias before release. The success of the Potjans-Diesmann cortical microcircuit model as a benchmark has been attributed in part to its wide acceptance and utility as a community resource [37].

The Role of Standardized Metrics and Evaluation

Standardized evaluation protocols are the backbone of any benchmark. They define how models are to be executed, how their outputs are to be reported, and how performance is quantified. Standardization is critical for ensuring that results from different groups are directly comparable.

Evaluation should extend beyond a single performance metric. A comprehensive benchmark should assess a model across multiple dimensions, which may include:

- Predictive Accuracy: The model's ability to replicate or predict neural data.

- Computational Performance: The model's efficiency in terms of simulation speed, memory footprint, and energy consumption, which is crucial for large-scale simulations [37].

- Biological Plausibility: The degree to which the model's mechanisms and dynamics align with known neurobiology.

- Robustness and Generalization: The model's performance on unseen data or under perturbed conditions [36] [38].

Table 1: Key Dimensions for Benchmark Evaluation

| Dimension | Description | Example Metrics |

|---|---|---|

| Predictive Accuracy | Fidelity in replicating neural data or behavior. | Pearson correlation, explained variance, representational similarity. |

| Computational Performance | Resource efficiency of simulation. | Simulation time per second of biological time, memory usage. |

| Biological Plausibility | Alignment with known neurobiological principles. | Qualitative comparison to known circuitry, dynamical regimes. |

| Robustness | Performance stability on unseen or noisy data. | Performance drop on out-of-distribution test sets. |

| Interpretability | Ability to derive mechanistic insights from the model. | Applicability of saliency maps, concept-based explanations [38]. |

A Framework for Benchmark Construction

Core Components of a Benchmark

A robust benchmark is composed of several interconnected core components that work together to provide a complete evaluation framework.

Standardized Datasets: The benchmark must provide a curated, pre-processed, and clearly partitioned set of data for training, validation, and testing. These datasets should be of high quality, well-annotated, and publicly accessible to ensure broad participation. The CNeuroMod dataset used in the Algonauts 2025 Challenge, with its nearly 80 hours of fMRI data synchronized with movie stimuli, is a prime example [36].

Evaluation Metrics and Protocols: This component defines the quantitative and qualitative measures of success. It includes not only the mathematical definitions of the metrics but also detailed protocols for running the evaluation, such as specifying the software environment, input formats, and output requirements. A modular workflow for performance benchmarking can help standardize this process [37].

Reference Models and Baselines: To contextualize results, a benchmark should provide a set of reference implementations, including simple baseline models and state-of-the-art models. This helps newcomers quickly understand the task and allows for meaningful progress tracking over time.

A Clear Submission and Reporting Format: A standardized template for reporting results ensures that all necessary information is provided by participants, facilitating fair comparison and meta-analysis.

Workflow for Benchmark Implementation

The following diagram illustrates a generalized, iterative workflow for developing and executing a computational benchmark, from initial dataset preparation to the final analysis of model submissions.

Diagram 1: Benchmark Implementation Workflow

The Scientist's Toolkit: Essential Research Reagents

In the context of benchmarking computational neuroscience models, "research reagents" extend beyond biological materials to include essential datasets, software tools, and model architectures that form the building blocks for research and development.

Table 2: Key Research Reagent Solutions in Computational Neuroscience