P300 and SSVEP Brain-Computer Interfaces: Principles, Applications, and Future Directions in Biomedical Research

This comprehensive review explores the foundational principles, methodological approaches, and clinical applications of P300 and Steady-State Visual Evoked Potential (SSVEP) brain-computer interfaces (BCIs).

P300 and SSVEP Brain-Computer Interfaces: Principles, Applications, and Future Directions in Biomedical Research

Abstract

This comprehensive review explores the foundational principles, methodological approaches, and clinical applications of P300 and Steady-State Visual Evoked Potential (SSVEP) brain-computer interfaces (BCIs). Targeting researchers, scientists, and drug development professionals, the article synthesizes current literature on these non-invasive EEG paradigms, highlighting their complementary strengths in creating robust hybrid systems. We examine signal processing techniques including wavelet transforms, Support Vector Machines (SVM), and ensemble Task-Related Component Analysis (TRCA) that achieve classification accuracies exceeding 90% in recent implementations. The review covers diverse applications from communication spellers and neurorehabilitation to virtual reality control, while addressing critical challenges such as visual fatigue, signal interference, and individual variability. Through comparative performance analysis of accuracy, information transfer rates, and practical implementation considerations, this work provides a scientific foundation for advancing BCI technology in clinical research and therapeutic development.

Fundamental Neurophysiology and Signal Characteristics of P300 and SSVEP

Historical Foundations and Core Principles

The field of Brain-Computer Interfaces (BCIs) has evolved significantly since the discovery of electrical brain activity. Event-Related Potentials (ERPs) were first reported by Sutton in 1965, representing electrophysiological responses time-locked to sensory, cognitive, or motor events [1]. These signals are extracted from the ongoing electroencephalogram (EEG) through signal averaging, which enhances the signal-to-noise ratio to reveal characteristic voltage deflections that are otherwise obscured by spontaneous brain activity [2] [3].

The foundational principle of evoked potential BCIs rests on detecting these stereotyped neural responses to external stimuli. When a user attends to a specific stimulus, their brain generates a distinct, measurable electrical signature. A BCI system detects this signature and translates it into a control command [1]. The P300 potential, a positive deflection occurring approximately 250-750 milliseconds after a rare or significant stimulus, was first identified in the context of the "oddball" paradigm, where subjects respond to infrequent target stimuli among a series of standard stimuli [1]. Similarly, the Steady-State Visual Evoked Potential (SSVEP) represents a continuous oscillatory brain response to repetitive visual stimulation, typically at frequencies above 6 Hz, where the visual cortex entrains to the frequency of the flickering stimulus [4] [5].

The non-invasive nature of EEG-based BCIs, utilizing electrodes placed on the scalp, has made these systems particularly attractive for communication and control applications, especially for individuals with severe motor impairments such as amyotrophic lateral sclerosis (ALS) and locked-in syndrome (LIS) [6] [1]. Early work by Farwell and Donchin (1988) established the P300 speller, a seminal application that allowed users to select characters from a matrix by counting flashes of the target character [7] [6]. This established the viability of ERP-based BCIs for assistive communication.

Technical Foundations of P300 and SSVEP

P300 Event-Related Potential

The P300 is an endogenous component of the ERP, meaning it is influenced by the internal cognitive state of the user rather than merely the physical characteristics of the stimulus. It is most prominently elicited in oddball paradigms, where the user must discriminate between frequent standard stimuli and rare target stimuli [1]. The amplitude of the P300 is sensitive to the probability of the target stimulus, with lower probability events eliciting larger responses. Its latency is considered an index of stimulus classification speed [3] [1].

The P300 waveform is often divided into two subcomponents: the P3a, which is fronto-centrally distributed with a shorter latency and reflects initial orienting to novelty, and the P3b, which is parietally distributed and associated with memory updating and the allocation of attentional resources [3] [1]. The neural generators of the P300 are believed to involve a distributed network including the parietal cortex, medial temporal lobe, and prefrontal cortex, with possible contributions from noradrenergic signaling via the locus coeruleus [3].

Steady-State Visual Evoked Potential (SSVEP)

SSVEPs are oscillatory neural responses generated in the visual cortex when the retina is stimulated by a visual stimulus flickering at a constant frequency [5]. The resulting EEG signal exhibits peaks in power at the fundamental frequency of the stimulus and its harmonics [8]. A key advantage of SSVEP-based BCIs is their high signal-to-noise ratio and the fact that they require minimal user training [4] [9]. Users need only to gaze at a visual target flickering at a specific frequency to generate a recognizable brain response.

The SSVEP response is strongest for stimulation frequencies in the 12-18 Hz range, though effective systems utilize frequencies from 6 Hz to over 20 Hz [8]. The response amplitude generally decreases with increasing stimulation frequency, but the signal-to-noise ratio often improves at higher frequencies due to lower background EEG power [5]. Recent advancements have explored multi-frequency SSVEP, where stimuli are encoded using combinations of frequencies rather than a single frequency, thereby expanding the number of available commands without requiring an impractical number of distinct base frequencies [8].

Current Paradigms and Performance Comparisons

Hybrid and Combined Paradigms

A significant trend in modern BCI research is the development of hybrid systems that combine multiple signal modalities to improve performance and robustness.

P300 and M-VEP Combined BCI: One innovative approach combined P300 potentials with motion-onset visual evoked potentials (M-VEPs). In this paradigm, stimuli could change color (eliciting P300), move (eliciting M-VEP), or do both simultaneously. Offline and online tests confirmed that the combined paradigm yielded significantly better performance than either approach alone, achieving a mean classification accuracy of 96% and a high practical bit rate [7].

P300-SSVEP Hybrid Speller: Another prominent hybrid system uses a Frequency Enhanced Row and Column (FERC) paradigm. In this design, each row and column in a 6×6 speller matrix is assigned a unique flickering frequency (e.g., 6.0-11.5 Hz). When a row or column flashes randomly to elicit a P300, it also flickers continuously to elicit an SSVEP. This allows for simultaneous detection of both signals. One study reported that this hybrid speller achieved an online accuracy of 94.29% and an information transfer rate (ITR) of 28.64 bits/min, outperforming single-modality spellers (P300-only: 75.29%; SSVEP-only: 89.13%) [6].

Table 1: Performance Comparison of BCI Paradigms

| Paradigm Type | Key Features | Reported Accuracy | Information Transfer Rate (ITR) | Key Advantages |

|---|---|---|---|---|

| P300-based BCI [7] [6] | Oddball paradigm; Row/Column flashing | ~75-96% | Varies with setup | Reliable; works with most users |

| SSVEP-based BCI [4] [6] | Frequency-coded flickering stimuli | ~89% | High (often >200 bits/min) [9] | High ITR; Minimal training |

| Combined P300 & M-VEP [7] | Stimuli change color and/or move | 96% (mean) | 26.7 bit/s (practical) | Superior accuracy and bit rate |

| Hybrid P300-SSVEP [6] | FERC paradigm; Simultaneous stimulation | 94.29% (online) | 28.64 bits/min | High accuracy & compatibility |

Novel Stimulus Presentations

Research into improving the user experience and signal quality has led to novel stimulus presentation methods.

AR and VR Integration: Head-mounted augmented reality (AR) displays are being used to create more portable and wearable SSVEP-BCI systems. A recent study explored binocularly incongruent stimulation, where the two lenses of an AR headset present flickers with distinct frequencies and/or phases to each eye. This approach has shown potential for improving target separability and presents a compelling strategy for future portable BCIs [9].

Stimulus Pattern Modification: To address issues of visual fatigue and low response intensity in SSVEP-BCIs, researchers have tested alternatives to the standard checkerboard pattern. One study found that using Quick Response (QR) code patterns could yield higher classification accuracy than traditional checkerboards. Furthermore, these patterns, particularly at low frequencies, were reported to reduce visual fatigue, a common drawback of SSVEP-based systems [4].

Table 2: Key Artifacts and Mitigation Strategies in EEG for BCI

| Artifact Type | Origin | Impact on Evoked Potentials | Common Mitigation Strategies |

|---|---|---|---|

| Ocular Artifacts | Eye movements and blinks [7] | Can obscure neural signals, especially over frontal sites | Regression techniques, Independent Component Analysis (ICA) [2] |

| Muscle Artifacts (EMG) | Tension in head/neck muscles | Introduces high-frequency noise | Filtering, Artifact rejection [2] |

| Line Noise | Power line interference (50/60 Hz) | Can mask SSVEP signals at specific frequencies | Notch filtering [8] |

| Cue-Related Potentials | Neural responses to abrupt visual cues [10] | Can superimpose and alter motor-related potentials | Using fading or rotational cues [10] |

Detailed Experimental Protocols

Protocol for a Hybrid P300-SSVEP Speller

This protocol is adapted from the FERC paradigm study [6].

Stimulus Interface Setup:

- Create a 6×6 matrix of characters (A-Z, 0-9) displayed on a monitor.

- Assign a unique flickering frequency to each row and each column. For example, assign columns frequencies from 6.0 to 8.5 Hz in 0.5 Hz steps, and rows frequencies from 9.0 to 11.5 Hz in 0.5 Hz steps.

- The flicker should be a white-black pattern with a specific duty cycle (e.g., 50%).

EEG Data Acquisition:

- Participants: Recruit subjects with normal or corrected-to-normal vision. They should be seated comfortably approximately 70 cm from the screen.

- Equipment: Use an EEG amplifier with a sampling rate of at least 256 Hz. A higher rate (e.g., 512 Hz or 1024 Hz) is preferable.

- Electrode Placement: Record from at least 8 channels, including key positions for P300 (e.g., Fz, Cz, Pz) and SSVEP (e.g., POz, Oz, O1, O2) according to the international 10-10 or 10-20 system. Maintain electrode impedances below 10 kΩ.

Experimental Procedure:

- Instruct the participant that their task is to focus on a target character specified by the experimenter and silently count the number of times it flashes.

- A trial consists of a random sequence of flashes, where each row and each column flashes once. Each flash (intensification) lasts for a fixed duration (e.g., 100 ms), with a subsequent inter-stimulus interval (e.g., 75 ms).

- During the flash, the corresponding row or column intensifies (for P300) while continuing its unique frequency flicker (for SSVEP).

- Multiple trials are repeated to form a block. Each character spelling is typically achieved with 5-15 trials for averaging.

Signal Processing and Classification:

- Preprocessing: Apply a band-pass filter (e.g., 0.1-20 Hz for P300; 5-30 Hz for SSVEP) and a 50/60 Hz notch filter.

- P300 Detection: For each channel, epoch the EEG from 0 to 800 ms after each flash onset. Extract features (e.g., wavelet coefficients) and use a classifier like Support Vector Machine (SVM) to determine whether the epoch contains a P300.

- SSVEP Detection: For each row and column frequency, apply a frequency analysis method like Canonical Correlation Analysis (CCA) or Ensemble Task-Related Component Analysis (TRCA) to the EEG epochs. The target is identified as the character at the intersection of the row and column that produced the strongest P300 and SSVEP responses, respectively.

- Data Fusion: Fuse the probabilities from the P300 and SSVEP classifiers using a weighted sum or a similar approach to make the final character decision.

Protocol for Binocular SSVEP in AR

This protocol is based on the dataset collected with an augmented reality headset [9].

Stimulus Setup:

- Use a binocular AR headset (e.g., Microsoft HoloLens 2) with a refresh rate of 60 Hz.

- Program an interface with multiple visual targets (e.g., 8 targets).

- For binocular-congruent stimulation, present the same flickering frequency to both eyes.

- For binocular-incongruent stimulation, present different flickering frequencies and/or phases to the left and right lenses for a single target.

EEG Data Acquisition:

- Equipment: Use a high-performance portable EEG amplifier.

- Electrode Placement: Record from 30 electrodes placed over the parieto-occipital brain region (e.g., POz, O1, O2, PO3, PO4, etc.) with a reference at Cz. Sampling rate should be high (e.g., 1024 Hz).

Experimental Procedure:

- Participants wear the AR headset and the EEG cap simultaneously.

- In each trial, a visual cue indicates the target the participant should attend to. The stimuli then begin flickering for a fixed duration (e.g., 4-5 seconds).

- Participants are instructed to maintain their gaze on the target during the stimulation period.

- The experiment systematically collects data for all target conditions and for both congruent and incongruent stimulation modes.

Signal Analysis:

- The EEG data is analyzed to compare the strength and harmonic components of the SSVEP response between congruent and incongruent stimulation conditions.

- Classification algorithms are used to quantify the target identification accuracy for each paradigm.

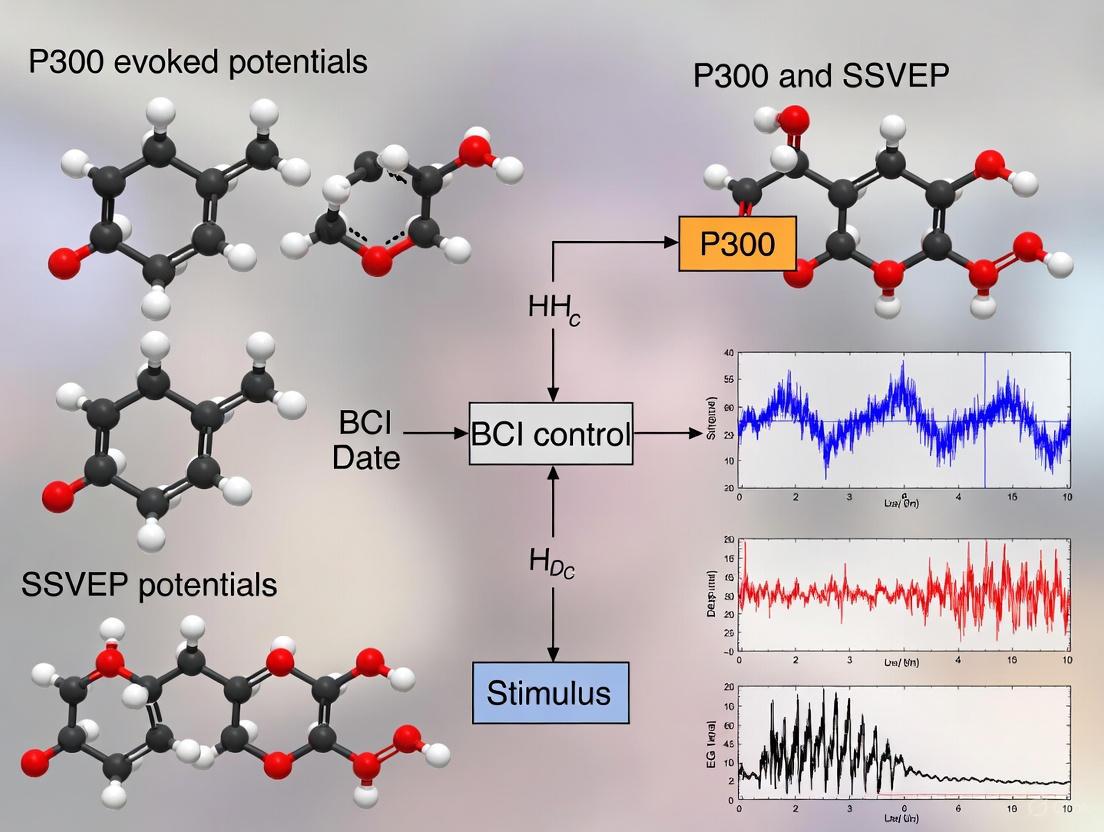

Visualization of BCI Signaling Pathways and Workflows

The following diagrams illustrate the core signaling pathways in evoked potentials and the workflow of a hybrid BCI system.

Diagram 1: Neural Signaling Pathway of Evoked Potentials

Diagram 2: Hybrid P300-SSVEP BCI Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials and Equipment for Evoked Potential BCI Research

| Item Name | Function / Application | Specification Notes |

|---|---|---|

| EEG Amplifier System [9] [8] | Records electrical brain activity from the scalp. | High input impedance; Sampling rate ≥512 Hz; Integrated band-pass and notch filters. |

| Active Electrode Cap [9] [10] | Holds electrodes in standard positions (10-10 or 10-20 system). | 32 to 64 channels typical; Requires stable, low-impedance connections (<10 kΩ). |

| Visual Stimulation Display [4] [8] | Presents flickering or flashing stimuli to evoke P300/SSVEP. | LCD/LED monitors with high refresh rate (≥120 Hz) or Head-Mounted AR/VR displays [9]. |

| Stimulus Presentation Software [9] [8] | Programs and controls the timing and sequence of visual stimuli. | Requires millisecond precision timing (e.g., Unity, Psychtoolbox). |

| Signal Processing Toolkit [2] [6] | For preprocessing, feature extraction, and classification of EEG data. | Includes algorithms for ICA, SVM, CCA, TRCA, and deep learning. |

| g.SAHARA Dry Electrodes [8] | Alternative to wet electrodes for easier setup. | Dry electrodes simplify setup without conductive gel, favoring real-world use [8]. |

| Microcontroller (e.g., STM32) [9] | Synchronizes stimulus presentation with EEG recording. | Delivers transistor-transistor logic (TTL) pulses to the amplifier to mark stimulus onsets. |

The P300 event-related potential (ERP), a positive deflection in the human electroencephalogram (EEG) occurring approximately 300 ms post-stimulus, serves as a critical neural index of cognitive processes such as attention, context updating, and memory operations. Its reliable elicitation, typically through the oddball paradigm, has established it as a cornerstone for cognitive neuroscience research and a vital signal for non-invasive Brain-Computer Interface (BCI) systems. This technical guide provides an in-depth examination of the P300's core neurophysiological principles, detailing its temporal dynamics, the experimental protocols used for its study, and its spatial distribution across the scalp. Furthermore, the integration of the P300 with Steady-State Visually Evoked Potentials (SSVEP) in hybrid BCI systems is discussed, highlighting synergistic approaches that enhance the performance and reliability of neural-driven applications for communication and control.

Temporal Characteristics of the P300

The temporal profile of the P300 is a key determinant of its functional significance and utility in BCI systems. Its latency and amplitude are modulated by a variety of task-related and subject-specific factors.

Latency and Amplitude Modulators

P300 latency is widely regarded as a measure of stimulus classification speed or evaluation time, which is independent of subsequent motor response processes [11]. It varies systematically with the difficulty of discriminating the target stimulus from standard stimuli; more challenging discriminations result in longer latencies [11]. A typical peak latency for a young adult performing a simple discrimination is around 300 ms, though this can vary significantly with task demands and subject population.

P300 amplitude, measured in microvolts (μV), is fundamentally linked to the probability of the target stimulus. Amplitude increases as the target's global and local sequence probability decreases [12]. This amplitude-probability relationship is a cornerstone of the "context updating" theory, which posits that the P300 reflects the revision of mental models in working memory when incoming stimuli deviate from expectations [12].

Table 1: Factors Influencing P300 Temporal Characteristics

| Factor | Effect on Amplitude | Effect on Latency | Theoretical Implication |

|---|---|---|---|

| Target Probability | Inversely related; lower probability yields higher amplitude [12]. | Minimal direct effect. | Supports context updating in working memory [12]. |

| Discrimination Difficulty | Can be reduced with increased difficulty due to resource allocation [12]. | Directly related; more difficult discriminations yield longer latency [11]. | Indexes stimulus evaluation time [11]. |

| Target-to-Target Interval (TTI) | Increases with longer intervals since previous target [12]. | Not specified in search results. | Relates to habituation and resource replenishment [12]. |

| Attentional Resources | Proportional to resources allocated to the task; reduced in dual-task paradigms [12]. | Longer latency with greater resource demands [12]. | Links P300 to limited-capacity attention systems. |

Emitted P300 and Temporal Uncertainty

The P300 can also be elicited by the non-occurrence of an expected stimulus, termed the "emitted P300." Research has demonstrated that the amplitude of the emitted P300 is generally lower than that of the evoked P300 (from an actual stimulus), and this decrement is more pronounced at longer inter-stimulus intervals (e.g., 1500 ms vs. 700 ms). This phenomenon is attributed to increased temporal uncertainty at longer intervals, as evidenced by greater variability in subjective time estimations and emitted P300 latencies. This suggests that the brain's internal timing mechanisms and the apprehension of stimulus omission play a crucial role in the generation of the P300 [13].

The Oddball Paradigm: Experimental Protocols

The oddball paradigm is the principal experimental method for eliciting the P300. Its design leverages the brain's sensitivity to deviant, task-relevant stimuli within a repetitive background.

Paradigm Variants and Workflow

The core principle involves presenting a sequence of stimuli where a rare "deviant" or "target" stimulus is interspersed among frequent "standard" stimuli. The subject's task is to detect and respond to these target stimuli, typically via mental counting or a physical button press [12] [14]. The P300 wave is only observed if the subject is actively engaged in the task of detecting the targets [11].

Diagram 1: Oddball Experiment Workflow

Table 2: Oddball Paradigm Variants and Their Specifications

| Paradigm Variant | Stimulus Types & Probabilities | Subject Task | Primary ERP Components Elicited | Application in BCI/Research |

|---|---|---|---|---|

| Classic Two-Stimulus | Standard (∼80%), Target (∼20%) [14]. | Detect and respond to targets. | P3b | Foundational P300 studies; basic BCI spellers. |

| Three-Stimulus (with novel distractors) | Standard (Frequent), Target (Infrequent), Novel Distracter (Infrequent) [12] [14]. | Detect and respond only to targets. | P3a (to novel distractors), P3b (to targets) | Studying involuntary attention (P3a) and its interaction with voluntary attention (P3b). |

| Single-Stimulus | Target only, presented infrequently with no other stimuli [12]. | Detect each stimulus. | P300 | Simplifying the stimulus environment. |

| Hybrid P300-SSVEP (FERC) | Rows/Columns flash with specific frequencies (e.g., 6.0-11.5 Hz) in random order [6]. | Gaze at target character. | P300 and SSVEP simultaneously | High-performance hybrid BCI spellers, improving accuracy and ITR [6]. |

The P3a and P3b Subcomponents

The P300 is not a unitary phenomenon but is composed of at least two subcomponents: the P3a and P3b. The P3a is a fronto-centrally maximal response to novel or unexpected distractor stimuli, reflecting a stimulus-driven frontal attention mechanism associated with the orienting response. In contrast, the P3b is a parieto-centrally maximal potential elicited by task-relevant target stimuli. It is linked to temporal-parietal activity and is thought to be related to subsequent memory processing [12]. The three-stimulus oddball paradigm is particularly effective for disentangling these subcomponents.

Spatial Distribution and Neural Origins

The topographic distribution of the P300 across the scalp provides critical insights into its underlying neural generators and functional dissociation.

Scalp Topography and Averaging Techniques

The P3b demonstrates a characteristic scalp distribution that increases in amplitude from frontal (Fz) to central (Cz) to parietal (Pz) electrode sites [12]. The P3a, in contrast, has a more anterior distribution. To enhance the reliability of P300 detection in BCI applications, advanced signal processing techniques are employed. For instance, Independent Component Analysis (ICA) can be used to create a subject-specific averaged spatial distribution template of the P300. This technique improves spatial filtering by identifying and combining P300-like independent components from multiple target epochs, leading to more accurate single-trial detection without requiring extensive prior training data [15] [16].

Neurobiological Generators and Pathways

Neuroimaging and neuropharmacological studies suggest that the P3a and P3b originate from distinct but interacting neural circuits. The P3a is associated with stimulus-driven frontal attention mechanisms and is linked to the frontal/dopaminergic neurotransmitter pathways. The P3b, on the other hand, is generated by temporal-parietal activity associated with attention and is thought to involve parietal/norepinephrine pathways [12]. While the hippocampus and various association areas of the neocortex are proposed contributors to the scalp-recorded P300, its precise intracerebral origin remains an area of active research [11].

Diagram 2: P300 Signaling Pathways

The Scientist's Toolkit: Research Reagents & Materials

This section details essential resources and materials for conducting P300 research, particularly in the context of BCI development.

Table 3: Key Research Resources for P300 BCI Experiments

| Resource Category | Specific Example / Specification | Function & Application in Research |

|---|---|---|

| EEG Data Acquisition System | g.tec medical engineering GmbH amplifiers with passive gel-based or active dry electrodes; recording at 256 Hz [17]. | Non-invasive recording of raw EEG signals. Signals are often bandpass and notch filtered at the amplifier stage. |

| Experimental Control Software | BCI2000 open-source software platform [17]. | Stimulus presentation, experiment control, data acquisition synchronization, and real-time BCI operation. |

| Stimulus Presentation Hardware | Custom hybrid SSVEP-P300 LED stimuli (e.g., COB LEDs controlled by 32-bit microcontroller) [18]. | Precise, customizable visual stimulation to simultaneously evoke SSVEP (via rhythmic flashing) and P300 (via random oddball flashes). |

| Public Datasets | bigP3BCI Dataset on PhysioNet [17]. | Provides a large, open, machine-learning-ready dataset for algorithm development and testing. Includes EEG, event markers, eye-tracking, and demographic/clinical data. |

| Eye-Tracking System | Tobii Pro X2-30 infrared eye tracker [17]. | Monitoring gaze and pupil dynamics for hybrid BCI studies, ensuring subject compliance, and studying overt attention. |

| Signal Processing Algorithms | Independent Component Analysis (ICA) [15] [16]; Wavelet and Support Vector Machine (SVM) for P300 detection [6]. | Spatial filtering, noise reduction, single-trial P300 detection, and classification for improving BCI accuracy and information transfer rate (ITR). |

P300 in Hybrid BCI Systems: Integration with SSVEP

The integration of P300 with other ERP modalities, particularly SSVEP, has emerged as a powerful strategy to create more robust and efficient BCIs.

Hybrid BCIs, such as the Frequency Enhanced Row and Column (FERC) paradigm, stimulate P300 and SSVEP responses simultaneously within the same interface. In this paradigm, each row and column of a speller grid is assigned a specific flickering frequency (e.g., 6.0 to 11.5 Hz). The random flashing of rows/columns induces the P300 potential, while the constant frequency-specific flicker evokes the SSVEP response. This dual coding allows the BCI to fuse detection probabilities from both signals, leading to significantly higher spelling accuracy (e.g., 94.29% online) and information transfer rates compared to using either signal alone [6]. While a competing effect exists where SSVEP stimuli can reduce P300 amplitude and vice versa, the extracted features remain discriminable for target classification [6]. This hybrid approach is a leading example of how the fundamental neurophysiology of the P300 can be leveraged within a broader framework of evoked potentials for advanced BCI control.

Steady-State Visual Evoked Potentials (SSVEPs) are periodic neural responses elicited by repetitive visual stimulation, typically at frequencies above 4 Hz [19]. These responses are characterized by oscillatory brain activity that is phase-locked to the rhythm of a visual stimulus, encompassing both the stimulus fundamental frequency and its harmonic components [19]. Within Brain-Computer Interface (BCI) research, SSVEPs, along with the event-related P300 potential, have become cornerstone paradigms due to their high information transfer rates (ITR), robust signal-to-noise ratios, and minimal user training requirements [20] [21] [22]. The utility of SSVEPs extends beyond neural engineering into cognitive neuroscience, where they serve as a powerful tool for investigating the dynamic mechanisms of visual processing and attention [23] [24]. This whitepaper provides an in-depth technical analysis of SSVEP mechanisms, focusing on the principles of frequency-tagging, the functional significance of harmonic responses, and their cortical origins, thereby offering a foundational resource for researchers and scientists engaged in BCI and cognitive research.

The SSVEP is a resonant brain response; when a visual stimulus is presented at a fixed frequency, the visual cortex produces an electrophysiological output that is frequency-locked to the input [19] [25]. This phenomenon, known as "frequency-tagging," allows researchers to tag specific elements in a complex visual scene by flickering them at unique frequencies [23]. The ensuing SSVEP can be measured via electroencephalography (EEG) and is typically analyzed in the frequency domain, where it manifests as distinct peaks at the fundamental driving frequency and its harmonics [19] [9].

SSVEP-based BCIs leverage this precise, time-locked response for command and control. When a user attends to a specific flickering target, the SSVEP response at that target's frequency is amplified, allowing the system to decode user intent [20]. Hybrid systems that integrate SSVEP with other paradigms, such as the P300 potential—a positive deflection in the EEG signal occurring approximately 300 ms after an infrequent or significant stimulus—demonstrate enhanced performance by providing multiple, verifiable neural correlates of user intention [20] [22]. These systems achieve higher classification accuracy and information transfer rates compared to single-paradigm BCIs [20] [22].

Neural Generators and Cortical Origins

The SSVEP response is not generated in a single brain area but arises from a network of visual cortical regions. The primary and secondary visual areas are major contributors, but the specific neural generators can be identified by combining SSVEP recordings with source localization techniques like dipole modeling and functional magnetic resonance imaging (fMRI).

Table 1: Cortical Sources of the SSVEP

| Brain Region | Contribution to SSVEP | Functional Specialization | Key Evidence |

|---|---|---|---|

| Primary Visual Cortex (V1) | A major source, particularly for the fundamental frequency component [26]. | Basic visual feature processing | Dipole localization colocalized with fMRI activation in medial occipital cortex [26]. |

| Motion-Sensitive Area (MT/V5) | A major source, especially for certain stimulus types [26]. | Motion processing | Source localized to mid-temporal regions of the contralateral hemisphere [26]. |

| Mid-Occipital (V3A) & Ventral Occipital (V4/V8) | Minor contributions [26]. | Higher-order visual processing (e.g., shape, color) | Considered in spatiotemporal modeling of SSVEP sources [26]. |

| Frontal-Parietal-Occipital Network | Supports the functional network of SSVEP harmonic responses [19]. | Attention and large-scale integration | Graph theory analysis shows main connections between frontal and parietal-occipital regions [19]. |

Research indicates that the sequence of cortical activation for steady-state stimulation is similar to that of transient stimulation, with early involvement of V1 followed by higher-tier areas [26]. Furthermore, large-scale brain modeling suggests that SSVEPs are supported by efficient functional connectivity across this distributed network, with stronger responses correlated with more efficient network properties [24].

Harmonic Components and Functional Segregation

The SSVEP response is not limited to the fundamental frequency of the stimulus; it also includes energy at integer multiples, known as harmonics. The second harmonic (twice the stimulus frequency) is particularly significant and has been shown to have distinct spatial and functional roles from the fundamental component [19] [25].

The dissociation between these components is rooted in the neurophysiology of the visual cortex. The fundamental (first harmonic) response is strongly linked to frequency-following neural populations, such as simple cells in V1 that are selective for luminance polarity (light or dark) [25]. In contrast, the second harmonic is linked to frequency-doubling neural populations, such as complex cells in V1 and other areas that respond to both light and dark phases of the stimulus [25].

Table 2: Functional Roles of SSVEP Harmonic Components

| Component | Neural Population | Scalp Topography | Modulation by Attention | Postulated Functional Role |

|---|---|---|---|---|

| First Harmonic (Fundamental) | Frequency-following (e.g., simple cells) | More medial maximum [25] | Negligible to weak modulation [25] | Preserves relatively undistorted sensory fidelity [25]. |

| Second Harmonic | Frequency-doubling (e.g., complex cells) | More lateral, contralateral maximum [25] | Strongly modulated by voluntary attention [25] | Mediates top-down signal modulation for attentional selection [25]. |

This functional segregation is critical for BCIs. The strongly attention-dependent second harmonic provides a robust neural signal for inferring user focus, which can be exploited to improve the performance of SSVEP-based applications [25]. Graph theoretical analyses confirm that the strength of the second harmonic response is positively correlated with the efficiency (e.g., higher clustering coefficient, global efficiency) of its underlying functional brain network [19].

Experimental Protocols and Methodologies

Frequency-Tagging for Competitive Stimulus Processing

Objective: To quantify how attention and reinforcement history modulate cortical representation of competing visual stimuli [23] [27].

Procedure:

- Stimuli: Present two or more stimuli on a screen, each flickering at a unique, known frequency (e.g., 7 Hz and 10 Hz). This is the core of "frequency-tagging" [23] [27].

- Task: Instruct participants to attend to one stimulus while ignoring the other(s). Alternatively, use a learning paradigm where one stimulus is made more behaviorally relevant (e.g., it predicts a specific outcome) [27].

- EEG Recording: Record high-density EEG, focusing on occipital and parieto-occipital electrodes.

- Data Analysis:

- Apply a Fast Fourier Transform (FFT) to the EEG signal to compute the power spectral density.

- Extract SSVEP amplitude (or signal-to-noise ratio) at the tag frequencies of each stimulus.

- Compare the amplitude at the tagged frequency of the attended/high-priority stimulus versus the unattended/low-priority stimulus. An increase indicates attentional enhancement or learned prioritization [23] [27].

Visualization: The following diagram illustrates the workflow and neural correlates of this frequency-tagging protocol.

Hybrid SSVEP + P300 BCI Protocol

Objective: To create a high-accuracy BCI system by simultaneously eliciting and integrating SSVEP and P300 responses for robust intent detection [20] [22].

Procedure:

- Stimulus Design: Create a visual interface where each command option flickers at a specific frequency (to evoke SSVEP) and also flashes briefly in a random sequence (to evoke P300).

- Calibration: Present each command target in a known sequence to record individual-specific SSVEP and P300 patterns.

- Real-Time Operation:

- The user focuses their gaze and attention on the desired command option.

- The system records EEG data in epochs time-locked to the flash onsets.

- Signal Processing & Classification:

- SSVEP Pathway: FFT is used to compute the power spectrum. The target is identified as the frequency with the maximum amplitude [20].

- P300 Pathway: The time-domain EEG is averaged for each stimulus type (target vs. non-target). A classifier (e.g., linear discriminant analysis) detects the P300 peak to identify the target [20] [22].

- Data Fusion: The classifications from both pathways are combined (e.g., through a voting system or probabilistic fusion) to determine the final command with higher accuracy and reduced false positives [20] [22].

Visualization: The data flow and decision fusion in a hybrid BCI system are shown below.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Equipment for SSVEP Research

| Item | Specification / Example | Function in SSVEP Research |

|---|---|---|

| Visual Stimulator | LCD/LED monitor, HMAR (HoloLens 2), custom LED array [20] [9] | Presents repetitive visual stimuli with precise temporal control. |

| EEG Acquisition System | High-density amplifier (e.g., Neuroscan Grael 4K), 32+ channels [9] | Records electrical brain activity from the scalp with high temporal resolution. |

| Stimulation Control Hardware | Microcontroller (e.g., Teensy, STM32) [20] [9] | Precisely controls flicker frequency and timing, and generates event triggers. |

| Electrodes & Application Gel | Ag/AgCl sintered electrodes, electrolyte gel [21] | Ensures high-fidelity signal conduction from scalp to amplifier. |

| Stimulation Software | Unity 3D, Psychtoolbox for MATLAB [9] | Programs and renders the visual stimulus paradigm. |

| Frequency-Tagging Paradigm | Binocularly congruent/incongruent flicker, light-dark modulation [25] [9] | Elicits distinct SSVEP fundamental and harmonic responses. |

| Signal Processing Toolkit | Fast Fourier Transform (FFT), Power Spectral Density (PSD) analysis [20] [19] | Extracts SSVEP features (amplitude, phase) from the EEG signal. |

SSVEPs offer a unique window into the oscillatory dynamics and functional organization of the visual brain. The mechanisms of frequency-tagging, the functional segregation of harmonic components, and the distributed cortical origins of the response are not merely academic concerns; they are fundamental to advancing BCI technology. A deep understanding of these mechanisms enables the design of more efficient stimulation paradigms, more robust decoding algorithms, and higher-performance hybrid systems like the SSVEP+P300 BCI. Future research leveraging large-scale computational modeling [24], advanced neuroimaging, and novel stimulation platforms such as augmented reality [9] will continue to unlock the potential of SSVEPs, both as a tool for restoring communication and for probing the complexities of human cognition.

The efficacy of non-invasive brain-computer interfaces (BCIs) hinges on the precise delineation of neural generators for evoked potentials. This technical analysis provides a comparative examination of parietal and occipital cortex activation patterns underlying two dominant BCI paradigms: the event-related P300 potential and the steady-state visual evoked potential (SSVEP). We synthesize neurophysiological measurements, hybrid paradigm designs, and source localization findings to elucidate the distinct neural substrates and temporal dynamics associated with each cortical region. The findings indicate that P300 responses predominantly engage parietal attention networks, while SSVEP responses are generated primarily in occipital visual processing pathways, with specialized regions like the parieto-occipital sulcus demonstrating paradigm-specific reactivity. This systematic characterization provides a foundational reference for optimizing BCI stimulus paradigms, enhancing classification algorithms, and developing targeted applications in neurorehabilitation and communication.

Electroencephalography (EEG)-based brain-computer interfaces (BCIs) translate distinct neural activation patterns into control commands for external devices, offering communication pathways for individuals with severe neuromuscular impairments [28]. Among non-invasive approaches, two evoked potentials have demonstrated particular reliability for practical implementation: the P300 event-related potential and the steady-state visual evoked potential (SSVEP) [6] [20]. The P300 component manifests as a positive deflection in parietal regions approximately 300 ms after the presentation of an infrequent, task-relevant stimulus, reflecting cognitive processes related to attention and context updating. In contrast, SSVEPs represent continuous oscillatory responses in the occipital lobe phase-locked to rhythmic visual stimulation, typically flickering between 6-30 Hz [6] [9].

A critical challenge in advancing BCI technology lies in accurately characterizing the cortical origins of these signals. The neural generators—specific cortical populations whose synchronized activity gives rise to scalp-measured potentials—determine the spatial fingerprints and signal properties that inform paradigm design and decoding algorithms. Understanding the differential contributions of parietal and occipital cortices enables researchers to exploit their complementary strengths through hybrid systems, mitigate limitations of individual paradigms, and optimize target applications from spellers to neuroprosthetics [6] [20].

This whitepaper provides a technical analysis of parietal versus occipital cortex activation patterns within the context of P300 and SSVEP BCIs. We integrate neuromagnetic measurements, hybrid BCI architectures, and source localization evidence to establish a definitive reference for researchers and developers working at the intersection of cognitive neuroscience and neuroengineering.

Neural Generators and Cortical Activation Patterns

Occipital Cortex Generators

The occipital cortex, encompassing primary visual area V1 and extrastriate areas V2-V5, serves as the principal neural generator for SSVEP responses. Neuromagnetic measurements reveal that pattern stimuli (e.g., checkerboards) elicit strong contralateral activation in the occipital V1/V2 cortex, characterized by an initial transient response at 65-75 ms followed by sustained activation throughout stimulus presentation [29]. This sustained activation represents continuous visual processing in the ventral stream and provides the robust frequency-tagged signal exploited by SSVEP-BCIs.

The occipital cortex demonstrates specialized functional organization relevant to BCI design. SSVEP frequency responses located in the center of the visual field produce the most pronounced energy increase, decaying approximately in a Gaussian distribution toward the periphery [6]. This physiological property necessitates precise gaze control for optimal BCI performance. Furthermore, activation patterns differ significantly between stimulus types; luminance stimuli produce weaker sustained occipital activation and more bilateral response distributions compared to pattern stimuli, suggesting varying degrees of magnocellular and parvocellular pathway engagement [29].

Parietal Cortex Generators

The parietal cortex, particularly the medial parietal regions and temporoparietal junction, serves as the dominant generator for the P300 component. Source localization studies consistently identify the parietal lobe as the origin of the characteristic positive deflection occurring 300 ms post-stimulus, reflecting its role in attention allocation and context updating [20] [28]. The superior temporal gyrus (BA38) and medial frontal gyrus (BA10) have also been implicated as secondary generators during different cognitive states, indicating distributed networks contributing to the final scalp-recorded potential [30].

Beyond the classic P300, the parietal cortex demonstrates specialized activation patterns during visual processing. The medial parieto-occipital sulcus (POS) region shows particular reactivity to luminance stimuli, activating 60-70 ms later than initial occipital responses and generating strong responses to both foveal and extrafoveal stimuli [29]. This parietal region appears preferentially engaged by stimuli with higher "attention-catching" value, suggesting its role in orienting and attentional capture mechanisms relevant to BCI oddball paradigms.

Table 1: Comparative Characteristics of Primary Neural Generators

| Cortical Region | Dominant BCI Paradigm | Temporal Response Profile | Key Anatomical Structures | Functional Correlates |

|---|---|---|---|---|

| Occipital Cortex | SSVEP | Sustained oscillation during stimulation; Initial transient (65-75 ms) | V1/V2 visual areas; Striate and extrastriate cortex | Early visual processing; Frequency tagging; Pattern recognition |

| Parietal Cortex | P300 | Late positive deflection (~300 ms) | Medial parietal lobe; Temporoparietal junction; Parieto-occipital sulcus | Attention allocation; Context updating; Oddball processing |

| Parieto-Occipital Sulcus | Luminance-evoked potentials | Delayed response (130-145 ms post-stimulus) | Medial POS region | Attention capture; Luminance processing; Eye movement planning |

Integrated Cortical Networks in Hybrid BCIs

Advanced BCI systems increasingly leverage both parietal and occipital generators through hybrid P300-SSVEP paradigms. These integrated approaches demonstrate synergistic benefits, with the parietal P300 providing complementary information to the occipital SSVEP. For instance, the Frequency Enhanced Row and Column (FERC) paradigm simultaneously evokes both responses by incorporating frequency coding into the traditional P300 speller matrix, achieving significantly higher accuracy (94.29%) compared to single-paradigm implementations (P300-only: 75.29%; SSVEP-only: 89.13%) [6].

Neurophysiological evidence suggests partially independent generation mechanisms for these signals, enabling their simultaneous detection without catastrophic interference. Although competitive effects can occur—with SSVEP stimuli potentially reducing P300 amplitude and vice-versa—the extracted features remain sufficiently discriminative for classification purposes [6]. This neural independence permits the development of sequential verification systems where SSVEP frequencies provide primary classification confirmed by P300 event markers, substantially reducing false positives in practical applications [20].

Experimental Protocols and Methodologies

Paradigm Design and Stimulation Parameters

P300 Oddball Paradigm: The classic oddball paradigm presents rare target stimuli within a stream of frequent non-target stimuli. In visual P300 spellers, a 6×6 character matrix flashes rows or columns in random order, with targets occurring with low probability (typically 0.17-0.20) to elicit the P300 response [6] [31]. Stimulus duration is typically 100-500 ms with inter-stimulus intervals of 500-1500 ms. Participants maintain mental count of target appearances, enhancing attention and P300 amplitude.

SSVEP Frequency Paradigm: SSVEP paradigms employ visual stimuli flickering at fixed frequencies between 6-30 Hz, with the 6-12 Hz range often producing the strongest responses [6] [9]. Modern implementations use frequency intervals of 0.5 Hz or less to maximize number of selectable targets. For binocular AR headsets, innovative incongruent dual-frequency stimulation presents different frequencies to each eye, improving target separability and BCI performance [9].

Hybrid FERC Paradigm: The Frequency Enhanced Row and Column paradigm assigns specific flicker frequencies (e.g., 6.0-11.5 Hz in 0.5 Hz steps) to each row and column of a 6×6 matrix. Rows and columns flash pseudorandomly to elicit P300 responses, while continuous flickering evokes SSVEPs, enabling simultaneous detection of both signals [6].

Signal Acquisition and Preprocessing

EEG Configuration: High-density EEG systems with 32-64 channels provide optimal spatial resolution for source localization. Electrodes should be concentrated over parietal and occipital regions according to the international 10-10 system, with specific attention to positions Pz, P3, P4, POz, O1, O2 for capturing P300 and SSVEP generators [9] [31]. Reference electrode typically placed at Cz or linked mastoids, with ground between Fz and FPz. Impedance should be maintained below 10-20 kΩ for optimal signal quality [9].

Artifact Removal: EEG preprocessing requires robust artifact removal techniques. Independent Component Analysis (ICA) effectively separates and removes ocular artifacts [28]. Canonical Correlation Analysis (CCA) is particularly effective for SSVEP processing, while wavelet transforms address myogenic artifacts [28]. For mobile applications, additional motion artifact correction using inertial measurement units (IMUs) is recommended [31].

Filtering Parameters: For P300 detection, bandpass filtering between 0.1-20 Hz effectively captures the relevant components while reducing high-frequency noise [28]. SSVEP analysis typically uses 1-40 Hz bandpass to capture fundamental and harmonic responses, with notch filters at 50/60 Hz to eliminate line noise [6].

Table 2: Standardized Experimental Parameters for Evoked Potential Recording

| Parameter | P300 Paradigm | SSVEP Paradigm | Hybrid Paradigm |

|---|---|---|---|

| Stimulus Type | Random row/column highlighting | Frequency-specific flickering | Frequency-coded flashing |

| Target Probability | 0.17-0.20 | N/A (gaze-dependent) | 0.17-0.20 + gaze |

| Stimulus Duration | 100-500 ms | Continuous | 100-500 ms (P300) + Continuous (SSVEP) |

| Frequency Range | N/A | 6-30 Hz (optimal: 6-12 Hz) | 6-11.5 Hz for tagging |

| EEG Channels | Pz, P3, P4, Cz, Fz | POz, O1, O2, Oz | Pz, P3, P4, POz, O1, O2 |

| Filter Range | 0.1-20 Hz Bandpass | 1-40 Hz Bandpass | 0.1-40 Hz Bandpass |

| Trial Repetitions | 10-15 for averaging | 5-10 cycles per frequency | 10-15 for P300, continuous for SSVEP |

Source Localization Procedures

Accurate identification of neural generators requires sophisticated source localization techniques applied to high-density EEG recordings:

Electrode Placement: Minimum 32-channel systems with dense coverage over posterior regions are recommended. The integration of electrodes at the left and right mastoids improves source modeling accuracy [31].

Head Model Construction: Individual MRI-based head models provide optimal accuracy, though standardized boundary element models (BEM) or finite element models (FEM) offer practical alternatives when structural imaging is unavailable.

Inverse Solution Methods: Standardized low-resolution electromagnetic tomography (sLORETA) and its variants (e.g., swLORETA) effectively localize cortical and subcortical generators of oscillatory activity [30]. For SSVEP sources, frequency-domain beamforming approaches demonstrate particular efficacy in isolating generators of specific frequency responses.

Validation Metrics: Localization accuracy should be verified through: (1) dipole fit goodness-of-measure (>85%); (2) consistency across participants; and (3) congruence with fMRI and MEG literature on visual processing [29] [30].

Signaling Pathways and Experimental Workflows

The neural processing pathways for P300 and SSVEP responses involve distinct yet partially overlapping cortical networks. The following diagrams illustrate these pathways and representative experimental workflows.

Neural Processing Pathways for P300 and SSVEP

Diagram 1: Neural processing pathways for SSVEP (blue) and P300 (green) responses, illustrating distinct cortical generators and processing streams.

Hybrid BCI Experimental Workflow

Diagram 2: Hybrid BCI experimental workflow integrating P300 and SSVEP pathways from stimulus to application control.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Methods for Neural Generator Research

| Research Tool | Specifications & Variants | Primary Function | Technical Considerations |

|---|---|---|---|

| EEG Acquisition Systems | 32-64 channel wet systems (BrainAmp); Portable systems (SMARTING); Mobile headsets (Emotiv) | Neural signal recording with high temporal resolution | Scalp vs. ear-EEG configurations; 500-1024 Hz sampling rate; Electrode impedance <20 kΩ |

| Visual Stimulation Devices | LCD/LED monitors (60-120 Hz); Custom LED arrays (COB LEDs); AR/VR headsets (HoloLens 2) | Presentation of visual paradigms with precise timing | Refresh rate limitations; Luminance control; Binocular stimulation capability |

| Stimulus Control Systems | Microcontrollers (Teensy, STM32); Software platforms (Unity, Psychtoolbox) | Precise timing control; Trigger synchronization | TTL pulse generation; Integration with EEG systems |

| Source Localization Software | sLORETA/swLORETA; BrainStorm; FieldTrip; EEGLAB | 3D reconstruction of neural generators | Individual MRI vs. template head models; Inverse solution algorithms |

| Signal Processing Tools | Independent Component Analysis (ICA); Canonical Correlation Analysis (CCA); Wavelet Transform | Artifact removal; Feature enhancement | Computational demands; Real-time capability |

| Classification Algorithms | Support Vector Machines (SVM); Task-Related Component Analysis (TRCA); Deep Learning models | Intent recognition from neural features | Single-trial vs. ensemble methods; Transfer learning approaches |

Discussion and Future Directions

The comparative analysis of parietal and occipital cortex activation patterns reveals distinct neurophysiological signatures that can be leveraged for specialized BCI applications. Occipital SSVEP generators provide robust, frequency-specific signals ideal for continuous control applications requiring multiple discrete commands, while parietal P300 generators offer cognitive biomarkers well-suited for attention-based communication systems.

Future research directions should focus on several critical areas. First, the development of personalized stimulation parameters based on individual neuroanatomical variations could significantly enhance signal quality. Second, advanced source separation techniques that dynamically isolate parietal and occipital contributions in hybrid paradigms would enable more efficient parallel processing. Third, the integration of novel stimulation approaches, such as binocular incongruent frequency stimulation in AR environments, promises to expand the target capacity of SSVEP systems while maintaining user comfort [9]. Finally, miniaturized acquisition systems that maintain high signal quality in mobile environments will be essential for translating laboratory findings to real-world applications [31].

The characterization of comparative neural generators provided in this analysis establishes a foundational framework for the next generation of evoked potential BCIs. By leveraging the distinct properties of parietal and occipital cortex activation patterns, researchers can develop more efficient, robust, and user-friendly systems that advance both theoretical understanding and practical applications in neurotechnology.

Brain-Computer Interface (BCI) technology establishes a direct communication pathway between the brain and an external device, offering significant potential for neurorestorative applications and assistive technologies [20]. Among non-invasive electroencephalography (EEG)-based BCIs, the P300 event-related potential and Steady-State Visual Evoked Potential (SSVEP) represent two of the most widely implemented paradigms due to their superior information transfer rates (ITR) and minimal user training requirements [20] [32]. The P300 is a positive deflection in the EEG signal occurring approximately 300 ms after a rare, significant stimulus in an "oddball" paradigm, while SSVEP is a periodic response elicited by a visual stimulus flickering at a constant frequency, typically observed over the occipital cortex [6] [33] [32].

Historically, BCI systems relied on single paradigms, which often faced limitations such as user incompatibility, susceptibility to erroneous signal classification, and inherent performance trade-offs [20] [33]. Hybrid BCIs that combine multiple paradigms have emerged as a powerful strategy to overcome these constraints. Specifically, integrating P300 and SSVEP leverages their complementary strengths, resulting in enhanced system performance, including improved classification accuracy, increased information transfer rates, and greater reliability [6] [20] [34]. This whitepaper elucidates the theoretical foundations, performance advantages, and methodological considerations of hybrid P300-SSVEP BCIs compared to their single-paradigm counterparts.

Theoretical Foundations and Performance Advantages

Core Mechanisms and Synergistic Integration

The P300 and SSVEP signals originate from distinct neural processes and are analyzed in different domains—time and frequency, respectively. This independence is a key theoretical basis for their successful integration, as it minimizes interference during signal processing and classification [6].

- P300 Mechanism: The P300 potential is an endogenous component of event-related potentials (ERPs), arising from cognitive processing of infrequent or task-relevant stimuli. It is predominantly observed over the parietal cortex and requires attention from the user [33] [32].

- SSVEP Mechanism: SSVEP is an exogenous response, manifesting as frequency-locked oscillations in the visual cortex synchronized to the frequency of an external flickering stimulus and its harmonics [20] [32].

When combined in a hybrid paradigm, these signals provide complementary information channels. For instance, in a speller application, the SSVEP component can identify the general group or region of a target, while the P300 can pinpoint the specific target within that group, leading to more robust decision-making [6] [35]. While initial concerns existed about potential competition or interference between simultaneous stimuli, studies have demonstrated that although SSVEP stimuli may reduce P300 amplitude and vice-versa, the extracted features remain sufficiently discriminative for high classification accuracy [6].

Quantitative Performance Comparison

Empirical studies consistently demonstrate that hybrid P300-SSVEP BCIs outperform single-paradigm systems across key metrics such as classification accuracy and information transfer rate.

Table 1: Performance Comparison of Single vs. Hybrid P300-SSVEP Paradigms

| Paradigm | Reported Accuracy (%) | Information Transfer Rate (ITR, bits/min) | Key Features | Source |

|---|---|---|---|---|

| P300 Only | 75.29 | N/A | Classical Row-Column (RC) paradigm [6] | [6] |

| SSVEP Only | 89.13 | N/A | Used ensemble TRCA for detection [6] | [6] |

| Hybrid (FERC) | 94.29 | 28.64 | Fused P300 (SVM) & SSVEP (TRCA) [6] | [6] |

| Hybrid (LED) | 86.25 | 42.08 | LED-based stimulator, 4-direction control [20] | [20] |

| Hybrid (Adaptive LDA) | 97.4 | N/A | Adaptive classifier for non-stationary EEG [34] | [34] |

The performance superiority of hybrid systems is further validated by their ability to mitigate the "BCI illiteracy" or "inefficiency" problem, where a significant minority of users cannot effectively control a single-paradigm BCI [33]. By providing multiple neural pathways for control, hybrid BCIs offer a fallback mechanism, thereby expanding the usable population [33] [35].

Detailed Experimental Protocols and Methodologies

The Frequency Enhanced Row and Column (FERC) Paradigm

The FERC paradigm is a sophisticated hybrid approach designed to simultaneously evoke P300 and SSVEP responses [6].

- Stimulus Design: A standard 6x6 character matrix is used. Each row and column is assigned a unique flickering frequency within the 6.0 Hz to 11.5 Hz range (intervals of 0.5 Hz). Columns are assigned lower frequencies (6.0-8.5 Hz), while rows are assigned higher frequencies (9.0-11.5 Hz) [6].

- Stimulation Sequence: To induce the P300 potential, rows and columns flash in a pseudorandom sequence. Each row or column flashes once per trial. Concurrently, the continuous flickering at the assigned frequencies evokes the SSVEP response [6].

- Data Acquisition: EEG data is typically recorded from multiple scalp electrodes, with a focus on occipital and parietal sites for SSVEP and P300, respectively.

- Signal Processing and Fusion:

- P300 Detection: EEG epochs time-locked to the flash events are analyzed. A common method involves wavelet decomposition for feature extraction, followed by a Support Vector Machine (SVM) classifier [6].

- SSVEP Detection: The frequency-domain EEG signal is analyzed. Ensemble Task-Related Component Analysis (TRCA) has been shown to outperform traditional methods like Canonical Correlation Analysis (CCA) [6].

- Decision Fusion: The probabilities from the P300 and SSVEP classifiers are fused using a weighted control approach to make the final target character determination [6].

Figure 1: The FERC Hybrid BCI Workflow. This diagram illustrates the parallel processing paths for P300 and SSVEP signals, from stimulus evocation to final decision fusion.

LED-Based Dual-Mode Visual Stimulation System

Conventional LCD monitors have refresh rate limitations that can restrict the selection of stimulation frequencies. A novel hardware-based approach uses Light-Emitting Diodes (LEDs) to create a more robust visual stimulator [20].

- Hardware Design: The stimulator comprises an array of eight LEDs. Four large green Chip-on-Board (COB) LEDs are used for SSVEP elicitation, flickering at precise frequencies (7, 8, 9, and 10 Hz). Four high-power red LEDs are concentrically positioned to provide the P300-evoking flashes [20].

- Control System: A microcontroller (e.g., Teensy) ensures precise temporal control over the flickering and flashing sequences, minimizing frequency deviation (errors reported between 0.15%-0.20%) [20].

- Feature Extraction and Classification:

- SSVEP Detection: Power Spectral Density (PSD) analysis or Fast Fourier Transform (FFT) is used to identify the frequency component with the maximal amplitude.

- P300 Detection: Time-domain analysis is performed to detect the characteristic positive peak around 300 ms post-stimulus.

- Intent Recognition: Directional control is determined by the SSVEP frequency with the highest amplitude, while the presence of a P300 potential provides secondary verification, reducing false positives [20].

Shape-Changing Hybrid Paradigm

To mitigate the interference caused by color-changing stimuli in traditional "flash and flicker" paradigms, a shape-changing hybrid paradigm has been developed [33].

- Stimulus Design: Instead of changing color to elicit the P300, the target stimuli change their shape. This method aims to decrease the degradation of SSVEP strength that can occur with color changes, which disrupt the stable visual pattern required for strong SSVEP responses [33].

- Experimental Comparison: Studies comparing this new paradigm to the normal color-changing hybrid paradigm showed a nearly 20% increase in SSVEP classification accuracy, while maintaining P300 classification accuracy at 100% [33].

- Conclusion: This approach demonstrates that careful design of the stimulus property used to evoke the P300 (shape instead of color) can significantly enhance the overall hybrid BCI performance by minimizing interference between the two evoked potentials.

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing a hybrid P300-SSVEP BCI requires a suite of specialized hardware and software components. The table below details key materials and their functions.

Table 2: Essential Research Materials for Hybrid P300-SSVEP BCI Research

| Item Name | Function/Description | Application in Research |

|---|---|---|

| Multi-channel EEG System (e.g., BioSemi, g.USBamp) | Records electrical brain activity from the scalp with high temporal resolution. | Primary data acquisition for both P300 (parietal) and SSVEP (occipital) signals [6] [35] [36]. |

| Visual Stimulator (LCD Monitor or Custom LED Array) | Presents the visual paradigm to the user (e.g., flickering stimuli). | LCDs are common but limited by refresh rates. Custom LED arrays offer precise frequency control and stronger SSVEP responses [20]. |

| Stimulation Control Software (e.g., Psychtoolbox) | Provides precise timing control for visual stimulus presentation. | Critical for generating accurate flicker frequencies and random flash sequences to reliably evoke SSVEP and P300 [36]. |

| Signal Processing & Classification Algorithms (SVM, TRCA, CCA, Adaptive LDA) | Extracts and classifies features from noisy EEG data. | SVM for P300 detection; TRCA/CCA for SSVEP detection; Adaptive classifiers handle non-stationary EEG [6] [34]. |

| Electrode Caps (with Ag/AgCl electrodes) | Holds EEG electrodes in standardized positions (10-20/10-10 system). | Ensures consistent and reproducible signal acquisition across subjects and sessions [37]. |

Advanced Classification and Future Directions

Handling Non-Stationarity with Adaptive Classification

A significant challenge in BCI is the non-stationary nature of EEG signals, which can degrade classifier performance over time. An adaptive version of the Linear Discriminant Analysis (LDA) classifier has been proposed specifically for hybrid SSVEP+P300 BCIs [34].

- Method: This classifier continuously updates its parameters—specifically the covariance matrix and mean values for each class—based on incoming EEG signals. It prioritizes recent signal data over older history, allowing it to adapt to changes in the user's brain responses [34].

- Performance: This adaptive LDA achieved an estimated classification accuracy of 97.4%, outperforming both pooled mean LDA and conventional multiclass LDA classifiers. This high accuracy is crucial for neurorestorative applications where reliable feedback is essential [34].

Figure 2: Adaptive Classification Workflow. This diagram shows the continuous feedback loop where the classifier model updates its parameters based on the incoming EEG data stream to maintain high accuracy over time.

Emerging Frontiers: Omitted Stimulus Potentials and Sequential Coding

Beyond the classic P300, researchers are exploring other time-domain features like the Omitted Stimulus Potential (OSP), which is elicited when an expected stimulus in a regular train is occasionally omitted [36] [37].

- Hybrid SSVEP-OSP Paradigm: This novel approach uses repetitive visual stimuli with randomly interspersed "missing" flickers. The steady-state flicker evokes the SSVEP, while the omission event evokes the OSP, combining frequency and time-domain features within a single, unified stimulus [36].

- Multiple Time-Frequencies Sequential Coding (MTFSC): This advanced strategy further develops the hybrid concept by combining multiple OSPs and Steady-State Motion VEPs (SSMVEP). It aims to more efficiently use both time and frequency information to expand the number of BCI targets and improve performance, with one study reporting 89.04% accuracy and 36.37 bits/min ITR for a nine-target stimulator [37].

The theoretical basis for combining P300 and SSVEP into a hybrid BCI paradigm is well-founded in their complementary neural origins and signal characteristics. Empirical evidence overwhelmingly confirms that hybrid systems deliver superior performance, including enhanced classification accuracy, higher information transfer rates, and improved robustness compared to single-paradigm approaches. Advanced stimulus paradigms like FERC, innovative hardware using LEDs, and sophisticated adaptive classification algorithms collectively address the limitations of traditional BCIs. Furthermore, emerging research on OSPs and novel sequential coding strategies points toward a future where hybrid BCIs offer even greater communication bandwidth and reliability, solidifying their role as a cornerstone of next-generation brain-computer interfaces for both clinical and research applications.

Advanced Signal Processing and Emerging Biomedical Applications

In brain-computer interface (BCI) research, the stimulus paradigm—the set of external stimuli or mental tasks designed to elicit specific brain responses—is a fundamental component that directly impacts system performance [38]. For evoked potentials like P300 and steady-state visual evoked potentials (SSVEP), paradigm design determines how effectively a user's intentions are "written" into measurable brain signals [38]. This technical guide examines three advanced approaches for P300 and SSVEP BCI control: the Frequency Enhanced Row and Column (FERC) paradigm, Region-Based paradigms, and Checkerboard paradigms.

The P300 wave is an event-related potential component elicited during decision-making, typically appearing as a positive deflection in voltage approximately 250-500 ms after a rare "target" stimulus in an oddball paradigm [39] [11]. SSVEPs are oscillatory brain responses phase-locked to periodic visual stimuli, typically occurring at the same frequency as the flickering stimulus and its harmonics [40]. Effective paradigm design must optimize the elicitation of these signals while considering user comfort, performance, and practical implementation constraints.

Fundamental Neurophysiological Principles

P300 and SSVEP Mechanisms

The P300 component is considered an endogenous potential, as its occurrence links not to the physical attributes of a stimulus but to a person's reaction to it, reflecting processes involved in stimulus evaluation or categorization [39]. It is usually recorded most strongly over the parietal lobe and is thought to have multiple intracerebral generators, possibly including the hippocampus and various association areas of the neocortex [39] [11].

SSVEPs reflect the entrained activity of visual cortical populations, with amplitudes and phases depending on stimulus frequency, contrast, and duty cycle [40]. These signals typically show resonance-like enhancement around ~10, ~20, and ~40 Hz and are strongest over occipital electrodes [40]. When the retina is excited by a visual stimulus at a constant rate (typically 3.5-75 Hz), the brain generates oscillatory activity at the same frequency and its harmonics [40].

Design Principles for BCI Paradigms

Effective BCI paradigm design follows several key principles. The central nervous system signals evoked by paradigm-specific tasks should have good separability for reliable classification [38]. Paradigm tasks must be easy and safe for users to perform, providing a good experience and comfort level [38]. Additionally, tasks specified by the paradigm should be consistent with tasks controlled by the BCI, avoiding non-transparent mappings that may affect performance [38].

FERC (Frequency Enhanced Row and Column) Paradigm

Paradigm Architecture and Implementation

The FERC paradigm is a hybrid BCI approach that incorporates frequency coding into the traditional row-column (RC) paradigm to simultaneously evoke P300 and SSVEP signals [41]. In this design, a 6×6 matrix layout is used with each row or column assigned a specific flickering frequency between 6.0 and 11.5 Hz at 0.5 Hz intervals [41]. The row/column flashes occur in a pseudorandom sequence, maintaining the "oddball" presentation principle necessary for P300 elicitation while adding frequency tagging for SSVEP generation.

The paradigm uses a white-black flicker pattern with distinct frequencies assigned to different rows and columns. During operation, when a user focuses on a target character, the specific row and column containing that character flash at their respective frequencies, simultaneously evoking both P300 potentials (due to the rare flashing event) and SSVEP responses (due to the frequency-specific flickering) [41].

Signal Processing and Classification Methods

For P300 detection, the FERC paradigm employs a combination of wavelet transformation and support vector machine (SVM) classification, which has demonstrated superior performance compared to traditional linear discriminant classifiers [41]. For SSVEP detection, an ensemble task-related component analysis (TRCA) method is used, outperforming canonical correlation analysis [41]. The detection probabilities from both approaches are fused using a weight control approach to determine the final character selection.

Table 1: Performance Comparison of FERC Paradigm Components

| Component | Method | Performance | Comparison with Alternatives |

|---|---|---|---|

| P300 Detection | Wavelet + SVM | Significant improvement over baseline | Outperformed LDA variants (61.90-72.22%) |

| SSVEP Detection | Ensemble TRCA | High accuracy | Superior to CCA method (73.33%) |

| Hybrid Fusion | Weighted control | Optimized combination | Leverages complementary strengths of both signals |

Experimental Protocol and Validation

In validation experiments, subjects were presented with the 6×6 character matrix and instructed to focus on target characters. The row and column flashes were performed in random order, with each row or column flashing once per trial. EEG data was typically recorded from multiple electrodes following standard placements. For offline analysis, the recorded data was processed using the wavelet and SVM approach for P300 detection and ensemble TRCA for SSVEP detection, with subsequent fusion of results [41].

Online tests with 10 subjects demonstrated that the implemented BCI speller achieved an accuracy of 94.29% and an information transfer rate (ITR) of 28.64 bit/min, outperforming single-modality approaches (P300-only: 75.29%; SSVEP-only: 89.13%) [41]. The offline calibration tests showed even higher accuracy of 96.86% [41].

Figure 1: FERC Paradigm Workflow - Integrating P300 and SSVEP evocation through combined random flashing and frequency tagging

Region-Based Paradigms

RSVP-Based Spellers

Region-Based paradigms, particularly those using Rapid Serial Visual Presentation (RSVP), present characters sequentially in the same spatial location, eliminating the need for gaze shifting between different screen areas. The Triple RSVP speller represents an advancement in this category, presenting three different characters simultaneously in a single trial, with each character appearing three times across different blocks to enhance ITR [42].

In this approach, the presentation interface consists of three symbol presentation areas (60×65 pixels each), arranged parallel with the middle area offset downward by 30 pixels to facilitate simultaneous viewing [42]. Characters are presented in groups of three, with each 250ms presentation constituting one trial. The symbol group presentation order is determined by a carefully designed sequence where each block contains 36 characters distributed across 12 symbol groups [42].

Experimental Protocol for Triple RSVP

The experimental protocol involves subjects watching three blocks of symbol groups to identify a single target character. During this process, the target character appears three times in different symbol groups across different blocks. EEG signals corresponding to each character presentation are averaged, with the P300 component used to identify the intended character [42].

This paradigm achieves gaze-independence and space-independence, making it suitable for integration into mobile smart devices with limited display areas. Testing demonstrated an online average accuracy of 79.0% and ITR of 20.259 bit/min at a spelling speed of 10 seconds per character [42].

Table 2: Region-Based Paradigm Performance Characteristics

| Parameter | Triple RSVP | Traditional RSVP | Advantages |

|---|---|---|---|

| Spatial Requirement | 90×195 pixels | Varies | Minimal space needed |

| Gaze Dependence | Independent | Independent | Suitable for users with limited eye movement |

| ITR | 20.259 bit/min | Lower than Triple RSVP | Improved through character grouping |

| Accuracy | 79.0% | Comparable | Maintained despite multiple characters |

Checkerboard Paradigms

Paradigm Structure and Visual Arrangement

The Checkerboard Paradigm (CBP), introduced by Townsend et al., represents a significant advancement over the traditional row-column paradigm by reorganizing the stimulus layout to address the adjacency-distraction problem [43]. In this approach, characters are arranged so that no two adjacent rows or columns flash sequentially, reducing classification errors caused by nearby non-target flashes eliciting P300 responses.

The CBP uses an 8×9 matrix structure that can be separated into two 6×6 matrices, with all rows of one matrix flashing randomly before the columns [43]. This flashing pattern ensures that adjacent items rarely flash consecutively, minimizing the "double-flash" problem where two adjacent items flash in rapid succession, making it difficult to identify which item elicited the P300 response.

Color and Pattern Optimization

Research has explored various color combinations to enhance performance in checkerboard paradigms. Studies investigating red face with white rectangle (RFW), red face with blue rectangle (RFB), and red face with red rectangle (RFR) patterns found that RFW achieved the highest average online accuracy at 96.94%, significantly outperforming RFR (93.61%) and RFB (92.22%) patterns [43].

Additional research has examined the effects of different colors across varying frequency ranges. One comprehensive study compared white, red, and green stimuli at low (5 Hz), medium (12 Hz), and high (30 Hz) frequencies across 42 subjects [44]. Results indicated that middle frequencies (12 Hz) generated the best signal-to-noise ratio (SNR), followed by low and then high frequencies [44]. While red stimuli performed well at middle frequencies, white generated comparable SNR without potential photosafety concerns associated with red flicker [44].

Figure 2: Checkerboard Paradigm Optimization - Addressing key limitations of row-column paradigms through layout and sequence design

Comparative Analysis and Performance Metrics

Performance Across Paradigms

Table 3: Comprehensive Performance Comparison of BCI Paradigms

| Paradigm | Accuracy (%) | ITR (bit/min) | User Comfort | Key Advantages |

|---|---|---|---|---|

| FERC | 94.29 (online) | 28.64 | Moderate | Hybrid approach, high ITR |

| Triple RSVP | 79.0 (online) | 20.26 | High | Gaze-independent, compact |

| Checkerboard | Up to 96.94 | Varies | Moderate | Reduced adjacency errors |

| Traditional RC | 75.29 (P300 only) | Lower than hybrid | Moderate | Established baseline |

Stimulus Parameter Optimization