Optimizing Variational Mode Decomposition with Genetic Algorithms: A Guide for Advanced Drug Discovery and Biomedical Data Analysis

This article provides a comprehensive exploration of Variational Mode Decomposition (VMD) optimized by Genetic Algorithms (GA) for researchers and professionals in drug development.

Optimizing Variational Mode Decomposition with Genetic Algorithms: A Guide for Advanced Drug Discovery and Biomedical Data Analysis

Abstract

This article provides a comprehensive exploration of Variational Mode Decomposition (VMD) optimized by Genetic Algorithms (GA) for researchers and professionals in drug development. It covers foundational principles of VMD and its sensitivity to parameter selection, detailing how GA automates the optimization of key parameters like mode number and penalty factor to enhance signal processing. The content explores methodological implementations and applications in spectral analysis and fault diagnosis, with transferable insights for biomedical data. It addresses troubleshooting common optimization challenges and presents validation strategies through comparative analysis with other algorithms. This guide synthesizes key performance metrics and outlines future directions for applying GA-VMD in clinical research and pharmaceutical development.

Understanding VMD and Genetic Algorithms: Core Principles for Biomedical Signal Processing

The Critical Challenge of VMD Parameter Selection in Complex Data Analysis

Variational Mode Decomposition (VMD) has emerged as a powerful signal processing technique for decomposing complex, non-stationary signals into a discrete number of band-limited intrinsic mode functions (IMFs). Unlike empirical mode decomposition (EMD), VMD employs a solid mathematical foundation based on variational principles, making it highly effective for applications ranging from fault diagnosis in rotating machinery to biomedical signal processing [1] [2]. However, the performance and accuracy of VMD are profoundly influenced by the appropriate selection of its key parameters, namely the mode number (K) and the penalty factor (α, also known as the bandwidth control parameter). The decomposition result of VMD largely depends on the choice of penalty parameter α and decomposition number K, while other parameters are typically set based on experience [3]. This parameter sensitivity presents a critical challenge that significantly impacts the method's reliability and effectiveness across diverse application domains.

The fundamental VMD algorithm operates by solving a constrained variational problem that aims to minimize the sum of bandwidths of all modes while ensuring their collective reconstruction of the original signal [1]. This process involves three key parameters that must be specified in advance: the mode number K, initial center frequencies, and the quadratic penalty term α. Research has demonstrated that the values of other parameters such as noise-tolerance τ and convergence criterion tolerance c exert minimal influence on decomposition performance, with default settings (τ = 0 and c = 1 × 10⁻⁶) generally proving effective for vibration signals [1]. Consequently, the selection of K and α represents the most significant challenge for researchers and practitioners implementing VMD in complex data analysis scenarios.

Critical Parameters and Their Impact on VMD Performance

Mode Number (K) Selection

The mode number K dictates how many intrinsic mode functions the input signal will be decomposed into, making it one of the most crucial parameters in VMD. Selecting an inappropriate K value leads to two primary problems: under-decomposition or over-decomposition. When K is set too low, the algorithm fails to separate all relevant components, resulting in mode mixing where multiple distinct signal elements are combined within a single IMF. Conversely, when K is set too high, the decomposition produces redundant or spurious components that lack physical meaning and may obscure genuine signal features [3] [2]. This challenge is particularly acute in real-world applications where the optimal number of intrinsic components is unknown a priori, requiring sophisticated approaches to determine the appropriate decomposition level.

The impact of incorrect K selection extends beyond theoretical concerns to practical consequences across various domains. In fault diagnosis for rolling element bearings, an improper K value can prevent the accurate extraction of weak fault information from vibration signals, allowing critical failure precursors to remain obscured by noise and interference [1]. Similarly, in operational modal analysis of civil structures, an incorrect mode number can lead to mode mixing, "greatly impairing the quality of decomposition" and compromising the identification of closely spaced structural modes [2]. These examples underscore the critical importance of appropriate K selection for ensuring the reliability of VMD-based analysis in safety-critical applications.

Penalty Factor (α) and Initial Center Frequencies

The penalty factor α governs the bandwidth constraint of the extracted IMFs, controlling the trade-off between mode fidelity and compactness in the frequency domain. A higher α value results in narrower bandwidth modes with reduced overlap, while a lower α permits broader bandwidth components [3]. This parameter significantly influences the separation quality between adjacent modes, particularly when dealing with signals containing components with closely spaced center frequencies. Research has shown that for vibration signals, a penalty term α = 2000 has proven effective in many scenarios, though this setting may not be optimal for all applications [1].

Initial center frequencies represent another critical parameter set that strongly influences VMD decomposition performance and diagnostic reliability [1]. Proper initialization of these frequencies facilitates faster convergence and helps avoid local minima during the optimization process. Despite their importance, the influence of initial center frequencies has been largely overlooked in many VMD implementations, with most approaches focusing exclusively on optimizing K and α [1]. This oversight can lead to suboptimal decomposition results, particularly when processing signals with complex spectral characteristics or significant noise contamination.

Table 1: Key VMD Parameters and Their Impact on Decomposition Performance

| Parameter | Role in VMD | Impact of Improper Selection | Typical Optimization Approaches |

|---|---|---|---|

| Mode Number (K) | Determines number of extracted IMFs | Under-decomposition (mode mixing) or over-decomposition (redundant components) | Optimization algorithms, scale space representation, indicator-based selection |

| Penalty Factor (α) | Controls bandwidth of extracted IMFs | Poor separation of closely spaced components or excessive smoothing of transient features | Multi-objective optimization, empirical testing, population-based heuristics |

| Initial Center Frequencies | Starting points for frequency domain optimization | Slow convergence, suboptimal decomposition, mode alignment issues | Scale space peak detection, prior knowledge, recursive initialization |

Optimization Approaches for VMD Parameters

Computational Intelligence and Genetic Algorithms

Computational intelligence approaches, particularly genetic algorithms (GAs), have demonstrated significant promise in addressing the VMD parameter optimization challenge. These evolutionary algorithms excel at solving complex, multi-objective optimization problems where traditional gradient-based methods struggle due to non-convex search spaces and multiple local optima [4] [3]. The multi-objective multi-island genetic algorithm (MIGA) represents one advanced implementation that has been successfully applied to optimize VMD parameters for rolling bearing fault feature extraction [3]. This approach leverages multiple parallel evolving populations (islands) with periodic migration to maintain diversity while exploring the parameter space more effectively than single-population alternatives.

The effectiveness of GA-based VMD optimization hinges on appropriate fitness function selection. Envelope entropy (Ee) and Renyi entropy (Re) have been employed as complementary fitness measures, with Ee reflecting signal sparsity and Re characterizing energy aggregation degree in the time-frequency distribution [3]. This multi-objective approach enables simultaneous optimization for both component separation quality and feature concentration, addressing dual aspects of decomposition performance. Similarly, other implementations have utilized kurtosis-based indices, with the grasshopper optimization algorithm (GOA) employed to maximize kurtosis weighted by correlation coefficient for vibration signal analysis and machinery fault diagnosis [2]. These approaches demonstrate how domain-specific knowledge can be incorporated into the optimization process to enhance VMD performance for targeted applications.

Adaptive and De-Mixing VMD Approaches

Recent research has introduced innovative VMD variants that address parameter selection challenges through algorithmic modifications rather than external optimization. The Improved VMD (IVMD) method employs scale space representation to adaptively determine both the number of modes and their initial center frequencies [1]. This approach constructs a scale space by computing the inner product between the signal's Fourier spectrum and a Gaussian function, then identifies mode parameters through peak detection in this transformed domain. By leveraging the scale space representation, IVMD achieves more accurate and stable decomposition while reducing reliance on manually set parameters [1].

The de-mixing VMD (D-VMD) framework represents another significant advancement, specifically designed to alleviate mode mixing through modifications to the core variational formulation [2]. This approach introduces an ensemble correlation coefficient as an additional Lagrangian multiplier term to enhance constraints on mode separation, particularly beneficial for signals with closely spaced modes. The multivariate extension, D-MVMD, applies the same principles to multi-channel signals, maintaining coordinated decomposition across channels while preventing mode mixing [2]. These methodological innovations complement parameter optimization strategies by embedding stronger separation constraints directly into the decomposition process, reducing sensitivity to initial parameter selection.

Table 2: VMD Optimization Methods and Their Applications

| Optimization Method | Key Mechanism | Advantages | Representative Applications |

|---|---|---|---|

| Multi-Island Genetic Algorithm (MIGA) | Parallel evolving populations with migration | Enhanced search diversity, avoidance of local optima | Bearing fault feature extraction [3] |

| Scale Space Representation | Gaussian filtering of Fourier spectrum with peak detection | Fully adaptive parameter determination, no optimization required | Locomotive bearing fault diagnosis [1] |

| Grasshopper Optimization Algorithm (GOA) | Swarm intelligence mimicking grasshopper behavior | Efficient exploration of high-dimensional parameter spaces | Vibration signal analysis [2] |

| De-Mixing VMD (D-VMD) | Additional Lagrangian multiplier for mode separation | Intrinsic mitigation of mode mixing, especially for close modes | Operational modal analysis [2] |

Experimental Protocols for VMD Parameter Optimization

Protocol 1: Multi-Objective Genetic Algorithm Optimization

This protocol outlines the procedure for optimizing VMD parameters using a multi-objective genetic algorithm approach, suitable for applications where the optimal parameter values are unknown.

Materials and Reagents:

- Signal data set (vibration, biomedical, or other non-stationary signals)

- Computing environment with MATLAB, Python, or similar analytical software

- Multi-objective optimization toolbox or custom genetic algorithm implementation

- VMD algorithm implementation with adjustable parameters

Procedure:

- Signal Preparation: Acquire and preprocess the target signals. For vibration signals, apply appropriate filtering to remove extreme outliers while preserving relevant frequency content. For biomedical signals like impedance cardiography (ICG), address both stationary and non-stationary noise sources including power-line interference, motion artifacts, and baseline wander [5].

Fitness Function Definition: Establish multiple fitness criteria based on decomposition objectives. Implement envelope entropy (Ee) to measure sparsity and Renyi entropy (Re) to quantify energy aggregation in time-frequency distribution [3]. Alternatively, use kurtosis weighted by correlation coefficient for fault detection applications [2].

Algorithm Configuration: Initialize the multi-island genetic algorithm with appropriate population sizes, migration intervals, and termination criteria. Define the search ranges for parameters K (typically 3-10 for most applications) and α (commonly 100-3000 based on signal characteristics) [3].

Optimization Execution: Execute the genetic algorithm to evolve parameter combinations across multiple generations. Employ elite preservation strategies to maintain high-performing candidates throughout the evolutionary process.

Validation and Selection: Evaluate optimized parameters on validation datasets separate from training data. Select the final parameter set based on Pareto optimality considering multiple fitness objectives.

Application: Implement VMD with optimized parameters for the target application, such as fault feature extraction or signal denoising.

Protocol 2: Scale Space-Based Adaptive VMD

This protocol describes the procedure for implementing the Improved VMD (IVMD) method using scale space representation for fully adaptive parameter determination.

Materials and Reagents:

- Raw signal data with potential fault components or features of interest

- Computational resources for Fourier analysis and peak detection algorithms

- Scale space representation implementation

- Multipoint kurtosis (MKurt) calculation capability

Procedure:

- Signal Acquisition: Collect the target signal using appropriate sensors and acquisition systems. For rolling bearing analysis, use accelerometers with sufficient sampling frequency to capture relevant impact frequencies [1].

Scale Space Construction: Compute the Fourier spectrum of the input signal. Construct the scale space representation by calculating the inner product between the signal's Fourier spectrum and a Gaussian function with varying widths [1].

Parameter Identification: Detect local maxima within the scale space representation to identify both the mode number K and initial center frequencies. This step replaces manual parameter specification with data-driven determination.

VMD Decomposition: Execute the VMD algorithm using the adaptively determined parameters. The Wiener filtering approach in the Fourier domain is applied iteratively to extract the mode components [1].

Mode Selection and Merging: Calculate multipoint kurtosis (MKurt) values for each decomposed mode. Identify fault-relevant components based on MKurt criteria and merge them to enhance diagnostic clarity while suppressing redundancy [1].

Feature Analysis: Perform subsequent analysis (e.g., envelope spectrum analysis for bearing faults) on the reconstructed signal containing merged fault components.

Protocol 3: Real-Time VMD for Sensor Signal Processing

This protocol outlines the implementation of Parameter-Optimized Recursive Sliding VMD (PO-RSVMD) for applications requiring real-time signal processing, such as industrial sensor systems.

Materials and Reagents:

- Streamed sensor data (e.g., from inertial measurement units)

- Computing platform with sufficient processing capabilities for real-time operation

- Recursive sliding window implementation

- Performance monitoring for iteration time and RMSE tracking

Procedure:

- System Initialization: Configure the recursive sliding window parameters appropriate for the target application. For industrial polishing motor monitoring, establish baseline signal characteristics during normal operation [6].

Termination Condition Setting: Implement an iterative termination condition based on modal component error mutation judgment to prevent over-decomposition and reduce computational load [6].

Rate Learning Factor Integration: Incorporate a rate learning factor to automatically adjust the initial center frequency of the current window. This factor combines the current center frequency with the previous window's center frequency to minimize errors [6].

Real-Time Processing: Apply the PO-RSVMD algorithm to incoming data streams. For IMU signals in industrial polishing, target angular velocity measurements affected by strong interference components [6].

Performance Monitoring: Track iteration time, number of iterations, and root mean square error (RMSE) during operation. Under typical conditions, PO-RSVMD achieves iteration time reduction of at least 53% compared to standard VMD and RSVMD [6].

Output Extraction: Utilize the denoised signal components for subsequent control decisions or feature extraction, maintaining the real-time operation constraints of the application.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagents and Computational Tools for VMD Optimization

| Tool/Reagent | Function/Purpose | Application Context | Implementation Notes |

|---|---|---|---|

| Multi-Island Genetic Algorithm (MIGA) | Parallel optimization of VMD parameters | Bearing fault diagnosis, feature extraction | Uses envelope entropy and Renyi entropy as fitness functions [3] |

| Scale Space Representation | Adaptive determination of mode number and center frequencies | Fault diagnosis in rolling bearings | Based on Fourier spectrum and Gaussian filtering [1] |

| Multipoint Kurtosis (MKurt) | Identification of fault-relevant IMF components | Machinery condition monitoring | Guides selection and merging of modes after decomposition [1] |

| Grasshopper Optimization Algorithm (GOA) | Swarm intelligence-based parameter optimization | Vibration signal analysis | Maximizes kurtosis weighted by correlation coefficient [2] |

| Recursive Sliding VMD (RSVMD) | Real-time signal processing with sliding windows | Industrial sensor signal denoising | Incorporates prior knowledge from previous decompositions [6] |

| De-Mixing VMD (D-VMD) | Enhanced mode separation through modified variational formulation | Operational modal analysis with close modes | Uses ensemble correlation coefficient to reduce mode mixing [2] |

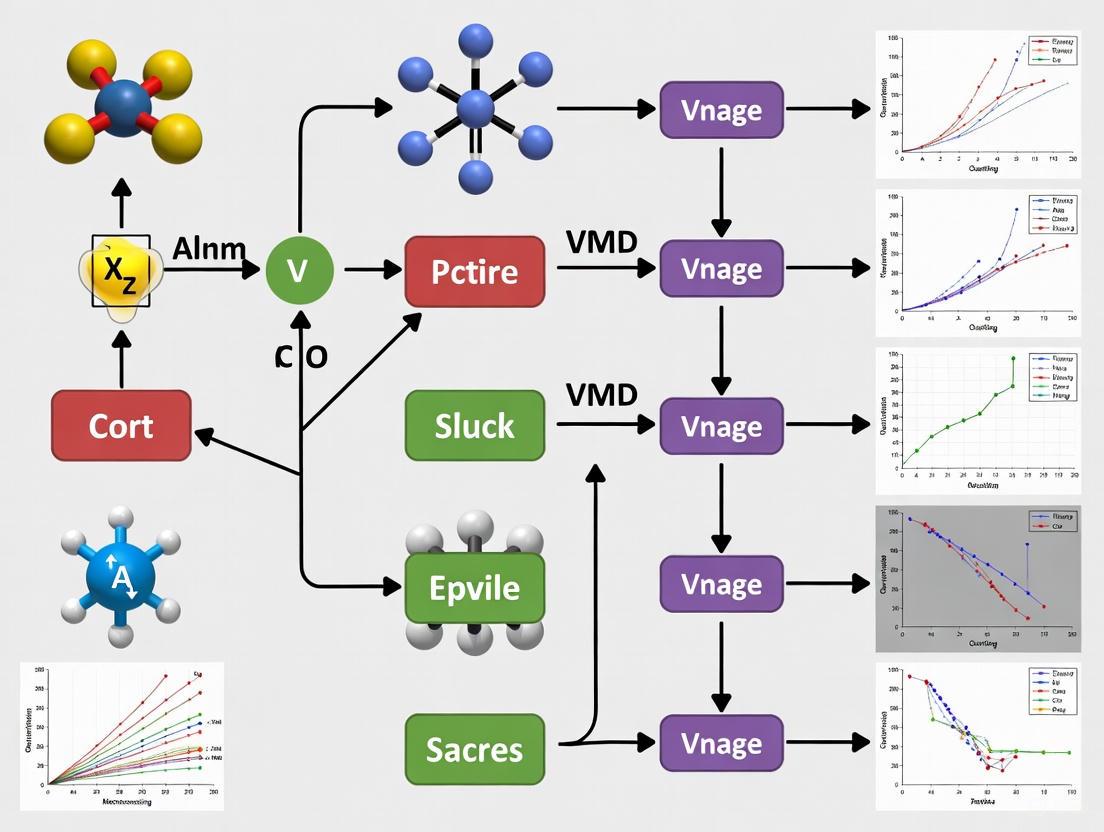

Workflow Diagrams for VMD Optimization

Mathematical Formulation

Variational Mode Decomposition (VMD) is a fully non-recursive, adaptive signal decomposition technique that intrinsically models signals as an ensemble of amplitude-modulated and frequency-modulated components, known as Intrinsic Mode Functions (IMFs). Its core strength lies in formulating the decomposition process as a constrained variational problem, which is then solved to achieve a global optimum, effectively avoiding the mode mixing prevalent in empirical methods [7].

The fundamental goal of VMD is to decompose a real-valued input signal ( x(t) ) into a predefined number ( K ) of discrete modes, ( uk(t) ), each compact around a respective center pulsation ( \omegak ). This is achieved by constructing and solving the following constrained variational problem [8]:

[ \min{{uk},{\omegak}} \left{ \sum{k=1}^{K} \left\| \partialt \left[ \left( \delta(t) + \frac{j}{\pi t} \right) * uk(t) \right] e^{-j\omegak t} \right\|2^2 \right} ] [ \text{subject to} \quad \sum{k=1}^{K} uk = x(t) ]

Here:

- ( u_k ) represents the ( k )-th mode.

- ( \omega_k ) is the center frequency of the ( k )-th mode.

- ( \delta(t) ) is the Dirac delta function.

- ( * ) denotes the convolution operator.

- The term ( \left( \delta(t) + \frac{j}{\pi t} \right) * uk(t) ) is the analytic signal of ( uk(t) ), obtained via the Hilbert transform [9].

- The exponential term ( e^{-j\omega_k t} ) frequency-shifts each mode's spectrum to baseband.

- The squared L²-norm of the gradient estimates the bandwidth of each mode.

To render this problem tractable, it is transformed into an unconstrained form using an augmented Lagrangian function ( \mathcal{L} ) [8]:

[ \mathcal{L}({uk},{\omegak},\lambda) = \alpha \sum{k=1}^{K} \left\| \partialt \left[ \left( \delta(t) + \frac{j}{\pi t} \right) * uk(t) \right] e^{-j\omegak t} \right\|2^2 + \left\| x(t) - \sum{k=1}^{K} uk(t) \right\|2^2 + \left\langle \lambda(t), x(t) - \sum{k=1}^{K} uk(t) \right\rangle ]

This Lagrangian incorporates:

- The data fidelity constraint (( \left\| x(t) - \sum uk(t) \right\|2^2 )).

- The reconstruction constraint, enforced by the Lagrange multiplier ( \lambda(t) ).

- The penalty factor ( \alpha ), which balances the importance of bandwidth constraint against reconstruction fidelity.

The solution is efficiently found using the Alternating Direction Method of Multipliers (ADMM), which iteratively updates the mode estimates ( uk ), their center frequencies ( \omegak ), and the Lagrangian multiplier ( \lambda ) in an alternating fashion [10] [8].

In the frequency domain, the update equations are:

1. Mode Update: [ \hat{u}k^{n+1}(\omega) = \frac{\hat{x}(\omega) - \sum{i \neq k} \hat{u}i(\omega) + \frac{\hat{\lambda}(\omega)}{2}}{1 + 2\alpha (\omega - \omegak)^2} ] This acts as a Wiener filter, applied to the current residual signal, favoring frequencies near ( \omega_k ) [8] [7].

2. Center Frequency Update: [ \omegak^{n+1} = \frac{\int0^\infty \omega \left| \hat{u}k(\omega) \right|^2 d\omega}{\int0^\infty \left| \hat{u}_k(\omega) \right|^2 d\omega} ] This equation updates the center frequency as the center of gravity of the mode's power spectrum [8].

The algorithm iterates until convergence, determined by a specified tolerance tol [8].

Key Parameters and Their Optimization via Genetic Algorithm

The performance of VMD is highly sensitive to the selection of its key parameters. Inappropriate choices can lead to mode mixing (insufficient ( K )) or over-decomposition (excessive ( K )), and poor bandwidth separation (suboptimal ( \alpha )) [8]. Manual tuning is often inadequate for complex signals, necessitating robust optimization frameworks like the Genetic Algorithm (GA).

Key Decomposition Parameters

The two most critical parameters requiring optimization are the number of modes ( K ) and the penalty factor ( \alpha ).

Decomposition Number (( K )): This parameter defines the total number of Intrinsic Mode Functions (IMFs) to be extracted from the input signal.

- Impact: If ( K ) is set too low, the decomposition will be insufficient, leading to mode mixing where a single IMF contains multiple, distinct frequency components. Conversely, if ( K ) is set too high, it results in over-decomposition, creating spurious or redundant modes with no physical meaning and increasing computational load [8].

- Optimization Goal: Find the minimal ( K ) that fully resolves the signal's constituent components without mixing.

Penalty Factor (( \alpha )): Also known as the bandwidth parameter, ( \alpha ) controls the compactness of each mode around its center frequency.

- Impact: A lower value of ( \alpha ) results in wider bandwidth, allowing each mode to capture a broader range of frequencies. This can be beneficial for transient or non-stationary signals. A higher value of ( \alpha ) enforces a narrower bandwidth, producing more constrained, tonal components suitable for harmonic signals. An incorrectly chosen ( \alpha ) leads to poor frequency separation and blurred mode boundaries [8] [7].

- Optimization Goal: Balance the bandwidth constraint to match the signal's inherent frequency characteristics.

Table 1: Key Parameters of Variational Mode Decomposition (VMD)

| Parameter | Symbol | Role in Decomposition | Effect of Low Value | Effect of High Value | Common Optimization Approach |

|---|---|---|---|---|---|

| Decomposition Number | ( K ) | Determines the number of extracted IMFs. | Mode Mixing: Multiple components merged into one IMF. | Over-decomposition: Creates redundant, non-physical modes. | Multi-objective optimization using metrics like Envelope Entropy and Rényi Entropy [8]. |

| Penalty Factor | ( \alpha ) | Controls the bandwidth of each IMF. | Wider Bandwidth: Modes are less compact, better for transients. | Narrower Bandwidth: Modes are more compact, better for tonal signals. | Searched alongside ( K ) within a defined range (e.g., 100-5000) [8] [7]. |

| Time Step | ( \tau ) | Noise-tolerance parameter for Lagrangian multiplier update. | Lower noise tolerance, stricter enforcement of constraints. | Higher noise tolerance, faster convergence but potentially less accurate. | Often fixed at 0 for no-noise tolerance or a small positive value (e.g., 0.1-0.3) [8]. |

| Convergence Tolerance | tol |

Stopping criterion for the optimization process. | Early Termination: Potential incomplete decomposition. | Prolonged Computation: Diminishing returns on accuracy. | Typically fixed at a small value like ( 1 \times 10^{-7} ) [8]. |

Genetic Algorithm for Parameter Optimization

The Genetic Algorithm (GA) is a population-based metaheuristic inspired by natural selection, ideal for navigating complex, non-linear parameter spaces to find a global optimum. Its application to VMD parameter optimization is highly effective [8].

Core Components of the GA-VMD Framework:

- Chromosome Encoding: A candidate solution (chromosome) is encoded as a pair of parameters ( (K, \alpha) ). ( K ) is a positive integer, while ( \alpha ) is a positive real number [8].

- Fitness Function: This function evaluates the quality of a decomposition resulting from a specific ( (K, \alpha) ) pair. An effective fitness function should promote sparsity and discriminative power in the resulting IMFs. A powerful combination uses:

- Envelope Entropy (( Ee )): Measures the sparsity of the signal. A lower envelope entropy indicates a more sparse and informative IMF, which is often desirable for feature extraction [8].

- Rényi Entropy (( Re )): Reflects the energy concentration and aggregation degree of the signal's time-frequency distribution. Lower Rényi entropy signifies better energy aggregation [8]. The multi-objective fitness function can be designed to minimize a weighted sum of these entropies across all extracted IMFs.

- Genetic Operators:

- Selection: Fitter chromosomes (parameter pairs yielding lower entropy) are preferentially selected for reproduction.

- Crossover: Pairs of selected chromosomes exchange genetic material, creating offspring that combine parameters from both parents.

- Mutation: A small, random alteration is applied to parameters in some offspring, introducing new genetic material and helping to escape local optima.

Table 2: Genetic Algorithm Optimization of VMD Parameters

| GA Component | Role in VMD Optimization | Typical Configuration/Remarks |

|---|---|---|

| Chromosome | Encodes a potential solution as a parameter set ( (K, \alpha) ). | ( K ) is a positive integer; ( \alpha ) is a positive real number. The search space for both must be predefined. |

| Fitness Function | Quantifies the quality of the decomposition for a given ( (K, \alpha) ) pair. | Multi-objective functions are effective, e.g., minimizing a combination of Envelope Entropy (for sparsity) and Rényi Entropy (for energy concentration) [8]. |

| Selection | Preferentially selects parameter sets that yield better fitness scores for reproduction. | Techniques like tournament selection or roulette wheel selection are commonly used. |

| Crossover | Combines parts of two parent parameter sets to generate new offspring sets. | Simulates the exchange of genetic information, exploring new combinations of ( K ) and ( \alpha ). |

| Mutation | Randomly modifies a parameter in an offspring set with a small probability. | Introduces diversity into the population, helping to avoid premature convergence on a local optimum. |

| Termination | Criteria to stop the evolutionary process and return the best solution. | Based on a maximum number of generations, a fitness threshold, or convergence stability. |

Application Notes and Protocols

This section provides detailed experimental protocols for implementing VMD, both in its standard form and optimized with a Genetic Algorithm, across different scientific domains.

Protocol 1: Standard VMD Implementation for Signal Denoising

Application Context: This protocol is designed for preprocessing noisy signals, such as those from biomedical sensors [5] or mechanical vibration data [8], where the goal is to isolate a signal of interest from contaminating noise.

Objective: To decompose a noisy signal using standard VMD parameters and reconstruct a denoised version by selectively summing relevant IMFs.

Materials and Software:

- Software: MATLAB (with Signal Processing Toolbox) [11] or Python (using the

vmdpypackage) [7]. - Input Data: A one-dimensional, uniformly-sampled time series signal.

Procedure:

- Signal Preparation: Load the input signal. If the signal is non-stationary, consider detrending. Normalize the signal to zero mean if necessary.

- Parameter Initialization: Make an initial estimate for the key parameters:

- Decomposition Number (( K )): If the signal's frequency components are unknown, use a spectral analysis (e.g., FFT or Power Spectral Density) to count dominant peaks as an initial ( K ) [10]. Start with a low number (e.g., 3-5) to avoid over-decomposition.

- Penalty Factor (( \alpha )): A default value of 2000 is a good starting point for many applications. Adjust based on signal characteristics: use lower values (500-1500) for transient signals and higher values (2000-5000) for tonal, harmonic signals [7].

- Other Parameters: Typically set

tau=0(no noise tolerance),DC=0(no DC component),init=1(initialize frequencies uniformly), andtol=1e-7[11] [7].

- Execute VMD: Run the VMD function (e.g.,

vmd(x)in MATLAB [11] orVMD(signal, alpha, tau, K, DC, init, tol)in Python [7]) to obtain the ( K ) IMFs and the residual signal. - IMF Analysis and Selection: Analyze the IMFs in the frequency domain to identify which modes contain the signal of interest and which contain noise. Noise often resides in the highest frequency modes (IMFs 1 and 2) or in modes with irregular, non-oscillatory morphology.

- Signal Reconstruction: Sum the IMFs identified as containing the signal of interest to reconstruct the denoised signal. Exclude the noise-dominant IMFs and, if applicable, the residual.

Troubleshooting:

- Persistent Noise: If noise remains, increment ( K ) and repeat the process. This may better isolate noise into a specific, discardable mode.

- Loss of Signal Features: If signal features are lost, the ( K ) might be too high (causing signal splitting) or ( \alpha ) might be too high (over-constraining bandwidth). Reduce ( K ) or ( \alpha ) and re-run.

Protocol 2: GA-Optimized VMD for Complex Feature Extraction

Application Context: This protocol is essential for analyzing highly complex, non-stationary signals where manual parameter tuning fails, such as in fault diagnosis of rolling bearings [8], forecasting of agricultural prices [12], or predicting the state-of-health of lithium-ion batteries [13].

Objective: To automatically find the globally optimal VMD parameters ( (K, \alpha) ) that maximize the extraction of meaningful features for a specific downstream task (e.g., classification or regression).

Materials and Software:

- Software: Python is recommended for flexibility (using

vmdpyand GA libraries likeDEAPorpymoo). - Input Data: A labeled dataset of representative signals.

Procedure:

- Define Search Space: Establish realistic bounds for the parameters to be optimized.

- Formulate Fitness Function: Design a function that quantifies decomposition quality. A robust approach is to use:

- Envelope Entropy Minimization: For each candidate ( (K, \alpha) ), run VMD, calculate the envelope entropy for each IMF, and use the minimum entropy value among the IMFs as one fitness component. This promotes sparsity [8].

- Rényi Entropy Minimization: Calculate the Rényi entropy of the time-frequency distribution for the same decomposition. This promotes energy concentration.

- The overall fitness can be a simple sum or a weighted sum of these two entropy measures:

Fitness = w1 * min(Envelope_Entropy) + w2 * Rényi_Entropy, where the goal is minimization.

- Configure GA: Set the genetic algorithm's operational parameters.

- Population Size: Typically 20-50 individuals.

- Generations: 20-100 generations, depending on computational budget.

- Crossover & Mutation Rates: Standard values (e.g., crossover probability 0.7, mutation probability 0.1) are a good start.

- Run GA Optimization: Execute the GA. The algorithm will iteratively evaluate populations of ( (K, \alpha) ) pairs, selecting, crossing, and mutating them over generations until the termination criteria are met.

- Validation: Apply the best-performing ( (K, \alpha) ) pair from the GA to a held-out validation set of signals to confirm its generalization performance for the intended task (e.g., fault detection accuracy or forecasting error).

Troubleshooting:

- Premature Convergence: If the GA converges too quickly to a suboptimal solution, increase the mutation rate or population size to introduce more diversity.

- High Computational Cost: Each fitness evaluation requires a full VMD run. Use a smaller representative subset of data for the optimization phase or reduce the maximum number of generations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Software for VMD Research and Application

| Tool/Software | Function | Usage Context |

|---|---|---|

| MATLAB Signal Processing Toolbox | Provides the official vmd function for signal decomposition and analysis [11]. |

Industry and academia; preferred for integrated signal analysis environments and prototyping. |

Python vmdpy Package |

An open-source Python implementation of the VMD algorithm [7]. | Data science, machine learning pipelines, and open-source research projects. |

Genetic Algorithm Library (e.g., DEAP, pymoo) |

Provides frameworks for setting up and running custom GA optimizations [8]. | Essential for automating the search for optimal VMD parameters ( (K, \alpha) ). |

| Envelope Entropy & Rényi Entropy Code | Custom scripts to calculate these entropy measures from IMFs for fitness evaluation. | Serves as the objective function in the GA-VMD optimization loop [8]. |

| High-Precision Accelerometer | Captures raw vibration or motion signals for decomposition (e.g., in pile foundation testing [14] or bearing fault diagnosis [8]). | Field data collection in mechanical, civil, and aerospace engineering. |

| Impedance Cardiography (ICG) Monitor | Acquires physiological signals for denoising and analysis using VMD frameworks [5]. | Clinical and biomedical research for non-invasive cardiovascular monitoring. |

Biological Inspiration and Core Analogy

Genetic Algorithms are a class of evolutionary algorithms whose core operational principles are inspired by the mechanisms of natural selection and genetics first formally introduced by John Holland [15]. GAs emulate the process of natural evolution to solve complex optimization and search problems by treating potential solutions as individuals in a population that evolves over successive generations.

The foundational analogy maps biological evolutionary concepts directly onto computational optimization processes, creating a powerful heuristic search methodology [16]. The following table summarizes this direct biological-to-computational mapping that forms the basis of all GA operations.

Table 1: Core Analogy Between Biological Evolution and Genetic Algorithms

| Biological Concept | GA Component | Function in Optimization Process |

|---|---|---|

| Chromosome | Solution (as parameter set) | Encodes a potential solution to the problem, typically as a string (bit, integer, real-valued) |

| Gene | Single parameter/variable | A component of the solution string representing one optimizable parameter |

| Population | Set of candidate solutions | Collection of potential solutions undergoing evolution simultaneously |

| Fitness | Objective function value | Quantitative measure of a solution's quality relative to optimization goal |

| Selection | Selection operator | Process that chooses fitter individuals to reproduce based on fitness scores |

| Crossover | Recombination operator | Combines genetic material from two parents to create novel offspring solutions |

| Mutation | Mutation operator | Introduces random changes to maintain diversity and explore new regions of search space |

| Generation | Iteration | One cycle of evaluation, selection, recombination, and mutation |

The biological inspiration provides GAs with distinct advantages over traditional optimization methods, particularly their ability to perform global search across broad, multi-modal landscapes without becoming trapped in local optima, their flexibility in handling diverse variable types and complex constraints, and their robustness in noisy environments where gradient information is unreliable or unavailable [16].

Optimization Mechanics and Algorithmic Framework

The optimization mechanics of Genetic Algorithms follow a structured, iterative process that emulates evolutionary pressure. Each generation, the population undergoes evaluation, selection, and variation operations that collectively improve solution quality over time [16].

Core Operational Mechanics

The optimization process follows a systematic workflow with clearly defined genetic operators:

Initialization: The process begins by generating an initial population of candidate solutions, typically created randomly to sample diverse regions of the search space. Solution representation varies by problem domain, with common encoding schemes including binary, integer, and real-valued vectors [16].

Evaluation: Each individual in the population is evaluated using a predefined fitness function that quantifies its performance on the optimization task. The fitness function serves as the primary selection pressure, directly determining an individual's probability of contributing genetic material to subsequent generations [16].

Selection: Selection operators choose individuals from the current population to serve as parents for reproduction, with probability proportional to their fitness. Common selection strategies include tournament selection, roulette wheel selection, and rank-based selection, each providing different balances between selection pressure and population diversity [16].

Crossover (Recombination): This operator combines genetic information from two parent solutions to create one or more offspring. By exchanging solution segments between parents, crossover can construct novel solutions that potentially combine beneficial traits from both parents. The crossover rate parameter controls the probability that recombination occurs for any given parent pair [16].

Mutation: Mutation introduces random perturbations to individual solution components, providing a mechanism for exploring new regions of the search space and maintaining genetic diversity. The mutation rate parameter controls the frequency of these random changes, typically set to low values to preserve building blocks while enabling exploration [16].

The following diagram illustrates the complete iterative workflow of a standard Genetic Algorithm, showing the sequence of operations from initialization through termination:

Advanced Algorithmic Variants

Beyond the standard GA framework, several enhanced variants have been developed to address specific optimization challenges:

Elitist Genetic Algorithms: This variant explicitly preserves a predetermined number of best-performing individuals from one generation to the next, preventing the loss of high-quality solutions through the stochastic selection and variation processes [17].

Hybrid GA-Neural Network Frameworks: Recent research has explored integrating deep learning with evolutionary processes. These frameworks utilize neural networks, particularly Multi-Layer Perceptrons (MLPs), to extract "synthesis insights" from the evolutionary data generated during the GA search process. These insights guide the algorithm toward more promising search regions, significantly enhancing optimization efficiency and effectiveness [15].

Application Protocol: VMD-GA Hybrid for Agricultural Price Forecasting

The integration of Variational Mode Decomposition with Genetic Algorithm optimization represents a powerful hybrid methodology for handling complex, non-stationary time series data. The following protocol details a specific implementation for agricultural commodity price forecasting, which demonstrates the practical application of VMD-GA fusion [12].

Experimental Workflow and Design

The VMD-GA hybrid methodology follows a staged approach that leverages the strengths of both techniques:

Table 2: VMD-GA Hybrid Model Workflow Stages

| Stage | Primary Operation | Key Parameters | Objective |

|---|---|---|---|

| 1. Data Preparation | Acquisition & preprocessing of agricultural price series | Commodity selection, time granularity, normalization | Ensure data quality and compatibility with decomposition |

| 2. GA-VMD Optimization | Simultaneous optimization of VMD parameters [K, α] | Population size, generations, fitness function | Achieve optimal signal decomposition with minimal information loss |

| 3. Component Forecasting | Individual LSTM modeling of each IMF | LSTM architecture, lookback period, GA-optimized hyperparameters | Accurately predict future values of each decomposed component |

| 4. Ensemble Reconstruction | Aggregation of component forecasts into final prediction | Summation of IMF forecasts | Generate comprehensive price prediction from component models |

The following diagram visualizes this integrated workflow, highlighting the sequential interaction between the VMD, GA, and LSTM components:

Detailed Experimental Protocol

Phase 1: GA-Optimized Variational Mode Decomposition

Objective: Decompose complex agricultural price series into intrinsic mode functions (IMFs) with minimal information loss through optimized VMD parameters [12].

Materials and Reagents:

- Historical agricultural commodity price data (monthly frequencies for maize, palm oil, soybean oil)

- Computational environment with MATLAB or Python (NumPy, SciPy)

- VMD implementation package (original or compatible open-source version)

Procedure:

- Data Preparation:

- Collect historical price data for target commodities (minimum 5-10 years recommended)

- Preprocess data: handle missing values using interpolation, normalize series to zero mean and unit variance

- Partition data: 70-80% for training, 20-30% for testing temporal validity

Genetic Algorithm Configuration:

- Population initialization: Generate random population of VMD parameter sets [K, α]

- Parameter bounds: Set K range [3, 10] (number of IMFs), α range [100, 5000] (balancing parameter)

- Fitness function: Implement envelope entropy minimization as fitness evaluation criterion

- GA parameters: Set population size (30-50), generations (50-100), crossover rate (0.7-0.9), mutation rate (0.05-0.1)

Optimization Execution:

- Run GA for specified generations, evaluating fitness for each parameter set

- Apply VMD to training data using each candidate parameter set [K, α]

- Calculate envelope entropy for resulting IMFs

- Select top performers for reproduction using tournament selection

- Apply crossover (simulated binary) and mutation (Gaussian) to create new generation

- Terminate when fitness improvement falls below threshold (e.g., <0.001 for 5 consecutive generations)

Signal Decomposition:

- Extract optimal parameters [Kopt, αopt] from best-performing individual

- Apply VMD with optimized parameters to entire price series

- Validate decomposition quality through IMF orthogonality and completeness checks

Phase 2: GA-Optimized LSTM Forecasting

Objective: Develop accurate forecasting models for each IMF using Long Short-Term Memory networks with GA-optimized hyperparameters [12].

Procedure:

- LSTM Architecture Setup:

- Design LSTM network with input layer, 1-3 hidden layers, and output layer

- Define hyperparameter search space: hidden units [32, 128], learning rate [0.001, 0.1], batch size [16, 64], dropout rate [0.1, 0.5]

Hyperparameter Optimization:

- Initialize GA population with random hyperparameter combinations

- Implement fitness function using root mean square error (RMSE) on validation set

- Execute GA optimization for 30-50 generations with population size 20-40

- Apply elitism to preserve top 10-15% performers between generations

Component Model Training:

- Train separate GA-optimized LSTM for each IMF using optimal hyperparameters

- Use lookback period of 6-12 time steps for sequential input

- Train for 100-200 epochs with early stopping based on validation loss

- Store all trained IMF forecasting models

Phase 3: Ensemble and Validation

Objective: Aggregate component forecasts and validate model performance against benchmark approaches [12].

Procedure:

- Forecast Aggregation:

- Generate forecasts for each IMF using respective LSTM models

- Sum IMF predictions to reconstruct final price forecast

- Apply inverse normalization to restore original scale

- Performance Validation:

- Calculate performance metrics: RMSE, MAPE, Directional Accuracy (D_stat)

- Compare against benchmarks: individual LSTM, EMD-LSTM, EEMD-LSTM, CEEMDAN-LSTM

- Perform statistical significance testing (Diebold-Mariano test)

- Execute TOPSIS analysis for multi-criteria performance evaluation

Performance Metrics and Validation

The VMD-GA hybrid model demonstrates statistically significant improvements over traditional approaches according to comprehensive evaluation across multiple metrics and agricultural commodities [12].

Table 3: Performance Comparison of VMD-GA Hybrid Model vs. Benchmark Methods

| Commodity | Model | RMSE | MAPE (%) | Directional Accuracy (%) |

|---|---|---|---|---|

| Maize | VMD-LSTM (Proposed) | 8.24 | 3.92 | 85.7 |

| CEEMDAN-LSTM | 19.13 | 7.00 | 71.4 | |

| EEMD-LSTM | 21.83 | 8.50 | 64.3 | |

| EMD-LSTM | 25.74 | 9.17 | 57.1 | |

| Individual LSTM | 28.91 | 9.83 | 50.0 | |

| Palm Oil | VMD-LSTM (Proposed) | 95.65 | 3.39 | 82.4 |

| CEEMDAN-LSTM | 122.35 | 4.33 | 70.6 | |

| EEMD-LSTM | 131.96 | 5.03 | 64.7 | |

| EMD-LSTM | 148.32 | 5.66 | 58.8 | |

| Individual LSTM | 163.56 | 6.15 | 52.9 | |

| Soybean Oil | VMD-LSTM (Proposed) | 76.63 | 3.12 | 87.5 |

| CEEMDAN-LSTM | 104.99 | 4.21 | 75.0 | |

| EEMD-LSTM | 115.46 | 4.89 | 68.8 | |

| EMD-LSTM | 130.29 | 5.55 | 62.5 | |

| Individual LSTM | 144.82 | 6.07 | 56.3 |

Research Reagent Solutions

The experimental implementation of Genetic Algorithms and hybrid frameworks requires specific computational tools and analytical resources. The following table outlines essential research reagents and their functions in GA-based research [18].

Table 4: Essential Research Reagents and Computational Tools for GA Research

| Research Reagent / Tool | Function | Application Context |

|---|---|---|

| Multi-layer Perceptron (MLP) Networks | Extraction of synthesis insights from evolutionary data | Deep-learning guided evolutionary frameworks [15] |

| Variational Mode Decomposition (VMD) | Non-recursive signal decomposition into intrinsic mode functions | Pre-processing of non-stationary time series data [12] |

| Long Short-Term Memory (LSTM) | Temporal sequence modeling and forecasting | Prediction of decomposed signal components [12] |

| Envelope Entropy | Fitness function for signal decomposition quality | Optimization criterion for VMD parameter tuning [12] |

| RayBiotech Assay Services | Biomarker discovery and validation | Drug target identification in pharmaceutical applications [18] |

| Particle Swarm Optimization (PSO) | Alternative bio-inspired optimization | Performance comparison with GA approaches [19] |

| Support Vector Machines (SVM) | Fitness function approximation | Synthetic data generation for imbalanced learning [17] |

Why Combine GA with VMD? Synergistic Advantages for Precision Signal Processing

Variational Mode Decomposition (VMD) has emerged as a powerful alternative to traditional decomposition techniques like Empirical Mode Decomposition (EMD), offering superior mathematical foundation, reduced mode mixing, and stronger noise robustness [20] [12]. However, its performance is critically dependent on the proper selection of two key parameters: the number of decomposition modes (K) and the penalty factor (α) [21] [22]. Inappropriate parameter selection leads to either under-decomposition, where insufficient feature extraction occurs, or over-decomposition, which creates spurious, physically meaningless components and increases computational complexity [20] [22]. Manual parameter tuning relies heavily on expert experience and becomes impractical for large-scale or automated signal processing systems. This parameter sensitivity creates a significant bottleneck for applying VMD to complex, non-stationary signals across various scientific and engineering domains, from biomedical engineering to renewable energy forecasting.

The Synergistic Partnership: How GA Complements VMD

The integration of Genetic Algorithm (GA) with VMD creates a powerful synergy that automates parameter selection and enhances decomposition quality. This partnership leverages the complementary strengths of both techniques.

- VMD's Role: Provides a sophisticated decomposition framework that can effectively separate multi-component signals into quasi-orthogonal Intrinsic Mode Functions (IMFs) with specific sparsity properties when properly parameterized [12].

- GA's Role: Offers a robust, population-based optimization strategy inspired by natural selection that efficiently explores the vast parameter space to find optimal or near-optimal [K, α] combinations without requiring gradient information [12] [23].

This synergy is quantified through specific fitness functions that guide the evolutionary process. Common optimization objectives include:

- Minimum Envelope Entropy: Favors decompositions that concentrate signal features into a minimal number of components [22].

- Maximum Kurtosis or Sparsity: Enhances feature detection in mechanical fault diagnosis applications [21].

- Dual Criteria Approaches: Combine multiple objectives, such as minimizing permutation entropy while maximizing subsequence variability, for balanced performance [20].

Table 1: Key Fitness Functions for GA-VMD Optimization

| Fitness Function | Optimization Goal | Typical Application Domain |

|---|---|---|

| Minimum Envelope Entropy | Concentrate signal energy into sparse components | General signal denoising [22] |

| Permutation Entropy Minimization | Enhance pattern extraction and predictability | Wind speed forecasting [20] |

| Spectral Kurtosis Maximization | Detect transient impulses and faults | Mechanical fault diagnosis [21] |

| Multi-objective Criteria | Balance multiple decomposition qualities | Complex biomedical signals [24] |

Quantitative Advantages: Evidence from Cross-Domain Applications

Empirical validation across diverse domains demonstrates that GA-optimized VMD consistently outperforms both standalone VMD and VMD optimized with other algorithms in terms of accuracy, convergence speed, and decomposition efficiency.

In agricultural price forecasting, a GA-optimized VMD-LSTM hybrid model reduced RMSE by 56.93%, 21.83%, and 27.00% for maize, palm oil, and soybean oil, respectively, compared to the next best CEEMDAN-LSTM model [12]. Similarly, for short-term power load forecasting, the GA-VMD-BP model showed a 31.71% higher R² value than a standard BP model and 1.46% improvement over a non-optimized VMD-BP model [23].

A critical advantage of GA is its convergence efficiency. In wind power prediction applications, the Beluga Whale Optimization (BWO) algorithm achieved convergence 23.3% faster than GA [25], indicating that while GA is highly effective, continued algorithm innovation may yield further improvements. The recently proposed Intelligent Vortex Optimization (IVO) method also claims superior accuracy and faster convergence compared to GA for mechanical fault diagnosis [21].

Table 2: Performance Comparison of VMD Optimization Algorithms

| Optimization Algorithm | Key Advantages | Performance Evidence | Application Context |

|---|---|---|---|

| Genetic Algorithm (GA) | Robust global search, handles non-differentiable functions | 31.71% R² improvement over baseline model [23] | Power load forecasting |

| Particle Swarm (PSO) | Simple implementation, fast convergence | Prone to local optima without dynamic adjustments [22] | General signal processing |

| Intelligent Vortex (IVO) | Enhanced global search, golden section rule | 76.27% computational efficiency gain [21] | Mechanical fault diagnosis |

| Beluga Whale (BWO) | Fast convergence, strong global search | 23.3% faster convergence than GA [25] | Wind power prediction |

Experimental Protocols: Implementing GA-VMD Integration

Protocol 1: Basic GA-VMD Parameter Optimization Framework

This protocol provides a generalized workflow for optimizing VMD parameters using a genetic algorithm, applicable across most signal processing domains.

Research Reagent Solutions:

- Input Signal: The raw temporal data to be decomposed (e.g., ECG, vibration, price series).

- VMD Algorithm: The core decomposition routine requiring parameters [K, α].

- Genetic Algorithm Framework: Optimization library with selection, crossover, and mutation operators.

- Fitness Function: Quantitative metric to evaluate decomposition quality (e.g., envelope entropy).

- Computing Environment: MATLAB, Python, or similar platform with sufficient processing power.

Step-by-Step Procedure:

- Signal Preprocessing: Prepare the input signal by removing obvious artifacts and normalizing if necessary. For long sequences, consider segmentation.

- Define Parameter Bounds: Establish realistic search boundaries for K (typically 3-10) and α (typically 100-5000) based on domain knowledge [22].

- Initialize GA Population: Generate an initial population of candidate solutions [K, α] with random values within the defined bounds.

- VMD Decomposition: For each candidate solution in the population, perform VMD decomposition using its specific [K, α] values.

- Fitness Evaluation: Calculate the fitness value for each decomposition. For general denoising, use minimum envelope entropy: ( H{envelope} = -\sum pi \log(pi) ), where ( pi ) is the normalized envelope signal [22].

- GA Evolution: Apply selection, crossover, and mutation operators to create a new generation of candidate solutions.

- Convergence Check: Terminate when fitness improvement falls below a threshold or maximum generations are reached.

- Validation: Apply the optimized VMD parameters to a test signal to verify decomposition quality.

Protocol 2: Multi-Criteria IMF Selection Post-Decomposition

After obtaining optimized decomposition, this protocol ensures selective reconstruction by identifying and excluding noise-dominant components.

Research Reagent Solutions:

- Decomposed IMFs: The set of intrinsic mode functions from GA-optimized VMD.

- Variance Contribution Rate (VCR): Metric quantifying each IMF's energy contribution.

- Correlation Coefficient Metric (CCM): Measure of linear relationship between each IMF and original signal.

- Threshold Criteria: Predefined values for VCR and CCM to classify IMFs as relevant or noise.

Step-by-Step Procedure:

- Calculate Variance Contribution Rate: Compute VCR for each IMFk: ( VCRk = \frac{Energy(IMFk)}{\sum{i=1}^K Energy(IMFi)} \times 100\% ).

- Compute Correlation Coefficients: Calculate Pearson correlation coefficient between each IMF and the original signal.

- Establish Threshold Criteria: Set thresholds based on empirical observation (e.g., VCR < 1% or correlation coefficient < 0.1 may indicate noise) [22].

- Classify IMFs: Categorize each IMF as signal-dominant or noise-dominant using the dual-criteria screening.

- Selective Reconstruction: Sum only the signal-dominant IMFs to obtain the denoised signal.

- Quality Assessment: Evaluate the reconstructed signal using domain-appropriate metrics (SNR, RMSE, predictive accuracy).

Conceptual Framework and Workflow Visualization

GA-VMD Optimization and Denoising Workflow - This diagram illustrates the complete process from raw input signal to denoised output, highlighting the integration between genetic algorithm optimization and variational mode decomposition.

Advanced Applications and Emerging Methodologies

The GA-VMD framework has demonstrated significant utility across diverse domains requiring high-precision signal processing:

In agricultural economics, GA-optimized VMD effectively decomposes complex, non-stationary price series, enabling more accurate forecasting when combined with LSTM networks [12]. For renewable energy systems, the approach enhances wind power prediction accuracy by decomposing non-stationary power sequences into more manageable components, addressing critical grid integration challenges [25]. In biomedical engineering, multi-objective optimization approaches combined with VMD improve arrhythmia classification from ECG signals, though these advanced methods may use specialized algorithms beyond standard GA [24]. For infrastructure monitoring, optimized VMD enables precise denoising of pressure signals in water supply networks, facilitating more accurate predictive maintenance and leak detection [22].

Cross-Domain Applications and Performance - This diagram showcases the diverse applications of GA-VMD frameworks and their demonstrated performance improvements across different domains.

The integration of Genetic Algorithm with Variational Mode Decomposition represents a powerful methodology for precision signal processing, effectively addressing VMD's critical parameter sensitivity limitation. This synergistic combination leverages GA's robust global search capabilities to automate the optimization of VMD's [K, α] parameters, leading to statistically significant improvements in forecasting accuracy, noise reduction, and feature extraction across diverse application domains. While emerging optimization algorithms continue to push performance boundaries, GA remains a foundational approach due to its proven effectiveness, conceptual clarity, and reliable convergence properties. The experimental protocols and frameworks presented provide researchers with practical methodologies for implementing this powerful synergistic approach in their signal processing applications.

Variational Mode Decomposition (VMD), particularly when enhanced by genetic algorithms (GA), has established itself as a powerful and adaptable signal processing technique across diverse scientific fields. This note details its foundational applications in two key areas: the analysis of non-stationary time-series data in industrial fault diagnosis and agricultural forecasting, and its potential in processing complex spectral data. The core strength of the VMD-GA synergy lies in its ability to overcome the limitations of traditional decomposition methods by automatically and optimally extracting intrinsic mode functions (IMFs) from noisy, complex signals. We provide a detailed protocol for implementing a GA-optimized VMD model, structured data on its performance, and a catalog of essential research tools.

Quantitative Performance of VMD-GA Hybrid Models

The following table summarizes the documented performance of GA-optimized VMD models against other techniques in various applications.

Table 1: Performance Comparison of VMD-GA Hybrid Models Against Benchmark Models

| Application Domain | Model | Key Performance Metrics | Reference |

|---|---|---|---|

| Agricultural Price Forecasting | GA-VMD-LSTM | RMSE reduced by 21.83-56.93%; MAPE reduced by 21.67-44% compared to the next best model (CEEMDAN-LSTM). | [12] [26] |

| Short-Term Power Load Forecasting | GA-VMD-BP | R² increased by 31.71% vs. BP and 1.46% vs. VMD-BP; MAE decreased by 205.91 MW and 48.51 MW, respectively. | [23] |

| Rolling Bearing Fault Diagnosis | MIGA-VMD (Multi-Island GA) | Accurately identifies fault characteristic frequencies for both single-point and composite faults, overcoming mode mixing. | [27] [8] |

| Multi-Sensor Fault Recognition | Two-Layer GA-BP | Recognition accuracy for lost, high-bias, and low-bias signals improved by 26.09%, 18.18%, and 7.15%, respectively, over a single BP model. | [28] |

Research Reagent Solutions: The VMD-GA Toolkit

The following table outlines the essential computational "reagents" required for constructing and deploying a VMD-GA research pipeline.

Table 2: Key Research Reagents and Computational Tools for VMD-GA Studies

| Item Name | Function / Definition | Application Context | |

|---|---|---|---|

| Variational Mode Decomposition (VMD) | A non-recursive, adaptive signal decomposition method that separates a signal into discrete sub-signals (IMFs) with specific sparsity properties in the spectral domain. | Core decomposition technique for non-stationary signals like bearing vibrations or commodity prices. | [12] [27] [8] |

| Genetic Algorithm (GA) | An optimization technique that mimics natural selection to search a vast parameter space and find optimal solutions, such as the best VMD parameters (K, α). | Used to automate and optimize the selection of VMD's key parameters, overcoming manual and suboptimal selection. | [12] [8] |

| Intrinsic Mode Functions (IMFs) | The finite-bandwidth, quasi-orthogonal components into which VMD decomposes the original input signal. | Represent the simplified building blocks of the complex signal, which are individually modeled and forecast. | [12] [23] [8] |

| Fitness Function (e.g., Envelope Entropy) | A quantitative criterion (e.g., Envelope Entropy, Renyi Entropy) used by the GA to evaluate the quality of a given set of VMD parameters. | Guides the GA optimization process; low envelope entropy indicates a sparse and informative IMF. | [8] |

| Long Short-Term Memory (LSTM) | A type of recurrent neural network capable of learning long-term dependencies in sequential data. | Used for forecasting the decomposed IMF components in time-series prediction applications. | [12] |

| Back Propagation (BP) Neural Network | A classic artificial neural network that uses backpropagation for training. | Serves as a regression or prediction model for the decomposed signal components. | [23] [28] |

Experimental Protocol: GA-Optimized VMD for Signal Decomposition and Forecasting

This protocol provides a step-by-step methodology for applying the GA-VMD hybrid model, as utilized in agricultural price forecasting and fault diagnosis studies [12] [27].

Procedure

Step 1: Signal Acquisition and Preprocessing

- Acquire the raw time-series signal (e.g., vibration data, commodity prices).

- Perform necessary preprocessing, including data cleaning, normalization, and addressing missing values to prepare a robust dataset for analysis.

Step 2: Genetic Algorithm Optimization of VMD Parameters

- Objective: Determine the optimal VMD parameters—the number of modes (

K) and the penalty factor (α). - Fitness Function: Define a fitness function for the GA to minimize. A common and effective choice is the envelope entropy [8]. A signal with a clearer impulsive feature (indicative of a fault or a key pattern) has a lower envelope entropy.

- For a given

{K, α}pair, decompose the signal using VMD. - Calculate the envelope spectrum of each resulting IMF.

- Compute the envelope entropy for each IMF. The fitness value can be the minimum or average entropy across all IMFs.

- For a given

- GA Execution: Run the GA to search the

{K, α}parameter space, iteratively evaluating candidates with the above fitness function until the optimal values are found.

Step 3: Decompose Signal with Optimized VMD

- Using the GA-optimized parameters

Kandα, perform the final VMD on the entire preprocessed signal. - This will yield

Knumber of IMF components (IMF1, IMF2, ..., IMFK) and potentially a residual component.

Step 4: Component Forecasting and Reconstruction

- For forecasting applications (e.g., price prediction):

- Divide the IMFs into training and testing sets.

- Train a forecasting model (e.g., LSTM, BP Neural Network) on each IMF's training data [12] [23].

- Use the trained models to predict the future values of each IMF.

- Aggregate (ensemble) the forecasts of all IMFs to reconstruct the final prediction of the original signal.

Step 5: Feature Identification (for Fault Diagnosis)

- For diagnostic applications (e.g., bearing fault detection):

- Select the IMF(s) containing the most critical fault information, often using an index like the kurtosis or Holder coefficient [8].

- Perform envelope spectrum analysis on the selected IMF(s).

- Identify the characteristic fault frequencies in the envelope spectrum to diagnose the fault type.

Workflow Visualization

Below is the DOT script for a diagram illustrating the complete experimental workflow.

Diagram Title: Unified Workflow for GA-VMD Signal Analysis

Advanced Protocol: Adaptive VMD via Spectrum Reconstruction and Segmentation (SRAS-VMD)

For applications requiring fully adaptive decomposition without pre-defining K, the SRAS-VMD method provides a robust solution, particularly effective in noisy environments [27].

Procedure

Step 1: Fourier Spectrum Reconstruction

- Compute the Fourier spectrum of the input signal.

- Apply a spectrum reconstruction algorithm to simplify the spectrum, reducing the influence of noise and highlighting dominant frequency components.

Step 2: Spectrum Segmentation and Boundary Fusion

- Extract the energy spectrum of the reconstructed Fourier spectrum.

- Perform a pre-segmentation of the energy spectrum using an algorithm like "locmaxmin" to identify initial boundaries between potential modes.

- Fuse adjacent segmentation boundaries based on the Gini index of the squared envelope. This step merges over-segmented components, leading to a more accurate estimation of the true number of modes

K.

Step 3: Parameter Determination and VMD Execution

- The final number of fused segments directly determines the VMD parameter

K. - The center frequencies of these segments are used to initialize the VMD's center frequencies (

ωk). - Execute the standard VMD algorithm with these adaptively determined parameters.

Step 4: Optimal Mode Selection and Analysis

- To select the IMF containing the most critical information (e.g., a fault signature), use an index like Periodic Modulation Intensity (PMI) to quantify the periodicity and information content of each IMF [27].

- Analyze the envelope spectrum of the optimal IMF to identify characteristic frequencies and complete the diagnosis.

Workflow Visualization

Below is the DOT script for a diagram illustrating the SRAS-VMD workflow.

Diagram Title: SRAS-VMD Adaptive Decomposition Workflow

Implementing GA-VMD: Step-by-Step Methodology and Drug Discovery Applications

The GA-VMD framework represents a significant advancement in signal processing by integrating the optimization power of Genetic Algorithms (GAs) with the adaptive decomposition capabilities of Variational Mode Decomposition (VMD). This hybrid approach effectively addresses one of the most significant challenges in using VMD: the need for manual parameter selection. VMD requires users to predefine two critical parameters—the number of decomposition modes (k) and the balancing parameter of the data-fidelity constraint (α). Inappropriate selection of these values can lead to insufficient decomposition or over-decomposition, adversely affecting subsequent analysis [29]. The GA-VMD framework automates this parameter selection process, enabling more accurate and efficient signal analysis across various scientific domains, including drug discovery and pharmaceutical development.

Theoretical Foundation

Variational Mode Decomposition (VMD)

VMD is a non-recursive, adaptive signal decomposition technique that fundamentally differs from earlier methods like Empirical Mode Decomposition (EMD). The core principle of VMD involves decomposing a real-valued input signal f into a discrete number of mode functions uₖ(t), each with limited bandwidth in the spectral domain. The method formulates this as a constrained variational problem [29]:

subject to:

where uₖ represents the modes, ωₖ denotes their center frequencies, and δ(t) is the Dirac distribution. This formulation aims to ensure that each mode is compact around a center pulsation ωₖ, determined along with the decomposition process.

Genetic Algorithm (GA) Optimization

Genetic Algorithms belong to the class of evolutionary optimization techniques inspired by natural selection. In the context of parameter optimization for VMD, GAs employ several biologically-inspired operations [29] [30]:

- Initialization: A population of candidate solutions (parameter sets) is randomly generated within predefined search bounds.

- Selection: Individuals are selected for reproduction based on their fitness scores, favoring better solutions.

- Crossover: Pairs of selected individuals exchange genetic information to create offspring with combined characteristics.

- Mutation: Random alterations are introduced to maintain diversity within the population and explore new regions of the solution space.

This evolutionary process continues iteratively until a termination criterion is satisfied, typically reaching a maximum number of generations or achieving a target fitness level.

Integration of GA and VMD

The integration of GA with VMD creates a synergistic relationship where each component enhances the capabilities of the other. The GA serves as an intelligent search mechanism that systematically explores the parameter space to identify optimal (k, α) combinations. This optimization process is guided by a carefully designed fitness function that evaluates the quality of the resulting decomposition. Common fitness metrics include correlation coefficient, root mean square error, sample entropy, and central frequency observation [29]. The optimized VMD parameters then enable more effective signal decomposition, producing modes with superior mathematical properties such as sparsity and orthogonality.

Application Notes: Implementation in Drug Discovery

Enhanced Biomolecular Signal Processing

In pharmaceutical research, the GA-VMD framework provides powerful capabilities for analyzing complex biomolecular signals derived from various spectroscopic and computational techniques. The method has demonstrated particular utility in processing signals from molecular dynamics simulations, where it can separate relevant conformational changes from stochastic noise [31]. For instance, when applied to analyze protein-ligand binding dynamics, GA-VMD can effectively isolate distinct frequency components corresponding to different molecular motions, ranging from rapid side-chain fluctuations to slower domain movements. This decomposition enables researchers to focus specifically on motions relevant to binding events, potentially revealing insights into allosteric mechanisms and intermediate states that might be obscured in raw data [32] [31].

Virtual Screening and Binding Affinity Prediction

The GA-VMD framework significantly enhances virtual screening processes in structure-based drug design. By optimizing the decomposition of molecular interaction signals, researchers can achieve more accurate predictions of binding affinities—a crucial parameter in early drug discovery. When integrated with molecular docking approaches, the framework helps identify subtle patterns in binding interactions that might be missed by conventional analysis methods [30]. This capability is particularly valuable for targeting challenging protein classes such as G-protein coupled receptors (GPCRs) and ion channels, where dynamic behavior plays a critical role in function and drug binding [31]. The optimized signal processing enables more reliable ranking of candidate compounds, potentially reducing false positives in virtual screening campaigns.

Table 1: Performance Comparison of GA-VMD Framework in Different Applications

| Application Domain | Performance Metric | Standard VMD | GA-VMD Framework | Improvement |

|---|---|---|---|---|

| Wind Speed Prediction [29] | RMSE | 0.215 | 0.130 | 39.5% |

| Wind Speed Prediction [29] | MAE | 0.162 | 0.099 | 38.9% |

| Wind Speed Prediction [29] | R² | 0.981 | 0.995 | 1.4% |

| Agricultural Price Forecasting [12] | MAPE (Maize) | 15.32% | 8.58% | 44.0% |

| Agricultural Price Forecasting [12] | MAPE (Palm Oil) | 9.47% | 7.41% | 21.7% |

| Multi-step Forecasting [33] | MAPE | 0.208 | 0.100 | 51.9% |

Analysis of Molecular Dynamics Trajectories

Molecular dynamics (MD) simulations generate vast amounts of high-dimensional data representing the temporal evolution of molecular systems. The GA-VMD framework offers an effective approach for analyzing these complex trajectories by decomposing atomic motions into distinct modes with specific frequency characteristics [31]. This decomposition facilitates the identification of functionally relevant conformational changes and collective motions that may be difficult to detect using standard principal component analysis. Additionally, the application of GA-VMD to analyze time-dependent properties from MD simulations, such as distance fluctuations between binding site residues or changes in solvent accessibility, can provide valuable insights into the dynamics of molecular recognition events [32] [34].

Experimental Protocols

Protocol 1: Standard Implementation of GA-VMD

Purpose: To provide a standardized methodology for applying the GA-VMD framework to signal processing tasks in pharmaceutical research.

Materials and Software Requirements:

- MATLAB or Python programming environment

- VMD implementation package

- Genetic Algorithm toolbox

- Input signal data (molecular dynamics trajectories, spectroscopic measurements, etc.)

Procedure:

Signal Preprocessing:

- Load the input signal and normalize if necessary

- Remove obvious artifacts or outliers that may interfere with decomposition

- For molecular dynamics data, extract relevant collective variables or time-series metrics

GA Parameter Initialization:

- Set population size (typically 20-50 individuals)

- Define search bounds for k (usually [3, 15]) and α (typically [100, 5000])

- Specify genetic operators: selection method (tournament or roulette wheel), crossover rate (0.6-0.9), and mutation rate (0.01-0.1)

- Set termination criteria (maximum generations or fitness threshold)

Fitness Function Definition:

- Implement a fitness evaluation function that: a. Applies VMD to the input signal using the candidate (k, α) parameters b. Calculates fitness metrics such as sample entropy, correlation coefficient, or energy difference between modes c. Returns a composite fitness score

GA Optimization Execution:

- Initialize population with random parameter sets within bounds

- For each generation: a. Evaluate fitness of all individuals b. Select parents based on fitness scores c. Apply crossover and mutation to create offspring d. Evaluate offspring fitness e. Implement elitism to preserve best solutions

- Continue until termination criteria are met

Final Decomposition:

- Extract the optimal (k, α) parameters from the best individual

- Perform VMD decomposition using these optimized parameters

- Validate decomposition quality through statistical analysis and visual inspection

Expected Outcomes: The protocol should yield an optimized decomposition of the input signal into intrinsic mode functions with minimal overlap in the frequency domain and maximal sparsity properties.

Protocol 2: GA-VMD for Binding Free Energy Analysis

Purpose: To analyze molecular dynamics trajectories of protein-ligand complexes for enhanced binding free energy calculations using the GA-VMD framework.

Materials:

- Molecular dynamics simulation trajectories of protein-ligand complexes

- GROMACS, AMBER, or NAMD simulation software

- Custom scripts for trajectory analysis

- MM-PBSA or LIE calculation tools

Procedure:

Trajectory Preprocessing:

- Align trajectories to remove global rotation and translation

- Extract relevant time-series data: protein-ligand distances, interaction energies, dihedral angles

GA-VMD Parameter Optimization:

- Apply Protocol 1 to identify optimal VMD parameters for each time-series

- Focus on minimizing mode mixing while capturing relevant binding dynamics