Optimizing Neural Population Dynamics: A Comprehensive Workflow for Computational Neuroscience and Drug Development

This article provides a comprehensive guide to Neural Population Dynamics Optimization Algorithms (NPDOAs), a class of computational methods at the intersection of neuroscience and machine learning.

Optimizing Neural Population Dynamics: A Comprehensive Workflow for Computational Neuroscience and Drug Development

Abstract

This article provides a comprehensive guide to Neural Population Dynamics Optimization Algorithms (NPDOAs), a class of computational methods at the intersection of neuroscience and machine learning. Aimed at researchers, scientists, and drug development professionals, it details the workflow from foundational concepts to advanced applications. We explore the theoretical basis of neural manifolds and dynamical systems, present state-of-the-art methodologies like MARBLE and LangevinFlow, and address critical troubleshooting and optimization challenges such as hyperparameter tuning and computational bottlenecks. The guide concludes with rigorous validation frameworks and comparative analyses against established benchmarks, highlighting the transformative potential of NPDOAs for modeling brain function, accelerating therapeutic discovery, and developing personalized medicine approaches for neurological disorders.

Theoretical Foundations of Neural Population Dynamics

Theoretical Foundation

Neural manifolds are low-dimensional, geometric structures that describe the patterns of activity within high-dimensional neural populations. The core principle is that complex brain dynamics, which underlie cognitive functions and behavior, are constrained to flow along a simplified, low-dimensional subspace within the vast state space of all possible neural activity patterns [1] [2] [3]. This organization allows the brain to perform computations efficiently.

The emergence of these low-dimensional structures is theorized to result from mechanisms such as time-scale separation and averaging [1]. In this framework, fast, oscillatory neuronal activity averages out over time, allowing slower, task-related dynamics to dominate the system's trajectory. This process effectively collapses the high-dimensional system onto a slower, invariant manifold that captures the essential computational states [1]. Furthermore, the separation of neural processes into orthogonal dimensions within a manifold explains how the same population of neurons can encode different variables (e.g., movement preparation vs. execution) without interference [3].

Experimental Protocols and Methodologies

Protocol: Uncovering Low-Dimensional Manifolds from EEG Data in Clinical Stroke Research

This protocol outlines the methodology for identifying stable low-dimensional neural dynamics in stroke patients during a motor imagery task, adapted from a recent Brain-Computer Interface (BCI) study [4].

- Aim: To assess the restoration of neural population dynamics following BCI training for stroke rehabilitation.

- Experimental Setup:

- Participants: Chronic stroke patients. A public dataset of acute stroke patients can also be used for validation.

- Task: Patients perform motor imagery (MI) tasks (e.g., imagining hand movement) before and after a regimen of BCI training.

- Recording: Whole-brain EEG recordings are acquired during the task.

- Procedure:

- Data Acquisition: Record EEG signals from patients during MI tasks pre- and post-BCI training.

- Source Localization: Project the sensor-level EEG signals into the brain's voxel space using a source localization algorithm (e.g., eLORETA). This step simulates the neural population activity within specific Regions of Interest (ROIs).

- Dimensionality Reduction: Apply a dimensionality reduction technique (such as Laplacian Eigenmaps or PCA) to the source-localized neural activity to extract the low-dimensional neural manifold.

- Analysis: Compare the features of the low-dimensional manifolds (e.g., trajectory structure, occupied dimensions) before and after BCI training to quantify restoration of neural dynamics. Correlate manifold features with behavioral outcomes and specific frequency bands (e.g., theta oscillations).

- Key Outputs: Low-dimensional neural manifolds; quantitative metrics of manifold stability and restoration; correlation between theta-band power and manifold features.

Protocol: A Universal Workflow for Creating and Validating Detailed Neuronal Models

This protocol describes a generalized, automated workflow for creating robust, detailed electrical models of neurons, which can serve as building blocks for simulating larger neural populations and their dynamics [5].

- Aim: To generate detailed single-neuron models that accurately reproduce experimentally observed electrophysiological behaviors and show high generalizability.

- Experimental Setup:

- Inputs: 3D morphological reconstructions of neurons (e.g., in SWC or Neurolucida format) and electrophysiological recordings (e.g., in NWB or Igor format).

- Procedure:

- Feature Extraction: From the electrophysiological data, extract features that define the neuron's electrical behavior (e.g., spike timing, threshold, adaptation).

- Model Optimization: Use an evolutionary algorithm to optimize the parameters of the neuronal model (ionic conductances, channel distributions) to match the extracted experimental features. The model integrates the neuron's morphology and a set of ionic mechanisms.

- Model Validation: Test the optimized model against additional, novel stimulus patterns not used during the optimization process to validate its robustness.

- Generalization Assessment: Assess the model's generalizability by applying it to a population of similar morphologies to ensure it performs reliably across variations.

- Key Outputs: Validated and generalized single-neuron models (e.g., in NeuroML or Neuron HOC format); a 5-fold improvement in generalizability compared to canonical models [5].

Data Analysis and Workflow Visualization

Dimensionality Reduction Techniques for Manifold Extraction

The following table summarizes standard techniques used to uncover low-dimensional manifolds from high-dimensional neural data.

Table 1: Dimensionality Reduction Techniques for Neural Manifold Extraction

| Technique | Type | Key Principle | Typical Use Case in Neuroscience |

|---|---|---|---|

| Principal Component Analysis (PCA) [1] [2] | Linear | Finds orthogonal axes that capture maximum variance in the data. | Initial data exploration; denoising; as a preprocessing step for non-linear methods. |

| Laplacian Eigenmaps (LEM) [1] | Non-Linear | Preserves local geometric relationships and captures the global flow structure of dynamics. | Uncovering the underlying continuous manifold from neural trajectories; visualizing transitions between attractor states [1]. |

| t-SNE [1] | Non-Linear | Emphasizes the visualization of local data structure by preserving pairwise similarities. | Creating intuitive 2D/3D visualizations of neural states from high-dimensional data. |

| UMAP [1] | Non-Linear | Balances the preservation of local and global data structure. | Similar to t-SNE, often with faster runtimes and better global structure preservation. |

| CEBRA [3] | Non-Linear (Hybrid) | Uses contrastive learning to identify compact representations that relate neural activity to behavior. | Creating latent spaces where neural dynamics and behavioral variables are jointly embedded. |

Core Analytical Workflow

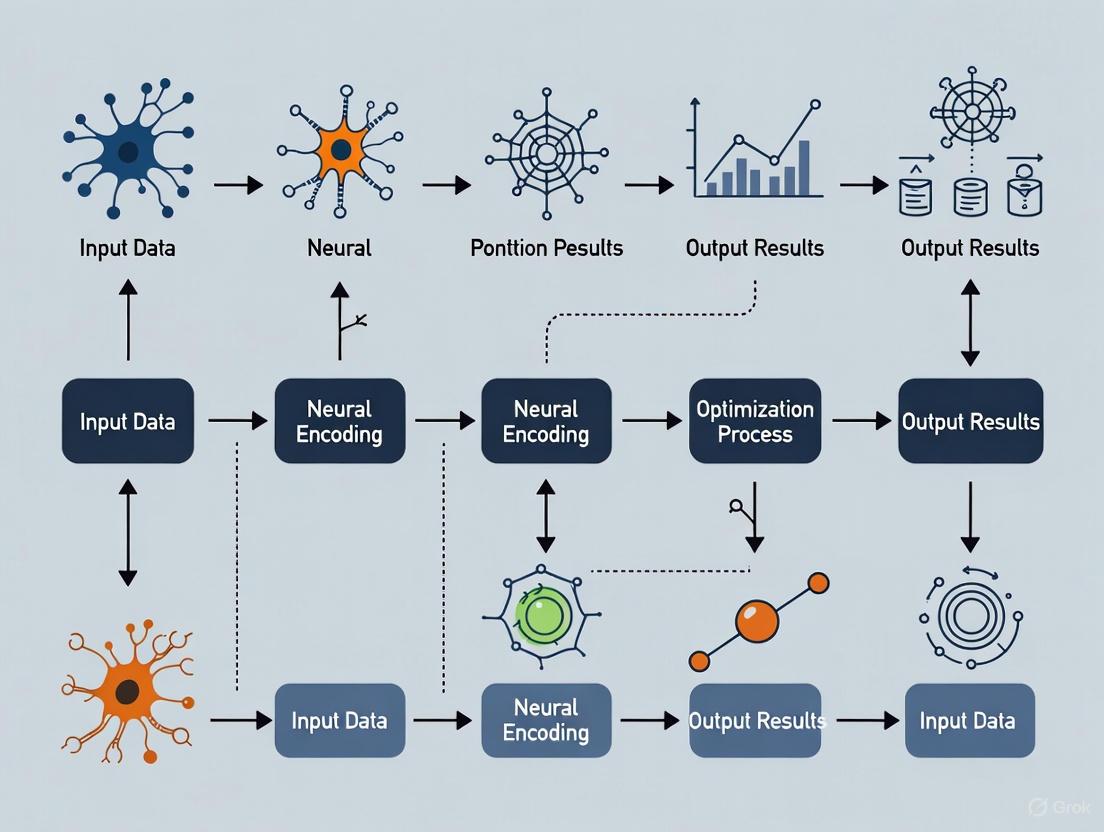

The diagram below illustrates the logical flow from raw neural data to the interpretation of low-dimensional neural manifolds, integrating the protocols and techniques described above.

The Scientist's Toolkit: Research Reagents & Essential Materials

Table 2: Essential Tools for Neural Manifold and Dynamics Research

| Item / Reagent | Function / Explanation |

|---|---|

| High-Density Neural Recorders (e.g., Neuropixels, EEG) | Enables simultaneous recording from hundreds to thousands of neurons or brain-wide signals, providing the high-dimensional data required for population analyses [2] [4]. |

| Source Localization Software (e.g., eLORETA) | Projects signals from sensor space (e.g., EEG) to source space, allowing estimation of activity within specific brain regions for subsequent manifold analysis [4]. |

| Dimensionality Reduction Libraries (e.g., scikit-learn, UMAP) | Software implementations of algorithms like PCA, LEM, and UMAP used to project high-dimensional data into a low-dimensional manifold [1]. |

| Neural Simulation Environments (e.g., Neuron, Arbor) | Platforms for building and simulating detailed computational models of neurons and networks, as used in the universal workflow for model creation [5]. |

| Model Optimization Tools (e.g., BluePyOpt) | Tools that use evolutionary algorithms or other methods to fit model parameters to experimental data, a key step in creating generalizable models [5]. |

| Behavioral Task Control Software (e.g., BCI2000, PyGame) | Presents stimuli and records behavioral outputs, generating the task-related variables that are correlated with neural manifold dynamics [4] [3]. |

Theoretical Foundations

Dynamical systems theory provides a mathematical framework for describing the evolution of systems over time. In the context of neural population dynamics, these concepts are essential for understanding how neural circuits process information and converge on optimal states for decision-making and computation.

Dynamical Flows represent the trajectory of a system's state through phase space, defined by differential or difference equations that specify how system variables change over time [6]. In neural systems, these flows describe the temporal evolution of neural population activities, guiding the system toward stable states representing perceptual decisions or motor outputs [7].

Fixed Points occur where the dynamical flow reaches equilibrium (dx/dt = 0). These points represent stable states where a system will remain indefinitely without perturbation [6]. In neural population models, fixed points correspond to attractor states associated with categorical decisions or memory representations [7]. The stability of these points determines whether the system remains in a particular state or transitions to alternatives.

Attractors are sets of states toward which a system tends to evolve, representing the long-term behavior of dynamical systems [6]. Attractors can take various forms:

- Point Attractors: Single stable equilibrium points [8]

- Limit Cycles: Periodic, oscillatory states [6]

- Strange Attractors: Complex, fractal-structured sets with chaotic dynamics [6]

In neural systems, attractors enable stable representation of categorical information despite noisy inputs, with neural trajectories flowing toward defined regions in state space [7].

Application in Neural Population Dynamics Optimization

The Neural Population Dynamics Optimization Algorithm (NPDOA) implements these dynamical concepts through three core strategies that balance exploration and exploitation in optimization tasks [9].

Attractor Trending Strategy

This strategy drives neural populations toward optimal decisions by leveraging fixed-point dynamics, ensuring exploitation capability. The neural state evolves toward attractors representing high-quality solutions in the optimization landscape, analogous to how biological neural networks converge to perceptual decisions [9] [7].

Coupling Disturbance Strategy

This mechanism introduces controlled perturbations that deviate neural populations from attractors through coupling with other neural populations, improving exploration ability. This prevents premature convergence to local optima by leveraging repeller dynamics that push the system away from suboptimal fixed points [9].

Information Projection Strategy

This approach controls communication between neural populations, enabling transition from exploration to exploitation by regulating the impact of attractor trending and coupling disturbance on neural states [9]. This mirrors how top-down signals modulate neural dynamics in biological systems to prioritize different information streams [7].

Table 1: Core Strategies in Neural Population Dynamics Optimization Algorithm

| Strategy | Dynamical Concept | Function in Optimization | Biological Correspondence |

|---|---|---|---|

| Attractor Trending | Fixed Point Dynamics | Exploitation: Drives convergence to optimal solutions | Neural population convergence to categorical representations [7] |

| Coupling Disturbance | Repeller Dynamics | Exploration: Prevents premature convergence to local optima | Neural variability enhancing behavioral exploration |

| Information Projection | Flow Control | Balance Regulation: Controls exploration-exploitation transition | Top-down modulation of neural processing [7] |

Quantitative Framework

The dynamical properties of neural systems can be quantified through several key metrics that inform optimization performance:

Table 2: Quantitative Metrics for Neural Population Dynamics

| Metric | Definition | Measurement Approach | Optimization Significance |

|---|---|---|---|

| Convergence Rate | Speed at which system approaches attractor | Lyapunov exponent analysis [6] | Determines optimization speed and efficiency |

| Basin of Attraction Size | Region in phase space leading to an attractor [8] | Phase space analysis | Defines robustness to initial conditions and noise |

| Mutual Information | Information between stimulus and neural response [10] | Information-theoretic analysis | Quantifies coding efficiency and solution quality |

| Tuning Strength | Modulation depth of neural response | Reverse-correlation analysis [10] | Reflects discrimination capacity between solutions |

Experimental Protocols

Protocol: Attractor Dynamics Mapping in Neural Populations

Purpose: To characterize fixed points and attractor landscapes in neural population activities during decision-making tasks.

Materials:

- Multielectrode array system for population recording

- Visual stimulation setup (for sensory paradigms)

- Computational framework for dynamical systems analysis (e.g., Mozaik) [11]

Procedure:

- Stimulus Presentation: Implement rapid serial visual presentation (RSVP) of oriented gratings with periodic changes in stimulus features (orientation, luminance, contrast) [10]

- Neural Recording: Record extracellular activity from neural population (e.g., V1) during stimulus presentation with sufficient temporal resolution (≤ 1ms)

- State Space Reconstruction: Embed neural population activity in lower-dimensional state space using dimensionality reduction techniques (PCA, t-SNE)

- Flow Field Estimation: Calculate instantaneous population activity vectors to map dynamical flows

- Fixed Point Identification: Locate regions where flow magnitude approaches zero using numerical root-finding algorithms

- Stability Analysis: Compute Jacobian matrices at fixed points to determine stability (eigenvalue analysis)

- Basin Boundary Mapping: Identify separatrices between attraction basins through phase space sampling

Analysis:

- Quantify convergence times to different attractors

- Measure attractor strength through local flow field divergence

- Compute mutual information between stimulus features and neural states [10]

Protocol: NPDOA Implementation for Drug Discovery Optimization

Purpose: To apply neural population dynamics optimization to molecular design and virtual screening in pharmaceutical development.

Materials:

- Chemical compound libraries (e.g., ZINC, ChEMBL)

- Molecular descriptor calculation software

- High-performance computing infrastructure

- NPDOA implementation framework [9]

Procedure:

- Problem Formulation:

- Define optimization objective (e.g., binding affinity, ADMET properties)

- Encode molecular structures as neural population states (neurons represent molecular features)

- Set fitness function based on quantitative structure-activity relationships (QSAR)

Algorithm Configuration:

- Initialize multiple neural populations with random states

- Set parameters for attractor trending, coupling disturbance, and information projection [9]

- Define termination criteria (fitness threshold, maximum iterations)

Optimization Execution:

- Iterate through attractor trending phase to exploit promising regions

- Apply coupling disturbance to escape local optima

- Regulate balance using information projection based on convergence metrics

- Record trajectory of best solution across iterations

Validation:

- Select top candidates from final population

- Conduct molecular docking simulations

- Perform experimental validation through high-throughput screening

Analysis:

- Compare convergence performance against traditional optimization algorithms

- Quantify diversity of solution population throughout optimization

- Analyze exploration-exploitation balance through state space coverage metrics

Visualization Schematics

Neural Population Dynamics Optimization Workflow

Dynamical Flows and Fixed Points in State Space

Research Reagent Solutions

Table 3: Essential Research Materials for Neural Dynamics Experiments

| Reagent/Resource | Function/Purpose | Example Applications | Key Specifications |

|---|---|---|---|

| Multielectrode Array Systems | Simultaneous recording from neuronal populations | Mapping population dynamics during decision tasks [10] | High channel count (>64), suitable temporal resolution (<1ms) |

| Mozaik Workflow Platform | Integrated simulation environment for spiking networks [11] | Testing computational models of attractor dynamics | PyNN compatibility, Neo data structure support |

| BluePyOpt Optimization Toolbox | Parameter optimization for neuronal models [5] | Tuning model parameters to match experimental data | Evolutionary algorithm implementation, feature extraction |

| Neural Simulation Environments (NEURON, NEST) | Large-scale network simulation | Implementing attractor network models [11] | Parallel computing support, multi-compartment neurons |

| Information Theory Toolkits | Quantifying neural coding efficiency [10] | Measuring mutual information in population codes | Spike train analysis, bias correction methods |

The Role of Optimization in Modeling Neural Computations

Optimization algorithms serve as the computational engine for training models that decipher how neural populations perform computations. In the context of neural population dynamics—which describes how the coordinated activity of groups of neurons evolves over time to drive perception, cognition, and action—optimization provides the essential mechanisms for fitting models to high-dimensional neural data and extracting meaningful computational principles [2]. The convergence of sophisticated optimization techniques with large-scale neural recordings has enabled a new generation of models that move beyond describing single neurons to capturing the collective dynamics of entire neural circuits [12] [13]. This application note outlines key optimization algorithms, presents structured experimental protocols, and provides practical tools for researchers investigating how neural populations implement computations through dynamics.

Optimization Algorithms in Neural Computation

Fundamental Optimization Algorithms

Optimization algorithms minimize loss functions by adjusting model parameters (weights and biases) through iterative updates. The choice of optimizer significantly impacts a model's ability to capture the temporal dependencies and low-dimensional manifold structure characteristic of neural population dynamics [14] [15].

Table 1: Comparison of Optimization Algorithms for Neural Dynamics Modeling

| Optimizer | Key Mechanism | Advantages | Disadvantages | Neural Dynamics Applications |

|---|---|---|---|---|

| Stochastic Gradient Descent (SGD) | Updates parameters using gradient from random data subset | Simple, easy to implement, less memory | Slow convergence, requires careful learning rate tuning | Baseline method for recurrent neural network training [14] |

| SGD with Momentum | Accumulates gradient from previous steps to accelerate convergence | Reduces oscillations, faster convergence | Introduces additional hyperparameter (β) | Modeling dynamics with smooth temporal trajectories [14] |

| Adam | Combines momentum with adaptive learning rates for each parameter | Fast convergence, handles noisy gradients | Memory intensive, more hyperparameters | Training complex dynamical systems on large-scale neural recordings [14] [16] |

| RMSProp | Adapts learning rate based on moving average of squared gradients | Prevents rapid decay of learning rates | Computationally expensive | Modeling neural dynamics with sparse coding patterns [14] |

Advanced Optimization Frameworks for Neural Dynamics

Recent methodological advances have introduced specialized optimization frameworks tailored to the unique challenges of neural population modeling:

MARBLE (MAnifold Representation Basis LEarning): Uses geometric deep learning to decompose neural dynamics into local flow fields on low-dimensional manifolds, employing unsupervised optimization to map these fields into a common latent space [16]. This approach explicitly leverages the manifold hypothesis of neural computation during optimization.

CroP-LDM (Cross-population Prioritized Linear Dynamical Modeling): Implements a prioritized learning objective that specifically optimizes for cross-population dynamics, preventing them from being confounded by within-population dynamics [17]. This is particularly valuable for multi-region neural recordings.

BLEND (Behavior-guided Neural Population Dynamics Modeling): Employs privileged knowledge distillation where a teacher model trained on both neural activity and behavior distills its knowledge to a student model that uses only neural activity [18]. This optimization strategy allows models to benefit from behavioral data even when such data is unavailable at inference time.

Experimental Protocols for Neural Dynamics Optimization

Protocol 1: Fitting Dynamical Systems Models to Neural Population Data

Objective: To fit a dynamical system model ( \frac{dx}{dt} = f(x(t), u(t)) ) that describes how neural population state ( x ) evolves over time under external inputs ( u(t) ) [2].

Materials and Reagents:

- Multi-electrode arrays (e.g., Neuropixel probes) for large-scale neural recordings [12]

- Computational framework for dynamical systems modeling (e.g., Python with PyTorch/TensorFlow)

- Dimensionality reduction tools (PCA, FA) for initial processing of high-dimensional neural data [19]

Procedure:

- Neural Data Preprocessing:

- Bin spike counts into 10-50ms time windows to construct population activity vectors [2]

- Apply smoothing filters to reduce noise while preserving temporal structure

- Z-score normalize firing rates across neurons and trials

Model Architecture Selection:

- Choose Recurrent Neural Networks (RNNs) as flexible parameterizations of the unknown function ( f ) [2]

- Initialize network weights using schemes appropriate for RNNs (e.g., orthogonal initialization)

- Determine appropriate state dimensionality based on neural population size and task complexity

Optimization Configuration:

- Select Adam optimizer with initial learning rate of 0.001-0.01 for balanced convergence speed and stability [14]

- Define loss function as mean squared error between predicted and actual neural activity

- Implement gradient clipping (max norm: 1.0) to prevent exploding gradients in RNNs

Training and Validation:

- Split data into training (70%), validation (15%), and test (15%) sets preserving trial structure

- Implement early stopping based on validation loss with patience of 20-50 epochs

- Monitor both reconstruction accuracy and dynamical systems properties (e.g., fixed points, stability)

Troubleshooting:

- If model fails to capture temporal dependencies, increase hidden state dimensionality or incorporate explicit delays

- For unstable training, reduce learning rate or increase gradient clipping threshold

- If model overfits, introduce dropout or L2 regularization to loss function

Protocol 2: Identifying Latent Dynamics Using Regression Subspace Optimization

Objective: To extract temporal structures of neural modulations by task parameters in a regression subspace, linking rate-coding and dynamical systems perspectives [19].

Materials and Reagents:

- Task design with controlled parameters (continuous or categorical)

- Single-unit recording equipment with precise temporal alignment to task events

- Statistical computing environment (MATLAB, R, or Python with statsmodels)

Procedure:

- Neural Response Characterization:

- Align neural activity to relevant task events (e.g., stimulus onset, movement initiation)

- Compute trial-averaged firing rates for each task condition

- For each neuron and time point, fit regression model: ( \text{rate} = β0 + β1\cdot\text{param}1 + ... + βk\cdot\text{param}_k )

Regression Subspace Construction:

- Construct regression matrix ( B(t) ) containing all regression coefficients across time and neurons [19]

- Apply Principal Component Analysis (PCA) to ( B(t) ) to identify dominant patterns of task-related modulation

- Project neural activity into the regression subspace to visualize modulation dynamics

Dynamics Analysis:

- Plot trajectories of neural population activity in the regression subspace

- Identify fixed points, limit cycles, or other dynamical features

- Relate trajectory geometry to behavioral outcomes or task parameters

Validation:

- Compare extracted dynamics across different neural populations

- Test generalization to held-out task conditions

- Verify that dynamics reflect known neurobiological constraints

Troubleshooting:

- If regression models show poor fit, check for non-linear relationships between task parameters and neural activity

- If subspace dimensionality remains high, consider demixed PCA or targeted dimensionality reduction

- For noisy trajectories, increase trial counts or apply smoothing before regression

Table 2: Research Reagent Solutions for Neural Dynamics Optimization

| Reagent/Tool | Function | Example Applications | Implementation Considerations |

|---|---|---|---|

| Linear Dynamical Systems | Models neural dynamics as linear state transitions | Cross-population dynamics (CroP-LDM), initial data exploration [17] | Limited capacity for nonlinear dynamics; mathematically tractable |

| Recurrent Neural Networks | Flexible parameterization of nonlinear neural dynamics | MARBLE, LFADS, full neural population modeling [2] [16] | Requires careful regularization; optimization challenges |

| Factor Analysis | Identifies latent factors underlying correlated neural variability | Dimensionality reduction before dynamical modeling [12] | Determines intrinsic dimensionality of neural recordings |

| Geometric Deep Learning | Leverages manifold structure in optimization | MARBLE's local flow field analysis [16] | Computationally intensive; requires specialized architectures |

| Privileged Knowledge Distillation | Transfers knowledge from privileged (behavior) to regular (neural) features | BLEND framework for behavior-guided optimization [18] | Enables use of behavioral data without requiring it at inference |

Workflow Visualization

Neural Dynamics Optimization Workflow: This diagram illustrates the comprehensive pipeline from neural data acquisition to computational interpretation, highlighting the role of optimization algorithms at each stage.

Computational Framework of Neural Dynamics: This diagram illustrates the relationship between neural population states, their underlying dynamics, and the optimization processes used to model them, highlighting the low-dimensional latent structure.

Application Notes

Optimizing for Behavioral State-Dependent Dynamics

Behavioral states such as locomotion significantly alter neural population dynamics. During locomotion, mouse visual cortex exhibits shifts from transient to sustained response modes, facilitating rapid emergence of stimulus tuning [12]. When optimizing models of these state-dependent dynamics:

- Incorporate behavioral state as an explicit input to dynamical systems models

- Use separate initialization schemes or learning rates for state-dependent parameters

- Employ behavior-guided distillation frameworks like BLEND when behavioral data is partially available [18]

- Validate that optimized models capture both the temporal dynamics and correlation structure changes observed across behavioral states

Cross-Population Dynamics Optimization

When modeling interactions between multiple neural populations (e.g., different brain regions), specialized optimization approaches are required:

- Implement CroP-LDM's prioritized learning objective to specifically optimize cross-population predictive accuracy [17]

- Use separate loss terms for within-population and cross-population dynamics

- Employ causal filtering during optimization to ensure temporal interpretability of cross-region influences

- Regularize models to prevent overfitting to dominant within-population dynamics that may mask cross-population interactions

Interpretable Dynamics Through Regularized Optimization

To extract scientifically meaningful dynamics from neural population models:

- Incorporate manifold consistency constraints into the loss function to ensure discovered dynamics respect low-dimensional structure [16]

- Use targeted regularization to encourage dynamical features (fixed points, limit cycles) that have clear computational interpretations

- Optimize for both reconstruction accuracy and dynamical interpretability metrics

- Validate that optimized models generate trajectories consistent with trial-to-trial variability observed in experimental data

Optimization algorithms provide the fundamental machinery for building computational bridges between neural activity measurements and theoretical principles of neural computation. The specialized frameworks and protocols outlined here enable researchers to move beyond descriptive accounts of neural activity to mechanistic models of how neural populations implement computations through dynamics. As neural recording technologies continue to scale, the development of increasingly sophisticated optimization approaches will be essential for uncovering the universal computational principles governing neural population dynamics across brain regions, behavioral states, and species.

Linking Population Dynamics to Cognitive Functions and Behavior

A fundamental shift is occurring in neuroscience: the population doctrine is drawing level with the single-neuron doctrine that has long dominated the field [20]. This doctrine posits that the fundamental computational unit of the brain is the population of neurons, not the individual neuron [21]. Representations in the brain are encoded as patterns of activity of large populations of highly interconnected neurons, a science also known as parallel distributed processing (PDP) [22]. This approach achieves neurological verisimilitude and has successfully accounted for a vast spectrum of cognitive phenomena in healthy individuals and impairments resulting from neurological conditions [22]. Understanding the dynamics of these neural populations is crucial for linking brain activity to cognitive functions and behavior, and provides a framework for developing optimization algorithms in computational neuroscience.

Core Theoretical Concepts

The population-level approach to neurophysiology is built upon several foundational concepts that provide a spatial and dynamic perspective on neural computation.

Foundational Principles

- State Spaces: The canonical analysis for population neurophysiology is the neural state space [20]. Instead of plotting the firing rate of a single neuron over time, the state space is a coordinate system where each axis represents the activity of one neuron. At any moment, the population's activity is a single point (a vector) in this high-dimensional space, and over time, it forms a trajectory [20].

- Manifolds: The full set of possible neural states occupied during a behavior often forms a low-dimensional, non-linear surface embedded within the high-dimensional state space. This structure is known as a manifold [20] [21]. The geometry of this manifold constrains the neural dynamics and is thought to reflect the underlying computational structure of the task.

- Dynamics: Neural dynamics refer to how the population's state evolves over time, forming trajectories through the state space [20] [21]. These trajectories are not random; they are governed by rules that can be modeled mathematically. The speed, direction, and stability of these trajectories are thought to correspond to cognitive processes like evidence accumulation, decision-making, and motor planning.

Implications for Cognitive Function and Dysfunction

The properties of population-encoding networks provide an orderly explanation for numerous brain functions and dysfunctions [22]. Knowledge is represented in the strength of the connections between neurons, and learning consists of alterations of these connection strengths. In a semantic network, for example, knowledge is organized in an energy landscape with attractor basins [22]. A central "centroid" might represent the most typical mammal, with sub-basins for specific animals like dogs or cats. The depth of these basins is determined by factors like the frequency of experience and the age of acquisition. With network damage, as in semantic dementia, these basins become shallower, leading to errors where atypical exemplars are lost and responses settle into more typical or superordinate categories, a phenomenon accurately simulated by PDP models [22].

Table 1: Core Concepts of Neural Population Doctrine

| Concept | Description | Functional Significance |

|---|---|---|

| State Space | A coordinate system where each axis represents a neuron's activity. The population's activity is a point in this space [20]. | Provides a spatial view of population activity, enabling analysis of patterns and distances between states. |

| Manifold | A low-dimensional surface within the state space that contains the neural trajectories for a specific behavior or computation [20] [21]. | Reflects the underlying computational structure and constraints of a task. |

| Neural Dynamics | The time-evolution of the population state, forming trajectories through the state space or manifold [20] [21]. | Correlates with cognitive processes like decision-making and motor planning. |

| Attractor Dynamics | The tendency of a network to settle into stable, preferred states (attractors) from a range of similar input patterns [22]. | Supports content-addressable memory, pattern completion, and stable categorical perception. |

Experimental Protocols & Methodologies

To translate population-level theory into empirical findings, specific experimental and analytical methodologies are required.

Protocol 1: Investigating Cognitive Representations using Population State Analysis

This protocol outlines the steps for analyzing how a cognitive variable (e.g., a memorized stimulus) is represented in a neural population.

- Neural Data Acquisition: Simultaneously record the activity of N neurons (e.g., via neuropixels, tetrodes, or calcium imaging) from a relevant brain region (e.g., prefrontal cortex) while an animal performs a cognitive task (e.g., a delayed match-to-sample task).

- Data Preprocessing: Bin the spike counts of all N neurons into successive time bins (e.g., 50-200ms) to create a series of population activity vectors:

A(t) = [a1(t), a2(t), ..., aN(t)]. - Dimensionality Reduction: Apply a dimensionality reduction technique (e.g., Principal Component Analysis - PCA) to the collection of population vectors. This projects the high-dimensional data into a lower-dimensional (e.g., 2D or 3D) state space defined by the main axes of variance (the principal components) for visualization and analysis.

- State Space Visualization: Plot the neural trajectories in the low-dimensional state space. Color-code the trajectories or points based on the cognitive condition (e.g., the identity of the memorized stimulus).

- Distance Analysis: Calculate the distance (e.g., Euclidean or Mahalanobis distance) between neural states corresponding to different conditions. For instance, measure the average distance between population vectors for different remembered stimuli during the delay period [20].

- Relate Distance to Behavior: Correlate the neural distance measures with behavioral performance (e.g., accuracy or reaction time) to establish a functional link between population representation and cognition.

Protocol 2: A PINN Framework for Modeling Neural and Behavioral Dynamics

Physics-Informed Neural Networks (PINNs) offer a powerful tool for modeling the dynamics of neural populations or the behaviors they drive, blending data-driven learning with physical (or biological) constraints [23]. This protocol is adapted from recent work on applying PINNs to ordinary differential equation (ODE) systems.

Problem Formulation:

- Forward Problem: Given a system of ODEs representing neural or behavioral dynamics (e.g., a Wilson-Cowan model for neural populations or a mosquito population model for disease vector behavior), and initial/boundary conditions, solve for the system states.

- Inverse Problem: Given sparse observational data of the system states, infer the unknown parameters of the ODEs.

Network Architecture and Training:

- Inputs: The independent variable(s), typically time

t. - Outputs: The system state variables

U(t)(e.g., firing rates of different neural populations or counts of different organism life stages). - Loss Function Construction:

- Data Loss (

L_data): Mean squared error (MSE) between network predictions and observed data. - Physics Loss (

L_physics): MSE of the ODE residuals, calculated by substituting the network's predictions into the governing ODEs. - Total Loss:

L_total = ω_data * L_data + ω_physics * L_physics.

- Data Loss (

- Customized Training Techniques:

- Normalization: Apply Min-Max scaling to all inputs, outputs, and the ODEs themselves to address multi-scale issues [23].

- Loss Re-weighting: Implement an adaptive algorithm to balance the weights (

ω_data,ω_physics) during training to prevent one loss term from dominating [23]. - Causal Training: Train the network sequentially on expanding time domains to respect temporal causality and improve convergence [23].

- Inputs: The independent variable(s), typically time

Visualization of Workflows and Signaling Pathways

The following diagrams, generated with Graphviz, illustrate the core logical relationships and experimental workflows described in these protocols.

Neural Population Analysis Workflow

PINN Architecture for Dynamical Systems

The Scientist's Toolkit: Research Reagent Solutions

This section details key computational tools and conceptual frameworks essential for research in neural population dynamics.

Table 2: Essential Research Tools for Neural Population Dynamics

| Research Tool | Category | Function & Application |

|---|---|---|

| Dimensionality Reduction (PCA, t-SNE, UMAP) | Analytical Software | Projects high-dimensional neural data into a low-dimensional state space for visualizing manifolds and neural trajectories [20]. |

| Physics-Informed Neural Networks (PINNs) | Computational Model | A multi-task learning framework that integrates observational data with the constraints of governing differential equations to solve forward and inverse problems in dynamics [23]. |

| High-Density Neural Probes (e.g., Neuropixels) | Hardware | Enables simultaneous recording of hundreds to thousands of neurons, providing the necessary data density for population-level analysis [20]. |

| State Space Vector | Conceptual Framework | The mathematical representation of population activity at a single time point; its direction and magnitude can predict stimulus identity and behavioral outcomes, respectively [20]. |

| FAIR Data Management Plan | Data Protocol | A set of principles (Findable, Accessible, Interoperable, Reusable) that guides research data management, ensuring data can be effectively shared and reused by the community [24]. |

Data Presentation and Analysis

Quantitative analysis is central to the population doctrine. The following table summarizes key metrics and their cognitive correlates.

Table 3: Quantitative Metrics in Population Analysis and Their Cognitive Correlates

| Metric | Definition | Cognitive/Behavioral Correlation |

|---|---|---|

| State Vector Magnitude | The norm (e.g., L2-norm) of the population activity vector. Essentially the total activity across the population [20]. | Predicts how well a stimulus will be remembered; may reflect attentional engagement or cognitive effort [20]. |

| Inter-state Distance | The Euclidean or Mahalanobis distance between two population states in the high- or low-dimensional space [20]. | Quantifies the dissimilarity of neural representations (e.g., of two different concepts or decisions). Larger distances may correlate with easier discrimination. |

| Trajectory Speed | The rate of change of the population state over time (derivative of the state vector). | May correspond to the speed of cognitive processing, such as the rate of evidence accumulation in a decision-making task. |

| Choice Probability | The ability to decode an animal's upcoming choice from the population activity prior to the behavior. | A direct link between population dynamics and behavioral output, crucial for validating computational models. |

Neural population dynamics describe the time evolution of patterned activity across groups of neurons, which is fundamental to brain functions like motor control, decision-making, and working memory [25]. The core concept is that neural computations emerge from these collective dynamics, shaped by underlying network connectivity [25]. Research in this field seeks to identify the principles governing these dynamics and leverage them for algorithmic optimization, such as in the novel Neural Population Dynamics Optimization Algorithm (NPDOA), a brain-inspired meta-heuristic that simulates interconnected neural populations during cognition and decision-making [9].

A significant challenge in the field is defining what constitutes a neural population. Definitions often rely on arbitrary boundaries of measurement technology or physical cartography, rather than dynamical boundaries based on functional independence or computational unity [26]. This review synthesizes the current research landscape, highlighting key computational frameworks, empirical findings, and methodological challenges, with a focus on implications for optimization algorithm development.

Current Research Landscape

Brain-Inspired Optimization Algorithms

The NPDOA represents a direct translation of neural dynamic principles into a meta-heuristic optimization framework. It incorporates three novel strategies inspired by brain neuroscience:

- Attractor Trending Strategy: Drives neural populations towards optimal decisions, ensuring exploitation capability.

- Coupling Disturbance Strategy: Deviates neural populations from attractors via coupling, improving exploration ability.

- Information Projection Strategy: Controls communication between neural populations, enabling a transition from exploration to exploitation [9].

Systematic experiments on benchmark and practical problems have verified NPDOA's effectiveness, demonstrating distinct benefits for addressing single-objective optimization problems [9].

Advanced Analysis and Modeling Techniques

Recent methodological advances have significantly improved the ability to infer and model latent neural dynamics.

- MARBLE (MAnifold Representation Basis LEarning): This geometric deep learning method decomposes neural dynamics into local flow fields over neural manifolds and maps them into a common latent space. It provides an unsupervised, interpretable representation that can discover consistent dynamics across different networks and animals without requiring behavioral supervision [16].

- Low-Rank Linear Dynamical Models: These models effectively capture the low-dimensional structure prevalent in neural population activity. Their low-rank parameterization (e.g.,

A_s = D_{A_s} + U_{A_s}V_{A_s}^⊤) separates diagonal components (accounting for neuron-specific properties) from low-rank components (capturing population-wide interactions), enabling efficient estimation of causal interactions from photostimulation data [27]. - Active Learning for System Identification: Novel active learning procedures have been developed to design optimal photostimulation patterns for efficiently identifying neural population dynamics. This approach can yield up to a two-fold reduction in the data required to achieve a given predictive power by targeting the low-dimensional structure of the dynamics [27].

- Cross-Population Prioritized Linear Dynamical Modeling (CroP-LDM): This method prioritizes learning dynamics shared across different neural populations (e.g., from different brain regions) over within-population dynamics. This ensures that the extracted cross-population dynamics are not confounded by local dynamics and provides a more interpretable model of interactions [17].

- Behavior-Guided Modeling (BLEND): This framework uses privileged knowledge distillation to train a model on both neural activity and behavior during training. The distilled student model can then infer behavior using only neural activity as input, enhancing neural dynamics modeling without requiring specialized architectures [28].

- Task-Driven Neural Network Models: These models use performance on a computational task (e.g., predicting limb position) as an optimization objective to generate neural representations. The resulting task-optimized internal representations have been shown to successfully predict neural dynamics in proprioceptive areas, generalizing from synthetic data to real neural activity [29].

- Scalable Computational Tools: Frameworks like AutoLFADS (using Population Based Training for hyperparameter optimization) and its subsequent implementations via KubeFlow and Ray provide scalable solutions for the computationally intensive process of extracting latent dynamics from large-scale neural recordings [30].

Table 1: Key Computational Frameworks in Neural Population Dynamics

| Framework Name | Core Methodology | Primary Application | Key Advantage |

|---|---|---|---|

| NPDOA [9] | Brain-inspired meta-heuristic with attractor, coupling, and projection strategies. | Solving complex single-objective optimization problems. | Balances exploration and exploitation using neural principles. |

| MARBLE [16] | Geometric deep learning on neural manifolds. | Unsupervised, interpretable representation of dynamics across systems. | Discovers consistent latent representations without behavioral labels. |

| CroP-LDM [17] | Prioritized linear dynamical systems. | Modeling cross-regional neural interactions. | Isolates shared cross-population dynamics from within-population dynamics. |

| BLEND [28] | Privileged knowledge distillation from behavior. | Behavior-guided neural dynamics modeling. | Improves behavioral decoding and identity prediction without paired data at inference. |

| Active LDS [27] | Active learning for low-rank regression. | Efficient system identification via optimal photostimulation. | Reduces amount of experimental data required for model fitting. |

| AutoLFADS [30] | Deep learning (LFADS) with hyperparameter tuning. | Extracting latent dynamics from neural population data. | Scalable, automated hyperparameter optimization for diverse datasets. |

Empirical Findings on Dynamical Constraints and Representations

Empirical studies have provided critical insights into the nature and constraints of neural dynamics, which are highly relevant for developing robust algorithms.

- Dynamical Constraints are Robust: A seminal study using a brain-computer interface (BCI) challenged monkeys to volitionally alter or time-reverse the natural time courses of neural population activity in motor cortex. Animals were unable to violate these natural neural trajectories, suggesting that the underlying network connectivity imposes strong constraints on the possible paths of neural activity [25].

- Working Memory Involves Reformatted Representations: Research on human working memory using fMRI has revealed that neural representations are both stable and dynamic. Surprisingly, dynamics were stronger in early visual cortex than in higher-level frontoparietal areas. The neural code for a memory target in V1 transformed over the delay period, spreading from the target location towards the fovea, effectively reformatting the memory into a representation more proximal to the forthcoming guided behavior [31].

- Low-Dimensionality of Dynamics: Neural population activity consistently resides in a low-dimensional subspace, a finding that is leveraged by most modern modeling approaches to improve estimation and prediction [27].

Table 2: Key Empirical Findings on Neural Population Dynamics

| Neural System | Key Finding | Implication for Algorithms |

|---|---|---|

| Motor Cortex (B/C Interface) [25] | Neural trajectories are difficult to violate or time-reverse volitionally. | Optimization landscapes may have inherent, constrained pathways; exploration must work within these dynamics. |

| Working Memory (Human fMRI) [31] | Coexisting stable and dynamic codes; dynamics reformat information for behavior. | Algorithms may need parallel processes for stable memory maintenance and dynamic transformation of solutions. |

| Premotor/Motor Cortex (Cross-regional) [17] | Interactions from premotor to motor cortex are dominant, quantifiable via prioritized dynamics. | Modeling hierarchical interactions in multi-population systems requires methods that prioritize directional influence. |

| Proprioception (CN & S1) [29] | Task-driven models predicting limb state best predict neural activity. | Defining a relevant computational objective (task) is crucial for generating accurate models of neural coding. |

Key Challenges and Future Directions

Despite significant progress, the field of neural population dynamics faces several interconnected challenges that represent opportunities for future research, particularly in the context of optimization algorithm development.

- Defining Functional Neural Populations: A fundamental challenge is moving beyond arbitrary or physically defined neural populations towards a definition based on dynamical or functional independence. A population should be defined as a group of neurons whose dynamics are strongly constrained by internal interactions but only weakly influenced by external connections [26]. Developing and applying methods to identify such dynamical boundaries remains an open problem.

- Balancing Exploration and Exploitation: The NPDOA explicitly addresses this balance through its three strategies [9]. However, empirically demonstrating that neural circuits achieve an optimal balance, and understanding how that balance is modulated by task demands or brain state, is an ongoing area of inquiry with direct implications for adaptive meta-heuristics.

- Integrating Cross-Population Interactions: As evidenced by the development of CroP-LDM, a major challenge is accurately modeling interactions between distinct neural populations without having the shared dynamics confounded by stronger within-population dynamics [17]. This is crucial for understanding brain-wide computation.

- Linking Dynamics to Behavior: While methods like BLEND [28] and task-driven modeling [29] make strides in connecting neural dynamics to behavior, establishing a complete, causal link from specific dynamical features to the generation of specific behaviors is still a central goal in neuroscience.

- Modeling with Limited Data: The high dimensionality of neural data and the complexity of models necessitate large datasets. Active learning approaches [27] and efficient low-rank models are promising solutions, but developing methods that are robust and accurate with limited sampling is a persistent challenge.

Experimental Protocols

Protocol: Validating Neural Dynamics Constraints via BCI

This protocol is based on the experiments described in [25] that tested the robustness of neural trajectories.

Objective: To determine if the natural time courses of neural population activity in motor cortex can be volitionally altered. Materials and Reagents:

- Non-human primate (e.g., Rhesus monkey) implanted with a multi-electrode array in primary motor cortex.

- Real-time neural signal processing system.

- Brain-Computer Interface (BCI) setup for providing visual feedback of neural activity.

- Gaussian Process Factor Analysis (GPFA) algorithm for dimensionality reduction.

Procedure:

- Neural Recording and Decoding: Record spiking activity from ~90 neural units. Use causal GPFA to project the high-dimensional activity into a 10-dimensional (10D) latent state in real-time.

- Establish Baseline Mapping: Define an initial BCI mapping (

MoveIntprojection) that translates the 10D latent state to a 2D cursor position. This mapping should be intuitive for the animal to use for cursor control. - Identify Natural Trajectories: Have the animal perform a two-target center-out task. Observe and record the neural trajectories in the 10D latent space for movements between target pairs.

- Find Structured Projections: Identify a 2D projection of the 10D state (e.g., a

SepMaxprojection) where the neural trajectories for opposing movements (A-to-B vs. B-to-A) are distinct and exhibit directional curvature. - Alter Visual Feedback: Change the BCI feedback to the animal from the

MoveIntprojection to theSepMaxprojection. The animal now sees a curved trajectory for straight-line cursor movements. - Challenge with Path Control: Directly challenge the animal to follow a prescribed, unnatural path in the

SepMaxprojection (e.g., a time-reversed version of its natural trajectory) to acquire a target. - Data Analysis: Compare the attempted neural trajectories during the challenge phase to the natural trajectories. Quantify the deviation and the animal's success rate in following the prescribed path.

Interpretation: A failure to substantially alter the neural trajectory from its natural path, despite strong incentive, provides evidence that the neural dynamics are constrained by the underlying network.

Protocol: Active Learning of Dynamics via Photostimulation

This protocol is based on [27] for efficiently identifying neural population dynamics.

Objective: To actively select informative photostimulation patterns for estimating a low-rank linear dynamical system model of neural population activity. Materials and Reagents:

- Mouse expressing optogenetic actuators (e.g., Channelrhodopsin-2) in excitatory neurons in motor cortex.

- Two-photon microscope for simultaneous calcium imaging of a neural population (500-700 neurons).

- Two-photon holographic photostimulation system for precise targeting of groups of 10-20 neurons.

- Computational pipeline for fitting low-rank autoregressive models.

Procedure:

- Initial Data Collection: Perform an initial set of photostimulation trials targeting random groups of neurons. Record the calcium responses of the entire imaged population.

- Model Initialization: Fit an initial low-rank autoregressive (AR) model to the recorded data. This model will have the form:

x_{t+1} = Σ_{s=0}^{k-1} (A_s x_{t-s} + B_s u_{t-s}) + vwhereA_sandB_sare diagonal plus low-rank matrices. - Active Stimulus Selection: Using the current model estimate, compute which photostimulation pattern (i.e., which group of neurons to stimulate) is expected to maximally reduce the uncertainty in the model parameters (e.g., which minimizes the predicted error variance).

- Iterative Data Collection and Update: a. Apply the selected photostimulation pattern and record the neural response. b. Update the low-rank AR model with the new data. c. Repeat steps 3 and 4 for a set number of trials or until model performance converges.

- Comparison to Passive Learning: Compare the predictive accuracy of the model trained with active stimulus selection against a model trained using the same number of randomly selected photostimulation patterns.

Interpretation: The active learning approach should achieve a higher model accuracy with fewer trials, demonstrating a more efficient identification of the causal neural dynamics.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Neural Population Dynamics Research

| Item | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Two-photon Holographic Optogenetics [27] | Precise simultaneous photostimulation of specified groups of individual neurons while measuring activity. | Causally probing network connectivity and testing dynamical models. |

| Multi-electrode Arrays [25] | Chronic, high-yield recording of spiking activity from dozens to hundreds of neurons. | Tracking neural trajectories with high temporal resolution for BCI studies. |

| Low-Rank Autoregressive Models [27] | A class of models that parsimoniously capture the low-dimensional dynamics of neural populations. | Simulating neural dynamics and predicting responses to perturbation. |

| Latent Factor Analysis via Dynamical Systems (LFADS/AutoLFADS) [30] | A deep learning method for inferring latent dynamics from noisy, high-dimensional neural data. | Denoising neural recordings and extracting underlying dynamical states. |

| Gaussian Process Factor Analysis (GPFA) [25] | A dimensionality reduction technique for extracting smooth, low-dimensional latent trajectories from neural data. | Visualizing neural trajectories in a low-dimensional state space for BCI control. |

| Task-Driven Neural Networks [29] | Models whose internal representations are optimized to perform specific computational tasks. | Generating and testing hypotheses about the computational goals of neural circuits. |

Workflow and Conceptual Diagrams

NPDOA Algorithm Workflow

Diagram Title: NPDOA's Three Core Strategies for Optimization

Dynamical Constraints Experimental Paradigm

Diagram Title: Testing the Robustness of Neural Trajectories with BCI

Core Algorithms and Practical Implementation

MARBLE (MAnifold Representation Basis LEarning) is a representation learning method that leverages geometric deep learning to infer interpretable and consistent latent representations from neural population dynamics. The core premise of MARBLE is that neural dynamics evolve on low-dimensional manifolds, and by decomposing these on-manifold dynamics into local flow fields, it can map them into a common latent space in a fully unsupervised manner [16] [32]. This approach addresses a fundamental challenge in neuroscience: inferring latent dynamical processes from neural data and interpreting their relevance to computational tasks, even when neural states are embedded differently across recording sessions, individuals, or artificial neural networks [16]. MARBLE provides a powerful similarity metric to compare cognitive computations across different systems without requiring behavioral supervision, enabling researchers to discover global latent structures that parametrize high-dimensional neural dynamics during processes such as gain modulation, decision-making, and changes in internal state [16] [32].

Unlike traditional dimensionality reduction methods such as PCA or UMAP that treat neural activations as static point clouds, or supervised approaches like CEBRA that require behavioral labels, MARBLE utilizes the temporal information of neural dynamics and the manifold structure to learn representations without alignment constraints [32]. This unsupervised capability is particularly valuable for scientific discovery where behavioral labels may introduce unintended correspondences or may not be available. The framework has demonstrated state-of-the-art within- and across-animal decoding accuracy when applied to experimental single-neuron recordings from primates and rodents, as well as to recurrent neural networks performing cognitive tasks [16].

Theoretical Foundation and Algorithmic Principles

Core Mathematical Concepts

MARBLE is grounded in differential geometry and dynamical systems theory. It represents neural population activity during a task as a set of d-dimensional time series {x(t; c)} under various experimental conditions c. Rather than analyzing individual trajectories, MARBLE treats the ensemble of trials under condition c as a vector field Fc = (f1(c), ..., fn(c)) anchored to a point cloud Xc = (x1(c), ..., xn(c)) representing all sampled neural states [16] [32]. The framework assumes these states lie on a smooth, low-dimensional manifold embedded in the high-dimensional neural state space.

The algorithm approximates this unknown manifold using a proximity graph constructed from Xc. This graph provides the structure to define tangent spaces around each neural state and establish notions of smoothness and parallel transport between nearby vectors [16]. This construction enables MARBLE to define a learnable vector diffusion process that denoises the flow field while preserving its fixed point structure, which is crucial for maintaining the dynamical properties of the original system [16].

Key Computational Steps

MARBLE implements several innovative computational steps to transform raw neural data into interpretable latent representations:

Local Flow Field (LFF) Extraction: For each neural state i, MARBLE extracts a local flow field defined as the vector field within a graph distance p from i. This LFF encodes the local dynamical context, providing information about short-term dynamical effects of perturbations [16]. The parameter p determines the scale of local approximation and can be considered the order of the function that locally approximates the vector field.

Geometric Feature Encoding: MARBLE employs a specialized geometric deep learning architecture with three components: (1) p gradient filter layers that compute the best p-th order approximation of the LFF around each point; (2) inner product features with learnable linear transformations that ensure invariance to different embeddings of neural states; and (3) a multilayer perceptron that outputs the final latent vector zi [16].

Unsupervised Contrastive Learning: The model is trained using an unsupervised contrastive objective that leverages the continuity of LFFs over the manifold. Adjacent LFFs are typically more similar than non-adjacent ones, providing a natural self-supervision signal without requiring external labels [16].

Table 1: Key Hyperparameters in the MARBLE Framework

| Hyperparameter | Description | Typical Settings |

|---|---|---|

| Proximity Graph Parameter (p) | Order of local approximation for LFFs | Varied based on dataset (see Supplementary Table 3 in [16]) |

| Latent Space Dimension (E) | Dimensionality of output latent vectors | Optimized for specific applications |

| Gradient Filter Layers | Number of layers for LFF approximation | Corresponds to p value |

| Training Epochs | Number of training iterations | Until convergence (default settings typically sufficient) |

Experimental Protocols and Implementation

Data Preparation and Preprocessing

Successful application of MARBLE requires careful data preparation. The input to MARBLE consists of neural firing rates organized as trials under different experimental conditions. For single-neuron recordings, spike times should be converted to continuous firing rates using appropriate smoothing kernels. The data structure should maintain the relationship between trials, conditions, and temporal sequences [16] [32]. While MARBLE is designed to handle the inherent noise in neural recordings, standard preprocessing steps such as normalization and outlier removal may be applied as needed. The method does not require explicit behavioral labels, but user-defined labels of experimental conditions under which trials are dynamically consistent can be provided to permit local feature extraction [16].

Step-by-Step Implementation Protocol

Data Loading and Structure: Load neural data structured as trials × time × neurons. Organize trials by experimental conditions. MARBLE assumes trials within the same condition c are dynamically consistent.

Manifold Graph Construction:

- Construct a proximity graph from the point cloud Xc of all neural states across trials.

- This graph approximates the underlying neural manifold and enables definition of local neighborhoods for LFF extraction.

- The graph construction method (e.g., k-nearest neighbors or ε-ball) and parameters should be chosen based on data density and structure.

Local Flow Field Computation:

- For each neural state i in the point cloud, compute the local flow field within a graph distance p.

- The LFF captures the local dynamical context around each point, encoding short-term temporal evolution.

Network Configuration and Training:

- Configure the geometric deep learning architecture with p gradient filter layers.

- Set up inner product features with learnable transformations to ensure embedding invariance.

- Train the network using the unsupervised contrastive learning objective that preserves LFF continuity.

- Most hyperparameters can be kept at default values, with only a few requiring tuning as summarized in Supplementary Table 3 of the original publication [16].

Latent Representation Extraction:

- Pass all neural states through the trained network to obtain latent representations Zc = (z1(c), ..., zn(c)).

- These latent vectors form an empirical distribution Pc representing the flow field under condition c.

Cross-Condition Comparison:

- Map multiple flow fields (different conditions within or across systems) simultaneously.

- Compute distances between latent representations using optimal transport distance d(Pc, Pc′) to quantify dynamical overlap [16].

MARBLE Computational Workflow

Validation and Benchmarking Protocol

To validate MARBLE implementations and compare performance against alternative methods, follow this benchmarking protocol:

Dataset Selection: Use standardized datasets including:

- Simulated nonlinear dynamical systems with known ground truth

- Recordings from primate premotor cortex during reaching tasks

- Hippocampal recordings from rodents during spatial navigation

- Activity from recurrent neural networks trained on cognitive tasks

Comparison Methods: Compare against current representation learning approaches including:

- Linear methods: PCA, targeted dimensionality reduction (TDR)

- Nonlinear manifold learning: t-SNE, UMAP

- Dynamical systems methods: LFADS

- Supervised representation learning: CEBRA

Evaluation Metrics:

- Within-animal decoding accuracy for behavioral variables

- Across-animal decoding consistency

- Interpretability of latent representations in terms of global system variables

- Robustness to sparse sampling and representational drift

Table 2: Performance Benchmarking of MARBLE Against Alternative Methods

| Method | Within-Animal Decoding Accuracy | Across-Animal Consistency | Interpretability | Supervision Required |

|---|---|---|---|---|

| MARBLE | State-of-the-art | State-of-the-art | High - directly parametrizes neural dynamics | Unsupervised |

| CEBRA | High | Moderate to High | Moderate | Supervised or self-supervised |

| LFADS | Moderate | Low to Moderate | Moderate | Requires alignment |

| PCA | Low | Low | Low | Unsupervised |

| UMAP | Low to Moderate | Low | Low to Moderate | Unsupervised |

Applications in Neural Population Analysis

Analyzing Decision-Making and Internal States

MARBLE has demonstrated particular utility in discovering latent representations that parametrize neural dynamics during cognitive processes such as decision-making. When applied to recordings from the premotor cortex of macaques during a reaching task, MARBLE uncovered emergent low-dimensional representations that corresponded to decision variables and internal states [16]. The framework's ability to represent dynamics statistically over ensembles of trajectories using local dynamical contexts enables it to capture meaningful computational variables without supervision. This represents a significant advancement over methods that require explicit behavioral labels or assume linear dynamics.

In decision-making tasks, MARBLE has revealed how neural populations implement decision thresholds and how these thresholds change under different task conditions. The method's sensitivity to subtle changes in high-dimensional dynamical flows allows researchers to detect alterations in decision-making strategies that are not apparent through linear subspace alignment methods [16] [32]. This capability makes MARBLE particularly valuable for studying how cognitive computations are implemented in biological and artificial neural systems.

Characterizing Gain Modulation and Adaptation

MARBLE provides powerful tools for investigating neural adaptation phenomena such as contrast gain control in visual processing. While not explicitly applying MARBLE, studies of contrast adaptation in visual cortex demonstrate the types of population-level recoding that MARBLE is designed to detect and characterize [33] [34]. In primary visual cortex, contrast adaptation can be understood as a reparameterization of population responses, where the contrast-response function shifts along the log contrast axis in different environments [33].

MARBLE's distributional representation of vector fields can capture such gain modulation phenomena by comparing the latent representations under different adaptation states. The optimal transport distance between distributions Pc and Pc′ quantitatively measures how much the underlying dynamics have changed due to adaptation, providing a data-driven metric of neural computation changes that complements traditional information-theoretic approaches [34]. This approach reveals how neural systems maintain stable representations despite changes in input statistics, a fundamental challenge in sensory processing.

MARBLE Network Architecture

Research Reagent Solutions

Table 3: Essential Research Tools for MARBLE Implementation

| Resource Category | Specific Tools/Solutions | Function in MARBLE Workflow |

|---|---|---|

| Neural Recording Systems | Two-photon calcium imaging, Neuropixels, extracellular arrays | Provides raw neural population data (firing rates) for analysis |

| Data Processing Tools | Suite2p, SpikeInterface, custom preprocessing pipelines | Signal extraction, spike sorting, firing rate estimation |

| Computational Frameworks | Python (PyTorch, TensorFlow), Geometric Deep Learning libraries | Implementation of MARBLE architecture and training |

| Visualization Tools | Matplotlib, Plotly, Graphviz | Visualization of manifolds, latent spaces, and dynamics |

| Benchmark Datasets | Primate premotor cortex data, rodent hippocampus data, RNN activity | Validation and benchmarking of MARBLE implementations |

| Comparison Methods | PCA, UMAP, LFADS, CEBRA implementations | Performance comparison and method validation |

The Neural Population Dynamics Optimization Algorithm (NPDOA) is a novel brain-inspired meta-heuristic method that simulates the activities of interconnected neural populations in the brain during cognition and decision-making processes [9]. This algorithm is grounded in the population doctrine from theoretical neuroscience, where each solution is treated as a neural state of a neural population [9]. Within this framework, individual decision variables represent neurons, and their values correspond to the firing rates of these neurons [9]. The NPDOA operates by having the neural states of these populations evolve according to neural population dynamics, effectively translating the brain's efficient information processing and optimal decision-making capabilities into a powerful optimization methodology [9]. Its design specifically addresses the critical challenge in meta-heuristic algorithms: maintaining an effective balance between exploration (discovering promising areas of the search space) and exploitation (thoroughly searching these promising areas) [9].

Core Algorithmic Framework and Workflow

The NPDOA framework is built upon three fundamental strategies that work in concert to navigate the solution space effectively.

The Three Core Strategies of NPDOA

Attractor Trending Strategy: This strategy drives neural populations toward optimal decisions by guiding their neural states to converge towards different attractors, which represent favorable decisions [9]. This process ensures the algorithm's exploitation capability, allowing it to thoroughly search promising regions identified in the search space [9].

Coupling Disturbance Strategy: This mechanism creates interference in neural populations by coupling them with other neural populations, thereby disrupting the tendency of their neural states to move directly toward attractors [9]. This strategy enhances the algorithm's exploration ability, helping to prevent premature convergence to local optima by maintaining population diversity [9].

Information Projection Strategy: This component controls communication between neural populations, regulating the impact of the attractor trending and coupling disturbance strategies on the neural states [9]. This enables a smooth transition from exploration to exploitation throughout the optimization process [9].

Computational Workflow

The following diagram illustrates the logical workflow and interaction between the core strategies of the NPDOA:

Performance Analysis and Benchmarking

Rigorous evaluation of the NPDOA against standard benchmark functions and practical problems has demonstrated its competitive performance compared to other state-of-the-art metaheuristic algorithms.

Quantitative Performance Metrics

Table 1: Benchmark Performance Comparison of Metaheuristic Algorithms

| Algorithm | Average Friedman Ranking (30D) | Average Friedman Ranking (50D) | Average Friedman Ranking (100D) | Key Strengths |

|---|---|---|---|---|

| NPDOA | 3.00 | 2.71 | 2.69 | Balanced exploration-exploitation, effective avoidance of local optima [9] |

| PMA | 3.00 | 2.71 | 2.69 | High convergence efficiency [35] |

| CSBOA | Not specified | Not specified | Not specified | Competitive performance on most benchmark functions [36] |

| INPDOA | Validated on 12 CEC2022 functions | Validated on 12 CEC2022 functions | Validated on 12 CEC2022 functions | Enhanced AutoML optimization [37] |

Statistical Validation

The performance of NPDOA has been statistically validated using non-parametric tests including the Wilcoxon rank-sum test and Friedman test, which confirm the robustness and reliability of the algorithm compared to other metaheuristic approaches [9] [35]. These statistical evaluations provide confidence in NPDOA's consistent performance across various problem domains and dimensionalities.

Application Protocols

Protocol 1: Medical Prognostic Prediction Modeling

This protocol details the application of an improved NPDOA (INPDOA) for automated machine learning in medical prognostic modeling, specifically for autologous costal cartilage rhinoplasty (ACCR) outcomes [37].

Objective: To develop an AutoML-based prognostic prediction model and visualization system for ACCR, addressing clinical challenges of postoperative complications and satisfaction disparity [37].

Dataset Preparation:

- Collect retrospective data from 447 patients (2019-2024) integrating 20+ parameters spanning biological, surgical, and behavioral domains [37].

- Include demographic variables (age, sex, BMI), preoperative clinical factors (nasal pore size, prior surgery history, ROE score), intraoperative variables (surgical duration, hospital stay), and postoperative behavioral factors (nasal trauma, antibiotic duration, smoking) [37].

- Divide cohort into training (n=264) and internal test sets (n=66) using 8:2 split, with external validation set (n=117) [37].

- Apply SMOTE to training set to address class imbalance [37].

INPDOA-Enhanced AutoML Framework:

- Encode three decision spaces into hybrid solution vector: base-learner type, feature selection, and hyper-parameters [37].

- Implement dynamically weighted fitness function:

f(x) = w₁(t)·ACC_CV + w₂·(1-‖δ‖₀/m) + w₃·exp(-T/T_max)[37]. - Configure weight coefficients to adapt across iterations—prioritizing accuracy initially, balancing accuracy and sparsity mid-phase, and emphasizing model parsimony terminally [37].

Validation:

Expected Outcomes:

Protocol 2: Engineering Design Optimization

This protocol outlines the application of NPDOA for solving complex engineering design problems, demonstrating its versatility beyond medical applications.