Optimizing Inter-Stimulus Intervals in fMRI: A Strategic Guide for Robust Cognitive Paradigm Design

This article provides a comprehensive guide for researchers and drug development professionals on optimizing inter-stimulus intervals (ISIs) in functional magnetic resonance imaging (fMRI) cognitive paradigms.

Optimizing Inter-Stimulus Intervals in fMRI: A Strategic Guide for Robust Cognitive Paradigm Design

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing inter-stimulus intervals (ISIs) in functional magnetic resonance imaging (fMRI) cognitive paradigms. It synthesizes foundational principles, advanced methodological applications, and practical troubleshooting strategies to enhance statistical efficiency and data reliability. Covering topics from the basic hemodynamic response function to the design of ultrafast and precision fMRI studies, the content addresses critical challenges like head motion and individual variability. Furthermore, it explores validation techniques and comparative analyses of different design approaches, offering evidence-based recommendations to maximize detection power and reproducibility in both basic cognitive neuroscience and clinical trial contexts.

The Building Blocks: Understanding ISI and the BOLD Response

Defining Inter-Stimulus Interval (ISI) and Stimulus Onset Asynchrony (SOA) in fMRI Contexts

FAQs & Troubleshooting Guide

Q1: What is the precise definition of ISI and SOA in an fMRI paradigm? A: The Inter-Stimulus Interval (ISI) is the time between the offset of one stimulus and the onset of the next. Stimulus Onset Asynchrony (SOA) is the time between the onsets of two consecutive stimuli. In a paradigm where stimulus duration is fixed, SOA = Stimulus Duration + ISI. Confusing these two is a common source of timing errors in experimental design.

Q2: My BOLD signal shows poor contrast-to-noise. Could my ISI be the issue? A: Yes. An ISI that is too short can lead to the "overlapping responses" problem, where the hemodynamic response from one stimulus has not returned to baseline before the next begins. This reduces the detectability of individual events. For better separation, consider using a jittered ISI or increasing the mean ISI to allow the HRF to resolve.

Q3: I am getting unexpected habituation or priming effects. How does SOA influence this? A: Cognitive effects like habituation (decreased response) and priming (facilitation of processing) are highly sensitive to SOA. A very short SOA may induce strong priming, while a long SOA might allow habituation to occur. If your results contradict your hypotheses, systematically varying the SOA in a follow-up experiment can help dissociate these cognitive temporal dynamics.

Q4: What is "temporal jittering" and why is it critical for event-related fMRI? A: Temporal jittering is the introduction of variable, pseudo-random ISIs between trials. It is critical because it ensures that the neural events are not perfectly correlated with the slow, periodic noise (e.g., respiration, scanner drift) and other neural events. This deconvolution is essential for obtaining independent estimates of the HRF for each trial type.

Q5: My design efficiency is low. How can I optimize my ISI/SOA distribution? A: Low design efficiency often stems from a predictable, fixed ISI. Use a genetic algorithm or a similar tool to generate an optimized, jittered sequence of ISIs. This sequence should maximize the orthogonality between regressors in your General Linear Model (GLM) and the expected HRF, thereby improving statistical power.

Table 1: Impact of SOA on fMRI Design Types and BOLD Response

| Design Type | Typical SOA Range | Key Characteristics | BOLD Response Profile | Best Use Cases |

|---|---|---|---|---|

| Slow Event-Related | 10 - 16 s | Allows HRF to return fully to baseline. | Well-separated, high-amplitude peaks. | Estimating full HRF shape; strong, isolated cognitive events. |

| Rapid Event-Related | 2 - 6 s (jittered) | HRFs overlap; relies on jitter for deconvolution. | Overlapping responses, modeled via GLM. | High trial count; measuring reaction times; efficient scanning. |

| Blocked Design | N/A (Stimuli grouped) | Alternating blocks of task and rest/control. | Sustained, plateau-like signal. | Localizing brain areas involved in a sustained cognitive process. |

Table 2: Recommended ISI/Jitter Ranges for Cognitive Domains

| Cognitive Domain | Suggested Mean ISI | Jitter Range | Rationale |

|---|---|---|---|

| Perceptual Tasks | 3 - 6 s | ± 1 - 3 s | Short processing time allows for rapid presentation and high efficiency. |

| Working Memory | 8 - 12 s | ± 2 - 4 s | Longer ISI accommodates encoding, maintenance, and retrieval phases. |

| High-Level Reasoning | 10 - 16 s | ± 3 - 5 s | Complex cognitive operations require longer durations and full HRF recovery. |

Experimental Protocol: Optimizing ISI Using a Genetic Algorithm

Objective: To determine the ISI distribution that maximizes statistical power for detecting differences between two task conditions in an event-related fMRI design.

Methodology:

- Define Constraints: Set the minimum and maximum allowable ISI (e.g., 2s and 12s) and the total number of trials and scan duration.

- Generate Candidate Designs: Create a population of potential trial sequences, each with a random (but constrained) order of conditions and jittered ISIs.

- Model the HRF: Convolve each candidate design matrix with a canonical Hemodynamic Response Function (e.g., double-gamma function) to create predicted BOLD signals.

- Calculate Efficiency: For each design, compute the efficiency of the contrast between the two task conditions. Efficiency is typically derived from the variance of the contrast estimate in the GLM (

(X'X)^-1). - Apply Genetic Algorithm:

- Selection: Select the top-performing designs (highest efficiency).

- Crossover: "Breed" these designs by combining parts of their trial/ISI sequences.

- Mutation: Introduce small random changes (mutations) to the ISI values or trial order in the offspring to avoid local minima.

- Iterate: Repeat steps 3-5 for hundreds or thousands of generations until efficiency converges on a maximum.

- Validate: The final, optimized sequence is used in the actual fMRI experiment.

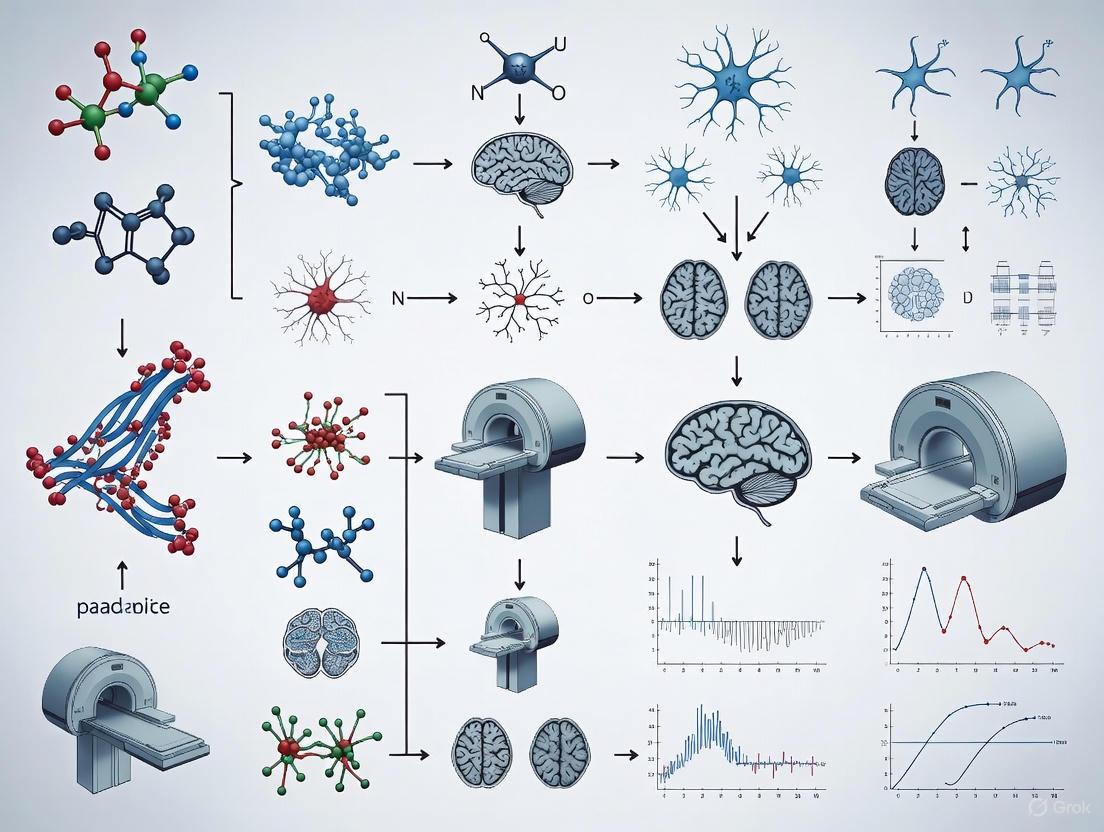

Experimental Workflow & Conceptual Diagrams

Title: fMRI ISI Optimization Workflow

Title: ISI vs. SOA Timing Relationship

The Scientist's Toolkit

Table 3: Essential Research Reagents & Solutions for fMRI Paradigm Design

| Item | Function & Explanation |

|---|---|

| Stimulus Presentation Software (e.g., PsychoPy, E-Prime, Presentation) | Precisely controls and delivers visual/auditory stimuli while recording timing and participant responses with millisecond accuracy. Critical for implementing jittered ISIs. |

| fMRI Scanner (3T/7T) | The core instrument for measuring the Blood-Oxygen-Level-Dependent (BOLD) signal. Higher field strength (7T) provides better signal-to-noise ratio. |

| Canonical Hemodynamic Response Function (HRF) | A mathematical model (e.g., double-gamma function) of the typical BOLD response to a brief neural event. Used to convolve with the stimulus timing model in the GLM. |

| Genetic Algorithm Toolbox (e.g., in MATLAB, Python's DEAP) | Software library used to computationally optimize the sequence of trials and ISIs to maximize the statistical power of the experimental design. |

| General Linear Model (GLM) Analysis Package (e.g., SPM, FSL, AFNI) | Statistical software used to model the fMRI data, where the predicted BOLD response (stimulus convolved with HRF) is fit to the actual measured data. |

Frequently Asked Questions

FAQ 1: How stable is the Hemodynamic Response Function (HRF) over time in longitudinal studies?

The HRF demonstrates remarkable long-term stability. Research shows that both the amplitude and temporal dynamics of strong HRFs are highly repeatable across sessions separated by intervals of up to 3 months [1]. This stability is observed when using high spatial resolution (2-mm voxels) to minimize partial-volume effects, which can otherwise introduce variability [1].

Positive HRFs generally show greater consistency than negative HRFs, which tend to be weaker and more variable across sessions [1]. The time-to-peak (TTP) parameter is notably the most stable HRF characteristic, while onset time and poststimulus undershoot amplitude typically show greater variability [1].

FAQ 2: What is the optimal Inter-Stimulus Interval (ISI) for event-related fMRI designs?

The optimal ISI depends on whether you use a fixed or jittered design. For fixed ISI designs, statistical efficiency drops dramatically with intervals shorter than 15 seconds [2]. However, with properly jittered or randomized ISIs, efficiency improves monotonically with decreasing mean ISI [2].

Jittered designs with variable ISIs can provide more than 10 times greater statistical efficiency compared to fixed ISI designs [2]. This approach also enables direct comparison and integration with EEG/MEG studies by using similar experimental designs across imaging modalities [2].

FAQ 3: How do I choose between block and event-related designs for cognitive paradigms?

Your choice should balance statistical power with psychological considerations. Block designs cluster trials of the same condition together, providing the highest signal-to-noise ratio and statistical power for detection [3]. However, they may introduce confounds like participant habituation or prediction effects due to their repetitive nature [3].

Event-related designs present trials from different conditions in random order, making experiments more engaging for participants [3]. They are better suited for estimating the detailed shape of the HRF and are essential for studying trial-unique cognitive processes [3]. Rapid event-related designs with jittered ISIs allow for more trials within a given scanning duration while maintaining the ability to deconvolve overlapping BOLD responses [3].

FAQ 4: How does vascular health affect HRF shape and fMRI interpretation?

Vascular health significantly influences HRF characteristics, particularly in older populations or those with cerebrovascular risk factors. Aging and vascular risk have the largest impacts on the maximum peak value of the HRF [4]. Using a canonical HRF in populations with altered cerebrovascular health can lead to misinterpretation of brain activity patterns [4].

Employing subject-specific HRFs in these populations results in more consistent activation patterns and larger effect sizes compared to using a canonical HRF [4]. Even small errors in HRF onset time estimation (as little as 1 second) can affect statistical sensitivity and cause false negatives [4].

Troubleshooting Guides

Problem: Low Statistical Power in Event-Related Design

Potential Cause: Suboptimal ISI selection without proper jitter.

Solution: Implement variable ISI designs rather than fixed intervals. Use optimization software like optseq2 or OptimizeX to generate timing schedules that maximize design efficiency [3]. Variable ISI designs can provide more than 10 times greater efficiency than fixed ISI designs [2].

Implementation Steps:

- Determine your trial types and approximate scanning duration

- Use

optseq2to optimize for HRF estimation orOptimizeXto optimize for detection of specific contrasts [3] - Validate your design by checking for collinearity between regressors

- Conduct behavioral pilot testing to ensure psychological validity

Problem: Inconsistent HRF Across Sessions or Subjects

Potential Cause: Vascular variability or partial volume effects.

Solution: Implement acquisition and analysis strategies that account for HRF variability.

Implementation Steps:

- Acquire data with high spatial resolution (2-mm voxels) focused on central gray matter to minimize partial volume effects [1]

- For populations with potential cerebrovascular issues (older adults, those with vascular risk factors), consider estimating subject-specific HRFs using a simple localizer task [4]

- Use Finite Impulse Response (FIR) models in analysis when precise HRF shape estimation is critical [3]

- Focus on the most stable HRF parameters (like time-to-peak) when making cross-session comparisons [1]

Problem: Poor Differentiation Between Conditions

Potential Cause: High collinearity between regressors in rapid event-related designs.

Solution: Optimize jitter and trial ordering to maximize discriminability.

Implementation Steps:

- Ensure sufficient jitter in your design - the overlap between BOLD responses should vary across trials [3]

- Use software packages to calculate and maximize the efficiency of your design matrix [3]

- Consider psychological effects like the Gratton Effect in cognitive control tasks, where trial sequence impacts BOLD response [3]

- Balance the number of trials with realistic scanning durations (typically 60-90 minutes maximum) [3]

Quantitative Data Tables

Table 1: HRF Temporal Stability Across Sessions

| HRF Parameter | Cross-Session Variability | Notes |

|---|---|---|

| Time-to-Peak (TTP) | Highly stable | Most reliable parameter for cross-session comparisons [1] |

| Peak Amplitude | Highly repeatable for strong HRFs | Positive HRFs more stable than negative HRFs [1] |

| Onset Time | Variable | Defined as 1 SD above baseline [1] |

| Undershoot Amplitude | Most variable parameter | Shows greatest session-to-session fluctuation [1] |

| Overall Shape | Remarkably consistent | Stable across 3-hour, 3-day, and 3-month intervals [1] |

Table 2: Design Efficiency Comparison

| Design Type | ISI | Statistical Efficiency | Best Use Cases |

|---|---|---|---|

| Fixed ISI | >15 sec | Moderate | Simple paradigms, pilot studies [2] |

| Fixed ISI | <15 sec | Severely reduced | Not recommended [2] |

| Jittered ISI | 500ms-2s | High (10x fixed ISI) | Rapid presentation, maximum trials [2] |

| Block Design | N/A | Highest for detection | Robust activation mapping [3] |

| Slow Event-Related | 12-15s | Moderate | Individual trial analysis [3] |

Table 3: Stimulus Delivery Software Comparison

| Software | Timing Accuracy | Learning Curve | Key Features |

|---|---|---|---|

| Cogent | Moderate | Steep (requires MATLAB) | Open-source, completely programmable [5] |

| E-Prime | Good | Gentle (GUI with drag-and-drop) | User-friendly, integrated analysis tools [5] |

| Presentation | Excellent (<1ms) | Steep (custom scripting language) | Sub-millisecond precision, fMRI mode for scanner sync [5] |

Experimental Protocols

Protocol 1: Measuring HRF Stability Across Sessions

Purpose: To quantify the long-term reliability of HRF parameters for longitudinal studies [1].

Stimulus: Use a 2-second duration multisensory stimulus to evoke strong, localized neural responses across majority of cortex [1].

Acquisition Parameters:

- Spatial resolution: 2-mm cubic voxels

- Focus on central gray matter

- Cover >70% of cerebral cortex

- Multiple sessions: 3-hour, 3-day, and 3-month intervals [1]

Analysis:

- Extract HRF parameters: peak amplitude, TTP, FWHM, undershoot amplitude, TTU

- Calculate within-session and across-session variability

- Compare spatial patterns of HRF parameters across subjects

Protocol 2: Optimized Event-Related Design Implementation

Purpose: To maximize statistical power while maintaining psychological validity [3].

Design Optimization:

- Use

optseq2for HRF estimation-focused designs orOptimizeXfor detection-focused designs [3] - Specify desired contrasts for efficiency maximization

- Generate multiple design candidates and compare efficiency metrics

Validation:

- Check for collinearity between regressors

- Conduct behavioral pilot testing to ensure task engagement

- Verify that trial sequences avoid psychological confounds (e.g., Gratton effects)

The Scientist's Toolkit

Research Reagent Solutions

| Tool | Function | Application Notes |

|---|---|---|

| High-Resolution fMRI (2-mm voxels) | Minimizes partial volume effects | Essential for reliable gray matter HRF measurement [1] |

| Multisensory Stimulus Protocol | Activates majority of cortex | Simple but effective for evoking strong HRFs [1] |

optseq2 Software |

Optimizes experimental designs for estimation | Maximizes ability to estimate HRF shape [3] |

OptimizeX Software |

Optimizes designs for detection | Maximizes power for specific contrasts [3] |

| Subject-Specific HRF Modeling | Accounts for vascular differences | Critical for populations with cerebrovascular risk factors [4] |

| Finite Impulse Response (FIR) Analysis | Models HRF without shape assumptions | Ideal for estimating individual time points of BOLD response [3] |

Workflow Diagrams

HRF Experimental Design Workflow

Troubleshooting Guides and FAQs

Frequently Asked Questions

FAQ 1: What is the minimum Inter-Stimulus Interval (ISI) I can use without causing significant hemodynamic refractoriness? Using an ISI that is too short prevents the Blood Oxygen Level-Dependent (BOLD) signal from fully recovering to its baseline, leading to an attenuated response for subsequent stimuli. While one study demonstrated functionally linear response summation with ISIs as short as 2 seconds for simple motor tasks, a minimum ISI of 6 seconds is recommended for complex cognitive stimuli like faces to avoid this signal attenuation [6].

FAQ 2: Can I use identical stimulus repetitions to save time in my experiment? Repeating identical stimuli can confound your results by introducing repetition suppression (or fMRI adaptation). One study found that presenting pairs of identical faces, compared to different faces, led to significantly less signal recovery in bilateral mid-fusiform and right prefrontal regions [6]. This effect can be mistaken for, or mask, a true hemodynamic refractory period. For general experimental designs not specifically studying adaptation, it is better to use different stimuli.

FAQ 3: Why is my experiment's test-retest reliability poor even with a well-designed ISI? The reliability of fMRI measures is a known challenge. Recent converging reports suggest that standard univariate measures (e.g., voxel-level activation) often have poor test-retest reliability [7]. This can be influenced by factors beyond ISI, including the specific brain region, the cognitive paradigm, and the preprocessing pipeline. To improve reliability, consider using multivariate approaches that aggregate signals across multiple voxels or regions, as they often demonstrate better reliability and validity [7].

FAQ 4: My paradigm is long. How can I make it more time-efficient without sacrificing data quality? Consider employing a mixed block/event-related design. This design allows you to present a large number of stimuli in a limited time by overlaying transient events on sustained blocks. Research has shown that such designs can successfully separate sustained activity (related to overall task maintenance) from transient activity (related to individual stimuli) while enabling a versatile range of contrasts within a brief scanning session [8].

Troubleshooting Common Experimental Problems

Problem 1: Incomplete Hemodynamic Recovery

- Symptoms: Attenuated BOLD signal for stimuli presented later in a sequence or block; difficulty detecting significant activation for later items.

- Root Causes: ISI is too short for the complexity of the stimuli; identical stimulus repetition causing neural adaptation.

- Solutions:

- Increase ISI: For complex stimuli, ensure a minimum ISI of 6 seconds. For a more complete recovery, an average ISI of 9 seconds may be necessary, especially if the expected signal differences between conditions are small [6].

- Vary Stimuli: Avoid using identical stimuli in quick succession unless repetition suppression is the effect of interest [6].

- Validate with Simulations: Use empirical data to simulate the expected BOLD responses at different ISIs to choose the most efficient design that maintains detection power [6].

Problem 2: Low Test-Retest Reliability

- Symptoms: High variability in activation maps or connectivity strength across scanning sessions for the same participant.

- Root Causes: Over-reliance on univariate measures; high autocorrelation in signals; preprocessing choices that introduce spurious correlations.

- Solutions:

- Adopt Multivariate Measures: Shift focus from single-voxel activation to network-based or pattern-based analyses, which generally show higher reliability [7].

- Refine Preprocessing: Be cautious with band-pass filtering (e.g., 0.01-0.1 Hz) in resting-state fMRI, as it can inflate correlation estimates and false positives. Adjust sampling rates to align with the analyzed frequency band and use surrogate data methods to account for autocorrelation [9].

Problem 3: Confounds Masquerading as Neural Signals

- Symptoms: A neural signal that perfectly tracks sequence position might be driven by collinear variables rather than a dedicated "positional code."

- Root Causes: Cognitive processes like memory load, sensory adaptation, reward expectation, and the mere passage of time are inherently correlated with an item's position in a sequence [10].

- Solutions:

- Careful Experimental Design: Actively control for or manipulate these collinear variables. For example, design conditions that dissociate memory load from temporal position.

- Interpret with Caution: Acknowledge that a multivariate pattern that decodes sequence position could be reading out from these correlated cognitive states rather than a pure positional signal [10].

Table 1: Impact of Inter-Stimulus Interval (ISI) on Hemodynamic Recovery

| ISI Duration | Stimulus Type | Key Finding | Experimental Context |

|---|---|---|---|

| 3 seconds | Identical Faces | Significantly less signal recovery in mid-fusiform & prefrontal cortex [6] | Paired-stimulus design, gender discrimination task [6] |

| 6 seconds | Identical Faces | Better signal recovery compared to 3s ISI, but still less than different faces [6] | Paired-stimulus design, gender discrimination task [6] |

| 6 seconds | Different Faces | Good signal recovery; suitable for avoiding refractoriness with complex stimuli [6] | Paired-stimulus design, gender discrimination task [6] |

| 2-5 seconds | Checker-boards / Simple Motor | Functionally linear response summation possible [6] | Basic sensory/motor tasks [6] |

Table 2: fMRI Reliability and Preprocessing Biases

| Metric / Method | Reliability / Effect | Key Consideration |

|---|---|---|

| Univariate Activation | Poor test-retest reliability [7] | Less suitable for individual differences research [7] |

| Multivariate Patterns | Better test-retest reliability [7] | Preferred for robust measurement [7] |

| Band-pass Filter (0.01-0.1 Hz) | Inflates correlation estimates [9] | Can cause 50-60% of detected correlations in white noise to be significant post-correction [9] |

| Filtering without Downsampling | Further distorts correlation coefficients [9] | Increases false positive rate [9] |

Experimental Protocols

Protocol 1: Assessing Hemodynamic Recovery and Adaptation

This protocol is adapted from a study investigating signal recovery and repetition suppression using face stimuli [6].

- Objective: To quantify the recovery of the hemodynamic response at different ISIs and to estimate the contribution of fMRI adaptation (fMR-A) to signal loss.

- Stimuli: Colored photographs of unfamiliar human faces. No face is repeated across trials.

- Trial Types:

- A single face.

- A pair of identical faces at ISI of 3 sec.

- A pair of identical faces at ISI of 6 sec.

- A pair of different faces at ISI of 3 sec.

- A pair of different faces at ISI of 6 sec.

- Task: Participants perform a gender discrimination task for each presented face to ensure attention.

- fMRI Acquisition:

- Scanner: 3T Siemens Allegra.

- Sequence: T2*-weighted gradient-echo EPI.

- Parameters: TR = 3000 ms, TE = 30 ms, 32 axial slices, 3.0 mm thickness.

- Data Analysis:

- Preprocessing: Motion correction, spatial smoothing (4 mm FWHM), temporal high-pass filtering.

- Statistical Modeling: Use a General Linear Model (GLM) with Finite Impulse Response (FIR) predictors to model the hemodynamic response to the first and second stimulus in each pair without assuming a canonical shape.

- Comparison: Compare the estimated response magnitude and shape for the second stimulus across the different ISI and repetition conditions.

Protocol 2: A Time-Efficient Mixed Design for Memory Encoding

This protocol describes a versatile paradigm for mapping memory encoding across sensory conditions within a short scanning time [8].

- Objective: To comprehensively measure sensory-specific and sensory-unspecific memory encoding activity within 10 minutes.

- Stimuli:

- Auditory: 80 environmental sounds and 80 human vocal sounds.

- Visual: 80 scenes and 80 faces.

- Paradigm Design: A mixed block/event-related design.

- Blocks: 20-second blocks of auditory (environmental/vocal) or visual (scene/face) stimuli, interleaved with 15-second rest blocks.

- Events: Within each block, individual stimuli are presented as discrete events.

- Task: Participants are instructed to encode the stimuli. This is followed by a post-scan recognition test with old and new items to identify remembered (hit) and forgotten (miss) trials.

- fMRI Acquisition:

- Standard whole-brain acquisition on a 3T scanner.

- Data Analysis:

- Contrasts:

- Sensory Activity: Auditory vs. Visual blocks.

- Stimulus-Selective Activity: Faces vs. Scenes; Voices vs. Environmental sounds.

- Encoding Success Activity (ESA): Contrasting neural activity during the encoding of subsequently remembered vs. forgotten items.

- Sustained vs. Transient Activity: Modeling block-related and event-related responses.

- Contrasts:

Experimental Workflow and Decision Diagrams

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for fMRI Paradigm Optimization

| Item | Function in Research | Specific Example / Note |

|---|---|---|

| Stimulus Sets (Standardized) | Provides consistent, validated experimental inputs to reduce variance and improve reproducibility. | Sets of unfamiliar faces [6], environmental sounds, vocal sounds, scenes, and faces [8]. |

| Cognitive Task Protocols | Defines the experimental procedure and participant instructions to ensure consistent cognitive engagement. | Gender discrimination task [6], memory encoding instruction followed by post-scan recognition test [8]. |

| Data Simulation Tools | Allows researchers to model and predict BOLD responses and statistical power for different ISI choices before running costly experiments. | Critical for evaluating the efficiency/recovery trade-off and avoiding underpowered designs [6]. |

| Multivariate Analysis Pipelines | Software tools for analyzing pattern-based information across multiple voxels, offering better reliability than univariate methods. | Recommended to improve test-retest reliability of fMRI measures [7]. |

| Physiological Noise Modeling Tools | Methods to measure and correct for noise from cardiac and respiratory cycles, which is crucial for brainstem fMRI and reliable signals elsewhere. | Includes noise modeling and spatial masking techniques [11]. |

What are the primary sources of noise in fMRI data? The main sources are physiological fluctuations (from cardiac and respiratory cycles), low-frequency scanner drift, and other scanner-related instabilities. Physiological noise originates from the subject and includes changes in cerebral blood flow, blood volume, arterial pulsatility, and CSF flow due to the cardiac cycle, as well as magnetic field changes from the respiratory cycle [12]. Low-frequency drift (0.0-0.015 Hz) is often caused by scanner instabilities rather than subject motion or physiology, and is more pronounced in image regions with high spatial intensity gradients [13] [14].

How does magnetic field strength (e.g., 3T vs. 7T) affect physiological noise? Physiological noise increases with the square of the magnetic field strength, whereas the signal-to-noise ratio (SNR) increases only linearly [12]. This means that at higher field strengths (like 7T), physiological noise can become the dominant source of noise. While higher fields allow for increased spatial resolution, the temporal SNR for fMRI does not necessarily improve in areas like the brainstem where physiological noise is already strong [12].

What is low-frequency drift, and what causes it? Low-frequency drift is a slow, steady change in the fMRI signal baseline over time, typically in the frequency range of 0.0–0.015 Hz [13] [14]. It was historically attributed to physiological noise or subject motion, but controlled experiments on cadavers and phantoms have demonstrated that scanner instabilities are a major cause, particularly in magnetically non-homogeneous regions [13] [14].

What is the impact of poor experimental design on noise? Poor design choices can reduce statistical power and complicate the interpretation of results. The order and timing of stimulus events (the experimental design) interact with noise sources and the hemodynamic response. Optimizing the design using tools like genetic algorithms can maximize efficiency for detecting activations and estimating the hemodynamic response shape, mitigating the impact of noise [15].

How can I identify and correct for physiological noise in my data? Correction often involves modeling the noise sources based on independent measurements of the cardiac and respiratory cycles. One common method is RETROICOR (Retrospective Image Correction) [12]. Data-driven approaches, such as Independent Component Analysis (ICA), can also identify and remove noise components [12]. Furthermore, standardized preprocessing pipelines like HALFpipe and fMRIPrep offer various denoising strategies, including regressing out signals from white matter and cerebrospinal fluid [16].

Quantitative Data on fMRI Noise

Table 1: Prevalence of Low-Frequency Drift Across Different Sources [13] [14]

| Source Type | Percentage of Significant Voxels (Range) | Key Finding |

|---|---|---|

| Homogeneous Phantom | ~1.10% | Minimal drift in a controlled, uniform object. |

| Cadaver | 13.7% - 49.0% | Significant drift present despite absence of living physiology. |

| Normal Volunteer | 22.1% - 61.9% | Drift is present in living humans. |

| Non-Homogeneous Phantom | 46.4% - 68.0% | Drift is most pronounced in magnetically inhomogeneous objects. |

Table 2: Impact of Field Strength on Noise Characteristics [12]

| Field Strength | Physiological Noise | Thermal Noise | Practical Implication |

|---|---|---|---|

| 3 Tesla (3T) | Lower relative contribution | Higher relative contribution | Physiological noise is less dominant. |

| 7 Tesla (7T) | Higher relative contribution (increases with B₀²) | Lower relative contribution | Physiological noise can become the dominant noise source, especially at standard resolutions. |

Experimental Protocols for Noise Investigation

Protocol 1: Isolating Scanner-Induced Low-Frequency Drift

This protocol is based on the seminal study by Smith et al. (1999) that systematically investigated the causes of low-frequency drift [13] [14].

- Sample Preparation: Acquire time-series T*2-weighted fMRI volumes from the following:

- A homogeneous phantom.

- A non-homogeneous phantom.

- A human cadaver.

- A normal, living volunteer.

- Data Acquisition: Perform scans on clinical 1.5 T MRI systems using different readout gradients (e.g., spiral and EPI) to test for consistency across pulse sequences.

- Data Analysis:

- Test the time-series data from each voxel for significant deviations from Gaussian noise within the low-frequency range (0.0–0.015 Hz).

- Set a statistical significance threshold (e.g., P=0.001).

- Calculate the percentage of voxels showing significant drift for each sample type.

- Interpretation: The finding of significant drift in cadavers and non-homogeneous phantoms—where physiological noise and conscious motion are absent—provides strong evidence for scanner instabilities as a major cause [13] [14].

Protocol 2: Implementing a Physiological Noise Model

This protocol outlines the use of a general linear model (GLM) to correct for physiological noise, as described by Harvey et al. (2013) [12].

- Independent Measurement: Record the subject's cardiac pulse (using a pulse oximeter) and respiratory cycle (using a belt) simultaneously with the fMRI acquisition.

- Noise Regressor Generation: Use a method like RETROICOR to generate regressors that model the expected noise based on the phase of the cardiac and respiratory cycles at each time point in the scan.

- Model Expansion (Optional): Include additional regressors to model other effects, such as:

- Respiratory volume per time (RVT).

- Heart rate (HR).

- Interactions between cardiac and respiratory cycles.

- GLM Analysis: Include the generated physiological noise regressors in the GLM alongside your task-related regressors. This models out the variance associated with physiology, leaving a cleaner estimate of the BOLD signal related to the experimental task.

- Quality Assessment: Compare the temporal Signal-to-Noise Ratio (tSNR) or model fit statistics before and after physiological noise correction to evaluate the benefit, particularly in high-noise regions like the brainstem.

Experimental Design Optimization Workflow

The following diagram illustrates a general framework for optimizing an fMRI experimental design, such as the inter-stimulus interval (ISI), to maximize statistical power in the presence of noise, using a genetic algorithm [15].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Analytical Tools for fMRI Noise Management

| Tool Name | Type/Function | Key Application in Noise Handling |

|---|---|---|

| Genetic Algorithm (GA) [15] | Optimization Algorithm | Searches the space of possible experimental designs (e.g., event sequences) to maximize statistical efficiency and counterbalancing, mitigating the impact of noise. |

| RETROICOR [12] | Physiological Noise Model | Corrects for signal changes induced by cardiac and respiratory cycles using externally recorded physiological data. |

| Independent Component Analysis (ICA) [12] [17] | Data-Driven Denoising | Identifies and removes noise components (e.g., motion, scanner artifacts) from the data without external measurements. |

| HALFpipe [16] | Standardized fMRI Processing Pipeline | Provides a containerized, reproducible workflow for preprocessing and denoising, including various confound regression strategies. |

| FSL FIX [17] | ICA-Based Denoising Tool | Uses a trained classifier to automatically identify and remove noise components from ICA decompositions, as used in the HCP pipelines. |

The diagram below maps the logical relationships between the primary sources of fMRI noise and their downstream effects on the acquired signal.

Troubleshooting Guides

FAQ 1: Why does my rapid event-related fMRI design suffer from low statistical power even with a high number of trials?

Problem: Your design uses a fixed, short Inter-Stimulus Interval (ISI), leading to severe overlap of the hemodynamic responses and low efficiency for estimating the response to individual events [18].

Solution: Implement a jittered or randomized ISI design instead of a fixed one.

- Root Cause: The sluggish Blood Oxygen Level Dependent (BOLD) hemodynamic response causes signals from consecutive events to overlap. With a fixed, short ISI, this overlap is systematic and creates high collinearity between predictors in your statistical model, making it difficult to estimate the response to a single event [18] [19].

- Fix: Transition to a variable ISI design. By properly jittering or randomizing the time between trial onsets, the overlap between hemodynamic responses becomes asynchronous. This de-correlates the predictors in your design matrix, dramatically improving the efficiency with which the brain response to each event type can be estimated [18]. The efficiency of such variable ISI designs can be more than 10 times greater than that achieved by fixed ISI designs [18].

FAQ 2: My cognitive paradigm requires a fixed, alternating event sequence (e.g., cue-target). How can I optimize it when I cannot randomize the trial order?

Problem: In paradigms like cue-target attention or working memory tasks, the event order is inherently fixed and non-random, which can lead to convolved BOLD signals [19].

Solution: Optimize other design parameters, such as ISI range and the inclusion of null events.

- Root Cause: When event sequences cannot be randomized (e.g., in a cue-target paradigm where a cue is always followed by a target), the BOLD signals from consecutive events temporally overlap in a predictable, and often suboptimal, way. This reduces the efficacy of standard deconvolution approaches [19].

- Fix: Use a quantitative framework to explore the "fitness landscape" of your design. Key parameters to manipulate include:

- ISI Bounds: Systematically vary the minimum and maximum ISI within the constraints of your task [19].

- Proportion of Null Events: Incorporate trials with no stimulus or task (null events) to introduce variability and improve the estimation efficiency of your conditions of interest [19].

- Leverage Advanced Toolboxes: Use simulation tools like the

deconvolvePython toolbox to model the nonlinear properties of the BOLD signal and identify the optimal combination of design parameters for your specific alternating sequence [19].

FAQ 3: How do I choose between optimizing for detection power versus estimation efficiency in my design?

Problem: There is an inherent trade-off in fMRI design between the power to detect an activated brain region (detection) and the power to accurately estimate the shape and timing of the hemodynamic response (estimation) [15].

Solution: Select a design that aligns with your primary research question.

- For Detection Power (e.g., contrasting two conditions): Blocked designs or rapid event-related designs with a known Hemodynamic Response Function (HRF) are highly efficient. They maximize the signal when you are primarily interested in whether one condition evokes a larger response than another [15].

- For Estimation Efficiency (e.g., characterizing the HRF shape): Designs with randomized events and jittered ISIs that contain both high and low spectral frequencies are best. These designs allow the shape, timing, and amplitude of the HRF to be estimated with high precision, which is crucial if your hypothesis concerns the response dynamics themselves [15].

- Balanced Approach: Pseudorandom designs that mix blocks and isolated events can provide a reasonable compromise, offering good performance for both detection and estimation [15].

Table 1: A Comparison of Fixed vs. Jittered ISI Experimental Designs

| Design Parameter | Fixed ISI Design | Jittered/Randomized ISI Design |

|---|---|---|

| Statistical Efficiency | Dramatically falls off with short ISIs (< 4-5s) [18]. | Improves monotonically with decreasing mean ISI; can be >10x more efficient than fixed designs [18]. |

| Typical ISI Range | Often >= 15 seconds was historically recommended for optimal power [18]. | Feasible to use mean ISIs as short as 500 ms [18]. |

| BOLD Signal Overlap | Systematic and predictable, leading to high collinearity [18]. | Asynchronous and variable, leading to de-correlated predictors [18]. |

| HRF Estimation | Poor for characterizing the shape of the hemodynamic response [15]. | Excellent; allows for reliable estimation of the HRF time course with sub-second resolution [15]. |

| Psychological Validity | Higher risk of habituation and anticipatory effects due to predictable timing. | Reduces participant anticipation and habituation, improving psychological validity [15]. |

| Paradigm Flexibility | Less compatible with the timing of natural cognitive processes and other modalities like EEG/MEG [18]. | Highly compatible; allows for identical experimental designs across fMRI and EEG/MEG [18]. |

Table 2: Key Parameters for Optimizing Non-Randomized, Alternating Designs

| Parameter | Impact on Design Efficiency | Practical Recommendation |

|---|---|---|

| Inter-Stimulus Interval (ISI) Bounds | Directly controls the degree of temporal overlap between consecutive events (e.g., cue and target). Influences both detection and estimation power [19]. | Explore a wide range of minimum and maximum ISIs through simulation to find the optimal balance for your specific paradigm [19]. |

| Proportion of Null Events | Introducing "empty" trials provides a baseline and increases the variability of the design matrix, improving the estimation of trial-specific responses [19]. | The optimal proportion is context-dependent; simulations are necessary to determine the right amount for a given design [19]. |

| Stimulus Sequence | The fixed order in alternating designs (e.g., C-T-C-T) is the primary constraint on efficiency [19]. | While the sequence is fixed, optimization of ISI and null trials is critical. Advanced analysis tools (e.g., GLMsingle) can help post-hoc [19]. |

Experimental Protocols & Methodologies

Protocol 1: Implementing a Jittered, Rapid Event-Related Design

This methodology allows for the efficient estimation of brain responses to individual events presented at a rapid rate [18].

- Define Conditions and Trial Number: Determine your experimental conditions and the total number of trials per condition. Power considerations may require many trials, which rapid designs facilitate.

- Create a Pseudorandom Sequence: Generate a sequence where trials from different conditions are presented in a randomized or pseudorandom order. This helps to counterbalance condition order across participants.

- Jitter the Inter-Stimulus Interval (ISI): Instead of a fixed interval, select ISIs from a predefined distribution. The mean ISI can be very short (e.g., 2-4 seconds or less). The ISI distribution can be:

- Stochastic: Fully randomized within a range (e.g., 0.5 to 6 seconds).

- Jittered: Selected from a set of specific values that optimize design efficiency [15].

- Incorporate Null Events (Optional): Randomly intersperse trials where no stimulus is presented. This introduces an baseline condition and further improves the estimation of the hemodynamic response for actual trials [19].

- Optimize the Design: Use an optimization algorithm, such as a Genetic Algorithm (GA), to search the vast space of possible event sequences. The GA selects a sequence that maximizes fitness criteria, such as:

- Contrast Estimation Efficiency: The ability to precisely estimate the difference between conditions.

- HRF Estimation Efficiency: The ability to accurately estimate the shape of the hemodynamic response.

- Psychological Validity: Factors like proper counterbalancing to avoid confounds [15].

Protocol 2: Fitness Landscape Exploration for Fixed-Sequence Paradigms

For paradigms with non-randomizable event orders (e.g., cue-target), this protocol uses simulation to find the best possible parameters [19].

- Define Fixed Event Sequence: Establish the unchangeable sequence of events (e.g., Cue-Target, Cue-Target, ...).

- Parameterize the Design: Identify the variable parameters:

- ISI between consecutive event pairs (e.g., a range from 1 to 8 seconds).

- The proportion of null trials to insert (e.g., 10% to 30%).

- Model the BOLD Signal: Use a realistic forward model to simulate the BOLD signal. This model should:

- Incorporate a canonical Hemodynamic Response Function (HRF).

- Include nonlinearities using a Volterra series expansion to capture "memory" effects where the response to one event influences the next [19].

- Add realistic noise derived from actual fMRI data (e.g., using the

fmrisimPython package) to simulate experimental conditions accurately [19].

- Run Exhaustive Simulations: Systematically run simulations across the defined parameter space (e.g., all combinations of ISI bounds and null trial proportions).

- Calculate Efficiency Metrics: For each simulated design, calculate estimation efficiency (the inverse of the sum of the variance of parameter estimates) and detection power.

- Identify the Optimum: Analyze the resulting "fitness landscape" to select the design parameters that provide the highest efficiency for your key contrasts of interest [19].

Visual Workflow: Shifting from Fixed to Optimized Designs

The following diagram illustrates the conceptual and practical shift from a traditional fixed-ISI design to a modern, optimized approach, highlighting the key considerations at each stage.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for fMRI Experimental Design Optimization

| Tool / Reagent | Function / Purpose | Application Notes |

|---|---|---|

| Genetic Algorithm (GA) | A flexible search algorithm used to find an optimal sequence of event trials from a vast number of possibilities by evolving solutions against fitness criteria [15]. | Ideal for optimizing rapid event-related designs with multiple conditions. It can simultaneously maximize contrast estimation efficiency, HRF estimation efficiency, and psychological counterbalancing [15]. |

deconvolve Toolbox |

A Python-based toolbox designed to provide guidance on optimal design parameters for non-randomized, alternating event sequences common in cognitive neuroscience [19]. | Use this when your paradigm has a fixed event order (e.g., cue-target). It helps find the best ISI and null-event proportion through simulations with realistic noise and BOLD nonlinearity [19]. |

| Volterra Series | A mathematical model used to capture the nonlinear dynamics of the BOLD signal, such as how the response to one event is influenced by previous events [19]. | Critical for creating accurate forward models in simulation-based optimization. It moves beyond simple linear convolution, leading to more realistic efficiency estimates [19]. |

| Jittered ISI Distribution | A set of variable time intervals between trial onsets, essential for de-correlating overlapping hemodynamic responses [18]. | Can be stochastic (fully random) or deterministic (a fixed set of values). The mean ISI can be very short (e.g., 500ms), allowing for high trial counts without a severe loss of power [18]. |

| Null Events | Trials in which no stimulus is presented and no task is performed, serving as an implicit baseline [19]. | Introducing these "empty" trials increases the variability of the design matrix, which improves the estimability of the hemodynamic response for actual trials of interest [19]. |

From Theory to Practice: Implementing Advanced ISI Designs

Frequently Asked Questions (FAQs)

What is the fundamental problem that variable ISIs solve in fMRI design? The blood oxygen level-dependent (BOLD) signal measured in fMRI is sluggish, unfolding over several seconds. When stimuli are presented too close together in a fixed, predictable order, their hemodynamic responses overlap significantly. This overlap makes it difficult to isolate the brain activity related to each individual event or condition. Variable Inter-Stimulus Intervals (ISIs) introduce "jitter" into the design, which helps to deconvolve, or separate, these overlapping signals, leading to more precise measurements of the neural response to each stimulus [20] [21].

My event sequence cannot be fully randomized (e.g., in a cue-target paradigm). How can I optimize it? In non-randomized, alternating designs (e.g., a fixed cue-target sequence), you cannot rely on random event order to separate signals. In these cases, varying the ISI becomes the primary tool for optimization. By systematically jittering the time between the cue and the target, you can change the temporal overlap of their BOLD responses on each trial. Simulations show that exploring a wide range of feasible ISIs is critical for finding a sequence that maximizes the efficiency with which the two responses can be separated during analysis [20].

How does randomization improve statistical efficiency? Efficiency is a measure of the precision of your parameter estimates in a statistical model. Randomization of event order and the use of variable ISIs work to decrease the collinearity (correlation) between the model's predictors. When predictors are less correlated, the statistical model can estimate the unique contribution of each condition with greater confidence and lower variance, thereby increasing the power to detect a true effect [15] [21].

Beyond separation of signals, what other confounds does randomization help control? A consistent change in neural activity as a sequence progresses can masquerade as a dedicated "positional code." However, this apparent positional signal can be confounded by other cognitive processes that are collinear with sequence position, such as:

- Memory load, which increases with each subsequent item.

- Sensory adaptation, where neural responses in sensory cortex decrease to repeated stimuli.

- Reward expectation, which changes as the end of a sequence (and potential reward) approaches. Randomization helps to break these systematic correlations, allowing researchers to isolate the neural representation of order from these unrelated processes [10].

Troubleshooting Common Experimental Issues

Problem: Low detection power for contrasts between conditions. Solution:

- Diagnosis: The design may have high collinearity between regressors for the conditions you wish to compare.

- Action: Ensure that the event order for the conditions of interest is randomized or counterbalanced. Using a variable ISI can further help. Refer to the efficiency calculations in the table below to evaluate your design before running the experiment. Scanning for a longer duration, if feasible for your participants, can also increase the degrees of freedom and overall power [21].

Problem: Inability to separate BOLD responses in a fixed, alternating sequence (e.g., Cue-Target, Cue-Target...). Solution:

- Diagnosis: In a perfectly alternating design with a fixed ISI, the BOLD responses for the two event types are perfectly collinear.

- Action: Jitter the interval between the cue and the target. Use simulations to find the optimal range of ISIs that maximizes estimation efficiency for your specific paradigm. Tools like the

deconvolvePython toolbox are designed to help with this optimization [20].

Problem: Suspected contamination of results by low-frequency scanner drift. Solution:

- Diagnosis: The experimental design may have created very low-frequency signals that are difficult to distinguish from background noise and scanner drift.

- Action: Avoid using very long blocks or contrasting trials that are very far apart in time. Most fMRI analysis packages apply high-pass filtering to remove these low-frequency signals, which can also remove your experimental effect of interest if it is of a similar frequency [21].

Experimental Protocols & Design Parameters

Protocol 1: Efficiency Calculation for a Simple Contrast This protocol allows you to compute the statistical efficiency of a design for detecting a specific effect.

- Define Design Matrix (X): Create a design matrix where each column is a predictor (e.g., a condition) convolved with a canonical Hemodynamic Response Function (HRF).

- Define Contrast Vector (c): Specify a contrast vector that represents the statistical comparison of interest (e.g., Condition A vs. Baseline would be

[1], while A vs. B would be[1, -1]). - Calculate Efficiency: The efficiency for a contrast is defined as: Efficiency = 1 / (c'(X'X)⁻¹c) A higher value indicates a more efficient design for detecting that specific contrast [15] [21].

Protocol 2: Optimizing a Design Using a Genetic Algorithm (GA) For complex designs with multiple conditions and constraints, a GA can find a near-optimal sequence.

- Encode Sequence: Represent a potential experimental sequence as a "chromosome," where each gene is a condition or a specific ISI.

- Define Fitness Function: The fitness of a sequence is its statistical efficiency for your key contrasts. You can also add penalties for psychological invalidity (e.g., too many repetitions of the same stimulus).

- Evolve Solutions: The GA creates a population of sequences, "breeds" them (combining parts of good sequences), and introduces random "mutations." Over many generations, it selects for sequences with higher and higher fitness [15].

Table 1: Key Parameters for fMRI Design Optimization

| Parameter | Description | Impact on Efficiency | Recommended Range / Approach |

|---|---|---|---|

| Inter-Stimulus Interval (ISI) | Time between onsets of successive trials. | Shorter ISIs generally increase efficiency for detection, but can increase collinearity. Jittered ISIs are critical for separation. | Vary between ~2-20 seconds; avoid fixed, very short ISIs for all trials [20] [21]. |

| Null Events | Trials with no stimulus, often just a fixation cross. | Inserting null events (~20-35% of trials) provides a baseline and adds jitter, improving estimation of overlapping responses [20] [21]. | |

| Design Efficiency | A quantitative measure of the precision of a statistical estimate. | The goal of optimization. Depends on the specific contrast of interest. | Calculate using c'(X'X)⁻¹c; use optimization algorithms to maximize this value [15]. |

| Estimation vs. Detection | Efficiency for estimating HRF shape vs. detecting an effect of known shape. | There is a trade-off. Block designs are best for detection; rapid, jittered event-related designs are better for estimation [15]. | Choose based on the primary goal of your experiment. For new paradigms, prioritize HRF estimation. |

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Research Reagents and Computational Tools

| Item | Function in Research | Example / Note |

|---|---|---|

| Genetic Algorithm (GA) | A flexible optimization algorithm used to search the vast space of possible stimulus sequences to find those with maximum statistical efficiency for a given set of contrasts [15]. | Can be implemented in MATLAB, Python, or R. Allows incorporation of multiple, custom fitness criteria. |

| deconvolve Toolbox | A Python-based toolbox specifically designed to provide guidance and simulate the optimal design parameters for non-random, alternating event-related designs common in cognitive neuroscience [20]. | Available at: https://github.com/soukhind2/deconv |

| GLMsingle | A data-driven tool for estimating single-trial BOLD responses from fMRI data. It can be used to improve detection efficiency post-hoc through techniques like HRF fitting and denoising [20]. | Useful for analyzing data from experiments with closely spaced events. |

| fmrisim | A Python package that can generate realistic simulated fMRI noise, which is crucial for accurate and powerful simulations when testing experimental designs [20]. | Helps in building a "fitness landscape" for design parameters by using noise with accurate statistical properties. |

| Canonical HRF | The assumed model of the hemodynamic response used in the General Linear Model (GLM) to create predictors from your stimulus timing. | A double-gamma function is standard in packages like SPM. Variation from this model can be captured using basis functions. |

Experimental Workflow and Signaling Pathways

The following diagram illustrates the logical workflow for optimizing and validating an fMRI experimental design using variable ISIs and randomization.

Optimization and Validation Workflow

The diagram below conceptualizes how variable ISIs resolve the problem of overlapping BOLD signals, which is the core signaling pathway this guide addresses.

Resolving BOLD Signal Overlap

Frequently Asked Questions (FAQs)

Q1: What is the minimum Inter-Stimulus Interval (ISI) achievable in event-related fMRI designs? With proper experimental design, ISIs can be significantly shorter than traditional paradigms. While fixed ISIs of less than 15 seconds result in severe statistical inefficiency, using properly jittered or randomized ISIs allows for presentation rates as fast as 500 ms while maintaining considerable efficiency. Designs with variable ISI can show more than 10 times greater efficiency than fixed ISI designs [2]. Advanced studies have successfully detected neural representations with stimulus onsets separated by as little as 32 ms [22].

Q2: How can I calibrate out vascular delays to improve temporal accuracy in fast fMRI? The latency of fMRI signals is confounded by local cerebral vascular reactivity (CVR), which varies across brain locations. To address this:

- Use a breath-holding (BH) task to map hemodynamic latency across the brain, as it modulates cerebral blood flow without an accompanying change in cerebral metabolic rate of oxygen [23].

- Perform CVR calibration by subtracting the CVR latency (measured via BH task) from the task-related fMRI signal latency [23].

- Employ fast fMRI protocols with high sampling rates (e.g., 10 Hz) to reliably delineate these sub-second temporal features [23].

Q3: My design has non-randomized, alternating event sequences (e.g., cue-target). How can I optimize it? For paradigms where event order is fixed (e.g., CTCTCT...), standard randomization is impossible. Optimization strategies include:

- Manipulating the ISI and the proportion of null events within the sequence [20].

- Using a deconvolution approach with a realistic model of nonlinearity and noise to separate overlapping BOLD signals [20].

- Employing specialized toolboxes like

deconvolve(Python) to simulate and identify optimal design parameters for your specific alternating sequence [20].

Q4: What are the key preprocessing steps for cleaning fast fMRI data? Independent Component Analysis (ICA) is a common data-driven method for noise removal.

- For resting-state data, single-subject ICA is run on each session separately using tools like FSL's

FEAT[24]. - Turn off spatial smoothing and ensure proper registration to standard space if using automated classifiers like FIX [24].

- Although more common for resting-state fMRI, ICA-based cleaning can also be applied to task-based data [24].

Troubleshooting Guides

Issue: Low Detection Power or Estimation Efficiency in Rapid Designs

Problem: The statistical power to detect activations or estimate hemodynamic responses is low despite using rapid event-related designs.

| Solution | Description | Key Parameters/Considerations |

|---|---|---|

| Jitter or Randomize ISIs [2] [20] | Avoid fixed ISIs; use variable timing between stimuli. Statistical efficiency improves monotonically with decreasing mean ISI when ISI is randomized. | Efficiency of jittered designs can be >10x that of fixed ISI designs. |

| Incorporate Null Events [20] | Introduce trials with no stimulus to improve the estimation of overlapping hemodynamic responses. | Optimize the proportion of null events relative to active trials. |

| Account for BOLD Nonlinearities [20] | Use models that capture the nonlinear and transient properties of the BOLD signal, especially for events close in time. | Implement using Volterra series or similar approaches in simulation tools. |

Issue: Poor Separation of Temporally Overlapping BOLD Responses

Problem: In complex paradigms, BOLD responses from successive events overlap significantly, making it difficult to isolate the neural correlates of individual cognitive processes.

Solutions:

- Leverage Multivariate Pattern Analysis: Instead of relying on univariate response amplitudes, use probabilistic pattern classifiers (e.g., multinomial logistic regression) trained on isolated events. These classifiers can be applied to sequence trials to detect the content and order of rapidly presented items, even with substantial temporal overlap [22].

- Employ Data-Driven Deconvolution: Use advanced analysis tools like

GLMsingle[20] to estimate single-trial responses. This tool uses techniques such as data-driven denoising and appropriate HRF fitting to deconvolve events that are close together in time. - Pre-simulate Your Design: Before collecting data, use a toolbox like

deconvolve[20] to simulate your specific paradigm, including its alternating structure and expected noise. This allows you to pre-emptively optimize parameters like ISI and null event ratio for the best possible estimation efficiency.

Issue: Inaccurate Inference of Neural Timing Due to Vascular Confounds

Problem: Differences in fMRI signal timing between brain regions may reflect variations in local vascular reactivity rather than the sequence of underlying neural activity.

Solutions:

- Measure and Calibrate with a Breath-Holding Task: Acquire a separate dataset where participants perform a breath-holding task. This task characterizes the CVR latency for each voxel [23].

- Calibrate Task fMRI Latency: Subtract the CVR latency map derived from the breath-holding task from the latency map obtained during your cognitive task (e.g., a visuomotor task). This calibration step helps isolate the neural component of the timing differences [23].

- Use Ultra-Fast Acquisition: Whenever possible, use acquisition protocols with a high sampling rate (e.g., TR = 100 ms or 600 ms) to improve the resolution of temporal features and make latency estimation more reliable [23] [25].

Experimental Protocols & Methodologies

Protocol 1: Detecting Sub-Second Activation Sequences

This protocol is adapted from a study that successfully decoded visual representation sequences with items presented as fast as 32 ms apart [22].

- Stimuli: Use a set of distinct images (e.g., cat, chair, face, house, shoe).

- Experimental Design:

- Slow Trials: Present images individually with long ISIs (~2.5 s). Use these trials to train the pattern classifier.

- Fast Trials: Present images in rapid sequences. The order can be random (sequence trials) or include repetitions (repetition trials).

- Presentation Parameters: Image presentation time can be 100 ms with inter-item intervals as short as 32 ms.

- fMRI Acquisition: Standard BOLD fMRI.

- Analysis:

- Classifier Training: Train a multinomial logistic regression classifier (one-vs-rest) on fMRI activation patterns from the slow trials for each image category.

- Sequence Application: Apply the trained classifiers to the fast sequence trials to obtain a time course of probabilistic classification for each image.

- Order Detection: Analyze these classifier time courses to detect the presence and order of neural representations within the fast sequence.

Protocol 2: Calibrating fMRI Latency for Sub-Second Timing

This protocol details the method to calibrate vascular delays for more accurate neural timing inference, using a visuomotor task as an example [23].

- Tasks:

- Breath-Holding (BH) Task: To map CVR. Use a block design (e.g., paced breathing -> exhalation -> breath-hold for 15s). Repeat for 4 blocks.

- Visuomotor (VM) Task: To measure task-related latency. Participants press a button with the thumb corresponding to the hemifield of a flashing checkerboard stimulus (e.g., right stimulus -> right hand). Use randomized inter-stimulus intervals.

- fMRI Acquisition: Use an ultra-fast fMRI sequence (e.g., Simultaneous-multi-slice inverse imaging (SMS-InI)) with a high sampling rate (e.g., TR = 100 ms, 10 Hz). This high temporal resolution is critical [23].

- Analysis:

- Latency Estimation: For both BH and VM tasks, estimate the signal latency at each voxel by correlating its time series with a temporally shifted reference function.

- CVR Calibration: For the VM task, subtract the latency map from the BH task from the latency map of the VM task on a voxel-by-voxel basis. This yields a CVR-calibrated latency map.

- Sequence Analysis: On the calibrated map, confirm the expected neural activation sequence (e.g., LGN -> Visual Cortex -> Sensorimotor Cortex).

The Scientist's Toolkit

Table: Essential Materials and Reagents for Ultrafast fMRI Research

| Item | Function/Application in Research |

|---|---|

| 3T or Higher MRI Scanner | High-field scanners provide improved signal-to-noise ratio, which is beneficial for detecting the subtle effects in fast fMRI. |

| Multi-Channel Head Coil | (e.g., 32-channel) Increases signal reception and spatial resolution. |

| Ultra-Fast fMRI Sequence | Sequences like simultaneous-multi-slice (SMS) or Inverse Imaging (InI) enable sub-second temporal resolution (TR < 1 s) [23] [25]. |

| Stimulus Presentation Software | Software like Psychtoolbox [23] for precise control over stimulus timing and synchronization with the MRI scanner. |

| Physiological Monitoring Equipment | Photoplethysmogram for cardiac cycle and respiratory belt for respiration. Essential for noise correction in the BOLD signal [23]. |

| Pattern Classifier | Multinomial logistic regression [22] or other multivariate classifiers to decode rapidly changing neural representations from fMRI patterns. |

| Deconvolution Toolbox | Tools like deconvolve [20] or GLMsingle [20] to optimize designs and estimate single-trial responses from overlapping BOLD signals. |

| Automated ICA Cleaning Tool | FSL's FIX [24] for automated, ICA-based denoising of fMRI data, particularly useful for resting-state data. |

Workflow & Signaling Diagrams

CVR Calibration Workflow

Stimulus Optimization Logic

Frequently Asked Questions (FAQs)

FAQ 1: Why are long fMRI scan times necessary for precision mapping of individual brains? Group-averaged data obscures subject-specific features of functional brain organization. Achieving a high temporal signal-to-noise ratio for reliable individual-specific network estimation requires several hours of data per person, as individual brain networks are more detailed than group-average networks and contain unique features that are lost in group analyses [26].

FAQ 2: Can I use task-based fMRI data instead of resting-state data for precision functional mapping? Yes. Research shows that whole-brain within-individual networks can be estimated exclusively from task data. Correlation matrices from task data show strong similarity to those derived from resting-state data, suggesting an underlying stable network architecture that persists across task states. The largest factor affecting similarity is the amount of data, not whether it comes from rest or tasks [27].

FAQ 3: What is the minimum amount of fMRI data required for reliable individual-specific mapping? Precisely mapping an individual's brain typically requires 40-60 minutes of resting-state data, though supervised methods can create individual-specific networks with slightly less data (e.g., 20 minutes). The ABCD study, for example, collects 20 minutes of resting-state data plus 40 minutes of task fMRI data per participant, which can be combined for individual-specific mapping [28].

FAQ 4: How does inter-stimulus interval (ISI) optimization improve paradigm design? Parameter optimization, including ISI, is crucial for eliciting optimal neural responses. For somatosensory gating paradigms, research has identified that an ISI of 200-220 ms produces optimal suppression of sensory input. Proper ISI selection ensures more robust detection of neural phenomena and higher paradigm sensitivity [29].

Troubleshooting Guides

Issue 1: Inadequate Signal-to-Noise for Individual Network Detection

Problem: Unable to detect clear individual-specific network features despite following standard protocols.

Solutions:

- Increase data acquisition time: Collect multiple scanning sessions per individual, aiming for 5+ hours total data [26]

- Pool task and resting-state data: Combine both data types to increase statistical power for network estimation [27]

- Optimize acquisition timing: Standardize scan times (e.g., nighttime scanning) to minimize circadian effects [26]

- Implement censoring procedures: Use framewise displacement thresholds (<0.2 mm) to exclude high-motion data segments [28]

Issue 2: Suboptimal Paradigm Design for Clinical Populations

Problem: Task paradigms show ceiling/floor effects in heterogeneous clinical populations.

Solutions:

- Simplify instructions: Use language-free paradigms with minimal verbal instructions [30]

- Employ multi-sensory stimuli: Incorporate auditory and visual stimuli to engage multiple processing networks [30]

- Adjust difficulty dynamically: Modify task conditions, presentation speed, or difficulty levels for impaired patients [31]

- Include deep encoding tasks: Use semantic judgment tasks (e.g., pleasant/unpleasant decisions) during memory encoding [32]

Issue 3: Inconsistent Network Topography Across Analysis Methods

Problem: Different analysis techniques yield varying individual network maps.

Solutions:

- Apply multiple community detection methods: Use complementary approaches including Infomap, template matching, and non-negative matrix factorization [28]

- Establish consensus mappings: Generate consensus across edge densities and thresholds [28]

- Validate with independent data: Confirm network estimates predict functional dissociations in independent task data [27]

- Use probabilistic atlases: Reference population probability atlases while maintaining individual-specific features [28]

Table 1: Precision fMRI Data Acquisition Recommendations

| Parameter | Minimum for Basic Mapping | Optimal for High-Fidelity | Key Considerations |

|---|---|---|---|

| Total Scan Time | 20 minutes resting-state [28] | 5+ hours combined data [26] | Pool resting-state and task data [27] |

| Session Structure | Single session | 10+ sessions over time [26] | Standardize time-of-day [26] |

| Task fMRI | 10-minute paradigm [30] | 6 hours diverse tasks [26] | Include multiple contrast conditions [30] |

| ISI Optimization | 200-500 ms general [29] | 200-220 ms somatosensory gating [29] | Paradigm-specific optimization needed |

| Motion Censoring | FD < 0.3 mm | FD < 0.2 mm [28] | Use framewise displacement metrics |

Table 2: Memory Encoding fMRI Paradigm Specifications

| Component | Stimulus Type | Duration | Cognitive Process | Expected Activation |

|---|---|---|---|---|

| Auditory Stimuli | Environmental sounds; Vocal sounds | 538-2771 ms [30] | Sensory encoding | Auditory cortex; Voice-selective regions [30] |

| Visual Stimuli | Faces; Spatial scenes | Block design [30] | Face/scene processing | Fusiform face area; Parahippocampal place area [30] |

| Encoding Task | Pleasant/unpleasant judgments | Event-related [32] | Deep semantic encoding | Medial temporal lobe; Hippocampus [32] |

| Recognition Test | Old/New items | Post-scan [30] | Memory retrieval | Hippocampus; Precuneus [30] |

Table 3: Key Research Reagents & Computational Tools

| Resource Type | Specific Tool/Resource | Function/Purpose |

|---|---|---|

| Precision Atlases | MIDB Precision Brain Atlas [28] | Individual-specific network topography reference |

| Datasets | Midnight Scan Club (MSC) Data [26] | High-fidelity individual connectome benchmark |

| Analysis Methods | Infomap (IM) Algorithm [28] | Network community detection using information theory |

| Template Matching | Gordon et al. Template Matching [28] | Individual network assignment via template correlation |

| Overlap Mapping | OMNI (Overlapping MultiNetwork Imaging) [28] | Identifies regions with multiple network membership |

Methodological Workflows

Precision fMRI Analysis Pipeline

Data Quantity-Quality Relationship

FAQs on fMRI Challenges and Solutions

Q1: What are the primary sources of head motion artifacts in fMRI, and why are they problematic? Head motion changes tissue composition within a voxel, distorts the magnetic field, and disrupts steady-state magnetization recovery. This leads to signal dropouts and artifactual amplitude changes in the BOLD signal, which can cause distance-dependent biases in inferred signal correlations and compromise the validity of functional connectivity analysis [33].

Q2: How is test-retest reliability measured in fMRI studies, and what is considered acceptable? Test-retest reliability is most commonly measured using the Intraclass Correlation Coefficient (ICC). The ICC represents the proportion of total measured variance attributable to differences between individuals. A common historical rule of thumb categorizes ICC as:

- Poor: <0.4

- Fair: 0.4-0.59

- Good: 0.6-0.74

- Excellent: ≥0.75 [34]

Q3: Why is resting-state fMRI (rs-fMRI) particularly valuable for pediatric neuroimaging? rs-fMRI is valuable for pediatric populations because it (a) equalizes measurement conditions by removing influence of individual differences in task performance and personal competencies, and (b) data acquisition is relatively easy and fast, requiring less participant collaboration [35].

Q4: What factors can improve the reliability of fMRI measures? Research indicates that both task-based activation and functional connectivity reliability increase with shorter test-retest intervals and appropriate task type [34].

Troubleshooting Guides

Guide 1: Mitigating Motion Artifacts

Problem: Subject motion is contaminating the fMRI signal, leading to unreliable functional connectivity measures.

Solutions:

- Prospective Motion Correction: Use real-time head motion monitoring systems (e.g., MoCAP) that utilize structured light or optical tracking to perform prospective motion correction by adjusting MR gradient. This approach can significantly improve image quality in both structural and functional MRI [36].

- Retrospective Motion Correction with Censoring: Identify and remove (censor) volumes with high frame-by-frame motion, along with directly adjoining frames. To address the data discontinuity caused by censoring, advanced methods like structured low-rank matrix completion can recover missing entries by exploiting the implicit structure in time series data [33].

- Advanced Regression: Beyond standard 6-parameter rigid body correction, consider voxelwise retrospective motion regressors. This approach has been shown to improve temporal signal-to-noise ratio (tSNR) by 40% in cases of linear drift motion and 11% in realistic motion scenarios compared to standard 6-parameter models [36].

Guide 2: Improving Test-Retest Reliability

Problem: Univariate fMRI measures (voxel/region-level task activation, edge-level functional connectivity) show poor test-retest reliability.

Solutions:

- Optimize Experimental Design: Consider multivariate approaches that may improve both reliability and validity. Shorter test-retest intervals can enhance reliability [34].

- Ensure Proper Measurement: When calculating ICC, carefully consider your model choice. ICC(2,1) is often an ideal starting point for most univariate fMRI reliability studies, as it reflects absolute agreement and includes a random facet for repeated measurements over time [34].

- Account for Developmental Factors: In developmental populations, recognize that age-related changes in connection strength are specific to neurodevelopmental stages, and functional specialization of brain networks increases with age. Five years of age appears to be a milestone with strengthening connectivity [35].

Table 1: ICC Reliability Benchmarks for fMRI Measures

| fMRI Measure | Typical ICC Range | Reliability Category | Key Influencing Factors |

|---|---|---|---|

| Voxel-level Task Activation | <0.4 | Poor | Task type, test-retest interval |

| Region-level Task Activation | <0.4 | Poor | Task type, test-retest interval |

| Edge-level Functional Connectivity | <0.4 | Poor | Test-retest interval, motion |

| Multivariate Approaches | >0.6 | Good to Excellent | Analysis method, dimensionality |

Table 2: Motion Correction Technique Comparison

| Technique | Principle | Advantages | Limitations |

|---|---|---|---|

| Prospective Correction (e.g., MoCAP) [36] | Real-time motion tracking with gradient adjustment | Significantly reduces motion artifacts | Requires specialized hardware |

| Censoring (Volume Removal) [33] | Excising high-motion volumes from analysis | Simple to implement | Creates data discontinuities, data loss |

| Structured Low-Rank Matrix Completion [33] | Recovery of censored entries using signal structure | Compensates for data loss from censoring | Computationally intensive |

| Navigator-Based Methods [36] | Using orbital navigators for motion estimation | Effective for 3D-EPI fMRI | Sensitive to physiological motion |

Experimental Protocols

Protocol 1: Motion-Compensated Recovery Using Structured Matrix Prior

Purpose: To recover high-quality fMRI time series from motion-corrupted data [33].

Methodology:

- Data Acquisition: Acquire unprocessed fMRI volumes (Yi) with recorded motion parameters.