Optimizing ICA for EEG Artifact Removal: A Strategic Guide for Biomedical Research

Independent Component Analysis (ICA) is a cornerstone technique for isolating and removing artifacts from electroencephalography (EEG) data, a critical step in neuroimaging for drug development and clinical research.

Optimizing ICA for EEG Artifact Removal: A Strategic Guide for Biomedical Research

Abstract

Independent Component Analysis (ICA) is a cornerstone technique for isolating and removing artifacts from electroencephalography (EEG) data, a critical step in neuroimaging for drug development and clinical research. This article provides a comprehensive framework for optimizing ICA component selection, moving from foundational principles to advanced validation. We explore the core assumptions of ICA and the challenges posed by motion and physiological artifacts. The guide details current automated and semi-automated classification methods, including ICLabel and novel deep-learning approaches, and offers troubleshooting strategies for common pitfalls like overcleaning and motion artifact handling. Finally, we present rigorous validation methodologies and comparative analyses with emerging techniques like iCanClean and ASR, empowering researchers to enhance data integrity and accelerate biomarker discovery.

Understanding ICA: Core Principles and the Challenge of Artifacts

Independent Component Analysis (ICA) is a fundamental blind source separation technique widely used across various scientific fields, including biomedical signal processing and drug development. For researchers focused on artifact removal, a deep understanding of the basic ICA model is the first critical step toward optimizing its performance. This technical resource center addresses the core concepts and common experimental challenges associated with implementing ICA, providing targeted troubleshooting and proven methodologies to enhance the reliability of your results.

Frequently Asked Questions (FAQs)

1. What is the core mathematical principle behind ICA? ICA operates on the principle that observed signals are linear mixtures of statistically independent sources. The model is formulated as X = AS, where X is the observed data matrix, A is the mixing matrix, and S contains the independent source signals [1]. The goal is to find a demixing matrix W (where W = A⁻¹) to recover the source estimates via S = WX. The solution relies on maximizing the non-Gaussianity or statistical independence of the components, often using algorithms like Infomax or FastICA [2].

2. Why is determining the correct number of Independent Components (ICs) crucial? Selecting the optimal number of ICs is vital to avoid under-decomposition (where artifacts and neural signals remain mixed) or over-decomposition (where neurogenic activity is split into multiple, less meaningful components, potentially distorting the signal) [3] [4]. An incorrect number can severely hamper effective source separation and subsequent artifact removal, leading to unreliable data [2].

3. What is "whitening" and why is it an essential pre-processing step? Whitening (or sphering) is a preprocessing step that removes any correlations between the data channels. It transforms the data so that its covariance matrix becomes the identity matrix [1]. Geometrically, this process restores the initial "shape" of the data cloud, after which ICA only needs to perform a rotation to find the independent components. This simplifies the computation and is a standard first step in most ICA algorithms [1].

4. My ICA-corrected data still shows residual artifacts. What is the most likely cause? Residual artifacts are often a sign of undercorrection. With commonly used settings, Infomax ICA can leave artifacts in the data and even distort neurogenic activity [5]. This can be mitigated by optimizing key pipeline parameters, such as:

- The high-pass and low-pass filters applied to the training data.

- Massively overweighting the proportion of training data containing prominent artifacts like saccadic spike potentials (SP) [5].

5. How can I objectively evaluate the quality of my ICA decomposition for artifact removal? Correction quality should be quantified in terms of both undercorrection (residual artifacts) and overcorrection (removal of neurogenic activity) [5]. Using objective measures like an eye-tracker can help quantify residual artifacts. Furthermore, decomposition quality can be evaluated using metrics like:

- The mutual information between components.

- The proportion of brain, muscle, and 'other' components identified.

- The residual variance of brain components [4].

Troubleshooting Guides

Problem 1: Poor ICA Decomposition Quality

Symptoms: High mutual information between components, a low proportion of identified brain components, or a high residual variance in brain components after decomposition [4].

| Investigation Step | Action & Diagnostic |

|---|---|

| Check Data Cleaning | Evaluate the impact of automatic sample rejection. Tools like the AMICA algorithm's built-in rejection function can iteratively remove bad samples based on model fit (log-likelihood) [4]. |

| Assess Data Quality | Determine if excessive movement artifacts are present. Note that increased movement intensity in mobile experiments can significantly decrease decomposition quality [4]. |

| Optimize Parameters | Systematically vary and optimize key parameters: (1) high-pass/low-pass filter cutoffs for training data, (2) overweighting data sections with strong artifacts, and (3) the threshold for component rejection [5]. |

Problem 2: Determining the Optimal Number of ICs

Challenge: It is difficult to know how many Independent Components (ICs) to extract, and an incorrect number leads to either under- or over-decomposition.

Solution A: Employ a Block-Similarity Method (e.g., TSS-ICA or CW_ICA) This method is suitable for datasets of various sizes and does not require prior knowledge of the source signals [3] [2].

- Step 1: Use a flexible blocking strategy (like two-stage sampling) to divide the observed signals into two representative sub-blocks.

- Step 2: Run ICA separately on each sub-block, extracting different numbers of ICs.

- Step 3: Calculate the similarity between the ICs extracted from the two blocks. A novel similarity measurement can be used, and hypothesis testing (with Bonferroni correction) can identify "remarkably similar" components [3].

- Step 4: The optimal number of ICs is the maximum number at which corresponding components between blocks remain highly similar. A sudden drop in similarity indicates that the extraction of pure components has been exceeded [3] [2].

Solution B: Leverage the Durbin-Watson (DW) Criterion This method uses the residual signal after reconstructing the data with a set number of ICs [2].

- Procedure: Calculate the DW statistic for the residual matrix. A DW value tending towards 0 suggests the signal is noise-free and requires further decomposition (under-decomposition). A value close to 2 indicates the signal is noisy and has been over-decomposed [2].

Experimental Protocols for Key Cited Studies

Protocol 1: Optimizing ICA for Ocular Artifact Removal in Free-Viewing Experiments [5]

- Data Collection: Combine EEG with eye-tracking during tasks with unconstrained eye movements (e.g., visual search or sentence reading).

- Parameter Optimization: Orthogonally vary four key parameters in your ICA pipeline:

- High-pass filter cutoff for training data.

- Low-pass filter cutoff for training data.

- Proportion of training data containing saccadic spike potentials (SP).

- Threshold for eye tracker-based component rejection.

- Quality Assessment: Use the eye-tracker to objectively quantify correction quality, measuring both residual artifacts (undercorrection) and the removal of neurogenic activity (overcorrection).

- Benchmarking: Compare the performance of your optimized Infomax ICA against an alternative spatial filter like Multiple Source Eye Correction (MSEC).

Protocol 2: Evaluating the Impact of Data Cleaning on ICA Decomposition [4]

- Dataset Selection: Acquire EEG datasets with varying degrees of participant mobility (from stationary to mobile setups).

- Apply Cleaning: Process the data using the AMICA algorithm, systematically varying the cleaning strength (number of cleaning iterations and rejection thresholds).

- Quality Metrics: For each decomposition, calculate:

- Mutual information between components.

- The proportion of components classified as brain, muscle, or 'other'.

- Residual variance of brain components.

- Signal-to-noise ratio in specific conditions.

- Analysis: Statistically analyze how movement intensity and cleaning strength affect the decomposition quality metrics.

Table 1. Effects of Data Cleaning on ICA Decomposition Quality (AMICA Algorithm) [4]

| Movement Intensity | Cleaning Strength | Effect on Decomposition Quality |

|---|---|---|

| High (within a study) | Not Applied | Significant decrease in quality |

| Variable (across studies) | Not Applied | Effect not consistently significant |

| Any | Low (e.g., 1-2 iterations) | Minor improvement |

| Any | Moderate (e.g., 5-10 iterations) | Likely significant improvement |

| Any | High | Improvement smaller than expected; AMICA is robust with limited cleaning |

Table 2. Methods for Determining the Optimal Number of ICs

| Method | Principle | Key Metric | Pros | Cons |

|---|---|---|---|---|

| TSS-ICA/CW_ICA [3] [2] | Block Similarity | Maximum rank-based correlation between ICs from two data sub-blocks | No prior knowledge needed; suitable for small samples; statistically rigorous | Requires data segmentation |

| Durbin-Watson (DW) [2] | Residual Analysis | DW statistic of residual signals (0=under, 2=over) | Conceptually simple | Can be unstable with real-world, non-linear signals |

| KMO-ICA [3] | Partial Correlation | Kaiser-Meyer-Olkin index of the residual matrix | Does not require source prior knowledge | Struggles with unstructured data; matrix inversion issues with high correlation |

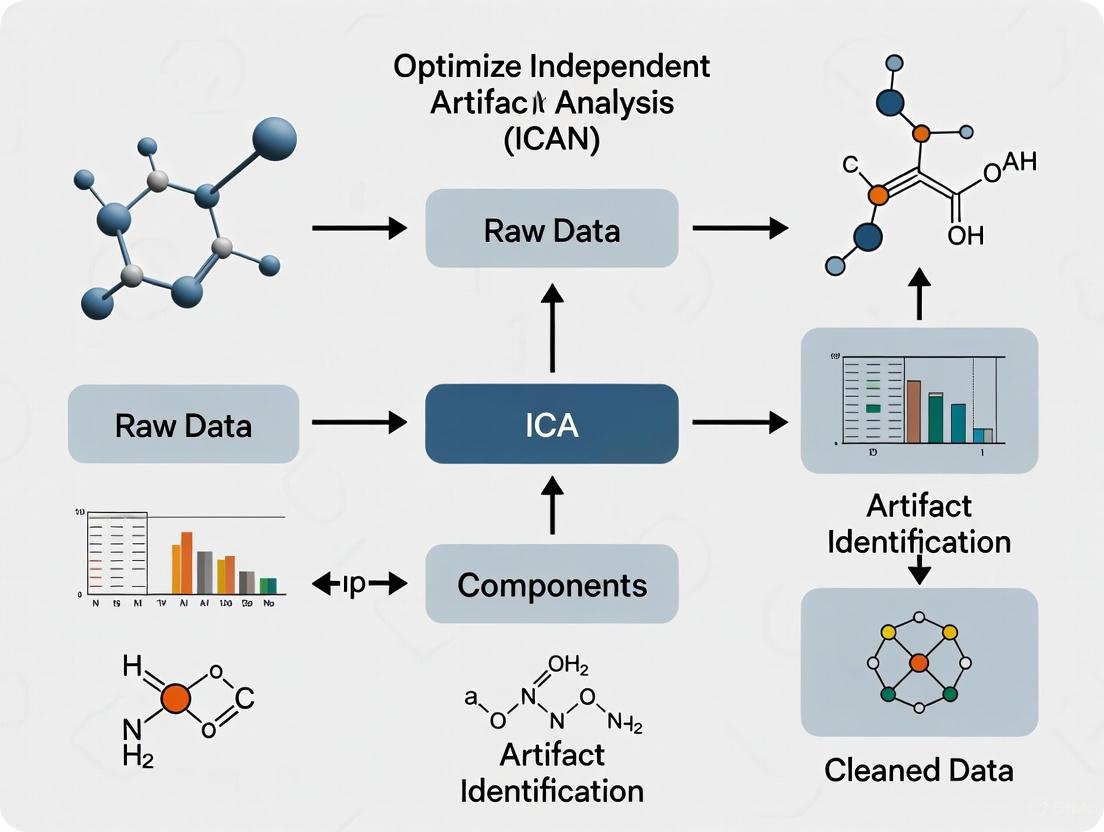

Workflow Visualization

ICA Artifact Removal Workflow

Optimal IC Number Determination

The Scientist's Toolkit

Table 3. Essential Research Reagents & Computational Tools

| Item Name | Function & Application | Key Notes |

|---|---|---|

| Infomax ICA [1] | A common algorithm for performing ICA decomposition. | Minimizes mutual information between output components. Available in toolboxes like EEGLAB. |

| AMICA Algorithm [4] | A powerful ICA algorithm with integrated automatic sample rejection. | Can iteratively reject bad samples during decomposition based on model log-likelihood, improving results. |

| FastICA Algorithm [2] | A widely-used algorithm for ICA that maximizes non-Gaussianity. | Known for its computational efficiency. |

| Eye Tracker [5] | Objective validation tool for quantifying ocular artifacts pre- and post-ICA correction. | Critical for benchmarking artifact removal efficacy and optimizing pipeline parameters. |

| CW_ICA / TSS-ICA [3] [2] | Methods to determine the optimal number of ICs via data blocking and similarity testing. | TSS-ICA is designed to work well with small-scale datasets, a common challenge. |

| Durbin-Watson Criterion [2] | A statistical metric used as a method to determine IC optimal number via residual analysis. | Values near 0 suggest under-decomposition; values near 2 suggest over-decomposition. |

FAQ: A Researcher's Guide to EEG Artifacts

Q1: What is an EEG artifact and why is its removal critical for research? An EEG artifact is any recorded signal that does not originate from neural activity. These unwanted signals contaminate the recording and can obscure the underlying brain signals, which are typically in the microvolt range (a few to tens of microvolts) and therefore have a very low amplitude [6]. Ensuring clean signals is a fundamental preliminary step in EEG analysis because artifacts can compromise data quality, lead to misinterpretation of results, and in clinical contexts, even cause misdiagnosis (e.g., by mimicking epileptiform activity) [6] [7].

Q2: What are the main categories of EEG artifacts? EEG artifacts are broadly categorized into two groups based on their origin [6] [7]:

- Physiological Artifacts: These originate from the subject's own body. Key examples include ocular artifacts (EOG from eye blinks and movements), muscle artifacts (EMG from jaw clenching, swallowing), cardiac artifacts (ECG from heartbeats), and artifacts from perspiration or respiration [6].

- Non-Physiological Artifacts: These are technical artifacts stemming from external sources. Common types are electrode "pop" from sudden impedance changes, cable movement, AC power line interference (50/60 Hz), and incorrect reference electrode placement [6].

Q3: How do different artifacts impact the ICA component selection process? Independent Component Analysis (ICA) works by separating mixed signals into statistically independent components [8]. Successful identification and rejection of artifact-related components are crucial. Each artifact type has a distinct "signature" that can be recognized in the ICA component's properties [9]:

- Ocular (EOG) Components: Exhibit a characteristic scalp topography with strong frontal projections. Their activity time course shows high-amplitude, low-frequency deflections corresponding to blinks or saccades, and the power spectrum shows a smoothly decreasing pattern [9].

- Muscle (EMG) Components: Often show a focal, irregular topography. Their activity is high-frequency and non-rhythmic, and the power spectrum is broad and dominates the beta and gamma frequency ranges [6].

- Cardiac (ECG) Components: Typically show a rhythmic waveform in the component time course that correlates with the heart rate. The topography may be prominent in channels close to the neck [6].

Q4: What are the specific challenges of artifact removal in modern wearable EEG studies? Wearable EEG systems, often using dry electrodes and fewer channels (typically below 16), face unique challenges [10] [11]. The uncontrolled, "in-motion" recording conditions introduce more and stronger motion artifacts. Furthermore, the low number of channels limits the effectiveness of standard source separation methods like ICA, making artifact removal more difficult and driving the need for novel, tailored algorithms [10].

The following table summarizes the key characteristics of the most common physiological artifacts, providing a reference for their identification.

Table 1: Characteristics of Common Physiological EEG Artifacts

| Artifact Type | Origin | Time-Domain Signature | Frequency-Domain Signature | Impact on ICA |

|---|---|---|---|---|

| Ocular (EOG) | Corneo-retinal potential dipole; eye blinks and movements [6]. | Sharp, high-amplitude deflections (100-200 µV), especially over frontal electrodes (Fp1, Fp2) [6]. | Dominant in low frequencies (Delta: 0.5-4 Hz, Theta: 4-8 Hz), potentially mimicking cognitive processes [6]. | Components with strong frontal topography and low-frequency, high-amplitude activity are likely ocular and should be flagged for rejection [9]. |

| Muscle (EMG) | Electrical activity from muscle contractions (e.g., jaw, neck, face) [6]. | High-frequency, sharp activity superimposed on the EEG; amplitude proportional to contraction strength [6]. | Broadband noise, dominating Beta (13-30 Hz) and Gamma (>30 Hz) ranges, masking cognitive signals [6]. | Components with a focal topography and high-frequency, non-stationary activity are characteristic of muscle artifacts. |

| Cardiac (ECG) | Electrical signal from heartbeats [6]. | Rhythmic, periodic waveforms recurring at the heart rate, often visible in central or neck-adjacent channels [6] [12]. | Overlaps several EEG bands; may show a peak at the heart rate frequency (typically ~1-1.7 Hz) [6]. | Components showing a consistent, periodic pattern that correlates with a simultaneously recorded ECG channel are likely cardiac. |

| Motion | Gross motor activity disrupting the electrode-skin interface (head/body movements) [6]. | Large, slow baseline drifts or sudden, non-linear noise bursts [6] [13]. | Can introduce power at the frequency of movement (e.g., gait frequency and its harmonics during walking/running) [13]. | Motion artifacts can severely compromise the quality of the ICA decomposition itself, making it harder to isolate clean brain or other artifact components [13]. |

Experimental Protocols for Artifact Management

Protocol 1: Standard ICA for Ocular Artifact Removal

This protocol outlines the standard methodology for using ICA to identify and remove ocular artifacts, such as blinks, from multi-channel EEG data [8] [9].

Methodology:

- Data Preprocessing: Load the EEG dataset. It is recommended to reject bad channels and bad data portions before running ICA, though the algorithm can run on basically clean data [9].

- Channel Selection: Select the EEG channels to be used for the decomposition. Typically, all scalp EEG channels are included, while non-EEG channels (like dedicated EOG/EMG) are excluded [9].

- ICA Execution: Run the ICA decomposition using an algorithm such as Infomax ICA (

runica). The algorithm assumes the data is a linear mix of statistically independent, non-Gaussian sources and calculates an "unmixing matrix" (W) to separate them such thatS = WX, where X is the measured data and S is the matrix of independent components [8]. - Component Inspection: Inspect the resulting independent components using tools that visualize the component's scalp topography, activity time course, and power spectrum [9].

- Artifact Identification & Rejection: Identify components corresponding to ocular artifacts based on their typical frontal topography, high-amplitude deflections in the time course that match blinks, and a smoothly decreasing power spectrum [9].

- Data Reconstruction: Reconstruct the artifact-corrected EEG signal by projecting the components back to the sensor space, excluding (zeroing out) the artifact components identified in the previous step [8].

Protocol 2: Targeted QRS-Complex Based ECG Artifact Removal

This protocol describes a method to specifically remove ECG artifacts by detecting the QRS complex in a synchronized ECG recording and applying a filter only to the contaminated segments, thus minimizing the loss of neural information [12].

Methodology:

- Acquire Reference Signal: Simultaneously record a reference ECG signal alongside the EEG.

- Detect R-Peaks: Apply an R-peak detection algorithm (e.g., the open-source

R_peak_detect.mfunction in MATLAB) to the ECG signal to identify the location of each heartbeat [12]. - Define QRS Complexes: Use the detected R-peaks as reference points to define the time windows of the QRS complexes [12].

- Apply Targeted Filtering: Instead of filtering the entire EEG signal, apply a zero-phase filter (or another suitable filter) only to the EEG segments that correspond to the identified QRS complex windows. This preserves the integrity of the EEG signal outside of these specific periods [12].

- Analyze Cleaned EEG: Proceed with the analysis of the noise-free EEG signal.

The workflow for this targeted approach is outlined below.

Protocol 3: Advanced Processing for Motion Artifacts in Mobile EEG

For movement-intensive studies (e.g., locomotion), specialized preprocessing before ICA is often necessary. This protocol compares two modern approaches: Artifact Subspace Reconstruction (ASR) and iCanClean [13].

Methodology:

- Data Acquisition: Record EEG data during dynamic tasks (e.g., running) using a mobile EEG system. The use of "dual-layer" sensors, which include a noise-reference electrode, is ideal for iCanClean [13].

- Approach Selection:

- Artifact Subspace Reconstruction (ASR): This method uses a sliding-window Principal Component Analysis (PCA) to identify and remove high-variance signal components that exceed a user-defined threshold (standard deviation 'k') compared to a clean baseline calibration period. A higher k value (e.g., 20-30) is less aggressive, while a lower value cleans more aggressively [13].

- iCanClean: This method uses Canonical Correlation Analysis (CCA) to identify subspaces in the scalp EEG that are highly correlated with subspaces in a noise reference. The correlated noise components are then subtracted. The noise reference can come from dual-layer electrodes or be created as a "pseudo-reference" from the raw EEG itself (e.g., by notch-filtering below 3 Hz to isolate motion artifact). The aggressiveness is controlled by an R² threshold [13].

- Evaluation of Cleaning: The success of these methods can be evaluated by assessing the dipolarity of the resulting ICA components, the reduction of spectral power at the gait frequency and its harmonics, and the ability to recover expected event-related potentials (ERPs) like the P300 [13].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Datasets for EEG Artifact Removal Research

| Tool/Resource | Function/Benefit | Example Use-Case |

|---|---|---|

| EEGLAB | An interactive MATLAB toolbox for processing EEG data. It provides a comprehensive framework for ICA, including running algorithms and component inspection tools [9]. | The primary platform for implementing Protocol 1 (Standard ICA), allowing researchers to visualize component topographies, spectra, and time courses to make informed rejection decisions [9]. |

| ICLabel | An EEGLAB plugin that automatically classifies ICA components into categories (e.g., "Brain", "Eye", "Muscle", "Heart", "Line Noise", "Channel Noise", "Other") using a trained dataset [13]. | Provides an initial, automated labeling of components to assist researchers, particularly those new to ICA, in the component selection process. Note: it may be less effective on data with strong motion artifacts [13]. |

| EEGdenoiseNet | A benchmark dataset provided as a semi-synthetic library of clean EEG, EOG, and EMG signals. Researchers can mix these to create datasets with known ground truth [14]. | Invaluable for training and evaluating new deep learning models (like CLEnet) for artifact removal, as it allows for quantitative performance measurement via SNR and Correlation Coefficient [14]. |

| Deep Learning Models (e.g., CLEnet) | Emerging models that combine architectures like CNN and LSTM in an end-to-end network to separate artifacts from EEG signals without requiring manual component selection [14]. | Used for automated, robust artifact removal from multi-channel EEG data, showing superior performance in removing mixed and unknown artifacts compared to traditional models [14]. |

| Fixed Frequency EWT + GMETV | A novel method designed for single-channel EEG that automates the removal of EOG artifacts by decomposing the signal and filtering out contaminated components [15]. | A specialized solution for the growing field of portable, single-channel EEG devices where multi-channel techniques like ICA are not applicable [15]. |

FAQs: Understanding the Core Challenge

Q1: Why is it so difficult to automatically distinguish brain signal components from artifact components in ICA? The core challenge lies in the significant overlap in the statistical and physiological characteristics of brain and artifact components. ICA separates data into maximally independent sources, but it is a purely statistical technique and cannot distinguish between neural and non-neural sources based on the intended research goal. Therefore, components representing, for example, brain activity and muscle activity can appear similarly non-Gaussian and statistically independent, making them hard to separate using simple, automated thresholds [16] [17] [18].

Q2: What are the specific characteristics where this overlap occurs? The overlap primarily manifests in four key domains, which are typically used to classify components:

- Spatial Topography: While eye blink artifacts often have a characteristic frontal distribution, other artifacts like muscle tension can have topographies that overlap with brain regions of interest [9] [17].

- Temporal Activity: The time courses of some high-frequency brain oscillations (e.g., gamma) can be mistaken for the high-frequency, burst-like activity of muscle artifacts (EMG) [17].

- Spectral Profile: The power spectrum of an artifact can sometimes mimic that of brain signals. For instance, the smoothly decreasing spectrum of an eye artifact can overlap with the spectral profile of certain brain rhythms [9].

- Statistical Properties: Measures of non-Gaussianity, like kurtosis, which are used to find independent components, can be high for both brain-derived and artifactual sources. A component with a peaky distribution could be an eye blink or an epileptic spike [18] [19].

Q3: In what specific experimental paradigms is ICA-based artifact removal most likely to be unreliable? ICA performance can be compromised when the artifact has low trial-to-trial variability. Research on Transcranial Magnetic Stimulation (TMS)-evoked potentials has shown that if an artifact repeats very similarly after each TMS pulse, it can break the statistical independence assumption of ICA. This can cause the algorithm to inaccurately separate components, potentially removing brain signals along with the artifact and biasing the results [16].

Q4: How can researchers estimate the reliability of their ICA decomposition for a given dataset? Even without a ground truth, one can assess reliability. A study on TMS-EEG suggested that the trial-to-trial variability of the identified artifact components can be measured after ICA is run. Low variability in a component may indicate a higher risk of an unreliable decomposition and spurious cleaning [16]. Furthermore, using tools like the RELICA plugin in EEGLAB allows researchers to assess the stability of their ICA results through bootstrapping, revealing which components are robustly identified across multiple runs [9].

The following tables consolidate quantitative findings from research on artifact detection and removal.

Table 1: Performance Comparison of Automatic Artifact Detection Methods

| Detection Method | Key Features Used | Average Performance (Mean Squared Error) | Notes |

|---|---|---|---|

| ICA + Renyi's Entropy [19] | Renyi's entropy (order 2) | 7.4% Error | Outperformed the kurtosis/Shannon entropy method, and was able to detect muscle artifacts. |

| ICA + Kurtosis & Shannon's Entropy [19] | Kurtosis and Shannon's Entropy | 31.3% Error | Failed to detect muscle activity in many cases. |

| Linear Classifier (LPM) [17] | 6 optimized features (spectral, spatial, temporal) | <10% MSE (on Reaction Time data) | Performance was on par with inter-expert disagreement. |

| Linear Classifier (LPM) [17] | Same pre-calculated classifier as above | 15% MSE (on Auditory ERP data) | Demonstrated good generalization to a different paradigm. |

Table 2: Impact of Artifact Variability on ICA Cleaning Accuracy [16]

| Artifact Trial-to-Trial Variability | Impact on ICA Independence Assumption | Expected Cleaning Accuracy |

|---|---|---|

| Low (Deterministic) | Breaks the assumption of statistical independence between components. | Low; high risk of also removing non-artifactual brain data. |

| High (Stochastic) | Better fulfills the assumption of statistical independence. | High; more reliable isolation and removal of the artifact. |

Experimental Protocols

Protocol 1: Systematic Component Inspection and Labeling

This methodology outlines the manual procedure for identifying and classifying ICA components, which is considered a gold standard against which automated methods are measured [9].

1. ICA Decomposition:

- Data Preparation: Load the continuous or epoched EEG dataset. Ensure data is high-pass filtered (e.g., 1 Hz cutoff) to remove slow drifts that can impair ICA performance [20].

- Algorithm Selection: Run ICA decomposition using an algorithm such as Infomax, FastICA, or Picard. The default in many toolboxes is often Infomax, which is suitable for super-Gaussian sources [9] [20].

2. Multi-Domain Component Visualization: For each independent component, generate and inspect the following plots [9]:

- Spatial Topography: Plot the 2-D scalp map of the component's back-projection (a column of the inverse weight matrix, W⁻¹). Look for characteristic patterns (e.g., frontal dipoles for eye blinks, temporal/neck patterns for muscle noise).

- Time Course: Scroll through the component's activation (a row of the source matrix, S). Look for stereotypical temporal patterns (e.g., infrequent, large-amplitude spikes for blinks; high-frequency, burst-like activity for muscle noise).

- Power Spectrum: Plot the frequency spectrum of the component's activation. Look for distinctive spectral signatures (e.g., low-frequency dominance for eye movements; broad, high-frequency power for muscle artifacts).

- ERPimage: (For epoched data) Create an ERPimage plot to see if the component's activity is time-locked to events. This can help identify cognitive components or stimulus-related artifacts.

3. Expert Labeling: Based on the confluence of evidence from all three domains (spatial, temporal, spectral), an expert labels each component as "Brain," "Artifact" (and specifies type, e.g., "EOG," "EMG," "ECG"), or "Mixed/Uncertain."

Protocol 2: Assessing ICA Reliability Using the RELICA Method

This protocol describes a procedure to evaluate the stability of an ICA decomposition, which is crucial for understanding the confidence in component selection [9].

1. Data Resampling:

- Perform multiple ICA decompositions (e.g., 100 runs) on bootstrapped versions of the original data. Bootstrapping involves creating new datasets by randomly sampling segments from the original data with replacement.

2. Cluster Analysis:

- For each run of ICA, the components are computed. Subsequently, components from all runs are clustered based on similarity of their scalp topographies and/or time courses.

3. Reliability Measurement:

- The stability and reliability of each component from the original decomposition are measured by how consistently it is re-identified across the bootstrapped runs and how tightly its replicas cluster together. Components that do not form a tight, consistent cluster are considered unreliable and should be interpreted with caution.

Signaling Pathways & Workflows

ICA Component Inspection Workflow

Artifact Variability Impact on ICA

The Scientist's Toolkit

Table 3: Essential Software Tools and Algorithms for ICA Research

| Tool/Algorithm | Function | Key Application in ICA Research |

|---|---|---|

| EEGLAB [9] | An interactive MATLAB toolbox for processing EEG data. | Provides a complete ecosystem for running ICA (multiple algorithms), visualizing components (topography, time-course, spectrum), and manually labeling them. Plugins like RELICA assess reliability. |

| MNE-Python [20] | An open-source Python package for exploring, visualizing, and analyzing neurophysiological data. | Offers implementations of ICA (FastICA, Picard, Infomax) with tight integration for creating and applying ICA solutions to Raw and Epochs objects. Includes functions for automatically detecting EOG/ECG artifacts. |

| FastICA Algorithm [21] [20] | A computationally efficient ICA algorithm based on a fixed-point iteration scheme to maximize non-Gaussianity. | A standard algorithm for performing the decomposition. Its efficiency is beneficial for high-density EEG systems or for running multiple decompositions (e.g., in RELICA). |

| Infomax Algorithm [9] [20] | An ICA algorithm that maximizes the mutual information between the inputs and outputs of a neural network. | Another standard algorithm, particularly effective for super-Gaussian sources. The extended-Infomax version can also find sub-Gaussian sources, making it robust for various data types. |

| RELICA Plugin [9] | An EEGLAB plugin for assessing the reliability of ICA components. | Used to measure the stability and reliability of components identified by ICA through bootstrapping, helping researchers identify which components are robust and which are uncertain. |

Troubleshooting Guide: ICA Component Selection

Q: What are the key metrics for selecting biologically relevant vs. artifactual ICA components? A: The selection relies on multiple metrics assessing the component's topography, time-course statistics, and spectral properties. No single metric is sufficient; components should be evaluated through a convergent validation approach.

Metric 1: Topographic Dipolarity

- Purpose: Assesses the biological plausibility of a component. Brain-originating components should have a scalp map compatible with a single equivalent dipole.

- Interpretation: A low residual variance (RV) indicates a good fit. Components with high dipolarity are likely cortical in origin.

- Quantitative Threshold: Components with an RV of less than 10% are typically considered near-dipolar and thus likely of cerebral origin [22].

Metric 2: Time-Course Kurtosis

- Purpose: Measures the "peakedness" of the component time-course distribution. Artifacts like eye blinks or muscle activity often have highly peaked, non-Gaussian distributions.

- Interpretation: High kurtosis can indicate the presence of stereotypical artifacts. Maximizing non-Gaussianity is a core principle of ICA [23].

Metric 3: Spectral Features

- Purpose: Identifies components based on their frequency content.

- Interpretation:

- Ocular Artifacts: Dominated by low-frequency power.

- Muscle Artifacts: Exhibit high-frequency "broadband" power.

- Neural Components: Often show activity in canonical bands like alpha, beta, or theta.

Metric 4: Temporal Entropy (Mutual Information Reduction)

- Purpose: Measures the statistical independence of a component from all others. A successful ICA decomposition maximizes the independence between components.

- Interpretation: Algorithms that achieve a higher Mutual Information Reduction (MIR) are more effective at separating the data into true underlying sources. The remaining Pairwise Mutual Information (PMI) between component time courses should be low [22].

Q: My ICA decomposition fails to capture clear ocular artifacts. What could be wrong? A: This issue can stem from data quality or algorithmic settings.

- Data Preprocessing: Ensure your data is properly high-pass filtered (e.g., at 0.5-1 Hz) to remove slow drifts that can hinder ICA performance [24].

- Algorithm Choice: Different ICA algorithms (e.g., Infomax, FastICA, AMICA) can yield varying results. AMICA and other likelihood/mutual information-based methods have been shown to produce a higher number of dipolar components, which may improve separation [22].

- Number of Components: Specifying an incorrect number of components can lead to poor unmixing. If you suspect the default is too low, try increasing it, as this can sometimes better capture artifacts [24].

Q: After ICA cleaning, my TMS-evoked potentials (TEPs) seem altered. Is this expected? A: Yes, this is a known risk. Research shows that if the TMS-induced artifact has low trial-to-trial variability, ICA may incorrectly identify it as a stable, independent source. This can cause the algorithm to remove not just the artifact but also parts of the brain-generated TEP, leading to biased results [25]. Always measure the variability of the removed artifact components to estimate cleaning reliability.

Quantitative Metrics for ICA Component Evaluation

The table below summarizes the key metrics, their targets, and interpretation guidelines.

| Metric | What It Measures | Target for Brain Components | Indication of Artifact |

|---|---|---|---|

| Dipolarity [22] | Fit of component scalp topography to a single equivalent dipole. | Residual Variance < 10% | High residual variance; irregular scalp map. |

| Kurtosis [23] | "Heavy-tailedness" or peakedness of the amplitude distribution. | Varies; generally moderate. | Extreme positive or negative values. |

| Spectral Features | Power distribution across frequency bands. | Peak in a canonical band (e.g., Alpha: 8-12 Hz). | Dominant low-freq. (ocular) or high-freq. (muscle). |

| Temporal Entropy / MIR [22] | Statistical independence from other component time-courses. | High independence (low mutual information with others). | High dependency on other components (high PMI). |

Experimental Protocol: Validating an ICA Decomposition

This protocol provides a step-by-step method to evaluate the quality of an ICA decomposition for artifact removal, based on best practices from recent literature.

1. Data Preprocessing & Decomposition:

- Apply a high-pass filter (e.g., 1 Hz cutoff) to the continuous EEG data [24].

- Perform ICA decomposition using a chosen algorithm (e.g., Infomax, AMICA) on the filtered data.

- Do not perform aggressive artifact rejection prior to ICA, as this can disrupt the statistical assumptions of the algorithm [26].

2. Component Evaluation & Metric Calculation:

- For each component, calculate the four key metrics:

- Dipolarity: Compute the residual variance (RV) between the component's scalp map and the projection of the best-fitting single equivalent dipole [22].

- Kurtosis: Calculate the kurtosis of the component's time-course.

- Spectral Features: Generate the power spectral density for the component.

- Temporal Entropy: Use the decomposition's overall Mutual Information Reduction (MIR) and the pairwise mutual information (PMI) between components as quality measures [22].

3. Decision & Validation:

- Classify components as "Brain" or "Artifact" based on convergent evidence from all metrics.

- Critical Check for TEPs: If working with TMS-EEG data, calculate the trial-to-trial variability of the artifact components. Low variability suggests a higher risk that brain activity was also removed [25].

- Subtract the selected artifact components from the data and visually inspect the cleaned EEG to confirm artifact removal and preservation of neural signals.

Logical Workflow for ICA Component Selection

The following diagram illustrates the decision-making process for classifying ICA components.

The table below lists essential computational tools and metrics used in ICA-based EEG analysis.

| Tool / Resource | Function / Description |

|---|---|

| Mutual Information Reduction (MIR) | A core metric to evaluate the overall success of an ICA decomposition in producing statistically independent components [22]. |

| Residual Variance (RV) | The key quantitative measure for dipolarity, indicating how well a component's scalp map fits a single equivalent dipole source [22]. |

| AMICA Algorithm | An ICA algorithm shown in comparisons to achieve high mutual information reduction and yield a high number of dipolar components [22]. |

| Trial-to-Trial Variability Measure | A crucial check for TMS-EEG studies to assess the reliability of ICA cleaning and avoid unintended removal of brain signals [25]. |

From Manual to Automated: Modern ICA Component Classification Techniques

Troubleshooting Guides & FAQs

Installation and Setup

Q1: How do I install the ICLabel classifier in EEGLAB?

The ICLabel plugin can be installed directly through the EEGLAB plugin manager, which is the easiest method. Alternatively, you can install it manually from its GitHub repository. If choosing manual installation, note that the project includes matconvnet as a submodule. When cloning the repository, use the command git clone --recursive https://github.com/lucapton/ICLabel.git to ensure all dependencies are included. If downloading as a ZIP file, you must separately download the required version of matconvnet and place it in the ICLabel folder [27].

Q2: I have installed ICLabel, but nothing happens when I run it. Is this a bug?

This is likely not a bug. The ICLabel plugin itself does not include built-in plotting functions. After it finishes processing, it will display "Done" in the MATLAB command window. To visualize the components and their classifications, it is highly recommended to install the complementary Viewprops plug-in. If Viewprops is installed, it will automatically open and display the components after ICLabel finishes its classification [27].

Interpretation of Results

Q3: How do I access and interpret the output probabilities from ICLabel?

ICLabel stores its results in the EEG structure under EEG.etc.ic_classification.ICLabel.classifications. This is a matrix where each row corresponds to an Independent Component (IC) and each column represents the probability of that component belonging to a specific category. The order of the categories is defined in EEG.etc.ic_classification.ICLabel.classes. For example, to get the label vector for the fifth IC, you would use EEG.etc.ic_classification.ICLabel.classifications(5, :). The seven output categories are: Brain, Muscle, Eye, Heart, Line Noise, Channel Noise, and Other [27] [28].

Q4: What probability threshold should I use to automatically reject components?

While the optimal threshold can depend on your specific research goals, ICLabel is designed to provide probabilistic outputs to guide your decision. A common approach for artifact removal research is to use the pop_icflag function to automatically flag components based on predefined probability thresholds. For instance, you could set a rule to flag any component with a probability of being in "Muscle," "Line Noise," or "Channel Noise" categories greater than a certain value (e.g., 0.8) for rejection. You can then review the flagged components before finalizing the rejection to ensure validity [27].

Performance and Integration in Mobile EEG Research

Q5: Does ICLabel perform well with mobile EEG data containing strong motion artifacts?

It is important to know that the standard ICLabel classifier was not explicitly trained on mobile EEG data. The presence of large motion artifacts can contaminate the ICA decomposition itself, potentially reducing ICLabel's classification accuracy [13]. For Mobile Brain/Body Imaging (MoBI) studies, it is often necessary to employ advanced preprocessing techniques before ICA to mitigate motion artifacts. Research indicates that using tools like iCanClean (which leverages canonical correlation analysis with noise references) or Artifact Subspace Reconstruction (ASR) can lead to a higher-quality ICA decomposition with more dipolar brain components, which in turn should improve ICLabel's performance on such data [13].

The quality of the ICA decomposition is paramount for accurate component labeling. A recent study systematically evaluated the impact of data cleaning on the AMICA algorithm, one of the most powerful ICA algorithms. The findings suggest that:

- Increased participant movement can negatively impact decomposition quality within a study.

- Automatic sample rejection, a feature built into the AMICA algorithm, can significantly improve the decomposition.

- The study recommends using a moderate cleaning strength, such as 5 to 10 iterations of the AMICA sample rejection function, for most datasets to improve decomposition, regardless of motion intensity [4]. Ensuring a high-quality decomposition is a critical step before applying ICLabel.

Experimental Protocols for Decomposition Quality

Evaluating the Impact of Preprocessing on ICA Decomposition

Objective: To systematically assess how different preprocessing pipelines affect ICA decomposition quality and subsequent ICLabel classification accuracy, particularly in datasets with high artifact load.

Methodology:

- Data Selection: Use open-access EEG datasets that encompass a range of motion intensities, from stationary to full-body movement (MoBI). Ensure datasets have high channel counts (e.g., >58 channels) and known electrode locations [4].

- Preprocessing Variations: Apply different preprocessing techniques to the same raw datasets:

- Pipeline A: Minimal high-pass filtering (e.g., 1-2 Hz).

- Pipeline B: Moderate automatic sample rejection (e.g., 5-10 iterations of AMICA's built-in rejection) [4].

- Pipeline C: Advanced artifact removal using iCanClean or Artifact Subspace Reconstruction (ASR) with an appropriate threshold (e.g., k=20-30 for ASR) [13].

- ICA Decomposition: Perform ICA decomposition using a consistent algorithm (e.g., AMICA) on all preprocessed versions of the data.

- Quality Metrics: Evaluate decomposition quality using:

- ICLabel Analysis: Run ICLabel on each decomposition and compare the proportion of components classified as Brain, Muscle, and Noise across the different pipelines.

Workflow for Optimized ICA Component Selection

The following diagram illustrates a recommended workflow for leveraging ICLabel within an optimized ICA component selection pipeline, integrating the troubleshooting advice and experimental protocols outlined above.

Key Research Reagent Solutions

The following table details essential software tools and their functions for research involving ICLabel and optimized ICA decomposition.

| Research Reagent | Function in Experiment | Key Parameter Considerations |

|---|---|---|

| ICLabel Classifier [27] [28] | Automated classification of ICA components into physiological and non-physiological source categories. | Provides probability outputs (Brain, Muscle, Eye, etc.). Use pop_icflag to set automated rejection thresholds. |

| AMICA Algorithm [4] | High-performance ICA decomposition, includes built-in sample rejection to improve decomposition quality. | Enable sample rejection with 5-10 iterations for moderate cleaning. Robust to artifacts but sensitive to data length/quality. |

| iCanClean [13] | Motion artifact removal using Canonical Correlation Analysis (CCA) with noise references; ideal for MoBI. | Can use dual-layer electrode noise or create pseudo-references from EEG. An R² threshold of ~0.65 is recommended for walking data. |

| Artifact Subspace Reconstruction (ASR) [13] | Statistical method to identify and remove high-variance, high-amplitude artifacts from continuous EEG. | Performance is highly sensitive to the threshold parameter k. A k of 20-30 is often recommended to avoid over-cleaning. |

| Viewprops Plugin [27] | Visualization of IC properties (topography, spectrum, etc.) for manual verification of ICLabel output. | Essential for troubleshooting and validating automated classifications. Displays multiple IC properties in a single figure. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My ICA cleaning appears to be removing brain signals along with artifacts. What could be causing this?

A: This is a known issue, particularly when artifact waveforms have low trial-to-trial variability [25]. When artifacts repeat with very similar morphology across trials, it can create dependencies between underlying components, causing ICA to perform unreliably. To diagnose this, measure the variability of your artifact components post-ICA; low variability often predicts this type of cleaning error [25].

Q2: For mobile EEG experiments with significant motion, how much data cleaning should I do before running ICA?

A: A 2024 study suggests that while data cleaning improves ICA decomposition, its effect is smaller than expected [4]. The AMICA algorithm is robust even with limited data cleaning. For most datasets, moderate cleaning (5-10 iterations of AMICA's sample rejection function) is sufficient, regardless of motion intensity [4].

Q3: Can ICA effectively remove all types of EEG artifacts?

A: ICA has proven effective for various artifacts including eye blinks, eye movements, ECG, muscle activity, and line noise [29] [8]. However, its performance depends on factors like statistical independence of sources and artifact variability [25]. It is particularly advantageous as it requires no reference signal and avoids removing brain activity, unlike regression techniques [8].

Q4: How can I objectively measure the success of my ICA-based artifact removal?

A: Multiple quantitative measures exist: (1) Calculate the normalized correlation coefficient between pre- and post-cleaning signals to ensure minimal distortion of interictal activity [29]; (2) Evaluate mutual information between components; (3) Analyze residual variance of brain components; and (4) Calculate signal-to-noise ratios in cleaned versus uncleaned data [4].

Q5: What are the limitations of using ICA for TMS-evoked potentials?

A: For TMS-evoked potentials, ICA becomes unreliable when TMS-induced artifacts repeat similarly after each pulse [25]. This low trial-to-trial variability can cause ICA to incorrectly eliminate brain-derived EEG data along with artifacts, particularly affecting the early (0-30 ms) TEP components [25].

Common Experimental Issues and Solutions

Problem: Poor ICA decomposition quality in mobile EEG experiments

- Solution: Implement moderate automatic sample rejection (5-10 iterations in AMICA) before decomposition. Increased movement significantly decreases decomposition quality, but moderate cleaning improves it without requiring excessive data removal [4].

Problem: Uncertainty in identifying artifactual components after ICA

- Solution: Combine multiple evaluation metrics rather than relying on a single measure. Examine component mutual information, topographies, power spectra, and time-course characteristics to distinguish brain from non-brain components more reliably [4].

Problem: ICA fails to separate artifacts from brain signals in TEP data

- Solution: Measure artifact component variability post-ICA. If variability is low, consider alternative cleaning methods or use the variability measure to estimate cleaning reliability even without ground-truth data [25].

Table 1: ICA Performance Across Different Artifact Types

| Artifact Type | Removal Efficacy | Key Considerations | Quantitative Measures |

|---|---|---|---|

| Eye Movements/ Blinks | High [8] | Can increase cognitive load if subjects are instructed to suppress blinks [8] | Normalized correlation coefficient shows minimal signal change [29] |

| Muscle Activity (EMG) | Effective [29] [4] | More prevalent in mobile EEG; excessive cleaning may remove important data [4] | Proportion of muscle components in decomposition [4] |

| Cardiac (ECG) | Effective [29] | Can be identified and removed without reference signal [8] | Component topography and time-course analysis [29] |

| Line Noise | Effective [29] | Electrical artifacts from equipment or power lines [4] | Spectral analysis of components [4] |

| TMS-Induced | Variable Reliability [25] | Becomes unreliable with low trial-to-trial artifact variability [25] | Artifact variability measurement predicts cleaning accuracy [25] |

| Electrode Movement | Challenging [4] | Large transient spikes easier to detect than cable sway [4] | Sample rejection based on log-likelihood in AMICA [4] |

Table 2: Impact of Data Cleaning on ICA Decomposition Quality

| Experimental Condition | Decomposition Quality | Recommended Cleaning | Effect of Cleaning Strength |

|---|---|---|---|

| Stationary EEG | High [4] | Minimal cleaning required | Smaller effect on quality [4] |

| Mobile EEG (Low Motion) | Moderate to High [4] | 5 iterations of sample rejection | Significant improvement [4] |

| Mobile EEG (High Motion) | Lower [4] | 5-10 iterations of sample rejection | Significant improvement [4] |

| TMS-EEG (Variable Artifacts) | Dependent on artifact variability [25] | Measure component variability first | Can decrease reliability if variability is low [25] |

Detailed Experimental Protocols

Protocol 1: ICA-Based Artifact Removal for Standard EEG

This protocol implements ICA for removing common artifacts (eye movements, ECG, muscle) from standard EEG recordings [29] [8].

Data Preparation: Use short EEG samples with evident artifacts and spikes. Ensure data meets ICA assumptions: linear mixing of statistically independent, non-Gaussian sources [29] [8].

ICA Calculation: Apply Joint Approximate Diagonalization of Eigen-matrices (JADE) algorithm to calculate independent components [29].

Component Identification: Analyze components to identify those related to artifacts based on topography, time-course, and spectral characteristics [8].

Signal Reconstruction: Reconstruct signal excluding artifact-related components. Zero out problematic components while applying mixing matrix [8].

Validation: Calculate normalized correlation coefficient between original and cleaned signals to measure changes. Have multiple examiners independently identify changes in morphology and location of discharges and artifacts [29].

Protocol 2: Evaluating ICA Cleaning Accuracy for TMS-Evoked Potentials

This protocol measures accuracy of ICA-based cleaning specifically for TMS-evoked potentials where artifacts may mask early (0-30 ms) TEPs [25].

Simulated Artifacts: Impose simulated artifacts with varying variability on measured artifact-free TEPs. Systematically vary artifact waveform variability from deterministic to stochastic [25].

ICA Processing: Apply ICA decomposition to simulated data using standard parameters.

Accuracy Measurement: Measure cleaning accuracy for each level of artifact variability by comparing to ground-truth artifact-free data.

Variability Quantification: Calculate variability of artifact components using ICA-derived components. Establish relationship between measured variability and cleaning accuracy [25].

Reliability Prediction: Use measured variability to predict cleaning reliability even without ground-truth data [25].

Protocol 3: Optimizing ICA for Mobile EEG with Automatic Cleaning

This protocol implements automatic sample rejection before ICA decomposition for mobile EEG data with significant motion artifacts [4].

Dataset Selection: Use high-density EEG datasets (≥58 channels) with varying movement levels from stationary to mobile Brain/Body Imaging (MoBI) setups [4].

Parameter Variation: Apply AMICA algorithm with varying sample rejection criteria (number of iterations, SD thresholds). Use integrated AMICA sample rejection based on log-likelihood [4].

Quality Assessment: Evaluate decomposition quality using multiple measures: mutual information between components, proportion of brain/muscle/other components, residual variance of brain components, and signal-to-noise ratio [4].

Optimization: Determine optimal cleaning parameters that maximize decomposition quality while preserving data. Moderate cleaning (5-10 iterations) typically provides best results [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ICA Artifact Removal Research

| Tool/Resource | Function/Purpose | Application Context |

|---|---|---|

| AMICA Algorithm | Adaptive Mixture ICA; currently one of the most powerful decomposition algorithms [4] | Mobile and stationary EEG artifact removal |

| JADE Algorithm | Joint Approximate Diagonalization of Eigen-matrices; calculates independent components [29] | Standard EEG artifact removal |

| Artifact Subspace Reconstruction (ASR) | Identifies artifactual time periods based on artifact subspaces [4] | Initial data cleaning before ICA |

| Sample Rejection (AMICA) | Model-driven rejection of bad samples based on log-likelihood during decomposition [4] | Automatic data cleaning integrated with ICA |

| Variability Measurement Tool | Quantifies trial-to-trial variability of artifact components [25] | Predicting ICA cleaning accuracy for TMS-EEG |

| Normalized Correlation Coefficient | Measures changes caused by artifact component suppression [29] | Validating preservation of neural signals |

Experimental Workflows and System Architecture

ICA-Based Artifact Removal Workflow

Automatic Artifact Detection System Logic

Mobile EEG Preprocessing Pipeline

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of integrating covariates into ICA compared to conventional ICA? Integrating covariates like behavioral scores directly into the ICA decomposition process can uncover stronger and more robust brain-behavior relationships. Unlike conventional ICA, which performs decomposition and correlation analysis sequentially, the augmented approach allows the behavioral data to directly influence how the brain connectivity patterns are separated into independent components, often leading to more significant and stable correlations in independent test datasets [30] [31].

Q2: My dataset has a limited sample size. Can I still use this method? Small sample sizes pose a challenge, particularly for methods that require data splitting. However, newer algorithms like TSS-ICA (Two-Stage Sampling ICA) are being developed specifically to handle small-scale and unstructured datasets more effectively [3]. These methods use a flexible blocking and similarity testing strategy to determine the optimal number of reliable components from limited data.

Q3: What types of covariates can be integrated into the ICA framework? The method is versatile and can incorporate various continuous or categorical measures. The featured research used cognitive performance metrics from standardized tests like the Woodcock-Johnson (WJ) Tests of Cognitive Abilities [30] [31]. In principle, clinical scores, symptom severity ratings, or other behavioral or physiological measures can also be included as covariates.

Q4: How does integrating covariates affect the artifact removal process in EEG analysis? While the primary literature focuses on using covariate-enhanced ICA to find brain-behavior relationships, the underlying principle could refine artifact removal. By making the decomposition informed by a relevant behavioral covariate, the resulting components might more cleanly separate brain signals from non-brain artifacts, though this specific application is an area for further research.

Q5: What are the consequences of selecting too many or too few ICA components? Selecting an incorrect number of components directly impacts result quality:

- Under-decomposition (too few components): Results in components that are poorly separated mixtures of multiple underlying sources, obscuring the true regulatory or artifactual structure and leading to inaccurate interpretations [32].

- Over-decomposition (too many components): Leads to splitting of true sources into multiple components and the creation of biologically meaningless components, often dominated by a single gene or signal feature, which introduces noise [32].

Troubleshooting Guides

Problem 1: Weak or Non-Significant Correlation Between ICA Components and Behavioral Measures

Potential Causes and Solutions:

Cause: Inadequate decomposition quality.

- Solution: Ensure the input data for ICA is clean. For EEG data, apply a high-pass filter (e.g., 1 Hz cutoff) before ICA to remove slow drifts that can reduce the independence of sources [20]. Consider using automatic sample rejection algorithms, like the one built into the AMICA algorithm, to iteratively remove bad samples that negatively impact the model fit [4].

Cause: Using a conventional ICA approach.

- Solution: Shift from the conventional two-step method (ICA then correlation) to an augmented ICA approach where behavioral data is integrated directly into the decomposition matrix. This allows the covariate to guide the separation of brain connectivity patterns [30].

Cause: The number of ICA components is not optimal.

- Solution: Employ a dimensionality selection method like OptICA, which aims to find the highest dimension that produces a low number of over-decomposed (e.g., single-gene dominated) components, thus avoiding both under- and over-decomposition [32].

Problem 2: Determining the Optimal Number of Components for Decomposition

Recommended Procedure (TSS-ICA Method):

This method is designed to find reliable components, even with small sample sizes [3].

- Create Sub-Blocks: Use a two-stage sampling method on your observed data to create two representative sub-blocks.

- Run ICA Separately: Perform ICA on each of the two sub-blocks.

- Similarity Measurement: Identify corresponding Independent Components (ICs) between the two sub-blocks using a novel similarity measurement.

- Hypothesis Testing: Determine which components are "remarkably similar" using a statistical testing approach with corrections for multiple comparisons (e.g., Bonferroni correction).

- Determine Optimal Number: The optimal number of ICs is the number of pure components that are consistently and significantly similar across the sub-blocks.

Problem 3: Handling Missing Data in Multimodal Fusion with ICA

The Problem: Missing data in one or more modalities (e.g., some subjects lack MRI or PET scans) drastically reduces sample size if only complete cases are used, leading to biased results and information loss [33].

Recommended Solution: Full Information Linked ICA (FI-LICA)

FI-LICA handles missing data under the Linked ICA framework without discarding subjects [33].

- Principle: Built upon complete cases, it uses all available data to recover the missing latent information.

- Advantage: More accurate and reliable than complete case analysis or simple zero-filling, leading to better performance in downstream tasks like classification and prediction.

- Implementation: The algorithm employs a full information approach to estimate the shared subject loadings across all modalities, even for subjects with missing data.

Experimental Protocols & Data Presentation

Protocol 1: Augmented ICA with Integrated Covariates

This protocol details the method for integrating behavioral data directly into the ICA decomposition of EEG connectivity data [30] [31].

1. Data Collection and Preprocessing

- Participants: 175 patients with a range of neuropsychiatric conditions.

- EEG Data: Collect 5-minute resting-state EEG with a 19-channel system (10-20 placement). Preprocess data using software like NeuroGuide: apply automated artifact rejection, band-pass filter (1-30 Hz), and re-reference to linked ears.

- Behavioral Covariate: Administer the Woodcock-Johnson (WJ) Tests of Cognitive Abilities to obtain the General Intellectual Ability (GIA) score.

- Connectivity Analysis: Compute functional connectivity measures (e.g., lagged coherence in the upper alpha band) between brain regions using source localization like swLORETA.

2. ICA Decomposition Methods

- Method 1 (Conventional ICA): Perform ICA (e.g., using Infomax algorithm) solely on the EEG connectivity data. Then, correlate the resulting independent components with the WJ GIA scores.

- Method 2 (Augmented ICA): Create an augmented data matrix that combines both the EEG connectivity data and the behavioral covariate (WJ scores). Perform ICA on this augmented matrix to allow the behavioral data to directly influence the decomposition.

3. Analysis and Validation

- Compare the strength and statistical significance of the correlations between EEG connectivity components and cognitive performance found by the two methods.

- Validate the robustness of the correlations using an independent test dataset.

Comparative Analysis: Conventional vs. Augmented ICA

The table below summarizes the key differences and outcomes between the two methodological approaches based on the cited study [30] [31].

| Feature | Conventional ICA | Augmented ICA with Covariates |

|---|---|---|

| Data Input | EEG connectivity data only | Augmented matrix of EEG connectivity + behavioral scores |

| Analytical Sequence | 1. Decompose EEG data2. Correlate components with behavior | Simultaneous decomposition of EEG and behavioral data |

| Influence of Covariate | Indirect (post-hoc correlation) | Direct (guides the decomposition) |

| Reported Outcome | Standard correlations | Stronger, more significant, and robust correlations |

| Key Advantage | Simplicity and established methodology | Enhanced ability to uncover brain-behavior relationships |

Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function / Application in ICA Research |

|---|---|

| EEG System (19-channel) | Records raw brain electrical activity from the scalp according to the 10-20 system, providing the primary input signal for decomposition [30] [31]. |

| Woodcock-Johnson (WJ) Tests | A standardized battery of cognitive assessments used to obtain behavioral covariates (e.g., General Intellectual Ability score) for integration with EEG data [30] [31]. |

| NeuroGuide Software | Quantitative EEG (qEEG) analysis software used for automated artifact rejection, filtering, and computation of functional connectivity metrics like lagged coherence [30]. |

| Infomax ICA Algorithm | A specific ICA algorithm used to decompose mixed signals into statistically independent components by maximizing the information transfer between mixed signals and components [30]. |

| AMICA Algorithm | (Adaptive Mixture ICA) A powerful ICA algorithm that includes an iterative, model-driven sample rejection function to improve decomposition quality by removing artifactual samples during computation [4]. |

| swLORETA | (Standardized weighted Low-Resolution Electromagnetic Tomography) Used for source localization and calculating functional connectivity metrics between brain regions from EEG signals [30]. |

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing Poor ICA Decomposition Quality in High-Motion Data

Problem: Independent components appear non-dipolar or fail to separate neural activity from motion artifacts during running/jogging paradigms.

Symptoms:

- Low dipolarity scores in ICA components

- High power remains at gait frequency and harmonics after processing

- Failure to recover expected ERP components (e.g., missing P300 effects)

Solutions:

Step 1: Implement Advanced Preprocessing Use either iCanClean or Artifact Subspace Reconstruction (ASR) before ICA decomposition:

- iCanClean with pseudo-reference signals: Apply with R² threshold of 0.65 and 4-second sliding windows when dual-layer noise sensors are unavailable [13].

- Artifact Subspace Reconstruction: Use k-threshold values between 10-30 to avoid over-cleaning; k=20-30 recommended for balancing artifact removal and signal preservation [13].

Step 2: Validate Component Quality

- Calculate dipolarity metrics for brain independent components

- Check power reduction at gait frequency (1.5-3 Hz) and harmonics

- Verify recovery of expected ERP latencies in stimulus-locked tasks [13]

Step 3: Optimize Dimensionality Selection Use CW_ICA method to determine optimal component number:

- Divide mixed signals into two blocks

- Apply ICA separately to each block

- Calculate rank-based correlation matrix between blocks

- Select component count that maximizes meaningful separation [2]

Table 1: Performance Comparison of Motion Artifact Removal Approaches

| Method | ICA Dipolarity | Gait Frequency Power Reduction | ERP Component Recovery | Key Parameters |

|---|---|---|---|---|

| iCanClean (pseudo-reference) | High | Significant | P300 congruency effects recovered | R²=0.65, 4s window |

| ASR | Moderate-High | Significant | Similar latency to standing task | k=20-30 |

| Traditional ICA Only | Low | Minimal | Poor or inconsistent | - |

Guide 2: Automated Artifact Classification Failure

Problem: Automated classifiers misclassify neural components as artifactual or fail to identify motion-contaminated components.

Symptoms:

- Loss of neurologically relevant signals

- Residual artifacts in cleaned data

- Poor generalization across different motion paradigms

Solutions:

Step 1: Feature Selection Optimization Implement optimized feature subset including:

- Frequency domain features: Spectral characteristics in δ (1-3Hz), θ (4-7Hz), and α (8-13Hz) bands

- Spatial domain features: Component scalp topography patterns

- Temporal domain features: Time-course characteristics and correlations [34]

Step 2: Channel Attention Mechanism For OPM-MEG or high-density EEG:

- Employ randomized dependence coefficient (RDC) to evaluate component-reference correlation

- Integrate global average pooling (GAP) and global max pooling (GMP) layers

- Use correlation thresholds to construct training datasets [35]

Step 3: Cross-Paradigm Validation

- Test classifier on data from different experimental paradigms

- Verify preservation of discriminant information for target applications

- Ensure <10% mean squared error compared to expert ratings [34]

Frequently Asked Questions

Q1: How do I determine the optimal number of independent components for my high-motion EEG dataset?

A: The CW_ICA method provides robust dimensionality determination:

- Split your mixed signals into two blocks

- Apply ICA separately to each block

- Compute Spearman correlations between components from different blocks

- Use the column-wise maximum rank-based correlations to determine optimal component count

- This method avoids both under-decomposition (too few components) and over-decomposition (too many components) that can obscure meaningful neural signals [2]

Q2: What are the practical differences between using iCanClean with pseudo-reference signals versus actual dual-layer sensors?

A: The key differences are:

- Dual-layer sensors: Physically separate noise references provide cleaner artifact separation; ideal for new experimental setups

- Pseudo-references: Created by applying notch filters to raw EEG to identify noise subspaces; practical for existing datasets where physical noise sensors weren't recorded

- Effectiveness: Both approaches significantly improve ICA dipolarity and reduce motion artifacts, though dual-layer sensors may provide marginal advantages in extreme motion conditions [13]

Q3: Will aggressive artifact removal damage neural signals of interest?

A: Over-cleaning is a valid concern. Evidence suggests:

- Using ASR with k-values below 10 may remove neural signals along with artifacts

- iCanClean with R² thresholds around 0.65 preserves neural signals while removing motion artifacts

- Always validate against known neural markers (e.g., P300 in flanker tasks) to ensure neural preservation [13]

- The combination of artifact correction and rejection doesn't significantly improve decoding performance in most cases, but correction remains essential to minimize artifact-related confounds [36]

Q4: How can I validate that my automated classification is working properly?

A: Implement a multi-metric validation approach:

- Quantitative metrics: Component dipolarity, power reduction at gait frequencies

- Functional metrics: Recovery of expected ERP components (latency and amplitude effects)

- Decoding metrics: Preservation of task-relevant neural information for MVPA

- Comparative metrics: Performance relative to expert manual classification (target <10% MSE) [13] [34]

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Tool/Resource | Function/Purpose | Implementation Notes |

|---|---|---|

| iCanClean Algorithm | Motion artifact removal using reference noise signals | Use pseudo-reference signals when dual-layer sensors unavailable; R²=0.65 optimal |

| Artifact Subspace Reconstruction (ASR) | identifies and removes high-variance artifact components | k=20-30 optimal; avoid k<10 to prevent over-cleaning |

| CW_ICA Method | Determines optimal ICA dimensionality | Prevents under/over-decomposition using rank-based correlations |

| Automated Component Classifier | Identifies artifactual components using spatial, spectral, and temporal features | Linear classifiers with optimized feature subsets achieve <10% MSE vs. experts |

| ICLabel | ICA component classification | Not trained on mobile EEG; limited for high-motion paradigms |

| Stationary Wavelet Transform + Savitzky-Golay | Motion artifact mitigation in physiological signals | Preserves critical morphological features (e.g., QRS complex in ECG) |

Experimental Protocol: Validating Artifact Removal in High-Motion Paradigms

Purpose: To evaluate the effectiveness of automated artifact classification and removal methods during high-motion conditions.

Materials:

- Mobile EEG system with minimum 32 channels

- Flanker task or similar cognitive paradigm

- Treadmill or open space for locomotor tasks

Procedure:

Data Acquisition:

- Record EEG during both static standing and dynamic (jogging/running) conditions

- Implement identical cognitive tasks in both conditions

- Include sufficient trials for ERP analysis (minimum 800 trials recommended)

Preprocessing Pipeline:

- Apply either iCanClean (R²=0.65, 4s window) or ASR (k=20)

- Band-pass filter 1.5-40 Hz

- Segment data into epochs time-locked to stimuli

ICA Decomposition:

- Determine optimal component count using CW_ICA

- Perform ICA using FastICA, Infomax, or JADE algorithms

Automated Component Classification:

- Extract spatial, spectral, and temporal features

- Apply pre-trained linear classifier with optimized feature subset

- Remove components classified as artifactual

Validation Metrics:

- Calculate dipolarity of remaining components

- Compare power spectral density at gait frequency pre-/post-cleaning

- Extract ERP components and compare latency/amplitude to static condition

- Verify expected cognitive effects (e.g., P300 congruency effects)

Expected Outcomes:

- Significant reduction in power at gait frequency and harmonics

- Recovery of ERP components with similar latency to static condition

- Preservation of expected cognitive modulation (e.g., larger P300 for incongruent stimuli)

- High dipolarity scores in retained components [13]

Workflow Visualization

Workflow Description: This diagram illustrates the comprehensive pipeline for processing high-motion EEG data, from acquisition through validation. The color-coded sections represent major processing stages: data acquisition (white), preprocessing (green), ICA and component classification (blue), and validation (red). The dashed lines indicate optional feedback loops for parameter optimization based on validation metrics.

Advanced Technical Notes

For researchers implementing these methods in novel paradigms:

Cross-Paradigm Generalization: When applying classifiers trained on one paradigm to another:

- Test on data from different experimental designs (e.g., auditory ERP, motor imagery BCI)

- Verify performance maintains <15% mean squared error compared to expert ratings

- Ensure preservation of task-relevant discriminant information [34]

Computational Efficiency: CW_ICA provides significant computational advantages over bootstrap resampling or cross-validation methods while maintaining robustness for signals with different characteristics [2].

Real-Time Applications: For BCI or neurofeedback applications requiring real-time processing:

- iCanClean and ASR can be implemented in online pipelines

- Automated classification enables immediate artifact removal

- Maintains signal quality without the latency of manual component inspection [13] [34]

Solving Real-World Problems: An Optimization Guide for Challenging Data

Addressing the Specific Challenge of Motion Artifacts in Mobile EEG

Frequently Asked Questions

What are the main types of motion artifacts in mobile EEG? Motion artifacts originate from two primary sources: electrode movement and cable movement. Electrode movement artifacts occur when changes in pressure on the gel layer modify the electrode-tissue interface, altering the electrode's offset. Cable movement artifacts result from changing capacitive coupling as cables move within an electrical field. These artifacts are particularly challenging because their frequency band often overlaps with useful EEG signals, they may be uncorrelated in electrode space, and both EEG and artifacts are non-stationary [37] [38].

Why are motion artifacts particularly problematic for ICA decomposition? Motion artifacts reduce ICA decomposition quality by contaminating the ability to identify maximally independent sources. The continued presence of large motion artifacts can prevent ICA from effectively separating brain signals from artifactual sources. Furthermore, standard component classification tools like ICLabel have not been trained on mobile EEG data and do not adapt to the present dataset, making them less reliable for identifying motion-related artifacts [13] [39].

Which preprocessing methods are most effective for motion artifact removal before ICA? Research comparing artifact removal approaches during running found that iCanClean (using pseudo-reference noise signals) and Artifact Subspace Reconstruction (ASR) were particularly effective. Both methods led to the recovery of more dipolar brain independent components, significantly reduced power at the gait frequency and its harmonics, and produced ERP components similar in latency to those identified in stationary tasks. iCanClean was somewhat more effective than ASR in certain analyses, particularly for capturing expected P300 ERP congruency effects [13] [39].

Troubleshooting Guides

Problem: Poor ICA Decomposition Quality During Movement