Optimizing High-Density EEG: A Comprehensive Guide to Channel Selection Algorithms for Research and Clinical Applications

This article provides a thorough examination of channel selection algorithms for high-density Electroencephalography (HD-EEG) montages, a critical step for enhancing signal quality, reducing computational complexity, and improving the accuracy of...

Optimizing High-Density EEG: A Comprehensive Guide to Channel Selection Algorithms for Research and Clinical Applications

Abstract

This article provides a thorough examination of channel selection algorithms for high-density Electroencephalography (HD-EEG) montages, a critical step for enhancing signal quality, reducing computational complexity, and improving the accuracy of downstream applications. Tailored for researchers, scientists, and drug development professionals, the content explores the fundamental principles, mathematical foundations, and diverse methodological approaches for electrode selection. It delves into advanced optimization and troubleshooting strategies, including automated algorithms and handling of artifacts, and offers a rigorous framework for validating and comparing algorithm performance. By synthesizing the latest research, this guide serves as a vital resource for optimizing EEG-based studies and clinical tools, from motor imagery classification and epilepsy source localization to neuromarketing and pharmaceutical efficacy testing.

Understanding HD-EEG Channel Selection: Principles, Challenges, and Mathematical Foundations

Frequently Asked Questions

1. Why is channel selection necessary if I already have a high-density EEG system? Channel selection is critical for several reasons. It helps to reduce computational complexity and avoid model overfitting by eliminating redundant data, which can ultimately lead to higher classification accuracy [1]. Furthermore, selecting an optimal subset of channels significantly decreases setup time, making experiments more efficient and improving the practicality of HD-EEG, especially in repeated-measures or clinical settings [1].

2. What is a typical performance trade-off when reducing the number of EEG channels? Research shows that it is possible to use a surprisingly small number of optimally selected channels while maintaining high performance. For instance, one study found that for a single source localization problem, optimal subsets of just 6 to 8 electrodes could achieve equal or better accuracy than using a full HD-EEG montage of 231 channels in a majority of cases [2]. In motor imagery tasks, excellent performance can often be achieved using only 10–30% of the total channels [1].

3. How do electrode placement errors affect my data? Inaccurate electrode positioning is not a trivial issue. One study demonstrated that an average electrode localization error of 6.8 mm, which can occur with common electromagnetic digitizers, led to a severe degradation in beamformer performance. This can significantly reduce the output signal-to-noise ratio (SNR) and potentially cause a failure to detect low-SNR signals from deeper brain structures [3].

4. What are the main methods for selecting an optimal subset of channels? Two common approaches are:

- Algorithmic and Classification-Based Methods: These techniques use defined criteria to select channel subsets that maximize performance for a specific task, such as motor imagery classification. They can be based on spatial filters, correlation metrics, or sequential selection [1].

- Evolutionary Optimization Methods: Methods like the Non-dominated Sorting Genetic Algorithm II (NSGA-II) formulate channel selection as a multi-objective optimization problem. It concurrently minimizes the number of electrodes and the source localization error, automatically finding optimal combinations for a given brain activity [2].

5. I'm getting a weird signal from my reference electrode. What should I check? A problematic reference or ground electrode can affect all channels. Follow this systematic troubleshooting guide [4]:

- First, check electrode connections: Ensure all plugs are secure, re-clean and re-apply the problematic electrode, and add conductive gel or pressure if using a cap.

- Second, check hardware and software: Restart the acquisition software, then restart the amplifier and computer. Check that all necessary cables (e.g., Ethernet) are firmly connected.

- Third, check the headbox: If possible, swap out the headbox with a known working one to see if the problem persists.

- Finally, check participant-specific factors: Remove all metal accessories from the participant. Try placing the ground electrode on a different location (e.g., the participant's hand, sternum, or even the experimenter's hand) to see if the signal improves. This can help identify issues like "oversaturation" [4].

Troubleshooting Guides

Guide 1: Troubleshooting Common EEG Signal Quality Issues

- Problem: Poor signal quality across multiple or all channels.

- Solution: A systematic approach is required to isolate the cause [4].

- Inspect Electrodes/Cap: Verify all connections from the cap to the amplifier. Re-apply electrodes with proper skin preparation (cleaning and mild abrasion). For caps, ensure no hair is under the sensors and check for conductive gel bridging between electrodes.

- Check Hardware & Software: Restart the acquisition software. If the issue continues, perform a full restart of the computer and the amplifier unit.

- Test the Headbox: Swap the headbox with another one from a working system. If the problem is resolved, the original headbox may be faulty.

- Isolate Participant/Electrode Interaction: Remove participant jewelry and metal objects. Sweep the room for potential electromagnetic interference. As a last resort, try different ground electrode placements (e.g., hand, sternum) to rule out individual skin conductivity issues [4].

Guide 2: Implementing an Automated Channel Selection Protocol

This guide outlines the methodology for using a Genetic Algorithm (GA) to find optimal low-density channel subsets for source localization [2].

- Objective: To identify the minimum number of EEG electrodes and their optimal locations that retain the source localization accuracy of a full high-density (HD-EEG) montage for a specific neural source.

- Experimental Protocol:

- Inputs Required: You will need the EEG/ERP data containing the source activity to analyze, a realistic head model, and the ground-truth location of the neural source (for validation).

- Optimization Loop: The process combines the NSGA-II algorithm with a source reconstruction method (e.g., wMNE, sLORETA) [2].

- Workflow: The algorithm generates candidate electrode subsets, which are weighted and used to perform source reconstruction. The localization error for each subset is calculated and fed back to the algorithm, which then evolves the population of candidates to find the best solutions [2].

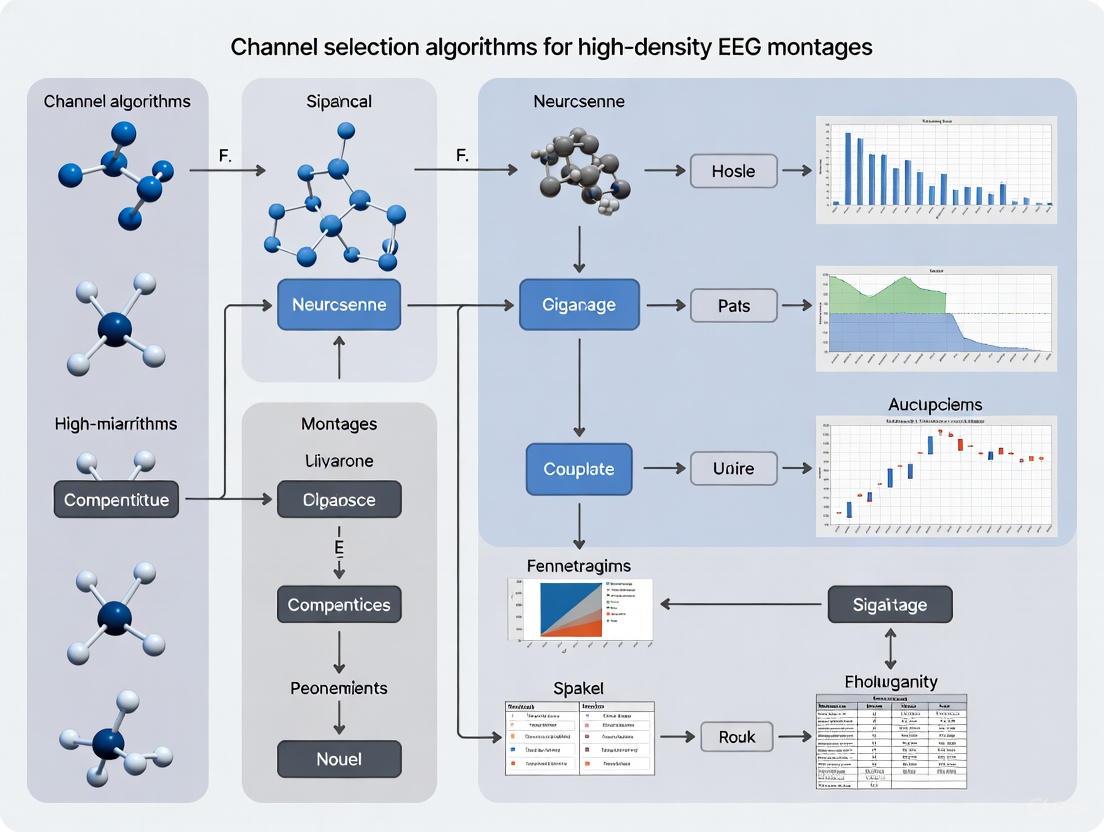

The diagram below illustrates this automated workflow.

Guide 3: Minimizing Electrode Placement Error

- Problem: Inaccurate coregistration of EEG electrode positions with the subject's MRI leads to source localization errors [3].

- Solution:

- Use High-Accuracy Digitization: When possible, use high-accuracy 3D digitization methods (e.g., optical scanners) instead of traditional electromagnetic digitizers, which can have higher mean errors [3].

- Careful Fiducial Registration: When using an electromagnetic digitizer, take extreme care in manually annotating the fiducial locations (nasion, pre-auricular points) on the subject's MRI. This step is prone to inter-experimenter variability [3].

- Incorporate Headshape Data: Acquiring a digitized headshape along with electrode positions can help reduce coregistration error by improving the fit to the MRI-derived scalp surface [3].

Data and Performance Tables

Table 1: Performance of Optimized Low-Density Electrode Subsets vs. Full HD-EEG

This table summarizes findings from a study that used a Genetic Algorithm to find optimal channel combinations for source localization [2].

| Number of Sources | Number of Optimized Electrodes | Performance vs. 231-Channel HD-EEG (Synthetic Data) | Performance vs. 231-Channel HD-EEG (Real Data) |

|---|---|---|---|

| Single Source | 6 | Equal or better accuracy in >88% of cases | Equal or better accuracy in >63% of cases |

| Single Source | 8 | Equal or better accuracy in >88% of cases | Equal or better accuracy in >73% of cases |

| Three Sources | 8 | Equal or better accuracy in 58% of cases | Not Reported |

| Three Sources | 12 | Equal or better accuracy in 76% of cases | Not Reported |

| Three Sources | 16 | Equal or better accuracy in 82% of cases | Not Reported |

Table 2: Impact of Electrode Coregistration Error on Beamformer Performance

This table compares the coregistration accuracy of different 3D digitization methods and their impact on the signal-to-noise ratio (SNR) during source reconstruction, using highly accurate fringe projection scanning as ground truth [3].

| Digitization Method | Mean Coregistration Error | Impact on Beamformer Output SNR |

|---|---|---|

| "Flying Triangulation" Optical Sensor | 1.5 mm | Less severe degradation |

| Electromagnetic Digitizer (Polhemus Fastrak) | 6.8 mm | Severe degradation (penalties of several decibels) |

The Scientist's Toolkit

Table 3: Essential Reagents and Materials for HD-EEG Channel Selection Research

| Item | Function in Research |

|---|---|

| High-Density EEG System (64-256 channels) | Provides the high-resolution spatial data required as a baseline for evaluating and selecting optimal channel subsets [5]. |

| 3D Electrode Digitizer | Accurately measures the 3D spatial coordinates of each EEG electrode on the subject's head, which is crucial for building accurate forward models for source localization [3]. |

| Realistic Head Model | A computational model (often 3-layer BEM) that estimates how electrical currents in the brain are projected to the scalp electrodes. It is a core component for source reconstruction and channel selection optimization [2]. |

| Genetic Algorithm Optimization Toolbox | Software library (e.g., NSGA-II) used to automate the search for optimal electrode subsets by minimizing both channel count and localization error [2]. |

| Source Reconstruction Software | Algorithms such as weighted Minimum Norm Estimation (wMNE), sLORETA, or Multiple Sparse Priors (MSP) used to estimate the location of brain sources from scalp potentials [2]. |

Troubleshooting Guide & FAQs

This section addresses common practical challenges in research on channel selection algorithms for high-density EEG montages.

Frequently Asked Questions

Q1: My classification accuracy drops after channel selection. Is this normal, and how can I address it? A drop in accuracy can occur if the channel selection algorithm removes channels containing neurophysiologically relevant information. This is not the desired outcome. To address it:

- Re-evaluate Selection Criteria: Channel selection should aim to discard only redundant or noisy channels. Ensure your evaluation criterion (e.g., a specific feature's power or a mutual information measure) is strongly correlated with your application's goal (e.g., seizure detection, motor imagery) [6].

- Validate the Subset: Use a separate validation set to test the performance of the selected channel subset before finalizing it. Consider using wrapper or embedded techniques, which use a classification algorithm itself to evaluate channel subsets, potentially leading to better performance than filter methods [6].

Q2: How can I effectively manage different types of artifacts in my high-density EEG data before channel selection? Artifacts, if not handled, can misguide channel selection algorithms. Different artifacts require specific strategies [7]:

- Ocular & Muscular Artifacts: Techniques like wavelet transforms and Independent Component Analysis (ICA) are frequently used. For a modern, end-to-end approach, deep learning models like the Artifact Removal Transformer (ART) can simultaneously address multiple artifact types in multichannel data [8].

- Motion & Instrumental Artifacts: ASR-based (Artifact Subspace Reconstruction) pipelines are widely applied. Consider using auxiliary sensors like Inertial Measurement Units (IMUs) to enhance detection under movement conditions, though these are currently underutilized [7].

Q3: My computational resources are overwhelmed by high-density data. What are my options? This is a primary reason to employ channel selection. The process reduces the data dimensionality for subsequent processing [6].

- Use Filtering Techniques: For high speed and scalability, use filtering techniques for channel selection. These methods use an independent evaluation criterion (like a distance measure) and are not tied to a computationally intensive classifier [6].

- Leverage Public Data: When developing new algorithms, use public datasets to benchmark your methods and support reproducibility, reducing the need for constant, resource-heavy new data collection [7].

Q4: What is the practical difference between Filter, Wrapper, and Embedded channel selection methods? The table below summarizes the key differences based on their evaluation approach [6]:

Table 1: Comparison of Channel Selection Evaluation Techniques

| Technique | Evaluation Method | Key Advantage | Key Disadvantage |

|---|---|---|---|

| Filter | Uses an independent measure (e.g., distance, information) | High speed, classifier-independent, scalable | Lower accuracy, ignores channel combinations |

| Wrapper | Uses a specific classification algorithm's performance | Higher accuracy, considers channel interactions | Computationally expensive, prone to overfitting |

| Embedded | Selection is part of the classifier's learning process | Good balance of performance and speed, less overfitting | Tied to a specific classifier's mechanics |

Artifact Management Protocols

Protocol: Handling Ocular and Muscular Artifacts using ICA and Wavelet Transforms

Application: This protocol is suited for the initial cleaning of high-density EEG data to prepare it for channel selection and feature extraction [7].

Methodology:

- Data Preparation: Apply a band-pass filter (e.g., 1-40 Hz) to the raw data.

- Independent Component Analysis (ICA): Run ICA on the filtered data to separate it into statistically independent components.

- Component Classification: Identify artifact-laden components based on their temporal, spectral, and topographic characteristics (e.g., a component with high power in the frontal electrodes and time-locked to eye blinks is likely an ocular artifact).

- Artifact Removal: Remove the identified artifact components from the data.

- Wavelet-Augmented Cleaning (Optional): For residual, non-stationary muscular artifacts, apply a wavelet transform (e.g., using a Daubechies wavelet) to the reconstructed data. Identify and threshold coefficients associated with high-frequency, short-duration bursts typical of muscle noise.

- Signal Reconstruction: Reconstruct the clean EEG signal from the remaining wavelet coefficients.

The Scientist's Toolkit

Table 2: Essential Research Reagents & Materials for EEG Channel Selection Research

| Item / Solution | Function / Explanation |

|---|---|

| High-Density EEG System | Acquisition system with a large number of electrodes (e.g., following the 10-20 system or denser). Provides the high-dimensional data input required for channel selection research [6]. |

| Public EEG Datasets | Pre-recorded, often annotated datasets (e.g., for seizure, motor imagery). Crucial for algorithm development, benchmarking, and ensuring reproducibility without new costly acquisitions [7]. |

| Independent Component Analysis (ICA) | A blind source separation technique. Used as a core method for isolating and removing physiological artifacts like eye blinks and heart signals from the EEG data before channel selection [7]. |

| Artifact Removal Transformer (ART) | A deep learning model for EEG denoising. An emerging end-to-end solution that uses a transformer architecture to remove multiple artifact types simultaneously, reconstructing a cleaner multichannel signal [8]. |

| Wrapper-Based Evaluation Classifier | A classification algorithm (e.g., SVM, LDA) used within a wrapper technique. It directly evaluates the performance of a selected channel subset, helping to identify the most relevant channels for a specific task [6]. |

Experimental Workflows and Data

Channel Selection Algorithm Workflow

The following diagram illustrates the general workflow for selecting an optimal subset of channels from a high-density EEG montage, which helps mitigate computational burden and the curse of dimensionality.

Artifact Removal Impact Assessment

To quantify the effect of artifact removal on subsequent analysis, the following performance metrics are commonly used, especially when a clean reference signal is available [7].

Table 3: Key Metrics for Assessing Artifact Management Performance

| Performance Metric | Description | Typical Use Case |

|---|---|---|

| Accuracy | The degree to which the processed signal matches a clean reference. Reported by 71% of studies in a systematic review when a clean ground truth is available [7]. | Validating the fidelity of the signal reconstruction after artifact removal. |

| Selectivity | The ability of an algorithm to remove artifacts while preserving the underlying neural signal. Assessed by 63% of studies with respect to the physiological signal of interest [7]. | Evaluating whether neurophysiologically relevant information is retained. |

| Mean Squared Error (MSE) | A direct measure of the difference between the processed and a clean reference signal. Used in comprehensive validations of deep learning models like ART [8]. | Benchmarking the performance of different denoising algorithms. |

| Signal-to-Noise Ratio (SNR) | Measures the level of the desired neural signal relative to background noise and artifacts. A key metric for evaluating the effectiveness of artifact removal transformers [8]. | Quantifying the improvement in signal quality after processing. |

Core Mathematical Concepts in EEG Channel Selection

What is the fundamental role of covariance matrices in HD-EEG analysis?

Covariance matrices are fundamental in quantifying the spatial relationships and dependencies between signals from different EEG channels. In spatial filtering and source localization, the covariance matrix of the array output data is critical. For an array with M sensor elements, the sample covariance matrix is computed from the multichannel EEG data. Eigenvalue decomposition (EVD) of this covariance matrix is a key step in subspace methods like MUSIC, which separates the data into signal and noise subspaces to estimate signal parameters [9]. The performance of adaptive spatial filters and subspace methods is highly dependent on having a sufficient number of samples to accurately estimate the covariance matrix. Performance degrades when the number of samples is less than the number of array sensor elements, leading to rank deficiency problems when inverting the matrix, particularly with coherent signal sources [9].

How does spatial filtering improve source localization in HD-EEG?

Spatial filtering techniques act as beamformers that process signals from sensor arrays in the presence of interference and noise. The core concept involves applying weight vectors to the incoming data to optimize performance under various constraints [9]. The Minimum Variance Distortionless Response (MVDR) beamformer is a well-known adaptive spatial filtering approach that is data-dependent [9]. A key theoretical advancement is the concept of an "optimal spatial filter" that can completely eliminate noise while simultaneously separating signals arriving from different directions in space [9]. The Spatial Signal Focusing and Noise Suppression (SSFNS) algorithm operationalizes this concept by formulating the solution for the optimal spatial filter as an optimization problem solved through iterative constraint introduction [9]. This approach enables Direction-of-Arrival (DOA) estimation even under demanding conditions including single-snapshot scenarios, low signal-to-noise ratio, coherent sources, and unknown source counts [9].

What optimization criteria are used for channel selection?

Channel selection algorithms employ various optimization criteria to identify optimal electrode subsets. These approaches can be categorized into five main evaluation techniques [6]:

Table: Evaluation Techniques for EEG Channel Selection

| Technique | Evaluation Basis | Advantages | Limitations |

|---|---|---|---|

| Filtering | Independent measures (distance, information, dependency, consistency) [6] | High speed, classifier independence, scalability [6] | Lower accuracy, ignores channel combinations [6] |

| Wrapper | Classification algorithm performance [6] | Potentially higher accuracy | Computationally expensive, prone to overfitting [6] |

| Embedded | Criteria from classifier learning process [6] | Good interaction between selection and classification, less prone to overfitting [6] | Tied to specific classifier |

| Hybrid | Combination of independent measures and mining algorithms [6] | Leverages strengths of both approaches | Increased complexity |

| Human-based | Specialist experience and feedback [6] | Incorporates domain knowledge | Subjective, expertise-dependent |

Multi-objective optimization approaches have been successfully applied to channel selection, particularly the Non-dominated Sorting Genetic Algorithm II (NSGA-II), which concurrently minimizes both localization error and the number of required EEG electrodes [2]. This method searches for Pareto-optimal solutions that provide the best trade-offs between these competing objectives.

Troubleshooting Common Experimental Issues

How can researchers address spatial under-sampling in standard EEG montages?

Standard low-density EEG (LD-EEG) montages often suffer from spatial under-sampling, particularly for brain regions below the circumferential limit of standard coverage. Case studies demonstrate that "whole head" HD-EEG electrode placement significantly improves visualization of inferior and medial brain surfaces [10]. In one case, a 6-year-old boy with possible left occipital interictal epileptiform discharges (IEDs) showed only minimal evidence on standard LD-EEG, with activity evident in just one channel (O1) [10]. However, HD-EEG with 128 channels revealed a well-defined occipital IED with an expansive field to electrodes below the inferior circumferential limit of standard LD-EEG [10]. Similarly, in a 67-year-old man with longstanding epilepsy, HD-EEG provided improved localization of IEDs in frontal basal regions that were poorly captured by standard montages [10]. To mitigate spatial under-sampling, researchers should consider HD-EEG with expanded coverage beyond the standard 10-20 system, particularly when investigating temporal, inferior frontal, or occipital regions.

What approaches overcome the problem of falsely generalized discharges?

Falsely generalized IEDs can be accurately lateralized and localized using HD-EEG with precise coregistration to structural imaging. In an 11-year-old boy with tuberous sclerosis complex and refractory epilepsy, standard LD-EEG revealed rare IEDs with maximal amplitude at the midline (Cz) and no evident lateralization [10]. However, visual analysis of HD-EEG recording showed a clear left hemispheric predominance [10]. Electrical Source Imaging (ESI) of the IED peaks using HD-EEG data localized to a single large calcified tuber in the left posterior cingulate gyrus, which was not achievable with LD-EEG data [10]. This accurate localization is particularly important for sources close to the midline, where LD-EEG spatial resolution is insufficient for lateralization. The methodology requires:

- HD-EEG recording with sufficient channels (typically 64-128)

- Precise digitization of electrode positions

- Accurate co-registration with structural MRI (e.g., T1-weighted MEMPRAGE)

- Electrical Source Imaging using appropriate forward models (e.g., boundary element method)

- Source analysis software (e.g., MNE-C with Freesurfer) [10]

How can computational complexity be managed in HD-EEG analysis?

The computational burden of HD-EEG processing can be addressed through optimized channel selection and efficient algorithms. The high-dimensional nature of structural models presents significant computational challenges [11]. Exploring all possible electrode combinations for an optimal subset requires solving the inverse problem 2^C-1 times for a single source case with C channels [2]. For 128 electrodes, this means 3.4×10^38 computations, which is infeasible [2]. The NSGA-II algorithm reduces this computational cost to approximately O(P^2) where P is the population size [2]. This approach has been successfully applied to identify minimal electrode subsets that maintain accurate source localization while dramatically reducing computational requirements [2].

Experimental Protocols & Methodologies

Protocol for optimal electrode subset selection using NSGA-II

The NSGA-II-based methodology for identifying optimal electrode subsets involves a systematic multi-stage process [2]:

Optimal Electrode Selection Workflow

The optimization process combines source reconstruction algorithms with the multi-objective genetic algorithm. Key implementation considerations include:

- Input Requirements: EEG/ERP data for the source(s) to analyze, appropriate head model, and ground-truth source locations for error calculation [2]

- Algorithm Configuration: Population size, termination criteria, and fitness function definition based on localization error metrics [2]

- Inverse Problem Solution: Integration with source reconstruction algorithms (wMNE, sLORETA, MSP) for fitness evaluation [2]

- Validation: Evaluation on both synthetic and real EEG datasets with known ground truth [2]

Experimental results demonstrate that optimal subsets with only 6-8 electrodes can attain equal or better accuracy than HD-EEG with 200+ channels for single source localization in 63-88% of cases [2].

Protocol for HD-EEG with electrical source imaging

The methodology for clinical HD-EEG with source localization involves specific technical procedures [10]:

HD-EEG Source Imaging Protocol

This protocol requires specific technical resources and methodological steps:

- HD-EEG Acquisition: Minimum 64-channel system (128+ preferred), 1000Hz sampling rate, simultaneous video recording [10]

- Electrode Localization: Precise 3D digitization of electrode positions using systems like Fastrak (Polhemus Inc.), alignment using nasion and auricular points as fiducial markers [10]

- MRI Co-registration: Structural imaging (T1-weighted MEMPRAGE), cortical surface reconstruction using Freesurfer, three-layer Boundary Element Model generation using watershed algorithm [10]

- Source Analysis: IED identification and averaging (minimum 10 recommended), Electrical Source Imaging using software packages (MNE-C, Geosource), localization to anatomical features [10]

The Scientist's Toolkit: Research Reagents & Materials

Table: Essential Resources for HD-EEG Channel Selection Research

| Resource Category | Specific Examples | Function/Purpose | Technical Specifications |

|---|---|---|---|

| EEG Systems | 128-channel ANT-neuro waveguard cap with Natus amplifier [10] | HD-EEG data acquisition | 1000Hz sampling rate, 128+ channels [10] |

| Electrode Localization | Fastrak 3D digitizer (Polhemus Inc.) [10] | Precise electrode positioning | 3D spatial coordinate measurement [10] |

| Structural Imaging | T1-weighted multi echo MEMPRAGE [10] | Anatomical reference | High-resolution structural data for source modeling [10] |

| Source Analysis Software | MNE-C package [10], Geosource 2.0 [10] | Electrical Source Imaging | Cortical surface reconstruction, forward model computation, source estimation [10] |

| Optimization Algorithms | Non-dominated Sorting Genetic Algorithm II (NSGA-II) [2] | Multi-objective channel selection | Minimizes localization error and channel count simultaneously [2] |

| Reference Datasets | Synthetic EEG with known sources [2], Real EEG with intracranial validation [2] | Method validation | Ground truth for performance evaluation [2] |

Advanced Technical Reference

Quantitative performance of optimized electrode subsets

Empirical studies provide quantitative evidence for the effectiveness of optimized channel selection:

Table: Performance of Optimized Electrode Subsets for Source Localization

| Scenario | Electrode Count | Performance Comparison to HD-EEG | Success Rate | Notes |

|---|---|---|---|---|

| Single Source (Synthetic) | 6 electrodes | Equal or better accuracy than 231 electrodes | 88% of cases [2] | Optimized for specific source |

| Single Source (Real EEG) | 6 electrodes | Equal or better accuracy than 231 electrodes | 63% of cases [2] | Validation with real data |

| Single Source (Synthetic) | 8 electrodes | Equal or better accuracy than 231 electrodes | 88% of cases [2] | Improved consistency |

| Single Source (Real EEG) | 8 electrodes | Equal or better accuracy than 231 electrodes | 73% of cases [2] | Better real-data performance |

| Multiple Sources (3, Synthetic) | 8 electrodes | Equal or better accuracy than 231 electrodes | 58% of cases [2] | More challenging scenario |

| Multiple Sources (3, Synthetic) | 12 electrodes | Equal or better accuracy than 231 electrodes | 76% of cases [2] | Improved multi-source performance |

| Multiple Sources (3, Synthetic) | 16 electrodes | Equal or better accuracy than 231 electrodes | 82% of cases [2] | Near-HD performance with far fewer channels |

These results demonstrate that optimized low-density subsets can potentially outperform standard HD-EEG montages for specific localization tasks, while dramatically reducing computational requirements and experimental complexity [2]. The key insight is that electrode positioning is more critical than absolute electrode count, with optimal placement being highly dependent on the specific neural sources of interest.

Frequently Asked Questions

What are the primary goals of channel selection in high-density EEG research? The core objectives are threefold: to reduce computational complexity by lowering data dimensionality, to improve classification accuracy by mitigating overfitting from redundant or noisy channels, and to decrease setup time, which enhances practical usability and subject comfort [1] [6].

Why is subject-specific channel selection often necessary? The optimal number and location of EEG channels vary significantly between individuals. A channel subset that works for one subject is unlikely to produce the same performance for another, due to anatomical and functional differences. Automatic subject-specific selection methods are therefore crucial for optimal performance [12].

My classification accuracy is low despite using many channels. What could be wrong? This is a classic symptom of overfitting, where your model learns noise from irrelevant channels rather than the underlying neural signal. This is a primary reason for employing channel selection. We recommend using a wrapper-based technique with your classifier (e.g., SVM, CNN) or a filter-based method like correlation analysis to identify and retain only the most informative channels for your specific task and subject [1] [6] [12].

I am getting inconsistent results when replicating a channel selection protocol. How can I troubleshoot? First, systematically rule out technical issues. Follow the signal path: check electrode/cap connections, restart acquisition software and hardware, and try a different headbox if available [4]. If the hardware is functional, ensure your protocol accounts for subject-specificity. A method that selects channels based on a population average may not be stable for an individual. Consider implementing a subject-specific selection criterion [12].

How can I evaluate if my channel selection method is successful? Success should be measured against the three core objectives. Compare your results using the selected channel subset against the full channel set using the following metrics:

- Classification Accuracy: There should be a comparable or improved accuracy rate [1] [12].

- Computational Efficiency: Measure the reduction in time and memory required for feature extraction and model training [6].

- Setup Time: The time taken to apply the electrode montage should be significantly reduced.

Troubleshooting Guides

Guide 1: Addressing Poor Classification Accuracy

Symptoms: Model performance plateaus or decreases even as you add more channels; high variance in performance across different subjects.

Methodology & Protocols: This guide utilizes a filter-based channel selection approach, which is fast, scalable, and independent of the classifier.

Protocol: Correlation-Based Channel Selection

- Select a Reference Channel: Choose a channel in the brain region known to be relevant to your task (e.g., for motor imagery, use Cz, C3, or C4 as references) [12].

- Calculate Correlation Coefficients: Compute the Pearson correlation coefficient between the time-series signals of the reference channel and every other channel.

- Set a Correlation Threshold: Retain only those channels that show a correlation above a defined threshold (e.g., 0.7) with the reference channel. This selects channels with similar task-related activity [12].

- Validate the Subset: Perform your standard classification (e.g., using Common Spatial Patterns (CSP) with an LDA or SVM classifier) on this reduced channel set and compare the accuracy to the full set.

Expected Outcome: Studies have demonstrated that this method can achieve a channel reduction of over 65% while improving classification accuracy by >5% for motor imagery tasks [12].

Guide 2: Improving Computational Efficiency

Symptoms: Long feature extraction and model training times; system runs out of memory; impractical for real-time or portable BCI applications.

Methodology & Protocols: This protocol uses a wrapper-based technique to find the smallest subset of channels that maintains performance.

Protocol: Sequential Feature Selection with a Classifier

- Subset Generation: Use a sequential search algorithm (e.g., forward selection or backward elimination) to generate candidate channel subsets.

- Subset Evaluation: For each candidate subset, perform the following:

- Extract features (e.g., band power, CSP features).

- Train and test your chosen classifier (e.g., SVM, Deep Neural Networks) using cross-validation.

- Record the classification accuracy for that subset.

- Stopping Criterion: Continue the search until adding or removing channels no longer provides a significant improvement in accuracy, or a pre-defined number of channels is reached.

- Result: The algorithm identifies a minimal channel set. Research indicates that often only 10–30% of the total channels are needed to achieve performance on par with, or even better than, using all channels [1].

Expected Outcome: A significant reduction in the dimensionality of the data, leading to faster computation and lower memory requirements, making the system more suitable for real-time use [1] [6].

Experimental Protocols for Systematic Evaluation

To rigorously evaluate any channel selection algorithm, you must measure its performance against the three defined objectives. The table below outlines key metrics and methodologies.

Table 1: Evaluation Framework for Channel Selection Algorithms

| Evaluation Objective | Core Metric | Measurement Methodology | Interpretation of Results |

|---|---|---|---|

| Classification Accuracy | Accuracy, F1-Score, Kappa | Compare classifier performance on the selected channel subset vs. the full montage using cross-validation [1] [12]. | A maintained or improved score with a smaller subset indicates successful selection of informative channels and reduced overfitting. |

| Computational Efficiency | Feature Extraction & Model Training Time | Record the time taken to extract features and train the model for the subset vs. the full channel set [6]. | A significant reduction in processing time demonstrates improved efficiency and practicality for portable systems. |

| Localization & Setup | Number of Channels, Montage Setup Time | Report the absolute number of channels selected and the estimated time saved in applying the smaller montage [1]. | Fewer channels directly translate to faster setup and improved subject comfort, enhancing the usability of the BCI. |

The Researcher's Toolkit: Essential Materials & Reagents

Table 2: Key Research Reagents and Computational Tools for EEG Channel Selection Research

| Item Name | Function / Explanation |

|---|---|

| High-Density EEG System | Acquisition hardware with 64+ channels for recording scalp potentials. Provides the raw data for channel selection algorithms. |

| EEG Cap (10-20/10-10 System) | Electrode headset with standardized placements (e.g., C3, C4, Cz) ensuring consistent and replicable data collection across subjects. |

| EEGLAB / BCILAB | A MATLAB toolbox that provides an interactive environment for processing EEG signals, including visualization, preprocessing, and ICA [13]. |

| Python (Scikit-learn, MNE) | Programming environment with libraries for implementing machine learning classifiers (SVM, LDA) and signal processing pipelines for channel evaluation [1] [12]. |

| Common Spatial Patterns (CSP) | A signal processing algorithm used to compute spatial filters that maximize the variance of one class while minimizing the variance of the other, crucial for feature extraction in MI-based BCI [12]. |

| Pearson Correlation Coefficient | A statistical measure used in filter-based channel selection to identify and retain channels with high temporal similarity to a reference channel [12]. |

Workflow Diagram: Channel Selection Evaluation

The following diagram illustrates the logical workflow and key decision points for evaluating channel selection algorithms against the three core objectives.

Channel Selection Evaluation Workflow

A Taxonomy of Channel Selection Algorithms: From CSP to Deep Learning

For researchers in neuroscience and drug development working with high-density Electroencephalography (EEG) montages, the Common Spatial Pattern (CSP) algorithm is a cornerstone technique for feature extraction in Motor Imagery (MI) based Brain-Computer Interface (BCI) systems [14]. Its primary function is to design spatial filters that maximize the variance of one class of EEG signals (e.g., imagination of left-hand movement) while minimizing the variance of the other class (e.g., right-hand movement), effectively highlighting the event-related desynchronization (ERD) and synchronization (ERS) phenomena characteristic of motor imagery [14] [15].

Despite its widespread use, the traditional CSP algorithm has notable limitations, including sensitivity to outliers, a propensity for overfitting, especially with high channel counts, and a focus limited to the spatial domain while neglecting informative, subject-specific spectral details [14] [16] [15]. To address these challenges, several powerful variants have been developed. This guide focuses on two major variants—Filter Bank CSP (FBCSP) and Sparse CSP (SCSP)—providing troubleshooting and methodological details to assist in their successful implementation for your research.

Comparison of CSP Algorithm Variants

The table below summarizes the core characteristics, strengths, and weaknesses of the standard CSP algorithm and its key variants to help you select the most appropriate method.

Table 1: Overview of Common Spatial Pattern Algorithms and Variants

| Algorithm | Core Principle | Key Advantages | Common Challenges |

|---|---|---|---|

| Common Spatial Pattern (CSP) | Finds spatial filters that maximize variance ratio between two classes [14]. | Simplicity; high performance with clean, well-defined data. | Sensitive to noise/outliers; prone to overfitting; ignores spectral information [14] [16]. |

| Filter Bank CSP (FBCSP) | Applies CSP across multiple subject-specific frequency sub-bands (e.g., within 8-30 Hz) [16] [17]. | Leverages spectral information; improves feature discrimination; allows for automated band selection. | Increased computational complexity; requires effective feature selection to avoid dimensionality explosion [16]. |

| Sparse CSP (SCSP) | Introduces sparsity constraints (e.g., L1/L2 norm) to the CSP projection matrix, forcing it to focus on the most relevant channels [17]. | Built-in channel selection; robust to noise; improves model interpretability. | Requires careful tuning of the sparsity parameter r; optimization process is computationally more intensive [17]. |

| Variance Characteristic Preserving CSP (VPCSP) | Adds a graph Laplacian-based regularization to preserve local variance and reduce abnormality in the projected features [14]. | Increases robustness of extracted features; improves classification accuracy. | Introduces an additional user-defined parameter (l, the graph connection interval) that needs tuning [14]. |

| Adaptive Spatial Pattern (ASP) | A new paradigm that minimizes intra-class energy while maximizing inter-class energy after spatial filtering, complementing CSP [15]. | Distinguishes overall energy characteristics; can be combined with CSP features (FBACSP) for enhanced performance [15]. | Requires iterative optimization (e.g., Particle Swarm Optimization), increasing computational load [15]. |

Frequently Asked Questions (FAQs) and Troubleshooting

Here are solutions to common problems encountered when implementing CSP and its variants.

FAQ 1: My CSP model is overfitting, especially with a high-density EEG montage. What can I do?

- Problem: High channel counts relative to training trials lead to models that do not generalize.

- Solutions:

- Employ Regularized CSP (RCSP): Integrate regularization techniques like ridge regression into the CSP cost function to penalize overly complex models [15].

- Use Sparse CSP (SCSP): This variant inherently performs channel selection by forcing the spatial filter to focus on a subset of the most discriminative channels, effectively reducing the feature dimensionality and mitigating overfitting [17].

- Adopt a Filter Bank Approach (FBCSP): Combine CSP with a filter bank and follow it with a robust feature selection algorithm (e.g., Mutual Information-based selection) to retain only the most informative features from the most relevant frequency bands [16].

FAQ 2: How can I improve the signal-to-noise ratio (SNR) and robustness of my CSP features?

- Problem: Features are distorted by artifacts and outliers in the EEG signal.

- Solutions:

- Implement Robust Sparse CSP (RSCSP): Replace the standard covariance matrix calculation with a more robust estimator like the Minimum Covariance Determinant (MCD). The MCD algorithm finds a subset of the data with the smallest covariance determinant, effectively ignoring outliers that would otherwise distort the spatial filter [17].

- Apply Variance Characteristic Preserving (VPCSP): This method adds a regularization term that smooths the projected feature space, reducing the impact of abnormal points and preserving the local variance structure of the signal [14].

FAQ 3: The performance of my standard CSP is suboptimal. How can I leverage frequency information?

- Problem: Standard CSP operates on a broad, pre-defined frequency band, missing subject-specific discriminative information in narrower bands.

- Solution:

- Switch to Filter Bank CSP (FBCSP): Decompose the EEG signal into multiple narrow frequency sub-bands (e.g., 4Hz bands from 8-30Hz). Extract CSP features from each sub-band independently, then use a feature selection algorithm like Mutual Information-based Best Individual Feature (MIBIF) to select the most subject-specific and discriminative features for classification [16] [17].

FAQ 4: How can I extend these methods, which are designed for two-class problems, to multiple MI tasks (e.g., left hand, right hand, and foot)?

- Problem: Standard CSP and many of its variants are fundamentally binary classifiers.

- Solution:

- Use a One-vs-Rest (OvR) or Pairwise Framework: Decompose the multi-class problem into multiple binary problems. For OvR, you train one CSP model for each class against all others. For pairwise, you train a model for every possible pair of classes. The Filter Bank Maximum a-Posteriori CSP (FB-MAP-CSP) framework has been successfully extended this way for multi-condition classification [16].

Experimental Protocols for Key CSP Variants

This section provides detailed methodologies for implementing the core variants discussed, ensuring you can replicate and adapt these approaches in your experiments.

Protocol 1: Implementing Filter Bank CSP (FBCSP) FBCSP enhances CSP by incorporating spectral filtering and selection [16] [17].

- Signal Preprocessing: Bandpass filter the raw EEG data to a wide range of interest (e.g., 8-30 Hz encompassing μ and β rhythms). Apply any necessary artifact removal (e.g., for eye movements).

- Filter Bank Decomposition: Divide the preprocessed signal into multiple overlapping or non-overlapping frequency sub-bands. A common approach is to use 4Hz bands (e.g., 8-12 Hz, 12-16 Hz, ..., 24-28 Hz).

- Spatial Filtering: For each sub-band, calculate the CSP spatial filters and extract features as per the standard algorithm. Typically, the log-variance of the first and last m projected components is taken, resulting in a 2m-dimensional feature vector per sub-band.

- Feature Selection: Concatenate the features from all sub-bands. Use a feature selection algorithm (e.g., Mutual Information-based Best Individual Feature - MIBIF) to select the most discriminative features for the classification task.

- Classification: Train a classifier (e.g., Linear Discriminant Analysis - LDA) on the selected feature set.

The following workflow diagram illustrates the FBCSP process:

Protocol 2: Implementing Sparse CSP (SCSP) SCSP introduces sparsity to the spatial filters for automatic channel selection and improved robustness [17].

- Covariance Matrix Calculation: Compute the normalized covariance matrices

C₁andC₂for the two classes of EEG trials, as in standard CSP. - Formulate Optimization Problem: The SCSP objective function modifies the standard CSP problem by incorporating a sparsity penalty. It can be represented as:

min_W (1-r) * [∑_{i=1}^m W_i C₁ W_i^T + ∑_{i=m+1}^{2m} W_i C₂ W_i^T] + r * ∑_{i=1}^{2m} (||w_i||_1 / ||w_i||_2)subject toW_i(C₁ + C₂)W_i^T = 1and orthogonality constraints. Here,ris a sparsity parameter (0 ≤ r ≤ 1) that controls the trade-off between the original CSP objective and the sparsity of the filtersW. - Solve for Sparse Filters: Employ an optimization algorithm to solve the above problem for the sparse spatial filters

W. TheL1/L2norm promotes sparsity while being scale-invariant. - Feature Extraction & Classification: Use the resulting sparse filters

Wto project the original EEG data and extract features, following the same logarithmic variance transformation as standard CSP. Proceed to classification.

Protocol 3: Implementing Robust Sparse CSP (RSCSP) For data with significant outliers, RSCSP combines sparsity with robust covariance estimation [17].

- Robust Covariance Estimation: Instead of the standard sample covariance matrix, use the Minimum Covariance Determinant (MCD) estimator to calculate

C₁andC₂. The MCD finds a subsetHofhdata points that minimizes the determinant of the covariance matrix, making it resistant to outliers.C_w = 1/(α * t * n_w - 1) * E_w * E_w^Twhereαis related to the breakdown point. Algorithms like FASTMCD can be used for efficient computation. - Sparse Filter Optimization: With the robust covariance matrices, proceed to solve the Sparse CSP (SCSP) optimization problem outlined in Protocol 2.

- Proceed with Standard Steps: Complete the process with feature extraction and classification as described previously.

The Scientist's Toolkit: Essential Research Reagents and Materials

The table below lists key computational tools and concepts essential for conducting research in CSP-based MI-BCI systems.

Table 2: Key Reagents and Computational Solutions for CSP Research

| Item / Concept | Function / Description | Relevance in CSP Research |

|---|---|---|

| High-Density EEG Montage | A standardized arrangement of many EEG electrodes (e.g., 64-channels) on the scalp according to the 10-20 system [18]. | Provides the high-dimensional spatial input signal required for effective spatial filtering. The foundation for all CSP analysis. |

| Common Spatial Pattern (CSP) | A spatial filtering algorithm that maximizes the variance difference between two classes of EEG signals [14]. | The core feature extraction technique from which all variants (FBCSP, SCSP) are derived. |

| Filter Bank | An array of bandpass filters that decomposes the EEG signal into multiple frequency sub-bands [16] [17]. | A critical component of FBCSP, enabling the extraction of spectrally localised CSP features. |

| Mutual Information (MI) Feature Selection | A filter-based feature selection method that ranks features based on the mutual information with the target class label [16] [17]. | Used in FBCSP to select the most discriminative features from the high-dimensional feature vector across all sub-bands. |

| Sparsity Penalty (L1/L2 Norm) | A regularization term added to an optimization problem to encourage a sparse solution, where many coefficients become zero [17]. | The core mechanism behind Sparse CSP (SCSP), which forces the spatial filter to use only a subset of relevant EEG channels. |

| Minimum Covariance Determinant (MCD) | A robust estimator of multivariate location and scatter, which is less influenced by outliers [17]. | Used in Robust Sparse CSP (RSCSP) to compute covariance matrices that are not skewed by anomalous data points. |

| Particle Swarm Optimization (PSO) | A computational method that optimizes a problem by iteratively trying to improve a candidate solution with regard to a given measure of quality [15]. | Can be used to solve for complex spatial filters in advanced variants like Adaptive Spatial Pattern (ASP) [15]. |

Workflow for Method Selection and Implementation

To successfully implement these algorithms, a structured workflow is recommended. The following diagram outlines the key decision points and paths for selecting and applying the appropriate CSP variant:

Frequently Asked Questions (FAQs)

Q1: What is the core difference between filter, wrapper, and embedded feature selection methods?

The core difference lies in how they evaluate and select features.

- Filter Methods evaluate features based on their intrinsic statistical properties (e.g., correlation with the target variable) and are independent of any machine learning model [19] [20] [21].

- Wrapper Methods use the performance of a specific predictive model to evaluate the usefulness of a feature subset. They "wrap" themselves around a model and are computationally more expensive [19] [22] [23].

- Embedded Methods integrate the feature selection process directly into the model training phase, allowing the model itself to decide which features are most important [19] [24] [25].

Q2: Why is feature selection critical in the context of high-density EEG (HD-EEG) research?

Feature selection, often termed channel selection in EEG analysis, is crucial for several reasons [6] [2]:

- Computational Efficiency: It reduces the computational cost and time required for processing signals from hundreds of electrodes, which is vital for developing portable or real-time medical devices.

- Performance Improvement: It mitigates overfitting by removing irrelevant or noisy channels, which can lead to more accurate seizure detection, motor imagery classification, and other diagnostic applications.

- Practical Setup: It decreases the setup time and improves patient comfort by identifying a minimal set of electrodes without sacrificing signal quality, facilitating longer monitoring periods.

Q3: My wrapper method is taking an extremely long time to run. What is causing this and what can I do?

This is a common issue due to the fundamental nature of wrapper methods. The long runtime is caused by the repeated training and evaluation of a machine learning model across numerous potential feature subsets [19] [21] [23]. To address this:

- Switch Algorithms: For an initial exploration, use a less computationally expensive search algorithm like Sequential Forward Selection (SFS) instead of exhaustive search methods [19].

- Leverage Hybrid Methods: Consider using a hybrid approach that uses a fast filter method to pre-reduce the number of features before applying the wrapper method [6].

- Use Embedded Methods: For large datasets, embedded methods like LASSO or tree-based models are highly recommended as they are more efficient and often provide comparable or better results [20] [25].

Q4: I've applied a filter method, but my final model's performance is poor. Why might this be?

This can occur because filter methods evaluate each feature in isolation [22] [21]. A feature that appears irrelevant on its own might be highly predictive when combined with others. Filter methods fail to capture these feature interactions. To resolve this:

- Combine Methods: Use the output of the filter method (a reduced feature set) as a starting point for a wrapper or embedded method.

- Try a Different Category: Move directly to a wrapper or embedded method, which are designed to account for interactions between features and often yield superior model performance [19] [25].

Q5: How do I know which feature selection technique is best for my specific EEG analysis task?

There is no single "best" technique; the choice depends on your specific constraints and goals [20] [23]. The following table summarizes key decision factors:

| Criterion | Filter Methods | Wrapper Methods | Embedded Methods |

|---|---|---|---|

| Computational Cost | Low [22] [20] | Very High [19] [21] | Medium (comparable to a single model training) [24] [25] |

| Model Consideration | No (model-agnostic) [21] [24] | Yes (model-specific) [19] [23] | Yes (model-specific) [24] [25] |

| Risk of Overfitting | Low [21] | High [20] [21] | Medium [25] |

| Handles Feature Interactions | No [22] [21] | Yes [19] [25] | Yes [24] [25] |

| Best Suited For | Initial data exploration, very large datasets [22] [20] | Small to medium datasets where model performance is critical [20] | A balanced approach for efficiency and accuracy [20] [25] |

Troubleshooting Guides

Issue: Inconsistent Channel Selection Results in HD-EEG

Problem: The selected optimal electrode subset varies significantly when the algorithm is run multiple times or on different data segments from the same subject, leading to unreliable conclusions.

Solution:

- Increase Data Sampling: Ensure you are averaging a sufficient number of events (e.g., interictal epileptiform discharges) for analysis. One study noted that while averaging 10 IEDs is typical, sometimes it is not feasible if discharges are rare, which can lead to unstable results [10].

- Apply Stability Selection: Incorporate techniques like stability selection with subsampling when using wrapper or embedded methods. This improves the robustness of the selected features by identifying those that consistently appear across multiple subsamples of the data [22].

- Validate with Ground-Truth: Whenever possible, validate the selected channels against a known ground-truth. For example, in one case, the source localization from HD-EEG was validated against a patient's known calcified tuber, confirming the accuracy of the selected channels [10].

Issue: Genetically Optimized Electrode Subsets Fail to Generalize

Problem: An electrode subset identified by a genetic algorithm (like NSGA-II) as optimal for one task or subject performs poorly when applied to a new task or a different subject.

Solution:

- Problem-Specific Optimization: Understand that an electrode subset is often optimal for a specific brain activity and source localization problem [2]. The methodology should be re-run for new experimental paradigms or clinical questions.

- Incorporate Head Geometry: Ensure the optimization process uses an accurate, subject-specific head model derived from their MRI. The coregistration of electrode coordinates with the anatomical model is critical for reliable source estimation and, by extension, for identifying meaningful electrode subsets [10] [2].

- Multi-Subject Framework: For group-level studies, implement a framework that finds a consensus subset that works well across multiple subjects, rather than relying on a single subject's optimized set [2].

Experimental Protocols

Protocol 1: Implementing a Filter-Wrapper Hybrid for EEG Channel Selection

This protocol combines the speed of filter methods with the accuracy of wrapper methods for effective channel selection [6].

Methodology:

- Feature Extraction: For each EEG channel, extract relevant signal features (e.g., power in specific frequency bands, wavelet coefficients) from the epoched data.

- Initial Filtering: Apply a filter method (e.g., Fisher score, mutual information) to rank all channels based on their relevance to the target variable (e.g., seizure vs. non-seizure).

- Subset Generation: Retain the top K channels from the filter ranking. The value of K can be based on a threshold or a predetermined number.

- Wrapper Refinement: Use a sequential search wrapper method (e.g., Sequential Forward Selection) on the reduced set of K channels. Train and cross-validate your classifier (e.g., SVM, Random Forest) on different subsets to find the final, optimal channel set.

- Validation: Report the final classification performance (e.g., accuracy, AUC-ROC) on a held-out test set using only the selected channels.

Protocol 2: Automated Optimal Electrode Selection using Genetic Algorithm (GA)

This protocol details the use of a multi-objective genetic algorithm to find minimal electrode subsets that maintain source localization accuracy, as demonstrated in recent research [2].

Methodology:

- Define the Optimization Problem:

- Objectives: Minimize (1) the source localization error and (2) the number of electrodes used.

- Variables: The presence or absence of each electrode in the HD-EEG montage.

- Algorithm Setup: Employ the Non-dominated Sorting Genetic Algorithm II (NSGA-II).

- Inputs: HD-EEG data, a head model, and the ground-truth location of the brain activity (if available).

- Fitness Calculation: For each candidate electrode subset (a "chromosome" in the GA), solve the EEG inverse problem (e.g., using wMNE or sLORETA) and compute the localization error.

- Iteration and Selection: The GA iterates through generations, using crossover and mutation to create new candidate subsets. It selects subsets that form a "Pareto front," representing the best trade-offs between the two objectives.

- Output: The algorithm outputs a set of non-dominated optimal electrode subsets (e.g., a 6-electrode set, an 8-electrode set) that achieve accuracy comparable to the full HD-EEG setup.

Workflow and Relationship Diagrams

Feature Selection Technique Classification

Genetic Algorithm for Electrode Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Channel Selection Research |

|---|---|

| High-Density EEG System (e.g., 128-256 channels) | Provides the high spatial resolution signal data required as the ground truth for evaluating and optimizing low-density electrode subsets [10] [2]. |

| 3D Digitizer (e.g., Fastrak, Polhemus) | Precisely records the 3D spatial coordinates of each EEG electrode on the subject's scalp, which is crucial for accurate co-registration with MRI and reliable source localization [10]. |

| Boundary Element Model (BEM) | A head model constructed from MRI that computes the forward solution, estimating how electrical currents in the brain manifest as signals on the scalp. Essential for source localization algorithms [10]. |

| sLORETA / wMNE / MSP | Inverse problem solvers. These algorithms estimate the location and activity of brain sources from the scalp EEG data. They are used to compute the fitness (localization error) in optimization protocols [2]. |

| Non-dominated Sorting Genetic Algorithm II (NSGA-II) | A multi-objective evolutionary algorithm used to efficiently search the vast space of possible electrode combinations to find those that minimize both channel count and localization error [2]. |

High-density Electroencephalography (HD-EEG) systems, with up to 256 electrodes, provide unparalleled spatial resolution for analyzing brain activity. However, this wealth of data comes with significant challenges, including increased computational complexity, setup time, and potential for redundant information. Channel selection has therefore emerged as a critical preprocessing step to identify optimal electrode subsets that maintain signal fidelity while drastically reducing resource requirements. Within this context, evolutionary and metaheuristic approaches, particularly Genetic Algorithms (GAs) and their multi-objective variants like NSGA-II, have established themselves as powerful tools for navigating the vast search space of possible channel combinations. These methods systematically evolve solutions that balance competing objectives: maximizing informative content for specific neurophysiological tasks while minimizing the number of required electrodes. This technical support document provides comprehensive guidance for researchers implementing these advanced optimization techniques within EEG experimental frameworks, addressing common methodological challenges and providing validated troubleshooting protocols.

FAQs & Troubleshooting Guides

Q1: Our GA converges too quickly to a suboptimal channel subset. How can we improve exploration?

- Problem Identification: Premature convergence often indicates insufficient population diversity or excessive selection pressure.

- Solution Pathway:

- Implement Sparsity-Driven Initialization: Instead of random initialization, use a sparse initialization strategy that prioritizes anatomically dispersed channels. This sparsifies the search space and accelerates convergence toward more globally optimal solutions [26].

- Adjust Genetic Operator Rates: Increase the mutation probability to reintroduce lost genetic material and prevent homogeneity. The Enhanced NSGA-II (E-NSGA-II) framework employs an intra-population evolution-based mutation operator that dynamically shrinks the search space to help escape local optima [26].

- Hybrid Filter-Wrapper Approach: Combine a filter method (e.g., statistical t-test) for initial population generation to preserve critical features. This guides the wrapper method (GA) and reduces computational cost from irrelevant searches [26] [27].

Q2: How do we effectively formulate the fitness function for a multi-objective channel selection problem?

- Problem Identification: A poorly defined fitness function fails to balance the trade-offs between channel count and classification/localization accuracy.

- Solution Pathway:

- Standard Multi-objective Formulation: For NSGA-II, define two primary objectives: a) Maximize the performance metric (e.g., classification accuracy, or the negative of source localization error), and b) Minimize the number of selected channels [28] [29] [26].

- Incorporate Domain Knowledge: Specific studies have successfully combined GAs with classifiers like Logistic Regression (GALoRIS) or Linear SVM to create a fitness function that directly evaluates the predictive power of a channel subset [30] [31].

- Address Data Heterogeneity: To mitigate EEG signal variability, introduce a normalization procedure before fitness evaluation. One proposal uses different fitness functions that consider performance measures (accuracy/silhouette score) alone or combined with the number of features, weighted by specific parameters [31].

Q3: The computational cost of our wrapper-based GA is prohibitive for HD-EEG data. How can we reduce runtime?

- Problem Identification: Evaluating every potential channel subset with a complex classifier or source localization algorithm is computationally expensive.

- Solution Pathway:

- Leverage a Hybrid Methodology: Use a fast filter method (e.g., correlation-based, statistical testing with Bonferroni correction) for a preliminary, aggressive reduction of the channel pool. This creates a smaller, more relevant search space for the subsequent GA [26] [27].

- Adopt a Surrogate Model: In the initial generations, use a less computationally expensive model (e.g., Linear Discriminant Analysis) to evaluate fitness, switching to a more complex model only for promising solutions in later generations.

- Implement a Greedy Repair Strategy: As used in E-NSGA-II, a final-phase greedy repair strategy can quickly refine feature subsets to enhance performance without running additional, full generations [26].

Q4: How can we validate that an optimized low-density montage retains the accuracy of the original HD-EEG?

- Problem Identification: It is essential to ensure that the selected minimal channel subset does not sacrifice critical neurophysiological information.

- Solution Pathway:

- Benchmark Against Ground Truth: Use synthetic EEG data with known source locations or real data from invasive recordings (e.g., stereotactic EEG) as a ground truth for validation [28] [32].

- Comparative Performance Metrics: Rigorously compare key metrics—such as source localization error, classification accuracy, True Acceptance Rate (TAR), and True Rejection Rate (TRR)—between the optimized subset and the full HD-EEG setup. The goal is to achieve equal or better performance with fewer channels [28] [29].

- Statistical Validation: Apply statistical tests (e.g., paired t-tests) to confirm that the performance of the optimized subset is not significantly worse than that of the full montage across multiple subjects or trials.

Detailed Experimental Protocols

Protocol 1: NSGA-II for EEG Source Localization

This protocol is designed to find the minimal electrode set that preserves the source localization accuracy of a full HD-EEG montage [28] [32].

- 1. Input Preparation:

- Data: HD-EEG recordings (synthetic or real) with known ground-truth source locations.

- Head Model: A realistic head model (e.g., from MRI) is required for the forward solution.

- Inverse Solver: Select an algorithm (e.g., wMNE, sLORETA, MSP).

- 2. Optimization Loop Setup:

- Chromosome Encoding: Each chromosome is a binary string representing all candidate channels (e.g., 1=selected, 0=deselected).

- Objective Functions:

- Minimize the Localization Error (LE) for the target source(s).

- Minimize the Number of Selected Electrodes.

- Algorithm Core: Configure NSGA-II with standard selection, crossover, and mutation operators.

- 3. Evaluation & Validation:

- For each chromosome (channel subset), solve the inverse problem and calculate the LE against the ground truth.

- The algorithm outputs a Pareto-optimal front of solutions, representing the best trade-offs between channel count and accuracy.

- Validate optimal subsets by comparing their mean LE and standard deviation against the full HD-EEG montage on independent data.

Protocol 2: Multi-objective Channel Selection for Subject Identification

This protocol optimizes channels for a biometric system capable of identifying subjects and rejecting intruders [29].

- 1. Input Preparation:

- Data: ERP or resting-state EEG data from multiple subjects.

- Feature Extraction: Use Empirical Mode Decomposition (EMD) to extract sub-bands. From each sub-band, compute features like instantaneous energy and fractal dimensions for each channel.

- 2. Multi-Layer Optimization:

- Chromosome Encoding: Extends beyond channel selection to include classifier hyperparameters (e.g.,

nuandgammafor a one-class SVM with RBF kernel). - Objective Functions:

- Maximize Subject Identification Accuracy (multi-class SVM).

- Maximize True Acceptance Rate (TAR).

- Maximize True Rejection Rate (TRR).

- Minimize Number of Selected Channels.

- Algorithm Core: Utilize NSGA-II or NSGA-III to handle the four-objective optimization.

- Chromosome Encoding: Extends beyond channel selection to include classifier hyperparameters (e.g.,

- 3. System Validation:

- Train a one-class SVM for intruder detection and a multi-class SVM for subject identification using the selected channels and hyperparameters.

- Report final performance metrics (Accuracy, TAR, TRR) on a held-out test set.

Protocol 3: GA for Cognitive Workload Feature Selection

This protocol selects optimal features from multi-channel EEG data to classify cognitive workload states [30] [31].

- 1. Input Preparation:

- Data: EEG data recorded during tasks inducing low and high cognitive workload (e.g., driving simulation).

- Feature Extraction: Compute a wide variety of features (e.g., power spectral density in delta, theta, alpha, beta, gamma bands) from all channels in time, frequency, and time-frequency domains. Normalize the data to mitigate inter-subject heterogeneity.

- 2. GA Configuration (GALoRIS):

- Chromosome Encoding: Represents a subset of the extracted features.

- Fitness Function: A combination of Genetic Algorithms and Logistic Regression (LoR) is used. The fitness of a feature subset is based on the performance of the LoR classifier in distinguishing workload states.

- Selection & Crossover: Use standard GA operators, with a focus on selecting features that contribute most to the model's predictive power.

- 3. Model Building & Testing:

- The dataset produced by the GA is used to train and test various classifiers (e.g., SVM, k-NN).

- Validate the model's precision, and the reduction in the original dataset's size (e.g., >50%).

Performance Data & Comparative Analysis

Table 1: Quantitative Performance of Genetic Algorithm-based Channel/Feature Selection Methods

| Application Domain | Algorithm Used | Optimal Subset Size | Reported Performance | Reference |

|---|---|---|---|---|

| Source Localization | NSGA-II | 6-8 electrodes | Equal/better accuracy than 231-channel HD-EEG in >88% (syn.) & >73% (real) cases | [28] [32] |

| Subject Identification | NSGA-II | 3 channels | Accuracy: 0.83, TAR: 1.00, TRR: 1.00 | [29] |

| Subject Identification | NSGA-II | 12 channels | Accuracy: 0.93, TAR: 0.93, TRR: 0.95 | [29] |

| Cognitive Workload | GALoRIS (GA + LoR) | <50% original features | Precision >90% for workload classification | [30] |

| Motor Imagery | Statistical Filter + DL | Significant reduction | Accuracy improvements of 3.27% to 42.53% over baselines | [27] |

Workflow & Signaling Pathway Diagrams

NSGA-II for EEG Channel Selection

Diagram Title: NSGA-II Optimization Workflow for EEG Channel Selection

Experimental Validation Protocol

Diagram Title: Experimental Validation Workflow for Optimized EEG Montages

Research Reagent Solutions

Table 2: Essential Computational Tools for GA-based EEG Channel Selection

| Reagent / Tool | Type | Primary Function in Workflow | Exemplary Use Case |

|---|---|---|---|

| NSGA-II | Multi-objective Algorithm | Finds Pareto-optimal trade-offs between channel count and accuracy. | Core optimizer in source localization and subject identification [28] [29]. |

| sLORETA / wMNE | Inverse Problem Solver | Estimates the location of neural sources from scalp potentials. | Used in the fitness function to calculate localization error [28] [32]. |

| SVM (Linear/RBF) | Classifier | Evaluates the discriminative power of a selected channel subset. | Acts as the fitness evaluator in identification/authentication tasks [30] [29]. |

| Empirical Mode Decomposition (EMD) | Signal Decomposition | Extracts innate oscillatory modes from non-stationary EEG signals. | Used for feature extraction prior to channel selection [29]. |

| Power Spectral Density (PSD) | Feature Extraction | Quantifies signal power in different frequency bands (Delta, Theta, Alpha, etc.). | Used to create features for cognitive state classification [30] [31]. |

| Logistic Regression (LoR) | Classifier | Simple, effective model for probabilistic classification. | Integrated with GA in GALoRIS for feature selection fitness evaluation [30]. |

FAQs: Core Concepts and Applications

Q1: What is EEG channel reconstruction, and why is it important for research? EEG channel reconstruction refers to the process of using computational methods, such as Convolutional Neural Networks (CNNs), to generate or restore data from missing or unused EEG channels. In research, this is crucial for mitigating the challenges of high-density EEG montages, which can be hampered by noisy signals, artifact contamination, or practical limitations on the number of electrodes that can be used. By intelligently reconstructing channels, researchers can effectively reduce computational complexity, improve the spatial resolution of brain signals, and decrease equipment costs and setup time without sacrificing critical neural information [33].

Q2: How do CNNs specifically outperform traditional methods like spherical spline interpolation for channel reconstruction? CNNs learn the complex, non-linear statistical distributions of cortical electrical fields from vast amounts of real EEG data. In contrast, traditional spherical spline interpolation is a mathematical technique that does not incorporate this learned neurophysiological knowledge. Studies directly comparing the two methods have shown that CNN-based upsampling produces results that experienced clinical neurophysiologists rate as more realistic than those generated by interpolation. Furthermore, the performance of CNNs improves with the amount of training data, whereas interpolation does not learn from data [34].

Q3: In a typical CNN-based channel reconstruction workflow, what are the key input and output parameters? A typical workflow involves using a generative CNN to upsample or restore channels. For instance, a network might be trained to:

- Input: A reduced set of channels (e.g., 4 or 14 channels) or a 21-channel EEG with one dynamically missing channel [34].

- Output: A full, high-density montage (e.g., 21 channels) [34]. The network learns a mapping function to predict the values of missing channels based on the spatial relationships and patterns learned from the training data.

Q4: What are the primary performance metrics used to validate CNN-based channel reconstruction models? Validation is multi-faceted and involves both quantitative and qualitative measures:

- Statistical Metrics: Direct comparison of the reconstructed signal against the ground truth using measures like Mean Squared Error (MSE) and Structural Dissimilarity (DSSIM) [35].

- Clinical/Expert Evaluation: The "gold standard" in clinical neurophysiology involves having board-certified experts visually assess the reconstructed EEG traces. A successful model produces outputs that experts cannot distinguish from real data and deem suitable for clinical assessment [34].

Q5: Can this technology be used to create a completely new "virtual" electrode at a location that was not originally recorded? Yes, this is a primary application. CNNs can function as "virtual EEG-electrodes," performing spatial upsampling to create a higher-density channel map from a lower-density recording. This allows researchers to effectively generate data for electrode locations that were not physically used during the recording session, based on the learned spatial correlations between electrodes [34].

Troubleshooting Guides

Guide 1: Addressing Poor Reconstruction Accuracy

Problem: Your CNN model is producing reconstructed EEG channels with high error (e.g., high MSE) compared to the ground truth signals.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient Training Data | Check the number of subjects and recording hours in your training set. | Increase the diversity and volume of training data. Performance has been shown to improve significantly as the number of training subjects increases, particularly in the range of 5 to 100 subjects [34]. |

| Inadequate Model Capacity | Analyze model architecture depth and complexity compared to state-of-the-art. | Consider a more complex generative network architecture that combines residual CNN paths for shallow features with transformer blocks for long-range dependencies, which has proven effective for signal reconstruction tasks [35]. |

| Data Preprocessing Issues | Verify the preprocessing pipeline: filtering, artifact removal, and normalization. | Implement a robust preprocessing protocol including bandpass filtering (e.g., 1–35 Hz) and artifact rejection using algorithms like Independent Component Analysis (ICA) to ensure the training data is clean [36]. |