Optimizing EEG Channel Selection for Motor Imagery BCI: Methods, Applications, and Future Directions

Electroencephalography (EEG)-based Brain-Computer Interfaces (BCIs) for motor imagery (MI) hold transformative potential in neurorehabilitation and assistive technology.

Optimizing EEG Channel Selection for Motor Imagery BCI: Methods, Applications, and Future Directions

Abstract

Electroencephalography (EEG)-based Brain-Computer Interfaces (BCIs) for motor imagery (MI) hold transformative potential in neurorehabilitation and assistive technology. However, the high dimensionality of multi-channel EEG data presents significant challenges, including computational complexity, prolonged setup time, and potential performance degradation due to redundant or noisy channels. This article provides a comprehensive analysis of optimized EEG channel selection strategies designed to overcome these hurdles. We explore the foundational principles of MI-BCI and the critical need for channel selection, review cutting-edge methodological approaches from filter-based techniques to deep learning-embedded selection, address key troubleshooting and optimization challenges for real-world application, and present a rigorous comparative analysis of algorithm performance and validation paradigms. The synthesis of current research indicates that strategic channel reduction not only maintains but often enhances classification accuracy—achieving gains of over 24% in some studies—while drastically improving system portability and efficiency, paving the way for more practical and accessible BCI systems.

The Foundation of Motor Imagery BCI and the Critical Need for Channel Selection

Core Principles of Motor Imagery BCI

Motor Imagery (MI) in Brain-Computer Interfaces (BCIs) enables users to control external devices through the mental rehearsal of physical movements without any motor output. This technology relies on detecting characteristic patterns in neural activity associated with imagining specific movements, making it particularly valuable for neurorehabilitation and assistive technology applications [1] [2].

The foundation of MI-BCI operation rests on the phenomenon of Sensorimotor Rhythms (SMRs), which are oscillatory patterns generated by neuronal populations in the cortex during motor imagery tasks. These rhythms are categorized into distinct frequency bands: the mu rhythm (7-13 Hz), the beta rhythm (13-30 Hz), and the gamma rhythm (30-200 Hz). During motor imagery, these rhythms exhibit predictable changes known as Event-Related Desynchronization (ERD) and Event-Related Synchronization (ERS), which serve as the primary control signals for MI-BCI systems [1].

MI-BCIs represent an "active" BCI paradigm, distinguishing them from reactive systems that depend on external stimuli. This characteristic makes MI-BCIs particularly suitable for applications requiring voluntary, self-paced control, such as neuroprosthetics and stroke rehabilitation [1] [2].

Technical Implementation and Signaling Pathways

The standard MI-BCI processing pipeline involves multiple stages from signal acquisition to command execution. Understanding this workflow is essential for implementing effective MI-BCI systems.

Information Processing Pathway in MI-BCI Systems

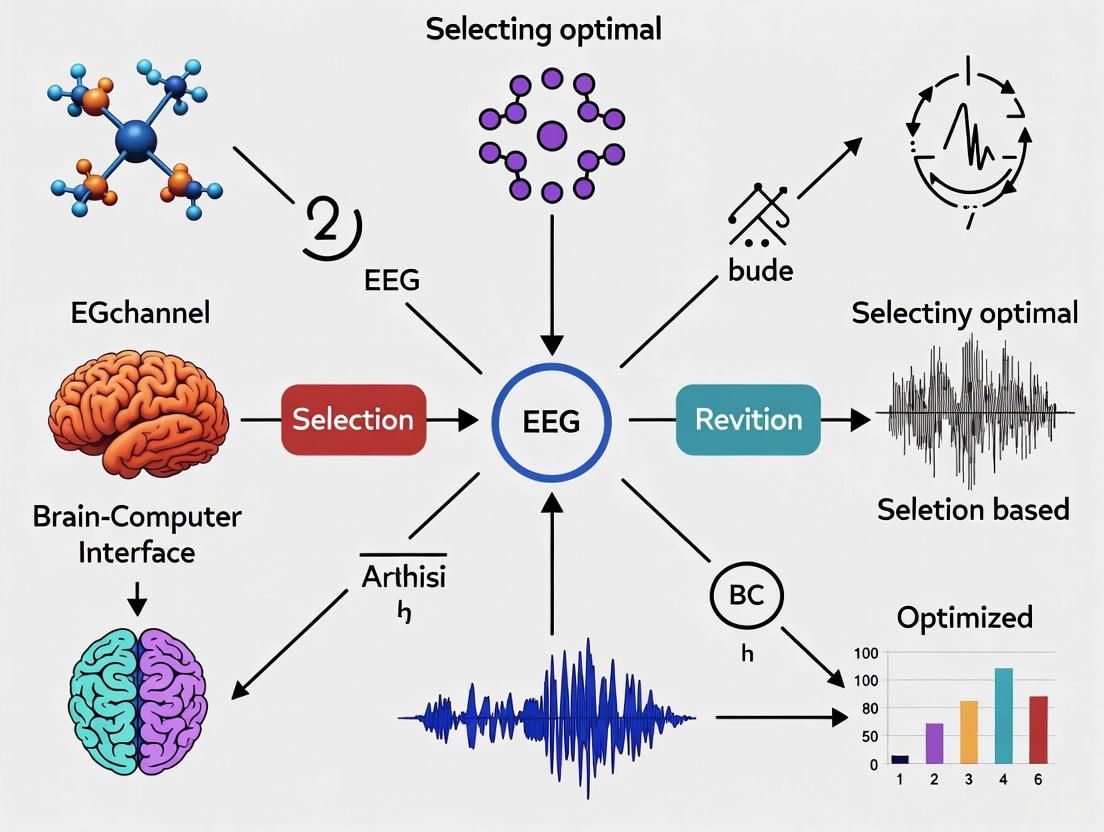

The diagram below illustrates the complete processing pathway from user intention to device control in a typical MI-BCI system:

Key Algorithmic Approaches in MI Classification

Table 1: Comparative Performance of MI Classification Algorithms

| Algorithm Type | Key Features | Reported Accuracy | Applications | References |

|---|---|---|---|---|

| EEGEncoder (Transformer-TCN) | Dual-Stream Temporal-Spatial Blocks; parallel processing | 86.46% (subject-dependent); 74.48% (subject-independent) | Multi-class MI tasks | [3] |

| Hybrid Optimization (WSO + ChOA) | Two-tier deep learning (CNN + M-DNN); MRMR channel selection | 95.06% | Binary MI classification | [4] |

| TCACNet | Temporal and channel attention convolutional network | 11.4% improvement vs. baseline | General MI tasks | [4] |

| Fisher Score + Local Optimization | Channel selection based on CSP features | 79.37% (using 11 vs. 22 channels) | Portable BCI systems | [5] |

EEG Channel Selection Methodologies

Optimized EEG channel selection represents a critical advancement for enhancing MI-BCI performance while improving system portability and usability. Channel selection methods reduce computational complexity while maintaining or improving classification accuracy by identifying the most informative electrode locations.

Channel Selection Workflow

The following diagram outlines a standardized workflow for optimized EEG channel selection in MI-BCI systems:

Channel Selection Performance Metrics

Table 2: EEG Channel Selection Performance Comparison

| Method | Average Channels Selected | Classification Accuracy | Improvement vs. Full Channels | Computational Efficiency |

|---|---|---|---|---|

| Fisher Score + Local Optimization [5] | 11 | 79.37% | +6.52% | High |

| MRMR + Hybrid Optimization [4] | Not specified | 95.06% | Significant | Medium |

| TCACNet Attention [4] | 50% reduction | +11.4% vs. baseline | Reduced training data by 50% | High |

Experimental Protocols for MI-BCI Research

Standardized MI-BCI Experimental Setup

Protocol 1: Basic MI-BCI Data Acquisition and Processing

Participant Preparation

- Apply EEG cap following international 10-20 system

- Ensure electrode impedances < 5 kΩ

- Conduct brief MI ability screening

Experimental Paradigm

- Implement cue-based trials with random inter-trial intervals

- Use visual cues for MI tasks (left hand, right hand, feet, tongue)

- Record 4-6 second trials with baseline period

Signal Acquisition Parameters

- Sampling rate: 250 Hz

- Bandpass filter: 0.5-60 Hz

- Notch filter: 50/60 Hz line noise

Data Processing Pipeline

- Preprocessing: Bandpass filter 8-30 Hz for mu/beta rhythms

- Artifact removal: Automated EOG/EMG rejection

- Feature extraction: Common Spatial Patterns (CSP)

- Classification: Linear Discriminant Analysis (LDA) or SVM

Advanced Deep Learning Implementation

Protocol 2: EEGEncoder Framework for MI Classification [3]

Data Preprocessing

- Input: EEG segments (1125 timepoints × 22 channels)

- Downsampling Projector: 3 convolutional layers with ELU activation

- Batch normalization between layers

Model Architecture

- Dual-Stream Temporal-Spatial Blocks (DSTS)

- Parallel processing branches with dropout regularization

- Temporal Convolutional Networks (TCN) for temporal features

- Transformer modules for global dependencies

Training Parameters

- Optimization: Adam optimizer

- Learning rate: 0.001 with decay scheduling

- Regularization: Dropout (p=0.5) and L2 weight decay

Research Reagent Solutions and Materials

Table 3: Essential Research Materials for MI-BCI Development

| Category | Specific Tools/Solutions | Function/Purpose | Example Applications |

|---|---|---|---|

| EEG Hardware | Emotiv EPOC X [1] | Low-cost EEG acquisition (14 channels) | Proof-of-concept studies |

| Signal Processing | MATLAB EEGLAB, Python MNE | Preprocessing and visualization | General EEG analysis |

| Deep Learning Frameworks | PyTorch, TensorFlow with custom EEG layers [3] | MI classification model development | EEGEncoder implementation |

| Benchmark Datasets | BCI Competition IV-2a [3] [5] | Algorithm validation and comparison | Standardized performance testing |

| Optimization Algorithms | War Strategy Optimization (WSO), Chimp Optimization (ChOA) [4] | Channel selection and parameter tuning | Hybrid optimization approaches |

| Performance Metrics | SONIC Benchmark [6] | Standardized BCI performance evaluation | Information Transfer Rate (bits/sec) |

Clinical Applications and Implementation Guidelines

MI-BCIs show particular promise in neurorehabilitation, especially for stroke recovery. Evidence-based recommendations emphasize:

- Patient-Centered Approach: MI-based interventions must be tailored to individual preferences, needs, and goals through interdisciplinary teams [2]

- Progressive Training Structure: Begin with simple, gross movements and gradually add complexity through additional movement features or cognitive demands [2] [7]

- Multimodal Feedback: Combine visual, haptic, and proprioceptive feedback to enhance MI vividness and learning [2]

The integration of Virtual Reality (VR) with MI-BCI creates immersive environments that boost engagement and facilitate more vivid motor imagery, potentially reducing BCI inefficiency which affects 15-30% of users [2].

Current Challenges and Future Directions

Despite significant advances, MI-BCI research faces several challenges:

- Inter-subject Variability: Classification performance varies significantly between individuals [1]

- Signal Quality Constraints: Consumer-grade EEG hardware presents technical limitations for reliable performance [1]

- BCI Inefficiency: A substantial proportion of users (15-30%) struggle to achieve effective BCI control [2]

Future research directions include developing more adaptive deep learning architectures, improving real-time processing capabilities, and creating more standardized benchmarking frameworks like the SONIC protocol [6] to enable direct comparison between different MI-BCI approaches.

Electroencephalography (EEG) serves as a critical, non-invasive tool for recording brain activity, with extensive applications in brain-computer interface (BCI) systems, cognitive neuroscience, and clinical diagnosis. Its high temporal resolution, portability, and relative low cost make it a preferred modality for real-time systems such as motor imagery (MI)-based BCIs, which translate imagined movements into control commands for external devices [8] [9]. However, the use of multi-channel EEG systems introduces significant challenges that can impede performance and practicality. The inherent noise and artifacts from physiological (e.g., eye movements, muscle activity) and non-physiological sources dilute the neural signals of interest. The redundancy of information across numerous channels leads to data overload without a proportional gain in informative content. Consequently, this results in high computational costs, complicating the development of real-time, portable, and clinically viable systems [8] [9] [10]. This document, framed within the context of optimized EEG channel selection for motor imagery BCI research, details these challenges and provides structured application notes and experimental protocols to address them.

Quantitative Comparison of Channel Selection Methods

Channel selection is a critical preprocessing step to mitigate redundancy, improve signal quality, and enhance computational efficiency. The table below summarizes the performance of various channel selection methods as reported in recent literature.

Table 1: Performance Comparison of EEG Channel Selection and Classification Methods

| Method Category | Specific Method/Model | Dataset(s) Used | Key Metric (Accuracy %) | Number of Channels Used (Reduction) | Key Advantage |

|---|---|---|---|---|---|

| Filter + DL | STA-EEGNet with ANOVA | 2D/3D VR EEG | 99.78% | 51 (from 118) | Integrates spatial-temporal attention [11] |

| Statistical Filter + DL | t-test + Bonferroni + DLRCSPNN | BCI Competition III IVa, IV 1 | >90% (all subjects) | Significant reduction (Corr. <0.5 excluded) | High accuracy across subjects [8] [12] |

| Wrapper | SPEA-II + RCSP | BCI Competition | Comparable to full set | ~50% reduction | Multi-objective optimization; user comfort [13] |

| Filter | Wavelet-Packet Energy Entropy (WPEE) | BCI Competition IV 2a, PhysioNet | 86.81%, 86.64% | 16 (from 22; 27% reduction) | Computationally efficient; preserves info [14] |

| DL with EOG | 1D-CNN with EOG & EEG | BCI Competition IV IIa, Weibo | 83% (4-class), 61% (7-class) | 6 total (3 EEG, 3 EOG) | Leverages EOG's neural info; fewer EEG channels [10] |

Detailed Experimental Protocols

This section provides step-by-step protocols for implementing key channel selection methodologies, enabling researchers to replicate and build upon advanced practices in the field.

Protocol 1: ANOVA-Based Channel Selection for Deep Learning

This protocol is adapted from studies achieving high classification accuracy by identifying the most statistically relevant channels before model training [11].

1. Objective: To select a subset of EEG channels that significantly differ between experimental conditions (e.g., 2D vs. 3D VR, or different MI tasks) to improve the performance of a subsequent deep learning model.

2. Materials and Reagents:

- Raw multi-channel EEG data from a controlled experiment.

- Computing environment with statistical software (e.g., Python with SciPy, MATLAB) and deep learning frameworks (e.g., PyTorch, TensorFlow).

3. Procedure: 1. Data Preprocessing: Apply standard preprocessing steps to the raw EEG data. This typically includes: * Bandpass filtering (e.g., 0.5-40 Hz) to remove drift and high-frequency noise. * Notch filtering (e.g., 50/60 Hz) to remove line noise. * Artifact removal using techniques like Independent Component Analysis (ICA) or Artifact Subspace Reconstruction (ASR) [15] [16]. 2. Epoching: Segment the continuous data into trials (epochs) time-locked to the specific event (e.g., onset of motor imagery cue). 3. Feature Extraction (for ANOVA): For each channel and each trial, extract a relevant feature. Common features include band power in specific frequency bands (e.g., μ-rhythm: 8-13 Hz, β-rhythm: 13-30 Hz for MI) or signal variance. 4. One-Way ANOVA: For each channel, perform a one-way ANOVA test. * Input: The extracted feature (e.g., μ-band power) from all trials, grouped by the experimental condition (e.g., class of motor imagery). * Output: A p-value for each channel, representing the probability that the observed differences in feature means between conditions occurred by chance. 5. Channel Selection: Rank channels based on their p-values (lower p-value indicates higher significance). Select the top k channels, or all channels with a p-value below a significance threshold (e.g., p < 0.05 after correction for multiple comparisons). 6. Model Training: * Use only the selected subset of channels from the training data. * Train a deep learning model such as STA-EEGNet, which incorporates spatial-temporal attention blocks to dynamically weigh the importance of features from the selected channels [11]. 7. Validation: Evaluate the trained model on a held-out test set using only the same selected channels.

Protocol 2: Hybrid Statistical-DL Framework for Motor Imagery

This protocol outlines a hybrid approach that combines statistical tests with a Bonferroni correction for robust channel selection, followed by a specialized feature extraction and classification pipeline [8] [12].

1. Objective: To develop a computationally efficient and accurate pipeline for classifying motor imagery tasks by selecting non-redundant, task-related channels.

2. Materials and Reagents:

- Publicly available MI datasets (e.g., BCI Competition III IVa, BCI Competition IV 1).

- Software for signal processing and machine learning (e.g., Python with MNE, Scikit-learn).

3. Procedure: 1. Data Preprocessing: Load and preprocess the data as described in Protocol 1, Step 3.1. 2. Channel Selection via t-test and Bonferroni Correction: * For each channel and each subject, perform a series of two-sample t-tests to compare feature values (e.g., band power) between the two MI classes. * Apply the Bonferroni correction to the obtained p-values to control the family-wise error rate due to testing multiple channels. * Calculate the correlation coefficients between channels. Exclude any channel with a correlation coefficient below a threshold (e.g., 0.5) to ensure only statistically significant and non-redundant channels are retained [8] [12]. 3. Feature Extraction with DLRCSP: Instead of traditional Common Spatial Patterns (CSP), use a Regularized CSP (R-CSP) or its deep learning variant (DLRCSP). This technique shrinks the covariance matrix estimate towards the identity matrix, improving generalization and stability, especially with a small number of trials or channels [8] [13]. 4. Classification with Neural Networks: Feed the features extracted by DLRCSP into a standard Neural Network (NN) or Recurrent Neural Network (RNN) for final classification of the MI task.

Signaling Pathways and Workflows

The following diagram illustrates the logical workflow of a comprehensive EEG processing pipeline that integrates channel selection, a core strategy for addressing the challenges of noise, redundancy, and cost.

EEG Processing Pipeline with Channel Selection

The Scientist's Toolkit: Essential Research Reagents and Materials

This table lists key computational tools and data resources essential for conducting research on multi-channel EEG analysis and channel selection.

Table 2: Key Research Reagents and Solutions for EEG Channel Selection Research

| Item Name | Specifications / Example | Primary Function in Research |

|---|---|---|

| Public EEG Datasets | BCI Competition III IVa, IV 2a, IV 1; PhysioNet MI Dataset | Provides standardized, annotated data for developing, training, and benchmarking new algorithms. |

| Signal Processing Toolkits | MNE-Python, EEGLAB, FieldTrip | Offers built-in functions for preprocessing, filtering, artifact removal, and source localization. |

| Deep Learning Models | EEGNet, STA-EEGNet, ShallowConvNet, TCN-based architectures | Serves as state-of-the-art baselines or customizable frameworks for end-to-end EEG classification. |

| Spatial Filtering Algorithms | Common Spatial Patterns (CSP), Regularized CSP (R-CSP) | Extracts discriminative features from multi-channel data, often used as a precursor or component of channel selection. |

| Optimization Algorithms | Strength Pareto Evolutionary Algorithm II (SPEA-II), Multi-Objective PSO (MOPSO) | Used in wrapper-based channel selection to search for the optimal channel subset that maximizes accuracy and minimizes channel count. |

| Statistical Analysis Software | Python (SciPy, StatsModels), R, MATLAB | Performs statistical tests (e.g., ANOVA, t-test) for filter-based channel selection and result validation. |

Event-Related Desynchronization (ERD) and Event-Related Synchronization (ERS) represent fundamental neural oscillatory phenomena that form the cornerstone of modern Motor Imagery (MI)-based Brain-Computer Interface (BCI) systems. ERD manifests as a relative power decrease in specific electroencephalogram (EEG) frequency bands, while ERS represents a relative power increase following movement or imagery tasks [17]. These sensorimotor rhythms (SMRs) originate from neuronal populations in the cortex and are categorized into three primary types: the mu rhythm (7-13 Hz), beta rhythm (13-30 Hz), and gamma rhythm (30-200 Hz), with most non-invasive BCI research focusing on mu and beta bands due to technical limitations in measuring gamma activity with EEG [1].

During motor execution and mental motor imagery, these rhythmic activities demonstrate characteristic patterns that can be decoded to infer user intent. The reliability of ERD as a BCI control signal depends significantly on understanding the conditions that cause significant desynchronization, which remains a central challenge in improving MI-BCI systems [17]. These neurophysiological principles are particularly crucial for optimizing EEG channel selection, as identifying channels with the strongest ERD/ERS responses directly enhances classification accuracy while reducing computational complexity in practical BCI applications [8].

Neurophysiological Mechanisms and Experimental Evidence

Neural Oscillatory Dynamics

ERD/ERS phenomena reflect complex sensorimotor processes within the brain's motor planning and execution networks. Mu-rhythm ERD (8-13 Hz) occurs consistently during motor planning, execution, and imagery of hand/finger movements, while beta rhythm ERD (14-30 Hz) demonstrates similar patterns during voluntary execution and imagery [17]. Research indicates that beta band activity attenuates during voluntary movements but increases during steady contractions, suggesting it may reflect the "maintenance of status quo" in sensorimotor circuits [17].

The strength of ERD appears to reflect the time differentiation of hand postures in motor planning processes or variation of proprioception resulting from hand movements, rather than motor commands generated downstream that recruit motor neurons [17]. This understanding has profound implications for channel selection strategies, as it suggests that optimal electrodes should be placed over brain regions most involved in motor planning and proprioceptive processing.

Experimental Modulation of ERD Strength

Systematic investigations have revealed how kinematic and kinetic parameters modulate ERD strength. A comprehensive study examining repetitive hand grasping movements at different speeds (Hold, 1/3 Hz, and 1 Hz) and grasping loads (0, 2, 10, and 15 kgf) demonstrated that both mu and beta-ERD during task periods were significantly weakened under Hold conditions, where participants maintained isometric contraction [17]. This suggests that movement dynamics rather than static force production drive ERD phenomena.

Table 1: Experimental Modulation of ERD Parameters [17]

| Experimental Parameter | Levels Tested | Effect on Mu-ERD | Effect on Beta-ERD |

|---|---|---|---|

| Movement Speed | Hold (isometric) | Significantly weakened | Significantly weakened |

| Slow (1/3 Hz) | Salient ERD | Slightly weak ERD | |

| Fast (1 Hz) | Salient ERD | Slightly weak ERD | |

| Grasping Load | 0, 2, 10, 15 kgf | No significant difference | No significant difference |

| Interaction Effect | Speed × Load | Not observed | Not observed |

These findings indicate that kinematic parameters (movement speed) rather than kinetic parameters (motor loads) primarily modulate ERD strength, informing both experimental design and channel selection criteria for MI-BCI systems.

ERD/ERS Measurement Protocols and Channel Selection

Standardized Experimental Protocol

The following protocol outlines a standardized approach for ERD/ERS measurement optimized for channel selection studies, synthesized from multiple research methodologies [8] [17] [1]:

Participant Preparation: Recruit right-handed participants without neurological disorders. Position participants in a comfortable chair with arm support to minimize muscle artifacts. Apply EEG cap according to the 10-20 system, focusing on C3, C4, and surrounding electrodes over primary motor and somatosensory cortices.

Signal Acquisition Setup: Use a minimum of 8-36 electrodes for optimal real-time applications [1]. Configure recording parameters with sampling rate at 512-1000 Hz and band-pass filtering between 0.3-100 Hz. Employ bipolar spatial filtering in offline analysis to enhance signal quality.

Experimental Trial Structure:

- Rest Period: 8-10 seconds (randomized duration to prevent anticipatory responses)

- Preparation Period: 1 second (visual cue presentation)

- Task Period: 6 seconds (motor imagery execution)

Motor Imagery Tasks: Implement binary classification of right hand vs. right foot MI [8], or expand to multiclass paradigms including left hand, right hand, tongue movement, and lateral bending imagery [1]. Each condition should include 20-280 trials balanced across classes.

Data Acquisition: Record EEG signals throughout all periods, with particular focus on the transition from rest to task execution to capture ERD/ERS dynamics.

Channel Selection Methodology

Optimized channel selection represents a critical step in enhancing MI-BCI performance. A novel hybrid approach combining statistical tests with Bonferroni correction-based channel reduction has demonstrated significant improvements in classification accuracy [8]:

Initial Channel Evaluation: Apply statistical t-tests and p-value analysis to identify task-related EEG channels.

Correlation-based Filtering: Discard channels with correlation coefficients below 0.5 to ensure statistical significance and minimize redundancy.

Feature Extraction: Implement Regularized Common Spatial Patterns (DLRCSP) with covariance matrix shrinkage toward the identity matrix, automatically determining the γ regularization parameter using Ledoit and Wolf's method [8].

Classification: Utilize Neural Networks (NN) or Recurrent Neural Networks (RNN) for final MI task classification.

Table 2: Performance Comparison of Channel Selection Methods [8]

| Method | Dataset | Accuracy Improvement | Key Advantages |

|---|---|---|---|

| Proposed Hybrid Method | BCI Competition III IVa | 3.27% to 42.53% (individual subjects) | Highest accuracy (>90%), reduced computational complexity |

| BCI Competition IV-1 | 5% to 45% | Effective channel reduction, maintained performance | |

| BCI Competition IV-2a | 1% to 17.47% | Generalization across subjects | |

| TSCNN with DGAFF [8] | Multiple | 73.41% to 97.82% | Subject-specific optimization |

| DB-EEGNET with MPJS [8] | Multiple | 83.9% | Multi-objective optimization |

| CDCS with CSP/LDA [8] | BCI Competition | 77.57% and 66.06% | Cross-domain applicability |

This method has demonstrated exceptional performance, achieving accuracy above 90% for all subjects across three real-time EEG-based BCI datasets while significantly reducing the number of channels required for classification [8].

Visualization of ERD/ERS Pathways and Experimental Workflows

Neurophysiological Pathway from Motor Imagery to BCI Command

Experimental Workflow for Channel Selection and Validation

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials for ERD/ERS MI-BCI Research

| Category | Specific Items | Function & Application |

|---|---|---|

| Signal Acquisition | EEG Cap (10-20 system) [1], Active Dry Electrodes [17], NuAmps System [18], Emotiv EPOC X [1] | Non-invasive neural signal recording with optimal electrode-scalp contact |

| Microelectrode Arrays (Utah Array, Neuralace) [19], ECoG Grids [20], Stentrode [19] | Invasive/high-resolution signal acquisition for clinical applications | |

| Signal Processing | Band-pass Filters (0.1-100 Hz) [17], Notch Filters (50/60 Hz) [20], Analog-to-Digital Converters [20] | Noise reduction and signal conditioning |

| Feature Extraction | Common Spatial Patterns (CSP) [8], Hilbert-Huang Transform (HHT) [21], Permutation Conditional Mutual Information (PCMICSP) [21] | Spatial and temporal feature identification from EEG signals |

| Classification Algorithms | Neural Networks (NN) [8], Back Propagation NN with Honey Badger Algorithm (BPNN-HBA) [21], Regularized CSP with NN (DLRCSPNN) [8] | Machine learning classification of MI tasks from extracted features |

| Experimental Paradigms | Visual Cue Systems, 3D Tetris Environment [18], Motor Imagery Training Protocols [1] | Elicitation and enhancement of ERD/ERS responses through engaging tasks |

Advanced Applications and Performance Optimization

Enhanced Classification Techniques

Recent advances in classification algorithms have substantially improved ERD/ERS detection for MI-BCI systems. The optimized Back Propagation Neural Network with Honey Badger Algorithm (BPNN-HBA) represents a significant innovation, utilizing the algorithm's chaotic and ergodic behavior to determine optimal weights and thresholds for the error backpropagation neural network [21]. This approach enhances global convergence properties and prevents local optima trapping, achieving maximum accuracy of 89.82% on the EEGMMIDB dataset [21].

The integration of chaotic disturbances further refines this solution, improving model accuracy and convergence rates. When combined with sophisticated preprocessing techniques like Hilbert-Huang Transform (HHT) for non-linear, non-stationary EEG analysis and Permutation Conditional Mutual Information Common Spatial Pattern (PCMICSP) for feature extraction, these classification methods demonstrate superior performance compared to traditional approaches [21].

Gamification and Training Protocols

Innovative training approaches utilizing gamification principles have shown promise in enhancing users' ERD/ERS production capabilities. Studies comparing 3D Tetris environments with traditional 2D screen games demonstrated that groups performing MI in immersive 3D environments showed more significant improvements in generating MI-associated ERD/ERS [18]. Analysis of game scores indicated an obvious uptrend in 3D Tetris environments but not in 2D screen games, suggesting that rich-control environments improve associated mental imagery and enhance MI-based BCI skills [18].

Body awareness training protocols integrating mindfulness and physical exercises have also demonstrated value in improving MI proficiency, particularly for multiclass BCI systems classifying six mental states: resting state, left and right hand movement imagery, tongue movement, and left and right lateral bending [1].

The strategic optimization of EEG channel selection based on ERD/ERS principles represents a critical advancement in MI-BCI research. The neurophysiological mechanisms underlying event-related desynchronization and synchronization provide a robust foundation for identifying optimal channel subsets that maximize classification performance while minimizing computational requirements. The methodologies and protocols detailed in this document offer researchers comprehensive frameworks for implementing these principles in both experimental and clinical settings.

Future research directions should focus on further refining channel selection algorithms through advanced machine learning techniques, expanding multiclass MI paradigms for enhanced BCI control dimensionality, and developing more engaging training protocols to improve user proficiency. As these neurotechnologies continue to evolve, the precise application of ERD/ERS principles will remain essential for creating effective, reliable brain-computer interfaces that translate neural signatures of movement intention into functional control signals.

The Impact of Channel Selection on System Performance and Practical Usability

Electroencephalography (EEG)-based Brain-Computer Interfaces (BCIs) using Motor Imagery (MI) have emerged as a transformative technology for enabling communication and control without physical movement. In these systems, users imagine limb movements, generating discernible patterns in brain signals that can be translated into commands for external devices. The performance and practical deployment of these systems are critically dependent on the number and placement of EEG electrodes. While high-density electrode arrays can provide comprehensive spatial coverage, they introduce significant challenges including prolonged setup time, increased computational complexity, and greater susceptibility to noise. Consequently, EEG channel selection has become an indispensable process in MI-BCI design, aiming to identify an optimal subset of channels that preserves essential information while enhancing system efficiency and user comfort [22] [9].

The central challenge in channel selection lies in balancing a trade-off: using too few channels may discard discriminative information, while too many introduce noise and redundancy. Research has demonstrated that selecting the correct channels can not only maintain but often improve classification accuracy by eliminating irrelevant data sources that might otherwise contribute to overfitting. Furthermore, from a practical standpoint, streamlined electrode setups are crucial for developing portable BCI systems suitable for home or clinical use, reducing setup time from hours to minutes, and improving overall user experience [22] [23]. This application note examines the impact of channel selection on both system performance and practical usability, providing structured data comparisons, experimental protocols, and analytical tools to guide researchers in optimizing their MI-BCI designs.

Performance Comparison of Channel Selection Methods

The efficacy of channel selection methods is typically evaluated based on their ability to achieve high classification accuracy with a minimal number of channels. The table below summarizes the performance of various state-of-the-art channel selection methods on standard MI datasets.

Table 1: Performance of Channel Selection Methods on Standard MI Datasets

| Method | Core Approach | Number of Channels Selected | Classification Accuracy | Dataset Used |

|---|---|---|---|---|

| Fisher Score + Local Optimization [5] | Filter-based (Fisher Score) with local search | Average of 11 | 79.37% (4-class) | BCI Competition IV-2a |

| ECA-CNN [23] | Embedded (Efficient Channel Attention) | 8 (of 22) | 69.52% (4-class) | BCI Competition IV-2a |

| Wavelet-Packet Energy Entropy (WPEE) [24] | Filter-based (Energy Entropy) | ~16 (removes 27%) | 86.81% | BCI Competition IV-2a |

| Common Channel Selection (Arpaia et al.) [25] | Not Specified | 6 | 77-83% (2-class) | BCI Competition IV-2a |

| 10 | >60% (4-class) | |||

| Entropy-Based CSP [26] | Filter-based (Shannon Entropy) | Not Specified | Surpassed cutting-edge techniques | BCI Competition III-IV(A), IV-I |

| Shallow CNN (SCNN) [27] | Embedded (Temporal/Pointwise Conv.) | Not Specified | 72.01% | BCI Competition IV-2a |

A key finding across multiple studies is that a significantly reduced channel set, often between 10-30% of the total channels, can deliver performance comparable or superior to using the full channel set [22]. For instance, on the widely used BCI Competition IV-2a dataset, the method employing Fisher Score and Local Optimization achieved a notable 79.37% accuracy in a four-class classification task using only 11 channels on average, which was a 6.52% improvement over using all 22 channels [5]. Similarly, deep learning approaches that integrate attention mechanisms, such as the ECA-CNN, can autonomously learn and rank channel importance, facilitating the creation of personalized, optimal channel subsets for each subject [23].

Categorization of Channel Selection Methods and Workflows

Channel selection algorithms can be broadly classified into three main categories based on their evaluation strategy and integration with the classifier: Filter, Wrapper, and Embedded methods [9].

Filter Methods: These techniques evaluate channels based on intrinsic characteristics of the signal, such as entropy, variance, or mutual information, without involving a classifier. They are computationally efficient and independent of the learning model. Examples include algorithms based on Fisher Score [5] and Wavelet-Packet Energy Entropy [24] [26]. Their disadvantage is that they may ignore dependencies between channels and the classifier's bias.

Wrapper Methods: These methods use the performance of a specific classifier (e.g., SVM, CNN) as the objective function to evaluate selected channel subsets. While they can yield highly optimized subsets, they are computationally intensive and prone to overfitting, especially with a large number of channels or limited data [9].

Embedded Methods: These techniques integrate the channel selection process directly into the classifier training. Deep learning models are particularly suited for this, as they can learn channel importance through mechanisms like attention modules [23] or 1x1 convolutions [27]. They offer a good balance between performance and computational cost by jointly optimizing channel selection and model parameters.

The following diagram illustrates a typical experimental workflow integrating data augmentation, channel selection, and classification, as seen in modern MI-BCI pipelines.

Figure 1: A Unified Workflow for MI-BCI System with Channel Selection.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear guide for implementation, this section outlines detailed protocols for two prominent channel selection methods: a filter-based approach and an embedded deep learning approach.

Protocol 1: Filter-Based Selection using Fisher Score and Local Optimization

This protocol is adapted from the method proposed by Luo et al. (2024) [5], which achieved high performance on a standard dataset.

- Objective: To select a subject-specific optimal subset of EEG channels for motor imagery classification.

- Dataset: BCI Competition IV Dataset IIa (22 channels, 4-class MI).

- Software/Materials: MATLAB or Python with scikit-learn; EEG processing toolbox (e.g., MNE-Python).

Procedure:

- Preprocessing:

- Bandpass filter the raw EEG data to the 8-30 Hz range to capture sensorimotor rhythms (Mu and Beta bands).

- Segment the data into epochs time-locked to the motor imagery cue (e.g., 0-4 seconds post-cue).

Feature Extraction:

- For each frequency band of interest and for each trial, extract spatial features using the Common Spatial Patterns (CSP) algorithm. The CSP algorithm projects the EEG data to a space where the variance between two classes is maximized.

- The result is a set of CSP features for each channel and trial.

Fisher Score Ranking:

- Calculate the Fisher Score for each channel based on the extracted CSP features. The Fisher Score for a channel is defined as: ( F = \frac{(\mu1 - \mu2)^2}{\sigma1^2 + \sigma2^2} ) where ( \mu1, \mu2 ) and ( \sigma1^2, \sigma2^2 ) are the means and variances of the features for the two classes, respectively.

- Rank all channels in descending order of their Fisher Scores. Channels with higher scores have better discriminative power.

Local Optimization:

- Start with an empty set of selected channels.

- Iteratively add channels from the top of the ranked list.

- After each addition, evaluate the classification accuracy (e.g., using a Linear Discriminant Analysis (LDA) classifier) via cross-validation.

- The final channel subset is determined at the point where the accuracy plateaus or begins to decrease. This step identifies a compact yet highly discriminative set of channels.

Protocol 2: Embedded Selection using Efficient Channel Attention (ECA)

This protocol is based on the work by Frontiers in Neuroscience (2023) [23] and exemplifies a modern deep-learning approach.

- Objective: To leverage a deep neural network to automatically learn and rank channel importance for subject-specific optimal channel selection.

- Dataset: BCI Competition IV dataset 2a.

- Software/Materials: Python, TensorFlow/PyTorch, GPU acceleration recommended.

Procedure:

- Data Preparation:

- Apply a bandpass filter (e.g., 1-40 Hz) to remove artifacts and DC drift.

- Normalize the continuous data using an exponential moving average.

- Segment the data into 4-second trials.

Model Architecture and Training:

- Design a Convolutional Neural Network (CNN) based on a standard architecture like DeepNet or EEGNet.

- Integrate Efficient Channel Attention (ECA) modules between the convolutional layers. The ECA module uses global average pooling to squeeze global spatial information, followed by a 1D convolution to efficiently capture cross-channel interactions and generate channel weights.

- Train the entire network (CNN + ECA modules) end-to-end on the subject's training data for the MI classification task.

Channel Weight Extraction and Ranking:

- After training, forward-pass the training data and extract the channel weights learned by the ECA modules.

- Average these weights across all training trials to get a stable importance score for each channel.

- Rank the channels in descending order based on their average weights.

Subset Formation and Evaluation:

- Form a new channel subset by selecting the top k channels from the ranking. The value of k can be adjusted based on performance requirements or hardware constraints.

- Retrain and evaluate a classification model using only the data from the selected k channels to validate the performance of the subset.

For researchers seeking to implement channel selection methods, the following table catalogues key computational tools and datasets as essential "research reagents."

Table 2: Key Resources for EEG Channel Selection Research

| Resource Name | Type | Key Features / Function | Example Use in Context |

|---|---|---|---|

| BCI Competition IV-2a [5] [23] | Public Dataset | 22 EEG channels, 4-class MI, 9 subjects. | Standard benchmark for validating and comparing channel selection algorithms. |

| WBCIC-MI Dataset [28] | Public Dataset | 59 EEG channels, 62 subjects, multi-session, 2 & 3-class MI. | Ideal for testing cross-session stability and generalizability of channel selection. |

| MNE-Python [27] | Software Toolbox | Open-source Python package for EEG/MEG data analysis. | Used for data preprocessing, filtering, epoching, and visualization. |

| Efficient Channel Attention (ECA) [23] | Algorithm | Lightweight attention module for CNNs; captures cross-channel interactions. | Embedded within a CNN to automatically learn and rank channel importance. |

| Common Spatial Patterns (CSP) [5] [26] | Algorithm | Spatial filter that maximizes variance between two classes. | Used as a feature extractor before applying filter-based channel selection. |

| Wavelet-Packet Decomposition [24] | Algorithm | Signal processing method for time-frequency analysis. | Used to calculate energy entropy for channel ranking and for data augmentation. |

The strategic selection of EEG channels is not merely a preprocessing step but a critical determinant of the overall performance and real-world viability of Motor Imagery BCI systems. As evidenced by the data and protocols presented, sophisticated channel selection methods—ranging from statistically driven filter approaches to learned embedded techniques—enable reductions of 50-70% in the number of channels while maintaining or even enhancing classification accuracy. This directly translates to reduced computational load, faster setup times, and improved user comfort, thereby addressing significant barriers to the practical adoption of BCI technology. The continued development and refinement of these methods, particularly those offering personalized and interpretable channel subsets, will be instrumental in transitioning MI-BCIs from controlled laboratory environments into reliable, everyday assistive and rehabilitative technologies.

In electroencephalography (EEG)-based motor imagery (MI) Brain-Computer Interface (BCI) research, the selection of an optimal subset of EEG channels is a critical preprocessing step. This process aims to identify the most informative channels to improve system performance while enhancing practicality. The evaluation of any channel selection method rests upon three fundamental metrics: classification Accuracy, Computational Load, and Setup Time. This document details these metrics, provides protocols for their measurement, and situates them within the context of a thesis on optimized EEG channel selection for MI-BCI research.

Core Evaluation Metrics

The performance of an EEG channel selection strategy is quantitatively assessed against the triad of metrics defined in the table below.

Table 1: Core Evaluation Metrics for EEG Channel Selection Methods

| Metric | Definition | Quantitative Measures | Significance in Channel Selection |

|---|---|---|---|

| Accuracy | The ability of the BCI system to correctly classify MI tasks from EEG signals using the selected channels [8]. | Classification Accuracy (%), Sensitivity, Specificity, F1-Score [8] [29]. | Directly measures the informational sufficiency of the selected channel set. Redundant or noisy channels can degrade accuracy [8]. |

| Computational Load | The amount of computational resources required for both the channel selection process and the subsequent model training and inference [29]. | Feature Extraction Time, Model Training Time, Number of Features/Channels, Algorithm Complexity [8] [29]. | Determines the feasibility for real-time BCI operation. Fewer channels reduce feature dimensionality, lowering computational cost [8] [29]. |

| Setup Time | The time required to prepare the BCI system for use, primarily driven by the number of electrodes that need to be placed [8]. | Electrode Application Time (minutes), Total Preparation Time [8]. | Critical for practical deployment and user acceptance, especially in clinical or daily-life settings. Channel selection minimizes setup time [8]. |

Quantitative Benchmarking Data

The following table synthesizes performance data from recent studies, illustrating the impact of channel selection on the defined metrics.

Table 2: Performance Benchmarks from Recent Channel Selection Studies

| Study (Citation) | Channel Selection Method | Dataset(s) Used | Key Results |

|---|---|---|---|

| Khanam et al. (2025) [8] [12] | Statistical t-test with Bonferroni correction & DLRCSPNN | BCI Competition III-IVa, BCI Competition IV-1 [8] | Accuracy: Achieved >90% for all subjects, improving by up to 42.53% for individual subjects compared to baseline algorithms. Setup: Method reduces number of channels, directly decreasing setup time [8]. |

| Degirmenci et al. (2025) [29] | Statistical-significance based feature and channel selection | Proprietary Finger MI Dataset | Accuracy: Max 59.17% (subject-dependent) and 39.30% (subject-independent) for 5-finger + idle state classification. Computational Load: Feature selection reduces irrelevant/redundant features, lowering computational complexity and improving generalization [29]. |

| World Robot Contest Dataset (2025) [28] | Deep Learning Models (EEGNet, DeepConvNet) | WBCIC-MI (2-class & 3-class) | Accuracy: Provided high-quality benchmark data, achieving 85.32% (2-class) and 76.90% (3-class) mean accuracy, demonstrating dataset reliability for evaluating methods [28]. |

Detailed Experimental Protocols

To ensure reproducible evaluation of channel selection methods, the following protocols are recommended.

Protocol for Assessing Classification Accuracy

This protocol outlines the steps for evaluating the end-to-end classification performance of a BCI system utilizing a selected channel set.

Title: Accuracy Evaluation Workflow

Materials:

- EEG Dataset: A public benchmark (e.g., BCI Competition IV 2a [30]) or a proprietary dataset with multiple subjects and sessions.

- Computing Environment: Standard workstation with MATLAB or Python.

- Software Tools: EEG processing toolbox (e.g., MNE-Python, EEGLAB), machine learning libraries (e.g., scikit-learn, TensorFlow).

Procedure:

- Data Partitioning: Divide the dataset into training and testing sets using a subject-specific k-fold cross-validation scheme (e.g., 5-fold) to ensure robust results [29].

- Pre-processing: Apply a bandpass filter (e.g., 8-30 Hz to cover Mu and Beta rhythms) and perform artifact removal (e.g., ocular, muscular) on the continuous data [8].

- Channel Selection: Execute the channel selection algorithm on the training data to identify the optimal channel subset. The selection criteria (e.g., statistical significance, genetic algorithms) are defined by the method under test [8] [29].

- Feature Extraction: From the selected channels, extract relevant features. Common Spatial Pattern (CSP) is a standard for MI-BCI [8] [30], but time-frequency or nonlinear features can also be used [29].

- Model Training & Evaluation: Train a classifier (e.g., Linear Discriminant Analysis, Support Vector Machine, Neural Network) using the features from the training set. Apply the trained model to the test set and calculate performance metrics: Accuracy, F1-Score, and Kappa coefficient [8] [29].

Protocol for Quantifying Computational Load

This protocol measures the processing time and resource demands of the channel selection and classification pipeline.

Title: Computational Load Profiling

Materials:

- Profiling Tools: Python's

cProfilemodule,timeit, or MATLAB'stic/tocand Profiler. - System Monitoring: Task Manager (Windows),

htop(Linux), or Activity Monitor (macOS).

Procedure:

- Modular Timing: Isolate and independently time the three core modules of the BCI pipeline:

- Channel Selection Algorithm: Time the process of determining the optimal channel subset from the pre-processed data.

- Feature Extraction: Time the process of generating features from the selected channels.

- Model Training: Time the classifier training process [29].

- Resource Monitoring: Run the entire pipeline while monitoring the system's CPU and RAM usage. Record the peak memory consumption.

- Averaging: Repeat the timing measurements (e.g., 10 times) and report the average and standard deviation to account for system variability. The reduction in computational load is demonstrated by comparing these metrics against a baseline that uses all available channels [8].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for EEG Channel Selection Research

| Item | Function / Relevance | Example / Specification |

|---|---|---|

| Public EEG Datasets | Serves as a standard benchmark for fair comparison and validation of new channel selection algorithms. | BCI Competition III/IV [8] [30], WBCIC-MI [28]. Multi-session, multi-subject datasets are preferred. |

| Statistical Analysis Tools | To perform initial filter-based channel selection and validate the significance of results. | T-test, ANOVA, Bonferroni correction [8]. |

| Feature Extraction Algorithms | To transform raw EEG from selected channels into discriminable features for classification. | Common Spatial Patterns (CSP) [8], Wavelet Transforms [29], nonlinear dynamics [29]. |

| Machine Learning Classifiers | To evaluate the ultimate classification performance of the selected channel set. | Support Vector Machines (SVM) [29], Neural Networks (NN) [8], Linear Discriminant Analysis (LDA) [30]. |

| High-Performance Computing | To handle the intensive computations of search-based channel selection and deep learning models. | Workstations with powerful CPUs/GPUs for rapid prototyping and hyperparameter tuning. |

A Review of Advanced Channel Selection Algorithms and Their Workflows

Electroencephalogram (EEG)-based Brain-Computer Interfaces (BCIs) offer a direct communication pathway between the brain and external devices, showing significant promise in neuroprosthetics and rehabilitation for individuals with motor disabilities [12]. Motor Imagery (MI), the mental rehearsal of a motor act without physical execution, induces distinct brain activity patterns, such as Event-Related Desynchronization (ERD) and Synchronization (ERS), which can be decoded from EEG signals to control assistive technologies [31] [26].

A central challenge in developing practical MI-BCI systems is the inherent complexity of multi-channel EEG data. While high-density electrode arrays improve spatial resolution, they also introduce redundant information and noise, increase computational costs, and prolong system setup time, thereby hindering rapid system response and everyday usability [5] [31]. Consequently, optimized EEG channel selection is a critical preprocessing step to enhance BCI performance. By identifying and retaining only the most task-relevant channels, researchers can improve classification accuracy, reduce computational overhead, and facilitate the development of more portable and user-friendly systems [31] [12].

This application note focuses on three prominent filter-based channel selection techniques—Fisher Score, Correlation, and Statistical Testing. These methods rank channels based on specific discriminative criteria without involving a classifier, offering computational efficiency and strong theoretical foundations [32] [26]. We provide a detailed comparative analysis, experimental protocols, and practical toolkits to guide researchers in implementing these methods for optimized EEG channel selection in MI-BCI research.

Comparative Analysis of Filter-Based Techniques

The table below summarizes the core principles, performance, and applications of the three primary filter-based channel selection techniques.

Table 1: Comparative Analysis of Filter-Based Channel Selection Techniques for MI-BCI

| Technique | Underlying Principle | Key Advantages | Reported Performance | Ideal Use Cases |

|---|---|---|---|---|

| Fisher Score | Ranks channels based on the ratio of inter-class variance to intra-class variance of features [5]. | Enhances separability between MI classes; improves signal quality for subsequent spatial filtering [5]. | Avg. of 11 channels selected; 79.37% accuracy (6.52% improvement over all 22 channels) on BCI Competition IV IIa [5]. | Binary or multi-class MI tasks requiring high discriminative power from a minimal channel set. |

| Correlation-Based | Selects channels exhibiting high inter-trial signal correlation, assuming MI-related channels share common information [32] [33]. | Strong neurophysiological basis; effective noise reduction by removing uncorrelated channels [32]. | 78% to 91.3% accuracy across datasets; often locates channels near C3, Cz, C4 [32]. | Subject-specific BCI designs and investigations into functional brain connectivity during MI. |

| Statistical Testing | Employs hypothesis tests (e.g., t-test) to identify channels with statistically significant signal differences between MI tasks [12]. | Provides a rigorous, probabilistic framework for channel significance; reduces model overfitting [12]. | Accuracy improvements of 3.27% to 45% reported across subjects and datasets versus using all channels [12]. | High-stakes applications requiring robust and statistically validated channel sets. |

Experimental Protocols for Channel Selection

This section details standardized protocols for implementing each filter-based channel selection method.

Protocol for Fisher Score with Local Optimization

This method effectively identifies a minimal channel set with high discriminative power for MI tasks [5].

Workflow Overview:

Detailed Procedure:

Data Preprocessing:

- Dataset: Utilize a standard MI dataset such as BCI Competition IV Dataset IIa.

- Filtering: Apply a band-pass filter (e.g., 8-30 Hz) to retain μ and β rhythms crucial for MI.

- Segmentation: Extract epochs time-locked to the MI cue presentation (e.g., 0-4 seconds post-cue).

Feature Extraction:

Fisher Score Calculation:

- For each channel

i, calculate the Fisher Score. The formula for a binary classification task is:Fisher Score(i) = (mean_class1(i) - mean_class2(i))² / (variance_class1(i) + variance_class2(i))wheremean_class1(i)andmean_class2(i)are the mean values of the features (e.g., CSP variance) for channeliacross trials of class 1 and class 2, respectively, andvariance_class1(i)andvariance_class2(i)are the corresponding variances [5].

- For each channel

Channel Ranking and Selection:

- Rank all channels in descending order based on their calculated Fisher scores.

- Employ a local optimization strategy. Start with the highest-ranked channel and iteratively add the next best channel from the ranked list. Evaluate the performance (e.g., classification accuracy) after each addition using a simple classifier on a validation set. The final channel subset is selected when performance peaks or a predefined number of channels is reached [5].

Protocol for Correlation-Based Channel Selection (CCS)

This method selects channels based on the inter-trial correlation, capitalizing on the premise that task-related channels will exhibit consistent, correlated activity [32].

Workflow Overview:

Detailed Procedure:

Data Preprocessing:

- Follow the same preprocessing steps as in the Fisher Score protocol (filtering and epoching).

Correlation Matrix Computation:

- For a given channel, calculate the pairwise correlation coefficients (e.g., Pearson correlation) between all possible pairs of trials within the same MI task class. This results in a correlation matrix for each channel.

Mean Correlation Calculation:

- For each channel, compute the average of all the pairwise correlation coefficients from the matrix generated in the previous step. This mean correlation value serves as the channel's relevance score [32].

Channel Selection:

- Rank the channels based on their mean correlation scores in descending order.

- Select the top K channels from this ranked list. The value of K can be predetermined based on computational constraints or optimized through cross-validation. Studies have shown that this method often selects channels over the sensorimotor cortex (e.g., C3, Cz, C4), which aligns with the neurophysiology of MI [32].

Protocol for Statistical Testing with Bonferroni Correction

This method uses rigorous statistical tests to identify channels with significant differences between MI task conditions, controlling for false discoveries [12].

Workflow Overview:

Detailed Procedure:

Data Preprocessing and Feature Extraction:

- Preprocess the data as described in previous protocols.

- Extract a relevant feature for each trial and channel. A common feature is the log-variance of the band-pass filtered signal or the power within specific frequency bands.

Hypothesis Testing:

- For each EEG channel, formulate the null hypothesis that the distribution of features is the same for two different MI tasks (e.g., left-hand vs right-hand imagery).

- Perform an independent samples t-test (or a non-parametric alternative like the Mann-Whitney U test if normality assumptions are violated) for each channel to test this hypothesis, obtaining a p-value for each channel.

Multiple Comparison Correction:

- Apply the Bonferroni correction to control the family-wise error rate. The corrected significance threshold is calculated as

α_corrected = α / N, whereαis the original significance level (e.g., 0.05) andNis the total number of channels tested. - Compare each channel's p-value to this stricter

α_corrected.

- Apply the Bonferroni correction to control the family-wise error rate. The corrected significance threshold is calculated as

Channel Selection:

- Retain only those channels for which the p-value is less than the Bonferroni-corrected significance threshold. This ensures that only channels showing statistically significant differences between the MI tasks are selected [12].

The Scientist's Toolkit: Research Reagent Solutions

The table below outlines the essential computational tools and data resources required for implementing the described channel selection protocols.

Table 2: Essential Research Reagents and Resources for EEG Channel Selection

| Resource Category | Specific Tool / Resource | Function / Application | Key Notes |

|---|---|---|---|

| Public EEG Datasets | BCI Competition IV Dataset IIa [5] [34] | Benchmark dataset for validating and comparing channel selection algorithms. | Contains 22-channel EEG data for 4 MI tasks from 9 subjects. |

| BCI Competition III Dataset IVa [32] | Another standard dataset for evaluating binary MI classification. | Used in correlation-based and other selection method validations. | |

| Feature Extraction Algorithms | Common Spatial Patterns (CSP) [5] [32] | Extracts spatial features that maximize discrimination between two MI classes. | Foundation for many channel selection and classification pipelines. |

| Filter Bank CSP (FBCSP) [32] [26] | Extends CSP by optimizing spatial filters in multiple frequency bands. | Captures frequency-specific MI patterns for improved performance. | |

| Classification Models | Support Vector Machine (SVM) [32] [26] | Classifies extracted features into MI tasks after channel selection. | Popular for its effectiveness in high-dimensional, non-linear problems. |

| Neural Networks (NN) / Deep Learning [12] [21] | Can be used for end-to-end classification or as part of a feature extraction pipeline. | Shown to achieve high accuracy (>90%) when combined with effective channel selection [12]. | |

| Statistical & ML Libraries | Scikit-learn (Python) | Provides implementations for Fisher Score, SVM, and other standard ML tools. | Enables rapid prototyping of the described protocols. |

| EEGLAB / MNE-Python | Specialized toolboxes for EEG preprocessing, visualization, and analysis. | Facilitates handling of EEG data structure and preprocessing steps. |

Electroencephalography (EEG)-based Motor Imagery Brain-Computer Interfaces (MI-BCIs) enable users to control external devices through mental imagination of movements without physical execution. During MI tasks, the brain produces event-related synchronization (ERS) and event-related desynchronization (ERD) patterns at specific scalp locations, which serve as the fundamental basis for BCI classification [23]. While modern EEG systems can record from over 100 channels, excessive channels introduce computational complexity, increase setup time, and risk overfitting without necessarily improving performance [31] [22]. Channel selection has therefore emerged as a crucial preprocessing step that enhances system portability, reduces computational burden, and can improve classification accuracy by eliminating redundant or noisy channels [9].

Wrapper and hybrid methods represent advanced approaches to channel selection that leverage intelligent search algorithms and combination strategies to identify optimal channel subsets. These methods offer significant advantages over traditional filter methods by evaluating channel subsets based on their actual classification performance rather than relying solely on general statistical criteria [9]. This application note provides detailed protocols and implementation guidelines for sequential floating search and hybrid optimization methods, enabling researchers to effectively apply these techniques in MI-BCI research.

Technical Foundations: Classification of Channel Selection Methods

EEG channel selection methods are broadly categorized into three main approaches, each with distinct characteristics and implementation considerations:

Filter Methods: These approaches select channels based on general statistical characteristics of the data (such as entropy or correlation) without involving a classifier [26]. They are computationally efficient but may yield suboptimal results for specific classification tasks.

Wrapper Methods: These methods utilize a specific classifier's performance as the evaluation criterion for channel subsets, typically employing search algorithms like Sequential Floating Search to navigate the channel space [35] [9]. While computationally intensive, they often produce superior results by optimizing directly for classification accuracy.

Hybrid Methods: Combining elements of both filter and wrapper approaches, hybrid methods leverage initial filtering to reduce the search space before applying wrapper techniques [4] [36]. This balanced approach mitigates computational demands while maintaining performance-oriented selection.

Table 1: Comparison of Channel Selection Method Categories

| Method Type | Evaluation Criteria | Computational Cost | Advantages | Limitations |

|---|---|---|---|---|

| Filter Methods | Statistical measures (entropy, correlation) | Low | Fast execution, classifier-independent | May not optimize classification accuracy |

| Wrapper Methods | Classifier performance | High | Accuracy-optimized, considers channel interactions | Computationally intensive, risk of overfitting |

| Hybrid Methods | Combined statistical and classification measures | Moderate | Balanced approach, reduced computation | Complex implementation, parameter tuning required |

Sequential Floating Search Methods: Principles and Protocols

Theoretical Foundation and Implementation Variants

Sequential Floating Search represents a sophisticated wrapper approach that dynamically adjusts the number of channels during the selection process by alternating between inclusion (forward) and exclusion (backward) phases. The Sequential Backward Floating Search (SBFS) variant has demonstrated particular effectiveness for MI-BCI applications, achieving significantly higher classification accuracy (p < 0.001) compared to using all channels or conventional MI channels (C3, C4, Cz) across multiple public datasets [35].

The fundamental principle behind SBFS involves iteratively removing the least significant channels while continuously re-evaluating the contribution of previously eliminated channels. This floating mechanism enables the algorithm to recover from potentially premature eliminations, resulting in more robust channel subsets. A modified SBFS approach further enhances computational efficiency by leveraging neuroanatomical principles—selecting symmetrical channel pairs during each iteration to reduce the search space while maintaining coverage of motor cortex regions [35].

Detailed Experimental Protocol: SBFS for MI-BCI

Equipment and Software Requirements

- EEG recording system with international 10-20 electrode placement

- MATLAB or Python with Signal Processing and Machine Learning toolboxes

- BCI datasets (BCI Competition IV dataset 2a, BCI Competition III dataset IIIa)

- Computing hardware: Minimum 8GB RAM, multi-core processor recommended

Step-by-Step Implementation Procedure

Data Preprocessing

- Apply a bandpass filter (8-30 Hz) to raw EEG signals to capture mu and beta rhythms relevant to MI tasks [35]

- Segment data into appropriate trial epochs based on cue timing (e.g., 3-6 seconds post-cue for BCI Competition IV 2a)

- Perform artifact removal using techniques like independent component analysis (ICA) or exponential moving average normalization [23]

Feature Extraction

- Extract spatial features using Common Spatial Patterns (CSP) for each channel subset candidate

- Calculate log-variance of CSP projections to generate feature vectors for classification

- Standardize features across channels to zero mean and unit variance

SBFS Algorithm Initialization

- Start with the complete channel set (S = {C1, C2, ..., CN})

- Initialize performance benchmark using all channels with cross-validation

- Set stopping criterion (e.g., maximum iterations, performance degradation threshold)

Iterative Floating Search Process

- Backward Phase: Remove the channel whose exclusion yields the best performance improvement

- Forward Phase: Re-add previously removed channels if they now improve performance

- Evaluation: Assess each subset using 10-fold cross-validation with Linear Discriminant Analysis (LDA) classifier

- Continue until stopping criterion is met

Validation and Subset Selection

- Validate final channel subset on held-out test data

- Compare performance against full channel set and conventional MI channels

- Document selected channels and corresponding performance metrics

Table 2: Performance Comparison of SBFS on BCI Competition Datasets

| Dataset | Subjects | Full Channels | Conventional MI Channels | SBFS Selected Channels | Accuracy Improvement |

|---|---|---|---|---|---|

| BCI Competition IV 2a | 9 | 22 channels | C3, C4, Cz (3 channels) | 8-12 channels (average 11) | +6.52% [5] |

| BCI Competition III IIIa | 3 | 60 channels | C3, C4, Cz (3 channels) | ~14 channels (subject-specific) | Significant (p<0.001) [35] |

| BCI Competition IV 1 | 4 | 59 channels | C3, C4, Cz (3 channels) | ~16 channels (subject-specific) | Significant (p<0.001) [35] |

Workflow Visualization: SBFS Channel Selection

Hybrid Optimization Methods: Integrated Approaches

Theoretical Framework and Algorithmic Strategies

Hybrid optimization methods combine the efficiency of filter techniques with the performance orientation of wrapper methods through multi-stage processing or integrated objective functions. These approaches typically employ an initial filtering stage to reduce the search space, followed by refined wrapper-based selection on the promising channel candidates [4] [36]. Recent advances have incorporated metaheuristic optimization algorithms like War Strategy Optimization (WSO) and Chimp Optimization Algorithm (ChOA) to enhance search efficiency in high-dimensional channel spaces [4].

The fundamental advantage of hybrid methods lies in their ability to balance computational demands with selection quality. By leveraging fast filtering for initial screening and applying more computationally intensive wrapper evaluation only to promising subsets, these methods achieve performance comparable to pure wrapper approaches with significantly reduced processing time [36]. Advanced implementations have demonstrated the capability to select optimal channel subsets representing just 10-30% of total channels while maintaining or even improving classification accuracy compared to full-channel configurations [31] [22].

Detailed Experimental Protocol: Hybrid Filter-Wrapper Approach

Equipment and Software Requirements

- EEG acquisition system with standard electrode placement

- MATLAB with Optimization and Deep Learning toolboxes

- Python libraries: Scikit-learn, TensorFlow/PyTorch, SciPy

- High-performance computing resources recommended for large datasets

Step-by-Step Implementation Procedure

Data Preparation and Preprocessing

- Load EEG recordings from MI tasks (e.g., BCI Competition IV dataset 2a)

- Apply 1-40 Hz bandpass filtering and notch filtering at 50 Hz to remove line noise [23]

- Segment data into 4-second epochs time-locked to MI cues

- Apply exponential moving average normalization (decay factor 0.999) per channel

Initial Filter-Based Channel Ranking

- Calculate Fisher scores for each channel based on CSP features to assess class separability [5]

- Compute Shannon entropy for each channel and rank by information content [26]

- Select top-k channels (e.g., 50% of total) based on combined filter criteria

- Alternatively, use MRMR (Minimum Redundancy Maximum Relevance) algorithm for initial selection [4]

Hybrid Optimization Setup

- Initialize hybrid algorithm (e.g., ECCSPSOA: Enhanced Chaotic Crow Search and PSO) [37]

- Define objective function combining classification accuracy and channel count

- Set algorithm parameters: population size, iteration count, convergence criteria

Wrapper-Based Refinement

- Evaluate candidate channel subsets using deep learning classifiers (CNN or modified DNN) [4]

- Employ k-fold cross-validation (k=10) to assess generalization performance

- Apply regularization techniques to prevent overfitting to training data

- Iterate until convergence or maximum iterations reached

Final Selection and Validation

- Select channel subset with optimal performance-complexity tradeoff

- Validate on completely held-out test set not used during selection

- Compare against baseline methods (full channels, conventional MI channels)

- Perform statistical significance testing on results across multiple subjects

Table 3: Performance of Hybrid Methods on MI-BCI Tasks

| Hybrid Method | Components | Dataset | Channels Selected | Classification Accuracy | Key Advantage |

|---|---|---|---|---|---|

| WSO-ChOA with MRMR [4] | MRMR pre-selection, War Strategy & Chimp Optimization, CNN-DNN classifier | BCI Competition IV 2a | Subject-specific subsets | 95.06% | High accuracy with minimal channels |

| Fisher Score with Local Optimization [5] | Fisher score ranking, local optimization | BCI Competition IV 2a | ~11 channels (average) | 79.37% (+6.52% improvement) | Balanced performance and efficiency |

| ECA-DeepNet [23] | Efficient Channel Attention, DeepNet architecture | BCI Competition IV 2a | 8 channels | 69.52% | Automatic subject-specific selection |

Workflow Visualization: Hybrid Channel Selection

Table 4: Essential Research Resources for EEG Channel Selection Studies

| Resource Category | Specific Examples | Function/Purpose | Implementation Notes |

|---|---|---|---|

| EEG Datasets | BCI Competition IV 2a [23], BCI Competition III IIIa [35], BCI Competition IV 1 [35] | Benchmark evaluation, method comparison | Publicly available, standardized protocols for performance comparison |

| Signal Processing Tools | Bandpass filters (8-30 Hz) [35], Common Spatial Patterns (CSP) [5], Independent Component Analysis | Feature extraction, noise reduction | MATLAB Signal Processing Toolbox, Python SciPy and MNE libraries |

| Classification Algorithms | Linear Discriminant Analysis (LDA) [35], Support Vector Machines (SVM) [26], Convolutional Neural Networks (CNN) [4] | Performance evaluation of channel subsets | Scikit-learn for traditional ML, TensorFlow/PyTorch for deep learning |

| Optimization Frameworks | Particle Swarm Optimization (PSO) [37], War Strategy Optimization (WSO) [4], Chimp Optimization Algorithm (ChOA) [4] | Efficient search through channel combinations | Custom implementation required, available in optimization toolboxes |

| Evaluation Metrics | Classification accuracy, Kappa coefficient, F1-score, Computational time | Performance assessment and method comparison | Essential for comprehensive evaluation beyond pure accuracy |

Wrapper and hybrid methods represent sophisticated approaches to EEG channel selection that offer significant advantages for MI-BCI systems. Sequential Floating Search methods provide systematic, performance-driven channel elimination with floating recovery mechanisms, while hybrid optimization techniques balance computational efficiency with selection quality through integrated filter-wrapper architectures. The protocols detailed in this application note enable researchers to implement these advanced methods effectively, accelerating the development of more efficient and practical BCI systems.

Future research directions include the development of more efficient hybrid algorithms with reduced computational demands, enhanced adaptive selection methods that accommodate non-stationary EEG characteristics, and integration of transfer learning approaches to leverage information across subjects. As these methodologies mature, they will continue to advance the practicality and performance of MI-BCI systems for both clinical and non-clinical applications.

Electroencephalogram (EEG)-based Brain-Computer Interfaces (BCIs) offer a direct communication pathway between the brain and external devices, showing particular promise for applications in motor rehabilitation and assistive technologies [38] [8]. Motor Imagery (MI), the mental rehearsal of a motor act without its physical execution, is a prevalent paradigm in non-invasive BCIs. However, the use of high-density EEG electrode arrays introduces practical challenges including prolonged setup time, user discomfort, and computational complexity during signal processing [39] [40]. Furthermore, multichannel EEG signals often contain redundant information, and task-irrelevant channels can introduce noise that degrades BCI performance [39] [8].

Channel selection addresses these issues by identifying and retaining the most informative EEG channels for a specific task. This process reduces data dimensionality, mitigates the risk of overfitting, and can enhance classification accuracy while decreasing the computational burden [8]. Recently, deep learning approaches have demonstrated significant potential in automating and optimizing channel selection. This Application Note details protocols employing Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and attention mechanisms for embedded and deep learning-driven channel selection in MI-BCI research.

Deep Learning Architectures for Channel Selection and Analysis

Attention Mechanisms for Channel Weighting

Attention mechanisms have emerged as a powerful tool for channel selection by learning to assign importance weights to different EEG channels based on their contribution to the task.

Protocol: Attention-based Channel Weight Assignment [41] [42]

- Objective: To reconstruct bad EEG channels or assign importance weights for selection using a data-driven approach that does not rely on physical electrode distance.

- Procedure:

- Input Preparation: Use EEG data from known good channels. Apply a channel masking (CM) technique to randomly omit some good channel data during training, forcing the model to learn from multiple channels and disperse attention.

- Model Architecture: Implement an attention mechanism model (AMACW) as follows:

- Represent each channel's data with a multidimensional vector to capture inter-channel relationships in a high-dimensional space.

- Use attention mechanisms to compute correlations between these channel vectors.

- Replace the standard Softmax function with a simple function to allow the model to utilize information from negatively correlated channels.

- Weight Allocation: Transform the learned inter-channel correlations into a set of channel weights.

- Output: The model outputs reconstructed data for bad channels or a weight score for each channel, indicating its importance.

- Applications: Bad channel interpolation (especially for channels with unknown locations) and channel selection for downstream MI classification tasks.

Hybrid CNN-RNN Models with Attention

Combining CNNs, RNNs, and attention mechanisms allows for the joint modeling of spatial, spectral, and temporal features in EEG, which is crucial for identifying optimal channels.

Protocol: ACGRU for Cross-Patient Seizure Prediction [41]