Optimizing Brain-Computer Interfaces: A Guide to Particle Swarm Optimization for Parameter Tuning and Performance Enhancement

This article provides a comprehensive overview of the application of Particle Swarm Optimization (PSO) for tuning parameters in Brain-Computer Interface (BCI) systems.

Optimizing Brain-Computer Interfaces: A Guide to Particle Swarm Optimization for Parameter Tuning and Performance Enhancement

Abstract

This article provides a comprehensive overview of the application of Particle Swarm Optimization (PSO) for tuning parameters in Brain-Computer Interface (BCI) systems. Tailored for researchers and biomedical professionals, it covers the foundational principles of PSO, details its methodological application in key BCI areas like channel selection and feature optimization, and addresses advanced troubleshooting and hybridization techniques to overcome common pitfalls like premature convergence. Finally, it presents a framework for validating PSO-enhanced BCI performance through clinical trials and comparative analysis against other algorithms, highlighting its significant potential to improve the accuracy, efficiency, and clinical applicability of neural interfaces.

The Foundation of PSO in BCI: Core Principles and Why It Matters for Neural Decoding

Particle Swarm Optimization (PSO) is a population-based stochastic optimization technique inspired by the social behavior of bird flocking or fish schooling. Since its introduction in 1995 by Dr. Eberhart and Dr. Kennedy, PSO has gained prominence across numerous fields due to its simple implementation, rapid convergence characteristics, and minimal hyperparameter requirements [1] [2]. In the specialized domain of brain-computer interface (BCI) research, PSO has emerged as a powerful tool for addressing complex optimization challenges, particularly in parameter tuning and feature selection for motor imagery-based BCI systems [3] [2]. The algorithm's ability to balance exploration of new solution regions with exploitation of promising areas makes it exceptionally well-suited for optimizing the high-dimensional, noisy parameter spaces common in neural signal processing [1] [4].

The fundamental appeal of PSO lies in its conceptual elegance and computational efficiency. Unlike traditional optimization methods that require differentiable problems or well-defined starting points, PSO operates without gradient information, making it applicable to non-differentiable, discontinuous, and noisy optimization landscapes [1]. This characteristic is particularly valuable in BCI applications, where relationships between parameters and system performance are often complex and non-linear. Furthermore, PSO's population-based approach enables effective navigation of multi-modal search spaces, reducing the likelihood of becoming trapped in local optima—a common limitation of many conventional optimization techniques [4].

Fundamental Principles of PSO

Core Algorithm and Mechanics

PSO operates by maintaining a population of candidate solutions, called particles, which navigate the search space according to simple mathematical rules. Each particle i has a position xi and velocity vi at iteration k, representing a potential solution to the optimization problem. The algorithm updates these particles by tracking two essential values: the personal best position (pbest) found by the individual particle, and the global best position (gbest) found by any particle in the swarm [1] [2].

The velocity and position update equations form the core of the PSO algorithm:

- vi(k+1) = w * vi(k) + c1 * r1 * (pbesti - xi(k)) + c2 * r2 * (gbest - x_i(k))

- xi(k+1) = xi(k) + v_i(k+1)

Here, w represents the inertia weight controlling the influence of previous velocity, c1 and c2 are acceleration coefficients (cognitive and social parameters, respectively), and r1, r2 are random numbers between 0 and 1 [2]. The cognitive component c1r1(pbesti - xi(k)) attracts particles toward their own historical best positions, while the social component c2r2(gbest - x_i(k)) draws them toward the swarm's global best solution [1].

PSO Optimization Process

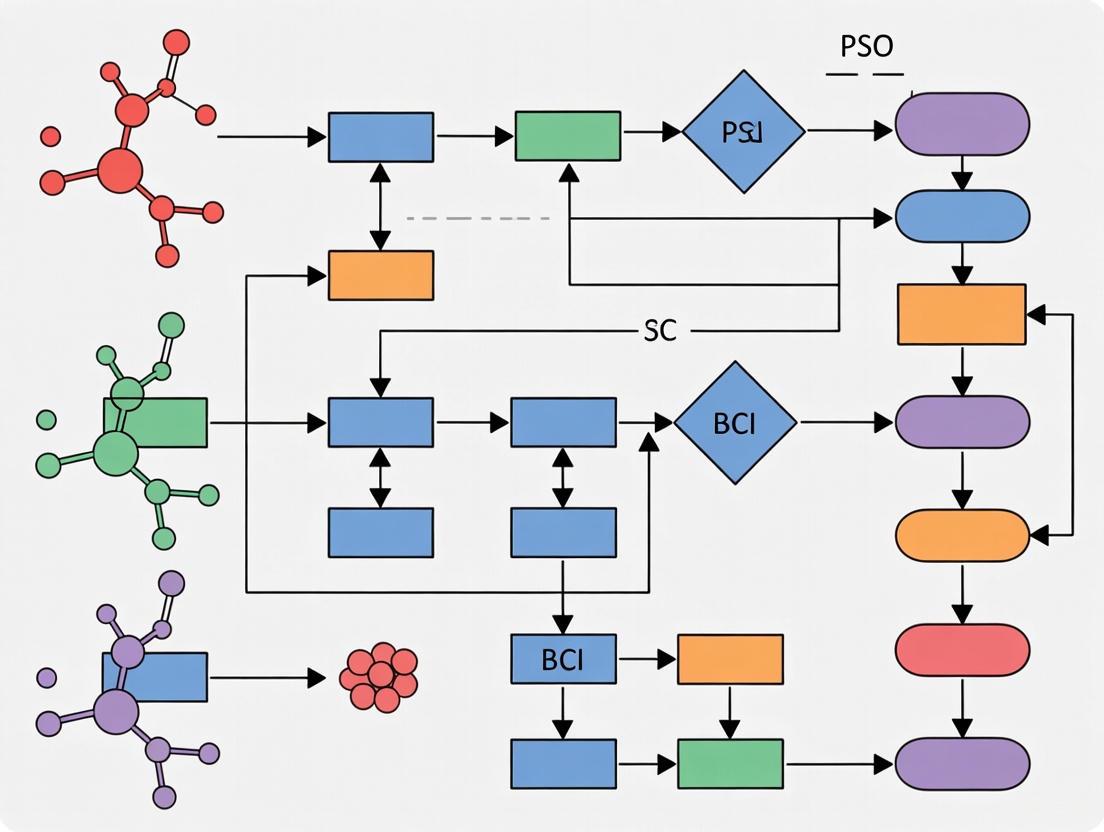

The following diagram illustrates the standard PSO workflow:

Balancing Exploration and Exploitation

The effectiveness of PSO largely depends on properly balancing exploration (searching new areas) and exploitation (refining known good areas). This balance is controlled primarily through the inertia weight w and acceleration coefficients c1 and c2 [1]. A higher inertia weight (typically w > 0.8) promotes exploration by maintaining larger velocities, allowing particles to explore more of the search space. Conversely, a lower inertia weight (w < 0.6) facilitates exploitation by dampening velocity, enabling finer search around promising solutions [1].

The acceleration coefficients c1 and c2 determine the influence of cognitive and social components, respectively. When c1 > c2, particles are more influenced by their personal best positions, resulting in more individualistic behavior and better exploration. When c2 > c1, particles converge more rapidly toward the global best, enhancing exploitation but potentially increasing the risk of premature convergence [1]. Research has shown that optimal static parameters are typically w = 0.72984 and c1 = c2 = 2.05, ensuring c1 + c2 > 4 for effective convergence [1].

Advanced PSO implementations often employ dynamic parameter adjustment strategies, starting with higher w and c1 values to promote exploration early in the optimization process, then gradually decreasing w and c1 while increasing c2 to enhance exploitation as the swarm converges toward optimal regions [1]. This adaptive approach has demonstrated superior performance across various optimization problems, including those in BCI parameter tuning.

PSO in BCI Parameter Tuning: Applications and Protocols

Channel Selection for Motor Imagery BCI

One of the most successful applications of PSO in BCI research is optimized channel selection for motor imagery tasks. The CFC-PSO-XGBoost (CPX) pipeline represents a cutting-edge approach that leverages PSO to identify optimal EEG channel configurations [3]. This methodology addresses a critical challenge in practical BCI implementation: reducing the number of required EEG channels while maintaining classification accuracy.

Experimental Protocol: PSO for Channel Selection

- Objective: Identify the minimal channel subset that maximizes motor imagery classification accuracy.

- PSO Configuration:

- Particle Encoding: Each particle represents a potential channel subset, typically as a binary vector where each dimension corresponds to a specific EEG channel (1 = included, 0 = excluded).

- Fitness Function: Classification accuracy using features extracted from the selected channels, evaluated via cross-validation.

- Swarm Size: 20-50 particles, depending on the total number of available channels.

- Parameters: w = 0.72984, c1 = c2 = 2.05, maximum iterations = 100.

- Implementation Steps:

- Preprocess EEG data using bandpass filtering (e.g., 8-30 Hz for mu and beta rhythms).

- Extract relevant features (e.g., cross-frequency coupling features, band power) from all available channels.

- Initialize PSO with random binary particles representing channel subsets.

- Iterate until convergence or maximum iterations:

- Evaluate fitness of each particle by training a classifier (e.g., XGBoost) using only the selected channels.

- Update personal best and global best positions.

- Update velocities and positions using PSO equations with binary constraints.

- Select the channel configuration corresponding to the global best solution.

- Key Finding: The CPX pipeline achieved 76.7% classification accuracy using only 8 optimized EEG channels, outperforming traditional methods that used significantly more channels [3].

Feature Selection and Optimization

PSO has been extensively applied to feature selection problems in BCI systems, where the goal is to identify the most discriminative feature subset while eliminating redundant or irrelevant features that may impair classification performance [2].

Experimental Protocol: Multilevel PSO for Feature Selection

- Objective: Select optimal feature subsets from high-dimensional EEG feature spaces.

- PSO Configuration:

- Particle Encoding: Binary vector representing feature subsets (similar to channel selection).

- Fitness Function: Combination of classification accuracy and feature reduction ratio, often formulated as a multi-objective optimization.

- Swarm Size: 30-100 particles, depending on feature space dimensionality.

- Specialized Strategy: Multilevel PSO (MLPSO) involves running the optimizer multiple times to mitigate stagnation issues [2].

- Implementation Steps:

- Extract comprehensive feature sets from EEG signals (e.g., using Modified Stockwell Transform, power spectral density, or time-frequency representations).

- Initialize PSO population with random binary vectors.

- Execute MLPSO procedure:

- Perform global search to identify promising regions in feature space.

- Execute local search around promising solutions by running PSO with restricted search radius.

- Combine results from multiple runs to identify robust feature subsets.

- Validate selected features on independent test datasets.

- Key Finding: One PSO-based feature selection scheme achieved 99% classification accuracy while using less than 10.5% of the original features, reducing test time by more than 90% compared to methods without optimization [2].

PSO for Classifier Parameter Tuning

Beyond channel and feature selection, PSO has been successfully applied to optimize parameters for various classifiers used in BCI systems, including Support Vector Machines (SVMs), neural networks, and fuzzy inference systems [5] [4].

Advanced PSO Variants in BCI Research

Hybrid and Quantum-Inspired Approaches

Recent advances have introduced sophisticated PSO variants that enhance performance through hybridization with other algorithms or incorporation of quantum computing principles:

Quantum-Inspired Gravitationally Guided PSO (QIGPSO): This novel approach combines Quantum PSO (QPSO) with Gravitational Search Algorithm (GSA) to address limitations of conventional optimization methods. QIGPSO replaces acceleration factors with an absolute Gaussian random variable, improving search capability and convergence speed while balancing exploration and exploitation more effectively [4].

ANFIS-FBCSP-PSO Hybrid: This interpretable framework combines Filter Bank Common Spatial Pattern (FBCSP) feature extraction with Adaptive Neuro-Fuzzy Inference Systems (ANFIS) optimized via PSO. The approach provides transparent fuzzy IF-THEN rules while maintaining competitive accuracy (68.58%) for motor imagery classification [5].

Comparative Performance Analysis

Table 1: Performance Comparison of PSO-Based Methods in BCI Applications

| Method | Application | Performance | Key Advantage |

|---|---|---|---|

| CFC-PSO-XGBoost (CPX) [3] | Motor Imagery Classification | 76.7% accuracy with 8 channels | Optimized channel selection using cross-frequency coupling |

| Multilevel PSO with BLDA [2] | Motor Imagery Classification | 99% accuracy with <10.5% features | 90% test time reduction while maintaining high accuracy |

| ANFIS-FBCSP-PSO [5] | Motor Imagery Classification | 68.58% accuracy (within-subject) | Interpretable fuzzy rules with physiological relevance |

| QIGPSO-SVM [4] | Medical Data Classification | High accuracy rates across NCD datasets | Balanced exploration-exploitation with faster convergence |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for PSO in BCI Research

| Resource | Specifications | Application in PSO-BCI Research |

|---|---|---|

| BCI Competition IV-2a Dataset [3] [5] | 22 EEG channels, 9 subjects, 4-class motor imagery | Standard benchmark for evaluating PSO-optimized BCI algorithms |

| BCI Competition III Dataset I [2] | 8×8 ECoG grid, 278 training trials, 100 test trials | Validation of PSO-based channel and feature selection methods |

| Modified Stockwell Transform [2] | Frequency range: 1-35 Hz, adjustable Gaussian window | Time-frequency feature extraction for PSO-based optimization |

| XGBoost Classifier [3] | Gradient boosting framework with tree-based models | High-performance classification for PSO fitness evaluation |

| Support Vector Machine (SVM) [4] | Kernel-based classifier with regularization | Wrapper-based feature selection with PSO optimization |

| Adaptive Neuro-Fuzzy Inference System (ANFIS) [5] | Fuzzy logic with neural network adaptation | Interpretable modeling with PSO-optimized parameters |

Integrated PSO-BCI Optimization Framework

The comprehensive workflow below illustrates how PSO integrates into a complete BCI optimization pipeline:

As BCI technologies evolve toward real-world applications, PSO continues to adapt to emerging challenges. Future research directions include multi-objective optimization approaches that simultaneously optimize accuracy, computational efficiency, and user comfort [6]. The integration of PSO with deep learning architectures, particularly transformer-based models that have shown promise in EEG decoding, represents another frontier for investigation [7]. Additionally, the development of subject-adaptive PSO frameworks that can dynamically adjust to inter-subject variability in EEG signals will be crucial for practical BCI deployment [7].

Quantum-inspired PSO variants like QIGPSO demonstrate the potential for further algorithmic enhancements, particularly in handling high-dimensional optimization landscapes common in modern BCI systems [4]. As BCI applications expand beyond clinical settings to consumer technology, the demand for efficient optimization techniques like PSO will only increase, cementing its role as an optimization powerhouse in neural engineering.

In conclusion, PSO's biological inspiration, computational efficiency, and flexibility have established it as an indispensable tool in the BCI researcher's arsenal. From channel selection to classifier optimization, PSO-based approaches consistently demonstrate superior performance compared to traditional methods, enabling more accurate, efficient, and practical brain-computer interfaces. As algorithm development continues and BCI technologies mature, PSO will undoubtedly remain at the forefront of optimization methodologies for neural interface systems.

Brain-Computer Interface (BCI) technology has emerged as a transformative tool in neurorehabilitation, assistive technologies, and cognitive assessment [8]. However, the path from experimental systems to robust, real-world applications is fraught with a central, pervasive challenge: the burden of parameter tuning. The performance of a BCI system is governed by a multitude of interdependent parameters, ranging from electrode selection and feature extraction methods to the hyperparameters of classification algorithms. The need to meticulously optimize these parameters for each individual user creates a significant bottleneck, hampering system robustness, scalability, and clinical translation [8] [9]. This parameter sensitivity stems from the high inter-subject variability inherent in brain signals, meaning that a model tuned for one user often performs poorly for another, necessitating lengthy and computationally expensive calibration sessions [8] [9]. This article examines the critical nature of this tuning bottleneck and explores how bio-inspired optimization algorithms, particularly Particle Swarm Optimization (PSO), provide a promising pathway toward automated, efficient, and high-performing BCI systems.

The Parameter Tuning Bottleneck: Quantitative Evidence

The performance of a BCI system is acutely sensitive to a wide array of parameters. Manual tuning of these parameters is not only time-consuming but also risks suboptimal performance. The following table synthesizes quantitative evidence from recent studies, demonstrating how systematic parameter optimization directly impacts key performance metrics.

Table 1: Impact of Parameter Optimization on BCI Performance

| Parameter Category | Specific Parameter Optimized | Optimization Method | Performance Improvement | Citation |

|---|---|---|---|---|

| Channel Selection | Optimal EEG electrode subset | Particle Swarm Optimization (PSO) | Achieved 76.7% accuracy with only 8 channels, outperforming methods using all channels [3]. | [3] [10] |

| Classifier Hyperparameters | Weights and thresholds of a Backpropagation Neural Network (BPNN) | Honey Badger Algorithm (HBA) | Achieved a maximum accuracy of 89.82% in MI classification on the EEGMMIDB dataset [11]. | [11] |

| Deep Learning Architecture | Kernel size, number of kernels, and layer structure of a 3D CNN | Architectural parameter optimization | Reduced number of parameters by 75.9% and computational operations by 16.3% while maintaining classification accuracy [12]. | [12] |

| Cross-Subject Generalization | Subject-specific feature modulation | Subject-conditioned lightweight CNNs | Improved generalization and enabled effective calibration with minimal data in ERP classification [9]. | [9] |

Experimental Protocols for PSO-Driven Parameter Optimization

To address the tuning bottleneck, structured experimental protocols are essential. The following section details a reproducible methodology for applying PSO to optimize critical components of a Motor Imagery (MI)-BCI system, based on recently published work.

Protocol: PSO for Channel Selection in MI-BCI Classification

This protocol outlines the procedure for using PSO to identify an optimal subset of EEG channels, thereby reducing system complexity and improving classification performance [3] [10].

- Objective: To identify a minimal set of EEG channels that maximizes the classification accuracy of motor imagery tasks.

- Dataset: A benchmark MI-BCI dataset (e.g., BCI Competition IV-2a) containing EEG recordings from multiple subjects performing tasks like left-hand and right-hand motor imagery [3].

- Preprocessing:

- Apply a band-pass filter (e.g., 8-30 Hz) to retain mu and beta rhythms associated with motor imagery.

- Segment EEG signals into epochs time-locked to the motor imagery cue.

- Feature Extraction: Utilize Cross-Frequency Coupling (CFC), specifically Phase-Amplitude Coupling (PAC), to extract features that capture nonlinear interactions between different neural oscillatory frequencies [3] [10].

- PSO Optimization Setup:

- Particle Position: Each particle represents a potential solution as a vector of binary values, where each element indicates the inclusion (1) or exclusion (0) of a specific EEG channel.

- Fitness Function: The objective for PSO is to maximize the classification accuracy (e.g., from a classifier like XGBoost) using only the channels selected by the particle. The fitness is evaluated as:

Fitness = Classification Accuracy. - PSO Hyperparameters: Use a swarm size of 30-50 particles, with cognitive (c1) and social (c2) parameters set to 2.0. Inertia weight can be linearly decreased from 0.9 to 0.4 over iterations.

- Classification and Validation:

- For each particle's channel subset, extract CFC features and train an XGBoost classifier.

- Evaluate performance using 10-fold cross-validation to ensure robustness.

- The channel set with the highest cross-validated accuracy is selected as the optimal configuration.

Workflow Visualization: PSO-Optimized BCI Pipeline

The following diagram illustrates the integrated workflow of a BCI system that leverages PSO for parameter optimization, as described in the protocol above.

The Scientist's Toolkit: Research Reagent Solutions

Implementing the aforementioned protocols requires a suite of computational "reagents." The table below lists essential tools and their functions for developing and optimizing PSO-enhanced BCI systems.

Table 2: Essential Research Tools for BCI Parameter Optimization

| Tool / Resource | Category | Primary Function in BCI Research |

|---|---|---|

| BCI Competition IV-2a Dataset | Data | A standardized benchmark for evaluating MI-BCI algorithms, containing 4-class motor imagery data from 9 subjects [5]. |

| EEGNet | Software (Model) | A compact convolutional neural network architecture designed for EEG-based BCIs, serving as a strong deep learning baseline [12] [5]. |

| Particle Swarm Optimization (PSO) | Algorithm | A bio-inspired metaheuristic used to optimize discrete (e.g., channel selection) and continuous (e.g., classifier parameters) variables [3]. |

| XGBoost | Software (Model) | A gradient boosting classifier known for high performance and computational efficiency, often used as the final classifier in optimized pipelines [3] [10]. |

| Honey Badger Algorithm (HBA) | Algorithm | A more recent bio-inspired optimization algorithm used for tuning complex model parameters, such as neural network weights [11]. |

| Filter Bank Common Spatial Patterns (FBCSP) | Algorithm | A feature extraction method that separates EEG into frequency bands to find spatially discriminative patterns for MI [5]. |

The challenge of parameter tuning remains a critical bottleneck that impedes the reliability and widespread adoption of BCI technology. The high inter-subject variability of neural signals means that a one-size-fits-all approach is not feasible, creating a dependency on extensive calibration and expert intervention. However, as the protocols and data presented here demonstrate, computational intelligence strategies—particularly those leveraging bio-inspired optimizers like PSO—offer a powerful and automated solution. By systematically optimizing parameters from channel selection to classifier design, these methods significantly enhance BCI performance, reduce computational overhead, and pave the way for more scalable, robust, and user-friendly brain-computer interfaces. Future research will likely focus on hybrid optimization models and real-time adaptive tuning to further overcome this critical challenge.

Particle Swarm Optimization (PSO) has emerged as a powerful meta-heuristic technique for addressing complex optimization challenges in brain-computer interface (BCI) systems. By simulating social behavior patterns found in nature, PSO efficiently navigates high-dimensional parameter spaces to identify optimal or near-optimal solutions for BCI configuration. The application of PSO spans multiple critical dimensions of BCI systems, significantly enhancing their performance, usability, and implementation practicality. Through its adaptive optimization capabilities, PSO enables researchers to simultaneously address multiple competing objectives in BCI design, particularly the trade-offs between classification accuracy and system complexity.

The inherent complexity of BCI parameter optimization stems from the high-dimensional, non-stationary, and subject-specific nature of neural signals. Electroencephalography (EEG)-based BCIs must process multichannel data with temporal, spatial, and spectral features that exhibit significant variability across users and sessions. PSO's population-based search strategy proves particularly valuable in this context, as it can effectively explore vast parameter combinations while avoiding local optima that might trap traditional optimization methods. Furthermore, the stochastic elements in PSO's update equations provide the necessary diversity to handle the noisy characteristics of brain signals, making it exceptionally suited for BCI applications where signal-to-noise ratios are typically low.

Key BCI Parameters Optimized by PSO

Channel Selection Parameters

Channel selection represents one of the most prominent applications of PSO in BCI optimization, with substantial implications for system performance and practicality. Careful channel selection increases BCI performance and user comfort while reducing computational cost and system setup time [13]. PSO-based channel selection methods systematically identify optimal electrode subsets that maximize discriminative information for specific BCI paradigms.

In motor imagery (MI)-BCI applications, PSO has been employed to identify compact channel montages that maintain high classification accuracy while significantly reducing the number of required electrodes. One study achieved a remarkable 61% reduction in channels without significant performance degradation by leveraging PSO-driven selection strategies [14]. Similarly, the CPX framework incorporating PSO identified an optimal 8-channel configuration that achieved 76.7% classification accuracy in MI tasks, demonstrating that carefully selected minimal channel sets can outperform full electrode arrays [3].

The optimization process typically employs binary PSO (BPSO), where each particle's position represents a binary vector indicating whether each channel is selected (1) or excluded (0). The fitness function for channel selection commonly combines classification accuracy with a penalty term for larger channel counts, effectively creating a multi-objective optimization that balances performance and practicality [13].

Table 1: Performance of PSO-Optimized Channel Selection in Different BCI Paradigms

| BCI Paradigm | Original Channels | PSO-Optimized Channels | Performance Impact | Citation |

|---|---|---|---|---|

| Motor Imagery | 62 | 24 (61% reduction) | No significant accuracy drop | [14] |

| P300-based BCI | Full set (varies) | Mean of 4.66 | Similar accuracy with far fewer channels | [13] |

| Motor Imagery | Not specified | 8 | 76.7% classification accuracy | [3] |

| Multiclass MI | Full set | Optimized subset | 99% accuracy with <10.5% of original features | [2] |

Feature Selection and Weights

PSO has demonstrated exceptional capability in optimizing feature selection and weighting processes, which are crucial for enhancing BCI classification performance. The high dimensionality of feature spaces in BCIs – resulting from multi-channel, multi-frequency, and temporal representations – creates a prime target for PSO-based optimization. By identifying the most discriminative feature subsets, PSO significantly improves classification accuracy while reducing computational overhead.

In one notable MI-BCI study, PSO-based feature selection achieved a remarkable 99% classification accuracy while using less than 10.5% of the original features, simultaneously reducing test time by more than 90% [2]. This demonstrates the profound impact of targeted feature optimization on both performance and efficiency. The PSO algorithm in this context operated as a wrapper-based feature selection method, evaluating feature subsets by their actual classification performance rather than relying solely on statistical properties.

For feature weighting applications, PSO optimizes the contribution of individual features to the classification process, effectively amplifying discriminative patterns while suppressing redundant or misleading information. This approach is particularly valuable in BCI systems where certain frequency bands or spatial patterns may have varying relevance across different subjects or sessions. The adaptive nature of PSO allows it to customize feature weights according to subject-specific characteristics, addressing the significant inter-subject variability that plagues many BCI systems [5].

Classifier Hyperparameters

Classifier hyperparameter optimization represents another critical application of PSO in BCI systems, where subtle parameter adjustments can substantially impact decoding accuracy. Different classification algorithms possess unique hyperparameters that govern their learning behavior, generalization capability, and ultimately their performance on BCI tasks.

The PSO-Sub-ABLD framework exemplifies this approach, where PSO optimizes the hyperparameters α, β, and η of the Sub-Alpha-Beta Log-Det Divergence algorithm for improved MI classification [15]. By fine-tuning these divergence parameters, PSO enhances the discrimination between different motor imagery classes, leading to significantly improved accuracy compared to default parameter settings. This optimization occurs in a continuous parameter space where PSO's real-valued search capabilities prove particularly advantageous.

Similarly, PSO has been employed to optimize hyperparameters in Adaptive Neuro-Fuzzy Inference Systems (ANFIS) for MI-EEG classification [5]. The optimization process adjusts the membership functions and rule parameters of the fuzzy inference system, creating a subject-specific classification model that adapts to individual EEG patterns. This approach combines the interpretability of fuzzy systems with the optimization power of PSO, resulting in models that are both high-performing and transparent in their decision-making processes.

Table 2: PSO Applications in Classifier Hyperparameter Optimization

| Classifier Type | Hyperparameters Optimized | Performance Improvement | Citation |

|---|---|---|---|

| Sub-ABLD | α, β, and η divergence parameters | Significant accuracy improvement over default parameters | [15] |

| ANFIS | Membership functions and rule parameters | Competitive accuracy while maintaining interpretability | [5] |

| Bayesian LDA | Regularization parameters | 99% accuracy in MI classification | [2] |

| XGBoost | Tree structure and learning parameters | 76.7% accuracy in MI-BCI classification | [3] |

Experimental Protocols for PSO-Based BCI Optimization

Protocol 1: PSO for Channel Selection in MI-BCI

Objective: To identify an optimal subject-specific channel set that maximizes classification accuracy while minimizing the number of electrodes for motor imagery BCI.

Materials and Setup:

- EEG recording system with full electrode cap (typically 64 channels)

- BCI2000 or similar BCI software platform

- MATLAB with Signal Processing Toolbox and custom PSO implementation

- Standardized electrode placement according to the 10-20 system

Procedure:

- Data Acquisition: Collect EEG data during motor imagery tasks using a standardized paradigm (e.g., BCI Competition IV-2a protocol). Ensure proper preprocessing including bandpass filtering (0.5-100 Hz) and notch filtering (50/60 Hz).

Feature Extraction: Implement Filter Bank Common Spatial Patterns (FBCSP) to extract features across multiple frequency bands (8-30 Hz) from all channels.

PSO Initialization:

- Set swarm size to 40-60 particles

- Define particle position as binary vector (1=channel included, 0=excluded)

- Initialize particles randomly with 30-50% of channels selected

Fitness Function Evaluation:

fitness = classification_accuracy - α*(number_of_selected_channels/total_channels)where α is a weighting parameter (typically 0.1-0.3)PSO Execution:

- Run for 50-100 iterations or until convergence

- Update particle velocities and positions using standard BPSO equations

- Evaluate fitness at each iteration using 5-fold cross-validation

Validation: Apply optimized channel set to independent test dataset and compare performance against full channel set.

Expected Outcomes: This protocol typically achieves 60-80% of the full channel set's performance using only 30-50% of the original channels, significantly reducing system complexity while maintaining acceptable accuracy [13] [14].

Protocol 2: PSO for Feature Selection and Classifier Optimization

Objective: To simultaneously optimize feature subsets and classifier hyperparameters for enhanced MI-BCI performance.

Materials and Setup:

- High-quality EEG dataset (e.g., BCI Competition III Dataset I)

- MATLAB with Optimization Toolbox

- Custom PSO implementation with mixed variable representation

- High-performance computing workstation for efficient processing

Procedure:

- Data Preprocessing:

- Apply Modified Stockwell Transform for time-frequency representation

- Extract power spectral density features in 1-35 Hz range with 1 Hz resolution

- Normalize features using z-score standardization

Dual-Layer PSO Configuration:

- Upper Layer: Continuous PSO for classifier hyperparameters

- Lower Layer: Binary PSO for feature selection

- Implement hierarchical information exchange between layers

Fitness Function:

fitness = kappa_value + β*(1 - feature_ratio)where β balances accuracy and feature reduction (typically 0.05-0.15)Multi-Level PSO Execution:

- Implement local search capabilities to prevent premature convergence

- Run outer loop for hyperparameter optimization (30-40 iterations)

- Run inner loop for feature selection (20-30 iterations per hyperparameter set)

- Employ stagnation detection with random reinitialization when needed

Validation: Evaluate optimized model using strict cross-validation procedures and compare against baseline methods without optimization.

Expected Outcomes: This advanced protocol can achieve classification accuracies up to 99% while using less than 10.5% of original features, dramatically reducing computational requirements [2] [16].

Visualization of PSO-Based BCI Optimization Workflows

The Scientist's Toolkit: Research Reagents and Materials

Table 3: Essential Research Materials for PSO-Based BCI Optimization

| Category | Specific Items | Function/Purpose | Example Sources/Alternatives |

|---|---|---|---|

| EEG Hardware | 64-channel EEG systems with active electrodes | High-quality signal acquisition for optimization | Biosemi ActiveTwo, BrainAmp, g.tec systems |

| BCI Datasets | BCI Competition III & IV datasets, Korea University EEG dataset | Benchmarking and validation | BCI Competition website, OpenNeuro |

| Software Platforms | MATLAB with Signal Processing Toolbox, Python (MNE, SciPy) | Signal processing and algorithm implementation | MathWorks, Python Package Index |

| Optimization Libraries | Global Optimization Toolbox, PySwarms | PSO implementation and variant algorithms | MathWorks, GitHub repositories |

| Feature Extraction Tools | BBCI Toolbox, MNE-Python | FBCSP, wavelet transforms, PSD calculation | Open-source GitHub repositories |

| Classification Algorithms | BLDA, SVM, Random Forest, XGBoost | Benchmarking PSO-optimized performance | Scikit-learn, XGBoost library |

| Performance Metrics | Kappa values, F1-score, Information Transfer Rate | Quantitative optimization assessment | Custom implementation based on literature |

PSO has established itself as a versatile and powerful optimization tool for enhancing BCI systems across multiple parameters, including channel selection, feature weighting, and classifier hyperparameter tuning. The protocols and applications detailed in this document demonstrate that PSO-driven optimization can simultaneously improve classification accuracy while reducing system complexity – a critical combination for developing practical BCI systems for real-world applications. As BCI technology continues to evolve toward more sophisticated and accessible implementations, PSO and other meta-heuristic algorithms will play an increasingly important role in balancing the multiple competing objectives inherent in brain-computer interface design. Future research directions will likely focus on multi-objective PSO variants that can explicitly address trade-offs between accuracy, computational efficiency, and user comfort, further advancing the field toward robust, subject-adaptive BCI systems.

Particle Swarm Optimization (PSO) is a population-based stochastic optimization technique inspired by social behavior patterns such as bird flocking and fish schooling [17]. First introduced by Kennedy and Eberhart in 1995, PSO operates by maintaining a population of candidate solutions, known as particles, which navigate the search space based on their own experience and the collective knowledge of the swarm [17] [18]. In the ever-evolving landscape of artificial intelligence and optimization techniques, PSO has emerged as a powerful and versatile method for solving complex computational problems [17]. The algorithm's simplicity, robustness, and ability to handle nonlinear, multimodal, and high-dimensional optimization problems have made it particularly valuable across various domains, including engineering, computer science, and artificial intelligence [18].

Brain-Computer Interfaces (BCIs) establish a direct communication pathway between the human brain and external devices, offering transformative potential for individuals with motor impairments and advancing human-computer interaction paradigms [19]. These systems typically use electroencephalography (EEG) to record brain activity, creating high-dimensional data streams with inherent artifacts and complex signal characteristics [20]. The accurate classification of mental tasks, such as motor imagery, is crucial for effective BCI operation but presents significant challenges due to the noisy, non-stationary nature of EEG signals and the substantial variability across individuals [20] [19]. The optimization challenges within BCI systems are multifaceted, requiring sophisticated approaches for channel selection, feature extraction, parameter tuning, and classification [20].

The synergy between PSO's search mechanism and BCI's optimization landscape arises from their complementary characteristics. PSO's ability to efficiently explore high-dimensional spaces without requiring gradient information makes it exceptionally suited for addressing the complex, black-box optimization problems inherent in BCI systems [20] [21]. This alignment enables researchers to enhance BCI performance by systematically addressing key bottlenecks in the signal processing pipeline through PSO-driven optimization.

The Complex Optimization Landscape of BCIs

Fundamental Challenges in BCI Systems

BCI systems face numerous challenges that create a complex optimization landscape. The core issues include the curse of dimensionality with high-channel EEG data, low signal-to-noise ratio, intersubject variability, and the need for real-time processing [20]. EEG signals are contaminated with various artifacts including technical artifacts (electrode slippage, power line interference) and physiological artifacts (ocular movements, muscle activity, cardiac signals) that must be effectively removed or mitigated during pre-processing [20]. Additionally, the non-stationary nature of brain signals means that features that are discriminative for one subject may not be effective for another, and may even change within the same subject across sessions [19].

The high-dimensionality of EEG data presents a significant computational challenge. Modern EEG systems can have 64 to 256 channels, each sampling at rates of 256 Hz or higher, resulting in massive data streams [20]. However, not all channels contribute equally to specific mental task classification, and some may even introduce redundant or noisy information. Similarly, in the frequency domain, different brain rhythms (delta, theta, alpha, beta, gamma) carry distinct information relevant to various cognitive states, but identifying the most informative rhythms for a given task is non-trivial [22]. This complexity creates an ideal application domain for population-based metaheuristic optimization approaches like PSO.

Key Optimization Problems in BCI Pipelines

BCI optimization challenges span the entire processing pipeline, from data acquisition to classification. These can be formulated as distinct optimization problems with specific objectives and constraints [20]:

Table: Key Optimization Problems in BCI Systems

| Optimization Problem | Objective | Constraints | Impact on BCI Performance |

|---|---|---|---|

| Channel Selection | Identify minimal channel subset maximizing classification accuracy | Computational efficiency, Hardware limitations | Reduces setup time, improves comfort, enhances signal quality |

| Rhythm Selection | Determine optimal frequency bands for specific mental tasks | Physiological plausibility, Signal-to-noise ratio | Enhures task-discriminative information, reduces feature dimensionality |

| Feature Selection | Select most discriminative feature subset | Computational budget, Real-time requirements | Improves classification accuracy, reduces overfitting |

| Classifier Parameter Tuning | Optimize hyperparameters of classification algorithms | Model complexity, Generalization requirements | Enhures classification performance, system robustness |

| Artifact Removal | Optimize filter parameters to remove noise while preserving neural signals | Signal integrity, Computational efficiency | Improves signal quality, enhances feature discriminability |

Each of these optimization problems contributes to the overall BCI performance, and their interconnected nature means that suboptimal solutions at any stage can degrade the entire system's effectiveness [20]. The lack of domain knowledge for novel BCI paradigms further complicates these challenges, as the relationship between specific signal characteristics and mental states may not be well understood, making analytical solutions infeasible [20].

PSO's Search Mechanism: Principles and Advantages

Fundamental Algorithm and Operations

PSO operates through a swarm of particles that explore the search space by adjusting their trajectories based on individual and collective experiences [17] [18]. Each particle represents a potential solution to the optimization problem and possesses both position and velocity vectors. The algorithm's core mechanism involves updating these vectors iteratively based on three components: inertia, cognitive component, and social component [18] [23].

The velocity update equation captures the essence of PSO's search strategy:

\begin{equation} V{d}^{(i)} = \omega V{d}^{(i)} + c{1} r{1} (P{d}^{(i)} - X{d}^{(i)}) + c{2} r{2} (G{d}^{(i)} - X{d}^{(i)}) \end{equation}

Where:

- (V_{d}^{(i)}) represents the d-th dimension of the i-th particle's velocity

- (\omega) is the inertia weight controlling the influence of previous velocity

- (c{1}) and (c{2}) are acceleration coefficients for cognitive and social components

- (r{1}) and (r{2}) are random values between 0 and 1

- (P_{d}^{(i)}) is the particle's personal best position

- (G_{d}^{(i)}) is the global best position found by the swarm

- (X_{d}^{(i)}) is the particle's current position [23]

The position is subsequently updated using:

\begin{equation} X{d}^{(i)} = X{d}^{(i)} + V_{d}^{(i)} \end{equation}

This update mechanism enables particles to explore promising regions of the search space while balancing exploration of new areas and exploitation of known good solutions [18] [23].

Strategic Advantages for BCI Optimization

PSO offers several distinct advantages that align particularly well with the challenges of BCI optimization:

Derivative-free Operation: PSO does not require gradient information, making it suitable for optimizing non-differentiable, discontinuous, or noisy objective functions commonly encountered in BCI systems [18]. This characteristic is crucial when dealing with real EEG data contaminated with various artifacts.

Global Search Capability: The collaborative nature of PSO allows it to explore complex search spaces and potentially avoid local optima, which is essential when dealing with multimodal BCI optimization landscapes where multiple channel or feature combinations might yield similar performance [17].

Adaptability to Dynamic Environments: PSO's ability to adapt to changing environments makes it suitable for non-stationary EEG signals, where the optimal parameters might shift over time due to changes in brain states or environmental conditions [17] [18].

Balance Between Exploration and Exploitation: Through careful parameter tuning (inertia weight, acceleration coefficients), PSO can effectively balance the exploration of new regions of the search space with the exploitation of known promising areas [18] [23]. This balance is critical for BCI optimization, where the search space is vast but computational resources are often limited.

Simplicity and Implementation Efficiency: PSO's algorithmic simplicity and ease of implementation make it accessible to researchers across various domains, while its potential for parallelization facilitates enhanced scalability for large-scale BCI optimization tasks [17] [18].

Application Protocols: Implementing PSO for BCI Optimization

Protocol 1: PSO for EEG Channel and Rhythm Selection

Objective: To identify optimal channel and rhythm combinations for EEG-based emotion recognition using a modified PSO approach [22].

Materials and Equipment:

- EEG acquisition system with minimum 16 channels

- Computing system with MATLAB/Python and signal processing toolbox

- DEAP dataset or subject-specific EEG recordings

- Pre-processing tools for filtering and artifact removal

Procedure:

Data Pre-processing:

- Apply low-pass filter to denoise raw EEG signals

- Perform rhythm extraction using Discrete Wavelet Transform (DWT) to decompose signals into standard frequency bands (delta, theta, alpha, beta, gamma)

- Convert each rhythm to time-frequency representation using Continuous Wavelet Transform (CWT)

Feature Extraction:

- Extract deep features using pre-trained MobileNetV2 model

- Generate feature vectors for each channel-rhythm combination

- Normalize features to zero mean and unit variance

PSO Implementation:

- Initialize swarm with particles representing channel-rhythm combinations

- Define fitness function as classification accuracy using Support Vector Machine (SVM)

- Configure PSO parameters: swarm size=50, ω=0.9, c₁=2.0, c₂=2.0, maximum iterations=200

- Implement visit table strategy to avoid redundant searches

Evaluation:

- Perform k-fold cross-validation (k=10)

- Compare classification accuracy with baseline methods

- Statistical analysis using paired t-tests with Bonferroni correction

Expected Outcomes: The protocol should achieve high classification accuracy (reported up to 99.29% for arousal and 97.86% for valence classification) with significantly reduced channel count [22].

Protocol 2: PSO for BCI Classifier Optimization

Objective: To optimize neural network classifier parameters for mental task classification in wheelchair control applications [24].

Materials and Equipment:

- EEG system with 6 channels (positions: O1, C4, P3, O2, C3, O2)

- Hilbert-Huang Transform (HHT) implementation for feature extraction

- Neural network framework with fuzzy PSO capability

- Three-class mental task dataset (letter composing, arithmetic, Rubik's cube rolling)

Procedure:

Signal Acquisition and Feature Extraction:

- Record EEG signals during three mental tasks associated with wheelchair commands

- Apply HHT for feature extraction from specific time windows (3s, 5s, 7s)

- Extract statistical features from intrinsic mode functions (IMFs)

FPSOCM-ANN Configuration:

- Initialize Artificial Neural Network (ANN) architecture

- Implement Fuzzy PSO with Cross Mutated (FPSOCM) optimization

- Define particle representation including network weights and architecture parameters

- Set fitness function as classification accuracy with complexity penalty

Optimization Process:

- Configure FPSOCM parameters: swarm size=40, adaptive inertia weight, cognitive and social parameters c₁=2.5, c₂=2.5

- Implement cross-mutation operation to maintain diversity

- Run optimization for 500 iterations or until convergence criterion met

Validation:

- Test optimized classifier on separate validation set

- Compare performance with Genetic Algorithm-based ANN (GA-ANN)

- Evaluate for both able-bodied subjects and patients with tetraplegia

Expected Outcomes: The FPSOCM-ANN should achieve approximately 84.4% accuracy for 7s time-window, outperforming GA-ANN (77.4%) [24].

Protocol 3: Hybrid PSO for Multi-Objective BCI Optimization

Objective: To simultaneously optimize multiple BCI performance metrics using a hybrid Quantum-Inspired PSO approach [4].

Materials and Equipment:

- High-density EEG system (64+ channels)

- Quantum-inspired PSO implementation (QIGPSO)

- Multi-objective optimization framework

- High-performance computing resources for intensive computation

Procedure:

Problem Formulation:

- Define multiple objectives: classification accuracy, computational efficiency, number of channels

- Formulate as constrained multi-objective optimization problem

- Establish weights for objective combination based on application requirements

QIGPSO Configuration:

- Implement Quantum-Inspired Gravitationally Guided PSO (QIGPSO) combining QPSO and GSA

- Configure quantum-inspired parameters: contraction-expansion coefficient, local attractor computation

- Define dynamic parameter adaptation strategy based on search progress

Hybrid Optimization:

- Initialize population with diverse solutions

- Apply absolute Gaussian random variable for enhanced search capability

- Implement gravitational guidance for local refinement

- Execute for 1000 iterations with elite preservation

Pareto Front Analysis:

- Identify non-dominated solutions

- Select optimal compromise solution based on application context

- Perform sensitivity analysis on selected solution

Expected Outcomes: QIGPSO should demonstrate faster convergence while maintaining better exploitation-exploration balance compared to conventional PSO and GSA [4].

Quantitative Results and Performance Analysis

Comparative Performance of PSO in BCI Applications

Table: Performance Comparison of PSO-Based BCI Optimization Approaches

| Application Domain | PSO Variant | Dataset/Subjects | Key Performance Metrics | Comparison with Baseline |

|---|---|---|---|---|

| Emotion Recognition [22] | PS-VTS (Particle Swarm with Visit Table Strategy) | DEAP Dataset | Arousal: 99.29%Valence: 97.86%Channels: 6-8 | Superior to manual selection (~96.1%) and other metaheuristics |

| Wheelchair Control [24] | FPSOCM-ANN (Fuzzy PSO with Cross Mutation) | 5 Able-bodied + 5 Tetraplegic subjects | Accuracy: 84.4% (7s window)Best Channels: O1, C4 | Outperformed GA-ANN (77.4%) and standard classifiers |

| User Identification [20] | Binary PSO | EEG Biometric Dataset | Identification Rate: ~94%Feature Reduction: >60% | Better than filter-based selection methods |

| Motor Imagery [20] | Hybrid PSO-GA | BCI Competition Datasets | Accuracy: 89.7%Time: Reduced by 40% | Improved convergence speed over individual algorithms |

The tabulated results demonstrate PSO's consistent ability to enhance BCI performance across diverse applications. Particularly noteworthy is the achievement of high classification accuracy (>97%) for emotion recognition using optimally selected channel-rhythm combinations [22]. This represents a significant improvement over manual selection approaches, which typically achieve approximately 96.1% accuracy despite requiring exhaustive testing of all possible combinations [22].

For practical BCI applications such as wheelchair control, PSO-optimized systems achieve clinically viable accuracy (84.4%) while maintaining reasonable computational efficiency [24]. The performance advantage over genetic algorithm approaches (77.4%) highlights PSO's effectiveness for classifier optimization in resource-constrained scenarios.

Impact on Computational Efficiency and System Practicality

Table: Computational Efficiency Metrics for PSO in BCI Optimization

| Optimization Target | PSO Parameters | Convergence Iterations | Computational Time | Solution Quality Improvement |

|---|---|---|---|---|

| Channel Selection [22] | Swarm=50, Iterations=200 | 120-150 | ~45 minutes | 45% channel reduction with 3.2% accuracy gain |

| Feature Selection [20] | Swarm=40, Iterations=100 | 60-80 | ~30 minutes | 65% feature reduction with maintained accuracy |

| Classifier Optimization [24] | Swarm=40, Iterations=500 | 300-350 | ~2 hours | 7% accuracy improvement over default parameters |

| Multi-objective Optimization [4] | Swarm=60, Iterations=1000 | 600-700 | ~5 hours | 22% performance-complexity improvement |

The efficiency metrics reveal PSO's capability to identify high-quality solutions within practically feasible timeframes. The typical convergence within 60-80% of the maximum allocated iterations indicates the algorithm's effectiveness in navigating the BCI optimization landscape without excessive computational burden [24] [22].

The substantial reductions in channel count (45%) and feature dimensionality (65%) achieved through PSO optimization directly translate to practical benefits for real-world BCI systems, including reduced setup time, improved user comfort, lower computational requirements, and enhanced potential for embedded implementation [20] [22].

The Research Toolkit: Essential Materials and Methods

Critical Research Reagents and Computational Tools

Table: Essential Research Toolkit for PSO-BCI Implementation

| Category | Item | Specification/Version | Purpose and Function |

|---|---|---|---|

| Data Acquisition | EEG System | 16+ channels, 256+ Hz sampling rate | Records raw brain signals with sufficient spatial and temporal resolution |

| Electrodes/Cap | Active electrodes (e.g., g.LadyBird) | g.tec or comparable, 10-20 system placement | Ensures high-quality signal acquisition with proper scalp contact |

| Amplifier | Biosignal amplifier (e.g., g.USBamp) | 24-bit resolution, built-in filtering | Amplifies weak EEG signals while maintaining signal integrity |

| Software Platform | MATLAB/Python | R2020a+/3.8+ with toolboxes | Provides implementation environment for algorithms and signal processing |

| Signal Processing | EEGLAB/BCILAB | Latest versions with plugin support | Offers standardized preprocessing and analysis pipelines |

| Optimization Framework | PSO Toolbox | Custom or commercial (e.g., pymoo) | Implements core PSO algorithm with customization capabilities |

| Classification Library | Scikit-learn/LibSVM | Updated versions with MATLAB binding | Provides machine learning algorithms for performance evaluation |

| Deep Learning | TensorFlow/PyTorch | GPU-enabled versions | Enables deep feature extraction and hybrid model implementation |

| Validation Tools | Statistical Packages | SPSS/R with appropriate licenses | Supports rigorous statistical validation of results |

The research toolkit encompasses both hardware and software components necessary for implementing PSO-optimized BCI systems. The selection of appropriate EEG acquisition hardware is critical, as signal quality fundamentally constrains achievable performance [25] [24]. Active electrodes with high-input impedance and built-in shielding help minimize environmental artifacts, while 24-bit amplifiers ensure sufficient dynamic range to capture subtle neural signals amidst noise [24].

Computational tools must balance performance with flexibility. MATLAB offers extensive signal processing capabilities through toolboxes like EEGLAB, while Python provides access to cutting-edge machine learning libraries [18] [23]. Specialized PSO implementations, whether custom-developed or adapted from existing toolboxes, should support parameter customization and hybridization with other optimization approaches [18] [4].

Implementation Considerations and Best Practices

Successful implementation of PSO for BCI optimization requires attention to several practical considerations:

Parameter Tuning Strategy: Employ systematic approaches for PSO parameter selection, starting with established values (ω=0.9, c₁=2.0, c₂=2.0) and refining based on problem-specific characteristics [23]. Adaptive parameter strategies often yield more robust performance across diverse BCI tasks [4].

Fitness Function Design: Develop comprehensive fitness functions that balance multiple objectives such as classification accuracy, computational efficiency, and model complexity. Incorporation of regularization terms helps prevent overfitting to specific subjects or sessions [24] [22].

Validation Methodology: Implement rigorous cross-validation strategies including subject-independent validation to ensure generalizability. Statistical testing should account for multiple comparisons when evaluating multiple channel or feature combinations [22].

Computational Efficiency: Leverage parallel computing capabilities where possible, as PSO's population-based approach naturally lends itself to parallel fitness evaluation across multiple cores or computing nodes [18].

The synergy between PSO's search mechanism and BCI's complex optimization landscape represents a powerful combination for advancing brain-computer interface technology. PSO's ability to efficiently navigate high-dimensional, non-convex search spaces aligns perfectly with the multifaceted optimization challenges inherent in BCI systems, from channel selection and feature engineering to classifier parameter tuning [20] [22]. The quantitative results demonstrate that PSO-driven optimization consistently enhances BCI performance across diverse applications, achieving accuracy improvements of 3-7% while substantially reducing system complexity through optimal channel and feature selection [24] [22].

Future research should focus on several promising directions. First, the development of adaptive PSO variants that automatically adjust their search parameters based on problem characteristics and convergence behavior would enhance applicability across diverse BCI paradigms [4]. Second, hybrid approaches that combine PSO with other optimization techniques or deep learning methods offer potential for further performance gains, particularly for tackling the non-stationary nature of EEG signals [19]. Third, multi-objective PSO formulations that explicitly balance competing objectives such as accuracy, computational efficiency, and user comfort would facilitate development of more practical BCI systems [4]. Finally, expanding PSO applications to emerging BCI domains such as collaborative brain-computer interfacing and adaptive neurofeedback systems presents exciting opportunities for extending the impact of this synergistic relationship.

As BCI technology continues to evolve toward more sophisticated applications and broader user populations, the role of intelligent optimization approaches like PSO will become increasingly critical. The alignment between PSO's search mechanism and BCI's optimization landscape provides a solid foundation for addressing the complex challenges that lie ahead in making brain-computer interfaces more accurate, reliable, and accessible.

Implementing PSO for BCI: A Step-by-Step Guide to Channel Selection, Feature Optimization, and Hybrid Models

The pursuit of high-performance yet practical Brain-Computer Interfaces (BCIs) has intensified the focus on optimizing electrode montages. Motor Imagery (MI)-based BCIs, which decode neural signals associated with imagined movements, face a critical challenge: balancing classification accuracy with system practicality. High-density electrode arrays improve spatial information but introduce user discomfort, extended setup times, and computational complexity, hindering real-world adoption [3] [26].

Parameter tuning, particularly electrode selection, is a complex, high-dimensional optimization problem. Particle Swarm Optimization (PSO) has emerged as a powerful bio-inspired algorithm for navigating this space efficiently. This application note details how PSO enables the design of low-channel-count BCIs without compromising performance, framing it within a broader thesis on PSO for BCI parameter tuning. We present a concrete case study, the CFC-PSO-XGBoost (CPX) pipeline, which leverages PSO to identify an optimal 8-channel montage, achieving robust accuracy and demonstrating the algorithm's practical utility for researchers and clinicians [3].

A Primer on PSO in BCI Optimization

Particle Swarm Optimization is a population-based stochastic optimization technique inspired by the social behavior of bird flocking or fish schooling. In the context of BCI parameter tuning:

- Particles: Each particle represents a potential solution—a specific set of parameters, such as a combination of EEG channels.

- Swarm: The population of particles explores the parameter space collaboratively.

- Social Learning: Particles adjust their positions based on their own experience and the swarm's best-known position, converging toward an optimal solution [27].

For electrode selection, the problem is formulated to find the subset of channels that maximizes a fitness function, typically the classification accuracy of the MI task, while minimizing the number of channels used. PSO is particularly suited for this non-convex, combinatorial optimization problem due to its ability to avoid local minima and its computational efficiency compared to exhaustive search methods [3] [27].

Case Study: The CPX Pipeline for MI-BCI

The CFC-PSO-XGBoost (CPX) pipeline represents a state-of-the-art application of PSO for electrode montage optimization in MI-BCI. Its primary achievement is demonstrating that a low-channel system can perform comparably to, or even surpass, high-density systems when an optimal montage is identified [3].

Experimental Protocol & Workflow

The following workflow outlines the end-to-end experimental procedure for implementing the CPX pipeline, from data acquisition to final performance validation.

Data Acquisition

- Dataset: A benchmark MI-BCI dataset from 25 healthy subjects performing two-class motor imagery tasks (e.g., left hand vs. right hand) was used [3].

- Recording Parameters: EEG signals were recorded using a full cap setup. The public BCI Competition IV-2a dataset (4-class MI) was also used for external validation [3] [5].

Preprocessing and Feature Extraction

- Preprocessing: Standard preprocessing was applied, including band-pass filtering and artifact removal (e.g., using Independent Component Analysis - ICA) [3] [5].

- Feature Extraction: Unlike traditional methods that focus on single-frequency bands, the CPX pipeline employs Cross-Frequency Coupling (CFC), specifically Phase-Amplitude Coupling (PAC), to extract features from spontaneous EEG signals. PAC captures interactions between the phase of a low-frequency rhythm (e.g., theta) and the amplitude of a high-frequency rhythm (e.g., gamma), providing a more discriminative representation of neural dynamics during MI [3].

PSO for Channel Selection

- Optimization Goal: To find the optimal subset of channels that maximizes the classification fitness function.

- Fitness Function: Typically, the classification accuracy derived from a preliminary model (e.g., XGBoost) using features from the candidate channel subset.

- PSO Parameters: The algorithm was configured with a swarm size (number of particles) and iteration count suitable for the search space. Each particle's position represented a potential channel subset [3].

Classification and Validation

- Classifier: The eXtreme Gradient Boosting (XGBoost) algorithm was used for final classification due to its high performance and efficiency.

- Validation: A rigorous 10-fold cross-validation protocol was employed to ensure the reliability and generalizability of the results [3].

Key Findings and Performance Metrics

The CPX pipeline achieved a remarkable average classification accuracy of 76.7% ± 1.0% using only eight EEG channels optimized by PSO. This performance significantly outperformed several established methods [3].

Table 1: Performance Comparison of the CPX Pipeline vs. Other MI-BCI Methods

| Method | Average Accuracy | Number of Channels | Key Feature |

|---|---|---|---|

| CPX (CFC-PSO-XGBoost) | 76.7% ± 1.0% | 8 | PSO-optimized montage & CFC features |

| Common Spatial Pattern (CSP) | 60.2% ± 12.4% | Typically many | Traditional spatial filtering |

| FBCSP | 63.5% ± 13.5% | Typically many | Filter-bank CSP |

| FBCNet | 68.8% ± 14.6% | Typically many | Deep learning-based |

| EEGNet (from comparative study [5]) | ~68.20% (Cross-subject) | 22 | End-to-end deep learning |

The external validation on the BCI Competition IV-2a dataset further confirmed the pipeline's robustness, achieving 78.3% average accuracy in a more complex 4-class MI problem [3]. Furthermore, the model showed a Matthews Correlation Coefficient (MCC) and Kappa value of 0.53, indicating a moderate to strong agreement between predictions and actual labels beyond simple accuracy [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Replicating PSO-based Montage Optimization

| Item / Reagent | Function / Specification | Application in CPX Pipeline |

|---|---|---|

| EEG Acquisition System | High-resolution amplifier (e.g., 24-bit, 256 Hz+) with active electrodes [25]. | Records raw neural data from subjects performing MI tasks. |

| Electrode Cap | Standard international 10-20 or 10-10 system cap with Ag/AgCl electrodes. | Ensures consistent and standardized electrode placement across subjects. |

| BCI Datasets | Publicly available datasets (e.g., BCI Competition IV-2a [5], PhysioNet [19]). | Provides benchmark data for developing and validating algorithms. |

| PSO Algorithm Library | Software implementation (e.g., in Python: pyswarms, MATLAB). |

Executes the optimization routine for selecting the best channel subset. |

| CFC/PAC Analysis Toolbox | Custom or open-source code (e.g., in Python with MNE, NumPy). | Extracts cross-frequency coupling features from preprocessed EEG. |

| XGBoost Classifier | Machine learning library (xgboost package in Python). |

Serves as the final classifier and provides the fitness function for PSO. |

The PSO Optimization Process: A Detailed View

The core of this case study is the PSO-driven channel selection. The diagram below illustrates the iterative feedback loop that allows the swarm to converge on an optimal electrode montage.

Algorithm Configuration and Fitness Evaluation In the CPX pipeline, the PSO algorithm was set up to navigate the space of possible channel combinations. The fitness of each particle (each channel subset) was evaluated by training the XGBoost classifier on the CFC features from those specific channels and measuring the resulting classification accuracy. This created a direct feedback loop where higher accuracy directly increased a particle's fitness, driving the swarm toward the most informative montages [3].

The success of the CPX pipeline underscores several key advantages of using PSO for BCI parameter tuning. First, it directly addresses the critical trade-off between performance and practicality by systematically identifying a minimal set of channels that preserve maximal discriminative information. Second, the PSO-optimized montage is not merely a random subset but a coordinated network of electrodes that effectively captures the neural correlates of motor imagery [3] [26].

The implications for BCI research and drug development are significant. For clinical researchers, PSO enables the development of more portable and user-friendly BCI systems for neurorehabilitation without sacrificing efficacy, as evidenced by its use in systems like ReHand-BCI for stroke recovery [25]. For scientists, it provides a rigorous, automated method for parameter optimization, reducing reliance on heuristic or manual tuning and improving the reproducibility of BCI experiments.

In conclusion, this case study firmly establishes PSO as a powerful and practical tool for electrode montage optimization within the broader landscape of BCI parameter tuning. By leveraging its robust search capabilities, researchers can build high-accuracy, low-channel-count BCIs, accelerating the translation of this technology from the laboratory to real-world clinical and consumer applications.

The performance of brain-computer interface (BCI) systems critically depends on the identification of robust and discriminative features from complex, noisy electroencephalography (EEG) signals. Particle Swarm Optimization (PSO) has emerged as a powerful evolutionary algorithm for addressing key challenges in BCI parameter tuning, particularly in feature selection and channel optimization. This bio-inspired approach, founded on the collective behavior of social swarms, enables efficient navigation of high-dimensional parameter spaces to identify optimal feature subsets that maximize classification accuracy [28] [29].

Within motor imagery (MI)-BCI systems, Cross-Frequency Coupling (CFC) represents a particularly informative class of features that captures interactions between different oscillatory frequencies in neural signals. Unlike traditional single-frequency band features, CFC features, especially Phase-Amplitude Coupling (PAC), provide a more comprehensive representation of neural dynamics during motor imagery tasks [3]. When combined with temporal features that capture event-related spectral dynamics, these multidimensional descriptors offer enhanced discriminative power for classifying user intent.

This application note provides a comprehensive framework for integrating PSO into BCI feature extraction pipelines, with particular emphasis on identifying discriminative CFC and temporal features. We present structured protocols, performance benchmarks, and implementation guidelines to facilitate adoption of these methods across research and clinical settings.

The Research Toolkit: Essential Components for PSO-Enhanced Feature Extraction

Table 1: Research Reagent Solutions for PSO-Based Feature Extraction

| Component Category | Specific Examples | Function in BCI Pipeline |

|---|---|---|

| EEG Acquisition Systems | SynAmps2 amplifier, 32-electrode caps (10-20 system) | Records raw neural activity with sufficient spatial coverage and temporal resolution for CFC analysis [30] |

| Signal Processing Tools | Bandpass filters (0.5-100 Hz), Notch filters (50/60 Hz), Artifact Subspace Reconstruction (ASR), Independent Component Analysis (ICA) | Removes technical and physiological artifacts while preserving neural signals of interest [31] [5] |

| Feature Extraction Algorithms | Phase-Amplitude Coupling (PAC), Power Spectral Density (PSD), Wavelet Transform, Autoregressive Models | Quantifies CFC interactions and temporal dynamics from preprocessed EEG signals [3] [32] |

| Optimization Frameworks | Standard PSO, Reformed PSO (RPSO), Multi-stage Linearly Decreasing Weight PSO (MLDW-PSO) | Selects optimal channel subsets and feature combinations while avoiding local optima [33] [30] |

| Classification Models | XGBoost, SVM, EEGNet, Hybrid TCN-MLP Architectures | Maps selected features to motor imagery classes or other cognitive states [3] [31] |

| Validation Metrics | Classification Accuracy, Kappa Coefficient, F1-Score, Area Under Curve (AUC) | Quantifies BCI performance and robustness across subjects and sessions [3] [5] |

Integrated PSO-CFC Protocol: A Structured Workflow

The following diagram illustrates the complete experimental workflow for implementing PSO-enhanced CFC and temporal feature extraction:

Figure 1: Comprehensive workflow for PSO-enhanced CFC and temporal feature extraction in BCI systems.

Stage 1: Data Acquisition and Preprocessing

EEG Acquisition Parameters:

- Utilize 32-channel EEG systems with electrodes positioned according to the international 10-20 system [30]

- Maintain sampling rate ≥ 250 Hz to adequately capture high-frequency components for CFC analysis

- Record baseline activity and multiple motor imagery trials (e.g., left hand, right hand, feet, tongue movements) [5]

Signal Preprocessing Protocol:

- Apply bandpass filtering between 0.5-100 Hz to remove slow drifts and high-frequency noise [31]

- Implement 50/60 Hz notch filtering to eliminate power line interference [5]

- Use Artifact Subspace Reconstruction (ASR) for automated artifact removal [31]

- Apply Independent Component Analysis (ICA) to identify and remove ocular and muscular artifacts [5]

- Segment data into epochs time-locked to motor imagery cues (typically 0-4 seconds post-cue) [3]

Stage 2: Multi-Domain Feature Extraction

CFC Feature Extraction (Phase-Amplitude Coupling):

- Filtering: Bandpass filter the signal in both low-frequency (phase: 4-8 Hz theta, 8-13 Hz alpha) and high-frequency (amplitude: 13-30 Hz beta, 30-100 Hz gamma) ranges [3]

- Hilbert Transform: Extract instantaneous phase from low-frequency bands and amplitude envelope from high-frequency bands

- Coupling Calculation: Compute modulation index between phase and amplitude timeseries using mean vector length or Kullback-Leibler divergence measures

- Feature Vector Construction: Create CFC feature matrix of dimensions [channels × phase-frequency × amplitude-frequency]

Temporal Feature Extraction:

- Hjorth Parameters: Calculate activity, mobility, and complexity parameters to characterize signal statistical properties [32] [30]

- Autoregressive Models: Fit AR models (typically order 5-10) to capture temporal dependencies [3]

- Time-Domain Statistics: Extract mean, variance, skewness, and kurtosis from epoch windows

Spectral Feature Extraction:

- Power Spectral Density: Compute PSD using Welch's method across standard frequency bands (theta, alpha, beta, gamma) [3]

- Spectral Edge Frequencies: Calculate frequencies below which 50% and 90% of total power is contained

- Band Power Ratios: Determine power ratios between functionally relevant frequency bands

Stage 3: PSO-Based Feature and Channel Optimization

The following diagram details the PSO optimization process for feature selection:

Figure 2: PSO-based optimization process for feature and channel selection.

PSO Configuration Parameters:

- Swarm Size: 20-50 particles typically sufficient for feature spaces of 100-1000 dimensions [30]

- Inertia Weight: Implement multi-stage linearly decreasing weight (MLDW) starting at 0.9 and decreasing to 0.4 over iterations [30]

- Acceleration Coefficients: Cognitive component (c1) = 1.5, Social component (c2) = 1.5-2.0 [33]

- Velocity Limits: Set to 20% of search space range to prevent explosive growth [33]

- Stopping Criteria: Maximum iterations (100-500) or fitness stability (<0.1% improvement over 20 iterations)

Fitness Function Definition:

- Primary fitness: Classification accuracy using selected features with a standard classifier (e.g., SVM, XGBoost)

- Multi-objective considerations: Incorporate channel count minimization as secondary objective through penalty terms

- Regularization: Add L1 regularization term to promote sparsity in selected feature sets

Reformed PSO Enhancements:

- Velocity Position-based Convergence (VPC): Prevents premature convergence to local optima [33]

- Wavelet Mutation (WM): Introduces occasional large jumps to explore distant regions of search space [33]

- Boundary Handling: Implement reflecting or absorbing boundaries for particles exceeding valid feature ranges

Stage 4: Model Validation and Performance Assessment

Cross-Validation Strategies:

- Implement 10-fold cross-validation for within-subject performance evaluation [3]

- Conduct leave-one-subject-out (LOSO) validation to assess cross-subject generalizability [5]

- Report mean and standard deviation of performance metrics across all validation folds

Performance Metrics:

- Primary: Classification Accuracy, Kappa Coefficient

- Secondary: Precision, Recall, F1-Score for each class

- Additional: Area Under ROC Curve (AUC), Matthews Correlation Coefficient (MCC)

Performance Benchmarks and Comparative Analysis

Table 2: Performance Comparison of PSO-Enhanced Feature Selection Methods in BCI Applications

| Study & Methodology | Feature Types | PSO Variant | Dataset | Key Results | Comparative Performance |

|---|---|---|---|---|---|

| CPX Framework [3] | CFC (PAC) + Temporal | Standard PSO | BCI Competition IV-2a | 76.7% accuracy, 8 channels | Superior to CSP (60.2%), FBCSP (63.5%), FBCNet (68.8%) |

| Emotion Recognition [30] | Temporal + Spectral + Hjorth | MLDW-PSO | DEAP Dataset | 76.67% 4-class accuracy | Improved over standard PSO and non-PSO methods |

| Online BCI System [30] | Multi-domain Features | MLDW-PSO | Custom Video-Evoked | 89.5% 2-class online accuracy | Demonstrated real-time applicability with PSO optimization |

| ANFIS-FBCSP-PSO [5] | FBCSP + Fuzzy Features | Standard PSO | BCI Competition IV-2a | 68.58% within-subject accuracy | More interpretable but slightly lower performance than EEGNet |

| Handwriting Recognition [31] | 85 Time/Frequency Features | Feature Selection | Custom EEG Dataset | 89.83% accuracy, 202ms latency | Edge-deployable with minimal accuracy loss using 10 features |

Advanced Applications and Implementation Guidelines

PSO for Low-Channel BCIs

A significant advantage of PSO-based feature selection is the ability to identify minimal channel sets without compromising performance. The CPX framework demonstrated that only 8 optimally-placed electrodes can achieve 76.7% classification accuracy in a 2-class MI task, compared to 60.2% with traditional Common Spatial Patterns using full channel sets [3]. This reduction in channel count enhances practical usability and reduces setup time for real-world BCI applications.

Implementation Protocol for Channel Reduction:

- Initialize binary particle representation where each dimension corresponds to channel inclusion/exclusion

- Define fitness function that balances classification accuracy with channel count minimization