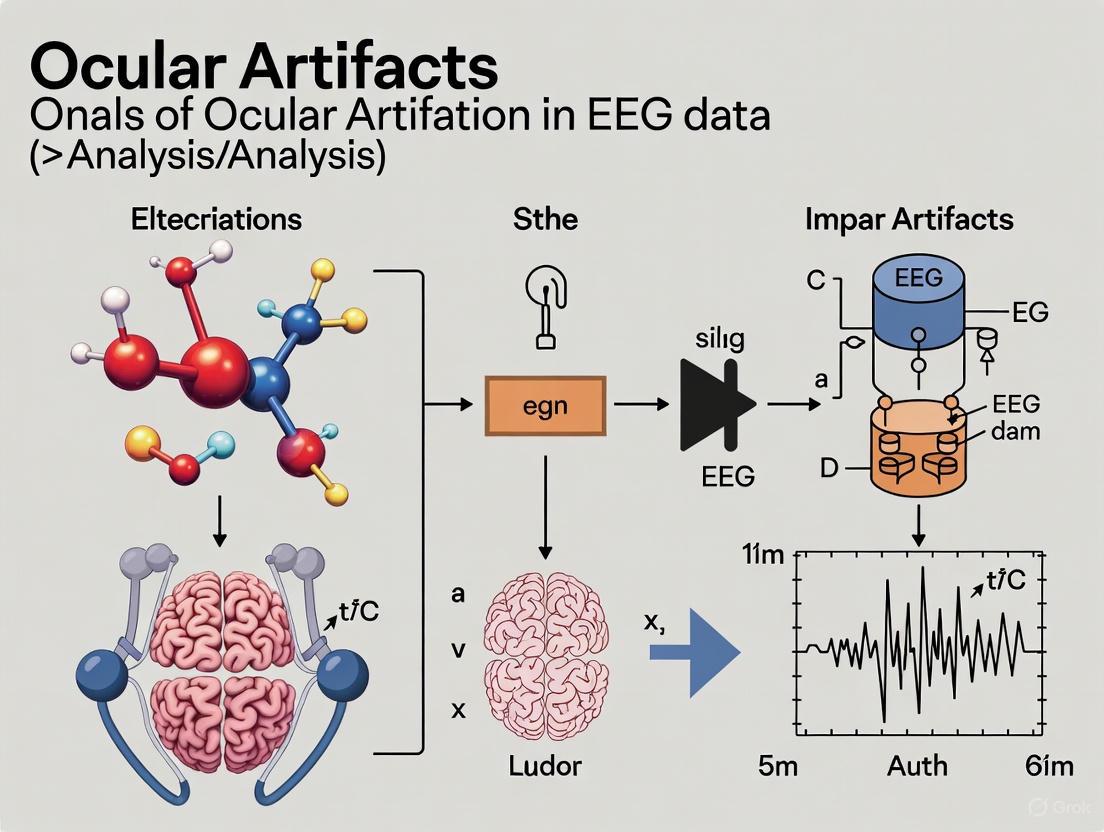

Ocular Artifacts in EEG Analysis: Impacts, Correction Methods, and Best Practices for Biomedical Research

Ocular artifacts, including blinks and saccades, pose a significant challenge in electroencephalographic (EEG) data analysis by introducing large-amplitude, low-frequency signals that can obscure crucial neural information and lead to data...

Ocular Artifacts in EEG Analysis: Impacts, Correction Methods, and Best Practices for Biomedical Research

Abstract

Ocular artifacts, including blinks and saccades, pose a significant challenge in electroencephalographic (EEG) data analysis by introducing large-amplitude, low-frequency signals that can obscure crucial neural information and lead to data misinterpretation. This article provides a comprehensive overview for researchers and drug development professionals, covering the foundational physiology of these artifacts, their specific impacts on signal integrity, and a detailed evaluation of both established and emerging correction methodologies. We further offer a practical guide for troubleshooting and optimizing artifact handling in diverse experimental setups, from traditional lab-based systems to modern wearable EEG, and conclude with a comparative analysis of validation metrics to inform robust analytical pipelines in clinical and translational neuroscience.

Understanding Ocular Artifacts: Physiological Origins and Their Impact on EEG Signal Integrity

The human eye is not merely a passive sensory organ but an active source of significant electrophysiological phenomena that profoundly impact electroencephalographic (EEG) research. Three primary physiological sources—the corneo-retinal dipole, eyelid movements, and extraocular muscle activity—generate electrical potentials that can contaminate EEG recordings, presenting substantial challenges for neuroscientists and clinical researchers. These ocular artifacts exhibit amplitudes that often dwarf genuine neural signals, with their frequency bandwidth (3–15 Hz) critically overlapping with diagnostically important brain rhythms such as theta and alpha waves [1]. Understanding the precise mechanisms through which these ocular structures generate artifacts is fundamental to developing effective correction methodologies, ensuring the integrity of neural data, and advancing both basic research and applied clinical studies, including drug development projects investigating neurophysiological outcomes.

Physiological Foundations of Ocular Artifacts

The Corneo-Retinal Dipole (CRD)

The corneo-retinal dipole represents a fundamental bioelectrical phenomenon central to understanding ocular artifacts in EEG. This dipole arises from the transmembrane potential differences between the positively charged cornea and the negatively charged retina, creating a stable electrical field that spans the eyeball [2] [1]. This potential difference, measuring approximately 6-10 mV in the resting state, transforms the entire eyeball into a biological electric dipole [3]. During any rotational movement of the eyeball, this dipole field rotates correspondingly within the conductive medium of the head. This movement generates widespread potential changes across the scalp that are detectable by EEG electrodes, with the frontal regions being most significantly affected due to their proximity to the ocular globes. Research has demonstrated that the CRD-related artifacts can be considered stationary for at least 1-1.5 hours, validating the feasibility of calibration-based correction approaches for both offline and online EEG analysis [2].

Eyelid Anatomy and Movement Artifacts

The eyelids are complex, multi-layered structures whose movements introduce substantial artifacts distinct from those generated by the CRD. Anatomically, the eyelids consist of three primary lamellae:

- Anterior Lamella: Comprising the thin skin (approximately 1 mm thick, among the thinnest in the human body) and the orbicularis oculi muscle, a concentric striated muscle responsible for eyelid closure [4].

- Middle Lamella: Containing the orbital septum (a fibrous membrane) and fat pads that provide structural separation [4].

- Posterior Lamella: Housing the tarsal plate (dense connective tissue providing mechanical support), retractors (levator palpebrae superioris and Müller's muscle), and the palpebral conjunctiva (a mucous membrane) [4].

The primary eyelid movements are controlled by two key muscular systems: the levator palpebrae superioris (innervated by CN III) for eyelid elevation, and the orbicularis oculi (innervated by CN VII) for eyelid closure [5] [6]. During blinking, the rapid movement of the eyelid across the corneal surface introduces high-amplitude potential field changes independent of eyeball rotation [2] [1]. The eyelid itself acts as a sliding conductive layer that modulates the electrical field generated by the underlying CRD, creating characteristic spike-like artifacts in the EEG signal that are particularly prominent in frontal electrodes.

Extraocular Muscles and Electromyogenic Artifacts

The extraocular muscles (EOMs) represent a specialized group of seven skeletal muscles responsible for controlling eyeball movement and eyelid elevation. These include the four rectus muscles (superior, inferior, medial, and lateral), two oblique muscles (superior and inferior), and the levator palpebrae superioris [7] [8]. These muscles exhibit a significantly lower nerve-to-muscle fiber ratio (1:3 to 1:5) compared to other skeletal muscles (1:50 to 1:125), enabling precise control but also generating substantial electrical activity during contraction [7].

Innervation is provided by three cranial nerves: the oculomotor nerve (CN III) supplies the majority of EOMs, the trochlear nerve (CN IV) innervates the superior oblique, and the abducens nerve (CN VI) controls the lateral rectus [7] [8]. Contractions of these muscles during saccades, smooth pursuit, and fixation generate electromyographic (EMG) signals that manifest as high-frequency bursts in the EEG, typically in the 30-100 Hz range, though their harmonics can affect lower frequencies crucial for brain rhythm analysis [1].

Table 1: Extraocular Muscles and Their Functions

| Muscle | Primary Action | Innervation | Artifact Type |

|---|---|---|---|

| Medial Rectus | Adduction | Oculomotor (CN III) | Saccades, pursuit |

| Lateral Rectus | Abduction | Abducens (CN VI) | Saccades, pursuit |

| Superior Rectus | Elevation | Oculomotor (CN III) | Vertical movements |

| Inferior Rectus | Depression | Oculomotor (CN III) | Vertical movements |

| Superior Oblique | Intorsion, depression | Trochlear (CN IV) | Torsional movements |

| Inferior Oblique | Extorsion, elevation | Oculomotor (CN III) | Torsional movements |

| Levator Palpebrae Superioris | Eyelid elevation | Oculomotor (CN III) | Blink-related |

Quantitative Characterization of Ocular Artifacts

Ocular artifacts exhibit distinct electrophysiological properties that enable their identification and quantification in EEG signals. The characteristics vary significantly between the different physiological sources, necessitating tailored correction approaches for each artifact type.

Table 2: Quantitative Characteristics of Ocular Artifacts in EEG

| Parameter | CRD & Eyelid Artifacts | Extraocular Muscle Artifacts | Neural EEG (Comparison) |

|---|---|---|---|

| Amplitude Range | 50-200 μV (up to 10x EEG) [1] | 5-50 μV [1] | 5-20 μV (scalp) |

| Frequency Bandwidth | 3-15 Hz [1] | 30-100 Hz (fundamental) [1] | 0.5-70 Hz |

| Spatial Distribution | Anterior-maximum (frontal) [1] | Anterior-focused | Variable by rhythm |

| Duration | 100-400 ms (blinks) [1] | 10-100 ms (saccades) | Continuous |

| Frequency of Occurrence | 12-18 blinks/minute [1] | Variable by task | N/A |

Methodologies for Experimental Investigation

Calibration Protocols for Artifact Characterization

Establishing reliable experimental protocols is essential for systematic investigation of ocular artifacts. The sparse generalized eye artifact subspace subtraction (SGEYESUB) algorithm, which demonstrates state-of-the-art correction performance, utilizes a calibration data acquisition protocol requiring approximately five minutes per subject [2]. This protocol involves:

- Participant Preparation: Application of high-density EEG electrodes (typically 64+ channels) with simultaneous EOG recording to monitor ocular activity.

- Calibration Task: Participants perform a structured sequence of ocular movements including:

- Voluntary blinks (10-15 repetitions at 3-second intervals)

- Horizontal saccades (following a visual target between 10° left and right positions)

- Vertical saccades (following a target between 10° up and down positions)

- Smooth pursuit (tracking a slowly moving visual target in circular patterns)

- Data Acquisition: EEG is recorded at a minimum sampling rate of 500 Hz to adequately capture both slow (CRD) and fast (EMG) components, with trigger markers indicating movement onset.

This calibration data enables the construction of subject-specific artifact templates that account for individual anatomical variations in skull conductivity, eye socket geometry, and dipole strength [2].

Histological Examination Techniques

For fundamental research into ocular artifact mechanisms, histological analysis provides structural insights. Recent investigation of the radar/ultrasound analogy for retinal function employed the following methodology in a rabbit model [3]:

- Tissue Preparation: Bilateral retinal tissues were carefully excised and horizontally embedded in paraffin blocks to optimize visualization of all retinal layers.

- Staining Protocols: Sections were stained using:

- Hematoxylin and Eosin (H&E) for general morphological analysis

- Masson's Trichrome (MTC) for connective tissue differentiation

- Neuronal Density Assessment: Employing the physical dissector method, a stereological technique that provides unbiased estimates of particle number without assumptions about particle shape, size, or orientation [3].

- Layer-by-Layer Analysis: The ten distinct retinal layers were examined for structural analogies to radar/ultrasound system components, with the outermost layer compared to an acoustic lens and the ganglion cell layer analyzed for piezoelectric transducer-like properties [3].

Advanced Signal Processing and Correction Methodologies

Algorithmic Approaches for Artifact Correction

Multiple computational approaches have been developed to address the challenge of ocular artifacts in EEG data, each with distinct strengths and applications:

Regression-Based Methods: These traditional approaches operate under the linearity assumption that the recorded EEG signal represents the cumulative sum of neural activity and artifacts:

RawEEG(n) = EEG(n) + artifacts(n)[1]. They utilize electrooculographic (EOG) channels or frontal EEG electrodes as ocular artifact templates to estimate channel-specific weighting coefficients (β) that quantify artifact influence, which is then subtracted from the contaminated signal [1].Independent Component Analysis (ICA): This blind source separation technique decomposes multichannel EEG data into statistically independent components, enabling identification and removal of ocular artifact-related components [1]. ICA is particularly effective with high-density EEG systems (40+ channels) and can successfully separate neural activity from both CRD and eyelid movement artifacts.

Sparse Generalized Eye Artifact Subspace Subtraction (SGEYESUB): This advanced algorithm offers state-of-the-art correction performance by maximizing preservation of resting brain activity and event-related potentials while reducing residual correlations between corrected EEG channels and eye artifacts to below 0.1 [2]. Once fitted to calibration data (~5 minutes), the correction reduces to a simple matrix multiplication, enabling both offline and real-time application.

Artifact Subspace Reconstruction (ASR): This adaptive method operates by detecting and reconstructing the subspace of EEG data contaminated by artifacts using statistical properties of the signal [1]. ASR is particularly valuable for continuous EEG recordings and real-time applications like brain-computer interfaces.

Deep Learning-Based Approaches: Emerging methodologies employ deep neural networks trained on clean EEG signals to recognize and correct non-physiological patterns [1]. These show promise for handling complex, non-stationary artifacts but require substantial training data.

Impact on Multivariate Pattern Analysis

The influence of artifact correction extends to advanced analytical approaches like multivariate pattern analysis (MVPA). Recent research examining support vector machines (SVM) and linear discriminant analysis (LDA) for EEG decoding reveals that the combination of artifact correction and rejection generally does not improve decoding performance in most cases across seven common event-related potential paradigms (N170, mismatch negativity, N2pc, P3b, N400, lateralized readiness potential, and error-related negativity) [9]. However, artifact correction remains essential to minimize artifact-related confounds that might artificially inflate decoding accuracy, potentially leading to incorrect conclusions about neural representations [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Ocular Artifact Investigation

| Category | Specific Items | Function/Application |

|---|---|---|

| Recording Equipment | High-density EEG system (64+ channels) | Primary neural data acquisition |

| EOG electrodes & amplifier | Ocular movement monitoring | |

| Electromagnetic shielding | Environmental noise reduction | |

| Signal Processing | SGEYESUB algorithm [2] | State-of-the-art artifact correction |

| ICA algorithms (e.g., Infomax, Extended) | Component-based artifact removal | |

| ASR implementation | Real-time artifact correction | |

| Calibration Tools | Visual stimulation system | Controlled ocular movement elicitation |

| Eye-tracking systems | Validation of ocular movement patterns | |

| Response trigger interface | Precise event marking | |

| Histological Supplies | H&E stain [3] | General tissue morphology |

| Masson's Trichrome stain [3] | Connective tissue differentiation | |

| Physical dissector setup [3] | Stereological neuronal density estimation | |

| Analysis Software | EEGLAB, FieldTrip | EEG processing pipeline implementation |

| Custom decoding scripts (SVM, LDA) [9] | Multivariate pattern analysis | |

| Statistical packages (R, Python, MATLAB) | Quantitative outcome assessment |

The comprehensive understanding of corneo-retinal dipole physiology, eyelid movement dynamics, and extraocular muscle function provides the essential foundation for addressing one of the most persistent challenges in EEG research. The systematic characterization of these artifact sources enables the development of increasingly sophisticated correction methodologies that preserve neural signals while removing non-cerebral contaminants. As EEG technology expands into wearable devices and real-time brain-computer interfaces, the demand for robust, efficient artifact handling continues to grow. Future research directions include refining real-time correction algorithms, exploring novel biomedical sensing modalities for improved artifact detection, and developing standardized validation frameworks for artifact correction performance across diverse populations and recording conditions. For drug development professionals and clinical researchers, maintaining rigorous standards for ocular artifact management remains paramount for ensuring the validity and interpretability of electrophysiological biomarkers in both basic research and therapeutic applications.

Ocular artifacts represent one of the most significant sources of contamination in electroencephalography (EEG) data, posing substantial challenges for neuroscientific research and clinical applications. These electrical potentials generated by eye movements and blinks can overwhelm genuine neural signals, leading to misinterpretation of brain activity. Within the context of a broader thesis on how ocular artifacts affect EEG data analysis research, this technical guide provides a comprehensive characterization of three primary ocular artifact types: blinks, saccades, and microsaccades. Understanding the origin, properties, and impact of these artifacts is fundamental to developing effective correction methodologies and ensuring the validity of EEG findings in both basic research and drug development applications.

Physiological Origins and Characteristics

Ocular artifacts originate from the electrical field created by the corneo-retinal dipole, where the cornea carries a positive charge relative to the negatively charged retina [10]. When the eye moves or blinks, this dipole field shifts position relative to EEG electrodes, producing measurable electrical potentials that contam neural recordings.

Characterization of Blinks

Eye blinks are characterized by very high amplitude negative waveforms in the bifrontal regions [10]. The underlying mechanism involves Bell's Phenomenon, where the eyes roll upward during a blink, bringing the positive cornea closer to the frontal electrodes Fp1 and Fp2 [10]. This movement produces a positive signal deflection that is most prominent in frontal leads without significant spread to posterior regions. Blinks are a normal component of awake EEG and typically last 100-400 milliseconds [11]. Unlike cerebral signals, blinks lack a posterior field, have no preceding spike before the larger amplitude wave, and cause minimal disruption to the background neural activity [10].

Characterization of Saccades

Saccades are rapid, conjugate eye movements used to reorient the foveal region to new spatial locations, occurring approximately 3 times per second [12]. These "ballistic" movements are characterized by high velocity and brief duration, during which visual processing is largely suppressed. In EEG recordings, lateral saccades produce opposing polarities in F7 and F8 electrodes due to the corneo-retinal dipole [10]. When looking to the right, the right cornea approaches F8 (creating a positive charge) while the left retina moves toward F7 (creating a negative charge), resulting in a characteristic "phase reversal" pattern [10]. The saccadic spike artifact (SP) at saccade onset is particularly problematic as it can resemble synchronous neuronal gamma band activity [13].

Characterization of Microsaccades

Microsaccades are very small, involuntary eye movements (typically <1.0°) that occur during attempted visual fixation at an average rate of 1-2 per second [14] [12]. These tiny flicks are embedded within slower drifting movements and represent the most prominent contribution to fixational eye movements. Despite their small size, microsaccades generate significant artifacts through two primary mechanisms: extraocular muscle activity that propagates to the EEG as a saccadic spike potential, and genuine cortical activity manifested in the EEG 100-140 ms after movement onset [14]. This cortical response resembles the visual lambda response evoked by larger voluntary saccades, challenging the standard assumption that brain activity from saccades is precluded during fixation [14].

Table 1: Comparative Characteristics of Ocular Artifacts

| Feature | Blinks | Saccades | Microsaccades |

|---|---|---|---|

| Primary Origin | Eyelid movement, corneal dipole shift | Voluntary eye movement, corneal dipole shift | Involuntary fixation adjustment, corneal dipole shift |

| Typical Duration | 100-400 ms [11] | 30-100 ms [12] | 10-30 ms [12] |

| Amplitude in EEG | High amplitude (often >100μV) [10] | Moderate to high amplitude | Low to moderate amplitude [14] |

| Spatial Distribution | Bifrontal (Fp1, Fp2), minimal posterior spread [10] | Frontal-temporal, opposing polarities [10] | Widespread, but often frontal emphasis [14] |

| Frequency Content | Predominantly low frequency (0-12 Hz) [11] | Broadband, with gamma band contamination [13] | Broadband, with gamma band contamination [14] |

| Functional Role | Corneal lubrication, cognitive modulation [15] | Visual reorientation, scene sampling | Fixation maintenance, perceptual stabilization [16] |

Impact on EEG Data Analysis

Signal Contamination Mechanisms

Ocular artifacts compromise EEG data through multiple mechanisms. Blink artifacts primarily contaminate the low-frequency EEG bands (0-12 Hz) that are associated with critical cognitive processes including hand movements, attention levels, and drowsiness [11]. The high amplitude of blink artifacts can saturate amplifier inputs, causing transient signal loss and distorting event-related potentials. Saccadic movements generate spike potentials that manifest as broadband artifacts in the EEG spectrum, particularly problematic in the gamma frequency range where they can mimic induced gamma band activity [13]. Microsaccades present a more insidious challenge because they occur frequently during fixation tasks and generate both myogenic artifacts from extraocular muscles and genuine cortical responses that are difficult to disentangle from stimulus-related activity [14].

Consequences for Research Interpretation

The presence of ocular artifacts can lead to systematic biases in EEG analysis. In event-related potential studies, blink artifacts time-locked to stimuli can distort component amplitudes and latencies, particularly for frontal components. For frequency domain analyses, saccade-related spike potentials can artificially inflate power estimates in gamma band ranges, potentially leading to false conclusions about neural synchronization [13]. Microsaccades introduce additional confounding factors because their probability often varies systematically with experimental conditions; for example, microsaccade probability modulates according to the proportion of target stimuli in oddball tasks, causing artifactual modulations of late stimulus-locked ERP components [14].

Table 2: Research Reagent Solutions for Ocular Artifact Management

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Eye Tracking Systems | SMI eye tracking glasses [15], EyeLink 1000 Plus [12], IView-X Hi-Speed [14] | Precise measurement of eye movements simultaneous with EEG recording |

| Artifact Detection Algorithms | k-means clustering with SSA [11], Velocity-based microsaccade detection [14], Scalp topography-based ML [17] | Identification and isolation of artifact-contaminated epochs in EEG data |

| Artifact Removal Techniques | Independent Component Analysis (ICA) [11] [18], Singular Spectrum Analysis (SSA) [11], Adaptive filtering [11] | Separation and subtraction of artifact components from neural signals |

| Machine Learning Classifiers | Artificial Neural Networks (ANN) [17], Support Vector Machines (SVM) [18] | Automated classification and removal of artifact-contaminated segments |

| Experimental Controls | Fixation points [14], Chin/forehead rests [14], Trial rejection protocols [15] | Minimization of artifact generation during data acquisition |

Experimental Protocols for Characterization

Protocol for Blink Artifact Investigation

The characterization of blink artifacts and their relationship to perceptual processes requires carefully controlled paradigms. In one experimental design, participants view ambiguous plaid stimuli while continuously reporting their perceptual experience [15]. The stimulus consists of moving gratings superimposed over each other, creating bistable perception where viewers alternate between seeing unidirectional coherent motion or bidirectional component movement [15]. During testing, participants are seated in a dark room 40 cm from the display with heads stabilized using a chin rest. Binocular eye movements are recorded at 120 Hz using eye tracking glasses synchronized with EEG acquisition [15]. Participants provide continuous perceptual reports via response buttons, with button lifts indicating perceptual switches. This protocol enables precise correlation between blink events, neural activity, and perceptual changes.

Protocol for Microsaccade Detection and Analysis

High-quality recording of microsaccades requires specialized equipment and analytical approaches. In a typical fixation paradigm, participants maintain gaze on a central fixation point during 10-second trials while being instructed to avoid blinks and large eye movements [14]. Stimuli may include checkerboard patterns or face images presented on a monitor. Eye movements are recorded monocularly from one eye using infrared video-based eye trackers with high sampling rates (500 Hz or greater) and high spatial resolution (0.01° or better) [14]. Microsaccades are detected using velocity-based algorithms that identify outliers in two-dimensional velocity space [14]. The critical parameters include a velocity threshold set to 5 median-based standard deviations of the velocity values, minimum duration of 6 ms, maximum magnitude of 1°, and minimum temporal separation of 50 ms from previous microsaccades [14]. EEG segments are then extracted around microsaccade onset and baseline-corrected for analysis.

Diagram 1: Experimental Workflow for Ocular Artifact Characterization

Detection and Removal Methodologies

Traditional and Machine Learning Approaches

Multiple computational approaches have been developed to identify and remove ocular artifacts from EEG signals. For single-channel EEG, a combined k-means and Singular Spectrum Analysis (SSA) method has demonstrated efficacy by extracting eye-blink artifacts based on time-domain features without modifying uncontaminated regions of the EEG signal [11]. This approach involves mapping the single-channel EEG signal into a multivariate data matrix, computing time-domain features (energy, Hjorth mobility, kurtosis, and range), applying k-means clustering to identify artifact components, processing these with SSA, and finally subtracting the estimated artifact from the contaminated signal [11].

Comparative studies of machine learning classifiers have identified Artificial Neural Networks (ANN) as particularly effective when combined with scalp topography features for eye-blink artifact detection [17]. Other classifiers including Support Vector Machines (SVM) and Linear Discriminant Analysis (LDA) have shown varying levels of performance across different feature sets [18] [17]. Importantly, research indicates that the combination of artifact correction and rejection does not necessarily enhance decoding performance in multivariate pattern analysis, though artifact correction remains essential to minimize confounds that might artificially inflate decoding accuracy [18].

Validation Frameworks

Robust validation of artifact removal techniques requires both synthetic and real EEG data. Synthetic datasets are constructed by combining artifact-free EEG segments with manually extracted eye-blink artifacts, creating ground truth data where the precise artifact contribution is known [11]. Performance metrics including power spectrum ratio (Γ) and mean absolute error (MAE) quantify the effectiveness of artifact removal while assessing potential distortion of neural signals [11]. For microsaccade-related artifacts, validation includes correlation with high-resolution eye tracking and assessment of residual artifacts in the gamma frequency range where saccadic spike potentials are most prominent [14] [13].

Diagram 2: Ocular Artifact Propagation Pathway in EEG Research

Blinks, saccades, and microsaccades present distinct yet interconnected challenges for EEG research, each with characteristic generation mechanisms, topographic distributions, and methodological implications. Blinks produce high-amplitude frontal potentials, saccades generate spike potentials that contaminate gamma band activity, and microsaccades create both myogenic artifacts and genuine cortical responses. Comprehensive characterization of these artifacts enables development of more effective detection and removal strategies, including advanced machine learning approaches and signal processing techniques. Future research should focus on standardized validation frameworks and the integration of multimodal recording approaches to further disentangle ocular artifacts from neural signals of interest. For drug development professionals and neuroscientists, rigorous attention to ocular artifacts remains essential for ensuring the validity and interpretability of EEG findings across basic and applied research contexts.

Electroencephalography (EEG) is a fundamental tool for non-invasively investigating brain function, with applications spanning from basic cognitive neuroscience to clinical drug development. However, the low amplitude of neural signals (typically in the microvolt range) makes EEG highly susceptible to contamination from various sources of noise, collectively known as artifacts [19]. Among these, ocular artifacts (OAs)—generated by eye blinks and movements—represent one of the most pervasive and methodologically challenging problems in EEG data analysis. These artifacts introduce significant confounding signals that can obscure or mimic genuine neural activity, potentially compromising research validity and leading to erroneous conclusions [19] [9]. This technical guide examines the core issue of spectral and spatial contamination caused by ocular artifacts, with a specific focus on their overlap with the delta, theta, and alpha frequency bands. Furthermore, it details advanced methodological frameworks for their identification and removal, providing researchers with practical tools to enhance data integrity in neuroscientific and clinical research.

Spectral and Spatial Characteristics of Ocular Artifacts

Spectral Overlap with Neural Oscillations

The principal challenge of ocular artifacts lies in their extensive spectral overlap with key brain rhythms of interest. Unlike narrowband noise sources (e.g., powerline interference), OAs generate broadband signals that directly contaminate the canonical EEG frequency bands.

- Dominant Frequency Bands: Ocular artifacts exhibit peak spectral power in the delta (0.5–4 Hz) and theta (4–8 Hz) bands [19]. This is particularly problematic as these bands are critical for studying cognitive processes such as decision-making, memory, and attention, as well as clinical conditions like encephalopathy and sleep [20].

- Extended Spectral Influence: The spectral profile of a blink is not strictly limited to low frequencies. The box-shaped deflection caused by saccadic eye movements contains frequency components that can extend up to 20 Hz [21], creating substantial overlap with the alpha (8–13 Hz) band—the hallmark rhythm of the awake, relaxed brain and a key metric in many neuropharmacological studies [20].

Table 1: Spectral Characteristics of Ocular Artifacts and Overlapping Neural Functions

| EEG Band | Frequency Range (Hz) | Primary Neural Correlates | Ocular Artifact Impact |

|---|---|---|---|

| Delta | 0.5–4 | Slow-wave sleep, attention, brain injury | High-amplitude contamination from blinks; can mimic pathological slowing [19] [20] |

| Theta | 4–8 | Drowsiness, memory encoding, cognitive control | Significant contamination from blinks and saccades; can confound studies of meditation or cognitive effort [19] [20] |

| Alpha | 8–13 | Relaxed wakefulness, posterior dominant rhythm | Contamination from saccadic eye movements; can distort the baseline power metric [21] [20] |

| Beta | 13–30 | Active thinking, motor processing | Minimal direct overlap, but can be affected by residual artifact components [21] |

Spatial Topography and Amplitude

The spatial distribution of ocular artifacts is determined by the corneo-retinal potential dipole. When the eye moves or the eyelid closes during a blink, this dipole shifts, creating a large electrical field measurable across the scalp [19] [21].

- Maximum Impact Zone: The artifact is most prominent over frontal and prefrontal electrodes (e.g., Fp1, Fp2, F7, F8), where amplitudes can reach 100–200 µV—an order of magnitude larger than underlying cortical EEG [19] [21].

- Far-Field Effects: Although the voltage distribution follows a distance-dependent gradient, the artifact's influence is not confined to frontal regions. The propagated signal can significantly contaminate central and even parietal sites, complicating the analysis of neural generators outside the frontal lobe [21].

Diagram 1: Spectral and Spatial Contamination Pathways of Ocular Artifacts. This figure illustrates how artifacts from the corneo-retinal dipole propagate across key EEG frequency bands and scalp regions.

Methodologies for Ocular Artifact Detection and Removal

A range of techniques exists to mitigate ocular contamination, from classical regression-based approaches to modern data-driven and deep learning methods. The choice of method depends on factors such as the number of EEG channels, availability of EOG recordings, and computational resources.

Classical and Modern Signal Processing Approaches

- Independent Component Analysis (ICA): ICA is a widely used blind source separation technique that decomposes multi-channel EEG data into statistically independent components (ICs). Ocular artifacts, due to their distinct spatial and temporal properties, often segregate into specific ICs, which can be manually or automatically identified and removed from the data [22] [21]. ICA is highly effective but can be computationally intensive and may require expert supervision for component selection.

- Regression-Based Methods: These techniques use simultaneously recorded electrooculogram (EOG) signals to model the artifact's contribution to each EEG channel. The modeled artifact is then subtracted from the contaminated EEG. While effective, they often require dedicated EOG channels and may risk over-correction, removing parts of the genuine neural signal [21].

- Advanced Decomposition and Filtering: Newer hybrid methods demonstrate robust performance, particularly for challenging scenarios like single-channel EEG. One prominent example is the Fixed Frequency Empirical Wavelet Transform (FF-EWT) combined with a Generalized Moreau Envelope Total Variation (GMETV) filter [23]. This approach automatically decomposes the signal, identifies artifact-laden components using metrics like kurtosis and dispersion entropy, and applies a specialized filter to remove the artifact while preserving underlying brain activity [23].

- Spectral Signal Space Projection (S3P): This frequency-domain framework creates frequency-specific spatial projectors to suppress artifacts that have distinct topographies in narrowband oscillations. This is particularly advantageous for removing noise whose spatial pattern changes across frequencies, offering a targeted approach for denoising specific bands like delta, theta, or alpha [24].

Deep Learning Architectures

Recent advances in artificial intelligence have led to the development of calibration-free, end-to-end models for artifact removal.

- EEGOAR-Net: This deep learning model is based on a U-Net architecture and is trained to map contaminated EEG signals to their clean counterparts. A key innovation is its montage-independent design, achieved through a training methodology that masks different channels, making it flexible for use with various EEG setups. It operates without the need for subject-specific calibration or EOG reference channels, making it highly practical for real-time applications like brain-computer interfaces [25].

Table 2: Comparison of Ocular Artifact Removal Methodologies

| Method | Core Principle | Key Advantages | Key Limitations |

|---|---|---|---|

| ICA [22] [21] | Blind source separation into independent components | Does not require EOG reference; effective for multiple artifact types | Computationally intensive; component selection can be subjective |

| Regression [21] | EOG-based subtraction of artifact waveform | Simple conceptual framework; well-established | Requires EOG channels; may remove neural signals (over-correction) |

| FF-EWT + GMETV [23] | Wavelet decomposition & targeted filtering | Automated; suitable for single-channel EEG | Parameter tuning may be required for new datasets |

| S3P [24] | Frequency-specific spatial projection | Optimized for narrowband oscillatory analysis | Complex implementation; requires noise subspace definition |

| EEGOAR-Net (DL) [25] | Deep learning-based reconstruction | Montage-independent; no calibration or EOG needed | Requires large, diverse training datasets; "black box" nature |

Diagram 2: Workflow of Ocular Artifact Removal Methodologies. This diagram outlines the pathways from raw, contaminated EEG to a cleaned signal using different processing techniques.

Table 3: Key Research Reagents and Solutions for EEG Artifact Research

| Tool / Resource | Category | Primary Function | Example Use Case |

|---|---|---|---|

| High-Density EEG System (e.g., 128-channel EGI) [26] | Hardware | High-resolution spatial sampling of brain activity | Enables precise source localization and effective ICA by providing sufficient spatial channels [26] |

| Portable EEG System (e.g., BrainVision LiveAmp) [27] | Hardware | EEG data acquisition in naturalistic settings | Facilitates research on brain function in ecologically valid environments (homes, schools) [27] |

| EOG Electrodes [21] | Hardware | Record reference signals for eye movements/movements | Provides a dedicated channel for regression-based correction methods [21] |

| ICA Algorithm (e.g., in EEGLAB) [22] [26] | Software | Separate neural and non-neural signal sources | The cornerstone of many preprocessing pipelines for isolating and removing ocular components [22] |

| Advanced Filtering Toolboxes (e.g., for FF-EWT) [23] | Software | Implement specialized signal decomposition and filtering | Critical for single-channel EEG analysis or when EOG references are unavailable [23] |

| Deep Learning Models (e.g., EEGOAR-Net) [25] | Software | End-to-end artifact attenuation | Provides a calibration-free, montage-flexible solution for rapid preprocessing in BCI applications [25] |

| Standardized Preprocessing Pipelines (e.g., in BrainVision Analyzer) [21] | Software | Structured, reproducible workflow for artifact handling | Ensures consistency and efficiency in data cleaning across large datasets or multi-site studies [21] |

Failure to adequately address ocular artifacts can have profound consequences for data interpretation. Artifacts can reduce the signal-to-noise ratio, decreasing statistical power for detecting genuine neural effects [9]. More critically, they can introduce systematic confounds, potentially leading to false positives. For instance, a condition that elicits more frequent blinks (e.g., due to fatigue or cognitive load) could be misinterpreted as showing enhanced delta or theta power [19]. Recent research evaluating multivariate pattern analysis (decoding) has shown that while artifact correction does not always improve decoding performance, it remains essential to prevent artifact-related confounds from artificially inflating accuracy metrics and leading to incorrect conclusions [9].

Ocular artifacts present a formidable challenge in EEG research due to their significant spectral overlap with diagnostically and cognitively relevant frequency bands and their widespread spatial propagation across the scalp. A thorough understanding of their properties is a prerequisite for selecting an appropriate mitigation strategy. While established techniques like ICA and regression continue to be valuable, emerging methods from signal processing (e.g., FF-EWT) and deep learning (e.g., EEGOAR-Net) offer powerful, automated, and flexible alternatives. As EEG technology evolves toward greater portability and use in naturalistic settings [27], the development and adoption of robust, scalable artifact handling protocols will be paramount. By rigorously addressing the problem of ocular contamination, researchers in neuroscience and drug development can enhance the validity, reliability, and interpretability of their EEG data, solidifying the role of electrophysiology as a cornerstone tool for understanding brain function and its modification by pharmacological agents.

Electroencephalography (EEG) research is fundamentally constrained by the pervasive challenge of physiological artifacts, with ocular artifacts representing a predominant source of contamination. These artifacts introduce significant confounding variability, potentially biasing experimental outcomes in cognitive neuroscience, clinical diagnosis, and pharmaceutical development. This technical guide delineates the biophysical mechanisms through which ocular artifacts disproportionately affect frontal EEG channels, quantifies their impact on signal integrity, and evaluates contemporary methodological frameworks for their mitigation. Emphasis is placed on the implications for analysis reliability and the critical importance of targeted artifact management in upholding the validity of neuroscientific and clinical research findings.

Electroencephalography (EEG) provides unparalleled millisecond-scale temporal resolution for investigating brain dynamics, but its utility is contingent upon signal quality. The recorded microvolt-scale signals are exceptionally susceptible to contamination from non-neural sources, collectively termed artifacts [28] [19]. Among these, ocular artifacts (OAs)—generated by eye blinks and movements—are particularly problematic due to their high amplitude and spectral overlap with neural signals of interest [28] [29]. The inherent properties of OAs, including their generation via a robust bioelectric dipole and volume conduction through the head, result in a characteristic topographical distribution. This distribution is most pronounced over the frontal and frontopolar regions (e.g., Fp1, Fp2, F7, F8), which are also critical for assessing cognitive functions such as executive control and decision-making [30] [21]. Consequently, the accurate interpretation of frontal EEG activity is intimately linked to the effective management of OAs. Failure to address this contamination can lead to the misattribution of artifact-derived signals to neural processes, thereby compromising the integrity of research conclusions, from basic cognitive studies to clinical trials assessing neurotherapeutics.

The Core Biophysical Mechanisms

The profound impact of ocular artifacts on frontal channels is explained by two fundamental principles: the genesis of a high-amplitude electrical field and its projection via volume conduction.

The Corneo-Retinal Dipole

The primary source of ocular artifacts is the corneo-retinal potential, a steady electrical potential difference across the human eye. The cornea is electrically positive relative to the negatively charged retina, creating a stable electric dipole [19] [21]. During ocular events such as blinks or saccades, this dipole undergoes significant displacement and orientation changes.

- Eye Blinks: The closure of the eyelid causes a rapid movement of the corneal positivity towards the frontal scalp, inducing a large, positive-going slow potential deflection in the EEG recording [21].

- Eye Movements: Lateral saccades rotate the entire dipole, creating a field where one hemisphere (toward which the eyes move) becomes more positive while the other becomes more negative, resulting in a characteristic box-shaped waveform [21].

This dipole is robust, generating signals in the millivolt range (100–200 µV), which is orders of magnitude larger than the microvolt-scale cortical EEG signals it obscures [19] [30].

Volume Conduction and Projection to the Scalp

The electrical field generated by the corneo-retinal dipole propagates instantaneously through the head's conductive tissues (e.g., brain, cerebrospinal fluid, skull, skin) via volume conduction [30]. This process can be modeled as the passive spread of an electrical current from a point source. The strength of the recorded artifact at any given electrode is inversely proportional to the square of the distance from the source. Given the proximity of frontal electrodes to the ocular dipole, they experience the strongest signal. While the amplitude declines with greater distance, the artifact's influence is still measurable over posterior regions, albeit attenuated [21]. The following diagram illustrates this core signaling pathway.

Quantitative Impact and Signal Characteristics

The contamination of frontal channels by ocular artifacts is not merely topographic but has distinct, quantifiable signatures in both time and frequency domains, which are critical for detection and analysis.

Table 1: Characteristics of Ocular Artifacts in Frontal Channels

| Domain | Characteristic Signature | Quantitative Impact |

|---|---|---|

| Time Domain | High-amplitude, slow deflections. Blinks show monophasic peaks; saccades show box-shaped waveforms [21]. | Amplitudes of 100–200 µV, dwarfing cortical EEG (typically < 100 µV) [19] [30]. |

| Frequency Domain | Dominant spectral power in the delta (0.5–4 Hz) and theta (4–8 Hz) bands [19] [21]. | Masks genuine neural oscillations critical for studying sleep, drowsiness, and certain cognitive tasks. |

| Spatial Topography | Maximum amplitude over frontopolar sites (Fp1, Fp2), with strong projection to frontal (F7, F8) and central sites [30] [21]. | Can be measured and used for topographic identification and rejection algorithms. |

The quantitative disparity is stark. As noted in a 2025 study on dry EEG, the standard deviation of the signal—a measure of variability—can be dramatically reduced through effective artifact cleaning, underscoring the disproportionate influence of these contaminants on data metrics [31].

Methodologies for Investigation and Removal

A variety of experimental and computational methodologies have been developed to study and mitigate ocular artifacts. The choice of method often depends on the experimental setup, such as the number of available EEG channels.

Experimental Protocols for Ocular Artifact Analysis

Research into ocular artifacts often employs structured paradigms to elicit them in a controlled manner.

- Motor Execution Paradigm: A common protocol involves instructing participants to perform specific movements (e.g., hand, feet, or tongue movements) while EEG is recorded. This allows researchers to investigate the interaction between movement-related artifacts and ocular artifacts, particularly in mobile or dry EEG systems [31]. The protocol typically includes fixation crosses and cue arrows to standardize timing, with EEG recorded from a high-density cap (e.g., 64 channels) to capture full topographic spread.

- Controlled Elicitation: Simpler paradigms directly instruct participants to perform periodic eye blinks or saccades according to a visual cue. This generates a clean dataset of artifact events that can be used to train machine learning classifiers or validate removal algorithms [29].

Removal Techniques and Workflows

The workflow for handling ocular artifacts has evolved from simple rejection to sophisticated decomposition and machine learning approaches.

- Blind Source Separation (BSS) Techniques: Methods like Independent Component Analysis (ICA) are considered gold standards for multi-channel data. ICA decomposes the multi-channel EEG signal into statistically independent components (ICs). Ocular artifacts are typically isolated into one or a few ICs based on their characteristic topography (frontal focus), time course, and spectral properties. These artifactual ICs can then be subtracted from the data before signal reconstruction [31] [32] [30]. Studies show that targeted artifact reduction within components, rather than wholesale component subtraction, better protects against the artificial inflation of effect sizes (e.g., in ERPs) and source localization biases [32].

- Advanced Single-Channel Methods: For portable or single-channel systems where ICA is not feasible, complex pipelines have been developed. One state-of-the-art method integrates a Support Vector Machine (SVM) to first identify artifact-contaminated segments. These segments are then decomposed using Genetic Algorithm-optimized Variational Mode Decomposition (GA-VMD), followed by further source separation using the Second-Order Blind Identification (SOBI) algorithm. Components identified as artifacts via an approximate entropy threshold are removed before signal reconstruction [29]. The general workflow for such a pipeline is illustrated below.

Table 2: The Scientist's Toolkit: Key Reagents and Resources for Ocular Artifact Research

| Item Name | Type | Function in Research |

|---|---|---|

| High-Density Dry EEG System (e.g., 64-channel) | Hardware | Enables recording in ecological scenarios with rapid setup; particularly prone to motion and ocular artifacts, making it a key platform for method development [31]. |

| eego Amplifier & waveguard touch Cap | Hardware | Example of a commercial research-grade system used for acquiring high-fidelity EEG data for artifact analysis [31]. |

| Independent Component Analysis (ICA) | Algorithm | A foundational blind source separation method for isolating and removing ocular and other artifacts from multi-channel data [31] [32] [30]. |

| RELAX Pipeline | Software/Plugin | A freely available EEGLAB plugin that implements a targeted artifact reduction method to minimize false positives and protect neural signals [32]. |

| SVM-GA-VMD-SOBI Pipeline | Algorithmic Pipeline | An advanced, automated framework specifically designed for the removal of ocular artifacts from single-channel EEG data [29]. |

| Semi-Synthetic Benchmark Datasets | Data Resource | Publicly available datasets (e.g., from EEGdenoiseNet) that combine clean EEG with recorded artifacts, enabling standardized testing and validation of new algorithms [33]. |

The vulnerability of frontal EEG channels to ocular artifacts is an immutable consequence of basic biophysics, driven by the high-amplitude corneo-retinal dipole and volume conduction. This phenomenon poses a persistent and significant challenge, threatening the validity of findings across neuroscience and drug development. Quantifying the impact—through amplitude, spectral, and topographic analysis—is a critical first step. Fortunately, the methodological arsenal available to researchers is powerful and evolving, ranging from well-established BSS techniques for dense-array data to innovative, machine-learning-driven pipelines for single-channel applications. The ongoing refinement of these methods, particularly those that move beyond simple subtraction to targeted cleaning, is paramount. Ensuring that EEG-based research conclusions are driven by neural signals, rather than ocular artifacts, requires diligent application of these sophisticated tools and a fundamental understanding of the principles of amplitude and projection.

In electroencephalography (EEG) analysis, the conventional wisdom holds that artifacts are a source of noise that obscures neural signals and diminishes analytical power. However, within the specific context of multivariate pattern analysis (MVPA), or decoding, a more complex and counterintuitive narrative emerges: systematic artifacts can artificially inflate decoding accuracy, leading to invalid conclusions about brain function. This whitepaper examines the mechanisms by which this inflation occurs, presents quantitative evidence of its effects, and provides methodological guidance for ensuring the validity of EEG decoding research, with a particular focus on ocular artifacts.

The core of the problem lies in the nature of decoding algorithms themselves. Unlike univariate analyses that assess signals at individual electrodes or time points, decoders like Support Vector Machines (SVMs) and Linear Discriminant Analysis (LDA) are designed to find any consistent pattern in the multidimensional data that distinguishes experimental conditions [18] [9]. If an artifact—such as an eye blink or muscle movement—occurs in a time-locked or condition-specific manner, the decoder can learn this artifactual pattern rather than, or in addition to, the underlying neural signal. Consequently, what appears to be successful decoding of a cognitive state may in fact be the successful decoding of a non-neural confound [34].

Mechanisms: How Artifacts Become Confounds

The Anatomy of an Inflated Decoding Result

Systematic artifacts inflate decoding performance through a direct confounding mechanism. For an artifact to cause inflation, two conditions must be met:

- The artifact must be large-amplitude and structured, possessing a consistent spatial or temporal signature that a classifier can detect.

- The artifact's occurrence must be correlated with the experimental conditions or task labels used to train the decoder.

Ocular artifacts are particularly potent confounds due to their high amplitude and the fact that eye movements and blinks are often intrinsically linked to cognitive tasks. For instance, in a visual attention task where a stimulus appears in the left versus right hemifield, participants may systematically make saccades toward the stimulus location. The resulting horizontal eye movement artifacts will be perfectly correlated with the task condition labels (left vs. right). A decoder may then achieve high accuracy by simply learning the characteristic pattern of the eye movement from frontal and temporal electrodes, rather than decoding the neural correlates of attention from occipital or parietal cortices [34] [21].

Table 1: Characteristics of High-Risk Artifacts in EEG Decoding

| Artifact Type | Spectral Profile | Spatial Distribution | Common Paradigms at Risk |

|---|---|---|---|

| Ocular Blinks | Delta/Theta (0.5–5 Hz) [35] | Bilateral, Frontal-Dominant [21] | P3b, N400, any long-duration task |

| Saccades / Eye Movements | Delta/Theta (0.5–5 Hz) [35] | Lateralized, Temporal [21] | N2pc, visual search, spatial attention |

| Muscle Artifacts (EMG) | Broadband, high-freq. (20–300 Hz) [21] | Focal, Temporal/Nuchal | Motor tasks, speech, LRP paradigms |

| Pulse Artifact | ~1 Hz (Heart Rate) [21] | Focal, Temporal/Mastoid | Resting-state, patient studies |

Experimental Evidence from Multiverse Analyses

Recent large-scale, systematic studies provide compelling quantitative evidence for artifact-induced inflation. A 2025 multiverse analysis published in Communications Biology systematically varied preprocessing steps across seven common EEG paradigms and assessed their impact on decoding performance using EEGNet and time-resolved logistic regression [34].

A critical finding was that artifact correction steps, including ICA, consistently reduced decoding performance. The authors identified specific scenarios where this was most pronounced:

- In the N2pc experiment, the removal of ocular artifacts strongly negatively impacted decoding performance for target position. This is because eye movements toward the target hemifield provided a systematic, non-neural signal that the decoder exploited [34].

- In the Lateralized Readiness Potential (LRP) experiment, removing muscle artifacts reduced decoding performance for hand-motor responses, suggesting that muscle activity related to the button press was itself informative to the classifier [34].

This demonstrates that when artifacts are systematically linked to the task, they become a reliable source of information for the decoder. Removing them reveals the true, and often lower, performance of the decoder when relying solely on neural signals.

Quantitative Impact: Assessing the Magnitude of Inflation

To understand the real-world impact, it is essential to examine quantitative data on how artifact correction influences key decoding metrics. The following table synthesizes findings from studies that directly compared decoding performance with and without rigorous artifact correction.

Table 2: Impact of Artifact Correction on EEG Decoding Performance

| Experimental Paradigm | Classifier Type | Performance Metric | Without Artifact Correction | With Artifact Correction | Key Finding |

|---|---|---|---|---|---|

| N2pc (Hemifield Decoding) | EEGNet [34] | Balanced Accuracy | Inflated | Significantly Reduced | Ocular artifacts from target-directed saccades were predictive. |

| LRP (Hand Response) | EEGNet [34] | Balanced Accuracy | Inflated | Significantly Reduced | EMG from button presses was a primary decoder feature. |

| Seven ERP Paradigms | SVM & LDA [18] [9] | Binary/Multi-class Accuracy | No significant improvement from correction in most cases | Correction did not enhance performance | Highlights correction's role in validity, not performance. |

| Multiple Paradigms | Time-resolved Logistic Regression [34] | T-sum Statistic | Lower with minimal preprocessing | Increased with filtering but reduced with ICA | Simple preprocessing helped, but artifact removal reduced confounds. |

The data clearly show that the inflation is not a minor effect but can be substantial enough to form the primary basis for a decoder's success. Relying on uncorrected data in these scenarios leads to a fundamentally incorrect interpretation of what the decoder has learned.

Methodological Protocols for Controlled Investigation

For researchers seeking to validate their own findings, here are detailed protocols for conducting a controlled assessment of artifact impact.

Protocol A: The Artifact Inclusion/Exclusion Test

This protocol is the most direct way to test for artifact inflation [18] [34].

- Data Processing: Preprocess your EEG data through two parallel pipelines.

- Pipeline 1 (Artifact-Retained): Apply only minimal preprocessing (e.g., filtering, re-referencing). Do not perform ICA, artifact rejection, or other artifact removal procedures.

- Pipeline 2 (Artifact-Corrected): Apply a comprehensive artifact removal strategy. This should include ICA for ocular and muscle artifacts and potentially automated artifact rejection (e.g., Autoreject) for other large, non-stereotyped noises [34].

- Decoding Analysis: Train and test your chosen decoder (e.g., SVM, LDA) identically on the datasets from both pipelines, using the same cross-validation splits.

- Comparison: Statistically compare the decoding accuracy between the two pipelines.

- Interpretation: If accuracy is significantly higher in the artifact-retained pipeline, it is strong evidence that artifactual signals are contributing to decoding performance. The corrected pipeline's accuracy is a more valid estimate of neural decoding.

Protocol B: Source-Specific Artifact Analysis

This protocol helps identify which type of artifact is responsible for inflation.

- Targeted Correction: Instead of one comprehensive correction pipeline, run multiple pipelines where you selectively remove specific artifacts.

- Pipeline A: Remove only ocular components via ICA.

- Pipeline B: Remove only muscular components via ICA.

- Pipeline C: Remove both.

- Pipeline D: No artifact removal (baseline).

- Differential Impact: Compare the decoding performance across all pipelines. A significant drop in performance after removing ocular components (Pipeline A) points to ocular artifacts as the primary confound. Similarly, a drop after Pipeline B implicates muscle artifacts.

Figure 1: Experimental workflow for Protocol A, the Artifact Inclusion/Exclusion Test. Comparing decoder performance between artifact-retained and artifact-corrected pipelines reveals potential inflation.

The Scientist's Toolkit: Key Reagents and Computational Solutions

To implement the protocols outlined above, researchers can leverage a suite of established software tools and methods.

Table 3: Essential Research Reagents for Artifact Management in Decoding

| Tool / Method | Primary Function | Role in Mitigating Inflation | Implementation Notes |

|---|---|---|---|

| Independent Component Analysis (ICA) | Blind source separation to isolate artifact components [35] [36]. | Identifies and allows removal of stereotyped artifacts (ocular, muscle, cardiac) before decoding. | Gold standard for multi-channel data; requires careful component classification [37]. |

| Support Vector Machine (SVM) | A multivariate classifier for decoding analysis [18] [9]. | The primary tool whose output accuracy is tested for vulnerability to artifact inflation. | Its performance should be compared before and after artifact correction. |

| Artifact Subspace Reconstruction (ASR) | An automated method for detecting and reconstructing artifact-contaminated data segments [35] [38]. | Useful for non-stereotyped and large-amplitude artifacts in real-time or wearable EEG applications. | Particularly relevant for mobile EEG with motion artifacts [38]. |

| Linear Regression (EOG) | Models and subtracts EOG influence from EEG channels [35] [37]. | Directly corrects for ocular artifacts using EOG reference channels. | Simpler than ICA but risks over-correction and removing neural signal [37]. |

| Autoreject Package | Python-based tool for automated artifact rejection and bad channel interpolation [34]. | Handles non-biological and high-amplitude transient artifacts that ICA may not capture. | Reduces trial loss via interpolation, improving decoder training [34]. |

The pursuit of high decoding accuracy must not come at the cost of scientific validity. As evidenced, systematic artifacts, particularly ocular artifacts, pose a direct threat to the interpretability of EEG decoding studies by providing a non-neural pathway to high classification performance. To ensure robustness, researchers should adopt the following best practices:

- Always Report Correction Methods: Clearly detail the artifact correction procedures (or lack thereof) in any publication. This is a minimum standard for reproducibility and critical evaluation [39].

- Perform Control Analyses: Implement the protocols described in Section 4 as a standard control. Demonstrating that your results hold after rigorous artifact correction strengthens their validity.

- Prioritize Interpretability over Raw Performance: A slightly lower decoding accuracy based on genuine neural signals is far more valuable than a higher accuracy driven by confounds. As stated in [34], "uncorrected artifacts may increase decoding performance, this comes at the expense of interpretability and model validity."

- Leverage Advanced Tools: Utilize the toolkit in Section 5. For most research-grade EEG decoding, a combination of ICA for correction and SVM for decoding, followed by a comparison with uncorrected data, represents a robust methodological approach.

By acknowledging and actively controlling for this phenomenon, the field can move beyond obscuration and ensure that EEG decoding provides genuine insights into brain function.

A Practical Guide to Ocular Artifact Correction: From Traditional ICA to Deep Learning

Electroencephalographic (EEG) signals are perpetually vulnerable to contamination by ocular artifacts—electrical potentials generated by eye movements and blinks. These artifacts present a significant challenge in neurophysiological research and drug development due to three primary factors: their power spectrum (3–15 Hz) overlaps informatively with EEG theta and alpha bands; their frequency of occurrence is too high to permit simple epoch rejection without substantial data loss; and their amplitudes are dramatically larger than neural signals, potentially leading to misinterpretation of brain activity [1]. In the context of pharmacological studies, where EEG may serve as a biomarker for drug efficacy, undetected ocular artifacts can confound results by obscuring true neurophysiological signals or creating illusory treatment effects.

Numerous methods have been developed to correct these artifacts, ranging from simple rejection to advanced computational approaches. Among these, the regression-based procedure introduced by Gratton, Coles, and Donchin (1983) represents a foundational methodology that continues to influence contemporary EEG preprocessing pipelines [40]. This whitepaper provides an in-depth technical examination of the Gratton and Cole algorithm, detailing its underlying principles, practical implementation workflow, and limitations within modern research environments.

Principles of the Gratton and Cole Regression Method

The Gratton and Cole algorithm, formally termed the Eye Movement Correction Procedure (EMCP), operates on a core linearity assumption. It posits that the recorded signal on any EEG electrode is an additive combination of true brain activity and artifact contributions, which can be separated using a calculated propagation factor [40] [1].

Core Mathematical Model

The model is mathematically described as follows. For a given electrode ( e_i ) at time point ( n ):

[ \text{RawEEG}{ei}(n) = \text{EEG}{ei}(n) + \beta{ei} \cdot \text{artifacts}(n) ]

Here, ( \beta{ei} ) represents the artifact propagation factor specific to each electrode, quantifying how strongly the ocular artifact manifests at that recording site [1]. This factor varies across the scalp, typically exhibiting higher magnitudes at frontal sites closest to the eyes and decreasing toward parietal regions [40]. The procedure's key innovation was computing these propagation factors after removing stimulus-linked variability from both EEG and electrooculogram (EOG) traces, and deriving separate factors for blinks and eye movements based on data from the experimental session itself rather than a separate calibration [40].

Comparative Advantages in Research Settings

This approach offers distinct advantages for research contexts, particularly in clinical populations or paradigms where eye movements are part of the experimental task:

- Data Retention: Enables retention of all experimental trials, crucial for maintaining statistical power in studies with limited trials or populations characterized by frequent artifacts [40]

- Session-Specific Calibration: Computes propagation factors from experimental data rather than separate calibration sessions, enhancing ecological validity [40]

- Differential Correction: Applies separate correction factors for blinks and saccades, acknowledging their different electrical characteristics and scalp distributions [40]

Workflow and Implementation

Implementing the Gratton and Cole algorithm requires a systematic approach to ensure valid artifact correction. The following workflow synthesizes the original methodology with modern implementations found in contemporary toolkits like MNE-Python [41].

Data Preparation and Preprocessing

Proper data preparation is crucial for successful regression-based artifact correction:

- EEG Referencing: Apply a consistent reference scheme (e.g., average reference) before artifact correction [41]

- Filtering: Apply band-pass filtering (e.g., 0.3-40 Hz) to both EEG and EOG channels to remove slow drifts and high-frequency noise that can affect regression stability [41]

- Channel Selection: Identify appropriate EOG channels (vertical and horizontal) and EEG channels for correction

Table 1: Essential Research Reagents and Materials

| Item | Specification/Function |

|---|---|

| EEG System | Multi-channel system with capability for simultaneous EOG recording |

| EOG Electrodes | Bipolar placement for monitoring vertical and horizontal eye movements |

| Processing Software | Implementation environment (e.g., MNE-Python, EEGLAB, custom scripts) |

| Filtering Algorithms | Digital band-pass filters for pre-processing (e.g., 0.3-40 Hz FIR filters) |

| Regression Calculator | Computational implementation for estimating propagation factors |

Figure 1: Complete workflow of the Gratton and Cole regression method for ocular artifact correction, from data acquisition through validation.

Core Regression Procedure

The algorithm follows a structured procedure to estimate and remove ocular artifacts:

- Artifact Detection: Identify periods containing ocular artifacts (blinks and saccades) in the EOG channel [42]

- Propagation Factor Calculation: For each EEG electrode, compute the propagation factor (( \beta{ei} )) that describes the relationship between EOG and EEG traces during artifact periods, after first removing stimulus-locked variability from both signals [40]

Artifact Subtraction: Remove the estimated artifact component from the continuous EEG data using the formula:

[ \text{CorrectedEEG}{ei}(n) = \text{RawEEG}{ei}(n) - \beta{ei} \cdot \text{EOG}(n) ]

Validation: Verify correction efficacy by comparing data before and after correction, typically through visual inspection of evoked potentials or quantitative metrics [41]

Methodological Variations

Modern implementations have introduced variations to enhance the original algorithm:

- Gratton's Evoked Response Subtraction: Subtract the evoked potential from each epoch before computing regression coefficients to isolate artifact-related variance [41]

- Croft & Barry's Blink Epoching: Create epochs time-locked to blink events and compute regression on the averaged blink response to improve coefficient estimation [41]

Performance and Limitations

While foundational, the Gratton and Cole algorithm has specific limitations that researchers must consider when selecting artifact correction methods.

Quantitative Performance Metrics

Table 2: Comparative Performance of Ocular Artifact Correction Methods

| Method | EEG Data Requirements | Key Advantages | Key Limitations |

|---|---|---|---|

| Regression-Based (Gratton & Cole) | Requires EOG channels | Simple implementation; preserves all trials; session-specific factors [40] | Assumes linear, time-invariant propagation; EOG channel may contain brain signals [1] |

| Independent Component Analysis (ICA) | High-density EEG recommended (>40 channels) [1] | Does not require EOG channels; handles non-linear components [42] | Subjective component selection; computationally intensive; may distort spectral power [43] |

| Artifact Subspace Reconstruction (ASR) | Multi-channel EEG | Effective for real-time applications; handles various artifact types | May over-clean data; requires parameter tuning |

| Deep Learning Approaches | Large training datasets | Adaptive to complex patterns; minimal manual intervention | Black box nature; requires extensive computational resources |

Specific Limitations and Considerations

The regression approach faces several critical limitations that affect its application in modern research:

- Crosstalk Contamination: EOG electrodes record not only ocular artifacts but also cerebral activity, particularly from frontal regions, potentially leading to over-correction and removal of genuine neural signals [41]

- Linearity Assumption: The method assumes a linear, time-invariant relationship between EOG and EEG channels, which may not fully capture the complex electromagnetic propagation of artifacts [1]

- Spatial Specificity: A single propagation factor per electrode may inadequately represent artifact dynamics across different experimental conditions or time points [40]

- Practical Constraints: The method is generally recommended for EEG rather than MEG data, as the relationship between artifact and signal differs in magnetic field recordings [41]

Comparative studies have revealed that while regression effectively reduces ocular artifacts, it may not match the performance of other methods in certain contexts. For instance, Wallstrom et al. (2004) found that adaptive filtering improved regression-based correction, and that PCA-based methods effectively reduced artifacts with minimal spectral distortion, while ICA sometimes distorted power in specific frequency bands [43].

Impact on Advanced Analytical Approaches

The choice of artifact correction method can significantly influence downstream analyses, particularly sophisticated analytical approaches increasingly used in pharmaceutical and cognitive neuroscience research.

Implications for Multivariate Pattern Analysis

Recent evidence suggests that artifact correction strategies interact critically with multivariate analytical approaches:

- Decoding Performance: A 2025 study examining SVM- and LDA-based decoding of EEG signals found that artifact correction combined with artifact rejection generally did not improve decoding performance across seven common ERP paradigms [9]

- Confound Management: Despite not enhancing performance, artifact correction remains essential to minimize the risk of artificially inflated decoding accuracy caused by artifact-related confounds rather than genuine neural signals [9]

- Trial Count Considerations: For multivariate analysis, preserving trial count through correction may be more advantageous than aggressive rejection, as sufficient trials are crucial for training robust decoders [9]

Figure 2: Relationship between artifact correction strategies and multivariate pattern analysis (MVPA) outcomes in EEG research.

The Gratton and Cole regression algorithm represents a historically significant and methodologically straightforward approach to ocular artifact correction in EEG research. Its core principles of linear artifact propagation and session-specific calibration continue to offer utility in specific research contexts, particularly those prioritizing trial retention and implementing minimal channel arrays.

Based on our technical examination, we recommend:

- Contextual Method Selection: Reserve regression-based methods for studies with limited channel counts or when maximal trial retention is paramount [1]

- Validation and Verification: Implement rigorous post-correction validation, particularly when using regression-corrected data in multivariate decoding paradigms [9]

- Hybrid Approaches: Consider combining regression with complementary techniques—using regression for initial ocular artifact reduction followed by other methods for remaining artifacts

- Transparent Reporting: Clearly document artifact correction procedures and parameters in research publications to enable proper evaluation and replication

Within the broader thesis of how ocular artifacts affect EEG data analysis, the Gratton and Cole algorithm highlights a fundamental tension in electrophysiological research: the imperative to remove confounding signals while preserving genuine neural data. As analytical techniques grow more sophisticated, the interaction between artifact correction strategies and research outcomes will continue to demand careful consideration, particularly in pharmaceutical development contexts where EEG may serve as a sensitive biomarker for treatment effects.

Independent Component Analysis (ICA) has established itself as a fundamental technique in the preprocessing pipeline for high-density electroencephalography (EEG). By separating mixed signals into statistically independent sources, ICA enables the effective identification and removal of pervasive ocular artifacts—such as blinks and eye movements—that would otherwise obscure neural signals. This technical guide explores the core principles of ICA, provides detailed experimental protocols for its application, and quantitatively demonstrates its efficacy in preserving data integrity. Framed within the broader challenge of how ocular artifacts affect EEG data analysis, this review underscores ICA's critical role in ensuring the validity and reliability of neuroscientific and clinical research.

Electroencephalography (EEG) provides unparalleled millisecond-scale temporal resolution for studying brain dynamics, but its signal is notoriously susceptible to contamination from non-neural sources. Among these, ocular artifacts are one of the most prevalent and challenging problems. Generated by the corneo-retinal dipole potential, eye blinks and movements produce large electrical potentials that can spread across the scalp, overwhelming the much smaller microvolt-level brain signals [44] [45]. These artifacts are especially problematic in cognitive experiments where participants are visually engaged or are not explicitly instructed to refrain from blinking, as such instructions can themselves alter brain activity [44].

The impact of these artifacts extends beyond simply adding noise; they can severely distort quantitative analyses and lead to spurious findings. For instance, artifact-contaminated signals can artificially inflate the apparent synchronization between channels or create false event-related potentials. Consequently, effective artifact remediation is not merely a technical preprocessing step but a foundational requirement for any rigorous EEG research. While various methods exist, from simple regression to advanced machine learning, Independent Component Analysis (ICA) has emerged as a particularly powerful and widely adopted solution for high-density EEG systems.

Theoretical Foundations of ICA

Core Mathematical Principle

Independent Component Analysis is a blind source separation (BSS) technique that aims to decompose a multivariate signal into additive, statistically independent sub-components. The fundamental assumption for EEG is that the signals recorded at the scalp (X) are linear, instantaneous mixtures of underlying brain and non-brain source activities (S), combined via an unknown mixing matrix (A). This relationship is formalized as:

X = A × S

The goal of ICA is to estimate an unmixing matrix (W) that inverts this process, recovering the original source signals as:

S = W × X