Neuronal Network Simulation Benchmarks: A Comprehensive Guide for Biomedical Research and Drug Development

This article provides a comprehensive overview of the current state and critical importance of benchmarking in neuronal network simulations, tailored for researchers, scientists, and drug development professionals.

Neuronal Network Simulation Benchmarks: A Comprehensive Guide for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive overview of the current state and critical importance of benchmarking in neuronal network simulations, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles and urgent need for standardization in the field, exemplified by emerging frameworks like NeuroBench. The content delves into practical methodological approaches for implementing benchmarks on both conventional high-performance computing (HPC) systems and neuromorphic hardware, covering key metrics from simulation speed to biological fidelity. It further addresses common troubleshooting and performance optimization challenges, including scaling pitfalls and data accuracy. Finally, the article examines advanced validation and comparative analysis techniques essential for ensuring model reliability, reproducibility, and their ultimate utility in accelerating biomedical discoveries and therapeutic development.

The Why and What: Establishing Foundational Principles for Neuronal Simulation Benchmarks

Defining the Benchmarking Crisis in Computational Neuroscience

Computational neuroscience increasingly relies on complex simulations to understand brain function in health and disease. This endeavor depends critically on sophisticated simulation technology that can leverage modern high-performance computing (HPC) systems. However, the field now faces a benchmarking crisis—a critical lack of standardized, reproducible methods for evaluating the performance of simulation technologies. This crisis impedes development, compromises reproducibility, and hinders the community's ability to make informed decisions about tool selection and hardware investment.

The core of this crisis stems from the challenging complexity of benchmarking itself. As highlighted by Jordan et al. (2022), benchmarking experiments in simulation science span five complex dimensions: "Hardware configuration," "Software configuration," "Simulators," "Models and parameters," and "Researcher communication" [1]. The absence of standardized specifications for measuring simulator performance on HPC systems means that maintaining comparability between benchmark results is exceptionally difficult [1]. This article analyzes the roots of this crisis, presents quantitative performance landscapes, outlines standardized experimental methodologies, and provides a toolkit for researchers to navigate these challenges.

The Dimensions of the Benchmarking Crisis

Multifaceted Challenges in Performance Evaluation

The benchmarking crisis in computational neuroscience arises from several interconnected challenges:

- Lack of Standardization: A pronounced lack of standardized specifications exists for measuring the scaling performance of simulators on HPC systems [1]. This absence of common standards makes direct comparisons between studies nearly impossible and complicates reproducibility.

- Diverse Simulator Landscape: The field utilizes numerous simulators with different design philosophies, including CPU-based simulators (NEST, NEURON, Brian), GPU-accelerated platforms (GeNN, Brian2CUDA, NeuronGPU), and neuromorphic systems (SpiNNaker, BrainScaleS) [1] [2] [3]. This diversity, while beneficial for innovation, creates substantial benchmarking complexity.

- Hardware and Software Heterogeneity: Benchmarking studies are conducted on different contemporary compute clusters and supercomputers with varying architectures, configurations, and software environments [1]. This heterogeneity can lead to unwanted optimization toward specific machine types rather than general performance improvements.

- Validation Versus Efficiency Tension: A fundamental tension exists between model validation (whether results are correct) and efficiency validation (whether results are computed efficiently) [1]. This is complicated by the chaotic nature of neuronal network dynamics, where minimal deviations in algorithms, number resolutions, or random number generators can lead to significantly different activity patterns [1].

The Reproducibility Gap

Neuroscientific simulation studies are already notoriously difficult to reproduce, and benchmarking adds another layer of complexity [1]. Reported benchmarks may differ not only in the structure and dynamics of employed neuronal network models but also in:

- The type of scaling experiment (strong vs. weak scaling)

- Software and hardware versions and configurations

- Analysis methodologies and presentation of results

- Measurement of different efficiency metrics (time-to-solution, energy-to-solution, memory consumption) [1]

This reproducibility gap represents a significant crisis for a field whose foundation rests on the reliability and comparability of computational results.

Quantitative Landscape of Simulation Performance

Performance Metrics and Benchmark Results

Table 1: Key Performance Metrics in Neuronal Network Benchmarking

| Metric Category | Specific Metrics | Definition/Interpretation | Relevance |

|---|---|---|---|

| Time Efficiency | Time-to-solution | Total wall-clock time for simulation completion | Determines feasibility of large-scale/long-time simulations |

| Real-time performance | Simulated time equals wall-clock time | Essential for robotics and closed-loop applications | |

| Sub-real-time performance | Simulated time < wall-clock time | Enables studies of slow processes (learning, development) | |

| Resource Efficiency | Energy-to-solution | Total energy consumption for simulation | Important for economic and environmental sustainability |

| Memory consumption | Peak memory usage during simulation | Constrains maximum model size on given hardware | |

| Scaling Performance | Strong scaling | Fixed model size, increasing resources | Reveals limiting time-to-solution for existing models |

| Weak scaling | Model size grows with resources | Assesses capability for larger-scale simulations |

Table 2: Representative Performance Comparisons Across Simulators

| Simulator | Hardware Target | Reported Performance Gains | Supported Features |

|---|---|---|---|

| Brian2CUDA | NVIDIA GPUs | Up to 3 orders of magnitude acceleration vs. CPU [3] | Full Brian feature set including arbitrary models, plasticity, heterogeneous delays |

| Brian2GeNN | NVIDIA GPUs | Comparable to Brian2CUDA [3] | Limited to common feature set of Brian and GeNN |

| NEST | CPU clusters | Extensive scaling documentation up to largest HPC systems [1] | Focus on network dynamics, size, and structure |

| NEURON | CPU/GPU | Performance advances via code generation for GPUs [4] | Specialization in morphologically detailed neurons |

| SpiNNaker | Neuromorphic | Real-time simulation capability [2] | Low-power embodied simulations |

Historical Context and Performance Trajectories

The computational capabilities available to neuroscientists have grown exponentially. Supercomputing performance has increased from ~10 TeraFLOPS in the early 2000s to above 1 ExaFLOPS in 2022—a 100,000-fold increase representing almost 17 doublings of computational capability in 22 years [4] [5]. This staggering growth has necessitated continuous software adaptations, with simulators having to "reinvent themselves and change substantially to embrace this technological opportunity" [4].

This performance explosion has transformed the scientific questions accessible to computational neuroscientists. The field has progressed from balanced random network models to biologically realistic network models representing mammalian cortical circuitry at full scale, with neuron and synapse numbers increasing by an order of magnitude [4] [5]. This scaling removes uncertainties about how emerging network phenomena scale with network size, addressing a long-standing theoretical challenge [4].

Standardized Experimental Protocols for Benchmarking

Core Methodological Framework

To address the benchmarking crisis, the community requires standardized experimental protocols. The following methodologies provide a foundation for comparable performance evaluation:

Weak-Scaling Experiments: The network model size increases proportionally to computational resources, maintaining a fixed workload per compute node under perfect scaling [1]. This approach assesses the capability to simulate increasingly large networks. A critical consideration is that scaling neuronal networks inevitably alters network dynamics, complicating comparisons between scales [1].

Strong-Scaling Experiments: The model size remains unchanged while computational resources increase [1]. This methodology identifies the limiting time-to-solution for existing models and is particularly relevant for network models of natural size describing neuronal activity correlation structure.

Model Complexity Gradients: Benchmarks should employ network models with different complexity levels, from simple balanced random networks to complex multi-area models with biological realism [1]. This gradient helps identify performance bottlenecks specific to certain model characteristics.

The beNNch Framework: A Reference Implementation

As a response to the benchmarking crisis, Jordan et al. (2022) developed beNNch, an open-source software framework implementing a generic benchmarking workflow decomposed into unique segments consisting of separate modules [1]. This framework provides:

- Standardized configuration, execution, and analysis of benchmarks for neuronal network simulations

- Unified recording of benchmarking data and metadata to foster reproducibility

- Identification of performance bottlenecks across network models with different complexity levels

- Guidance for development toward more efficient simulation technology [1]

The implementation of such frameworks represents a critical step toward resolving the benchmarking crisis by providing much-needed standardization.

Visualization of Benchmarking Workflows and Relationships

Dimensions of HPC Benchmarking Experiments

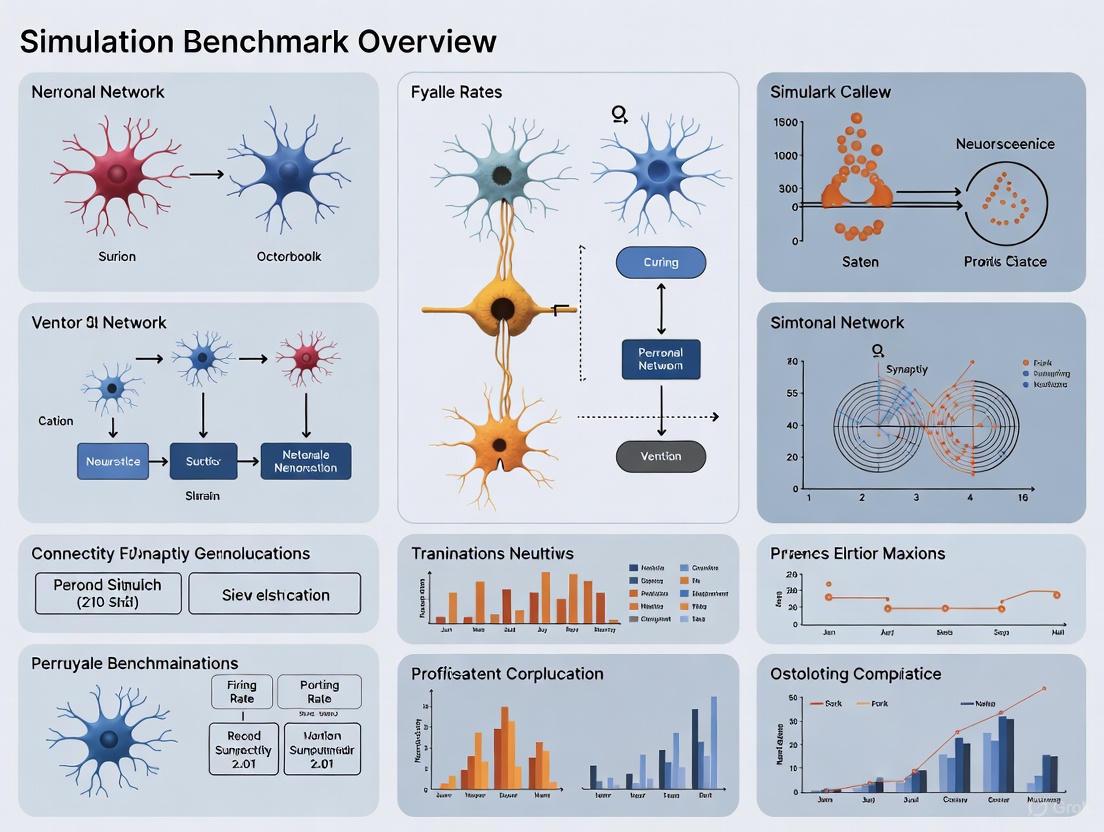

Figure 1: The five main dimensions of HPC benchmarking experiments in computational neuroscience, with examples from neuronal network simulations [1].

Benchmarking Workflow Architecture

Figure 2: Modular workflow for performance benchmarking of neuronal network simulations, illustrating the segmentation of the benchmarking endeavor into distinct phases [1].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Neuronal Network Benchmarking

| Tool Category | Specific Tools | Function/Purpose | Access Model |

|---|---|---|---|

| Simulation Engines | NEST [2], NEURON [4], Brian [3] | Core simulation technology for spiking neuronal networks | Open source |

| Brian2CUDA [3], Brian2GeNN [3] | GPU-accelerated simulation backends | Open source | |

| Arbor [1] | Simulation of morphologically detailed neurons | Open source | |

| Benchmarking Frameworks | beNNch [1] | Configuration, execution, and analysis of benchmarks | Open source |

| Workflow Tools | PyNN [2] | Simulator-independent language for building network models | Open source |

| NESTML [2] | Domain-specific language for neuron model specification | Open source | |

| Hardware Platforms | HPC CPU clusters [1] | Large-scale network simulations | Institutional access |

| GPU systems [3] | Massively parallel simulation acceleration | Varying access | |

| Neuromorphic systems (SpiNNaker, BrainScaleS) [4] [2] | Energy-efficient brain-inspired computing | Research access |

Future Directions and Community Solutions

Emerging Approaches and Technologies

Resolving the benchmarking crisis requires community-wide efforts and technological innovations:

Sustainability and Portability: With scientific software life spans potentially exceeding 40 years, sustainability and portability are increasingly important [4]. Modernization of complex scientific software benefits from robust continuous integration, testing, and documentation workflows [4].

Algorithmic Reconsideration: After 15 years of intense research, no consensus exists on whether event-driven or clock-driven approaches to simulating spiking neuronal networks are more efficient [4]. This suggests there may be no general answer, and hybrid approaches may be necessary.

Embracing Architectural Evolution: The community must continue adapting to rapidly changing hardware systems, including progressively parallel processor architectures, GPUs with thousands of simple cores, and emerging neuromorphic computing platforms [4].

Analysis Package Development: A discrepancy exists between advanced simulation capabilities and analysis tools. While HPC methods for simulation are increasingly sophisticated, similar advancements are needed for analyzing the resulting data [4].

Community-Wide Standardization Efforts

Addressing the benchmarking crisis requires coordinated community action:

- Development and adoption of standardized benchmark models across multiple complexity levels

- Agreement on key performance metrics and reporting standards

- Implementation of container technologies for reproducible software environments [4]

- Creation of curated repositories for executable model descriptions and benchmarking results [4]

- Enhanced documentation of benchmarking methodologies and result interpretation

The International Neuroinformatics Coordinating Facility (INCF) has played a crucial role in developing standards and best practices since 2007 [4]. Expanding these efforts specifically toward benchmarking standards represents a promising path forward.

The benchmarking crisis in computational neuroscience represents a critical challenge for a field increasingly dependent on complex simulations of neuronal networks. This crisis manifests through inadequate standardization, reproducibility challenges, and difficulties in comparative performance evaluation across diverse hardware and software environments.

Addressing this crisis requires community-wide adoption of standardized benchmarking frameworks, such as the modular workflow implemented in beNNch, which decomposes the benchmarking process into reproducible segments [1]. Furthermore, the field must embrace sustainable software development practices to ensure the long-term viability of simulation technologies [4].

As computational capabilities continue to evolve—with exascale computing, specialized AI accelerators, and neuromorphic systems becoming increasingly available [4]—resolving the benchmarking crisis becomes ever more critical. Through coordinated community effort, standardized methodologies, and shared benchmarking resources, computational neuroscience can overcome this crisis and more effectively leverage advancing computational capabilities to understand brain function in health and disease.

Benchmarking serves as a cornerstone of scientific progress, providing the standardized frameworks necessary for validating methods, ensuring reproducibility, and guiding future development. In the computationally intensive field of neuronal network simulations, benchmarking is particularly critical for navigating the trade-offs between model biological fidelity, simulation performance, and interpretability. This whitepaper examines the core objectives of benchmarking, detailing its methodologies, applications in neuromorphic computing and feature selection, and its indispensable role in fostering reproducible, cumulative scientific advancement. We present standardized protocols, quantitative comparisons, and community-driven initiatives that together create a foundation for reliable and transparent research.

In computational research, the proliferation of methods and algorithms creates a critical challenge for scientists: selecting the most appropriate tool for a given analysis. Benchmarking addresses this challenge through the rigorous, head-to-head comparison of different methods using well-characterized datasets and consistent evaluation criteria [6]. Its core objectives are multifaceted, aiming to:

- Ensure Reproducibility and Rigor: By defining standard experimental conditions and evaluation metrics, benchmarking allows independent verification of results, which is a fundamental tenet of science [6] [7].

- Provide Objective Performance Assessment: Neutral benchmarking studies, conducted independently of method development, offer unbiased evaluations of a method's strengths and weaknesses under controlled conditions [6].

- Guide Method Selection and Development: Benchmarks equip researchers with evidence-based recommendations for choosing analytical tools, while also highlighting limitations that inspire and direct future methodological innovations [6] [8].

- Measure Technological Advancement: In fields like high-performance computing and neuromorphic hardware, benchmarking tracks progress over time, quantifying improvements in metrics such as simulation speed, energy efficiency, and model scale [9] [10] [11].

Within neuronal network research, benchmarking is indispensable for reconciling the field's competing demands for biological realism and computational tractability. As simulations scale toward whole-brain models [9] [12], robust benchmarks are the only way to objectively assess whether increasing complexity translates to genuine scientific insight.

Foundational Principles of Rigorous Benchmarking

A high-quality benchmarking study is built upon a foundation of careful design and transparent reporting. The following principles are essential for generating accurate, unbiased, and informative results [6].

Defining Purpose and Scope

The benchmark's purpose must be clearly defined at the outset. Is it a neutral comparison of existing methods or a performance demonstration for a new method? The scope determines the comprehensiveness of the study, influencing the number of methods and datasets included [6]. A neutral benchmark should strive to be as comprehensive as possible within resource constraints.

Selection of Methods and Datasets

Method selection should be justified and avoid perceived bias. Neutral benchmarks often aim to include all available methods for a specific analysis, or at least define clear, justified inclusion criteria (e.g., software availability, usability) [6].

Dataset selection is equally critical. Benchmarks typically use two types of data:

- Simulated Data: Allows for a known "ground truth," enabling quantitative measurement of a method's ability to recover the true signal [6] [8]. A key challenge is ensuring simulations accurately reflect properties of real data.

- Real Data: Provides authenticity and tests performance under real-world conditions, though the ground truth may be unknown or only partially known [6].

Evaluation Criteria and Metrics

Performance must be evaluated using predefined, quantitative metrics that are relevant to the scientific question. These often include:

- Key Performance Metrics: Accuracy, precision, recall, or F1 score for classification tasks; error rates for regression.

- Secondary Measures: Computational efficiency (runtime, memory usage), scalability, and usability (ease of installation, quality of documentation) [6] [10].

Reproducibility and Reporting

For a benchmark to be valuable, it must be reproducible. This requires detailed reporting of software versions, parameters, and computational environment, alongside making code and data available [6] [10]. The complexity of benchmarking in simulation science is illustrated by the multiple dimensions that must be documented, as shown in the workflow below.

Diagram 1: Multidimensional benchmarking workflow for simulation science.

Table 1: Essential Guidelines for Benchmarking Design and Their Associated Challenges [6].

| Principle | Essentiality | Potential Pitfalls |

|---|---|---|

| Defining Purpose & Scope | High (+++) | Scope too broad/narrow; unrepresentative results |

| Selection of Methods | High (+++) | Excluding key methods; introducing selection bias |

| Selection of Datasets | High (+++) | Unrepresentative datasets; overly simplistic simulations |

| Parameter & Software Versions | Medium (++) | Uneven parameter tuning across methods |

| Key Quantitative Metrics | High (+++) | Metrics that don't reflect real-world performance |

| Secondary Measures (e.g., runtime) | Medium (++) | Subjectivity in qualitative measures; hardware dependence |

| Reproducible Research Practices | Medium (++) | Tools/software becoming inaccessible over time |

Benchmarking Methodologies and Experimental Protocols

Translating benchmarking principles into actionable experiments requires standardized protocols. This section outlines specific methodologies for different computational domains.

Protocol for Benchmarking Neural Simulators

Benchmarking high-performance spiking neural network simulators focuses on metrics like time-to-solution, energy-to-solution, and memory consumption [10]. The protocol involves:

- Model Selection: Choosing representative network models, such as balanced random networks with excitatory and inhibitory populations (e.g., the Brunel model) [10].

- Scaling Experiment Design:

- Strong Scaling: The problem size (network model) is fixed, and the number of processors is increased. The goal is to find the fastest time-to-solution for a given model [10].

- Weak Scaling: The problem size per processor is kept constant as the number of processors is increased. This tests the simulator's ability to handle larger models with more resources [10].

- Measurement: The simulation is executed on the target HPC system, and the wall-clock time for both the network setup phase and the simulation phase is recorded. Multiple runs are performed to ensure reliability.

Protocol for Benchmarking Feature Selection Methods

Benchmarking Feature Selection (FS) methods, particularly for non-linear signals, requires carefully constructed synthetic data with known ground truth [8]. A standard protocol is:

- Dataset Generation: Create synthetic datasets where a few features non-linearly determine the output, and many other features are irrelevant decoys. Example datasets include (see Diagram 2):

- RING: Features define a circular decision boundary.

- XOR: The classic non-linear problem where individual features are uninformative.

- Complex Compositions: Merging RING and XOR features to create more challenging benchmarks [8].

- Method Execution: Run a suite of FS methods (e.g., traditional like Random Forests and Lasso, and DL-based like LassoNet and DeepPINK) on these datasets.

- Performance Quantification: Evaluate how well each method ranks the true predictive features over the decoys, using metrics like area under the precision-recall curve.

Diagram 2: Workflow for benchmarking feature selection methods.

A suite of standardized software, hardware, and datasets forms the essential "reagents" for conducting benchmarking research in computational neuroscience and machine learning.

Table 2: Key Research Reagent Solutions for Neuronal Network Simulation and Benchmarking.

| Tool / Resource | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| NEST [10] | Simulator Software | Simulation of large-scale spiking neuron networks | A standard tool for creating baseline performance metrics; often used in scaling studies on HPC systems. |

| NEURON [13] | Simulator Software | Simulation of biologically detailed, multi-compartment neurons | Provides a reference for functional correctness and simulation fidelity for models of single neurons and microcircuits. |

| EDEN [13] | Simulator Software | High-performance, NeuroML-compatible neural simulator | Serves as a benchmark for simulation speed and efficiency, often compared against NEST and NEURON. |

| NeuroML [13] | Modeling Standard | Community-standard model description language | Ensures model portability and reproducibility across different simulation platforms. |

| NeuroBench [11] | Benchmark Framework | Standardized framework for benchmarking neuromorphic algorithms and systems | Provides a common set of tools and methodologies for fair and inclusive measurement of neuromorphic approaches. |

| Balanced Random Network (Brunel) [10] | Benchmark Model | A standardized spiking network model with balanced excitation/inhibition | A widely used reference model for assessing simulator performance and scaling. |

| Supercomputer Fugaku [12] | HPC Hardware | One of the world's fastest supercomputers | Platform for extreme-scale benchmarks, such as the microscopic-level simulation of a mouse cortex. |

Quantitative Benchmarking in Action: Case Studies

Benchmarking Whole-Brain Simulation Feasibility

A prime example of benchmarking for forecasting is the systematic estimation of mammalian whole-brain simulation feasibility. By analyzing technological trends in supercomputing, transcriptomics, and connectomics, researchers have projected the following timelines [9]:

Table 3: Projected Feasibility Timeline for Mammalian Whole-Brain Cellular-Level Simulations [9].

| Species | Brain Scale | Projected Feasibility Date |

|---|---|---|

| Mouse | ~70 million neurons | Around 2034 |

| Marmoset | ~600 million neurons | Around 2044 |

| Human | ~86 billion neurons | Likely later than 2044 |

These projections rely on benchmarking current simulation capabilities and extrapolating exponential improvements in computing power and neural measurement technologies [9].

Benchmarking Simulator Performance

Rigorous comparisons of neural simulators reveal significant performance differences. The EDEN simulator, for instance, was benchmarked against the established NEURON simulator using a variety of NeuroML models. The study demonstrated that EDEN ran one to nearly two orders-of-magnitude faster than NEURON on a typical desktop computer, a critical metric for research productivity [13]. Such benchmarks not only guide tool selection but also drive development by highlighting inefficiencies in existing technology.

Benchmarking in Neuromorphic Computing

The NeuroBench initiative exemplifies the community-driven approach to benchmarking. It provides a hardware-independent and hardware-dependent evaluation framework for neuromorphic algorithms and systems [11]. By establishing common tasks, datasets, and metrics, NeuroBench aims to objectively quantify the advantages of neuromorphic approaches, such as energy efficiency and real-time processing capabilities, over conventional computing methods. This is vital for steering the development of this promising field.

Benchmarking is far more than a technical exercise; it is a fundamental practice that underpins research reproducibility, objectivity, and progress. In the complex and rapidly evolving field of neuronal network simulations, standardized benchmarks provide the necessary compass to navigate methodological choices, validate extraordinary claims—such as the feasibility of whole-brain simulation—and ensure that increasing computational scale translates to genuine biological insight. As community-wide efforts like NeuroBench gain traction, benchmarking will continue to be the critical link between ambitious scientific questions and reliable, reproducible answers.

The rapid advancement of artificial intelligence has exposed the limitations of conventional computing architectures, particularly in terms of energy efficiency and computational scalability. Neuromorphic computing has emerged as a promising alternative, drawing inspiration from the brain's structure and function to create more efficient computing paradigms [11]. However, the field has faced a significant obstacle: the lack of standardized benchmarks. This deficiency has made it difficult to accurately measure technological progress, compare performance against conventional methods, and identify the most promising research directions [11] [14]. Without common standards, the neuromorphic research community risks fragmentation, inefficiency, and inability to demonstrate clear advances over traditional approaches.

The benchmarking challenge extends across multiple dimensions of neuromorphic research. For algorithm development, researchers need hardware-independent ways to evaluate novel brain-inspired approaches like spiking neural networks (SNNs). For system implementation, standardized metrics are required to assess complete neuromorphic hardware systems in real-world scenarios. Furthermore, the field encompasses diverse approaches from simulated neuronal networks on high-performance computing (HPC) systems to dedicated neuromorphic chips, each requiring appropriate benchmarking methodologies [1]. This complex landscape has driven the community to develop comprehensive solutions that can keep pace with rapid innovation while providing objective performance evaluation.

NeuroBench: A Community-Driven Benchmarking Framework

NeuroBench represents a collaborative effort from an open community of researchers across industry and academia to address the standardization gap in neuromorphic computing. Established as a benchmark framework for neuromorphic algorithms and systems, it provides a common set of tools and systematic methodology for inclusive benchmark measurement [11] [14] [15]. The framework is designed to deliver an objective reference for quantifying neuromorphic approaches through two complementary tracks: a hardware-independent algorithm track for evaluating brain-inspired algorithms, and a hardware-dependent system track for assessing complete neuromorphic systems [14]. This dual-track approach recognizes the different evaluation needs at various stages of neuromorphic technology development.

The design philosophy behind NeuroBench emphasizes collaborative development and iterative improvement. Unlike previous benchmarking attempts that saw limited adoption, NeuroBench was specifically designed to be inclusive, actionable, and adaptable to the rapidly evolving neuromorphic landscape [14]. The framework is intended to continually expand its benchmarks and features to track and foster progress made by the research community. By providing standardized evaluation methodologies, NeuroBench aims to accelerate innovation in neuromorphic computing while enabling direct comparison between different approaches and against conventional computing baselines.

Core Architecture and Metrics

NeuroBench employs a structured approach to benchmarking through carefully defined metrics and evaluation protocols. The framework introduces a comprehensive set of metrics that capture the unique characteristics of neuromorphic systems, going beyond traditional computing benchmarks to include neuromorphic-specific considerations such as temporal dynamics and event-based processing [14] [16].

Table 1: NeuroBench Evaluation Metrics Categories

| Metric Category | Specific Metrics | Application Context |

|---|---|---|

| Correctness Metrics | Task accuracy, precision, recall | Algorithm and system tracks |

| Complexity Metrics | Footprint, connection sparsity, activation sparsity, synaptic operations | Hardware-independent evaluation |

| System Performance Metrics | Time-to-solution, energy-to-solution, memory consumption | Hardware-dependent evaluation |

| Efficiency Metrics | Energy per inference, computational density | Cross-platform comparisons |

The architecture of NeuroBench supports both post-processing of existing results and on-the-fly evaluation during model or system operation [14]. This flexibility allows researchers to integrate NeuroBench into their existing workflows with minimal disruption. The framework's tools are designed to be accessible to the broader research community while providing sufficiently detailed metrics for in-depth analysis of neuromorphic approaches.

Evaluation Workflow and Implementation

The NeuroBench evaluation process follows a systematic workflow that ensures consistent application across different platforms and use cases. The process begins with benchmark task selection, covering multiple application domains relevant to neuromorphic computing, such as few-shot continual learning and event camera object detection [14] [16]. For each task, researchers configure the appropriate metrics based on their evaluation track (algorithm or system) and specific research questions.

The following diagram illustrates the core NeuroBench evaluation workflow:

Implementation of NeuroBench benchmarks involves integrating the framework's tools with the target algorithm or system. For the algorithm track, this typically involves running standardized tasks on simulated neuromorphic approaches using conventional hardware, with NeuroBench measuring relevant metrics without hardware-specific optimizations. For the system track, the same tasks are executed on complete neuromorphic systems, with additional measurements for energy consumption, real-time performance, and other system-level characteristics [14]. This structured approach enables meaningful comparisons across different neuromorphic approaches and against conventional computing baselines.

Community-Driven Benchmarking Initiatives

Complementary Benchmarking Approaches

While NeuroBench provides a comprehensive framework for neuromorphic computing evaluation, several community-driven initiatives address specific aspects of the benchmarking challenge. These complementary approaches include specialized tools for particular simulation environments, performance analysis on high-performance computing systems, and collaborative research models that indirectly advance benchmarking through community engagement.

The beNNch framework represents an open-source software solution specifically designed for configuring, executing, and analyzing benchmarks for neuronal network simulations [1]. Unlike the broader scope of NeuroBench, beNNch focuses specifically on the performance of simulation engines across different network models with varying complexity levels. The framework employs a modular workflow that decomposes the benchmarking process into distinct segments, each consisting of separate modules for configuration, execution, and analysis [1]. This modular approach enhances reproducibility by recording benchmarking data and metadata in a unified way.

Another significant community effort is the Potjans-Diesmann cortical microcircuit model (PD14), which has emerged as an informal but widely adopted benchmark for neuromorphic systems [17]. Originally developed to understand how cortical network structure shapes dynamics, this model of early sensory cortex representing ~77,000 neurons and ~300 million synapses has become a reference model for testing simulation technology, including CPU-based, GPU-based, and neuromorphic simulators [17]. The widespread adoption of PD14 demonstrates how community-driven model sharing can advance benchmarking even in the absence of formal standards.

Collaborative Research Models

Massively collaborative projects represent another approach to community-driven benchmarking advancement. The Collaborative Modeling of the Brain (COMOB) project exemplifies this model, bringing together researchers from multiple countries to collaboratively investigate spiking neural network models for sound localization [18]. While not a benchmarking framework per se, this approach facilitates informal benchmarking through shared code bases and common research questions.

The COMOB project established a public Git repository with code for training SNNs to solve sound localization tasks via surrogate gradient descent, inviting anyone to use this code as a starting point for their own investigations [18]. This model provides hands-on research experience to early-career researchers while creating opportunities for comparing different approaches to similar problems—an informal benchmarking process that complements formal frameworks like NeuroBench.

Workflow for Modular Benchmarking

The beNNch framework implements a sophisticated workflow for managing the complexity of benchmarking experiments in computational neuroscience. This workflow addresses five main dimensions of benchmarking: hardware configuration, software configuration, simulators, models and parameters, and researcher communication [1].

The following diagram illustrates the modular workflow for neuronal network simulation benchmarking:

This modular approach enables researchers to systematically explore the performance of simulation technologies across different combinations of hardware and software configurations. By maintaining clear separation between configuration, execution, and analysis phases, the framework enhances reproducibility and enables more meaningful comparisons between different benchmarking studies [1].

Experimental Protocols and Evaluation Methodologies

Standardized Benchmarking Protocols

Implementing effective benchmarks for neuromorphic computing requires careful attention to experimental design and protocol standardization. NeuroBench and complementary frameworks establish specific methodologies to ensure valid, reproducible results across different platforms and implementations.

For system track evaluations, the protocol involves deploying complete neuromorphic systems in realistic application scenarios while measuring multiple performance dimensions simultaneously [14]. This includes measuring time-to-solution (the wall-clock time required to complete a specific computation), energy-to-solution (the total energy consumed during computation), and memory consumption throughout the execution [1]. These measurements provide insights into the trade-offs between different neuromorphic approaches and their conventional counterparts.

For algorithm track evaluations, the focus shifts to hardware-independent metrics that capture the fundamental efficiency of neuromorphic approaches. The protocol involves running standardized tasks on simulated neuromorphic algorithms while measuring metrics such as connection sparsity, activation sparsity, and synaptic operations [14]. These metrics highlight the potential advantages of neuromorphic algorithms even before hardware implementation.

Case Study: The PD14 Model as a Benchmark

The Potjans-Diesmann cortical microcircuit model (PD14) provides a compelling case study of how community-adopted benchmarks emerge and drive progress. Originally developed to understand the relationship between cortical network structure and dynamics, PD14 has become a standard benchmark for evaluating simulation technology [17].

The experimental protocol for using PD14 as a benchmark involves simulating the defined network model—representing ~77,000 neurons and ~300 million synapses under 1 mm² of early sensory cortex—on target hardware or simulation software while measuring performance metrics [17]. The model's well-defined architecture and reproducible dynamics make it ideal for comparing different simulation approaches, from high-performance computing clusters to dedicated neuromorphic hardware.

The widespread adoption of PD14 demonstrates several important principles for effective benchmarking: the benchmark must be scientifically relevant, computationally challenging but feasible, easily implementable across different platforms, and supported by a clear reference implementation. These principles have guided the development of more formal benchmarking frameworks like NeuroBench.

Research Reagents and Tools

Essential Benchmarking Frameworks and Platforms

The neuromorphic benchmarking ecosystem comprises several specialized frameworks and platforms, each designed to address specific aspects of performance evaluation. The table below summarizes key tools available to researchers in the field.

Table 2: Essential Benchmarking Tools for Neuromorphic Computing Research

| Tool/Framework | Primary Function | Key Features | Application Context |

|---|---|---|---|

| NeuroBench | Comprehensive benchmarking of neuromorphic algorithms and systems | Dual-track approach (algorithm/system), standardized metrics, community-driven development | General-purpose neuromorphic computing evaluation |

| beNNch | Performance benchmarking of neuronal network simulations | Modular workflow, unified data storage, reproducibility focus | HPC simulation performance analysis |

| SpikeSim | Compute-in-memory hardware evaluation for SNNs | Hardware fidelity modeling, memory resource management, architecture exploration | SNN hardware design space exploration |

| SpikingJelly | SNN training and evaluation framework | Multi-dataset support, energy efficiency optimization, GPU acceleration | SNN algorithm development and comparison |

| NEST Simulator | Large-scale neuronal network simulations | Multi-scale modeling, parallel execution, diverse neuron models | Neuroscience-inspired model simulation |

Simulation Engines and Modeling Tools

Beyond dedicated benchmarking frameworks, researchers rely on various simulation engines and modeling tools that incorporate benchmarking capabilities. These tools enable both model development and performance evaluation within integrated environments.

The NEST Simulator represents a cornerstone technology in this category, enabling large-scale simulations of heterogeneous networks of point neurons or neurons with few electrical compartments [1]. NEST has been extensively used for benchmarking studies, particularly for evaluating scaling performance on high-performance computing systems. Similarly, Brian provides a flexible environment for simulating SNNs on CPUs, while GeNN and NeuronGPU focus on GPU-accelerated simulations [1].

For dedicated neuromorphic hardware platforms, specialized tools enable mapping neural networks onto physical systems. The SpiNNaker system, for example, provides software stacks for deploying and benchmarking neural models on its massive parallel architecture [1]. These platform-specific tools complement general benchmarking frameworks by providing detailed performance insights for particular hardware implementations.

Future Directions and Community Impact

Evolving Benchmarking Needs

As neuromorphic computing continues to mature, benchmarking frameworks must evolve to address new challenges and applications. Future developments in NeuroBench and related initiatives will likely focus on several key areas: expanding benchmark tasks to cover emerging application domains, refining metrics to better capture real-world performance trade-offs, and enhancing support for novel neuromorphic architectures [14] [16].

An important direction involves standardizing data formats and interfaces to improve interoperability between different neuromorphic systems and conventional computing platforms [16]. The field must also develop specialized benchmarks for application-specific domains such as biomedical signal processing, autonomous systems, and edge AI applications. These domain-specific benchmarks will help demonstrate the practical value of neuromorphic computing beyond laboratory environments.

Broader Impact on Research and Development

Standardized benchmarking frameworks like NeuroBench are already having a transformative effect on neuromorphic computing research and development. By providing objective performance evaluation criteria, these frameworks help identify the most promising research directions, allocate resources more effectively, and demonstrate concrete progress to funding agencies and stakeholders [11] [14].

The community-driven nature of these benchmarking initiatives fosters collaboration across institutional and geographical boundaries, accelerating collective progress. As noted in the NeuroBench publication, the framework was "collaboratively designed from an open community of researchers across industry and academia" [11], representing a shared investment in the future of neuromorphic computing. This collaborative model ensures that benchmarking standards remain relevant, comprehensive, and adaptable to the rapidly evolving landscape of neuromorphic technologies.

Looking forward, the continued development and adoption of standardized benchmarks will be crucial for transitioning neuromorphic computing from research laboratories to practical applications. By enabling objective comparison between different approaches and demonstrating clear advantages over conventional computing in specific domains, frameworks like NeuroBench will play a vital role in establishing neuromorphic computing as a viable paradigm for next-generation intelligent systems.

Neuronal network simulation represents a cornerstone of modern neuroscience, enabling researchers to formulate and test hypotheses on brain function. The field spans a vast spectrum of spatial and temporal scales, from the detailed biophysics of single neurons to the system-level dynamics of entire brains. This technical guide provides a comprehensive overview of the current state of neuronal network simulation benchmarks research, detailing the methodologies, tools, and validation frameworks essential for conducting robust computational neuroscience studies. The expansion of this field has been fueled by simultaneous advances in computational power, such as the Fugaku supercomputer capable of over 400 quadrillion operations per second [19], and in experimental techniques for measuring neural structure and function. Simulations now serve as critical platforms for investigating normal brain function, modeling disease states like Alzheimer's and epilepsy [19], and even testing potential therapeutic interventions in silico before clinical application. This whitepaper aims to equip researchers, scientists, and drug development professionals with a thorough understanding of the technical landscape across this multi-scale domain, from foundational single-neuron models to the emerging frontier of whole-brain simulation.

Single Neuron Modeling

Biophysical Detailing and Parameter Optimization

At the most fundamental level, single neuron models simulate the electrical and chemical behavior of individual neurons. These models range from simplified integrate-and-fire models to morphologically detailed biophysical models that incorporate dendritic arbors, ion channels, and synaptic inputs. A central challenge in detailed modeling has been parameter identification, as it is rarely possible to directly measure all relevant properties with sufficient precision [20].

The recent development of differentiable simulators such as Jaxley has revolutionized parameter estimation by enabling gradient-based optimization. Unlike traditional gradient-free approaches (e.g., genetic algorithms), these tools use automatic differentiation and GPU acceleration to efficiently optimize parameters in high-dimensional spaces [20]. For example, Jaxley can train biophysical models with up to 100,000 parameters to perform computational tasks or match experimental recordings [20].

Table 1: Single Neuron Simulation Approaches

| Model Type | Key Characteristics | Typical Applications | Computational Demand |

|---|---|---|---|

| Point Neuron (e.g., Integrate-and-Fire) | Simplified electrical properties; no morphological detail | Large-scale network studies; theoretical analysis | Low |

| Single-Compartment Biophysical | Incorporates ion channel dynamics; limited spatial structure | Studies of intrinsic excitability; channelopathies | Medium |

| Multi-Compartment Biophysical | Detailed morphology; spatially distributed channels and synapses | Dendritic integration; synaptic plasticity studies | High |

Experimental Protocols for Single Neuron Model Validation

A standard protocol for validating single neuron models involves fitting model parameters to intracellular recordings [20]:

Electrophysiological Recording: Obtain whole-cell patch-clamp recordings from the neuron of interest, using step-current or noisy-current injections to probe various firing patterns.

Model Construction: Create a morphologically detailed reconstruction of the neuron, incorporating appropriate ion channel types in different cellular compartments (soma, dendrites, axon).

Parameter Optimization: Use gradient descent to minimize the difference between simulated and recorded voltage traces. The loss function typically incorporates summary statistics such as the mean and standard deviation of the voltage in specific time windows, or differentiable measures like Dynamic Time Warping (DTW) for longer recordings [20].

Model Validation: Test the optimized model with stimulus protocols not used during the fitting process to assess generalizability.

Mesoscale Circuit Modeling

Data-Driven Dynamics and Computational Benchmarks

Mesoscale circuit modeling investigates how ensembles of neurons transform inputs into goal-directed outputs, a process known as neural computation. The Computation-through-Dynamics Benchmark (CtDB) provides a standardized framework for developing and validating data-driven models that infer latent neural dynamics from recorded neural activity [21]. CtDB addresses critical gaps in the field by providing: (1) synthetic datasets reflecting computational properties of biological neural circuits, (2) interpretable performance metrics, and (3) standardized training and evaluation pipelines [21].

This framework emphasizes that neural computation occurs across three conceptual levels: the computational level (what goal the system accomplishes), the algorithmic level (how neural dynamics implement the computation), and the implementation level (how these dynamics are embedded in biological neural circuits) [21].

Benchmarking Methodology

The CtDB validation protocol involves several critical stages [21]:

Task-Trained Model Generation: Create synthetic datasets by training dynamical systems to perform specific computational tasks (e.g., 1-bit memory flip-flop), ensuring these proxies reflect goal-directed computation.

Data-Driven Model Training: Train data-driven models to reconstruct neural activity from the synthetic datasets.

Multi-Metric Evaluation: Assess model performance using metrics sensitive to specific failure modes, going beyond simple reconstruction accuracy to evaluate how well inferred dynamics match ground truth.

Table 2: Neural Computation Benchmarking Metrics

| Performance Criterion | What It Measures | Detection Capability |

|---|---|---|

| Trajectory Accuracy | Similarity between true and inferred latent states | Overall dynamical fidelity |

| Fixed Point Alignment | Correspondence between attractors in true and inferred dynamics | Correct identification of stable states |

| Input-Output Mapping | Fidelity in replicating computational transformations | Preservation of computational function |

Whole-Brain Modeling

Large-Scale Biophysical Simulations

Whole-brain modeling integrates multiple spatial scales to simulate the entire brain or major brain systems. Recent advances have enabled the creation of biophysically realistic simulations of complete brain regions, such as the landmark simulation of an entire mouse cortex containing almost ten million neurons and 26 billion synapses [19]. These simulations incorporate both form and function, with 86 interconnected brain regions based on detailed anatomical data from resources like the Allen Cell Types Database and Allen Connectivity Atlas [19].

Such large-scale simulations enable researchers to ask previously intractable questions about disease propagation, seizure dynamics, and network-level effects of focal perturbations [19]. The computational demands are immense, requiring supercomputing resources like Fugaku, but these models provide unprecedented opportunities to observe emergent brain dynamics in silico.

Connectome-Based Network Modeling

Complementing detailed biophysical simulations, connectome-based modeling uses mathematical frameworks to understand how large-scale brain organization influences dynamics and function. These models typically employ cortical hierarchy and excitability gradients to explain observed phenomena, such as the finding that high-order brain regions show stronger responses to electrical stimulation than low-order regions [22].

The methodology for building these models involves [22]:

Structural Connectome Construction: Using diffusion-weighted MRI or other tract-tracing methods to map the anatomical connections between brain regions.

Neural Mass Model Implementation: Representing each brain region with simplified neural dynamics, such as Wilson-Cowan or FitzHugh-Nagumo models.

Parameter Optimization: Fitting model parameters to match empirical data, such as resting-state functional MRI or electrophysiological recordings.

Virtual Perturbation Experiments: Using the optimized model to perform in-silico interventions that would be difficult or unethical in real subjects, such as "virtual dissections" of specific network connections [22].

Table 3: Whole-Brain Simulation Scales and Projections

| Brain Scale | Current Capabilities | Projected Timeline | Key Challenges |

|---|---|---|---|

| Mouse Cortex | ~10 million neurons, 26 billion synapses (achieved) [19] | N/A (achieved) | Integration of cellular diversity; multiscale validation |

| Mouse Whole-Brain | Regional simulations integrated | ~2034 (projected cellular level) [9] | Complete connectome; cross-regional specialization |

| Marmoset Whole-Brain | Partial connectome models | ~2044 (projected) [9] | Expanded computational resources; cross-species validation |

| Human Whole-Brain | Simplified large-scale network models | Later than 2044 (projected) [9] | Massive computational demands; ethical considerations |

Emerging Trends and Future Projections

Technological Advancements

The field of neuronal simulation is rapidly evolving, driven by several converging technological trends:

Differentiable Simulation: Tools like Jaxley leverage automatic differentiation and GPU acceleration to enable gradient-based optimization of biophysical parameters, dramatically improving fitting efficiency for detailed models [20].

AI-Powered Simulation: Artificial intelligence is being integrated into simulation workflows for real-time insights, results summarization, and even conversational interaction with models [23]. Reinforcement learning integration allows agents to explore strategies within simulated environments [23].

Cloud-Based Simulation Platforms: Cloud infrastructure enables global collaboration, centralized data management, and access to powerful computing resources without local hardware constraints [23] [24]. Emerging platforms allow model building and editing directly in web browsers [23].

Digital Twins with Real-Time Data: Lightweight protocols like MQTT enable real-time data streaming between simulations and physical systems, creating dynamic digital twins that accurately mirror real-world assets [23].

Future Projections for Whole-Brain Simulation

Systematic analysis of technological trends suggests that mouse whole-brain simulation at the cellular level could be realized around 2034, with marmoset simulations following around 2044, and human whole-brain simulations likely becoming feasible later than 2044 [9]. These projections are based on exponential improvements in supercomputing performance, transcriptomics, connectomics, and neural activity measurement technologies [9].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Research Reagent Solutions for Neuronal Network Simulation

| Tool/Platform | Type | Primary Function | Key Features |

|---|---|---|---|

| Jaxley | Software Library | Differentiable biophysical simulation | GPU acceleration; automatic differentiation; Python-based [20] |

| NEURON | Software Environment | Biophysical simulation of neurons and networks | Extensive model library; multi-compartment support; HPC compatibility [20] |

| Computation-through-Dynamics Benchmark (CtDB) | Benchmark Framework | Standardized evaluation of neural dynamics models | Synthetic datasets; interpretable metrics; input-output transformation focus [21] |

| Allen Cell Types Database | Data Resource | Cellular properties for model constraining | Morphological and electrophysiological data; cross-species comparison [19] |

| Brain Modeling ToolKit | Software Framework | Construction and simulation of brain models | Modular architecture; community-driven model sharing [19] |

| AnyLogic | Simulation Platform | Multimethod simulation modeling | Discrete-event, agent-based, and system dynamics modeling in unified environment [23] |

| Supercomputer Fugaku | Computing Infrastructure | Large-scale simulation execution | 400+ petaflops performance; massive parallelization [19] |

The spectrum of neuronal simulation scales represents a rapidly advancing frontier in computational neuroscience. From biophysically detailed single neurons to system-level whole-brain models, each scale offers unique insights and presents distinct challenges. The development of standardized benchmarking frameworks like CtDB enables more rigorous validation and comparison of neural dynamics models [21], while technological advances in differentiable simulation [20] and supercomputing [19] continue to expand what is computationally feasible.

Future progress will depend on continued collaboration across disciplines, sharing of data and models, and the development of increasingly sophisticated theoretical frameworks. As these tools mature, they promise to transform our understanding of neural computation and accelerate the development of treatments for neurological disorders. For researchers and drug development professionals, familiarity with this multi-scale simulation landscape is becoming increasingly essential for cutting-edge neuroscience research.

Implementation in Practice: Methodologies and Real-World Applications in Biomedicine

The field of computational neuroscience is increasingly reliant on complex large-scale neuronal network models to understand brain function in health and disease. This progress is coupled with advances in network theory and growing availability of detailed brain connectivity data. As models grow in scale and complexity to study interactions across multiple brain areas or long-timescale phenomena like system-level learning, the development of efficient simulation technology becomes paramount [25]. The critical process driving this development is benchmarking—the systematic measurement of simulation performance—which identifies performance bottlenecks and guides progress toward more efficient simulation technology [25].

However, the field currently lacks standardized benchmarks, making it difficult to accurately measure progress, compare different approaches, and identify promising research directions [11]. Maintaining comparability of benchmark results is particularly challenging due to the absence of standardized specifications for measuring how simulators perform and scale on modern high-performance computing (HPC) systems [25]. This article addresses these challenges by presenting a comprehensive modular workflow for benchmarking neuronal network simulations, from initial configuration through final analysis, providing researchers with a systematic methodology for rigorous performance evaluation.

Core Principles of Neuronal Network Benchmarking

Benchmarking in computational neuroscience serves two primary purposes: guiding the development of simulation technology and providing objective comparisons between different neuromorphic approaches [11] [25]. Effective benchmarking must encompass both hardware-independent assessment of algorithms and hardware-dependent evaluation of full system implementations [11].

The benchmarking process must account for the diverse scales and levels of biological detail present in neuronal network models, from simplified point neurons to morphologically detailed models [26]. Benchmarks should represent scientifically relevant network models that stress different aspects of simulation technology, providing complementary information about performance characteristics [25].

A critical challenge in neuronal network benchmarking is the rapidly evolving ecosystem of models and technologies. Continuous benchmarking approaches, inspired by continuous integration practices in software engineering, help address this challenge by automatically testing performance across code versions and hardware configurations [26]. This enables early detection of performance regressions and fosters collaborative model refinement across research groups.

The Modular Benchmarking Workflow

The benchmarking workflow can be decomposed into three sequential phases with distinct modules within each phase. This modular design enhances flexibility, reproducibility, and comparability across different benchmarking studies.

Phase 1: Configuration and Setup

The initial phase establishes the foundation for reproducible benchmarking through careful specification of all benchmark components.

- Network Model Selection: Choose scientifically relevant network models with different complexity levels to stress various aspects of simulation technology. Models should span different spatial and temporal scales, from microcircuits to multi-area networks [25].

- Hardware Specification: Document complete system configuration including CPU type and core count, memory architecture, storage subsystem, and network interconnect for HPC systems [25].

- Software Environment: Precisely record software versions, compiler options, environment variables, and dependency versions that may affect performance [26].

- Parameter Configuration: Define all simulation parameters including numerical integration methods, time steps, and network connectivity patterns.

Phase 2: Execution and Data Collection

This phase involves running benchmarks and systematically collecting performance data.

- Execution Module: Run simulations across different hardware configurations and parameter combinations. Ensure consistent conditions by controlling system load and resource allocation [25].

- Performance Monitoring: Track key metrics during execution including time-to-solution, memory usage, energy consumption, and scaling efficiency [25].

- Metadata Collection: Automatically record comprehensive metadata including benchmark identifiers, software versions, execution timestamps, and system configuration details [25].

Phase 3: Analysis and Reporting

The final phase transforms raw performance data into actionable insights.

- Data Processing: Calculate performance metrics from raw timing and monitoring data. Generate visualizations of scaling behavior and resource utilization [25].

- Comparative Analysis: Compare results across different software versions, hardware configurations, or simulation scales to identify trends and bottlenecks [25].

- Reporting: Generate comprehensive reports with performance summaries, metadata documentation, and visualizations that facilitate reproducibility and knowledge transfer [25].

The following diagram illustrates the complete workflow and the interconnections between its modules:

Experimental Protocols for Benchmarking

Functional Benchmarking Protocols

Functional benchmarking assesses how well simulated networks reproduce established neuronal dynamics and behaviors. The following protocols provide standardized assessment methodologies:

Table 1: Functional Benchmarking Protocols

| Protocol Name | Purpose | Key Metrics | Validation Reference |

|---|---|---|---|

| Rallpack Benchmarks | Measure basic neuronal electrical properties | Cable equation accuracy, compartmental integration fidelity | [25] |

| Network Oscillation Analysis | Quantify synchronized network behavior | Oscillation frequency, amplitude, synchronization index | [25] |

| Spike Pattern Reproduction | Verify temporal precision of output spikes | Spike timing accuracy, rate coding fidelity | [25] |

Implementation of functional benchmarks requires careful specification of reference models, numerical tolerance levels, and comparison methodologies. For example, Rallpack benchmarks compare simulator output against analytical solutions or high-precision reference implementations to quantify numerical accuracy [25].

Performance Benchmarking Protocols

Performance benchmarking focuses on computational efficiency and resource utilization across different hardware and software configurations:

Table 2: Performance Benchmarking Metrics

| Metric Category | Specific Metrics | Measurement Methodology |

|---|---|---|

| Execution Performance | Time-to-solution, Simulation rate (ms/s), Parallel efficiency | Wall-clock measurement, Scaling analysis |

| Memory Utilization | Peak memory usage, Memory bandwidth utilization | System monitoring tools, Custom memory tracking |

| Energy Efficiency | Energy consumption, Energy-delay product | Hardware counters, External power measurement |

| Scaling Behavior | Strong scaling efficiency, Weak scaling efficiency | Multi-node execution with varying core counts |

Performance benchmarks should be conducted using standardized network models that represent scientifically relevant use cases. The beNNch framework provides reference implementations of such networks with different complexity levels, from simple balanced random networks to multi-area models with intricate connectivity [25].

Implementation Frameworks and Tools

The beNNch Framework

The beNNch framework serves as a reference implementation of the modular benchmarking workflow, providing open-source tools for configuration, execution, and analysis of neuronal network benchmarks [25]. Key features include:

- Modular Design: Separate modules for network generation, simulation execution, performance monitoring, and data analysis

- Metadata Management: Unified recording of benchmarking data and metadata to foster reproducibility

- HPC Compatibility: Support for large-scale benchmarking on high-performance computing systems

- Multi-simulator Support: Capability to benchmark various simulation engines using the same network models

beNNch enables systematic comparison of simulator performance across different versions, helping identify performance regressions or improvements during development [25].

NeuroBench: A Community Standard

NeuroBench represents a community-led initiative to establish standardized benchmarks for neuromorphic computing algorithms and systems. This framework introduces a common set of tools and systematic methodology for inclusive benchmark measurement [11]. NeuroBench addresses both hardware-independent assessment of algorithms and hardware-dependent evaluation of complete systems, providing an objective reference framework for quantifying neuromorphic approaches [11].

Continuous Benchmarking Systems

Recent advances include the development of continuous benchmarking systems that apply principles of continuous integration to neuronal network simulation [26]. These systems automatically execute benchmarks when code changes are made, providing immediate feedback on performance implications. Key innovations include:

- Automated Artifact Generation: Configurations, environments, and results generated automatically without manual intervention

- Unified Workflow Specification: Decoupling benchmark execution from researcher-specific configurations and hardware details

- Centralized Result Storage: Facilitating comparison across platforms and code versions

- Lowered Entry Barrier: Making benchmarking accessible to first-time users through standardized procedures

This approach addresses the significant reproducibility challenges posed by individual setup configurations across different laboratories [26].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Benchmarking Tools and Frameworks

| Tool/Framework | Type | Primary Function | Application Context |

|---|---|---|---|

| beNNch [25] | Software framework | Configuration, execution and analysis of benchmarks | Generic neuronal network simulations |

| NeuroBench [11] | Benchmark standard | Common tools for neuromorphic algorithm/system assessment | Neuromorphic computing evaluation |

| NEST [25] | Simulation engine | Large-scale spiking neuronal network simulation | Computational neuroscience research |

| SpikingJelly [27] | SNN framework | Training and evaluation of spiking neural networks | Energy-efficient AI applications |

| Arbor [25] | Simulation library | Morphologically-detailed neural network simulation | Biophysically detailed modeling |

| CARLsim [25] | GPU-accelerated library | Creation of neurobiologically detailed SNNs | GPU-optimized network simulation |

Experimental Design and Data Analysis

Designing Effective Benchmarking Experiments

Robust benchmarking experiments require careful experimental design to ensure results are statistically valid and scientifically meaningful:

- Variable Isolation: Systematically vary one parameter at a time (network size, connectivity, neuron model complexity) while holding others constant to isolate effects

- Replication and Randomization: Execute multiple runs with different random seeds to account for variability and random initialization effects

- Control Conditions: Include reference simulations with established tools to provide baseline performance measures

- Progressive Complexity: Start with simple network models and progressively increase complexity to identify scaling bottlenecks

The following diagram illustrates the experimental design process for benchmarking studies:

Statistical Analysis of Benchmark Results

Proper statistical analysis is essential for drawing valid conclusions from benchmarking data:

- Descriptive Statistics: Calculate mean, median, standard deviation, and confidence intervals for performance metrics across multiple runs

- Variance Analysis: Use ANOVA or similar techniques to determine if performance differences across configurations are statistically significant

- Scaling Analysis: Fit performance models to strong and weak scaling data to quantify parallel efficiency

- Correlation Analysis: Identify relationships between network parameters (connection density, firing rates) and performance metrics

Applications in Neuromorphic Computing and Drug Development

The modular benchmarking workflow finds important applications in neuromorphic computing and pharmaceutical research, enabling quantitative comparison of different approaches and platforms.

Benchmarking Neuromorphic Systems

Neuromorphic computing shows significant promise for advancing computing efficiency and capabilities of AI applications using brain-inspired principles [11]. Comprehensive benchmarking of neuromorphic systems requires evaluation across multiple dimensions:

- Algorithm Assessment: Hardware-independent evaluation of neuromorphic algorithms including spiking neural networks (SNNs) and learning rules [11]

- System Performance: Hardware-dependent measurement of energy efficiency, computational throughput, and real-time processing capabilities [11]

- Application-Specific Metrics: Task-specific performance measures for target applications such as pattern recognition, sensory processing, or motor control

Recent studies have conducted comprehensive multimodal benchmarking of leading SNN frameworks including SpikingJelly, BrainCog, Sinabs, SNNGrow, and Lava [27]. These evaluations integrate quantitative metrics (accuracy, latency, energy consumption) across diverse datasets (image, text, neuromorphic event data) with qualitative assessments of framework adaptability and community engagement [27].

Applications in Neuroscience and Drug Development

Benchmarking workflows enable more reliable simulation of neuronal dynamics relevant to drug development and disease modeling:

- Mechanotransduction Mapping: Advanced benchmarking facilitates characterization of neuronal responses to mechanical forces, relevant to neurological disorders like epilepsy and Alzheimer's disease [28]

- Network Pathology Modeling: Standardized models enable comparison of drug effects on pathological network dynamics across different research groups

- High-Throughput Screening: Optimized simulation platforms allow computational screening of compound effects on neuronal network function

Benchmarked simulation platforms can model hyper-sensitive mechanotransduction in neuronal networks, characterizing how subtle physical forces influence neuronal signaling—a process implicated in various neurological disorders [28]. These models integrate multi-scale simulations from molecular dynamics of mechanosensitive ion channels to network-level activity patterns [28].

The field of neuronal network benchmarking continues to evolve with several promising directions for future development:

- Standardized Benchmark Suites: Community adoption of standardized benchmark collections representing diverse neuroscience use cases

- Automated Performance Regression Testing: Integration of benchmarking into development workflows to automatically detect performance regressions

- Cross-Platform Comparability: Enhanced methodologies for fair comparison across diverse hardware architectures including neuromorphic systems

- Reproducibility Enhancements: Improved metadata standards and containerization technologies to ensure benchmark reproducibility

The modular workflow approach presented in this article provides a systematic methodology for benchmarking neuronal network simulations, from initial configuration through final analysis. By decomposing the complex benchmarking process into well-defined segments with standardized interfaces, this approach enhances reproducibility, comparability, and utility of performance measurements [25]. As the field continues to advance with increasingly complex models and diverse computing platforms, rigorous benchmarking will remain essential for guiding development of more efficient simulation technology and enabling scientific progress in computational neuroscience and neuromorphic computing.

In the pursuit of understanding the brain's computational principles, large-scale neuronal network simulations have become an indispensable tool for neuroscientists and drug development professionals. The scale and complexity of these simulations are projected to grow exponentially, with estimates suggesting that mouse whole-brain simulations at the cellular level could be feasible by the mid-2030s, followed by marmoset and human whole-brain simulations after 2044 [9] [29]. This rapid advancement is driven by parallel developments in supercomputing, neural measurement technologies, and increasingly sophisticated simulation software.

Selecting an appropriate simulation engine is a critical strategic decision that directly impacts research feasibility, performance, and biological interpretability. This technical guide provides a comprehensive comparison of four prominent simulators—NEST, NEURON, Brian, and Arbor—framed within the context of neuronal network simulation benchmarks research. We examine their architectural paradigms, performance characteristics, and suitability for different research domains, supported by quantitative benchmarking data and experimental protocols.

Core Architectural Paradigms

NEST specializes in simulating large-scale networks of point neurons, optimizing for efficiency when modeling hundreds of thousands to millions of simplified neuronal models [30]. Its architecture is designed for distributed computing environments, leveraging MPI for parallel execution across high-performance computing (HPC) systems.

NEURON represents the established standard for modeling neurons with detailed morphological complexity. Originally developed in the 1980s, it enables researchers to create biologically realistic neuron models using its dedicated NMODL description language [31]. It has evolved to support parallelization while maintaining its focus on biophysical accuracy at the single-cell and microcircuit level.

Brian emphasizes ease of use and flexibility with a "write the equations" approach implemented in Python. Its design philosophy prioritizes scientist productivity, allowing for rapid prototyping of novel neuron and synapse models without requiring low-level implementation work [32]. Brian automatically checks for dimensional consistency and provides warnings about potentially unstable solvers.

Arbor is a modern simulator library designed for contemporary HPC architectures, focusing on networks of morphologically detailed neurons. It combines a Python frontend with heavily optimized execution on multi-core CPUs and GPUs, aiming to provide performance portability across different hardware backends [31] [33]. Arbor represents a next-generation approach that balances biological detail with computational efficiency.

Table 1: Simulation Engine Capabilities and Specializations

| Simulator | Primary Abstraction Level | Morphological Detail | Scalability Focus | Programming Interface |

|---|---|---|---|---|

| NEST | Point neurons | Limited | Large-scale networks | Python, C++ |

| NEURON | Multi-compartment neurons | Extensive | Single cells to microcircuits | Python, HOC, NMODL |

| Brian | Flexible (point to simple multi-compartment) | Moderate | Small to medium networks | Python |

| Arbor | Multi-compartment neurons | Extensive | Large-scale networks | Python, C++ |

Performance Benchmarks and Scaling Characteristics

Experimental Protocols for Benchmarking