NeuroBench: The Community-Driven Framework Benchmarking Neuromorphic Computing

NeuroBench is a standardized, open-source benchmark framework designed to address the critical lack of comparability in the rapidly advancing field of neuromorphic computing.

NeuroBench: The Community-Driven Framework Benchmarking Neuromorphic Computing

Abstract

NeuroBench is a standardized, open-source benchmark framework designed to address the critical lack of comparability in the rapidly advancing field of neuromorphic computing. Developed by a broad collaboration of academic and industry researchers, it provides a common methodology and toolset for the fair evaluation of both neuromorphic algorithms and hardware systems. This article explores NeuroBench's foundational principles, its dual-track methodology for hardware-independent and hardware-dependent evaluation, and its suite of application-specific tasks and metrics. We detail how researchers can utilize NeuroBench for development and optimization, and examine its role in validating performance against conventional approaches. For professionals in biomedical research and drug development, this framework offers a reliable pathway to assess neuromorphic technologies for applications such as neural prosthetics and real-time biosignal analysis.

What is NeuroBench? Addressing the Benchmarking Crisis in Neuromorphic Computing

The Pressing Need for Standardization in Neuromorphic Research

The rapid growth of artificial intelligence (AI) and machine learning has resulted in increasingly complex and large models, with a computation growth rate that exceeds efficiency gains from traditional technology scaling [1]. This looming limit to continued advancements intensifies the urgency for exploring new resource-efficient and scalable computing architectures. Neuromorphic computing has emerged as a promising area to address these challenges by porting computational strategies employed in the brain into engineered computing devices and algorithms [1]. However, the field currently lacks standardized benchmarks, making it difficult to accurately measure technological advancements, compare performance with conventional methods, and identify promising research directions [1] [2].

The absence of standardization poses significant risks to the field's development, including fragmentation with incompatible systems from different vendors, inefficiencies from inconsistent data formats and protocols, and potential security vulnerabilities in sensitive application domains [3]. Prior neuromorphic computing benchmark efforts have not seen widespread adoption due to a lack of inclusive, actionable, and iterative benchmark design and guidelines [4]. This article examines how the NeuroBench framework addresses these critical standardization challenges through a community-driven approach to benchmarking neuromorphic algorithms and systems.

The Standardization Challenge in Neuromorphic Computing

The Diversity of Neuromorphic Approaches

Neuromorphic computing research encompasses a wide spectrum of brain-inspired computing techniques at algorithmic, hardware, and system levels [1]. The field initially referred specifically to approaches emulating the biophysics of the brain by leveraging physical properties of silicon, as proposed by Mead in the 1980s [1]. However, it has since expanded to include diverse approaches:

- Neuromorphic algorithms: Neuroscience-inspired methods that strive toward expanded learning capabilities, such as predictive intelligence, data efficiency, and adaptation, including spiking neural networks (SNNs) and primitives of neuron dynamics, plastic synapses, and heterogeneous network architectures [1].

- Neuromorphic systems: Algorithms deployed to hardware that seek greater energy efficiency, real-time processing capabilities, and resilience compared to conventional systems, utilizing biologically-inspired approaches like analog neuron emulation, event-based computation, non-von-Neumann architectures, and in-memory processing [1].

Critical Standardization Barriers

Multiple challenges hinder standardization efforts in neuromorphic computing. The field's rapid innovation pace threatens to render standards obsolete quickly, while industry fragmentation with competing priorities among vendors and research groups complicates establishing unified standards [3]. Additionally, practitioners face the persistent challenge of balancing flexibility and regulation, where overly rigid standards may stifle innovation while too much flexibility leads to inconsistencies [3]. The field's nascent stage also means fewer large-scale deployments exist to guide standardization efforts, and security concerns persist as non-standardized systems may introduce exploitable vulnerabilities, especially in sensitive domains like healthcare or defense [3].

NeuroBench: A Standardized Framework for Neuromorphic Benchmarking

NeuroBench represents a collaboratively-designed effort from an open community of researchers across industry and academia, aiming to provide a representative structure for standardizing the evaluation of neuromorphic approaches [1] [4]. The framework introduces a common set of tools and systematic methodology for inclusive benchmark measurement, delivering an objective reference framework for quantifying neuromorphic approaches in both hardware-independent (algorithm track) and hardware-dependent (system track) settings [4].

The framework is designed to be community-driven, with open-source tools and resources available on GitHub [5], and is structured to evolve iteratively, incorporating new benchmarks and features to track progress made by the research community [4]. This addresses the challenge of rapid innovation by allowing the framework to adapt as the field advances.

Core Architecture and Components

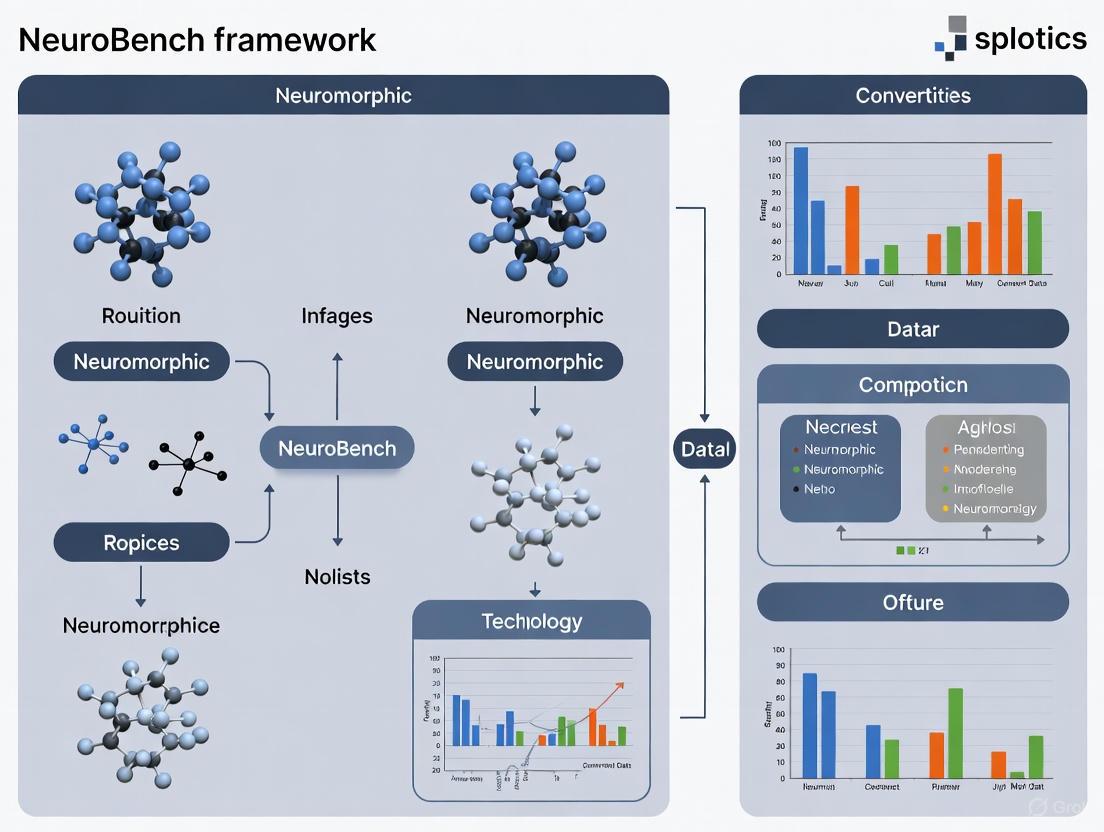

The NeuroBench framework comprises several integrated components that work together to standardize evaluation. The following diagram illustrates the complete NeuroBench evaluation workflow:

The Dataset component provides standardized data formats, with PyTorch tensors of shape (batch, timesteps, features*) as the expected format, though special cases exist for sequence-to-sequence prediction tasks [6]. Pre-processors handle data preprocessing and accept (data, targets) tuples of PyTorch tensors, returning similarly structured output [6]. The framework supports various Model types that accept data tensors and return predictions, which can be final shapes for target comparison or arbitrary shapes for post-processing [6]. Post-processors accumulate predictions and handle postprocessing, accepting prediction tensors and returning results that should match data targets for comparison [6]. The Metrics system includes both static metrics (computable from the model alone) and workload metrics (requiring model predictions and targets) [6].

Benchmark Tasks and Evaluation Metrics

NeuroBench incorporates a comprehensive suite of benchmark tasks that represent real-world neuromorphic applications. The available benchmarks include:

Table 1: NeuroBench Benchmark Tasks and Applications

| Benchmark Task | Application Domain | Description |

|---|---|---|

| Few-shot Class-incremental Learning (FSCIL) [7] | Continual Learning | Evaluates ability to learn new classes from few examples while retaining previous knowledge |

| Event Camera Object Detection [7] | Computer Vision | Object detection using event-based vision sensors |

| Non-human Primate (NHP) Motor Prediction [7] | Neuroprosthetics | Predicting motor commands from neural activity |

| Chaotic Function Prediction [7] | Time Series Prediction | Forecasting chaotic temporal patterns |

| DVS Gesture Recognition [7] | Human-Computer Interaction | Recognizing gestures from dynamic vision sensor data |

| Google Speech Commands (GSC) Classification [7] | Audio Processing | Keyword classification from audio input |

The evaluation metrics in NeuroBench are systematically organized into multiple categories that collectively provide a comprehensive assessment of neuromorphic solutions:

Table 2: NeuroBench Evaluation Metrics Taxonomy

| Metric Category | Specific Metrics | Evaluation Focus |

|---|---|---|

| Accuracy Metrics [7] | Classification Accuracy | Task performance and correctness |

| Efficiency Metrics [7] [3] | Footprint, Synaptic Operations (Effective MACs/ACs) | Computational and memory efficiency |

| Sparsity Metrics [7] | Connection Sparsity, Activation Sparsity | Biological plausibility and potential hardware efficiency |

These metrics enable direct comparison between different neuromorphic approaches and conventional methods, providing a standardized way to quantify trade-offs between accuracy, efficiency, and biological plausibility.

Implementation and Experimental Protocols

Standardized Evaluation Methodology

The NeuroBench framework implements a rigorous methodology for benchmarking neuromorphic algorithms and systems. The general design flow for using the framework involves: (1) training a network using the train split from a particular dataset; (2) wrapping the network in a NeuroBenchModel; (3) passing the model, evaluation split dataloader, pre-/post-processors, and a list of metrics to the Benchmark and executing run() [7].

The framework's API specifications ensure consistency across evaluations. Data is expected in PyTorch tensor format with shape (batch, timesteps, features), where features can be any number of dimensions [6]. Datasets must output (data, targets) tuples of PyTorch tensors with matching batch dimensions, or 3-tuples with kwargs for metadata in specialized cases like object detection [6]. This standardization enables fair comparison across different models and approaches.

Example Experimental Protocol: Google Speech Commands

A concrete example of the NeuroBench methodology can be seen in the Google Speech Commands (GSC) classification benchmark, which provides demonstrated results for both artificial neural networks (ANNs) and spiking neural networks (SNNs) [7]. The experimental workflow for this benchmark involves:

Data Preparation: The GSC dataset is automatically downloaded and preprocessed. The dataset contains audio recordings of spoken commands for keyword classification.

Model Training: Networks are trained using the training split of the dataset. The example provides separate scripts for ANN and SNN approaches.

Benchmark Execution: The trained network is wrapped in a NeuroBenchModel (either TorchModel or SNNTorchModel depending on the network type). The evaluation is performed using the Benchmark class with appropriate pre-processors, post-processors, and metrics.

Result Calculation: The framework computes a comprehensive set of metrics including footprint, connection sparsity, classification accuracy, activation sparsity, and synaptic operations [7].

The expected results from the demonstration show the characteristic trade-offs between ANN and SNN approaches: while the ANN achieves slightly higher classification accuracy (86.5% vs 85.6%), the SNN demonstrates significantly higher activation sparsity (96.7% vs 38.5%), highlighting potential efficiency advantages for event-based hardware [7].

The Researcher's Toolkit: Essential Components

Implementing standardized neuromorphic research requires specific tools and components. The following table details key elements from the NeuroBench framework:

Table 3: Essential Research Components for Standardized Neuromorphic Research

| Component | Function | Implementation in NeuroBench |

|---|---|---|

| Benchmark Harness [5] | Core evaluation framework | Open-source Python package available via PyPI (pip install neurobench) |

| Data Loaders [7] | Standardized data access | Integrated datasets with consistent formatting and pre-processing |

| Model Wrappers [6] | Unified model interface | NeuroBenchModel base class with specific implementations for PyTorch and SNNtorch |

| Pre-processors [6] | Data preparation and feature extraction | Configurable processing pipelines for spike conversion and data normalization |

| Post-processors [6] | Output interpretation and aggregation | Methods for combining spiking outputs and generating final predictions |

| Metric Calculators [6] | Performance quantification | Comprehensive suite of accuracy, efficiency, and sparsity metrics |

Impact and Future Directions

Advancing Neuromorphic Research Through Standardization

NeuroBench addresses critical standardization challenges in neuromorphic computing by providing a unified framework for evaluation. The framework's community-driven design helps overcome industry fragmentation by bringing together researchers from academia and industry to develop shared understanding of best practices [3]. Its balanced approach to benchmarking, focusing on task-level evaluation with hierarchical metric definitions, allows for flexible implementation while maintaining standardized assessment [3].

The framework's open-source nature and collaborative development model help address intellectual property concerns while encouraging transparency and adoption [5]. By providing objective performance measurement, NeuroBench enables researchers to quantitatively demonstrate advancements in neuromorphic computing, facilitating comparison with conventional approaches and helping identify the most promising research directions [1].

Integration with Broader Standardization Initiatives

NeuroBench complements other standardization efforts in neuromorphic computing, including NIST's work on performance benchmarking and device characterization, IEEE's development of hardware interfaces and software frameworks, and ISO's focus on ethical considerations and data formats [3]. The framework's benchmarking metrics provide a foundation for objective comparisons across different neuromorphic systems and algorithms [3].

The relationship between NeuroBench and other standardization components can be visualized as follows:

Future Development and Community Adoption

NeuroBench is designed as an evolving standard that will expand its benchmarks and features to foster and track progress made by the research community [4]. The framework intends to continually incorporate new tasks and evaluation methodologies as the field advances. Community contribution is actively encouraged through development of new benchmarks, improvements to the harness, and submission of results [5] [7].

The long-term vision for standardization in neuromorphic computing includes achieving interoperability between neuromorphic systems and traditional computing architectures, scalability for large-scale applications, robust security protocols, and ethical deployment frameworks [3]. NeuroBench is positioned to play a key role in realizing this vision by providing the necessary tools and framework for standardized evaluation and collaboration.

The pressing need for standardization in neuromorphic research is effectively addressed by the NeuroBench framework, which provides a comprehensive, community-driven approach to benchmarking neuromorphic algorithms and systems. By offering standardized metrics, evaluation methodologies, and tools, NeuroBench enables objective comparison across different approaches, accelerates research progress, and helps identify the most promising directions for the field. As neuromorphic computing continues to evolve, frameworks like NeuroBench will play an increasingly critical role in ensuring that advancements are measurable, comparable, and translatable to real-world applications across various domains, from edge computing and robotics to healthcare and scientific research.

The rapid growth of artificial intelligence (AI) and machine learning has resulted in increasingly complex and large models, with computation growth rates now exceeding efficiency gains from traditional technology scaling [1]. This escalating computational demand has intensified the urgency for exploring new resource-efficient and scalable computing architectures, positioning neuromorphic computing as a particularly promising solution. By implementing brain-inspired principles, neuromorphic technology aims to unlock key hallmarks of biological intelligence—including exceptional energy efficiency, real-time processing capabilities, and adaptive learning [1]. However, despite nearly a decade of concentrated research and development, the neuromorphic research field has faced a significant impediment: the absence of standardized benchmarks.

This lack of standardized evaluation methods has made it difficult to accurately measure technological advancements, compare performance against conventional approaches, or identify the most promising research directions [1] [4]. Prior benchmarking efforts failed to achieve widespread adoption due to insufficiently inclusive, actionable, and iterative designs [4]. To address this critical gap, the neuromorphic research community initiated a collaborative project to develop NeuroBench—a comprehensive benchmark framework for neuromorphic computing algorithms and systems that represents the first successful community-wide standardization effort in this field [1] [4].

The NeuroBench Initiative: A Community-Driven Approach

Collaborative Origins and Development

NeuroBench stands apart from previous benchmarking attempts through its fundamentally collaborative development model. The initiative brought together an extensive community of researchers from both industry and academia, creating a framework specifically designed to be "collaborative, fair, and representative" [8]. This unprecedented collaboration involved over 100 researchers from more than 50 academic and industrial institutions worldwide, representing a comprehensive cross-section of the neuromorphic research ecosystem [9] [10].

The project began in 2022 as a response to a critical question: How could neuromorphic engineering gain significant traction while still lacking established benchmarks and metrics for fair evaluations, including comparisons against conventional machine learning approaches? [10] The initiative was ignited through the leadership of researchers including Jason Yik, Charlotte Frenkel, and Vijay Janapa Reddi, with key support from Korneel Van den Berghe and many others [10]. The wide range of approaches and design schools in neuromorphic research—while a source of innovation—had previously led to fragmentation that hindered the establishment of common benchmarks [10]. NeuroBreakthroughly addressed this challenge by creating a forum where the community could collectively agree on representative benchmarks.

Addressing the Benchmarking Gap

The core challenge NeuroBench addressed was the rich diversity of techniques employed in neuromorphic research, which had resulted in a lack of clear standards for benchmarking [8]. This absence made it difficult to effectively evaluate the advantages and strengths of neuromorphic methods compared to traditional deep-learning-based approaches [8]. NeuroBench established itself as a community-driven solution with three fundamental pillars:

- Collaborative Design: The framework was developed by the community, for the community, ensuring broad representation across different neuromorphic approaches [8].

- Fair Evaluation: The benchmarks provide objective comparisons between neuromorphic and conventional approaches across multiple application domains [4].

- Representative Tasks: The selected benchmarks cover a wide spectrum of neuromorphic applications, from edge computing to neuroscientific exploration [1] [7].

This inclusive approach has positioned NeuroBench as a unifying force in the field, driving technological progress through standardized evaluation [8].

NeuroBench Technical Framework Architecture

Dual-Track Benchmarking Methodology

NeuroBench introduces a sophisticated dual-track framework that enables comprehensive evaluation of neuromorphic technologies across different maturity levels and implementation strategies [1] [4]. This structured approach allows researchers to quantify neuromorphic advantages in both theoretical and practical contexts.

Table 1: NeuroBench Dual-Track Benchmarking Structure

| Track | Evaluation Focus | Key Metrics | Target Applications |

|---|---|---|---|

| Algorithm Track | Hardware-independent performance [1] | Accuracy, activation sparsity, synaptic operations [7] | Algorithm exploration, model development [1] |

| System Track | Hardware-dependent performance [1] | Energy efficiency, throughput, latency [1] | Hardware deployment, edge computing [1] |

Core Benchmark Tasks and Metrics

The NeuroBench framework includes carefully selected benchmark tasks that represent diverse neuromorphic application domains. These benchmarks are designed to stress-test the unique capabilities of neuromorphic approaches while providing meaningful comparisons with conventional methods.

Table 2: NeuroBench v1.0 Benchmark Tasks and Specifications

| Benchmark Task | Domain | Data Modality | Key Challenge |

|---|---|---|---|

| Keyword Few-shot Class-incremental Learning (FSCIL) [7] | Continual learning | Audio | Adapting to new classes with limited examples |

| Event Camera Object Detection [7] | Computer vision | Event-based data | Processing sparse, asynchronous visual data |

| Non-human Primate Motor Prediction [7] | Neuroscience | Neural signals | Decoding neural activity into motor commands |

| Chaotic Function Prediction [7] | Time series | Numerical data | Predicting complex, chaotic dynamics |

| DVS Gesture Recognition [7] | Gesture recognition | Event-based vision | Recognizing human gestures from event cameras |

| Google Speech Commands Classification [7] | Audio processing | Audio | Keyword spotting from audio commands |

The framework employs a comprehensive set of metrics that capture the unique advantages of neuromorphic approaches. For the algorithm track, these include footprint (model complexity), connection sparsity, classification accuracy, activation sparsity, and synaptic operations (separated into effective MACs and ACs) [7]. This detailed metric selection enables multidimensional comparison between conventional and neuromorphic approaches.

The NeuroBench Harness: Implementation Framework

A key innovation of NeuroBench is the development of an open-source Python package called the "NeuroBench harness" that provides standardized tools for benchmark implementation [11] [7]. This harness allows researchers to consistently run benchmarks and extract comparable metrics across different approaches.

The technical architecture of the NeuroBench harness includes several integrated components [7]:

- Benchmark Definitions: Standardized workload metrics and static metrics

- Datasets: Curated benchmark datasets with consistent preprocessing

- Model Frameworks: Support for popular frameworks like Torch and SNNTorch

- Pre-processing: Tools for data preparation and conversion to spikes

- Post-processors: Methods for combining and interpreting spiking outputs

The design flow for using the framework follows a systematic process: (1) train a network using the train split from a benchmark dataset; (2) wrap the network in a NeuroBenchModel; (3) pass the model, evaluation split dataloader, pre-/post-processors, and metrics to the Benchmark and run the evaluation [7]. This standardized workflow ensures consistent evaluation across different research groups and approaches.

Experimental Protocols and Evaluation Methodologies

Standardized Benchmark Execution

NeuroBench provides detailed experimental protocols to ensure reproducible and comparable results across studies. The following workflow diagram illustrates the standard benchmark execution process:

Diagram 1: NeuroBench Experimental Workflow

A concrete example of this protocol in practice is demonstrated in the Google Speech Commands keyword classification benchmark, which provides baseline results for both artificial neural networks (ANNs) and spiking neural networks (SNNs) [7]. The experimental protocol for this benchmark includes:

- Data Preparation: Download and preprocess the Google Speech Commands dataset

- Model Training: Train either an ANN or SNN using the training split

- Benchmark Setup:

- Wrap the trained model in a

NeuroBenchModel - Load the evaluation split dataloader

- Configure pre-processors and post-processors

- Define the metrics list including footprint, connection sparsity, classification accuracy, activation sparsity, and synaptic operations

- Wrap the trained model in a

- Evaluation: Execute the

benchmark.run()function to compute all metrics - Comparison: Compare results against established baselines

The expected results for this benchmark demonstrate the trade-offs between approaches: while the ANN achieves 86.5% accuracy with higher MAC operations, the SNN achieves 85.6% accuracy with higher activation sparsity (96.7%) and uses AC operations instead of MACs [7].

Baseline Establishment and Performance Comparison

NeuroBench has established comprehensive baselines across both neuromorphic and conventional approaches, enabling meaningful comparison of performance advantages. The baseline results reveal characteristic trade-offs between different approaches:

For the Google Speech Commands benchmark, the ANN baseline demonstrates [7]:

- Footprint: 109,228 parameters

- Classification Accuracy: 86.5%

- Activation Sparsity: 38.5%

- Synaptic Operations: 1,728,071 Effective MACs

In comparison, the SNN baseline shows [7]:

- Footprint: 583,900 parameters

- Classification Accuracy: 85.6%

- Activation Sparsity: 96.7%

- Synaptic Operations: 3,289,834 Effective ACs

These results highlight a key neuromorphic advantage: SNNs achieve significantly higher activation sparsity (96.7% vs 38.5%), which can translate to energy efficiency in specialized hardware, though they currently require more parameters [7].

Implementing NeuroBench benchmarks requires specific tools and resources that collectively form the researcher's toolkit for neuromorphic evaluation.

Table 3: Essential NeuroBench Research Resources

| Resource | Type | Function | Access |

|---|---|---|---|

| NeuroBench Harness | Software Package | Core framework for running benchmarks and extracting metrics [11] [7] | Python Package Index (PyPI): pip install neurobench [7] |

| Benchmark Datasets | Data | Curated datasets for each benchmark task with standardized splits [7] | Through NeuroBench harness and associated repositories [7] |

| Example Scripts | Code | Reference implementations for each benchmark [7] | GitHub repository examples folder [7] |

| Pre-processing Tools | Software | Data transformation and spike conversion utilities [7] | Included in NeuroBench harness [7] |

| Model Wrappers | Software | Adapters for different model types (PyTorch, SNN Torch) [7] | Included in NeuroBench harness [7] |

The NeuroBench harness is designed for easy adoption through multiple pathways. Researchers can install the core package directly from PyPI using pip install neurobench [7]. For development and contribution, the project uses Poetry for dependency management and supports Python ≥3.9 [7]. The open-source nature of the project encourages community extension and development of additional features, programming frameworks, and metrics [7].

Future Directions and Community Impact

Ongoing Development and Expansion

NeuroBench is designed as a living framework that continually expands its benchmarks and features to track progress made by the research community [4]. The current roadmap includes several important enhancements:

- Improved Support for Analog Approaches: Expanding beyond digital neuromorphic systems to include analog and mixed-signal implementations [10]

- Co-design Track: Developing benchmarks that explicitly evaluate algorithm-hardware co-design strategies [10]

- Open Platforms: Creating more accessible benchmarking platforms for broader community participation [10]

- Additional Benchmarks: Continuously adding new benchmark tasks that represent emerging neuromorphic applications

The framework maintains forward compatibility through its modular architecture, allowing new benchmarks, metrics, and evaluation methodologies to be incorporated as the field evolves [4] [7].

Impact on Neuromorphic Research

Since its introduction, NeuroBench has already begun to significantly influence the neuromorphic computing landscape. The framework has been adopted in prominent research contexts, including the IEEE BioCAS 2024 Grand Challenge on Neural Decoding [10]. By providing the first widely-accepted standardization for neuromorphic evaluation, NeuroBench enables:

- Objective Comparison: Direct, quantitative comparison between different neuromorphic approaches and against conventional baselines

- Progress Tracking: Clear measurement of technological advancements over time

- Research Direction Identification: Data-driven identification of the most promising research directions

- Industrial Adoption: Lowered barriers for industry evaluation of neuromorphic technologies

The community-driven nature of NeuroBench ensures that it remains relevant and representative of the diverse approaches within neuromorphic computing, while simultaneously providing the common ground needed to drive the field forward [8] [10].

NeuroBench represents a watershed moment for neuromorphic computing research. By successfully addressing the long-standing lack of standardized benchmarks through an unprecedented collaborative effort across academia and industry, the framework provides the objective reference needed to quantify advances in neuromorphic algorithms and systems [1] [4]. The dual-track approach enables comprehensive evaluation spanning from theoretical algorithm development to practical system implementation, while the open-source tools lower barriers to adoption and participation [11] [7].

As a community-driven initiative, NeuroBench embodies the collective expertise and diverse perspectives of the neuromorphic research field, ensuring that the benchmarks remain fair, representative, and relevant [8]. The establishment of this standardized evaluation framework marks a crucial step toward maturing neuromorphic computing from exploratory research to measurable technological advancement, ultimately accelerating progress toward more efficient and capable AI systems inspired by the computational principles of the brain [1] [4].

The field of neuromorphic computing, which leverages brain-inspired principles to create more efficient and capable computing systems, has experienced significant growth and diversification in recent years [1]. However, this rapid innovation has occurred in the absence of standardized benchmarking methodologies, creating a critical challenge for the research community. Without consistent evaluation standards, it becomes difficult to accurately measure technological progress, compare neuromorphic approaches against conventional methods, or identify the most promising research directions [1] [4]. The NeuroBench framework emerges as a direct response to this challenge, representing a collaborative community effort from researchers across academia and industry to establish fair, reproducible, and representative benchmarks for neuromorphic computing algorithms and systems [8].

NeuroBench aims to provide a common set of tools and a systematic methodology for inclusive benchmark measurement, delivering an objective reference framework for quantifying neuromorphic approaches [1]. The framework is specifically designed to be a collaborative, fair, and representative benchmark suite developed by the community, for the community [8]. This positioning addresses the shortcomings of previous benchmarking attempts that failed to achieve widespread adoption due to insufficiently inclusive, actionable, and iterative design principles [4]. By establishing standardized evaluation practices, NeuroBench enables meaningful comparisons across different neuromorphic approaches and against conventional deep-learning-based methods, thereby accelerating progress in the field [8].

The Critical Need for Standardization in Neuromorphic Computing

The expansion of artificial intelligence and machine learning has led to increasingly complex and large models, with computation growth rates now exceeding the efficiency gains realized through traditional technology scaling [1]. This efficiency crisis is particularly acute for resource-constrained edge devices, intensifying the urgency for exploring new resource-efficient computing architectures [1]. Neuromorphic computing approaches this challenge by porting computational strategies employed in the brain into engineered computing devices, aiming to achieve the scalability, energy efficiency, and real-time capabilities characteristic of biological neural systems [1].

The term "neuromorphic" has evolved significantly since Mead's original conception in the 1980s, which focused on emulating brain biophysics using silicon properties [1]. The field now encompasses a diverse range of brain-inspired techniques at algorithmic, hardware, and system levels [1]. This diversity, while intellectually rich, has created fundamental challenges for comparative assessment:

- Algorithm diversity: Neuromorphic algorithms include spiking neural networks (SNNs), plastic synapses, and heterogeneous network architectures, often evaluated on conventional hardware before deployment to specialized systems [1].

- Hardware heterogeneity: Neuromorphic hardware implements varied biologically-inspired approaches including analog neuron emulation, event-based computation, non-von-Neumann architectures, and in-memory processing [1].

- Application variability: Neuromorphic systems target applications ranging from neuroscientific exploration to low-power edge intelligence and datacenter-scale acceleration [1].

This heterogeneity has historically prevented the emergence of clear standards for benchmarking, hindering effective evaluation of neuromorphic advantages compared to traditional methods [8]. NeuroBench directly addresses this fragmentation by creating a unified evaluation framework that accommodates diversity while enabling fair comparison.

Core Objectives of NeuroBench

Ensuring Fair Comparison Across Paradigms

The first core objective of NeuroBench is to establish a standardized evaluation foundation that enables fair comparison across diverse neuromorphic approaches and with conventional computing methods. The framework achieves this through its dual-track structure, which accommodates both hardware-independent and hardware-dependent evaluation contexts [1] [4]. The algorithm track focuses on hardware-independent assessment of neuromorphic algorithms, allowing researchers to compare algorithmic innovations without the confounding variables introduced by different hardware platforms [1]. This is particularly valuable during early research and development phases where algorithmic exploration typically occurs on conventional CPUs and GPUs. Conversely, the systems track addresses hardware-dependent evaluation, recognizing that the full potential of neuromorphic approaches emerges when algorithms are co-designed with and deployed to specialized hardware [1].

This two-track approach ensures that benchmarks are appropriate to the context and research questions being investigated. For algorithm comparisons, the framework neutralizes hardware variables that could skew results, while for system comparisons, it enables holistic assessment of integrated algorithm-hardware performance. The framework further ensures fairness through its collaborative development process, which engages a broad community of stakeholders to prevent bias toward any particular institutional or commercial approach [8]. This community-driven design guarantees that the benchmark tasks, metrics, and methodologies represent diverse perspectives and use cases, rather than reflecting the priorities of a single organization or research group.

Enabling Research Reproducibility

The second core objective of NeuroBench is to establish the methodological rigor and transparency necessary for reproducible research. The framework provides detailed specifications for benchmark tasks, evaluation metrics, and reporting requirements, ensuring that results can be consistently replicated across different research environments [4]. This commitment to reproducibility is operationalized through the NeuroBench harness, an open-source Python package that standardizes the evaluation process across different research groups and institutions [11]. By providing shared tools and interfaces, the harness reduces implementation variability that often compromises reproducibility in experimental neuromorphic computing research.

The framework's dedication to reproducibility extends to its comprehensive documentation and contribution guidelines, which establish clear standards for experimental methodology [12]. These guidelines include specifications for testing practices, documentation formats, and code quality controls that collectively enhance the reliability and replicability of published results. The framework employs pre-commit hooks and automated testing protocols to ensure that contributions adhere to project standards before integration, maintaining consistency across the benchmark suite [12]. Furthermore, the framework's design as a living benchmark that continually expands its tasks and features ensures that reproducibility standards evolve alongside the field, addressing new research directions and methodologies as they emerge [4].

Guiding Future Research Directions

The third core objective of NeuroBench is to provide actionable insights that guide future research directions and resource allocation in the neuromorphic computing field. By establishing standardized performance baselines across both neuromorphic and conventional approaches, the framework creates a reference point for measuring progress over time [4]. This longitudinal perspective enables the community to identify which research directions are yielding diminishing returns and which are demonstrating accelerating progress, informing strategic decisions about research investments.

The framework's structured evaluation methodology produces comparable performance data across multiple dimensions including accuracy, efficiency, latency, and energy consumption [4]. This multidimensional assessment prevents over-optimization on any single metric and encourages balanced advancement across the various attributes that define useful computing systems. By highlighting performance gaps and trade-offs, the benchmarks help researchers identify the most promising opportunities for breakthrough innovations. The framework's design as an expandable benchmark suite allows it to incorporate new tasks, metrics, and application domains as the field evolves, ensuring its continued relevance for guiding research direction [4].

Table 1: NeuroBench Benchmark Tracks and Characteristics

| Track | Focus | Evaluation Context | Primary Metrics | Target Applications |

|---|---|---|---|---|

| Algorithm Track | Neuromorphic algorithms | Hardware-independent | Accuracy, algorithmic efficiency, learning capabilities | Spiking neural networks, plastic synapses, heterogeneous networks |

| Systems Track | Integrated algorithms and hardware | Hardware-dependent | Energy efficiency, throughput, latency, real-time performance | Edge intelligence, datacenter acceleration, neuroscientific exploration |

Implementation and Methodology

NeuroBench Framework Architecture

The NeuroBench framework implements its core objectives through a structured architecture consisting of benchmark definitions, evaluation tools, and reporting standards. The framework includes four defined algorithm benchmarks in its initial release, with algorithmic complexity metric definitions and baseline results [11]. These benchmarks are designed to represent common tasks and challenges in neuromorphic computing, providing a balanced assessment of capabilities across different application domains. The system track benchmarks are defined but remain under active development, reflecting the greater complexity involved in standardizing hardware evaluation methodologies [11].

A key architectural element is the clear separation between benchmark specifications (the formal definitions of tasks, metrics, and conditions) and benchmark implementations (the concrete software tools for evaluation). This separation allows the framework to maintain stable specification standards while enabling continuous improvement of the tools that support them. The framework employs a modular design that facilitates the addition of new benchmark tasks as the field evolves, with clearly defined interfaces for integrating novel neuromorphic approaches and application domains [4]. This extensibility ensures that the framework remains relevant despite the rapid pace of innovation in neuromorphic computing.

Development Workflow and Contribution Process

The NeuroBench project maintains rigorous development workflows to ensure the quality and consistency of its benchmarking tools and methodologies. The process begins with community discussion of proposed changes or additions, typically initiated through the project's issue tracker [12]. This transparent discussion phase ensures that modifications receive input from diverse stakeholders and align with the framework's overarching goals. Once consensus emerges, contributors implement changes following a standardized workflow involving forking the repository, creating descriptive branches, and developing features with comprehensive testing and documentation [12].

The project enforces code quality through automated pre-commit hooks that run on all contributions, performing formatting checks, linting, and other validations before code can be committed [12]. This automated quality gate maintains consistency across the codebase despite the distributed nature of development. The workflow further requires that all modifications be thoroughly tested using the pytest framework, with tests placed in the designated neurobench/tests directory [12]. The final step involves opening a pull request to merge contributions into the development branch of the main repository, with clear and informative descriptions of the changes made [12]. This structured yet flexible process balances community inclusion with methodological rigor, ensuring that the framework evolves without compromising its reliability.

Benchmark Evaluation Methodology

The NeuroBench evaluation methodology employs a systematic approach to ensure consistent and comparable results across different neuromorphic platforms and algorithms. The process begins with task specification, which clearly defines the input data, expected outputs, and evaluation conditions for each benchmark [4]. This specification includes details on dataset usage, preprocessing requirements, and any data augmentation techniques that should be applied. For the algorithm track, the methodology focuses on hardware-agnostic metrics that isolate algorithmic performance from platform-specific characteristics, while the systems track incorporates hardware-dependent measurements that capture the full system behavior [1].

The actual evaluation is conducted through the NeuroBench harness, which provides standardized interfaces for executing benchmarks and collecting results [11]. This harness automates the process of running multiple trials, aggregating results, and computing the specified metrics, reducing manual intervention and potential human error. The harness supports both software simulations (for algorithm development) and hardware deployment (for system evaluation), with appropriate adaptation to each context. For system benchmarking, the methodology includes precise specifications for measurement techniques and instrumentation requirements to ensure consistent data collection across different hardware platforms. The final output includes comprehensive performance profiles that capture multiple dimensions of system behavior rather than reducing performance to single-number summaries.

Table 2: Essential Research Reagent Solutions in NeuroBench

| Component | Function | Implementation Examples |

|---|---|---|

| Benchmark Harness | Standardized evaluation execution | Python package with unified API for all benchmarks |

| Pre-commit Hooks | Code quality enforcement | Automated formatting, linting, and validation checks |

| Testing Framework | Verification of benchmark implementations | Pytest integration with comprehensive test suites |

| Documentation Tools | Methodology specification and dissemination | Google docstrings format with Sphinx documentation |

| Metric Calculators | Performance quantification | Standardized implementations of evaluation metrics |

Collaborative Development Model

The NeuroBench framework distinguishes itself through its genuinely collaborative development model, which engages researchers from across academia and industry in an open community process [8]. This model directly supports the core objectives of fair comparison and representative benchmarking by ensuring that the framework reflects diverse perspectives rather than being dominated by any single institution or commercial interest. The project maintains transparency through its public repository and open discussion channels, allowing any researcher to contribute to the evolution of the benchmarks [13]. This inclusivity is particularly important for a field as interdisciplinary as neuromorphic computing, where progress depends on collaboration between specialists in neuroscience, computer architecture, algorithm design, and application domains.

The community-driven nature of NeuroBench is evident in its author list, which includes contributors from dozens of institutions including Harvard University, Delft University of Technology, Forschungszentrum Jülich, Intel Labs, and many others [9]. This broad participation ensures that the benchmark tasks represent real-world applications and research challenges rather than artificial or narrowly academic exercises. The framework specifically aims to overcome the limitations of previous benchmarking efforts that failed to achieve widespread adoption due to insufficient community input [4]. By building consensus across the field, NeuroBench creates a common language and evaluation standard that enables more effective collaboration and knowledge transfer between research groups.

Future Directions and Impact

The NeuroBench framework is designed as a living standard that will evolve alongside the neuromorphic computing field [4]. The current version includes established algorithm benchmarks with baseline results, while system track benchmarks remain under active development [11]. This phased rollout reflects a pragmatic approach to benchmark development, prioritizing areas where community consensus is more readily achievable while continuing to work on more challenging evaluation domains. The framework's architecture specifically accommodates future expansion through its modular design and versioning system, allowing the addition of new benchmark tasks, metrics, and application domains as the field progresses.

The long-term impact of NeuroBench extends beyond mere performance comparison to shaping the trajectory of neuromorphic computing research [4]. By establishing standardized evaluation methodologies, the framework enables more meaningful comparison between research results, accelerating the identification of promising approaches. The comprehensive nature of NeuroBench assessments encourages holistic optimization across multiple system attributes rather than narrow focus on isolated metrics. Furthermore, the framework's emphasis on real-world applications and efficiency metrics helps bridge the gap between academic research and practical deployment, potentially accelerating the translation of neuromorphic technologies from laboratory demonstrations to fielded systems [1]. As the framework gains adoption, it will generate increasingly comprehensive data on performance trends and trade-offs, providing valuable insights for guiding future research investments and policy decisions in the computing field.

The field of neuromorphic computing, inspired by the architecture and operation of the brain, has emerged as a promising avenue to enhance the efficiency of machine learning pipelines and advance computing capabilities using brain-inspired principles [1] [14]. This paradigm aims to mimic the brain's exceptional energy efficiency and real-time processing capabilities through novel hardware and algorithms that depart from traditional von Neumann computing architecture [15]. However, the rapid growth of this field has exposed a critical challenge: the lack of standardized benchmarks [1]. Prior to NeuroBench, this absence made it difficult to accurately measure technological advancements, compare performance against conventional methods, and identify promising future research directions [4]. This gap hindered both academic research and industrial adoption, as objective comparisons between different neuromorphic approaches were nearly impossible to achieve in a consistent manner.

The NeuroBench framework represents a collaboratively-designed effort from an open community of researchers across industry and academia to address these challenges [1] [4]. It serves as a benchmark framework specifically designed for neuromorphic algorithms and systems, providing a common set of tools and systematic methodology for inclusive benchmark measurement [16]. By delivering an objective reference framework for quantifying neuromorphic approaches, NeuroBench enables researchers to systematically evaluate both the performance and efficiency of brain-inspired computing technologies [17]. This framework is particularly vital as neuromorphic computing shows increasing promise for enabling AI applications in resource-constrained environments where energy efficiency and low latency are critical requirements [14].

NeuroBench Framework Architecture

Dual-Track Benchmarking Approach

NeuroBench introduces a sophisticated dual-track approach that recognizes the distinct requirements for evaluating algorithmic innovations versus complete system implementations. This architecture enables comprehensive assessment across different stages of neuromorphic technology development, from conceptual algorithms to deployed systems. The framework's structure consists of two complementary tracks:

Hardware-Independent (Algorithm Track): This track focuses on evaluating neuromorphic algorithms in isolation from specific hardware constraints [4]. By running algorithms on conventional hardware such as CPUs and GPUs, researchers can assess fundamental algorithmic advances and drive design requirements for next-generation neuromorphic hardware [1]. This approach allows for direct comparison of algorithmic efficiency without the confounding variables introduced by specialized hardware architectures.

Hardware-Dependent (System Track): This track evaluates complete neuromorphic systems, including both algorithms and the specialized hardware they run on [4]. This holistic approach is essential because neuromorphic systems are composed of algorithms deployed to hardware that seek greater energy efficiency, real-time processing capabilities, and resilience compared to conventional systems [1]. The system track acknowledges that true neuromorphic advantages often emerge from the co-design of algorithms and hardware.

Core Metrics and Evaluation Methodology

NeuroBench employs a comprehensive set of metrics designed to capture the unique characteristics and advantages of neuromorphic computing. These metrics enable multi-dimensional comparison across different approaches and provide a complete picture of performance trade-offs. The framework's evaluation methodology spans multiple critical dimensions:

Table: NeuroBench Core Evaluation Metrics

| Metric Category | Specific Metrics | Description |

|---|---|---|

| Accuracy | Task accuracy, Temporal precision | Performance on target applications and time-sensitive tasks |

| Efficiency | Energy consumption, Latency, Throughput | Resource utilization and processing speed |

| Hardware Utilization | Area efficiency, Memory usage, Computational density | Silicon footprint and resource efficiency |

| Robustness | Noise immunity, Stability under variation | Performance consistency in real-world conditions |

The evaluation process incorporates both quantitative performance metrics and qualitative assessments, with quantitative metrics receiving approximately 70% weighting in overall evaluation [18]. This balanced approach ensures rigorous comparison while accounting for practical implementation factors. For spiking neural networks (SNNs), specific considerations include temporal dynamics, spike-based communication patterns, and event-driven processing efficiency [18]. The benchmarking process is designed to be extensible, allowing for continuous expansion of benchmarks and features to track progress made by the research community [4].

Benchmarking Neuromorphic Algorithms

Algorithmic Scope and Evaluation Criteria

The algorithmic track of NeuroBench encompasses a diverse range of brain-inspired computing approaches, with particular focus on spiking neural networks (SNNs) and related neuromorphic algorithms [1]. SNNs, often referred to as the third generation of neural networks, mimic the discrete spiking behavior of biological neurons and enable asynchronous, event-driven processing [18]. This paradigm offers potential for significant energy savings and real-time processing capabilities, making SNNs particularly attractive for engineering applications requiring both energy efficiency and temporal precision [18]. The algorithmic scope extends beyond SNNs to include various neuroscience-inspired methods that strive toward goals of expanded learning capabilities, such as predictive intelligence, data efficiency, and adaptation [1].

Evaluation of neuromorphic algorithms focuses on their computational properties and performance characteristics independent of specific hardware implementation. Key criteria include computational efficiency, temporal processing capabilities, learning and adaptation mechanisms, and scalability to complex problems. The algorithms are assessed on their ability to leverage neuromorphic principles such as sparse, event-driven computations; temporal coding strategies; and biologically plausible learning rules [15]. For SNNs specifically, evaluation includes their inherent recurrent nature due to memory elements in spiking neurons, making them suitable for real-world sequential tasks [14].

Experimental Protocols and Methodologies

The evaluation of neuromorphic algorithms follows rigorous experimental protocols designed to ensure fair comparison and reproducible results. These methodologies encompass multiple aspects of algorithm performance and behavior:

Training Method Comparison: Algorithms are evaluated across different training approaches including surrogate gradient methods, ANN-to-SNN conversion, and biologically plausible local learning rules [18]. Each method presents distinct trade-offs between accuracy, training efficiency, and biological plausibility that must be systematically assessed.

Temporal Dynamics Analysis: For spiking neural networks, comprehensive evaluation across varying time steps is essential [18]. This analysis captures the fundamental trade-offs between temporal resolution and computational efficiency that characterize SNN performance.

Multi-Modal Task Evaluation: Algorithms are tested across diverse data modalities including static images, text data, and neuromorphic sensor data (e.g., event-based camera outputs) [18]. This multi-modal approach ensures robust assessment of algorithmic capabilities beyond narrow domains.

Noise Immunity Testing: Performance under varying noise conditions is evaluated to assess algorithmic robustness [18]. This testing is particularly important for real-world applications where clean data cannot be guaranteed.

The experimental workflow for algorithm benchmarking follows a structured pipeline from data preparation through performance analysis, with strict controls on hardware configuration and software environment to ensure comparability [18].

Key Algorithmic Benchmarks and Baseline Performance

NeuroBench establishes standardized benchmarks across multiple application domains to enable consistent algorithmic evaluation. These benchmarks span traditional machine learning tasks adapted for neuromorphic implementations as well as tasks specifically designed to leverage neuromorphic advantages:

Table: Representative Algorithm Benchmarks and Performance Ranges

| Benchmark Task | Data Modality | Key Metrics | Performance Range |

|---|---|---|---|

| Gesture Recognition | Event-based vision | Accuracy, Latency | Varies by approach & dataset |

| Keyword Spotting | Audio/Events | Accuracy, Energy per inference | Varies by approach & dataset |

| Image Classification | Static frames | Accuracy, Time steps needed | Varies by approach & dataset |

| Object Detection | Event-based vision | mAP, Processing latency | Varies by approach & dataset |

Performance baselines established through NeuroBench reveal fundamental characteristics of neuromorphic algorithms. Directly trained SNNs often demonstrate advantages in energy efficiency and latency for temporal tasks, while ANN-to-SNN converted models may achieve higher accuracy on static image classification at the cost of increased latency [18]. The framework has documented energy efficiency improvements ranging from 6× to 300× compared to conventional approaches in optimized cases [19].

Benchmarking Neuromorphic Systems

System Components and Architecture Evaluation

Neuromorphic systems benchmarking encompasses the integrated evaluation of complete systems comprising specialized hardware architectures and the algorithms they execute. This holistic approach is critical because neuromorphic systems target a wide range of applications, from neuroscientific exploration to low-power edge intelligence and datacenter-scale acceleration [1]. System benchmarking evaluates multiple architectural approaches including digital neuromorphic chips (e.g., Intel Loihi, IBM TrueNorth, SpiNNaker), analog/mixed-signal designs, and emerging technologies based on memristive devices, spintronic circuits, and photonic processors [15].

The system evaluation examines key architectural features including:

- Event-driven data-flow processing that enables sparse, asynchronous computation [19]

- Near-/in-memory computing architectures that overcome von Neumann bottlenecks [14]

- Parallel processing elements that mimic the brain's distributed computation [15]

- Network-on-chip communication fabrics for efficient inter-neuron connectivity [19]

Each architectural approach presents distinct trade-offs between flexibility, efficiency, and bio-realism. Digital neuromorphic chips offer programmability and reliability but may sacrifice energy efficiency compared to analog approaches. Memristive and emerging technology-based systems promise greater density and energy efficiency but face challenges with device variability and manufacturing consistency [15].

Hardware-Software Co-Design Assessment

A critical aspect of neuromorphic systems benchmarking is the evaluation of hardware-software co-design, where algorithms are optimized for specific hardware characteristics and vice versa. This co-design is essential for realizing the full potential of neuromorphic computing, as demonstrated by specialized techniques such as:

- Spike-grouping methods that process spikes in batches to reduce energy consumption and latency of event-based processing [19]

- Event-driven depth-first convolution that lowers memory requirements and processing latency for CNN inference on neuromorphic processors [19]

- Precision optimization using lower precision data types for weights and neuron states to reduce memory usage [19]

- Mapping efficiency optimization to maximize utilization of fragmented on-chip memory in near-memory processing architectures [19]

These optimizations have demonstrated significant improvements, with reported gains of 6× to 300× in energy efficiency, 3× to 15× in latency reduction, and 3× to 100× in area efficiency compared to unoptimized approaches [19]. The benchmarking process evaluates both the final performance and the efficiency of the co-design process itself.

System-Level Metrics and Performance Baselines

System-level benchmarking employs comprehensive metrics that capture the end-to-end performance of neuromorphic systems deployed in practical scenarios. These metrics extend beyond algorithmic performance to include implementation-specific characteristics:

- Energy Efficiency: Measured as energy per inference or synaptic operations per joule, with digital neuromorphic chips demonstrating 100× to 1000× improvements over conventional processors on suitable tasks [15]

- Latency: Critical for real-time applications, with event-driven systems achieving sub-millisecond response times for sensor processing tasks [19]

- Area Efficiency: Measured as performance per unit silicon area, highlighting the trade-offs between different hardware approaches [19]

- Thermal Characteristics: Particularly important for embedded and edge deployment scenarios

- Scalability: Ability to maintain efficiency as system size increases, evaluated through multi-chip and multi-core configurations

The benchmarking framework also assesses practical deployment considerations including software toolchain maturity, programming model usability, and integration with conventional computing systems. These factors significantly influence the real-world applicability of neuromorphic systems beyond raw performance metrics.

Advanced Methodologies for Specialized Domains

Benchmarking for Robotic Vision Applications

Robotic vision represents a particularly demanding application domain for neuromorphic computing, requiring low-latency processing of dynamic visual information under severe power constraints. NeuroBench addresses these specialized requirements through benchmarks inspired by biological systems such as Drosophila (fruit fly), which achieves remarkable navigation capabilities with approximately 100,000 neurons operating on just a few microwatts of power [14]. The benchmarking approach for this domain emphasizes:

- Integration of specialized sensing using event-based cameras that generate asynchronous, sparse streams of binary events at high temporal resolution (10μs vs 3ms for conventional cameras) with low power consumption (10mW vs 3W) [14]

- Real-time processing capabilities for tasks such as optical flow estimation, depth estimation, and obstacle avoidance in dynamic environments

- System-level performance metrics that combine sensing, processing, and actuation in closed-loop configurations

Vision-based drone navigation (VDN) serves as an exemplary application driver for these benchmarks, requiring holistic scene understanding through underlying perception tasks while operating under severe computational and energy constraints [14]. The benchmarking methodology captures the interplay between event-based sensing, spiking neural network processing, and specialized neuromorphic hardware in achieving biological levels of efficiency and responsiveness.

Framework and Toolchain Evaluation

NeuroBench includes comprehensive evaluation of the software frameworks and toolchains that enable neuromorphic system development. This assessment covers multiple dimensions including:

- Framework Adaptability to different neural models, learning rules, and hardware targets

- Training Efficiency for various learning approaches including supervised, unsupervised, and reinforcement learning

- Hardware Compatibility and deployment workflow efficiency

- Community Engagement and ecosystem maturity [18]

Recent benchmarking of five leading SNN frameworks—SpikingJelly, BrainCog, Sinabs, SNNGrow, and Lava—revealed distinct specialization patterns. SpikingJelly excels in overall performance and energy efficiency, while BrainCog demonstrates robust performance on complex tasks. Sinabs and SNNGrow offer balanced performance in latency and stability, with Lava showing limitations in large-scale dataset adaptability [18]. This framework evaluation provides crucial guidance for researchers selecting development tools for specific application requirements.

Key Research Reagents and Solutions

The advancement of neuromorphic computing research relies on a sophisticated ecosystem of hardware platforms, software frameworks, and datasets. This "research toolkit" enables experimental investigation across the neuromorphic computing stack:

Table: Essential Research Resources for Neuromorphic Computing

| Resource Category | Specific Examples | Function and Purpose |

|---|---|---|

| Neuromorphic Hardware | Intel Loihi, IBM TrueNorth, SpiNNaker | Physical implementation of neuromorphic architectures |

| SNN Frameworks | SpikingJelly, BrainCog, Lava, Sinabs | Software environment for SNN development and training |

| Neuromorphic Sensors | Event-based cameras (DAVIS, Prophesee) | Bio-inspired sensing for sparse, asynchronous data |

| Benchmark Datasets | Neuromorphic MNIST, DVS Gesture, N-Caltech | Standardized data for evaluation and comparison |

| Benchmark Tools | NeuroBench framework | Standardized evaluation metrics and procedures |

Experimental Setup and Configuration Guidelines

To ensure reproducible and comparable results across different research efforts, NeuroBench provides detailed guidelines for experimental setup and configuration. These guidelines cover:

- Hardware Configuration: Standardized computational resources (e.g., AMD EPYC 9754 128-core CPU, RTX 4090D GPU, 60 GB RAM) for fair algorithm comparison [18]

- Software Environment: Consistent software versions (e.g., PyTorch 2.1.0, CUDA 11.8) and dependency management [18]

- Evaluation Protocols: Standardized train/test splits, hyperparameter settings, and evaluation metrics across benchmark tasks

- Reporting Standards: Comprehensive documentation of all experimental parameters and conditions

These standardized configurations enable meaningful comparison across different research efforts while still allowing investigation of specialized optimizations for particular hardware platforms or application domains.

Implementation Workflow and Visual Guide

The NeuroBench benchmarking process follows a structured workflow that ensures comprehensive evaluation while maintaining comparability across different neuromorphic approaches. The following diagram illustrates the key stages and decision points in this process:

Future Directions and Community Impact

Evolving Benchmark Challenges

As neuromorphic computing continues to advance, NeuroBench faces ongoing challenges in maintaining relevant and comprehensive benchmarks. Key areas for future development include:

- Multi-modal integration benchmarks that evaluate systems processing diverse sensor data (vision, audio, tactile) in coordinated frameworks

- Lifelong learning evaluation assessing capabilities for continuous adaptation without catastrophic forgetting of previous knowledge

- Scalability metrics for increasingly large and complex neural architectures approaching brain-scale complexity

- Standardized power measurement methodologies that enable fair comparison across diverse hardware platforms

- Ethical and safety benchmarks for autonomous systems employing neuromorphic intelligence

These evolving challenges reflect the dynamic nature of neuromorphic computing and the need for benchmarks that anticipate future developments rather than simply documenting current capabilities.

Roadmap for Widespread Adoption

The broader impact and adoption of NeuroBench depends on several critical factors that the framework addresses through its community-driven development model:

- Inclusive Design: Collaborative development across industry and academia ensures relevance to diverse research priorities and application domains [1]

- Extensibility: The open-source framework allows community contributions of new benchmarks, metrics, and evaluation methodologies [4]

- Actionable Guidance: Detailed protocols and standardized reporting enable practical implementation rather than theoretical comparison

- Iterative Refinement: Regular updates incorporate community feedback and technological advancements [4]

The ongoing development of NeuroBench represents a crucial enabling technology for the neuromorphic computing field, providing the standardized evaluation framework necessary to accelerate progress from laboratory research to practical deployment across diverse application domains. By establishing common ground for comparison and collaboration, NeuroBench helps transform neuromorphic computing from a collection of isolated advances into a cohesive technological paradigm with clearly demonstrated capabilities and advantages over conventional approaches.

How NeuroBench Works: A Dual-Track Framework for Algorithms and Systems

Neuromorphic computing, which leverages brain-inspired principles to advance computing efficiency and artificial intelligence (AI) capabilities, has emerged as a promising solution to the looming limitations of conventional computing architectures. The rapid growth of AI and machine learning has led to increasingly complex models whose computational demands exceed the efficiency gains predicted by traditional technology scaling laws [1]. Neuromorphic systems, inspired by the biophysics of the brain, aim to reproduce the high-level performance, energy efficiency, and real-time processing capabilities of biological neural systems [1]. However, the field has historically suffered from a critical impediment to progress: the lack of standardized benchmarks.

Prior to initiatives like NeuroBench, the neuromorphic research landscape was fragmented, making it difficult to accurately measure technological advancements, compare performance against conventional methods, or identify the most promising research directions [1] [4]. This absence of common evaluation standards hindered the collaborative development and commercial adoption of neuromorphic technologies. The NeuroBench framework was collaboratively designed by an open community of over 100 researchers from more than 50 academic and industrial institutions to address this precise challenge [9]. It provides a common set of tools and a systematic methodology for benchmarking neuromorphic approaches through a two-track evaluation model that distinguishes between hardware-independent and hardware-dependent assessment [1] [4]. This whitepaper provides an in-depth technical examination of this two-track model, detailing its protocols, metrics, and application within the broader NeuroBench framework for researchers and scientists in neuromorphic computing and related fields.

Foundations of the NeuroBench Framework

NeuroBench is conceived as a community-driven, open-source framework that delivers an objective reference for quantifying neuromorphic approaches. Its development was motivated by the recognition that neuromorphic computing optimizes for different goals—such as energy efficiency, real-time processing, and event-driven computation—than conventional systems, thus necessitating novel benchmarking methods [20]. The framework is designed to be inclusive, actionable, and iterative, allowing for continuous expansion and refinement as the field evolves [4].

The core structure of NeuroBench is built around two complementary tracks, which are summarized in the table below.

Table 1: The NeuroBench Two-Track Evaluation Model

| Feature | Hardware-Independent (Algorithm Track) | Hardware-Dependent (System Track) |

|---|---|---|

| Primary Goal | Evaluate algorithmic innovations and computational principles [1] [4] | Assess performance of full systems integrating algorithms and hardware [1] [4] |

| Focus Area | Model performance, learning capabilities, data efficiency [1] | Energy efficiency, latency, throughput, real-time capabilities [1] |

| Key Metrics | Accuracy, precision, recall, F1-score [21] | Energy consumption, latency, throughput, computational density [1] |

| Execution Environment | Simulation on conventional hardware (CPUs, GPUs) [1] | Deployment on specialized neuromorphic hardware [1] |

| Typical Use Case | Driving design requirements for next-generation hardware [1] | Benchmarking complete solutions for edge intelligence or datacenter acceleration [1] |

This two-track approach allows researchers to dissect the contributions of algorithms and hardware platforms separately, enabling clearer insights into the sources of performance and efficiency gains. The following diagram illustrates the logical relationship and workflow between these two tracks within the NeuroBench framework.

The Hardware-Independent (Algorithm) Track

Core Objectives and Experimental Protocol

The hardware-independent track, often termed the algorithm track, is designed to evaluate the efficacy of neuromorphic algorithms—such as spiking neural networks (SNNs) and other neuroscience-inspired models—divorced from the specific characteristics of any physical hardware platform [1]. The primary goal is to assess intrinsic algorithmic properties like learning capabilities, data efficiency, and adaptability [1]. This track is crucial for driving the design requirements of next-generation neuromorphic hardware by first establishing what algorithms are most promising.

The experimental protocol for this track follows a systematic methodology:

- Algorithm Implementation: The neuromorphic algorithm (e.g., an SNN) is implemented using a software framework that supports neuromorphic modeling, such as the open-source harness provided by NeuroBench [5].

- Dataset and Task Selection: The algorithm is applied to standardized benchmark tasks across various domains like image recognition, audio processing, or neural decoding. NeuroBench has established several such tasks to ensure fair comparisons [22].

- Simulated Execution: The model is executed in a simulated environment on conventional hardware like CPUs or GPUs. This isolation allows for a pure evaluation of the algorithm's computational principles [1].

- Performance Measurement: Quantitative metrics are collected based on the algorithm's output, without measuring physical resource consumption.

Key Evaluation Metrics and Methodologies

The evaluation in this track relies heavily on established quantitative metrics from machine learning, adapted as needed for neuromorphic contexts. The following table catalogs the primary metrics and their significance.

Table 2: Key Quantitative Metrics for the Hardware-Independent Track

| Metric | Computational Formula | Evaluation Purpose |

|---|---|---|

| Accuracy | (TP + TN) / (TP + TN + FP + FN) [21] | Measures the overall proportion of correct predictions. |

| Precision | TP / (TP + FP) [21] | Evaluates the model's ability to avoid false positives. |

| Recall | TP / (TP + FN) [21] | Evaluates the model's ability to identify all relevant instances (avoid false negatives). |

| F1-Score | 2 * (Precision * Recall) / (Precision + Recall) [21] | Provides a harmonic mean of precision and recall, useful for imbalanced datasets. |

| AUC-ROC | Area Under the Receiver Operating Characteristic Curve [21] | Measures the model's capability to distinguish between classes. |

To ensure robustness, evaluation methodologies such as k-fold cross-validation are employed. In this technique, the dataset is randomly partitioned into k equally sized subsets. The model is trained k times, each time using a different subset as the validation set and the remaining k-1 subsets as the training set. This process helps prevent overfitting and provides a more accurate estimate of performance on unseen data [21]. The choice of metrics should be guided by the specific problem domain; for example, precision and recall are often more informative than accuracy in binary classification problems with class imbalance [21].

The Hardware-Dependent (System) Track

Core Objectives and Experimental Protocol

The hardware-dependent track, or the system track, evaluates the performance of complete neuromorphic systems, where algorithms are deployed on specialized brain-inspired hardware [1]. This track is critical for assessing the real-world benefits of neuromorphic computing, such as unparalleled energy efficiency, low latency, and resilience, which arise from the co-design of algorithms and hardware [1] [20].

The experimental protocol for this track is inherently more complex, involving the full stack of computation:

- System Configuration: The neuromorphic algorithm is deployed onto a target neuromorphic hardware platform. This may involve platform-specific compilation and mapping of the neural network onto the hardware's physical fabric.

- Workload Execution: The system executes the benchmark task, which may involve processing real-time, event-based data streams.

- Physical Measurement: Specialized tools are used to measure not only task performance (e.g., accuracy) but also physical quantities like power draw and execution time.

- Data Collection and Analysis: The raw physical measurements are processed to compute the system-level metrics defined below.

Key Evaluation Metrics and Methodologies

The system track employs a distinct set of metrics that reflect the overarching goals of neuromorphic computing. These metrics collectively provide a holistic view of a system's efficiency and capability.

Table 3: Key Quantitative Metrics for the Hardware-Dependent Track