Neural Population Dynamics Optimization: A Brain-Inspired Framework for Biomedical Innovation

This article introduces Neural Population Dynamics Optimization Algorithms (NPDOAs), a novel class of brain-inspired meta-heuristic methods.

Neural Population Dynamics Optimization: A Brain-Inspired Framework for Biomedical Innovation

Abstract

This article introduces Neural Population Dynamics Optimization Algorithms (NPDOAs), a novel class of brain-inspired meta-heuristic methods. We explore the foundational principles of how interconnected neural populations perform efficient computations and make optimal decisions, drawing parallels to dynamical systems in theoretical neuroscience. The article details core algorithmic strategies, including attractor trending for exploitation and coupling disturbance for exploration, and examines their application in solving complex optimization problems in drug discovery and biomedical research. We further address key implementation challenges, compare NPDOAs with established optimization methods, and validate their performance through benchmark tests and real-world case studies. This resource is tailored for researchers, scientists, and drug development professionals seeking to leverage cutting-edge computational techniques for accelerating biomedical innovation.

The Brain as an Optimizer: Unpacking the Neuroscience Behind the Algorithm

The study of neural population dynamics represents a paradigm shift in neuroscience, moving from a focus on individual neurons to understanding how collective neural activity gives rise to brain function. This framework conceptualizes neural computations as being performed by the coordinated, time-varying activity of entire neural populations, governed by underlying network constraints and dynamical systems principles [1] [2]. Significant experimental, computational, and theoretical work has identified rich structure within this coordinated activity, with an emerging challenge being to uncover the nature of the associated computations, how they are implemented, and what role they play in driving behavior [1].

The core mathematical framework describes neural population dynamics using dynamical systems theory, where the evolution of neural population states follows the form dx/dt = f(x(t), u(t)), with x representing an N-dimensional vector of neural firing rates and u representing external inputs to the circuit [1]. This perspective has proven powerful for understanding processes ranging from motor control to decision-making, and has recently inspired novel computational approaches, including the development of optimization algorithms that mirror these biological principles [3].

Biological Foundations of Neural Population Dynamics

Empirical Evidence for Constrained Neural Trajectories

Groundbreaking experimental work has provided compelling evidence that neural population activity follows constrained trajectories that reflect underlying network architecture. In a crucial experiment, researchers used a brain-computer interface (BCI) to challenge non-human primates to violate the naturally occurring sequences of neural population activity in the motor cortex [4] [2]. This included prompting subjects to traverse a natural sequence of neural activity in a time-reversed manner—essentially going the wrong way on a hypothesized "one-way path" [4].

Despite providing visual feedback and reward incentives, animals were unable to alter the fundamental sequences of their neural activity, supporting the view that stereotyped activity sequences arise from constraints imposed by the underlying neural circuitry [4] [2]. This robustness suggests that the observed neural trajectories are not merely epiphenomena but reflect fundamental computational mechanisms implemented by the network [2].

The Role of Network Architecture

In network models, the time evolution of activity is shaped by the network's connectivity [2]. The activity of each node at a given point in time is determined by the activity of every node at the previous time point, the network's connectivity, and the inputs to the network [2]. This architecture gives rise to characteristic flow fields that reflect the specific computations performed by the network [2]. The empirical observation that neural activity follows such flow fields, and that these paths are difficult to violate, forges a link between activity time courses observed in empirical studies and the network-level computational mechanisms they are believed to support [2].

Computational Frameworks for Modeling Neural Dynamics

Linear Dynamical Systems Approaches

Linear dynamical models provide a foundational framework for modeling neural population activity due to their interpretability and mathematical tractability. A low-rank autoregressive approach has demonstrated particular effectiveness for capturing the essential dynamics while respecting the low-dimensional structure of neural data [5]. This model can be formulated as:

x_{t+1} = Σ_{s=0}^{k-1} (A_s x_{t-s} + B_s u_{t-s}) + v

where the matrices A_s and B_s are parameterized as diagonal plus low-rank components, capturing both individual neuron properties and population-level interactions [5].

For modeling interactions across brain regions, Cross-population Prioritized Linear Dynamical Modeling (CroP-LDM) has been developed to specifically prioritize learning dynamics shared across neural populations while preventing them from being confounded by within-population dynamics [6]. This prioritized learning approach has proven more accurate for identifying cross-region interactions compared to methods that jointly maximize likelihood for both shared and within-region activity [6].

Geometric Deep Learning for Neural Manifolds

Recent advances in geometric deep learning have enabled more sophisticated modeling of neural population dynamics that explicitly accounts for the manifold structure of neural activity. The MARBLE (MAnifold Representation Basis LEarning) framework decomposes dynamics into local flow fields and maps them into a common latent space using unsupervised geometric deep learning [7].

This approach operates by:

- Approximating the unknown neural manifold using a proximity graph

- Defining tangent spaces around each neural state to enable parallel transport between nearby vectors

- Decomposing the vector field into local flow fields (LFFs) that encode local dynamical context

- Mapping LFFs to latent vectors using a geometric deep learning architecture with gradient filter layers and inner product features [7]

MARBLE discovers emergent low-dimensional latent representations that parametrize high-dimensional neural dynamics during cognitive processes like gain modulation and decision-making, enabling consistent comparison of cognitive computations across different neural networks and animals [7].

Behavior-Guided Modeling via Knowledge Distillation

The BLEND framework addresses the common challenge of imperfectly paired neural-behavioral datasets by treating behavior as privileged information during training [8]. This approach uses knowledge distillation where a teacher model that takes both behavior observations and neural activities as inputs trains a student model that uses only neural activity during inference [8].

This model-agnostic framework enhances existing neural dynamics modeling architectures without requiring specialized model development, demonstrating over 50% improvement in behavioral decoding and over 15% improvement in transcriptomic neuron identity prediction after behavior-guided distillation [8].

Experimental Methodologies and Protocols

Active Learning for Neural System Identification

Traditional approaches to modeling neural population dynamics involve recording activity during natural behavior and then fitting models to this data, which provides correlational insights but limited causal inference [5]. Active learning techniques combined with two-photon holographic optogenetics have revolutionized this process by enabling experimenters to design causal perturbations that efficiently reveal system dynamics [5].

The active stimulation design procedure follows this protocol:

- Initial Data Collection: Record baseline neural population responses to random photostimulation patterns targeting groups of 10-20 neurons [5]

- Model Fitting: Estimate a low-rank autoregressive model from initial data to capture low-dimensional structure [5]

- Stimulation Optimization: Compute photostimulation patterns that maximize information gain about model parameters, targeting the low-dimensional structure [5]

- Iterative Refinement: Alternate between stimulation, recording, and model updating to progressively refine estimates of neural dynamics [5]

This approach has demonstrated up to a two-fold reduction in the amount of data required to reach a given predictive power compared to passive stimulation approaches [5].

BCI Paradigms for Probing Neural Constraints

Brain-computer interface paradigms provide powerful methods for causally testing hypotheses about neural computation through dynamics [2]. The experimental protocol for probing neural trajectory constraints involves:

- Neural Recording: Implant multi-electrode arrays in motor cortex and record from ~90 neural units [2]

- Dimensionality Reduction: Transform neural activity into 10-dimensional latent states using causal Gaussian process factor analysis (GPFA) [2]

- BCI Mapping: Map neural states to 2D cursor position (not velocity) to provide direct visual feedback of neural trajectory [2]

- Projection Identification: Identify both movement-intention (MoveInt) and separation-maximizing (SepMax) projections of the neural state space [2]

- Task Design: Challenge animals to perform tasks requiring specific neural trajectories, including time-reversed paths [2]

This protocol has revealed that neural trajectories are remarkably constrained, as animals cannot volitionally alter fundamental sequence dynamics even when provided with direct visual feedback and strong incentives [2].

Table 1: Quantitative Performance Comparison of Neural Dynamics Modeling Approaches

| Method | Key Innovation | Application Domain | Performance Improvement |

|---|---|---|---|

| NPDOA [3] | Brain-inspired metaheuristic with attractor trending, coupling disturbance, and information projection strategies | Global optimization problems | Outperformed 9 other meta-heuristic algorithms on benchmark problems |

| MARBLE [7] | Geometric deep learning for manifold-structured neural dynamics | Within- and across-animal decoding | State-of-the-art decoding accuracy with minimal user input |

| BLEND [8] | Behavior-guided knowledge distillation | Neural activity and behavior modeling | >50% improvement in behavioral decoding, >15% improvement in neuron identity prediction |

| Active Learning LDS [5] | Active stimulation design for low-rank system identification | Causal circuit perturbation | Up to 2-fold data efficiency improvement over passive approaches |

| CroP-LDM [6] | Prioritized learning of cross-population dynamics | Multi-region neural interactions | More accurate than static methods and non-prioritized dynamic approaches |

The Neural Population Dynamics Optimization Algorithm (NPDOA)

Algorithm Formulation and Mechanisms

The Neural Population Dynamics Optimization Algorithm (NPDOA) represents a direct translation of neural computational principles to optimization methodology [3]. This brain-inspired metaheuristic treats each solution as a neural state and incorporates three key strategies derived from neural population dynamics:

Attractor Trending Strategy: Drives neural populations toward optimal decisions, ensuring exploitation capability by mimicking how neural activity converges to stable states associated with favorable decisions [3]

Coupling Disturbance Strategy: Deviates neural populations from attractors through coupling with other neural populations, improving exploration ability analogous to neural interference mechanisms [3]

Information Projection Strategy: Controls communication between neural populations, enabling transition from exploration to exploitation by regulating information transmission [3]

In NPDOA, each decision variable represents a neuron, with its value corresponding to the neuron's firing rate. The algorithm simulates activities of interconnected neural populations during cognition and decision-making, with neural states transferring according to neural population dynamics [3].

Performance and Applications

Extensive testing on benchmark and practical engineering problems has verified NPDOA's effectiveness, demonstrating distinct benefits for addressing single-objective optimization problems compared to existing metaheuristic approaches [3]. The algorithm successfully balances exploration and exploitation—a fundamental challenge in optimization—by directly implementing mechanisms observed in biological neural systems.

Research Reagent Solutions and Experimental Tools

Table 2: Essential Research Tools for Neural Population Dynamics Investigation

| Tool/Technology | Function | Application Context |

|---|---|---|

| Two-photon Holographic Optogenetics [5] | Precise photostimulation of experimenter-specified neuron groups | Causal perturbation of neural circuits during active learning |

| Multi-electrode Arrays [2] | Simultaneous recording from large neural populations (~90 units) | Measuring population activity dynamics in motor cortex |

| Causal GPFA [2] | Dimensionality reduction for neural trajectories | Real-time visualization of low-dimensional neural states in BCI |

| Brain-Computer Interfaces (BCI) [4] [2] | Closed-loop neural activity monitoring with feedback | Testing neural constraints and rehabilitation applications |

| Geometric Deep Learning Frameworks [7] | Modeling manifold-structured neural dynamics | MARBLE implementation for interpretable latent representations |

Signaling Pathways and Computational Workflows

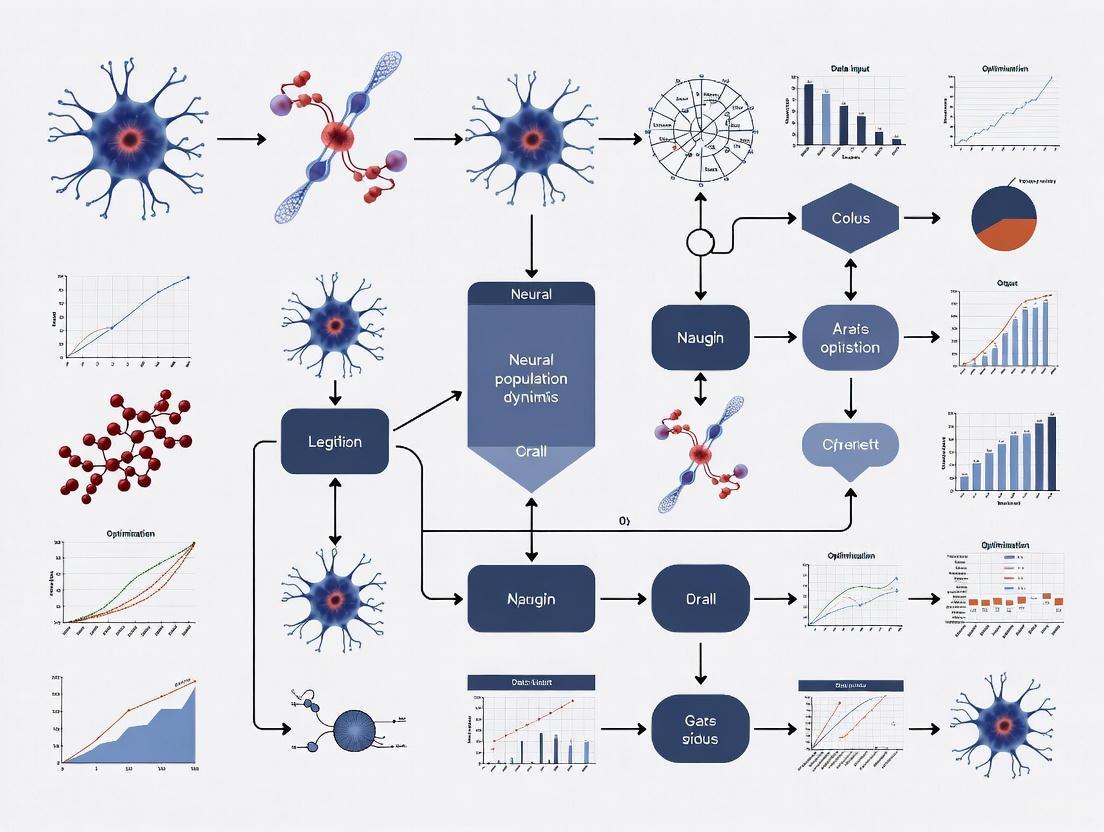

Diagram 1: Workflow Integrating Biological Discovery and Computational Modeling

Diagram 2: Neural Population Dynamics Optimization Algorithm (NPDOA) Workflow

Future Directions and Applications

The integration of neural population dynamics principles with computational modeling continues to evolve, with several promising research directions emerging. Multi-scale modeling approaches that span from molecular to systems levels represent an important frontier, enabled by advances in single-cell technologies and omics data integration [9]. Digital twin methodologies that create comprehensive computational models of biological systems for simulating disease progression and treatment response show particular promise for therapeutic development [9].

In clinical applications, understanding neural constraints has significant implications for neurorehabilitation. As Grigsby notes, "If we have an understanding of how constrained this activity is, we may be able to positively impact patient care and recovery. The idea is that we can maybe help them regain some motor control by using optimized learning that takes into account the constraints of neural activity sequence" [4]. This approach could lead to more effective BCI-based rehabilitation strategies that work with, rather than against, the intrinsic dynamics of neural circuits.

Further development of brain-inspired algorithms also presents opportunities for advancing artificial intelligence and optimization methods. The NPDOA demonstrates that principles extracted from neural computation can yield practical benefits for solving complex optimization problems, suggesting fertile ground for continued cross-disciplinary collaboration between neuroscience and computer science [3].

The study of neural population dynamics has transformed from descriptive characterization to mechanistic computational modeling, creating a virtuous cycle where biological insights inspire algorithmic innovations that in turn generate new hypotheses about neural function. The empirical observation that neural trajectories follow constrained paths shaped by network architecture [4] [2] has profound implications for both basic neuroscience and clinical applications.

The development of sophisticated modeling approaches like MARBLE [7], BLEND [8], and CroP-LDM [6], combined with active learning paradigms [5], continues to enhance our ability to infer neural computations from population activity data. Meanwhile, the translation of these biological principles to optimization algorithms like NPDOA [3] demonstrates the practical value of this research beyond neuroscience.

As measurement technologies continue to improve, enabling larger-scale neural recordings with greater precision, and computational methods become increasingly sophisticated, the interplay between biological networks and computational models will likely yield further insights into one of the most complex systems in nature—the brain.

This technical guide delineates the core principles of attractors, coupling, and information projection in neural systems, framing these concepts within the context of the novel Neural Population Dynamics Optimization Algorithm (NPDOA). The NPDOA is a brain-inspired meta-heuristic that translates the computational capabilities of interconnected neural populations into an efficient optimization framework [10]. We provide a quantitative analysis of its performance against established algorithms, detail the experimental protocols for benchmarking, and present visualizations of its core mechanisms. Aimed at researchers and scientists, this whitepaper serves as a foundational reference for understanding and applying these brain-inspired principles to complex optimization problems in fields including computational biology and drug development.

Neural population dynamics refer to the collective activity of interconnected neurons in the brain during sensory, cognitive, and motor computations [10]. The human brain excels at processing diverse information and making optimal decisions, a capability that inspires computational models. The dynamics of neuron populations often evolve on low-dimensional manifolds, meaning that the high-dimensional activity of many neurons can be described by a much smaller number of underlying variables [7].

The Neural Population Dynamics Optimization Algorithm (NPDOA) is a novel swarm intelligence meta-heuristic that directly translates these brain activities into an optimization method. In the NPDOA, a potential solution to an optimization problem is treated as the neural state of a neural population. Each decision variable in the solution represents a neuron, and its value corresponds to the neuron's firing rate [10]. This conceptual framework allows the algorithm to simulate the cognitive and decision-making processes of the brain through three core strategies, which will be explored in detail in this guide.

Defining Attractors in Neural Systems

Theoretical Foundations

An attractor is a fundamental concept in dynamical systems theory, describing a set of states toward which a system naturally evolves from a wide range of starting conditions [11]. Imagine a landscape of hills and valleys: a ball placed on any point of a hill will roll down into the valley below. The valley is the attractor—a stable state that "attracts" nearby states [11].

Attractor networks are a popular computational construct used to model different brain systems, allowing for elegant computations that represent various aspects of brain function [11]. They exhibit properties like robustness against damage (structural stability), pattern completion (the ability to recall a full memory from a partial cue), and pattern separation (the ability to distinguish between similar inputs) [11].

Types of Attractors in Neural Circuits

The brain employs several geometrically distinct types of attractors, each suited for representing different kinds of information [11]:

- Point Attractors: A single, stable equilibrium state. This is the simplest type of attractor, useful for modeling decisions where activity settles on one final outcome, such as in memory recall or perceptual decision-making [11].

- Ring Attractors: A continuous set of stable states arranged in a circle. This topology is ideal for representing cyclical variables without boundaries, such as head direction. The activity forms a stable "bump" that can move around the ring, tracking the variable it encodes [11].

- Line Attractors: A continuous line of stable states, suitable for representing a variable with a range, like the position of an eye [11].

- Plane Attractors: An extension to two dimensions, useful for representing spatial location. When the topology of the plane is transformed into a torus, it can model the periodic spatial patterns of grid cells [11].

Table 1: Types of Neural Attractors and Their Functional Roles

| Attractor Type | Geometric Structure | Proposed Neural Correlate | Computational Function |

|---|---|---|---|

| Point Attractor | A single stable state | Memory patterns, decision outcomes | Discrete memory storage, pattern completion, final decision state |

| Ring Attractor | A continuous circle of states | Head-direction cells | Encoding of cyclical variables (e.g., heading direction) |

| Plane Attractor | A 2D sheet of states | Place cells, grid cells | Spatial navigation and mapping |

In the context of the NPDOA, the attractor trending strategy drives the neural populations (potential solutions) towards optimal decisions, thereby ensuring the algorithm's exploitation capability. It guides the neural states to converge towards stable states associated with favorable decisions [10].

The Role of Coupling in Neural Dynamics

Conceptual Framework

Coupling in neural systems refers to the structured connectivity and interactions between neurons or distinct neural populations. These connections, defined by synaptic weights, determine how the activity of one neuron influences another. The specific pattern of coupling is what gives rise to the rich attractor dynamics described in the previous section [11].

For instance, in a model of head-direction cells, nearby neurons on the conceptual "ring" are connected by strong excitatory synapses, which reinforce each other's activity. In contrast, neurons that are far apart on the ring are connected with inhibitory synapses, which suppress each other's activity. This specific coupling architecture is what allows a stable "bump" of activity—the attractor—to form and persist [11].

Coupling as a Disturbance Mechanism in NPDOA

The NPDOA co-opts this biological principle through its coupling disturbance strategy. While the attractor trending strategy pulls populations toward stability, the coupling disturbance strategy intentionally disrupts this process. It deviates neural populations from their current attractors by simulating coupling with other neural populations [10].

This mechanism is crucial for maintaining the algorithm's exploration ability. By preventing populations from converging too quickly to a single point, it helps the algorithm avoid becoming trapped in local optima and encourages a broader search of the solution space [10]. This reflects a computational interpretation of the dynamic and adaptive couplings observed in biological neural networks, which can be shaped by learning [12].

Information Projection and Regulation

Information projection is the process that controls communication and information flow between different neural populations. In the brain, this is analogous to the function of specific neural pathways that relay processed information from one brain region to another to guide behavior and perception.

Within the NPDOA, the information projection strategy acts as a regulatory mechanism that balances the opposing forces of the attractor trending (exploitation) and coupling disturbance (exploration) strategies [10]. It governs how populations influence one another, effectively controlling the transition from a broad exploratory search to a focused exploitation of promising regions.

This strategy enables a dynamic balance, which is critical for the performance of any meta-heuristic algorithm. Without effective regulation, an algorithm may either converge prematurely to a suboptimal solution (too much exploitation) or fail to converge at all (too much exploration) [10].

The Neural Population Dynamics Optimization Algorithm (NPDOA)

Integrated Framework and Workflow

The NPDOA integrates the three core principles into a cohesive optimization framework. The algorithm treats each potential solution as a neural population and iteratively updates these populations by applying the three core strategies [10].

Diagram 1: NPDOA Core Workflow

Benchmarking and Quantitative Performance

The NPDOA has been systematically evaluated against other meta-heuristic algorithms on benchmark and practical engineering problems. The results demonstrate its distinct benefits in addressing many single-objective optimization problems [10].

Table 2: Comparative Analysis of Meta-heuristic Algorithms (Based on NPDOA Source)

| Algorithm Class | Representative Algorithms | Key Strengths | Common Drawbacks |

|---|---|---|---|

| Evolutionary Algorithms | Genetic Algorithm (GA), Differential Evolution (DE) | High efficiency, easy implementation, simple structures | Premature convergence, challenging problem representation, multiple parameters to tune |

| Swarm Intelligence | Particle Swarm (PSO), Artificial Bee Colony (ABC) | Cooperative cooperation, individual competition | Falls into local optima, low convergence speed, high computational complexity in high dimensions |

| Physical-inspired | Simulated Annealing (SA), Gravitational Search (GSA) | Versatile tools combining physics with optimization | Trapping into local optimum, premature convergence |

| Mathematics-inspired | Sine-Cosine (SCA), Gradient-Based (GBO) | New perspective on search strategies | Lack of trade-off between exploitation and exploration |

| Brain-inspired (NPDOA) | Neural Population Dynamics (NPDOA) | Balanced exploration/exploitation via three novel strategies | As per the No-Free-Lunch theorem, may not excel in all problems |

Experimental Protocols and Research Toolkit

Benchmarking Protocol for Algorithm Validation

To validate the performance of algorithms like the NPDOA, researchers employ a standardized protocol using benchmark problems. The following methodology is adapted from common practices in the field and aligns with the experimental studies conducted for the NPDOA [10] [13].

- Problem Selection: A diverse set of benchmark problems is selected. This includes:

- Classical Mathematical Functions: Unimodal and multimodal functions (e.g., Sphere, Rastrigin, Ackley) to test convergence and avoidance of local minima.

- Practical Engineering Design Problems: Real-world constrained problems like the compression spring design, cantilever beam design, pressure vessel design, and welded beam design [10].

- Synthetic Non-linear Datasets: For testing feature selection capabilities, datasets like RING (circular decision boundaries) and XOR (archetypal non-linear problem) can be used [13].

- Experimental Setup:

- Platform: Experiments are run on a computational platform like PlatEMO [10].

- Hardware: A standard computer configuration (e.g., Intel Core i7 CPU, 2.10 GHz, 32 GB RAM) is used for consistent timing [10].

- Parameters: Population size, maximum iterations, and algorithm-specific parameters are defined and kept consistent across runs.

- Performance Metrics:

- Solution Quality: Best, worst, average, and standard deviation of the final objective function value over multiple independent runs.

- Convergence Speed: The number of iterations or function evaluations required to reach a predefined solution threshold.

- Statistical Significance: Non-parametric statistical tests (e.g., Wilcoxon signed-rank test) are used to confirm the significance of performance differences.

The Scientist's Toolkit: Research Reagents & Materials

This table details key computational tools and concepts used in research on neural population dynamics and algorithms like the NPDOA.

Table 3: Essential Research Tools for Neural Dynamics and Optimization

| Tool / Concept | Type / Category | Function in Research |

|---|---|---|

| PlatEMO | Software Platform | A MATLAB-based platform for experimental evolutionary multi-objective optimization, used for running and comparing algorithms [10]. |

| Synthetic Datasets (e.g., XOR, RING) | Benchmark Data | Non-linearly separable datasets with known ground truth, used to quantitatively evaluate an algorithm's ability to identify complex feature relationships [13]. |

| Attractor Network Models | Theoretical Framework | Computational models (e.g., ring attractors) that provide the foundational inspiration for brain-inspired optimization strategies [11] [10]. |

| Low-Dimensional Manifold | Analytical Concept | The subspace in which high-dimensional neural population activity actually evolves; its structure is key to understanding neural computations [7]. |

| Variational Free Energy (VFE) | Mathematical Principle | A quantity that, when minimized, can explain the emergence of self-organizing attractor dynamics in a system, per the Free Energy Principle [12]. |

The principles of attractors, coupling, and information projection are not merely descriptive of brain function; they are powerful constructs that can be engineered into efficient computational algorithms. The Neural Population Dynamics Optimization Algorithm stands as a testament to this, translating the brain's ability to balance stability (via attractors) with flexibility (via coupling and regulation) into a robust optimization methodology. For researchers in fields from computational neuroscience to drug development, these principles offer a rich, brain-inspired framework for solving complex, non-linear problems. Future work will focus on extending these principles to multi-objective optimization and further validating their efficacy on large-scale, real-world biological datasets.

The brain functions as a complex, high-dimensional dynamical system. Understanding how neural populations—ensembles of interacting neurons—process information and generate behavior requires a shift from static snapshots to a dynamical systems framework. This framework posits that the computational capabilities of neural circuits are embedded within the temporal evolution of their population-level activity. The core concept is computation through dynamics (CTD), where the rules governing how a neural population's state changes over time (its dynamics) directly perform sensory, cognitive, and motor computations [1]. Formally, this is described by a differential equation: ( \frac{d\mathbf{x}}{dt} = f(\mathbf{x}(t), \mathbf{u}(t)) ). Here, ( \mathbf{x} ) is an N-dimensional vector representing the firing rates of N neurons at time ( t ), known as the neural population state. The function ( f ) embodies the dynamical rules dictated by the brain's circuitry, and ( \mathbf{u} ) represents external inputs to the circuit [1]. A primary goal of modern systems neuroscience is to infer these latent dynamical rules, ( f ), from recorded neural activity and to understand how they are optimized to drive goal-directed behavior, forming the basis for research into Neural Population Dynamics Optimization Algorithms (NPDOAs) [10].

Theoretical Foundations: Neural State Spaces and Dynamics

The Neural State Space

The neural population state, comprising the simultaneous activity of all recorded neurons, defines a point in a high-dimensional state space. Each dimension corresponds to the activity of one neuron. The evolution of this state over time traces a trajectory in this space, much like the path of a pendulum defined by its position and velocity [1]. While neural recordings can encompass hundreds of dimensions, the underlying dynamics often reside on a lower-dimensional manifold. Dimensionality reduction techniques are crucial for visualizing and analyzing these trajectories, as they allow researchers to project high-dimensional data into a 2D or 3D subspace that captures the majority of the variance, making the system's flow field interpretable [1].

Table 1: Key Concepts in Dynamical Systems Neuroscience

| Concept | Mathematical Representation | Neural Interpretation |

|---|---|---|

| Neural Population State | ( \mathbf{x}(t) = [x1(t), x2(t), ..., x_N(t)] ) | The firing rates of N neurons at a given time [1]. |

| Dynamical Rule | ( \frac{d\mathbf{x}}{dt} = f(\mathbf{x}(t), \mathbf{u}(t)) ) | The transformation performed by the neural circuit's wiring and biophysics [1]. |

| State Space Trajectory | The path of ( \mathbf{x}(t) ) over time | The time-course of population-wide neural activity [1]. |

| Input | ( \mathbf{u}(t) ) | External sensory stimuli or internal signals driving the circuit [1]. |

| Attractor | A state (or set of states) toward which the system evolves | Can represent stable network states, such as memory holdings or decision outcomes [10]. |

The Challenge of High-Dimensional Parameter Spaces

Personalized brain modeling introduces a significant challenge: high-dimensional parameter spaces. Instead of using a few global parameters for an entire brain model, a more precise approach is to equip each brain region with its own local model parameter. This creates a model with over 100 free parameters that must be optimized simultaneously to fit empirical data [14]. Traditional parameter search methods become computationally intractable in such high-dimensional spaces. This necessitates the use of sophisticated mathematical optimization algorithms, such as Bayesian Optimization (BO) and the Covariance Matrix Adaptation Evolution Strategy (CMA-ES), to maximize the fit between simulated and empirical functional connectivity for individual subjects [14]. Navigating this high-dimensional space is crucial for uncovering the individual differences in brain dynamics that may relate to behavior and disease.

Methodologies for Modeling and Optimization

Data-Driven and Task-Trained Modeling Approaches

Two primary modeling approaches are used to infer neural dynamics. Data-driven (DD) models are trained to reconstruct recorded neural activity as the product of a low-dimensional dynamical system and an embedding function [15]. The goal is to infer the latent dynamics ( f ), embedding ( g ), and latent state ( \mathbf{z} ) directly from neural observations ( \mathbf{y} ). In contrast, task-trained (TT) models are trained to perform specific, goal-directed input-output transformations. These models are often used to generate synthetic benchmark datasets that reflect the computational properties of biological circuits, which are more suitable for validation than non-computational chaotic attractors [15].

The Neural Population Dynamics Optimization Algorithm (NPDOA)

Inspired by brain neuroscience, the NPDOA is a meta-heuristic algorithm that treats potential solutions as neural states of interconnected neural populations. It simulates decision-making and cognitive processes through three core strategies [10]:

- Attractor Trending Strategy: Drives the neural states of populations towards attractors associated with optimal decisions, ensuring exploitation capability.

- Coupling Disturbance Strategy: Deviates neural states from attractors through coupling with other populations, improving exploration ability.

- Information Projection Strategy: Controls communication between neural populations, enabling a transition from exploration to exploitation and balancing the two [10].

This brain-inspired approach offers a novel method for solving complex, nonlinear optimization problems common in engineering and scientific research.

Table 2: Optimization Algorithms for High-Dimensional Neural Modeling

| Algorithm | Category | Key Mechanism | Application in Neural Dynamics |

|---|---|---|---|

| CMA-ES | Evolutionary Algorithm | Adapts the covariance matrix of a search distribution to fit the topology of the objective function [14]. | Optimizing up to 103 regional parameters in whole-brain models to fit empirical functional connectivity [14]. |

| Bayesian Optimization (BO) | Sequential Model-Based | Builds a probabilistic model of the objective function to direct the search towards promising parameters [14]. | Personalized fitting of whole-brain models in high-dimensional parameter spaces [14]. |

| Neural Population Dynamics Optimization (NPDOA) | Brain-Inspired Meta-heuristic | Mimics attractor trending, coupling disturbance, and information projection of neural populations [10]. | Solving general nonlinear single-objective optimization problems [10]. |

Figure 1: A workflow for inferring neural dynamics from high-dimensional data, illustrating the roles of dimensionality reduction, model optimization, and validation.

Experimental Protocols and Validation

A Protocol for High-Dimensional Whole-Brain Model Fitting

The following methodology outlines the process for fitting a whole-brain model in a high-dimensional parameter space, as demonstrated by Wischnewski et al. (2025) [14]:

- Model Selection: Choose a base dynamical model (e.g., a system of coupled phase oscillators) where each brain region is represented by a node.

- Parameterization: Define a high-dimensional parameter space by assigning a local model parameter (e.g., a coupling gain or intrinsic frequency) to each individual brain region, in addition to any global parameters.

- Empirical Data: Obtain subject-specific structural connectivity (SC) and resting-state functional connectivity (FC) data, typically from diffusion-weighted and functional MRI.

- Optimization Setup: Define the objective function, typically the correlation between the simulated FC (sFC) from the model and the empirical FC. Initialize the chosen optimization algorithm (BO or CMA-ES).

- Iterative Optimization: Run the optimization algorithm to iteratively propose new parameter sets. For each proposal, run the whole-brain simulation, compute the sFC, and evaluate the objective function. The algorithm uses this feedback to refine its search.

- Validation and Analysis: Upon convergence, validate the optimized model by analyzing the goodness-of-fit (GoF), the reliability of the sFC across runs, and the stability of the optimized parameters. The resulting parameters and GoF can then be used as features for downstream tasks like clinical classification [14].

Benchmarking with the Computation-through-Dynamics Benchmark (CtDB)

To address the challenge of validating data-driven models, the Computation-through-Dynamics Benchmark (CtDB) provides a standardized platform. CtDB offers [15]:

- Synthetic Datasets: A library of datasets generated by task-trained models that reflect goal-directed computations, moving beyond simple chaotic attractors.

- Interpretable Metrics: A set of metrics that go beyond neural activity reconstruction to assess how accurately a model has inferred the underlying ground-truth dynamics ( f ). These metrics are sensitive to specific model failures.

- Standardized Pipeline: A public codebase for training and evaluating models, facilitating comparison and rapid iteration during development.

Table 3: Key Performance Criteria for Data-Driven Dynamics Models

| Performance Criterion | Description | Why It Matters |

|---|---|---|

| Reconstruction Accuracy | How well the model predicts recorded or simulated neural activity. | Necessary but not sufficient; high reconstruction does not guarantee accurate dynamics inference [15]. |

| Dynamics Identification | How accurately the model infers the underlying dynamical rules ( f ). | Core to the CTD framework; ensures the model has learned the correct computational algorithm [15]. |

| Generalization | How well the model predicts neural activity under conditions different from the training data (e.g., different inputs). | Tests the robustness and true predictive power of the inferred dynamics [15]. |

This section details essential computational tools, algorithms, and resources for research in neural population dynamics.

Table 4: Essential Research Tools for Neural Dynamics

| Tool / Resource | Type | Function in Research |

|---|---|---|

| CMA-ES & Bayesian Optimization | Optimization Algorithm | Fitting high-dimensional, personalized whole-brain models to empirical neuroimaging data [14]. |

| Recurrent Neural Networks (RNNs) | Deep Learning Model | Serves as a parameterized dynamical system ( R_θ(x(t), u(t)) ) for both data modeling and task-based modeling [1]. |

| Computation-through-Dynamics Benchmark (CtDB) | Benchmarking Platform | Provides synthetic datasets and metrics for validating data-driven dynamics models [15]. |

| NeuroMark Pipeline | Neuroimaging Tool | A hybrid functional decomposition tool for estimating subject-specific brain networks from fMRI data, useful for generating features for modeling [16]. |

| NPDOA | Meta-heuristic Algorithm | A novel optimization algorithm inspired by neural population dynamics for solving complex engineering problems [10]. |

Figure 2: The three core strategies of the NPDOA, showing how they interact to balance exploration and exploitation during the search for an optimal solution.

Applications and Future Directions

The dynamical systems framework has been successfully applied to elucidate computation in various domains, including motor control, decision-making, and working memory [1]. In clinical neuroscience, personalized whole-brain models optimized in high-dimensional spaces have shown promise for improving the classification of neurological and psychiatric conditions. For instance, the coupling parameters and goodness-of-fit values from these high-dimensional models have demonstrated significantly higher accuracy in sex classification tasks compared to low-dimensional models, highlighting their sensitivity to individual differences [14]. The future of this field hinges on the development of more powerful and reliable data-driven models, the creation of richer benchmarks through CtDB, and the continued integration of optimization algorithms like NPDOA to navigate the complex, high-dimensional landscapes of the brain's dynamics. This convergence of computational neuroscience, optimization theory, and clinical application paves the way for a deeper understanding of brain function and dysfunction.

The brain functions as a highly efficient biological system that continuously solves complex optimization problems to link sensation with action. Through sophisticated neural computations, it transforms ambiguous sensory inputs into decisive motor commands, balancing competing goals such as speed and accuracy. This in-depth technical guide explores the core principles that the brain employs to achieve these optimization goals, focusing on the dynamics of neural populations across distributed circuits. Understanding these mechanisms—how the brain filters relevant sensory evidence, accumulates information over time, and prepares motor outputs—provides not only fundamental insights into cognition but also a framework for developing novel therapeutic interventions in neurological and psychiatric disorders. The following sections synthesize recent advances in large-scale neural recording, computational modeling, and theoretical frameworks that reveal how distributed neural dynamics are orchestrated to achieve behavioral optimization.

Neural Computations in Sensory Processing

Sensory processing involves filtering and transforming raw sensory input into behaviorally relevant representations. Recent brain-wide recording techniques reveal that these representations are surprisingly distributed across brain regions.

Widespread Encoding of Sensory Evidence

In trained mice performing a visual change detection task, neural responses to subtle, behaviorally relevant fluctuations in visual stimulus temporal frequency (TF) were observed across most brain areas [17]. Table 1 summarizes the distribution of TF-responsive neurons across major brain regions.

Table 1: Distribution of Visual Evidence (Temporal Frequency) Encoding Across Brain Areas

| Brain Region | Category | Percentage of TF-Responsive Neurons |

|---|---|---|

| Visual Cortex | Sensory Areas | Highest concentration |

| Frontal Cortex (MOs, ACA, mPFC) | Association Cortex | 5-25% |

| Basal Ganglia (CP, GPe, SNr) | Subcortical | 5-25% |

| Hippocampus (DG, CA1, CA3) | Medial Temporal Lobe | 5-25% |

| Midbrain (MRN, APN, SCm) | Midbrain | 5-25% |

| Cerebellum (Lob4/5, SIM, DCN) | Cerebellum | 5-25% |

| Medulla & Orofacial Motor Nuclei | Motor Output | Not significant |

These sensory representations are sparse, with only 5-45% of neurons in non-sensory areas encoding stimulus fluctuations, and cannot be explained by movement artifacts, as fast or slow TF pulses did not trigger consistent movements [17].

Experimental Protocol: Identifying Sensory-Responsive Neurons

Objective: To identify neurons encoding sensory evidence and characterize their response properties during perceptual decision-making [17].

Task Design: Head-fixed mice observe a drifting grating stimulus whose speed fluctuates noisily every 50ms around a baseline. Mice must report sustained speed increases by licking a reward spout while remaining stationary during evidence presentation.

Neural Recording: Simultaneously record brain-wide neural activity using dense silicon electrode arrays (Neuropixels) spanning 51 brain regions, complemented by high-speed videography of facial movements and pupil.

Statistical Modeling: Fit single-cell Poisson generalized linear models (GLMs) to neural activity using task-related events, stimuli, and behavioral parameters as predictors.

Cross-Validation: Use nested cross-validation tests (holding out predictors of interest) to identify neurons significantly encoding sensory evidence (stimulus TF) while accounting for variance from other task variables.

Response Characterization: For identified sensory-responsive neurons, quantify response properties including peak time and duration by aligning neural activity to fast TF pulses (50ms stimulus samples).

This protocol reveals that sensory evidence is not confined to canonical sensory pathways but is widely distributed, enabling parallel processing across the brain [17].

Optimization Through Evidence Accumulation in Decision-Making

Decision-making under uncertainty requires accumulating sensory evidence over time to reach a threshold for action selection. Neural population dynamics reveal how this computation is implemented across distributed brain circuits.

Neural Dynamics of Evidence Integration

During perceptual decisions, neural populations exhibit dynamics consistent with evidence accumulation. Several key findings have emerged from recent studies:

Distributed Integration: Evidence integration emerges sparsely across most brain areas after learning, with integrated sensory representations driving movement-preparatory activity [17]. Visual responses evolve from transient activations in sensory areas to sustained representations in frontal-motor cortex, thalamus, basal ganglia, midbrain, and cerebellum, enabling parallel evidence accumulation [17].

Shared Dynamics for Evidence and Action: In evidence-accumulating regions, shared population activity patterns encode both visual evidence and movement preparation, distinct from movement-execution dynamics [17]. Activity in movement-preparatory subspace is driven by evidence-integrating neurons and collapses at movement onset, allowing the integration process to reset [17].

Integrated Selection and Control: Theoretical models and neural evidence suggest that action selection and sensorimotor control are not implemented by distinct modules but represent two modes of an integrated dynamical system [18]. Dimensionality reduction of neural activity in premotor, primary motor, and prefrontal cortex, as well as the globus pallidus, reveals functionally interpretable components reflecting state transitions between deliberation and commitment [18].

Individual Variability in Neural Implementations

Despite common computational principles, individuals can employ different neural implementations to solve the same task. In rats performing a context-dependent auditory decision task, substantial heterogeneity was observed across individuals in both behavior and neural dynamics, despite uniformly good task performance [19]. Theoretical frameworks define a space of possible network solutions that can implement the required computation, with different individuals occupying different regions of this solution space [19].

Table 2: Individual Variability in Neural Implementations of Decision-Making

| Analysis Method | Key Finding | Theoretical Implication |

|---|---|---|

| Targeted Dimensionality Reduction | Similar choice axes across contexts | Parallel neural trajectories for different decisions |

| Model-Based TDR Analysis | Essentially one-dimensional dynamics during accumulation | Evidence accumulation along a line attractor |

| Cross-Individual Comparison | Heterogeneous neural dynamics despite similar performance | Multiple network solutions can implement same computation |

| Theory-Behavior Linking | Specific link between neural and behavioral signatures | Variability in solution space position drives joint neural-behavioral variability |

Experimental Protocol: Targeted Dimensionality Reduction for Decision Dynamics

Objective: To identify and visualize low-dimensional neural trajectories during evidence accumulation and decision formation [19].

Task Design: Rats perform a context-dependent auditory pulse task where they must determine either the prevalent location or frequency of auditory pulses based on a contextual cue. Pulse rates provide independent evidence for location and frequency decisions.

Neural Recording: Implant tetrodes in frontal orienting fields (FOF) and medial prefrontal cortex (mPFC) to record single-neuron activity during task performance.

Pseudo-Population Construction: Combine neurons recorded across different sessions into a single time-evolving N-dimensional neural vector, averaging across trials with identical pulse rates for each context and choice.

Subspace Identification: Apply targeted dimensionality reduction to identify orthogonal linear subspaces that best predict the subject's choice, momentary location evidence, or momentary frequency evidence.

Trajectory Visualization: Project noise-reduced neural trajectories onto the identified choice axis to visualize evidence accumulation dynamics during stimulus presentation.

This protocol reveals that choice-related information evolves along an essentially one-dimensional straight line in neural space during evidence accumulation, consistent with gradual integration of sensory evidence [19].

Motor Control as the Optimization Endpoint

The final stage of sensorimotor transformation involves converting decision signals into precisely timed motor commands. Neural population dynamics reveal how this transition is optimized.

From Deliberation to Commitment

Neural activity during decision-making transitions from a deliberation phase to commitment and movement execution. During deliberation, cortical activity unfolds on a two-dimensional "decision manifold" defined by sensory evidence and urgency [18]. At the moment of commitment, activity falls off this manifold into a choice-dependent trajectory leading to movement initiation [18]. The structure of this manifold varies across brain regions:

- In PMd, the decision manifold is curved

- In M1, it is nearly perfectly flat

- In dlPFC, it is almost entirely confined to the sensory evidence dimension

- In the pallidum, activity during deliberation is primarily defined by urgency [18]

Geometric Deep Learning for Neural Dynamics

Recent advances in geometric deep learning enable more interpretable representations of neural population dynamics. The MARBLE (MAnifold Representation Basis LEarning) framework decomposes on-manifold dynamics into local flow fields and maps them into a common latent space using unsupervised geometric deep learning [7]. This approach:

- Discovers emergent low-dimensional latent representations that parametrize high-dimensional neural dynamics

- Enables consistent representation across neural networks and animals

- Provides robust comparison of cognitive computations

- Achieves state-of-the-art within- and across-animal decoding accuracy [7]

Cross-Regional Dynamics and Optimization Pathways

Complex behaviors require coordinated interactions across multiple brain regions. Understanding these cross-population dynamics is essential for elucidating how optimization is achieved through distributed computation.

Prioritized Learning of Cross-Population Dynamics

Cross-population prioritized linear dynamical modeling (CroP-LDM) addresses the challenge of identifying shared dynamics across neural populations that may be confounded by within-population dynamics [6]. This approach:

- Prioritizes learning dynamics shared across populations over within-population dynamics

- Supports both causal filtering (using only past data) and non-causal smoothing (using all data)

- Quantifies dominant interaction pathways across brain regions interpretably

- Reveals biologically consistent pathways, such as PMd better explaining M1 than vice versa [6]

Signaling Pathways in Integrated Sensorimotor Decisions

The following diagram illustrates the integrated neural pathway from sensory evidence to motor execution, synthesizing findings from multiple studies of neural population dynamics during decision-making:

Integrated Pathway from Sensation to Action

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Methods for Studying Neural Population Dynamics

| Tool/Method | Function | Example Application |

|---|---|---|

| Neuropixels Probes | High-density electrode arrays for large-scale neural recording | Simultaneous recording from 51 brain regions in mice [17] |

| Poisson Generalized Linear Models (GLMs) | Identify neurons encoding task variables while accounting for covariates | Quantifying sensory, decision, and motor encoding [17] |

| Targeted Dimensionality Reduction (TDR) | Identify neural subspaces related to specific task variables | Visualizing choice-related neural trajectories [19] |

| Geometric Deep Learning (MARBLE) | Learn interpretable representations of neural dynamics | Comparing neural computations across subjects and species [7] |

| Cross-Population Prioritized LDM (CroP-LDM) | Model shared dynamics across neural populations | Identifying dominant interaction pathways between brain regions [6] |

| Urgency-Gating Model (UGM) | Computational model combining evidence and urgency | Accounting for speed-accuracy tradeoffs in decision-making [18] |

Advanced Analysis Framework for Neural Dynamics

The following diagram outlines the MARBLE framework workflow for extracting interpretable representations from neural population dynamics:

MARBLE Framework for Neural Dynamics Analysis

Implications for Drug Discovery and Therapeutic Development

Understanding neural computations and their optimization principles has significant implications for drug discovery, particularly for neurological and psychiatric disorders affecting decision-making and motor control.

Structure- and Dynamics-Based Drug Discovery

Computer-aided drug discovery (CADD) has evolved from static structure-based approaches to incorporate dynamics-based methods that account for target flexibility [20]. Key advances include:

Expanded Structural Data: Machine learning tools like AlphaFold have dramatically expanded the available structural data for drug targets, predicting over 214 million unique protein structures [20].

Dynamics-Based Methods: Molecular dynamics (MD) simulations and the Relaxed Complex Method enable sampling of target conformations, including cryptic pockets not evident in static structures [20].

Ultra-Large Virtual Screening: Combinatorial libraries of drug-like compounds have grown to billions of molecules, enabling unprecedented exploration of chemical space [20].

Targeting Neural Computation in Disorders

Disruptions in neural computations underlie many neuropsychiatric disorders. Understanding the normal optimization principles in sensory processing, decision-making, and motor control provides:

- Novel targets for restoring normal neural dynamics in conditions like schizophrenia, addiction, and anxiety disorders

- Biomarkers for assessing treatment efficacy based on normalization of neural dynamics

- Computational frameworks for predicting how pharmacological interventions alter neural population dynamics

The brain achieves remarkably efficient sensorimotor transformations through distributed neural computations that optimize behavior across multiple constraints. Evidence accumulation emerges as a fundamental optimization strategy implemented in parallel across brain regions, with shared dynamics linking sensory evidence to motor preparation. Individual variability in neural implementations reveals multiple solutions to the same computational problem, while cross-regional interactions coordinate these distributed processes. Advanced analytical approaches, including geometric deep learning and prioritized dynamical modeling, provide increasingly powerful tools for deciphering these neural optimization principles. These insights not only advance our fundamental understanding of brain function but also open new avenues for therapeutic interventions targeting disrupted neural computations in neurological and psychiatric disorders.

Implementing Brain-Inspired Optimization: Strategies and Real-World Applications

In the evolving landscape of computational optimization, meta-heuristic algorithms have gained significant popularity for their efficiency in solving complex, non-linear problems across diverse scientific fields. Neural Population Dynamics Optimization Algorithm (NPDOA) represents a groundbreaking paradigm shift as the first swarm intelligence optimization algorithm that strategically leverages human brain activity mechanisms for computational optimization [10]. Unlike traditional algorithms inspired by animal behavior or evolutionary processes, NPDOA derives its foundational principles from theoretical neuroscience, specifically simulating the decision-making processes of interconnected neural populations during cognitive tasks [10] [21].

The algorithm operates on the population doctrine in theoretical neuroscience, treating each potential solution as a neural population where decision variables correspond to individual neurons and their values represent neuronal firing rates [10]. This bio-inspired approach allows NPDOA to effectively balance the critical characteristics of any successful meta-heuristic algorithm: exploration (identifying promising areas in the search space) and exploitation (thoroughly searching those promising areas) [10]. Without adequate exploration, algorithms converge prematurely to local optima, while insufficient exploitation prevents convergence altogether [10]. NPDOA addresses this fundamental challenge through three innovatively designed strategies working in concert: attractor trending, coupling disturbance, and information projection [10].

Core Architectural Components of NPDOA

Attractor Trending Strategy

The attractor trending strategy forms the exploitation backbone of NPDOA, responsible for driving neural populations toward optimal decisions by guiding them toward stable neural states associated with favorable decisions [10]. In neuroscience, attractors represent stable states in neural networks that correspond to specific decisions or memories; NPDOA computationally emulates this phenomenon to refine solutions toward optimality.

- Biological Foundation: This strategy mirrors how neural circuits in the brain settle into stable activity patterns during decision-making processes [10]. The mathematical implementation creates dynamic attractors within the solution space that gradually pull candidate solutions toward regions of higher fitness.

- Computational Implementation: The algorithm positions attractors at promising locations within the search space, typically at or near the current best solutions. Neural populations are then driven toward these attractors through position update equations that simulate the "gravitational pull" of promising regions [10].

- Role in Optimization: By systematically guiding solutions toward these attractors, NPDOA ensures intensive local search around high-quality solutions, enabling the algorithm to refine solutions and achieve high-precision convergence [10].

Coupling Disturbance Strategy

The coupling disturbance strategy provides the essential exploration mechanism in NPDOA by deliberately deviating neural populations from attractors through coupling with other neural populations [10]. This strategic disruption prevents premature convergence and maintains population diversity throughout the optimization process.

- Biological Foundation: This approach mimics the phenomenon where neural populations influence each other through inhibitory or excitatory connections, creating dynamic interactions that prevent neural networks from becoming trapped in single stable states [10].

- Computational Implementation: The strategy introduces calculated perturbations to solution vectors by coupling them with other population members, effectively creating controlled diversity within the population [10]. These disturbances are mathematically formulated to provide sufficient magnitude to escape local optima while maintaining the potential for discovering improved solutions.

- Role in Optimization: By preventing homogeneous convergence and maintaining solution diversity, coupling disturbance enables NPDOA to explore previously unvisited regions of the search space, significantly enhancing its capability to locate global optima in complex, multi-modal landscapes [10].

Information Projection Strategy

The information projection strategy serves as the regulatory mechanism in NPDOA, controlling communication between neural populations and facilitating the crucial transition from exploration to exploitation [10]. This component dynamically modulates the influence of the other two strategies based on algorithmic progress.

- Biological Foundation: This strategy computationally emulates the brain's ability to regulate information flow between different neural regions through specialized projection pathways, allowing for coordinated activity across distributed networks [10].

- Computational Implementation: Information projection operates by adjusting the weighting between attractor trending and coupling disturbance strategies throughout the optimization process [10]. Early iterations typically favor coupling disturbance to promote exploration, while later iterations progressively emphasize attractor trending to refine solutions.

- Role in Optimization: By dynamically balancing the influence of exploration and exploitation mechanisms, information projection enables NPDOA to maintain search diversity during initial phases while ensuring precise convergence during final stages [10]. This adaptive balancing represents a key innovation that addresses a fundamental limitation in many existing meta-heuristic algorithms.

Performance Evaluation: Quantitative Analysis

The efficacy of NPDOA has been rigorously validated through comprehensive testing on standard benchmark functions and practical engineering problems [10]. The algorithm demonstrates competitive performance against nine established meta-heuristic algorithms, offering distinct benefits for addressing single-objective optimization problems [10].

Table 1: Performance Comparison of NPDOA Against Other Meta-heuristic Algorithms

| Algorithm Category | Representative Algorithms | Key Advantages | Common Limitations | NPDOA Improvements |

|---|---|---|---|---|

| Evolutionary Algorithms | Genetic Algorithm (GA), Differential Evolution (DE) | Effective for diverse problem types | Premature convergence, parameter sensitivity | Reduced premature convergence through coupling disturbance [10] |

| Swarm Intelligence | PSO, ABC, WOA | Good exploration capabilities | Low convergence, local optima trapping | Balanced exploration-exploitation through information projection [10] |

| Physics-inspired | SA, GSA | Simple implementation | Local optima trapping, premature convergence | Enhanced global search via brain-inspired mechanisms [10] |

| Mathematics-inspired | SCA, GBO | No metaphor requirement | Poor exploration-exploitation balance | Strategic balance through three specialized mechanisms [10] |

Table 2: NPDOA Performance on Engineering Design Problems

| Engineering Problem | Key Performance Metrics | Comparison with Traditional Methods | Notable Advantages |

|---|---|---|---|

| Compression Spring Design | High solution quality, convergence efficiency | Outperformed conventional mathematical approaches [10] | Handles nonlinear constraints effectively [10] |

| Cantilever Beam Design | Improved objective function values | Superior to other meta-heuristic algorithms [10] | Effective in structural optimization [10] |

| Pressure Vessel Design | Competitive constraint satisfaction | Better performance than established alternatives [10] | Reliable for complex engineering constraints [10] |

| Welded Beam Design | Optimized design parameters | Enhanced efficiency and solution quality [10] | Balanced exploration and exploitation [10] |

Implementation Protocols and Experimental Framework

Algorithm Initialization and Parameter Configuration

Successful implementation of NPDOA requires careful attention to initialization procedures and parameter configuration. The algorithm follows a structured initialization process:

- Population Initialization: Generate initial neural populations randomly across the search space or using problem-specific initialization techniques to provide comprehensive coverage of the solution landscape [10].

- Parameter Settings: Key parameters include population size (typically 30-100 neural populations), attraction coefficients (control attractor trending strength), disturbance factors (govern coupling disturbance intensity), and projection weights (regulate information projection influence) [10].

- Termination Criteria: Establish appropriate stopping conditions, including maximum function evaluations, convergence thresholds (minimal improvement over successive iterations), or computation time limits [10].

Experimental studies validating NPDOA were conducted using PlatEMO v4.1 on a computer equipped with an Intel Core i7-12700F CPU, 2.10 GHz, and 32 GB RAM, ensuring reproducible performance benchmarks [10].

Enhanced Variants: INPDOA for Medical Applications

The fundamental NPDOA architecture has been successfully extended to create improved variants for specialized applications. The Improved Neural Population Dynamics Optimization Algorithm (INPDOA) represents an enhanced version specifically developed for Automated Machine Learning (AutoML) optimization in medical prognostics [22].

Table 3: INPDOA Application in Medical Prognostics: Experimental Configuration

| Component | Implementation Details | Medical Application Specifics |

|---|---|---|

| Dataset | 447 ACCR patients (2019-2024), 20+ parameters spanning biological, surgical, and behavioral domains [22] | Autologous costal cartilage rhinoplasty (ACCR) prognosis prediction [22] |

| Validation Method | 12 CEC2022 benchmark functions, clinical decision support system development [22] | Bidirectional feature engineering, SHAP values for variable contribution quantification [22] |

| Performance Metrics | Test-set AUC = 0.867 for 1-month complications, R² = 0.862 for 1-year ROE scores [22] | Decision curve analysis demonstrated net benefit improvement over conventional methods [22] |

| Clinical Impact | MATLAB-based CDSS development for real-time prognosis visualization [22] | Reduced prediction latency, improved alignment between surgical precision and patient-reported outcomes [22] |

The INPDOA-enhanced AutoML model demonstrated exceptional performance in predicting rhinoplasty outcomes, achieving an AUC of 0.867 for 1-month complications and R² of 0.862 for 1-year Rhinoplasty Outcome Evaluation (ROE) scores [22]. This medical application exemplifies NPDOA's versatility in adapting to specialized domains with rigorous performance requirements.

Visualization of NPDOA Architecture and Workflow

NPDOA Algorithmic Workflow

Medical Application Framework Using INPDOA

Table 4: Essential Research Reagents and Computational Tools for NPDOA Implementation

| Resource Category | Specific Tools/Platforms | Implementation Role | Application Context |

|---|---|---|---|

| Computational Platforms | PlatEMO v4.1 [10], MATLAB [22] | Experimental framework, algorithm development | Benchmark testing, clinical decision support systems [10] [22] |

| Performance Benchmarks | CEC2017, CEC2022 test suites [23] [22] [24] | Algorithm validation, comparative analysis | Standardized performance evaluation [23] [22] |

| Medical Data Sources | ACCR patient datasets (447 patients) [22] | Real-world validation, clinical model development | Prognostic prediction for rhinoplasty outcomes [22] |

| Statistical Validation Tools | Wilcoxon rank-sum test, Friedman test [23] [24] | Statistical significance testing | Performance comparison against competing algorithms [23] [24] |

| Visualization Frameworks | SHAP values [22], custom MATLAB interfaces [22] | Model interpretability, clinical interface design | Explainable AI for clinical decision support [22] |

The Neural Population Dynamics Optimization Algorithm represents a significant advancement in meta-heuristic optimization by leveraging principles from theoretical neuroscience. Its three-strategy architecture—attractor trending, coupling disturbance, and information projection—provides a sophisticated mechanism for balancing exploration and exploitation, addressing fundamental limitations of existing optimization approaches [10].

The algorithm's effectiveness has been demonstrated across multiple domains, from standard benchmark functions to practical engineering design problems [10] and specialized medical applications [22]. The successful implementation of INPDOA in medical prognostics particularly highlights the translational potential of this approach, enabling the development of robust predictive models with clinical relevance [22].

Future research directions for NPDOA include expansion to multi-objective optimization problems, hybridization with other optimization paradigms, adaptation to dynamic optimization environments, and exploration of additional domains where brain-inspired computation could provide distinctive advantages. As a novel brain-inspired meta-heuristic, NPDOA establishes a promising foundation for the next generation of bio-inspired optimization algorithms that leverage the profound computational capabilities of neural systems.

The identification of Drug-Target Interactions (DTIs) is a critical, early, and costly step in the drug discovery pipeline. Traditional biological experiments, while reliable, suffer from high costs and time-consuming processes [25]. Neural Population Dynamics Optimization Algorithm (NPDOA) represents a novel brain-inspired meta-heuristic method designed to address complex optimization problems [10]. This case study positions NPDOA within the broader context of neural algorithm research, investigating its potential to enhance the training of deep neural networks (DNNs) for DTI prediction. We demonstrate that NPDOA-trained models can achieve superior performance by effectively balancing the exploration of the vast chemical-biological space with the exploitation of known interaction patterns, offering a robust framework for accelerating in-silico drug discovery.

Background and Theoretical Foundations

The Drug-Target Interaction Prediction Landscape

DTI prediction has evolved from ligand-based and molecular docking methods to modern deep learning approaches [26]. Deep learning models, particularly those using chemogenomics, learn representations from the chemical structures of drugs and the genomic information of targets to predict interactions [25]. However, challenges persist, including handling the complex nonlinear relationship between drugs and targets, mitigating feature redundancy, and generating reliable, well-calibrated predictions to avoid overconfident and incorrect results [25] [27] [28].

Neural Population Dynamics Optimization Algorithm (NPDOA)

Inspired by brain neuroscience, NPDOA simulates the activities of interconnected neural populations during cognition and decision-making [10]. It operates on three core strategies:

- Attractor Trending Strategy: Drives neural populations towards optimal decisions, ensuring exploitation capability.

- Coupling Disturbance Strategy: Deviates neural populations from attractors by coupling with other neural populations, thus improving exploration ability.

- Information Projection Strategy: Controls communication between neural populations, enabling a transition from exploration to exploitation [10].

This balance makes NPDOA particularly suited for optimizing complex, non-convex objective functions inherent in training DNNs for DTI prediction.

Methodology: Implementing NPDOA for DTI Prediction

The following diagram illustrates the integrated experimental workflow for NPDOA-optimized DTI prediction:

Data Preparation and Feature Engineering

The model begins with the construction of a comprehensive feature set from drugs and targets.

- Drug Representation: Drugs are commonly encoded as molecular fingerprints (e.g., 881-dimensional PubChem fingerprints) or represented as 2D topological graphs and 3D spatial structures for more advanced models [27] [29].

- Target Representation: Protein sequences are encoded via evolutionary features extracted from Position Specific Scoring Matrices (PSSM). More sophisticated encoders use pre-trained protein language models like ProtTrans to capture deeper semantic features [27] [29].

To address feature redundancy, techniques like Sparse Principal Component Analysis (SPCA) are employed to compress the features into a uniform vector space with reduced information redundancy [29] [28].

Neural Network Architecture and NPDOA Integration

A deep neural network architecture serves as the base predictor. The concatenated feature vector of a drug-target pair is fed into a multilayer feedforward network. The innovation lies in using NPDOA to optimize the training of this network.

The NPDOA algorithm treats each potential set of neural network weights as a "neural state" within a population. The attractor trending strategy guides the weight updates towards regions that minimize loss (exploitation), while the coupling disturbance strategy introduces stochasticity to help the model escape local minima (exploration). The information projection strategy balances the influence of these two forces across training epochs, ensuring a robust and efficient path to convergence [10]. This is particularly valuable for sufficiently learning the features of the chemical space of drugs and the biological space of targets without getting trapped in suboptimal solutions [25].

Experimental Protocol and Evaluation

Benchmarking and Experimental Setup

To evaluate the NPDOA-trained DTI model, we followed a standardized experimental protocol:

- Datasets: Models are trained and validated on public benchmark datasets such as KIBA, Davis, and DrugBank [25] [27] [28]. These datasets are randomly split into training, validation, and test sets, typically in a ratio of 8:1:1 [27].

- Evaluation Metrics: A comprehensive set of metrics is used for evaluation [27]:

- Classification Metrics: Accuracy (ACC), Precision, Recall, F1 Score, Matthews Correlation Coefficient (MCC).

- Ranking Metrics: Area Under the ROC Curve (AUC) and Area Under the Precision-Recall Curve (AUPR).

- Regression Metrics: Mean Square Error (MSE) and Concordance Index (CI) for binding affinity prediction [25].

Performance Comparison

The table below summarizes a comparative analysis of DTI prediction models, illustrating the performance level an NPDOA-optimized model would aim to achieve.

Table 1: Performance Comparison of DTI Prediction Models on Benchmark Datasets

| Model | Dataset | Accuracy (Std) | MCC (Std) | AUC (Std) | AUPR (Std) |

|---|---|---|---|---|---|

| EviDTI [27] | DrugBank | 82.02% | 64.29% | - | - |

| EviDTI [27] | Davis | - | - | ~0.915 | ~0.635 |

| EviDTI [27] | KIBA | - | - | ~0.921 | - |

| DeepLSTM (SPCA) [29] | Nuclear Receptors | - | - | 0.9206 | - |

| OverfitDTI (Morgan-CNN) [25] | KIBA | - | - | - | - |

| DTI-MHAPR (HAN-PCA-RF) [28] | FSL Dataset | - | - | 0.995 | - |

Note: Performance gaps exist between models and datasets. The NPDOA framework is designed to deliver state-of-the-art (SOTA) or near-SOTA results across these varied benchmarks by improving training efficiency and model robustness. MCC: Matthews Correlation Coefficient; AUC: Area Under the ROC Curve; AUPR: Area Under the Precision-Recall Curve.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for DTI Prediction Experiments

| Item / Resource | Function in DTI Prediction |

|---|---|

| Benchmark Datasets (KIBA, Davis, DrugBank) | Provides gold-standard data for model training, validation, and benchmarking. |