Neural Information Preservation in EEG Artifact Removal: A 2025 Review of Deep Learning and Benchmarking

This article provides a comprehensive analysis of state-of-the-art techniques for removing artifacts from electroencephalography (EEG) signals while preserving critical neural information.

Neural Information Preservation in EEG Artifact Removal: A 2025 Review of Deep Learning and Benchmarking

Abstract

This article provides a comprehensive analysis of state-of-the-art techniques for removing artifacts from electroencephalography (EEG) signals while preserving critical neural information. Tailored for researchers, scientists, and drug development professionals, it explores the foundational challenges of artifact contamination, details the latest methodological advances in deep learning, including State Space Models (SSMs) and hybrid architectures like CNN-LSTM networks, and offers practical guidance for troubleshooting and optimization. The review further establishes a rigorous framework for the validation and comparative benchmarking of artifact removal pipelines, synthesizing performance metrics and recent findings to guide method selection for clinical and biomedical research applications.

The Critical Challenge: Why Artifacts Threaten Neural Data Integrity in Modern Neuroscience

The pursuit of pristine neural data is a fundamental challenge in neuroscience and drug development. Biological artifacts, originating from the subject's own body, and environmental artifacts, from external sources, can significantly distort electroencephalography (EEG) and other neurophysiological signals, potentially leading to erroneous interpretations of brain function and drug effects [1]. In wearable EEG systems, which enable brain monitoring in real-world environments, this problem is exacerbated by the relaxed constraints of the acquisition setup, including the use of dry electrodes, reduced scalp coverage, and subject mobility [1]. The presence of these uncertain artifacts and noise significantly reduces the quality of EEG recordings, posing critical challenges for accurate data analysis in both research and clinical applications [2]. Effectively managing these artifacts is not merely a technical exercise but a prerequisite for generating reliable, high-fidelity neural data that can inform scientific discovery and therapeutic development.

Neural recordings are susceptible to a diverse array of contaminating signals. Understanding their origins is the first step in developing effective removal strategies. These artifacts can be broadly categorized based on their source.

Table: Classification of Common Neural Signal Artifacts

| Category | Specific Source | Origin | Key Characteristics |

|---|---|---|---|

| Biological (Physiological) | Ocular (EOG) | Eye movements & blinks | High-amplitude, low-frequency |

| Muscle (EMG) | Head, neck, jaw muscle activity | Broadband, high-frequency | |

| Cardiac (ECG) | Heartbeat | Periodic, consistent morphology | |

| Vascular Pulsation | Blood flow in scalp arteries | Pulse-synchronous, localized | |

| Environmental (Non-Physiological) | Motion Artifact | Head movement, cable sway | Time-locked to gait/movement, high-amplitude |

| Powerline Interference | Mains electricity (50/60 Hz) | Narrowband, steady frequency | |

| Electrode Noise | Impedance changes, pop | Abrupt, non-stationary | |

| Instrumentation Noise | Amplifier circuits | Broadband, low-level |

The specific features of artifacts in wearable EEG differ from those in traditional lab-based systems due to dry electrodes, reduced scalp coverage, and significant subject mobility [1]. For instance, motion artifacts during whole-body movements like running produce artifacts that contaminate the EEG and reduce the quality of subsequent signal processing steps like Independent Component Analysis (ICA) decomposition [3]. Furthermore, the reduced number of channels in wearable systems often limits the effectiveness of standard artifact rejection techniques that rely on source separation methods, such as Principal Component Analysis (PCA) and ICA [1].

Quantitative Comparison of Artifact Removal Techniques

A variety of algorithms have been developed to address the challenge of artifact contamination. The performance of these methods varies significantly depending on the artifact type, recording context (e.g., static vs. mobile), and the neural signal of interest. The following tables summarize the quantitative performance of several state-of-the-art techniques as reported in recent experimental studies.

Table 1: Performance Comparison on Semi-Synthetic Data (EMG & EOG Artifacts) [2]

| Model | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|

| CLEnet (Proposed) | 11.498 | 0.925 | 0.300 | 0.319 |

| DuoCL | 10.912 | 0.901 | 0.325 | 0.334 |

| NovelCNN | 10.345 | 0.885 | 0.355 | 0.351 |

| 1D-ResCNN | 9.987 | 0.870 | 0.371 | 0.363 |

Table 2: Performance in Motion Artifact Removal During Running (Flanker Task) [3]

| Preprocessing Method | ICA Dipolarity | Power at Gait Freq. | Recovery of P300 Effect |

|---|---|---|---|

| iCanClean (w/ pseudo-reference) | High | Significantly Reduced | Yes |

| Artifact Subspace Reconstruction (ASR) | High | Significantly Reduced | Yes (Weaker) |

| No Preprocessing / ICA alone | Low | High | No |

Table 3: Performance by Stimulation Type in tES Artifact Removal [4]

| Stimulation Type | Best Performing Model | Key Metric (RRMSE) |

|---|---|---|

| tDCS (transcranial Direct Current Stimulation) | Complex CNN | Lowest Temporal & Spectral Error |

| tACS (transcranial Alternating Current Stimulation) | M4 (State Space Model) | Lowest Temporal & Spectral Error |

| tRNS (transcranial Random Noise Stimulation) | M4 (State Space Model) | Lowest Temporal & Spectral Error |

Experimental Protocols for Key Artifact Removal Methods

Protocol: Motion Artifact Removal with iCanClean and ASR

This protocol is designed for removing motion artifacts from EEG data collected during locomotion, such as running, based on a comparative study [3].

1. Data Acquisition:

- Record EEG from participants performing a dynamic task (e.g., an adapted Flanker task) during both static standing and dynamic jogging conditions.

- Use a mobile EEG system. If available, use dual-layer sensors where one layer contacts the scalp and a second, mechanically coupled layer records only motion-induced noise.

2. Signal Preprocessing:

- For iCanClean: If dual-layer noise sensors are unavailable, create pseudo-reference noise signals by applying a temporary notch filter (e.g., below 3 Hz) to the raw EEG to isolate noise subspaces.

- Set the canonical correlation analysis (CCA) parameters to an R² threshold of 0.65 and a sliding window of 4 seconds to identify and subtract noise subspaces correlated with the pseudo-reference [3].

- For Artifact Subspace Reconstruction (ASR): Calculate the root mean square (RMS) of 1-second sliding windows of the continuous EEG. Use a condensed Gaussian distribution to convert RMS values to z-scores. Define the calibration reference data as segments where z-scores fall within -3.5 to 5.0 for at least 92.5% of electrodes. Apply a k-threshold between 10 and 30 during cleaning to avoid "over-cleaning" [3].

3. Validation & Analysis:

- Perform Independent Component Analysis (ICA) and evaluate component dipolarity. A higher number of dipolar brain components indicates better decomposition quality.

- Compute spectral power at the step frequency and its harmonics. Effective cleaning shows significant power reduction at these frequencies.

- For event-related potential (ERP) studies, check for the recovery of expected components (e.g., P300 congruency effect) in the dynamic condition that match those in the static condition.

Protocol: Deep Learning for General Artifact Removal with CLEnet

This protocol outlines the training and evaluation of the CLEnet model for removing various artifacts from multi-channel EEG data [2].

1. Dataset Preparation:

- Use semi-synthetic datasets created by adding known artifacts (e.g., EOG, EMG, ECG) to clean EEG recordings. This provides a ground truth for supervised learning.

- For real-world validation, use a dedicated multi-channel EEG dataset containing unknown artifacts collected during cognitive tasks (e.g., a 2-back task).

2. Model Architecture & Training:

- Implement the CLEnet architecture, which consists of:

- A Dual-Branch CNN with kernels of different scales to extract morphological features from the EEG.

- An Improved EMA-1D (One-Dimensional Efficient Multi-Scale Attention) module embedded in the CNN to enhance temporal feature preservation.

- An LSTM (Long Short-Term Memory) network following the CNN to capture the temporal dependencies of genuine EEG.

- Train the model in an end-to-end, supervised manner using Mean Squared Error (MSE) as the loss function to minimize the difference between the output and the clean ground-truth EEG.

3. Model Evaluation:

- Evaluate performance using multiple metrics on a held-out test set:

- Signal-to-Noise Ratio (SNR) and Correlation Coefficient (CC): Higher values are better.

- Relative Root Mean Square Error in the temporal (RRMSEt) and spectral (RRMSEf) domains: Lower values are better.

- Conduct ablation studies by removing the EMA-1D module to confirm its contribution to model performance.

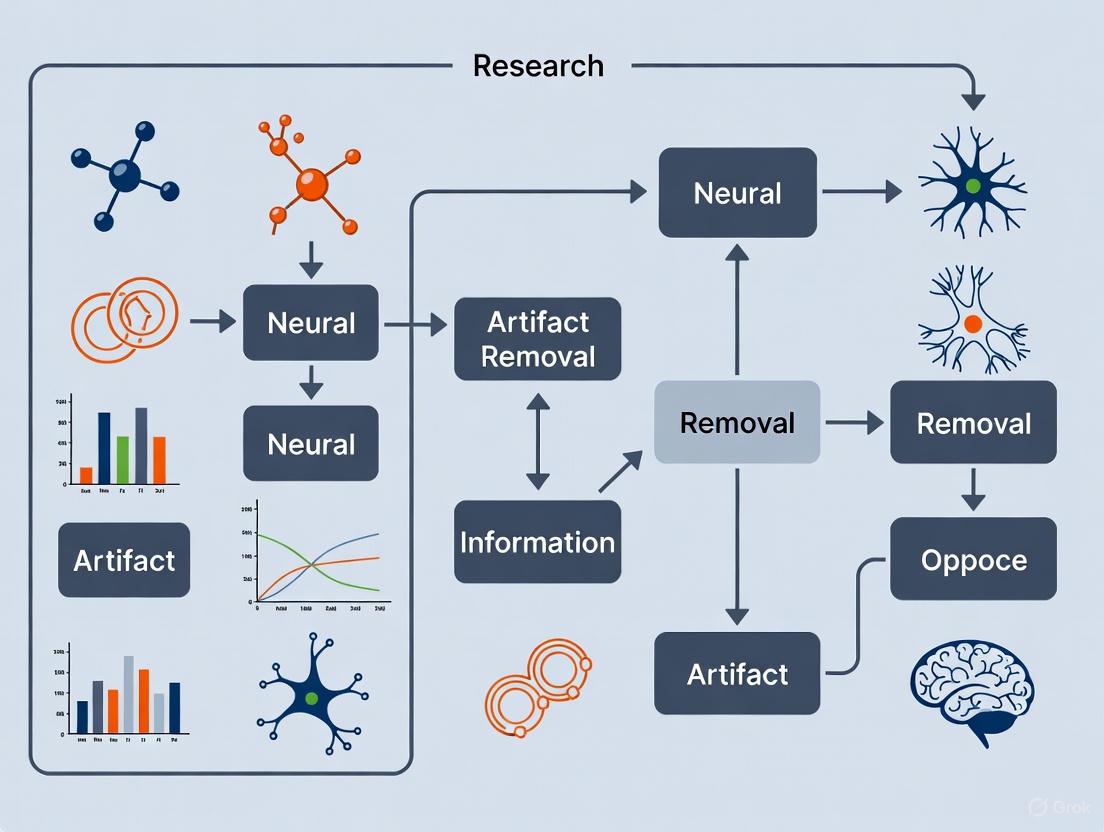

Signaling Pathways and Workflows

The following diagram illustrates the high-level workflow for processing neural signals, from acquisition to clean data, highlighting key decision points for artifact management.

This table details key computational tools, algorithms, and data resources essential for conducting rigorous artifact removal research.

Table: Key Research Reagents and Solutions for Artifact Removal

| Tool/Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| ICLabel [3] | Software Plugin (EEGLAB) | Automates classification of ICA components (brain, eye, muscle, etc.). | Standard ICA-based cleaning pipelines for lab EEG. |

| Artifact Subspace Reconstruction (ASR) [3] | Algorithm | Removes high-amplitude, non-stationary artifacts using a sliding-window PCA approach. | Preprocessing for mobile EEG, motion artifact removal. |

| iCanClean [3] | Algorithm & Framework | Uses CCA with noise references (real or pseudo) to subtract motion artifact subspaces. | High-motion scenarios like walking or running; requires noise reference. |

| CLEnet [2] | Deep Learning Model | End-to-end removal of multiple artifact types using dual-scale CNN, LSTM, and attention. | Multi-channel EEG with mixed/unknown artifacts; no need for manual intervention. |

| EEGdenoiseNet [2] | Benchmark Dataset | Provides semi-synthetic data with clean EEG and added EOG/EMG artifacts. | Training, benchmarking, and comparative evaluation of denoising algorithms. |

| State Space Models (SSM) [4] | Algorithmic Framework | Excels at modeling and removing complex, structured noise like tACS and tRNS artifacts. | Cleaning EEG recorded during transcranial electrical stimulation (tES). |

| SpyKing / SNNs [5] | Framework & Model | Implements Spiking Neural Networks for energy-efficient, potentially more private, computation. | Emerging approach for secure, low-power neural data processing. |

The accurate extraction and preservation of neural information are fundamental to advancements in neuroscience, brain-computer interfaces (BCIs), and neuropharmaceutical development. Neural signals, which carry the brain's functional information, are invariably contaminated by various artifacts and noise during acquisition. The core objective of neural signal processing is therefore to remove these contaminants while maximally preserving the integrity of the underlying neural data. This balance is critical; over-aggressive filtering can discard vital neural information, whereas insufficient processing leaves artifacts that obscure true brain activity. As neural interfacing technologies evolve towards higher channel counts, exceeding thousands of electrodes, the development of efficient, real-time signal processing techniques that prioritize neural information preservation has become a central challenge in the field [6]. This guide provides a comparative analysis of current artifact removal techniques, evaluating their performance based on their efficacy in preserving neural information across different experimental contexts.

Neural Signals and the Contamination Challenge

Neural signals comprise several components, each with distinct characteristics and informational value. Action potentials (spikes) are rapid, all-or-none electrochemical impulses from individual neurons, typically lasting 1-2 ms with amplitudes ranging from tens to hundreds of microvolts. These are a primary source of information for prosthetic and rehabilitation applications. Local Field Potentials (LFPs) represent the low-frequency components (typically <300 Hz) resulting from the aggregated synaptic activity of neuronal populations. While sometimes informative, LFPs are often filtered out when the focus is on single-unit activity [6].

These signals are susceptible to contamination from various sources:

- Physiological Artifacts: Include ocular artifacts (eye blinks and movements), muscle activity (EMG), and cardiac activity (ECG and pulse artifacts). These are particularly challenging as their frequency spectra often overlap with neural signals of interest [7].

- Environmental Artifacts: Arise from electromagnetic interference, faulty electrodes, or cable movements [7].

- Stimulation Artifacts: In closed-loop systems or during transcranial Electrical Stimulation (tES), the stimulation signal itself creates massive artifacts that can swamp native neural activity [4].

The following table summarizes the key signal types and their contaminants:

Table 1: Characteristics of Neural Signals and Common Artifacts

| Signal/Artifact Type | Frequency Range | Amplitude Range | Origin | Informational Value |

|---|---|---|---|---|

| Action Potentials | 300 Hz - 6 kHz | 50 - 500 μV | Firing of individual neurons | High; encodes neural computation |

| Local Field Potentials (LFP) | <300 Hz | 100 - 1000 μV | Aggregate synaptic activity | Context-dependent; network-level info |

| Ocular Artifact | 0 - 20 Hz | Often >1000 μV | Eye movements and blinks | Contaminant |

| Muscle Artifact (EMG) | 0 - >200 Hz | Highly variable | Head and neck muscle activity | Contaminant |

| Stimulation Artifact (tES) | Stimulation frequency | Can saturate amplifiers | Transcranial Electrical Stimulation | Contaminant |

Comparative Analysis of Artifact Removal Techniques

This section objectively compares the performance of major artifact removal methodologies, focusing on their ability to preserve neural information while effectively eliminating contaminants.

Signal Decomposition Techniques

Signal decomposition methods separate neural data into constituent components, allowing for the selective removal of artifactual elements.

Table 2: Comparison of Advanced Signal Decomposition Techniques

| Decomposition Method | Underlying Principle | Effectiveness on Noise | Computational Cost | Key Advantage | Key Limitation | Reported Accuracy |

|---|---|---|---|---|---|---|

| Empirical Mode Decomposition (EMD) | Adaptive, data-driven time-scale separation | High noise sensitivity, mode mixing | Moderate | Data-driven, no pre-defined basis | Susceptible to mode mixing | 94.2% (PQD Classification) [8] |

| Ensemble EMD (EEMD) | EMD over noise ensembles | Reduces mode mixing | High | Robustness to mode mixing | High computational load | 95.1% (PQD Classification) [8] |

| Complete EEMD with Adaptive Noise (CEEMDAN) | Complete reconstruction with adaptive noise | Better noise handling than EEMD | High | Minimal reconstruction error | Complex parameter tuning | 95.8% (PQD Classification) [8] |

| Variational Mode Decomposition (VMD) | Constrained optimization for mode extraction | High noise robustness | Moderate to High | Preserves signal non-stationarity | Requires preset mode number | 99.16% (PQD Classification) [8] |

| State Space Models (SSM) - M4 Network | Multi-modular deep learning architecture | Excels on complex tACS/tRNS artifacts | High (GPU-dependent) | Handles complex, non-linear artifacts | Requires substantial training data | Best for tACS/tRNS (EEG Denoising) [4] |

| Complex CNN | Deep convolutional neural network | Best for tDCS artifacts | High (GPU-dependent) | Learns complex spatial features | Black-box interpretation | Best for tDCS (EEG Denoising) [4] |

Classical vs. Machine Learning-Based Approaches

Traditional and modern approaches offer different trade-offs between interpretability, computational demand, and performance.

Table 3: Classical vs. Machine Learning-Based Removal Techniques

| Technique | Methodology | Best For | Neural Information Preservation | Hardware Efficiency |

|---|---|---|---|---|

| Regression | Subtract artifact estimated from reference channels | Ocular artifacts | Moderate; can remove neural signals | High; simple computation [7] |

| Blind Source Separation (BSS/ICA) | Statistically independent component separation | Muscle, ocular, and cardiac artifacts | High when components accurately classified | Moderate; depends on channel count [7] |

| Wavelet Transform | Multi-resolution time-frequency analysis | Transient artifacts and spikes | High with appropriate thresholding | Moderate [8] |

| Random Forest Classifier | Ensemble machine learning with feature extraction | Classifying multiple disturbance types | High when trained on clean data | Low for training, moderate for inference [8] |

| Deep Learning (CNN, SSM) | End-to-end feature learning and filtering | Complex, non-linear artifacts (e.g., tES) | Very High with proper training | Low for training, variable for inference [4] |

Experimental Protocols and Methodologies

To ensure reproducible comparisons, this section outlines standard experimental protocols for evaluating artifact removal techniques.

Protocol for Benchmarking Decomposition Techniques

Objective: To quantitatively compare the neural information preservation capabilities of EMD, EEMD, CEEMDAN, and VMD when coupled with a classifier.

Dataset Generation:

- Utilize a synthetic benchmark dataset (e.g., IEEE-1159 for PQDs) comprising multiple signal classes to simulate various neural states and artifacts [8].

- For real-world validation, incorporate field data from point of common coupling (e.g., with integrated photovoltaic systems to introduce realistic noise) [8].

Signal Processing:

- Apply each decomposition method (EMD, EEMD, CEEMDAN, VMD) to the dataset to generate Intrinsic Mode Functions (IMFs).

- Extract discriminative features (e.g., entropy, statistical moments) from the IMFs.

Classification and Validation:

- Implement a Random Forest Classifier (RFC) with hyperparameter tuning via grid search (optimizing number of trees, depth, features) [8].

- Employ 5-fold cross-validation to ensure robustness and compute confidence intervals for accuracy metrics.

- Perform paired t-tests to determine the statistical significance of performance differences between methods.

Protocol for Deep Learning Model Evaluation

Objective: To benchmark deep learning models against classical methods for removing specific artifact types like tES noise.

Semi-Synthetic Dataset Creation:

- Record clean EEG data in resting state conditions.

- Synthetically generate tES artifacts (tDCS, tACS, tRNS) and add them to the clean EEG to create a ground truth dataset [4].

Model Training and Testing:

- Train multiple models (e.g., Complex CNN, M4 SSM network) and classical methods (e.g., regression, ICA) on the synthetic dataset.

- Test all techniques on held-out data and real tES-contaminated recordings.

Performance Metrics:

- Evaluate using Root Relative Mean Squared Error (RRMSE) in both temporal and spectral domains.

- Compute Correlation Coefficient (CC) between processed signals and the clean ground truth to quantify information preservation [4].

Diagram 1: Experimental Workflow for Technique Evaluation

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential computational tools and signal processing "reagents" critical for experiments in neural information preservation.

Table 4: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Example Use Case | Preservation Consideration |

|---|---|---|---|

| Microelectrode Arrays | High-density neural signal acquisition | Recording intra-cortical spiking activities | Density impacts spatial resolution; material affects signal-to-noise ratio [6] |

| Synthetic Benchmark Datasets | Controlled algorithm validation | Testing decomposition techniques (e.g., IEEE-1159) | Provides ground truth for quantifying information preservation [8] |

| Semi-Synthetic EEG + Artifact | Validation with known ground truth | Evaluating tES artifact removal | Enables rigorous benchmarking of deep learning models [4] |

| Random Forest Classifier | Machine learning-based signal classification | Classifying power quality disturbances | Hyperparameter tuning prevents overfitting, preserving generalizable info [8] |

| State Space Models (SSMs) | Deep learning for time-series modeling | Removing complex tACS/tRNS artifacts | Architecture designed to model temporal dependencies, preserving signal dynamics [4] |

| Variational Mode Decomposition | Adaptive signal decomposition | Feature extraction for classification | Constrained optimization helps separate noise from signal components [8] |

Diagram 2: Neural Information Preservation Pathway

The optimal technique for neural information preservation depends critically on the specific artifact type, signal characteristics, and application constraints. Based on comparative analysis:

- VMD paired with Random Forest currently offers the highest classification accuracy for signal disturbances, making it suitable for scenarios requiring precise identification of neural states amidst noise [8].

- Deep Learning approaches (Complex CNN, M4 SSM) excel in removing complex, non-linear artifacts such as those induced by transcranial electrical stimulation, outperforming classical methods in these specific domains [4].

- Classical methods (BSS/ICA, Regression) remain valuable for their computational efficiency and interpretability, particularly in resource-constrained implantable devices or for well-understood artifacts like ocular and cardiac contaminants [6] [7].

The selection of an artifact removal strategy must therefore be guided by a triage of the primary contamination source, the computational resources available, and the specific neural information features crucial for the downstream application. Future developments will likely focus on hybrid models that combine the interpretability of classical methods with the power of deep learning, all while maintaining the low-power, real-time operation required for next-generation high-density neural interfaces.

Wearable electroencephalography (EEG) has emerged as a transformative technology for brain monitoring, enabling neuroscientific research and clinical diagnostics to move from highly controlled laboratory settings into real-world environments [9]. This shift is driven by the development of portable, wireless systems that facilitate long-term recording while participants are out of the lab and moving about [9]. Unlike traditional high-density, wet-electrode EEG systems that require stationary subjects in shielded rooms, wearable EEG aims to capture brain activity during natural behaviors, including walking, cycling, and even running [9].

However, this transition presents three interconnected challenges that impact signal quality and the fidelity of neural information: the use of dry electrodes, vulnerability to motion artifacts, and operation with low channel counts. Dry electrodes, while enabling rapid setup and improving user comfort, typically exhibit higher electrode-skin impedance compared to gel-based wet electrodes, making them more susceptible to noise [10] [9]. Motion artifacts pose a significant threat to data integrity, as the amplitude of movement-induced noise can be an order of magnitude greater than the neural signals of interest [11]. Furthermore, the shift to low-density systems (often with 16 or fewer channels) limits the effectiveness of classical artifact removal techniques like Independent Component Analysis (ICA), which rely on high spatial resolution to separate neural activity from noise [12]. This article examines these unique challenges, evaluates the performance of current solutions, and discusses their implications for preserving critical neural information in real-world settings.

The Core Triad of Challenges

Dry Electrodes: A Trade-off Between Practicality and Signal Quality

Dry electrode technology eliminates the need for skin abrasion and conductive gel, making EEG systems suitable for user-applied, long-term home monitoring [13]. From a practical standpoint, setup time for dry systems averages just 4.02 minutes compared to 6.36 minutes for wet electrode systems, and comfort ratings remain acceptable during extended 4-8 hour recordings [13].

However, the primary technical challenge is the higher and more unstable electrode-skin impedance. To combat this, active electrodes have been developed. For instance, QUASAR’s dry electrode EEG sensors incorporate ultra-high impedance amplifiers (>47 GOhms) capable of handling contact impedances up to 1-2 MOhms, thereby producing signal quality comparable to wet electrodes [13]. Similarly, Naox ear-EEG devices use dry-contact electrodes with active electrode technology featuring 13 TΩ input impedance to minimize noise despite higher electrode-skin impedance (approximately 300 kΩ) [13].

Table 1: Performance Comparison of Dry vs. Active Dry Electrodes

| Electrode Type | Electrode-Skin Impedance | Amplifier Input Impedance | Key Advantages | Primary Limitations |

|---|---|---|---|---|

| Passive Dry | High (≈1-2 MΩ) | Not Specified | Rapid setup, no gel, user-friendly | High motion artifact susceptibility, unstable contact |

| Active Dry [10] [13] | High (≈300 kΩ - 2 MΩ) | Very High (>>47 GΩ - 13 TΩ) | Stabilizes signal, handles high impedance, motion-resistant | Higher power consumption, more complex hardware |

| Passive Wet [10] | Low (≈5-10 kΩ) | Not Specified | Stable low-impedance contact, gold-standard signal quality | Gel dries over time, long setup, skin preparation needed |

Experimental data underscores the importance of hardware-level solutions. A 2023 study directly comparing passive dry, active dry, and passive wet electrodes during treadmill walking found that while treating a passive-wet system as a benchmark, only the active-electrode design more or less rectified movement artifacts for dry electrodes [10]. This finding suggests that a lightweight, minimally obtrusive dry EEG headset should at least equip an active-electrode infrastructure to sustain its validity in real-world scenarios [10].

Motion Artifacts: The Dominant Noise Source

Motion artifacts are a critical challenge because their amplitude can be at least ten times greater than that of the underlying bio-signals, severely obscuring neural information [11]. These artifacts arise from several mechanisms, including electrode-tissue interface fluctuations, cable movement, and the movement of the electrodes themselves through ambient electromagnetic fields [9].

Motion artifact mitigation strategies can be categorized into hardware-based and software-based approaches:

- Hardware Solutions: These focus on improving the physical interface and electronics. Patented mechanical isolation designs in dry electrodes stabilize them for artifact-free recordings during movement [13]. Furthermore, novel electrode designs can record motion noise in addition to the EEG signal components, allowing this noise to be removed by software filtering [9].

- Software Solutions: Signal processing techniques are widely used. Artifact Subspace Reconstruction (ASR) is an adaptive method that can identify and remove components of the signal that exceed a statistical threshold of typical brain activity [10]. Independent Component Analysis (ICA) is a blind source separation technique that can isolate and discard artifact-laden components [12]. Deep learning approaches are also emerging as powerful tools for managing muscular and motion artifacts, with promising applications in real-time settings [12].

The efficacy of these software methods, however, is highly dependent on the number of EEG channels. A 2023 study demonstrated that the performance of the ASR pipeline was substantially compromised by limited electrodes [10]. This creates a particular vulnerability for low-density wearable systems, where the reduced spatial information makes it difficult to reliably distinguish brain signals from noise.

Low Channel Counts: Limiting Advanced Processing

The drive for user-friendly, wearable EEG has resulted in systems with drastically reduced channel counts, often below sixteen [12]. While this improves ease of use, affordability, and setup speed, it imposes significant constraints on data analysis.

The primary limitation is the impairment of source separation methods like ICA and Principal Component Analysis (PCA). These algorithms rely on having a sufficient number of spatial samples (i.e., electrodes) to disentangle the mixture of neural and non-neural sources that compose the scalp EEG signal [12]. With low-density setups, there are fewer channels than underlying sources, making it impossible to cleanly separate them. This bottleneck is now seen as a main hurdle to the wider take-up of wearable EEG [9].

Despite this, research has shown that even minimal systems can be effective for specific, well-defined applications. For example, a two-channel forehead-mounted mEEG system was able to capture and quantify the N200 and P300 event-related potential components during a visual oddball task [14]. Furthermore, a wearable reduced-channel system using only four sensors to create a 10-channel montage demonstrated clinical potential by allowing epileptologists to accurately identify patients experiencing electrographic seizures with 90% sensitivity and 90% specificity [15]. These findings confirm that while low-channel systems are not suitable for all research questions, they can provide reliable data for targeted applications.

Experimental Data & Performance Comparison

To illustrate the performance trade-offs in real-world scenarios, the following table summarizes quantitative findings from key studies that have directly addressed these challenges.

Table 2: Experimental Performance of Wearable EEG Systems Across Challenges

| Study / System | Primary Challenge Addressed | Experimental Protocol | Key Performance Metrics & Results |

|---|---|---|---|

| Yang et al. (2023) [10] | Dry Electrodes & Motion | 18 subjects performed an oddball task during treadmill walking (1-2 KPH). Simultaneous EEG with passive/active dry and passive wet electrodes. | Active dry electrodes rectified movement artifacts compared to passive dry. ASR performance was substantially compromised by low electrode count. |

| Frankel et al. (2021) [15] | Low Channel Count | 20 subjects wore a 4-sensor wireless system (10-channel montage) alongside traditional video-EEG in an EMU for up to 5 days. | Blinded review detected people with seizures with 90% sensitivity, 90% specificity. Individual seizure detection: 61% sensitivity, 0.002 false positives/hour. |

| Krigolson et al. (2025) [14] | Low Channel Count | Participants performed a visual oddball task while EEG was recorded with a two-channel forehead-mounted system ("Patch"). | The system successfully captured and quantified N200 and P300 ERP components from a minimal forehead array, confirming reliability for targeted ERP paradigms. |

To ensure the validity of findings from wearable EEG studies, rigorous experimental protocols and data processing pipelines are essential. Below is a detailed description of a typical methodology used to evaluate systems under realistic conditions.

- 1. Participant & Task Design: A cohort of participants (e.g., n=18-35) is recruited. They perform a standardized neurophysiological paradigm, such as a visual or auditory oddball task, which reliably evokes well-known Event-Related Potentials (ERPs) like the P300 [10] [16] [14].

- 2. Data Acquisition: EEG is recorded simultaneously using the wearable system under test and a high-density, laboratory-grade system as a benchmark [15]. Critically, data collection occurs during various motion conditions, such as treadmill walking at different speeds (e.g., 1-2 KPH), running, or cycling [10] [9].

- 3. Preprocessing: Data is processed using a standard pipeline in tools like EEGLAB or Brainstorm. This includes:

- 4. Artifact Processing & Analysis: The core analysis involves applying and comparing artifact removal methods, such as ASR or ICA, on the wearable system data [10] [12]. The cleaned data is then compared to the laboratory-grade benchmark using quantitative metrics like Signal-to-Noise Ratio (SNR), component amplitude and latency, and, for clinical studies, sensitivity/specificity for detecting pathological events [10] [15].

This workflow is summarized in the following diagram, which outlines the logical sequence from participant preparation to final quantitative comparison.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Tools for Wearable EEG Research & Development

| Tool / Technology | Function | Example Use-Case in Research |

|---|---|---|

| Active Dry Electrodes [10] [13] | Stabilize high-impedance connection; reduce motion artifacts at the source. | Essential for obtaining usable EEG data during subject movement in dry-electrode systems. |

| Artifact Subspace Reconstruction (ASR) [10] [12] | Adaptive, online-capable method for removing high-amplitude, non-stationary artifacts. | Cleaning data in real-time BCI applications or during offline analysis of motion-corrupted segments. |

| Independent Component Analysis (ICA) [12] [16] | Blind source separation to isolate and remove artifact components (ocular, muscular). | Standard post-processing step for removing stereotyped artifacts after data collection. |

| Multivariate Pattern Analysis (MVPA) [16] | Machine learning technique to decode neural representations from high-dimensional EEG data. | Used to explore neural mechanisms in naturalistic paradigms, even with complex stimuli. |

| In-Ear EEG Platforms [13] [11] | Discreet form factor for recording from the ear canal; socially discrete monitoring. | Enables long-term, user-friendly brain monitoring in ecological settings. |

| fNIRS Integration [13] [17] | Measures blood oxygenation changes in the cortex; complements EEG with metabolic info. | Provides a multimodal picture of brain activity; more tolerant to movement than EEG. |

The unique challenges of wearable EEG—dry electrodes, motion artifacts, and low channel counts—are interconnected problems that require a systems-level approach. No single solution is sufficient; rather, preserving neural information demands a combination of hardware innovations, sophisticated software processing, and a clear understanding of the limitations imposed by electrode count. Active electrodes provide a foundational hardware solution for stabilizing the signal at the source [10]. For artifact removal, techniques like ASR and deep learning show promise, but their efficacy is inherently limited in low-channel systems, constraining the use of powerful spatial filters like ICA [10] [12].

The future of wearable EEG lies in the intelligent integration of hybrid technologies. Combining EEG with motion-tolerant modalities like fNIRS can provide a more robust, multimodal picture of brain function [17]. Furthermore, the development of advanced, channel-count-adaptive algorithms and the continued miniaturization of high-impedance electronics will be crucial. By acknowledging these challenges and leveraging the appropriate toolkit, researchers can effectively harness the power of wearable EEG to unlock the brain's mysteries in the dynamic environments of real life.

The integrity of neural signal data is a foundational pillar in neuroscience research and central nervous system (CNS) drug development. Artifacts—unwanted signals from non-neural sources—corrupt electrophysiological data, potentially leading to flawed interpretations and costly missteps in the development of new therapies. This guide provides an objective comparison of modern artifact removal techniques, detailing their experimental protocols and quantifying their performance to inform selection for high-stakes neurological research.

The Critical Role of Clean Neural Data in Drug Development

The global CNS therapeutics market is projected to grow to $410 million by 2035, fueled by the urgent need for treatments for conditions like Alzheimer's, Parkinson's, and multiple sclerosis [18]. Success in this high-failure-rate sector depends on reliable data. Artifacts in neural recordings introduce significant noise, obscuring true biomarkers and compromising the assessment of a drug's effect on brain activity.

The emergence of wearable EEG for real-world brain monitoring in clinical trials introduces new artifact challenges from motion, dry electrodes, and environmental noise [12]. Furthermore, techniques like Transcranial Electrical Stimulation (tES), used both as a therapeutic intervention and a research tool, generate massive artifacts that can swamp genuine neural signals [4]. Effective artifact removal is therefore not merely a data processing step but a crucial safeguard for ensuring the validity of preclinical and clinical findings.

Comparative Analysis of Neural Artifact Removal Techniques

Performance Benchmarking

Different artifact removal methods exhibit distinct strengths and weaknesses depending on the artifact type, recording modality, and data characteristics. The table below summarizes the quantitative performance of several advanced techniques.

Table 1: Performance Comparison of Modern Artifact Removal Algorithms

| Algorithm | Core Methodology | Best For Artifact Type | Reported Performance Metrics | Key Limitations |

|---|---|---|---|---|

| ComplexCNN [4] | Deep Learning: Convolutional Neural Network | tDCS artifacts | Highest performance for tDCS (Specific metrics not provided [4]) | Performance is stimulation-type dependent [4] |

| M4 Network [4] | Deep Learning: State Space Models (SSM) | tACS & tRNS artifacts | Highest performance for tACS and tRNS [4] | Performance is stimulation-type dependent [4] |

| CLEnet [2] | Deep Learning: Dual-scale CNN + LSTM + EMA-1D attention | Multi-artifact (EMG, EOG, ECG) & unknown artifacts | SNR: 11.498 dB; CC: 0.925; RRMSEt: 0.300; RRMSEf: 0.319 (Mixed artifacts) [2] | Complex architecture may increase computational cost [2] |

| ICA/PCA [12] [2] | Blind Source Separation | Ocular & muscular artifacts (in high-density EEG) | Widely applied but requires manual component inspection [2] | Requires many channels; struggles with low-density wearable EEG [12] |

| Wavelet Transform [12] | Signal Decomposition | Ocular & muscular artifacts | Among most frequently used techniques [12] | Requires expert knowledge for threshold setting [12] |

| ASR [12] | Statistical Reconstruction | Ocular, movement, & instrumental artifacts | — | — |

Key to Metrics: SNR (Signal-to-Noise Ratio) - higher is better; CC (Correlation Coefficient) - higher is better, max is 1.0; RRMSE (Relative Root Mean Square Error) - lower is better.

Experimental Protocols for Benchmarking

The quantitative results in Table 1 were derived from rigorous, structured experiments. The following workflow generalizes the methodology used to evaluate and compare different artifact removal pipelines.

Diagram 1: Artifact Removal Evaluation Workflow

Detailed Protocol Steps:

Data Preparation (Semi-Synthetic Dataset Creation): This controlled approach is used in studies like [4] and [2].

- Clean EEG: Obtain artifact-free EEG recordings from public databases or controlled lab settings.

- Artifact Signals: Record pure artifact signals (e.g., EOG from eye blinks, EMG from jaw clenching) or generate synthetic tES artifacts [4].

- Mixing: Linearly combine clean EEG and artifact signals at known signal-to-noise ratios to create a contaminated dataset where the ground truth (original clean EEG) is known [2].

Algorithm Application: Apply the artifact removal techniques under evaluation (e.g., CLEnet, ICA, wavelet transform) to the contaminated semi-synthetic dataset.

Ground Truth Comparison: Compare the output of each algorithm (the "cleaned" signal) against the original, known-clean EEG signal.

Performance Metric Calculation: Calculate quantitative metrics to evaluate each algorithm's performance [4] [2]:

- Temporal Similarity: Use Correlation Coefficient (CC) and Relative Root Mean Squared Error in temporal domain (RRMSEt).

- Spectral Preservation: Use Relative Root Mean Squared Error in frequency domain (RRMSEf).

- Signal Quality Improvement: Measure the Signal-to-Noise Ratio (SNR).

The Scientist's Toolkit: Key Reagents & Computational Solutions

Successful implementation of artifact removal pipelines relies on both data and specialized computational tools.

Table 2: Essential Research Resources for Neural Signal Processing

| Item / Solution | Function / Description | Application in Research |

|---|---|---|

| Semi-Synthetic Benchmark Datasets [2] | Public datasets mixing clean EEG with known artifacts (e.g., EOG, EMG). | Provides a controlled ground truth for rigorous algorithm development, testing, and benchmarking. |

| Pre-trained Models (e.g., EMFF-2025) [19] | Neural network potentials trained on large datasets, usable via transfer learning. | Accelerates project setup by providing a foundational model that can be adapted to specific tasks with minimal new data. |

| Independent Component Analysis (ICA) | A blind source separation algorithm that decomposes multi-channel signals into independent components. | Identifies and isolates artifact components (e.g., from eyes, heart) for removal; most effective with high-channel count data [12]. |

| Wavelet Transform Toolboxes | Software libraries (e.g., in MATLAB, Python) for multi-resolution signal analysis. | Used to denoise signals by thresholding wavelet coefficients associated with artifacts [12]. |

| Artifact Subspace Reconstruction (ASR) | A statistical method that identifies and removes high-variance artifact components in multi-channel data. | Particularly useful for handling large-amplitude, transient artifacts like movement and electrode pops in wearable EEG [12]. |

| Digital Biomarkers & Wearables [20] | Sensors (e.g., IMU, EOG) and algorithms for continuous physiological monitoring. | Provides auxiliary data to improve the detection of motion and physiological artifacts in real-world settings [12]. |

Implications for Neurological Disorder Research & Therapy Development

The choice of an artifact removal strategy has direct, tangible consequences for drug development. The following diagram illustrates how this technical decision influences the entire R&D pipeline.

Diagram 2: Impact of Artifact Removal on Drug Development Outcomes

The implications are significant:

Ensuring Biomarker Fidelity: Reliable biomarkers are increasingly the cornerstone of modern CNS trials. The 2025 Alzheimer's drug development pipeline, for example, includes 182 trials, with biomarkers serving as primary outcomes in 27% of them [21]. Artifacts can masquerade as or mask genuine biomarker signals, leading to incorrect patient stratification or failure to detect a drug's biological effect.

Supporting Advanced Modalities: New therapeutic approaches like Antisense Oligonucleotides (ASOs) and stem cell therapies are emerging for CNS disorders [18]. Evaluating their precise mechanisms and effects often relies on sensitive neurophysiological recordings, making data purity paramount.

Enabling Real-World Monitoring: The shift towards wearable EEG for decentralized trials and long-term monitoring in conditions like Parkinson's demands artifact handling strategies that perform outside the controlled lab environment [12] [20]. Techniques that leverage auxiliary sensors and deep learning show promise in meeting this challenge.

Methodological Frontiers: From Traditional Filters to Advanced Deep Learning Architectures

In neural information research, non-invasive techniques like electroencephalography (EEG) provide critical insights into brain function but are frequently contaminated by physiological and environmental artifacts. Preserving the integrity of neural signals during artifact removal is paramount, as the loss of subtle neurophysiological information can compromise analyses in both clinical and research settings, from neuromodulation studies to drug development. Among the numerous available signal processing techniques, Principal Component Analysis (PCA), Independent Component Analysis (ICA), and Wavelet Transform have established themselves as foundational tools. This guide provides an objective comparison of these three traditional techniques, benchmarking their performance in artifact removal, with a specific focus on their efficacy in preserving underlying neural information. The evaluation is grounded in recent experimental data, detailing methodologies and outcomes to inform researchers and scientists in their selection of appropriate processing pipelines.

Theoretical Foundations and Applications

Principal Component Analysis (PCA)

PCA is a linear dimensionality reduction technique that transforms correlated variables into a set of uncorrelated principal components, ordered such that the first few retain most of the variation present in the original dataset [22] [23]. It operates by computing the eigenvectors and eigenvalues of the covariance matrix, identifying the directions of maximum variance in the data [22]. In the context of artifact removal, PCA is effective for separating signals based on their variance, often assuming that artifacts (like ocular movements) contribute a larger variance compared to neural signals. However, a significant limitation is that the resulting principal components are linear combinations of original variables and can lack direct physiological interpretability, making it challenging to relate them to underlying neural processes [24] [23]. Its application is most suitable for scenarios where the artifact is the dominant source of variance in the recorded signal.

Independent Component Analysis (ICA)

ICA is a blind source separation (BSS) technique that decomposes a multivariate signal into statistically independent components [24] [25]. It operates on the assumption that the recorded signal is a linear mixture of independent sources, such as neural activity, eye blinks, and muscle noise. ICA aims to unmix these sources by maximizing the non-Gaussianity of the component distributions [25]. This method is particularly powerful for isolating and removing artifacts like electrooculogram (EOG) from multi-channel EEG data, as these artifacts often originate from independent physiological processes [26]. A key limitation is its requirement for multiple channels to function effectively and its reliance on the statistical independence of sources, which may not always hold in practice, potentially leading to the incomplete separation of neural data and artifacts [25] [26].

Wavelet Transform

Wavelet Transform, particularly the Discrete Wavelet Transform (DWT), provides a time-frequency multi-resolution analysis of a signal [27]. It decomposes a signal into different frequency sub-bands using a set of basis functions (wavelets) localized in both time and frequency. This allows for the identification and manipulation of signal features at specific scales [27] [28]. For artifact removal, a common technique is wavelet denoising, which involves thresholding the detailed coefficients resulting from DWT to suppress noise before reconstructing the signal [27] [28]. Its non-stationary signal handling makes it highly effective for preserving transient neural events and removing artifacts like muscle noise or baseline wander from single-channel recordings [27] [29] [26]. Variants like the Empirical Wavelet Transform (EWT) further adapt the decomposition to the specific modes present in the signal's spectrum [29] [26].

Performance Benchmarking

The performance of PCA, ICA, and Wavelet Transform was evaluated using data from recent studies involving EEG artifact removal. Key metrics include Signal-to-Noise Ratio (SNR), Correlation Coefficient (CC), and Root Relative Mean Squared Error (RRMSE).

Table 1: Performance Benchmarking in EEG Artifact Removal

| Technique | Artifact Type | Key Performance Metrics | Experimental Context |

|---|---|---|---|

| ICA | tES (tDCS) | Temporal RRMSE: ~0.45, Spectral RRMSE: ~0.55, CC: >0.9 [4] | Synthetic tES artifacts added to clean EEG [4]. |

| Wavelet (EWT-AF) | Ocular Artifacts | Avg. SNR Improvement: +9.21 dB, CC: 0.837 [29] | Real EEG data from BCI Competition 2008 [29]. |

| Wavelet (FF-EWT+GMETV) | Ocular Artifacts | Lower RRMSE, Higher CC vs. EMD/SSA [26] | Synthetic & real EEG datasets [26]. |

| Wavelet (DWT+NLM+NOA) | BW, MA, EM | Avg. SNR Improvement: +3.12 dB vs. second-best method [30] | Real-world noise on Physionet datasets [30]. |

Table 2: Qualitative Strengths and Limitations for Neural Information Preservation

| Technique | Strengths | Limitations for Neural Research |

|---|---|---|

| PCA | Reduces data dimensionality; effective for high-variance artifacts [22] [23]. | Low physiological interpretability; risk of removing neural signal with high variance [24]. |

| ICA | Excellent separation of independent sources (e.g., eye blinks) in multi-channel data [4] [26]. | Requires multiple channels; performance depends on source independence [25] [26]. |

| Wavelet Transform | Preserves signal morphology; effective for single-channel data; handles non-stationary signals [27] [28] [29]. | Performance depends on parameter selection (e.g., wavelet type, decomposition level) [28] [30]. |

Detailed Experimental Protocols

Protocol 1: Benchmarking Deep Learning and Traditional Models for tES Artifact Removal

This study [4] provided a comparative benchmark of various models, including ICA, for removing artifacts induced by Transcranial Electrical Stimulation (tES) during EEG recordings.

- Data Preparation: A semi-synthetic dataset was created by combining clean EEG data with synthetic tES artifacts (tDCS, tACS, tRNS), ensuring a known ground truth for controlled evaluation.

- Method Implementation: Eleven artifact removal techniques were tested. ICA was implemented as one of the traditional benchmarks. The analysis focused on separating the mixed signal into independent components, with artifact-related components identified and removed.

- Performance Evaluation: Models were evaluated using Root Relative Mean Squared Error (RRMSE) in both temporal and spectral domains, and the Correlation Coefficient (CC) between the cleaned signal and the original clean EEG. Results indicated that for tDCS artifacts, a convolutional network outperformed others, while for tACS and tRNS, a State Space Model (SSM) was superior. ICA showed competitive performance, particularly in certain stimulation conditions [4].

Protocol 2: EWT with Adaptive Filtering for Ocular Artifact Removal

This research [29] introduced a hybrid method combining Empirical Wavelet Transform (EWT) and Adaptive Filtering (AF) for removing ocular artifacts.

- Data Source: The study utilized the open-source EEGdenoiseNet dataset and real EEG data from the BCI Competition 2008 Graz dataset A.

- Method Implementation:

- Decomposition: The contaminated EEG signal was decomposed using EWT to extract modulated oscillations.

- Artifact Identification: The identified components were used to estimate reference artifact signals.

- Filtering: A Normalized Least Mean Square (NLMS)-based Adaptive Filter was applied to remove the identified artifacts from the signal.

- Performance Evaluation: The performance was measured using Signal-to-Noise Ratio (SNR) and Correlation Coefficient (CC). The EWT-AF model achieved an average SNR improvement of 9.21 dB and a CC value of 0.837, demonstrating its effectiveness in preserving neural information while removing artifacts [29].

Protocol 3: Optimized DWT-NLM Framework for ECG (Conceptually Transferable)

While focused on ECG denoising, this study [30] showcases an advanced optimization of wavelet techniques that is conceptually transferable to neural signal processing.

- Data Source: Experiments were conducted on ECG signals from Physionet datasets contaminated with Additive White Gaussian Noise (AWGN), Baseline Wander (BW), Muscle Artifact (MA), and Electrode Motion Artifact (EM).

- Method Implementation:

- A hybrid DWT and Non-Local Means (NLM) framework was employed.

- The Nutcracker Optimization Algorithm (NOA) was integrated to dynamically optimize critical parameters, including wavelet decomposition levels, basis functions, and NLM parameters (e.g., patch size and bandwidth).

- A sigmoid-tuned threshold function was introduced to reduce constant deviation and pseudo-Gibbs phenomena.

- Performance Evaluation: The method was evaluated based on output SNR and RMSE. The NOA-optimized DWT+NLM framework provided an average SNR enhancement of 3.12 dB compared to the second-best method in real-world noise scenarios, highlighting the importance of parameter optimization in wavelet-based denoising [30].

Workflow and Signaling Pathways

The following diagram illustrates a generalized, high-level workflow that encapsulates the core steps of the artifact removal techniques discussed in this guide.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and methodological components essential for implementing the benchmarked artifact removal techniques.

Table 3: Essential Research Reagents for Artifact Removal Research

| Research Reagent | Function & Application | Example Use Case |

|---|---|---|

| Semi-Synthetic Datasets | Enable controlled, rigorous model evaluation by combining clean neural data with known artifact signatures [4] [26]. | Benchmarking ICA performance for tES artifact removal [4]. |

| Optimization Algorithms (e.g., NOA) | Dynamically tune critical parameters (e.g., decomposition levels, threshold) in composite denoising frameworks to prevent neural information loss [30]. | Optimizing DWT and NLM parameters for ECG denoising [30]. |

| Fixed Frequency EWT (FF-EWT) | An adaptive signal decomposition method that creates wavelet filters tailored to the specific spectral content of the input signal [26]. | Isulating fixed-frequency EOG artifacts from single-channel EEG [26]. |

| Adaptive Filters (e.g., NLMS) | Systematically remove artifact components identified during the decomposition stage using a recursive feedback mechanism [29]. | Removing ocular artifacts after EWT decomposition [29]. |

| Performance Metrics (SNR, RRMSE, CC) | Quantitatively evaluate the denoising performance and the degree of neural information preservation [4] [29] [30]. | Comparing the efficacy of EWT-AF against EMD-AF [29]. |

The benchmarking analysis reveals that no single technique is universally superior; the optimal choice depends on the specific research context. ICA excels in multi-channel setups where artifacts stem from statistically independent sources, such as ocular movements. PCA offers a straightforward approach for dimensionality reduction and is effective when artifacts account for the largest variance, though at the potential cost of physiological interpretability. Wavelet Transform, particularly in its advanced and optimized forms like EWT and DWT-NLM, demonstrates remarkable versatility and effectiveness for both single and multi-channel data, preserving critical neural signal morphology while removing a wide spectrum of artifacts. For researchers whose primary focus is the preservation of neural information, wavelet-based methods, especially those enhanced by adaptive filtering and parameter optimization, currently present a powerful and robust choice, as evidenced by their superior performance in recent comparative studies.

The accurate analysis of neural signals is fundamental to advancements in neuroscience, neuromodulation therapies, and drug development. However, a significant challenge in this domain is the presence of artifacts—unwanted noise that obscures genuine brain activity. These artifacts can originate from various sources, including muscle movement (EMG), eye blinks (EOG), and electrical stimulation therapies themselves. The emerging application of wearable EEG devices in real-world settings further amplifies this challenge due to motion artifacts and the use of dry electrodes [12]. Deep learning technologies, particularly Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Generative Adversarial Networks (GANs), are revolutionizing the preservation of neural information by providing sophisticated, data-driven solutions for artifact removal and data augmentation. This guide objectively compares the performance of these architectures within the critical context of neural signal processing.

Core Architectures and Their Functions

The three deep learning architectures excel in distinct roles for handling neural data:

Convolutional Neural Networks (CNNs) are specialized for processing data with spatial or topological structure. Their core operation, convolution, applies filters that extract local features, making them ideal for identifying patterns in multi-channel EEG data or the time-frequency representations of signals [31] [2]. In neural signal processing, they are predominantly used for discriminative tasks like artifact detection and signal classification.

Recurrent Neural Networks (RNNs), including their advanced variants like Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU), are designed for sequential data. They possess an internal memory that captures temporal dependencies, which is crucial for modeling the time-evolving nature of neural signals [32]. This makes them exceptionally well-suited for tasks that require understanding the dynamic progression of a brain signal over time.

Generative Adversarial Networks (GANs) consist of two competing neural networks: a Generator that creates synthetic data and a Discriminator that distinguishes between real and generated data [31]. This adversarial training framework is powerful for generative tasks, such as data augmentation to address data scarcity or generating clean neural signals from noisy inputs.

Comparative Analysis of Key Characteristics

Table 1: Comparative analysis of CNN, RNN, and GAN architectures.

| Feature | Convolutional Neural Network (CNN) | Recurrent Neural Network (RNN) | Generative Adversarial Network (GAN) |

|---|---|---|---|

| Primary Function | Feature Extraction & Classification [31] | Sequential Modeling & Prediction [33] | Data Generation & Augmentation [31] |

| Core Strength | Capturing spatial hierarchies and local patterns | Modeling temporal dependencies and long-term context | Learning and replicating complex data distributions |

| Typical Input | Images, Spectrograms, Multi-channel Data [31] | Time-Series Data, Signal Sequences [33] | Random Noise Vector (Generator) [34] |

| Common Use in Neuroscience | Artifact detection, Signal classification | Temporal feature extraction, Signal prediction | Synthetic data generation, Artifact removal [34] [4] |

| Training Paradigm | Supervised Learning [31] | Supervised Learning | Unsupervised/Adversarial Learning [31] |

Quantitative Performance in Neural Signal Processing

Performance in EEG Artifact Removal

Artifact removal is a critical step for preserving neural information in EEG analysis. Different deep learning models have been benchmarked against various artifact types.

Table 2: Performance comparison of deep learning models in EEG artifact removal tasks. Performance is measured using Root Relative Mean Squared Error (RRMSE) and Correlation Coefficient (CC); lower RRMSE and higher CC indicate better performance [4] [2].

| Model Architecture | Artifact Type | Key Metric 1 (RRMSE) | Key Metric 2 (CC) | Key Findings & Context |

|---|---|---|---|---|

| Complex CNN [4] | tDCS Artifacts | Lowest RRMSE (Study-specific) | Highest CC (Study-specific) | Excelled at removing transcranial Direct Current Stimulation (tDCS) artifacts in EEG recordings [4]. |

| Multi-modular SSM (M4) [4] | tACS & tRNS Artifacts | Lowest RRMSE (Study-specific) | Highest CC (Study-specific) | A State Space Model (SSM)-based network performed best for more complex oscillatory artifacts like tACS and tRNS [4]. |

| CLEnet (CNN + LSTM) [2] | Mixed EMG/EOG | RRMSEt: 0.300 | CC: 0.925 | A hybrid model combining dual-scale CNN and LSTM achieved superior performance in removing mixed physiological artifacts [2]. |

| CLEnet (CNN + LSTM) [2] | ECG | RRMSEt: 8.08% lower than baseline | CC: 0.75% higher than baseline | Demonstrated significant superiority in removing cardiac artifacts from EEG signals [2]. |

Performance in Data Augmentation and State Estimation

Data scarcity is a common problem in battery neuroscience and neuropharmacology, where collecting extensive experimental data is costly and time-consuming. GANs offer a powerful solution for data augmentation.

Table 3: Performance of GAN-generated data in battery state estimation, demonstrating its utility for augmenting experimental datasets. Performance is measured using Root Mean Square Error (RMSE); lower values indicate better performance [34].

| Application Scenario | Model Trained With | State Estimated | Performance (RMSE) | Key Findings |

|---|---|---|---|---|

| Data Replacement [34] | GAN-Generated Data Only | State of Health (SOH) | Slightly higher than real data | Estimation accuracy decreased only slightly when real data were completely replaced with generated data [34]. |

| Data Enhancement [34] | Real + GAN-Generated Data | State of Health (SOH) | 0.69% | Augmenting the real dataset with synthetic data improved the estimator's accuracy beyond using real data alone [34]. |

| Data Enhancement [34] | Real + GAN-Generated Data | State of Charge (SOC) | 0.58% | Demonstrated the framework's high accuracy across different state estimation tasks [34]. |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the experimental methodologies cited in the performance tables.

Protocol 1: Benchmarking EEG Artifact Removal Models

This protocol is based on the comparative study of ML methods for tES artifact removal [4] and the development of CLEnet [2].

- 1. Dataset Preparation: A semi-synthetic dataset is created by combining clean, artifact-free EEG recordings with synthetically generated tES artifacts (for tDCS, tACS, tRNS) or recorded physiological artifacts (EMG, EOG, ECG). This provides a known ground truth for quantitative evaluation [4] [2].

- 2. Data Preprocessing: The raw signal is typically filtered and segmented. For models like CLEnet, data is formatted into epochs suitable for deep learning model input [2].

- 3. Model Training: Multiple deep learning models (e.g., Complex CNN, SSM-based models, LSTM, hybrid models) are trained on the artifact-contaminated EEG signals. The target output for the model is the clean EEG signal. A loss function like Mean Squared Error (MSE) is used to minimize the difference between the model's output and the ground truth [2].

- 4. Model Evaluation:

- Primary Metrics: Processed signals are evaluated against the known ground truth using:

- Secondary Metrics: Signal-to-Noise Ratio (SNR) improvement is also a common metric [2].

Protocol 2: Evaluating GANs for Data Augmentation

This protocol follows the W-DC-GAN-GP-TL framework used for lithium-ion battery data, a methodology transferable to experimental neural data [34].

- 1. Real Data Collection: A limited set of real experimental data is collected (e.g., voltage/current curves from battery cycling experiments).

- 2. GAN Training & Data Generation: A GAN model (incorporating Deep CNNs, Wasserstein distance, and Gradient Penalty) is trained on the real data. After training, the generator is used to produce a large volume of synthetic data that mirrors the statistical properties of the real dataset [34].

- 3. Experimental Scenarios:

- Data Replacement: A state estimation model (e.g., Bi-GRU for SOH) is trained exclusively on the generated data and then tested on a held-out set of real data.

- Data Enhancement: The state estimation model is trained on a combined dataset of real and generated data, then tested on real data.

- 4. Performance Quantification: The state estimation model's performance in both scenarios is evaluated using Root Mean Square Error (RMSE) and compared against a baseline model trained only on the limited real data [34].

Visualizing Experimental Workflows

The following diagrams illustrate the key experimental and model workflows discussed in this guide.

GAN-Based Data Augmentation Workflow

Hybrid Deep Learning for Artifact Removal

Table 4: Essential computational tools and datasets for deep learning-based neural signal processing.

| Item / Resource | Function / Description | Relevance in Research |

|---|---|---|

| Semi-Synthetic Datasets [2] | Datasets created by adding known artifacts to clean EEG signals. | Provides a ground truth for controlled development, training, and rigorous benchmarking of artifact removal algorithms [4] [2]. |

| W-DC-GAN-GP-TL Framework [34] | A GAN variant using Wasserstein distance, Deep Convolutions, Gradient Penalty, and Transfer Learning. | A reliable, generalized framework for enriching time-series experimental data, addressing the widespread data shortage problem in research [34]. |

| MEMCAIN Model [35] | A multi-task feature fusion model integrating a CNN-Attention network (CCANet) with a memory autoencoder. | Addresses class imbalance and limited feature representation in intrusion detection, a challenge analogous to identifying rare neural events [35]. |

| Independent Component Analysis (ICA) [12] | A blind source separation technique used as a traditional baseline method. | A standard against which the performance of new deep learning models is often compared to demonstrate improvement [12] [2]. |

| Explainable AI (XAI) Tools (e.g., SHAP, LIME) [36] | Post-hoc interpretation tools for complex deep learning models. | Provides insights into model decisions, increasing trust and transparency, which is critical for clinical and scientific validation [36]. |

Electroencephalography (EEG) is a fundamental tool in neuroscience and clinical diagnostics, providing unparalleled temporal resolution for monitoring brain activity. However, a significant challenge in EEG analysis lies in the pervasive contamination of signals by various artifacts—including ocular (EOG), muscular (EMG), cardiac (ECG), and environmental noise—which can obscure genuine neural information and compromise analytical integrity. The core thesis of modern artifact removal research centers on developing specialized computational architectures that can effectively eliminate these contaminants while maximally preserving the underlying neural signal, a balance critical for both research accuracy and clinical application. Traditional methods like regression, independent component analysis (ICA), and wavelet transforms often fall short in addressing non-stationary artifacts or require laborious manual intervention [2] [37] [38].

The emergence of deep learning has revolutionized this domain, enabling fully automated, end-to-end artifact removal systems. This guide provides a detailed comparison of three advanced neural architectures—CLEnet, M4 SSM, and AnEEG—each representing distinct algorithmic approaches to this challenge. CLEnet integrates convolutional networks with temporal modeling, the M4 model employs a novel state space framework, and AnEEG leverages adversarial training. We objectively evaluate their performance against standardized metrics, detail their experimental protocols, and situate their contributions within the broader research objective of achieving optimal fidelity in neural information preservation.

Architectural Breakdown and Methodologies

CLEnet: A Dual-Branch Hybrid for Temporal-Morphological Feature Extraction

CLEnet is engineered to address a key limitation of prior models: their specialization on specific artifact types and poor performance on multi-channel data containing unknown noise sources. Its architecture is a sophisticated dual-branch network that synergistically combines Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks, augmented with a custom attention mechanism [2].

- Dual-Scale CNN & EMA-1D: The first stage of CLEnet employs two convolutional kernels of different scales to extract morphological features from the EEG signal. This allows the model to identify and capture artifact patterns of varying sizes and shapes. Critically, an improved one-dimensional Efficient Multi-Scale Attention (EMA-1D) module is embedded within the CNN. This module captures pixel-level relationships through cross-dimensional interactions, enhancing the extraction of genuine EEG morphological features while simultaneously preserving and reinforcing the signal's temporal characteristics [2].

- Temporal Feature Extraction with LSTM: The features extracted by the CNN-EMA branch are then subjected to dimensionality reduction via fully connected layers to eliminate redundancy. Subsequently, an LSTM network processes this refined data to model the long-term temporal dependencies inherent in genuine brain activity, a step crucial for separating structured neural signals from irregular artifacts [2].

- End-to-End Reconstruction: The final stage involves flattening the fused and enhanced features and using fully connected layers to reconstruct them into artifact-free EEG. The entire model is trained in a supervised manner using Mean Squared Error (MSE) as the loss function, enabling an end-to-end learning process from noisy input to clean output [2].

The following diagram illustrates the workflow of the CLEnet model.

M4 SSM: A Multi-Modular State Space Model for Complex Stimulation Artifacts

The M4 model is designed to tackle a particularly stubborn class of artifacts: those induced by Transcranial Electrical Stimulation (tES), which can severely hinder the analysis of concurrent EEG recordings. Its innovation lies in its use of a State Space Model (SSM) as the core computational unit, offering a powerful alternative to traditional CNNs and Transformers [39] [4].

- State Space Model (SSM) Core: SSMs are designed to model long-range dependencies in sequential data through a system of linear ordinary differential equations. The M4 model utilizes a two-dimensional selective scan (SS2D) process, which involves unfolding the input in multiple directions, processing the sequences with a data-dependent SSM layer (S6 block), and then merging the outputs. This allows the model to capture global contextual information efficiently, with computational complexity that scales linearly rather than quadratically (as with Transformers), making it both effective and efficient for large-scale signals like EEG [39].

- Multi-Modular Architecture: The M4 model is structured as a multi-modular network, specifically optimized for removing complex tES artifacts such as those from tACS (alternating current) and tRNS (random noise). This specialized design enables it to handle the unique characteristics of stimulation-induced noise, which can be highly structured and challenging to separate from neural activity [4].

The logical flow of the SS2D process, which is central to the M4 model's encoder, is shown below.

AnEEG: An LSTM-Empowered Adversarial Network

AnEEG proposes a generative approach to artifact removal by leveraging a Long Short-Term Memory-based Generative Adversarial Network (LSTM-GAN). This architecture is designed to generate artifact-free EEG signals that maintain the original neural activity's temporal dynamics [37].

- Generative Adversarial Framework: The model consists of two core components: a Generator and a Discriminator. The Generator, built with a two-layer LSTM network, takes noisy EEG data as input and attempts to produce a clean, artifact-free version. The Discriminator, typically a one-dimensional convolutional network, then evaluates the generated signal, comparing it to ground-truth clean data and trying to distinguish between real and generated samples [37].

- LSTM for Temporal Integrity: The use of LSTM in the Generator is critical. Its ability to capture long-term temporal dependencies and contextual information makes it exceptionally well-suited for EEG data, ensuring that the generated signal preserves the natural dynamics of brain activity throughout the denoising process [37].

- Adversarial Training: The two networks are trained simultaneously in a competitive process. The Generator strives to produce increasingly realistic signals to fool the Discriminator, while the Discriminator becomes better at identifying synthetic data. This adversarial process drives the overall model to generate high-quality, artifact-free EEG [37].

Performance Comparison and Experimental Data

To objectively evaluate the performance of CLEnet, M4 SSM, and AnEEG, we summarize quantitative results from their respective studies using standardized metrics including Signal-to-Noise Ratio (SNR), Correlation Coefficient (CC), and Relative Root Mean Square Error in the temporal and frequency domains (RRMSEt and RRMSEf).

Table 1: Performance Comparison on Specific Artifact Types

| Model | Artifact Type | SNR (dB) | Correlation Coefficient (CC) | Temporal RRMSE | Spectral RRMSE |

|---|---|---|---|---|---|

| CLEnet [2] | Mixed (EMG + EOG) | 11.498 | 0.925 | 0.300 | 0.319 |

| ECG | Not Reported | ~0.75* | ~8.08% lower than DuoCL | ~5.76% lower than DuoCL | |

| M4 SSM [4] | tACS | Not Reported | Best Performance (vs 10 other methods) | Best Performance | Best Performance |

| tRNS | Not Reported | Best Performance (vs 10 other methods) | Best Performance | Best Performance | |

| AnEEG [37] | Mixed Artifacts | Improved (vs Wavelet) | Improved (vs Wavelet) | Lower (vs Wavelet) | Lower (vs Wavelet) |

| 1D-ResCNN [2] | Mixed (EMG + EOG) | Lower than CLEnet | Lower than CLEnet | Higher than CLEnet | Higher than CLEnet |

| DuoCL [2] | Mixed (EMG + EOG) | Lower than CLEnet | Lower than CLEnet | Higher than CLEnet | Higher than CLEnet |

Note: Exact values for M4 SSM's SNR and RRMSE were not provided in the search results, but it was identified as the top performer on CC and RRMSE against ten other methods for tACS and tRNS artifacts. CLEnet's ECG performance is reported as a percentage improvement over DuoCL.

Table 2: Performance on Multi-Channel EEG with Unknown Artifacts

| Model | SNR (dB) | Correlation Coefficient (CC) | Temporal RRMSE | Spectral RRMSE |

|---|---|---|---|---|

| CLEnet [2] | Best Performance (2.45% improvement) | Best Performance (2.65% improvement) | Best Performance (6.94% decrease) | Best Performance (3.30% decrease) |

| 1D-ResCNN [2] | Lower | Lower | Higher | Higher |

| NovelCNN [2] | Lower | Lower | Higher | Higher |

| DuoCL [2] | Lower | Lower | Higher | Higher |

Analysis of Comparative Performance

- CLEnet for Biological Artifacts: CLEnet demonstrates superior and robust performance in removing common biological artifacts like EMG, EOG, and ECG, as well as mixed and unknown artifacts in multi-channel data. Its integrated design allows it to outperform other mainstream models like 1D-ResCNN and DuoCL across all key metrics (SNR, CC, RRMSE), highlighting its effectiveness in preserving neural information fidelity [2].

- M4 SSM for Stimulation Artifacts: The M4 SSM model excels in the specific niche of removing tES-artifacts, particularly the complex waveforms of tACS and tRNS. It achieved the best results in its comparative study against ten other ML methods, including a top-performing convolutional network (Complex CNN). This establishes SSMs as a particularly powerful architecture for handling structured, stimulation-induced noise where traditional CNNs may be insufficient [4].

- AnEEG's General Enhancement: AnEEG shows promising results in general artifact removal, demonstrating improvements over traditional techniques like wavelet decomposition. It achieves lower NMSE and RMSE values, indicating a closer agreement with the original, clean signal, and higher CC, SNR, and SAR values [37].

Experimental Protocols and Methodologies

A critical aspect of comparing these architectures is understanding the experimental setups and datasets used for their validation.

Table 3: Key Research Reagents and Experimental Resources

| Resource Name | Type | Function in Evaluation | Source/Reference |

|---|---|---|---|

| EEGdenoiseNet | Dataset | Provides clean EEG segments and isolated EOG/EMG artifacts for creating semi-synthetic datasets. | [2] |

| MIT-BIH Arrhythmia Database | Dataset | Source of ECG signals for creating semi-synthetic ECG-contaminated EEG data. | [2] |