Neural Encoding and Decoding Frameworks: From BCI Foundations to Drug Discovery Applications

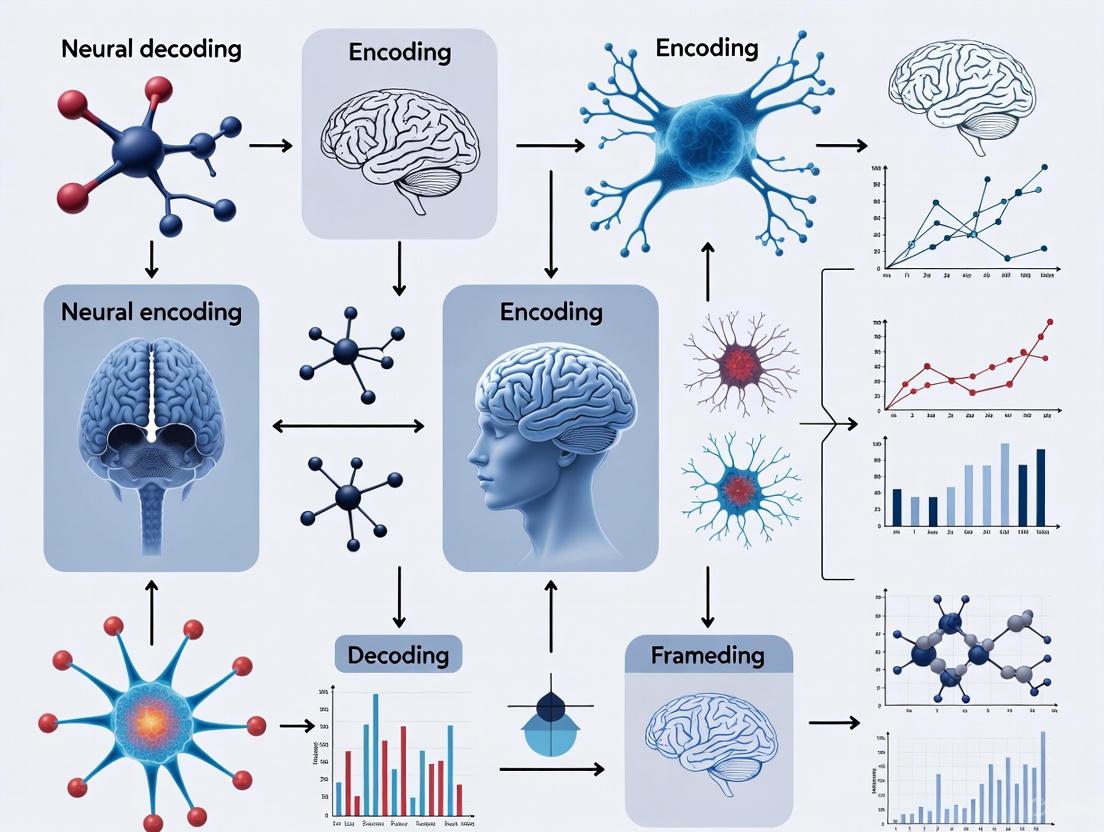

This article provides a comprehensive overview of modern neural encoding and decoding frameworks, exploring their foundational principles, methodological advances, and transformative applications.

Neural Encoding and Decoding Frameworks: From BCI Foundations to Drug Discovery Applications

Abstract

This article provides a comprehensive overview of modern neural encoding and decoding frameworks, exploring their foundational principles, methodological advances, and transformative applications. It details how deep learning and machine learning models are revolutionizing brain-computer interfaces (BCIs) and computational drug discovery. The content systematically addresses core challenges in parameter optimization and model validation, while presenting comparative analyses of traditional versus modern decoding approaches. Designed for researchers, scientists, and drug development professionals, this review synthesizes cutting-edge developments from motor control and speech neuroprosthetics to innovative platforms like Pocket2Drug for ligand binding site prediction, offering practical insights for both neurological therapeutics and pharmaceutical development.

Core Principles of Neural Encoding and Decoding: From Biological Basis to Computational Frameworks

In the field of neuroscience and brain-computer interface (BCI) research, the processes of neural encoding and decoding represent fundamental pillars for understanding how the brain processes information and generates behavior. Neural encoding refers to the mapping from external stimuli or internal cognitive states to neural responses, while neural decoding represents the inverse mapping—from neural activity back to the stimuli or states that produced it [1]. These complementary processes form the core of modern systems neuroscience and have become particularly crucial for developing technologies that interface the brain with external devices, with applications ranging from restoring communication in paralyzed patients to treating neurological disorders [2] [3].

The distinction between encoding and decoding is not merely conceptual but represents fundamentally different mathematical and computational challenges. As formalized through Bayesian statistics, the relationship between these processes is captured by the equation: P(stimulus|response) = P(response|stimulus) × P(stimulus)/P(response) [1]. This framework highlights that decoding requires not only knowledge of the encoding scheme but also prior information about stimulus probabilities and the statistical properties of neural responses.

This technical guide provides a comprehensive overview of the core concepts, mathematical frameworks, experimental methodologies, and practical applications of neural encoding and decoding, with particular emphasis on current research in brain-computer interfaces.

Theoretical Foundations and Mathematical Frameworks

Fundamental Definitions and Relationships

At its core, neural encoding investigates how neurons transform information about stimuli or cognitive states into patterns of neural activity. This is represented by the conditional probability P(response|stimulus)—the probability of observing a particular neural response given a specific stimulus [1]. In contrast, neural decoding addresses the inverse problem: determining the probability that a particular stimulus or state occurred, given the observed neural response, represented by P(stimulus|response) [1].

The relationship between encoding and decoding is not a simple inverse operation. Rather, effective decoding requires integrating the encoding scheme with prior knowledge about the statistical regularities of the environment and the inherent variability of neural responses. This Bayesian perspective has become fundamental to modern neural decoding approaches, particularly in BCI applications where prior knowledge about likely user intentions can significantly improve decoding accuracy [1].

Neural Coding Schemes

The nervous system employs multiple coding schemes to represent information, each with distinct advantages for different types of neural computations:

Table: Primary Neural Coding Schemes in the Central Nervous System

| Coding Scheme | Definition | Key Characteristics | Representative Neural Systems |

|---|---|---|---|

| Rate Coding | Information encoded in firing rate measured over discrete time intervals | • Tuning curves can be Gaussian, monotonic, or inhibitory• Robust to variability in individual spike timing• Simple to decode | • Visual cortex (orientation tuning)• Motor cortex (direction and force)• Head direction cells |

| Temporal Coding | Information encoded in precise timing of spikes relative to stimuli or oscillations | • Can represent information independently of firing rate• Higher theoretical information capacity• Requires precise spike timing measurements | • Inferotemporal cortex (visual patterns)• Locust olfactory system (odor identity) |

| Population Coding | Information distributed across ensembles of neurons | • Reduces ambiguity from single neuron variability• Enables higher-dimensional representations• Built-in redundancy provides robustness | • Motor cortex (movement direction)• Hippocampal place cells |

Rate coding represents the most extensively studied neural coding scheme, characterized by tuning curves that describe how a neuron's firing rate varies with different stimulus features or movement parameters [1]. These tuning curves can take various forms, including Gaussian profiles for visual orientation tuning, monotonic functions for eye position representation, and inhibitory profiles for binocular disparity coding [1].

Temporal coding schemes utilize the precise timing of action potentials to convey information, potentially independently of firing rate. For example, neurons in the inferotemporal cortex show distinct temporal response profiles to different visual patterns, even when overall firing rates are similar [1]. In the locust olfactory system, projection neurons fire phase-locked to oscillatory local field potentials, with the precise timing carrying information about odor identity [1].

Population coding emerges from the collective activity of neural ensembles, where information is represented in a distributed manner across many neurons. This scheme allows downstream structures to decode more precise information than would be possible from any single neuron, as demonstrated by the population vector algorithm for decoding movement direction from motor cortical activity [1].

Experimental Methodologies and Protocols

Brain Signal Acquisition Technologies

The experimental study of neural encoding and decoding relies on technologies capable of recording brain signals at various spatial and temporal scales:

Table: Comparison of Neural Signal Acquisition Modalities for Encoding/Decoding Research

| Modality | Invasiveness | Spatial Resolution | Temporal Resolution | Primary Applications | Key Limitations |

|---|---|---|---|---|---|

| Microelectrode Arrays (MEA) | Fully invasive (implanted in tissue) | Single neurons | Millisecond | Single-unit encoding models, motor decoding | Tissue damage, signal degradation over time |

| Electrocorticography (ECoG) | Semi-invasive (surface of cortex) | ~1 mm (local field potentials) | Millisecond | Speech decoding, motor intention decoding | Limited to cortical surface, requires surgery |

| Electroencephalography (EEG) | Non-invasive | ~1-2 cm | Millisecond | Brain-state monitoring, evoked potentials | Low spatial resolution, poor signal-to-noise ratio |

| functional MRI (fMRI) | Non-invasive | ~1-3 mm | Seconds | Functional mapping, cognitive encoding | Poor temporal resolution, indirect neural measure |

| Magnetoencephalography (MEG) | Non-invasive | ~5-10 mm | Millisecond | Functional connectivity, network dynamics | Expensive, limited availability |

High-density electrode arrays have demonstrated significant advantages for decoding applications. A systematic comparison of standard and high-density ECoG grids found that high-density grids (2 mm diameter, 4 mm spacing) significantly outperformed standard grids (4 mm diameter, 10 mm spacing) in classifying six elementary arm movements, with error rates of 11.9% versus 33.1% respectively [4]. This improvement highlights how increased spatial sampling enhances the resolution of neural representations.

Protocol for Inner Speech Decoding

Recent advances in decoding inner speech (imagined speech without physical articulation) demonstrate the cutting edge of BCI research. The following protocol, adapted from a Stanford University study published in Cell, details the methodology for decoding inner speech from motor cortex activity [5] [6]:

Objective: To decode internally imagined speech from neural signals in the motor cortex for potential communication applications in patients with speech impairments.

Subjects: Four participants with severe speech and motor impairments due to ALS or stroke, implanted with microelectrode arrays in speech-related motor areas.

Neural Signal Acquisition:

- Microelectrode arrays (Utah arrays or similar) surgically implanted in motor cortical areas associated with speech production

- Signals recorded at high sampling rates (typically 2,000 Hz or higher) to capture both spike activity and local field potentials

- Common average referencing applied to reduce noise

Experimental Paradigm:

- Attempted Speech Condition: Participants attempt to physically speak words despite impairment, providing strong neural signals for initial decoder training

- Inner Speech Condition: Participants imagine speaking words without any physical movement

- Sentence Production: Participants imagine speaking whole sentences for real-time decoding evaluation

- Unintentional Speech Detection: Participants perform non-verbal cognitive tasks (sequence recall, counting) to test decoding of unintentional inner speech

Decoder Training and Implementation:

- Feature Extraction: Neural features are extracted from motor cortex activity, focusing on patterns associated with phonemes (the smallest units of speech)

- Machine Learning: Custom algorithms (typically deep learning models) are trained to map neural features to intended words or phonemes

- Vocabulary Sets: Testing with both limited (50-word) and extensive (125,000-word) vocabularies to assess scalability

- Real-time Implementation: Trained decoders run in real-time to provide immediate feedback

Privacy Protection Mechanisms:

- Selective Decoding: Training decoders to distinguish attempted speech from inner speech and silence the latter when appropriate

- Password Protection: Implementing a keyword unlocking system where the decoder only activates when a specific passphrase is imagined

Performance Metrics:

- Word error rates (14-33% for 50-word vocabulary; 26-54% for 125,000-word vocabulary)

- Real-time decoding speed (words per minute)

- Accuracy of privacy protection mechanisms (>98% detection of unlock phrase)

This protocol demonstrates that inner speech evokes robust, decodable patterns in motor cortex, though with weaker signals than attempted speech. The study successfully established proof-of-principle for inner speech decoding while implementing crucial privacy safeguards [5] [6].

Protocol for Multi-DOF Movement Decoding

Decoding complex arm movements requires distinguishing neural patterns associated with different degrees of freedom (DOF). The following protocol details methodology from research comparing standard and high-density ECoG grids for movement decoding [4]:

Objective: To decode six elementary upper extremity movements from ECoG signals and compare performance between standard and high-density electrode grids.

Subjects: Three subjects with standard ECoG grids (4 mm diameter, 10 mm spacing) and three with high-density grids (2 mm diameter, 4 mm spacing) implanted over primary motor cortex.

Movement Set: Participants performed six elementary movements with the arm contralateral to the implant:

- Pincer grasp/release

- Wrist flexion/extension

- Forearm pronation/supination

- Elbow flexion/extension

- Shoulder internal/external rotation

- Shoulder forward flexion/extension

Data Acquisition:

- ECoG signals recorded at 2048 Hz sampling rate

- Movement trajectories measured using electrogoniometers and gyroscopes

- Synchronization of neural and movement data using common pulse train

Signal Processing:

- Frequency Band Separation: Signals decomposed into μ (8-13 Hz), β (13-30 Hz), low-γ (30-50 Hz), and high-γ (80-160 Hz) bands

- Power Calculation: Band-specific power computed for movement detection

- Feature Selection: Contrast index calculated as difference in high-γ power during movement versus idling

Decoder Design:

- State Decoder: Binary classifier to detect presence/absence of movement

- Movement Decoder: Six-class classifier to identify which specific movement was performed

- Cross-validation: Performance evaluation using held-out test data

Performance Metrics:

- Classification error rates for state detection and movement identification

- Statistical comparison between standard and high-density grid performance

- Analysis of movement confusion patterns (which movements are most frequently misclassified)

This study demonstrated significantly lower error rates for high-density grids (2.6% for state decoding, 11.9% for movement decoding) compared to standard grids (8.5% and 33.1% respectively), highlighting the importance of spatial resolution for complex movement decoding [4].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Materials and Technologies for Neural Encoding/Decoding Studies

| Tool Category | Specific Examples | Function/Application | Key Considerations |

|---|---|---|---|

| Electrode Arrays | Utah Array, Precision Layer 7, HD ECoG Grids | Neural signal acquisition from cortical tissue | Invasiveness, biocompatibility, signal quality, long-term stability |

| Signal Acquisition Systems | Neural signal processors, bioamplifiers, ADC systems | Amplification, filtering, and digitization of neural signals | Channel count, sampling rate, noise floor, input impedance |

| Decoding Algorithms | Deep neural networks, Kalman filters, linear discriminant analysis | Mapping neural signals to intended movements or speech | Computational complexity, training data requirements, generalization |

| Biocompatible Materials | Conductive polymers, carbon nanomaterials, flexible substrates | Interface between electronics and neural tissue | Biostability, mechanical compliance, chronic immune response |

| Neural Signal Simulators | Synthetic neural data generators, biophysical models | Algorithm validation and system testing | Biological realism, parameter tuning, noise modeling |

| Behavioral Task Suites | Custom software for motor tasks, speech paradigms, cognitive assays | Controlled elicitation of neural activity for encoding studies | Task design, timing precision, participant engagement |

Emerging hardware solutions are increasingly important for implementing practical decoding systems. Recent advances in low-power circuit design have enabled the development of specialized chips for BCI applications that can perform real-time decoding with minimal power consumption—a critical requirement for implantable devices [7]. These systems must balance computational complexity against power constraints, with the complexity of signal processing typically dominating power consumption in EEG and ECoG decoding circuits [7].

Flexible neural interfaces represent another significant advancement, with companies like Precision Neuroscience developing thin-film electrode arrays that conform to the cortical surface without penetrating brain tissue. These devices aim to provide high-quality signals with reduced tissue damage compared to penetrating electrodes [8].

Computational Frameworks and Implementation

Mathematical Foundations of Decoding Algorithms

The Bayesian framework provides a principled mathematical foundation for neural decoding, formalizing the relationship between encoding and decoding as:

P(stimulus|response) = P(response|stimulus) × P(stimulus) / P(response)

Where:

- P(stimulus|response) is the posterior probability—the decoder's estimate of the stimulus given neural response

- P(response|stimulus) is the likelihood—the encoding model describing how stimuli evoke neural responses

- P(stimulus) is the prior—expectations about stimuli before observing neural data

- P(response) is the evidence—a normalizing constant ensuring probabilities sum to one [1]

This framework reveals that decoding is not simply the inverse of encoding but requires integrating sensory evidence with prior knowledge. The prior term P(stimulus) embodies the statistical regularities of the environment, while the likelihood P(response|stimulus) captures the noisy relationship between stimuli and neural responses.

Hardware Implementation Considerations

Implementing decoding algorithms in practical BCI systems requires careful consideration of hardware constraints and optimization strategies:

Input Data Rate (IDR) Requirements: The relationship between classification performance and input data rate can be empirically estimated, providing guidelines for sizing new BCI systems. Higher classification accuracy typically requires higher IDR, though with diminishing returns [7].

Power-Channel Tradeoffs: Counterintuitively, increasing the number of recording channels can simultaneously reduce power consumption per channel (through hardware sharing) and increase information transfer rate (by providing more input data). This creates favorable scaling properties for high-channel-count systems [7].

Algorithm-Hardware Co-design: Optimal implementation requires matching algorithm complexity to hardware capabilities. Simpler algorithms like linear discriminant analysis can provide satisfactory performance with significantly lower power consumption than more complex deep learning approaches, making them preferable for implanted applications with strict power constraints [7].

Neural encoding and decoding represent complementary frameworks for understanding how the brain represents information and translating this understanding to practical applications in brain-computer interfaces. The Bayesian formulation of the relationship between encoding and decoding highlights that effective decoding requires integrating sensory evidence with prior knowledge, rather than simply inverting encoding models.

Current research demonstrates increasingly sophisticated applications of these principles, from decoding inner speech for communication restoration to multi-degree-of-freedom movement control for prosthetic devices. These advances are enabled by improvements in neural interface technology, particularly high-density electrode arrays that provide enhanced spatial resolution, and specialized hardware implementations that enable real-time decoding with minimal power consumption.

Future progress will likely come from several directions: improved understanding of neural representations across different brain areas, more sophisticated decoding algorithms that leverage deep learning and other advanced machine learning techniques, and continued development of neural interface hardware with higher channel counts and better biocompatibility. As these technologies mature, they hold the potential to restore communication and mobility to people with severe neurological impairments, while also providing fundamental insights into how the brain represents and processes information.

The brain functions as a sophisticated information processing system, continually encoding incoming sensory data and decoding this information to plan and execute actions. Within the field of brain-computer interface (BCI) research, understanding these fundamental processes is paramount for developing technologies that can restore or replace impaired neurological functions [2]. Neural encoding refers to the transformation of external stimuli (e.g., visual scenes, sounds) into patterns of neural activity, primarily within sensory cortices. Conversely, neural decoding involves interpreting these neural activity patterns to predict stimuli, intentions, or behaviors [9]. Modern BCI systems, particularly bidirectional BCIs (BBCIs), leverage both principles to create closed-loop systems that not only interpret brain signals to control external devices but also provide sensory feedback through neural stimulation, effectively acting as "neural co-processors" for the brain [9]. This whitepaper details the biological mechanisms underlying sensory encoding and motor decoding, framing them within the context of advanced BCI research and development.

The Biological Basis of Sensory Encoding

Sensory encoding begins when specialized receptor organs transduce physical energy (light, sound, pressure) into electrochemical signals. These signals are relayed through thalamic nuclei to primary sensory areas of the neocortex, where feature extraction occurs.

Visual Stimulus Encoding in the Cortex

Recent research utilizing large-scale neuronal recordings has illuminated how intrinsic brain dynamics influence the encoding of visual stimuli. During passive viewing, the brain exhibits widespread, coordinated activity that plays out over multisecond timescales in the form of quasi-periodic spiking cascades [10]. These cascades involve up to 70% of neurons from various cortical and subcortical areas firing in highly structured temporal sequences that recur every 5–10 seconds [10]. The efficacy of visual stimulus encoding is systematically modulated during each cascade cycle, linked to fluctuating arousal states.

Table 1: Key Findings on Visual Stimulus Encoding from Large-Scale Recordings

| Aspect | Experimental Finding | Biological Implication |

|---|---|---|

| Cascade Persistence | Spiking cascades persist during visual stimulation, similar to rest [10] | Self-generated, intrinsic dynamics continuously shape sensory processing. |

| Arousal Modulation | Encoding accuracy is 23.0 ± 8.5% higher during high-arousal states (p = 2.1×10⁻¹⁰) [10] | Arousal level, indexed by pupil size and LFP power, directly determines encoding fidelity. |

| State-Dependent Encoding | High-efficiency encoding occurs during peak arousal, alternating with hippocampal ripples in low arousal [10] | The brain alternates between exteroceptive (sensory) and internal (mnemonic) operational modes. |

| Locomotion Effect | Active locomotion abolishes cascade dynamics, maintaining a high-arousal, high-efficiency state [10] | Active behavior engages a distinct neural regime optimized for sensory processing. |

The brain's internal state, defined by population spiking dynamics, strongly affects visual information encoding. Machine learning decoders (e.g., Support Vector Machines) show that the accuracy of predicting image identity from neuronal spiking activity exhibits a strong and robust linear association (r = 0.975, p = 3×10⁻²¹) with the internal state index [10]. This demonstrates that the brain's intrinsic, arousal-related dynamics fundamentally govern the reliability of sensory representations.

From Perception to Action: The Decoding of Information for Movement

The transformation of sensory representations into motor commands involves a complex network of brain areas, with the primary motor cortex (M1) serving as a critical node for decoding movement intentions.

Decoding Algorithms for Motor Control

State-of-the-art decoding algorithms for intracortical BCIs often employ linear decoders such as the Kalman filter [9]. The Kalman filter is an optimal recursive estimator that uses a series of measurements observed over time, containing statistical noise, to produce estimates of unknown variables. In the context of motor decoding:

- State Vector (xₜ): Typically represents kinematic quantities to be estimated, such as hand position, velocity, and acceleration.

- Measurement Model: Specifies how the kinematic vector xₜ at time t relates linearly (via a matrix B) to the measured neural activity vector yₜ:

yₜ = Bxₜ + mₜ[9]. - Dynamics Model: Specifies how xₜ linearly changes (via matrix A) over time:

xₜ = Axₜ₋₁ + nₜ[9]. - Noise Processes: nₜ and mₜ are zero-mean Gaussian noise processes representing state evolution and measurement uncertainty, respectively.

This framework allows for the continuous estimation of kinematic parameters from neural population activity, enabling real-time control of prosthetic devices.

Table 2: Experimental Protocols in Bidirectional BCI Research

| Study (Subject) | Decoding Method | Neural Signal Source | Encoding / Stimulation Method | Task & Outcome |

|---|---|---|---|---|

| Flesher et al. (Human) [9] | Linear decoder mapping M1 firing rates to movement velocities. | Multi-electrode recordings in M1. | Torque sensor data linearly mapped to pulse train amplitude in S1. | Continuous force matching with a robotic hand; success rate higher with stimulation feedback vs. vision alone. |

| Bouton et al. (Human) [9] | Six SVMs applied to mean wavelet power features. | 96-electrode array in hand area of M1. | Surface FES; stimulation intensity as piecewise linear function of decoder output. | Production of six different wrist and hand motions in a quadriplegic patient. |

| Ajiboye et al. (Human) [9] | Linear decoder (Kalman-like) mapping firing rates to % activation of muscle groups. | Neuronal firing rates and high-frequency LFP power in hand area of M1. | Functional Electrical Stimulation (FES) of arm muscles. | Tetraplegic subject performed multi-joint arm movements with 80-100% accuracy, including drinking coffee. |

| Klaes et al. (Non-Human Primate) [9] | Kalman filter decoding hand position, velocity, acceleration. | M1 recordings. | Intracortical microstimulation (ICMS) in S1 (300 Hz biphasic pulse train). | Match-to-sample task using a virtual arm; success rates of 70->90% (chance: 50%). |

Experimental Methodologies and Workflows

This section details the standard protocols for conducting experiments that investigate sensory encoding and motor decoding.

Protocol for Investigating Arousal-Dependent Sensory Encoding

Objective: To determine how intrinsic brain dynamics and arousal states modulate the encoding fidelity of sensory stimuli.

- Animal Preparation & Recordings: Use transgenic mice (e.g., C57BL/6J) expressing GCaMP6f in cortical neurons. Perform large-scale neuronal recordings using high-density Neuropixels probes targeting 44 brain regions, including visual cortex, hippocampus, and thalamus [10].

- Stimulus Presentation: During periods of passive viewing, present visual stimuli (e.g., natural scenes or drifting gratings) on a monitor for 250 ms, repeated 50 times each in random order [10].

- Behavioral State Monitoring: Simultaneously track locomotion via a rotary encoder and monitor arousal using pupil diameter and local field potential (LFP) power in delta (<4 Hz) and gamma (55-65 Hz) bands [10].

- Data Processing:

- Spike Sorting: Isolate single-unit activity from raw electrophysiological data.

- Cascade Detection: Order neurons by their principal delay profile to identify quasi-periodic spiking cascades [10].

- State Index Calculation: Compute an index summarizing the relative activation level of negative- and positive-delay neuronal subpopulations to define high- and low-arousal brain states [10].

- Encoding Analysis: Train a Support Vector Machine (SVM) decoder with 5-fold cross-validation to predict image identity from population spiking activity. Calculate decoding accuracy separately for trials occurring during high- and low-arousal states to quantify state-dependent encoding fidelity [10].

Protocol for Closed-Loop Bidirectional BCI Control

Objective: To enable a subject to control a prosthetic device or paralyzed limb using decoded motor commands while receiving sensory feedback via intracortical stimulation.

- Decoder Calibration (Open-Loop):

- Kinematic Data Collection: Record neural activity from M1 while the subject (human or non-human primate) observes or performs (via assisted control) specific motor tasks [9].

- Model Fitting: Fit a Kalman filter or linear regression model to map neural features (e.g., firing rates, LFP power) to observed kinematics (position, velocity, grip force) [9].

- Stimulator Calibration:

- Percept Mapping: For each electrode in somatosensory cortex (S1), determine the stimulation parameters (e.g., pulse frequency, amplitude) that elicit a percept on a specific body part (e.g., thumb, index finger) [9].

- Transduction Function: Define a linear function that maps sensor data from the prosthetic (e.g., grip force) to parameters of the stimulation pulse train [9].

- Closed-Loop Operation:

- Neural Recording: Continuously record and process neural signals from M1.

- Real-Time Decoding: Use the calibrated Kalman filter to decode intended kinematics in real-time.

- Device Control: Translate the decoded kinematics into commands for a prosthetic limb or Functional Electrical Stimulation (FES) of muscles [9].

- Sensory Encoding: Based on sensor data from the prosthesis, deliver corresponding intracortical microstimulation to S1 to provide tactile feedback. To manage stimulation artifacts, use an interleaved scheme (e.g., alternating 50 ms recording and 50 ms stimulation windows) [9].

The following diagram illustrates the core workflow and brain areas involved in a bidirectional BCI system.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for Neural Encoding/Decoding Studies

| Item / Technology | Function / Application | Specific Examples / Properties |

|---|---|---|

| Neuropixels Probes [10] | High-density, large-scale recording of single-unit activity from hundreds of neurons across multiple brain areas simultaneously. | Silicon-based multielectrode arrays; used for capturing spiking cascades and population coding dynamics. |

| GCaMP Calcium Indicators [10] | Genetically encoded sensors for optical monitoring of neuronal activity via fluorescence changes in response to calcium influx. | Used in transgenic mice (e.g., Thy1-GCaMP6f) for large-scale functional imaging of neural populations. |

| Support Vector Machine (SVM) [10] | A supervised machine learning model used for classification tasks, such as decoding stimulus identity from population activity. | Applied to binned spike counts to predict which image was shown to the animal, yielding a measure of encoding accuracy. |

| Kalman Filter [9] | An optimal estimation algorithm for decoding continuous kinematic parameters (e.g., velocity, position) from neural activity. | Used in motor BCIs; models the relationship between neural signals and kinematics with linear Gaussian dynamics. |

| Intracortical Microstimulation (ICMS) [9] | Delivering small electrical currents via implanted microelectrodes to activate or inhibit local neural populations, providing artificial sensory feedback. | Biphasic pulse trains (e.g., 200-400 Hz) delivered to S1 to mimic tactile sensations in bidirectional BCI tasks. |

| Functional Electrical Stimulation (FES) [9] | Electrical stimulation of peripheral nerves or muscles to reanimate paralyzed limbs and restore functional movement. | Surface or implanted electrodes; stimulation intensity modulated by decoded motor commands. |

In brain-computer interface (BCI) research, the mathematical formalization of neural encoding and decoding provides the foundational framework for translating brain signals into actionable commands. Neural encoding refers to the processes by which external stimuli are translated into neural activity, while neural decoding aims to reconstruct these stimuli or the user's intentions from recorded brain signals [11] [12]. This bidirectional relationship forms the core of modern BCI systems, enabling direct communication between the brain and external devices for restoring impaired sensory, motor, and cognitive functions in neurological disorders [2] [13].

The mathematical relationship between encoding and decoding can be conceptualized through Bayesian principles. Formally, if we let (K) represent a vector of neural activity from (N) neurons and (x) represent a stimulus or behavioral variable, the encoding model describes (P(K|x)) - how neural responses depend on the stimulus. Conversely, decoding involves inverting this relationship to estimate (P(x|K)) using Bayes' theorem [12]. This statistical formulation enables researchers to quantify how information is transmitted within the nervous system and develop algorithms that translate neural signals into device commands.

Fundamental Statistical Frameworks for Neural Coding

Core Mathematical Formulations

Neural coding research employs diverse statistical approaches to model the relationship between neural activity and external variables. The foundational encoding model represents the neural response of population (K) to stimulus (x) as:

[ P(K|x) ]

Here, (K) is a vector representing the activity of (N) neurons, with each entry typically representing spike counts in discrete time bins or rate responses [12]. This statistical relationship summarizes how neuronal populations respond to external events and forms the basis for predicting neural activity from known stimuli.

For decoding, the inverse problem is addressed through Bayesian inference:

[ P(x|K) = \frac{P(K|x)P(x)}{P(K)} ]

where (P(x|K)) is the posterior probability of the stimulus given the neural data, (P(K|x)) is the likelihood derived from encoding models, (P(x)) is the prior probability of the stimulus, and (P(K)) serves as a normalizing constant [12]. This Bayesian framework provides a principled approach to decoding that incorporates prior knowledge about the statistical structure of the environment.

Key Encoding Models

Table 1: Comparison of Major Neural Encoding Models

| Model Type | Mathematical Formulation | Key Advantages | Limitations |

|---|---|---|---|

| Linear Regression | (K = Wx + \epsilon) | Simple, interpretable parameters | Limited capacity for nonlinear relationships |

| Generalized Linear Models (GLMs) | (g(E[K]) = Wx + \epsilon) | Handles non-normal response distributions via link functions | Still limited to moderate nonlinearities |

| Artificial Neural Networks (ANNs) | (K = f(Wn...f(W2f(W_1x))) ) | Universal function approximators, captures complex nonlinearities | Less interpretable, requires large datasets |

| Information Theory Models | (I(X;K) = \sum_{x,k} P(x,k) \log \frac{P(x,k)}{P(x)P(k)}) | Model-free, measures predictive accuracy without assuming specific relationships | Computationally intensive for large populations |

Encoding models have evolved from simple linear regression to increasingly sophisticated approaches. Generalized Linear Models (GLMs) extend linear models by incorporating nonlinear link functions to accommodate diverse neural response distributions [12]. More recently, artificial neural networks (ANNs) have emerged as powerful nonlinear encoding models that can capture complex relationships between stimuli and neural responses through their hierarchical, integrative properties [12].

Information-theoretic approaches provide a model-free framework for quantifying how much information neural responses convey about stimuli. The mutual information (I(X;K)) between stimuli (X) and neural responses (K) measures the reduction in uncertainty about the stimulus provided by the neural response [12] [14]. The Kullback-Leibler (KL) divergence offers another information-theoretic measure:

[ I(f,g) = \int f(x) \ln \frac{f(x)}{g(x)} dx ]

which quantifies the information lost when an approximating model (g) is used instead of the true distribution (f) [14]. This formalism is particularly valuable for comparing different encoding models and optimizing their parameters.

Experimental Protocols and Methodologies

Signal Acquisition Modalities

Table 2: Brain Signal Acquisition Methods for Encoding/Decoding Studies

| Method | Spatial Resolution | Temporal Resolution | Invasiveness | Primary Applications |

|---|---|---|---|---|

| Electroencephalography (EEG) | ~10 mm | ~1-100 ms | Non-invasive | Basic research, clinical BCIs for communication |

| Electrocorticography (ECoG) | ~1 mm | ~1-10 ms | Semi-invasive (subdural) | Motor decoding, speech neuroprosthetics |

| Intracortical Microarrays | ~0.05 mm (single neurons) | <1 ms | Fully invasive | High-performance motor control, neural mechanisms |

| Functional MRI (fMRI) | ~1-3 mm | ~1-3 seconds | Non-invasive | Cognitive studies, brain mapping |

| Magnetoencephalography (MEG) | ~5 mm | ~1-100 ms | Non-invasive | Cognitive studies, clinical pre-surgical mapping |

The choice of signal acquisition method significantly impacts the type of encoding and decoding models that can be developed. Non-invasive methods like EEG provide widespread accessibility but suffer from limited spatial resolution and signal-to-noise ratio due to attenuation from intervening tissues [13] [15]. Invasive methods such as intracortical microarrays offer single-neuron resolution but require neurosurgical implantation and face challenges with long-term signal stability [13].

Recent advances have enabled large-scale neuronal recordings that capture the activity of hundreds to thousands of neurons simultaneously, dramatically expanding our understanding of population coding mechanisms [11] [12]. These technological developments have facilitated the shift from studying individual neurons to investigating how information is distributed across neuronal populations.

Motor Decoding Experimental Protocol

A representative experimental protocol for motor decoding involves several key stages. The BCI system must first acquire brain signals, extract relevant features, translate these features into device commands, and provide output to external devices [13]. The following Graphviz diagram illustrates this workflow:

Diagram 1: Motor decoding experimental workflow

In a typical finger movement decoding experiment, subjects perform or imagine finger movements while neural activity is recorded. For ECoG-based approaches, subjects focus on a display and move the respective finger according to visual cues displayed for 2-3 seconds, followed by 2-3 seconds of rest [16]. Each finger is typically moved approximately 30 times across a 10-minute recording session per subject.

Statistical analysis begins with quality checks using box plots to identify outliers and noisy channels in the neural data [16]. Preprocessing algorithms remove artifacts and standardize the signals. The resulting cleaned dataset often exhibits dual polarity and Gaussian distribution properties, guiding the selection of appropriate activation functions (e.g., Tanh) for subsequent neural network models [16].

Speech Decoding Experimental Protocol

Speech neuroprosthetics represent an emerging application of neural decoding. In recent clinical trials, participants with severe motor impairments have electrode arrays implanted in the motor cortex areas controlling speech-related articulators (lips, tongue, larynx) [17]. Participants imagine speaking sentences presented to them while neural activity is recorded.

The system learns patterns of neural activity corresponding to intended speech sounds through supervised learning approaches. When participants imagine speaking, these neural patterns are converted into text on a screen or synthetic speech output [17]. This approach has demonstrated the feasibility of decoding continuous language from neural signals, with recent advances leveraging large language models (LLMs) for improved decoding performance [15].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Algorithms for Neural Decoding

| Tool Category | Specific Methods | Function | Application Examples |

|---|---|---|---|

| Classification Algorithms | Linear Discriminant Analysis (LDA), Support Vector Machines (SVM) | Distinguishes between discrete mental states | EEG-based spellers, movement classification |

| Regression Models | Kalman Filter, Linear Regression | Decodes continuous parameters | Finger trajectories, kinematic parameters |

| Deep Learning Architectures | Convolutional Neural Networks (CNN), Long Short-Term Memory (LSTM), Transformers | Handles complex spatiotemporal patterns | ECoG-based finger decoding, speech reconstruction |

| Dimensionality Reduction | PCA, Gaussian Process Factor Analysis | Redends noise and reveals latent structure | Visualizing neural trajectories, pre-processing |

| Information Theory Metrics | Mutual Information, Kullback-Leibler Divergence | Quantifies information content | Model comparison, neural coding efficiency |

The experimental toolkit for neural encoding and decoding studies includes both hardware components and computational methods. For invasive approaches, microelectrode arrays like the Paradromics device (with thin, stiff platinum-iridium electrodes penetrating the cortical surface) enable recording from individual neurons [17]. Non-invasive approaches typically use multi-channel EEG systems with conductive gels or dry electrodes.

Computational tools range from traditional statistical models to modern deep learning approaches. The Kalman filter remains widely used for decoding continuous kinematic parameters, with both supervised and unsupervised variants [18]. Recent work has explored weakly supervised methods that leverage discovered symmetries between unsupervised decoding positions and ground-truth positions in motor tasks [18].

Deep learning architectures have shown particular promise in handling the complex spatiotemporal patterns in neural data. Convolutional Neural Networks (CNNs) extract features from neural signals, while Long Short-Term Memory (LSTM) networks capture temporal dependencies [16]. Incorporating dropout and regularization techniques makes these models more resilient to noise and variability in neural data [16].

Advanced Mathematical Frameworks

Dynamical Systems Approaches

Neural populations exhibit rich dynamical properties that can be formalized through state-space models:

[ xt = Ax{t-1} + wt ] [ Kt = Cxt + vt ]

where (xt) represents the latent neural state at time (t), (Kt) is the observed neural activity, (A) is the state transition matrix, (C) is the observation matrix, and (wt), (vt) are noise processes [18]. These models capture the temporal evolution of neural population activity and have proven particularly effective for decoding continuous movement parameters.

Recent advances have extended these approaches to incorporate nonlinear dynamics through recurrent neural networks (RNNs) and switching dynamical systems. Multiplicative RNNs allow mappings from neural input to motor output to partially adapt to changes in neural activity sources, addressing the challenge of non-stationarity in chronic neural recordings [18].

Information-Theoretic Foundations

Information theory provides a fundamental framework for quantifying neural coding efficiency. The Kullback-Leibler divergence serves as a crucial measure for comparing encoding models:

[ I(f,g) = \sum_i f(i) \ln \frac{f(i)}{g(i)} ]

where (f) represents the true data distribution and (g) represents an approximating model [14]. This formalism allows researchers to measure information loss when using simplified models and optimize model complexity.

The relationship between encoding and decoding can be understood through the concept of the "neural manifold" - a low-dimensional space in which neural population activity evolves. While sensory information is implicitly encoded in high-dimensional sensory inputs, hierarchical processing in the brain transforms these representations into more explicit formats that are easily decoded by downstream areas [12]. For example, object identity that is non-linearly encoded in retinal activity becomes more linearly decodable in inferotemporal cortex representations [12].

The following Graphviz diagram illustrates this conceptual relationship between encoding and decoding processes:

Diagram 2: Encoding-decoding conceptual framework

Future Directions and Challenges

The mathematical formalization of neural encoding and decoding continues to evolve with several promising research directions. Causal modeling approaches aim to move beyond correlational relationships to infer and test causality in neural circuits [12]. Large language models (LLMs) are increasingly being applied to linguistic neural decoding, leveraging their powerful information understanding and generation capabilities [15].

A significant challenge remains in improving the day-to-day and moment-to-moment reliability of BCI performance to approach the reliability of natural muscle-based function [13]. This requires advances in signal acquisition hardware, validation in long-term real-world studies, and addressing the individual differences in neural signals that currently challenge widespread BCI adoption [2].

The integration of normative models with deep learning approaches presents another promising direction. These models can incorporate structural and functional constraints of neural circuits to develop more biologically plausible decoding algorithms [12]. As recording technologies continue to scale, enabling measurements from increasingly large neuronal populations, our mathematical frameworks must similarly evolve to capture the full complexity of neural computation while remaining interpretable and useful for clinical applications.

The mathematical foundations of neural encoding and decoding will continue to play a crucial role in translating basic neuroscience discoveries into effective clinical interventions for neurological disorders, ultimately restoring communication and motor function for people with severe disabilities.

Brain-Computer Interfaces as a Practical Testbed for Encoding-Decoding Principles

Brain-Computer Interfaces (BCIs) have emerged as a powerful experimental framework for investigating neural encoding and decoding principles. By establishing a direct communication pathway between the brain and external devices, BCIs provide an unparalleled testbed for understanding how neural activity represents information (encoding) and how these representations can be translated into actionable commands (decoding) [2]. This bidirectional communication loop enables researchers to test fundamental hypotheses about neural computation while simultaneously developing practical applications for restoring function in patients with neurological disorders. The core BCI framework implements a closed-loop system where brain signals are acquired, processed to decode user intent, and used to control external devices, potentially including neuromodulation systems that provide feedback to the nervous system [19].

The evolution of BCI technologies has accelerated our understanding of neural coding principles across diverse brain regions and functions. Current BCI systems can be broadly categorized into invasive approaches, which record from intracortical microelectrodes or electrocorticography (ECoG) arrays placed on the cortical surface, and non-invasive approaches that primarily utilize electroencephalography (EEG) [2] [20]. Each modality offers distinct trade-offs between spatial and temporal resolution, signal-to-noise ratio, and practical implementation requirements, making them suitable for different research questions and applications.

Fundamental Encoding-Decoding Principles in BCI

Theoretical Foundations of Neural Coding

The theoretical foundation of BCIs rests on the principle that cognitive processes, motor intentions, and sensory experiences are represented by reproducible patterns of neural activity. These representations exist across multiple spatial and temporal scales, from individual neuron spiking activity to population-level field potentials. Neural encoding refers to the process by which external stimuli or internal states are transformed into these patterned neural responses, while neural decoding aims to reconstruct stimuli or intentions from the observed neural activity [15].

A critical insight from BCI research is that the brain maintains a systematic mapping between intention and neural activation, even in the absence of peripheral execution. For instance, motor imagery—the mental rehearsal of movement without physical execution—evokes patterns of neural activity in motor regions that share similarities with those observed during actual movement execution [2]. This preservation of intentional representations provides the fundamental basis for BCIs designed to restore motor function. Similarly, in the speech domain, both attempted speech and inner speech generate distinguishable patterns of activity in motor cortex regions, enabling the potential decoding of communication intent without overt vocalization [5] [21].

The BCI Closed-Loop Architecture

The canonical BCI system implements a complete closed-loop architecture that continuously cycles through signal acquisition, processing, decoding, and effector control. This architecture provides a practical framework for testing encoding-decoding theories in real-time. The core components include:

- Signal Acquisition: Recording neural signals through invasive or non-invasive methods

- Pre-processing: Enhancing signal quality through filtering and artifact removal

- Feature Extraction: Identifying informative characteristics in the neural signals

- Decoding Algorithm: Translating features into control commands

- Effector Application: Executing commands through external devices

- Feedback: Providing sensory information to the user to complete the control loop

This closed-loop architecture enables iterative refinement of both the decoding algorithms and the user's ability to modulate their neural activity, embodying the principles of neuroplasticity and adaptive control.

Experimental Paradigms and Methodologies

Signal Acquisition Modalities

Table 1: Comparison of BCI Signal Acquisition Modalities

| Modality | Spatial Resolution | Temporal Resolution | Invasiveness | Primary Applications |

|---|---|---|---|---|

| Microelectrode Arrays (MEA) | Single neuron | Millisecond | Invasive (intracortical) | Motor control, speech decoding |

| Electrocorticography (ECoG) | Millimeter | Millisecond | Invasive (cortical surface) | Motor imagery, speech decoding |

| Electroencephalography (EEG) | Centimeter | Millisecond | Non-invasive | Motor imagery, SSVEP, P300 |

| Functional MRI (fMRI) | Millimeter | Seconds | Non-invasive | Brain mapping, connectivity |

| Magnetoencephalography (MEG) | Millimeter | Millisecond | Non-invasive | Cognitive processing studies |

BCI research employs diverse signal acquisition modalities, each with distinct advantages for investigating specific encoding-decoding principles. Invasive approaches using microelectrode arrays (MEAs) implanted directly into brain tissue provide the highest spatial resolution, enabling recording of single-neuron activity [7]. These signals offer exquisite detail about neural coding principles but require substantial surgical intervention. Electrocorticography (ECoG), which places electrode arrays on the cortical surface, provides signals with high temporal resolution and better spatial resolution than non-invasive methods, while reducing the risks associated with penetrating brain tissue [7].

Non-invasive approaches, particularly electroencephalography (EEG), dominate practical BCI applications due to their safety and accessibility. EEG measures electrical activity from the scalp, providing millisecond temporal resolution but limited spatial resolution due to signal smearing through the skull and other tissues [20]. Recent advances in high-density EEG systems have improved spatial resolution, making them increasingly valuable for studying population-level neural coding principles. Functional MRI offers superior spatial resolution for mapping neural representations but suffers from poor temporal resolution due to the slow hemodynamic response, limiting its utility for real-time decoding applications [15].

Major Experimental Protocols

Motor Imagery and Execution Paradigms

Motor-related BCIs typically employ either motor imagery (MI) or motor execution (ME) paradigms. In MI protocols, participants imagine performing specific movements without actual muscle contraction, while ME protocols involve attempted or actual movement. Both approaches evoke modulations in sensorimotor rhythms (e.g., mu and beta rhythms) that can be decoded to control external devices. Standardized experimental designs include cue-based trials where visual or auditory prompts indicate the specific movement to imagine or execute, followed by rest periods. These paradigms have been fundamental for investigating how movement intention is encoded in neural populations and how these representations can be decoded for prosthetic control [2].

The reliability of motor decoding has been demonstrated across multiple studies, with accuracy highly dependent on signal modality and decoding approach. Invasive methods typically achieve higher performance; for instance, MEA-based systems can decode continuous movement parameters with correlation coefficients exceeding 0.7-0.9 in non-human primate studies, while human ECoG studies report classification accuracies of 80-95% for discrete movement directions [7]. Non-invasive EEG-based systems generally achieve lower performance, with typical classification accuracies of 70-85% for binary limb movement classification, though performance varies substantially across individuals and with training [20].

Visual Evoked Potential Paradigms

Visual evoked potential (VEP) paradigms leverage the brain's reliable response to visual stimuli. In code-modulated VEP (c-VEP) approaches, visual stimuli flicker according to specific pseudo-random binary sequences, evoking time-locked brain responses that can be decoded to determine which stimulus the user is attending to [22]. These paradigms provide a highly reliable signal for investigating how predictable sensory inputs are encoded in visual pathways and how these representations can be decoded for communication and control.

Recent research has optimized c-VEP parameters to balance performance and user experience. A systematic investigation of visual stimulus opacity found that semi-transparent stimuli (specifically 50% white and 100% black stimuli) maintained high classification accuracy (99.38%) while significantly reducing visual fatigue compared to traditional high-contrast stimuli (from 6.4 to 3.7 on a 10-point fatigue scale) [22]. This optimization demonstrates how understanding encoding principles (how visual stimuli are represented in neural activity) can lead to improved decoding approaches that enhance both performance and usability.

Inner Speech Decoding Protocols

Inner speech decoding represents a cutting-edge paradigm for investigating linguistic representations without overt articulation. In a landmark study by Kunz et al. (2025), participants with speech impairments due to ALS or stroke either attempted to speak or imagined saying words and sentences while neural activity was recorded from motor cortex using microelectrode arrays [5] [21]. The experimental protocol involved:

- Training Phase: Participants repeatedly produced or imagined a set of words while neural data was collected to train decoding models

- Closed-Loop Testing: Participants imagined speaking sentences while the BCI decoded in real-time

- Unintentional Speech Detection: Participants performed non-verbal tasks (sequence recall, counting) to test whether private inner speech could be decoded

- Privacy Protection Evaluation: Testing methods to prevent decoding of unintentional inner speech

This protocol revealed that attempted and inner speech evoked similar patterns of neural activity, though attempted speech produced stronger signals. The decoding system achieved error rates between 14% and 33% for a 50-word vocabulary and between 26% and 54% for a 125,000-word vocabulary [5]. Participants with severe speech weakness preferred using imagined speech over attempted speech due to lower physical effort, highlighting the practical importance of understanding different encoding strategies for clinical applications.

Advanced Linguistic Decoding Approaches

Beyond inner speech, broader linguistic neural decoding aims to reconstruct language information from brain activity during both perception and production. Experimental paradigms in this domain include:

- Stimulus Recognition: Identifying which linguistic stimulus (word, sentence) a person is processing from evoked brain activity

- Text Stimuli Reconstruction: Decoding words or sentences at the word or sentence level using classifiers, embedding models, and custom network modules

- Brain Recording Translation: Treating brain activity as a "source language" and translating it into understandable text, analogous to machine translation

- Speech Neuroprosthesis: Decoding inner or vocal speech based on human intentions, progressing from phoneme-level recognition to open-vocabulary sentence decoding [15]

These approaches leverage the finding that artificial neural networks, particularly large language models (LLMs), exhibit patterns of functional specialization similar to cortical language networks, enabling more accurate decoding of linguistic representations [15].

Technical Implementation and Performance Metrics

Decoding Algorithms and Architectures

Table 2: Comparison of Neural Decoding Approaches and Performance

| Decoding Approach | Signal Modality | Application Domain | Typical Performance | Computational Demand |

|---|---|---|---|---|

| Deep Learning (CNN, LSTM) | EEG, ECoG, MEA | Motor imagery, speech decoding | High accuracy (80-95%) | High |

| Linear Discriminant Analysis (LDA) | ECoG, MEA | Movement classification, speech | Moderate to high accuracy | Low |

| Canonical Correlation Analysis | EEG | SSVEP classification | High ITR (>100 bits/min) | Moderate |

| Support Vector Machine (SVM) | EEG, ECoG | Motor imagery, P300 | Moderate accuracy (70-85%) | Moderate |

| Convolutional Neural Networks | EEG | Motor imagery, emotion recognition | High accuracy (80-90%) | High |

BCI decoding algorithms range from classical machine learning approaches to sophisticated deep learning architectures. For motor decoding, common approaches include linear discriminant analysis (LDA), support vector machines (SVM), and convolutional neural networks (CNN), which learn the mapping between neural features (e.g., band power, spatial patterns) and movement intentions [7]. For speech decoding, recurrent architectures like long short-term memory (LSTM) networks have proven effective for sequence decoding, while transformer-based models are increasingly used for their contextual processing capabilities [15].

Recent advances have leveraged large language models (LLMs) for linguistic decoding, capitalizing on their powerful information understanding and generation capacities. Studies have demonstrated that representations in these models account for a significant portion of the variance observed in human brain activity during language processing, enabling more accurate reconstruction of perceived or produced language [15]. The scaling laws observed in both brain encoding models and pre-trained LLMs suggest that larger systems with more parameters can better bridge brain activity patterns and human linguistic representations, given sufficient data [15].

Performance Evaluation Metrics

The evaluation of BCI decoding approaches employs diverse metrics tailored to specific applications:

- Classification Accuracy: Percentage of correct classifications for discrete tasks

- Information Transfer Rate (ITR): Bits per minute communicated through the BCI, combining speed and accuracy

- Word Error Rate (WER): Common metric for speech decoding systems, measuring word-level accuracy

- Pearson Correlation Coefficient: Measures similarity between decoded and actual continuous signals

- BLEU/ROUGE Scores: Evaluate semantic similarity for language generation tasks [15]

Hardware implementations introduce additional metrics focused on computational efficiency:

- Power Consumption per Channel (PpC): Critical for implantable and portable systems

- Input Data Rate (IDR): Relationship between data volume and classification performance

- Decoding Latency: Time delay between neural activity and command execution [7]

Counterintuitively, analysis of hardware systems reveals a negative correlation between power consumption per channel and information transfer rate, suggesting that increasing channel count can simultaneously reduce power consumption through hardware sharing and increase ITR by providing more input data [7]. For EEG and ECoG decoding circuits, power consumption is dominated by signal processing complexity rather than data acquisition itself.

Research Reagents and Tools

Table 3: Essential Research Materials for BCI Encoding-Decoding Studies

| Research Tool | Category | Primary Function | Example Applications |

|---|---|---|---|

| Microelectrode Arrays | Hardware | Record single-neuron activity | Motor decoding, speech neuroprosthetics |

| EEG Systems with Active Electrodes | Hardware | Non-invasive neural recording | Motor imagery, visual evoked potentials |

| ECoG Grids | Hardware | Cortical surface recording | Epilepsy monitoring, motor mapping |

| fNIRS Systems | Hardware | Hemodynamic activity measurement | Cognitive studies, clinical monitoring |

| Conductive Polymers/Hydrogels | Material | Improve electrode interface | Signal quality enhancement |

| Carbon Nanomaterials | Material | Enhance electrode performance | Biocompatibility, signal quality |

| Linear Discriminant Analysis | Algorithm | Feature classification | Motor imagery, movement classification |

| Convolutional Neural Networks | Algorithm | Spatial feature extraction | Signal classification, pattern recognition |

| Canonical Correlation Analysis | Algorithm | Multivariate correlation | SSVEP classification |

| Space-Time-Coding Metasurface | Experimental Apparatus | Secure visual stimulation | SSVEP with enhanced security [23] |

The development of advanced biomaterials has been crucial for improving BCI performance and biocompatibility. Conductive polymers and carbon nanomaterials enhance signal quality and biocompatibility at the electrode-tissue interface, addressing one of the key challenges in long-term BCI implementations [2]. Hydrogel-based interfaces show particular promise for creating stable, high-fidelity recording conditions for chronic implants.

For linguistic decoding research, specialized experimental setups integrate multiple technologies. The Brain Space-Time-Coding Metasurface (BSTCM) platform represents an advanced tool that combines visual stimulation for SSVEP-based BCIs with information interaction to the external environment, improving system compactness and reliability while enabling secure communication through harmonic-encrypted beams [23].

Visualization of Core BCI Principles

The Encoding-Decoding Loop in BCI

Inner Speech Decoding Experimental Workflow

Hardware Implementation Trade-offs

Emerging Research Frontiers

The future of BCI as a testbed for encoding-decoding principles lies in several promising directions. First, the integration of large language models continues to enhance linguistic decoding capabilities, with evidence that both model scaling and increased training data improve alignment with neural representations [15]. Second, hardware advancements are progressing toward fully implantable, wireless systems that can record from larger neuronal populations while minimizing power consumption [7]. These systems will enable more naturalistic studies of neural coding principles over extended time periods.

Privacy and security represent critical frontiers for BCI research, particularly as decoding capabilities advance. The demonstration that private inner speech can be decoded raises important ethical considerations [5] [21]. Proposed solutions include training decoders to distinguish between attempted and inner speech, and implementing password-protection systems that only activate decoding when users intentionally "unlock" the system with a specific passphrase [21]. Simultaneously, physical-layer security approaches using technologies like space-time-coding metasurfaces can protect wireless BCI communications from interception [23].

Brain-Computer Interfaces provide an essential practical testbed for investigating neural encoding and decoding principles across domains ranging from motor control to linguistic communication. The closed-loop nature of BCI systems enables rigorous testing of hypotheses about how information is represented in neural activity and how these representations can be reliably decoded to restore communication and control for people with neurological disorders. As BCI technologies continue to advance, they will undoubtedly yield further insights into fundamental neural coding principles while simultaneously delivering transformative clinical applications.

A brain-computer interface (BCI) fundamentally operates by establishing a direct communication pathway between the brain and an external device, bypassing the body's normal peripheral nerves and muscles [24]. Central to this process are the complementary frameworks of neural encoding and neural decoding. Neural encoding describes how external stimuli, intentions, or mental tasks are translated ("written") into specific patterns of neural activity. Conversely, neural decoding refers to the process of interpreting ("reading") these neural activity patterns to identify the original intention or stimulus, thereby enabling control of a BCI [24] [12]. The brain itself can be viewed as a series of cascading encoding and decoding operations, where sensory areas encode stimuli and downstream areas decode these representations into meaningful actions and perceptions [12] [25]. The efficacy of any BCI system is therefore contingent on the reliable detection and interpretation of key neural signals, which vary in their spatial and temporal resolution, invasiveness, and the specific aspects of neural activity they capture [2] [26].

Core Neural Signals and Their Characteristics

Neural signals used in BCI research can be broadly categorized based on the recording technique, which determines their spatial and temporal resolution, level of invasiveness, and the type of information they can decode.

Table 1: Comparison of Key Neural Signals for BCI Decoding

| Signal Type | Spatial Resolution | Temporal Resolution | Invasiveness | Primary Information Carried | Key BCI Applications |

|---|---|---|---|---|---|

| Spike Trains (SUA/MUA) | Single Neuron | Millisecond | Invasive | Discrete action potentials; coding of specific intent or stimulus features [26]. | High-performance prosthetic control, speech decoding [27] [28]. |

| Local Field Potentials (LFP) | Population (µm to mm) | Millisecond to Second | Invasive | Synaptic inputs and outputs of a neuronal population; oscillatory dynamics [24] [26]. | Movement planning, cognitive state monitoring [24]. |

| Electrocorticography (ECoG) | Population (cm) | Millisecond | Semi-Invasive | Cortical surface potentials; high-frequency activity related to motor and speech functions [24] [15]. | Motor control, speech neuroprosthetics, seizure focus localization [2] [15]. |

| Electroencephalography (EEG) | Population (cm) | Millisecond | Non-Invasive | Scalp-recorded voltage fluctuations; event-related potentials and oscillatory rhythms [24] [29]. | P300 speller, SSVEP, motor imagery BCIs [24] [2]. |

| Functional MRI (fMRI) | High (mm) | Second | Non-Invasive | Hemodynamic response (blood flow) correlated with neural activity [24]. | Brain mapping, neurofeedback therapy [2]. |

| Magnetoencephalography (MEG) | Population (cm) | Millisecond | Non-Invasive | Magnetic fields induced by neuronal electrical currents [24]. | Cognitive research, source localization of pathological activity [24]. |

| Functional NIRS (fNIRS) | Low (cm) | Second | Non-Invasive | Hemodynamic response based on optical absorption [24]. | Developing BCIs for daily use, monitoring cognitive load [24]. |

The choice of signal is a critical trade-off. Invasive methods like spike trains and ECoG offer superior signal-to-noise ratio and spatiotemporal resolution, making them suitable for complex decoding tasks such as speech neuroprosthetics [15] [28]. Non-invasive methods like EEG, while less precise, are safer and more practical for wider application, particularly for communication and basic control [24] [2].

Neural Coding Principles and Decoding Methodologies

Foundational Concepts of Neural Coding

Neural coding is the language the brain uses to represent information. Different signals employ distinct coding schemes. At the single-neuron level, information is often encoded in the firing rate (rate coding) or the precise timing of spikes (temporal coding) [12]. At the population level, information is distributed across the coordinated activity of many neurons, forming complex, high-dimensional representations that can be modeled as neural manifolds [12] [25]. The process of decoding involves building mathematical models to invert the encoding process, predicting the stimulus or intent from the observed neural activity [12].

Mathematical Frameworks for Decoding

The mathematical foundation of decoding is based on estimating the probability of a stimulus or intent ( x ) given a observed neural response ( K ), which is a vector representing the activity of N neurons [12]. This can be formulated as:

[P(x \mid K)]

where ( K ) represents features such as spike counts in a time bin or the rate response of each neuron. A wide array of models are used to approximate this relationship:

- Linear Models: Such as linear regression and linear discriminant analysis (LDA), provide a basic framework for predicting neural responses or classifying intents based on a linear relationship with stimulus features [12] [30].

- Generalized Linear Models (GLMs): Extend linear models by accommodating non-normal response distributions and nonlinear link functions, offering more flexibility for neural data [12].

- Machine Learning and Deep Learning Models: Non-linear models like Support Vector Machines (SVM), Convolutional Neural Networks (CNNs), and Recurrent Neural Networks (RNNs) have become powerful tools for decoding complex spatio-temporal patterns in brain signals [15] [29]. Large Language Models (LLMs) are now being leveraged for their powerful contextual understanding in tasks like linguistic neural decoding [15].

- Spiking Neural Networks (SNNs): As the third generation of neural networks, SNNs more closely mimic the brain's operation by processing information in the form of spikes over time. They are particularly suitable for real-time, causal decoding and offer remarkable energy efficiency, making them ideal for implantable BCI devices [29] [27].

Experimental Protocols for Key BCI Paradigms

Motor Imagery (MI) Decoding with EEG

Objective: To decode a user's intention to perform a specific movement (e.g., hand grasping) from non-invasive EEG signals, enabling control of assistive devices [24] [29].

Protocol:

- Participant Preparation: Apply a multi-channel EEG cap according to the 10-20 system. Use conductive gel to ensure electrode impedance is below 5 kΩ.

- Experimental Setup: The participant sits in front of a screen. The protocol involves cued trials of grasp-and-lift movements of a small object [29].

- Data Acquisition:

- Record continuous EEG from scalp electrodes (e.g., 32 channels).

- Simultaneously record electromyography (EMG) from relevant forearm muscles (e.g., Flexor Digitorum, Common Extensor Digitorum) and kinematics from sensors on the wrist, thumb, and index finger to serve as ground truth for movement onset and trajectory [29].

- Signal Processing:

- Feature Extraction & Decoding:

- Envelope the filtered EEG signals and downsample.

- Extract features such as the power in specific frequency bands or the signal amplitude over time.

- Train a decoding model (e.g., a Brain-Inspired Spiking Neural Network - BI-SNN or a SVM) to map the EEG features to the concurrent EMG/kinematic data [29]. The BI-SNN model, for instance, involves encoding signals into spike sequences, mapping them into a 3D reservoir, and using Spike-Time Dependent Plasticity (STDP) for learning [29].

Speech Neuroprosthetics with ECoG

Objective: To decode attempted or imagined speech from intracranial brain signals to restore communication in paralyzed individuals [15] [28].

Protocol:

- Participant Preparation: An ECoG grid or strip is surgically implanted over cortical areas critical for speech, such as the ventral sensorimotor cortex and the superior temporal gyrus [15].

- Experimental Setup: The participant is shown prompts on a screen and asked to attempt to speak the words or imagine speaking them without vocalizing.

- Data Acquisition:

- Record high-density ECoG signals (e.g., hundreds of electrodes) at a high sampling rate (≥1000 Hz) to capture broad spectral activity, including high-gamma activity (70-150 Hz) which is a robust marker of local cortical activation [15].

- Synchronize neural recordings with the presented speech stimuli.

- Signal Processing:

- Preprocess the ECoG data by filtering out line noise and artifacts.

- Compute the temporal-spectral evolution, often by extracting the power of the high-gamma band.

- Feature Extraction & Decoding:

- Align the high-gamma features with the phonemes, syllables, or words of the speech stimulus.

- Train a sequence-to-sequence model, such as a recurrent neural network (RNN) or transformer, to map the neural activity features to a sequence of linguistic units (phonemes or words) [15]. Modern approaches may leverage large language models (LLMs) as a prior to constrain the decoding output to meaningful sentences, significantly improving accuracy [15].

- Evaluate performance using word error rate (WER) or character error rate (CER) [15].

Table 2: Research Reagent Solutions for Neural Decoding Experiments

| Reagent / Material | Function in Experiment | Example Use Case |

|---|---|---|

| Microelectrode Arrays (MEAs) | Records spike trains and LFPs from populations of neurons. High-impedance electrodes can isolate single units [27]. | Implanted in motor cortex for dexterous prosthetic control [27]. |

| ECoG Grids/Strips | Records cortical surface potentials from the subdural space. Provides a balance of resolution and coverage [15]. | Placed over speech cortex for decoding attempted speech [15]. |

| EEG Cap with Ag/AgCl Electrodes | Records scalp potentials non-invasively. Conductive gel ensures low impedance [29]. | Used in motor imagery experiments to detect event-related desynchronization [29]. |

| Genetically Encoded Calcium Indicators (GECIs, e.g., GCaMP) | Fluorescent proteins that signal neural activity via changes in intracellular calcium concentration [30]. | Used in two-photon imaging in animal models to record from large populations of identified neurons at single-cell resolution [30]. |

| Optogenetic Actuators (e.g., Channelrhodopsin) | Light-sensitive ion channels used to stimulate specific neurons with temporal precision [30]. | Causal testing of neural encoding principles by stimulating defined neural populations and observing behavioral outcomes [30]. |

| Synchronized EMG & Kinematics | Provides ground truth data for motor output (muscle activation and movement trajectory) [29]. | Correlated with EEG or ECoG to train decoders for movement prediction [29]. |

Emerging Frontiers and Future Directions

The field of neural decoding is rapidly evolving, driven by several key trends. First, there is a push towards more causal and energy-efficient models. Spiking Neural Networks (SNNs), like the Spikachu framework, offer a promising path forward by providing causal processing suitable for real-time BCI use while consuming orders of magnitude less energy than traditional artificial neural networks (ANNs), making them ideal for implantable devices [27].

Second, scaling laws are becoming evident in neural decoding. Just as in other AI domains, performance in decoding tasks improves with larger models and more training data. This has led to the development of foundation models trained on massive, multi-subject datasets, which can then be efficiently fine-tuned for new subjects or tasks with minimal data, a process known as few-shot transfer [15] [27].

Finally, decoding is moving beyond the motor cortex to tap into high-level cognitive signals. Researchers are successfully decoding internal dialogue (inner speech) and intentions from regions like the posterior parietal cortex, which is associated with planning and reasoning [28]. This, combined with AI-powered analysis, raises important ethical considerations regarding mental privacy and the need for robust data protection laws for neural data [28].

Deep Learning and Machine Learning Methods: Architectures and Cross-Domain Applications