Minimizing Neural Signal Loss in EEG Research: Advanced Strategies for Artifact Rejection and Correction

This article provides a comprehensive framework for researchers and drug development professionals to optimize electroencephalography (EEG) preprocessing by balancing artifact removal with the preservation of neural signals.

Minimizing Neural Signal Loss in EEG Research: Advanced Strategies for Artifact Rejection and Correction

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to optimize electroencephalography (EEG) preprocessing by balancing artifact removal with the preservation of neural signals. It addresses the critical challenge of neural data loss during artifact rejection, a common pitfall that can compromise statistical power and lead to incorrect conclusions in both basic research and clinical trials. Drawing on the latest evidence, we explore the foundational principles of EEG artifacts, evaluate the efficacy of current methodological approaches like Independent Component Analysis (ICA) and machine learning, and provide practical troubleshooting guidance for complex scenarios such as wearable EEG and high-density systems. Furthermore, we present a rigorous framework for validating preprocessing pipelines, emphasizing the importance of metrics that go beyond simple artifact removal to ensure the biological validity of the retained signal. The goal is to empower scientists to design preprocessing workflows that maximize data quality and integrity.

Understanding the Trade-Off: Why Artifact Rejection Can Cost You 70% of Your Neural Signal

The ability to capture continuous neural recordings from wearable devices and implanted systems represents a revolutionary advance for neuroscience research and clinical monitoring. However, these signals are vulnerable to contamination by artifacts—non-neural signals that mimic genuine brain activity and obscure true neurophysiological patterns. Effective artifact management is crucial for reducing neural signal loss during artifact rejection, ensuring the validity of both real-time analysis and subsequent research findings. This guide provides troubleshooting methodologies for identifying and mitigating these deceptive signals.

FAQ: Understanding Neural Signal Artifacts

What are artifacts in neural recordings? Artifacts are unwanted signals that contaminate neural data, originating from both biological sources (eye movements, muscle activity, cardiac rhythms) and environmental sources (powerline interference, electrode movement, external electromagnetic interference) [1] [2]. In advanced deep brain stimulation (DBS) devices, an additional artifact occurs when detected voltage exceeds the device's maximum sensing capabilities, triggering a specific flag in the neural power stream [3] [4].

Why are artifacts particularly problematic for wearable EEG and chronic DBS recordings? Artifacts present a greater challenge in wearable and implanted systems due to uncontrolled environments, subject mobility, and the use of dry electrodes which reduce signal stability [2]. These systems' reduced channel count also limits the effectiveness of traditional artifact rejection techniques like Independent Component Analysis (ICA) [2]. Furthermore, artifacts are more frequent during physical activity [3] [4], making continuous real-world monitoring especially vulnerable to signal corruption.

How can I distinguish between artifacts and genuine neural signals? Different artifact types exhibit distinct spatial, temporal, and spectral characteristics. The table below summarizes key identifiers for common artifact types:

Table: Characteristics of Common Neural Recording Artifacts

| Artifact Type | Spectral Profile | Spatial Distribution | Common Causes |

|---|---|---|---|

| Ocular (EOG) | Low-frequency (< 4 Hz) [1] | Frontal regions | Eye blinks, movements [1] |

| Muscular (EMG) | High-frequency (> 13 Hz) [1] | Focal, temporal regions | Muscle contractions, jaw clenching [1] [2] |

| Powerline | Narrowband (50/60 Hz) | Global across channels | Electrical interference [1] |

| Motion | Broadband | Variable | Physical movement, electrode displacement [3] [2] |

| Overvoltage (DBS) | Flag value in power stream [3] | Device-specific | Voltage exceeding sensing capability [3] [4] |

What is the impact of inadequate artifact management on research outcomes? Failure to properly address artifacts can lead to misinterpretation of brain activity, potentially resulting in incorrect conclusions in both research and clinical practice. Artifacts can obscure genuine neural biomarkers and, in severe cases, may lead to misdiagnosis or inappropriate treatment decisions [1].

Troubleshooting Guides: Artifact Identification and Mitigation

Guide 1: Identifying Overvoltage Artifacts in Medtronic Percept DBS Data

Problem: Unexplained signal dropouts or flag values appear in longitudinal neural recordings from implanted DBS devices.

Background: The Medtronic Percept DBS device incorporates sensing capabilities that can capture neural signals during stimulation therapy. However, when the detected voltage exceeds the device's maximum sensing capabilities, it inserts a specific flag value into the neural power stream instead of the actual voltage measurement [3] [4].

Identification Protocol:

- Data Inspection: Systematically scan neural power stream data for predefined flag values that indicate overvoltage conditions.

- Lead Comparison: Note that overvoltage events are significantly more common in patients implanted with legacy Medtronic 3387 leads compared to newer Medtronic SenSight leads [3] [4].

- Contextual Correlation: Correlate overvoltage events with patient activity logs. In studies where patients concurrently wore Oura Rings, overvoltage events were more likely during periods of physical activity [3] [4].

Mitigation Strategy: Implement a principled data correction strategy for samples affected by overvoltage events. This involves identifying flagged samples and applying appropriate interpolation or reconstruction techniques to preserve data integrity for analysis [3].

Guide 2: Managing Artifacts in Wearable EEG Systems

Problem: Signal quality degradation in wearable EEG systems used in real-world environments, complicating data interpretation.

Background: Wearable EEG devices face unique challenges including reduced electrode contact stability with dry electrodes, environmental electromagnetic interference, and motion artifacts from subject mobility [2]. These systems typically have fewer channels (<16), which limits spatial resolution and reduces effectiveness of conventional artifact rejection methods [2].

Identification Protocol:

- Spectral Analysis: Identify abnormal power distribution, particularly elevated low-frequency power from movement artifacts or high-frequency bursts from muscular activity [1].

- Auxiliary Sensor Integration: Utilize data from inertial measurement units (IMUs) or other motion sensors to identify periods of movement that correlate with signal artifacts [2].

- Automated Detection: Implement deep learning approaches that are increasingly effective for muscular and motion artifacts, with promising applications in real-time settings [2].

Mitigation Workflow: Adopt a multi-stage pipeline that includes artifact detection, categorization, and targeted removal strategies specific to each artifact type.

Guide 3: Implementing Deep Learning for Advanced Artifact Removal

Problem: Conventional artifact removal methods (regression, ICA, wavelet transforms) inadequately separate artifacts from neural signals, resulting in significant neural data loss.

Background: Deep learning models, particularly Generative Adversarial Networks (GANs) and LSTM networks, have demonstrated remarkable effectiveness in removing artifacts while preserving underlying neural information [1]. These approaches can learn complex, non-linear relationships between artifactual and neural components.

Implementation Protocol:

- Model Selection: Choose an appropriate architecture such as AnEEG (LSTM-based GAN) which effectively captures temporal dependencies in EEG data [1].

- Training Strategy: Train the model on diverse datasets containing EEG recordings with various artifacts, using clean EEG signals or semi-simulated data as ground truth [1].

- Validation: Assess performance using quantitative metrics including Normalized Mean Square Error (NMSE), Root Mean Square Error (RMSE), Correlation Coefficient (CC), Signal-to-Noise Ratio (SNR), and Signal-to-Artifact Ratio (SAR) [1].

Performance Metrics: The table below summarizes typical performance improvements achievable with deep learning approaches compared to traditional methods:

Table: Deep Learning Artifact Removal Performance Metrics

| Model | NMSE | RMSE | CC | SNR Improvement | Key Advantage |

|---|---|---|---|---|---|

| AnEEG (LSTM-GAN) | Lower values [1] | Lower values [1] | Higher values [1] | Improved [1] | Captures temporal dependencies [1] |

| GCTNet (GAN-CNN-Transformer) | N/A | 11.15% RRMSE reduction [1] | N/A | 9.81 dB improvement [1] | Captures global & temporal features [1] |

| Wavelet-Based Methods | Higher values [1] | Higher values [1] | Lower values [1] | Less improvement [1] | Traditional approach |

Table: Research Reagent Solutions for Neural Signal Processing

| Tool/Category | Specific Examples | Function & Application |

|---|---|---|

| Artifact Detection Algorithms | Wavelet Transforms, ICA with thresholding [2] | Identifies artifacts in EEG signals based on statistical properties or component separation |

| Deep Learning Models | AnEEG (LSTM-GAN), GCTNet (GAN-CNN-Transformer) [1] | Advanced artifact removal using neural networks to separate neural signals from artifacts |

| Hardware Platforms | Medtronic Percept DBS, Dry electrode EEG headsets, Ear-EEG systems [3] [5] | Neural signal acquisition hardware with varying susceptibility to artifacts |

| Reference Datasets | EEG Eye Artefact Dataset, BCI Competition IV2b, MIT-BIH Arrhythmia Dataset [1] | Benchmark datasets for developing and validating artifact removal algorithms |

| Performance Metrics | NMSE, RMSE, CC, SNR, SAR [1] | Quantitative assessment of artifact removal effectiveness and signal preservation |

| Auxiliary Sensors | IMU, Oura Ring, accelerometers [3] [2] | Provides contextual data for correlating artifacts with physical activity and movement |

Effective artifact management requires a nuanced approach that balances aggressive artifact removal with preservation of genuine neural signals. The methodologies outlined in this guide—from identifying DBS overvoltage events to implementing deep learning pipelines—provide researchers with structured approaches to mitigate one of the most significant challenges in modern neural signal processing. As wearable and implanted neural monitoring systems continue to evolve, developing more sophisticated artifact handling techniques will remain crucial for extracting meaningful insights from the brain's electrical activity.

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary trade-off when rejecting artifact-contaminated trials? The core trade-off lies between signal quality and data quantity. Rejecting trials removes noise from artifacts like eye blinks or muscle movement, which can create confounds and reduce statistical power. However, this also discards a significant portion of your data. Crucially, recent evidence suggests that the signals traditionally discarded as "noise" may contain meaningful biological information, with one study reporting that conventional artifact rejection can remove up to 70% of the task-relevant variance in EEG data [6].

FAQ 2: Does artifact correction alone guarantee improved decoding performance? Not necessarily. A large-scale evaluation found that the combination of artifact correction (using Independent Component Analysis, or ICA) and artifact rejection did not significantly improve decoding performance for Support Vector Machine (SVM) and Linear Discriminant Analysis (LDA) models in the vast majority of cases across a wide range of ERP paradigms. However, artifact correction remains critical to minimize artifact-related confounds that could artificially inflate decoding accuracy, even if it doesn't boost performance [7].

FAQ 3: When is trial rejection absolutely necessary? Trial rejection is particularly important when artifacts act as a systematic confound—for example, if participants blink more in one experimental condition than in another. In such cases, the artifact itself could create a false difference between conditions. Rejection is also necessary when artifacts prevent the analysis of the neural signal of interest, such as when a blink occurs at the exact time a visual stimulus is presented, interfering with the participant's ability to see it [8].

FAQ 4: Are there automated alternatives to manual artifact rejection? Yes, automated algorithms are being developed to standardize and speed up the process. For instance, ARTIST is a fully automated artifact rejection algorithm for single-pulse TMS-EEG data that uses a pattern classifier to identify and remove artifact components from Independent Component Analysis (ICA) with high accuracy. Such tools help reduce the subjectivity and time burden of manual cleaning [9].

Troubleshooting Guides

Guide 1: Diagnosing Performance Loss After Aggressive Trial Rejection

Problem: After rejecting a large number of trials, your multivariate pattern analysis (MVPA) or ERP component amplitude is weaker or non-significant.

| Possible Cause | Diagnostic Questions | Recommended Action |

|---|---|---|

| Insufficient Statistical Power | How many trials remain per condition? Is the trial count balanced across conditions? | Calculate power based on remaining trials. Consider using artifact correction instead of rejection for marginal cases [7]. |

| Loss of Biological Signal | Were the rejected artifacts purely noise, or could they have contained physiologically relevant information? | Re-analyze a subset of data with a less aggressive threshold. Compare results with and without rejection to quantify signal loss [6]. |

| Introduction of Bias | Did rejection disproportionately remove trials from one condition or participant group? | Check the distribution of rejected trials across conditions and subjects. If unbalanced, correction methods may be fairer [8]. |

Guide 2: Choosing Between Artifact Correction and Rejection

Problem: You are unsure whether to correct for artifacts or reject trials containing them in your specific experimental context. The flowchart below outlines a systematic decision-making process.

Quantitative Data on Rejection Impact

The following tables summarize key quantitative findings from recent research on the effects of artifact rejection, providing a evidence-based reference for your experimental planning.

Table 1: Impact of Artifact Rejection on EEG Decoding and Synchronization

| Study Metric / Condition | Performance with Standard Rejection | Performance without Rejection (Whole-System) | Key Finding |

|---|---|---|---|

| Trial-Level Correlation (Phase Sync. vs. Voltage) [6] | r = 0.195 | r = 0.590 | Rejection reduced trial-level coupling threefold, discarding meaningful signal. |

| Target vs. Non-Target Discrimination [6] | -0.4% | +0.6% | Discrimination reversed sign after rejection, potentially leading to wrong conclusions. |

| SVM/LDA Decoding Performance (Multiple Paradigms) [7] | No significant improvement | No significant improvement | Rejection did not enhance performance in most cases, questioning its necessity for decoding. |

Table 2: When Rejection Improves Data Quality

| Scenario | Rejection Benefit | Quantitative Evidence |

|---|---|---|

| Extreme Voltage Deflections (from movement) [8] | Reduces uncontrolled variance and increases statistical power. | Benefits of removing high-noise trials outweigh the cost of having fewer trials for averaging [8]. |

| Donor-Derived Cell-Free DNA (dd-cfDNA) in Kidney Transplant Rejection [10] | Not applicable (biomarker in plasma). | Median dd-cfDNA was 2.9% during AMR vs. 0.3% in stable controls, making it a strong non-invasive biomarker [10]. |

| TMS-EEG Artifacts (e.g., scalp muscle activation) [9] | Essential for analyzing TMS-evoked potentials (TEPs). | Automated rejection algorithms (e.g., ARTIST) can achieve 95% classification accuracy vs. expert manual cleaning [9]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Neural Signal Processing and Artifact Management

| Item / Reagent | Function in Research | Application Note |

|---|---|---|

| Independent Component Analysis (ICA) | A blind source separation technique used to isolate and remove artifacts with stable scalp distributions (e.g., eyeblinks, heartbeats) from neural signals [7] [8]. | Effective for correcting ocular artifacts but may not eliminate the need for subsequent trial rejection in all cases [7]. |

| Donor-Derived Cell-Free DNA (dd-cfDNA) | A non-invasive biomarker released during cell death (e.g., organ transplant rejection). Quantified as a percentage of total cfDNA [10]. | A dd-cfDNA level >1% is a validated threshold for indicating a high probability of active kidney transplant rejection [10]. |

| Kuramoto Order Parameter (R) | A metric to measure global phase synchronization across multiple neural signals, which is distinct from traditional voltage (ERP) or coherence analyses [6]. | Useful for quantifying whole-system coordination that may be lost during conventional artifact rejection. |

| Automated Artifact Rejection Algorithms (e.g., ARTIST, MARA) | Supervised classifiers trained to automatically identify and reject artifact components from ICA, reducing manual labor and subjective bias [9]. | Particularly valuable for noisy datasets like TMS-EEG, and can perform on par with expert human reviewers [9]. |

| Anti-Seizure Medications (ASMs: Carbamazepine, Phenytoin) | Pharmacological tools used in microphysiological systems (e.g., DishBrain) to modulate neural network hyperactivity and study information processing [11]. | Carbamazepine at 200 µM significantly reduced mean firing rate and improved goal-directed performance in a neural culture model [11]. |

Experimental Protocol: Evaluating Artifact Minimization Strategies

This protocol provides a step-by-step methodology for quantifying the impact of artifact correction and rejection in your own EEG/MEG studies, based on established research practices [7] [8].

Aim: To determine the optimal artifact minimization approach for a specific dataset by assessing its impact on both confounds and data quality.

1. Data Preprocessing:

- Acquire raw EEG data and apply band-pass filtering (e.g., 0.1-30 Hz) appropriate for your ERP components of interest.

- Segment the data into epochs time-locked to your events.

2. Create Parallel Processing Pipelines: Process the data through two distinct pipelines for comparison. * Pipeline A (Correction & Rejection): Apply ICA to identify and remove components corresponding to ocular and other stable artifacts. Subsequently, reject epochs containing voltage deflections exceeding a threshold (e.g., ±100 µV). * Pipeline B (Minimal Rejection): Apply only minimal rejection (e.g., for extreme drift or flat-line signals) or use only artifact correction without subsequent trial rejection.

3. Quantify Key Metrics: Calculate the following for each pipeline: * Number of Retained Trials: Count trials per condition after processing. * Data Quality Metric: Use a metric like Standardized Measurement Error (SME), which incorporates both single-trial noise and the number of trials, providing a direct link to statistical power [8]. * Confound Check: Test if there are systematic differences in artifact presence (e.g., remaining EOG signal) between experimental conditions. * Decoding/Analysis Performance: Run your primary analysis (e.g., SVM decoding, ERP component measurement) on the data from each pipeline.

4. Compare and Interpret: The flowchart below visualizes the logical relationship and outcomes of this experimental protocol.

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My analysis pipeline has always involved rigorous artifact rejection, but my cognitive task results seem to lose statistical power. What could be happening?

Traditional preprocessing assumes that physiological signals like eye movements and muscle activity are noise that obscure neural signals [12] [13]. However, emerging evidence challenges this assumption. A 2025 study demonstrated that conventional artifact rejection can remove approximately 70% of task-relevant variance and even reverse target discrimination outcomes (from +0.6% to -0.4%) [6]. This suggests that the physiological signals you are removing may contain meaningful information about whole-system cognitive processes. We recommend comparing results with and without artifact rejection to determine if critical information is being lost [6].

Q2: How can I determine if eye blinks in my data contain cognitive information versus simply contaminating the signal?

The informative value of an artifact depends on your experimental context and research question. Eye blinks are known to momentarily alter visual processing in the brain [14]. If blinking occurs systematically in relation to your task paradigm (e.g., right after stimulus presentation), it may reflect a cognitive process rather than random noise [14]. You can analyze the temporal relationship between artifacts and task events, and compare phase synchronization metrics between conditions with and without artifacts preserved [6].

Q3: Are there automated methods that can distinguish between 'informative' and 'contaminating' physiological signals?

Fully automated algorithms are emerging, particularly for specialized applications like TMS-EEG and OPM-MEG [15] [9]. These typically use independent component analysis (ICA) combined with pattern classifiers that leverage spatio-temporal features of components [9]. A 2025 study achieved 98.52% accuracy in automatic artifact recognition using magnetic reference signals and a channel attention mechanism [15]. However, determining the cognitive relevance of these components still requires theoretical framing and experimental design that considers embodied cognition principles [6].

Q4: What practical first steps can I take to minimize unnecessary signal loss in my current research?

- Parallel Analysis: Run your analysis pipeline with and without artifact rejection and compare outcomes [6].

- Component Categorization: When using ICA, avoid bulk removal of all non-neural components. Instead, document the characteristics and trial-by-trial prevalence of removed components [12] [6].

- Theoretical Framework: Develop hypotheses about how specific physiological signals (e.g., eye movements, muscle tension) might contribute to your cognitive construct of interest [6].

- Reference Signals: Consider using dedicated sensors to record physiological signals (e.g., EOG, ECG) as references rather than for simple subtraction [15].

Troubleshooting Guides

Problem: Conventional artifact rejection weakens or reverses my experimental effects.

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Re-analyze a subset of data without any artifact rejection. | Preserved or strengthened effect suggests meaningful physiological signals were being removed [6]. |

| 2 | Calculate trial-level correlation between phase synchronization (e.g., Kuramoto Order Parameter) and voltage amplitude before and after artifact rejection. | A significant drop in correlation after rejection indicates loss of meaningful signal [6]. |

| 3 | Analyze the temporal relationship between artifact occurrence and task events. | Systematic patterns suggest functional role of physiological signals [6] [14]. |

| 4 | Implement a component classification approach that documents rather than blindly removes physiological components. | More nuanced understanding of which physiological signals contribute to your effects [15] [9]. |

Problem: I need to maintain some artifact removal but want to minimize signal loss.

| Step | Action | Rationale |

|---|---|---|

| 1 | Use high-density EEG systems (64+ channels) to better separate neural from non-neural sources via ICA [6]. | More channels improve source separation capabilities [6]. |

| 2 | Implement automated artifact detection algorithms (e.g., ARTIST, MARA) that use multiple spatio-temporal features rather than simple amplitude thresholds [9]. | Reduces subjective bias and allows for consistent re-analysis with adjusted parameters [9]. |

| 3 | Preserve the timing information of removed artifacts for later analysis of their relationship to task events. | Enables post-hoc analysis of whether removed "artifacts" were systematically related to cognition [6]. |

| 4 | Consider using alternative metrics like phase synchronization that may be less affected by certain artifacts [6]. | Some cognitive processes may be better captured by phase relationships than voltage amplitude [6]. |

Quantitative Evidence: Comparing Artifact Handling Approaches

Table 1: Performance Metrics of Conventional vs. Alternative Artifact Handling Methods

| Method | Trial-Level Correlation (R vs. ERP) | Target Discrimination | Automation Level | Key Advantage |

|---|---|---|---|---|

| Conventional Rejection [6] | 0.195 | -0.4% | Manual | Familiar, standardized |

| Whole-System Preservation [6] | 0.590 | +0.6% | None | Preserves 70% more signal |

| ICA + Manual Classification [12] | Varies | Varies | Semi-manual | Expert judgment |

| ARTIST Algorithm [9] | Similar to manual | Similar to manual | Full | 95% accuracy vs. experts |

| RDC + Attention Model [15] | N/A | N/A | Full | 98.52% accuracy |

Table 2: Temporal Characteristics of Cognitive Processes Revealed When Artifacts Are Preserved

| Frequency Band | Peak Latency (ms) | Postulated Cognitive Function | Effect of Artifact Removal |

|---|---|---|---|

| Theta (4-8 Hz) [6] | 169 | Orienting attention | Disrupts early attention processes |

| Alpha (8-13 Hz) [6] | 286 | Understanding (P300) | Reduces target discrimination |

| Beta (13-30 Hz) [6] | 777 | Consolidation | Impairs memory formation |

| Eye Movements [14] | Variable | Attention shifting | Alters visual processing |

| Muscle Activity [6] | Variable | Arousal, preparation | Reduces behavioral accuracy |

Experimental Protocols

Protocol 1: Testing the Embodied Resonance Hypothesis in P300 Tasks

This protocol tests whether physiological signals traditionally treated as artifacts contribute to cognitive performance in a target discrimination paradigm [6].

- Participants: 10+ healthy adults, right-handed preferred [6].

- Equipment: 64-channel EEG system, standard electrode cap, amplifier with 0.1-100 Hz bandpass filter [6].

- Stimuli: Use a P300 Speller paradigm or oddball task with target and non-target stimuli [6].

- Procedure:

- Parallel Processing:

- Analysis:

Protocol 2: Automated Artifact Recognition with Magnetic Reference Signals

This protocol uses dedicated reference sensors to automatically identify physiological artifacts in OPM-MEG data, applicable to EEG with appropriate electrical references [15].

- Participants: 16+ healthy volunteers [15].

- Equipment: 32+ OPM sensors (or high-density EEG), additional reference sensors for ocular and cardiac signals, magnetically shielded room [15].

- Stimuli: Auditory oddball paradigm with 800 trials, 200-300 ms pure tones, 2s inter-stimulus interval [15].

- Procedure:

- Artifact Identification:

- Validation:

The Scientist's Toolkit

Table 3: Essential Research Reagents and Solutions for Artifact-Informed Research

| Item | Function | Application Notes |

|---|---|---|

| High-Density EEG System (64+ channels) [6] | Improved source separation via spatial sampling | Critical for effective ICA decomposition |

| DC-Coupled Amplifiers [9] | Prevents saturation from large artifacts | Essential for TMS-EEG studies |

| Dedicated Reference Sensors [15] | Records physiological signals for correlation analysis | Magnetic sensors for MEG, EOG/ECG for EEG |

| Artifact Subspace Reconstruction [16] | Removes transient artifacts while preserving data | Alternative to complete trial rejection |

| Independent Component Analysis [12] [16] | Separates mixed signals into components | Foundation for most advanced artifact handling |

| Kuramoto Order Parameter Algorithm [6] | Measures global phase synchronization | Captures different aspects of neural dynamics |

| Randomized Dependence Coefficient [15] | Quantifies linear and non-linear dependencies | Better than Pearson correlation for complex relationships |

| Channel Attention Mechanism [15] | Automatically weights informative features | Improves artifact classification accuracy |

Methodological Workflows

What is the fundamental difference between a neural signal and an artifact? An artifact is any recorded electrical activity that does not originate from the brain's cerebral activity. In the context of EEG and MEG, artifacts are considered contaminants that hinder the analysis of the neural signals of interest [17] [18].

Why is correctly identifying artifact type crucial for research? Correct identification is the first step in choosing the appropriate removal strategy. Misclassification can lead to the unnecessary loss of valid neural data or, conversely, the retention of confounding noise. This is critical for the integrity of research findings, especially in drug development where signal purity can influence conclusions about a compound's effect on brain activity [19].

Physiological Artifacts: Identification & Troubleshooting

Physiological artifacts are generated from the patient's own body from sources other than the brain [17].

Electrooculogram (EOG) Artifacts

What are EOG artifacts and what do they look like? EOG artifacts are caused by eye movements and blinks. The eyeball acts as an electrical dipole with a positive cornea and a negative retina. Movement of this dipole generates a large electrical field detectable by frontal EEG electrodes [17] [18].

- Eye Blinks: Appear as high-amplitude, slow, positive-phase deflections over the frontopolar (Fp1, Fp2) electrodes. The waveform is broad and typically has no field in the posterior regions of the scalp [18].

- Lateral Eye Movements: Create opposing polarities in the frontal (F7 and F8) electrodes. For example, looking to the right causes a positive deflection at F8 and a negative deflection at F7 [17] [18].

How can I prevent my participant's blinks from contaminating the data? Instruct participants to minimize blinking during critical stimulus presentation periods and provide defined, regular breaks where they are encouraged to blink freely. This is a proactive measure to reduce the occurrence of the artifact [20].

A blink artifact is easily confused with cerebral activity. How do I tell them apart? Frontal spike and wave cerebral activity, unlike blink artifacts, will typically have a broader electrical field that spreads into posterior regions and will often be preceded by a spike component. Eye blinks do not disrupt the underlying background brain rhythm [18].

Electromyogram (EMG) Artifacts

What causes EMG artifacts and how are they identified? EMG artifacts are caused by the contraction of muscles, most commonly from the frontalis (forehead), temporalis (temples), and jaw muscles. They are characterized by high-frequency, low-amplitude, irregular "spiky" waveforms that can obscure the underlying EEG [17] [18].

My participant is still. Why is there muscle artifact in the recording? Even without overt movement, muscle tension from clenching the jaw, frowning, or maintaining head position can generate low-level, persistent EMG artifact, particularly in the frontal and temporal channels [17].

Are there specific EMG patterns I should know? Yes. For instance, essential tremor or Parkinson's disease can produce rhythmic 4-6 Hz sinusoidal artifacts that may mimic cerebral activity. Chewing creates sudden bursts of generalized fast activity, while talking produces rhythmic muscle artifacts from the tongue and jaw [17].

Electrocardiogram (ECG) & Other Physiological Artifacts

How does the heart cause an artifact? The electrical activity of the heart (the QRS complex) can be conducted to the scalp and picked up by EEG electrodes. The ECG artifact appears as a rhythmic, sharp waveform that is time-locked to the patient's heartbeat, often most prominent on the left side of the head [17] [18].

What is a pulse artifact? A pulse artifact occurs when an EEG electrode is placed over a pulsating blood vessel. The mechanical pulsation causes a slow, rhythmic wave that is time-locked to the heartbeat but occurs about 200-300 milliseconds after the QRS complex [17].

What is that very slow, swaying baseline in my data? This is likely a sweat artifact. Sodium chloride in sweat carries a charge that interacts with the electrodes, producing very slow (often <0.5 Hz) baseline drifts [17] [18].

Table 1: Summary of Common Physiological Artifacts

| Artifact Type | Main Source | Key Identifying Features | Most Affected Channels |

|---|---|---|---|

| Eye Blink (EOG) | Eyeball dipole movement | High-amplitude, slow, positive deflection | Fp1, Fp2 |

| Lateral Eye Move (EOG) | Eyeball dipole rotation | Opposing polarities at F7/F8 | F7, F8 |

| Muscle (EMG) | Muscle contraction | High-frequency, "spiky" fast activity | Frontal, Temporal |

| Cardiac (ECG) | Heart electrical activity | Rhythmic sharp wave, time-locked to heartbeat | Left-sided, Referential earlobe |

| Pulse | Arterial pulsation | Slow rhythmic wave, lag after QRS complex | Single electrode over vessel |

| Sweat | Electrolyte-skin interaction | Very slow baseline drifts (<0.5 Hz) | Widespread, variable |

Non-Physiological Artifacts: Identification & Troubleshooting

Non-physiological (external) artifacts arise from outside the body, from the equipment, or the recording environment [17].

Electrode & Impedance Artifacts

What is an "electrode pop" and what causes it? An electrode pop appears as a sudden, very steep, high-voltage deflection that returns to baseline more slowly. It is caused by an abrupt change in impedance at a single electrode, often due to a loose connection, poor contact, or drying electrolyte gel [17] [18].

A single channel shows bizarre, high-amplitude noise. What should I do? This is a classic sign of a high-impedance electrode. The signal from that channel is unreliable and should be considered for exclusion from analysis. Preventing this involves ensuring good electrode-scalp contact before recording begins [21].

Environmental & Electrical Artifacts

What is 50/60 Hz artifact and how do I get rid of it? This is power line interference, manifesting as a high-frequency, monotone oscillation at exactly 50 Hz or 60 Hz. It is often caused by improper grounding, nearby electrical devices, or equipment sharing a power outlet with the EEG machine. Using a notch filter can remove it, but improving grounding and isolating equipment is a better solution [17] [22].

My data has a sudden, large, step-like jump. What is it? In MEG, this is known as a SQUID jump—a sudden instability in the superconducting quantum interference device. In EEG, it can be caused by a sudden movement of the electrode cable or a large static discharge. These artifacts typically affect multiple channels simultaneously [23] [20].

Table 2: Summary of Common Non-Physiological Artifacts

| Artifact Type | Main Source | Key Identifying Features | Troubleshooting Tips |

|---|---|---|---|

| Electrode Pop | Poor electrode contact | Sudden, steep deflection at a single electrode | Check electrode connection and gel |

| High Impedance | Poor electrode-scalp contact | Noisy, distorted signal on a single channel | Ensure impedance is below 5kΩ (wet systems) |

| 50/60 Hz Noise | Power line interference | Monotone, 50/60 Hz oscillation | Check grounding, move electrical devices, use notch filter |

| SQUID Jump (MEG) | MEG sensor instability | Sudden, large step in signal across many channels | - |

| Movement | Patient or cable movement | Chaotic, high-amplitude, low-frequency swings | Secure cables, instruct participant to remain still |

Experimental Protocols for Artifact Handling

This section provides methodologies for addressing artifacts in research data.

Protocol 1: Visual and Manual Artifact Rejection

Visual inspection is a common first step for identifying and rejecting artifacts.

- Load Data: Read preprocessed, segmented (epoched) data into your analysis environment (e.g., MATLAB with FieldTrip toolbox) [20].

- Configure Tool: Use a function like

ft_rejectvisualand select a method:'trial': View all channels for one trial at a time to identify bad trials [20].'channel': View all trials for one channel at a time to identify consistently noisy channels [20].'summary': Get a statistical overview (e.g., variance, max/min) across all channels and trials to quickly identify outliers [20].

- Inspect and Mark: Manually scroll through the data presentation. Use the mouse to mark trials or channels that contain obvious artifacts (e.g., large drifts, jumps, muscle bursts) [20].

- Reject: The tool automatically removes the marked trials/channels and returns a cleaned data structure.

FAQs on Visual Rejection: Is visual rejection subjective? Yes, it is a subjective decision. The criteria for what constitutes a "bad" trial can vary between researchers and depends on the planned analysis (e.g., time-frequency analysis is more sensitive to muscle artifacts than ERF analysis) [20].

How can I ensure consistency across multiple datasets?

Define a standardized protocol for your study that specifies the ft_rejectvisual method and the specific types and amplitudes of artifacts to be rejected before you begin screening datasets [20].

Protocol 2: Automatic Artifact Removal with Independent Component Analysis (ICA)

ICA is a powerful technique for separating and removing artifacts without discarding entire trials, by decomposing the data into independent source components.

- Prerequisite: Ensure the data is continuous or contains long epochs. A proper ICA decomposition requires a large amount of data [21].

- Decompose: Run ICA on the multi-channel EEG data. The algorithm outputs a set of Independent Components (ICs), each with a time course and a scalp topography [21].

- Identify Artifactual ICs:

- Visual Inspection: Examine the topography and time course of each IC. Eye-blink components typically have a frontal, bilateral topography and a time course matching blinks. Muscle components have a focal, lateral topography and high-frequency, burst-like activity [21].

- Automatic Classification: Use algorithms that employ clustering (e.g., hierarchical clustering) based on features like topography, frequency spectrum, and correlation with reference channels (EOG, ECG) to automatically flag artifact-related ICs [21].

- Remove Components: Project the data back to the sensor space, excluding the components identified as artifacts [21].

FAQs on ICA: Can ICA remove all types of artifacts? ICA is highly effective for artifacts with consistent, linear scalp distributions like blinks, eye movements, and cardiac activity. It is less effective for artifacts that vary in distribution across trials, such as some movement artifacts or irregular muscle bursts [19].

Should I do artifact rejection before or after ICA? It is often recommended to first remove severe, atypical artifacts (like SQUID jumps or large movement artifacts) using visual or threshold-based methods before running ICA. This improves the quality of the ICA decomposition [20].

A Critical Perspective: Rethinking "Contaminants" in Neural Signal Loss

Could our methods for removing artifacts be discarding meaningful biological signals? A 2025 preprint challenges the conventional model that equates artifacts with noise. The study proposes that cognition involves whole-body phase synchronization, meaning signals from eye movements, muscles, and the autonomic nervous system may be part of the cognitive process, not just contaminants [6].

What is the evidence for this claim? The study analyzed EEG data with and without standard artifact rejection. It found that removing artifacts reduced a key metric of neural synchronization (trial-level correlation) by approximately threefold (from 0.590 to 0.195) and reversed the sign of target discrimination accuracy. This suggests that signals conventionally discarded may contain a significant portion (up to ~70%) of the task-relevant variance [6].

What does this mean for my research on artifact rejection? This perspective does not mean abandoning artifact correction, but rather applying it more thoughtfully. The goal shifts from maximal removal to the prevention of confounds. Researchers should:

- Test whether systematic differences in artifacts (e.g., more blinking in one experimental condition) are creating bogus effects that artificially inflate results [19].

- Consider that for advanced analyses like multivariate pattern analysis (MVPA), the combination of ICA correction and artifact rejection may not improve decoding performance and the loss of trials can be more detrimental than the artifacts themselves [19].

- Acknowledge that in some contexts, what is considered "noise" may be a relevant part of the embodied cognitive signal [6].

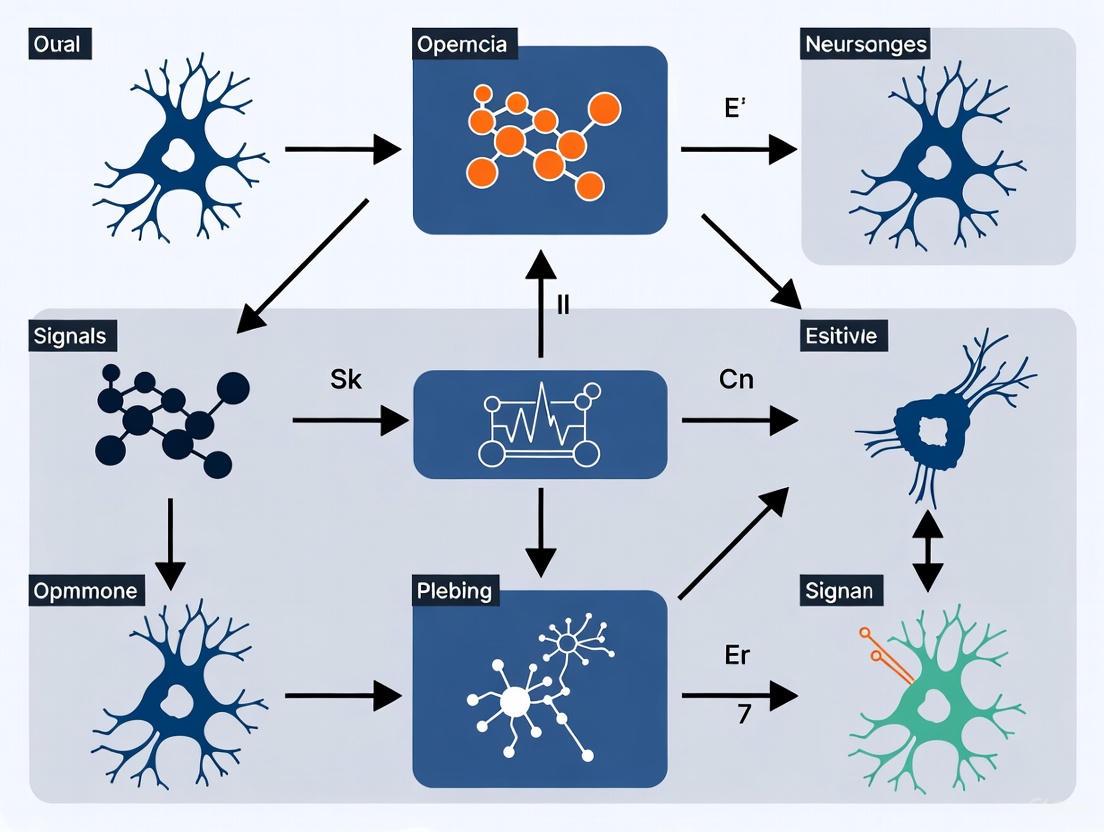

Visual Guide: Artifact Identification & Processing Workflows

Artifact Identification Key

Standard vs. Alternative Processing

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Tools for Artifact Management in Research

| Tool / Material | Primary Function | Application Note |

|---|---|---|

| High-Density EEG System (64+ channels) | Data Acquisition | Essential for high-quality ICA decomposition and better source separation of artifacts [21] [6]. |

| Abrasive Electrolyte Gels & Pastes | Ensure Good Electrode Contact | Critical for maintaining low electrode-scalp impedance (<5kΩ), which minimizes non-biological artifacts [21]. |

| Electrode Impedance Checker | Quality Control | Verify impedance at each electrode before recording starts to prevent poor contact artifacts [21]. |

| Independent Component Analysis (ICA) | Artifact Correction | Algorithmic tool to separate and remove artifacts with consistent topographies (e.g., blink, ECG) without trial loss [19] [21]. |

| EOG & ECG Reference Electrodes | Artifact Monitoring | Dedicated channels to record eye and heart activity, providing reference signals for artifact identification and regression/ICA [23] [17]. |

| Faraday Cage / Shielded Room | Environmental Control | Attenuates external electromagnetic interference, reducing 50/60 Hz line noise and other environmental artifacts [17]. |

| Signal Processing Toolboxes (e.g., FieldTrip, EEGLAB) | Data Analysis | Software environments providing standardized, peer-reviewed functions for visual and automatic artifact rejection [23] [20]. |

Beyond Simple Rejection: A Practical Guide to Advanced Artifact Correction Techniques

Troubleshooting Guides & FAQs

FAQ: What is the primary rationale for using ICA in artifact correction? ICA is a blind source separation technique that decomposes EEG signals into statistically independent components. It is considered a gold standard because it can separate neural activity from non-neural artifacts (like those from eyes and muscles) that have distinct spatial, temporal, and spectral characteristics, even when their frequencies overlap. This allows for the selective removal of artifactual components while preserving underlying brain signals, which is crucial for reducing neural signal loss compared to simply discarding entire contaminated data segments [24] [25].

Troubleshooting: My ICA decomposition seems unreliable or unstable. What could be wrong? ICA requires certain preconditions for optimal performance. Ensure you have:

- Sufficient Data: ICA works best with large amounts of clean data. A common guideline is to have more data points (number of channels × time points) than the square of the number of components. For high-density EEG, using the ‘pca’ option to reduce dimensionality may be necessary [25].

- Stationary Data: The statistical properties of the signals should be relatively constant over time.

- Correct Channel Setup: Only include EEG channels in the decomposition. Remove or exclude EMG, EOG, and other non-EEG channels, as ICA assumes instantaneous mixing, which may not hold for these signal types [25].

FAQ: Does artifact correction via ICA actually improve decoding performance in analyses like MVPA? A recent large-scale evaluation found that while the combination of ICA-based artifact correction and artifact rejection (trial removal) did not significantly enhance decoding performance for SVM- and LDA-based classifiers in the vast majority of cases, artifact correction remains strongly recommended [7]. The primary benefit is that it minimizes artifact-related confounds that could artificially inflate decoding accuracy, leading to more robust and valid conclusions without necessarily boosting raw performance numbers [7].

Troubleshooting: How can I automatically classify ICA components as artifacts to save time? Manual component inspection is the traditional method, but several automated, machine learning-based approaches exist that use features from multiple domains to classify components. The table below summarizes key features used by automated classifiers [24] [26]:

Table: Feature Domains for Automated ICA Component Classification

| Domain | Description | Example Features |

|---|---|---|

| Spatial | Analyzes the topographic scalp map of the component. | Patterns indicative of eye blinks (fronto-central), lateral eye movements (bi-polar), or muscle noise (focal, high-frequency) [24] [25]. |

| Spectral | Examines the frequency profile of the component's activity. | Eye artifacts typically have low-frequency spectra (< 4 Hz), while muscle artifacts have high-frequency, broad-spectrum activity (> 20 Hz) [24]. |

| Temporal | Looks at the time-course characteristics of the component. | High amplitude, steeply peaked deflections coinciding with eye blinks or movements [24]. |

These features can be used with classifiers like Linear Discriminant Analysis (LDA) or Support Vector Machines (SVM) to achieve accuracy levels comparable to expert agreement [24] [26].

Troubleshooting: After ICA, which components should I remove? There is no one-size-fits-all answer, as it requires expert judgment. However, you should inspect components and flag them as likely artifacts if they show:

- Scalp Map: A smoothly decreasing EEG spectrum typical of an eye artifact and a strong far-frontal projection [25].

- Component Time Course: Individual eye movements in the component erpimage [25].

- Power Spectrum: A smoothly decreasing EEG spectrum typical of an eye artifact, or a broad-spectrum, high-frequency profile typical of muscle noise [25].

Quantitative Data on Artifact Correction & Analysis

Table 1: Impact of Artifact Minimization on EEG/ERP Decoding Performance

| Aspect Evaluated | Key Finding | Implication for Research |

|---|---|---|

| Overall Impact on Decoding | Combination of artifact correction & rejection did not significantly improve decoding performance in the vast majority of cases [7]. | Raw decoding accuracy may not be the primary reason to use ICA. |

| Value of Artifact Correction | Recommended to minimize artifact-related confounds that might artificially inflate decoding accuracy [7]. | Critical for ensuring the validity of results and avoiding incorrect conclusions. |

| Scope of Evidence | Evaluation across seven common ERP paradigms (N170, MMN, N2pc, P3b, N400, LRP, ERR) and multi-way decoding tasks [7]. | Findings are robust across a wide range of experimental designs. |

Table 2: Characteristic Features of Major Artifactual ICA Components

| Artifact Type | Spatial Topography (Scalp Map) | Temporal Signature | Spectral Profile |

|---|---|---|---|

| Ocular (Blinks & Movements) | Strong, smooth frontal distribution; bipolar for lateral movements [25]. | Large, low-frequency, monophasic (blinks) or square-wave (movements) deflections [24]. | Low-frequency peak, power drops off sharply above ~4 Hz [24]. |

| Muscle (EMG) | Focal, often over temporal or neck muscles; patchy and irregular [24]. | High-frequency, irregular, "spiky" activity [24]. | Broad-spectrum, high-frequency power (>20 Hz) [24]. |

| Heart (ECG) | Widespread, often maximal over posterior or lateral head regions; can be right- or left-side dominant. | Stereotyped, periodic spikes corresponding to heart rate. | -- |

Experimental Protocols

Detailed Methodology: ICA for Ocular and Muscle Artifact Correction

Objective: To implement a standard ICA workflow for the identification and removal of ocular and muscle artifacts from continuous or epoched EEG data, thereby preserving neural signals of interest.

Materials & Software:

- EEG recording system with appropriate electrode montage.

- Computing environment (e.g., MATLAB, Python).

- EEGLAB toolbox or equivalent.

Procedure:

- Data Import and Preprocessing: Import raw EEG data. Apply a high-pass filter (e.g., 1 Hz cutoff) to remove slow drifts, which can improve ICA decomposition. It is critical to not use severe low-pass filtering or notch filters, as these can distort the independence of sources [25].

- Channel and Data Selection: Ensure channel locations are properly defined. Select only EEG channels for ICA decomposition. If working with a subset of data for computational efficiency, ensure the selected data portions are representative and contain examples of the artifacts (e.g., eye blinks) [25].

- ICA Decomposition: Run an ICA algorithm (e.g., Infomax, FastICA) on the preprocessed data. The Infomax algorithm in EEGLAB's

runicais a common choice. For data with strong line noise, use the extended option to also detect sub-gaussian sources [25].- Command-line example in EEGLAB:

[icasig, W, H] = runica(eeg_data, 'extended', 1, 'stop', 1e-7);

- Command-line example in EEGLAB:

- Component Inspection and Labeling: Visually inspect the resulting components. For each component, examine:

- Its scalp topography (

pop_topoplot). - Its activity time course (

pop_eegplot). - Its power spectrum (

pop_spectopo). - (For epoched data) Its event-related potential image (

pop_erpimage). Label components based on the characteristic features outlined in Table 2 [25].

- Its scalp topography (

- Artifact Removal and Data Reconstruction: After identifying artifactual components (e.g., components 1 and 2 in the diagram below), subtract their activity from the original data. This creates a cleaned dataset without the removed artifacts [25].

- Command-line example in EEGLAB:

clean_eeg = eeg_data - W(:, [1 2]) * icasig([1 2], :);

- Command-line example in EEGLAB:

Advanced Protocol: Automated Component Classification with LDA

Objective: To employ a Linear Discriminant Analysis (LDA) classifier for the automated identification of artifactual ICA components, reducing manual workload [26].

Procedure:

- Feature Extraction: For each ICA component, extract a feature vector. Effective features can be derived using image processing algorithms applied to the component's scalp map, such as:

- Range Filter: Highlights local intensity variations.

- Local Binary Patterns (LBP): Captures texture information.

- Geometric Features: Describe the shape and distribution of the topography [26].

- Classifier Training: Train an LDA model on a pre-existing dataset of expert-labeled components (brain vs. artifact). The study by [26] achieved an 88% accuracy rate using range filter features.

- Classification: Apply the trained LDA model to the feature vectors of new, unlabeled components from a different study to automatically flag them for rejection [24] [26].

Workflow & Signaling Pathways

ICA-Based Artifact Correction Workflow

Automated Component Classification Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for ICA Implementation in EEG Research

| Tool / Resource | Function / Description | Application Note |

|---|---|---|

| EEGLAB | An open-source MATLAB toolbox providing an interactive environment for processing EEG data, including comprehensive ICA functionalities [25]. | The primary platform for many EEG researchers to run ICA, visualize components, and remove artifacts. Supports multiple ICA algorithms. |

| ICA Algorithms (Infomax, FastICA) | The core computational engines that perform the blind source separation. Different algorithms may have slightly different performance characteristics [25]. | Infomax (runica) is a standard default. FastICA may require a separate plugin. The choice can be made within EEGLAB. |

| ICLabel EEGLAB Plugin | A plug-in that provides an automated classification of ICA components into categories like "Brain," "Eye," "Muscle," "Heart," "Line Noise," and "Other" [25]. | Greatly aids in the initial, rapid labeling of components, though expert verification is still recommended. |

| ADJUST EEGLAB Plugin | An automated tool for identifying artifactual components based on spatial and temporal features, specifically designed for event-related EEG data [24]. | Useful for a more hypothesis-driven approach to artifact detection in ERP studies. |

| Linear Discriminant Analysis (LDA) | A machine learning classifier that can be trained on expert-labeled components to automate the artifact identification process [26]. | Enables the development of custom, automated artifact removal pipelines, improving reproducibility and efficiency. |

Frequently Asked Questions

1. What is the core principle behind a hybrid artifact management workflow? The core principle is to first use artifact correction algorithms to clean the data, reserving minimal artifact rejection only for segments that are so severely corrupted they cannot be reliably corrected. This strategy aims to preserve the maximum amount of neural data and trial counts, which is crucial for the statistical power of subsequent analyses [27] [7].

2. My analysis pipeline already uses ICA. Why should I consider a hybrid approach? While ICA is a powerful correction tool, a hybrid approach makes its application more strategic. Research indicates that aggressive artifact rejection, even via ICA, can inadvertently discard biologically meaningful signals. A hybrid workflow encourages careful validation to ensure that the components marked for rejection are truly artifactual and not part of a whole-body cognitive process [6].

3. How do I decide whether to correct or reject a specific artifact? The decision can be based on the type and severity of the artifact. The following table summarizes a common classification and handling strategy, as demonstrated in fNIRS research [27]:

| Artifact Type | Key Characteristics | Recommended Handling Method |

|---|---|---|

| Baseline Shift (BS) | Slow, sustained signal drift due to head position change. | Spline interpolation to model and subtract the drift [27]. |

| Slight Oscillation | Lower-amplitude, higher-frequency noise from minor movement. | Dual-threshold wavelet-based method to reduce oscillation [27]. |

| Severe Oscillation | High-amplitude spikes from rapid head motion. | Cubic spline interpolation for correction, applied before BS removal [27]. |

4. Does artifact correction prior to analysis actually improve multivariate decoding performance? Evidence suggests that while the combination of correction and rejection may not always significantly boost decoding accuracy for simple tasks, applying artifact correction is still strongly recommended. It helps to minimize artifact-related confounds that could otherwise lead to inflated or spurious decoding results [7].

5. Are there automated tools for implementing a hybrid workflow? Yes, the field is moving toward automation. For example, the ARTIST algorithm provides a fully automated, ICA-based artifact rejection method for TMS-EEG data, achieving high accuracy compared to manual expert cleaning [9]. Furthermore, deep learning models like CLEnet are being developed for end-to-end artifact removal from multi-channel EEG data, reducing the need for manual intervention [28].

Troubleshooting Guides

Problem: Low Trial Count After Processing

- Symptoms: Your final analyzed dataset has a very small number of trials, leading to low statistical power in event-related potential (ERP) or decoding analyses.

- Potential Causes: Overly conservative thresholding in artifact rejection steps.

- Solutions:

- Prioritize Correction: Re-process your data, focusing first on applying robust correction algorithms (e.g., wavelet, spline, or deep learning-based methods) to salvage corrupted segments [27] [28].

- Validate Rejection: If using ICA, re-inspect the components you are rejecting. A hybrid workflow suggests that components with a plausible neural topography and time course should be retained [6].

- Benchmark Performance: After correction, evaluate if the cleaned data produces the expected, physiologically plausible results (e.g., a clear P300 component) before deciding to reject entire trials [7].

Problem: Poor Signal-to-Noise Ratio (SNR) After Correction

- Symptoms: The neural signals of interest remain obscured by noise even after running artifact correction procedures.

- Potential Causes: The chosen correction method may not be optimal for the specific type of artifact in your data, or severe artifacts were incorrectly corrected instead of rejected.

- Solutions:

- Match Method to Artifact: Consult the artifact classification table above. Ensure you are using a method designed for your primary artifact type; for example, wavelet filters are effective for oscillations but not for baseline shifts [27].

- Apply Sequential Correction: For data with multiple artifact types, implement a sequential pipeline. One proven workflow is to first correct severe oscillations, then remove baseline shifts, and finally reduce slight oscillations [27].

- Consider Advanced Models: For complex or unknown artifacts, especially in multi-channel data, try a state-of-the-art deep learning model like CLEnet, which integrates CNNs and LSTMs to handle a wide variety of artifacts effectively [28].

Problem: Inconsistent Results Across Subjects or Sessions

- Symptoms: Your artifact processing pipeline works well for some datasets but fails on others.

- Potential Causes: Inconsistent manual intervention or failure of the algorithm to generalize across different artifact morphologies.

- Solutions:

- Standardize with Automation: Employ a standardized, fully automated algorithm like ARTIST (for TMS-EEG) or a pre-trained CLEnet model (for EEG) to ensure consistent processing across all your data [9] [28].

- Inspect Intermediate Steps: Check the output of each step in your hybrid workflow (e.g., after severe artifact correction, after BS removal) to identify which stage is failing for particular subjects.

- Validate on a Subset: Manually validate the automated pipeline's performance on a small subset of data from each subject or session to ensure generalizability before processing the entire batch.

Experimental Protocols & Data

Protocol: A Standardized Hybrid fNIRS Processing Workflow

This protocol, adapted from a 2022 study, provides a detailed method for combining correction and minimal rejection in fNIRS data [27].

Motion Artifact Detection:

- Calculate the two-sided moving standard deviation of the measured fNIRS signal.

- Use this moving SD to identify segments containing oscillations and baseline shifts automatically [27].

Artifact Classification and Handling:

- Classify detected artifacts into three categories: Baseline Shift (BS), Slight Oscillation, and Severe Oscillation.

- Apply a sequential correction pipeline:

- Step 1: Severe Artifact Correction. Use cubic spline interpolation to correct the segments with severe oscillations.

- Step 2: BS Removal. Apply spline interpolation to model and subtract the baseline shift.

- Step 3: Slight Oscillation Reduction. Use a dual-threshold wavelet-based method to reduce the remaining slight oscillations [27].

Quality Control and Minimal Rejection:

- After the comprehensive correction, calculate quality metrics such as Signal-to-Noise Ratio (SNR) and Pearson’s correlation coefficient (R).

- Only reject data segments that, after all correction attempts, still fail to meet a pre-defined, stringent quality threshold.

Quantitative Performance of Artifact Handling Methods

The table below summarizes key performance metrics from validation studies for different artifact handling methods, allowing for direct comparison.

| Method / Model | Modality | Key Performance Metrics | Key Advantage |

|---|---|---|---|

| Hybrid fNIRS Approach [27] | fNIRS | Improved SNR and Pearson's R with strong stability. | Combines strengths of spline (for BS) and wavelet (for oscillation). |

| CLEnet [28] | EEG | SNR: 11.498 dB; CC: 0.925; RRMSEt: 0.300. | Effectively removes mixed and unknown artifacts from multi-channel EEG. |

| ARTIST [9] | TMS-EEG | 95% IC classification accuracy vs. expert. | Fully automated and accurate for noisy TMS-EEG data. |

| ICA-based Correction + Rejection [7] | EEG | No significant decoding performance gain in most cases. | Highlights that correction is essential to avoid confounds, even if performance doesn't skyrocket. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Hybrid Workflows |

|---|---|

| Independent Component Analysis (ICA) | A blind source separation technique used to decompose data into independent components, which can then be classified as neural or artifactual for selective removal [9] [6]. |

| Wavelet-Based Methods | Effective for isolating and correcting abrupt, high-frequency motion artifacts and slight oscillations without affecting the entire signal [27]. |

| Spline Interpolation | Used to model and subtract slow, sustained baseline shifts and to correct high-amplitude severe oscillations [27]. |

| Deep Learning Models (e.g., CLEnet) | End-to-end neural networks that learn to map artifact-corrupted signals to clean ones, reducing reliance on manual feature engineering and component rejection [28]. |

| Kuramoto Order Parameter (R) | A metric for measuring global phase synchronization across channels. It can be used to validate that artifact rejection is not destroying meaningful cross-system coordination [6]. |

Workflow Visualization

Diagram 1: A strategic hybrid workflow for artifact management.

Diagram 2: A sequential correction pipeline for fNIRS artifacts.

Framed within a thesis on reducing neural signal loss, this guide addresses a central challenge in electrophysiological research: how to automatically identify and remove artifacts without discarding valuable neural data. Artifacts—unwanted signals from non-neural sources like muscle movement or eye blinks—can severely compromise data integrity. Traditional rejection methods often result in significant data loss, hampering analysis, especially in real-world, mobile studies. Deep learning models, particularly hybrid architectures like CNN-LSTM, offer a sophisticated solution by learning to distinguish artifacts from neural signals with high precision, thereby minimizing the loss of critical neurophysiological information [29] [2] [30].

Troubleshooting Guides

Model Performance and Training

Q1: My CNN-LSTM model for artifact identification is not converging during training. What could be wrong?

This is often related to data quality, model architecture, or hyperparameters.

- Problem: Insufficient or poor-quality training data.

- Solution: Ensure your dataset is large enough and accurately labeled. The EDABE dataset, for instance, provides 74 hours of expert-corrected EDA signals, which can serve as a benchmark. Using data augmentation techniques to generate more training samples can also significantly improve performance [29].

- Problem: Inadequate model architecture for the signal type.

- Solution: CNNs excel at extracting local, spatial features from signal windows, while LSTMs model long-range temporal dependencies. A hybrid CNN-LSTM architecture is particularly well-suited for time-series signals like EEG or EDA. Confirm that your model leverages both capabilities effectively [29] [30].

- Problem: Suboptimal hyperparameter selection.

- Solution: Systematically tune key hyperparameters such as learning rate, batch size, and the number of layers/filters. A model's performance is highly sensitive to these choices.

Q2: After artifact correction, my signal seems distorted, and I suspect neural information is being lost. How can I verify this?

Preserving the signal of interest is paramount. The following methods can be used to validate your pipeline's integrity.

- Solution: Use a ground-truth validation protocol. If possible, collect data where the neural response is known, even amidst artifacts. For example, one study used Steady-State Visual Evoked Potentials (SSVEPs) elicited by a known stimulus. The preservation of the SSVEP response after artifact removal confirmed that the neural signal was retained [30].

- Solution: Employ quantitative metrics beyond simple accuracy. Calculate the Signal-to-Noise Ratio (SNR) before and after processing. An effective correction should increase the SNR. Furthermore, compare the phasic components (e.g., Skin Conductance Responses in EDA) of automatically corrected signals against those corrected by human experts; a lack of significant difference indicates successful preservation [29] [30].

Q3: Is artifact rejection always necessary for deep learning-based classification of EEG data?

Not necessarily. Research indicates that for some tasks, skipping artifact rejection may be feasible.

- Solution: Evaluate your end-goal. One study found that for a CNN-based classification of abnormal vs. normal EEGs, artifact rejection did not improve final classification performance, though it did speed up the training process. Consider whether your model can learn to ignore artifacts implicitly, which simplifies the processing pipeline [31].

Data & Pre-processing

Q4: I am working with wearable EEG/EDA data, which is notoriously noisy. Are there specific considerations for artifact handling in these environments?

Yes, artifacts in wearable systems have distinct features that require tailored approaches [2].

- Problem: Specific challenges of wearable acquisition setups (dry electrodes, subject mobility, reduced channel count) [2].

- Solution: Move beyond techniques designed for high-density lab EEG. Methods like Artifact Subspace Reconstruction (ASR) and deep learning models that do not rely heavily on source separation (like ICA) are more suitable for the low-channel-count data from wearable devices [2].

- Problem: Underutilization of auxiliary sensors.

Frequently Asked Questions (FAQs)

Q: What is the advantage of using a hybrid CNN-LSTM model over a standalone CNN or LSTM? A: A hybrid architecture combines the strengths of both networks. The CNN component acts as a feature extractor, identifying local patterns and robust features within short segments of the signal. The LSTM component then processes the sequence of these features, learning the temporal dynamics and context over time. This is ideal for physiological signals where both the shape of a waveform and its timing are critical for identification [29] [30].

Q: My artifact correction model works well in the lab but fails in real-world recordings. Why? A: This is likely due to a lack of generalization. Lab environments are controlled, while real-world settings introduce a wider variety of unpredictable artifacts and noise. To address this, train your models on datasets that reflect real-world conditions, such as the EDABE dataset collected during an immersive virtual reality task. Incorporating data from auxiliary sensors (IMU, EMG) can also make models more robust to the dynamics of uncontrolled environments [29] [2].

Q: How can I quantitatively evaluate the performance of my artifact identification model? A: Beyond standard metrics like accuracy, use metrics that are meaningful for the imbalance often found in artifact data:

- Sensitivity (Recall): The model's ability to correctly identify true artifacts.

- Precision: The proportion of identified artifacts that are truly artifacts.

- Area Under the Curve (AUC): Measures the overall separability between artifact and clean classes.

- Cohen's Kappa: Assesses agreement between the model and human experts, correcting for chance.

One study reported a model with 88% accuracy, 72% sensitivity, and outperformed other methods in AUC and Kappa [29].

Q: Are there public benchmarks available for developing and testing my models? A: Yes. Publicly available datasets like the EDABE dataset are crucial for benchmarking. Using such resources allows for direct, fair comparisons with state-of-the-art methods and ensures your research is reproducible and grounded in a common standard [29].

Experimental Protocols & Data

The table below summarizes key performance metrics from recent studies employing deep learning models for artifact management, providing a benchmark for your own experiments.

Table 1: Performance Metrics of Deep Learning Models for Artifact Handling

| Model/Approach | Application | Key Metrics | Reported Performance | Source |

|---|---|---|---|---|

| LSTM-1D CNN | EDA Artifact Recognition | Test Accuracy, Sensitivity | 88% Accuracy, 72% Sensitivity | [29] |

| CNN-based | EEG Clean vs. Artifact Classification | Test Accuracy, Recall, Precision | 85% Accuracy, 89% Recall, 82% Precision | [31] |

| Hybrid CNN-LSTM | EEG Muscle Artifact Removal | Qualitative & SNR-based | Effective removal & SSVEP preservation | [30] |

| CNN-based | EEG Abnormal vs. Normal Classification | Test Accuracy (with vs. without artifact rejection) | 84% (with and without rejection) | [31] |

Detailed Methodology: Hybrid CNN-LSTM for Muscle Artifact Removal

This protocol details a specific approach for removing muscle artifacts from EEG using a hybrid CNN-LSTM network with EMG reference signals [30].

1. Objective: To remove muscle artifacts from EEG signals while preserving neurologically relevant components, such as Steady-State Visual Evoked Potentials (SSVEPs).

2. Data Acquisition:

- Participants: 24 participants.

- Stimulus: Participants were presented with a light-emitting diode (LED) stimulus to elicit SSVEPs.

- Artifact Induction: Simultaneously, participants performed strong jaw clenching to induce significant muscle artifacts.

- Recordings: Simultaneous recording of EEG and facial/neck EMG signals.

3. Model Architecture & Workflow: The model uses a hybrid CNN-LSTM architecture. The workflow involves using the EMG signals as a reference to help the model identify and remove the muscle-based artifacts from the contaminated EEG signal, outputting a cleaned EEG signal.

4. Training with Data Augmentation:

- An augmented dataset was generated from the raw EEG and EMG recordings to create a diverse and robust training set for the neural network.

5. Validation & Evaluation:

- Comparison: The results were compared against common methods like Independent Component Analysis (ICA) and linear regression.

- Metric: The algorithm's effectiveness was assessed by its ability to remove artifacts while preserving the SSVEP response, using Signal-to-Noise Ratio (SNR) as a key metric [30].

- Domain Analysis: The quality of the cleaned signals was evaluated in both the time and frequency domains.

CNN-LSTM Artifact Removal Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Resources for Automated Artifact Identification Research

| Item / Resource | Function / Description | Relevance to Experiment |

|---|---|---|

| EDABE Dataset | A public dataset of 74 hours of EDA signals from 43 subjects, collected in an immersive VR environment with expert manual corrections. | Serves as a ground-truth benchmark for developing and comparing EDA artifact correction models [29]. |

| Auxiliary EMG Sensors | Sensors placed on the face and neck to record muscle activity. | Provides a reference signal for muscle artifacts, enabling more precise removal from EEG using hybrid deep learning models [30]. |

| Inertial Measurement Units (IMUs) | Sensors that measure motion and orientation. | Used in wearable studies to capture motion artifacts directly, enhancing detection in real-world conditions [2]. |

| Blind Source Separation (BSS) Tools | Software toolkits for methods like Independent Component Analysis (ICA) and Canonical Correlation Analysis (CCA). | Provides a baseline or component for hybrid methods to separate neural signals from artifacts in multi-channel data [2] [30]. |

| Data Augmentation Pipelines | Computational methods to artificially expand training datasets. | Critical for generating diverse training data for deep learning models, improving their robustness and generalization [30]. |

FAQ: Troubleshooting Guide for Artifact Rejection

Q1: After artifact correction, my decoding performance hasn't improved. Is this normal? Yes, this can be normal. A 2025 study assessing SVM and LDA classifiers found that combining artifact correction and rejection did not significantly enhance decoding performance in the vast majority of cases across various common ERP paradigms (N170, P3b, N400, etc.). However, the study strongly recommends using artifact correction (like ICA) prior to decoding analyses to minimize artifact-related confounds that could artificially inflate accuracy. The key is to avoid incorrect conclusions rather than necessarily boosting performance [7].

Q2: How can I handle artifacts when using a low-channel-count wearable EEG system? This presents specific challenges as standard techniques like ICA, which require multiple channels, become less effective [2]. Consider the following:

- For muscular and motion artifacts, deep learning approaches are emerging as a promising solution [2].

- For general artifact detection, ASR-based pipelines are widely applied and can handle ocular, movement, and instrumental artifacts [2].

- Auxiliary sensors like Inertial Measurement Units (IMUs) are underutilized but have great potential for enhancing motion artifact detection in ecological conditions [2].

Q3: I'm concerned that rejecting artifact-contaminated Independent Components (ICs) is also removing neural signals. What are my options? Your concern is valid, as artifactual components often contain residual cerebral activity [32]. A hybrid methodology called REG-ICA addresses this exact problem. Instead of completely rejecting artifactual ICs, it applies a regression algorithm (like stable Recursive Least Squares, sRLS) to these components to remove only the ocular artifact patterns while preserving the underlying neural signals. This method has been shown to distort brain activity less than complete component rejection [32].

Q4: Artifacts are causing a high false alarm rate in my automated seizure detection pipeline. How can I reduce this? Integrating a dedicated artifact detector with your seizure detector can significantly reduce false alarms. One 2024 study using a Gradient Boosted Tree classifier for wearable EEG devices showed that this integration reduced false alarms by up to 96% compared to using a seizure detector alone. This is because many artifacts, particularly muscular ones, have morphological similarities to seizures and can be misinterpreted by the detector [33].

Detailed Experimental Protocol: REG-ICA for Ocular Artifact Correction

The following protocol is adapted from the REG-ICA methodology [32], which combines Blind Source Separation (BSS) and regression to minimize the loss of neural signals.

Objective: To remove ocular artifacts from EEG recordings while minimizing the distortion of the underlying cerebral activity.

Materials and Reagents:

| Item | Function in the Experiment |

|---|---|

| EEG Recording System | To acquire raw neural signal data from the scalp. |

| Electrooculogram (EOG) Electrodes | To record reference signals of ocular activity (vertical and horizontal EOG). |

| REG-ICA Algorithm | A hybrid algorithm (e.g., as a plugin for EEGLAB) that performs ICA followed by regression on components [32]. |

| Stable Recursive Least Squares (sRLS) Algorithm | The regression algorithm used within REG-ICA to filter artifacts from components. |

| Artificially Contaminated EEG Dataset | Optional, for validation and benchmarking of the method's performance [32]. |

Procedure:

- Data Acquisition & Preprocessing: Record continuous EEG and simultaneous EOG data. Apply a high-pass filter (e.g., 0.5-1 Hz) to remove slow drifts and basic band-pass filter the data according to your research needs.

- Blind Source Separation (ICA): Use an ICA algorithm (e.g., the extended INFOMAX algorithm) to decompose the preprocessed EEG data into independent components (ICs).