Intracortical Brain-Machine Interfaces for Real-Time Robotic Arm Control: 2025 Advances and Clinical Applications

This article comprehensively examines the current state of intracortical brain-machine interfaces (BMIs) for real-time robotic arm control, a rapidly advancing field poised to restore motor function for individuals with paralysis.

Intracortical Brain-Machine Interfaces for Real-Time Robotic Arm Control: 2025 Advances and Clinical Applications

Abstract

This article comprehensively examines the current state of intracortical brain-machine interfaces (BMIs) for real-time robotic arm control, a rapidly advancing field poised to restore motor function for individuals with paralysis. Targeting researchers, scientists, and biomedical professionals, it explores the fundamental principles of invasive neural signal acquisition, the deep learning methodologies enabling dexterous control, and the optimization of system performance for clinical viability. The content synthesizes recent breakthroughs from human trials, including long-term high-accuracy communication and the restoration of touch sensation. A comparative analysis contrasts the performance and applications of leading intracortical systems from industry pioneers, providing a validated perspective on the technology's readiness for translation into therapeutic and assistive devices.

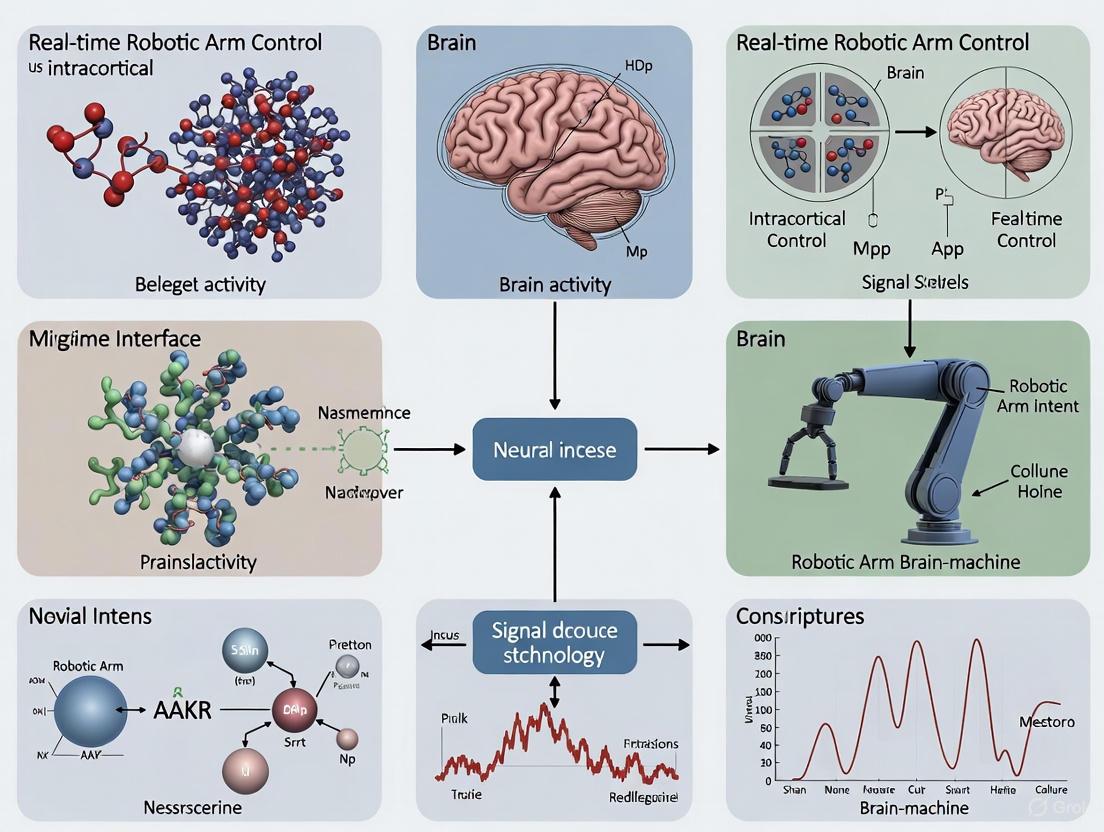

The Architecture of Thought-Controlled Movement: Core Principles of Intracortical BCIs

Intracortical brain-computer interfaces (iBCIs) represent a transformative technology for restoring communication and motor function to individuals with paralysis by creating a direct pathway between the brain and external devices. These systems translate neural activity recorded from the motor cortex into control commands for effectors such as robotic arms, computer cursors, or speech synthesizers [1]. The efficacy of iBCIs has been demonstrated in multiple clinical trials, including the landmark BrainGate pilot studies, where participants with tetraplegia achieved high-performance communication rates and dexterous robotic control [2] [3]. This document details the complete experimental pipeline, from neural signal acquisition to device command, providing application notes and protocols tailored for researchers and scientists working on real-time robotic control systems.

The iBCI Processing Pipeline: Components and Workflow

The iBCI pipeline is a multi-stage system that converts raw neural signals into smooth, intentional device control. The workflow can be conceptualized as a series of transformations, illustrated below.

Neural Signal Acquisition

The process begins with the acquisition of neural signals using microelectrode arrays surgically implanted in the motor cortex.

- Implant Design: The BrainGate system and similar iBCIs typically use the Utah Array, a silicon-based microelectrode array with 96 to 100 electrodes arranged on a 4 mm by 4 mm platform [4]. Each electrode, approximately 1.5 mm long, penetrates the cortical tissue to record extracellular signals.

- Signal Types: The arrays record two primary types of signals:

- Signal Processing: Raw neural signals are amplified and digitized. A headstage connected to a percutaneous pedestal on the scalp typically performs initial amplification. Signals are digitized at a high sampling rate (e.g., 30 kHz per channel) and transmitted to an external computer for real-time processing [4].

Feature Engineering

The next stage involves extracting informative features from the raw neural data that correlate with the user's movement intention.

Table 1: Common Neural Features in iBCI Systems

| Feature | Signal Origin | Bandwidth | Key Characteristics | Primary Application |

|---|---|---|---|---|

| Spike Firing Rate [1] | Action Potentials | 250 Hz–5 kHz | High temporal resolution, reflects single-neuron activity | Continuous kinematic control |

| Neural Activity Vector (NAV) [1] | Action Potentials | 250 Hz–6 kHz | Combines spatial & temporal information from multiple units | Reaching and grasping tasks |

| Spiking-Band Power (SBP) [1] | Action Potentials | 300 Hz–1 kHz | Robust, high spatial specificity | Finger kinematics decoding |

| Mean Wavelet Power (MWP) [1] | Full-band Signal | 0–3.75 kHz | Provides spectral & temporal information | Discrete movement classification |

| Local Motor Potential (LMP) [1] | Local Field Potential | <300 Hz | Stable signal, represents population activity | Hand kinematics decoding |

For continuous robotic arm control, spike-based features like binned firing rates are often preferred due to their rich kinematic information [1] [6]. The firing rate is calculated by counting the number of spikes detected on each electrode within a sliding time bin (typically 20-100 ms) [1] [4]. These binned counts from all electrodes are concatenated to form a feature vector that serves as the input to the decoder.

Decoder Mapping

The decoder is the computational core of the iBCI, which establishes a mapping between neural features and the intended movement command.

Table 2: Decoding Algorithms for Motor iBCIs

| Decoder Type | Model Architecture | Output Type | Example Application | Performance Notes |

|---|---|---|---|---|

| Kalman Filter [2] [4] | Linear dynamic system | Continuous kinematics (e.g., velocity) | 2D cursor control, robotic arm reaching | High performance, smooth trajectories |

| Linear Discriminant Analysis (LDA) [1] | Linear classifier | Discrete states (e.g., grasp type) | Classification of hand postures | Simple, effective for closed-loop control |

| Support Vector Machine (SVM) [1] | Nonlinear classifier | Discrete states | Classification of imagined hand movements | Handles complex feature spaces |

| Recurrent Neural Network (RNN/LSTM) [5] [7] | Neural network with memory | Continuous kinematics or phoneme sequences | Speech decoding, complex kinematics | Models temporal dynamics, high accuracy |

For real-time robotic arm control, the Kalman Filter and its variants (e.g., the ReFIT Kalman Filter) are widely used for continuous decoding of arm velocity or position [2]. The decoder is calibrated to predict kinematics, such as the velocity of a robotic hand in 3D space, from the neural feature vector. The Kalman filter models this relationship as a linear dynamical system, providing robust and smooth control [4].

Device Command

The final output from the decoder is translated into commands for an external device. For robotic arm control, this typically involves:

- Reaching: The decoded 3D velocity commands are sent to the robotic arm's controller to move the end-effector in space [3].

- Grasping: A separate discrete or continuous decoder output can control hand closure, often mapped to the firing rate of a specific neural population or a state-based classifier [3].

Experimental Protocol: Real-Time Robotic Arm Control

This protocol outlines the key steps for establishing a closed-loop iBCI system for robotic arm control, based on methodologies from published clinical trials [2] [3].

Pre-experiment Setup: Decoder Calibration

Objective: To collect training data for building the initial neural decoder.

Procedure:

- Neural Recording: The participant is seated facing a monitor. Neural data (threshold crossings or multi-unit activity) are recorded from the implanted microelectrode arrays [4].

- Visual Guidance Task: The participant is instructed to observe a computer cursor or a virtual robotic arm performing a task. A typical paradigm is the "center-out" task, where the cursor moves from the center of the screen to peripheral targets [3].

- Data Collection: The following data are synchronously recorded for several trials (e.g., 5-10 minutes):

- Neural Features: The binned firing rates (20-100 ms bins) from all active electrodes.

- Kinematic Ground Truth: The velocity of the cursor or virtual arm provided by the task software.

- Decoder Training: A Kalman filter is trained to map the neural feature vectors to the observed kinematics. The filter parameters (observation and state matrices) are estimated from this training data [2] [4].

Real-Time Closed-Loop Control Session

Objective: To enable the participant to control a robotic arm in real time using decoded neural signals.

Procedure:

- System Initialization: Load the trained decoder and establish a communication link with the robotic arm's application programming interface (API).

- Closed-Loop Operation:

- Neural Feature Extraction: In real-time, compute the neural feature vector (e.g., binned spike counts) from the latest neural data.

- Kinematic Decoding: Pass the feature vector through the Kalman filter to produce a velocity command (e.g.,

[Vx, Vy, Vz]for translation,[ω]for grasp). - Command Execution: Stream the velocity command to the robotic arm controller.

- Visual Feedback: The participant uses visual feedback of the moving robotic arm to adjust their neural activity and correct trajectories [3].

- Performance Assessment: Conduct standardized tasks, such as the Action Research Arm Test (ARAT), to quantify performance. This involves timing the participant as they pick up and manipulate various objects [3].

Protocol for Incorporating Bidirectional Feedback

Advanced iBCI systems can provide artificial somatosensory feedback via Intracortical Microstimulation (ICMS) of the somatosensory cortex, significantly improving functional performance [3].

Supplementary Setup and Procedure:

- Sensory Mapping: Prior to functional tasks, determine the stimulation parameters (e.g., amplitude, frequency) for electrodes in area 1 of the somatosensory cortex that evoke tactile sensations perceived on specific parts of the hand (e.g., thumb, palm) [3].

- Sensor Integration: Equip the robotic hand with sensors (e.g., contact sensors, force transducers) that detect touch and grip force.

- Bidirectional Control Loop: In addition to the standard control loop, implement:

- Sensory Encoding: Map the robot's sensor output (e.g., contact = 1, no contact = 0) to the pre-calibrated ICMS parameters.

- Stimulation Delivery: In real-time, deliver ICMS to the corresponding site in the somatosensory cortex when the robot touches or grasps an object [3].

The complete workflow for a bidirectional iBCI is depicted below.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for iBCI Research

| Item Name | Function/Application | Technical Specifications | Example Use Case |

|---|---|---|---|

| Utah Microelectrode Array [4] | Chronic neural signal recording from cortex. | 96 electrodes, 4x4 mm platform, 1.5 mm electrode length. | Primary sensor for acquiring action potentials and LFPs in motor cortex. |

| Parylene-C Coating [4] | Biocompatible insulation for electrodes. | Polymer coating applied to silicon shanks. | Reduces inflammatory response and improves long-term signal stability. |

| Percutaneous Pedestal [4] | Physical connector for signal transmission. | Titanium screw-fixed connector on the skull. | Provides a stable electrical interface between implanted arrays and external amplifiers. |

| Wireless Transmitter [4] | Transcutaneous neural data transmission. | Head-mounted unit, ~48 Mbps from 200 channels. | Enables cable-free operation, improving user mobility and reducing infection risk. |

| Kalman Filter Decoder [2] [4] | Real-time translation of neural features to kinematics. | Linear dynamical system model with steady-state gains. | Core algorithm for continuous control of robotic arm velocity. |

| Intracortical Microstimulation (ICMS) System [3] | Artificial sensory feedback generation. | Biphasic current pulses delivered to somatosensory cortex. | Provides tactile feedback during grasping tasks, closing the sensorimotor loop. |

Performance Metrics and Outcomes

Performance in real-time robotic control is rigorously quantified. Key metrics from clinical studies include:

- Trial Completion Time: In a bidirectional BCI study, the median time to complete an object transfer task on the ARAT was reduced from 20.9 seconds without feedback to 10.2 seconds with ICMS feedback—a 51% improvement [3].

- Grasp Time: The time spent attempting to grasp objects decreased by 66% (from 13.3 s to 4.6 s) with artificial tactile sensations [3].

- Communication Rate: For communication applications, which share a core pipeline with robotic control, participants have achieved typing rates of up to 90 characters per minute and speech decoding at 62 words per minute [2] [7].

These results demonstrate that the iBCI pipeline, from precise neural decoding to the integration of sensory feedback, can restore functional motor capacity and provide a viable platform for advanced neuroprosthetic applications.

In the pursuit of real-time robotic arm control using intracortical brain-machine interfaces (BMIs), the method of neural signal acquisition is a foundational determinant of system performance. This Application Note provides a detailed comparison of two principal invasive recording technologies: intracortical Microelectrode Arrays (MEAs) and endovascular electrodes. The choice between these approaches involves a critical trade-off between signal fidelity and procedural invasiveness, impacting everything from the quality of robotic control to clinical viability and long-term stability [8]. This document synthesizes current research and protocols to guide researchers and scientists in selecting and implementing the appropriate signal acquisition methodology for high-performance BMI systems aimed at restoring motor function.

Technology Comparison at a Glance

The following table summarizes the core characteristics of microelectrode arrays and endovascular electrodes, providing a high-level comparison of their underlying principles, advantages, and limitations.

Table 1: Core Characteristics of Invasive Signal Acquisition Technologies

| Feature | Microelectrode Arrays (MEAs) | Endovascular Electrodes |

|---|---|---|

| Fundamental Principle | Penetrating cortical tissue to record near neural sources [9]. | Deploying electrode arrays within cerebral blood vessels (e.g., superior sagittal sinus) [10] [11]. |

| Primary Advantage | Superior signal resolution enabling single-neuron recording [9] [12]. | Minimally invasive implantation, avoiding open craniotomy [10] [11]. |

| Key Limitation | Provokes foreign body response (e.g., gliosis), leading to chronic signal degradation [10] [9]. | Lower spatial resolution compared to MEAs and theoretical risk of vascular complications (e.g., thrombosis) [10] [11]. |

| Clinical Translation | Demonstrated in human trials for complex robotic arm and hand control [13] [14]. | Early clinical feasibility demonstrated for communication in paralyzed patients [11]. |

Dimensional Framework for Technology Selection

A more nuanced understanding can be achieved by evaluating these technologies across two independent dimensions: the surgical procedure's invasiveness and the sensor's operating location [8]. This two-dimensional framework is instrumental for aligning a technology's profile with specific research or clinical goals.

Surgery Dimension: Invasiveness of Procedures

This dimension classifies the anatomical trauma associated with the implantation procedure [8]:

- Invasive: Procedures causing anatomically discernible trauma to brain tissue at the micron scale or larger. Microelectrode array implantation is classified here, as it requires a craniotomy and penetration of the cortical tissue [13].

- Minimally Invasive: Procedures that cause anatomical trauma but spare the brain tissue itself. Endovascular approaches fall into this category, as they involve catheter-based delivery through the vascular system without direct brain penetration [10] [8].

- Non-Invasive: Procedures that induce no anatomically discernible trauma (e.g., scalp EEG).

Detection Dimension: Operating Location of Sensors

This dimension classifies technologies based on the sensor's final location relative to the brain [8]:

- Implantation: Sensors are placed within human tissue. Microelectrode arrays are a prime example, as they are implanted directly into the gray matter [9].

- Intervention: Sensors leverage naturally existing cavities, such as blood vessels, without damaging the original tissue integrity. Endovascular stented electrodes are classified here [8].

- Non-Implantation: Sensors remain on the surface of the body (e.g., scalp).

The following diagram illustrates how MEA and endovascular technologies are positioned within this two-dimensional framework, highlighting their fundamental operational differences.

Quantitative Performance Data

The theoretical advantages and disadvantages of each technology translate into concrete differences in signal quality and decoding performance, which are critical for real-time robotic control. The following table summarizes key quantitative metrics reported in the literature.

Table 2: Performance Metrics for Robotic Control Applications

| Metric | Microelectrode Arrays | Endovascular Electrodes |

|---|---|---|

| Signal Type | Single- and multi-unit activity [9] [12]. | Local field potentials (LFPs) and electrocorticography (ECoG)-like signals [10] [11]. |

| Information Transfer Rate | < 3 bits/s for invasive BMIs in general [15]. | Data specific to finger-level control not yet available. |

| Finger Movement Decoding Accuracy | Continuous decoding of finger position with avg. correlation of ρ = 0.78 in non-human primates [14]. | Real-time decoding of motor execution/intention for 2-finger (80.56%) and 3-finger (60.61%) tasks using non-invasive EEG [16]. |

| Longevity & Stability | Chronic declines in signal quality over time; usable life may be limited to several years [9] [12]. | Stable long-term recordings demonstrated in ovine models and early human trials with minimal signal degradation [10] [11]. |

Experimental Protocols

To ensure reproducible results in intracortical BMI research, standardized experimental protocols are essential. Below are detailed methodologies for key procedures involving both technologies.

Protocol: Robotic Implantation of Microelectrode Arrays

Application: Precise anatomical implantation of Utah Arrays into the hand and arm areas of the motor and somatosensory cortex for high-fidelity sensorimotor BMI studies [13].

Workflow Diagram:

Key Materials:

- Robotic Stereotactic System: e.g., ROSA (Zimmer Biomet) for high-accuracy trajectory guidance [13].

- Microelectrode Arrays: Utah Arrays (Blackrock Neurotech); e.g., 96-channel for motor cortex, 32-channel for somatosensory cortex [13].

- Pneumatic Insertion Device: For single-impact, consistent embedding of arrays into the parenchyma [13].

- Intraoperative Verification Tools: High-resolution camera and flexible endoscope for visual confirmation of complete array insertion [13].

Protocol: Endovascular Stentrode Deployment

Application: Minimally invasive placement of an electrode array in the superior sagittal sinus to record motor signals for device control [10] [11].

Workflow Diagram:

Key Materials:

- Stentrode Device: A self-expanding stent integrated with multiple recording electrodes [10] [11].

- Endovascular Delivery System: Includes introducer sheaths, microcatheters, and guidewires designed for navigation through the cerebral venous system [10].

- Anticoagulant/Antiplatelet Therapy: Essential to reduce the risk of stent thrombosis post-implantation [10].

Protocol: Real-time Decoding for Individual Finger Control

Application: Decoding individuated finger movements from neural signals to enable dexterous control of a robotic hand at the finger level [16] [14].

Workflow Diagram:

Key Parameters for Kalman Filter Decoding (MEAs) [14]:

- Hand State Vector: Xt = [p, v, a, 1]^T, where p is finger position, v is velocity, and a is acceleration.

- Neural Activity Vector: Yt = [y1, y2, ..., yn]^T, containing firing rates for each of n channels.

- Observation Model: Yt = C * Xt + et, where C is the matrix of kinematic tuning parameters and et is noise.

- State Transition Model: Xt = A * Xt-1 + wt, where A is the state transition matrix and wt is noise.

Key Parameters for Deep Learning Decoding (EEG) [16]:

- Network Architecture: EEGNet-8,2, a compact convolutional neural network optimized for EEG-based BCIs.

- Fine-Tuning: Subject-specific model adaptation using first-half session data to improve online performance.

- Performance Metric: Majority voting accuracy calculated over multiple segments of a trial.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Intracortical BMI Signal Acquisition Research

| Item | Function/Application | Example Specifications / Notes |

|---|---|---|

| Utah Array | Record single- and multi-unit activity from cortical tissue. | 96-electrode array; 4x4 mm footprint; 1.5 mm electrode length (for motor cortex) [13]. |

| Stentrode | Record ECoG-like signals from within a blood vessel. | Self-expanding stent electrode; deployed in superior sagittal sinus [10] [11]. |

| Cerebus System | Acquire and process high-channel-count neural data. | Real-time neural signal processor (e.g., from Blackrock Neurotech) [14]. |

| ROSA Robot | Perform precise stereotactic guidance for array implantation. | Provides sub-millimeter accuracy for trajectory planning and execution [13]. |

| Pneumatic Inserter | Ensure consistent, reliable implantation of MEAs. | Delays a single impact force to embed all array shanks simultaneously [13]. |

| EEGNet | Decode neural signals in real-time using deep learning. | A compact convolutional neural network designed for EEG-based BCIs [16]. |

| Anti-platelet Therapy | Mitigate the risk of thrombosis for endovascular implants. | e.g., Dual antiplatelet therapy (DAPT) post-Stentrode implantation [10]. |

Intracortical brain-machine interfaces (iBMIs) aim to restore functional movement, such as robotic arm control, to individuals with paralysis by interpreting neural activity from the brain. The efficacy of these systems hinges on the precise decoding of motor intent and the integration of realistic sensory feedback to create a closed-loop control system. This application note details the critical cortical regions involved in these processes and provides standardized protocols for neural decoding and somatosensory feedback in the context of real-time robotic arm control.

Key Cortical Regions for iBMI

For iBMIs designed for robotic arm control, signals are typically decoded from a network of motor-related brain regions. Furthermore, providing somatosensory feedback requires engaging specific sensory areas. The table below summarizes the primary cortical targets.

Table 1: Key Cortical Regions for Motor Decoding and Somatosensory Feedback

| Cortical Region | Abbreviation | Primary Role in iBMI | Recorded Signal / Method | Key Findings/Function |

|---|---|---|---|---|

| Primary Motor Cortex | M1 | Executes motor commands; primary source for kinematic and kinetic parameter decoding. [17] [18] | Single- and multi-unit activity via Utah Array. [17] | Decodes arm position, velocity, force, and muscle activity. [17] Critical for movement execution despite downstream injury. [17] |

| Posterior Parietal Cortex | PPC | Plans movement intentions and goals; provides higher-level cognitive signals. [18] [19] | Functional Ultrasound (fUS), Local Field Potentials. [19] | Decodes planned movement direction (e.g., 8 directions achieved with fUS). [19] Offers stable decoding across sessions. [19] |

| Primary Somatosensory Cortex | S1 | Processes tactile and proprioceptive feedback; target for restoring sensation. [20] | Intracortical Microstimulation (ICMS). [20] | ICMS evokes artificial tactile sensations (e.g., pressure, tingle); improves grasp force accuracy in bidirectional BCIs. [20] |

| Dorsal Premotor Cortex | PMd | Involved in motor planning and preparation. [18] | Single- and multi-unit activity. [18] | Contributes unique movement-related information not always resolvable from M1 alone. [18] |

The following diagram illustrates the integrated signal flow and the key brain regions in a closed-loop iBMI for robotic arm control.

Experimental Protocols

Protocol: Decoding Motor Intent for Multi-Directional Control

This protocol outlines the procedure for using fUS neuroimaging from the Posterior Parietal Cortex (PPC) to decode planned movement directions, enabling control of a robotic arm or computer cursor. [19]

- Objective: To establish a closed-loop fUS-BMI for online decoding of two to eight movement directions.

- Equipment:

- Miniaturized ultrasound transducer (e.g., 15.6 MHz).

- Real-time ultrafast ultrasound acquisition system.

- Primate or human subject with a cranial opening over the left PPC.

- Behavioral task setup with visual display and reward system.

- Procedure:

- Device Positioning: Position the ultrasound transducer normal to the brain above the dura mater, targeting the left PPC regions (LIP, MIP). [19]

- Initial Training Phase:

- Subject performs 100 successful memory-guided saccades or reach trials to cued targets.

- Stream fUS images at 2 Hz during the delay period preceding movement.

- Use this data to train an initial decoder (PCA + LDA). [19]

- Closed-Loop BMI Phase:

- Switch to BMI mode. The decoder's output, based on real-time fUS activity from the last three images of the memory period, dictates the task direction.

- Provide immediate visual feedback of the decoded direction.

- After each successful trial, add the new fUS data to the training set and retrain the decoder. [19]

- Cross-Session Stability (Pretraining):

- To enable immediate control on new days, align fUS data from a previous session to the current imaging plane using semiautomated rigid-body alignment.

- Pretrain the initial decoder with this aligned historical data.

- Continue with real-time retraining during the session to adapt to new conditions. [19]

Table 2: Key Performance Metrics from fUS-BMI Studies

| Metric | Reported Performance | Experimental Context |

|---|---|---|

| Online Decoding Accuracy | Reached 82% for 2 directions. [19] | Rhesus macaque performing memory-guided saccades. |

| Number of Decoded Directions | Up to 8 movement directions. [19] | fUS-BMI from posterior parietal cortex. |

| Decoder Stability | Significant accuracy achieved by Trial 7 with pretraining vs. Trial 55 without. [19] | Using pretrained decoder from a session months prior. |

Protocol: Implementing Bidirectional Control with ICMS Feedback

This protocol describes the implementation of a bidirectional BCI that decodes grasp commands from M1 and provides artificial tactile feedback via ICMS of S1. [20]

- Objective: To improve grasp force control of a virtual or robotic gripper by providing intracortical somatosensory feedback.

- Equipment:

- Implanted microelectrode arrays in hand area of M1 and area 1 of S1 (e.g., Utah Array).

- Neural signal processor (e.g., Neuroport Neural Signal Processor).

- Intracortical microstimulation system.

- Virtual reality environment with a gripper simulation.

- Procedure:

- Decoder Calibration:

- Present a virtual gripper applying "gentle," "medium," or "firm" forces to an object.

- The participant observes and imagines performing the same action.

- Record neural activity from M1 and fit a linear encoding model (e.g., Eq. 1) to relate spike rates to grasp velocity (

gv) and grasp force (gf). [20] r = b₀ + b_v * gv + b_f * gf...(Eq. 1)

- Closed-Loop Grasping Task:

- Participant uses the BCI decoder to control the virtual gripper.

- Positive decoded

gvcloses the gripper; decodedgfcommands the applied force. [20]

- ICMS Feedback Integration:

- Upon object contact, deliver ICMS to a single electrode in S1 at 100 Hz.

- Linearly map the applied grasp force (

gfa) to the stimulation amplitude (e.g., 20 μA at 0.1 au to 90 μA at 16 au). [20] - The evoked sensation (e.g., pressure, tingle) provides continuous feedback about the force.

- Experimental Conditions:

- Compare performance across conditions: Visual feedback only, ICMS feedback only, and combined feedback.

- Include a sham-ICMS condition to control for data loss during stimulation artifact blanking. [20]

- Decoder Calibration:

The flow of sensory information from the robotic arm back to the brain is detailed below.

Table 3: Impact of ICMS Feedback on BCI Performance

| Feedback Condition | Key Performance Outcome | Significance |

|---|---|---|

| Visual Feedback Only | Baseline for force control accuracy. | -- |

| ICMS Feedback | Improved overall applied grasp force accuracy compared to visual feedback alone. [20] | Demonstrates that artificial somatosensation can enhance fine motor control in BCI. |

| Sham-ICMS | No significant improvement in force accuracy. [20] | Confirms that performance gain is due to neurostimulation, not data blanking artifacts. |

The Scientist's Toolkit

Table 4: Essential Research Reagents and Materials for Intracortical BMI Research

| Item | Function / Application | Example / Specification |

|---|---|---|

| Utah Microelectrode Array | Chronic intracortical recording of single- and multi-unit activity. [17] | Blackrock/NeuroPort Array; 100 electrodes, 4.2x4.2mm, 1.0-1.5mm shank length. [17] |

| Neuroport Neural Signal Processor | Acquires, filters, and processes neural signals in real-time. [20] | Blackrock Microsystems; band-pass filtering (0.3–7500 Hz), spike detection. [20] |

| Functional Ultrasound (fUS) | Large-field-of-view neuroimaging for decoding movement plans. [19] | 15.6 MHz transducer; 100μm resolution; records coronal planes from PPC. [19] |

| Intracortical Microstimulation (ICMS) | Provides artificial sensory feedback by stimulating somatosensory cortex. [20] | 100 Hz stimulation frequency; amplitude modulated (e.g., 20-90 μA) by task parameter (e.g., force). [20] |

| Linear/Linear Discriminant Analysis (LDA) Decoder | Real-time translation of neural features into movement commands. [19] | Classic, robust algorithm for kinematic decoding. [19] |

| ReFIT-Kalman Filter | Adaptive decoding algorithm that improves performance and stability. [21] | Recalibrated Feedback Intention-Trained Kalman Filter; maintains >90% accuracy over months. [21] |

{#context}

| Company | Primary Implant Type & Invasiveness | Key Technological Features | Recording Bandwidth / Electrode Count | Key Application in Motor Control | Human Trial Stage (as of 2025) |

|---|---|---|---|---|---|

| Neuralink [22] [23] [24] | Intracortical; Invasive | 1024 electrodes on flexible threads, wireless, implanted via robotic surgery [23] | 1024 electrodes per device; high bandwidth [22] [23] | Thought control of cursors, robotic arms for paralysis [23] | 7 patients implanted [24] |

| Blackrock Neurotech [22] [25] | Intracortical; Invasive | Utah Array; rigid electrodes implanted into cortex [25] | 100 electrodes per array (Utah); established lower bandwidth [22] [25] | Foundational research for prosthetic and computer control [22] [25] | Dozens of human implants since 2004 [22] |

| Paradromics [22] [24] | Intracortical; Invasive | "Connexus" BCI; high-density electrode array [22] [24] | Highest bandwidth among featured companies [22] | Aims for high-fidelity applications like speech decoding [22] | First-in-human surgery completed [24] |

| Precision Neuroscience [22] [26] [24] | Cortical Surface (Epicortical); Minimally Invasive | "Layer 7" array; thin film conforming to brain surface, inserted via 1mm micro-slit [26] | 1024 electrodes per array; modular design to cover large areas [26] | High-resolution data capture for intention decoding [26] | FDA clearance for interface; early human trials [24] |

| Synchron [22] [27] [28] | Endovascular; Minimally Invasive | "Stentrode"; stent-based electrode array delivered via blood vessels [22] | Lower bandwidth; suitable for discrete commands (clicks, scrolls) [22] [28] | Digital device control for daily tasks (email, texting) [28] | 10+ patients implanted [22] [27] |

Experimental Protocols for Intracortical BCIs in Robotic Control

The following protocols detail the methodology for conducting experiments with intracortical Brain-Computer Interfaces (BCIs) to achieve real-time robotic arm control, synthesizing approaches from leading companies and research.

1. Participant Preparation and Surgical Implantation This initial phase involves the precise placement of the neural interface.

- Candidate Screening: Recruit participants with tetraplegia resulting from spinal cord injury or amyotrophic lateral sclerosis (ALS). Secure informed consent and obtain ethical and regulatory approvals (e.g., FDA IDE) [29] [24].

- Surgical Implantation: Under sterile conditions, perform a craniotomy to access the primary motor cortex (M1). For Neuralink, a specialized robot inserts the flexible electrode threads into the cortical layers [23]. For Blackrock or Paradromics, the Utah array or similar high-density array is implanted into the hand knob area of M1 [25] [24]. The implant is connected to a percutaneous pedestal or a fully implanted, wireless transmitter [22].

2. Neural Signal Acquisition and Processing This protocol covers the transition from raw brain signals to decoded control commands.

- Signal Acquisition: Record extracellular action potentials (spikes) and local field potentials (LFPs) from the implanted microelectrodes. Use a high-sample-rate amplifier and digitizer [29].

- Pre-processing: Apply a band-pass filter (e.g., 300-5000 Hz for spikes) and a notch filter to remove line noise. For Neuralink and Paradromics, this processing is handled by custom application-specific integrated circuits (ASICs) within the implantable device [22] [23].

- Feature Extraction: For spike-based decoding, isolate single- or multi-unit activities by setting amplitude thresholds on the filtered signal. Calculate the binned firing rate of neurons as the primary feature for decoding algorithms [29].

3. Calibration and Decoder Training Here, the system learns to map neural activity to intended movement.

- Calibration Paradigm: Guide the participant through a "calibration mode." This may involve observing movements on a screen (observation paradigm) or attempting to move their own paralyzed hand while neural activity is recorded (motor imagery paradigm) [29].

- Decoder Training: The intended kinematic parameters (e.g., hand velocity, grip force) recorded during calibration are used as the training target. A decoding algorithm, such as a Kalman filter or a recurrent neural network (RNN), is trained to predict these parameters from the stream of neural features [29] [30]. Precision Neuroscience emphasizes the use of machine learning for this translation [26].

4. Real-Time Closed-Loop Control and Task Performance This protocol establishes the functional, feedback-driven control of the robotic device.

- Closed-Loop Setup: The trained decoder translates neural activity into continuous, real-time commands for a robotic arm in a closed-loop system. The participant receives visual feedback from their actions, which is critical for adaptive learning [29] [30].

- Task Performance Assessment: Quantify performance using standardized metrics. Key tasks include:

- Center-Out Reaching Task: The participant moves the robotic cursor from a central point to peripheral targets. Measure success rate, path efficiency, and movement time [22].

- Activities of Daily Living (ADL) Tasks: Assess the ability to perform functionally meaningful actions, such as grasping and moving objects. The Synchron BCI, for instance, is evaluated on tasks like managing email and online banking [28].

Signaling Pathway & Experimental Workflow

The following diagrams illustrate the core signal processing pathway and the sequential experimental workflow for intracortical BCIs.

BCI Signal Decoding Pathway

BCI Experiment Workflow

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and technologies essential for developing and implementing intracortical BCIs for robotic control.

| Item / Technology | Function in BCI Research |

|---|---|

| High-Density Microelectrode Array (e.g., Utah Array, Neuralink's threads, Paradromics' Connexus) | The physical interface for recording neural signals; penetrates cortical tissue to capture action potentials from individual or small groups of neurons [22] [25] [24]. |

| Flexible Thin-Film Substrate (e.g., Precision's Layer 7) | Forms the basis of conformable electrode arrays that minimize immune response and tissue damage, enabling stable long-term recordings [26]. |

| Robotic Surgical System | Enables precise, minimally invasive implantation of flexible electrode threads into specific cortical layers and regions, critical for high-quality signal acquisition [23]. |

| Low-Noise Neural Amplifier ASIC | An application-specific integrated circuit that amplifies and digitizes tiny neural signals (microvolts) at the source, minimizing signal degradation and power consumption [22] [23]. |

| Kalman Filter / Recurrent Neural Network (RNN) Decoder | A computational algorithm that translates temporal sequences of neural firing rates into smooth, continuous predictions of intended kinematic parameters (velocity, position) [29] [30]. |

| Biocompatible Hermetic Encapsulation | A protective coating or package that shields the implanted electronics from the corrosive biological environment while preventing leakage of potentially harmful materials into the body [29]. |

From Brainwaves to Actions: Deep Learning and Real-World Application Paradigms

Intracortical brain-machine interfaces (iBMIs) aim to restore motor function for individuals with paralysis by translating neural activity from the cerebral cortex into control signals for external devices, such as robotic arms. The core of this technology lies in the neural decoding algorithm, which interprets intention from recorded brain signals. While traditional methods often relied on linear models and hand-crafted features, recent advances have been powered by deep neural networks (DNNs). These models can learn complex, non-linear relationships from high-dimensional neural data, leading to substantial improvements in decoding accuracy and the realization of more dexterous, real-time robotic control. This document details the application of these advanced algorithms, providing structured protocols and resources for researchers in the field.

Application Notes: Deep Learning Architectures for iBMIs

Deep learning has revolutionized neural decoding by enabling end-to-end learning from raw or minimally processed neural signals. Below are key architectures and their applications in intracortical decoding for robotic control.

- Convolutional Neural Networks (CNNs): Architectures like EEGNet, though initially designed for non-invasive electroencephalography (EEG), have inspired CNN-based models for invasive signals. Their convolutional layers excel at extracting spatially localized features from multichannel neural data, which is crucial for decoding movement kinematics like velocity and position from motor cortex recordings [16] [31].

- Hybrid Spiking Neural Networks (SNNs): As a more biologically plausible alternative to traditional artificial neural networks (ANNs), SNNs process information using sparse, event-driven spikes. A recently proposed SNN model with feature fusion demonstrated higher accuracy and was "tens or hundreds of times more efficient" than ANNs in decoding motor-related intracortical signals in non-human primates. This makes SNNs exceptionally suitable for implantable, low-power BCI systems [32].

- Customized Deep Learning Pipelines: For complex tasks like continuous reach-and-grasp, subject-specific DNNs are trained to map motor imagery (MI) features to continuous control outputs. These pipelines can decode multiple degrees of freedom simultaneously, such as 2D movement and a discrete "click" signal for grasping, enabling naturalistic interaction with the environment [31].

Table 1: Key Deep Learning Architectures for Intracortical Decoding

| Architecture | Primary Application in iBMIs | Key Advantage | Representative Citation |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Decoding continuous movement kinematics (e.g., cursor/robot velocity) from multi-electrode arrays. | Automatic spatial feature extraction from high-density neural recordings. | [16] [31] |

| Hybrid Spiking Neural Networks (SNNs) | Motor decoding with a focus on high energy efficiency and potential for fully-implantable systems. | High computational efficiency and low power consumption on neuromorphic hardware. | [32] |

| Subject-Specific Deep Models | Real-time, continuous control of robotic arms for complex tasks (e.g., reach, grasp, and place). | Customized decoding for individual users, improving performance and adaptation. | [31] |

Experimental Protocols

This section provides a detailed methodology for implementing a deep learning-based iBMI for real-time robotic arm control, from signal acquisition to closed-loop operation.

Protocol 1: Signal Acquisition and Preprocessing for iBMIs

Objective: To acquire high-quality intracortical signals from the motor cortex and prepare them for decoding. Materials: Intracortical microelectrode array (e.g., Utah Array), neural signal amplifier, data acquisition system. Procedure:

- Surgical Implantation: Implant a microelectrode array into the hand and arm area of the participant's primary motor cortex (M1) using standard sterile neurosurgical techniques.

- Signal Recording: Record neural signals, which can include action potentials (spikes) and local field potentials (LFPs). A typical setup involves sampling at 1 kHz or higher [2].

- Feature Extraction: For spike-based decoding, extract features in real-time. Common features include:

- Thresholded Spike Counts: Bin neural spikes (e.g., in 20-100ms windows) for each electrode.

- Neural Activity Vectors (NAV): Calculate firing rates across the array to form a feature vector [32].

- Feature Fusion (Optional): To enhance decoding, manually extracted NAV features can be fused with deep learning-derived features from a separate network, creating a richer input representation for the final decoder [32].

Protocol 2: Decoder Training and Calibration

Objective: To train a subject-specific deep learning model that maps neural features to intended movement parameters. Materials: Processed neural data, corresponding kinematic data (from a robot or cursor), training software (e.g., Python, TensorFlow/PyTorch). Procedure:

- Calibration Task: The participant observes or attempts to mimic a visually guided task. For example, a cursor or robotic arm moves to random targets on a screen while neural data is recorded. This establishes the paired dataset

{neural features, kinematic outputs}[31] [2]. - Model Selection and Training:

- For Continuous Control (e.g., Velocity): A CNN or ReFIT Kalman filter (a non-deep learning baseline) can be trained. The model learns to predict the velocity of the participant's intended movement in 2D or 3D space from the neural features [2].

- For Discrete Control (e.g., Grasping): A classifier (e.g., a Hidden Markov Model or a DNN) can be trained to detect the intention to "click" or grasp from specific neural patterns [31] [2].

- Fine-Tuning: To combat inter-session variability, a base model can be fine-tuned at the beginning of each session using a small amount of new calibration data, which significantly improves online performance [16].

Protocol 3: Real-Time Closed-Loop Control

Objective: To enable the participant to control a robotic arm in real-time using the trained decoder. Materials: Trained decoder model, robotic arm system (e.g., a multi-fingered hand), real-time control software. Procedure:

- System Integration: Integrate the trained decoder into a real-time processing pipeline. Neural features are continuously extracted, fed into the model, and the decoder's output is streamed as control commands to the robotic arm.

- Task Execution: The participant performs functional tasks using the BCI. In a "cup relocation" task, for instance, the participant uses decoded 2D velocity to move the robotic arm over a cup and a decoded "click" signal to initiate grasp. They then move the arm to a new location and issue another "click" to release [31].

- Performance Assessment: Quantify performance using metrics such as task success rate, completion time, and the number of objects successfully manipulated within a time limit [31].

The following diagram illustrates the complete real-time decoding and control workflow.

Performance Benchmarking

The adoption of deep and spiking neural networks has led to measurable improvements in the performance of iBMI systems. The tables below summarize key quantitative outcomes.

Table 2: Decoding Performance of Advanced Algorithms

| Decoding Paradigm | Model Architecture | Performance Outcome | Citation |

|---|---|---|---|

| Individual Finger Movement | CNN (EEGNet with Fine-Tuning) | Online decoding accuracy of 80.56% (2-finger task) and 60.61% (3-finger task) for robotic hand control. | [16] |

| Intra-cortical Motor Decoding | Spiking Neural Network (SNN) | Achieved higher accuracy than traditional ANNs while being tens or hundreds of times more efficient in terms of computation. | [32] |

| Continuous Reach & Grasp | Subject-Specific Deep Model | Enabled users to grab, move, and place an average of 7 cups in a 5-minute run using a robotic arm. | [31] |

| High-Performance Communication | ReFIT Kalman Filter + HMM | Achieved a free-typing rate of 24.4 ± 3.3 correct characters per minute for a participant with paralysis. | [2] |

Table 3: Standard Evaluation Metrics for Neural Decoding

| Metric | Description | Application in iBMIs |

|---|---|---|

| Decoding Accuracy | Percentage of correct predictions in a classification task (e.g., finger movement). | Measures the precision of discrete intent decoding [16]. |

| Task Success Rate | Percentage of successfully completed trials in a functional task (e.g., object grasp). | Assesses the practical utility of the entire BCI system [31]. |

| Information Throughput | The rate of information transfer, often in bits per second (bps). | Quantifies the communication speed of the BCI system [2]. |

| Computational Efficiency | Processing speed and power consumption of the decoder. | Critical for the development of portable, implantable devices [32]. |

The Scientist's Toolkit

A successful iBMI experiment relies on a suite of specialized materials and reagents. The following table details essential components.

Table 4: Essential Research Reagents and Materials for iBMI Research

| Item Name | Function/Application | Specific Example / Note |

|---|---|---|

| Microelectrode Array | Records neural signals (spikes & LFPs) directly from the cortex. | Utah Array (e.g., 96-channel); surgically implanted in M1 [2]. |

| Neural Signal Amplifier | Amplifies microvolt-level neural signals for acquisition. | Systems from Blackrock Microsystems or Intan Technologies. |

| Deep Learning Framework | Provides environment for building and training decoder models. | TensorFlow, PyTorch; used to implement CNNs, SNNs, etc. [32] [31]. |

| Robotic Manipulator | The external device controlled by the decoded neural signals. | Multi-fingered robotic hands or arms for dexterous tasks [16] [31]. |

| Feature Fusion Module | Integrates handcrafted features with deep learning features. | A custom software component to combine NAV and DNN features for improved input to the decoder [32]. |

The logical relationships and data flow between these core components in an experimental setup are visualized below.

Intracortical brain-machine interfaces (iBMIs) represent a promising frontier for restoring motor function to individuals with severe paralysis. A significant challenge in this field has been advancing beyond the control of single effectors, like computer cursors or simple grippers, towards achieving the dexterous, multi-finger control necessary for complex tasks of daily living. This Application Note details the experimental protocols and key findings from seminal studies that have successfully demonstrated real-time, continuous decoding of individuated finger movements and reach-and-grasp tasks using iBMIs. The progression from offline decoding to closed-loop brain control of a virtual hand and a virtual quadcopter, as documented in recent high-impact studies, marks a critical evolution in the field, highlighting a trend towards more intuitive and high-performance systems [14] [33] [34].

Case Study 1: Neural Control of Finger Movement in a Virtual Environment

This foundational study demonstrated the first real-time brain control of finger-level fine motor skills in non-human primates (NHPs), establishing a benchmark for continuous decoding of precise finger movements [14] [34].

Experimental Protocol

- Subjects and Implantation: Four rhesus macaques were implanted with intracortical electrode arrays (96-channel Utah arrays) in the hand area of the primary motor cortex (M1), identified via surface landmarks relative to the arcuate and central sulci [14].

- Behavioral Task (Physical Control): Monkeys were trained to perform a virtual fingertip position acquisition task. A flex sensor was attached to the index finger to measure position. Monkeys made movements with all four fingers simultaneously to control the aperture of a virtual power grasp displayed on a screen. The task involved acquiring a spherical target that appeared in the path of the virtual finger and holding it for 100–500 ms [14].

- Data Acquisition: Broadband neural data was recorded at 30 kS/s. Neural spikes were detected by thresholding the high-pass filtered (250 Hz) signal. Thresholded spikes were streamed for both offline analysis and online decoding [14].

- Decoding Methodology:

- Kinematic State Vector: The hand state at time bin t was defined as ( Xt = [p, v, a, 1]^T ), where p was the measured finger position, and v and a were the derived velocity and acceleration [14].

- Neural Activity Vector: Firing rates for each channel were compiled into vector ( Yt ) [14].

- Decoder: A linear Kalman filter was used to decode continuous finger position from the thresholded neural spikes in both offline reconstruction and real-time brain control modes [14] [34].

Key Findings and Quantitative Data

The study successfully transitioned from offline analysis to real-time brain control in two monkeys.

Table 1: Performance Metrics for NHP Finger Control Task [14]

| Metric | Offline Decoding (All 4 monkeys) | Online Brain Control (2 monkeys) |

|---|---|---|

| Decoding Performance | Average correlation (ρ) = 0.78 between actual and predicted position | Slight degradation compared to physical control |

| Task Performance | Not Applicable | Average target acquisition rate = 83.1% |

| Information Throughput | Not Applicable | Average = 1.01 bits/s |

Case Study 2: A High-Performance Finger BCI for Quadcopter Control

Building on earlier work, this study demonstrated a high-performance, continuous, finger-based iBCI in a human participant with tetraplegia, doubling the decoded degrees of freedom (DOF) and applying the control to a complex, recreational task [33].

Experimental Protocol

- Participant and Implantation: A 69-year-old right-handed man with C4 AIS C spinal cord injury was enrolled in the BrainGate2 pilot clinical trial. Two 96-channel microelectrode arrays were implanted in the hand ‘knob’ area of the left precentral gyrus [33].

- Finger Kinematics: The system decoded movements of three independent finger groups, yielding four DOF:

- Thumb: 2D movement (flexion-extension and abduction-adduction).

- Index-Middle Finger Group: 1D movement (flexion-extension).

- Ring-Little Finger Group: 1D movement (flexion-extension) [33].

- Calibration & Task Paradigm:

- Open-Loop Calibration: The participant attempted to move his fingers in sync with a moving hand avatar. Neural data (spike-band power, SBP) and target kinematics were used for initial decoder training [33].

- Closed-Loop Training: The decoder was refined using the assumption that decoded movements away from intended targets were errors [33].

- Control Tasks: The participant performed 2DOF (thumb and index-middle) and 4DOF tasks. In the 4DOF task, two of the three finger groups were randomly cued to new targets each trial, requiring simultaneous and individuated control [33].

- Decoding Methodology: A temporally convolved feed-forward neural network mapped SBP to finger velocities for real-time control of the virtual hand [33].

Key Findings and Quantitative Data

The system achieved high-performance, continuous control, enabling complex tasks with decoded finger movements.

Table 2: Performance Metrics for Human 2DOF vs. 4DOF Finger Control [33]

| Metric | 2DOF Decoder / Task | 4DOF Decoder / Task (All Trials) | 4DOF Decoder / Task (Final Blocks) |

|---|---|---|---|

| Mean Acquisition Time | 1.33 ± 0.03 s | 1.98 ± 0.05 s | 1.58 ± 0.06 s |

| Target Acquisition Rate | 88 ± 6 targets/min | 64 ± 4 targets/min | 76 ± 2 targets/min |

| Trial Success Rate | 98.1% | 98.7% | 100% |

| Information Throughput | Not Specified | Not Specified | 2.60 ± 0.12 bps |

- Finger Individuation: During trials where only one finger group was cued, the mean velocity of non-cued fingers was substantially lower than that of the cued finger, demonstrating effective individuation [33].

- Application: Virtual Quadcopter Control: The decoded finger positions were used to control a virtual quadcopter, the participant's top restorative priority. This provided a compelling demonstration of dexterous navigation for recreation, addressing unmet needs for leisure and a sense of enablement [33].

Experimental Workflow and Signaling

The following diagram illustrates the core closed-loop workflow common to the described iBMI systems for dexterous finger control.

Figure 1: Closed-loop workflow for intracortical brain-machine interfaces (iBMIs) in dexterous control tasks. The process begins with the user's movement intention, creating a continuous feedback loop that enables real-time control and adaptation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for iBMI Finger Decoding Studies

| Item | Function & Application | Specific Examples / Models |

|---|---|---|

| Intracortical Electrode Array | Records neural activity (spikes, local field potentials) from the motor cortex. | 96-channel Utah Array (Blackrock Microsystems) [14] [33] |

| Neural Signal Processor | Acquires, amplifies, and digitizes broadband neural data in real-time. | Cerebus System (Blackrock Microsystems) [14] |

| Kinematic Tracking System | Measures physical hand/finger kinematics for decoder calibration. | Flex Sensor (e.g., Spectra Symbol FS-L-0073-103-ST) [14], Data Gloves, Optical Motion Capture |

| Virtual Reality Environment | Provides a controlled, interactive platform for task presentation and brain-controlled avatar manipulation. | Custom software using Unity [33] or MusculoSkeletal Modeling Software [14] |

| Decoding Algorithm | Translates neural signals into predicted or intended kinematic outputs. | Linear Kalman Filter [14] [34], Temporally Convolved Feed-Forward Neural Network [33] |

The progression from NHP studies to human clinical trials, and from basic finger control to the operation of complex virtual systems, underscores the rapid advancement in dexterous iBMI control. The protocols and data outlined herein provide a framework for developing high-performance systems that extend beyond restoration of basic communication to include intuitive control of multiple degrees of freedom, opening new possibilities for recreation, social connectedness, and enhanced independence for individuals with paralysis. Future work will focus on improving long-term decoding stability, incorporating tactile feedback, and further increasing the dimensionality of controlled movements.

Bidirectional brain-computer interfaces (BCIs) represent a paradigm shift in neuroprosthetics, moving beyond one-way communication to enable a closed-loop dialogue between the brain and external devices. Intracortical microstimulation (ICMS) serves as the critical feedback component in these systems, delivering artificial sensory information directly to the brain by electrically stimulating specific neural populations [35] [36]. This technology holds transformative potential for restoring sensation and enhancing motor control in patients with neurological disorders or limb loss, particularly when integrated with motor decoding for real-time robotic arm control [37] [36]. This Application Note provides a structured overview of ICMS principles, quantitative performance data, and detailed experimental protocols to support research in this rapidly advancing field.

Fundamental Principles of ICMS

ICMS operates by delivering low-current electrical pulses through microelectrodes implanted in target brain regions, primarily the primary somatosensory cortex (S1) for restoring tactile sensation [35]. Unlike earlier approaches that sought to override natural neural processing, modern ICMS aims for integration into ongoing cortical processes [38]. The response to electrical stimulation is not a substitute for but is integrated into natural processing, mimicking physiological modulatory effects such as those from attention or expectation [38].

Key biophysical parameters—including current amplitude, pulse width, frequency, and waveform—must be carefully optimized to achieve effective and safe neural activation [38] [35]. The phase of the local field potential at the moment of stimulation can significantly predict response amplitude, highlighting the importance of aligning stimulation with the brain's inherent rhythmic activity [38].

Applications in Sensory Restoration and Motor Control

Sensory Guidance for Motor Tasks

Research in non-human primates has demonstrated that ICMS can deliver instruction signals for directional reaching tasks. In these experiments, microstimulation of S1 enabled rhesus monkeys to interpret artificial sensations as commands for controlling a computer cursor, achieving proficiency levels comparable to natural vibrotactile cues delivered to the skin [35].

Modulating Cortical Processing

Studies in the guinea pig auditory cortex reveal that ICMS can differentially modulate neural responses to sensory stimuli. When combined with an acoustic stimulus, low-current ICMS selectively enhanced long-latency induced responses while reducing evoked components. This supra-additive amplification mimics natural top-down feedback processes, suggesting ICMS can selectively enhance specific aspects of sensory processing [38].

Key Research Findings and Parameters

Table 1: Summary of Key ICMS Experimental Findings

| Application | Model System | Key Finding | ICMS Parameters |

|---|---|---|---|

| Sensory Guidance [35] | Rhesus monkey | ICMS in S1 instructed reach direction as effectively as peripheral vibrotactile stimulation. | Charge-balanced pulses; Electrode pairs in S1. |

| Cortical Modulation [38] | Guinea pig auditory cortex | ICMS supra-additively enhanced induced responses to acoustic stimuli, mimicking top-down modulation. | Low-current pulses (3.11 ± 0.74 μA); Biphasic, cathodic-leading. |

| Safety & Tissue Response [39] | Mouse model | ICMS induced rapid microglia process convergence and increased blood-brain barrier permeability, dependent on current amplitude. | Clinically relevant waveforms; Higher amplitudes increased effects. |

Experimental Protocols

Protocol: ICMS for Sensory Guidance in a Primate Model

This protocol outlines the procedures for using ICMS to deliver instructive sensory cues in a bidirectional BCI, based on methods validated in non-human primates [35].

Materials and Setup

- Animal Model: Rhesus macaque.

- Cortical Implants: Multiple 32-channel microelectrode arrays.

- Key Implantation Sites: Primary Motor Cortex (M1) for signal recording; Primary Somatosensory Cortex (S1) for ICMS delivery.

- BCI Apparatus: Computer display, joystick, juice reward system.

Procedure

- Surgical Implantation: Implant microelectrode arrays in M1 (for recording motor commands) and S1 (for ICMS delivery) under aseptic conditions.

- Task Training: Train the animal in a target choice task.

- The trial begins with the animal holding a cursor on a central target.

- An instruction period (0.5-2 s) follows, during which a directional instruction is given.

- Instruction Modalities:

- ICMS Condition: Deliver microstimulation to S1 to instruct reach direction.

- Control Condition: Use vibrotactile stimulation of the hand to instruct reach direction.

- Behavioral Response: Following the instruction period, the animal must move the cursor to the target corresponding to the instructed direction.

- Data Acquisition & Analysis:

- Record neuronal ensemble activity from M1.

- Decode movement intention to control the cursor.

- Compare task performance (accuracy, latency) between ICMS and vibrotactile instruction modalities.

Protocol: Investigating ICMS-Induced Cortical Modulation

This protocol describes methods for studying how ICMS modulates sensory processing at the network level, suitable for implementation in rodent models [38].

Materials and Setup

- Animal Model: Anesthetized guinea pig.

- Stimulation and Recording: Multielectrode array spanning all cortical layers; Acoustic stimulation system.

- Key Recording Site: Primary Auditory Cortex (A1).

Procedure

- Animal Preparation: Anesthetize the animal and surgically expose the auditory cortex.

- Electrode Placement: Insert a multielectrode array perpendicular to the cortical surface to record from all layers.

- Stimulation Paradigm: Apply three types of stimuli in a randomized order:

- Acoustic Only: 50 μs condensation clicks.

- ICMS Only: Low-current, biphasic pulses (e.g., ~3 μA).

- Combined: Acoustic click presented concurrently with ICMS pulse.

- Data Acquisition:

- Record extracellular local field potentials (LFPs) and multi-unit activity.

- Perform time-frequency analysis on the recorded signals to separate:

- Evoked Activity: Phase-locked to the stimulus.

- Induced Activity: Non-phase-locked to the stimulus.

- Data Analysis:

- Quantify and compare the power of evoked and induced responses across the three stimulation conditions.

- Analyze the relationship between the pre-stimulus LFP phase and the amplitude of the response to each stimulus type.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Solutions for ICMS Research

| Item/Category | Function/Application | Specific Examples/Notes |

|---|---|---|

| Microelectrode Arrays [35] [39] | Recording neural signals and delivering ICMS. | Polyimide-coated tungsten or stainless-steel electrodes; 32-channel arrays; 1 mm spacing between electrode pairs. |

| Charge-Balanced Stimulation [38] [35] | Safely delivering electrical current to neural tissue without causing damage. | Biphasic, cathodic-leading square-wave pulses; No interphase delay. |

| Biocompatible Materials [36] | Enhancing signal quality and long-term stability of implants. | Conductive polymers (e.g., PEDOT); Carbon nanomaterials (e.g., reduced graphene oxide). |

| Two-Photon Imaging [39] | Visualizing cellular-level responses to ICMS in real time. | Used in dual-reporter mice (e.g., GFP-labeled microglia, red fluorescent Ca2+ indicator for neurons). |

| Deep Learning Decoders [16] [36] | Translating recorded neural activity into control commands for external devices. | EEGNet; Convolutional Neural Networks (CNNs); Used for real-time decoding of movement intention. |

Safety and Biocompatibility Considerations

The long-term efficacy of ICMS-based bidirectional BCIs is contingent upon their safety and biocompatibility. Recent findings indicate that ICMS can trigger rapid biological responses in non-neuronal cells:

- Microglial Response: ICMS induces microglia process convergence (MPC) within 15 minutes of stimulation. This response is more pronounced at higher current amplitudes, indicating a dose-dependent effect [39].

- Blood-Brain Barrier (BBB) Integrity: Increased vascular dye penetration in stimulated tissue suggests that ICMS can temporarily increase BBB permeability, another amplitude-dependent effect [39].

These findings underscore the necessity for comprehensive characterization of tissue response to ICMS and the establishment of refined safety standards for chronic stimulation protocols.

Visual Appendix

Bidirectional BCI Closed-Loop System

ICMS Experimental Workflow

Intracortical Brain-Machine Interfaces (iBMIs) represent a transformative neurotechnology that establishes a direct communication pathway between the brain and external devices. For individuals with tetraplegia, amyotrophic lateral sclerosis (ALS), or brainstem stroke, these systems offer the potential to restore communication and control, thereby significantly improving quality of life and functional independence [29] [40]. While early proof-of-concept demonstrations have validated the feasibility of iBMIs, the transition to reliable, long-term home use has remained a significant clinical challenge. Key obstacles include the non-stationarity of neural signals, gradual degradation of electrode performance, and the need for frequent decoder recalibration [41] [42]. This application note synthesizes recent evidence from chronic human trials demonstrating that stable, high-accuracy iBMI performance over multiple years is now achievable, moving the technology from laboratory settings to practical, real-world application.

Case Study: Chronic Intracortical BCI for Communication and Computer Control

Recent findings from the BrainGate2 clinical trial provide compelling evidence for the long-term viability of intracortical BCIs. A pivotal case study involved a participant with tetraplegia due to ALS who utilized an implanted iBMI for over two years (over 4,800 hours) of independent home use [43].

Table 1: Performance Metrics from a Long-Term Home Use iBMI Study

| Performance Metric | Result | Details |

|---|---|---|

| Implant Duration | >2 years | Continuous home use exceeding 4,800 hours [43] |

| Recording Arrays | 4 microelectrode arrays | Placed in the left ventral precentral gyrus [43] |

| Electrode Count | 256 channels | [43] |

| Communication Output | >237,000 sentences | Generated by the user via decoded speech [43] |

| Word Output Accuracy | Up to 99% | Achieved in controlled tests [43] |

| Communication Rate | ~56 words per minute | [43] |

| Recalibration Need | No daily recalibration | System maintained performance without daily recalibration [43] |

The participant achieved full-time control of a personal computer, enabling work and communication with loved ones. This case demonstrates that implanted BCIs can provide dependable communication and digital access over multi-year periods, a critical milestone for clinical viability [43].

Quantitative Evidence of Long-Term Stability and Performance

Long-term iBMI stability relies on addressing neural instabilities and decoder drift. Analysis of longitudinal data from tetraplegic participants using fixed decoders reveals periods of stable performance followed by fluctuation, necessitating monitoring and recalibration strategies [41].

Table 2: Quantitative Evidence of Neural Instability and Decoder Performance

| Measure | Participant T11 | Participant T5 | Context |

|---|---|---|---|

| Study Duration | 142 days | 28 days | Using fixed decoders for cursor control [41] |

| Stable Performance Period | First 3 months | First 3 sessions | Measured by low Angle Error (AE) [41] |

| Median Angle Error (Stable) | 26.8° ± 22.6° | 39.6° ± 23.9° | [41] |

| Median Angle Error (Unstable) | 88.4° ± 46.1° | 58.8° ± 31.7° | [41] |

| MINDFUL Correlation | Pearson r = 0.93 | Pearson r = 0.72 | Correlation between neural instability score and cursor performance [41] |

The MINDFUL method quantifies instabilities in neural data by calculating the statistical distance between neural activity patterns during a target period and a reference period with known good performance. Its strong correlation with decoding performance enables the determination of when recalibration is necessary without knowledge of the user's true movement intentions [41].

Experimental Protocols for Long-Term iBMI Deployment

Protocol 1: Surgical Implantation and Initial Setup

Objective: To chronically implant microelectrode arrays in the motor cortex for long-term neural signal acquisition.

- Pre-surgical Planning: Utilize high-resolution MRI to identify the target implantation zone, typically the hand knob region of the precentral gyrus, for motor control tasks, or the ventral precentral gyrus for speech decoding [40] [43].

- Array Implantation: Surgically implant multiple microelectrode arrays (e.g., Utah Arrays). In the featured long-term study, four arrays were implanted in the left ventral precentral gyrus, providing recordings from 256 electrodes [43].

- Signal Verification: Post-surgical, verify the quality and amplitude of neural signals, including single-unit and multi-unit activity, and local field potentials [44] [45].

- Decoder Calibration (Initial): Conduct initial decoder calibration sessions where the user performs cued motor imagery or attempted movements while neural data and task labels are recorded to train the initial decoding algorithm [45] [41].

Protocol 2: Daily Use and Performance Tracking

Objective: To enable users to operate a personal computer for communication and control in a home environment.

- System Setup: Provide users with a integrated system for home use, including the implanted interface, a percutaneous connector, signal processing hardware, and a personal computer with assistive software [43].

- Multimodal Decoding: Implement decoders for multiple functions. The featured system decoded both attempted speech (into text) and attempted hand movements (into computer cursor movements and clicks) [43].

- Continuous Performance Monitoring: Log continuous usage data, including computer control commands, communication output, and neural signals. Implement algorithms like MINDFUL to monitor signal stability and predict performance degradation without requiring ground-truth labels from the user [41].

Protocol 3: Decoder Recalibration with Minimal Data

Objective: To maintain high decoding performance while minimizing the burden of frequent recalibration sessions.

- Instability Detection: Use a method like MINDFUL to calculate a statistical distance (e.g., Kullback-Leibler divergence) between current neural feature distributions and a reference distribution from a high-performance period. A rising score indicates the need for recalibration [41].

- Advanced Recalibration Techniques: Employ transfer learning to leverage historical data and minimize new data requirements.

- Model Architecture: Use an Active Learning-Domain Adversarial Neural Network (AL-DANN). This model uses a domain adversarial strategy to align features between historical (source) and new (target) data distributions, reducing calibration effort [42].

- Data Collection: Collect a very small amount of new, labeled data (e.g., as few as four samples per category) from the user during a brief, cued task [42].

- Model Update: Fine-tune the pre-trained decoder using the combination of historical data and the newly acquired minimal dataset. This approach has been shown to reduce recalibration time by over 80% while maintaining performance [42].

Diagram 1: Workflow for long-term iBMI maintenance, integrating stability monitoring and minimal-data recalibration.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for Chronic iBMI Research

| Item | Function/Description | Example/Note |

|---|---|---|

| Microelectrode Arrays | Chronic neural signal recording; implanted in cortical tissue. | Utah Array; 96 or 256 electrodes [43] [41]. |

| Percutaneous Connector System | Provides physical and electrical connection between implanted arrays and external systems. | Allows for long-term chronic use in home environments [43]. |

| Neural Signal Processor | Amplifies, filters, and digitizes raw neural signals from electrodes. | Essential for real-time decoding; often uses threshold-crossing spikes or spike power as features [41]. |

| Kinematic Decoders | Algorithms that translate neural signals into control commands. | Includes Recurrent Neural Networks (RNNs) and linear filters for cursor velocity [41]. |

| Speech Decoding Network | Converts neural activity from speech motor cortex into text or audio. | Deep learning models trained on neural data during attempted speech [40] [43]. |

| Stability Monitoring Algorithm (MINDFUL) | Quantifies instability in neural data to predict performance degradation. | Uses Kullback-Leibler divergence on neural feature distributions [41]. |

| Transfer Learning Model (AL-DANN) | Enables rapid decoder recalibration with minimal new data. | Active Learning-Domain Adversarial Neural Network [42]. |

The convergence of robust intracranial hardware, advanced multimodal decoding algorithms, and intelligent recalibration frameworks has propelled iBMIs into a new era of clinical practicality. Evidence from long-term human trials confirms that stable, high-accuracy communication and computer control in a home environment is not only possible but can be sustained for multiple years. The implementation of protocols for stability monitoring and minimal-data recalibration is critical for managing the inherent non-stationarity of neural interfaces, reducing user burden, and ensuring reliable daily performance. These advances mark a significant step toward the widespread clinical adoption of iBMIs as a restorative technology for individuals with severe paralysis.

Navigating Clinical Hurdles: Safety, Longevity, and Performance Optimization

For brain-machine interfaces (BMIs) aimed at real-time robotic arm control, the long-term stability of intracortical implants is a paramount concern. The functional longevity of these devices is intrinsically linked to the biological response they elicit from brain tissue. This application note synthesizes current research data and protocols on implant biocompatibility and chronic recording stability, providing a framework for researchers and developers to enhance the safety and durability of next-generation neuroprosthetics [46].

The foreign body response (FBR)—characterized by glial scar formation, chronic inflammation, and neuronal loss—remains a primary obstacle to sustainable intracortical recording and stimulation. This document provides a synthesized overview of quantitative stability data, detailed experimental methodologies for assessing biocompatibility, and visualizations of key biological processes to guide the development of chronically stable brain-machine interfaces.

Quantitative Data on Stability and Biocompatibility

The long-term performance of intracortical electrodes is influenced by a combination of material properties, biological responses, and implant location. The data below summarize key quantitative findings from recent studies.

Table 1: Chronic Stability Metrics of Intracortical Implants

| Metric | Study Findings | Implication for Chronic Stability | Source |

|---|---|---|---|

| Single-Unit Recording Stability | Identifiable neural units can change within a single day, though some remain stable for weeks or months. | BCI decoders must adapt to a shifting neural population to maintain performance. | [47] |

| Performance Instability (MINDFUL Score) | A measure of neural distribution shift (Kullback–Leibler divergence) correlated strongly with degraded cursor control performance (Pearson r = 0.93 and 0.72 in two participants). | Neural distribution shifts can predict BCI performance degradation, enabling timely recalibration. | [41] |

| Layer-Dependent Stimulation Stability | Intracortical microstimulation (ICMS) detection thresholds in rats were most stable in cortical layers 4 and 5 over 40 weeks, while layers 1 and 6 showed consistent increases. | Implant depth significantly impacts long-term stimulation stability. | [48] |

| Biocompatibility Coating Efficacy | Polyimide electrodes with covalently bound dexamethasone released the anti-inflammatory drug for over two months, significantly reducing immune response and scar tissue in animal models. | Localized, slow-release anti-inflammatory coatings can extend functional implant lifespan. | [49] |

Table 2: Foreign Body Response and Layer-Dependent Effects

| Aspect of FBR | Experimental Findings | Significance | |

|---|---|---|---|

| Astrocytic Glial Scar | The area of astrocytic scarring peaked in cortical layers 2/3. | Scarring is non-uniform, acting as a bio-insulating layer that impairs signal transmission. | [48] |