Implementing NeuroBench Algorithm Track: A Complete Guide for Neuromorphic Computing Research

This comprehensive guide provides researchers and scientists with practical knowledge for implementing the NeuroBench algorithm track, a standardized framework for benchmarking neuromorphic computing algorithms.

Implementing NeuroBench Algorithm Track: A Complete Guide for Neuromorphic Computing Research

Abstract

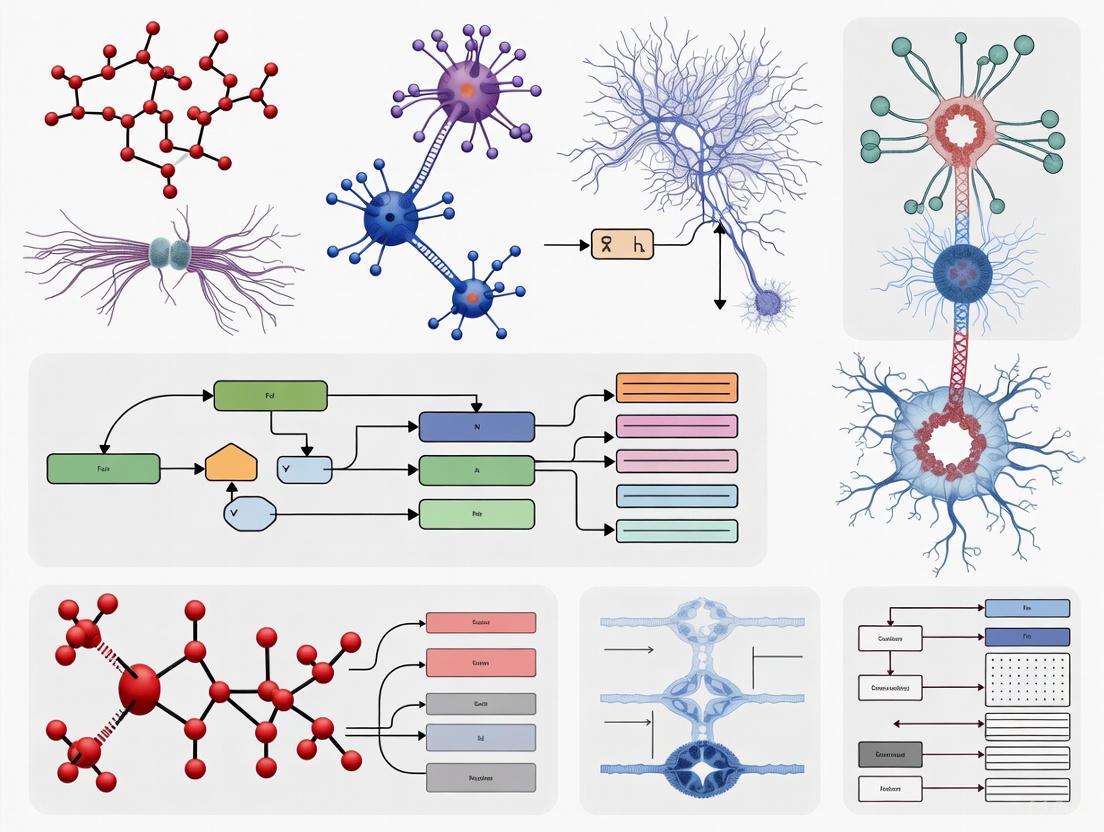

This comprehensive guide provides researchers and scientists with practical knowledge for implementing the NeuroBench algorithm track, a standardized framework for benchmarking neuromorphic computing algorithms. Covering foundational concepts to advanced implementation strategies, it details how to leverage NeuroBench's open-source tools for hardware-independent evaluation of spiking neural networks and brain-inspired algorithms. The article explores the framework's methodology, application across domains, optimization techniques, and comparative analysis approaches to objectively quantify neuromorphic algorithm advancements against conventional methods.

Understanding NeuroBench: The Foundation for Neuromorphic Algorithm Benchmarking

The Benchmarking Gap in Neuromorphic Computing

The rapid advancement of artificial intelligence (AI) and machine learning has led to increasingly complex and large models, with computational growth rates that exceed efficiency gains from traditional technology scaling [1]. This creates a pressing need for new, resource-efficient computing architectures. Neuromorphic computing, which draws inspiration from the brain's computational principles, has emerged as a promising avenue for achieving scalable, energy-efficient, and real-time embodied computation [1] [2].

However, the field faces a significant challenge: the absence of standardized benchmarks. This lack makes it difficult to accurately measure progress, compare performance fairly against conventional methods, and identify promising research directions [1] [3]. Prior efforts to create benchmarks failed to achieve widespread adoption due to designs that were not inclusive, actionable, or iterative [3]. This "benchmarking gap" hinders the coordinated development and objective assessment of neuromorphic technologies.

NeuroBench was conceived as a community-driven solution to this problem. It provides a common framework for evaluating neuromorphic computing algorithms and systems, aiming to deliver an objective reference for quantifying advancements in both hardware-independent and hardware-dependent settings [1] [4].

The NeuroBench Framework: Structure and Core Principles

NeuroBench is a collaboratively designed framework involving researchers from across industry and academia. Its core mission is to provide a representative structure for standardizing the evaluation of neuromorphic approaches [3] [5].

Dual-Track Evaluation Approach

A foundational principle of NeuroBench is its dual-track structure, which ensures comprehensive assessment across different stages of research and development.

- Algorithm Track (Hardware-Independent): This track focuses on evaluating brain-inspired algorithms, such as Spiking Neural Networks (SNNs), in a hardware-agnostic simulated environment [1] [3]. The primary goal is to assess intrinsic algorithmic advancements, such as data efficiency, learning capabilities, and adaptation, before deployment on specialized hardware. This allows researchers to drive design requirements for next-generation neuromorphic systems [1].

- System Track (Hardware-Dependent): This track evaluates full systems where algorithms are deployed on neuromorphic hardware [1] [3]. It aims to measure real-world performance hallmarks, including energy efficiency, latency, and throughput, thereby quantifying the benefits of dedicated neuromorphic hardware that utilizes event-based computation and non-von-Neumann architectures [1].

Collaborative and Evolving Design

NeuroBench is an open, community-driven project. Its design is intended to be inclusive and to continually expand its benchmarks and features to foster and track the progress of the entire research community [3] [6]. This ensures that the framework remains relevant and can adapt to new research breakthroughs.

The following workflow illustrates the end-to-end process for conducting an evaluation using the NeuroBench framework:

NeuroBench in Practice: Metrics, Benchmarks, and Protocols

Comprehensive Performance Metrics

NeuroBench employs a comprehensive set of metrics to ensure a holistic evaluation beyond just task accuracy. These metrics provide a multi-faceted view of a model's performance and efficiency [6].

Table 1: Core Performance Metrics in NeuroBench

| Metric Category | Specific Metric | Description |

|---|---|---|

| Task Performance | Classification Accuracy | Standard accuracy on the given benchmark task [6]. |

| Computational Efficiency | Synaptic Operations | Measures the number of effective Multiply-Accumulate (MAC) and Accumulate (AC) operations [6]. |

| Activation Sparsity | Measures the sparsity of neuronal activations, a key for energy savings in event-driven systems [6]. | |

| Hardware Efficiency | Footprint | Model and synapse memory footprint [6]. |

| Connection Sparsity | Sparsity of synaptic connections in the network [6]. |

Available Benchmarks and Baselines

The framework includes a growing suite of benchmark tasks designed to probe different capabilities of neuromorphic algorithms and systems. The following table summarizes key benchmarks available in NeuroBench.

Table 2: Exemplar NeuroBench Benchmarks and Baseline Performance

| Benchmark Task | Domain | Description | Example Baseline (ANN) | Example Baseline (SNN) |

|---|---|---|---|---|

| Google Speech Commands (GSC) [6] | Audio | Keyword classification from audio data. | Footprint: 109,228Accuracy: 86.5% [6] | Footprint: 583,900Accuracy: 85.6% [6] |

| DVS Gesture Recognition [6] | Vision | Action recognition from event-based camera data. | Under development | Under development |

| Event Camera Object Detection [6] | Vision | Object detection using event-based camera inputs. | Under development | Under development |

| NHP Motor Prediction [6] | Biomedical | Predicting limb movement from neural data. | Under development | Under development |

Implementing NeuroBench research requires a suite of software tools and datasets. The following "Research Reagent Solutions" table details these key components.

Table 3: Key Research Reagent Solutions for NeuroBench Implementation

| Tool / Resource | Type | Function in Research |

|---|---|---|

| NeuroBench Python Package [6] | Software Framework | The core harness for running evaluations, calculating metrics, and ensuring consistent methodology. |

| PyTorch / SNNTorch [6] | Software Framework | Supported machine learning frameworks for building and training models (ANNs and SNNs). |

| Event-Camera Datasets (e.g., DVS Gesture) [6] | Data | Provides biologically plausible, asynchronous input data ideal for testing SNNs. |

| NHP Motor Datasets [6] | Data | Enables benchmarking on real neural decoding tasks, bridging the gap to biomedical applications. |

Detailed Experimental Protocol for the Algorithm Track

This protocol provides a step-by-step guide for evaluating a model on a NeuroBench algorithm benchmark, using the Google Speech Commands (GSC) classification task as an example.

Software Environment Setup

- Create a Python environment (Python ≥ 3.9 is required).

- Install the NeuroBench package via PyPI using the command:

pip install neurobench[6]. Alternatively, for development, clone the GitHub repository and use Poetry:pip install poetryfollowed bypoetry install[6].

Model Training and Preparation

- Dataset Acquisition: The framework will typically automatically download the benchmark dataset (e.g., Google Speech Commands) when the example script is run for the first time [6].

- Model Training: Train your network using the official training split of the dataset. The NeuroBench repository provides example scripts (

benchmark_ann.pyfor ANNs andbenchmark_snn.pyfor SNNs) that demonstrate this process [6]. - Model Wrapping: Wrap your trained model in a

NeuroBenchModelwrapper. This standardizes the interface, ensuring the model can be properly called by the benchmark harness for evaluation [6].

Benchmark Execution and Analysis

- Configure the Benchmark: Instantiate the

Benchmarkclass by passing:- The wrapped

NeuroBenchModel. - A DataLoader for the evaluation split of the data.

- The necessary pre-processors and post-processors (e.g., for converting data to spikes or decoding output spikes).

- The list of metrics to be computed (e.g.,

['Footprint', 'ConnectionSparsity', 'ClassificationAccuracy', 'ActivationSparsity', 'SynapticOperations']) [6].

- The wrapped

- Run the Evaluation: Call the

run()method on the benchmark object. This will perform inference on the test data and compute all specified metrics [6]. - Interpret Results: The

run()method returns a dictionary of results. Compare your results against the published baselines and leaderboards available on the NeuroBench website [5]. This allows you to quantify your model's performance and efficiency against the state of the art.

NeuroBench addresses a critical bottleneck in the field of neuromorphic computing by providing a standardized, community-driven framework for evaluation. Its dual-track approach enables rigorous and comparable assessment of both algorithms and systems, guiding research toward more efficient and capable brain-inspired computing. By adopting NeuroBench, researchers and scientists can contribute to a cohesive and accelerated advancement of neuromorphic technology, ultimately helping to realize its potential for scalable and energy-efficient AI.

The NeuroBench Algorithm Track establishes a standardized framework for the hardware-independent evaluation of neuromorphic computing algorithms. This track is purposefully designed to assess the intrinsic capabilities of brain-inspired algorithms—such as Spiking Neural Networks (SNNs)—separately from the performance characteristics of any specific physical hardware. The primary objective is to enable fair and direct comparison between neuromorphic and conventional approaches (e.g., Artificial Neural Networks), and to identify promising algorithmic directions based on their own merits [1] [7]. By simulating execution on conventional hardware like CPUs and GPUs, researchers can isolate and quantify the advantages stemming from algorithmic innovations, such as novel neuron models, learning rules, or network architectures, thereby driving the design requirements for next-generation neuromorphic hardware [1].

This evaluation is crucial because the neuromorphic research field has historically suffered from a lack of standardized benchmarks, making it difficult to accurately measure progress, compare performance against conventional methods, and identify the most promising research trajectories [7] [3]. NeuroBench addresses the challenges of implementation diversity and rapid research evolution by providing a common, open-source harness that unites disparate tooling and allows for an iterative, community-driven benchmark framework [7].

Core Metrics and Quantitative Benchmarks

The hardware-independent evaluation under NeuroBench employs a comprehensive suite of metrics designed to quantify key performance characteristics of neuromorphic algorithms. These metrics are hierarchically defined to capture multiple facets of performance, from task correctness to computational and biological complexity.

Table 1: Summary of Core NeuroBench Algorithm Track Metrics

| Metric Category | Metric Name | Description | Quantitative Example |

|---|---|---|---|

| Correctness | Classification Accuracy | Proportion of correct predictions in classification tasks. | 86.53% (ANN), 85.63% (SNN) on Google Speech Commands [6] |

| Complexity | Footprint | Total number of model parameters [6]. | 109,228 (ANN), 583,900 (SNN) [6] |

| Connection Sparsity | Proportion of zero-weight connections in the model [6]. | 0.0 (Dense Model) [6] | |

| Activation Sparsity | Proportion of inactive neurons over time or across data [6]. | 38.5% (ANN), 96.7% (SNN) [6] | |

| Synaptic Operations | Count of Multiply-Accumulates (MACs) and Accumulates (ACs) [6]. | ~1.73M Effective MACs (ANN), ~3.29M Effective ACs (SNN) [6] |

Table 2: NeuroBench v1.0 Standard Algorithm Benchmark Tasks

| Benchmark Task | Description | Domain |

|---|---|---|

| Keyword Few-shot Class-incremental Learning (FSCIL) | Combies few-shot learning with incremental class addition, testing adaptive learning [6]. | Audio / Continual Learning |

| Event Camera Object Detection | Object detection using dynamic vision sensor (event camera) data [6]. | Event-Based Vision |

| Non-human Primate (NHP) Motor Prediction | Decodes motor commands from neural activity data [6]. | Neuroprosthetics |

| Chaotic Function Prediction | Predicts the evolution of chaotic dynamical systems [6]. | Time Series Prediction |

| DVS Gesture Recognition | Recognizes human gestures from a Dynamic Vision Sensor [6]. | Event-Based Vision |

| Google Speech Commands (GSC) Classification | Keyword spotting in audio samples [6]. | Audio Processing |

| Neuromorphic Human Activity Recognition (HAR) | Classifies physical activities from sensor data [6]. | Sensor Data Processing |

Detailed Experimental Protocols

General Workflow for Benchmark Evaluation

The following diagram illustrates the standard end-to-end workflow for evaluating an algorithm using the NeuroBench harness.

The evaluation of a model follows a systematic workflow designed for reproducibility and fairness [6]:

- Model Training: Researchers first train their network using the officially designated training split of a NeuroBench benchmark dataset (e.g., Google Speech Commands).

- Model Wrapping: The trained model is then wrapped in a

NeuroBenchModelclass. This abstraction allows the framework to interact with models from different underlying libraries (e.g., PyTorch, SNN Torch) in a consistent manner. - Data Preparation: The evaluation split of the dataset is loaded using a standard DataLoader.

- Configuration: Pre-processors and post-processors are defined. Pre-processors handle tasks like data conversion to spikes, while post-processors combine spiking outputs over time to generate final predictions.

- Execution: The

Benchmarkclass is instantiated with the model, dataloader, processors, and a list of desired metrics. Calling therun()method executes the evaluation and returns the computed metric scores. - Reporting: Results can be submitted to the NeuroBench leaderboards for comparison with other approaches [6].

Protocol for Google Speech Commands Benchmark

The Google Speech Commands (GSC) classification task is a foundational benchmark for keyword spotting. The following protocol provides a detailed methodology for this benchmark.

Research Reagent Solutions:

Table 3: Essential Materials for the GSC Benchmark

| Item Name | Function / Description |

|---|---|

| Google Speech Commands Dataset | A standardized dataset of one-second audio utterances of short commands, used for training and evaluating keyword spotting algorithms [6]. |

| NeuroBench Python Harness | The core open-source software tool that provides the NeuroBenchModel wrapper, Benchmark class, and metric calculators to standardize the evaluation process [6]. |

| PyTorch / SNN Torch | Deep learning frameworks used for building, training, and wrapping models. The NeuroBenchModel interface ensures compatibility across different frameworks [6]. |

| Pre-processors | Data transformation modules that convert raw audio into a suitable format for the model (e.g., spectrograms for ANNs or spike trains for SNNs) [6]. |

| Post-processors | Modules that interpret the model's output. For SNNs, this often involves aggregating spike counts over time to produce a final classification decision [6]. |

Experimental Procedure:

- Data Acquisition and Partitioning: Download the Google Speech Commands dataset. Use the predefined training and evaluation splits as specified in the NeuroBench protocol to ensure comparable results.

- Model Design and Training:

- ANN Baseline: Design a standard non-spiking neural network (e.g., a convolutional network). Train the model using backpropagation and the provided training split.

- SNN Model: Design a spiking neural network. Train the model using a surrogate gradient method or other SNN-compatible learning rule on the same training split.

- Benchmark Execution:

- Wrap both trained models using

NeuroBenchModel. - Prepare the evaluation split dataloader.

- Configure the necessary pre-processors (e.g., for feature extraction) and post-processors.

- Instantiate the

Benchmarkclass with the model, dataloader, processors, and the full list of metrics:Footprint,ConnectionSparsity,ClassificationAccuracy,ActivationSparsity, andSynapticOperations. - Execute the benchmark by calling

run().

- Wrap both trained models using

- Data Analysis and Reporting: Collect the results dictionary. Compare the performance of the ANN and SNN across all metrics, paying particular attention to the trade-offs between accuracy, computational footprint, and activation sparsity. Report findings in the format required for the public leaderboard.

Implementation and Integration

Software Toolchain and Workflow

The practical implementation of the Algorithm Track relies on a specific software toolchain centered around the open-source NeuroBench harness. The following diagram depicts the integration of these components.

Integration into a research workflow is facilitated by the NeuroBench Python package, installable via PyPI (pip install neurobench) [6]. The design flow mandates that after training a network, it must be wrapped in a NeuroBenchModel to present a unified interface. The researcher then provides this wrapped model, along with the evaluation dataloader, any necessary pre-/post-processors, and a list of metrics to the Benchmark runner [6].

Example scripts for benchmarks, such as Google Speech Commands, are provided in the project's examples directory. These scripts demonstrate the complete process, from loading data to printing results, and can be executed from a Poetry-managed virtual environment [6]. The expected outputs for the provided ANN and SNN examples on the GSC task are quantitative results encompassing all core metrics, allowing for immediate comparison [6]. This structured approach ensures that all algorithms are evaluated under identical conditions, making results objectively comparable and fostering reproducible research.

Spiking Neural Networks (SNNs) represent the third generation of neural networks, distinguished by their use of discrete, asynchronous spikes for communication and their incorporation of temporal dynamics to process information [8] [9]. This biological plausibility makes them a cornerstone of neuromorphic computing, a field aiming to replicate the brain's exceptional energy efficiency and computational capabilities in engineered systems [1]. The NeuroBench framework emerges as a community-led initiative to address the lack of standardized benchmarks in this rapidly evolving field [1]. It provides a common methodology for fairly evaluating and comparing the performance of neuromorphic algorithms and systems, both in hardware-independent and hardware-dependent contexts, thus accelerating progress toward viable, brain-inspired artificial intelligence (AI) [1] [4].

Key Terminology and Biological Primitives

Understanding SNNs requires familiarity with both their biological inspirations and their computational models. The table below defines the core terminology.

Table 1: Key Terminology in Spiking Neural Networks

| Term | Biological Inspiration | Computational Model/Function |

|---|---|---|

| Spiking Neuron | Biological neuron that transmits information via action potentials [9]. | The basic computational unit of an SNN. Models include Leaky Integrate-and-Fire (LIF), Izhikevich, and Hodgkin-Huxley [8] [10]. |

| Membrane Potential ((V_m)) | The electrical potential difference across a neuron's cell membrane [9]. | A state variable representing the neuron's internal activation level. Incoming spikes increase or decrease it; it decays over time without input [8]. |

| Spike / Action Potential | A brief, all-or-nothing electrochemical pulse traveling along the axon [9]. | A binary event (1 or 0) transmitted to connected neurons. The primary information carrier in SNNs [8]. |

| Threshold ((V_{th})) | The membrane potential level that must be exceeded to trigger an action potential [9]. | A predefined value. If the membrane potential (Vm > V{th}), the neuron fires a spike and (V_m) is reset [8]. |

| Synapse | The junction between two neurons where neurotransmitters are released [9]. | A connection between two spiking neurons, characterized by a synaptic weight ((w)). The weight defines the strength and sign (excitatory/inhibitory) of the connection [10]. |

| Spike-Timing-Dependent Plasticity (STDP) | Hebbian learning principle: "neurons that fire together, wire together" [10]. | An unsupervised learning rule where the change in synaptic weight depends on the precise timing of pre- and post-synaptic spikes [11] [10]. |

Experimental Protocols for SNN Research

Adhering to standardized experimental protocols is essential for generating reproducible and comparable results, a core principle of the NeuroBench framework [1]. The following sections detail protocols for training and evaluating SNNs.

Protocol: Training a Deep SNN with Time-to-First-Spike Coding

This protocol outlines the procedure for training a high-performance, energy-efficient deep SNN using Time-to-First-Spike (TTFS) coding, based on the methodology achieving less than 0.3 spikes per neuron [12].

1. Objective: To train a deep SNN (e.g., for image classification) that matches the performance of an equivalent traditional Artificial Neural Network (ANN) while minimizing energy consumption through sparse spiking activity.

2. Materials and Dataset:

- Datasets: Standard image datasets: MNIST, Fashion-MNIST, CIFAR-10, CIFAR-100, or PLACES365 [12].

- Software Framework: A deep learning framework with SNN support, such as snnTorch [8] or BrainCog [13].

- Encoding: A TTFS input encoder.

3. Workflow: The end-to-end process for creating and validating a TTFS-SNN is summarized in the following workflow diagram.

4. Detailed Procedures:

- Step 1 - Input Encoding: Convert input data (e.g., pixel intensities) into spike latencies. For an input value (xj^{(0)} \in [0,1]), calculate the spike time as (tj^{(0)} = t{\text{max}}^{(0)} - \tauc \cdot xj^{(0)}), where (\tauc) is a time constant [12].

- Step 2 - Network Initialization: Initialize the SNN parameters. Critical: Use an identity mapping parameterization to ensure stable training dynamics and equivalence to an ANN with Rectified Linear Units (ReLU) [12].

- Step 3 - Forward Pass Simulation: Simulate the network dynamics for a predefined time window ((t{\text{min}}^{(n)}) to (t{\text{max}}^{(n)})). For each neuron (i) in layer (n), integrate inputs using the membrane potential equation: [ \tauc \frac{dVi^{(n)}}{dt} = Ai^{(n)} + \sumj W{ij}^{(n)} H(t - tj^{(n-1)}) ] where (H) is the Heaviside step function. A spike is emitted the moment (V_i^{(n)}) crosses the threshold [12].

- Step 4 - Loss Calculation & Backward Pass: Compute the loss function based on output spike times. Perform backpropagation through time using the exact gradient of the spike-time-based loss, not a surrogate approximation [12].

- Step 5 - Weight Update: Update synaptic weights using a gradient descent optimization algorithm.

- Step 6 - Iteration: Repeat Steps 3-5 until the model converges.

5. Key Measurements:

- Task Performance: Final classification accuracy on the test set [12].

- Energy Efficiency: Average number of spikes per neuron during inference (target: <0.3) [12].

- Training Stability: Monitor for vanishing or exploding gradients during the learning process [12].

Protocol: Evolving a Brain-Inspired Small-World SNN

This protocol describes using neuroevolution to create recurrent SNNs (RSNNs) with brain-inspired topological properties for enhanced efficiency and versatility [14].

1. Objective: To evolve an RSNN, specifically a Liquid State Machine (LSM), that exhibits small-world topology and critical dynamics for efficient multi-task learning.

2. Materials and Dataset:

- Datasets: NMNIST, MNIST, Fashion-MNIST [14].

- Algorithm: Multi-objective Evolutionary Liquid State Machine (ELSM) algorithm [14].

- Software: Brain simulation platforms like NEST [8] or BrainCog [13] can be adapted for evolution.

3. Workflow: The cyclical process of evolving an SNN's architecture is illustrated below.

4. Detailed Procedures:

- Step 1 - Population Initialization: Create an initial population of RSNNs (LSMs) with random connectivity.

- Step 2 - Fitness Evaluation: For each RSNN in the population:

- Task Performance: Measure accuracy on the training tasks (e.g., image classification) [14].

- Structural Fitness: Calculate the small-world coefficient (measuring high clustering and short path length) of the network [14].

- Dynamical Fitness: Assess the network's proximity to a critical state, which is associated with optimal computational capability [14].

- Step 3 - Multi-Objective Selection: Select parent networks for reproduction based on a combination of high task performance, high small-world coefficient, and critical dynamics [14].

- Step 4 - Evolution: Create a new generation of RSNNs by applying crossover and mutation operations to the selected parents. Mutations alter the connectivity pattern.

- Step 5 - Iteration: Repeat Steps 2-4 for multiple generations until a network emerges that satisfies all objectives.

5. Key Measurements:

- Multi-Task Performance: Accuracy across all benchmark tasks (e.g., 97.23% on NMNIST, 98.12% on MNIST) [14].

- Evolved Topology: Presence of hub nodes, short paths, long-tailed degree distributions, and community structures in the final network [14].

- Versatility: The ability of a single evolved model to perform well on multiple different tasks without architectural changes [14].

The Scientist's Toolkit: Essential Research Reagents

The following table catalogs key software and methodological "reagents" required for contemporary SNN research, aligned with the NeuroBench vision.

Table 2: Essential Research Reagents for SNN Implementation

| Category | Item | Function / Application |

|---|---|---|

| Software Frameworks | snnTorch [8] | An open-source Python library for building and gradient-based training of SNNs using PyTorch. |

| BrainCog [13] | A comprehensive platform for brain-inspired AI and simulation, supporting various neurons, learning rules, and cognitive functions. | |

| NEST [8] [15] | A simulator for large-scale, structurally complex SNNs in neuroscience research. | |

| Training Methods | Surrogate Gradient Learning [8] [12] | Enables backpropagation in SNNs by using a differentiable approximation of the spike function in the backward pass. |

| ANN-to-SNN Conversion [12] [13] | Converts a pre-trained ANN to an SNN, preserving performance and enabling low-power deployment. | |

| Encoding Schemes | Time-to-First-Spike (TTFS) [12] | An input encoding where information is represented by the latency of a single spike, enabling ultra-low-power inference. |

| Rate Coding [8] | An input encoding where information is represented by the firing rate of a spike train over a time window. | |

| Learning Rules | Spike-Timing-Dependent Plasticity (STDP) [11] [10] | An unsupervised, biologically plausible local learning rule that updates weights based on pre- and post-synaptic spike timing. |

| Hardware Systems | SpiNNaker [8] [1] | A neuromorphic computing architecture using massive parallelism for large-scale SNN simulation. |

| Loihi [8] [1] | An Intel research chip that implements online learning and adaptive SNNs in silicon. |

Performance Benchmarking and Data Presentation

Quantitative benchmarking is essential for tracking progress. The following tables consolidate key performance metrics from recent literature, providing a reference for evaluating models under the NeuroBench paradigm.

Table 3: Benchmarking SNN Performance on Image Classification Tasks

| Model / Approach | Dataset | Key Metric (Accuracy) | Key Metric (Efficiency) |

|---|---|---|---|

| Deep TTFS SNN [12] | CIFAR-10, CIFAR-100, PLACES365 | Matches equivalent ANN performance | < 0.3 spikes/neuron |

| Evolutionary LSM (ELSM) [14] | NMNIST | 97.23% | Evolved small-world topology for low energy consumption |

| Evolutionary LSM (ELSM) [14] | MNIST | 98.12% | Versatile structure for multiple tasks |

| SNN with COM & Attention [11] | Caltech 101 | Outperforms SOTA by ~20% | Hardware-efficient winner-take-all mechanism |

Table 4: Comparing SNN Software Platforms

| Framework | Primary Focus | Key Strengths | Brain Simulation Support |

|---|---|---|---|

| snnTorch [8] | Deep SNNs, Gradient-based Learning | PyTorch integration, user-friendly | Limited |

| BrainCog [13] | Brain-inspired AI & Simulation | Rich cognitive functions, versatile components | Extensive (Multi-scale) |

| NEST [8] [15] | Large-Scale Neuroscience | Optimized for big structural networks | Extensive |

| Brian 2 [8] [15] | Computational Neuroscience | Flexible and easy-to-use model definition | Moderate |

NeuroBench is a benchmark framework for neuromorphic computing algorithms and systems, collaboratively designed by an open community of researchers across industry and academia [1] [3]. It addresses a critical gap in the neuromorphic research field, which currently lacks standardized benchmarks, making it difficult to accurately measure technological advancements, compare performance with conventional methods, and identify promising future research directions [1]. The framework introduces a common set of tools and a systematic methodology for inclusive benchmark measurement, delivering an objective reference framework for quantifying neuromorphic approaches in both hardware-independent (algorithm track) and hardware-dependent (system track) settings [1] [4].

The rapid growth of artificial intelligence (AI) and machine learning (ML) has resulted in increasingly complex and large models, with computation growth rates exceeding efficiency gains from technology scaling [1]. Neuromorphic computing has emerged as a promising approach to address these challenges by leveraging brain-inspired principles to advance computing efficiency and capabilities of AI applications [1] [3]. NeuroBench aims to provide a representative structure for standardizing the evaluation of these neuromorphic approaches, fostering reproducible and comparable research outcomes.

NeuroBench Framework Architecture

Core Structural Components

The NeuroBench framework is structured around two primary evaluation tracks and a modular software architecture that enables comprehensive benchmarking. The framework's design facilitates both algorithm-level and system-level assessments through standardized components.

Table 1: NeuroBench Framework Components

| Component | Description | Primary Function |

|---|---|---|

| Algorithm Track | Hardware-independent evaluation | Measures algorithmic performance and efficiency |

| System Track | Hardware-dependent evaluation | Assesses full system performance including hardware |

| NeuroBench Harness | Open-source Python package | Executes benchmarks and extracts metrics |

| Pre-processors | Data transformation modules | Convert raw data to spike-compatible formats |

| Post-processors | Output processing modules | Combine and interpret spiking outputs |

| Metrics Package | Standardized evaluation metrics | Quantifies performance across multiple dimensions |

The algorithm track focuses on hardware-independent evaluation, allowing researchers to assess neuromorphic algorithms running on conventional hardware like CPUs and GPUs [1]. This approach drives design requirements for next-generation neuromorphic hardware by first exploring algorithms with readily available computing resources. Conversely, the system track encompasses hardware-dependent evaluation, assessing the performance of neuromorphic systems that comprise algorithms deployed to specialized brain-inspired hardware [1].

The Benchmarking Workflow

The NeuroBench framework implements a systematic workflow for benchmarking neuromorphic computing approaches. This workflow ensures consistent evaluation across different algorithms and systems.

Diagram 1: NeuroBench Benchmarking Workflow

The design flow for using the NeuroBench framework follows a structured process [6]. Researchers first train a network using the training split from a particular dataset. The trained network is then wrapped in a NeuroBenchModel to ensure compatibility with the benchmarking system. The evaluation process involves passing the model, evaluation split dataloader, pre-processors, post-processors, and a list of metrics to the Benchmark class and executing the run() method to obtain comprehensive performance evaluations [6].

NeuroBench Tools and Implementation

NeuroBench Harness and Software Architecture

The NeuroBench harness is an open-source Python package that allows users to easily run benchmarks and extract relevant metrics [5] [6]. This software infrastructure provides the foundational tools for implementing the NeuroBench methodology in practice.

Table 2: NeuroBench Software Components

| Component | Implementation | Usage |

|---|---|---|

| Installation | PyPI package (pip install neurobench) |

Quick deployment and dependency management |

| Development Environment | Poetry-based configuration | Consistent development and deployment environments |

| Model Interface | NeuroBenchModel wrapper |

Standardized model integration |

| Pre-processing | Modular data transformation | Spike conversion and data preparation |

| Post-processing | Output aggregation methods | Interpretation of spiking outputs |

| Metrics Calculator | Comprehensive metrics package | Multi-dimensional performance assessment |

The harness is designed with modularity in mind, containing specific sections for benchmarks (including workload metrics and static metrics), datasets, framework support for Torch and SNNTorch models, pre-processing utilities for data conversion to spikes, and post-processors that handle spiking output combination [6]. This modular architecture enables researchers to extend the framework with new benchmarks, metrics, and processing methods while maintaining compatibility with the core evaluation system.

Research Reagent Solutions

The NeuroBench framework provides essential "research reagents" in the form of software tools and methodological components that enable standardized neuromorphic computing research.

Table 3: Essential NeuroBench Research Reagents

| Research Reagent | Function | Implementation Example |

|---|---|---|

| Standardized Datasets | Provides consistent input data for benchmarking | DVS Gesture, Google Speech Commands |

| Pre-processing Modules | Transforms raw data into spike-compatible formats | Data normalization, spike encoding |

| Model Wrapper | Standardizes model interfaces for evaluation | NeuroBenchModel base class |

| Metrics Calculator | Quantifies performance across multiple dimensions | Accuracy, sparsity, efficiency metrics |

| Benchmark Runner | Executes standardized evaluation pipelines | Benchmark.run() method |

| Data Loaders | Handles dataset loading and partitioning | PyTorch DataLoader compatibility |

These research reagents form the essential toolkit for conducting NeuroBench-compliant research, ensuring that different approaches can be fairly compared using consistent evaluation methodologies, datasets, and metrics [6]. The availability of these standardized components significantly reduces the implementation overhead for researchers while ensuring methodological consistency across the field.

Experimental Protocols and Benchmarking Methodology

Defined Benchmarks and Evaluation Tasks

NeuroBench includes multiple standardized benchmarks that cover diverse application domains relevant to neuromorphic computing. These benchmarks are designed to assess different capabilities of neuromorphic algorithms and systems.

Table 4: NeuroBench v1.0 Benchmark Tasks

| Benchmark Task | Domain | Application Context |

|---|---|---|

| Keyword Few-shot Class-incremental Learning (FSCIL) | Incremental learning | Adaptive learning scenarios |

| Event Camera Object Detection | Computer vision | Event-based visual processing |

| Non-human Primate (NHP) Motor Prediction | Motor neuroscience | Brain-machine interfaces |

| Chaotic Function Prediction | Time series analysis | Forecasting and prediction |

| DVS Gesture Recognition | Event-based vision | Gesture recognition from event cameras |

| Google Speech Commands (GSC) Classification | Audio processing | Keyword spotting |

| Neuromorphic Human Activity Recognition (HAR) | Motion analysis | Activity recognition from sensor data |

These benchmarks are carefully selected to represent common application domains for neuromorphic computing while providing diverse challenges that test different aspects of neuromorphic algorithms and systems [6]. The inclusion of both static and temporal tasks ensures comprehensive evaluation of neuromorphic approaches across different data modalities and processing requirements.

Comprehensive Metrics Framework

NeuroBench employs a multi-dimensional metrics framework that evaluates not only task performance but also computational efficiency and neuromorphic characteristics. This comprehensive approach ensures that benchmarks capture the full spectrum of considerations relevant to neuromorphic computing.

Table 5: NeuroBench Metrics Framework

| Metric Category | Specific Metrics | Evaluation Purpose |

|---|---|---|

| Task Performance | Classification Accuracy | Primary task competency |

| Computational Efficiency | Footprint, Synaptic Operations | Resource utilization |

| Sparsity | Connection Sparsity, Activation Sparsity | Neuromorphic characteristics |

| Energy Efficiency | Effective MACs, Effective ACs | Power and energy consumption |

The metrics framework is designed to balance traditional performance measures (like accuracy) with neuromorphic-specific considerations (like sparsity and energy efficiency) [6]. This dual focus ensures that benchmarks reward approaches that successfully leverage neuromorphic principles to achieve improved efficiency without compromising task performance.

Detailed Experimental Protocol

Implementing a complete NeuroBench evaluation requires following a detailed experimental protocol that ensures reproducible and comparable results. The protocol encompasses data preparation, model development, and systematic evaluation.

Diagram 2: NeuroBench Experimental Protocol

The experimental protocol begins with data preparation, where researchers select an appropriate benchmark dataset and apply the standard data splits and pre-processing procedures defined by NeuroBench [6]. This ensures consistent input data across different evaluations. During model development, researchers design and train their neuromorphic models using the training split, then wrap the trained model using the NeuroBenchModel interface. The evaluation phase involves configuring the benchmark with appropriate metrics, executing the benchmark run, and analyzing the comprehensive results across all measured dimensions.

Implementation Examples and Baseline Results

Practical Implementation Examples

NeuroBench provides concrete implementation examples that demonstrate how to use the framework for specific benchmark tasks. These examples serve as practical starting points for researchers implementing their own NeuroBench evaluations.

For the Google Speech Commands (GSC) keyword classification benchmark, NeuroBench offers both artificial neural network (ANN) and spiking neural network (SNN) implementation examples [6]. The ANN benchmark example produces results including a footprint of 109,228 parameters, connection sparsity of 0.0, classification accuracy of 86.5%, activation sparsity of 38.5%, and synaptic operations measured as 1,728,071 effective MACs [6]. The comparable SNN benchmark shows a different efficiency profile with a footprint of 583,900 parameters, classification accuracy of 85.6%, activation sparsity of 96.7%, and synaptic operations measured as 3,289,834 effective ACs with no MAC operations [6].

These examples highlight the framework's ability to capture meaningful differences between conventional and neuromorphic approaches, particularly in terms of activation sparsity and the types of synaptic operations performed. The higher activation sparsity in the SNN implementation demonstrates a key neuromorphic characteristic that potentially translates to energy efficiency during inference.

Community Adoption and Extension

The NeuroBench framework is designed as a community-driven project that welcomes further development from the neuromorphic research community [6]. The framework maintains contribution guidelines and encourages extensions to features, programming frameworks, metrics, and tasks. This open approach ensures that the benchmark ecosystem evolves alongside the field it aims to measure.

The project is maintained by a collaborative team from industry and academia, with technical contributions from numerous researchers across institutions [6]. This diverse development base helps ensure that the framework addresses the needs of different stakeholders in the neuromorphic computing landscape, from algorithm researchers focused on novel neural models to system engineers developing specialized neuromorphic hardware.

NeuroBench represents a critical step forward for the neuromorphic computing research community by providing a standardized, comprehensive framework for benchmarking algorithms and systems. Through its structured architecture, systematic methodology, and open-source implementation, NeuroBench addresses the pressing need for comparable and reproducible evaluation in this rapidly evolving field. The framework's dual-track approach (algorithm and system), comprehensive metrics, diverse benchmark tasks, and modular software architecture provide researchers with the necessary tools to quantitatively assess and compare neuromorphic approaches while maintaining methodological consistency. As the field continues to advance, NeuroBench is positioned to serve as the foundational benchmarking platform that enables accurate measurement of progress, identification of promising research directions, and fair comparison between different neuromorphic computing approaches.

The field of neuromorphic computing, which aims to advance computing efficiency and capabilities through brain-inspired principles, faces a significant challenge: the absence of fair and widely-adopted objective metrics and benchmarks. This lack of standardization hinders the research community's ability to measure technological advancement, compare novel approaches, and make evidence-based decisions on promising research directions [7]. NeuroBench emerges as a direct response to this challenge, conceived as a benchmark framework for neuromorphic computing algorithms and systems that is collaboratively designed by an open community of researchers across industry and academia [1] [4].

The development model of NeuroBench represents a paradigm shift in neuromorphic computing research. Unlike previous benchmark efforts that saw limited adoption due to insufficiently inclusive, actionable, and iterative designs, NeuroBench was created through a collaboratively-designed effort from nearly 100 co-authors across over 50 institutions in industry and academia [7]. This unprecedented scale of collaboration ensures the framework provides a representative structure for standardizing the evaluation of neuromorphic approaches while balancing the diverse perspectives and needs of both academic research and industrial application.

NeuroBench Framework Architecture

Dual-Track Benchmarking Approach

NeuroBench implements a sophisticated dual-track architecture designed to accommodate the different development stages and evaluation needs within the neuromorphic computing ecosystem. This structure enables comprehensive assessment across the spectrum from algorithmic exploration to deployed systems [7].

Algorithm Track (Hardware-Independent): This track focuses on evaluating neuromorphic algorithms through simulated execution on conventional hardware such as CPUs and GPUs. The primary goal is to drive design requirements for next-generation neuromorphic hardware by exploring neuroscience-inspired methods that strive toward expanded learning capabilities, including predictive intelligence, data efficiency, and adaptation. This track encompasses approaches such as spiking neural networks (SNNs) and primitives of neuron dynamics, plastic synapses, and heterogeneous network architectures [1] [7].

System Track (Hardware-Dependent): This track evaluates complete neuromorphic systems composed of algorithms deployed to specialized hardware. The focus is on assessing real-world performance characteristics including energy efficiency, real-time processing capabilities, and resilience compared to conventional systems. This track encompasses hardware utilizing biologically-inspired approaches such as analog neuron emulation, event-based computation, non-von-Neumann architectures, and in-memory processing [1] [7].

Community Governance and Evolution Model

NeuroBench employs an iterative, community-driven initiative specifically designed to evolve over time, ensuring ongoing representation and relevance to neuromorphic research. This dynamic evolution model addresses the challenge of rapid research innovation in neuromorphic computing that can render existing standards obsolete [7] [16]. The framework is maintained through ongoing collaboration between industry and academic engineers and researchers, with core maintenance handled by researchers from multiple institutions [6]. The project incorporates structured versioning to facilitate productive foundational and evolving performance evaluation, with NeuroBench v1.0 already including four defined algorithm benchmarks, algorithmic complexity metric definitions, and algorithm baseline results [5].

NeuroBench Algorithm Track Protocol

Benchmark Tasks and Specifications

The NeuroBench algorithm track includes several carefully selected benchmark tasks that represent challenging problems where neuromorphic approaches may show particular promise. These benchmarks are designed to stress-test key capabilities of neuromorphic algorithms while enabling direct comparison with conventional approaches.

Table 1: NeuroBench v1.0 Algorithm Benchmark Tasks

| Benchmark Task | Problem Domain | Key Neuromorphic Relevance |

|---|---|---|

| Few-shot Class-incremental Learning (FSCIL) | Continuous learning with limited data | Data efficiency, adaptive learning without catastrophic forgetting |

| Event Camera Object Detection | Processing event-based vision data | Temporal processing, sparse asynchronous computation |

| Non-human Primate (NHP) Motor Prediction | Neural decoding and motor control | Real-time processing, biological signal processing |

| Chaotic Function Prediction | Temporal sequence forecasting | Temporal dynamics, predictive capability |

Additional algorithm benchmarks available in the framework include DVS Gesture Recognition, Google Speech Commands (GSC) Classification, and Neuromorphic Human Activity Recognition (HAR) [6].

Comprehensive Evaluation Metrics

NeuroBench employs a hierarchical metric definition that captures key performance indicators of interest for neuromorphic computing. These metrics are categorized to provide a multidimensional assessment of algorithm performance.

Table 2: NeuroBench Algorithm Track Evaluation Metrics

| Metric Category | Specific Metrics | Definition and Significance |

|---|---|---|

| Correctness Metrics | Classification Accuracy | Task performance accuracy measuring fundamental capability |

| Complexity Metrics | Footprint | Total number of parameters in the network |

| Connection Sparsity | Proportion of zero-valued connections in the network | |

| Activation Sparsity | Proportion of zero activations during inference | |

| Synaptic Operations | Effective MACs (Multiply-Accumulate) and ACs (Accumulate Operations) |

These metrics collectively enable a comprehensive evaluation that captures not only task performance but also computational efficiency and resource utilization characteristics that are particularly relevant for neuromorphic systems [6].

Experimental Implementation Protocols

Algorithm Benchmarking Workflow

The NeuroBench framework provides a standardized workflow for implementing and evaluating algorithms against the benchmark suite. The structured methodology ensures consistent, comparable results across different research efforts.

Data Preparation and Pre-processing

The benchmark workflow begins with data preparation using the standardized datasets incorporated in the NeuroBench framework. The protocol requires:

- Utilizing the official train/test splits provided for each benchmark task to ensure comparability

- Applying appropriate pre-processing techniques to convert raw data into formats suitable for neuromorphic processing

- For non-spiking native data (e.g., Google Speech Commands), implementing spike conversion encoders such as rate coding, temporal coding, or delta modulation

- Ensuring reproducible data loading through the standardized DataLoader interfaces provided by the framework

Model Training and Validation

Researchers implement their neuromorphic models using supported frameworks (primarily PyTorch and SNNTorch), following these protocol requirements:

- Models must be trained exclusively on the designated training split of each benchmark dataset

- Validation using the designated validation split (where available) for hyperparameter tuning and model selection

- Documentation of all training parameters, including learning rates, optimization algorithms, and training epochs

- Implementation of appropriate regularization techniques specific to neuromorphic models, such as activity regularization to encourage sparsity

NeuroBench Model Integration

The trained model must be wrapped in a NeuroBenchModel interface to ensure standardized evaluation:

This wrapping step ensures consistent model behavior across different implementations and provides the framework with necessary hooks for extracting standardized metrics.

Benchmark Execution and Evaluation

The core evaluation phase involves configuring and executing the benchmark using the NeuroBench harness:

The evaluation protocol requires:

- Using the official evaluation split for final metric computation

- Applying standardized pre-processors for consistent input formatting

- Implementing appropriate post-processors for interpreting spiking outputs

- Executing all defined metrics for comprehensive assessment

- Reporting complete results without selective omission

Research Reagent Solutions and Tools

Successful implementation of NeuroBench algorithm benchmarks requires specific computational tools and frameworks. The following table details the essential components of the research toolkit.

Table 3: Essential Research Reagents and Tools for NeuroBench Implementation

| Tool/Category | Specific Implementation | Function in Research Protocol |

|---|---|---|

| Core Framework | NeuroBench Python Package | Primary benchmark harness providing standardized evaluation infrastructure and metric computation |

| Neuromorphic Libraries | SNNTorch | Provides spiking neural network components, neuron models, and surrogate gradient training capabilities |

| Simulation Platforms | PyTorch | Enables hardware-independent algorithm development and testing on conventional computing resources |

| Data Management | Standardized DataLoaders | Ensures consistent data loading, preprocessing, and train/test split application across different research implementations |

| Model Interfaces | NeuroBenchModel Wrapper | Standardizes model integration into the benchmark framework enabling consistent evaluation across diverse implementations |

| Evaluation Components | Pre-processors and Post-processors | Handles input data formatting and output interpretation consistently across different models and tasks |

Community Collaboration Framework

The NeuroBench project embodies a sophisticated collaboration model that enables effective cooperation across institutional boundaries and between academic and industrial researchers. This framework provides multiple pathways for community participation and contribution.

Contribution Pathways and Protocols

The NeuroBench project has established clear pathways for community contribution across different levels of engagement:

Benchmark Implementation and Results Submission: Researchers can implement existing benchmarks and submit results to the public leaderboards, following the standardized evaluation protocols outlined in Section 4. This requires full disclosure of methodology and complete result reporting.

Framework Development and Extension: Contributors can participate in developing the core NeuroBench harness through the GitHub repository, including adding new features, supporting additional neuromorphic frameworks, or optimizing metric computation [6].

New Benchmark Proposal and Development: The community-driven evolution model allows researchers to propose and develop new benchmark tasks through a structured process involving concept papers, prototype implementations, and community review.

Standardization Working Groups: Participants can join specialized working groups focused on specific aspects of neuromorphic benchmarking, such as metric definition, hardware abstraction interfaces, or domain-specific benchmark development.

Governance and Decision-Making Protocols

The collaborative development of NeuroBench operates under a transparent governance model designed to balance inclusivity with technical rigor:

- Technical decisions are made through consensus-building among active contributors, with special weight given to domain experts in relevant subtopics

- The framework maintains a core maintenance team responsible for coordinating contributions and ensuring framework consistency [6]

- Benchmark inclusion follows a evidence-based protocol requiring demonstration of task relevance, evaluation soundness, and community value

- Regular workshops and community meetings provide forums for strategic planning and technical coordination

This governance approach addresses the challenge of industry fragmentation in neuromorphic computing by bringing together competing organizations and research groups to develop shared understanding of best practices [16].

Impact and Future Directions

The NeuroBench collaborative framework represents a significant advancement in neuromorphic computing research methodology. By providing standardized benchmarks and evaluation protocols, it enables direct comparison of different neuromorphic algorithms on common tasks, accelerating progress in areas like event-based vision, auditory processing, and motor control [16]. The community-driven development model ensures that the framework remains relevant and inclusive as the field evolves.

Future development directions for NeuroBench include expansion of benchmark tasks to encompass emerging application domains, refinement of evaluation metrics to better capture neuromorphic advantages, and enhanced support for various neuromorphic hardware platforms. The ongoing collaboration between industry and academic partners through this framework continues to drive the field toward more rigorous, comparable, and impactful research outcomes.

For researchers interested in contributing to or utilizing NeuroBench, the project website (neurobench.ai) provides current information, while the GitHub repository offers the open-source benchmark harness and detailed documentation for implementation [5] [6].

NeuroBench is a community-driven, open-source benchmark framework designed to standardize the evaluation of neuromorphic computing algorithms and systems [5] [1]. Developed through a collaborative effort of nearly 100 researchers across over 50 institutions in academia and industry, it addresses the critical lack of standardized benchmarks in the neuromorphic computing field [3] [17] [7]. The framework provides a common set of tools and a systematic methodology for fair and representative measurement of neuromorphic approaches, enabling researchers to quantify advancements and compare performance against conventional methods effectively [1] [17]. Its dual-track structure supports both hardware-independent algorithm development and hardware-dependent system implementation, fostering comprehensive progress in brain-inspired computing [7].

The following table summarizes the core official resources for accessing and utilizing the NeuroBench framework.

Table 1: Core NeuroBench Resources for Researchers

| Resource Type | Location/Identifier | Primary Function | Key Contents |

|---|---|---|---|

| Official Website | https://neurobench.ai/ | Central project hub and updates | Preprint links, mailing list signup, high-level project information [5]. |

| Documentation | https://neurobench.readthedocs.io/ | Technical reference and user guide | API overview, installation instructions, getting started tutorials, contributing guidelines [6]. |

| Source Code | https://github.com/neurobench | Code access and development | Benchmark harness, baseline results, system benchmark repositories [18]. |

| Academic Preprint | arXiv:2304.04640 [cs.AI] | Conceptual foundation and specifications | Detailed benchmark definitions, metric descriptions, methodology, and baseline results [3]. |

| Peer-Reviewed Publication | Nature Communications 16, 1545 (2025) | Validated academic reference | Peer-reviewed perspective on the framework's design and its role in the field [1]. |

The NeuroBench Framework Structure

The NeuroBench framework is strategically divided into two parallel tracks to cater to different stages of neuromorphic research and development [7].

Algorithm Track

The Algorithm Track is designed for hardware-independent evaluation of brain-inspired algorithms, primarily Spiking Neural Networks (SNNs) [7]. This allows researchers to benchmark the performance and efficiency of their models on conventional hardware (CPUs/GPUs) before deployment on specialized neuromorphic systems. The track emphasizes key neuromorphic metrics such as activation sparsity and synaptic operations [6].

System Track

The System Track focuses on hardware-dependent benchmarking, assessing the performance of algorithms deployed on physical neuromorphic hardware [5] [7]. This track is crucial for measuring real-world gains in areas like energy efficiency, latency, and throughput, which are key promises of neuromorphic computing [1].

Implementation Protocol: Algorithm Track Workflow

The standard workflow for implementing the NeuroBench algorithm track in a research project follows a structured sequence from data preparation to metric analysis, as visualized below.

Step-by-Step Experimental Protocol

- Environment Setup: Install the NeuroBench package via PyPI using the command

pip install neurobench[6]. For development, clone the GitHub repository and usepoetryto manage a consistent virtual environment [6]. - Model and Data Preparation: Train a network using the training split of a NeuroBench-supported dataset. Supported benchmarks include Keyword Few-shot Class-incremental Learning (FSCIL), Event Camera Object Detection, Non-human Primate (NHP) Motor Prediction, and Chaotic Function Prediction, among others [6].

- Framework Integration: Wrap the trained model as a

NeuroBenchModelto ensure compatibility with the benchmark harness. Define any necessary pre-processors (for data conversion to spikes) and post-processors (for decoding spiking outputs) [6]. - Benchmark Execution: Pass the wrapped model, the evaluation split dataloader, pre/post-processors, and a list of desired metrics to the

Benchmarkclass. Execute the evaluation by calling therun()method [6]. - Results Analysis: The

run()method returns a dictionary of results. These metrics can be used for internal analysis or submitted for comparison on the public NeuroBench leaderboards to benchmark against community solutions [6].

Benchmark Tasks and Metrics

NeuroBench provides a suite of tasks and a hierarchical metrics system to ensure comprehensive evaluation of neuromorphic algorithms.

Table 2: Key Benchmark Tasks and Evaluation Metrics in NeuroBench v1.0

| Benchmark Category | Example Tasks | Core Performance Metrics | Core Efficiency Metrics |

|---|---|---|---|

| Classification | Google Speech Commands, DVS Gesture Recognition [6] | Classification Accuracy [6] | Footprint (number of parameters), Activation Sparsity [6] |

| Prediction | Non-human Primate Motor Prediction, Chaotic Function Prediction [6] | Mean Square Error (MSE), Pearson Correlation Coefficient | Synaptic Operations (Effective ACs/MACs) [6] |

| Incremental Learning | Keyword Few-shot Class-incremental Learning (FSCIL) [6] | Few-shot learning accuracy, Forgetting | Connection Sparsity [6] |

| Object Detection | Event Camera Object Detection [6] | Average Precision (AP) | Energy consumption (system track) |

Exemplar Experimental Implementation

The following protocol details a specific benchmark example to illustrate a complete experimental workflow.

Protocol: Google Speech Commands (GSC) Classification Benchmark

Objective: To benchmark the performance and efficiency of an ANN and SNN on the Google Speech Commands keyword classification task using NeuroBench.

Research Reagent Solutions:

Table 3: Essential Materials and Resources for GSC Benchmark

| Item | Function/Description | Source/Availability |

|---|---|---|

| Google Speech Commands Dataset | A dataset of one-second audio utterances of 30 keywords, used for simple keyword classification [6]. | Publicly available; automatically downloaded by the example script. |

NeuroBench Harness (neurobench) |

The core Python package that provides the benchmarking infrastructure, metrics, and model wrapping utilities [5] [6]. | PyPI (pip install neurobench) or GitHub. |

Example Scripts (benchmark_ann.py, benchmark_snn.py) |

Ready-to-run scripts that demonstrate the complete benchmark workflow for ANN and SNN models on the GSC task [6]. | Located in the /examples/gsc/ directory of the NeuroBench GitHub repository. |

| Pre-processors (included in examples) | Convert raw audio data into a format suitable for the model (e.g., feature vectors for ANN, spike trains for SNN) [6]. | Provided within the NeuroBench examples. |

| Post-processors (included in examples) | Decode the model's output (e.g., spike rates) into a final classification decision [6]. | Provided within the NeuroBench examples. |

Procedure:

- Setup: Navigate to the NeuroBench

examples/gscdirectory in a terminal. - Run ANN Baseline: Execute

poetry run python examples/gsc/benchmark_ann.py. This script will download the dataset, run the benchmark on an example Artificial Neural Network (ANN), and print results. - Run SNN Baseline: Execute

poetry run python examples/gsc/benchmark_snn.pyto benchmark an example Spiking Neural Network (SNN) [6]. - Expected Results:

- ANN Results: The script should output metrics similar to:

{'Footprint': 109228, 'ConnectionSparsity': 0.0, 'ClassificationAccuracy': 0.865, 'ActivationSparsity': 0.385, 'SynapticOperations': {'Effective_MACs': 1728071.1, 'Effective_ACs': 0.0, 'Dense': 1880256.0}}[6]. - SNN Results: The script should output metrics similar to:

{'Footprint': 583900, 'ConnectionSparsity': 0.0, 'ClassificationAccuracy': 0.856, 'ActivationSparsity': 0.967, 'SynapticOperations': {'Effective_MACs': 0.0, 'Effective_ACs': 3289834.3, 'Dense': 29030400.0}}[6].

- ANN Results: The script should output metrics similar to:

Interpretation: This exemplar experiment highlights the trade-offs between ANNs and SNNs. While the SNN in this example has a larger parameter Footprint, it achieves significantly higher Activation Sparsity (96.7% vs. 38.5%), a key neuromorphic efficiency metric. Furthermore, the Synaptic Operations are broken down into multiply-accumulate (MAC) for ANNs and accumulate (AC) for SNNs, providing a direct comparison of computational load [6]. This demonstrates how NeuroBench metrics enable quantitative, multi-faceted analysis of model performance.

Hands-On Implementation: Setting Up and Running NeuroBench Evaluations

NeuroBench is a community-driven, open-source framework designed for benchmarking neuromorphic computing algorithms and systems [6]. It provides a standardized methodology and a common set of tools for the fair and representative evaluation of neuromorphic approaches, ranging from spiking neural networks (SNNs) to neuromorphic hardware [1] [5]. For researchers implementing the NeuroBench algorithm track, this harness offers an objective reference framework for quantifying progress in a hardware-independent setting [3]. This guide provides detailed protocols for installing the NeuroBench Python harness and executing initial benchmark experiments.

System Requirements and Installation

This section outlines the prerequisites and the procedure for installing the NeuroBench package.

Prerequisites

Before installation, ensure your system meets the following requirements:

- Python: Version 3.9 or higher is required [6].

- Package Manager:

pipis used for installation from PyPI. For development,poetryis recommended [6]. - Operating System: The framework is designed to be cross-platform (Windows, Linux, macOS).

Installation Methods

You can install the NeuroBench harness via two primary methods.

Installation from PyPI (Recommended for Users)

For most users who simply wish to run benchmarks, install the package directly from the Python Package Index (PyPI) using pip [6].

This command installs the latest stable release of NeuroBench and its core dependencies.

Installation from Source (Recommended for Developers)

For developers interested in contributing to the project or needing access to the latest development version, install directly from the source repository using poetry.

This method is necessary to run the example scripts located in the examples directory [6].

Key Components and Structure

Upon installation, you gain access to the core components of the NeuroBench framework, which are structured as follows [6]:

neurobench.benchmarks: Contains workload metrics and static metrics.neuroblast.datasets: Provides access to neuromorphic benchmark datasets.neuroblast.models: Includes frameworks for Torch and SNNTorch models.- Pre-processors: Tools for data pre-processing and conversion to spikes.

- Post-processors: Methods for combining and interpreting spiking outputs from models.

The NeuroBench Workflow: A Protocol for Algorithmic Benchmarking

The standard workflow for using the NeuroBench framework involves a sequence of steps from model training to metric evaluation. The following diagram illustrates this integrated process.

Step-by-Step Experimental Protocol

Protocol 1: Standard Benchmark Evaluation

- Data Preparation: Obtain the standard benchmark dataset (e.g., Google Speech Commands, DVS Gesture Recognition) [6]. Use the official train/validation/test splits as defined in the NeuroBench benchmarks to ensure comparable results.

- Model Training: Train your network using the training split from the dataset. This can be an Artificial Neural Network (ANN) or a Spiking Neural Network (SNN) implemented in a framework like PyTorch or SNNTorch [6].

- Model Wrapping: Wrap your trained model in a

NeuroBenchModelobject. This provides a standardized interface for the benchmark harness to interact with your model [6]. - Benchmark Setup:

- Prepare the evaluation split dataloader.

- Instantiate the necessary pre-processors and post-processors.

- Select a list of metrics to be evaluated (e.g., footprint, activation sparsity, classification accuracy).

- Benchmark Execution: Pass the wrapped model, dataloader, processors, and metrics list to the

Benchmarkclass and call therun()method [6]. - Results Analysis: The

run()method returns a dictionary of results. Compare these results against the baselines provided on the NeuroBench leaderboards [6].

Exemplary Experimental Protocols

This section provides detailed, reproducible protocols for running baseline benchmarks included in the NeuroBench repository.

Protocol 2: Google Speech Commands (GSC) Classification Benchmark

Objective: To benchmark the performance and efficiency of a model on the Google Speech Commands keyword classification task [6].

Materials:

- Refer to Table 2 for the required research reagents (software components).

Method:

- Navigate to the examples directory:

cd neurobench/examples/gsc/[6]. - Run the ANN benchmark baseline script:

- Run the SNN benchmark baseline script:

- The scripts will automatically download the GSC dataset, execute the full NeuroBench workflow and print the results to the terminal.

Expected Results: The following table summarizes the expected baseline results from the NeuroBench examples [6].

Table 1: Expected Baseline Results for GSC Benchmark

| Metric | ANN Baseline | SNN Baseline |

|---|---|---|

| Footprint | 109,228 | 583,900 |

| Connection Sparsity | 0.0% | 0.0% |

| Classification Accuracy | 86.53% | 85.63% |

| Activation Sparsity | 38.54% | 96.69% |

| Synaptic Operations (Effective MACs) | 1,728,071.17 | 0.0 |

| Synaptic Operations (Effective ACs) | 0.0 | 3,289,834.32 |

Protocol 3: Extending to Other Benchmark Tasks

NeuroBench includes several other benchmarks suitable for different research foci. The methodology remains consistent across tasks, with changes primarily in the dataset and model architecture.

Table 2: Available NeuroBench v1.0 Benchmarks & Reagents

| Benchmark Task | Domain | Key Metrics | Research Reagents (Software) |

|---|---|---|---|

| Keyword Few-shot Class-incremental Learning (FSCIL) | Audio / Continual Learning | Accuracy, Footprint, Forward Transfer | neurobench.datasets, PyTorch Model |

| Event Camera Object Detection | Event-based Vision | mAP, Synaptic Operations, Activation Sparsity | Event-based Dataloader, Pre-processors |

| Non-human Primate (NHP) Motor Prediction | Biomedical / Time-series | Prediction Accuracy, Energy Efficiency | NHP Dataset, Post-processors |

| Chaotic Function Prediction | Dynamical Systems | Prediction Error, Computational Cost | neurobench.benchmarks |

| DVS Gesture Recognition | Neuromorphic Vision | Classification Accuracy, Activation Sparsity | DVS Gesture Dataset, SNNTorch |

Method:

- Identify the benchmark task from the list above.

- Locate the corresponding example script in the

examplesdirectory of the NeuroBench repository. - Follow a protocol similar to Protocol 2, adapting the model architecture and training regimen to the specific task while using the standard NeuroBench evaluation harness.

Metrics and Analysis

NeuroBench evaluates models on a comprehensive set of metrics that go beyond mere task accuracy to capture computational efficiency and biological plausibility. The logical relationship between the model and the full suite of metrics it is evaluated against is shown below.

The metrics are categorized as follows [6]:

- Static Metrics: Measured independently of input data, such as Footprint (total number of parameters) and Connection Sparsity.

- Workload Metrics: Dependent on the input data, such as Classification Accuracy, Activation Sparsity (percentage of zeros in activations), and Synaptic Operations (effective multiply-accumulates for ANNs or accumulate operations for SNNs).

This multi-faceted evaluation is critical for a holistic understanding of a model's performance and its suitability for deployment on resource-constrained neuromorphic hardware. By following the protocols in this guide, researchers can consistently generate results that are directly comparable to those published on the official NeuroBench leaderboards [6].

NeuroBench is a community-driven, open-source benchmark framework specifically designed to evaluate neuromorphic computing algorithms and systems [1] [3]. The framework addresses a critical gap in the neuromorphic research field, which has historically lacked standardized benchmarks for accurately measuring technological advancements, comparing performance with conventional methods, and identifying promising research directions [1]. The algorithm track operates in a hardware-independent setting, focusing on evaluating algorithms based on both performance and computational efficiency metrics [3]. This standardized approach enables direct comparison between neuromorphic and conventional machine learning approaches, providing an objective reference framework for quantifying advancements in brain-inspired computing [1].

Experimental Setup and Installation

Environment Configuration

The NeuroBench framework is distributed as a Python package through PyPI and can be installed with a single command [6]:

For development purposes or customized implementations, researchers can clone the repository directly from GitHub and utilize poetry for maintaining a consistent virtual environment [6] [19]:

This installation approach requires Python ≥3.9 and typically completes within a few minutes [6]. The framework is designed to be compatible with common deep learning libraries, particularly PyTorch and SNNTorch, providing flexibility for researchers working with both artificial and spiking neural networks [6].

Key Research Reagent Solutions

Table 1: Essential Research Components for NeuroBench Implementation

| Component | Type | Function | Implementation Example |

|---|---|---|---|

| NeuroBenchModel | Software Wrapper | Standardizes model interface for benchmarking | Wraps custom PyTorch/SNN models |

| DataLoaders | Data Interface | Provides standardized data loading for benchmarks | Evaluation split dataloader for specific tasks |

| Pre-processors | Data Processor | Handles data transformation and spike conversion | Pre-processing of data, conversion to spikes |

| Post-processors | Output Processor | Combines and interprets model outputs | Methods for combining spiking outputs |

| Metrics | Evaluation Module | Quantifies performance and efficiency | Classification accuracy, synaptic operations |

Core Benchmark Workflow

Comprehensive Workflow Architecture

The NeuroBench benchmark workflow follows a systematic methodology that ensures reproducible and comparable results across different neuromorphic algorithms [1] [3]. The complete process, from dataset preparation to metric extraction, can be visualized through the following workflow:

Dataset Loading and Preparation

NeuroBench provides integrated access to multiple standardized neuromorphic datasets, ensuring consistent evaluation across research efforts [6]. The current framework includes several benchmark tasks:

- Keyword Few-shot Class-incremental Learning (FSCIL): Evaluates continual learning capabilities

- Event Camera Object Detection: Tests performance on event-based vision tasks

- Non-human Primate (NHP) Motor Prediction: Assesses neural decoding performance

- Chaotic Function Prediction: Measures temporal processing capabilities

- DVS Gesture Recognition: Uses dynamic vision sensor data for gesture classification

- Google Speech Commands (GSC) Classification: Tests audio processing capabilities

- Neuromorphic Human Activity Recognition (HAR): Evaluates activity recognition from sensor data [6]

Researchers load datasets using the standardized data loaders provided in the framework, which automatically handle train/test splits and ensure consistent preprocessing across different models [6].

Model Definition and Training

The workflow supports various neuromorphic model architectures, including spiking neural networks (SNNs) and conventional artificial neural networks (ANNs) [1]. Researchers first define their model using their preferred framework (PyTorch or SNNTorch), then train it using the training split of the chosen benchmark dataset [6]. The training process follows standard practices for the specific model type, with the flexibility to incorporate neuromorphic principles such as sparse connectivity, event-based processing, and bio-plausible learning rules [1].

Model Wrapping and Preprocessing