ICA vs PCA for EEG Artifact Removal: A Comprehensive Guide for Biomedical Research

Electroencephalogram (EEG) data is notoriously susceptible to contamination from physiological and non-physiological artifacts, posing a significant challenge in neuroscience research and drug development.

ICA vs PCA for EEG Artifact Removal: A Comprehensive Guide for Biomedical Research

Abstract

Electroencephalogram (EEG) data is notoriously susceptible to contamination from physiological and non-physiological artifacts, posing a significant challenge in neuroscience research and drug development. This article provides a systematic comparison of two predominant blind source separation (BSS) techniques for artifact removal: Independent Component Analysis (ICA) and Principal Component Analysis (PCA). We explore their foundational principles, methodological applications, and comparative performance in isolating and removing artifacts such as ocular, muscle, and cardiac interference. Aimed at researchers and scientists, this review synthesizes current evidence, addresses practical troubleshooting, and discusses the emerging role of hybrid and deep learning methods to guide the selection and optimization of preprocessing pipelines for clean and reliable EEG data analysis.

EEG Artifacts and Blind Source Separation: Unveiling the Core Principles of ICA and PCA

The Critical Challenge of Artifacts in EEG Analysis for Clinical and Research Applications

Electroencephalography (EEG) is a crucial tool in clinical neurology and neuroscience research, providing millisecond-scale temporal resolution for studying brain dynamics. However, a significant challenge persists: EEG signals are exceptionally vulnerable to contamination by artifacts originating from both physiological and non-physiological sources. These unwanted signals can mimic pathological activity, obscure genuine neural phenomena, and ultimately compromise diagnostic validity and research conclusions [1].

Artifacts are broadly categorized as physiological or extrinsic. Physiological artifacts arise from bodily processes, including ocular artifacts from eye blinks and movements, muscle artifacts from head and facial muscle activity, and cardiac artifacts from heart electrical activity or pulse [1]. Extrinsic artifacts stem from environmental interference, instrument noise, or electrode issues [1]. The problem is particularly acute in emerging applications using wearable EEG systems and in mobile brain imaging paradigms, where motion artifacts introduce additional complexity [2] [3].

This article objectively compares the performance of two principal computational approaches for artifact management: Principal Component Analysis (PCA) and Independent Component Analysis (ICA), with additional context on modern hybrid techniques. We present supporting experimental data to guide researchers and clinicians in selecting appropriate methodologies for their specific applications.

Technical Foundations of PCA and ICA

Principal Component Analysis (PCA)

PCA is a multivariate analysis technique based on linear transformation. It decomposes a set of potentially correlated variables into a set of linearly uncorrelated variables called Principal Components (PCs). These components are estimated as projections onto the eigenvectors of the data's covariance or correlation matrix [4].

The primary objective of PCA is dimensionality reduction and noise reduction by retaining the fewest components that account for the most variance in the original data with minimal information loss [4]. In EEG applications, PCA's performance is highly dependent on the pre-normalization method applied to the data [4].

Independent Component Analysis (ICA)

ICA extends beyond PCA by separating multivariate signals into statistically independent components. Unlike PCA, which finds uncorrelated components, ICA finds components that are independent, a stronger statistical condition measured using higher-order statistics [4] [5].

ICA operates on the assumption that the recorded EEG signals represent linear mixtures of independent source signals from the brain and artifactual sources. The algorithm searches for a linear transformation that minimizes statistical dependency and mutual information between components [4]. In practice, ICA decomposition often begins with a whitening step using PCA or Singular Value Decomposition (SVD) to improve signal-to-noise ratio before identifying independent sources [4].

Table 1: Fundamental Differences Between PCA and ICA

| Feature | Principal Component Analysis (PCA) | Independent Component Analysis (ICA) |

|---|---|---|

| Primary Objective | Variance maximization, dimensionality reduction | Statistical independence maximization, source separation |

| Statistical Basis | 2nd-order statistics (covariance) | Higher-order statistics |

| Component Nature | Orthogonal, uncorrelated | Statistically independent |

| Output Ordering | By explained variance (decreasing) | No inherent ordering |

| Assumptions | Linear correlations exist in data | Sources are statistically independent and non-Gaussian |

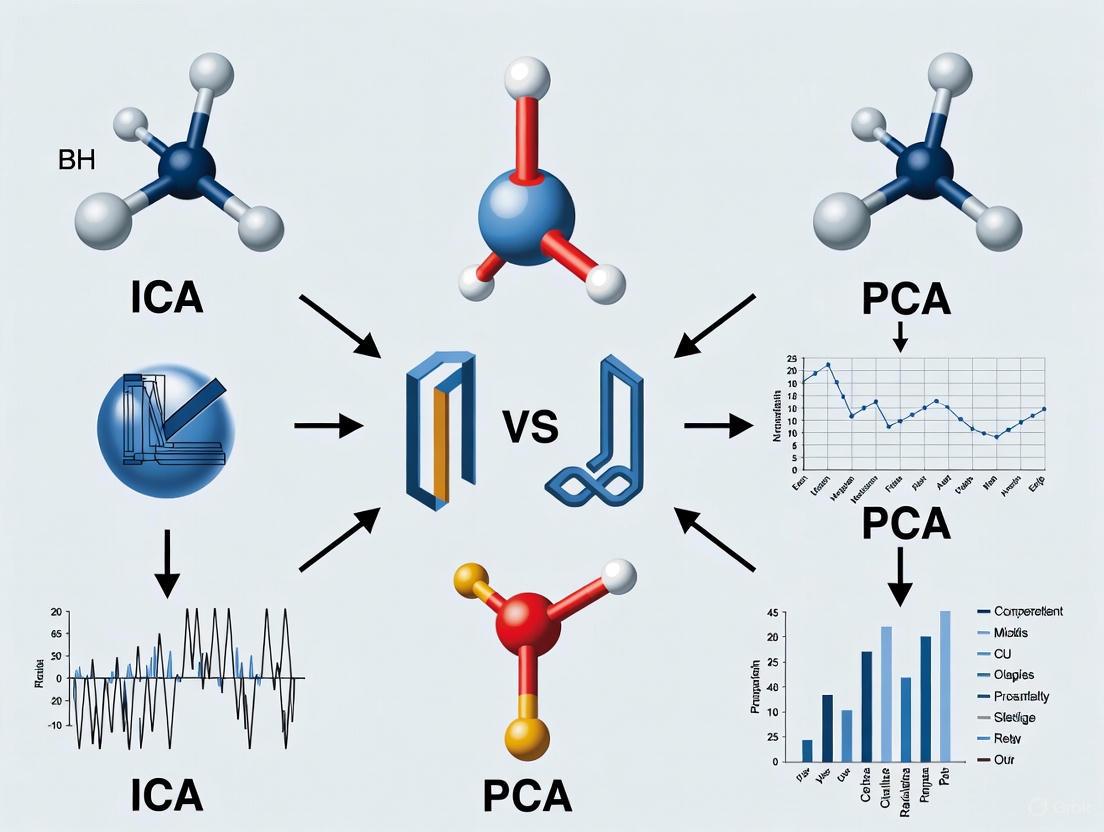

Figure 1: Typical ICA Workflow for EEG Artifact Removal. ICA typically incorporates PCA as a preprocessing whitening step before performing independent component decomposition.

Comparative Performance Analysis: PCA vs. ICA

Signal Separation Efficacy

Direct comparisons in controlled studies reveal fundamental performance differences. In PET imaging research, which shares similar multivariate analysis challenges with EEG, PCA demonstrated superior stability and created better results both qualitatively and quantitatively compared to ICA in simulated images. PCA effectively extracted signals from noise and showed insensitivity to noise type, magnitude, and correlation when proper pre-normalization was applied [4].

Conversely, ICA excels in specific artifact separation tasks. ICA has proven particularly effective at isolating stereotyped artifacts including ocular movements (EOG), muscle activity (EMG), cardiac signals (ECG), and channel noise [6]. These artifacts often have statistical properties distinct from cerebral activity, making them good candidates for ICA separation.

Impact of Data Dimensionality Reduction

A critical consideration in ICA implementation is whether to apply preliminary dimension reduction by PCA. Evidence indicates that PCA rank reduction adversely affects subsequent ICA decomposition quality. One study demonstrated that reducing data rank by PCA to retain 95% of original variance decreased the mean number of recovered 'dipolar' independent components from 30 to 10 per dataset and reduced median component stability from 90% to 76% [6].

Furthermore, PCA rank reduction increased uncertainty in equivalent dipole positions and spectra of neural sources, and decreased the number of subjects represented in component clusters corresponding to specific brain activities [6]. These findings suggest that for EEG data, PCA rank reduction should be avoided or carefully tested before application as an ICA preprocessing step [6].

Table 2: Quantitative Performance Comparison of PCA and ICA for EEG Artifact Removal

| Performance Metric | PCA | ICA | Experimental Context |

|---|---|---|---|

| Stability | High | Variable, sensitive to noise levels | PET simulation study [4] |

| Dipolar Components | N/A | 30/set (no PCA reduction) | 72-channel EEG study [6] |

| Dipolar Components | N/A | 10/set (with PCA 95% variance) | 72-channel EEG study [6] |

| Component Stability | N/A | 90% (no PCA reduction) | EEG reliability assessment [6] |

| Component Stability | N/A | 76% (with PCA 95% variance) | EEG reliability assessment [6] |

| Motion Artifact Handling | Limited | Improved with specialized preprocessing | Mobile EEG during running [2] |

Advanced Methodologies and Experimental Protocols

Modern Preprocessing Techniques for Mobile EEG

Recent research addresses motion artifacts in ecological settings using advanced preprocessing methods before ICA:

iCanClean: This algorithm uses canonical correlation analysis (CCA) and reference noise signals to detect and correct noise subspaces. It can utilize dual-layer EEG setups where outward-facing noise electrodes provide reference artifact recordings [2] [7]. Optimal parameters determined through parameter sweeps include a 4-second window length and R² threshold of 0.65, which improved good-quality brain components from 8.4 to 13.2 (+57%) [7].

Artifact Subspace Reconstruction (ASR): ASR employs a sliding-window PCA approach to identify and remove high-variance artifacts based on a calibration period [2]. Studies recommend a threshold parameter (k) of 20-30, with lower values producing more aggressive cleaning [2].

Experimental Protocol for Method Comparison

A rigorous comparison between artifact removal approaches for mobile EEG during running employed this methodology:

- Data Acquisition: EEG recorded during dynamic jogging and static standing versions of a Flanker task [2].

- Preprocessing: Compared no preprocessing, ASR, and iCanClean with pseudo-reference noise signals [2].

- Evaluation Metrics:

Results demonstrated that both iCanClean and ASR improved component dipolarity and reduced power at gait frequencies. However, only iCanClean consistently identified the expected P300 amplitude differences between congruent and incongruent flanker conditions during running [2].

Figure 2: Experimental Framework for Comparing Motion Artifact Removal Methods. Modern pipelines evaluate methods based on multiple criteria including component quality, spectral features, and functional neural metrics.

Table 3: Research Reagent Solutions for EEG Artifact Processing

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| EEGLAB | Software Environment | ICA implementation and component visualization | General EEG analysis [5] |

| MNE-Python | Software Library | ICA decomposition with multiple algorithms | Python-based EEG/MEG analysis [8] |

| ICLabel | Automated Classifier | Component classification using neural networks | Identifying brain vs. artifact components [7] |

| iCanClean | Algorithm | Motion artifact removal using CCA | Mobile EEG with motion artifacts [7] |

| Artifact Subspace Reconstruction (ASR) | Algorithm | PCA-based artifact removal | High-amplitude artifact correction [2] |

| Dual-Layer EEG Caps | Hardware | Reference noise recording | Mobile EEG studies [7] |

The critical challenge of artifacts in EEG analysis requires methodological choices informed by empirical evidence. PCA offers stability and effectiveness for general noise reduction, particularly in high-noise environments, and serves as a valuable whitening step for ICA. However, ICA provides superior separation of specific physiological artifacts when sufficient high-quality data is available and dimensionality is preserved.

Emerging approaches like iCanClean and ASR demonstrate that hybrid methods combining reference signals with advanced statistical techniques can address the persistent problem of motion artifacts in mobile settings. Future research directions should include standardized benchmarking across artifact types, development of real-time processing pipelines for clinical applications, and adaptive algorithms that automatically adjust to individual differences in artifact characteristics.

For researchers and clinicians, selection between PCA, ICA, and advanced hybrid methods should be guided by specific application requirements, data quality, and the particular artifact types most prevalent in their recording environments.

Defining Blind Source Separation (BSS) in Signal Processing

Blind Source Separation (BSS) is a computational technique for separating a set of source signals from a mixture of observed signals, with little to no prior knowledge about the source signals or the mixing process. [9] This method is highly underdetermined but can yield useful solutions under a variety of conditions, making it invaluable in fields ranging from audio processing to biomedical signal analysis. [9] The classical example is the "cocktail party problem," where the goal is to isolate a single speaker's voice from a mixture of multiple conversations. [9]

In the context of electroencephalogram (EEG) artifact removal, BSS is a crucial unsupervised learning technique. [10] The core problem is modeled as:

X(t) = A · S(t) + N [10]

Where X(t) is the vector of observed mixed signals, S(t) is the vector of unknown source signals, A is the unknown mixing matrix, and N represents noise. [10] The objective is to find a demixing matrix W that approximates the inverse of A to recover the original sources: Ŝ(t) = W · X(t). [9] [10]

Theoretical Comparison: ICA vs. PCA as BSS Techniques

While both Independent Component Analysis (ICA) and Principal Component Analysis (PCA) are used for BSS, their underlying principles and objectives differ significantly, as outlined in the table below.

Table 1: Fundamental Differences Between PCA and ICA for Blind Source Separation

| Feature | Principal Component Analysis (PCA) | Independent Component Analysis (ICA) |

|---|---|---|

| Core Principle | Orthogonal transformation to decompose correlated variables into spatially orthogonal, uncorrelated components (Principal Components). [10] | Decomposes multivariate data into statistically independent components (ICs) by minimizing mutual information or maximizing non-Gaussianity. [10] |

| Primary Goal | Variance maximization; reduce data dimensionality. [11] | Statistical independence; separate latent sources. [9] [11] |

| Statistical Criterion | Identifies uncorrelated components. [10] | Identifies independent, non-Gaussian components (assumed for sources). [10] |

| Assumptions on Sources | Sources are uncorrelated. | Sources are statistically independent. [10] |

| Limitations in EEG | Limited by frequency band interference and voltage similarity between artifact and neural signal; does not provide a complete solution for artifact removal. [10] | Computationally complex; can suffer from permutation problem; may introduce signal distortion; manual component selection is often required. [10] |

Experimental Comparison in EEG Artifact Removal

Recent research directly compares the performance of ICA and other BSS-inspired methods for cleaning motion artifacts from EEG data during physical activities like running. The table below summarizes key performance metrics from a 2025 study.

Table 2: Experimental Performance of Artifact Removal Methods in Mobile EEG [2]

| Method | ICA Decomposition Quality (Component Dipolarity) | Power Reduction at Gait Frequency | Recovery of Expected P300 ERP Congruency Effect |

|---|---|---|---|

| ICA alone | Reduced quality due to motion artifact contamination. [2] | Not Applicable (Baseline) | Not Reported |

| ICA + Artifact Subspace Reconstruction (ASR) | Improved recovery of dipolar brain components. [2] | Significant reduction. [2] | Produced ERPs similar to standing task; did not capture the full P300 effect. [2] |

| ICA + iCanClean | Most effective in producing dipolar brain components. [2] | Significant reduction. [2] | Effectively captured the expected P300 congruency effect. [2] |

Detailed Experimental Protocols

The following workflow visualizes the methodology used in the aforementioned comparative study on motion artifact removal during running.

EEG Artifact Removal Workflow

1. Data Acquisition: EEG is recorded from participants during dynamic activities (e.g., an adapted Flanker task during jogging) and a static control condition (the same task during standing). [2]

2. Preprocessing & Application of BSS Methods: The recorded EEG is then processed using different methods for comparison: [2]

- ICA-only: The raw data is decomposed via ICA without specific motion artifact preprocessing.

- ICA with Artifact Subspace Reconstruction (ASR): This method uses a sliding-window Principal Component Analysis (PCA) to identify and remove high-variance, high-amplitude artifacts from continuous EEG based on a calibration period. A key parameter is the standard deviation threshold

k, with lower values (e.g., 10-30) leading to more aggressive cleaning. [2] - ICA with iCanClean: This approach leverages canonical correlation analysis (CCA) to detect and subtract noise subspaces from the scalp EEG. It uses either dedicated noise sensors or, more commonly, "pseudo-reference" noise signals created from the raw EEG (e.g., by applying a notch filter below 3 Hz). A critical parameter is the correlation criterion

R²(e.g., 0.65), which determines which noise components are subtracted. [2]

3. Performance Evaluation: The cleaned data is evaluated against three key metrics to assess the success of the underlying BSS process (ICA): [2]

- ICA Component Dipolarity: Measures the quality of the ICA decomposition. Physiological brain sources are dipolar, so a higher number of dipolar components indicates a more successful separation. [2]

- Spectral Power at Gait Frequency: A successful method will significantly reduce power at the frequency of the stepping motion and its harmonics. [2]

- Stimulus-locked Event-Related Potential (ERP) Components: The ultimate test is whether neural signatures, like the P300 wave during a cognitive task, can be recovered in the dynamic condition, matching those found in the static, artifact-free condition. [2]

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Tools and Algorithms for BSS in EEG Research

| Tool/Algorithm | Function in BSS Research |

|---|---|

| Independent Component Analysis (ICA) | The core BSS algorithm for decomposing multichannel EEG into statistically independent sources, enabling the identification and removal of artifactual components. [9] [10] |

| Principal Component Analysis (PCA) | Used for dimensionality reduction and as a preprocessing step for whitening data before ICA. Also the core of ASR for artifact removal. [2] [10] |

| Artifact Subspace Reconstruction (ASR) | An adaptive, data-driven method for removing high-amplitude motion artifacts from continuous EEG in real-time, improving the subsequent performance of ICA. [2] |

| iCanClean Algorithm | A method that uses canonical correlation analysis (CCA) to identify and subtract motion artifact subspaces from EEG signals, particularly effective with pseudo-reference or dual-layer sensor noise signals. [2] |

| ICLabel | A classifier (often an EEGLAB plugin) that automates the labeling of ICA components as 'brain', 'muscle', 'eye', 'heart', 'line noise', or 'other', streamlining the component selection process. [2] |

| JADE Algorithm | A specific ICA algorithm (Joint Approximate Diagonalization of Eigenmatrices) used for separating mixed signals, including images and multidimensional data. [9] |

| FastICA Algorithm | A computationally efficient ICA algorithm that maximizes the non-Gaussianity of components to achieve separation. [10] |

Blind Source Separation is a powerful framework for unraveling mixed signals, with ICA being a particularly effective method for the non-trivial problem of EEG artifact removal. While PCA serves as a foundational technique for decorrelation and dimensionality reduction, ICA's pursuit of statistical independence makes it superior for isolating neural signals from complex artifacts like those generated during motion. Experimental evidence confirms that ICA's performance is significantly enhanced when combined with advanced preprocessing methods like iCanClean or ASR, which aggressively target motion-based noise. For researchers in drug development and neuroscience, this comparison underscores that a hybrid BSS approach is often essential for achieving the signal fidelity required to study brain dynamics in ecologically valid, real-world settings.

In electroencephalography (EEG) research, the analysis of neural signals is consistently challenged by the presence of various artifacts—unwanted signals originating from non-neural sources. These artifacts can stem from physiological processes like eye movements and muscle activity, or from technical issues such as electrode displacement and environmental interference. Effective artifact removal is therefore a critical preprocessing step to ensure the validity of subsequent neural signal analysis. Within this domain, Principal Component Analysis (PCA) has established itself as a fundamental, variance-based linear algebra technique for dimensionality reduction and artifact mitigation. This guide provides a comparative objective performance analysis of PCA against other prevalent methods, with a specific focus on its application in EEG artifact removal research.

PCA operates on a simple yet powerful principle: it transforms the original, potentially correlated variables (EEG channels or time points) into a new set of linearly uncorrelated variables called principal components. These components are ordered such that the first few retain most of the variation present in the original dataset. Artifact removal is achieved by projecting the data onto this new component space, identifying and discarding components that predominantly represent artifacts, and then reconstructing the signal from the remaining components. This method contrasts with Independent Component Analysis (ICA), which separates signals based on statistical independence rather than variance. The ensuing sections will evaluate PCA's efficacy using quantitative data, detail standard experimental protocols, and situate its performance within the modern EEG preprocessing toolkit.

Theoretical Foundations and Comparative Frameworks

Core Mechanism of PCA

PCA is a statistical procedure that uses an orthogonal transformation to convert a set of observations of possibly correlated variables into a set of values of linearly uncorrelated variables called principal components. This transformation is defined in such a way that:

- The first principal component has the largest possible variance.

- Each succeeding component, in turn, has the highest variance possible under the constraint that it is orthogonal to the preceding components.

The mathematical foundation of PCA lies in the eigenvalue decomposition of the data covariance matrix or singular value decomposition (SVD) of the data matrix itself. The principal components are the eigenvectors of the covariance matrix, and the eigenvalues represent the magnitude of variance captured by each component. In EEG applications, artifacts often manifest as high-variance events, causing them to be captured within the first few principal components, which can then be removed prior to signal reconstruction.

PCA vs. ICA: A Fundamental Comparison

While both PCA and ICA are blind source separation techniques, their underlying objectives and mechanisms differ significantly, as outlined in the table below.

Table 1: Fundamental Comparison of PCA and ICA

| Feature | Principal Component Analysis (PCA) | Independent Component Analysis (ICA) |

|---|---|---|

| Separation Criterion | Maximizes explained variance; components are orthogonal and uncorrelated | Maximizes statistical independence; components are non-Gaussian and independent |

| Mathematical Foundation | Eigenvalue decomposition, Singular Value Decomposition | Information-theoretic measures, entropy maximization |

| Component Ordering | Components are ordered by variance explained | No inherent ordering of components |

| Assumptions | Data lies on a linear subspace; Gaussianity is optimal | Source signals are statistically independent and non-Gaussian |

| Primary Strength | Efficient dimensionality reduction; optimal for Gaussian data | Effective separation of non-Gaussian sources (neural signals, artifacts) |

| Typical Artifact Targets | Large-amplitude transient artifacts, electrode pops | Ocular, cardiac, and muscle artifacts |

The selection between PCA and ICA often depends on the specific artifact characteristics and analysis goals. PCA is particularly effective for removing large-amplitude, transient artifacts that dominate the variance in the signal, whereas ICA is often more effective for separating persistent physiological artifacts like eye blinks and muscle activity that have distinct temporal structures but may not always be the highest-variance sources [12].

Performance Evaluation and Quantitative Comparison

Efficacy in Removing Specific Artifact Types

Different artifact removal techniques demonstrate variable performance depending on the nature of the contaminating signal. The following table summarizes quantitative performance metrics for PCA and other common methods across various artifact types.

Table 2: Performance Comparison of Artifact Removal Techniques for Different Artifact Types

| Artifact Type | Method | Key Performance Metrics | Reported Efficacy | Limitations |

|---|---|---|---|---|

| Ocular (EOG) | PCA | Reduces amplitude by ~70-80% in frontal channels | Effective for large-amplitude blinks | Risk of removing neural activity with similar variance [12] |

| ICA | Component correlation with EOG >0.9 | High accuracy in separating blink components | Requires manual component inspection [12] | |

| Muscle (EMG) | PCA | Broadband power reduction in 20-100 Hz range | Moderate efficacy | Less effective for diffuse muscle noise [3] |

| ICA | Selectively reduces EMG components | Good for focal EMG artifacts | Performance decreases with channel count reduction [3] | |

| Motion Artifacts | PCA | Power reduction at gait frequency harmonics | Limited effectiveness | Motion artifacts often non-stationary [2] |

| ASR | η (artifact reduction): 86% ±4.13, SNR improvement: 20 ±4.47 dB | High effectiveness for motion | Requires calibration data [13] | |

| Large Transients | PCA | Near-complete removal of spike artifacts | Excellent for large-amplitude transients | May oversmooth neural signals [12] |

Impact on Signal Integrity and Subsequent Analyses

Beyond mere artifact removal, a critical consideration is how these methods affect the integrity of the underlying neural signals and their impact on downstream analyses.

Table 3: Impact on Signal Integrity and Analysis Outcomes

| Method | Residual Artifact | Neural Signal Preservation | Effect on ERP Decoding | Component Dipolarity |

|---|---|---|---|---|

| PCA | Low for large artifacts | Moderate (risk of neural component removal) | Minor improvement in SNR | Not applicable |

| ICA | Low for physiological artifacts | High when properly classified | Minimal effect on decoding accuracy [14] | High for brain components [2] |

| ASR | Moderate for motion | Variable (depends on threshold) | Limited data available | Improved with optimal k (10-30) [2] |

| iCanClean | Low for motion | High with proper noise reference | Enables P300 detection during running [2] | Superior to ASR in locomotion [2] |

Notably, research has shown that for event-related potential decoding, extensive artifact correction through ICA combined with artifact rejection did not significantly improve support vector machine performance in the vast majority of cases across multiple paradigms including N170, mismatch negativity, N2pc, P3b, and N400 [14]. This suggests that while artifact removal is crucial for minimizing confounds, its impact on multivariate pattern analysis may be less pronounced than previously assumed.

Experimental Protocols and Methodologies

Standardized PCA Workflow for EEG Artifact Removal

The implementation of PCA for EEG artifact removal follows a systematic protocol to ensure reproducible results. The following diagram illustrates the standard workflow for PCA-based artifact removal in EEG processing:

PCA-Based Artifact Removal Workflow

Step-by-Step Protocol:

Data Preparation and Filtering: Begin with raw EEG data, typically referenced to a common average or mastoid reference. Apply a bandpass filter (e.g., 1-40 Hz) to remove extreme frequency components that might dominate variance calculations. A high-pass filter of 1-2 Hz is particularly important to improve stationarity [12] [15].

Covariance Matrix Calculation: The preprocessed EEG data matrix ( X ) of dimensions ( channels \times time points ) is used to compute the covariance matrix ( \Sigma = \frac{1}{N-1} XX^T ), where ( N ) represents the number of time points. This matrix captures the variance and covariance relationships between different EEG channels.

Eigenvalue Decomposition: Perform eigenvalue decomposition on the covariance matrix ( \Sigma ) to obtain eigenvectors (principal components) and eigenvalues (variance explained by each component). The components are ordered by decreasing eigenvalue magnitude.

Component Selection and Projection: Identify components representing artifacts through various criteria:

- Variance thresholding: Discard components explaining an exceedingly high percentage of total variance, characteristic of large artifacts.

- Visual inspection: Examine component topographies and time courses for patterns typical of known artifacts.

- Automated detection: Use algorithmic approaches to flag components with specific spatial or temporal characteristics matching artifact templates.

Signal Reconstruction: Project the data back to the original sensor space using only the retained components, effectively reconstructing the EEG signal without the artifact-dominated components. The reconstructed data constitutes the cleaned EEG output for subsequent analysis.

Integrated PCA-ICA Protocol for Comprehensive Cleaning

For optimal artifact removal, PCA is often combined with ICA in a sequential processing pipeline. The protocol below, adapted from a standardized EEG preprocessing framework, highlights this integrative approach [12]:

Integrated PCA-ICA Artifact Removal Protocol

This hybrid approach leverages the strengths of both methods:

- ICA is applied first to remove ocular artifacts, which it excels at identifying due to their consistent temporal structure [12].

- PCA is subsequently employed to target large-amplitude, transient artifacts (e.g., from muscle vibrations or electrode movement) that violate stationarity assumptions and may not be cleanly separated by ICA alone [12].

This sequential protocol ensures that large transient artifacts do not compromise ICA decomposition, while also capitalizing on ICA's superior ability to isolate ocular artifacts without distorting neural signals that might share similar variance profiles.

Table 4: Key Software and Analytical Resources for EEG Artifact Removal Research

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| EEGLAB | Software toolbox | Interactive MATLAB environment for EEG processing | Standard platform for implementing PCA, ICA, and ASR [2] [12] |

| AMICA Plugin | Algorithm plugin | Adaptive Mixture ICA for enhanced source separation | Alternative to Infomax ICA; includes sample rejection options [15] |

| clean_rawdata | EEGLAB plugin | Automatic artifact removal using ASR | Handles large-amplitude artifacts using PCA-based reconstruction [2] |

| ICLabel | EEGLAB plugin | Automated IC classification | Classifies ICA components as brain, muscle, eye, etc. [2] |

| iCanClean | Standalone algorithm | Motion artifact removal using CCA | Leverages noise references for optimal motion artifact cleaning [2] |

The comparative analysis presented in this guide demonstrates that PCA remains a valuable tool in the EEG preprocessing arsenal, particularly for addressing large-amplitude, transient artifacts that dominate signal variance. Its computational efficiency and straightforward interpretation make it well-suited for initial data cleaning stages and for integration with more complex methods like ICA.

However, PCA's limitations in handling physiological artifacts with complex temporal structures and its potential for removing neural activity sharing variance characteristics with artifacts necessitate a nuanced application. For contemporary EEG research, particularly involving mobile setups and real-world applications, the integration of PCA within a hybrid processing pipeline represents the most effective strategy. Researchers should consider the specific artifact profile of their dataset, the critical neural features of interest, and available computational resources when selecting and parameterizing artifact removal approaches.

Future developments in deep learning-based artifact removal show promise for handling unknown artifacts and adapting to multi-channel contexts [16], but established linear methods like PCA and ICA will continue to provide foundational approaches for ensuring EEG data quality in both clinical and research settings.

Electroencephalography (EEG) provides unparalleled temporal resolution for studying brain dynamics but is notoriously susceptible to contamination by various artifacts. Source separation techniques are fundamental for isolating neural signals from these unwanted interferences. Among the most prominent methods are Independent Component Analysis (ICA) and Principal Component Analysis (PCA), which leverage distinct statistical principles to decompose complex multichannel recordings. ICA separates mixed signals into components that are statistically independent, a property often exhibited by biologically distinct brain and artifact sources [5]. In contrast, PCA separates signals based on orthogonality and variance, identifying components that are uncorrelated and account for the greatest variance in descending order [17] [18]. This guide provides an objective, data-driven comparison of ICA and PCA, focusing on their application in EEG artifact removal for research and clinical applications.

Theoretical Foundations: ICA vs. PCA

The mathematical divergence between ICA and PCA underlies their differing performance in source separation. PCA is a linear dimensionality reduction technique that projects data onto a new set of axes (principal components) that are orthogonal and ranked by the amount of variance they explain from the original dataset [17] [18]. This makes PCA highly effective for data compression and removing correlated noise, but it does not necessarily separate physiologically distinct sources.

ICA, conversely, is a blind source separation (BSS) technique that assumes the observed multichannel EEG data is a linear mixture of underlying source signals. Its objective is to find a demixing matrix that recovers these sources by maximizing their statistical independence—a stronger condition than mere uncorrelation [5]. ICA algorithms achieve this by optimizing higher-order statistics, such as making the distributions of the extracted components as non-Gaussian as possible. This core theoretical difference makes ICA particularly suited for separating sources like brain activity, eye blinks, and muscle noise, which are often generated by physiologically independent processes.

Experimental Protocols for Performance Comparison

Common Validation Methodologies

Researchers typically evaluate the performance of artifact removal algorithms using both simulated and real-world EEG data. Key methodological approaches include:

- Ground-Truth Simulation: Studies often create datasets by adding known artifact templates (e.g., from eye movement or muscle recordings) to clean EEG baseline data. The accuracy of an algorithm is measured by how well it recovers the original, uncontaminated signal [19] [2].

- Recovery of Evoked Responses: In event-related potential (ERP) studies, the efficacy of an artifact removal method is gauged by its ability to recover known ERP components, such as the P300, after the data has been artificially contaminated or recorded in challenging conditions (e.g., during motion) [2].

- Signal Quality Metrics: Quantitative indices like Signal-to-Noise Ratio (SNR), residual variance, and component dipolarity (a measure of how well a component's scalp map can be explained by a single equivalent brain dipole) are standard measures for comparing performance [19] [20] [15].

Workflow for Method Comparison

The following diagram illustrates a typical experimental workflow for comparing ICA and PCA in an EEG artifact removal pipeline.

Comparative Performance Data

Extensive research has quantified the performance of ICA and PCA across various artifact types and experimental conditions. The tables below summarize key findings from the literature.

Table 1: Overall Performance Comparison of ICA and PCA for Common EEG Artifacts

| Artifact Type | ICA Performance | PCA Performance | Key Supporting Evidence |

|---|---|---|---|

| Ocular (Eye Blinks) | Highly effective; reliably separates and removes blinks without distorting underlying neural signals [21] [5]. | Moderate; can remove high-variance blink artifacts but often with greater loss of neural signal [21] [20]. | ICA outperformed PCA in separating and removing ocular artifacts, with results comparing favorably [21]. |

| Muscle (EMG) | Effective, particularly with advanced algorithms like AMICA; separates high-frequency muscle noise into distinct components [5] [15]. | Limited; muscle artifacts are often distributed across multiple principal components, making clean removal difficult [17]. | ICA was more effective at isolating EMG artifacts, while PCA risked removing more neural signal [20]. |

| Gradient (EEG-fMRI) | New ICA-based methods developed specifically for 3T spiral in-out and EPI sequences outperform PCA, AAS, and TS methods, especially in theta and alpha bands [19]. | Modest performance; PCA-based methods show limited efficacy compared to advanced ICA methods for gradient artifacts [19]. | ICA-based methods recovered ERPs and resting EEG with high SNR (>4) below the beta band, outperforming PCA [19]. |

| Motion (Locomotion) | Robust when combined with preprocessing (e.g., AMICA sample rejection); improves component dipolarity and preserves ERPs during running [2] [15]. | Not commonly used as a primary method; artifact subspace reconstruction (ASR), which uses PCA, is effective but may over-clean [2]. | ICA decompositions were significantly improved with automatic sample rejection, even in high-motion environments [15]. |

Table 2: Quantitative Recovery of Neural Signals After Artifact Removal

| Metric / Condition | ICA Results | PCA Results | Experimental Context |

|---|---|---|---|

| Signal-to-Noise Ratio (SNR) in Beta Band | Modest (SNR ~1) [19]. | Modest (SNR ~1) [19]. | Gradient artifact removal during fMRI [19]. |

| Residual Variance in Brain Components | Lower residual variance, indicating cleaner separation of brain and non-brain sources [15]. | Higher residual variance, suggesting less complete separation [18]. | Component analysis after decomposition [18] [15]. |

| P300 ERP Congruency Effect | Recovered effectively during running with iCanClean (a CCA/ICA method) [2]. | Not specifically reported for PCA; ASR (PCA-based) produced similar ERP latencies but not the amplitude effect [2]. | Flanker task during static standing vs. jogging [2]. |

| Component Dipolarity | Higher number of dipolar brain components, indicating superior source separation physiologically plausible for brain activity [2] [15]. | Fewer dipolar components, as PCA separates by variance, not physiological reality [2]. | Quality assessment of decomposition during locomotion [2]. |

Implementing ICA effectively requires specific tools and considerations. The following table outlines key "research reagents" for conducting ICA in EEG studies.

Table 3: Essential Tools and Considerations for ICA Implementation

| Item | Function / Description | Examples & Notes |

|---|---|---|

| High-Density EEG | Provides sufficient spatial sampling for ICA to resolve independent sources. | 64+ channels are recommended; many studies use 32-256 channels [15] [22]. |

| ICA Algorithms | The computational engine that performs the source separation. | Infomax (runica), AMICA, FastICA, SOBI. AMICA is often among the best-performing for EEG [5] [15]. |

| Component Classification Tools | Automatically labels independent components as brain or artifact. | ICLabel is a standard EEGLAB plugin that uses a trained neural network to classify components [2] [22]. |

| Preprocessing Pipeline | Data preparation steps critical for a successful ICA decomposition. | Includes bad channel removal, high-pass filtering (e.g., 1-2 Hz), and bad segment rejection [5] [15]. |

| Computational Resources | ICA is computationally intensive, scaling with channel count and data length. | High-performance workstations or computer clusters are recommended for large datasets or using algorithms like AMICA [5]. |

Optimization and Advanced Hybrid Techniques

Preprocessing for Optimal ICA

The quality of ICA decomposition is highly dependent on data preprocessing and quality. Research shows that moderate automatic cleaning (e.g., 5-10 iterations of sample rejection within the AMICA algorithm) generally improves decomposition quality across both stationary and mobile EEG protocols [15]. Furthermore, applying a high-pass filter (e.g., 1 Hz or 2 Hz) before ICA computation increases data stationarity and improves component stability, though the resulting demixing matrix can often be successfully applied back to the less aggressively filtered data to preserve low-frequency neural content [22].

Beyond Pure ICA: Hybrid Methods

To overcome the limitations of any single method, researchers have developed powerful hybrid processing techniques:

- ICA-Wavelet Combinations: Wavelet-based thresholding is applied to the time courses of artifact-laden independent components, rather than directly to raw EEG data. This allows for more precise removal of artifact elements within a component while preserving the underlying neural signal [23].

- ICA-EMD Fusion: Empirical Mode Decomposition (EMD) adaptively decomposes a signal into oscillatory modes. These modes can be used to denoise ICA components before signal reconstruction, helping to address the "mode-mixing" problem of EMD [23].

- iCanClean: This method leverages canonical correlation analysis (CCA) to identify and subtract noise subspaces from the EEG that are highly correlated with reference noise signals, either from dedicated sensors or derived from the EEG itself. It has been shown to be highly effective for motion artifact removal during locomotion [2].

The logical relationship between core and hybrid methods is illustrated below.

The experimental data consistently demonstrates that ICA generally outperforms PCA for the removal of common physiological artifacts from EEG, particularly when the goal is to preserve the integrity of the underlying neural signal for cognitive or clinical analysis. Its strength lies in its foundation on statistical independence, which aligns well with the properties of biologically distinct sources. PCA, while excellent for dimensionality reduction and handling certain structured noises, often falls short in cleanly separating brain activity from artifacts like muscle or eye movement due to its sole reliance on variance and orthogonality.

The future of EEG artifact removal lies not in a single algorithm, but in systematic pipelines and intelligent hybrid methods. Best practices include using high-density EEG systems, ensuring rigorous data preprocessing, and leveraging advanced ICA algorithms like AMICA combined with automated component classification. For challenging recording environments, such as mobile brain-body imaging or simultaneous EEG-fMRI, hybrid approaches like iCanClean or ICA combined with wavelet denoising show significant promise. As the field moves towards more naturalistic neuroscience, the development and validation of these robust, automated artifact removal strategies will be paramount for generating reliable, high-fidelity neural data.

In electroencephalography (EEG) artifact removal research, the choice of signal separation algorithm can profoundly impact the quality of resultant neural data and the validity of subsequent neuroscientific or clinical conclusions. Two predominant theoretical frameworks employed for this purpose are Principal Component Analysis (PCA) and Independent Component Analysis (ICA). While both are linear transformation techniques, their underlying objectives and theoretical foundations are fundamentally distinct. PCA is grounded in the principle of orthogonality, seeking components that are uncorrelated and that maximize variance in the data. In contrast, ICA is rooted in the principle of statistical independence, a much stronger condition, aiming to separate mixed sources by maximizing the independence of their component distributions, often measured through higher-order statistics like kurtosis [17] [24]. This guide provides a structured, objective comparison of these frameworks, focusing on their application in EEG artifact removal, to aid researchers in selecting and implementing the most appropriate methodology for their specific investigative needs.

Theoretical Foundations: A Comparative Analysis

The core distinction between PCA and ICA lies in their statistical objectives and the type of data structure they seek to exploit. The following table delineates their fundamental theoretical differences.

Table 1: Core Theoretical Differences Between PCA and ICA

| Feature | Principal Component Analysis (PCA) | Independent Component Analysis (ICA) |

|---|---|---|

| Primary Objective | Variance maximization and dimensionality reduction [17] | Blind source separation of mixed signals [17] [25] |

| Component Relation | Uncorrelated (2nd-order statistics) [24] | Statistically independent (higher-order statistics) [24] |

| Statistical Basis | Orthogonality; diagonalizes the covariance matrix [17] | Non-Gaussianity; minimizes mutual information [17] [24] |

| Component Order | Ordered by explained variance (from largest to smallest) | No inherent order; components are considered equally [25] |

| Assumption on Sources | Assumes Gaussian or low-dimensional data | Requires non-Gaussian, independent source signals [24] |

The Principle of Orthogonality in PCA

PCA operates by identifying a new set of orthogonal axes (principal components) in the data. The first component aligns with the direction of maximum variance, with each subsequent component capturing the next highest variance under the constraint of orthogonality to all previous components [17]. This process is equivalent to an eigenvalue decomposition of the data's covariance matrix, effectively identifying and ranking the directions of correlation. In the context of EEG, this allows PCA to compress data and isolate major patterns of variance. However, a key limitation is that uncorrelatedness (orthogonality) does not imply independence. Artifacts and neural signals can have complex, higher-order statistical dependencies that PCA, focusing only on second-order correlations, may fail to separate effectively [17] [23].

The Principle of Statistical Independence in ICA

ICA is designed to solve the "blind source separation" problem. It assumes that the observed multichannel EEG signal is a linear mixture of underlying source signals originating from the brain and various artifacts. The goal of ICA is to find a linear transformation that makes the output components (the estimated sources) as statistically independent as possible [25]. Independence is a stricter condition than uncorrelatedness, as it involves the entire probability distribution and relies on higher-order statistics (e.g., kurtosis). This enables ICA to separate sources based on the morphology of their time-course distributions, making it particularly powerful for isolating stereotypical artifacts like eye blinks, muscle activity, and line noise from neural signals, even when their frequency spectra overlap [17] [26].

Figure 1: A theoretical workflow comparing the decomposition objectives of PCA and ICA when applied to a multichannel EEG signal.

Experimental Protocols in EEG Artifact Removal

The application of PCA and ICA in research follows well-established, yet distinct, experimental protocols. The following workflow generalizes the common steps for both methods in the context of EEG cleaning.

Figure 2: A generalized experimental workflow for artifact removal from EEG using PCA or ICA, highlighting key methodological differences.

Detailed Methodologies

3.1.1 PCA-Based Artifact Removal After standard EEG preprocessing (filtering, bad channel removal), PCA is applied to the multichannel data. The resulting components are orthogonal and ordered by the amount of variance they explain. Artifacts with high amplitude, such as large eye movements or channel pops, often occupy the first few components due to their high variance. The experimental protocol involves:

- Decomposition: Performing Singular Value Decomposition (SVD) on the data matrix [17].

- Identification: Inspecting the component time courses and topographies. Components with topographies dominated by frontal regions (for ocular artifacts) or exceptionally high variance are flagged as artifacts. A scree plot can aid in identifying components with disproportionate variance [17].

- Removal: Projecting the data back to the sensor space while excluding the components identified as artifacts.

3.1.2 ICA-Based Artifact Removal ICA has become a gold standard for artifact removal in EEG. Its protocol is more focused on the statistical properties of the components:

- Decomposition: Using an algorithm (e.g., FastICA, Infomax) to estimate independent components from the preprocessed EEG data [17] [24].

- Identification: This critical step uses component features to classify them as brain or non-brain:

- Automated Classification: Tools like ICLabel employ trained classifiers that use features such as time-course autocorrelation, power spectral density, and topography to label components as brain, eye, muscle, heart, line noise, or channel noise [27] [2].

- Feature-Based Detection: Components can be identified based on known characteristics: ocular artifacts have a frontal scalp map and a high-amplitude, low-frequency time course; muscle artifacts have a high-frequency, broadband spectral profile [28].

- Removal: The artifact components are subtracted from the data, and the remaining components are projected back to create the cleaned EEG signal [28].

Performance Comparison: Quantitative and Qualitative Data

The efficacy of PCA and ICA has been quantitatively compared across numerous studies. The following table synthesizes key performance metrics from experimental data.

Table 2: Experimental Performance Comparison in EEG Artifact Removal

| Metric | PCA Performance | ICA Performance | Supporting Evidence |

|---|---|---|---|

| Artifact Separation | Effective for high-variance artifacts (e.g., large eye blinks); struggles with sources that have overlapping variance with neural signals [23]. | Superior for separating biologically independent sources like ocular, muscle, and cardiac artifacts, even with spectral overlap [17] [26]. | A study on depression EEG classification found ICA outperformed PCA in separating artifacts from neural data, leading to better subsequent analysis [23]. |

| Signal Distortion | Risk of removing neural signals that are correlated with or contribute high variance to the data. | Lower signal distortion when correctly identifying artifact components, as it targets statistically independent sources [26]. | An adaptive CCA-ICA method demonstrated better preservation of inherent cognitive components in EEGs compared to other methods, leading to more reliable analysis [26]. |

| Dimensionality Reduction | Excellent for data compression, reducing dimensions while preserving maximum variance. | Not primarily designed for dimensionality reduction, though it can be used as such; focus is on source separation [24]. | PCA is explicitly analyzed from the viewpoint of data compression, while ICA's strength lies in transforming the problem into one of independent decompositions [17]. |

| Computational Load | Typically lower; relies on efficient eigenvalue decomposition. | Generally higher; involves iterative optimization to maximize non-Gaussianity or minimize mutual information. | The processing time for PCA is noted to be significantly lower than classical methods like wavelets [17]. |

| Classification Accuracy | Can improve classification but may be less effective than ICA if artifacts are not the dominant source of variance. | Often leads to higher post-cleaning classification accuracy in BCI and diagnostic tasks. | In an emotion classification task, an ICA-based artifact removal method significantly improved accuracy compared to other methods [26]. A depression EEG study also showed higher classification accuracy after ICA denoising [23]. |

Case Study: Motion Artifact Removal in Mobile EEG

A 2025 comparative study on motion artifact removal during overground running provides a concrete example of these methods in a challenging, ecologically valid context [29] [2]. The study evaluated preprocessing approaches based on the dipolarity of resulting ICA components—a key indicator of component quality representing neural sources. The findings demonstrated that preprocessing the data with advanced algorithms like iCanClean (which uses canonical correlation analysis) and Artifact Subspace Reconstruction (ASR), before ICA, led to the recovery of more dipolar brain independent components [2]. This underscores that while ICA is powerful, its performance is contingent on effective preprocessing, especially in high-motion environments. The study concluded that these methods were effective for reducing motion artifacts and identifying stimulus-locked ERP components during running [29].

The Scientist's Toolkit: Essential Research Reagents and Solutions

For researchers embarking on EEG artifact removal studies, the following tools and software libraries are indispensable.

Table 3: Essential Research Tools for PCA and ICA in EEG Research

| Tool/Solution | Function | Relevant Context |

|---|---|---|

| EEGLAB | An interactive MATLAB toolbox for processing EEG data. It provides a comprehensive framework for importing, preprocessing, and visualizing data, and includes implementations of Infomax ICA and tools for component analysis [2]. | Widely used for ICA-based artifact removal; includes plugins like ICLabel for automated component classification. |

| MNE-Python | An open-source Python package for exploring, visualizing, and analyzing human neurophysiological data. It supports both PCA and ICA for noise reduction [30]. | Commonly used in modern research for its scalability and integration with machine learning workflows. |

| ICLabel | An EEGLAB plugin that automatically classifies independent components into categories (Brain, Muscle, Eye, Heart, Line Noise, Channel Noise, Other) using a trained neural network [2]. | Reduces the subjectivity and time required for manual component inspection, standardizing the ICA process. |

| Artifact Subspace Reconstruction (ASR) | A preprocessing method that uses PCA to identify and remove high-variance, non-stereotypical artifacts in a sliding-window approach [2]. | Often used as a preprocessing step before ICA to improve decomposition quality, especially in mobile EEG studies [2]. |

| iCanClean | An algorithm that leverages reference noise signals (or pseudo-references) and canonical correlation analysis (CCA) to detect and correct motion-artifact subspaces in the EEG data [2]. | Particularly effective for motion artifact removal in mobile brain imaging during walking and running [29] [2]. |

| FastICA Algorithm | A computationally efficient algorithm for ICA that maximizes non-Gaussianity through a fixed-point iteration scheme [24]. | Available in toolboxes like MNE-Python and scikit-learn; popular for its speed and simplicity. |

The comparative analysis reveals that the choice between PCA and ICA is not a matter of one being universally superior, but rather a strategic decision based on the research question and data characteristics. PCA, with its foundation in orthogonality and variance, offers a robust and computationally efficient method for data compression and the removal of high-variance, stereotypical artifacts. However, its reliance on second-order statistics is a limitation when artifacts and neural signals share similar variance profiles. ICA, founded on the more potent principle of statistical independence, excels at blind source separation, making it the preferred choice for isolating and removing complex biological artifacts like those from eyes and muscles. The experimental evidence consistently shows that ICA, particularly when enhanced with modern preprocessing and automated classification tools, provides a more effective pathway to cleaner neural data, which in turn supports more accurate brain-computer interfacing and clinical diagnostics. For contemporary EEG research, especially in dynamic, real-world settings, ICA provides a more powerful and flexible theoretical and practical framework for artifact removal.

From Theory to Practice: A Step-by-Step Guide to Implementing ICA and PCA for Artifact Removal

In electroencephalography (EEG) research, Blind Source Separation (BSS) has become a cornerstone technique for isolating neural signals from various artifacts. The efficacy of BSS methods, notably Independent Component Analysis (ICA) and Principal Component Analysis (PCA), is not inherent but is profoundly dependent on the preprocessing pipeline applied to the raw data. These preprocessing steps—encompassing filtering, data cleaning, and re-referencing—fundamentally shape the quality of the source separation, thereby influencing all subsequent analyses. A recent systematic review underscored that most artifact processing pipelines integrate detection and removal phases but rarely separate their individual impact on final performance metrics [31] [3]. Furthermore, studies demonstrate that preprocessing choices can artificially inflate effect sizes in event-related potentials and bias source localization if not carefully applied [32]. This guide provides an objective comparison of methodologies and their performance, framing the discussion within the broader thesis of comparing ICA and PCA for EEG artifact removal. It is designed to equip researchers and drug development professionals with the empirical evidence needed to construct robust, reproducible preprocessing protocols for effective BSS.

Theoretical Foundations: ICA and PCA in EEG Processing

Core Principles and Assumptions

Independent Component Analysis (ICA) is a statistical and computational technique used to uncover hidden factors, or source signals, from a set of observed mixtures. Its core assumption is that the observed multichannel EEG data matrix ( X \in \mathbb{R}^{N \times M} ) (where ( N ) is the number of channels and ( M ) is the number of samples) is a linear mixture of underlying, statistically independent source signals ( S ). This relationship is expressed as ( X = AS ), where ( A ) is the mixing matrix. The goal of ICA is to find a demixing matrix ( W = A^{-1} ) such that ( S = WX ) yields the independent sources [15]. ICA's strength in EEG analysis lies in its ability to separate sources based on their statistical independence, making it highly effective for isolating non-Gaussian signals like eye blinks, muscle activity, and, crucially, brain rhythms.

Principal Component Analysis (PCA), in contrast, is a dimensionality reduction technique that transforms the data to a new coordinate system where the greatest variance lies on the first coordinate (the first principal component), the second greatest variance on the second, and so on. It operates under the assumption that the components are orthogonal (uncorrelated). While PCA is excellent for denoising and reducing data dimensionality by discarding components with the least variance, it is less effective than ICA for artifact removal because neural and artifactual sources often share overlapping variances. Physiological artifacts are not merely "noise" with small variance; they can be high-amplitude signals, and removing high-variance components may also remove critical neural information.

A Workflow for BSS-Based EEG Cleaning

The following diagram illustrates a generalized preprocessing workflow for BSS, highlighting key decision points shared by ICA and PCA methodologies.

Comparative Performance Analysis: ICA vs. PCA

Quantitative Performance Metrics

The table below summarizes key performance metrics for ICA and PCA based on recent experimental studies, particularly in the context of wearable EEG systems.

Table 1: Quantitative Performance Comparison of ICA and PCA for EEG Artifact Removal

| Metric | ICA Performance | PCA Performance | Experimental Context | Source |

|---|---|---|---|---|

| Overall Usage Frequency | Among the most frequent techniques for ocular & muscular artifacts | Less frequently a primary technique | Systematic review of 58 wearable EEG studies | [31] [3] |

| Typical Accuracy | ~71% (when clean signal is reference) | Not prominently reported | Assessment with clean signal as ground truth | [31] |

| Residual Variance | Lower residual variance in brain components post-cleaning | Higher residual variance expected | Quality metric after AMICA decomposition | [15] |

| Impact on Decoding | Can decrease decoding performance if neural signal is removed | Not specifically quantified | Analysis of EEGNet & time-resolved decoding | [33] |

| Dependency on Preprocessing | Highly dependent; quality degrades with motion & improves with cleaning | Not specifically evaluated | Effect of data cleaning on AMICA decomposition | [15] |

Performance in Specific Artifact Domains

The effectiveness of BSS techniques varies significantly depending on the artifact type. Research indicates that ICA is a dominant method for managing specific physiological artifacts. A 2025 systematic review identified that wavelet transforms and ICA are among the most frequently used techniques for managing ocular and muscular artifacts in wearable EEG [31]. In contrast, PCA is less commonly featured as a standalone solution for these specific artifact categories in modern pipelines. ICA's success stems from its ability to separate sources like eye blinks and muscle activity, which often have non-Gaussian distributions and are statistically independent from cortical signals.

However, a critical caveat is that the subtraction of entire artifact components identified by ICA can sometimes remove neural signals alongside artifacts, potentially leading to false positive effects and biased source localization [32]. This has led to the development of more targeted methods, such as the RELAX pipeline, which focuses artifact reduction on specific temporal periods (for eye movements) or frequency bands (for muscle activity) within components, rather than subtracting components wholesale [32].

Experimental Protocols and Methodological Insights

Detailed Protocol: Evaluating Preprocessing on ICA Decomposition

A 2024 study by T. Händel et al. provides a robust protocol for assessing how data cleaning impacts ICA decomposition quality, offering a model for rigorous experimentation [15].

Objective: To systematically investigate the effect of automatic time-domain sample rejection on the quality of the AMICA (Adaptive Mixture ICA) decomposition across datasets with varying levels of participant mobility.

Datasets: Eight open-access EEG datasets from six studies were included, representing a mobility gradient from stationary to Mobile Brain/Body Imaging (MoBI) setups. All datasets used at least 58 EEG channels and had a sampling rate of ≥250 Hz.

Independent Variables:

- Cleaning Intensity: Manipulated via the AMICA algorithm's built-in sample rejection function. The number of rejection iterations and the standard deviation (SD) threshold for rejection were varied.

- Motion Intensity: Datasets were categorized based on the level of participant movement during recording.

Dependent Variables (Measures of Decomposition Quality):

- Mutual Information (MI): Lower MI between components indicates better separation and higher independence.

- Component Classification: The proportion of components classified as 'Brain', 'Muscle', or 'Other' (e.g., using ICLabel).

- Residual Variance: The variance in the data not explained by the brain components after artifact removal.

- Exemplary Signal-to-Noise Ratio (SNR): Calculated to compare a standing condition versus a mobile condition within the same experiment.

Procedure:

- Data Preparation: Data were high-pass filtered at 1 Hz and bad channels were interpolated.

- AMICA Decomposition: AMICA was run on each dataset with different cleaning strengths (e.g., 0 to 15 rejection iterations, with SD thresholds of 3 or 5).

- Quality Assessment: The dependent variables were computed for each resulting decomposition.

- Statistical Analysis: Linear mixed models were used to analyze the effects of cleaning and mobility on decomposition quality.

Key Finding: While increased movement decreased decomposition quality within individual studies, the AMICA algorithm was robust to limited data cleaning. Cleaning strength significantly improved decomposition, but the effect was smaller than expected. The study concluded that moderate cleaning (5-10 iterations of AMICA sample rejection) is sufficient to improve most datasets, regardless of motion intensity [15].

Protocol: A Modular Pipeline Comparison

A 2025 study by T. D. Almeida et al. took a modular approach to evaluate preprocessing choices, providing a framework for objective comparison [34].

Objective: To quantify the effect of different pre-processing strategies on EEG signal quality and analysis outcomes using quantitative metrics.

Experimental Design: The researchers constructed a modular pipeline and varied methods at key stages:

- Artifact Removal: Two ICA algorithms (SOBI and Extended Infomax) were compared.

- Re-referencing: Four methods were tested: Common Averaged Reference (CAR), robust CAR (rCAR), Reference Electrode Standardization Technique (REST), and Reference Electrode Standardization and Interpolation Technique (RESIT).

Evaluation Metrics: The impact of these pipelines was quantified using Event-Related Spectral Perturbation (ERSP) of the sensorimotor rhythm in an Action Observation and Motor Imagery protocol.

Key Finding: The study revealed that signal segmentation significantly affects the cleaning procedure, while comparable results were obtained across the different ICA approaches. For re-referencing, CAR, REST, and RESIT produced similar topographical patterns, whereas rCAR showed the most divergent ERSP pattern [34]. This highlights that steps like segmentation and re-referencing can be as impactful as the choice of BSS algorithm itself.

Visualization of Method Selection and Impact

Decision Pathway for BSS Method Selection

The following diagram outlines the logical decision process for choosing and applying ICA or PCA within an EEG preprocessing pipeline, incorporating findings from recent comparative studies.

For researchers aiming to implement these preprocessing pipelines, a standardized set of tools and datasets is crucial for reproducibility and validation. The following table details key resources referenced in the cited studies.

Table 2: Essential Research Reagents and Resources for EEG BSS Preprocessing

| Resource Name | Type | Primary Function in Research | Example Use Case |

|---|---|---|---|

| AMICA Plugin | Software Algorithm | An advanced ICA algorithm for decomposing EEG data into independent components. | Used to evaluate the impact of time-domain cleaning on decomposition quality [15]. |

| RELAX Pipeline | Software Plugin (EEGLAB) | Implements targeted artifact reduction within ICA components to minimize neural signal loss. | Cleaning Go/No-Go and N400 task data while reducing effect size inflation [32]. |

| ERP CORE Dataset | Public Dataset | Provides standardized, openly available EEG data from multiple classic experimental paradigms. | Served as the primary data source for multiverse analysis of preprocessing on decoding [33]. |

| EEGdenoiseNet | Public Dataset | A semi-synthetic benchmark dataset for developing and testing EEG denoising algorithms. | Used to train and evaluate deep learning models like CLEnet for artifact removal [16]. |

| Clean_rawdata (ASR) | Software Function | An automatic method for identifying and removing bad data periods based on artifact subspaces. | Referenced as a common automatic cleaning method, though sensitive to threshold settings [15]. |

The empirical evidence clearly demonstrates that the selection and application of BSS methods like ICA and PCA are not one-size-fits-all decisions. ICA remains the dominant and more effective technique for artifact removal in high-density EEG systems, particularly for physiological artifacts like ocular and muscle activity, achieving accuracy around 71% [31]. However, its performance is highly contingent on rigorous preprocessing, including appropriate filtering and data cleaning, with studies showing that moderate automatic cleaning (e.g., 5-10 iterations in AMICA) significantly enhances decomposition quality [15].

A critical consideration is that aggressive artifact correction, while improving signal purity, can sometimes reduce downstream decoding performance. This suggests that classifiers may learn to exploit structured noise correlated with experimental conditions [33]. Therefore, the optimal pipeline must balance cleaning efficacy with the preservation of neural information, potentially employing targeted methods like RELAX [32]. Future research will likely see greater integration of deep learning models, such as hybrid CNN-LSTM networks (e.g., CLEnet), which show promise in handling unknown artifacts and multi-channel data without relying on the strict statistical assumptions of traditional BSS [16]. For now, a meticulous, empirically grounded approach to preprocessing remains the essential foundation for any effective BSS application in EEG research.

The pursuit of clean neural signals is a fundamental challenge in electroencephalography (EEG) research. Artifacts originating from ocular movements, muscle activity, cardiac rhythms, and environmental noise can significantly obscure brain-generated electrical signals, complicating data interpretation and analysis. Within this context, principal component analysis (PCA) and independent component analysis (ICA) have emerged as two prominent computational techniques for addressing the artifact contamination problem. While both are blind source separation (BSS) methods, their underlying principles and applications differ substantially. PCA is a statistical procedure that employs an orthogonal transformation to convert a set of observations of possibly correlated variables into a set of values of linearly uncorrelated variables called principal components. This transformation is defined such that the first principal component accounts for the largest possible variance in the data, and each succeeding component, in turn, has the highest variance possible under the constraint that it is orthogonal to the preceding components [6].

In contrast, ICA is a computational method for separating a multivariate signal into additive, statistically independent non-Gaussian components. The core assumption of ICA is that the observed EEG signals are linear mixtures of independent source signals from neural and non-neural origins. ICA aims to find a linear transformation that minimizes the statistical dependence between the components, thereby isolating artifacts into specific independent components (ICs) that can be removed before signal reconstruction [35] [5]. The ongoing comparison between these methodologies forms a critical thesis in computational neuroscience, as researchers seek to optimize the balance between computational efficiency, artifact removal efficacy, and the preservation of neurologically meaningful signals.

Theoretical Foundations and Procedural Implementation

The Mathematical Framework of PCA

The procedural implementation of PCA for artifact removal follows a systematic sequence. Mathematically, given an EEG data matrix X with dimensions m×n (where m is the number of channels and n is the number of time points), PCA begins with mean-centering the data by subtracting the mean of each channel. The covariance matrix C = XX^T is then computed, followed by an eigenvalue decomposition of C to obtain eigenvectors (principal components) and eigenvalues (variances). The transformation can be expressed as Y = P^TX, where P is the matrix of eigenvectors and Y is the projected data in the principal component space [6]. Artifact removal occurs by identifying and discarding components associated with artifacts, followed by reconstruction of the signal using the remaining components.

The following diagram illustrates the standard workflow for implementing PCA in EEG artifact removal:

The ICA Methodology

ICA operates under a different model, assuming that observed signals are instantaneous linear mixtures of statistically independent sources. The model is formulated as X = AS, where X is the observed data matrix, A is the mixing matrix, and S contains the independent sources. The goal is to estimate both A and S by finding a demixing matrix W such that U = WX provides the independent components. Multiple algorithms exist for this estimation, with Infomax and FastICA being among the most common in EEG analysis [35] [5]. These algorithms iteratively optimize W to maximize the non-Gaussianity or statistical independence of the components.

The table below summarizes the core analytical differences between PCA and ICA:

Table 1: Fundamental Theoretical Distinctions Between PCA and ICA

| Feature | Principal Component Analysis (PCA) | Independent Component Analysis (ICA) |

|---|---|---|

| Primary Objective | Variance Maximization | Statistical Independence Maximization |

| Statistical Basis | 2nd-Order Statistics (Correlation) | Higher-Order Statistics |

| Component Orthogonality | Enforces Orthogonality | No Orthogonality Constraint |

| Component Interpretation | Mathematical Constructs with Maximal Variance | Putative Source Signals |

| Gaussianity Assumption | Optimal for Gaussian Distributions | Requires Non-Gaussian Sources |

| Output Order | Ordered by Explained Variance | No Inherent Ordering |

Comparative Analysis: Experimental Evidence and Performance Data

Efficacy in Artifact Removal and Signal Preservation

Research directly comparing PCA and ICA for artifact removal has yielded consistent findings regarding their respective strengths and limitations. A critical study demonstrated that applying PCA for dimensionality reduction prior to ICA decomposition adversely affected the quality of the resulting independent components. Reducing data rank by PCA to retain 95% of original data variance decreased the mean number of recovered 'dipolar' ICs from 30 to 10 per dataset and reduced median IC stability from 90% to 76% [6]. This degradation occurs because PCA's variance-maximization objective conflicts with ICA's goal of separating sources based on statistical independence, potentially merging neurophysiologically distinct sources into single principal components.

In the context of specific artifact types, ICA generally demonstrates superior performance for separating physiological artifacts like eye blinks, cardiac signals, and muscle activity, as these sources generate statistically independent signals from brain activity [5]. The following workflow illustrates how ICA is typically applied for artifact removal, with the option to use PCA as a preliminary step:

However, PCA can be effective in scenarios with pronounced artifacts that dominate the variance structure of the data, or when computational efficiency is paramount. For movement artifacts encountered in mobile EEG studies, hybrid approaches that combine PCA with other methods have shown promise. One study found that artifact subspace reconstruction (ASR), which utilizes PCA principles, effectively reduced motion artifacts during running, particularly when using a k-threshold parameter between 20-30 [2].

Quantitative Performance Comparison

The table below summarizes experimental findings from studies that have evaluated PCA and ICA for various EEG processing tasks:

Table 2: Experimental Performance Comparison of PCA and ICA in EEG Applications

| Study/Application | Method | Key Performance Metric | Result | Limitations/Notes |

|---|---|---|---|---|

| General Decomposition Quality [6] | PCA + ICA | Number of Dipolar Components | Reduced from 30 to 10 (with 95% variance retention) | PCA rank reduction adversely affects IC quality |

| General Decomposition Quality [6] | ICA Alone | Component Stability | 90% median stability | Higher reliability in source identification |

| Epilepsy Detection [36] | PCA + SVM | Classification Accuracy | 87.4% | Lower than LDA+SVM (96.2%) |

| Epilepsy Detection [36] | ICA + SVM | Classification Accuracy | 84.3% | Performance varies with ICA algorithm |

| Motion Artifact Removal [2] | ASR (PCA-based) | Dipolar Components & Power at Gait Frequency | Effective reduction with optimal parameters | Performance parameter-dependent |

| SVM Decoding [37] | Various PCA Approaches | Decoding Accuracy | Frequently reduced vs. no PCA | Not consistently beneficial |

Advantages and Limitations in Research Applications

Advantages of PCA

- Computational Efficiency: PCA is mathematically straightforward and computationally less intensive than ICA, making it suitable for rapid preprocessing or applications with limited computational resources [6].