ICA for EEG Artifact Removal: A Comprehensive Guide for Biomedical Researchers

Independent Component Analysis (ICA) has become a cornerstone technique for cleaning electroencephalography (EEG) data of confounding artifacts, which is a critical preprocessing step in both neuroscience research and clinical drug...

ICA for EEG Artifact Removal: A Comprehensive Guide for Biomedical Researchers

Abstract

Independent Component Analysis (ICA) has become a cornerstone technique for cleaning electroencephalography (EEG) data of confounding artifacts, which is a critical preprocessing step in both neuroscience research and clinical drug development. This article provides a comprehensive resource for researchers and scientists, covering the foundational principles of ICA, detailed methodological pipelines for its application on common artifacts like ocular, cardiac, and muscle activity, and advanced strategies for optimizing performance in challenging scenarios like free-viewing and mobile experiments. It further synthesizes empirical evidence on the validation of ICA's efficacy, compares it with alternative artifact removal methods, and discusses its critical impact on the reliability of downstream analyses, such as EEG microstates and event-related potentials, thereby ensuring data integrity for robust scientific and clinical conclusions.

Understanding ICA and EEG Artifacts: Core Principles for Effective Cleaning

Demystifying the Blind Source Separation Technique

Independent Component Analysis (ICA) is a advanced signal processing technique designed to solve the "blind source separation" problem. Its core purpose is to recover a set of independent, non-Gaussian source signals from their observed linear mixtures, without prior knowledge of the mixing process or the sources themselves [1] [2]. This capability makes it a powerful tool across various fields, including audio processing, financial analysis, and particularly in biomedical signal analysis where it has revolutionized electroencephalography (EEG) artifact removal [3].

The classic analogy used to explain ICA is the "Cocktail Party Problem," where multiple microphones in a room, each picking up a different mixture of voices, are used to isolate the individual speech of each talker [1] [2]. Similarly, in EEG applications, ICA treats the signals recorded from each electrode as a linear mixture of underlying independent sources, which include both brain activity and various artifacts like eye blinks, muscle movement, and heart activity [3]. By identifying and separating these sources, ICA enables researchers to isolate and remove contaminants while preserving the neural signals of interest.

Mathematical Foundation and Core Principles

Theoretical Framework

The mathematical model of ICA assumes that the observed data matrix X (representing multichannel EEG recordings) is generated by a linear mixing of statistically independent source components S (neural and artifactual sources) via an unknown mixing matrix A [1] [2]. This relationship is expressed as:

X = A S

The goal of ICA is to find an "unmixing" matrix W that approximates the inverse of A, thereby recovering the independent sources:

S = W X

where S contains the estimated independent components, and W is the unmixing matrix that separates the sources [1] [3]. The columns of the inverse matrix W⁻¹ represent the scalp topographies of the components, providing crucial information about their physiological origins [3].

Key Assumptions and Requirements

For ICA to successfully separate sources, several critical assumptions must be met:

- Statistical Independence: The source signals must be statistically independent of each other, meaning that knowing the value of one source provides no information about the value of another [2].

- Non-Gaussian Distribution: The source signals must have non-Gaussian distributions since Gaussian distributions are symmetric and maximize entropy, making separation impossible [2].

- Linear Mixing: The sources are assumed to mix linearly at the sensors, and propagation delays from sources to electrodes are considered negligible [3].

These assumptions are generally reasonable for EEG data, making ICA particularly well-suited for neurophysiological applications [3].

ICA in EEG Artifact Removal: Mechanisms and Workflows

How ICA Separates EEG Artifacts

In EEG analysis, ICA operates as a spatial filter that decomposes multichannel scalp recordings into temporally independent and spatially fixed components [3]. Each resulting component consists of a time course of activation and an associated scalp map that shows its projection strength at each electrode [3]. Artifacts such as eye blinks, eye movements, muscle activity, and cardiac signals typically generate components with distinctive characteristics:

- Eye Blinks: Produce components with large, punctate activations that project strongly to frontal sites [3].

- Eye Movements: Generate components with low-frequency time courses that also project mainly to frontal electrodes [3].

- Muscle Activity: Creates components with high-frequency spectral content (above 20 Hz) that project to temporal sites [3].

- Cardiac Artifacts: Show periodic activations synchronized with the heartbeat [4].

Once identified, artifactual components can be "zeroed out" by setting their contributions to zero and reconstructing the EEG signals from only the neural components, effectively removing the artifacts without discarding valuable data epochs [1].

Advantages Over Traditional Methods

ICA offers significant advantages compared to conventional artifact removal techniques:

- No Data Loss: Unlike epoch rejection methods that discard contaminated data segments, ICA preserves all collected information [1] [3].

- No Reference Channels Required: Unlike regression techniques, ICA does not require separate reference channels (like EOG for eye artifacts), which themselves often contain brain signals that would be inadvertently removed [1] [3].

- Versatility: ICA can remove various artifact types without specialized reference signals, including muscle noise, electrode artifacts, and line noise that lack clear reference channels [3].

Table 1: Quantitative Performance of ICA in EEG Artifact Removal

| Artifact Type | Removal Efficacy | Key Identifying Features | Clinical Validation |

|---|---|---|---|

| Eye Movements & Blinks | High efficacy in isolating and removing [3] | Frontal projection, low-frequency or punctate activations [3] | Evident clearing of signals with minimal spike distortion [4] |

| Muscle Artifacts | Effective separation from neural activity [3] | Temporal projection, spectral peak >20 Hz [3] | Successful removal while preserving interictal activity [4] |

| ECG (Heart) Artifacts | Can be effectively identified and removed [4] | Periodic activations synchronized with heartbeat | Correlation analysis shows minimal signal distortion [4] |

| Line Noise | Effective reduction of 60Hz and aliased frequencies [3] | Narrow frequency band components | Improved signal-to-noise ratio in corrected EEG [3] |

Experimental Protocols and Implementation

Data Collection Requirements

Successful ICA application depends on appropriate experimental design and data collection practices. Recent research has quantified the relationship between data quantity and decomposition quality, providing evidence-based guidance for researchers [5].

- Data Quantity: Studies using the AMICA algorithm, considered a benchmark in the field, demonstrate that decomposition quality (measured by mutual information reduction and component near-dipolarity) generally increases with more data [5]. While common heuristic thresholds exist, benefits may continue to increase with additional data collection beyond these thresholds [5].

- Experimental Considerations: For locomotion studies where motion artifacts are prevalent, researchers should design tasks with careful consideration of cognitive and locomotive demands to balance ecological validity with data quality [6]. Low-intensity locomotion (e.g., slow walking) typically produces more analyzable data than high-intensity movements (e.g., running) due to reduced motion artifacts [6].

Step-by-Step ICA Implementation Protocol

The following workflow outlines a standardized protocol for implementing ICA in EEG artifact removal:

ICA Implementation Workflow for EEG Artifact Removal

Step 1: Data Preparation and Preprocessing

- Begin with raw multichannel EEG data that has been properly collected with appropriate sampling rates (typically 200-500 Hz for standard systems) [6].

- Apply bandpass filtering (e.g., 1-100 Hz) and re-referencing as needed for your specific research questions.

- Ensure data meets ICA assumptions: sufficient data length, more time points than channels, and continuous recording where possible.

Step 2: ICA Decomposition

- Select an appropriate ICA algorithm based on your data characteristics and computational resources. Common choices include:

- Apply the selected algorithm to the preprocessed data to obtain the unmixing matrix W and independent components S.

Step 3: Component Identification and Classification

- Analyze the resulting components using both their time courses and scalp topographies.

- Apply established heuristics for identifying common artifacts [3]:

- Eye blinks: Frontal projection, large punctate activations

- Eye movements: Frontal projection, low-frequency time course

- Muscle artifacts: Temporal projection, high-frequency content

- Cardiac artifacts: Periodic waveform synchronized with EKG

- Document the criteria used for classifying each component as neural or artifactual.

Step 4: Signal Reconstruction and Validation

- Reconstruct artifact-corrected EEG signals by projecting only the neural components back to sensor space:

clean_data = W⁻¹(:,neural) * activations(neural,:)[3]. - Quantitatively validate the results by comparing original and corrected data using metrics like correlation analysis to ensure minimal distortion of neural signals [4].

- Visually inspect the corrected data to confirm artifact removal and preservation of neural activity.

Python Implementation Example

For researchers implementing ICA in Python, here is a basic framework using the FastICA algorithm from scikit-learn:

Advanced Applications and Recent Developments

Covariate-Integrated ICA

Recent advances in ICA methodology have introduced approaches that integrate behavioral or clinical covariates directly into the decomposition process. A 2025 study demonstrated that incorporating cognitive performance metrics from the Woodcock-Johnson Cognitive Abilities Test during ICA decomposition strengthened and stabilized the correlations between EEG connectivity measures and cognitive performance [7]. This augmented ICA approach provides a more powerful multivariate framework for uncovering brain-behavior relationships than conventional ICA followed by post-hoc correlation analysis [7].

Comparative Analysis with Other Techniques

Table 2: ICA vs. Alternative Artifact Removal Methods for EEG

| Method | Mechanism | Advantages | Limitations | Best Use Cases |

|---|---|---|---|---|

| Independent Component Analysis (ICA) | Blind source separation based on statistical independence [2] | No reference channels needed, preserves neural data, handles multiple artifact types [1] [3] | Requires sufficient data, computationally intensive, component identification subjective [2] | Research studies with high-channel counts, multiple artifact types [3] |

| Regression Methods | Uses reference signals to estimate and subtract artifact contributions | Simpler implementation, computationally efficient | Requires clean reference channels, removes correlated brain signals [3] | Studies with clear reference signals available |

| Principal Component Analysis (PCA) | Separates components based on variance [2] | Computationally efficient, objective component ordering | Mixes neural and artifactual sources, not designed for biological signals [2] | Initial data exploration, dimensionality reduction [2] |

| Epoch Rejection | Discards contaminated data segments | Simple implementation, guarantees artifact removal | Significant data loss, reduces statistical power [3] | Studies with rare artifacts and abundant data |

Essential Research Reagents and Tools

Table 3: Research Reagent Solutions for ICA-EEG Studies

| Tool Category | Specific Examples | Function in ICA-EEG Research |

|---|---|---|

| ICA Algorithms | JADE, FastICA, AMICA, ExtendedICA [3] [4] | Perform the core decomposition of mixed signals into independent components |

| EEG Processing Toolboxes | EEGLAB, MNE-Python [1] | Provide integrated environments for implementing ICA and visualizing components |

| Quality Metrics | Mutual Information Reduction (MIR), Component Near-Dipolarity [5] | Quantify decomposition quality and guide data collection requirements |

| Visualization Tools | Topographic maps, component activations, power spectra [3] | Facilitate identification and classification of artifactual components |

| Validation Tools | Correlation analysis, spectral comparison, expert rating [4] | Quantify artifact removal efficacy and neural signal preservation |

Independent Component Analysis represents a powerful approach for blind source separation that has proven particularly valuable in EEG artifact removal. By leveraging the statistical properties of underlying sources, ICA can effectively separate and remove contaminants while preserving neural signals of interest. The technique continues to evolve with advancements like covariate-integrated ICA offering new possibilities for exploring brain-behavior relationships [7]. When implemented with careful attention to data requirements and component identification protocols, ICA provides researchers with a robust tool for enhancing EEG data quality and enabling more accurate neuroscientific and clinical investigations.

Electroencephalography (EEG) is a fundamental tool in clinical neurology, neuroscience research, and increasingly in non-clinical domains such as brain-computer interfaces (BCIs), wellness tracking, and neuroergonomics [8]. However, the EEG signal is highly susceptible to contamination by various non-neural artifacts, which can obscure cerebral activity and lead to misinterpretation of data. Effective artifact management is therefore a critical prerequisite for valid EEG analysis, particularly in studies investigating neural correlates of cognition, behavior, or drug effects [9]. The challenge is especially pronounced in the context of wearable EEG systems, which utilize dry electrodes, reduced channel counts, and record in uncontrolled environments, making artifacts more frequent and pronounced [8].

This application note establishes a detailed taxonomy of the three primary biological artifacts—ocular, muscular, and cardiac—framed within the methodological context of Independent Component Analysis (ICA), a dominant approach for artifact removal. We provide a structured classification, quantitative summaries, experimentally-validated protocols, and practical toolkits to support researchers in developing robust EEG preprocessing pipelines.

A Detailed Taxonomy of Major EEG Artifacts

Artifacts in EEG signals are typically categorized based on their origin. The following sections detail the characteristics of ocular, muscle, and cardiac artifacts, which are the most common biological contaminants.

Ocular Artifacts

Ocular artifacts originate from eye movements and blinks. The primary mechanism is the movement of the corneo-retinal dipole, which creates an electrical field that propagates across the scalp [10].

- Eye Blinks: Characterized by high-amplitude, low-frequency deflections, typically in the 0.5–2 Hz range, with a broad, symmetric frontal scalp topography [11] [10].

- Saccadic Eye Movements: Generate sharp, lateralized potentials in the frontal and fronto-polar regions due to the rapid rotation of the eyeball.

- Slow Eye Movements: Produce slow, drifting potentials that can be mistaken for slow cortical oscillations.

These artifacts are particularly problematic for the analysis of event-related potentials (ERPs) and low-frequency brain signals.

Muscle Artifacts

Muscle artifacts, or electromyogenic (EMG) artifacts, are caused by the electrical activity of cranial, facial, and neck muscles [8].

- Spectral Signature: EMG artifacts have a broadband spectral profile that can overwhelm EEG activity across all frequencies, but is most prominent in the high-frequency range (>20 Hz).

- Topographical Distribution: Their topography depends on the muscle group activated (e.g., frontalis muscle tension affects frontal channels, while temporalis muscle activity affects temporal channels).

- Amplitude and Duration: They manifest as high-frequency, low-amplitude bursts or sustained tonic activity, making them challenging to separate from neural gamma-band activity.

Cardiac Artifacts

Cardiac artifacts, or electrocardiographic (ECG) artifacts, result from the electrical activity of the heart.

- Primary Manifestation: The most common signature is the QRS complex, which appears as a stereotyped, periodic spike in the EEG trace, synchronized with the heartbeat.

- Propagation Mechanisms:

- Direct Propagation: Volume conduction from the heart, often visible in ear lobe or temporal electrodes when the subject is lying down.

- Pulse Artifacts: Caused by the pulsation of nearby blood vessels against an electrode, leading to slow, rhythmic waves at the heart rate.

Table 1: Taxonomic Summary of Major EEG Artifacts

| Artifact Category | Spectral Domain | Spatial Topography | Temporal Signature | Key Identifying Features |

|---|---|---|---|---|

| Ocular (EOG) | Low-frequency (0.5–2 Hz for blinks; up to 10 Hz for saccades) [11] | Bilateral, fronto-polar maxima [10] | High-amplitude, smooth deflections (blinks); sharp, lateralized potentials (saccades) | Corneo-retinal dipole; strongly correlated with EOG channel |

| Muscular (EMG) | Broadband, dominant in high-frequencies (>20 Hz) [8] | Focal over specific muscle groups (frontal, temporal) | High-frequency, irregular, burst-like or tonic | Non-stereotyped, spatially focal; ICs often have "spiky" spectra |

| Cardiac (ECG) | Pulse artifact: <1-3 Hz; QRS: broadband [12] | Widespread, but often maximal in temporal/earlobe electrodes | Stereotyped, periodic QRS complexes; slow pulse waves | Precise temporal locking to heartbeat; can be identified via ECG channel |

ICA as a Core Strategy for Artifact Removal

Independent Component Analysis (ICA) is a blind source separation technique that decomposes multi-channel EEG data into maximally independent components (ICs) [10]. The underlying assumption is that the recorded EEG is a linear mixture of independent neural and non-neural sources. ICA solves the "unmixing" problem, allowing for the identification and removal of artifact-laden components before signal reconstruction [10].

The ICA Workflow for Artifact Removal

The standard ICA-based artifact removal pipeline involves several key stages, from data preparation to component rejection and signal reconstruction.

Advanced ICA Considerations and Emerging Methods

While standard ICA is powerful, several critical considerations and novel extensions have been developed to enhance its efficacy.

- Data Requirements: ICA requires a substantial amount of data for stable decomposition. It is recommended to use more trials than channels, and the data should be as clean as possible beforehand [10].

- The "Over-Cleaning" Pitfall: A critical, often overlooked issue is that ICs are rarely purely artifactual or neural. Subtracting entire components deemed "artifactual" can inadvertently remove neural signals, leading to artificially inflated effect sizes in ERP and connectivity analyses, and biasing source localization [9] [13].

- Targeted Artifact Reduction: To mitigate this, advanced methods like the RELAX pipeline perform targeted cleaning. Instead of subtracting entire components, it selectively removes artifact-dominated periods (for eye movements) or frequency bands (for muscle noise) within components, better preserving neural information [9] [13].

- Challenges in Wearable EEG: The effectiveness of ICA can be compromised in wearable EEG systems with low channel counts (often <16), as it limits the spatial resolution needed for optimal source separation [8].

Experimental Protocols for Artifact Management

Protocol 1: ICA-Based Ocular Artifact Removal with RELAX

This protocol details the steps for implementing the RELAX pipeline, an advanced ICA-based method for targeted ocular and muscle artifact reduction [9] [13].

- Data Acquisition: Record EEG using a standard high-density (e.g., 64-channel) system. Ensure synchronization with EOG and EMG channels if available.

- Preprocessing:

- Apply a high-pass filter at 1 Hz and a low-pass filter at 80 Hz.

- Remove bad channels via visual inspection or automated methods (e.g., high-frequency noise, flat-line signals).

- Reject grossly contaminated data segments.

- ICA Decomposition: Perform ICA using the Infomax algorithm (

runicain EEGLAB) with extended options to capture sub-Gaussian sources [10]. - Component Labeling: Use an automated classifier like ICLabel to obtain preliminary labels for each IC (e.g., "Brain," "Eye," "Muscle") [14].

- Targeted Cleaning with RELAX:

- Install the RELAX plugin for EEGLAB from its public repository (

https://github.com/NeilwBailey/RELAX). - Configure the cleaning parameters. For ocular artifacts, RELAX will identify eye-movement-related ICs and subtract only the blink and saccade periods from the component time course.

- For muscle artifacts, RELAX will identify muscle-related ICs and apply a frequency-domain filter to remove the high-frequency artifact power while preserving lower-frequency neural activity within the same component.

- Install the RELAX plugin for EEGLAB from its public repository (

- Data Reconstruction: Reconstruct the EEG signal from the modified ICs.

- Validation: Quantify the success of artifact reduction by comparing the correlation between EEG and EOG/EMG channels before and after processing. For ERPs, verify that expected components (e.g., N400, P300) are preserved without inflation [9].

Protocol 2: Motion Artifact Removal for Mobile EEG

This protocol is tailored for EEG recorded during locomotion (e.g., walking, running), where motion artifacts are severe [14].

- Setup: Use a mobile EEG system with active electrodes. If available, integrate inertial measurement units (IMUs) to track head motion.

- Preprocessing with Advanced Algorithms:

- Option A: Artifact Subspace Reconstruction (ASR). Apply ASR (e.g., the

clean_rawdataEEGLAB plugin) with a threshold parameterktypically set between 10-30. A lowerkis more aggressive and suitable for high-motion scenarios like running [14]. - Option B: iCanClean. If pseudo-reference or dedicated noise sensors are available, use iCanClean with a canonical correlation threshold (R²) of 0.65 and a 4-second sliding window, which has been shown to be effective for walking data [14].

- Option A: Artifact Subspace Reconstruction (ASR). Apply ASR (e.g., the

- ICA Decomposition: Perform ICA on the preprocessed data.

- Component Evaluation: Assess the quality of the decomposition by calculating the dipolarity of the resulting components. A higher proportion of dipolar components suggests a successful separation of brain sources from non-physiological motion artifacts [14].

- Validation:

- Examine the power spectrum at the gait frequency and its harmonics; effective cleaning should show a reduction in power at these frequencies.

- For task-based studies, confirm that expected ERPs (e.g., P300 in a Flanker task) can be recovered with the correct latency and topography [14].

Table 2: Key Research Reagents and Resources for EEG Artifact Management

| Resource Name | Type/Category | Primary Function in Research | Key Features & Applications |

|---|---|---|---|

| EEGLAB | Software Environment | Provides a comprehensive framework for EEG processing, including ICA. | Core platform for running ICA; hosts essential plugins like ICLabel, RELAX, and ASR [9] [10]. |

| RELAX Pipeline | Software Plugin (for EEGLAB) | Implements targeted artifact reduction within ICA components. | Mitigates effect size inflation and source localization bias; superior to full-component rejection [9] [13]. |

| ICLabel | Software Plugin (for EEGLAB) | Automates the classification of ICA components. | Uses a trained dataset to label components as Brain, Muscle, Eye, Heart, etc., streamlining the review process [14]. |

| ICanClean | Software Algorithm | Reduces motion artifacts using reference noise signals. | Leverages canonical correlation analysis (CCA); effective for motion artifacts in mobile brain imaging [14]. |

| EGI HydroCel Geodesic Sensor Net | Hardware (EEG Cap) | Standardized high-density EEG acquisition. | Ensures consistent electrode placement; critical for high-quality ICA and spatial analysis [15]. |

| Child Mind Institute Healthy Brain Network (HBN) Biobank | Public Dataset | Provides normative EEG data for method development and validation. | Includes resting-state EEG (eyes open/closed) from a large pediatric cohort; useful for establishing baselines [15]. |

| EEGOAR-Net | Deep Learning Model | Calibration-free removal of ocular artifacts. | Montage-independent U-Net model; does not require EOG channels or subject-specific calibration data [16]. |

A rigorous and nuanced understanding of EEG artifact taxonomy is indispensable for neuroscientific and clinical research. While ICA remains a cornerstone technique for managing these artifacts, researchers must be aware of its limitations, particularly the risk of neural signal removal when using simplistic component-subtraction approaches. The adoption of targeted cleaning methods like the RELAX pipeline, along with specialized tools for motion artifact correction, represents the current best practice. As EEG applications expand into real-world, mobile, and wearable domains, continued refinement of these protocols will be essential to ensure the validity and reliability of electrophysiological findings in studies of brain function, therapeutic interventions, and drug development.

Theoretical Foundation of ICA in EEG

Independent Component Analysis (ICA) has become a cornerstone technique in electroencephalography (EEG) signal processing due to its powerful ability to separate mixed signals into their underlying source components. The core principle of ICA rests on the assumption that the observed EEG signals represent a linear mixture of statistically independent source signals originating from the brain and various non-neural sources.

The mathematical model for ICA defines the observed EEG signal matrix ( X ) as a linear mixture of independent source signals ( S ) through a mixing matrix ( A ), such that: [ X = A S ] The goal of ICA is to estimate an unmixing matrix ( W ) that separates the observed signals into statistically independent components: [ S = W X ] where ( S ) represents the independent components [17]. ICA algorithms operate under the fundamental assumption that the source components are statistically independent and have non-Gaussian distributions (with the exception that at most one component can be Gaussian) [17] [18].

This mathematical framework makes ICA particularly well-suited for EEG analysis because it effectively addresses the blind source separation problem - the challenge of recovering original source signals without prior knowledge of the mixing process. The physiological basis for this approach lies in the recognition that EEG recordings capture superimposed electrical activity from multiple distinct generators, including cortical neurons, ocular movements, cardiac activity, and muscle contractions, which can reasonably be assumed to originate from statistically independent processes [4].

ICA Implementation Protocols for EEG Artifact Removal

Data Preparation and Preprocessing

Successful application of ICA for EEG artifact removal requires careful data preprocessing to meet the statistical assumptions of the algorithm:

High-Pass Filtering: ICA is sensitive to low-frequency drifts, requiring data to be high-pass filtered prior to fitting. A cutoff frequency of 1 Hz is typically recommended to remove slow drifts while preserving neural signals of interest [19].

Data Collection Requirements: The quality of ICA decomposition depends on adequate data quantity. For optimal results using advanced algorithms like AMICA, sufficient data must be collected, as benefits to decomposition quality may continue to increase with more data beyond common heuristic thresholds [5].

Handling Mobile EEG: For experiments with participant movement, moderate data cleaning (5-10 iterations of sample rejection in AMICA) improves decomposition quality, particularly for datasets with significant motion artifacts [18].

Baseline Considerations: When working with epoch data, perform high-pass filtering but avoid baseline correction, as this can negatively impact ICA performance [19].

ICA Decomposition Workflow

The following protocol outlines a standardized approach for ICA-based artifact removal in EEG research:

Protocol 1: Standardized ICA for EEG Artifact Removal

Data Input Preparation: Format continuous or epoched EEG data with all channels included except reference electrodes.

Algorithm Selection: Choose an appropriate ICA algorithm based on data characteristics:

Parameter Configuration:

Model Fitting: Execute ICA decomposition on the preprocessed data.

Component Classification: Identify artifactual components using validated methods:

Artifact Removal: Reconstruct signals excluding artifactual components using

ICA.apply()[19].

Table 1: ICA Algorithm Selection Guide

| Algorithm | Best Use Case | Key Parameters | Advantages |

|---|---|---|---|

| FastICA | Standard EEG recordings | ncomponents, maxiter | Computational efficiency [19] |

| Infomax/Picard | Noisy data or when extended independence required | extended=True (for Infomax) | Robustness to noise [19] [17] |

| AMICA | High-quality decomposition for research | Data quantity, cleaning iterations | Considered benchmark for quality [5] [18] |

| JADE | Short EEG samples with evident artifacts | Component count | Effective for clear artifact separation [4] |

Specialized Protocol for TMS-Evoked Potentials

For TMS-EEG artifact removal, specific considerations apply:

Protocol 2: ICA for TMS-Evoked Potentials (TEPs)

Data Characteristics: Recognize that TMS-induced artifacts typically mask early (0-30 ms) TEP components [20].

Variability Assessment: Measure trial-to-trial artifact variability using ICA-derived components, as low variability can lead to unreliable cleaning and potential removal of brain-derived activity [20].

Accuracy Validation: Estimate cleaning reliability by measuring artifact component variability, which predicts cleaning accuracy even without clean ground-truth data [20].

Component Exclusion: Apply conservative exclusion criteria, particularly for components with low trial-to-trial variability, to minimize unintended removal of neural signals [20].

Advanced ICA Applications in Neuroscience Research

ICA with Integrated Covariates

A novel approach enhances ICA's utility for cognitive neuroscience by integrating behavioral measures directly into the decomposition process:

Protocol 3: Covariate-Integrated ICA for Brain-Behavior Relationships

Data Preparation: Create a combined dataset incorporating both EEG connectivity measures and behavioral assessment scores (e.g., Woodcock-Johnson Cognitive Abilities Test) [17].

Dual Decomposition Approach:

Validation: Compare correlation strength and robustness between extracted components and cognitive performance measures across independent test datasets [17].

Interpretation: Identify components that show significant relationships with cognitive performance, potentially serving as biomarkers for clinical and cognitive deficits [17].

Table 2: Quantitative Metrics for ICA Quality Assessment

| Metric | Calculation Method | Interpretation | Optimal Range |

|---|---|---|---|

| Mutual Information Reduction (MIR) | Measures reduction in signal mutual information after decomposition | Higher values indicate better component separation [5] [18] | Increasing values preferred, may not plateau [5] |

| Near Dipolarity | Measures how closely components match expected dipole projections | Higher values indicate more physiologically plausible sources [5] | Component-dependent, higher generally better |

| Residual Variance | Variance unexplained after component projection | Lower values indicate better model fit [18] | Study-dependent, lower generally better |

| Component Class Proportion | Ratio of brain:muscle:other components | Higher brain component ratio suggests better decomposition [18] | Application-dependent |

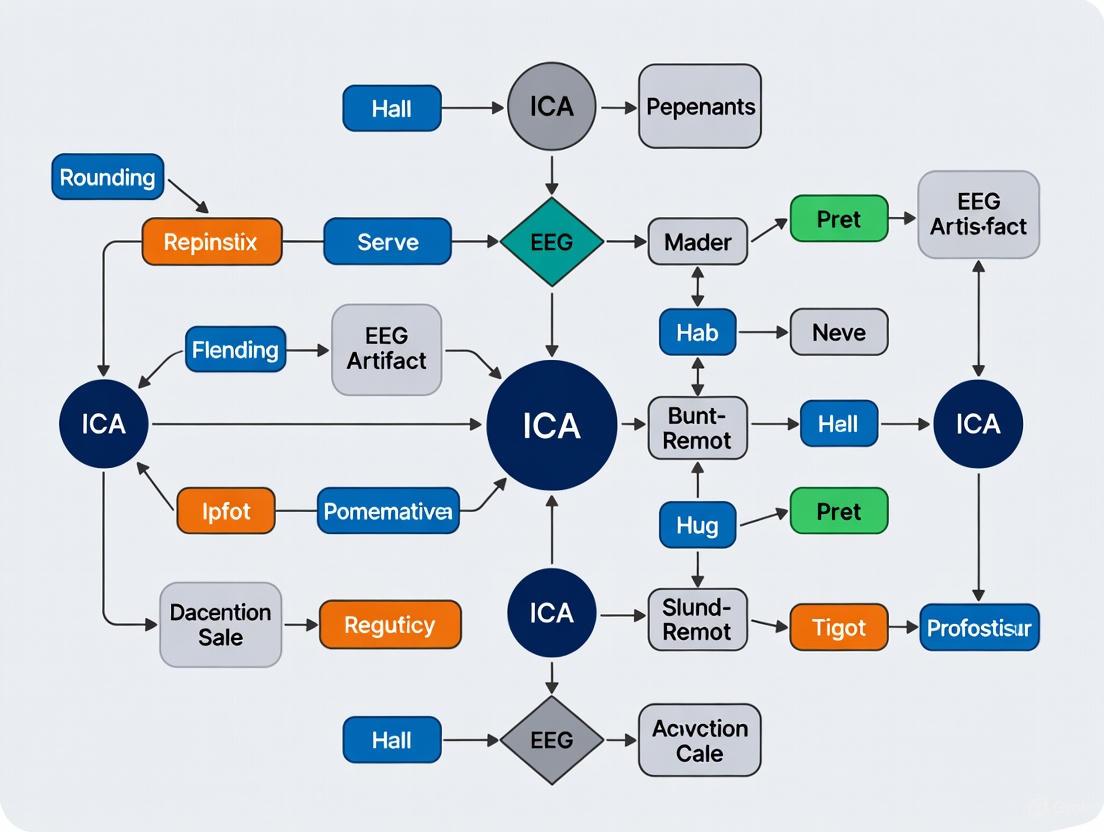

Visualization of ICA Workflows

Figure 1: Comprehensive ICA workflow for EEG artifact removal

Figure 2: Mathematical principles of ICA for source separation

Research Reagent Solutions for ICA-EEG Studies

Table 3: Essential Research Materials for ICA-EEG Investigations

| Resource Category | Specific Tools/Solutions | Research Function | Implementation Notes |

|---|---|---|---|

| Software Libraries | MNE-Python ICA module | Complete ICA implementation including fitting, component selection, and signal reconstruction [19] | Provides multiple algorithms (FastICA, Infomax, Picard) and artifact detection methods |

| EEG Systems | 19+ channel systems with 10-20 placement | Data acquisition with sufficient spatial sampling for effective source separation [17] | Higher channel counts (e.g., 71 channels) enable better decomposition quality [5] |

| ICA Algorithms | AMICA, FastICA, Infomax, JADE | Core decomposition engines with different performance characteristics [19] [4] [18] | AMICA considered benchmark; choice depends on data quality and research goals [5] [18] |

| Artifact Detection | MNE's findbads* methods, SVM classification | Automated identification of artifactual components after decomposition [19] [21] | Can be combined with manual inspection for validation |

| Connectivity Metrics | swLORETA, lagged coherence | Functional connectivity analysis for covariate-integrated ICA approaches [17] | Lagged coherence reduces volume conduction effects [17] |

| Quality Metrics | Mutual Information Reduction, Near Dipolarity | Quantitative assessment of decomposition quality [5] [18] | Essential for method validation and optimization |

Validation and Quality Control Protocols

Quantitative Performance Validation

Protocol 4: ICA Decomposition Quality Assessment

Metric Calculation:

Component Categorization:

- Classify components as brain, muscle, or 'other' based on spatial and temporal characteristics [18]

- Use multiple criteria including topography, frequency spectrum, and time-course properties

Signal-to-Noise Assessment:

- Compare SNR in conditions of interest before and after ICA cleaning [18]

- Validate preservation of neural signals while removing artifacts

Limitations and Considerations

Despite its powerful capabilities, researchers must recognize important limitations of ICA:

Trial-to-Trial Variability: When artifacts show minimal variability across trials (as in TMS-EEG), ICA may become unreliable and potentially remove brain-derived activity along with artifacts [20].

Algorithm Selection: Different ICA algorithms may yield varying results, requiring careful selection based on specific research needs and data characteristics [19] [18].

Data Requirements: Decomposition quality improves with increased data quantity, with benefits potentially continuing beyond common heuristic thresholds [5].

Linearity Assumption: ICA assumes linear mixing of sources, which may not fully capture the complex volume conduction properties of head tissues, though it remains a effective approximation for most EEG applications [17].

Independent Component Analysis (ICA) has become a cornerstone technique in electroencephalogram (EEG) preprocessing, particularly for the critical task of artifact removal. Its efficacy, however, is not guaranteed and hinges on several foundational prerequisites. The broader thesis of ICA for EEG artifact removal research posits that the success of the method is intrinsically linked to the quality and structure of the input data. This application note details the core prerequisites—data quality, channel count, and stationarity assumptions—that researchers must satisfy to ensure reliable and interpretable results. Failure to adhere to these principles can lead to incomplete artifact separation, the unintended removal of neural signals, or fundamentally invalid decompositions [20]. We synthesize current research to provide structured quantitative data, experimental protocols, and practical tools to guide researchers in optimizing their ICA workflows.

Quantitative Prerequisites for ICA

The following tables summarize the key quantitative findings from the literature regarding data requirements and performance for ICA in EEG.

Table 1: Data Quantity and Channel Configuration Requirements

| Factor | Key Finding | Quantitative Evidence | Source |

|---|---|---|---|

| Data Quantity | Benefits of increased data may extend beyond common heuristic thresholds; no clear plateau observed. | Mutual Information Reduction (MIR) and near-dipolarity continued to improve with more data beyond common benchmarks in a 71-channel study. | [5] |

| Channel Count | An intermediate number of channels is optimal; too few or too many degrades performance. | 5–8 channels were identified as the optimal range for motor imagery BCI applications, balancing information and noise. | [22] |

| Artifact Removal Performance | ICA can effectively clean various artifacts with minimal distortion to neural signals. | A quantitative study reported minimal distortion of interictal activity (measured via correlation analysis) after removing EKG, eye movement, and muscle artifacts. | [4] |

Table 2: ICA Performance and Error Metrics in Validation Studies

| Study Context | Performance Metric | Result | Implications | |

|---|---|---|---|---|

| General Artifact Classification | Mean Squared Error (MSE) vs. Expert Labeling | <10% MSE on reaction time data; 15% MSE on an auditory ERP paradigm. | Automated classification can perform on par with inter-expert disagreement levels. | [23] |

| TMS-Evoked Potentials (TEP) Cleaning | Cleaning Accuracy | ICA becomes unreliable and may remove brain signals when artifact trial-to-trial variability is small. | Highlights a critical violation of the independence assumption in specific paradigms. | [20] |

Experimental Protocols for Prerequisite Validation

Protocol: Assessing Data Sufficiency and Channel Setup

This protocol is designed to empirically determine the optimal amount of data and channel configuration for a given experimental setup.

- Data Preparation: Begin with a raw, continuous EEG dataset that has been acquired with a high channel count (e.g., 64 or 128 channels) and is of substantial duration (e.g., 20+ minutes).

- Subsampling Data Quantity: Randomly subsample the data to create multiple datasets of varying lengths. The data length should be expressed as a ratio of the total number of data frames (samples) to the number of channels (F/C). Test a wide range of F/C ratios (e.g., from 100 to 10000) [5].

- Subsampling Channel Count: From the full channel set, create multiple channel subsets. These should include:

- Very sparse sets (e.g., 3-5 channels over motor cortex).

- Intermediate sets (e.g., 5-8 channels, selected automatically or based on literature [22]).

- A high-density set (e.g., 32+ channels).

- ICA Decomposition: Run a chosen ICA algorithm (e.g., AMICA [5] or Infomax [10]) on all combinations of the data quantity and channel count subsets.

- Quality Assessment: Evaluate the quality of each resulting decomposition using metrics such as:

- Mutual Information Reduction (MIR): Measures the independence of the components [5].

- Near-Dipolarity: The proportion of components with a dipolar scalp topography, which is indicative of a compact cortical source [5].

- Component Reliability: Use a framework like RAICAR (Ranking and Averaging Independent Component Analysis by Reproducibility) to assess the stability of components across multiple ICA runs with different initializations [24].

- Optimal Point Determination: Plot the quality metrics against both the F/C ratio and the number of channels. The point where quality metrics begin to asymptote or peak indicates the optimal, efficient data configuration for your specific paradigm.

Protocol: Evaluating Stationarity and Trial-to-Trial Variability

This protocol tests the critical ICA assumption of statistical independence between sources, which can be violated by highly stereotypical artifacts.

- Simulated Artifact Injection: Start with a clean, artifact-free TMS-Evoked Potential (TEP) dataset or similar event-related potential data. If such data is unavailable, use resting-state EEG from which major artifacts have been meticulously removed.

- Generate Artifacts: Create simulated artifacts (e.g., a TMS pulse artifact or eye blink template) with controlled levels of trial-to-trial variability.

- Low-Variability Condition: Add the artifact to each trial with nearly identical waveform and amplitude.

- High-Variability Condition: Add the artifact with significant random variations in amplitude, latency, or morphology across trials [20].

- ICA Processing: Apply ICA to both the low-variability and high-variability datasets.

- Accuracy Assessment: Compare the ICA-cleaned data to the original artifact-free ground truth. Calculate the accuracy of artifact removal, for example, by measuring the correlation or mean squared error between the cleaned data and the true neural signal [20].

- Measure Component Variability: For real data where the ground truth is unknown, calculate the trial-to-trial variability of the artifact-dominated independent component itself. This can be a predictor of cleaning reliability [20].

- Interpretation: If accuracy is low and the identified artifact component shows very low variability, the results should be treated with caution, as ICA may have inaccurately partitioned the neural signal.

The following diagram illustrates the logical workflow and decision points for setting up a successful ICA analysis based on the aforementioned protocols.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Algorithmic Tools for ICA Research

| Tool Name | Type | Primary Function in ICA Research | Key Reference / Source |

|---|---|---|---|

| EEGLAB | Software Environment | Provides a comprehensive framework for ICA decomposition, visualization, and component rejection. | [10] |

| AMICA Plugin | Algorithm/Plugin | A multimodal ICA algorithm considered a benchmark for decomposition quality; requires significant computation. | [5] |

| JADE Algorithm | Algorithm | An ICA algorithm based on Joint Approximate Diagonalization of Eigen-matrices; effective for artifact removal. | [4] |

| FastICA Algorithm | Algorithm | A widely-used, computationally efficient ICA algorithm based on fast fixed-point iteration. | [25] |

| Infomax Algorithm | Algorithm | A popular ICA algorithm that maximizes the mutual information between inputs and outputs. | [10] |

| RAICAR Framework | Methodology | A framework for ranking and averaging ICA components by reproducibility across multiple realizations. | [24] |

| CW_ICA Method | Methodology | A recently proposed method for automatically determining the optimal number of ICs. | [26] |

The successful application of ICA for EEG artifact removal is a deliberate process grounded in rigorous data preparation. Researchers must treat it not as a one-size-fits-all filter but as a powerful tool with specific requirements. The evidence clearly demonstrates that success depends on: providing sufficient high-quality data per channel, configuring an optimal number of electrodes for the given task, and critically evaluating the stationarity assumptions of the underlying sources, particularly in paradigms with stereotypical artifacts like TMS. By adhering to the validated protocols and utilizing the tools outlined in this document, researchers can significantly enhance the reliability and interpretability of their ICA results, thereby strengthening the validity of their subsequent neuroscientific and clinical findings.

A Step-by-Step Guide to Implementing ICA for EEG Cleaning

Independent Component Analysis (ICA) has become a cornerstone technique in electroencephalography (EEG) research for separating neural activity from various artifacts. The quality of ICA decomposition, however, is profoundly influenced by specific preprocessing steps applied to the data beforehand. Proper preprocessing is not merely a procedural formality but a critical determinant of ICA performance, directly impacting the algorithm's ability to isolate biologically plausible neural sources and effectively remove artifacts such as ocular movements, cardiac signals, and muscle activity. This application note provides detailed protocols for the essential preprocessing steps of filtering and referencing, framed within the context of preparing EEG data for optimal ICA decomposition in artifact removal research.

Theoretical Foundations: Preprocessing for Optimal ICA

The effectiveness of ICA relies on certain statistical assumptions about the input data, primarily that the underlying sources are statistically independent and mixed linearly through volume conduction. Preprocessing aims to condition the recorded EEG signals to better satisfy these assumptions.

The Impact of Filtering on ICA

Filtering choices directly influence ICA performance by controlling the frequency content available for decomposition. High-pass filtering is particularly crucial because EEG data exhibits a 1/f power spectral density, meaning lower frequencies dominate the signal amplitude [27]. Since ICA is biased toward higher amplitude activity when working with finite data lengths, excessive low-frequency content can cause the algorithm to focus on slow drifts rather than neurologically relevant activity in higher bands. Furthermore, low-frequency signals below 1 Hz are often contaminated by physiological artifacts such as sweating, which introduces spatiotemporal non-stationarity that violates ICA assumptions [27].

The official MNE documentation explicitly warns that "ICA is sensitive to low-frequency drifts and therefore requires the data to be high-pass filtered prior to fitting. Typically, a cutoff frequency of 1 Hz is recommended" [19]. Empirical evidence supports this recommendation, with studies concluding that "high-pass filtered data at 1-2Hz works best for ICA" in terms of signal-to-noise ratio and component quality [27].

The Role of Reference Choice in ICA

The reference electrode problem is fundamental to EEG interpretation, as there is no electrically neutral point on the body or head—a concept known as the "no-Switzerland principle" [28]. The choice of reference scheme affects the spatial structure of EEG data, which in turn influences the ICA decomposition process.

Different reference techniques transform the ideal infinite reference recording (VInf) through specific transformation matrices [28]. The average reference (AVG), while popular, results in a zero-sum constraint across all channels, which affects the spatial topographies of independent components [28]. Research comparing reference techniques for ICA has found that the Reference Electrode Standardization Technique (REST), which approximates a reference at infinity, shows "overall superiority" for ICA analysis, particularly in preserving both temporal ERP characteristics and spatial topographies of components [28].

Table 1: Common EEG Reference Techniques and Their Properties

| Reference Technique | Mathematical Formulation | Impact on ICA | Best Use Cases |

|---|---|---|---|

| Linked Mastoids/Ears (LM) | ( V{LM} = (I - \tilde{T}{LM}) V_{Inf} ) [28] | Standard approach, may introduce bias from non-neutral sites | Clinical protocols, tradition-based pipelines |

| Average Reference (AVG) | ( V{AVG} = (I - \tilde{T}{AVG}) V_{Inf} ) [28] | Imposes zero-sum constraint on component topographies | High-density arrays, studies with uniform scalp coverage |

| Reference Electrode Standardization Technique (REST) | ( V{REST} = T{REST} V_{Inf} ) [28] | Approximates ideal reference, often superior for ICA | Source localization, studies aiming for optimal component separation |

Experimental Protocols

Protocol 1: Data Preparation and Filtering for ICA

This protocol outlines the critical steps for preparing raw EEG data to ensure optimal ICA decomposition, with specific attention to filtering parameters.

Materials and Equipment

- Raw EEG data recorded according to experimental requirements

- Computing environment with EEGLAB or MNE-Python software

- Data storage with adequate space for processed data files

Step-by-Step Procedure

Data Import and Integrity Check

- Import raw EEG data into your preferred analysis environment (EEGLAB/MNE-Python).

- Verify that the data is continuous without inherent segmentation that might disrupt stationarity.

- Check for channels filled entirely with zeros or clearly biologically implausible values, as these will severely compromise ICA and should be removed before decomposition [27].

Downsampling (Optional but Recommended)

- Downsample data to approximately 250 Hz if the original sampling rate is significantly higher [27].

- Rationale: Reduces computational load and data size while maintaining neurologically relevant frequency content. Modern downsampling algorithms automatically apply anti-aliasing filters.

High-Pass Filtering

- Apply a high-pass filter with a cutoff frequency of 1-2 Hz. In practice, a pass-band edge of 1 Hz (equivalent to a -6 dB cutoff at 0.5 Hz) is recommended [27].

- Rationale: This critical step removes slow drifts that violate ICA's stationarity assumption and bias the decomposition toward high-amplitude, low-frequency artifacts [19] [27].

- Filter type: Use a zero-phase filter (e.g., FIR) to prevent temporal distortion of event-related potentials.

Low-Pass Filtering (Optional)

- Apply a low-pass filter with a cutoff of 40-50 Hz to reduce high-frequency noise, including muscle artifacts and line noise interference [29].

- For studies specifically investigating high-frequency activity (e.g., gamma oscillations), adjust this parameter accordingly or omit this step.

Bad Channel Identification and Interpolation

- Identify channels with excessive noise, flat signals, or consistent artifacts using automated algorithms and visual inspection.

- Interpolate identified bad channels using spherical spline or other spatially appropriate methods.

- Note: Interpolation should be performed before ICA to ensure a complete channel set, but the same bad channels should be noted and potentially excluded from the actual ICA decomposition to maintain data quality.

Quality Control and Verification

- Visual Inspection: Plot filtered data to verify the removal of slow drifts while maintaining physiological signal characteristics.

- Spectral Analysis: Confirm appropriate frequency content using power spectral density plots.

- Stationarity Check: Ensure the filtered data exhibits relatively constant variance over time, without systematic trends or abrupt jumps.

Protocol 2: Referencing and ICA Decomposition

This protocol details the application of different reference techniques and the subsequent execution of ICA.

Materials and Equipment

- EEG data preprocessed according to Protocol 1

- EEGLAB or MNE-Python with ICA functionality

- Head model information (if using REST reference)

Step-by-Step Procedure

Reference Application

- Apply your chosen reference scheme to the preprocessed and filtered data. Common options include:

- Note: Each method has theoretical advantages and limitations, with REST often providing superior results for ICA decomposition [28].

Data Segmentation for ICA (Critical Step)

- Select a stationary segment of data for ICA training. This segment should:

- Contain representative artifacts (especially ocular movements if removing ocular artifacts).

- Be free of large-amplitude, transient non-stationary artifacts (e.g., large muscle spikes, electrode pops) [29].

- In practice, this can be a dedicated "artifact training period" where participants perform systematic eye blinks and movements, or a clean segment extracted from the experimental data.

- Select a stationary segment of data for ICA training. This segment should:

ICA Decomposition

- Execute ICA on the segmented, referenced data using preferred algorithm (Infomax, FastICA, or Picard).

- Set the

n_componentsparameter based on your data: use a fixed number (e.g., 20-30 for standard EEG arrays) or a float (e.g., 0.999) to select components explaining 99.9% of variance [19]. - For MNE users, the ICA object is fitted to the data, producing

mixing_matrix_andunmixing_matrix_attributes [19].

Component Application to Full Dataset

- Apply the computed ICA weights from the training segment to the entire continuous dataset, including portions that may contain non-stationary artifacts [29].

- This transfers the spatial filters learned from clean data to all experimental data.

Quality Control and Verification

- Component Topography: Examine component scalp maps for physiologically plausible patterns.

- Time Courses: Review component activations for correspondence with known artifacts (e.g., eye blinks, saccades, muscle bursts).

- Diagnostic Tools: Use automated artifact detection (e.g.,

find_bads_eog,find_bads_ecgin MNE) to supplement visual inspection [19].

Data Presentation and Analysis

Quantitative Comparison of Filtering Parameters

Table 2: Comparative Analysis of High-Pass Filter Cutoffs for ICA Decomposition

| Filter Cutoff (Hz) | Effect on ICA Stability | Residual Ocular Artifacts | Use Case Recommendation |

|---|---|---|---|

| 0.1 Hz | Suboptimal due to increased low-frequency drift | Poor removal | Studies specifically investigating infraslow activity |

| 0.5-1.0 Hz | Optimal stability and component quality | Effective removal | Standard ERP and spectral analysis; recommended default |

| 2.0 Hz | Good stability, may attenuate some neural signals | Effective removal | Studies focusing on beta/gamma bands or with strong drift |

Table 3: Key Computational Tools and Functions for ICA Preprocessing

| Tool/Resource | Function/Purpose | Implementation Example |

|---|---|---|

| Zero-Phase FIR Filter | Removes low-frequency drifts without temporal distortion | mne.filter.filter_data() or EEGLAB's pop_eegfiltnew() |

| ICA Algorithm (Infomax) | Core decomposition engine identifying independent sources | mne.preprocessing.ICA(method='infomax') or EEGLAB runica() |

| Spherical Spline Interpolation | Reconstructs bad channels using spatial neighborhood information | mne.channels.interpolate_bads_eeg() or EEGLAB eeg_interp() |

| REST Reference Toolbox | Converts data to approximate infinity reference | EEGLAB plugins or standalone REST implementation |

| Automated Artifact Detection | Identifies component correlates of biological artifacts | ICA.find_bads_eog() and ICA.find_bads_ecg() in MNE |

Workflow Visualization

The following diagram illustrates the complete preprocessing pipeline for ICA, integrating both filtering and referencing steps:

Diagram 1: Complete ICA Preprocessing Workflow. Critical steps (red/green) require precise parameter selection, while yellow boxes indicate input/output stages.

Proper preprocessing is an essential prerequisite for successful ICA decomposition in EEG artifact removal. The protocols detailed in this application note provide evidence-based guidelines for the critical steps of filtering and referencing. Specifically, high-pass filtering at 1-2 Hz effectively removes slow drifts that bias ICA, while careful selection of reference technique (with REST offering theoretical advantages) optimizes the spatial structure of components. By implementing these standardized protocols, researchers in both basic neuroscience and drug development can enhance the reliability of ICA for isolating neural signals from contaminants, thereby improving the quality of electrophysiological biomarkers in clinical research.

Independent Component Analysis (ICA) has become a cornerstone technique in electroencephalography (EEG) preprocessing for isolating neural activity from various artifacts. ICA is a blind source separation method that decomposes multichannel EEG recordings into statistically independent components, each with a fixed scalp topography and a time course of activation [31]. Within neuroscience research, this capability is crucial for identifying and removing confounding signals from eye movements, muscle activity, and cardiac rhythms without discarding valuable data segments [9] [10]. The success of this decomposition, however, depends critically on the choice of algorithm and the careful execution of the protocol. This application note provides detailed methodologies for implementing two prominent ICA algorithms—Infomax and AMICA—within the context of EEG artifact removal, offering researchers a structured framework for obtaining optimal results.

Core ICA Algorithms for EEG: Infomax vs. AMICA

Algorithm Comparison and Selection

For EEG decomposition, the selection of an ICA algorithm involves trade-offs between computational efficiency, decomposition quality, and stability. The following table summarizes the key characteristics of Infomax and AMICA, the latter being widely considered a benchmark for performance [32].

Table 1: Key Characteristics of Infomax and AMICA Algorithms

| Feature | Infomax ICA | AMICA |

|---|---|---|

| Core Principle | Maximizes information transfer (infomax) or minimizes mutual information between components [31]. | Uses adaptive mixtures of generalized Gaussians to model source densities; can fit multiple models to stationary data subsets [32] [33]. |

| Typical Performance | Robust and reliable for super-Gaussian sources; performance can be enhanced with the 'extended' option for sub-Gaussian sources like line noise [10]. | Often outperforms other ICA algorithms on quantitative metrics like mutual information reduction and component dipolarity [32]. |

| Computational Demand | Moderate; suitable for standard computing resources. | High; often requires substantial computation time and resources, potentially benefiting from compiled versions or high-performance computing [33]. |

| Stability | Decompositions can vary slightly between runs due to random initial weight matrices [10]. | Known for producing high-quality, stable decompositions. |

| Best Use Cases | Standard artifact removal tasks; a good general-purpose choice. | Research requiring the highest possible decomposition quality; analysis of complex source interactions. |

Quantitative Data Requirements for Stable Decomposition

A critical factor for a successful ICA decomposition is having a sufficient amount of data. The required data quantity scales with the square of the number of EEG channels. A common heuristic is the $\kappa$ value, defined as:

$$\kappa = \frac{\text{number of data frames}}{(\text{number of channels})^2}$$

While a $\kappa$ value of 20 is often recommended heuristically, recent empirical investigations using AMICA suggest that decomposition quality, as measured by Mutual Information Reduction (MIR) and component dipolarity, continues to improve with more data beyond this threshold, showing no clear plateau [32]. The following table quantifies this relationship based on a study using a 71-channel EEG setup.

Table 2: Data Quantity and ICA Decomposition Quality (AMICA on 71 channels)

| $\kappa$ Ratio | Impact on Mutual Information Reduction (MIR) | Impact on Near-Dipolar Components |

|---|---|---|

| Low $\kappa$ | Lower MIR, indicating less effective separation of sources [32]. | Fewer components with residual variance < 10% when fitted with an equivalent dipole [32]. |

| Increasing $\kappa$ | Asymptotic increase in MIR observed [32]. | General increasing trend in the number of near-dipolar components [32]. |

| $\kappa = 20$ | Often used as a heuristic minimum, but may not represent a performance plateau [32]. | Benefits of collecting additional data are shown to extend beyond this common threshold [32]. |

Experimental Protocols for ICA Decomposition

Preprocessing for ICA

The quality of the ICA decomposition is heavily dependent on proper data preprocessing. The procedure aims to prepare the data such that ICA can model the most meaningful sources, rather than trivial noise or artifacts [34].

- Data Loading and Channel Selection: Load the continuous or epoched dataset into the processing environment (e.g., EEGLAB). ICA is typically run on EEG channels only, as other bio-signals like EMG involve propagation delays that violate ICA's assumption of instantaneous mixing [10].

- Filtering: Apply a high-pass filter (e.g., 0.1 Hz or 1 Hz cutoff) to remove slow drifts that can adversely affect the ICA solution [34]. A low-pass filter can also be applied to reduce high-frequency noise.

- Bad Channel and Segment Rejection: Identify and remove or interpolate consistently noisy or flat channels. It is also crucial to remove sections of data with infrequent, large-amplitude artifacts (e.g., SQUID jumps in MEG, electrode pops) before ICA. These atypical artifacts can "use up" components that would otherwise model brain activity or common artifacts [34]. Tools like

ft_databrowserin FieldTrip or EEGLAB'seegplotcan be used for this manual rejection. The removed sections can be replaced with NaN (Not a Number) values to maintain the data's continuous structure [34]. - (Optional) Data Reduction: For high-density datasets or to improve computational efficiency, data can be downsampled. However, be aware that this can reduce the quality of separation for high-frequency artifacts like muscle activity [34].

Protocol A: Running Infomax ICA in EEGLAB

Infomax ICA is a standard algorithm available in EEGLAB and provides a robust, general-purpose decomposition [10].

- Launch the ICA Function: After preprocessing, select

Tools→Decompose data by ICA→Run ICA. - Algorithm Selection: In the pop-up window, select

Infomaxorrunicafrom the algorithm dropdown menu. - Parameter Configuration:

- Execution: Click

Okto run the decomposition. The command window will display iterative output showing the learning rate and weight change until convergence is reached [10]. - Post-processing: Once finished, the ICA weights are stored in the EEG structure and can be visualized and analyzed.

Protocol B: Running AMICA

AMICA is a high-performance algorithm that can be run as a standalone program or from within MATLAB/EEGLAB via a plugin [33].

- Plugin Installation: Install the AMICA and

postAmicaUtilityplugins for EEGLAB from the official plugin repository [33]. - Data Preparation: Follow the standard preprocessing steps outlined in section 3.1. AMICA benefits from large amounts of high-quality data.

- Running AMICA: AMICA creates its own set of menus within EEGLAB. Navigate to the AMICA menu to configure and launch the decomposition.

- Model Configuration: A key feature of AMICA is its ability to estimate multiple ICA models for different stationary subsets of the data. The number of models and other parameters can be configured at this stage.

- Execution and Loading: The computation may take a significant amount of time. After completion, use the

postAmicaUtilityfunctions to load the computed models and components back into the EEGLAB environment for further inspection [33].

The following workflow diagram summarizes the key steps for preprocessing and running ICA.

Table 3: Key Software Tools and Resources for ICA Research

| Tool/Resource | Function in ICA Research | Access/Reference |

|---|---|---|

| EEGLAB | A collaborative, open-source MATLAB environment providing a comprehensive framework for ICA analysis, visualization, and artifact removal [10]. | https://sccn.ucsd.edu/eeglab/ |

| AMICA Plugin | An EEGLAB plugin that implements the Adaptive Mixture ICA algorithm, often yielding superior decomposition quality [32] [33]. | Available via the EEGLAB plugin manager. |

| RELAX Pipeline | An EEGLAB plugin for a targeted artifact reduction method that cleans artifact periods/frequencies, helping to avoid the artificial inflation of effect sizes [9]. | GitHub: NeilwBailey/RELAX |

| FieldTrip Toolbox | An alternative open-source MATLAB toolbox for advanced EEG/MEG analysis that includes its own implementations of ICA and other preprocessing tools [34]. | https://www.fieldtriptoolbox.org/ |

| DIPFIT | An EEGLAB extension used to fit equivalent dipole models onto ICA component scalp maps, aiding in the validation of their biological plausibility [32]. | Included in standard EEGLAB distributions. |

The effective application of ICA for EEG artifact removal hinges on a meticulous experimental approach. Researchers must choose an algorithm aligned with their quality and resource constraints, with Infomax serving as a robust default and AMICA offering potentially superior results at a higher computational cost. Crucially, the data itself must be prepared with care, ensuring that sufficient, clean data is presented to the algorithm—a requirement quantified by the $\kappa$ parameter. By adhering to the detailed protocols and leveraging the tools outlined in this document, researchers can reliably harness ICA to isolate neural signals, thereby enhancing the validity and interpretability of their EEG findings in both basic neuroscience and applied drug development research.

The accurate separation of neural activity from non-neural artifacts is a fundamental prerequisite for electroencephalography (EEG) research. Independent Component Analysis (ICA) has emerged as a powerful blind source separation technique that decomposes multi-channel EEG data into maximally independent components (ICs). A critical challenge lies in reliably identifying which of these components represent brain activity and which reflect various artifacts. This application note provides a comprehensive framework for classifying artifactual ICs based on their distinct topographical, temporal, and spectral signatures, equipping researchers with standardized protocols for enhancing EEG data quality in basic and clinical research.

Theoretical Foundations of ICA for EEG Decomposition

ICA operates on the principle of separating mixed signals into statistically independent sources without prior knowledge of their nature or mixing process. In the context of EEG, the recorded signals from scalp electrodes represent linear mixtures of underlying neural, ocular, muscular, and technical sources. The ICA algorithm estimates a demixing matrix that separates these sources into independent components, each characterized by a fixed topography (spatial distribution across electrodes), a time course of activation, and a spectral profile.

The validity of ICA decomposition relies on key assumptions: that the source signals are statistically independent and non-Gaussian, that the mixing process is linear and instantaneous, and that the number of recorded channels equals or exceeds the number of independent sources. Following decomposition, each IC can be examined through multiple feature domains to determine its origin, enabling researchers to exclude artifactual components before reconstructing the cleaned EEG signal [35].

Signature Profiles of Major Artifact Classes

Systematic identification of artifactual components requires multidimensional assessment across spatial, temporal, and frequency domains. The tables below summarize the characteristic signatures of common artifact types encountered in EEG research.

Table 1: Topographical, Temporal, and Spectral Signatures of Physiological Artifacts

| Artifact Type | Topographical Signature | Temporal Signature | Spectral Signature |

|---|---|---|---|

| Ocular (Blink) | Bifrontal focus (Fp1, Fp2); Symmetrical distribution [36] | High-amplitude, slow deflections (200-400 ms) time-locked to blink events [37] | Dominant in delta (0.5-4 Hz) and theta (4-8 Hz) bands [37] |

| Lateral Eye Movements | Asymmetric frontal distribution; Phase reversal between F7/F8 [36] | Sawtooth waveform with opposing polarities at F7 and F8 [36] | Broadband with low-frequency emphasis |

| Muscle (EMG) | Focal over temporal regions (temporalis); Diffuse for neck/shoulder muscles [37] | High-frequency, low-amplitude bursts; Irregular pattern [37] | Broadband with peak power in beta (13-30 Hz) and gamma (>30 Hz) [37] |

| Cardiac (ECG) | Maximum over left temporal/occipital regions; Often unilateral [36] | Stereotyped waveform repeating at heart rate (60-100 bpm) [37] | Multiple harmonics of heart rate frequency |

Table 2: Signature Profiles of Technical and Motion Artifacts

| Artifact Type | Topographical Signature | Temporal Signature | Spectral Signature |

|---|---|---|---|

| Electrode Pop | Highly localized to single electrode; No field distribution [36] | Sudden, high-amplitude transient with steep onset [37] | Broadband, non-stationary noise [37] |

| Line Noise | Global across all electrodes; May vary with electrode impedance [37] | Persistent 50/60 Hz oscillation; Constant amplitude [37] | Sharp peak at 50 Hz (Europe) or 60 Hz (North America) [37] |

| Head Movement | Widespread, non-stationary topography; Affects multiple electrodes [38] | High-amplitude, low-frequency drifts; Irregular bursts [38] | Dominant low-frequency content (<2 Hz) |

| Sweat Artifact | Widespread, shifting distribution; Often anterior emphasis [36] | Very slow drifts (<0.5 Hz); Non-stationary baseline [37] | Extreme dominance in delta band (0.1-0.5 Hz) [37] |

Experimental Protocols for IC Identification

Standardized IC Classification Workflow

Protocol 1: Comprehensive IC Evaluation

Objective: Systematically classify ICs using multi-domain features.

Materials:

- ICA-decomposed EEG data

- Visualization software (EEGLAB, MNE-Python, FieldTrip)

- Computing workstation with sufficient RAM for data handling

Procedure:

- Generate Topographical Maps

- Plot IC scalp distributions using spline interpolation

- Note focal extremes and spatial patterns

- Reference against canonical artifact topographies [36]

Analyze Temporal Characteristics

- Visualize IC time courses across entire recording

- Identify stereotyped waveforms repeating at physiological frequencies

- Detect irregular, high-amplitude transients

Examine Spectral Properties

- Compute power spectral density for each IC

- Identify spectral peaks at characteristic frequencies

- Note broadband versus narrowband properties

Cross-Domain Correlation

- Correlate spatial and temporal features

- Confirm consistency across signature domains

- Make final classification decision

Duration: Approximately 30-45 minutes per dataset depending on recording length and number of components.

Protocol 2: Ocular and Cardiac Artifact Specific Identification

Objective: Specifically identify and remove ocular and cardiac artifacts.

Materials:

- ICA-decomputed EEG data

- Simultaneously recorded EOG/ECG channels (if available)

- EOG/ECG template maps for correlation

Procedure:

- Template Matching

- Correlate IC topographies with canonical ocular artifact templates [35]

- Identify components with highest correlation coefficients (>0.7)

Time-Course Analysis

- Create EOG/ECG epochs synchronized to events (blinks, saccades, heartbeats)

- Average IC activation locked to these events

- Verify consistent temporal relationship

Spectral Validation

- Confirm peak at expected frequency ranges (delta for blinks, heart rate for ECG)

- Check for harmonic patterns in cardiac components

Selective Removal

- Mark identified artifactual components for exclusion

- Preserve components with ambiguous characteristics

- Reconstruct data and verify artifact reduction [35]

Quality Control: Verify artifact reduction by comparing pre- and post- correction data using variance metrics and visual inspection.

The Researcher's Toolkit

Table 3: Essential Tools and Resources for ICA-Based Artifact Removal

| Tool/Resource | Function | Implementation Notes |

|---|---|---|

| MNE-Python | Open-source Python package for EEG/MEG analysis | Provides comprehensive ICA implementation with multiple algorithms (FastICA, Picard, Infomax) [35] |

| EEGLAB | Interactive MATLAB toolbox for EEG processing | Offers extensive IC visualization tools and plugin architecture for component classification [39] |

| FieldTrip | MATLAB toolbox for advanced EEG/MEG analysis | Includes ICA preprocessing and component rejection pipelines [34] |

| ICLabel | Automated IC classifier | Provides probabilistic classification of components into brain, muscle, eye, heart, line noise, channel noise, and other categories [14] |

| ADJUST | Automated artifact detector | Specifically designed for identifying blink, horizontal eye movement, and generic discontinuities in event-related paradigms [40] |

Special Considerations for Challenging Populations

Pediatric and Infant EEG

ICA application in infant EEG presents unique challenges due to limited recording durations and rapid developmental changes in brain activity. Recent evidence suggests that standard ICA approaches may require modification for pediatric populations [40]. The minimum data requirement for effective ICA decomposition follows the formula: $k \cdot N^2 / fs$, where $N$ is the number of channels, $fs$ is the sampling frequency, and $k$ is a multiplier (typically ≥30) [40]. For high-density infant EEG (128 channels, 500 Hz), this translates to approximately 16 minutes of clean data—often challenging to acquire with restless infants.

Comparative studies indicate that while ICA effectively corrects eye-movement artifacts in infant EEG (sensitivity = 0.89), it may distort clean signals more than alternative methods like Artifact Blocking (specificity = 0.72 vs. 0.81 for AB) [40]. Researchers working with pediatric populations should consider these trade-offs when selecting artifact removal strategies.

Mobile EEG and Motion Artifacts

Studies involving movement, such as locomotion or naturalistic behaviors, introduce high-amplitude motion artifacts that challenge conventional ICA. Recent advances in artifact removal algorithms like iCanClean and Artifact Subspace Reconstruction (ASR) show promise for mobile EEG applications [14].

iCanClean leverages reference noise signals and canonical correlation analysis to detect and correct motion artifact subspaces, particularly effective when using dual-layer electrodes [14]. When benchmarked against ASR, iCanClean demonstrated superior performance in recovering dipolar brain components (dipolarity index: 15.3% improvement over ASR) and restoring expected P300 event-related potential patterns during locomotion tasks [14].

Validation and Quality Control

Robust validation of artifact removal efficacy is essential for ensuring data integrity. Recommended quality control measures include:

- Dipolarity Assessment: Calculate the percentage of brain ICs with dipolar scalp distributions (>70% expected in clean data) [14]

- Spectral Validation: Verify reduction of artifact-specific spectral peaks (e.g., >3dB reduction at gait frequency harmonics in locomotion studies) [14]

- Topographical Stability: Ensure microstate topographies remain stable across different ICA preprocessing strategies [41]

- Statistical Power: Confirm that experimental effects maintain or increase statistical power after artifact removal [41]

Quantitative benchmarks should be established a priori and reported in methodology sections to enhance reproducibility and cross-study comparisons.

Systematic identification of artifactual components through their topographical, temporal, and spectral signatures provides a robust foundation for EEG data cleaning. The protocols and frameworks presented in this application note empower researchers to implement standardized, transparent artifact removal procedures that preserve neural signals of interest while effectively mitigating contamination. As ICA methodologies continue to evolve, particularly for challenging recording scenarios and special populations, the multi-dimensional signature approach offers a flexible yet principled framework for adapting to new developments in the field.