Hybrid Methods for EEG Artifact Removal: A Comparative Analysis for Enhanced Biomedical Research

This article provides a comprehensive comparative analysis of hybrid artifact removal methods for electroencephalography (EEG) signals, a critical preprocessing step for researchers and drug development professionals utilizing EEG data.

Hybrid Methods for EEG Artifact Removal: A Comparative Analysis for Enhanced Biomedical Research

Abstract

This article provides a comprehensive comparative analysis of hybrid artifact removal methods for electroencephalography (EEG) signals, a critical preprocessing step for researchers and drug development professionals utilizing EEG data. It explores the foundational need for these methods over single-technique approaches, details specific hybrid methodologies and their applications across clinical and research settings, addresses key implementation challenges and optimization strategies, and presents a rigorous validation framework for comparing method performance. By synthesizing the latest research, this review serves as a guide for selecting and optimizing artifact removal pipelines to improve the reliability of neural data in studies ranging from neuromarketing to neuroprosthetics and clinical diagnostics.

Why Hybrid Methods? Overcoming the Limits of Single-Technique Artifact Removal

Electroencephalography (EEG) provides unparalleled temporal resolution for monitoring brain activity, making it invaluable for both clinical diagnostics and neuroscience research. However, the utility of EEG is fundamentally constrained by a pervasive challenge: artifacts—unwanted signals originating from non-neural sources—that contaminate the recordings. The removal of these artifacts is particularly complicated not merely by their presence, but by their significant spectral and spatial overlap with genuine neural signals [1]. Physiological artifacts such as ocular movements (EOG), muscle activity (EMG), and cardiac rhythms (ECG) possess frequency profiles that seamlessly blend with the standard EEG bands of interest, from delta (<4 Hz) to beta (13-30 Hz) [1]. For instance, eye blinks manifest in the low-frequency delta range, while muscle tension produces high-frequency noise that encroaches on the beta band. This spectral entanglement means simple frequency-based filtering is often ineffective, as it would remove crucial neural information alongside the artifacts [2].

Simultaneously, the spatial distribution of these artifacts across the scalp further complicates their isolation. Due to the phenomenon of volume conduction, artifacts from a localized source (like the eyes or neck muscles) propagate widely through the conductive tissues of the head, affecting multiple EEG channels [1] [3]. This spatial overlap means that the same electrode will capture a mixture of cerebral activity and artifactual signals, making it difficult to distinguish brain-derived components. Consequently, artifact removal in EEG analysis is not a simple preprocessing step but a central, unresolved problem that demands sophisticated solutions to untangle this complex spatio-spectral interplay without compromising the integrity of the underlying neural data.

A Comparative Framework for Artifact Removal Methodologies

A diverse array of computational techniques has been developed to address the challenge of EEG artifact removal. These methods can be broadly categorized into traditional approaches, modern deep learning models, and hybrid systems that combine multiple strategies. The following sections provide a detailed comparison of these methodologies, supported by experimental data and protocols.

Traditional and Hybrid Methods

Traditional approaches often rely on signal decomposition and statistical analysis to separate neural activity from artifacts. Independent Component Analysis (ICA) is a widely used blind source separation technique that decomposes multi-channel EEG data into statistically independent components, allowing for the manual or semi-automatic identification and removal of artifactual sources [1] [4]. Empirical Mode Decomposition (EMD) is an adaptive, data-driven method that decomposes non-stationary signals into intrinsic mode functions (IMFs), which can then be filtered or thresholded to remove noise [5]. Wavelet Transform provides a multi-resolution analysis of signals in both time and frequency domains, enabling the selective filtering of artifactual components across different frequency bands [5] [6].

More recently, hybrid methods that combine the strengths of multiple traditional approaches have shown improved performance. For example, the Automatic Wavelet Common Component Rejection (AWCCR) method first employs wavelet decomposition to break down EEG signals into frequency sub-bands, then identifies artifactual components using kurtosis and entropy measures, and finally applies Common Component Rejection (CCR) to remove artifacts shared across channels [6] [3]. Another hybrid approach, EMD-DFA-WPD, combines Empirical Mode Decomposition with Detrended Fluctuation Analysis (DFA) for mode selection, followed by Wavelet Packet Decomposition (WPD) for signal extraction [5].

Table 1: Performance Comparison of Traditional and Hybrid Artifact Removal Methods

| Method | Artifact Types | Key Metrics | Performance Results | Experimental Protocol |

|---|---|---|---|---|

| AWCCR [6] [3] | White noise, eye blink, muscle, electrical shift | NRMSE, PCC | Outperformed ICA and AWICA on semi-simulated data; ~10% increase in motor imagery classification accuracy | Resting-state EEG contaminated with simulated artifacts; real motor imagery EEG |

| EMD-DFA-WPD [5] | Ocular and muscle artifacts | SNR, MAE, Classification Accuracy | SNR: Improved; MAE: Lower; RF/SVM Accuracy: 98.51%/98.10% | Real EEG from depression patients; comparison of classifiers with/without denoising |

| Fingerprint + ARCI + SPHARA [4] | Dry EEG artifacts from movement | SD (μV), SNR (dB) | SD: 6.72 μV (from 9.76); SNR: 4.08 dB (from 2.31) | Dry EEG during motor execution paradigm; combined spatial and temporal denoising |

Deep Learning Approaches

The field of EEG artifact removal has been transformed by the emergence of deep learning techniques, which can learn complex, non-linear mappings from artifact-contaminated to clean EEG signals without requiring manual feature engineering. Convolutional Neural Networks (CNNs) excel at extracting spatial and morphological features from multi-channel EEG data [2] [7]. Long Short-Term Memory (LSTM) networks are particularly effective for modeling temporal dependencies in EEG time-series data [2] [8]. Generative Adversarial Networks (GANs) employ a dual-network architecture where a generator creates denoised signals while a discriminator learns to distinguish them from genuine clean EEG [8].

More sophisticated architectures combine these elements. CLEnet integrates dual-scale CNNs with LSTM layers and an improved EMA-1D (One-Dimensional Efficient Multi-Scale Attention) mechanism to simultaneously capture morphological features and temporal dependencies [2]. AnEEG incorporates LSTM layers within a GAN framework to leverage both temporal modeling and adversarial training [8]. Another approach, M4, based on State Space Models (SSMs), has shown particular effectiveness for removing complex artifacts such as those induced by transcranial Electrical Stimulation (tES) [7].

Table 2: Performance Comparison of Deep Learning Artifact Removal Methods

| Method | Network Architecture | Artifact Types | Key Results | Experimental Dataset |

|---|---|---|---|---|

| CLEnet [2] | Dual-scale CNN + LSTM + EMA-1D | EMG, EOG, ECG, unknown | Mixed artifacts: SNR=11.50dB, CC=0.93; ECG: RRMSEt reduced by 8.08% | EEGdenoiseNet, MIT-BIH, real 32-channel EEG |

| AnEEG [8] | LSTM-based GAN | Ocular, muscle, environmental | Lower NMSE/RMSE, higher CC, improved SNR and SAR vs. wavelet methods | Multiple public EEG datasets |

| M4 (SSM) [7] | State Space Models | tES artifacts (tDCS, tACS, tRNS) | Best for tACS/tRNS; superior RRMSE and CC metrics | Synthetic datasets (clean EEG + tES artifacts) |

| EEGDNet [7] | Transformer-based | EOG, EMG | Excels at EOG removal; less effective on other artifacts | Semi-synthetic datasets with EOG/EMG |

Comparative Analysis of Methodologies

When comparing these diverse approaches, distinct patterns emerge regarding their strengths and limitations. Traditional methods like ICA and wavelet transforms offer interpretability and have established theoretical foundations, but often require manual intervention or prior knowledge about artifact characteristics [1]. Hybrid methods such as AWCCR and EMD-DFA-WPD demonstrate improved performance by addressing the limitations of individual techniques, showing particularly strong results for specific artifact types and in applications like motor imagery classification [5] [3].

Deep learning approaches generally achieve state-of-the-art performance, especially for complex artifacts and in scenarios with unknown noise characteristics [2] [8]. Their advantages include end-to-end processing without need for manual component selection, and adaptability to various artifact types through training. However, they typically require large amounts of training data and computational resources, and their "black box" nature can limit interpretability.

The choice of optimal method depends heavily on the specific research context: the types of artifacts present, the available computational resources, the need for interpretability versus performance, and whether the application requires real-time processing. For clinical applications where interpretability is crucial, hybrid methods may be preferred, while for BCI applications requiring high accuracy, deep learning approaches might be more suitable.

Experimental Protocols and Benchmarking

Robust evaluation of artifact removal methods requires standardized experimental protocols and benchmarking frameworks. Researchers have developed both semi-synthetic and real-world experimental paradigms to quantitatively assess performance across methodologies.

Semi-Synthetic Data Generation

The semi-synthetic approach involves adding simulated artifacts to clean EEG recordings, enabling precise quantification of removal performance since the ground truth is known [2] [3]. Typical protocols involve:

- Artifact Simulation: Generating realistic artifact waveforms for ocular blinks (slow, monophasic waves), muscle activity (high-frequency, random patterns), cardiac interference (pulse-like waveforms), and electrical shifts [3].

- Mixing Procedure: Combining clean EEG with simulated artifacts at controlled signal-to-noise ratios (SNRs) using linear addition:

EEG_contaminated = EEG_clean + γ * Artifact, where γ controls contamination level [3]. - Performance Metrics: Calculating quantitative measures including:

- Signal-to-Noise Ratio (SNR): Measures the relative power of signal versus noise [5] [2]

- Correlation Coefficient (CC): Quantifies the linear similarity between cleaned and ground truth EEG [2]

- Root Mean Square Error (RMSE): Assesses the magnitude of differences between cleaned and original signals [2] [8]

- Relative RMSE (RRMSE): Normalized RMSE in temporal or spectral domains [2] [7]

Real-World Experimental Paradigms

Real-world validation employs task-based EEG recordings where neural responses are well-characterized, enabling assessment of how artifact removal preserves biological signals of interest:

- Motor Execution/Imagery Paradigms: Participants perform or imagine specific movements (e.g., hand, feet, tongue) while EEG is recorded [4]. The quality of artifact removal is assessed by measuring the improvement in task classification accuracy or the preservation of expected event-related desynchronization/synchronization in sensorimotor rhythms [3].

- Resting-State Studies: EEG is recorded during eyes-open and eyes-closed conditions, with artifact removal performance evaluated by the enhancement of expected physiological patterns (e.g., posterior alpha power increase during eyes-closed) [9].

- Dry EEG Validation: Specifically for dry electrode systems, protocols involve recording during body movements with performance benchmarking against gel-based systems or assessment of signal quality metrics (standard deviation, SNR) after processing [4].

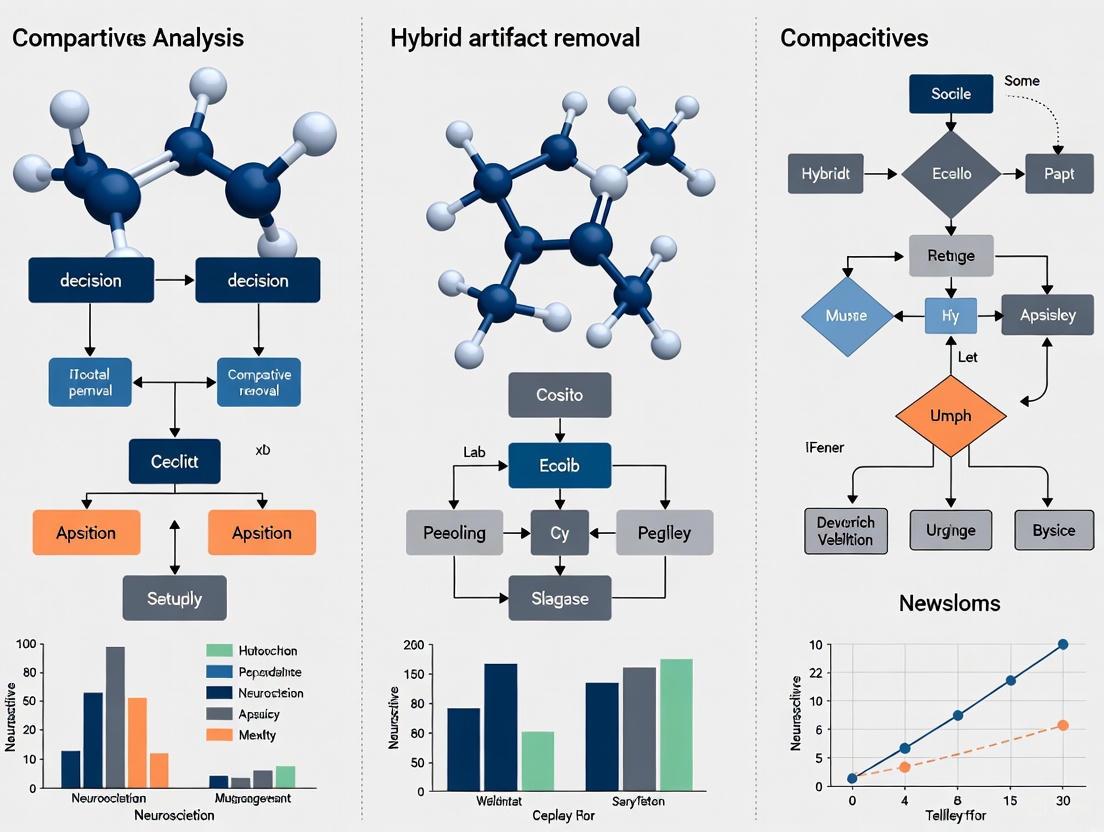

Visualization of Method Workflows

Understanding the workflow of hybrid artifact removal methods is crucial for their implementation and optimization. The following diagram illustrates the typical processing pipeline for advanced artifact removal systems:

This workflow demonstrates the multi-stage approach of hybrid methods, where signals undergo sequential processing in temporal, spectral, and spatial domains to effectively separate artifacts from neural activity while preserving the integrity of the underlying brain signals.

Implementing effective EEG artifact removal requires both computational tools and experimental resources. The following table details key solutions and their functions in artifact removal research:

Table 3: Essential Research Resources for EEG Artifact Removal Studies

| Resource Category | Specific Examples | Function in Research | Key Characteristics |

|---|---|---|---|

| Reference Datasets | EEGdenoiseNet [2], PhysioNet Motor Imagery [8] | Algorithm training/benchmarking | Semi-synthetic mixtures; known ground truth |

| Software Toolboxes | EEGLAB [1], MNE-Python | Implementation of ICA, wavelet methods | Open-source; standardized implementations |

| Deep Learning Frameworks | TensorFlow, PyTorch [2] [8] | Custom model development | Flexible architectures for novel methods |

| Specialized Hardware | Carbon-Wire Loops (CWL) [10], Dry EEG systems [4] | Reference artifact recording; mobile applications | MR-compatible; motion artifact characterization |

The challenge of EEG artifacts, rooted in their fundamental spectral and spatial overlap with neural signals, continues to drive innovation in signal processing methodology. Our comparative analysis reveals that while traditional methods provide interpretability and established performance, hybrid approaches that combine multiple processing strategies typically achieve superior results by addressing the multi-faceted nature of artifacts. Meanwhile, deep learning methods represent the emerging frontier, offering state-of-the-art performance particularly for complex artifacts and unknown noise sources, though at the cost of interpretability and computational demands.

The future of EEG artifact removal will likely involve several key developments: increased use of domain adaptation techniques to improve model generalization across different recording conditions; development of more interpretable deep learning models that maintain performance while providing insights into their decision processes; and creation of standardized benchmarking frameworks that enable more rigorous comparison across studies. As EEG technology continues to evolve toward dry electrode systems and mobile applications, artifact removal methods must similarly advance to address the unique challenges posed by these emerging recording modalities. Through continued methodological innovation and rigorous comparative validation, the field will overcome the inherent challenges of artifact contamination, unlocking the full potential of EEG for understanding brain function and dysfunction.

Electroencephalography (EEG) provides a non-invasive window into brain dynamics with millisecond temporal resolution, serving as a vital tool in neuroscience research, clinical diagnosis, and brain-computer interface (BCI) development [11]. However, the EEG signal, typically measured in microvolts (µV), is exceptionally vulnerable to contamination from various sources of noise collectively known as artifacts [12]. These unwanted signals can originate from physiological processes such as eye movements (EOG), muscle activity (EMG), and cardiac activity (ECG), as well as from non-physiological sources like electrode interference and power line noise [12] [13]. The presence of artifacts can obscure genuine neural activity, reduce the signal-to-noise ratio (SNR), and potentially lead to misinterpretation of data—a critical concern in clinical settings where artifacts might be mistaken for epileptiform activity [12].

Addressing these contaminants is therefore a fundamental prerequisite for reliable EEG analysis. For decades, researchers have relied on standalone classical methods—primarily regression, Blind Source Separation (BSS), and filtering—to tackle this problem. While these techniques have formed the bedrock of EEG preprocessing, they each carry inherent limitations that restrict their effectiveness, particularly as EEG applications expand into more complex and real-world scenarios. This article provides a comparative analysis of these limitations, framing them within the broader research context that is increasingly turning to hybrid methods for more robust solutions [11].

The table below summarizes the core principles and fundamental limitations of the three primary categories of standalone artifact removal methods.

Table 1: Comparison of Standalone EEG Artifact Removal Methods

| Method | Core Principle | Key Advantages | Fundamental Limitations |

|---|---|---|---|

| Regression | Estimates and subtracts artifact contribution using reference channels (e.g., EOG, ECG) [13]. | Simple, computationally efficient, intuitive model [13] [11]. | Requires additional reference channels; assumes constant propagation; risks over-correction and neural signal loss [11]. |

| Blind Source Separation (BSS) | Separates recorded signals into statistically independent components (e.g., ICA) [13]. | Reference-free; effective for separating temporally independent sources like EMG [12] [13]. | Struggles with non-stationary or complex artifacts (e.g., muscle); component classification is challenging and often manual [11]. |

| Filtering | Removes signal components in specific frequency bands (e.g., 50/60 Hz line noise) [13]. | Highly effective for removing narrowband, non-physiological noise [13]. | Ineffective for artifacts with overlapping frequency content (e.g., EMG vs. Gamma waves); can distort waveform morphology [12]. |

The following diagram illustrates the common challenges and decision points that lead to the failure of these standalone methods when processing a contaminated EEG signal.

Common Failure Pathways of Standalone Methods

Quantitative Performance Benchmarks

The theoretical limitations of these methods are borne out in practical performance. The following table synthesizes quantitative data from experimental benchmarks, illustrating how different methods perform in terms of key metrics like Signal-to-Noise Ratio (SNR) improvement and accuracy when tackling specific artifacts.

Table 2: Experimental Performance Benchmarks of Various Artifact Removal Methods

| Method Category | Specific Method | Artifact Target | Key Performance Metric | Reported Result | Experimental Context |

|---|---|---|---|---|---|

| Hybrid Deep Learning | CNN-LSTM (with EMG reference) | Muscle Artifacts (Jaw Clenching) | SNR Increase (vs. raw EEG) | ~4-5 dB Improvement [14] | SSVEP preservation in 24 subjects [14] |

| Hybrid Deep Learning | Artifact Removal Transformer (ART) | Multiple Artifacts | Signal Reconstruction (MSE) | Outperformed other DL models [15] | Validation on open BCI datasets [15] |

| Hybrid Model | BiGRU-FCN + Multi-scale STD | Motion Artifacts in BCG | Classification Accuracy | 98.61% [16] | Sleep monitoring in 10 patients [16] |

| Standalone BSS | Independent Component Analysis (ICA) | Muscle Artifacts | Component Classification | Often requires manual intervention [11] | Widespread real-world use [13] [11] |

| Standalone Regression | Linear Regression (with EOG) | Ocular Artifacts | Risk of Neural Signal Loss | High (Over-correction) [11] | Widespread real-world use [13] [11] |

Detailed Experimental Protocols and Data

To understand the quantitative benchmarks, it is essential to consider the experimental methodologies from which they were derived. The following protocols from key studies highlight the rigorous approaches used to evaluate artifact removal techniques.

Protocol 1: Evaluating a CNN-LSTM Hybrid for Muscle Artifact Removal

A 2025 study introduced a hybrid CNN-LSTM model specifically designed to remove muscle artifacts while preserving Steady-State Visual Evoked Potentials (SSVEPs), a critical brain response for many BCI applications [14].

- Data Acquisition: EEG and EMG data were simultaneously recorded from 24 participants. To create a controlled artifact, subjects performed strong jaw clenching while being presented with an LED stimulus designed to elicit SSVEPs [14].

- Model Architecture & Training: The hybrid model used Convolutional Neural Networks (CNN) to extract spatial features from the signals, followed by Long Short-Term Memory (LSTM) layers to model temporal dependencies. A key innovation was the use of additional facial and neck EMG recordings as inputs to guide the denoising process. The model was trained on an augmented dataset generated from the raw recordings to ensure robustness [14].

- Evaluation Metric: The study used an increase in the Signal-to-Noise Ratio of the SSVEP response as the primary metric for success. This directly measured the method's ability to remove noise (muscle artifacts) while preserving the signal of interest (SSVEP) [14].

- Comparative Analysis: The performance of the CNN-LSTM model was benchmarked against standalone methods, including Independent Component Analysis and linear regression, demonstrating superior artifact removal and signal preservation [14].

Protocol 2: Benchmarking a Transformer Model on Multichannel EEG

Another 2025 study developed the Artifact Removal Transformer (ART), an end-to-end model for denoising multichannel EEG data [15].

- Data Preparation and Training: Since obtaining perfectly clean real-world EEG is impossible, the researchers used a clever workaround. They applied Independent Component Analysis to real EEG data and then reconstructed "pseudo-clean" signals by removing components identified as artifacts. These pseudo-clean signals were then used as ground truth to train the transformer model in a supervised learning framework [15].

- Model Architecture: The model leveraged a transformer architecture, which is exceptionally good at capturing long-range dependencies and transient dynamics in time-series data, making it well-suited for the millisecond-scale features of EEG [15].

- Validation Strategy: The model was rigorously validated on a wide range of open BCI datasets. Performance was assessed using standard metrics like Mean Squared Error and SNR, as well as through more sophisticated techniques like source localization and classification of EEG components, confirming its effectiveness in restoring neural information [15].

The workflow for such an experimental benchmark, from data preparation to model evaluation, is summarized below.

EEG Artifact Removal Evaluation Workflow

The Scientist's Toolkit: Key Research Reagents and Solutions

The advancement of artifact removal methods relies on a suite of computational tools and data resources. The following table details essential "research reagents" commonly used in this field.

Table 3: Essential Research Tools for EEG Artifact Removal Research

| Tool Name | Type/Category | Primary Function in Research | Example Use Case |

|---|---|---|---|

| Independent Component Analysis (ICA) | Software Algorithm (BSS) | Blind separation of multi-channel EEG into independent source components for manual or automatic artifact rejection [13] [11]. | Decomposing EEG to identify and remove components correlated with eye blinks or muscle noise [12] [13]. |

| ICLabel | Software Classifier | Automated deep learning-based classifier for ICA components; labels components as brain, eye, muscle, heart, etc. [14]. | Reducing manual workload and subjectivity in classifying ICA components after BSS decomposition [14]. |

| OMol25-Trained Neural Network Potentials (NNPs) | Pre-trained AI Model | Predicts molecular energy and properties; used in methodological development and benchmarking of computational approaches [17]. | Serving as a benchmark for comparing the accuracy of low-cost computational methods like DFT in predicting charge-related properties [17]. |

| PyTorch / TensorFlow | Deep Learning Framework | Provides the programming environment for building, training, and testing complex neural network models like CNN-LSTM and Transformers [16]. | Implementing a custom BiGRU-FCN hybrid model for motion artifact detection in biomedical signals [16]. |

| Simulated & Augmented Data | Data Resource | Artificially generated or modified data used to create large, diverse training sets where the "ground truth" clean signal is known [14] [15]. | Training an Artifact Removal Transformer (ART) using pseudo-clean data generated via ICA [15]. |

The limitations of standalone artifact removal methods—regression, BSS, and filtering—are fundamental and stem from their core assumptions, which are often violated by the complex, non-stationary, and overlapping nature of artifacts and neural signals in real-world EEG data [12] [11]. While these classical methods remain useful for specific, well-defined problems, the quantitative benchmarks and experimental protocols detailed in this guide clearly demonstrate their inadequacy for advanced applications requiring high-fidelity signal recovery.

The field is now decisively shifting toward hybrid and deep learning approaches [14] [16] [15]. By integrating multiple techniques or leveraging the powerful pattern recognition capabilities of neural networks, these next-generation methods overcome the siloed limitations of their predecessors. They offer a more holistic, adaptive, and effective solution for purifying the brain's electrical signals, thereby unlocking greater reliability for EEG in clinical diagnostics, cognitive neuroscience, and high-performance Brain-Computer Interfaces.

In the face of growing demands for precision and efficiency across scientific and industrial fields, a powerful paradigm is emerging: the strategic combination of different methodological approaches. Hybrid methods, which integrate disparate techniques—often marrying mechanistic models with data-driven algorithms or coupling physical sensors with computational analytics—are demonstrating profound advantages over traditional, singular approaches. This comparative analysis examines the performance of these hybrid methods against conventional alternatives in the critical, interconnected domains of separation and preservation. The objective evidence, gathered from fields ranging from chemical engineering to biomedical signal processing, consistently reveals that hybrid frameworks achieve superior outcomes in accuracy, efficiency, and resource utilization, establishing a new standard for research and development.

Performance Comparison: Hybrid Methods vs. Conventional Alternatives

The following tables summarize quantitative performance data from experimental studies across different application domains, demonstrating the measurable advantages of hybrid methodologies.

Table 1: Performance in Chemical Separation Processes

This table compares a hybrid modelling approach for chemical separations against traditional evaporation and standalone nanofiltration, based on data from industrial-scale simulations [18].

| Separation Technology | Average Energy Reduction | Average CO₂ Emissions Reduction | Key Performance Metric (Rejection) |

|---|---|---|---|

| Hybrid Nanofiltration Modelling | 40% (vs. evaporation) | 40% (vs. evaporation) | Predictive accuracy (R²) of 0.89 for solute rejection [18] |

| Standalone Evaporation | Baseline | Baseline | Not Applicable |

| Standalone Nanofiltration | Variable (0-36%) | Variable | Rejection threshold >0.6 required to outperform evaporation [18] |

| Hybrid Chromatographic Modelling | Computational cost reduced by 97% [19] | Not Reported | Accurately captures nonlinear dynamics for process optimization [19] |

Table 2: Performance in Signal Preservation (Artifact Removal)

This table compares the effectiveness of a hybrid CNN-LSTM approach for removing muscle artifacts from EEG signals against other common signal processing techniques [14].

| Artifact Removal Method | Primary Mechanism | Performance in Preserving SSVEP Signals | Key Limitations |

|---|---|---|---|

| Hybrid CNN-LSTM (with EMG) | Deep Learning + Auxiliary Signal | Excellent performance; effectively removes artifacts while retaining useful components [14] | Requires additional EMG data recording [14] |

| Independent Component Analysis (ICA) | Blind Source Separation | Effective for multichannel data | Limited effectiveness with low-density or single-channel wearable EEG [20] |

| Linear Regression | Reference Channel Modeling | Effective but requires a simultaneous reference channel [14] | Performance depends on quality of reference signal [14] |

| FF-EWT + GMETV Filter | Wavelet Transform + Filtering | High performance on single-channel EEG; validated on synthetic & real data [21] | Designed specifically for EOG (eye-blink) artifacts [21] |

Experimental Protocols and Methodologies

The superior performance of hybrid methods is validated through rigorous, domain-specific experimental protocols. The detailed methodologies below illustrate how these experiments are conducted and how the comparative data is generated.

Protocol for Chemical Separation Technology Selection

This protocol, used to generate the data in [18], provides a framework for selecting the most efficient separation technology for a given application.

- Objective: To holistically compare the energy consumption and emissions of chemical separation technologies (nanofiltration, evaporation, extraction, and hybrid configurations) for a specific solute-solvent mixture.

- Hybrid Model Workflow:

- Data Compilation: The NF-10K dataset, containing 9,921 nanofiltration measurements for 1,089 small organic solutes, is used as the foundation [18].

- Machine Learning Prediction: A Message-Passing Graph Neural Network (GNN) is trained on the NF-10K dataset. The model takes inputs of molecular structure, solvent, membrane, and process parameters to predict a key performance indicator: solute rejection (R) [18].

- Techno-Economic and Environmental Modeling: The predicted solute rejection and solvent permeance are fed into mechanistic, first-principle models. These models calculate the energy demand and carbon dioxide equivalent emissions for a specific separation task (e.g., concentrating a solution from 1% to 95%) [18].

- Comparison and Thresholding: The energy and emissions of nanofiltration and hybrid systems are compared against baseline evaporation (with varying levels of heat integration) and liquid-liquid extraction. The analysis establishes clear threshold parameters (e.g., a rejection value of 0.6) to guide technology selection [18].

- Outcome: The model identifies the optimal technology, achieving an average of 40% reduction in energy and emissions, with pharmaceutical purification seeing reductions up to 90% [18].

Protocol for EEG Signal Preservation with a Hybrid Deep Learning Approach

This protocol, detailed in [14], outlines the steps for removing muscle artifacts to preserve neurologically relevant signals.

- Objective: To remove muscle artifacts from electroencephalography (EEG) signals while preserving critical brain responses, such as Steady-State Visual Evoked Potentials (SSVEPs).

- Experimental Setup:

- Data Collection: EEG signals and simultaneous Electromyography (EMG) signals from facial and neck muscles are recorded from 24 participants.

- Stimulation and Artifact Induction: Participants are presented with a visual stimulus (a flickering LED) to elicit SSVEPs in the brain. Simultaneously, they perform strong jaw clenching to induce significant muscle artifacts that contaminate the EEG signal [14].

- Hybrid CNN-LSTM Workflow:

- Data Augmentation: An augmented dataset of EEG and EMG recordings is generated to create a diverse training set for the neural network.

- Model Architecture: A hybrid neural network is constructed.

- The Convolutional Neural Network (CNN) component extracts spatial features from the input data.

- The Long Short-Term Memory (LSTM) component models the temporal dependencies in the signal.

- Training: The model is trained to learn the complex, non-linear relationship between the recorded EMG (artifact source) and the corresponding muscle artifacts in the EEG signal.

- Artifact Removal and Evaluation: The trained model processes contaminated EEG signals, subtracting the predicted artifact component. The cleaned signal is evaluated in both time and frequency domains, with the Signal-to-Noise Ratio (SNR) of the SSVEP response used as a key quantitative metric for preservation quality [14].

Research Workflow and Signaling Pathways

The following diagrams illustrate the logical workflows of the two key hybrid methods analyzed, highlighting the synergistic flow of information and processes.

Diagram 1: Workflow for Hybrid Chemical Separation Modelling

Diagram 2: Workflow for Hybrid EEG Artifact Removal

The Scientist's Toolkit: Key Research Reagent Solutions

The development and implementation of advanced hybrid methods rely on a suite of essential reagents, software, and hardware. This table details critical components used in the featured experiments.

Table 3: Essential Materials for Hybrid Method Development

| Item Name | Function / Role in the Workflow | Specific Example from Research |

|---|---|---|

| Graph Neural Networks (GNNs) | Data-driven model for predicting complex system parameters (e.g., solute rejection) from molecular structures and process conditions [18]. | Used to predict 7.1 million solute rejections for chemical separation technology selection [18]. |

| Message-Passing Neural Network | A type of GNN that operates on graph-structured data, allowing atoms and bonds in a molecule to exchange information [18]. | The specific architecture used to achieve an R² of 0.89 for solute rejection prediction [18]. |

| Hybrid CNN-LSTM Architecture | A deep learning model that combines spatial feature extraction (CNN) with time-series analysis (LSTM) for processing sequential data like signals [14]. | The core model for removing muscle artifacts from EEG signals while preserving SSVEPs [14]. |

| Auxiliary EMG Sensors | Hardware components that provide a reference signal for artifact sources, enabling supervised learning for signal cleaning [14]. | Simultaneously recorded with EEG to provide a precise noise reference for the CNN-LSTM model [14]. |

| Fixed Frequency EWT (FF-EWT) | A signal processing technique that adaptively decomposes a signal into components for targeted analysis and manipulation [21]. | Used to decompose single-channel EEG signals to isolate and remove EOG artifacts [21]. |

| Stable-Isotope Standards (SIS) | Labeled peptide internal standards used in hybrid LC/MS assays for highly accurate and sensitive protein quantification [22]. | Critical for the precise quantitative measurement of low-abundance proteins like CTLA-4 in T cells [22]. |

| Anti-Peptide Antibodies | Antibodies used in the SISCAPA method to immunocapture specific surrogate peptides from a complex digest, enhancing assay sensitivity [22]. | Used to selectively extract the target peptide for CTLA-4 measurement prior to LC/MS analysis [22]. |

The extraction of meaningful information from complex, multi-source data is a fundamental challenge across scientific disciplines, from astrophysics to biomedical engineering. Traditional signal processing techniques, such as Blind Source Separation (BSS), provide a foundational framework for this task by enabling the recovery of original source signals from observed mixtures without prior knowledge of the mixing process or the sources themselves [23]. Conventional BSS methods, including Independent Component Analysis (ICA) and Non-negative Matrix Factorization (NMF), rely on statistical assumptions like independence or non-negativity to achieve separation [24]. However, these methods often struggle with real-world data characterized by dynamic dependencies, non-stationary behavior, and high noise levels [24].

The integration of machine learning (ML), particularly deep learning, with classical BSS and decomposition algorithms has given rise to a powerful hybrid paradigm. These approaches combine the theoretical guarantees and interpretability of traditional signal processing with the adaptability and representational power of learned models [25]. This comparative analysis examines the performance of these hybrid artifact removal methods, evaluating them against classical techniques and providing a detailed examination of their experimental protocols, performance metrics, and applicability in research settings.

Theoretical Foundations of Hybrid BSS Methods

Classical BSS and Its Limitations

Blind Source Separation aims to retrieve a set of N independent source signals, denoted as s, from M observed mixed signals, x, where x = As, and A is an unknown mixing matrix [23]. The "blind" nature of the problem signifies a lack of prior knowledge about both the sources and the mixing process. Established algorithms include:

- Independent Component Analysis (ICA): Separates sources by maximizing their statistical independence, typically using higher-order statistics [23] [24].

- FastICA: A computationally efficient implementation of ICA [26] [27].

- Non-negative Matrix Factorization (NMF): Factorizes a non-negative data matrix into two non-negative matrices, useful for parts-based representation [24].

- Joint Approximate Diagonalization of Eigenmatrices (JADE): Utilizes the eigenmatrices of cumulant tensors for separation [26].

A significant limitation of these classical methods is their reliance on fixed assumptions (e.g., statistical independence, non-negativity) which may not hold in complex, real-world scenarios involving dynamic coupling or non-stationary signals [24]. Furthermore, they often lack adaptability to specific data types and can be sensitive to noise.

The Machine Learning Enhancement

Machine learning, particularly deep learning, enhances BSS by learning data-driven representations from the data itself. This eliminates the need for rigid a priori assumptions and allows models to adapt to the inherent structure of the signals [28] [25]. Key concepts include:

- Learned Sparse Representations: Replacing fixed wavelet dictionaries with learned, wavelet-like transforms (e.g., Learnlets) that can better capture the morphological diversity of sources [25].

- End-to-End Learning: Using deep neural networks, such as Convolutional Neural Networks (CNNs), to directly model the separation process, often leading to superior performance in noisy conditions [27].

- Hybrid Architectures: Combining optimization-based BSS loops with learned components, thereby preserving interpretability while gaining the expressiveness of deep learning [25].

Comparative Performance Analysis of Hybrid Methods

The following tables summarize the performance of various hybrid BSS methods compared to classical and pure ML alternatives, based on experimental data from the literature.

Table 1: Comparative Performance of BSS Algorithms on Signal Separation Tasks

| Algorithm | Type | Key Principle | Reported Performance Metric | Result | Computational Complexity |

|---|---|---|---|---|---|

| LCS (Learnlet Component Separator) [25] | Hybrid (Sparsity + DL) | Learnlet transform for sparse representation | Average gain in Signal-to-Noise Ratio (SNR) | ~5 dB gain over state-of-the-art | Moderate (requires training) |

| MODMAX [26] | Classical-Hybrid | Maximizes expected modulus for constant-envelope signals | Bit Error Rate (BER) & MAE | BER < 10⁻⁴ at 12 dB SNR; MAE: 4.27% | Low (hardware-friendly) |

| Time-Delayed DMD [24] | Decomposition-based | Dynamic Mode Decomposition with time-delay embedding | Superior separation of dynamic/non-stationary signals | Qualitative and quantitative superiority over ICA | Moderate |

| Improved FastICA [27] | Classical (Enhanced) | Enhanced whitening process for stability | Mean Absolute Error (MAE) | MAE: 4.27% (vs. 14.58% for original) | Low |

| c-FastICA/nc-FastICA [26] | Classical | Maximizes negentropy/kurtosis | Separation Performance & Convergence | Poor performance with non-circular complex signals (c-FastICA) | Low |

| JADE [26] | Classical | Diagonalizes high-order cumulant matrix | Separation Performance & Convergence | Good performance, limited by high computational cost | High |

Table 2: Application-Based Suitability of BSS Methodologies

| Application Domain | Exemplar Method | Key Advantage | Experimental Validation |

|---|---|---|---|

| Astrophysical Image Separation (CMB, Foregrounds) [25] | LCS | Adapts to complex, non-Gaussian signal morphologies | Superior reconstruction of CMB and SZ effects vs. GMCA |

| Communication Signals [26] | MODMAX | High efficiency and robustness for constant-envelope modulations (GMSK, OQPSK) | Achieves near-ideal BER with significantly lower complexity |

| Biomedical Signal Analysis (EEG) [24] | Time-Delayed DMD | Effectively handles non-stationary signals and dynamic coupling | Validated on EEG artifact removal |

| Unmanned Aerial Vehicle (UAV) Classification [27] | HCCSANet (CNN with Attention) | High recognition accuracy from radar cross-section (RCS) data | 96.30% accuracy in classifying 8 UAV types |

| General-Purpose Audio/Image Separation [24] | Traditional ICA (FastICA) | Simplicity and speed for well-defined, independent sources | Baseline performance, struggles with complex dependencies |

Detailed Experimental Protocols and Methodologies

The LCS Framework for Astrophysical Component Separation

The Learnlet Component Separator (LCS) is a novel framework that embeds a learned sparse representation into a classical BSS iterative process [25].

- Core Innovation: Replaces fixed wavelet dictionaries (e.g., Starlet) in algorithms like the Generalised Morphological Component Analysis (GMCA) with a Learnlet transform. This is a structured Convolutional Neural Network (CNN) designed to emulate a wavelet-like, multiscale decomposition but with filters learned from data [25].

- Workflow:

- Input: Multi-channel observational data.

- Iterative Estimation: The algorithm alternates between:

- Sparse Coding: Representing the current source estimates in the Learnlet domain.

- Thresholding: Applying a shrinkage operator to promote sparsity.

- Mixing Matrix Update: Re-estimating the mixing matrix based on the refined sources.

- Output: Separated source components and the estimated mixing matrix.

- Validation: Tested on synthetic data with structured scenes (e.g., airplane, boat) and real astrophysical data, including X-ray supernova remnants and Cosmic Microwave Background (CMB) extraction. Performance was measured via Signal-to-Noise Ratio (SNR) gain over methods like GMCA [25].

The following diagram illustrates the core workflow of the LCS algorithm:

The MODMAX Algorithm for Communication Signals

The MODMAX algorithm addresses the need for low-complexity, high-performance BSS in communication systems [26].

- Core Innovation: Exploits the constant-envelope property of many digital communication signals (e.g., GMSK, OQPSK). It minimizes the variance of the estimated signals' envelopes using a complex Newton's method under a unitary constraint for numerical stability [26].

- Experimental Protocol:

- Signal Generation: Source signals are generated using constant-envelope modulation schemes.

- Mixing: Signals are instantaneously mixed via a complex-valued matrix.

- Separation: MODMAX is compared against c-FastICA, nc-FastICA, and JADE.

- Evaluation Metrics:

- Separation Performance: Measured by the quality of the recovered constellation diagram.

- Bit Error Rate (BER): Calculated after demodulation.

- Computational Complexity: Counted as the number of multiplications required.

- Key Findings: MODMAX achieved a BER of less than 10⁻⁴ at an SNR of 12 dB, matching or exceeding the performance of more complex algorithms while requiring fewer computational operations, making it suitable for hardware implementation [26].

Time-Delayed Dynamic Mode Decomposition (DMD) for Dynamic Signals

This approach extends the Dynamic Mode Decomposition (DMD) method to handle BSS for signals with strong temporal dynamics, where traditional ICA fails [24].

- Core Innovation: Incorporates time-delayed coordinates into the DMD framework. This embeds the temporal structure of the signals directly into the separation model, enhancing its ability to handle non-stationary behavior and dynamic coupling [24].

- Workflow:

- Data Matrices: The observed data is arranged into two matrices, X and Y, where Y is a time-shifted version of X.

- Linear Operator: The best-fit linear operator A that maps X to Y is computed (A = YX†).

- Eigendecomposition: The dynamic modes are derived from the eigenvectors and eigenvalues of A.

- Source Reconstruction: The sources are reconstructed from these dynamic modes.

- Validation: Applied to separate audio signals and remove artifacts from EEG data, demonstrating superior performance in capturing and separating dynamic components compared to conventional ICA [24].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Tools for Hybrid BSS Development and Testing

| Tool / Solution | Category | Function in Research | Exemplar Use |

|---|---|---|---|

| Learnlet Transform [25] | Learned Representation | Provides a data-adaptive, wavelet-like sparse basis for representing sources. | Core of the LCS algorithm for astrophysical image separation. |

| Gramian Angular Field (GAF) [27] | Data Preprocessing | Encodes 1D time-series data (e.g., Radar Cross-Section) into 2D images for CNN processing. | Converting UAV RCS sequences into images for classification in HCCSANet. |

| Hybrid Cross-Channel & Spatial Attention (HCCSA) Module [27] | Neural Network Component | Enhances CNN's feature representation by focusing on informative spatial and channel dimensions. | Integrated into HCCSANet to boost UAV classification accuracy to 96.30%. |

| Complex Newton's Method with Unitary Constraint [26] | Optimization Solver | Enables stable and efficient maximization of objective functions for complex-valued signals. | Used in MODMAX to achieve low BER with reduced complexity. |

| Time-Delay Embedding [24] | Signal Preprocessing | Reconstructs the phase space of a dynamical system to capture temporal dependencies. | Critical for the Time-Delayed DMD method to handle non-stationary signals. |

| FastICA / JADE [26] [24] | Classical Algorithm | Serves as a baseline for benchmarking the performance of novel hybrid methods. | Used in comparative studies across numerous papers [26] [24]. |

Integrated Workflow and Signaling Pathways in Hybrid BSS

The logical relationship and workflow between classical techniques, decomposition methods, and machine learning in a hybrid BSS system can be synthesized as follows. The process begins with raw mixed signals, which undergo standard preprocessing like centering and whitening. The core hybrid separation engine then operates by leveraging a synergy between a physical model (e.g., a linear mixing assumption) and a data-driven prior (e.g., a learned sparse representation or a deep neural network). The physical model provides a structural constraint, while the data-driven prior guides the solution towards realistic source configurations. This synergy is often implemented through an iterative optimization loop that refines estimates of both the sources and the mixing process, ultimately yielding the separated components.

The following diagram maps this integrated signaling and workflow pathway:

This comparative analysis demonstrates that hybrid approaches to Blind Source Separation, which strategically combine classical signal processing, decomposition techniques, and machine learning, consistently outperform traditional methods. The evidence shows that hybrid models like LCS, MODMAX, and Time-Delayed DMD offer significant advantages in terms of separation accuracy, robustness to noise, and adaptability to complex, real-world data dynamics [26] [24] [25].

The choice of the optimal hybrid method is highly application-dependent. Researchers working with structured image data, such as in astrophysics, may find the LCS framework most beneficial. For communication systems with constant-envelope signals, MODMAX provides an optimal balance of performance and efficiency. When dealing with non-stationary time-series data like EEG, Time-Delayed DMD offers a powerful solution. Ultimately, the synergy between well-understood physical models and data-driven learning defines the state-of-the-art in BSS, providing researchers and scientists with a powerful toolkit for unraveling complex mixed signals.

Inside the Toolbox: A Deep Dive into Modern Hybrid Methodologies and Their Applications

Electroencephalogram (EEG) analysis is perpetually challenged by contamination from muscle artifacts (EMG), which exhibit broad spectral overlap with neural signals and high amplitude variability. Hybrid blind source separation (BSS) techniques, which integrate sophisticated signal decomposition algorithms with source separation methods, have emerged as a powerful solution to this problem. This guide provides a comparative analysis of two leading hybrid methodologies: Variational Mode Decomposition with Canonical Correlation Analysis (VMD-CCA) and Ensemble Empirical Mode Decomposition with Independent Component Analysis (EEMD-ICA). We objectively evaluate their performance against standardized metrics, detail experimental protocols, and present a scientific resource toolkit to inform method selection for research and clinical applications.

Muscle artifacts pose a significant threat to EEG data integrity because their frequency spectrum (0.01–200 Hz) substantially overlaps with that of cerebral activity [29]. This complicates the use of simple linear filters. Hybrid BSS methods address this by first breaking down single-channel EEG into multiple components, thereby creating a "pseudo-multi-channel" dataset. This enriched dataset provides a superior foundation for subsequent source separation algorithms to isolate and remove artifactual components [30] [31].

- VMD-CCA: This method leverages the mathematical robustness of Variational Mode Decomposition (VMD) to decompose an EEG signal into a finite number of band-limited intrinsic mode functions (IMFs). Canonical Correlation Analysis (CCA) then targets and removes components with low autocorrelation—a characteristic feature of muscle noise [30] [32].

- EEMD-ICA: This approach utilizes the adaptive nature of Ensemble Empirical Mode Decomposition (EEMD) for signal decomposition. Subsequently, Independent Component Analysis (ICA) is employed to separate components based on statistical independence, often requiring manual or automated inspection to identify and discard artifact-laden sources [31].

The core distinction lies in their operational principles: VMD-CCA exploits the temporal structure (autocorrelation) of the signal, whereas EEMD-ICA relies on statistical independence. This fundamental difference dictates their performance, computational efficiency, and suitability for various research scenarios.

Performance Comparison & Experimental Data

Evaluations using semi-simulated and real EEG datasets demonstrate the distinct performance characteristics of each method. The following table summarizes quantitative results from controlled experiments.

Table 1: Quantitative Performance Comparison of Hybrid Methods

| Metric | VMD-CCA | EEMD-ICA | Experimental Context |

|---|---|---|---|

| Relative Root Mean Square Error (RRMSE) | Lower values reported, ~0.3 under low SNR [30] | Higher than VMD-CCA [30] | Reconstruction error on semi-simulated data with muscle artifacts [30] |

| Average Correlation Coefficient (CC) | Superior performance, remains high (>0.9) even at SNR < 2 dB [30] [31] | Good, but outperformed by VMD-CCA [30] | Measures similarity between cleaned and ground-truth clean EEG [30] |

| Computational Speed | Faster due to VMD's mathematical formulation [30] | Slower; EEMD requires numerous iterations for ensemble averaging [31] | Execution time on identical datasets; critical for real-time processing [31] |

| Stability & Robustness | High; VMD is less prone to mode mixing and is more noise-robust [30] [33] | Moderate; EEMD can suffer from mode aliasing despite ensemble averaging [30] | Consistency of decomposition results across different signal-to-noise ratios [30] |

| Performance with Few Channels | Effective even with limited EEG channels; effect becomes more random as channels decrease [30] [32] | Performance can degrade with fewer channels [30] | Testing with varying numbers of EEG electrodes [30] |

Detailed Experimental Protocols

Protocol for VMD-CCA

The VMD-CCA workflow is designed to leverage the high autocorrelation of neural signals to separate them from muscle noise.

- Signal Decomposition via VMD: Each channel of the raw, contaminated EEG signal is decomposed into a pre-defined number ((K)) of band-limited Intrinsic Mode Functions (IMFs). VMD solves a constrained variational problem to ensure each IMF is compact around a central frequency [30]. A common practice is to set (K) to at least 5 to avoid over-segmentation that results in negligible noise components [32].

- Initial Artifact IMF Selection: The delay-1 autocorrelation coefficient is calculated for each IMF. Genuine EEG components, being more rhythmic and structured, exhibit high autocorrelation (e.g., >0.95), while muscle artifact components demonstrate lower values. IMFs with autocorrelation below a set threshold are selected for further cleaning [30] [32].

- Source Separation via CCA: The selected artifact-prone IMFs from all channels are grouped into a new data matrix. CCA decomposes this matrix into uncorrelated components. The autocorrelation of these CCA components is again assessed; those with low autocorrelation are identified as residual artifacts [30].

- Reconstruction of Clean EEG: The artifact CCA components are set to zero. The remaining components are then projected back to the IMF domain. Finally, the cleaned IMFs are combined with the initially retained "clean" IMFs to reconstruct the artifact-free EEG signal [30].

Protocol for EEMD-ICA

The EEMD-ICA method uses a noise-assisted approach to achieve a clean decomposition before isolating independent sources.

- Signal Decomposition via EEMD: The EEG signal is decomposed using EEMD. This involves:

- Adding multiple realizations of white noise to the original signal.

- Applying Empirical Mode Decomposition (EMD) to each noise-added signal to obtain IMFs.

- Ensemble-averaging the corresponding IMFs across all trials to produce the final EEMD-based IMFs, which mitigates mode mixing [31].

- Channel Reconstruction & Component Pooling: All IMFs from all EEG channels (or a selected set of artifact-like IMFs based on simple metrics) are pooled together to form a multi-channel dataset [31].

- Source Separation via ICA: ICA is applied to this pooled dataset to find statistically independent components. The underlying assumption is that neural and artifactual sources are independent.

- Artifact Component Identification & Removal: This critical step often requires manual inspection by an expert to visually identify and reject components corresponding to artifacts. Alternatively, automated classifiers based on features like entropy, kurtosis, or spectral properties can be used [34]. The remaining components are used to reconstruct the cleaned EEG.

Workflow Visualization

The following diagrams illustrate the core procedural pathways for both the VMD-CCA and EEMD-ICA methods, highlighting their logical structure and key differences.

VMD-CCA Workflow

EEMD-ICA Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for Hybrid BSS Research

| Item / Solution | Function / Description | Relevance in Hybrid BSS |

|---|---|---|

| High-Density EEG System | Multi-electrode array (e.g., 19-channel based on 10-20 system) for recording scalp potentials. | Provides the raw, contaminated signals for processing. A sufficient number of channels is crucial for effective BSS [30] [33]. |

| Synchronized EMG Array | Multiple EMG electrodes placed on facial/neck muscles. | Provides a reference signal for adaptive filtering, significantly improving artifact removal in extended methods like EEMD-CCA with adaptive filtering [29]. |

| VMD Algorithm | A computational tool for adaptive, quasi-orthogonal signal decomposition. | Core to the VMD-CCA method. Its parameters, especially the number of modes (K), must be optimized for the dataset [30] [32]. |

| EEMD Algorithm | A noise-assisted data analysis method for non-stationary signals. | Core to the EEMD-ICA method. The number of ensemble trials and the amplitude of added noise are key parameters [31]. |

| CCA & ICA Algorithms | CCA: Finds uncorrelated sources. ICA: finds statistically independent sources. | The second-stage BSS engines. CCA uses second-order statistics, while ICA uses higher-order statistics [30] [31]. |

| Semi-Simulated Datasets | Clean EEG artificially contaminated with real muscle artifacts at known SNRs. | Enables rigorous, quantitative validation and benchmarking of denoising performance where ground truth is available [30] [31]. |

| Performance Metrics (RRMSE, CC) | RRMSE: Relative Root Mean Square Error. CC: Correlation Coefficient. | Quantitative standards for evaluating the fidelity of the cleaned EEG compared to a ground truth signal [30] [31]. |

The comparative analysis reveals a nuanced performance landscape. VMD-CCA demonstrates superior performance in terms of reconstruction error (RRMSE), signal fidelity (CC), and computational speed, making it a robust choice for scenarios requiring automated, efficient processing, such as in real-time BCI applications or studies involving large datasets [30]. Its reliance on autocorrelation provides a principled, often automated, criterion for artifact rejection.

Conversely, EEMD-ICA, while potentially slower and less automatable, remains a powerful and widely understood tool. Its strength lies in its ability to separate sources based on statistical independence, which can be effective for various artifact types beyond muscle activity. However, its dependence on manual component rejection or complex automated classifiers introduces subjectivity and complexity [34].

The selection between VMD-CCA and EEMD-ICA is not a matter of one being universally better, but rather which is more appropriate for the specific research context. For focused, efficient, and highly effective muscle artifact suppression, VMD-CCA is the leading candidate. For studies dealing with multiple, diverse artifact types where manual inspection is feasible, EEMD-ICA offers valuable flexibility. Future directions point toward deep learning-based end-to-end denoising models like CLEnet and AnEEG, which show promise in handling unknown artifacts and complex multi-channel data [35] [8], potentially setting a new benchmark in the ongoing evolution of EEG artifact removal.

The analysis of electroencephalography (EEG) signals is a cornerstone of neuroscientific research and clinical diagnostics. However, a persistent challenge in this field is the contamination of these signals by physiological artifacts, primarily originating from muscle activity (Electromyography, or EMG) and eye movements (Electrooculography, or EOG). These artifacts can severely obscure neural information, leading to inaccurate analyses and interpretations. Traditional artifact removal methods often rely solely on the EEG data itself, facing limitations when artifact and neural signal frequencies overlap. The emergence of deep learning has catalyzed the development of more sophisticated denoising approaches. Among these, hybrid architectures combining Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks have shown significant promise. A particularly advanced strand of this research explores the fusion of CNN-LSTM models with auxiliary EMG or EOG inputs, creating a multimodal system that leverages direct information about the interference sources to achieve superior artifact removal. This guide provides a comparative analysis of these hybrid artifact removal methods, evaluating their performance against traditional and other deep-learning techniques to inform researchers and development professionals about the current state-of-the-art.

Performance Comparison of Artifact Removal Methods

The following tables summarize the performance of various artifact removal methods, including hybrid CNN-LSTM approaches with auxiliary inputs, as reported in recent literature. Performance is measured using key metrics such as Signal-to-Noise Ratio (SNR), Correlation Coefficient (CC), and Relative Root Mean Square Error in the temporal and frequency domains (RRMSEt and RRMSEf).

Table 1: Performance on Mixed (EMG + EOG) Artifact Removal (Single-Channel EEG)

| Method | Architecture Type | Auxiliary Input | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|---|

| CLEnet [2] | CNN-LSTM with attention (Dual-scale) | Not specified | 11.498 | 0.925 | 0.300 | 0.319 |

| DuoCL [2] | CNN-LSTM | Not specified | 10.123 | 0.894 | 0.335 | 0.342 |

| NovelCNN [2] | CNN | Not specified | 9.854 | 0.882 | 0.351 | 0.358 |

| 1D-ResCNN [2] | CNN | Not specified | 9.521 | 0.871 | 0.365 | 0.370 |

Table 2: Performance on ECG Artifact Removal (Single-Channel EEG)

| Method | Architecture Type | Auxiliary Input | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|---|

| CLEnet [2] | CNN-LSTM with attention (Dual-scale) | Not specified | 13.451 | 0.938 | 0.274 | 0.301 |

| DuoCL [2] | CNN-LSTM | Not specified | 12.795 | 0.931 | 0.298 | 0.320 |

| 1D-ResCNN [2] | CNN | Not specified | 12.301 | 0.925 | 0.315 | 0.335 |

Table 3: Performance on Multi-Channel EEG with Unknown Artifacts

| Method | Architecture Type | Auxiliary Input | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|---|

| CLEnet [2] | CNN-LSTM with attention (Dual-scale) | Not specified | 9.523 | 0.892 | 0.312 | 0.338 |

| DuoCL [2] | CNN-LSTM | Not specified | 9.296 | 0.869 | 0.335 | 0.350 |

| 1D-ResCNN [2] | CNN | Not specified | 9.101 | 0.851 | 0.349 | 0.361 |

Table 4: Comparison with Non-CNN-LSTM State-of-the-Art

| Method | Architecture Type | Auxiliary Input | Key Performance Highlight |

|---|---|---|---|

| ART (Artifact Removal Transformer) [15] | Transformer | No | Outperforms other deep-learning models in restoring multichannel EEG; improves BCI performance. |

| ICA (Independent Component Analysis) [14] | Blind Source Separation | No | Commonly used benchmark; outperformed by hybrid CNN-LSTM with EMG in SSVEP preservation [14]. |

| Linear Regression [14] | Regression | Requires reference channel | Outperformed by hybrid CNN-LSTM with EMG in SSVEP preservation [14]. |

Detailed Experimental Protocols

To ensure the reproducibility of the results summarized above, this section details the core experimental methodologies common to the cited studies.

Data Acquisition and Preprocessing

The development and validation of hybrid models require high-quality, well-annotated datasets.

- Data Sources: Experiments typically use both semi-synthetic and real-world EEG datasets. Semi-synthetic data are created by clean EEG recordings from public databases (e.g., EEGdenoiseNet [2]) with artifact signals (EMG, EOG, ECG) at known signal-to-noise ratios. This allows for precise ground-truth comparison [2]. Real-world data involves simultaneous recording of EEG and auxiliary EMG/EOG signals from participants under controlled tasks, such as responding to visual stimuli while performing jaw clenching (to induce EMG artifacts) [14] or conducting conversation tasks [36].

- Preprocessing: Raw signals are first band-pass filtered to remove extreme frequency noise. A critical step for CNN-LSTM models is segmentation, where continuous signals are divided into short, overlapping time windows or epochs [37]. These epochs serve as the input samples for the network. For some approaches, time-frequency representations (e.g., spectrograms) are computed from these epochs to be used as 2D inputs [38] [37].

Model Architecture and Training

The core innovation lies in the fusion of architectures and data modalities.

- CNN-LSTM Hybrid Design: The standard pipeline involves CNN layers first, which extract salient spatial or morphological features from each input epoch. The output of the CNN is then fed into LSTM layers, which model the temporal dependencies between the features across successive time points in the epoch [14] [2]. An advanced variant is CLEnet, which uses a dual-branch CNN with different kernel sizes to extract multi-scale features, an improved attention mechanism (EMA-1D) to enhance relevant features, and then an LSTM for temporal modeling [2].

- Fusion with Auxiliary Inputs: The key differentiator is the integration of EMG/EOG data. In one approach, the auxiliary signals are processed alongside the EEG within the same hybrid network. The model is trained to learn the complex, non-linear relationships between the specific muscle or eye movement patterns (from EMG/EOG) and their corresponding artifact signatures in the EEG signal [14]. Another method uses the auxiliary signals to create a reference-based training set, where the CNN-LSTM is trained to map "contaminated" EEG signals to their "clean" counterparts, with the auxiliary data helping to inform the augmentation process [14].

- Training Regime: Models are trained in a supervised manner. The loss function is typically the Mean Squared Error (MSE) between the model's output and the ground-truth clean EEG signal [2]. The Adam optimizer is commonly used to minimize this loss. To prevent overfitting, techniques like dropout and early stopping are employed, and the dataset is split into separate training, validation, and test sets.

Evaluation Metrics

The performance of these models is quantified using standardized metrics that assess both signal fidelity and artifact removal efficacy.

- Signal-to-Noise Ratio (SNR): Measures the power ratio between the clean signal and the residual noise. A higher SNR indicates better artifact removal [14] [2].

- Correlation Coefficient (CC): Quantifies the linear correlation between the cleaned signal and the ground-truth clean signal. A value closer to 1 indicates better preservation of the original neural information [2].

- Relative Root Mean Square Error (RRMSE): Calculates the normalized difference between the cleaned and ground-truth signals, both in the time domain (RRMSEt) and frequency domain (RRMSEf). Lower values indicate higher reconstruction accuracy [2].

Workflow and Architecture Diagrams

Experimental Workflow for EEG-EMG Fusion

The following diagram illustrates the end-to-end process for training and evaluating a hybrid CNN-LSTM model with auxiliary EMG inputs for EEG artifact removal.

CLEnet Dual-Branch Hybrid Architecture

The following diagram details the architecture of CLEnet, a state-of-the-art dual-branch CNN-LSTM model that incorporates an attention mechanism for enhanced performance.

Table 5: Key Resources for EEG Artifact Removal Research

| Category | Item | Function and Application |

|---|---|---|

| Datasets | EEGdenoiseNet [2] | A semi-synthetic benchmark dataset containing clean EEG and artifact (EMG, EOG) signals for training and evaluating denoising algorithms. |

| MIT-BIH Arrhythmia Database [2] [39] | A public database of ECG signals, often used as a source of cardiac artifact to contaminate EEG for model testing. | |

| Software & Libraries | TensorFlow / PyTorch | Open-source deep learning frameworks used to build, train, and deploy CNN-LSTM hybrid models. |

| FusionBench [40] | A comprehensive benchmark suite for evaluating the performance of various deep model fusion techniques across multiple tasks. | |

| MULTIBENCH++ [41] | A large-scale, unified benchmarking framework for multimodal fusion, useful for rigorous cross-domain evaluation. | |

| Hardware | High-Density EEG Systems | Capture brain electrical activity with high spatial resolution from multiple scalp locations (e.g., 32, 64, or 128 channels). |

| Surface EMG/EOG Sensors | Record reference signals for muscle (EMG) and ocular (EOG) activity simultaneously with EEG for multimodal fusion models. | |

| Signal Processing Tools | Independent Component Analysis (ICA) [14] [15] | A blind source separation method used for both traditional artifact removal and for generating pseudo-clean training data for deep learning models. |

| Canonical Correlation Analysis (CCA) [14] | A statistical method used to remove components of a signal that are correlated with artifacts. |

The accurate interpretation of neural signals is fundamental to advancing brain-computer interfaces (BCIs), neuroprosthetics, and clinical diagnostics. These signals, however, are frequently contaminated by physiological artifacts—such as those from eye movements (EOG) and muscle activity (EMG)—which can obscure crucial information and degrade system performance. Hybrid methods, which combine multiple algorithmic strategies or data sources, have emerged as a powerful solution to this challenge. By leveraging the complementary strengths of individual techniques, these approaches achieve a superior balance between artifact removal efficacy and the preservation of underlying neural information, a balance that single-method strategies often fail to attain. The evolution of these methods is particularly critical for applications like SSVEP-based BCIs and diagnostic systems, where signal integrity is paramount [42] [14]. This guide provides a comparative analysis of contemporary hybrid artifact removal methods, detailing their experimental protocols, performance data, and suitability for specific research and clinical applications.

Comparative Analysis of Hybrid Artifact Removal Methods

The following table summarizes the core methodologies, applications, and key performance metrics of several advanced hybrid approaches.

Table 1: Comparison of Hybrid Artifact Removal Methods

| Method Name | Core Hybridization Strategy | Primary Application | Key Performance Metrics | Reported Performance |

|---|---|---|---|---|

| CNN-LSTM with EMG [14] | Deep Learning (CNN-LSTM) + Additional EMG Reference Signal | Cleaning muscle artifacts from SSVEP-EEG | Signal-to-Noise Ratio (SNR) Improvement | Excellent performance; effectively retains SSVEP responses [14] |

| ART (Transformer-based) [15] | Transformer Architecture + ICA-generated Training Data | End-to-end denoising of multichannel EEG | Mean Squared Error (MSE), Signal-to-Noise Ratio (SNR) | Surpasses other deep-learning models; sets a new benchmark [15] |

| FF-EWT + GMETV Filter [21] | Fixed Frequency EWT + Generalized Moreau Envelope TV Filter | EOG artifact removal from single-channel EEG | Relative Root Mean Square Error (RRMSE), Correlation Coefficient (CC) | Substantial improvement on synthetic and real data [21] |

| SSA-CCA [14] | Singular Spectrum Analysis (SSA) + Canonical Correlation Analysis (CCA) | Muscle artifact removal from EEG | N/S (Not Specified in detail) | Effective removal by leveraging autocorrelation properties [14] |

| Mixed Template CCA [42] | CCA with a novel mixed reference template | Identifying non-control states in SSVEP-BCI | Accuracy, Information Transfer Rate (ITR) | Average online accuracy: 93.15%; ITR: 31.612 bits/min [42] |

Detailed Experimental Protocols and Workflows

Hybrid CNN-LSTM Model with EMG Reference

This approach addresses the challenge of removing muscle artifacts while preserving evoked potentials like SSVEPs.

- Aim: To remove muscle artifacts from EEG signals while preserving crucial components such as Steady-State Visual Evoked Potentials (SSVEPs) [14].

- Data Acquisition: EEG and EMG data were recorded from 24 participants. Participants were presented with an LED stimulus to elicit SSVEPs while performing strong jaw clenching to induce significant muscle artifacts [14].

- Hybrid Workflow:

- Simultaneous Recording: EEG signals and EMG signals from facial and neck muscles were recorded concurrently.

- Data Augmentation: An innovative strategy for generating augmented EEG and EMG data was developed to create a diverse training dataset.

- Model Training: A hybrid Convolutional Neural Network-Long Short-Term Memory (CNN-LSTM) architecture was trained to learn the mapping between the contaminated EEG+EMG inputs and the clean EEG signals.

- Signal Reconstruction: The trained model processes new, contaminated data to output a cleaned EEG signal.

- Validation Metric: The method was uniquely evaluated based on the change in Signal-to-Noise Ratio (SNR) of the SSVEP response, ensuring that artifact removal did not degrade the neural signal of interest [14].

The following diagram illustrates the experimental workflow for this method:

Mixed Template CCA for SSVEP-BCI

This method enhances the classic Canonical Correlation Analysis (CCA) algorithm for more intuitive, self-paced BCI control.

- Aim: To create a BCI system that can directly identify a user's non-control (idle) state without requiring additional biological signals or complex paradigms [42].

- Protocol: An improved CCA model was developed that uses a mixed template as a reference signal. This innovative approach allows the algorithm to distinguish between intentional control commands and idle states based solely on the EEG signal, a process known as "self-paced" control [42].

- Experimental Tasks:

- Online Task 1: Participants controlled a UAV in a virtual reality environment. The system successfully translated non-control states into a "hover" command.

- Online Task 2: Participants performed a free-trajectory mission, flying the UAV from a start point to a destination according to their own will, necessitating real-time, self-paced control.

- Performance Metrics: The system's performance was evaluated using Accuracy and Information Transfer Rate (ITR), key metrics for BCI usability. The system achieved an average accuracy of 93.15% and an ITR of 31.612 bits/min in the first task, demonstrating high effectiveness [42].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of hybrid methods requires specific materials and data processing tools. The following table lists key components used in the featured research.

Table 2: Key Research Reagents and Materials for Hybrid Method Implementation

| Item Name / Type | Function / Application in Research | Example from Featured Experiments |

|---|---|---|

| Multi-channel EEG System | Records electrical brain activity from the scalp; fundamental signal for BCI and diagnostics. | Used across all studies for primary data acquisition [42] [14] [15]. |

| EMG Recording Equipment | Records muscle activity as a reference signal for deep learning models to identify and remove EMG artifacts. | Facial and neck EMG used in the CNN-LSTM hybrid method [14]. |

| Visual Stimulation Apparatus | Presents flickering stimuli at specific frequencies to evoke SSVEPs in the brain. | LED stimuli used to elicit SSVEPs for BCI experiments [42] [14]. |

| ICA-derived Training Data | Provides "pseudo-clean" data pairs for supervised training of deep learning denoising models. | Used to generate training data for the ART (Transformer) model [15]. |

| Virtual Reality (VR) Platform | Creates an immersive, controlled environment for testing BCI applications like UAV control. | BCI-VR hybrid system for testing UAV control based on SSVEP [42]. |

Workflow Visualization of a Generalized Hybrid Method

The logical flow of a generalized hybrid artifact removal method, synthesizing common elements from the analyzed studies, can be visualized as follows:

Discussion and Comparative Outlook

The comparative analysis reveals that the choice of a hybrid method is highly dependent on the specific application and the nature of the target artifact. For instance, the CNN-LSTM with EMG reference is particularly suited for scenarios where muscle artifacts severely corrupt signals containing evoked potentials, as its use of an additional biosignal provides a strong foundation for precise artifact separation [14]. In contrast, the Mixed Template CCA offers a computationally efficient solution for real-time BCI systems, enabling more natural, self-paced interaction by reliably detecting user intent without extra hardware [42].

The emergence of end-to-end deep learning models like the ART transformer signifies a trend towards methods that require less hand-crafted feature engineering and can handle multiple artifact types simultaneously [15]. However, these methods often demand large, high-quality training datasets. Meanwhile, signal processing-based hybrids like FF-EWT + GMETV are particularly valuable for portable, single-channel EEG systems, where computational simplicity and channel independence are critical [21].