HPC Benchmarking for Neuronal Networks: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on conducting high-performance computing (HPC) benchmarking experiments for neuronal networks.

HPC Benchmarking for Neuronal Networks: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on conducting high-performance computing (HPC) benchmarking experiments for neuronal networks. It covers foundational principles of neuromorphic computing and spiking neural networks (SNNs), explores established and emerging benchmarking methodologies like the NeuroBench framework, details optimization strategies for enhanced performance and energy efficiency, and presents comparative analyses of leading software tools and hardware platforms. The content is tailored to address the growing computational demands in biomedical research, offering practical insights for deploying efficient and scalable neuronal network simulations in scientific discovery and therapeutic development.

Neuromorphic Computing and SNNs: Foundations for Biomimetic HPC

The Computational Paradigm Shift: From ANNs to SNNs

Artificial Neural Networks (ANNs) have driven many breakthroughs in artificial intelligence, but their high computational and energy costs limit scalability and deployment in resource-constrained environments like edge devices [1]. Brain-inspired computing addresses these limitations by drawing inspiration from the brain's architecture and efficiency.

Spiking Neural Networks (SNNs) represent the third generation of neural networks, offering greater biological plausibility and potential energy efficiency than previous ANN generations [1]. They process information through discrete electrical signals called spikes, operating in continuous time with event-driven computation that processes information only when changes occur [1]. This sparsity and temporal coding allows SNNs to embrace the energy efficiency found in biological systems.

The transition to neuromorphic computing is motivated by the end of Moore's Law and the growing energy demands of conventional AI hardware. As Professor Dmitri Strukov notes, "AI needs new hardware, not just new algorithms... This energy consumption mainly comes from data traffic between memory and processing units" [2]. Neuromorphic computing addresses this by merging memory and processing, inspired by the brain's architecture where "synapses provide a direct memory access to the neurons that process information" [2].

Performance Benchmarking: Quantitative Comparisons

Benchmarking computational frameworks is essential for evaluating performance improvements. The tables below summarize key performance metrics for SNNs and related technologies compared to conventional approaches.

Table 1: Performance Benchmarking of SNN Implementations

| Network Model / Hardware | Task / Dataset | Performance Metric | Comparison to Conventional Hardware |

|---|---|---|---|

| Memristor-based TMSNN [3] | MNIST classification | Competitive classification accuracy | High energy efficiency in theory |

| Memristor-based TMSNN [3] | Fashion-MNIST classification | Competitive classification accuracy | High energy efficiency in theory |

| Automated ANN-to-SNN Conversion [4] | Multiple DNN/CNN architectures | 2.65% average accuracy penalty | 82.71% reduction in energy-latency product |

| Proposed Graph-Partitioning [4] | SNN mapping | 79.74% latency decrease, 14.67% energy reduction | 82.71% lower energy-latency product |

| Predictive Coding (PC) Networks [5] | CIFAR-10 (ResNet-18) | Near-backpropagation (BP) accuracy | Performance decreases with deeper networks |

| Predictive Coding (PC) Networks [5] | CIFAR-100 & Tiny ImageNet | Matches BP on 5/7-layer CNNs | Falls behind on 9-layer CNNs and ResNets |

Table 2: Neuromorphic Hardware Platforms

| Hardware Platform | Type | Key Features | Neuron Capacity |

|---|---|---|---|

| Loihi (Intel) [2] | Neuromorphic Chip | Self-learning, programmable synaptic learning rules | 130,000 neurons |

| Loihi 2 System (Intel) [2] | Neuromorphic System | - | 50 million neurons |

| Pohoiki Springs (Intel) [2] | Neuromorphic System | - | 100 million neurons |

| Upcoming Intel System [2] | Neuromorphic System | - | 1+ billion neurons |

| SpiNNaker [1] [6] | Neuromorphic Hardware | - | - |

| TrueNorth [1] | Neuromorphic Hardware | - | - |

| NeuroGrid [1] | Neuromorphic Hardware | - | - |

Experimental Protocols for HPC Benchmarking

Protocol 1: Benchmarking Predictive Coding Networks

Objective: Compare the performance of Predictive Coding (PC) networks against standard backpropagation (BP) on image classification tasks [5].

Materials: PCX library (JAX-based), standard image datasets (CIFAR-10, CIFAR-100, Tiny ImageNet), GPU-enabled computing resources [5].

Procedure:

- Network Configuration: Implement identical network architectures (e.g., VGG-7, ResNet-18) for both PC and BP training

- Hyperparameter Tuning: Use PCX library for efficient hyperparameter search

- Training: Train networks on identical dataset splits

- Energy Monitoring: Measure energy consumption during training and inference phases

- Scalability Testing: Evaluate performance across increasing network depths (5, 7, 9 layers)

- Analysis: Record test accuracy, training time, and energy consumption

Key Measurements:

- Test accuracy across dataset categories

- Energy consumption during training and inference

- Training time to convergence

- Layer-wise energy distribution analysis

Protocol 2: ANN-to-SNN Conversion and Benchmarking

Objective: Transform ANNs to SNNs and evaluate performance on neuromorphic hardware [4].

Materials: SNN Tool Box (SNN-TB), CARLsim simulator, graph-partitioning algorithms, network-on-chip (NoC) tools [4].

Procedure:

- Network Conversion: Use SNN-TB to automatically convert pre-trained ANNs to SNNs

- Graph Partitioning: Apply novel graph-partitioning algorithm (SNN-GPA) to handle large-scale graphs (>100,000 vertices)

- Hardware Mapping: Map partitioned SNNs to NoC architecture using placement tools

- Performance Evaluation: Execute benchmark tasks on converted SNNs

- Metrics Collection: Record accuracy, latency, energy consumption, and communication overhead

Key Measurements:

- Classification accuracy penalty compared to original ANN

- Inter-synaptic and intra-synaptic communication reduction

- Latency decrease and energy reduction

- Energy-latency product improvement

Protocol 3: NeuroBench Standardized Evaluation

Objective: Provide standardized benchmarking of neuromorphic algorithms and systems using the NeuroBench framework [7].

Materials: NeuroBench framework, benchmark tasks, conventional and neuromorphic hardware platforms.

Procedure:

- Hardware-Independent Assessment: Evaluate algorithm performance abstracted from hardware

- Hardware-Dependent Assessment: Measure full system performance on neuromorphic platforms

- Comparative Analysis: Compare against conventional AI systems

- Efficiency Metrics: Collect measurements on energy consumption, computational speed, and accuracy

- Application Testing: Evaluate across various applications (vision, robotics, scientific computing)

Key Measurements:

- Time-to-solution and energy-to-solution

- Task-specific accuracy metrics

- Memory consumption and computational efficiency

- Real-time processing capabilities

Visualization of Workflows and Architectures

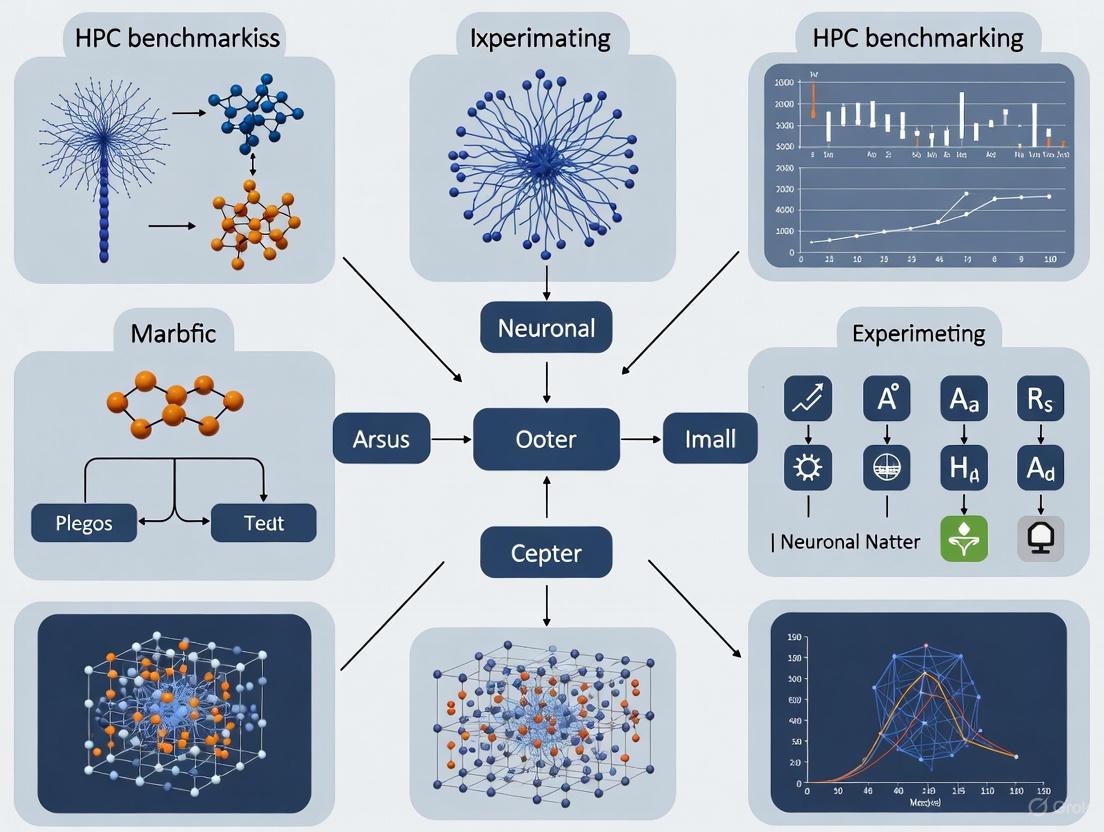

Figure 1: Comprehensive workflow for converting ANNs to SNNs and deployment on neuromorphic hardware, illustrating the transformation from continuous processing to event-driven computation with specialized hardware implementation.

Table 3: Research Reagent Solutions for Neuromorphic Computing

| Tool / Resource | Type | Function | Application in Research |

|---|---|---|---|

| PCX Library [5] | Software Framework | JAX-based library for predictive coding networks | Accelerated training and benchmarking of PC networks |

| NeuroBench [7] | Benchmark Framework | Standardized evaluation of neuromorphic algorithms/systems | Comparative performance analysis across platforms |

| SNN Tool Box (SNN-TB) [4] | Conversion Tool | Automated transformation of ANNs to SNNs | Network conversion for neuromorphic implementation |

| CARLsim [4] | Simulation Environment | GPU-accelerated SNN simulation | Large-scale SNN training and testing |

| Loihi Neuromorphic Chip [2] | Hardware Platform | Self-learning neuromorphic processor | Energy-efficient SNN implementation and testing |

| Memristor Crossbars [3] | Hardware Component | In-memory computing substrate | Efficient synaptic weight implementation in SNNs |

| Graph-Partitioning Algorithm [4] | Computational Tool | Partitioning large SNN graphs for NoC mapping | Optimizing neural placement for reduced communication |

| NEST Simulator [6] | Simulation Environment | Large-scale spiking network simulation | Network dynamics study and model validation |

Figure 2: HPC benchmarking architecture for neuronal networks, showing the comprehensive evaluation pipeline from input models through hardware platforms to standardized performance metrics using the NeuroBench framework.

Spiking Neural Networks (SNNs), often regarded as the third generation of neural network models, offer a set of unique advantages rooted in their biological plausibility. These networks process information through discrete, event-driven spikes over time, unlike the continuous activation values of traditional Artificial Neural Networks (ANNs). The core computational principles of SNNs—temporal dynamics, sparsity, and event-driven processing—make them exceptionally well-suited for energy-efficient, temporal data processing tasks. In the context of high-performance computing (HPC) and demanding fields like drug development, these characteristics translate into significant gains in efficiency, scalability, and capability for processing complex spatio-temporal data. SNNs leverage dynamical sparsity, where neurons activate sparsely to minimize data communication, which is critical for overcoming bandwidth limitations between memory and processor in hardware implementations [8]. Their event-driven nature means that computations are triggered only upon the arrival of a spike, potentially unlocking orders-of-magnitude gains in energy efficiency, especially when deployed on neuromorphic hardware such as Intel's Loihi or SynSense's Speck [9].

Core Advantages and Quantitative Benefits

The following table summarizes the key advantages of SNNs and their practical implications for research and application development, particularly in HPC environments.

Table 1: Core Advantages of Spiking Neural Networks

| Advantage | Core Principle | Key Benefit for HPC & Research | Quantitative Improvement |

|---|---|---|---|

| Event-Driven Processing & Sparsity | Computation occurs only upon receipt of a spike, leading to sparse, asynchronous data flow. | Drastically reduces energy consumption and computational load; enables efficient deployment on neuromorphic hardware. | Can replace costly multiply-accumulate operations with simple accumulations, enabling orders-of-magnitude efficiency gains on neuromorphic processors [9]. |

| Inherent Temporal Dynamics | Neurons are stateful, with membrane potentials that integrate inputs over time, providing implicit recurrence. | Ideal for processing temporal sequences and time-series data without complex recurrent architectures; capable of extracting temporal features in feed-forward networks. | Enables comparable results to LSTM networks with a smaller number of parameters, demonstrating superior parameter efficiency [10]. |

| Enhanced Energy Efficiency | Combines event-driven computation with sparse activity to minimize power-intensive operations. | Reduces the energy footprint of large-scale AI model training and inference, a critical concern for HPC centers. | Achieved via sparse, event-driven computation on low-power neuromorphic hardware [9] [8]. |

| Delay & Recurrent Learning | Synaptic and axonal delays can be incorporated and learned, enriching the network's temporal processing capabilities. | Increases network capacity and computational richness; allows for optimization of temporal pathways. | Learnable delays can enhance accuracy; recurrent delays are particularly beneficial in small networks [11]. |

Experimental Protocols for HPC Benchmarking

To validate the advantages of SNNs within an HPC benchmarking framework, the following detailed experimental protocols are proposed. These methodologies are designed to be reproducible and provide clear, quantitative metrics for comparison against traditional ANN models.

Protocol: Benchmarking Temporal Sequence Processing

This protocol evaluates the SNN's ability to process and understand temporal dependencies, a task critical for analyzing dynamic biological processes in drug development, such as protein folding or cellular signaling pathways.

- Objective: To quantify the accuracy and parameter efficiency of SNNs in modeling long-range temporal dependencies and compare them against recurrent ANNs like LSTMs.

- Dataset: DVS-Gesture-Chain (DVS-GC). This event-based action recognition dataset demands an understanding of the precise order of events, unlike its predecessor, which could be solved without temporal feature extraction [10].

- Network Architecture:

- Experimental Group: A feed-forward SNN and a recurrent SNN.

- Control Group: An LSTM network.

- HPC Setup & Training:

- Implement the SNNs using the mlGeNN library [11] and the LSTM using a standard framework like PyTorch.

- Utilize surrogate gradient methods (e.g., as implemented in mlGeNN) for training the SNNs, as they allow for gradient-based optimization of the non-differentiable spike function [11] [9].

- Train all models on an HPC cluster with multiple GPU nodes. Monitor and log computational load, memory usage, and energy consumption using profiling tools.

- Key Metrics:

- Primary: Classification accuracy on the DVS-GC test set.

- Secondary: Number of trainable parameters, total energy consumption (Joules), and training time (hours).

- Expected Outcome: The recurrent SNN is expected to achieve comparable accuracy to the LSTM while using a significantly smaller number of parameters, demonstrating the parameter efficiency of SNNs for temporal tasks [10].

Protocol: Benchmarking Efficiency via Model Pruning

This protocol assesses the interaction between the inherent dynamical sparsity of SNNs and static sparsity induced by pruning, a key technique for model deployment on resource-constrained hardware.

- Objective: To achieve extreme levels of sparsity in an SNN with minimal performance degradation, highlighting its compatibility with efficient hardware deployment.

- Dataset: DVS128 Gesture [8].

- Pruning Methodology: Apply a spatio-temporal pruning algorithm [8]:

- Spatial Pruning: Use the Layer-Adaptive Magnitude-based Pruning Score (LAMPS) technique to prune synaptic connections based on weight magnitudes, adjusting the pruning scale per layer to prevent bottlenecks.

- Temporal Pruning: Dynamically reduce the number of time steps used during inference for redundant input samples, based on an analysis of the accumulated membrane potential.

- HPC Setup & Training:

- Implement the SNN and pruning algorithm in an HPC environment.

- Train the model pre-pruning, then apply the spatio-temporal pruning iteratively.

- Fine-tune the pruned model to recover any lost accuracy.

- Key Metrics:

- Primary: Model accuracy pre- and post-pruning.

- Secondary: Percentage reduction in parameters and computational operations (e.g., 98.18% parameter reduction [8]).

- Expected Outcome: Successful pruning should result in a dramatic reduction in model size and computational load with negligible loss or even a slight improvement in accuracy on event-based datasets [8].

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines essential software and hardware tools required for conducting advanced SNN research within an HPC benchmarking context.

Table 2: Essential Tools for SNN Research and HPC Benchmarking

| Tool Name | Type | Primary Function in SNN Research |

|---|---|---|

| mlGeNN [11] | Software Framework | A spike-based machine learning library built on the GPU-optimized GeNN simulator. It facilitates efficient training and simulation of SNNs on HPC-grade GPUs, supporting advanced features like delay learning. |

| EventProp Algorithm [11] | Training Algorithm | An algorithm for calculating exact gradients in SNNs, using a hybrid approach of differential equations and event-based backward passes. It is memory-efficient and enables training on long sequences. |

| NeuroBench [7] | Benchmarking Framework | A community-developed framework for standardized benchmarking of neuromorphic computing algorithms and systems, ensuring objective comparison in both hardware-independent and hardware-dependent settings. |

| Lava [9] | Software Framework | An open-source software framework for developing neuromorphic applications, compatible with platforms like Intel's Loihi 2. |

| Spatio-Temporal Pruning [8] | Optimization Algorithm | A pruning algorithm that reduces both spatial (synaptic) and temporal redundancy in SNNs, crucial for deploying models on memory- and compute-constrained neuromorphic hardware. |

Workflow and System Diagrams

The following diagrams illustrate key experimental workflows and the structural relationship between SNN advantages and their applications.

SNN Advantage Application Pathway

Delay Learning Experiment Workflow

Neuromorphic computing represents a fundamental departure from traditional von Neumann architecture by co-locating memory and processing, using event-driven, asynchronous circuits inspired by the biological brain [12] [13]. For researchers in neuronal networks and drug development, this paradigm offers transformative potential for simulating complex neural dynamics with orders-of-magnitude greater energy efficiency than conventional high-performance computing (HPC) systems [13]. The energy demands of modern artificial intelligence systems, where training models like GPT-3 can consume as much energy as powering 120 homes for a year, have created urgent need for more efficient computing paradigms [13]. Neuromorphic hardware achieves these efficiency gains through spiking neural networks (SNNs) that mimic the brain's sparse, event-driven communication, operating with power budgets as low as 20 watts - comparable to the human nervous system [13].

This landscape encompasses two complementary approaches: digital neuromorphic chips that use conventional CMOS technology to emulate neural networks with high programmability, and emerging devices that leverage novel physical properties to naturally emulate neuro-synaptic functions [12]. For computational neuroscience and pharmaceutical research, these platforms enable real-time simulation of neural circuits, accelerated drug discovery through efficient pattern matching, and detailed modeling of neurological mechanisms with unprecedented biological fidelity [12] [14]. The maturation of frameworks like NeuroBench now provides standardized methodologies for objectively quantifying neuromorphic system performance, enabling rigorous comparison against conventional HPC platforms for specific research applications [7].

Digital Neuromorphic Chips

Architecture and Performance Specifications

Digital neuromorphic chips implement spiking neural networks using conventional digital CMOS technology, providing flexible, programmable platforms for neural simulation. These chips typically consist of multiple neurosynaptic cores that operate in parallel, communicating via asynchronous spike messages [12] [15]. Unlike conventional processors, they employ event-driven computation where energy consumption scales with neural activity rather than operating continuously at peak power [13].

Table 1: Comparison of Major Digital Neuromorphic Platforms

| Platform | Intel Loihi 2 | SpiNNaker 2 | IBM TrueNorth |

|---|---|---|---|

| Release Year | 2021 [15] | 2019 (2nd gen) [12] | 2014 [12] |

| Neuron Capacity | 1 million neurons per chip [15] | 10 million cores planned [12] | 1 million neurons per chip [12] |

| Synapse Capacity | 120 million maximum [15] | Billions of synapses [12] | 256 million synapses [12] |

| Power Consumption | ~1 Watt [15] | Adaptive power management [12] | ~70 milliwatts [12] |

| Technology Node | Intel 4 process [15] | 22nm process with 3D integration [12] | 28nm process [12] |

| On-Chip Learning | Supported [15] | Limited [12] | Not supported [12] |

| Key Features | Programmable neuron models, graded spikes, asynchronous NoC [15] | Massive parallelism, ARM cores, custom network [12] | Fully digital, fixed neural model [12] |

Intel's Loihi 2 architecture exemplifies modern digital neuromorphic design, featuring 128 neural cores and 6 embedded x86 processors connected via an asynchronous network-on-chip [15]. The neural cores are fully programmable digital signal processors optimized for emulating biological neural dynamics, supporting not only standard leaky integrate-and-fire models but also user-defined neuron behaviors through microcode instructions [15]. This programmability enables researchers to implement more biologically realistic neuron models with various resonance, adaptation, threshold, and reset functions critical for accurate neural simulations [15].

Experimental Protocol: Benchmarking Digital Neuromorphic Performance

Objective: Quantify the computational efficiency and accuracy of digital neuromorphic platforms (Loihi 2, SpiNNaker) for simulating biologically realistic neural networks, comparing against conventional HPC and GPU-based simulations.

Materials and Setup:

- Neuromorphic Hardware: Intel Loihi 2 system (Kapoho Point board) or SpiNNaker machine [15]

- Control System: Conventional HPC node with NVIDIA GPU (for baseline measurements)

- Software Framework: Intel Lava framework for Loihi 2 or SpiNNaker software tools [15]

- Monitoring Equipment: Precision power meter (e.g., Yokogawa WT210) for real-time power measurement

Procedure:

- Network Configuration: Implement Izhikevich neuron model on Loihi 2 using microcode programming capability to define neuron dynamics [15]. Configure identical network using NEST simulator on GPU control system.

- Workload Definition: Design benchmark network with 10,000 neurons and 1 million synapses, incorporating multiple neuron types (regular spiking, fast spiking, intrinsically bursting) and synaptic plasticity (STDP) [15].

- Execution Protocol:

- Stimulate network with identical Poisson spike trains on both platforms

- Record simulation time for 10 seconds of biological time

- Measure power consumption at 100ms intervals using power meter

- Capture output spike trains and membrane potentials of 100 representative neurons

- Data Collection:

- Record total energy consumption (Joules)

- Measure simulation execution time (seconds)

- Calculate synaptic operations per second (SOPS)

- Extract spike timing precision (milliseconds)

Validation Metrics:

- Energy Efficiency: joules per synaptic operation [12]

- Computational Throughput: synaptic operations per second (SOPS) [12]

- Temporal Accuracy: spike timing difference versus reference simulation

- Biological Fidelity: ability to reproduce characteristic neural dynamics (bursting, adaptation) [15]

Diagram 1: Digital Neuromorphic Benchmarking Workflow

Emerging Memristor-Based Devices

Memristors (memory resistors) represent the most mature category of emerging neuromorphic devices, leveraging reversible resistance changes to naturally emulate synaptic plasticity [14] [16]. These two-terminal electronic devices remember their resistance state based on the history of applied voltage/current, enabling them to implement synaptic weight storage co-located with computation [12]. This intrinsic property makes them ideal for building dense crossbar arrays that perform analog matrix-vector multiplication - the fundamental operation in neural networks - directly in physics through Ohm's law and Kirchoff's law [12].

Memristor-based neuromorphic systems typically employ crossbar arrays where memristive devices at the intersections between row and column electrodes serve as synaptic weights [14]. When input voltages are applied to rows, the currents summing at each column naturally compute the weighted sum through memristive conductances, enabling massively parallel, fast, and energy-efficient computation that bypasses von Neumann bottleneck [12]. This in-memory computing approach can achieve tremendous energy efficiency and density, with experimental demonstrations showing orders-of-magnitude improvement over conventional approaches for specific workloads like pattern recognition and associative memory [12].

Table 2: Memristor Device Characteristics for Neuromorphic Applications

| Parameter | Typical Range | Impact on Neural Network Performance |

|---|---|---|

| Resistance Ratio (HRS/LRS) | 10-1000 [16] | Determines readout margin and classification accuracy [14] |

| Switching Speed | Nanoseconds to microseconds [16] | Limits maximum spike rate and network throughput [14] |

| Endurance | 10^6-10^12 cycles [14] | Affects usable lifetime and online learning capability [14] |

| Retention Time | Seconds to years [16] | Determines volatility and need for refresh operations [14] |

| Variability | 5-20% cycle-to-cycle [14] | Impacts training convergence and inference accuracy [14] |

| Energy per Switch | Femtojoules to picojoules [16] | Contributes to overall system energy efficiency [12] |

Material systems for memristors have diversified significantly, including 2D materials (MoS₂), perovskite compounds (CsPbI₃), phase-change materials (GST), and metal oxides (TiO₂, HfO₂) [16]. Each material system offers different trade-offs between switching speed, endurance, retention, and energy efficiency. For instance, 2D material-based memristors like MoS₂ devices demonstrate stable resistive switching with high ON/OFF ratios due to their atomic-scale thickness and defect-free interfaces [16], while perovskite-based devices can exhibit volatile switching behavior suitable for temporal signal processing [16].

Experimental Protocol: Characterizing Memristor-Based Synaptic Arrays

Objective: Evaluate the performance and reliability of memristor crossbar arrays for implementing synaptic weights in spiking neural networks, quantifying impact of device non-idealities on network accuracy.

Materials and Setup:

- Memristor Array: 128×128 crossbar array with 1T1R (one-transistor-one-memristor) cells [14]

- Test Equipment: Semiconductor parameter analyzer (Keysight B1500A) with pulse generation unit

- Control System: FPGA controller for implementing neural network and learning algorithms

- Characterization Software: Custom AHaH (Anti-Hebbian and Hebbian) framework for device evaluation [14]

Procedure:

- Initial Characterization:

- Apply DC voltage sweeps (-2V to +2V) to random device sample to extract I-V characteristics

- Measure HRS (high resistance state) and LRS (low resistance state) distributions across array

- Quantify cycle-to-cycle variability through 100 successive switching cycles

- Synaptic Emulation:

- Implement STDP (spike-timing-dependent plasticity) learning rule using identical pulse scheme

- Apply pre- and post-synaptic spike patterns with varying temporal differences

- Measure conductance change as function of spike timing

- Characterize weight update linearity and symmetry

- Network Implementation:

- Map trained weights from pattern recognition SNN to memristor conductances

- Apply input pattern voltages to rows, read output currents from columns

- Perform inference on MNIST dataset or equivalent benchmark

- Record classification accuracy versus software baseline

- Reliability Testing:

- Subject array to extended operation (10^5 inference cycles)

- Monitor accuracy degradation over time

- Characterize failure modes (stuck-at faults, conductance drift)

Evaluation Metrics:

- Programming Precision: achieved versus target conductance states [14]

- Inference Accuracy: classification accuracy on benchmark dataset [14]

- Energy Efficiency: energy per synaptic operation [12]

- Noise Resilience: performance degradation with device variability [14]

- Endurance: cycles until 10% accuracy degradation [14]

Diagram 2: Memristor Characterization and Testing Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Materials for Neuromorphic Experiments

| Research Reagent | Function/Application | Example Specifications |

|---|---|---|

| Intel Loihi 2 Platform | Digital neuromorphic research | 1M neurons, 120M synapses, Lava framework [15] |

| SpiNNaker System | Large-scale neural simulation | 10M ARM cores, custom interconnect [12] |

| Memristor Crossbar Arrays | Synaptic weight implementation | 128×128 1T1R, HfO₂ or MoS₂ based [14] [16] |

| AHaH Evaluation Framework | Memristor performance assessment | Noise injection, degradation modeling [14] |

| NeuroBench Suite | Standardized benchmarking | Hardware-agnostic metrics [7] |

| Semiconductor Parameter Analyzer | Device I-V characterization | Keysight B1500A with pulse generator [14] |

| Event-Based Vision Sensor | Neuromorphic sensory input | DVS (Dynamic Vision Sensor) [17] |

The neuromorphic hardware landscape presents researchers with diverse options for accelerating neuronal network simulations and computational neuroscience research. Digital neuromorphic chips like Loihi 2 and SpiNNaker offer programmable, flexible platforms for simulating complex neural dynamics with high energy efficiency, while emerging memristor-based devices provide unprecedented density and efficiency for synaptic operations through in-memory computing [12] [15] [16].

For the HPC benchmarking community, standardized frameworks like NeuroBench are critical for objectively comparing these emerging platforms against conventional computing systems [7]. The experimental protocols outlined provide methodologies for quantifying key performance metrics including energy efficiency, computational throughput, temporal accuracy, and biological fidelity. As these technologies mature, they promise to enable new frontiers in real-time neural simulation, brain-inspired computing, and energy-efficient intelligent systems for scientific research and pharmaceutical development.

The commercial trajectory of neuromorphic technologies points toward increased adoption in specialized applications where energy efficiency, real-time processing, and adaptive learning are paramount [18] [19]. With the development of more accessible programming models and standardized toolchains, these brain-inspired computing systems are poised to become invaluable tools for researchers exploring the complexities of neural networks and seeking to accelerate computational drug discovery and development.

The Critical Need for Standardized Benchmarking in Neuromorphic Research

The rapid growth of artificial intelligence (AI) and machine learning has resulted in increasingly complex and large models, whose substantial growth rate of computation now exceeds the efficiency gains realized through traditional technology scaling [7]. This has intensified the urgency for exploring new resource-efficient computing architectures, positioning neuromorphic computing as a promising solution that adapts biological neural principles to synthesize high-efficiency computational devices [18]. However, the absence of standardized benchmarks presents a fundamental barrier to quantifying technological advancements, comparing performance with conventional methods, and identifying promising research directions [7] [20].

The neuromorphic research field currently suffers from a massive infrastructure gap compared to conventional machine learning. While ML researchers benefit from mature ecosystems with standardized benchmarks, frameworks, and deployment tools, neuromorphic researchers operate in a fragmented landscape where a simple implementation that takes 10 minutes in PyTorch can require 2 weeks to port to neuromorphic hardware [20]. This fragmentation stems from diverse hardware platforms with unique interfaces, limited and inconsistent datasets, and isolated toolchains that collectively hinder reproducible research and measurable progress [20].

For the field to advance systematically and transition effectively from academic research to commercial applications, the community must unite around common benchmarking standards that deliver an objective reference framework for quantifying neuromorphic approaches across hardware-independent and hardware-dependent settings [7]. This is particularly crucial for high-performance computing (HPC) applications in neuronal network research, where accurate performance and energy efficiency measurements are essential for guiding architectural decisions and resource allocation.

The Current State of Neuromorphic Benchmarking

Existing Benchmark Frameworks and Their Limitations

Several benchmarking initiatives have emerged to address the measurement challenges in neuromorphic computing, though they remain fragmented across research groups. The NeuroBench framework represents one of the most comprehensive community-driven efforts to establish standardized evaluation methodologies [7]. Developed collaboratively by researchers across industry and academia, NeuroBench introduces a common set of tools and systematic methodology for inclusive benchmark measurement, covering both algorithmic and system-level performance [7].

Another significant contribution is SNABSuite (Spiking Neural Architecture Benchmark Suite), which focuses on cross-platform benchmarking using backend-agnostic implementations of spiking neural networks coupled to platform-specific configurations [21]. This suite supports simulations across various platforms including NEST (CPU), GeNN (GPU), SpiNNaker, and BrainScaleS, allowing direct comparison of benchmark-specific performance metrics [21].

However, these frameworks face several limitations. Most evaluations focus on single-modality assessments (e.g., visual tasks only) with incomplete coverage of training paradigms, and they lack unified evaluation standards across frameworks [22]. Furthermore, the field suffers from a shortage of specialized analysis tools comparable to MLflow or TensorBoard in conventional ML, with researchers often relying on general-purpose solutions that don't capture unique characteristics of spiking neural networks [20].

The Hardware Diversity Challenge

The neuromorphic landscape encompasses dramatically different hardware architectures, creating inherent benchmarking challenges:

- Digital neuromorphic chips like Intel's Loihi and SpiNNaker use standard transistor technology to implement large spiking neural networks with programmable connectivity, offering flexibility but potentially higher energy consumption for reproducing rich neural dynamics [12].

- Memristive and analog systems leverage physical properties of electronic devices to naturally emulate neuron/synapse behavior, potentially offering greater energy efficiency but facing challenges with device variability and noise [12].

- Emerging technologies including spintronic, photonic, and 2D material-based devices present additional benchmarking complexities due to their novel operating principles and evaluation requirements [12].

This architectural diversity means that benchmarks must be carefully designed to account for fundamental differences in computation paradigms, precision, and operational constraints across platforms.

Standardized Benchmarking Metrics and Framework

Core Performance Metrics

A comprehensive neuromorphic benchmarking framework must integrate multiple metric categories to fully characterize system performance. Based on analysis of current research, the following metric categories have been identified as essential:

Table 1: Essential Metric Categories for Neuromorphic Benchmarking

| Metric Category | Specific Measurements | Research Context Importance |

|---|---|---|

| Computational Performance | Throughput (samples/sec, inferences/sec), Latency (time-to-solution, real-time factor), Computational density (synaptic ops/sec/area) | Critical for HPC-scale neuronal network simulations requiring real-time or faster-than-real-time performance [21] |

| Energy Efficiency | Energy per inference, Power consumption under load, Energy-delay product | Essential for evaluating sustainability and deployment potential in resource-constrained environments [21] [22] |

| Network Characterization | Spike bandwidth, Fan-in/fan-out capabilities, Synaptic memory capacity, Neuron parameter flexibility | Determines applicable network architectures and models for neuronal research [21] |

| Application Performance | Accuracy on standardized tasks (image classification, signal processing), Noise immunity, Generalization capability | Provides comparative performance assessment against conventional approaches [22] |

| Algorithmic Efficiency | Task completion accuracy, Learning speed (for online learning scenarios), Data efficiency (samples required for convergence) | Measures how effectively algorithms leverage neuromorphic principles [7] |

The NeuroBench Framework Proposal

The NeuroBench framework has emerged as a community-driven response to the benchmarking challenge, proposing a structured approach to evaluation [7]. The framework encompasses:

- Hardware-independent metrics for assessing algorithmic advances separate from their implementation

- Hardware-dependent metrics for evaluating full system performance

- Standardized datasets and tasks to ensure fair comparisons across platforms

- Energy measurement methodologies that account for different power profiles and operational modes

This framework aims to provide researchers with a common foundation for quantifying neuromorphic approaches while accommodating the diversity of hardware platforms and research objectives [7].

Experimental Protocols for Neuromorphic Benchmarking

Cross-Platform Benchmarking Methodology

To ensure reproducible and comparable results across diverse neuromorphic platforms, researchers should adhere to a standardized experimental protocol:

Table 2: Experimental Protocol for Cross-Platform Neuromorphic Benchmarking

| Protocol Phase | Key Activities | Documentation Requirements |

|---|---|---|

| 1. Platform Characterization | Measure baseline performance metrics (idle power, thermal profile), Validate neuron model fidelity against reference implementations, Characterize communication bandwidth and latency [21] | Platform specifications (process technology, clock rates), Configuration parameters, Calibration data |

| 2. Network Mapping | Implement standardized network models (e.g., cortical microcircuits, winner-take-all networks), Apply platform-specific optimizations, Validate functional correctness [21] | Mapping methodology, Optimization techniques employed, Verification results |

| 3 Benchmark Execution | Execute standardized workloads with fixed hyperparameters, Monitor performance counters and power consumption, Record spike outputs and temporal dynamics [21] [22] | Raw performance data, Environmental conditions, Measurement instrumentation details |

| 4. Data Analysis | Calculate standardized metrics (Table 1), Compare against reference implementations, Perform statistical analysis on results [7] [22] | Analysis scripts, Statistical significance tests, Comparative visualizations |

Workflow for Neuromorphic Benchmarking Implementation

The following diagram illustrates the standardized workflow for implementing neuromorphic benchmarks across diverse hardware platforms:

Energy Measurement Protocol

Given the critical importance of energy efficiency in neuromorphic systems, a precise measurement methodology is essential:

- Instrumentation Setup: Utilize precision power meters (e.g., Yokogawa WT210, National Instruments PXIe) with sampling rates sufficient to capture dynamic power variations [21].

- Baseline Measurement: Record power consumption in idle state for all components, including cooling and support systems.

- Workload Execution: Execute benchmarks while continuously monitoring power across all relevant voltage rails, ensuring synchronization between power data and computational events.

- Data Processing: Integrate power measurements over time to calculate total energy consumption, subtracting baseline power where appropriate.

- Normalization: Express results in standardized units such as energy per inference or energy per synaptic operation to enable cross-platform comparison [21].

This protocol should be supplemented with thermal measurements where applicable, as temperature variations can significantly impact both performance and energy efficiency in neuromorphic hardware.

The Scientist's Toolkit: Research Reagent Solutions

To facilitate reproducible neuromorphic research, the following table outlines essential "research reagents" – key hardware platforms, software frameworks, and datasets that constitute the fundamental tools for benchmarking experiments:

Table 3: Essential Research Reagents for Neuromorphic Benchmarking

| Reagent Category | Specific Examples | Function in Research Context |

|---|---|---|

| Hardware Platforms | Intel Loihi [12], SpiNNaker [12] [21], BrainScaleS [21], IBM TrueNorth [12] | Provide target systems for benchmarking with diverse architectures (digital, mixed-signal) and scaling properties |

| Software Frameworks | SpikingJelly [22], BrainCog [22], NEST [21], GeNN [21], Lava [20] [22] | Enable model development, simulation, and deployment with varying degrees of biological realism and hardware targeting |

| Benchmark Suites | NeuroBench [7], SNABSuite [21], Multimodal SNN Framework Benchmark [22] | Provide standardized evaluation methodologies and metrics for cross-platform comparison |

| Datasets | DVS128, N-MNIST [20], Tonic datasets [20], Custom conversions from traditional datasets (e.g., ImageNet, CIFAR) [22] | Supply temporal, event-driven data for training and evaluation, representing various modalities (vision, audio, etc.) |

| Analysis Tools | Custom power monitoring setups [21], Specialized SNN visualization tools, Statistical analysis packages | Enable performance profiling, energy measurement, and results validation |

Impact on High-Performance Computing for Neuronal Network Research

Standardized benchmarking directly addresses critical challenges in HPC environments for neuronal network research:

Performance and Efficiency Quantification

Without standardized benchmarks, comparing the performance of different neuromorphic approaches for large-scale neuronal network simulations is virtually impossible. The implementation of common metrics enables researchers to:

- Make informed decisions about hardware acquisition and resource allocation

- Accurately estimate time-to-solution for complex neuronal simulations

- Optimize energy consumption in HPC facilities where neuromorphic systems operate alongside traditional computing resources

- Validate that neuromorphic implementations maintain biological fidelity while achieving performance targets [21]

Research Reproducibility and Collaboration

The establishment of benchmarking standards directly enhances the reproducibility of neuronal network research by:

- Providing common baselines for comparing novel neuromorphic architectures

- Enabling multi-institution collaborations through shared evaluation methodologies

- Facilitating the peer-review process through standardized performance reporting

- Accelerating knowledge transfer across computational neuroscience and neuromorphic engineering communities [20]

The critical need for standardized benchmarking in neuromorphic research stems from the field's transition from isolated demonstrations to integrated high-performance computing solutions. The current fragmented landscape, characterized by incompatible toolchains, isolated benchmarks, and non-reproducible results, fundamentally limits scientific progress and commercial adoption [20].

The community-driven development of frameworks like NeuroBench and SNABSuite represents a crucial step toward addressing these challenges [7] [21]. By adopting common metrics, standardized protocols, and shared evaluation methodologies, researchers can finally quantify the true potential of neuromorphic computing for neuronal network research and applications.

For the broader thesis on HPC benchmarking experiments for neuronal networks research, standardized neuromorphic evaluation provides an essential foundation for comparing architectural approaches, quantifying performance-per-watt advantages, and making informed decisions about future research directions. The ongoing collaboration between industry and academia in developing these standards will be instrumental in shaping the next generation of efficient, brain-inspired computing systems [23].

High-Performance Computing (HPC) has become indispensable for neuronal network research, enabling large-scale simulations that bridge cellular-level mechanisms and brain-wide functions. The field is increasingly moving towards creating detailed digital twins of brain circuitry, integrating vast anatomical and physiological datasets to conduct virtual experiments that are infeasible in live organisms [24]. A central focus in this domain is the simulation of the canonical cortical microcircuit, a conserved local network architecture found across the mammalian neocortex [24]. Benchmarking these complex simulations requires a careful evaluation of traditional and brain-inspired computing metrics, balancing raw computational power with the pursuit of the extreme energy efficiency characteristic of biological systems [12] [25].

This document outlines core performance metrics and experimental protocols for HPC benchmarking in neuronal network research. We focus on the Spiking Neural Network (SNN) models, which are inspired by the event-driven, sparse communication principles of the brain and often demonstrate superior energy efficiency compared to traditional artificial neural networks [4]. The outlined application notes and protocols are designed to provide researchers, scientists, and drug development professionals with a standardized framework for evaluating computational performance, energy consumption, and simulation accuracy in this rapidly evolving field.

Core HPC Performance Metrics

Evaluating hardware for neuronal network simulation involves a combination of traditional HPC metrics and specialized measures tailored to the characteristics of brain-inspired computation. The table below summarizes these key performance indicators.

Table 1: Core HPC Performance Metrics for Neuronal Network Simulations

| Metric | Description | Interpretation in Neuronal Network Context |

|---|---|---|

| FLOPS (Floating Point Operations Per Second) [26] | Measures raw computational throughput, crucial for matrix multiplications in training and simulation. | Less predictive for sparse, event-driven SNNs on neuromorphic hardware; more relevant for GPU/CPU-based training of large models [26]. |

| Real-Time Factor (RTF) [24] | Ratio of wall-clock time to simulated model time ((q{RTF} = T{wall} / T_{model})). | RTF > 1: Simulation is slower than real-time. RTF < 1: Simulation is faster than real-time, enabling rapid experimentation [24]. |

| Synaptic Events per Second [24] | The total number of synaptic events processed per second of wall-clock time. | A more meaningful throughput measure for SNN simulation than FLOPS, reflecting the capacity to handle network communication [24]. |

| Energy per Synaptic Event [24] | Total energy consumed during the state-propagation phase divided by the number of processed synaptic events. | A primary metric for energy efficiency; lower values indicate hardware better suited for large-scale, low-power neuromorphic systems [24]. |

| Latency | Delay in processing and communicating spikes between neurons. | Critical for real-time interactive simulations and closed-loop robotic applications; measured in milliseconds or microseconds [4]. |

| Energy-Latency Product [4] | The product of total energy consumption and execution latency. | A composite metric assessing the trade-off between speed and efficiency; lower values are desirable [4]. |

Performance Benchmarking and Current Hardware Landscape

The pursuit of simulating biologically detailed neuronal networks has spurred innovation across hardware platforms. The performance of these systems is best evaluated using standardized benchmark models, such as the Potjans-Diesmann (PD14) cortical microcircuit model, which represents ~80,000 neurons and ~300 million synapses [24].

Table 2: Performance Comparison on the PD14 Cortical Microcircuit Model (Simulating 1 Second of Biological Time) [24]

| Hardware Platform | Simulation Technology | Real-Time Factor (RTF) | Energy per Synaptic Event |

|---|---|---|---|

| Traditional Server | CPU-based (NEST Simulator) | ~ 0.01 (100x slower than real-time) | ~ 10 µJ |

| GPU-Accelerated System | CUDA-based (GeNN) | ~ 0.1 (10x slower than real-time) | ~ 1 µJ |

| Many-Core System | SpiNNaker Board | ~ 1.0 (Real-time) | ~ 1 µJ |

| Neuromorphic System | BrainScaleS-2 | < 0.001 (>1000x faster than real-time) | ~ 0.1 µJ |

The data reveals a clear trajectory: specialized neuromorphic architectures can achieve significant gains in both simulation speed and energy efficiency compared to traditional HPC platforms. The energy consumption of these systems can be orders of magnitude lower, making them promising candidates for large-scale simulations and deployment in resource-constrained environments [24].

The broader HPC and AI accelerator market, valued at nearly $150 billion in 2024 and projected to exceed $370 billion by 2030, is driven by these specialized hardware demands [26]. While GPUs currently dominate for training large AI models, the market includes innovative startups and established players developing architectures specifically for AI workloads, including dataflow processors, wafer-scale systems, and processing-in-memory technologies [26].

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential software, hardware, and model resources required for conducting HPC benchmarking experiments for neuronal networks.

Table 3: Essential Tools and Resources for Neuronal Network Benchmarking

| Tool/Resource | Type | Function and Application |

|---|---|---|

| NeuroBench Framework [7] | Benchmarking Suite | A community-developed, standardized framework for evaluating neuromorphic algorithms and systems in both hardware-independent and hardware-dependent settings. |

| PD14 Cortical Microcircuit [24] | Standardized Model | A de facto standard benchmark model comprising a full-density spiking neural network of a cortical microcircuit; enables direct cross-platform performance comparisons. |

| SNN Tool Box (SNN-TB) [4] | Software Tool | Facilitates the conversion of traditional Artificial Neural Networks (ANNs) to Spiking Neural Networks (SNNs) for deployment on neuromorphic hardware. |

| CARLsim [4] | Software Library | A C++ library for simulating large, biologically detailed SNNs, capable of leveraging multiple CPUs and GPUs simultaneously. |

| SpiNNaker / Loihi 2 [12] [24] | Neuromorphic Hardware | Digital neuromorphic platforms designed for massively parallel, event-driven simulation of SNNs with low power consumption. |

| Memristive Crossbar Arrays [12] | Emerging Hardware | Analog/mixed-signal neuromorphic devices that perform in-memory computing, potentially offering extreme energy efficiency for synaptic operations. |

Experimental Protocols for Benchmarking

Protocol 1: Measuring Real-Time Factor and Energy Efficiency

This protocol measures the core performance metrics when simulating a standardized neuronal network model on a target platform.

- Platform Setup: Install and configure the target hardware/software platform. For neuromorphic systems, ensure the software toolchain (e.g., NxSDK for Loihi, SpiNNaker software) is correctly installed [12] [18].

- Benchmark Model Loading: Load the PD14 cortical microcircuit model or another standardized benchmark (e.g., from NeuroBench) [7] [24]. The network initialization phase must be completed before timing.

- Power Measurement Initiation: Connect a power meter to the system under test (at the rack or chip level, where possible). Begin logging power draw at a high frequency (≥1 kHz).

- State-Propagation Execution: Execute the simulation for a defined period of biological model time (e.g., (T{model}) = 10 seconds). Precisely record the wall-clock time ((T{wall})) for this phase, excluding initialization.

- Data Collection and Calculation:

- Calculate the Real-Time Factor: (q{RTF} = T{wall} / T{model}) [24].

- Calculate total energy consumed during state propagation: (E{total} = \int P(t) dt).

- Obtain the total number of synaptic events processed ((N{syn})) from the simulator's output logs.

- Calculate the Energy per Synaptic Event: (E{per-synapse} = E{total} / N{syn}) [24].

Protocol 2: Benchmarking SNN Training and Inference

This protocol assesses performance during the training and deployment of a Spiking Neural Network for a practical task, such as image classification or anomaly detection.

- Dataset and Model Selection: Choose a benchmark dataset (e.g., MedMNIST for medical images, VisA for industrial anomaly detection) [27]. Select an SNN architecture (e.g., a converted VGG16 or ResNet18) [4] [27].

- Model Conversion/Sparsification: Use an automated tool flow (e.g., SNN-TB) to convert a pre-trained ANN to an SNN [4]. Alternatively, apply sparsification techniques (e.g., the SET method) to create a sparse SNN for efficient inference [27].

- Training/Inference Execution: Run the training or inference process on the target hardware. For training, use surrogate gradient methods or other SNN-compatible learning rules [12] [18].

- Performance Monitoring: Record key metrics:

- Training Accuracy/Loss: Track convergence over epochs.

- Inference Time: Measure the time to process a single batch or the entire test set.

- Energy Consumption: Measure total energy used during inference.

- Sparsity Analysis: Report the achieved sparsity level and its impact on model size [27].

- Comparison and Analysis: Compare the final accuracy, latency, and energy consumption against a dense baseline model and other hardware platforms.

Analysis and Visualization of Benchmarking Data

Effective analysis requires visualizing the relationships and trade-offs between different performance metrics. The energy-latency product is a key composite metric that captures the balance between speed and efficiency [4].

Adhering to these protocols and utilizing the provided toolkit will enable reproducible and comparable benchmarking of HPC systems for neuronal network research. This structured approach is critical for driving progress in computational neuroscience and the development of next-generation neuromorphic computing platforms.

Benchmarking Methodologies and Frameworks for Neuromorphic Systems

The rapid evolution of artificial intelligence (AI) and machine learning has led to increasingly complex models, whose growing computational demands outpace the efficiency gains from traditional technology scaling [7]. This challenge is particularly acute for resource-constrained edge devices, intensifying the need for new, resource-efficient computing architectures. Neuromorphic computing has emerged as a promising solution, aiming to replicate the brain's exceptional efficiency, scalability, and real-time processing capabilities through brain-inspired hardware and algorithms [7].

However, the neuromorphic research field has historically suffered from a critical gap: the lack of standardized benchmarks. This absence has made it difficult to objectively measure technological progress, compare the performance of neuromorphic approaches against conventional methods, or identify the most promising future research directions [7] [28]. Prior benchmarking efforts saw limited adoption due to designs that were not inclusive, actionable, or iterative [28].

To address these shortcomings, NeuroBench was introduced. It is a collaborative, community-driven benchmark framework developed by nearly 100 researchers across over 50 institutions in industry and academia [28] [29]. Its mission is to provide a common set of tools and a systematic methodology for fairly and representatively evaluating neuromorphic algorithms and systems. By offering an objective reference framework, NeuroBench enables the quantitative comparison of neuromorphic approaches in both hardware-independent and hardware-dependent settings, fostering continued progress in the field [7] [28].

NeuroBench Framework Architecture

NeuroBench is designed with a structured architecture to comprehensively address the different facets of neuromorphic computing evaluation. Its core innovation lies in a dual-track approach that separates the evaluation of algorithms from the assessment of complete hardware systems.

The Dual-Track Evaluation Model

The framework is organized into two parallel tracks to cater to different stages of research and development:

- Algorithm Track (Hardware-Independent): This track focuses on evaluating computational models and algorithms—such as Spiking Neural Networks (SNNs) and other neuroscience-inspired methods—using simulations on conventional hardware like CPUs and GPUs [7] [28]. The primary goal is to assess the intrinsic computational capabilities, data efficiency, and adaptability of algorithms, driving the design requirements for future neuromorphic hardware [7]. Performance is measured using standardized metrics that are agnostic to the underlying hardware.

- System Track (Hardware-Dependent): This track evaluates full systems where algorithms are deployed on specialized neuromorphic hardware [7] [28]. It aims to measure real-world performance characteristics such as energy efficiency, real-time processing speed, and system resilience. This track leverages biologically-inspired hardware approaches like analog neuron emulation, event-based computation, and in-memory processing [7].

Table: NeuroBench Dual-Track Evaluation Focus

| Track | Evaluation Focus | Primary Metrics | Target |

|---|---|---|---|

| Algorithm Track | Computational performance, learning capabilities | Accuracy, activation sparsity, synaptic operations | Algorithms & Models |

| System Track | Energy efficiency, latency, throughput | Power consumption, inference time, cost | Hardware Systems |

The following diagram illustrates the logical structure and workflow of the NeuroBench framework, showing the relationship between its core components and the two evaluation tracks:

Key Performance Metrics

NeuroBench employs a comprehensive suite of metrics to ensure a holistic evaluation of neuromorphic approaches. These metrics are categorized based on their application across the two tracks.

Table: Core NeuroBench Performance Metrics

| Metric Category | Specific Metric | Description | Applicable Track |

|---|---|---|---|

| Static Metrics | Footprint | Number of model parameters | Algorithm |

| Connection Sparsity | Proportion of zero-weight connections | Algorithm | |

| Workload Metrics | Activation Sparsity | Proportion of inactive neurons over time | Algorithm |

| Synaptic Operations | Number of effective Multiply-Accumulates (MACs) or Accumulates (ACs) | Algorithm | |

| Classification Accuracy | Task-specific prediction performance | Algorithm | |

| System Metrics | Energy Consumption | Total energy used per task (e.g., Joules) | System |

| Latency | Time from input to output (e.g., milliseconds) | System | |

| Throughput | Processing rate (e.g., samples/second) | System |

NeuroBench Benchmarks and Protocols

NeuroBench provides a set of standardized benchmarks and a rigorous experimental protocol to ensure fair and reproducible evaluation across different neuromorphic solutions.

Standardized Benchmark Tasks

The framework includes a growing suite of benchmark tasks designed to represent challenging real-world problems for neuromorphic computing. These benchmarks are publicly available through the NeuroBench Python package [30].

Table: NeuroBench v1.0 Benchmark Tasks

| Benchmark Task | Domain | Description | Dataset/Source |

|---|---|---|---|

| Keyword Few-shot Class-incremental Learning (FSCIL) | Audio, Continual Learning | Classifies keywords from audio with limited examples and incremental new classes | Google Speech Commands (GSC) |

| Event Camera Object Detection | Computer Vision | Detects objects from event-based camera data | Gen1 Automotive Detection |

| Non-human Primate (NHP) Motor Prediction | Brain-Computer Interfaces | Predicts limb movement from neural recording data | Non-human primate neurophysiology |

| Chaotic Function Prediction | Time-Series Analysis | Predicts the evolution of chaotic dynamical systems | Lorenz, Mackey-Glass |

| DVS Gesture Recognition | Neuromorphic Vision | Classifies human gestures from Dynamic Vision Sensor (DVS) data | DVS128 Gesture Dataset |

| Neuromorphic Human Activity Recognition (HAR) | Embedded Sensing | Recognizes human activities from event-based sensor data | Neuromorphic HAR Dataset |

Experimental Workflow Protocol

The experimental workflow for using the NeuroBench framework follows a systematic, multi-stage process designed to ensure consistency and reproducibility. The following diagram outlines the key stages from data preparation to result generation:

The detailed protocol for a NeuroBench evaluation is as follows:

- Data Preparation: Obtain the designated dataset for the chosen benchmark (e.g., Google Speech Commands for audio classification). Split the data into training and evaluation sets as defined by the benchmark specification [30].

- Model Training: Train the neural network model (e.g., an SNN or ANN) using only the training split of the data. The choice of architecture and training algorithm (e.g., surrogate gradient learning for SNNs) is left to the researcher, promoting innovation [30].

- Model Wrapping: Integrate the trained model into the NeuroBench framework by wrapping it as a

NeuroBenchModel. This standardizes the interface for subsequent evaluation [30]. - Benchmark Configuration and Execution: Create a

Benchmarkobject by specifying:- The wrapped

NeuroBenchModel. - The evaluation split dataloader.

- Any required pre-processors (for input data) and post-processors (to interpret model outputs).

- The comprehensive list of metrics to compute (e.g., footprint, accuracy, sparsity) [30].

- Execute the evaluation by calling the

run()method.

- The wrapped

- Results Analysis and Reporting: The framework returns a dictionary of results for all specified metrics. Researchers can submit these results to the public NeuroBench leaderboards to compare their solutions against other state-of-the-art approaches [30].

Example Protocol: Google Speech Commands Classification

To illustrate a concrete implementation, below is the specific protocol for the Google Speech Commands (GSC) classification benchmark, a common task for keyword spotting.

- Objective: To classify 1-second audio clips of spoken commands into one of 35 word classes with high accuracy and efficiency.

- Dataset: Google Speech Commands v2 [30].

- Pre-processing:

- Audio waveforms are converted into Mel-frequency cepstral coefficients (MFCCs) or similar features.

- For Spiking Neural Networks (SNNs), these features are often converted into spike trains using encoding techniques like rate coding or latency coding.

- Model Wrapping:

- Post-processing: The spiking or non-spiking outputs from the model are aggregated over the temporal duration of the audio sample to produce a single classification decision.

- Metrics: The benchmark is evaluated against the standard NeuroBench metric suite, including:

- Classification Accuracy: Primary measure of task performance.

- Footprint: Total number of parameters (e.g., 109,228 for an example ANN, 583,900 for an example SNN) [30].

- Activation Sparsity: Proportion of silent neurons (e.g., 38.5% for an example ANN, 96.7% for an example SNN) [30].

- Synaptic Operations: Computational cost, reported as Effective MACs (for ANNs) or Effective ACs (for SNNs) [30].

The Scientist's Toolkit

Implementing and evaluating models with NeuroBench requires a specific set of software tools and resources. The following table details the key components of the "research reagent solutions" essential for working with this framework.

Table: Essential Research Reagents and Tools for NeuroBench

| Tool / Resource | Type | Function | Access Method |

|---|---|---|---|

| NeuroBench Python Package | Software Framework | Core harness for running benchmarks, computing metrics, and ensuring evaluation consistency [30] [31]. | pip install neurobench [30] |

| PyTorch / snnTorch | Machine Learning Library | Primary framework for building, training, and wrapping models for evaluation in NeuroBench [30]. | Python package install |

| Standard Datasets (e.g., GSC, DVS Gestures) | Data | Curated, benchmark-specific datasets for training and evaluation, ensuring fair comparisons [30]. | Downloaded automatically via benchmark scripts |

| Pre-processors & Post-processors | Code Module | Handle data formatting, spike encoding, and output decoding, standardizing the input/output pipeline [30]. | Part of NeuroBench API |

| Metrics Suite | Evaluation Code | Standardized implementations of all NeuroBench metrics (footprint, sparsity, accuracy, etc.) [30]. | Part of NeuroBench API |

| Neuromorphic Hardware Simulators (e.g., Nengo, Brian2GeNN) | Software Simulator | Enable hardware-independent algorithm testing and prototyping for the system track [32]. | Various independent installations |

NeuroBench represents a pivotal community-driven effort to standardize the evaluation of neuromorphic computing algorithms and systems. By providing a unified framework with a dual-track approach, comprehensive metrics, and standardized benchmarks, it directly addresses the critical lack of comparable and reproducible evaluation methods that has hindered the field.

For researchers in high-performance computing (HPC) and neuronal networks, NeuroBench offers a robust, fair, and actionable toolkit. It enables the direct comparison of novel neuromorphic approaches against each other and conventional methods, illuminating true performance advancements. Its ongoing, collaborative nature ensures it will evolve alongside the field, continually providing the reference framework needed to quantify and guide progress in brain-inspired computing.

In high-performance computing (HPC) for neuronal networks research, benchmarking is the cornerstone practice for evaluating the performance of algorithms, software, and hardware. Benchmarks provide a standardized method to assess relative performance across different systems and architectures, which is critical for driving progress in computationally intensive fields like biomedical data analysis [33]. The choice between synthetic benchmarks, which use specially created programs to test specific components, and application benchmarks, which run real-world programs, carries significant implications for predicting real-world performance and guiding research and procurement decisions [33]. This article explores the dichotomy between these benchmarking approaches, with a specific focus on their application in HPC experiments for neuronal networks and biomedical research.

Defining the Benchmarking Landscape

A benchmark is the act of running a computer program, a set of programs, or other operations to assess the relative performance of an object, normally by running several standard tests and trials against it [33]. In the context of machine learning and HPC, predictive benchmarking has evolved into a central epistemic practice, typically comprising a learning problem, a standardized dataset split, an evaluation metric, and a public leaderboard for ranking models [34].

Characteristics of Benchmark Types

- Synthetic Benchmarks are designed to mimic a particular type of workload on a component or system using specially created programs. A classic example in HPC is the LINPACK benchmark, which measures floating-point computing power by solving a dense system of linear equations and is used to rank the TOP500 supercomputers globally [33]. These benchmarks are often abstract, focusing on isolating and measuring the performance of specific subsystems, such as a CPU's floating-point operation performance or a hard disk's read/write speed.

- Application Benchmarks run real-world programs on the system. In biomedical research, this could involve running an actual neuronal network training pipeline on a dataset of histological images to identify cancerous tissue [35]. While these benchmarks usually provide a better measure of real-world performance, they can be more complex and time-consuming to set up and execute [33].

The table below summarizes the core distinctions between these two benchmark categories.

Table 1: Core Characteristics of Synthetic and Application Benchmarks

| Feature | Synthetic Benchmarks | Application Benchmarks |

|---|---|---|

| Definition | Specially created programs to impose a specific workload [33] | Real-world programs run on the system [33] |

| Primary Goal | Isolate and measure performance of individual components | Measure end-to-end performance on practical tasks |

| Examples | LINPACK, Dhrystone, Whetstone [33] | Training a deep learning model for protein folding prediction [35] [34] |

| Advantages | Controlled, repeatable, good for hardware comparisons | High relevance to actual research workloads |

| Disadvantages | May not correlate well with real-world application performance [36] | Can be complex, time-consuming, and less portable |

The Critical Role of Benchmarking in Biomedical Neural Network Research

Biomedical research generates vast amounts of complex data, from numerical biomarker concentrations to time-series data and high-content bioimages [35]. The analysis of this data, particularly for tasks like biomarker identification in bioimages, is increasingly reliant on deep neural networks [35]. Benchmarking is indispensable in this field for several reasons:

- Measuring Engineering Progress: Benchmarks like ImageNet have served as proxy signals for measuring methodological progress on abstract tasks like image classification, which is directly transferable to biomedical image analysis [34].

- Informing Model Selection for Deployment: Beyond pure research, benchmarks help policymakers and clinicians select models for deployment in clinical settings. For instance, benchmarking the effectiveness and efficiency of deep learning models for clinical semantic textual similarity helps validate their usefulness in real-time applications [37].

- Establishing Trust through Explainability: The "black box" nature of complex neural networks hinders their clinical adoption. Explainable AI (XAI) techniques are emerging as a crucial component of model evaluation, helping to understand model predictions and develop trust among practitioners and patients [38]. Benchmarking XAI methods is thus becoming a research frontier in itself.

A well-constructed benchmark must adhere to key principles to be scientifically useful. These include relevance, representativeness, equity, repeatability, cost-effectiveness, scalability, and transparency [33]. Furthermore, drawing substantial scientific inferences from benchmark scores requires construct validity, which involves making explicit assumptions about the theoretical structure of the learning problems, evaluation functions, and data distributions [34].

Case Study: Benchmarking Clinical Deep Learning Models

A validation study on Semantic Textual Similarity (STS) in the clinical domain provides a concrete example of a rigorous application-level benchmark [37]. The study did not just report the highest Pearson correlation; it provided a comprehensive evaluation of top-performing deep learning models.

Table 2: Benchmarking Results for Clinical Semantic Textual Similarity Models [37]

| Model | Average Pearson Correlation | Relative Inference Time | Key Observation |

|---|---|---|---|

| BioSentVec | 0.8497 | 1x (Baseline) | Highest effectiveness in 3 of 4 measures |

| BioBERT | 0.8481 | ~50x slower than BioSentVec | Struggled with highly similar sentences containing negations |

| Convolutional Neural Network (CNN) | Not Specified | ~2.5x slower than BioSentVec | Good balance of performance and efficiency |

| Random Forest (Baseline) | Not Specified | Not Specified | Used for comparative purposes |

Experimental Protocol for Benchmarking Clinical DL Models

The following protocol outlines the methodology used in the clinical STS study, which can be adapted for benchmarking models in other biomedical domains [37].

Objective: To benchmark the effectiveness and efficiency of top-ranked deep learning models for semantic textual similarity in the clinical domain. Materials: Expertly annotated STS dataset from the OHNLP Consortium, standardized training and testing splits.

- Model Selection: Select top-performing models relevant to the domain (e.g., BioSentVec, BioBERT, ClinicalBERT, CNN).

- Baseline Establishment: Include a baseline model (e.g., Random Forest).

- Experimental Repetition: Repeat the experiment multiple times (e.g., N=10) using the official training and testing sets to ensure statistical robustness.

- Effectiveness Measurement: Calculate primary and secondary metrics on the test set.

- Primary Metric: Pearson correlation (official metric).

- Secondary Metrics: Spearman correlation, R², Mean Squared Error (MSE).

- Efficiency Measurement: Record the inference time for each model under standardized hardware and software conditions.

- Robustness Analysis: Analyze model performance across different sub-populations of the data (e.g., sentence pairs of varying similarity levels).

- Statistical Analysis: Report 95% confidence intervals using robust statistical tests (e.g., Wilcoxon rank-sum test) on the average Pearson correlation and running time.

Workflow Visualization

The diagram below illustrates the structured workflow of this benchmarking protocol.

The Scientist's Toolkit: Research Reagent Solutions

To implement rigorous benchmarks in neuronal network research, specific software tools and frameworks are essential. The table below lists key resources mentioned across the search results.

Table 3: Essential Research Reagents for Neural Network Benchmarking

| Tool/Framework | Primary Function | Relevance to Benchmarking |

|---|---|---|

| TensorFlow / pyTorch [35] | Deep Learning Frameworks | Primary platforms for developing, training, and evaluating neural network models. |

| scikit-learn (sklearn) [35] | Traditional Machine Learning | Provides baseline models (e.g., Random Forest) and utilities for data preprocessing. |

| BioBERT / BioSentVec [37] | Domain-Specific Language Models | Pre-trained models that serve as state-of-the-art benchmarks in clinical and biological NLP tasks. |

| COCO Protocol [39] | Black-Box Optimization Benchmark | Provides a rigorous protocol for numerical optimization, including statistical analysis and reporting. |

| XAI Techniques [38] | Explainable Artificial Intelligence | Methods to interpret model predictions, crucial for validating models in a clinical context. |

| Color Contrast Analyzers [40] [41] | Accessibility Validation | Tools to ensure that any visualizations or dashboards created meet accessibility standards (WCAG AA). |

The choice between synthetic and application benchmarks is not a matter of which is universally superior, but of selecting the right tool for the specific question at hand. As Carl Nelson notes, "You shouldn't be using one benchmark to determine the performance of a system anyway" [36]. For HPC benchmarking in neuronal networks research, a multi-faceted approach is critical.