Harnessing Neural Population Dynamics: From Theoretical Foundations to Optimized Biomedical Applications

This article synthesizes the latest theoretical and methodological advances in neural population dynamics to provide a roadmap for optimizing computational models and their biomedical applications.

Harnessing Neural Population Dynamics: From Theoretical Foundations to Optimized Biomedical Applications

Abstract

This article synthesizes the latest theoretical and methodological advances in neural population dynamics to provide a roadmap for optimizing computational models and their biomedical applications. We first explore the foundational principles that reveal neural dynamics as robust, network-constrained processes, moving to innovative methodologies like geometric deep learning and foundation models that capture these dynamics across sessions and individuals. A core focus is troubleshooting key challenges, such as distinguishing cross-population signals and managing heterogeneous time scales, with targeted optimization strategies. Finally, we establish a rigorous framework for validating and comparing dynamic models, assessing their predictive power and biological interpretability. This integrative overview is tailored for researchers, scientists, and drug development professionals seeking to leverage neural dynamics for enhanced therapeutic discovery and closed-loop neurotechnologies.

The Core Principles: Defining Neural Populations and Their Constrained Dynamics

For decades, neuroscientists have largely defined neural populations by anatomical landmarks—brain regions, cortical layers, and cytoarchitectonic boundaries. While this approach has provided a necessary organizational framework, it inherently limits our understanding of neural computation, which arises from dynamic, coordinated activity that does not respect these arbitrary borders. The computation through dynamics (CTD) framework posits that neural computations are implemented by the temporal evolution of population activity within a neural state space [1]. This perspective necessitates a shift from purely anatomical definitions of neural populations toward definitions grounded in shared dynamic function.

This paradigm shift is critical for optimization research, particularly in drug development, where understanding the precise dynamics of neural circuits can lead to more targeted interventions with fewer off-target effects. By defining populations by their functional signatures—such as shared information encoding, coordinated temporal dynamics, or common output pathways—we can identify the fundamental computational units that drive behavior and cognition.

Theoretical Foundation: Neural Population Dynamics

The Dynamical Systems Framework

At its core, the dynamical systems framework models neural population activity as a trajectory through a high-dimensional state space. The state of a population of N neurons can be represented as an N-dimensional vector, x(t), where each element represents the firing rate of one neuron at time t [1]. The evolution of this state through time is governed by:

[ \frac{dx}{dt} = f(x(t), u(t)) ]

where f is a function capturing the intrinsic circuit dynamics, and u(t) represents external inputs to the circuit [1]. This formulation reveals that neural population responses reflect underlying dynamics resulting from intracellular properties, interneuronal connectivity, and external inputs.

Table 1: Key Concepts in Neural Population Dynamics

| Concept | Mathematical Representation | Computational Interpretation |

|---|---|---|

| Neural Population State | x(t) = [x₁(t), x₂(t), ..., xₙ(t)] | Instantaneous snapshot of population activity |

| State Space | N-dimensional space spanned by neuronal firing rates | Landscape of all possible population states |

| Neural Trajectory | Temporal evolution of x(t) through state space | Implementation of a neural computation |

| Dynamics Function | f(x(t), u(t)) | Captures circuit connectivity and intrinsic neural properties |

Low-Dimensional Manifolds and Computational Processing

A critical insight from this framework is that neural activity is often constrained to low-dimensional manifolds within the high-dimensional state space. While we might record from hundreds of neurons, their coordinated activity typically evolves within a subspace of only 10-20 dimensions [1]. This phenomenon reflects the fundamental organizing principles of neural circuits and reveals that the relevant computational variables are these latent dimensions rather than individual neuron activities.

Defining Populations by Projection-Specific Function

Specialized Population Codes in Output Pathways

Recent research demonstrates that functional specialization according to output pathways provides a powerful principle for defining neural populations. In the posterior parietal cortex (PPC), neurons projecting to different target areas (e.g., anterior cingulate cortex [ACC], retrosplenial cortex [RSC], or contralateral PPC) form distinct population codes with specialized correlation structures that enhance information transmission [2].

These projection-defined subpopulations exhibit:

- Distinct temporal activity profiles: ACC-projecting neurons show higher activity early in trials, while RSC-projecting neurons activate later during decision execution [2].

- Structured correlation networks: Neurons sharing a projection target exhibit stronger pairwise correlations arranged in information-enhancing motifs [2].

- Behavior-dependent reorganization: The specialized correlation structure appears only during correct behavioral choices, not error trials [2].

Table 2: Properties of Projection-Defined Neural Populations in PPC

| Projection Target | Temporal Activation Profile | Correlation Structure | Information Specialization |

|---|---|---|---|

| Anterior Cingulate Cortex (ACC) | Early trial activity | Information-enhancing motifs | Sample cue encoding, early decision processing |

| Retrosplenial Cortex (RSC) | Late trial activity | Information-enhancing motifs | Choice encoding, navigation planning |

| Contralateral PPC | Uniform across trial | Information-enhancing motifs | Integrated task variables |

These findings demonstrate that projection-defined populations constitute biologically real functional units with specialized computational properties that cannot be identified through anatomical location alone.

Communication Subspaces for Inter-Area Communication

The communication between functionally defined populations can be understood through the concept of communication subspaces (CS). When two brain areas communicate, the sending population's activity is transformed through a communication subspace—a set of dimensions that selectively extracts features to propagate to the downstream area [3]. This CS may not align with the principal components of neural activity but instead may communicate activity along low-variance dimensions critical for specific computations [3].

Methodological Toolkit for Identifying Functional Populations

Experimental Approaches and Reagent Solutions

Table 3: Essential Research Reagents and Tools for Defining Functional Populations

| Reagent/Tool | Function | Application Example |

|---|---|---|

| Retrograde Tracers (e.g., fluorescent conjugates) | Identify neurons projecting to specific target areas | Labeling PPC neurons projecting to ACC, RSC, or contralateral PPC [2] |

| Multi-Electrode Arrays | Record simultaneous activity from hundreds of neurons | Monitoring network bursting in hippocampal slices [4] |

| Two-Photon Calcium Imaging | Measure activity of identified neuronal populations | Imaging hundreds of neurons simultaneously in PPC during decision-making [2] |

| Optogenetic Tools (e.g., Channelrhodopsin) | Precisely manipulate specific neuronal populations | Within-manifold perturbations to test computational roles [3] |

| Vine Copula Models | Analyze multivariate dependencies in neural and behavioral data | Isolating contribution of task variables while controlling for movement [2] |

Statistical and Analytical Frameworks

Population Tracking Model

The population tracking model provides a scalable statistical method for characterizing neural population activity with only N² parameters, making it suitable for large populations. This model matches the population rate (number of synchronously active neurons) and the probability that each neuron fires given the population rate, effectively capturing key features of population dynamics without requiring exponentially large datasets [5].

Vine Copula Models for Multivariate Dependencies

Nonparametric vine copula (NPvC) models enable researchers to estimate the multivariate dependencies among a neuron's activity, task variables, and movement variables. This approach expresses multivariate probability densities as the product of a copula (quantifying statistical dependencies) and marginal distributions. The NPvC method offers advantages over generalized linear models by capturing nonlinear dependencies and better handling collinearities between task and behavioral variables [2].

Network Science Approaches

Network science provides powerful tools for analyzing population recordings by treating neurons as nodes and their interactions as links. This approach enables researchers to:

- Quantify system-wide changes following manipulations

- Track changes in dynamics over time

- Quantitatively define theoretical concepts like cell assemblies [6]

Key network metrics include degree distributions (revealing dominant neurons), local clustering (revealing locked dynamics), and efficiency (measuring population cohesion) [6].

Dynamical Systems Identification

Linear dynamical systems (LDS) models provide a foundation for modeling neural population dynamics:

[ x(t + 1) = Ax(t) + Bu(t) ] [ y(t) = Cx(t) + d ]

where x(t) is the neural population state, y(t) is the measured neural activity, A is the dynamics matrix capturing intrinsic dynamics, B is the input matrix, u(t) represents inputs, C is the observation matrix, and d is a constant offset [3]. For more complex computations, recurrent neural networks (RNNs) can model nonlinear dynamics:

[ \frac{dx}{dt} = R_θ(x(t), u(t)) ]

where Rθ is an RNN with parameters θ [1].

Experimental Protocols for Functional Population Analysis

Protocol 1: Recording and Analyzing Network Activity with Multi-Electrode Arrays

This protocol details steps for recording and analyzing network bursting activity in acute brain slices [4]:

- Slice Preparation: Prepare acute hippocampal slices from mouse brain, maintaining physiological conditions throughout the process.

- Activity Induction: Induce neuronal network bursting activity using appropriate pharmacological agents or stimulation protocols.

- Recording: Record bursting activity with multi-electrode arrays (MEAs), ensuring proper signal acquisition and minimal noise.

- Analysis: Analyze network activity using burst detection algorithms that identify synchronized population events based on timing and amplitude thresholds.

Protocol 2: Population Decoding with Support Vector Machines

This protocol enables decoding of behavioral variables from population activity [7]:

- Data Preparation: Compute z-scored spike counts in behaviorally relevant time windows (e.g., 0-500 ms after stimulus onset).

- Classifier Selection: Implement a linear support vector machine (SVM) for binary classification problems.

- Cross-Validation: Use Monte-Carlo cross-validation with 80/20 training/test splits repeated 100 times.

- Weight Analysis: Examine SVM weights to identify neurons making significant contributions to decoding.

Protocol 3: Within-Manifold Neural Perturbations

This advanced protocol tests causal roles of neural dynamics [3]:

- Manifold Identification: First characterize the low-dimensional manifold of natural population activity using dimensionality reduction.

- Perturbation Design: Design stimulation patterns that displace the neural state within the identified manifold.

- Precision Stimulation: Deliver spatiotemporally precise stimulation (optogenetic or electrical) to induce the desired state displacement.

- Effect Quantification: Measure how dynamics respond and how behavior is affected compared to outside-manifold perturbations.

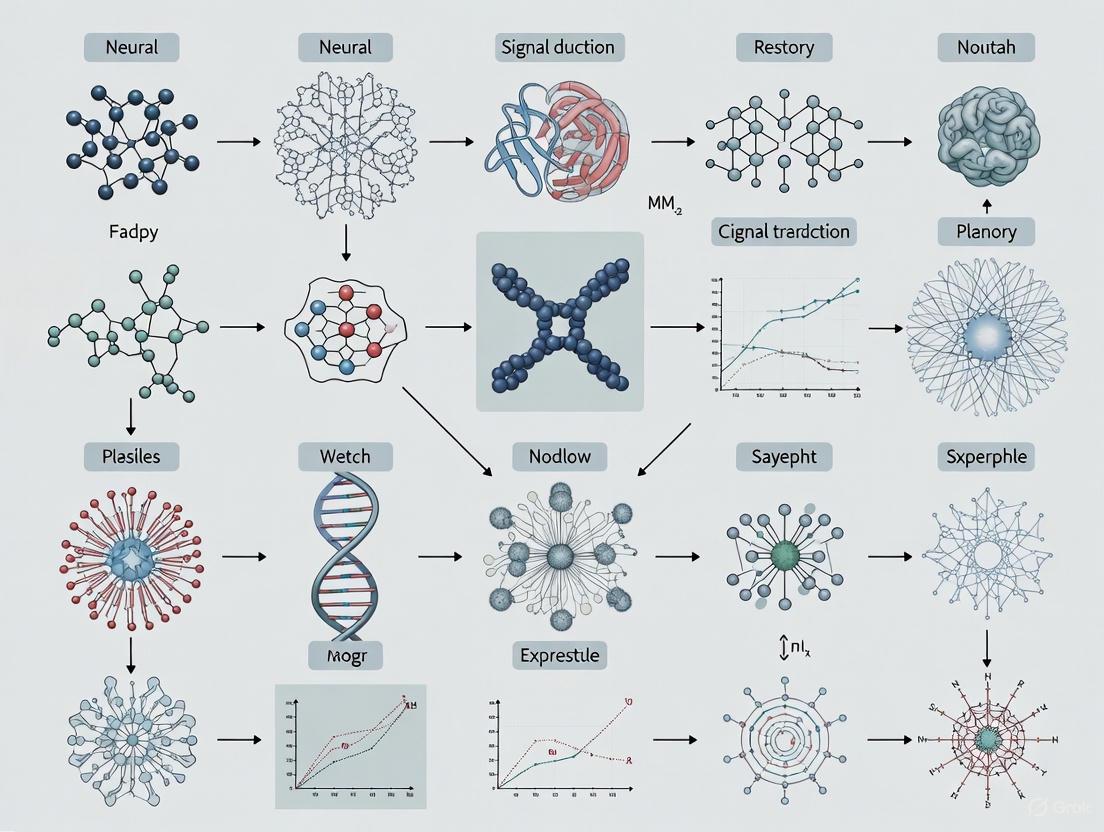

Visualization of Functional Population Concepts

Neural Population Dynamics in State Space

Communication Between Functional Populations

Implications for Optimization Research and Therapeutics

Defining neural populations by dynamic function rather than anatomical position has profound implications for optimization research and drug development:

Target Identification: Functional populations defined by specific computational roles provide more precise therapeutic targets than broadly defined brain regions.

Treatment Optimization: Understanding the dynamic properties of pathological neural circuits enables optimization of stimulation parameters for neuromodulation therapies.

Biomarker Development: Dynamic signatures of functional populations can serve as sensitive biomarkers for treatment response and disease progression.

Circuit-Based Therapeutics: Interventions can be designed to specifically modulate information processing within identified functional populations rather than broadly affecting entire brain regions.

The functional approach to defining neural populations represents a fundamental shift in neuroscience that bridges the gap between neural activity and computation. By focusing on how neurons collectively process information through coordinated dynamics, regardless of anatomical proximity, we can identify the true computational units of the brain and develop more effective, targeted interventions for neurological and psychiatric disorders.

The brain functions as a dynamical system, where thoughts, decisions, and actions are generated by the evolution of population-wide neural activity through time—a concept formalized as computation through neural population dynamics [1]. Within this framework, neural trajectories—the paths that neural population activity follows in a high-dimensional state space—are fundamental to understanding how the brain performs computations [1]. The robustness of these trajectories, that is, their ability to withstand or rapidly recover from perturbations, is a critical determinant of reliable sensorimotor control and cognitive function. This review synthesizes recent empirical evidence, with a focus on the motor cortex, to elucidate the mechanisms that confer robustness upon neural trajectories. Understanding these principles not only advances fundamental neuroscience but also provides a biological blueprint for the next generation of robust optimization algorithms and adaptive control systems, as seen in brain-inspired meta-heuristic methods like the Neural Population Dynamics Optimization Algorithm (NPDOA) [8].

Theoretical Framework: Computation Through Dynamics

The dynamical systems perspective models the collective activity of a neural population as a state vector, (\mathbf{x}(t)), whose components represent the firing rates of N neurons simultaneously recorded. The temporal evolution of this neural population state is governed by: [ \frac{d\mathbf{x}}{dt} = f(\mathbf{x}(t), \mathbf{u}(t)) ] where the function (f) captures the intrinsic dynamics arising from the underlying neural circuitry, and (\mathbf{u}(t)) represents external inputs [1]. A neural trajectory is the path traced by (\mathbf{x}(t)) through this N-dimensional state space over time.

In motor cortex, these trajectories are not mere epiphenomena; they are the physical implementation of the computation that plans and executes movement. Preparatory activity sets the initial condition of the neural state, and the subsequent dynamics—often taking the form of rotational or "neural oscillations"—drive the movement itself [9]. The robustness of this process can be defined as the ability of the trajectory to return to its intended path following an internal or external perturbation, ensuring the accurate and timely execution of a motor plan.

Empirical Evidence of Robustness in Motor Cortex

Differential Effects of Perturbation in Static vs. Dynamic Contexts

A seminal 2025 study provides direct empirical evidence for the context-dependent robustness of motor cortical dynamics. The study trained monkeys to perform delayed center-out reaches under two conditions: to a static target and to a rotating target requiring interception [9].

- Experimental Perturbation: Intracortical microstimulation (ICMS) was applied in the primary motor cortex (M1) or dorsal premotor cortex (PMd) during the late delay period. This served as a controlled, focal perturbation to the ongoing neural dynamics.

- Behavioral Findings: ICMS consistently prolonged reaction times (RTs) in the static target condition. In stark contrast, the same perturbation did not increase RTs in the moving target condition; in some cases, it even shortened them [9].

- Neural Correlates: Analysis of the neural population activity post-perturbation revealed that in the moving condition, the neural state diverged less from its intact trajectory and exhibited a faster recovery rate compared to the static condition [9].

Table 1: Key Experimental Findings on Trajectory Robustness [9]

| Aspect | Static Target Condition | Moving Target Condition |

|---|---|---|

| ICMS Effect on RT | Prolonged | Unaffected or Shortened |

| Neural State Divergence | Larger | Smaller |

| Recovery Rate | Slower | Faster |

| Neural State Preparation | Largely completed before GO cue | Involves continuous transformation |

This dissociation indicates that the neural dynamics underlying interception are inherently more resilient to perturbation. The authors attributed this robustness to the nature of the computation being performed. Reaching to a static target relies on a motor plan that is largely finalized before movement onset, making its preparatory state vulnerable. In contrast, intercepting a moving target requires continuous sensorimotor transformation, where the motor plan is continuously updated based on ongoing visual feedback [9]. This continuous inflow of external input appears to actively stabilize the neural dynamics, allowing them to rapidly correct for errors introduced by the ICMS perturbation.

The Stabilizing Role of Continuous Input and Optimal Control

The empirical findings are supported by computational modeling. A neural network model developed to mirror the experiment demonstrated that continuous feedback inputs are the key mechanism that rapidly corrects perturbation-induced errors in the dynamic reaching condition [9]. This aligns with the broader theoretical principle that external inputs, (\mathbf{u}(t)), can contextualize a computation by changing the dynamical regime of the circuit [1].

Furthermore, the framework of Nonlinear Optimal Control Theory provides a normative explanation for how such robust control might be implemented. When applied to a bistable neural population model, optimal control strategies find the most cost-efficient input to switch the system between states (e.g., from an "up" to a "down" state). These strategies often exploit the intrinsic dynamics of the system, applying a minimal pulse to push the state just across the boundary of the target's basin of attraction, from where the system's own dynamics complete the transition [10]. This principle—using minimal control effort to harness intrinsic dynamics—is a likely candidate for how the brain economically ensures robust trajectory control.

Experimental Protocols for Investigating Neural Trajectories

Intracortical Microstimulation (ICMS) Perturbation Protocol

The following methodology was used to generate the key findings on robustness [9]:

- Animal Model & Task: Two monkeys (G and L) were trained to perform a delayed center-out reaching task in two contexts: static targets and rotating targets requiring interception. Delay periods were 200 ms (short) or 900 ms (long).

- Neural Recording & Stimulation: Neural activity was recorded from M1 and PMd using 64-channel linear probes. A single electrode on the probe was used for delivering biphasic, sub-threshold ICMS.

- Stimulation Parameters:

- Timing: Applied during late delay (PreGO: from 100 ms before the "go cue" (GO) to GO; PreMO: from GO to 150 ms after GO).

- Duration: 100 ms trains.

- Frequency: 300 Hz.

- Amplitude: Set 5–10 μA below the movement-elicitation threshold.

- Trial Structure: Stimulated (ST) and non-stimulated (NS) trials were randomly interleaved to allow for paired comparisons.

- Data Analysis:

- Behavior: Change in reaction time (ΔRT = RTST - RTmatched_NS) was the primary behavioral metric.

- Neural States: Neural population activity was analyzed by projecting high-dimensional recordings into a lower-dimensional state space using dimensionality reduction techniques (e.g., PCA or jPCA). The divergence and recovery of neural trajectories post-ICMS were quantified.

Neural Population Dynamics and Dimensionality Reduction

The general workflow for analyzing neural trajectories from population recordings is as follows [1]:

- Data Acquisition: Simultaneously record spike counts or firing rates from dozens to hundreds of neurons in motor cortex during a behavioral task.

- State Space Construction: For each time bin, construct a neural state vector (\mathbf{x}(t)) where each component is the activity of one neuron.

- Dimensionality Reduction: Apply techniques like Principal Component Analysis (PCA) to find the low-dimensional (e.g., 2-3D) subspace that captures the majority of the variance in the population activity.

- Trajectory Visualization and Analysis: Plot the evolution of the neural state within this subspace to visualize the neural trajectories. Analyze properties such as direction, speed, and geometry in relation to behavior and perturbation.

The diagram below illustrates the core concepts and the experimental workflow for probing the robustness of neural trajectories.

Diagram 1: Conceptual framework of neural trajectory robustness. Continuous external input, in dynamic contexts, stabilizes intrinsic circuit dynamics to enable rapid recovery from perturbations.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Materials and Tools for Neural Dynamics Research

| Item / Technique | Function in Research |

|---|---|

| High-Density Neural Probes (e.g., 64-chan. linear arrays) | Simultaneously record action potentials from dozens to hundreds of neurons in a local population. |

| Intracortical Microstimulation (ICMS) | Apply controlled, localized electrical perturbations to neural circuits to test causal relationships. |

| Electromyography (EMG) | Record muscle activity to ensure perturbations are sub-threshold and do not directly cause movement. |

| Dimensionality Reduction (e.g., PCA, jPCA) | Analyze high-dimensional neural data by extracting the dominant, low-dimensional latent factors (neural trajectories). |

| Recurrent Neural Network (RNN) Models | Serve as task-based or data-driven models of neural population dynamics to test computational hypotheses. |

| Nonlinear Optimal Control Theory | A mathematical framework to identify the most efficient inputs for steering neural dynamics, predicting control strategies. |

Empirical evidence firmly establishes that neural trajectories in motor cortex are not fragile pathways but highly robust computational entities. Their resilience is not static but is dynamically regulated by the behavioral context, with continuous sensorimotor transformation during tasks like interception actively enhancing robustness through ongoing feedback. This is mechanistically enabled by the interplay between the intrinsic dynamics of the cortical circuit and continuous external inputs that can rapidly correct deviations. The principles of robust neural trajectory control—harnessing intrinsic dynamics, leveraging continuous feedback, and employing optimal control strategies—offer profound inspiration for developing more adaptive and resilient artificial optimization algorithms and engineered systems.

Significant experimental, computational, and theoretical work has identified rich structure within the coordinated activity of interconnected neural populations. An emerging challenge is to uncover the nature of the associated computations, how they are implemented, and what role they play in driving behavior. This framework, termed computation through neural population dynamics, aims to reveal general motifs of neural population activity and quantitatively describe how neural population dynamics implement computations necessary for driving goal-directed behavior [1]. The dynamical systems perspective posits that neural population responses reflect underlying dynamics resulting from intracellular dynamics, circuitry connecting neurons, and external inputs to the circuit [1]. This stands in contrast to purely feedforward or single-neuron-centric views of neural processing.

In this framework, a neural population constitutes a dynamical system that, through its temporal evolution, performs computations to generate and control movement, make decisions, and maintain working memory [1]. The mathematical foundation for this perspective comes from dynamical systems theory, where the evolution of a neural population's state can be described by differential equations that capture how current states and inputs determine future states [1]. This approach has proven particularly powerful for understanding cortical responses dominated by intrinsic neural dynamics rather than sensory input dynamics [1].

Table: Key Concepts in Neural Population Dynamics

| Concept | Mathematical Representation | Neural Interpretation |

|---|---|---|

| State Space | N-dimensional space where each axis represents one neuron's firing rate | Complete description of population activity at a moment in time |

| Flow Field | Vector field showing how the state evolves from any position | Governing dynamics that transform neural activity over time |

| Attractor | States toward which the dynamics evolve from nearby states | Memory states, decision outcomes, or stable behavioral outputs |

| Trajectory | Path through state space over time | Evolution of population activity during computation |

For optimization research, this perspective offers powerful approaches for understanding how biological systems solve complex problems in real-time. The neural implementation of optimization algorithms reveals principles that can inform artificial systems, while the analysis of neural dynamics provides novel frameworks for solving dynamic optimization problems [11].

Theoretical Foundations of Neural Population Dynamics

Mathematical Framework of Computation Through Dynamics

The fundamental mathematical description of computation through neural population dynamics can be expressed as:

[ \frac{dx}{dt} = f(x(t), u(t)) ]

Where (x) is an N-dimensional vector describing the firing rates of all neurons in a population (the neural population state), (dx/dt) is its temporal derivative, (f) is a potentially nonlinear function capturing the circuit dynamics, and (u) is a vector describing external inputs to the neural circuit [1].

This formulation means that the current state of the neural population (x(t)) and any inputs (u(t)) determine how the population activity will change in the next moment. The function (f) embodies the computational capacity of the circuit, transforming inputs into trajectories that ultimately drive behavior. Different computational paradigms—such as integration, stability, selection, or transformation—emerge from different instantiations of (f) [1].

To build intuition, consider a physical analogy: a pendulum. A pendulum is a two-dimensional dynamical system whose state variables are position and velocity. When released from different initial conditions, the pendulum traces out different trajectories in state space. The flow field shows what the pendulum would do if it started in any given position, providing a convenient summary of the overall dynamics [1]. Similarly, neural population dynamics can be visualized and analyzed through state space plots and flow fields, though typically in higher dimensions.

Attractor Dynamics as a Computational Primitive

Attractors are fundamental states toward which a dynamical system evolves, and they provide a powerful framework for understanding neural computation. Different attractor types support different computational functions:

- Point Attractors: Single stable states useful for memory maintenance and selection. The system converges to the same state from various initial conditions.

- Line Attractors: Continuous sets of stable states that can encode continuous variables such as head direction, eye position, or the intensity of an internal state [12].

- Ring Attractors: Circular manifolds of stable states that can represent periodic variables like heading direction.

Recent causal evidence has demonstrated line attractor dynamics in the hypothalamus encoding an aggressive internal state [12]. In these experiments, neurons exhibited approximate line-attractor dynamics during both engagement in and observation of aggressive encounters, with the integration dimension maintaining persistent activity aligned across attack sessions [12]. This provides a clear example of how continuous attractors can encode continuously varying internal states.

Table: Attractor Types and Their Computational Functions

| Attractor Type | Mathematical Structure | Computational Function | Experimental Evidence |

|---|---|---|---|

| Point Attractor | Single stable fixed point | Selection, decision-making, memory | Motor planning in cortical areas |

| Line Attractor | Continuous line of fixed points | Encoding continuous variables (intensity, position) | Aggressive internal state in hypothalamus [12] |

| Ring Attractor | Circle of fixed points | Representing periodic variables | Head direction systems |

Methodological Approaches for Analyzing Neural Dynamics

Flow-Field Inference from Neural Data

A significant challenge in studying neural population dynamics is estimating the underlying flow fields from experimental data. FINDR (Flow-field Inference from Neural Data using deep Recurrent networks) is an unsupervised deep learning method that infers low-dimensional nonlinear stochastic dynamics underlying neural population activity [13] [14].

The FINDR method models observed population spike trains (y) as non-homogeneous Poisson processes with rates (λ) conditioned on a latent variable (z):

[ y|z \sim \text{PoissonProcess}(λ = r(z)) ]

The latent dynamics evolve according to a stochastic differential equation:

[ τdz = μ(z,u)dt + ΣdW ]

Where (τ) is a fixed time constant, (μ) is the drift function, (Σ) is the noise covariance, and (W) is a Wiener process [13]. FINDR uses a gated neural stochastic differential equation (gnSDE) to approximate the drift function (μ), combining the expressive power of neural networks with appropriate dynamical constraints [13].

FINDR is implemented as a variational autoencoder (VAE) with a sequential structure that minimizes two objectives: one for neural activity reconstruction and another for flow-field inference [13]. This architecture allows it to disentangle task-relevant and task-irrelevant components of neural population activity, which is crucial for interpreting the resulting dynamics.

Real-Time Adaptive Experimental Paradigms

Traditional neuroscience experiments often involve predetermined hypotheses and post-hoc analysis, but understanding neural dynamics increasingly requires adaptive experimental designs. The improv software platform enables flexible integration of modeling, data collection, analysis pipelines, and live experimental control under real-time constraints [15].

improv uses a modular architecture based on the "actor model" of concurrent systems, where each independent function is handled by a separate actor that communicates with others via message passing [15]. This design allows for:

- Real-time preprocessing of large-scale neural recordings

- Continual updating of model parameters as new data arrives

- Closed-loop experimental manipulations based on current model states

- Interactive visualization and experimenter oversight

This approach is particularly valuable for causal experiments that directly intervene in neural systems, such as targeted optogenetic stimulation based on real-time functional characterization of neurons [15]. For optimization research, such platforms enable more efficient experimental designs by allowing models to select the most informative tests to conduct.

Real-Time Adaptive Experimental Pipeline

Experimental Evidence and Protocols

Causal Evidence for Line Attractor Dynamics

A recent landmark study provided direct causal evidence for line attractor dynamics in the mammalian hypothalamus [12]. The experimental protocol involved:

Animal Model and Preparation:

- Mice expressing Esr1 in ventrolateral subdivision of ventromedial hypothalamus (VMHvl)

- Expression of jGCaMP7s (calcium indicator) and ChRmine (opsin) in VMHvlEsr1 neurons

- Head-fixed mice observing aggression to enable two-photon imaging and perturbation

Identification of Attractor-Contributing Neurons:

- Record calcium activity during observation of aggression

- Apply recurrent switching linear dynamical systems (rSLDS) analysis to identify integration dimension (x1) and orthogonal dimension (x2)

- Select neurons with highest weighting to x1 dimension as attractor-contributing neurons

Optogenetic Perturbation Protocol:

- Target 5 identified x1 neurons per field of view using holographic optogenetics

- Deliver repeated pulses of optogenetic stimulation (2s, 20Hz, 5mW) with 20s interstimulus interval

- Measure population-level response in integration dimension

Key Findings:

- Stimulation of x1 neurons yielded robust integration of activity in the entire x1 dimension

- Progressively increasing peak activity after each consecutive pulse

- Slow decay of activity after each peak without returning to pre-stimulus baseline

- Off-manifold perturbations (targeting x2 neurons) resulted in rapid relaxation back to attractor

This study provided the first direct evidence that continuous attractor dynamics can encode an internal state in the mammalian brain, bridging circuit and manifold levels of analysis [12].

Comparative Dynamics of Different Network Architectures

Recent work has investigated the computational potential of networks that rely solely on synapse modulation during inference to process task-relevant information—the multi-plasticity network (MPN) [16]. Unlike traditional recurrent neural networks (RNNs) that use fixed weights and recurrent activity to maintain information, MPNs use transient synaptic modifications.

Experimental Protocol for MPN-RNN Comparison:

- Train both MPN and RNN on integration-based tasks using standard supervised learning

- For MPN: Implement two forms of modulation mechanisms (pre/postsynaptic-dependent and presynaptic-only)

- Analyze low-dimensional dynamics using dimensionality reduction techniques

- Characterize attractor structure and stability

Key Findings:

- MPNs operate with qualitatively different dynamics and attractor structure than RNNs

- MPNs use a task-independent, single point-like attractor with dynamics slower than task-relevant timescales

- MPNs outperform RNNs on several neuroscience-relevant measures including catastrophic forgetting and flexibility

- MPNs achieve comparable performance to RNNs on 19 NeuroGym tasks despite having no recurrent connections [16]

This work demonstrates that synaptic modulations alone can support rich computational capabilities with distinct dynamical signatures from traditional recurrent networks.

Table: Comparison of Neural Network Models for Temporal Computation

| Feature | Recurrent Neural Network (RNN) | Multi-Plasticity Network (MPN) |

|---|---|---|

| Information Storage | Recurrent neuronal activity | Synaptic modulation states |

| Weight Changes During Inference | Fixed | Continuously modified |

| Attractor Structure | Task-specific manifolds | Single point-like attractor |

| Biological Basis | Recurrent connectivity | STSP, STDP, other synaptic plasticity |

| Performance on Integration Tasks | High | Comparable or superior in some measures |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Research Tools for Neural Dynamics Studies

| Tool/Reagent | Function | Example Use Cases |

|---|---|---|

| jGCaMP7s | Genetically encoded calcium indicator | Monitoring neural activity with high signal-to-noise ratio [12] |

| ChRmine | Sensitive opsin for optogenetic manipulation | Holographic stimulation of multiple neurons simultaneously [12] |

| FINDR | Software for flow field inference | Estimating underlying dynamics from spike train data [13] [14] |

| improv | Software platform for adaptive experiments | Real-time modeling and closed-loop experimental control [15] |

| rSLDS | Recurrent switching linear dynamical systems | Identifying latent dynamics and attractor dimensions [12] |

| MPN Framework | Multi-plasticity network model | Studying computation through synaptic modulations [16] |

Applications to Optimization Research

Accelerated Neural Dynamics Models for Dynamic Optimization

The principles of neural population dynamics have inspired new approaches to solving dynamic optimization problems. The Accelerated Neural Dynamics (AND) model builds on the Zeroing Neural Dynamics (ZND) framework to solve dynamic nonlinear optimization (DNO) problems with finite-time convergence [11].

The AND model designs a dynamic coefficient based on time or the error norm that amplifies the convergence speed of the error during the convergence process [11]. This approach:

- Achieves finite-time convergence to theoretical solutions, unlike exponential convergence in original ZND

- Can be transformed into a scalar-based dynamic mode that treats subsystems uniformly

- Maintains high robustness to various perturbations in computational environments

- Eliminates multilayer nonlinear treatment structures in favor of global nonlinear adaptive weighting

The AND model has demonstrated practical utility in applications such as acoustic-based time difference of arrival (TDOA) localization, showing superior performance compared to traditional models [11].

Dynamics-Enabled Optimization in Neural Systems

Neural systems solve optimization problems constantly—from reward maximization to energy-efficient control—and understanding their native optimization algorithms provides insights for artificial systems. Several key principles emerge:

Robustness Through Dynamics: Neural systems maintain functionality despite noise and perturbations. AND models demonstrate how dynamical systems can maintain robustness while achieving accelerated convergence [11], providing insights for engineering systems that must operate reliably in uncertain environments.

Multi-Timescale Adaptation: The brain operates across multiple temporal scales, from milliseconds for spike generation to seconds for decision-making. MPNs demonstrate how synaptic modulations at different timescales can support flexible computation [16], inspiring multi-timescale optimization algorithms.

Resource-Efficient Computation: Neural systems achieve remarkable computational power with limited energy resources. The efficiency of attractor-based computation [12] [16] suggests principles for resource-constrained optimization in artificial systems.

Dynamics-Based Optimization Approach

The computational role of dynamics—from attractors to flow fields—represents a fundamental shift in how we understand neural processing and its applications to optimization research. As measurement technologies continue to improve, allowing simultaneous recording of larger neural populations, and as analytical methods become more sophisticated, we can expect further insights into how dynamical systems principles underlie neural computation.

Key future directions include:

- Developing more accurate methods for inferring high-dimensional dynamics from limited neural data

- Bridging gaps between molecular/cellular mechanisms and population-level dynamics

- Applying principles of neural dynamics to more complex optimization problems in engineering and artificial intelligence

- Creating unified theoretical frameworks that span neural computation and mathematical optimization

The convergence of theoretical modeling, experimental perturbation, and real-time adaptive paradigms promises to yield deeper insights into how dynamics give rise to computation. As these fields advance, they will continue to inform each other—with neural systems inspiring new optimization algorithms, and mathematical frameworks providing new ways to understand neural computation.

The evidence presented in this review demonstrates that neural computation is fundamentally dynamical in nature, implemented through attractor dynamics and flow fields that transform information across time. This perspective not only advances our understanding of neural systems but also provides powerful approaches for solving complex optimization problems across scientific and engineering domains.

In systems neuroscience, a fundamental challenge lies in reconciling the vast dimensionality of neural circuits—comprising millions of neurons—with the relatively low-dimensional nature of behavior and cognition. Neural manifolds provide a powerful theoretical and analytical framework to resolve this paradox. They are defined as low-dimensional subspaces within the high-dimensional state space of neural population activity, within which the network's dynamics are constrained and evolve over time [17] [18]. The core premise of the neural manifold framework is that the patterns of activity generated by a population of neurons are not random excursions throughout the entire possible state space. Instead, due to the network's intrinsic circuitry and the functional requirements of behavior, neural activity is confined to a much lower-dimensional, structured surface—the manifold [17]. This concept has transitioned from a mathematical buzzword to a foundational paradigm for understanding how the brain orchestrates complex functions, offering a conceptually appropriate level of analysis for systems neuroscientists [17].

The investigation of neural manifolds represents a significant paradigm shift from single-neuron analysis to a population-centric view of brain function. Since the 1960s, neuroscientists have been captivated by two key observations: first, that simple tasks engage large populations of neighboring neurons, and second, that individual neurons exhibit "mixed selectivity," responding to a multitude of sensory, cognitive, and motor features [17]. The neural manifold framework offers a parsimonious explanation: information is not uniquely encoded in the spike trains of individual neurons but is rather specified by their coordinated activity [17]. By analogy, individual words carry meaning, but the full informational content of a paragraph emerges only from their collective organization. This population-level perspective is essential because the fundamental processes that guide animal behavior are emergent properties of the collective neural population, necessitating observation of the population as a whole to be properly described [17].

Theoretical Foundations and Mathematical Definition

Conceptual and Mathematical Formalism

To conceptualize a neural manifold, one can imagine a state space where each axis represents the firing rate of a single neuron. For a population of N neurons, this defines an N-dimensional state space. During a behavior, such as reaching for an object, the instantaneous activity of all neurons defines a single point in this space. Over time, the evolving neural activity traces a trajectory—a path—through the state space [17]. The crucial observation is that these trajectories do not randomly explore the full N-dimensional volume but are instead confined to a lower-dimensional surface, the neural manifold [17] [18].

Mathematically, a manifold is a topological space that is locally Euclidean, meaning that around every point, the space resembles a patch of a simpler, flat space (like a line or a plane) [18]. In practical neuroscientific terms, the "neural manifold" is the low-dimensional subspace within the higher-dimensional neural activity space that explains the majority of the variance of the neural dynamics [18]. This characterization depends on a description where the system's state is continuous and determined by the instantaneous firing rates of each neuron, and what is observed experimentally is typically a "point cloud" of neural activity states that samples the underlying topological manifold [18].

Key Assumptions and Misconceptions

The definition of neural manifolds rests on three key assumptions [17]:

- Confinement to a Surface: Neural population activity is confined to a surface with a specific geometry.

- Smoothness and Continuity: This surface is smooth (differentiable) and continuous, meaning activity will follow this surface over time.

- Low Intrinsic Dimensionality: The surface's intrinsic dimensionality is smaller than the full population dimensionality.

Several misconceptions surround this framework. First, neural manifolds are not synonymous with dimensionality reduction. While manifolds are currently estimated using dimensionality reduction techniques, the framework encompasses more than just the technique; it involves considering the properties of the surface, including its topology and geometry [17]. Second, the term "low-dimensional" requires careful interpretation. It is vital to distinguish between the embedding dimensionality (the number of dimensions needed to fully characterize the manifold in neural space) and the intrinsic dimensionality (the number of degrees of freedom needed to describe the neural activity states on the manifold itself) [17]. The neural manifold framework posits only that the intrinsic dimensionality is smaller than the number of neurons, not that the embedding dimensionality is necessarily low. Finally, the manifold view does not dismiss single neurons. It incorporates the fact that the activity of any given neuron is best understood in relation to the activity of other neurons that provide its inputs [17].

Table 1: Key Dimensionality Concepts in Neural Manifold Analysis

| Term | Definition | Interpretation in Neuroscience |

|---|---|---|

| Population Dimensionality (N) | The number of recorded neurons; the full dimension of the recorded state space. | The apparent complexity of the system from the measurement perspective. |

| Embedding Dimensionality | The number of dimensions required to embed the manifold in the neural state space without distortion. | Reflects the distributed nature of the computation across the population. |

| Intrinsic Dimensionality (D) | The number of independent variables or degrees of freedom needed to describe activity on the manifold. | Represents the underlying complexity of the computation or behavior being generated. |

Analytical Methods for Neural Manifold Learning

Dimensionality Reduction Techniques

Neural Manifold Learning (NML) describes a subset of machine learning algorithms that take a high-dimensional neural activity matrix (of N neurons across T time points) and embed it into a lower-dimensional matrix while preserving key information content [18]. These algorithms can be broadly categorized as linear or non-linear.

Linear methods, such as Principal Component Analysis (PCA), identify mutually orthogonal directions of maximum variance in the data. PCA is widely used due to its simplicity and interpretability, but it may distort the true structure of data that lies on a non-linear manifold [17] [18]. Other linear algorithms include Participation Ratio (PR) and Parallel Analysis (PA), which provide more principled criteria than PCA for selecting the number of components to retain [19].

Non-linear methods are often better suited for neural data, given the high degree of recurrence and non-linearities in neural circuits [17]. These include:

- Isomap: Embeds data by preserving geodesic distances (distances along the manifold) between all pairs of points.

- Locally Linear Embedding (LLE): Represents each data point as a linear combination of its nearest neighbors and finds a low-dimensional representation that best preserves these local relationships.

- t-SNE: Emphasizes the preservation of local structure, making it excellent for visualization but less so for quantitative analysis.

- Uniform Manifold Approximation and Projection (UMAP): Often provides a better balance between local and global structure preservation compared to t-SNE and is computationally more efficient [18].

A critical challenge in applying these methods is accurately estimating the intrinsic dimensionality. Linear algorithms tend to overestimate the dimensionality of non-linearly embedded data, while most algorithms, both linear and non-linear, overestimate dimensionality in the presence of high noise [19]. To address the noise problem, denoising techniques like the Joint Autoencoder—a deep learning-based method—have been developed to significantly improve subsequent dimensionality estimation [19].

Quantifying Manifold Geometry for Classification

Beyond identifying the manifold, a key analytical advancement has been the development of a geometrical framework that links manifold properties to computational function, particularly classification capacity. This theory establishes that the ability to linearly separate distinct object manifolds (e.g., neural activity patterns for different categories) depends on three fundamental geometric properties [20]:

- Manifold Radius ((R_M)): The effective spread or extent of a manifold in neural state space, normalized by the distance between manifold centers.

- Manifold Dimension ((D_M)): The effective number of dimensions along which a manifold varies.

- Inter-manifold Correlation: The degree of alignment between the dominant axes of different manifolds.

The manifold classification capacity ((α_c)) is the maximum number of object manifolds per neuron (P/N) that can be linearly separated. It is maximized when manifolds have a small radius, low intrinsic dimensionality, and low correlation with each other [20]. This provides a direct, quantitative link between the geometry of neural representations and their computational utility.

Figure 1: Workflow for Neural Manifold Analysis. This diagram outlines the key steps in processing neural data to extract and quantify neural manifolds, from initial recording through to the calculation of functional classification capacity.

Experimental Evidence and Protocols

Key Experimental Paradigms

The neural manifold framework has been validated and applied across diverse experimental paradigms, species, and brain regions. An early demonstration in 2003 characterized olfactory encoding in the locust, and the approach has since become ubiquitous [17]. A foundational causal experiment involved using a brain-computer interface (BCI) learning paradigm. In this study, researchers could precisely control the mapping from neural population activity in monkey motor cortex to an output behavior. They demonstrated that learning is constrained by the existing neural manifold; monkeys could readily learn to produce neural population activities that lay within the pre-existing manifold but struggled to generate activities that lay outside it. This provided causal evidence that neural manifolds constrain behavioral learning and plasticity [17].

Another major area of research involves studying neural population dynamics during motor tasks, such as reaching. In these experiments, neural activity in motor cortical areas traces out low-dimensional trajectories within the manifold that correspond to preparation, initiation, and execution of movement. The smooth, predictable nature of these dynamics within the manifold supports the idea that the manifold captures the fundamental computational logic of the circuit [18].

Protocol: Characterizing Object Manifolds in Deep Neural Networks

Deep Neural Networks (DNNs) serve as a powerful testbed for the neural manifold theory. The following protocol, adapted from [20], details how to characterize object manifolds in a DNN:

- Stimulus Presentation: Process a large set of labeled images (e.g., from ImageNet) through the DNN. For each object class (e.g., "cats," "dogs"), collect the population activation vectors (the firing rates of the artificial neurons) from a specific layer of the network in response to all images of that class. This collection of points for one class forms its point-cloud object manifold.

- Manifold Sampling: Define different levels of variability for analysis. For instance, create a "low-variability" manifold using only the top 10% of images with the highest classification score for that class, and a "high-variability" manifold using all available images for the class.

- Dimensionality Reduction and Geometric Analysis: For each layer of the network, apply the geometrical analysis framework to the set of object manifolds. Calculate the manifold radius ((RM)), dimension ((DM)), and inter-manifold correlations.

- Capacity Calculation: Compute the linear classification capacity ((α_c)) for the object manifolds in each layer.

- Comparison Across Layers and Conditions: Track how (RM), (DM), and (α_c) change from the input layer to the output layer. Compare a fully trained network against an untrained network (with random weights) and against a control condition where image labels are shuffled to destroy the object-manifold relationship.

Key Findings from this Protocol: The geometry of object manifolds becomes progressively more separable across the layers of a trained DNN. This is orchestrated through a reduction in manifold radius and dimension, and a weakening of inter-manifold correlations. Consequently, the classification capacity increases along the network hierarchy. Untrained networks show minimal improvement, indicating that learning, not just architecture, shapes this beneficial geometry [20].

Table 2: Key Reagents and Computational Tools for Neural Manifold Research

| Research Tool | Type | Primary Function in Manifold Research |

|---|---|---|

| Multi-electrode Arrays / Calcium Imaging | Experimental Hardware | Enables simultaneous recording of action potentials or fluorescence from hundreds to thousands of neurons, providing the high-dimensional activity data. |

| Principal Component Analysis (PCA) | Linear Algorithm | A baseline method for linear dimensionality reduction; useful for initial exploration and when manifolds are approximately linear. |

| UMAP / t-SNE | Non-linear Algorithm | Non-linear dimensionality reduction techniques powerful for visualization and for uncovering complex manifold structures. |

| Joint Autoencoder | Denoising Algorithm | A deep-learning based denoiser used to preprocess noisy neural data, improving the accuracy of subsequent dimensionality estimation. |

| Manifold Capacity Estimation Code | Analytical Tool | Software implementations of the theoretical framework for calculating (RM), (DM), and classification capacity (α_c) from point-cloud data. |

| Brain-Computer Interface (BCI) | Experimental Paradigm | Allows causal testing of manifold constraints by mapping neural activity to an output, and monitoring how learning alters activity within the manifold. |

Applications in Artificial Intelligence and Machine Learning

The principles of neural manifolds have significantly influenced artificial intelligence, particularly in the domain of self-supervised learning (SSL). SSL aims to learn useful representations from unlabeled data, often by creating "positive pairs" through augmentations (e.g., different cropped or rotated views of the same image) and "negative" samples from different images. A recent framework, Contrastive Learning As Manifold Packing (CLAMP), explicitly recasts this problem as a neural manifold packing problem [21].

In CLAMP, each image and its augmentations form a local "augmentation sub-manifold" in the representation space. The learning objective is not just to pull positive points together and push negative points apart, but to optimally pack these sub-manifolds to minimize overlap. The loss function is inspired by the physics of short-range repulsive particle systems, like those in simple liquids or jammed packings, which naturally leads to a uniform and well-separated arrangement of manifolds [21]. This manifold-centric approach provides a more geometric and interpretable foundation for SSL, connecting it to physical principles and yielding representations that achieve state-of-the-art performance in image classification tasks. It demonstrates that the efficiency of learned representations in artificial systems can be understood and improved through the geometry of neural manifolds.

Applications in Disease Research and Drug Development

The neural manifold framework offers a novel lens for understanding and diagnosing neurological and neuropsychiatric disorders. The hypothesis is that the pathophysiological mechanisms of disease alter the fundamental dynamics of neural circuits, which should be observable as changes in the geometry and trajectories of neural manifolds [18].

Research is exploring this in several contexts:

- Alzheimer's Disease (AD): Simulation studies of mouse models of AD suggest that neural manifold analysis could reveal the circuit-level consequences of molecular and cellular neuropathology, potentially identifying biomarkers for early detection or monitoring progression [18].

- Motor Disorders: Analysis of neural population dynamics in motor cortex during reaching tasks can be used to investigate disorders that impact motor control, with the potential to identify specific distortions in the neural manifold that correlate with symptoms [19].

- Neuropsychiatric Disorders: Investigating neural populations in the medial prefrontal cortex that are active during social decision-making may generate testable hypotheses for disorders like Autism Spectrum Disorder (ASD) and Schizophrenia, where social cognition is impaired [18].

In the pharmaceutical industry, the manifold concept is being leveraged for drug discovery. For instance, Roche has partnered with Manifold Bio to use an AI-driven platform that measures how thousands of potential "shuttles" can transport drugs across the blood-brain barrier (BBB) in living organisms [22]. This in-vivo testing creates a high-dimensional dataset of biological interactions, the analysis of which likely relies on concepts similar to manifold learning to identify successful candidates. Furthermore, a "Manifold Medicine" schema has been proposed, which conceptualizes disease states as multidimensional vectors and designs combination drug therapies ("manifold drug cocktails") to counter these pathological vectors across multiple body-wide axes simultaneously [23]. This represents a move away from single-target drugs towards systems-level, multi-dimensional treatment strategies.

Figure 2: Manifold Framework in Disease and Therapy. This diagram illustrates the conceptual model of neurological disease as a distortion of the healthy neural manifold and how therapeutic interventions, informed by drug discovery platforms that use manifold learning, aim to restore healthy dynamics.

The neural manifold framework has established itself as a fundamental paradigm for bridging the gap between the high-dimensional nature of neural activity and the low-dimensional essence of behavior and cognition. By providing a mathematically rigorous yet conceptually intuitive platform, it allows researchers to describe the population-level dynamics that underlie brain function. The framework's power is demonstrated by its broad applicability, from explaining fundamental biological processes in motor control and perception to driving innovations in artificial intelligence and offering new pathways for understanding and treating brain disorders. The continued development of analytical tools for quantifying manifold geometry and the formulation of theories that link this geometry to computational function promise to yield even deeper insights into the operating principles of complex neural systems.

Linking Single-Cell Properties to Population-Level Computation

Understanding how the properties of individual neurons give rise to sophisticated computations at the population level is a fundamental challenge in neuroscience. The framework of neural population dynamics has emerged as a powerful paradigm for addressing this challenge, positing that computational functions are implemented through the coordinated, time-evolving activity of neural ensembles [1]. This technical guide synthesizes current methodologies and findings that explicitly bridge single-cell characteristics—including genetic identity, projection target, and response properties—with the collective dynamics that underlie computation. This bridge is critical for advancing theoretical models of brain function and for informing targeted therapeutic interventions, as many neurological and psychiatric disorders are increasingly understood as dysfunctions of specific cell types within larger circuit dynamics [24].

Core Theoretical Framework: Computation Through Dynamics

The computation through dynamics (CTD) framework formalizes neural population activity as a trajectory in a high-dimensional state space. The core mathematical formulation is that of a dynamical system:

dx/dt = f(x(t), u(t))

Here, x is an N-dimensional vector representing the state of the neural population (e.g., the firing rates of N neurons), and f is a function that captures the intrinsic circuit dynamics, which governs how the population state evolves over time, influenced by external inputs u(t) [1]. Within this framework, single-cell properties shape the function f by determining the intrinsic dynamics and connectivity patterns of the circuit. The population-level computation is then read out from the trajectory of the system's state over time.

Table 1: Key Concepts in Neural Population Dynamics

| Concept | Mathematical Representation | Computational Role |

|---|---|---|

| Neural Population State | Vector x(t) of N firing rates | Represents the instantaneous state of the population in an N-dimensional space [1] |

| Dynamical System | dx/dt = f(x(t), u(t)) | Governs the temporal evolution of the population state, implementing the computation [1] |

| State Space | N-dimensional coordinate system | Provides a visualization and analysis framework for population trajectories [1] |

| Attractor Dynamics | Stable states (e.g., points, lines, rings) toward which dynamics evolve | Enables robust maintenance of information, as in working memory or integration [24] [25] |

Paradigms for Linking Single-Cell and Population Levels

Projection-Defined Subpopulations and Structured Correlations

Recent research demonstrates that neurons defined by their common axonal projection target can form specialized population codes that are not apparent in undifferentiated populations. In the mouse posterior parietal cortex (PPC) during a decision-making task, neurons projecting to the same area (e.g., anterior cingulate cortex or retrosplenial cortex) exhibit elevated pairwise activity correlations structured into specific network motifs [2].

These motifs consist of pools of neurons with enriched information-enhancing (IE) interactions within pools. This structured correlation architecture enhances the information the population encodes about the animal's choice, particularly for larger population sizes. Crucially, this specialized structure is unique to identified projection neurons and is present only during correct behavioral choices, linking a single-cell property (projection identity) to a population-level code that directly supports accurate behavior [2].

Experimental Protocol for Identifying Projection-Specific Codes

- Retrograde Labeling: Inject fluorescent retrograde tracers conjugated to different dyes into target areas (e.g., ACC, RSC).

- In Vivo Calcium Imaging: Perform two-photon calcium imaging in the source area (e.g., PPC) to record the activity of hundreds of neurons simultaneously during a behavioral task.

- Cell Identification: Post-hoc, identify imaged neurons as belonging to a specific projection group based on their tracer fluorescence.

- Information Analysis: Use statistical models, such as nonparametric vine copula (NPvC) models, to estimate the mutual information between a neuron's activity and task variables, conditioning on movement and other confounding variables [2].

- Correlation Network Analysis: Analyze pairwise correlation structures within and across projection-defined populations and relate these structures to behavioral output.

Cell-Type-Specific Dynamics via Transcriptomic Identity

Integrating transcriptomic cell typology with dynamical systems modeling offers a powerful path to mechanistic understanding. This approach was applied to the medial habenula, where distinct cell types encode different aspects of reward [24].

- TH+ neurons were found to encode reward-predictive cues.

- Tac1+ neurons encoded reward outcome and reward history.

The population dynamics of Tac1+ neurons were analyzed using an optimized nonlinear dynamical systems model, which revealed activity patterns consistent with a line attractor—a dynamical regime ideal for integrating information over time, such as tracking reward history [24]. This integration of molecular identity, recording, and modeling illustrates how single-cell properties can dictate the computational algorithms implemented at the population level.

Behavioral State Modulation of Population Dynamics

Behavioral states, such as locomotion, can dynamically reconfigure single-cell and population-level response properties. In the mouse primary visual cortex (V1), locomotion induces a shift in single-neuron temporal dynamics from transient to more sustained response modes [26]. This single-cell change is coupled with an acceleration in the stabilization of stimulus-evoked noise correlations and a simplification of the latent trajectories of population activity, which make more direct transitions to stimulus-encoding states [26]. Collectively, these state-dependent changes enable faster, more stable, and more efficient sensory encoding during locomotion.

Table 2: Summary of Key Experimental Findings

| Neural System | Single-Cell Property | Impact on Population Dynamics | Computational Function |

|---|---|---|---|

| Posterior Parietal Cortex [2] | Common projection target | Structured correlation networks with information-enhancing motifs | Enhances choice information and guides accurate behavior |

| Medial Habenula [24] | Transcriptomic type (TH+ vs. Tac1+) | Distinct dynamics; Tac1+ populations form a line attractor | Encodes reward-predictive cues (TH+) and integrates reward history (Tac1+) |

| Primary Visual Cortex [26] | Locomotion state | Shift to sustained firing; faster correlation stabilization; simplified latent trajectories | Enables faster, more stable sensory encoding |

| Recurrent Neural Network Model [25] | Functional specialization via learning | Emergence of modular ring and control attractors | Solves path integration on a ring |

Computational and Analytical Methods

Dimensionality Reduction and Latent Variable Models

A critical first step in analyzing high-dimensional neural data is dimensionality reduction. Techniques such as Factor Analysis (FA) are used to project the activity of hundreds of neurons into a lower-dimensional "latent space" that captures the majority of the neural variance [1] [26]. The trajectories of population activity within this latent space are then analyzed as the manifestation of the underlying computation, allowing researchers to visualize and quantify how neural states evolve over time during a behavior [26].

Dynamical Systems Modeling with Recurrent Neural Networks

Recurrent Neural Networks (RNNs) have become a primary tool for modeling the function f that governs neural population dynamics. These networks can be trained in two primary ways:

- Data Modeling: The RNN is trained to replicate recorded neural population data, thereby identifying a potential underlying dynamical system [1].

- Task-Based Modeling: The RNN is trained to perform a specific computational task (e.g., path integration). The solutions and dynamics that emerge in the trained network can then generate testable hypotheses about how biological neural circuits might solve similar problems [25].

For example, training an RNN on a ring-based path integration task can lead to the self-organization of a modular architecture: one subpopulation forms a stable ring attractor to maintain the integrated position, while another subpopulation forms a dissipative control unit that processes velocity inputs [25]. This shows how general learning objectives can give rise to structured, interpretable dynamics in a population.

Diagram 1: RNN self-organization for path integration.

Advanced Statistical Methods for Information Analysis

The nonparametric vine copula (NPvC) model is a advanced method for quantifying how much information a neuron carries about a task variable while controlling for other covariates, such as movement [2]. This method expresses multivariate probability densities using a copula, which quantifies statistical dependencies without making strong assumptions about the form of the relationships. This provides a more accurate and robust estimate of neuronal information, especially in the presence of nonlinear tuning, compared to conventional methods like generalized linear models (GLMs) [2].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Tools

| Reagent / Tool | Function | Example Application |

|---|---|---|

| Retrograde Tracers (e.g., fluorescent conjugates) | Labels neurons based on their axonal projection target. | Identifying PPC neurons projecting to ACC or RSC to study projection-specific codes [2]. |

| Genetically Encoded Calcium Indicators (e.g., GCaMP) | Reports neural activity as changes in fluorescence. | Large-scale calcium imaging of hundreds of neurons in PPC or V1 during behavior [2] [26]. |

| High-Density Electrophysiology Probes (e.g., Neuropixels 2.0) | Records action potentials from hundreds of neurons simultaneously. | Dense sampling of single-unit activity in mouse V1 across behavioral states [26]. |

| Vine Copula (NPvC) Models | Statistical model for estimating mutual information from multivariate data. | Isolating a neuron's information about a task variable while controlling for movements [2]. |

| Recurrent Neural Network (RNN) Models | Parameterized dynamical system for modeling or task-solving. | Identifying line attractor dynamics in Tac1+ habenula cells or ring attractors in navigation models [24] [25]. |

| Factor Analysis | Dimensionality reduction technique. | Extracting latent trajectories of population activity in V1 [26]. |

The paradigms and methods outlined herein provide a robust roadmap for linking the properties of single cells to the computational functions of neural populations. The key insight is that single-cell properties—be they molecular, anatomical, or physiological—fundamentally constrain and shape the emergent population dynamics. Future progress will depend on the continued integration of large-scale neural recordings, precise cell-type manipulation, and data-driven computational modeling. This integrated approach will not only refine our theoretical understanding of neural computation but also pave the way for identifying specific cell populations and dynamic motifs that could be targeted for treating neurological disorders.

Advanced Methods for Modeling and Applying Neural Dynamics

A fundamental challenge in neuroscience is understanding how cognitive computations emerge from the collective dynamics of neural populations. These dynamics often evolve on low-dimensional, smooth subspaces known as neural manifolds [27]. The ability to infer consistent latent dynamics from neural recordings is crucial for comparing cognitive strategies across individuals and sessions, a process complicated by representational drift and differing neural embeddings across subjects [27] [28].

The MARBLE (MAnifold Representation Basis LEarning) framework is a geometric deep learning method designed to overcome this challenge. It learns interpretable and consistent latent representations of neural population dynamics by explicitly leveraging their underlying manifold structure [27] [29]. By decomposing neural dynamics into constituent flow motifs, MARBLE can identify whether different animals or artificial networks use the same computational strategies during cognitive tasks, without requiring supervised behavioral labels [27] [28].

Theoretical Foundations of MARBLE

The Neural Manifold Hypothesis

Neural population activity underlying computations such as decision-making or motor control is not random high-dimensional noise. Instead, it is constrained to evolve on low-dimensional smooth subspaces or manifolds [27]. The geometry and topology of these manifolds are thought to be fundamental to neural computation [27].

Limitations of Existing Methods

Existing methods for analyzing neural population dynamics face significant limitations:

- Linear Methods (PCA, CCA): Assume linear subspace structure and struggle with nonlinear manifolds. CCA requires trial-averaged dynamics and linear alignment across sessions [27].

- Nonlinear Manifold Learning (t-SNE, UMAP): Do not explicitly represent temporal dynamics, focusing only on state density [27].

- Dynamical Systems Methods (LFADS): Infer latent dynamics but often require alignment via linear transformations, which may not be meaningful if subjects employ different neural strategies [27].

- Supervised Methods (CEBRA): Can find consistent representations but require behavioral supervision, potentially introducing bias [27].

MARBLE addresses these limitations by providing an unsupervised framework that explicitly learns dynamical flows on nonlinear manifolds and provides a mathematically rigorous similarity metric for comparing dynamics across systems [27].

The MARBLE Methodology

Core Mathematical Framework

MARBLE treats neural population dynamics as a collection of flow fields over an unknown manifold. For a set of d-dimensional neural time series {x(t; c)} recorded under experimental condition c, MARBLE represents the dynamics as a vector field F_c = (f_1(c), ..., f_n(c)) anchored to a point cloud X_c = (x_1(c), ..., x_n(c)) of sampled neural states [27].

The method involves these key steps:

1. Manifold Approximation: The unknown neural manifold is approximated by constructing a proximity graph from the neural state point cloud X_c. This graph defines local tangent spaces and a notion of smoothness (parallel transport) between nearby vectors [27].

2. Flow Field Denoising: A learnable vector diffusion process denoises the estimated flow field while preserving its fixed-point structure, using the graph structure to define smoothness constraints [27].

3. Local Flow Field (LFF) Decomposition: The global vector field is decomposed into Local Flow Fields around each neural state, defined as the vector field within a graph distance p. This lifts d-dimensional neural states to a O(d^(p+1))-dimensional space encoding local dynamical context [27].

4. Unsupervised Geometric Deep Learning: A geometric deep learning architecture maps LFFs to a common latent space, with specific components ensuring invariance to different neural embeddings [27].

Neural Network Architecture

MARBLE's architecture consists of three specialized components [27]:

- Gradient Filter Layers: Provide the best p-th-order approximation of the Local Flow Field around each neural state.

- Inner Product Features: Create features invariant to local rotations in the LFFs, making the representations independent of specific neural embeddings.

- Multilayer Perceptron: Maps the processed features to a final latent vector

z_ifor each neural state.

The network is trained using an unsupervised contrastive learning objective that leverages the continuity of LFFs over the manifold—adjacent LFFs are more similar than non-adjacent ones [27].

MARBLE Workflow

The following diagram illustrates the complete MARBLE processing pipeline from neural recordings to latent representations:

Experimental Validation and Benchmarking

Experimental Protocols

MARBLE has been rigorously validated across multiple neural systems:

1. Primate Reaching Task: Recordings from premotor cortex of macaques during a reaching task were used to test MARBLE's ability to decode arm movements and identify consistent dynamics across animals [27] [28].

2. Rodent Navigation Task: Hippocampal recordings from rats during spatial navigation in a maze were analyzed to discover shared dynamical motifs during spatial memory tasks [27] [29].

3. Recurrent Neural Networks (RNNs): MARBLE analyzed high-dimensional dynamical flows in RNNs trained on cognitive tasks, detecting subtle changes related to gain modulation and decision thresholds not captured by linear methods [27].