Filtering Motion Artifacts with Canonical Correlation Analysis: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive examination of Canonical Correlation Analysis (CCA) as a powerful multivariate method for filtering motion artifacts and enhancing signal quality in biomedical data.

Filtering Motion Artifacts with Canonical Correlation Analysis: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive examination of Canonical Correlation Analysis (CCA) as a powerful multivariate method for filtering motion artifacts and enhancing signal quality in biomedical data. Tailored for researchers, scientists, and drug development professionals, we explore CCA's foundational principles in identifying correlated patterns across multi-view data, detail methodological implementations including regularized variants for high-dimensional datasets, address critical troubleshooting aspects for optimal performance, and present rigorous validation frameworks. Drawing from recent applications in neuroimaging, multi-omics integration, and brain-computer interfaces, this guide offers practical strategies for improving data quality and analytical robustness in preclinical and clinical research settings.

Understanding CCA Fundamentals: From Correlation Theory to Artifact Identification

Canonical Correlation Analysis (CCA) serves as a powerful multivariate statistical technique for identifying and quantifying the relationships between two sets of variables. Within biomedical engineering and neuroscience, researchers have effectively leveraged CCA and its extensions, such as sparse CCA (SCCA), to tackle the persistent challenge of motion artifacts in mobile neuroimaging technologies like electroencephalography (EEG) and functional near-infrared spectroscopy (fNIRS). This application note details the core principles of CCA, provides a curated analysis of its performance in denoising experiments, and outlines a definitive step-by-step protocol for its application in motion artifact correction, supported by data visualization and essential reagent solutions.

Canonical Correlation Analysis (CCA) is a classical multivariate technique designed to uncover the underlying relationships between two multidimensional datasets [1]. Imagine you have two sets of observations collected from the same subjects, denoted as X (an n × p₁ matrix) and Y (an n × p₂ matrix). The fundamental goal of CCA is to find two linear combinations—one from the first set and one from the second set—called canonical variables, such that the correlation between these two new variables is maximized [1].

The mathematical objective can be summarized as finding weight vectors u (of dimension p₁ × 1) and v (of dimension p₂ × 1) that solve the following problem:

maxu,v q = uTXTYv subject to the constraints: uTXTXu = 1 and vTYTYv = 1 [1]

Here, q is the resulting canonical correlation, a measure of the strength of the relationship. The process can be repeated to find additional pairs of canonical variables that are uncorrelated with previous pairs, thus revealing multiple, independent modes of association between the two datasets [1].

In the context of high-dimensional neuroimaging data, where the number of variables (voxels or channels) far exceeds the number of observations, traditional CCA faces challenges. This limitation has been successfully addressed by Sparse CCA (SCCA), which incorporates regularization penalties to yield sparse canonical vectors [1]. These sparse vectors have many coefficients set to zero, which enhances interpretability by pinpointing the most relevant variables—such as specific brain regions or signal components—that drive the relationship between the datasets, a crucial feature for artifact removal pipelines [1].

CCA in Motion Artifact Research: Application and Data

Motion artifacts pose a significant threat to data quality in mobile neuroimaging, such as wearable EEG, which is increasingly used in real-world brain-computer interface (BCI) applications, naturalistic sports research, and clinical monitoring [2] [3] [4]. These artifacts stem from muscle activity, electrode movement, and cable swings, often obscuring the neural signals of interest [4]. CCA has emerged as a powerful component in sophisticated signal processing pipelines designed to mitigate these artifacts.

A prominent application is the hybrid Wavelet Packet Decomposition with CCA (WPD-CCA) method for cleaning single-channel EEG and fNIRS signals [5]. This two-stage approach first uses WPD to break the corrupted signal down into multiple sub-bands or nodes. CCA is then applied to these nodes to identify and separate motion artifact components from the underlying neurophysiological signal based on their correlational structure [5].

The table below summarizes quantitative performance data for the WPD-CCA method in correcting motion artifacts from a benchmark dataset, demonstrating its effectiveness compared to a single-stage WPD approach.

Table 1: Performance of WPD-CCA in Motion Artifact Correction for EEG and fNIRS Signals

| Signal Modality | Method | Key Wavelet Packet | Average ΔSNR (dB) | Average Artifact Reduction (η%) |

|---|---|---|---|---|

| EEG | Single-Stage WPD | db2 | 29.44 | 53.48 |

| EEG | Two-Stage WPD-CCA | db1 | 30.76 | 59.51 |

| fNIRS | Single-Stage WPD | fk4 | 16.11 | 26.40 |

| fNIRS | Two-Stage WPD-CCA | db1 / fk8 | 16.55 / - | - / 41.40 |

Source: Adapted from [5]. Performance metrics are defined as: ΔSNR (Improvement in Signal-to-Noise Ratio); η (Percentage Reduction in Motion Artifacts).

The data shows that the two-stage WPD-CCA method consistently outperforms the single-stage WPD technique, providing greater improvement in signal quality and a higher percentage of artifact reduction for both EEG and fNIRS signals [5]. This establishes CCA as a valuable component in a robust denoising workflow.

Experimental Protocols

Protocol 1: Implementing Sparse CCA (SCCA) for High-Dimensional Neuroimaging Data

This protocol is adapted from methods used to analyze multivariate similarities in pharmacological MRI data and is suitable for high-dimensional datasets where variable selection is critical [1].

Data Preparation and Standardization:

- Collect your two multidimensional datasets, X and Y (e.g., brain imaging data from two different conditions or time points).

- Standardize each column (variable) of X and Y to have a mean of zero and a standard deviation of one.

Parameter Tuning and Optimization:

- The core of SCCA is the introduction of sparsity parameters (often denoted as c₁ and c₂) that control the number of non-zero weights in the canonical vectors u and v.

- Critically, optimize sparsity parameters for each dataset independently using a method like permutation testing. This provides the model flexibility to account for differing properties between the two views, such as the number of activated voxels or data smoothness [1].

Model Fitting:

- Apply the SCCA algorithm, using the optimized parameters, to find the first pair of sparse canonical vectors u and v that maximize the correlation between Xu and Yv.

Subsequent Component Extraction:

- To find more than one set of relationships, apply the SCCA algorithm again to the residual data after subtracting the variance explained by the first pair of canonical vectors [1].

Protocol 2: Two-Stage Motion Artifact Correction using WPD-CCA for Single-Channel EEG

This protocol provides a detailed workflow for cleaning motion artifacts from a single-channel EEG recording, based on the method validated in [5].

Signal Decomposition:

- Input: A single-channel EEG signal corrupted with motion artifacts.

- Using Wavelet Packet Decomposition (WPD), decompose the signal down to a pre-defined level (e.g., level 4) using a suitable wavelet family (e.g., Daubechies 'db1').

- This results in a set of wavelet packet nodes, each containing a specific time-frequency representation of the original signal.

Component Separation via CCA:

- Treat the set of wavelet packet nodes as your multivariate dataset.

- Apply CCA between these nodes to transform them into a new set of canonical components. The underlying logic is that components representing motion artifacts will exhibit a distinct correlational structure compared to neural signal components.

Artifact Removal and Signal Reconstruction:

- Identify and zero out the canonical components corresponding to motion artifacts.

- Apply the inverse CCA transformation.

- Finally, reconstruct the cleaned EEG signal using the Inverse Wavelet Packet Decomposition on the modified nodes.

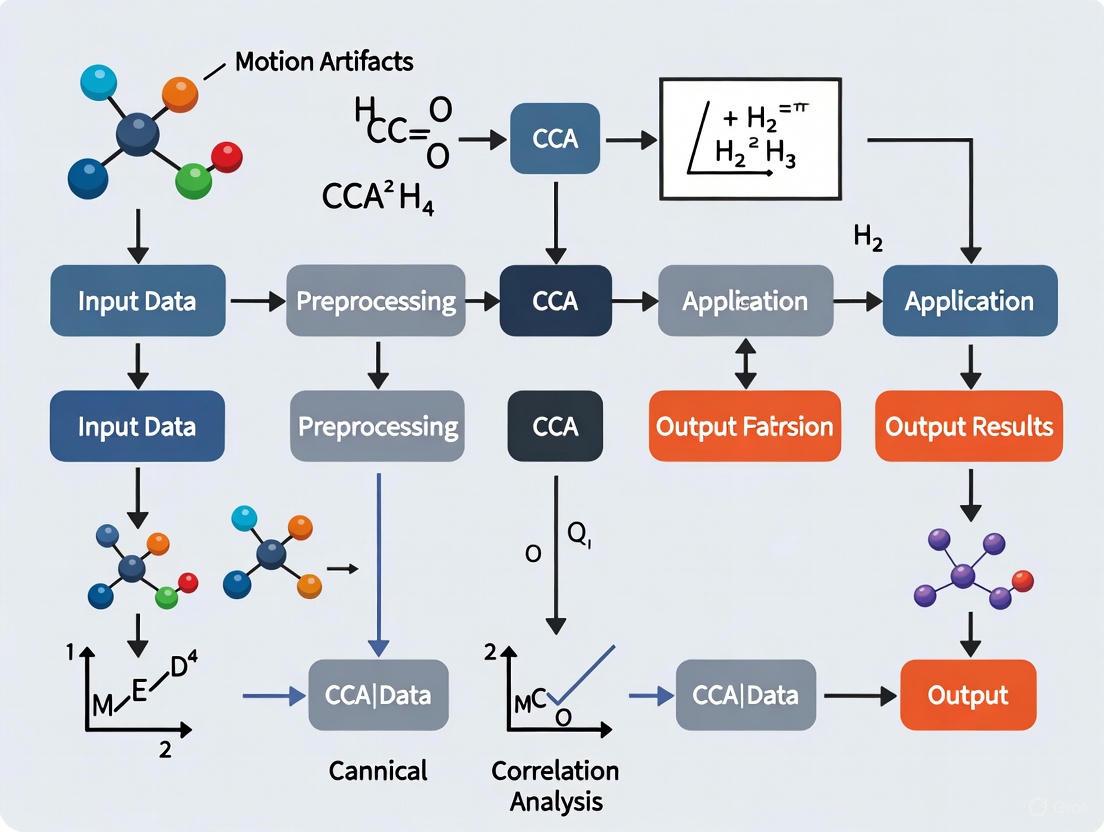

The following workflow diagram illustrates this two-stage process:

The Scientist's Toolkit: Research Reagent Solutions

The effective application of CCA and related methods requires a suite of computational tools and data resources. The following table lists key "reagent solutions" essential for research in this field.

Table 2: Essential Research Tools for CCA-based Motion Artifact Research

| Tool / Resource | Type | Function in Research |

|---|---|---|

| Science-Grade Wearable EEG [3] [4] | Hardware | Acquires neural signals in real-world, mobile conditions. Essential for generating ecologically valid data containing motion artifacts. |

| Accelerometer (ACC) / Inertial Measurement Unit (IMU) [2] [5] | Hardware | Provides auxiliary motion data. Used to synchronize with and validate motion artifact peaks in EEG signals, improving detection accuracy. |

| Benchmark EEG/fNIRS Datasets with Motion Artifacts [5] | Data | Publicly available datasets containing recorded or induced motion artifacts. Critical for developing, testing, and benchmarking denoising algorithms like WPD-CCA. |

| SPAD / SAS / R / Python (with SciKit-learn) [1] [6] | Software | Statistical software and programming languages with dedicated libraries for performing multivariate analyses, including CCA, SCCA, and other statistical operations. |

| Wavelet Toolbox (e.g., in MATLAB) | Software | Provides specialized functions for implementing signal decomposition techniques like Wavelet Packet Decomposition (WPD), which is a key pre-processing step for hybrid methods like WPD-CCA [5]. |

Visualization and Data Presentation

Effective communication of CCA results and data is crucial. The following diagram illustrates the core conceptual workflow of a Canonical Correlation Analysis, showing the transformation of original datasets to find maximally correlated components.

When creating visualizations for publication, adhere to these best practices for accessibility and clarity [7] [8]:

- Color and Contrast: Use tools like ColorBrewer or Viz Palette to select colorblind-safe palettes. Ensure sufficient contrast between elements.

- Font and Labels: Use sans-serif fonts for chart titles and labels. Directly label data series where possible instead of relying solely on legends.

- Accuracy and Integrity: Ensure visual representations accurately reflect the underlying data to avoid misinterpretation.

Within the broader thesis on canonical correlation analysis (CCA) for filtering motion artifacts, this document details the mathematical formulation of optimization objectives and constraint solutions. The research focuses on developing robust algorithms for motion artifact correction from single-channel EEG and fNIRS signals, which are inherently non-stationary and susceptible to corruption from patient movement during acquisition using wearable devices [9]. Successful detection of various neurological and neuromuscular disorders depends critically on clean EEG and fNIRS signals, making the reduction of motion artifacts a matter of utmost importance [9]. This paper provides application notes and experimental protocols for implementing novel artifact removal techniques, particularly focusing on the mathematical framework of optimization problems involved in single-stage and two-stage denoising approaches.

Theoretical Background and Mathematical Framework

Canonical Correlation Analysis (CCA) Fundamentals

Canonical Correlation Analysis is a multivariate data-driven approach that derives latent components (LCs), which are optimal linear combinations of the original data, by maximizing correlation between two data matrices [10]. Given two multivariate datasets X and Y, CCA finds linear combinations aᵢ and bᵢ that maximize the correlation between aᵢᵀX and bᵢᵀY. The mathematical formulation involves solving the optimization problem:

maximize ρ = corr(aᵢᵀX, bᵢᵀY) subject to var(aᵢᵀX) = 1, var(bᵢᵀY) = 1, and cov(aᵢᵀX, aⱼᵀX) = 0 for i ≠ j

This fundamental CCA optimization provides the mathematical basis for its application in motion artifact correction, where it helps separate neural signals from motion-induced noise components by maximizing the correlation between different signal representations [9] [10].

Wavelet Packet Decomposition (WPD) Mathematical Formulation

Using the WPD technique, signals can be decomposed into a wavelet packet basis at diverse scales [9]. For-level decomposition, a wavelet packet basis is represented by multiple signals, where the wavelet packet bases are produced recursively from the scaling and wavelet functions. The recursive formulation is defined as:j

ψj2i[n]=∑kh[k]ψji[2n−k]ψj2i+1[n]=∑kg[k]ψji[2n−k]

where h[k] and g[k] are quadrature mirror filters associated with the scaling function and wavelet function, respectively. This decomposition creates a complete binary tree structure where each node represents a specific subspace with corresponding basis functions, allowing for optimal signal representation for artifact removal [9].

Optimization Objectives in Motion Artifact Correction

Primary Optimization Objectives

The fundamental optimization problem in motion artifact correction aims to maximize signal fidelity while minimizing noise components. For artifact removal from physiological signals, the objective function can be formulated as:

minimize J(x) = ‖y − x‖² + λ‖Wx‖² subject to Cx ≤ d

where y represents the observed noisy signal, x is the clean signal to be estimated, W is a transformation matrix (e.g., wavelet transform), λ is a regularization parameter, and Cx ≤ d represents constraints on the solution [9].

Performance Metrics as Optimization Targets

The optimization process specifically targets the improvement of well-established performance metrics used in artifact correction [9]:

TABLE 1: Key Performance Metrics for Optimization Targets

| Metric | Formula | Optimization Objective |

|---|---|---|

| Difference in Signal-to-Noise Ratio (ΔSNR) | ΔSNR = SNRₒᵤₜ − SNRᵢₙ | Maximize |

| Percentage Reduction in Motion Artifacts (η) | η = (‖Aᵢₙ‖ − ‖Aₒᵤₜ‖)/‖Aᵢₙ‖ × 100% | Maximize |

The research demonstrates that through appropriate optimization, these metrics can be significantly improved, with the proposed WPD-CCA method achieving ΔSNR values of 30.76 dB for EEG and 16.55 dB for fNIRS signals, and η values of 59.51% for EEG and 41.40% for fNIRS signals [9].

Constraint Solutions for Single-Channel Denoising

Mathematical Constraints in Signal Decomposition

The application of WPD-CCA for motion artifact correction involves several mathematical constraints that ensure effective signal separation [9]:

- Orthogonality Constraint: Wavelet basis functions must satisfy ∫ ψⱼₖ(t)ψⱼₘ(t)dt = δₖₘ, where δₖₘ is the Kronecker delta function

- Energy Preservation: The decomposition must satisfy Parseval's identity ‖x‖² = ∑ⱼ∑ₖ|⟨x, ψⱼₖ⟩|²

- Canonical Correlation Maximization: For CCA stage, the constraint is to find weight vectors that maximize correlation ρ = corr(wₓᵀX, wᵧᵀY) subject to ‖wₓ‖ = 1 and ‖wᵧ‖ = 1

Optimization Algorithms for Constraint Satisfaction

The solution to the constrained optimization problem involves:

- Multi-level Decomposition: Implementing WPD to level 4-8 using specific wavelet families (dbN, symN, coifN, fkN)

- Thresholding Operation: Applying soft or hard thresholding to wavelet coefficients using minimax or sure thresholding rules

- CCA Application: Performing CCA on reconstructed signals from different wavelet nodes to maximize correlation between signal components

- Reconstruction: Inverse transformation to obtain denoised signal while maintaining temporal structure

Experimental Protocols for WPD-CCA Implementation

Materials and Research Reagent Solutions

TABLE 2: Essential Research Materials and Computational Tools

| Item | Function/Specification | Application Context |

|---|---|---|

| Wavelet Families | Daubechies (db1-db3), Symlets (sym4-sym6), Coiflets (coif1-coif3), Fejer-Korovkin (fk4, fk6, fk8) | Multi-resolution analysis for signal decomposition |

| Benchmark Dataset | Publicly available EEG and fNIRS data with motion artifacts | Performance validation and method comparison |

| CCA Algorithm | Multivariate statistical analysis implementation | Maximizing correlation between signal components |

| Performance Metrics | ΔSNR and η calculations | Quantitative evaluation of denoising efficacy |

Detailed Step-by-Step Protocol

Protocol Title: Two-Stage Motion Artifact Removal Using WPD-CCA

Purpose: To remove motion artifacts from single-channel EEG and fNIRS signals using wavelet packet decomposition combined with canonical correlation analysis.

Materials Preparation:

- Acquire single-channel EEG/fNIRS data with motion artifacts

- Select appropriate wavelet family based on signal characteristics (db1 for EEG, fk4/fk8 for fNIRS recommended)

- Prepare computational environment with signal processing toolbox supporting wavelet transforms and CCA

Procedure:

- Stage 1: Wavelet Packet Decomposition

- Perform 5-level wavelet packet decomposition using selected wavelet family

- Apply thresholding to detail coefficients using Birgé-Massart strategy

- Reconstruct signals from selected nodes

Stage 2: Canonical Correlation Analysis

- Form multivariate datasets from different wavelet node reconstructions

- Apply CCA to find linear combinations that maximize correlation

- Select canonical variates representing neural activity components

Signal Reconstruction

- Reconstruct artifact-corrected signal from selected canonical variates

- Calculate performance metrics (ΔSNR and η) for quality assessment

Validation:

- Compare performance with existing methods (DWT, EMD, EEMD, VMD)

- Statistical analysis of performance metrics across multiple recordings

- Visual inspection of reconstructed signals for residual artifacts

Data Presentation and Performance Analysis

Quantitative Performance Comparison

TABLE 3: Performance Comparison of Artifact Removal Techniques for EEG Signals

| Method | Wavelet Type | Average ΔSNR (dB) | Average η (%) |

|---|---|---|---|

| WPD (Single-Stage) | db2 | 29.44 | 53.48 |

| WPD (Single-Stage) | db1 | 28.95 | 55.12 |

| WPD-CCA (Two-Stage) | db1 | 30.76 | 59.51 |

| WPD-CCA (Two-Stage) | db2 | 29.89 | 57.23 |

| Existing Methods | EMD-CCA | 24.15 | 47.32 |

TABLE 4: Performance Comparison of Artifact Removal Techniques for fNIRS Signals

| Method | Wavelet Type | Average ΔSNR (dB) | Average η (%) |

|---|---|---|---|

| WPD (Single-Stage) | fk4 | 16.11 | 26.40 |

| WPD (Single-Stage) | fk8 | 15.89 | 25.17 |

| WPD-CCA (Two-Stage) | db1 | 16.55 | 39.87 |

| WPD-CCA (Two-Stage) | fk8 | 16.32 | 41.40 |

| Existing Methods | EEMD-ICA | 14.25 | 32.15 |

The tabular data presentation follows recommended practices for scientific communication, organizing complex quantitative information for easy comparison and interpretation [11]. The results demonstrate that the two-stage WPD-CCA technique significantly outperforms single-stage approaches and existing state-of-the-art methods for both EEG and fNIRS signal types [9].

Visual Representation of Methodologies

WPD-CCA Algorithm Workflow

Mathematical Optimization Framework

The mathematical formulation of optimization objectives and constraint solutions for CCA-based motion artifact filtering presents a robust framework for enhancing signal quality in physiological monitoring. The WPD-CCA approach demonstrates significant improvements in both ΔSNR and artifact reduction percentage metrics compared to existing methods, validating its efficacy for both EEG and fNIRS signal types. The explicit formulation of optimization targets and constraint satisfaction strategies provides researchers with a reproducible methodology for implementing advanced artifact correction techniques in neurological and neuromuscular disorder detection applications.

The Role of Canonical Variables in Extracting Shared Signal Patterns

Canonical Correlation Analysis (CCA) is a multivariate statistical method designed to identify and quantify the relationships between two sets of variables. In the context of signal processing, particularly for filtering motion artifacts from physiological signals like electroencephalography (EEG) and functional near-infrared spectroscopy (fNIRS), CCA serves as a powerful blind source separation technique. It extracts underlying components, known as canonical variates, that represent shared signal patterns between different data sets. The primary objective of CCA is to find linear combinations of the variables in each set—termed canonical variables—such that the correlation between these combinations is maximized. Formally, given two multidimensional variables X and Y, CCA finds weight vectors a and b to create canonical variates U = a'X and V = b'Y, maximizing the correlation ρ = corr(U, V) [12] [13]. The strength of this approach lies in its ability to separate artifact-laden components from clean neural signals based on their correlation structure with a reference signal, making it exceptionally suitable for motion artifact removal in mobile health monitoring and neuroimaging studies [14] [5].

Theoretical Foundations of Canonical Variables

Mathematical Formulation

The mathematical solution for CCA involves a generalized eigenvalue problem. For two standardized data sets X and Y, with within-set covariance matrices ΣXX and ΣYY, and between-set covariance matrix ΣXY, the canonical correlations ρ and weight vectors a and b are found by solving the equations ΣXX^{-1} ΣXY ΣYY^{-1} ΣYX a = ρ² a and ΣYY^{-1} ΣYX ΣXX^{-1} ΣXY b = ρ² b [12] [13]. The resulting eigenvalues ρ² represent the squared canonical correlations, which are typically ordered in descending magnitude, with the first pair of canonical variates exhibiting the highest correlation. Each subsequent pair of canonical variates is uncorrelated with the previous pairs, ensuring that they capture distinct dimensions of the shared variability between the two data sets [13]. The number of possible canonical variable pairs is limited by the smaller dimensionality of the two input data sets.

Interpretation of Canonical Variables

In motion artifact filtration, the canonical variables are interpreted as latent sources that generate the observed signals. The first few pairs of canonical variates often capture the motion artifacts due to their high temporal correlation with the artifact components, while subsequent pairs retain the neural signals of interest [15] [5]. The weights (a and b) indicate the contribution of each original signal channel to the canonical variate, facilitating the identification of which channels are most affected by artifacts. Furthermore, the loadings, which are correlations between the original signals and the canonical variates, provide a more interpretable measure for understanding which original variables drive the relationships uncovered by CCA [16].

Application Notes: CCA for Motion Artifact Removal

Performance Comparison of CCA-Based Filtering Techniques

The following table summarizes the performance of various CCA-based methods for motion artifact removal from physiological signals, as reported in recent studies:

Table 1: Performance of CCA-based motion artifact removal methods

| Method | Signal Type | Performance Metrics | Key Findings |

|---|---|---|---|

| Gaussian Elimination CCA (GECCA) [15] | EEG | Reduced computation cost, improved DSNR, RMSE | Replacing matrix inversion with backslash operator in CCA reduced computation time while maintaining filtering efficiency. |

| CCA Filtering [14] | High-density EMG | Reduction in motion artifact frequency content, minimized signal reduction in myoelectric bands | Outperformed standard high-pass filtering and PCA by better removing artifacts in locomotion data while preserving true EMG signal. |

| WPD-CCA [5] | Single-channel EEG | Average ΔSNR: 30.76 dB, Average η (artifact reduction): 59.51% | Two-stage method combining wavelet packet decomposition and CCA effectively cleansed single-channel signals. |

| WPD-CCA [5] | fNIRS | Average ΔSNR: 16.55 dB, Average η (artifact reduction): 41.40% | Demonstrated the adaptability of the hybrid method for different physiological signal types. |

| EEMD-GECCA-SWT [15] | EEG | Evaluated with DSNR, λ, RMSE, elapsed time | A cascaded approach using an improved CCA method effectively suppressed motion artifacts with reduced computational cost. |

Advantages of CCA over Other Techniques

CCA provides several distinct advantages over other artifact removal techniques like Independent Component Analysis (ICA) and Principal Component Analysis (PCA). Studies have concluded that CCA is more accurate and faster than ICA for removing eye-blink artifacts from EEG [15]. Furthermore, for muscle artifact removal, CCA performs better because these artifacts often lack stereotyped topography [15]. Compared to PCA, CCA filtering provided a greater reduction in signal content at frequency bands associated with motion artifacts in high-density EMG, while also minimizing signal reduction in frequency bands constituting the true myoelectric signal [14]. This makes CCA particularly valuable for analyzing data from dynamic movements like walking and running.

Experimental Protocols

Protocol 1: Motion Artifact Removal from Single-Channel EEG using WPD-CCA

This protocol describes a two-stage method for cleaning single-channel EEG signals corrupted by motion artifacts [5].

1. Signal Decomposition via Wavelet Packet Decomposition (WPD):

- Input: Single-channel raw EEG signal

X(t). - Procedure: Decompose

X(t)into multiple components using a selected wavelet packet family (e.g., Daubechiesdb1). - Output: A set of wavelet packet components

WPC₁, WPC₂, ..., WPCₙ.

2. Constructing the Multivariate Input for CCA:

- Reference 1: Create a delayed version of the original signal,

X(t-1). - Reference 2: Create a temporally correlated signal using a linear convolution mask, e.g.,

Y = conv2(X, [1 0 1])[15]. - CCA Input Matrix: Combine the WPCs and the reference signals into a multivariate matrix

Z = [WPCs; X(t-1); Y].

3. Canonical Correlation Analysis:

- Procedure: Perform CCA on the constructed matrix

Zto find canonical variates. The first few variates, which are highly correlated with the references, are identified as artifact components. - Artifact Removal: Remove the artifact-laden canonical variates.

4. Signal Reconstruction:

- Procedure: Reconstruct the cleaned signal from the remaining canonical variates and the inverse WPD transform.

- Validation: Calculate performance metrics like

ΔSNRand artifact reduction percentageηto evaluate efficacy [5].

Figure 1: Workflow for single-channel EEG artifact removal using WPD-CCA.

Protocol 2: GECCA for Enhanced Computational Efficiency

This protocol uses Gaussian Elimination (GE) to speed up the CCA computation, which is beneficial for processing long-duration signals or in real-time applications [15].

1. Input Signal Vector Formation:

- Primary Input:

X[n], a multidimensional signal (e.g., from EEMD of a single-channel EEG). - Temporally Correlated Vector: Generate

Y[n]by applying a 2-D valid convolution operator toX[n]with a mask such as[1 0 1], such thatY = conv2(X, [1 0 1])[15]. - Merging: Merge both vectors into a composite matrix

Z = [X; Y].

2. Covariance Matrix Calculation:

- Procedure: Calculate the covariance matrix

C = cov(Z').

3. Eigenvalue Solution via Gaussian Elimination:

- Standard CCA Problem: The solution involves equations of the form

A * Cxx = CxyandB * Cyy = Cyx, which conventionally use matrix inversion. - GECCA Enhancement: Replace the matrix inverse operation (

inv) with the left matrix division (backslash\) operator to solve these linear equations more efficiently [15]. - Output: Obtain the demixing matrix

Wand the estimated source componentsS[n] = W' * p[n], wherep[n]are the canonical variates.

4. Artifact Component Identification and Reconstruction:

- Procedure: Identify and remove canonical variates corresponding to motion artifacts.

- Output: Reconstruct the cleaned signal from the remaining sources.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential materials and tools for CCA-based motion artifact research

| Item Name | Function/Description | Example Use Case |

|---|---|---|

| High-Density Electrode Arrays | Multi-electrode setups for recording spatial signal properties. | Essential for capturing sufficient data for CCA from muscles (HD-EMG) or scalp (HD-EEG) [14]. |

| Mobile EEG/fNIRS Systems | Wearable, ambulatory systems for signal acquisition in naturalistic settings. | Enables collection of data during movement where motion artifacts are prevalent [2]. |

| Accelerometer/Gyroscope | Inertial Measurement Units (IMUs) to quantify subject movement. | Provides a reference signal to inform CCA about the timing and intensity of motion artifacts [5]. |

| Wavelet Toolbox | Software library for signal decomposition (e.g., WPD). | Used in pre-processing to create multivariate input from single-channel data for CCA [5]. |

| CCA Software Implementation | Code libraries for performing CCA (e.g., in Python, MATLAB, R). | Core computational engine. Python's scikit-learn CCA class or MATLAB's canoncorr are commonly used [12] [16]. |

Visualization and Advanced Interpretation

Logical Workflow for CCA Filtering

The following diagram illustrates the general decision-making and data flow process involved in applying CCA filtering to a physiological signal, integrating multiple techniques from the protocols.

Figure 2: General decision workflow for CCA-based motion artifact removal.

Statistical Inference and Validation

When applying CCA, proper statistical validation is crucial. Simple permutation tests, as often used to identify significant modes of shared variation, can produce inflated error rates. This is especially true when data have been adjusted for nuisance variables (e.g., age, sex), as residualization introduces dependencies that violate the exchangeability assumption of permutation tests [17]. Validated statistical methods, such as transforming residuals to a lower-dimensional basis or using stepwise estimation of canonical correlations, should be employed to ensure reliable inference [17]. Furthermore, the performance of artifact removal should be quantified using established efficiency matrices like delta signal-to-noise ratio (DSNR), root mean square error (RMSE), and artifact reduction percentage (η) [15] [5].

The accurate separation of biological signals from motion-induced noise is a fundamental challenge in biomedical research and clinical diagnostics. Motion artifacts present a significant obstacle, particularly in wearable healthcare devices and long-term monitoring scenarios, as they can obscure vital physiological information and lead to erroneous interpretations. This document establishes a theoretical framework for understanding the sources of motion artifacts and presents advanced signal processing techniques, with a particular emphasis on Canonical Correlation Analysis (CCA) and its variants, for effective artifact removal. The principles outlined are applicable to a range of biological signals, including electroencephalography (EEG), electrocardiography (ECG), and functional near-infrared spectroscopy (fNIRS). The objective is to provide researchers and drug development professionals with a structured approach to preserving signal integrity in the presence of movement, thereby enhancing the reliability of data collected in both controlled and ambulatory settings.

Theoretical Foundations of Motion Artifacts and CCA

The Nature of Motion Artifacts

Motion artifacts are signal contaminations originating from the relative movement between sensors and the biological source or from the movement of the subject itself. Unlike physiological noise, these artifacts are non-biological in origin and can manifest with amplitudes an order of magnitude greater than the signal of interest, dramatically reducing the signal-to-noise ratio (SNR). In EEG recordings, for instance, motion artifacts can be caused by muscle contractions, electrode displacement, or cable movement [18] [19]. For PPG and ECG signals, artifacts often arise from sensor-tissue decoupling and variations in pressure or contact impedance [20] [18]. These artifacts typically exhibit non-stationary and nonlinear properties, making them difficult to model and remove with simple frequency-domain filtering, especially when their spectral content overlaps with that of the underlying biological signal.

Canonical Correlation Analysis: A Core Mathematical Framework

Canonical Correlation Analysis (CCA) is a multivariate statistical method designed to uncover the underlying correlations between two sets of variables. In the context of motion artifact removal, CCA is repurposed as a blind source separation (BSS) technique. The fundamental principle involves treating the observed multichannel biological data as one set of variables and a temporally delayed version of the same data as the second set. CCA then finds linear combinations of the original signals and their time-lagged versions that are maximally autocorrelated [13] [19].

Formally, given two datasets ( Y1 \in \mathbb{R}^{N \times p1} ) and ( Y2 \in \mathbb{R}^{N \times p2} ), where ( N ) is the number of observations and ( pk ) is the number of features, CCA finds canonical coefficients ( u1 ) and ( u2 ) that maximize the correlation ( \rho ): [ \max{u1, u2} \rho = \text{corr}(Y1 u1, Y2 u2) = \frac{u1^T \Sigma{12} u2}{\sqrt{u1^T \Sigma{11} u1} \sqrt{u2^T \Sigma{22} u2}} ] where ( \Sigma{11} ) and ( \Sigma{22} ) are within-set covariance matrices, and ( \Sigma{12} ) is the between-set covariance matrix [13]. The solution involves solving an eigenvalue problem, and the resulting components are ordered by their correlation coefficients. Components with low correlation, indicative of white noise or muscle artifacts due to their low autocorrelation, can be identified and removed [19]. The cleaned signal is subsequently reconstructed from the remaining components.

Advanced CCA Frameworks and Hybrid Techniques

The basic CCA framework has been extended into several advanced variants to address specific challenges in motion artifact correction.

Gaussian Elimination CCA (GECCA)

A key innovation to reduce the computational cost of traditional CCA is the use of Gaussian elimination and backslash operations for solving the linear equations involved in calculating eigenvalues. This approach replaces the more computationally intensive matrix inverse operations, thereby reducing computation cost without sacrificing performance. This method has been successfully applied in EEG motion artifact removal, demonstrating improved efficiency in highly noisy environments [15].

Higher-Order CCA (HOCCA)

Standard CCA focuses on second-order correlations. Higher-Order CCA (HOCCA) generalizes this concept to capture higher-order statistical dependencies between datasets. This is particularly useful for complex data like natural images or spatio-chromatic signals, where HOCCA can jointly model both the spatial structure and adaptive changes, such as those caused by varying illumination conditions [21].

Multiset CCA (MCCA)

When dealing with more than two data modalities, Multiset CCA (MCCA) is employed. MCCA extends the CCA framework to find a shared latent representation across multiple datasets simultaneously. This is highly relevant in neuroscience, where data may be collected from various sources like EEG, fMRI, and genetic information, allowing for a comprehensive analysis of their joint relationships [13].

Hybrid CCA-Signal Decomposition Methods

To enhance artifact removal, CCA is often combined with other signal decomposition techniques in a cascaded or hybrid fashion. These methods first decompose the signal to create a multichannel representation, which is then processed by CCA.

- Wavelet Packet Decomposition with CCA (WPD-CCA): This two-stage method first decomposes a single-channel signal (e.g., EEG or fNIRS) into multiple frequency sub-bands using WPD. CCA is then applied to these sub-bands to isolate and remove artifact components. This approach has shown superior performance, achieving an average SNR improvement of 30.76 dB for EEG and 16.55 dB for fNIRS signals [5].

- Ensemble Empirical Mode Decomposition with CCA (EEMD-CCA): EEMD adaptively decomposes a non-stationary signal into Intrinsic Mode Functions (IMFs). CCA is subsequently used to segregate artifact-laden IMFs from those containing the biological signal of interest [15] [5].

- CCA with Spectral Slope Analysis: This method augments traditional CCA by analyzing the spectral slope of the derived components. Tonic muscle artifacts often have a spectral power that increases with frequency, a characteristic that can be used to differentiate them from neural signals, which typically have a steeper spectral roll-off. This combination allows for more accurate identification and removal of muscle contamination from EEG [19].

The following diagram illustrates the logical workflow of a generic hybrid CCA-based artifact removal process.

Quantitative Performance of CCA-Based Techniques

The efficacy of CCA-based artifact removal is quantitatively assessed using established performance metrics, primarily the improvement in Signal-to-Noise Ratio (ΔSNR) and the percentage reduction in motion artifacts (η). The following tables summarize the performance of various techniques across different biological signals.

Table 1: Performance of CCA-Based Techniques for EEG Motion Artifact Removal

| Technique | Key Feature | Reported Performance (ΔSNR / η) | Key Advantage |

|---|---|---|---|

| WPD-CCA [5] | Two-stage decomposition & correlation | ΔSNR: 30.76 dB, η: 59.51% | Effective for single-channel EEG |

| GECCA [15] | Gaussian elimination for Eigen calculation | Improved DSNR & reduced RMSE | Lower computational cost |

| CCA-Spectral Slope [19] | Spectral feature for component rejection | Comparable to ICA | Effective for tonic muscle artifact |

Table 2: Performance of CCA-Based Techniques for Other Biosignals

| Signal Type | Technique | Reported Performance (ΔSNR / η) | Key Advantage |

|---|---|---|---|

| fNIRS [5] | WPD-CCA | ΔSNR: 16.55 dB, η: 41.40% | Effective for optical signals |

| ECG [20] | Rd-ICA (Multichannel) | Improved waveform preservation | Uses redundant chest & back leads |

Table 3: Comparison with Non-CCA Artifact Removal Methods

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Wavelet Filtering [22] | Multi-resolution analysis | Excellent for spike removal | Poor at correcting baseline shifts |

| Spline Interpolation [22] | Models artifact segments | Effective for baseline shifts | Leaves high-frequency spikes |

| ICA [19] | Statistical independence | Powerful for source separation | High computational complexity |

| Deep Learning (Motion-Net) [2] | Convolutional Neural Network | High accuracy (η: 86%) | Requires large training datasets |

Experimental Protocols for CCA-Based Artifact Removal

This section provides detailed, step-by-step protocols for implementing key CCA-based artifact removal techniques.

Protocol 1: Motion Artifact Removal from EEG using WPD-CCA

This protocol is designed for processing single-channel EEG data corrupted by motion artifacts [5].

Signal Decomposition:

- Select a wavelet packet family (e.g., Daubechies 'db1') and a decomposition level.

- Decompose the single-channel EEG signal, ( X(t) ), into a set of wavelet packet components (WPCs) using the Wavelet Packet Decomposition (WPD) algorithm. This creates a multichannel representation of the signal.

Formulate Data Matrices for CCA:

- Let the matrix of WPCs be ( Y ).

- Create a time-lagged version of this matrix, ( Y_{\text{lag}} ), using a linear convolution mask such as [1, 0, 1].

Apply CCA:

- Perform CCA on the matrix pair ( (Y, Y_{\text{lag}}) ).

- Obtain the canonical components ( p[n] ) and the demixing matrix ( W ).

Identify and Remove Artifact Components:

- Analyze the correlation coefficients of the canonical components. Components with low correlation coefficients are likely artifacts.

- Set the artifact-related components to zero.

Signal Reconstruction:

- Reconstruct the artifact-corrected WPCs using the inverse of the demixing matrix: ( S[n] = W' \cdot p_{\text{clean}}[n] ).

- Apply the Inverse Wavelet Packet Transform to the corrected WPCs to obtain the cleaned single-channel EEG signal.

Protocol 2: Motion Artifact Removal using CCA with Spectral Slope

This protocol is optimized for removing tonic muscle artifacts from multi-channel EEG data [19].

Apply BSS-CCA:

- For a multi-channel EEG recording ( X ), create a one-sample delayed version ( X_{\text{lag}} ).

- Perform CCA on ( (X, X_{\text{lag}}) ) to extract canonical components.

Component Analysis:

- Calculate the correlation coefficient ( \rho ) for each canonical component.

- Compute the spectral slope for each component by fitting a linear regression to the log-power spectrum.

Component Rejection:

- Establish a threshold for the spectral slope. Components with a shallow or positive spectral slope (indicating increasing power with frequency) are likely muscle artifacts.

- Reject components identified as artifacts based on their spectral characteristics, even if their correlation coefficient is not the lowest.

Signal Reconstruction:

- Reconstruct the EEG signal using only the retained (brain-dominated) components.

Protocol 3: Gaussian Elimination CCA (GECCA) for Enhanced Computation

This protocol modifies the core CCA calculation for reduced computational time [15].

Steps 1-2: Follow Protocol 1, steps 1 and 2, to obtain the data matrix ( Z = [X; Y] ) and its covariance matrix ( C ).

Solve Linear Equations with Gaussian Elimination:

- Instead of computing ( A = \text{inv}(C{xx}) C{xy} ), solve the linear system ( A \cdot C{xx} = C{xy} ) for ( A ) using a backslash operator (e.g.,

A = C_{xx} \ C_{xy}in MATLAB). - Similarly, solve ( B \cdot C{yy} = C{yx} ) for ( B ).

- Instead of computing ( A = \text{inv}(C{xx}) C{xy} ), solve the linear system ( A \cdot C{xx} = C{xy} ) for ( A ) using a backslash operator (e.g.,

Eigenvalue Calculation:

- Form the matrix ( G = A \cdot B ).

- Calculate the eigenvalues ( \rho^2 ) and eigenvectors ( a ) of ( G ). The eigenvectors are the canonical coefficients.

Steps 4-5: Follow Protocol 1, steps 4 and 5, to remove artifacts and reconstruct the signal.

The workflow for these experimental protocols is summarized in the following diagram.

The Scientist's Toolkit: Essential Reagents and Materials

Table 4: Key Research Reagent Solutions for Motion Artifact Research

| Item Name | Function/Application | Specifications/Notes |

|---|---|---|

| Wearable EEG/fNIRS System [18] | Ambulatory acquisition of neural signals. | Systems with active dry electrodes can reduce motion artifacts at the source. |

| Inertial Measurement Unit (IMU) [20] | Provides reference signal for motion. | Triaxial accelerometers/gyroscopes to measure head/body movement. |

| Redundant ECG Electrodes [20] | Provides additional information for artifact rejection. | Electrodes placed on both chest and back for multichannel processing. |

| Neuromuscular Blockade Agents [19] | Creates a ground-truth, EMG-free dataset for validation. | Used in controlled studies to validate muscle artifact removal algorithms. |

| Synthetic Signal Datasets [22] [23] | Algorithm validation with known ground truth. | Adds known motion artifacts or HRFs to clean signals for performance testing. |

This framework establishes a comprehensive theoretical and practical foundation for distinguishing biological signals from motion artifacts using advanced correlation-based techniques. CCA, in its standard and variant forms, provides a powerful, flexible, and mathematically robust approach for tackling the pervasive challenge of motion artifacts. The quantitative data and detailed protocols provided herein offer researchers a clear pathway for implementation and validation. As the field moves towards more ambulatory and wearable monitoring solutions, the refinement of these methods—particularly their integration with machine learning and deep learning models—will be crucial for unlocking the full potential of continuous, real-world physiological monitoring in both clinical and research settings.

The analysis of electrophysiological signals such as electroencephalography (EEG) and electromyography (EMG) is fundamental to neuroscience research and clinical diagnostics. However, these signals are invariably contaminated by motion artifacts, which are particularly problematic in mobile brain-body imaging and ambulatory monitoring applications. Motion artifacts arise from various sources including head movement, electrode displacement, cable sway, and muscle contractions, leading to signal distortions that can obscure underlying neural activity and compromise interpretation. Traditional filtering approaches have provided foundational solutions but present significant limitations when artifact frequencies overlap with the signal of interest. This application note explores the conceptual and practical advantages of Canonical Correlation Analysis (CCA) over traditional filtering methods for artifact removal, providing researchers with a structured comparison and detailed protocols for implementation.

Conceptual Framework: CCA Versus Traditional Methods

Traditional Filtering Approaches

Traditional filtering methods for artifact removal typically rely on fixed frequency-domain operations:

- High-pass and low-pass filters utilize predefined cutoff frequencies to attenuate unwanted signal components. While crucial for removing basic noise, their efficacy is severely limited when motion artifacts spectrally overlap with brain signals, as these artifacts can contaminate a broad frequency range [2].

- Notch filters target specific frequency bands (e.g., 50/60 Hz line noise) but are ineffective against motion artifacts which often exhibit broadband characteristics [18].

The fundamental limitation of these conventional approaches is their reliance on frequency separation between signal and artifact. In real-world mobile recording scenarios, the frequency content of motion artifacts often significantly overlaps with physiological signals of interest, making complete separation via traditional filtering impossible without substantial signal loss [2] [18].

Canonical Correlation Analysis Fundamentals

CCA is a multivariate statistical method that identifies and separates components based on their temporal correlation structure rather than merely their spectral properties:

- Blind source separation principle: CCA operates under the assumption that recorded signals are linear mixtures of underlying sources, including both neural activity and artifactual components [24].

- Temporal correlation maximization: Unlike ICA which maximizes statistical independence, CCA seeks to find linear combinations of variables that maximize their correlation, making it particularly effective for identifying components with specific temporal characteristics [24].

- Component rejection: Once correlated artifactual components are identified, they can be removed before signal reconstruction, effectively preserving the neural signals of interest [14] [24].

This temporal structure-based approach allows CCA to separate artifacts even when they occupy similar frequency bands as the physiological signals, providing a significant advantage over conventional filtering methods.

Quantitative Performance Comparison

Table 1: Comparative Performance of Artifact Removal Methods Across Modalities

| Method | Signal Type | Performance Metrics | Key Advantages | Limitations |

|---|---|---|---|---|

| CCA Filtering | High-density EMG during running | Greater reduction at motion artifact frequencies; Minimal signal reduction at true EMG bands [14] | Superior artifact-source separation; Optimal for dynamic movements | Requires multiple channels; Computational complexity |

| Traditional High-Pass Filtering | High-density EMG during running | Standard 20 Hz cutoff; Limited motion artifact removal [14] | Simple implementation; Established standards | Inadequate for motion artifacts overlapping with signal |

| ICA | EEG with muscle artifacts | Effective for less noisy data with single cortical sources [24] | Established BSS method; Component classification available | Performance degrades with highly contaminated data |

| EMD | EEG with muscle artifacts | Outperforms others for highly contaminated data [24] | Data-driven adaptation; Single-channel capability | Mode mixing issues; Computational intensity |

| iCanClean (CCA-based) | EEG during running | Improved dipolar components; Better P300 congruency effects [25] | Leverages noise references; Effective for locomotion data | Requires specialized hardware or pseudo-reference creation |

Table 2: Performance Metrics of Advanced CCA-Integrated Approaches

| Method | Application | ΔSNR (dB) | Artifact Reduction (%) | Implementation Complexity |

|---|---|---|---|---|

| WPD-CCA [5] | Single-channel EEG | 30.76 | 59.51 | Moderate |

| WPD-CCA [5] | fNIRS signals | 16.55 | 41.40 | Moderate |

| iCanClean [25] | EEG during running | - | - | High |

| CCA Filtering [14] | High-density EMG | Superior to high-pass and PCA | Superior to high-pass and PCA | Moderate |

Detailed Experimental Protocols

Protocol 1: CCA for Motion Artifact Removal from High-Density EMG During Locomotion

This protocol adapts the methodology from [14] for removing motion artifacts from high-density EMG recordings during dynamic movements.

Research Reagent Solutions

Table 3: Essential Research Materials and Equipment

| Item | Specification | Function/Purpose |

|---|---|---|

| EMG Electrode Array | High-density grid (e.g., 8×8 configuration) | Spatial sampling of muscle activity |

| Reference Sensors | Accelerometers/gyroscopes | Motion reference signal acquisition |

| Amplification System | High-input impedance, wireless preferred | Signal acquisition with minimal cable artifacts |

| Signal Processing Software | MATLAB, Python with SciPy | Implementation of CCA algorithms |

| CCA Implementation | Custom scripts or toolboxes (e.g., EEGLAB) | Blind source separation of artifacts |

Step-by-Step Procedure

Signal Acquisition and Preprocessing

- Apply high-density EMG electrode arrays to target muscles (e.g., gastrocnemius or tibialis anterior) following skin preparation guidelines to minimize impedance.

- Secure motion reference sensors (accelerometers) adjacent to EMG arrays to capture movement dynamics.

- Record data during locomotor tasks (walking, running) with synchronized EMG and motion sensor acquisition.

- Apply basic bandpass filtering (10-500 Hz) to remove extreme out-of-band noise while preserving the opportunity for CCA to address in-band artifacts.

CCA Implementation

- Format data into a multivariate dataset where each channel represents a time-series recording.

- Perform CCA decomposition to identify canonical components representing maximally correlated patterns across the dataset.

- Calculate correlation coefficients between each canonical component and the motion sensor references to identify artifact-dominated components.

- Establish a correlation threshold (typically determined empirically through pilot data) for component rejection.

Signal Reconstruction and Validation

- Reconstruct the EMG signal excluding components identified as motion artifacts.

- Validate performance by comparing power spectral density before and after processing at frequency bands associated with motion artifacts (typically <20 Hz) and EMG signals (typically 20-500 Hz).

- Quantify performance using signal-to-noise ratio improvement and reduction in motion-related components.

Protocol 2: WPD-CCA for Single-Channel EEG Motion Artifact Removal

This protocol implements the hybrid WPD-CCA approach validated in [5] for situations requiring single-channel artifact removal.

Research Reagent Solutions

Table 4: Essential Materials for EEG Artifact Removal

| Item | Specification | Function/Purpose |

|---|---|---|

| EEG Acquisition System | Mobile EEG with dry/wet electrodes | Signal acquisition in dynamic environments |

| Wavelet Toolbox | MATLAB Wavelet Toolbox or PyWavelets | Time-frequency decomposition |

| CCA Implementation | Custom scripts for single-channel application | Artifact component identification |

| Validation Dataset | Benchmark data with known artifacts | Method performance quantification |

Step-by-Step Procedure

Wavelet Packet Decomposition

- Select an appropriate wavelet packet (db1, db2, or fk4 based on [5] findings) for signal decomposition.

- Decompose the single-channel EEG signal into multiple nodes representing different frequency sub-bands.

- Apply a thresholding technique to identify nodes potentially contaminated by motion artifacts.

CCA-Based Artifact Removal

- Reconstruct potential artifact signals from the identified nodes.

- Apply CCA between the reconstructed artifact signals and the original EEG to identify correlated components.

- Remove components exceeding a predefined correlation threshold.

Signal Reconstruction and Validation

- Reconstruct the cleaned EEG signal from the remaining components.

- Quantify performance using artifact reduction percentage (η) and SNR improvement (ΔSNR) metrics as defined in [5].

- Compare against traditional methods (wavelet-only, EMD, ICA) to validate superior performance.

Implementation Tools and Visualizations

CCA Workflow Diagram

Figure 1: CCA-Based Artifact Removal Workflow

Comparative Method Analysis

Figure 2: Comparative Analysis of Artifact Removal Approaches

Discussion and Implementation Considerations

The comparative analysis demonstrates that CCA-based approaches offer significant advantages for motion artifact removal in scenarios where traditional filtering methods fail, particularly when artifact and signal frequencies overlap. The integration of CCA with other signal decomposition techniques (WPD-CCA, EMD-CCA) further enhances performance for single-channel applications [5].

Critical implementation considerations include:

- Reference Signals: CCA performance is enhanced with appropriate reference signals. When dedicated motion sensors are unavailable, pseudo-references can be created from the data itself through techniques like temporary notch filtering or identifying artifact-dominated channels [25].

- Computational Complexity: CCA requires greater computational resources than traditional filtering, which may impact real-time implementation. Optimization strategies include channel selection and dimensionality reduction.

- Parameter Optimization: CCA performance depends on proper parameter selection including window size, correlation thresholds, and component rejection criteria, which should be optimized for specific applications.

For research involving mobile brain imaging and ambulatory monitoring, CCA and its hybrid implementations provide powerful tools for maintaining data quality in ecologically valid environments where motion artifacts are inevitable.

Canonical Correlation Analysis represents a significant advancement over traditional filtering methods for motion artifact removal in physiological signal processing. By leveraging temporal correlation structure rather than relying solely on frequency separation, CCA effectively addresses the fundamental limitation of conventional approaches when artifacts and signals occupy overlapping spectral bands. The quantitative comparisons and detailed protocols provided in this application note equip researchers with the necessary framework to implement CCA-based artifact removal in their experimental paradigms, ultimately enhancing data quality and reliability in mobile electrophysiological research.

CCA Implementation Strategies: Techniques for Effective Motion Artifact Filtering

The efficacy of Canonical Correlation Analysis (CCA) in filtering motion artifacts from biomedical signals is profoundly influenced by the preprocessing pipeline applied to the raw data. Proper data scaling, centering, and dimension alignment are not merely preliminary steps but are foundational to ensuring that CCA can accurately separate neural signals from contamination originating from muscle activity, eye movement, and body motion [26]. These artifacts often exhibit amplitudes that surpass the cortical signals of interest, potentially leading to biased analysis and misinterpretation if not adequately addressed [26]. This application note provides a detailed protocol for establishing a robust preprocessing framework, contextualized within CCA-based motion artifact research for electrophysiological signals like EEG and fNIRS.

The core challenge in artifact removal lies in the spectral overlap between noise and brain activity, rendering simple frequency filters ineffective as they suppress informative neural signatures [26]. CCA, a blind source separation technique, excels in this context by exploiting the autocorrelation properties of signals to isolate artifacts [26]. However, its performance is contingent on the proper conditioning of input data. Scaling ensures that features on different numerical scales contribute equally to the analysis, preventing variables with larger variances from dominating the canonical correlation structure [27]. Centering, which adjusts the mean of each feature to zero, is critical for algorithms like CCA and Principal Component Analysis (PCA) to function correctly, as it aligns the data around a common origin [27]. Finally, dimension alignment guarantees that multi-channel datasets are structured appropriately for CCA to identify correlated sources across observations.

Theoretical Foundation

The Role of Centering and Scaling in CCA

Centering and scaling are crucial preprocessing steps that ensure the stability and interpretability of CCA results when applied to high-dimensional biomedical data.

Centering: This process adjusts the data so that each feature has a mean of zero. For a feature vector (X), the centered value (X') is calculated as: [ X' = X - \mu ] where (\mu) is the mean of the feature [27]. Centering removes bias introduced by non-zero means, ensuring that the first principal component describes the direction of maximum variance rather than the direction of the mean. This is particularly important in CCA, which seeks to maximize correlation between datasets; non-centered data can lead to skewed components that do not reflect the true underlying relationship [27].

Scaling: This adjusts the range of the data to ensure all features contribute equally to the analysis. This is vital for CCA because the technique is sensitive to the variance of input variables. Without scaling, a feature with a naturally large range (e.g.,

10,000) would disproportionately influence the correlation structure compared to a feature with a small range (e.g.,0-100). Several scaling methods are commonly employed:- Standardization (Z-score Normalization): This method transforms data to have a mean of 0 and a standard deviation of 1. The formula for a feature value (X) is: [ X_{\text{standardized}} = \frac{X - \mu}{\sigma} ] where (\sigma) is the standard deviation of the feature [27]. It is ideal for models assuming a Gaussian distribution and is highly recommended for CCA, PCA, and Support Vector Machines (SVMs).

- Min-Max Scaling: This technique squeezes data into a fixed range, typically

[0, 1]: [ X{\text{scaled}} = \frac{X - X{\min}}{X{\max} - X{\min}} ] It is beneficial when the model requires bounded input, such as in neural networks with sigmoid activation functions [27]. - MaxAbs Scaling: This scales data based on its maximum absolute value, preserving the sign of the original data and maintaining sparsity. It is calculated as: [ X{\text{scaled}} = \frac{X}{|X{\max}|} ] This is useful for data containing both positive and negative values [27].

Table 1: Comparison of Common Data Scaling Techniques

| Method | Formula | Best Used For | Pros | Cons |

|---|---|---|---|---|

| Standardization | ( X' = \frac{X - \mu}{\sigma} ) | Gaussian-distributed data; CCA, PCA, SVM [27] | Ensures equal feature contribution; stable convergence. | Sensitive to outliers. |

| Min-Max Scaling | ( X' = \frac{X - X{\min}}{X{\max} - X_{\min}} ) | Bounded data; neural networks [27] | Preserves original distribution in a fixed range. | Distorted by strong outliers. |

| MaxAbs Scaling | ( X' = \frac{X}{|X_{\max}|} ) | Sparse data; data with positive/negative values [27] | Maintains sparsity and sign; computationally efficient. | Sensitive to large-magnitude outliers. |

Dimension Alignment via Canonical Correlation Analysis

Dimension alignment refers to the process of projecting multiple datasets into a shared, low-dimensional space where their correlated structures are maximized. CCA is a powerful statistical method for this purpose. Given two datasets (X) and (Y), CCA finds linear combinations of their variables, called canonical variates (U = X^T a) and (V = Y^T b), such that the correlation between (U) and (V) is maximized [26] [28].

This is achieved by solving a generalized eigenvalue problem involving the covariance matrices (\Sigma{XX}), (\Sigma{YY}), and the cross-covariance matrix (\Sigma_{XY}) [28]. In the context of motion artifact removal, the observed multi-channel EEG signal (X(t)) is considered a linear mixture of underlying source signals (S(t)), including both cerebral and artifactual components [26]. CCA decomposes (X(t)) into these sources, which can be automatically classified and removed based on features like autocorrelation before reconstructing the cleaned signal [26].

Experimental Protocols for CCA-Based Motion Artifact Removal

Protocol 1: CCA with Feature Extraction and GMM Clustering

This protocol outlines a method for real-time artifact removal from multichannel EEG signals, suitable for cognitive research applications [26].

Research Reagent Solutions:

- EEG Recording System: A wired EEG cap with multiple Ag/AgCl electrodes (e.g., 62 electrodes), a SynAmps2 system, and recording software [26].

- Computing Environment: Software for signal processing (e.g., MATLAB, Python with SciPy) capable of performing CCA, feature extraction, and GMM clustering.

- Visual Stimulation Setup: A monitor for presenting visual-evoked potential (VEP) and steady-state VEP (SSVEP) tasks to validate the method [26].

Step-by-Step Procedure:

- Data Acquisition and Preprocessing:

- Source Separation via CCA:

- Let the preprocessed EEG signals be (X(t) = [x1(t), x2(t),...,x_M(t)]^T), where (M) is the number of channels.

- CCA is applied to (X(t)) and its time-delayed version (Y(t) = X(t-1)) to find the demixing matrix (W) that yields the sources (\hat{S}(t) = W X(t)) [26].

- The derived components (\hat{S}(t)) are ordered by their autocorrelation coefficients, with artifacts typically having lower autocorrelation [26].

- Feature Extraction:

- Extract representative features from each CCA-derived component. Useful features include spectral powers and temporal features such as fractal dimension and higher-order statistics, which are effective for identifying ocular artifacts [26].

- Artifact Classification with GMM:

- Use the extracted features to train a Gaussian Mixture Model (GMM). The GMM clusters the features into groups corresponding to artifacts and brain signals without requiring manually labeled data [26].

- Components identified as artifacts are removed by setting their corresponding columns in the mixing matrix (A = W^{-1}) to zero, creating (\tilde{A}) [26].

- Signal Reconstruction:

- Reconstruct the artifact-free EEG signals (\hat{X}(t)) using the corrected mixing matrix: (\hat{X}(t) = \tilde{A} \cdot \hat{S}(t)) [26].

The following workflow diagram illustrates this multi-stage process for artifact removal:

Diagram 1: CCA-GMM Artifact Removal Workflow

Protocol 2: Wavelet Packet Decomposition with CCA (WPD-CCA) for Single-Channel Signals

This protocol describes a two-stage method for correcting motion artifacts in single-channel EEG and fNIRS signals, where standard CCA is not directly applicable [5].

Research Reagent Solutions:

- Single-Channel Recorder: A wearable EEG or fNIRS sensor.

- Wavelet Toolbox: Access to wavelet packet decomposition functions (e.g., in MATLAB or Python's

PyWavelets). - Benchmark Dataset: A publicly available dataset containing motion-artifact-corrupted signals with ground-truth clean segments for validation [5].

Step-by-Step Procedure:

- Signal Decomposition using WPD:

- Select a wavelet packet family (e.g., Daubechies (

db1), Symlets (sym4), or Fejer-Korovkin (fk4)) and a decomposition level. - Decompose the single-channel signal into multiple nodes or sub-bands using Wavelet Packet Decomposition (WPD). This acts as a first stage of noise separation [5].

- Select a wavelet packet family (e.g., Daubechies (

- Create Multichannel Input for CCA:

- Select a set of relevant WPD nodes (e.g., the most artifact-contaminated nodes) to form a new multichannel signal. This step artificially creates a multi-dimensional dataset from a single channel, enabling the use of CCA [5].

- Apply CCA for Artifact Removal:

- Apply CCA to the newly formed multichannel signal. CCA will identify and isolate the artifact components within this derived dataset [5].

- Remove the components identified as artifacts.

- Signal Reconstruction:

- Reconstruct the denoised signal from the remaining clean CCA components and the corresponding inverse WPD transform.

- Signal Decomposition using WPD:

The diagram below illustrates this two-stage denoising approach for single-channel signals:

Diagram 2: WPD-CCA Single-Channel Denoising

Performance Metrics and Comparative Analysis

The performance of artifact removal pipelines is quantitatively evaluated using standardized metrics. The following table summarizes the reported efficacy of different CCA-based methods discussed in the protocols.

Table 2: Performance Comparison of CCA-Based Artifact Removal Methods

| Method | Signal Type | Key Performance Metrics | Reported Results | Reference |

|---|---|---|---|---|

| CCA with GMM | Multichannel EEG | Qualitative/Visual inspection of temporal and spectral preservation. | Effective removal of blinks, head/body movement, and chewing artifacts while preserving spectral features important for cognitive research. | [26] |

| WPD-CCA | Single-channel EEG | (\Delta SNR) (dB): Improvement in Signal-to-Noise Ratio.(\eta (\%)): Percentage reduction in motion artifacts. | (\Delta SNR = 30.76 \text{ dB}), (\eta = 59.51\%) (using db1 wavelet). |

[5] |

| WPD-CCA | Single-channel fNIRS | (\Delta SNR) (dB), (\eta (\%)) | (\Delta SNR = 16.55 \text{ dB}), (\eta = 41.40\%) (using fk8 wavelet). |

[5] |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for CCA Filtering Experiments

| Item | Function/Application | Example Specifications |

|---|---|---|

| High-Density EEG System | Captures electrical brain activity with high spatial resolution for effective source separation. | 60+ Ag/AgCl electrodes; impedance < 5 kΩ; sampling rate ≥ 1 kHz [26]. |

| Mobile EEG (mo-EEG) | Enables data collection in naturalistic settings, where motion artifacts are prevalent. | Wearable, fewer electrodes, accelerometer for motion tracking [2]. |

| Visual Stimulation System | Provides controlled stimuli to evoke brain responses for validating artifact removal methods. | Monitor with precise flicker frequencies (e.g., 1 Hz for VEP, 15 Hz for SSVEP) [26]. |

| Computational Software | Platform for implementing CCA, wavelet transforms, and machine learning models. | MATLAB, Python (with Scikit-learn, PyWavelets, MNE-Python). |

| Wavelet Packets | Base functions for decomposing signals in the WPD-CCA method. | Daubechies (db1, db2), Symlets (sym4), Fejer-Korovkin (fk4, fk8) [5]. |

Critical Considerations and Best Practices

- Pipeline Order is Critical: Preprocessing steps are often linear projections. Performing them in a modular, sequential manner (e.g., motion regression followed by temporal filtering) can reintroduce artifacts previously removed. Where possible, combine nuisance regressions into a single projection or use sequential orthogonalization [29].

- Data Scaling is Algorithm-Dependent: While centering and scaling are crucial for CCA, k-NN, and SVM, they are unnecessary for tree-based algorithms like Random Forests. Always select preprocessing based on the underlying model [27].

- Validation with Realistic Data: Use datasets that include real-world motion artifacts and, if possible, ground-truth clean signals or known evoked potentials (like VEP) to objectively assess the performance of the artifact removal pipeline without compromising neural signals of interest [26] [2] [5].

Canonical Correlation Analysis (CCA) is a powerful multivariate statistical method used to uncover relationships between two sets of variables. In biomedical research, CCA has proven particularly valuable for data fusion and multimodal integration, allowing researchers to find shared information across different data types or modalities [30]. The core objective of CCA is to identify and quantify the associations between two multidimensional variable sets by finding linear combinations that exhibit maximum correlation with each other. These linear combinations, known as canonical variates, provide insights into the underlying relationships between the datasets.

The application of CCA has expanded significantly in biomedical contexts, including neuroimaging studies where it facilitates the fusion of functional magnetic resonance imaging (fMRI), electroencephalography (EEG), and structural MRI (sMRI) data [30]. More recently, CCA has been successfully implemented in motion artifact removal pipelines for mobile EEG systems, demonstrating its practical utility in addressing challenging signal processing problems in real-world biomedical recordings [25]. The method's flexibility allows it to accommodate data from various experimental paradigms, including resting state studies and naturalistic settings where traditional model-based approaches often prove insufficient.

Theoretical Foundation of CCA

Mathematical Formulation

Given two centered datasets, X ∈ ℝ^{n×p} and Y ∈ ℝ^{n×q}, representing two different views of the same n observations, CCA seeks to find weight vectors a ∈ ℝ^p and b ∈ ℝ^q such that the correlation between the canonical variates u = Xa and v = Yb is maximized. This leads to the optimization problem:

ρ = max{a, b} corr(Xa, Yb) = max{a, b} ( a^T Σxy b ) / ( sqrt(a^T Σxx a) · sqrt(b^T Σ_yy b) )

where Σxx and Σyy are the within-set covariance matrices of X and Y, respectively, and Σ_xy is the between-sets covariance matrix.

The solution involves solving the eigenvalue equations: Σxx^{-1} Σxy Σyy^{-1} Σyx a = ρ^2 a Σyy^{-1} Σyx Σxx^{-1} Σxy b = ρ^2 b

Subsequent canonical variates are uncorrelated with previous ones and account for the remaining correlation structure. The number of canonical variable pairs is equal to min(rank(X), rank(Y)).

Table 1: Key Mathematical Components of Standard CCA

| Component | Symbol | Description | Dimension |

|---|---|---|---|

| Dataset 1 | X | First set of variables (e.g., brain signals) | n×p |

| Dataset 2 | Y | Second set of variables (e.g., motion sensors) | n×q |

| Canonical weights | a, b | Linear transformation vectors | p×1, q×1 |

| Canonical variates | u, v | Projected data: u = Xa, v = Yb | n×1 |

| Canonical correlation | ρ | Correlation between u and v | Scalar |

Assumptions and Considerations

Successful application of CCA requires attention to several key assumptions. CCA assumes linear relationships between variables, multivariate normality, and homoscedasticity. The presence of outliers can significantly impact results, making robust preprocessing essential. Additionally, CCA requires adequate sample sizes, with recommendations suggesting at least 10-20 observations per variable for stable solutions. Multicollinearity within either dataset can cause instability in the weight estimation, requiring potential regularization in high-dimensional settings common to biomedical data.

Standard CCA Protocol for Biomedical Data

Preprocessing and Data Preparation

Step 1: Data Collection and Feature Extraction Collect raw data from your target modalities. For motion artifact correction in EEG, this typically includes scalp EEG recordings and reference noise signals (either from dedicated noise sensors or created as pseudo-references from the EEG itself) [25]. Extract relevant features or use the full multidimensional data depending on your research question. For temporal signals like EEG, appropriate windowing should be applied.

Step 2: Data Cleaning and Normalization Center each variable by subtracting its mean. Consider standardizing variables to unit variance if they are measured on different scales, though this decision should be guided by domain knowledge. Address missing values through appropriate imputation methods or exclusion. Perform outlier detection and treatment to prevent skewed results.

Step 3: Data Integration and Alignment Ensure temporal alignment between datasets when working with time-series data. Verify subject-wise correspondence for multi-subject studies. For feature-level fusion, create matched feature matrices X and Y with equal numbers of observations (subjects or time points).

Core CCA Implementation

Step 4: Covariance Matrix Computation Calculate the covariance matrices: Σxx = X^TX/(n-1), Σyy = Y^TY/(n-1), and Σ_xy = X^TY/(n-1). For high-dimensional data where p or q > n, consider regularization approaches to ensure matrix invertibility.

Step 5: Eigenvalue Decomposition Solve the CCA eigenvalue problem through generalized eigenvalue decomposition. Most scientific computing languages (Python with scikit-learn, R, MATLAB) provide built-in functions for this computation. The implementation typically involves:

- Computing Σxx^{-1} and Σyy^{-1} (or regularized equivalents)

- Forming the matrix M = Σxx^{-1} Σxy Σyy^{-1} Σyx

- Performing eigenvalue decomposition on M to obtain eigenvalues ρi^2 and eigenvectors ai

Step 6: Canonical Variate Calculation Compute the canonical variates for both datasets: ui = Xai and vi = Ybi for i = 1,...,k, where k = min(rank(X), rank(Y))). The vectors bi are obtained as bi = Σyy^{-1} Σyx ai / ρi.

Step 7: Correlation and Significance Testing Examine the canonical correlations ρ_i, which represent the correlation between each pair of canonical variates. Perform statistical testing to determine the number of significant canonical correlations using Bartlett's test or permutation testing.

Table 2: CCA Implementation Steps with Key Equations

| Step | Key Operation | Mathematical Formula | Implementation Notes |

|---|---|---|---|

| Covariance Computation | Matrix multiplication | Σ_xy = X^TY/(n-1) | Handle missing values appropriately |

| Eigenvalue Solution | Generalized EVD | M = Σxx^{-1} Σxy Σyy^{-1} Σyx | Use regularization if matrices are singular |

| Variate Calculation | Linear projection | ui = Xai, vi = Ybi | Center data before projection |

| Significance Testing | Hypothesis testing | Bartlett's χ² = -(n-1-(p+q+1)/2)∑ln(1-ρ_i²) | df = (p-i+1)(q-i+1) for i-th correlation |

Interpretation and Validation

Step 8: Result Interpretation Interpret the canonical weight vectors a and b to understand which variables contribute most to the relationships. Examine the canonical loadings (correlations between original variables and canonical variates) for more stable interpretations, especially with multicollinearity.

Step 9: Cross-Validation and Reproducibility Assess the stability of CCA results through cross-validation or split-sample replication. Calculate the canonical variates on independent data to ensure generalizability rather than overfitting to specific datasets.

Step 10: Visualization and Reporting Create scatterplots of canonical variate pairs, heatmaps of canonical loadings, and other relevant visualizations to communicate findings effectively. Report effect sizes (canonical correlations) and statistical significance for complete interpretation.

Application to Motion Artifact Removal in EEG