Ensuring Real-World Reliability: A Comprehensive Framework for Robustness Assessment in Neural Interfaces

This article provides a systematic review of strategies for evaluating and enhancing the robustness of neural interfaces in real-world environments.

Ensuring Real-World Reliability: A Comprehensive Framework for Robustness Assessment in Neural Interfaces

Abstract

This article provides a systematic review of strategies for evaluating and enhancing the robustness of neural interfaces in real-world environments. Tailored for researchers, scientists, and drug development professionals, it bridges the gap between controlled laboratory validation and the unpredictable conditions of chronic, deployed use. The scope spans from foundational principles and signal acquisition challenges to advanced methodological adaptations for signal disruption, targeted troubleshooting for biological and technical failures, and rigorous validation metrics. By synthesizing current research on flexible electrodes, automatic error detection, and adaptive machine learning, this article offers a consolidated reference to guide the development of next-generation, clinically viable brain-computer interfaces.

Defining Robustness: From Lab Bench to Real-World Challenges for Neural Interfaces

The Critical Imperative of Robustness for Chronic BCI Usability

For brain-computer interfaces (BCIs) to transition from laboratory demonstrations to chronic, real-world usage, robustness stands as the most critical imperative. Chronic BCI systems are likely to encounter various signal disruptions due to biological, material, and mechanical issues that can corrupt neural data [1] [2]. Unlike controlled laboratory environments, real-world applications demand systems that can operate reliably despite these challenges without constant technician intervention or daily recalibration. The robustness challenge spans multiple dimensions: maintaining signal integrity against physical degradation of sensors, preserving decoding accuracy amid non-stationary brain signals, and ensuring security against adversarial manipulations—all while protecting user privacy [3] [4]. This comparison guide examines current approaches for assessing and ensuring BCI robustness, providing researchers with experimental data and methodologies for evaluating neural interfaces under real-world conditions.

Comparative Analysis of BCI Robustness Approaches

Table 1: Comparison of BCI Robustness Enhancement Approaches

| Approach | Core Methodology | Robustness Target | Key Performance Metrics | Experimental Results |

|---|---|---|---|---|

| Statistical Process Control (SPC) with Channel Masking [1] [2] | Automated detection of disrupted channels using SPC; masking layer for removal; unsupervised weight updates | Signal disruptions from channel failures | Maintained performance with corrupted channels; computation time; data storage requirements | Maintained high performance with corrupted channels; minimized computation and storage needs |

| Augmented Robustness Ensemble (ARE) [4] | Data alignment, augmentation, adversarial training, and ensemble learning integrated with privacy-preserving transfer learning | Data scarcity, adversarial attacks, privacy concerns | Classification accuracy on benign samples; accuracy under attack; privacy protection level | Outperformed 10+ baseline methods in accuracy and robustness across 3 privacy scenarios |

| Attention-Based Network Defense [5] | Evaluating and hardening attention-based deep learning models for EEG classification | Adversarial perturbations on MI-EEG signals | Classification accuracy; kappa score under attack | Clean data: 87.15% accuracy, 0.8287 kappa; Under attack: 9.07% accuracy, -0.21 kappa |

| Shared Control with AR [6] | User-centric evaluation combining quantitative and qualitative assessments in real-world tasks | Real-world usability with minimal mental effort | Task completion rate; user experience; system reliability | Comprehensive framework for iterative robustness improvements |

Table 2: Real-World Deployment Challenges and Mitigation Strategies

| Deployment Challenge | Impact on Chronic Usability | Current Mitigation Approaches | Limitations |

|---|---|---|---|

| Signal Disruptions [1] [2] | Performance degradation from corrupted channels; requires recalibration | SPC monitoring; channel masking; transfer learning | Computational overhead; may require historical data |

| Adversarial Vulnerability [4] [5] | Malicious manipulation of BCI outputs; safety concerns | Adversarial training; robust ensemble methods; detection mechanisms | Often reduces clean data accuracy; increased model complexity |

| Daily Variability [1] | Signal non-stationarity requires frequent recalibration | Unsupervised updates; deep learning on historical data | User surveys indicate unwillingness for daily retraining |

| Privacy Concerns [4] | Exposure of sensitive neural data; regulatory non-compliance | Source-free transfer learning; federated learning; data perturbation | Potential accuracy trade-offs; implementation complexity |

Experimental Protocols for Robustness Assessment

Statistical Process Control for Channel Disruption Detection

The SPC methodology for automatic channel disruption detection involves a multi-stage protocol [1] [2]:

Data Collection: Continuously monitor key channel health metrics including impedance values and channel correlations over extended time periods (demonstrated with 5-year clinical data).

Baseline Establishment: Calculate baseline behavior and variability measures from historical neural data during normal operation.

Control Chart Implementation: Create control charts for four array-level metrics specifically designed for neural signal monitoring.

Disruption Flagging: Apply statistical criteria to identify sessions with potential disruptions, classifying channels as "out-of-control" when they deviate significantly from established baselines.

Grubbs' Test Application: Perform formal statistical testing to confirm channel disruptions while controlling for multiple comparisons.

This protocol enables automatic identification of problematic channels without user intervention, enabling subsequent masking and decoder adaptation.

Augmented Robustness Ensemble for Multi-Threat Protection

The ARE algorithm addresses three challenges simultaneously through an integrated workflow [4]:

Data Alignment: Apply Euclidean Alignment (EA) to reduce inter-subject variability and distribution discrepancy.

Data Augmentation: Generate diversified training samples to improve model generalization despite limited data.

Adversarial Training: Incorporate adversarial samples crafted from training data to enhance robustness against malicious attacks.

Ensemble Learning: Combine multiple models to produce more stable and accurate predictions.

Privacy Integration: Implement one of three privacy frameworks: centralized source-free transfer, federated source-free transfer, or source data perturbation.

Experimental validation involves benchmarking against 10+ established methods across three public EEG datasets, with evaluation metrics including accuracy on clean data, accuracy under attack, and privacy preservation efficacy.

Adversarial Robustness Assessment for Attention-Based Networks

The vulnerability assessment protocol for attention-based networks involves [5]:

Model Development: Design a high-performing attention-based deep learning model specifically for Motor Imagery EEG classification.

Baseline Performance: Establish baseline performance on clean data using the BCI Competition 2a dataset, reporting both accuracy and kappa scores.

Attack Strategy Implementation: Apply multiple adversarial attack strategies against the trained models, including white-box and black-box scenarios.

Robustness Metrics: Quantify performance degradation using accuracy and kappa scores under attack conditions.

Comparative Analysis: Compare vulnerability profiles with traditional CNN architectures to identify attention-specific vulnerabilities.

This protocol reveals that despite high performance on clean data (87.15% accuracy), attention-based models can suffer catastrophic failure under attack (dropping to 9.07% accuracy).

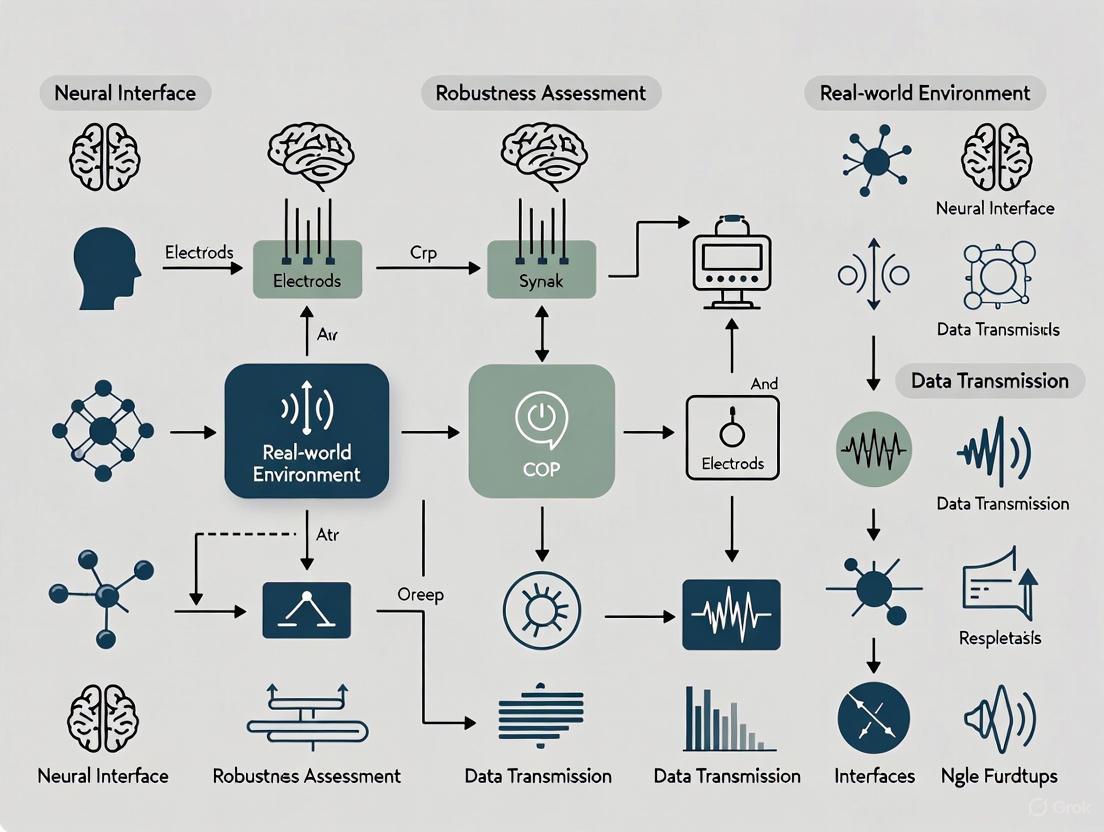

Visualization of Key Robustness Frameworks

SPC Channel Correction Workflow

ARE Multi-Threat Protection Framework

The Scientist's Toolkit: Research Reagents & Materials

Table 3: Essential Research Resources for BCI Robustness Assessment

| Resource/Reagent | Specifications & Variants | Research Function | Key Applications |

|---|---|---|---|

| Neural Signal Acquisition Systems | EEG (non-invasive); ECoG (partial invasive); Utah/Michigan arrays (invasive) | Record raw neural signals with varying spatial/temporal resolution | Signal quality assessment; artifact detection; baseline performance establishment |

| Statistical Process Control Software | Custom Python 3.6+ implementations; control chart generators; Grubbs' test packages | Automated monitoring of channel health metrics; disruption detection | Real-time signal quality assessment; chronic stability tracking |

| Adversarial Attack Libraries | EEG-specific adversarial sample generators; universal perturbation frameworks | System vulnerability assessment; robustness benchmarking | Stress-testing BCI classifiers; evaluating failure modes |

| Privacy Preservation Tools | Source-free transfer learning frameworks; federated learning platforms; data perturbation algorithms | Protect sensitive user data during model development and deployment | Compliance with GDPR; ethical BCI development; user trust establishment |

| Benchmark Datasets | BCI Competition 2a; other public EEG datasets; longitudinal clinical trial data | Standardized performance comparison; method validation | Algorithm benchmarking; reproducibility assurance |

The experimental data and comparative analysis presented demonstrate that robustness is not a single-dimensional property but a multifaceted requirement spanning signal integrity, algorithmic stability, security, and privacy. For chronic BCI usability to become a clinical and commercial reality, robustness must be designed into systems from inception rather than added as an afterthought. The most promising approaches emerging from current research include automated self-correction mechanisms like SPC with channel masking [1] [2], comprehensive frameworks like ARE that address multiple challenges simultaneously [4], and rigorous adversarial testing protocols that reveal previously overlooked vulnerabilities [5]. Future research directions should prioritize real-world validation in home environments, development of standardized robustness benchmarks, and exploration of novel materials science solutions to improve the biological stability of neural interfaces [7]. As BCIs expand beyond healthcare into smart home control, communication, and other daily applications [3], the imperative for robustness will only intensify, demanding continued interdisciplinary collaboration between neuroscientists, computer engineers, and clinical researchers.

Brain-Computer Interface (BCI) technology establishes a direct communication pathway between the human brain and external devices, representing a transformative advancement in human-machine interaction [8]. The efficacy of BCI systems hinges on the seamless integration of three fundamental components: signal acquisition, which detects neural activity; processing, which decodes this activity into commands; and output, which executes these commands as actionable functions [8] [9]. For researchers and clinicians, understanding the performance characteristics of each component is crucial for selecting appropriate technologies for specific real-world applications, particularly when assessing robustness in non-laboratory environments. This guide provides a structured comparison of current BCI methodologies, supported by experimental data, to inform development decisions in neural interface research.

Signal Acquisition Technologies

The signal acquisition module is responsible for recording cerebral signals, bearing the critical responsibility for the initial detection quality that impacts all subsequent stages [10] [8]. Acquisition technologies are broadly categorized by their level of invasiveness, which directly correlates with signal fidelity and clinical risk.

- Non-invasive Acquisition: Primarily using electroencephalography (EEG), this approach records electrical activity from electrodes placed on the scalp. It is safe, cost-effective, and portable but suffers from low spatial resolution and signal attenuation by the skull [11]. EEG signals are inherently weak, typically in the microvolt (µV) range, and susceptible to artifacts from muscle movement or environmental noise [8].

- Invasive Acquisition: These methods involve surgically placing electrode arrays, such as microchips or stents, to record neural activity directly from the cortex. They provide high-fidelity signals with excellent spatial and temporal resolution but carry higher surgical risks and potential for tissue scarring over time [12] [11].

Table 1: Comparison of Primary Neural Signal Acquisition Technologies

| Technology | Invasiveness | Spatial Resolution | Temporal Resolution | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|

| Electroencephalography (EEG) [8] [11] | Non-invasive | Low (Scalp-level) | High (Milliseconds) | Safe, portable, low cost | Low signal-to-noise ratio, sensitive to artifacts |

| Electrocorticography (ECoG) [12] | Minimally Invasive | High (Cortical surface) | High (Milliseconds) | Higher fidelity than EEG, less risk than implants | Requires craniotomy, limited coverage |

| Endovascular Stentrode [12] | Minimally Invasive | Moderate | High | No open-brain surgery, stable signal | Position constraints, signal filtering by vessel wall |

| Utah Array [12] | Fully Invasive | Very High (Neuron-level) | Very High | High-bandwidth, single-neuron recording | Tissue damage, scarring risk over time |

| Neuralace [12] | Fully Invasive | Very High (Cortical layer) | Very High | Conformable, broad cortical coverage | New technology, long-term biocompatibility under evaluation |

The following workflow outlines the generalized process from signal acquisition to output in a closed-loop BCI system, integrating the components discussed in this article.

Signal Processing and Decoding Methodologies

The processing component analyzes recorded brain activity to interpret the operator's intended action [8]. This stage is critical for managing noisy signals and high inter-subject variability. Advances in artificial intelligence (AI) and machine learning (ML) have dramatically improved the decoding of neural signals.

Algorithm Performance Comparison

The accurate classification of neural data, particularly for tasks like Motor Imagery (MI), is crucial for enhancing BCI performance [13]. Research evaluates a range of classifiers, from traditional machine learning to sophisticated deep learning and hybrid models.

Table 2: Performance Comparison of Processing Algorithms for EEG-Based Motor Imagery Classification

| Algorithm | Reported Accuracy | Key Characteristics | Best Suited For |

|---|---|---|---|

| Random Forest (RF) [13] | 91.0% | Ensemble method, robust to overfitting | A strong baseline for MI classification with good interpretability. |

| Support Vector Machine (SVM) [9] | Information Missing | Effective in high-dimensional spaces | Scenarios with well-defined feature separability. |

| Convolutional Neural Network (CNN) [13] | 88.2% | Excels at extracting spatial features from multi-channel EEG. | Learning spatial patterns from electrode arrays. |

| Long Short-Term Memory (LSTM) [13] | 16.1% | Models temporal sequences and dependencies. | Best used in hybrids for capturing time-series dynamics. |

| CNN-LSTM Hybrid [13] | 96.1% | Combines spatial (CNN) and temporal (LSTM) feature extraction. | High-accuracy applications requiring robust spatiotemporal modeling. |

| GA-Optimized Transformer [14] | 89.3% | Evolved via genetic algorithm; self-attention mechanism. | Addressing inter-subject variability and noisy EEG signals. |

Key Experimental Protocols in Signal Processing

Adherence to standardized experimental protocols is essential for reproducibility and performance validation. The following methodologies are commonly employed in robust BCI research:

- Data Pre-processing Pipeline: Raw EEG signals undergo a multi-step cleaning process [13] [15]. This typically includes:

- Band-pass Filtering: Isolating frequency bands of interest (e.g., mu band 8-13 Hz for motor imagery).

- Artifact Removal: Using techniques like Independent Component Analysis (ICA) to separate and remove ocular or muscular artifacts.

- Normalization: Scaling data to a standard range to facilitate model convergence.

- Feature Extraction Techniques: Transforming raw signals into meaningful features is critical for classification [13]. Common methods include:

- Time-Frequency Analysis: Using Wavelet Transform or Power Spectral Density (PSD) to capture spectral changes over time.

- Spatial Filtering: Applying algorithms like Common Spatial Patterns (CSP) to enhance discriminability between MI classes.

- Riemannian Geometry: Classifying covariance matrices of EEG signals in a Riemannian manifold, which is often robust to noise and non-stationarities.

- Validation and Generalization Testing: To ensure robustness, models are evaluated using subject-independent cross-validation [14]. Performance metrics such as accuracy, kappa score, and F1-score are reported on held-out test sets to validate generalization to unseen data.

Output and Application Interfaces

The output component translates the decoded intent into a command to control an external device or software [12] [8]. This forms the tangible interface through which the user interacts with the world. The feedback component then closes the loop, informing the user of the system's interpretation, allowing for real-time adjustments [8] [9].

Table 3: Comparison of BCI Output Applications and Their Performance Metrics

| Application Domain | Output Device | Control Paradigm | Reported Performance |

|---|---|---|---|

| Communication [12] [8] | Computer Cursor / Speller | P300, Motor Imagery, Imagined Speech | Speech BCIs infer words at 99% accuracy with <0.25s latency [12]. |

| Motor & Mobility [13] | Robotic Arm / Wheelchair | Motor Imagery (Left/Right Hand, Feet) | Hybrid CNN-LSTM models enable control with 96% classification accuracy [13]. |

| Neurorehabilitation [16] [9] | Functional Electrical Stimulation (FES) | Closed-Loop Neurostimulation | Used for motor recovery in stroke; assessed via clinical scales and neuroplasticity biomarkers. |

| Cognitive Monitoring [9] | Alert System for Caregivers | Passive EEG Monitoring | AI-driven BCIs are being explored for longitudinal monitoring of cognitive decline in Alzheimer's. |

The Researcher's Toolkit: Essential Materials and Reagents

For scientists replicating or advancing BCI research, familiarity with key resources is fundamental. The following table details essential solutions and their functions.

Table 4: Key Research Reagent Solutions for BCI Experimentation

| Item | Function in BCI Research | Specific Examples / Notes |

|---|---|---|

| EEG Recording System | Acquires raw neural signals from the scalp. | Systems with high-input impedance amplifiers and wet/dry electrodes. Portability is a key research focus [11]. |

| Implantable Electrode Arrays | For high-fidelity invasive signal acquisition. | Utah Array (Blackrock), Stentrode (Synchron), Layer 7 (Precision) [12]. |

| Conductive Electrode Gel | Ensures low impedance between scalp and EEG electrodes. | Standard for wet EEG systems; crucial for signal quality [11]. |

| Standardized EEG Datasets | For algorithm training, benchmarking, and validation. | PhysioNet EEG Motor Movement/Imagery Dataset [14] [13], Berlin BCI Competition IV Dataset 2a [14]. |

| Signal Processing Toolboxes | Provide implemented algorithms for filtering, feature extraction, and classification. | EEGLAB, MNE-Python, BCILAB. |

| Deep Learning Frameworks | Enable the development and training of custom models like CNN-LSTM hybrids. | TensorFlow, PyTorch. |

Brain-Computer Interfaces (BCIs) represent a revolutionary technology that enables direct communication between the brain and external devices, offering transformative potential in healthcare, rehabilitation, and human-computer interaction [11] [12]. Within this domain, a fundamental dichotomy exists between invasive interfaces, which require surgical implantation, and non-invasive interfaces, which record neural activity from the scalp surface. The choice between these approaches involves significant trade-offs, particularly concerning their robustness—the ability to maintain performance amidst the challenges of real-world environments.

Robustness in BCI systems encompasses several dimensions: signal stability over time, resilience to biological and environmental noise, adaptability to user state changes, and consistent performance outside controlled laboratory settings [1] [6]. This analysis systematically compares invasive and non-invasive neuronal interfaces through the lens of robustness, synthesizing current research findings, experimental data, and methodological approaches to provide researchers and developers with a comprehensive assessment of their inherent capabilities and limitations.

Fundamental Divergences in Signal Characteristics

The core distinction between invasive and non-invasive BCIs originates from fundamental differences in the nature of the signals they acquire, which directly dictates their performance characteristics and robustness challenges.

Invasive interfaces record signals directly from the cortical surface or within brain tissue, providing access to high-frequency neural activity including action potentials (spikes) and local field potentials (LFPs) [17]. These signals emanate from localized neuronal populations, offering fine-grained information about neural computation with high spatial specificity and signal-to-noise ratio. The neurophysiological basis for this superiority lies in the physical proximity to neural sources, minimizing signal attenuation and distortion through intervening tissues [17].

Conversely, non-invasive interfaces, primarily electroencephalography (EEG), capture a spatially blurred summation of post-synaptic potentials from millions of neurons [17]. These signals must traverse several biological layers—cerebrospinal fluid, skull, and scalp—each acting as a spatial low-pass filter that attenuates high-frequency components and blurs anatomical specificity. Consequently, EEG predominantly reflects synchronized activity in large neuronal assemblies, with limited access to the rich high-frequency information available to invasive devices [11] [17].

Table 1: Fundamental Signal Characteristics Comparison

| Characteristic | Invasive BCIs | Non-Invasive BCIs (EEG) |

|---|---|---|

| Signal Sources | Action potentials, local field potentials | Post-synaptic potentials (summed) |

| Spatial Resolution | Micrometer-scale (single neurons) | Centimeter-scale (neuronal assemblies) |

| Temporal Resolution | Millisecond (up to kHz range) | Millisecond (effectively <90 Hz) |

| Information Bandwidth | High (multi-dimensional control) | Limited (lower-dimensional control) |

| Dominant Neuron Types | Diverse (pyramidal cells, interneurons) | Primarily cortical pyramidal cells |

| Anatomical Access | Deep and superficial structures possible | Superficial cortical regions only |

Signal Degradation Pathways

The robustness of each interface type is challenged by distinct signal degradation pathways. Invasive systems face biological integration issues, including the foreign body response that can lead to glial scarring and signal degradation over time [1] [17]. Electrode material degradation, miniaturization-related failures, and biofouling present additional robustness challenges for chronic implants.

Non-invasive systems contend primarily with environmental interference and biological artifacts. EEG signals are particularly susceptible to contamination from muscle activity (EMG), eye movements (EOG), cardiac signals (ECG), and environmental electromagnetic noise [11] [6]. This vulnerability necessitates sophisticated preprocessing and artifact removal algorithms, which themselves may introduce processing delays and potential signal distortions that impact real-time performance [6].

Quantitative Performance and Robustness Metrics

Direct comparison of performance metrics reveals how fundamental signal differences translate into functional capabilities with distinct robustness profiles.

Information Transfer Rates and Decoding Accuracy

Invasive BCIs consistently achieve higher information transfer rates (ITR) and decoding accuracy across multiple domains. In motor control applications, invasive systems using intracortical signals have enabled multi-dimensional control of robotic prosthetics with performance levels approaching natural movement [17] [12]. For communication applications, recent speech BCIs have demonstrated remarkable performance, decoding intended words from neural activity with accuracies up to 99% at latencies below 0.25 seconds [12].

Non-invasive systems exhibit more modest performance ceilings, typically achieving lower ITRs that limit their applicability for complex control tasks. The information bottleneck arises from the fundamental physiological constraints of EEG signals rather than algorithmic limitations [17]. While advanced signal processing and machine learning techniques can improve performance, they cannot overcome the inherent biophysical constraints of scalp-recorded signals.

Table 2: Experimental Performance Metrics in Research Settings

| Performance Metric | Invasive BCIs | Non-Invasive BCIs (EEG) |

|---|---|---|

| Communication Rate | >100 characters/minute (speech decoding) | <30 characters/minute (P300 speller) |

| Motor Control Dimensions | High (7D continuous control demonstrated) | Limited (typically 2-3 discrete commands) |

| Decoding Accuracy | >90% (motor), >99% (speech) | 70-90% (highly user-dependent) |

| Signal-to-Noise Ratio | High (μV range) | Low (μV range buried in noise) |

| Adaptation Time | Days to weeks (closed-loop plasticity) | Weeks to months (user training required) |

| Long-Term Stability | Months to years (with signal drift) | Stable with proper setup |

Real-World Usability and Signal Stability

Robustness in real-world environments presents distinct challenges for each interface type. Invasive systems demonstrate remarkable long-term stability when successfully implanted, with some studies reporting functional recordings over multiple years [1]. However, they face robustness challenges from biological processes, including immune responses that can encapsulate electrodes and degrade signal quality over time [17]. Recent approaches using statistical process control (SPC) methods enable automated detection of disrupted channels, allowing neural decoders to adapt by reallocating signal processing to intact channels [1].

Non-invasive systems offer superior immediate usability but struggle with consistency across sessions. EEG exhibits significant inter-session and intra-individual variability, necessitating frequent recalibration to maintain performance [6]. The requirement for individualized calibration and user training creates substantial usability barriers that impact real-world robustness [6]. Environmental factors—such as electrical interference, user movement, and electrode displacement—further degrade performance in non-laboratory settings [11].

Methodological Approaches to Robustness Assessment

Evaluating BCI robustness requires specialized experimental protocols that assess performance under realistic conditions. Standardized assessment methodologies enable meaningful comparison across interface types.

Experimental Protocols for Real-World Validation

Comprehensive robustness evaluation extends beyond offline classification accuracy to include real-time performance metrics during functionally meaningful tasks:

Protocol 1: Sustained Performance Testing: Participants complete extended sessions (2+ hours) of continuous BCI operation to assess fatigue effects and signal stability [6]. Performance metrics (accuracy, latency, completion rate) are tracked across time blocks to quantify degradation patterns.

Protocol 2: Multi-Task Interference Assessment: Users perform primary BCI tasks while simultaneously engaging in secondary cognitive or motor activities (e.g., auditory discrimination, minor limb movements) [6]. This protocol evaluates robustness to divided attention scenarios common in real-world use.

Protocol 3: Environmental Stress Testing: Systems are operated in environments with controlled introduction of real-world challenges: electromagnetic interference, varying lighting conditions, and background noise [6]. Performance metrics compared to laboratory baselines quantify environmental robustness.

Protocol 4: Adaptive Decoder Evaluation: Implements the Statistical Process Control (SPC) framework for invasive systems [1] or adaptive classification for non-invasive systems [6] to quantify performance recovery following intentional signal disruption or channel failure.

Signal Processing and Decoding Methodologies

Robustness enhancement requires specialized algorithms tailored to each interface's vulnerability profile:

Invasive BCI Robustness Methods:

- Statistical Process Control (SPC) Framework: Implements quality-control principles to automatically detect signal disruptions by monitoring channel health metrics (impedance, signal power, cross-channel correlations) against established baselines [1].

- Channel Masking and Transfer Learning: Removes corrupted channels via a masking layer in neural network decoders without architectural changes, followed by unsupervised weight updates to maintain performance with reduced inputs [1].

- Data Augmentation with Dropout/Mixup: Increases model resilience to channel failure by training with randomly zeroed inputs (simulating channel loss) or linear combinations of training examples [1].

Non-Invasive BCI Robustness Methods:

- Artifact Removal Algorithms: Sophisticated preprocessing using independent component analysis (ICA), regression methods, or adaptive filtering to separate neural signals from contamination sources [6].

- Transfer Learning Across Sessions: Leveraging data from previous sessions to reduce calibration requirements and mitigate inter-session variability [6].

- Shared Control Architectures: Combining limited BCI commands with environmental context and autonomous assistance to reduce cognitive load and improve overall system reliability [6].

Research Reagent Solutions and Experimental Materials

Advancing BCI robustness research requires specialized tools and methodologies. The following table catalogues essential research solutions with their specific applications in robustness assessment and enhancement.

Table 3: Essential Research Reagents and Experimental Materials

| Research Solution | Function in Robustness Research | Example Implementations |

|---|---|---|

| High-Density EEG Systems | Assess spatial resolution limits and signal quality in non-invasive paradigms | 64-256 channel systems with active electrodes [11] |

| Utah & Michigan Microelectrode Arrays | Provide high-resolution neural recording for invasive BCI development | Blackrock Neurotech Utah arrays (96 channels) [1] [12] |

| Statistical Process Control (SPC) Framework | Automated detection of signal disruptions in chronic recordings | Adapted Western Electric rules for neural data [1] |

| Adaptive Neural Network Decoders | Maintain performance with changing signal characteristics | Masking layers for channel dropout, unsupervised updates [1] |

| Artifact Removal Toolboxes | Mitigate contamination in non-invasive signals | ICA, regression methods, adaptive filtering implementations [6] |

| Shared Control Architectures | Reduce cognitive load and improve overall system reliability | Environment-aware action selection with limited BCI commands [6] |

| Standardized Performance Metrics | Enable cross-study robustness comparison | Information transfer rate, task completion accuracy, resilience scores [6] |

The robustness trade-offs between invasive and non-invasive neural interfaces reflect fundamental biophysical constraints that cannot be fully overcome by technological advances alone. Invasive systems offer superior signal quality and information bandwidth but face challenges in long-term biological stability and require substantial surgical intervention [17] [12]. Non-invasive systems provide immediate accessibility and minimal risk but contend with inherent signal limitations that restrict their performance ceiling and real-world reliability [11] [6].

Future research directions focus on mitigating these trade-offs through several promising approaches. Hybrid BCI systems that combine complementary signals may leverage the strengths of each approach while minimizing their individual limitations [18]. Next-generation electrode designs emphasizing biocompatible, flexible materials aim to reduce foreign body responses and extend functional longevity of invasive devices [12]. Advanced decoding algorithms incorporating adaptive learning and environmental context awareness show potential for enhancing robustness in both interface types [1] [6].

The trajectory of BCI development suggests a future where interface selection will be application-specific rather than universally prescribed. Clinical applications requiring high-performance control may justify invasive approaches, while non-invasive systems may dominate in consumer applications where convenience and accessibility outweigh performance demands. As robustness enhancement strategies continue to evolve, both interface classes will play crucial roles in advancing brain-computer interaction technology, each finding its optimal domain within the increasingly sophisticated ecosystem of neural interfaces.

The transition of neural interfaces from controlled laboratory settings to real-world clinical and consumer applications demands a rigorous assessment of their robustness. In these dynamic environments, devices encounter significant challenges that can compromise their performance and longevity. Key among these are chronic signal disruptions, persistent biocompatibility issues, and algorithmic vulnerabilities to distribution shifts in neural data. These stressors collectively determine whether a neural interface can maintain stable, long-term operation and provide reliable therapeutic or communicative functions for users. This guide provides a systematic comparison of how different neural interface technologies perform when confronted with these real-world challenges, synthesizing current research findings and experimental data to inform development priorities and selection criteria for researchers and clinicians.

Stressor Analysis: Comparative Performance of Neural Interfaces

The performance of neural interfaces varies significantly across different technology categories when subjected to core real-world stressors. The table below provides a comparative analysis of non-invasive, minimally invasive, and fully invasive interfaces based on current research.

Table 1: Comparative Analysis of Neural Interface Technologies Under Real-World Stressors

| Interface Category | Signal Disruption Vulnerability | Biocompatibility & Foreign Body Response | Robustness to Distribution Shifts | Typical Longevity & Failure Modes |

|---|---|---|---|---|

| Non-Invasive (EEG) | High susceptibility to motion artifacts and electromagnetic interference [19] | Minimal biocompatibility concerns (non-implantable) | Moderate; requires frequent recalibration due to non-stationary signals [20] | Indefinite, but performance degrades without regular maintenance |

| Minimally Invasive (ECoG, µECoG) | Moderate; reduced artifact compared to EEG but susceptible to tissue encapsulation effects [21] | Moderate; reduced mechanical mismatch with flexible substrates [21] [22] | High; more stable signal characteristics over time [21] | Months to years; performance decline correlates with encapsulation |

| Fully Invasive (Intracortical MEAs) | High vulnerability to biological responses (glial scarring) [20] [23] | Significant challenges; chronic inflammation and glial scarring [23] [24] | Moderate; stable single-unit recording until encapsulation progresses [20] | Months to years; signal degradation due to biological encapsulation |

Experimental Insights on Biocompatibility and Signal Stability

Controlled studies demonstrate the direct relationship between biocompatibility and signal stability. Research on flexible electronics reveals that devices with Young's modulus matching neural tissue (1-10 kPa) significantly reduce chronic inflammatory responses compared to rigid implants (silicon ~102 GPa, platinum ~102 MPa) [23] [22]. One longitudinal investigation showed that ultrathin gold µECoG arrays with hexagonal metal complex architectures maintained low electrical impedance and high signal-to-noise ratios for extended periods by minimizing mechanical mismatch and inflammatory response [21].

Quantitative assessments of the foreign body response show that traditional rigid microelectrodes typically exhibit a progressive increase in impedance of 200-500 kΩ over several weeks, correlating with glial scar formation that can increase the electrode-neuron distance by 50-100 μm [24]. This underscores the critical relationship between material properties and long-term signal fidelity.

Signal Disruption Classification and Compensation Strategies

Neural interface signal disruptions can be systematically categorized based on their duration and amenability to intervention, enabling targeted compensation strategies.

Table 2: Signal Disruption Classification Framework and Compensatory Approaches

| Disruption Category | Duration & Characteristics | Root Causes | Compensation Strategies | Compensation Effectiveness |

|---|---|---|---|---|

| Transient Disruptions | Minutes to hours; often resolve spontaneously [20] | Micromotion, transient biological processes, external interference [20] | Robust neural decoder features, adaptive machine learning models [20] | High; can maintain >85% performance with proper algorithms |

| Reversible Disruptions | Persistent until intervention [20] | Protein fouling, localized inflammation [20] [24] | Statistical Process Control for detection, impedance spectroscopy [20] | Moderate; requires intervention but often fully recoverable |

| Irreversible Compensable | Persistent or progressive decline [20] | Partial electrode damage, progressive glial scarring [20] [23] | Information salvage techniques, adaptive decoding methods [20] | Variable; highly algorithm-dependent (30-70% performance recovery) |

| Irreversible Non-Compensable | Permanent signal loss [20] | Complete electrode failure, severe tissue damage [20] [23] | Device replacement required [20] | None; requires hardware intervention |

Experimental Protocols for Disruption Characterization

Research into signal disruptions typically employs multi-modal assessment protocols:

- Longitudinal impedance spectroscopy tracks interface degradation over time [20] [24]

- Histological analysis quantifies glial fibrillary acidic protein (GFAP) expression and neuronal density (NeuN) around implant sites [24]

- Simultaneous electrophysiology and imaging correlates signal quality metrics with biological responses [23]

- Accelerated aging tests evaluate material stability in physiological conditions [25] [24]

These methodologies enable researchers to systematically evaluate disruption mechanisms and test compensatory approaches under controlled conditions before clinical implementation.

Figure 1: Neural Signal Disruption Classification and Intervention Framework. This diagram illustrates the four categories of signal disruptions in neural interfaces, their root causes, and corresponding compensation strategies based on current research [20] [23] [24].

Biocompatibility Challenges and Material Innovations

The biological response to implanted neural interfaces represents a critical stressor that directly impacts device performance and longevity. The foreign body response triggers a cascade of events that ultimately compromises signal quality.

The Foreign Body Response Process

Upon implantation, neural electrodes initiate a complex biological response [24]:

- Acute Phase (0-48 hours): Blood-brain barrier disruption, microvascular damage, and activation of microglia and macrophages

- Subacute Phase (Days to Weeks): Recruitment of astrocytes, release of chemokines and neurotoxic factors

- Chronic Phase (Weeks to Months): Formation of dense glial scars, increased distance between electrodes and neurons, significant impedance elevation

This response creates a self-perpetuating cycle where mechanical mismatch triggers biological responses that further degrade signal acquisition capabilities.

Material Solutions and Experimental Validation

Recent research has focused on developing material strategies to mitigate these biocompatibility challenges:

Table 3: Advanced Material Strategies for Enhanced Biocompatibility

| Material Innovation | Mechanical Properties | Biocompatibility Performance | Signal Quality Outcomes |

|---|---|---|---|

| Conductive Polymers (PEDOT:PSS) | Flexible, moderate conductivity [25] | Reduced inflammatory response; improved cellular integration [25] | Lower electrode impedance; enhanced charge transfer [25] |

| Ultrathin Gold µECoG | Mechanically robust yet flexible [21] | Minimal inflammatory response; conformal tissue integration [21] | High signal-to-noise ratio; stable long-term recording [21] |

| Biodegradable Scaffolds (PLLA-PTMC) | Temporary support; degrades after repair [25] | Eliminates need for secondary removal; reduces infection risk [25] | Stable signals during critical healing phase [25] |

| Self-Healing Hydrogels | Dynamic repair of mechanical damage [25] | Excellent compliance with neural tissue [25] | Maintains stable interface during mechanical stress [25] |

Experimental validation of these materials typically involves:

- In vivo electrochemical impedance spectroscopy to track interface stability

- Histological analysis of glial fibrillary acidic protein (GFAP) for astrocyte activation

- Immunohistochemical staining for neuronal markers (NeuN) and microglial activation (IBA1)

- Longitudinal electrophysiological recording to correlate biological responses with signal quality metrics

Distribution Shifts and Algorithmic Adaptation

Neural interfaces face significant challenges from distribution shifts - changes in the statistical properties of neural signals between training and deployment environments that degrade decoding performance.

Categories of Distribution Shifts

Research identifies several key types of distribution shifts in neural interface applications:

- Cross-Session Variability: Signal characteristics change between recording sessions due to electrode migration, tissue changes, or hormonal variations [20]

- Cross-Task Generalization: Models trained on specific tasks fail to generalize to novel behaviors or cognitive states [19]

- Long-Term Non-Stationarity: Progressive changes in neural representation due to learning, plasticity, or disease progression [20]

- Contextual Variability: Changes in neural encoding based on environmental context, arousal state, or pharmacological influences [26]

Algorithmic Compensation Strategies

Several algorithmic approaches have demonstrated effectiveness in mitigating distribution shifts:

Table 4: Algorithmic Strategies for Handling Distribution Shifts in Neural Interfaces

| Algorithmic Approach | Mechanism | Implementation Requirements | Effectiveness Evidence |

|---|---|---|---|

| Adaptive Machine Learning | Continuous model updates using incoming data [20] | Substantial computational resources; careful overfitting prevention | Maintains performance with gradual shifts (70-90% baseline) [20] |

| Transfer Learning | Leverages pre-trained models adapted to new distributions [19] | Diverse initial training dataset; domain adaptation techniques | Reduces recalibration time by 30-60% [19] |

| Domain-Invariant Feature Learning | Extracts features robust to distribution changes [20] | Advanced neural network architectures; multi-domain training data | Improves cross-session generalization by 15-25% [20] |

| Ensemble Methods | Combines multiple specialized decoders [20] | Multiple model training; fusion algorithm development | Provides more stable performance across conditions [20] |

Figure 2: Distribution Shift Challenges in Neural Interfaces. This diagram illustrates the primary categories of distribution shifts that degrade neural decoding performance and the algorithmic strategies employed to mitigate these challenges [20] [19] [26].

The Scientist's Toolkit: Essential Research Reagents and Materials

Advancing neural interface technology requires specialized materials and experimental tools. The following table details key solutions currently driving innovation in the field.

Table 5: Essential Research Toolkit for Neural Interface Development

| Material/Reagent | Composition/Type | Primary Function | Key Research Findings |

|---|---|---|---|

| PEDOT:PSS | Conductive polymer blend [25] | Flexible electrode coating; enhances charge transfer [25] | Reduces impedance by 60-80%; improves signal-to-noise ratio [25] |

| Ultrathin Gold Arrays | Hexagonal metal complex architecture [21] | Transparent, flexible neural electrodes [21] | Enables simultaneous electrical recording and optical modulation [21] |

| Biodegradable Scaffolds (PLLA-PTMC) | Polymer composites [25] | Temporary neural support; eliminates secondary surgery [25] | Promotes axon regeneration while gradually transferring load to healing tissue [25] |

| Graphene-Based Nanocomposites | 2D carbon nanomaterials [25] | High-surface-area electrode coating [25] | Enhances charge injection capacity; supports neural growth [25] |

| Self-Healing Hydrogels | Dynamic polymer networks [25] | Tissue-integrated electrode interface [25] | Maintains electrical continuity after mechanical deformation [25] |

| Impedance Spectroscopy Systems | Electrochemical characterization tools [20] [24] | Monitoring electrode-tissue interface stability [20] | Early detection of fouling and encapsulation (200-500 kΩ increases signal trouble) [24] |

| Multi-Electrode Arrays (Neuropixels) | High-density silicon probes [22] | Large-scale neural activity mapping [22] | Records from 1000+ neurons simultaneously; tracks plasticity effects [22] |

The systematic assessment of neural interfaces under real-world stressors reveals a complex interplay between biological, material, and algorithmic factors. Signal disruptions, biocompatibility challenges, and distribution shifts collectively determine the translational potential of these technologies. Current evidence suggests that integrative approaches combining advanced materials science with adaptive algorithms offer the most promising path forward. Flexible, tissue-matched substrates significantly reduce foreign body responses, while sophisticated machine learning techniques mitigate performance degradation from distribution shifts. The development of standardized experimental protocols for robustness assessment will accelerate progress toward clinically viable neural interfaces that maintain performance across diverse real-world conditions. As these technologies evolve, continued focus on the fundamental stressors examined here will be essential for achieving the long-term stability and reliability required for widespread clinical adoption.

The Impact of Chronic Immune Responses and Glial Scarring on Long-Term Signal Stability

The long-term stability of neural interfaces is critically dependent on the biological response they elicit following implantation. A primary challenge is the foreign body response, which involves chronic inflammation and the formation of a glial scar, ultimately leading to a decline in recording quality and functional longevity of the device [27] [28]. This insulating barrier, composed of reactive glial cells and extracellular matrix proteins, increases the distance between electrodes and viable neurons, thereby attenuating neural signals and increasing impedance [29] [27]. This guide objectively compares the impact of different neural interface design strategies on mitigating these responses, providing a robustness assessment for researchers and development professionals.

Comparative Analysis of Key Factors Mitigating Immune Responses

The table below summarizes how critical design parameters influence the chronic immune response and subsequent signal stability.

Table 1: Comparison of Neural Interface Design Parameters and Their Impact on Stability

| Design Parameter | Impact on Glial Scarring & Chronic Inflammation | Effect on Long-term Signal Stability | Supporting Experimental Data |

|---|---|---|---|

| Probe Density [30] | Low-density (∼1.35 g/cm³) probes cause significantly smaller astrocytic scars and less microglial attachment than high-density (∼21.45 g/cm³) probes. | Reduced inertial forces lead to less chronic tissue reaction, preserving signal quality. | Astrocytic (GFAP) signal intensity significantly lower around low-density probes at 6 weeks post-implantation. |

| Probe Flexibility & Cross-section [29] [27] | Flexible materials with small cross-sections reduce mechanical mismatch and micromotion-induced damage, minimizing chronic inflammation. | Smaller, more flexible probes demonstrate nearly seamless integration and outstanding recording stability. | Carbon fiber electrodes (7 µm diameter) enable stable recording; thinner probes reduce glial scarring. |

| Surface Biocompatibility [31] | Antifouling coatings (e.g., piCVD polymer) reduce protein adsorption, significantly lowering glial scarring and increasing neuronal preservation. | Coated probes maintain high-quality neural recordings with improved signal-to-noise ratio (SNR) over 3 months. | 66.6% reduction in glial scarring; 84.6% increase in neuronal density; SNR improved from 18.0 to 20.7 over 13 weeks. |

| Implantation Strategy [29] | Distributed implantation of ultra-thin filaments minimizes acute injury and promotes healing, while unified implantation is better for deep brain structures. | Reduced acute injury translates to less chronic inflammation, supporting sustained signal quality. | NeuroRoots filamentous electrodes (7 µm wide) recorded signals for up to 7 weeks with minimal trauma. |

Experimental Protocols for Assessing Interface Robustness

To evaluate the robustness of neural interfaces in real-world environments, standardized experimental protocols are essential. The following methodologies are critical for assessing the chronic foreign body response and its functional consequences.

Protocol 1: Histological Quantification of Glial Scarring

This protocol measures the extent of the immune response at the tissue-electrode interface [30].

- Animal Model: Rat models are commonly used.

- Implantation: Probes are implanted untethered to the skull to isolate the effect of specific parameters (e.g., density) from tethering artifacts.

- Tissue Collection: At a chronic time point (e.g., 6 weeks post-implantation), brain tissue is collected and sectioned.

- Immunohistochemistry: Tissue sections are stained with the following antibodies:

- Anti-GFAP: To label reactive astrocytes.

- Anti-CD68 (ED1): To label activated microglia/macrophages.

- Anti-NeuN: To label neuronal nuclei and assess neuronal density and proximity to the implant.

- Imaging and Analysis: Confocal microscopy is used to image the tissue surrounding the implant tract. The intensity of GFAP and ED1 staining is quantified as a function of distance from the implant site. Additionally, the number of NeuN-positive cells within defined regions of interest (e.g., 0–50 µm, 50–100 µm) is counted.

Protocol 2: Functional Electrochemical and Electrophysiological Validation

This protocol correlates biological responses with the functional performance of the electrode [31].

- Chronic Recording: Neural signals (e.g., spontaneous activity and evoked potentials) are recorded from implanted electrodes over extended periods (e.g., 3 months).

- Signal Quality Metrics:

- Signal-to-Noise Ratio (SNR): Calculated periodically to track recording quality degradation.

- Electrode Impedance: Measured regularly at relevant frequencies (e.g., 1 kHz); a rise in impedance often correlates with glial scar formation.

- Correlative Histology: After the final recording session, the brain tissue is processed for histology (as in Protocol 1). The functional data (SNR, impedance) is then directly correlated with the quantitative histology (glial scarring, neuronal density) for the same device.

Visualization of Key Concepts and Workflows

The following diagrams illustrate the core mechanisms and experimental approaches discussed.

The Immune Response Cascade to Neural Implants

This diagram outlines the sequential biological events leading to glial scarring and signal degradation.

Experimental Workflow for Robustness Assessment

This diagram maps the standard workflow for evaluating the long-term stability and biocompatibility of neural interfaces.

The Scientist's Toolkit: Key Research Reagents and Materials

The table below details essential reagents and materials used in the featured experiments for investigating neural interface biocompatibility.

Table 2: Essential Research Reagents for Neural Interface Biocompatibility Studies

| Reagent/Material | Function in Experimental Protocol | Specific Example & Citation |

|---|---|---|

| Anti-GFAP Antibody | Labels reactive astrocytes in immunohistochemistry to visualize and quantify the astrocytic component of the glial scar. | Standard immunohistochemical staining of brain sections; used to show reduced scarring around low-density probes [30] and coated electrodes [31]. |

| Anti-Iba1/CD68 Antibody | Labels activated microglia and infiltrating macrophages to assess the innate immune response at the implant-tissue interface. | Anti-CD68 (ED1) used to quantify microglial activation on explanted probes and surrounding tissue [30]. |

| Anti-NeuN Antibody | Labels neuronal nuclei to quantify neuronal survival and density in the vicinity of the implant, correlating with recording potential. | Used to confirm presence of neurons near both high and low-density probes, indicating preserved recording targets [30]. |

| Parylene C | A biocompatible polymer used as a consistent, inert coating for neural probes to insulate conductors and provide a uniform surface. | Used to coat both platinum and carbon fiber probes to isolate the variable of density from underlying material chemistry [30]. |

| piCVD Co-polymer | An ultrathin anti-fouling coating applied via photoinitiated chemical vapor deposition to reduce protein adsorption and glial scarring. | Poly(2-hydroxyethyl methacrylate-co-ethylene glycol dimethacrylate) coating shown to significantly improve signal stability and reduce inflammation over 3 months [31]. |

| Carbon Fiber | A material for constructing low-density, small cross-section neural probes that minimize mechanical mismatch and inertial forces. | Hollow carbon fiber needles (density ~1.35 g/cm³) demonstrated significantly reduced glial scarring compared to platinum [30]. |

Methodologies for Resilience: Engineering Adaptive and Robust Neural Decoding Systems

The performance of signal processing systems in real-world settings is critically dependent on their robustness to noise. For researchers and drug development professionals, this is particularly pertinent when dealing with data from neural interfaces or biological sensors, where signal integrity is paramount. This guide provides a comparative analysis of contemporary methodologies for feature extraction and classification in noisy environments, framing them within the broader context of robustness assessment for neural interfaces. We objectively evaluate the performance of competing approaches, from novel spiking neural networks to advanced feature selection techniques, supported by experimental data and detailed protocols to inform your research and development efforts.

Comparative Analysis of Advanced Methods

The quest for robustness has led to several innovative approaches. The table below compares four advanced methods, detailing their core principles, strengths, and applicability.

Table 1: Comparison of Advanced Methods for Noisy Signal Processing

| Method Name | Core Principle | Reported Advantages | Best Suited For |

|---|---|---|---|

| Noise-Tolerant Robust Feature Selection (NTRFS) [32] | Uses (\ell_{2,1})-norm minimization & block-sparse projection to identify and leverage beneficial noise. | Enhances robustness, improves classification performance, eliminates parameter tuning [32]. | High-dimensional data analysis (e.g., bioinformatics, masked facial images). |

| Rhythm-SNN [33] | Employs oscillatory signals to modulate spiking neurons, creating sparse, synchronized firing patterns. | State-of-the-art accuracy, high energy efficiency, superior robustness to noise & adversarial attacks [33]. | Edge-AI, low-power temporal processing (e.g., neuromorphic hearing aids, speech recognition). |

| Noisy SNN (NSNN) with Noise-Driven Learning [34] | Incorporates noisy neuronal dynamics as a computational resource rather than a detriment. | Competitive performance, improved robustness, better reproduction of probabilistic neural coding [34]. | Probabilistic computation, models adapting to specialized neuromorphic hardware. |

| Feature Extraction with Noise Injection [35] | Augments training data with injected noise and uses Digital Signal Processing (DSP) for feature extraction. | Enhances data diversity, improves model generalization, effective with limited data [35]. | Time series classification (e.g., healthcare, finance, industrial monitoring). |

Performance Benchmarking

To quantify the performance of these methods, we summarize key experimental results reported across multiple studies. The following tables provide a snapshot of their classification accuracy and efficiency.

Table 2: Classification Accuracy on Benchmark Datasets

| Method / Dataset | SHD [33] | DVS-Gesture [33] | S-MNIST [33] | UCR Archive (Avg.) [35] |

|---|---|---|---|---|

| Rhythm-SNN | 92.5% | 99.2% | 99.5% | N/A |

| Noise Injection + DSP [35] | N/A | N/A | N/A | ≈5-10% improvement over baselines |

| Standard SNN (Baseline) | 89.5% | 97.8% | 98.9% | N/A |

Table 3: Energy Efficiency and Robustness Comparison

| Metric | Rhythm-SNN [33] | Standard SNN [33] | Deep Learning Model [33] |

|---|---|---|---|

| Relative Energy Cost | 1x | ~10x | >100x |

| Robustness to Perturbations | High | Medium | Low to Medium |

Experimental Protocols and Methodologies

NTRFS for Robust Feature Selection

The NTRFS method is designed to actively manage noise within high-dimensional data [32]. Its optimization process involves:

- Problem Formulation: The model treats class prototypes as optimization variables, moving beyond simple arithmetic means to achieve more accurate class representation in noisy conditions.

- Robust Loss Minimization: It employs (\ell{2,1})-norm minimization for the loss function, which is less sensitive to noise compared to traditional (\ell2)-norm [32].

- Block-Sparse Projection: An (\ell_{2,0})-norm constraint is directly applied to enable block-sparse projection learning. This selects discriminative features directly on the subspace without the need for tuning sparse regularization parameters [32].

- Adaptive Anomaly Estimation: The mechanism adjusts the weight of each feature based on its informative saliency, leveraging noise-tolerant information to promote the discovery of true class prototypes [32].

- Iterative Optimization: A dedicated iterative algorithm is used to solve the non-convex trace ratio and NP-hard block sparsity problems, with studies demonstrating guaranteed convergence [32].

Rhythm-SNN for Temporal Processing

The Rhythm-SNN architecture is inspired by the neural oscillation mechanisms of the brain, which are key to robust biological computation [33]. The workflow is as follows:

Diagram 1: Rhythm-SNN Workflow

The core of the method involves modulating the neuronal dynamics with an oscillatory signal, ( m(t) ), often implemented as a square wave [33]. This signal rhythmically switches neurons between 'ON' states, where they update and fire normally, and 'OFF' states, where neuronal updates are halted. This process yields multiple benefits: it sparsifies neuronal activity (reducing energy cost), acts as a shortcut for gradient backpropagation (easing training), and helps preserve memory in neuronal states [33]. The use of heterogeneous oscillators with diverse periods and phases enables the network to process information across multiple timescales simultaneously.

Noise Injection and DSP for Time Series

This methodology enhances model generalization by artificially expanding the dataset and emphasizing key features [35]. The protocol involves three distinct stages:

Diagram 2: Noise Injection Pipeline

- Data Augmentation: Gaussian noise with a level set at 30% of the standard deviation of the original data is added to create an augmented dataset, typically increasing its size tenfold [35].

- Digital Signal Processing (DSP): The augmented data is processed using DSP techniques. This involves sampling, quantization, and transformation into the frequency domain using methods like the Fourier transform to extract salient frequency features [35].

- Model Training and Classification: The transformed features are used to train a classification model, such as an LSTM, GRU, or Temporal Convolutional Network (TCN), for the final task [35].

The Scientist's Toolkit

Successful implementation of the aforementioned experiments relies on a suite of key computational tools and datasets.

Table 4: Essential Research Reagents and Resources

| Item Name | Type | Function / Application | Example Sources / Formats |

|---|---|---|---|

| NTRFS Framework | Algorithm | Robust feature selection for high-dimensional, noisy data. | Custom implementation based on NTRFS literature [32]. |

| Rhythm-SNN Codebase | Software Tool | Training and evaluating oscillation-modulated SNNs for temporal tasks. | Public Git repository; Python/PyTorch-based [33]. |

| Noise Injection & DSP Pipeline | Methodology | Data augmentation and feature extraction for time series classification. | Custom Python scripts (NumPy, SciPy) [35]. |

| UCR Time Series Archive | Dataset | Benchmarking for time series classification algorithms. | Publicly available archive [35]. |

| Spiking Heidelberg Digits (SHD) | Dataset | Benchmarking for neuromorphic and SNN models on auditory tasks. | Publicly available dataset [33]. |

| DVS-Gesture | Dataset | Event-based action recognition for SNN evaluation. | Publicly available dataset [33]. |

| Surrogate Gradient Learning | Algorithm | Training SNNs with non-differentiable components using backpropagation. | Code frameworks like SPyTorch [33]. |

This comparison guide demonstrates a paradigm shift in processing signals for noisy, real-world environments. Techniques like NTRFS that leverage the informational value within noise, and brain-inspired models like Rhythm-SNN and NSNN, are setting new benchmarks for robustness and energy efficiency. The experimental data and detailed protocols provided offer researchers and development professionals a clear pathway for selecting and implementing the most appropriate advanced signal processing strategies for their specific applications, particularly within the demanding context of neural interfaces and biomedical data analysis.

Leveraging Deep Learning and Historical Data for Decoder Stability

Intracortical brain-computer interfaces (iBCIs) hold significant promise for restoring motor function to individuals with paralysis by translating neural activity into control signals for external devices [36]. A paramount challenge hindering their clinical adoption is decoder instability, where the performance of the algorithm that maps neural signals to intended actions degrades over time due to recording instabilities [2] [36]. These instabilities arise from factors such as micro-movements of electrodes, biological reactions to the implant, and neuronal cell death, leading to a non-stationary relationship between the recorded signals and the user's intent [36]. Leveraging deep learning and historical data presents a transformative approach for mitigating this issue. This guide objectively compares emerging deep learning-based decoders that utilize historical data for stability against traditional methods, framing the comparison within the broader thesis of robustness assessment for neural interfaces in real-world environments.

Core Challenges in Neural Decoding and the Role of Deep Learning

The primary obstacle to robust iBCI performance is the non-stationarity of neural signals. In controlled lab settings, decoders are typically recalibrated daily using fresh, labeled data collected from the user [2] [36]. However, this process is burdensome and impractical for daily home use [2]. Deep learning models, particularly those trained on extensive historical data from multiple sessions, offer a solution. These models can learn underlying latent structures and dynamics of neural population activity that are more stable over time than the signals from individual neurons [36].

- Manifold Stability: Neural activity resides on a low-dimensional "manifold"—a structure representing the patterns of co-activation across many neurons. This manifold has a stable relationship with behavior over long periods [36]. Deep learning models can learn to project non-stationary, high-dimensional neural recordings from different days onto this consistent manifold.

- Temporal Dynamics: The brain processes information through dynamics—rules governing how neural activity evolves over time. Models incorporating dynamics, such as recurrent neural networks (RNNs), can achieve high-performance decoding and may also contribute to stability, as these dynamics are consistent across time [36].

Comparative Analysis of Decoder Stabilization Approaches

The table below provides a high-level comparison of traditional recalibration methods against two advanced deep-learning frameworks that leverage historical data and latent structures for stability.

Table 1: Comparison of Decoder Stabilization Approaches for iBCIs

| Approach | Core Principle | Recalibration Requirement | Key Advantages | Key Limitations | Reported Performance (Representative) |

|---|---|---|---|---|---|

| Traditional Supervised Recalibration | Daily retraining of a decoder (e.g., Kalman filter) using new labeled data [2]. | Frequent (e.g., daily) supervised sessions [36]. | Simple, reliable in controlled settings. | High user burden, interrupts daily use [36]. | Performance degrades significantly without daily recalibration [36]. |

| NoMAD (Nonlinear Manifold Alignment with Dynamics) | Aligns non-stationary data to a stable neural manifold using a pre-trained dynamics model (LFADS) without behavioral labels [36]. | Unsupervised; no labeled data needed post-initial training [36]. | Unparalleled long-term stability (months) [36], incorporates temporal dynamics. | Complex architecture, computationally intensive training. | Maintained high decoding accuracy over 3 months in monkey motor cortex without recalibration [36]. |

| SPC with Channel Masking & Unsupervised Update | Uses Statistical Process Control (SPC) to automatically detect and mask corrupted signal channels, then updates decoder unsupervisedly [2]. | Unsupervised; triggered automatically by signal disruption [2]. | Targets specific channel failures, computationally efficient for deployment [2]. | Primarily addresses channel corruption, not full non-stationarity. | Maintained high performance with simulated disruption of 10-50 channels in a 96-electrode system [2]. |

Detailed Methodologies of Featured Approaches

NoMAD: A Framework for Dynamics-Based Stabilization

NoMAD leverages a modified Latent Factor Analysis via Dynamical Systems (LFADS) architecture, a type of sequential variational autoencoder, to model the underlying dynamics of neural population activity [36].

Experimental Protocol:

- Initial Supervised Training (Day 0): A dataset of neural activity and concurrent behavior (e.g., limb kinematics) is collected.

- A modified LFADS model is trained. Key modifications include a low-dimensional read-in matrix and a behavioral readout.

- The model's "Generator" (an RNN) learns a latent dynamical system that can predict both neural firing rates and behavior.

- A separate, high-capacity decoder (e.g., a Wiener filter or RNN) is trained to map the Generator's states to the Day 0 behavior [36].

- Unsupervised Alignment (Day K): On a subsequent day, with potential recording instabilities, only unlabeled neural data is collected.

- The weights of the pre-trained Generator RNN are frozen to preserve the learned dynamics.

- An alignment network, the read-in matrix, and the rates readout are updated using two unsupervised objectives: a) Maximizing the likelihood of the observed Day K neural data. b) Minimizing the Kullback-Leibler (KL) divergence between the distributions of the Generator states on Day 0 and Day K. This aligns the latent dynamics of the new data with the stable original manifold [36].

- Decoding: Once aligned, the Day K neural data is passed through the aligned model, and the stable Day 0 decoder is used to predict behavior with high accuracy.

The following diagram illustrates the NoMAD alignment process:

SPC and Masking for Robustness to Channel Corruption

This approach focuses on maintaining performance when a subset of recording channels become corrupted, a common real-world failure mode [2].

Experimental Protocol:

- Corruption Detection: An adapted Statistical Process Control (SPC) framework monitors channel-level metrics (e.g., impedance, signal correlation) in real-time. It establishes baseline tolerance bounds from historical data and flags channels that deviate as "out-of-control" [2].

- Channel Masking: A masking layer is inserted into a neural network decoder. When the SPC algorithm identifies a corrupted channel, its input is automatically set to zero ("masked") before being passed to the subsequent decoding layers. This architecture does not change, allowing for transfer learning [2].

- Unsupervised Update: The masking of channels triggers an unsupervised update to the decoder weights, reassigning importance to the remaining, healthy channels without requiring the user to perform a new calibration task [2].

The workflow for this method is shown below:

Quantitative Performance Comparison

The following table summarizes key experimental data from evaluations of the discussed methods, demonstrating their effectiveness in maintaining decoder stability.

Table 2: Experimental Performance Data for Stable Decoding Approaches

| Decoder Approach | Experimental Model / Data | Stability Challenge | Key Performance Metric & Result |

|---|---|---|---|

| NoMAD [36] | Monkey motor cortex during a 2D wrist force task. | 3-month duration without supervised recalibration. | Decoding Accuracy: Maintained high, stable performance over the entire 3-month period, outperforming previous state-of-the-art manifold alignment methods that did not incorporate dynamics. |

| SPC + Masking + Unsupervised Update [2] | Clinical BCI data and simulated disruptions from a 5-year study with an implanted Utah array. | Corruption (e.g., shorting, floating) of a subset of channels in a 96-electrode array. | Robustness to Channel Loss: Maintained high performance with the simulated removal of 10-50 of the most informative channels, minimizing performance decrements. |

| Multiplicative RNN (MRNN) with Augmentation [2] | Intracortical BCI data. | Simulated loss of the most informative electrodes. | Robustness to Electrode Zeroing: Tolerated zeroing of 3-5 of the most informative electrodes with only moderate performance drops. |

| Hysteresis Neural Dynamical Filter (HDNF) [2] | Intracortical BCI data (96 and 192-electrode systems). | Simulated loss of the most informative electrodes. | Robustness to Electrode Zeroing: Performance remained similar to baseline with removal of ~10 (96-el) to ~50 (192-el) of the most informative channels. |

This table details key computational tools and models used in the development of stable deep-learning decoders.

Table 3: Essential Reagents and Computational Tools for Decoder Stability Research

| Item / Resource | Function in Research | Relevant Context |

|---|---|---|

| LFADS (Latent Factor Analysis via Dynamical Systems) | A sequential VAE that models neural population activity via a generative RNN to infer latent dynamics and firing rates [36]. | Core component of the NoMAD framework for learning a stable dynamics model from initial supervised data [36]. |

| Recurrent Neural Network (RNN) | A class of neural networks with internal memory, ideal for modeling time-series data like neural dynamics [36]. | Used as the "Generator" network in LFADS/NoMAD to produce temporally coherent latent states [36]. |

| Statistical Process Control (SPC) | A quality-control framework using statistical methods to monitor and control a process; adapted to monitor neural signal health [2]. | Used to automatically detect corrupted recording channels by identifying deviations from established baselines [2]. |

| TensorFlow / PyTorch | Open-source deep learning frameworks that provide libraries for building and training complex neural network models [37]. | Foundational platforms for implementing and experimenting with models like LFADS, RNNs, and custom decoder architectures. |

| Variational Autoencoder (VAE) | A generative model that learns a latent probabilistic representation of input data, useful for dimensionality reduction [38]. | The underlying architecture for LFADS, which is a sequential extension designed for neural data [36]. |

| Kullback-Leibler (KL) Divergence | A statistical measure of how one probability distribution differs from a reference distribution. | Serves as a key loss function in NoMAD's unsupervised alignment step, driving the Day K data distribution to match the stable Day 0 manifold [36]. |

The integration of deep learning with principles of latent manifolds and neural dynamics represents a paradigm shift in the pursuit of stable intracortical brain-computer interfaces. Framed within a robustness assessment for real-world environments, objective comparison reveals that methods like NoMAD, which explicitly model and align temporal dynamics, offer a path to unprecedented long-term stability without user intervention [36]. Complementary approaches that automatically detect and adapt to hardware-level signal corruption further enhance system resilience [2]. While computational complexity remains a consideration, these deep learning-driven strategies significantly outperform traditional recalibration-dependent decoders on the critical metric of sustained performance. This progress underscores the necessity of leveraging historical data and the stable computational principles of neural population activity to build BCIs that are not only high-performing but also reliable enough for long-term clinical and real-world use.

Automatic Disruption Detection with Statistical Process Control (SPC) for Channel Health Monitoring

For brain-computer interfaces (BCIs) to transition from controlled laboratory settings to viable long-term daily usage, they must achieve a critical level of robustness against signal disruptions. Such disruptions, arising from biological, material, and mechanical issues, frequently cause individual recording channels to fail while leaving others unaffected, significantly degrading system performance and user experience [1] [39]. Within the broader research thesis on robustness assessment of neural interfaces in real-world environments, automatic disruption detection emerges as a foundational pillar. This guide objectively compares the performance of a novel approach—Statistical Process Control (SPC) for channel health monitoring—against other algorithmic strategies for maintaining BCI functionality. We provide a detailed analysis of experimental protocols and quantitative results to equip researchers and developers with the data needed for informed technology selection.

Disruption Types and Compensatory Strategies

A critical first step in developing robust systems is understanding the nature of signal disruptions. A review by Downey et al. (2020) proposes a functional classification system that complements traditional etiology-based categories (biological, material, mechanical) by focusing on the impact on BMI performance and the appropriate compensatory response [39].

Table 1: Classification of Intracortical BMI Signal Disruptions

| Disruption Class | Duration of Impact | Intervention Required | Example Causes | Recommended Compensatory Strategies |

|---|---|---|---|---|