Ensemble Learning Methods to Prevent Overfitting in Brain-Computer Interfaces: A Guide for Biomedical Researchers

This article provides a comprehensive analysis of ensemble learning methods to mitigate overfitting in Brain-Computer Interface (BCI) systems, with a specific focus on applications in neurotechnology and drug development research.

Ensemble Learning Methods to Prevent Overfitting in Brain-Computer Interfaces: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive analysis of ensemble learning methods to mitigate overfitting in Brain-Computer Interface (BCI) systems, with a specific focus on applications in neurotechnology and drug development research. It explores the foundational challenges of non-stationary EEG signals and covariate shift, details methodological implementations of adaptive ensemble algorithms, offers troubleshooting and optimization strategies for model robustness, and presents comparative validation of techniques against state-of-the-art benchmarks. Aimed at researchers and scientists, the content synthesizes current literature to guide the development of reliable, generalizable BCI models for clinical and research applications, highlighting future directions for biomedical innovation.

Understanding Overfitting and Non-Stationarity in BCI Systems

The Critical Challenge of Non-Stationary EEG Signals in BCI

Frequently Asked Questions

Q1: What are non-stationary EEG signals, and why are they problematic for Brain-Computer Interfaces? Non-stationarity in EEG signals refers to the statistical properties (like mean and variance) that change over time. These fluctuations pose significant challenges for BCI performance and implementation because they cause models trained on data from one session to perform poorly on new data, requiring frequent recalibration and leading to overfitting on session-specific noise [1].

Q2: What are the most common sources of artifacts that contribute to EEG non-stationarity? EEG signals are contaminated by various artifacts that introduce non-stationary noise. The main categories are [2]:

- Physiological Artifacts: Originate from the user's body and include:

- Ocular activity: Eye blinks and movements, which are high-amplitude and low-frequency.

- Muscle activity: From jaw, neck, or facial muscles, producing high-frequency, broadband noise.

- Cardiac activity: Heartbeat signals that can create rhythmic artifacts.

- Perspiration: Causes slow baseline drifts.

- Non-Physiological Artifacts: Arise from external sources, such as:

- Electrode pop: Sudden impedance changes cause transient spikes.

- Cable movement: Creates irregular or rhythmic waveform distortions.

- AC power interference: Introduces 50/60 Hz line noise.

Q3: How can I quickly check if my EEG data is contaminated by artifacts? A simple, rule-based initial check involves examining signal amplitude. Artifacts like eye blinks or muscle activity are often huge, sometimes in the millivolt range, compared to typical EEG signals in the microvolt range. A general threshold is that any signal exceeding 100 microvolts is suspect and warrants further investigation [3].

Q4: How does overfitting relate to non-stationary EEG signals? Overfitting occurs when a model learns patterns—including noise and session-specific quirks—from its training data that do not generalize to new, unseen data [4]. Non-stationary EEG signals are a primary source of such deceptive patterns. A model may overfit by memorizing the specific noise signature of a training session, leading to poor performance when that noise changes in subsequent sessions [5] [1].

Q5: Can ensemble learning methods help with this issue? Yes. Ensemble models are collections of smaller models whose predictions are averaged. They are very effective at resisting overfitting, as they distribute errors among individual sub-models, preventing the overall system from relying too heavily on any one potentially misleading pattern found in the data [4]. Studies have demonstrated the success of hybrid and ensemble models, such as EEGBoostNet, for tasks like seizure detection, achieving high accuracy by combining the strengths of different architectures [6].

Troubleshooting Guides

Guide 1: Identifying and Correcting Ocular Artifacts

Ocular artifacts (blinks and saccades) are a major source of non-stationary noise, overwhelming informative EEG features in the 3–15 Hz frequency range [7].

Detection & Correction Methods:

The table below summarizes the most effective techniques for correcting ocular blink artifacts.

| Method | Principle | Best For | Key Considerations |

|---|---|---|---|

| Regression-Based [7] | Models and subtracts the artifact contribution using a template (e.g., from an EOG channel). | Studies where a dedicated EOG channel is available. | Requires a calibration run; simpler but may remove neural signals correlated with the artifact. |

| Independent Component Analysis (ICA) [7] [2] | Decomposes the EEG signal into independent components; artifact components are identified and removed. | High-density EEG systems (e.g., >40 channels). | Computationally intensive; requires manual component inspection or automated classifiers. |

| Artifact Subspace Reconstruction (ASR) [7] | Detects and reconstructs the data subspace contaminated by artifacts in real-time. | Real-time applications and mobile EEG. | An advanced, adaptive method suitable for online BCI. |

| Deep Learning-Based [7] | Uses trained neural networks (e.g., CNNs, Autoencoders) to recognize and remove non-physiological patterns. | Large datasets; correcting various artifact types simultaneously. | Requires large amounts of training data but offers a powerful, integrated solution [1]. |

Experimental Protocol: ICA for Ocular Artifact Removal

- Data Preprocessing: Apply a band-pass filter (e.g., 1–50 Hz) to the raw EEG to eliminate slow drifts and high-frequency noise [7].

- ICA Decomposition: Run an ICA algorithm (e.g., Infomax, FastICA) on the preprocessed data to break it down into independent components.

- Component Classification: Identify components corresponding to ocular artifacts. These typically have high amplitudes, frontally dominant scalp distributions, and a high power in low-frequency bands [2].

- Artifact Removal: Remove the identified artifact components from the data.

- Signal Reconstruction: Reconstruct the clean EEG signal from the remaining components.

Guide 2: Mitigating Cross-Session Non-Stationarity with Deep Learning

Non-stationarity across recording sessions is a major hurdle for reliable BCI operation, as it degrades model performance and requires recalibration [1].

Experimental Protocol: Supervised Autoencoder for Domain Adaptation This protocol is based on a novel method that uses a supervised autoencoder to reduce session-specific information while preserving task-related signals [1].

- Objective: Compress high-dimensional EEG inputs and reconstruct them to mitigate non-stationary variability across sessions.

- Network Architecture: Design an autoencoder where the objective function includes:

- Reconstruction Loss: Minimizes the error between the input and output (unsupervised).

- Session Identity Loss: A supervised term that ensures the latent (compressed) representations do not contain information about which session the data came from.

- Task Classification Loss: A second supervised term that ensures the latent representations are optimized for the end-task (e.g., motor imagery classification).

- Training: Train the network on data from multiple existing sessions. The model learns to create a session-invariant representation of the data.

- Evaluation: Test the model on held-out data from new sessions without any recalibration. This approach has been shown to outperform both naïve cross-session and within-session methods [1].

The following workflow diagram illustrates the supervised autoencoder protocol for handling multi-session data.

Guide 3: Designing Robust Models to Prevent Overfitting

The inherent non-stationarity of EEG signals makes BCI models highly susceptible to overfitting [5] [4].

Strategies to Prevent Overfitting:

| Strategy | Description | How it Addresses Non-Stationarity |

|---|---|---|

| Ensemble Learning [4] | Combines predictions from multiple models (e.g., Random Forest, custom hybrid models). | Averages out errors and session-specific noise captured by individual models, enhancing generalization [6]. |

| Transfer Learning (TL) [5] | Leverages patterns learned from one subject or task to another with minimal recalibration. | Directly tackles inter-subject and inter-session variability, a key manifestation of non-stationarity. |

| Regularization [4] | Techniques that reduce model complexity (e.g., dropout layers in neural networks). | Prevents the model from having the capacity to "memorize" noisy, non-stationary artifacts in the training data. |

| Early Stopping [4] | Halting the training process once performance on a validation set stops improving. | Stops the model before it starts learning session-specific noise patterns, preserving generalization. |

Experimental Protocol: Building an Ensemble for Motor Imagery Classification

- Model Selection: Choose a diverse set of base models. For EEG, effective architectures include [8] [6]:

- Convolutional Neural Networks (CNNs) for spatial feature extraction.

- Long Short-Term Memory (LSTM) or Bidirectional Gated Recurrent Units (Bi-GRU) networks for temporal dynamics modeling.

- Models enhanced with attention mechanisms to focus on task-relevant neural patterns.

- Training: Train each base model on the same training dataset.

- Ensemble Method: Use an ensemble technique like:

- Averaging: For regression tasks, average the predictions of all models.

- Majority Voting: For classification tasks, take the class predicted by the majority of models.

- Stacking: Use a meta-learner (like XGBoost) to learn how to best combine the base models' predictions [6].

- Validation: Evaluate the ensemble's performance on a separate validation set and compare it to individual base models to confirm improved robustness.

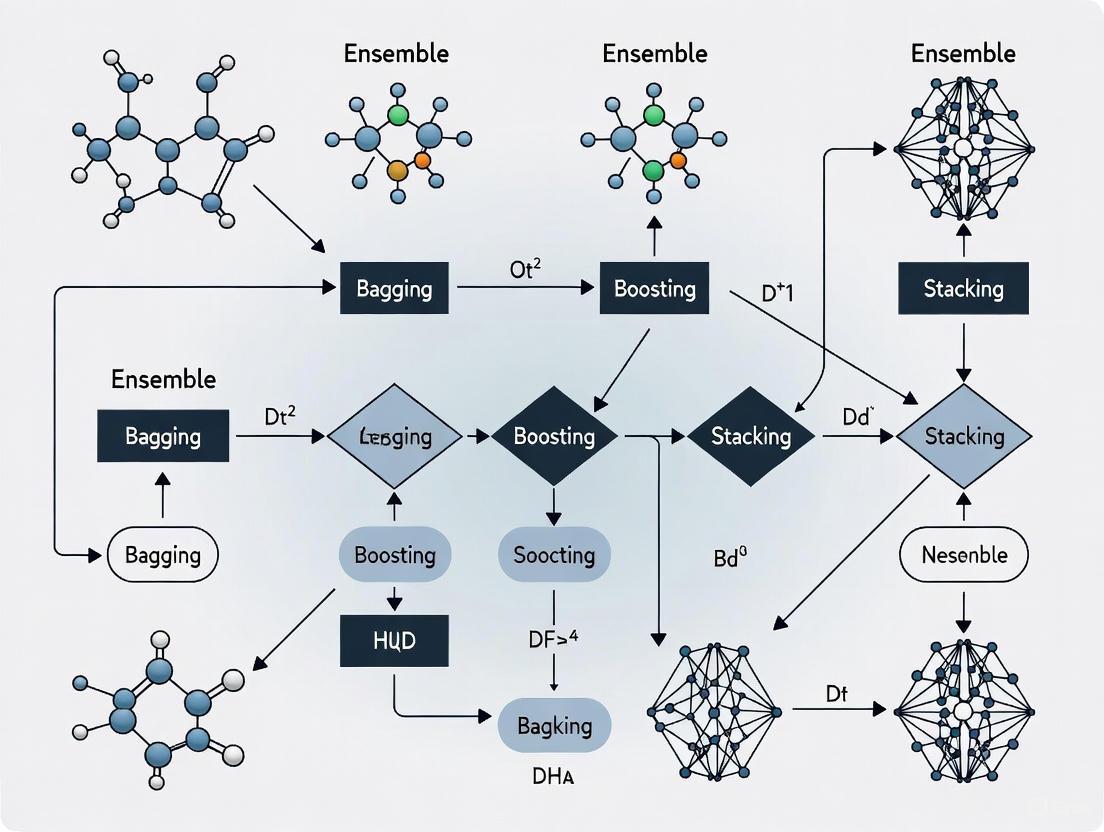

The diagram below illustrates the structure of a hierarchical ensemble model that integrates different types of neural networks for robust classification.

The Scientist's Toolkit: Key Research Reagents & Solutions

This table details essential computational tools and methodological approaches used in modern BCI research to combat non-stationarity and overfitting.

| Item / Solution | Function in BCI Research |

|---|---|

| Independent Component Analysis (ICA) [7] [2] | A blind source separation technique used to isolate and remove artifacts (ocular, muscle) from multi-channel EEG data. |

| Artifact Subspace Reconstruction (ASR) [7] | An advanced, adaptive algorithm for real-time detection and correction of artifact-contaminated segments in the EEG signal. |

| Supervised Autoencoders [1] | A deep learning architecture used for domain adaptation, designed to learn session-invariant feature representations, reducing the need for recalibration. |

| Convolutional Neural Networks (CNNs) [8] | Deep learning models specialized for extracting spatial features and patterns from raw EEG signals or their time-frequency representations. |

| Long Short-Term Memory (LSTM) Networks [8] | A type of recurrent neural network (RNN) designed to model temporal sequences and dependencies in EEG data over time. |

| Attention Mechanisms [8] | Modules integrated into neural networks that allow the model to dynamically focus on the most task-relevant spatial and temporal segments of the EEG signal. |

| Explainable AI (XAI) / SHAP [6] | A framework for interpreting complex model predictions, helping researchers understand which EEG channels and features drive the classification. |

| Transfer Learning (TL) [5] | A methodology that applies knowledge gained from solving one problem (or subject) to a different but related problem, mitigating inter-session/subject variability. |

In machine learning, particularly in sensitive domains like Brain-Computer Interface (BCI) research and drug development, a common assumption is that data encountered during a model's deployment will share the same statistical distribution as the data it was trained on. Covariate shift is a specific type of dataset drift that challenges this assumption. It occurs when the distribution of input features (covariates) changes between the training and operational environments, while the underlying conditional relationship between the inputs and outputs remains the same [9]. This phenomenon is a major source of model degradation in real-world, non-stationary systems, such as those analyzing electroencephalography (EEG) signals [10] [11]. For researchers using ensemble methods to prevent BCI overfitting, understanding and correcting for covariate shift is essential for building robust and generalizable models. This guide addresses the specific challenges and solutions related to covariate shift in an experimental context.

Troubleshooting Guides

Guide 1: Diagnosing a Sudden Drop in Model Performance During an Experimental Session

Problem: Your previously high-performing BCI model, trained to classify motor imagery from EEG signals, experiences a sharp decline in classification accuracy during a new experimental session or with a new cohort of subjects.

Explanation: A sudden performance drop is a classic symptom of covariate shift [9]. In the context of EEG-based BCIs, the non-stationary nature of brain signals means that the distribution of input features (e.g., power in specific frequency bands) can change between the training session (calibration) and testing session (operation), or even within a single session [10] [11]. The model, trained on the original input distribution, becomes ineffective when presented with data from a new distribution, even if the fundamental brain patterns for "left hand" or "right hand" imagery remain unchanged.

Steps for Diagnosis:

- Verify Data Quality: First, rule out hardware or data collection issues. Check for loose electrodes, excessive muscle artifacts, or amplifier drift in the raw EEG signals.

- Compare Feature Distributions: Extract the same features used by your model (e.g., Common Spatial Pattern (CSP) features, band power) from both your training dataset and a sample of the new, poorly performing data.

- Visualize the Shift: Plot the distributions of these key features for the two datasets. The presence of a covariate shift is often visually apparent as a misalignment between the two distributions. A statistical test like the two-sample Kolmogorov-Smirnov test can provide quantitative confirmation.

- Implement a Shift-Detection Test: For online, real-time systems, implement an automated detection method. The Exponentially Weighted Moving Average (EWMA) model is a proven technique for detecting covariate shifts in streaming EEG features [10] [11]. It monitors feature statistics and flags significant deviations.

Guide 2: Adapting an Ensemble Classifier to Session-to-Session Non-Stationarity in EEG

Problem: The performance of your ensemble classifier (e.g., Random Forest) degrades when applied to EEG data collected in a different session from the training data, due to inter-session covariate shift.

Explanation: Ensemble methods like bagging are powerful for preventing overfitting, but their static nature can be a limitation in non-stationary environments [12]. A fixed ensemble may not adequately represent the evolving data distribution. An active adaptation strategy is required.

Steps for Adaptation (CSE-UAEL Method):

This methodology integrates Covariate Shift Estimation with Unsupervised Adaptive Ensemble Learning [10].

- Detect the Shift: Use an EWMA-based control chart on the incoming stream of EEG features (e.g., CSP features) to detect when a covariate shift has occurred [10] [11].

- Validate the Shift: To minimize false alarms and unnecessary retraining, employ a two-stage process: initial detection followed by a validation step on subsequent data points [11].

- Update the Ensemble: Once a shift is validated, create a new classifier. This new classifier is trained on the most recent data, the distribution of which is assumed to represent the new regime.

- Incorporate the New Classifier: Add this new classifier to your existing ensemble. The combined ensemble now possesses knowledge of both the old and new data distributions, making it more robust to the observed non-stationarity [10].

- Update the Knowledge Base: Use transductive learning (e.g., a Probabilistic Weighted K-Nearest Neighbour method) to assign labels to the new, unlabeled data, allowing the system to update its knowledge base in an unsupervised manner [10].

The following workflow illustrates this adaptive process:

Frequently Asked Questions (FAQs)

What is the fundamental difference between covariate shift and concept shift?

Answer: Both are types of dataset drift, but they affect different parts of the learning problem.

- Covariate Shift: Defined as a change in the distribution of the input variables (P(x)) between training and testing, while the conditional distribution of the outputs given the inputs (P(y|x)) remains unchanged [9] [10]. For example, training a model on EEG data from young adults and testing on data from older adults, where the feature distributions differ but the meaning of a "left-hand imagination" signal is the same.

- Concept Shift: Refers to a change in the relationship between inputs and outputs, meaning the conditional distribution (P(y|x)) itself has changed [9]. For instance, if the neurological signature for a specific motor imagery task changes over long-term use, the same input features (x) would now correspond to a different mental command (y).

Why are ensemble methods particularly well-suited to handle covariate shift?

Answer: Ensemble methods, by their nature, combine multiple models, which introduces diversity and robustness [12]. In the context of covariate shift:

- Variance Reduction: Bagging-based ensembles (e.g., Random Forest) reduce model variance by averaging predictions, which helps smooth out erratic predictions caused by shifts in the input data [12].

- Adaptive Potential: As detailed in the troubleshooting guide, ensemble structures can be dynamically updated. New classifiers can be added to the ensemble to specifically learn from the new data distribution brought about by the covariate shift, creating a composite model that understands both old and new regimes [10]. This makes them more flexible than a single, static classifier.

What quantitative metrics should I track to evaluate the severity of a covariate shift?

Answer: Researchers should monitor the following metrics to quantify distributional changes:

| Metric | Description | Interpretation |

|---|---|---|

| Population Stability Index (PSI) | Measures the difference between two distributions by binning data and comparing proportions. | PSI < 0.1 indicates no significant shift. PSI > 0.25 indicates a major shift. |

| Kullback-Leibler (KL) Divergence | An information-theoretic measure of how one probability distribution differs from a reference. | A value of 0 indicates identical distributions. Higher values indicate greater divergence. |

| Feature Mean/Standard Deviation | Track the change in the average value and spread of key input features over time. | A significant drift in these basic statistics is a strong, direct indicator of covariate shift. |

| EWMA Control Chart Statistics | Plots the exponentially weighted mean of a feature over time against control limits. | A data point or trend crossing the control limits signals a statistically significant shift [10] [11]. |

How can I design my BCI experiment to be more resilient to covariate shift from the start?

Answer: Proactive experimental design can mitigate the effects of covariate shift.

- Diverse Training Data: Collect training data that is as representative as possible of the expected operational conditions. This includes varying the time of day, subject fatigue levels, and across multiple sessions [9].

- Feature Invariance: Prioritize feature extraction techniques that yield stable features across sessions. Common Spatial Pattern (CSP) is widely used, but its regularized variants (e.g., Regularized CSP) are designed to be more robust to noise and non-stationarities [13].

- Architect for Adaptation: Choose a machine learning framework that supports online learning or active adaptation from the outset. Planning for a static model deployment will inevitably lead to performance decay in a non-stationary environment like BCI.

Experimental Protocols

Protocol 1: EWMA-Based Covariate Shift Detection in EEG Features

This protocol provides a detailed methodology for implementing a real-time covariate shift detection system, as used in state-of-the-art BCI research [10] [11].

Objective: To detect the point in a stream of EEG features where the input data distribution significantly deviates from the baseline (training) distribution.

Materials:

- Preprocessed EEG data stream.

- Extracted features (e.g., CSP features, band power from specific channels).

- Computational environment for statistical computing (e.g., Python, MATLAB).

Methodology:

- Establish a Baseline: Calculate the mean (μ₀) and standard deviation (σ₀) of the chosen feature from the baseline training data.

- Initialize the EWMA Statistic: Set the initial EWMA statistic (Z₀) to the baseline mean (μ₀).

- For each new feature value (xₜ) in the streaming data: a. Calculate the new EWMA statistic: Zₜ = λ * xₜ + (1 - λ) * Zₜ₋₁, where λ is a smoothing parameter (0 < λ ≤ 1). b. Calculate the control limits for the EWMA chart: * Upper Control Limit (UCL) = μ₀ + L * σ₀ * √(λ/(2-λ)) * Lower Control Limit (LCL) = μ₀ - L * σ₀ * √(λ/(2-λ)) (Where L is a multiplier chosen to achieve a desired false alarm rate, often set to 3). c. Compare Zₜ to the control limits. If Zₜ falls outside the UCL or LCL, a covariate shift is flagged.

- Validation: Once a shift is flagged, continue monitoring the next few data points. If they consistently remain outside the control limits, the shift is confirmed, and adaptation protocols should be initiated.

Protocol 2: Implementing an Adaptive Ensemble with CSE-UAEL

This protocol describes the process of creating and maintaining an adaptive ensemble classifier based on covariate shift estimation [10].

Objective: To create an ensemble learning model that dynamically updates itself in response to detected covariate shifts, maintaining high classification accuracy in non-stationary environments.

Materials:

- A baseline (initial) training dataset with labels.

- A stream of unlabeled test data (e.g., from an ongoing BCI session).

- A base classifier algorithm (e.g., Linear Discriminant Analysis, Decision Tree).

- Implemented EWMA shift-detection module (from Protocol 1).

Methodology:

- Initialization: Train the first base classifier on the initial labeled training dataset. This is the first member of the ensemble.

- Streaming and Monitoring: As new, unlabeled test data arrives, extract features and feed them into the EWMA shift-detection system.

- Shift Detection and Validation: Follow Protocol 1 to detect and validate a covariate shift.

- Classifier Creation: Upon successful shift validation, create a new classifier. Since the new data is unlabeled, use a transductive learning method like Probabilistic Weighted K-Nearest Neighbour (PWKNN) to estimate labels for the new data based on its similarity to the existing knowledge base [10]. Train the new classifier on this newly labeled data.

- Ensemble Update: Add the newly trained classifier to the ensemble. The prediction of the ensemble can be a simple majority vote or a weighted average of the constituent classifiers.

- Knowledge Base Update: Merge the newly labeled data (or a representative subset) into the knowledge base to enrich the data available for future retraining. The system then returns to Step 2, continuously monitoring for the next shift.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and methodological "reagents" essential for experimenting with and mitigating covariate shift in BCI research.

| Item | Function in Experiment |

|---|---|

| Exponentially Weighted Moving Average (EWMA) Model | A statistical process control method used as the core engine for detecting covariate shifts in streaming feature data [10] [11]. |

| Common Spatial Pattern (CSP) & Regularized CSP (RCSP) | Feature extraction algorithms that enhance the discriminability of EEG signals for motor imagery tasks. RCSP variants are designed to reduce overfitting and improve stability with limited data [13]. |

| Probabilistic Weighted K-Nearest Neighbour (PWKNN) | A transductive learning algorithm used to assign probabilistic labels to new, unlabeled data after a shift is detected, enabling unsupervised model adaptation [10]. |

| Bagging Ensemble Framework | A machine learning meta-algorithm that trains multiple models on different data subsets. It reduces variance and provides a flexible structure into which new, adapted classifiers can be integrated [10] [12]. |

| Linear Discriminant Analysis (LDA) | A simple, fast, and robust classifier often used as the base learner in adaptive ensemble methods for BCI due to its good performance on EEG data [10] [14]. |

How Overfitting Manifests in Single-Classifier BCI Models

Frequently Asked Questions (FAQs)

1. What is overfitting and why is it a critical problem in BCI research? Overfitting occurs when a machine learning model learns the training data too well, including its noise and irrelevant patterns, but performs poorly on new, unseen data. In Brain-Computer Interface (BCI) systems, this means a model might achieve high accuracy on the EEG data it was trained on but fail to generalize to new sessions with the same subject or to different subjects altogether. This is a critical barrier to developing reliable BCIs for real-world applications, such as neuro-rehabilitation or communication devices, as it undermines the model's robustness and practical utility [15] [16].

2. What are the key symptoms that my single-classifier BCI model is overfitting? The primary symptom is a significant performance gap between training and test data. You might observe:

- High training accuracy but low test accuracy [15] [16].

- The model performs well on data from a specific dataset or session but fails on data from a new session or a different public dataset, a problem known as cross-dataset variability [17].

- Performance degradation due to the non-stationary nature of EEG signals across sessions and subjects [18].

3. What are the main causes of overfitting in motor imagery (MI)-BCI models? Overfitting in MI-BCI is primarily driven by the fundamental characteristics of EEG data and model design:

- Data Scarcity: It is difficult and costly to collect a large number of high-quality EEG trials. Models trained on small datasets are more likely to learn non-existent patterns [19] [18].

- High-Dimensional, Noisy Data: EEG signals have a low signal-to-noise ratio (SNR). A complex model can easily learn the noise instead of the underlying brain activity pattern [14] [18].

- Subject Variability: EEG signals are unique to each individual (inter-subject variability) and can change for the same individual across different sessions (intra-subject variability). A model trained without accounting for this will not generalize [17] [18] [20].

Troubleshooting Guide: Identifying and Mitigating Overfitting

Problem: My model's performance is excellent on training data but poor on validation/test data.

| Step | Action | Expected Outcome & Diagnostic Tip |

|---|---|---|

| 1. Diagnose | Use K-Fold Cross-Validation: Split your data into k folds (e.g., 5 or 10). Train on k-1 folds and validate on the held-out fold. Repeat this process k times [14] [16]. | A significant difference between the average validation accuracy and the training accuracy indicates overfitting. This provides a more robust performance estimate than a single train-test split [14]. |

| 2. Validate Generalization | Test your model on a completely independent dataset or on data from a subject that was not included in the training set (subject-independent testing) [17]. | A sharp drop in accuracy on the independent dataset confirms the model has overfitted to the specific structure of your primary training dataset [17]. |

| 3. Mitigate with Data Augmentation | Artificially increase the size and diversity of your training set. For EEG data, consider methods like adding Gaussian noise, cropping, or advanced methods like Conditional Generative Adversarial Networks (cGANs) [19] [18]. | This helps the model learn more robust features. For example, studies have shown cGAN-based augmentation can significantly improve classifier performance on MI tasks [19]. |

| 4. Apply Regularization | Introduce techniques that constrain the model. For neural networks, use Dropout layers, which randomly ignore a percentage of neurons during training to prevent co-adaptation [15]. For other models, L1/L2 regularization adds a penalty for large weights in the model [14]. | The model becomes less sensitive to specific weights and learns more generalizable features, reducing variance [15]. |

| 5. Simplify the Model | Reduce model complexity. For a neural network, this could mean using fewer layers or neurons. For a decision tree, limit the maximum depth [16]. | A simpler model has less capacity to memorize the training data and is forced to learn the broader, more relevant patterns. |

Experimental Protocol: Evaluating Model Generalization

Objective: To systematically test a single-classifier model for overfitting and cross-dataset variability.

Methodology:

- Dataset Selection: Utilize at least two public MI-EEG datasets (e.g., from BCI Competition IV). Ensure they have comparable paradigms (e.g., left vs. right-hand imagery) [17] [19].

- Data Preprocessing: Apply a standard preprocessing pipeline (e.g., bandpass filtering 8-30 Hz for Mu/Beta rhythms, select channels C3, Cz, C4). This ensures consistency across datasets [17].

- Feature Extraction: Extract Common Spatial Patterns (CSP) for feature reduction, a common and effective method for MI-BCI [17] [18].

- Model Training & Evaluation:

- Within-Dataset Performance: Train your model (e.g., SVM, LDA) on one part of Dataset A and test it on the held-out portion of Dataset A. Record the accuracy.

- Cross-Dataset Performance: Train your model on the entire Dataset A. Then, evaluate its performance on the entire Dataset B without any retraining. Record the accuracy [17].

Interpretation: A model that generalizes well will maintain reasonably high accuracy in both the within-dataset and cross-dataset scenarios. A large drop in cross-dataset accuracy is a clear manifestation of overfitting to the training dataset's specific characteristics.

Quantitative Data on Overfitting Manifestations

The table below summarizes experimental results from the literature that demonstrate the overfitting problem in BCI models, particularly the challenge of cross-dataset generalization.

| Model / Context | Training Data | Test Data | Reported Performance | Key Insight / Manifestation of Overfitting |

|---|---|---|---|---|

| Deep Learning Models [17] | One MI Dataset | Different MI Dataset | "Significantly worse" performance | Demonstrates cross-dataset variability; a model optimal for one dataset fails on another. |

| Subject-Independent Inner Speech Classification [21] | All Subjects (Mixed) | Left-out Subjects | ~32% Accuracy | Highlights the difficulty of generalizing across different individuals with unique EEG patterns. |

| cWGAN-GP Data Augmentation on EEGNet [19] | BCI Competition IV IIa (Original) | BCI Competition IV IIa (Test Set) | 82.0% Accuracy | Baseline performance without augmentation on a within-dataset test. |

| cWGAN-GP Data Augmentation on EEGNet [19] | BCI Competition IV IIa (+ Augmented Data) | BCI Competition IV IIa (Test Set) | Improved from 82.0% | Adding artificially generated data helps mitigate overfitting caused by data scarcity, leading to better generalization on the same test set. |

The Scientist's Toolkit: Research Reagents & Materials

| Item / Technique | Function in BCI Research |

|---|---|

| Common Spatial Patterns (CSP) | A spatial filtering algorithm used to maximize the variance of one class while minimizing the variance of the other, essential for feature extraction in Motor Imagery BCIs [17] [18]. |

| EEGNet | A compact convolutional neural network architecture specifically designed for EEG-based BCIs. It is a common benchmark model for evaluating new methods [19] [18]. |

| Conditional GAN (cGAN/WGAN-GP) | A type of generative model used for data augmentation. It creates artificial EEG trials that mimic real data, helping to overcome overfitting by expanding the training dataset [19]. |

| Linear Discriminant Analysis (LDA) | A classic, lightweight classification algorithm often used as a baseline in BCI decoding due to its simplicity and effectiveness on high-dimensional data [19] [14]. |

| Support Vector Machine (SVM) | A powerful classifier that finds an optimal hyperplane to separate different classes in the feature space. It is widely used in BCI research but is prone to overfitting without proper regularization [21] [14]. |

| K-Fold Cross-Validation | A robust statistical method used to evaluate model performance and detect overfitting by repeatedly partitioning the data into training and validation sets [14] [16]. |

Workflow: Diagnosing Overfitting in a Single-Classifier BCI Model

The following diagram illustrates a systematic workflow for identifying overfitting in a BCI model, from initial training to final diagnosis.

The Impact of Noisy, High-Dimensional Data on Model Generalization

Troubleshooting Guides

Troubleshooting Guide 1: Diagnosing and Remedying Overfitting in BCI Models

Problem: My model achieves high accuracy on training data but performs poorly on unseen subject data.

Explanation: This is a classic sign of overfitting, where the model memorizes noise and subject-specific patterns in the high-dimensional training data instead of learning generalizable neural features. In BCI, this is often caused by the "curse of dimensionality," where the number of features (e.g., EEG channels, time points, frequency bands) vastly exceeds the number of observations, allowing the model to find spurious correlations [22] [23].

Solution Steps:

- Confirm Overfitting: Check for a significant gap between training and validation/test accuracy. Use cross-validation, not just a single train-test split [23].

- Apply Regularization: Integrate L1 (Lasso) or L2 (Ridge) regularization into your model. L1 can also perform feature selection by driving some feature coefficients to zero [22] [23].

- Implement Ensemble Learning: Train multiple models and aggregate their predictions. For instance, a Random Subspace Ensemble trains multiple weak learners (e.g., Linear Discriminant Analysis) on randomly selected feature subsets, reducing variance and improving generalization [24]. Bagging (Bootstrap Aggregating) is another effective method [25].

- Validate and Iterate: Use a held-out test set for final evaluation only after diagnostics and adjustments are complete.

Troubleshooting Guide 2: Managing High-Dimensional Feature Spaces in Neurodata

Problem: The feature extraction process for my EEG/MEG signals has generated thousands of features, making the model slow and prone to overfitting.

Explanation: High-dimensional feature spaces are inherently sparse, meaning data points are spread far apart. This sparsity makes it difficult for models to learn robust patterns and increases the risk of fitting to noise [22] [23]. The model's performance becomes computationally expensive and unstable.

Solution Steps:

- Feature Selection: Identify and retain the most informative features.

- Filter Methods: Use statistical tests (e.g., correlation with the target variable) to select the top-k features [22].

- Wrapper Methods: Use Recursive Feature Elimination (RFE) to find the optimal feature subset by iteratively training models and removing the weakest features [22].

- Channel Selection: For MEG/EEG, use methods like correlation coefficient and variance entropy product (CC-VEP) to select the most task-relevant channels, suppressing noise and redundancy [26].

- Dimensionality Reduction: Project your data into a lower-dimensional space.

- Utilize Robust Algorithms: Employ algorithms that are inherently more resilient to high-dimensional data, such as Random Forests or regularized Support Vector Machines (SVM) [22].

Troubleshooting Guide 3: Handling Noisy EEG/MEG Signals to Improve Generalization

Problem: My BCI model's performance is inconsistent, likely due to the high noise-to-signal ratio in the brain signal data.

Explanation: EEG signals have a high noise-to-signal ratio, which is even more pronounced in paradigms like inner speech, where there are no external stimuli to trigger well-defined neural responses. Noise can come from muscle movements, eye blinks, environmental interference, or subject-specific variability [21] [27]. If not addressed, models will learn to fit this noise, harming generalization.

Solution Steps:

- Advanced Preprocessing:

- Feature Extraction Robust to Noise:

- Use the DivCSP algorithm with intra-class regularization terms for spatial filtering, which is more robust against noisy signals and outliers compared to standard Common Spatial Patterns (CSP) [26].

- Ensemble Learning for Robustness:

- Implement classifier fusion. Train multiple different classifiers (e.g., k-NN, SVM, Random Forest) and combine their probabilistic outputs using a multi-criteria decision-making fusion (MCDM-MCF) strategy. This leverages the strengths of different algorithms and averages out their errors, leading to more stable and reliable predictions on new data [26].

Frequently Asked Questions (FAQs)

What are the most effective ensemble methods to prevent overfitting in BCI research?

The most effective ensemble methods are those that introduce diversity among the base models [25].

- Bagging (Bootstrap Aggregating): Models like Random Forests are excellent for this. They train multiple decision trees on different bootstrap samples of the data and aggregate their predictions, reducing variance and overfitting [25].

- Boosting: Methods like AdaBoost train models sequentially, where each new model focuses on the errors of the previous ones. This can yield high accuracy but requires care to avoid overfitting the training data [28] [25].

- Random Subspace Method: This involves training multiple models on random subsets of the features (e.g., EEG channels or frequency bands). This is particularly effective for high-dimensional BCI data and has been shown to enhance performance in fNIRS-BCIs [24].

How can I validate that my model generalizes well and isn't just overfitting?

Robust validation techniques are crucial.

- Stratified K-Fold Cross-Validation: This technique ensures that each fold of the data is a representative microcosm of the whole dataset. It provides a more reliable estimate of model performance than a single train-test split [23].

- Subject-Independent Validation: The most rigorous test for a BCI model is to train it on data from one set of subjects and test it on a completely held-out set of subjects. This directly measures how well the model generalizes across individuals, which is a core challenge in BCI [21].

- Use a Validation Set for Early Stopping: When training iterative models (e.g., neural networks), monitor performance on a validation set and stop training when validation performance plateaus or starts to degrade, even if training performance continues to improve [23].

My dataset is small; how can I possibly build a generalizable model with high-dimensional data?

A small sample size with high dimensionality is a prime scenario for overfitting. Your strategy must focus on maximizing the utility of limited data.

- Dimensionality Reduction is Key: Aggressively apply feature selection and dimensionality reduction (e.g., PCA, LDA) to reduce the number of features before model training [22] [23].

- Use Strong Regularization: Prioritize models with built-in regularization, such as L1 and L2. These techniques explicitly penalize model complexity to prevent it from fitting the noise in your small dataset [22] [23].

- Opt for Simpler Models: Instead of complex, deep learning models, consider starting with simpler linear models (e.g., Linear SVM, LDA) that have less capacity to overfit. They can often perform better than overly complex models when data is scarce [23].

Are there specific techniques to handle subject-dependent variability in BCI data?

Yes, this is a central problem in practical BCI systems.

- Subject-Dependent Models: Train a separate model for each individual. This approach can yield higher accuracy (e.g., 46.6% average per-subject accuracy for inner speech) because the model fine-tunes to a specific subject's unique neural patterns [21].

- Subject-Independent Models: Train a single, generalized model on data from many subjects. While more challenging and often resulting in lower initial accuracy (e.g., 32% for inner speech), this is a more scalable and practical solution for real-world applications [21].

- Transfer Learning and Domain Adaptation: These are advanced techniques that aim to take a model trained on a source group of subjects and adapt it to a new, target subject with minimal calibration data.

Experimental Protocols & Data

Table 1: This table summarizes key quantitative results from recent BCI studies that employed ensemble and other methods to combat overfitting and improve generalization.

| Study / Model | BCI Paradigm | Key Method | Reported Accuracy | Generalization Context |

|---|---|---|---|---|

| BruteExtraTree [21] | Inner Speech (EEG) | Moderate stochasticity from ExtraTrees | 46.6% (avg per-subject) | Subject-Dependent |

| BruteExtraTree [21] | Inner Speech (EEG) | Moderate stochasticity from ExtraTrees | 32% | Subject-Independent |

| Subasi et al. [29] | Motor Imagery (EEG) | MSPCA, WPD & Ensemble Learning | 94.83% | Subject-Independent |

| Subasi et al. [29] | Motor Imagery (EEG) | MSPCA, WPD & Ensemble Learning | 98.69% | Subject-Dependent |

| Integrated MEG Framework [26] | Mental Imagery (MEG) | Channel Selection & Classifier Fusion | 12.25% improvement over base classifiers | N/A |

| Klosterman et al. [28] | Cognitive Workload (Hybrid BCI) | AdaBoost Ensemble (ANN, SVM, LDA) | Improved accuracy & reduced variance | Multi-day training paradigm |

Detailed Protocol: Implementing a Random Subspace Ensemble for fNIRS-BCI

This protocol is adapted from the research on random subspace ensemble learning for fNIRS-BCIs [24].

Objective: To improve the classification accuracy of a functional near-infrared spectroscopy (fNIRS) BCI task (e.g., mental arithmetic vs. idle state) by leveraging ensemble learning to mitigate overfitting.

Materials:

- Dataset: A preprocessed fNIRS dataset with trials from multiple subjects.

- Features: Extracted temporal features (e.g., mean, slope) of oxygenated and deoxygenated hemoglobin (Δ[HbO] and Δ[HbR]) from multiple channels and time windows.

- Software: Machine learning library (e.g., scikit-learn in Python).

Methodology:

- Feature Vector Construction: For each trial, create a high-dimensional feature vector by concatenating features like the mean (AVG) and slope (SLP) of Δ[HbO] and Δ[HbR] across all channels and time windows.

- Strong Learner Baseline:

- Train a single, sophisticated model (e.g., a Linear Support Vector Machine) using the entire, high-dimensional feature set.

- Evaluate its performance using cross-validation to establish a baseline accuracy.

- Random Subspace Ensemble Training:

- Choose a weak learner (e.g., Linear Discriminant Analysis).

- Define the number of weak learners in the ensemble (e.g., 100).

- For each weak learner:

- Randomly select a subset of features from the total feature pool (e.g., 50% of features).

- Train the weak learner on this random feature subset.

- Inference (Prediction):

- For a new test sample, each weak learner in the ensemble makes a prediction based on its own feature subset.

- The final prediction is determined by a majority vote (for classification) or averaging (for regression) of all weak learners' predictions.

- Validation: Compare the ensemble's cross-validated accuracy against the strong learner baseline. The random subspace ensemble is expected to yield higher and more robust generalization accuracy.

Visualizations

Diagram 1: From Noisy High-Dimensional Data to a Generalizable Model

Diagram 2: Random Subspace Ensemble Learning Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential computational and methodological "reagents" for developing robust BCI models.

| Tool / Technique | Function | Relevance to Preventing Overfitting |

|---|---|---|

| L1 (Lasso) & L2 (Ridge) Regularization | Adds a penalty to the model's loss function to shrink coefficients. | Prevents model complexity by penalizing large coefficients; L1 can perform feature selection [22] [23]. |

| Random Forest | An ensemble of decision trees trained on bootstrapped data and random feature subsets. | Reduces variance and overfitting through averaging and decorrelating trees [22] [25]. |

| Principal Component Analysis (PCA) | A linear dimensionality reduction technique that projects data into a lower-dimensional space. | Mitigates the curse of dimensionality by creating uncorrelated components that capture maximum variance [22]. |

| Independent Component Analysis (ICA) | A blind source separation method for separating multivariate signals into additive subcomponents. | Critically removes artifacts (e.g., eye blinks, muscle noise) from EEG/MEG signals, cleaning the data [21]. |

| Recursive Feature Elimination (RFE) | A wrapper method for feature selection that recursively removes the least important features. | Reduces the feature space by identifying and keeping the most salient features for the model [22]. |

| Stratified K-Fold Cross-Validation | A resampling procedure that splits data into 'k' folds while preserving the class distribution. | Provides a robust estimate of model performance and generalization error, guarding against over-optimism [23]. |

Frequently Asked Questions (FAQs)

1. What is the core theoretical principle that makes ensemble methods more robust? The core principle is the "wisdom of the crowd", where combining multiple models (base learners) reduces the overall error by ensuring that individual model errors cancel each other out. The total error of a model is composed of bias, variance, and irreducible error. Ensemble methods specifically target and reduce the variance component, which is a major cause of overfitting. By averaging multiple models, the ensemble smooths out extreme predictions, leading to better generalization on unseen data [12] [30].

2. How does the bias-variance tradeoff relate to ensemble robustness? The bias-variance tradeoff is a fundamental concept explaining ensemble robustness [30].

- Bias measures the average difference between a model's predictions and the true values. High bias causes underfitting.

- Variance measures how much a model's predictions change when trained on different data samples. High variance causes overfitting. Ensemble methods break this tradeoff by combining multiple models to lower the overall variance without necessarily increasing bias. For instance, bagging is particularly effective at reducing high variance [12] [30].

3. Why is diversity among base models critical for ensemble methods? Diversity is the most important factor for a successful ensemble. If all base models make the same errors, combining them will not improve performance. Statistically diverse models—those that make incorrect predictions on different data samples—ensure that their strengths compensate for others' weaknesses. This diversity can be achieved by using different algorithms, different training data subsets (via bootstrapping), or different features for each model [31].

4. How do different ensemble techniques (bagging, boosting, stacking) contribute to robustness? Each technique enhances robustness through a distinct mechanism:

- Bagging (Bootstrap Aggregating): Trains many models in parallel on different random subsets of the data (bootstrapped samples) and averages their predictions. This directly reduces variance and is highly effective for models like Decision Trees that are prone to overfitting [12] [32] [31].

- Boosting: Trains models sequentially, where each new model focuses on correcting the errors of its predecessors. This primarily reduces bias. To prevent overfitting and ensure robustness, modern boosting algorithms incorporate regularization, learning rate shrinkage, and early stopping [12].

- Stacking: Combines the predictions of diverse models using a meta-learner. The meta-model learns how to best weigh the predictions of the base models, effectively capturing a more complex and accurate mapping from the data than any single model could [12] [32].

5. Can ensemble methods handle noisy data and outliers common in real-world datasets? Yes, ensembles are particularly adept at handling noise [12]:

- Bagging averages predictions, which drowns out the impact of outliers.

- Boosting gradually assigns lower weight to noisy data points over successive iterations.

- Stacking leverages multiple models, ensuring no single model overly focuses on outlier-driven patterns.

Troubleshooting Guides

Problem 1: Your Ensemble Model is Overfitting

Potential Causes and Solutions:

Cause: Lack of Base Model Diversity

- Solution: Ensure your base learners are statistically diverse. Use different algorithms (e.g., SVM, Decision Trees, Logistic Regression) or train the same algorithm on different feature subsets or data samples. Avoid using models that all make the same types of errors [31].

Cause: Overly Complex Base Models in Bagging

- Solution: While bagging reduces variance, using base models that are too complex can still lead to overfitting. Apply techniques like max_depth restriction in Decision Trees or prune trees to control their complexity [12].

Cause: Boosting Iterated for Too Many Rounds

- Solution: Boosting can overfit if trained for too many sequential stages. Use early stopping by monitoring performance on a validation set and halting training when the validation error stops improving. Also, use a lower learning rate to make each model's contribution smaller and more conservative [12].

Problem 2: Your Ensemble Model is Underfitting

Potential Causes and Solutions:

Cause: Base Models are Too Weak (High Bias)

- Solution: In boosting, the sequential models need to be capable of learning from the errors. If the base models are too simple (e.g., stumps), they may not capture the necessary patterns. Slightly increase the complexity of the weak learners (e.g., allow deeper trees) [31].

Cause: Aggressive Regularization

- Solution: While regularization prevents overfitting, overly strong regularization parameters (like L1/L2 penalties) can cause underfitting. Systematically tune hyperparameters using cross-validation to find the right balance [12].

Problem 3: High Computational Cost and Long Training Times

Potential Causes and Solutions:

Cause: Ensemble Size is Too Large

- Solution: Using an excessive number of base models (e.g., thousands of trees) yields diminishing returns. Prune the ensemble by finding the smallest number of models that still delivers optimal performance. A study might find that 100 trees perform as well as 500 [12].

Cause: Use of Computationally Expensive Base Models

- Solution: Consider using a subset of features for training each model (like in Random Forest) to speed up individual model training. For stacking, you can use simpler models as the meta-learner [32].

The following table summarizes a typical experimental result demonstrating how ensemble methods improve robustness over a single model, using a synthetic regression dataset. The single Decision Tree shows a large gap between training and test accuracy, a classic sign of overfitting. The ensemble methods significantly close this gap, showing better generalization [33].

Table 1: Performance Comparison of Single Model vs. Ensemble Methods

| Model | Training Accuracy | Test Accuracy | Variance Reduction |

|---|---|---|---|

| Single Decision Tree | 0.96 | 0.75 | - |

| Random Forest (Bagging) | 0.96 | 0.85 | High |

| Gradient Boosting | 1.00 | 0.83 | Medium-High |

Experimental Protocols

Protocol 1: Implementing a Basic Stacking Ensemble This protocol outlines the steps to create a stacking ensemble, which combines multiple models via a meta-classifier [32].

- Split Data: Split the dataset into a training set and a hold-out test set.

- Create K-Folds: Split the training set into K folds (e.g., K=10).

- Train Base Models:

- For each base model (e.g., SVM, Decision Tree):

- Train the model on 9 folds of the training data.

- Use the model to make predictions on the remaining 1-fold (validation fold).

- Repeat this process so that every data point in the training set has a corresponding "out-of-fold" prediction from this model.

- Once done, fit each base model on the entire training set and use it to generate predictions for the test set.

- For each base model (e.g., SVM, Decision Tree):

- Build Meta-Features: The out-of-fold predictions from all base models are stacked together to form a new feature matrix (the meta-features) for the training set. The test set predictions form the meta-feature matrix for the test set.

- Train Meta-Model: A meta-classifier (e.g., Logistic Regression) is trained on the new meta-feature matrix derived from the training set.

- Final Prediction: The trained meta-model makes the final prediction using the meta-features from the test set.

Protocol 2: Preventing Overfitting in a Gradient Boosting Model This protocol details key methodologies to ensure a boosting ensemble remains robust and does not overfit [12].

- Apply a Learning Rate: Instead of allowing each new tree to fully correct the errors, use a small learning rate (e.g., 0.1) to shrink its contribution. This makes the learning process more conservative and robust.

- Implement Early Stopping:

- Split the training data into a training subset and a validation subset.

- Train the boosting model iteratively.

- After each iteration (or a set of iterations), evaluate the model's performance on the validation set.

- Stop the training process when the validation performance has not improved for a pre-defined number of rounds (e.g., 50 rounds).

- Use Regularization: Many boosting implementations (like XGBoost) have built-in L1 (Lasso) and L2 (Ridge) regularization parameters. Tune these parameters to penalize overly complex trees.

- Subsample Data and Features: For each boosting round, train the new tree on a random subset (e.g., 80%) of the training data and/or a random subset of the features. This introduces randomness that improves robustness.

Research Reagent Solutions

Table 2: Essential Software and Libraries for Ensemble Research

| Item / Library | Function / Application |

|---|---|

| Scikit-learn (Python) | Provides implementations for Bagging (BaggingClassifier/Regressor), Random Forests, AdaBoost, and Stacking, making it a versatile toolkit for classic ensemble methods [32]. |

| XGBoost (Python/R/Julia) | An optimized library for Gradient Boosting that includes regularization, handling missing values, and early stopping, essential for creating robust, high-performance boosted models [30]. |

| OHDSI PatientLevelPrediction (R) | An R package designed for building and evaluating prediction models, including ensembles, on standardized clinical data, facilitating reproducible research in healthcare [34]. |

| Random Forest | A specific bagging algorithm that trains decision trees on random subsets of data and features, introducing extra diversity to further decrease variance and overfitting [32] [34]. |

| AdaBoost | A pioneering boosting algorithm that works by increasing the weight of misclassified data points in each successive iteration, focusing the ensemble on harder-to-predict samples [32]. |

Methodological Visualizations

Ensemble Learning Workflow

How Bagging Reduces Variance

Implementing Adaptive Ensemble Learning for BCI Robustness

Active vs. Passive Adaptation Schemes for Non-Stationary Environments

Fundamental Concepts

What are active and passive adaptation schemes in the context of non-stationary BCIs?

In non-stationary Brain-Computer Interface environments, where electroencephalography (EEG) signal distributions change over time, two primary adaptation schemes are employed:

- Active Adaptation Schemes: These methods use a shift detection test to identify when significant changes (covariate shifts) occur in the streaming data. Adaptive actions, such as updating the classifier ensemble, are initiated only when a shift is confirmed [10].

- Passive Adaptation Schemes: These methods operate under the assumption that input data distributions shift continuously. Therefore, the system adapts to new data distributions continuously for every new incoming observation or batch of observations, without specifically detecting shifts [10].

The table below summarizes the core differences:

Table: Comparison of Active and Passive Adaptation Schemes

| Feature | Active Scheme | Passive Scheme |

|---|---|---|

| Adaptation Trigger | Detection of a statistically significant covariate shift [10] | Continuous; assumes data distribution is always shifting [10] |

| Computational Cost | Generally lower; updates occur only when necessary [10] | Generally higher; continuous model updates are required [10] |

| Implementation Example | Covariate Shift Estimation-based Unsupervised Adaptive Ensemble Learning (CSE-UAEL) [10] | Dynamically weighted ensemble classification (DWEC) or similar passive ensemble methods [10] |

| Advantage | More efficient; adds new classifiers to the ensemble only when a novel data distribution is detected [10] | Can be more responsive to very gradual, continuous changes without the need for a detection threshold [10] |

| Disadvantage | Relies on accurate shift detection; may lag if shifts are very sudden or subtle [10] | Higher risk of overfitting to noise and higher computational load due to constant updating [10] |

How do ensemble learning methods prevent overfitting in BCI research?

Ensemble methods combine multiple base models (learners) to create a single, more robust predictive model. They combat overfitting—where a model learns noise and specific patterns in the training data but fails to generalize to new data—through several mechanisms [33] [12]:

- Reducing Variance (Bagging): Algorithms like Random Forest train multiple models on different subsets of the data (bootstrapping) and aggregate their predictions (e.g., by averaging or majority vote). This process smooths out extreme predictions and prevents any single model from dominating, thereby stabilizing the output and reducing variance [33] [12].

- Reducing Bias (Boosting): Algorithms like AdaBoost or Gradient Boosting train models sequentially, with each new model focusing on correcting the errors of its predecessors. While powerful, boosting can be prone to overfitting. This is mitigated using techniques like a low learning rate to slow down the learning process, early stopping before the model starts to learn noise, and regularization to penalize model complexity [33] [12].

- Combining Strengths (Stacking): This method uses a meta-model to learn how to best combine the predictions from diverse base models (e.g., SVM, decision trees). By leveraging the unique strengths of each model and learning which model to trust for specific patterns, stacking creates a more balanced and generalized solution [12].

Troubleshooting Guides & FAQs

Performance Issues

Q: My BCI model achieves high accuracy on training data but performs poorly on unseen test data. What is the cause and how can I address it?

A: This is a classic symptom of overfitting. The model has likely become too complex and has memorized the training data, including its noise, rather than learning the underlying generalizable patterns [33].

Troubleshooting Steps:

- Implement Ensemble Methods: Transition from a single-classifier model to an ensemble method.

- To reduce variance, employ Bagging with a

Random Forestalgorithm [33] [12]. - If using Boosting, ensure you leverage its built-in regularization parameters. Tune the

learning_rate, useearly_stopping_roundsbased on a validation set, and apply L1/L2 regularization to prevent the sequential models from becoming overly complex [12].

- To reduce variance, employ Bagging with a

- Hyperparameter Tuning: Use cross-validation to find the optimal parameters for your ensemble model. Key parameters include tree depth (

max_depth), the number of base estimators (n_estimators), and the learning rate for boosting algorithms. Avoid using overly large ensembles to reduce unnecessary complexity [12]. - Validate with a Holdout Set: Always monitor your model's performance on a separate validation dataset that is not used during training. This provides the best indicator of generalization performance [12].

Q: The performance of my adaptive BCI system degrades significantly between recording sessions (inter-session) or even within a session (intra-session). Why does this happen?

A: This is primarily caused by the non-stationary nature of EEG signals, leading to covariate shift. This means the input data distribution (P_test(x)) during testing differs from the distribution during training (P_train(x)), while the conditional distribution (P(y|x)) remains the same. This can be due to changes in user attention, fatigue, electrode impedance, or environmental factors [10].

Troubleshooting Steps:

- Diagnose Covariate Shift: Implement a shift detection method, such as an Exponentially Weighted Moving Average (EWMA) model, to monitor the common spatial pattern (CSP) features of the EEG signals for significant distribution changes [10].

- Choose the Correct Adaptation Scheme:

- If shifts are abrupt and identifiable (e.g., after a break), an Active Adaptation Scheme is more efficient. Upon detecting a shift, you can add a new classifier trained on post-shift data to your ensemble [10].

- If the data drifts continuously and smoothly, a Passive Adaptation Scheme that continuously updates the model with new incoming data might be more appropriate, though computationally more expensive [10].

- Utilize Unsupervised Adaptive Ensemble Learning: In an online setting, you can use a method like CSE-UAEL. When a covariate shift is estimated, a new classifier is added to the ensemble. Transductive learning (e.g., using a Probabilistic Weighted K-Nearest Neighbour method) can be used to label new data without supervision for this new classifier [10].

Implementation & Technical Issues

Q: I am experiencing unusual noise patterns, such as nearly identical, high-amplitude waveforms across all EEG channels. What could be the source of this problem?

A: Widespread, identical noise on all channels typically points to an issue with a common component shared across all channels, most often the reference (SRB2) or ground electrodes [35].

Troubleshooting Steps:

- Check Electrode Connections: Verify that the reference and ground earclip electrodes are securely connected. For a Cyton board with a Daisy module, ensure the bottom

SRB2pins on both boards are ganged together using a Y-splitter cable, which should then connect to a single earclip. TheBIASpin on the Cyton should be connected to a second earclip [35]. - Test and Replace Electrodes: Swapping or replacing the earclip electrodes is a recommended first step. Also, ensure the electrodes are properly abraded and that electrolyte gel is used to maintain good skin contact and impedance below 2000 kOhms [35].

- Reduce Environmental Noise:

- Unplug your laptop from its power source.

- Use a fully charged battery for the BCI amplifier.

- Sit away from the computer monitor and other sources of electromagnetic interference (EMI) [35].

- Verify Hardware Settings: In the acquisition software's hardware settings, confirm that the

SRB2is set toONfor all channels [35].

Q: My data streaming is intermittent, with frequent packet loss warnings or "data streaming error" messages. How can I resolve this?

A: This is often related to USB connectivity, software latency, or environmental interference.

Troubleshooting Steps:

- Improve USB Connection: Do not plug the USB dongle directly into the computer's port. Instead, use a powered USB hub to connect the dongle. You can also try using a USB extension cord to move the dongle away from the computer to reduce EMI [35].

- Optimize Software Settings: Increase the

SampleBlockSizeparameter in your BCI software (e.g., BCI2000) to reduce the system update rate and associated processor load [36]. - Check Power Source: Ensure that the amplifier's battery is fully charged. A low battery can cause streaming failures [35].

- Close Background Applications: Resource-intensive applications like a web browser with multiple tabs (e.g., Google Chrome) can introduce delays and should be closed during experiments [35].

Experimental Protocols & Methodologies

Protocol: Covariate Shift Estimation and Adaptive Ensemble Learning (CSE-UAEL)

This protocol outlines the methodology for implementing an active adaptation scheme to handle non-stationarities in motor imagery EEG data [10].

Objective: To create a BCI system that actively detects distribution shifts in streaming EEG features and updates a classifier ensemble accordingly, thereby maintaining robust performance in a non-stationary environment.

Materials:

- EEG Acquisition System: A multi-channel EEG amplifier with electrodes placed according to the international 10-20 system.

- Signal Processing Software: MATLAB or Python with toolboxes (e.g., MNE, EEGLAB) for preprocessing and feature extraction.

- Computational Environment: A computer capable of running real-time signal processing and machine learning models (e.g., in Python with scikit-learn).

Workflow:

Methodology:

- Signal Acquisition & Preprocessing: Acquire EEG signals during motor imagery tasks (e.g., imagining left vs. right hand movement). Apply band-pass filtering (e.g., 8-30 Hz for Mu and Beta rhythms) and artifact removal (e.g., using Independent Component Analysis - ICA).

- Feature Extraction: Extract Common Spatial Pattern (CSP) features from the preprocessed EEG signals to maximize the variance between two classes of motor imagery.

- Initial Training: Train an initial ensemble of classifiers (e.g., Linear Discriminant Analysis - LDA) on the first batch of calibrated data.

- Shift Detection (CSE): For each new sample or batch of streaming data, estimate potential covariate shifts using an Exponentially Weighted Moving Average (EWMA) model on the extracted CSP features.

- Ensemble Update (UAEL): If a significant shift is detected:

- A new classifier is initialized and added to the existing ensemble.

- To train this new classifier without pre-labeled data, use transductive learning via a Probabilistic Weighted K-Nearest Neighbour (PWKNN) algorithm to label the new data based on its similarity to the existing training data.

- Classification: The final prediction is made by aggregating the outputs of all classifiers in the current ensemble.

Protocol: Inner Speech Classification with Stochasticity to Prevent Overfitting

This protocol describes an approach for classifying inner speech EEG signals, which are particularly challenging due to high noise and variability, using a novel ensemble-like method designed to combat overfitting [21].

Objective: To achieve high accuracy in classifying inner speech (e.g., words like "up," "down") from EEG data in both subject-dependent and subject-independent settings, while mitigating overfitting.

Materials:

- EEG Dataset: A publicly available inner speech dataset, such as the "Thinking out Loud" dataset [21].

- Preprocessing Tools: Software for applying band-pass filters, notch filters, and artifact removal (e.g., ICA).

- Machine Learning Environment: Python with scikit-learn for implementing the

BruteExtraTreeclassifier and other models.

Workflow:

Methodology:

- Data Preparation: Load the inner speech dataset, which typically contains multi-channel EEG recordings timed with the silent articulation of specific words.

- Preprocessing: Clean the data by applying a band-pass filter (e.g., 0.5-100 Hz) and a notch filter (e.g., 50/60 Hz) to remove line noise. Use ICA to remove ocular and muscular artifacts.

- Feature Extraction: Use advanced feature extraction techniques. Multi-wavelet analysis has been shown to be highly effective for capturing relevant information from inner speech signals [21].

- Model Training and Evaluation:

- For Subject-Dependent analysis (high accuracy goal): Train a model on data from a single subject. The proposed

BruteExtraTreeclassifier, which relies on moderate stochasticity inherited from the Extremely Randomized Trees algorithm, has been shown to achieve high per-subject accuracy (e.g., 46.6%) [21]. - For Subject-Independent analysis (better generalization): Train a model on data from multiple subjects to create a universal classifier. Deep learning models like ShallowFBCSPNet have shown promise in this more challenging scenario [21].

- For Subject-Dependent analysis (high accuracy goal): Train a model on data from a single subject. The proposed

- Overfitting Mitigation: The

BruteExtraTreeclassifier inherently combats overfitting by introducing high randomness in tree building. For other models, standard techniques like cross-validation, regularization, and early stopping should be employed.

The Scientist's Toolkit

Table: Essential Reagents and Tools for BCI Experimentation

| Item Name | Function / Application | Key Details / Rationale |

|---|---|---|

| CSP Feature Extraction | Spatial filtering to maximize variance between motor imagery classes [10]. | Foundational for effective MI-BCI; provides discriminative features that are monitored for covariate shifts. |

| EWMA Model | A statistical method for detecting covariate shifts in streaming data [10]. | Core component of active adaptation schemes; triggers ensemble updates when data distribution changes. |

| Probabilistic Weighted KNN (PWKNN) | A transductive learning algorithm for unsupervised labeling of new data [10]. | Enables model adaptation in real-time when no true labels are available for new data after a detected shift. |

| Random Forest | A bagging ensemble method to reduce variance and prevent overfitting [33] [12]. | A robust, out-of-the-box solution for creating a generalized model by averaging multiple decision trees. |

| Gradient Boosting (XGBoost) | A boosting ensemble method that sequentially corrects errors from previous models [33] [12]. | Effective for complex patterns; requires careful tuning of learning rate and use of early stopping to avoid overfitting. |

| BruteExtraTree Classifier | A highly stochastic tree-based model proposed for noisy inner speech classification [21]. | Relies on randomness to create diverse trees, reducing overfitting and improving generalization on subject-dependent data. |

| Multi-wavelet Analysis | A preprocessing and feature extraction technique for non-stationary signals like inner speech EEG [21]. | Captures time-frequency information effectively, leading to significantly higher classification accuracy. |

| Independent Component Analysis (ICA) | A blind source separation method for removing artifacts (e.g., eye blinks, muscle movement) from EEG [21]. | Critical for improving the signal-to-noise ratio before feature extraction and model training. |

Covariate Shift Estimation with EWMA for Dynamic Model Updates

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Excessive False Alarms in Shift Detection

Problem: My EWMA-based covariate shift detection system is generating too many false alarms, causing unnecessary model updates and resource consumption.

Diagnosis: Excessive false alarms typically occur when the EWMA control chart is overly sensitive to minor fluctuations in the input data stream that do not represent genuine distributional shifts [37].

Solution: Implement a two-stage shift-detection structure [37] [10]:

- First Stage: Use EWMA control chart in online mode to detect potential shift points

- Second Stage: Validate detected shifts using statistical hypothesis tests:

Verification: After implementation, monitor the false positive rate. A well-tuned system should maintain detection sensitivity while reducing false alarms by at least 30% compared to single-stage approaches [37].

Guide 2: Addressing Performance Degradation in Non-Stationary BCI Environments

Problem: My BCI classification accuracy deteriorates during extended sessions due to non-stationary EEG signals.

Diagnosis: Covariate shift in EEG feature distributions between training and operational phases is a common challenge in BCI systems [10]. This manifests as Ptrain(x) ≠ Ptest(x) while conditional distribution Ptrain(y|x) = Ptest(y|x) remains unchanged [10].

Solution: Deploy CSE-UAEL (Covariate Shift Estimation-based Unsupervised Adaptive Ensemble Learning) [10]:

- Use EWMA to detect covariate shifts in Common Spatial Pattern (CSP) features [10]

- Create and update classifier ensembles when shifts are detected

- Employ transductive learning with Probabilistic Weighted K-Nearest Neighbour (PWKNN) to enrich training data during evaluation [10]

Expected Outcome: This active adaptation approach has shown significant performance improvements over passive schemes in motor imagery BCI tasks [10].

Frequently Asked Questions

Q1: How do I select the appropriate smoothing factor (λ) for EWMA in BCI applications?

A: The optimal λ value depends on your specific BCI paradigm and data characteristics [10]:

- For motor imagery BCI with CSP features: λ between 0.05-0.2 has shown effectiveness [10]

- Start with λ=0.1 and adjust based on monitoring false alarm rates and detection delay [37]

- Higher λ (closer to 1) increases sensitivity to recent changes but may raise false alarms [37]

Q2: What are the computational requirements for implementing real-time EWMA shift detection?

A: EWMA is computationally efficient for real-time applications [37]:

- Memory requirements: Minimal (only needs to store previous EWMA value)

- Computational cost: Low (simple recursive calculation)

- Suitable for deployment in embedded BCI systems and online adaptive learning frameworks [37]

Q3: How does EWMA compare to other shift detection methods like CUSUM for BCI applications?

A: Comparative advantages of EWMA include [37]:

- Superior performance in detecting small to moderate shifts

- Reduced time delay in detection compared to CUSUM and ICI methods

- Better handling of non-stationary time-series characteristics common in EEG

- Fewer false alarms when combined with two-stage validation [37]

Experimental Protocols & Methodologies

Protocol 1: EWMA-based Covariate Shift Detection in EEG Signals

Purpose: Detect distribution shifts in motor imagery BCI features to trigger model updates [10].

Materials:

- Multichannel EEG recording system

- Preprocessed EEG signals with extracted features (e.g., CSP features)

- Computing platform with mathematical computing software (MATLAB, Python)

Procedure:

- Feature Extraction: Calculate CSP features from preprocessed EEG signals [10]

- EWMA Initialization:

- Set initial EWMA value (EWMA₀) to mean of initial training data

- Select smoothing factor λ (typically 0.05-0.2 for EEG applications) [10]

- Online Monitoring:

- For each new observation xt at time t:

- Calculate EWMAₜ = λ × xₜ + (1 - λ) × EWMAₜ₋₁ [37]

- Monitor when EWMA values exceed control limits based on training distribution

- For each new observation xt at time t:

- Shift Validation:

- When potential shift detected, collect subsequent samples

- Apply statistical validation test (K-S test for univariate, Hotelling T-Squared for multivariate) [37]

- Model Update:

- If shift validated, trigger ensemble classifier update

- Add new classifier trained on post-shift data to ensemble [10]

Protocol 2: Adaptive Ensemble Learning with CSE-UAEL

Purpose: Maintain BCI classification performance under non-stationary conditions through active ensemble adaptation [10].

Procedure:

- Initial Ensemble Creation: Train multiple base classifiers on initial training data