Enhancing EEG Analysis: A Comprehensive Guide to SVM for Artifact Detection in Biomedical Research

This article provides a comprehensive overview of Support Vector Machine (SVM) applications for Electroencephalography (EEG) artifact detection, specifically tailored for researchers and professionals in drug development and biomedical fields.

Enhancing EEG Analysis: A Comprehensive Guide to SVM for Artifact Detection in Biomedical Research

Abstract

This article provides a comprehensive overview of Support Vector Machine (SVM) applications for Electroencephalography (EEG) artifact detection, specifically tailored for researchers and professionals in drug development and biomedical fields. We explore the foundational principles of EEG artifacts and SVM mechanics, detail methodological pipelines for implementation across various research contexts, address critical troubleshooting and optimization challenges specific to biomedical data, and present rigorous validation frameworks for performance comparison. By synthesizing current research and practical considerations, this guide aims to equip researchers with the knowledge to implement robust EEG artifact detection systems, thereby enhancing data quality for subsequent analysis in clinical trials, neuropharmacology studies, and therapeutic development.

Understanding the Landscape: EEG Artifacts and SVM Fundamentals for Biomedical Research

Electroencephalography (EEG) is a fundamental tool in neuroscience research and clinical diagnostics, prized for its high temporal resolution, non-invasiveness, and portability [1]. However, its utility is compromised by the persistent challenge of artifacts—any recorded signals that do not originate from cerebral neural activity [2]. These artifacts can significantly distort the EEG signal, leading to inaccurate data interpretation and potentially flawed scientific conclusions or clinical misdiagnoses [2]. In the specific context of developing Support Vector Machine (SVM) models for automated artifact detection, a precise understanding of these contaminating sources is the critical first step in feature selection, model training, and validation. Artifacts are broadly categorized into physiological artifacts, which originate from the subject's own body, and non-physiological artifacts, which arise from external technical or environmental sources [2]. This document provides a detailed overview of these artifacts, supported by quantitative data and experimental protocols, to inform robust SVM-based detection research.

Classification and Characterization of EEG Artifacts

The following sections delineate the primary artifact types, their origins, and their distinct signatures in both time and frequency domains, which are essential for designing feature extraction pipelines for SVM classifiers.

Physiological Artifacts

Physiological artifacts are electrical signals generated by the body's own activities. Their amplitude is often significantly larger than that of genuine brain activity, which is typically in the microvolt range [2].

Table 1: Characteristics of Common Physiological Artifacts

| Artifact Type | Biological Origin | Time-Domain Signature | Frequency-Domain Signature | Topographic Distribution |

|---|---|---|---|---|

| Ocular (EOG) | Corneo-retinal potential dipole; eye blinks and movements [2] | Slow, high-amplitude deflections (up to 100-200 µV) [2] | Dominant in delta (0.5-4 Hz) and theta (4-8 Hz) bands [2] | Primarily frontal electrodes (Fp1, Fp2, F7, F8) |

| Muscle (EMG) | Muscle fiber contractions (e.g., jaw, neck, face) [2] | High-frequency, low-amplitude, non-stationary noise [2] | Broadband, dominating beta (13-30 Hz) and gamma (>30 Hz) bands [2] | Widespread, but focused near temporal muscles |

| Cardiac (ECG) | Electrical activity of the heart [3] | Rhythmic, periodic QRS-like complexes [3] | Overlaps multiple EEG frequency bands [2] | Most prominent on left-side head electrodes |

| Pulse | Pulsation of scalp arteries beneath electrodes [3] | Slow, rhythmic baseline oscillations [3] | Very low frequency (< 2 Hz) | Localized to electrodes overlying blood vessels |

| Sweat | Changes in electrode-skin impedance from sweat gland activity [2] | Very slow baseline drifts [2] | Contaminates delta and theta bands [2] | Generalized, often affecting multiple electrodes |

| Respiration | Chest and head movement during breathing [2] | Slow, rhythmic waveforms synchronized with breath rate [2] | Very low frequency (e.g., 0.2-0.33 Hz for 12-20 breaths/min) [2] | Variable, often frontal or central |

Non-Physiological (Technical) Artifacts

Non-physiological artifacts stem from the recording environment, instrumentation, and equipment, and are unrelated to the subject's physiology.

Table 2: Characteristics of Common Non-Physiological Artifacts

| Artifact Type | External Origin | Time-Domain Signature | Frequency-Domain Signature | Topographic Distribution |

|---|---|---|---|---|

| Electrode Pop | Sudden change in electrode-skin impedance [2] | Abrupt, high-amplitude transient, often isolated to one channel [2] | Broadband, non-stationary noise [2] | Localized to a single faulty electrode |

| Cable Movement | Physical disturbance of electrode cables [2] | Sudden deflections or rhythmic drift if movement is periodic [2] | Can produce artificial peaks at low/mid frequencies [2] | Often affects a single channel or group of channels |

| AC Powerline | Electromagnetic interference from mains power (50/60 Hz) [2] | Persistent, high-frequency sinusoidal oscillation [2] | Sharp, narrow peak at 50 Hz or 60 Hz [2] | Global across all channels |

| Incorrect Reference | Poor contact or high impedance at the reference electrode site [2] | Abrupt, large shifts across all channels simultaneously [2] | Abnormally high power across all frequencies [2] | Global, affecting all channels |

Experimental Protocols for Artifact Analysis

To train and validate SVM models for artifact detection, standardized protocols for data acquisition and processing are paramount. The following protocols are synthesized from recent literature.

Protocol 1: Generation of a Semi-Synthetic Benchmark Dataset

This protocol is designed to create a ground-truthed dataset for supervised learning of artifact detection algorithms, such as those based on SVM [1] [4].

- Objective: To generate an EEG dataset with known artifacts, enabling precise training and quantitative performance validation of artifact detection and removal algorithms.

- Materials and Reagents:

- Clean EEG Data: Source from public repositories like EEGdenoiseNet [1] or the TUH EEG Corpus [5]. Ensure data is high-quality with minimal inherent artifacts.

- Artifact Signals: Obtain pure EOG and EMG recordings from dedicated experiments or databases (e.g., EEGdenoiseNet). For cardiac artifacts, use ECG from the MIT-BIH Arrhythmia Database [1].

- Procedure:

- Data Preprocessing: Bandpass filter all clean EEG and artifact signals to a standard range (e.g., 1-40 Hz) and resample to a uniform sampling rate (e.g., 250 Hz).

- Linear Mixing: Create semi-synthetic contaminated EEG signals by linearly mixing clean EEG with artifact signals:

EEG_contaminated = EEG_clean + α * Artifact, whereαis a scaling factor used to simulate varying levels of contamination [1]. - Dataset Splitting: Partition the dataset into training, validation, and testing sets, ensuring no data from the same subject or recording session appears in different splits.

- Performance Metrics: Quantify artifact removal performance using Signal-to-Noise Ratio (SNR), Average Correlation Coefficient (CC), and Relative Root Mean Square Error in temporal and frequency domains (RRMSEt, RRMSEf) [1].

Protocol 2: Rule-Based and Deep Learning Feature Extraction for SVM

This protocol outlines a hybrid approach where features are extracted for SVM classification using both established signal processing principles and insights from deep learning models.

- Objective: To extract discriminative features from EEG windows for SVM training to classify artifact types.

- Materials: Pre-processed EEG data (raw or semi-synthetic), segmented into epochs (e.g., 1s to 30s windows) [5].

- Procedure:

- Data Segmentation: Segment continuous EEG into non-overlapping windows. Note that optimal window length is artifact-dependent (e.g., 1s for non-physiological, 5s for muscle, 20s for eye artifacts) [5].

- Feature Extraction:

- Rule-Based Features: Calculate statistical (mean, variance, kurtosis), spectral (band power in delta, theta, alpha, beta, gamma), and nonlinear (entropy, Hjorth parameters) features for each channel and epoch [6].

- Deep Learning Features: Use a pre-trained lightweight Convolutional Neural Network (CNN) to automatically extract features from each epoch. The CNN's penultimate layer can serve as a high-level feature vector for the SVM [5].

- Feature Reduction: Apply a feature reduction matrix based on metaheuristic optimization algorithms, such as the Grey Wolf Optimizer (GWO), to select the most discriminative features and reduce computational complexity [6].

- Model Training: Train a hybrid SVM-Fuzzy system (or a standard SVM) on the reduced feature set. Optimization algorithms like the Goose Optimization Algorithm (GOA) can be employed to fine-tune the classifier's hyperparameters [6].

Protocol 3: Targeted Artifact Reduction for ERP Analysis

This protocol, based on the RELAX pipeline, focuses on minimizing the introduction of false positives in Event-Related Potential (ERP) studies, a critical consideration when validating the output of an SVM artifact detector [7].

- Objective: To clean artifacts from EEG data while preserving neural signals and avoiding the artificial inflation of ERP effect sizes.

- Materials: Continuous EEG data with event markers.

- Procedure:

- Initial Preprocessing: Perform standard filtering and Independent Component Analysis (ICA) to decompose the data.

- Targeted Cleaning:

- For eye movement components, instead of subtracting the entire component, only subtract the activity during identified periods of eye movement [7].

- For muscle components, apply spectral filtering to remove only the high-frequency power above a certain threshold (e.g., >20 Hz) from the component, leaving the lower frequencies intact [7].

- Reconstruction: Reconstruct the data in the channel space using the modified components.

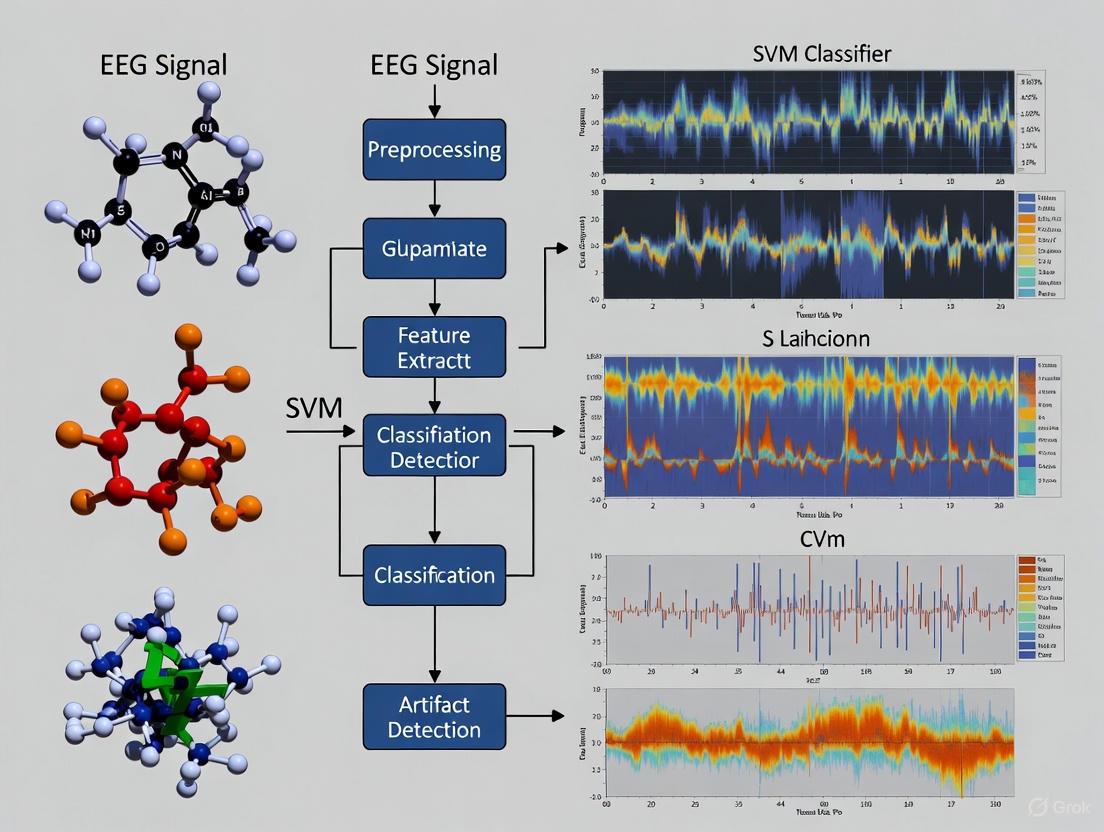

Workflow Visualization for SVM-Based Artifact Detection

The following diagram illustrates a proposed integrated workflow for SVM-based artifact detection and removal, incorporating elements from the experimental protocols.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational tools, datasets, and algorithms that form the "research reagents" for developing EEG artifact detection systems.

Table 3: Essential Resources for EEG Artifact Research

| Resource Name | Type | Function/Benefit | Example Use Case |

|---|---|---|---|

| EEGdenoiseNet [1] | Benchmark Dataset | Provides clean EEG and recorded EOG/EMG for creating semi-synthetic data. | Training and benchmarking supervised models like SVM. |

| TUH EEG Artifact Corpus [5] | Clinical EEG Dataset | Large, real-world dataset with expert-annotated artifact labels. | Testing model generalizability to clinical data. |

| RELAX (EEGLAB Plugin) [7] | Software Pipeline | Implements targeted artifact reduction to minimize false positives in ERPs. | Post-detection cleaning to preserve neural signals. |

| Grey Wolf Optimizer (GWO) [6] | Metaheuristic Algorithm | Reduces feature dimensionality, lowering computational cost for SVM. | Optimizing feature selection from a large extracted set. |

| Goose Optimization Algorithm [6] | Metaheuristic Algorithm | Optimizes the parameters of a hybrid SVM-Fuzzy classifier. | Fine-tuning classifier hyperparameters for high accuracy. |

| Lightweight CNN [5] | Deep Learning Model | Acts as a feature extractor; provides discriminative inputs for SVM. | Transfer learning for feature extraction from EEG epochs. |

| Independent Component Analysis (ICA) [2] [7] | Blind Source Separation | Decomposes EEG into independent components for analysis. | Identifying artifact-laden components for removal. |

Support Vector Machines (SVMs) represent a cornerstone of supervised machine learning models with particular significance for electroencephalography (EEG) analysis, including the critical task of artifact detection. Developed at AT&T Bell Laboratories and based on statistical learning frameworks of VC theory proposed by Vapnik and Chervonenkis, SVMs are max-margin models designed for classification and regression analysis [8]. In the context of EEG research, where distinguishing neural signals from artifacts remains challenging, SVMs provide distinct advantages due to their resilience to noisy data and strong generalization performance with limited samples [9] [8].

The application of SVM-based frameworks in EEG artifact detection has gained substantial research interest, particularly as wearable EEG technologies introduce new challenges with artifacts exhibiting specific features due to dry electrodes, reduced scalp coverage, and subject mobility [10]. Artifacts—unwanted signals originating from non-neural sources—can significantly compromise EEG interpretation and lead to clinical misdiagnosis if not properly identified [2]. SVM's capacity to handle high-dimensional, nonlinear data makes it particularly suited for differentiating subtle artifact patterns from genuine brain activity in complex EEG recordings [9] [11].

Core Theoretical Principles

Linear Separation and Maximum Margin Classification

The fundamental principle underlying SVM is the concept of optimal linear separation in a high-dimensional feature space. Given a training dataset of n points of the form {(x₁, y₁), ..., (xₙ, yₙ)}, where xᵢ represents the feature vectors and yᵢ ∈ {-1, 1} denotes class labels, SVM constructs a hyperplane that separates the two classes with maximum margin [8]. For EEG artifact detection, these two classes typically represent "clean EEG" versus "artifact-contaminated EEG," though multi-class extensions exist for identifying specific artifact types.

The optimal separating hyperplane satisfies the condition yᵢ(wᵀxᵢ - b) ≥ 1 for all i, where w is the weight vector normal to the hyperplane and b is the bias term. The margin width between classes is given by 2/‖w‖, which SVM maximizes while ensuring correct classification [8]. This maximum margin principle enhances the model's generalization capability—a critical advantage for EEG applications where data non-stationarity is common.

Table 1: Key Mathematical Components of Linear SVM

| Component | Mathematical Expression | Role in EEG Artifact Detection |

|---|---|---|

| Hyperplane | wᵀx - b = 0 | Decision boundary for artifact vs. clean EEG |

| Margin Width | 2/‖w‖ | Buffer zone maximizing robustness to EEG variability |

| Constraint | yᵢ(wᵀxᵢ - b) ≥ 1 | Ensures correct classification of training examples |

| Optimization | min┬(w,b) ½‖w‖² | Finds optimal separation with minimal misclassification |

Soft Margin and Hinge Loss for Noisy EEG Data

In practical EEG applications, perfect linear separation is often impossible due to noise and overlapping class distributions. The soft-margin formulation addresses this through the introduction of slack variables (ξᵢ) and a regularization parameter (C) [8]. The optimization problem becomes:

min┬(w,b,ξ) ½‖w‖² + C(∑ᵢξᵢ) subject to yᵢ(wᵀxᵢ - b) ≥ 1 - ξᵢ and ξᵢ ≥ 0

The hinge loss function, defined as max(0, 1 - yᵢ(wᵀxᵢ - b)), quantifies the misclassification error [8]. The parameter C controls the trade-off between maximizing the margin and minimizing classification errors—a crucial consideration for EEG artifact detection where some artifacts may share characteristics with neural signals.

Kernel Methods for Nonlinear EEG Pattern Recognition

The kernel trick represents SVM's most powerful capability for handling nonlinear patterns in EEG data. By mapping input features to a higher-dimensional space without explicit transformation, SVMs can construct nonlinear decision boundaries [8]. For a kernel function K(xᵢ, xⱼ) = φ(xᵢ)ᵀφ(xⱼ), the dual optimization problem becomes:

max┬(α) ∑ᵢαᵢ - ½∑ᵢ∑ⱼαᵢαⱼyᵢyⱼK(xᵢ, xⱼ) subject to 0 ≤ αᵢ ≤ C and ∑ᵢαᵢyᵢ = 0

Table 2: Common Kernel Functions in EEG Artifact Detection

| Kernel Type | Mathematical Form | Advantages for EEG Analysis |

|---|---|---|

| Linear | K(xᵢ, xⱼ) = xᵢᵀxⱼ | Interpretable, works well with high-dimensional features |

| Polynomial | K(xᵢ, xⱼ) = (γxᵢᵀxⱼ + r)ᵈ | Captures complex feature interactions in multi-channel EEG |

| Radial Basis Function (RBF) | K(xᵢ, xⱼ) = exp(-γ‖xᵢ - xⱼ‖²) | Handles nonlinear patterns common in artifact morphology |

| Multiple Kernel Learning | K(xᵢ, xⱼ) = ∑ₘdₘKₘ(xᵢ, xⱼ) | Combines heterogeneous EEG features optimally [9] |

Multiple Kernel Learning (MKL) represents an advanced approach where the kernel is defined as a linear combination of base kernels (e.g., polynomial and RBF), with weights dₘ optimized during training [9]. This approach has demonstrated promising results in EEG classification, achieving accuracies up to 99.20% for 2-class mental task discrimination [9].

SVM Applications in EEG Analysis and Artifact Detection

Performance in EEG Classification Tasks

SVMs have demonstrated exceptional performance across diverse EEG classification tasks. In mental task classification, MKL-SVM has achieved average accuracies of 99.20%, 81.25%, 76.76%, and 75.25% for 2-class, 3-class, 4-class, and 5-class classifications respectively [9]. For epilepsy detection, hybrid SVM models combining kernel sparse representation have reached over 99% accuracy in binary classification tasks, with certain applications attaining 100% accuracy [11].

In comparative studies, SVMs generally outperform other classifiers like Linear Discriminant Analysis (LDA) and Neural Networks (NN) for EEG-based Brain-Computer Interfaces (BCIs), particularly for solving problems with high dimensionality, nonlinearity, and small datasets [9] [12]. This superior performance stems from SVM's structural risk minimization principle, which contrasts with the empirical risk minimization approach of neural networks [9].

Artifact Detection and Correction Protocols

The impact of artifact correction on SVM decoding performance has been systematically evaluated across multiple experimental paradigms. Research demonstrates that the combination of artifact correction and rejection does not significantly enhance decoding performance in most cases, though artifact correction remains essential to minimize artifact-related confounds that might artificially inflate decoding accuracy [13].

Independent Component Analysis (ICA) has emerged as a preferred method for ocular artifact correction prior to SVM classification, while artifact rejection effectively discards trials with large voltage deflections from other sources such as muscle artifacts [13]. This protocol balance is particularly important for maintaining sufficient trial counts for SVM training while minimizing artifact contamination.

Advanced SVM Architectures for EEG Analysis

Recent research has explored hybrid architectures integrating SVMs with other methodologies to enhance EEG analysis:

SVM-KSRC: A hybrid approach connecting SVM with Kernel Sparse Representation Classification using support vectors, demonstrating superior performance in epilepsy detection compared to either method used separately [11].

SVM-enhanced Attention Mechanisms: Integration of SVM's margin maximization objective directly into self-attention computation to improve interclass separability in motor imagery EEG classification [14].

Adaptive SVM (A-SVM): Online recursive updating of classifier parameters to address EEG non-stationarity, enabling the model to track changing feature distributions during prolonged recordings [12].

ORICA-CSP with A-SVM: Combination of Online Recursive Independent Component Analysis with Common Spatial Patterns and Adaptive SVM for robust feature extraction in motor imagery tasks [12].

Experimental Protocols and Methodologies

Standardized Protocol for EEG Artifact Detection Using SVM

Objective: To detect and classify artifacts in EEG signals using Support Vector Machines while preserving neural signals of interest.

Materials and Equipment:

- EEG recording system (wet or dry electrodes)

- Reference electrodes for EOG/EMG monitoring

- Preprocessing software (EEGLAB, FieldTrip, or custom tools)

- Computing environment with SVM implementation (Python scikit-learn, LIBSVM)

Procedure:

- Data Acquisition and Preprocessing

- Record EEG using standard montages (10-20 system or wearable configurations)

- Apply bandpass filtering (0.5-45 Hz) to remove extreme frequency artifacts

- Segment data into epochs relevant to experimental paradigm

- Implement average or reference electrode re-referencing

- Artifact Correction Phase

- Perform Independent Component Analysis (ICA) to separate neural and artifactual components

- Identify and remove components corresponding to ocular artifacts

- Apply correction to all channels while preserving neural activity [13]

- Feature Extraction

- Extract temporal features: variance, mean, amplitude, Hjorth parameters

- Calculate frequency-domain features: band power (delta, theta, alpha, beta, gamma)

- Compute complexity measures: entropy, fractal dimension, wavelet coefficients

- For multi-channel EEG: derive spatial features using CSP or Laplacian filters

- SVM Training and Validation

- Partition data into training (70%), validation (15%), and test (15%) sets

- Select appropriate kernel function based on data characteristics

- Optimize hyperparameters (C, γ) using grid search with cross-validation

- Train SVM model on artifact-labeled training data

- Evaluate performance on held-out test set using accuracy, sensitivity, specificity

- Implementation Considerations

- For wearable EEG with limited channels (<16), prioritize feature selection to avoid dimensionality issues [10]

- For real-time applications, implement adaptive SVM with periodic model updates [12]

- For multi-class artifact identification, employ one-vs-one or one-vs-rest strategies

Protocol for Multi-class Mental Task Classification Using MKL-SVM

Objective: To classify multiple cognitive states from EEG signals using Multiple Kernel Learning SVM [9].

Procedure:

- EEG Preprocessing

- Apply artifact correction using ICA

- Bandpass filter to 0.5-40 Hz range

- Segment data into task-specific epochs

- Multi-domain Feature Extraction

- Compute Wavelet Packet Entropy (WPE) for time-frequency analysis

- Calculate Granger causality for effective connectivity between channels

- Extract power spectral density across standard frequency bands

- Multiple Kernel Configuration

- Define base kernels: polynomial (degree 2-3) and RBF (varying γ)

- Initialize kernel weights uniformly: dₘ = 1/M for m = 1,...,M

- Implement gradient descent optimization to learn optimal kernel weights

- Model Training and Evaluation

- Employ K-fold cross-validation (typically K=10)

- Use SimpleMKL algorithm for efficient optimization [9]

- Evaluate using classification accuracy, F1-score, and confusion matrix analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for SVM-Based EEG Artifact Detection Research

| Resource Category | Specific Examples | Research Application |

|---|---|---|

| EEG Datasets | BCI Competition IV (Dataset 2a, 2b), University of Bonn Epilepsy Dataset, Physionet EEG Motor Movement/Imagery Dataset | Benchmarking SVM performance across different artifact types and EEG paradigms [11] [15] |

| Artifact Processing Tools | Independent Component Analysis (ICA), Automatic Subspace Reconstruction (ASR), Wavelet Transform Denoising | Preprocessing to isolate artifacts and enhance signal quality before SVM classification [13] [10] |

| Feature Extraction Algorithms | Common Spatial Patterns (CSP), Wavelet Packet Entropy (WPE), Granger Causality, Empirical Mode Decomposition (EMD) | Generating discriminative features for SVM classification of artifacts vs. neural signals [9] [12] [15] |

| SVM Implementations | LIBSVM, scikit-learn SVC, SimpleMKL, Adaptive SVM (A-SVM) | Core classification algorithms with varying kernel options and adaptation capabilities [9] [12] |

| Performance Metrics | Accuracy, Sensitivity, Specificity, F1-Score, Area Under ROC Curve (AUC) | Quantifying artifact detection performance and model comparison [10] [11] |

| Validation Methodologies | Leave-One-Subject-Out (LOSO) Cross-Validation, K-fold Cross-Validation, Hold-out Validation | Ensuring robust generalizability of SVM models across subjects and sessions [14] [15] |

Support Vector Machines provide a powerful framework for EEG artifact detection, offering robust performance through their maximum margin principle and kernel-based nonlinear mapping capabilities. From fundamental linear separation to advanced multiple kernel learning approaches, SVMs continue to evolve with hybrid architectures that address the unique challenges of EEG analysis. As wearable EEG technologies advance and artifact management grows more complex, SVM-based methodologies remain essential tools for researchers seeking to extract meaningful neural information from contaminated signals. The continued integration of SVMs with adaptive learning, deep learning architectures, and multimodal signal processing promises to further enhance their utility in both clinical and research applications.

Why SVM for EEG? Advantages in Handling Non-Stationary and High-Dimensional Neural Data

Electroencephalogram (EEG) signals represent one of the most complex biological datasets, characterized by inherent non-stationarity, high dimensionality, and low signal-to-noise ratio. These characteristics pose significant challenges for pattern recognition algorithms in brain-computer interfaces (BCIs), neurological disorder diagnosis, and cognitive state monitoring. Among various machine learning approaches, Support Vector Machines (SVM) have demonstrated consistent effectiveness across diverse EEG applications, from motor imagery classification to epileptic seizure detection and neurological disease diagnosis. The theoretical foundation of SVM, based on statistical learning theory and structural risk minimization, provides distinct advantages for EEG data analysis compared to other classification approaches [16] [17] [18].

Performance Comparison: SVM vs. Other Classification Methods

Table 1: Comparative Performance of SVM and Other Classifiers on EEG Tasks

| EEG Application | SVM Performance | Alternative Methods | Comparative Performance |

|---|---|---|---|

| Motor Imagery Classification | 91% accuracy [19] | Random Forest: 91% [19] | Equivalent top performance |

| Epileptic Seizure Detection | 99% accuracy [20] | Naïve Bayes: 96.47% [20] | SVM superior by ~2.5% |

| Epileptic Seizure Recognition | 99.42% accuracy (CNN-SVM-PCA) [20] | DNN alone: 96.91% [20] | Hybrid SVM superior by ~2.5% |

| Ictal EEG Detection | 97% sensitivity, 96.25% specificity [18] | EMD with other classifiers | State-of-the-art performance |

| Alzheimer's Detection | ~3% improvement with SVM classifier [21] | Deep learning models alone | Consistent performance enhancement |

| Artifact Correction | No significant improvement from artifact rejection [13] | Multiple artifact handling methods | Robust to artifact correction |

Table 2: SVM Performance Across EEG Datasets and Conditions

| Dataset | SVM Variant | Feature Extraction | Performance Metrics |

|---|---|---|---|

| BCI Competition III [16] | PSO-optimized SVM | Common Spatial Patterns | Significant improvement in classification accuracy |

| Bonn EEG Dataset [18] | Standard SVM | Empirical Mode Decomposition | 98% sensitivity, 99.4% specificity |

| Epileptic Seizure Recognition [20] | CNN-SVM-PCA | Deep Learning + PCA | 99.42% accuracy |

| BONN Dataset [20] | CNN-SVM-PCA | Deep Learning + PCA | 99.96% accuracy |

| Clinical EEG Data [17] | Universum SVM | Wavelet Transform | 99% classification accuracy |

Theoretical Advantages of SVM for EEG Analysis

Handling High-Dimensional Data

EEG data typically involves recordings from multiple channels (often 32-256) across time samples, creating feature spaces with extremely high dimensionality. SVM excels in such environments through kernel tricks that map data to higher-dimensional spaces where linear separation becomes feasible without explicitly computing the coordinates in that space [16] [17]. This capability allows SVM to handle the complex interactions between EEG channels and time points effectively.

Managing Limited Training Samples

Unlike deep learning approaches that typically require large datasets, SVM performs effectively with relatively small training samples, which is particularly valuable in EEG research where data collection is often constrained by subject availability, fatigue, and experimental practicality [16] [22]. The structural risk minimization principle implemented in SVM seeks to minimize the upper bound of generalization error rather than just training error, enhancing performance on limited datasets [17].

Robustness to Non-Stationarity

EEG signals are inherently non-stationary, with statistical properties that change over time due to brain state transitions, artifacts, and other factors. SVM's margin maximization provides inherent robustness to certain types of variability and noise, as small perturbations in the input space typically do not significantly affect the optimal hyperplane [14] [13].

Global Optimization Solution

The convex optimization formulation of SVM guarantees a global optimum, avoiding the local minima problems that plague neural network approaches. This reliability is particularly valuable in clinical and research applications where consistent performance is essential [17].

Experimental Protocols for EEG Classification with SVM

Standard Protocol for Motor Imagery EEG Classification

Protocol Details:

Data Acquisition and Preprocessing

- Collect EEG signals using standard international 10-20 electrode placement system

- Apply band-pass filtering between 0.5-40 Hz to remove high-frequency noise and DC drift

- Perform artifact removal using Independent Component Analysis (ICA) or regression methods

- Segment data into epochs time-locked to motor imagery cues

Feature Extraction using Common Spatial Patterns (CSP)

- Calculate spatial covariance matrices for different motor imagery classes

- Perform simultaneous diagonalization of covariance matrices

- Select spatial filters that maximize variance for one class while minimizing for another

- Extract features as log-variance of CSP-filtered signals [16]

SVM Model Training and Optimization

- Utilize RBF kernel for nonlinear classification boundaries

- Optimize kernel parameters (γ) and regularization (C) using grid search or PSO

- Implement cross-validation strategies appropriate for EEG (e.g., leave-one-subject-out)

- Train final model on optimized parameters

Advanced Protocol: SVM-Enhanced Deep Learning for EEG

Protocol Details:

Hybrid Architecture Integration

- Implement CNN layers for spatial feature extraction from multichannel EEG

- Utilize LSTM or BiLSTM networks for temporal dynamics modeling

- Integrate SVM principles directly into attention mechanisms to enhance class separability

- Replace traditional softmax output with SVM-based margin classification [14]

SVM-Enhanced Attention Mechanism

- Embed margin maximization objective directly into self-attention computation

- Transform standard attention to maximize separation between class centroids

- Apply regularization that enforces large margin between different motor imagery classes

- This approach has demonstrated consistent improvements in accuracy, F1-score, and sensitivity compared to conventional attention mechanisms [14]

Implementation Considerations

- Use differentiable quadratic programming solutions for SVM integration

- Implement custom loss functions combining cross-entropy and margin terms

- Balance contributions of deep learning and SVM components through weighted losses

Research Reagent Solutions: Essential Tools for EEG-SVM Research

Table 3: Key Computational Tools and Algorithms for EEG-SVM Research

| Research Tool | Function | Application Context |

|---|---|---|

| Common Spatial Patterns (CSP) | Spatial filter optimization | Motor imagery feature extraction [16] |

| Regularized CSP (RCSP) | Improved covariance estimation | Small sample size scenarios [16] |

| Empirical Mode Decomposition (EMD) | Non-stationary signal decomposition | Epileptic seizure detection [18] |

| Particle Swarm Optimization (PSO) | Hyperparameter optimization | SVM kernel and parameter selection [16] |

| Universum SVM | Incorporation of prior knowledge | Seizure classification with interictal data [17] |

| Wavelet Transform | Time-frequency analysis | Feature extraction for neurological disorders [17] |

| Independent Component Analysis (ICA) | Artifact removal and source separation | EEG preprocessing [13] [4] |

| Riemannian Geometry | Manifold-based classification | Advanced feature space transformation [19] |

Discussion: SVM in Modern EEG Research Context

While deep learning approaches have gained prominence in EEG analysis, SVM continues to offer distinct advantages, particularly in scenarios with limited training data, requirement for model interpretability, or computational constraints. The emergence of hybrid models that combine deep learning feature extraction with SVM classification demonstrates the ongoing relevance of SVM in advanced BCI and neurotechnology applications [14] [20].

Recent research has successfully integrated SVM with deep learning architectures, creating models that leverage the complementary strengths of both approaches. These hybrid systems typically use deep neural networks for automatic feature learning from raw or minimally processed EEG signals, while employing SVM in the final classification layer to provide robust decision boundaries with strong generalization capabilities [14] [20].

Furthermore, SVM's well-established theoretical foundation provides interpretability advantages over pure deep learning models, which often function as "black boxes." This interpretability is particularly valuable in clinical applications and scientific research where understanding the relationship between EEG features and classification outcomes is essential for validation and trust in the system [17] [18].

Support Vector Machines remain a powerful and relevant tool for EEG signal classification, offering proven performance across diverse applications from basic research to clinical diagnostics. Their theoretical foundations in statistical learning theory provide inherent advantages for handling the high-dimensional, non-stationary, and noisy nature of neural data. While pure SVM approaches deliver robust performance, particularly with appropriate feature engineering, the future trajectory points toward hybrid models that leverage deep learning for feature representation and SVM for robust classification. This synergistic approach combines the representational power of neural networks with the generalization guarantees of margin-based classifiers, pushing the boundaries of what's possible in EEG decoding and brain-computer interface technology.

The Critical Impact of Artifacts on Downstream Analysis in Drug Development Pipelines

In the high-stakes environment of drug development, the integrity of analytical data is paramount. Artifacts—systematic errors and non-biological noise—introduced during experimental procedures represent a critical, yet often undetected, threat to data reliability and subsequent decision-making. These artifacts can originate from various sources, including instrumental errors, environmental factors, and procedural inconsistencies, ultimately compromising the validity of downstream analyses and potentially derailing development pipelines. Traditional quality control (QC) methods, which primarily rely on control wells, have proven insufficient for detecting many spatial and systematic artifacts that specifically affect drug-treated samples [23].

This application note examines the profound impact of artifacts on drug development data, highlighting a novel QC metric—Normalized Residual Fit Error (NRFE)—that directly addresses this challenge. Furthermore, we explore the translational potential of advanced artifact detection methodologies, specifically Support Vector Machine (SVM)-based frameworks successfully applied in electroencephalography (EEG) signal processing, for enhancing reliability in pharmaceutical screening. We provide detailed protocols and resources to empower researchers to identify, quantify, and mitigate artifacts, thereby safeguarding data integrity from initial discovery through to regulatory submission.

The Hidden Problem: Undetected Artifacts in Drug Screening

Large-scale pharmacogenomic initiatives, such as the Cancer Cell Line Encyclopedia (CCLE) and Genomics of Drug Sensitivity in Cancer (GDSC), have significantly advanced our understanding of drug responses. However, concerns regarding inter-laboratory consistency and reproducibility persist, often traceable to undetected artifacts in high-throughput screening (HTS) data [23]. Conventional QC metrics like Z-prime factor (Z'), Strictly Standardized Mean Difference (SSMD), and signal-to-background ratio (S/B) focus exclusively on control wells. This fundamental limitation renders them blind to systematic errors—such as evaporation gradients, pipetting inaccuracies, or drug-specific precipitation—that manifest specifically in drug wells [23].

The normalized residual fit error (NRFE) metric was developed to overcome this blind spot. By analyzing deviations between observed and fitted dose-response values across all compound wells and applying a binomial scaling factor to account for response-dependent variance, NRFE directly evaluates data quality from the drug-treated wells themselves [23]. This control-independent approach is orthogonal to traditional methods, making it particularly effective at identifying spatial artifacts and systematic errors that conventional metrics miss.

Table 1: Comparison of Traditional and NRFE Quality Control Metrics

| Metric | Basis of Calculation | Primary Strength | Primary Weakness |

|---|---|---|---|

| Z'-factor | Variability and separation of positive/negative controls [23] | Simple, established industry standard for assay-wide technical issues [23] | Cannot detect artifacts in drug wells; blind to spatial patterns [23] |

| SSMD | Normalized difference between controls [23] | Robust metric for effect size between controls [23] | Fails to capture drug-specific or position-dependent errors [23] |

| S/B Ratio | Ratio of mean control signals [23] | Simple to calculate and interpret [23] | Ignores variability; weakest correlation with other QC metrics [23] |

| NRFE | Deviations between observed and fitted dose-response values in all compound wells [23] | Detects systematic spatial artifacts and drug-specific issues missed by control-based metrics [23] | Requires dose-response data; not a replacement for control-based metrics (complementary) [23] |

The consequences of undetected artifacts are severe. Analysis of the PRISM dataset demonstrated that plates with elevated NRFE values (>15) exhibited a three-fold lower reproducibility among technical replicates compared to high-quality plates (NRFE <10) [23]. Furthermore, integrating NRFE into the QC process for matched data from the GDSC project improved the cross-dataset correlation from 0.66 to 0.76, underscoring its power to enhance data consistency and reliability [23]. The following workflow diagram illustrates how systematic artifacts undermine data integrity and how NRFE detects them.

Figure 1: Data Integrity Workflow. This diagram contrasts the ideal experimental workflow with the reality of how undetected artifacts lead to flawed conclusions, and how integrating NRFE into the QC process safeguards downstream analysis and reproducibility.

Translational Insights: SVM-Based Artifact Detection from EEG Research

The challenge of separating meaningful signal from complex noise is not unique to drug development. The field of neuroscience, particularly EEG analysis, has made significant strides in developing sophisticated computational methods for artifact detection and correction. The translational application of these methods holds great promise for pharmaceutical analytics.

SVM and Hybrid Models in EEG

In EEG research, artifacts from ocular, cardiac, and muscular activity profoundly complicate the interpretation of neural signals [24]. Support Vector Machines (SVMs) are a well-established machine learning technique for classifying clean EEG signals from contaminated ones. Their ability to handle high-dimensional data makes them particularly suitable for this task [6]. Research has demonstrated that a hybrid SVM-Fuzzy system, optimized with nature-inspired algorithms, can achieve exceptional accuracy (98.1%) in identifying epileptic seizures from EEG data, showcasing the power of combining SVM with complementary machine learning approaches for robust signal classification [6].

Furthermore, a critical study evaluating artifact minimization for EEG decoding concluded that while artifact correction (e.g., using Independent Component Analysis) is essential to avoid artificially inflated decoding accuracy, its combination with artifact rejection did not significantly enhance the performance of SVM-based decoders in most cases [13]. This highlights the robustness of SVM classifiers and underscores the primary importance of artifact correction prior to analysis.

The Power of Transfer Learning

A key advancement in artifact detection is the use of transfer learning. A seminal study demonstrated that an SVM artifact detection model trained on contact ECG data could be effectively optimized for a different signal modality—capacitively coupled ECG (ccECG)—using transfer learning [25]. This approach improved the classifier's accuracy on the new modality by 5-8%, requiring only a limited amount of newly labelled data (as few as 20 segments) for adaptation [25]. This methodology directly addresses a major bottleneck in drug development: the costly and time-consuming need to manually label large, modality-specific datasets for each new assay or instrument platform.

Integrated Application Protocol: Combining NRFE and SVM for Enhanced QC

This protocol outlines a hybrid quality control strategy that integrates the NRFE metric with an SVM classifier, leveraging the strengths of both approaches to maximize the detection of artifacts in drug screening data.

Protocol: NRFE-Guided SVM for Artifact Detection in HTS

Aim: To implement a two-tiered quality control pipeline for high-throughput drug screening that identifies both systematic spatial artifacts (via NRFE) and complex, non-linear signal contaminants (via SVM).

Materials and Reagents

- Drug Plates: 384-well or 96-well microplates containing test compounds and controls.

- Cell Lines: Relevant cancer or disease-specific cell lines.

- Viability/Range Readout Kit: e.g., CellTiter-Glo Luminescent Cell Viability Assay.

- Automated Liquid Handling System.

- Plate Reader: Compatible with the chosen readout kit.

- Software: R package

plateQCfor NRFE calculation [23]; Python with scikit-learn for SVM modeling; specialized tool for dose-response curve fitting (e.g.,drcin R).

Procedure

- Step 1: Experimental Setup & Data Acquisition

- Seed cells into microplates according to standard protocols for your cell line and assay.

- Dispense compounds across the plate using the liquid handling system, ensuring a logical dose-response pattern (e.g., serial dilutions across a row or column).

- Incubate for the designated time and develop the assay according to the readout kit's instructions.

- Acquire raw data (e.g., luminescence, fluorescence) from the plate reader.

Step 2: Calculate Traditional and NRFE QC Metrics

- Calculate traditional QC metrics (Z'-factor, SSMD) from the positive and negative control wells [23].

- Fit dose-response curves to the raw data for each compound-cell line combination.

- Using the

plateQCR package, compute the NRFE value for each plate using the fitted and observed values [23]. - QC Checkpoint 1: Apply tiered NRFE thresholds. Flag plates with NRFE > 15 for exclusion or expert review. Plates with NRFE between 10-15 require additional scrutiny, while those with NRFE < 10 are considered acceptable [23].

Step 3: Prepare Data for SVM Classification

- From the raw well-level data, extract a set of signal quality features. These can include:

- Label a subset of data segments (e.g., wells or plate sectors) as "clean" or "contaminated" based on the NRFE analysis and visual inspection to create a training set.

Step 4: Train and Apply SVM Classifier

- Train an SVM model with a radial basis function (RBF) kernel on the labeled feature data from a source domain (e.g., a previously validated HTS dataset or a different modality).

- Optional Transfer Learning: If the model performance on a new target domain (e.g., a novel assay format) is suboptimal, apply transfer learning. Use a small set of labeled data from the new domain to adapt the pre-trained SVM model, fine-tuning its decision boundary [25].

- Use the trained (or adapted) SVM model to classify all wells or segments in the dataset.

Step 5: Integrated Decision Making

- Combine the results from the NRFE and SVM analyses. A plate should be considered for rejection or re-testing if it is flagged by either method.

- This integrated approach ensures the detection of both large-scale systematic artifacts (NRFE's strength) and localized or complex signal contaminants (SVM's strength).

The following workflow provides a visual summary of this integrated protocol.

Figure 2: Integrated NRFE-SVM QC Protocol. This workflow diagram outlines the parallel paths of NRFE-based plate assessment and SVM-based signal classification, which converge for a final, robust data quality assessment.

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 2: Essential Materials for Implementing Advanced Artifact Detection

| Item | Function/Description | Example/Supplier |

|---|---|---|

| plateQC R Package | Open-source software for calculating the NRFE metric and performing control-independent quality assessment of drug screening plates [23]. | Available at: https://github.com/IanevskiAleksandr/plateQC [23] |

| SVM Library | A robust programming library for creating and training Support Vector Machine classifiers for signal quality assessment. | Python's scikit-learn (sklearn.svm.SVC) |

| Dose-Response Curve Fitting Tool | Software for modeling the relationship between compound concentration and effect, which is a prerequisite for NRFE calculation. | R package drc |

| High-Quality Control Compounds | Well-characterized agonists/antagonists for establishing robust positive and negative controls, ensuring traditional QC metrics (Z', SSMD) are valid. | Supplier-specific (e.g., Tocris, Sigma-Aldrich) |

| Standardized Cell Viability Assay | A homogeneous, luminescent assay for quantifying viable cells, providing a reproducible readout for HTS. | CellTiter-Glo Luminescent Assay |

Artifacts pose a silent but critical threat to the integrity of the drug development pipeline. While traditional QC methods provide a foundational check, they are inadequate for detecting the full spectrum of systematic errors. The integration of novel, control-independent metrics like NRFE and the strategic adoption of robust machine learning classifiers, such as SVMs, offer a powerful, multi-layered defense. By learning from adjacent fields like EEG signal processing and implementing the detailed protocols and tools outlined in this document, drug development professionals can significantly enhance data reliability, improve reproducibility between studies, and de-risk the entire pipeline from discovery to clinical application.

From Theory to Practice: Implementing SVM Pipelines for EEG Artifact Detection

The efficacy of Support Vector Machine (SVM) models in electroencephalography (EEG) artifact detection hinges critically on appropriate data preprocessing. EEG signals are inherently non-stationary and contaminated by various biological and environmental artifacts, including ocular movements, muscle activity, and power line interference [4]. Without meticulous preprocessing, these artifacts can masquerade as genuine neural patterns, leading to misleading SVM classification results. Proper preprocessing transforms raw, artifact-laden EEG signals into a feature space where the SVM can construct an optimal hyperplane to distinguish genuine neural activity from artifacts, thereby enhancing the model's generalization capability and physiological interpretability [26]. This document outlines standardized protocols for filtering, segmentation, and feature extraction strategies specifically optimized for SVM-based artifact detection in EEG research.

Data Preprocessing Pipeline for SVM

Filtering and Artifact Removal

Table 1: Filtering Techniques for EEG Artifact Management

| Technique | Primary Function | Parameters | Impact on SVM Performance |

|---|---|---|---|

| Bandpass Filter | Removes non-physiological frequencies | 0.5-45 Hz for neural signals; 4-13 Hz for muscular artifacts [10] | Reduces high-frequency noise that can dominate SVM feature space |

| Wavelet Transform | Multi-resolution analysis for non-stationary artifacts | Mother wavelet: Daubechies; Threshold: Stein's Unbiased Risk Estimate [27] | Preserves transient neural features while removing artifacts |

| Independent Component Analysis (ICA) | Separates neural and artifactual sources | InfoMax or Extended-Infomax algorithm [10] | Isforms artifact-related components for rejection |

| Automatic Subspace Reconstruction (ASR) | Corrects large-amplitude artifacts | Cutoff: 20-30 standard deviations [10] | Handles movement and transient artifacts in continuous EEG |

| Deep Learning (AnEEG) | Artifact removal via LSTM-based GAN | Generator: 2-layer LSTM; Discriminator: 4-layer 1D-CNN [4] | Generates artifact-free signals while preserving neural dynamics |

Temporal Segmentation Strategies

Table 2: EEG Segmentation Methods for SVM Analysis

| Method | Principle | Parameters | SVM Compatibility |

|---|---|---|---|

| Fixed-Length Segmentation | Divides EEG into equal epochs | 0.5-2 second windows; 50% overlap optional [28] | Simple implementation; Consistent feature vector dimensions |

| Adaptive Segmentation (CTXSEG) | Creates variable-length segments based on statistical differences | Change point detection; Stationarity-based boundaries [28] | May require feature normalization for fixed SVM input dimensions |

| Functional Connectivity Segmentation | Segments based on network structure stability | Connectivity metric: PLV/PC; Graph distance threshold [29] | Captures cognitive state changes relevant to artifact detection |

| Event-Locked Segmentation | Epochs aligned to external events | Pre/post-event intervals; Baseline correction | Controls for event-related potentials in artifact detection |

Feature Extraction for SVM Optimization

Feature extraction transforms preprocessed EEG signals into discriminative representations that enable SVMs to effectively separate artifactual from neural components.

Time-Domain Features: Include statistical measures (variance, skewness, kurtosis) and amplitude-based features that capture artifact characteristics such as abrupt voltage deflections from ocular movements [27].

Frequency-Domain Features: Power spectral density estimates across standard bands (delta, theta, alpha, beta, gamma) help identify artifacts with distinct spectral signatures, such as muscle contamination in high-frequency bands [27].

Time-Frequency Features: Wavelet coefficients provide joint temporal and spectral information crucial for detecting transient artifacts like eye blinks that have both temporal localization and frequency content [27].

Nonlinear Features: Entropy measures (Fuzzy Entropy, Hierarchical Fuzzy Entropy) and fractal dimensions quantify signal complexity, with artifacts often exhibiting different regularity patterns compared to neural signals [30].

Spatial Features: For multi-channel EEG, common spatial patterns (CSP) and functional connectivity metrics capture topographic distributions that differentiate localized artifacts from distributed neural activity [12].

Experimental Protocols

Protocol 1: Comprehensive Artifact Processing Pipeline

Objective: Implement a complete artifact detection and correction pipeline for SVM-based EEG analysis.

Materials: Raw EEG data (minimum 16 channels), MATLAB/Python with EEGLAB, SVM library (scikit-learn), high-performance computing resources.

Procedure:

- Data Import: Load raw EEG data in standard format (e.g., .edf, .set).

- Bandpass Filtering: Apply 0.5-45 Hz zero-phase bandpass filter.

- Bad Channel Identification: Detect channels with excessive noise (>5 SD from mean).

- ICA Decomposition: Perform ICA using InfoMax algorithm.

- Artifact Component Classification: Use ICLabel to identify artifact-related components.

- Component Removal: Subtract ocular and muscular artifact components.

- Segmentation: Apply fixed-length segmentation (1-second epochs, no overlap).

- Feature Extraction: Compute time-domain, frequency-domain, and nonlinear features.

- SVM Training: Train linear SVM with 5-fold cross-validation.

- Performance Validation: Assess accuracy, sensitivity, specificity on held-out test set.

Troubleshooting: If SVM performance is suboptimal, consider alternative segmentation strategies or feature sets. For computational efficiency with large datasets, consider ASR as an alternative to ICA [10].

Protocol 2: Adaptive Segmentation for Non-Stationary EEG

Objective: Implement and validate adaptive segmentation to improve artifact detection in continuous EEG.

Materials: Continuous EEG recording, MATLAB/Python with signal processing toolbox, custom segmentation algorithms.

Procedure:

- Preprocessing: Apply basic bandpass filter (0.5-45 Hz).

- Functional Connectivity Calculation: Compute phase locking value (PLV) between all channel pairs using sliding window (0.5s duration).

- Graph Distance Computation: Calculate network structure differences between consecutive windows using node centrality measures [29].

- Change Point Detection: Identify segmentation boundaries where graph distance exceeds 2 standard deviations from mean.

- Segment Validation: Ensure minimum segment duration of 0.5s for meaningful analysis.

- Feature Extraction: Compute feature vectors for each variable-length segment.

- Feature Normalization: Apply standardization to accommodate variable segment lengths for SVM.

- SVM Classification: Train and test SVM on adaptive segments compared to fixed segments.

Validation: Compare classification performance between fixed and adaptive segmentation using ROC analysis.

Visualization of Workflows

EEG Processing Pipeline for SVM

Artifact Detection Decision Protocol

Table 3: Critical Resources for EEG Artifact Detection Research

| Resource | Specification | Research Application |

|---|---|---|

| EEG Acquisition System | 64-channel wet electrode system with impedance <10 kΩ | Gold-standard reference data collection [10] |

| Wearable EEG Headset | Dry electrode system with motion sensors | Ecological artifact data collection [10] |

| EEGLAB Toolkit | MATLAB-based environment with ICA implementation | Standardized preprocessing and component analysis [13] |

| BCI Competition IV Dataset 2a | 9-subject, 4-class motor imagery data | Benchmark for artifact detection algorithms [12] |

| PhysioNet Motor Imagery Dataset | 64-channel EEG from 109 subjects | Large-scale validation of SVM approaches [4] |

| Custom SVM Implementation | Scikit-learn with linear and RBF kernels | Flexible model development and testing [26] |

Effective preprocessing pipelines are fundamental to robust SVM-based EEG artifact detection. The integration of advanced filtering techniques, adaptive segmentation approaches, and multidimensional feature extraction creates an optimized pathway for distinguishing artifacts from neural signals. Current evidence suggests that while artifact correction is essential to minimize confounds, the combination of correction and rejection does not necessarily enhance SVM decoding performance in all paradigms [13]. Future research directions should focus on real-time adaptive processing, deep learning integration for artifact removal [4], and standardized benchmarking across diverse EEG acquisition systems. The protocols outlined herein provide a foundation for reproducible, effective artifact detection in SVM-based EEG research.

Electroencephalography (EEG) is a vital tool in neuroscience and clinical diagnostics, but its signal quality is often compromised by artifacts—unwanted noise originating from both physiological and non-biological sources. Effective artifact detection is a critical preprocessing step, and Support Vector Machines (SVMs) have proven to be powerful classifiers for this task. The performance of an SVM model is heavily dependent on the features it receives; well-engineered features that capture the distinct characteristics of artifacts in the temporal, spectral, and spatial domains are paramount for high-accuracy detection. These features enable the SVM to construct optimal hyperplanes for separating artifact-contaminated EEG segments from clean brain activity. This document provides detailed application notes and protocols for feature engineering, framed within the context of a broader thesis on SVM-based artifact detection in EEG research.

Feature Engineering Domains and Quantitative Comparison

The following sections delineate feature extraction methodologies across three fundamental domains. The subsequent table provides a comparative summary of their performance and characteristics.

Table 1: Comparative Analysis of Feature Domains for SVM-Based Artifact Detection

| Domain | Example Features | Best for Artifact Type | Key Advantage | Reported Performance (Accuracy) |

|---|---|---|---|---|

| Temporal | Statistical Moments (Variance, Skewness, Kurtosis), Amplitude Threshold | Eye-blink, High-amplitude Glitches | Computational simplicity, real-time applicability | >90% for eye-blink (with topography) [31] |

| Spectral | Power Spectral Density (PSD), Band Power (Delta, Theta, Alpha, Beta, Gamma), Spectral Entropy | Muscle, Electrode Pop, Powerline Noise | Directly captures oscillatory nature of EEG and artifacts | Varies by artifact; PSD is a foundational feature [32] [27] |

| Spatial | Scalp Topography, Phase Locking Value (PLV), Functional Connectivity Maps | Eye-blink, Muscle, Channel-specific Noise | Exploits multi-channel information and brain network dynamics | 97.61% (for emotion detection, indicative of high utility) [33] |

| Time-Frequency | Wavelet Coefficients, Marginal Hilbert Spectrum (MHS) | Muscle, Motion, Complex Transients | Resolves non-stationary signals; high joint time-frequency resolution | Effective for muscular and motion artifacts [34] [33] |

Temporal Domain Features

Temporal domain features are computed directly from the EEG signal amplitude over time. They are computationally efficient and effective for detecting artifacts with distinct amplitude or statistical properties.

- Statistical Moments: These are fundamental descriptors of the signal's amplitude distribution.

- Variance/Standard Deviation: Measures signal power. Artifacts like eye-blinks or muscle bursts often cause large deviations from the mean, resulting in high variance.

- Skewness: Quantizes the asymmetry of the amplitude distribution. A symmetric brain signal typically has skewness near zero, while artifacts can introduce asymmetry.

- Kurtosis: Reflects the "tailedness" of the distribution. Signals with sharp, transient artifacts (e.g., spikes) often exhibit high kurtosis.

- Amplitude-based Features: Simple thresholds on the absolute amplitude or the peak-to-peak amplitude in an epoch can flag high-amplitude artifacts like electrode pops or large eye movements.

Spectral and Time-Frequency Domain Features

Spectral features characterize the frequency content of the EEG signal, which is crucial since both neural activity and artifacts occupy distinct frequency bands.

- Power Spectral Density (PSD): Estimates the power distribution across frequencies. It can be computed using methods like the Fast Fourier Transform (FFT) or Welch's method [27]. Muscle artifacts, for instance, manifest as a diffuse increase in high-frequency (Beta/Gamma) power.

- Band Power: The absolute or relative power within standard EEG bands (Delta: 0.5-4 Hz, Theta: 4-8 Hz, Alpha: 8-13 Hz, Beta: 13-30 Hz, Gamma: >30 Hz) is a highly informative feature [33] [32].

- Spectral Entropy: Measures the predictability or regularity of the power spectrum, with artifacts often introducing more disorder.

For non-stationary signals where frequency content changes over time, time-frequency analysis is superior.

- Wavelet Transform (WT): Decomposes the signal into different frequency components while retaining temporal information. Features can be extracted from the coefficients of specific scales corresponding to artifact-prone frequencies [34] [27]. Discrete Wavelet Transform (DWT) is widely used for its computational efficiency.

- Marginal Hilbert Spectrum (MHS): A component of the Hilbert-Huang Transform, MHS provides a time-frequency-energy representation that is highly effective for analyzing non-linear and non-stationary signals like EEG, showing promise for artifact detection [33].

Spatial Domain Features

Spatial features leverage information from multiple EEG electrodes to capture the topographic distribution of brain activity and artifacts.

- Scalp Topography: This feature captures the voltage map across the scalp at a given time point. Artifacts have characteristic topographic patterns; for example, eye-blink artifacts manifest as a strong, smooth frontal field that reverses polarity, a pattern easily distinguished from most cortical potentials. Research has identified scalp topography as the most potent single feature for detecting eye-blink artifacts [31].

- Functional Connectivity: These features quantify the statistical interdependence between signals from different electrode pairs, capturing the network dynamics of the brain.

- Phase Locking Value (PLV): A non-linear measure that assesses the consistency of phase differences between two signals over trials or time. It is used to construct functional connectivity maps that can distinguish artifact-related from brain-related activity [33].

- Other Measures: Correlation, coherence, and mutual information are also used to define connectivity and identify abnormal spatial patterns caused by artifacts.

Experimental Protocols for Feature Extraction

Protocol 1: Extraction of Spectral Features using Welch's PSD

Objective: To compute Power Spectral Density (PSD) and band power features from multi-channel EEG data for SVM training.

Materials:

- Cleaned, continuous or epoched EEG data.

- Computing environment (e.g., Python with MNE, SciPy, and Scikit-learn libraries).

Procedure:

- Data Segmentation: Divide the continuous EEG signal into non-overlapping or overlapping epochs (e.g., 2-second segments).

- Detrending: Remove linear trends from each epoch to prevent low-frequency drift from distorting the PSD estimate.

- Tapering: Apply a window function (e.g., Hanning window) to each epoch to reduce spectral leakage.

- FFT Computation: For each channel and epoch, compute the FFT.

- PSD Estimation: Calculate the squared magnitude of the FFT and normalize by the sampling frequency and window power to obtain the periodogram.

- Averaging: Average the periodograms across epochs (Welch's method) or across overlapping segments within a long epoch to reduce variance.

- Band Power Extraction: Integrate the PSD within the standard frequency bands (Delta, Theta, Alpha, Beta, Gamma) for each channel. These integrated values form the feature vector for each EEG segment.

SVM Integration: The band power values from all channels can be concatenated into a single feature vector for each data segment, which is then labeled (e.g., "clean" or "artifact") and used to train the SVM classifier [27].

Protocol 2: Extraction of Spatial Features using Functional Connectivity

Objective: To compute Phase Locking Value (PLV) between EEG channels and construct spatial feature vectors for artifact detection.

Materials:

- Preprocessed, band-pass filtered EEG data.

- Signal processing toolbox (e.g., MATLAB, Python with NumPy and MNE).

Procedure:

- Band Selection: Choose a frequency band of interest (e.g., Theta for eye-blinks, Gamma for muscle artifacts).

- Hilbert Transform: Apply the Hilbert transform to the band-pass filtered signal from each channel to extract the instantaneous phase, (\phi(t)), for each sample point.

- PLV Calculation: For each pair of channels (i) and (j), compute the PLV over a time window of length (T) as: (PLV{ij} = \left| \frac{1}{T} \sum{t=1}^{T} e^{i(\phii(t) - \phij(t))} \right|) where (i) is the imaginary unit. The result is a value between 0 (no phase synchronization) and 1 (perfect phase synchronization).

- Feature Vector Construction: The PLV values for all unique channel pairs are arranged into a symmetric connectivity matrix. The upper or lower triangle of this matrix (excluding the diagonal) can be unfolded into a feature vector that represents the spatial connectivity pattern for that time window [33].

SVM Integration: This PLV-based feature vector is labeled and used as input for the SVM. The classifier learns to distinguish the connectivity patterns associated with artifacts (e.g., highly synchronized frontal channels during an eye-blink) from those of clean, task-related brain activity.

Workflow Visualization: From Raw EEG to SVM Classification

The following diagram illustrates the integrated workflow for feature engineering and SVM-based artifact detection.

The Scientist's Toolkit: Research Reagents and Solutions

Table 2: Essential Materials and Tools for EEG Artifact Detection Research

| Item | Function in Research | Example/Note |

|---|---|---|

| High-Density EEG System | Acquires neural data with high spatial resolution. | 64+ channels; systems with active electrodes reduce environmental noise. |

| Dry-Electrode Wearable EEG | Enables data collection in ecological, real-world settings. | Prone to specific motion artifacts; requires tailored feature extraction [34]. |

| Independent Component Analysis (ICA) | Blind source separation to isolate artifact components. | Used as a preprocessing step to generate inputs for spatial feature extraction [35]. |

| Wavelet Toolbox | Provides algorithms for time-frequency decomposition. | MATLAB Wavelet Toolbox or Python PyWavelets for DWT/CWT analysis [27]. |

| Public EEG Datasets | Benchmarks and validates feature extraction methods. | DEAP, SEED, or artifact-annotated datasets are crucial for training SVMs [33] [36]. |

| SVM Libraries | Provides optimized implementations of the classifier. | libsvm, Scikit-learn (Python) with RBF kernel typically performs well. |

Electroencephalography (EEG) serves as a critical tool in clinical and neuroscientific research for non-invasively monitoring brain activity. However, EEG signals are notoriously susceptible to various artifacts—unwanted noise originating from ocular (EOG), muscular (EMG), cardiac (ECG), and environmental sources. These artifacts can obscure genuine neural activity and severely compromise the validity of subsequent analysis. Within the broader context of a thesis on Support Vector Machine (SVM) artifact detection in EEG research, this document outlines detailed application notes and protocols for designing, evaluating, and deploying a robust SVM-based pipeline for the identification and management of artifacts in EEG data. The structured workflow presented herein, from rigorous model training and cross-validation to final deployment, is designed to equip researchers, scientists, and drug development professionals with a reliable methodology to ensure the integrity of EEG-derived biomarkers in clinical trials and neurological studies.

SVM Workflow for EEG Artifact Detection

The following diagram illustrates the comprehensive workflow for an SVM-based system designed to detect artifacts in EEG data, integrating data handling, model development, and deployment phases.

SVM Workflow for EEG Artifact Detection

This workflow outlines the key stages for developing an SVM model to identify artifacts in EEG signals. The process begins with Raw EEG Data Acquisition, which is often contaminated with noise from various sources [37] [10]. The data then undergoes Signal Preprocessing, which may include bandpass filtering and specialized artifact removal techniques like Ensemble Empirical Mode Decomposition with Fast Independent Component Analysis (EEMD-FastICA) to effectively filter out EOG artifacts and other noise [37].

Subsequently, Feature Extraction is performed to capture discriminative characteristics from the preprocessed signals. For artifact detection, this often involves generating a comprehensive feature vector by integrating time-frequency features (e.g., using Wavelet Packet Transform - WPT) and nonlinear features (e.g., using Sample Entropy - SampEn) [37]. To enhance model efficiency and performance, Feature Selection techniques, such as Gray Wolf Optimization (GWO), can be employed to reduce dimensionality and select the most relevant features [6].

The core of the workflow is SVM Model Training, where a kernel function is selected, and hyperparameters are tuned. The model then undergoes rigorous Model Cross-Validation (e.g., k-Fold or Leave-One-Subject-Out - LOSO) to ensure performance generalizability and avoid overfitting [38] [39]. This is followed by comprehensive Model Evaluation using a suite of metrics like accuracy, precision, recall, F1-score, and AUC-ROC [40].

Finally, the validated model proceeds to Model Deployment, where it can be implemented for real-time artifact detection in wearable EEG systems [10] or for batch processing of recorded data, ultimately outputting Clean EEG Data or a detailed Artifact Report.

Experimental Protocols & Methodologies

Detailed Protocol: EEMD-FastICA for Ocular Artifact Removal

Objective: To remove ocular (EOG) artifacts from raw EEG signals, resulting in cleaner neural data [37].

- Step 1: Signal Decomposition. Apply Ensemble Empirical Mode Decomposition (EEMD) to the raw multi-channel EEG signal. EEMD adds white noise to the signal multiple times and performs Empirical Mode Decomposition (EMD) on each noisy version, then averages the results to obtain a set of stable Intrinsic Mode Functions (IMFs). This mitigates mode mixing and provides a more robust decomposition than standard EMD [37].

- Step 2: Source Separation. Use Fast Independent Component Analysis (FastICA) on the obtained IMFs. FastICA is a blind source separation algorithm that maximizes the non-Gaussianity of the components to isolate statistically independent sources, effectively separating neural activity from artifact components [37].

- Step 3: Artifact Identification & Reconstruction. Identify components corresponding to EOG artifacts based on their topographic maps and temporal characteristics. These components are then removed, and the remaining IMFs are reconstructed to produce a clean EEG signal with minimal EOG contamination [37].

Detailed Protocol: WPT-SampEn Feature Extraction for Fatigue Detection

Objective: To extract a robust set of features from preprocessed EEG signals for classifying driver fatigue states using an SVM [37].

- Step 1: Time-Frequency Decomposition. Perform Wavelet Packet Transform (WPT) on the artifact-reduced EEG signals. WPT provides a more detailed time-frequency representation than standard wavelet transform by decomposing both the approximation and detail coefficients, allowing for finer resolution across all frequency bands of interest (e.g., delta, theta, alpha, beta, gamma) [37].

- Step 2: Nonlinear Feature Calculation. Compute the Sample Entropy (SampEn) from the reconstructed EEG signals. SampEn is a measure of signal complexity and regularity. A decrease in SampEn is often associated with increased mental fatigue, providing a crucial nonlinear feature for the classifier [37].

- Step 3: Feature Vector Construction. Integrate the statistical features from WPT (e.g., energy, variance of coefficients) and the SampEn values into a comprehensive feature vector. This multi-feature fusion approach provides the SVM classifier with both time-frequency and nonlinear dynamic information, significantly improving detection accuracy for fatigue states [37].

Protocol: k-Fold Cross-Validation for Model Evaluation

Objective: To obtain a reliable and unbiased estimate of the SVM model's performance on unseen data [41] [39] [42].

- Step 1: Data Partitioning. Randomly shuffle the entire dataset and split it into 'k' equal-sized folds (a common choice is k=5 or k=10). For stratified k-fold, ensure each fold maintains the same proportion of class labels (e.g., artifact vs. clean) as the complete dataset, which is crucial for imbalanced data [41].

- Step 2: Iterative Training and Validation. For each unique fold 'i' (where i=1 to k):

- Use fold 'i' as the validation set.

- Use the remaining k-1 folds as the training set.

- Train the SVM model on the training set.

- Validate the trained model on the validation set and record the chosen performance metric(s) (e.g., accuracy, F1-score) [39].

- Step 3: Performance Averaging. Calculate the final performance estimate by averaging the results from the 'k' iterations. The standard deviation of these results indicates the model's stability across different data subsets [42].

Performance Metrics & Quantitative Data

Core Model Evaluation Metrics

Table 1: Key classification metrics for evaluating SVM performance in EEG artifact detection.

| Metric | Formula | Interpretation & Use-Case |

|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Overall correctness. Best used on balanced datasets [40]. |

| Precision | TP/(TP+FP) | Measures the purity of positive predictions. Crucial when the cost of False Positives (FP) is high (e.g., mistakenly labeling clean EEG as artifact) [40] [42]. |

| Recall (Sensitivity) | TP/(TP+FN) | Measures the completeness of positive predictions. Crucial when the cost of False Negatives (FN) is high (e.g., failing to detect a true artifact) [40] [42]. |

| F1-Score | 2(PrecisionRecall)/(Precision+Recall) | The harmonic mean of Precision and Recall. Provides a single, balanced metric, especially useful for imbalanced datasets [40] [42]. |

| Specificity | TN/(TN+FP) | Measures the ability to identify negative cases correctly (true negative rate) [40]. |

| AUC-ROC | Area Under the ROC Curve | Measures the model's overall ability to discriminate between classes, independent of the classification threshold [40]. |

Reported Performance in EEG Studies

Table 2: Reported performance of SVM and hybrid models in recent EEG analysis studies.

| Study / Application | Methodology | Key Performance Outcome |

|---|---|---|

| Epileptic Seizure Detection [6] | Hybrid SVM-Fuzzy classifier with GWO feature selection | 98.1% Accuracy, 97.8% Sensitivity, 98.4% Specificity |

| Explainable Epilepsy Detection [38] | Feature engineering with kNN classifier (for context) | 99.61% Accuracy (10-fold CV), 79.92% Accuracy (LOSO CV) |

| Driver Fatigue Detection [37] | SVM with WPT and Sample Entropy features | Significant improvement in recognition accuracy compared to single-feature methods |

| Motor Imagery EEG Classification [14] | SVM-enhanced attention mechanism in a deep learning model | Consistent improvements in accuracy, F1-score, and sensitivity |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential computational tools and packages for implementing the SVM workflow.

| Tool / Solution | Function / Purpose | Example / Note |

|---|---|---|

| Signal Preprocessing Toolbox | Filtering, artifact removal, and basic time-frequency analysis. | EEGLAB, MNE-Python, FieldTrip (MATLAB) |

| Feature Extraction Library | Calculating complex, nonlinear, and entropy-based features. | PyEntropy (Python), Nonlinear Measures Toolbox |

| Machine Learning Framework | Implementing SVM, cross-validation, and hyperparameter tuning. | Scikit-learn (Python): provides SVC, cross_val_score, GridSearchCV [39] |

| Optimization Algorithm | For feature selection and hyperparameter optimization. | Gray Wolf Optimization (GWO), Goose Optimization Algorithm (GOA) [6] |