Deep Learning vs. Traditional Methods for EEG Artifact Removal: A Comprehensive Analysis for Biomedical Research

This article provides a systematic comparison of deep learning (DL) and traditional signal processing techniques for electroencephalography (EEG) artifact removal, a critical preprocessing step in neuroscience and clinical diagnostics.

Deep Learning vs. Traditional Methods for EEG Artifact Removal: A Comprehensive Analysis for Biomedical Research

Abstract

This article provides a systematic comparison of deep learning (DL) and traditional signal processing techniques for electroencephalography (EEG) artifact removal, a critical preprocessing step in neuroscience and clinical diagnostics. Tailored for researchers and drug development professionals, it explores the foundational principles, methodological advances, and practical challenges in the field. We detail how DL models like CNNs, GANs, and LSTMs overcome limitations of traditional methods such as ICA and wavelet transforms, particularly for complex artifacts in mobile and wearable EEG. The content covers performance validation metrics, optimization strategies for real-world applications, and a forward-looking perspective on integrating these technologies to enhance the reliability of brain data in biomedical research.

The EEG Artifact Problem: From Classic Challenges to Modern Deep Learning Solutions

Electroencephalography (EEG) is a fundamental tool in neuroscience and clinical diagnostics, providing unparalleled temporal resolution for monitoring brain activity. However, the utility of EEG is critically dependent on signal quality, which is perpetually threatened by various artifacts that can obscure neural information. Artifacts are unwanted signals originating from multiple sources, broadly categorized as physiological (from the subject's body), motion-related (from movement), and technical (from equipment or environment). The expansion of EEG into real-world applications through wearable devices has further exacerbated artifact challenges, as uncontrolled environments and subject mobility introduce additional noise sources. Effective artifact management is therefore not merely a preprocessing step but a pivotal determinant of data validity, influencing applications ranging from clinical diagnosis to brain-computer interfaces (BCIs). This guide systematically defines the EEG artifact landscape, objectively compares the performance of traditional and deep learning removal methods, and provides experimental protocols to inform researcher selection for specific applications.

Defining the Artifact Landscape: A Taxonomy of EEG Contaminants

EEG artifacts can be classified based on their origin, characteristics, and impact on the signal. Understanding this taxonomy is essential for selecting appropriate removal strategies. The table below catalogs the primary artifact types, their sources, and key identifying features.

Table 1: Taxonomy of Common EEG Artifacts

| Artifact Category | Specific Type | Origin/Source | Key Characteristics | Impact on EEG Signal |

|---|---|---|---|---|

| Physiological | Ocular Artifacts [1] [2] | Eye blinks and movements (corneo-retinal dipole, eyelid movement) | High-amplitude, slow deflections (3-15 Hz); Frontally dominant | Overwhelms theta/alpha bands; obscures event-related potentials |

| Muscle Artifacts (EMG) [3] [1] | Head, neck, jaw muscle contractions (talking, swallowing) | High-frequency bursts (>13 Hz to >200 Hz); broad spectral distribution | Masks beta/gamma activity; mimics epileptic spikes | |

| Cardiac Artifacts [1] | Heartbeat (pulse, ECG) | Periodic waveform (~1.2 Hz for pulse); characteristic spike pattern | Can be mistaken for pathological slow waves or spike-wave complexes | |

| Motion | Head Movement [4] | Vertical head movements during walking/gait | Baseline shifts; periodic oscillations | Alters signal baseline and amplitude, corrupting steady-state potentials |

| Electrode Motion [4] | Displacement from scalp due to cable sway, heel strike | Sudden, high-amplitude transients (gait-related amplitude bursts) | Creates spike-like artifacts that mimic neural activity | |

| Technical/Environmental | Powerline Interference [1] [5] | Alternating current from mains electricity | 50/60 Hz sinusoidal oscillation and harmonics | Obscures high-frequency neural activity |

| Instrument Artifacts [1] | Faulty electrodes, high impedance, cable movements | Irregular, non-physiological patterns, sharp transients | Can cause signal dropouts or localized noise |

Diagram 1: A hierarchical taxonomy of common EEG artifacts, showing primary categories and their characteristic signal features.

Traditional vs. Deep Learning Approaches: A Comparative Analysis

Artifact removal pipelines generally consist of two phases: detection and removal. Traditional methods often rely on strong statistical or mathematical assumptions about the signal, while deep learning (DL) approaches learn these features directly from data.

Core Methodologies and Workflows

Traditional Workflow: Traditional methods typically involve a sequential process of signal decomposition, component identification, and reconstruction.

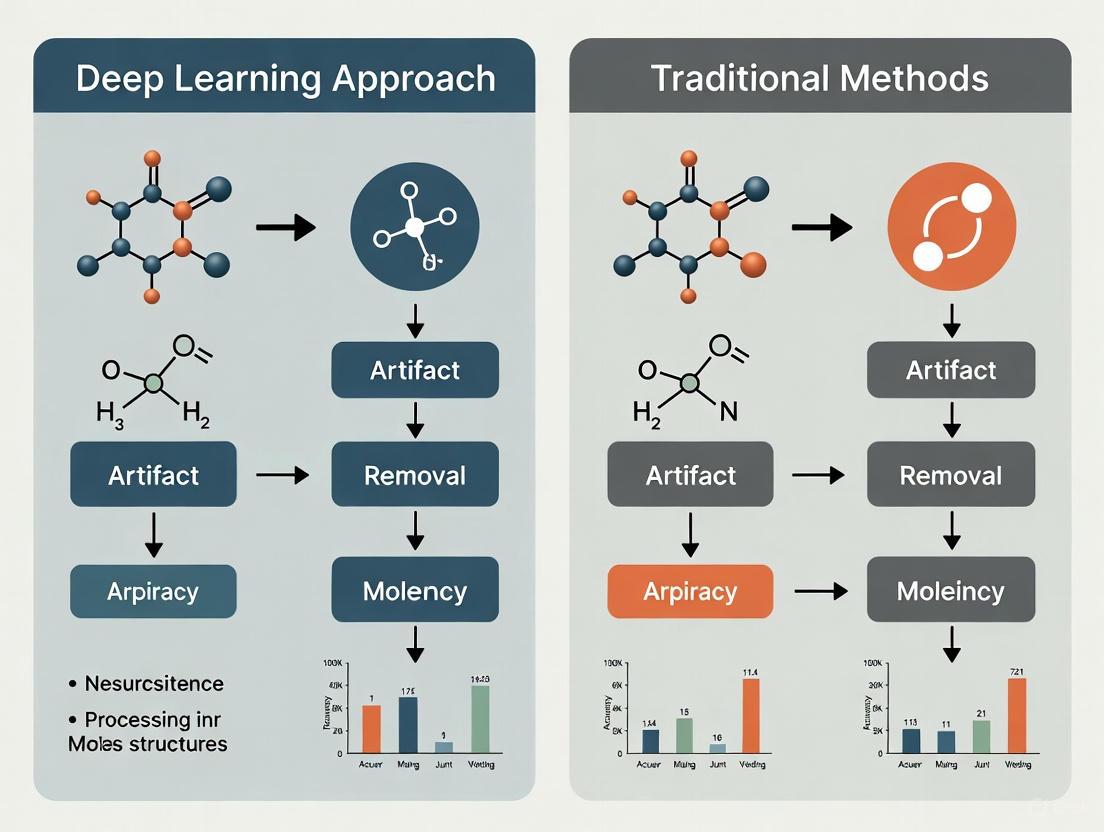

Diagram 2: The standard workflow for traditional artifact removal methods, a sequential, component-based process.

Deep Learning Workflow: DL models, particularly those using an end-to-end structure, learn to map contaminated input signals directly to cleaned outputs.

Diagram 3: The end-to-end workflow for deep learning-based artifact removal, showing the direct mapping learned during supervised training.

Quantitative Performance Comparison

The following tables synthesize experimental data from recent studies to compare the performance of various artifact removal methods across different artifact types.

Table 2: Performance Comparison for Ocular and Muscle Artifact Removal

| Method | Category | Artifact Type | Key Metric Results | Experimental Context |

|---|---|---|---|---|

| Regression-based [1] [2] | Traditional | Ocular | N/A | Requires reference EOG channel; performance drops without reference; simple but limited. |

| ICA [3] [1] | Traditional | Ocular, Muscle | N/A | Effective with high-channel counts (>40); requires manual inspection; struggles with low-density wearable EEG. |

| Wavelet Transform [3] | Traditional | Ocular, Muscle | N/A | Commonly used with thresholding; integrated in many pipelines. |

| AnEEG (LSTM-GAN) [6] | Deep Learning | Muscle, Ocular, Environmental | Lower NMSE, RMSE; Higher CC, SNR, SAR vs. wavelet | Outperformed wavelet decomposition; strong agreement with ground truth. |

| CLEnet (CNN-LSTM) [5] | Deep Learning | EMG, EOG, Mixed | SNR: 11.50 dB, CC: 0.93 (Mixed Artifacts) | Excelled in removing mixed artifacts; effective on multi-channel data with unknown artifacts. |

Table 3: Performance Comparison for Motion and Complex Artifacts

| Method | Category | Artifact Type | Key Metric Results | Experimental Context |

|---|---|---|---|---|

| ASR [3] [2] | Traditional | Ocular, Movement, Instrumental | N/A | Widely applied; detects and reconstructs artifact subspaces; suitable for real-time. |

| Motion-Net (CNN) [4] | Deep Learning | Motion | Artifact Reduction (η): 86% ±4.13; SNR Improvement: 20 ±4.47 dB | Subject-specific model; used Visibility Graph features for accuracy on smaller datasets. |

| M4 (State Space Models) [7] | Deep Learning | tACS, tRNS | Best RRMSE, CC for complex tACS/tRNS | Multi-modular network excelled at removing complex transcranial stimulation artifacts. |

| Complex CNN [7] | Deep Learning | tDCS | Best RRMSE, CC for tDCS | Convolutional network performed best on direct current stimulation artifacts. |

Experimental Protocols and Benchmarking

To ensure fair and reproducible comparisons, researchers rely on standardized experimental protocols and benchmark datasets.

Common Experimental Protocols

- Semi-Synthetic Data Generation [6] [7] [5]: This widespread protocol involves clean EEG segments that are artificially contaminated with recorded artifact signals (e.g., EOG, EMG). This provides a known ground truth for quantitative evaluation. Key steps include:

- Acquiring clean EEG and separate artifact signals.

- Linearly or non-linearly mixing them at controlled signal-to-noise ratios (SNRs).

- Using the original clean EEG as the ground truth for training and validation.

- Real Data with Expert Annotation [4]: In this protocol, real EEG data is collected while subjects perform artifact-inducing actions (e.g., walking, blinking). The ground truth is established using advanced methods like artifact-free intervals or high-fidelity artifact removal techniques (e.g., ICA) verified by expert annotation.

- Performance Metrics: Standard quantitative metrics include:

Table 4: Essential Resources for EEG Artifact Removal Research

| Resource/Solution | Function/Role in Research | Example Instances |

|---|---|---|

| Public Benchmark Datasets | Provides standardized data for training and fair comparison of algorithms. | EEGdenoiseNet [5], MIT-BIH Arrhythmia Database [5], PhysioNet Motor/Imagery Dataset [6] |

| Deep Learning Frameworks | Provides the software environment to build, train, and test complex neural network models. | TensorFlow, PyTorch |

| Signal Processing Toolkits | Offers implementations of traditional algorithms for preprocessing and baseline comparisons. | EEGLAB (ICA), ASR, FieldTrip |

| Semi-Synthetic Data Pipelines | Enables controlled validation of removal techniques by providing a known ground truth. | Custom pipelines using clean EEG + artifact libraries (EOG, EMG, ECG) [5] |

The artifact landscape in EEG is diverse, necessitating a nuanced approach to removal. Traditional methods like ICA, regression, and wavelet transforms remain effective for specific, well-defined artifacts, particularly in high-channel-count lab settings. However, their reliance on manual intervention, specific assumptions, and high channel counts limits their efficacy for the low-density, dynamic environments of wearable EEG.

Deep learning approaches represent a paradigm shift, demonstrating superior performance in handling complex artifacts like motion and mixed noise, adapting to unknown artifacts, and operating automatically in an end-to-end fashion. Models like CLEnet [5] and Motion-Net [4] show remarkable versatility and accuracy. The current consensus indicates that while traditional methods are sufficient for controlled settings, deep learning is increasingly critical for real-world, mobile applications. Future research will likely focus on developing more efficient, explainable DL models that require less data and are robust across diverse populations and recording conditions, further bridging the gap between laboratory research and real-world brain monitoring.

In both clinical diagnostics and brain-computer interface (BCI) applications, the presence of artifacts—unwanted signals that obscure data of interest—poses significant challenges to accuracy, reliability, and safety. Artifacts stem from diverse sources, including patient movement, equipment limitations, and environmental interference, and they can severely degrade the performance of medical imaging and neural signal processing systems. The pursuit of robust artifact removal methods has emerged as a critical research frontier, characterized by a fundamental tension between traditional signal processing techniques and modern deep learning approaches. This article examines the impact of artifacts across medical domains and provides a comparative analysis of removal methodologies, emphasizing experimental data and protocols to guide researchers and developers in selecting optimal strategies for their specific applications.

The Impact of Artifacts in Medical Imaging and Neural Data

Artifacts in Medical Imaging

In medical imaging, artifacts can lead to misdiagnosis, false positives, and false negatives. A recent systematic evaluation of Vision-Language Models (VLMs) on medical images containing artifacts revealed significant performance degradation. On original, unaltered images, VLMs achieved moderate accuracy: 0.645 for brain MRI, 0.602 for optical coherence tomography (OCT), and 0.604 for chest X-ray applications. However, the introduction of weak artifacts (images that are partially obscured but still interpretable) caused accuracy to decline by -3.34% (MRI), -9.06% (OCT), and -10.46% (X-ray) [8]. Furthermore, these models demonstrated a poor ability to identify strong artifacts (severely distorted, ungradable images), with detection rates as low as 0.128 for OCT and 0.115 for X-ray images [8]. This indicates that advanced AI models are not yet robust enough to perform reliably on artifact-laden medical images, a critical requirement for clinical deployment.

Artifacts in Brain-Computer Interfaces and EEG

For Brain-Computer Interfaces (BCIs) and electroencephalography (EEG), artifacts are a central problem. BCIs, which enable communication between the brain and external devices, are particularly vulnerable. Artifacts can lead to erroneous interpretations, poor model fitting, and subsequently reduced online performance [9]. This is especially critical for communication BCIs (cBCIs) designed for individuals with severe motor disabilities, where accuracy is paramount [9].

The amplitude and nature of EEG signals make them highly susceptible to contamination. Ocular artifacts from eye blinks can have amplitudes up to ten times greater than the underlying neural signal, while muscle activity introduces noise across a wide frequency range (0–200 Hz), distorting critical beta and gamma brain waves [10]. This contamination hinders the accurate analysis of brain activity, potentially leading to false alarms in clinical settings or errors in BCI control [10].

Comparative Analysis: Traditional vs. Deep Learning Artifact Removal

The evolution of artifact removal techniques has progressed from traditional model-based methods to data-driven deep learning approaches. The table below summarizes the core characteristics of these two paradigms.

| Feature | Traditional Methods | Deep Learning Methods |

|---|---|---|

| Core Principle | Relies on statistical assumptions and linear signal processing [10]. | Learns complex, non-linear mappings from noisy to clean data [10]. |

| Example Techniques | Regression, Independent Component Analysis (ICA), Wavelet Transform, Adaptive Filtering [10] [6]. | Convolutional Neural Networks (CNNs), Transformers, Autoencoders, Generative Adversarial Networks (GANs) [7] [11] [10]. |

| Key Strengths | Well-understood; computationally efficient for some applications [10]. | Superior at handling complex, non-stationary artifacts; end-to-end learning without manual feature engineering [7] [10]. |

| Major Limitations | Linear assumptions may not hold for real data; often requires manual parameter tuning and intervention [10]. | High computational cost; requires large datasets; "black box" nature can reduce interpretability [10]. |

| Generalizability | Often limited, as performance is tied to specific statistical assumptions [10]. | Higher potential, but can be dataset-specific; a key research challenge [10]. |

Performance Comparison of Specific Methods

Experimental data from recent studies allows for a direct comparison of the quantitative performance of various artifact removal methods. The following tables consolidate results from key experiments in EEG and MRI domains.

Table 1: Comparative Performance of Deep Learning Models on EEG Denoising (Semi-Synthetic Data)

| Model | Stimulation Type | Key Metric | Performance | Reference / Context |

|---|---|---|---|---|

| Complex CNN | tDCS | RRMSE (Temporal) | Best Performance | [7] |

| M4 (SSM-based) | tACS | RRMSE (Temporal) | Best Performance | [7] |

| M4 (SSM-based) | tRNS | RRMSE (Temporal) | Best Performance | [7] |

| ART (Transformer) | Multi-Artifact | MSE / SNR | Surpassed other DL models | [11] |

| AnEEG (GAN-LSTM) | Multi-Artifact | NMSE / RMSE / CC | Lower error, higher correlation than wavelet methods | [6] |

| GCTNet (GAN-CNN-Transformer) | Multi-Artifact | RRMSE / SNR | 11.15% reduction in RRMSE, 9.81 improvement in SNR | [6] |

Table 2: Performance of a Deep Learning Model on Knee MRI Motion Artifact Removal

| Model | Input Type | RMSE | PSNR | SSIM | Reference |

|---|---|---|---|---|---|

| De-Artifact Diffusion Model | Motion-corrupted Knee MRI | 11.44 ± 0.12 | 33.23 ± 0.22 | 0.968 ± 0.002 | [12] |

| ESR (Comparison Algorithm) | Motion-corrupted Knee MRI | 14.87 ± 0.11 | 30.85 ± 0.19 | 0.943 ± 0.003 | [12] |

The data shows that the performance of deep learning models can be highly dependent on the specific application and artifact type. For instance, in Transcranial Electrical Stimulation (tES) artifact removal, no single model was best for all stimulation types: Complex CNN excelled for tDCS, while an M4 model based on State Space Models was superior for tACS and tRNS [7]. Furthermore, models like the De-Artifact Diffusion Model for knee MRI demonstrate that deep learning can significantly outperform other advanced algorithms on real-world clinical data [12].

Experimental Protocols and Workflows

To ensure reproducible and valid results, rigorous experimental protocols are essential in artifact removal research. Below are detailed methodologies from key studies.

Protocol for Benchmarking EEG Denoising Models

This protocol, used to evaluate models for removing tES artifacts, highlights the use of semi-synthetic data for controlled evaluation [7].

- Step 1: Dataset Creation. A semi-synthetic dataset is created by combining clean, artifact-free EEG recordings with synthetically generated tES artifacts (simulating tDCS, tACS, and tRNS). This provides a known ground truth for rigorous evaluation.

- Step 2: Model Training. Multiple artifact removal models (eleven in the cited study) are trained on the generated dataset. The model's task is to learn the mapping from the noisy (EEG + artifact) signal to the clean EEG signal.

- Step 3: Quantitative Evaluation. Model performance is evaluated using metrics calculated against the known ground truth. Key metrics include:

- Root Relative Mean Squared Error (RRMSE): Assesses accuracy in both temporal and spectral domains.

- Correlation Coefficient (CC): Measures the linear relationship between the cleaned signal and the ground truth.

Protocol for Knee MRI Motion Artifact Removal

This protocol outlines a supervised deep learning approach for a clinically relevant problem [12].

- Step 1: Paired Data Collection. The model is trained using a dataset of 90 patients, each with two sets of images: one with motion artifacts and a corresponding "ground truth" image without artifacts, acquired via immediate rescanning.

- Step 2: Model Construction and Training. A supervised conditional diffusion model is constructed. This model is trained to learn the conditional distribution of clean images given the artifact-corrupted input.

- Step 3: Validation and Testing. The model is tested on internal and external test datasets from different time periods and hospitals to assess generalizability. Performance is evaluated with:

- Objective Metrics: Root Mean Square Error (RMSE), Peak Signal-to-Noise Ratio (PSNR), and Structural Similarity Index (SSIM).

- Subjective Assessment: Clinical experts rate the quality of the output images.

Workflow Visualization

The following diagram illustrates a generalized supervised deep learning workflow for artifact removal, as applied in both EEG and MRI contexts.

Supervised Deep Learning for Artifact Removal

The Scientist's Toolkit: Key Research Reagents and Materials

Successful experimentation in this field relies on specific data, software, and hardware. The following table details essential "research reagents" for developing and benchmarking deep learning-based artifact removal methods.

Table 3: Essential Research Reagents for Deep Learning-Based Artifact Removal

| Item Name | Function / Application | Specific Examples / Notes |

|---|---|---|

| Semi-Synthetic Datasets | Provides a known ground truth for controlled model training and evaluation. | Clean EEG + synthetic tES artifacts [7]; Clean EEG mixed with EOG/EMG artifacts [6]. |

| Paired Real-World Datasets | Enables supervised training on real artifacts where ground truth is available. | Paired motion-corrupted and immediately rescanned knee MRI [12]. |

| Public EEG Datasets | Serves as benchmark for training and evaluating generalizability. | PhysioNet motor/imaging dataset [6]; EEG Eye Artefact Dataset [6]. |

| Independent Component Analysis (ICA) | Used for generating pseudo clean-noisy training data pairs or for pre-processing. | Enhanced training data generation for the ART transformer model [11]. |

| Deep Learning Frameworks | Provides the programming environment for building and training complex models. | TensorFlow, PyTorch. |

| High-Performance Computing (HPC) | Essential for training large models like transformers and diffusion models. | GPU clusters for reducing training time of deep neural networks. |

| Quantitative Evaluation Metrics | Standardized for objective performance comparison across studies. | RRMSE, CC, SNR, SAR (for EEG) [7] [6]; RMSE, PSNR, SSIM (for MRI) [12]. |

Artifacts present a profound and multi-faceted problem that directly impacts the accuracy of clinical diagnoses and the reliability of emerging technologies like BCIs. The research community's response has been a rapid transition from traditional, assumption-heavy methods toward powerful, data-driven deep learning models. Evidence shows that while no single solution is universally optimal, deep learning approaches—from CNNs and GANs to the latest transformers and diffusion models—consistently push the performance frontier, offering superior artifact suppression and signal restoration. However, challenges remain in computational efficiency, model interpretability, and real-world generalizability. The future of artifact removal lies in addressing these challenges through hybrid architectures, self-supervised learning, and a steadfast commitment to developing solutions with high online parity and clinical translatability.

The analysis of Electroencephalography (EEG) signals is fundamental to both neuroscience research and clinical diagnostics, providing unparalleled insights into brain function with millisecond-level temporal resolution [6]. However, the journey from raw EEG recordings to interpretable neural data is fraught with a major obstacle: artifacts. These unwanted signals, originating from biological sources like eye movements (EOG) and muscle activity (EMG), or environmental sources such as powerline interference, can severely obscure genuine brain activity, leading to misinterpretation and flawed conclusions [6] [13]. For decades, the field has relied on a suite of established traditional methods to combat this problem. Techniques based on regression, filtering, and Blind Source Separation (BSS)—including Independent Component Analysis (ICA), Principal Component Analysis (PCA), and Wavelet Transform—have formed the cornerstone of EEG preprocessing pipelines [14] [15].

These traditional methods are characterized by their strong mathematical foundations, relative computational simplicity, and well-understood behavior. Before the advent of deep learning, they were the definitive tools for enhancing EEG signal quality. This guide provides an objective comparison of these established techniques, detailing their operational principles, experimental performance, and suitability for different artifact types. This establishes a crucial baseline for understanding their enduring value and limitations within the broader thesis of deep learning versus traditional approaches in artifact removal research.

Methodologies and Experimental Protocols

A rigorous evaluation of artifact removal methods requires standardized experimental protocols and performance metrics. The following section outlines the core methodologies of traditional techniques and describes how they are benchmarked in experimental settings.

Core Methodological Principles

- Regression-Based Methods: These techniques employ a reference signal, typically from dedicated EOG or EMG channels, to model and subtract the artifact's contribution from the EEG data. The protocol involves calculating a transfer function between the reference artifact and the contaminated EEG channels, which is then used to estimate and remove the artifact component [14].

- Filtering Techniques (Bandpass/Adaptive):

- Bandpass Filtering: A straightforward protocol where specific frequency bands associated with artifacts are attenuated. For example, a high-pass filter at 0.5 Hz can suppress slow drifts, while a notch filter at 50/60 Hz removes powerline interference [15].

- Adaptive Filtering: This method uses a reference noise signal and an adaptive algorithm (e.g., Recursive Least Squares - RLS) to dynamically adjust filter coefficients, optimizing noise cancellation even when signal statistics change over time [16] [15].

- Blind Source Separation (BSS):

- Independent Component Analysis (ICA): ICA is a statistical technique that decomposes multi-channel EEG data into independent components (ICs). The experimental protocol involves a human expert or automated algorithm visually inspecting and classifying these ICs based on their temporal, spectral, and spatial patterns to identify and remove those corresponding to artifacts [14] [15].

- Principal Component Analysis (PCA): PCA decomposes the EEG signal into orthogonal components ordered by variance. The protocol assumes that high-variance components are likely artifacts. These components are then discarded before reconstructing the signal, preserving the lower-variance neural data [14].

- Wavelet Transform: This method uses a protocol of signal decomposition and thresholding. The EEG signal is decomposed into different frequency sub-bands using a mother wavelet. A threshold is then applied to the wavelet coefficients to suppress those associated with noise, after which the signal is reconstructed [14] [16].

Benchmarking and Evaluation Protocols

To quantitatively assess the performance of these methods, researchers use semi-synthetic or real EEG datasets with known ground truths. Common evaluation metrics include [6] [7] [15]:

- Temporal Similarity: Correlation Coefficient (CC) measures the linear relationship between the cleaned signal and the original clean signal. Root Mean Square Error (RMSE) and Relative RMSE (RRMSE) quantify the magnitude of error introduced by the cleaning process.

- Quality Metrics: Signal-to-Noise Ratio (SNR) and Signal-to-Artifact Ratio (SAR) gauge the improvement in signal quality post-processing.

- Classification Accuracy: In applied settings, the ultimate test is whether denoising improves the accuracy of downstream tasks, such as disease diagnosis using classifiers like Support Vector Machines (SVM) and Random Forests [14].

The diagram below illustrates a generalized experimental workflow for benchmarking artifact removal methods.

Performance Data and Comparative Analysis

The following tables summarize the experimental performance of traditional methods against deep learning approaches and each other, based on published benchmarks.

Table 1: Comparative Performance of Traditional vs. Deep Learning Methods

| Method Category | Method Name | Artifact Type | Key Performance Metrics (vs. Traditional Baseline) | Reference |

|---|---|---|---|---|

| Traditional (Wavelet) | Wavelet Decomposition | Various | Used as a baseline for comparison. | [6] |

| Deep Learning | AnEEG (LSTM-GAN) | Various | Lower NMSE & RMSE, Higher CC, improvements in SNR and SAR. | [6] |

| Deep Learning | GCTNet | Ocular, EMG | 11.15% reduction in RRMSE, 9.81 improvement in SNR. | [6] |

| Traditional (Hybrid) | EMD-DFA-WPD | Ocular, EMG | Achieved classification accuracy of 98.51% for depression diagnosis. | [14] |

| Traditional (Adaptive) | Deep WVFLN (RLS) | Ocular | MSE: 0.18 μV², RMSE: 0.4242 μV. | [16] |

Table 2: Performance of Methods Specific to tES Artifact Removal

| Method | Stimulation Type | Temporal RRMSE | Spectral RRMSE | Correlation Coefficient (CC) |

|---|---|---|---|---|

| Complex CNN | tDCS | Best Performance | - | - |

| M4 Network (SSM) | tACS | Best Performance | - | - |

| M4 Network (SSM) | tRNS | Best Performance | - | - |

| Traditional Methods (e.g., ICA, Filtering) | tDCS/tACS/tRNS | Variable, generally outperformed by the best DL models. | - | - |

Table 3: Performance of Single-Channel EOG Removal Techniques

| Method | Artifact Type | Relative RMSE (RRMSE) | Correlation Coefficient (CC) |

|---|---|---|---|

| FF-EWT + GMETV | EOG (Eye Blink) | Lower | Higher |

| EMD | EOG (Eye Blink) | Higher | Lower |

| SSA | EOG (Eye Blink) | Higher | Lower |

Analysis of Comparative Performance

- Deep Learning Advantages: As shown in Table 1, modern deep learning models like AnEEG and GCTNet consistently demonstrate superior quantitative performance compared to traditional wavelet techniques, achieving lower error (NMSE, RMSE) and higher signal fidelity (CC, SNR) [6]. Their strength lies in learning complex, non-linear artifact patterns directly from data without requiring manual feature engineering.

- Enduring Strengths of Traditional Methods:

- Hybrid Models: Traditional hybrid approaches remain highly competitive. The EMD-DFA-WPD model achieved a remarkable 98.51% accuracy in a clinical depression diagnosis task, proving that well-designed traditional pipelines can deliver state-of-the-art results in applied settings [14].

- Specialized Scenarios: The performance of traditional methods is highly dependent on the artifact type and recording context (Tables 2 & 3). For example, adaptive filters like the WVFLN-RLS are very effective for specific, well-defined noise like ocular artifacts [16].

- Computational Efficiency: Traditional methods like filtering and wavelet transforms are generally less computationally intensive than deep learning models, making them more suitable for real-time applications on devices with limited processing power [13].

Successful experimentation in EEG artifact removal relies on a suite of key resources, from datasets to software tools.

Table 4: Essential Research Resources for Artifact Removal Studies

| Resource Category | Specific Example | Function and Application |

|---|---|---|

| Public EEG Datasets | PhysioNet Motor/Imaging Dataset | Provides real EEG data for training and benchmarking artifact removal algorithms [6]. |

| Mendeley EEG Database | Source of clean and artifactual EEG data, used for validating specific methods like ocular artifact removal [16]. | |

| EEG Eye Artefact Dataset | A dedicated dataset for developing and testing methods against ocular artifacts [6]. | |

| Benchmarking Tools | ABOT (Artefact removal Benchmarking Online Tool) | An online tool that allows researchers to compare over 120 machine learning and traditional methods for artifact removal from various neuronal signals, complying with FAIR principles [13]. |

| Algorithmic Toolboxes | ICA (e.g., in EEGLAB) | Standard software implementation for running Independent Component Analysis on EEG data [14]. |

| Wavelet Toolbox (e.g., in MATLAB) | Provides functions for performing wavelet decomposition and thresholding for denoising [14]. |

The established traditional methods of regression, filtering, and blind source separation form a robust, well-understood foundation for EEG artifact removal. While deep learning approaches are emerging as powerful alternatives, often outperforming traditional methods on quantitative metrics, traditional techniques are far from obsolete.

The future of EEG artifact removal lies not in a single victorious approach, but in the strategic combination of traditional and deep learning methods. Hybrid models that leverage the interpretability and efficiency of traditional techniques with the representational power of deep learning hold significant promise. Furthermore, the development of resources like the ABOT benchmarking tool is critical for providing objective, standardized comparisons that guide researchers in selecting the most appropriate method for their specific signal type, artifact, and application context [13]. As the field moves towards more mobile and real-world applications with wearable EEG, the development of efficient, robust, and hybrid denoising pipelines will be more important than ever.

The analysis of electrophysiological and medical imaging data is fundamental to neuroscience research and clinical diagnostics. However, the accurate interpretation of this data is consistently challenged by the presence of artifacts—unwanted signals that obscure genuine biological activity. Traditional approaches to artifact removal have primarily relied on statistical decompositions and linear filtering techniques. While useful in controlled environments, these methods contain inherent limitations rooted in their requirement for manual intervention and their foundational linear assumptions. The emergence of deep learning represents a paradigm shift, offering data-driven approaches that automatically learn to separate artifact from signal without relying on rigid linear models. This guide provides an objective comparison of these methodological approaches, supported by experimental data quantifying their performance across multiple domains.

Defining the Traditional Paradigm and Its Core Limitations

Traditional artifact removal techniques form the historical foundation of signal processing in biomedical data. These methods can be broadly categorized into several classes, each with specific operational principles and applications.

Regression-based methods primarily rely on setting a reference channel and using linear transformation to subtract the estimated artifact from the contaminated EEG. However, their performance significantly decreases in the absence of a reference signal, which additionally increases operational difficulty and cost [5]. Filtering methods, including notch filters for powerline interference, have relatively limited applicability due to the significant spectral overlap between physiological artifacts (like EMG and EOG) and the effective components of biological signals [5].

Blind Source Separation (BSS) techniques, including Independent Component Analysis (ICA), Principal Component Analysis (PCA), and Canonical Correlation Analysis (CCA), map artifact-contaminated signals into a new data space through mathematical transformations [3] [10]. These methods then remove components corresponding to artifacts using established criteria or manual intervention, reconstructing the remaining components to obtain clean data [5]. Decomposition techniques like Wavelet Transform, Empirical Mode Decomposition (EMD), and Singular Spectrum Analysis (SSA) break signals into constituent elements for selective artifact removal [15] [10].

The core limitations of these traditional approaches emerge from two fundamental constraints: their reliance on manual intervention and their foundation in linear assumptions. ICA and other BSS methods frequently require visual inspection and manual selection of components for rejection, introducing subjectivity and hindering automated processing pipelines [5]. Similarly, techniques like EMD and SSA often depend on manual parameter tuning or threshold-based heuristics, limiting their scalability and generalizability across diverse datasets [10].

The mathematical foundation of many traditional methods rests on the assumption that artifacts and biological signals combine in a linear, stationary manner [10]. This linear assumption fails to account for the complex, nonlinear interactions that characterize real-world biological systems, particularly in mobile acquisition environments [10].

Quantitative Performance Comparison: Traditional vs. Deep Learning Approaches

Experimental data from controlled studies provides objective evidence of the performance disparities between traditional and deep learning methods for artifact removal. The following tables summarize key findings across multiple data modalities and artifact types.

Table 1: Performance Comparison of Methods on EEG Artifact Removal Tasks (RRMSE: Relative Root Mean Square Error; CC: Correlation Coefficient)

| Method Category | Specific Method | Artifact Type | Performance Metrics | Key Limitations |

|---|---|---|---|---|

| Traditional BSS | ICA | Ocular, Muscle | Requires manual component selection [5] | Subjective, non-automated, struggles with low-channel counts [3] |

| Traditional Decomposition | Wavelet Transform | EOG | Depends on threshold parameters [10] | May not perfectly reconstruct original signal [15] |

| Traditional Decomposition | EMD | EOG | Suffers from mode mixing [15] | Can lead to loss of important signal components [15] |

| Deep Learning | Complex CNN | tDCS artifacts | Best performance for tDCS [7] | Specialized for specific artifact types [7] |

| Deep Learning | M4 (SSM-based) | tACS, tRNS artifacts | Best for tACS and tRNS [7] [17] | Higher computational complexity [7] |

| Deep Learning | CLEnet | Multi-artifact (EMG, EOG, unknown) | CC: 0.925, RRMSEt: 0.300 [5] | Complex architecture requiring significant data [5] |

| Deep Learning | AnEEG (GAN-LSTM) | Multiple biological artifacts | Lower NMSE/RMSE, higher CC vs. wavelet [6] | Training instability common in GAN architectures [6] |

Table 2: Performance Metrics for Single-Channel EOG Removal Techniques

| Method | Synthetic Data Performance | Real EEG Performance | Applicability to Single-Channel |

|---|---|---|---|

| FF-EWT + GMETV (Proposed) | Lower RRMSE, Higher CC [15] | Improved SAR and MAE [15] | Specifically designed for SCL [15] |

| ICA | Not evaluated | Effective for MCL [15] | Limited effectiveness for SCL [15] |

| Adaptive Filtering | Requires reference signal [15] | Dependent on reference quality [15] | Applicable but needs reference [15] |

| EMD | Component separation [15] | Mode mixing issues [15] | Applicable but imperfect reconstruction [15] |

Experimental Protocols and Methodologies

To ensure reproducibility and proper interpretation of the comparative data, this section outlines the standard experimental protocols used for evaluating artifact removal methods in the cited studies.

Semi-Synthetic Dataset Creation

A validated approach for rigorous benchmarking involves creating semi-synthetic datasets where clean signals are artificially contaminated with known artifacts, enabling precise ground truth comparisons [7] [5]. For EEG artifact removal studies, researchers typically:

- Source clean EEG segments from publicly available databases like EEGdenoiseNet [5]

- Record or simulate artifact signals (EOG, EMG, ECG, or tES artifacts) [7] [5]

- Linearly mix artifacts with clean EEG at controlled signal-to-noise ratios [5]

- Validate the realism of synthetic datasets against real contaminated recordings [7]

Performance Evaluation Metrics

Standardized metrics enable objective comparison across methods. The most commonly employed metrics include:

- Temporal Domain Accuracy: Relative Root Mean Square Error in temporal domain (RRMSEt) and Correlation Coefficient (CC) between processed and ground truth signals [7] [5]

- Spectral Domain Accuracy: Relative Root Mean Square Error in frequency domain (RRMSEf) assesses preservation of spectral content [7] [5]

- Signal Quality Indices: Signal-to-Noise Ratio (SNR) and Signal-to-Artifact Ratio (SAR) quantify the effectiveness of artifact suppression [6] [5]

- Clinical Validation: For medical imaging, diagnostic performance compared to ground truth using expert ratings and clinical accuracy measures [12] [18]

Implementation Details for Deep Learning Methods

Training deep learning models for artifact removal follows a supervised learning paradigm:

- Network Architecture Selection: Choosing appropriate architectures (CNN, LSTM, GAN, Transformer, or hybrid) based on the signal characteristics [10]

- Loss Function Optimization: Typically using Mean Squared Error (MSE) to minimize differences between denoised output and ground truth [10]

- Parameter Optimization: Utilizing optimizers like Adam, RMSProp, or Stochastic Gradient Descent to update network weights [10]

- Validation Strategy: Employing k-fold cross-validation or hold-out validation sets to prevent overfitting and ensure generalizability [19]

Figure 1: Conceptual workflow comparing the fundamental approaches of traditional and deep learning methods for artifact removal, highlighting their core characteristics and resulting limitations or strengths.

The Scientist's Toolkit: Key Research Reagents and Computational Solutions

Table 3: Essential Resources for Advanced Artifact Removal Research

| Resource Category | Specific Tool/Solution | Function/Purpose | Example Applications |

|---|---|---|---|

| Benchmark Datasets | EEGdenoiseNet | Provides standardized clean/artifact pairs for training & evaluation [5] | Method comparison, model validation |

| Computational Frameworks | TensorFlow/PyTorch | Deep learning framework for model development & training [10] | Implementing CNN, LSTM, GAN architectures |

| Traditional Algorithms | ICA, Wavelet Transform, EMD | Baseline traditional methods for performance comparison [15] [10] | Establishing performance benchmarks |

| Evaluation Metrics | RRMSE, CC, SNR, SAR | Quantitative performance assessment [7] [6] [5] | Objective method comparison |

| Specialized Architectures | CNN-LSTM hybrids (CLEnet) | Capture both spatial and temporal features [5] | Multi-channel EEG artifact removal |

| Specialized Architectures | GAN with LSTM (AnEEG) | Adversarial training for artifact generation/removal [6] | Biological artifact removal |

| Specialized Architectures | State Space Models (M4) | Modeling sequential dependencies [7] [17] | tACS and tRNS artifact removal |

Figure 2: Standardized experimental workflow for developing and evaluating deep learning-based artifact removal methods, showing key stages from data preparation through performance validation.

The experimental evidence consistently demonstrates that deep learning approaches outperform traditional methods across multiple metrics and artifact types. The superiority is particularly evident in handling complex, real-world artifacts where nonlinear relationships and overlapping spectral characteristics prevail. While traditional methods maintain utility in specific, controlled scenarios with clear linear separability, their fundamental limitations of manual intervention and linear assumptions restrict their effectiveness in advanced research and clinical applications.

Deep learning models excel through their capacity for automated operation, preservation of signal integrity, and adaptability to diverse artifact types. However, researchers should consider that this enhanced performance comes with increased computational complexity and data requirements. The choice between methodological approaches should be guided by the specific application constraints, including available computational resources, the necessity for real-time processing, and the diversity of artifacts encountered. Future research directions likely involve developing more efficient architectures, incorporating self-supervised learning to reduce labeled data dependence, and enhancing model interpretability for clinical adoption.

The analysis of electroencephalography (EEG) signals is fundamental to neuroscience research, clinical diagnosis, and brain-computer interface (BCI) applications. However, the inherent vulnerability of EEG signals to contamination by various artifacts—from physiological sources like ocular and muscle activity to environmental interference—has long posed a significant challenge. Traditional artifact removal techniques have primarily operated on linear assumptions and often required manual parameter tuning, limiting their effectiveness and generalizability. The emergence of deep learning represents a paradigm shift in this domain, moving from linear filtering and heuristic-based approaches to data-driven models that learn complex, nonlinear mappings directly from noisy to clean EEG signals. This guide objectively compares the performance of this new deep learning paradigm against traditional methods, providing researchers with the experimental data and methodological insights needed to inform their artifact removal strategies.

Performance Benchmarking: Deep Learning vs. Traditional Methods

Deep learning models have demonstrated superior performance across multiple quantitative metrics compared to traditional artifact removal techniques. The table below summarizes key experimental findings from recent studies.

Table 1: Performance Comparison of EEG Denoising Methods

| Model/Method | Model Type | Key Performance Metrics | Artifact Types Targeted |

|---|---|---|---|

| AnEEG (LSTM-based GAN) [6] | Deep Learning (Generative) | Lower NMSE & RMSE; Higher CC, SNR & SAR values than wavelet techniques | General artifacts (muscle, ocular, environmental) |

| DHCT-GAN [20] | Deep Learning (Hybrid) | Outperforms state-of-the-art networks on 6 metrics for EMG, EOG, ECG, and mixed artifacts | EMG, EOG, ECG, Mixed |

| Deep Lightweight CNN [21] | Deep Learning (Discriminative) | F1-score improvements of +11.2% to +44.9% over rule-based methods | Eye movement, muscle, non-physiological |

| Complex CNN [7] | Deep Learning (Discriminative) | Best performance for tDCS artifact removal (RRMSE, CC) | tES artifacts (tDCS) |

| Multi-modular SSM (M4) [7] | Deep Learning (State Space) | Best performance for tACS and tRNS artifact removal (RRMSE, CC) | tES artifacts (tACS, tRNS) |

| Wavelet Decomposition [6] | Traditional (Thresholding) | Higher NMSE and RMSE, lower CC compared to AnEEG | General artifacts |

| Rule-Based Methods [21] | Traditional (Heuristic) | Lower F1-scores across all artifact categories | Eye movement, muscle, non-physiological |

The data consistently shows that deep learning models achieve a higher degree of signal fidelity. The lower Normalized Mean Square Error (NMSE) and Root Mean Square Error (RMSE) values indicate a better agreement with the original, clean signal, while higher Correlation Coefficient (CC) values reflect a stronger linear relationship with the ground truth [6]. Improvements in Signal-to-Noise Ratio (SNR) and Signal-to-Artifact Ratio (SAR) further confirm the effectiveness of deep learning in isolating neural activity from contamination [6].

Experimental Protocols in Deep Learning EEG Denoising

Data Preprocessing and Model Training

A critical factor in the success of deep learning models is a robust and standardized data preprocessing pipeline. A typical protocol, as used in studies like the lightweight CNN for artifact detection, involves several key stages [21]:

- Signal Standardization: Raw EEG signals are resampled to a uniform sampling rate (e.g., 250 Hz) and filtered with a bandpass (e.g., 1-40 Hz) and a notch filter (50/60 Hz) to remove line noise and out-of-band artifacts.

- Referencing and Normalization: Average referencing is applied to reduce common-mode noise, followed by global normalization (e.g., using RobustScaler) across all channels to standardize the input for stable model training.

- Adaptive Segmentation: The continuous EEG is segmented into non-overlapping windows. Research indicates that optimal window lengths are artifact-specific: 1s for non-physiological, 5s for muscle, and 20s for eye movement artifacts [21].

- Model Optimization: Models are typically trained using loss functions like Mean Square Error (MSE) and optimized with algorithms such as ADAM or RMSProp to minimize the divergence between the denoised output and the ground truth clean signal [10].

Architectures of State-of-the-Art Models

The deep learning paradigm encompasses a diverse set of neural network architectures, each with distinct strengths:

- Generative Adversarial Networks (GANs): Models like AnEEG and DHCT-GAN employ a generator network to produce denoised signals and a discriminator network to distinguish them from real clean EEG. This adversarial training encourages the generator to output highly realistic, artifact-free signals [6] [20].

- Convolutional Neural Networks (CNNs): These models, including the Deep Lightweight CNN, excel at extracting local spatial and temporal features from EEG data. Their hierarchical structure is well-suited for identifying pattern-based artifacts like muscle activity and electrode pops [21] [22].

- Hybrid Architectures: The current state-of-the-art trend involves combining architectural components. DHCT-GAN, for instance, uses a dual-branch hybrid CNN-Transformer network. The CNN branch captures local features, while the Transformer branch models long-range temporal dependencies, allowing the model to handle a wider variety of complex artifacts [20]. Another study combined CNNs for EEG with LSTMs for EDA in a multimodal approach to noise annoyance detection [22].

The following diagram illustrates the typical workflow and architecture of an advanced hybrid denoising model like DHCT-GAN:

Diagram 1: Workflow of a Hybrid Deep Learning Model for EEG Denoising

For researchers embarking on deep learning-based EEG denoising, the following tools, datasets, and models are essential components of the modern toolkit.

Table 2: Research Reagent Solutions for EEG Denoising

| Resource Type | Name/Example | Function & Application |

|---|---|---|

| Public Datasets | Temple University Hospital (TUH) EEG Artifact Corpus [21] | Provides expert-annotated artifact labels for training and validating detection models in realistic clinical scenarios. |

| EEG DenoiseNet [6] | Contains clean EEG segments mixed with EMG and EOG artifacts, useful for semi-simulated validation. | |

| Software & Libraries | EEGLAB/ MATLAB [23] | Standard toolboxes for foundational EEG preprocessing, including filtering and re-referencing. |

| Python Deep Learning Frameworks (TensorFlow, PyTorch) | Essential for building, training, and deploying complex models like CNNs, GANs, and Transformers. | |

| Model Architectures | Lightweight CNNs [21] | Ideal for real-time or resource-constrained applications requiring efficient artifact detection. |

| Hybrid CNN-Transformers (e.g., DHCT-GAN) [20] | State-of-the-art for high-performance denoising, balancing local feature extraction with global context. | |

| Evaluation Metrics | NMSE, RMSE, CC [6] [7] | Quantifies signal fidelity and similarity to ground truth. |

| SNR, SAR [6] | Measures the effectiveness of artifact suppression and neural signal preservation. | |

| Hardware | Portable EEG Systems (e.g., BrainVision LiveAmp) [23] | Enables community-based and naturalistic data collection, expanding data diversity. |

| Dry Electrode Headsets [24] | Facilitates comfortable, long-term monitoring, though may introduce unique artifact profiles. |

The evidence from recent studies solidifies the position of deep learning as a transformative paradigm in EEG artifact removal. By learning complex, nonlinear mappings from noisy to clean signals, models such as hybrid CNN-Transformers and GANs consistently surpass the capabilities of traditional linear methods and heuristic-based rules. The key advantages of this shift are superior denoising performance across a range of quantitative metrics, reduced reliance on manual expertise for parameter tuning, and enhanced adaptability to diverse and complex artifact types. For the research community, adopting this paradigm requires familiarity with new tools and datasets but offers the reward of more accurate, automated, and reliable EEG analysis, thereby strengthening findings in neuroscience, clinical diagnostics, and drug development.

A Deep Dive into Architectures: CNNs, GANs, and Hybrid Models in Action

Convolutional Neural Networks (CNNs) for Spatial and Morphological Feature Extraction

In the field of deep learning, particularly in research focused on artifact removal, a central conflict exists between traditional, often linear, methods and modern deep learning approaches. Convolutional Neural Networks (CNNs) have emerged as a powerful tool for spatial and morphological feature extraction, directly addressing the limitations of traditional techniques. This capability is crucial for distinguishing subtle features in complex datasets, from medical images to biological shapes. The core strength of CNNs lies in their hierarchical architecture, which uses convolution layers with filters to automatically learn and extract relevant spatial features—such as edges, textures, and shapes—from input data [25]. This review objectively compares the performance of CNN-based feature extraction methods against traditional alternatives across various scientific domains, providing researchers and drug development professionals with validated experimental data to guide their methodological choices.

Performance Comparison: CNNs vs. Traditional Methods

Quantitative comparisons reveal that CNN-based methods consistently outperform traditional techniques in extracting discriminative spatial and morphological features. The following tables summarize experimental results from multiple independent studies.

Table 1: Performance Comparison in Morphological Feature Extraction

| Method | Application Domain | Key Performance Metric | Result | Traditional Method Result |

|---|---|---|---|---|

| Morpho-VAE [26] | Primate Mandible Shape Analysis | Cluster Separation Index (CSI) | Well-separated clusters (CSI <1) | PCA: Overlapping clusters (CSI >1) |

| Morphological-Convolutional Neural Network (MCNN) [27] | Melanoma Classification | Area Under Curve (AUC) | 0.94 (95% CI: 0.91 to 0.97) | Popular CNNs (ResNet-18, etc.): Lower AUC |

| Classification CNN [28] | Pharmaceutical Excipient Morphology | Classification Accuracy | High Accuracy | N/A (Traditional classification not used) |

| Morphological Feature Extractor [29] | Dog Breed Identification | Qualitative Recognition Capability | Structured, interpretable feature analysis | Traditional CNN: Confused by background |

Table 2: Performance in Signal & Image Artifact Handling

| Method | Application | Comparison Baseline | Performance Advantage |

|---|---|---|---|

| Specialized Lightweight CNNs [30] | EEG Artifact Detection | Rule-Based Methods | F1-score improvement: +11.2% to +44.9% |

| Complex CNN [7] | tDCS Artifact Removal | Various ML Methods | Best performance for tDCS artifacts (RRMSE, Correlation Coefficient) |

| M4 Network (SSMs) [7] | tACS & tRNS Artifact Removal | Various ML Methods | Best performance for tACS/tRNS artifacts |

| PISC (Physics-Informed + CNN) [31] | CT Metal Artifact Reduction | NMAR, O-MAR, CNN-MAR, DuDoNet | Best qualitative scores, least new artifacts |

| DuDoNet [31] | CT Metal Artifact Reduction | FBP, NMAR, O-MAR, CNN-MAR | Best quantitative Artifact Index (AI) |

Experimental Protocols and Methodologies

Morphological Feature Extraction with Morpho-VAE

The Morpho-VAE framework represents a hybrid approach combining unsupervised and supervised learning for landmark-free morphological analysis [26].

- Data Preparation: The study used 147 mandible samples from seven families. Three-dimensional mandible data were projected from three directions to create two-dimensional input images for analysis [26].

- Architecture: The model integrates a Variational Autoencoder (VAE) with a classifier module. The encoder compresses input images into a low-dimensional latent space, and the decoder reconstructs images. The classifier ensures the extracted features are discriminative between classes [26].

- Loss Function: A weighted total loss function guides the training:

E_total = (1 - α)E_VAE + αE_C, whereE_VAEis the VAE reconstruction and regularization loss, andE_Cis the classification loss. The hyperparameterαwas optimized to 0.1 via cross-validation [26]. - Evaluation: Cluster separation in the latent space was quantified using a custom Cluster Separation Index (CSI) and compared against traditional Principal Component Analysis (PCA) and a standard VAE [26].

Specialized CNNs for EEG Artifact Detection

This protocol outlines the development of specialized CNNs for detecting specific classes of artifacts in continuous Electroencephalography (EEG) signals [30].

- Data Source: The Temple University Hospital (TUH) EEG Corpus was used, containing expert-annotated artifacts. The dataset includes 158,884 annotations across 19 categories, with muscle, eye movement, and electrode artifacts being most common [30].

- Preprocessing:

- Signal Standardization: Recordings were resampled to 250 Hz and converted to a standardized 22-channel bipolar montage.

- Filtering: A bandpass filter (1-40 Hz) and notch filter (50/60 Hz) were applied to remove line noise.

- Referencing & Normalization: Average referencing was applied, followed by global normalization using RobustScaler.

- Segmentation: Data were segmented into non-overlapping windows of varying lengths (1s to 30s) to identify the optimal temporal context for each artifact type [30].

- Model Design and Training: Three distinct CNN systems were developed, each optimized for a specific artifact class (eye movement, muscle, non-physiological). Each system was tailored with an ideal temporal window size identified through experimentation: 20s for eye movements, 5s for muscle activity, and 1s for non-physiological artifacts [30].

- Comparison: The CNN systems were evaluated against standard rule-based clinical detection methods on a held-out test set using metrics like F1-score and ROC AUC [30].

CNN-based Metal Artifact Reduction (MAR) in CT

This protocol describes a comparative benchmark of MAR methods, including deep learning approaches, for CT images with neurovascular coils [31].

- Patient Cohort: 40 patients with intracranial aneurysms treated with endovascular coil embolization were included. Non-contrast brain CT scans were acquired using specific clinical protocols [31].

- Compared Methods: The study compared several algorithms:

- Traditional MAR: Normalized MAR (NMAR) and Metal Artifact Reduction for Orthopedic Implants (O-MAR).

- Deep Learning MAR: CNN-MAR and a Dual Domain Network (DuDoNet).

- Hybrid Method: Physics-Informed Sinogram Completion (PISC), which combines NMAR with physical correction [31].

- Evaluation:

- Quantitative Analysis: The Artifact Index (AI) was calculated by measuring the standard deviation (SD) of ROIs placed near metal coils and in artifact-free regions

(AI = SD_coil / SD_background). - Qualitative Analysis: Two blinded neuroradiologists independently evaluated the images using a five-point Likert scale, assessing metal artifact severity, diagnostic confidence, resolution, new artifacts, and soft tissue interfaces [31].

- Quantitative Analysis: The Artifact Index (AI) was calculated by measuring the standard deviation (SD) of ROIs placed near metal coils and in artifact-free regions

Workflow and Architectural Diagrams

Workflow for Landmark-Free Morphological Analysis

The following diagram illustrates the integrated workflow of the Morpho-VAE model for extracting morphological features without manual landmark annotation.

Specialized CNN Pipeline for EEG Artifact Detection

This diagram outlines the specialized pipeline for detecting different classes of artifacts in EEG signals, highlighting the artifact-specific optimization.

For researchers aiming to implement CNN-based feature extraction or artifact removal, the following tools and datasets are essential.

Table 3: Essential Research Resources for CNN-Based Feature Extraction

| Resource Name | Type | Primary Function in Research | Example Use-Case |

|---|---|---|---|

| TUH EEG Corpus [30] | Datasets | Provides expert-annotated, real-world EEG data for training and validating artifact detection models. | Benchmarking EEG artifact detection algorithms. |

| Primate Mandible Image Data [26] | Datasets | Enables landmark-free morphological analysis of biological shapes. | Studying evolutionary and developmental biology. |

| SEM Images of Excipients [28] | Datasets | Allows quantitative evaluation of particle morphology for pharmaceutical materials. | Clustering and classifying excipients by particle shape. |

| Pre-trained Models (VGG16, ResNet) [25] | Software/Tools | Provides a feature extraction backbone via transfer learning, saving training time and computational resources. | Rapid prototyping for custom image classification tasks. |

| Morphological Regulated VAE (Morpho-VAE) [26] | Algorithm | A hybrid architecture for extracting discriminative morphological features in an unsupervised manner. | Analyzing shapes where homologous landmarks are difficult to define. |

| Physics-Informed Sinogram Completion (PISC) [31] | Algorithm | A hybrid method combining traditional physical correction with deep learning for superior artifact reduction. | Correcting metal artifacts in clinical CT images. |

| RobustScaler [30] | Preprocessing Tool | Global normalization method that preserves relative amplitude relationships, crucial for stable CNN training. | Preprocessing EEG signals before input into a CNN. |

Generative Adversarial Networks (GANs) and LSTM Integration for Temporal Dynamics

The removal of artifacts from biological signals represents a significant challenge in data analysis, particularly within neuroscience and clinical diagnostics. Traditional methods often rely on statistical assumptions and linear models, which struggle to capture the complex, non-linear, and temporal nature of artifacts contaminating signals like electroencephalography (EEG) or Heart Rate Variability (HRV). The integration of Generative Adversarial Networks (GANs) and Long Short-Term Memory (LSTM) networks has emerged as a powerful, model-free approach for this task. This hybrid architecture leverages the adversarial training of GANs to learn the underlying distribution of clean data, while the LSTM components excel at modeling long-range temporal dependencies inherent in time-series data. This synergy creates a robust framework for generating high-fidelity, artifact-free signals, moving beyond the limitations of conventional techniques and offering a new paradigm for data preprocessing in critical applications such as brain-computer interfaces (BCIs) and personalized healthcare monitoring [6] [11] [32].

Performance Comparison: GAN-LSTM vs. Alternative Methods

Experimental results across various domains demonstrate the superior performance of the GAN-LSTM fusion model compared to both traditional methods and other deep learning architectures. The following tables summarize quantitative findings from key studies in artifact removal and sequential data prediction.

Table 1: Performance Comparison in EEG Artifact Removal

| Model | Dataset | Key Metric 1 | Key Metric 2 | Key Metric 3 |

|---|---|---|---|---|

| AnEEG (LSTM-based GAN) [6] | Various EEG datasets | Lower NMSE & RMSE vs. wavelet methods | Higher Correlation Coefficient (CC) | Improved SNR and SAR |

| ART (Transformer) [11] | Multiple open BCI datasets | Superior Mean Squared Error (MSE) | Better Signal-to-Noise Ratio (SNR) | Sets new benchmark in EEG processing |

| GCTNet (GAN+CNN+Transformer) [6] | Semi-simulated & real datasets | 11.15% reduction in Relative RMSE | 9.81 improvement in SNR | Outperforms existing approaches |

| Wavelet Decomposition [6] | Various EEG datasets | Higher NMSE & RMSE | Lower CC | Lower SNR and SAR |

Table 2: Performance in Sequential Data Prediction Tasks

| Model | Application | Performance Metrics |

|---|---|---|

| GAN-LSTM [33] | Coiled Tubing Drilling Parameter Prediction | ~90% accuracy (≈17% higher than standalone GAN or LSTM) |

| LSTM [34] | Daily Streamflow Forecasting | Lowest NRMSE; Highest Nash-Sutcliff efficiency (E, EH, EL) and correlation |

| Standalone GAN [33] | Coiled Tubing Drilling Parameter Prediction | Lower accuracy compared to the fused GAN-LSTM model |

| SVR (Support Vector Regression) [35] | Traffic Flow Prediction | Outperformed by hybrid deep learning models |

Table 3: Performance in Other Domains

| Model | Application | Key Findings |

|---|---|---|

| TL-LSTM-GAN [35] | Adaptive Traffic Signal Control | Reduces congestion and energy usage; superior to state-of-the-art methods |

| LSTM [36] | Schottky Diode Behavior Prediction | R² > 0.98; RMSE as low as 6.22 mA; reliable, cost-effective alternative to experiments |

| Adaptive LSTM [32] | HRV-based Psychosis Prediction | Mean F1 score of 0.9817 without artifact preprocessing |

Experimental Protocols and Methodologies

GAN-LSTM for Artifact Removal in EEG

The AnEEG model presents a typical protocol for using an LSTM-based GAN to remove artifacts from EEG signals. The generator is typically composed of a two-layer LSTM network, which processes the noisy EEG input sequence. This architecture is chosen specifically to capture the temporal dependencies and contextual information in the signal. The generator's role is to transform the noisy input into a cleaned version of the signal. The discriminator, often a one-dimensional convolutional neural network (1D-CNN), then judges whether its input is a real, clean signal from the training set or a generated one from the generator. This adversarial process, guided by a loss function that may include terms for temporal-spatial-frequency consistency, trains the generator to produce artifact-free EEG that preserves the underlying neural information [6]. The model is trained on datasets containing pairs or sets of EEG recordings with various artifacts (e.g., from the HaLT dataset or PhysioNet), allowing it to learn a robust mapping from noisy to clean signals [6].

GAN-LSTM for Parameter Prediction in Engineering

In a different application, a GAN-LSTM fusion network was employed to predict multiple drilling parameters (e.g., circulation pressure, ROP, wellhead pressure) in coiled tubing operations. Here, the LSTM network serves as the core predictive model, processing the historical time-series data of drilling parameters. Its ability to capture long-term dependencies in sequential data is crucial for accurate forecasting. To overcome the challenge of multi-variable output and maintain high accuracy, the powerful generative model of a GAN is used to refine the LSTM's outputs. The low-dimensional data from the LSTM is fed into the GAN's generator, which produces the final, high-dimensional predictions. The discriminator then evaluates these predictions against real data. This combined approach mitigates the accuracy drop typically seen when LSTMs output multiple variables and leads to more reliable predictions for complex engineering systems [33].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Research Reagents and Computational Tools

| Item / Solution | Function / Explanation |

|---|---|

| EEG Datasets (e.g., PhysioNet, HaLT) | Provide the essential raw, often artifact-laden, biological signals required for training and validating deep learning models. [6] |

| Independent Component Analysis (ICA) | A blind source separation technique used as a preprocessing step to generate pseudo clean-noisy data pairs for supervised training of models like ART. [11] |

| Graph Data & Spatial Embeddings | Provide structural and relational context (e.g., sensor locations in a network) which can be fused with time-series data in advanced spatio-temporal models. [37] |

| Wearable Device HRV Recordings | Source long-term, real-world physiological time-series data for personalized health prediction models, often containing inherent artifacts. [32] |

| Pre-trained Models (e.g., ResNet-50 on ImageNet) | Used in Transfer Learning (TL) to initialize discriminators, leveraging learned feature representations to improve convergence and performance on new tasks. [35] |

Architectural Workflows and Signaling Pathways

The following diagram illustrates the typical workflow of a GAN-LSTM model for signal denoising, integrating the components and processes described in the experimental protocols.

GAN-LSTM Denoising Workflow

The logical flow begins with a Noisy Signal Input (e.g., artifact-contaminated EEG) fed into the LSTM Generator. The generator's role is to capture the temporal context and produce a Generated "Clean" Signal. This generated signal, along with a Real Clean Signal from the training dataset (ground truth), is presented to the Discriminator. The discriminator's task is to distinguish between the two. The result of this discrimination generates Adversarial Feedback (a gradient signal), which is propagated back to the LSTM Generator. This feedback loop forces the generator to continuously improve its output until it can produce a Clean Output that the discriminator can no longer distinguish from the real clean data. Throughout this process, the LSTM components are critical for understanding the temporal evolution of the signal, ensuring that the denoising is context-aware and not just a point-wise operation [6] [33].

Emerging Transformer Networks and Attention Mechanisms for Global Context

The quest to effectively capture global context—the complex, long-range dependencies within data—represents a central challenge in deep learning. For years, traditional architectures like Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) have been constrained in their ability to model these relationships, primarily due to their inductive biases toward local connectivity and sequential processing [38]. The introduction of the Transformer architecture in 2017, with its core self-attention mechanism, marked a paradigm shift by enabling direct, parallelized interactions between all elements in a sequence, regardless of their positional distance [39]. This foundational innovation unlocked unprecedented capabilities in modeling global context, establishing Transformers as the backbone of modern large language models and vision systems [40] [41].

However, the standard self-attention mechanism's computational and memory requirements scale quadratically with sequence length (O(L²)), creating a significant bottleneck for processing very long sequences [42]. This review explores the cutting-edge landscape of emerging Transformer networks and attention mechanisms specifically designed to overcome this limitation while preserving or even enhancing the model's capacity for global context understanding. We will objectively compare the performance of these novel architectures, analyze supporting experimental data, and frame their development within the applied context of artifact removal in biomedical signal processing, a domain where distinguishing global signal from local noise is paramount.

Foundational Principles and the Need for Evolution

Core Components of the Original Transformer

The original Transformer architecture, introduced in "Attention Is All You Need," relies on three key components to process information. The Self-Attention Mechanism allows the model to weigh the importance of all other tokens when encoding a specific token. It dynamically computes a weighted sum of value (V) vectors based on compatibility scores between a query (Q) and a set of keys (K), formally expressed as Attention(Q, K, V) = softmax(QKᵀ/√dₖ)V [39]. Multi-Head Attention extends this by running multiple self-attention operations in parallel, each with linearly projected versions of Q, K, and V. This allows the model to jointly attend to information from different representation subspaces at different positions, capturing diverse contextual relationships [40] [39]. Finally, Positional Encodings are added to the input embeddings to inject information about the order of the sequence, as the self-attention mechanism itself is permutation invariant. The original model used fixed sine and cosine functions for this purpose [38] [39].

The Quadratic Bottleneck and Its Implications

The fundamental constraint of the standard Transformer is its quadratic complexity. Calculating the attention scores for every token against every other token in a sequence of length L requires computing and storing an L×L matrix. This O(L²) complexity makes it computationally prohibitive and memory-intensive to process very long sequences, such as extended documents, high-resolution images, or lengthy biomedical time-series recordings [42]. This limitation has spurred a major research direction focused on developing more efficient architectures that can maintain strong performance on global context modeling while being scalable for long-context applications.

Emerging Architectures and Mechanisms

The field has evolved along several innovative paths to address the quadratic bottleneck. The following table summarizes the core approaches and their representative models.

Table 1: Categories of Emerging Efficient Transformer Architectures

| Architecture Category | Core Innovation | Key Representative Models | Primary Advantage |

|---|---|---|---|

| Linearized Attention | Reformulates attention as linear operations via kernel approximation or recurrence. | Linear Transformer [42], Performer [42], RetNet [40], Mamba [40] | Linear O(L) time and memory complexity. |

| State Space Models (SSMs) | Replaces attention with structured state-space layers for sequence modeling. | Mamba [40] [7], S4 [7] | Efficient long-sequence handling; data-dependent reasoning (Mamba). |

| Hybrid & Alternative Models | Combines elements from different architectures or introduces novel mechanisms. | Hyena [40], RWKV [40], Multi-Range Attention [43] | Leverages strengths of multiple paradigms (e.g., convolution + attention). |

| Sparse Attention | Restricts attention computation to a selected subset of tokens. | Fixed-Pattern, Clustering-Based, Block-Sparse [42] | Reduces computation by ignoring presumably irrelevant token pairs. |

Linear Attention and Recurrent Formulations

Linear Attention methods aim to break the quadratic barrier by re-parameterizing the softmax attention. The core idea is to find a feature map, φ(·), that allows the attention operation to be expressed as a linear function, often leveraging the associativity property of matrix multiplication to compute the outputs in reverse order. For example, the Linear Transformer uses a positive feature map like ELU(x)+1, while the Performer leverages random Fourier features to unbiasedly approximate the softmax kernel [42]. A significant development in this space is the Retentive Network (RetNet), which employs a retention mechanism that mimics recurrence within Transformer blocks, enabling constant-time (O(1)) inference while maintaining compatibility with Transformer-like APIs [40].

State Space Models (SSMs)

State Space Models (SSMs) have emerged as a powerful alternative to attention, particularly for long sequences. Inspired by classical control theory, SSMs map a one-dimensional input sequence to an output via a hidden state, showing linear scalability. Mamba is a leading SSM architecture that enhances traditional linear SSMs by introducing data-dependent weights, allowing it to selectively propagate or forget information based on the current input. This makes Mamba capable of context-dependent reasoning, a capability previously dominated by attention-based models. Mamba has demonstrated high performance in domains like genomics, audio, and long-text modeling, offering 100x faster inference on sequences of 64k tokens compared to standard Transformers in some benchmarks [40] [7].

Multi-Range and Hybrid Attention Mechanisms

Beyond fully replacing attention, other approaches enhance it with more efficient or flexible patterns. Multi-Range Attention Mechanisms are a class of architectures that enable a single model to dynamically integrate features across multiple spatial, temporal, or semantic scales. They overcome the limitation of fixed-context attention by employing techniques like variable window sizes, hierarchical compression, and parallel multi-scale heads. For instance, Multi-Scale Window Attention (MSWA) assigns different window sizes to each attention head and layer, allowing fine-grained local and broad global contexts to be processed concurrently. Empirical results show that such multi-range approaches deliver better performance on language modeling perplexity and image super-resolution tasks than single-scale models at a comparable computational cost [43].

Hyena is another notable hybrid model that replaces attention with long convolutions and gated activations, reportedly delivering 100x faster inference on long sequences (64k tokens) while maintaining competitive accuracy on NLP benchmarks [40].

Performance Comparison and Experimental Data

To objectively evaluate these emerging architectures, we summarize their performance based on reported benchmarks. The following table synthesizes quantitative data from the literature.

Table 2: Comparative Performance of Emerging Architectures on Key Benchmarks

| Model / Architecture | Complexity (Seq. Length L) | Reported Performance Highlights | Key Experimental Findings |

|---|---|---|---|

| Standard Transformer | O(L²) | Baseline for tasks (e.g., LM, BV) | Becomes computationally infeasible for very long sequences (L > 50k). |

| Mamba (SSM) | O(L) | Language Modeling: Matches Transformer quality on Pile (800GB text) [40].Long Context: 100x faster inference at 64k tokens [40].Artifact Removal: SOTA on tACS/tRNS artifact removal (RRMSE, CC) [7] [17]. | Excels in long-context and data-dependent reasoning tasks; efficient on hardware. |