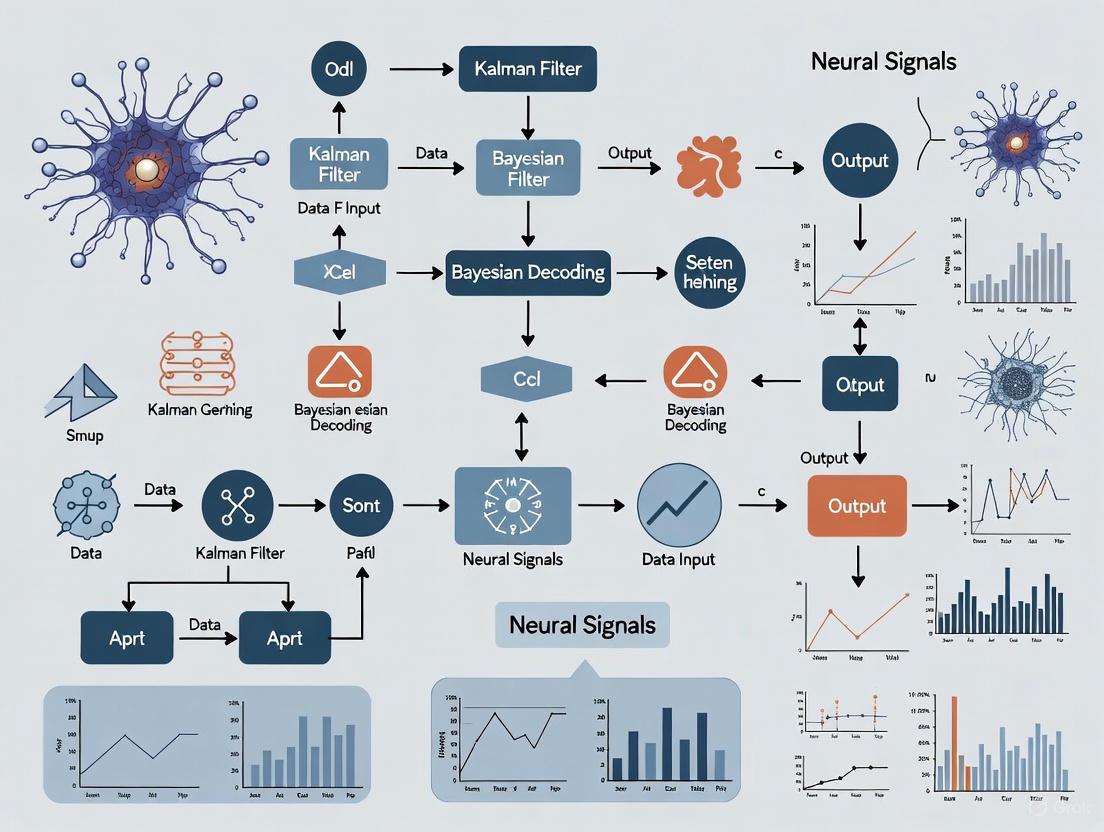

Decoding Neural Signals: A Comprehensive Guide to Kalman Filters and Bayesian Methods for Research and Drug Development

This article provides a thorough exploration of Kalman filters and Bayesian decoding methods for interpreting neural signals, tailored for researchers, scientists, and drug development professionals.

Decoding Neural Signals: A Comprehensive Guide to Kalman Filters and Bayesian Methods for Research and Drug Development

Abstract

This article provides a thorough exploration of Kalman filters and Bayesian decoding methods for interpreting neural signals, tailored for researchers, scientists, and drug development professionals. It covers foundational principles, from how neurons encode information to the mathematical basis of decoders. The piece delves into practical implementation, comparing traditional methods like the steady-state Kalman filter with modern machine learning and Bayesian approaches. It further addresses critical challenges in optimization and computational efficiency, and offers a rigorous comparison of decoder performance across different brain regions and applications. Finally, the article synthesizes key takeaways and discusses future implications, including the use of these methods in clinical trial design and novel therapeutic discovery, providing a vital resource for advancing biomedical and clinical research.

From Spikes to Signals: Foundational Principles of Neural Encoding and Decoding

The brain functions as a sophisticated distributed system where perception, cognition, and behavior emerge from the coordinated activity of neuronal populations. A fundamental principle governing this system is the continuous process of neural encoding and decoding, where information about environmental features and body states is represented in neural activity patterns and subsequently interpreted by downstream brain regions for decision-making and motor control [1]. This process forms the core computational framework through which the brain interacts with and adapts to its environment.

From an experimental perspective, "decoding the brain" holds dual significance: it refers both to the brain's inherent capacity to interpret its own internal signals and to researchers' ability to build algorithms that measure information represented in neural activity for basic scientific discovery and translational applications such as Brain-Computer Interfaces (BCIs) [1]. The mathematical relationship between encoding and decoding is intrinsically linked through Bayesian principles, where decoding involves inverting the encoding process to recover stimuli or cognitive states from observed neural activity [1].

Table: Key Concepts in Neural Encoding and Decoding

| Concept | Definition | Mathematical Representation |

|---|---|---|

| Neural Encoding | Process of representing sensory stimuli or cognitive variables in neural activity patterns | P(K|x) where K is neural activity and x is stimulus [1] |

| Neural Decoding | Process of interpreting or reconstructing information from neural activity patterns | P(x|K) - inverting the encoding relationship [1] |

| Population Coding | Information representation through coordinated activity of multiple neurons | Geometry defined by tuning curves of single neurons [2] |

| Kalman Filter | Traditional decoding algorithm that estimates system state from noisy measurements | Optimal for linear systems with Gaussian noise [3] [4] |

Theoretical Foundations and Geometric Principles

Geometric Framework of Neural Population Coding

Recent research has revealed that neural populations encode both sensory and dynamic cognitive variables through a unified geometric principle [2]. In this framework, population dynamics encode latent variables (such as decision variables during cognitive tasks), while individual neurons exhibit diverse tuning functions to these population states. This creates a population code where the heterogeneity of single-neuron responses arises from their varied sensitivity to the same underlying latent variable, rather than from complex individual dynamics [2].

This geometric principle was elegantly demonstrated in the primate premotor cortex during decision-making tasks, where population dynamics encoded a one-dimensional decision variable predicting choices, while individual neurons showed diverse tuning to this variable [2]. The stability of these tuning functions across different stimulus conditions suggests that stimuli affect the dynamics of the encoded variable but not the fundamental geometry of its neural representation [2].

The Encoding-Decoding Relationship as Bayesian Inference

The mathematical relationship between encoding and decoding can be formalized through Bayesian principles. While encoding models describe how neural responses depend on stimuli [P(K|x)], decoding models invert this relationship to estimate stimuli from neural activity [P(x|K)] [1]. This inversion naturally handles the inherent noise and ambiguity in neural representations, allowing for optimal estimation of external variables from noisy neural data.

Methodological Approaches and Algorithms

Traditional Decoding Methods

Traditional neural decoding has relied heavily on methods such as Wiener filters and Kalman filters, which provide optimal state estimation for linear systems with Gaussian noise [4]. The Kalman Filter, in particular, has been widely used in BCI applications because it leverages the smooth, predictable nature of many neural processes and behaviors [3]. However, these methods face fundamental limitations when dealing with the complex, nonlinear dynamics inherent in many neural systems [3].

Modern Machine Learning Approaches

Modern machine learning has significantly advanced neural decoding capabilities. Neural networks, gradient boosting, and other ensemble methods have demonstrated superior performance compared to traditional approaches across multiple brain areas, including motor cortex, somatosensory cortex, and hippocampus [4]. These methods are particularly valuable when the primary research aim is maximizing predictive accuracy, as in engineering applications and BCIs [4].

The DeMaND (Decoding using Manifold Neural Dynamics) algorithm represents a recent advancement that overcomes limitations of the Kalman Filter by learning a map of how recorded signals evolve, then using this map to decode signals of interest through noise [3]. This approach offers greater flexibility for nonlinear systems while requiring less training data and computational power than many neural networks [3].

Multimodal Integration Frameworks

Recent frameworks have demonstrated that integrating multiple modalities can significantly enhance decoding performance. The HMAVD (Harmonic Multimodal Alignment for Visual Decoding) framework integrates EEG, image, and text data to improve decoding of visual neural representations by using text as a semantic bridge to enhance cross-modal alignment [5]. To address challenges of modality dominance, this approach employs a Modal Consistency Dynamic Balancing (MCDB) strategy that quantifies each modality's contribution and adaptively adjusts information weights in the shared representation [5].

Similarly, the NEDS (Neural Encoding and Decoding at Scale) framework enables simultaneous encoding and decoding through a multi-task masking strategy that alternates between neural, behavioral, within-modality, and cross-modality masking during training [6]. This approach has demonstrated state-of-the-art performance for both encoding and decoding when pretrained on multi-animal data and fine-tuned on new subjects [6].

Table: Comparison of Neural Decoding Algorithms

| Algorithm | Primary Use Case | Advantages | Limitations |

|---|---|---|---|

| Kalman Filter | State estimation in linear systems with Gaussian noise [3] [4] | Optimal for linear systems, computationally efficient | Limited for nonlinear systems [3] |

| Wiener Filter | Time series prediction [4] | Simple implementation, well-established theory | Limited to stationary processes |

| Neural Networks | Complex nonlinear decoding problems [4] | High performance, versatile architecture | Large data requirements, "black box" interpretation [3] [4] |

| DeMaND Algorithm | Nonlinear systems with complex dynamics [3] | Flexible model, requires less data, interpretable | Newer method with less extensive testing |

| Multimodal Frameworks (HMAVD, NEDS) | Integrating multiple data modalities [5] [6] | Enhanced performance, cross-modal alignment | Increased complexity, potential modality imbalance [5] |

Experimental Protocols and Applications

Visual Information Decoding from EEG Signals

Protocol: Decoding Color Information from Scalp EEG

Background: Decoding visual features from EEG signals presents particular challenges due to the low signal-to-noise ratio and source ambiguity of scalp measurements [7]. However, recent advances have made it possible to decode both spatial (orientation, location) and non-spatial (color) features of visual stimuli [7].

Experimental Setup:

- Participants: 30 healthy adults with normal or corrected-to-normal vision

- Visual Stimuli: Bilateral Gabor patches (sinusoidal gratings) presented for 300ms

- Color Space: 48 colors drawn from CIELAB color space with fixed lightness (L=54)

- Electrode Placement: Standard scalp arrays with emphasis on posterior sites

Multivariate Analysis:

- Method: Linear Discriminant Analysis (LDA) applied to patterns of EEG activity across electrodes

- Time Resolution: Analysis focused on specific time windows following stimulus presentation

- Validation: Comparison with orientation decoding and control for luminance artifacts

Key Findings: Robust color decoding was achieved with characteristics indicating genuine visual processing rather than artifacts: (1) posterior contralateral electrode dominance, (2) parametric tuning to color space, and (3) successful decoding from multi-item displays [7]. The magnitude of color decoding was comparable to orientation decoding, establishing color as a viable dimension for tracking visual processing with EEG [7].

Decision Variable Decoding from Premotor Cortex

Protocol: Inferring Decision Dynamics from Population Spiking

Background: Decision-making represents a fundamental cognitive process where internal states evolve over time to form choices. Decoding these dynamic cognitive variables requires specialized approaches that can handle single-trial variability and heterogeneous neural responses [2].

Experimental Paradigm:

- Task: Perceptual decision-making where monkeys discriminate dominant color in checkerboard stimuli

- Stimulus Conditions: Varied difficulty levels (easy/hard) and response sides (left/right)

- Neural Recording: Linear multi-electrode arrays in dorsal premotor cortex (PMd)

- Behavioral Measure: Reaction-time task with touch response

Computational Framework: A flexible modeling approach was developed that simultaneously infers population dynamics and tuning functions:

Latent Dynamics Model: $\dot{x}=-D\frac{\mathrm{d}\Phi(x)}{\mathrm{d}x}+\sqrt{2D}\xi(t)$

- Where Φ(x) is a potential function defining deterministic forces

- D represents noise magnitude accounting for stochasticity [2]

Tuning Functions: Individual neurons modeled with unique nonlinear tuning functions fi(x) to the latent variable

Initial State Distribution: p0(x) represents starting states before stimulus onset

Spike Generation: Spikes modeled as inhomogeneous Poisson processes with rate λ(t) = fi(x(t))

Inference Procedure: Simultaneous inference of Φ(x), p0(x), fi(x), and D through maximum likelihood estimation, validated with synthetic data and optogenetic perturbations [2].

Key Findings: The approach revealed that PMd population dynamics encode a one-dimensional decision variable, with heterogeneous single-neuron responses arising from diverse tuning to this common variable rather than complex individual dynamics [2]. The inferred dynamics indicated an attractor mechanism for decision computation, with consistent tuning functions across stimulus conditions [2].

Large-Scale Neurobehavioral Modeling

Protocol: Simultaneous Neural Encoding and Decoding at Scale (NEDS)

Background: Large-scale neural and behavioral datasets require modeling approaches that can capture bidirectional relationships between neural activity and behavior across multiple animals and sessions [6].

Dataset:

- Source: International Brain Laboratory repeated site dataset

- Subjects: 83 mice performing standardized decision-making task

- Neural Recording: Neuropixels probes targeting consistent brain regions

- Behavioral Variables: Whisker motion, wheel velocity, choice, block prior

Model Architecture:

- Multimodal Transformer: Tokenized neural and behavioral data processed through shared transformer

- Multi-Task Masking: Alternating between neural, behavioral, within-modality, and cross-modality masking

- Training Objective: Learning conditional expectations between neural activity and behavior

Implementation:

- Pretraining: Data from 73 animals

- Fine-tuning: Held-out 10 animals for evaluation

- Benchmarking: Comparison with POYO+ and NDT2 models

Key Findings: NEDS achieved state-of-the-art performance for both encoding and decoding, with performance scaling meaningfully with pretraining data and model capacity [6]. The learned embeddings exhibited emergent properties, accurately predicting brain regions without explicit training [6].

Research Reagent Solutions and Essential Materials

Table: Essential Research Materials for Neural Decoding Studies

| Material/Resource | Function/Application | Example Use Cases |

|---|---|---|

| Neuropixels Probes [6] | High-density neural recording | Large-scale population recordings across multiple brain regions |

| Linear Multi-Electrode Arrays [2] | Population spiking activity recording | Decision-making studies in premotor cortex |

| Scalp EEG Systems [7] | Non-invasive brain activity recording | Visual feature decoding studies |

| CIELAB Color Space Standards [7] | Perceptually uniform color stimulus generation | Color decoding experiments |

| Kalman Filter Algorithms [3] [4] | Traditional state estimation | Baseline decoding performance comparison |

| DeMaND Algorithm [3] | Advanced decoding for nonlinear systems | Applications requiring flexible models with limited data |

| Multimodal Transformer Architectures [6] | Integrated neural and behavioral modeling | Large-scale neurobehavioral datasets |

| Linear Discriminant Analysis (LDA) [7] | Multivariate pattern classification | EEG-based feature decoding |

The field of neural decoding has evolved significantly from traditional linear methods like the Kalman filter to sophisticated machine learning approaches and multimodal frameworks that capture the complex, dynamic nature of neural representations [3] [4]. The geometric principle governing neural population coding appears to be conserved across sensory and cognitive domains, with diverse single-neuron responses arising from varied tuning to common latent variables rather than complex individual dynamics [2].

Future progress will likely involve increased emphasis on causal modeling approaches that move beyond correlation to test neural coding hypotheses through intervention [1], continued development of large-scale foundation models capable of generalizing across animals and tasks [6], and improved methods for balancing multimodal contributions to prevent dominant modality effects [5]. As these techniques advance, they will further enhance both our fundamental understanding of neural computation and our ability to develop effective translational applications in brain-computer interfaces and neurotechnology.

Within modern neural signals research, the ability to accurately decode intentions from brain activity forms the cornerstone of brain-machine interfaces and systems neuroscience. Bayesian decoding methods, particularly those employing Kalman filters, provide a powerful statistical framework for this purpose. These approaches allow researchers to transform noisy, high-dimensional neural data into meaningful estimates of behavioral variables and cognitive states. The core mathematical frameworks of Linear Regression, Generalized Linear Models (GLMs), and State-Space Models provide the foundational pillars supporting these advanced decoding techniques. This article details the specific applications, experimental protocols, and practical implementations of these frameworks within neural signal research, with particular emphasis on their role in Bayesian decoding pipelines.

Linear Regression Frameworks in Neural Decoding

Basic Principles and Applications

Linear regression establishes a fundamental relationship between neural activity and behavioral variables, typically modeling firing rates as a linear function of kinematic parameters such as hand position, velocity, or acceleration. In motor cortex decoding, this relationship is often expressed as ( y = Xβ + ε ), where ( y ) represents the neural firing rates, ( X ) denotes the kinematic state matrix, and ( β ) contains the regression coefficients quantifying the relationship [8]. This framework provides the simplest yet effective approach for initial characterization of neural tuning properties.

The standard linear regression approach serves as the foundation for more complex decoding algorithms, including the population vector algorithm and multiple linear regression methods for continuous state estimation [8]. Its computational efficiency makes it particularly valuable for real-time decoding applications where processing latency constrains algorithm selection. However, basic linear regression fails to capture the Poisson-like variability inherent in neural spiking activity and cannot readily incorporate history-dependent effects, limitations addressed by more sophisticated GLM frameworks.

Table 1: Linear Regression Applications in Neural Decoding

| Application Domain | Model Variants | Neural Signals | Decoded Variables |

|---|---|---|---|

| Motor Decoding | Population Vector Algorithm | M1 Spiking Activity | Hand Direction, Velocity |

| Cognitive State Monitoring | Multiple Linear Regression | EEG Band Power | Attention Level, Cognitive Load |

| Sensory Decoding | Stimulus Reconstruction | V1/LGN Firing Rates | Visual Stimulus Features |

Experimental Protocol: Tuning Property Characterization

Objective: To characterize the relationship between neural firing rates and kinematic parameters using linear regression.

Materials and Setup:

- Microelectrode arrays implanted in primary motor cortex (M1)

- Neural signal acquisition system (e.g., Cerebus System)

- Behavioral task apparatus for arm movement tracking

- Spike sorting software (e.g., Offline Sorter)

Procedure:

- Data Collection: Record simultaneous neural activity and behavioral kinematics during a random target pursuit task. For M1 decoding, sample hand position at 500Hz and compute velocity/acceleration via differentiation [8].

- Temporal Alignment: Account for neural processing delays by comparing neural activity in 50ms bins with kinematic measurements taken 100ms later [8].

- Feature Extraction: Calculate firing rates using spike counts in 50ms overlapping windows.

- Model Estimation: Solve for regression coefficients using least squares estimation: ( β = (X^TX)^{-1}X^Ty ).

- Model Validation: Assess prediction accuracy through cross-validation, measuring correlation between predicted and actual kinematics.

Generalized Linear Models (GLMs) for Neural Encoding

Framework Fundamentals

Generalized Linear Models extend basic linear regression to better accommodate the statistical properties of neural spike trains by incorporating non-Gaussian noise models and nonlinear link functions. The point process GLM framework characterizes spiking activity through a conditional intensity function:

[ λ(t|Ht) = \lim{Δ→0} \frac{P(N(t+Δ)-N(t)=1|H_t)}{Δ} ]

where ( H_t ) represents the spiking history and relevant covariates [9]. This formulation enables more accurate characterization of neural sensitivity by modeling spike trains as binary point processes rather than continuous firing rates.

The GLM framework provides particular value for modeling neurons in higher visual areas where receptive fields exhibit dynamic, time-varying properties influenced by both external sensory inputs and internal cognitive factors [9]. Standard time-invariant GLMs assume stationary neural response properties, making them inadequate for capturing the rapid modulation observed during tasks involving attention, reward expectation, or motor planning.

Time-Varying Extensions for Nonstationary Neural Responses

Time-varying GLM extensions address the limitation of stationary models by allowing parameters to evolve during different behavioral epochs. These approaches are essential for characterizing how neurons in higher visual areas dynamically adjust their sensory processing based on behavioral context, with changes occurring at millisecond timescales [9]. For example, neurons in area MT show rapid response modulation during saccadic eye movements, creating a time-varying relationship between visual stimuli and neural responses.

The flexibility of time-varying GLMs makes them particularly suitable for investigating the neural basis of various cognitive functions, including covert attention, working memory, and task rule implementation [9]. These models can capture how multiple behavioral variables interact and influence sensory processing on single trials, providing a powerful tool for linking physiological responses to cognitive phenomena.

Table 2: GLM Variants for Neural Encoding

| GLM Type | Link Function | Noise Model | Application Context |

|---|---|---|---|

| Poisson GLM | Exponential | Poisson | Basic Spike Train Modeling |

| Bernoulli GLM | Logit | Bernoulli | Binary Spike Events |

| Time-Varying GLM | Exponential | Poisson | Nonstationary Cognitive Tasks |

| Common-Input GLM | Exponential | Poisson | Multidimensional Hidden States |

Experimental Protocol: Time-Varying Receptive Field Mapping

Objective: To characterize how neural receptive fields dynamically change during cognitive tasks using time-varying GLMs.

Materials and Setup:

- Multi-electrode recording array in higher visual area (e.g., V4, MT)

- Visual stimulus presentation system

- Eye tracking system for monitoring fixation and saccades

- Behavioral task control software

Procedure:

- Experimental Design: Implement a behavioral paradigm that incorporates cognitive factors (e.g., attention cues, working memory delays, or reward expectation).

- Data Collection: Simultaneously record neural responses, visual stimuli, and behavioral variables (eye position, task events) with millisecond precision.

- Model Specification: Construct a GLM with time-varying parameters that depend on behavioral state: ( λ(t) = f(Xsensoryβsensory(t) + Xcognitiveβcognitive(t)) ).

- Parameter Estimation: Fit model parameters using maximum likelihood estimation with regularization to track parameter evolution.

- Model Validation: Compare time-varying and time-invariant models using cross-validated likelihood to quantify improvement in characterization accuracy.

State-Space Models and Kalman Filtering

Theoretical Foundation

State-space models provide a unified framework for neural decoding by modeling both the relationship between neural activity and behavioral states (observation model) and the temporal evolution of those states (state transition model). The Kalman filter implements recursive Bayesian estimation within this framework, providing optimal state estimates for linear Gaussian systems.

The basic state-space formulation comprises:

- Observation Model: ( yk = Hxk + qk ), where ( yk ) represents neural activity, ( xk ) is the behavioral state, and ( qk ) is observation noise.

- State Transition Model: ( x{k+1} = Axk + wk ), where ( A ) governs state dynamics and ( wk ) is process noise [8].

This formulation enables efficient, real-time decoding of continuous movement trajectories from population neural activity, making it particularly valuable for brain-machine interface applications.

Advanced Formulations: Hidden State Models

Recent extensions to the basic Kalman filter incorporate hidden states to account for unobserved behavioral, cognitive, or physiological variables that influence neural activity. The hidden state model formulation:

[ yk = Hxk + Gnk + qk ] [ \begin{pmatrix} x{k+1} \ n{k+1} \end{pmatrix} = A \begin{pmatrix} xk \ nk \end{pmatrix} + w_k ]

includes both observable states ( xk ) (e.g., hand kinematics) and hidden states ( nk ) (e.g., attention, muscle activity, motivation) [8]. This approach provides a more appropriate representation of neural data and generates more accurate decoding compared to standard models.

Figure 1: Hidden State Model Architecture. The Kalman filter with hidden states incorporates both observable behavioral variables and unobserved cognitive/physiological factors that influence neural activity.

Target-Informed Decoding Frameworks

Incorporating target information significantly improves decoding accuracy for goal-directed movements. The target-included model characterizes the hand state as an autoregressive process while representing the target as a linear Gaussian constraint on the movement endpoint [10]. This formulation introduces a drift term in the kinematic prior that guides estimates toward the intended target.

Forward-backward propagation algorithms efficiently compute target-informed state estimates by leveraging future target information during decoding [10]. This approach can be combined with time decoding methods that detect when specific movement landmarks (e.g., target acquisitions) occur, creating a coupled framework that leverages both continuous trajectory estimation and discrete event detection.

Figure 2: Target-Informed Decoding Framework. This coupled approach combines continuous trajectory estimation with discrete target time detection to improve decoding accuracy for stereotyped movements.

Experimental Protocol: Kalman Filter with Hidden States

Objective: To decode hand kinematics from motor cortical activity using a Kalman filter with hidden states.

Materials and Setup:

- 100-electrode silicon microelectrode array implanted in primary motor cortex

- 30kHz neural signal acquisition system with spike sorting capabilities

- Arm position tracking system (e.g., exoskeletal arm with joint angle sensors)

- Random target pursuit task presentation system

Procedure:

- Training Data Collection: Record simultaneous neural activity and hand kinematics during a random target pursuit task with 50ms binning [8].

- Model Identification: Estimate parameters ( θ = (H, G, Q, A, W, μ, Σ) ) using the expectation-maximization (EM) algorithm to maximize the marginal log-likelihood ( \log p({xk,yk};θ) ) [8].

- State Estimation: Apply the Kalman filter recursion to decode hand states from neural activity:

- Prediction step: ( \hat{x}{k|k-1} = A\hat{x}{k-1|k-1} )

- Update step: ( \hat{x}{k|k} = \hat{x}{k|k-1} + Kk(yk - H\hat{x}_{k|k-1}) )

- Performance Validation: Compare decoding accuracy between standard Kalman filter and hidden state extensions using variance-accounted-for metrics.

Table 3: State-Space Model Comparison for Neural Decoding

| Model Type | State Components | Parameter Estimation | Advantages | Limitations |

|---|---|---|---|---|

| Standard Kalman Filter | Hand kinematics only | EM Algorithm | Computational efficiency | Misses unobserved states |

| Hidden-State Model | Hand kinematics + Multidimensional hidden states | EM Algorithm | Accounts for cognitive/muscular factors | Increased parameter space |

| Target-Informed Model | Hand kinematics + Target position | Forward-Backward Propagation | Improved accuracy for goal-directed tasks | Requires target/timing information |

| Mixture of Trajectory Models | Multiple trajectory components | Expectation-Maximization | Captures movement variability | Model selection complexity |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Neural Decoding Research

| Research Reagent | Specification | Function | Example Application |

|---|---|---|---|

| Silicon Microelectrode Arrays | 100 platinized-tip electrodes | Record population neural activity | Simultaneous recording of 100+ M1 units [8] |

| Neural Signal Processor | Cerebus Acquisition System | Amplify, filter, and digitize neural signals | 30kHz sampling with real-time spike detection [8] |

| Spike Sorting Software | Offline Sorter (Plexon) | Isolate single-unit activity | Manual spike sorting using contours and templates [8] |

| Behavioral Task Control | KINARM System | Present targets and record movements | Random target pursuit with hand tracking [10] |

| Kinematic Tracking System | 500Hz position sensing | Measure hand/arm kinematics | Compute velocity/acceleration via differentiation [8] |

Integrated Experimental Protocol: Complete Neural Decoding Pipeline

Objective: To implement a complete neural decoding pipeline integrating GLM encoding models with Kalman filter decoding for closed-loop brain-machine interface applications.

Materials and Setup:

- All components listed in Table 4

- Real-time processing system with low-latency neural signal processing

- Closed-loop feedback interface (e.g., robotic arm or cursor display)

Procedure:

- Simultaneous Data Collection: Record baseline neural activity and behavior during a random target pursuit task spanning 400-550 trials [8] [10].

- Encoding Model Development: Fit time-varying GLMs to characterize the relationship between neural activity and behavioral variables, including both sensory and task-related factors [9].

- Decoder Training: Identify parameters for a hidden-state Kalman filter using the expectation-maximization algorithm on training data [8].

- Closed-Loop Implementation: Deploy the trained Kalman filter for real-time decoding in closed-loop control applications with neural activity processed in 50ms bins [8].

- Performance Quantification: Evaluate decoding accuracy using variance-accounted-for and correlation coefficients between decoded and actual kinematics.

- Model Refinement: Incorporate target information using forward-backward propagation when target positions are known [10].

Figure 3: Complete Neural Decoding Pipeline. This integrated workflow combines encoding model development with state-space decoding for closed-loop neural interface applications.

The integration of the Kalman Filter (KF) as a recursive Bayesian decoder represents a cornerstone technique in modern neural signal research, particularly for the estimation of motor kinematics from brain activity. By treating neural population activity as noisy measurements of an underlying kinematic state, the KF provides an optimal recursive algorithm for inferring intended movement parameters such as position, velocity, and acceleration. This application note details the theoretical foundation, practical implementation protocols, and experimental validation of the KF as a Bayesian decoder, with specific emphasis on its application in motor neuroscience and brain-computer interfaces (BCIs). The frameworks and methodologies presented herein are designed to enable researchers to accurately decode movement intentions from neural signals, thereby advancing both foundational neuroscience and therapeutic neurotechnology development.

Neural Representations of Motor Kinematics

A fundamental principle in neuroscience is that neurons in the sensorimotor cortex encode movement parameters through coordinated population activity [1]. Motor kinematics—the spatial and motion aspects of movement including position, velocity, acceleration, and direction—are robustly represented in the primary motor (M1) and somatosensory (S1) cortices [11]. Research involving non-human primates and humans has consistently demonstrated that these kinematic parameters can be decoded from various neural signals, including intracortical recordings, electrocorticography (ECoG), and functional magnetic resonance imaging (fMRI) [11]. The "hand knob" area of the sensorimotor cortex, which controls hand and finger movements, has been particularly fruitful for BCI applications due to its detailed representation of kinematic parameters [11].

The Bayesian Approach to Neural Decoding

Bayesian decoding provides a statistical framework for inferring motor intentions from neural activity by combining a prior distribution of movement states with a likelihood function that relates neural activity to those states [12] [13]. This approach allows for the integration of prior knowledge about movement dynamics with new neural evidence, resulting in posterior probability distributions over kinematic variables. The Bayesian framework naturally handles uncertainty in neural measurements and incorporates constraints such as movement smoothness, making it particularly suitable for decoding continuous kinematic trajectories [12].

The Kalman Filter as a Recursive Bayesian Decoder

Theoretical Foundation

The Kalman Filter is a recursive state estimation algorithm that operates within a Bayesian framework to estimate the hidden state of a dynamic system from noisy measurements [14]. In the context of motor kinematics decoding, the KF treats the intended movement parameters (e.g., hand position, velocity) as the hidden state and neural activity as the noisy measurements. The algorithm maintains an estimate of the probability distribution over the kinematic state, which it updates recursively as new neural data arrives.

The mathematical derivation of the KF can be approached through vector-space optimization or Bayesian optimal filtering [15]. Both approaches yield the same recursive update equations that optimally combine predictions from a dynamic model with new measurements, while providing a measure of estimation uncertainty [14] [15].

Mathematical Formulation

For motor kinematics decoding, the standard KF assumes linear Gaussian state and observation models:

State Transition Model: [ xt = A x{t-1} + wt, \quad wt \sim \mathcal{N}(0, Q) ]

Observation Model: [ yt = C xt + vt, \quad vt \sim \mathcal{N}(0, R) ]

Where:

- ( x_t ) represents the kinematic state vector (e.g., position, velocity) at time ( t )

- ( y_t ) represents the neural observation vector (e.g., spike counts, LFP features) at time ( t )

- ( A ) is the state transition matrix that encodes the dynamics of kinematic parameters

- ( C ) is the observation matrix that relates kinematic states to neural activity

- ( wt ) and ( vt ) are process and observation noise terms with covariance matrices ( Q ) and ( R ) respectively

The KF recursively applies two main steps:

- Prediction Step: Uses the dynamic model to predict the current state and covariance based on previous estimates

- Update Step: Incorporates the latest neural measurements to refine the state prediction

Experimental Protocols for Kalman Filter Decoding

Neural Data Acquisition and Preprocessing

Materials and Equipment:

- Multi-electrode arrays (Utah arrays, Neuropixels) for intracortical recordings

- Electrocorticography (ECoG) grids for surface recordings

- Amplification and digitization systems with appropriate sampling rates

- Neural signal processing software (MATLAB, Python with specialized toolboxes)

Protocol:

- Record neural activity during motor tasks with simultaneous kinematic tracking

- Preprocess neural signals: bandpass filter, spike sort, or extract local field potentials

- Bin neural activity into time windows (typically 20-100 ms) and count spikes or extract power features

- Record ground-truth kinematics using motion capture systems, data gloves, or robotic interfaces

- Synchronize neural and kinematic data with high temporal precision

- Normalize neural features (z-score) to account for baseline variations

Kalman Filter Training Procedure

Protocol:

- Define state vector: Typically includes position, velocity, and optionally acceleration for each degree of freedom [ xt = [px, py, vx, vy, ax, a_y]^T ]

Estimate model parameters from training data:

- Compute state transition matrix ( A ) from kinematic data using least squares: [ A = \left( \sum{t=2}^T xt x{t-1}^T \right) \left( \sum{t=2}^T x{t-1} x{t-1}^T \right)^{-1} ]

- Compute observation matrix ( C ) by relating neural activity to kinematics: [ C = \left( \sum{t=1}^T yt xt^T \right) \left( \sum{t=1}^T xt xt^T \right)^{-1} ]

- Estimate noise covariance matrices ( Q ) and ( R ) from residuals

Validate model parameters on held-out data to prevent overfitting

Decoding and Performance Evaluation

Protocol:

- Initialize filter with appropriate initial state and covariance estimates

- Run recursive estimation on test data using the trained KF parameters

- Compare decoded kinematics to ground-truth measurements

- Quantify performance using standardized metrics:

Table 1: Performance Metrics for Kalman Filter Decoding

| Metric | Formula | Interpretation |

|---|---|---|

| Correlation Coefficient (CC) | ( \rho(\hat{x}, x) ) | Linear relationship between decoded and actual kinematics |

| Normalized Root Mean Square Error (nRMSE) | ( \frac{\sqrt{\frac{1}{T}\sum{t=1}^T (\hat{x}t - xt)^2}}{x{\max} - x_{\min}} ) | Normalized magnitude of decoding errors |

| Signal-to-Noise Ratio (SNR) | ( 10\log_{10}\left(\frac{\text{Var}(x)}{\text{Var}(\hat{x} - x)}\right) ) | Ratio of signal power to error power |

Implementation Workflow

The following diagram illustrates the complete workflow for implementing a Kalman Filter decoder for motor kinematics:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials and Tools for Kalman Filter Decoding

| Category | Specific Item/Technique | Function/Purpose |

|---|---|---|

| Neural Recording | Utah multi-electrode arrays | Chronic recording from neuronal populations in motor cortex |

| Electrocorticography (ECoG) grids | Surface recording of field potentials with high spatial resolution | |

| Neuropixels probes | High-density recording from hundreds to thousands of neurons | |

| Kinematic Tracking | Optical motion capture (Vicon) | High-precision tracking of hand and arm position |

| Electromagnetic tracking (Polhemus) | Tracking without line-of-sight limitations | |

| Exoskeleton robots (KINARM, MIT-Manus) | Precise measurement of joint angles and forces | |

| Computational Tools | MATLAB with Statistics & Signal Processing Toolboxes | Implementation of KF algorithms and data analysis |

| Python (NumPy, SciPy, scikit-learn) | Open-source platform for neural decoding | |

| Neural Signal Processing (OpenEphys, KiloSort) | Spike sorting and feature extraction | |

| Experimental Paradigms | Center-out reaching task | Standardized protocol for studying motor control |

| Random target pursuit task | Testing continuous trajectory decoding | |

| Brain-Computer Interface tasks | Real-time validation of decoding algorithms |

Bayesian Interpretation of Kalman Filter Components

The following diagram illustrates the relationship between Bayesian inference concepts and Kalman Filter components:

Performance Benchmarks and Applications

Quantitative Performance Metrics

Research studies implementing Kalman Filters for motor kinematics decoding have reported the following performance ranges across various experimental paradigms:

Table 3: Typical Performance of Kalman Filter Decoders for Motor Kinematics

| Kinematic Parameter | Correlation Coefficient (CC) | Normalized RMSE | Neural Signal Type |

|---|---|---|---|

| Hand Position (2D) | 0.75 - 0.95 | 0.15 - 0.35 | Intracortical spikes (M1) |

| Hand Velocity (2D) | 0.80 - 0.98 | 0.10 - 0.25 | Intracortical spikes (M1) |

| Joint Angles (Arm) | 0.65 - 0.90 | 0.20 - 0.40 | ECoG (Sensorimotor cortex) |

| Finger Flexion | 0.60 - 0.85 | 0.25 - 0.45 | ECoG (Hand knob area) |

| Grasp Force | 0.70 - 0.92 | 0.18 - 0.32 | Intracortical spikes (M1) |

Applications in Neuroscience and Neurotechnology

The Kalman Filter decoder has enabled numerous advances in both basic neuroscience and applied neurotechnology:

Basic Motor Neuroscience: Investigating how kinematic parameters are encoded in distributed neural populations and how these representations transform during learning and adaptation [11] [1]

Brain-Computer Interfaces: Enabling continuous control of computer cursors, robotic arms, and neuroprosthetics for individuals with paralysis [11]

Neurological Disorder Research: Quantifying alterations in motor encoding in conditions such as Parkinson's disease, stroke, and ALS

Neurorehabilitation: Providing real-time feedback for motor retraining and assessing recovery of function

Drug Development: Serving as a quantitative biomarker for evaluating therapeutic effects on motor system function in preclinical and clinical trials

Advanced Considerations and Future Directions

Extensions to the Basic Kalman Filter

While the standard Kalman Filter assumes linear dynamics and Gaussian noise, several extensions have been developed to address more complex scenarios:

- Extended Kalman Filter (EKF): Handles nonlinear systems through local linearization

- Unscented Kalman Filter (UKF): Uses deterministic sampling to approximate nonlinear transformations more accurately

- Ensemble Kalman Filter (EnKF): Uses Monte Carlo sampling for high-dimensional states

- Switching Kalman Filters: Models multiple dynamic regimes and transitions between them

Integration with Modern Machine Learning

Recent approaches have combined the recursive Bayesian framework of the KF with deep learning:

- Using neural networks to learn the observation model ( C ) from data

- Replacing KF components with learned networks while maintaining the recursive structure

- Combining KF with recurrent neural networks for modeling complex temporal dynamics

The Kalman Filter remains a fundamental tool in the neuroscience toolkit, providing an optimal, interpretable, and computationally efficient framework for decoding motor intentions from neural activity. Its strong theoretical foundation in Bayesian estimation continues to make it a benchmark against which newer machine learning approaches are evaluated in neural decoding applications.

Bayesian inference provides a principled, probabilistic framework for interpreting neural activity, formalizing how prior knowledge can be combined with new evidence to decode behavior and perceptual experiences. This approach conceptualizes the brain as a "Bayesian machine" that continuously performs probabilistic inference, a theory with profound implications for understanding neural computation [13]. The core of this methodology rests on Bayes' theorem, which mathematically describes how prior beliefs ( P(\text{hypothesis}) ) are updated with new sensory evidence ( P(\text{evidence} | \text{hypothesis}) ) to form a posterior belief ( P(\text{hypothesis} | \text{evidence}) ). In neural decoding, the "hypothesis" often represents a sensory stimulus, motor intent, or cognitive state, while the "evidence" constitutes the observed pattern of neural activity.

Two complementary perspectives dominate this field: Bayesian Encoding and Bayesian Decoding. Bayesian Encoding asks how neural circuits implement inference in an internal model, representing entire probability distributions over relevant variables. In contrast, Bayesian Decoding treats neural activity as given and focuses on how an external observer can optimally recover information about stimuli or behavior from this activity, emphasizing the statistical uncertainty of the decoder [13]. This application note focuses on the latter, detailing practical methodologies for decoding behavioral and perceptual variables from neural population data using Bayesian techniques, with particular emphasis on integration with Kalman filtering for dynamic state estimation.

Theoretical Foundation

Core Bayesian Framework

The Bayesian decoding framework operationalizes Bayes' theorem for neural data analysis:

[ P(s|\mathbf{r}) = \frac{P(\mathbf{r}|s)P(s)}{P(\mathbf{r})} ]

Here, ( P(s|\mathbf{r}) ) is the posterior probability of stimulus or state ( s ) given the observed population response ( \mathbf{r} ), ( P(\mathbf{r}|s) ) is the likelihood function describing the probability of observing response ( \mathbf{r} ) given state ( s ), ( P(s) ) is the prior probability representing knowledge about ( s ) before observing neural data, and ( P(\mathbf{r}) ) serves as a normalization constant [16] [13]. The likelihood is typically derived from neuronal tuning curves, which characterize the average response of each neuron to different states or stimuli.

Table: Core Components of Bayesian Decoding Framework

| Component | Mathematical Representation | Neural Correlate | Functional Role | |

|---|---|---|---|---|

| Prior | ( P(s) ) | Previous experience, contextual knowledge | Encodes expectations before evidence arrival | |

| Likelihood | ( P(\mathbf{r} | s) ) | Neuronal tuning curves + noise model | Relates neural activity to possible states |

| Posterior | ( P(s | \mathbf{r}) ) | Synthesis of prior and likelihood | Final belief distribution used for decoding |

Distinction Between Bayesian Encoding and Decoding

A crucial conceptual distinction exists between Bayesian Encoding and Bayesian Decoding approaches, which employ similar mathematics but address fundamentally different questions [13]:

Bayesian Decoding focuses on how an external observer can optimally read out information about a stimulus ( s ) from neural responses ( \mathbf{r} ) by computing ( P(s|\mathbf{r}) ). The "likelihood" in this context refers to ( P(\mathbf{r}|s) ) - the relationship between stimuli and neural responses.

Bayesian Encoding asks how neural circuits could compute and represent an approximation to a probability distribution over latent variables ( x ) in an internal generative model, typically the posterior ( P(x|I) ) where ( I ) represents sensory inputs. Here, the "likelihood" refers to ( P(I|x) ) - the relationship between internal model variables and sensory observations.

This application note focuses primarily on Bayesian Decoding methods, where the probabilistic framework is used as an analytical tool for interpreting neural population activity in relation to measurable variables.

Bayesian Methods for Neural Decoding

The Kalman Filter for Motor Cortical Decoding

The Kalman filter provides an efficient recursive method for Bayesian inference when the likelihood and prior are linear and Gaussian, making it particularly suitable for decoding continuous movement trajectories from motor cortical activity [17]. In this framework, the state transition (prior) and observation (likelihood) models are both linear with additive Gaussian noise:

[ \mathbf{x}t = \mathbf{A}\mathbf{x}{t-1} + \mathbf{w}t, \quad \mathbf{w}t \sim \mathcal{N}(0, \mathbf{Q}) ] [ \mathbf{y}t = \mathbf{C}\mathbf{x}t + \mathbf{v}t, \quad \mathbf{v}t \sim \mathcal{N}(0, \mathbf{R}) ]

where ( \mathbf{x}t ) represents the kinematic state (e.g., hand position, velocity), ( \mathbf{y}t ) is the observed neural activity (firing rates), ( \mathbf{A} ) is the state transition matrix, ( \mathbf{C} ) is the observation matrix, and ( \mathbf{w}t ), ( \mathbf{v}t ) are process and observation noise respectively [17]. The Kalman filter recursively computes the posterior probability of the state given all previous neural observations:

- Prediction step: Compute prior belief using state transition model

- Update step: Combine prior with new neural observations via Bayes' rule

This approach has demonstrated superior performance in reconstructing hand trajectories from multi-neuron recordings in primate motor cortex compared to previous methods, while providing a principled probabilistic model of motor cortical coding [17].

Diagram: Kalman Filter Recursive Decoding Workflow. The filter continuously cycles between prediction based on the movement model and updating based on new neural observations.

Advanced Kalman Filter Implementations

While the standard Kalman filter assumes linear Gaussian relationships, extensions have been developed to address more complex neural coding properties. The Unscented Kalman Filter (UKF) enables the use of non-linear (quadratic) neural tuning models, which can describe neural activity significantly better than linear models [18]. Additionally, the n-th order UKF incorporates a history of recent states, improving prediction by capturing relationships between neural activity and movement at multiple time offsets simultaneously [18]. In real-time BMI experiments, these advanced filters have demonstrated superior performance in both off-line reconstruction of movement trajectories and closed-loop operation compared to standard Kalman or Wiener filters.

Bayesian Decoding for Calcium Imaging Data

Calcium imaging presents unique challenges for Bayesian decoding due to indirect measurement of neural activity, lower sampling frequencies, and uncertainty in exact spike timing. A specialized probabilistic framework has been developed that uses a simplified naive Bayesian classifier to infer behavior from calcium imaging recordings [16]. The method involves:

- Signal binarization: Discriminating periods of activity versus inactivity based on normalized calcium signals exceeding 2 standard deviations with positive first derivative

- Probability computation: Calculating ( P(A) ) (probability of neuron being active), ( P(Si) ) (probability of behavioral state i), and ( P(Si \cap A) ) (joint probability of activity and state)

- Bayesian classification: Using these probability distributions to decode behavior

This approach has been successfully applied to decode spatial position from hippocampal CA1 place cell activity in mice, demonstrating robust inference despite the limitations of calcium imaging data [16].

Color Perception Decoding

Bayesian decoding approaches have also elucidated how color percepts are extracted from neuronal responses in inferior-temporal (IT) cortex [19]. IT neurons show narrow tuning to specific colors with peak responses scattered throughout color space. A winner-take-all decoding scheme based on the peak responses of these narrowly-tuned neurons approximates the performance of optimal Bayesian decoding that uses complete tuning curve information [19]. This suggests the brain may employ computationally efficient approximations to fully Bayesian inference.

Table: Quantitative Performance Comparison of Bayesian Decoding Methods

| Decoding Method | Neural Signal Type | Application Domain | Reported Performance | Key Advantages |

|---|---|---|---|---|

| Kalman Filter [17] | Multi-unit firing rates | Hand trajectory reconstruction | More accurate than previously reported results | Recursive, efficient, provides uncertainty estimates |

| Unscented Kalman Filter [18] | Multi-unit firing rates | BMI cursor control | Outperformed standard KF and Wiener filter in closed-loop tasks | Handles non-linear tuning, uses movement history |

| Naive Bayesian Classifier [16] | Calcium imaging (GCaMP) | Spatial position decoding | Robust inference despite sparse sampling | Works with binarized activity, handles photobleaching |

| Winner-Take-All [19] | IT cortex firing rates | Color perception | Approximates optimal Bayesian decoder | Computationally efficient, biologically plausible |

Experimental Protocols

Kalman Filter Decoding for Motor Cortical Signals

Objective: Decode continuous hand trajectory from multi-unit motor cortical activity using a Kalman filter.

Materials:

- Chronically implanted multielectrode microarray in primary motor cortex

- Neural signal acquisition system (>30kHz sampling)

- Behavioral apparatus for measuring hand kinematics

- Computing system for real-time processing

Procedure:

Training Data Collection:

- Record simultaneous neural activity and hand kinematics during guided reaching tasks

- Extract firing rates by counting spikes in 25-100ms bins

- Preprocess kinematics (position, velocity) by smoothing and resampling to match neural data temporal resolution

Model Identification:

- Estimate state transition matrix ( \mathbf{A} ) from autocorrelation of kinematic data

- Calculate observation matrix ( \mathbf{C} ) using linear regression between neural activity and kinematics

- Compute noise covariance matrices ( \mathbf{Q} ) and ( \mathbf{R} ) from residuals of these fits

Filter Implementation:

- Initialize state estimate ( \mathbf{x}0 ) and error covariance ( \mathbf{P}0 )

- For each time step ( t ):

- Prediction: [ \mathbf{x}t^- = \mathbf{A}\mathbf{x}{t-1} ] [ \mathbf{P}t^- = \mathbf{A}\mathbf{P}{t-1}\mathbf{A}^T + \mathbf{Q} ]

- Update: [ \mathbf{K}t = \mathbf{P}t^-\mathbf{C}^T(\mathbf{C}\mathbf{P}t^-\mathbf{C}^T + \mathbf{R})^{-1} ] [ \mathbf{x}t = \mathbf{x}t^- + \mathbf{K}t(\mathbf{y}t - \mathbf{C}\mathbf{x}t^-) ] [ \mathbf{P}t = (\mathbf{I} - \mathbf{K}t\mathbf{C})\mathbf{P}_t^- ]

- Output decoded trajectory ( \mathbf{x}_t )

Validation:

- Compute correlation coefficient between actual and decoded trajectories

- Calculate mean squared error of position and velocity estimates

- For real-time BMI applications, implement closed-loop control and measure task performance

Bayesian Decoding from Calcium Imaging Data

Objective: Decode behavioral states (e.g., spatial location) from calcium imaging data using a naive Bayesian classifier.

Materials:

- Microendoscope with GRIN lens for in vivo calcium imaging

- GCaMP-expressing animal model

- Behavioral tracking system

- Computing system for image processing and analysis

Procedure:

Data Preprocessing:

- Perform motion correction using recursive algorithms [16]

- Extract neuronal spatial footprints and associated calcium activity

- Temporally deconvolve calcium signals to approximate spike rates (optional)

- Synchronize behavioral and neural data timestamps

Signal Binarization:

- For each neuron, normalize calcium trace to zero mean and unit variance

- Identify activity periods where:

- Normalized signal amplitude > 2 standard deviations

- First derivative of signal is positive (transient rise period)

- Create binary activity matrix ( A_{n,t} ) where 1 = active, 0 = inactive

Probability Distribution Calculation:

- Compute prior probability of behavioral state ( i ): [ P(S_i) = \frac{\text{time in state } i}{\text{total time}} ]

- Calculate marginal likelihood of neural activity: [ P(A) = \frac{\text{time active}}{\text{total time}} ]

- Determine joint probability of state and activity: [ P(S_i \cap A) = \frac{\text{time active while in state } i}{\text{total time}} ]

Bayesian Decoding:

- For each time bin, compute posterior probability over all states: [ P(Si|\mathbf{r}) \propto P(\mathbf{r}|Si)P(S_i) ]

- Where ( P(\mathbf{r}|S_i) ) is approximated using the product of individual neuron probabilities (naive Bayes assumption)

- Select state with maximum posterior probability as decoded output

Validation:

- Compute decoding accuracy as percentage of correctly classified states

- Calculate mutual information between decoded and actual states

- Perform shuffled controls to establish significance

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Bayesian Decoding Experiments

| Reagent/Resource | Function/Application | Example Specifications | Key Considerations |

|---|---|---|---|

| Multielectrode Arrays | Chronic neural recording for motor decoding | 96-256 electrodes, 400μm spacing, Utah array configuration | Biocompatibility, long-term stability, impedance characteristics |

| Genetically Encoded Calcium Indicators (GECIs) | Neural activity visualization via calcium imaging | GCaMP6/7 variants, AAV delivery, expressed in target regions | Expression specificity, kinetics, photostability, brightness |

| Microendoscopes | In vivo calcium imaging in freely behaving animals | GRIN lenses, 0.5-1mm diameter, compatible with head-mounted cameras | Minimizing tissue damage, light throughput, working distance |

| Neural Signal Acquisition Systems | Extracellular potential recording | 30kHz sampling/channel, 16-bit resolution, hardware filtering | Channel count, noise floor, common-mode rejection ratio |

| Behavioral Tracking Systems | Kinematic measurement or position tracking | High-speed cameras (>100fps), reflective markers, depth sensing | Temporal synchronization with neural data, spatial resolution |

| Computational Frameworks | Implementation of decoding algorithms | MATLAB, Python with SciPy/NumPy, real-time capable | Processing speed, compatibility with acquisition systems |

Data Analysis and Interpretation

Performance Metrics for Bayesian Decoders

Rigorous validation of decoding performance requires multiple complementary metrics:

- Correlation coefficient: Measures linear relationship between decoded and actual variables

- Mean squared error: Quantifies absolute decoding accuracy

- Mutual information: Captures general statistical dependence beyond linear correlations

- Confusion matrices: For discrete state decoding, visualizes classification patterns

- Significance testing: Comparing decoding accuracy to shuffled controls

For real-time BMI applications, additional metrics include task completion rate, path efficiency, and throughput (bits per second) to assess functional utility.

Implementation Considerations

Successful implementation of Bayesian decoding methods requires careful attention to several practical considerations:

Prior Specification: The choice of prior significantly impacts results, particularly with limited data. Weakly informative priors can stabilize estimates without imposing strong assumptions, while informative priors based on previous experiments can improve decoding accuracy [20]. Sensitivity analysis should be performed to assess prior influence.

Neural Feature Selection: Decoding performance depends critically on which neural features are used as inputs. Options include:

- Raw spike counts in temporal bins

- Smoothed firing rates

- Binarized activity indicators (for calcium imaging)

- Deconvolved spike probabilities

- Spectral power in specific frequency bands

Model Order Selection: For state-space approaches like the Kalman filter, the dimensionality of the state vector and the model order (number of past states incorporated) must balance expressiveness and overfitting. Cross-validation procedures should guide these choices.

Real-Time Implementation: Closed-loop applications require efficient algorithms that can complete decoding within a single time bin (typically 20-100ms). Optimization techniques include:

- Pre-computing constant matrices

- Efficient matrix inversion methods (e.g., Cholesky decomposition)

- Fixed-point arithmetic for embedded systems

Bayesian inference provides a powerful, principled framework for decoding behavior and perception from neural signals, formally incorporating prior knowledge while explicitly quantifying uncertainty. The integration of Kalman filtering with Bayesian principles has been particularly successful for decoding continuous variables like movement trajectories, while specialized approaches have been developed for challenging data modalities like calcium imaging. As neural recording technologies continue to advance, enabling measurement of increasingly large populations, Bayesian methods offer a mathematically coherent approach to harnessing this information for basic scientific discovery and clinical applications such as brain-machine interfaces. Future directions include developing more accurate neural tuning models, efficient approximate inference techniques for real-time implementation, and hierarchical Bayesian approaches that leverage structured prior knowledge about neural computation.

Neural decoding is a fundamental tool in neuroscience and neural engineering that uses recorded brain activity to infer information about external variables, stimuli, or behavioral states [21]. This process is mathematically framed as a regression problem when predicting continuous variables (e.g., hand position) or a classification problem when predicting discrete states (e.g., stimulus category) [21] [1]. The core aim is to learn a mapping function that transforms neural signals into meaningful representations of the outside world.

This decoding approach serves two primary purposes in research: (1) engineering applications such as brain-machine interfaces (BMIs) where improved predictive accuracy directly enhances device performance, and (2) scientific discovery to understand what information is contained within neural populations and how it relates to behavior and perception [21] [1]. Within the brain's own processing hierarchy, decoding occurs naturally as downstream neural circuits interpret and transform information encoded by upstream populations [1].

Mathematical Framework of Decoding

Core Principles

From a mathematical perspective, decoding involves inverting the encoding process. Given neural response data ( K ) (typically represented as a vector of spike counts or firing rates from ( N ) neurons), the goal is to estimate an external variable ( x ) by modeling the conditional probability ( P(x|K) ) [1]. This inversion is fundamentally guided by Bayes' theorem:

[ P(x|K) = \frac{P(K|x)P(x)}{P(K)} ]

where:

- ( P(K|x) ) is the likelihood (encoding model)

- ( P(x) ) is the prior probability of the stimulus

- ( P(K) ) serves as a normalization constant [1]

Comparison of Decoding Approaches

Table 1: Comparison of Neural Decoding Methodologies

| Method Category | Representative Algorithms | Key Assumptions | Typical Applications | Interpretability |

|---|---|---|---|---|

| Traditional Filters | Wiener filter, Kalman filter | Linear dynamics, Gaussian noise | Continuous kinematic decoding for BMIs | Moderate |

| Modern Machine Learning | Neural networks, Gradient boosted trees | Minimal assumptions, data-driven | High-performance decoding across domains | Low |

| Bayesian Methods | Bayesian linear regression, Particle filters | Explicit prior distributions, probabilistic relationships | Hippocampal place decoding, probabilistic inference | High |

| Linear Models | Ridge regression, Linear discriminant analysis | Linearity, Gaussian residuals | Baseline comparisons, interpretable decoding | High |

Experimental Protocols for Neural Decoding

Protocol 1: Building a Basic Neural Decoder

Objective: Implement a standardized pipeline for decoding external variables from neural population activity.

Materials and Equipment:

- Neural recording system (electrophysiology, fMRI, ECoG, or EEG)

- Behavioral task apparatus for ground truth measurement

- Computing environment with appropriate machine learning libraries

Procedure:

- Data Collection: Simultaneously record neural signals and corresponding external variables (e.g., movement parameters, sensory stimuli, cognitive states) with precise temporal alignment.

- Feature Extraction: Preprocess neural data to extract relevant features:

- For spike data: Bin spike counts in 20-100ms windows [21]

- For continuous signals: Extract spectral power in relevant frequency bands

- Data Partitioning: Split data into training (70%), validation (15%), and test (15%) sets, maintaining temporal structure if dealing with time series data.

- Model Selection: Train multiple candidate models (start with linear regression, Kalman filter, neural network, and gradient boosted trees).

- Hyperparameter Tuning: Use cross-validation on training data to optimize hyperparameters for each model type.

- Performance Evaluation: Assess final models on held-out test data using appropriate metrics (see Section 4.0).

Troubleshooting Tips:

- If models show poor performance, ensure temporal alignment between neural activity and external variables

- If overfitting occurs, increase regularization strength or simplify model architecture

- For unbalanced datasets, use stratified sampling or appropriate weighting schemes

Protocol 2: Comparative Performance Assessment

Objective: Systematically evaluate and compare different decoding algorithms on standardized datasets.

Procedure:

- Dataset Selection: Choose appropriate neural datasets with corresponding ground truth variables. Publicly available datasets include:

- Monkey motor cortex during reaching tasks

- Rat hippocampus during spatial navigation

- Human ECoG during speech production

- Implementation: Apply multiple decoding methods to the same dataset using consistent preprocessing and evaluation frameworks.

- Benchmarking: Quantify performance using standardized metrics appropriate for the decoding problem (regression: R², MSE; classification: accuracy, F1-score).

- Statistical Comparison: Use paired statistical tests to determine significant performance differences between methods.

Performance Metrics and Evaluation

Table 2: Quantitative Performance Comparison Across Studies

| Brain Area | Decoding Task | Kalman Filter Performance | Neural Network Performance | Performance Improvement | Reference |

|---|---|---|---|---|---|

| Motor Cortex | Hand position decoding | R² = 0.72 | R² = 0.84 | +16.7% | [21] |

| Somatosensory Cortex | Texture discrimination | Accuracy = 81% | Accuracy = 89% | +9.9% | [21] |

| Hippocampus | Spatial location decoding | MSE = 0.35 | MSE = 0.28 | +20.0% | [21] |

| Visual Cortex | Image classification | Accuracy = 75% | Accuracy = 88% | +17.3% | [1] |

Evaluation Metrics by Task Type:

- Continuous Variables (Regression):

- Mean Squared Error (MSE)

- Coefficient of Determination (R²)

- Pearson Correlation Coefficient

- Discrete Variables (Classification):

- Accuracy

- F1-Score

- Area Under ROC Curve (AUC-ROC)

- Sequence Decoding:

Research Reagent Solutions

Table 3: Essential Tools for Neural Decoding Research

| Research Reagent | Function | Example Applications |

|---|---|---|

| Generalized Linear Models (GLMs) | Model neural responses with non-normal distributions | Basic encoding models, hypothesis testing |

| Recurrent Neural Networks (RNNs) | Capture temporal dependencies in neural data | Decoding continuous movements from motor cortex |

| Convolutional Neural Networks (CNNs) | Extract spatial patterns from neural activity | Visual image reconstruction from V1/V4 activity |

| Gradient Boosted Trees (XGBoost) | High-performance tabular data prediction | Non-linear decoding with minimal hyperparameter tuning |

| Kalman Filters | Bayesian decoding with temporal priors | Tracking continuous states in dynamical systems |

| Support Vector Machines (SVMs) | Maximum-margin classification | Cognitive state decoding from prefrontal cortex |

| Large Language Models (LLMs) | Contextual semantic representation | Linguistic neural decoding [22] |

Workflow Visualization

Neural Decoding Methodology Workflow

Method Selection Framework

Decoder Selection Decision Framework

Implementation Considerations

Data Requirements and Preprocessing

Successful decoding implementation requires careful attention to data quality and preprocessing:

- Temporal Binning: Neural spike data should be binned appropriately (typically 20-100ms) to capture relevant information while minimizing noise [21]

- Feature Selection: Include relevant neural features such as firing rates, population vectors, or spectral power bands

- Cross-Validation: Use temporal or k-fold cross-validation to avoid overfitting and ensure generalizability

- Hyperparameter Optimization: Systematically tune model parameters using validation sets or cross-validation

Cautions and Limitations

While modern machine learning methods often outperform traditional approaches, several important considerations apply:

- Interpretation Limitations: High decoding performance does not imply that the decoder's internal transformations mimic biological computation [21]

- Causal Inference: Successful decoding from a brain region does not necessarily indicate that region is causally involved in processing the decoded variable [21]

- Prior Information: Some decoders incorporate prior information about the decoded variable, entangling neural information with external assumptions [21]

- Dataset Scale: Decoder performance and generalizability depends on having sufficient data quantity and quality, with requirements varying by brain area and task complexity [1]

Modern machine learning approaches, particularly neural networks and gradient boosting, consistently outperform traditional methods like Kalman filters across multiple neural decoding tasks [21]. However, method selection should be guided by specific research goals, data constraints, and interpretability requirements rather than purely maximizing accuracy.

Implementing Decoders: From Traditional Filters to Modern Bayesian Machine Learning

The Steady-State Kalman Filter (SSKF) represents a significant computational optimization of the conventional Kalman filter, particularly valuable for real-time applications with limited processing resources. In time-invariant stochastic systems, the optimal Kalman gain typically converges to a constant value after a finite number of recursions, approaching its steady-state form rather than continuing as a time-varying matrix [23]. This convergence behavior enables a fundamental trade-off: by precomputing and fixing the Kalman gain at its steady-state value, implementation complexity is substantially reduced while preserving estimation accuracy in many practical scenarios [23] [24].

This approach is especially relevant in neural decoding applications, where the Kalman filter has become a cornerstone algorithm for estimating intended movement kinematics from motor cortical activity [23] [17]. As neural interface systems evolve toward processing larger neuronal ensembles and more complex signal types, the computational efficiency offered by the steady-state formulation becomes increasingly critical for feasible real-time implementation [23]. The following sections explore the theoretical foundations, practical implementation, and specific applications of SSKF, with particular emphasis on neural signal research within the broader context of Bayesian decoding methods.

Theoretical Foundations and Computational Advantages

Convergence Properties and Steady-State Behavior

The theoretical basis for the steady-state Kalman filter stems from the convergence behavior observed in linear time-invariant systems. Empirical studies using human motor cortical data demonstrate that the standard Kalman filter gain converges to within 95% of its steady-state value remarkably quickly—typically within 1.5 ± 0.5 seconds (mean ± s.d.) under realistic decoding conditions [23]. Furthermore, the difference in decoded movement velocity between the adaptive Kalman filter and its steady-state counterpart becomes negligible within approximately 5 seconds, with correlation coefficients reaching 0.99 over extended session lengths [23].

This rapid convergence validates the practical applicability of SSKF for continuous decoding tasks, as the performance penalty during initial iterations is minimal and short-lived. The stability of this steady-state solution can be formally guaranteed through theoretical conditions on system observability and the radius of ambiguity sets in distributionally robust formulations [25].

Computational Complexity Analysis

The computational advantage of SSKF becomes particularly evident when analyzing algorithmic complexity relative to the standard Kalman filter implementation. The reduction in real-time operations is substantial, as illustrated in the following comparison:

Table 1: Computational Complexity Comparison Between KF and SSKF

| Operation | Standard KF Complexity | Steady-State KF Complexity |

|---|---|---|

| A priori state estimate | (O(s^2)) | (O(s^2)) |

| A priori covariance | (O(s^3)) | – |

| A posteriori state estimate | (O(sn)) | (O(sn)) |

| A posteriori covariance | (O(s^2 + s^2n)) | – |

| Kalman gain computation | (O(s^2n + sn^2 + n^3)) | – |

| Full recursion | (O(s^3 + s^2n + sn^2 + n^3)) | (O(s^2 + sn)) |

In neural decoding applications, where the number of observations (n, neuronal units) typically far exceeds the number of states (s, kinematic variables), the standard Kalman filter complexity effectively becomes (O(n^3)), while SSKF reduces to (O(n)) [23]. This complexity reduction translates to tangible performance gains; experimental implementations demonstrate that SSKF reduces the computational load (algorithm execution time) for decoding firing rates of 25 ± 3 single units by a factor of 7.0 ± 0.9 [23].

The relative efficiency of SSKF scales quadratically with ensemble size, making it particularly advantageous for resource-constrained neural interface systems facing increasing channel counts [23]. This efficiency enables longer battery life in wireless implantable systems and facilitates the practical implementation of future large-dimensional, multisignal neural interface systems [23].

Implementation Protocols for Neural Signal Decoding

Experimental Setup and Data Acquisition

Implementing SSKF for neural decoding requires careful experimental setup and data acquisition protocols. In clinical trials such as BrainGate, intracortical microelectrode arrays (10×10 silicon microelectrodes) are typically implanted in the precentral gyrus contralateral to the dominant hand within the arm representation area [23]. These arrays protrude 1-1.5mm from a 4×4mm platform and record neural activity during structured behavioral tasks [23].

The behavioral paradigm generally involves two phases: filter-building (open-loop motor imagery) and closed-loop assessment. During filter-building, participants observe a training cursor moving on a screen while imagining controlling it with their own dominant hand [23]. Two primary task types are employed:

- Pursuit-tracking tasks: A training cursor moves from a starting location toward randomly placed targets, generating trajectories that span much of the screen area [23].

- Center-out tasks: The training cursor moves between central and peripheral targets (typically 4 or 8 radial locations) with Gaussian velocity profiles [23].

Neural data and simultaneous kinematic measurements (position, velocity) recorded during these sessions provide the training dataset for estimating the SSKF parameters prior to real-time decoding implementation.

SSKF Configuration and Training Protocol

The steady-state Kalman filter implementation for neural decoding follows a structured protocol:

System Identification Phase:

- Kinematic State Modeling: Define the state vector to include movement kinematics. A typical implementation includes hand position, velocity, and acceleration in 2D space, resulting in a 6-dimensional state vector: ( \mathbf{y}t = [xt, yt, \dot{x}t, \dot{y}t, \ddot{x}t, \ddot{y}_t]^T ) [17].

- Neural Observation Modeling: The observation vector comprises binned spike counts from each recorded unit, transformed into firing rates [23] [17].

- Model Estimation: Estimate the state transition matrix (A), observation matrix (C), process noise covariance (Q), and observation noise covariance (R) from training data using maximum likelihood or least-squares methods [17].

Steady-State Gain Computation:

- Solve Discrete Algebraic Riccati Equation: Compute the steady-state error covariance (P\infty) by solving the DARE: ( P\infty = AP\infty A^T - AP\infty C^T(CP\infty C^T + R)^{-1}CP\infty A^T + Q ) [23].

- Compute Steady-State Gain: Calculate the constant Kalman gain: ( K\infty = P\infty C^T(CP_\infty C^T + R)^{-1} ) [23].

Real-Time Decoding Phase:

- Initialization: Set initial state estimate (\hat{\mathbf{y}}0) and initialize with constant gain (K\infty).

- Prediction Step: (\hat{\mathbf{y}}t^- = A\hat{\mathbf{y}}{t-1})

- Update Step: (\hat{\mathbf{y}}t = \hat{\mathbf{y}}t^- + K\infty(\mathbf{z}t - C\hat{\mathbf{y}}t^-)) where (\mathbf{z}t) is the observed neural activity vector at time (t) [23] [17].

This protocol eliminates the computationally intensive prediction and update of error covariance matrices during real-time operation, substantially reducing computational demands while maintaining decoding accuracy [23].

Performance Evaluation and Comparative Analysis

Quantitative Performance Metrics

Rigorous evaluation of SSKF performance encompasses multiple metrics that quantify both decoding accuracy and computational efficiency:

Table 2: Performance Metrics for Steady-State Kalman Filter Evaluation

| Metric Category | Specific Metrics | Measurement Methodology |

|---|---|---|

| Decoding Accuracy | Velocity correlation coefficient | Pearson correlation between decoded and actual hand velocity [23] |