Cross-Subject vs. Subject-Specific BCI Models: Validation Strategies, Clinical Applications, and Future Directions

This article provides a comprehensive analysis of validation paradigms for brain-computer interface models, contrasting subject-specific and cross-subject approaches.

Cross-Subject vs. Subject-Specific BCI Models: Validation Strategies, Clinical Applications, and Future Directions

Abstract

This article provides a comprehensive analysis of validation paradigms for brain-computer interface models, contrasting subject-specific and cross-subject approaches. We explore the fundamental principles, methodological innovations, and optimization strategies that address key challenges in BCI generalization, including inter-subject variability and signal non-stationarity. For researchers and drug development professionals, we present rigorous validation frameworks and comparative analyses that inform model selection for clinical translation. The synthesis covers emerging trends in transfer learning, domain adaptation, and transformer architectures that enhance BCI adaptability while maintaining decoding accuracy, offering critical insights for neurodegenerative disease monitoring and neurorehabilitation applications.

Fundamental Principles and Challenges in BCI Model Validation

Brain-Computer Interfaces (BCIs) face a fundamental challenge in model design: whether to create customized systems for individual users or develop universal systems that work across multiple users. This comparison guide examines the core conceptual differences between subject-specific and cross-subject BCI approaches, providing researchers with a comprehensive framework for selecting appropriate methodologies based on experimental requirements, target applications, and performance considerations. Through analysis of current literature and experimental data, we demonstrate that the choice between these paradigms represents a critical trade-off between model precision and practical scalability in BCI validation research.

The fundamental challenge in brain-computer interface research stems from significant individual variability in brain anatomy, neural activity patterns, and electrophysiological signals across different subjects [1]. This neurophysiological diversity has profound implications for BCI model development, forcing researchers to choose between creating customized solutions for individual users or developing universal systems that can generalize across populations.

Subject-specific approaches dominate traditional BCI research, relying on extensive calibration data collected from individual users to create highly personalized models. While this method often yields superior decoding performance for the target individual, it requires lengthy calibration procedures and substantial computational resources for each new user [2] [3]. This limitation has driven investigation into cross-subject approaches that aim to identify common neural representations across individuals, enabling faster deployment and broader applicability at the potential cost of some performance precision [1] [4].

The tension between these paradigms is particularly relevant for applications in clinical drug development and large-scale neurotechnology trials, where practical constraints often limit the feasibility of extensive subject-specific calibration. This guide systematically compares these approaches to inform research design decisions in both academic and industrial settings.

Conceptual Framework & Definitions

Subject-Specific BCI (SS-BCI)

Subject-Specific BCI systems are individually calibrated models trained exclusively on data from a single user. These systems leverage the unique neurophysiological signature of an individual to achieve optimized performance for that specific person. The underlying assumption is that neural patterns associated with specific tasks or states exhibit sufficient consistency within an individual but substantial variation between individuals, necessitating personalized model calibration [2] [5].

Cross-Subject BCI (CS-BCI)

Cross-Subject BCI approaches, also referred to as subject-independent (SI-BCI) or universal BCI models, are designed to generalize across multiple users without individual calibration. These systems aim to identify common neural features that remain stable across different individuals, creating a single model that can be applied to new users with minimal or no additional training [1] [4] [2]. The core innovation lies in extracting invariant neural representations while filtering out subject-specific variability.

Comparative Analysis: Core Conceptual Differences

Table 1: Fundamental Conceptual Differences Between Subject-Specific and Cross-Subject BCI Approaches

| Aspect | Subject-Specific BCI | Cross-Subject BCI |

|---|---|---|

| Core Philosophy | Personalization via individual calibration | Generalization through common features |

| Training Data | Single-subject data | Multi-subject data pooling |

| Model Output | Customized decoder for one user | Universal decoder for multiple users |

| Calibration Requirement | Extensive for each new user | Minimal to none for new users |

| Primary Strength | Optimized individual performance | Immediate usability & scalability |

| Primary Limitation | Poor generalization across users | Potential performance trade-off |

| Computational Load | Distributed across users | Concentrated in initial training |

| Ideal Application | High-precision individual control | Population-level studies & rapid deployment |

Fundamental Philosophical Divergence

The philosophical divergence between these approaches centers on how they address inter-subject variability. Subject-specific methods treat this variability as an insurmountable obstacle to generalization, thus requiring individual calibration. In contrast, cross-subject approaches treat inter-subject variability as noise that can be filtered out to reveal stable, transferable neural representations [1] [4].

This philosophical difference manifests in technical implementation. Subject-specific models typically employ individual feature spaces and customized classification boundaries, while cross-subject approaches utilize shared embedding spaces and domain adaptation techniques to align distributions across subjects [4] [6].

Methodological Implications for Research Design

The choice between these paradigms significantly impacts research design. Subject-specific approaches require repeated measures designs with extensive data collection from each participant, limiting sample sizes but enabling deep individual analysis. Cross-subject approaches facilitate larger between-subject designs with reduced per-subject data collection, enabling broader population inferences but potentially missing subtle individual differences [2] [3].

Experimental Evidence & Performance Comparison

Quantitative Performance Metrics

Table 2: Experimental Performance Comparison Across BCI Paradigms and Modalities

| Study | BCI Paradigm | Subject-Specific Accuracy | Cross-Subject Accuracy | Performance Gap |

|---|---|---|---|---|

| Dos Santos et al. (2023) [2] | MI-EEG (LDA) | 76.85% | 80.30% | -3.45% |

| Dos Santos et al. (2023) [2] | MI-EEG (SVM) | 94.20% | 83.23% | +10.97% |

| CSDD (2025) [1] | MI-EEG | Baseline | +3.28% improvement | - |

| CSCL (2025) [4] | EEG-Emotion | Not reported | 97.70% (SEED) | - |

| Selective Pooling (2021) [3] | MI-EEG | Varies by subject | Comparable to subject-specific | Minimal |

Analysis of Performance Patterns

The experimental data reveals a complex performance landscape. While subject-specific approaches generally achieve higher peak performance for individual users, recent cross-subject methods have demonstrated remarkably competitive results, sometimes even surpassing subject-specific models in specific configurations [2]. The performance gap appears to be modality-dependent and influenced by the algorithm sophistication.

For motor imagery (MI) paradigms, subject-specific models typically maintain a performance advantage, though advanced cross-subject methods like the Cross-Subject DD (CSDD) algorithm have demonstrated progressive improvements [1]. In emotion recognition domains, cross-subject approaches using contrastive learning (CSCL) have achieved exceptionally high accuracy (97.70% on SEED dataset), suggesting that certain neural processes may contain more transferable patterns across individuals [4].

Technical Implementation & Methodologies

Subject-Specific BCI Implementation

Subject-specific implementations typically follow a standardized calibration pipeline centered on individual data collection and model optimization:

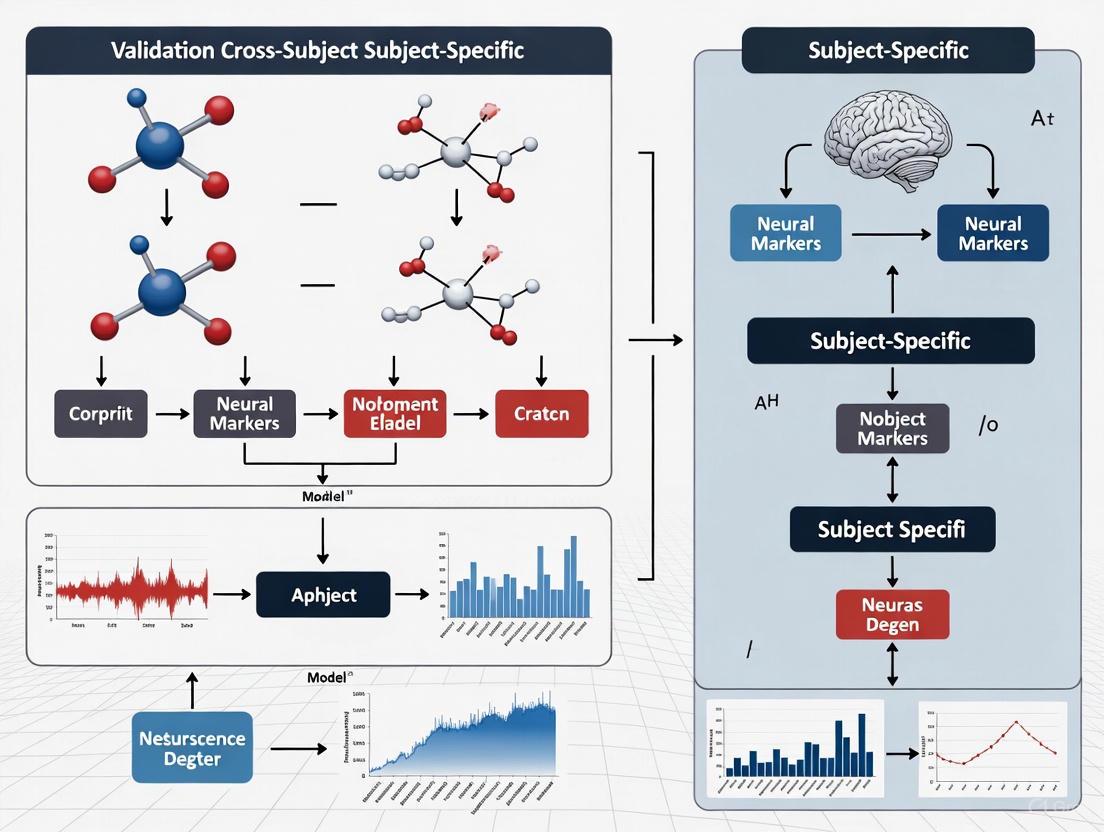

Figure 1: Subject-Specific BCI workflow emphasizing individual calibration and validation.

The subject-specific methodology relies heavily on individual calibration sessions where users perform predefined tasks while neural data is collected. Feature extraction methods like Common Spatial Patterns (CSP) are optimized for the individual's distinctive patterns, and classifiers are trained to recognize the subject's unique neural signatures [2] [5]. This approach requires substantial training data from each user but typically achieves superior performance for that specific individual.

Cross-Subject BCI Implementation

Cross-subject approaches employ more complex architectures designed to identify and leverage common neural patterns:

Figure 2: Cross-subject BCI workflow featuring multi-subject training and zero-shot validation.

Advanced cross-subject implementations utilize several sophisticated strategies:

- Selective Subject Pooling: Strategically choosing source subjects with transferable features rather than using all available data [3]

- Domain Alignment: Applying techniques to minimize distribution shifts between subjects [4] [7]

- Common Feature Extraction: Identifying neural representations that remain stable across individuals [1]

- Leave-One-Subject-Out (LOSO) Validation: Rigorous testing of generalization capability to unseen users [2] [6]

The CSDD algorithm exemplifies modern cross-subject approaches, implementing a four-stage process: (1) training personalized models for each subject, (2) transforming them into relation spectrums, (3) identifying common features through statistical analysis, and (4) constructing a universal model based on these common features [1].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Methodological Components for BCI Generalization Research

| Research Component | Function in BCI Research | Example Implementations |

|---|---|---|

| Leave-One-Subject-Out (LOSO) | Validation protocol for cross-subject generalization | [2] [6] |

| Common Spatial Patterns (CSP) | Spatial filtering for feature extraction | [2] [5] [3] |

| Domain Adaptation | Aligning feature distributions across subjects | SUTL [7], CSCL [4] |

| Transfer Learning | Leveraging knowledge from source to target subjects | SSTL-PF [1] |

| Contrastive Learning | Learning invariant representations across subjects | CSCL in hyperbolic space [4] |

| Selective Subject Pooling | Identifying optimal source subjects for transfer | Performance-based selection [3] |

| Relation Spectrum Analysis | Decomposing models to extract common features | CSDD algorithm [1] |

Application Contexts & Research Implications

When to Prefer Subject-Specific Approaches

Subject-specific BCI approaches are preferable in scenarios where:

- Maximum performance precision is required for individual users

- Long-term usage by a single individual justifies calibration investment

- Clinical applications demand optimized individual control

- Research focuses on deep individual differences in neural processing

- Subjects have atypical neural patterns not well-represented in population models

When to Prefer Cross-Subject Approaches

Cross-subject BCI approaches offer advantages in situations requiring:

- Rapid deployment to new users without calibration

- Large-scale studies with limited per-subject data collection

- Population-level inferences about neural mechanisms

- Clinical translation for patients unable to complete lengthy calibration

- BCI illiteracy mitigation for users who struggle with traditional paradigms [2] [3]

Future Directions & Market Outlook

The BCI field is experiencing rapid growth, with the global market projected to increase from $2.83 billion in 2025 to $8.73 billion by 2033, representing a 15.13% CAGR [8]. This expansion is driving increased investment in both approaches, though cross-subject methods are gaining prominence due to their scalability advantages.

Future research directions include:

- Hybrid approaches that combine subject-specific adaptation with cross-subject initialization

- Advanced domain adaptation techniques from general machine learning

- Multimodal integration to improve generalization across subjects [6]

- Federated learning frameworks to leverage distributed data while preserving privacy

- Neuromarker discovery for identifying stable cross-subject neural representations

Clinical translation efforts are increasingly emphasizing cross-subject methods, with companies like Synchron, Neuralink, and Precision Neuroscience developing solutions that minimize individual calibration requirements [9] [10]. This trend reflects the practical realities of clinical implementation where lengthy calibration procedures present significant barriers to adoption.

The choice between subject-specific and cross-subject BCI approaches represents a fundamental trade-off between individual optimization and practical scalability. Subject-specific methods continue to offer superior performance for individual users but at the cost of extensive calibration requirements. Cross-subject approaches provide immediate usability and broader applicability while rapidly closing the performance gap through advanced machine learning techniques.

For researchers and drug development professionals, selection between these paradigms should be guided by specific research questions, practical constraints, and application contexts. The evolving landscape suggests that hybrid approaches leveraging cross-subject initialization with minimal subject-specific adaptation may offer the most promising path forward for both scientific discovery and clinical translation.

The Critical Challenge of Inter-Subject Variability in Brain Signals

Inter-subject variability remains a primary obstacle in the development of robust brain-computer interface (BCI) systems, significantly limiting their practical application and commercialization. This challenge stems from substantial differences in brain anatomy, neurophysiology, and cognitive strategies among individuals, which cause machine learning models trained on one subject to perform poorly on others. The BCI research community has developed two principal approaches to address this fundamental issue: cross-subject generalized models that leverage data from multiple users to create systems requiring minimal calibration, and subject-specific adaptive models that employ transfer learning techniques to rapidly customize pre-trained models for individual users. This comprehensive analysis compares the performance, methodological frameworks, and practical implications of these competing approaches, providing researchers with evidence-based guidance for selecting appropriate strategies based on their specific application requirements, data availability, and performance targets.

The Core Problem: Quantifying Inter-Subject Variability in Neural Signals

Inter-subject variability in electroencephalography (EEG)-based BCIs presents a multi-faceted challenge affecting signal characteristics, feature distributions, and ultimately classification performance across different users. This variability arises from numerous sources including anatomical differences in skull thickness and brain morphology, neurophysiological factors such as age and gender, and psychological factors including cognitive strategies and attention levels [11] [12]. The consequence is that EEG signals exhibit significant distribution shifts across subjects, violating the fundamental independent and identically distributed (I.I.D.) assumption underlying most conventional machine learning algorithms [12].

Empirical investigations have quantified the substantial performance degradation that occurs when subject-independent models are applied to new users without adaptation. Studies implementing Leave-One-Subject-Out Cross-Validation (LOSOCV) – where models are trained on multiple subjects and tested on a completely unseen subject – typically report accuracy reductions of 10-30% compared to subject-specific models trained on the target user's data [13]. This performance gap represents the "inter-subject variability penalty" that BCI systems must overcome to achieve practical utility.

The phenomenon of BCI illiteracy further compounds this challenge, with approximately 10-30% of users unable to achieve effective control of standard BCI systems due to their inability to generate discriminative brain patterns [13]. Neurophysiological studies have identified correlates of this phenomenon, including significantly lower alpha peaks at motor cortex electrodes (C3 and C4) in poor performers compared to good performers [13].

Table 1: Manifestations and Impact of Inter-Subject Variability in BCI Systems

| Aspect of Variability | Manifestation in EEG Signals | Impact on BCI Performance |

|---|---|---|

| Spatial Topography | Differences in ERD/ERS patterns across sensorimotor areas | Reduced effectiveness of common spatial patterns across subjects |

| Temporal Dynamics | Variability in latency and amplitude of ERP components (e.g., P300) | Decreased classification accuracy for event-related paradigms |

| Spectral Properties | Differences in dominant frequency bands and power distribution | Compromised performance of frequency-based feature extraction |

| Session-to-Session Stability | Signal drift within the same subject across different sessions | Model degradation over time requiring recurrent recalibration |

Figure 1: The multi-faceted challenge of inter-subject variability in BCI systems, showing how diverse factors contribute to signal distribution shifts and ultimately degrade model performance.

Comparative Analysis of Solution Approaches

Cross-Subject Generalized Models

Cross-subject generalization approaches aim to create BCI systems that new users can operate immediately without extensive calibration. These methods typically leverage data from multiple subjects to train models that capture common neural patterns while remaining robust to inter-subject differences.

Selective Subject Pooling represents one promising strategy that moves beyond simply pooling all available subject data. This approach strategically selects subjects who yield reasonable BCI performance, excluding outliers or poor performers who might negatively impact model generalization [13]. Empirical studies have demonstrated that this selective approach significantly enhances cross-subject performance compared to using all available subjects indiscriminately [13].

Paradigm Optimization offers another pathway to improved cross-subject generalization. Research comparing different EEG paradigms has revealed that the Rapid Serial Visual Presentation (RSVP) paradigm evokes more similar ERP patterns across subjects compared to traditional matrix spellers [14]. Quantitative analysis shows that the average matching number between subjects' averaged ERP waveforms was 3 times higher for RSVP (20 matches) than for the matrix paradigm (6 matches) when using a cosine similarity threshold of 0.5 [14]. This enhanced similarity directly translates to performance benefits, with RSVP achieving an average Information Transfer Rate (ITR) of 43.18 bits/min, approximately 13% higher than the matrix paradigm [14].

Correlation Analysis Rank (CAR) Algorithm represents a novel method for improving cross-subject classification while minimizing training data requirements. When evaluated with 58 subjects – a substantially larger sample size than most BCI studies – the CAR algorithm achieved an AUC value of 0.8 in cross-subject classification, significantly outperforming traditional random selection approaches which achieved only 0.65 [14].

Table 2: Performance Comparison of Cross-Subject Generalization Approaches

| Method | Key Mechanism | Reported Performance | Subjects | Limitations |

|---|---|---|---|---|

| Selective Subject Pooling [13] | Strategic selection of subjects with good BCI performance | Enhanced cross-subject performance vs. non-selective pooling | Public MI BCI datasets | Requires performance assessment for subject selection |

| RSVP Paradigm [14] | Evokes more similar ERP patterns across subjects | 43.18 bits/min ITR (13% higher than matrix) | 58 subjects | Limited to specific BCI applications |

| CAR Algorithm [14] | Optimizes training subject selection for new users | 0.8 AUC vs. 0.65 for random selection | 58 subjects | Algorithm complexity |

| Neural Manifold Analysis [5] | Identifies class-specific and subject-invariant intervals | Improved accuracy for poor performers | BCI Competition IV datasets | Computational intensity |

Subject-Specific Adaptive Models

Subject-specific approaches embrace the uniqueness of individual neural signatures, employing various adaptation strategies to customize models for each user. These methods typically start with a base model – either untrained or pre-trained on multiple subjects – and then specialize it using target subject data.

Explicit Subject Conditioning represents a sophisticated framework for incorporating subject-specific characteristics directly into neural network architectures. Recent research has explored two primary conditioning mechanisms:

- Projection-based Conditioning: This approach performs subject-specific modulation in the feature space by computing the projection of extracted features onto a learned subject embedding vector, effectively learning a subject-specific receptive field where features aligned with the subject's learned direction receive amplification [15].

- Feature-wise Linear Modulation (FiLM): This more flexible approach performs affine transformations on extracted features using subject-specific scaling (γ) and bias (β) parameters, enabling heterogeneous feature modulation where each dimension can be independently scaled and shifted [15].

These conditioning approaches are particularly valuable in data-scarce BCI environments, as they enable rapid adaptation to new subjects with minimal calibration data. The experimental protocol typically involves a two-stage process: pre-training on multiple subjects followed by incremental fine-tuning using progressively more data from the target subject (from 1 to 4 batches of 60 trials each) [15].

Metric-Based Spatial Filtering Transformer (MSFT) represents a state-of-the-art subject-specific approach that leverages additive angular margin loss to enhance inter-class separability while enforcing intra-class compactness [16]. This method decouples the training of feature extractors and classifiers, enabling the extraction of more generalized and discriminative features. When evaluated on the BCI Competition IV-2a and 2b datasets, MSFT achieved remarkable performance:

- Specific-subject classification: 86.11% accuracy for 2a (4-class), 88.39% for 2b (2-class) [16]

- Cross-subject classification: 61.92% accuracy for 2a [16]

- Cross-task classification: 83.38% accuracy when training the feature extractor on 2a data and fine-tuning the classifier on 2b data [16]

Neural Manifold Analysis (NMA) offers an innovative approach to identifying optimal time intervals for feature extraction that capture both class-specific and subject-specific characteristics [5]. By constructing a multi-dimensional feature space to detect intervals with enhanced discriminability, this method has demonstrated significant improvements in classification accuracy, particularly for subjects with initially poor performance. When applied to the Graz Competition IV 2A (four-class) and 2B (two-class) motor imagery datasets, NMA-based pipelines surpassed state-of-the-art algorithms designed for MI tasks [5].

Figure 2: Workflow for subject-specific model adaptation, showing how limited subject data is combined with conditioning mechanisms to create customized models.

Experimental Protocols and Validation Frameworks

Robust experimental design is essential for meaningful comparison of BCI approaches addressing inter-subject variability. The research community has converged on several standard protocols and validation frameworks.

Leave-One-Subject-Out Cross-Validation (LOSOCV) represents the gold standard for evaluating cross-subject generalization performance [13]. In this rigorous framework, models are trained on data from all available subjects except one, and then tested on the completely unseen left-out subject. This process is repeated such that each subject serves as the test subject once, providing a comprehensive assessment of true cross-subject generalization without any data leakage from test subjects into training.

Incremental Fine-Tuning Methodology enables evaluation of data-efficient calibration approaches that minimize the amount of subject-specific data required for BCI calibration [15]. This protocol typically involves starting with just one batch (e.g., 60 trials) from the target subject's fine-tuning set and progressively increasing to multiple batches (e.g., up to four batches). Each model is fine-tuned and cross-validated using all possible permutations of the selected batches, providing robust performance estimates across different amounts of calibration data [15].

Temporal Splitting Strategies address potential confounding factors such as fatigue effects when combining multiple sessions from the same subject. A balanced approach involves temporally dividing held-out subject sessions into fine-tuning and test sets, counterbalancing potential fatigue effects by taking half of each session and merging it with the opposite half while preserving the original class-label distribution [15].

Hyperparameter Optimization with Class Imbalance Awareness is particularly crucial in BCI applications where datasets typically exhibit significant class imbalance (e.g., 1 Target for every 9 Non-Targets in ERP paradigms) [15]. Comprehensive optimization strategies must prioritize metrics that account for this imbalance, such as Matthews Correlation Coefficient (MCC), rather than raw accuracy [15].

Table 3: Standard Experimental Protocols in Inter-Subject Variability Research

| Protocol | Implementation | Evaluation Focus | Advantages |

|---|---|---|---|

| LOSOCV [13] | Train on N-1 subjects, test on left-out subject; repeat for all subjects | True cross-subject generalization | Prevents data leakage, comprehensive assessment |

| Incremental Fine-Tuning [15] | Progressively increase target subject data (1 to 4 batches) | Data efficiency and calibration requirements | Models practical deployment scenarios |

| Temporal Splitting [15] | Combine halves from different sessions for fine-tuning/test sets | Controls for fatigue and session effects | Balances data distributions across sets |

| MCC-Based Early Stopping [15] | Use Matthews Correlation Coefficient for model selection | Robustness to class imbalance | More appropriate for skewed BCI datasets |

Advancing research in inter-subject variability requires specialized tools, datasets, and analytical resources. The following table summarizes key resources mentioned in the literature.

Table 4: Essential Research Resources for Inter-Subject Variability Studies

| Resource Category | Specific Examples | Function and Application | Availability |

|---|---|---|---|

| Public BCI Datasets | BCI Competition IV (2a, 2b) [5] [16], BrainForm [15], Continuous Pursuit Dataset [17] | Benchmarking algorithms, training generalized models | Publicly available |

| Signal Processing Toolboxes | MNE [15], EEGLAB [13], OpenBMI [13] | Preprocessing, feature extraction, visualization | Open source |

| Feature Extraction Algorithms | Common Spatial Patterns (CSP) [13] [12], FBCSP [5], Neural Manifold Analysis [5] | Identifying discriminative spatial and temporal patterns | Various implementations |

| Subject Conditioning Frameworks | Projection-based conditioning [15], FiLM layers [15] | Incorporating subject-specific characteristics into DNNs | Custom implementations |

| Validation Frameworks | Leave-One-Subject-Out Cross-Validation [13] | Assessing true cross-subject generalization | Standard practice |

Performance Benchmarking and Application-Specific Recommendations

Direct performance comparisons across studies must be interpreted cautiously due to differences in datasets, evaluation protocols, and experimental conditions. However, several trends emerge from the aggregated research.

For cross-subject generalization, the RSVP paradigm combined with the CAR algorithm has demonstrated particularly strong performance with an AUC of 0.8 across 58 subjects [14]. Selective subject pooling strategies have also shown consistent improvements over non-selective approaches, though the magnitude of improvement depends on the specific subject cohort [13].

For subject-specific adaptation, the MSFT framework with additive angular margin loss has achieved impressive specific-subject accuracy of 86.11% (4-class) and 88.39% (2-class) on standard benchmarks [16]. The explicit subject conditioning approaches using projection or FiLM mechanisms enable rapid adaptation with minimal data, making them particularly suitable for real-world applications where extended calibration is impractical [15].

Application-specific recommendations can be derived from these performance comparisons:

- Clinical and Assistive Technologies: Where reliability is paramount and some calibration time is acceptable, subject-specific adaptive approaches (particularly MSFT and explicit conditioning) are recommended due to their superior final performance.

- Consumer Applications: For applications requiring immediate usability with no calibration, cross-subject approaches (particularly RSVP paradigms with selective pooling) represent the only feasible option, despite potential performance compromises.

- Research Environments: Neural Manifold Analysis offers powerful investigation tools for understanding the neural basis of inter-subject variability and identifying optimal feature extraction intervals [5].

The emerging approach of cross-task classification, exemplified by MSFT's ability to achieve 83.38% accuracy when trained on one task and fine-tuned on another, represents a promising direction for creating more flexible and generalizable BCI systems [16].

The critical challenge of inter-subject variability in brain signals continues to drive innovation in BCI research. The competing approaches of cross-subject generalization and subject-specific adaptation offer complementary strengths, with the former enabling zero-calibration systems and the latter achieving higher ultimate performance at the cost of some calibration data. The emerging trend toward hybrid approaches that leverage multi-subject pre-training followed by lightweight subject-specific adaptation represents a promising middle ground, offering improved initial performance for new users while maintaining the ability to specialize with minimal data.

Future progress will likely come from several directions: improved neural manifolds that better capture invariant neural representations across subjects [5], more sophisticated subject conditioning mechanisms that efficiently incorporate individual characteristics [15], and larger-scale publicly available datasets that enable training more robust base models [17]. Additionally, a deeper understanding of the neurophysiological basis of BCI illiteracy may enable targeted interventions to help poor performers generate more discriminative brain patterns [13] [12].

As these technological advances mature, they will gradually overcome the critical challenge of inter-subject variability, ultimately enabling robust, reliable BCIs suitable for real-world applications across diverse user populations.

Brain-Computer Interface (BCI) illiteracy, a significant challenge in neurotechnology, refers to the inability of a substantial portion of users to operate BCI systems effectively. This phenomenon affects approximately 15–30% of BCI users, who fail to achieve satisfactory control within standard training periods [18] [19] [3]. These individuals typically achieve classification accuracies below 70%, significantly impacting overall system performance and reliability [18] [19]. The existence of BCI illiteracy presents a fundamental obstacle to the development of robust, generalizable BCI models, particularly in the critical research area of cross-subject versus subject-specific model validation.

The core of the problem lies in the neurophysiological variability between individuals. Subjects labeled as BCI illiterate often fail to produce the distinct event-related desynchronization/synchronization (ERD/ERS) patterns required for reliable motor imagery (MI) classification [19]. Research indicates that poor performers generate lower alpha peaks at key motor cortex electrodes (C3 and C4) compared to good performers, highlighting fundamental differences in brain activity patterns [3]. This variability directly impacts the generalization capabilities of BCI algorithms, creating a pressing need for strategies that can bridge this performance gap and enable more inclusive BCI technologies.

Quantitative Prevalence and Performance Gap

The prevalence of BCI illiteracy and its impact on model performance is well-documented across multiple studies. The table below summarizes key quantitative findings:

Table 1: Documented Prevalence and Performance Impact of BCI Illiteracy

| Metric | Reported Value | Context & Dataset | Citation |

|---|---|---|---|

| Prevalence Rate | 15-30% of users | Proportion of users failing to achieve control | [18] [19] [3] |

| Performance Threshold | < 70% Accuracy | Typical classification accuracy for illiterate users | [18] [19] |

| High-Performer Accuracy | 85.32% (2-class), 76.90% (3-class) | Average using EEGNet/DeepConvNet on a 62-subject dataset | [20] |

| Correlation with Resting-State EEG | r = 0.53 (PSD at 10Hz) | Correlation between resting-state alpha power and subsequent BCI performance | [21] |

| High vs. Low Performer Difference | Statistically Significant | In theta and alpha band powers during resting state | [3] |

The performance disparity is further exemplified by high-quality datasets, such as the one from the 2019 World Robot Conference Contest, which reported average accuracies of 85.32% for two-class and 76.90% for three-class motor imagery tasks across 62 subjects using state-of-the-art deep learning models [20]. This establishes a benchmark that BCI illiterate users struggle to meet, creating a significant performance gap that generalization strategies must address.

Impact on Model Generalization and Validation

The presence of BCI illiteracy fundamentally challenges the core assumptions of BCI model validation, creating a distinct divergence in strategy efficacy between subject-specific and cross-subject approaches.

The Subject-Specific vs. Cross-Subject Paradigm

- Subject-Specific Models: These models are trained on an individual user's data, allowing them to learn personalized neurophysiological patterns. This approach can mitigate the effects of illiteracy for that specific user but requires a lengthy and cumbersome calibration phase, which is often impractical for real-world applications [3].

- Cross-Subject (Subject-Independent) Models: These models are trained on data from a group of users and applied to new, unseen subjects. This approach aims for "zero-training" BCI use but is highly susceptible to performance degradation due to the high inter-subject variability that characterizes BCI illiteracy [3]. When data from BCI illiterate subjects is included in the training pool, the model learns suboptimal or noisy feature representations, which harms its generalization capability to new users [19] [3].

The Generalization Challenge

The neurophysiological characteristics of BCI illiterate users directly create what is known as a domain shift between the data distributions of high-performing and low-performing subjects. This shift means that features extracted from the brain signals of a BCI-literate "source" domain are not directly applicable to the BCI-illiterate "target" domain [19]. Furthermore, BCI illiteracy is often associated with poor repeatability of EEG patterns not just across subjects, but also across different recording sessions for the same subject, introducing cross-session variability that further complicates model generalization [19].

Strategies for Improving Generalization

Several advanced computational strategies have been developed to address the generalization challenge posed by BCI illiteracy. The following workflow illustrates the logical relationship between the core problem and the leading solution paradigms.

Selective Subject Pooling

This strategy involves curating the training dataset by selectively choosing data from subjects who yield reasonable BCI performance, rather than using all available subjects [3]. The hypothesis is that training a subject-independent model on a pool of consistently high-performing subjects provides a more stable foundation of discriminative neurophysiological features.

- Experimental Protocol: The typical leave-one-subject-out cross-validation (LOSOCV) is modified. A decision function

g(X)evaluates a subject's specific BCI performancef(X)using their own data. If the performance meets a certain threshold, the subject is added to the selective source poolS. New test subjects are then evaluated using a modelh(S)trained only on this curated pool [3]. - Supporting Evidence: This approach has shown promise on public MI BCI datasets. It leverages the finding that good and poor performers have notably different neurophysiological characteristics, and thus excluding the latter during training can enhance a model's generalizability [3].

Domain Adaptation and Semantic Style Transfer

These are feature-level approaches that aim to explicitly reduce the distributional discrepancy between different subjects or sessions.

- Subject-to-Subject Semantic Style Transfer (SSSTN): This method transfers the "classification style" from a high-performing source subject (a BCI "expert") to a target subject (a BCI illiterate user) [18]. It uses a loss function to align the feature distributions while preserving class-relevant semantic information from the target, effectively teaching the model to extract more literate-like features from illiterate subjects.

- Distribution Adaptation Framework: Another approach uses Multi-Kernel Maximum Mean Discrepancy (MK-MMD) to align the marginal distributions of source and target domain data in a high-dimensional Reproducing Kernel Hilbert Space (RKHS) [19]. The workflow for this method is detailed below.

- Experimental Protocol: The source domain data (e.g., from high-performers) is used to train a Multiple-Kernel Extreme Learning Machine (MK-ELM) to find a subspace that maximizes class separability. Then, MK-MMD is applied to align the distributions of the source and target (BCI illiterate) data within this new subspace. Finally, a robust classifier like Random Forest is trained on the adapted features [19].

- Performance: This MK-DA-RF framework has been validated on open datasets containing BCI illiterate subjects, showing a reduction in inter-domain differences and improved performance for both cross-subject and cross-session scenarios [19].

Foundation Models and Brain State-Aware Learning

A more recent advancement involves building large-scale EEG foundation models pre-trained on massive, diverse datasets.

- BrainPro Model: This model introduces "brain state-aware" representation learning. It uses a shared encoder alongside multiple parallel, brain-state-specific encoders (e.g., for motor, emotion) with a decoupling loss. This design explicitly disentangles shared neural features from those specific to certain processes, which may be dysregulated in BCI illiteracy [22].

- Experimental Protocol: The model is pre-trained self-supervised on a large EEG corpus. For downstream tasks like motor imagery, it can flexibly combine the shared encoder with the relevant process-specific encoder (e.g., motor), allowing it to adapt more effectively to individual users' neural signatures [22].

- Performance: BrainPro reports state-of-the-art generalization across nine public BCI datasets, indicating a promising path toward models that are inherently more robust to the variability underlying BCI illiteracy [22].

The Scientist's Toolkit: Key Research Reagents

The following table catalogues essential datasets, algorithms, and software tools frequently used in BCI illiteracy and generalization research.

Table 2: Essential Research Reagents for BCI Generalization Studies

| Reagent / Resource | Type | Primary Function in Research | Example Use Case |

|---|---|---|---|

| BCI Competition IV Datasets (2a & 2b) | Public Dataset | Benchmark for evaluating cross-subject & cross-session algorithms. | Used to validate SSSTN and other domain adaptation methods [18]. |

| WBCIC-MI Dataset (62 subjects) | Public Dataset | Provides large-scale, high-quality MI data for training generalizable models. | Used to achieve high subject-specific accuracies with EEGNet/DeepConvNet [20]. |

| Common Spatial Pattern (CSP) | Algorithm | Spatial filter for extracting discriminative MI features from multi-channel EEG. | A base feature extraction method in selective pooling studies [3]. |

| EEGNet | Deep Learning Model | Compact convolutional neural network for EEG-based BCIs. | Used to establish baseline performance on new datasets (e.g., 85.32% on WBCIC-MI) [20]. |

| Multi-Kernel MMD (MK-MMD) | Algorithm | Measures and minimizes distribution discrepancy between source and target domains in a high-dimensional space. | Core component of the distribution adaptation framework for tackling BCI illiteracy [19]. |

| Random Forest (RF) | Algorithm | Classifier robust to high-dimensional features without need for extensive hyperparameter tuning. | Used as the final classifier in the MK-DA-RF framework after domain adaptation [19]. |

| OpenBMI Toolbox | Software Toolbox | Provides pre-processing, feature extraction, and classification pipelines for MI-BCI. | Facilitates reproducible research and comparative studies on BCI illiteracy [3]. |

The challenge of BCI illiteracy underscores a critical trade-off in BCI model validation. Subject-specific models offer a personalized solution at the cost of practicality, while naive cross-subject models offer convenience but fail for a significant portion of the population. The emergence of sophisticated strategies like selective subject pooling, domain adaptation, and large foundation models represents a paradigm shift towards a third way: building subject-independent systems that are intrinsically designed to handle human neurophysiological diversity.

The quantitative data reveals that the performance gap is substantial but not insurmountable. The success of these advanced methods hinges on their ability to identify and leverage stable, transferable neural features while explicitly modeling or correcting for inter-subject variability. Future research directions will likely involve the creation of even larger, more diverse public datasets, the refinement of brain-state-aware foundation models, and the integration of these generalization techniques into real-time, closed-loop BCI systems. Ultimately, overcoming BCI illiteracy is not merely about improving average accuracy metrics; it is about developing truly inclusive and robust neurotechnology that is accessible and reliable for all potential users.

A foundational challenge in cognitive neuroscience and brain-computer interface (BCI) development lies in selecting appropriate neural signal acquisition modalities that align with research goals and validation frameworks. Electroencephalography (EEG), electrocorticography (ECoG), and functional magnetic resonance imaging (fMRI) represent three dominant neuroimaging techniques, each with distinct strengths and limitations in spatial resolution, temporal resolution, and invasiveness [23] [24]. The choice among these modalities becomes particularly critical when considering model validation approaches—whether to develop subject-specific models tailored to individual neural patterns or cross-subject models that generalize across populations [1] [25]. Subject-specific models often achieve higher accuracy for individuals but require extensive calibration, while cross-subject models offer plug-and-play functionality at the potential cost of performance [26]. This analysis systematically compares EEG, ECoG, and fMRI across technical specifications, validation paradigms, and experimental applications to guide researchers in aligning acquisition modalities with their specific validation requirements in BCI and cognitive neuroscience research.

Technical Comparative Analysis of Acquisition Modalities

The selection of a neural signal acquisition modality involves navigating fundamental trade-offs between spatial resolution, temporal resolution, and invasiveness. Table 1 provides a detailed comparison of the core technical characteristics of EEG, ECoG, and fMRI.

Table 1: Technical specifications of EEG, ECoG, and fMRI

| Feature | EEG | ECoG | fMRI |

|---|---|---|---|

| Spatial Resolution | Low (centimeter-scale) | High (millimeter-scale) | High (millimeter-scale) |

| Temporal Resolution | High (millisecond-scale) | High (millisecond-scale) | Low (second-scale) |

| Invasiveness | Non-invasive | Invasive (subdural) | Non-invasive |

| Measured Signal | Electrical potentials from pyramidal neurons | Electrical potentials from cortical surface | Hemodynamic (BOLD) response |

| Primary Signal Source | Post-synaptic potentials | Local field potentials | Blood oxygenation level-dependent changes |

| Typical Coverage | Whole cortex | Localized cortical regions | Whole brain |

| Signal-to-Noise Ratio | Low | High | Medium |

| Portability | High | Low (clinical setting) | Low |

EEG measures electrical activity via electrodes placed on the scalp, providing excellent temporal resolution but limited spatial resolution due to signal smearing through skull and tissues [23] [27]. In contrast, ECoG records electrical activity directly from the cortical surface, bypassing the skull barrier to achieve both high temporal and spatial resolution, but requiring invasive surgical implantation typically only available in clinical populations such as epilepsy patients [23] [28]. fMRI measures brain activity indirectly through the hemodynamic response, providing excellent spatial resolution but poor temporal resolution due to the slow nature of blood flow changes [23] [24].

The relationship between these modalities can be quantitatively characterized. Studies comparing fMRI with invasive electrophysiological recordings indicate that the Blood Oxygen Level Dependent (BOLD) signal correlates most strongly with local field potentials (LFPs) rather than spiking activity, with particularly strong relationships to high-frequency ECoG power (gamma band, 28-56 Hz, and high frequencies, 64-116 Hz) [23] [24] [29]. Interestingly, the correlation between fMRI and electrical activity displays frequency-dependent characteristics, with positive correlations for high-frequency power and negative correlations for low-frequency power (theta, 4-8 Hz, and alpha, 8-12 Hz) across multiple task-related cortical structures [24] [29].

Performance Across Validation Approaches

Subject-Specific Validation

Subject-specific models are trained and validated on data from the same individual, maximizing personalization while requiring significant per-subject calibration. EEG has been successfully applied in subject-specific models for motor imagery classification, with deep learning approaches such as EEGNet and shallow ConvNet achieving high accuracy [26] [27]. Similarly, subject-specific ECoG models have demonstrated remarkable performance in natural language decoding, with encoding models using contextual word embeddings from large language models accounting for significant variance in neural responses [28]. fMRI-informed EEG models have also shown promise in subject-specific reward processing detection, where combined EEG-fMRI recordings enable the creation of personalized fingerprints of ventral striatum activation [30].

The reliability of subject-specific responses varies across modalities. For naturalistic stimuli, single-subject ECoG demonstrates high repeat-reliability (inter-viewing correlation), whereas single-subject EEG and fMRI show more variable responses, often requiring grand-averaging across subjects to achieve comparable reliability [24] [29]. This suggests that while all modalities can support subject-specific modeling, ECoG provides more robust single-subject signals, while EEG and fMRI may benefit from complementary approaches to enhance signal quality.

Cross-Subject Validation

Cross-subject validation presents greater challenges due to inter-individual variability in brain anatomy, physiology, and cognitive strategies [1] [25]. Table 2 compares modality performance across validation paradigms.

Table 2: Modality performance in different validation approaches

| Modality | Subject-Specific Accuracy | Cross-Subject Accuracy | Primary Challenges in Cross-Subject Application |

|---|---|---|---|

| EEG | Variable (requires calibration) | Lower (~8.93% improvement with advanced DG methods) [26] | High inter-subject variability, low signal-to-noise ratio |

| ECoG | High (limited data availability) | Limited evidence (small patient cohorts) | Limited subject pools, ethical constraints |

| fMRI | High (spatially precise) | Moderate (similar spatial organization) | Low temporal resolution, high cost limiting sample sizes |

EEG cross-subject models face significant challenges due to distribution shifts across individuals [25] [26]. Domain generalization approaches have emerged to address this, with methods like correlation alignment and knowledge distillation achieving approximately 8.93% accuracy improvement on BCI competition datasets [26]. Transfer learning techniques that pre-train on multiple source subjects before fine-tuning on target subjects have also shown promise in reducing calibration time [1].

For fMRI, cross-subject validation benefits from more consistent spatial organization across individuals, enabling successful application of encoding models across subjects when aligned to common anatomical templates [31]. However, the low temporal resolution limits applicability for decoding rapidly evolving cognitive processes.

Cross-subject ECoG validation is less common due to limited patient cohorts and variable electrode placement determined by clinical needs [28]. However, when electrode locations can be normalized to functional or anatomical regions, ECoG signals show promising generalization, particularly for high-gamma activity during language processing [28].

Hybrid and Integrated Approaches

Multimodal integration offers promising avenues for enhancing both subject-specific and cross-subject validation. Simultaneous EEG-fMRI recording has been used to create EEG fingerprints of deep brain structures like the ventral striatum, enabling scalable monitoring of reward processing with temporal precision [30]. Similarly, novel transformer-based encoding models have successfully integrated MEG and fMRI data to estimate cortical source activity with high spatiotemporal resolution, demonstrating improved prediction of ECoG signals compared to models trained solely on ECoG [31].

The following diagram illustrates a multimodal integration framework for cross-subject validation:

This integrated approach addresses individual variability through subject-specific forward models while leveraging shared representations in a common source space, potentially offering a robust solution for cross-subject generalization [31].

Experimental Protocols and Methodologies

EEG Cross-Subject Validation Protocol

Domain generalization approaches for EEG involve specific methodological considerations. A representative protocol for motor imagery EEG classification includes:

Data Partitioning: Implementing subject-based data splits is crucial to avoid data leakage. Nested-Leave-N-Subjects-Out (N-LNSO) cross-validation provides more realistic performance estimates compared to sample-based approaches [25].

Feature Extraction: Spectral features across multiple frequency bands are extracted, often using wavelet transformations or filter banks. Knowledge distillation frameworks can then capture invariant representations across subjects [26].

Domain Invariant Learning: Correlation alignment (CORAL) methods minimize distribution shifts between source domains by aligning second-order statistics of feature distributions [26].

Regularization: Distance regularization enhances dissimilarity between different types of invariant features to reduce redundancy and improve generalization [26].

This protocol has demonstrated 8.93% accuracy improvement on BCI Competition IV 2a dataset compared to baseline approaches, highlighting the importance of proper experimental design in cross-subject EEG analysis [26].

fMRI-EEG Integration Protocol

The development of fMRI-informed EEG models follows a structured methodology:

Simultaneous Acquisition: EEG and fMRI data are collected concurrently while participants engage with carefully designed stimuli, such as pleasurable music for reward system activation [30].

Feature Mapping: Spectro-temporal features from EEG signals are mapped to BOLD activation in target regions (e.g., ventral striatum) using multivariate regression models [30].

Model Validation: The resulting EEG fingerprint (e.g., VS-EFP for ventral striatum) is tested for specificity against control regions and predictive validity across different reward paradigms [30].

Cross-modal Generalization: Successful models demonstrate ability to predict BOLD activity in new subjects using EEG alone, enabling scalable neural monitoring [30].

This approach exemplifies how multimodal integration can leverage the complementary strengths of different acquisition modalities—using fMRI's spatial precision to inform EEG-based models with superior temporal resolution.

Naturalistic Stimulus Encoding Protocol

For studying complex cognitive processes, naturalistic paradigms have gained traction across all three modalities:

Stimulus Design: Extended naturalistic stimuli (e.g., audio podcasts, movie clips) are presented to engage authentic cognitive processing [28] [31].

Feature Extraction: Multiple feature streams representing the stimulus are extracted, including mel-spectrograms for acoustic properties, phoneme representations for speech units, and contextual word embeddings from language models [28] [31].

Encoding Models: Linear or neural network encoding models learn mappings from stimulus features to neural responses, often with regularization to handle high-dimensional feature spaces [28].

Cross-subject Alignment: Anatomical normalization and functional alignment techniques enable model transfer across subjects despite individual differences in brain organization [31].

This protocol has revealed striking correspondences between neural responses to natural language across modalities, with contextual word embeddings from large language models accounting for significant variance in ECoG, EEG, and fMRI signals [28] [31].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents and resources for multimodal neuroscience

| Resource Category | Specific Tools | Function/Purpose |

|---|---|---|

| Software Libraries | MNE-Python, EEGLAB, FieldTrip | Preprocessing, analysis, and visualization of EEG/MEG data |

| Deep Learning Frameworks | EEGNet, Transformers, BENDR | Specialized architectures for neural signal decoding |

| Stimulus Feature Extractors | GPT-2 embeddings, Penn Phonetics Lab Forced Aligner, Mel-spectrogram extraction | Representing stimuli at multiple linguistic and acoustic levels |

| Neuroimaging Datasets | "Podcast" ECoG Dataset [28], BCI Competition IV 2a [26], OpenNeuro datasets | Benchmarking, model development, and comparative analysis |

| Anatomical Registration Tools | FreeSurfer, FSL, SPM | Cross-subject alignment and source space construction |

The comparative analysis of EEG, ECoG, and fMRI reveals a complex landscape where modality selection must align with validation approach priorities. EEG offers practical advantages for cross-subject applications requiring scalability and temporal resolution, despite challenges with signal quality and inter-subject variability. ECoG provides unparalleled spatiotemporal resolution for subject-specific modeling but remains limited to clinical populations. fMRI excels in spatial precision and whole-brain coverage but is constrained by temporal resolution and cost. The emerging paradigm of multimodal integration represents a promising direction, leveraging the complementary strengths of each modality to overcome individual limitations. For BCI validation frameworks, subject-specific approaches currently deliver higher performance, while cross-subject methods offer greater practical utility—with the optimal choice dependent on application requirements. Future advances will likely stem from improved cross-modal integration, sophisticated domain adaptation techniques, and larger-scale naturalistic datasets that better capture the neural dynamics underlying complex cognition.

Brain-Computer Interface (BCI) technology has emerged as a transformative tool, enabling direct communication between the brain and external devices by decoding neural signals. A central challenge impeding its widespread adoption is the significant variability in brain anatomy and electrophysiological signals across individuals [1]. This inter-subject variability means that BCI models painstakingly calibrated for one user often fail to generalize to new users, necessitating extensive recalibration and limiting practical utility [3]. Research indicates that approximately 10–30% of users cannot achieve sufficient classification accuracy, a phenomenon termed "BCI illiteracy," further highlighting the impact of individual differences [3].

Addressing this challenge requires a deep exploration of two core neurocomputational concepts: neural plasticity and common neural representations. Neural plasticity—the brain's ability to reorganize its structure and function—provides the foundation for users to learn to control BCIs. Simultaneously, the identification of stable common features in neural activity across different individuals is crucial for building models that work reliably for new users without subject-specific training [1]. This guide objectively compares the performance of subject-specific and cross-subject BCI approaches, examining their theoretical bases, experimental performance, and implications for the future of neurotechnology.

Core Concepts: Subject-Specific vs. Cross-Subject BCI Models

Subject-Specific BCI Models

Subject-specific models are trained exclusively on the neural data of a single individual. This approach designs the model to capture the unique electrophysiological "fingerprint" of that user, thereby maximizing performance for that person. The primary advantage is the potential for high single-subject accuracy, as the model is finely tuned to a specific signal profile. Its main drawback is the lack of generalizability and the practical burden of collecting extensive labeled data for every new user, making it less suitable for widespread clinical or consumer application [1] [3].

Cross-Subject BCI Models

Cross-subject models aim to create a universal decoder that can perform effectively on new, unseen users. These models are trained on data pooled from multiple individuals and seek to identify stable neural representations that are invariant across the population [1]. The key advantage is scalability, as they can theoretically be deployed for new users without any calibration. The central challenge lies in successfully disentangling these common features from confounding subject-specific signal characteristics. Promising strategies to achieve this include:

- Transfer Learning & Domain Adaptation: Fine-tuning a pre-trained model with small amounts of data from a new user [1].

- Deep Learning: Using architectures like EEGNet to automatically learn hierarchical features from raw data [1].

- Explicit Common Feature Extraction: Novel methods, such as the Cross-Subject DD (CSDD) algorithm, which statistically analyzes multiple subject-specific models to isolate pure common features [1].

- Selective Subject Pooling: Strategically choosing which subjects to include in the training pool based on their performance or signal quality, rather than using all available data [3].

Comparative Performance Analysis

The performance gap between subject-specific and cross-subject approaches is narrowing with advanced algorithms. The tables below summarize key experimental findings from recent studies.

Table 1: Performance Comparison of Subject-Specific vs. Cross-Subject Models on the BCIC IV 2a Dataset (Motor Imagery)

| Model Type | Specific Model Name | Reported Accuracy | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Cross-Subject | CSDD (Cross-Subject DD) [1] | Not Specified (3.28% improvement over peers) | Extracts pure common features; promotes generalizability | Novel method requiring further validation |

| Cross-Subject | Selective Subject Pooling [3] | Performance varies with pool selection | Reduces negative transfer from dissimilar subjects | Requires criteria for "good source" subject selection |

| Cross-Subject | Contrastive Learning (Emotion Recognition) [4] | Up to 97.70% (SEED dataset) | Learns invariant features; robust to label noise | Complex training procedure |

Table 2: Impact of Model Evaluation Protocols on Reported Performance [32] [25]

| Evaluation Protocol | Reported Accuracy Impact | Risk of Data Leakage | Real-World Generalizability |

|---|---|---|---|

| Sample-Wise K-Fold Cross-Validation | Inflated (Overestimation up to 30.4%) | High | Poor |

| Block-Wise or Subject-Wise Cross-Validation | More Realistic (Lower) | Low | Good |

| Nested Leave-N-Subjects-Out (N-LNSO) [25] | Most Realistic & Reliable | Very Low | Best |

Experimental Protocols and Methodologies

The CSDD Algorithm for Extracting Common Features

The Cross-Subject DD (CSDD) algorithm proposes a novel, multi-stage workflow to explicitly extract and model common features across subjects [1].

- Subject-Specific Model Training: A personalized BCI model (e.g., based on a convolutional neural network) is first trained for each individual in the source pool.

- Transformation to Relation Spectrum: Each trained model is transformed into a representation called a "relation spectrum," which captures the learned features and relationships within the data.

- Statistical Analysis for Common Features: The collection of relation spectra from all subjects undergoes statistical analysis to identify features that are consistently present and stable across the majority of individuals.

- Universal Model Construction: A final, generalized BCI model is constructed based solely on the identified common features. This model is designed to be directly applicable to new, unseen subjects.

Figure 1: CSDD Algorithm Workflow. A four-stage process for building a cross-subject BCI model by extracting common neural features.

Selective Subject Pooling Strategy

This methodology challenges the conventional practice of using all available subject data for training cross-subject models. It posits that pooling data from subjects with poor BCI performance or highly dissimilar signals can degrade model performance [3].

- Source Subject Evaluation: Each potential source subject's data is used to train a subject-specific model, whose performance is then evaluated.

- Pool Selection: A decision function ( g(X) ) is applied to determine if a subject is a "good source." This function can be based on performance thresholds (e.g., classification accuracy > 70%) or neurophysiological markers (e.g., strength of sensorimotor rhythms).

- Model Training with Selected Pool: A subject-independent model is trained using data only from the selected pool of "good" subjects.

- Testing: The model's generalization is tested on held-out subjects from the same dataset or different datasets.

Figure 2: Selective Subject Pooling Workflow. A framework for improving cross-subject generalization by curating the training pool.

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Resources for BCI Model Validation Research

| Category / Item | Specific Examples / Details | Primary Function in Research |

|---|---|---|

| Public EEG Datasets | BCIC IV 2a [1], SEED, CEED, FACED, MPED [4] | Provides standardized, annotated data for training and benchmarking models across different tasks (motor imagery, emotion). |

| Signal Processing & Feature Extraction Tools | Common Spatial Patterns (CSP) [3], Filter Bank CSP (FBCSP) [32], Power Spectral Density (PSD) [4] | Extracts discriminative spatiotemporal features from raw, noisy EEG signals for classification. |

| Deep Learning Architectures | EEGNet [1], ShallowConvNet, DeepConvNet [25] | Provides end-to-end models that automatically learn relevant features from raw or pre-processed EEG data. |

| Cross-Validation Frameworks | Nested Leave-N-Subjects-Out (N-LNSO) [25], Leave-One-Subject-Out (LOSOCV) [3] | Ensures robust and realistic estimation of model performance on unseen subjects, preventing data leakage. |

| Domain Adaptation Algorithms | HDNN-TL [1], SCDAN [1] | Mitigates the distribution shift between data from different subjects or sessions, improving transferability. |

The pursuit of robust cross-subject BCI models is fundamentally a quest to understand the balance between neural plasticity and common neural representations. While subject-specific models currently offer high performance for calibrated individuals, cross-subject approaches are rapidly advancing and hold the key to scalable, practical BCI systems. The development of sophisticated algorithms like CSDD for explicit common feature extraction [1] and strategic frameworks like selective subject pooling [3] demonstrates significant progress.

Future research must prioritize several key areas:

- Standardized and Rigorous Evaluation: Widespread adoption of robust cross-validation schemes like Nested-LNSO is critical to obtain realistic performance estimates and foster reproducibility [25].

- Explainable AI: Moving beyond "black box" models to understand what the identified common features represent neurophysiologically will build trust and guide algorithm development.

- Hybrid Approaches: Combining the strengths of cross-subject foundation models with lightweight, rapid subject-specific adaptation (transfer learning) presents a promising path forward [1].

By grounding model development in the theoretical foundations of neural plasticity and shared representations, the field can overcome the generalization challenge, ultimately enabling BCI technologies that are accessible and effective for a broad population.

Methodological Innovations and Clinical Translation Pathways

Brain-Computer Interface (BCI) technology enables direct communication between the brain and external devices, offering transformative potential in neurorehabilitation, assistive technology, and cognitive enhancement [33]. Electroencephalography (EEG)-based BCIs are particularly prominent due to their non-invasive nature, cost-effectiveness, and high temporal resolution [33] [34]. However, a significant challenge impedes their widespread adoption: poor cross-subject generalization. Traditional BCI models trained on individual users often fail when applied to new subjects due to substantial inter-individual variability in brain anatomy, neural activity patterns, and electrophysiological signals [1] [4]. This limitation necessitates extensive user-specific calibration, which is time-consuming, computationally expensive, and impractical for real-world applications [1] [25].

To address this fundamental challenge, research has increasingly focused on developing algorithms that can learn robust, generalizable neural representations across diverse individuals. Two promising approaches have emerged: the Cross-Subject DD (CSDD) algorithm, which systematically extracts common features across subjects to build a universal model [1], and universal semantic feature extraction frameworks, which leverage advanced deep learning architectures to learn task-independent representations directly from EEG data [34]. This guide provides a comprehensive comparison of these innovative approaches, detailing their methodologies, experimental protocols, and performance relative to other paradigms, within the critical context of cross-subject versus subject-specific BCI model validation research.

Comparative Analysis of CSDD and Alternative Approaches

The following table summarizes the core characteristics, strengths, and limitations of CSDD alongside other prominent approaches in cross-subject BCI research.

Table 1: Comparison of Cross-Subject BCI Algorithms

| Algorithm | Core Methodology | Reported Performance | Key Advantages | Primary Limitations |

|---|---|---|---|---|

| CSDD (Cross-Subject DD) [1] | Extracts common neural features via personalized model transformation and statistical analysis of relation spectrums. | 3.28% performance improvement over existing similar methods on BCIC IV 2a dataset. | Directly targets cross-subject commonalities; novel "system filter" concept similar to Fourier filtering. | Relies on initial per-subject models; multi-stage process may be computationally complex. |

| Universal Semantic Feature Extraction [34] | Unsupervised framework integrating CNNs, Autoencoders, and Transformers to capture task-independent semantic features. | Avg. 83.50% on BCICIV 2a (MI), 98.41% on Lee2019-SSVEP, Avg. AUC 91.80% on ERP datasets. | Task independence; robustness to inter-subject variability; supports various downstream analyses. | High computational demand; data-intensive due to Transformer architecture. |

| Cross-Subject Contrastive Learning (CSCL) [4] | Employs dual contrastive losses in hyperbolic space to learn subject-invariant emotion representations. | 97.70% (SEED), 96.26% (CEED), 65.98% (FACED), 51.30% (MPED) for emotion recognition. | Effective for cross-subject emotion recognition; handles label noise; captures hierarchical relationships. | Primarily validated on emotion tasks; performance varies significantly across datasets. |

| Hybrid Feature Learning [35] | Combines STFT-based spectral features with functional/structural brain connectivity features. | 86.27% and 94.01% accuracy for cross-session inter-subject attention classification on two datasets. | Incorporates brain connectivity; high interpretability; effective feature selection. | Limited to attention tasks; may not capture complex non-stationarities as effectively as deep learning. |

Detailed Experimental Protocols and Methodologies

The CSDD Algorithm Workflow

The CSDD framework constructs a universal BCI model through a structured, four-stage pipeline designed to distill common neural features from multiple individuals [1].

- Subject-Specific Transfer Learning (SSTL-PF): First, personalized BCI models are trained for each subject in the source domain. This often involves a pre-training and fine-tuning strategy, beginning with a universal feature extraction (UFE) base—typically an end-to-end Convolutional Neural Network (CNN) that processes raw EEG signals (∈ ℝN×C×T), where N is trials, C is electrodes, and T is time points [1].

- Transformation to Relation Spectrums (TPM-RS): Each trained personalized model is not used directly for decoding. Instead, it is transformed into a standardized representation called a "relation spectrum." This critical step converts the subject-specific parameters into a comparable format [1].

- Common Feature Extraction (ECF-SA): Statistical analysis is applied across the collection of relation spectrums to identify and isolate features that are consistently present and stable across the majority of subjects. This step effectively filters out idiosyncratic, subject-specific neural patterns [1].

- Universal Model Construction (BCSDM-CF): The final, universal cross-subject decoding model is built based exclusively on the set of common features identified in the previous step. This model is designed to be applied directly to new, unseen subjects without further calibration [1].

Figure 1: CSDD Algorithm Workflow

Universal Semantic Feature Extraction Framework

This framework aims to learn a universal, task-independent feature representation from EEG signals in an unsupervised manner, making it robust to inter-subject variability [34]. Its architecture consists of three integrated components:

- Encoder: Processes raw, preprocessed EEG segments. It typically uses one-dimensional Convolutional Neural Networks (1D-CNNs) to capture local spatial-temporal patterns. The features are then passed through a multi-head self-attention mechanism to model global dependencies and contextual relationships across the entire signal segment [34].

- Bridging Layer: This is the core of the framework. A Lambda layer converts the encoder's high-dimensional output into the final latent "semantic features." These features are designed to abstract away low-level, task-specific details and instead encapsulate high-level, meaningful neural representations that are consistent across different tasks and subjects [34].

- Decoder: Mirrors the encoder structure and aims to reconstruct the original EEG signals from the latent semantic features. The quality of reconstruction provides a self-supervised signal that guides the training process, ensuring the semantic features retain the essential information from the input [34].

Figure 2: Universal Semantic Feature Extraction Framework

Critical Validation Protocols in Cross-Subject Research

Robust experimental validation is paramount. A key finding across recent literature is that the choice of data partitioning and cross-validation scheme dramatically impacts reported performance and generalizability [32] [25].

Table 2: Impact of Cross-Validation Schemes on Reported Performance (Based on [32])

| Classification Algorithm | Performance with Standard K-Fold | Performance with Block-Wise Splits | Reported Performance Difference |

|---|---|---|---|

| Filter Bank Common Spatial Pattern (FBCSP) with LDA | Inflated accuracy | Realistic accuracy | Up to 30.4% |

| Riemannian Minimum Distance (RMDM) | Inflated accuracy | Realistic accuracy | Up to 12.7% |

- The Problem of Temporal Dependencies: Standard K-fold cross-validation, which randomly splits data into training and testing sets, often leads to over-optimistic performance estimates. This occurs because samples from the same continuous recording block can end up in both training and test sets, allowing the model to learn temporal dependencies and artifacts (e.g., from sensor drift, gradual drowsiness) rather than genuine, generalizable neural patterns [32].

- Recommended Practices: For credible cross-subject validation, researchers should adopt:

- Subject-Based Splits: Data from the same subject must not appear in both training and test sets simultaneously. The Leave-One-Subject-Out (LOSO) or Nested-Leave-N-Subjects-Out (N-LNSO) methods are considered best practices [25].

- Block-Wise Splits: When validating within a subject's data, the splitting should respect the experimental block structure, ensuring entire blocks of trials are assigned to either training or testing to prevent data leakage [32].

Table 3: Essential Resources for Cross-Subject BCI Research

| Resource Category | Specific Examples | Function and Application |

|---|---|---|

| Benchmark EEG Datasets | BCIC IV 2a [1], SEED, CEED, FACED, MPED [4] | Standardized public datasets for training and, most importantly, benchmarking algorithm performance against the state-of-the-art in a fair and reproducible manner. |

| Signal Processing & Feature Extraction | Short-Time Fourier Transform (STFT) [35], Discrete Wavelet Transform (DWT) [36], Power Spectral Density (PSD) [4], Functional Connectivity (e.g., PLV) [35] | Methods to convert raw, noisy EEG signals into meaningful, discriminative features for classification. Hybrid features (spectral + connectivity) show promise for cross-subject tasks [35]. |

| Core Machine Learning Models | EEGNet, ShallowConvNet, DeepConvNet [25], CNN-Autoencoder-Transformer [34], SVM [35] | Established model architectures that serve as strong baselines (EEGNet) or form the core of novel frameworks (CNN-Autoencoder-Transformer). |

| Validation & Statistical Tools | Nested-Leave-N-Subjects-Out (N-LNSO) Cross-Validation [25], Bootstrapped Confidence Intervals [32] | Critical protocols for obtaining realistic performance estimates, ensuring model generalizability, and providing statistically robust results. |