Cross-Participant Generalization in Neural Decoding: Advances, Challenges, and Future Directions

This article provides a comprehensive analysis of cross-participant generalization for neural decoding models, a critical challenge in developing robust brain-computer interfaces (BCIs) and clinical neurotechnologies.

Cross-Participant Generalization in Neural Decoding: Advances, Challenges, and Future Directions

Abstract

This article provides a comprehensive analysis of cross-participant generalization for neural decoding models, a critical challenge in developing robust brain-computer interfaces (BCIs) and clinical neurotechnologies. We explore the foundational principles of neural decoding and the inherent barriers to subject-invariant model performance, including neural signal heterogeneity and inter-individual variability. The review covers cutting-edge methodological solutions—from self-supervised learning and transformer architectures to multimodal data fusion—that enhance model generalizability across diverse populations. We present rigorous validation frameworks and comparative performance benchmarks across decoding tasks, from inner speech recognition to visual reconstruction. Finally, we discuss persistent optimization challenges and future research directions aimed at creating truly generalizable neural decoding systems for transformative biomedical applications.

The Core Challenge: Understanding Cross-Participant Variability in Neural Signals

Defining Neural Decoding and the Generalization Problem

Neural decoding is a neuroscience field concerned with the reconstruction of sensory stimuli, cognitive states, or behavioral outputs from information that has already been encoded and represented in the brain by networks of neurons [1]. In essence, it is a mathematical mapping from brain activity to the outside world, serving as the inverse process of neural encoding, which maps the outside world to brain activity [2]. This "mind reading" capability enables researchers to predict what sensory stimuli a subject is receiving or what actions they intend to perform based purely on neural action potentials [1] [2].

The process operates on the fundamental principle that neurons encode information through varying spike rates or temporal patterns, and that these patterns contain decipherable information about external stimuli or internal states [1]. The relationship between encoding and decoding is formally represented in Bayesian terms, where decoding involves calculating P(stimulus|response) using knowledge of the encoding scheme P(response|stimulus), the probability of particular stimuli P(stimulus), and the general probability of neural responses P(response) [2].

The Cross-Participant Generalization Challenge

Defining the Problem

The generalization problem in neural decoding refers to the significant challenge of creating models that maintain performance when applied to new participants, experimental sessions, or tasks beyond those used during training. This challenge arises because neural representations exhibit substantial variability across individuals due to differences in neuroanatomy, functional organization, and cognitive strategies [3] [4].

Cross-participant generalization stands in contrast to within-participant approaches, where separate classifiers are built for each individual. While within-participant analyses identify brain regions with consistent functional roles within individuals, cross-participant approaches reveal aspects of brain organization that generalize across individuals [3]. Research indicates these approaches provide distinct information about brain function, with cross-participant analyses often implicating additional brain regions beyond those identified in within-participant studies [3].

Technical and Biological Foundations

The generalization problem is compounded by several technical and biological factors:

Spatial Resolution Limitations: The number of neurons needed to reconstruct stimuli with reasonable accuracy depends on recording methods and brain areas being recorded. Limited sampling problems mean researchers can never completely account for error associated with noisy data from stochastically functioning neurons [1].

Temporal Precision Requirements: Neural systems operate with millisecond precision throughout sensory and motor areas, demanding models that can perform at these temporal scales while maintaining generalization capabilities [1].

Representational Alignment: When applied to neural mass signals such as LFP, MEG, or fMRI, pattern generalization is susceptible to confounds due to spatial mixing, making it difficult to draw valid conclusions about underlying neural representations [4].

Model Architectures and Generalization Performance

Evolution of Neural Decoding Approaches

Traditional neural decoding models have evolved from simple probabilistic approaches to sophisticated deep learning architectures:

Probabilistic Decoders include spike train number coding, instantaneous rate coding, temporal correlation coding, and Ising decoders, which use statistical relationships to reconstruct stimuli from neural responses [1]. These approaches form the mathematical foundation for neural decoding but often lack the flexibility for robust cross-participant generalization.

Recurrent Neural Networks (RNNs) offer fast, low-latency inference on sequential data with strong task-specific performance but struggle with generalization to new subjects due to rigid input formats requiring fixed-size, time-binned inputs [5].

Transformer-based Architectures provide greater flexibility through adaptable neural tokenization approaches and have demonstrated impressive generalization capabilities through large-scale pretraining. However, they face challenges in real-time applications due to quadratic computational complexity [5].

Emerging Hybrid Architectures

Recent research has introduced hybrid models that combine the strengths of different architectural components:

POSSM (POYO-SSM) represents a novel hybrid architecture that combines individual spike tokenization via a cross-attention module with a recurrent state-space model (SSM) backbone [5]. This design enables fast, causal online prediction while supporting efficient generalization to new sessions, individuals, and tasks through multi-dataset pretraining.

The model operates at millisecond-level resolution by tokenizing individual spikes using both neural unit identity and precise timestamps, then processes these tokens through a cross-attention encoder that projects variable numbers of spikes to a fixed-size latent space before sequential processing via the SSM [5].

Table 1: Comparative Performance of Neural Decoding Architectures

| Model Architecture | Cross-Participant Generalization | Inference Speed | Computational Demand | Key Limitations |

|---|---|---|---|---|

| Traditional RNNs | Limited | Fast | Low | Fixed input formats; poor session transfer |

| Transformer-based | Strong through pretraining | Slow | High (quadratic complexity) | Computationally prohibitive for long sequences |

| POSSM (Hybrid SSM) | Strong through multi-dataset pretraining | Fast (up to 9× faster than Transformers) | Moderate | Emerging approach; requires validation across domains |

Quantitative Performance Comparison

Cross-Species and Cross-Task Generalization

Recent advances have demonstrated remarkable generalization capabilities across previously challenging domains:

Cross-Species Transfer: POSSM exhibits the ability to transfer knowledge from non-human primates to humans. When pretrained on diverse monkey motor-cortical recordings and fine-tuned on human data, the model achieves state-of-the-art performance decoding imagined handwritten letters from human cortical activity [5]. This highlights the transferability of neural dynamics across primate species and suggests the potential for leveraging abundant non-human data to augment limited human datasets.

Cross-Task Generalization: Hybrid architectures maintain performance across disparate tasks including intracortical decoding of monkey motor tasks, human handwriting decoding, and speech decoding [5]. The same architecture achieves decoding accuracy comparable to state-of-the-art Transformers while significantly reducing inference costs (up to 9× faster on GPU) across these varied applications [5].

Table 2: Generalization Performance Across Experimental Paradigms

| Generalization Type | Performance Metrics | Key Findings | Experimental Support |

|---|---|---|---|

| Cross-Subject | Matching or outperforming within-subject baselines | Linear transforms or brief fine-tuning sufficient for adaptation | [5] [6] |

| Cross-Species | State-of-the-art on human handwriting decoding | Pretraining on monkey data improves human decoding | [5] |

| Cross-Task | Maintained accuracy on motor, handwriting, and speech tasks | 9× faster inference than Transformers on GPU | [5] |

| Cross-Modality | Speech-to-text with 8.3% WER on zero-shot tasks | Hierarchical GRU decoder with CTC supervision | [6] |

Impact of Scaling Laws

Recent evidence suggests that neural decoding models, particularly those leveraging large language models (LLMs), follow scaling laws where performance increases with model size, training data, and computational budget [7]. Studies have verified that brain encoding models and pre-trained LLMs exhibit improved performance with growing parameters, indicating the necessity of developing larger systems to bridge brain activity patterns and human linguistic representations when given sufficient data [7].

Experimental Protocols and Methodologies

Cross-Subject Decoding for Speech BCIs

Objective: To investigate cross-subject generalization for speech brain-computer interfaces by training neural-to-phoneme decoders jointly across multiple participants and datasets [6].

Datasets: Utilize the two largest intracortical speech datasets (Willett et al. 2023; Card et al. 2024) with an independent inner-speech dataset (Kunz et al. 2025) for validation [6].

Alignment Method: Implement day- and dataset-specific affine transforms to align neural activity into a shared feature space across participants [6].

Model Architecture: Employ a hierarchical GRU decoder with intermediate Connectionist Temporal Classification (CTC) supervision and feedback connections to mitigate the conditional-independence assumption of standard CTC loss [6].

Evaluation: Assess performance on within-subject baselines, adaptation to unseen subjects using linear transforms or brief fine-tuning, and generalization to inner speech paradigms [6].

Multivariate Pattern Analysis (MVPA) for Visual Chunk Memory

Objective: To identify neural correlates of chunk memory processes during visual statistical learning using time-resolved multivariate pattern analysis [8].

Experimental Design: Present visual statistical learning tasks while recording EEG, then analyze during both learning and decision-making phases [8].

Temporal Feature Extraction: Identify specific components in learning stages (P100, P200, P600) and decision-making phases (P100, P200, P400, P600) corresponding to distinct cognitive processes [8].

Analysis Approach: Combine univariate analysis (GFP) with multivariate pattern analysis (MVPA) to establish neural activity patterns of early chunk memory processes [8].

Validation: Correlate behavioral results with neural space representations during decision-making conditions to establish functional significance [8].

Signaling Pathways and Model Architecture

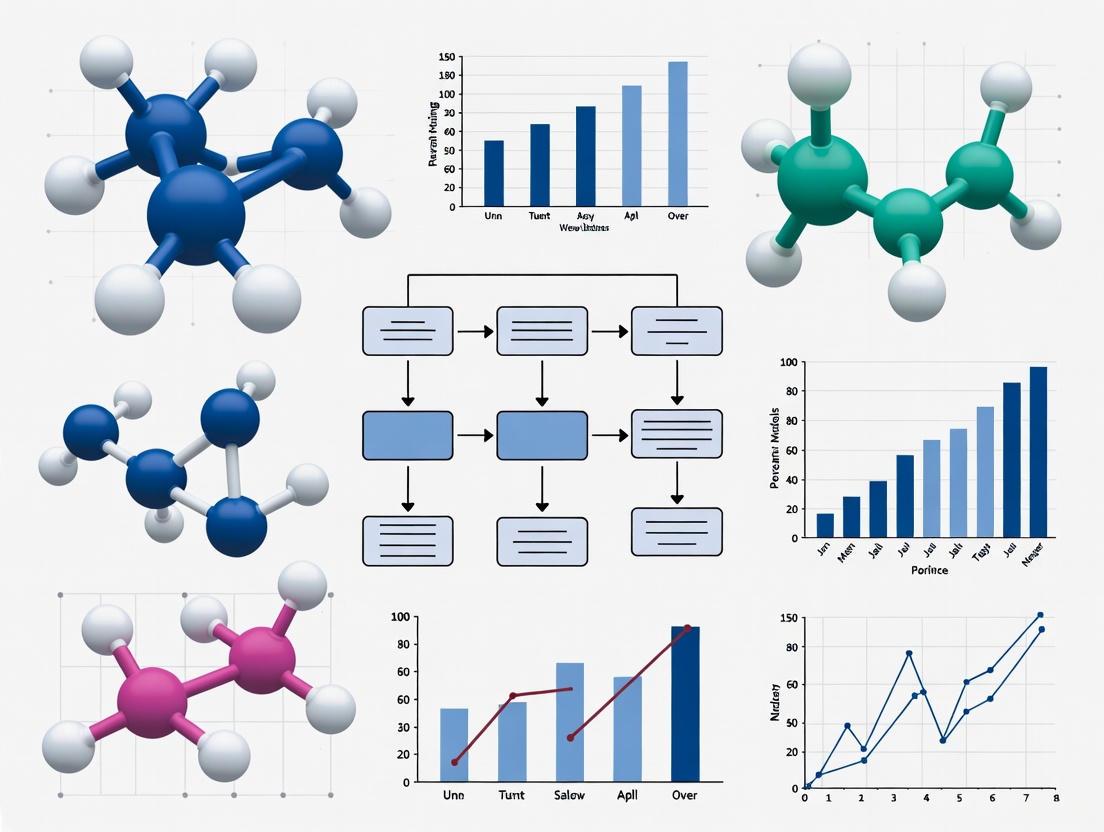

Neural Encoding-Decoding Loop

Cross-Participant Generalization Framework

Research Reagent Solutions Toolkit

Table 3: Essential Research Tools for Neural Decoding Studies

| Research Tool | Function | Application in Generalization Studies |

|---|---|---|

| High-Density Multi-Electrode Arrays | Record from hundreds of neurons simultaneously | Capture population coding across brain regions [1] |

| Electrocorticography (ECoG) | Measure electrical activity from cortical surface | High signal-to-noise ratio for speech decoding [7] [6] |

| Functional MRI (fMRI) | Measure blood oxygenation changes | Investigate distributed representations across participants [3] |

| Magnetoencephalography (MEG) | Measure magnetic fields from neural activity | Temporal precision for language decoding [7] |

| Spike Sorting Algorithms | Identify individual neuron spike times | Enable precise tokenization for models like POSSM [5] |

| POYO Tokenization | Represent spikes with unit ID and timestamp | Flexible input processing for cross-participant modeling [5] |

| Affine Transform Layers | Align neural features across participants | Create shared representation spaces [6] |

| Connectionist Temporal Classification (CTC) | Train sequence models without alignment | Speech decoding with variable input-output lengths [6] |

The generalization problem in neural decoding remains a significant challenge but shows promising pathways toward solutions through hybrid architectures, multi-dataset pretraining, and cross-species transfer learning. The emergence of models capable of maintaining performance across participants, tasks, and even species indicates progress toward clinically deployable brain-computer interfaces and foundational insights into neural computation.

Future research directions should focus on developing more sophisticated alignment techniques, expanding cross-species datasets, and establishing standardized evaluation benchmarks for generalization performance. As scaling laws suggest continued improvement with model and data size, the field appears poised to overcome many current limitations in cross-participant neural decoding.

Inter-subject variability, the natural differences in brain anatomy and function between individuals, presents a central challenge and opportunity in neuroscience. Far from being mere noise, this variability is increasingly recognized as a critical data source for understanding human abilities, disabilities, and differential treatment outcomes [9]. In the specific context of neural decoding models, which aim to interpret brain activity, this variability directly impacts cross-participant generalization performance—a core hurdle in developing robust brain-computer interfaces (BCIs) and clinical applications [10]. The brain functions as a noisy plastic system, where each individual embodies a unique parameterization shaped by genetics and experience, inevitably producing diverse neural responses to identical tasks or stimuli [9]. This article systematically compares the key sources of inter-subject variability—neuroanatomical, physiological, and cognitive—and details the experimental methodologies employed to quantify them, providing a foundational guide for researchers and drug development professionals working to advance personalized neuroscience.

Neuroanatomical variability forms the structural basis for functional differences observed across individuals. This encompasses differences in both the gray matter architecture, such as cortical thickness and density, and the white matter circuitry that defines neural pathways [9]. These structural differences are not merely academic; they directly constrain and shape the functional networks that underlie all cognitive processes.

Table 1: Key Neuroanatomical Factors Contributing to Inter-Subject Variability

| Factor Category | Specific Measures | Impact on Neural Decoding |

|---|---|---|

| Gray Matter Architecture | Cortical thickness, Grey matter density, Morphological anatomy [9] | Influences local processing capacity and signal strength. |

| White Matter Pathways | Tractography, Myelination, Callosal topography [9] | Affects speed and efficiency of communication between brain regions. |

| Network Topology | Functional connectivity, Structural connectivity [9] [10] | Determines the unique functional organization of large-scale brain networks. |

| Neurotransmitter Systems | Receptor/transporter distribution [11] | Modulates neural excitability and synaptic plasticity, affecting overall brain dynamics. |

Quantifying these anatomical differences is crucial for interpreting neuroimaging data. A transdiagnostic study of psychiatric disorders identified four robust neuroanatomical differential factors (ND factors) that capture shared patterns of gray matter volume variation across diagnoses [11]. This demonstrates that individual morphological profiles can be represented as a unique linear combination of common underlying factors, preserving inter-individual variation while identifying shared neurobiological mechanisms [11]. The following workflow illustrates how individualized structural variations are analyzed to identify common factors across a population:

Physiological variability refers to differences in the dynamic, often state-dependent, functions of the brain and body that influence neural signals. This includes fluctuations in brain rhythms, neurovascular coupling, and metabolic processes [9]. In practical applications like electroencephalography (EEG)-based brain-computer interfaces, this manifests as significant intra- and inter-subject variability in sensorimotor rhythms, creating a "covariate shift" in data distributions that severely impedes model transferability across sessions and subjects [10].

The non-stationarity of brain signals—meaning their statistical properties change over time—is a fundamental characteristic of a healthy, plastic brain but poses a substantial challenge for consistent neural decoding [10]. This is compounded by the fact that motor variability, often considered noise, is actually an integral part of the motor learning process itself [10].

Table 2: Experimental Protocols for Assessing Physiological Variability

| Experimental Paradigm | Primary Metrics | Data Modality | Key Insights |

|---|---|---|---|

| Stabilography | Ellipse area, Center of pressure path, Symmetry index [12] | Biomechanical force plate | Demonstrates diverse repeatability; ellipse area is least stable (%SD=45.79), symmetry is most stable (%SD=4.60) [12]. |

| Sensorimotor BCI | Event-related desynchronization/synchronization (ERD/S), Covariate shift magnitude [10] | EEG | Time-variant and individualized neurophysiological characteristics significantly impact BCI performance and generalization [10]. |

| Cross-Task EEG Decoding | Response time prediction accuracy, Psychopathology score regression [13] | EEG (HBN-EEG dataset) | Challenges in generalizing across subjects and cognitive paradigms (e.g., Resting State, Movie Watching, Symbol Search) [13]. |

| Cortical Microcircuit Simulation | Spike rates, Spike train irregularity, Correlations [14] | Simulated neural networks (SpiNNaker, NEST) | Benchmarks accuracy and performance of simulators in replicating biological variability for large-scale networks (~80k neurons) [14]. |

The complexity of capturing this variability is a key driver behind initiatives like the 2025 EEG Foundation Challenge, which focuses specifically on cross-task transfer learning and subject-invariant representation to build models that can generalize across different subjects and experimental conditions [13].

Cognitive strategies represent a higher-order source of inter-subject variability, where individuals employ different mental approaches to solve the same task. This is a dominant source of intersubject variability arising from degeneracy—the capacity for different neural pathways to produce the same functional output [9]. For example, when asked to calculate the sum of integers from 1 to 8, subjects may use at least three distinct strategies: a step-by-step addition, a multiplication-based approach (8×9/2), or direct recall from memory [9]. Each strategy recruits distinct cognitive processes and neural activation patterns.

This strategic diversity has profound implications for experimental design and analysis. When data from subjects using different strategies are averaged, the result can be a hybrid activation map containing both false negatives (where variable features cancel out) and false positives (where feature combinations create illusory patterns) [9]. This is further complicated by individual differences in cognitive style, expectation, and subjective judgment, all of which modulate both brain function and underlying structure over time [9].

Methodological Approaches and The Scientist's Toolkit

Addressing inter-subject variability requires a multifaceted methodological approach. Normative modeling has emerged as a powerful technique to quantify individualized deviations by constructing reference models based on healthy population data, against which individual cases can be compared [11]. Similarly, Group Independent Component Analysis (GICA) provides a data-driven framework for identifying group-level spatial components that can be back-projected to estimate subject-specific components, effectively capturing between-subject differences in spatial, temporal, and amplitude domains [15].

Simulation tools are indispensable for testing the limits of these methods. The SimTB toolbox allows researchers to generate simulated fMRI data with parameterized variability, enabling systematic evaluation of analytic methods under controlled conditions [15]. For large-scale neural network simulation, both software like NEST and neuromorphic hardware like SpiNNaker are used, with studies showing they can achieve similar accuracy in simulating full-scale cortical microcircuits, despite different underlying architectures and power consumption profiles [14].

Table 3: Research Reagent Solutions for Variability Research

| Tool / Resource | Type | Primary Function in Variability Research |

|---|---|---|

| SimTB Toolbox [15] | Software (MATLAB) | Generates simulated fMRI data with parameterized inter-subject variability to test analytic methods. |

| HBN-EEG Dataset [13] | Dataset | Provides EEG from 3,000+ participants across 6 tasks for evaluating cross-subject/model generalization. |

| Group ICA (GICA) [15] | Analytical Method | Decomposes multi-subject data into group and individual-level components to capture variability. |

| Non-negative Matrix Factorization (NMF) [11] | Analytical Method | Identifies underlying neuroanatomical factors from individualized deviation maps. |

| NEST Simulator [14] | Software | Simulates large-scale neural network models with biological time scales on HPC clusters. |

| SpiNNaker Hardware [14] | Neuromorphic Hardware | Enables real-time simulation of large neural networks with low power consumption. |

| Normative Modeling [11] | Analytical Framework | Constructs statistical models of normal brain function to quantify individual deviations. |

A critical advancement in the field is the move toward transfer learning and subject-invariant representations in neural decoding models. The 2025 EEG Foundation Challenge explicitly encourages the development of models that use unsupervised or self-supervised pretraining to capture general latent EEG representations, which can then be fine-tuned for specific supervised objectives to achieve generalization across subjects [13]. This approach is vital for reducing the reliance on tedious per-subject calibration sessions and moving toward plug-and-play BCIs.

Inter-subject variability in neuroanatomy, physiology, and cognition is not an obstacle to be overcome but a fundamental feature of human brain organization that must be embraced and understood. The future of neural decoding and its application in clinical and research settings depends on our ability to model this variability explicitly. Methodologies that account for individual differences—such as normative modeling, GICA, and transfer learning—are rapidly evolving and showing promise in improving cross-participant generalization performance. For drug development professionals and neuroscientists, recognizing and quantifying these sources of variability is essential for developing personalized interventions and understanding the spectrum of treatment responses. The scientific toolkit for this endeavor is rich and expanding, combining sophisticated computational models, large-scale datasets, and innovative analytical frameworks to turn the challenge of variability into a source of insight.

The Encoding-Decoding Dichotomy in Brain Signal Processing

In neuroscience, the processes of neural encoding and decoding represent a fundamental dichotomy that describes how the brain processes information. Neural encoding refers to the mapping of external stimuli or internal cognitive states to patterns of neural activity. It answers the question: How do neurons represent information about the world? Conversely, neural decoding involves inferring stimuli or cognitive states from recorded neural activity, essentially reading the brain's representations to determine what information is being processed [16]. This encoding-decoding framework serves as a powerful paradigm for understanding how the brain computes, perceives, and acts, with significant implications for brain-computer interfaces (BCIs), neuroprosthetics, and our fundamental understanding of neural computation.

The relationship between encoding and decoding is intrinsically linked to cross-participant generalization, a core challenge in neural decoding research. The ability to decode information accurately across different individuals depends critically on the consistency of neural encoding principles across brains. As research has revealed, the brain performs cascading encoding-decoding operations where upstream neural representations are transformed and processed by downstream areas to extract behaviorally relevant information [16]. This hierarchical processing enables increasingly explicit representations that facilitate simpler decoding at higher cortical levels, though this process involves complex, often nonlinear transformations distributed across specialized brain networks.

Comparative Analysis of Neural Decoding Approaches

Methodological Spectrum in Neural Decoding

Neural decoding methodologies span a broad spectrum from classical model-based approaches to modern deep learning techniques, each with distinct advantages for cross-participant generalization. Model-based approaches like Kalman filters, Wiener filters, and Generalized Linear Models (GLMs) directly characterize probabilistic relationships between neural firing and variables of interest, offering interpretability and stability with limited data [17] [16]. In contrast, machine learning approaches employ "black-box" neural networks that can capture complex nonlinear relationships but typically require larger datasets and come with significant computational costs [17]. The recent integration of large language models (LLMs) and foundation models pre-trained on non-EEG data has further expanded this methodological landscape, enabling improved cross-modal alignment and zero-shot generalization capabilities for EEG analysis [18].

Table 1: Comparative Performance of Neural Decoding Methodologies

| Method Category | Representative Algorithms | Key Advantages | Cross-Participant Generalization Challenges | Typical Applications |

|---|---|---|---|---|

| Classical Model-Based | Kalman Filter, Wiener Filter, Vector Reconstruction, Generalized Linear Models (GLMs) | High interpretability, stable with limited data, well-understood theoretical properties | Limited capacity for complex nonlinear mappings; may require participant-specific parameter tuning | Head direction decoding [17], motor control, basic sensory decoding |

| Traditional Machine Learning | Support Vector Machines (SVM), Linear Discriminant Analysis (LDA), Random Forests | Better handling of nonlinear relationships than classical methods; less data-intensive than deep learning | Performance degradation due to inter-subject variability; requires feature engineering | Stimulus recognition [7], basic classification tasks |

| Deep Learning | CNN (EEGNet), RNN, Transformers, Spectro-temporal Transformers | Automatic feature learning, state-of-the-art performance on complex tasks, handle raw signals | High data requirements; prone to overfitting to individual subjects; computationally intensive | Inner speech recognition [19], continuous language decoding [7] |

| Foundation Models & LLMs | Fine-tuned GPT, Spectro-temporal Transformers with wavelet decomposition, CLIP-inspired architectures | Powerful cross-modal transfer, zero-shot capabilities, leverage pre-trained knowledge | Domain shift from pre-training data to neural signals; architectural mismatch | EEG-to-text translation [18], cross-task transfer learning [13] |

Quantitative Performance Comparison Across Domains

Decoding performance varies significantly across application domains, with factors such as signal-to-noise ratio, neural representation consistency, and task complexity critically influencing cross-participant generalization capabilities. The table below synthesizes quantitative results from recent studies across major decoding domains.

Table 2: Cross-Domain Performance Comparison of Neural Decoding Approaches

| Application Domain | Stimulus/Behavior Type | Best Performing Method | Reported Performance Metrics | Cross-Participant Assessment |

|---|---|---|---|---|

| Inner Speech Decoding | 8 imagined words | Spectro-temporal Transformer with wavelet decomposition | 82.4% accuracy, Macro-F1: 0.70 [19] | Leave-one-subject-out (LOSO) validation |

| Linguistic Neural Decoding | Textual stimuli reconstruction | LLM-based approaches with contextual embeddings | BLEU: ~0.40-0.60, ROUGE: ~0.35-0.55 (highly task-dependent) [7] | Limited in current literature; primarily within-subject |

| Head Direction Decoding | Rodent head direction | Population vector-based methods, Kalman filter | ~85-95% accuracy (varies by brain region) [17] | Coherence maintained across simultaneously recorded cells |

| Visual Stimulus Reconstruction | Image viewing | GANs, Diffusion Models, VAEs combined with fMRI | PCC: ~0.70-0.85 (highly dependent on stimulus complexity) [20] | Emerging research; limited cross-subject results |

| Clinical Factor Prediction | Psychopathology dimensions | Foundation models with cross-task pretraining | Externalizing factor prediction (ongoing benchmark) [13] | Primary focus of 2025 EEG Foundation Challenge |

Cross-Participant Generalization Performance

The critical challenge of cross-participant generalization manifests differently across neural recording modalities. For non-invasive approaches like EEG, significant inter-subject variability due to anatomical differences, electrode placement variations, and functional organization presents substantial obstacles [18] [13]. The 2025 EEG Foundation Challenge specifically addresses this through two competition tracks: cross-task transfer learning and subject-invariant representation for predicting clinical factors [13]. Recent approaches using foundation models pre-trained on large-scale non-EEG data have shown promising improvements in cross-subject generalization, leveraging their powerful representational capacity and cross-modal alignment mechanisms [18].

For invasive approaches such as ECoG and intracortical recordings, the fundamental encoding principles may demonstrate greater consistency across participants, though electrode placement variability remains challenging. Studies of head direction cells across thalamo-cortical circuits have revealed remarkable consistency in population coding principles across subjects, with simultaneously recorded HD cells maintaining coherent angular relationships [17]. This consistency in underlying neural representation facilitates more robust cross-participant decoding approaches for basic sensory and cognitive variables compared to higher-level cognitive states.

Experimental Protocols in Neural Decoding Research

Protocol for Oscillation-Based Communication Modeling

Recent research investigating rhythmic neural synchronization patterns employs sophisticated protocols to uncover fundamental encoding principles. One prominent study analyzed EEG resting-state recordings from 1,668 participants across five public datasets, including individuals with various neurological conditions (MDD, ADHD, OCD, Parkinson's, Schizophrenia) and healthy controls aged 5-89 [21]. The experimental workflow involved:

Signal Acquisition and Preprocessing: Two minutes of resting-state EEG signal were extracted from each dataset, excluding the first 5 seconds to avoid initial eye-closure effects. Electrodes were re-referenced to average reference, followed by standard denoising procedures including 60 Hz low-pass filtering, 50/60 Hz notch filtering, and 0.1 Hz high-pass filtering to remove slow drift [21].

Time-Frequency Analysis: The time-frequency representation of each electrode's continuous EEG recording was calculated using the Stockwell transform with a time resolution of 0.002 s and frequency resolution of 0.3 Hz. Frequencies were averaged over electrodes for each lobe (frontal, temporal, parietal, occipital) to create time-frequency power modulation for each region [21].

Synchronization Quantification: The upper envelope of spectral signals was calculated using the Hilbert transform and downsampled to 100 Hz. Spearman correlation between hemispheric amplitude envelopes was computed using a running window approach to identify alternating patterns of synchronization and desynchronization states [21].

This protocol revealed a binary-like pattern of correlation states alternating between fully synchronized and desynchronized several times per second, likely resulting from beating between slightly different frequencies. This pattern was consistent across ages, states (eyes open/closed), and brain regions, suggesting a fundamental encoding mechanism for neural communication [21].

Neural Synchronization Analysis Workflow: This diagram illustrates the experimental protocol for identifying binary synchronization patterns in neural oscillations, demonstrating the multi-stage approach from raw EEG acquisition to pattern identification and biomarker validation [21].

Protocol for Deep Learning-Based Inner Speech Decoding

Decoding inner speech (covert articulation without audible output) represents one of the most challenging frontiers in neural decoding research. A recent pilot study established a rigorous protocol for evaluating deep learning models on this task using a bimodal EEG-fMRI dataset [19]:

Participant Selection and Experimental Paradigm: Four healthy right-handed participants performed structured inner speech tasks involving eight target words divided into two semantic categories (social words: "child," "daughter," "father," "wife"; numerical words: "four," "three," "ten," "six"). Each word was presented in 40 trials, resulting in 320 trials per participant [19].

Data Preprocessing and Segmentation: EEG signals were preprocessed to remove artifacts and segmented into epochs time-locked to each imagined word. Strict quality control led to the exclusion of one participant (sub-04) due to excessive noise and poor signal quality, with more than 70% of epochs rejected because of persistent high-amplitude artifacts (> ±300 μV), electrode detachment, and flatline channels [19].

Model Architecture and Training: Two primary architectures were compared: EEGNet (a compact convolutional neural network) and a spectro-temporal Transformer. The Transformer incorporated wavelet-based time-frequency features and self-attention mechanisms. Models were trained using leave-one-subject-out (LOSO) cross-validation to rigorously assess cross-participant generalization [19].

Performance Evaluation: Classification performance was assessed using accuracy, macro-averaged F1 score, precision, and recall. Ablation studies examined the contribution of individual Transformer components, including wavelet decomposition and self-attention mechanisms [19].

This protocol demonstrated the superiority of the spectro-temporal Transformer, which achieved 82.4% classification accuracy and 0.70 macro-F1 score, substantially outperforming both standard and enhanced EEGNet models. The ablation studies confirmed that both wavelet-based frequency decomposition and attention mechanisms contributed significantly to this improved performance [19].

Protocol for Cross-Task and Cross-Subject EEG Foundation Challenges

The 2025 EEG Foundation Challenge has established standardized protocols for evaluating cross-participant generalization at scale [13]:

Dataset Composition: The challenge utilizes the HBN-EEG dataset containing recordings from over 3,000 participants across six distinct cognitive tasks, including both passive (Resting State, Surround Suppression, Movie Watching) and active tasks (Contrast Change Detection, Sequence Learning, Symbol Search) [13].

Evaluation Framework: Two primary challenges are defined: (1) Cross-Task Transfer Learning, requiring prediction of behavioral performance metrics (response time) from an active paradigm using EEG data, with suggestions to use passive tasks for pretraining; and (2) Externalizing Factor Prediction, requiring prediction of continuous psychopathology scores from EEG recordings across multiple experimental paradigms while maintaining subject invariance [13].

Generalization Metrics: Performance is evaluated based on regression accuracy for behavioral metrics and clinical factors, with emphasis on robustness across different subjects and experimental paradigms. The competition specifically encourages unsupervised or self-supervised pretraining strategies to learn generalizable neural representations before fine-tuning on specific supervised objectives [13].

This large-scale, standardized evaluation protocol represents a significant advancement in neural decoding research, directly addressing the critical challenge of cross-participant generalization while controlling for confounding factors through rigorous experimental design and comprehensive dataset composition.

Signaling Pathways and Computational Workflows

Binary Encoding Model for Neural Communication

Recent research has proposed a novel brain communication model in which frequency modulation creates binary messages encoded and decoded by brain regions for information transfer. This model suggests that alternating patterns of synchronization and desynchronization, observed as several transitions per second, form a digital-like encoding scheme for neural information transfer [21]. The signaling pathway for this binary encoding model can be visualized as follows:

Binary Neural Communication Pathway: This diagram illustrates the proposed model where interference between slightly different neural oscillation frequencies creates beating patterns that form binary synchronization states for neural information encoding and decoding [21].

Cross-Subject Neural Decoding Workflow

Modern approaches to cross-subject neural decoding employ sophisticated workflows that leverage foundation models and transfer learning to address the challenge of inter-subject variability. The following workflow represents state-of-the-art methodologies being applied in current research [18] [13]:

Cross-Subject Neural Decoding Workflow: This diagram illustrates the modern approach using foundation models pre-trained on non-EEG data and cross-modal alignment techniques to achieve subject-invariant representations for generalized neural decoding [18].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Resources for Neural Decoding Research

| Resource Category | Specific Tools & Technologies | Function/Purpose | Key Considerations for Cross-Participant Generalization |

|---|---|---|---|

| Data Acquisition Systems | EEG (128-channel systems), fMRI, MEG, ECoG, intracortical microelectrodes | Capture neural signals at appropriate spatiotemporal resolution | Standardized protocols minimize inter-system variability; electrode placement consistency critical |

| Public Datasets | HBN-EEG (3,000+ participants) [13], MODMA [21], OpenNeuro ds003626 (inner speech) [19] | Provide standardized benchmarks for method comparison | Large sample sizes essential for capturing population variability; multiple tasks enable cross-task evaluation |

| Signal Processing Tools | EEGLAB [21], FieldTrip [21], MNE-Python [21], Brainstorm | Preprocessing, artifact removal, feature extraction | Harmonization pipelines critical for cross-dataset and cross-site compatibility |

| Decoding Algorithms | EEGNet [19], Spectro-temporal Transformers [19], Kalman filters [17], GLMs [16] | Extract meaningful information from neural signals | Architecture choices balance complexity with generalizability; regularization techniques prevent overfitting |

| Foundation Models | Pre-trained LLMs (GPT, LLaMA) [18], Vision models (ViT) [18], Audio models (Wav2Vec) [18] | Enable cross-modal transfer and zero-shot learning | Domain adaptation techniques bridge gap between pre-training data and neural signals |

| Evaluation Frameworks | Leave-one-subject-out (LOSO) cross-validation [19], 2025 EEG Foundation Challenge [13] | Rigorous assessment of generalization performance | Standardized metrics enable cross-study comparisons; ablation studies identify critical components |

The encoding-decoding dichotomy provides a powerful framework for understanding neural information processing, with cross-participant generalization representing both a fundamental challenge and critical validation criterion for neural decoding approaches. The comparative analysis presented here reveals several key insights: first, that the methodological evolution from classical model-based approaches to modern deep learning and foundation models has progressively improved decoding performance, though often at the cost of interpretability; second, that performance varies substantially across application domains, with basic sensory and motor decoding generally achieving higher accuracy than complex cognitive states like inner speech; and third, that rigorous cross-participant evaluation protocols like LOSO validation and large-scale challenges are essential for meaningful performance assessment.

The most promising future directions appear to lie in hybrid approaches that leverage the interpretability of model-based methods with the representational power of deep learning, particularly through foundation models pre-trained on non-EEG data and carefully adapted to neural decoding tasks. As standardized large-scale datasets and evaluation frameworks continue to emerge, the field moves closer to clinically viable neural decoding systems that maintain robust performance across the natural variability of human brains, ultimately enabling more effective brain-computer interfaces, neuroprosthetics, and therapeutic interventions.

The pursuit of robust neural decoding models, particularly those capable of generalizing across participants, confronts a fundamental biological reality: neural signals are inherently heterogeneous. This heterogeneity manifests as non-stationarity (changing statistical properties over time), profound sensitivity to noise, and significant morphological differences between individuals and even within the same subject across sessions. Far from being mere noise, this heterogeneity is increasingly recognized as a core feature of neural computation. In the specific context of cross-participant generalization for neural decoding models, these variations present a formidable challenge, often causing models trained on one set of individuals to fail when applied to another. Research demonstrates that neural heterogeneity, spanning structural, genetic, and electrophysiological dimensions, is not a detriment but a fundamental characteristic that enhances information encoding and computational robustness in biological systems [22] [23]. Understanding and engineering these heterogeneous properties is therefore not just about managing a nuisance; it is about aligning artificial decoding systems with the core design principles of the brain itself to achieve true generalization.

Experimental Comparisons: Quantifying the Impact of Heterogeneity

The impact of neural heterogeneity on system performance has been quantitatively assessed across various studies, from simulated spiking neural networks (SNNs) to biological experiments. The following table summarizes key experimental findings.

Table 1: Experimental Data on Neural Heterogeneity and System Performance

| Study & System | Heterogeneity Type Introduced | Experimental Task | Key Performance Findings |

|---|---|---|---|

| SNN Simulation [22] [23] | External (input current), Network (coupling strength), Intrinsic (partial reset) | Curve fitting, Network reconstruction, Speech/image classification | Consistently improved learning accuracy and robustness across all three learning methods (RLS, FORCE, SGD), regardless of the heterogeneity source. |

| Spatially Extended E-I SNN [24] | Neuronal timescale (leakage, gain time constants) | Input-output mapping, Mackey-Glass signal representation | Timescale diversity disrupted intrinsic coherent patterns, reduced temporal rate fluctuations, and enhanced reliability of computation. |

| Electrosensory System (Weakly Electric Fish) [25] | On- and Off-type neuronal responses | Coding of envelope signals amidst stimulus-induced noise | Mixed On- and Off-type populations showed lower noise response similarity (~0.0) than same-type pairs (On-On: ~0.4, Off-Off: ~0.3), enabling better noise averaging and greater information transmission about the signal. |

| EEG Foundation Challenge [13] | Cross-subject and cross-task variability | Cross-task transfer learning, Subject-invariant representation | A primary goal is to create models that generalize across different subjects and cognitive paradigms, highlighting the field's focus on overcoming heterogeneity. |

The data reveals a consistent theme: properly structured heterogeneity enhances a system's computational capacity and resilience. In the electric fish, response heterogeneity makes population-level responses to noise more independent, facilitating a more reliable readout of the behaviorally relevant signal [25]. In SNNs, heterogeneity systematically improves performance across diverse tasks, suggesting it is a general principle for building robust neural models [22] [23].

Table 2: Impact of Neuronal Timescale Diversity on Network Dynamics

| Network Property | Homogeneous Network (στL = 0) |

Heterogeneous Network (στL = 0.4) |

|---|---|---|

| Temporal Rate Fluctuations | High | Significantly Decreased |

| Synchronization | Strong pairwise synchrony | Substantially lower pairwise synchrony |

| Spike Count Correlation | Broad distribution, higher mean | Narrower distribution, lower mean |

| Collective Dynamics | Coherent spatiotemporal patterns | Disrupted patterns, robust asynchronous state |

| Firing Rate Distribution | Gaussian distribution | Broader, non-Gaussian distribution |

The transition from a homogeneous to a heterogeneous network, as shown in Table 2, fundamentally alters network dynamics. Heterogeneity disrupts widespread synchronization, leading to a more stable asynchronous state that is less dominated by intrinsic activity and more responsive to external input [24]. This "input-slaved" dynamics is crucial for reliable information processing.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for evaluating heterogeneity, this section details the methodologies from key cited studies.

- Objective: To systematically evaluate the impact of external, network, and intrinsic heterogeneity on the learning accuracy and robustness of SNNs across multiple learning methods and tasks.

- Network Model: A network of coupled Izhikevich (IK) neuron models was used, balancing biological plausibility and computational efficiency [22] [23].

- Incorporating Heterogeneity:

- External Heterogeneity: Each neuron

ireceives a unique, constant external currentηi, drawn from a Lorentzian distribution. - Network Heterogeneity: The electrical coupling strength

gifor each neuron is varied according to a Lorentzian distribution. - Intrinsic Heterogeneity: A neuron-specific partial reset mechanism is implemented via the reset coefficient

θi, also Lorentzian-distributed.

- External Heterogeneity: Each neuron

- Learning & Tasks: The network was evaluated using three distinct learning methods: Ridge Least Square (RLS) for curve fitting, FORCE learning for complex tasks like chaotic system prediction, and Surrogate Gradient Descent (SGD) for real-world image and speech classification.

- Outcome Measures: Learning accuracy (e.g., error in curve fitting, classification accuracy) and robustness to parameter variations were quantified and compared against homogeneous baselines.

- Objective: To determine how On- and Off-type neuronal heterogeneities in the electrosensory system affect population coding of a behaviorally relevant envelope signal embedded in a noisy stimulus waveform.

- Biological Preparation: In-vivo recordings were performed from

n=41(21 On-type, 20 Off-type) pyramidal neurons in the electrosensory lateral line lobe (ELL) of awake, behaving weakly electric fish (Apteronotus leptorhynchus). - Stimulus Design: A composite stimulus was presented, consisting of a "noise" waveform (0-15 Hz) whose amplitude was modulated by a slow, sinusoidal "signal" envelope (1 Hz). The signal and noise were statistically independent.

- Data Analysis:

- Signal & Noise Response Similarity: Spike trains were binned into non-overlapping windows. Signal response similarity was calculated as the correlation between spike counts shuffled by the signal cycle. Noise response similarity was the correlation of residual spike counts (after subtracting the signal-cycle average).

- Information Transmission: The mutual information rate between single-neuron spiking activities and the sinusoidal signal was computed.

- Population Analysis: Similarity measures and information were compared for three population pair types: On-On, Off-Off, and On-Off.

Signaling Pathways and Workflows

The mechanistic role of neural heterogeneity in enabling reliable computation and cross-subject generalization can be visualized as a cascading pathway.

Figure 1: Mechanistic Pathway from Heterogeneity to Improved Generalization. Heterogeneity disrupts intrinsic dynamics, forcing the network to be more driven by external inputs, which in turn creates more reliable and generalizable representations [24].

The Scientist's Toolkit: Research Reagent Solutions

This table catalogs key computational models, datasets, and analytical approaches essential for researching neural signal heterogeneity.

Table 3: Essential Research Tools for Investigating Neural Heterogeneity

| Tool Name / Concept | Type | Primary Function in Research | Key Application in Context |

|---|---|---|---|

| Spiking Neural Network (SNN) Models | Computational Model | Simulate biologically realistic neural dynamics with action potentials. | Platform for systematically introducing and testing the effects of parameter heterogeneity (e.g., in Izhikevich model parameters) on network performance [22] [24] [23]. |

| HBN-EEG Dataset | Dataset | A large-scale public dataset containing EEG from >3000 participants across 6 cognitive tasks, with psychometrics [13]. | Benchmark for evaluating cross-subject and cross-task generalization in decoding models, directly addressing heterogeneity challenges [13]. |

| Individual Adaptation Module | Algorithmic Component | Normalizes subject-specific patterns in neural data. | Core component in frameworks like NEED for achieving zero-shot cross-subject generalization by explicitly modeling and countering inter-subject morphological differences [26]. |

| Response Similarity Analysis | Analytical Method | Quantifies the correlation of neural responses (e.g., to signal vs. noise) across a population. | Measures how heterogeneity decorrelates population activity, as used in electrosensory studies to show noise averaging benefits [25]. |

| Cross-Task Transfer Learning | Training Paradigm | Trains a model on multiple tasks or paradigms to improve robustness. | Encourages the development of foundation models that extract latent representations invariant to specific tasks, a key strategy against non-stationarity and context-dependence [13]. |

| POYO/POSSM Architecture | Neural Decoder Model | A hybrid model using spike tokenization and state-space models for efficient, generalizable decoding [5]. | Demonstrates how flexible input processing and efficient sequence modeling can handle variable neural identities and spike timings across subjects. |

The journey toward neural decoding models that generalize across participants necessitates a fundamental shift in perspective: from treating neural signal heterogeneity as a problem to be eliminated, to recognizing it as a core design principle to be harnessed. Quantitative evidence from computational and biological experiments consistently shows that heterogeneity—whether in neuronal parameters, cell types, or network connectivity—is a powerful mechanism for enhancing computational capacity, robustness, and the reliable representation of external inputs. By disrupting strong, intrinsic synchrony and promoting input-slaved dynamics, heterogeneity helps create a neural substrate that is more stable and interpretable. Future progress in cross-participant generalization will likely depend on the development of new models and architectures, such as foundation models and hybrid encoders, that are explicitly designed to leverage, rather than fight, the rich and variable tapestry of the brain's activity [13] [5] [26].

The Impact of Varying Experimental Paradigms and Sensor Placements

A central challenge in modern neuroscience is developing neural decoding models that can generalize across different individuals and experimental conditions. Current models are typically trained on small numbers of subjects performing a single task, severely limiting their clinical applicability [27]. The fundamental obstacle lies in the signal heterogeneity introduced by various factors including non-stationarity, noise sensitivity, inter-subject morphological differences, varying experimental paradigms, and differences in sensor placement [28]. This heterogeneity creates a significant barrier to building robust models that can adapt to EEG data collected from diverse tasks and individuals without expensive recalibration.

The brain itself performs continuous encoding and decoding operations, where sensory areas encode stimuli and downstream areas decode these representations to build internal models of the environment and self [16]. Understanding how the brain achieves such robust decoding across varying conditions provides inspiration for computational approaches. The core principle is that neurons encode new information by decoding and transforming information from upstream neurons, creating a cascade of encoding-decoding operations that ultimately guide behavior [16]. This perspective highlights the fundamental interdependence of neural encoding and decoding processes that computational models must capture to achieve similar generalization capabilities.

Experimental Framework: Cross-Paradigm and Cross-Subject Decoding

The EEG Foundation Challenge as a Benchmarking Platform

The 2025 EEG Foundation Challenge: From Cross-Task to Cross-Subject EEG Decoding provides a structured framework for systematically evaluating generalization performance [28] [27]. Accepted to the NeurIPS 2025 Competition Track, this challenge addresses two critical aspects of generalization through distinct tasks:

Challenge 1: Cross-Task Transfer Learning - A supervised regression task requiring participants to predict behavioral performance metrics (response time) from an active experimental paradigm using EEG data, potentially leveraging passive activities as pretraining [28].

Challenge 2: Externalizing Factor Prediction - A supervised regression challenge requiring teams to predict continuous psychopathology scores from EEG recordings across multiple experimental paradigms while maintaining robustness across different subjects [28].

This competition utilizes an unprecedented, multi-terabyte dataset of high-density EEG signals (128 channels) recorded from over 3,000 child to young adult subjects, representing an order of magnitude larger than typical EEG challenge datasets [27]. Each participant engaged in six distinct cognitive tasks, providing a rich multi-task, multi-condition collection of neural data that far exceeds the breadth and diversity of prior EEG competitions [27].

Dataset Composition and Experimental Paradigms

The Healthy Brain Network Electroencephalography (HBN-EEG) dataset forms the foundation for systematic comparison of generalization performance [28] [27]. The dataset includes six carefully designed experimental paradigms that probe different cognitive domains:

Table: Experimental Paradigms in the HBN-EEG Dataset

| Paradigm Type | Task Name | Cognitive Domain | Description |

|---|---|---|---|

| Passive | Resting State (RS) | Baseline | Eyes open/closed conditions with fixation cross |

| Passive | Surround Suppression (SuS) | Visual processing | Four flashing peripheral disks with contrasting background |

| Passive | Movie Watching (MW) | Naturalistic perception | Four short films with different themes |

| Active | Contrast Change Detection (CCD) | Visual attention | Identifying dominant contrast in co-centric flickering grated disks |

| Active | Sequence Learning (SL) | Memory | Memorizing and reproducing sequences of flashed circles |

| Active | Symbol Search (SyS) | Executive function | Computerized version of WISC-IV subtest |

Each participant's data is accompanied by four psychopathology dimensions derived from the Child Behavior Checklist (CBCL) and demographic information including age, sex, and handedness [28]. The data is formatted according to the Brain Imaging Data Structure (BIDS) standard and includes comprehensive event annotations using Hierarchical Event Descriptors (HED), making it particularly suitable for cross-task analysis and machine learning applications [27].

Comparative Performance Across Paradigms and Placements

Quantitative Performance Metrics

The evaluation framework for neural decoding generalization incorporates multiple quantitative metrics to assess model performance across different dimensions:

Table: Performance Metrics for Generalization Assessment

| Metric Category | Specific Metrics | Application Context |

|---|---|---|

| Cross-Task Transfer | Regression accuracy (R²), Mean squared error (MSE) | Transfer learning challenge [28] |

| Cross-Subject Generalization | Prediction correlation, Error variance | Subject-invariant representation [28] |

| Clinical Application | Psychopathology score prediction accuracy | Externalizing factor prediction [28] |

| Information Encoding | Mutual information, Decoding efficiency | Neural representation quality [16] [29] |

The competition's unique zero-shot cross-domain generalization requirement means submitted models might be trained on a subset of tasks and then tested on data from held-out tasks or conditions, evaluating capacity to generalize without task-specific fine-tuning [27]. This approach directly addresses a critical gap in neurotechnology: decoding cognitive function from EEG without explicit behavioral labels.

Impact of Experimental Paradigm Diversity

Research indicates that the diversity of experimental paradigms used during training significantly impacts model generalization capability. The combination of active and passive tasks in the HBN-EEG dataset provides complementary information that enhances model robustness:

Passive paradigms (Resting State, Surround Suppression, Movie Watching) capture neural signatures with minimal cognitive load requirements, providing stable baseline measures less influenced by task engagement variability [28].

Active paradigms (Contrast Change Detection, Sequence Learning, Symbol Search) engage specific cognitive processes that may enhance decoding of task-relevant variables but introduce additional performance-related variability [28].

Evidence suggests that models trained on diverse paradigms learn more robust representations that capture invariant neural patterns across cognitive states. This paradigm diversity helps address the fundamental challenge that neural responses are rarely tuned to precisely one variable, as multiple stimulus dimensions influence responses in complex ways [29].

Methodological Approaches for Enhanced Generalization

Experimental Protocols for Generalization Assessment

The EEG Foundation Challenge implements standardized protocols to ensure consistent evaluation of generalization performance:

Protocol 1: Cross-Task Transfer Learning Assessment

- Model training on a subset of available tasks (e.g., passive tasks only)

- Zero-shot evaluation on held-out active tasks (e.g., Contrast Change Detection)

- Performance comparison against models trained and tested on the same task

- Statistical analysis of transfer efficiency across task types

Protocol 2: Cross-Subject Generalization Assessment

- Training on a diverse subject population with varying demographic characteristics

- Evaluation on completely unseen subjects with different demographic profiles

- Analysis of performance consistency across age groups, sex, and clinical profiles

- Assessment of subject-invariant representation quality

Protocol 3: Clinical Relevance Validation

- Prediction of transdiagnostic psychopathology factors (e.g., externalizing factor)

- Correlation of model predictions with standardized clinical assessments

- Evaluation of robustness across different recording sessions and states

- Assessment of potential clinical utility as biomarkers

These protocols enable systematic investigation of how varying experimental paradigms and subject characteristics impact decoding performance, providing insights into the fundamental limitations and opportunities for improvement in neural decoding models.

Neural Signal Processing Workflow

The generalized neural decoding process involves multiple stages from signal acquisition to behavioral prediction, each contributing to overall system performance:

This workflow highlights the critical transition from encoding to decoding processes in neural data analysis. The encoding process involves mapping stimuli to neural responses, while decoding involves inferring stimuli or states from neural activity [16]. The interplay between these processes across varying paradigms and sensor configurations forms the foundation for assessing generalization capabilities.

The Researcher's Toolkit: Essential Materials and Methods

Successfully investigating the impact of varying experimental paradigms and sensor placements requires specific methodological tools and resources:

Table: Essential Research Reagents and Resources

| Resource Category | Specific Solution | Function in Research |

|---|---|---|

| Dataset | HBN-EEG Dataset [28] [27] | Provides standardized, large-scale EEG data across multiple paradigms and subjects |

| Data Standard | BIDS Format [27] | Ensures consistent data organization and facilitates reproducibility |

| Annotation Framework | HED Tags [27] | Enables precise event characterization across different experimental paradigms |

| Software Environment | BRAINet Framework [27] | Supports scalable analysis of large-scale EEG datasets |

| Evaluation Platform | EEG Challenge Starter Kit [28] | Provides standardized evaluation metrics and benchmark comparisons |

| Sensor Configuration | 128-channel EGI system [28] | Enables high-density spatial sampling for sensor placement optimization |

These resources collectively enable researchers to systematically investigate how experimental paradigms and recording parameters impact decoding generalization, providing the foundation for developing more robust neural decoding models.

The systematic investigation of how varying experimental paradigms and sensor placements impact neural decoding performance reveals both significant challenges and promising pathways forward. The heterogeneity introduced by different paradigms and subject characteristics remains a substantial barrier to clinical translation, but approaches that leverage diverse training data and explicitly optimize for invariance show considerable promise.

The fundamental insight from both computational and neuroscience perspectives is that robust decoding requires models that capture the essential computations the brain itself performs when extracting task-relevant variables from noisy, heterogeneous neural signals [16] [29]. As the field advances, integrating knowledge from large-scale challenges like the EEG Foundation Challenge with theoretical principles of neural computation will be essential for developing the next generation of neural decoding models capable of genuine generalization across paradigms and populations.

The potential clinical applications, particularly in computational psychiatry where identifying objective biomarkers for mental health conditions could revolutionize diagnosis and treatment, underscore the critical importance of addressing these generalization challenges [28] [27]. Continued progress will require collaborative efforts between machine learning researchers and neuroscientists to develop models that not only achieve high performance on specific tasks but maintain this performance across the rich variability inherent in real-world clinical applications.

The quest to understand neural codes—how information is represented and communicated by ensembles of neurons—is fundamental to neuroscience. A critical challenge in both basic science and clinical applications is transferable neural coding: creating models that can decode neural signals effectively across different subjects, recording sessions, or even related tasks. The inability of decoders to generalize, a phenomenon known as catastrophic interference, often arises because acquiring new knowledge can overwrite existing knowledge in artificial neural networks, and analogous retroactive interference occurs in humans [30].

This guide explores the information theory principles that govern neural code transferability, objectively comparing the performance of various neural decoding models. We focus specifically on their cross-participant generalization performance, a crucial requirement for viable Brain-Computer Interfaces (BCIs) and robust neural analysis tools. The transfer of knowledge is framed not just as a technical challenge but as a fundamental trade-off between the benefit of positive transfer (faster learning of new tasks) and the cost of interference (disruption of existing knowledge) [30].

Quantitative Comparison of Neural Decoding Models

The performance of neural decoding models is quantified using metrics like decoding accuracy, generalization across subjects/sessions, and data efficiency. The table below summarizes experimental data for various model types.

Table 1: Performance Comparison of Neural Decoding Models in Cross-Subject/Session Generalization

| Model Type | Key Features | Test Context | Reported Performance | Key Advantage |

|---|---|---|---|---|

| Generative Spike Synthesizer with Adapter [31] | Deep-learning GAN; maps kinematics to spikes; rapid session/subject adaptation | Motor BCI; Monkey reaching task | Accelerated decoder training; significantly improved generalization with limited new data | Data augmentation; overcomes need for large subject-specific datasets |

| Linear Neural Networks (Rich vs. Lazy Regimes) [30] | Two-layer linear ANNs; rich (overlapping reps) vs. lazy (distinct reps) | Continual learning of sequential rules | Rich: Better transfer, higher interference. Lazy: Worse transfer, lower interference. | Mimics human individual differences; clarifies transfer-interference trade-off |

| Statistical Model-Based Methods [17] | Kalman Filter, Vector Reconstruction, GLMs, Wiener Cascade | Decoding head direction from thalamo-cortical cells | High accuracy; direct probabilistic interpretation | Established, interpretable, less computationally intensive |

| Machine Learning "Black-Box" Methods [17] | Multi-layered neural networks | Decoding head direction from thalamo-cortical cells | Can capture complex relationships; accuracy comes with time cost | High performance for non-linear, complex relationships |

| Transfer Learning with Graph Neural Networks (GNNs) [32] | Adaptive readouts; pre-training on low-fidelity data | Molecular property prediction for drug discovery | Up to 8x performance improvement in low-data regimes | Leverages knowledge from related, larger datasets effectively |

| EEG-based Emotion Recognition TL [33] | Various Transfer Learning and Domain Adaptation methods | Cross-subject and cross-session EEG classification | Performs better than other approaches in average accuracy | Mitigates EEG non-stationarity (Dataset Shift problem) |

Table 2: Impact of Task Similarity on Transfer and Interference in Continual Learning [30]

| Rule Similarity Between Task A and B | Transfer (Learning Task B) | Interference (Retest on Task A) | Representation Strategy in ANNs |

|---|---|---|---|

| Same | Highest benefit | Not applicable (rules identical) | Reuse of identical neural subspaces |

| Near | Moderate benefit | Highest interference | Shared, overlapping neural subspaces |

| Far | Lowest benefit | Lowest interference | Separate, non-overlapping neural subspaces |

Experimental Protocols for Assessing Transferability

Cross-Subject & Cross-Session BCI Decoding

Objective: To develop a BCI decoder that generalizes to new recording sessions or new subjects with minimal recalibration [31].

Protocol:

- Data Collection: Record neural spiking activity (e.g., from primary motor cortex) and corresponding behavioral kinematics (e.g., hand velocity) during a task (e.g., a reaching task). Data is parsed into time bins (e.g., 10ms).

- Spike Synthesizer Training: Train a Generative Adversarial Network (GAN)-based spike synthesizer on a full dataset from one "source" session or subject. This model learns a mapping

P(K|x)from hand kinematicsxto spike trainsK[16] [31]. - Adapter Training: For a new "target" subject or session, a small amount of neural data (e.g., 35 seconds) is used to adapt the pre-trained synthesizer to the new domain.

- Decoder Training & Evaluation: A BCI decoder (e.g., Wiener or Kalman filter) is trained on a combination of the limited real data from the target and a large amount of synthesized data from the adapted synthesizer. Performance is evaluated on held-out real data from the target session/subject and compared against a decoder trained only on the limited real data [31].

Key Insight: This protocol uses a generative model for smart data augmentation, effectively tackling the problem of data scarcity in clinical BCI applications [31].

Continual Learning and the Transfer-Interference Trade-off

Objective: To systematically quantify how the similarity between sequentially learned tasks affects knowledge transfer and catastrophic interference in both humans and ANNs [30].

Protocol:

- Task Design: Learners (humans or ANNs) perform two sequential tasks (A then B) in a contextual setting. Each task involves applying a specific rule (e.g., a rotational offset) to map stimuli to outputs.

- Similarity Manipulation: The rule in task B is systematically varied relative to task A across experimental groups: Same, Near (small change), or Far (large change).

- Training and Testing: Learners are trained on task A to proficiency, then on task B, and finally retested on task A without further feedback.

- Quantification:

- Transfer: Calculated as the improvement in initial performance on task B, attributable to knowledge of task A.

- Interference: Quantified by fitting a mixture model to responses during the retest on task A to measure the probability of using the incorrect (task B) rule.

Key Insight: This protocol reveals a fundamental computational trade-off. Higher transfer for similar tasks comes at the cost of higher interference, a phenomenon governed by the degree of overlap in neural representations [30].

Visualization of Core Concepts and Workflows

The Encoding-Decoding Framework in Neural Circuits

The brain itself can be viewed as a series of cascading encoding and decoding operations, which is also the principle behind building decoder algorithms for BCIs [16].

Generative Model Workflow for Cross-Subject BCI

This workflow demonstrates how a generative model can be rapidly adapted to new subjects to train high-performance decoders with minimal data [31].

The Scientist's Toolkit: Research Reagent Solutions

This section details key computational tools and modeling approaches essential for research into transferable neural codes.

Table 3: Essential Research Tools for Neural Code Transferability

| Tool / Method | Function | Relevance to Transferability |

|---|---|---|

| Generalized Linear Models (GLMs) [16] [17] | Statistical encoding models that predict neural spiking based on stimuli or covariates. | Provides a foundational, interpretable baseline for understanding what information is encoded in a population, a prerequisite for decoding. |

| Generative Adversarial Networks (GANs) [31] | Deep learning models that learn to synthesize realistic data from a training distribution. | Can generate unlimited, realistic neural data for new subjects/sessions after adaptation, overcoming data scarcity for decoder training. |

| Graph Neural Networks (GNNs) with Adaptive Readouts [32] | Neural networks that operate on graph-structured data; adaptive readouts use attention to aggregate node embeddings. | Crucial for effective transfer learning in molecular data; prevents underperformance and allows knowledge transfer from low-fidelity to high-fidelity tasks. |

| Linear Neural Networks (in Rich/Lazy Regimes) [30] | Simplified ANNs that help isolate fundamental computational principles of learning. | Serves as a "model organism" to study the transfer-interference trade-off and to model individual differences (lumpers vs. splitters) in humans. |

| Kalman & Wiener Filters [31] [17] | Classical statistical model-based decoders for estimating dynamic system states from noisy observations. | High-performing, interpretable benchmarks against which more complex machine learning decoders must be compared, especially for motor BCIs. |

Discussion: Trade-offs and Future Directions

The pursuit of transferable neural codes is inherently a balancing act. The most significant trade-off is between transfer and interference. Models that promote positive transfer by reusing and adapting existing neural representations for similar new tasks are often the most vulnerable to catastrophic interference, where new learning corrupts old knowledge [30]. This is computationally efficient but fragile. Conversely, models that create separate, non-overlapping representations for each task avoid interference but learn new tasks more slowly from scratch and fail to build upon acquired knowledge.

Future research must move towards causal modeling to infer and test causality in neural circuits, going beyond correlational decoding [16]. Furthermore, developing foundational internal world models that can learn hierarchical behavioral representations from rich, large-scale datasets is a promising direction. Such models could support flexible downstream decoding tasks that generalize robustly across contexts [16]. Finally, embracing and formally modeling individual differences in learning strategies—the natural variation between "lumpers" who generalize and "splitters" who separate—will be key to creating neural decoders that work reliably for everyone [30].

Architectural Solutions: Building Subject-Invariant Neural Decoders

The field of neural decoding, which aims to translate brain activity into interpretable information, has undergone a profound transformation. The shift from models relying on carefully hand-engineered features to those using deep learning to automatically discover representations has fundamentally altered the landscape of brain-computer interfaces and computational neuroscience. This revolution is particularly evident in the critical challenge of cross-participant generalization—the ability of a model trained on one set of individuals to perform accurately on entirely new subjects. This guide compares the performance, methodologies, and real-world applicability of these competing paradigms.

Defining the Paradigms: Handcrafted vs. Deep Learned Features

At its core, the difference between the two approaches lies in the origin of the features used for decoding.

- Hand-Engineered Features (Handcrafted): This traditional paradigm relies on domain expertise. Researchers manually extract specific, pre-defined characteristics from the neural signal. Common examples include spectral power in specific frequency bands, signal entropy, or waveform shapes. A model, such as a Support Vector Machine (SVM) or Linear Discriminant Analysis (LDA), is then trained on these curated features for classification or regression [34] [19].

- Learned Representations (Deep Learning): In this modern paradigm, deep neural networks (e.g., CNNs, Transformers) are fed raw or minimally processed neural data. The network's multiple layers automatically learn a hierarchy of features—from simple to complex—directly from the data, optimizing them for the end task without human intervention [7] [19].

The table below summarizes the fundamental distinctions between these two approaches.

| Characteristic | Hand-Engineered Features | Deep Learned Representations |

|---|---|---|