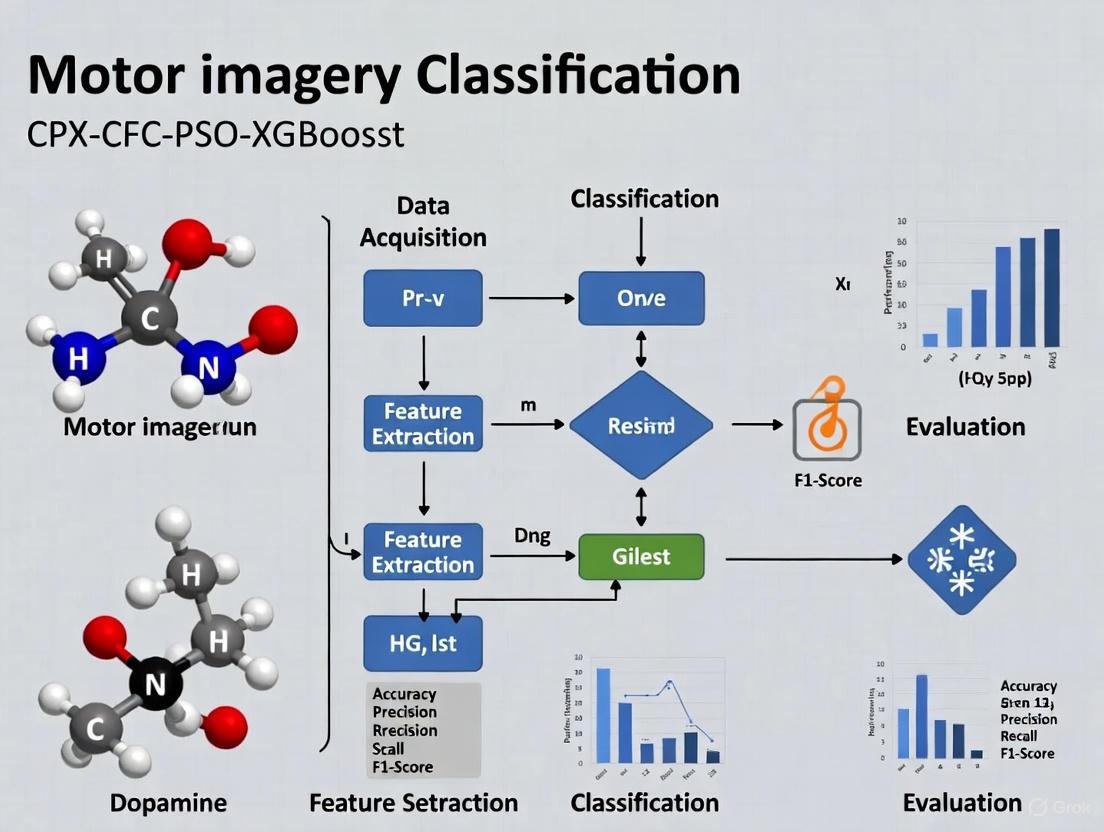

CPX CFC-PSO-XGBoost: An Optimized Framework for High-Accuracy Motor Imagery EEG Classification in Biomedical Applications

This article introduces the CPX CFC-PSO-XGBoost framework, a novel computational approach designed to address significant challenges in Motor Imagery (MI)-based Brain-Computer Interface (BCI) systems, particularly the high inter-subject variability and...

CPX CFC-PSO-XGBoost: An Optimized Framework for High-Accuracy Motor Imagery EEG Classification in Biomedical Applications

Abstract

This article introduces the CPX CFC-PSO-XGBoost framework, a novel computational approach designed to address significant challenges in Motor Imagery (MI)-based Brain-Computer Interface (BCI) systems, particularly the high inter-subject variability and low signal-to-noise ratio of Electroencephalography (EEG) data. The framework synergistically combines Covariance-based Feature Construction (CFC) for robust spatial feature extraction, Particle Swarm Optimization (PSO) for adaptive hyperparameter tuning, and the eXtreme Gradient Boosting (XGBoost) algorithm for superior classification performance. We detail its methodological foundation, provide a comprehensive troubleshooting guide for common implementation pitfalls in biomedical signal processing, and present a rigorous validation against contemporary deep learning and conventional machine learning models using public BCI competition datasets. The results demonstrate that the proposed framework achieves state-of-the-art accuracy and robustness, offering a powerful tool for researchers and developers in neuroinformatics and clinical rehabilitation.

Foundations of Motor Imagery BCI and the Need for Advanced Computational Frameworks

Core Principles of Motor Imagery Brain-Computer Interfaces

Motor Imagery Brain-Computer Interfaces (MI-BCIs) represent a transformative technology that enables direct communication between the human brain and external devices through the mental rehearsal of physical movements without any motor execution. This technology leverages the discovery that imagined movements activate similar neural substrates in the motor cortex as actual physical movements, particularly through modulations of sensorimotor rhythms (SMRs) [1]. These rhythmic patterns, which include mu rhythms (8-13 Hz) and beta rhythms (13-30 Hz), exhibit characteristic changes during motor imagery that can be detected and classified to control assistive devices, rehabilitation tools, and communication systems [1].

The fundamental neurophysiological phenomena underlying MI-BCI operation are Event-Related Desynchronization (ERD) and Event-Related Synchronization (ERS). ERD manifests as a decrease in oscillatory power in the mu and beta frequency bands over the sensorimotor cortex during motor imagery, reflecting an activated cortical state engaged in movement preparation [2]. Conversely, ERS typically occurs after movement cessation or during recovery, appearing as a relative power increase in these bands [2]. This ERD/ERS paradigm provides the primary neural correlates that MI-BCI systems decode to translate intention into action, creating a direct pathway from cognitive process to device control that bypasses compromised peripheral nerves and muscles [2] [1].

Table 1: Key Neurophysiological Signals in MI-BCIs

| Signal Type | Frequency Range | Cortical Location | Functional Correlation |

|---|---|---|---|

| Mu Rhythm (Lower) | 7-10 Hz | Sensorimotor Cortex | Movement inhibition, readiness |

| Mu Rhythm (Higher) | 10-13 Hz | Sensorimotor Cortex | Movement preparation |

| Beta Rhythm (Lower) | 12-20 Hz | Sensorimotor Cortex | Movement planning, execution |

| Beta Rhythm (Higher) | 20-30 Hz | Sensorimotor Cortex | Somatosensory processing |

| Gamma Rhythm | 30-200 Hz | Widespread | Higher cognitive processing |

The CPX CFC-PSO-XGBoost Framework: An Advanced Classification Approach

The CPX (CFC-PSO-XGBoost) framework represents a significant methodological advancement in MI-BCI signal classification, specifically designed to address the challenges of low signal-to-noise ratio and high inter-subject variability in EEG signals. This integrated pipeline combines three innovative components to achieve enhanced classification performance with reduced channel requirements [3].

The first component, Cross-Frequency Coupling (CFC), moves beyond traditional single-frequency band analysis by capturing interactions between different oscillatory rhythms. Specifically, the framework employs Phase-Amplitude Coupling (PAC) to examine how the phase of lower frequency oscillations (e.g., theta or alpha rhythms) modulates the amplitude of higher frequency oscillations (e.g., gamma rhythms). This approach recognizes that complex cognitive processes like motor imagery involve coordinated activity across multiple frequency bands, and CFC features provide a more comprehensive representation of these neural dynamics [3].

The second component implements Particle Swarm Optimization (PSO) for intelligent channel selection. This bio-inspired algorithm identifies an optimal subset of EEG channels (typically around eight) that contribute most significantly to classification accuracy, thereby reducing system complexity while maintaining performance. This optimization addresses a critical practical constraint in BCI applications by minimizing setup time and improving user comfort without compromising signal quality [3].

The final component utilizes the XGBoost (Extreme Gradient Boosting) classifier, a powerful machine learning algorithm that builds an ensemble of decision trees with regularization to prevent overfitting. This classifier demonstrates particular efficacy in handling the high-dimensional feature spaces derived from CFC analysis while providing interpretable feature importance metrics that offer insights into the most discriminative neural features for motor imagery classification [3].

In validation studies, the CPX framework has demonstrated 76.7% ± 1.0% classification accuracy for two-class MI problems using only eight EEG channels, outperforming traditional approaches like Common Spatial Patterns (CSP: 60.2% ± 12.4%) and FBCNet (68.8% ± 14.6%) [3]. This performance advantage highlights the value of integrating cross-frequency interactions with optimized channel selection and powerful classification algorithms.

Clinical Applications and Therapeutic Potential

MI-BCI technology holds particular promise for neurorehabilitation, where it can facilitate recovery through targeted activation of compromised neural circuits. In stroke rehabilitation, MI-BCI systems create a closed-loop environment where patients' motor imagery attempts are detected and translated into actuation by robotic exoskeletons, providing both physical movement and visual/auditory feedback that reinforces damaged sensorimotor pathways [2]. This approach harnesses the brain's inherent neuroplasticity by repeatedly engaging the motor network in a way that mimics actual movement, potentially driving cortical reorganization and functional recovery [2] [4].

Pilot studies have demonstrated the clinical feasibility of this approach. A 2025 investigation involving ischemic stroke patients showed that MI-BCI training combined with robotic hand assistance resulted in significant improvements in motor function across all participants [2]. EEG analysis confirmed the presence of event-related desynchronization in the high-alpha band power at motor cortex locations during training sessions, providing neural evidence of motor cortex engagement during the rehabilitation process [2].

Beyond stroke, MI-BCI applications are expanding to address a spectrum of neurological conditions. Research is exploring their potential for patients with cerebral palsy, Parkinson's disease, spinal cord injuries, and other conditions affecting motor function [5] [4]. The technology also shows promise for communication systems for individuals with complete locked-in syndrome, offering an alternative channel for interaction when all voluntary muscle control is lost [1].

Table 2: Clinical Applications of MI-BCI Technology

| Clinical Condition | Application Focus | Reported Outcomes |

|---|---|---|

| Ischemic Stroke | Upper limb rehabilitation | Significant motor function improvements, ERD patterns in motor cortex [2] |

| Spinal Cord Injury | Communication and environmental control | Restoring interaction capabilities, promoting neural plasticity |

| Cerebral Palsy | Motor function rehabilitation | Utilizing shared neural mechanisms between MI and ME [5] |

| Parkinson's Disease | Gait and movement rehabilitation | Potential for improving motor planning and execution [5] |

| Amyotrophic Lateral Sclerosis | Communication systems | Alternative channel for interaction in advanced disease stages |

Experimental Protocols and Methodologies

Participant Selection and Preparation

Robust MI-BCI research requires careful participant selection and standardization. Studies typically recruit right-handed participants with normal or corrected-to-normal vision and no history of neurological or psychiatric disorders. For clinical populations, specific inclusion criteria apply, such as confirmed ischemic stroke diagnosis via neuroimaging, Brunnstrom recovery stage ≤4 for upper limb function, and sufficient cognitive capacity (MMSE ≥18) to understand and execute tasks [2]. Prior to experimentation, participants receive comprehensive instructions about MI techniques, often supplemented by body awareness training protocols integrating mindfulness and physical exercises to enhance MI performance [1].

Data Acquisition Parameters

High-quality EEG acquisition forms the foundation of reliable MI-BCI systems. Research-grade systems typically employ 64-channel caps arranged according to the international 10-20 system, with sampling rates ≥250 Hz and appropriate impedance thresholds (<5 kΩ) [6]. Additional electrodes for electrooculogram (EOG) and electrocardiogram (ECG) recording are recommended for artifact identification and removal. The experimental environment should be electrically shielded and acoustically dampened to minimize external interference, with consistent lighting conditions maintained across sessions [6].

Motor Imagery Paradigm Design

Standardized MI paradigms typically employ cue-based designs with balanced trial structures. A common approach includes: (1) a pre-trial rest period (2.0-2.5 seconds) with fixation cross; (2) visual and/or auditory cue presentation (1.0-1.5 seconds) indicating the required imagery task; (3) motor imagery execution period (3.0-4.0 seconds); and (4) post-imagery rest period (2.0-3.0 seconds) [5] [6]. Tasks typically focus on unilateral hand movements (e.g., grasping, opening) or foot movements, with trial counts ranging from 40-100 per class per session to ensure adequate data for model training while minimizing fatigue effects [6].

Essential Research Reagent Solutions

Table 3: Key Research Tools and Technologies for MI-BCI Development

| Category | Specific Solution | Function/Purpose |

|---|---|---|

| EEG Hardware | Neuracle EEG Systems (64-channel) | High-quality signal acquisition with portability [6] |

| EEG Hardware | Emotiv EPOC X | Low-cost, mobile neurotechnology applications [1] |

| Signal Processing | RxHEAL BCI Hand Rehabilitation System | Integrated MI-BCI training with robotic feedback [2] |

| Data Resources | WBCIC-MI Dataset (62 subjects, 3 sessions) | Cross-session and cross-subject algorithm validation [6] |

| Data Resources | BCI Competition IV-2a Dataset | Benchmark for multi-class MI classification [3] |

| Classification Algorithms | CPX (CFC-PSO-XGBoost) Pipeline | Enhanced accuracy with optimized channel selection [3] |

| Classification Algorithms | EEGNet | Deep learning approach for EEG classification [6] |

| Validation Framework | MOABB (Mother of All BCI Benchmarks) | Standardized performance comparison across algorithms |

Visualizing MI-BCI Workflows

CPX Framework Classification Pipeline

Motor Imagery Experimental Protocol

Clinical Rehabilitation Feedback Loop

Motor Imagery-based Brain-Computer Interfaces (MI-BCIs) represent a transformative technology that enables direct communication between the human brain and external devices by decoding neural activity associated with imagined movements [7]. Despite significant advances, two persistent challenges critically limit their widespread adoption and practical efficacy: inter-subject variability and the low signal-to-noise ratio (SNR) of electroencephalography (EEG) signals. Inter-subject variability refers to the significant differences in EEG patterns across different users, caused by factors such as age, gender, brain anatomy, and living habits, which severely degrade the generalization capability of machine learning models [8] [9]. Meanwhile, the inherently low SNR of non-invasive EEG signals, stemming from their weak amplitude and contamination by various biological and environmental artifacts, poses fundamental limitations on classification accuracy and system robustness [7] [3]. This application note examines these interconnected challenges within the context of the emerging CPX (CFC-PSO-XGBoost) framework and other contemporary solutions, providing detailed protocols and analytical tools to advance MI-BCI research.

Understanding the Fundamental Challenges

The Problem of Inter-Subject Variability

Inter-subject variability presents a fundamental obstacle to developing generalized MI-BCI systems. Research has demonstrated that the feature distribution of EEG signals changes significantly across individuals, meaning a model trained on one subject typically performs poorly on another [8]. This variability arises from neurophysiological factors including skull conductivity differences, cortical thickness variations, and unique brain topographies [9]. Studies have revealed that time-frequency responses of EEG signals are more consistent within the same subject across sessions than between different subjects, suggesting that cross-subject and cross-session transfer learning may require fundamentally different approaches [9]. The consequence is the "BCI inefficiency" problem, where approximately 10-50% of users cannot operate standard MI-BCI systems effectively [9].

The Low Signal-to-Noise Ratio Problem

EEG signals captured non-invasively from the scalp surface typically exhibit extremely low SNR, characterized by weak signal strength (microvolts) contaminated by multiple noise sources [7]. These noise sources include physiological artifacts (ocular movements, muscle activity, cardiac rhythms) and environmental interference (line noise, improper electrode contact). This noise contamination obscures the neural patterns of interest, particularly event-related desynchronization/synchronization (ERD/ERS) phenomena in sensorimotor rhythms that are crucial for MI detection [10]. The non-stationary nature of EEG signals further complicates this issue, as statistical properties change over time even within the same recording session [7].

Table 1: Quantitative Performance of Recent MI-BCI Frameworks Addressing Key Challenges

| Framework/Model | Core Innovation | Within-Subject Accuracy | Cross-Subject Accuracy | Key Application Advantage |

|---|---|---|---|---|

| HA-FuseNet [7] | Multi-scale feature fusion + hybrid attention | 77.89% (BCI IV-2A) | 68.53% (BCI IV-2A) | Robustness to spatial resolution variations |

| CPX (CFC-PSO-XGBoost) [3] | Cross-frequency coupling + optimized channel selection | 76.7% ± 1.0% | 78.3% (BCI IV-2A) | Effective with only 8 EEG channels |

| Dual-CNN with Cortical Mapping [11] | Cortex-based electrode projection + hemispheric difference | 96.36% (group-level, Physionet) | 98.88% (best individual) | High accuracy on individual subjects |

| DWGC-SVM fMRI Approach [12] | Dynamic Granger causality + effective connectivity | 69.3% (3-class) | N/A | Reduced latency in real-time decoding |

Integrated Methodological Approaches for Challenge Mitigation

The CPX Framework: CFC-PSO-XGBoost

The CPX pipeline represents an integrated methodology specifically designed to address both SNR limitations and inter-subject variability through a structured approach combining novel feature extraction and channel optimization.

Phase-Amplitude Coupling (PAC) for CFC Feature Extraction Cross-frequency coupling (CFC) analysis moves beyond traditional single-frequency band features by capturing interactions between different oscillatory components in the EEG signal [3]. The protocol involves:

- Signal Preprocessing: Bandpass filtering raw EEG to isolate relevant frequency bands (mu: 8-13Hz, beta: 14-30Hz, gamma: >30Hz)

- PAC Computation: For each channel, extract the phase of low-frequency oscillations and amplitude of high-frequency oscillations using Hilbert transform

- Coupling Quantification: Calculate modulation index between phase and amplitude sequences to generate CFC features This approach captures non-linear neural dynamics that traditional power spectral features miss, providing more discriminative features for MI classification [3].

Particle Swarm Optimization for Channel Selection PSO addresses both computational efficiency and inter-subject variability by identifying optimal channel subsets:

- Initialization: Initialize particle population with random channel subsets (e.g., 8-channel combinations)

- Fitness Evaluation: Assess classification accuracy using XGBoost with CFC features for each subset

- Particle Update: Iteratively update particle positions toward locally and globally optimal solutions

- Convergence: Select final channel configuration when fitness improvement falls below threshold This optimization reduces channel count while maintaining performance, directly addressing practical deployment constraints [3].

HA-FuseNet Architecture for Robust Feature Learning

HA-FuseNet implements a dual-pathway architecture combining DIS-Net (CNN-based) and LS-Net (LSTM-based) to extract complementary spatio-temporal features [7]. The model incorporates:

- Multi-scale dense connectivity for capturing diverse temporal patterns

- Hybrid attention mechanisms for emphasizing task-relevant features

- Global self-attention modules for modeling long-range dependencies

- Lightweight design reducing computational overhead for potential real-time application

Diagram 1: Integrated MI-BCI Processing Pipeline Showing Key Stages from Signal Acquisition to Application

Experimental Protocols for Addressing MI-BCI Challenges

Protocol 1: Inter-Subject Variability Assessment

Objective: Quantify and characterize inter-subject variability in MI patterns to inform model development.

Materials and Setup:

- EEG acquisition system (64+ channels recommended, e.g., BrainAmp, g.tec)

- Electrode cap positioned according to 10-10 international system

- Visual cue presentation system (monitor or VR headset)

- BCI2000, OpenVibe, or custom stimulus presentation software

Procedure:

- Participant Preparation: Recruit 10+ subjects with balanced gender representation. Apply conductive gel to achieve electrode-scalp impedance <10 kΩ.

- Experimental Paradigm:

- Implement cue-based MI task with random presentation of left hand, right hand, feet, and tongue imagery

- Use fixed inter-trial intervals (4-6s) with visual fixation cross between trials

- Record minimum of 40 trials per MI class per subject

- Data Acquisition:

- Sample at ≥256Hz with appropriate hardware filtering (0.1-100Hz)

- Record continuous EEG with event markers synchronized to stimulus onset

- Variability Analysis:

- Compute time-frequency representations (ERD/ERS) for each subject and class

- Apply Common Spatial Patterns (CSP) and compare feature distributions across subjects

- Perform statistical testing (ANOVA) on feature variance between within-subject and cross-subject conditions

Expected Outcomes: Quantitative measures of inter-subject variability in temporal, spectral, and spatial domains, informing personalized model adjustments.

Protocol 2: SNR Enhancement Through Cortical Mapping

Objective: Improve effective SNR through source reconstruction and cortical projection.

Materials and Setup:

- High-density EEG system (64+ channels)

- Structural MRI for individual head models (or use template like colin27)

- Boundary Element Method (BEM) forward model implementation

- Weighted Minimum Norm Estimation (WMNE) software

Procedure:

- Data Collection: Acquire standard MI-EEG data as in Protocol 1

- Forward Modeling:

- Create individual head model with BEM incorporating scalp, skull, and brain compartments

- Compute leadfield matrix defining sensitivity of each electrode to cortical sources

- Inverse Solution:

- Apply WMNE to estimate cortical source activity from scalp potentials

- Define Regions of Interest (ROIs) over sensorimotor cortex

- Virtual Electrode Creation:

- Project sensor-level signals to cortical surface

- Create symmetric left-right hemisphere ROI pairs for differential analysis

- Validation:

- Compare classification accuracy between sensor-space and source-space features

- Assess cross-subject consistency of source-space MI patterns

Expected Outcomes: Significant improvement in SNR and inter-subject consistency through cortical signal reconstruction [11].

Table 2: Research Reagent Solutions for MI-BCI Implementation

| Reagent/Resource | Specification Purpose | Example Products/Implementations |

|---|---|---|

| EEG Acquisition Systems | Signal recording with optimal temporal resolution | BrainAmp, g.tec, BioSemi, Emotiv, Neuroscan |

| Signal Processing Toolboxes | Preprocessing, feature extraction, classification | EEGLab, BCILAB, MNE-Python, OpenBMI |

| Cortical Mapping Tools | Source reconstruction for SNR improvement | BrainStorm, SPM, FieldTrip, NUTMEG |

| BCI Experiment Platforms | Stimulus presentation and data synchronization | BCI2000, OpenVibe, PsychToolbox, Unity |

| Machine Learning Libraries | Implementation of classification algorithms | Scikit-learn, XGBoost, TensorFlow, PyTorch |

| Validation Datasets | Benchmarking algorithm performance | BCI Competition IV-2A, Physionet EEGMMIDB |

Implementing effective MI-BCI research requires specialized tools and resources. The following table details critical components for establishing a capable research pipeline.

Visualization and Analytical Tools

Diagram 2: CPX Framework Architecture Showing CFC Feature Extraction, PSO Optimization, and XGBoost Classification Stages

The dual challenges of inter-subject variability and low SNR continue to drive innovation in MI-BCI research. Frameworks like CPX and HA-FuseNet demonstrate that integrated approaches combining advanced feature extraction, channel optimization, and attention mechanisms can significantly improve both within-subject and cross-subject performance. The experimental protocols and analytical tools presented here provide a foundation for systematic investigation of these challenges. Future research directions should focus on adaptive learning systems that continuously adjust to individual user characteristics, hybrid approaches combining EEG with other modalities, and standardized benchmarking methodologies to enable direct comparison of solution strategies. As these technical challenges are addressed, the path accelerates toward practical, robust MI-BCI systems capable of transforming neurorehabilitation and human-computer interaction.

Sensorimotor rhythms (SMR) are oscillatory brain activities observed primarily over sensorimotor cortical areas. The most studied components include the rolandic mu rhythm (8–12 Hz, also termed "central alpha") and beta rhythms (13–30 Hz) [13] [14]. These rhythms exhibit characteristic power changes during motor and cognitive tasks, known as Event-Related Desynchronization (ERD) and Event-Related Synchronization (ERS) [13]. ERD represents a decrease in oscillatory power, correlating with cortical activation during processes like motor planning and execution. Conversely, ERS represents a power increase, often associated with cortical deactivation or idling, such as following movement termination [13] [14]. These phenomena are not limited to active movement but also occur during passive movement, motor imagery, and movement observation [13], suggesting a complex role beyond mere motor execution. Recent evidence indicates that ERD/ERS patterns are not purely motor phenomena but reflect broader mechanisms common to both motor and cognitive functions, such as working memory and focused attention [13].

Neurophysiological Mechanisms and Functional Significance

The functional role of sensorimotor beta oscillations has been reinterpreted beyond the classic "idling" hypothesis, which viewed ERS simply as an inhibitory state of the sensorimotor system [13]. Current theories propose that beta ERD serves to release cortical inhibition, enabling movement execution or cognitive processing, while beta ERS helps maintain the current motor or cognitive set [13]. Furthermore, a metabolic perspective suggests that beta modulation, particularly ERS amplitude, reflects energy consumption necessary for use-dependent plasticity and learning processes [13]. This view is supported by links between beta power changes and GABAergic activity and lactate changes [13].

From a topological perspective, movement-related beta ERD/ERS dynamics are observed not only over sensorimotor areas but also over frontal and pre-frontal areas [13]. This broader distribution reinforces the concept that these oscillations are not merely a reflection of motor activity but are involved in processes common to motor and cognitive functions, potentially serving as a mechanism for attention-related processes needed to filter out irrelevant information [13].

Table 1: Key Frequency Bands and Their Functional Correlates in Sensorimotor Processing

| Frequency Band | Common Terminology | Primary Functional Correlates | ERD/ERS Significance |

|---|---|---|---|

| 8–12 Hz | Mu Rhythm, Central Alpha | Somatosensory processing; generation linked to primary somatosensory cortex [15]. | ERD during motor execution, motor imagery, and somatosensory stimulation [14] [15]. |

| 13–30 Hz | Beta Rhythm | Motor processing; generation linked to primary motor cortex [15]. | ERD during motor preparation/execution; post-movement beta rebound (ERS) [13] [14]. |

| 30–200 Hz | Gamma Rhythm | Prokinetic processes | Power increase (synchronization) during movement planning and execution [13]. |

Experimental Protocols for ERD/ERS Investigation

Protocol for Investigating Tactile Imagery-Induced ERD

Objective: To quantify ERD induced by tactile imagery (TI) in the somatosensory cortex and compare it with ERD during real tactile stimulation [15].

Subject Preparation:

- Recruit right-handed healthy volunteers with no history of neurological disorders.

- Obtain informed consent following ethical committee approval.

- Use a standard 32-channel EEG system (e.g., EMOTIV EEG) with electrodes placed according to the international 10-20 system. Ensure impedances are kept below 5 kΩ [16] [17] [18].

Experimental Design:

- The session consists of four conditions: Tactile Stimulation (TS), Control, Learning of TI, and TI.

- Each condition comprises randomly mixed trials (e.g., 20 trials per type). Total session duration should not exceed 90 minutes.

- Vibrotactile stimuli are delivered to the right hand during TS trials.

- Visual cues (pictograms) indicate the trial type (TS, TI, control, or reference state).

- During TI trials, participants imagine the vibrotactile sensation without physical stimulation.

- During control trials, the TS pictogram is shown but no stimulus is delivered and TI is not performed.

- Reference state (rst) trials require participants to mentally count objects on the screen to establish a baseline.

Data Analysis:

- Preprocess EEG data: apply band-pass filtering (e.g., 8–30 Hz) and artifact removal.

- Calculate ERD/ERS using the percentage of power decrease/increase relative to a reference period (e.g., the rst trials) [15].

- Perform time-frequency analysis focused on the mu (8–12 Hz) and beta (13–30 Hz) bands.

- Compare topographical maps of ERD, particularly over the contralateral somatosensory area (C3 electrode) [15].

Protocol for a Motor Imagery BCI Paradigm

Objective: To classify motor imagery (MI) tasks for a Brain-Computer Interface (BCI) using EEG signals, leveraging ERD/ERS features [3] [18].

Subject Preparation:

- Participants sit in a comfortable armchair facing a computer screen.

- Apply a multi-channel EEG cap (e.g., 32-channel). Systems with fewer channels (e.g., 8) can be optimized for practicality [3] [18].

Experimental Design:

- The paradigm involves two-class motor imagery tasks (e.g., left hand vs. right hand).

- Each trial lasts 6–8 seconds: a fixation cross appears first, followed by a visual cue indicating the imagined movement, then the motor imagery period, and finally a rest period.

- Multiple trials (e.g., 60–100 per class) are collected per session.

Signal Processing and Classification (CPX Framework):

- Preprocessing: Filter raw EEG signals (e.g., 8–30 Hz) and remove artifacts.

- Feature Extraction using Cross-Frequency Coupling (CFC): Extract Phase-Amplitude Coupling (PAC) features to capture interactions between different frequency bands of spontaneous EEG signals [3].

- Channel Selection using Particle Swarm Optimization (PSO): Optimize electrode montage to identify a compact set of channels (e.g., 8 channels) without compromising classification performance [3].

- Classification using XGBoost: Employ the XGBoost algorithm to classify the motor imagery tasks based on the extracted CFC features. Validate performance using 10-fold cross-validation [3].

Table 2: Key Methodology and Performance in Recent ERD/ERS and BCI Studies

| Study Focus | Core Methodology | Key Outcome Metrics |

|---|---|---|

| Tactile Imagery ERD [15] | Comparison of EEG during real vs. imagined vibrotactile stimulation; analysis of mu and beta ERD. | Significant contralateral ERD in the mu-band during both real and imagined tactile stimulation, most prominent at C3. |

| MI-BCI Classification (CPX Framework) [3] | CFC feature extraction, PSO channel selection, XGBoost classifier on spontaneous EEG. | Average accuracy: 76.7% ± 1.0% (two-class); 78.3% on external BCI Competition IV-2a dataset. |

| MI-BCI with Reduced Electrodes [18] | Elastic Net regression to predict full-channel (22) EEG from few central channels (8) for MI classification. | Average classification accuracy: 78.16% (range: 62.30% to 95.24%). |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials and Tools for ERD/ERS and MI-BCI Research

| Item / Technique | Specification / Example | Primary Function in Research |

|---|---|---|

| EEG Recording System | 32-channel EMOTIV EEG; Ag-AgCl electrodes [16]. | Records scalp electrical activity with high temporal resolution; essential for capturing oscillatory dynamics. |

| Electrode Placement Standard | International 10–20 system [16] [17]. | Ensures consistent and anatomically precise electrode placement across subjects and studies. |

| Impedance Control | Impedance kept below 5 kΩ [17] [18]. | Maximizes signal-to-noise ratio and reduces artifacts in the recorded EEG data. |

| Stimulation & Cueing Software | PsychoPy software [16]. | Presents visual cues and controls the timing of stimuli and task paradigms with high precision. |

| Tactile Stimulator | Vibrotactile stimulation device [15]. | Delivers controlled somatosensory stimuli to investigate real and imagined sensation processing. |

| Quantitative EEG (QEEG) Analysis | Automated feature extraction for posterior dominant rhythm, reactivity, symmetry, etc. [17]. | Provides objective, quantitative measures of background EEG properties and event-related changes. |

Visualization of Experimental Workflows and Neurophysiological Concepts

ERD/ERS in Cortical Processing

General Workflow for ERD/ERS Experiments

Motor Imagery (MI)-based Brain-Computer Interfaces (BCIs) have traditionally relied on a processing pipeline incorporating Common Spatial Patterns (CSP) for feature extraction, followed by classifiers such as Linear Discriminant Analysis (LDA) and Support Vector Machines (SVM). While this paradigm has formed the backbone of MI-BCI research for years, its limitations in handling the non-stationary, low signal-to-noise ratio nature of electroencephalography (EEG) data are increasingly apparent. This application note details the specific constraints of CSP, LDA, and SVM, framing them within the context of modern BCI development. We further provide validated experimental protocols for quantifying these limitations and highlight how emerging approaches, such as the CFC-PSO-XGBoost (CPX) framework, which leverages Cross-Frequency Coupling (CFC) and Particle Swarm Optimization (PSO), address these shortcomings to achieve superior classification accuracy above 76% with reduced channel counts [3].

The standard MI-BCI classification pipeline involves preprocessing EEG signals, extracting discriminative features using CSP, and classifying these features using linear or kernel-based classifiers like LDA and SVM [19] [20]. Common Spatial Patterns (CSP) is a spatial filtering technique designed to maximize the variance of one class while minimizing the variance of the other, effectively highlighting the event-related desynchronization/synchronization (ERD/ERS) patterns central to MI [21]. The resulting features are typically fed into Linear Discriminant Analysis (LDA), which finds a linear combination of features that best separates two or more classes, or Support Vector Machines (SVM), which constructs a hyperplane or set of hyperplanes in a high-dimensional space for classification [19] [22].

Despite their widespread adoption, these methods possess inherent weaknesses. CSP's performance is critically dependent on subject-specific frequency band selection and is sensitive to noise and outliers [23]. LDA assumes linear separability and Gaussian distribution of data, conditions rarely met by real-world EEG signals [19]. While more robust, SVM struggles with high-dimensional feature spaces and requires careful parameter tuning [19] [22]. The following sections dissect these limitations in detail and provide protocols for their empirical validation.

Limitations of Common Spatial Patterns (CSP)

CSP's fundamental objective is to find spatial filters that maximize the variance difference between two classes of EEG signals. However, this strength is also the source of its primary weaknesses.

Table 1: Key Limitations of the CSP Algorithm

| Limitation | Description | Impact on Performance |

|---|---|---|

| Frequency Band Sensitivity | CSP performance is highly dependent on the subject-specific reactive frequency band for MI. Manual or suboptimal band selection severely degrades results [21]. | Leads to inconsistent performance across subjects and sessions, requiring individual calibration. |

| Noise and Outlier Sensitivity | As a variance-based method, CSP is highly sensitive to artifacts and outlier trials, which disproportionately influence the covariance matrix estimation [23]. | Reduced robustness and generalization; spatial filters may not represent true neural activity. |

| Limited to Two-Class Problems | The standard CSP formulation is inherently binary. Extension to multi-class problems requires complex, often suboptimal, ensemble approaches like One-vs-Rest [23]. | Complicates applications requiring more than two commands (e.g., left hand, right hand, foot, tongue). |

| Amplitude-Only Focus | CSP utilizes only amplitude (band power) information, entirely ignoring the phase information of EEG signals, which contains valuable discriminative content [24]. | Fails to capture the full complexity of neural dynamics, limiting the feature space. |

| Fixed Spatial Filters | CSP produces static spatial filters for a given calibration dataset, unable to adapt to non-stationarities in the EEG signal over time [21]. | Performance drops during long-term use without recalibration. |

Experimental Protocol: Demonstrating CSP's Frequency Sensitivity

Objective: To quantify the performance degradation of CSP when using a fixed, broad frequency band versus subject-specific optimized bands.

Materials:

- Public BCI Competition IV Dataset 2a.

- EEG data from subjects performing left-hand, right-hand, foot, and tongue MI tasks.

- Computing environment with MATLAB or Python (with Scikit-learn, MNE-Python).

Methodology:

- Data Preparation: Select data from two classes (e.g., left-hand vs. right-hand MI). Use the trial timings marked in the dataset (typically 0-4 seconds after cue).

- Spatial Filtering:

- Condition A (Fixed Band): Apply a bandpass filter with a broad, fixed frequency range (e.g., 8-30 Hz). Apply CSP to extract 4 spatial filters per class (8 total).

- Condition B (Optimized Band): Use a filter bank approach (e.g., 4-8 Hz, 8-12 Hz, ..., 24-28 Hz). Apply CSP in each sub-band and select the most discriminative features using a feature selection algorithm like Mutual Information [21] [22].

- Feature Extraction & Classification: For both conditions, compute the log-variance of the spatially filtered signals. Train an LDA classifier using 10-fold cross-validation.

- Analysis: Compare the average cross-validation accuracy between Condition A and Condition B across all subjects. Statistical significance can be assessed using a paired t-test.

Expected Outcome: Condition B (optimized bands) is expected to yield a statistically significant improvement in classification accuracy, demonstrating CSP's dependency on appropriate frequency selection.

Limitations of LDA and SVM Classifiers

Even with optimally extracted CSP features, the choice of classifier introduces another layer of constraints.

Table 2: Comparative Limitations of LDA and SVM Classifiers in MI-BCI

| Classifier | Core Principle | Key Limitations in MI-BCI |

|---|---|---|

| Linear Discriminant Analysis (LDA) | Finds a linear projection that maximizes between-class variance and minimizes within-class variance. | Assumes data is linearly separable and features are normally distributed with equal covariance matrices—assumptions often violated by EEG data [19]. Highly sensitive to noise and outliers. Simple model may underfit complex, high-dimensional EEG feature spaces. |

| Support Vector Machine (SVM) | Finds the optimal hyperplane that maximizes the margin between classes in a transformed feature space. | Performance is highly sensitive to kernel and parameter selection (e.g., C, gamma) [19] [22]. Computationally expensive for large datasets, potentially hindering real-time application. The "black box" nature of non-linear kernels offers limited interpretability. |

Experimental Protocol: Comparing Classifier Robustness to Non-Linearity

Objective: To evaluate the performance of LDA and SVM against a non-linear tree-based classifier (XGBoost) on non-linearly separable, high-dimensional CSP features.

Materials:

- The same dataset and CSP features from Section 2.1.

- LDA, SVM (with linear and RBF kernels), and XGBoost classifiers.

Methodology:

- Feature Generation: Use the optimized CSP features (log-variance) from the previous protocol.

- Model Training & Evaluation:

- Train three classifiers: LDA, SVM (with hyperparameter tuning via grid search), and XGBoost.

- Evaluate all models using a subject-independent "leave-one-subject-out" (LOSO) cross-validation scheme to test generalizability [19].

- Analysis: Compare the average LOSO accuracy, precision, recall, and F1-score across all classifiers. Use ANOVA with post-hoc tests to determine significant differences.

Expected Outcome: LDA is expected to show lower performance compared to tuned SVM and XGBoost, particularly in the subject-independent scenario, highlighting its limitations with complex, non-Gaussian data. The CPX framework's use of XGBoost is designed to overcome this by naturally handling complex non-linear relationships [3].

The Research Toolkit

Table 3: Essential Research Reagents and Tools for MI-BCI Research

| Item / Technique | Function / Description | Application in MI-BCI |

|---|---|---|

| Common Spatial Pattern (CSP) | Spatial filtering algorithm to maximize class separability based on signal variance [21]. | Extracting discriminative spatial features from multi-channel EEG during motor imagery. |

| Filter Bank CSP (FBCSP) | Extension of CSP that operates on multiple frequency sub-bands to handle frequency variability [21] [22]. | Improving robustness across subjects by automating the selection of discriminative frequency bands. |

| Particle Swarm Optimization (PSO) | A computational method that optimizes a problem by iteratively trying to improve a candidate solution [3]. | Optimizing channel selection and hyperparameters for classifiers to enhance performance and reduce computational load. |

| XGBoost (eXtreme Gradient Boosting) | An advanced, scalable tree-boosting system known for its speed and performance [3]. | Classifying MI tasks by modeling complex, non-linear relationships in high-dimensional feature spaces. |

| Cross-Frequency Coupling (CFC) | A method to analyze interactions between different neural oscillation frequencies, such as Phase-Amplitude Coupling (PAC) [3]. | Extracting more robust features that capture complex neural dynamics beyond traditional band power. |

| Relief-F Algorithm | A feature selection algorithm that estimates the quality of features based on how well their values distinguish between instances that are near to each other [22]. | Reducing feature dimensionality by selecting the most discriminative CSP or CFC features for classification. |

Workflow Visualization: Conventional vs. CPX Framework

The diagram below contrasts the traditional MI-BCI pipeline with the modern CPX framework, illustrating the conceptual advance.

Comparative Workflow: Conventional vs. CPX Framework. The conventional pipeline relies solely on CSP and simple classifiers, creating key bottlenecks (red nodes). The CPX framework introduces advanced feature extraction via CFC, uses PSO to intelligently select a minimal channel set, and leverages XGBoost's non-linear classification power (green nodes), resulting in a more robust and accurate system [3].

Conventional methods based on CSP, LDA, and SVM have laid a strong foundation for MI-BCI research but are hampered by significant limitations in robustness, adaptability, and performance. These constraints—including sensitivity to noise and frequency bands, reliance on linear assumptions, and poor generalizability in subject-independent scenarios—are quantifiable using the provided experimental protocols.

The emerging CPX framework directly addresses these shortcomings. By replacing CSP with Cross-Frequency Coupling (CFC) for more robust feature extraction, using Particle Swarm Optimization (PSO) for optimal channel selection, and employing XGBoost for powerful non-linear classification, it represents a paradigm shift. This integrated approach, which has demonstrated superior accuracy of 76.7% with only eight EEG channels, provides a more effective pathway for developing practical, high-performance BCI systems for both clinical and consumer applications [3]. Future work should focus on the real-time implementation and further validation of such advanced frameworks across diverse user populations.

The Emergence of Hybrid and Deep Learning Models in EEG Analysis

The field of electroencephalogram (EEG) analysis has been transformed by the emergence of hybrid and deep learning models, which offer unprecedented accuracy in decoding complex brain signals. These advanced computational approaches have demonstrated remarkable success across various applications, from diagnosing neurological disorders to enabling brain-computer interfaces (BCIs). Traditional machine learning methods for EEG analysis often relied on manually engineered features and struggled with the non-stationary, high-dimensional nature of neural data. The integration of multiple architectural paradigms within hybrid models has overcome these limitations, providing robust solutions for real-time processing and classification of brain activity patterns. This evolution is particularly evident in motor imagery classification, where frameworks like CPX (CFC-PSO-XGBoost) demonstrate how strategically combined algorithms can significantly enhance BCI performance [3]. This article examines the current landscape of hybrid deep learning approaches in EEG analysis, with specific focus on their architectural innovations, performance benchmarks, and implementation protocols.

Performance Comparison of Hybrid EEG Analysis Models

Table 1: Comparative Performance of Recent Hybrid EEG Models

| Model Name | Architecture Type | Application Domain | Accuracy (%) | Key Innovations |

|---|---|---|---|---|

| CPX (CFC-PSO-XGBoost) [3] | Feature Extraction + Optimization + Classification | Motor Imagery BCI | 76.7 ± 1.0 | Cross-Frequency Coupling, PSO channel selection |

| Hybrid Deep Learning Model [25] | Hybrid Deep Learning | Cognitive State Classification | 93.0 (intra-subject), 88.0 (inter-subject) | Multi-architecture integration |

| Multi-Feature Fusion + SVM-AdaBoost [26] | Feature Fusion + Ensemble Learning | Motor Imagery BCI | 95.37 | Multi-wavelet features, WOA optimization |

| HA-FuseNet [27] | CNN-LSTM with Attention | Motor Imagery Classification | 77.89 (within-subject), 68.53 (cross-subject) | Multi-scale dense connectivity, hybrid attention |

| ACXNet [28] | Autoencoder-CNN-XGBoost | Mental Workload Estimation | 92.10 (SIMKAP), 89.94 (No task) | Neural manifolds, cross-task generalization |

| TCN-LSTM with XAI [29] | Temporal CNN-LSTM | Dementia Diagnosis | 99.7 (binary), 80.34 (multi-class) | Explainable AI, modified Relative Band Power |

Table 2: Input/Output Specifications for EEG Hybrid Models

| Model | Input Type | Number of Channels | Output Classes | Computational Efficiency |

|---|---|---|---|---|

| CPX [3] | Spontaneous EEG with CFC features | 8 (optimized) | 2 (Motor Imagery) | High (low-channel requirement) |

| Hybrid Cognitive Model [25] | Raw EEG signals | Not specified | 3 (Attention, Interest, Mental Effort) | Real-time feasible under lab hardware |

| Multi-Feature Fusion [26] | Multi-wavelet decomposed signals | Standard BCI montage | 4 (Motor Imagery actions) | Moderate (multiple feature extraction) |

| HA-FuseNet [27] | Raw MI-EEG signals | Standard montage | 4 (L hand, R hand, foot, tongue) | Lightweight design for real-time use |

| ACXNet [28] | Topographic & temporal neural manifolds | Not specified | 2 (Low/High Mental Workload) | Scalable for real-world applications |

| TCN-LSTM XAI [29] | Relative Band Power features | 19 electrodes | 3 (AD, FTD, Healthy) | Lightweight framework |

Experimental Protocols for Hybrid EEG Model Implementation

CPX Framework Protocol for Motor Imagery Classification

Objective: To implement the CFC-PSO-XGBoost (CPX) pipeline for classifying motor imagery tasks from spontaneous EEG signals [3].

Materials and Dataset:

- EEG data from 25 participants performing motor imagery tasks

- Benchmark MI-BCI dataset or BCI Competition IV-2a dataset

- Hardware: Standard EEG acquisition system with minimum 8 electrodes

- Software: MATLAB/Python with signal processing toolboxes

Procedure:

- Data Preprocessing:

- Apply bandpass filtering (0.5-45 Hz) to remove artifacts and DC drift

- Resample data to 250 Hz for standardization

- Apply artifact removal techniques (ASR/ICA) if needed

Feature Extraction using Cross-Frequency Coupling (CFC):

- Calculate Phase-Amplitude Coupling (PAC) between low-frequency phase and high-frequency amplitude

- Extract CFC features from all channel pairs

- Generate a comprehensive feature matrix representing neural interactions

Channel Selection using Particle Swarm Optimization (PSO):

- Initialize PSO with population size of 30-50 particles

- Define fitness function based on classification accuracy

- Iterate until convergence to identify optimal 8-channel subset

- Validate selected channels across participants

Classification with XGBoost:

- Format optimized features for XGBoost input

- Set parameters: maxdepth=6, learningrate=0.1, n_estimators=100

- Implement 10-fold cross-validation

- Evaluate performance using accuracy, precision, recall, and F1-score

Validation:

- Compare against baseline methods (CSP, FBCSP, FBCNet, EEGNet)

- Perform statistical testing for significance (p<0.05)

- Report confusion matrices and ROC curves

Multi-Feature Fusion with SVM-AdaBoost Protocol

Objective: To implement a comprehensive feature fusion approach combined with ensemble learning for high-accuracy motor imagery classification [26].

Materials and Dataset:

- BCI competition dataset or equivalent MI-EEG data

- FIR filters for preprocessing

- Morlet and Haar wavelets for decomposition

Procedure:

- Signal Preprocessing:

- Apply FIR bandpass filter (16-32 Hz) to extract β rhythm

- Segment data into appropriate trial epochs

Multi-Wavelet Decomposition:

- Construct multi-wavelet framework using Morlet and Haar wavelets

- Perform three-level wavelet packet decomposition

- Generate combined wavelet coefficient matrices

Multi-Domain Feature Extraction:

- Energy Features: Calculate wavelet energy from coefficients

- CSP Features: Apply Common Spatial Patterns for spatial filtering

- AR Features: Extract Autoregressive model coefficients (order 10)

- PSD Features: Compute Power Spectral Density using Welch's method

- Feature Fusion: Concatenate all features and normalize using z-score

WOA-Optimized SVM-AdaBoost Classification:

- Initialize SVM with RBF kernel

- Optimize SVM parameters (C, γ) using Grid Search with Cross-Validation

- Configure AdaBoost with SVM as weak learner

- Apply Whale Optimization Algorithm (WOA) to optimize:

- Number of weak learners (10-100)

- Learning rate (0.01-1.0)

- Train final ensemble model and evaluate performance

Validation Metrics:

- Classification accuracy and Kappa value

- Comparative analysis against traditional methods

- Computational efficiency assessment

HA-FuseNet Protocol for End-to-End MI Classification

Objective: To implement an attention-based hybrid network for robust motor imagery classification with enhanced generalization [27].

Dataset: BCI Competition IV Dataset 2A

Procedure:

- Data Preparation:

- Load and preprocess 4-class MI data (left hand, right hand, foot, tongue)

- Apply minimal preprocessing: bandpass filtering and normalization

- Segment into trials without extensive manual feature extraction

Dual-Path Architecture Implementation:

- DIS-Net Path (Local Features):

- Implement inverted bottleneck layers

- Configure multi-scale dense connectivity

- Extract local spatio-temporal features

- LS-Net Path (Global Context):

- Implement LSTM architecture

- Capture long-range temporal dependencies

- Model global spatio-temporal patterns

- DIS-Net Path (Local Features):

Hybrid Attention Integration:

- Implement channel-wise attention modules

- Add spatial attention mechanisms

- Incorporate global self-attention module

- Fuse features from both paths with attention weighting

Lightweight Design Optimization:

- Apply model compression techniques

- Optimize for computational efficiency

- Ensure real-time inference capability

Validation:

- Evaluate within-subject and cross-subject accuracy

- Compare with EEGNet, ShallowConvNet, DeepConvNet

- Assess robustness to spatial resolution variations

Workflow Visualization

CPX and Comparative Hybrid Model Workflows

Table 3: Essential Research Resources for Hybrid EEG Model Development

| Resource Category | Specific Tools/ Algorithms | Function in EEG Analysis | Application Examples |

|---|---|---|---|

| Feature Extraction Methods | Cross-Frequency Coupling (CFC) [3] | Captures interactions between different frequency bands | Phase-Amplitude Coupling in motor imagery |

| Multi-Wavelet Decomposition [26] | Multi-resolution time-frequency analysis | Morlet-Haar combined framework for feature diversity | |

| Common Spatial Patterns (CSP) [26] | Enhances discriminability of spatial patterns | Motor imagery classification | |

| Relative Band Power (RBP) [29] | Quantifies power distribution across frequency bands | Dementia diagnosis using alpha, beta, gamma bands | |

| Optimization Algorithms | Particle Swarm Optimization (PSO) [3] | Selects optimal channel subsets | Reduced 25 channels to 8 without performance loss |

| Whale Optimization Algorithm (WOA) [26] | Optimizes hyperparameters of ensemble models | Tuned AdaBoost learning rate and weak learner count | |

| Grid Search with Cross-Validation [26] | Systematically explores parameter spaces | Optimized SVM penalty and kernel parameters | |

| Classification Models | XGBoost [3] [28] | Gradient boosting with high efficiency and interpretability | Motor imagery and mental workload classification |

| SVM-AdaBoost [26] | Ensemble of weak learners with boosting | High-accuracy (95.37%) MI classification | |

| Hybrid Deep Learning (CNN-LSTM) [29] [27] | Captures both spatial and temporal dependencies | HA-FuseNet for end-to-end MI classification | |

| Explainability Frameworks | SHAP (SHapley Additive exPlanations) [29] | Provides model interpretability and feature importance | Understanding feature contributions in dementia diagnosis |

| Datasets | BCI Competition IV-2a [3] [27] | Benchmark for motor imagery classification | 4-class MI data for model validation |

| STEW Dataset [28] | Simultaneous Task EEG Workload data | Mental workload estimation across tasks | |

| TUH EEG Corpus [30] | Large clinical EEG database | Training and validation of clinical applications |

Comparative Architecture Analysis

Comparative Architectures of Hybrid EEG Models

Discussion and Future Directions

The emergence of hybrid deep learning models represents a paradigm shift in EEG analysis, addressing fundamental challenges in brain signal interpretation. The CPX framework exemplifies how strategic integration of signal processing techniques (CFC), optimization algorithms (PSO), and modern machine learning (XGBoost) can create efficient systems with reduced channel requirements [3]. Similarly, feature-fusion approaches demonstrate that combining complementary feature types through ensemble methods can achieve exceptional accuracy [26]. The consistent theme across successful implementations is the synergistic combination of algorithms that compensate for each other's limitations.

Future development should focus on several critical areas. First, improving cross-subject generalization remains challenging, as evidenced by the performance gap between within-subject (77.89%) and cross-subject (68.53%) results in HA-FuseNet [27]. Second, explainable AI frameworks like SHAP need broader integration to enhance clinical acceptance [29]. Third, computational efficiency must be maintained as model complexity increases, particularly for real-time BCI applications. Finally, standardization of evaluation protocols and benchmarking across diverse datasets will accelerate clinical translation.

The progression toward lightweight, interpretable, and robust hybrid models points to a future where EEG-based technologies become ubiquitous in both clinical and consumer applications. As these frameworks mature, they will enable more natural human-computer interaction, personalized neurotherapy, and accessible cognitive monitoring systems.

Building the CPX CFC-PSO-XGBoost Framework: A Step-by-Step Methodology

Within the framework of CPX (CFC-PSO-XGBoost) research for motor imagery (MI) classification, the acquisition of clean electroencephalography (EEG) signals is paramount. EEG is susceptible to contamination by various physiological and non-physiological artifacts, which can severely compromise the extraction of meaningful Cross-Frequency Coupling (CFC) features and ultimately degrade the performance of the classifier [31] [3]. This document provides detailed application notes and protocols for effective EEG artifact removal, serving as a critical foundation for reliable MI-based brain-computer interface (BCI) development.

Quantitative Comparison of EEG Artifact Removal Techniques

Selecting an appropriate artifact removal method is a critical first step. The table below summarizes the performance of various contemporary techniques, providing a quantitative basis for selection.

Table 1: Performance Comparison of Deep Learning-Based Artifact Removal Models on Semi-Synthetic Data

| Model | Architecture Core | Artifact Types | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|---|

| CLEnet [31] | Dual-scale CNN + LSTM + EMA-1D | Mixed (EMG+EOG) | 11.498 | 0.925 | 0.300 | 0.319 |

| 1D-ResCNN [31] | Multi-scale CNN | Mixed (EMG+EOG) | - | - | - | - |

| NovelCNN [31] | CNN | EMG | - | - | - | - |

| EEGDNet [31] | Transformer | EOG | - | - | - | - |

| DuoCL [31] | CNN + LSTM | Mixed (EMG+EOG) | - | - | - | - |

| ART [32] | Transformer | Multiple | - | - | - | - |

Abbreviations: SNR (Signal-to-Noise Ratio), CC (Correlation Coefficient), RRMSEt (Relative Root Mean Square Error in temporal domain), RRMSEf (RRMSE in frequency domain). A higher SNR/CC and lower RRMSE indicate better performance.

Table 2: Performance of Traditional and Single-Channel Techniques for EOG Removal

| Method | Core Principle | Best For | Key Metrics & Performance | Limitations |

|---|---|---|---|---|

| ICA [33] [34] | Blind Source Separation | Multi-channel data, Ocular artifacts | Effective ocular artifact correction without EOG channel [33] | Requires many channels, stationarity, manual component inspection |

| PCA [33] | Variance-based Separation | Large-amplitude transient artifacts | Effective removal of large-amplitude idiosyncratic components [33] | May distort neural signals if not carefully applied |

| FF-EWT + GMETV [35] | Adaptive Wavelet Transform + Filtering | Single-channel EOG artifacts | High CC, Low RRMSE, improved SAR on real data [35] | Mode mixing risk, parameter tuning |

| SSA [35] | Subspace Decomposition | Single-channel, Low-frequency noise | Effective oscillatory component separation [35] | Requires careful threshold setting |

Detailed Experimental Protocols

Protocol 1: Semi-Automatic Preprocessing with ICA/PCA

This protocol is designed for multi-channel EEG data and emphasizes step-by-step quality checking to ensure the removal of large-amplitude artifacts without an EOG channel [33].

Workflow Diagram: Semi-Automatic EEG Preprocessing

Step-by-Step Procedure:

Bandpass Filtering & Bad Channel Interpolation

- Apply a bandpass filter (e.g., 1-40 Hz) to the raw, continuous data. A relatively high-pass filter (1-2 Hz) is critical for subsequent ICA decomposition [33].

- Optional but Recommended: Apply a notch filter (e.g., 50/60 Hz) to remove line noise.

- Identify and interpolate bad channels (e.g., based on abnormal variance or kurtosis).

ICA-Based Ocular Artifact Correction

- Select a Stationary Segment: To ensure proper ICA decomposition, select a segment of data that is stationary (lacks large, abrupt jumps) but contains ocular artifacts (e.g., blinks, eye movements). This can be a dedicated calibration task or a clean segment from the main data [33].

- Run ICA: Perform ICA decomposition (e.g., using Extended Infomax or SOBI algorithms) on this selected segment. Studies show the choice of ICA algorithm has a relatively small effect compared to other pipeline steps [34].

- Identify and Remove Ocular Components: Manually inspect the resulting independent components (ICs). ICs corresponding to eye blinks and movements are typically characterized by their topography (fronto-polar focus), time course (high amplitude, slow deflections for blinks), and power spectrum (dominance of low frequencies). Remove these artifact-related components.

- Apply Weights: Apply the calculated ICA weights to the entire, continuously recorded dataset to remove the ocular artifacts.

PCA-Based Large-Amplitude Artifact Correction

- Following ICA correction, apply Principal Component Analysis (PCA) to target large-amplitude, non-specific transient artifacts (e.g., muscle vibrations, remaining noise) that lack consistent statistical properties and are difficult to extract via ICA [33].

- Identify and remove principal components dominated by these artifacts.

Export Processed Data

- Export the fully corrected, continuous data for subsequent analysis and epoching in the CPX pipeline.

Protocol 2: Single-Channel EOG Removal for Portable EEG

This protocol is tailored for single-channel (SCL) portable EEG systems, where traditional multi-channel methods like ICA are not feasible [35].

Workflow Diagram: Single-Channel EOG Removal

Step-by-Step Procedure:

Signal Decomposition using FF-EWT

- Decompose the contaminated single-channel EEG signal using Fixed Frequency Empirical Wavelet Transform (FF-EWT). This method adaptively decomposes the signal into six Intrinsic Mode Functions (IMFs) or sub-band signals (SBSs) corresponding to standard EEG frequency bands (e.g., 0-4 Hz, 4-8 Hz, 8-13 Hz, etc.) [35].

Feature Extraction for EOG Identification

- For each resulting IMF/SBS, calculate a set of features to automatically identify those contaminated by EOG artifacts. Key features include:

- Kurtosis (KS): EOG artifacts are high-amplitude, transient events, leading to a high kurtosis value in the contaminated component [35].

- Power Spectral Density (PSD): EOG artifacts are dominant in the low-frequency range (0.5-12 Hz) [35].

- Dispersion Entropy (DisEn): This metric helps characterize the complexity and randomness of the signal.

- For each resulting IMF/SBS, calculate a set of features to automatically identify those contaminated by EOG artifacts. Key features include:

Automated Component Selection and Filtering

- Apply a pre-defined threshold to the extracted features to automatically identify and select the EOG-related components (IMFs).

- Apply a Generalized Moreau Envelope Total Variation (GMETV) filter to the selected components to suppress the artifact content while preserving the underlying neural signal [35].

Signal Reconstruction

- Reconstruct the clean EEG signal using the processed IMFs/SBSs (with EOG artifacts removed) and all other unprocessed components.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Algorithms for EEG Preprocessing

| Tool/Solution | Function in Preprocessing | Relevance to CPX Framework |

|---|---|---|

| ICA Algorithms (e.g., Infomax, SOBI) [33] [34] | Separates mixed signals into independent sources for manual or automated artifact rejection. | Critical for obtaining clean, multi-channel MI data required for high-quality CFC feature extraction. |

| FF-EWT + GMETV Framework [35] | Provides a fully automated pipeline for removing EOG artifacts from single-channel EEG. | Enables the use of low-channel, portable EEG systems for MI-BCI, aligning with CPX's goal of low-channel utilization [3]. |

| CLEnet Deep Learning Model [31] | End-to-end artifact removal for multi-channel EEG, effective against mixed and unknown artifacts. | Provides a state-of-the-art, automated method to ensure data quality prior to PSO-XGBoost classification. |

| Particle Swarm Optimization (PSO) [3] | Optimizes channel selection by identifying the most informative EEG electrodes. | Directly integrated into CPX; reduces data dimensionality and hardware requirements while maintaining classification accuracy [3]. |

| Transformer-based Models (e.g., ART) [32] | Uses self-attention mechanisms to capture long-range dependencies in EEG for denoising. | Represents the cutting-edge in artifact removal, potentially improving the signal quality for subsequent CFC analysis. |

Integration with the CPX Framework and Best Practices

The efficacy of the entire CPX (CFC-PSO-XGBoost) framework hinges on the quality of the input EEG signals. Effective artifact removal directly enhances the quality of CFC features, particularly Phase-Amplitude Coupling (PAC), which is sensitive to contamination from sources like EMG and EOG [3]. A clean signal allows the PSO algorithm to more accurately select physiologically relevant channels, rather than those dominated by artifact. Furthermore, it ensures that the XGBoost classifier models genuine brain activity patterns related to motor imagery, leading to more robust and accurate decoding [3].

For researchers implementing these protocols, visual and quantitative validation is essential. Always plot data before and after processing to verify artifact removal and neural signal preservation. For the CPX framework, it is critical to perform artifact removal before epoching data into trials for motor imagery. This ensures that the temporal structure of the data used for CFC analysis is not distorted. Finally, when comparing conditions or subjects, use the exact same preprocessing pipeline and parameters to maintain consistency and ensure that results reflect true neurological differences and not variations in data processing.

Within the CPX (CFC-PSO-XGBoost) framework for Motor Imagery (MI) classification, Covariance-based Feature Construction (CFC) serves as the foundational element for extracting discriminative spatial patterns from Electroencephalogram (EEG) signals. The primary objective of this component is to transform high-dimensional, noisy multi-channel EEG data into a lower-dimensional, informative feature set that maximizes the separability between different MI tasks. This is achieved by analyzing the covariance structure of the neural data, which captures the synergistic activity between different brain regions during mental tasks. The spatial filters derived from CFC are designed to enhance the signal-to-noise ratio by emphasizing neurophysiological patterns relevant to motor imagery, such as Event-Related Desynchronization (ERD) and Event-Related Synchronization (ERS), thereby providing optimized input for the subsequent PSO-based channel selection and XGBoost classification stages of the CPX pipeline [36] [3].

Theoretical Foundation and Algorithmic Variants

The core mathematical principle underlying CFC is the Common Spatial Pattern (CSP) algorithm and its modern derivatives. The standard CSP algorithm solves a generalized eigenvalue decomposition problem to find spatial filters that maximize the variance of one class while minimizing the variance of the other [21] [37]. Specifically, for multi-channel EEG data ( \mathbf{X}i \in \mathbb{R}^{C \times T} ) (with ( C ) channels and ( T ) time points), the covariance matrix for class ( n ) is estimated as ( \mathbf{\Gamma}n = \frac{1}{|{\epsilon}n|} \sum{i \in \epsilonn} \mathbf{X}i \mathbf{X}i^\top ). The objective is to find a spatial filter ( \mathbf{w} ) that maximizes the Rayleigh quotient: ( \mathbf{w}{\text{opt}} = \arg \max{\mathbf{w}} \frac{\mathbf{w}^\top \mathbf{\Gamma}1 \mathbf{w}}{\mathbf{w}^\top \mathbf{\Gamma}_2 \mathbf{w}} ) [37].

Numerous enhanced variants of CSP have been developed to address limitations such as sensitivity to noise and outliers, and to improve feature robustness. The table below summarizes key CFC variants relevant to the CPX framework:

Table 1: Key Covariance-based Feature Construction Methods for MI-BCI

| Method | Core Innovation | Advantage | Reported Performance |

|---|---|---|---|

| Filter Bank CSP (FBCSP) [21] | Applies CSP across multiple frequency bands. | Captures frequency-specific MI features. | Baseline for many improvements [21]. |

| Adaptive Spatial Pattern (ASP) [21] | Minimizes intra-class energy matrix & maximizes inter-class matrix. | Distinguishes overall energy characteristics; complements CSP. | Contributed to accuracies of 74.61% (Dataset 2a) and 81.19% (Dataset 2b) [21]. |

| Variance Characteristics Preserving CSP (VPCSP) [37] | Adds graph theory-based regularization to preserve local variance. | Improves robustness against outliers in projected space. | Achieved 87.88% accuracy on BCI Competition III IVa [37]. |

| Temporal Stability Learning Method (TSLM) [38] | Optimizes spatial filters to enhance temporal feature stability. | Reduces instability across time periods, improving robustness. | Achieved 84.45% on BCI Competition IV 2a [38]. |

| Regularized CSP (RCSP) [37] | Incorporates regularization terms (e.g., Tikhonov) into the CSP objective. | Mitigates overfitting and improves generalization. | A foundational framework for robust CSP [37]. |

Experimental Protocols and Workflows

Standard CSP Feature Extraction Protocol

The following protocol details the steps for extracting CSP features from preprocessed EEG data, forming a baseline for the CPX framework.

- Input: Epoched EEG data for two classes (e.g., left-hand vs. right-hand MI). Shape:

(n_trials, n_channels, n_timepoints). - Covariance Matrix Calculation:

- For each trial ( i ) of class ( k ), calculate the sample covariance matrix: ( \mathbf{\Gamma}i = \frac{\mathbf{X}i \mathbf{X}i^\top}{\text{trace}(\mathbf{X}i \mathbf{X}_i^\top)} ) [37]. Normalization by trace makes the covariance invariant to the total signal power.

- Average the covariance matrices separately for each class to obtain ( \mathbf{\Gamma}1 ) and ( \mathbf{\Gamma}2 ).

- Generalized Eigenvalue Decomposition:

- Feature Extraction:

- Project each trial onto the first and last ( m ) filters (e.g., ( m=3 )): ( \mathbf{Z} = \mathbf{W}^\top \mathbf{X} ).

- For each of the ( 2m ) projected components, compute the log-variance: ( fp = \log(\text{var}(\mathbf{z}p)) ). The resulting feature vector for the trial is ( \mathbf{f} = [f1, f2, ..., f_{2m}] ) [37].

Integrated CFC Workflow in the CPX Framework

The CFC component is not applied in isolation but is integrated into the broader CPX pipeline. The workflow below illustrates how CFC interacts with other components, such as the Particle Swarm Optimization (PSO) for channel selection.

Diagram 1: Integrated CFC Workflow in CPX Framework. The CFC process (green) transforms raw EEG into spatial features, which are then evaluated within a PSO optimization loop (red) to select the most informative channels.

Protocol for Advanced Regularized CSP (VPCSP)

For researchers requiring higher robustness against artifacts and outliers, the following protocol for Variance Characteristics Preserving CSP (VPCSP) is recommended [37].

- Input: Epoched EEG data for two classes.

- Graph Construction:

- For a projected component ( \mathbf{z} ), construct an adjacency matrix ( \mathbf{A} ) where ( A_{i,j} = 1 ) if ( |i-j| = l ) (a predefined interval, e.g., 3), otherwise 0. This connects points in the time series to model local smoothness.

- Laplacian Matrix Calculation:

- Compute the graph Laplacian ( \mathbf{L} = \mathbf{D} - \mathbf{A} ), where ( \mathbf{D} ) is the diagonal degree matrix.

- Modified Objective Function:

- The VPCSP objective incorporates a graph regularization term: ( \mathbf{w}{\text{opt}} = \arg \max{\mathbf{w}} \frac{\mathbf{w}^\top \mathbf{\Gamma}1 \mathbf{w}}{\mathbf{w}^\top \mathbf{\Gamma}2 \mathbf{w} + \alpha \cdot \mathbf{w}^\top \mathbf{X}^\top \mathbf{L} \mathbf{X} \mathbf{w}} ), where ( \alpha ) is a regularization hyperparameter. This term penalizes large differences between connected points in the projected signal, preserving local variance characteristics and reducing sensitivity to outliers [37].

- Feature Extraction: Continue with steps 3-4 of the standard CSP protocol.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for CFC Implementation

| Item / Reagent | Specification / Function | Implementation Note |

|---|---|---|

| EEG Datasets | BCI Competition IV 2a & 2b [21] [39], Physionet [40]. | Provides standardized, labeled MI-EEG data for benchmarking CFC methods. |

| CSP Algorithm | Baseline for spatial filtering. | Maximizes variance ratio between two classes [37]. Implement using generalized eigenvalue solvers (e.g., scipy.linalg.eig). |

| Regularization Parameter (α) | Controls trade-off between class separation and feature smoothness/robustness. | Critical in VPCSP [37] and RCSP [37]; optimal value is often subject-specific. |

| Filter Bank | Set of bandpass filters (e.g., 4-40 Hz, multiple bands). | Used in FBCSP to decompose EEG into frequency bands before applying CSP [21]. |

| Optimization Solver | PSO (Particle Swarm Optimization). | Used in the CPX framework for channel selection [36] [3] and in ASP for spatial filter computation [21]. |

| Feature Vector | Log-variance of spatially filtered signals [37]. | The final constructed feature set delivered to the classifier. |

Performance and Validation

The performance of various CFC methods is quantitatively assessed on public benchmarks. The following table summarizes key results, demonstrating the progression from standard CSP to more advanced regularized and adaptive methods.

Table 3: Quantitative Performance Comparison of CFC Methods on Benchmark Datasets

| Method | Dataset | Key Metric | Performance | Comparative Outcome |

|---|---|---|---|---|

| Standard CSP [36] | BCI Competition IV 2a | Average Accuracy | 60.2% ± 12.4% | Baseline |

| FBCSP [36] | BCI Competition IV 2a | Average Accuracy | 63.5% ± 13.5% | Improvement over CSP |

| ASP + CSP (FBACSP) [21] | BCI Competition IV 2a | Average Accuracy | 74.61% | Outperformed FBCSP by 11.44% |

| VPCSP [37] | BCI Competition III IVa | Classification Accuracy | 87.88% | Superior to other reported CSP variants |

| TSLM [38] | BCI Competition IV 2a | Classification Accuracy | 84.45% | Outperformed state-of-the-art spatial filtering methods |

| CPX Framework (Integrated CFC) [36] [3] | Benchmark MI-BCI Dataset | Average Accuracy | 76.7% ± 1.0% | Validates the efficacy of the CFC-PSO-XGBoost pipeline |

These results validate that advanced CFC methods, which focus on robustness (VPCSP, TSLM) and complementary feature extraction (ASP), significantly enhance MI classification accuracy compared to traditional CSP, thereby forming a strong foundation for the overall CPX framework.

Electroencephalography (EEG) provides a non-invasive, high-temporal-resolution window into brain dynamics, making it indispensable for diagnosing neurological disorders, conducting cognitive neuroscience research, and developing brain-computer interfaces (BCIs). A significant challenge in EEG analysis lies in decoding these complex, high-dimensional, and non-stationary signals to extract meaningful information. Within the broader CPX CFC-PSO-XGBoost framework for motor imagery classification, the Extreme Gradient Boosting (XGBoost) classifier serves as a powerful and robust engine for final decision-making. Its ability to manage diverse feature sets, resist overfitting, and deliver highly accurate, interpretable results makes it a cornerstone component for translating processed neural data into reliable classifications.

Performance Benchmarks: XGBoost in EEG Analysis

XGBoost has demonstrated state-of-the-art performance across a wide spectrum of EEG classification tasks. The following table summarizes its efficacy as reported in recent, high-quality studies.

Table 1: Performance of XGBoost in Various EEG Classification Applications

| Application Domain | Key EEG Features / Input | Performance Metrics | Citation |

|---|---|---|---|

| Multimodal Affective State Classification | Temporal & spectral features from in-ear PPG & behind-the-ear EEG, selected via ReliefF. | Accuracy: 97.58%Precision: 97.57%Recall: 97.57%F1-Score: 97.58% | [41] |

| ADHD Diagnosis | Power Spectral Density (PSD) from 19 channels across five frequency bands. | Accuracy: 90.81%F1-Score: 0.9347 | [42] |

| Epileptic Seizure Detection in Neonates | Deep features from STFT spectrograms extracted via Inception-ResNetV2. | Accuracy: 98.75%Precision: 98.56%Sensitivity: 98.36%Specificity: 98.91% | [43] |

| Disorders of Consciousness (DoC) Detection | A novel combined effective connectivity index. | Accuracy: 99.07%AUC: 98.74%Specificity: 99.77%Sensitivity: 97.71% | [44] |

| Emotion Recognition (Arousal, Valence, Dominance) | Features from EEG spectrograms using a 2DCNN. | Accuracy: ~99.77% (for valence and dominance) | [45] |

These results underscore XGBoost's versatility and power. Its strong performance is consistently linked to two factors: the use of discriminative input features and careful hyperparameter tuning, often with advanced optimization techniques like Bayesian optimization [41] or Particle Swarm Optimization (PSO) [43].

Experimental Protocols for XGBoost in EEG Classification

This section provides a detailed, step-by-step methodology for replicating a high-performance XGBoost pipeline for EEG classification, as exemplified by recent studies.

Protocol: Affective State Classification with Optimized XGBoost