Comparing BCI Paradigms: A Comprehensive Analysis of P300, SSVEP, and Motor Imagery for Research and Clinical Applications

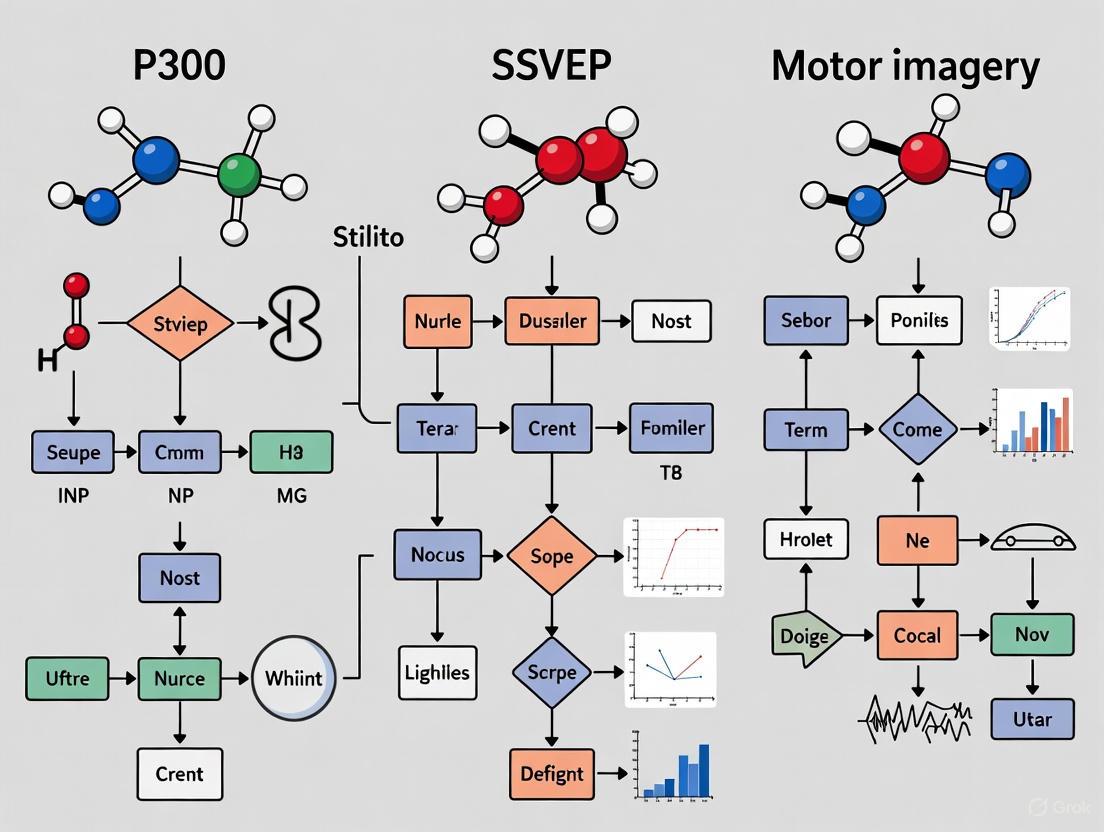

This article provides a systematic comparison of the three primary non-invasive Brain-Computer Interface (BCI) paradigms: P300 event-related potentials, Steady-State Visual Evoked Potentials (SSVEP), and Motor Imagery (MI).

Comparing BCI Paradigms: A Comprehensive Analysis of P300, SSVEP, and Motor Imagery for Research and Clinical Applications

Abstract

This article provides a systematic comparison of the three primary non-invasive Brain-Computer Interface (BCI) paradigms: P300 event-related potentials, Steady-State Visual Evoked Potentials (SSVEP), and Motor Imagery (MI). Tailored for researchers and biomedical professionals, it explores the foundational neurophysiological principles, methodological implementations, and decoding algorithms for each approach. The review critically examines performance metrics including classification accuracy and Information Transfer Rate (ITR), addresses key challenges such as BCI illiteracy and signal variability, and highlights emerging hybrid systems that combine multiple paradigms. With a focus on practical applications in neurorehabilitation, assistive technology, and drug development, this analysis synthesizes current research to guide paradigm selection and future development in clinical neuroscience.

Neurophysiological Foundations: Understanding P300, SSVEP, and Motor Imagery Signals

Brain-Computer Interface (BCI) technology has emerged as a transformative tool in neuroscience, offering direct communication pathways between the brain and external devices. Among the various electroencephalography (EEG) signals utilized in BCIs, the P300 event-related potential (ERP) holds particular significance due to its robust nature and minimal training requirements. The P300 is a positive deflection in the EEG that occurs approximately 300 ms after the presentation of a rare, task-relevant stimulus within a sequence of frequent, standard stimuli, a classic setup known as the "Oddball Paradigm" [1] [2]. This cognitive potential is widely recognized as a neural correlate of attention and working memory updating, making it a valuable probe for investigating fundamental cognitive processes [3].

This article provides a systematic comparison of the P300 oddball paradigm against other prominent BCI paradigms, namely the Steady-State Visual Evoked Potential (SSVEP) and Motor Imagery (MI). The objective is to furnish researchers, scientists, and drug development professionals with a clear, data-driven overview of their performance characteristics, operational mechanisms, and optimal application contexts. By synthesizing recent experimental data and methodological insights, this guide aims to inform paradigm selection for both basic cognitive research and applied clinical or commercial development.

Comparative Analysis of BCI Paradigms: P300, SSVEP, and Motor Imagery

The landscape of non-invasive BCIs is largely dominated by three major paradigms, each with distinct neural correlates and operational requirements. Table 1 provides a consolidated comparison of their core attributes and performance metrics, synthesizing data from multiple studies.

Table 1: Performance Comparison of P300, SSVEP, and Motor Imagery BCI Paradigms

| Feature | P300 (Oddball) | Steady-State Visual Evoked Potential (SSVEP) | Motor Imagery (MI) |

|---|---|---|---|

| Underlying Signal | Endogenous event-related potential (ERP) [2] | Exogenous, periodic neural response to flicker [1] | Endogenous sensorimotor rhythm (de)synchronization [4] |

| Typical Eliciting Task | Mental count of rare target stimuli [5] [6] | Gaze at or focus on a flickering stimulus [1] | Imagination of body movement (e.g., hand squeeze) [4] [7] |

| Key Cognitive Process | Context updating, attention, and decision making [3] | Attentional modulation of visual cortex response [1] | Activation of sensorimotor cortex networks [4] |

| Training Requirements | Minimal to none [2] | Minimal to none [2] | Requires significant user training [4] [2] |

| Information Transfer Rate (ITR) | Moderate (e.g., ~12 bits/min in classic speller) to High in hybrids [2] [8] | High [1] [8] | Generally lower than P300 and SSVEP [4] |

| Typical Accuracy | High (e.g., >90% in spellers) [1] [2] | High (e.g., ~89% in spellers) [1] | Variable and user-dependent [4] |

| Major Advantage | Low training, intuitive, good for communication [2] | High ITR, robust signal, multiple commands [1] [8] | Does not require external stimulation, good for motor rehabilitation [4] |

| Major Disadvantage | Requires averaging, slower than SSVEP [9] | Can cause visual fatigue, risk for photosensitive epilepsy [8] | High "illiteracy" rate, requires extensive training [9] |

The P300 paradigm's primary strength lies in its low training demand and high accuracy for discrete command tasks, such as spelling [2]. However, its speed is constrained by the need for signal averaging across multiple trials to achieve a sufficient signal-to-noise ratio. In contrast, SSVEP-based systems often achieve higher ITRs but can induce visual fatigue and are unsuitable for individuals with photosensitivity [8]. Motor Imagery, while powerful for rehabilitation applications due to its engagement of sensorimotor circuits, suffers from variable performance across users and a steep learning curve [4] [9].

Experimental Protocols and Methodologies

A critical understanding of BCI paradigms requires insight into their standard experimental protocols. The following sections detail the methodologies for eliciting and analyzing the key signals.

The P300 Oddball Paradigm

The canonical P300 oddball paradigm involves presenting the user with a random sequence of two types of stimuli: a frequent "standard" and an infrequent "target." The subject is instructed to perform a mental task, such as silently counting, each time the target appears [5] [6].

- Stimuli and Probability: In a visual P300 speller, a matrix of characters is displayed. Rows and columns are flashed in random order. The target character is the one located at the intersection of the flashed row and column that the user is focusing on. The low probability of the target row/column flash is key to eliciting a strong P300 response. Typical target probabilities range from 0.10 to 0.30 [5] [2].

- Timing Parameters: A single trial involves a stimulus presentation (e.g., a row flash) of short duration (typically 100-200 ms), followed by an inter-stimulus interval (ISI) of 500-800 ms before the next stimulus. The P300 component is typically analyzed in EEG epochs from 0 to 600 ms post-stimulus [4].

- EEG Recording and Preprocessing: EEG is recorded from multiple scalp electrodes (e.g., using the international 10-20 system). Key electrodes for P300 detection are often located over parietal and central sites (e.g., Pz, Cz). Standard preprocessing includes bandpass filtering (e.g., 0.1-30 Hz) and artifact removal for blinks and eye movements [4] [3].

- Signal Analysis: The recorded EEG is segmented into epochs time-locked to each stimulus. Epochs are then averaged separately for target and non-target stimuli to reveal the P300 wave, which peaks around 300-500 ms at the parietal cortex. Classification algorithms like Support Vector Machines (SVM) and Linear Discriminant Analysis (LDA) are used for single-trial detection in BCIs [1] [2].

The following diagram illustrates the logical structure and cognitive processes engaged by the oddball paradigm.

The Single-Stimulus P300 Paradigm

A notable variation is the "single-stimulus" paradigm, where only the target stimulus is presented overtly, and the standard stimulus is replaced by silence or a pause. Studies have shown that this paradigm produces P300 components with similar amplitudes and morphologies to the traditional oddball, with the inter-target interval being a critical factor. This suggests the brain's context-updating mechanism is driven by the temporal probability of a significant event, even without explicit, frequent standard stimuli [5] [6]. This paradigm is useful in applied settings where a very simple task is required [5].

Hybrid P300/SSVEP Paradigm

To overcome the limitations of single-paradigm systems, hybrid BCIs have been developed. A prominent example is the hybrid P300/SSVEP speller.

- Stimulus Paradigm: The interface is typically a grid of characters (e.g., 6x6). Each row and column is assigned a unique flickering frequency to evoke SSVEP (e.g., from 6.0 to 11.5 Hz). Simultaneously, the rows and columns flash in a pseudorandom sequence to elicit the P300 potential [1].

- Task: The user focuses on a target character. This action simultaneously evokes both an SSVEP response at the specific frequency assigned to its row and column, and a P300 potential when that row and column are flashed [1] [9].

- Signal Processing and Fusion: The SSVEP signal is typically analyzed using frequency-domain methods like Canonical Correlation Analysis (CCA) or task-related component analysis (TRCA). The P300 is detected in the time-domain using classifiers like SVM. The classification outcomes from both pathways are then fused using a weighted sum or a voting scheme to make a final decision, significantly improving accuracy over either single paradigm [1].

Table 2: Key Research Reagents and Materials for a Hybrid P300/SSVEP BCI Experiment

| Item Category | Specific Examples & Functions |

|---|---|

| EEG Acquisition System | NVX52 DC amplifier [4], DSI-24 dry electrode headset [7], or similar wet electrode systems (e.g., BrainVision). Function: Records raw neural signals from the scalp. |

| Stimulus Presentation Hardware | Standard LCD monitor [2] or custom LED arrays [8]. Function: Presents visual flicker for SSVEP and flash patterns for P300. LED arrays offer more precise temporal control [8]. |

| Stimulus Presentation Software | Custom applications built in Unity [7], Python (using Psychopy or PyGame), or MATLAB. Function: Controls stimulus timing, sequence, and paradigm logic. |

| Signal Processing & Classification Algorithms | P300: Support Vector Machine (SVM) [1], Linear Discriminant Analysis (LDA). SSVEP: Ensemble Task-Related Component Analysis (TRCA) [1], Canonical Correlation Analysis (CCA). Function: Extracts features and translates EEG signals into commands. |

| Data Streaming Framework | Lab Streaming Layer (LSL) [7]. Function: Synchronizes EEG data with stimulus markers for precise temporal analysis. |

The experimental workflow for a typical hybrid BCI system, integrating both hardware and software components, is depicted below.

Quantitative Performance Data

Empirical data from controlled studies provides the most compelling evidence for comparing BCI paradigms. Table 3 summarizes key performance metrics from recent research, highlighting the performance of individual paradigms and the synergistic effect of their integration.

Table 3: Experimental Performance Metrics from BCI Studies

| Study Paradigm | Reported Accuracy (%) | Information Transfer Rate (ITR) | Key Experimental Parameters |

|---|---|---|---|

| Classic P300 Speller (RCP) [2] | Up to 95% | ~12 bits/min | 6x6 matrix; Row/Column flashing; ~12 flashes per trial. |

| P300 (Single-Stimulus Paradigm) [5] | Similar to Oddball | Similar to Oddball | Auditory stimuli; Inter-target interval matched to oddball ISI. |

| SSVEP Speller [1] | 89.13% (Offline) | Not Specified | 6x6 grid; Frequency coding (6.0-11.5 Hz). |

| Motor Imagery BCI [4] | Distinguished from P300 (No overall %) | Lower than P300/SSVEP | Two stimuli; Mental counting vs. motor imagery tasks. |

| Hybrid P300/SSVEP (FERC Paradigm) [1] | 96.86% (Offline) 94.29% (Online) | 28.64 bits/min | 6x6 grid; Frequency-enhanced RC paradigm; SVM + TRCA fusion. |

| Hybrid P300/SSVEP (LED-based) [8] | 86.25% (Online) | 42.08 bits/min | 4-direction control; LED stimuli; FFT + P300 peak detection. |

The data clearly demonstrates that hybrid systems, particularly those combining P300 and SSVEP, can surpass the performance of either single paradigm, achieving higher accuracy and robust information transfer rates [1] [8]. This makes hybrid BCIs a compelling choice for advanced applications requiring high reliability.

The P300 oddball paradigm remains a cornerstone of cognitive neuroscience and BCI research due to its direct linkage to fundamental cognitive processes and its ease of use. While it offers high accuracy and minimal training, its performance in terms of speed can be outperformed by the SSVEP paradigm. The choice of paradigm is ultimately application-dependent. For communication systems requiring high speed and where users can tolerate flickering stimuli, SSVEP may be optimal. For rehabilitation focusing on motor network plasticity, Motor Imagery is most appropriate. For general-purpose, intuitive, and high-accuracy control, the P300 paradigm is an excellent choice. The future of high-performance BCIs, however, appears to lie in hybrid systems. By intelligently combining the strengths of multiple paradigms like P300 and SSVEP, researchers can create systems that are not only more accurate and faster but also more adaptable to a wider range of users and environments, thereby accelerating the translation of BCI technology from the laboratory to real-world applications.

Steady-State Visual Evoked Potentials (SSVEPs) are a cornerstone of non-invasive Brain-Computer Interface (BCI) technology, characterized by neural oscillations in the visual cortex that are entrained to the frequency of an external rhythmic visual stimulus [10] [11]. As a reactive BCI paradigm, SSVEP requires no user training and offers high information transfer rates (ITR) and robust performance, making it a popular choice for communication systems and assistive technologies [10] [12]. This guide provides a systematic comparison of the SSVEP paradigm against two other dominant non-invasive BCI approaches: the P300 event-related potential and Motor Imagery (MI). Framed within a broader thesis on BCI paradigms, this article objectively compares their performance using recent experimental data, details key methodologies, and outlines essential research tools, serving as a reference for researchers and scientists in the field.

Performance Comparison of BCI Paradigms

The performance of BCI paradigms is typically quantified by metrics such as classification accuracy, information transfer rate (ITR, in bits/min), and user comfort. The table below synthesizes experimental data from recent studies to provide a direct comparison of the SSVEP, P300, and Motor Imagery paradigms.

Table 1: Experimental Performance Comparison of Major Non-Invasive BCI Paradigms

| Paradigm | Reported Accuracy (%) | Information Transfer Rate (ITR) | Key Strengths | Major Limitations |

|---|---|---|---|---|

| SSVEP | 76.5% - 96.71% [13] [14] | 27.55 - 70.42 bits/min [13] [14] | High ITR, No user training required, Multi-target capability [10] [15] | Causes visual fatigue, Requires stable gaze, Performance depends on stimulus design [10] [13] |

| P300 | ~96% (in hybrid systems) [15] | Up to 27.10 bits/min (in hybrid systems) [13] | High accuracy, Reliable for spellers [15] | Lower ITR than SSVEP, Requires rare "oddball" stimuli, Can be slow [15] |

| Motor Imagery (MI) | Up to 97.5% (with novel cues) [16] | Not prominently reported | Stimulus-independent, More "natural" control, Effective for motor rehabilitation [17] [16] [15] | Requires extensive user training, "BCI-Illiteracy" in 15-30% of users, Lower accuracy without advanced processing [16] [18] |

The data reveals a trade-off between the high speed and accuracy of reactive paradigms (SSVEP, P300) and the autonomy offered by active paradigms like MI. SSVEP consistently achieves the highest ITRs, making it suitable for applications requiring fast, discrete commands. Recent innovations in SSMVEP (Steady-State Motion VEP) aim to mitigate its primary drawback—visual fatigue—by using moving stimuli instead of flickering lights, achieving an accuracy of 83.81% [10]. Furthermore, the integration of code-modulated VEP (c-VEP) with Mixed Reality (MR) has demonstrated performance parity with traditional screens (96.71% accuracy, 27.55 bits/min), highlighting a path toward practical, portable BCIs [13].

Detailed Experimental Protocols

To ensure the reproducibility of BCI experiments, this section outlines the standard methodologies for the primary paradigms, with a focus on the novel SSMVEP protocol.

SSVEP and SSMVEP Protocol

Objective: To evoke and record frequency-tagged neural responses using flickering or moving visual stimuli, and to classify the target based on these responses [10] [14].

Key Methodology Details:

Stimulus Design:

- Traditional SSVEP: Visual stimuli (e.g., squares) flicker at distinct frequencies (e.g., 15Hz, 20Hz) [15].

- Innovative SSMVEP (Bimodal Motion-Color): Stimuli consist of concentric "Newton's rings" that oscillate radially. A bimodal design integrates color contrast (e.g., red/green) with motion, with luminance carefully controlled using the formula:

L(r,g,b) = C1(0.2126R + 0.7152G + 0.0722B)to avoid flicker [10]. The area ratio of the rings to the background is a key parameter, optimized at 0.6 in recent studies [10].

EEG Acquisition:

- Subjects: Typically 10-20 subjects with normal or corrected-to-normal vision [10] [13].

- Electrode Placement: 6-16 electrodes over the parietal and occipital lobes (e.g., Pz, O1, Oz, O2) according to the 10-20 system [10] [16].

- Equipment & Settings: EEG data is recorded using amplifiers like the g.USBamp at a 1200 Hz sampling rate. Data is band-pass filtered (2-100 Hz) and a notch filter (48-52 Hz) is applied to remove line noise [10].

Signal Processing & Classification:

- Feature Extraction: The Power Spectral Density (PSD) is computed using Fast Fourier Transform (FFT) to identify the frequency component with the highest amplitude [10]. For MEG-based SSVEF, a Spatial Distribution Analysis (SDA) algorithm that uses the center of gravity of the synchronization index distribution has shown superior performance [19].

- Classification: The target is identified as the stimulus frequency whose fundamental or harmonic frequency matches the peak in the PSD. Machine learning classifiers like EEGNet (a deep learning model) are also employed for higher performance [10].

Figure 1: SSVEP/SSMVEP Experimental Workflow. The process begins with visual stimulation and ends with a classified command, passing through key stages of signal acquisition and processing.

Motor Imagery (MI) Protocol

Objective: To decode the user's intent from sensorimotor rhythms (mu/beta waves) that are modulated when they imagine a movement without physically performing it [16] [18].

Key Methodology Details:

- Stimulus & Cue Design: Participants are cued to imagine specific movements (e.g., left hand, right hand). Recent studies have moved beyond simple arrow cues to pictures of hands or instructional videos to improve user immersion and accuracy [16].

- EEG Acquisition:

- Signal Processing & Classification:

- Preprocessing: Band-pass filtering in mu (7-13 Hz) and beta (12-30 Hz) ranges [18].

- Feature Extraction: Spatial filtering using Common Spatial Patterns (CSP) to maximize variance between two classes of MI tasks [16].

- Classification: Standard classifiers like Linear Discriminant Analysis (LDA) and Support Vector Machines (SVM) are used [16]. Transfer learning from Motor Execution (ME) to MI tasks is an emerging approach that reduces calibration time [17].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful BCI research relies on a suite of specialized hardware and software tools. The following table catalogues the essential solutions used in the featured experiments.

Table 2: Key Research Reagent Solutions for BCI Experimentation

| Item Name | Function / Application | Example Use Case |

|---|---|---|

| g.USBamp Amplifier | High-quality EEG signal acquisition and analog-to-digital conversion. | Used in SSMVEP studies for 6-channel recording at 1200 Hz [10]. |

| g.Nautilus PRO | Portable, wireless EEG headset with up to 16 channels. | Employed in Motor Imagery paradigm research with healthy and post-stroke subjects [16]. |

| Emotiv EPOC X | Consumer-grade, research-validated EEG headset (14 channels). | Explored for scalable, multiclass MI-BCI systems [18]. |

| AR/MR Glasses | Presents visual stimuli in an augmented or mixed reality environment. | Enabled c-VEP BCI studies outside of traditional lab settings [13] [14]. |

| Newton's Rings Paradigm | Visual stimulus for SSMVEP that uses radial motion to reduce fatigue. | Served as the core stimulus in the bimodal motion-color SSVEP study [10]. |

| EEGNet | Deep learning convolutional neural network for EEG classification. | Achieved high accuracy in classifying SSMVEP responses [10]. |

| CAR + CSP Algorithm | Common Average Reference & Common Spatial Patterns for feature extraction. | Used to extract discriminative features from MI EEG data [16]. |

The comparative analysis underscores that the "best" BCI paradigm is inherently application-dependent. SSVEP, particularly in its modern SSMVEP and c-VEP implementations, remains the uncontested leader for raw speed and classification accuracy, a fact robustly demonstrated by ITRs exceeding 70 bits/min [14]. Its primary challenge of user fatigue is being actively addressed through innovative stimulus designs that integrate motion and controlled color contrast [10]. In contrast, Motor Imagery BCIs, while slower and requiring significant user training, offer a unique value proposition for neurorehabilitation and applications where external stimulation is impractical [17] [16]. The future of BCI lies not only in refining individual paradigms but also in developing intelligent hybrid systems that leverage the complementary strengths of SSVEP, P300, and MI to create more versatile, robust, and user-friendly brain-computer interfaces.

This guide provides a comparative analysis of three primary electroencephalography (EEG)-based Brain-Computer Interface (BCI) paradigms: Motor Imagery (MI), P300, and Steady-State Visual Evoked Potential (SSVEP). For researchers in drug development and neuroscience, understanding the performance characteristics, experimental protocols, and hardware requirements of these paradigms is critical for selecting the appropriate tool for specific applications, from cognitive monitoring to neurorehabilitation.

Performance Comparison of BCI Paradigms

The table below summarizes the core characteristics and performance metrics of the three major BCI paradigms, based on current research.

| Paradigm | Main Physiological Principle | Typical Control Signal | Key Performance Metrics (Reported Ranges) | Primary Advantages | Primary Challenges |

|---|---|---|---|---|---|

| Motor Imagery (MI) | Event-Related Desynchronization/Synchronization (ERD/ERS) of sensorimotor rhythms [9] | Imagination of body movement (e.g., hands, feet) [9] | - Accuracy: Varies significantly with user proficiency and algorithm choice [20].- ITR: Generally lower than evoked potentials [2].- Training Required: Extensive user training often needed [1] [2]. | Does not require external stimulation; more endogenous control [2]. | Significant "BCI illiteracy" problem; not all users can achieve proficient control [9] [20]. |

| P300 | Positive deflection in EEG ~300ms after a rare "target" stimulus in an oddball paradigm [9] [2] | Attention to an infrequent flash among a series of standard flashes [9] | - Accuracy: Up to 100% in some hybrid paradigms [9].- ITR: Highly dependent on flash rate; optimal rate is user-specific (e.g., 8-32 Hz) [21].- Training: Minimal training required [9]. | High accuracy achievable; minimal user training [9] [2]. | Requires multiple signal averages for good performance, which can reduce speed [9]. |

| SSVEP | Periodic brain response in visual cortex synchronized to a flickering visual stimulus [9] [22] | Gaze at a visual stimulus flickering at a specific frequency [9] | - Accuracy: Can be high (e.g., 89.13% in offline tests) [1].- ITR: Among the fastest BCIs [9] [1].- Training: Minimal training required [22]. | High information transfer rate (ITR); multiple commands with few electrodes [22] [1]. | Can cause visual fatigue and discomfort; small seizure risk [9]. |

Comparative Insight: While MI offers a more endogenous form of control, P300 and SSVEP paradigms generally provide higher accuracy and faster communication speeds with less user training, making them strong candidates for specific assistive technologies [9] [1] [2]. However, the development of hybrid systems that combine paradigms is a leading trend to overcome the limitations of single-method approaches [9] [1].

Detailed Experimental Protocols

To ensure reproducible results, the following sections detail the standard experimental methodologies for each paradigm.

Motor Imagery (MI) with ERD/ERS

The MI paradigm relies on the user's mental rehearsal of a movement without any physical execution. This activates neural networks in the sensorimotor cortex, leading to observable changes in oscillatory brain activity.

Procedure:

- Cue Presentation: A visual or auditory cue instructs the user on which movement to imagine (e.g., a left-hand or right-hand grasp).

- Imagery Period: The user performs the cued motor imagery for a defined period, typically 3-5 seconds. During this time, ERD (a power decrease) is observed in the mu (8-13 Hz) and beta (13-30 Hz) rhythms over the contralateral sensorimotor cortex.

- Rest Period: A break of several seconds is provided between trials to allow brain rhythms to return to baseline, characterized by ERS (a power increase).

- Data Acquisition: EEG is recorded throughout, with emphasis on electrodes over the sensoromotor cortex (e.g., C3, Cz, C4).

Key Analysis Method: A common challenge is improving decoding accuracy from EEG. Recent advances use Deep Learning (DL) with Transfer Learning (TL). For instance, a model can be trained on data from actual Motor Execution (ME), which produces clearer signals, and then applied to classify Motor Imagery (MI) without retraining, leveraging the shared neural patterns between execution and imagination [17].

P300 Evoked Potential

The P300 is an event-related potential elicited when a user attends to a rare target stimulus interspersed with frequent non-target stimuli.

Procedure:

- Stimulus Presentation: A matrix of characters or symbols is displayed. Rows and columns of the matrix flash in a pseudo-random sequence [2].

- User Task: The user focuses on a target character and mentally counts each time the row or column containing that character flashes.

- EEO Recording: EEG is recorded, typically from central and parietal electrodes (e.g., Fz, Cz, Pz). The P300 potential appears as a positive peak around 300ms post-stimulus at the Pz electrode when the target row/column flashes [21].

- Signal Averaging: Responses to multiple flashes are averaged to enhance the signal-to-noise ratio of the P300.

Key Parameter - Flash Rate: The stimulus presentation rate significantly affects performance. Lower flash rates (e.g., 4 Hz) generally produce larger P300 amplitudes and higher accuracy, but the optimal rate for maximizing characters per minute is user-specific and can range from 8 to 32 Hz [21].

Steady-State Visual Evoked Potential (SSVEP)

SSVEPs are elicited by presenting a visual stimulus that flickers at a fixed frequency, inducing a brain response at the same (fundamental) frequency and its harmonics.

Procedure:

- Stimulus Presentation: Multiple visual stimuli (e.g., boxes on a screen), each flickering at a distinct frequency (e.g., from 6.0 to 11.5 Hz), are presented simultaneously [1].

- User Task: The user focuses their gaze on one target stimulus.

- EEG Recording: EEG is recorded from occipital electrodes (e.g., O1, Oz, O2, POz), where the visual cortex response is strongest.

- Frequency Recognition: The target is identified by finding which stimulus frequency elicits the strongest SSVEP response in the user's EEG, often using methods like Ensemble Task-Related Component Analysis (TRCA) or Canonical Correlation Analysis (CCA) [1].

Innovation - SSMVEP: To reduce visual fatigue from traditional flickering, a Steady-State Motion Visual Evoked Potential (SSMVEP) paradigm can be used. This uses motion patterns, like expanding/contracting Newton's rings, instead of simple luminance flicker. Performance can be further enhanced by integrating color contrast, creating a bimodal motion-color stimulus that achieves higher accuracy (e.g., 83.81%) and improved user comfort [22].

Signaling Pathways and Experimental Workflow

The diagram below illustrates the logical workflow of a standard BCI experiment, from stimulus presentation to command output, and highlights the distinct neural pathways activated by different paradigms.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential materials and software tools used in BCI research, as cited in the literature.

| Item | Function in BCI Research | Example Use Case / Note |

|---|---|---|

| g.USBamp Amplifier (g.tec) | Multi-channel EEG signal acquisition and amplification [21] [22]. | Used in both P300 and SSVEP studies for high-quality data recording [21] [22]. |

| Electro-Cap International Cap | Holds EEG electrodes in standardized positions (10-20 system) on the scalp [21]. | Ensures consistent electrode placement across subjects and sessions [21]. |

| BCI2000 Software Platform | A general-purpose software platform for data acquisition, stimulus presentation, and protocol design [21]. | Widely used in academic research for controlling all aspects of BCI experiments [21]. |

| Support Vector Machine (SVM) | A machine learning algorithm for classifying EEG features, such as P300 potentials [1]. | Used for single-trial P300 detection, outperforming linear classifiers in some hybrid spellers [1]. |

| Ensemble Task-Related Component Analysis (TRCA) | A method for frequency recognition in SSVEP-based BCIs [1]. | Achieves higher classification accuracy for SSVEP than canonical correlation analysis (CCA) [1]. |

| EEGNet | A compact convolutional neural network (CNN) architecture for EEG-based BCIs [22]. | Used for classification of SSVEP and SSMVEP paradigms [22]. |

| Deep Learning (e.g., EEGSym) | Applies transfer learning for tasks like classifying Motor Imagery, potentially using data from Motor Execution [17]. | Can bridge the gap between different BCI paradigms and reduce calibration time [17]. |

In conclusion, the choice between Motor Imagery, P300, and SSVEP paradigms involves a direct trade-off between the endogenous control and hardware simplicity offered by MI, and the higher accuracy and speed provided by the evoked P300 and SSVEP responses. The ongoing development of hybrid systems and advanced machine learning decoders is a key frontier in overcoming the limitations of any single paradigm [9] [1] [17].

Distinct Neural Generators and Cortical Origins of Each Paradigm

Brain-Computer Interfaces (BCIs) translate brain activity into commands for external devices, offering groundbreaking potential in neurorehabilitation and assistive technologies. The efficacy of a BCI system is fundamentally determined by its underlying paradigm—the specific mental task or external stimulus used to generate a measurable neural signal. The P300 event-related potential, Steady-State Visual Evoked Potential (SSVEP), and Motor Imagery (MI) represent three of the most established and widely researched BCI paradigms. Each paradigm originates from distinct neurophysiological processes and engages separable cortical networks. This guide provides a detailed comparison of these three paradigms, focusing on their unique neural generators, cortical origins, and experimental performance metrics, to inform researchers and developers in selecting the optimal paradigm for specific applications.

The table below summarizes the core characteristics, neural generators, and performance metrics of the P300, SSVEP, and Motor Imagery BCI paradigms.

Table 1: Comparative Overview of P300, SSVEP, and Motor Imagery BCI Paradigms

| Feature | P300 | SSVEP | Motor Imagery |

|---|---|---|---|

| Paradigm Type | Evoked / Exogenous | Evoked / Exogenous | Spontaneous / Endogenous |

| Key Neural Marker | Positive ERP ~300ms post-stimulus | Oscillatory EEG at stimulus frequency (and harmonics) | Event-Related Desynchronization/Synchronization (ERD/ERS) in mu/beta rhythms |

| Primary Cortical Origins | Temporo-Parietal Junction, Frontal Cortex [21] | Primary and Secondary Visual Cortex (V1, V2) [23] [24] | Contralateral Sensorimotor Cortex [25] [26] |

| Stimulus Requirement | Rare, target stimuli within an oddball sequence | Repetitive visual flicker at constant frequency | Mental rehearsal of movement without external stimulus |

| Typical Accuracy | ~95% (Tactile P300) [27] | ~79-87% (Hybrid SSVEP-OSP) [28] | ~86.5% (Deep Learning Classification) [29] |

| Information Transfer Rate (ITR) | Varies with flash rate [21] | Up to ~53.8 bits/min [30] | Highly variable and user-dependent |

| User Training Load | Low / Minimal | Low / Minimal | High / Requires extensive user training |

Neural Generators and Cortical Origins

Understanding the distinct brain regions and neural circuits that generate each paradigm's signal is crucial for paradigm selection and signal interpretation.

P300 Paradigm

The P300 is an event-related potential (ERP) characterized by a positive deflection in the EEG signal occurring approximately 300 milliseconds after the presentation of a rare, task-relevant "target" stimulus within a stream of standard, non-target stimuli [21]. Its generation involves a distributed network. Key contributors include the temporo-parietal junction (TPJ), which is involved in attention and context updating, and frontal cortical areas. The P300 amplitude is highly sensitive to the "oddball" effect and is modulated by the stimulus presentation rate; lower flash rates (e.g., 4-8 Hz) generally produce larger amplitudes and higher classification accuracies compared to faster rates (e.g., 32 Hz) [21].

SSVEP Paradigm

The Steady-State Visual Evoked Potential is an oscillatory brain response entrained to the frequency of a repetitive visual stimulus. When a user gazes at a flickering light, the visual cortex produces EEG activity at the same frequency (the fundamental) and its harmonics [24]. The primary neural generators of the SSVEP are located in the primary (V1) and secondary (V2) visual cortices in the occipital lobe [23]. Evidence from electrocorticography (ECoG) studies confirms that SSVEPs can be reliably recorded directly from the cortical surface, with a single electrode over the primary visual cortex often sufficing for high-accuracy decoding [24]. Hybrid paradigms that combine SSVEP with other signals, such as the omitted stimulus potential (OSP), further enhance robustness by engaging additional temporal dynamics [28].

Motor Imagery Paradigm

Motor Imagery involves the mental simulation of a movement without any physical execution. This cognitive process activates brain regions that largely overlap with those involved in actual movement execution. The primary neural correlate is the Event-Related Desynchronization (ERD)—a decrease in power within the mu (8-12 Hz) and beta (13-30 Hz) frequency bands over the contralateral sensorimotor cortex [26]. For instance, imagining a right-hand movement would typically cause ERD over the left sensorimotor cortex. This paradigm directly engages the mirror neuron system and motor cortex, making it particularly suitable for motor rehabilitation applications [25]. Advanced deep learning models, such as transformer-based architectures, are now being employed to better capture the complex spatial-temporal features of MI-EEG, achieving high classification accuracies [29].

The following diagram illustrates the primary cortical origins of the neural signals for each BCI paradigm.

Detailed Experimental Protocols and Data

This section details the methodologies from key studies to provide a practical reference for experimental design.

A Typical P300 BCI Experiment (Stimulus Rate Investigation)

This study investigated how stimulus presentation rate affects P300 speller performance [21].

- Stimulus Paradigm: An 8×9 matrix of characters was presented. Rows and columns flashed in groups of 6, with the target character flashing as part of a rare "oddball" sequence.

- Parameters Tested: Four different flash rates (4, 8, 16, and 32 Hz) were compared. The duration of the flash was always half of the time between flash onsets.

- EEG Recording: Data was collected from 16 scalp electrodes (F3, Fz, F4, T7, C3, Cz, C4, T8, CP3, CP4, P3, Pz, P4, PO7, Oz, PO8), referenced to the right mastoid.

- Signal Processing & Classification: Data was filtered (0.5-30 Hz). Stepwise linear discriminant analysis (SWLDA) was applied to features from 8 channels (Fz, Cz, P3, Pz, P4, PO7, Oz, PO8) from a 0-800 ms post-stimulus window.

- Key Result: Lower flash rates (4 Hz and 8 Hz) produced significantly larger P300 amplitudes and higher classification accuracies, though the optimal rate for information transfer rate (ITR) varied among users.

A Hybrid SSVEP-based BCI Experiment (Integrating Omitted Stimuli)

This study introduced a novel hybrid BCI that simultaneously leverages SSVEP and the Omitted Stimulus Potential (OSP) [28].

- Stimulus Paradigm: Four discs flickered from black to white at a fixed frequency (e.g., 15 Hz or 20 Hz). Periodically, a "flicker" was omitted (a "missing event"), creating a predictable deviation. Two patterns were tested: "missing black disc" and "missing white disc."

- EEG Recording: EEG was acquired from 10 occipital and parietal electrodes (O1, O2, Oz, PO3, POz, PO4, PO7, PO8, Pz, Cz) using a g.USBamp amplifier.

- Hybrid Feature Extraction & Classification:

- SSVEP: Canonical Correlation Analysis (CCA) was used in the frequency domain to identify the target based on the steady-state response.

- OSP: A classifier combining Support Vector Machine (SVM) and Bayesian fusion was used in the time domain to detect the brain's response to the omitted stimulus.

- Key Result: The hybrid approach yielded an online accuracy of 86.82% and an ITR of 24.06 bits/min with the "missing white disc" pattern, demonstrating the feasibility and performance gain of combining temporal and frequency features.

A Motor Imagery BCI Experiment (with Neurofeedback)

This study explored how social context (single vs. competitive task) affects MI performance using a neurofeedback setup [26].

- Task: Participants were asked to imagine walking to move a humanoid robot. The experiment included blocks of action execution, MI without feedback, and MI with feedback.

- EEG Recording & Feature Extraction: A portable EEG headset (Smarting from mbt) was used. The key feature was the relative ERD calculated in the mu/beta bands from the sensorimotor cortex. The channel with the strongest ERD was used for neurofeedback.

- Neurofeedback & Classification: A Linear Discriminant Analysis (LDA) classifier was trained on data from the MI-without-feedback block. The classifier's output was translated into robot movement (1, 2, or 3 steps).

- Key Result: While no overall group difference was found between single and competitive conditions, inter-individual analysis revealed that some users performed better alone (single-gain group) while others benefited from competition, highlighting the importance of user-centered design in MI-BCI.

The Scientist's Toolkit: Essential Research Reagents and Materials

The table below lists key materials and their functions as derived from the experimental protocols cited in this guide.

Table 2: Key Research Materials and Equipment for BCI Experimentation

| Item | Primary Function in BCI Research | Example Use Case |

|---|---|---|

| g.USBamp Amplifier (g.tec) | High-quality multichannel EEG signal acquisition and digitization. | Used in P300 [21] and SSVEP-OSP [28] studies for reliable data recording. |

| Electro-Cap International EEG Cap | Holds electrodes in standardized positions on the scalp for consistent EEG recording. | Employed in P300 studies to ensure proper electrode placement (10-20 system) [21]. |

| Psychophysics Toolbox 3.0 | A software library for precise visual stimulus presentation and timing control in MATLAB. | Used to control visual stimulators in SSVEP and P300 paradigms [28]. |

| Linear Discriminant Analysis (LDA) | A simple, efficient classification algorithm for distinguishing between two or more classes. | Applied for real-time classification in both P300 [21] and Motor Imagery [26] paradigms. |

| Canonical Correlation Analysis (CCA) | A multivariate statistical method for detecting SSVEPs by measuring correlation between EEG and reference signals. | The standard method for target identification in SSVEP-based BCIs [28]. |

| Portable EEG Headset (e.g., mbt Smarting) | Mobile, easy-to-set-up EEG system for real-time neurofeedback and out-of-lab experiments. | Used in Motor Imagery neurofeedback studies involving movement and competition [26]. |

| Deep Learning Frameworks (e.g., for Transformers/TCNs) | Software libraries for building complex models that automatically learn features from raw or preprocessed EEG data. | Used to achieve state-of-the-art accuracy in Motor Imagery classification [29]. |

Inherent Strengths and Limitations of Each Signal Type

Brain-Computer Interface (BCI) paradigms based on P300 event-related potentials, Steady-State Visual Evoked Potentials (SSVEP), and Motor Imagery (MI) represent the three primary non-invasive approaches for translating brain signals into commands. Each paradigm possesses a unique profile of strengths and limitations, making them differentially suitable for specific applications, from communication spellers to motor rehabilitation. This guide provides an objective, data-driven comparison of these technologies, detailing their performance characteristics, underlying experimental protocols, and the essential reagents required for their implementation. Understanding these factors is crucial for researchers and developers selecting the optimal BCI paradigm for their specific use case, whether the priority is high information transfer rate, minimal user training, or continuous control.

Quantitative Performance Comparison

The following tables synthesize key performance metrics and characteristics based on contemporary research findings.

Table 1: Performance Metrics of BCI Paradigms

| Paradigm | Typical Accuracy (%) | Information Transfer Rate (ITR) | Number of Commands | Training Requirement |

|---|---|---|---|---|

| P300 | 75.29 - 95% [1] [2] [31] | 10.1 - 28.64 bits/min [1] [2] [31] | High (e.g., 36 in a 6x6 matrix) [32] [2] | Minimal user training [32] [33] |

| SSVEP | 86.13 - 98.19% [1] [34] | High (e.g., 27.02 bits/min with 0.8s stimulus) [35] | Limited by available distinct frequencies [34] | Minimal to no user training [34] [33] |

| Motor Imagery (MI) | Varies significantly with user proficiency [33] | Lower than P300/SSVEP; ~1 selection/5s at 70% accuracy [33] | Typically 2-3 classes (e.g., left/right hand) [33] | Extensive user training required [32] [33] |

Table 2: Characteristics and Applicability

| Paradigm | Key Strength | Primary Limitation | Ideal Application Context |

|---|---|---|---|

| P300 | High number of discrete commands [32] [2] | Slow due to need for signal averaging; susceptible to adjacency errors [2] [34] | Spelling systems, discrete menu selection [2] [31] |

| SSVEP | High ITR and accuracy; rapid response [34] [35] | Visual fatigue; limited command set without complex coding; seizure risk [9] [34] [33] | High-speed control, continuous control applications [33] |

| Motor Imagery (MI) | Does not require external stimulation; endogenous control [33] | "BCI Illiteracy" - does not work for a significant portion of users [9] [33] | Motor rehabilitation, neuroprosthetics [25] [31] |

| Hybrid (P300+SSVEP) | Improved accuracy and ITR over single paradigms [1] [9] | Increased system complexity; potential signal interference [1] [9] | Applications requiring high reliability and speed [1] [31] |

Detailed Experimental Protocols

To contextualize the data above, below are the standard methodologies for eliciting and detecting signals for each paradigm.

P300 Speller Protocol

The canonical P300 speller is based on the oddball paradigm.

- Stimulus Presentation: A 6x6 matrix of characters is displayed. Rows and columns are intensified (flashed) in a pseudo-random sequence. The user focuses on a target character and mentally counts each time it flashes [2] [31].

- EEG Data Acquisition: EEG is recorded from scalp electrodes, typically including central and parietal sites (e.g., Pz, Cz). The recording is time-locked to the onset of each flash.

- Signal Processing: The key challenge is detecting the weak P300 signal amidst noise. Supervised machine learning is standard.

- Feature Extraction: Temporal features from the EEG epoch following each flash (e.g., 0-600 ms post-stimulus) are used.

- Classification: A classifier, such as Linear Discriminant Analysis (LDA) or Support Vector Machine (SVM), is trained to distinguish target flashes (containing P300) from non-target flashes [1] [28]. Detection often requires averaging across multiple flash repetitions to achieve acceptable accuracy [1] [34].

SSVEP Detection Protocol

SSVEP relies on the brain's resonant response to repetitive visual stimulation.

- Stimulus Presentation: Multiple visual stimuli (e.g., boxes, LEDs) are presented, each flickering at a distinct frequency (e.g., 6 Hz, 8 Hz, 10 Hz). The user focuses their gaze on one target stimulus [34] [35].

- EEG Data Acquisition: Signals are recorded primarily from the occipital lobe (e.g., O1, Oz, O2), which processes visual information.

- Signal Processing: Analysis occurs in the frequency domain to identify the dominant response frequency.

- Canonical Correlation Analysis (CCA): A widely-used method that finds a spatial filter to maximize the correlation between the EEG data and pre-defined sine-cosine reference signals at the stimulus frequencies and their harmonics [34].

- Task-Related Component Analysis (TRCA): An advanced method that improves signal-to-noise ratio by maximizing the reproducibility of SSVEP responses across trials. Recent enhancements like Spectrum-Enhanced TRCA (SE-TRCA) further boost performance by incorporating spectral features [34].

Motor Imagery Protocol

MI is based on the modulation of sensorimotor rhythms.

- Stimulus & Task: Unlike evoked potentials, MI does not require an external "stimulus" in the same way. Users are cued (e.g., by a visual prompt) to kinesthetically imagine a specific motor action, such as moving their left hand or right hand, without performing any actual movement [33].

- EEG Data Acquisition: Signals are recorded over the sensorimotor cortex, specifically around the C3 and C4 electrode locations according to the international 10-20 system [33].

- Signal Processing: The key is detecting Event-Related Desynchronization (ERD) and Synchronization (ERS)—decreases and increases in specific frequency band power (e.g., mu rhythm 8-12 Hz, beta rhythm 13-30 Hz) associated with the imagery.

- Feature Extraction: Band power features are extracted from specific frequency bands and channels.

- Classification: Classifiers like LDA or SVM are trained to differentiate the spatial and spectral patterns corresponding to different motor imagery tasks [33]. Performance is highly dependent on user training and ability to produce distinct neural patterns.

Signaling Pathways and Experimental Workflows

The following diagrams illustrate the fundamental mechanisms and standard experimental workflows for each BCI paradigm.

P300 Oddball Paradigm Workflow

SSVEP Frequency Response Pathway

Motor Imagery ERD/ERS Pathway

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential hardware, software, and analytical "reagents" required for BCI research.

Table 3: Essential Materials for BCI Research

| Item | Function | Example Specifications/Notes |

|---|---|---|

| EEG Acquisition System | Measures electrical brain activity from the scalp. | Multi-channel systems (e.g., 64-channels); g.USBamp (g.tec), Neuroscan SynAmps2 [28] [35]. Requires low impedance (<5 kΩ) [28]. |

| Electrode Cap | Holds electrodes in standardized positions. | Follows international 10-10 or 10-20 systems [35]. Material: Ag/AgCl for wet EEG; gold-cup for active systems. |

| Visual Stimulation Display | Presents paradigms to evoke P300/SSVEP. | Standard LCD/LED monitors; VR/AR headsets (e.g., PICO Neo3 Pro) for immersive environments [35]. Precise timing control is critical. |

| Stimulation Software | Controls stimulus presentation and timing. | Psychophysics Toolbox (for MATLAB) [28], Unity3D Engine [35]. Must sync with EEG via event markers. |

| Signal Processing Toolbox | Algorithms for feature extraction and classification. | OpenViBE [33], BCILAB, custom scripts in MATLAB/Python. Implements CCA, TRCA, LDA, SVM, etc. |

| Validation Metrics | Quantifies system performance. | Accuracy (%): Correct selections/Total attempts. ITR (bits/min): Accounts for speed and accuracy [1] [34]. |

Implementation and Applications: From Signal Acquisition to Real-World Deployment

Signal Acquisition Requirements and Electrode Placements

Brain-Computer Interface (BCI) technology establishes a direct communication pathway between the human brain and external devices, bypassing conventional neuromuscular channels [36]. The efficacy of a BCI system is predominantly contingent upon its signal acquisition module, which bears the critical responsibility for the detection and recording of cerebral signals [37]. Electrode placement and montage design are pivotal components of this module, directly influencing signal quality, feature discriminability, and overall system performance.

Different BCI paradigms—P300, Steady-State Visual Evoked Potential (SSVEP), and Motor Imagery (MI)—elicit distinct neural responses originating from various cortical areas. Consequently, each paradigm necessitates specialized electrode placement strategies to optimize signal acquisition. This guide provides a comparative analysis of signal acquisition requirements and electrode placements for these major non-invasive BCI paradigms, synthesizing recent experimental data to inform researchers and development professionals in selecting appropriate methodologies for specific applications.

Comparative Performance of BCI Paradigms

Table 1: Performance Comparison of Major BCI Paradigms

| Paradigm | Typical Accuracy Range | Information Transfer Rate (ITR) | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| SSVEP | 86.25% - 93.15% [38] [39] | 42.08 - 138.89 bits/min [38] [40] | High ITR, minimal user training, robust signal-to-noise ratio [38] | Visual fatigue, risk of photosensitive epilepsy, unsuitable for users with visual impairments [38] |

| P300 | Comparable to SSVEP [38] | Comparable to SSVEP [38] | Requires abbreviated training intervals, suitable for rapid user adaptation [38] | Requires high level of concentration, significant individual differences [39] |

| Motor Imagery (MI) | Limited output of instructions [39] | Lower than SSVEP/P300 [39] | Completely endogenous, no external stimuli needed [36] | Extensive training needed, lower accuracy, inherently user-specific [39] [41] |

| Hybrid (SSVEP+P300) | ~94.29% (Speller System) [38] | 28.64 bits/min (Speller System) [38] | Enhanced accuracy and reliability, reduced false positives through sequential validation [38] | Higher system complexity and cost [38] |

Table 2: Electrode Placement and Setup Requirements

| Paradigm | Primary Brain Regions Targeted | Typical Electrode Montage (10-20 System) | Minimal Channel Configurations | Setup Time & Complexity |

|---|---|---|---|---|

| SSVEP | Visual Cortex (Occipital) | O1, Oz, O2, POz, PO3, PO4, PO7, PO8 [42] [40] | 8-channel wet or dry electrodes [40]; Single Oz-Pz bipolar [42] | Wet (103s), Dry (38s) for 8-channel [40] |

| P300 | Parietal Cortex, Central Brain Regions | Pz, Cz, Fz (for P300 component) [38] | Often combined with SSVEP in hybrid systems [38] | Varies; generally requires precise timing |

| Motor Imagery (MI) | Primary Motor Cortex (Sensorimotor) | C3, Cz, C4 (over sensorimotor cortex) [36] | Multi-channel configurations common for source localization [41] | Requires individual calibration, increasing setup time [41] |

| cVEP | Broad Visual Cortex | Up to 16 electrodes across occipital-parietal region [42] | 6-electrode setup possible with retraining [42]; Single Oz [42] | Performance decline with fewer electrodes; retraining needed [42] |

Experimental Protocols and Methodologies

SSVEP with Fast System Setup

A 2025 study developed a wearable SSVEP-BCI system to explore simplified setups [40]. Fifteen healthy participants were tested using both dry and wet electrodes in a real-life scenario.

- Stimulation: Used visual stimuli at different flickering frequencies.

- Signal Acquisition: EEG signals were recorded using an 8-channel setup.

- Setup Time: The average system setup time was 38.40 seconds for dry electrodes and 103.40 seconds for wet electrodes, significantly shorter than traditional BCI experiments.

- Performance: Despite lower signal quality in the fast-setup condition, the system achieved an average ITR of 138.89 bits/min with wet electrodes and 70.59 bits/min with dry electrodes, demonstrating that rapid setup does not necessarily compromise performance [40].

Hybrid SSVEP + P300 System

A 2025 study presented a novel LED-based dual-stimulus apparatus integrating SSVEP and P300 paradigms [38]. The system was designed for directional control, with four frequencies (7, 8, 9, 10 Hz) corresponding to forward, backward, right, and left commands.

- Stimulation: Used green COB-LEDs for SSVEP elicitation and red LEDs for P300 evocation.

- Signal Processing: Real-time feature extraction was performed using concurrent analysis of maximum Fast Fourier Transform (FFT) amplitude and P300 peak detection.

- Performance: The system achieved a mean classification accuracy of 86.25% and an average ITR of 42.08 bits per minute, exceeding conventional accuracy thresholds for BCI systems [38].

Electrode Reduction in cVEP Paradigms

A 2025 online BCI study with thirty-eight participants investigated the effect of reducing electrode count from 16 to 6 in a code-modulated VEP (cVEP) paradigm [42].

- Conditions: Three configurations were tested: a baseline 16-electrode setup, a reduced 6-electrode setup without retraining, and a reduced 6-electrode setup with retraining.

- Findings: Performance generally declined with fewer electrodes. However, retraining the classification pipeline restored near-baseline mean ITR and accuracy for participants for whom the system remained functional. This highlights significant individual differences in cVEP response characteristics and suggests that minimal electrode setups require flexible, individualized classification methods [42].

Signaling Pathways and Experimental Workflows

The following diagram illustrates the generalized signal processing workflow common to EEG-based BCI systems, from signal acquisition to device output.

BCI System Workflow

The diagram below details the specific signal processing pathways for the SSVEP, P300, and Motor Imagery paradigms, highlighting the distinct feature extraction methods employed for each.

Paradigm Signal Processing

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Equipment for BCI Research

| Item | Function | Example Use Case & Notes |

|---|---|---|

| EEG Amplifier & Data Acquisition System | Records electrical brain activity from the scalp. | Foundational for all non-invasive EEG-based BCI paradigms. |

| Active or Passive Electrodes (Ag/AgCl) | Measures potential differences at the scalp surface. | Wet electrodes provide higher signal quality (ITR=138.89 bits/min) [40]. |

| Dry Electrodes | Measures EEG signals without conductive gel. | Enables faster setup (38s), suitable for practical applications, though with lower ITR (70.59 bits/min) [40]. |

| Visual Stimulation Apparatus (LCD/LED) | Prescribes flickering patterns to evoke SSVEP/VEP. | LED-based stimuli produce more robust SSVEP responses than LCDs due to superior temporal precision [38]. |

| Microcontroller (e.g., Teensy) | Precisely controls timing of visual stimuli. | Used to generate parallel outputs at distinct frequencies (7, 8, 9, 10 Hz) for SSVEP [38]. |

| Signal Processing Software (Python/MATLAB) | Implements pre-processing, feature extraction, and classification algorithms. | Key for methods like CCA, FFT, and P300 peak detection [38] [39]. |

| Hybrid Stimulation Design (COB-LEDs) | Integrates multiple stimulus types for hybrid paradigms. | Combines green COB-LEDs (SSVEP) with red LEDs (P300) in a single setup [38]. |

Brain-Computer Interface (BCI) technology has witnessed a paradigm shift in signal processing methodologies, moving from traditional machine learning algorithms to sophisticated deep learning architectures. This evolution is particularly evident across three major BCI paradigms: P300 event-related potentials, Steady-State Visual Evoked Potentials (SSVEP), and Motor Imagery (MI). Each paradigm presents unique challenges and opportunities for algorithm development, with performance metrics varying significantly based on the neural signals being decoded. The transition from classical approaches like Support Vector Machines (SVM) and Task-Related Component Analysis (TRCA) to contemporary deep learning models represents more than just incremental improvement—it constitutes a fundamental reshaping of how we extract meaningful patterns from complex neural data.

Traditional algorithms dominated BCI research for decades, offering interpretability and computational efficiency while requiring careful feature engineering. SVM, for instance, excelled at finding optimal hyperplanes to separate different neural states in high-dimensional spaces, while TRCA proved particularly effective for SSVEP-based systems by maximizing the reproducibility of task-related components. However, the emergence of deep learning has introduced models capable of automatic feature extraction from raw or minimally processed signals, often achieving superior performance at the cost of increased computational complexity and data requirements. This comprehensive analysis examines the performance characteristics, implementation requirements, and practical considerations of both traditional and deep learning approaches across the major BCI paradigms, providing researchers with evidence-based guidance for algorithm selection.

Traditional Algorithms: The Foundational Framework

Support Vector Machines (SVM) in BCI Applications

Support Vector Machines have established themselves as one of the most reliable workhorses for BCI classification tasks, particularly for P300 and Motor Imagery paradigms. The strength of SVM lies in its ability to create optimal separating hyperplanes in high-dimensional feature spaces, making it exceptionally robust for the noisy, non-stationary characteristics of EEG signals. In practical BCI applications, SVM implementations typically achieve accuracy rates between 70-80% for binary classification tasks, though performance varies significantly based on feature extraction methods and subject-specific calibration.

The mathematical foundation of SVM makes it particularly well-suited to handle the high-dimensional nature of EEG features extracted through spatial filtering techniques. When combined with kernel functions (linear, polynomial, or radial basis function), SVM can effectively model complex nonlinear relationships between features without requiring explicit feature transformation. Recent studies have demonstrated that SVM maintains competitive performance even when compared to more complex deep learning models, particularly in scenarios with limited training data. For instance, in one MI classification study, SVM served as a robust baseline against which more complex models were evaluated, demonstrating its enduring utility in BCI research pipelines [43].

Task-Related Component Analysis (TRCA) for SSVEP

Task-Related Component Analysis has emerged as a particularly powerful algorithm for SSVEP-based BCIs, with recent extensions enhancing its performance further. TRCA operates by maximizing the reproducibility of task-related components during stimulus presentation, effectively extracting stable SSVEP responses from background EEG activity. Unlike methods that rely solely on spectral power, TRCA leverages the trial-to-trial consistency of brain responses, making it exceptionally robust to non-task-related neural activity and artifacts.

The core mathematical principle behind TRCA involves finding linear combinations of channels that maximize the covariance between trials from the same condition. This approach has demonstrated remarkable efficacy in SSVEP classification, with recent implementations achieving information transfer rates (ITR) exceeding 200 bits/min in high-performance SSVEP-BCIs using LCD/LED displays [44]. For augmented reality SSVEP-BCIs, which present additional challenges due to their portable nature, TRCA-based methods have maintained robust performance, with one study reporting accuracies of approximately 87.5% using only 0.5s of data [45]. The method's effectiveness has been further enhanced through filter bank extensions and integration with spatial filtering techniques, solidifying its position as a state-of-the-art approach for SSVEP classification.

Performance Comparison of Traditional Algorithms

Table 1: Performance Metrics of Traditional BCI Algorithms Across Paradigms

| Algorithm | Primary Paradigm | Average Accuracy | Key Strengths | Limitations |

|---|---|---|---|---|

| Support Vector Machine (SVM) | Motor Imagery, P300 | 70-80% (subject-dependent) [43] | Robust to noise, effective in high-dimensional spaces, minimal hyperparameter tuning | Requires careful feature engineering, performance plateaus with complex data |

| Task-Related Component Analysis (TRCA) | SSVEP | 87.5% (0.5s data) [45] | Maximizes trial-to-trial consistency, minimal calibration requirements, high ITR | Primarily suited for SSVEP, limited applicability to other paradigms |

| Linear Discriminant Analysis (LDA) | Motor Imagery | 66.53% (2-class across datasets) [46] | Computational efficiency, simple implementation, works well with CSP features | Assumes normal distribution and equal covariance, struggles with complex patterns |

| Filter Bank Methods | SSVEP | >200 bits/min ITR [44] | Leverages harmonic components, enhances target identification | Requires multiple frequency bands, increased computational load |

Deep Learning Approaches: The New Frontier

Convolutional Neural Networks for Spatial-Temporal Feature Extraction

Convolutional Neural Networks (CNNs) have revolutionized EEG signal processing by automatically learning both spatial and temporal features from raw or minimally processed data. Unlike traditional methods that require manual feature engineering, CNNs employ hierarchical learning through multiple convolutional layers that detect increasingly abstract patterns. For MI classification, 2D-CNN architectures have demonstrated remarkable performance when applied to time-frequency representations of EEG signals. One notable implementation, the AMD-KT2D framework, achieved exceptional accuracy of 96.75% for subject-dependent and 92.17% for subject-independent classification by transforming EEG-MI signals into 2D spectrograms using an Optimized Short-Time Fourier Transform (OptSTFT) [47].

The AMD-KT2D framework exemplifies the modern approach to CNN-based BCI systems, incorporating a guide-learner architecture where Improved ResNet50 (IResNet50) extracts high-level spatial-temporal features while a Customized 2D CNN captures multi-scale patterns. This approach addresses one of the fundamental challenges in MI-BCI: the need for models that can generalize across subjects and sessions. By utilizing an Adaptive Margin Disparity Discrepancy (AMDD) loss function, the framework minimizes domain disparity between different subjects, enhancing cross-subject generalization without sacrificing subject-specific performance [47].

Transformer Architectures with Temporal Convolutional Networks

The integration of Transformer architectures with Temporal Convolutional Networks (TCNs) represents one of the most significant recent advances in BCI signal processing. This hybrid approach leverages the strengths of both architectures: the self-attention mechanism of Transformers effectively captures global dependencies and long-range interactions in EEG signals, while TCNs provide efficient local feature extraction through dilated causal convolutions. The EEGEncoder model, which employs a novel Dual-Stream Temporal-Spatial Block (DSTS), has demonstrated state-of-the-art performance on the BCI Competition IV-2a dataset, achieving an average accuracy of 86.46% for subject-dependent and 74.48% for subject-independent classification [29].

The transformer component in these hybrid models addresses a critical limitation of previous deep learning architectures: the ability to model relationships between distant brain regions that activate simultaneously during cognitive tasks. By applying self-attention mechanisms to EEG sequences, Transformers can effectively weight the importance of different time points and channels, mimicking the brain's distributed processing mechanisms. Meanwhile, the TCN branches capture hierarchical temporal patterns at multiple timescales, from millisecond-level oscillations to longer-lasting cognitive states. This synergistic combination has proven particularly effective for motor imagery tasks, where both immediate sensorimotor rhythms and longer-duration cognitive planning components contribute to the classificable neural signature [29].

Emerging Deep Learning Architectures and Performance

Table 2: Performance of Deep Learning Models in BCI Applications

| Model Architecture | BCI Paradigm | Reported Accuracy | Key Innovations | Computational Requirements |

|---|---|---|---|---|

| EEGEncoder (Transformer + TCN) | Motor Imagery | 86.46% (subject-dependent) 74.48% (subject-independent) [29] | Dual-Stream Temporal-Spatial blocks, parallel structures | High (requires GPU acceleration) |

| AMD-KT2D (2D-CNN with guide-learner) | Motor Imagery | 96.75% (subject-dependent) 92.17% (subject-independent) [47] | OptSTFT transformation, Adaptive Margin Disparity Discrepancy loss | Medium (pre-trained ResNet50 backbone) |

| Multiple 1D CNN with EOG integration | Motor Imagery | 83% (4-class) with 6 total channels [48] | Channel reduction strategy, EOG information utilization | Low to medium (efficient 1D convolutions) |

| Signal Prediction with Elastic Net | Motor Imagery | 78.16% (with channel reduction) [43] | Signal prediction from reduced channels, elastic net regression | Low (traditional regression with preprocessing) |

Experimental Protocols and Methodologies

Standardized Evaluation Frameworks and Datasets

Robust evaluation of BCI algorithms requires standardized datasets and consistent validation methodologies. The field has largely coalesced around several publicly available datasets that enable direct comparison between algorithms. The BCI Competition IV Dataset 2a has emerged as a particularly important benchmark for motor imagery paradigms, containing EEG data from 9 subjects performing 4-class motor imagery tasks (left hand, right hand, feet, and tongue) [29] [48]. Similarly, the Weibo dataset provides a more challenging 7-class motor imagery benchmark that tests algorithms' capacity to discriminate between finer-grained motor commands [48].

Proper experimental protocol necessitates rigorous train-test splits, typically following a 70-15-15 (train-validation-test) partition to prevent overfitting and ensure generalizability [49]. For subject-independent evaluations, leave-one-subject-out cross-validation provides the most stringent test of an algorithm's capacity to generalize across individuals. The emergence of transfer learning and domain adaptation techniques has been particularly valuable in addressing the well-documented challenge of BCI illiteracy, where approximately 15-30% of users struggle to achieve proficient control of MI-based BCIs [43]. Modern evaluation protocols must also account for practical considerations such as computational efficiency and potential for real-time implementation, with information transfer rate (ITR) serving as a key metric for SSVEP paradigms that incorporates both classification accuracy and speed [44].

Critical Methodological Considerations in Algorithm Design

Several methodological factors significantly influence algorithm performance across BCI paradigms. The number and placement of EEG channels represents a fundamental design consideration, with recent research demonstrating that strategic channel reduction can enhance practicality without sacrificing performance. One innovative approach achieved 83% accuracy in 4-class MI classification using only 3 EEG and 3 EOG channels, challenging the conventional wisdom that more channels invariably yield better performance [48]. This channel reduction strategy not only improves system portability but also reduces computational requirements and setup time.

Another critical consideration involves addressing the non-stationary nature of EEG signals and substantial inter-subject variability. Techniques such as elastic net regression have proven effective for predicting full-channel EEG data from a limited subset of electrodes, achieving 78.16% accuracy in MI classification while significantly reducing hardware requirements [43]. For SSVEP paradigms, novel classification approaches like Spatial Distribution Analysis (SDA) have leveraged the high spatial resolution of MEG systems to achieve calibration-free operation with significantly enhanced accuracy and ITR across all window sizes [19]. These methodological innovations highlight the ongoing evolution from brute-force computational approaches toward more sophisticated, neurally-informed signal processing techniques.

Visualization of Algorithm Selection Workflow

Diagram 1: A structured workflow for selecting BCI algorithms based on paradigm requirements and practical constraints

Table 3: Essential Research Resources for BCI Algorithm Development

| Resource Category | Specific Tools/Datasets | Primary Application | Key Features/Availability |

|---|---|---|---|

| Public Datasets | BCI Competition IV-2a [29] [48] | Motor Imagery | 9 subjects, 4-class MI, standard benchmark |

| Weibo Dataset [48] | Motor Imagery | 7-class MI, more complex classification | |

| Binocular AR-SSVEP Dataset [45] | SSVEP | 30 subjects, AR headset, binocular coding | |

| Software Libraries | MOABB [46] | Multiple Paradigms | Standardized evaluation framework |

| BNCI Horizon [46] | Multiple Paradigms | Dataset collection and tools | |

| Deep BCI [46] | Multiple Paradigms | Deep learning focused resources | |

| Hardware Specifications | Emotiv Epoc Flex [47] | EEG Acquisition | 32 channels, saline-based, portable |

| HoloLens 2 [45] | SSVEP Stimulation | AR headset, binocular stimulation | |

| Grael 4K EEG Amplifier [45] | High-quality Recording | 1024 Hz sampling, research-grade | |

| Evaluation Metrics | Classification Accuracy | All Paradigms | Standard performance measure |

| Information Transfer Rate (ITR) | SSVEP | Incorporates speed and accuracy | |

| Cohen's Kappa | All Paradigms | Chance-corrected accuracy measure |

The comparative analysis of traditional and deep learning algorithms across major BCI paradigms reveals a complex performance landscape without universal solutions. Traditional algorithms like SVM and TRCA maintain strong relevance for specific applications, offering computational efficiency, interpretability, and robust performance particularly in scenarios with limited data. Meanwhile, deep learning approaches have demonstrated remarkable capabilities for handling complex pattern recognition tasks, achieving state-of-the-art performance in subject-dependent configurations and showing increasing promise for cross-subject generalization through advanced architectures like transformers and hybrid models.

Strategic algorithm selection must consider multiple factors beyond raw classification accuracy, including computational requirements, data efficiency, implementation complexity, and alignment with specific BCI paradigm characteristics. For SSVEP-based systems, TRCA and its extensions continue to offer exceptional performance with minimal calibration, while motor imagery applications are increasingly leveraging sophisticated deep learning architectures that automatically extract discriminative spatial-temporal features. As BCI technology continues to evolve toward more practical applications, the integration of hybrid approaches—combining the strengths of traditional and deep learning methods—will likely drive the next generation of advances in neural signal processing and brain-computer communication.

Brain-Computer Interface (BCI) technology has evolved significantly, offering multiple paradigms for translating brain signals into commands for communication and control. Among the most prominent are the P300 event-related potential, Steady-State Visual Evoked Potential (SSVEP), and Motor Imagery (MI). Each paradigm possesses distinct characteristics, advantages, and limitations, making them suitable for different applications and user populations. This guide provides a systematic comparison of these primary BCI paradigms, focusing on the critical performance metrics of accuracy, information transfer rate (ITR), and speed, to inform researchers and developers in selecting the optimal approach for specific use cases. The evaluation is contextualized within the broader thesis of comparing P300, SSVEP, and Motor Imagery BCI paradigms for research and practical applications.

Performance Metrics Comparison

The table below summarizes the core performance characteristics and typical experimental outcomes for the P300, SSVEP, and Motor Imagery BCI paradigms.

Table 1: Comparative Performance Metrics of Major BCI Paradigms

| Metric / Paradigm | P300 | SSVEP | Motor Imagery (MI) |

|---|---|---|---|

| Primary Signal Type | Event-Related Potential (ERP) | Visually Evoked Potential | Sensorim Rhythm (ERD/ERS) |

| Typical Accuracy Range | ~75% - 96% [50] [2] | ~80% - 100% [50] [51] | Varies widely; requires user training [52] |

| Reported High ITR | ~28.64 bits/min (Hybrid) [50] | Up to ~250 bits/min (High-end) [53] | Generally lower than evoked potentials [52] |

| Typical Speed (chars/min) | Slower (requires averaging) [9] | 20.9 chars/min (Hybrid) [51] | Slower, dependent on user skill [36] |

| Stimulus Dependency | Exogenous (requires external stimulus) | Exogenous (requires flickering stimulus) | Endogenous (internally generated) |

| User Training Required | Minimal to none | Minimal to none | Significant training often required [52] |

| Key Advantage | High accuracy with minimal user training | Highest potential ITR and speed | Does not require external stimulus; natural control |

Detailed Analysis of BCI Paradigms

P300 Paradigm

The P300 is an event-related potential characterized by a positive deflection in the EEG signal approximately 300 ms after a rare, task-relevant stimulus is presented [9] [2]. Its classic application is the P300 speller, first introduced by Farwell and Donchin, which uses a 6x6 matrix of characters. Rows and columns flash in a random sequence, and the user's focus on a target character elicits a P300 response when its corresponding row or column flashes [2].

- Performance Profile: P300-based systems consistently achieve high classification accuracies, often reported in the range of 75% to 96% [50] [2]. However, to achieve this, the EEG responses from multiple flashes must be averaged to improve the signal-to-noise ratio, which inherently reduces the system's speed and overall ITR [9]. A hybrid P300-SSVEP speller demonstrated an online ITR of 28.64 bits/min [50].

- Advantages and Disadvantages: The primary advantage of the P300 paradigm is its minimal training requirement, making it accessible to new users [9]. A key limitation is the "adjacency problem," where flashes adjacent to the target can distract users and cause classification errors [2].

Steady-State Visual Evoked Potential (SSVEP) Paradigm

SSVEPs are neural oscillations elicited in the visual cortex when a user focuses attention on a visual stimulus flickering at a constant frequency, typically greater than 6 Hz [28] [53]. The SSVEP response is frequency-locked to the stimulus and can be identified as a peak at the fundamental frequency and its harmonics in the power spectrum.

- Performance Profile: SSVEP-based BCIs are renowned for their high ITRs, among the fastest of all non-invasive BCIs [38] [53]. With advanced signal processing methods like Ensemble Task-Related Component Analysis (eTRCA), high ITRs can be achieved [50]. A hybrid SSVEP-EMG speller achieved an impressive average ITR of 96.1 bits/min and a speed of 20.9 characters per minute [51]. Classification accuracy is also generally high, often ranging from 80% to 100% [50] [51].