Breaking the Subject and Session Barrier: A Comprehensive Guide to Generalized Neural Decoders

The development of neural decoders that generalize across subjects and recording sessions is a pivotal challenge in creating practical brain-computer interfaces and clinical neurotechnology.

Breaking the Subject and Session Barrier: A Comprehensive Guide to Generalized Neural Decoders

Abstract

The development of neural decoders that generalize across subjects and recording sessions is a pivotal challenge in creating practical brain-computer interfaces and clinical neurotechnology. This article provides a systematic exploration of this field, addressing its foundational principles, the dataset shift problem caused by non-stationary EEG signals, and the resulting need for robust generalization. We survey state-of-the-art methodological solutions, including transfer learning, domain adaptation, and novel architectures like the NEED framework that enable zero-shot cross-subject and cross-task generalization. The content further delves into practical troubleshooting, parameter optimization frameworks, and fine-tuning strategies to overcome performance degradation. Finally, we present a rigorous comparative analysis of validation metrics and benchmarking practices essential for evaluating decoder performance in real-world scenarios. This resource is tailored for researchers, scientists, and drug development professionals seeking to build reliable, generalizable neural decoding systems for biomedical and clinical applications.

The Core Challenge: Understanding Dataset Shift and the Imperative for Generalization

FAQ: Core Concepts

F1: What is meant by "cross-subject" and "cross-session" generalization in neural decoding?

A1: In the context of neural decoders:

- Cross-Subject Generalization refers to the ability of a decoding model trained on data from a group of individuals (subjects) to perform accurately on data from new, unseen subjects. This is challenging due to the pronounced physiological differences between individuals [1] [2].

- Cross-Session Generalization refers to the ability of a model to maintain its performance on data from the same subject but recorded in different sessions, which could be separated by hours, days, or weeks. This is difficult because the non-stationary nature of EEG signals can lead to the Dataset Shift Problem, where the statistical properties of the brain signals change over time [2].

F2: Why is this generalization considered a critical bottleneck for neurotechnology?

A2: The lack of robust generalization currently limits the real-world deployment and scalability of neurotechnologies. Without it:

- No Plug-and-Play Systems: Decoders cannot be used "out-of-the-box" for new users. A new model would need to be trained for each individual, which is a time-consuming and inefficient process that requires collecting new calibration data [1].

- Unreliable Performance: A decoder's performance can degrade significantly over time for the same user, even within the same session, requiring frequent recalibration and making long-term use impractical [2].

- Hindered Clinical Adoption: The inability to generalize across subjects and sessions is a major barrier to developing robust clinical brain-computer interfaces (BCIs) and diagnostic tools that can be widely applied in healthcare settings [3].

F3: What are the primary technical causes of this bottleneck?

A3: The core issues stem from the inherent variability of brain data:

- The Dataset Shift Problem: The non-stationarity of EEG signals means their statistical properties change over time and across conditions, violating the common machine learning assumption that training and test data are drawn from the same distribution [2].

- Inter-subject Variability: Anatomical (e.g., skull thickness, brain morphology) and physiological differences (e.g., neural firing patterns) lead to significant variations in how brain activity is recorded from different individuals [2] [1].

- Intra-subject Variability: A single subject's brain signals can be affected by factors that change from session to session, such as fatigue, attention, hormonal cycles, and changes in electrode-scalp contact [2].

Troubleshooting Guide: Common Experimental Issues

Issue 1: Poor Model Accuracy on Unseen Subjects

- Symptoms: Your model achieves high accuracy during within-subject validation but performance drops drastically when tested on data from new subjects.

- Explanation: The model has overfitted to subject-specific patterns in the training data and has failed to learn domain-invariant features that are relevant to the task across the population.

- Solutions:

- Employ Transfer Learning: Utilize domain adaptation techniques to explicitly minimize the distributional differences between subjects in the feature space [2].

- Incorporate an Individual Adaptation Module: As demonstrated in the NEED framework, pretrain a module on multiple subjects to normalize subject-specific patterns, enabling better zero-shot generalization [4].

- Increase Data Diversity: Train your model on a larger and more diverse set of subjects. The EEG Foundation Challenge, for example, uses data from over 3,000 participants to encourage the learning of robust, generalizable representations [1].

Issue 2: Model Performance Degrades Over Time in the Same Subject

- Symptoms: A decoder calibrated at the start of a session performs well initially, but its accuracy declines as the session continues or in follow-up sessions.

- Explanation: This is a classic manifestation of the dataset shift problem caused by the non-stationary nature of EEG signals. The model is sensitive to short-term, within-session changes in the signal properties [2].

- Solutions:

- Adaptive Feature Extraction: Implement feature extraction methods that are less sensitive to non-stationarities or can adapt to them over time. Combining this with transfer learning has shown promise [2].

- Session-to-Session Domain Adaptation: Treat data from different sessions of the same subject as separate domains and apply domain adaptation techniques to align them [2].

- Continuous Calibration: Design protocols for periodic, quick recalibration of the model during use to compensate for drift.

Issue 3: Inability to Generalize Across Different Cognitive Tasks

- Symptoms: A model trained to decode a specific cognitive task (e.g., motor imagery) fails to perform or adapt to a different but related task (e.g., attention).

- Explanation: The model has become overly specialized to the task-specific neural patterns present in its original training data. This is part of the conventional "one-task-per-model" paradigm that fails to leverage commonalities across different cognitive activities [1].

- Solutions:

- Train on Multiple Tasks: Use a multi-task learning framework that encourages the model to learn a shared representation across several cognitive tasks. This is a key objective of modern EEG foundation model research [1].

- Utilize a Unified Architecture: Implement a model architecture, like the NEED framework's dual-pathway design, that is explicitly designed to handle different visual domains (and by extension, different tasks) through a unified inference mechanism [4].

Experimental Protocols & Data

Key Methodologies for Generalization Experiments

Protocol 1: Leave-One-Subject-Out (LOSO) Cross-Validation This is the standard validation strategy for evaluating cross-subject generalization.

- Procedure: Iteratively select one subject as the test set, and use the data from all remaining subjects as the training set. Repeat this process until every subject has been used as the test subject once.

- Purpose: Provides an almost unbiased estimate of how a model will perform on a completely new, unseen subject from a similar population [2].

- Reporting: The final performance metric is the average of the performance scores across all test subjects.

Protocol 2: Cross-Session Validation This protocol evaluates a model's stability over time for the same subject.

- Procedure: Train a model on data from one or more initial recording sessions from a subject. Then, test the model's performance on data from a subsequent session held out from training.

- Purpose: Directly measures the practical longevity of a decoder and its resistance to the non-stationarities of EEG signals over time [2].

- Reporting: Performance is reported for each subject individually, showing the change in accuracy from the training session(s) to the test session.

Performance Comparison of Generalization Approaches

Table 1: Quantitative results from recent studies tackling the generalization bottleneck. SSIM (Structural Similarity Index Measure) is a metric for image reconstruction quality, where a value of 1 indicates perfect reconstruction.

| Study / Method | Generalization Type | Key Result | Reported Metric |

|---|---|---|---|

| NEED Framework [4] | Zero-shot Cross-Subject | Maintained 93.7% of within-subject classification performance on unseen subjects. | Relative Performance |

| NEED Framework [4] | Zero-shot Cross-Subject | Maintained 92.4% of visual reconstruction quality on unseen subjects. | Relative SSIM |

| NEED Framework [4] | Zero-shot Cross-Task (to Image Reconstruction) | Achieved direct transfer to a new task without fine-tuning. | SSIM = 0.352 |

| Modern ML (NN, Ensembles) [3] | Within-Subject / Cross-Session | Significantly outperformed traditional methods (Wiener/Kalman filters). | Decoding Accuracy |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential components for building generalizable neural decoders, as identified in the research.

| Item / Technique | Function in Research |

|---|---|

| Transfer Learning / Domain Adaptation [2] | A set of methods to adapt a model trained on a "source" domain (e.g., set of subjects) to perform well on a different but related "target" domain (e.g., new subjects), mitigating dataset shift. |

| Individual Adaptation Module [4] | A pretrained network component designed to normalize or filter out subject-specific neural patterns, allowing the core decoder to focus on task-relevant, domain-invariant features. |

| Dual-Pathway Architecture [4] | A model design that processes neural data through separate streams to capture both low-level visual/neural dynamics and high-level semantics, improving robustness for tasks like reconstruction. |

| Large-Scale, Multi-Subject Datasets [1] | Datasets comprising thousands of subjects (e.g., the EEG Foundation Challenge's 3,000+ subjects) are essential for training models to learn representations that generalize across population variability. |

| Zero-Shot Inference Mechanism [4] | A unified framework that allows a single model to be applied to different tasks (e.g., video and image reconstruction) without task-specific fine-tuning, enabling cross-task generalization. |

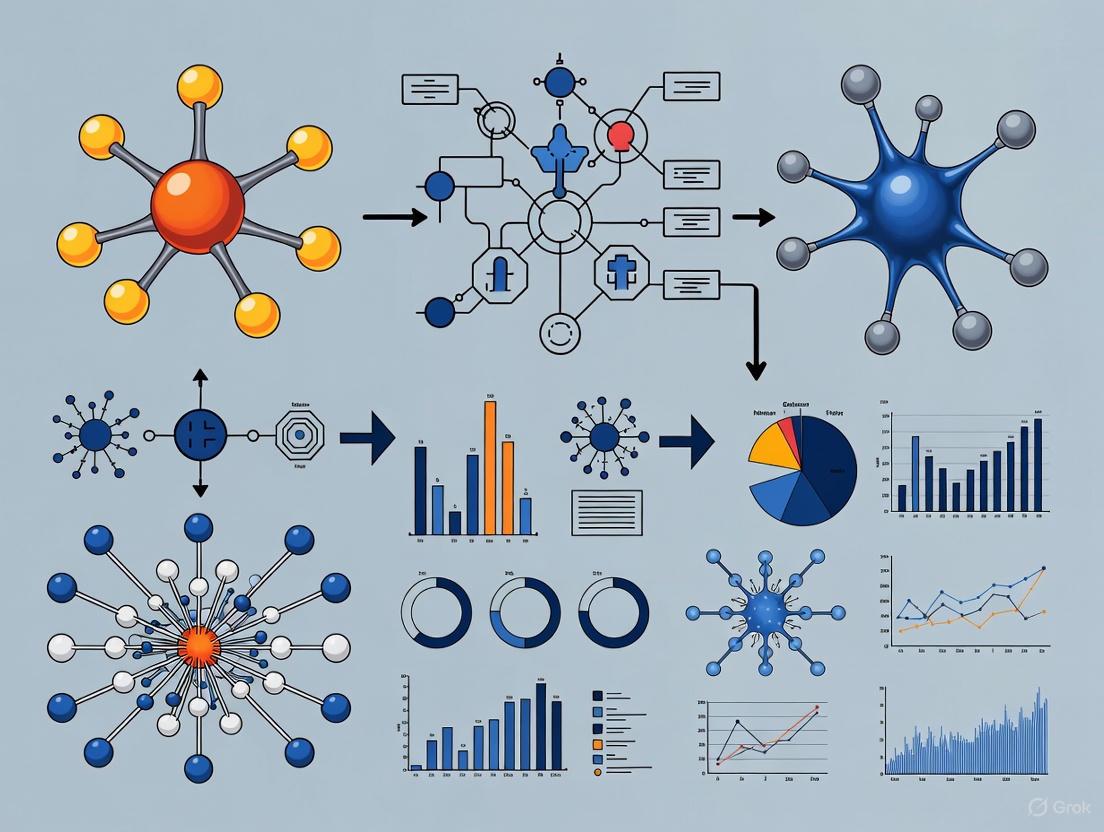

Diagnostic Diagrams

Diagram 1: The Domain Shift Problem in Neurotechnology

Diagram 2: A Framework for Cross-Subject Generalization

Theoretical Foundation: Why Brain Signals Inherently Cause Dataset Shift

What is the fundamental link between brain signal non-stationarity and dataset shift? Electroencephalography (EEG) and other brain signals are fundamentally non-stationary, meaning their statistical properties (like mean and variance) change over time [5] [6]. This inherent non-stationarity is a primary root cause of Dataset Shift in brain-computer interface (BCI) and neural decoder research [2]. When the data distribution changes between your training and testing environments, your model's performance degrades.

Aren't brain signals just noisy? Why can't we use standard linear methods? Brain signals are not just noisy; they are "3N" signals: Nonstationary, Nonlinear, and Noisy [5]. Using linear analysis methods (like FFT) on sufficiently long time intervals is inappropriate for such complex signals. The brain is a complex nonlinear system, and treating its signals as linear is a fundamental misunderstanding, akin to a geographer insisting the Earth is flat because their local measurements seem to form a plane [5].

Table: Core Properties of Brain Signals that Cause Dataset Shift

| Property | Description | Consequence for Neural Decoders |

|---|---|---|

| Non-Stationarity [5] [2] | Statistical properties change over time due to switching between metastable brain states. | Models trained on data from one time session fail on data from another session. |

| Non-Linearity [5] | The output is not proportional to the input, and does not obey superposition principles. | Linear models and analyses fail to capture the true underlying dynamics of the signal. |

| Subject Variability [7] | Major inter-subject differences in neural morphology and signal patterns. | A model perfect for one subject performs poorly on another without adaptation. |

Troubleshooting Guide: FAQs on Identifying and Solving Dataset Shift

FAQ 1: My model works perfectly in the lab but fails with new subjects. What happened?

You are likely experiencing Covariate Shift, a type of dataset shift where the distribution of input features (the EEG patterns) differs between your training lab subjects and new test subjects [8].

- Root Cause: The training data does not adequately represent the operating environment. This is often due to Sample Selection Bias during data collection or the inherent non-stationary environments of biological systems [8].

- Symptoms:

- High accuracy during cross-validation on your original dataset.

- Drastic drop in performance when the model is applied to data from a new session or a new subject.

- Solution:

- Apply Transfer Learning: Use techniques like domain adaptation to align the feature distributions of your source (training) and target (new subject) data [2].

- Utilize Subject-Invariant Features: Focus your feature engineering on discovering patterns that are robust across individuals [7].

- Lightweight Calibration: Incorporate a small amount of data (e.g., 10%) from the new subject for calibration. Research has shown this can significantly boost performance (e.g., achieving accuracy of 0.781 and AUC of 0.801) [9].

FAQ 2: My decoder's performance drops over time, even for the same subject. How do I fix this?

This indicates Concept Drift, where the underlying relationship between the neural signals (input) and the decoded variable (output) has changed over time [8].

- Root Cause: The non-stationary nature of the brain means that the neural representations for the same task or state are not constant across sessions [5] [10].

- Symptoms:

- Gradual degradation of model performance over multiple recording sessions.

- The model becomes less reliable the longer it is in use.

- Solution:

- Generative Models for Data Augmentation: Train a generative model (e.g., a Generative Adversarial Network or GAN) on an initial session. This model can then be rapidly adapted to new sessions with limited data to synthesize realistic new spike trains, effectively augmenting your training data and improving the decoder's robustness [10].

- Continuous Learning Strategies: Implement algorithms designed for non-stationary data streams. Techniques like ELR (Effective Learning Rate) re-warming can help the model "unlearn" outdated features and adapt to new ones by periodically increasing the learning dynamics [11].

- Session-Specific Calibration: Plan for regular, short re-calibration sessions to update the model parameters.

FAQ 3: How can I proactively test for dataset shift in my experimental pipeline?

Proactive detection is key to building robust models.

- Method: Statistical Distance Measures: Use metrics like the Kullback-Leibler (KL) divergence or Maximum Mean Discrepancy (MMD) to compare the probability distributions of your training data and your test (or deployment) data [8]. A large statistical distance is a clear warning sign of potential dataset shift.

- Method: Monitor Performance Metrics: Closely track metrics like loss and accuracy on a held-out validation set that is representative of your target deployment environment (e.g., containing multiple subjects). A growing gap between training and validation performance is a classic indicator of shift [8].

Table: Summary of Dataset Shift Types and Mitigation Strategies

| Type of Shift | Definition | Best Mitigation Strategies |

|---|---|---|

| Covariate Shift [8] | The distribution of input features (X) changes between training and test. | - Domain Adaptation [2]- Importance Weighting- Subject-Invariant Representations [7] |

| Prior Probability Shift [8] | The distribution of the target variable (Y) changes. | - Adjusting classification thresholds.- Re-sampling training data. |

| Concept Drift [8] | The relationship between the inputs and outputs (X → Y) changes. | - Continuous Learning/Adaptation [11]- Generative models for data augmentation [10]- Regular model re-calibration. |

| Internal Covariate Shift | The distribution of inputs to hidden layers in a deep network changes during training. | - Use of Batch Normalization layers [8]. |

Experimental Protocols for Robust Generalization

Protocol: Cyclic Inter-Subject Training for Cross-Subject Generalization

This protocol is designed to learn a robust, subject-invariant initial model.

Objective: To create a neural decoder that generalizes well to unseen subjects by reducing primacy bias and encouraging feature learning [9] [11].

Workflow:

Methodology:

- Data Preparation: Pool data from multiple (N) source subjects.

- Cyclic Training: Instead of training on each subject's data sequentially to completion, the model is trained by alternating between subjects in short segments. For example, the model might be trained on a few batches from Subject 1, then a few batches from Subject 2, and so on, repeating this cycle [9].

- Outcome: This approach prevents the model from over-specializing on the first subject it sees (primacy bias) and encourages the development of generalized features that are useful across all subjects [11].

Protocol: Generative Model Adaptation for Cross-Session Decoding

This protocol uses a data-driven approach to tackle session-to-session variability.

Objective: To rapidly adapt a decoder to a new recording session or a new subject using only a small amount of new data [10].

Workflow:

Methodology:

- Base Model Training: A deep-learning generative model (e.g., a GAN) is trained on a full dataset from one source session. It learns a mapping from behavioral variables (e.g., hand kinematics) to the associated neural spike trains [10].

- Rapid Adaptation: The pre-trained generative model is then fine-tuned on a very small amount of data (as little as 35 seconds) from a new target session or subject.

- Data Augmentation: The adapted model synthesizes a virtually unlimited number of new, realistic neural data points that emulate the properties of the new session.

- Decoder Training: The BCI decoder is trained on a combination of the limited real data and the large volume of synthesized data. This augmented dataset leads to a more robust and better-generalizing decoder than training on the limited data alone [10].

The Scientist's Toolkit: Key Research Reagents & Materials

Table: Essential Resources for Cross-Subject/Session EEG Research

| Resource / Reagent | Function / Application | Relevance to Generalization |

|---|---|---|

| HBN-EEG Dataset [7] | A large-scale public dataset with >3000 participants across 6 cognitive tasks. | Provides a benchmark for evaluating cross-task and cross-subject generalization. |

| Batch Normalization [8] | A layer in deep neural networks that standardizes its inputs. | Mitigates Internal Covariate Shift by stabilizing the distribution of inputs to hidden layers, accelerating training and improving performance. |

| Effective Learning Rate (ELR) Re-warming [11] | A training procedure that periodically increases the effective step size. | Counters primacy bias and loss of plasticity, helping models adapt to new data distributions in non-stationary environments. |

| Generative Adversarial Networks (GANs) [10] | A class of deep generative models that can learn to synthesize realistic data. | Used to create generative spike synthesizers for data augmentation, enabling robust decoder training with limited session-specific data. |

| Domain Adaptation Algorithms [2] | A suite of transfer learning methods designed to align feature distributions. | Directly addresses Covariate Shift by minimizing the discrepancy between source (training) and target (test) domains. |

A fundamental challenge in modern neuroscience and brain-computer interface (BCI) research lies in the significant variability between individuals. Every brain is unique, with its structural and functional organization shaped by genetic and environmental factors over the course of development [12]. This individuality directly translates into inter-subject variability in the location of functional brain areas and the network organization of structural connectivity [12]. For neural decoders—computational models that map brain signals to stimuli or behavior—this variability poses a major obstacle. Models that perform well for one subject often fail when applied to another, or even on the same subject in a different recording session [10]. This technical support center addresses the core experimental and computational issues in achieving robust cross-subject and cross-session generalization for neural decoders, providing troubleshooting guides and methodologies for researchers and drug development professionals.

FAQs: Core Concepts in Neural Alignment & Generalization

1. What are brain-network alignment and neural tracking, and why are they critical for cross-subject generalization?

- Brain-Network Alignment refers to the process of establishing a meaningful correspondence between the neural nodes (e.g., brain regions) or network features of different individuals. It is necessary because the structural and functional properties of a specific region in a standard brain atlas are not perfectly equivalent across subjects [12]. Without proper alignment, comparing or aggregating neural data across subjects is invalid.

- Neural Tracking is the phenomenon where cortical activity automatically follows the dynamics of external stimuli, such as the acoustic or linguistic features of speech [13]. It ensures the temporal alignment of brain recordings with the stimuli or behavior they represent, providing a coherent signal for decoders to learn from.

- Together, they are foundational for generalization. Proper alignment ensures the decoder receives spatially comparable inputs across subjects, while neural tracking provides a temporally stable and consistent signal, enabling the model to learn universal mapping rules rather than subject-specific noise.

2. My subject-specific neural decoder performs well, but fails on new subjects. What is the primary cause?

The primary cause is the misalignment of feature spaces between subjects. Your decoder has likely learned features that are specific to the individual's neuroanatomy, electrode placement, or recording session characteristics. When applied to a new subject, these features are no longer relevant. This can be caused by:

- Structural misalignment: Differences in brain anatomy and parcellation mean that the same atlas region may contain functionally different neuronal populations across subjects [12].

- Functional misalignment: Even aligned regions may have different tuning properties or represent information in distinct ways [10].

- Signal property shifts: Differences in signal-to-noise ratio (SNR), electrode impedance, or recording hardware can alter the input distribution to the decoder [13].

3. What data-driven approaches can improve alignment without requiring extensive new data for each subject?

Recent advances leverage unsupervised and generative models:

- Graph Matching: This technique aligns the structural connectomes of different subjects by finding a permutation of the nodes (brain regions) of one graph that minimizes its dissimilarity to another. Using spatial adjacency as a prior in this process has been shown to effectively reduce inter-subject variability [12].

- Generative Adversarial Networks (GANs): A spike-train synthesizer can be trained on data from one session or subject and then rapidly adapted to a new subject using a small amount of data (e.g., 35 seconds). This model can then generate a virtually unlimited amount of realistic, subject-specific neural data to augment the training of a decoder, significantly improving its performance and generalization [10].

- Unified Models: Architectures like UniBrain are designed from the ground up to be subject-invariant. They use techniques like voxel aggregation to handle variable fMRI signal lengths and adversarial training with a subject discriminator to force the extraction of subject-invariant features, enabling zero-shot decoding on unseen subjects [14].

Troubleshooting Guides: From Theory to Practice

Problem 1: Poor Cross-Subject Decoding Performance

Symptoms: High performance on training subjects, but a significant drop in accuracy when the model is applied to held-out subjects.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|

| Structural Misalignment | Check if node permutations from graph matching are largely non-identity [12]. | Apply an unsupervised graph matching algorithm (e.g., FAQ) with spatial adjacency initialization to align subject connectomes before decoder training [12]. |

| Subject-Specific Overfitting | Evaluate if your model uses subject-specific parameters or modules [14]. | Transition to a unified model architecture (e.g., UniBrain) that uses adversarial feature alignment to learn subject-invariant representations [14]. |

| Insufficient/Imbalanced Data | Analyze performance as a function of training set size for new subjects. | Use a generative model (e.g., GAN-based spike synthesizer) pre-trained on a source subject and adapted with minimal data from the target subject to augment your dataset [10]. |

| Incorrect Input Assumptions | Verify if the model assumes fixed-length fMRI inputs across subjects [14]. | Implement a group-based extractor that aggregates variable-length voxels into a fixed number of functionally coherent groups to standardize input size [14]. |

Problem 2: Unstable Performance Across Recording Sessions

Symptoms: Decoder accuracy degrades over time for the same subject, or varies significantly between sessions.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|

| Neural Attribute Shift | Compare firing rates, tuning curves, or position activity maps between sessions [10]. | Employ the same generative model adaptation strategy used for cross-subject generalization to rapidly recalibrate the decoder to the new session's neural attributes [10]. |

| Changed Electrode Properties | Inspect signal impedance and noise levels. | Incorporate a domain adaptation layer that is trained to be invariant to session-specific signal properties [10]. |

| Neural Plasticity | Check for gradual performance decline over long periods. | Implement a continuous learning framework that allows the decoder to slowly adapt to long-term changes without catastrophic forgetting of its original function. |

Experimental Protocols for Validation

Protocol 1: Validating Cross-Subject Alignment with Graph Matching

Objective: To quantify and reduce the misalignment of structural connectomes across a group of subjects.

- Data Preparation: Obtain structural connectivity matrices for all subjects derived from the same parcellation atlas [12].

- Algorithm Selection: Choose a graph matching algorithm such as the Fast Approximate Quadratic (FAQ) algorithm, known to be effective for brain connectivity networks [12].

- Initialization: Initialize the algorithm with a Spatial Adjacency prior, which has been shown to outperform random or identity initialization by encouraging biologically plausible, local permutations [12].

- Alignment: For each subject pair, compute the permutation matrix ( P{ij} ) that minimizes the Frobenius norm ( \min{P \in \mathcal{P}} \lVert Gi - P^T Gj P \rVertF ), where ( Gi ) and ( G_j ) are the adjacency matrices [12].

- Validation:

- Quantitative: Measure the reduction in the average Frobenius norm between subject connectomes after alignment. A significant decrease confirms improved group-wise similarity [12].

- Qualitative: Characterize the obtained permutations. Successful alignment typically involves permutations between neighboring parcels, not distant brain areas [12].

Protocol 2: Evaluating Generalization with a Unified Model

Objective: To train and evaluate a neural decoder that generalizes to unseen subjects without subject-specific parameters.

- Model Architecture: Implement the UniBrain framework or similar [14]:

- Group-Based Extractor: Aggregate variable-length fMRI voxels into a fixed number of groups based on functional similarity.

- Mutual Assistance Embedder: A transformer-based module that decodes fMRI representations into both semantic and geometric embeddings in a coarse-to-fine manner.

- Bilevel Feature Alignment: Use adversarial training at the extractor level and CLIP-space alignment at the embedder level to enforce subject invariance.

- Training Regime:

- Train the model on data from a set of seen subjects.

- Critical Step: Do not use any subject-specific parameters or modules [14].

- Evaluation:

- In-Distribution (ID): Test on held-out data from the seen subjects. Performance should be comparable to state-of-the-art subject-specific models [14].

- Out-of-Distribution (OOD): Test the model in a zero-shot manner on completely unseen subjects. This is the true test of cross-subject generalization. Report standard metrics like SSIM for image reconstruction or accuracy for classification tasks [14].

Essential Visualizations

Diagram 1: Cross-Subject Neural Decoding Workflow

Diagram 2: Bilevel Feature Alignment in Unified Models

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and methodological "reagents" essential for experiments in cross-subject neural decoding.

| Research Solution | Function & Application | Key Characteristics |

|---|---|---|

| Graph Matching Algorithms (e.g., FAQ) [12] | Aligns the nodes of structural or functional connectomes from different subjects to a common reference. | Reduces inter-subject variability; Improves similarity when parcels >100; Works best with spatial priors. |

| Generative Adversarial Networks (GANs) [10] | Synthesizes realistic neural spike trains; used for data augmentation and rapid adaptation to new subjects/sessions. | Captures neural attributes (tuning curves, firing rates); Enables adaptation with minimal data (e.g., 35 sec). |

| Unified Model Frameworks (e.g., UniBrain) [14] | A single model for all subjects that eliminates subject-specific parameters for zero-shot generalization. | Uses voxel aggregation and adversarial alignment; Drastically reduces parameter count; Enables OOD decoding. |

| Adversarial Feature Alignment [14] | A training scheme that forces the feature extractor to learn representations that a discriminator cannot use to identify the subject. | Creates subject-invariant features; Core component for preventing overfitting to subject-specific patterns. |

| Mutual Assistance Embedder [14] | Decodes neural representations into both semantic and geometric embeddings, which assist each other for richer reconstruction. | Coarse-to-fine decoding; Aligns with CLIP text and image features; Improves guidance for stimulus reconstruction. |

This technical support center addresses common challenges in cross-subject and cross-session generalization for neural decoders, providing actionable guides for researchers and scientists.

Frequently Asked Questions

Q1: Why do my neural decoding models fail when applied to new subjects? Model failure on new subjects is primarily due to inter-subject variability [2] [15]. EEG and fMRI signals exhibit substantial individual differences caused by anatomical, physiological, and cognitive factors. This non-stationarity leads to the Dataset Shift problem, where the data distribution differs between training and deployment environments [2]. To address this, employ subject-invariant feature learning methods such as adversarial training [16] or contrastive learning [15] that explicitly disentangle subject-specific noise from semantic content.

Q2: What is the difference between cross-session and cross-subject generalization?

- Cross-Session Generalization: Focuses on maintaining performance for the same subject across different recording sessions, combating within-subject signal non-stationarity [2].

- Cross-Subject Generalization: Aims to decode neural data from completely new subjects not seen during training, requiring models to handle much larger inter-individual variability [2] [1]. Cross-subject scenarios are generally more challenging but essential for scalable BCI systems.

Q3: How can I achieve decent performance with minimal data from new subjects? Few-shot calibration is highly effective. Research shows that incorporating just 10% of a target subject's data for calibration can achieve an accuracy of 0.781 and AUC of 0.801 in imagined speech detection tasks [9]. Implement cyclic inter-subject training with shorter per-subject segments and frequent alternation among subjects during pretraining to build a robust base model [9].

Q4: What methods work best for zero-shot cross-subject decoding? For true zero-shot scenarios (no target subject data), the most promising approaches include:

- Explicit feature disentanglement to separate subject-related and semantic-related components [16]

- Adversarial training to learn subject-invariant representations [16]

- Contrastive learning in hyperbolic space to capture hierarchical relationships in neural data [15]

- Functional alignment techniques like ridge regression or hyperalignment to map subjects to a common space [17]

Q5: How do I choose between functional and anatomical alignment?

- Anatomical Alignment: Transforms individual brains to match a standard template using anatomical landmarks. Effective for larger brain structures but may lack precision for smaller, variable regions [17].

- Functional Alignment: Synchronizes brain activity patterns across individuals. Ridge regression has emerged as superior for fine-grained information decoding, outperforming other functional alignment techniques in cross-subject brain decoding studies [17].

Use functional alignment when dealing with high-level cognitive tasks or when precise functional localization is critical. Anatomical alignment suffices for basic spatial normalization.

Troubleshooting Guides

Problem: Poor Cross-Subject Generalization in EEG Emotion Recognition

Symptoms: Model performs well on training subjects but accuracy drops significantly (15-30%) on new subjects.

Solutions:

- Implement Cross-Subject Contrastive Learning (CSCL)

- Employ dual contrastive objectives with emotion and stimulus contrastive losses

- Use hyperbolic space instead of Euclidean to better capture hierarchical relationships

- Implement a triple-path encoder integrating spatial, temporal, and frequency information [15]

- Apply Dynamic Brain Functional Networks

- Construct time-varying functional connectivity using sliding windows

- Extract network attributes: global efficiency, local efficiency, and local clustering coefficients

- This approach achieved 91.17% accuracy in arousal classification on DEAP dataset [18]

Verification: Test on multiple benchmark datasets (SEED, DEAP, MPED) to ensure robustness. Cross-subject contrastive learning has shown 97.70% accuracy on SEED, 96.26% on CEED, and 65.98% on FACED datasets [15].

Problem: Limited Cross-Task Generalization

Symptoms: Model trained on one cognitive task (e.g., motor imagery) fails to decode other tasks (e.g., emotion recognition).

Solutions:

- Utilize Foundation Model Approaches

- Adopt Unified Frameworks

Verification: Evaluate using zero-shot cross-task benchmarks. Successful models maintain 93.7% of within-subject classification performance when transferring to unseen tasks [4].

Problem: Subject-Specific Noise Overwhelming Semantic Content

Symptoms: Model learns to identify subjects rather than neural content, failing to extract task-relevant information.

Solutions:

- Apply Explicit Disentanglement Framework

- Use Zebra's four-component decomposition: subject-invariant, subject-specific, semantic-specific, and semantically irrelevant features [16]

- Implement residual decomposition and adversarial training to remove subject-specific noise [16]

- Project semantic-specific features into shared visual-semantic space aligned with CLIP embeddings [16]

- Leverage Multi-Subject Training with Alignment

Verification: Quantitative metrics should show significant improvement in semantic-relevant metrics (e.g., +0.084 PixCorr gain) while reducing subject identification accuracy.

Performance Comparison Tables

Table 1: Cross-Subject Emotion Recognition Performance Across Methods

| Method | Dataset | Accuracy | Key Innovation |

|---|---|---|---|

| CSCL (Contrastive Learning) [15] | SEED | 97.70% | Hyperbolic space, triple-path encoder |

| CSCL (Contrastive Learning) [15] | CEED | 96.26% | Emotion and stimulus contrastive losses |

| CSCL (Contrastive Learning) [15] | FACED | 65.98% | Region-aware learning mechanism |

| Dynamic Brain Network [18] | DEAP (Arousal) | 91.17% | Time-varying functional connectivity |

| Dynamic Brain Network [18] | DEAP (Valence) | 90.89% | Network attribute features |

| MdGCNN-TL [18] | DEAP | 65.89% | Graph neural network with transfer learning |

| MSRN+MTL [18] | DEAP | 71.29% | Multi-scale residual network |

Table 2: Zero-Shot Cross-Subject Decoding Performance

| Method | Modality | Task | Performance vs. Fine-Tuned | Key Metric |

|---|---|---|---|---|

| Zebra [16] | fMRI | Visual Decoding | Comparable to fully fine-tuned | SSIM: 0.384 (vs. 0.375 fine-tuned) |

| Zebra [16] | fMRI | Visual Decoding | +0.084 PixCorr gain | 0.153 vs. 0.069 baseline |

| NEED [4] | EEG | Video Reconstruction | 92.4% of within-subject quality | Cross-task SSIM: 0.352 |

| Ridge Regression Alignment [17] | fMRI | Image Reconstruction | 90% scan time reduction | Comparable to single-subject decoding |

Experimental Protocols

Protocol 1: Cross-Subject Contrastive Learning for EEG Emotion Recognition

This protocol implements the CSCL framework for robust cross-subject emotion recognition [15].

Materials:

- EEG recording system (128-channel recommended)

- Emotional stimulus presentation setup

- Standard preprocessing pipeline (filtering, artifact removal)

Procedure:

- Data Preparation:

- Extract segments from EEG signals during emotional stimulus presentation

- Apply standard preprocessing: bandpass filtering, artifact removal

- Normalize data per subject

Feature Extraction:

- Implement triple-path encoder:

- Spectral Path: Compute frequency-domain features (PSD, DE)

- Temporal Path: Extract time-domain patterns

- Spatial Path: Model inter-channel relationships

- Combine features using attention-based fusion

- Implement triple-path encoder:

Contrastive Learning:

- Project features into hyperbolic space using Poincaré embeddings

- Apply dual contrastive losses:

- Emotion Contrastive Loss: Pull same emotion classes together, push different apart

- Stimulus Contrastive Loss: Maintain consistency across same stimuli

- Optimize using Riemannian optimization methods

Classification:

- Use simple classifier (MLP) on learned representations

- Evaluate using leave-one-subject-out cross-validation

Validation: Test on multiple datasets (SEED, CEED, FACED, MPED) to ensure generalizability.

Protocol 2: Zero-Shot fMRI Visual Decoding with Disentanglement

This protocol implements the Zebra framework for zero-shot cross-subject visual decoding [16].

Materials:

- fMRI data (7T recommended for visual decoding)

- Visual stimulus set (natural images)

- Pretrained ViT and CLIP models

Procedure:

- Data Preprocessing:

- Convert fMRI data to 2D brain activation maps (256×256)

- Apply anatomical alignment to standard space

- Extract region-of-interest (especially visual cortex)

Subject-Invariant Feature Extraction:

- Use ViT-based fMRI encoder (fMRI-PTE) pretrained on multi-subject data

- Apply adversarial training with gradient reversal

- Implement residual decomposition to separate subject-specific variations

Semantic-Specific Feature Learning:

- Project features to CLIP space using diffusion prior

- Align with visual embeddings using contrastive loss

- Preserve semantic discriminability while removing subject information

Image Reconstruction:

- Use diffusion model for image generation from semantic embeddings

- Employ pre-trained Stable Diffusion with guided sampling

- Generate reconstructions from subject-invariant features only

Validation Metrics:

- Structural Similarity (SSIM)

- Pixel Correlation (PixCorr)

- AlexNet(5) accuracy for semantic content

- Subject identification accuracy (should be at chance level)

Research Reagent Solutions

Table 3: Essential Tools for Neural Decoding Research

| Tool | Function | Example Use Cases |

|---|---|---|

| fMRI-PTE [16] | ViT-based fMRI encoder | Mapping fMRI to unified 2D representations |

| Dynamic Brain Networks [18] | Time-varying connectivity analysis | Capturing evolving neural patterns in emotion |

| CLIP Embeddings [16] | Semantic feature alignment | Bridging neural and visual semantic spaces |

| Hyperbolic Embeddings [15] | Hierarchical representation learning | Modeling complex relationships in neural data |

| Diffusion Prior [16] | Latent space transformation | Converting neural to visual embeddings |

| Adversarial Discriminators [16] | Subject-invariant learning | Removing subject-specific noise |

| Contrastive Loss Functions [15] | Representation enhancement | Learning invariant emotional features |

Methodological Diagrams

Diagram 1: Cross-Subject Generalization Framework

Diagram 2: Zero-Shot Disentanglement Pipeline

Diagram 3: Contrastive Learning for EEG Emotion Recognition

The Impact of Theoretical Models and Participant Screening on Decoder Performance

Troubleshooting Guides

FAQ 1: Why does my neural decoder perform poorly on new subjects, and how can I fix it?

Issue: A model trained on one set of subjects shows significantly degraded performance (e.g., lower classification accuracy or reconstruction quality) when applied to new, unseen subjects. This is a classic problem of poor cross-subject generalization.

Explanation: A primary cause is the high degree of inter-subject variability in brain anatomy and functional organization. Decoders often learn to rely on subject-specific neural patterns that do not transfer well. Furthermore, if the training data lacks sufficient subject diversity, the model fails to learn the underlying, invariant neural code.

Solutions:

- Adversarial Disentanglement: Implement frameworks like ZEBRA, which use adversarial training to explicitly disentangle fMRI representations into subject-related and semantic-related components. This forces the model to isolate subject-invariant features, enabling zero-shot generalization to new subjects without fine-tuning [19].

- Input Normalization: Employ an Individual Adaptation Module, pre-trained on multiple datasets, to help normalize subject-specific patterns in EEG data before the main decoding stage. This has been shown to maintain over 92% of visual reconstruction quality on unseen subjects [4].

- Multi-Subject Pretraining: Pretrain your model on data from a large number of subjects across diverse tasks. As demonstrated with transformer models on calcium imaging data, combining data from different sources builds more robust representations that can transfer to new subjects and even new brain regions [20].

FAQ 2: How can I improve my decoder's generalization across different tasks or experimental sessions?

Issue: A decoder trained for one specific cognitive task (e.g., listening to speech) fails to perform well on a different but related task (e.g., reading), limiting its practical utility.

Explanation: Models can become overly specialized to the statistical regularities of a single task or session. Variations in cognitive state, attention, and low-level sensory processing between tasks and sessions can render these models ineffective.

Solutions:

- Multi-Task Pretraining: Train a single model on multiple tasks simultaneously. Research on multi-task transformers shows that this approach allows the model to extract common information from diverse neural circuits, facilitating transfer to new tasks and sessions [20].

- Unified Architecture: Design a model with a flexible inference mechanism that can adapt to different visual or cognitive domains. The NEED framework, for example, uses a dual-pathway architecture to capture both low-level visual dynamics and high-level semantics, allowing it to handle both video and image reconstruction from EEG without task-specific fine-tuning [4].

- Leverage Foundation Models: Utilize large, pre-trained models, such as Large Language Models (LLMs), as a backbone. Their powerful, general-purpose representations of information can be aligned with neural activity, providing a strong prior that improves generalization to new tasks and contexts [13].

FAQ 3: My decoder works in offline analysis but is too slow for real-time use. How can I optimize for speed?

Issue: The model achieves good accuracy but has high latency and computational demands, making it unsuitable for real-time brain-computer interface (BCI) applications or closed-loop experiments.

Explanation: Complex architectures like Transformers, while accurate, often have significant computational overhead. The choice of model architecture and compiler settings directly impacts inference speed.

Solutions:

- Hybrid Architecture: Use a hybrid model like POSSM, which combines a recurrent State-Space Model (SSM) backbone with a cross-attention module for spike tokenization. This architecture is designed for causal, online prediction and has been shown to achieve accuracy comparable to Transformers while being up to 9x faster on GPU [21].

- Compiler and Precision Tuning: Utilize compiler flags to optimize the trade-off between speed and accuracy. For instance, on AWS Neuron, using the

--auto-castflag can improve performance by using lower precision (BF16), though this may sometimes affect accuracy and requires careful validation [22]. - Model Warmup: For consistent low-latency inference, ensure the model is properly "warmed up" before processing critical requests. This can be done by sending a few dummy prompts through the system to initialize states and caches, mitigating slow initial responses [22].

FAQ 4: What is the impact of data preprocessing on decoder performance and generalization?

Issue: Decoding performance is highly sensitive to the specific preprocessing pipeline applied to the raw neural data (e.g., EEG), making results difficult to reproduce and generalize.

Explanation: Preprocessing steps directly shape the input features the decoder learns from. Certain steps may remove biologically relevant signals or, conversely, leave in structured noise that the model can exploit, leading to inflated but non-generalizable performance.

Solutions:

- Systematic Pipeline Evaluation: Adopt a "multiverse" approach, where you systematically test multiple preprocessing paths. One study varied filtering, artifact correction, and referencing, finding that choices like high-pass filter cutoff significantly influenced decoding accuracy [23].

- Caution with Artifacts: Be aware that artifact correction steps (e.g., ICA for ocular artifacts) can sometimes reduce decoding performance because the artifacts themselves may be systematically correlated with the task (e.g., eye movements in a visual attention task). The decision to correct must balance performance against the interpretability of the neural signal [23].

- Use Sensible Defaults: Start with established preprocessing defaults for your modality. For example, the study recommends using higher high-pass filter cutoffs and linear detrending, which consistently boosted decoding performance across multiple EEG experiments [23].

Experimental Protocols & Data

Key Experiment: Evaluating Preprocessing Choices

Objective: To quantitatively assess how different EEG preprocessing steps influence cross-subject decoding performance.

Methodology:

- Data: Use a publicly available dataset (e.g., ERP CORE) containing multiple experiments and participants [23].

- Multiverse Design: Systematically vary key preprocessing steps to create a "multiverse" of analysis pipelines. The steps should include:

- High-pass and low-pass filter cutoffs

- Artifact correction methods (ICA for ocular/muscle artifacts, Autoreject)

- Reference scheme (e.g., average, Cz)

- Baseline correction and detrending

- Decoding: Train and evaluate a decoder (e.g., EEGNet or time-resolved logistic regression) for each preprocessing path on each subject.

- Analysis: Use linear mixed models to estimate the marginal effect of each preprocessing step on decoding performance, isolating its impact from other steps.

Key Quantitative Findings: The table below summarizes the average impact of specific preprocessing choices on decoding performance across several EEG experiments [23].

Table: Impact of EEG Preprocessing Steps on Decoding Performance

| Preprocessing Step | Option A | Effect on Performance | Option B | Effect on Performance |

|---|---|---|---|---|

| High-Pass Filter | Lower Cutoff (0.01 Hz) | ↓ Decrease | Higher Cutoff (0.1-1 Hz) | ↑ Increase |

| Low-Pass Filter | Lower Cutoff (20 Hz) | ↑ Increase (Time-resolved) | Higher Cutoff (40 Hz) | Mixed / Neutral |

| Ocular Artifact Correction | ICA Correction | ↓ Decrease | No Correction | ↑ Increase* |

| Muscle Artifact Correction | ICA Correction | ↓ Decrease | No Correction | ↑ Increase* |

| Detrending | Linear Detrending | ↑ Increase | No Detrending | ↓ Decrease |

| Baseline Correction | Longer Interval | ↑ Increase | No / Short Interval | ↓ Decrease |

*Performance increase may come from decoding structured noise (e.g., eye movements) correlated with the task, reducing result interpretability [23].

Key Experiment: Adversarial Training for Cross-Subject Generalization

Objective: To enable a visual decoding model to perform accurately on fMRI data from unseen subjects without any subject-specific fine-tuning.

Methodology:

- Model Architecture (ZEBRA): Design a framework with a shared feature encoder followed by two branches:

- A semantic decoder for reconstructing images from brain activity.

- A subject discriminator that tries to predict the subject identity from the features.

- Adversarial Training: Train the model with a dual objective:

- The semantic decoder minimizes reconstruction loss.

- The feature encoder is trained to fool the subject discriminator, using a gradient reversal layer. This encourages the encoder to produce features that are informative for reconstruction but uninformative about subject identity [19].

- Evaluation: Test the model in a zero-shot setting on held-out subjects and compare performance against subject-specific models.

Diagrams & Workflows

Diagram: Adversarial Disentanglement for Cross-Subject Generalization

Diagram: Hybrid Model for Real-Time Decoding

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools and Methods for Neural Decoding Research

| Research Reagent / Method | Function & Explanation |

|---|---|

| Adversarial Training | A learning technique used to disentangle latent representations. It helps create subject-invariant features by forcing the model to fool a subject-classifier, improving cross-subject generalization [19]. |

| State-Space Models (SSMs) | A class of recurrent models known for efficient long-range sequence modeling. They form the backbone of hybrid architectures like POSSM, enabling fast, real-time neural decoding with low inference latency [21]. |

| Multi-Task Transformers | A flexible architecture trained on diverse datasets and tasks. It allows for transfer learning between different brain regions, cell types, and cognitive tasks, building large-scale, generalizable models of neural activity [20]. |

| Individual Adaptation Module | A pre-processing component designed to normalize subject-specific patterns in neural data (e.g., EEG). It acts as a subject "filter" to reduce inter-subject variability before the main decoding stage [4]. |

| Multiverse Analysis | A systematic grid-search methodology for evaluating multiple analysis pipelines (e.g., preprocessing steps). It quantifies the impact of analytical choices on outcomes like decoding performance, improving robustness and reproducibility [23]. |

Building Robust Decoders: Architectures and Transfer Learning Strategies for Real-World Application

Frequently Asked Questions

Q1: Why does my neural decoder's performance degrade when used on a different subject or in a new session? This is a classic problem of Dataset Shift, primarily caused by the non-stationary, non-linear, and non-Gaussian nature of neural signals [24]. Brain electrical activity varies between individuals due to differences in electrode placement, tissue characteristics, and inherent neuronal activity patterns [24]. Even for the same subject, physiological states and electrode conditions change over time, leading to significant distributional differences in the data between sessions [24]. Conventional models trained under the assumption that data is independently and identically distributed fail under these cross-subject/session conditions [24].

Q2: What is the fundamental difference between conventional approaches and Domain Adaptation? Conventional machine learning approaches train a decoder for a specific subject or session. In contrast, Domain Adaptation is a transfer learning technique that leverages knowledge from a labeled source domain (e.g., data from previous subjects or sessions) to improve learning and performance in a different but related target domain (a new subject or session), even when their joint probability distributions differ [24]. The core objective is to learn a decision function for the target domain that minimizes prediction error by aligning the distributions between domains [24].

Q3: My model works well on the training data but fails on new subjects. Is this an overfitting problem? While overfitting can be a factor, the primary issue is often model generalizability. A systematic review of EEG-based emotion recognition confirms that standard models suffer from the dataset shift problem in cross-subject and cross-session scenarios [2]. Transfer learning and domain adaptation methods are specifically designed to overcome this by improving the generalization of models to new, unseen data domains [2].

Q4: How much source domain data is typically needed for effective transfer learning? While requirements vary, some benchmarks in related fields suggest that for time-series data, having more than three weeks of periodic data or a few hundred buckets for non-periodic data is a good rule of thumb [25]. For neural decoding, a large EEG dataset used for cross-session studies contained 5 sessions per subject with 100 trials each, providing a robust foundation for adaptation algorithms [26].

Troubleshooting Guides

Issue 1: Poor Cross-Subject Generalization

Symptoms: High accuracy for the subject used in training, but a significant performance drop when the decoder is applied to new subjects.

| Step | Action | Technical Details |

|---|---|---|

| 1. Diagnosis | Check for covariate shift between subjects. | Use dimensionality reduction (e.g., t-SNE, PCA) to visualize feature distributions from different subjects. If subject clusters are separate, domain shift is confirmed. |

| 2. Solution | Apply Feature-Based Domain Adaptation [24]. | Transform source and target domain features into a shared space where distributions are aligned. Common methods include Correlation Alignment (CORAL) or Maximum Mean Discrepancy (MMD) minimization [24]. |

| 3. Implementation | Use an adversarial learning framework. | Train a feature extractor to generate domain-invariant features that can fool a simultaneous domain classifier [24]. |

| 4. Validation | Perform cross-subject validation. | Strictly leave one subject out for testing and report average performance across all left-out subjects, never mixing subject data [2]. |

Issue 2: Cross-Session Performance Instability

Symptoms: Decoder performance decays over time, or a model trained on day one performs poorly on data from the same subject collected days or weeks later.

| Step | Action | Technical Details |

|---|---|---|

| 1. Diagnosis | Assess performance drop using benchmark metrics. | One study reported within-session accuracy of 68.8% dropping to cross-session accuracy of 53.7% without adaptation [26]. |

| 2. Solution | Implement Partial Domain Adaptation (PDA) [27]. | PDA performs neural alignment only within the task-relevant latent subspace, disentangling it from task-irrelevant neural components that cause instability [27]. |

| 3. Implementation | Construct a causal dynamical system. | With pre-aligned short-time windows as input, use VAE-based representation learning and adversarial alignment to disentangle features [27]. |

| 4. Validation | Use Lyapunov theory. | Analytically validate the improved stability of the neural representations after alignment [27]. Experiments show PDA significantly enhances cross-session decoding performance [27]. |

Issue 3: Model Overfitting to Source Domain

Symptoms: The model performs excellently on the source domain data but fails to adapt to the target domain, even after fine-tuning.

| Step | Action | Technical Details |

|---|---|---|

| 1. Diagnosis | Check for overfitting during pre-training. | If the model is too complex or trained for too many epochs on the source data, it may learn features too specific to that domain. |

| 2. Solution | Apply Instance-Based DA [24]. | Strategically reduce the weights of labeled source domain samples that have a distribution significantly different from the target domain. This minimizes negative transfer [24]. |

| 3. Implementation | Sample weighting or selection. | Weight or select source domain samples based on their similarity to the target domain distribution before performing knowledge transfer [24]. |

| 4. Validation | Monitor target domain loss during fine-tuning. | A continuously high or increasing target domain loss indicates the model is struggling to adapt, possibly due to overfitting to the source. |

Performance Data and Benchmarks

Table 1: Benchmarking Performance of Different Learning Paradigms in a Motor Imagery EEG Dataset [26]

| Learning Paradigm | Description | Average Classification Accuracy |

|---|---|---|

| Within-Session (WS) | Model trained and tested on data from the same session. | 68.8% |

| Cross-Session (CS) | Model trained on one session and tested on another without adaptation. | 53.7% (Not significantly different from chance level) |

| Cross-Session Adaptation (CSA) | Model adapted using a small amount of target session data. | 78.9% |

Table 2: Categorization of Domain Adaptation Methods for Neural Decoding [24]

| Method Category | Core Principle | Typical Algorithms | Best For |

|---|---|---|---|

| Instance-Based | Weight or select source samples similar to the target domain. | Sample weighting, importance sampling. | Scenarios where parts of the source data are still relevant. |

| Feature-Based | Transform features to align source and target distributions. | CORAL, MMD, Adversarial Alignment. | Bridging significant distributional gaps between subjects/sessions. |

| Model-Based | Fine-tune a pre-trained model on a small target dataset. | Parameter sharing, fine-tuning of pre-trained layers. | When labeled target data is scarce but a large source dataset exists. |

Experimental Protocols for Validation

Protocol 1: Validating Cross-Subject Generalization

Objective: To evaluate how well a neural decoder trained on multiple subjects generalizes to a completely new subject.

Diagram: Cross-Subject Validation via Feature Alignment

- Data Partitioning: Reserve data from one or more subjects as the target domain. The remaining subjects form the source domain [2].

- Feature Alignment: Train a feature extractor to project data from both domains into a shared feature space where their distributions are aligned. This can be achieved by minimizing a divergence metric like MMD or through adversarial training [24].

- Decoder Training: Train the decoder model using the labeled data from the aligned source domain features.

- Validation: Test the final model on the held-out target subject's data. Repeat this process for all subjects (e.g., leave-one-subject-out cross-validation) [2].

Protocol 2: Validating Cross-Session Stability

Objective: To assess the long-term stability of a neural decoder and the effectiveness of adaptation techniques across different sessions.

Diagram: Cross-Session Stability with Partial Domain Adaptation

- Longitudinal Data Collection: Collect data from the same subject across multiple sessions, with intervals of days or weeks (e.g., a 5-session dataset over 10 days) [26].

- Base Model Pre-training: Train an initial model on data from the first session.

- Partial Domain Adaptation: When new session data arrives, employ a PDA framework. Use a causal dynamical system model to disentangle task-relevant neural features from task-irrelevant components. Perform adversarial alignment only on the task-relevant latent subspace [27].

- Stability Analysis: Use analytical tools like Lyapunov theory to validate the improved stability of the neural representations [27].

- Performance Tracking: Evaluate the adapted decoder on all subsequent sessions to monitor performance stability over time.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Algorithms for Neural Decoding Research

| Item / Solution | Function / Purpose | Example Use Case |

|---|---|---|

| Large Public Neuroimaging Datasets (e.g., HCP [28], OpenNeuro [28]) | Provides extensive source domain data for pre-training deep learning models, which is crucial for transfer learning success. | Pre-training a whole-brain fMRI cognitive decoder on the Human Connectome Project dataset before fine-tuning on a smaller, study-specific dataset [28]. |

| EEG Motor Imagery Datasets (e.g., 5-session cross-session dataset [26]) | Offers benchmark data specifically designed for testing cross-session and cross-subject generalization. | Benchmarking a new domain adaptation algorithm against standard WS, CS, and CSA performance metrics [26]. |

| Adversarial Alignment Frameworks | A feature-based DA method that uses a domain classifier to force the feature extractor to learn domain-invariant representations [24]. | Creating a subject-invariant EEG feature space for emotion recognition, improving classifier performance on new subjects [24]. |

| Partial Domain Adaptation (PDA) | A specialized DA framework that identifies and aligns only task-relevant neural components, ignoring task-irrelevant noise [27]. | Achieving stable long-term decoding performance in BCI applications across different experimental days, countering non-stationarity [27]. |

| Common Spatial Patterns (CSP) & Filter Bank CSP (FBCSP) | Classic spatial filtering algorithms for feature extraction in Motor Imagery BCI [26]. | Used as a strong baseline feature extraction method before applying domain adaptation techniques [26]. |

| Deep ConvNets / EEGNet | End-to-end deep learning models for neural signal decoding that can learn complex features directly from raw or pre-processed data [26]. | Serving as a powerful backbone model that can be combined with DA techniques for improved end-to-end cross-subject decoding [24]. |

Q: What is the NEED framework and what core problem does it solve? A: The NEED framework is the first unified model designed for zero-shot cross-subject and cross-task generalization in EEG-based visual reconstruction. It addresses the critical limitations of poor generalization across subjects and constraints to specific visual tasks that have hindered previous neural decoding systems. NEED allows a single model to work on new subjects and different visual tasks (like video or static image reconstruction) without requiring any subject-specific data or retraining [4].

Q: What are the main architectural components of NEED that enable this generalization? A: NEED tackles generalization through three key innovations [4]:

- Individual Adaptation Module: Pretrained on multiple EEG datasets to normalize subject-specific neural patterns.

- Dual-Pathway Architecture: Captures both low-level visual dynamics and high-level semantic content from EEG signals.

- Unified Inference Mechanism: Allows the model to adapt to different visual domains (e.g., video and images) within a single framework.

Performance Data & Generalization Metrics

The table below summarizes the key quantitative results of the NEED framework as reported in the research, demonstrating its strong cross-subject and cross-task performance.

Table 1: NEED Framework Performance Metrics

| Generalization Scenario | Metric | Performance | Significance |

|---|---|---|---|

| Cross-Subject (Unseen Subjects) | Retention of within-subject classification performance | 93.7% | Model retains nearly all its classification capability on new subjects without fine-tuning [4]. |

| Cross-Subject (Unseen Subjects) | Retention of visual reconstruction quality | 92.4% | Reconstructed visuals for new subjects are nearly identical in quality to those for known subjects [4]. |

| Cross-Task (Zero-shot transfer to static image reconstruction) | Structural Similarity Index (SSIM) | 0.352 | Demonstrates direct applicability to a new task (image reconstruction) without model retraining [4]. |

Experimental Protocols

Protocol 1: Validating Cross-Subject Generalization

This protocol outlines the steps to evaluate how well the NEED framework performs on EEG data from previously unseen subjects.

Table 2: Cross-Subject Validation Protocol

| Step | Action | Purpose | Key Inputs |

|---|---|---|---|

| 1. | Data Preparation | Ensure clean, standardized input data for the model. | Preprocessed EEG datasets from multiple subjects. |

| 2. | Subject Splitting | Simulate a real-world scenario with new users. | Hold out one or more subjects' data for testing. |

| 3. | Model Inference | Generate predictions for the unseen subject. | Trained NEED model; held-out subject's EEG data. |

| 4. | Performance Quantification | Measure generalization capability objectively. | Compare reconstructed images/videos to ground truth using SSIM and classification accuracy [4]. |

Protocol 2: Zero-Shot Cross-Task Transfer

This protocol describes the methodology for applying the NEED framework to a new visual task, such as moving from video to static image reconstruction, without any task-specific fine-tuning.

Table 3: Cross-Task Validation Protocol

| Step | Action | Purpose | Key Outputs |

|---|---|---|---|

| 1. | Task Definition | Clearly define the new target domain. | Static image reconstruction stimuli and corresponding EEG data. |

| 2. | Direct Inference | Assess inherent model flexibility. | NEED model trained only on video reconstruction tasks. |

| 3. | Evaluation | Gauge quality of transfer. | SSIM score of 0.352 for image reconstruction, confirming effective zero-shot transfer [4]. |

Framework Architecture & Workflow

The following diagram illustrates the core architecture and data flow of the NEED framework, highlighting how it achieves cross-subject and cross-task generalization.

NEED Framework Core Architecture

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key computational tools and methodological components essential for implementing and experimenting with generalized neural decoders like the NEED framework.

Table 4: Essential Research Reagents for Neural Decoding Generalization

| Reagent / Method | Function | Application in NEED |

|---|---|---|

| Individual Adaptation Module (IAM) | Normalizes subject-specific patterns in neural data. | Isolates subject-invariant, semantic-specific EEG representations for cross-subject generalization [4]. |

| Adversarial Training | A training technique where a generator and discriminator network compete. | Used to explicitly disentangle subject-related and semantic-related components of fMRI/EEG representations [19]. |

| Dual-Pathway Architecture | A neural network structure that processes information through separate streams. | Captures both low-level visual dynamics and high-level semantics from EEG signals for robust feature extraction [4]. |

| Generative Adversarial Networks (GANs) | A class of AI models that learn to generate new data with the same statistics as the training set. | Can be used as a generative spike-train synthesizer to create synthetic neural data for augmenting BCI decoder training and improving cross-session generalization [10]. |

| Zero-Shot Learning | A paradigm where a model performs a task without having seen any example of that task during training. | The core of NEED's inference mechanism, allowing it to perform cross-task visual reconstruction (e.g., video to images) without fine-tuning [4]. |

Frequently Asked Questions (FAQs)

1. What is the primary advantage of using a Hilbert Transform over a Fourier Transform in neural decoding models? The Hilbert Transform (HT) provides two key advantages that are crucial for neural decoding. First, it creates an analytic signal that removes non-physical negative frequencies, preventing spectrum waste and undesirable artifacts caused by their interaction with positive frequencies [29]. Second, unlike the global analysis of Fourier Transform, HT enables time-frequency analysis, allowing the calculation of instantaneous frequency with a resolution that reaches the sampling resolution of the observed signal [29]. This provides more fine-grained temporal information about neural dynamics.

2. My HTNet model generalizes poorly across subjects. What strategies can improve cross-subject performance? Poor cross-subject generalization often stems from model overfitting to subject-specific neural patterns. To address this:

- Adversarial Disentanglement: Implement frameworks like ZEBRA, which use adversarial training to explicitly decompose fMRI representations into subject-related and semantic-related components. This isolates subject-invariant, semantic-specific features, enabling zero-shot generalization to unseen subjects [19].

- Leverage Large Language Models (LLMs): Integrate pre-trained LLMs for their powerful information understanding and processing capacities. Their representations account for a significant portion of the variance in human brain activity during language tasks, improving model robustness [13].

- Check Data Alignment: Ensure temporal alignment of brain recordings with linguistic representations. A minor time shift can disrupt the association between language stimuli and neural patterns, harming generalization [13].

3. How do brain-region projection layers in HTNet adapt to different behavioral states? Evidence suggests that prefrontal cortex (PFC) subregions send highly specialized, state-dependent signals to posterior brain regions. For instance, the orbitofrontal cortex (ORB) and anterior cingulate area (ACA) selectively transmit information about arousal and motion to the primary visual cortex (VISp). These signals dynamically sharpen or suppress visual information processing based on the subject's arousal level and whether it is moving, effectively balancing each other to enhance relevant stimuli and suppress irrelevant ones [30].

4. What are the key evaluation metrics for a neural decoding model like HTNet in a cross-subject context? The choice of metric depends on your specific decoding task. The table below summarizes the most relevant metrics [13].

| Task Paradigm | Key Metric | Description |

|---|---|---|

| Stimuli Recognition | Accuracy | Percentage of correctly identified stimuli instances. |

| Brain Recording Translation | BLEU, ROUGE, BERTScore | Measures semantic consistency of decoded text with reference text; focuses on meaning over exact word matching. |

| Speech Neuroprosthesis | Word Error Rate (WER) | Accuracy of decoded hypotheses at the word level, common for inner speech recognition. |

| Speech Wave Reconstruction | Pearson Correlation (PCC) | Measures the linear relationship between generated and reference speech signals. |

Troubleshooting Guides

Issue: Low Signal-to-Noise Ratio in Hilbert Transform Output

Problem: The analytic signal generated by the Hilbert Transform is too noisy, leading to unreliable instantaneous frequency estimates for neural data.

Solution: Follow this diagnostic workflow to identify and resolve the issue.

Diagnostic Steps:

- Verify Signal Preprocessing: Ensure raw neural signals are properly preprocessed. Apply a band-pass filter appropriate for your signal's frequency range (e.g., 0.5-40 Hz for local field potentials) before applying the Hilbert Transform. This prevents high-frequency noise from aliasing into the analytic signal.

- Check Filtering Parameters: The HT itself is a linear operator and does not have "parameters" in the traditional sense. The noise likely originates from the input signal. Re-examine the parameters of your initial band-pass or notch filters.

- Inspect for Non-Stationarities: Neural data often contains non-stationary artifacts (e.g., from movement). Use techniques like artifact subspace reconstruction (ASR) or simply visually inspect your raw data to identify and remove these periods.

- Validate Sampling Rate: Confirm that your data was acquired at a sufficiently high sampling rate to satisfy the Nyquist criterion for the frequencies of interest. Inadequate sampling can distort the Hilbert Transform's phase estimates.

Issue: Failure in Cross-Session Decoding Generalization

Problem: Your HTNet model, trained on data from one experimental session, performs poorly when applied to data from the same subject in a subsequent session.

Solution: This is often caused by "representational drift"—subtle changes in how the brain encodes information over time. The following protocol helps mitigate this.

Experimental Protocol for Mitigating Representational Drift

- Objective: To align neural representations across sessions using adaptive stretching of task-relevant dimensions.

- Background: Research shows that to optimize for a task, the brain adaptively stretches its representations along goal-relevant dimensions, making them more dissimilar, while compressing irrelevant dimensions [31]. This principle can be applied to models.

- Procedure:

- Baseline Recording: Collect a baseline of neural data (e.g., from V4, MT, PFC) while the subject performs the task in the first session.

- Anchor Point Identification: Identify neural population activity patterns that correspond to key, well-separated stimuli or decisions in the task. These are your "anchors."

- Short Recalibration Block: In the new session, present a small set of trials containing these anchor stimuli. This block should be short to be practical.

- Representation Alignment: Compute the neural dissimilarity matrix for the anchor points from the new session. Use linear or non-linear transformation to align these representations to the stretched configuration from the original model. The goal is to re-establish the task-relevant representational geometry.

- Model Adjustment: Apply the learned transformation to the feature space of your HTNet model, effectively adjusting its brain-region projection layers for the new session.

Issue: Inaccurate Feature Extraction from Specific Brain Regions

Problem: The projection layers for a specific brain region (e.g., V4 for color, MT for motion) are not capturing the expected features, leading to poor decoding performance.

Solution: Systematically verify the anatomical and functional integrity of your input features.

Diagnostic Steps:

- Confirm Anatomical Mapping: If using invasive recordings, ensure your channel locations are correctly mapped to the brain region of interest using standardized atlases and software (e.g., DeepTraCE for bulk-labeled axons or DeepCOUNT for cell bodies) [32].