Breaking the Communication Barrier: Strategies for Maximizing Information Transfer in Non-Invasive BCI Spellers

This article provides a comprehensive analysis of strategies for optimizing the Information Transfer Rate (ITR) in non-invasive Brain-Computer Interface (BCI) spellers, a critical technology for restoring communication in patients with...

Breaking the Communication Barrier: Strategies for Maximizing Information Transfer in Non-Invasive BCI Spellers

Abstract

This article provides a comprehensive analysis of strategies for optimizing the Information Transfer Rate (ITR) in non-invasive Brain-Computer Interface (BCI) spellers, a critical technology for restoring communication in patients with severe neuromuscular impairments. Aimed at researchers and biomedical professionals, it explores the fundamental principles of EEG-based spellers, including P300, SSVEP, and Motor Imagery paradigms. The review delves into advanced methodological innovations such as hybrid systems, asynchronous control, and novel decoding algorithms like CNN-fuzzy-attention networks. It further addresses key challenges in signal processing, user-state detection, and visual fatigue, while evaluating performance through rigorous benchmarking of ITR, accuracy, and usability. Finally, the article examines the transformative potential of integrating large language models for predictive text and discusses the clinical translation pathway for these rapidly evolving neurotechnologies.

The Fundamentals of Non-Invasive BCI Spellers: Principles, Paradigms, and Performance Metrics

Troubleshooting Guides and FAQs

P300 Speller Troubleshooting

Problem: Low online classification accuracy.

- Potential Cause 1: Suboptimal stimulus parameters. The color and type of visual stimulus significantly impact the evoked P300 amplitude and performance [1] [2].

- Solution: Implement a "Grey to Color" (GC) stimulus paradigm instead of the traditional "Grey to White" (GW). Research shows GC produces higher amplitude ERPs, leading to better accuracy and Information Transfer Rate (ITR) [2]. Test combinations like a red face with a white rectangle background (RFW), which has outperformed red or blue backgrounds [1].

- Potential Cause 2: Adjacency distraction and double-flash problems. The standard Row-Column Paradigm (RCP) can cause flashes adjacent to the target to distract the user and reduce accuracy [3] [1].

- Solution: Utilize a Checkerboard Paradigm (CBP) or other flash patterns based on binomial coefficients. These paradigms flash groups of characters in a way that minimizes the probability of adjacent flashes, reducing distraction and improving target identification [1].

Problem: User reports visual fatigue or discomfort.

- Potential Cause: High-contrast, repetitive visual stimuli. Prolonged exposure to intense flickering can cause fatigue [4].

- Solution: While maintaining contrast, experiment with different color pairs. Participant preference for a paradigm can also correlate with higher performance, so consider user feedback [2]. Ensure the stimulus frequency is not overly intrusive.

SSVEP Speller Troubleshooting

Problem: A decline in SSVEP amplitude and SNR over time.

- Potential Cause: Visual fatigue from prolonged stimulation. This is a common issue in SSVEP paradigms and can alter EEG patterns, degrading performance [4].

- Solution: Design your speller using stimuli in the beta frequency range (14–22 Hz). Studies indicate the beta band is less susceptible to fatigue-induced variability compared to the alpha range. This can help maintain stable signal quality and classification accuracy throughout the experiment [4].

Problem: Low classification accuracy for a multi-target SSVEP speller.

- Potential Cause: Inadequate frequency resolution or signal processing.

- Solution: Employ advanced classification algorithms like Filter Bank Canonical Correlation Analysis (FBCCA) or Task-Related Component Analysis (TRCA). For deep learning approaches, ensure you have a large, high-quality dataset for training. Using a calibration-based approach with individual template data can significantly boost performance [4].

Motor Imagery (MI) Speller Troubleshooting

Problem: Difficulty in achieving multi-class control for spelling.

- Potential Cause: Limited control commands from MI tasks. Directly mapping multiple MI tasks to many characters is challenging [5].

- Solution: Implement a hierarchical speller paradigm like "Oct-o-Spell." This interface uses a three-layer system where users select from eight blocks in the first layer, which are then unfolded in subsequent layers. A 2D cursor controlled by a combination of two MI tasks (e.g., left hand, right hand, foot) allows for efficient navigation and selection from a large character set [5].

Problem: Low performance in asynchronous BCI control.

- Potential Cause: Instability in maintaining a "no-control" state.

- Solution: For initial experiments, consider a synchronous control protocol. This method removes the need for a brain-actuated switch to start the system, which can improve efficiency and reliability for users still mastering MI control [5].

General BCI Speller Issues

Problem: The system is unsuitable for users with limited or no eye movement (e.g., CLIS patients).

- Potential Cause: Reliance on visual stimulation. Visual BCIs are ineffective if a user cannot fixate on visual stimuli [6].

- Solution: Develop or switch to an auditory BCI speller. These systems present letters as a stream of auditory utterances (e.g., letter pronunciations) through headphones. Users focus on the target letter sound, eliciting a P300 response. Some systems use spatial cues (left/right audio) to add an extra dimension to the classification [6].

Problem: Stagnant ITR despite algorithm improvements.

- Potential Cause: Approaching the bandwidth limit of conventional visual-evoked potentials.

- Solution: Explore novel stimulation methods like a broadband white noise (WN) stimulus. Recent research suggests that using a broader frequency band for stimulation can surpass the performance limits of traditional SSVEP BCIs, potentially achieving record ITRs [7].

Table 1: Performance Comparison of BCI Speller Paradigms

| Paradigm | Stimulus Type | Average Accuracy (%) | Average ITR (bits/min) | Key Advantages | Key Challenges |

|---|---|---|---|---|---|

| P300 (GC Stimulus) [2] | Visual (Grey to Color) | >90% (online) | Significantly higher than GW | Enhanced ERP amplitude, user preference | Visual fatigue, adjacency distraction in RCP |

| SSVEP (Beta Range) [4] | Visual (14-22 Hz) | High (calibration-based) | Not Specified | Lower visual fatigue, high SNR, suitable for multi-target spellers | Requires individual calibration, potential fatigue at lower frequencies |

| Motor Imagery (Oct-o-Spell) [5] | Mental Imagery | >70% (online) | Not Specified | Does not require visual focus, hierarchical menu enables complex control | Requires user training, generally lower ITR than VEP-based systems |

| Auditory (CharStream) [6] | Auditory (Letter Utterances) | 30% (avg) to 100% (max) | 2.38 (avg) to 8.14 (max) | Works without visual focus, intuitive | Lower average accuracy and ITR, requires high concentration |

Table 2: Impact of Color on P300 Speller Performance (Online Accuracy) [1]

| Stimulus Pattern | Description | Average Accuracy (%) |

|---|---|---|

| RFW | Red Face with White Rectangle | 96.94% |

| RFR | Red Face with Red Rectangle | 93.61% |

| RFB | Red Face with Blue Rectangle | 92.22% |

Experimental Protocols & Methodologies

Protocol 1: P300 Speller with Color Modulation

Objective: To evaluate the effect of different color combinations on the performance and evoked potentials of a P300 speller [1].

- Interface: A 6x6 matrix of characters is presented on a screen.

- Stimuli: Different stimulus patterns are tested, such as a red face superimposed on a colored (white, blue, red) rectangular background.

- Flash Pattern: Instead of the standard RCP, a pattern based on binomial coefficients (e.g., C(12,2)) is used to mitigate adjacency distraction. This results in 12 flashes per trial, where two matrix elements are intensified simultaneously [1].

- Task: Participants are instructed to focus on a pre-defined target character and silently count the number of times it flashes.

- EEG Recording & Processing: EEG is recorded from multiple scalp electrodes. Epochs time-locked to the stimulus onset are extracted.

- Classification: A Bayesian Linear Discriminant Analysis (BLDA) classifier is trained to distinguish between target and non-target epochs.

- Output: The system identifies the character that produces the strongest P300 response and types it out.

Protocol 2: SSVEP Speller with Beta-Range Stimulation

Objective: To collect SSVEP data using beta-frequency stimulation to minimize visual fatigue while maintaining high classification accuracy [4].

- Interface: A 5x8 speller matrix (40 classes) is presented. Each character flickers at a unique frequency.

- Stimulus Parameters: Flickering frequencies are set in the beta band (14.0 Hz to 21.8 Hz, incremented by 0.2 Hz) using Joint Frequency and Phase Modulation (JFPM).

- Task (Cue-Based): Each trial begins with a blank screen, followed by a visual cue indicating the target character. Participants focus on the cued character during the 5-second flickering period.

- Data Recording: EEG is recorded from 31 channels covering central-to-occipital regions. Participants complete multiple sessions with breaks to mitigate fatigue.

- Fatigue Assessment: Subjective fatigue ratings and EEG band power analyses (e.g., increases in alpha power) are conducted before and after the experiment to quantify fatigue.

- Classification: Methods like Canonical Correlation Analysis (CCA) or Filter Bank CCA (FBCCA) are used for target identification.

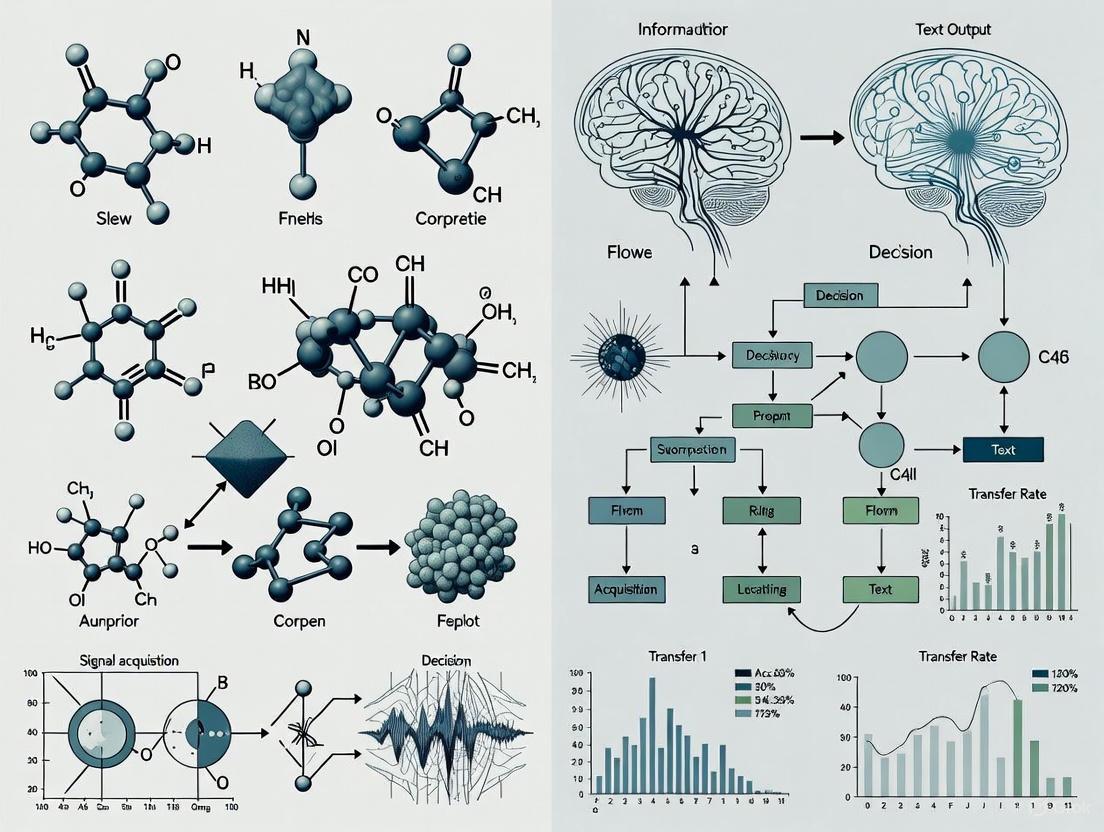

Experimental Workflow and Signaling Pathways

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for BCI Speller Research

| Item / Tool | Function / Description | Example from Literature |

|---|---|---|

| EEG Acquisition System | Records electrical brain activity from the scalp. | BioSemi ActiveTwo system with 31 Ag-AgCl wet electrodes [4]. Brain Products system with 16 active electrodes [6]. |

| Visual Stimulation Software | Presents the speller GUI and controls stimulus timing and properties. | MATLAB with Psychophysics Toolbox (PTB-3) [4]. "Qt Creater" software [1]. OpenViBE [6]. |

| Stimulus Presentation Hardware | Displays the visual stimuli to the user. | Standard LCD/LED monitors with high refresh rates (e.g., 120 Hz) for precise SSVEP frequency control [4]. |

| Auditory Stimulation Hardware | Presents auditory stimuli to the user. | Stereo headphones for delivering letter utterances and creating spatial cues [6]. |

| Classification Algorithms | Translates EEG features into device commands. | BLDA: For P300 classification [1]. CCA/FBCCA: For SSVEP frequency recognition [4]. CNN (e.g., EEGNet): For single-trial classification in auditory spellers [6]. SVM with CSP: For Motor Imagery task discrimination [5]. |

| Spatial Filtering | Enhances the signal-to-noise ratio of EEG signals. | Common Spatial Patterns (CSP): Used for Motor Imagery paradigms to maximize the variance between two classes of brain activity [5]. |

Technical Support Center

Troubleshooting Guides & FAQs

P300 Speller

- Q: Why is my P300 speller classification accuracy low?

- A: Low accuracy can stem from multiple sources. Please consult the following troubleshooting table.

| Issue | Possible Cause | Solution |

|---|---|---|

| Low Signal-to-Noise Ratio (SNR) | Excessive eye movements/blinks, muscle artifacts, poor electrode contact. | Instruct the user to minimize blinking during stimulus presentation. Ensure electrode impedances are below 5 kΩ. Apply artifact rejection algorithms (e.g., thresholding). |

| Weak P300 Amplitude | User fatigue/lack of attention, inappropriate stimulus parameters. | Keep session durations short. Use a salient, inter-stimulus interval (ISI) of 150-250 ms. Ensure the user is actively counting the target stimuli. |

| Poor Classifier Performance | Insufficient training data, non-representative training data. | Increase the number of sequences per character during training (e.g., 10-15). Ensure the training set includes a balanced number of targets and non-targets. |

- Q: What is the optimal number of sequences for a balance between speed and accuracy?

- A: The optimal number is a trade-off. The following table summarizes the typical relationship based on recent studies aiming to optimize ITR.

| Number of Sequences | Expected Accuracy (%) | Approximate ITR (bits/min) | Use Case |

|---|---|---|---|

| 5-8 | 70-85 | 15-25 | High-Speed Speller (lower accuracy) |

| 10-15 | 85-98 | 20-35 | Balanced Speller |

| >15 | >98 | <20 | High-Accuracy Speller (e.g., clinical use) |

Experimental Protocol: P300 Speller Calibration

- Setup: Apply a 16-32 channel EEG cap, focusing on Cz, Pz, P3, P4, PO7, PO8, O1, O2. Impedances should be <5 kΩ.

- Stimulus Presentation: Use a 6x6 matrix of characters. The stimulus is a row/column intensification for 100 ms with an ISI of 150-250 ms.

- Task: Instruct the user to silently count the number of times their target character is intensified.

- Data Collection: Record EEG data while presenting 10-15 sequences of intensifications (each row and column flashes once per sequence).

- Training: Extract epochs from -100 ms to 600 ms around each flash. Apply a bandpass filter (0.1-20 Hz). Use a stepwise linear discriminant analysis (SWLDA) or support vector machine (SVM) classifier to build a model distinguishing target from non-target flashes.

SSVEP Speller

Q: My SSVEP signal is weak at higher frequencies (>20 Hz). What can I do?

- A: The SSVEP response naturally attenuates at higher frequencies. Ensure your monitor has a high refresh rate (≥120 Hz) to render these frequencies effectively. Use a canonical correlation analysis (CCA)-based classifier, which is more robust for detecting weak SSVEPs compared to simple power spectral density analysis. Also, consider using reference signals at the fundamental and harmonic frequencies.

Q: How do I minimize visual fatigue in an SSVEP speller?

- A: Use a small set of high-frequency stimuli (e.g., 12 Hz, 15 Hz, 20 Hz) which are less perceptually intrusive. Implement a "gaze-shifting" paradigm where stimuli are grouped, reducing the need for large eye movements. Ensure the stimulus is not overly bright or flickering in a dark environment.

Research Reagent Solutions for SSVEP

| Item | Function |

|---|---|

| High Refresh Rate Monitor (≥120 Hz) | Accurately renders the rapid visual stimuli necessary for SSVEP. |

| Light-Emitting Diode (LED) Panels | Provides a brighter, more precise, and higher frequency flicker than monitors. |

| Canonical Correlation Analysis (CCA) | A multivariate statistical method that is the standard for robust SSVEP frequency detection. |

| Filter Bank CCA (FBCCA) | Enhances CCA by decomposing the EEG into sub-bands, improving performance for high-frequency SSVEP. |

Experimental Protocol: SSVEP Speller Calibration

- Setup: Apply EEG cap with electrodes over the visual cortex (POz, Oz, O1, O2, PO3, PO4, PO7, PO8). Ground at AFz, reference at Cz.

- Stimulus Presentation: Present at least 4 distinct visual stimuli (e.g., boxes on a screen) flickering at different frequencies (e.g., 8 Hz, 10 Hz, 12 Hz, 15 Hz).

- Task: Instruct the user to focus their gaze on one of the flickering targets for a 5-second trial.

- Data Collection: Record EEG data for the duration of each trial.

- Training: For each trial, apply a bandpass filter (e.g., 5-45 Hz). Extract signal segments. Use CCA or FBCCA to compute the correlation between the EEG data and reference signals (sine-cosine waves at the stimulus fundamental and harmonic frequencies). The frequency with the highest correlation coefficient is the target.

Motor Imagery (MI) Speller

Q: How can I improve the differentiation between left and right hand MI?

- A: Focus on the sensorimotor rhythms (8-30 Hz) over the contralateral motor cortex. Use Common Spatial Patterns (CSP) for feature extraction, as it maximizes the variance for one class while minimizing it for the other. Ensure users are performing kinesthetic motor imagery (feeling the movement) rather than visual.

Q: Why is the performance of my MI speller unstable across sessions?

- A: MI is highly susceptible to user's psychological state and requires significant user training. Implement a session-to-session transfer learning framework. Calibrate the classifier with a small amount of new data from each session and use it to adapt a pre-existing model. This reduces calibration time and improves stability.

Experimental Protocol: MI Speller Calibration (Left vs. Right Hand)

- Setup: Apply EEG cap focusing on C3, Cz, C4, and surrounding electrodes (FC1, FC2, CP1, CP2). Use the international 10-20 system.

- Task: Present a visual cue (e.g., arrow pointing left or right). Instruct the user to imagine kinesthetically opening and closing the corresponding hand until the cue disappears (e.g., 4 seconds). This should be followed by a rest period.

- Data Collection: Record multiple trials (e.g., 60-100 per class) in a randomized order.

- Training: Apply a bandpass filter (8-30 Hz) to capture mu and beta rhythms. Extract epochs corresponding to the cue period. Use the CSP algorithm to find spatial filters that best discriminate the two classes. Then, log-transform the variances of the filtered signals to create features. Train a linear discriminant analysis (LDA) or SVM classifier.

The Critical Role of Information Transfer Rate (ITR) as a Key Performance Indicator

In non-invasive Brain-Computer Interface (BCI) spellers, the Information Transfer Rate (ITR) serves as the crucial benchmark for quantifying system performance and communication efficiency. Measured in bits per minute (bpm) or bits per trial, ITR represents the effective speed at which a user can accurately transmit information through the BCI system [8]. For researchers developing BCI spellers for augmentative and alternative communication (AAC), optimizing ITR is paramount, as it directly determines whether a system transitions from a laboratory prototype to a clinically viable communication tool for individuals with severe speech and physical impairments (SSPI) [9].

A non-invasive BCI speller is a complex, closed-loop system where performance depends on the intricate relationship between user capabilities and technical parameters. The ITR encapsulates this relationship, functioning as a composite metric influenced by classification accuracy, the number of selectable targets, and the speed of selection [8]. Understanding and troubleshooting the factors that govern ITR is therefore fundamental to advancing the field of BCI research.

FAQs: Understanding ITR in BCI Spellers

What is ITR and why is it the most important metric for BCI spellers? ITR is a measure of how much information is communicated reliably per unit of time. It is calculated based on the number of possible commands (classes), the probability of correctly selecting a command (accuracy), and the time taken to make a selection (trial length) [8]. For a BCI speller, a high ITR means a user can spell words and sentences faster and with fewer errors, which is the ultimate goal of a communicative aid. While raw accuracy is important, a system with 95% accuracy that takes 5 seconds per character is inferior to a system with 85% accuracy that takes 1 second per character, as the latter will have a higher ITR and provide more efficient communication.

Our system achieves high offline accuracy, but the real-time ITR is low. What could be wrong? This common issue often points to a problem with system latency or the feedback loop. High offline accuracy confirms that the core signal processing and classification algorithms are sound. However, in real-time operation, delays can be introduced from multiple sources:

- Data Acquisition and Processing Delays: The time taken to acquire an EEG data block, process it, and complete the classification must be shorter than the physical duration of the data block itself to maintain real-time operation [8] [10].

- Stimulation Failure Rate (SFR): In systems relying on neurostimulation to close the loop, a high SFR can drastically reduce the effective ITR, even with a high-classifier accuracy [8].

- Inefficient Paradigm Design: The design of the speller interface itself (e.g., flash duration, inter-stimulus interval) may not be optimized for speed, creating a bottleneck [8].

What are realistic ITR values we should aim for with non-invasive spellers? The field is rapidly evolving, but here is a summary of reported performances:

- Historical Benchmark: For years, a high ITR for a motor imagery-based BCI was around 35 bits/min [8].

- Current Standard: Recent advancements have pushed non-invasive BCI ITRs to approximately 100 bits/min or less [8].

- State-of-the-Art: Some research groups have reported ITRs as high as 302 bits/min using optimized paradigms and algorithms [8]. It is critical to contextualize these values; a "respectable" value can also be considered as 1 bit/trial, independent of trial length, which allows for a more standardized comparison across different experimental paradigms [8].

Can a user's visual skills really impact BCI speller ITR? Yes, significantly. Most non-invasive BCI spellers (P300, VEP) rely on the user's ability to see, focus on, and distinguish between visual stimuli. This is a principle of "garbage in, garbage out" [9]. If a user has impaired visual skills (e.g., due to concomitant conditions in SSPI), they may not produce the expected brain signals, leading to poor classification and low ITR. What might be misdiagnosed as "BCI illiteracy" could in fact be a failure of the interface design to accommodate the user's visual capabilities [9]. Techniques like overt attention (looking directly at the target) have been shown to yield significantly better spelling performance than covert attention (looking away) for P300 spellers [9].

ITR Troubleshooting Guide

Low ITR and Accuracy

| Symptom | Possible Cause | Solution |

|---|---|---|

| Consistently low classification accuracy and ITR across users. | Suboptimal BCI parameters (window length, update rate) [8]. | Re-calibrate the BCI system for each user. Use self-calibrating BCIs that automate feature optimization to bypass time-intensive sessions [8]. |

| Poor signal-to-noise ratio in EEG data [10]. | Check electrode connections and skin impedance. Ensure the ground and reference electrodes are properly attached. For Cyton boards, adjust the gain settings to a lower value (e.g., 8x, 12x) if a 'RAILED' error appears [10]. | |

| High offline accuracy, but low online ITR. | High system latency [8]. | Profile your system to identify bottlenecks in data acquisition, processing, or stimulus presentation. Ensure the SampleBlockSize and SamplingRate parameters in BCI2000 are set appropriately to meet real-time constraints [11]. |

| Inefficient stimulus presentation paradigm. | Optimize flash durations and inter-stimulus intervals. Consider using alternative stimuli like "face spellers," which have been shown to improve performance over traditional flashing letters [9]. |

System Latency and Timing Issues

| Symptom | Possible Cause | Solution |

|---|---|---|

| Unstable operation and dropped data packets. | Packet loss in the data stream [10]. | For RF systems (Cyton), use a USB extension cable to bring the dongle and board closer together. Use the 'AutoScan' or 'Change Channel' manual radio configuration to find a cleaner frequency [10]. |

| Computer performance or resource contention. | Close unnecessary applications. Check the system console log for warnings or errors (e.g., in the OpenBCI GUI or BCI2000 Operator) [10]. | |

| Inconsistent timing between stimulus and brain response. | Improper configuration of timing states. | Verify that state variables like StimulusTime and SourceTime are being recorded correctly in your data file to measure and correct for system timing issues [11]. |

| Jitter in the visual presentation. | Use a high-performance graphics display and ensure your stimulus presentation code is optimized for precise timing, potentially using dedicated hardware synchronization. |

Quantitative Data for ITR Optimization

The following table summarizes key parameter relationships and their impact on ITR, based on simulation studies for non-invasive brain-to-brain interfaces (BBI), which share core components with BCI spellers [8].

Table 1: Key Parameter Effects on Information Transfer Rate

| Parameter | Optimal Value / Range | Impact on ITR |

|---|---|---|

| System Latency | ≤ 100 ms | Critical. Latency should be minimized. With optimal latency, the system can maintain near-maximum efficiency even with a 25% stimulation failure rate [8]. |

| Timeout Threshold | ≤ 2x System Latency | Should be set in relation to latency. A threshold longer than twice the latency value degrades ITR [8]. |

| Stimulation Failure Rate (SFR) | < 25% | Tolerable if latency and timeout are optimal. A high SFR can be compensated for by maximizing the number of trials per minute [8]. |

| Classifier Accuracy | Maximize | Direct, linear relationship. A higher accuracy directly increases the ITR [8]. |

| Window Update Rate | Match to CBI parameters | The BCI's update rate should be reflected in the CBI's system latency and timeout threshold to maximize trial count [8]. |

| Number of Classes | Optimize for user | Increasing classes can raise the bits/trial ceiling, but may reduce accuracy. An optimal balance must be found for each user [8]. |

Experimental Protocols for ITR Optimization

Protocol: Calibrating for Maximum ITR

Objective: To determine the set of parameters that yields the highest possible ITR for an individual user. Materials: EEG system (e.g., with BCI2000 or OpenBCI software), visual speller interface, subject chair. Methodology:

- Subject Preparation: Apply EEG cap according to the International 10-20 system. Ensure impedances are below 5-10 kΩ for clean signal acquisition [8] [10].

- Initial Parameter Set: Begin with standard parameters for your paradigm (e.g., for a P300 speller: a 6x6 matrix, 62.5ms flash duration, 125ms inter-stimulus interval).

- Calibration Session: Run a copy-spelling task where the user is prompted to spell a predefined phrase.

- Iterative Optimization:

- Step 1: Adjust

WindowLengthandUpdateRatein the signal processing module to find the shortest possible window that maintains acceptable accuracy. - Step 2: Based on the user's performance and the system's measured latency, adjust the

TimeoutThresholdto be no more than twice the latency value [8]. - Step 3: If the paradigm allows, experiment with the number of sequences per trial to balance speed and accuracy.

- Step 1: Adjust

- Validation: Run a new copy-spelling task with the optimized parameters and calculate the final ITR.

The workflow for this calibration and optimization process is outlined below.

Protocol: Assessing the Impact of Visual Acuity

Objective: To evaluate if a user's visual skills are a limiting factor in BCI speller ITR. Materials: Standard BCI speller setup, eye-tracking system (optional), visual acuity chart. Methodology:

- Baseline Visual Assessment: Conduct a basic test of visual acuity, contrast sensitivity, and ocular motility [9].

- Overt vs. Covert Attention Task: Have the user perform a spelling task under two conditions: a) looking directly at the target letter (overt attention), and b) looking at a fixation cross while attending to the target peripherally (covert attention) [9].

- Data Analysis: Compare the ITR and accuracy between the two conditions. A significantly lower performance in the covert condition may indicate a heavy reliance on foveal vision.

- Interface Adaptation: If visual deficits are suspected or identified, modify the interface. This can include increasing stimulus size, using high-contrast colors (e.g., following WCAG enhanced contrast guidelines of 7:1 for standard text [12]), reducing visual clutter, or exploring auditory BCI paradigms.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Non-Invasive BCI Speller Laboratory

| Item | Function in Research |

|---|---|

| Dry-Electrode EEG Headset | Provides a low-cost, consumer-grade option for EEG acquisition with sufficient spatial resolution for BCIs. Ideal for rapid prototyping and user studies [8]. |

| BCI2000 Software Platform | A general-purpose, open-source software platform for BCI research. It handles data acquisition, signal processing, and application presentation, and stores all parameters and states in a standardized data file [13] [11]. |

| International 10-20 System Cap | Standardizes EEG electrode positions relative to skull landmarks (nasion, inion), ensuring consistent and reproducible placement across subjects and sessions [8]. |

| Transcranial Focused Ultrasound (TFUS) | A non-invasive neuromodulation technique that can be used as a computer-brain interface (CBI) to close the loop in a brain-to-brain interface (BBI) system with lower power and space requirements than TMS [8]. |

| P300 Speller Matrix | A classic visual ERP paradigm where a matrix of characters flashes to elicit P300 event-related potentials. It is a standard benchmark for testing AAC-BCI systems [13] [9]. |

| OpenBCI Cyton/Ganglion Board | Open-source, versatile biosensing platforms that allow researchers to acquire high-quality EEG data. The GUI provides troubleshooting tools like the Console Log for debugging connectivity and data issues [10]. |

The relationships between these core components and the key performance metrics in a BCI speller system are illustrated in the following diagram.

Advantages and Inherent Limitations of Non-Invasive EEG vs. Invasive Approaches

For researchers focused on optimizing Information Transfer Rates (ITR) in non-invasive BCI spellers, the choice between invasive and non-invasive neural recording methods is foundational. Non-invasive Electroencephalography (EEG), which records brain activity from the scalp, and invasive approaches, such as Electrocorticography (ECoG) which requires surgical implantation of electrodes on the brain surface, present a fundamental trade-off between accessibility and signal fidelity [14] [15] [16]. This technical support article details the core characteristics, advantages, and limitations of each methodology to inform experimental design and troubleshooting in BCI speller research.

FAQ: Fundamental Comparisons

Q1: What are the primary technical differences between non-invasive EEG and invasive ECoG?

The core differences lie in the proximity to the neural source and the consequent impact on signal quality.

- Non-Invasive EEG measures electrical activity from the scalp surface. The signals pass through and are filtered by the cerebrospinal fluid, skull, and scalp, which act as a resistive barrier. This results in significant signal attenuation and spatial blurring [14] [16].

- Invasive ECoG records signals directly from the cortical surface. This proximity provides a much clearer and stronger signal, with higher spatial resolution and minimal contamination from non-cerebral artifacts like muscle activity [15] [16].

Table 1: Technical Comparison of Non-Invasive and Invasive BCI Approaches

| Feature | Non-Invasive EEG | Invasive ECoG |

|---|---|---|

| Spatial Resolution | Low (cm-range) [15] | High (mm-range) [15] |

| Temporal Resolution | High (Millisecond-level) [15] | High (Millisecond-level) [15] |

| Signal Strength | Very weak (microvolts), attenuated by skull [17] [16] | Strong (millivolts), direct neural access [16] |

| Typical ITR Range (for spellers) | P300: ~20-80 bits/min; SSVEP: ~30-300 bits/min [18] | Generally higher than non-invasive, but study-dependent |

| Artifact Vulnerability | High (sensitive to ocular, muscle, and environmental noise) [17] [15] | Low (largely immune to non-cerebral artifacts) [16] |

| Risk & Ethical Hurdles | Low (safe, no surgery) [15] | High (surgical risks of infection, tissue response) [15] |

Q2: What are the key advantages of non-invasive EEG for BCI speller research?

Despite its signal quality limitations, non-invasive EEG offers critical advantages that make it the predominant platform for BCI speller development:

- Safety and Accessibility: It is a completely safe method that does not require brain surgery, eliminating surgical risks like infection or tissue scarring [15] [16]. This makes it suitable for a wide participant pool, including healthy volunteers and patients without a clinical need for implantation.

- Cost-Effectiveness and Portability: EEG equipment is relatively inexpensive, highly portable, and easy to set up compared to ECoG systems or other neuroimaging tools like fMRI [19] [15]. This enables research outside controlled laboratory settings.

- High Temporal Resolution: EEG excels at capturing rapid changes in brain activity on the order of milliseconds, which is crucial for decoding fast cognitive processes like the P300 response used in many spellers [20] [15].

Q3: What inherent limitations of non-invasive EEG most directly impact speller ITR?

The following limitations are the primary bottlenecks for achieving high ITR in non-invasive BCI spellers:

- Poor Spatial Resolution: The skull scatters and blurs electrical signals, making it difficult to localize the precise origin of brain activity. This limits the number of distinct, reliably decodable commands, directly capping the potential ITR [14] [15].

- Low Signal-to-Noise Ratio (SNR): The brain signals of interest are very weak (in the microvolt range) and are easily contaminated by much stronger physiological artifacts (e.g., from eye movements, blinking, and muscle activity) and environmental noise [17] [21]. A low SNR requires more trials for reliable averaging, slowing down communication speed.

- Susceptibility to Artifacts: As noted, artifacts are a major challenge. Unlike ECoG, EEG is highly susceptible to non-cerebral signals. Effective artifact removal is a complex and critical preprocessing step, with all algorithms presenting trade-offs between noise removal and preservation of neural data [17].

Troubleshooting Guides

Problem: Low Signal-to-Noise Ratio (SNR) in EEG Recordings

A low SNR manifests as an inability to reliably detect the neural features (e.g., P300, SSVEP) that drive the speller, leading to high classification error and low ITR.

Recommended Solutions:

- Advanced Artifact Removal: Implement and test modern artifact removal algorithms.

- Deep Learning Models: Use state-of-the-art models like CLEnet, which integrates dual-scale CNN and LSTM with an attention mechanism. This model is designed to extract morphological and temporal features to separate EEG from artifacts effectively, even in multi-channel data with unknown noise sources [21].

- Blind Source Separation (BSS): Apply Independent Component Analysis (ICA) or Canonical Correlation Analysis (CCA) to separate artifact components from neural signals. Note: These methods often require manual inspection and sufficient prior knowledge for component rejection [21].

- Spatial Filtering: Apply Laplacian filtering or Common Average Reference (CAR) to enhance the local component of the signal and reduce widespread noise [18].

- Protocol Design: Minimize artifact generation at the source. Instruct participants to minimize blinks and body movements during critical trial periods. Use comfortable seating and a relaxed environment.

Problem: Low Information Transfer Rate (ITR) in Speller

The spelling speed is unacceptably slow, failing to meet practical communication needs.

Recommended Solutions:

- Paradigm Optimization:

- For P300 Spellers, reduce the number of stimulus flashes per character selection, as this directly speeds up the process (e.g., from 12 flashes to 9 or 7), but be aware of the trade-off with accuracy [19].

- Implement predictive text systems (like the T9 system used in mobile phones) to reduce the number of characters that need to be selected [19].

- Explore Hybrid BCIs that combine the strengths of multiple paradigms (e.g., P300 + SSVEP) to improve accuracy and speed [19].

- Personalized Classification: The features extracted for BCI control are often individualized. Ensure your classification model is calibrated to the specific user's brain signals for more accurate and efficient translation into commands [18].

- Channel Selection: Use feature dimensionality reduction methods to identify and use the minimal number of EEG channels required for accurate classification, which can simplify setup and improve processing efficiency [20].

Experimental Protocols for Key BCI Paradigms

Protocol 1: P300 Speller Setup

This protocol is based on the classic paradigm introduced by Farwell and Donchin [19].

- Stimulus Presentation: A 6x6 matrix of letters and symbols is displayed on a screen. Rows and columns of the matrix are intensified in a random sequence.

- Participant Task: The user focuses attention on the desired character in the matrix and mentally counts how many times it flashes.

- EEG Recording: Record continuous EEG from scalp sites (e.g., using the 10-20 system). The P300 event-related potential is time-locked to the intensification of the row or column containing the target character.

- Signal Processing: The workflow for processing the EEG signal to generate a speller command is as follows:

Protocol 2: Movement-Related Cortical Potential (MRCP) for Spelling

This protocol describes an offline spelling task using executed movements, as investigated by recent research [18].

- Participant Task: The user performs a specific motor act (e.g., ballistic dorsiflexion of the foot) to select a letter displayed on a computer screen. Tasks can vary from repeating a single letter to spelling phrases, which introduces different levels of cognitive load.

- EEG Recording: Record EEG from sites centered around the Cz electrode (primary motor cortex). The MRCP is a slow negative cortical potential that begins up to 2 seconds before movement onset.

- Signal Analysis: The key is to detect the MRCP in single trials. This involves specialized processing to enhance the low-frequency signal.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for BCI Speller Research

| Item / Tool | Function / Description | Relevance to Speller Research |

|---|---|---|

| Dry Electrode EEG Headsets | EEG sensors that do not require conductive gel, using ultra-high impedance amplifiers for signal quality [22]. | Enables quicker setup and more comfortable, longer-term experiments, improving user compliance for repeated spelling trials [22]. |

| Ear-EEG Systems | Records EEG signals from within the ear canal using dry-contact electrodes [22]. | Provides a discreet and user-friendly form factor for real-world BCI speller applications, though with a different channel set than scalp EEG [22]. |

| EEGdenoiseNet | A semi-synthetic benchmark dataset containing EEG, EMG, and EOG signals for training and validating artifact removal algorithms [21]. | Crucial for developing and benchmarking new deep learning models for artifact removal, a key step in improving SNR and ITR [21]. |

| 1D-ResCNN & NovelCNN | Deep learning architectures (1D Convolutional Neural Networks) specifically designed for EEG artifact removal and feature extraction [21]. | Provides state-of-the-art tools for preprocessing EEG data to achieve cleaner signals for more accurate classification in spellers [21]. |

| Lab Streaming Layer (LSL) | A system for unified collection of measurement data across different devices and software [23]. | Critical for synchronizing EEG data with stimulus presentation markers (e.g., from PsychoPy) in real-time, ensuring accurate epoch extraction for P300 or MRCP analysis [23]. |

Brain-Computer Interface (BCI) spellers represent one of the most significant practical applications of BCI technology, offering a non-muscular communication channel for individuals with severe motor disabilities such as amyotrophic lateral sclerosis (ALS) and locked-in syndrome (LIS) [3] [19]. These systems convert brain signals into executable commands for typing characters, words, and full messages. The field has evolved substantially since the pioneering work of Farwell and Donchin in 1988, with researchers developing multiple paradigms to improve the information transfer rate (ITR), a key metric combining speed and accuracy [3] [24]. This technical support center addresses the primary paradigms—P300, SSVEP, and MI—along with their hybrid combinations, providing researchers with troubleshooting guidance and methodological protocols to optimize their experimental setups for maximum performance.

Section 1: Established Speller Paradigms and Their Mechanisms

P300-Based Spellers

The P300 speller utilizes the P300 event-related potential, a positive peak in EEG signals occurring approximately 300ms after an unexpected, rare, or significant stimulus [25] [26].

- Farwell-Donchin (Row-Column) Paradigm: The original P300 speller presents a 6×6 matrix of letters and numbers. Rows and columns flash randomly while the user focuses on a target character. The system identifies the target by detecting P300 responses to the specific row and column containing that character [25] [27] [28].

- Region-Based Paradigm: Developed to mitigate the "adjacency-distraction error" of the row-column paradigm, this approach first flashes groups of characters. After the user selects a group, the individual characters within it are flashed for final selection, improving accuracy [25] [27].

- Checkerboard Paradigm (CBP): This method uses a virtual checkerboard pattern to assign characters to flash groups, ensuring that adjacent items never flash together. This successfully eliminates adjacency errors and the "double-flashing" problem where the same item flashes in quick succession [27].

- Single-Character Paradigm: Unlike flashing entire rows/columns, this paradigm intensifies individual characters. While it requires more flashes to cover all symbols, it elicits a higher P300 amplitude and offers greater flexibility in interface design [28].

Steady-State Visual Evoked Potential (SSVEP) Spellers

SSVEP spellers rely on brain signals elicited by visual stimuli flickering at constant frequencies. When a user gazes at a target, the visual cortex produces an EEG response at the same frequency (and harmonics) as the stimulus [3] [24]. These spellers typically offer higher ITRs than P300 systems due to a better signal-to-noise ratio. Modern implementations often use a QWERTY layout with joint frequency/phase modulation and advanced classification algorithms like Filter-Bank Canonical Correlation Analysis (FBCCA) to achieve high speeds [24].

Motor Imagery (MI) Spellers

MI spellers are based on the event-related desynchronization/synchronization (ERD/ERS) phenomenon that occurs when a user imagines a movement, such as moving their left or right hand. This mental imagination modulates sensorimotor rhythms in the brain [3] [19]. While they do not require external stimulation, they generally need more user training and yield lower ITRs compared to P300 and SSVEP spellers [3].

Hybrid Spellers

Hybrid BCIs combine two or more paradigms to overcome the limitations of a single system. Common combinations include P300-SSVEP and P300-MI. These spellers can achieve higher accuracy, reduce the training period, and improve overall robustness by leveraging the strengths of each approach [19].

Table 1: Comparison of Major Non-Invasive BCI Speller Paradigms

| Paradigm | EEG Signal Used | Stimulation Type | Key Advantage | Key Challenge | Typical Performance Range (Accuracy/ITR) |

|---|---|---|---|---|---|

| P300 (Row-Column) | P300 ERP | Visual (Row/Column Flash) | Well-established, high accuracy [28] | Susceptible to adjacency errors [27] | ~65-95% [27], ~10-27 bits/min [19] [28] |

| P300 (Region-Based) | P300 ERP | Visual (Region/Char. Flash) | Reduced perceptual error [25] | Requires two-level selection | Better accuracy vs. Row-Column [25] |

| P300 (Checkerboard) | P300 ERP | Visual (Pattern Flash) | Mitigates adjacency & double-flash errors [27] | More complex setup | High accuracy (>90%) reported [27] |

| SSVEP | Steady-State VEP | Visual (Flickering Stimuli) | High ITR, high SNR [24] | Requires gaze shifting, visual fatigue | ~90% [24], Up to ~325 bits/min (cued) [24] |

| Motor Imagery (MI) | ERD/ERS | Mental Imagination | No external stimulus needed [3] | Extensive training required [3] | Lower ITR vs. P300/SSVEP [3] |

| Hybrid (e.g., P300+SSVEP) | Multiple Signals | Multiple | Improved accuracy, shorter training [19] | Increased system complexity | Higher than single paradigms [19] |

Section 2: Troubleshooting Common Experimental Issues

FAQ: Addressing P300 Speller Performance Problems

Q1: My P300 speller has a high error rate, often selecting characters adjacent to the target. How can I mitigate this? A: This is a known "adjacency-distraction error" in the classic Row-Column Paradigm (RCP) [27]. We recommend the following:

- Switch Paradigms: Implement a Checkerboard Paradigm (CBP) or a Region-Based Paradigm. These are specifically designed to prevent adjacent items from flashing in the same group, effectively eliminating this error source [27].

- Optimize Stimulus Parameters: Adjust the Inter-Stimulus Interval (ISI) or stimulus duration. Temporal overlapping of P300 responses can reduce amplitude and contribute to errors. An ISI of at least 500ms can help avoid attentional blink and repetition blindness [27].

Q2: The BCI speller is stuck on a single letter and does not progress through the word. What could be wrong? A: This is often a configuration or software issue. Based on a real case with the BCI2000 platform and an EMOTIV FLEX headset [29]:

- Check Parameter Mismatch: Ensure the

NumberOfSequencesparameter matches theEpochsToAverageparameter in your processing chain (e.g., within the BCI2000P3SignalProcessingmodule) [29]. - Verify Script Configuration: If using a custom batch file or script, confirm that it correctly calls the signal processing module (e.g.,

P3SignalProcessinginstead ofDummySignalProcessing) [29]. - Inspect for Pause Commands: Check if your text input contains a specific character (like

<PAUSE>) that the system interprets as a command to halt [29].

Q3: During free communication tasks, my system's ITR drops significantly compared to cued spelling. How can I improve performance? A: This is a common challenge as free communication imposes a higher cognitive load [24].

- Enhance Usability: Shift focus from raw speed to user experience. Implement a familiar QWERTY layout, reduce the number of flicker frequencies, and display the last few classified characters on the screen to reduce working memory load [24].

- Increase Classification Accuracy: Use a higher frequency range for visual stimuli (e.g., 10.0–15.4 Hz) to reduce interference from endogenous alpha oscillations. Extend the flicker period and flicker-free interval to improve the signal-to-noise ratio (SNR) [24].

- Incorporate an Error-Correction System (ECS): Integrate a system to detect Error-Related Potentials (ErrPs). When the user perceives an error in the system's selection, an ErrP is generated. Online detection of ErrPs can trigger an automatic correction, boosting effective accuracy [26].

Q4: I am getting a "no Qt platform plugin" error when trying to run the P300 classifier tool. How do I resolve this? A: This is a platform-specific dependency issue.

- Reinstall Software: A fresh installation of the BCI platform (e.g., BCI2000) can sometimes resolve missing libraries. Ensure you download the correct version for your operating system [29].

- Seek Community Support: For open-source platforms like OpenViBE and BCI2000, consult the user forums. Other researchers have likely encountered and solved this problem. Provide details about your OS and software version for targeted help [30].

Section 3: Experimental Protocols & Optimization

Standard Protocol for a P300 Speller Experiment

- Subject Preparation: Place EEG electrodes according to the international 10-20 system. Key electrodes for P300 detection include Fz, Cz, Pz, and Oz [26]. Ensure electrode impedances are below 5-10 kΩ.

- Paradigm Configuration:

- Stimulus: Configure the flashing pattern (e.g., row/column, checkerboard, single character). Standard flash duration is 100-125 ms with an inter-stimulus interval of 100-125 ms [26].

- Matrix: Set up the character matrix (e.g., 6x6 for classic RCP).

- Sequences: Set the

NumberOfSequences(flash repetitions per character). Start with 5-15 sequences, adjusting based on required accuracy [26].

- Data Acquisition & Calibration: In a copy mode (or calibration mode), instruct the subject to focus on a series of cued target characters. This data, with known labels, is used to train the classifier model [26]. Acquire a sufficient number of repetitions per character (e.g., 15-20) for robust model training [24].

- Classifier Training: Process the calibration data. Common steps include:

- Band-pass filtering (e.g., 0.1-30 Hz).

- Epoching (e.g., -100 to 600 ms around stimulus).

- Feature extraction (e.g., down-sampling, baseline correction).

- Training a classifier like Stepwise Linear Discriminant Analysis (SWLDA) or a support vector machine (SVM) [26].

- Online Testing: The system uses the trained model to classify brain signals in real-time. Testing can be done in a copy mode for performance evaluation or in a free-spelling mode for genuine communication [26].

Protocol for a High-Performance SSVEP Speller Experiment

- Subject Preparation: Similar EEG setup as P300, with a focus on occipital electrodes (Oz, O1, O2) for capturing visual cortex activity.

- Paradigm Configuration:

- Layout: Use a QWERTY keyboard layout for user familiarity [24].

- Stimulus Flicker: Employ joint frequency/phase modulation for a larger set of unique stimuli. A typical frequency range is 10.0–15.4 Hz [24].

- Trial Structure: A stimulation period of 1.5 seconds followed by a flicker-free interval of 0.75 seconds is effective [24].

- Calibration & Template Generation: Record SSVEP responses for each flickering target. Use Filter-Bank CCA to generate individualized spatial filters and templates for each target frequency [24].

- Online Testing: The system acquires EEG data, applies the filter-bank, and uses CCA to compute the correlation between the signal and each template. The target with the highest correlation coefficient is selected.

Diagram 1: BCI Speller Experimental Workflow

Section 4: The Scientist's Toolkit

Research Reagent Solutions for BCI Speller Research

Table 2: Essential Materials and Software for BCI Speller Experiments

| Item Category | Specific Examples / Models | Critical Function |

|---|---|---|

| EEG Acquisition Hardware | EMOTIV FLEX, OpenBCI Cyton, g.tec amplifiers | Non-invasive recording of electrical brain activity. Multi-channel systems (32-64 ch) are common for research [29]. |

| Electrodes & Caps | Ag/AgCl electrodes, Electrode caps (10-20 system) | Signal transduction from the scalp. Wet electrodes require conductive gel; newer dry electrodes offer quicker setup [14]. |

| Signal Processing & BCI Platforms | BCI2000, OpenViBE, BCILAB | Provide a software framework for data acquisition, stimulus presentation, signal processing, and classifier training [29] [30]. |

| Stimulus Presentation Software | Psychtoolbox (for MATLAB), Presentation | Precisely control the timing and presentation of visual (or auditory) stimuli to evoke P300 or SSVEP responses. |

| Classification Algorithms | Stepwise LDA (SWLDA), Support Vector Machine (SVM), Convolutional Neural Networks (CNN), Filter-Bank CCA | Translate pre-processed EEG signals into intended commands. Algorithm choice depends on the paradigm (ERP vs. SSVEP) [24] [26]. |

| Performance Metrics | Information Transfer Rate (ITR), Character/Trial Accuracy | Quantify the speed and reliability of the speller system. ITR is the gold standard for comparing different BCI systems [3] [24]. |

Diagram 2: Logical Architecture of a BCI Speller System

Section 5: Performance Benchmarking and Data Presentation

Quantitative Performance of Various P300 Speller Designs

Table 3: Performance Metrics of Different P300 Speller Implementations

| Speller Paradigm / Study | Key Modification | Reported Accuracy | Reported ITR | Notes |

|---|---|---|---|---|

| Farwell & Donchin (1988) [19] [28] | Original 6x6 Row-Column | ~95% | ~12 bits/min | Baseline for comparison |

| Jin et al. (2010) [19] | Reduced flashes per sequence (9 flashes) | ~92.9% | ~14.8 bits/min | Optimized for speed |

| Jin et al. (2012) [19] | 7x12 keyboard matrix | ~94.8% | ~27.1 bits/min | Larger matrix, improved classification |

| Pires et al. (2012) [19] | Lateral single-character speller | ~89.9% | ~26.11 bits/min | Alternative to row-column |

| Akram et al. (2015) [19] | 3x3 matrix with T9 predictive text | N/A | Typing time reduced by 51.87% | Leveraged language models |

| Ganin et al. (2020) 'Neurochat' [19] | User-friendly interface & training | 63% (Session 1) to 92% (Session 10) | N/A | Shows importance of user training |

The development of BCI spellers has progressed from the seminal Farwell-Donchin matrix to a diverse ecosystem of paradigms including refined P300 approaches, SSVEP, MI, and hybrid systems. Optimizing ITR requires a holistic approach that balances paradigm selection, rigorous experimental protocol, and proactive troubleshooting of common hardware and software issues. The future of the field lies in creating more robust, user-centered systems that perform reliably not just in controlled cued-spelling tasks, but in the dynamic and cognitively demanding context of genuine free communication [24]. By leveraging the guidelines, protocols, and troubleshooting advice in this document, researchers can more effectively contribute to this vital and rapidly evolving area of assistive technology.

Methodological Breakthroughs: Algorithmic Advances and Novel Speller Paradigms for High-Speed BCI

Frequently Asked Questions & Troubleshooting

Q1: Why is the classification accuracy of my hybrid BCI system low, and how can I improve it? Low accuracy in a hybrid Brain-Computer Interface (BCI) system often stems from poor signal quality, inadequate feature extraction, or suboptimal classification algorithms. To enhance performance:

- For Motor Imagery (MI) signals, implement a denoising algorithm like the Complex-Valued Multivariate Iterative Filtering (CVMIF) to improve signal quality before feature extraction and classification [31].

- For cross-session stability, employ domain adaptation techniques like Correlation Alignment (CORAL). This method aligns the covariance matrices of training and test data, which has been shown to increase classification accuracy significantly, for example, from 81.54% to 94.29% in one study [32].

- Utilize robust classification algorithms. Bootstrap Aggregating (Bagging) has demonstrated high performance (up to 99.12% accuracy in controlled settings) and is particularly effective for managing the variable nature of EEG signals [32].

Q2: How can I reduce visual fatigue and safety risks for users in a system that uses SSVEP or P300 paradigms? Visual stimuli in SSVEP and P300 paradigms can cause eye strain, headaches, and pose risks for photosensitive individuals [32].

- Use safer stimulus frequencies. A 7 Hz flicker for SSVEP is outside the high-risk 15-25 Hz range and has been used as a "brain-controlled safety switch" to activate systems, thereby reducing fatigue and seizure risk [32].

- Implement a hybrid activation mechanism. Combine SSVEP with other inputs, such as Electrooculography (EOG) artifacts. Use the SSVEP response as a conscious activation switch, and then leverage EOG signals from eye movements for command generation. This two-stage process minimizes unintended commands and allows for less straining visual interaction after activation [32].

Q3: My system has a low Information Transfer Rate (ITR). What parameters should I optimize? The Information Transfer Rate is a key metric that depends on the speed and accuracy of selections. You can optimize it by adjusting task parameters [33].

- Adjust the number of targets (or commands). While accuracy may decline as the number of targets increases, the overall bit rate can be higher. For many users, four targets have been found to yield the maximum bit rate [33].

- Optimize the trial duration. Longer trial durations generally increase accuracy, but the optimal speed varies by user. Empirical testing is needed to find the best balance for each individual, with optimal movement times typically ranging from 2 to 4 seconds [33].

Q4: How can I design a system for effective multi-limb control or complex tasks in virtual reality (VR)? Achieving intuitive control beyond simple commands requires a multimodal approach to manage cognitive load.

- Integrate multiple control signals. Combine EEG with eye-tracking and manual controllers. For example, a system can use a threshold on NeuroSky's e-Sense attention metric (e.g., >80% for 300 ms) to trigger a virtual hand's activation, while gaze-driven targeting handles the selection [34].

- Implement conflict resolution algorithms. Use a soft maximum weighted arbitration algorithm to intelligently resolve conflicts between simultaneous manual and virtual inputs, achieving a high success rate in task execution [34].

Hybrid BCI Performance Data

The following tables summarize key quantitative findings from research on hybrid BCI systems, which can serve as benchmarks for your own experiments.

Table 1: Performance of Different Hybrid BCI Configurations

| BCI Modality Combination | Key Algorithm/Technique | Primary Application | Reported Performance |

|---|---|---|---|

| MI + SSVEP [31] | CVMIF denoising for MI | Robotic Arm Control | Average accuracy: 96.4%; Task success rate: >90% |

| SSVEP + EOG Artifacts [32] | CORAL + Bagging Classifier | System Activation & Command | Accuracy increased from 81.54% to 94.29% with CORAL |

| Attention (EEG) + Eye-Tracking [34] | Soft Maximum Weighted Arbitration | Tri-manual Control in VR | Virtual hand success rate: 87.5%; Conflict resolution: 92.4% success |

Table 2: Impact of Task Parameters on BCI Performance [33]

| Parameter | Impact on Performance | Optimization Guidance |

|---|---|---|

| Number of Targets | Accuracy decreases as target number increases. | For maximum bit rate, 4 targets is often optimal. |

| Trial Duration | Accuracy increases with longer trial times. | Optimal duration is user-specific; test between 2-4 seconds. |

Detailed Experimental Protocols

Protocol 1: MI-SSVEP Hybrid BCI for Robotic Arm Control This protocol is designed for multi-command, real-time control of a robotic arm [31].

- Signal Acquisition: Record EEG signals according to the international 10-20 system. For MI, use electrodes C3 and C4. For SSVEP, use occipital channels O1, Oz, O2, PO3, PO4, POz, PO7, and PO8.

- Paradigm Design: Create a fused paradigm where SSVEP stimuli are assigned as joint control commands (e.g., move up, down), and left/right hand motor imagery is assigned as the end-effector command (e.g., open/close gripper).

- Signal Processing:

- Process MI and SSVEP signals in parallel.

- For the MI pathway: Apply the CVMIF algorithm for preprocessing and denoising. Proceed with feature extraction and classification.

- For the SSVEP pathway: Use frequency-domain analysis (like Power Spectral Density) for feature extraction.

- System Control: Translate the classified outputs into control commands for the robotic arm. Use MI commands for critical task nodes to form a closed-loop control system.

Protocol 2: Two-Stage SSVEP-EOG Hybrid BCI for Safe Activation This protocol focuses on creating a robust and safe system with conscious activation [32].

- Stimuli Presentation: Present the user with a single screen showing a 7 Hz flickering LED and objects moving in different directions.

- Stage 1: Conscious Activation (SSVEP):

- The user must intentionally gaze at the 7 Hz LED to generate an SSVEP response.

- Detect this response using Power Spectral Density (PSD) and a classifier (e.g., Bagging). This step acts as a safety switch, activating the command stage only when intended.

- Stage 2: Command Input (EOG):

- Once activated, the user can issue commands by looking at moving objects.

- Detect the trajectory from EOG artifacts evident in the frontal lobe EEG channels.

- Extract time-domain features (power, energy) and classify them.

- Cross-Session Stabilization: To maintain performance across different sessions, apply the CORAL method to the feature data before the final classification to reduce inter-session variability.

Experimental Workflow Diagram

The diagram below illustrates the logical flow of a generic hybrid BCI system, integrating multiple paradigms like SSVEP and MI.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Hybrid BCI Research

| Item | Function in Research | Example & Notes |

|---|---|---|

| EEG Acquisition System | Records electrical brain activity. | Research-grade: Multi-channel systems (e.g., Emotiv Flex [32]) for high-fidelity data. Consumer-grade: Single-channel systems (e.g., NeuroSky MindWave [34]) for accessible prototyping. |

| Visual Stimulation Hardware/Software | Presents SSVEP or P300 stimuli. | LEDs flickering at specific frequencies (e.g., 7 Hz for safety [32]) or monitors displaying visual paradigms. |

| Classification Algorithms | Translates processed signals into commands. | Bootstrap Aggregating (Bagging): For robust performance across sessions [32]. Support Vector Machine (SVM) and Random Forest (RF) are also commonly used [32]. |

| Domain Adaptation Tool | Improves system stability across sessions/days. | Correlation Alignment (CORAL): Aligns data distributions from different sessions, drastically improving accuracy [32]. |

| Hybrid Fusion Framework | Integrates commands from multiple signal sources. | Soft Maximum Weighted Arbitration: Resolves conflicts between simultaneous inputs in multimodal systems (e.g., BCI-VR) [34]. |

| Virtual Reality (VR) Platform | Provides an immersive environment for testing complex control. | Integrated with eye-tracking (e.g., Tobii, 120 Hz) and BCI for multi-limb coordination studies [34]. |

This technical support center is designed for researchers and scientists working on non-invasive Brain-Computer Interface (BCI) spellers. Our focus is on optimizing Information Transfer Rates (ITR) while addressing the critical challenge of asynchronous control—specifically, the 'Midas Touch' problem, where systems incorrectly interpret non-control state brain activity as intentional commands. The guidance below provides troubleshooting and methodological support for implementing high-performance, real-world BCI systems that reliably distinguish between Intentional Control (IC) and Non-Control (NC) states.

Frequently Asked Questions (FAQs)

FAQ 1: What is the 'Midas Touch' problem in BCI spellers? The 'Midas Touch' problem refers to a phenomenon where a BCI system cannot reliably distinguish between periods when a user intends to give a command (Intentional Control, or IC state) and periods when the user is resting, thinking, or not wishing to interact (Non-Control, or NC state). This results in the system executing random, unintended commands during breaks, severely degrading user experience and practical usability [35] [36].

FAQ 2: Why is asynchronous control critical for real-world BCI applications? Synchronous BCIs operate with fixed time slots for command input, forcing users to communicate at the system's pace. Asynchronous control, also known as self-paced control, allows users to initiate commands at their own convenience. This is essential for realistic communication, as it enables users to pause for thought, take breaks, or simply not interact with the system without it producing erroneous outputs [35].

FAQ 3: What is a typical target performance for a robust NC state detection? State-of-the-art research has demonstrated asynchronous spellers with NC state detection performing at a level as low as 0.075 erroneous classifications per minute during the non-control state, while maintaining a high average ITR of 122.7 bits/min. This represents a significant improvement over previous systems, which could have error rates up to 0.49 per minute or higher [35].

FAQ 4: What are the major BCI paradigms used in spellers? The three main non-invasive BCI speller paradigms are:

- P300 Speller: Relies on the P300 event-related potential (ERP) generated when a user attends to a rare or significant stimulus among a series of standard stimuli [37].

- Steady-State Visual Evoked Potential (SSVEP) Speller: Utilizes brain responses elicited by visual stimuli flickering at specific frequencies. The user's focus on a particular frequency can be decoded to select a target [35] [38] [37].

- Motor Imagery (MI) Speller: Based on the detection of sensorimotor rhythm changes caused by imagining body movements (e.g., moving hands or feet) without actual physical movement [37].

FAQ 5: Our lab's synchronous BCI speller achieves high ITRs, but performance drops in free-spelling tasks. Why? High ITRs in cued, repetitive spelling tasks (e.g., typing "HIGH SPEED BCI" multiple times) may not translate to genuine free communication. The latter involves a higher cognitive load from generating novel thoughts, planning phrases, spelling unfamiliar words, and locating characters on the keyboard. This can reduce both speed and accuracy. Performance evaluations should therefore include free communication scenarios for a realistic assessment [24].

Troubleshooting Guides

Problem: Poor ITR and Classification Accuracy

Potential Causes and Solutions:

- Cause 1: Suboptimal Signal-to-Noise Ratio (SNR)

- Cause 2: Inadequate Feature Extraction or Classification Algorithm

- Solution: For SSVEP-based spellers, implement Filter-Bank Canonical Correlation Analysis (FBCCA) or advanced methods like modified Power Spectral Density (PSD) analysis, which has been shown to achieve high accuracy with low computational overhead, making it suitable for real-time systems [24] [38].

- Cause 3: Stimulus Parameter Misconfiguration

- Solution: Optimize the flicker parameters. Using a higher frequency range (e.g., 10.0–15.4 Hz) can help reduce interference from endogenous alpha oscillations. Also, tailor the phase shift of sinusoid templates to each individual user for improved performance [24].

Problem: Excessive False Activations During Non-Control State (The Midas Touch Problem)

Potential Causes and Solutions:

- Cause 1: Poorly Calibrated or Fixed Decision Threshold

- Solution: Implement a user-specific thresholding method for distinguishing between IC and NC states. This threshold should be calibrated for each individual during a dedicated training session that includes both control and non-control periods. Be aware that a threshold optimized purely for spelling speed may increase false activations during NC states [35].

- Cause 2: Lack of a Dedicated NC State Detection Model

- Solution: Do not rely solely on the target classification model. Develop a separate, robust classifier specifically designed to identify the NC state. This can involve methods that normalize frequency powers or use multi-class support vector machines to distinguish between multiple IC classes and one NC class [35].

Problem: User Fatigue and Visual Discomfort

Potential Causes and Solutions:

- Cause 1: Prolonged or Intense Visual Stimulation

- Cause 2: Unfamiliar or Inefficient Keyboard Layout

- Solution: Use a highly familiar QWERTY or QWERTZ layout instead of a matrix layout with lexicographic order. This reduces cognitive load and the time needed to locate characters. Implementing word prediction and auto-completion can also significantly reduce the number of required selections [35] [24].

Experimental Protocols for High-Performance Asynchronous Spellers

Protocol 1: Establishing a Baseline with an SSVEP Speller

This protocol outlines the setup for a high-speed SSVEP speller, which forms the foundation for adding asynchronous control.

- Objective: To calibrate a system and obtain user-specific templates for a multi-target SSVEP speller.

- Materials: EEG amplifier with at least 8 channels, cap with electrodes positioned over the occipital lobe, display screen for visual stimuli.

- Stimuli: On-screen keyboard with 28-40 targets, each flickering at a distinct frequency in the 10-15 Hz range. Phase modulation can also be used [24] [38].

- Procedure:

- Preparation: Apply EEG gel to achieve impedances below 10 kΩ. Key electrode sites: POz, PO7, O1, Oz, O2, PO8, P8, Iz [39].

- Cued Template Training: Present each character target to the user in a sequential, cued manner (e.g., "please focus on the letter 'A'"). Each target should be highlighted and flicker for a set duration (e.g., 1.5 s), followed by a flicker-free rest period (e.g., 0.75 s). Repeat this for each target for 20 iterations to build robust classification templates [24].

- Data Recording: Record multi-channel EEG data synchronized with the onset of each stimulus flicker.

- Signal Processing:

Protocol 2: Integrating and Validating Asynchronous NC State Detection

This protocol adds the critical layer of self-paced control.

- Objective: To train and validate a model that distinguishes between IC and NC states.

- Procedure:

- NC State Data Collection: After template training, conduct a session where the user is instructed to not focus on any specific target (NC state). The user may be asked to relax, look away from the screen, or think about something else. This data is used to characterize the brain's activity during non-control.

- Threshold Determination: Analyze the classifier's output values (e.g., correlation coefficients from CCA) during both the IC state (from Protocol 1) and the NC state. Determine a user-specific threshold that maximizes the separation between these two states. The goal is to only classify a selection when the output confidence exceeds this threshold [35].

- Validation: Test the system under four conditions: sustained IC, sustained NC, transition from IC to NC, and transition from NC to IC. Performance is measured by the number of erroneous classifications per minute during the NC state and the detection rate during the IC state [35].

The workflow for the complete asynchronous BCI speller system, integrating both protocols, is as follows:

Performance Data and Comparison

The table below summarizes key performance metrics from recent advanced BCI speller systems to serve as a benchmark for your experiments.

| System Paradigm | Key Feature | Avg. Accuracy (%) | Avg. ITR (bits/min) | NC State Performance | Citation |

|---|---|---|---|---|---|

| EEG2Code (Asynchronous) | Robust NC state detection, 32 targets | 99.3% | 122.7 | 0.075 errors/min | [35] |

| SSVEP (Single-Channel) | Modified PSD, low-complexity | 95.2% | 119.8 | N/A | [38] |

| Mind-Pinyin Speller | Imagined handwriting for Chinese | 75.7% | 160.0 | N/A | [40] |

| SSVEP (Filter-Bank CCA) | Optimized for free communication | 75.4% (on QWERTY) | 80.4 | N/A | [24] |

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists critical hardware and software components for building a state-of-the-art non-invasive BCI speller research platform.

| Item Name | Type | Function / Application | Example Product/Model |

|---|---|---|---|

| High-Density EEG Amplifier | Hardware | Acquires brain electrical activity with high temporal resolution. Essential for capturing ERPs and SSVEPs. | Brain Products LiveAmp, actiCHamp [41] [39] |

| Active Electrode Cap | Hardware | Ensures high-quality signal acquisition with lower impedances. Snap-cap versions allow flexible montage. | actiCAP slim, BrainCap [41] [39] |

| Stimulus Presentation Software | Software | Presents visual flickering stimuli (for VEP/SSVEP) or oddball paradigms (for P300) in a time-locked manner. | PsychoPy, OpenVibe, Presentation |

| Signal Processing & BCI Framework | Software | Provides real-time data acquisition, feature extraction, classification, and translation algorithms. | mindaffectBCI, OpenVibe, BCILAB [39] |

| Lab Streaming Layer (LSL) | Software | A unified system for synchronizing and streaming multi-modal data (EEG, triggers, etc.), crucial for experimental integrity. | BrainVision LSL Viewer [41] [39] |

Troubleshooting Guide: FAQs and Solutions

FAQ 1: My hybrid CNN-Transformer model is not generalizing well to new subjects. What strategies can I use to improve cross-subject performance?

- Problem: A model trained on one set of individuals performs poorly on new, unseen subjects due to high inter-subject variability in EEG signals [42] [43].

- Solutions:

- Implement Subject-Independent Training: Use evaluation strategies like Leave-One-Subject-Out (LOSO) cross-validation during development. This ensures the model is evaluated on subjects never seen during training, forcing it to learn more generalized features [43].

- Incorporate Data Augmentation: Artificially increase the size and diversity of your training data using techniques such as temporal warping, adding noise, or frequency domain augmentations. This helps prevent overfitting and improves robustness [42] [44].

- Architectural Adjustments: Employ models specifically designed for subject independence, such as the EEGCCT (Compact Convolutional Transformer). These models are structured to enhance generalization from limited data and have been shown to outperform subject-dependent models in cross-subject scenarios [43].

FAQ 2: The classification accuracy of my CSP-based system is low. How can I optimize the feature extraction process?

- Problem: The manually selected frequency band for the Common Spatial Pattern (CSP) algorithm is not optimal for a specific user, leading to poor feature discrimination [45].

- Solutions:

- Use Filter Bank CSP (FBCSP): Instead of a single frequency band, deploy a filter bank that decomposes the EEG signal into multiple frequency bands (e.g., 4-8 Hz, 8-12 Hz, ..., 36-40 Hz). The CSP algorithm is then applied to each band [45].

- Implement Automated Feature Selection: Follow the CSP stage with a feature selection algorithm, such as the Mutual Information-based Best Individual Feature (MIBIF), to autonomously select the most discriminative subject-specific frequency bands and CSP features for classification [45].

- Extend to Multi-class Problems: For classifying more than two motor imagery tasks (e.g., left hand, right hand, foot, tongue), use pairwise approaches. This involves applying the 2-class FBCSP to all possible pairs of classes and combining the results [45].

FAQ 3: My BCI speller has a high raw character selection rate, but the effective information transfer rate (ITR) during free communication is low. Why does this happen, and how can I improve it?

- Problem: The system performs well in cued, repetitive spelling tasks but fails in real-world free-communication scenarios due to increased cognitive load and user behavior like making corrections [24].

- Solutions:

- Optimize for Usability, Not Just Speed: Prioritize classification accuracy over minimizing single-character selection time. A slightly slower but more accurate system can lead to a higher effective ITR because users make fewer corrections [24].

- Design User-Centric Interfaces: Use familiar keyboard layouts (e.g., QWERTY) and provide real-time visual feedback of the last few classified characters. This reduces cognitive load and the number of saccades needed, improving the user experience and flow [24].

- Test in Realistic Settings: Evaluate your system with naïve users in genuine free-communication tasks, such as word association or conversational turn-taking, rather than only with cued phrases. This provides a more realistic performance appraisal [24].

FAQ 4: How can I effectively capture both local and global features in EEG signals for motor imagery classification?

- Problem: CNNs are good at extracting local temporal-spatial features but struggle with long-range dependencies, while pure Transformers can capture global dependencies but may overlook fine-grained local patterns [42] [44].

- Solutions:

- Adopt a Hybrid Architecture: Design an end-to-end model that sequentially combines CNNs and Transformers.

- Recommended Workflow:

- CNN Front-end: Use convolutional layers (e.g., based on EEGNet) to extract local temporal and spatial features from raw EEG trials [44] [43].

- Transformer Back-end: Feed the extracted features into a Transformer encoder module. The self-attention mechanism will model global dependencies and long-range interactions within the signal [42] [44].