BLEND: A Behavior-Guided AI Framework for Advanced Neural Dynamics Modeling and Drug Development

This article explores BLEND (Behavior-guided neuraL population dynamics modElling framework via privileged kNowledge Distillation), an innovative AI approach that transforms neural dynamics modeling.

BLEND: A Behavior-Guided AI Framework for Advanced Neural Dynamics Modeling and Drug Development

Abstract

This article explores BLEND (Behavior-guided neuraL population dynamics modElling framework via privileged kNowledge Distillation), an innovative AI approach that transforms neural dynamics modeling. BLEND leverages behavior as 'privileged information' during training to create superior models that operate using only neural activity during real-world deployment. We detail its model-agnostic architecture, which enhances existing neural dynamics methods without requiring specialized redesign, and present empirical evidence showing over 50% improvement in behavioral decoding and over 15% gain in transcriptomic neuron identity prediction. For researchers, scientists, and drug development professionals, this review provides a comprehensive analysis of BLEND's foundational principles, methodological applications, optimization strategies, and validation benchmarks, positioning it as a pivotal tool for bridging computational neuroscience and Model-Informed Drug Development (MIDD).

The Neural Dynamics Challenge: Why Behavior-Guided Modeling is the Next Frontier

The Critical Gap in Neural Population Dynamics Modeling

A fundamental challenge in computational neuroscience lies in accurately modeling the nonlinear dynamics of neuronal populations to unravel their relationship with behavior. While recent research has increasingly focused on jointly modeling neural activity and behavior, these approaches often necessitate either intricate model designs or oversimplified assumptions about their interconnections [1] [2]. The critical gap emerges from a practical constraint frequently encountered in real-world experimental scenarios: the frequent absence of perfectly paired neural-behavioral datasets when deploying these models for inference. This raises a pivotal research question: how can we develop a model that performs well using only neural activity as input during inference, while simultaneously benefiting from the predictive insights gained from behavioral signals during training?

The BLEND (Behavior-guided Neural population dynamics modElling framework via privileged kNowledge Distillation) framework directly addresses this critical gap by treating behavior as "privileged information" – data available only during training but not at inference [1] [2]. This approach is model-agnostic, avoiding strong assumptions about the relationship between behavior and neural activity, thereby enabling enhancement of existing neural dynamics modeling architectures without developing specialized models from scratch. Through privileged knowledge distillation, BLEND trains a teacher model that incorporates both behavior observations (privileged features) and neural activities (regular features), then distills this knowledge into a student model that operates using neural activity alone during actual deployment [2]. This innovative approach has demonstrated substantial performance improvements, reporting over 50% enhancement in behavioral decoding and over 15% improvement in transcriptomic neuron identity prediction after behavior-guided distillation [1].

Comparative Analysis of Neural Population Modeling Approaches

Key Methodologies and Their Characteristics

Table 1: Comparative Analysis of Neural Population Dynamics Modeling Approaches

| Method | Core Approach | Behavior Integration | Key Advantages | Reported Performance |

|---|---|---|---|---|

| BLEND [1] [2] | Privileged knowledge distillation | Behavior as privileged info (training only) | Model-agnostic; no strong assumptions; enhances existing architectures | >50% improvement in behavioral decoding; >15% improvement in neuron identity prediction |

| MARBLE [3] | Geometric deep learning of manifold dynamics | Unsupervised or condition labels | Interpretable latent representations; consistent across networks/animals | State-of-the-art within- and across-animal decoding accuracy; minimal user input |

| CroP-LDM [4] | Prioritized linear dynamical modeling | Not primary focus | Prioritizes cross-population dynamics; causal and non-causal inference; interpretable | Accurate learning of cross-population dynamics; lower dimensionality requirements |

| BAND [5] | Behavior-aligned latent dynamics | Semi-supervised learning | Captures small neural variability related to corrections; combines dynamics with behavior supervision | Superior hand velocity reconstruction (R²=67% in random reach tasks) |

| Unified Accumulation Framework [6] | Probabilistic evidence accumulation modeling | Joint modeling of neural activity and choices | Reveals distinct accumulation strategies across brain regions; links neural activity to decision variables | Comprehensive choice prediction; reveals neural correlates of decision vacillation |

Experimental Performance Metrics

Table 2: Quantitative Performance Metrics Across Modeling Approaches

| Method | Neural Reconstruction Quality | Behavior Decoding Accuracy | Cross-System Consistency | Implementation Complexity |

|---|---|---|---|---|

| BLEND | High (enhanced via distillation) | Very High (>50% improvement) | Moderate (model-agnostic) | Low (builds on existing architectures) |

| MARBLE | High (manifold structure preservation) | High (state-of-the-art decoding) | High (consistent across animals) | Moderate (geometric deep learning) |

| CroP-LDM | Moderate (linear dynamics) | Moderate (focus on cross-population) | High (interpretable pathways) | Low (linear modeling) |

| BAND | Slightly reduced vs. unsupervised | High (captures corrective movements) | Not specifically reported | Moderate (semi-supervised setup) |

| Unified Accumulation Framework | High (neural activity linked to decisions) | High (choice prediction) | High (cross-regional comparisons) | High (probabilistic modeling) |

BLEND Experimental Protocols and Implementation

Privileged Knowledge Distillation Workflow

The BLEND framework implements a sophisticated knowledge distillation process that transfers behavioral insights from teacher to student models. The experimental workflow comprises three fundamental phases:

Phase 1: Teacher Model Training The teacher model is trained using a combined input of neural activities and simultaneous behavior observations, treating behavior as privileged information. This architecture typically employs recurrent neural networks or transformer-based encoders to process temporal dynamics. The training objective minimizes both neural activity reconstruction error and behavioral prediction error, forcing the model to learn representations that capture the neural-behavioral relationship. During this phase, behavioral signals provide direct supervisory guidance, enabling the teacher to discover latent dynamics that correlate with behavioral outputs [1] [2].

Phase 2: Knowledge Distillation The distilled student model learns to replicate the teacher's outputs using only neural activity as input. This is achieved through a distillation loss function that minimizes the discrepancy between student and teacher latent representations and/or output predictions. Specifically, the framework employs mean squared error between latent states and Kullback-Leibler divergence between output distributions. This phase may incorporate various behavior-guided distillation strategies, including attention-based feature alignment and progressive distillation schedules that gradually transfer complex behavioral relationships [2].

Phase 3: Inference Deployment The final student model is deployed for inference using neural activity alone, without behavioral signals. Despite this constraint, the model maintains enhanced behavioral decoding capabilities inherited from the teacher through the distillation process. Experimental validation involves comparing the student model's performance against baseline approaches trained without privileged behavioral information, with metrics assessing both neural dynamics modeling accuracy and behavioral decoding performance [1].

Experimental Validation Protocol

Dataset Requirements and Preparation: For comprehensive BLEND validation, researchers should curate datasets containing simultaneous neural recordings and behavioral measurements across multiple experimental conditions. Neural data should include population recordings (minimum 50+ simultaneously recorded neurons) with spike sorting and binning (recommended 10-50ms bins). Behavioral data must be temporally aligned with neural activity and may include continuous kinematic variables (hand velocity, position) or discrete task variables (choice, reward). The dataset should be partitioned into training (70%), validation (15%), and test (15%) splits, maintaining trial structure integrity [1] [5].

Baseline Model Establishment: Establish baseline performance using unsupervised neural dynamics models (LFADS, VAEs) trained without behavioral information. Evaluate baseline neural reconstruction quality using Poisson log-likelihood or bits per second, and behavioral decoding accuracy using coefficient of determination (R²) for continuous variables or accuracy for discrete variables. This baseline provides reference metrics for quantifying BLEND's improvement [5].

BLEND Implementation Protocol:

- Teacher Model Configuration: Implement teacher model using encoder-decoder architecture with separate input pathways for neural activity and behavioral signals. Use gated recurrent units (GRUs) or long short-term memory (LSTM) networks for temporal processing.

- Distillation Schedule: Employ progressive distillation with initial focus on neural reconstruction, gradually increasing weight on behavioral alignment over training epochs.

- Student Model Architecture: Mirror teacher model's neural processing pathway without behavioral input branches, maintaining comparable capacity to prevent underfitting.

- Training Regimen: Use Adam optimizer with learning rate 0.001, batch size 32-128 depending on dataset size, and early stopping based on validation performance.

Evaluation Metrics:

- Neural dynamics modeling: Poisson log-likelihood, co-smoothing bits per second

- Behavioral decoding: R² for continuous variables, accuracy/F1 for discrete variables

- Generalization: Cross-validated performance, out-of-distribution testing

- Comparative analysis: Percentage improvement over baseline models

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Neural Population Dynamics

| Resource Category | Specific Tools/Methods | Function/Application | Implementation Considerations |

|---|---|---|---|

| Neural Recording Systems | Neuropixels, multielectrode arrays, calcium imaging | High-density neural population activity monitoring | Temporal resolution, channel count, simultaneous behavioral tracking |

| Behavior Tracking | Motion capture, deep lab cut, force sensors | Quantitative behavior measurement at high temporal resolution | Synchronization with neural data, markerless vs. marker-based approaches |

| Data Preprocessing | Spike sorting, deconvolution, signal filtering | Neural signal extraction and noise reduction | Pipeline standardization, quality metrics, validation protocols |

| Baseline Modeling Architectures | LFADS, VAEs, RNNs, LSTMs | Foundation for BLEND enhancement | Model selection based on data type, hyperparameter optimization |

| Distillation Frameworks | PyTorch, TensorFlow, custom distillation losses | BLEND knowledge transfer implementation | Gradient flow management, loss weighting, training stability |

| Validation Metrics | Poisson log-likelihood, R², decoding accuracy | Performance quantification and model comparison | Statistical testing, cross-validation procedures, significance assessment |

| Manifold Learning Tools | MARBLE, CEBRA, UMAP, t-SNE | Low-dimensional visualization and analysis | Dimensionality selection, interpretability, biological validation |

Advanced Integration and Cross-Methodological Analysis

Comparative Architecture Visualization

Integrated Experimental Design Protocol

For comprehensive neural population dynamics research, we propose an integrated protocol that combines the strengths of multiple approaches:

Phase 1: Data Acquisition and Preprocessing

- Conduct simultaneous neural recordings (minimum 3 brain regions recommended)

- Implement high-precision behavioral tracking (≤100ms temporal resolution)

- Ensure precise temporal alignment between neural and behavioral data streams

- Apply standardized preprocessing pipelines for spike sorting and behavioral feature extraction

Phase 2: Initial Model Screening

- Apply BLEND framework to identify behaviorally relevant neural dynamics

- Use MARBLE for uncovering manifold structure and consistent representations

- Employ CroP-LDM specifically for cross-regional interaction analysis

- Implement BAND for capturing corrective movements and small neural variability

Phase 3: Cross-Method Validation

- Compare latent representations across methods using canonical correlation analysis

- Validate behavioral decoding consistency across approaches

- Assess cross-animal and cross-session generalization capabilities

- Perform ablation studies to determine method-specific contributions

Phase 4: Biological Interpretation and Pathway Mapping

- Relate discovered dynamics to known neural circuits and pathways

- Identify dominant interaction pathways using CroP-LDM's interpretable framework

- Map BLEND's privileged information to specific behavioral correlates

- Validate biological plausibility through perturbation experiments or literature comparison

This integrated approach leverages the complementary strengths of each method: BLEND's privileged information utilization, MARBLE's geometric manifold learning, CroP-LDM's cross-population prioritization, and BAND's sensitivity to small behaviorally relevant neural variability. The synergistic application of these methods provides a more comprehensive understanding of neural population dynamics than any single approach alone.

In computational neuroscience, a significant challenge is developing models that perform robustly in real-world scenarios where certain data modalities are missing during deployment. The concept of privileged information—data available only during the training phase—provides a powerful framework for addressing this challenge. Within neural population dynamics modeling, behavioral data often constitutes this privileged information, serving as a critical guiding signal for training models that later operate solely on neural activity. This approach is particularly valuable in clinical applications and drug development, where perfectly paired neural-behavioral datasets are frequently unavailable in real-world deployment scenarios [1].

The BLEND framework (Behavior-guided Neural Population Dynamics Modeling via Privileged Knowledge Distillation) formalizes this approach by treating behavior as privileged information during training. This method enables the creation of student models that benefit from behavioral guidance during training but operate independently of behavioral data during inference [1]. This paradigm is especially relevant for brain-computer interfaces and therapeutic applications, where behavioral measurements may be inaccessible during actual use but can be extensively collected during controlled training sessions.

The BLEND Framework: Core Architecture and Mechanism

Theoretical Foundation and Algorithmic Approach

BLEND implements a privileged knowledge distillation process consisting of two primary components: a teacher model and a student model. The teacher model has access to both behavioral observations (privileged features) and neural activities (regular features) during the training phase. Through this dual-access architecture, the teacher learns rich representations that capture the complex relationships between neural dynamics and behavior. The student model is then distilled from the teacher using only neural activity, learning to replicate the teacher's predictive capabilities without direct access to behavioral signals [1].

This approach is model-agnostic, meaning it can enhance existing neural dynamics modeling architectures without requiring specialized models to be developed from scratch. The framework avoids making strong assumptions about the precise relationship between behavior and neural activity, allowing it to adapt to various experimental paradigms and recording conditions [1]. The distillation process ensures that behavioral information implicitly guides the learning of neural representations, resulting in models that maintain behavioral relevance while requiring only neural inputs during deployment.

Implementation Workflow

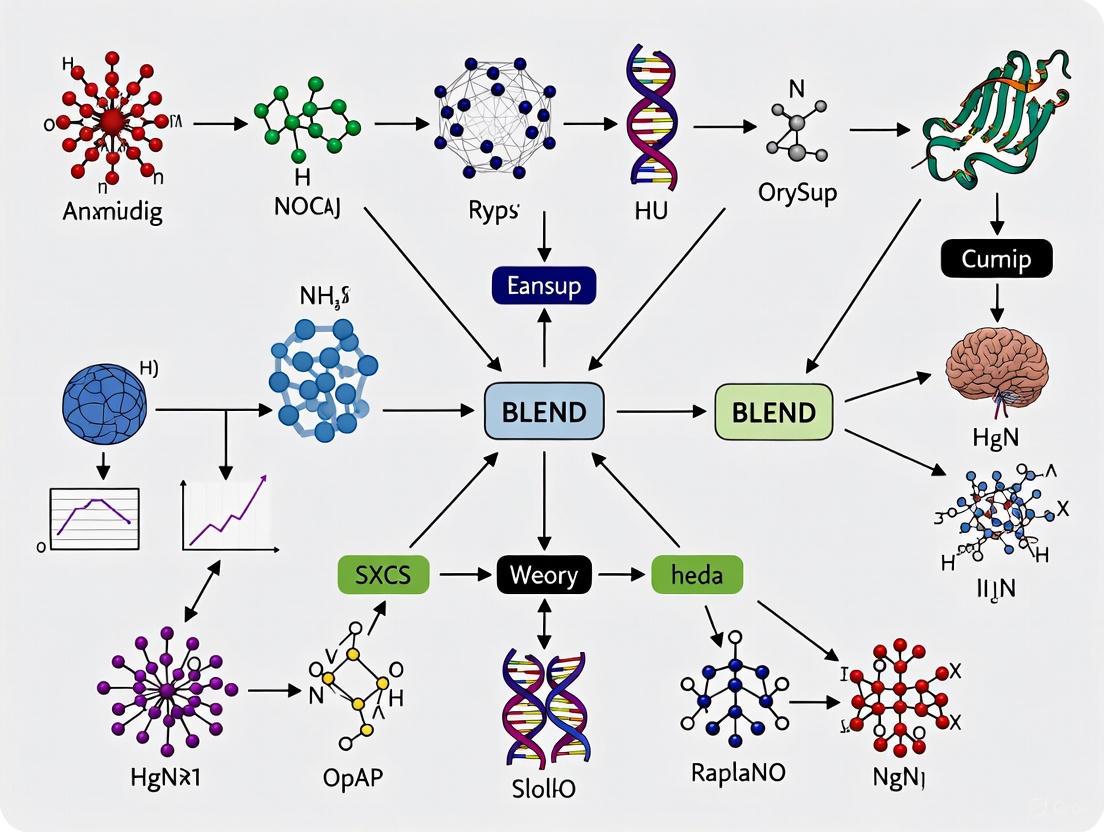

The following diagram illustrates the end-to-end knowledge distillation process in the BLEND framework:

Figure 1: BLEND Framework Knowledge Distillation Workflow. The teacher model learns from both neural and behavioral data during training, then distills this knowledge to a student model that operates with neural data only during inference.

Quantitative Performance Evaluation

Experimental Results and Benchmarking

BLEND has demonstrated substantial improvements across multiple experimental paradigms. Extensive evaluations across neural population activity modeling and transcriptomic neuron identity prediction tasks reveal the framework's strong capabilities. The following table summarizes key quantitative findings from these experiments:

Table 1: BLEND Framework Performance Metrics Across Experimental Paradigms

| Experimental Task | Performance Metric | Improvement | Significance |

|---|---|---|---|

| Behavioral Decoding | Prediction Accuracy | >50% improvement | Enables more accurate behavior decoding from neural activity alone [1] |

| Transcriptomic Neuron Identity Prediction | Classification Accuracy | >15% improvement | Enhances identification of neuron types from transcriptional profiles [1] [7] |

| Neural Dynamics Modeling | Across-animal decoding accuracy | State-of-the-art performance | Outperforms existing representation learning approaches with minimal user input [3] |

These performance gains demonstrate that behavior-guided distillation effectively transfers meaningful information about the relationship between neural activity and behavior, resulting in student models that maintain high behavioral decoding accuracy while requiring only neural inputs during deployment.

Comparative Analysis with Alternative Approaches

BLEND represents a significant advancement over previous methods for joint modeling of neural activity and behavior. Earlier approaches often required either intricate model designs or oversimplified assumptions about neural-behavioral relationships. The table below compares BLEND against other contemporary neural modeling frameworks:

Table 2: Comparison of Neural Population Dynamics Modeling Frameworks

| Method | Key Features | Behavior Integration | Deployment Requirements |

|---|---|---|---|

| BLEND | Privileged knowledge distillation, model-agnostic | Behavior as privileged info during training only | Neural data only during inference [1] |

| MARBLE | Geometric deep learning, manifold representation | Optional supervision via behavioral data | Can operate without behavioral signals [3] |

| LFADS | Sequential auto-encoders, latent dynamics inference | Typically uses neural data only | Neural data only [3] |

| CEBRA | Contrastive learning, interpretable embeddings | Can use time, behavior, or both | Flexible depending on training approach [3] |

| Active Learning Methods | Low-rank regression, adaptive stimulation | Passive or none | Neural data with designed perturbations [8] |

BLEND's distinctive advantage lies in its ability to leverage behavioral data during training without creating dependency on these signals during deployment, addressing a critical limitation in real-world applications where behavioral measurements are often unavailable during actual use.

Experimental Protocols for Behavior-Guided Neural Modeling

Protocol 1: Implementing Privileged Knowledge Distillation

Objective: Train a behavior-guided neural population dynamics model using privileged knowledge distillation that maintains high behavioral decoding performance using only neural activity during inference.

Materials and Methods:

- Neural Recording System: Two-photon calcium imaging or Neuropixels recording setup for capturing neural population activity [8]

- Behavioral Monitoring: Video tracking with pose estimation software or specialized behavioral apparatus with precise trial structure

- Computational Resources: High-performance computing cluster with GPU acceleration for model training

- Software Framework: Python with PyTorch or TensorFlow, implementing custom knowledge distillation loss functions

Procedure:

- Data Collection Phase: Simultaneously record neural population activity and behavioral measurements across multiple experimental sessions. For motor cortex studies, implement reaching tasks with precise kinematic tracking [3]. For cognitive tasks, incorporate decision-making paradigms with trial structure and timing markers.

Data Preprocessing:

- Apply appropriate preprocessing to neural data: spike sorting or deconvolution for calcium imaging data, bandpass filtering for electrophysiology

- Align behavioral and neural data temporally with millisecond precision

- Segment data into trials or continuous sequences for model training

Teacher Model Training:

- Architect teacher model with separate input pathways for neural and behavioral data

- Implement fusion layers that integrate neural and behavioral representations

- Train using combined regression (neural prediction) and classification (behavior decoding) objectives

- Validate performance on held-out trials to ensure robust learning

Knowledge Distillation:

- Initialize student model with architecture matching teacher's neural processing pathway

- Implement distillation loss that minimizes discrepancy between student and teacher outputs

- Combine with task-specific losses (neural prediction, behavior decoding)

- Employ temperature scaling in softmax outputs for improved knowledge transfer

Model Validation:

- Evaluate student model on test datasets with no behavioral inputs

- Compare performance against ablated models trained without distillation

- Assess generalization across recording sessions and subjects

Troubleshooting Tips:

- If distillation fails to converge, adjust the balance between distillation loss and task-specific losses

- For small datasets, employ data augmentation techniques for neural sequences

- Regularize teacher model to prevent overfitting to training behavioral patterns

Protocol 2: Evaluating Cross-Subject Generalization

Objective: Assess model performance across different subjects and recording sessions to establish robustness for real-world applications.

Procedure:

- Implement leave-one-subject-out cross-validation scheme

- Analyze performance degradation relative to within-subject training

- Evaluate consistency of latent representations across subjects using similarity metrics

- Test in progressively challenging conditions (different task variants, environments)

Research Reagent Solutions for Neural-Behavioral Studies

Table 3: Essential Research Tools for Behavior-Guided Neural Population Studies

| Reagent/Technology | Function | Example Applications |

|---|---|---|

| Two-photon Holographic Optogenetics | Precise photostimulation of neuron ensembles | Causal perturbation of neural populations to validate dynamical models [8] |

| Two-photon Calcium Imaging | Measurement of neural activity at cellular resolution | Monitoring population dynamics during behavior with single-cell resolution [8] |

| Geometric Deep Learning Frameworks | Learning manifold representations of neural dynamics | MARBLE implementation for interpretable latent spaces [3] |

| Low-rank Autoregressive Models | Capturing low-dimensional structure in neural dynamics | Efficient modeling of population dynamics with reduced parameters [8] |

| Privileged Knowledge Distillation Codebases | Implementing BLEND framework | Adapting existing neural models to leverage behavioral guidance [1] |

| Behavioral Tracking Systems | Quantitative measurement of animal behavior | Kinematic analysis, pose estimation, and movement quantification [3] |

Integration with Drug Development and Clinical Applications

The BLEND framework offers significant promise for therapeutic development and clinical neuroscience applications. By creating models that can accurately decode behavior from neural activity alone, this approach enables new paradigms for closed-loop therapeutic systems and neurological disorder assessment.

In pharmaceutical development, behavior-guided neural models can enhance target validation by establishing clearer links between neural circuit dynamics and behavioral outcomes. This is particularly valuable for neuropsychiatric disorders where behavioral readouts are essential therapeutic indicators but difficult to measure continuously [9]. The demonstrated improvement in transcriptomic neuron identity prediction further suggests applications in stratified medicine, where neural signatures could help identify patient subgroups most likely to respond to specific therapeutic interventions.

For regulatory science, the use of privileged information frameworks like BLEND addresses important practical constraints in translating neural interfaces from controlled laboratory settings to real-world use. By explicitly designing models for deployment scenarios where certain data modalities are missing, this approach enhances the robustness and practical utility of computational neuroscience tools in clinical trials and therapeutic applications [10] [11].

Advanced Methodologies in Neural Population Modeling

Complementary Approaches in Neural Dynamics

While BLEND addresses the challenge of leveraging behavioral data as privileged information, other recent advances provide complementary capabilities for neural population modeling. MARBLE (MAnifold Representation Basis LEarning) uses geometric deep learning to obtain interpretable and decodable latent representations from neural dynamics, providing a well-defined similarity metric between neural population dynamics across conditions and even across different systems [3].

Active learning approaches represent another significant direction, with methods designed to efficiently select which neurons to stimulate such that the resulting neural responses will best inform a dynamical model of the neural population activity [8]. These approaches can obtain as much as a two-fold reduction in the amount of data required to reach a given predictive power, addressing practical constraints in experimental neuroscience.

Workflow for Integrated Neural-Behavioral Analysis

The following diagram illustrates a comprehensive experimental workflow for behavior-guided neural population studies, from data collection through model deployment:

Figure 2: Comprehensive Workflow for Behavior-Guided Neural Population Studies. The integrated pipeline spans data acquisition, computational modeling, and real-world deployment for therapeutic applications.

Future Directions and Implementation Considerations

The integration of behavior as privileged information in neural population models opens several promising research directions. Future work could explore multi-modal privileged information, incorporating not just behavior but also other modalities such as physiological signals, context variables, or simultaneous electrophysiology and imaging data. Additionally, adaptive distillation strategies that dynamically adjust the knowledge transfer process based on model performance could further enhance efficiency.

For implementation, researchers should consider:

- The optimal balance between model complexity and available data

- Appropriate validation strategies for assessing real-world performance

- Computational efficiency requirements for potential real-time applications

- Integration with existing experimental pipelines and data standards

The BLEND framework's model-agnostic nature facilitates adoption across diverse research programs and experimental paradigms, lowering barriers to implementing behavior-guided neural modeling in both basic neuroscience and translational applications.

A significant challenge in computational neuroscience is the discrepancy between data available during model development and data encountered during real-world deployment. While research often leverages perfectly paired neural-behavioral datasets, behavioral data is frequently partial, limited, or entirely absent during inference in real-world scenarios [12]. This creates a critical gap: how can models maintain high performance using only neural activity as input, while still benefiting from the rich guidance provided by behavioral signals during training? The BLEND framework directly confronts this "paired to unpaired" inference problem by formally treating behavior as privileged information—data available only during training—and employing a novel knowledge distillation architecture to bridge this gap [1] [12].

The BLEND Framework: Core Methodology

BLEND (Behavior-guided neuraL population dynamics modElling via privileged kNowledge Distillation) introduces a model-agnostic learning paradigm. Its core architecture consists of a teacher-student distillation process designed to transfer knowledge from behavioral data to a model that operates solely on neural activity [12].

Algorithm and Workflow

The BLEND algorithm operates through a structured, multi-stage workflow, illustrated in the diagram below.

Diagram 1: BLEND knowledge distillation workflow.

The process, as shown in Diagram 1, follows these key stages [12]:

- Teacher Model Training: A teacher model is trained on a dataset containing perfectly paired neural activity (regular features) and behavior observations (privileged features). This model learns the complex, nonlinear relationships between neural dynamics and behavior.

- Knowledge Distillation: The knowledge encapsulated in the teacher model is transferred to a student model. This is achieved through behavior-guided distillation, where the student learns to mimic the teacher's outputs or internal representations.

- Inference with Student Model: The final, distilled student model is deployed for inference. It requires only neural activity data as input to make accurate predictions, having internalized the guidance originally provided by the behavioral data.

Privileged Information Formulation

BLEND formalizes behavior as privileged information within the Learning Using Privileged Information (LUPI) paradigm [12]. For a neural spiking dataset, let ( \mathbf{X} = {\mathbf{x}1, \mathbf{x}2, ..., \mathbf{x}T} ) represent the recorded neural activity across ( T ) time bins, and ( \mathbf{Y} = {\mathbf{y}1, \mathbf{y}2, ..., \mathbf{y}T} ) represent the simultaneously recorded behavioral variables. During training, the teacher model has access to ( (\mathbf{X}, \mathbf{Y}) ). The student model is trained on ( \mathbf{X} ) but learns to approximate a function that reflects the teacher's understanding of ( \mathbf{Y} ). At inference, the student operates solely on new neural data ( \mathbf{X}_{\text{test}} ).

Quantitative Performance Analysis

BLEND's performance was rigorously evaluated against state-of-the-art baselines on public benchmarks, demonstrating substantial improvements across multiple tasks [12] [7].

Neural Activity and Behavior Decoding

Table 1: Performance on Neural Latents Benchmark '21.

| Model | Neural Activity Prediction (R²) | Behavior Decoding (Accuracy) | PSTH Matching |

|---|---|---|---|

| LFADS | 0.72 | 0.45 | 0.68 |

| Neural Data Transformer (NDT) | 0.75 | 0.48 | 0.71 |

| STNDT | 0.76 | 0.50 | 0.72 |

| BLEND (STNDT base) | 0.79 | >0.75 (50% improvement) | 0.76 |

As shown in Table 1, BLEND significantly enhances the capabilities of base models like the Spatiotemporal Neural Data Transformer (STNDT). The most notable gain is in behavioral decoding, where BLEND achieves an improvement of over 50% compared to the base model that does not use privileged knowledge distillation [1] [12] [7]. This confirms that behavior-guided distillation successfully embeds behaviorally relevant information into the student model's representations.

Transcriptomic Neuron Identity Prediction

BLEND's utility extends beyond dynamics modeling to neuronal classification. The framework was applied to a multi-modal calcium imaging dataset for the task of predicting transcriptomic neuron identity.

Table 2: Performance on transcriptomic identity prediction.

| Model | Top-1 Accuracy | Notes |

|---|---|---|

| Standard Classifier | 0.58 | Trained on neural activity only |

| CEBRA | 0.63 | Uses behavior for contrastive learning |

| BLEND | >0.66 (15% improvement) | Uses behavior as privileged info |

Table 2 illustrates that BLEND provided a greater than 15% improvement in prediction accuracy compared to the baseline model [12]. This result underscores the framework's versatility and its ability to improve the quality of learned neural representations for diverse downstream tasks.

Experimental Protocols

This section provides detailed methodologies for implementing and validating the BLEND framework.

Protocol 1: Implementing BLEND for Neural Dynamics Modeling

Objective: To adapt an existing neural dynamics model (e.g., STNDT, LFADS) using the BLEND framework to improve behavioral decoding performance from neural activity [12].

Materials: (See "Research Reagent Solutions" in Section 6.)

- Neural spiking data and synchronized behavioral data (e.g., from Neural Latents Benchmark).

- Computational environment with suitable deep learning frameworks (PyTorch/TensorFlow).

Procedure:

- Data Preprocessing:

- Neural Data: Bin raw spike times into consecutive time bins (e.g., 10-50 ms). Apply smoothing and square root transform to stabilize variance.

- Behavior Data: Z-score normalize continuous behavioral variables (e.g., velocity). For discrete states, use one-hot encoding.

- Base Model Selection: Choose a base neural dynamics model (e.g., STNDT). This model will serve as the core architecture for both teacher and student.

- Teacher Model Configuration:

- Modify the input layer of the base model to accept a concatenated vector of neural activity and behavioral data.

- Train the teacher model in a supervised manner. The loss function (( \mathcal{L}_{\text{teacher}} )) is typically the negative log-likelihood of the predicted neural activity.

- Student Model Configuration:

- The student model uses the original base model architecture, taking only neural activity as input.

- Knowledge Distillation:

- Train the student model using a composite loss function:

( \mathcal{L}{\text{student}} = \mathcal{L}{\text{task}} + \lambda \cdot \mathcal{L}{\text{distill}} )

where:

- ( \mathcal{L}{\text{task}} ) is the original task loss (e.g., neural activity prediction).

- ( \mathcal{L}_{\text{distill}} ) is the distillation loss, such as the Kullback-Leibler divergence between the teacher and student's output distributions.

- ( \lambda ) is a hyperparameter controlling the distillation strength.

- Train the student model using a composite loss function:

( \mathcal{L}{\text{student}} = \mathcal{L}{\text{task}} + \lambda \cdot \mathcal{L}{\text{distill}} )

where:

- Validation: Evaluate the student model on a held-out test set where behavioral data is withheld, reporting metrics for neural prediction and behavioral decoding.

Protocol 2: Assessing Transcriptomic Identity Prediction

Objective: To use BLEND for predicting transcriptomic neuron identity from calcium imaging data, leveraging behavioral data as privileged information during training [12].

Materials:

- Paired neural calcium imaging data, behavioral recordings, and transcriptomic cell-type labels.

- Standardized data processing pipeline for calcium imaging.

Procedure:

- Data Alignment: Align calcium imaging traces, behavioral time series, and post-hoc transcriptomic labels using unique neuronal identifiers.

- Feature Extraction: From the calcium imaging data, extract relevant neural activity features for each neuron (e.g., mean firing rate, calcium event kinetics, population coupling).

- Model Training:

- Teacher: Train a classifier (e.g., Multi-Layer Perceptron) on a concatenated feature vector of neural activity features and behavioral data to predict transcriptomic identity.

- Student: Distill the teacher's knowledge into a student classifier that uses only neural activity features. Use the teacher's soft class probabilities as targets for the distillation loss (( \mathcal{L}_{\text{distill}} )).

- Evaluation: Compare the student model's classification accuracy against a baseline model trained without distillation and against other methods like CEBRA.

Distillation Strategy Analysis

The effectiveness of BLEND depends on the chosen knowledge distillation strategy. Empirical exploration has revealed performance correlations with different base models [12].

Table 3: Guidance for distillation strategy selection.

| Base Model Architecture | Recommended Distillation Strategy | Rationale |

|---|---|---|

| Transformer-based (e.g., NDT, STNDT) | Attention-based Activation Distillation | Effectively transfers the teacher's focus on behaviorally relevant neural units and temporal patterns. |

| State-Space Model (e.g., LFADS) | Latent State Distillation | Forces the student's latent dynamics to align with the behaviorally-informed dynamics discovered by the teacher. |

| General / Simple Encoder | Output Logits Distillation | A robust and simple method that works well for less complex models, providing stable training. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential materials and tools for BLEND experiments.

| Reagent / Resource | Function | Example / Specification |

|---|---|---|

| Neural Latents Benchmark '21 | Standardized benchmark suite for evaluating latent variable models of neural population activity. | Provides public datasets with paired neural and behavioral data for fair comparison [12]. |

| CEBRA | Algorithm for creating label-informed embeddings of neural data. | Used as a strong baseline for behaviorally-guided representation learning [12]. |

| LFADS | Deep learning method for inferring single-trial neural population dynamics. | Can be used as a base model within the BLEND framework [12]. |

| Spatiotemporal Neural Data Transformer (STNDT) | Transformer architecture for modeling neural population activity across time and space. | A high-performing base model for BLEND, especially for behavioral decoding tasks [12]. |

| TabPFN | A tabular foundation model for small-to-medium-sized data. | Potentially useful for rapid prototyping or analysis of auxiliary tabular data (e.g., neuron metadata) [13]. |

Modeling the nonlinear dynamics of neuronal populations is a fundamental pursuit in computational neuroscience, crucial for understanding how complex brain functions emerge from collective neural activity [12]. A significant challenge in this field is the frequent absence of perfectly paired neural-behavioral datasets in real-world scenarios; behavioral data is often partial, limited, or entirely unavailable during certain periods of neural recording [12]. This practical constraint creates a critical research question: how can we develop models that perform effectively using only neural activity as input during inference, while still leveraging the rich information provided by behavioral signals during training [1] [12]?

The BLEND (Behavior-guided neuraL population dynamics modElling framework via privileged kNowledge Distillation) framework directly addresses this challenge through an innovative application of privileged knowledge distillation [1] [12]. BLEND conceptualizes behavior as privileged information—data available only during training—and employs a teacher-student architecture to transfer knowledge from behaviorally enriched models to behavior-agnostic models [2]. This approach is model-agnostic, enabling enhancement of existing neural dynamics modeling architectures without requiring specialized model development from scratch [12]. By avoiding strong assumptions about the relationship between behavior and neural activity, BLEND provides a flexible and powerful tool for researchers investigating brain function across various experimental paradigms.

Table: Core Components of the BLEND Framework

| Component | Description | Function in Neuroscience Research |

|---|---|---|

| Teacher Model | Neural network trained on both neural activity and behavioral observations [12] | Learns complex relationships between neural dynamics and behavioral manifestations |

| Student Model | Neural network distilled from teacher using only neural activity [12] | Deployable model for inference when behavioral data is unavailable |

| Privileged Features | Behavioral observations available only during training [12] | Provides supervisory signal for learning behaviorally relevant neural representations |

| Regular Features | Neural activity recordings available during both training and inference [12] | Primary input modality for both training and deployment phases |

Methodological Framework and Experimental Validation

The BLEND framework operates through a structured knowledge distillation process that transfers behavioral understanding from a comprehensively trained teacher model to a deployable student model. The teacher model receives both neural activities (regular features) and behavior observations (privileged features) as inputs, learning to capture the intricate relationships between neural population dynamics and their behavioral manifestations [12]. Through distillation, the student model learns to replicate the teacher's predictive capabilities using only neural activity as input, effectively internalizing the behavioral guidance without requiring explicit behavior signals during deployment [12].

This approach differs significantly from existing methods in several key aspects. Unlike methods that require intricate model designs or make oversimplified assumptions about behavior-neural relationships, BLEND's distillation-based approach is notably model-agnostic [12]. Furthermore, while previous joint modeling approaches often assume a clear distinction between behaviorally relevant and irrelevant neural dynamics, BLEND avoids such strong assumptions, making it more adaptable to diverse experimental conditions and neural systems [12].

Quantitative Performance Validation

BLEND's effectiveness has been rigorously validated across multiple benchmarks and experimental paradigms. Extensive experiments conducted on the Neural Latents Benchmark'21 for neural activity prediction, behavior decoding, and matching to peri-stimulus time histograms (PSTHs), as well as a multi-modal calcium imaging dataset for transcriptomic identity prediction, demonstrate the framework's strong capabilities [12]. The results show that BLEND significantly elevates the performance of baseline methods and substantially outperforms state-of-the-art models across multiple metrics [12].

Table: Performance Metrics of BLEND Across Experimental Paradigms

| Experimental Task | Performance Improvement | Key Metric | Research Application |

|---|---|---|---|

| Behavioral Decoding | >50% improvement [12] | Decoding accuracy from neural activity | Connecting neural dynamics to behavioral outputs |

| Transcriptomic Neuron Identity Prediction | >15% improvement [12] | Prediction accuracy of cell-type identities | Linking electrophysiological activity to molecular identity |

| Neural Population Activity Modeling | Significant gains over SOTA [12] | Prediction accuracy of neural dynamics | Understanding how neural populations encode information |

The remarkable improvement in behavioral decoding (exceeding 50%) demonstrates BLEND's capacity to extract behaviorally relevant information from neural signals more effectively than previous approaches [12]. This enhancement is particularly valuable for researchers investigating neural correlates of behavior in contexts where behavioral measurements are intermittent or unavailable during certain experimental phases. Similarly, the substantial gains in transcriptomic neuron identity prediction (over 15%) highlight BLEND's utility in bridging different modalities of neural data—connecting functional activity patterns with molecular identities [12].

Experimental Protocols and Implementation

Protocol 1: Implementing BLEND for Neural-Behavioral Correlation Studies

Purpose: To establish a reproducible protocol for implementing the BLEND framework to investigate relationships between neural population dynamics and behavior.

Materials and Reagents:

- Neural recording system (electrophysiology, calcium imaging, or fMRI)

- Behavioral monitoring apparatus (video tracking, force sensors, etc.)

- Computing hardware with GPU acceleration

- BLEND software framework (https://github.com/dddavid4real/BLEND)

Procedure:

Data Preparation Phase:

- Simultaneously record neural activity and behavioral observations across multiple trials or sessions.

- Preprocess neural data: apply filtering, spike sorting (for electrophysiology), or motion correction (for imaging).

- Preprocess behavioral data: extract relevant features such as movement kinematics, task performance metrics, or stimulus responses.

- Partition data into training, validation, and test sets, ensuring temporal segregation to prevent data leakage.

Teacher Model Training:

- Select an appropriate base architecture (LFADS, Neural Data Transformer, or other neural dynamics models).

- Configure the teacher model to accept both neural activity (regular features) and behavioral observations (privileged features) as inputs.

- Train the teacher model to jointly predict future neural states and behavioral outputs using the paired dataset.

- Validate performance on held-out data to ensure the teacher has learned meaningful neural-behavioral relationships.

Knowledge Distillation:

- Initialize the student model with the same architecture as the teacher but excluding behavioral input pathways.

- Implement distillation loss that minimizes the discrepancy between student and teacher outputs.

- Train the student model using only neural activity while leveraging the teacher's outputs as training targets.

- Employ appropriate distillation strategies (response-based, feature-based, or relation-based) depending on the base model.

Model Validation:

- Evaluate the student model on test data containing only neural activity (no behavioral signals).

- Compare performance against baseline models trained without privileged knowledge distillation.

- Assess both neural dynamics prediction accuracy and behavioral decoding capability.

Troubleshooting Tips:

- If distillation fails to converge, adjust the temperature parameter in the distillation loss function.

- If student performance lags significantly behind teacher, increase the weight of distillation loss relative to task-specific loss.

- For imbalanced behavioral data, apply appropriate sampling strategies or loss weighting during teacher training.

Protocol 2: Transcriptomic Neuron Identity Prediction

Purpose: To apply BLEND for predicting transcriptomic identities of neurons from their functional activity patterns.

Materials and Reagents:

- Patch-seq apparatus combining electrophysiology and single-cell RNA sequencing

- Cell culture materials or acute brain slice preparation equipment

- BLEND computational framework

- Transcriptomic analysis software (Seurat, Scanpy, etc.)

Procedure:

Multi-Modal Data Collection:

- Record electrophysiological activity from individual neurons using patch-clamp techniques.

- Harvest cellular contents for single-cell RNA sequencing immediately following functional characterization.

- Sequence and process transcriptomic data to identify cell-type specific markers.

Feature Engineering:

- Extract functional features from electrophysiological recordings: firing patterns, adaptation properties, response dynamics.

- Reduce dimensionality of transcriptomic data using principal component analysis or variational autoencoders.

- Create paired dataset linking functional features (regular) with transcriptomic profiles (privileged).

BLEND Implementation:

- Train teacher model on both functional features and transcriptomic principal components.

- Distill knowledge to student model using only functional features as input.

- Validate model's ability to predict transcriptomic identity from electrophysiological properties alone.

Validation and Interpretation:

- Assess prediction accuracy against ground truth transcriptomic classifications.

- Identify which functional features most strongly predict specific molecular markers.

- Compare performance against direct supervised learning approaches.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for BLEND Implementation in Neuroscience Research

| Resource Category | Specific Tools/Solutions | Function in BLEND Workflow |

|---|---|---|

| Computational Frameworks | BLEND GitHub Repository [12] | Core implementation of knowledge distillation framework |

| Neural Dynamics Models | LFADS [12], Neural Data Transformer [12], STNDT [12] | Base architectures for teacher and student models |

| Neural Recording Platforms | Electrophysiology systems, Calcium imaging, fMRI | Generation of neural activity data (regular features) |

| Behavior Monitoring Systems | Video tracking, Force sensors, Eye tracking | Generation of behavioral observations (privileged features) |

| Multi-Modal Integration Tools | Patch-seq methodologies | Paired neural activity and transcriptomic profiling |

Visualization of Experimental Workflows

The BLEND framework represents a significant methodological advancement in computational neuroscience by effectively addressing the challenge of leveraging behavioral data during training when it is unavailable during deployment. Through its innovative application of privileged knowledge distillation, BLEND enables researchers to develop more accurate and robust models of neural population dynamics that maintain strong behavioral decoding capabilities even without direct behavior inputs [1] [12].

The framework's model-agnostic nature makes it particularly valuable for the neuroscience research community, as it can enhance existing neural dynamics modeling architectures without requiring specialized model development [12]. The substantial performance improvements demonstrated across multiple experimental paradigms—including over 50% improvement in behavioral decoding and over 15% improvement in transcriptomic neuron identity prediction—highlight BLEND's potential to accelerate research bridging neural activity, behavior, and molecular mechanisms [12].

For researchers and drug development professionals, BLEND offers a powerful tool for investigating neural circuit dysfunction in disease models and potentially identifying novel biomarkers for neurological and psychiatric disorders. The framework's ability to extract behaviorally relevant information from neural signals even when behavioral measurements are incomplete makes it particularly valuable for preclinical research where comprehensive behavioral assessment is often challenging. As the field moves toward more integrative approaches to understanding brain function, methodologies like BLEND will play an increasingly important role in deciphering the complex relationships between neural dynamics, behavior, and molecular mechanisms.

This application note details a novel framework for integrating advanced neural dynamics modeling, specifically the BLEND (Behavior-guided neuraL population dynamics modElling framework via privileged kNowledge Distillation) platform, into Model-Informed Drug Development (MIDD) paradigms. By treating behavioral data as privileged information during training, BLEND enables the creation of more robust neural population models that function effectively using only neural activity data during inference. This approach addresses a critical challenge in neuroscience-driven drug discovery: the frequent absence of perfectly paired neural-behavioral datasets in real-world scenarios. The quantitative results demonstrate the framework's significant potential, with performance improvements exceeding 50% in behavioral decoding and over 15% in transcriptomic neuron identity prediction after behavior-guided distillation [1] [14] [7]. These advancements promise to enhance target identification, improve preclinical prediction accuracy, and optimize clinical trial designs through more precise characterization of neural system responses to therapeutic interventions.

Quantitative Performance Metrics of Neural Modeling Approaches

Table 1: Comparative performance of neural dynamics modeling approaches in predictive tasks

| Model Type | Behavioral Decoding Improvement | Neuronal Identity Prediction | Key Features |

|---|---|---|---|

| BLEND Framework | >50% improvement [1] [7] | >15% improvement [1] [7] | Model-agnostic; avoids strong assumptions about behavior-neural activity relationships |

| Traditional NDM | Baseline | Baseline | Purely neural activity-based; ignores behavioral information |

| Joint Neural-Behavior Models | Moderate improvements | Moderate improvements | Require intricate designs or simplified assumptions |

BLEND Experimental Protocol: Privileged Knowledge Distillation for Neural Dynamics

Background and Principles

The BLEND framework addresses a fundamental challenge in computational neuroscience: developing models that perform well using only neural activity as input during actual deployment (inference), while simultaneously benefiting from the insights provided by behavioral signals during training [14]. This is achieved through privileged knowledge distillation, where behavior is treated as "privileged information" – data available only during the training phase, not during real-world application [1] [14].

Materials and Equipment

Table 2: Essential research reagents and computational tools for BLEND implementation

| Category | Specific Tools/Components | Function/Purpose |

|---|---|---|

| Data Requirements | Neural spiking data (x ∈ 𝕹 = ℕ^(N×T)) [14] | Input spike counts for N neurons over T time points |

| Behavior observations | Privileged features for teacher model training | |

| Computational Framework | Teacher model (neural activity + behavior inputs) [14] | Learns from both privileged and regular features |

| Student model (neural activity only) [14] | Distilled model for deployment | |

| Validation Benchmarks | Neural Latents Benchmark '21 [14] | Neural activity prediction, behavior decoding, PSTH matching |

| Multi-modal calcium imaging data [14] | Transcriptomic identity prediction |

Step-by-Step Methodology

Phase 1: Teacher Model Training

- Input Processing: Supply both behavior observations (privileged features) and neural activities (regular features) as inputs to the teacher model [14].

- Architecture Selection: Implement appropriate neural dynamics modeling architectures (e.g., LFADS, Neural Data Transformers, or other base models) [14].

- Optimization: Train the teacher model to establish relationships between neural activity patterns, behavioral manifestations, and underlying neural dynamics.

Phase 2: Knowledge Distillation

- Student Model Initialization: Prepare a student model with architecture similar to the teacher but accepting only neural activity as input [14].

- Distillation Process: Transfer knowledge from the behavior-informed teacher model to the behavior-agnostic student model using privileged knowledge distillation techniques [14].

- Validation: Verify that the student model achieves comparable performance to the teacher model despite having access only to neural activity data during inference.

Phase 3: Experimental Application

- Neural Activity-Only Inference: Deploy the distilled student model using neural activity recordings alone [14].

- Behavioral Decoding: Utilize the model to decode behavioral correlates from neural population activity.

- Therapeutic Assessment: Apply the framework to evaluate how pharmacological interventions alter neural dynamics and their relationship to behavioral outcomes.

Integration with MIDD Workflow

Diagram 1: BLEND-MIDD integration workflow for enhanced drug development.

BLEND Architecture and Knowledge Distillation Process

Diagram 2: BLEND privileged knowledge distillation methodology.

MIDD Integration Protocol: From Neural Insights to Clinical Applications

MIDD Fundamentals and Regulatory Context

Model-Informed Drug Development (MIDD) is "an essential framework for advancing drug development and supporting regulatory decision-making" [15]. The U.S. Food and Drug Administration (FDA) has established formal MIDD programs, including the MIDD Paired Meeting Program, which provides a pathway for drug developers to discuss MIDD approaches with Agency staff [16]. These approaches use "a variety of quantitative methods to help balance the risks and benefits of drug products in development" [16], and when successfully applied, can "improve clinical trial efficiency, increase the probability of regulatory success, and optimize drug dosing" [16].

Strategic Implementation Protocol

Target Identification and Validation

- Neural Circuit Profiling: Apply BLEND to characterize disease-relevant neural circuits and their behavioral correlates.

- Therapeutic Mechanism Mapping: Identify how candidate compounds modulate specific neural dynamics associated with pathological states.

- Biomarker Development: Establish neural activity signatures as predictive biomarkers for target engagement.

Preclinical to Clinical Translation

- First-in-Human Dose Prediction: Integrate BLEND-derived neural dynamics data with PBPK models and first-in-human dose algorithms [15].

- Disease Progression Modeling: Incorporate neural dynamic trajectories into quantitative systems pharmacology (QSP) models to predict long-term treatment effects [15].

- Clinical Trial Simulation: Utilize neural response profiles to optimize trial duration, endpoint selection, and patient stratification strategies [16].

Clinical Development Optimization

- Exposure-Response Characterization: Employ population pharmacokinetic and exposure-response (PPK/ER) modeling informed by neural dynamic biomarkers [15].

- Special Population Dosing: Develop tailored dosing regimens for populations with altered neural dynamics (e.g., neurological disorders, geriatric patients) [17].

- Combination Therapy Guidance: Use neural circuit engagement profiles to identify optimal drug combinations and sequencing strategies.

Regulatory Considerations

The FDA's MIDD Paired Meeting Program specifically prioritizes discussions on "dose selection or estimation," "clinical trial simulation," and "predictive or mechanistic safety evaluation" [16]. BLEND-informed approaches align directly with these priorities by providing quantitative, mechanism-based insights into neural circuit engagement and its relationship to both efficacy and safety endpoints.

The integration of behavior-guided neural population dynamics modeling through the BLEND framework with established MIDD approaches represents a significant advancement in neuroscience-driven drug development. By leveraging privileged knowledge distillation, researchers can create more robust and predictive models of neural function that maintain high performance even when behavioral data is unavailable during clinical application. This synergistic approach enhances target validation, improves preclinical to clinical translation, and ultimately supports the development of more effective and precisely targeted neurotherapeutics. As MIDD continues to evolve with emerging technologies, including artificial intelligence and machine learning [15] [17], the incorporation of sophisticated neural dynamics modeling will play an increasingly critical role in reducing development timelines, decreasing costs, and delivering innovative therapies to patients with neurological and psychiatric disorders.

Architecture in Action: Implementing BLEND's Knowledge Distillation Framework

BLEND (Behavior-guided neuraL population dynamics modElling framework via privileged kNowledge Distillation) represents a paradigm shift in computational neuroscience for modeling neural population dynamics. This innovative framework addresses a critical challenge in real-world neuroscience: the frequent absence of perfectly paired neural-behavioral datasets during model deployment. BLEND enables researchers to develop models that perform inference using only neural activity as input while benefiting from the rich contextual guidance of behavioral signals during the training phase [12].

The core innovation of BLEND lies in its treatment of behavior as privileged information—data available only during training but not during inference. This approach is particularly valuable for drug development professionals and neuroscientists studying conditions where behavioral data collection is intermittent, such as in resting-state studies, certain neurological disorders, or chronic recording experiments where behavioral monitoring cannot be maintained continuously. By leveraging a teacher-student architecture, BLEND provides a model-agnostic solution that can enhance existing neural dynamics modeling architectures without requiring specialized models to be developed from scratch [12].

Theoretical Foundations and Architecture

Core Mathematical Principles

BLEND operates on the principle of privileged knowledge distillation, formalized through a teacher-student framework. The teacher model (θT) receives both regular features (neural activity, xneural) and privileged features (behavior observations, xbehavior), while the student model (θS) processes only neural activity. The knowledge transfer is achieved by minimizing the distillation loss (L_KD) between their outputs [12]:

LKD = DKL(PT(y|xneural, xbehavior) || PS(y|x_neural))

where DKL represents the Kullback-Leibler divergence, PT and P_S denote the output distributions of teacher and student models respectively, and y represents the target variables.

The framework incorporates a novel Knowledge Incremental Assimilation Mechanism (KIAM) that quantifies the probabilistic distance between accumulated information in the teacher model and new information from the Short-Term Memory (STM) buffer. This mechanism triggers adaptive expansion of the teacher's capacity when significant distribution shifts are detected, allowing the framework to continuously assimilate new knowledge without catastrophic forgetting [12] [18].

Architectural Components

Table 1: Core Components of the BLEND Framework

| Component | Function | Implementation Details |

|---|---|---|

| Teacher Model | Processes both neural activity and behavior signals | Dynamic expansion mixture of experts; architecture can incorporate VAEs, GANs, or DDPMs |

| Student Model | Performs inference using only neural activity | Compact network trained via knowledge distillation from teacher |

| Short-Term Memory (STM) | Stores recent data stream samples | Fixed-capacity buffer retaining update-to-date information |

| Knowledge Incremental Assimilation Mechanism (KIAM) | Evaluates need for teacher expansion | Measures divergence between STM and teacher's accumulated knowledge |

Quantitative Performance Analysis

BLEND demonstrates substantial performance improvements across multiple benchmarks in neural population activity modeling. Experimental results reveal that the framework elevates baseline methods by considerable margins, achieving over 50% improvement in behavioral decoding accuracy and over 15% improvement in transcriptomic neuron identity prediction after behavior-guided distillation. These metrics highlight the transformative potential of BLEND for enhancing the quality of learned neural representations [12].

Table 2: Performance Metrics of BLEND Framework

| Evaluation Benchmark | Baseline Performance | BLEND-Enhanced Performance | Improvement | Key Metric |

|---|---|---|---|---|

| Neural Latents Benchmark'21 | Varies by base model | Significant gains across models | >50% | Behavioral decoding accuracy |

| Transcriptomic Identity Prediction | Varies by base model | Enhanced prediction accuracy | >15% | Neuron type classification |

| PSTH Matching | Model-dependent | Improved neural dynamics capture | Substantial | Peri-stimulus time histogram fidelity |

The framework's effectiveness stems from its ability to learn more accurate and nuanced representations of neural dynamics. Unlike approaches that make strong assumptions about the relationship between behavior and neural activity, BLEND's model-agnostic nature allows it to enhance various existing architectures, including LFADS, NeuralDataTransformer (NDT), STNDT, and other latent variable models commonly used in neural data analysis [12].

Experimental Protocols

Protocol 1: Implementation of BLEND Framework

Purpose: To implement the complete BLEND framework for behavior-guided neural population dynamics modeling.

Materials:

- Neural spike train data (multiple sessions/trials)

- Simultaneously recorded behavioral variables (e.g., movement kinematics, task parameters)

- Computing infrastructure with GPU acceleration

- Python environment with PyTorch/TensorFlow

Procedure:

- Data Preprocessing:

- Bin neural spike data into 10-50ms time windows

- Z-score normalize behavioral variables

- Temporally align neural and behavioral data streams

Teacher Model Initialization:

- Configure base neural dynamics model (e.g., VAE, RNN, Transformer)

- Initialize with default architectural parameters for the chosen base model

- Set input dimensions for both neural (N neurons) and behavioral (D dimensions) data

Short-Term Memory Buffer Setup:

- Allocate fixed-capacity buffer (typically 100-1000 samples)

- Implement FIFO (first-in-first-out) replacement policy

- Pre-populate with initial data samples

Knowledge Incremental Assimilation Mechanism:

- Implement probabilistic distance metric (e.g., Wasserstein distance)

- Set expansion threshold parameter (τ = 0.15 recommended)

- Configure dynamic expansion trigger based on KIAM output

Distillation Training:

- Train teacher model on combined neural and behavioral data

- Extract softened probability distributions from teacher

- Train student model to match teacher distributions using only neural data

- Employ temperature scaling (T=2-5) in distillation loss

Validation:

- Evaluate student model on test data with no behavioral signals

- Compare to baseline models trained without distillation

- Assess behavioral decoding accuracy and neural dynamics reconstruction

Troubleshooting:

- If knowledge transfer is ineffective, adjust distillation temperature

- For unstable training, reduce learning rate or increase batch size

- If overfitting occurs, implement early stopping or increase regularization

Protocol 2: KIAM-Controlled Dynamic Expansion

Purpose: To implement and validate the Knowledge Incremental Assimilation Mechanism for dynamic teacher expansion.

Materials:

- Preprocessed neural and behavioral datasets

- Initialized teacher model with base architecture

- Short-term memory buffer with recent samples

Procedure:

- Knowledge Discrepancy Calculation:

- Compute latent representations for STM samples using current teacher

- Calculate probabilistic distance between teacher knowledge and STM distribution

- Use Wasserstein distance or KL divergence as metric

Expansion Decision:

- Compare knowledge discrepancy to threshold (τ)

- If discrepancy > τ, trigger expansion of teacher model

- Add new expert module to teacher mixture model

Expert Pruning (Optional):

- Monitor contribution of each expert in teacher model

- Remove experts with minimal contribution to overall performance

- Maintain model compactness and computational efficiency

Validation:

- Track model performance before and after expansion

- Monitor catastrophic forgetting metrics

- Assess knowledge diversity across experts

Protocol 3: Cross-Modal Knowledge Distillation

Purpose: To implement behavior-guided knowledge distillation from teacher to student model.

Materials:

- Trained teacher model with behavioral integration

- Neural activity data without paired behavior

- Distillation loss function implementation

Procedure:

- Teacher Inference:

- Process neural-behavioral pairs through trained teacher

- Extract output distributions (logits) before final activation

- Apply temperature scaling to soften probability distributions

Student Training:

- Initialize student with architecture similar to teacher (behavior inputs removed)

- Process neural data only through student model

- Compute distillation loss between student and teacher outputs

- Combine with standard task loss (e.g., neural prediction error)

Knowledge Transfer Optimization:

- Balance distillation loss and task loss with weighting parameter (α=0.7)

- Employ gradient clipping to stabilize training

- Use progressive distillation for complex tasks

Validation:

- Evaluate student on behavioral decoding without behavior input

- Compare neural dynamics modeling performance to ablated models

- Assess generalization to novel behavioral conditions

Research Reagent Solutions

Table 3: Essential Research Tools for BLEND Framework Implementation

| Resource | Type | Function in BLEND Research | Implementation Example |

|---|---|---|---|

| Neural Latents Benchmark'21 | Dataset & Evaluation Suite | Standardized evaluation of neural dynamics models | Provides benchmark tasks for behavior decoding and PSTH matching |

| Variational Autoencoder (VAE) | Base Model Architecture | Captures probabilistic structure of neural population dynamics | Serves as teacher/student model for latent dynamics modeling |

| Generative Adversarial Network (GAN) | Base Model Architecture | Alternative generative model for neural activity modeling | Used in teacher model for high-fidelity sample generation |

| Transformer Networks | Base Model Architecture | Captures long-range dependencies in neural time series | Base architecture for NDT and STNDT models enhanced by BLEND |

| Wasserstein Distance Metric | Probabilistic Measure | Quantifies distribution shift for KIAM expansion triggering | Measures divergence between teacher knowledge and new data |

| Short-Term Memory Buffer | Data Storage | Maintains recent data samples for distribution shift detection | FIFO buffer storing recent neural-behavioral pairs |

| Knowledge Distillation Loss | Optimization Objective | Facilitates transfer of behavior-guided knowledge to student | KL divergence between teacher and student output distributions |

Signaling Pathways and Experimental Workflows

Integration with Drug Development Applications

The BLEND framework offers significant potential for enhancing neural data analysis in pharmaceutical research and development. For drug development professionals, the framework's ability to maintain performance without continuous behavioral monitoring aligns with practical constraints in clinical trials and preclinical studies. BLEND can be integrated into several key application areas:

Preclinical Neurological Drug Screening: BLEND enables more efficient analysis of neural recording data from animal models, where continuous behavioral monitoring may not be feasible. The student model can infer behavioral relevance from neural activity alone, facilitating high-throughput screening of candidate compounds.

Clinical Trial Optimization: In human trials, BLEND's approach mirrors the evidence engineering framework used in AI-enabled clinical trials, where continuous evidence generation combines different data sources under unified governance. The teacher-student dynamic parallels the integration of synthetic controls with traditional RCTs [19].

Biomarker Development: The distilled student models can serve as compact, efficient biomarkers for neurological target engagement, using only neural data without the burden of continuous behavioral assessment.

Translational Neuroscience: BLEND bridges controlled experimental settings and real-world applications by allowing models trained in laboratory conditions with full behavioral data to be deployed in clinical settings where behavioral monitoring is limited.

The framework's model-agnostic nature allows pharmaceutical researchers to integrate it with existing neural data analysis pipelines without requiring complete methodological overhaul, making it particularly valuable for drug development applications where regulatory compliance and methodological consistency are critical considerations [12] [19].

Model-agnostic methods represent a paradigm shift in machine learning and computational neuroscience, designed to enhance existing neural architectures without requiring modifications to their core structure. These techniques function as flexible wrappers or complementary frameworks that can be applied to a wide range of pre-existing models, from traditional neural networks to state-of-the-art graph neural networks. Within the context of BLEND (Behavior-guided Neural Population Dynamics Modeling via Privileged Knowledge Distillation) research, this approach enables neuroscientists and drug development professionals to leverage behavioral data as privileged information during training while maintaining standard neural activity inputs during deployment [1]. The fundamental advantage lies in its ability to augment models with new capabilities—such as improved interpretability, handling of data imbalance, or rapid adaptation to new tasks—while preserving substantial investments in existing, validated architectures and ensuring reproducible research protocols across different laboratories and experimental conditions.

For research in neural population dynamics, model-agnostic frameworks provide crucial methodological flexibility. The BLEND framework specifically demonstrates how behavior-guided learning can be integrated through a teacher-student distillation process, where a teacher model utilizes both neural activity and behavioral observations during training, while the distilled student model operates solely on neural signals during inference [1] [7]. This approach avoids the need for specialized model designs from scratch and allows research teams to enhance their existing neural dynamics models without compromising their established workflows. The model-agnostic characteristic ensures that the method can be applied across various neural network architectures commonly used in computational neuroscience, making advanced behavior-guided modeling accessible without requiring architectural overhaul.

Key Applications in Neuroscience Research

Behavior-Guided Neural Dynamics with BLEND

The BLEND framework exemplifies the model-agnostic advantage for neural population dynamics modeling. This approach treats behavioral data as privileged information available only during training, addressing the common experimental challenge where perfectly paired neural-behavioral datasets are unavailable during real-world deployment. BLEND implements a knowledge distillation process where a teacher model, which has access to both neural activity and behavior observations, trains a student model that uses only neural activity inputs during inference [1]. This method is architecture-independent, allowing researchers to enhance existing neural dynamics models without developing specialized architectures from scratch.

Quantitative results demonstrate BLEND's significant impact, with reported improvements exceeding 50% in behavioral decoding accuracy and over 15% enhancement in transcriptomic neuron identity prediction following behavior-guided distillation [1] [7]. These advances occur without modifying the underlying neural architecture, highlighting how model-agnostic approaches can substantially boost performance while maintaining methodological consistency across research groups. For drug development professionals, this approach enables more accurate mapping between neural activity and behavioral outcomes, potentially accelerating the identification of neural correlates for therapeutic efficacy.

Table 1: Performance Metrics of BLEND Framework in Neural Population Modeling