Beyond the Algorithm: A Practical Framework for Validating AI Tools in Neuroradiology

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating AI tools for clinical neuroradiology integration.

Beyond the Algorithm: A Practical Framework for Validating AI Tools in Neuroradiology

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating AI tools for clinical neuroradiology integration. It moves from foundational concepts and regulatory requirements to detailed methodological approaches for performance assessment. The content addresses common troubleshooting scenarios, including algorithmic bias and workflow integration challenges, and establishes a framework for comparative analysis and real-world impact validation. By synthesizing current evidence and trends, this guide aims to equip stakeholders with the knowledge to ensure AI tools are not only accurate but also clinically effective, safe, and scalable.

The Neuroradiology AI Landscape: From Hype to Clinical Reality

The Current State of AI Adoption in Neuroradiology Practice

FAQs: Understanding AI Integration in Neuroradiology

What is the current adoption rate of AI in clinical neuroradiology practice? Despite radiology leading medical AI adoption, real-world clinical integration in neuroradiology remains limited. Only about 30% of radiologists have integrated AI into routine workflows, with usage largely confined to specific, narrow tasks rather than comprehensive diagnostic platforms [1]. While the U.S. had authorized nearly 777 AI-enabled radiology devices by 2025, only about 126 FDA-cleared products exist for neuroradiology, and few of these relate to specialized areas like brain tumor imaging [2] [3].

What are the primary clinical applications of AI in neuroradiology? AI in neuroradiology focuses on triage, detection, and workflow enhancement. Key applications include detecting intracranial hemorrhages, cerebral aneurysms, large vessel occlusions in stroke, and spinal fractures [2]. These algorithms demonstrate high sensitivity, with reported sensitivities for brain and spine triage algorithms ranging from 88% to 95% [2]. AI is also used for brain tumor volumetrics and automated measurement tasks [2].

What are the most significant barriers to widespread AI adoption? Key barriers include lack of standardized reimbursement pathways, regulatory challenges, limited generalizability across diverse populations, "black box" opacity, workflow integration difficulties, and data privacy concerns [3] [1] [4]. Financial barriers are particularly pronounced in Europe, where reimbursement remains fragmented compared to developing pathways in the U.S. [3].

How does AI impact radiologist workload and efficiency? AI has dual potential: it can automate repetitive tasks (like measurements and initial triage) to reduce workload, but may also increase it by requiring radiologists to double-check AI results [1]. Well-designed AI tools can enhance efficiency by accelerating image acquisition, automating report generation, and prioritizing critical cases [5]. Generative AI shows particular promise for reducing administrative burdens by turning dictated speech into structured reports [6].

Will AI replace neuroradiologists? Most experts agree AI will augment rather replace neuroradiologists. AI serves as a supportive tool that enhances diagnostic capabilities but cannot replicate clinical reasoning, interdisciplinary consultation, or patient communication [1] [4]. The evolving role emphasizes AI as an assistant that handles repetitive tasks, allowing radiologists to focus on complex decision-making [5].

Troubleshooting Guides: Addressing AI Implementation Challenges

Problem: Poor Generalizability Across Patient Populations

Symptoms

- AI model performance degrades when applied to new institutions or scanner types

- Decreased accuracy for demographic groups underrepresented in training data

- Inconsistent results across geographic regions or healthcare systems

Solution: Implement Robust Validation Protocols

- Conduct local validation studies before clinical deployment to assess model performance using your institution's data [2]

- Evaluate model generalizability using metrics like Dice coefficient and Hausdorff distance to quantify performance variations [2]

- Establish continuous monitoring to detect performance drift across patient subgroups [7]

- Utilize diverse test datasets that represent the full clinical population, including variations in age, ethnicity, and clinical presentation [4]

Validation Framework

Problem: Lack of Explainability and Trust in AI Outputs

Symptoms

- Radiologists hesitate to accept AI recommendations without understanding the reasoning

- Difficulty reconciling conflicting AI and human interpretations

- Inability to explain AI findings to referring physicians or patients

Solution: Integrate Explainable AI (XAI) Methods

- Implement saliency maps (Grad-CAM, Layer-wise Relevance Propagation) to visualize features influencing AI decisions [8]

- Develop context-aware explanations tailored to different users (radiologists, technologists, referring physicians) [8]

- Create contrastive explanations that clarify why the AI reached its conclusion and not alternative diagnoses [8]

- Establish human-AI dialogue systems that allow radiologists to query the AI about specific findings [8]

Verification Protocol When AI findings conflict with human interpretation:

- Review saliency maps to identify relevant features used by the AI

- Correlate with clinical context and patient history

- Consult additional imaging or advanced sequences if available

- Document the discrepancy and final resolution for quality assurance

- Use the case for continuous model improvement and training

Problem: Inefficient Workflow Integration

Symptoms

- AI tools require separate logins and interfaces outside primary PACS/RIS

- Increased time spent toggling between systems

- Disruption to established clinical workflows

- Resistance from staff due to added complexity

Solution: Optimize System Integration

- Select AI solutions with seamless PACS/RIS integration through DICOM standards [9]

- Implement unified worklists that incorporate AI findings and prioritization directly into existing workflows [5]

- Utilize middleware platforms that consolidate multiple AI applications through single sign-on [10]

- Design role-based interfaces tailored to specific users (radiologists, technologists, administrators) [5]

Integration Workflow

Quantitative Performance Data of AI in Neuroradiology

Table 1: Diagnostic Performance of AI Algorithms in Neuroradiology Applications

| Clinical Application | Modality | Reported Sensitivity | Reported Specificity | Key Metrics | FDA Clearance Status |

|---|---|---|---|---|---|

| Intracranial Hemorrhage Detection | CT | 88-95% [2] | 85-93% [2] | High accuracy for triage | 30+ cleared devices [3] |

| Large Vessel Occlusion Detection | CTA | 90-94% [2] | 88-92% [2] | Critical for stroke workflow | 20+ cleared devices [3] |

| Cerebral Aneurysm Detection | CTA/MRA | 87-93% [2] | 82-90% [2] | Reduced false positives | Limited clearance [2] |

| Cervical Spine Fracture | CT | 88-95% [2] | 90-96% [2] | RSNA challenge winner models | 15+ cleared devices [3] |

| Brain Tumor Segmentation | MRI | Variable by type [2] | Variable by type [2] | Dice coefficient 0.75-0.85 [2] | Limited clearance [2] |

Table 2: AI Impact on Operational Metrics in Radiology Departments

| Efficiency Metric | Traditional Workflow | AI-Enhanced Workflow | Improvement | Evidence Level |

|---|---|---|---|---|

| CT Exam Throughput | 20-25 patients/day [5] | 30+ patients/day [5] | 20-30% increase | Multi-site study [5] |

| MR Acquisition Time | Standard protocols | 30-50% reduction [2] | Significant time savings | Vendor data [2] |

| Report Turnaround Time for Critical Findings | 60-120 minutes [9] | 15-30 minutes [9] | 50-75% reduction | Clinical validation [9] |

| Time Spent on Structured Reporting | 3-5 minutes/case [6] | 1-2 minutes/case [6] | 60-70% reduction | User feedback [6] |

| Administrative Burden | High (43% report increased) [5] | Moderate reduction potential [6] | 25-40% estimated reduction | Physician survey [5] |

Experimental Protocols for AI Validation

Protocol 1: Model Generalizability Assessment

Purpose Evaluate AI algorithm performance across diverse clinical environments and patient populations to ensure robustness before deployment.

Materials

- Multi-institutional datasets representing variation in scanner manufacturers, protocols, and patient demographics [2]

- Reference standard annotations established by expert neuroradiologist consensus [7]

- Performance metrics toolkit including Dice coefficient, Hausdorff distance, sensitivity, specificity [2]

- Statistical analysis software for subgroup performance analysis [7]

Methodology

- Dataset Curation

- Collect retrospective imaging studies from ≥3 independent institutions

- Ensure representation of key demographic variables (age, sex, ethnicity)

- Include variety of scanner models and acquisition parameters

Performance Benchmarking

- Run AI algorithms on all datasets using standardized preprocessing

- Calculate performance metrics for overall population and subgroups

- Compare performance variation across sites using ANOVA testing

Failure Analysis

- Identify cases with discordant AI and reference standard readings

- Analyze common characteristics of failed cases

- Determine root causes (technical, clinical, demographic)

Validation Criteria

- Performance degradation <5% across institutions

- No statistically significant performance differences across demographic subgroups

- Dice coefficient >0.80 for segmentation tasks [2]

Protocol 2: Clinical Workflow Impact Assessment

Purpose Quantify the effect of AI integration on radiologist efficiency, report turnaround times, and diagnostic consistency.

Materials

- Integrated AI-PACS platform with automated worklist prioritization [5]

- Time-tracking software integrated into reading workflow

- Structured reporting system with AI-assisted dictation [6]

- Reader study framework with case mix reflecting clinical practice

Methodology

- Baseline Assessment

- Measure current key performance indicators (KPIs) without AI

- Establish baseline reading times, report turnaround, accuracy rates

- Document workflow pain points and bottlenecks

Controlled Implementation

- Deploy AI tools for specific use cases (e.g., hemorrhage detection)

- Train users on AI interaction and interpretation

- Implement parallel reading for initial validation period

Impact Measurement

- Track time from exam completion to final report

- Measure time saved on automated tasks (measurements, segmentation)

- Assess diagnostic consistency using inter-reader agreement statistics

- Survey user satisfaction and perceived workload

Success Metrics

- ≥20% reduction in critical finding report turnaround time [9]

- ≥15% increase in reading throughput without accuracy degradation

- ≥80% user satisfaction with AI integration [5]

Research Reagent Solutions for AI Validation

Table 3: Essential Components for Neuroradiology AI Validation

| Research Component | Function | Implementation Examples | Validation Role |

|---|---|---|---|

| Curated Reference Datasets | Ground truth for model training/validation | RSNA challenge datasets [2], Multi-institutional collections | Performance benchmarking and generalizability testing |

| Annotation Platforms | Expert lesion segmentation and labeling | 3D Slicer, ITK-SNAP, Commercial annotation tools | Creating gold standard for model training |

| Performance Metrics Toolkits | Quantitative algorithm assessment | Python libraries (Scikit-learn, MedPy), Custom validation frameworks | Objective performance measurement across sites |

| Explainability (XAI) Frameworks | Model decision transparency | Grad-CAM, LRP, SHAP, Attention visualization [8] | Building clinical trust and identifying failure modes |

| Workflow Integration Middleware | Connects AI to clinical systems | DICOM routers, HL7 interfaces, PACS integration tools [10] | Real-world performance assessment |

| Bias Detection Tools | Identify performance disparities across subgroups | Fairness metrics (demographic parity, equalized odds) | Ensuring equitable performance across patient populations |

Clinical validation is a critical process in the development of artificial intelligence (AI) tools for neuroradiology, ensuring these technologies are not only technically sound but also effective and safe in real-world clinical practice. While technical performance metrics are important, true validation requires demonstrating that an AI tool improves diagnostic accuracy, enhances workflow efficiency, and ultimately leads to better patient outcomes [11]. This technical support center provides researchers, scientists, and drug development professionals with essential guidance, troubleshooting, and experimental protocols for robust clinical validation of neuroradiology AI tools.

FAQs: Core Concepts in Clinical Validation

What is the difference between technical accuracy and clinical validation for AI tools in neuroradiology?

Technical accuracy refers to an algorithm's performance on a specific, narrow task, such as detecting a condition in a curated dataset, and is often measured by metrics like sensitivity, specificity, and area under the curve (AUC) [11]. Clinical validation, however, is a broader evaluation that assesses whether the AI tool provides a net benefit when used by clinicians in the intended clinical setting and patient population. It focuses on the tool's impact on the diagnostic thinking and therapeutic decisions of physicians, and ultimately, on patient outcomes [11] [12]. A tool can be technically excellent but fail clinical validation if it does not fit into the clinical workflow or improve patient management.

Why is generalizability a major challenge in the clinical validation of neuroradiology AI?

AI algorithms, particularly those based on deep learning, are prone to a phenomenon called "overfitting," where they perform exceptionally well on their training data but see a significant drop in performance on external data from different hospitals [11]. This limited generalizability stems from several factors, including the high heterogeneity of medical data. Variations in MRI or CT scanner models, imaging protocols, and patient populations across different clinical sites can drastically alter an algorithm's performance [11] [13]. For instance, a study evaluating an AI tool for multiple sclerosis lesion assessment was conducted on a cohort of 112 patients scanned on 8 different MRI scanner models with varying protocols, a design crucial for a meaningful real-world validation [13].

What study designs are best for establishing the clinical utility of an AI tool?

Demonstrating clinical utility, which proves that using the AI tool improves patient outcomes, requires the most rigorous study designs [11].

- Randomized Clinical Trials (RCTs): These are considered the ideal for establishing clinical utility. In an RCT, patients are randomly assigned to groups where clinicians either use or do not use the AI tool, allowing researchers to directly measure the tool's impact on patient outcomes [11].

- Diagnostic Cohort Studies: This design tests the clinical validity/accuracy of the AI in samples that represent the target patient population in real-world clinical scenarios. It is a robust method for showing the tool's performance in a realistic setting before undertaking a larger and more complex RCT [11].

How does the V3 framework (Verification, Analytical Validation, Clinical Validation) structure the evaluation of medical AI?

The V3 framework provides a structured, three-component foundation for determining if a Biometric Monitoring Technology (BioMeT), a category that includes many AI tools, is fit-for-purpose [12].

- Verification: A systematic evaluation by hardware manufacturers to ensure the system is built correctly. This involves assessing sample-level sensor outputs "at the bench" (in silico and in vitro) [12].

- Analytical Validation: This step occurs at the intersection of engineering and clinical expertise. It evaluates the data processing algorithms that convert sensor measurements into physiological or clinical metrics, ensuring the tool correctly measures what it claims to measure in a controlled setting [12].

- Clinical Validation: This is typically performed by a clinical trial sponsor to demonstrate that the AI tool acceptably identifies, measures, or predicts a clinical state in the intended context of use, which includes the specific patient population and clinical setting [12].

Troubleshooting Common Clinical Validation Challenges

Problem: High False Positive Rates in Real-World Use

- Potential Cause: The algorithm's threshold for flagging a finding may be too sensitive for general clinical practice, or it may have been trained on data that lacks the diversity and "noise" found in real-world imaging.

- Solution: Recalibrate the algorithm's operating threshold based on real-world validation data to better align with clinical priorities. Conduct additional training with more diverse, multi-institutional data that reflects a wider range of imaging protocols and patient anatomies [11] [13]. A prospective study of an MS lesion AI tool noted low positive predictive values (0.35–0.65) due to false positive tendencies, highlighting the importance of post-market monitoring and re-validation [13].

Problem: AI Tool Fails to Integrate into Clinical Workflow

- Potential Cause: The tool was designed without sufficient input from end-users (neuroradiologists) or without considering existing hospital IT infrastructure and radiology workflow systems like PACS [14].

- Solution: Involve neuroradiologists and IT staff early in the design process. Utilize established communication standards like DICOM and IHE profiles to ensure seamless integration. Choose platforms that offer unified infrastructures to manage multiple AI applications, reducing inefficiencies [15] [14]. Pilot testing in a live clinical environment before a full-scale validation study is crucial to identify and resolve workflow bottlenecks.

Problem: Algorithm Performance Deteriorates at External Validation Sites

- Potential Cause: The model has poor generalizability due to differences in patient demographics, disease prevalence, or imaging equipment/protocols at external sites [11].

- Solution: From the outset, design validation studies to be multi-center and multi-vendor. Employ techniques such as domain adaptation during training and use a hold-out external test set from a completely different institution to get a true measure of generalizability [11] [2]. As one expert noted, an AI model that works in three hospitals may not work across the entire U.S. [2].

Experimental Protocols for Clinical Validation

Protocol 1: Prospective Clinical Validation for a Triage AI Tool

This protocol is designed to validate an AI tool that triages urgent findings, such as intracranial hemorrhage or large vessel occlusion.

- Study Design: Prospective, multi-center, blinded study.

- Participant Recruitment: Consecutively enroll patients from the emergency department who undergo a non-contrast head CT for suspected stroke. Predefine inclusion and exclusion criteria.

- Ground Truth Definition: Establish a consensus ground truth for each case using a panel of at least three expert neuroradiologists, blinded to the AI results. The panel will review all imaging and available clinical data.

- AI Workflow Integration: The AI tool runs automatically on all eligible scans within the PACS. The results are logged in a separate system and are not initially visible to the interpreting radiologist.

- Data Collection: Record the AI's findings (presence/absence of hemorrhage) and the time from scan completion to AI result. The initial clinical report by the on-call radiologist (without AI assistance) is also recorded.

- Outcome Measures:

- Primary: Sensitivity and specificity of the AI tool compared to the expert panel ground truth.

- Secondary: Time from scan to AI notification; the false positive rate; the rate of "missed" findings by the initial radiologist that were correctly flagged by the AI.

Protocol 2: Measuring AI's Impact on Radiologist Workflow and Efficiency

This protocol assesses whether an AI tool improves efficiency in a time-consuming task, such as quantifying multiple sclerosis (MS) lesions.

- Study Design: Prospective, randomized, crossover study.

- Participant Recruitment: Recruit neuroradiologists of varying experience levels.

- Case Selection: Curate a set of MS patient MRI exams, each including current and prior scans for comparison.

- Study Procedure: In the first phase, radiologists are randomized to read half the cases with AI assistance and half without. After a washout period, they read the other half under the opposite condition. The AI tool provides automated lesion counts and highlights new/enlarging lesions.

- Data Collection:

- Time Tracking: Record the time taken for assessment per case for both conditions.

- Accuracy: Compare the radiologist's findings (with and without AI) to a manually curated ground truth.

- User Feedback: Administer a post-study questionnaire using a Likert scale to assess the radiologists' perception of the tool's helpfulness, confidence, and integration ease.

- Outcome Measures: Mean assessment time saved; change in sensitivity/specificity for detecting new lesions; qualitative user feedback [13]. A 2025 study using a similar design found a mean reduction of 27 seconds per case when using AI for MS follow-up, and radiologists found the AI helpful in 87% of cases [13].

Performance Metrics and Data Presentation

Table 1: Key Performance Metrics for AI Clinical Validation

| Metric | Formula / Definition | Interpretation in Clinical Context | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Sensitivity | True Positives / (True Positives + False Negatives) | The ability of the AI to correctly identify patients with the disease. A high value is critical for rule-out tests and triage of critical findings [11]. | ||||||||

| Specificity | True Negatives / (True Negatives + False Positives) | The ability of the AI to correctly identify patients without the disease. A high value is important to avoid unnecessary follow-up tests and anxiety [11]. | ||||||||

| Positive Predictive Value (PPV) | True Positives / (True Positives + False Positives) | The probability that a patient with a positive AI result actually has the disease. Highly dependent on disease prevalence [11] [13]. | ||||||||

| Negative Predictive Value (NPV) | True Negatives / (True Negatives + False Negatives) | The probability that a patient with a negative AI result truly does not have the disease. A high NPV is valuable for triaging cases that may not need immediate attention [13]. | ||||||||

| Area Under the ROC Curve (AUC/AUROC) | Plot of Sensitivity vs. (1 - Specificity) | The mean sensitivity over all possible specificities. A general measure of discriminative ability, but should be interpreted with caution as it may not reflect performance at a clinically chosen threshold [11]. | ||||||||

| Dice Similarity Coefficient | 2 * | True Positive | / (2 * | True Positive | + | False Positive | + | False Negative | ) | Measures the spatial overlap between an AI-generated segmentation (e.g., of a tumor) and a manually drawn ground truth. Common for segmentation tasks [11] [2]. |

| Measure | Radiologist Alone | Radiologist with AI | AI Alone |

|---|---|---|---|

| Mean Assessment Time | Baseline | -27 seconds (p=0.317) | N/A |

| Helpfulness to Radiologist | N/A | 87% of cases | N/A |

| Negative Predictive Value (NPV) for new lesions | N/A | 0.89 | N/A |

| Positive Predictive Value (PPV) for new lesions | N/A | 0.35 - 0.65 | N/A |

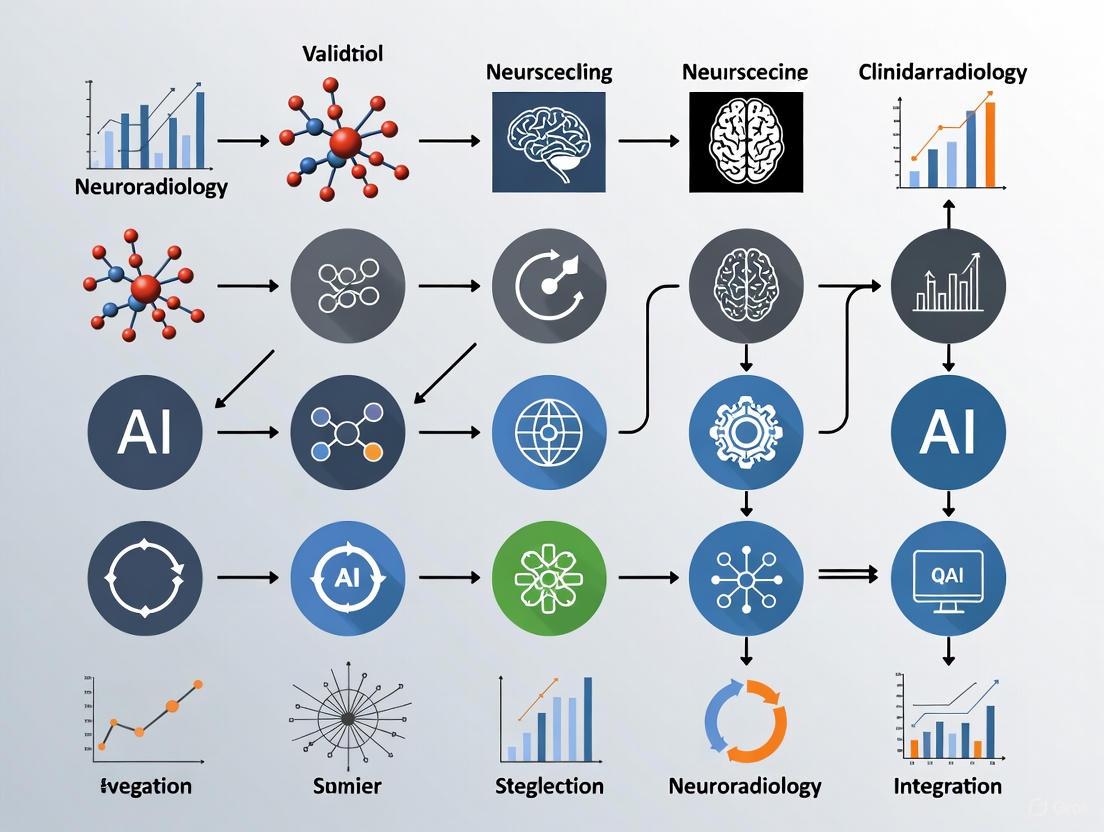

Workflow and Conceptual Diagrams

Diagram 1: The V3 Clinical Validation Workflow. This process, adapted from the V3 framework, outlines the foundational steps for establishing that an AI tool is fit-for-purpose, culminating in the demonstration of clinical utility [12].

Diagram 2: Relationship of Core Performance Metrics. This chart visualizes the relationship between the AI's predictions and the ground truth, defining the core components (TP, FP, TN, FN) used to calculate sensitivity, specificity, PPV, and NPV [11].

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for AI Validation

| Item | Function in Clinical Validation |

|---|---|

| Curated Datasets with Expert Ground Truth | Serves as the reference standard (gold standard) for training and initial testing. The quality of the ground truth (e.g., expert neuroradiologist annotations) is paramount [11] [12]. |

| External Test Sets (Multi-Center) | Independent datasets from different hospitals, used to evaluate the generalizability and real-world performance of the AI algorithm, testing for overfitting [11] [2]. |

| Performance Metric Calculators (e.g., Dice, AUC) | Software scripts or packages to calculate standardized performance metrics, ensuring consistent and comparable evaluation across different studies and tools [11]. |

| Clinical Data Integration Platform | A unified software infrastructure (e.g., an AI operating system) that allows for the seamless integration, deployment, and monitoring of multiple AI algorithms within a clinical workflow [15] [14]. |

| Structured Reporting Templates | Standardized templates, sometimes generated with the aid of large language models, that help convert free-text radiology reports into structured data for more consistent outcome measurement and data analysis [2]. |

This technical support center provides troubleshooting guides and FAQs for researchers and scientists conducting AI validation studies in neuroradiology. The content is framed within the context of validating AI tools for clinical integration research, focusing on key applications in stroke, hemorrhage, aneurysms, and spine imaging.

Troubleshooting Guides

Guide 1: Addressing AI Model Generalizability and Performance Gaps

Issue: AI model performance degrades when applied to external validation datasets or specific disease subtypes, threatening the validity of a clinical integration study.

Solution: Implement a rigorous, multi-faceted validation protocol.

Action 1: Subgroup Performance Analysis

- Procedure: Do not just calculate overall accuracy. Break down performance by clinically relevant subgroups, such as hemorrhage subtype, aneurysm location, or scanner manufacturer. This helps identify specific failure modes.

- Example: For an intracranial hemorrhage (ICH) detection AI, calculate sensitivity and specificity for each hemorrhage subtype (e.g., intraparenchymal, subdural, epidural). Research shows AI sensitivity for epidural hemorrhage can be as low as 75%, a critical detail masked by overall high performance [16].

Action 2: External Testing on Diverse Data

- Procedure: Validate the algorithm using retrospective data from multiple institutions not involved in model training. Ensure the data encompasses different geographic locations, patient demographics, and imaging equipment.

- Rationale: An algorithm developed at a single academic center may not perform well in community hospitals or across different populations. A model's generalizability cannot be assumed [2] [17].

Action 3: Implement a Real-Time Monitoring Framework

- Procedure: For deployed models, use frameworks like the Ensembled Monitoring Model (EMM) to estimate prediction confidence in real-time without needing ground-truth labels. The EMM uses an ensemble of sub-models; the agreement level between these sub-models and the primary AI indicates confidence [18].

- Output: Categorize AI predictions into "increased confidence," "similar confidence," or "decreased confidence" tiers. This allows researchers to flag unreliable predictions for further review during validation studies, reducing cognitive burden and potential misdiagnoses [18].

Guide 2: Integrating AI into Clinical Workflows for Pragmatic Trials

Issue: An AI tool with high diagnostic accuracy in a controlled, retrospective study fails to demonstrate practical impact when integrated into a live clinical workflow for a prospective trial.

Solution: Design the validation study around key clinical workflow metrics and seamless integration.

Action 1: Measure Operational Efficiency Metrics

- Procedure: In addition to diagnostic performance, track time-based and workflow metrics. Key Performance Indicators (KPIs) should include:

- Door-to-treatment decision time

- Critical case notification time

- Triage accuracy

- Expected Outcome: Successful AI integration has been shown to reduce door-to-treatment decision time by 26% and critical case notification time by 57% [16].

- Procedure: In addition to diagnostic performance, track time-based and workflow metrics. Key Performance Indicators (KPIs) should include:

Action 2: Ensure "Human-in-the-Loop" System Design

- Procedure: The AI should function as a decision-support tool, not an autonomous system. Design workflows that require radiologist confirmation for final diagnosis. This mitigates risks from AI "hallucinations" or over-reliance [17] [9].

- Analogy: "Think about AI like a toddler learning to ride a bike... Having an expert... to help support the bike while the child is learning is helpful" [17].

Action 3: Prioritize Seamless Technical Integration

- Procedure: Validate the AI tool within the existing clinical ecosystem (PACS, RIS). Tools that require radiologists to toggle between platforms create friction and reduce adoption. Seek solutions that embed results directly into the standard workflow via DICOM overlays or structured reports [9] [19].

Frequently Asked Questions (FAQs)

Q1: What are the realistic performance expectations for commercial AI in detecting intracranial hemorrhage? A: Based on a recent meta-analysis of 45 studies, commercial AI systems for ICH detection demonstrate high aggregate performance, but this varies significantly by hemorrhage subtype. You can expect pooled sensitivity of ~90% and specificity of ~95%. However, performance is not uniform; sensitivity for intraparenchymal hemorrhage is high (95%), but drops considerably for epidural hemorrhage (75%). This underscores the need for subtype-specific validation in your research [16].

Q2: Our validation study for a vertebral fracture AI shows high sensitivity but low PPV. How should this result be interpreted? A: This is a common finding. A study on the Nanox.AI HealthOST software revealed a similar pattern: at a >20% vertebral height reduction threshold, sensitivity was 92.0%, but PPV was only 16.5%. This indicates the AI is excellent at finding most true fractures (high sensitivity) but also generates a substantial number of false positives (low PPV). The clinical context should guide your response. For a screening tool where missing a fracture is unacceptable, this trade-off may be justified, provided a radiologist provides a secondary review of positive findings [20].

Q3: What methodologies exist for monitoring the performance of a "black-box" commercial AI model in real-time after deployment? A: The Ensembled Monitoring Model (EMM) framework is designed for this purpose. It operates without needing access to the internal workings of the commercial (black-box) AI. The EMM uses a ensemble of multiple sub-models trained for the same task. The agreement level between the EMM's sub-models and the primary AI's output serves as a proxy for confidence, allowing for real-time, case-by-case assessment without ground-truth labels [18].

Q4: How can AI be utilized to improve patient recruitment for stroke clinical trials? A: AI can significantly enhance trial recruitment in two key ways:

- Imaging-based Cohort Identification: Platforms like the AStrID (Acute Stroke Imaging Database) can automatically analyze MRI images to identify stroke type and precise location. Researchers can then query the database to find patients with very specific stroke attributes, enabling targeted recruitment for trials where a treatment may only be effective for a particular stroke profile [21].

- Automated Screening: AI can continuously analyze brain and vessel imaging in emergency settings to alert physicians about potential participants who meet the imaging criteria for a trial, thereby accelerating enrollment [17].

Quantitative Data Tables

Table 1: Diagnostic Performance of AI for Intracranial Hemorrhage Detection

| Model Category | Pooled Sensitivity (95% CI) | Pooled Specificity (95% CI) | Number of Studies (Patients) |

|---|---|---|---|

| Research Algorithms | 0.890 (0.839–0.942) | 0.926 (0.899–0.954) | 29 (n = 185,847) |

| Commercial AI Systems | 0.899 (0.858–0.940) | 0.951 (0.928–0.974) | 16 (n = 94,523) |

Source: Adapted from a meta-analysis in Brain and Spine [16].

Table 2: AI Performance by Intracranial Hemorrhage Subtype

| Hemorrhage Subtype | AI Sensitivity | Detection Challenge (Difficulty Score) |

|---|---|---|

| Intraparenchymal | 95% | Low |

| Subarachnoid | 90% | Medium |

| Subdural | 85% | Medium |

| Epidural | 75% | High (0.251) |

Source: Adapted from a meta-analysis in Brain and Spine [16].

Table 3: Clinical Impact of AI Integration on Workflow Efficiency

| Workflow Metric | Before AI Implementation | After AI Implementation | Relative Improvement |

|---|---|---|---|

| Door-to-Treatment Decision Time | 92 minutes | 68 minutes | -26% |

| Critical Case Notification Time | 75 minutes | 32 minutes | -57% |

| Triage Accuracy | 86% | 94% | +8% |

Source: Adapted from a meta-analysis in Brain and Spine [16].

Experimental Protocols

Protocol 1: Clinical Validation of a Vertebral Compression Fracture (VCF) AI Tool

This protocol is based on a real-world clinical validation study [20].

- Study Design: Retrospective analysis of outpatient chest and abdomen CT scans.

- Inclusion Criteria:

- Outpatients >50 years of age.

- CT scans performed for indications unrelated to vertebral fracture assessment.

- Exclusion Criteria:

- Patients with spinal hardware.

- Scans with excessive motion artifacts.

- Inadequate visualization of the thoracic/lumbar spine.

- Ground Truth Establishment:

- Two radiologists, including a senior musculoskeletal specialist, review all scans in consensus.

- Employ the Genant semiquantitative (GSQ) grading scheme.

- Resolve discrepancies through discussion or with a third expert in metabolic bone disease.

- AI Analysis:

- Process de-identified CT DICOM data through the AI software (e.g., Nanox.AI HealthOST).

- Test the AI's performance at different vertebral height reduction thresholds (e.g., >20% for mild, >25% for moderate fractures).

- Outcome Measures:

- Calculate sensitivity, specificity, PPV, and NPV of the AI against the radiologist-established ground truth.

- Compare original radiology reports with AI findings to determine the rate of previously missed fractures.

Protocol 2: Real-Time Monitoring of a Black-Box ICH Detection AI

This protocol outlines the implementation of the Ensembled Monitoring Model (EMM) framework [18].

- Model Setup:

- Primary Model: The commercial, black-box ICH detection AI to be monitored.

- EMM Construction: Develop an ensemble of five sub-models with diverse neural network architectures, all trained on the same task (ICH detection) but with different initializations and data augmentations.

- Inference and Monitoring:

- For each new head CT scan, the primary model and the five EMM sub-models process the image independently and in parallel.

- Record the binary output (ICH-positive or ICH-negative) from the primary model and each EMM sub-model.

- Confidence Scoring:

- Calculate the agreement level between the EMM sub-models and the primary model using unweighted vote counting.

- Agreement Level: 0%, 20%, 40%, 60%, 80%, 100%.

- Stratification and Action:

- Define confidence tiers based on agreement levels and baseline AI performance (see diagram below for logic).

- Increased Confidence: High agreement, AI prediction is highly reliable.

- Similar Confidence: Moderate agreement, use AI output as standard.

- Decreased Confidence: Low agreement, recommend disregarding AI output and conducting a conventional radiologist read.

Workflow and System Diagrams

AI Confidence Monitoring with EMM

Clinical Integration Pathway for Stroke AI

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Function in AI Validation Research | Example / Note |

|---|---|---|

| Ensembled Monitoring Model (EMM) | Provides real-time confidence scores for black-box AI predictions without needing ground-truth labels or model internals. | Critical for ongoing performance assurance in clinical integration studies [18]. |

| Structured Datasets & Challenges | Provide curated, expert-annotated datasets for benchmarking AI algorithm performance in a standardized way. | The RSNA 2025 Intracranial Aneurysm Detection AI Challenge offers a dataset from 18 sites across 5 continents for developing and testing detection models [22]. |

| Acute Stroke Imaging Database (AStrID) | An AI-driven platform that automatically identifies stroke type and location from MRI, facilitating patient stratification and recruitment for clinical trials. | Enables researchers to find patients with specific stroke attributes for precision trial enrollment [21]. |

| FDA-Cleared Commercial AI Software | Commercially available tools that have passed regulatory scrutiny; used as interventions in pragmatic trials to assess real-world impact. | Examples include ICH detection tools and vertebral fracture detection software (e.g., Nanox.AI HealthOST) [20] [16]. |

| Genant Semiquantitative (GSQ) Grading | A standardized method for radiologists to establish ground truth for vertebral compression fractures, against which AI performance is measured. | Essential for consistent labeling in validation studies for spine AI [20]. |

The integration of Artificial Intelligence (AI) tools into clinical neuroradiology requires rigorous validation and compliance with regional regulatory frameworks. For researchers and developers, understanding these pathways is crucial for designing studies that meet regulatory standards and facilitate clinical adoption. The U.S. Food and Drug Administration (FDA) and the European Union's CE marking represent two primary, distinct regulatory approaches for AI-based medical devices, including those for neuroradiology applications such as intracranial hemorrhage detection, large vessel occlusion identification, and image segmentation [23].

Navigating these frameworks is a fundamental part of the research and development process. This guide addresses common questions and troubleshooting issues that arise during the experimental validation of AI tools intended for this field.

FAQ: Core Regulatory Concepts

Q1: What is the fundamental difference between FDA clearance and CE marking?

The fundamental difference lies in the governing authority, geographical applicability, and the underlying regulatory philosophy.

FDA Clearance/Approval is granted by the U.S. Food and Drug Administration and is mandatory for marketing medical devices in the United States [24] [25]. It involves a direct review and decision by the FDA agency itself.

CE Marking is a manufacturer's declaration that a product complies with the applicable European Union legislation, allowing it to be freely marketed within the European Economic Area [26]. While often involving third-party "Notified Bodies" for higher-risk devices, it is not issued by a central EU authority [26] [23].

Table: Key Differences Between FDA Clearance and CE Marking

| Feature | FDA Clearance/Approval | CE Marking |

|---|---|---|

| Governing Authority | U.S. Food and Drug Administration (FDA) | Manufacturer's declaration (with Notified Bodies for higher-risk classes) [26] [23] |

| Geographical Scope | United States | European Economic Area (EU, Iceland, Liechtenstein, Norway) and Northern Ireland [27] |

| Primary Legal Basis | Food, Drug and Cosmetic Act [24] | EU Medical Device Regulation (MDR) [23] |

| Key Database | FDA's AI-Enabled Medical Devices List [28] | NANDO database for Notified Bodies [26] |

Q2: What is a 510(k) clearance, and how does it relate to AI neuroradiology tools?

A 510(k) is a premarket notification submitted to the FDA to demonstrate that a new device is "substantially equivalent" to a legally marketed existing device [24] [25]. This is the most common pathway for AI medical devices.

For an AI tool in neuroradiology, this means the manufacturer must identify a "predicate" device—a previously cleared medical device—and provide evidence that their new AI tool is at least as safe and effective. Many AI tools for triage, such as those that prioritize CT scans with suspected strokes, have been cleared via the 510(k) pathway by referencing existing predicate devices [29] [30].

Q3: Is prospective clinical data always required for CE marking an AI medical device?

Not always. The need for prospective clinical data depends on the device's risk classification and intended purpose under the EU Medical Device Regulation (MDR) [31].

For some AI devices, particularly those with an indirect clinical benefit (e.g., an AI tool that provides accurate anatomical measurements to support a clinician's decision, rather than making a diagnosis itself), robust retrospective validation using existing datasets may be sufficient [31]. The manufacturer can justify that clinical data from prospective investigations is "not deemed appropriate" under Article 61(10) of the MDR, provided they can substantiate safety and performance through other means, such as performance evaluation and bench testing [31].

However, AI tools that make novel predictions or direct diagnoses will likely require clinical data from investigations involving human subjects to demonstrate safety and performance [31].

Q4: What are the common regulatory challenges when validating an AI tool for neuroradiology?

- Defining the "Intended Use": Precisely defining the tool's role in the diagnostic workflow is critical for regulatory submission and liability. Deviating from the approved "intended use" can increase medico-legal risk [23].

- The "Black-Box" Problem: Complex AI models can be difficult to interpret. Regulators and clinicians favor interpretable or explainable AI, especially when a wrong output could lead to patient harm [23].

- Post-Market Surveillance: Once a device is on the market, manufacturers must monitor its real-world performance. For AI devices, this is crucial to catch "model drift" or unexpected failures in new patient populations [23].

Troubleshooting Guide: Experimental Validation

Issue 1: Inconsistent Performance Across Datasets

Problem: Your AI model shows high accuracy on the internal validation set but performs poorly on external, multi-center data.

Solution:

- Action: Conduct rigorous external validation early in the development cycle.

- Protocol: Source retrospective imaging data from at least three independent clinical sites with different scanner models, protocols, and patient demographics. Ensure the dataset is representative of the intended use population.

- Documentation: Meticulously document all pre-processing steps, image acquisition parameters, and demographic information for all datasets. This demonstrates to regulators that you have understood and controlled for key sources of variability.

Issue 2: Establishing Clinical Utility for Indirect Benefits

Problem: How to prove the clinical benefit of an AI tool that provides measurements but does not directly output a diagnosis.

Solution:

- Action: Build a validation strategy that links the tool's output to downstream benefits for patient management or public health [31].

- Protocol: Design a study to show that the AI tool improves measurement precision and consistency compared to manual methods. Then, use established clinical literature to link these improved measurements to better treatment planning or monitoring. Combine this with summative usability testing to prove the tool works effectively in the hands of the intended users [31].

Issue 3: Navigating UKCA vs. CE Marking After Brexit

Problem: Determining the correct marking to sell a device in the United Kingdom (UK) and the European Union (EU).

Solution:

- Action: Understand that two different markings are now required for full market access to both regions.

- Guidance: The UKCA mark is required for Great Britain (England, Scotland, Wales). The CE mark is required for the EU, the European Economic Area, and Northern Ireland [27].

- Current Status: While the UK currently recognizes CE marking indefinitely for many products, medical devices may require separate approvals under UKCA and CE marking due to differing regulations [27]. For the highest assurance, plan for separate conformity assessments with a UK Approved Body for UKCA and an EU Notified Body for CE marking.

Experimental Protocols for Regulatory Validation

Protocol 1: Performance Evaluation Against the Standard of Care

This protocol is designed to generate evidence for a 510(k) submission or CE marking technical file.

1. Objective: To demonstrate that the AI-based neuroradiology tool is non-inferior or superior to the standard clinical practice (e.g., radiologist interpretation without AI assistance) for a specific task.

2. Methodology:

- Study Design: Retrospective, multi-reader, multi-case study.

- Data Set Curation:

- Collect a minimum of 300 de-identified neuroradiology cases (e.g., non-contrast head CTs) with a prevalence of the target finding (e.g., hemorrhage) that reflects clinical reality.

- Establish a ground truth through an adjudicated panel of at least three expert neuroradiologists, blinded to the AI output.

- Reader Study:

- Recruit a cohort of board-certified radiologists with varying experience levels.

- Each reader interprets the case set twice: once without AI assistance and once with AI assistance, with a sufficient washout period between sessions.

- Present cases in a randomized order to prevent recall bias.

3. Data Analysis:

- Compare the sensitivity, specificity, and area under the ROC curve (AUC) for the detection of the target finding between the unassisted and AI-assisted reads.

- Use statistical tests (e.g., McNemar's test for sensitivity/specificity, DeLong's test for AUC) to establish non-inferiority or superiority.

Protocol 2: Workflow Impact Analysis

This protocol quantifies the clinical utility of an AI tool in terms of efficiency, a key consideration for hospital adoption.

1. Objective: To measure the reduction in time-to-treatment and radiologist interpretation time using an AI triage tool.

2. Methodology:

- Study Design: Prospective, randomized controlled trial in a live clinical environment.

- Intervention:

- Control Arm: Standard workflow for processing and interpreting scans.

- Intervention Arm: AI tool automatically analyzes scans and alerts the radiologist for positive cases.

- Data Collection:

- Primary Endpoint: "Door-to-treatment" time for time-sensitive conditions like stroke [30].

- Secondary Endpoint: Time from scan completion to radiologist's final report.

- Capture cognitive burden metrics via standardized questionnaires.

3. Data Analysis:

- Use a time-to-event analysis (e.g., Kaplan-Meier curves and log-rank test) to compare door-to-treatment times between the two arms.

- Compare interpretation times using a paired t-test or Mann-Whitney U test, depending on data distribution.

Essential Research Reagent Solutions

This table outlines key components for building and validating an AI model for neuroradiology.

Table: Research Reagent Solutions for AI Neuroradiology Validation

| Item | Function in Validation |

|---|---|

| Curated, Multi-Center Image Dataset | Serves as the primary substrate for training and internal validation. Must be annotated by experts to establish reference standards. |

| External Validation Dataset | An independent dataset, ideally from different institutions and scanner types, used to test model generalizability and robustness [23]. |

| Adjudication Committee | A panel of expert neuroradiologists who establish the ground truth for complex cases, resolving discrepancies in annotations. |

| DICOM Conformance Tools | Software tools that verify the AI system correctly interfaces with Picture Archiving and Communication Systems (PACS) using standard DICOM protocols. |

| Performance Benchmarking Software | Tools to calculate standardized performance metrics (e.g., AUC, sensitivity, specificity) and compare them against pre-defined performance goals or predicate devices. |

Regulatory Workflow Visualization

The diagram below outlines the key stages and decision points in the regulatory pathway for an AI tool in neuroradiology.

Troubleshooting Guides

Guide 1: Addressing Algorithmic Bias in AI Models

Problem: AI model performance degrades or shows unfair outcomes across different patient demographics.

Solution: A comprehensive strategy involving bias detection, mitigation, and ongoing monitoring.

Step 1: Identify and Quantify Bias

- Action: Use open-source tools like IBM's AI Fairness 360 to run bias audits on your model [32].

- Methodology: Calculate fairness metrics such as demographic parity and equalized odds across subgroups defined by race, sex, age, etc. [33]. A 2023 review found that 50% of healthcare AI studies had a high risk of bias, often due to imbalanced datasets [33].

Step 2: Mitigate Bias in Training Data

- Action: Curate diverse and representative training datasets.

- Methodology: Leverage consortia models like the National Clinical Cohort Collaborative (N3C), which harmonizes data from over 75 institutions, to access more inclusive data [32]. Prioritize datasets that reflect the demographic diversity of your intended patient population.

Step 3: Ensure Model Generalizability

- Action: Perform rigorous external validation.

- Methodology: Test the algorithm on data from multiple external hospitals beyond your development site. Evaluate performance using metrics like the Dice coefficient and Hausdorff distance for image segmentation tasks to ensure accuracy holds [2].

Guide 2: Managing Data Privacy and Security

Problem: Ensuring patient data privacy during AI model training and deployment, in compliance with regulations.

Solution: Implement privacy-preserving technologies and robust data governance.

Step 1: Adopt Federated Learning

- Action: Train AI models across multiple institutions without moving or centralizing raw patient data [32].

- Methodology: Deploy the model to each institution's secure server. Only model parameter updates (not patient data) are shared and aggregated. The FeatureCloud project in Europe is a leading example of this approach [32].

Step 2: Implement Data Anonymization and Encryption

Step 3: Update Legal Agreements

Guide 3: Ensuring Effective Human Oversight

Problem: Radiologists experience "automation bias," over-relying on AI outputs, or the AI system fails without detection.

Solution: Establish clear human oversight protocols and monitoring systems as mandated by regulations like the EU AI Act [36].

Step 1: Define and Implement Human Oversight Workflows

- Action: Ensure clinicians have the authority and responsibility to override AI predictions when their clinical judgment contradicts the AI output [36].

- Methodology: Create clear standard operating procedures (SOPs) that define which AI findings require mandatory radiologist confirmation and which can be accepted without review based on the clinical context and the tool's validated performance.

Step 2: Establish Logging and Monitoring

- Action: Activate and maintain system logs that capture key events and AI outputs.

- Methodology: Logs must be retained for a minimum of six months and be accessible for internal audits and incident reporting. This allows for tracking how the AI system is used and identifying potential performance drift or bias over time [36].

Step 3: Conduct Ongoing Quality Monitoring

- Action: Implement a program for continuous clinical validation [35].

- Methodology: Incorporate a "human in the loop" review and regular audits of AI recommendations. Performance should be benchmarked against clinical consensus and monitored for accuracy across all patient populations to detect emerging bias [35].

Frequently Asked Questions (FAQs)

Q1: What are the most common types of bias we should test for in our neuroradiology AI models? The most common biases originate from data, development, and human interaction [37]. Key types include:

- Data Bias: Training data that lacks diversity in race, ethnicity, age, or socioeconomic status, leading to models that underperform on underrepresented groups [33].

- Representation Bias: Datasets that over-represent certain populations (e.g., from high-income regions) [33]. A study of neuroimaging AI models for psychiatric diagnosis found 97.5% included subjects only from high-income regions [33].

- Human Biases: These include implicit bias (subconscious stereotypes) and systemic bias (structural inequities in healthcare) that can be reflected in the data used to train models [33].

Q2: Our model performs well in our hospital but fails elsewhere. How can we improve generalizability? This is a classic problem of generalizability. Solutions include:

- External Validation: Test your model on data from several external hospitals that use different imaging equipment and have different patient demographics [2].

- Diverse Training Data: Build training datasets from multiple institutions to ensure the model learns robust, generalizable features rather than site-specific noise [32].

- Federated Learning: This technique allows you to train on diverse datasets from multiple institutions without transferring sensitive patient data, inherently improving generalizability [32].

Q3: What are our legal responsibilities if we use an FDA-cleared AI tool that fails to identify an abnormality? Legal liability for AI errors remains complex and somewhat ambiguous. However, the prevailing consensus is that the final responsibility for patient care and diagnosis rests with the clinical radiologist [38]. Relying on an FDA-cleared tool does not absolve the clinician of this responsibility. Healthcare organizations must ensure there is effective human oversight and that radiologists are trained to interpret and, when necessary, override AI outputs [36].

Q4: What specific obligations does the EU AI Act place on our research hospital using AI in neuroradiology? The EU AI Act classifies medical AI devices as "high-risk," placing specific obligations on users (deployers) [36]:

- Ensure AI Literacy: Provide training so staff understand the capabilities and limitations of the AI systems they use [36].

- Human Oversight: Implement measures to prevent automation bias and allow for the overriding of AI decisions [36].

- Data Quality Control: Verify that input data (e.g., medical images) meets the quality specifications set by the AI provider [36].

- Logging and Monitoring: Maintain logs of the AI system's operation for at least six months for auditing and monitoring purposes [36].

Q5: How can we transparently communicate the use of AI to our patients? Transparency is key to maintaining patient trust.

- Update Policies: Develop clear organizational policies on AI use and transparency [35].

- Revise Consent Forms: Inform patients when AI tools may be used in their care, even if the use is apparent to the clinician [35]. Laws like Texas's TRAIGA (effective 2026) are making this a legal requirement [35].

- Educate Staff: Prepare clinical staff to answer patient questions about how AI is used in their diagnosis and treatment [35].

Quantitative Data on AI in Radiology

The table below summarizes key quantitative data from recent studies and reports on AI adoption, performance, and bias in radiology.

| Metric | Value / Finding | Source / Context |

|---|---|---|

| AI Adoption in Radiology (2015-2020) | Grew from 0% to 30% | American College of Radiology data [9] |

| FDA-cleared AI Medical Devices | 882 total, with 76% in radiology (as of May 2024) | FDA update [33] |

| Studies with High Risk of Bias (ROB) | 50% of sampled AI studies showed high ROB | Kumar et al., 2023 systematic evaluation [33] |

| AI for Brain Tumor Classification | Diagnosis in under 150 seconds vs. 20-30 min for conventional methods | NIH/National Library of Medicine study [9] |

| Radiation Dose Reduction with AI (Pediatric) | 36% to 70% reduction, with some up to 95% | 2022 study of 16 peer-reviewed papers [9] |

| Sensitivity of Brain/Spine Triage AI | Reported range of 88% to 95% | Commercially available algorithms [2] |

Research Reagent Solutions

This table details key tools, frameworks, and resources essential for the ethical development and validation of AI in neuroradiology.

| Reagent / Resource | Function / Purpose | Application in Research |

|---|---|---|

| AI Fairness 360 (AIF360) | An open-source toolkit to check for and mitigate bias in machine learning models. | Used to calculate fairness metrics and run bias audits on developed models [32]. |

| Federated Learning Framework | A decentralized machine learning approach that trains algorithms across multiple institutions without sharing raw data. | Enables training on diverse datasets while preserving patient privacy and improving model generalizability [32]. |

| Dice Coefficient | A statistical metric (range 0-1) used to gauge the similarity between two sets of data. | A standard metric for evaluating the performance of image segmentation models (e.g., tumor contouring) [2]. |

| Assess-AI Registry | A registry developed by the American College of Radiology for blinded reporting of AI-related safety events. | Allows for confidential sharing of AI errors or near-misses to drive industry-wide learning and safety improvements [35]. |

| Structured Reporting with NLP | Use of Natural Language Processing (NLP) models like GPT-4 to convert free-text reports into structured data. | Helps structure vast amounts of radiology data for easier analysis, though requires caution regarding data privacy and "hallucinations" [2]. |

Experimental Validation Workflow for AI in Neuroradiology

The diagram below outlines a comprehensive workflow for the experimental validation of an AI tool in neuroradiology, integrating ethical imperatives at each stage.

Building a Robust Validation Protocol: Metrics and Integration Strategies

Frequently Asked Questions (FAQs)

Q1: What are realistic sensitivity and specificity values I should expect from an AI tool for detecting intracranial hemorrhage? Real-world performance can differ from developer-reported metrics. A large-scale study on an FDA-cleared AI tool for detecting intracranial hemorrhage (ICH) on non-contrast CT scans demonstrated a sensitivity of 75.6% and a specificity of 92.1% [39]. For other acute conditions, such as brain aneurysms, vessel occlusion in stroke, and cervical spine fractures on CT, reported sensitivities from commercially available triage algorithms often range from 88% to 95% [2]. It is critical to validate these metrics within your own clinical environment, as prevalence of disease and patient population characteristics can significantly impact performance.

Q2: How can an AI tool that is highly specific still slow down my overall workflow? A highly specific tool minimizes false alarms, but workflow impact is also determined by the positive predictive value (PPV). The PPV indicates the percentage of AI-positive cases that are true positives. In the ICH detection study, the tool had a PPV of only 21.1%, meaning nearly 79% of its alerts were false positives [39]. Each false alarm requires a radiologist to spend extra time to confirm it is not a real finding. This study found that interpreting these falsely flagged cases took over a minute longer than reading unremarkable scans, creating a net efficiency loss despite the tool's high specificity [39].

Q3: What key metrics should I use to evaluate an AI tool's impact on workflow speed? The primary quantitative metric is the change in average interpretation time, measured before and after AI integration [39]. However, a comprehensive evaluation should also consider qualitative factors. Evidence from systematic reviews is mixed, showing that AI does not always guarantee workflow efficiency gains [40]. Researchers should also assess the false positive rate's impact on radiologist cognitive load, system integration stability, and changes in report turnaround times for both AI-triaged and non-triaged studies.

Q4: Why is model generalizability a critical factor in multi-center trials? An AI model developed and validated at one hospital may not perform equally well across other institutions. This lack of generalizability can stem from differences in scanner manufacturers, imaging protocols, and patient population demographics [2]. For a multi-center trial, a drop in performance at a new site could introduce bias and invalidate key endpoints. It is essential to conduct site-specific validation of the AI tool prior to and during the trial to ensure consistent and reliable performance across all participating centers.

Q5: How do I assess the trustworthiness of an AI algorithm's output for a clinical trial? Establishing trust requires a multi-faceted approach. First, scrutinize the tool's regulatory status (FDA/CE clearance) and the clinical evidence from studies conducted in settings similar to your trial [41]. Second, inquire about the transparency and explainability of the AI's decisions. Some systems provide visual aids, like highlighting areas of concern, to help users understand the output [42]. Finally, evaluate the diversity and representativeness of the training data to ensure the algorithm is suitable for your trial's patient population and minimize the risk of biased performance [43] [44].

Performance Metrics for AI in Neuroradiology

The following tables summarize key quantitative metrics and methodological considerations for validating AI tools in neuroradiology, based on current literature and real-world implementations.

Table 1: Reported Performance Metrics for Selected AI Applications in Neuroradiology

| AI Application | Reported Sensitivity | Reported Specificity | Key Context / Notes |

|---|---|---|---|

| Intracranial Hemorrhage (ICH) Detection [39] | 75.6% | 92.1% | Real-world performance in a teleradiology setting; Positive Predictive Value (PPV) was 21.1%. |

| Brain & Spine Triage Algorithms [2] | 88% - 95% | Not Specified | Range includes detection of hemorrhage, stroke, aneurysms, and cervical spine fractures. |

| Cervical Spine Fracture Detection [2] | High Performance | High Performance | Winning algorithms from the RSNA 2022 Challenge demonstrated high detection and localization performance. |

Table 2: Experimental Protocol for Validating AI Workflow Impact

| Protocol Step | Description | Considerations for Researchers |

|---|---|---|

| 1. Study Design | Retrospective or prospective comparison of workflow metrics before and after AI integration. | A retrospective review of over 61,000 scans provides a robust model [39]. Prospective studies can capture real-time adaptations. |

| 2. Key Metrics | Measure average interpretation time (speed) and diagnostic accuracy (sensitivity/specificity). | Track time from opening the study to finalizing the report. Use expert panel consensus as a reference standard for accuracy [39]. |

| 3. Contextual Analysis | Analyze the impact of false positives and algorithm reliability on workflow efficiency. | Calculate the Positive Predictive Value (PPV). A low PPV can overwhelm radiologists with false alerts and slow down the net workflow [39]. |

| 4. Environment Assessment | Evaluate the tool's performance in the specific clinical environment where it will be used. | Disease prevalence and case-mix vary by site (e.g., emergency department vs. outpatient center) and can drastically alter a tool's practical value [39]. |

Experimental Workflow and Metric Relationships

The following diagram illustrates the logical pathway and key relationships for evaluating AI performance metrics in a validation study.

AI Validation Metric Relationships

The Scientist's Toolkit: Research Reagents & Essential Materials

Table 3: Key Resources for AI Validation in Neuroradiology Research

| Resource Category | Specific Examples / Functions | Role in Experimental Validation |

|---|---|---|

| Validated Datasets | Retrospective collections of imaging studies (e.g., >60,000 non-contrast head CTs [39]) with expert-annotated ground truth. | Serves as the benchmark for conducting robust, large-scale retrospective evaluations of AI algorithm performance before prospective deployment. |

| Annotation & Analysis Software | Platforms for segmenting lesions (e.g., hemorrhages, tumors) and performing volumetric analysis [2]. | Used to generate high-quality ground truth labels for training and to conduct specialized analyses (e.g., tumor volumetrics) that AI tools may automate. |

| Teleradiology/ PACS Platforms | Integrated clinical systems for managing and reading high volumes of studies, especially during off-hours [39]. | Provides a real-world, high-pressure environment to test AI's impact on workflow efficiency and diagnostic accuracy in a operational setting. |

| Performance Metric Tools | Software to calculate Dice coefficient, Hausdorff distance [2], sensitivity, specificity, and PPV [39]. | Provides quantitative, standardized measures for comparing AI performance against human experts and other algorithms. Essential for objective validation. |

| Computational Infrastructure | Powerful computing resources (GPUs) and advanced measurement techniques required for developing and running complex AI models [44]. | The foundational hardware and software required to train, test, and run deep learning models, particularly for image reconstruction and analysis tasks. |

Frequently Asked Questions

Q1: What is the fundamental difference between the Dice Coefficient and Hausdorff Distance? The Dice Coefficient (Dice-Sørensen Coefficient) measures the volume overlap or spatial agreement between two segmentations. In contrast, the Hausdorff Distance measures the largest distance between the boundaries of two shapes, capturing the worst-case scenario of mismatch [45] [46].

Q2: My Dice score is high, but my Hausdorff Distance is also large. What does this indicate? This is a common scenario. A high Dice score confirms good overall volumetric overlap between your AI output and the ground truth. However, a large Hausdorff Distance signifies that there is at least one localized, severe error where a part of the AI's segmentation is far from the corresponding part in the ground truth [46]. This combination warrants a visual inspection of the segmentation boundaries to identify these specific outliers.

Q3: When evaluating an AI model for brain tumor segmentation, which metric should I prioritize? For clinical applications in neuroradiology, it is crucial to use both metrics in conjunction [2]. The Dice Coefficient is excellent for assessing the overall accuracy of tumor volume segmentation, which is vital for treatment planning. The Hausdorff Distance is critical for ensuring that the segmentation boundaries are precise everywhere, as large boundary errors could be disastrous for surgical navigation or radiation therapy targeting [2].

Q4: How can I implement the calculation of these metrics in Python? Basic implementations for the Dice Coefficient and Hausdorff Distance can be achieved using common scientific computing libraries. The code snippets below demonstrate this.

Q5: What are the accepted interpretation guidelines for these metrics in medical imaging? While universal thresholds don't exist due to task-dependent variability, the following table offers general interpretation guidance for neuroradiology applications, based on common practices in the literature.

Table 1: Interpretation Guidelines for Segmentation Metrics in Neuroradiology

| Metric | Value Range | Common "Good" Threshold | Clinical Interpretation Guide |

|---|---|---|---|

| Dice Coefficient | 0 (No overlap) to 1 (Perfect overlap) | Often > 0.7 - 0.8 [47] | Indicates the overall volumetric agreement between AI segmentation and expert ground truth. |

| Hausdorff Distance | 0 to ∞ (smaller is better) | Task-dependent (e.g., < 5-10 mm) | Captures the largest boundary error, crucial for ensuring local accuracy in sensitive areas [46]. |

Troubleshooting Guides

Problem: Inconsistent or Unexpectedly Poor Dice Scores

- Potential Cause 1: Data Mismatch. The AI model was trained on data from one hospital or scanner type but is being evaluated on data from another, leading to a domain shift that reduces generalizability [2].

- Solution: Ensure your training and test datasets are representative of the clinical population and imaging protocols. Perform external validation on a dataset from a different source.

- Potential Cause 2: Incorrect Handling of Multiple Classes. When dealing with multi-class segmentation (e.g., tumor core, edema), calculating a single aggregate Dice score can mask poor performance in one class.

- Solution: Always calculate the Dice Coefficient separately for each class or structure of interest to get a precise performance breakdown [47].

Problem: Computationally Slow Calculation of Hausdorff Distance

- Potential Cause: Brute-Force Algorithm. A naive implementation that checks every point on one surface against every point on the other has a computational complexity of O(n*m), which is prohibitively slow for high-resolution medical images [46].

- Solution: For 3D surfaces, use optimized libraries like

scipy.spatial.distance.directed_hausdorfforopen3d, which utilize spatial data structures (e.g., KD-trees) to efficiently find nearest neighbors, drastically speeding up the calculation.

Problem: Hausdorff Distance is Overly Sensitive to a Single Outlier

- Potential Cause: The standard Hausdorff Distance (max distance) is, by definition, extremely sensitive to a single outlier point, which may not represent the overall boundary quality.

- Solution: Use a percentile-based variant, such as the 95% or 99% Hausdorff Distance. This metric sorts all the boundary point distances and takes the 95th percentile value, making it more robust to outliers while still capturing severe boundary errors.

Experimental Protocols for Metric Validation

Protocol 1: Benchmarking an AI Segmentation Model against Ground Truth This protocol outlines the steps for a standard performance evaluation of a neuroradiology AI tool.

Table 2: Research Reagent Solutions for Segmentation Validation

| Reagent / Tool | Function / Description |

|---|---|

| Expert-Annotated Ground Truth | Manually segmented medical images by clinical experts; serves as the reference standard for validation. |

| NumPy & SciPy (Python libraries) | Core libraries for numerical computations and implementing/sourcing metric calculations. |

| ITK-SNAP / 3D Slicer | Software for visualizing segmentations in 3D, overlaying results with ground truth, and inspecting Hausdorff Distance outliers. |

Workflow Overview: The following diagram illustrates the key stages in the validation workflow for an AI segmentation model in neuroradiology.

Protocol 2: Inter-Model Comparison Study This protocol is for comparing the performance of two or more different AI models.

Methodology:

- Dataset: Select a held-out test set with expert ground truth annotations.

- Execution: Run each AI model on the entire test set.

- Calculation: For each model and each image, compute both the Dice Coefficient and the Hausdorff Distance.

- Statistical Analysis: Perform appropriate statistical tests (e.g., paired t-test or Wilcoxon signed-rank test) on the results to determine if the performance differences between models are statistically significant.

- Visualization: Generate scatter plots or bar charts to visually compare the distribution of scores for each model.

Workflow Overview: The diagram below shows the comparative analysis process for evaluating multiple AI models.

The following table provides a consolidated overview of the two metrics for quick reference.

Table 3: Characteristics of Dice Coefficient and Hausdorff Distance

| Feature | Dice Coefficient | Hausdorff Distance |

|---|---|---|

| Primary Focus | Volumetric Overlap | Boundary Agreement / Maximum Error |

| Mathematical Range | 0 to 1 | 0 to ∞ |

| Interpretation | Higher is better | Lower is better |

| Sensitivity | Insensitive to small, localized errors | Highly sensitive to outliers |

| Best Used For | Assessing overall accuracy | Ensuring local precision and capturing worst-case errors |

| Clinical Relevance | Tumor volume measurement, treatment response assessment | Surgical planning, radiation therapy targeting [2] |

Designing Validation Studies for Real-World Generalizability

Frequently Asked Questions (FAQs)

FAQ 1: What constitutes a robust real-world dataset for validating an AI tool in neuroradiology? A robust real-world dataset should be representative of the intended clinical population and capture the full spectrum of clinical scenarios. Key considerations include:

- Data Diversity and Representativeness: The data must represent a broad socio-economic and ethnic population to minimize the risk of bias. It should capture data across various healthcare settings, scanner manufacturers, and protocols to ensure generalizability across different clinical environments [48] [2]. For instance, a dataset used for a multiple sclerosis monitoring tool included 397 scan pairs from three different scanner manufacturers (GE, Philips, Siemens) to test algorithm robustness [49].

- Longitudinal View: For chronic conditions like multiple sclerosis, data that covers a long period is essential for assessing disease progression and treatment response [48] [49].

- Fitness for Purpose: The data source must align with the research question. For example, administrative claims data is robust for financial metrics but may lack clinical details like vital signs [48]. Data should be routinely collected from varied sources, including electronic health records (EHRs), medical claims data, and disease registries [50].

FAQ 2: How can I assess the real-world clinical impact of an AI tool beyond diagnostic accuracy? Evaluating real-world impact requires looking beyond traditional performance metrics. Essential factors include:

- Workflow Integration: The tool must be integrated into existing clinical systems, such as the Radiology Information System (RIS) and Picture Archiving and Communications System (PACS), without adding burden to clinicians. Successful integration can enable features like automated worklist prioritization [51] [52].

- Interpretation Time: A tool that increases radiologist interpretation times can be a significant adoption barrier, especially in high-volume practices. Studies have shown that AI tools can sometimes increase reading times, which may offset diagnostic benefits [51].

- Value for Different Users: The tool's benefit may vary. For example, one study found that an AI algorithm for detecting steno-occlusive lesions provided a statistically significant diagnostic benefit for less-experienced resident trainees but not for expert neuroradiologists [51].

- Return on Investment (ROI) : From an administrative perspective, the marginal diagnostic benefit of a tool must be weighed against its cost, especially if it performs a task that an expert is already expected to do [51].

FAQ 3: What are the key regulatory considerations when designing a validation study? In the United States, understanding the U.S. Food and Drug Administration (FDA) framework is critical.

- FDA Classification: AI tools in radiology are typically not approved as autonomous devices. Many are classified as Computer Aided Triage and Notification (CADt) tools, while others may be Computer Aided Detection (CADe) or Computer Aided Diagnosis (CADx) devices. This classification reflects the extent of validation and determines the intended use [51].