Best Practices for Neural Decoding with Machine Learning: A Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and scientists on implementing machine learning for neural decoding.

Best Practices for Neural Decoding with Machine Learning: A Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and scientists on implementing machine learning for neural decoding. It covers foundational principles, from defining neural decoding and its significance in understanding brain function to its translational applications in brain-computer interfaces (BCIs) and drug development. The guide details modern methodological approaches, including deep learning architectures and data handling for various neural signals. It further offers practical strategies for model optimization and troubleshooting and concludes with a framework for rigorous validation and comparative analysis of decoding algorithms. The content synthesizes the latest advances in the field to equip professionals with the knowledge to design robust and effective neural decoding systems.

Laying the Groundwork: Core Concepts and Neuroscience Principles of Neural Decoding

Neural decoding is a fundamental data analysis method in neuroscience that uses recorded neural activity to predict information about external stimuli, behavioral states, or cognitive processes [1] [2]. This approach operates on the principle that specialized neuronal populations encode relevant environmental and body-state features, enabling other brain areas—or external algorithms—to decode these representations for interpreting information and generating actions [3] [4]. In essence, neural decoding transforms neural signals into meaningful variables that can be analyzed to understand brain function or utilized for engineering applications such as brain-computer interfaces (BCIs) [2] [5].

The mathematical foundation of neural decoding involves estimating the relationship between neural activity patterns and specific variables of interest. Formally, this can be represented as predicting a stimulus or state variable ( x ) from a neural activity vector ( K ), which contains information from ( N ) neurons [3]. The decoding process leverages statistical relationships to make predictions about external variables based on observed neural responses, typically using machine learning classifiers or regression models that are trained on known neural response patterns and tested on independent data to validate their predictive accuracy [1] [2].

Key Principles and Methodological Approaches

Core Concepts in Neural Decoding

The process of neural decoding relies on several key principles that ensure accurate interpretation of neural signals. Neural tracking ensures temporal alignment between brain recordings and linguistic or sensory representations, accounting for minor time shifts in information transfer and neural response [6]. This alignment facilitates serialized and temporal modeling of cortical activities, making decoding of continuous stimuli possible. Complementing this, neural prediction underscores how the brain integrates contextual information during perception, similar to how artificial language models use context to predict upcoming words [6]. This predictive characteristic is crucial for understanding how the brain processes ongoing speech streams and other continuous stimuli.

Information processing in the brain can be conceptualized as a series of cascading encoding-decoding operations where downstream neurons decode and transform information from upstream populations to extract increasingly abstract representations [3]. This hierarchical processing enables the brain to build internal models of the environment that ultimately guide behavior. The distinction between encoding models (which predict neural responses from stimuli) and decoding models (which predict stimuli from neural responses) provides a fundamental framework for analyzing neural representations, though both perspectives are complementary in understanding neural computation [3].

Taxonomy of Neural Decoding Tasks

Neural decoding encompasses diverse task paradigms tailored to different research objectives and experimental designs:

- Stimuli Recognition: The simplest form of decoding, involving differentiation of linguistic or sensory stimuli by analyzing evoked brain responses, typically with a modest candidate set and limited sequence length [6].

- Brain Recording Translation: Focused on open-vocabulary continuous decoding, where systems generate stimulus sequences in textual or speech form based on evoked brain responses, analogous to machine translation that treats brain activity as the source language [6].

- Speech Neuroprosthesis: Aims to generate inner speech or vocal intentions from spontaneous neural activation patterns, progressing from phoneme-level recognition to open-vocabulary sentence decoding [6] [7].

Table 1: Neural Decoding Task Paradigms and Characteristics

| Task Paradigm | Decoding Target | Typical Applications | Complexity Level |

|---|---|---|---|

| Stimuli Recognition | Discrete categories from evoked responses | Basic brain-computer interfaces, cognitive neuroscience | Low (classification) |

| Text Stimuli Reconstruction | Words or sentences | Language decoding, communication systems | Medium (sequence generation) |

| Speech Reconstruction | Speech envelope, MFCC, or waveforms | Speech neuroprosthetics, auditory neuroscience | High (continuous signal generation) |

| Brain Recording Translation | Continuous text or speech sequences | Natural language decoding, translational research | High (open-vocabulary) |

| Inner Speech Decoding | Imagined or attempted speech | Assistive technologies, cognitive monitoring | Medium to High (intention decoding) |

Quantitative Performance Benchmarks

Decoding Accuracy Across Modalities and Paradigms

Recent advances in neural decoding, particularly using deep learning approaches, have significantly improved performance across various paradigms. In linguistic decoding, modern pipelines can achieve up to 37% top-10 accuracy for decoding individual words from non-invasive recordings (EEG/MEG) with a retrieval set of 250 words, substantially outperforming linear models that achieve only about 6% accuracy under similar conditions [8]. The integration of transformer architectures at the sentence level provides approximately a 50% performance boost compared to earlier deep learning models [8].

Performance varies considerably based on recording modality and experimental protocol. MEG recordings generally yield higher decoding accuracy than EEG, attributed to better signal-to-noise ratios [8]. Similarly, decoding performance is typically better when subjects read rather than listen to sentences, potentially due to clearer segmentation of visual words and the availability of low-level visual features like word length that aid decoding [8]. These performance differences highlight the importance of selecting appropriate recording modalities and experimental designs based on specific decoding objectives.

Scaling Laws in Neural Decoding

Decoding performance follows predictable scaling relationships with data quantity and quality. Performance increases log-linearly with the amount of training data, demonstrating the scalability of decoding techniques with expanding datasets [8]. Similarly, test-time averaging of multiple neural responses to the same stimulus produces substantial improvements, with some datasets achieving nearly 80% top-10 accuracy after averaging just 8 predictions [8]. This strong dependence on averaging indicates that current decoding performance is primarily constrained by the low signal-to-noise ratio of neural recordings rather than fundamental limitations in decoding algorithms.

Table 2: Performance Benchmarks Across Decoding Approaches

| Decoding Approach | Recording Modality | Performance Metric | Reported Performance | Reference |

|---|---|---|---|---|

| Linear Models (Ridge Regression) | MEG/EEG | Top-10 Accuracy | ~6% | [8] |

| EEGNet | EEG | Top-10 Accuracy | ~10% (varies by dataset) | [8] |

| BrainModule with Subject Layer | MEG/EEG | Top-10 Accuracy | ~20% (average across datasets) | [8] |

| Transformer-Enhanced Pipeline | MEG/EEG | Top-10 Accuracy | Up to 37% | [8] |

| Inner Speech Decoding (CNN) | ECoG | Word-level Accuracy | 35.2% | [7] |

| Modern ML Methods (Neural Networks) | Spike Recordings | Decoding Accuracy | Significantly outperforms traditional filters | [2] [5] |

Experimental Protocols and Methodologies

Protocol for Non-Invasive Word Decoding from M/EEG

Objective: To decode individual words from non-invasive brain recordings during reading or listening tasks.

Materials and Setup:

- Participants: 723 participants across multiple datasets provides robust statistical power [8]

- Stimuli: Sentences presented visually (reading) or auditorily (listening) in the participant's native language

- Recording Devices: EEG or MEG systems with appropriate temporal resolution for capturing word-level processing

- Data Acquisition: Record brain activity while participants process linguistic stimuli, with precise alignment between stimulus presentation and neural recordings

Experimental Procedure:

- Stimulus Presentation: Present text or speech stimuli using standardized protocols (e.g., Rapid Serial Visual Presentation for reading tasks)

- Neural Recording: Acquire continuous M/EEG data with precise timestamping of stimulus onset

- Preprocessing: Apply artifact removal, normalization, and band-pass filtering to enhance signal quality [7]

- Feature Extraction: Use deep learning architectures (e.g., convolutional layers followed by transformers) to extract relevant features from neural signals

- Model Training: Train decoding models with contrastive learning objectives to align neural features with word embeddings

- Cross-Validation: Evaluate performance using appropriate cross-validation schemes to ensure generalizability

Analysis Pipeline:

- Implement subject-specific layers in the model architecture to account for individual differences

- Use retrieval-based evaluation metrics (top-k accuracy) to assess decoding performance

- Perform ablation studies to determine the contribution of different model components

- Analyze temporal dynamics of word representation in neural signals

Protocol for Inner Speech Decoding

Objective: To decode imagined or covert speech from neural signals for brain-computer interface applications.

Materials and Setup:

- Participants: Individuals with intact cognitive function or those with speech impairments for clinical applications

- Recording Modalities: ECoG for high signal quality or non-invasive EEG/MEG where appropriate

- Task Design: Carefully designed prompts for inner speech production with appropriate controls

Experimental Procedure:

- Task Instruction: Participants imagine speaking words or sentences without overt articulation

- Neural Recording: Acquire neural data using appropriate temporal resolution for capturing speech-related signals

- Signal Enhancement: Apply preprocessing techniques specifically optimized for inner speech signals, which typically have lower amplitude than overt speech

- Feature Classification: Use machine learning classifiers (SVMs, Random Forests, or CNNs) to distinguish between different inner speech categories

- Model Validation: Employ rigorous cross-validation and test on held-out data to ensure real-world applicability

Technical Considerations:

- Account for high inter-subject variability in inner speech representation

- Address the challenge of low signal-to-noise ratio in non-invasive recordings

- Implement strategies to handle the continuous nature of inner speech versus discrete classification

Essential Research Reagents and Tools

The Scientist's Toolkit for Neural Decoding

Successful implementation of neural decoding research requires specific tools and computational resources. The following table outlines essential components of the neural decoding research pipeline:

Table 3: Essential Research Reagents and Tools for Neural Decoding

| Category | Specific Tools/Resources | Function/Purpose | Examples/Notes |

|---|---|---|---|

| Recording Technologies | EEG Systems | Non-invasive recording of electrical brain activity | High temporal resolution, lower spatial resolution [8] |

| MEG Systems | Non-invasive recording of magnetic brain activity | Better signal-to-noise ratio than EEG [8] | |

| ECoG Arrays | Invasive recording with high spatial and temporal resolution | Used in clinical settings with epilepsy patients [6] [7] | |

| fMRI | Functional imaging with high spatial resolution | Limited temporal resolution for language decoding [6] | |

| Software Packages | NeuroDecodeR (R) | Modular package for running decoding analyses | Supports parallel processing, rich R ecosystem [1] |

| Neural Decoding Toolbox (MATLAB) | Comprehensive decoding analysis framework | Mature codebase with extensive documentation [1] | |

| Python Decoding Packages (e.g., PyTorch, TensorFlow) | Custom deep learning implementations | Flexibility for implementing novel architectures [2] | |

| Machine Learning Approaches | Linear Models (Ridge Regression) | Baseline decoding performance assessment | Ubiquitous in neuroscience, provides benchmark [8] |

| Convolutional Neural Networks | Feature extraction from neural signals | Effective for spatial patterns in neural data [8] [7] | |

| Transformers | Contextual integration for sequence decoding | 50% performance boost for sentence-level decoding [8] | |

| Support Vector Machines | Classification of neural patterns | Traditional ML approach with good performance [7] | |

| Evaluation Metrics | Top-k Accuracy | Retrieval-based assessment | Appropriate for open-vocabulary decoding [8] |

| BLEU/ROUGE Scores | Semantic similarity for text generation | Used in brain recording translation tasks [6] | |

| Word Error Rate (WER) | ASR-inspired metric for speech decoding | Common in inner speech recognition [6] |

Advanced Methodological Considerations

Machine Learning Implementation Best Practices

Implementing machine learning effectively for neural decoding requires careful attention to several methodological considerations. Proper cross-validation is essential, typically using k-fold approaches where data is split into k parts, with k-1 parts used for training and the remaining part for testing, repeating this process k times with different test sets [1]. This approach ensures that decoding accuracy measurements are aggregated across multiple test sets, providing a reliable estimate of model performance.

Temporal decoding represents another important paradigm, particularly for time-series neural data recorded over fixed-length experimental trials. In this approach, classifiers are trained and tested at individual time points, with the procedure repeated across successive time points to reveal how information content fluctuates throughout a trial [1]. This method can track the flow of information through different brain regions over time and assess whether neural representations change across temporal intervals.

For interpreting what information neural populations contain, generalization analyses provide powerful insights. These analyses train classifiers on one set of conditions before testing on related but distinct conditions, revealing whether neural representations capture abstract or invariant features [1]. For example, training a decoder to discriminate objects shown at one retinal position then testing at different positions can assess position-invariant object information.

Cautions in Interpretation and Application

While neural decoding offers powerful insights, researchers must exercise caution in interpreting results. High decoding accuracy does not necessarily indicate that a brain area directly processes or is specialized for the decoded variable [2] [5]. For example, accurate image classification from retinal signals doesn't mean the retina's primary function is image classification, as the retina simply conveys visual information that could be used for multiple purposes.

Similarly, the mathematical transformations within machine learning decoders—even biologically-inspired neural networks—should not be interpreted as directly mimicking neural computations in the brain [2] [5]. The internal workings of these models are generally not designed for mechanistic interpretation, and high performance alone doesn't indicate biological plausibility.

When decoding incorporates prior information about variables (such as the overall probability of being in a location when decoding hippocampal place cells), researchers should recognize that the decoded output reflects both neural information and these priors [2]. Disentangling these sources is essential for accurate interpretation of what information is genuinely contained in neural populations versus what is contributed by the decoding algorithm itself.

Core Principles and Quantitative Foundations

The brain functions as a complex, distributed system where information is processed through continuous cycles of neural encoding and decoding. Encoding refers to the process by which external stimuli or internal states are transformed into specific patterns of neural activity. Conversely, decoding uses these neural activity patterns to make predictions about the original stimuli or states [4]. This loop is not a serial process but a dynamic interaction of nested and parallel sensorimotor control circuits that continuously govern our interaction with the world [9].

Modern neuroscience has moved beyond a rigid view of brain areas having singular, specialized functions. Instead, research reveals broad distribution and mixing of functions; for example, movement-related activity is found not only in motor areas but widely across sensory and association regions [10]. This architecture prioritizes pragmatic outcomes and closed-loop feedback control over purely accurate internal representations [9].

Table 1: Key Metrics in Modern Neural Decoding Approaches

| Decoding Paradigm | Key Metric | Reported Performance | Context & Significance |

|---|---|---|---|

| Semantic Decoding [11] | Classification Accuracy | Up to 77% (15 categories); 97% (living vs. non-living) | Highest reported accuracy for decoding word categories from intracranial recordings, far exceeding chance (7%). |

| Movement Encoding [10] | Explained Variance (R²) | Medulla: 0.176; Midbrain: 0.104 (Embedding method) | Quantifies the proportion of neural activity predictable from movement. Shows a logical progression, with higher values closer to motor periphery. |

| Model Comparison [10] | Improvement in Explained Variance | End-to-end vs. Marker-based: +330%End-to-end vs. Embedding: +76% | Demonstrates the superior predictive power of expressive, data-intensive models like deep learning over simpler approaches. |

The application of machine learning (ML), particularly deep learning, has been transformative for neural decoding. These tools can identify complex, non-linear patterns in high-dimensional neural data that traditional linear methods miss [2]. The performance gap is significant: in direct comparisons on datasets from motor cortex, somatosensory cortex, and hippocampus, modern methods like neural networks and gradient boosting "significantly outperform traditional approaches, such as Wiener and Kalman filters" [2]. Furthermore, the alignment between artificial neural networks and brain activity follows scaling laws, where larger models trained on more data show greater similarity to neural representations [6].

Experimental Protocols in Neural Decoding

This section details the core methodologies that enable researchers to map the encoding-decoding loop.

Protocol: Relating Neural Activity to Behavior Using Video-Based Feature Extraction

This protocol outlines methods for extracting behavioral features from video to model movement-related neural activity, as used in brain-wide encoding studies [10].

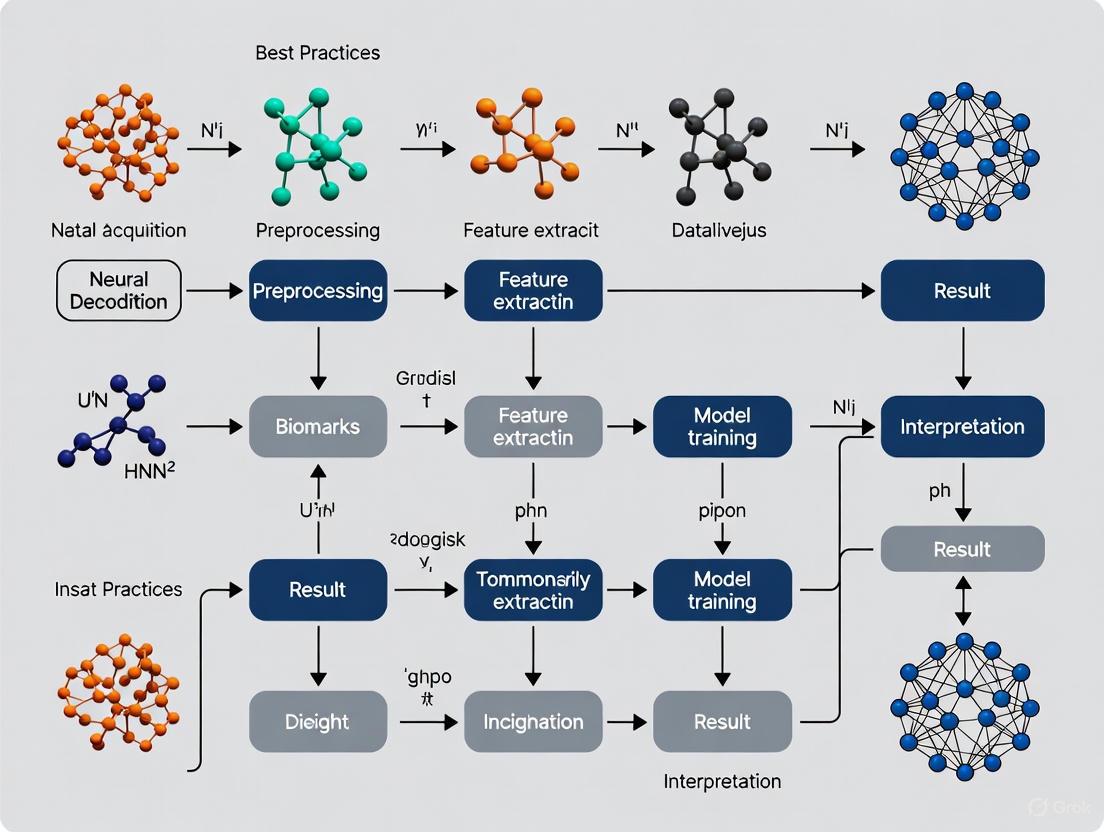

Workflow Diagram: Video-Based Neural Encoding Analysis

Materials and Reagents:

- Experimental Subject: Mouse performing a behavioral task (e.g., decision-making).

- Neural Recording System: Neuropixels probes for large-scale, simultaneous extracellular recording.

- Behavioral Monitoring: High-speed camera (e.g., 300 Hz) for videography.

- Computing System: Workstation with GPU for deep learning model training.

Procedure:

- Data Acquisition: Simultaneously record high-speed video of the animal's face and paws and neural population activity using implanted probes while the subject performs a task.

- Feature Extraction (Choose one approach):

- Marker-Based: Use DeepLabCut to track specific body parts (e.g., nose, tongue, jaw) in each video frame. The resulting 2D coordinate time-series serve as features.

- Embedding-Based: Train an autoencoder (a convolutional neural network encoder with a linear decoder) to compress each video frame into a low-dimensional vector (e.g., 16 dimensions). The vector time-series serves as features.

- End-to-End: Train a neural network (e.g., a convolutional neural network) to directly predict neural activity from raw video pixels.

- Model Training: Train a regression model (e.g., linear regression, neural network) to predict the time-varying activity of each neuron (or neuronal population) based on the extracted behavioral feature time-series.

- Model Validation & Analysis: Evaluate model performance by calculating the fraction of explained variance (R²) in neural activity on a held-out test dataset. Compare performance across brain areas, layers, or nuclei registered to a common anatomical atlas.

Protocol: Decoding Linguistic Content from Intracranial Recordings

This protocol describes the process of decoding semantic information (word categories) from human brain activity, a key approach for brain-computer interfaces (BCIs) [11].

Workflow Diagram: Semantic Content Decoding for BCIs

Materials and Reagents:

- Participants: Consenting human patients (e.g., epilepsy) undergoing invasive monitoring for clinical purposes.

- Recording Equipment: Clinical electrocorticography (ECoG) or stereo-EEG (sEEG) systems.

- Stimulus Presentation Software: For displaying word stimuli.

- Computing System: For data analysis and machine learning.

Procedure:

- Experimental Setup: Present consenting patients with a series of words from different semantic categories (e.g., tools, animals) visually or auditorily. Instruct them to think about the meaning of each word.

- Neural Data Acquisition: Record intracranial brain activity (e.g., ECoG, sEEG) time-locked to the stimulus presentation.

- Signal Preprocessing: Filter the neural data to remove artifacts and extract relevant features. Common features include spectral band power (e.g., alpha, beta, gamma rhythms) or time-frequency representations across the recorded electrodes.

- Classifier Training: Train a machine learning classifier (e.g., Support Vector Machine, neural network) to map the neural features from a specific time window to the corresponding word category.

- Validation and Testing: Evaluate decoding accuracy using cross-validation on held-out data. Report metrics like multiclass accuracy and the accuracy for distinguishing broad categories (e.g., living vs. non-living).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Technologies for Neural Decoding Research

| Tool / Technology | Type | Primary Function in Research |

|---|---|---|

| Neuropixels Probes [10] | Neural Recording | High-density electrodes for simultaneously recording action potentials from thousands of neurons across multiple brain regions. |

| DeepLabCut [10] | Software Tool | Markerless pose estimation based on deep learning to track animal body parts from video, generating behavioral feature data. |

| Autoencoders [10] | Algorithm / Model | Unsupervised deep learning model for compressing high-dimensional data (e.g., video frames) into lower-dimensional, informative feature vectors for encoding analysis. |

| Convolutional Neural Networks (CNNs) [10] [12] | Algorithm / Model | Class of deep neural networks ideal for processing structured grid data like images (from video) or spectrograms (from neural signals). |

| Support Vector Machines (SVM) [12] | Algorithm / Model | A versatile supervised learning model used for classification and regression, often applied in BCI settings for decoding categorical variables. |

| Long Short-Term Memory (LSTM) [12] | Algorithm / Model | A type of recurrent neural network (RNN) designed to model temporal sequences, useful for decoding continuous, time-varying signals like speech. |

| Allen Common Coordinate Framework (CCF) [10] | Atlas / Database | A standardized 3D reference atlas for the mouse brain, allowing integration and comparison of neural data from different experiments and labs. |

Application Notes: Integrating Decoding into Broader Research Goals

The protocols and tools described are not ends in themselves but are most powerful when integrated into a broader research strategy focused on understanding brain function and developing clinical applications.

Linking Causality: Beyond predicting neural activity from behavior, the encoding-decoding loop is crucial for establishing causality. The BRAIN Initiative highlights the need to "link brain activity to behavior with precise interventional tools that change neural circuit dynamics," progressing from observation to causation using optogenetics, chemogenetics, and other modulation techniques [13].

Clinical Translation: Reliable neural decoding is the cornerstone of Brain-Computer Interface (BCI) technology, which aims to restore function in neurological disorders like Parkinson's disease, stroke, and epilepsy [12]. Decoding movement intention can drive functional electrical stimulation (FES) of limbs, while decoding semantic content can provide new communication channels for paralyzed patients [11] [12].

Best Practices in Model Interpretation: A critical note of caution is that while ML decoders can achieve high predictive performance, the internal transformations of the model are not necessarily biologically interpretable. "High predictive performance is not evidence that transformations occurring within the ML decoder are the same as, or even similar to, those in the brain" [2]. Therefore, decoding results should be interpreted as demonstrating the information content within a neural population, not necessarily revealing the underlying biological computation.

Neural decoding is a fundamental tool in neuroscience and neuroengineering that involves using recorded brain activity to make predictions about external stimuli, intended actions, or cognitive states. The process relies on machine learning algorithms to interpret neural signals and translate them into actionable commands or meaningful interpretations. The field has evolved significantly from traditional linear methods to sophisticated modern machine learning approaches, particularly deep learning, which have dramatically improved decoding performance [5]. These advancements are driving progress across three primary domains: prosthetic device control, basic research into brain function, and communication systems for severely impaired individuals. Modern machine learning methods, including neural networks and ensemble methods, have demonstrated superior performance compared to traditional approaches like Wiener and Kalman filters, enabling more accurate and intuitive neural interfaces [5]. The following sections provide a comprehensive overview of the key applications, quantitative performance data, experimental protocols, and technical toolkits that define the current state of neural decoding research.

Table 1: Performance Metrics Across Key BCI Application Domains

| Application Domain | Neural Signal Modality | Key Performance Metrics | Reported Performance | Reference |

|---|---|---|---|---|

| Prosthetic Limb Control with Sensory Feedback | Intracortical microstimulation (ICMS) | Sensation localization accuracy, object identification | Stable, localized sensations over 1000+ days; ability to feel object boundaries and motion | [14] [15] [16] |

| Individual Finger Control (Non-invasive) | EEG-based movement execution & motor imagery | 2-finger vs. 3-finger classification accuracy | 80.56% (2-finger), 60.61% (3-finger) online decoding accuracy | [17] |

| Speech Neuroprosthetics | Intracortical microelectrode arrays | Word error rate (WER), character error rate (CER) | High accuracy for attempted speech; proof-of-concept for inner speech decoding | [18] |

| Wearable Non-invasive BCI | Microneedle scalp sensors | Classification accuracy for visual stimuli | 96.4% accuracy during movement; 12-hour stable operation | [19] |

Table 2: Comparison of Neural Signal Acquisition Modalities for BCI Applications

| Modality Type | Spatial Resolution | Temporal Resolution | Invasiveness | Key Applications | Limitations |

|---|---|---|---|---|---|

| fMRI | High (mm) | Low (seconds) | Non-invasive | Basic research, brain mapping | Bulky equipment, poor temporal resolution |

| EEG | Low (cm) | High (ms) | Non-invasive | Robotic control, communication | Limited spatial resolution, noise from volume conduction |

| MEG | Medium (~3-5 mm) | High (ms) | Non-invasive | Basic research, clinical | Expensive, bulky equipment |

| ECoG | High (mm) | High (ms) | Semi-invasive (surface implants) | Clinical monitoring, motor decoding | Requires craniotomy, limited coverage |

| Intracortical Microelectrodes | Very high (μm) | Very high (ms) | Invasive | High-performance motor prosthetics, sensory feedback | Tissue response, long-term stability challenges |

Application Notes & Experimental Protocols

Prosthetic Limb Control with Bidirectional Feedback

Application Objective: Restore both motor control and tactile sensation to prosthetic limbs through bidirectional brain-computer interfaces that decode movement intention and encode sensory feedback via intracortical microstimulation.

Background & Significance: Traditional prosthetic devices lack sensory feedback, requiring users to rely heavily on visual attention and resulting in clumsy, effortful operation. Research has demonstrated that providing somatosensory feedback through intracortical microstimulation (ICMS) significantly improves prosthetic control, enables more dexterous manipulation of objects, and creates a more embodied experience [14] [15]. Recent advances have moved beyond simple on/off contact sensations to enable users to feel complex spatiotemporal patterns such as object edges sliding across the skin and pressure changes [14].

Key Experimental Findings:

- Stable, Long-Term Sensations: Electrical stimulation of specific cortical neurons via implanted electrodes can evoke tactile sensations that remain stable in their perceived location on the hand for over 1,000 days, providing a consistent sensory reference for users [14] [15].

- Enhanced Grip Control: Participants receiving ICMS feedback could successfully prevent a slipping steering wheel from falling 69% more often than without feedback, demonstrating functional improvement in object manipulation [14].

- Complex Shape Perception: By stimulating overlapping pairs or clusters of electrodes with carefully orchestrated temporal patterns, researchers can create sensations of edges moving across the skin, enabling shape discrimination [14].

Protocol 1: Evoking Stable Tactile Sensations via ICMS

Objective: Create stable, precisely localized tactile sensations on the hand through intracortical microstimulation.

Procedure:

- Surgical Implantation: Place microelectrode arrays (e.g., Blackrock Neurotech arrays) in the area of the somatosensory cortex corresponding to hand sensation using standard stereotactic surgical techniques [14] [15].

- Sensory Mapping: Deliver short pulses (e.g., 0.2-1.0 mA, 200 Hz, 100-400 ms duration) to individual electrodes while participants report where and how strongly they feel each sensation.

- Create Sensory Map: Develop a detailed map correlating specific electrodes with perceived sensation locations on the hand.

- Stability Testing: Regularly test the same electrodes over extended periods (days to years) to confirm sensation location consistency.

- Multi-Electrode Stimulation: Stimulate closely spaced electrode pairs simultaneously to enhance sensation strength and clarity.

- Functional Integration: Incorporate the sensory feedback into closed-loop prosthetic control systems where sensors on a robotic hand trigger appropriate ICMS patterns during object manipulation.

Troubleshooting:

- If sensations weaken over time, check electrode impedance and consider slight current amplitude adjustments.

- If sensation locations shift significantly, recalibrate the sensory map.

- For poorly localized sensations, optimize electrode pairing configurations.

Protocol 2: Creating Artificial Motion Sensations

Objective: Generate the perception of smooth motion across the skin using patterned microstimulation.

Procedure:

- Identify Overlapping Zones: Identify pairs or clusters of electrodes whose evoked sensation locations overlap on the hand.

- Design Stimulation Patterns: Create stimulation sequences that activate electrodes in progressive patterns across the sensory map.

- Parameter Optimization: Systematically vary stimulation parameters (pulse frequency, current amplitude, timing between sequential activations) to maximize the perception of smooth, continuous motion.

- Psychophysical Validation: Have participants report the quality and continuity of motion sensations and adjust parameters accordingly.

- Object Interaction Testing: Implement motion patterns that correspond to real-world interactions, such as a object sliding between fingers or along the palm.

Non-invasive Robotic Hand Control at Individual Finger Level

Application Objective: Enable real-time control of robotic hands at the individual finger level using non-invasive EEG signals derived from actual or imagined finger movements.

Background & Significance: Most non-invasive BCIs for robotic control operate at the limb level, creating an unnatural mapping between intention and action. Individual finger control represents a significant advance for restoring dexterous manipulation, particularly for stroke survivors and others with hand impairments [17]. The challenge lies in decoding finger-specific signals from EEG data, as finger representations in the motor cortex are small and highly overlapping.

Key Experimental Findings:

- Real-time Control Feasibility: Using deep learning decoders, researchers achieved 80.56% online decoding accuracy for two-finger tasks and 60.61% for three-finger tasks using motor imagery [17].

- Fine-tuning Enhancement: Model performance significantly improved through session-specific fine-tuning, demonstrating the importance of adaptation to individual users and sessions [17].

- Multiple Feedback Modalities: Participants successfully used both visual feedback (on-screen displays) and physical feedback (robotic finger movements) to improve control performance.

Protocol 3: EEG-based Individual Finger Decoding for Robotic Control

Objective: Decode individual finger movements from EEG signals to control a robotic hand in real time.

Procedure:

- Participant Preparation: Apply high-density EEG caps (64+ electrodes) following standard preparation procedures (skin abrasion, conductive gel).

- Experimental Paradigm:

- Movement Execution (ME): Participants physically move individual fingers when cued.

- Motor Imagery (MI): Participants imagine moving individual fingers without physical movement.

- Data Collection:

- Present visual cues indicating which finger to move or imagine moving.

- Record EEG data during movement preparation and execution/imagery phases.

- Include adequate inter-trial rest periods to avoid fatigue.

- Decoder Training:

- Train EEGNet (a compact convolutional neural network) on collected data.

- Use leave-one-out cross-validation to assess model performance.

- Real-time Implementation:

- Process EEG data in real-time using sliding windows (e.g., 200-500 ms).

- Apply preprocessing (filtering, artifact removal) and feature extraction.

- Generate classification outputs to control corresponding robotic fingers.

- Online Fine-tuning:

- Collect additional data at the beginning of each session.

- Fine-tune the base model with new session-specific data.

- Implement majority voting across multiple time segments to stabilize outputs.

Troubleshooting:

- For poor classification accuracy, increase model capacity or enhance feature engineering.

- If real-time performance is laggy, optimize window size and computational efficiency.

- For participant fatigue, shorten session duration or increase break frequency.

Speech Neuroprosthetics for Communication Restoration

Application Objective: Decode speech attempts or inner speech from cortical activity to restore communication abilities in individuals with severe paralysis.

Background & Significance: For people with conditions like amyotrophic lateral sclerosis (ALS) or brainstem stroke that cause complete paralysis, conventional communication methods become impossible. Speech neuroprosthetics aim to decode intended speech directly from brain activity, potentially enabling rapid, natural communication [18]. Recent research has expanded from decoding attempted speech movements to exploring inner speech (completely imagined speech without movement), which could be less fatiguing and more comfortable for users.

Key Experimental Findings:

- High-Accuracy Decoding: BCIs can decode attempted speech with high accuracy using microelectrode arrays implanted in speech-related motor areas [18].

- Inner Speech Feasibility: Inner speech produces detectable, though weaker, neural patterns compared to attempted speech, demonstrating principle feasibility [18].

- Privacy Protection: Password-protection systems can effectively prevent accidental decoding of unintended inner thoughts, addressing significant privacy concerns [18].

Protocol 4: Inner Speech Decoding for Communication BCIs

Objective: Decode internally imagined speech from neural signals for communication applications.

Procedure:

- Surgical Implantation: Implant microelectrode arrays (e.g., Utah arrays) in speech motor cortex areas using standard neurosurgical techniques.

- Data Collection Paradigm:

- Attempted Speech: Participants try to actually speak words or phrases.

- Inner Speech: Participants imagine speaking words or phrases without any movement.

- Neural Feature Extraction: Record neural activity patterns and extract relevant features (firing rates, local field potentials, high-frequency band power).

- Phoneme-Level Decoding: Train machine learning models to recognize patterns associated with phonemes - the smallest units of speech.

- Sentence Reconstruction: Implement language models to stitch decoded phonemes into coherent words and sentences.

- Privacy Safeguards:

- For attempted speech systems: Train decoders to ignore inner speech patterns.

- For inner speech systems: Implement password protection where users must imagine a specific passphrase before decoding begins.

Basic Research Applications

Application Objective: Use neural decoding to understand fundamental principles of neural computation, information representation, and brain function.

Background & Significance: Beyond clinical applications, neural decoding serves as a powerful tool for basic neuroscience research. By analyzing what information can be decoded from neural populations and how decoding performance varies across brain regions, conditions, or time, researchers can infer how the brain represents and processes information [5] [3].

Key Research Applications:

- Information Content Analysis: Determine how much information neural activity contains about external variables and how this differs across brain areas [5].

- Neural Code Investigation: Test hypotheses about how information is encoded in neural activity by comparing hypothesis-driven decoders against machine learning benchmarks [5].

- Brain-Computer Alignment: Study relationships between artificial neural network representations and brain activity patterns to understand brain computation [6] [3].

Protocol 5: Using Decoding for Basic Neuroscience Research

Objective: Apply neural decoding methods to investigate fundamental questions about neural representation.

Procedure:

- Experimental Design: Design tasks that systematically vary stimuli or behaviors of interest.

- Neural Recording: Record neural activity using appropriate methods (e.g., electrophysiology, fMRI, EEG) during task performance.

- Decoder Selection: Choose decoding algorithms based on research question:

- Linear decoders for interpretability

- Modern ML methods (neural networks, gradient boosting) for maximum performance benchmarking

- Cross-Validation: Implement rigorous cross-validation to avoid overfitting and ensure generalizable results.

- Comparative Analysis:

- Compare decoding performance across brain regions

- Compare performance across experimental conditions

- Analyze how decoding information evolves over time

- Interpretation: Carefully interpret results in the context of neural mechanisms, recognizing that high decoding performance doesn't necessarily imply direct causal involvement [5].

Signaling Pathways and Experimental Workflows

BCI System Operational Workflow

Information Flow in the Brain

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools and Materials for Neural Decoding Experiments

| Category | Specific Tool/Technology | Function/Purpose | Example Use Cases |

|---|---|---|---|

| Neural Signal Acquisition | Microelectrode arrays (Blackrock Neurotech) | Record neural activity with high spatial and temporal resolution | Intracortical recording for motor decoding and sensory feedback |

| Neural Signal Acquisition | High-density EEG systems | Non-invasive recording of electrical brain activity | Finger movement decoding, motor imagery studies |

| Neural Signal Acquisition | Microneedle scalp sensors | Minimally invasive recording with improved signal quality | Wearable BCIs for continuous use |

| Stimulation Technology | Intracortical microstimulation (ICMS) systems | Deliver precise electrical stimulation to neural tissue | Creating artificial tactile sensations in prosthetic limbs |

| Computational Tools | EEGNet (Convolutional Neural Network) | Decode neural signals from EEG data | Individual finger movement classification |

| Computational Tools | Gradient boosting ensembles | High-performance decoding of various neural signals | Motor decoding, comparison studies |

| Computational Tools | Large Language Models (LLMs) | Decode and generate linguistic content | Speech neuroprosthetics, language decoding |

| Experimental Platforms | Robotic hand systems | Provide physical manifestation of decoding outputs | Prosthetic control validation, rehabilitation training |

| Experimental Platforms | AR/VR interfaces | Create controlled visual environments for BCI tasks | Hands-free communication systems, rehabilitation |

| Data Analysis Tools | Hyperparameter optimization frameworks | Automatically optimize decoder parameters | Maximizing decoding performance across subjects |

Neural decoding represents a rapidly advancing field with transformative applications in prosthetics, communication restoration, and basic neuroscience research. The protocols and applications detailed in this document demonstrate the remarkable progress in decoding increasingly sophisticated neural representations - from individual finger movements to inner speech. Critical to this progress has been the adoption of modern machine learning methods, which consistently outperform traditional approaches. As neural interfaces become more refined and decoding algorithms more sophisticated, the potential for restoring function to people with disabilities continues to expand. Future directions will likely focus on improving long-term stability, enhancing decoding resolution, developing less invasive recording methods, and creating more adaptive systems that evolve with users' changing needs and abilities.

Understanding brain function requires tools that can capture neural activity across multiple spatial and temporal scales. Neural recording techniques are broadly categorized into invasive and non-invasive methods, each with distinct trade-offs in signal quality, spatial resolution, and temporal resolution [20] [21]. Invasive methods, such as Electrocorticography (ECoG) and recordings of spiking activity, involve surgical implantation of electrodes directly onto the brain surface or into neural tissue. Non-invasive methods, such as Electroencephalography (EEG) and functional Magnetic Resonance Imaging (fMRI), measure brain activity externally through scalp electrodes or hemodynamic responses [22]. The choice of technique is critical and depends on the specific application, balancing the need for high-fidelity signals against considerations of safety, user comfort, and ethical constraints [20]. Within the context of modern neural decoding research, selecting the appropriate recording modality forms the foundational step for building effective machine learning models that can interpret brain activity for both scientific inquiry and engineering applications like brain-machine interfaces [5] [2].

Characteristics of Neural Signal Types

The performance and applicability of neural recording techniques are defined by their key characteristics. The table below provides a quantitative comparison of the most common invasive and non-invasive methods.

Table 1: Comparison of Invasive and Non-Invasive Neural Recording Techniques

| Technique | Spatial Resolution | Temporal Resolution | Invasiveness | Recorded Signal Origin | Primary Applications |

|---|---|---|---|---|---|

| EEG | ~1-3 cm (Low) [21] | ~1 ms (Excellent) [21] | Non-invasive [22] | Scalp-recorded electrical potentials from synchronized postsynaptic activity of cortical pyramidal neurons [21] | Diagnosis of epilepsy/sleep disorders, cognitive science research, basic BCIs [22] |

| fMRI | ~1 mm³ (Good) [21] | ~1-2 seconds (Poor) [21] | Non-invasive [21] | Blood Oxygen Level Dependent (BOLD) response, an indirect correlate of neural activity [21] | Mapping cognitive functions, clinical neuroimaging, pre-surgical planning |

| ECoG | ~1 cm (Medium) | ~1-5 ms (Excellent) [21] | Invasive (subdural) [21] | Electrical potentials from the cortical surface [21] | Refractory epilepsy monitoring, high-performance BCIs [22] [21] |

| Spiking (Intracortical) | Single Neuron (Excellent) | <1 ms (Excellent) | Highly Invasive (intracortical) [22] | Action potentials from individual neurons or small neuronal populations [21] | Fundamental neuroscience research, high-dexterity neuroprosthetics [20] [22] |

These characteristics directly influence the suitability of each technique for neural decoding. Invasive techniques provide high spatial and temporal resolution, which is crucial for decoding fine-grained motor commands or sensory details [20]. For instance, intracortical spiking signals are the gold standard for controlling complex robotic arms in brain-machine interfaces [22]. Conversely, non-invasive techniques like EEG, while noisier and spatially smeared, are safer and more accessible, making them suitable for communication devices, neurofeedback, and studying large-scale brain dynamics [20] [22]. The following diagram illustrates the fundamental signaling pathways for these recording modalities.

Diagram 1: Neural Signal Pathways

Experimental Protocols for Neural Recording

Protocol: Simultaneous EEG-fMRI for Multimodal Decoding

This protocol outlines a procedure for collecting complementary neural datasets, relating non-invasive signals to underlying neural population codes, as explored in recent research [23].

- Objective: To acquire temporally precise (EEG) and spatially precise (fMRI) data from the same participant during an identical cognitive task, enabling a comprehensive analysis of brain dynamics.

- Materials:

- MRI-safe high-density EEG system (e.g., 64-channel cap).

- 3T or higher MRI scanner.

- Stimulus presentation system compatible with the MRI environment.

- Procedure:

- Participant Preparation: Fit the participant with an MRI-safe EEG cap. Apply electrolyte gel to achieve electrode impedances below 10 kΩ. Brief the participant on the task and safety procedures.

- Stimulus Presentation: Present the experimental paradigm. For object decoding studies, this involves rapid serial visual presentation (RSVP) of images from distinct categories (e.g., animals, chairs, faces) [23]. Each image is displayed for 200-500 ms, followed by an inter-stimulus interval.

- Data Acquisition:

- fMRI: Acquire whole-brain BOLD images (e.g., T2*-weighted EPI sequence) with a TR of 2 seconds. A high-resolution T1-weighted anatomical scan should also be collected for co-registration.

- EEG: Record continuous EEG data at a high sampling rate (≥1000 Hz) synchronized with the stimulus triggers and scanner clock pulses to facilitate artifact correction.

- Data Preprocessing:

- fMRI: Perform standard preprocessing steps: slice-time correction, realignment, co-registration to the anatomical scan, normalization to standard space (e.g., MNI), and spatial smoothing.

- EEG: Process data offline to remove MRI-related artifacts (gradient switch and ballistocardiogram) using template subtraction or independent component analysis (ICA). Filter data (e.g., 0.1-40 Hz) and epoch from -100 ms to +600 ms relative to stimulus onset [23].

- Neural Decoding Application: Preprocessed data can be subjected to multivariate pattern analysis (MVPA). A machine learning classifier (e.g., a support vector machine or neural network) can be trained on the trial-by-trial EEG patterns or fMRI activation patterns to decode the object category being viewed [23]. The temporal generalization of decoding from EEG can be directly compared to the spatial localization from fMRI.

Protocol: ECoG Recording for High-Fidelity Signal Acquisition

This protocol details the methodology for recording cortical surface signals in a clinical setting, typically with patients undergoing monitoring for epilepsy surgery [21].

- Objective: To obtain high signal-to-noise ratio neural data with superior spatial and temporal resolution for decoding complex cognitive or motor representations.

- Materials:

- Subdural grid or strip electrodes (e.g., 64-256 contact arrays).

- Clinical-grade, high-resolution amplifier and data acquisition system.

- Procedure:

- Clinical Procedure: Electrode implantation is performed by a neurosurgeon. The placement of electrode grids is determined entirely by clinical needs for localizing epileptogenic zones.

- Experimental Task: After a post-operative recovery period, participants can perform tasks. These may include motor imagery, actual limb movement, speech, or passive sensory stimulation, depending on the research question and cortical coverage.

- Data Acquisition: Record ECoG signals from all electrode contacts at a high sampling rate (typically ≥1000 Hz). The signal is often recorded in a wide frequency band (e.g., 0.1-500 Hz) to allow for offline analysis of both low-frequency and high-frequency gamma (70-150 Hz) activity, the latter being a robust correlate of local cortical activation.

- Data Preprocessing:

- Visually inspect data and exclude channels with excessive noise or epileptiform activity.

- Re-reference signals, commonly to a common average reference.

- Filter data into frequency bands of interest for analysis (e.g., high-gamma band).

- Neural Decoding Application: The high-gamma power from ECoG signals provides a spatially precise and temporally dynamic feature set for machine learning decoders. For motor BMIs, a regression model (e.g., Kalman filter or neural network) can be trained to map high-gamma activity from motor cortex to continuous kinematic parameters (e.g., hand position or velocity) [5] [2].

Machine Learning Decoding Workflows

The process of translating raw neural data into decoded variables follows a structured pipeline. Modern machine learning methods, including neural networks and gradient boosting, have been shown to significantly outperform traditional linear approaches like Wiener and Kalman filters in terms of decoding accuracy [5] [2]. The workflow for integrating these methods is outlined below.

Diagram 2: Neural Decoding Workflow

Best Practices for Machine Learning in Neural Decoding

- When to Use Machine Learning: ML is most beneficial when the primary research aim is to maximize predictive performance, such as in engineering applications like brain-machine interfaces [5] [2]. It is also critical for benchmarking, as a high-performing ML decoder provides an upper bound against which to compare simpler, hypothesis-driven models [2].

- Model Selection and Testing:

- Test Multiple Algorithms: Begin by evaluating a suite of methods, as their performance is task-dependent. Neural networks and ensemble methods (e.g., gradient boosted trees) often achieve top performance on neural data from motor cortex, somatosensory cortex, and hippocampus [5].

- Rigorous Validation: Always use cross-validation to assess model performance and prevent overfitting. Strictly separate training, validation, and test datasets.

- Hyperparameter Optimization: Systematically tune model hyperparameters (e.g., learning rate, network architecture, regularization strength) using the validation set to optimize decoding accuracy [5] [2].

- Cautions in Interpretation:

- Mechanistic Insight: A high-performing ML decoder (e.g., a neural network) is a powerful predictive tool, but its internal transformations are not a direct model of brain function. Its "black box" nature makes it difficult to draw biological conclusions [2].

- Information vs. Causation: High decoding accuracy confirms that information about a variable is present in the neural signal but does not imply that the recorded brain area is the primary processor of that variable. Causal interpretations require additional experimental designs [2].

The Scientist's Toolkit: Research Reagents & Materials

Table 2: Essential Materials for Neural Recording and Decoding Experiments

| Item | Function/Description | Example Use Case |

|---|---|---|

| High-Density EEG Cap | A headset with multiple electrodes (e.g., 64-128 channels) for recording scalp potentials. | Non-invasive brain-computer interfaces, cognitive event-related potential (ERP) studies. |

| MRI-Safe EEG System | Specially designed equipment that functions safely and effectively inside an MRI scanner. | Simultaneous EEG-fMRI studies for correlating electrical and hemodynamic brain activity [23]. |

| Subdural Electrode Grid | A sterile, flexible array of electrodes (e.g., 8x8 grid) implanted on the cortical surface. | Clinical ECoG recording for epilepsy monitoring and high-resolution BCIs [21]. |

| Intracortical Microelectrode | A fine, penetrating electrode (e.g., Utah array) for recording action potentials from individual neurons. | Decoding intended movement signals from motor cortex for controlling robotic limbs [20] [22]. |

| Data Acquisition System | Amplifier and hardware for digitizing and synchronizing analog neural signals with task stimuli. | Essential for all electrophysiological recordings (EEG, ECoG, Spiking). |

| Stimulus Presentation Software | Software (e.g., Psychophysics Toolbox) for precise control of visual/auditory stimuli and task flow. | Presenting controlled experimental paradigms during neural data collection [23]. |

| Neural Decoding Code Package | Open-source software libraries providing implemented decoding algorithms. | Rapid implementation and comparison of machine learning decoders (e.g., [5]). |

Information theory provides a powerful framework for quantifying how neural activity represents and transmits information about sensory stimuli, cognitive states, and motor outputs. This application note explores the intersection of information theory and neural decoding, with a specific focus on best practices for applying machine learning to decipher the neural code. We outline standardized protocols for decoding experiments, present quantitative performance comparisons across methodologies, and detail essential research reagents. Within the broader thesis on neural decoding with machine learning, this document serves as a practical guide for implementing rigorous, reproducible, and high-performance decoding pipelines in neuroscience research and therapeutic development.

The central nervous system can be conceptualized as an information processing network, where neurons encode features of the external world and internal states into patterns of electrical activity. Neural decoding refers to the process of inferring these stimuli or states from recorded neural signals, a critical capability for both basic neuroscience and applied brain-computer interfaces (BCIs) [3]. The synergy between information theory and modern machine learning (ML) has dramatically accelerated progress in this field, moving beyond traditional linear models to leverage deep learning and other non-linear approaches that can capture the complex statistical relationships inherent in neural population data [5].

This document outlines practical protocols and applications for neural decoding, grounded in information-theoretic principles. We focus on providing a structured methodology for researchers, covering the main decoding paradigms, performance metrics, and experimental workflows. The subsequent sections provide a detailed breakdown of decoding tasks, quantitative benchmarks, step-by-step protocols for implementation, and a curated list of research tools.

Neural Decoding Paradigms and Performance Metrics

Neural decoding tasks can be categorized based on the nature of the target variable being decoded. The choice of task dictates the experimental design, data processing pipeline, and evaluation metrics.

Table 1: Taxonomy of Neural Decoding Tasks and Associated Metrics

| Decoding Task | Definition | Common Modalities | Primary Evaluation Metrics | Application Context |

|---|---|---|---|---|

| Stimuli Recognition [6] | Identifying a specific stimulus from a predefined set based on evoked neural activity. | EEG, MEG, fMRI, ECoG | Accuracy: Percentage of correctly classified instances. | Basic neuroscience investigations of sensory processing. |

| Brain Recording Translation [6] | Decoding open-vocabulary, continuous language or semantic content from brain signals during perception. | ECoG, fMRI | BLEU, ROUGE, BERTScore: Measures of semantic similarity to a reference text. | Communication BCIs, studying language representation. |

| Speech Neuroprosthesis [6] [7] | Decoding intended (inner) or attempted speech from spontaneous neural activation patterns. | ECoG (high-density), intracranial arrays | Word Error Rate (WER), Character Error Rate (CER): Measures of sequence transcription accuracy. | Restorative communication BCIs for paralyzed patients. |

| Motor Decoding [5] | Predicting kinematic parameters (e.g., hand velocity, grip force) from motor cortex activity. | Utah arrays, ECoG | Correlation Coefficient (r), Normalized Root Mean Square Error (NRMSE): Measures of regression performance. | Control of prosthetic limbs, robotic arms, and computer cursors. |

Quantitative performance varies significantly across decoding methods. Modern machine learning approaches consistently outperform traditional linear models.

Table 2: Comparative Performance of Decoding Algorithms on Representative Neural Datasets [5]

| Decoding Algorithm | Monkey Motor Cortex (Velocity Decoding, R²) | Rat Hippocampus (Position Decoding, R²) | Computational Complexity | Notes |

|---|---|---|---|---|

| Wiener Filter | 0.54 ± 0.03 | 0.41 ± 0.04 | Low | Traditional baseline; linear method. |

| Kalman Filter | 0.58 ± 0.03 | 0.45 ± 0.04 | Medium | Dynamic state-space model. |

| XGBoost (Gradient Boosting) | 0.62 ± 0.02 | 0.51 ± 0.03 | Medium-High | High performance, good interpretability. |

| Recurrent Neural Network (RNN) | 0.65 ± 0.02 | 0.55 ± 0.03 | High | Excels with temporal sequences. |

| Convolutional Neural Network (CNN) | 0.64 ± 0.02 | 0.53 ± 0.03 | High | Effective for spatial feature extraction. |

Experimental Protocols for Key Decoding Paradigms

Protocol: Decoding Inner Speech for Communication BCIs

Objective: To decode inner (covert) speech from non-invasive or invasive neural signals for use in a brain-computer interface [7].

Materials: See Section 5.1 for a list of essential research reagents.

Signal Acquisition:

- Fit the subject with a high-density EEG cap (e.g., 128+ channels) or, in clinical settings, use implanted ECoG grids.

- Record neural activity while the subject is cued to imagine (not vocalize) speaking specific words or syllables from a closed set (e.g., "left," "right," "up," "down").

- Duration: Collect a minimum of 50 trials per imagined word to ensure sufficient data for model training.

Data Preprocessing:

- Apply a band-pass filter (e.g., 0.5-100 Hz for EEG) to remove drift and high-frequency noise.

- Remove artifacts from eye blinks and muscle movement using algorithms like Independent Component Analysis (ICA).

- For EEG, re-reference signals to a common average reference.

- Segment data into epochs time-locked to the cue instructing the subject to begin inner speech.

Feature Engineering:

- Extract time-frequency features (e.g., power in specific frequency bands like Mu (8-12 Hz) and Beta (13-30 Hz)) using Morlet wavelets or similar methods.

- Alternatively, use raw time-series data as input for deep learning models, which can learn relevant features automatically.

Model Training & Validation:

- Model Choice: Train a Convolutional Neural Network (CNN) to classify the epoch of neural data into one of the imagined words. CNNs are effective at capturing spatial and temporal patterns in neural signals [7].

- Validation: Use a stratified k-fold cross-validation (e.g., k=5) to ensure performance is not biased by specific data splits. Report average test accuracy across all folds.

Decoding & Output:

- The output of the trained CNN classifier is the predicted intended word.

- This output can be used to control an external device, such as a spelling interface on a computer screen.

Protocol: Translating Perceived Speech from Cortical Activity

Objective: To reconstruct continuous language (words or sentences) a subject is listening to, from evoked brain activity [6].

Materials: Requires high signal-to-noise ratio data, typically from ECoG or fMRI.

Stimulus Presentation & Signal Acquisition:

- Present auditory stimuli (spoken sentences or stories) to the subject via headphones.

- Simultaneously record neural activity using ECoG from auditory and language-related cortical areas (e.g., Superior Temporal Gyrus) or with fMRI.

Stimulus Representation & Alignment:

- Convert the auditory speech stimuli into numerical representations using a hierarchical approach:

- Low-Level: Mel-Frequency Cepstral Coefficients (MFCCs) or speech envelope.

- High-Level: Embeddings from a pre-trained Large Language Model (LLM) to capture semantic content.

- Temporally align the neural data with the speech representations, accounting for the hemodynamic response delay in fMRI.

- Convert the auditory speech stimuli into numerical representations using a hierarchical approach:

Encoding Model Training:

- Train a model (e.g., a linear regression or a recurrent neural network) to predict the neural activity based on the speech representations. This establishes how speech features are encoded in the brain.

Inversion for Decoding:

- Use the trained encoding model in an inverse fashion. Given a novel segment of neural data, the model searches for the speech stimulus that would most likely have generated the observed activity.

- This can be framed as a sequence-to-sequence translation problem, where the source sequence is the neural recording and the target sequence is the text or speech.

Evaluation:

- Evaluate the decoded text against the ground-truth transcript using metrics from machine translation, such as BLEU score, which measures n-gram overlap, and BERTScore, which captures semantic similarity [6].

Workflow Visualization

The following diagram illustrates the core computational workflow for a modern neural decoding pipeline, integrating the protocols described above.

The Scientist's Toolkit: Research Reagent Solutions

Successful neural decoding experiments rely on a suite of hardware, software, and data processing tools. The following table details key components of a modern neural decoding pipeline.

Table 3: Essential Research Reagents for Neural Decoding with Machine Learning

| Category | Item / Solution | Function / Description | Example Tools / Models |

|---|---|---|---|

| Signal Acquisition | Electroencephalography (EEG) | Non-invasive recording of electrical activity from the scalp; high temporal resolution. | BioSemi, BrainVision, EGI Geodesic systems |

| Electrocorticography (ECoG) | Invasive recording from the cortical surface; higher signal-to-noise ratio than EEG. | Ad-Tech Medical, Integra LifeSciences grids | |

| Functional MRI (fMRI) | Non-invasive measurement of hemodynamic activity; high spatial resolution. | Siemens, Philips, GE scanners | |

| Data Preprocessing | Artifact Removal | Algorithms to remove non-neural noise (e.g., from eye blinks, muscle movement). | Independent Component Analysis (ICA) |

| Signal Filtering | Isolates frequency bands of interest and removes line noise. | Band-pass, notch filters | |

| Machine Learning Frameworks | Deep Learning Libraries | Flexible frameworks for building and training custom neural network decoders. | TensorFlow, PyTorch |

| Traditional ML & Gradient Boosting | High-performance libraries for tree-based models and ensembles. | XGBoost, scikit-learn | |

| Specialized Models | Pre-trained Language Models (LLMs) | Provide powerful semantic representations of language stimuli for encoding models. | BERT, GPT models [6] |

| Convolutional Neural Networks (CNNs) | Effective for decoding from data with spatial structure (e.g., ECoG grid, EEG topography). | Custom architectures [7] [5] | |

| Recurrent Neural Networks (RNNs) | Ideal for modeling temporal dependencies in neural and behavioral time series. | LSTM, GRU [24] [5] | |

| Evaluation & Analysis | Neural Decoding Code Package | Standardized code for comparing multiple decoding algorithms on neural datasets. | Kording Lab Neural Decoding Toolbox [5] |

| Metric Calculators | Code for computing standardized performance metrics (BLEU, WER, etc.). | NLTK, SacreBLEU |

Building Your Decoder: Machine Learning Methods and Real-World Implementation

In neural decoding, the core objective is to reconstruct stimuli, intentions, or behaviors from measured neural activity, forming a critical foundation for both scientific discovery and translational applications like Brain-Computer Interfaces (BCIs) [25] [5]. The choice between traditional Machine Learning (ML) and modern approaches, including Deep Learning and Large Language Models (LLMs), is pivotal and hinges on specific research goals, data modalities, and practical constraints. Traditional ML offers interpretability and efficiency with structured data, while modern AI provides superior power for complex, unstructured neural data but at the cost of transparency and computational resources [26] [27]. This Application Note provides a structured comparison and detailed protocols to guide researchers in selecting and implementing the appropriate model for their neural decoding research.

Core Comparative Analysis: Traditional Machine Learning vs. Modern AI

The fundamental distinction lies in their approach to problem-solving. Traditional ML requires humans to manually identify and extract relevant features from raw data before a model can learn from them. In contrast, Modern AI (especially deep learning) automates this feature extraction process, learning complex patterns directly from raw or minimally processed data [28] [29].

Structured Comparison of Approaches

Table 1: High-Level Comparison between Traditional ML and Modern AI for Neural Decoding

| Feature | Traditional Machine Learning | Modern AI (Deep Learning/LLMs) |

|---|---|---|

| Core Philosophy | Learns patterns from manually extracted features [29]. | Learns hierarchical features directly from raw data [28]. |

| Data Requirements | Works well with structured, tabular data or smaller datasets (<10,000 examples) [26] [28]. | Requires large, often unstructured datasets (100,000+ examples) [26] [30]. |

| Interpretability | High. Models like linear regression are often transparent and explainable [26] [31]. | Low (Black Box). Complex models are difficult to interpret, necessitating Explainable AI (XAI) techniques [26] [31]. |

| Computational Cost | Relatively low; can be run on standard CPUs [26]. | Very high; typically requires specialized hardware (GPUs/TPUs) [26] [28]. |

| Best-Suited Data Types | Numerical, categorical, or pre-processed feature vectors that fit in spreadsheets [26] [27]. | Raw, high-dimensional data like text, images, audio, and neural time series [26] [27]. |

| Typical Neural Decoding Use Cases | Initial benchmarking, hypothesis testing with linear mappings, decoding with limited data channels [5]. | Decoding complex perceptions, speech reconstruction from neural signals, multimodal data integration [6] [25]. |

Performance Comparison in Neural Decoding

Empirical evidence demonstrates that modern methods can significantly outperform traditional linear approaches in decoding accuracy across various brain areas.

Table 2: Empirical Performance Comparison of Decoding Models

| Brain Area / Task | Traditional ML Model (Performance) | Modern ML Model (Performance) | Key Finding |

|---|---|---|---|

| General Performance | Wiener Filter, Kalman Filter | Neural Networks, Gradient Boosting | Modern methods (NNs, ensembles) "significantly outperform traditional approaches" in decoding spiking activity from motor cortex, somatosensory cortex, and hippocampus [5]. |

| Speech Decoding (Non-invasive MEG) | Linear Baselines | CNN-Transformer Hybrids | Deep learning models consistently outperform linear baselines in tasks like object category decoding and semantic language reconstruction [25]. |

| Stimulus Recognition | Linear Classifiers (e.g., SVM) | Deep Neural Networks | For complex visual or semantic stimuli, DNNs leverage hierarchical processing for higher accuracy, though SVMs with careful feature engineering can be competitive in specific tasks [29]. |

Experimental Protocols for Neural Decoding

Protocol 1: Implementing a Traditional ML Decoding Pipeline

This protocol uses a linear model to decode a continuous variable (e.g., movement velocity) from neural spiking activity.

Objective: To establish a interpretable baseline for decoding an external variable from neural population activity.

Materials & Reagents:

- Neural Data: Extracted spike counts or local field potential (LFP) features from multiple channels over time bins.

- Behavioral Data: Time-synchronized continuous variable (e.g., hand position, velocity).

- Software: Python with Scikit-learn, NumPy, SciPy.

Procedure:

- Data Preprocessing:

- Bin neural spike counts into non-overlapping time windows (e.g., 50 ms).

- Smooth the behavioral data if necessary and align it with the neural data bins.

- Z-score neural features and the behavioral variable to standardize their distributions.

Model Training & Validation:

- Use Ridge Regression (linear regression with L2 regularization) to prevent overfitting.

- Split data into training (70%), validation (15%), and test (15%) sets, ensuring temporal continuity is preserved if the data is time-series.

- Train the model on the training set to learn the mapping:

Neural Activity → Behavioral Variable. - Use the validation set to tune the regularization hyperparameter (

alpha).

Model Evaluation:

- Apply the finalized model to the held-out test set.

- Quantify performance using the coefficient of determination (

R²) and the Pearson Correlation Coefficient (PCC) between the predicted and actual behavioral variables.

Troubleshooting:

- Low

R²: The relationship may be non-linear; consider a modern ML approach. Ensure features and labels are properly aligned in time. - Overfitting: Increase the regularization strength (

alpha) if performance on the validation set is much worse than on the training set.

Protocol 2: Implementing a Modern AI (Deep Learning) Decoding Pipeline

This protocol uses a Recurrent Neural Network (RNN) to decode a sequence (e.g., spoken words from neural signals).

Objective: To decode sequential or complex, non-linear relationships from high-dimensional neural data.

Materials & Reagents:

- Neural Data: High-density neural recordings (e.g., ECoG, EEG, or MEG signals).

- Stimulus Data: Corresponding sequence labels (e.g., text transcript of spoken words).

- Software: Python with PyTorch or TensorFlow, GPU acceleration.

Procedure:

- Data Preprocessing & Feature Engineering:

- Filter the raw neural data to relevant frequency bands (e.g., high-gamma for ECoG).

- Extract sequence chunks of neural data and align them with target output sequences (e.g., phonemes or words).

- Convert text labels into numerical tokens.

Model Architecture & Training:

- Implement an encoder-decoder RNN architecture (e.g., using LSTM or GRU layers).

- The encoder RNN processes the input sequence of neural features.

- The decoder RNN generates the output sequence (e.g., text) from the encoder's state.

- Use cross-entropy loss and train with the Adam optimizer.

- Employ teacher forcing during training to stabilize learning.

Model Evaluation:

Troubleshooting:

- Model fails to learn: Check gradient flow, reduce learning rate, and ensure data preprocessing is correct.

- Overfitting: Implement dropout layers, use L2 regularization, and employ early stopping on the validation set performance.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools and Models for Neural Decoding Research

| Tool Category | Specific Examples | Function & Application in Neural Decoding |

|---|---|---|

| Traditional ML Models | Linear/Ridge Regression, Wiener Filter, Kalman Filter [5] | Provides a simple, interpretable baseline for decoding continuous variables (e.g., kinematics). |

| Traditional ML Models | Support Vector Machines (SVM) [28] [29] | Effective for classification tasks (e.g., stimulus category) with structured feature sets. |

| Modern Deep Learning Models | Convolutional Neural Networks (CNNs) [25] | Process spatially structured neural data (e.g., from electrode arrays) or spectrograms of audio. |

| Modern Deep Learning Models | Recurrent Neural Networks (RNNs/LSTMs) [25] | Model temporal dependencies in neural time series for sequence decoding (e.g., speech). |

| Modern Deep Learning Models | Transformer Models [6] [25] | Capture long-range context in neural signals, useful for semantic decoding and language reconstruction. Leverage self-attention to weigh the importance of different neural signals over time. |

| Evaluation Metrics | R², Pearson Correlation Coefficient (PCC) [3] [25] |

Assess decoding accuracy for continuous variables. |

| Evaluation Metrics | Word Error Rate (WER), BLEU Score [6] [25] | Standard metrics for evaluating the performance of speech or text decoding pipelines. |

Decision Framework and Visual Workflow

The choice between traditional and modern approaches is not a matter of which is universally better, but which is the most appropriate tool for the specific research question and context [30].

Interpretation of the Workflow

This workflow provides a systematic guide for researchers:

- Path A (Traditional ML) is triggered by data and constraints that favor simplicity and interpretability. It is the recommended starting point for well-defined problems with limited data [26] [30] [5].

- Path B (Modern AI) is necessary when dealing with the complexity and scale of data that defies manual feature engineering. Its power comes with significant computational and data demands [26] [28].

- The iterative loop emphasizes that model selection is rarely linear. If a traditional model fails to meet performance targets, it may indicate the need for a more complex modern approach. Conversely, if a modern model is overfitting, incorporating traditional feature engineering might be beneficial.