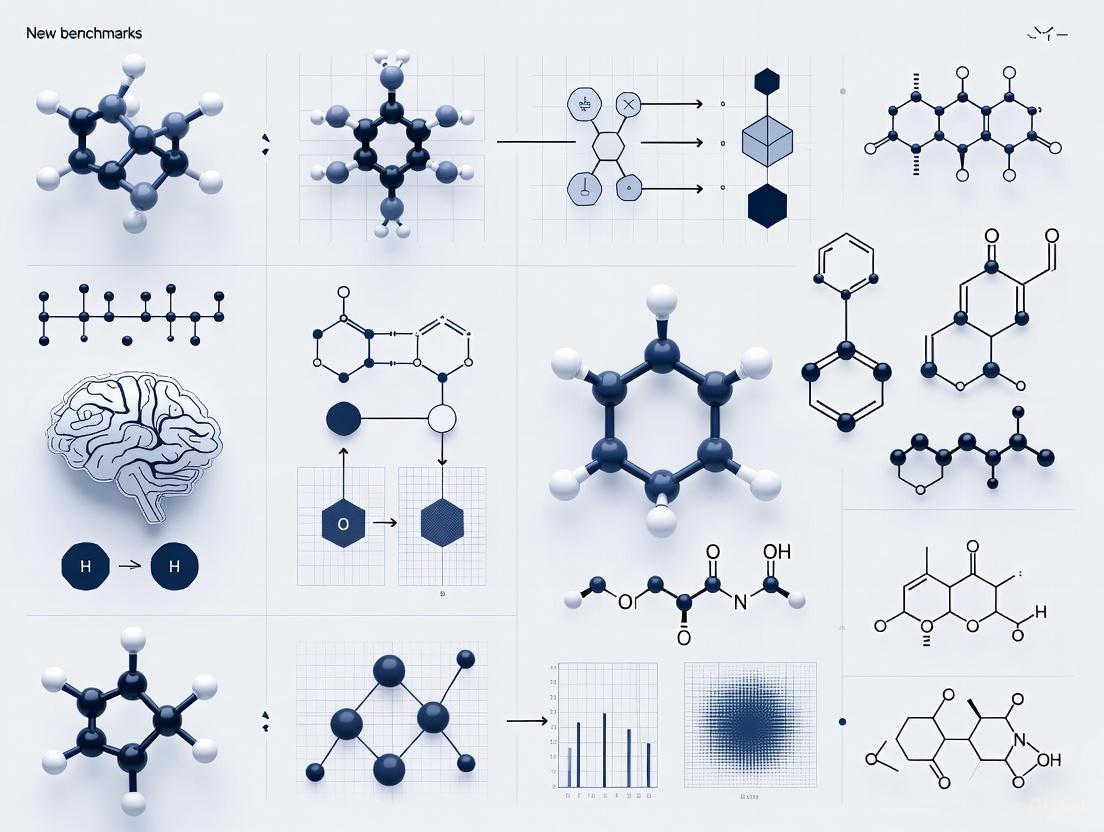

Benchmarking Predictive Coding Networks: New Tools and Benchmarks for Scalable, Biologically-Plausible AI in Drug Discovery

This article explores the latest advancements in benchmarking Predictive Coding Networks (PCNs), a class of biologically-plausible neural networks.

Benchmarking Predictive Coding Networks: New Tools and Benchmarks for Scalable, Biologically-Plausible AI in Drug Discovery

Abstract

This article explores the latest advancements in benchmarking Predictive Coding Networks (PCNs), a class of biologically-plausible neural networks. With the recent introduction of specialized tools like the PCX library, the field is tackling long-standing challenges of scalability and efficiency. We provide a comprehensive overview for researchers and drug development professionals, covering the foundational theory of PCNs, new methodological frameworks for their application, solutions for troubleshooting optimization, and rigorous validation against traditional models. The content synthesizes current research to highlight how robust PCN benchmarks can accelerate their adoption in critical areas like target validation and drug-target interaction (DTI) prediction, potentially leading to more efficient and cost-effective therapeutic development.

The Scalability Challenge in Predictive Coding: Why New Benchmarks Are Needed

Predictive coding (PC) has emerged as a dominant theoretical framework in neuroscience, proposing that the brain is fundamentally a prediction machine that actively anticipates incoming sensory inputs rather than passively processing them [1] [2]. This theory posits that the brain constantly maintains and updates internal generative models of the environment, using top-down connections to convey predictions and bottom-up connections to signal the mismatch between these predictions and actual sensory input—the prediction error [1] [3].

Originally developed to explain neural phenomena in sensory processing, particularly in the visual cortex, predictive coding provides a unifying principle for cortical function across domains, including language and interoception [1] [4]. The core computational objective is to minimize prediction error, which can be achieved either by updating internal models (perceptual learning) or by acting to change sensory input (active inference) [1]. This framework has since transcended its neuroscientific origins to inspire a class of biologically plausible machine learning algorithms that offer alternatives to traditional backpropagation-trained neural networks [5] [2].

This technical guide examines predictive coding from its theoretical foundations in neuroscience to its implementation in artificial neural networks, framing the discussion within the context of establishing new benchmarks for predictive coding network research. We synthesize recent experimental evidence, detail computational methodologies, and provide quantitative comparisons to equip researchers with the tools necessary to advance this rapidly evolving field.

Theoretical Foundations and Neural Evidence

Core Theoretical Framework

Predictive coding conceptualizes the brain as a hierarchical inference system organized to minimize surprise or free energy [1] [2]. The core architecture consists of:

- Top-down predictive signals: Generative models at higher hierarchical levels attempt to predict activities in lower levels.

- Bottom-up prediction errors: The discrepancy between actual input and top-down predictions is propagated upward to update internal models.

- Precision weighting: The brain estimates the reliability or predictability of sensory signals, weighting prediction errors accordingly—a process potentially linked to attention [1].

This framework inverts the classical view of perception as a bottom-up process, suggesting that perception is primarily driven by top-down predictions, with sensory inputs only shaping perception to the extent that they generate prediction errors [1].

Hierarchical Processing in the Cortex

The predictive coding architecture is instantiated in the brain as a cortical hierarchy, with different levels processing predictions and prediction errors at varying temporal and spatial scales [4]. A key study analyzing fMRI data from 304 participants listening to speech found that frontoparietal cortices predict higher-level, longer-range, and more contextual representations compared to temporal cortices [4]. This research demonstrated that enhancing deep language models with multi-timescale predictions improves their alignment with brain activity, revealing a hierarchical organization where higher-order areas generate predictions spanning longer temporal ranges (up to 8 words ahead, approximately 3.15 seconds) [4].

Table: Hierarchical Organization of Predictive Signals in Human Cortex During Speech Processing

| Cortical Region | Prediction Timescale | Representation Level | Key Function |

|---|---|---|---|

| Prefrontal Cortex | Longer-range (~8 words) | High-level, contextual | Predictive integration over extended contexts |

| Frontoparietal Cortices | Medium to long-range | Contextual semantic | Integration of meaning across sentences |

| Temporal Cortices | Short to medium-range | Syntactic & lexical | Local structure and word-level prediction |

| Auditory Cortex | Immediate | Acoustic-phonetic | Low-level speech sound processing |

Neural Signatures of Predictive Coding

Empirical support for predictive coding comes from various neural response phenomena:

Reduced BOLD signals for predictable stimuli: fMRI studies show decreased activity in sensory areas when stimuli are predictable, consistent with "explaining away" of predicted input [3]. For example, Alink et al. (2010) found lower BOLD responses in V1 for visual stimuli presented at predictable versus unpredictable time points [3].

Mismatch responses: Neural populations show characteristic responses to violated expectations, though the interpretation of these signals remains debated [6]. Some studies report null findings for prediction error signals in passive viewing paradigms, with error-like responses emerging primarily during active tasks [6].

Non-classical receptive field effects: The Rao and Ballard (1999) model explained how predictive coding accounts for phenomena like extra-classical receptive field effects, where a neuron's response to a stimulus in its receptive field is modulated by contextual information outside it [1] [6].

The following diagram illustrates the core hierarchical predictive coding circuitry and information flow:

Figure 1: Hierarchical Predictive Coding Circuit. Top-down predictions flow downward, while bottom-up prediction errors flow upward, with each level attempting to explain away activity at the level below.

Implementation in Artificial Neural Networks

From Biological Principles to Machine Learning

Translating predictive coding theory into artificial neural networks involves creating systems where:

- Hierarchical generative models attempt to predict inputs to lower levels

- Local weight updates minimize prediction error without backpropagation through time

- Dual populations of neurons represent predictions and prediction errors [5] [2]

This approach offers potential advantages over standard deep learning, including greater biological plausibility, local learning rules, and inherent capacity for unsupervised representation learning [5].

Predictive Coding Network Architecture

A standard predictive coding network (PCN) consists of layers of latent variables ( L \geq 1 ), with each layer attempting to predict the state of the layer below [2]. The core components include:

- Weights ( \mathbf{W}^{(l)} ) from layer ( l+1 ) to layer ( l )

- Preactivations ( \mathbf{a}^{(l)} = \mathbf{W}^{(l)} \mathbf{x}^{(l+1)} )

- Predictions ( \hat{\mathbf{x}}^{(l)} = f^{(l)}(\mathbf{a}^{(l)}) ) where ( f^{(l)} ) is often a nonlinear function

- Prediction errors ( \boldsymbol{\varepsilon}^{(l)} = \mathbf{x}^{(l)} - \hat{\mathbf{x}}^{(l)} )

The network minimizes the total squared prediction error, or energy: ( \mathcal{L} = \frac{1}{2} \sum_{l=0}^{L-1} \|\boldsymbol{\varepsilon}^{(l)}\|^2 ) [2].

Algorithmic Variants and Performance

Recent research has produced several PC-inspired algorithms with varying biological plausibility and performance characteristics:

Table: Comparison of Predictive Coding-Inspired Neural Network Models

| Model/Algorithm | Key Innovation | Plausibility | Performance | Key Reference |

|---|---|---|---|---|

| PredNet | Combines CNN with LSTM and autoregressive prediction | Medium | State-of-the-art in video prediction | Lotter et al. [5] |

| Forward-Forward Algorithm | Replaces backpropagation with two forward passes | Medium | Comparable to backprop on simple tasks | Hinton (2022) [5] |

| Predictive Coding Light (PCL) | Spiking neural network that suppresses predictable spikes | High | Reproduces V1 receptive fields; classification | Gütlin & Auksztulewicz (2025) [7] |

| Whittington & Bogacz | Approximates backpropagation using local updates | Medium | Matches backprop on MNIST | [2] |

Experimental comparisons show that PC-inspired models, especially locally trained predictive models, exhibit key PC-like behaviors (mismatch responses, formation of priors, learning of semantic information) better than supervised or untrained recurrent neural networks [5]. These models also demonstrate that activity regularization evokes mismatch response-like effects, suggesting it may serve as a proxy for the energy-saving principles of PC [5].

Experimental Protocols and Methodologies

Evaluating Predictive Coding Signatures in Artificial Networks

Gütlin and Auksztulewicz (2025) established a rigorous protocol for assessing whether PC-inspired algorithms reproduce hallmark features of predictive processing [5]. Their methodology provides a template for benchmarking PC networks:

1. Mismatch Response Assessment

- Protocol: Train RNNs with PC-inspired objectives, then measure response amplification to unexpected versus expected inputs.

- Stimuli: Sequences with occasional deviants from established patterns.

- Metrics: Difference in activation strength between predicted and unpredicted stimuli.

- Key Finding: PC-inspired models show stronger mismatch responses than supervised models, with activity regularization enhancing this effect [5].

2. Prior Formation Testing

- Protocol: Examine how networks build internal representations after exposure to structured inputs.

- Method: Analyze hidden layer representations before and after training on datasets with statistical regularities.

- Result: PC models develop more structured internal representations that reflect environmental statistics [5].

3. Semantic Learning Evaluation

- Protocol: Assess whether networks learn meaningful feature representations without explicit labels.

- Approach: Use learned representations for downstream classification tasks.

- Outcome: PC models learn semantically rich representations supporting zero-shot generalization [5].

The experimental workflow for this comprehensive evaluation is summarized below:

Figure 2: Predictive Coding Model Evaluation Workflow. Comprehensive assessment protocol for comparing PC-inspired models against supervised approaches and biological benchmarks.

Predictive Coding Light: A Neuromorphic Implementation

The PCL network exemplifies a biologically constrained PC implementation [7]:

Network Architecture:

- Input: Event camera data with ON/OFF brightness events

- Simple cell layer: Detects basic visual features

- Complex cell layer: Builds position-invariant representations

- Inhibitory connections: Short- and long-range lateral inhibition plus top-down inhibition

Training Protocol:

- Learning rule: Spike-timing-dependent plasticity (STDP)

- Inhibitory STDP: Learns to suppress predictable spikes

- Dataset: Natural images for feature development

- Evaluation: Sinusoidal grating responses and classification tasks

Key Findings:

- Reproduces simple and complex cell receptive fields found in V1

- Exhibits surround suppression, orientation-tuned suppression, and cross-orientation suppression

- Achieves energy efficiency through predictable spike suppression [7]

Essential Computational Tools for Predictive Coding Research

Table: Quantitative Analysis Tools for Predictive Coding Research

| Tool/Platform | Primary Function | Relevance to PC Research | Key Features |

|---|---|---|---|

| PyTorch/TensorFlow | Deep learning framework | Implementing PCN architectures | Automatic differentiation, GPU acceleration |

| SPSS | Statistical analysis | Analyzing behavioral and neural data | Comprehensive statistical procedures, user-friendly interface |

| R/RStudio | Statistical computing | Data analysis and visualization | Extensive packages for neuroscience, reproducible research |

| MATLAB | Numerical computing | Neural data analysis and modeling | Signal processing toolbox, simulation capabilities |

| MAXQDA/NVivo | Qualitative data analysis | Coding neuroimaging metadata | AI-assisted coding, mixed methods support |

Experimental Paradigms for Human and Animal Studies

Sequence Learning Tasks (e.g., Solomon & Kohn study):

- Stimuli: Oriented gratings in predictable sequences with occasional deviants

- Measures: Neural response (fMRI, EEG, electrophysiology) to expected vs. unexpected stimuli

- Species: Human and non-human primates

- Key Consideration: Active vs. passive viewing conditions affect results [6]

Natural Speech Listening (e.g., Caucheteux et al. study):

- Stimuli: Audiobook narratives (4.6 hours total)

- Participants: 304 individuals

- Imaging: fMRI during passive listening

- Analysis: Linear mapping between deep language model activations and brain responses [4]

Event-Based Vision Paradigm (for PCL networks):

- Stimuli: Natural images from event-based cameras

- Measures: Spike patterns, receptive field properties, classification accuracy

- Evaluation: Comparison to biological V1 response properties [7]

The convergence of neuroscientific theory and machine learning implementation has positioned predictive coding as a foundational framework for understanding brain function and developing more biologically plausible artificial intelligence. Current evidence demonstrates that predictive coding networks can capture essential computational principles of neural processing while offering practical advantages for unsupervised representation learning [5] [7].

Moving forward, establishing new benchmarks for predictive coding research requires:

- Standardized evaluation protocols that assess both functional performance and biological plausibility

- Multi-scale validation linking computational models to neural data across different measurement modalities

- Energy efficiency metrics that account for both computational and biological constraints

- Cross-species comparisons to identify conserved predictive processing principles

As predictive coding continues to bridge neuroscience and artificial intelligence, it promises not only to unravel the computational bases of human cognition but also to inspire the next generation of energy-efficient, robust machine learning systems [5] [4] [7]. The frameworks, methodologies, and resources outlined in this technical guide provide a foundation for researchers to advance these complementary goals.

Backpropagation (BP) is the foundational algorithm that powers modern deep learning, enabling the training of sophisticated artificial intelligence systems including large language models. However, BP faces significant biological plausibility and hardware efficiency limitations, as it is energy-intensive and unlikely to be implemented in biological brains [8]. Predictive coding (PC), a brain-inspired computational framework, has emerged as a promising alternative that relies on local updates and predictive processes rather than global error propagation. Despite theoretical advantages, PC networks (PCNs) have historically struggled to match BP's performance in large-scale applications, creating a significant scalability bottleneck that has limited their practical adoption [9]. Recent research has identified this scalability problem as one of the most important open challenges in the field, galvanizing community efforts to bridge this performance gap [10] [11].

The core hypothesis of predictive coding originates from neuroscience, proposing that the brain computes predictions of observed input and compares these predictions to actual received input. The difference between prediction and reality (prediction error) drives learning through locally computed updates, requiring only local information and potentially enabling more efficient hardware implementations [12]. While this framework shows considerable promise for creating more biologically plausible and energy-efficient AI systems, its practical implementation has revealed fundamental scalability limitations that this whitepaper examines in detail.

Fundamental Scalability Limitations in Predictive Coding

Architectural and Computational Bottlenecks

Predictive coding networks encounter several fundamental limitations when scaled to deeper architectures. The primary issue stems from exponential decay of feedback signals during the iterative inference process. As errors propagate from the output layer back through multiple hierarchical layers, feedback signals diminish rapidly, resulting in vanishing updates for early layers [13]. This problem is compounded by the sequential dependency of PC's inference steps, which creates a computational bottleneck. Whereas backpropagation computes gradients in a single backward pass, PC requires multiple iterations of "guess-and-check" where neurons predict each other's activities and adjust their own activities to improve future predictions [14].

Another critical limitation identified in recent benchmarking efforts is the energy concentration problem. Research has demonstrated that in deep PCNs, energy becomes concentrated in the final layers, with the energy in the last layer being orders of magnitude larger than in the input layer. This imbalance persists even after performing multiple inference steps and creates exponentially small gradients as network depth increases, severely hampering training effectiveness [15]. The relationship between learning rates and this energy imbalance shows that while smaller learning rates lead to better performance, they simultaneously exacerbate the energy concentration problem, creating a difficult optimization landscape [15].

Comparative Performance Analysis

Table 1: Performance Comparison Between Predictive Coding and Backpropagation on Various Architectures and Datasets

| Architecture | Dataset | PC Accuracy | BP Accuracy | Performance Gap | Key Limitations Observed |

|---|---|---|---|---|---|

| VGG-7 | CIFAR-10 | Comparable to BP | Baseline | Minimal | PC matches BP performance on medium-depth networks [15] |

| VGG-7 | CIFAR-100 | Comparable to BP | Baseline | Minimal | PC competitive on complex datasets with medium architectures [11] |

| 9-Layer CNN | CIFAR-10 | Decreasing | Increasing | Significant | Performance degradation emerges with depth [11] |

| ResNet-18 | CIFAR-10 | ~65% | ~90% | Substantial | ~25% accuracy drop in deeper residual networks [15] |

| 100+ Layer Networks | Simple Tasks | Previously Untrainable | Strong Performance | Critical | Historical inability to train very deep PCNs [9] |

The performance degradation in deeper networks highlights a fundamental divergence between PC and BP scaling properties. While backpropagation-enabled networks typically improve in performance with increased depth (up to a point), PC networks exhibit a troubling inverse relationship where additional layers degrade performance [15]. This represents a critical bottleneck that has prevented PC from competing with BP in large-scale settings and has recently been posed as a central challenge for the research community [9].

Recent Breakthroughs in Scaling Predictive Coding Networks

μPC: A Solution for Very Deep Networks

A significant breakthrough in scaling PCNs came with the development of μPC, a parameterization method based on Depth-μP that enables stable training of 100+ layer networks. This approach addresses key pathologies in standard PCNs that made deep networks practically untrainable. Through extensive analysis of PCN scaling behavior, researchers identified several instabilities that emerge with increasing depth, including gradient pathologies and activity divergence [9].

The μPC framework provides two crucial advantages for deep network training: First, it enables stable training of very deep (up to 128-layer) residual networks on classification tasks with competitive performance compared to current benchmarks. Second, it facilitates zero-shot transfer of both weight and activity learning rates across different network widths and depths, significantly reducing the need for extensive hyperparameter tuning [9]. This represents a substantial step forward in bridging the scalability gap between PC and BP.

Direct Kolen-Pollack Predictive Coding (DKP-PC)

Another innovative approach addressing PC's scalability limitations is DKP-PC, which simultaneously tackles both feedback delay and exponential decay problems. This method incorporates learnable feedback connections from the output layer to all hidden layers, establishing direct pathways for error transmission [13]. The theoretical improvement is substantial, reducing error propagation time complexity from O(L) to O(1), where L is network depth, enabling parallel parameter updates and significantly enhancing computational efficiency [13].

Table 2: Recent Algorithmic Improvements in Predictive Coding Scalability

| Method | Core Innovation | Theoretical Improvement | Empirical Results | Applicable Scope |

|---|---|---|---|---|

| μPC | Depth-μP parameterization | Enables 100+ layer training | Competitive performance on simple tasks with little tuning | Feedforward and residual networks [9] |

| DKP-PC | Learnable direct feedback connections | O(1) error propagation vs. O(L) | Performance comparable or better than standard PC with improved latency | Potentially generalizable to various architectures [13] |

| PCX Library | JAX-accelerated training | Significant speed-up for hyperparameter search | New SOTA results on multiple benchmarks using larger architectures | General PC research [10] [11] |

| Incremental PC | Modified inference process | Improved convergence properties | Better performance on image classification tasks | Standard PC architectures [11] |

Empirical results demonstrate that DKP-PC achieves performance at least comparable to, and often exceeding, standard PC while offering improved latency and computational performance. By enhancing both scalability and efficiency, this approach narrows the gap between biologically plausible learning algorithms and backpropagation, unlocking the potential of local learning rules for hardware-efficient implementations [13].

Experimental Protocols and Benchmarking Methodologies

Standardized Benchmarking Framework

The development of PCX, a specialized JAX library for accelerated predictive coding training, has enabled comprehensive benchmarking essential for tracking progress in scalability. This library provides a user-friendly interface with minimal learning curve through syntax inspired by PyTorch, extensive tutorials, and full compatibility with Equinox, a popular deep-learning extension of JAX [11]. The library's efficiency gains are substantial, leveraging JAX's Just-In-Time (JIT) compilation to enable researchers to test architectures much larger than commonly used in prior literature [10].

The benchmarking framework employs standardized tasks, datasets, metrics, and architectures to enable consistent comparison across research efforts. The primary tasks focus on computer vision applications: image classification (supervised learning) and image generation (unsupervised learning). Key datasets progress from simpler to more complex: MNIST, FashionMNIST, CIFAR-10, CIFAR-100, and Tiny Imagenet. This graduated complexity allows researchers to test algorithms from the easiest models (feedforward networks on MNIST) to more challenging architectures (deep convolutional models) [11].

Experimental Workflow for Scalability Assessment

The experimental protocol for assessing predictive coding scalability follows a systematic workflow that increment increases model complexity while monitoring performance metrics. The process begins with dataset selection across a complexity gradient, proceeds through architecture configuration with increasing depth, implements specific PC algorithm variants, executes the two-phase PC training process (inference followed by learning), and concludes with comprehensive evaluation focused specifically on scaling properties [11] [9].

Critical measurements during evaluation include not only final accuracy but also energy distribution across layers, gradient flow patterns, and convergence speed. These metrics provide insight into the underlying scalability limitations and help diagnose specific failure modes in deeper architectures. The energy distribution metric has proven particularly valuable, as imbalances in energy distribution between layers strongly correlate with performance degradation in deep networks [15].

Research Reagent Solutions

Table 3: Essential Research Tools and Resources for Predictive Coding Research

| Research Tool | Function | Implementation Details | Accessibility |

|---|---|---|---|

| PCX Library | Accelerated training and benchmarking | JAX-based, Equinox-compatible, JIT compilation | Open-source (https://github.com/liukidar/pcax) [11] |

| Standardized Benchmarks | Performance comparison across studies | 6 tasks, 5 datasets, multiple architectures | Publicly available in PCX [10] |

| μPC Parameterization | Enables deep network training | Depth-μP based scaling rules | Implementation in accompanying code [9] |

| DKP-PC Algorithm | Reduces error propagation delay | Learnable direct feedback connections | Description in publication [13] |

The development of specialized tools and resources has been instrumental in advancing PC scalability research. The PCX library provides the computational foundation, while standardized benchmarks ensure consistent comparison across studies. The μPC parameterization and DKP-PC algorithm represent specific methodological advances that directly address core scalability limitations. These research "reagents" enable systematic investigation of the PC scalability problem and facilitate reproducible progress in the field [11] [9] [13].

Signaling Pathways and Computational Frameworks

Comparative Signaling Pathways: PC vs BP

The fundamental computational differences between backpropagation and predictive coding create distinct signaling pathways that directly impact their scalability properties. Backpropagation employs a sequential two-phase process: a single forward pass followed by a global backward pass that propagates error signals from output to input layers. This creates a tight coupling between forward and backward computations, requiring precise matching of operations and limiting parallelism [8] [13].

In contrast, predictive coding utilizes an iterative inference phase where neuron activities undergo multiple equilibration steps before weight updates occur. During this phase, each layer generates predictions for subsequent layers and computes local errors based on mismatches between predictions and actual activities. These local errors drive both activity adjustments during inference and weight updates during learning. While this localized approach offers theoretical advantages for parallel implementation and biological plausibility, it introduces iterative dependencies that create computational bottlenecks in deep networks [8] [14].

Error Propagation Pathways in Deep Networks

The critical scalability limitation in predictive coding stems from its error propagation pathway. In standard PC, error signals must travel from the output layer back to early layers through multiple iterative steps during the inference phase. As these errors propagate through the network hierarchy, they experience exponential decay, resulting in vanishing updates for early layers [13]. This problem compounds with network depth, explaining why traditional PCNs perform adequately on shallow networks but fail on deeper architectures.

Recent innovations like DKP-PC address this fundamental limitation by introducing direct feedback pathways that bypass the hierarchical propagation process. By establishing learnable feedback connections from the output layer directly to all hidden layers, these approaches create shortcut connections for error signals, reducing the effective propagation path from O(L) to O(1) [13]. Similarly, μPC addresses numerical instabilities that emerge in deep networks through careful parameterization that maintains stable signal propagation across layers [9].

The scalability gap between predictive coding and backpropagation represents both a significant challenge and opportunity for the machine learning research community. While substantial progress has been made through methods like μPC and DKP-PC that enable training of 100+ layer networks, significant work remains to achieve parity with backpropagation across diverse architectures and tasks [9] [13]. The development of standardized benchmarks and accelerated libraries like PCX provides the necessary infrastructure for systematic community progress on this problem [10] [11].

Future research must focus on several critical directions: First, extending current scaling successes to more complex architectures including transformers and graph neural networks. Second, demonstrating competitive performance on large-scale datasets beyond the current capabilities on CIFAR and Tiny Imagenet. Third, further elucidating the theoretical relationship between predictive coding and trust-region optimization methods to better understand PC's learning dynamics [8]. Finally, exploring hardware co-design opportunities that leverage PC's local update properties for more energy-efficient implementation [8] [15].

Bridging the scalability gap between predictive coding and backpropagation would represent a milestone in developing more biologically plausible and hardware-efficient learning algorithms. The recent progress documented in this whitepaper suggests that this goal is increasingly attainable, potentially unlocking new paradigms for efficient AI systems that more closely mirror the remarkable capabilities of biological neural computation.

Predictive coding (PC) has emerged as a prominent neuroscientific theory and a promising framework for machine learning, positing that the brain continuously generates predictions about sensory inputs and updates internal models based on prediction errors [5]. Despite significant theoretical interest and a growing body of research, the field faces a critical challenge: the absence of standardized benchmarks. Research in predictive coding networks (PCNs) has been characterized by isolated efforts where most works "propose their own tasks and architectures, do not compare one against each other, and focus on small-scale tasks" [11]. This lack of a common framework makes reproducibility difficult, impedes direct comparison of results across studies, and ultimately hinders progress toward solving the field's most significant open problem—scalability [16] [11] [15].

This whitepaper delineates the core dimensions of this benchmarking problem, arguing that inconsistent tasks, architectures, and evaluation criteria have created a fragmented research landscape. By synthesizing recent community-driven efforts to establish baselines, we provide a structured analysis of the current state and propose a pathway toward unified evaluation standards that can accelerate progress in predictive coding research.

The Core Dimensions of the Benchmarking Problem

Proliferation of Non-Comparable Tasks and Datasets

The predictive coding literature utilizes a wide array of tasks and datasets with varying complexities, making cross-study comparisons nearly impossible. This inconsistency obscures the true progress of the field and the relative merits of different proposed algorithms.

Table 1: Inconsistent Task and Dataset Usage in PC Research

| Research Domain | Common Tasks/Datasets | Typical Model Scale | Key Limitations |

|---|---|---|---|

| Computer Vision | MNIST, FashionMNIST, CIFAR-10, CIFAR-100, Tiny Imagenet [11] [15] | Small to Medium (e.g., VGG-7) [15] | Focus on small-scale tasks; performance degrades on deeper models [15] |

| Novelty Detection | Custom correlated patterns, natural images [17] | Varies (e.g., rPCN, hPCN) [17] | High capacity but lacks standardized benchmarks for comparison [17] |

| Brain Modelling | Mismatch responses, prior formation, semantic learning [5] | Simple RNN architectures [5] | Evaluated on plausibility, not performance; not scaled to complex tasks [5] |

| Theoretical Analyses | Abstract, minimal synthetic settings (e.g., linear environments) [18] | Deep Linear Networks [18] | High tractability but limited real-world applicability [18] |

Inconsistent Model Architectures and Algorithmic Variations

A significant barrier to comparison is the absence of standard model architectures. Researchers employ diverse network structures and PC algorithm variants, making it difficult to discern whether performance differences stem from the core principles of predictive coding or specific implementation choices.

- Architectural Inconsistency: Studies range from simple, analytically tractable models like Recurrent PCNs (rPCNs) to more complex Hierarchical PCNs (hPCNs) and deep convolutional models [11] [17]. There is no agreed-upon "model zoo" for controlled experimentation.

- Algorithmic Variations: Multiple PC-inspired training objectives exist, including standard PC, incremental PC, PC with Langevin dynamics, and nudged PC [11]. Other related algorithms like Equilibrium Propagation (EP) and the Forward-Forward algorithm are sometimes compared, but not systematically [5]. This "proliferation of variations" lacks a unified framework for evaluation [11].

Divergent Evaluation Criteria and Scalability Gaps

Evaluation metrics and the focus of analysis vary significantly across studies, leading to an incomplete understanding of PCNs' capabilities and limitations.

- Performance vs. Plausibility: Some studies focus primarily on task performance (e.g., classification accuracy, image generation quality) [16] [15], while others prioritize biological plausibility and the emergence of brain-like responses [5]. This divergence in goals complicates direct comparison.

- The Scalability Gap: A critical and recurring finding is that PCNs perform comparably to backpropagation-trained networks on small-scale tasks but fall short as model depth and complexity increase. For instance, while PC can match backprop on a 5-7 layer convolutional network, its performance decreases on deeper models like 9-layer networks or ResNets, whereas backprop's performance continues to improve [15]. This highlights scalability as a primary challenge that inconsistent benchmarking has obscured.

Community Response: Toward Unified Benchmarks

Recent collaborative efforts have sought to address these benchmarking problems directly. The development of the PCX library and associated benchmarks represents a significant step toward standardization [16] [11].

The PCX Library: A Tool for Standardization

PCX is an open-source library built on JAX, designed for accelerated training of PCNs. Its core contributions to solving the benchmarking problem are:

- Performance and Simplicity: It offers a user-friendly interface and leverages JAX's Just-In-Time (JIT) compilation for efficiency, enabling larger-scale experiments and hyperparameter searches that were previously impractical [11] [15].

- Modularity and Compatibility: Its modular, object-oriented design allows researchers to easily construct and compare different PCN architectures and algorithms within a consistent codebase [15].

- Reproducibility: By providing a common framework, PCX mitigates the issue of irreproducible results stemming from implementation details [11].

Proposed Standardized Benchmarks

The collaborative work around PCX proposes a uniform set of benchmarks to serve as a foundation for future research, primarily in computer vision [11] [15].

Table 2: Proposed Standardized Benchmarks for Predictive Coding Networks

| Benchmark Category | Proposed Datasets | Proposed Architectures | Key Evaluation Metrics | Purpose & Rationale |

|---|---|---|---|---|

| Image Classification (Supervised) | MNIST, CIFAR-10, CIFAR-100, Tiny Imagenet [11] [15] | Feedforward networks, Small/Medium CNNs (e.g., VGG-7), Deep CNNs (e.g., 9-layer), ResNet-18 [15] | Test Accuracy [15] | Test scalability from easiest (MNIST) to complex models where PC currently fails [11] |

| Image Generation (Unsupervised) | Colored image datasets beyond MNIST/FashionMNIST [11] | To be defined generative architectures | Generation quality metrics | Extend PC beyond classification and simple generation tasks [11] |

| Comparative Baselines | Above datasets | Models of same complexity as PCNs | Performance gap to Backpropagation | Direct, controlled comparison against backprop and other bio-plausible methods [15] |

Experimental Protocols and Key Findings

Using the PCX library, extensive benchmarks have been run, providing new state-of-the-art baselines and illuminating persistent challenges. The following workflow outlines the standard experimental procedure for benchmarking a PCN.

Detailed Methodology for Benchmarking Experiments:

- Model Initialization: Construct a PCN with a defined hierarchical structure (L layers). The model is a hierarchical Gaussian generative model with parameters θ = {θ₀, θ₁, ..., θₗ} [11].

- Inference and Training Phase:

- For each input, the network performs inference by minimizing its energy (a measure of total prediction error) through an iterative process of updating neuronal activities [15] [19].

- Following inference, synaptic weights (parameters θ) are updated using a local plasticity rule that depends on the activities of pre- and post-synaptic neurons to minimize the network's energy [17].

- Evaluation:

- Task Performance: Primary metrics like classification accuracy are calculated on a held-out test set [15].

- Internal Dynamics Analysis: The energy (or precision) of prediction errors at different network layers is monitored. A key diagnostic is the "energy imbalance," the ratio of energies in subsequent layers, which is hypothesized to be linked to scalability [15].

Key Insights from Standardized Benchmarking

This unified approach has yielded critical insights into the current state of PCNs:

- State-of-the-Art Performance: Standardized benchmarks have allowed PCNs to achieve new SOTA results on multiple tasks and datasets, demonstrating for the first time that PC can perform well on complex datasets like CIFAR-100 and Tiny Imagenet, reaching performance comparable to backprop in medium-sized architectures [16] [15].

- The Scalability Bottleneck Identified: The primary limitation preventing PC from scaling to very deep networks is an energy imbalance, where the energy in the last layer is orders of magnitude larger than in the input layers. This imbalance prevents effective credit assignment to earlier layers during inference, leading to a performance drop in deep models [15]. The relationship between this energy imbalance and performance can be visualized as follows.

The Scientist's Toolkit: Essential Research Reagents

To facilitate reproducible research, the following table details key computational "reagents" essential for conducting benchmarks in predictive coding.

Table 3: Essential Research Reagents for Predictive Coding Benchmarking

| Reagent / Tool | Function / Purpose | Example / Specification |

|---|---|---|

| PCX Library | Primary framework for building and training PCNs. Provides efficiency, modularity, and a standard interface [11] [15]. | JAX-based library; compatible with Equinox; offers functional and object-oriented interfaces [11]. |

| Standardized Datasets | Common ground for evaluating and comparing model performance across studies. | MNIST, CIFAR-10, CIFAR-100, Tiny Imagenet [11] [15]. |

| Reference Architectures | Baseline model designs to isolate the effect of algorithmic changes from architectural ones. | Feedforward nets, VGG-7, 9-layer CNN, ResNet-18 [15]. |

| PC Algorithm Suite | Implementations of different PC variants for controlled ablation studies. | Standard PC, Incremental PC (iPC), PC with Langevin dynamics, Nudged PC [11]. |

| Energy Diagnostic Tools | Code to monitor and analyze the energy distribution across network layers during training/inference. | Calculates layer-wise energy and energy imbalance ratios [15]. |

The inconsistent use of tasks, architectures, and evaluation criteria has been a major impediment to progress in predictive coding research. The recent community-driven initiative, exemplified by the PCX library and its associated benchmarks, provides a concrete foundation for addressing this problem. By adopting these standardized benchmarks, the field can move beyond isolated proofs-of-concept and focus collectively on the fundamental challenge of scalability. Future research must build upon these baselines, using the identified energy imbalance and other diagnostics to develop more robust and scalable PC algorithms. The pathway forward requires a continued commitment to reproducible, comparable, and scalable experimental practices.

The pursuit of brain-inspired, energy-efficient learning algorithms represents a major frontier in machine learning (ML) research. Predictive Coding Networks (PCNs), grounded in neuroscientific theory, offer a compelling alternative to backpropagation (BP), the dominant but computationally intensive algorithm powering most modern AI [15]. However, the field has been hampered by a critical bottleneck: the lack of a standardized, high-performance software framework to test PCNs' scalability and performance on complex, large-scale tasks. This has prevented rigorous comparison of results across studies and slowed collective progress [16]. To address this, researchers have introduced PCX, a Python library built on JAX specifically designed for the accelerated development and benchmarking of PCNs [20] [15]. This technical guide details how PCX serves as a foundational tool, enabling reproducible, state-of-the-art experiments that establish new benchmarks and clarify the path forward for scalable, bio-plausible learning algorithms.

PCX Library: Core Architecture and Features

PCX is engineered to overcome previous limitations in PCN research by prioritizing performance, modularity, and ease of use. Its architecture is designed for both flexibility and computational efficiency, which are crucial for extensive experimentation.

Foundational Design and Compatibility

- JAX-Based Foundation: PCX leverages JAX, a high-performance numerical computing library, which provides automatic differentiation and just-in-time (JIT) compilation. This foundation is key to PCX's efficiency, as JIT compilation can lead to significant speed-ups during the iterative inference and learning processes characteristic of PCNs [15].

- Functional and Object-Oriented Paradigms: The library offers a unified interface, supporting both a functional approach and an imperative object-oriented interface for building PCNs. This dual approach provides researchers with flexibility, making the library accessible to those familiar with frameworks like PyTorch while remaining fully compatible with the broader JAX ecosystem [15] [16].

PCX provides modular primitives that act as building blocks for constructing complex PCN architectures. This modularity is essential for testing novel variations of PC algorithms without rebuilding core components from scratch. Key primitives include [15]:

- A module class for creating network layers and components.

- Vectorised nodes for efficient parallel computation.

- Optimizers tailored for PCN training.

- Layers that can be combined to create a complete PCN.

Table: Core Components of the PCX Library

| Component | Primary Function | Key Advantage |

|---|---|---|

| JAX Backend | Provides accelerated linear algebra & gradient computation | Enables JIT compilation & hardware acceleration (GPU/TPU) [20] [15] |

| Module Class | Serves as a base class for all network layers | Promotes code reusability and modular design [15] |

| Functional API | Allows for stateless function calls for PCN operations | Offers flexibility and compatibility with JAX transformations [15] |

| Object-Oriented API | Provides an imperative interface for building PCNs | Eases adoption for researchers from other ML frameworks [15] |

Establishing New Benchmarks with PCX

The development of PCX has enabled the creation of a comprehensive set of benchmarks, providing the community with standardized tasks to evaluate and compare PCN variations systematically.

Experimental Design and Methodology

The benchmarking effort focuses on computer vision tasks, specifically image classification (supervised learning) and image generation (unsupervised learning), due to their established popularity and simplicity [15]. The core experimental protocol involves a controlled comparison:

- Dataset Selection: Benchmarks utilize established datasets of varying complexity, including FashionMNIST, CIFAR-10, CIFAR-100, and Tiny Imagenet [15].

- Model Architecture: PCNs are constructed to mirror the architecture of standard deep learning models trained with BP, ensuring a direct comparison of the learning algorithm, not the model structure. This includes convolutional networks with 5, 7, and 9 layers, as well as deeper ResNet architectures [15] [16].

- Training and Evaluation: PCNs are trained using existing PC algorithms (e.g., iPC) and adaptations of other bio-plausible methods. Performance is primarily evaluated based on test accuracy for classification tasks. The internal dynamics, such as energy distribution across layers, are also analyzed to diagnose scalability issues [15].

Key Benchmarking Results

Extensive tests conducted with PCX reveal both the promise and current limitations of PCNs. The results represent a new state-of-the-art for PCNs on the provided tasks and datasets [16].

Table: Benchmark Results of Predictive Coding Networks vs. Backpropagation

| Dataset | Model Architecture | PCN Test Accuracy | BP Test Accuracy | Performance Gap |

|---|---|---|---|---|

| CIFAR-10 | VGG-7 | ~91.5% | ~91.5% | Parity [15] |

| CIFAR-10 | ResNet-18 | ~85.0% | ~93.0% | -8.0% [15] |

| CIFAR-100 | Convolutional (5-7 layers) | Matches BP | Matches BP | Parity [15] |

| Tiny Imagenet | Convolutional (5-7 layers) | Matches BP | Matches BP | Parity [15] |

| Deeper Models (e.g., 9-layer Conv, ResNet) | Performance decreases | Performance increases | Widening [15] |

The data demonstrates that PCNs can achieve performance on par with BP on small-to-medium-scale architectures (e.g., VGG-7 on CIFAR-10). However, a critical scalability problem emerges with deeper models. While BP's performance continues to improve with model depth, the performance of PCNs begins to decrease, highlighting a fundamental challenge for future research [15].

Figure: Experimental Benchmarking Workflow

Analysis of Scalability and Energy Dynamics

A key contribution of the research enabled by PCX is a diagnostic analysis of why PCNs fail to scale as effectively as BP. The primary issue identified is the imbalanced distribution of energy across the network's layers during learning [15].

The Energy Imbalance Problem

In a well-functioning multi-layer PCN, prediction errors (energy) should be effectively communicated from the top layers down to the bottom layers to guide learning. However, analysis reveals that the energy in the last layer is orders of magnitude larger than in the input layer. This imbalance persists even after several inference steps, making it difficult for the inference process to propagate energy effectively back to the first layers [15]. This problem is exacerbated as network depth increases, leading to exponentially small gradients in the earlier layers and hindering their ability to learn.

Relationship Between Learning Rate and Energy

Further investigation using PCX uncovered a critical relationship between the learning rate for the network's states (γ) and this energy imbalance. The analysis shows that:

- Small learning rates lead to better model performance but also result in larger energy imbalances between layers.

- Larger learning rates reduce the energy imbalance but degrade final model performance [15].

This creates a challenging trade-off: the hyperparameter settings that yield the best accuracy for a given architecture also induce the energy dynamics that prevent PCNs from scaling to deeper architectures as effectively as backpropagation. This insight, made possible by the efficient experimentation PCX allows, pinpoints a central issue that future PCN research must address.

Figure: Energy Dynamics in PCN Training

The Scientist's Toolkit: Essential Research Reagents

The following table details the key software and data "reagents" required to conduct PCN research and benchmarking using the PCX library.

Table: Essential Research Reagents for PCN Experimentation

| Tool/Reagent | Type | Function in Research | Key Features |

|---|---|---|---|

| PCX Library | Core Software Framework | Provides the primary environment for building, training, and analyzing PCNs. | JAX-based, modular primitives, object-oriented and functional APIs [20] [15]. |

| JAX | Numerical Computation Library | Serves as the foundational engine for PCX, enabling accelerated linear algebra and automatic differentiation. | JIT compilation, GPU/TPU support, automatic differentiation [20]. |

| Standard Image Datasets | Benchmarking Data | Serves as the standardized task for evaluating PCN performance and scalability. | CIFAR-10, CIFAR-100, Tiny Imagenet [15]. |

| Predictive Coding Algorithms | Algorithmic Implementations | The core learning rules being tested and compared (e.g., iPC). | Implemented as modular, swappable components within PCX [15] [16]. |

| Visualization Tools | Analysis Utilities | Used to diagnose internal network dynamics, such as energy flow and gradient distributions. | Custom scripts for plotting energy ratios and accuracy metrics [15]. |

The introduction of the PCX library marks a significant advancement in predictive coding research. By providing a high-performance, standardized framework, it enables rigorous benchmarking and has helped establish a new state-of-the-art for PCNs on a range of tasks. More importantly, its use has clearly illuminated the field's most pressing challenge: overcoming the energy imbalance that limits scalability in deep architectures. The work sets concrete milestones for the community, including training deep PCNs on complex datasets like ImageNet, applying PCNs to other modalities like graph neural networks and transformers, and ultimately demonstrating that a neuroscience-inspired algorithm can match the scaling properties of backpropagation [15]. PCX thus serves as the essential tool to galvanize community efforts toward achieving brain-like efficiency at scale.

Predictive Coding Networks (PCNs), inspired by neuroscientific theories of brain function, present a promising alternative to backpropagation-trained deep neural networks. Their potential for lower power consumption and greater biological plausibility makes them particularly attractive for next-generation AI hardware and drug discovery applications. However, a critical limitation hinders their widespread adoption: a consistent performance degradation as architectural depth increases. This whitepaper analyzes the fundamental causes behind this scalability issue, presents quantitative evidence from recent benchmarking studies, and outlines methodological approaches for diagnosing and addressing these limitations within a new benchmark-driven research framework. Understanding these constraints is essential for researchers and drug development professionals seeking to leverage PCNs for complex tasks such as molecular property prediction and pharmaceutical image analysis.

Empirical Evidence: Quantifying the Performance Degradation

Recent large-scale benchmarking efforts have systematically documented the inverse relationship between PCN depth and model performance. The following table synthesizes key findings from experiments conducted across standard computer vision datasets, providing a clear quantitative picture of this scalability challenge.

Table 1: Performance Comparison of PCNs vs. Backpropagation (BP) Across Model Depths

| Model Architecture | Dataset | PCN Test Accuracy | BP Test Accuracy | Performance Gap |

|---|---|---|---|---|

| VGG 5-Layer | CIFAR-100 | 74.3% | 75.1% | -0.8% |

| VGG 7-Layer | CIFAR-100 | 70.5% | 77.8% | -7.3% |

| VGG 9-Layer | CIFAR-100 | 61.2% | 79.5% | -18.3% |

| ResNet-18 | CIFAR-10 | ~65% | >90% | ~-25% |

The data reveals a critical trend: while shallow PCNs (e.g., 5-layer VGG) can compete with backpropagation, their performance markedly declines as depth increases to 7 and 9 layers. In contrast, backpropagation-based models continue to improve with added depth [15]. This demonstrates that PCNs currently lack the stable scaling properties required for modern deep learning.

Table 2: Impact of Learning Rate on PCN Layer Energy and Performance

| State Learning Rate (γ) | Test Accuracy | Energy Ratio (Last Layer / First Layer) |

|---|---|---|

| 0.001 | 89.5% | 1,200 |

| 0.01 | 85.1% | 350 |

| 0.1 | 72.3% | 50 |

Further analysis indicates that the learning rate for neuronal states (γ) plays a crucial role. Lower rates yield better accuracy but create a significant energy imbalance between layers. This excessively high energy ratio indicates that the inference process fails to propagate error signals effectively to earlier layers, which is a primary cause of the performance drop in deep architectures [15].

Methodological Framework: Experimental Protocols for Diagnosing Limitations

To systematically investigate the root causes of performance degradation, researchers can adopt the following experimental protocols. These methodologies enable a granular analysis of the internal dynamics within deep PCNs.

Protocol 1: Energy Distribution Profiling

Objective: To quantify the energy imbalance across different layers of a deep PCN during the inference process.

- Model Setup: Train multiple PCN architectures (e.g., 5, 7, and 9-layer convolutional networks) on a standardized dataset like CIFAR-100.

- Inference Monitoring: During both training and evaluation, record the energy (sum of squared errors) for each layer after a fixed number of inference steps.

- Data Extraction: For each model, calculate the energy ratio between the final and first layers (EL / E1). This metric serves as a key indicator of energy flow health.

- Correlation Analysis: Correlate the energy ratio with the final test accuracy across different model depths and hyperparameter settings (especially the state learning rate γ) [15].

Protocol 2: Gradient Flow Analysis

Objective: To track how effectively learning signals propagate backwards through the network's layers.

- Gradient Tracking: Instrument the PCN code to log the norms of the gradients for the weight parameters in each layer throughout training.

- Vanishing Gradient Metric: Compute the ratio of gradient norms between early and late layers (||∇W1|| / ||∇WL||). A rapidly decaying ratio signals the vanishing gradient problem.

- Comparative Baseline: Perform the same analysis on an identical network trained with backpropagation to isolate issues specific to the PCN learning algorithm [15].

Visualizing the Core Problem: Energy Imbalance in Deep PCNs

The following diagram illustrates the fundamental architectural flaw and the resulting energy imbalance that causes performance degradation in deep Predictive Coding Networks.

The diagram above, "Energy Imbalance in a Deep PCN," visually summarizes the core issue: energy (representing prediction error) becomes concentrated in the deeper layers (L4, L5) of the network. The feedback mechanism designed to propagate this energy backwards to earlier layers (L1, L2) is insufficient, creating a massive imbalance (e.g., E5/E1 ≈ 1200). This prevents early layers from receiving adequate learning signals, causing their representations to fail to improve and leading to the overall performance drop [15].

To facilitate rigorous experimentation in PCN research, the following table details key software tools and methodological components.

Table 3: Essential Research Reagents and Tools for PCN Experimentation

| Tool/Component | Type | Primary Function | Implementation Notes |

|---|---|---|---|

| PCX Library | Software Library | Provides an accelerated, user-friendly framework for building and training PCNs in JAX. | Enables just-in-time compilation for significant speed-ups; offers both functional and object-oriented interfaces [15]. |

| Standardized Benchmarks (CIFAR-10/100, Tiny ImageNet) | Dataset & Task | Provides consistent and comparable tasks for evaluating PCN scalability and performance. | Essential for controlled comparisons against backpropagation; includes both classification and generation tasks [10] [15]. |

| Energy Profiler | Diagnostic Tool | Custom code instrumentation to track and log energy values per layer during training and inference. | Critical for calculating the energy ratio metric and diagnosing the imbalance issue [15]. |

| Gradient Norm Monitor | Diagnostic Tool | Tracks the norms of weight gradients for each layer throughout the training process. | Used to identify vanishing gradient problems specific to the PCN learning dynamics [15]. |

The performance drop in deeper PCN architectures is a significant barrier rooted in the fundamental problem of energy imbalance. The empirical evidence and diagnostic protocols presented provide a clear framework for researchers to quantify and address this issue. Future work must focus on developing novel architectural designs and learning rules that promote healthier energy flow across all layers, ultimately enabling PCNs to scale as effectively as backpropagation-based models. Overcoming this challenge is a critical milestone on the path to realizing the full potential of bio-plausible, energy-efficient learning systems in scientific and medical applications.

Implementing Modern PCN Benchmarks: Tools, Datasets, and Best Practices

The field of neuroscience-inspired machine learning has long been hampered by a significant challenge: the inability of predictive coding networks (PCNs) to scale as effectively as models trained with conventional backpropagation (BP). While PCNs have demonstrated promising results on smaller-scale tasks, their performance has historically degraded when applied to deeper architectures and more complex datasets [21] [15]. This scalability issue has persisted for several reasons, including the computational inefficiency of existing PCN implementations, the absence of specialized libraries, and a lack of standardized benchmarks that would enable reproducible comparison and iterative progress [21]. These factors have collectively impeded research into one of the most promising open problems in the field—achieving brain-like computational efficiency.

The PCX library represents a foundational effort to overcome these barriers. Developed as a collaborative initiative between the University of Oxford, Vienna University of Technology, and VERSES AI, PCX is an open-source, JAX-based library specifically designed for accelerated PCN training [21] [15]. Its creation is coupled with the introduction of a comprehensive set of benchmarks, providing the community with a unified framework for evaluating PCN variations. This toolkit enables researchers to perform extensive hyperparameter searches and run experiments on more complex models and datasets than was previously feasible [15]. By tackling the problems of efficiency, reproducibility, and standardized evaluation simultaneously, PCX lays the groundwork for galvanizing community efforts toward solving the scalability problem.

Architectural Design and Core Features

PCX is engineered with a focus on performance, versatility, and ease of adoption. Built upon JAX, it leverages its just-in-time (JIT) compilation capabilities to achieve significant computational speed-ups, a critical advancement given that hyperparameter searches for small convolutional networks could previously take several hours [21] [15]. The library offers a user-friendly interface that balances functional and object-oriented programming paradigms, making it accessible to researchers familiar with popular deep-learning frameworks like PyTorch [21].

The library's architecture is built on several core principles:

- Compatibility: PCX is fully compatible with the broader JAX ecosystem, including libraries like Equinox, ensuring reliability and ease of integration with ongoing research developments [21].

- Modularity: It provides modular primitives—such as a module class, vectorized nodes, optimizers, and layers—that can be flexibly combined to construct a wide variety of PCN architectures [15].

- Efficiency: Extensive reliance on JIT compilation allows PCX to transform and optimize code for execution on CPUs, GPUs, and TPUs, making it a high-performance foundation for experimental research [15].

Installation and Reproducibility

PCX supports multiple installation methods designed to meet different research needs and ensure strict reproducibility [22]:

- Default PIP Installation: For general use, the library can be installed via PIP after first installing the appropriate JAX version for the target accelerator (e.g., CUDA 12.0+ or CPU-only).

- Poetry Installation: To guarantee a fully reproducible environment with managed dependencies, researchers can use Poetry. This method locks all package versions, preventing inadvertent changes in the computational environment that could affect results.

- Docker with Dev Containers: For the most automatic and consistent setup, a Docker image is provided. This is particularly recommended for users of VS Code, as it configures a containerized development environment with all necessary dependencies, eliminating conflicts with local system configurations.

Table 1: PCX Installation Methods Overview

| Method | Primary Use Case | Key Advantage | Command/Source |

|---|---|---|---|

| PIP | General Use & Quick Start | Simplicity and speed | pip install ... (from PyPI or wheel) |

| Poetry | Reproducible Research | Version-locked dependencies | poetry install --no-root |

| Docker | Automated & Consistent Setup | Pre-configured, isolated environment | Use VS Code "Dev Containers" extension |

Standardized Benchmarks for Predictive Coding Networks

Proposed Tasks, Datasets, and Architectures

To address the lack of uniform evaluation criteria in the field, the PCX initiative introduces a comprehensive set of benchmarks centered on canonical computer vision tasks: image classification and generation [21]. These tasks were selected for their simplicity and established popularity within the machine learning community, which facilitates direct comparison with other methods.

The benchmarks are structured as a progressive ladder of difficulty, enabling researchers to test algorithms from the simplest to the most complex scenarios. The proposed datasets include:

- MNIST: The foundational dataset for initial validation.

- FashionMNIST: A slightly more challenging grayscale image dataset.

- CIFAR-10 & CIFAR-100: Standard benchmarks for small-scale color image classification.

- Tiny Imagenet: A more challenging dataset that pushes the limits of current PCN capabilities [21] [15].

The model architectures are carefully chosen to align with those consistently used in related fields like equilibrium propagation and target propagation, thereby enabling direct cross-method comparisons. The architectural progression includes:

- Feedforward Networks: Basic models for MNIST.

- Convolutional Neural Networks (CNNs): Deeper 5, 7, and 9-layer convolutional models.

- ResNets: Deeper residual networks, such as ResNet-18, which currently represent a significant challenge for PCNs [15].

Implemented Learning Algorithms

The benchmarking effort encompasses a wide array of learning algorithms for PCNs. This includes not only standard Predictive Coding but also modern variations designed to improve performance and stability [21]:

- Standard PC: The foundational algorithm based on Rao and Ballard's formulation.

- Incremental PC (iPC): A variation that has shown strong performance on various tasks [21].

- PC with Langevin Dynamics: Incorporates stochastic sampling into the inference process [21].

- Nudged PC: An adaptation inspired by the Equilibrium Propagation (Eqprop) literature, applied to PC models for the first time within this work [21].

Experimental Protocols and Performance Analysis

Methodologies for Key Experiments

The experimental workflow for benchmarking with PCX involves a standardized process to ensure fair and reproducible evaluation across different models and algorithms. The following diagram illustrates the core inference and learning loop within a PCN, which is fundamental to all the conducted experiments.

The specific methodology for an image classification experiment, for instance, involves several key steps [21]:

- Model Instantiation: A PCN is constructed using PCX's modular primitives, with its depth and layer types defined according to the benchmark specification (e.g., VGG-7, ResNet-18).

- Inference Phase: For a given input (e.g., an image from CIFAR-10), the network's latent states are updated over a number of inference steps to minimize the network's global energy (or negative variational free energy).

- Learning Phase: After inference, the model's parameters (synaptic weights) are updated based on the stabilized states. This update is a form of gradient descent on the same energy.

- Evaluation: The model's performance is measured on a held-out test set using standard metrics like classification accuracy.

- Hyperparameter Tuning: An extensive search is performed over key hyperparameters, such as the learning rates for states (γ) and parameters, the number of inference steps, and the optimizer (SGD or Adam). This is where PCX's efficiency is critical.

Quantitative Performance Results

Leveraging the efficiency of PCX, researchers achieved new state-of-the-art results for PCNs on multiple benchmarks. The table below summarizes key performance findings, particularly highlighting the comparison with backpropagation (BP) and the emerging scalability challenge.

Table 2: Predictive Coding Performance vs. Backpropagation on Image Classification

| Dataset | Model Architecture | PC (Best Variant) | Backpropagation (BP) | Performance Gap |

|---|---|---|---|---|

| CIFAR-10 | Convolutional (5-Layer) | Comparable to BP [15] | Baseline | Minimal |

| CIFAR-10 | Convolutional (7-Layer) | Comparable to BP [15] | Baseline | Minimal |

| CIFAR-100 | Convolutional (5/7-Layer) | Comparable to BP [15] | Baseline | Minimal |

| Tiny Imagenet | Convolutional (5/7-Layer) | Comparable to BP [15] | Baseline | Minimal |

| CIFAR-10 | Convolutional (9-Layer) | Performance decreases [15] | Performance increases | Significant |

| CIFAR-10 | ResNet-18 | Performance falls [15] | Performance increases | Significant |

The results demonstrate a clear and important trend: PCNs can match the performance of BP-trained models on small to medium-scale architectures (e.g., VGG-7) across a range of complex datasets. This is a notable achievement, proving that brain-inspired learning algorithms can be effective for non-trivial tasks. However, as model depth and complexity increase, the performance of PCNs begins to degrade relative to BP, which continues to scale effectively [15]. This inversion of performance marks the new frontier for PCN research.

Analysis of the Scalability Bottleneck: Energy Imbalance

A key analytical finding from the PCX benchmarking effort is the identification of an energy imbalance as a primary cause of the scalability bottleneck [15]. During learning, the energy (prediction error) in the network's final layers becomes orders of magnitude larger than the energy in the earlier layers. This imbalance impedes the effective backward propagation of error signals during inference, leading to exponentially small gradient updates in the lower layers of very deep networks.

This phenomenon was studied by analyzing the ratio of energies between subsequent layers in relation to hyperparameters like the state learning rate (γ). The analysis revealed that while smaller learning rates led to better overall performance, they also correlated with larger energy imbalances [15]. This creates a challenging trade-off and points to the need for new inference or learning algorithms that can stabilize energy propagation across many layers.

The Scientist's Toolkit: Essential Research Reagents

The following table details the core components of the PCX ecosystem, which constitute the essential "research reagents" for conducting modern research with predictive coding networks.

Table 3: Key Research Reagent Solutions in the PCX Ecosystem

| Item / Component | Function / Purpose | Implementation in PCX |

|---|---|---|

| JAX Backend | Provides a high-performance, accelerator-agnostic foundation for numerical computing, enabling JIT compilation, automatic differentiation, and vectorization. | Core dependency of the library [22]. |

| Modular Layer Primitives | Pre-built components (e.g., Dense, Conv2D) to construct complex PCN architectures without re-implementing low-level details. | Object-oriented abstraction in pcx.layers [15]. |

| Equinox Compatibility | Ensures reliable, extendable, and production-ready code by building on a popular and well-designed JAX library for deep learning. | Fully compatible interface [21]. |

| Pre-defined Benchmarks | Standardized tasks, datasets, and model architectures to ensure fair and reproducible comparison of different PCN algorithms. | Provided in the library's examples and documentation [21]. |

| Multi-Algorithm Support | Allows for the direct comparison of standard PC, iPC, PC with Langevin dynamics, and Nudged PC within the same codebase. | Unified training loop interface [21] [15]. |

| Reproducibility Configs | Locked dependency versions and containerized environments to guarantee that experimental results can be replicated exactly. | poetry.lock file and Docker Dev Container configuration [22]. |

The introduction of the PCX library and its associated benchmarks marks a significant inflection point for predictive coding research. By providing a tool that combines performance, simplicity, and reproducibility, it lowers the barrier to entry and enables the community to tackle the field's most critical open problem: scalability. The work has already yielded new state-of-the-art results for PCNs and, more importantly, has clearly diagnosed the energy imbalance issue that limits performance on deeper models like ResNets.

This foundation paves the way for several critical future research directions. The primary challenge is to develop new PCN variants—whether through improved inference schemes, optimized energy functions, or regularized learning rules—that can maintain balanced energy propagation across dozens or hundreds of layers. The ultimate milestones will be to train deep PC models on large-scale datasets like ImageNet, to extend these principles to other model classes such as Graph Neural Networks and Transformers, and to demonstrate the viability of PCNs on low-energy neuromorphic hardware. The PCX toolkit provides the standardized platform upon which these ambitious goals can now be pursued.

Benchmark datasets serve as the foundational currency for progress in machine learning, providing standardized platforms for training, evaluating, and comparing algorithmic performance. For the specialized field of predictive coding (PC) networks—biologically plausible models inspired by information processing in the brain—these benchmarks are particularly crucial for driving scalability and reproducibility. Predictive coding posits that the brain continuously generates predictions about incoming sensory inputs and updates internal models based on prediction errors. While this framework has inspired computationally attractive algorithms, research efforts have historically been fragmented by the use of custom tasks and architectures, hindering direct comparison and systematic advancement [11] [15].

The lack of standardized benchmarks has obscured one of the field's most significant challenges: scaling PC networks to match the performance of backpropagation-trained models on complex tasks. Although PC networks can rival standard deep learning models on smaller datasets like CIFAR-10, their performance traditionally degrades with increasingly deep architectures or more complex data [15]. Recent initiatives, such as the development of the PCX library, have begun addressing these limitations by establishing uniform benchmarks across computer vision tasks including image classification and generation [11] [15]. This guide details the core benchmarking datasets central to this effort, providing quantitative comparisons, experimental protocols, and resource guidance to galvanize community research toward solving PC's scalability problem.

Core Benchmarking Datasets: Specifications and Significance

Dataset Specifications and Comparative Analysis

The evolution of benchmarking for predictive coding networks has centered on progressively more challenging image classification datasets. The table below summarizes the key specifications of these core datasets.

Table 1: Core Benchmark Datasets for Predictive Coding Research

| Dataset Name | Total Images | Image Resolution | Number of Classes | Training Images | Test Images | Notable Characteristics |

|---|---|---|---|---|---|---|

| CIFAR-10 [23] [24] | 60,000 | 32x32 | 10 | 50,000 | 10,000 | Mutualually exclusive classes; 6,000 images/class |

| CIFAR-100 [23] | 60,000 | 32x32 | 100 | 50,000 | 10,000 | 100 fine-grained classes grouped into 20 superclasses |

| Tiny ImageNet [11] [15] | Not specified | Not specified | 200 | Not specified | Not specified | More complex than CIFAR; used for medium-scale PC challenges |

CIFAR-10 provides the entry point for modern PC benchmarking, consisting of 60,000 32x32 color images across ten mutually exclusive classes such as airplanes, automobiles, birds, cats, deer, dogs, frogs, horses, ships, and trucks [23] [24] [25]. The dataset is standardized with 50,000 training images and 10,000 test images, with the training set typically divided into five batches for manageable processing [23]. Its relatively small image size and balanced class distribution make it ideal for rapid prototyping and initial algorithm validation.

CIFAR-100 maintains the same overall image count and dimensions as CIFAR-10 but introduces greater complexity through 100 fine-grained classes, with only 600 images per class [23]. This dataset incorporates a two-level hierarchical label structure where each image carries both a "fine" label (the specific class) and a "coarse" label (one of 20 superclasses) [23]. This hierarchical organization is particularly relevant for PC research, as it allows investigators to evaluate how well networks learn structured representations at multiple abstraction levels.

Tiny ImageNet represents a further step up in complexity, featuring 200 image classes in a reduced resolution format compared to the full ImageNet dataset [11] [15]. While specific image counts and resolutions vary across implementations, this dataset consistently serves as a bridge between simple academic datasets and real-world visual complexity. For predictive coding research, Tiny ImageNet has proven challenging, with current PC networks struggling to maintain performance parity with backpropagation-trained models at this scale [15].

Dataset Selection Rationale for Predictive Coding Research

These datasets form a structured progression path for evaluating predictive coding networks. CIFAR-10 enables researchers to verify basic functionality and compare against known baseline results, such as the approximately 11% test error achieved by convolutional neural networks with data augmentation [23]. CIFAR-100 introduces the challenge of learning from limited examples per class while navigating hierarchical relationships, testing network efficiency and representational capacity [23]. Finally, Tiny ImageNet pressures the scalability of PC algorithms, highlighting current limitations in energy propagation across deep network layers and motivating fundamental algorithmic improvements [11] [15].