Benchmarking Neuromorphic Efficiency: A Researcher's Guide to Metrics, Methods, and Medical Applications

This article provides a comprehensive guide for researchers and biomedical professionals on measuring the energy efficiency of neuromorphic hardware.

Benchmarking Neuromorphic Efficiency: A Researcher's Guide to Metrics, Methods, and Medical Applications

Abstract

This article provides a comprehensive guide for researchers and biomedical professionals on measuring the energy efficiency of neuromorphic hardware. It covers foundational principles inspired by the brain's extreme efficiency, details current standardized benchmarking frameworks like NeuroBench, and explores actionable metrics for development. A significant focus is placed on troubleshooting common pitfalls in metric selection and hardware measurement, and the guide concludes with strategies for validating performance against traditional systems. The content is tailored to inform the development of ultra-low-power applications, particularly for implantable medical devices and edge-AI in clinical settings.

Why Efficiency Matters: The Biological and Technical Imperatives of Neuromorphic Computing

The rapid expansion of Artificial Intelligence (AI) capabilities has triggered an unprecedented surge in computational energy demands, creating a sustainability crisis that threatens to hinder further advancement. Data centers that power AI models have become significant drivers of increased electricity consumption and higher utility costs for consumers [1]. Meanwhile, the human brain performs remarkable feats of computation and learning while consuming a mere ~20 watts of power—a stark contrast to the megawatts required by AI supercomputers [2] [3]. This profound discrepancy has catalyzed the emerging field of neuromorphic computing, which seeks to develop brain-inspired computing hardware that could revolutionize AI energy efficiency [4] [5]. This technical guide frames this energy challenge within the broader context of measuring and advancing neuromorphic hardware energy efficiency research, providing researchers with quantitative frameworks, experimental methodologies, and benchmarking approaches essential for evaluating progress in this critical field.

The energy consumption disparity between biological and artificial systems is not merely academic—it has tangible economic and environmental consequences. Residential electricity prices have already increased significantly, with experts identifying data centers as a primary driver [1]. The U.S. Department of Energy estimates that data centers will consume 6.7% to 12% of total U.S. electricity by 2028, up from 4.4% in 2023 [1]. This guide provides researchers with the conceptual frameworks and methodological tools needed to quantify, evaluate, and advance neuromorphic hardware energy efficiency—a critical metric for sustainable AI development.

Quantitative Analysis: Biological vs. AI Energy Consumption

Comparative Performance Metrics

Table 1: Energy Consumption Comparison: Biological Brain vs. Artificial Intelligence Systems

| System | Power Consumption | Information Processing Capacity | Learning Efficiency | Energy Source |

|---|---|---|---|---|

| Human Brain | ~20 watts [2] [3] | ~86 billion neurons [6] | One-shot/few-shot learning [7] | Biochemical (glucose) |

| AI Data Centers | Gigawatts (billions of watts) [2] | Trillions of parameters/operations | Requires massive labeled datasets [8] | Electrical grid |

| GPT-4 Training | ~ hundreds of thousands of kilowatt-hours [6] | ~1.7 trillion parameters | Thousands of examples per category [2] | Primarily fossil fuels & renewables |

| AI Inference | ~6000 joules per text response [4] | Varies by model size | Not applicable | Electricity |

| Neuromorphic Goal | Milliwatts to watts [6] | Millions to billions of artificial neurons [6] | Continuous online learning [5] | Electricity |

Table 2: U.S. Data Center Energy Projections and Impact (Source: International Energy Agency) [9]

| Metric | 2024 Value | 2030 Projection | Change | Contextual Comparison |

|---|---|---|---|---|

| Electricity Consumption | 183 TWh | 426 TWh | +133% | Equivalent to Pakistan's annual electricity demand (2024) |

| Share of U.S. Electricity | >4% | Projected higher | Increasing | - |

| Household Cost Impact | Current increases | +8% average by 2030 [9] | Rising | Up to 25% in high-demand regions like Virginia |

| Typical AI Hyperscale Center | Equivalent to 100,000 homes | New centers: 20x more | Dramatic increase | - |

| Primary Energy Sources | Natural gas (>40%), Renewables (~24%), Nuclear (~20%) [9] | Similar mix, potential nuclear increase | Evolving | - |

The quantitative disparity between biological and artificial computation is staggering. The human brain achieves its capabilities with approximately 86 billion neurons and consumes only 20 watts—enough power to run a dim light bulb [2] [6]. In contrast, training a single large AI model like GPT-4 can consume hundreds of thousands of kilowatt-hours of electricity—enough to power 50-150 average households for an entire year [6]. This efficiency gap becomes even more pronounced when examining learning capabilities: a child can recognize handwritten digits after seeing just a few examples, while AI systems typically require thousands of labeled examples to achieve similar recognition capabilities [7].

The energy demand from AI infrastructure is growing at an unsustainable rate. Data centers in the United States consumed 183 terawatt-hours (TWh) of electricity in 2024, representing more than 4% of total U.S. electricity consumption [9]. By 2030, this figure is projected to grow by 133% to 426 TWh, creating significant pressure on energy infrastructure and contributing to higher electricity costs for consumers [1] [9]. Some regions, particularly central and northern Virginia, could see electricity bills increase by more than 25% by 2030 due to data center concentration [9].

Neuromorphic Computing: Architectural Principles and Biological Inspiration

Core Principles of Brain-Inspired Computing

Neuromorphic computing represents a fundamental departure from traditional von Neumann architecture by emulating the brain's organizational principles. The field is built upon several key biological insights translated into engineering frameworks:

Co-location of Memory and Processing: In the brain, memory formation and information processing occur simultaneously through synaptic plasticity, eliminating the energy-intensive data movement that characterizes traditional computing [4] [3]. This in-memory computing approach radically reduces the power consumption associated with transferring data between separate memory and processing units [4].

Event-Driven Processing: Unlike clock-driven conventional processors that execute instructions continuously, the brain operates on an event-driven model where computation occurs primarily in response to neural spikes [6]. This sparse, asynchronous processing means that only relevant components consume significant power, while others remain in low-power states [5].

Massive Parallelism: The brain's ~86 billion neurons operate in parallel, enabling robust pattern recognition and fault tolerance [6]. Neuromorphic systems replicate this through interconnected networks of artificial neurons that distribute computational loads across many parallel units [6].

Analog Dynamics and Temporal Processing: Biological neural systems leverage precise timing relationships and analog electrochemical dynamics for computation. Neuromorphic devices implementing spiking neural networks (SNNs) encode information in the timing and frequency of discrete spikes rather than continuous values, making them particularly suitable for processing dynamic, real-world data [6].

Comparative Computing Architectures

Table 3: Architectural Comparison: Von Neumann vs. Neuromorphic Computing

| Characteristic | Von Neumann Architecture | Neuromorphic Computing | Biological Brain |

|---|---|---|---|

| Processing Model | Synchronous, sequential | Asynchronous, event-driven [6] | Asynchronous, event-driven |

| Memory & Processing | Physically separate [4] | Co-located (in-memory computing) [4] | Fully integrated |

| Data Movement | Constant bus traffic | Minimal data movement [4] | Localized signaling |

| Energy Profile | Watts to hundreds of watts [6] | Milliwatts to watts [6] | ~20 watts [2] |

| Learning Mechanism | Software-based, backpropagation | Hardware-based, synaptic plasticity [8] | Synaptic plasticity, Hebbian learning |

| Information Encoding | Binary (0s and 1s) | Temporal spikes [6] | Electrical & chemical spikes |

Experimental Protocols in Neuromorphic Hardware Research

Diffusive Memristor-Based Artificial Neurons

Research Objective: Develop artificial neurons that replicate the complex electrochemical behavior of biological neurons using diffusive memristors for energy-efficient neuromorphic computing [7].

Materials and Methods:

- Device Fabrication: Create diffusive memristors using silver ions in oxide substrates to emulate the ion dynamics of biological neurons [7].

- Characterization: Analyze ion motion and dynamic diffusion properties using electrical stimulation and imaging techniques.

- Circuit Design: Implement neuronal circuits where each artificial neuron requires only the footprint of a single transistor, dramatically reducing size and energy requirements [7].

Key Measurements:

- Ion diffusion kinetics and switching dynamics

- Energy consumption per spike event

- Device stability and endurance under continuous operation

- Integration density (devices per unit area)

Validation Metrics:

- Fidelity of biological neuron emulation

- Energy efficiency compared to conventional transistors

- Compatibility with existing semiconductor manufacturing processes

Magnetic Tunnel Junction (MTJ) Networks

Research Objective: Implement Hebbian learning ("cells that fire together, wire together") in neuromorphic systems using nanoscale magnetic tunnel junctions [8].

Materials and Methods:

- MTJ Fabrication: Create devices with two layers of magnetic material separated by an insulating layer through which electrons can tunnel [8].

- Network Integration: Connect MTJs in networks that mimic the brain's architecture for pattern learning and prediction.

- Protocol: Strengthen synaptic connections when coordinated firing occurs between artificial neurons [8].

Key Measurements:

- Tunnel magnetoresistance ratio

- Switching current density and speed

- Learning accuracy with minimal training computations

- Power consumption during pattern learning tasks

Validation Metrics:

- Reliability of binary switching for information storage

- Demonstration of autonomous learning without massive training datasets

- Scalability to larger network sizes

Electrochemical Ionic Synapses

Research Objective: Develop tunable electrochemical devices that mimic biological synapses by modulating conductivity through ion insertion [3].

Materials and Methods:

- Device Fabrication: Create electrochemical synapses using magnesium ions in tungsten oxide channels [3].

- Conductance Tuning: Precisely control electrical resistance through magnesium ion insertion into the metal oxide matrix.

- Characterization: Employ MATLAB-based data analysis and visualization to understand conductance changes at the atomic level [3].

Key Measurements:

- Ion insertion kinetics and reversibility

- Resistance switching dynamics and retention

- Energy consumption per synaptic operation

- Operational stability over multiple cycles

Validation Metrics:

- Synaptic plasticity emulation capability

- Energy efficiency compared to biological synapses

- Compatibility with CMOS manufacturing processes

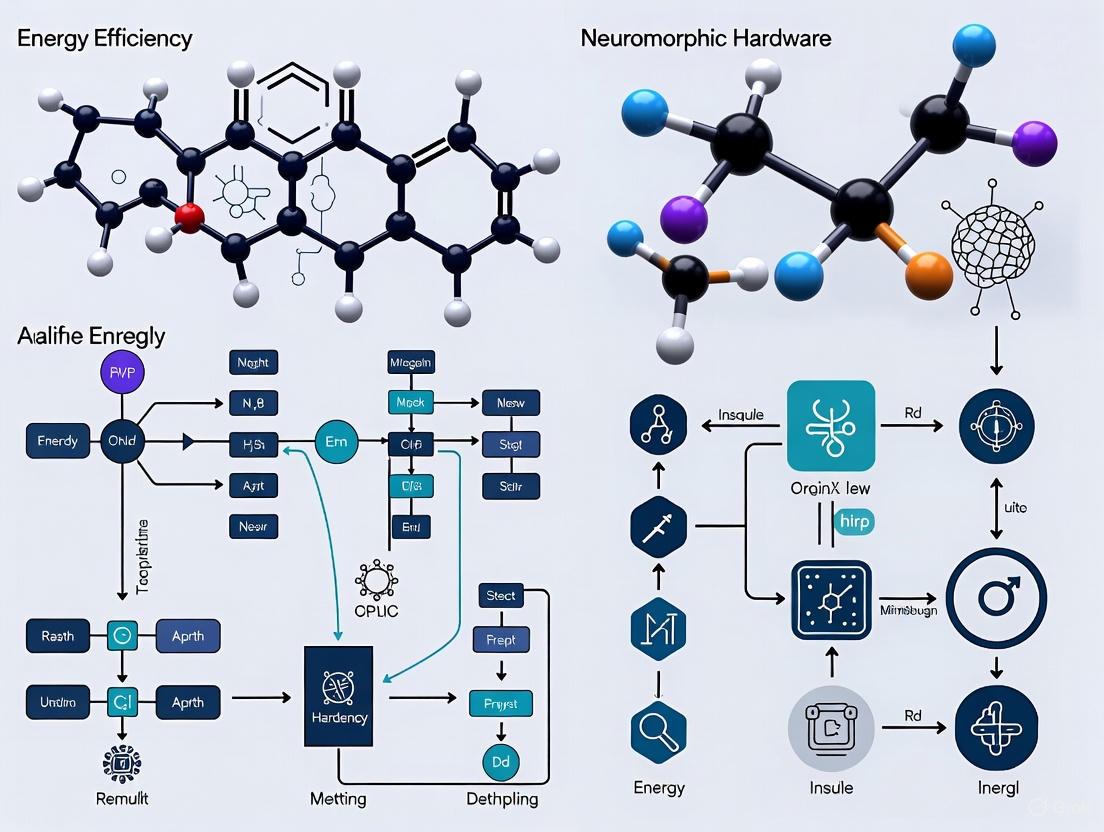

Visualization of Neuromorphic Architectures and Signaling Pathways

Von Neumann vs. Neuromorphic Architecture

Diffusive Memristor Operation

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Materials for Neuromorphic Hardware Development

| Material/Component | Function | Research Application | Key Properties |

|---|---|---|---|

| Phase-Change Materials (PCMs) | Artificial synapses and neurons [4] | Electrical switching devices | Controllable conductivity switching, retention |

| Copper Vanadium Oxide Bronze | Neuromorphic chip substrate [4] | Synaptic plasticity emulation | Precise electrical switching properties |

| Magnetic Tunnel Junctions (MTJs) | Binary switching elements [8] | Pattern learning networks | Reliable information storage, nanoscale |

| Silver Ions in Oxide | Diffusive memristor foundation [7] | Artificial neuron implementation | Ion dynamics similar to biological systems |

| Magnesium Ions in Tungsten Oxide | Electrochemical synaptic device [3] | Tunable conductance channels | Stable ion insertion, precise resistance control |

| Niobium Oxide | Neuromorphic computing material [4] | Artificial neuron implementation | Advanced switching characteristics |

| Metal-Organic Frameworks | Complex neuromorphic structures [4] | Advanced computing substrates | Tunable electrical properties |

The human brain remains the undisputed gold standard for computational efficiency, performing remarkable feats of cognition, pattern recognition, and adaptive learning while consuming merely 20 watts of power [2]. The growing energy demands of conventional AI systems—with data centers projected to consume up to 12% of U.S. electricity by 2028—highlight the urgent need for more efficient computing paradigms [1]. Neuromorphic computing represents the most promising approach to bridging this efficiency gap by fundamentally reimagining computer architecture through biological inspiration.

For researchers evaluating neuromorphic hardware energy efficiency, several key metrics emerge as critical benchmarks: energy per synaptic operation (targeting biological levels of femtojoules to picojoules), learning efficiency (few-shot versus massive dataset requirements), computational density (artificial neurons per unit area), and operational lifetime (endurance under continuous learning conditions). The experimental protocols and material systems outlined in this guide provide a framework for systematically measuring progress against these benchmarks. As neuromorphic computing matures from laboratory prototypes to commercial applications—particularly in edge computing, autonomous systems, and biomedical devices—the rigorous assessment of energy efficiency will remain paramount for achieving truly sustainable artificial intelligence that approaches the brain's remarkable efficiency.

The von Neumann architecture, which separates memory and processing units, has formed the foundation of computing for decades. However, this design creates a critical performance and energy efficiency bottleneck in artificial intelligence (AI) applications, as it requires constant data movement between memory and processor [10]. This "von Neumann bottleneck" forces energy-intensive shuttling of data that can consume over 60% of the total system energy in data-intensive workloads [11]. As AI models grow exponentially—with training for GPT-3 consuming as much energy as powering 120 homes for a year and GPT-4 requiring an estimated 50 times more—addressing this inefficiency has become imperative [12].

Neuromorphic computing, inspired by the brain's exceptional efficiency, offers a transformative solution by fundamentally rearchitecting how computation and data storage interact [10]. The human brain performs cognitive tasks on roughly 20 watts—the power demand of a couple of standard LED bulbs—dramatically outperforming conventional computers in energy efficiency [12]. This bio-inspired approach leverages two key principles: in-memory computing (co-locating memory and processing) and event-driven processing (activating resources only when needed) [13]. Together, these mechanisms eliminate the von Neumann bottleneck, enabling parallel, energy-efficient computation that is particularly suited to the massive matrix multiplication operations dominant in AI workloads [10] [11].

Core Principles of Brain-Inspired Computing

In-Memory Computing: Co-Locating Memory and Processing

In-memory computing fundamentally restructures the traditional computing paradigm by integrating memory and processing functions. This architecture is inspired by the brain, where memory formation and learning are co-located in interconnected regions and circuits [10]. In neuromorphic systems, memory devices serve as artificial synapses, with technologies including resistive random-access memory (RRAM), phase-change memory (PCM), and ferroelectric memory enabling both data storage and computation within the same physical location [11].

The core advantage of this approach lies in eliminating energy-intensive data movement. In conventional processors, the limiting factor isn't computational speed but rather the energy and time required to transport data between memory and computing units [10]. IBM's NorthPole neuromorphic chip exemplifies the benefits of in-memory computing, demonstrating image classification at a fraction of the energy required by conventional systems while achieving fivefold speed improvements [12]. As Dharmendra Modha, IBM's chief scientist for brain-inspired computing, states: "Architecture trumps Moore's Law," highlighting that structural innovation yields greater efficiency gains than simply packing more transistors onto chips [12].

Event-Driven Processing: Sparsity and Temporal Dynamics

Event-driven processing mimics the brain's sparse, efficient communication mechanism through spiking neural networks (SNNs). Unlike conventional systems that operate continuously, SNNs transmit information only when necessary through electrical "spikes" similar to biological neurons [12]. These spikes are sudden voltage surges lasting 2-5 milliseconds, triggered by changes as neurons exchange signals [12].

This sparse, event-driven operation provides two key efficiency advantages. First, computational resources activate only when needed, significantly reducing energy consumption during idle periods [12]. Second, information encoding in temporal patterns—precise spike timing rather than continuous electrical signals—enables highly efficient information processing [12]. As researcher Ghazi Sarwat Syed explains, "Our nerve cells are communicating sparsely, which is why we're so efficient" [10]. This event-driven paradigm is particularly effective for real-time applications and temporal data processing, making it ideal for edge computing scenarios where power resources are constrained [14].

Table 1: Comparative Characteristics of Computing Architectures

| Characteristic | Von Neumann Architecture | Tensor Processors (GPUs) | Neuromorphic Computing |

|---|---|---|---|

| Memory-Processing Relationship | Separate | Separate (but optimized for parallel data) | Co-located/in-memory |

| Processing Style | Continuous, clock-driven | Continuous, massively parallel | Event-driven, sparse |

| Data Movement | High (von Neumann bottleneck) | High (but optimized for batches) | Minimal/none |

| Energy Efficiency | Low for sequential AI tasks | Moderate for parallel AI training | Very high for inference & real-time tasks |

| Primary AI Applications | General purpose computing | AI training, large model inference | Edge AI, real-time processing, adaptive learning |

Neuromorphic Hardware Implementations

Emerging Memory Technologies for Synaptic Emulation

The physical implementation of in-memory computing relies on advanced memory technologies that can serve as artificial synapses. These devices must exhibit characteristics such as non-volatility, analog programmability, endurance, and the ability to gradually modulate conductance—mimicking the strengthening and weakening of biological synapses [11].

Phase-Change Memory (PCM): PCMs switch between conductive and resistive phases using controlled electrical pulses, allowing synchronization of electrical oscillations similar to biological neural activity [13]. These materials retain their conductive or resistive phase even after electrical pulses cease, effectively holding memory of previous states. This enables gradual conductivity changes in response to repeated electrical pulses, mirroring how biological synapses strengthen through repeated activation [13].

Resistive Random-Access Memory (RRAM): In RRAM, an atomic filament sits between two electrodes within an insulator. During AI training, input voltage changes the filament's oxidation state, altering its resistance—this resistance is then read as a weight during inferencing [10]. These cells are arranged in crossbar arrays on chips, creating networks of synaptic weights that have shown promise for analog computation while remaining flexible to updates [10].

Ferroelectric Memory and V-NAND Flash: Ferroelectric memories exhibit multi-level analog switching behaviors suitable for adaptive learning, though challenges remain in variability and integration scalability [11]. Meanwhile, commercial V-NAND flash memory offers maturity and high density for large-scale neuromorphic inference systems, despite limitations in analog programmability and endurance [11].

Architectural Implementation in Neuromorphic Processors

Several neuromorphic processors demonstrate the practical implementation of these principles:

IBM NorthPole: This brain-inspired chip integrates memory near compute in a distributed, modular core array with massive parallelism [12] [10]. NorthPole moves from spiking neurons and asynchronous design to a synchronous design, having demonstrated superior performance on various tasks at a fraction of the energy cost of conventional architectures [12] [10].

Intel Loihi 2: This neuromorphic chip simulates over 1 billion neurons and employs a fully asynchronous, event-driven architecture [12]. It supports dynamic on-chip learning and is designed for efficient SNN simulation [15] [11].

IBM Hermes: This analog chip incorporates millions of nanoscale PCM devices that function as analog versions of brain cells [10]. The PCM devices are assigned weights through electrical currents that physically change the state of chalcogenide glass, making it more or less conductive and thereby altering computation values [10].

Diagram 1: Computing architecture comparison

Measuring Energy Efficiency in Neuromorphic Hardware

Benchmarking Frameworks and Metrics

Evaluating neuromorphic hardware efficiency requires specialized benchmarking approaches that account for event-driven operation and in-memory computation. The Spiking Neural Architecture Benchmark Suite (SNABSuite) provides a cross-platform framework covering benchmarks from low-level characterization to high-level application evaluation [16]. This suite enables comparison of various neuromorphic systems, including mixed-signal and fully digital architectures, using benchmark-specific metrics [16].

Energy modeling within this framework allows researchers to estimate energy expenditure of neuromorphic systems by running simulations on standard hardware, with results closely matching published measurements [16]. These models help quantify the efficiency gap between neuromorphic systems and biological brains—revealing that current neuromorphic systems remain at least four orders of magnitude less efficient than the human brain, with two to three orders of magnitude improvement potentially achievable through modern fabrication processes [16].

Key Energy Efficiency Metrics

Standardized metrics are essential for comparative analysis of neuromorphic hardware:

- Energy per Synaptic Operation: Measures energy consumption for basic neural computation, typically in joules per synapse.

- Power Density: Power consumption per unit area, particularly important for embedded and edge devices.

- Inference Energy: Total energy required to process a single input instance, crucial for deployment scenarios.

- Energy-Delay Product: Combined metric evaluating both performance and efficiency.

- Static vs. Dynamic Power Consumption: Differentiates between idle power usage and active computation energy.

A significant challenge in the field is the lack of standardized, actionable metrics that provide practical insights for SNN developers [17]. Current research focuses on bridging the gap between accessible and high-fidelity metrics, developing battery-aware measurements, and improving energy-performance tradeoff assessments [17].

Table 2: Energy Efficiency Comparison for AI Inference Tasks

| Hardware Platform | Reported Energy Efficiency | Task | Key Architectural Feature |

|---|---|---|---|

| IBM NorthPole [12] [10] | "Fraction of the energy" of conventional systems; "5x faster" | Image classification (ImageNet) | In-memory computing, low precision, massive parallelism |

| Human Brain [12] [13] | ~20W for cognitive tasks | Continuous perception & cognition | Massive parallelism, sparsity, co-located memory & processing |

| Traditional GPU (for comparison) | High energy consumption; "Unsustainable" for scaling AI [12] [18] | AI training & inference | Separate memory & processing (von Neumann bottleneck) |

| Intel Loihi 2 [12] | High efficiency for specialized SNN workloads | SNN simulation & optimization | Asynchronous, event-driven spiking neural networks |

Experimental Protocols for Neuromorphic Benchmarking

Cross-Platform Performance Evaluation

Rigorous experimental protocols are essential for meaningful comparison of neuromorphic architectures. The SNABSuite framework employs a backend-agnostic implementation of SNNs coupled with backend-specific configurations, enabling direct cross-platform comparisons [16]. Benchmark implementations include:

- Constraint Satisfaction Problems: Scalable implementations like Sudoku puzzles using winner-take-all networks evaluate system performance on computational tasks [16].

- Converted ANN-SNN Networks: Using pre-trained artificial networks converted to spiking networks with rate-based or time-to-first-spike encodings [16].

- Low-Level Characterization: Measuring basic system properties like spike bandwidth between neurons, which limits all network implementations regardless of theoretical considerations [16].

Protocols must account for platform-specific constraints including connectivity limitations, numerical precision variations between analog and digital implementations, and differences in temporal dynamics between simulated and physical systems [16].

Energy Measurement Methodology

Accurate energy assessment requires specialized methodologies:

- Platform-Specific Power Monitoring: Utilizing built-in current sensors or external measurement equipment to track dynamic power consumption during benchmark execution.

- Task-Based Energy Profiling: Isolating energy consumption for specific operations (e.g., per inference or per synaptic event) rather than full-system power.

- Scale-Dependent Efficiency Analysis: Evaluating how energy efficiency changes with network size and complexity, identifying optimal operating regions.

- Comparative Baseline Establishment: Comparing neuromorphic implementations against optimized conventional solutions for equivalent tasks.

These protocols enable meaningful comparison between radically different architectures and help identify the most suitable applications for neuromorphic approaches [16].

Diagram 2: Neuromorphic benchmark workflow

The Researcher's Toolkit: Essential Technologies and Methods

Table 3: Research Reagent Solutions for Neuromorphic Experimentation

| Tool/Category | Example Implementations | Function in Research |

|---|---|---|

| Neuromorphic Hardware Platforms | Intel Loihi 2, IBM NorthPole, SpiNNaker, BrainScaleS-2 | Physical implementation for testing SNNs and in-memory computing architectures [12] [15] [16] |

| Memory Technologies for Synapses | Phase-Change Memory (PCM), Resistive RAM (RRAM), Ferroelectric Memory | Serve as artificial synapses in neuromorphic systems; provide analog programmability and weight storage [10] [11] [13] |

| Benchmarking Suites | SNABSuite (Spiking Neural Architecture Benchmark Suite) | Enable cross-platform performance and efficiency comparison using standardized metrics [16] |

| Simulation Frameworks | NEST, GeNN, PyNN | Software tools for simulating spiking neural networks prior to hardware deployment [16] |

| Programming Models for SNNs | Gradient-based training (e.g., SNN backpropagation), Hand-wiring, Random architectures | Methods for configuring and training spiking neural networks for specific applications [15] |

In-memory computing and event-driven processing represent foundational shifts in computing architecture that directly address the von Neumann bottleneck, enabling dramatic improvements in energy efficiency for AI workloads. These brain-inspired approaches have demonstrated practical benefits in research settings, with neuromorphic chips like IBM's NorthPole and Intel's Loihi 2 showing order-of-magnitude efficiency gains for specific applications [12].

Despite these advances, significant research challenges remain. Current analog memory devices face limitations in precision and endurance, particularly for on-chip training [10]. Benchmarking methodologies require standardization to enable meaningful cross-platform comparisons [17] [16]. Programming models for neuromorphic systems need development to lower the barrier to entry and enable wider adoption [15]. And the efficiency gap with biological brains—spanning two to four orders of magnitude—highlights the substantial headroom for continued innovation [16].

The roadmap for neuromorphic computing points toward heterogeneous hardware solutions tailored to specific application needs rather than one-size-fits-all architectures [18]. Key focus areas include leveraging sparsity through neural pruning strategies similar to biological brains [18], developing open frameworks and programming languages to foster collaboration [18], and continuing co-optimization of materials, devices, and algorithms. As AI energy consumption continues to grow unsustainably, neuromorphic computing offers a promising path toward more efficient and effective AI systems everywhere and anytime [18].

Quantifying the energy efficiency of neuromorphic hardware is a fundamental challenge in advancing brain-inspired computing. Unlike traditional processors where an "operation" is clearly defined (e.g., a floating-point operation or FLOP), neuromorphic systems process information through a complex interplay of discrete, event-driven actions: synaptic transmissions, somatic integrations, and spike generation. This inherent complexity creates a significant bottleneck for fair benchmarking and comparison. The energy efficiency claims for neuromorphic systems can vary by orders of magnitude, with some implementations demonstrating efficiencies ranging from tera-synaptic operations per second per watt (TOPS/Wsynaptic) to giga-spiking neural operations per second per watt (GOPS/Wsn), often surpassing equivalent traditional hardware efficiency by factors of 10 to 1000 for specific workloads [19]. However, without a standardized definition of what constitutes an "operation," these figures remain ambiguous and often misleading. This whitepaper deconstructs the core computational primitives of neuromorphic systems, provides a framework for their consistent measurement, and outlines detailed experimental protocols to equip researchers with the tools for rigorous, comparable energy efficiency analysis.

Deconstructing the Neuromorphic 'Operation'

An "operation" in a spiking neural network (SNN) is not a monolithic concept but a hierarchy of interdependent processes. Accurate measurement requires isolating and defining these components, as their energy costs and computational roles differ significantly.

The Synaptic Operation

The synaptic operation is the fundamental processing step that occurs when a pre-synaptic spike arrives at a synapse. Its biological inspiration is the release of neurotransmitters. In hardware, this involves:

- Spike Reception and Decoding: The synapse receives an incoming spike event, typically encoded as an address or a packet [20].

- Weight Application: The stored synaptic weight (efficiency) is retrieved from memory. This weight can be a digital value or an analog conductance, as in memristive devices [21].

- Post-Synaptic Effect Generation: The weight value is used to modulate the state of the post-synaptic neuron. In a simple model, this is an additive increase to a target conductance (

g_target += w) [22]. In more complex, dynamic synapses, this might involve interaction with internal synaptic variables like short-term plasticity traces [22].

A critical advancement in large-scale implementations is the separation of the synaptic plasticity adaptor array from the neuron array [20]. This architecture allows for a more generic and flexible handling of multiple plasticity rules (e.g., STDP, STDDP) without altering the core neural network structure. In such systems, the synaptic operation is performed by a dedicated adaptor, which updates the weight or delay value and sends a weighted or delayed pre-synaptic spike to the post-synaptic neuron [20].

The Somatic Integration Operation

The somatic integration operation occurs within the artificial neuron and is analogous to the integration of post-synaptic potentials in a biological neuron. Its primary function is to update the internal state of the neuron based on all received inputs. The core computational step is the numerical integration of the neuron's state equation, such as the Leaky Integrate-and-Fire (LIF) model:

τ_m * dV/dt = -V(t) + R_m * I_syn(t)

Where V(t) is the membrane potential, τ_m is the membrane time constant, R_m is the membrane resistance, and I_syn(t) is the total synaptic current. This integration is typically performed at every timestep (dt) in digital systems, or continuously in analog implementations [13] [23]. The energy cost of this operation scales with the complexity of the neuron model and the number of neurons updated per timestep.

The Spike Transmission Operation

Spike transmission is the event-driven communication of a binary spike from one neuron to its fan-out synapses. This is a defining feature of neuromorphic systems, enabling sparse, activity-dependent communication. The process involves:

- Spike Generation: The neuron's membrane potential exceeds a threshold, triggering a reset and the generation of a spike event.

- Routing: The spike event is routed through an on-chip or inter-chip network to all its target synapses [20] [23]. The energy cost of this operation is dominated by the routing overhead and scales with the average fan-out of neurons and the distance the spike must travel [23].

Table 1: Taxonomy of Core Neuromorphic Operations

| Operation Type | Core Function | Key Parameters | Primary Energy Cost Drivers |

|---|---|---|---|

| Synaptic Operation | Apply synaptic weight to post-synaptic neuron. | Synaptic weight (w), plasticity rule. | Memory access (weight read), computational cost of plasticity rule, fan-in. |

| Somatic Integration | Update neuron's internal state. | Membrane potential (V), time constant (τm), input current (Isyn). | Complexity of neuron model, integration timestep. |

| Spike Transmission | Communicate spike event to target synapses. | Fan-out (number of target synapses), spike routing distance. | Network-on-chip traffic, routing logic. |

A Framework for Measurement and Metrics

Translating the defined operations into quantifiable metrics is the next critical step. The field currently lacks standardization, but a consensus is emerging around several key performance indicators.

Established and Emerging Metrics

The most common high-level metric is Energy Per Inference, which measures the total energy (in Joules) required to process a single data sample (e.g., one image from a dataset). This is a system-level metric that is easy to understand but obscures the underlying operational efficiency [19].

For a more granular view, metrics must be tied to the defined operations:

- Synaptic Operations Per Second (SOPS): The total number of synaptic operations performed per second. A more instructive variant is SOPS per Watt (SOPS/W), which directly measures the energy efficiency of the core processing element [19].

- Spiking Neural Operations Per Second (SNOPS): A broader metric that can encapsulate a combination of somatic and synaptic processing. Its corresponding efficiency metric is SNOPS per Watt (SNOPS/W) [19].

A significant challenge is that these "neuromorphic operations" are fundamentally different from the FLOPs of traditional hardware, making direct comparison difficult. A fair comparison requires defining equivalence at the task level, for instance, by comparing the energy per inference on the same benchmark task [19].

Table 2: Comparative Energy Efficiency Metrics

| Metric Type | Traditional Computing (CPU/GPU) | Neuromorphic Computing | Key Characteristic |

|---|---|---|---|

| Operations/Watt | Giga FLOPs/Watt (GFLOPS/W) | Tera Synaptic OPS/W (TSOPS/W), Giga Spiking OPS/W (GSNOPS/W) | Focuses on computational throughput per unit energy. |

| Energy Per Inference | Microjoules to Millijoules | Nanojoules to Microjoules | Measures total task-level energy cost; most direct for application comparison. |

| Platform Throughput | Frames processed per second | Synaptic events per second, Real-time simulation speedup [23] | Measures processing capacity for the target data type. |

The Critical Role of Actionable Metrics

Current research indicates that while many existing metrics are useful for architectural comparisons, they often lack practical, actionable insights for developers trying to improve model efficiency [24]. To bridge this gap, metrics should be:

- Accessible: Obtainable early in the development cycle without requiring full hardware deployment.

- High-Fidelity: Accurately reflective of final deployment energy consumption.

- Actionable: Providing clear guidance on how to modify the model or hardware to improve efficiency [24].

Future research directions include developing more trend-based metrics, battery-aware metrics, and improved assessments of the energy-accuracy trade-off [24].

Experimental Protocols for Operational Energy Measurement

To ensure reproducible and comparable results, researchers should adhere to detailed experimental protocols. The following methodologies provide a template for rigorous measurement.

Protocol 1: Isolating Synaptic Operation Energy

Objective: To measure the energy consumed per synaptic operation, excluding somatic and spike transmission costs.

Workflow:

- Setup: Configure the system under test (e.g., SpiNNaker, Loihi, or an in-memory compute array) with a network of leaky integrate-and-fire (LIF) neurons.

- Network Topology: Create a single-layer feedforward network. The pre-synaptic population should be driven by a Poisson spike generator, while the post-synaptic population should have its spiking mechanism disabled, forcing it to operate as a pure integrator.

- Measurement: Use a high-resolution power meter (e.g., Monsoon Solution or chip-internal power monitors) to measure the total energy consumed by the system over a fixed time window (e.g., 10 seconds of simulated time).

- Calculation: The total number of synaptic operations is given by

N_synaptic = (Pre-synaptic spike rate) * (Number of pre-synaptic neurons) * (Number of synapses per neuron) * (Measurement time). The energy per synaptic operation isE_synaptic = Total Measured Energy / N_synaptic.

Protocol 2: Benchmarking with Standardized SNN Models

Objective: To evaluate overall system efficiency on a biologically relevant and computationally demanding benchmark.

Workflow:

- Benchmark Selection: Utilize a standardized model such as the cortical microcircuit model by Potjans and Diesmann [23]. This model has defined neuron counts, synapse counts, and expected activity rates.

- Configuration: Map the benchmark model onto the target hardware, optimizing for load balancing and communication efficiency. For example, employ multi-target partitioning strategies on platforms like SpiNNaker to separate neural and synaptic processing for higher throughput [23].

- Execution and Profiling: Run the simulation for a defined biological time (e.g., 10 seconds). Precisely measure the wall-clock time and the total system energy.

- Metrics Calculation:

- Real-time Factor:

Simulated Biological Time / Wall-clock Time. A factor >1 indicates real-time capability. - Throughput:

Total Synaptic Events Processed / Wall-clock Time. - Energy Efficiency:

Total Synaptic Events Processed / Total Energy Consumed(in SOPS/J).

- Real-time Factor:

Diagram 1: Experimental protocol workflow for measuring energy efficiency.

The Scientist's Toolkit: Essential Research Reagents

This table details key hardware platforms, software tools, and material systems that form the essential "research reagents" for conducting state-of-the-art neuromorphic energy efficiency research.

Table 3: Key Research Reagents for Neuromorphic Efficiency Experiments

| Reagent / Platform | Type | Primary Function in Research | Key Characteristics |

|---|---|---|---|

| SpiNNaker [23] | Digital Neuromorphic Hardware | Massively parallel simulation of large-scale SNNs in real-time. | Many-core architecture (ARM processors), designed for real-time simulation, efficient event-based communication. |

| Loihi 2 [15] | Digital Neuromorphic Research Chip | Exploring novel SNN algorithms and in-memory computing architectures. | Supports wide range of neuronal models, programmable synaptic learning rules. |

| Memristor Crossbar Arrays [21] | Analog/Mixed-Signal Hardware | Implementing in-memory computing and ultra-low-power synaptic operations. | Collocated memory and processing, analog computation, potential for picojoule-level synaptic events [19]. |

| Phase-Change Materials (PCMs) [13] | Functional Material | Building artificial neurons and synapses with adaptive firing. | Electrical conductivity can be switched, retains state, mimics synaptic strengthening. |

| SNNtorch / SpikingJelly [24] | Software Framework (PyTorch-based) | Gradient-based training and simulation of SNNs on traditional hardware. | Enables modern ML-driven SNN design, though energy estimates may not reflect neuromorphic hardware gains. |

| Event Cameras (DVS) [21] | Neuromorphic Sensor | Generating real-world, event-based data streams for processing. | High temporal resolution, low latency, produces asynchronous spike streams, ideal for testing with real inputs. |

The path to unambiguous and comparable energy efficiency metrics in neuromorphic computing begins with a precise definition of the fundamental "operation." By deconstructing systems into their constituent synaptic, somatic, and spike transmission operations, and by adopting standardized, actionable metrics and experimental protocols, the research community can overcome a significant barrier to progress. This rigorous approach to measurement is not merely an academic exercise; it is the foundation for guiding hardware design, optimizing algorithms, and ultimately fulfilling the promise of neuromorphic technology: to deliver artificial intelligence capabilities with the profound efficiency of the biological brain.

The exponential growth of artificial intelligence (AI) has triggered an equally exponential increase in the energy consumption of computing infrastructure. Conventional von Neumann architectures, which physically separate memory and processing, face fundamental efficiency limitations—data transfer between memory and processors can consume 200 times more energy than the actual computation itself [25]. This energy challenge has catalyzed the development of neuromorphic computing, a brain-inspired paradigm that promises to redefine the landscape of energy-efficient computing.

Neuromorphic hardware is founded on principles observed in biological neural systems. Unlike traditional artificial neural networks (ANNs) that process information continuously using floating-point operations, neuromorphic systems implement spiking neural networks (SNNs) that communicate through discrete, event-driven binary spikes [24]. This event-driven operation, combined with collocated memory and processing, enables unprecedented energy efficiency gains. Current research and early commercial deployments demonstrate efficiency improvements ranging from 100 to 1000 times over conventional central processing units (CPUs) and graphics processing units (GPUs) for specific workloads [26] [25].

This technical guide examines the substantiation behind these efficiency claims, analyzes the architectural and materials innovations enabling them, and provides researchers with methodologies for rigorous energy efficiency assessment. Framed within the broader context of neuromorphic hardware energy efficiency research, this review serves as a foundation for evaluating the transformative potential of this emerging computing paradigm.

Quantifying the Efficiency Claims

The striking claims of 100x to 1000x efficiency improvements in neuromorphic hardware are supported by a growing body of empirical evidence from research institutions and industry developers. The table below summarizes key experimental findings and their associated efficiency metrics.

Table 1: Documented Energy Efficiency Improvements in Neuromorphic Hardware

| Platform/Technology | Efficiency Gain | Experimental Context | Key Metric | Citation |

|---|---|---|---|---|

| Intel Loihi (chip-to-chip) | 1000x more efficient | Sensor fusion and temporal processing tasks | Energy consumption per inference | [26] |

| Neuromorphic Circuits (2D material T-FETs) | 100x higher efficiency | AI inference tasks compared to 7nm CMOS | Energy efficiency (TOPS/W) | [25] |

| Memristor-based Systems | 100x lower energy | Learning to play Atari Pong | Energy consumption vs. GPU implementation | [25] |

| Intel Loihi (full system) | 2-3x more economical | Question-answering about previously told stories | Overall system energy consumption | [26] |

| Computational RAM (CRAM) | 2500x more energy-efficient | MNIST handwritten digit classification | Energy consumption vs. near-memory processing | [25] |

| BrainScaleS (hybrid analog) | Up to 101x gains | Compared to traditional ANNs on GPU hardware | Energy per operation or inference | [24] |

These efficiency gains stem from multiple architectural advantages. The event-driven operation of SNNs means that energy consumption occurs predominantly during spike events, with minimal power draw during idle periods [24]. Furthermore, the collocation of memory and processing in neuromorphic architectures eliminates the energy-intensive data shuffling that characterizes von Neumann systems. When combined with high parallelism and the use of simple accumulation operations rather than more computationally expensive multiply-accumulate (MAC) operations, these attributes create a foundation for radically improved energy efficiency [24].

Architectural Foundations of Efficiency

Core Principles

The extraordinary energy efficiency claims of neuromorphic hardware originate from fundamental architectural differences that distinguish them from conventional computing platforms. The human brain, the biological inspiration for neuromorphic systems, operates with remarkable efficiency—consuming approximately 0.3 kilowatt-hours daily (equivalent to about 20 watts), while a typical GPU consumes 10-15 kilowatt-hours daily [27]. This biological precedent demonstrates the potential for massive parallelism and event-driven computation to achieve extreme energy efficiency.

The von Neumann bottleneck—where data transfer between separate memory and processing units consumes the majority of energy—is eliminated in neuromorphic architectures through memory-processor collocation [28] [24]. In practical terms, this approach can reduce or eliminate the energy penalty associated with data movement, which in conventional systems can account for up to 80% of total processor power [29]. This architectural shift enables a transition from continuous computation to event-driven processing, where energy consumption becomes proportional to actual computational workload rather than operating at consistently high power levels regardless of workload [24].

Spiking Neural Networks

Spiking Neural Networks (SNNs) represent the algorithmic counterpart to neuromorphic hardware, fundamentally differing from traditional Artificial Neural Networks (ANNs) in their information representation and processing methods. While ANNs process information continuously using floating-point values, SNNs encode information in temporal sequences of binary spikes [24]. This temporal encoding creates sparse activity patterns, where only a small subset of neurons activate at any given time, significantly reducing computational overhead.

The Leaky Integrate-and-Fire (LIF) neuron model, initially developed by Lapicque in 1907 and implemented in neuromorphic hardware, maintains an internal membrane potential that integrates incoming spikes [24]. This model enables neurons to operate as temporal filters, responding selectively to specific patterns of input activity while ignoring noise or irrelevant inputs. The combination of sparse activity and temporal filtering creates the conditions for extreme energy efficiency, as demonstrated by implementations showing 100x lower energy consumption compared to equivalent ANN implementations on conventional hardware [24].

Table 2: Comparison of Neural Network Paradigms

| Characteristic | Traditional ANNs | Spiking Neural Networks (SNNs) |

|---|---|---|

| Information Encoding | Continuous floating-point values | Discrete binary spikes across time |

| Operation Type | Continuous computation | Event-driven processing |

| Neuron Model | Multiply-accumulate operations | Leaky Integrate-and-Fire (LIF) |

| Computational Primitive | MAC operations (energy-intensive) | Accumulate operations (energy-efficient) |

| Activity Pattern | Dense activation | Sparse activation |

| Memory-Processing Relationship | Separated (von Neumann) | Collocated (neuromorphic) |

Enabling Technologies and Materials

Emerging Materials and Devices

The realization of efficient neuromorphic hardware depends critically on advanced materials and device structures that can implement neural functions with minimal energy requirements. Memristors and other resistive switching devices have emerged as key enabling components, serving as synaptic crossbar arrays that can store weights and perform analog matrix multiplication in place [30]. These devices typically exhibit low switching voltages and short response times, enabling energy-efficient operation while supporting the dense connectivity required for large-scale neural networks.

Two-dimensional (2D) materials represent another promising material class for neuromorphic applications. Projects like the ENERGIZE consortium—a joint Korean-EU partnership—are exploiting the exceptional properties of 2D materials, including their high crystallinity, absence of dangling bonds, and compatibility with back-end-of-line (BEOL) semiconductor processes [28]. These characteristics enable the development of devices with ultra-low switching energy while facilitating integration with conventional semiconductor technologies.

Superconducting and Photonic Approaches

Beyond conventional CMOS-based approaches, more radical technological pathways are being explored to push energy efficiency beyond current limits. Superconducting electronics based on niobium Josephson Junctions represent one such approach, promising 100x to 1000x lower power than CMOS technologies while maintaining or exceeding their performance [27]. In these systems, binary representation shifts from voltage levels to the direction of current flow in superconducting loops, essentially eliminating the static power consumption that plagues conventional semiconductor devices.

Photonic computing offers another disruptive pathway, with demonstrated capabilities for completing machine-learning classification in under half a nanosecond while achieving 92% accuracy [25]. Photonic chips could reduce energy required for AI training by up to 1,000 times compared to conventional processors, with the additional advantage of generating minimal heat, thereby reducing cooling requirements and associated operational costs [25].

Experimental Methodologies for Efficiency Validation

Benchmarking Approaches

Rigorous assessment of neuromorphic hardware efficiency requires standardized benchmarking methodologies that enable fair comparison across different platforms. The Spiking Neural Architecture Benchmark Suite (SNABSuite) has emerged as a framework for cross-platform benchmarking, supporting systems including NEST (CPU), GeNN (GPU), SpiNNaker (digital neuromorphic), and BrainScaleS (analog neuromorphic) [16]. This suite covers benchmarks from low-level characterization to high-level application evaluation using benchmark-specific metrics, enabling comprehensive efficiency analysis across diverse hardware platforms.

Benchmarking activities have revealed characteristic efficiency patterns across different neuromorphic architectures. For instance, the Loihi chip demonstrated particular efficiency advantages for temporal processing tasks, with internal chip communication proving 1000 times more efficient than chip-to-chip communication due to eliminated spike transmission overhead [26]. These findings highlight the importance of considering both internal efficiency and system-level communication costs when evaluating overall system performance.

Energy Measurement and Modeling

Accurately measuring energy consumption in neuromorphic systems presents unique challenges that require specialized approaches. Researchers have developed energy models that enable prediction of energy expenditure on target systems without direct hardware access [16]. These models combine benchmark performance metrics with energy efficiency considerations, allowing for comparative analysis between neuromorphic approaches and biological efficiency benchmarks.

When comparing neuromorphic systems to the biological paragon of the human brain, energy modeling reveals that current neuromorphic systems remain at least four orders of magnitude less efficient than their biological counterparts [16]. Even with modern fabrication processes, two to three orders of magnitude efficiency gap remain, highlighting both the impressive achievements of current neuromorphic technology and the substantial potential for future improvement.

Table 3: Essential Research Tools and Platforms for Neuromorphic Efficiency Research

| Tool/Platform | Type | Primary Function | Key Features | Accessibility |

|---|---|---|---|---|

| SNABSuite | Benchmarking Suite | Cross-platform performance and efficiency evaluation | Supports multiple neuromorphic backends; Energy modeling capabilities | Research community |

| SpiNNaker | Neuromorphic Hardware | Massively parallel digital neuromorphic system | 57,600 interconnected nodes; Real-time simulation capability | Available via EBRAINS |

| Intel Loihi/Loihi 2 | Neuromorphic Hardware | Research chip for SNN implementation | Event-driven asynchronous operation; Scalable neuromorphic architecture | Research partnerships |

| BrainScaleS | Neuromorphic Hardware | Hybrid analog-digital neuromorphic system | Physical emulation of neuron dynamics; High acceleration factor | Available via EBRAINS |

| SNNTorch | Software Framework | SNN development and simulation | PyTorch integration; GPU acceleration | Open source |

| SpikingJelly | Software Framework | SNN development and analysis | Comprehensive neuron models; Hardware deployment support | Open source |

| EBRAINS | Research Infrastructure | Collaborative platform for brain-inspired research | Multiple neuromorphic systems; Data and tool sharing | Academic researchers |

Research Challenges and Future Directions

Measurement and Metric Challenges

Despite significant progress in neuromorphic hardware development, researchers face substantial challenges in accurately measuring and comparing energy efficiency across platforms. A primary issue is the lack of standardized, actionable metrics that can guide energy-efficient SNN development [24]. Current metrics often facilitate architecture comparison but provide limited practical insights for developers seeking to optimize energy performance.

The gap between accessible metrics (easily obtained through simulation) and high-fidelity metrics (requiring actual hardware deployment) presents another significant challenge [24]. This disconnect complicates early-stage energy assessment, potentially leading to suboptimal design choices that only become apparent after hardware implementation. Furthermore, there is a notable shortage of battery-aware metrics that reflect changes in power requirements over time, despite the critical importance of such considerations for edge deployment scenarios [24].

Commercialization and Scaling Hurdles

The path to widespread commercialization of neuromorphic hardware faces several significant obstacles. High development costs associated with specialized architectures, novel fabrication technologies, and new materials create substantial barriers to entry, particularly for smaller companies [30]. These economic challenges are compounded by technical hurdles related to uncertain long-term reliability of emerging neuromorphic components, creating adoption risks for potential users.

The timeline mismatch between neuromorphic technology development and alternative energy-efficient computing solutions represents another consideration. While nuclear startups targeting AI power demand project first revenue between 2028-2030, neuromorphic systems are already being commercially deployed in research settings, with scaling expected between 2025-2027 [25]. This timeline advantage positions neuromorphic computing as a near-term solution to AI's energy challenges, though widespread adoption will require continued progress in scaling and integration with existing computing infrastructure.

Neuromorphic hardware represents a paradigm shift in computing architecture that directly addresses the escalating energy demands of artificial intelligence. The documented efficiency improvements of 100x to 1000x over conventional hardware are substantiated by growing experimental evidence from diverse research initiatives and early commercial deployments. These efficiency gains stem from fundamental architectural principles: event-driven processing, collocated memory and computation, and temporal information encoding in spiking neural networks.

While significant challenges remain in standardization, measurement methodologies, and commercialization, the trajectory of neuromorphic technology suggests a transformative impact on energy-efficient computing. As research continues to bridge the efficiency gap between synthetic systems and biological neural networks—which still maintain a four-order-of-magnitude advantage—neuromorphic hardware appears poised to play a crucial role in enabling sustainable AI expansion. For researchers and professionals engaged in drug development and biomedical research, these advances promise to unlock new possibilities for complex simulation and data analysis while containing energy consumption.

The Measurement Toolkit: From Standardized Benchmarks to Application-Specific Metrics

The rapid expansion of artificial intelligence (AI) and machine learning (ML) has led to increasingly complex models, yet the growth rate of computational demands for these models is surpassing the efficiency gains from traditional technology scaling [31]. This widening gap creates an urgent need for novel, resource-efficient computing architectures. Neuromorphic computing, drawing inspiration from the brain's architecture and principles, has emerged as a leading candidate to address these challenges, promising major advances in computing efficiency and capabilities [31] [32]. The field aims to replicate key hallmarks of biological intelligence—such as scalability, energy efficiency, and real-time embodied computation—by porting computational strategies from the brain into engineered devices and algorithms [31].

However, the absence of standardized benchmarks has significantly hindered the neuromorphic research field's progress. Without common standards, it becomes exceptionally difficult to measure technological advancements objectively, compare performance against conventional methods, or identify the most promising research directions [31] [33]. Prior benchmarking efforts have failed to achieve widespread adoption due to insufficiently inclusive, actionable, and iterative design principles [33]. To resolve this critical gap, the neuromorphic research community has collaboratively developed NeuroBench, a comprehensive benchmark framework for neuromorphic computing algorithms and systems. As an open community effort spanning industry and academia, NeuroBench provides a representative structure for standardizing the evaluation of neuromorphic approaches through a common set of tools and systematic methodology [31] [33].

The NeuroBench Framework: Core Architecture and Design Principles

NeuroBench is structured around two primary tracks that collectively enable end-to-end system evaluation: the Algorithm Track for hardware-independent assessment and the System Track for hardware-dependent evaluation [34] [33]. This dual-track approach recognizes the multifaceted nature of neuromorphic computing progress, which advances through both algorithmic innovations and hardware developments.

The framework's architecture consists of several integrated components that work together to provide comprehensive benchmarking capabilities. The benchmark harness is an open-source Python package that allows researchers to run evaluations consistently, while specialized sections handle datasets, pre-processing routines for converting data to spikes, and post-processors for interpreting spiking outputs [35]. This modular design ensures flexibility and extensibility as the field evolves.

Core Design Principles and Community-Driven Development

NeuroBench embodies several key design principles that distinguish it from previous benchmarking attempts. The framework prioritizes collaborative development through an open community of researchers across industry and academia, ensuring broad representation and adoption [31] [36]. This community-driven approach is critical for establishing NeuroBench as a definitive standard rather than just another proprietary benchmark.

The framework emphasizes actionable benchmarking by providing metrics that offer practical insights to guide research and development decisions [24]. Unlike benchmarks that merely rank systems, NeuroBench aims to identify specific strengths and weaknesses to drive targeted improvements. Additionally, the framework supports inclusive measurement through a systematic methodology that accommodates diverse neuromorphic approaches while maintaining objective comparability [33].

NeuroBench maintains an iterative development model that allows continuous expansion of benchmarks and features to track and foster community progress [33]. This adaptability ensures the framework remains relevant as neuromorphic computing evolves. The project website, documentation, and GitHub repository provide central hubs for community engagement and framework updates [34] [35] [37].

NeuroBench Metrics and Evaluation Methodology

NeuroBench employs a comprehensive suite of metrics designed to capture the multifaceted performance characteristics of neuromorphic algorithms and systems. These metrics are categorized to evaluate different aspects of performance, with particular emphasis on energy efficiency—a crucial advantage promised by neuromorphic approaches.

Comprehensive Metric Taxonomy

The table below summarizes the core metric categories used in NeuroBench evaluations:

Table 1: NeuroBench Metric Categories and Examples

| Category | Specific Metrics | Description | Relevance to Energy Efficiency |

|---|---|---|---|

| Accuracy Metrics | Classification Accuracy [35] | Task performance measurement | Ensures efficiency gains don't compromise functionality |

| Sparsity Metrics | Activation Sparsity, Connection Sparsity [35] | Measures event-driven activity and network connectivity | Directly correlates with energy consumption in neuromorphic hardware |

| Computational Metrics | Synaptic Operations (Effective MACs/ACs) [35] | Counts multiply-accumulate and accumulate operations | Predicts computational energy requirements |

| Hardware Efficiency | Footprint (memory), Energy Consumption [35] | Resource utilization measurements | Quantifies actual hardware efficiency gains |

| System-level Metrics | Throughput, Latency [33] | Overall system performance | Captures real-world operational efficiency |

Energy Efficiency Metric Analysis

Energy efficiency assessment presents particular challenges in neuromorphic computing. Current research classifies energy metrics based on four key properties: Accessibility (ease of measurement), Fidelity (accuracy in reflecting real hardware performance), Actionability (ability to guide improvements), and Trend-based analysis (sensitivity to architectural changes) [24].

A significant challenge identified in recent studies is the gap between accessible metrics (easily measured but less accurate) and high-fidelity metrics (accurate but requiring specialized hardware) [24]. This gap is particularly problematic for early-stage development when hardware access may be limited. NeuroBench addresses this through its dual-track approach, allowing algorithm-level energy estimation while also supporting direct hardware measurement.

The framework also emphasizes the need for more actionable metrics that provide practitioners with specific guidance for improving energy efficiency, rather than merely enabling comparisons between architectures [24]. This includes developing trend-based metrics that reflect changes in power requirements, battery-aware metrics for embedded applications, and improved energy-performance tradeoff assessments.

Experimental Protocols and Benchmark Implementation

NeuroBench establishes standardized experimental protocols to ensure consistent, reproducible evaluations across different neuromorphic approaches. The general workflow follows a systematic methodology that encompasses data preparation, model evaluation, and metric computation.

Standardized Evaluation Workflow

The evaluation process in NeuroBench follows a structured workflow that can be visualized as follows:

This workflow begins with model training using standard training datasets, followed by wrapping the trained network in a NeuroBenchModel to standardize the interface [35]. The evaluation process then uses designated evaluation split dataloaders, pre-processors for data preparation and spike conversion, and post-processors for interpreting spiking outputs [35]. The framework executes model inference and computes a comprehensive set of metrics through the Benchmark class's run() method [35].

Implementation Example: Google Speech Commands Benchmark

To illustrate NeuroBench in practice, consider the Google Speech Commands (GSC) keyword classification benchmark. The implementation includes both Artificial Neural Network (ANN) and Spiking Neural Network (SNN) examples, with the following typical results:

Table 2: Sample GSC Benchmark Results (Adapted from [35])

| Metric | ANN Baseline | SNN Baseline | Significance |

|---|---|---|---|

| Classification Accuracy | 86.5% | 85.6% | Comparable task performance |

| Activation Sparsity | 38.5% | 96.7% | SNNs show much sparser activation |

| Synaptic Operations | 1.73M MACs | 3.29M ACs | Different operation profiles |

| Footprint (Memory) | 109,228 | 583,900 | SNN requires more parameters |

| Connection Sparsity | 0% | 0% | Dense connectivity in baselines |

These results demonstrate how NeuroBench captures the fundamental tradeoffs in neuromorphic approaches. While the SNN implementation shows significantly higher activation sparsity (96.7% vs. 38.5%)—which would translate to energy savings on neuromorphic hardware—it also requires more parameters and different types of synaptic operations [35].

Current Benchmark Tasks and Application Domains

NeuroBench v1.0 includes several defined benchmarks spanning multiple application domains, each selected to represent important use cases for neuromorphic computing. These benchmarks enable researchers to evaluate their approaches against standardized tasks and compare performance with established baselines.

Defined Benchmark Tasks

The current NeuroBench algorithm benchmarks include [35]:

- Keyword Few-shot Class-incremental Learning (FSCIL): Evaluates continuous learning capabilities with limited examples

- Event Camera Object Detection: Tests performance with event-based vision sensors

- Non-human Primate (NHP) Motor Prediction: Challenges algorithms with biological neural data modeling

- Chaotic Function Prediction: Assesses temporal sequence processing capabilities

- DVS Gesture Recognition: Uses dynamic vision sensor data for gesture classification

- Google Speech Commands (GSC) Classification: Benchmarks keyword spotting accuracy and efficiency

- Neuromorphic Human Activity Recognition (HAR): Evaluates motion pattern classification from event-based sensors

These diverse tasks enable comprehensive evaluation across different neuromorphic computing strengths, including temporal processing, event-based sensing, and continuous learning. The benchmarks utilize various data modalities, from traditional audio to neuromorphic event-based vision sensors, ensuring broad coverage of application scenarios.

Implementing NeuroBench benchmarks requires familiarity with a ecosystem of tools, platforms, and datasets. The table below summarizes key resources for researchers entering the field:

Table 3: Essential Research Tools and Platforms for Neuromorphic Benchmarking

| Resource Category | Specific Tools/Platforms | Purpose/Function | Relevance to NeuroBench |

|---|---|---|---|

| Software Frameworks | SNNTorch [24], SpikingJelly [24] | SNN development and training | Primary algorithm development environments |

| Neuromorphic Hardware | Intel Loihi/Loihi 2 [24] [32], SpiNNaker [24] [32], BrainScaleS [24] | Specialized neuromorphic processors | System track evaluation platforms |

| Simulation Platforms | PyTorch-based simulation [24] | Algorithm development without hardware | Algorithm track evaluation |

| Datasets | Google Speech Commands [35], DVS Gesture [35] | Standardized benchmark data | Consistent task evaluation |

| Evaluation Tools | NeuroBench Python harness [35] [37] | Standardized metric computation | Core evaluation framework |

| Energy Measurement | Hardware-specific power monitors [24] | Direct power measurement | System track energy metrics |

The NeuroBench harness itself is available as a Python package installable via PyPI (pip install neurobench), with extensive documentation and examples provided through the project website and GitHub repository [35]. The framework integrates seamlessly with popular deep learning workflows while adding specialized capabilities for spiking neural network evaluation.

Future Directions and Community Impact

As neuromorphic computing advances toward commercial success, with potential applications in ultra-low-power battery-powered systems, IoT devices, and consumer wearables [15], standardized benchmarking becomes increasingly critical. NeuroBench is positioned to evolve alongside these technological developments, with several key expansion areas identified for future development.

The framework will continue to incorporate new benchmark tasks representing emerging application domains, particularly those emphasizing real-time processing, edge intelligence, and autonomous systems. There is also ongoing work to enhance system track benchmarks with more comprehensive hardware performance characterization, including reliability, thermal behavior, and scalability metrics [33].

For energy efficiency assessment—a core promise of neuromorphic computing—future NeuroBench developments aim to bridge the gap between accessible and high-fidelity metrics [24]. This includes creating more actionable metrics that provide specific guidance for improving energy efficiency, not just comparative rankings. Research directions include developing trend-based metrics that reflect changes in power requirements, battery-aware metrics for implantable devices [24], and improved energy-performance tradeoff assessments.

The long-term impact of NeuroBench extends beyond mere performance tracking. By establishing common evaluation standards, the framework enables more direct comparison between different neuromorphic approaches, facilitates technology transfer from research to industry, and helps identify the most promising directions for future investment and investigation [31] [33]. As the field addresses key challenges in programming models and deployment scalability [15], NeuroBench provides the necessary foundation for measuring progress toward commercially viable neuromorphic computing.

NeuroBench represents a critical infrastructure development for the neuromorphic computing research community, addressing the long-standing absence of standardized benchmarks that has hindered objective assessment of technological progress. Through its collaborative design, dual-track evaluation methodology, and comprehensive metric suite, the framework delivers an objective reference for quantifying neuromorphic approaches in both hardware-independent and hardware-dependent contexts.

As neuromorphic computing advances toward broader commercial adoption, with promising demonstrations showing orders-of-magnitude improvements in energy efficiency for suitable tasks [32] [38], NeuroBench provides the essential tools for tracking this progress and identifying the most promising research directions. The framework's open development model and community-driven governance ensure it will continue to evolve alongside the field, maintaining relevance as both neuromorphic algorithms and hardware mature.

For researchers, engineers, and stakeholders in neuromorphic computing, NeuroBench offers a standardized methodology for conducting rigorous, reproducible evaluations that capture the multifaceted performance characteristics of these brain-inspired systems. By adopting this common framework, the community can accelerate progress toward realizing the full potential of neuromorphic computing—ultra-efficient, scalable, and capable intelligent systems inspired by the most powerful computational entity known: the brain.

The pursuit of energy efficiency represents a central pillar in neuromorphic computing research, driven by the need to enable advanced artificial intelligence in power-constrained environments from edge devices to medical implants. As this brain-inspired computing paradigm advances, researchers face fundamental methodological decisions in how to quantify energy efficiency, primarily choosing between hardware-independent and hardware-dependent approaches. This distinction is not merely technical but strategic, affecting the validity, comparability, and practical relevance of research findings throughout the technology development pipeline. The selection between these metric classes must align with specific research stages—from early algorithm exploration to final hardware deployment—to ensure appropriate benchmarking without constraining innovation.

Within the broader context of measuring neuromorphic hardware energy efficiency, this guide establishes a structured framework for metric selection grounded in current research practices and collaborative community efforts. The emerging NeuroBench framework, developed through cross-institutional collaboration, provides a standardized methodology for inclusive benchmark measurement in both hardware-independent and hardware-dependent settings [31]. Similarly, the SNABSuite platform offers an overarching benchmark suite that spans from low-level characterization to high-level application evaluation using benchmark-specific metrics [16]. These initiatives reflect growing recognition that accurately quantifying the energy efficiency of neuromorphic systems requires specialized approaches distinct from traditional computing paradigms, as conventional metrics like FLOPS/watt fail to capture the event-driven, sparse, and temporal dynamics inherent to neuromorphic architectures [39].

Theoretical Framework: Defining the Metric Classes

Hardware-Independent Metrics

Hardware-independent metrics enable researchers to evaluate neuromorphic algorithms and architectures without direct access to physical hardware systems. These abstracted measures focus on computational and communication patterns that fundamentally influence energy consumption regardless of implementation specifics. This approach is particularly valuable during early research and development phases when hardware availability is limited or when comparing algorithmic approaches across different potential implementations.