Benchmarking Artifact Removal Algorithms: A Guide to Public Datasets and Best Practices for Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on benchmarking artifact removal algorithms using public datasets.

Benchmarking Artifact Removal Algorithms: A Guide to Public Datasets and Best Practices for Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on benchmarking artifact removal algorithms using public datasets. It covers the foundational principles of biomedical artifacts and the critical role of public datasets in enabling reproducible research. The piece explores a wide array of methodological approaches, from traditional techniques to advanced deep learning models, and offers practical strategies for troubleshooting and optimizing benchmarking pipelines. Finally, it details robust validation frameworks and comparative analysis techniques, synthesizing key performance metrics and evaluation standards to empower scientists in selecting and developing the most effective artifact removal strategies for their specific applications.

Understanding Artifacts and The Critical Role of Public Datasets

In biomedical data analysis, an artifact is defined as any component of a recorded signal or image that does not originate from the biological phenomenon of interest but arises from external sources, potentially compromising data integrity and interpretation. These unwanted signals represent a fundamental challenge across electroencephalography (EEG), medical imaging, and other biosignal modalities, as they can obscure genuine physiological information and lead to erroneous conclusions in both research and clinical settings [1].

The susceptibility of biomedical data to contamination stems from its inherent nature. EEG signals, for instance, are characterized by microvolt-range amplitudes, making them highly vulnerable to various physiological and non-physiological interference sources [2] [1]. Similarly, medical images can be affected by flaws introduced during acquisition, reconstruction, or processing. Effectively identifying and removing these artifacts is not merely a technical preprocessing step but a crucial prerequisite for ensuring the reliability of subsequent analysis, accurate diagnosis, and the development of robust computational models [3] [2]. This guide provides a comparative analysis of contemporary artifact removal algorithms, benchmarking their performance against standardized experimental protocols and public datasets to inform researcher selection and application.

Defining and Classifying Biomedical Artifacts

Biomedical artifacts are broadly categorized by their origin. Physiological artifacts originate from the subject's body but are unrelated to the target signal, while non-physiological artifacts stem from technical, environmental, or experimental sources [1].

Table: Classification of Common EEG Artifacts

| Category | Type | Primary Sources | Key Characteristics | Impact on Data |

|---|---|---|---|---|

| Physiological | Ocular (EOG) | Eye blinks, movements [1] | High-amplitude, slow deflections (Frontal channels) [1] | Dominates delta/theta bands [1] |

| Muscle (EMG) | Jaw clenching, talking, neck tension [1] | High-frequency, broadband noise [1] | Obscures beta/gamma rhythms [1] | |

| Cardiac (ECG) | Heartbeat [1] | Rhythmic, pulse-synchronous waveforms [1] | Overlaps multiple EEG bands [1] | |

| Sweat | Perspiration [1] | Very slow baseline drifts [1] | Contaminates delta band [1] | |

| Non-Physiological | Electrode Pop | Sudden impedance change [1] | Abrupt, high-amplitude transients (Single channel) [1] | Broadband, non-stationary noise [1] |

| Power Line | AC electrical interference [1] | 50/60 Hz narrow spectral peak [1] | Masks neural activity at line frequency [1] | |

| Motion | Head/body movement [4] [1] | Large, non-linear noise bursts [1] | Reduces ICA decomposition quality [4] |

Diagram 1: A taxonomy of common biomedical artifacts, categorized by origin and source.

Benchmarking Performance: Quantitative Comparison of Artifact Removal Algorithms

Evaluating the efficacy of artifact removal techniques requires a standardized set of metrics. For EEG denoising, common quantitative measures include Signal-to-Noise Ratio (SNR), the average Correlation Coefficient (CC) between cleaned and clean signals, and the Relative Root Mean Square Error in both temporal (RRMSEt) and frequency (RRMSEf) domains [3]. A higher SNR and CC indicate better performance, while lower RRMSE values are desirable [3].

Table: Performance Comparison of Deep Learning Models for EEG Artifact Removal

| Algorithm | Architecture | Primary Target | SNR (dB) | CC | RRMSEt | RRMSEf | Key Strength |

|---|---|---|---|---|---|---|---|

| CLEnet [3] | Dual-Scale CNN + LSTM + EMA-1D | Mixed (EMG+EOG) | 11.50 | 0.925 | 0.300 | 0.319 | Best overall on mixed artifacts [3] |

| EEGDfus [5] | Conditional Diffusion (CNN+Transformer) | EOG | N/A | 0.983 | N/A | N/A | Highest CC for EOG [5] |

| ART [6] | Transformer | Multiple, Multichannel | N/A | N/A | N/A | N/A | Effective multichannel reconstruction [6] |

| MoE Framework [7] | Mixture-of-Experts (CNN+RNN) | EMG (High Noise) | Competitive | Competitive | Competitive | Competitive | Superior in high-noise settings [7] |

| 1D-ResCNN [3] | Multi-scale CNN | General | Lower | Lower | Higher | Higher | Baseline for CNN-based approaches [3] |

Table: Performance of Motion Artifact Removal Approaches During Locomotion

| Algorithm | Type | Key Parameter | Dipolarity | Power at Gait Freq. | P300 ERP Recovery | Primary Use Case |

|---|---|---|---|---|---|---|

| iCanClean [4] | CCA with Noise Reference | R² threshold (e.g., 0.65) | High | Significantly Reduced | Yes (Congruency effect) [4] | Mobile EEG with noise references [4] |

| Artifact Subspace Reconstruction (ASR) [4] | PCA-based | Standard deviation threshold (k=20-30) [4] | High | Significantly Reduced | Yes (Latency similar) [4] | General mobile EEG preprocessing [4] |

| Independent Component Analysis (ICA) [4] | Blind Source Separation | N/A | Reduced by motion | Present | Challenging | Standard lab-based EEG [4] |

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies adhere to rigorous experimental protocols centered on standardized datasets and evaluation frameworks.

Standardized Datasets and Data Preparation

The use of public datasets is critical for unbiased benchmarking.

- Semi-Synthetic Datasets: The EEGdenoiseNet dataset is a key benchmark containing clean EEG segments and corresponding EOG and EMG artifacts, allowing for the controlled creation of semi-synthetic noisy data [3] [7] [5]. This enables precise calculation of metrics like SNR and CC because the ground-truth clean signal is known.

- Realistic Multi-Channel Datasets: Datasets containing real, multi-channel EEG data with inherent artifacts, such as the 32-channel dataset collected during a 2-back task mentioned in CLEnet research, are essential for evaluating algorithm performance on complex, unknown artifacts in a realistic setting [3].

- Motion Artifact Benchmarks: For locomotion studies, datasets from Flanker tasks performed during jogging and standing are used to evaluate the recovery of stimulus-locked ERPs like the P300 after artifact removal [4].

The general workflow involves splitting the data into training, validation, and test sets. Models are trained in a supervised manner, often using Mean Squared Error (MSE) as the loss function to minimize the difference between the denoised output and the ground-truth clean signal [3] [2].

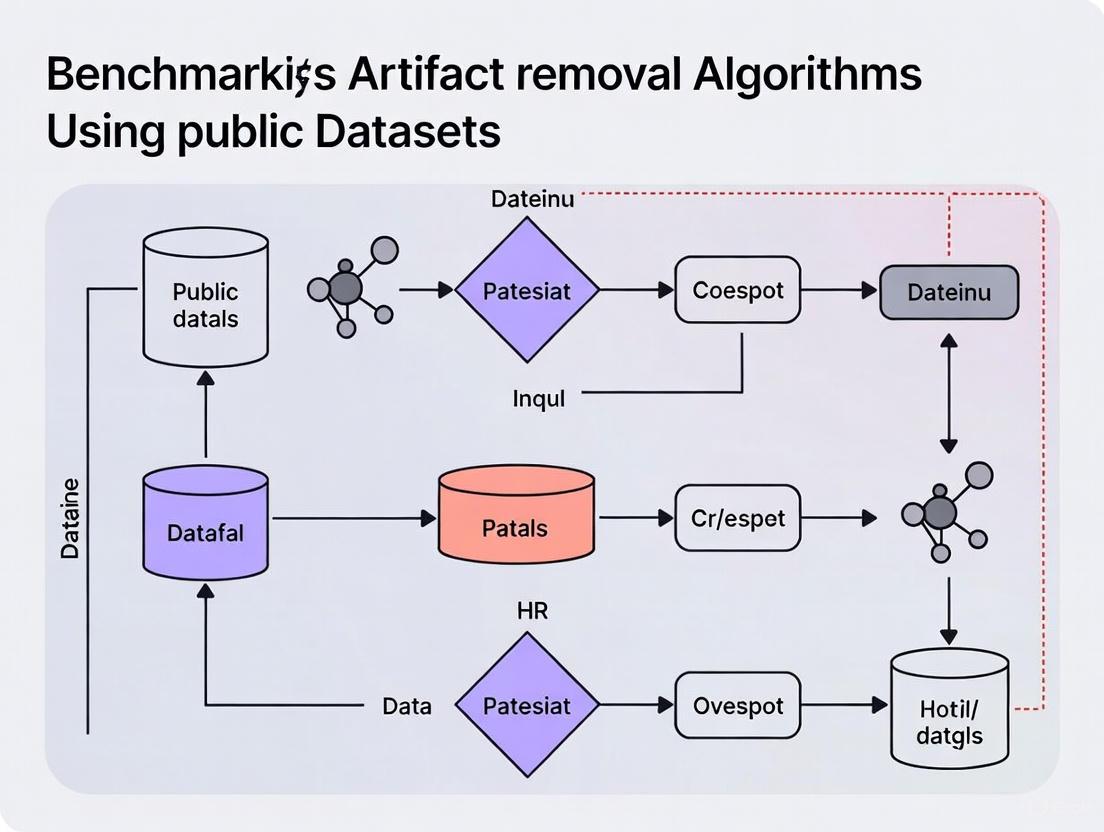

Diagram 2: Standardized experimental workflow for benchmarking artifact removal algorithms.

Detailed Methodologies of Featured Algorithms

CLEnet Protocol [3]: The methodology is divided into three stages: 1) Morphological and Temporal Feature Enhancement: Two convolutional kernels of different scales extract morphological features, with an embedded EMA-1D attention mechanism to enhance temporal features; 2) Temporal Feature Extraction: Features are dimensionality-reduced and fed into an LSTM network; 3) EEG Reconstruction: A final fully connected layer reconstructs the artifact-free signal. The model was tested on three datasets, including a proprietary 32-channel dataset, against benchmarks like 1D-ResCNN and NovelCNN.

iCanClean vs. ASR Protocol for Motion [4]:

This study compared motion artifact removal during running using an adapted Flanker task. iCanClean was implemented using pseudo-reference noise signals (notch filter below 3 Hz) with a canonical correlation analysis (CCA) R² threshold of 0.65 and a 4-second sliding window. ASR was implemented with a specific algorithm for reference period selection and a sliding-window PCA with a recommended standard deviation threshold k of 20-30. Evaluation was based on ICA component dipolarity, power reduction at the gait frequency and harmonics, and the ability to recover the expected P300 congruency effect in Event-Related Potentials (ERPs).

Table: Key Resources for Artifact Removal Research

| Resource | Type | Function in Research | Example |

|---|---|---|---|

| Standardized Public Datasets | Data | Provides benchmark for training & fair comparison of algorithms | EEGdenoiseNet [3], SSED [5] |

| Dual/Layer EEG Systems | Hardware | Provides dedicated noise sensors for reference-based algorithms (e.g., iCanClean) [4] | Systems with mechanically coupled noise sensors [4] |

| ICA Toolboxes | Software | Decomposes signals for analysis, used for generating training pairs or as a baseline method | ICLabel, EEGLAB plugins [4] |

| Deep Learning Frameworks | Software | Enables development and training of complex denoising models (CNNs, RNNs, Transformers) | TensorFlow, PyTorch |

| Evaluation Metric Suites | Analytical Scripts | Standardized quantitative assessment of denoising performance | Custom scripts for SNR, CC, RRMSE, etc. [3] |

| BIAS & BEAMRAD Guidelines | Reporting Tool | Ensures comprehensive and transparent reporting of datasets and challenge designs [8] | BEAMRAD tool for dataset documentation [8] |

The benchmark comparisons reveal a trade-off between specialization and generalization. While some models like CLEnet demonstrate robust performance across multiple artifact types [3], specialized frameworks like the Mixture-of-Experts (MoE) show promise for challenging scenarios like high-EMG noise [7]. The emergence of transformer-based models (ART) and diffusion models (EEGDfus) indicates a trend towards architectures capable of capturing complex, long-range dependencies in data for finer-grained reconstruction [5] [6].

Future progress hinges on several key factors: the development of more comprehensive and publicly available benchmark datasets, especially with real, labeled artifacts and multi-modal data [3] [8]; improved model generalizability to unseen noise and data from different acquisition systems [2]; and enhanced computational efficiency to enable real-time processing, particularly for brain-computer interfaces [2] [6]. Furthermore, the adoption of standardized documentation and reporting tools, such as the BEAMRAD tool, is critical for ensuring transparency, reproducibility, and the mitigation of bias in algorithm development [8]. By adhering to rigorous benchmarking protocols, researchers can continue to advance the field towards more reliable and clinically applicable artifact removal solutions.

In the rigorous domains of algorithm validation and scientific discovery, benchmarking serves as the fundamental mechanism for distinguishing genuine progress from unsubstantiated claims. It provides the standardized framework essential for the objective comparison of methods, technologies, and tools across diverse research environments. The absence of robust benchmarking invites a landscape fragmented by incompatible metrics, irreproducible results, and unquantifiable performance, ultimately stalling scientific and technological advancement. Nowhere is this imperative more critical than in the development of artifact removal algorithms and the validation of public datasets, where the integrity of the underlying data directly dictates the reliability of all subsequent findings. This guide objectively compares benchmarking practices and performance outcomes across two pivotal fields: biomedical signal processing for neural data and computational platforms for drug discovery. By synthesizing experimental data and detailed methodologies, we provide researchers and drug development professionals with a standardized framework for evaluating the tools that underpin their research.

Cross-Domain Benchmarking in Practice

Benchmarking methodologies, while universally valuable, require precise adaptation to the specific challenges and performance metrics of each research domain. The following section provides a detailed, data-driven comparison of benchmarking applications in two distinct fields: the analysis of neural signals and the discovery of new therapeutics.

Benchmarking Artifact Removal Algorithms for Neural Signals

In neuroengineering and mobile brain imaging, the removal of motion and stimulation artifacts from electroencephalography (EEG) signals is a prerequisite for accurate data interpretation. Researchers systematically evaluate artifact removal algorithms against a suite of performance metrics to identify the most effective approaches for specific recording conditions, such as those encountered during human locomotion or with high-channel-count prostheses [4] [9] [10].

Experimental Protocols & Performance Metrics: Key experiments in this field follow a structured validation pipeline. For motion artifact removal during running, EEG data is typically collected during dynamic tasks (e.g., a Flanker task performed while jogging) and a static control condition [4]. Algorithms are then evaluated based on:

- ICA Component Dipolarity: The quality of Independent Component Analysis (ICA) decomposition is assessed by calculating the number and proportion of components with a dipolar topography, a characteristic of brain-originating signals [4].

- Power Reduction at Artifact Frequencies: The algorithm's efficacy is quantified by the reduction in spectral power at the gait frequency and its harmonics [4].

- Recovery of Expected Neurophysiological Signals: Successful algorithms should allow for the identification of expected event-related potential (ERP) components, such as the P300 congruency effect, with a latency and amplitude comparable to those recorded in stationary conditions [4].

For electrical stimulation artifacts, as encountered in visual cortical prostheses, a different experimental approach is used. A simulated dataset containing both known neuronal activity and characterized stimulation artifacts is created to provide a "ground-truth" for validation [9]. Artifact removal methods are then benchmarked on their ability to:

- Recover Simulated Spikes and Multi-Unit Activity (MUA): The accuracy of reconstructing high-frequency neural firing patterns is a primary metric [9].

- Recover Local Field Potentials (LFP): The fidelity of reconstructing lower-frequency neural oscillations is also critical [9].

- Computational Complexity: The processing time and resource requirements are practical considerations for real-time applications [9].

Table 1: Performance Comparison of EEG Motion Artifact Removal Algorithms

| Algorithm | ICA Dipolarity | Power Reduction at Gait Frequency | P300 ERP Recovery | Key Experimental Finding |

|---|---|---|---|---|

| iCanClean (with pseudo-reference) [4] | High | Significant | Yes (with congruency effect) | Most effective for identifying stimulus-locked ERP components during running. |

| Artifact Subspace Reconstruction (ASR) [4] | High | Significant | Yes (latency similar to standing) | Effective but may not fully recover nuanced cognitive effects like the P300 amplitude difference. |

| Independent Component Analysis (ICA) [10] | Varies with signal quality | Moderate | Limited | Decomposition quality is reduced by the presence of large motion artifacts. |

Table 2: Performance Comparison of Stimulation Artifact Removal Methods for Neural Prostheses

| Algorithm | Spike/MUA Recovery | LFP Recovery | Computational Complexity | Conclusion |

|---|---|---|---|---|

| Polynomial Fitting [9] | High | Moderate | Low | Good trade-off for spike recovery and computational efficiency. |

| Exponential Fitting [9] | High | Moderate | Low | Good trade-off for spike recovery and computational efficiency. |

| Linear Interpolation [9] | Lower | High | Very Low | Effective for LFP recovery where precise spike timing is less critical. |

| Template Subtraction [9] | Lower | High | Medium | Effective for LFP recovery. |

Neural Signal Benchmarking Workflow

Benchmarking in Pharmaceutical Research & Development

In the pharmaceutical industry, benchmarking is a critical tool for de-risking the complex, costly, and high-failure-rate process of drug development. It involves comparing a drug candidate's performance against historical data from similar drugs to assess its Probability of Success (POS) and to inform strategic decision-making regarding resource allocation and risk management [11] [12].

Experimental Protocols & Performance Metrics: The core methodology for generating industry benchmarks involves large-scale empirical analysis of historical drug development pipelines [12] [13]. This process includes:

- Data Aggregation: Compiling comprehensive data on clinical trials, including success rates, timelines, and reasons for failure, from sources like ClinicalTrials.gov and proprietary databases [12].

- Stratification and Filtering: Data is stratified across multiple dimensions to enable precise comparisons. These dimensions include therapeutic area, modality (e.g., small molecule, biologic), mechanism of action, line of treatment, and biomarker status [11].

- Calculation of Likelihood of Approval (LoA): The unbiased ratio of the number of drugs entering Phase I trials to those receiving first FDA approval is calculated for a defined period (e.g., 2006-2022) [12].

- Evaluation of Computational Platforms: For computational drug discovery platforms like CANDO, benchmarking protocols use known drug-indication mappings from databases like the Comparative Toxicogenomics Database (CTD) and the Therapeutic Targets Database (TTD) as ground truth. Performance is measured by the platform's ability to rank known drugs highly for their associated indications, with metrics including recall and precision [13].

Table 3: Benchmarking Success Rates in Pharmaceutical R&D

| Benchmarking Focus | Key Metric | Result / Finding | Implication |

|---|---|---|---|

| Industry-Wide LoA (2006-2022) [12] | Likelihood of First Approval (Phase I to FDA approval) | Average: 14.3% (Range: 8% - 23% across 18 leading companies) | Provides a realistic baseline for assessing portfolio risk and valuing new projects. |

| Computational Drug Discovery (CANDO Platform) [13] | % of known drugs ranked in top 10 candidates | CTD Mapping: 7.4%\nTTD Mapping: 12.1% | Highlights the impact of the chosen "ground truth" database on perceived platform performance. |

| Traditional vs. Dynamic Benchmarking [11] | Data Completeness & POS Accuracy | Traditional methods often overestimate POS due to infrequent updates and simplistic phase-transition multiplication. | Dynamic benchmarks with real-time data and nuanced methodologies are essential for accurate decision-making. |

The Scientist's Toolkit: Essential Reagents for Robust Benchmarking

A successful benchmarking study relies on a foundation of high-quality data, validated tools, and clear methodologies. The following table details key "research reagents" essential for conducting rigorous evaluations in algorithm and dataset assessment.

Table 4: Key Research Reagents for Benchmarking Studies

| Reagent / Resource | Type | Function in Benchmarking |

|---|---|---|

| Public Datasets (e.g., MagicData340K) [14] | Dataset | Provides a large-scale, human-annotated benchmark with fine-grained labels (e.g., for image artifacts) for standardized algorithm training and testing. |

| Simulated Neural Data [9] | Dataset | Creates a "ground-truth" scenario where the uncontaminated neural signal is known, enabling precise validation of artifact removal methods for neuroprostheses. |

| ICLabel [4] | Software Tool | An EEGLAB plugin for automatically classifying Independent Components (ICs) as brain or artifact, used to evaluate the quality of ICA decomposition post-cleaning. |

| Artifact Subspace Reconstruction (ASR) [4] [10] | Algorithm | A robust method for removing high-amplitude artifacts from continuous EEG in real-time; used as both a preprocessing tool and a benchmark for comparison. |

| iCanClean [4] | Algorithm | An algorithm leveraging canonical correlation analysis (CCA) and noise references to remove motion artifacts from mobile EEG; a current state-of-the-art benchmark. |

| Therapeutic Targets Database (TTD) [13] | Database | Serves as a "ground truth" source of known drug-indication associations for benchmarking computational drug discovery and repurposing platforms. |

| Dynamic Benchmarks (e.g., Intelligencia AI) [11] | Methodology & Platform | A benchmarking solution that uses real-time data updates and advanced filtering to provide more accurate, current Probability of Success (POS) assessments than static methods. |

Generalized Benchmarking Logic Flow

The imperative for standardized evaluation is non-negotiable because it is the bedrock of scientific progress and effective resource allocation. As evidenced by the cross-domain comparisons, consistent benchmarking protocols enable researchers to move from isolated claims of efficacy to validated, comparable results. Whether optimizing an algorithm for a neural prosthesis or prioritizing a multi-million dollar drug development program, decisions must be grounded in empirical, benchmarked evidence. The continued development of large-scale, annotated public datasets, dynamic benchmarking platforms, and nuanced performance metrics is essential for accelerating innovation and delivering reliable outcomes in both technology and healthcare.

The rigorous benchmarking of artifact removal algorithms hinges upon access to high-quality, well-characterized public datasets. For researchers, scientists, and drug development professionals, selecting an appropriate dataset is a critical first step that can significantly influence the validity, reproducibility, and impact of their findings. The landscape of public data repositories is vast and heterogeneous, encompassing general-purpose aggregators and highly specialized collections tailored to specific scientific disciplines like medical imaging. This guide provides an objective comparison of key repositories and frameworks, with a particular focus on their application in benchmarking artifact removal algorithms, as exemplified by cutting-edge research in computed tomography (CT).

A notable example of a specialized benchmark is found in a 2025 study by Peters et al., which introduced a comprehensive framework for evaluating Metal Artifact Reduction (MAR) methods in CT imaging. This work highlights the essential components of a robust benchmarking dataset: a large volume of simulated training data and a clinically relevant evaluation benchmark with clearly defined metrics. The study utilized a clinical and a generic CT scanner geometry modeled in the open-access toolkit XCIST to simulate 14,000 metal artifact scenarios in the head, thorax, and pelvis regions. The resulting benchmark, which is publicly available, covers critical clinical use cases from small fiducial markers to large hip replacements and employs a suite of metrics assessing CT number accuracy, noise, image sharpness, and streak amplitude [15].

Repository Comparison at a Glance

The following table summarizes the key characteristics of major public dataset repositories, highlighting their primary applications and data attributes.

Table 1: Comparative Overview of Major Public Dataset Repositories

| Repository Name | Primary Use Case | Data Volume & Scope | Key Features & Integration | Notable Strengths | Notable Limitations |

|---|---|---|---|---|---|

| Kaggle [16] [17] | Real-world ML, data science competitions | Over 500,000 datasets across health, finance, biology, and more [17]. | Public notebooks, GPU/TPU access, user ratings, API access [17]. | Massive dataset variety; built-in code environment; access to real competition data and solutions [17]. | Dataset quality and documentation can be inconsistent [17]. |

| UCI ML Repository [16] [17] | Classic benchmarks, education, algorithm testing | 680+ datasets, typically smaller in scale (e.g., Iris, Wine Quality) [17]. | Datasets available in CSV, ARFF formats; searchable by task and data type [17]. | Comprehensive, trusted, and ideal for academic benchmarking [17]. | Some datasets are outdated; user interface is clunky; no modern workflow integration [17]. |

| OpenML [16] [17] | Reproducible ML experiments, AutoML | 21,000+ datasets with standardized metadata [17]. | Native integration with scikit-learn, mlr, WEKA; stores millions of model runs and hyperparameters [17]. | Rich metadata and consistent formatting; excellent for reproducibility and algorithm comparison [17]. | Interface can be overwhelming; less emphasis on massive, real-world datasets [17]. |

| Data.gov [16] | Data cleaning, public sector analysis | Over 290,000 datasets from US federal agencies (e.g., budgets, school performance) [16]. | US government open data; spans multiple agencies and topics. | Represents real-world public sector data with inherent complexity [16]. | Data often requires significant cleaning and domain research [16]. |

| Papers With Code [17] | Research-backed ML, state-of-the-art benchmarking | Curated datasets tied to peer-reviewed papers [17]. | Linked to papers, code, and leaderboards; dataset loaders for PyTorch/TensorFlow [17]. | Ideal for benchmarking against recent research; interconnected ecosystem of papers, code, and results [17]. | Not a broad directory; more research-focused than production-focused [17]. |

| Google Dataset Search [17] | Broad discovery of niche data | Indexes millions of datasets from global publishers (WHO, NASA, universities) [17]. | Search engine for dataset metadata; filters by format, license, and update date [17]. | Comprehensive for hard-to-find public data; no account required [17]. | Does not host data; dataset quality and link reliability vary [17]. |

| AWS/Google Public Datasets [16] | Large-scale data processing | Massive datasets (e.g., Common Crawl, GitHub activity, NOAA weather) [16]. | Hosted on cloud platforms (AWS, GCP); often accessible via SQL/BigQuery. | Demonstrate real-world data scale; integrated with cloud processing tools [16]. | Can incur costs for processing and querying large volumes of data [16]. |

Experimental Protocols for Benchmarking

When a repository hosts a benchmark for a specific task like artifact removal, the associated research paper typically details a standardized experimental protocol. Adhering to these protocols is crucial for fair and comparable results. Below is a generalized methodology derived from a specific MAR benchmark study.

Table 2: Key Experimental Metrics for a MAR Benchmark [15]

| Metric Category | Specific Metric | Function in Evaluation |

|---|---|---|

| Accuracy | CT Number Accuracy | Measures the deviation of CT numbers in specific regions from the ground truth, quantifying the algorithm's ability to restore correct values [15]. |

| Image Quality | Noise & Image Sharpness | Evaluates the level of introduced noise and the preservation (or enhancement) of edges and fine details [15]. |

| Artifact Reduction | Streak Amplitude | Directly quantifies the reduction in the intensity of streaking artifacts caused by metal objects [15]. |

| Structural Integrity | Structural Similarity Index | Assesses the preservation of overall image structure and the avoidance of introducing new structural distortions [15]. |

| Clinical Impact | Effect on Proton Therapy Range | For clinically relevant benchmarks, this evaluates the impact of the artifact reduction on downstream tasks like radiation therapy planning [15]. |

Detailed Methodology: MAR Benchmark Evaluation

The following workflow diagram outlines the key stages in creating and using a benchmark for Metal Artifact Reduction (MAR) algorithms, as described in recent literature [15].

The diagram above illustrates the three-phase methodology for building and employing a MAR benchmark. The corresponding experimental protocol is detailed below.

1. Data Generation & Simulation:

- Toolkit: The experiments are performed using a CT simulator such as XCIST (CatSim) to model both clinical and generic scanner geometries [15].

- Input Data: The simulation utilizes public, artifact-free CT databases from head, thorax, and pelvis regions [15].

- Artifact Simulation: The tool is used to simulate a large number (e.g., 14,000) of 2D metal artifact scenarios. This involves introducing different metal types and geometries, ranging from small fiducial markers to large hip implants. The realism of the simulation is validated by comparing simulated data against real CT phantom scans, with a mean CT number deviation of less than 2% considered acceptable [15].

2. Benchmark Definition:

- Test Cases: A comprehensive set of clinical scenarios is defined, covering the most relevant use cases in the head, thorax, and pelvis [15].

- Evaluation Metrics: A suite of quantitative metrics is selected to provide a holistic assessment of algorithm performance. These metrics cover accuracy, image quality, artifact reduction, and clinical impact, as detailed in Table 2 [15].

3. Algorithm Evaluation:

- Processing: The MAR algorithms under evaluation are run on the defined benchmark test cases.

- Analysis: For each algorithm output, the selected metrics are computed against the ground-truth, artifact-free data.

- Ranking: Results are aggregated and algorithms are ranked on a public leaderboard, allowing for direct comparison of performance across the different clinical scenarios and metrics [15].

The Scientist's Toolkit

For researchers working in the field of image artifact reduction, particularly with public benchmarks, the following tools and data solutions are essential.

Table 3: Essential Research Reagent Solutions for Image Artifact Reduction

| Tool / Reagent | Function / Application | Relevance to Benchmarking |

|---|---|---|

| XCIST (CatSim) Toolkit [15] | An open-access CT simulator for modeling scanner geometries and imaging physics. | Used to generate realistic training data and synthetic artifacts for algorithms when large-scale clinical ground truth is unavailable [15]. |

| LIU4K Benchmark [18] | A 4K resolution benchmark for evaluating single image compression artifact removal algorithms. | Provides a standardized dataset with diversified scenes and rich structures for benchmarking compression artifact algorithms [18]. |

| Standardized Metric Suite [15] | A pre-defined collection of full-reference, non-reference, and task-driven metrics. | Ensures consistent, objective, and comprehensive evaluation of algorithm performance against competitors [15]. |

| Public Repository (e.g., GitHub) | A platform for hosting code, datasets, and benchmark leaderboards. | Promotes reproducibility, collaboration, and allows researchers to compare their results directly against state-of-the-art methods. |

| Deep Learning Framework (e.g., PyTorch, TensorFlow) | Software libraries for building and training deep learning models. | Essential for implementing, training, and testing modern deep learning-based artifact reduction algorithms. |

In the rapidly evolving fields of computational imaging and text-to-image (T2I) generation, the presence of artifacts severely degrades output quality and limits practical application. A persistent challenge has been the lack of systematic, fine-grained evaluation frameworks capable of distinguishing between diverse artifact types. This guide objectively compares foundational approaches to artifact taxonomy development and dataset annotation, highlighting how these methodologies underpin the benchmarking of artifact removal algorithms. We focus on publicly available datasets that provide the essential labeled data required for training and evaluating next-generation models.

Comparative Analysis of Public Datasets for Artifact Assessment

The foundation of any robust benchmark is high-quality, annotated data. The table below summarizes and compares key public datasets that have advanced the field of artifact assessment.

Table 1: Comparison of Public Datasets for Artifact Assessment

| Dataset Name | Primary Focus | Artifact Taxonomy Granularity | Annotation Scale & Type | Key Strengths |

|---|---|---|---|---|

| MagicMirror (MagicData340K) [14] | Text-to-Image Generation | Multi-level (L1: Normal/Artifact, L2: e.g., Anatomy/Attribute, L3: e.g., Hand Structure) | 340K images; Human-annotated multi-labels [14] | Large scale; Fine-grained, hierarchical taxonomy; Detailed annotation guidelines [14] |

| SynArtifact-1K [19] | Synthetic Image Artifacts | Comprehensive (4 coarse-grained, 13 fine-grained classes e.g., Distortion, Omission) | 1.3K images; Categories, captions, coordinates [19] | Coarse-to-fine taxonomy; Annotations include descriptive captions and bounding boxes [19] |

| M-GAID [20] | Mobile Imaging (Ghosting) | Focused on high and low-frequency ghosting artifacts | 2,520 images; Patch-level (224x224) annotations [20] | Addresses mobile-specific challenges; Real-world scenarios; Precise patch-level labels [20] |

Experimental Protocols for Taxonomy Development and Annotation

A standardized, rigorous methodology is crucial for creating reliable datasets for benchmarking. The following protocols are synthesized from leading studies.

Taxonomy Development Protocol

The process begins with a systematic analysis of common failure modes in the target domain (e.g., T2I models, mobile cameras). Researchers categorize these into a logical hierarchy. For instance, MagicMirror establishes a three-tiered taxonomy: Level 1 differentiates normal images from those with artifacts; Level 2 categorizes artifacts into major groups like Anatomy and Attributes; and Level 3 provides specific labels for complex structures like "Hand Structure Deformity" [14]. Similarly, SynArtifact-1K uses a coarse-to-fine structure, first grouping artifacts into object-aware, object-agnostic, lighting, and others, before defining 13 specific artifact types like "Distortion" and "Omission" [19].

Data Collection and Annotation Protocol

- Diverse Data Sourcing: Collect prompts and generate images from a wide array of models to ensure diversity. MagicMirror, for example, sourced 50,000 prompts from user databases and generative models, then used them with multiple T2I systems like FLUX.1-dev, SD3.5, and Midjourney-v6.1 [14].

- Iterative Guideline Development: Create initial annotation guidelines, then refine them through pilot studies with expert annotators. This ensures definitions and visual examples for each label are clear and consistently applicable [14].

- Multi-Label Annotation: Annotators apply multiple L2 and L3 labels to a single image to capture all co-occurring artifacts, moving beyond a single, coarse-grained score [14].

- Quality Assurance: Implement continuous expert oversight during the large-scale labeling process to maintain high quality and inter-annotator consistency [14]. For specialized datasets like M-GAID, annotation may involve labeling specific image patches for precise artifact localization [20].

Workflow Diagram: From Data to Benchmark

The following diagram illustrates the end-to-end workflow for constructing a fine-grained artifact assessment benchmark, from initial data preparation to the final evaluation of artifact removal algorithms.

Diagram: Artifact Benchmark Construction Workflow

This section catalogs key datasets, models, and methodological tools that serve as essential "reagents" for research in fine-grained artifact assessment.

Table 2: Key Research Reagent Solutions for Artifact Assessment

| Reagent / Resource | Type | Primary Function in Research |

|---|---|---|

| MagicData340K [14] | Dataset | Provides a large-scale, human-annotated foundation for training and evaluating fine-grained artifact assessors, particularly for T2I models. |

| SynArtifact-1K [19] | Dataset | Serves as a benchmark for end-to-end artifact classification tasks, with annotations suitable for training Vision-Language Models (VLMs). |

| M-GAID [20] | Dataset | Enables the development and testing of algorithms specifically designed for detecting and removing ghosting artifacts in mobile photography. |

| Vision-Language Model (VLM) [14] [19] | Model Architecture | Acts as the backbone for building artifact classifiers capable of joint image and text understanding, enabling detailed assessment. |

| Group Relative Policy Optimization (GRPO) [14] | Training Algorithm | Enhances VLM training for assessment tasks by using a multi-level reward system to prevent reward hacking and improve reasoning consistency. |

| Reinforcement Learning from AI Feedback (RLAIF) [19] | Training Paradigm | Leverages the output of a trained artifact classifier as a reward signal to directly optimize generative models for reduced artifact production. |

The advancement of artifact removal algorithms is fundamentally constrained by the quality and granularity of the underlying taxonomies and annotated datasets. As our comparison shows, frameworks like MagicMirror, SynArtifact-1K, and M-GAID provide the essential foundation for moving beyond coarse, single-score metrics toward detailed, diagnostic assessment. The ongoing development of large-scale, finely-labeled public datasets and the sophisticated assessor models they enable is critical for creating meaningful benchmarks. These resources empower researchers to not only measure progress but also to pinpoint specific failure modes, thereby guiding the development of more robust and reliable computational imaging and generative AI systems.

Challenges in Data Accessibility and Curation for Robust Benchmarking

Benchmarking artifact removal algorithms is foundational to progress in multiple scientific disciplines, from medical imaging to generative AI. Yet, the development of robust, reliable, and reproducible benchmarks is fundamentally constrained by significant challenges in data accessibility and curation. The integrity of any benchmark is directly dependent on the quality, scale, and representativeness of the underlying data. This guide examines these challenges through a comparative analysis of current public datasets and the experimental protocols used to evaluate artifact removal algorithms. By objectively comparing the available resources and their supporting data, this article provides researchers, scientists, and drug development professionals with a clear framework for selecting and utilizing benchmarks, thereby informing more rigorous and reproducible research in the field.

The Core Challenges: Data Accessibility and Curation

The journey to establish a meaningful benchmark begins with overcoming two intertwined hurdles: gaining access to suitable data and then curating it to a high standard.

- Data Accessibility: A primary obstacle is the sheer scarcity of large-scale, publicly available datasets that include both artifact-laden data and their corresponding ground truth artifact-free versions. Such paired data is essential for training and evaluating supervised artifact removal models. In medical imaging, for instance, acquiring ground truth often requires rescanning patients, which raises practical and ethical concerns, consumes valuable scanner time, and is not always feasible [21]. Similarly, in other domains, creating a high-fidelity ground truth can be prohibitively expensive or complex.

- Data Curation: Once data is accessible, it must be meticulously curated. Key curation challenges include:

- Annotation Granularity: Coarse-grained labels (e.g., a single "artifact" score) are insufficient for diagnosing specific failure modes of algorithms. Fine-grained taxonomy, as seen in the MagicMirror benchmark which categorizes artifacts into "object anatomy," "attribute," and "interaction," is required for insightful evaluation [14].

- Data Imbalance: Artifacts concerning specific elements, such as human hands in generated images, may be rare in a dataset, leading to models that are not adequately tested on these critical cases. Targeted oversampling strategies are needed to address this [14].

- Standardization: The lack of universally accepted data formats, annotation guidelines, and predefined training/testing splits hinders the fair comparison of different algorithms across studies [22] [23].

Comparative Analysis of Public Datasets and Benchmarks

A review of recently introduced datasets highlights both the ongoing efforts to address these challenges and the varying approaches taken across different scientific domains. The following table summarizes key quantitative characteristics of several relevant benchmarks.

Table 1: Comparison of Public Datasets for Artifact Removal Benchmarking

| Dataset Name | Domain | Key Artifact Type | Data Volume & Type | Notable Features |

|---|---|---|---|---|

| MagicMirror (MagicData340K) [14] | Computer Vision (Text-to-Image) | Physical Artifacts (anatomical, structural) | 340K images; Human-annotated | Fine-grained, multi-label taxonomy (L1-L3); First large-scale dataset of its kind |

| KMAR-50K [21] | Medical Imaging (Knee MRI) | Motion Artifacts | 1,444 MRI sequence pairs; 62,506 images | Paired data (artifact vs. rescan ground truth); Multi-view, multi-sequence |

| EEG-tES Denoising Benchmark [24] | Neuroscience (EEG) | tES-induced Electrical Artifacts | Semi-synthetic dataset | Controlled, rigorous evaluation with known ground truth; Combines clean EEG with synthetic artifacts |

| PMLB [23] | General Machine Learning | N/A (General Benchmarking) | 200+ datasets for classification/regression | Curated, standardized format; Predefined training/testing splits |

These datasets illustrate a trend towards creating larger, more specialized resources. However, they also reveal a fragmentation in the field, where benchmarks are often domain-specific, making cross-disciplinary comparisons difficult. The move towards providing paired data, as demonstrated by KMAR-50K and the semi-synthetic approach in EEG research, is a critical step forward for supervised learning approaches [21] [24].

Experimental Protocols and Methodologies

Robust benchmarking requires not only data but also standardized experimental protocols. The methodologies employed in evaluating artifact removal algorithms are as important as the datasets themselves.

Quantitative Evaluation Metrics

A combination of metrics is typically used to assess different aspects of algorithmic performance:

- Image Fidelity Metrics: In image-related tasks, Peak Signal-to-Noise Ratio (PSNR) and Structural Similarity Index Measure (SSIM) are standard metrics for quantifying the similarity between the algorithm's output and the ground truth image. For example, the KMAR-50K benchmark reported U-Net achieving a PSNR of 28.468 and SSIM of 0.927 on transverse plane images [21].

- Error Metrics: Temporal or spatial error measures, such as Root Relative Mean Squared Error (RRMSE), are used to quantify the magnitude of artifact removal in signal processing domains like EEG denoising [24].

- Task-Specific Metrics: For generative models, metrics like accuracy and recall are used. ANN-Benchmarks, for evaluating approximate nearest neighbor algorithms, uses recall (the fraction of true nearest neighbors found) against queries per second [25].

Standardized Benchmarking Workflows

A rigorous benchmarking workflow involves several critical stages, from dataset preparation to the final analysis of results, ensuring that evaluations are consistent, fair, and reproducible.

Standardized Benchmarking Workflow

- Define Benchmarking Objectives: The process must begin by identifying specific goals, such as improving reconstruction accuracy, execution speed, or scalability [26] [27]. This determines the choice of metrics and data.

- Select Metrics & Prepare Data: Choose relevant quantitative metrics (e.g., PSNR, SSIM, RRMSE, recall) and gather representative datasets that reflect real-world conditions, ensuring they are split into training, validation, and test sets [26] [22] [21].

- Standardize Test Environment: The hardware and software configuration must be controlled and consistent to ensure results are reproducible and comparable. This includes using the same CPU/GPU, memory, operating system, and library versions across tests [26] [27].

- Execute Benchmark Runs: Algorithms are run multiple times to account for performance variations and to gather statistically significant data [27].

- Analyze & Compare Results: Use statistical tools to interpret the data, compare algorithms against baseline models, and identify areas for improvement [26] [25].

The Researcher's Toolkit: Essential Materials and Reagents

Successful experimentation in artifact removal requires a suite of computational "reagents" and tools. The following table details key resources for developing and benchmarking algorithms.

Table 2: Essential Research Reagents and Tools for Artifact Removal Benchmarking

| Item Name | Function / Purpose | Example Use Case |

|---|---|---|

| Specialized Datasets (e.g., KMAR-50K, MagicData340K) | Provides standardized, often paired, data for training and evaluation. | Training deep learning models for MRI motion artifact removal [21] or assessing T2I generation models [14]. |

| Benchmarking Frameworks (e.g., Google Benchmark, Apache JMH) | Provides robust platforms for performance testing, handling accurate timing and statistical analysis. | Microbenchmarking the execution speed and memory usage of different sorting algorithms [26] [27]. |

| Visualization Software (e.g., Matplotlib, Tableau) | Aids in interpreting and presenting benchmarking results through charts and graphs. | Plotting recall vs. queries per second for nearest-neighbor search algorithms [26] [25]. |

| Containerization Tools (e.g., Docker) | Packages the artifact, its dependencies, and runtime environment into a reproducible, portable unit. | Ensuring an algorithm can be successfully executed by artifact evaluation committees, as required by conferences like SIGCOMM [28]. |

| Performance Profilers (e.g., cProfile, Valgrind) | Provides detailed information on code execution, including time spent in functions and memory usage. | Identifying performance bottlenecks in an artifact removal algorithm during development [27]. |

Analysis of Leading Artifact Removal Algorithms

A comparison of algorithmic performance across different benchmarks reveals their relative strengths and weaknesses. The experimental data below is synthesized from recent studies to provide a comparative overview.

Table 3: Performance Comparison of Artifact Removal Algorithms Across Domains

| Algorithm / Model | Domain | Key Performance Results (Metric, Score) | Inference Speed / Scalability |

|---|---|---|---|

| U-Net [21] | Medical Imaging (MRI) | PSNR: 28.468, SSIM: 0.927 (on KMAR-50K transverse plane) | 0.5 seconds per volume (18x faster than EDSR) |

| Multi-modular SSM (M4) [24] | Neuroscience (EEG) | Best RRMSE for removing complex tACS and tRNS artifacts | Performance dependent on stimulation type |

| Complex CNN [24] | Neuroscience (EEG) | Best RRMSE for tDCS artifact removal | Performance dependent on stimulation type |

| ArtiFade [29] | Computer Vision (T2I) | Generates high-quality, artifact-free images from blemished inputs | Preserves generative capabilities of base diffusion model |

| MagicAssessor (with GRPO) [14] | Computer Vision (T2I) | Provides fine-grained artifact assessment and labeling | Addresses class imbalance and reward hacking |

The data shows that no single algorithm is universally superior. Model performance is highly dependent on the artifact type and domain. For instance, in EEG denoising, a Complex CNN excels with tDCS artifacts, while a State Space Model (SSM) is better for more complex tACS and tRNS artifacts [24]. In medical imaging, U-Net demonstrates an excellent balance between accuracy and inference speed, a critical consideration for clinical deployment [21].

Advanced Methodologies and Future Trends

The field is rapidly evolving, with new methodologies being developed to overcome existing limitations in benchmarking.

Overcoming Data Scarcity with Semi-Synthetic Data

When paired real-world data is unavailable, a powerful alternative is the creation of semi-synthetic datasets. This involves combining clean, artifact-free data (e.g., from a controlled experiment) with synthetically generated artifacts. This approach was successfully used in EEG research, where clean EEG data was combined with synthetic tES artifacts, allowing for a controlled and rigorous model evaluation because the ground truth was known [24].

Enhancing Evaluation with Advanced Model Training

Simply having data is not enough; models must be trained effectively to become reliable benchmarks. The development of MagicAssessor involved advanced training strategies like Group Relative Policy Optimization (GRPO). This was augmented with a novel multi-level reward system that guides the model from coarse to fine-grained detection and a consistency reward to align the model's reasoning with its final output, thereby preventing "reward hacking" where a model optimizes for the reward signal without performing the intended task [14]. The overall process of creating such a benchmark, from data curation to model deployment, is complex and multi-faceted.

From Data Curation to Benchmark Model

- Establish Fine-Grained Taxonomy: Create a hierarchical classification of artifacts (e.g., L1: Normal/Artifact, L2: Anatomy/Attribute, L3: Hand Structure) [14].

- Collect & Generate Diverse Data: Compile prompts from various sources and generate images using a diverse suite of models to ensure broad coverage [14].

- Human Annotation with Multi-Labels: Annotators manually label the collected data according to the taxonomy, allowing multiple co-occurring artifacts to be tagged per image [14].

- Train Assessor Model (with GRPO & Rewards): A Vision-Language Model is trained using strategies like GRPO, with a multi-level reward system and data sampling that oversamples challenging positive cases to overcome class imbalance [14].

- Deploy Automated Benchmark (MagicBench): The trained model is used to construct an automated benchmark for the systematic evaluation and comparison of other models [14].

Looking forward, several trends are poised to shape the future of benchmarking. There is a growing emphasis on standardizing benchmarking practices across the industry to ensure consistency and comparability [26]. Furthermore, as AI is applied in high-stakes domains, benchmarking will increasingly need to include metrics for fairness, transparency, and ethical considerations beyond raw performance [26]. Finally, the integration of benchmarking directly into the development lifecycle (Integration with DevOps) will help ensure that performance and reliability are continuously monitored [26].

Algorithmic Approaches and Implementation Strategies

Traditional Signal Processing vs. Modern Deep Learning Paradigms

The removal of artifacts from physiological signals represents a significant challenge in fields ranging from clinical neurology to brain-computer interface (BCI) development. As research increasingly relies on data from wearable sensors and real-world environments, the demand for robust, adaptive artifact removal algorithms has never been greater. This comparison guide examines the evolving landscape of signal processing methodologies, focusing specifically on the performance of traditional signal processing techniques against modern deep learning paradigms in benchmarking studies using public datasets. The analysis is contextualized within artifact removal for electroencephalography (EEG), a domain where signal purity is paramount for accurate interpretation yet notoriously difficult to achieve due to the overlapping characteristics of neural signals and various biological artifacts.

Methodological Foundations: A Comparative Analysis

Traditional Signal Processing Approaches

Traditional signal processing methods for artifact removal are typically grounded in mathematical models of signal properties and require substantial domain expertise for effective implementation.

Regression-Based Methods: These techniques utilize reference channels to estimate and subtract artifact components from contaminated signals through linear transformation. While effective with proper references, their performance degrades significantly without dedicated reference channels, increasing operational complexity [3].

Filtering Techniques: Conventional filtering approaches employ frequency-based separation but face fundamental limitations when artifact and neural signal spectra overlap substantially, as occurs with physiological artifacts like electromyography (EMG) and electrooculography (EOG) [3].

Blind Source Separation (BSS): This category includes principal component analysis (PCA), independent component analysis (ICA), empirical mode decomposition (EMD), and canonical correlation analysis (CCA). These methods transform contaminated signals into new data spaces where artifact components can be identified and removed. While often effective, BSS approaches typically require multiple channels, sufficient prior knowledge, and manual component selection, creating bottlenecks for automated processing pipelines [3] [10].

Modern Deep Learning Paradigms

Deep learning approaches learn features directly from data through layered network architectures, minimizing the need for manual feature engineering and explicit mathematical modeling of artifacts.

Hybrid Architecture Networks: Models like CLEnet integrate dual-scale convolutional neural networks (CNN) with Long Short-Term Memory (LSTM) networks and attention mechanisms. This combination enables simultaneous extraction of morphological features and temporal dependencies in EEG signals, addressing both spatial and dynamic characteristics of artifacts [3].

Transformer-Based Models: The Artifact Removal Transformer (ART) employs self-attention mechanisms to capture transient millisecond-scale dynamics in EEG signals. This end-to-end approach simultaneously addresses multiple artifact types in multichannel EEG data through supervised learning on noisy-clean data pairs [6].

Asymmetric Convolutional Networks: Approaches like MACN-MHA utilize asymmetric convolution blocks with multi-head attention mechanisms to focus on key time-frequency features. These are often combined with wavelet transform preprocessing for initial noise reduction [30].

Experimental Benchmarking: Performance Comparison

Quantitative Performance Metrics

Experimental validation of artifact removal algorithms employs multiple quantitative metrics to assess performance across different signal characteristics.

Table 1: Key Performance Metrics for Artifact Removal Algorithms

| Metric | Description | Interpretation |

|---|---|---|

| Signal-to-Noise Ratio (SNR) | Ratio of signal power to noise power | Higher values indicate better artifact rejection |

| Correlation Coefficient (CC) | Linear correlation between processed and clean signals | Values closer to 1.0 indicate better preservation of original signal |

| Relative Root Mean Square Error - Temporal (RRMSEt) | Temporal domain reconstruction error | Lower values indicate superior performance |

| Relative Root Mean Square Error - Frequency (RRMSEf) | Frequency domain reconstruction error | Lower values indicate superior performance |

Benchmarking Results on Public Datasets

Comparative studies on standardized datasets provide objective performance assessments across methodological paradigms.

Table 2: Performance Comparison on EEG Artifact Removal Tasks

| Algorithm | Architecture Type | Artifact Type | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|---|

| CLEnet | CNN + LSTM + Attention | Mixed (EMG+EOG) | 11.498 | 0.925 | 0.300 | 0.319 |

| CLEnet | CNN + LSTM + Attention | ECG | 5.13% improvement* | 0.75% improvement* | 8.08% reduction* | 5.76% reduction* |

| 1D-ResCNN | Deep Learning | Mixed (EMG+EOG) | Lower than CLEnet | Lower than CLEnet | Higher than CLEnet | Higher than CLEnet |

| NovelCNN | Deep Learning | Mixed (EMG+EOG) | Lower than CLEnet | Lower than CLEnet | Higher than CLEnet | Higher than CLEnet |

| DuoCL | Deep Learning | Mixed (EMG+EOG) | Lower than CLEnet | Lower than CLEnet | Higher than CLEnet | Higher than CLEnet |

| ART | Transformer | Multiple | Superior to other DL | N/A | N/A | N/A |

| ICA | Traditional BSS | Multiple | Lower than DL | Lower than DL | Higher than DL | Higher than DL |

| Wavelet Transform | Traditional | Multiple | Lower than DL | Lower than DL | Higher than DL | Higher than DL |

Note: Percentage values indicate improvement over DuoCL baseline [3] [6].

Experimental Protocols and Methodologies

Standardized experimental protocols enable fair comparison across different algorithmic approaches:

Semi-Synthetic Dataset Creation: Researchers often combine clean EEG recordings with experimentally recorded artifacts (EMG, EOG, ECG) in specific ratios to create ground truth pairs for supervised learning. This approach enables precise quantification of removal performance [3].

Real-World Dataset Validation: Algorithms are tested on experimentally collected EEG data containing unknown artifacts from various sources, including movement, vascular pulsation, and swallowing artifacts. This validates performance under realistic conditions [3].

Cross-Dataset Evaluation: Models trained on one dataset are tested on entirely different datasets to assess generalization capability, a particular challenge for traditional methods with fixed assumptions [3].

Ablation Studies: Systematic removal of specific components from deep learning architectures (e.g., attention modules) quantifies their contribution to overall performance [3].

The following diagram illustrates a typical experimental workflow for benchmarking artifact removal algorithms:

The Scientist's Toolkit: Essential Research Reagents

Implementation of effective artifact removal pipelines requires specific algorithmic components and data resources.

Table 3: Essential Research Reagents for Artifact Removal Research

| Resource Category | Specific Examples | Function/Purpose |

|---|---|---|

| Public Datasets | EEGdenoiseNet, MIT-BIH Arrhythmia Database | Provide standardized benchmark data for algorithm development and comparison [3] |

| Traditional Algorithms | ICA, PCA, Wavelet Transform, Regression | Establish baseline performance and handle well-characterized artifacts [3] [10] |

| Deep Learning Architectures | CNN, LSTM, Transformer, Hybrid Models | Address complex, unknown artifacts and adapt to specific signal characteristics [3] [6] |

| Performance Metrics | SNR, CC, RRMSE (Temporal & Frequency) | Quantitatively evaluate algorithm performance across multiple dimensions [3] |

| Attention Mechanisms | EMA-1D, Multi-Head Attention | Enhance feature selection capabilities and focus on relevant signal components [3] [30] |

Interpretation of Benchmarking Results

Performance Patterns Across Methodologies

The experimental data reveals several consistent patterns across benchmarking studies:

Specialization vs. Generalization: Traditional methods often excel in removing specific, well-characterized artifacts when their underlying assumptions match the data properties. In contrast, deep learning approaches demonstrate superior capability in handling unknown artifacts and adapting to variable conditions, with CLEnet showing 2.45% and 2.65% improvements in SNR and CC respectively for unknown artifact removal [3].

Data Efficiency Trade-offs: Traditional methods typically require less data for effective deployment but more expert intervention for tuning and component selection. Deep learning models demand larger, diverse datasets for training but subsequently offer more automated operation [3] [10].

Computational Resource Requirements: Traditional signal processing algorithms are generally less computationally intensive during execution, while deep learning models require significant resources for training but can be optimized for efficient inference [30].

Emerging Hybrid Approaches

The most significant trend observed in recent literature is the emergence of hybrid methodologies that combine strengths from both paradigms:

Signal Processing-Informed Deep Learning: Approaches that use wavelet transforms or other time-frequency analyses as preprocessing steps before deep learning feature extraction, leveraging the strengths of both methodologies [30].

Attention-Enhanced Architectures: Integration of attention mechanisms with conventional CNNs and LSTMs to improve feature selection, with ablation studies showing significant performance degradation when attention modules are removed [3].

Transfer Learning Applications: Using models pre-trained on source domains and fine-tuned with limited target domain data, addressing the challenge of limited labeled data in specific applications [30].

The relationship between algorithmic complexity and performance can be visualized as follows:

Benchmarking studies conducted on public datasets demonstrate that the choice between traditional signal processing and modern deep learning paradigms for artifact removal is highly context-dependent. Traditional methods maintain relevance for well-characterized artifacts and resource-constrained environments, while deep learning approaches offer superior performance for complex, unknown artifacts and automated operation. The most promising direction emerging from current research is the development of hybrid methodologies that leverage the theoretical foundations of signal processing with the adaptive capabilities of deep learning. Researchers should select approaches based on specific application requirements, considering factors such as artifact types, data availability, computational resources, and required level of automation. Future work should focus on developing more efficient architectures, improving explainability, and creating more comprehensive benchmarking datasets that reflect real-world variability.

Electroencephalography (EEG) is a cornerstone technique for measuring brain activity in clinical diagnostics, neuroscience research, and brain-computer interfaces [2]. However, EEG signals, characterized by microvolt-range amplitudes, are highly susceptible to contamination from various artifacts originating from both physiological sources (e.g., eye blinks, muscle activity, cardiac signals) and non-physiological sources (e.g., electrode pops, power line interference) [2] [1]. These artifacts can obscure genuine neural activity, leading to misinterpretation and potentially compromising clinical diagnoses [1]. Therefore, robust artifact removal is an essential preprocessing step to ensure the validity of EEG data analysis.

The field of EEG artifact removal has evolved significantly, moving from traditional methods like Independent Component Analysis (ICA) and Wavelet Transforms to modern deep learning models [2]. Benchmarking these algorithms on public datasets is crucial for objective comparison and scientific progress [31]. This guide provides a comprehensive comparison of these techniques, focusing on their operational principles, performance on standardized tasks, and applicability for research and development, particularly for an audience of scientists and drug development professionals.

Methodologies at a Glance: Core Artifact Removal Techniques

Traditional and Hybrid Signal Processing Methods

Independent Component Analysis (ICA) is a blind source separation method that decomposes multi-channel EEG signals into statistically independent components. Artifactual components are then identified and removed before signal reconstruction [32]. A key limitation is that discarding entire components can lead to the loss of underlying neural information present in that component [32].

Wavelet Transform (WT) is a powerful tool for analyzing non-stationary signals like EEG. It decomposes a signal into different frequency components, allowing for the identification and thresholding of coefficients associated with artifacts. Its effectiveness for single-channel EEG makes it suitable for minimalist and wearable systems [33]. The Wavelet-Enhanced ICA (wICA) method improves upon traditional ICA by applying wavelet-based correction to the artifactual independent components instead of rejecting them entirely, thereby preserving more neural data [32].

Emerging Deep Learning Models

Deep learning models learn complex, non-linear mappings from noisy to clean EEG signals in an end-to-end manner, overcoming many limitations of traditional methods [2].

- CLEnet: An advanced dual-branch network that integrates dual-scale Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks, supplemented with an improved one-dimensional Efficient Multi-Scale Attention mechanism (EMA-1D). This architecture is designed to extract both morphological and temporal features of EEG, enabling the effective removal of various artifacts, including unknown types, from multi-channel data [34].

- LSTEEG: A deep autoencoder utilizing LSTM layers for the detection and correction of artifacts in multi-channel EEG. It captures long-term, non-linear dependencies in sequential data and uses reconstruction error for anomaly detection [35].

- GAN-based Models (e.g., AnEEG): Models like Generative Adversarial Networks (GANs), sometimes integrated with LSTM layers, are trained to generate artifact-free EEG signals. The generator produces cleaned signals, while the discriminator judges their authenticity against ground-truth clean data [36].

Experimental Benchmarking on Public Datasets

The evaluation of artifact removal pipelines relies on standardized datasets and quantitative metrics to ensure fair comparisons.

Common Datasets and Performance Metrics

Key Public Datasets:

- EEGdenoiseNet [34] [36]: A semi-synthetic benchmark dataset containing clean EEG segments mixed with EOG and EMG artifacts.

- MIT-BIH Arrhythmia Database [34] [36]: Often used to create semi-synthetic datasets by combining clean EEG with ECG artifacts.

- Real 32-channel EEG Data [34]: Dataset collected from healthy subjects performing cognitive tasks, containing unknown, real-world artifacts.

- LEMON Dataset [35]: A dataset of clean EEG used for training and evaluating models like LSTEEG.

Key Performance Metrics:

- Signal-to-Noise Ratio (SNR) [34]: Measures the level of desired signal relative to noise. Higher is better.

- Correlation Coefficient (CC) [34] [33]: Measures the linear relationship between the cleaned signal and the ground-truth clean signal. Closer to 1 is better.

- Relative Root Mean Square Error (RRMSE) [34]: Measures the relative error in both temporal (RRMSEt) and frequency (RRMSEf) domains. Lower is better.

- Normalized Mean Square Error (NMSE) [33] [36]: A normalized measure of the overall error between cleaned and ground-truth signals. Lower is better.

Quantitative Performance Comparison

The following tables summarize the performance of various algorithms across different artifact removal tasks.

Table 1: Performance comparison on mixed (EMG+EOG) artifact removal using the EEGdenoiseNet dataset.

| Model | Type | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|

| CLEnet | Deep Learning | 11.498 | 0.925 | 0.300 | 0.319 |

| DuoCL | Deep Learning | 10.123 | 0.898 | 0.345 | 0.355 |

| NovelCNN | Deep Learning | 9.456 | 0.885 | 0.361 | 0.370 |

| 1D-ResCNN | Deep Learning | 8.789 | 0.870 | 0.380 | 0.389 |

| wICA | Hybrid | 7.950 | 0.841 | 0.421 | 0.440 |

| ICA | Traditional | 7.120 | 0.810 | 0.460 | 0.481 |

Table 2: Performance on ECG artifact removal and multi-channel EEG with unknown artifacts.

| Task | Model | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|

| ECG Removal | CLEnet | 9.815 | 0.938 | 0.227 | 0.245 |

| DuoCL | 9.332 | 0.931 | 0.247 | 0.260 | |

| NovelCNN | 8.901 | 0.920 | 0.265 | 0.278 | |

| Multi-channel Unknown | CLEnet | 8.765 | 0.891 | 0.402 | 0.410 |

| DuoCL | 8.556 | 0.868 | 0.432 | 0.424 | |

| NovelCNN | 7.989 | 0.845 | 0.468 | 0.455 |

Table 3: Comparison of Wavelet Transform parameters for single-channel ocular artifact (OA) removal [33].

| Wavelet Method | Basis Function | Threshold | Optimal CC | Optimal NMSE (dB) |

|---|---|---|---|---|

| Discrete Wavelet Transform (DWT) | bior4.4 | Statistical | 0.963 | -19.5 |

| Discrete Wavelet Transform (DWT) | coif3 | Statistical | 0.960 | -19.1 |

| Stationary Wavelet Transform (SWT) | sym3 | Universal | 0.945 | -17.8 |

| Stationary Wavelet Transform (SWT) | haar | Universal | 0.932 | -16.5 |

Detailed Experimental Protocols

CLEnet: Architecture and Workflow

CLEnet was designed to address limitations of prior deep learning models, specifically their inability to handle unknown artifacts and multi-channel EEG data effectively [34]. Its architecture and training process are as follows:

A. Network Architecture: The model operates in three distinct stages:

- Morphological Feature Extraction & Temporal Feature Enhancement: The input EEG is processed through two parallel branches with convolutional kernels of different scales to extract features at multiple resolutions. An improved EMA-1D (Efficient Multi-Scale Attention) module is embedded within these CNNs to enhance critical temporal features and suppress irrelevant ones.

- Temporal Feature Extraction: The combined multi-scale features are passed through fully connected layers for dimensionality reduction, then fed into an LSTM network to model long-range temporal dependencies in the EEG signal.

- EEG Reconstruction: The processed features are flattened and passed through final fully connected layers to reconstruct the artifact-free EEG signal [34].

B. Experimental Protocol:

- Training: The model was trained in a supervised manner using Mean Squared Error (MSE) as the loss function.

- Datasets: The model was evaluated on three datasets: i) EEGdenoiseNet for EMG/EOG, ii) A semi-synthetic dataset with ECG artifacts from the MIT-BIH database, and iii) A real 32-channel dataset collected in-house.

- Evaluation: Performance was benchmarked against 1D-ResCNN, NovelCNN, and DuoCL using SNR, CC, RRMSEt, and RRMSEf metrics [34].

Diagram 1: CLEnet's three-stage architecture for multi-channel EEG artifact removal.

Wavelet-Enhanced ICA (wICA) for Ocular Artifacts

The wICA method refines the standard ICA approach to minimize the loss of neural information [32].

Experimental Protocol:

- Decomposition: Multi-channel EEG data is decomposed into Independent Components (ICs) using ICA.

- Identification: Ocular artifact components are automatically identified.

- Wavelet Correction: Instead of rejecting the entire component, the artifactual IC is processed using Discrete Wavelet Transform (DWT): a. The component is decomposed into wavelet coefficients. b. A thresholding function (e.g., adaptive threshold) is applied to these coefficients to suppress peaks corresponding to EOG artifacts (blinks and eye movements). c. The component is reconstructed from the thresholded coefficients.

- Reconstruction: The corrected IC, which now retains neural activity from non-artifactual periods, is used alongside all other components to reconstruct the cleaned multi-channel EEG signal [32].

Diagram 2: wICA workflow for selective ocular artifact correction.

Benchmarking Ecosystem: EEG-FM-Bench

The proliferation of models has highlighted the need for standardized evaluation. EEG-FM-Bench is a comprehensive benchmark designed to address this gap by providing a unified framework for evaluating EEG Foundation Models (EEG-FMs), including those for denoising [31].

Key Features of the Benchmark:

- Curated Tasks: Includes 14 datasets across 10 canonical EEG paradigms (e.g., motor imagery, sleep staging, emotion recognition, seizure detection).

- Standardized Protocols: Implements consistent data processing and evaluation metrics to ensure fair comparisons.

- Flexible Fine-tuning: Evaluates models using multiple strategies, such as frozen backbone fine-tuning and full-parameter fine-tuning, to assess pre-training quality and architectural design [31].

Initial findings from this benchmark reveal that models capturing fine-grained spatio-temporal interactions and those trained with multi-task learning demonstrate superior generalization across different tasks and paradigms [31].

The Scientist's Toolkit: Key Research Reagents

For researchers aiming to implement or benchmark these artifact removal techniques, the following resources are essential.

Table 4: Essential research reagents and resources for EEG artifact removal research.

| Resource Type | Name / Specification | Function & Application |

|---|---|---|

| Benchmark Datasets | EEGdenoiseNet [34] | Semi-synthetic benchmark for training and evaluating models on EMG and EOG artifacts. |

| MIT-BIH Arrhythmia Database [34] | Source of ECG signals for creating semi-synthetic ECG artifact datasets. | |

| LEMON Dataset [35] | A dataset of clean EEG used for unsupervised training of models like autoencoders. | |

| Software & Tools | ICA (e.g., in EEGLAB) | Standard algorithm for blind source separation and artifact removal. |

| Wavelet Toolbox (MATLAB) | Implements DWT, SWT, and various thresholding functions for signal denoising. | |

| Deep Learning Frameworks (PyTorch, TensorFlow) | For building and training complex models like CLEnet, LSTEEG, and GANs. | |

| Performance Metrics | SNR, CC, RRMSE [34] | Core metrics for quantifying denoising performance and signal preservation. |

| NMSE, SAR [33] | Additional metrics for error measurement and artifact suppression quantification. | |

| Computational Framework | EEG-FM-Bench [31] | A unified open-source framework for the standardized evaluation of EEG models. |

The benchmarking data clearly illustrates a performance hierarchy in EEG artifact removal. Traditional methods like ICA and Wavelet Transforms remain effective, particularly for specific artifacts like ocular movements and in resource-constrained scenarios [32] [33]. However, advanced deep learning models, particularly hybrid architectures like CLEnet, demonstrate superior performance in handling complex, mixed, and unknown artifacts in multi-channel settings [34].

For researchers and drug development professionals, the choice of algorithm should be guided by the specific application. While wavelet methods offer a strong balance of performance and computational efficiency for single-channel systems, the future lies in deep learning models that can generalize across diverse and real-world conditions. The emergence of standardized benchmarks like EEG-FM-Bench will be critical in driving this progress, enabling fair comparisons and guiding the development of more robust, efficient, and generalizable artifact removal solutions [31] [2].

Functional near-infrared spectroscopy (fNIRS) and photoplethysmography (PPG) are non-invasive optical techniques that have gained significant traction in neuroscience and physiological monitoring. fNIRS measures cerebral hemodynamic changes by detecting near-infrared light passed through the scalp, providing insights into brain activity through concentration variations of oxyhemoglobin (HbO) and deoxyhemoglobin (HbR) [37]. PPG measures blood volume changes typically in peripheral tissues, commonly used for heart rate monitoring and vascular assessment. Despite their advantages, both techniques are highly vulnerable to motion artifacts (MAs), which represent the most significant source of noise and can severely compromise data quality and interpretation [37] [38].

Motion artifacts originate from imperfect contact between optodes and the skin during subject movement, including head displacements, facial movements, jaw activities, and even body movements that cause inertial effects on the recording equipment [38] [39]. These artifacts manifest as spike-like transients, baseline shifts, and slow drifts that can obscure genuine physiological signals [40]. The challenge is particularly pronounced in pediatric populations, clinical cohorts with limited mobility control, and real-world applications where movement is inherent to the experimental paradigm [37]. The development of effective motion correction strategies is therefore essential for maintaining the validity and reliability of fNIRS and PPG measurements across diverse applications.

Classification of Motion Artifact Correction Approaches