BCI Competition Datasets 2025: A Guide to State-of-the-Art Results and Methodologies

This article provides a comprehensive analysis of current Brain-Computer Interface (BCI) competition datasets and the state-of-the-art methodologies achieving top performance on them.

BCI Competition Datasets 2025: A Guide to State-of-the-Art Results and Methodologies

Abstract

This article provides a comprehensive analysis of current Brain-Computer Interface (BCI) competition datasets and the state-of-the-art methodologies achieving top performance on them. Tailored for researchers and biomedical professionals, it explores foundational datasets like BCI Competition IV-2a and IV-2b, as well as newer, high-quality resources. It details advanced deep learning models, including transformer-based architectures and convolutional networks, that are pushing classification accuracy boundaries. The review also addresses critical challenges such as cross-session stability and data non-stationarity, offering optimization strategies. By comparing model performance across key benchmarks, this guide serves as a vital resource for developing robust, clinically applicable BCI systems.

Foundations of BCI Benchmarking: Key Public Datasets and Their Clinical Relevance

Brain-Computer Interface (BCI) research represents one of the most interdisciplinary fields in modern science, combining neuroscience, signal processing, machine learning, and clinical rehabilitation. The development and advancement of this field have been significantly accelerated by the availability of high-quality, standardized datasets that enable researchers to benchmark their algorithms against common reference points. Among these, the datasets from the BCI Competition IV have emerged as foundational pillars, particularly the 2a and 2b datasets focusing on motor imagery (MI) paradigms [1]. These datasets have provided the community with rigorously collected, annotated data that has become the de facto standard for evaluating new signal processing and classification methods [2].

Motor imagery, the mental rehearsal of physical movements without actual execution, produces characteristic patterns in electroencephalography (EEG) signals through event-related desynchronization (ERD) and event-related synchronization (ERS) in the sensorimotor cortex [3]. The reliable decoding of these patterns is crucial for developing BCIs that can restore communication and control capabilities for individuals with severe motor impairments. The BCI Competition IV-2a and 2b datasets have been instrumental in driving progress in this area by providing carefully curated data that reflects the challenges of real-world BCI applications while maintaining controlled experimental conditions [2] [1].

This review provides a comprehensive comparison of these two foundational datasets, detailing their experimental paradigms, technical specifications, and the state-of-the-art methodologies that have been developed using them. We further present quantitative performance comparisons of contemporary algorithms and provide practical guidance for researchers working with these datasets.

Dataset Specifications and Experimental Paradigms

The BCI Competition IV-2a and 2b datasets share a common foundation in motor imagery research but differ in their specific experimental designs, subject populations, and recording configurations. Understanding these specifications is essential for selecting the appropriate dataset for a given research objective and for interpreting results within the proper context.

BCI Competition IV-2a Dataset

The BCI Competition IV-2a dataset, provided by the Graz University of Technology, contains EEG data from 9 subjects performing 4-class motor imagery tasks (left hand, right hand, feet, and tongue) [2] [1]. Each subject participated in two sessions on different days, each consisting of 6 runs with 48 trials (12 per class), totaling 576 trials per subject. The recordings were made using 22 EEG electrodes placed according to the international 10-20 system, with signals sampled at 250 Hz and bandpass-filtered between 0.5-100 Hz, with an additional 50 Hz notch filter for line noise removal [2]. The experimental paradigm for each trial began with a fixation cross and acoustic warning signal, followed by a visual cue indicating the specific motor imagery task to be performed for 4 seconds, then a short break until the next trial.

BCI Competition IV-2b Dataset

The BCI Competition IV-2b dataset similarly involved 9 subjects but focused specifically on 2-class motor imagery (left hand vs. right hand) [2]. This dataset was recorded using only 3 bipolar EEG channels (C3, Cz, and C4) sampled at 250 Hz with the same filtering as the 2a dataset. Each subject completed five sessions, with the first two sessions without feedback and the final three sessions with feedback provided to the subject. Each session contained approximately 120 trials, with a similar trial structure to the 2a dataset but with a 3-second motor imagery period instead of 4 seconds [2]. The simplification to two classes and fewer channels makes this dataset particularly suitable for algorithms targeting more streamlined BCI implementations.

Table 1: Technical Specifications of BCI Competition IV-2a and IV-2b Datasets

| Specification | BCI Competition IV-2a | BCI Competition IV-2b |

|---|---|---|

| Subjects | 9 | 9 |

| Classes | 4 (Left hand, Right hand, Feet, Tongue) | 2 (Left hand, Right hand) |

| EEG Channels | 22 | 3 bipolar |

| EOG Channels | 3 | 3 |

| Sampling Rate | 250 Hz | 250 Hz |

| Filtering | 0.5-100 Hz + 50 Hz notch | 0.5-100 Hz + 50 Hz notch |

| Trials per Class | 72 per session | ~150 per session |

| Imagery Period | 4 seconds | 3 seconds |

| Key Challenge | Multi-class discrimination | Binary classification with session transfer |

Methodologies: From Traditional Approaches to Deep Learning

The evolution of analysis methods applied to the BCI Competition IV datasets reflects broader trends in signal processing and machine learning, transitioning from carefully engineered feature extraction pipelines to end-to-end deep learning architectures.

Conventional Signal Processing and Machine Learning

Traditional approaches to motor imagery classification typically involve a multi-stage pipeline beginning with preprocessing (filtering, artifact removal), followed by feature extraction, and concluding with classification. The most successful conventional method has been the Common Spatial Patterns (CSP) algorithm, which finds spatial filters that maximize the variance for one class while minimizing it for the other [1] [3]. CSP is particularly effective for binary motor imagery classification and has formed the foundation for numerous variants and extensions. After spatial filtering, features are typically passed to classifiers such as Linear Discriminant Analysis (LDA) or Support Vector Machines (SVM) [3]. For the 4-class problem in dataset 2a, multi-class extensions of CSP or ensemble strategies combining multiple binary classifiers are typically employed.

Deep Learning Architectures

Recent years have seen a shift toward deep learning models that can learn relevant features directly from the raw or minimally processed EEG data, potentially capturing complex patterns that might be missed by manually engineered features.

Table 2: Deep Learning Architectures for MI-EEG Classification

| Architecture | Key Components | Advantages |

|---|---|---|

| EEGNet [4] | Compact CNN with depthwise and separable convolutions | EEG-specific design, parameter efficiency |

| EEG-TCNet [4] | Combination of EEGNet with Temporal Convolutional Network | Enhanced temporal feature extraction |

| CIACNet [4] | Dual-branch CNN with attention mechanism and TCN | Rich temporal features with focused attention |

| ATCNet [4] | Attention temporal convolutional network with multi-head attention | Emphasis on temporally relevant features |

| Two-Stage Transformer [5] | Transformer-based feature extraction with handcrafted feature fusion | Combines strengths of deep learning and traditional features |

| EEGEncoder [6] | Transformer-TCN fusion with Dual-Stream Temporal-Spatial blocks | Captures both global and local dependencies |

These architectures typically incorporate several key innovations:

- Attention mechanisms that allow the model to focus on the most relevant temporal segments or features [4] [5]

- Temporal Convolutional Networks (TCNs) that capture long-range dependencies more effectively than RNNs while being easier to train [4] [6]

- Multi-branch designs that process information at different temporal or spatial scales [4]

- Hybrid approaches that combine the strengths of deep learning with domain knowledge from traditional signal processing [5]

BCI Motor Imagery Classification Workflow

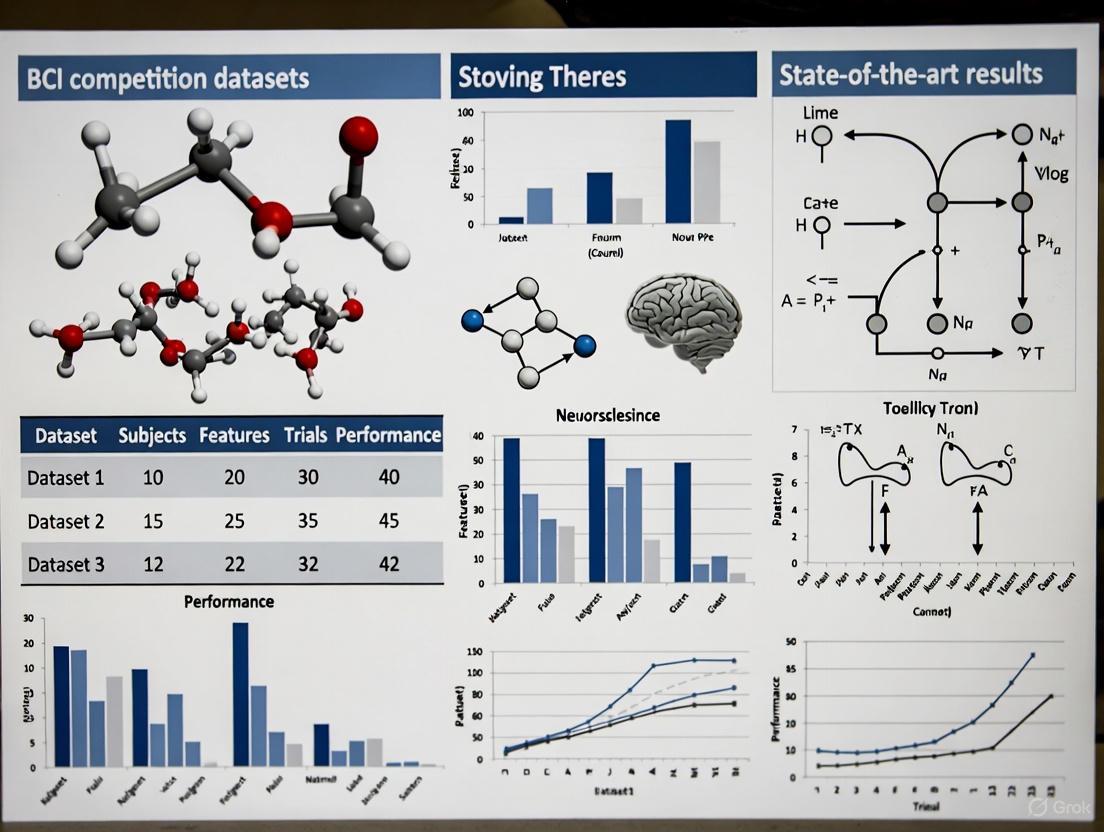

Performance Benchmarks and State-of-the-Art Results

The competitive nature of the BCI competitions has driven continuous improvement in classification performance on both datasets. Recent advances in deep learning have yielded particularly significant gains, especially for the more challenging 4-class discrimination problem in dataset 2a.

Comparative Performance Analysis

Table 3: Classification Accuracy (%) on BCI Competition IV-2a and IV-2b Datasets

| Model | BCI IV-2a (4-class) | BCI IV-2b (2-class) | Reference |

|---|---|---|---|

| FBCSP + SVM | 67.3 | 76.3 | [4] |

| EEGNet | 72.8 | 80.1 | [4] |

| EEG-TCNet | 75.1 | 82.6 | [4] |

| CIACNet | 85.2 | 90.1 | [4] |

| ATCNet | 78.6 | 84.2 | [4] |

| Two-Stage Transformer | 88.5 | 88.3 | [5] |

| EEGEncoder | 86.5 (subject-dependent) 74.5 (subject-independent) | - | [6] |

The performance trends reveal several important insights. First, the transition from traditional methods like FBCSP to deep learning approaches has consistently improved classification accuracy on both datasets. Second, the incorporation of attention mechanisms and temporal convolutional networks has provided particularly significant gains, as evidenced by the strong performance of models like CIACNet and the Two-Stage Transformer [4] [5]. Third, there remains a noticeable performance gap between subject-dependent models (trained and tested on data from the same individual) and subject-independent approaches (trained on multiple users and tested on unseen subjects), highlighting the challenge of inter-subject variability in BCI systems [6].

The Two-Stage Transformer model deserves special note, as it represents a sophisticated hybrid approach that combines deep learning embeddings with handcrafted features in its second stage, achieving approximately 3% improvement over comparable recent works [5]. This suggests that despite the power of deep learning, there remains valuable information in carefully engineered features that pure end-to-end approaches may not fully capture.

The Scientist's Toolkit: Essential Research Reagents

Working effectively with the BCI Competition IV datasets requires familiarity with a suite of computational tools and signal processing techniques. The following table summarizes key resources that form the essential toolkit for researchers in this domain.

Table 4: Essential Tools for BCI Competition IV Dataset Research

| Tool/Category | Specific Examples | Function | Relevance to BCI Competition IV |

|---|---|---|---|

| Signal Processing | Bandpass filters (8-30 Hz), Notch filters (50 Hz), Independent Component Analysis | Noise reduction, artifact removal | Isolate mu/beta rhythms, remove EOG artifacts [3] |

| Spatial Filtering | Common Spatial Patterns (CSP), Filter Bank CSP | Enhance discriminative spatial patterns | Critical for discriminating hand vs. foot movements [3] |

| Feature Extraction | Logarithmic variance, Power Spectral Density, Riemannian geometry | Convert signals to discriminative features | Input for traditional classifiers [5] |

| Classification Algorithms | LDA, SVM, Random Forests, Neural Networks | Map features to class labels | Binary (2b) vs. multi-class (2a) approaches differ [3] |

| Deep Learning Frameworks | TensorFlow, PyTorch, EEGNet, Braindecode | End-to-end classification | Implement architectures like EEG-TCNet, CIACNet [4] [6] |

| Evaluation Metrics | Accuracy, Kappa coefficient, F1-score | Performance assessment | Standardized comparison across studies [4] |

| Data Handling | MNE-Python, scikit-learn, NumPy | Data I/O, preprocessing, analysis | Standardized loading of GDF files [3] |

Modern Deep Learning Architecture for MI-EEG

The BCI Competition IV-2a and IV-2b datasets have established themselves as foundational benchmarks in motor imagery BCI research, driving algorithmic innovations for over a decade. The continuous improvement in classification accuracies—from approximately 67% with traditional methods to over 88% with modern deep learning architectures on the 4-class 2a dataset—demonstrates the significant progress enabled by these carefully curated datasets [4] [5].

Future research directions are likely to focus on several key challenges. Cross-subject generalization remains a significant hurdle, with performance drops of 10-12% when moving from subject-dependent to subject-independent paradigms [6]. Data-efficient learning approaches that reduce calibration time are essential for practical BCI systems [7]. The integration of explainable AI techniques will become increasingly important as complex deep learning models see wider adoption, particularly for clinical applications where interpretability is crucial. Finally, the development of hybrid models that combine the strengths of traditional signal processing with the representational power of deep learning appears particularly promising, as evidenced by the success of the Two-Stage Transformer network [5].

As BCI technology continues to transition from research laboratories to real-world applications, the foundational role of standardized benchmarks like the BCI Competition IV datasets becomes ever more critical. They provide not only performance benchmarks but also a common framework for methodological comparison and innovation, accelerating progress toward robust, practical brain-computer interfaces that can improve quality of life for individuals with motor impairments.

The field of electroencephalography (EEG)-based Brain-Computer Interfaces (BCIs) has long relied on a limited set of benchmark datasets, such as the BCI Competition IV datasets, which typically involved only 9 subjects [8] [9]. While these classics have driven algorithmic progress for over a decade, they present critical limitations for contemporary research, including small sample sizes, limited session variability, and an inability to adequately represent the BCI illiteracy phenomenon affecting approximately 20-40% of users [9]. The emergence of deep learning and the need for clinically translatable systems has intensified the demand for larger, more reliable, and more diverse datasets that can support the development of robust, subject-independent models and facilitate research into cross-session and cross-subject generalization [8] [10].

This guide introduces and objectively compares two next-generation datasets—WBCIC-MI and HEFMI-ICH—that represent significant advancements by addressing these fundamental limitations. Through detailed analysis of their experimental protocols, performance benchmarks, and unique characteristics, we provide researchers with the evidence needed to select appropriate datasets for specific research objectives, from basic algorithm development to clinical rehabilitation applications.

The WBCIC-MI and HEFMI-ICH datasets represent complementary approaches to advancing BCI research, with the former focusing on scaling traditional EEG paradigms and the latter pioneering multimodal acquisition for clinical applications.

Table 1: Core Dataset Specifications and Advancements

| Specification | WBCIC-MI | HEFMI-ICH | Classic Benchmarks (e.g., BCI Comp IV-2a) |

|---|---|---|---|

| Primary Innovation | Large-scale, multi-session, high-quality MI | First hybrid EEG-fNIRS for ICH rehabilitation | Established baseline for MI algorithm development |

| Subjects (Healthy/Patients) | 62 healthy | 17 healthy + 20 ICH patients | 9 healthy [11] [9] |

| Recording Sessions | 3 sessions on different days | Information not specified | 2 sessions [11] |

| EEG Channels | 59 EEG + 5 EOG/ECG | Information not specified | 22 EEG [11] |

| Additional Modalities | - | fNIRS | - |

| MI Tasks | 2-class: Left/Right hand grasping; 3-class: + Foot hooking | Left/Right hand MI | 4-class: Left/Right hand, Feet, Tongue [11] |

| Public Availability | Figshare [8] | PubMed/Scientific Data [12] [13] | BCI Competition Platform |

Table 2: Paradigm and Trial Structure Comparison

| Parameter | WBCIC-MI | HEFMI-ICH | Typical of Classic Paradigms [9] |

|---|---|---|---|

| Average Trial Length | 7.5 seconds | Information not specified | 9.8 seconds (range: 2.5-29 s) |

| MI Duration | 4 seconds | Information not specified | 4.26 seconds (range: 1-10 s) |

| Pre-rest Duration | 1.5 seconds (cue period) | Information not specified | 2.38 seconds |

| Stimulus Type | Brief video + auditory cues | Information not specified | Text, figure, or arrow |

| Trials per Session | 200 (2-class) / 300 (3-class) | Information not specified | ~48-288 |

Experimental Protocols and Methodologies

WBCIC-MI: Protocol for Large-Scale Data Collection

The WBCIC-MI dataset was acquired during the 2019 World Robot Conference Contest, following a rigorously standardized protocol designed to ensure high-quality, multi-session data [8].

Participant Cohort and Ethics: Sixty-two healthy, right-handed participants (aged 17-30, 18 females) were recruited, all naive BCI users. The 2-class experiment involved 51 subjects, while the more complex 3-class experiment involved 11 subjects. The study received approval from the Tsinghua University Medical Ethics Committee (approval number: 20190002) and adhered to the Declaration of Helsinki principles [8].

Experimental Paradigm: Each subject completed three recording sessions on different days to capture inter-session variability. Each session lasted approximately 35-48 minutes and included:

- Eye-opening (60 s) and eye-closing (60 s) baselines

- Five MI blocks with flexible breaks between them to prevent fatigue [8].

Each trial followed a precise structure: a 1.5-second visual and auditory cue period, a 4-second MI execution period where participants mentally repeated the imagined tasks 2-4 times, and a 2-second break period [8]. The visual cues for MI tasks were presented as brief videos on a white background, while the rest period displayed a white cross on a black background to minimize unnecessary stimuli [8].

Data Acquisition: EEG was recorded using a 64-channel wireless Neuracle EEG system with electrodes placed according to the international 10-20 system. Channels 1-59 recorded EEG signals, while channels 60-64 recorded electrocardiogram (ECG) and electrooculogram (EOG) signals, though the EOG/ECG channels were not used in the initial studies [8].

Figure 1: WBCIC-MI experimental workflow showing session structure and trial timing.

HEFMI-ICH: Protocol for Multimodal Clinical Data

The HEFMI-ICH dataset introduces a novel approach through synchronized EEG and functional near-infrared spectroscopy (fNIRS) acquisition, specifically designed for intracerebral hemorrhage (ICH) rehabilitation research [12] [13].

Participant Cohort: This dataset innovatively incorporates neural recordings from 17 normal subjects and 20 patients with ICH, providing a crucial resource for understanding BCI performance in clinical populations [12] [13].

Multimodal Paradigm: Under standardized left-right hand motor imagery paradigms, the dataset features systematically collected and preprocessed dual-modality neural data. The hybrid approach leverages the complementary strengths of EEG (high temporal resolution) and fNIRS (better spatial resolution and resilience to artifacts), offering a more comprehensive picture of brain activity during MI tasks [12].

Clinical Application Focus: The dataset is explicitly optimized for developing precision rehabilitation systems based on multimodal neural feedback, providing feature-engineered data specifically designed for classification algorithms and multidimensional signal decoding in patient populations [12].

Performance Benchmarks and Comparative Analysis

Quantitative Performance Metrics

The WBCIC-MI dataset demonstrates significant improvements in classification accuracy compared to classic benchmarks and contemporary alternatives, while HEFMI-ICH offers unique clinical applicability.

Table 3: Performance Benchmarking Across Datasets

| Dataset | Subject Count | Classification Accuracy | Algorithm Used | BCI Poor Performer Rate |

|---|---|---|---|---|

| WBCIC-MI (2-class) | 51 | 85.32% [8] | EEGNet | Information not specified |

| WBCIC-MI (3-class) | 11 | 76.90% [8] | DeepConvNet | Information not specified |

| HEFMI-ICH | 37 total | Information not specified | Information not specified | Information not specified |

| BCI Competition IV-2a | 9 | ~70-80% (reported in literature) [11] | Various | 36.27% (est. across datasets) [9] |

| OpenBMI | 54 | 74.7% [8] | State-of-the-art algorithm | Information not specified |

The performance advantage of WBCIC-MI is particularly notable given the larger subject pool, which makes these accuracy figures more statistically reliable and representative of real-world performance variation across users. The dataset's well-distributed performance also enables research into BCI illiteracy, allowing investigators to explore differences between high performers and low performers [8].

Signaling Pathways and Neural Correlates

Motor imagery BCI systems rely on detecting event-related desynchronization (ERD) and synchronization (ERS) in the sensorimotor cortex. The WBCIC-MI dataset captures these established neural correlates, while HEFMI-ICH extends this through multimodal acquisition of complementary signals.

Figure 2: Neural correlates and signaling pathways in motor imagery BCI, highlighting the multimodal advantage of HEFMI-ICH.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Research Reagents and Experimental Resources

| Resource | Function/Application | Dataset Context |

|---|---|---|

| Neuracle 64-channel EEG [8] | Wireless EEG acquisition with 59 EEG + 5 EOG/ECG channels; provides signal stability and effective shielding | WBCIC-MI data collection |

| EEGNet [8] [14] | Compact convolutional neural network for EEG classification; balances accuracy with computational efficiency | WBCIC-MI benchmark (achieved 85.32% 2-class accuracy) |

| DeepConvNet [8] | Deeper convolutional architecture for more complex pattern recognition in EEG signals | WBCIC-MI benchmark (achieved 76.90% 3-class accuracy) |

| Common Spatial Patterns (CSP) [9] | Spatial filtering method that maximizes variance between classes; foundational for MI classification | Performance evaluation across multiple datasets |

| Linear Discriminant Analysis (LDA) [9] | Classifier commonly used with CSP features; provides robust baseline performance | Standard benchmark algorithm (mean 66.53% across datasets) |

| Hybrid EEG-fNIRS Platform [12] | Synchronized acquisition system capturing complementary neural signals | HEFMI-ICH core innovation |

| MOABB Framework [10] | Open-source platform for reproducible BCI benchmarking; standardizes evaluation across datasets | Critical for comparative studies across classic and new datasets |

The WBCIC-MI and HEFMI-ICH datasets represent significant advancements over classic benchmarks, each offering unique strengths for different research applications. The WBCIC-MI dataset establishes a new standard for large-scale, high-quality MI data collection, with its multi-session design, substantial subject pool, and superior classification performance making it ideal for developing and validating robust subject-independent and cross-session algorithms [8]. The HEFMI-ICH dataset pioneers multimodal acquisition in a clinically relevant population, offering unparalleled opportunities for developing rehabilitation technologies and understanding neural correlates in patient populations [12] [13].

For researchers, the selection between these datasets should be guided by specific research objectives: WBCIC-MI for advancing core algorithmic capabilities with high-quality, large-sample data, and HEFMI-ICH for clinically translational work requiring multimodal signals and patient data. Both datasets represent the future of BCI research—moving beyond classic benchmarks to address the real-world challenges of variability, reliability, and clinical applicability that have long constrained the field.

Brain-Computer Interface (BCI) technology enables direct communication between the brain and external devices, offering significant potential for rehabilitation and assistive technologies [15]. Electroencephalography (EEG)-based BCIs, particularly those using motor imagery (MI), are widely used due to their non-invasive nature and high temporal resolution [4] [8]. The reliability and reproducibility of BCI research heavily depend on high-quality, publicly available datasets for developing and validating new algorithms [16] [9]. This guide provides a comparative analysis of modern BCI dataset specifications, focusing on channel counts, experimental tasks, and participant demographics, to aid researchers in selecting appropriate data resources.

Comparative Analysis of BCI Datasets

The table below summarizes the specifications of several contemporary and widely-used BCI datasets, highlighting the diversity in their design and scope.

| Dataset Name | Recording Channels (EEG/Other) | Participant Demographics | Motor Imagery/Execution Tasks | Key Features and Notes |

|---|---|---|---|---|

| Freewill Reaching and Grasping [17] | 29 EEG, 4 EOG | 23 healthy adults (8F/15M), aged 18-24 | Execution: Reaching and grasping one of four freely chosen cups | - Freewill choice of target and timing- Actual movement execution- Includes continuous data for movement planning & execution |

| WBCIC-MI (2019 Contest) [8] | 59 EEG, 1 ECG, 4 EOG | 62 healthy subjects (18F), aged 17-30 | Imagery: Left/right hand-grasping; Foot-hooking (3-class) | - High-quality, large-scale data (62 subjects)- Collected over 3 sessions on different days- Addresses cross-session and cross-subject variability |

| Acute Stroke Patient MI [18] | 29 EEG, 2 EOG | 50 acute stroke patients (39M/11F), avg age 56.7 | Imagery: Left or right-handed ball grasping | - Rare patient dataset (1-30 days post-stroke)- Includes NIHSS, MBI, and mRS clinical scores- Uses a portable, wireless EEG system |

| BCI Competition IV - Dataset 2a [2] [9] | 22 EEG, 3 EOG | 9 healthy subjects | Imagery: Left hand, right hand, feet, tongue | - Classic 4-class MI benchmark dataset- Widely used for algorithm validation |

| BCI Competition IV - Dataset 2b [2] [9] | 3 bipolar EEG, 3 EOG | 9 healthy subjects | Imagery: Left hand vs. right hand | - Low channel count (3 channels)- Focus on binary classification |

| BCI Competition IV - Dataset 4 [2] [19] | 48-64 ECoG | 3 subjects | Execution: Individual finger flexions | - ECoG modality for higher signal resolution- Focus on fine-grained motor control |

Detailed Experimental Protocols

The methodology for collecting BCI data is critical for understanding the resulting datasets and their appropriate application. The following workflow visualizes a standard experimental procedure for an MI-BCI paradigm.

A typical MI-BCI experiment, as used in the WBCIC-MI and Acute Stroke Patient datasets, follows a structured trial-based protocol [8] [18]:

- Participant Preparation and Resting State Recording: Participants are fitted with an EEG cap according to the international 10-10 or 10-20 system. The session often begins with resting-state recordings, typically 60 seconds with eyes open and 60 seconds with eyes closed, to establish baseline brain activity [8].

- Trial Structure: Each trial is structured as follows:

- Cue Presentation (1-2 seconds): A visual or auditory cue instructs the participant on the specific MI task to perform (e.g., a left or right arrow for hand imagery) [8] [18].

- Motor Imagery/Execution Period (3-4 seconds): The participant performs the required mental rehearsal of the movement (Motor Imagery) or the actual movement (Motor Execution) without any physical motion. This phase captures the event-related desynchronization/synchronization (ERD/ERS) in the sensorimotor rhythms [8] [9].

- Rest Period (1-2 seconds): A short break allows the participant to relax before the next trial, preventing fatigue [18].

- Data Acquisition and Pre-processing: EEG data is recorded continuously throughout the session. Standard pre-processing includes band-pass filtering (e.g., 0.5-40 Hz), artifact removal (e.g., using provided EOG channels to correct for eye movements), and segmenting the continuous data into epochs (trials) time-locked to the cue presentation [18].

Performance Metrics and State-of-the-Art Results

Dataset quality is often reflected in the classification performance achieved by standard and advanced algorithms. Performance is a key differentiator, as datasets with higher baseline accuracies are more reliable for developing robust BCIs.

- BCI Competition IV Datasets: These legacy datasets have reported accuracies ranging from 66.06% to 77.57% with traditional machine learning methods like Common Spatial Patterns (CSP) and Linear Discriminant Analysis (LDA) [15]. A large-scale meta-analysis of public datasets found a mean classification accuracy of 66.53% for two-class MI problems across 861 sessions, with about 36% of users being classified as "BCI poor performers" [9].

- Modern High-Quality Datasets: Newer, larger datasets demonstrate significantly higher performance. The WBCIC-MI dataset has achieved an average accuracy of 85.32% for two-class and 76.90% for three-class tasks using deep learning models like EEGNet and DeepConvNet, indicating superior signal quality and usability [8].

- Advanced Algorithm Performance: Novel deep learning architectures have pushed performance even further. The CIACNet model achieved 85.15% and 90.05% accuracy on the BCI IV-2a and IV-2b datasets, respectively [4]. Another hybrid method combining statistical channel reduction with a deep learning framework (DLRCSPNN) reported accuracy improvements of 3.27% to 42.53% for individual subjects on BCI Competition III Dataset IVa, achieving accuracies above 90% for all subjects [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

This table lists key resources and their functions for conducting BCI research, from data collection to analysis.

| Item | Function in BCI Research |

|---|---|

| Multi-channel EEG System (e.g., 64-channel Neuracle system [8]) | Records electrical brain activity from the scalp with high temporal resolution. The number of channels (e.g., 29, 59, 118) impacts spatial information. |

| Electrooculogram (EOG) Electrodes | Records eye movements. Essential for identifying and removing ocular artifacts that contaminate EEG signals, thereby improving signal quality [17] [8]. |

| Portable/Wireless EEG System (e.g., ZhenTec NT1 [18]) | Enables more flexible and comfortable data collection, which is particularly useful for clinical settings and patient populations. |

| Common Spatial Patterns (CSP) Algorithm | A standard feature extraction technique that maximizes the variance between two classes of EEG signals, highly effective for MI task discrimination [15] [18]. |

| Deep Learning Models (e.g., EEGNet, EEG-TCNet, CIACNet [4]) | Neural network architectures designed for EEG data. They can automatically learn complex spatial-temporal features from raw or pre-processed signals, often leading to state-of-the-art classification performance. |

| Standardized Clinical Scales (e.g., NIHSS, MBI, mRS [18]) | Used in patient studies to quantitatively assess stroke severity and functional independence, allowing for correlation between neural data and clinical status. |

The landscape of BCI datasets is diverse, with specifications tailored to different research needs. Key differentiators include the number and type of recording channels, the nature of the task (imagery vs. execution, cued vs. freewill), and the participant population (healthy vs. clinical). While legacy competition datasets remain valuable benchmarks, newer, larger, and more standardized datasets are emerging. These modern collections offer higher quality recordings, include auxiliary signals like EOG for better artifact handling, and are accompanied by detailed clinical metadata, enabling more robust and clinically relevant BCI research. Researchers should select datasets based on the specific requirements of their work, whether for developing generalizable algorithms, studying fine-grained motor control, or creating translational solutions for patient rehabilitation.

Brain-Computer Interface (BCI) research stands at a pivotal crossroads, balancing between remarkable laboratory demonstrations and the practical demands of clinical implementation. Traditional BCI competitions, such as BCI Competition IV and the 2020 International BCI Competition, have primarily driven algorithmic advancements through standardized datasets collected from healthy subjects under controlled conditions [20] [21]. While these competitions have significantly advanced the state-of-the-art in decoding algorithms, they have simultaneously highlighted a critical limitation: the lack of representation from real-world patient populations who ultimately stand to benefit most from BCI technologies [20] [22].

The emergence of datasets like HEFMI-ICH represents a paradigm shift toward addressing this translational gap. As the first hybrid EEG-fNIRS motor imagery dataset specifically designed for intracerebral hemorrhage (ICH) rehabilitation research, HEFMI-ICH provides a novel data source through synchronized acquisition of electroencephalogram (EEG) and functional near-infrared spectroscopy (fNIRS) signals from both normal subjects and ICH patients [12]. This dataset innovatively incorporates neural recordings from 17 normal subjects and 20 patients with ICH under standardized left-right hand motor imagery paradigms, featuring systematically collected and preprocessed dual-modality neural data [12]. This approach marks a significant departure from traditional BCI datasets and offers a crucial clinical bridge for developing more applicable rehabilitation technologies.

This analysis examines how next-generation datasets like HEFMI-ICH address the limitations of traditional BCI competitions through direct comparison of their characteristics, experimental protocols, and clinical relevance. By objectively comparing the composition, methodology, and application potential of these dataset types, we provide researchers with a framework for selecting appropriate data resources based on their translational objectives.

Comparative Analysis: Traditional BCI Competitions vs. Clinically-Focused Datasets

The fundamental differences between traditional BCI competition datasets and clinically-oriented datasets like HEFMI-ICH span multiple dimensions, from participant composition to data collection protocols and intended applications. The table below summarizes these key distinctions:

Table 1: Comparison between Traditional BCI Competition Datasets and Clinical Bridge Datasets

| Characteristic | Traditional BCI Competition Datasets | Clinical Bridge Datasets (e.g., HEFMI-ICH) |

|---|---|---|

| Participant Population | Primarily healthy subjects [21] [20] | Mixed: 17 normal subjects + 20 ICH patients [12] |

| Data Modalities | Typically single modality (EEG or ECoG) [21] | Multimodal: Synchronized EEG + fNIRS [12] |

| Clinical Context | Limited or absent [20] | Specific disease focus: Intracerebral Hemorrhage [12] |

| Experimental Paradigm | Standardized motor imagery tasks [21] | Standardized left-right hand MI tailored for rehabilitation [12] |

| Primary Application | Algorithm development and competition [21] [20] | Development of precision rehabilitation systems [12] |

| Data Accessibility | Publicly available for competition purposes [21] | Publicly available to facilitate rehabilitation research [12] |

| Target Research Outcome | Improved decoding accuracy [22] | Clinical translation and patient rehabilitation [12] |

The comparative analysis reveals that datasets like HEFMI-ICH address critical limitations of traditional approaches by incorporating patient populations, multimodal data acquisition, and rehabilitation-specific paradigms. This shift enables the development of BCI systems that account for the neurophysiological differences between healthy individuals and patients with brain injuries, which is crucial for creating effective clinical interventions.

Experimental Protocols and Methodologies

HEFMI-ICH Data Collection Protocol

The HEFMI-ICH dataset employs a meticulously designed experimental protocol that balances scientific rigor with clinical applicability. The methodology incorporates:

Participant Recruitment: The dataset includes neural recordings from 17 normal subjects and 20 patients with intracerebral hemorrhage, creating a balanced design that enables comparative analysis between healthy and affected populations [12].

Experimental Paradigm: Subjects perform standardized left-right hand motor imagery tasks, which are fundamental movements targeted in stroke and ICH rehabilitation [12]. This paradigm selection directly aligns with clinical rehabilitation goals.

Multimodal Data Acquisition: The synchronized collection of EEG and fNIRS signals provides complementary information about electrical activity and hemodynamic responses in the brain [12]. This multimodal approach increases the robustness of neural decoding by capturing different aspects of brain activity.

Data Preprocessing: The resource provides feature-engineered data optimized for classification algorithms and multidimensional signal decoding [12], reducing the preprocessing burden on researchers and facilitating faster development of rehabilitation algorithms.

The following diagram illustrates the experimental workflow for collecting clinically relevant BCI data:

Traditional BCI Competition Protocols

In contrast to clinically-focused datasets, traditional BCI competitions typically employ highly standardized protocols optimized for benchmarking algorithmic performance rather than clinical translation:

BCI Competition IV Dataset 4: This dataset featured ECoG signals for individual finger movement from three epileptic patients [22]. While it included patient data, the focus remained on fundamental motor decoding rather than rehabilitation applications.

Limited Clinical Context: Traditional competitions typically provide minimal clinical metadata, focusing instead on the raw neural signals and task labels [21] [20]. This limitation restricts investigators' ability to account for clinical variables that significantly impact system performance in real-world settings.

Algorithm-Centric Design: The experimental protocols prioritize clean, well-controlled data acquisition that facilitates comparison between decoding algorithms [21], but may not account for the artifacts and variability common in clinical environments.

The transition from laboratory demonstrations to clinically applicable BCI systems requires specialized research reagents and computational tools. The table below details key resources referenced in the search results that enable this translational research:

Table 2: Research Reagent Solutions for Clinical BCI Development

| Research Tool | Function/Purpose | Example Implementation |

|---|---|---|

| Hybrid EEG-fNIRS Systems | Synchronized acquisition of electrical and hemodynamic brain activity | HEFMI-ICH dataset incorporating dual-modality neural recordings [12] |

| Automated ICH Segmentation Algorithms | Precise delineation of hemorrhage regions on CT scans | nnU-Net framework for automatic ICH segmentation on CT datasets [23] |

| Radiomics Feature Extraction | Quantitative analysis of medical imaging characteristics | PyRadiomics pipeline extraction of 107 original features from NCCT scans [24] |

| 3D Convolutional Neural Networks | Analysis of volumetric medical imaging data | 3D CNN regressor for ICH onset prediction [23] |

| Gradient Boosted Regression Trees | Predictive modeling from complex clinical and imaging features | XGBoost algorithm for onset estimation using radiomics features [23] |

| Motor Imagery Paradigms | Standardized protocols for eliciting reproducible neural signals | Left-right hand motor imagery tasks in HEFMI-ICH [12] |

These specialized tools enable researchers to address the unique challenges of clinical BCI development, including heterogeneous patient populations, pathological brain states, and the need for robust signal processing techniques that can handle clinical noise and variability.

Clinical Applicability and Validation Frameworks

The ultimate test of any BCI dataset lies in its ability to facilitate development of systems that perform reliably in clinical settings. Traditional competition metrics like decoding accuracy provide limited insight into real-world applicability. Datasets like HEFMI-ICH enable more meaningful validation through:

Cross-Population Generalization: By including both healthy subjects and ICH patients, researchers can explicitly test how well algorithms generalize from healthy to impaired neurophysiology [12]. This is crucial for developing systems that work reliably across the spectrum of patient presentations.

Multimodal Correlation: Synchronized EEG-fNIRS acquisition enables researchers to explore relationships between electrical and hemodynamic signals in pathological brains [12], potentially leading to more robust decoding approaches that leverage complementary information sources.

Rehabilitation-Relevant Outputs: The focus on motor imagery for upper limb rehabilitation aligns with clinically meaningful outcomes [12], enabling direct translation to neurorehabilitation applications.

The following diagram illustrates the pathway from data acquisition to clinical implementation:

The evolution of BCI datasets from competition-focused benchmarks to clinically-relevant resources like HEFMI-ICH represents significant progress toward bridging the translational gap in neurotechnology. By incorporating real patient populations, multimodal data acquisition, and rehabilitation-specific paradigms, these datasets enable development of algorithms that account for the complexities of pathological neurophysiology.

While traditional BCI competitions will continue to drive algorithmic innovations, the future of clinical BCI development depends on resources that prioritize ecological validity and clinical relevance. Datasets like HEFMI-ICH provide essential stepping stones toward this goal, offering researchers the opportunity to develop and validate systems in contexts that more closely resemble real-world clinical scenarios.

The continued development of such clinically-focused datasets, coupled with appropriate validation frameworks that measure both algorithmic performance and clinical utility, will accelerate the translation of BCI technologies from laboratory demonstrations to meaningful interventions that improve patient outcomes in neurorehabilitation.

State-of-the-Art Algorithms: From Deep Learning to Hybrid Models

Electroencephalography (EEG)-based Brain-Computer Interfaces (BCIs) have emerged as a transformative technology for enabling direct communication between the brain and external devices. Within this domain, motor imagery (MI)—the mental rehearsal of physical movements without actual execution—represents one of the most widely investigated paradigms due to its applications in neurorehabilitation, prosthetic control, and assistive technologies [8] [25]. The core challenge in MI-BCI systems lies in accurately decoding noisy, non-stationary, and subject-specific EEG signals to classify intended movements.

Deep learning approaches, particularly Convolutional Neural Networks (CNNs), have demonstrated remarkable success in overcoming these challenges by automatically learning discriminative spatiotemporal features from raw EEG data. This review provides a comprehensive performance comparison of two dominant CNN-based architectures that have shaped the field: the foundational EEGNet and its advanced successor EEGNeX. Framed within the context of benchmark BCI competition datasets, this analysis synthesizes experimental data to guide researchers in selecting and implementing these architectures for state-of-the-art MI decoding.

EEGNet: A Compact Baseline Architecture

EEGNet, introduced by Lawhern et al. (2018), established itself as a compact, versatile CNN baseline adaptable across various BCI paradigms [26] [27]. Its design principles emphasize parameter efficiency and robust generalization even with limited training data. The architecture employs three sequential blocks:

- Temporal Convolution: Learns frequency-specific filters through a 2D convolutional layer.

- Depthwise Convolution: Applies spatial filters separately to each input channel to learn domain-specific spatial features.

- Separable Convolution: Efficiently combines depthwise and pointwise convolutions to summarize features across both temporal and spatial dimensions [26] [28].

This structured approach enables EEGNet to effectively extract and integrate spectral, spatial, and temporal features from multi-channel EEG inputs, making it a widely adopted benchmark.

EEGNeX: An Enhanced Successor

EEGNeX represents a significant architectural evolution, designed to enhance the extraction of global temporal and spectral representations while maintaining computational efficiency [26] [27]. It introduces several key modifications over EEGNet:

- Reinforced Spectral Extraction: Replaces the initial temporal convolution with a pair of standard 2D convolutions using more filters (two layers of 8 filters each versus EEGNet's single layer of 16 filters) to better capture shallow spectral information [27].

- Expanded Temporal Receptive Field: Substitutes depthwise separable convolution with dilated convolution, which enlarges the receptive field without increasing parameters or computational cost, thereby capturing longer-range temporal dependencies [26].

- Inverse Bottleneck Structure: Incorporates a structure with an expansion ratio of four to improve feature transformation capacity and information flow [27].

- Optimized Activation Strategy: Reduces the number of activation layers to minimize information loss and uses padding strategically to preserve signal length and expand the effective receptive field [26].

These innovations enable EEGNeX to model more complex temporal dynamics and spectral patterns inherent in EEG signals, addressing limitations of the original EEGNet architecture.

Architectural Evolution Workflow

The diagram below illustrates the key architectural differences and evolutionary pathway from EEGNet to EEGNeX and its hybrid variants.

Performance Comparison on Benchmark Datasets

Model performance is quantitatively evaluated on standardized, publicly available BCI competition datasets, which serve as common benchmarks for comparing MI decoding algorithms. The table below summarizes the classification accuracy of EEGNet, EEGNeX, and other notable architectures.

Table 1: Performance Comparison of CNN-based Models on Major BCI Competition Datasets

| Model | BCI IV-2a (4-class) | BCI IV-2b (2-class) | Key Architectural Features | Experimental Protocol |

|---|---|---|---|---|

| EEGNet | 76.90% (3-class) [8] | 85.32% [8] | Compact, depthwise & separable convolutions | Cross-subject validation, 250Hz data, 1.5-6s trial segmentation [28] |

| EEGNeX | 83.10% [26] | ~2.1-8.5% improvement over EEGNet [26] | Dilated convolutions, inverse bottleneck, reinforced spectral layers | MOABB evaluation, 11 diverse MI datasets, statistical significance testing (p<0.05) [26] |

| MBMANet | 83.18% [29] | - | Multi-branch structure with multiple attention mechanisms | End-to-end decoding, 9-subject evaluation, no subject-specific hyperparameter tuning [29] |

| CIACNet | 85.15% [25] | 90.05% [25] | Dual-branch CNN, improved CBAM attention, TCN | 70-15-15 train-validation-test split, kappa score evaluation (0.80) [25] |

| AMEEGNet | 81.17% [28] | 89.83% [28] | Multi-scale EEGNet, fusion transmission, ECA module | End-to-end, minimal preprocessing, 0.5-100Hz filtered data [28] |

| EEG-SGENet | 80.98% [27] | 76.17% [27] | Integration of SGE module for spatial enhancement | Lightweight design focus, BCI IV 2a & 2b dataset evaluation [27] |

Performance Analysis and Trends

The experimental data reveals several key insights:

EEGNeX's Consistent Advancement: EEGNeX demonstrates a statistically significant performance improvement of 2.1%–8.5% over EEGNet across various scenarios and datasets, establishing it as a robust successor [26]. This enhancement is attributed to its improved capacity for capturing long-range temporal dependencies and richer spectral features.

Impact of Attention Mechanisms: Models incorporating attention mechanisms, such as CIACNet and AMEEGNet, consistently achieve high accuracy, particularly on the 2-class BCI IV-2b dataset (exceeding 89%) [25] [28]. The Efficient Channel Attention (ECA) module in AMEEGNet, for instance, acts as a lightweight feature calibrator, dynamically weighting important EEG channels to suppress noise and enhance discriminative spatial features [28].

Advantages of Multi-Branch Designs: Architectures like MBMANet [29] and CIACNet [25] utilize parallel branches with varied convolutional kernels or attention mechanisms to extract multi-scale features. This design mitigates hyperparameter sensitivity to intersubject variability, improving model robustness without requiring subject-specific tuning.

Experimental Protocols and Methodologies

Standardized evaluation protocols are crucial for ensuring fair and meaningful performance comparisons. The following workflow outlines the common experimental methodology for training and evaluating these models on public datasets.

Key Methodological Considerations

- Data Segmentation: For BCI IV-2a and similar datasets, the critical "cue" and "motor imagery" phases are typically segmented from the time interval [1.5s, 6s] relative to trial onset, resulting in 1,125 data points per channel when sampled at 250 Hz [28].

- Normalization: Z-score normalization ( x_{\text{norm}} = \frac{x - \mu}{\sigma} ) is commonly applied to eliminate channel-wise differences in signal amplitude and offset, improving training stability [30].

- Evaluation Framework: A 70-15-15 split for training, validation, and testing is frequently employed [25]. Cross-subject validation on datasets like BCI IV-2a, where one full session per subject is held out for testing, provides a rigorous assessment of generalizability [26] [28].

The Scientist's Toolkit: Essential Research Reagents

Implementing and advancing CNN-based EEG decoders requires a suite of computational and data resources. The following table details key components of the modern BCI research toolkit.

Table 2: Essential Research Reagents for CNN-based MI-BCI Research

| Resource | Function | Example Specifications |

|---|---|---|

| Public Benchmark Datasets | Provides standardized data for model training, benchmarking, and fair comparison. | BCI Competition IV 2a (4-class, 22 electrodes, 9 subjects) [28]; BCI Competition IV 2b (2-class, 3 electrodes, 9 subjects) [28]; High Gamma Dataset (HGD, 4-class, 44 electrodes, 14 subjects) [28] |

| Deep Learning Frameworks | Enables efficient model prototyping, training, and evaluation with GPU acceleration. | Python, PyTorch, TensorFlow, MOABB (Mother of All BCI Benchmarks) [26] |

| Computational Hardware | Accelerates the training of deep neural networks, which is computationally intensive. | NVIDIA GPUs (e.g., V100, A100, RTX series) |

| Model Architectures | Core neural network designs that extract spatiotemporal features from EEG signals. | EEGNet [26], EEGNeX [26] [27], and their variants (e.g., CIACNet [25], AMEEGNet [28]) |

| Data Augmentation Techniques | Increases effective dataset size and diversity, improving model robustness and reducing overfitting. | Discrete Cosine Transform (DCT) reorganization [31]; Time-slicing & overlapping [30] |

This comparison guide has detailed the architectural evolution and empirical performance of dominant CNN-based models for MI-EEG decoding. EEGNet remains a highly valuable, compact baseline due to its efficiency and proven generalization across paradigms. However, for researchers pursuing state-of-the-art accuracy, EEGNeX and its hybrid derivatives—particularly those incorporating multi-branch structures and attention mechanisms—consistently deliver superior performance on benchmark datasets like BCI Competition IV 2a and 2b.

The trajectory of the field points towards increasingly sophisticated architectures that dynamically focus on salient EEG features while efficiently modeling complex temporal and spectral relationships. Future work will likely focus on enhancing model interpretability, further improving robustness to cross-session and cross-subject variability, and optimizing these architectures for real-time, resource-constrained BCI applications.

The accurate classification of Motor Imagery (MI) tasks from electroencephalography (EEG) signals is a cornerstone of modern non-invasive Brain-Computer Interface (BCI) systems. These systems, which translate brain activity into commands for external devices, hold profound promise for neurorehabilitation and assistive technologies [6] [32]. However, EEG signals are characterized by a low signal-to-noise ratio, non-stationarity, and complex temporal-spatial dynamics, presenting significant challenges for traditional machine learning methods that often rely on manual feature engineering [6] [33].

The advent of deep learning has revolutionized EEG decoding, with Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) establishing strong baselines [34]. Recently, transformer-based models, renowned for their powerful self-attention mechanisms, have emerged as a formidable frontier in MI-EEG research [34] [35] [36]. These models excel at capturing long-range dependencies and global contextual information within data, overcoming the limitations of CNNs and RNNs [34]. This guide provides a comparative analysis of state-of-the-art attention-based models, including the novel EEGEncoder, benchmarking their performance on standardized BCI competition datasets to illuminate the path forward in temporal-spatial feature extraction.

Experimental Protocols and Benchmark Datasets

Objective comparison of MI-EEG decoding models relies on rigorous evaluation using public benchmarks. The BCI Competition IV Dataset 2a is the most widely used benchmark for multi-class MI classification [6] [37] [33].

The BCI Competition IV 2a Benchmark

This dataset is a public standard for evaluating model performance in brain-computer interface research [33].

- Subjects and Tasks: Contains EEG recordings from 9 healthy subjects performing four MI tasks: left hand, right hand, both feet, and tongue.

- Data Characteristics: Signals are recorded from 22 scalp electrodes at a 250 Hz sampling rate. Each subject completed two sessions (training and testing), with 288 trials (4 seconds each) per session.

- Core Challenge: The limited number of training samples and the presence of significant noise and artifacts make classification on this dataset challenging [33].

Standardized Preprocessing and Evaluation

To ensure fair comparison, most studies adhere to a common preprocessing pipeline and evaluation metric.

- Preprocessing: Common steps include band-pass filtering (e.g., 4-40 Hz) to isolate motor-relevant frequency bands, and signal normalization [6] [32]. Some approaches use more advanced techniques like Discrete Wavelet Transform (DWT) for noise reduction [37].

- Evaluation Metric: The primary metric for performance comparison is the average classification accuracy across all subjects, often reported in both subject-dependent and subject-independent (cross-subject) settings [6].

Comparative Analysis of State-of-the-Art Models

The table below summarizes the performance and key characteristics of recent advanced models on the BCI Competition IV 2a dataset.

Table 1: Performance Comparison of Advanced Models on BCI IV 2a Dataset

| Model Name | Average Accuracy (%) | Core Architectural Innovation | Temporal-Spatial Feature Handling |

|---|---|---|---|

| EEGEncoder [6] | 86.46% (Subject-dependent) | Fusion of Modified Transformers & Temporal Convolutional Networks (TCN) | Dual-Stream Temporal-Spatial Block (DSTS) for collaborative feature capture. |

| GAH-TNet [33] | 86.84% | Graph Attention & Hierarchical Temporal Network | Integrates spatial graph attention with deep temporal feature encoding. |

| Hybrid CNN-Attention [37] | 85.53% | CNN for feature extraction with Talking-Heads Attention | Uses CNN for time-domain features and attention to enhance critical sequences. |

| Hybrid CNN-LSTM [32] | 96.06%* | Combination of CNN and LSTM networks | CNN extracts spatial features; LSTM captures temporal dependencies. |

| EEG-TCNet [37] | ~79.40% (Baseline) | Temporal Convolutional Networks | A strong baseline model using TCN for temporal modeling. |

Note: The 96.06% accuracy reported for the Hybrid CNN-LSTM model was achieved on the PhysioNet EEG Motor Movement/Imagery Dataset, not the BCI IV 2a dataset, and is included here to highlight the potential of hybrid architectures. Its performance underscores the impact of model architecture and dataset selection when comparing results.

In-Depth Model Methodologies

EEGEncoder: Transformer-TCN Fusion

The EEGEncoder model introduces a novel architecture designed to overcome the limitations of standalone transformers or TCNs [6]. Its workflow involves:

- Downsampling Projector: The raw EEG signals first pass through a module of convolutional and average pooling layers. This reduces the sequence length and noise while preparing the data for deeper analysis [6].

- Dual-Stream Temporal-Spatial (DSTS) Block: This is the core innovation. It employs parallel streams:

- A Temporal Convolutional Network (TCN) stream to capture fine-grained local temporal patterns.

- A Stable Transformer stream, enhanced with modern architectural improvements, to capture global dependencies and long-range interactions within the signal.

- Feature Fusion and Classification: The outputs from both streams are integrated, allowing the model to leverage both local and global contexts for the final classification decision [6].

GAH-TNet: Graph-Based Hierarchical Attention

The GAH-TNet model emphasizes the natural graph structure of EEG electrodes distributed over the scalp [33]. Its methodology consists of:

- Graph Attention Temporal Encoding (GATE): Models the spatial dependencies between different EEG channels using a graph structure based on the physical electrode layout. This block also encodes short-term temporal dynamics [33].

- Hierarchical Attention-Guided Deep Temporal Encoding (HADTE): This two-stage block uses attention mechanisms and temporal convolutions to extract both local fine-grained features and global long-term dependency features, creating a rich, multi-scale temporal representation [33].

Hybrid CNN-Attention Model

This model combines the strengths of CNNs for local feature extraction with the selectivity of attention mechanisms [37]. Its process is:

- Spatial-Temporal Feature Extraction: A CNN is first used to extract preliminary time-domain and spatial features from the preprocessed EEG, creating time series that contain spatial information.

- Feature Sequence Enhancement: A "talking-heads" attention mechanism is applied to the feature sequences, allowing the model to adaptively focus on the most crucial time points and features for the classification task.

- Final Classification: A Temporal Convolutional Network (TCN) further abstracts the attended features, and a fully connected layer performs the final classification [37].

Diagram: EEGEncoder Architecture Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers aiming to replicate or build upon these models, the following computational "reagents" are essential.

Table 2: Key Research Reagents and Computational Tools

| Item / Resource | Function in Research | Specification / Notes |

|---|---|---|

| BCI Competition IV 2a | Primary benchmark dataset for training and evaluation. | 9 subjects, 4-class MI, 22 EEG channels [6] [33]. |

| Temporal Convolutional Network (TCN) | Captures temporal dynamics in EEG with a large receptive field. | Avoids gradient issues of RNNs; used in EEGEncoder & EEG-TCNet [6] [33]. |

| Self-Attention Mechanism | Enables the model to weigh the importance of different time points/channels. | Core of transformer models; allows capturing of global context [34] [36]. |

| Graph Neural Network (GNN) | Models the non-Euclidean spatial relationships between EEG electrodes. | Critical for models like GAH-TNet that exploit brain topology [33]. |

| Discrete Wavelet Transform (DWT) | Preprocessing technique for noise reduction and feature preservation. | Used to enhance signal quality before feature extraction [37]. |

The comparative analysis reveals that EEGEncoder, GAH-TNet, and other hybrid attention-based models are pushing the boundaries of MI-EEG decoding. Their success stems from a shared paradigm: moving beyond single-mode feature extraction to a more integrated, collaborative modeling of temporal and spatial information. While EEGEncoder leverages a direct fusion of transformers and TCNs, GAH-TNet demonstrates the power of incorporating the brain's inherent graph structure.

Future research will likely focus on several key challenges. Improving cross-subject generalization remains a primary goal, as current subject-dependent accuracy is much higher than subject-independent performance [6]. Furthermore, the development of more interpretable and explainable models is crucial for building trust, especially in clinical applications [32] [35]. Finally, as the field matures, creating efficient models that can be deployed in real-time BCI systems outside of controlled laboratory settings will be the ultimate test of their value. The rise of transformers has undoubtedly set a new course for BCI research, promising more robust and intelligent systems for neural rehabilitation and human-computer interaction.

The accurate decoding of Motor Imagery Electroencephalogram (MI-EEG) signals represents a fundamental challenge in the development of effective Brain-Computer Interface (BCI) systems. These signals are characterized by inherent complexities, including non-stationarity, low signal-to-noise ratios, and significant individual variability, which have limited the efficacy of traditional machine learning approaches [37] [25]. In response, the field has witnessed a paradigm shift toward sophisticated deep learning architectures that synergistically combine the strengths of multiple neural network components. Hybrid models integrating Temporal Convolutional Networks (TCN), Convolutional Neural Networks (CNN), and attention mechanisms have emerged as particularly powerful frameworks for tackling the nuances of MI-EEG classification.

These hybrid architectures operate on a complementary principle: CNNs excel at extracting spatial features from multi-channel EEG signals, TCNs specialize in capturing long-range temporal dependencies through dilated causal convolutions, and attention mechanisms dynamically weight the importance of different features, channels, or time points [25] [6] [38]. This tripartite synergy enables models to learn more discriminative spatial-temporal representations from raw EEG data, effectively bypassing the need for manual feature engineering while demonstrating enhanced robustness to noise and inter-subject variability. The resulting performance improvements have established these hybrid models as state-of-the-art solutions on benchmark BCI competition datasets, paving the way for more reliable real-world BCI applications in neurorehabilitation, prosthetic control, and assistive communication [39] [38].

Architectural Fundamentals of Hybrid Models

Core Components and Their Integration

Hybrid TCN-CNN-Attention models for MI-EEG decoding are constructed from specialized components that each address distinct aspects of signal processing. The CNN component typically employs both one-dimensional and two-dimensional convolutional layers to extract spatially meaningful patterns from the electrode array. Architectures such as EEGNet implement depthwise and separable convolutions to efficiently model the spatial relationships between channels while maintaining a compact parameter footprint [25] [38]. The TCN component builds upon dilated causal convolutions that exponentially expand the receptive field without proportionally increasing parameters, effectively capturing multi-scale temporal context and mitigating gradient vanishing issues common in recurrent architectures [6] [38]. The attention mechanisms incorporated into these models vary from squeeze-and-excitation blocks that model channel-wise relationships to multi-head self-attention and convolutional block attention modules (CBAM) that jointly emphasize important features across both channel and spatial dimensions [25] [39] [38].

The integration of these components follows several architectural patterns. Some models, like CIACNet, employ a sequential approach where features pass through CNN, attention, and TCN modules in stages [25] [4]. Others, including ATCNet and AMFTCNet, implement deeper integration with attention mechanisms woven between convolutional and temporal layers to progressively refine feature representations [39] [38]. More advanced architectures like SMMTM adopt a multi-branch framework where parallel pathways process the input at different scales or modalities, with features fused at intermediate or final layers [40]. This architectural diversity demonstrates the flexibility of the core components while maintaining the common objective of learning robust spatial-temporal representations of brain activity patterns.

Representative Model Architectures

CIACNet (Composite Improved Attention Convolutional Network): This architecture utilizes a dual-branch CNN to extract rich temporal features, an improved convolutional block attention module (CBAM) to enhance feature extraction, TCN to capture advanced temporal features, and multi-level feature concatenation for more comprehensive feature representation [25] [4].

ATCNet (Attention-based Temporal Convolutional Network): ATCNet combines CNN, multi-head self-attention, and TCN in an integrated pipeline. The model uses CNN for initial spatial-temporal feature extraction, applies multi-head self-attention to emphasize important temporal segments, and finally employs TCN to capture high-level temporal features for classification [39].

AMFTCNet (Attention-based Multi-scale Fusion Temporal Convolutional Network): This model introduces a multi-branch structure with residual connections to extract multi-scale features, a Parallel Attention Temporal Convolution (PAT) block, and a novel Product-Sum Channel Attention (PSCA) mechanism to dynamically weight and combine high-dimensional features from different scales [38].

EEGEncoder: Employing a transformer-based approach, EEGEncoder incorporates a Downsampling Projector for EEG signal preprocessing and multiple parallel Dual-Stream Temporal-Spatial (DSTS) blocks that combine TCN and stabilized transformer layers to capture both local and global dependencies in EEG signals [6] [41].

SMMTM (Separable Multi-branch Multi-attention Temporal Model): This comprehensive architecture combines spatiotemporal convolution (SC), multi-branch separable convolution (MSC), multi-head self-attention (MSA), temporal convolution network (TCN), and multimodal feature fusion (MFF) to capture features at multiple scales and resolutions [40].

Table 1: Core Architectural Components of Major Hybrid Models

| Model | CNN Variant | Attention Mechanism | TCN Implementation | Feature Fusion Approach |

|---|---|---|---|---|

| CIACNet | Dual-branch CNN | Convolutional Block Attention Module (CBAM) | Standard TCN with residual blocks | Multi-level feature concatenation |

| ATCNet | EEGNet-based | Multi-head self-attention | Dilated causal convolutions | Sequential processing with attention gating |

| AMFTCNet | Multi-branch CNN | Product-Sum Channel Attention (PSCA) | Parallel Attention Temporal (PAT) blocks | Dynamic multi-scale weighting with PSCA |

| EEGEncoder | Downsampling Projector | Multi-head self-attention (Transformer) | Dual-Stream Temporal-Spatial blocks | Parallel processing with dropout |

| SMMTM | Spatiotemporal + Multi-branch separable | Multi-head self-attention | Standard TCN | Feature fusion and decision fusion |

Experimental Protocols and Benchmark Methodologies

Standardized Evaluation Frameworks

The performance of hybrid TCN-CNN-Attention models is primarily evaluated using publicly available BCI competition datasets, with BCI Competition IV-2a and IV-2b serving as the de facto standards for comparison. The BCI IV-2a dataset contains EEG recordings from 9 subjects performing 4-class motor imagery tasks (left hand, right hand, feet, and tongue) using 22 EEG channels, while the BCI IV-2b dataset comprises data from 9 subjects for 2-class motor imagery (left hand vs. right hand) with 3 bipolar channels [37] [25] [39]. Evaluation follows either subject-dependent protocols, where models are trained and tested on data from the same individual, or more challenging subject-independent protocols, which assess generalization capability across unseen subjects [6] [40].

Rigorous experimental methodologies are employed to ensure fair comparison. Standard preprocessing pipelines typically include frequency filtering (often in the 4-40Hz range to capture sensorimotor rhythms), artifact removal techniques such as discrete wavelet transform or common average referencing, and trial segmentation around the motor imagery cue [37] [39]. Data augmentation strategies like sliding window cropping are frequently applied to increase effective dataset size and improve model robustness [39]. Performance is predominantly measured using classification accuracy and kappa coefficient, with results reported through cross-validation schemes to ensure statistical reliability. Most studies employ subject-specific models rather than attempting universal classifiers, acknowledging the significant inter-subject variability in EEG patterns [25] [40].

Implementation Details and Training Protocols

The implementation of hybrid models follows careful parameter selection and optimization strategies. CNNs typically use 2D convolutional kernels with sizes adapted to temporal and spatial dimensions of EEG inputs, while TCNs employ dilated convolutions with carefully selected dilation factors to capture both short-term and long-term temporal dependencies [6] [38]. Attention mechanisms are configured with appropriate attention heads and dimensions balanced against computational constraints. Training generally utilizes the Adam optimizer with learning rates between 0.001 and 0.0001, batch sizes adapted to computational resources, and dropout regularization (typically between 0.3 and 0.5) to prevent overfitting [25] [6].

To ensure fair comparisons, most studies implement identical training-test splits when benchmarking against existing approaches, with common practices including 5-fold or 10-fold cross-validation repeated multiple times with different random seeds [38] [40]. Many implementations also incorporate early stopping based on validation performance to prevent overfitting. The computational environment is typically specified, with most experiments conducted using deep learning frameworks like TensorFlow or PyTorch, often with GPU acceleration to manage the substantial computational requirements of these hybrid architectures, particularly during the hyperparameter optimization phase [6] [41].

Table 2: Standard Experimental Protocols for MI-EEG Model Evaluation

| Protocol Aspect | Standard Configuration | Variations and Notes |

|---|---|---|

| Dataset Split | 5-fold or 10-fold cross-validation | Subject-dependent vs. subject-independent paradigms |

| Preprocessing | Bandpass filtering (4-40Hz), artifact removal | Common average referencing, wavelet denoising |

| Data Augmentation | Sliding window cropping, synthetic minority oversampling | Jittering, scaling, rotational transforms for EEG |

| Performance Metrics | Classification accuracy, Kappa coefficient | F1-score, precision, recall for class-imbalanced scenarios |

| Training Parameters | Adam optimizer, learning rate 0.001-0.0001 | Batch sizes 16-64, dropout rate 0.3-0.5 |

| Validation Approach | Hold-out validation set, early stopping | Nested cross-validation for hyperparameter tuning |

Performance Comparison on Benchmark Datasets

Quantitative Results Across Architectures

Comprehensive performance evaluations on standard BCI competition datasets demonstrate the superior capabilities of hybrid TCN-CNN-Attention models compared to conventional approaches. On the BCI Competition IV-2a dataset, which involves 4-class motor imagery classification, the AMFTCNet model achieves the highest reported accuracy at 87.77%, significantly outperforming simpler architectures [38]. CIACNet attains 85.15% accuracy, while EEGEncoder reaches 86.46% accuracy in subject-dependent evaluation mode [25] [6]. The hybrid CNN with attention-based feature selection described achieves 85.53% accuracy, showing substantial improvements over baseline models such as standard CNN (74.29%), EEGNet (78.63%), CNN-LSTM (74.35%), and EEG-TCNet (79.40%) [37]. These consistent performance gains across multiple independent studies highlight the robustness of the hybrid approach.

For the 2-class motor imagery tasks in the BCI Competition IV-2b dataset, performance is generally higher due to the reduced complexity of binary classification. CIACNet achieves 90.05% accuracy on this dataset, while AMFTCNet reaches 88.26% accuracy [25] [38]. The SMMTM model reports 89.26% accuracy on the BCI-2b dataset, further validating the effectiveness of multi-branch hybrid architectures [40]. In cross-subject evaluation scenarios, which present greater challenges due to inter-subject variability, the SMMTM model maintains a respectable 69.21% accuracy on the BCI-2a dataset, suggesting improved generalization capabilities [40]. These results collectively demonstrate that hybrid models consistently push the boundaries of what is achievable in MI-EEG decoding across different task complexities and evaluation paradigms.

Table 3: Performance Comparison of Hybrid Models on BCI Competition Datasets

| Model | BCI IV-2a Accuracy | BCI IV-2b Accuracy | Cross-Subject Performance | Kappa Value |

|---|---|---|---|---|

| CIACNet | 85.15% [25] | 90.05% [25] | Not reported | 0.80 [25] |

| AMFTCNet | 87.77% [38] | 88.26% [38] | Not reported | Not reported |

| EEGEncoder | 86.46% (subject-dependent) [6] | Not reported | 74.48% (subject-independent) [6] | Not reported |

| Hybrid CNN with Attention | 85.53% [37] | Not reported | Not reported | Not reported |

| SMMTM | 84.96% [40] | 89.26% [40] | 69.21% (BCI IV-2a) [40] | 0.797 (BCI IV-2a) [40] |

| ATCNet | 87.5% (subject-dependent) [39] | 86.3% (subject-dependent) [39] | Not reported | Not reported |

| Baseline: EEGNet | 78.63% [37] | Not reported | Not reported | Not reported |

| Baseline: EEG-TCNet | 79.40% [37] | Not reported | Not reported | Not reported |

Comparative Analysis and Performance Trends

The performance differentials between hybrid models and their conventional counterparts reveal important insights about architectural efficacy. The attention mechanism component consistently provides measurable improvements, with studies showing accuracy gains of 6-11% over models lacking attention modules [37]. The integration of TCN components demonstrates particular strength in capturing temporal dependencies in EEG signals, outperforming recurrent alternatives like LSTM and GRU while offering more stable gradient propagation [38] [40]. Furthermore, multi-branch architectures such as SMMTM and AMFTCNet show advantages in extracting complementary features at different scales or frequencies, leading to more robust representations compared to single-pathway models [38] [40].

An important emerging trend is the balance between model complexity and performance. While increasingly sophisticated architectures generally deliver improved accuracy, they also demand greater computational resources and risk overfitting on limited EEG data [6] [42]. This has prompted research into efficient model design, with approaches like EEGNet demonstrating that carefully designed compact architectures can achieve competitive performance with substantially reduced parameters [25] [38]. The optimal architectural configuration appears to be task-dependent, with simpler hybrids potentially sufficient for 2-class discrimination, while more complex multi-branch designs yield greater benefits for challenging 4-class scenarios [25] [40]. These observations highlight the importance of matching model complexity to both the specific classification task and the available computational resources.