Automated EEG Artifact Detection with Machine Learning: Advanced Methods for Researchers and Drug Development

This article provides a comprehensive exploration of machine learning (ML) techniques for automatic electroencephalogram (EEG) artifact detection, tailored for researchers and drug development professionals.

Automated EEG Artifact Detection with Machine Learning: Advanced Methods for Researchers and Drug Development

Abstract

This article provides a comprehensive exploration of machine learning (ML) techniques for automatic electroencephalogram (EEG) artifact detection, tailored for researchers and drug development professionals. It covers the foundational challenge of signal contamination and its impact on data integrity, reviews the evolution from traditional methods to specialized deep learning models like Convolutional Neural Networks (CNNs), and discusses optimization strategies for real-world applications. The content further delivers a critical analysis of performance validation metrics and comparative studies, offering insights to guide the selection and implementation of robust artifact detection pipelines in clinical trials and pharmacological research.

The Critical Challenge of EEG Artifacts in Research and Drug Development

FAQ: EEG Artifacts and Signal Integrity

Q: What is an EEG artifact? An EEG artifact is any recorded signal that does not originate from neural activity within the brain. These unwanted signals can stem from the patient's own body (physiological) or from the external environment or recording equipment (non-physiological) [1].

Q: Why is identifying artifacts so challenging? Artifacts are challenging because they are ubiquitous, do not follow the rules of cerebral localization, are often disorganized, and can be intermixed with genuine brain signals. Furthermore, some artifacts can look deceptively similar to cerebral activity or even mimic rhythmic properties of seizures [2].

Q: Why is robust artifact removal critical for machine learning (ML) research? For ML-based EEG analysis, artifacts represent a significant source of noise and confounding variables. If not properly removed, they can obscure genuine neural signatures, lead to misleading feature extraction, and ultimately result in poorly performing or biased models. Effective artifact handling is a crucial preprocessing step for building reliable automated detection systems [3] [4].

Physiological Artifacts

Physiological artifacts originate from the patient's own biological processes and body functions [5] [1].

Table: Common Physiological Artifacts in EEG

| Artifact Type | Origin | Typical Causes | Key Characteristics in EEG |

|---|---|---|---|

| Ocular Activity [2] [1] | Corneo-retinal potential (eye dipole) [1] | Blinks, saccades, lateral gaze [1] | High-amplitude, slow deflections maximal in frontal electrodes (Fp1, Fp2) [2] [1]. Lateral movements show opposite polarities at F7/F8 [2]. |

| Muscle Activity (EMG) [1] | Muscle fiber contractions [1] | Jaw clenching, talking, swallowing, frowning [1] | High-frequency, low-amplitude "broadband noise" that can obscure beta and gamma bands [1]. |

| Cardiac Activity [2] [1] | Electrical activity of the heart [1] | Heartbeat (ECG), pulse (ballistocardiogram) [1] | Rhythmic waveforms time-locked to the patient's heartbeat, often more prominent on the left side [2]. |

| Glossokinetic/Sweat [2] [1] | Tongue movement; sweat gland activity [2] [1] | Speaking; heat, stress [2] [1] | Tongue: Slow, diffuse delta activity [2]. Sweat: Very slow drifts (<0.5 Hz) in baseline [2] [1]. |

The diagram below illustrates the logical workflow for identifying common physiological artifacts based on their key characteristics.

Non-Physiological Artifacts

Non-physiological artifacts are caused by external factors, such as issues with the recording equipment or environmental interference [5] [1].

Table: Common Non-Physiological Artifacts in EEG

| Artifact Type | Origin | Typical Causes | Key Characteristics in EEG |

|---|---|---|---|

| Electrode Pop [2] [1] | Sudden change in electrode-skin impedance [1] | Loose electrode, drying electrolyte gel [1] | Sudden, high-amplitude transient with a very steep upslope, confined to a single electrode with no electrical field [2]. |

| AC/Power Line [1] | Electromagnetic interference from AC power [1] | Unshielded cables, nearby electrical devices [1] | Persistent high-frequency noise with a sharp peak at 50 Hz or 60 Hz in the frequency spectrum [1]. |

| Cable Movement [1] | Physical movement of electrode cables [1] | Tugging on cables, participant movement [1] | Irregular, high-amplitude deflections; can appear rhythmic if movement is repetitive [1]. |

| Incorrect Reference [1] | Poor contact or placement of the reference electrode [1] | Dried conductive gel, loose connection, omitted electrode [1] | Abnormal signal across all channels, with abrupt shifts and abnormally high power [1]. |

Machine Learning for Artifact Detection and Removal

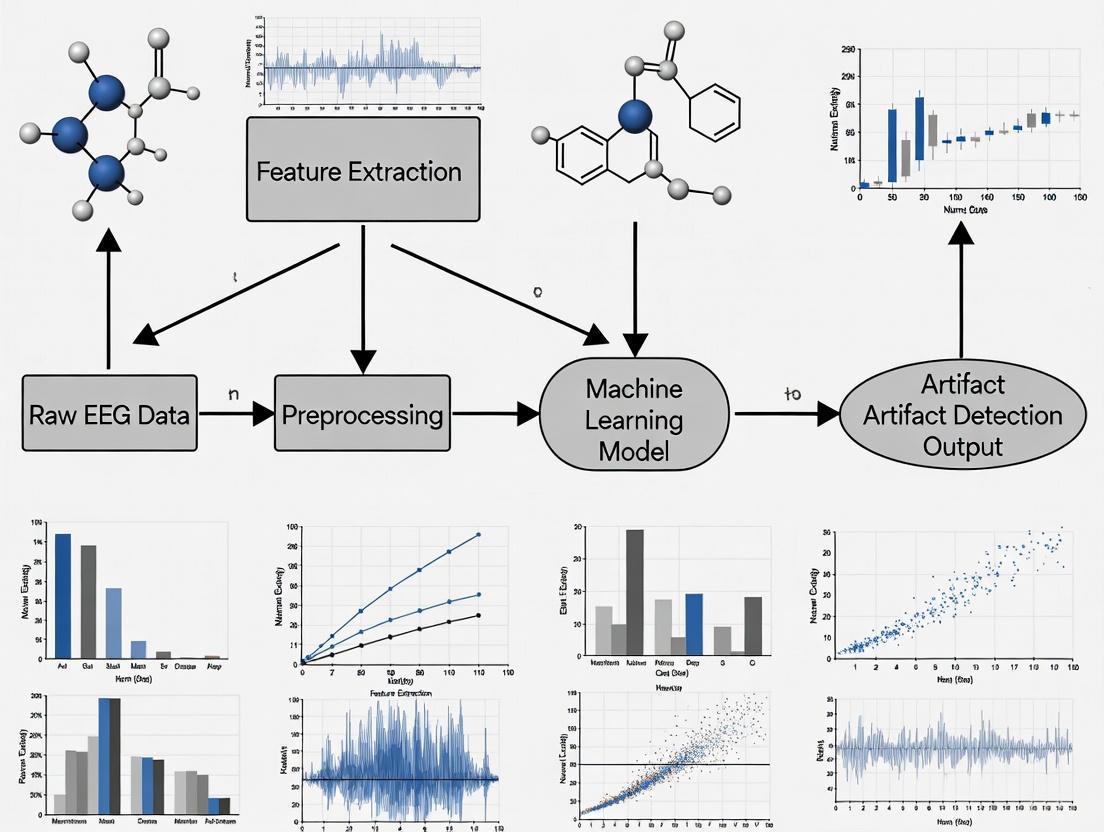

Automating artifact handling is a major focus in modern EEG research, aiming to overcome the time-consuming and subjective nature of manual review [3] [6]. The following workflow illustrates a generalized pipeline for a machine learning-based approach to this problem.

Experimental Protocol: Unsupervised Detection with LSTEEG Autoencoder This protocol is based on the methodology described by Aquilué-Llorens & Soria-Frisch [3].

- Data Preparation: Obtain a dataset of clean, pre-processed EEG epochs (e.g., from the LEMON dataset). Partition the data into training (60%), validation (20%), and test (20%) sets.

- Model Training: Train an LSTM-based autoencoder (LSTEEG) using only the clean training data. The objective is to minimize the Mean Squared Error (MSE) between the input epoch and the reconstructed output.

- Anomaly Detection: Forward new, unlabeled EEG epochs through the trained network. Calculate the reconstruction MSE.

- Classification: Epochs with low MSE are classified as "clean" (similar to training data), while epochs with high MSE are classified as "contaminated" or artifactual (anomalies). The MSE value serves as the classification metric, and performance can be evaluated using the Area Under the ROC Curve (AUC) [3].

Experimental Protocol: Supervised Correction with AnEEG (LSTM-GAN) This protocol is based on the methodology described in Scientific Reports [4].

- Data Preparation: Create a paired dataset where each input is a noisy/artifact-contaminated EEG epoch, and the corresponding target is a clean, ground-truth version of the same epoch. Ground truth can be established using advanced artifact removal techniques.

- Model Architecture: Implement a Generative Adversarial Network (GAN) where:

- The Generator is an LSTM-based network that takes the noisy EEG as input and generates a "cleaned" signal.

- The Discriminator is a Convolutional Neural Network (CNN) that tries to distinguish between the generator's output and the true clean signal.

- Adversarial Training: Train the model in a two-step iterative process. The generator learns to produce cleaned signals that are realistic enough to "fool" the discriminator, while the discriminator becomes better at identifying real vs. generated clean signals. This competition drives the generator to produce high-quality, artifact-free EEG.

- Evaluation: Use quantitative metrics like Normalized Mean Square Error (NMSE), Root Mean Square Error (RMSE), Correlation Coefficient (CC), and Signal-to-Noise Ratio (SNR) to validate the quality of the corrected signal against the ground truth [4].

The Scientist's Toolkit

Table: Key Computational Tools for Automated EEG Artifact Processing

| Tool / Reagent | Function / Application in Research |

|---|---|

| LSTM-based Autoencoder (e.g., LSTEEG) [3] | An unsupervised deep learning model that learns to compress and reconstruct clean EEG. It is leveraged for artifact detection by calculating the reconstruction error, where high error indicates an anomaly/artifact. |

| Generative Adversarial Network (GAN) [4] | A deep learning framework for artifact removal. It pits a generator (which cleans the signal) against a discriminator, leading to the synthesis of high-quality, artifact-corrected EEG data. |

| Independent Component Analysis (ICA) [3] [1] | A classical blind source separation technique used to decompose multi-channel EEG into independent components. Researchers can then manually or automatically (e.g., with ICLabel) identify and remove components correlated with artifacts. |

| Random Forest Classifier [6] | A traditional machine learning model effective for automated detection of artifacts, especially in specific contexts like single-channel, short-epoch neonatal EEG. |

| LEMON & EEGDenoiseNet Datasets [3] [4] | Publicly available benchmark EEG datasets that are essential for training, validating, and benchmarking the performance of new artifact detection and removal algorithms. |

Troubleshooting Guide: Addressing Common EEG Issues

Issue: Persistent noise or flat lines across all channels.

- Step 1: Check the basic electrode/cap connections. Ensure all components are plugged in correctly. Re-apply and check the impedance of the ground (GND) and reference (REF) electrodes, as a faulty reference can affect all channels [7] [1].

- Step 2: Restart the recording software and the amplifier unit. This can resolve temporary software glitches [7].

- Step 3: If possible, swap out the headbox and cables to rule out a hardware-level failure [7].

- Step 4: Check for external sources of electrical interference, such as unshielded power cables or electronic devices near the participant [1].

Issue: High-frequency noise on a single channel.

- Step 1: Identify the specific channel displaying the noise.

- Step 2: Inspect the corresponding electrode. Re-apply the electrode by cleaning the site and adding fresh conductive gel to ensure good skin contact and low impedance [7].

- Step 3: Check the specific cable and connector for that channel for any damage or looseness [1].

- Step 4: If the problem persists, try replacing the electrode entirely to rule out a "dead" or faulty sensor [7].

Troubleshooting Guides

Guide 1: Diagnosing Source Misclassification in Automated Artifact Detection

Problem: Your automated pipeline misclassifies high-entropy neural signals as artifacts, incorrectly rejecting valuable data.

Explanation: In the context of disorders of consciousness, high-entropy brain states are a hallmark of conscious awareness but can share temporal or spectral properties with muscle artifacts. Automated systems may mistakenly flag these valuable neural patterns as noise [8].

Solution:

- Step 1 - Verify with Ground Truth: Check if the epochs flagged as artifacts contain known neural markers. For example, confirm the presence of event-related potentials (ERPs) like P300 or N400 in the rejected trials using a separate clean dataset [9].

- Step 2 - Implement Spatial Analysis: Analyze the topographic distribution of the signal. Neural signals typically follow known spatial patterns (e.g., activity over parietal regions for certain cognitive tasks), while artifacts like muscle noise often have a more focal, frontal, or temporal distribution [8] [1].

- Step 3 - Adjust Algorithm Thresholds: If using an anomaly detection autoencoder like LSTEEG, recalibrate the threshold for the reconstruction error. Retrain or fine-tune the model on a dataset that includes high-entropy brain states to improve its discrimination capability [3].

Guide 2: Addressing Incomplete Artifact Removal in Deep Learning Models

Problem: After processing with a deep learning denoising model, residual artifacts remain in the EEG signal, potentially leading to confounds in decoding analyses.

Explanation: Deep learning models, such as the AnEEG network or LSTEEG autoencoder, are trained to reconstruct clean signals. However, with complex or high-amplitude artifacts (e.g., from motion or transcranial electrical stimulation), the model may only partially remove the noise, leaving remnants that can still skew downstream analysis [4] [3] [10].

Solution:

- Step 1 - Quality Metric Check: Calculate post-correction quality metrics like Signal-to-Artifact Ratio (SAR) and Correlation Coefficient (CC) against a ground truth. Low values indicate incomplete removal [4].

- Step 2 - Hybrid Approach: For severe artifacts, combine deep learning correction with a subsequent artifact rejection step. Models may struggle with large artifacts that completely mask brain activity; rejecting these epochs entirely preserves the integrity of the remaining data [3].

- Step 3 - Model Selection/Retraining: Benchmark different models for your specific artifact type. For instance, a multi-modular State Space Model (SSM) may outperform a convolutional network for removing artifacts from transcranial random noise stimulation (tRNS) [10]. Retrain your model on data that better represents the challenging artifacts in your experiments.

Frequently Asked Questions (FAQs)

Q1: We are using a low-density, wearable EEG system for a drug development study. Why do standard artifact removal techniques like ICA perform poorly, and what are better options?

A: Independent Component Analysis (ICA) requires a high number of channels and stable scalp coverage to effectively separate neural sources from artifacts reliably. Wearable EEG systems often have a low number of channels (typically below 16) and use dry electrodes, which reduces spatial resolution and increases signal instability, thus impairing ICA's effectiveness [11] [12]. Better options include:

- Artifact Subspace Reconstruction (ASR): An adaptive method that is widely applied for ocular, movement, and instrumental artifacts in wearable EEG [11].

- Deep Learning Models: Models like LSTM-based autoencoders (e.g., LSTEEG) or GANs (e.g., AnEEG) are emerging as powerful tools. They can learn complex, non-linear artifact representations and are particularly promising for muscular and motion artifacts in real-time settings [11] [4] [3].

Q2: Our multivariate pattern analysis (MVPA) shows good decoding accuracy, but we are concerned that residual artifacts might be creating false confounds. How can we verify this?

A: Your concern is valid, as artifacts can artificially inflate decoding accuracy. A recent study systematically evaluated this issue. The findings suggest that while a combination of artifact correction and rejection may not always significantly enhance decoding performance, artifact correction (e.g., using ICA for ocular artifacts) prior to analysis is still strongly recommended to minimize the risk of artifact-related confounds [9]. To verify your results:

- Control Analysis: Run your decoding algorithm on the artifact-free intervals only and compare the performance with the results from the full dataset. A significant drop in accuracy when using only clean data may indicate that artifacts were driving your initial results.

- Inspect Topographies: Examine the weight maps of your decoder. If the most influential features originate from channels known for artifact contamination (e.g., frontal sites for eye blinks), it suggests a potential confound [9].

Q3: For our automated pipeline, what is the most effective way to classify different types of artifacts (e.g., ocular vs. muscular) using machine learning?

A: Classifying specific artifact categories is a critical step for applying tailored removal strategies. The most effective approaches combine component analysis with deep learning:

- ICA with a Classifier: First, use ICA to decompose the EEG signal into independent components. Then, instead of manual inspection, use a trained classifier (e.g., a Convolutional Neural Network) to automatically label these components based on their topographic maps and power spectra. This method has achieved high accuracy (>91%) in classifying muscle, eye blink, and horizontal eye movement artifacts [13].

- End-to-End Deep Learning: Newer models like DSCnet are designed for multi-angle feature learning directly from EEG signals, which can capture spatiotemporal patterns characteristic of different artifacts without the need for a separate decomposition step [14].

Table 1: Performance Metrics of Selected Deep Learning Models for EEG Artifact Removal

| Model Name | Model Architecture | Key Metric | Performance Value | Primary Artifact Targeted | Reference Dataset |

|---|---|---|---|---|---|

| AnEEG | LSTM-based GAN | Correlation Coefficient (CC) | Higher than wavelet techniques | Muscle, Ocular, Environmental | [4] |

| DSCnet | Depthwise Separable CNN + DAFM | Classification Accuracy | 85.11% (Drug), 84.56% (Alcohol) | N/A (Addiction Detection) | Collected dataset, UCI [14] |

| ICA + ANN | ICA + Artificial Neural Network | Classification Accuracy | 91.01% ± 5.12% | Ocular (98.29%), EMG | 841 Healthy Subjects [13] |

| LSTEEG | LSTM-based Autoencoder | Area Under Curve (AUC) | Competitive performance shown | General Artifacts (Detection) | LEMON Dataset [3] |

| M4 Network | State Space Model (SSM) | Root Relative Mean Squared Error (RRMSE) | Best for tACS & tRNS | tES Artifacts | Synthetic tES Dataset [10] |

Table 2: Characteristics and Impacts of Common EEG Artifacts on Data Integrity

| Artifact Type | Origin | Temporal Signature | Spectral Signature | Primary Impact on Neural Signals & Risk of Skewed Results |

|---|---|---|---|---|

| Ocular (EOG) | Eye movements/blinks | Sharp, high-amplitude deflections (Frontal) | Dominates Delta/Theta bands | Masks cognitive low-frequency rhythms; can be misclassified as neural activity [1]. |

| Muscle (EMG) | Muscle contractions | High-frequency noise | Broadband, dominates Beta/Gamma | Obscures high-frequency neural oscillations related to cognition and motor activity [11] [1]. |

| Cardiac (ECG) | Heartbeat | Rhythmic waveforms | Overlaps multiple bands | Can create periodic confounds; challenging to detect without reference [1]. |

| Electrode Pop | Poor electrode contact | Abrupt, high-amplitude transients | Broadband, non-stationary | Can be misinterpreted as epileptiform spikes or other pathological neural events [1]. |

| Motion | Head/Body movement | Large, non-linear noise bursts | Varies; can mimic rhythms | Introduces non-linear drifts and noise, severely challenging signal interpretation in mobile EEG [11] [1]. |

Experimental Protocols

Protocol 1: Benchmarking Deep Learning Models for tES Artifact Removal

Objective: To evaluate and compare the performance of multiple machine learning models in removing artifacts induced by different Transcranial Electrical Stimulation (tES) modalities from simultaneous EEG recordings.

Methodology:

- Dataset Creation: A semi-synthetic dataset is created by adding clean EEG recordings with synthetically generated tES artifacts (for tDCS, tACS, and tRNS). This provides a known ground truth for rigorous evaluation [10].

- Model Training: A suite of eleven models is trained, including traditional methods and advanced deep learning architectures like Complex CNN and a multi-modular State Space Model (M4) [10].

- Performance Evaluation: Models are evaluated using three metrics calculated between the denoised output and the ground truth clean EEG:

- Root Relative Mean Squared Error (RRMSE) in temporal and spectral domains.

- Correlation Coefficient (CC) [10].

Key Workflow Diagram:

Protocol 2: Unsupervised Anomaly Detection for EEG Artifacts Using an Autoencoder

Objective: To detect artifacts in EEG signals without requiring labeled data, by treating artifacts as anomalies.

Methodology:

- Data Preparation: A dataset of clean, pre-processed EEG epochs is used (e.g., from the LEMON dataset). This data is split 60/20/20 for training, validation, and testing [3].

- Unsupervised Training: An autoencoder (e.g., LSTEEG) is trained solely on the clean EEG data. The network learns to compress and reconstruct normal brain activity by minimizing the Mean Squared Error (MSE) between its input and output [3].

- Anomaly Detection: During inference, new EEG epochs (potentially containing artifacts) are fed into the autoencoder. The reconstruction MSE is calculated for each epoch.

- A low MSE indicates the epoch is similar to the training data (i.e., clean).

- A high MSE indicates the epoch is an anomaly (i.e., contains artifacts) [3].

- Validation: The Area Under the Receiver Operating Characteristic Curve (AUC) is used to determine the predictive ability of the reconstruction MSE for classifying noisy vs. clean epochs [3].

Key Workflow Diagram:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Automated EEG Artifact Detection Research

| Resource Name / Type | Function in Research | Specific Example / Note |

|---|---|---|

| Public EEG Datasets | Provides standardized data for training and benchmarking machine learning models. | EEGDenoiseNet [3]; LEMON Dataset [3]; UCI Alcohol Addiction Dataset [14]; PhysioNet Motor/Imagery Dataset [4]. |

| Blind Source Separation (BSS) | A foundational technique for decomposing EEG signals into constituent sources before classification. | Independent Component Analysis (ICA) is the most common method, used to generate components for subsequent automated classification [13] [1]. |

| Deep Learning Frameworks | Enables the development and training of complex models for end-to-end artifact detection and removal. | Used for architectures like GANs (AnEEG [4]), LSTM Autoencoders (LSTEEG [3]), CNNs, and State Space Models (M4 [10]). |

| Semi-Synthetic Data Generators | Allows for controlled evaluation by mixing clean EEG with known artifacts, providing a perfect ground truth. | Crucial for benchmarking, especially for artifacts like those from tES, where a clean reference is otherwise unavailable [10]. |

| Automated Classification Tools | Reduces or eliminates the need for manual inspection of components or signals, enabling high-throughput analysis. | ICLabel (a CNN for ICA component labeling [3]); Custom ANN classifiers for ICA components [13]. |

Electroencephalography (EEG) is a crucial tool in neuroscience and clinical diagnostics, but its signals are frequently contaminated by artifacts from biological sources (eye movements, muscle activity, cardiac rhythms) and environmental sources (powerline interference, electrode movement) [4]. These artifacts obscure neural information and can lead to misinterpretation in both research and clinical settings. For decades, traditional methods like Blind Source Separation (BSS) and rule-based thresholding have formed the cornerstone of EEG artifact management. However, within the context of advancing automatic artifact detection using machine learning, understanding the specific limitations of these traditional approaches becomes paramount. This technical support guide examines these limitations through experimental evidence and provides troubleshooting guidance for researchers navigating these methodological challenges.

Understanding Blind Source Separation (BSS) and Its Limitations

Blind Source Separation, particularly Independent Component Analysis (ICA), has been a prominent processing tool in EEG research for separating intracranial dipolar sources from scalp recordings without relying on head modeling [15]. BSS operates on the superposition principle, where scalp potentials are represented as a linear, instantaneous mixture of underlying neural sources [16].

Core Technical Limitation: Sensitivity to Mixing Matrix Distortions

A fundamental assumption of BSS is that the mixing matrix remains invariant—meaning sources, electrodes, and head geometry do not change during recording. In practice, this assumption is frequently violated.

Troubleshooting FAQ: BSS Component Proliferation

- Q: My BSS analysis is identifying more components than expected, with many having similar properties. What is causing this, and how can I troubleshoot it?

- A: This is a common problem linked to violations of the mixing matrix invariance assumption. Even slight electrode movement or inter-individual anatomo-functional variability in group analyses creates non-Gaussian features that impair Higher-Order Statistics (HOS) algorithms [16]. To troubleshoot:

- Inspect Data Stationarity: Check for segments where electrode impedance may have changed or where head movement occurred.

- Compare Algorithms: Test Second-Order Statistics (SOS) algorithms like SOBI or AJDC, which are more robust to these distortions [16].

- For Group ICA: Avoid using a single mixing matrix for all subjects. Consider alternative approaches that account for inter-subject variability.

Experimental Protocol: Testing BSS Robustness

- Objective: To evaluate the sensitivity of different BSS algorithms to controlled distortions in the mixing matrix.

- Method (as simulated in [16]):

- Generate Synthetic Data: Create artificial EEG data from known dipolar sources with a stable mixing matrix.

- Introduce Distortion: Simulate a sudden electrode displacement by abruptly altering the mixing coefficients for a subset of channels.

- Apply BSS Algorithms: Process the distorted data with multiple HOS-based (e.g., FASTICA, INFOMAX) and SOS-based (e.g., SOBI, AJDC) algorithms.

- Evaluate Performance: Measure the quality of recovered signals and the accuracy of source localization for each algorithm.

- Key Finding: HOS-based methods are substantially more impaired by mixing matrix distortions, leading to the identification of spurious components and reduced localization efficiency [16].

Table 1: Comparison of BSS Algorithm Performance Under Mixing Matrix Distortions

| Algorithm Type | Example Algorithms | Robustness to Mixing Matrix Distortion | Impact on Source Recovery |

|---|---|---|---|

| Higher-Order Statistics (HOS) | FASTICA, INFOMAX, Ext-INFOMAX | Low | Substantial impairment; creates non-Gaussian features, leading to more components than actual sources [16]. |

| Second-Order Statistics (SOS) | SOBI, UW-SOBI, AJDC | High | Significantly less sensitive; better recovery of signal quality and localization accuracy [16]. |

| Hybrid Algorithms | JADE, COMBI | Moderate | Variable performance, often intermediate between SOS and HOS [16]. |

Workflow Diagram: BSS Analysis and Problem Identification

The following diagram illustrates a standard BSS workflow and pinpoints where the critical limitation of mixing matrix invariance can manifest.

The Thresholding Problem in Rule-Based Methods

Rule-based methods often rely on graph theoretical analyses of functional connectivity, which require thresholding to eliminate spurious connections. The choice of this threshold is arbitrary and represents a significant source of variability.

Core Technical Limitation: Arbitrary Threshold Selection

The arbitrary choice of a proportional threshold dramatically influences the global metrics of a functional connectivity network.

Troubleshooting FAQ: Inconsistent Graph Metrics

- Q: The global connectivity measures from my EEG functional connectivity analysis change drastically with different proportional thresholds. How can I ensure the stability and validity of my results?

- A: This is known as the "thresholding problem" [17]. To improve robustness:

- Avoid Single Thresholds: Do not base conclusions on a single, arbitrarily chosen threshold.

- Threshold-Free or Multi-Threshold Analysis: Explore threshold-free measures or report results across a range of plausible thresholds to demonstrate the stability of your findings.

- Improve Reasoning: Document and justify the chosen thresholding method in your analysis protocol. The field is moving towards improved reasoning behind analytic choices and the adoption of different approaches [17].

Experimental Protocol: Quantifying Thresholding Variability

- Objective: To demonstrate the effect of proportional thresholding on graph network parameters.

- Method (as conducted in [17]):

- Data Acquisition: Collect resting-state EEG from participants (e.g., 146 recordings).

- Compute Functional Connectivity: Calculate connectivity matrices using multiple synchronization measures (e.g., wPLI, ImCoh, Coherence).

- Apply Thresholding: Apply a series of proportional thresholds (e.g., from 5% to 30% strongest connections retained) to each matrix.

- Calculate Graph Metrics: For each thresholded matrix, compute global graph metrics such as clustering coefficient, path length, and small-worldness.

- Analyze Variability: Plot the graph metrics as a function of the applied threshold.

- Key Finding: Graph network parameters show significant changes as a function of the chosen threshold, which can directly influence the outcome and conclusions of a study [17].

Table 2: Impact of Proportional Thresholding on Global Graph Metrics (Sample Observations from [17])

| Proportional Threshold (% of strongest connections) | Effect on Clustering Coefficient | Effect on Characteristic Path Length | Risk of Conclusion Bias |

|---|---|---|---|

| Low (e.g., 5-10%) | May be artificially high due to inclusion of spurious weak connections. | May be artificially low. | High: Network may appear erroneously integrated. |

| Medium (e.g., 15-20%) | Potentially reflects true network structure, but "true" value is unknown. | Potentially reflects true network structure. | Medium: Highly dependent on sample and measure. |

| High (e.g., 25-30%) | May be artificially low due to oversparsification of the network. | May be artificially high. | High: Network may appear erroneously segregated. |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Tools for EEG Artifact Research

| Item / resource | Function / purpose | Example / note |

|---|---|---|

| EEGLAB | An open-source MATLAB toolbox for processing single-trial EEG dynamics, including ICA [18]. | Provides a framework for BSS analysis, visualization, and plugin management (e.g., FIRFILT plugin). |

| TUH EEG Artifact Corpus | A public dataset of clinical EEG with expert-annotated artifacts [19]. | Used for training and validating automated artifact detection models; contains 158k+ annotations. |

| Standardized Montages | A predefined set of electrode pairs for bipolar derivation. | Reduces common-mode noise; essential for standardizing inputs to deep learning models [19]. |

| SOS BSS Algorithms (e.g., SOBI, AJDC) | For source separation when data violates the mixing matrix invariance assumption [16]. | More robust than HOS algorithms to electrode movement and group-level analysis. |

| Deep Learning Frameworks (e.g., TensorFlow, PyTorch) | For building and training specialized artifact detection models. | Enables development of CNNs and other architectures tailored to specific artifact classes [19]. |

Comparative Analysis: Traditional vs. Modern Approaches

The limitations of traditional methods have catalyzed the development of novel approaches, particularly deep learning models.

Troubleshooting FAQ: Choosing an Artifact Handling Strategy

- Q: With the rise of deep learning, should I abandon traditional methods like BSS and rule-based thresholding for artifact management?

- A: Not necessarily. The choice of method should be guided by your specific research context:

- For Well-Defined, Stationary Data: Traditional BSS can still be effective and offers interpretability.

- For Clinical or Noisy Data: Consider SOS-based BSS for its robustness or explore modern deep learning models.

- For Automated, High-Throughput Processing: Specialized deep learning models are superior. Research shows that artifact-specific Convolutional Neural Networks (CNNs) significantly outperform rule-based methods, with F1-score improvements from +11.2% to +44.9% [19]. These models can be optimized for specific artifacts (e.g., 1s windows for non-physiological, 20s for eye movements) [19].

- For Functional Connectivity: Move beyond single-threshold analyses and employ multi-threshold or threshold-free techniques to ensure the robustness of your findings [17].

Table 4: Comparison of Artifact Handling Methodologies

| Methodology | Key Principle | Primary Limitations | Best-Suited Context |

|---|---|---|---|

| BSS (HOS - ICA) | Separates sources by maximizing statistical independence using higher-order statistics [15]. | Highly sensitive to mixing matrix distortions; can create spurious components [16]. | Single-subject research data with minimal head/electrode movement. |

| BSS (SOS - SOBI) | Separates sources using second-order statistics (temporal correlations) [16]. | More robust to mixing matrix non-stationarity but may have other computational limitations [16]. | Data with suspected instability (e.g., group studies, long recordings). |

| Rule-Based Thresholding | Applies fixed rules or thresholds to exclude artifactual data segments or connections. | Arbitrary threshold selection dramatically alters graph network parameters [17]. | Initial, exploratory data screening; requires careful validation. |

| Deep Learning (e.g., CNN) | Learns artifact features directly from large, labeled datasets through hierarchical feature detection [19]. | Requires large annotated datasets; "black box" nature can reduce interpretability [19]. | High-accuracy, automated detection of specific artifact classes in large datasets. |

| Hybrid Expert Schemes | Combines signal processing (e.g., energy screening) with rule-based expert knowledge [20]. | Requires careful design to encode expert knowledge effectively; may be complex to implement. | Tasks requiring precise localization and duration of specific micro-events (e.g., K-complex detection in sleep EEG) [20]. |

The limitations of traditional BSS and rule-based thresholding are not merely theoretical but have demonstrable, quantifiable impacts on EEG data analysis, as evidenced by the experimental protocols and data summarized here. The fundamental constraints of mixing matrix invariance and arbitrary threshold selection can introduce bias, variability, and spurious findings. The field is now moving toward a new paradigm characterized by more robust second-order statistics, sophisticated hybrid models that integrate expert knowledge, and specialized deep learning systems. These advanced methods offer a path toward fully automated, accurate, and reliable artifact handling, which is essential for the progression of robust machine learning applications in EEG research.

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common types of EEG artifacts I should account for in my automated detection model?

EEG artifacts can be broadly categorized as physiological (from the body) or technical (from equipment or environment). The table below summarizes the common artifacts and recommended detection methods.

| Artifact Type | Origin | Key Characteristics | Recommended ML Detection Methods |

|---|---|---|---|

| Eye Blinks & Movements [21] | Physiological (Eyes) | High-amplitude, slow deflections; frontally prominent. [21] | ICA, Template matching on EOG channels. [22] [23] |

| Muscle Artifacts (EMG) [21] | Physiological (Muscles) | High-frequency, broadband activity; most prominent above 20 Hz. [21] | ICA, Time-frequency analysis, Band-power features. [21] |

| Heartbeat Artifacts (ECG) [21] | Physiological (Heart) | Rhythmic, spike-shaped pattern; can be confounded with epileptiform activity. [21] | ICA, SSP with ECG channel correlation. [22] [23] |

| Line Noise [21] | Technical (Environment) | Sharp peak at 50/60 Hz and its harmonics. [21] | Notch filtering, Spectral analysis. [21] |

| Electrode "Pops" [21] | Technical (Electrode) | Sudden, large-amplitude, instantaneous deflection. [21] | Amplitude-thresholding, Statistical outlier detection. [21] |

| Sweat/Skin Potentials [21] | Physiological (Skin) | Very slow drifts and fluctuations. [21] | High-pass filtering, Drift-correction algorithms. [21] |

FAQ 2: My deep learning model for seizure detection is overfitting on my limited EEG dataset. What strategies can I use?

Overfitting is a common challenge, especially with small-sample EEG datasets [24]. You can employ several strategies:

- Data Augmentation: Use techniques like sliding windows with overlaps to artificially expand your dataset [24]. For a 23.6-second EEG file, using a 1-second sliding window can generate multiple samples, increasing the effective dataset size for training.

- Simplified Model Architectures: Instead of large, complex networks, use streamlined models designed for small data. For example, one study proposed a "micro-capsule network" that maintains high accuracy with reduced computational complexity and lower risk of overfitting [24].

- Transfer Learning: Leverage pre-trained models or general-purpose features learned from larger datasets and adapt them to your specific task with a smaller amount of patient-specific data [25].

FAQ 3: How can I create a model that works well across different subjects and tasks (cross-subject/cross-task)?

This is a core challenge in building generalizable EEG foundation models. The 2025 EEG Foundation Challenge highlights two key approaches [26]:

- Cross-Task Transfer Learning: Develop models using unsupervised or self-supervised pre-training on data from various cognitive tasks (e.g., resting state, movie watching). The model learns general latent EEG representations, which are then fine-tuned for a specific supervised task like predicting behavioral performance [26].

- Subject-Invariant Representations: Create robust models that explicitly learn to ignore subject-specific morphological and physiological differences. This often involves training on data from a large number of participants (e.g., 3,000+) across multiple paradigms to force the model to find common, task-related features [26].

FAQ 4: What are the trade-offs between traditional machine learning and deep learning for artifact detection?

The choice between traditional Machine Learning (ML) and Deep Learning (DL) depends on your data and resources.

| Feature | Traditional Machine Learning | Deep Learning |

|---|---|---|

| Data Dependency | Performs better with smaller datasets [24]. | Requires large datasets to avoid overfitting [24]. |

| Feature Engineering | Relies on manual feature extraction (e.g., statistical moments, entropy, bandpower) [27] [25]. | Automatic feature learning from raw or preprocessed data [27] [25]. |

| Computational Load | Generally less computationally intensive. | Can be very computationally intensive, requiring high hardware resources [24]. |

| Interpretability | Often more interpretable (e.g., knowing which feature is important). | Acts as a "black box," making it harder to understand decisions [24]. |

| Best Use Case | Well-defined artifacts with known characteristics on small-to-medium datasets. | Complex artifact patterns or when manual feature extraction is impractical on large datasets. |

Troubleshooting Guides

Issue 1: Poor performance of my artifact detection algorithm due to overlapping signal and artifact frequencies.

Problem: Some artifacts, like muscle activity, have a broad frequency spectrum that overlaps with the EEG signal of interest (e.g., beta and gamma bands), making simple frequency filtering ineffective [21].

Solution: Employ component-based methods that leverage spatial information.

- Use Independent Component Analysis (ICA): ICA can separate EEG recordings into statistically independent components. Artifacts like blinks, eye movements, and heartbeats often map to distinct components that can be identified and removed [21].

- Identify Artifact Components: Correlate components with reference EOG and ECG channels to automatically identify those corresponding to artifacts. Components with topographies focused on the eyes (for blinks) or temples (for saccades) and time courses that match the artifact can be selected for rejection [22] [23].

- Reconstruct Signal: Remove the identified artifact components and reconstruct the "cleaned" EEG signal.

The following workflow outlines this component-based approach to artifact removal:

Issue 2: My model fails to generalize and performs poorly on new, unseen subject data.

Problem: High inter-subject variability in EEG signals causes models trained on one group of subjects to perform poorly on others [25].

Solution: Implement a Ensemble of Deep Transfer Learning (EDTL) strategy [25].

- Leverage Pre-trained Models: Start with general-purpose models pre-trained on large-scale datasets (e.g., ResNet, EfficientNet) to capture universal features.

- Incorporate Domain-Specific Models: Combine the pre-trained models with a custom 2D Convolutional Neural Network (2DCNN) designed for your specific EEG data characteristics.

- Create Patient-Specific Features: Use techniques like Short-Time Fourier Transform (STFT) to convert EEG signals into spectrograms, which can better capture time-frequency patterns for individual patients.

- Ensemble the Models: Combine the predictions of the pre-trained models and the custom 2DCNN. This ensemble approach improves robustness against noise and enhances adaptability to new subjects [25].

The workflow below illustrates the key steps in creating a generalizable, subject-invariant model using transfer learning and ensemble methods:

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table lists key computational tools and materials used in modern ML-based EEG artifact detection research.

| Item Name | Function / Application |

|---|---|

| HBN-EEG Dataset [26] | A large-scale public dataset with over 3,000 participants across six tasks, ideal for training and benchmarking generalizable foundation models. [26] |

| CHB-MIT Scalp EEG Database [27] [25] | A standard public dataset for epileptic seizure detection, widely used to validate and compare the performance of new algorithms. [27] [25] |

| Bonn EEG Dataset [24] | A public dataset containing EEG recordings categorized into epilepsy-specific stages, often used for small-sample method development. [24] |

| Independent Component Analysis (ICA) [21] | A computational method used to separate mixed EEG signals into independent sources, crucial for isolating and removing artifact components. [21] |

| Short-Time Fourier Transform (STFT) [25] | A signal processing technique that converts 1D EEG time-series into 2D spectrograms (time-frequency representations), used as input for image-based deep learning models like CNNs. [25] |

| Micro-Capsule Network [24] | A streamlined deep learning architecture designed to effectively learn from small-sample EEG datasets by preserving spatial hierarchical relationships in the data. [24] |

| Python MNE Library [23] | An open-source Python package for exploring, visualizing, and analyzing human neurophysiological data, providing standard preprocessing and artifact detection tools. [23] |

Frequently Asked Questions (FAQs)

Q1: What are the most common artifacts that can compromise EEG data in clinical trials? EEG artifacts are any recorded signals not originating from neural activity. They are broadly categorized as follows [1]:

- Physiological Artifacts: Originate from the participant's body.

- Ocular Artifacts: From eye blinks and movements (high-amplitude, low-frequency), affecting frontal electrodes.

- Muscle Artifacts (EMG): From jaw, face, or neck muscle contractions (broadband, high-frequency noise).

- Cardiac Artifacts (ECG): Rhythmic waveforms from heartbeats.

- Perspiration/Respiration: Cause slow baseline drifts and impedance changes.

- Non-Physiological Artifacts: Originate from external sources.

- Electrode Pop: Sudden, high-amplitude transients from poor electrode-skin contact.

- Cable Movement: Creates variable noise from cable displacement.

- AC Power Line Interference: A sharp 50/60 Hz noise from electrical environments.

Q2: Why is automated artifact detection crucial for modern drug development trials? Manual artifact review is time-consuming, labor-intensive, and subjective, which is not feasible for large-scale, multi-site clinical trials. Automated methods based on machine learning (ML) and deep learning (DL) ensure standardized, reproducible, and scalable data preprocessing. This enhances the reliability of EEG biomarkers as endpoints by reducing human error and bias, which is critical for regulatory acceptance [28].

Q3: My trial uses wearable EEG devices. Are there special considerations for artifact handling? Yes. Wearable EEG systems, which often use dry electrodes and have a low number of channels, present specific challenges [11]:

- Increased Artifact Proneness: Dry electrodes and subject mobility introduce more motion artifacts and signal instability.

- Limited Spatial Resolution: A reduced number of channels (often below 16) impairs the effectiveness of traditional source separation methods like Independent Component Analysis (ICA).

- Emerging Solutions: Techniques like Artifact Subspace Reconstruction (ASR) and deep learning models are being validated for wearable EEG. The use of auxiliary sensors (e.g., IMUs) is promising but currently underutilized [11].

Q4: How do I choose between a traditional algorithm and a deep learning model for my study? The choice depends on your data, resources, and goals. The table below summarizes key considerations based on published research [19] [29] [28].

| Method | Best For | Key Advantages | Limitations |

|---|---|---|---|

| Independent Component Analysis (ICA) | Studies with sufficient channels (e.g., >16); isolating ocular and muscular artifacts [11] [1]. | Well-established, interpretable, does not require labeled data. | Requires expert intervention for component rejection, less effective for low-density EEG [11]. |

| Machine Learning (e.g., Random Forest) | Small to medium-sized datasets; scenarios requiring high accuracy with limited training data [28]. | High performance with smaller datasets, fast training and inference. | Requires manual feature engineering in many implementations. |

| Deep Learning (CNN, LSTM, Autoencoders) | Large, labeled datasets; complex artifacts (muscle, motion); end-to-end learning [19] [29] [30]. | Automatic feature extraction, state-of-the-art accuracy, handles complex patterns. | Requires large amounts of training data; acts as a "black box"; computationally intensive [28]. |

Q5: What are the key performance metrics for evaluating an artifact detection algorithm? The choice of metrics depends on whether the task is detection (classifying an epoch as artifact/noise) or removal (reconstructing a clean signal). Commonly used metrics include [11]:

- For Detection:

- Accuracy: Overall correctness.

- Selectivity (True Negative Rate): Ability to identify clean segments correctly.

- For Removal/Reconstruction:

- Signal-to-Noise Ratio (SNR): Measures noise reduction.

- Correlation Coefficient (CC): Measures waveform preservation.

- Root Mean Square Error (RMSE): Quantifies difference from a clean reference.

Troubleshooting Guides

Guide 1: Resolving Poor Algorithm Performance on Your Dataset

Problem: Your artifact detection model is underperforming, showing low accuracy or high false positives.

Solution: Follow this systematic troubleshooting workflow.

Steps:

- Data Quality & Preprocessing Check

- Ensure consistent preprocessing (bandpass filtering, notch filtering, referencing) across all datasets [19].

- Verify that your training data is representative of the clinical trial population and conditions (e.g., same type of EEG headset, similar patient demographics).

- Audit your labels. Inconsistent or inaccurate ground truth annotations are a major source of failure. Ensure high inter-rater agreement if labeled manually [19].

Model & Training Check

- Assess model-task fit. A model trained on adult EEG may fail on infant EEG due to different artifact characteristics [28].

- Optimize hyperparameters like temporal window size. Research shows optimal windows vary by artifact: 1s for non-physiological, 5s for muscle, and 20s for eye movements [19].

- If you have a small dataset, prioritize traditional ML models like Random Forest, which can outperform deep learning in data-scarce scenarios [28].

Strategy Check

- For complex data with multiple artifact types, consider using specialized models for each artifact class instead of a single, generic model [19].

- When working with multi-channel data, ensure your model architecture (e.g., CLEnet) can leverage spatial relationships between channels, unlike models designed for single-channel input [29].

Guide 2: Implementing a Robust Artifact Handling Pipeline

Problem: You need to establish a standardized, end-to-end pipeline for artifact detection and removal in your trial's analysis plan.

Solution: Adopt a modular pipeline that can be tailored to your specific endpoint.

Steps:

- Standardized Preprocessing: Apply consistent filtering (e.g., 1-40 Hz bandpass, 50/60 Hz notch) and re-reference all data. This ensures uniformity before artifact handling [19].

- Choose a Detection Method: Select a method based on the FAQ and Table 1. For a balanced approach, a Random Forest classifier offers high accuracy and is less sensitive to dataset size [28].

- Select a Handling Strategy: This is a critical choice that depends on your trial's statistical power and endpoint.

- Epoch Rejection: Simply discard data segments flagged as artifacts. This is safe but can lead to significant data loss, which may be unacceptable in trials with limited recording time.

- Artifact Correction: Use techniques like ICA or advanced deep learning models (e.g., CLEnet, LSTEEG) to reconstruct the clean neural signal. This preserves data volume but requires rigorous validation to ensure neural signals are not distorted [29] [30].

- Validation and Reporting: Always report the percentage of data rejected or corrected in your analysis. Validate the pipeline on a held-out test set with expert annotations to ensure its performance meets the trial's quality standards.

Experimental Protocols & Benchmarking

Key Experimental Metrics from Current Literature

The following table summarizes quantitative performance data from recent studies to help you benchmark your own systems. Note that performance is highly dataset-dependent.

| Model / Approach | Artifact Type | Performance Metrics | Reference / Dataset |

|---|---|---|---|

| Deep Lightweight CNN | Eye Movements | ROC AUC: 0.975 | Temple University Hospital (TUH) EEG Corpus [19] |

| Deep Lightweight CNN | Muscle Activity | Accuracy: 93.2% | Temple University Hospital (TUH) EEG Corpus [19] |

| Deep Lightweight CNN | Non-Physiological | F1-Score: 77.4% | Temple University Hospital (TUH) EEG Corpus [19] |

| CLEnet (CNN + LSTM) | Mixed (EMG + EOG) | SNR: 11.498 dB, CC: 0.925 | EEGdenoiseNet [29] |

| Random Forest | General (Infant EEG) | Balanced Accuracy: 0.873 | BRISE Infant Dataset [28] |

| Deep Learning Model | General (Infant EEG) | Balanced Accuracy: 0.881 | BRISE Infant Dataset [28] |

| LSTEEG (LSTM Autoencoder) | General | Superior to convolutional autoencoders in detection & correction | Study-specific dataset [30] |

| Item | Function & Application | Example / Note |

|---|---|---|

| Public EEG Datasets | For training, validating, and benchmarking models. | Temple University Hospital (TUH) EEG Corpus (includes artifact labels) [19]; EEGdenoiseNet (semi-synthetic data for removal) [29]. |

| Standardized Preprocessing Tools | To apply consistent filtering, referencing, and epoching. | Toolboxes like MNE-Python, EEGLAB. Implementation should follow published protocols [19]. |

| Blind Source Separation (BSS) Tools | For traditional artifact removal methods. | Implementations of ICA (e.g., in EEGLAB) are useful for comparison and specific use cases [11] [1]. |

| Deep Learning Frameworks | For building and training state-of-the-art artifact models. | TensorFlow or PyTorch. Used to implement architectures like CNNs, LSTMs, and Autoencoders [19] [29] [30]. |

| Auxiliary Sensors | To provide additional data streams for improved artifact detection in mobile/wearable settings. | Inertial Measurement Units (IMUs) to track motion. Still underutilized but with high potential [11]. |

Machine Learning Architectures for EEG Artifact Detection: From CNNs to Hybrid Models

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using specialized, artifact-specific CNN models over a single general-purpose model?

Using multiple CNN systems, each specialized for a specific artifact class, significantly outperforms approaches that use a single model for all artifacts. Research shows that artifact-specific models allow for optimization of critical parameters, such as temporal window length, to match the unique characteristics of each artifact type. For instance, optimal window lengths were found to be 20 seconds for eye movements, 5 seconds for muscle activity, and 1 second for non-physiological artifacts [19]. This tailored approach has demonstrated F1-score improvements of +11.2% to +44.9% over traditional rule-based methods [19].

Q2: My model performs well on training data but poorly on new patient data. How can I improve its generalizability?

This is a common challenge, often stemming from the "one-size-fits-all" assumption that artifact characteristics are similar across all subjects and recording conditions [19]. To improve generalizability:

- Utilize Transfer Learning: Start with a generalized model pre-trained on a large, diverse dataset. This model can then be efficiently retrained with a limited amount of data from a new acquisition system or patient group to form a data-specific model, a process shown to be effective in iEEG studies [31].

- Employ Data Augmentation: Increase the effective size and diversity of your training dataset using techniques such as adding Gaussian white noise, using sliding windows, or employing Generative Adversarial Networks (GANs) to create synthetic EEG examples [32].

- Ensure Dataset Diversity: Train your model on datasets that feature a wide range of artifact types, recording conditions, and patient populations, such as the Temple University Hospital (TUH) EEG Corpus, which is representative of real clinical settings [19].

Q3: How do I choose the right input representation (e.g., raw signal vs. time-frequency images) for my CNN?

The choice depends on the nature of the artifacts you are targeting and the network architecture.

- 1D-CNN for Raw Waveforms: Treating the time-series as a 1D image allows the CNN to learn features directly from the raw signal without the need for manual feature extraction, making it a powerful and efficient option [31]. This approach has been successfully applied in speech recognition and biological signal processing [31].

- 2D-CNN for Time-Frequency Images: Transforming the signal into a time-frequency representation (e.g., using Fourier or Wavelet transforms) can be beneficial. CNNs excel at processing these 2D images and can leverage their strong pattern recognition capabilities to identify distinctive artifact signatures in the time-frequency plane [31].

- Spatio-Temporal Processing: If your electrode spatial information is known, you can use CNNs to process multi-channel EEG data, allowing the model to learn both the spatial and temporal characteristics of artifacts [31].

Q4: What are the alternatives if I lack a large, expertly labeled dataset for training?

A lack of labeled data is a major constraint. Consider these alternative deep-learning approaches that reduce labeling dependency:

- Autoencoders for Anomaly Detection: Train a convolutional autoencoder (CAE) exclusively on clean, artifact-free EEG data. The model learns to reconstruct normal brain signals. During inference, contaminated segments will have a high reconstruction error, which can be used as an anomaly metric to detect artifacts without needing labels for the artifacts themselves [3]. This approach has achieved high accuracy (95.5%) in detecting unseen artifacts in other biological signal domains [33].

- Leverage Pre-trained Models: Use existing, validated models like ICLabel, which is a CNN designed to classify independent components derived from ICA, to automate the initial stages of your preprocessing pipeline [3].

Troubleshooting Guides

Problem: Model Fails to Distinguish Pathological Activity from Artifacts

Issue: The CNN misclassifies epileptiform activity (e.g., interictal spikes) as artifacts, which is a critical error in clinical applications like epilepsy evaluation.

Diagnosis and Solution: This occurs because some pathological activities and artifacts share similar characteristics in the signal [31]. The model must be explicitly designed to avoid this mutual misclassification.

- Step 1: Curate Targeted Training Labels: Ensure your ground truth annotations are performed by experts who can accurately differentiate between, for example, a pathological spike and an electrode pop artifact. The training data must reflect this crucial distinction.

- Step 2: Incorporate Spatial Information: Use a multi-channel CNN architecture that can learn the spatial distribution of signals across the scalp. Genuine neural activity follows logical topographic fields of distribution, which can help the model distinguish it from artifacts [1].

- Step 3: Algorithm Design Priority: Select or design a model with this specific requirement in mind. For instance, one CNN method for iEEG was explicitly "designed to meet the condition" of avoiding misclassification between pathological high-frequency oscillations and artifacts [31].

Problem: Inconsistent Model Performance Across Different Artifact Types

Issue: The model detects certain artifacts (e.g., eye blinks) with high accuracy but performs poorly on others (e.g., muscle noise or electrode pops).

Diagnosis and Solution: A single model configuration is not optimal for the diverse temporal and spectral characteristics of different artifacts.

- Step 1: Adopt a Multi-Model Framework: Do not rely on a single CNN. Instead, deploy a system of specialized, lightweight CNNs, where each is optimized for a specific artifact class (e.g., one for eye movements, one for muscle activity, and one for non-physiological artifacts) [19].

- Step 2: Optimize Hyperparameters per Artifact: Tune the architecture and parameters for each specialized model. The most critical parameter is the input segment length, as each artifact type has a distinct optimal temporal window for detection [19].

- Step 3: Ensemble Predictions: Combine the outputs from your specialized CNNs to generate a final, comprehensive artifact profile for the EEG signal.

Workflow: Implementing an Artifact-Specific CNN Framework

Problem: High Computational Cost and Slow Training Times

Issue: Training deep CNNs on large, high-density EEG datasets is slow and computationally expensive.

Diagnosis and Solution: Complex models and large datasets naturally require significant resources. However, several strategies can mitigate this.

- Step 1: Use Lightweight Architectures: Design or select "deep lightweight" CNN architectures that maintain high accuracy with fewer parameters, making them faster to train and more suitable for deployment [19].

- Step 2: Leverage Transfer Learning: Instead of training from scratch, take a pre-trained generalized model and retrain (fine-tune) only the final layers with your specific data. This process is significantly faster and requires less data [31].

- Step 3: Utilize Hardware Acceleration: Perform training on systems with Graphical Processing Units (GPUs), which are specifically designed for the parallel computations required by CNNs and can dramatically speed up the process [31].

Experimental Protocols & Performance Data

Key Experimental Methodology for Training an Artifact-Specific CNN

The following protocol is synthesized from recent studies on artifact-specific CNNs [19]:

- Data Sourcing: Obtain EEG data from a large, publicly available corpus with expert artifact annotations, such as the Temple University Hospital (TUH) EEG Corpus.

- Preprocessing:

- Resampling: Standardize the sampling rate across all recordings (e.g., to 250 Hz).

- Filtering: Apply a bandpass filter (e.g., 1-40 Hz) and a notch filter (50/60 Hz) to remove line noise.

- Montage: Convert all recordings to a standardized bipolar montage.

- Normalization: Use a method like RobustScaler to normalize data across channels and timepoints.

- Adaptive Segmentation: Segment the continuous EEG into non-overlapping windows. Critically, use different window lengths optimized for each artifact class (e.g., 20s for eye, 5s for muscle, 1s for non-physiological).

- Model Training: Train separate CNN architectures for each artifact class. The architecture for each can be a relatively simple stack of convolutional, pooling, and fully connected layers.

- Performance Validation: Evaluate the model on a held-out test set and compare its performance (F1-score, Accuracy, ROC AUC) against standard rule-based detection methods.

Quantitative Performance of CNN Models for EEG Artifact Detection

The table below summarizes the demonstrated performance of CNN-based approaches for detecting various types of EEG artifacts.

| Artifact Type | Model Approach | Key Performance Metric | Reported Score | Context & Notes |

|---|---|---|---|---|

| General iEEG Artifacts | Convolutional Neural Network | F1-Score (Generalized Model) | 0.81 | Trained on one dataset, tested on another [31]. |

| F1-Score (Specialized Model) | 0.96 | Retrained on target dataset via transfer learning [31]. | ||

| Eye Movements | Specialized Lightweight CNN | ROC AUC | 0.975 | Optimal 20s window [19]. |

| Muscle Activity | Specialized Lightweight CNN | Accuracy | 93.2% | Optimal 5s window [19]. |

| Non-Physiological | Specialized Lightweight CNN | F1-Score | 77.4% | Optimal 1s window [19]. |

| Eye Blink Artifacts | 10-layer CNN | Classification Accuracy | 99.67% | Study on 30 subjects [32]. |

Research Reagent Solutions: Essential Materials & Tools

The table below lists key computational "reagents" and resources essential for developing artifact-specific CNNs for EEG.

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| TUH EEG Artifact Corpus [19] | Dataset | Provides a large volume of expert-annotated, real-world EEG data for training and benchmarking artifact detection models. |

| ICLabel [3] | Pre-trained Model / Tool | A CNN-based tool that automates the classification of Independent Components (ICs) derived from ICA, streamlining a common preprocessing step. |

| EEGDenoiseNet [3] | Benchmark Dataset | A dataset designed specifically for training and benchmarking deep learning models for EEG denoising. |

| TensorFlow2 Object Detection API [34] | Software Library | An open-source framework that can be adapted for building and training object detection models, which can be repurposed for 1D signal detection tasks. |

| RobustScaler [19] | Preprocessing Algorithm | A data normalization technique that preserves relative amplitude relationships between EEG channels while standardizing the input for stable model training. |

Frequently Asked Questions (FAQs)

FAQ 1: Why is a single temporal window size not optimal for detecting all artifact classes? Different biological and non-biological artifacts have distinct temporal and spectral characteristics. For instance, eye movements are slow and span several seconds, while muscle artifacts are rapid and brief. Using a single window size fails to capture these unique dynamics effectively. Research has confirmed that specialized models with optimized window sizes significantly outperform generic approaches, with one study finding optimal windows of 20 seconds for eye movements, 5 seconds for muscle activity, and 1 second for non-physiological artifacts [19].

FAQ 2: How does an improperly chosen temporal window impact model performance? An incorrectly sized temporal window can lead to two main issues:

- Too short a window: May fail to capture the complete morphology of long-duration artifacts (e.g., eye rolls), missing crucial contextual information.

- Too long a window: Can dilute short-duration artifact features with extensive clean EEG data, making it harder for the model to learn discriminative patterns and increasing computational cost [35].

FAQ 3: What is the trade-off between data volume and sample independence when segmenting EEG? This is a key methodological consideration. Using a small temporal shift (high overlap) between consecutive windows increases the number of training samples, which can boost model performance. However, it also reduces the independence of samples. If the evaluation protocol is not stringent (e.g., not using subject-wise splits), this can lead to over-optimistic performance metrics. A larger shift provides more independent samples but may result in insufficient data volume for training complex models [35].

FAQ 4: Are deep learning models always superior to traditional methods for artifact detection? While deep learning models like Convolutional Neural Networks (CNNs) have shown remarkable performance, their effectiveness is highly dependent on correct design choices, including temporal window sizing. Studies have demonstrated that artifact-specific CNN models can significantly outperform traditional rule-based methods and other automated frameworks like FASTER. However, this advantage is fully realized only when the model architecture and parameters are tailored to the target artifact [19].

Troubleshooting Guides

Problem 1: Poor Detection Accuracy for a Specific Artifact Class

Symptoms: Your model performs well on some artifacts (e.g., muscle) but poorly on others (e.g., eye blinks).

Solution: Implement an artifact-specific multi-model pipeline.

- Isolate the Problem: Analyze your model's confusion matrix to confirm the poor performance is isolated to one or two artifact classes.

- Segment your Data: Re-segment your EEG training data using the recommended optimal window sizes for the underperforming class (see Table 1).

- Train Specialist Models: Train a dedicated model for the problematic artifact class using the optimally segmented data. A lightweight CNN is often effective for this [19].

- Deploy a Pipeline: In your inference workflow, create a pipeline where an EEG segment is evaluated by multiple specialist models in parallel, each optimized for a different artifact.

The workflow for this pipeline can be visualized as follows:

Problem 2: Inconsistent Model Performance Across Subjects or Sessions

Symptoms: High accuracy for some subjects but low for others, indicating poor generalization.

Solution: Enforce a strict subject-wise evaluation protocol and review segmentation.

- Check for Data Leakage: Ensure that segments from the same subject do not appear in both training and test sets. This is a common cause of inflated and non-generalizable performance [35].

- Re-evaluate Window Shift: If you are using overlapping windows, try increasing the shift (reducing overlap) to increase sample independence. A smaller shift can improve accuracy but may inflate performance if evaluation splits are not sufficiently stringent [35].

- Augment Training Data: Use data augmentation techniques to increase the diversity of your training set and improve model robustness across different subjects and recording conditions.

Problem 3: Handling Continuous, Real-Time EEG Streams

Symptoms: The model, trained on segmented data, is difficult to apply to a continuous signal for online monitoring.

Solution: Use a sliding window approach with an optimized step size.

- Define Analysis Window: Use the optimal window length for your target artifact (e.g., 5s for muscle artifacts).

- Define Step Size: Choose a step size (temporal shift) that balances latency and computational load. A common practice is to use a 1-second step, providing frequent updates without overwhelming the system.

- Buffer and Process: In your application, buffer the incoming EEG signal and run the trained model on the most recent window at every step.

Table 1: Experimentally Validated Optimal Temporal Windows for Different Artifact Classes. This table summarizes key findings from a study that developed specialized CNN models for distinct artifact types, establishing that optimal window length is artifact-dependent [19].

| Artifact Class | Optimal Temporal Window | Key Performance Metric & Score |

|---|---|---|

| Eye Movements | 20 seconds | ROC AUC: 0.975 (97.5%) |

| Muscle Activity | 5 seconds | Accuracy: 93.2% |

| Non-Physiological | 1 second | F1-Score: 77.4% |

Table 2: Impact of Temporal Window Shift on Model Performance. This table generalizes findings on how the step size between consecutive analysis windows affects the training data and subsequent model evaluation, based on a controlled study of cognitive fatigue detection [35].

| Temporal Window Shift | Effective Training Samples | Sample Independence | Potential Impact on Reported Performance |

|---|---|---|---|

| Small Shift (High Overlap) | High number | Low | Can inflate performance metrics if test data is not strictly separated; requires subject-wise evaluation. |

| Large Shift (Low Overlap) | Low number | High | More reliable generalization but may limit data available for training complex models. |

Experimental Protocols

Protocol 1: Determining the Optimal Temporal Window for a New Artifact Class

Objective: To empirically identify the best temporal window length for detecting a specific artifact.

Materials: A dataset of EEG recordings with expert-annotated labels for the target artifact.

Methodology:

- Preprocessing: Standardize your EEG data. This includes resampling to a common frequency (e.g., 250 Hz), applying bandpass filtering (e.g., 1-40 Hz), and using a robust scaling method like

RobustScalerto normalize the data [19]. - Segmentation: Segment the preprocessed EEG into non-overlapping windows of varying durations (e.g., 1s, 2s, 5s, 10s, 20s, 30s).

- Model Training: Train identical model architectures (e.g., a lightweight CNN) on each of the segmented datasets. Keep all hyperparameters constant except for the input size, which will adapt to the window length.

- Evaluation: Evaluate each model on a held-out test set using a comprehensive set of metrics (e.g., Accuracy, F1-Score, ROC AUC). Use a strict subject-wise split to ensure generalizable results [35].

- Analysis: Plot the performance metrics against the window lengths. The window length that yields the highest performance is optimal for that artifact-model combination.

The workflow for this experiment is outlined below:

Protocol 2: Validating a Multi-Model Detection Pipeline

Objective: To verify that a pipeline of artifact-specific models outperforms a single generalist model.

Materials: A dataset with annotations for multiple artifact classes (e.g., from the TUH EEG Corpus [19]).

Methodology:

- Baseline Model: Train a single "generalist" model using a fixed, commonly-used window size (e.g., 4 seconds) on all artifact classes.

- Specialist Models: For each major artifact class (e.g., eye, muscle, non-physiological), train a dedicated model using its respective optimal window size (from Protocol 1 or literature).

- Pipeline Integration: Create an inference pipeline that runs all specialist models on incoming data and aggregates their outputs.

- Comparative Evaluation: On a held-out test set, compare the per-class and overall performance of the specialist pipeline against the generalist baseline.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Datasets for EEG Artifact Detection Research.

| Item | Function in Research | Example / Note |

|---|---|---|

| Public EEG Datasets | Provides standardized, expert-annotated data for model training and benchmarking. | TUH EEG Artifact Corpus: Contains a large number of artifact annotations across 19 categories, ideal for training and validation [19]. |

| Preprocessing Tools | Essential for standardizing raw EEG signals before segmentation and analysis. | Tools for re-referencing, filtering (bandpass/notch), and normalization (e.g., RobustScaler) are fundamental [19] [36]. |

| Blind Source Separation (BSS) | A traditional technique for separating neural activity from artifacts, often used as a baseline or for data pre-cleaning. | Independent Component Analysis (ICA) is widely used to isolate ocular and muscle artifacts [11] [9]. |

| Deep Learning Frameworks | Enables the development and training of complex, artifact-specific models like CNNs. | TensorFlow and PyTorch are common choices for implementing lightweight CNN architectures [19] [4]. |

FAQs and Troubleshooting Guides for EEG Artifact Detection Research

Autoencoders

Q1: My autoencoder fails to learn meaningful features from EEG data and just copies the input. What should I do?

This is a common sign of an overcomplete autoencoder where the bottleneck layer is too large, allowing the network to learn a trivial identity function [37] [38].

- Solution A: Implement an Undercomplete Architecture: Design your bottleneck layer with fewer dimensions than your input EEG data to force compression. For instance, if your input EEG segment has 1,000 features, try a bottleneck of 50-100 neurons [38].

- Solution B: Apply Regularization: Introduce sparsity constraints or L1/L2 weight regularization to prevent the network from merely memorizing the input. A contractive autoencoder architecture can also help by making the learned representations robust to small variations in input [37].

- Solution C: Add Noise: Train a denoising autoencoder by intentionally adding noise to your input EEG samples while training the model to reconstruct the clean version. This forces the network to learn robust features rather than copying [37].

Q2: The reconstructed EEG signal from my autoencoder is too lossy. How can I improve the reconstruction fidelity?

The lossy nature of autoencoders is a fundamental limitation, but its impact can be managed [37].

- Solution A: Adjust the Bottleneck Size: If the bottleneck is too narrow, crucial information is lost. Systematically test reconstruction accuracy with progressively larger bottleneck layers until you find an optimal trade-off between compression and fidelity [37].

- Solution B: Align Loss Function with Data Type: For real-valued EEG data, use Mean Squared Error (MSE) loss. For binary or thresholded inputs, Cross-Entropy Loss is often more effective [38].

- Solution C: Business Decision on Tolerance: Determine the maximum tolerable reconstruction error for your specific application (e.g., artifact detection vs. signal compression) and tune your model accordingly [37].

Recurrent Neural Networks (RNNs)

Q3: My RNN performs well on recent EEG data but fails to learn long-term dependencies in extended recordings. Why?

This is the classic vanishing gradient problem, where gradients become exponentially smaller as they are backpropagated through time, preventing weight updates in earlier layers [39] [40].

- Solution A: Use Advanced RNN Architectures: Replace your vanilla RNN with Long Short-Term Memory (LSTM) or Gated Recurrent Unit (GRU) networks. These incorporate gating mechanisms that regulate information flow, effectively preserving long-term dependencies [39].

- Solution B: Activation Function and Weight Initialization: Using ReLU or Leaky ReLU activation functions instead of sigmoid/tanh can mitigate vanishing gradients. Proper weight initialization schemes (e.g., Xavier initialization) also help [39].

- Solution C: Gradient Clipping: To address the opposite exploding gradient problem, implement gradient clipping during training to cap the maximum gradient value and maintain stability [39].

Q4: What is a sensible default RNN architecture to start with for EEG sequence modeling?

When beginning a new project, start simple to establish a baseline and ensure your pipeline works [41].

- Recommended Defaults:

- Architecture: A single hidden layer LSTM model. LSTMs are more powerful than vanilla RNNs and a good starting point [41].

- Activation Function: Use

Tanhactivation for the LSTM layers [41]. - Input Normalization: Always normalize your input EEG sequences by subtracting the mean and dividing by the standard deviation [41].

Transformers

Q5: I am getting a "CUDA out of memory" error when training a Transformer on my EEG dataset. How can I reduce memory usage?

Transformers have a self-attention mechanism with a memory complexity that is quadratic with respect to the input sequence length, making them resource-intensive [42] [43].

- Solution A: Reduce Batch Size: Lower the

per_device_train_batch_sizevalue in your training arguments. This is the most straightforward way to immediately reduce GPU memory consumption [43]. - Solution B: Use Gradient Accumulation: Maintain an effective large batch size by using

gradient_accumulation_steps. This technique processes smaller batches sequentially and accumulates gradients before performing a weight update, reducing peak memory usage [43]. - Solution C: Shorten Input Sequences: Consider segmenting your EEG data into shorter, but still meaningful, time windows to reduce the sequence length presented to the Transformer [42].