Artifacts and Brain-Computer Interface Performance: A Comprehensive Analysis for Biomedical Research

This article provides a systematic examination of the multifaceted impact of artifacts on Brain-Computer Interface (BCI) performance, tailored for researchers and drug development professionals.

Artifacts and Brain-Computer Interface Performance: A Comprehensive Analysis for Biomedical Research

Abstract

This article provides a systematic examination of the multifaceted impact of artifacts on Brain-Computer Interface (BCI) performance, tailored for researchers and drug development professionals. It explores the fundamental challenge of distinguishing motion and muscle artifacts from physiological brain signals, which is critical for reliable data interpretation. The content surveys a spectrum of artifact handling methodologies, from established blind source separation techniques to emerging deep learning models. It further investigates optimization strategies for real-time systems, including the crucial principle of online parity, and evaluates performance trade-offs in clinical applications. Finally, the article presents a comparative analysis of validation frameworks and discusses the translational pathway from laboratory research to clinical deployment, offering a holistic resource for advancing BCI technology in biomedicine.

Understanding the Artifact Problem: From Signal Contamination to Informative Features

Brain-Computer Interface (BCI) technology, which translates neural activity into commands for external devices, has transitioned from laboratory research to real-world clinical trials, with several companies advancing human testing as of 2025 [1]. The core of any BCI system is its ability to accurately decode intended commands from electroencephalography (EEG) signals. However, the fidelity of this decoding process is critically dependent on signal quality. Artifacts—any recorded signals not originating from cerebral activity—represent a fundamental challenge, as they can obscure neural information, reduce the signal-to-noise ratio (SNR), and ultimately degrade BCI performance and reliability [2] [3].

Artifacts pose a particular threat to BCIs because they can mimic or mask genuine brain patterns, leading to misclassification by decoding algorithms. For instance, a study assessing the impact of artifact correction on Support Vector Machine (SVM) and Linear Discriminant Analysis (LDA) based decoding stressed that while artifact rejection may not always enhance performance, proper correction is essential to minimize artifact-related confounds that could artificially inflate decoding accuracy and lead to incorrect conclusions [4]. This is especially critical for mobile EEG (mo-EEG) systems, which enable monitoring in naturalistic settings but are highly susceptible to motion artifacts [5]. This paper establishes a detailed taxonomy of EEG artifacts most detrimental to BCI applications, providing a structured framework for their identification and removal to advance robust BCI development.

A Detailed Taxonomy of EEG Artifacts

EEG artifacts are broadly categorized by their origin into physiological artifacts (from the subject's body) and non-physiological artifacts (from external sources). The table below systematizes the primary artifacts affecting BCI systems.

Table 1: Taxonomy of Key EEG Artifacts Relevant to BCI Performance

| Category | Specific Type | Origin | Impact on EEG Signal | Frequency Characteristics | Primary Affected BCI Applications |

|---|---|---|---|---|---|

| Physiological | Ocular (EOG) | Corneo-retinal dipole shift from eye blinks/movement [3] | High-amplitude, slow deflections over frontal electrodes [2] | Dominant in delta/theta bands (0.5-8 Hz) [3] | Visual BCIs, P300 spellers [4] |

| Muscle (EMG) | Muscle contractions (jaw, neck, face) [2] | High-frequency, broadband noise [3] | Broadband, 20-300 Hz; overlaps beta/gamma [2] [3] | Motor imagery, all BCIs during user movement | |

| Cardiac (ECG) | Electrical activity of the heart [2] | Rhythmic, spike-like waveforms [3] | ~1 Hz (pulse) to ~1-5 Hz (ECG) [2] | All applications, particularly with high-impedance setups | |

| Motion Artifacts | Head/body movement disrupting electrode-skin interface [5] | Large, non-linear amplitude shifts, bursts [3] | Often low-frequency (<5 Hz) drifts or broad spectrum [5] | Mobile BCIs, ambulatory systems [5] | |

| Non-Physiological | Electrode Pop | Sudden change in electrode-skin impedance [3] | Abrupt, high-amplitude transient in a single channel [3] | Broadband, non-stationary [3] | All BCIs, can be mistaken for neural spikes |

| Cable Movement | Motion of electrode cables causing impedance changes/EMI [3] | Repetitive waveforms or sudden deflections [3] | Can introduce artificial low-frequency peaks [3] | Mobile and laboratory BCIs | |

| Power Line Interference | Electromagnetic fields from AC power (50/60 Hz) [3] | Persistent high-frequency sinusoidal noise [3] | Sharp peak at 50 Hz or 60 Hz [3] | All BCIs in non-shielded environments |

Physiological Artifacts

Ocular Artifacts

The eye acts as an electric dipole, and movements alter this field, generating an electrooculogram (EOG) that propagates across the scalp. With amplitudes often 10-20 times greater than cortical EEG, ocular artifacts are a primary source of contamination, particularly for BCIs relying on frontal electrodes or low-frequency signals [2] [3].

Muscle Artifacts

Electromyographic (EMG) signals from facial, jaw, and neck muscle contractions are a recognized tough problem for BCIs. Their broadband nature directly overlaps with the beta and gamma rhythms crucial for decoding motor commands and cognitive states, making them difficult to filter without sacrificing neural information [2] [3].

Motion Artifacts

In mobile BCI setups, motion introduces complex artifacts through electrode cable movement, changes in impedance, and head movements causing baseline shifts. These artifacts are often arrhythmic and non-linear, complicating their removal and posing a significant challenge for real-world BCI use [5].

Non-Physiological (Technical) Artifacts

Electrode and Cable Artifacts

Electrode "pops" from sudden impedance changes create sharp transients that can be mistaken for epileptiform activity or other neural events. Cable movement introduces similar artifacts that can be rhythmic, mimicking brain oscillations. Both can severely disrupt single-trial analyses essential for BCIs [3].

Environmental Interference

Power line interference (50/60 Hz) is a common issue, appearing as a sharp spectral peak that can mask high-frequency neural activity. While notch filters can remove it, they may also distort the genuine EEG signal [3].

Methodologies for Artifact Detection and Removal

A range of techniques from simple filtering to advanced machine learning has been developed to mitigate artifacts. The choice of method often involves a trade-off between the fidelity of preserved neural data and the computational complexity.

Classical Signal Processing Approaches

Table 2: Classical and Blind Source Separation Artifact Removal Methods

| Method | Underlying Principle | Best For Artifact Type | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Regression (Time/Frequency) | Estimates and subtracts artifact contribution using reference channels (e.g., EOG) [2] | Ocular artifacts | Simple, computationally efficient [2] | Requires reference channels; risk of over-correction and removing neural data [2] |

| Filtering (Band-pass/Notch) | Removes signals outside a predefined frequency range [2] | Power line noise, slow drifts | Very simple, fast, and effective for non-overlapping noise [2] | Ineffective when artifact and neural frequencies overlap [2] |

| Blind Source Separation (BSS) - ICA | Separates recorded signals into statistically independent components [2] | Ocular, muscle, cardiac artifacts [6] | Reference-free; can isolate and remove specific artifact components [2] | Requires many channels; computationally intensive; manual component inspection often needed [2] |

Advanced and Machine Learning Approaches

Machine learning, particularly deep learning, offers a powerful, data-driven approach to artifact removal. These models can learn complex, non-linear relationships between contaminated signals and their clean counterparts.

Motion-Net Deep Learning Algorithm: A subject-specific Convolutional Neural Network (CNN) based on a U-Net architecture was recently developed for motion artifact removal. This framework is trained and tested on data from individual subjects separately, enhancing its personalization and efficacy. A key innovation is the incorporation of Visibility Graph (VG) features, which convert time-series EEG data into graph structures, providing additional structural information that improves the model's performance, especially with smaller datasets. In experiments, Motion-Net achieved an average motion artifact reduction of 86% ±4.13 and an SNR improvement of 20 ±4.47 dB [5].

Experimental Protocol for Motion-Net:

- Data Acquisition and Preprocessing: EEG data is recorded alongside accelerometer (Acc) data to capture motion. The data is cut according to experimental triggers and resampled to synchronize EEG and Acc signals. A baseline correction is performed by deducting a fitted polynomial.

- Feature Extraction: Two types of features are extracted from the preprocessed EEG signals: a) Raw EEG signals, and b) Visibility Graph (VG) features, which characterize the structural properties of the signal.

- Model Training and Validation: The Motion-Net model is trained using three distinct experimental approaches to evaluate its robustness:

- Experiment 1: Uses only raw EEG signals as input.

- Experiment 2: Uses a combination of raw EEG and the VG feature set.

- Experiment 3: Employs a dual-encoder structure to process raw EEG and VG features separately before merging them for the final output. The model is trained separately for each subject, and performance is evaluated using metrics like Artifact Reduction Percentage (η), SNR Improvement, and Mean Absolute Error (MAE) against a ground-truth clean signal [5].

The Scientist's Toolkit: Key Research Reagents and Materials

Table 3: Essential Materials and Tools for BCI Artifact Research

| Item Name | Function/Application | Specific Example/Note |

|---|---|---|

| High-Density EEG System | Acquires neural data with high spatial resolution; crucial for BSS methods like ICA. | Systems with 32+ electrodes; Bitbrain's 16-channel system is an example filtered at 0.5–30 Hz [3]. |

| Auxiliary Reference Sensors | Provides dedicated recordings of non-neural physiological signals for regression-based removal. | EOG electrodes for eye blinks, ECG for heart activity, Accelerometers for motion [2] [5]. |

| ICA Software Toolboxes | Implement algorithms (Infomax, SOBI, FastICA) to decompose EEG and isolate artifact components. | EEGLAB toolbox includes routines for ICA and other artifact detection methods [6]. |

| Deep Learning Frameworks | Provide environment for developing and training custom artifact removal models like Motion-Net. | Frameworks like TensorFlow or PyTorch enable the building of CNN and LSTM models [5] [3]. |

| Visibility Graph (VG) Feature Code | Converts 1D EEG time-series into graph structures for enhanced feature extraction in ML models. | Used to improve model accuracy and stability with smaller datasets [5]. |

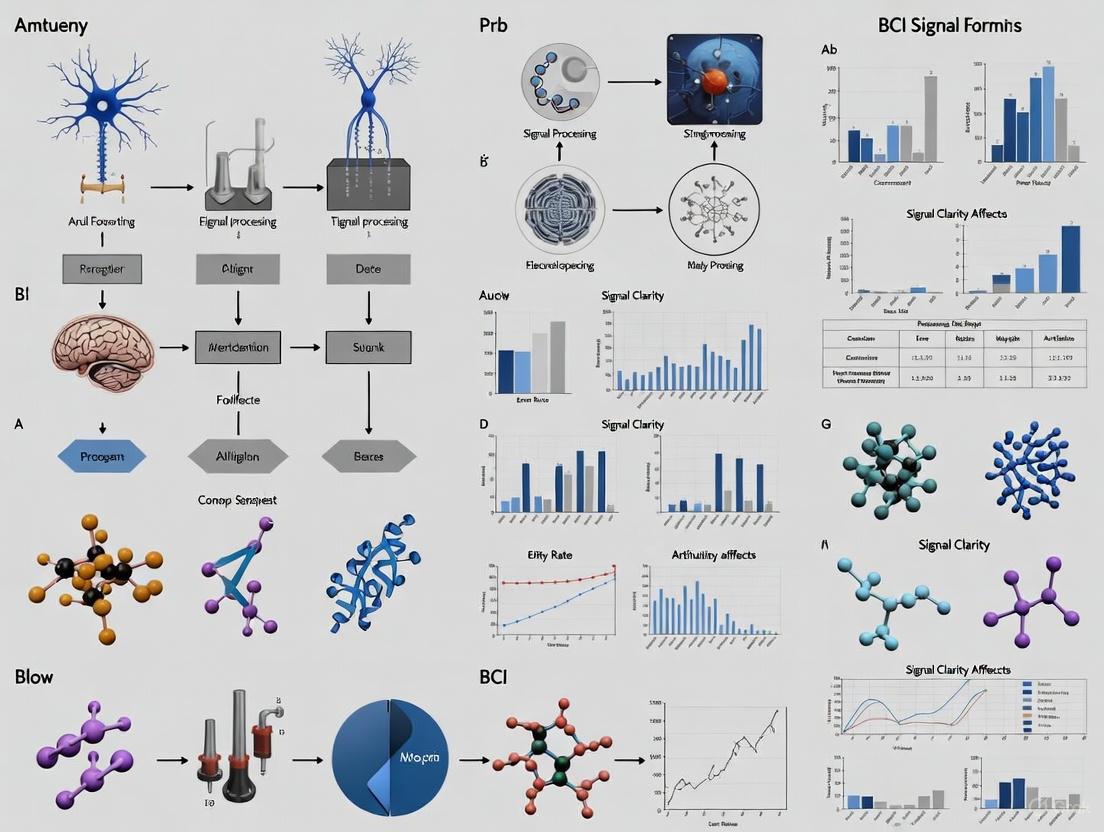

Visualizing Artifact Handling Workflows

The following diagrams illustrate the logical workflow for a general ICA-based artifact removal process and the specific architecture of the advanced Motion-Net model.

Workflow for ICA-Based Artifact Removal

Diagram 1: ICA-Based Artifact Removal

Motion-Net Model Architecture

Diagram 2: Motion-Net Dual-Encoder Architecture

The path toward reliable, real-world BCIs is inextricably linked to the effective management of EEG artifacts. The taxonomy presented herein—categorizing artifacts by their physiological and non-physiological origins—provides a critical framework for diagnosing and addressing signal contamination. While classical techniques like filtering and ICA remain pillars of artifact handling, the emergence of subject-specific deep learning models, such as Motion-Net, marks a significant advancement. These data-driven approaches offer the potential to handle complex, non-linear artifacts like those from motion, which are particularly detrimental to mobile BCI applications. As the field progresses, the continued development and refinement of these removal methodologies will be paramount in overcoming the noise barrier, ensuring that the user's neural intent, and not artifact-driven confounds, dictates BCI performance.

In brain-computer interface (BCI) research, the fidelity of neural command decoding hinges on a single, crucial metric: the signal-to-noise ratio (SNR). Artifacts—unwanted signals originating from non-neural sources—pose a fundamental challenge by degrading this SNR, effectively obscuring the neural commands essential for BCI operation. These artifacts introduce contaminating signals that can be orders of magnitude larger than the neural signals of interest, overwhelming the subtle patterns of intentional control and leading to erroneous interpretations, reduced classification accuracy, and ultimately, system failure [7] [8] [9]. As BCI technologies transition from controlled laboratory settings to real-world applications in healthcare, industry, and daily life, the imperative to understand and mitigate these disruptive signals has never been greater [10] [1]. This whitepaper examines the mechanistic pathways through which artifacts corrupt the neural signal pathway, quantifies their impact on BCI performance, and synthesizes current methodologies for restoring SNR to enable reliable neural communication.

Defining the Problem: Neural Signals Versus Artifacts

The Nature of Neural Signals in BCI

BCI systems rely on the acquisition and interpretation of electrophysiological signals representing specific brain activities. These include:

- Electroencephalography (EEG): Electrical activity measured from the scalp surface, characterized by low amplitude (microvolts) and limited spatial resolution [11].

- Event-Related Potentials (ERPs): Time-locked neural responses to specific stimuli, such as the P300 potential used in communication BCIs [9].

- Sensorimotor Rhythms: Oscillatory patterns originating from the sensorimotor cortex during motor imagery or execution [8].

These neural signals exist within specific frequency bands (Delta, Theta, Alpha, Beta, Gamma) and exhibit characteristic spatial distributions across the scalp. Their inherently weak nature makes them particularly vulnerable to contamination from stronger non-neural sources [11].

Major Artifact Classes and Their Characteristics

Artifacts in BCI systems can be categorized by their origin, properties, and impact on the signal pathway.

Table 1: Classification and Characteristics of Major BCI Artifacts

| Artifact Category | Specific Sources | Spectral Properties | Amplitude Range | Primary Impact on SNR |

|---|---|---|---|---|

| Ocular Artifacts | Eye blinks, saccades, lateral movements | Low-frequency (<4 Hz) | 50-100 μV (blinks) | Masks low-frequency neural patterns, obscures ERPs |

| Muscular Artifacts | Jaw clenching, forehead tension, head movement | Broadband (20-300 Hz) | Can exceed 100 μV | Overwhelms high-frequency neural oscillations (Beta, Gamma) |

| Motion Artifacts | Cable swings, electrode displacement, head movement | DC shifts to low-frequency | Variable, often large | Causes baseline wander, obscures all frequency components |

| Environmental Artifacts | Power-line interference, electromagnetic interference | 50/60 Hz and harmonics | Variable | Introduces narrowband noise at specific frequencies, reduces clarity |

| Instrumental Artifacts | Electrode "pops", impedance changes, amplifier saturation | DC shifts, abrupt transitions | Can saturate amplifiers | Creates signal dropouts or saturation, renders data unusable |

Each artifact type presents distinct temporal, spectral, and spatial signatures that complicate the separation of neural from non-neural content [10] [8]. The spatial distribution of artifacts further complicates their identification; ocular artifacts typically manifest most strongly in frontal regions, while muscular artifacts from jaw clenching affect temporal areas [8]. This complex interplay of artifact sources creates a multifaceted challenge for BCI signal processing pipelines, particularly in real-world environments where multiple artifact types occur simultaneously [10].

Quantifying the Impact: How Artifacts Degrade BCI Performance

Direct Effects on Signal-to-Noise Ratio and Classification Accuracy

The degradation of SNR by artifacts directly translates to measurable reductions in BCI classification performance. Research has demonstrated that artifacts can diminish the SNR of acquired brain signals, fundamentally limiting the overall performance of the BCI system [11]. One controlled investigation using real-time functional MRI found that the presence of BCI control, which engages additional cognitive processes, actually increased subjects' whole-brain signal-to-noise ratio compared to no-control conditions, highlighting how task engagement can potentially improve SNR when artifacts are properly managed [7].

The table below synthesizes quantitative findings from multiple studies on how artifacts impact specific BCI paradigms and the performance recovery possible with artifact mitigation.

Table 2: Quantified Impact of Artifacts on BCI Performance Metrics

| BCI Paradigm | Performance Metric | With Artifacts | After Artifact Mitigation | Reference |

|---|---|---|---|---|

| Hybrid BCI (Eye-tracker + EEG) | True Positive Rate (Dwell time=0.0s) | 24.6% (artifacts ignored) | 44.7% (with proposed algorithm) | [12] |

| Covert Speech Rate Classification | Fast vs. Slow Counting Classification Accuracy | ~65% (no control condition) | ~72% (with neurofeedback control) | [7] |

| P300-based Communication BCI | Classification Accuracy | Significant degradation reported | Online parity filtering improved performance | [9] |

| Self-Paced Hybrid BCI | False Positives/Minute | >2 (with artifacts) | <2 (with proposed removal) | [12] |

Consequences for End-Users and Clinical Applications

The performance degradation quantified above translates directly to real-world limitations for BCI users:

- Reduced Communication Rates: For communication BCIs, decreased classification accuracy directly lowers characters-per-minute typing rates, frustrating users and limiting practical utility [9].

- Increased False Activations: In self-paced BCIs, artifacts trigger false commands during intended rest periods, leading to user frustration and potential safety risks in control applications [12].

- Cognitive Load Increases: Users must often employ compensatory mental strategies or restrict natural behaviors (e.g., blinking, swallowing) to maintain acceptable performance, increasing fatigue and reducing long-term usability [9].

These impacts are particularly significant for the target populations of assistive BCIs, including individuals with amyotrophic lateral sclerosis, spinal cord injuries, or brainstem stroke, for whom BCI technology may represent the primary channel for communication or environmental control [1] [11].

Methodological Approaches: Experimental Protocols for Studying Artifact Impact

Protocol 1: Controlled Motion Artifact Induction

This systematic approach evaluates motion artifact impact on EEG signals in simulated real-world conditions:

- Participant Preparation: Apply science-grade EEG systems according to 10-20 international system, ensuring impedance levels <10 kΩ [8].

- Baseline Recording: Collect 5 minutes of resting-state EEG with eyes open and closed to establish individual baseline rhythms [8].

- Artifact Induction Protocol:

- Treadmill walking: Record EEG during walking at varying speeds (1.5-4.0 km/h)

- Head movements: Implement standardized head rotation paradigms (left-right, up-down)

- Jaw clenching: Record during periodic jaw clenching at 0.5-2.0 Hz frequencies

- Cable manipulation: Introduce controlled cable swings during stationary periods [8]

- Parallel Task Performance: During motion conditions, participants perform BCI tasks (e.g., motor imagery, P300 spelling) to quantify combined effects on classification accuracy [8].

- Data Annotation: Synchronize motion capture data with EEG recordings to precisely tag artifact onset, duration, and type [8].

This protocol enables researchers to systematically correlate specific motion parameters with resulting artifact characteristics and their impact on SNR metrics.

Protocol 2: Online Parity Validation for Artifact Filtering

This methodology evaluates whether offline artifact processing approaches maintain effectiveness during real-time BCI operation:

- Experimental Design: Recruit participants for a standard BCI paradigm (e.g., P300 matrix speller) with multiple sessions [9].

- Data Acquisition: Collect EEG data using standard protocols with both high-density (research) and low-density (consumer-grade) systems [9].

- Processing Conditions:

- Conventional filtering: Apply digital filters to the entire dataset after collection

- Online parity filtering: Process only the segmented data epochs that would be available during closed-loop control [9]

- Model Training and Testing: Train classification models separately on data from each processing condition, then evaluate performance using offline simulations of online operation [9].

- Performance Metrics: Compare true positive rates, false positive rates, and information transfer rates between conditions to quantify the "online parity gap" [9].

This approach addresses the critical disconnect between offline processing methods and real-time BCI operation, ensuring that artifact handling techniques evaluated in research will translate effectively to clinical and consumer applications [9].

The Research Toolkit: Methods and Technologies for Artifact Management

Signal Processing Approaches

Researchers employ a diverse arsenal of computational techniques to combat artifacts in BCI systems:

- Independent Component Analysis (ICA): A blind source separation technique that identifies statistically independent components in multichannel EEG data, allowing for selective removal of artifact-contributed components [4] [13]. This approach is particularly effective for ocular and muscular artifacts when sufficient spatial sampling is available.

- Wavelet Transform Methods: Techniques like Stationary Wavelet Transform (SWT) with adaptive thresholding effectively isolate and remove transient artifacts while preserving neural signal integrity, making them suitable for real-time applications [12].

- Canonical Correlation Analysis (CCA): Separates neural signals from artifacts based on their different autocorrelation properties, effectively targeting rhythmic artifacts like power-line interference [9].

- Deep Learning Approaches: Emerging transformer-based architectures like the Artifact Removal Transformer (ART) show promise for end-to-end denoising of multichannel EEG signals, simultaneously addressing multiple artifact types through learned representations [13].

- Adaptive Filtering: Employs reference signals (e.g., from EOG or accelerometers) to dynamically estimate and subtract artifact contributions from EEG channels [8].

Hardware and Experimental Design Solutions

Beyond computational approaches, several hardware and methodological strategies help mitigate artifacts at their source:

- Auxiliary Sensors: Integration of electrooculography (EOG), electromyography (EMG), and inertial measurement units (IMUs) provides reference signals for adaptive filtering and ground truth for artifact identification [10] [8].

- Dry Electrode Systems: Modern dry electrodes reduce application time and improve user comfort, though they may be more susceptible to motion artifacts than traditional wet electrodes [10].

- Physical Stabilization: Mechanical systems that stabilize electrode cables reduce motion-induced artifacts during user movement [8].

- Online Parity Design: Experimental protocols that maintain consistency between training and operational conditions improve real-world performance of artifact handling methods [9].

Table 3: Research Reagent Solutions for BCI Artifact Management

| Tool Category | Specific Examples | Primary Function | Considerations for Use |

|---|---|---|---|

| Signal Processing Algorithms | Independent Component Analysis (ICA), Wavelet Transform, Canonical Correlation Analysis | Separate neural signals from artifacts in recorded data | Computational demand, channel count requirements, real-time capability |

| Deep Learning Architectures | Artifact Removal Transformer (ART), Convolutional Neural Networks | End-to-end denoising of contaminated signals | Training data requirements, generalizability across subjects |

| Reference Sensors | EOG electrodes, EMG sensors, inertial measurement units (IMUs) | Provide auxiliary signals for adaptive filtering | Additional hardware complexity, data synchronization |

| EEG Acquisition Systems | Science-grade amplifiers, active electrodes, shielded cables | Maximize native signal quality while minimizing environmental interference | Cost, portability, setup time |

| Validation Datasets | Semi-simulated EEG, controlled artifact induction protocols | Benchmark performance of artifact handling methods | Ecological validity, ground truth availability |

Emerging Solutions and Future Directions

The frontier of artifact management in BCI research is characterized by several promising developments:

- Advanced Deep Learning Architectures: Transformer-based models like the Artifact Removal Transformer (ART) represent a significant advancement in end-to-end EEG denoising, demonstrating superior performance in restoring multichannel EEG signals compared to conventional methods [13]. These models excel at capturing the transient millisecond-scale dynamics characteristic of both neural signals and artifacts.

- Online Parity Principles: Growing recognition that artifact handling methods must be evaluated under conditions that match real-time BCI operation [9]. Research shows that filtering approaches maintaining online parity—where processing conditions match those during closed-loop control—can significantly improve model performance compared to conventional offline filtering applied to entire datasets.

- Hybrid Methodologies: Combining multiple approaches (e.g., ICA with wavelet transforms) creates more robust pipelines capable of addressing the complex artifact mixtures encountered in real-world BCI use [12].

- Foundation Models of Brain Activity: Emerging approaches aim to build generalizable models trained on thousands of hours of neural data from numerous individuals, potentially creating more adaptive artifact resistance that transfers across different users and sessions [14].

Artifacts represent a fundamental challenge in brain-computer interface research by systematically degrading the signal-to-noise ratio essential for reliable neural command decoding. Through multiple mechanisms—including amplitude domination, spectral overlapping, and spatial contamination—artifacts obscure the subtle neural patterns that encode user intent, directly diminishing BCI classification accuracy and practical utility. The research community has developed a sophisticated toolkit of signal processing algorithms, hardware solutions, and experimental protocols to address this challenge, with approaches ranging from component analysis and wavelet transforms to emerging deep learning architectures. As BCI technologies continue their transition from laboratory demonstrations to real-world applications, maintaining fidelity in the face of artifacts will remain a core research priority. Success in this endeavor will ultimately determine whether BCIs can fulfill their potential to restore communication and control for individuals with severe neurological disabilities, while enabling new forms of human-technology interaction for broader populations.

In brain-computer interface (BCI) research, the conventional paradigm has treated artifacts as contamination to be eliminated. Electroencephalography (EEG) and other neural recording modalities are persistently corrupted by non-neural signals originating from ocular movements, muscle activity, cardiac rhythms, and environmental noise. The longstanding challenge of artifact removal has significantly impacted neuroscientific analysis and BCI performance, driving the development of increasingly sophisticated denoising algorithms [13]. However, a paradoxical opportunity is emerging: these unwanted signals may themselves become valuable sources of information for classifying user states, intentions, and contextual factors. The artifact paradox represents a fundamental shift in perspective—from elimination to utilization—that could potentially augment BCI performance and expand their applications.

This paradox operates within a delicate balance. While artifacts undoubtedly obscure neural signals of interest, they simultaneously provide a window into user behaviors and states that are often correlated with task performance and cognitive load. The very ocular movements that distort EEG patterns can reveal visual attention strategies; the muscle artifacts that mask sensorimotor rhythms can indicate physical intention; the cardiac fluctuations can reflect emotional or cognitive states. By reframing artifacts not merely as noise but as potential information channels, BCI systems may leverage these signals to create more robust, adaptive, and context-aware interfaces. This whitepaper explores this transformative concept through quantitative analysis of artifact handling methodologies, detailed experimental protocols, and visualization of integrative approaches that harness the artifact paradox to enhance BCI classification performance.

Quantitative Analysis of Artifact Handling Methodologies

The evolution of artifact handling strategies in BCI research reveals a progression from simple removal to sophisticated utilization. The table below summarizes the performance characteristics of various approaches, demonstrating how modern methods increasingly recognize the informational value of artifacts.

Table 1: Performance Comparison of Artifact Handling Methods in BCI Applications

| Method | Primary Function | Advantages | Limitations | Classification Impact |

|---|---|---|---|---|

| Independent Component Analysis (ICA) [15] | Separates mixed signals into statistically independent components | Effective for isolating ocular and muscular artifacts; preserves neural signals | Requires manual component identification; struggles with non-stationary artifacts | Potential for reincorporating informative artifact components into classification models |

| Four Class Iterative Filtering (FCIF) [15] | Iterative artifact removal using filter banks and ICA | Specifically designed for ocular artifact mitigation; mathematical formulation allows for effective artifact mitigation | Computational complexity; primarily focused on ocular artifacts | Improved motor imagery classification accuracy (98.575% reported) by removing contaminating signals |

| Artifact Removal Transformer (ART) [13] | End-to-end deep learning model for EEG denoising | Holistic denoising solution addressing multiple artifact types simultaneously; captures transient millisecond-scale dynamics | Requires extensive training data; black box nature limits interpretability | Significantly improves BCI performance by reconstructing clean multichannel EEG signals |

| Brain-to-Brain Synchronization Metrics [16] | Uses artifact-free intervals to compute neural alignment | Provides objective team performance metrics; enables real-time assessment | Requires clean EEG segments; correlation with performance may be negative in certain contexts | Anterior alpha total interdependence strongly correlates (-0.87) with TeamSTEPPS team performance scores |

The performance data reveals that conventional artifact removal methods like ICA establish a foundation for clean signal acquisition, while emerging approaches demonstrate the potential for artifacts to serve as valuable information sources. The strong negative correlation (mean -0.87) between anterior alpha brain-to-brain synchronization and team performance scores illustrates how neural signals—once properly isolated from artifacts—can provide objective metrics for assessing complex cognitive states [16]. This establishes the foundation for the artifact paradox: by first understanding and removing contaminating signals, we can better identify which "artifacts" might actually carry useful information.

Table 2: Artifact Types and Their Potential Informational Value in BCI Systems

| Artifact Category | Origin | Traditional Treatment | Potential Informational Value | Extraction Challenges |

|---|---|---|---|---|

| Ocular Artifacts [15] | Eye movements, blinks | Removal via ICA or regression | Indicator of visual attention, fatigue, cognitive load | Temporal overlap with neural signals of interest |

| Muscle Artifacts [15] | Head, neck, jaw muscle activity | Filtering, rejection | Potential indicator of movement intention, stress, postural adjustments | Widespread spectral contamination |

| Cardiac Artifacts | Heartbeat, pulse | Template subtraction, filtering | Emotional arousal, cognitive effort, autonomic state | Periodic nature facilitates identification but requires precise timing |

| Environmental Noise [15] | Power line, equipment | Notch filtering, shielding | Equipment integrity, signal quality assessment | Often non-physiological, limited user state information |

Experimental Protocols for Artifact-Informed BCI Research

Protocol 1: Team Performance Assessment Through Brain-to-Brain Synchronization

This protocol demonstrates how clean neural signals, once isolated from artifacts, can provide objective performance metrics that might otherwise be obscured by contaminating signals [16].

Participants and Setting: 90 participants (15 groups of 6 simulated medical professionals) engaged in virtual simulation-based interprofessional education (SIMBIE) sessions. The controlled laboratory environment minimized confounding variables like temperature, visual and auditory noise [16].

EEG Acquisition and Preprocessing: Wireless EEG devices recorded neural activity during 30-minute virtual simulation sessions. Data preprocessing included artifact mitigation to produce clean EEG signals, which were segmented based on Unix times of verbal communications [16].

Feature Extraction: Total interdependence (TI) values, representing brain-to-brain synchronization, were computed from the clean EEG signals. These were aggregated to produce group-level TI metrics, specifically focusing on the alpha frequency band (8-12 Hz) in anterior brain areas [16].

Performance Correlation: TeamSTEPPS scores across 5 domains were independently assessed by trained raters and correlated with TI metrics. The results revealed strongly negative, statistically significant correlations (mean -0.87, SD 0.06) between group TI and group TeamSTEPPS scores [16].

Protocol 2: Motor Imagery Classification with Advanced Artifact Removal

This protocol exemplifies the conventional approach of aggressive artifact removal to enhance classification accuracy, achieving remarkable performance but potentially discarding valuable artifact-based information [15].

Dataset and Preparation: Utilized BCI Competition IV Dataset 2a & 2b. Implemented preprocessing steps including filtering and feature extraction with mathematical rigor [15].

Artifact Removal Phase: Applied Four Class Iterative Filtering (FCIF), a novel technique for ocular artifact removal using iterative filtering and filter banks. FCIF employs mathematical formulation for effective artifact mitigation, improving EEG data quality through iterative projection to reduce artifact-related components' influence on EEG channels [15].

Feature Extraction and Classification: Implemented FC-FBCSP (Four Class Filter Bank Common Spatial Pattern) algorithm to handle four-class motor imagery classification. Processed frequency-specific features using Common Spatial Pattern transformation to enhance discriminative patterns between motor imagery classes [15].

Classification Optimization: Employed a Modified Deep Neural Network (DNN) classifier tailored to handle complex neural patterns associated with motor intentions, achieving a mean accuracy of 98.575% [15].

Visualization Frameworks for Artifact-Informed BCI Systems

The following diagrams illustrate conceptual frameworks and workflows for leveraging the artifact paradox in BCI systems.

Integrative BCI System Leveraging the Artifact Paradox

Experimental Workflow for Team Performance Assessment

Table 3: Key Research Reagents and Computational Tools for Artifact-Informed BCI Research

| Tool Category | Specific Tools/Methods | Function | Implementation Considerations |

|---|---|---|---|

| Signal Acquisition Hardware [16] | Wireless EEG devices, Intracortical microarrays, ECoG grids | Record neural signals with minimal introduced artifact | Balance between signal quality (intracranial vs. scalp) and invasiveness [17] |

| Artifact Removal Algorithms [13] [15] | ART (Artifact Removal Transformer), FCIF (Four Class Iterative Filtering), ICA | Separate neural signals from contaminating artifacts | Trade-offs between computational complexity and degree of preservation of neural information |

| Feature Extraction Methods [16] [15] | Total Interdependence (TI), Filter Bank Common Spatial Patterns (FBCSP) | Identify discriminative patterns in neural and artifact-derived signals | Selection should align with specific classification goals and signal characteristics |

| Classification Frameworks [15] | Modified Deep Neural Networks (DNN), Transformer architectures | Decode user intention from multimodal feature sets | Black box nature may conflict with mechanistic understanding goals [18] |

| Validation Metrics [13] [16] | Mean squared error, Signal-to-noise ratio, TeamSTEPPS scores, Classification accuracy | Quantify system performance and artifact impact | Multidimensional assessment provides most comprehensive evaluation |

The artifact paradox represents both a challenge and opportunity for advancing brain-computer interface systems. Traditional approaches that view artifacts purely as noise to be eliminated have demonstrated significant value, as evidenced by the remarkable 98.575% classification accuracy achieved through sophisticated artifact removal in motor imagery tasks [15]. Simultaneously, emerging research reveals that the very signals we traditionally discard may harbor valuable information about user states, intentions, and contextual factors, as demonstrated by the strong correlation between clean neural signals and team performance metrics [16].

The path forward requires a balanced approach that acknowledges both perspectives. Different BCI applications may fall at various points along the spectrum from complete artifact elimination to strategic artifact utilization. Clinical applications requiring precise neural decoding may benefit from advanced denoising methods like the Artifact Removal Transformer [13], while applications in team performance monitoring or cognitive state assessment may strategically leverage certain artifact-derived features. What remains constant is the need for rigorous methodology, comprehensive validation, and thoughtful consideration of the epistemological implications of treating complex biological signals through increasingly sophisticated but often opaque computational models [18]. By embracing rather than resisting the artifact paradox, BCI researchers can develop more robust, adaptive, and informative systems that better serve both disabled populations and emerging applications in human-computer interaction.

The transition of brain-computer interface (BCI) applications from controlled laboratory settings to dynamic real-world environments represents a paradigm shift in neurotechnology. This migration has exposed a critical challenge: the vulnerability of non-invasive electroencephalography (EEG)-based systems to motion artifacts. These artifacts, originating from various sources including muscle activity, fasciculation, cable swings, or magnetic induction, pose significant obstacles to reliable BCI operation during physical activities [19]. The escalating global incidence of neurological disorders has intensified the need for effective rehabilitation and assistive technologies, with BCI emerging as a promising solution for conditions involving sensory disorders, motor disorders, cognitive disorders, and mental disorders [20]. However, the practical implementation of BCI technology for clinical and everyday applications hinges on effectively addressing the motion artifact problem. Motion artifacts manifest in EEG signals through multiple mechanisms, including muscle twitches in skeletal and neck muscles causing sharp transients, vertical head movements during walking leading to baseline shifts and periodic oscillations, and sudden electrode displacement during gait cycles producing amplitude bursts [5]. These artifacts can significantly distort EEG signal morphology, potentially obscuring underlying brain activity and leading to misinterpretation of neural signals [5]. The impact is particularly pronounced in mobile EEG (mo-EEG) systems, where the fundamental objective is to record brain signals from moving subjects, thus creating a complex scenario where the artifact source is inherent to the measurement context.

Quantifying the Motion Artifact Problem: Prevalence and Impact

The challenge of motion artifacts is not merely theoretical but presents quantifiable limitations to BCI performance and practicality. Research indicates that traditional EEG systems face significant limitations from movement-vulnerable rigid sensors, inconsistent skin-electrode impedance, and bulky electronics, which collectively diminish system portability and feasibility for continuous use [21]. The table below summarizes the quantitative impact of motion on BCI performance across various studies and conditions:

Table 1: Impact of Motion on BCI Signal Quality and System Performance

| Motion Condition | EEG Signal Quality Metric | BCI Performance Metric | Key Findings |

|---|---|---|---|

| Standing (Static Baseline) | Reference signal quality [22] | High accuracy for SSVEP and ERP paradigms [22] | Provides baseline for mobile condition comparisons |

| Walking (0.8-1.6 m/s) | Signal quality degradation observed [22] | Maintained performance with robust methods [22] | Motion artifacts become significant but manageable |

| Running (2.0 m/s) | Substantial signal quality reduction [22] | Performance degradation without compensation [22] | Most challenging condition requiring advanced artifact mitigation |

| Various Motions (Standing, walking, running) | N/A | SSVEP classification: 96.4% accuracy with artifact-controlled sensors [21] | Demonstrates potential of hardware solutions |

| Excessive Motions (Incl. running) | Low impedance density (0.03 kΩ·cm⁻²) maintained [21] | N/A | Sensor-level innovation enables motion resilience |

The temporal dimension of motion artifacts presents another layer of complexity. Unlike stationary artifacts, motion-induced noise often exhibits arrhythmic patterns in real-life situations because people do not always move at a consistent pace [5]. This variability complicates the process of resolving and removing these artifacts using traditional signal processing techniques. Furthermore, the problem extends beyond signal quality to user comfort and practicality. Traditional dry electrodes, while eliminating the skin irritation issues of wet electrodes, often cannot maintain stable contact without constant pressure, leading to user discomfort during movement [21]. These multifaceted challenges underscore why motion artifacts represent a primary bottleneck hindering the real-world deployment of BCIs across diverse application domains including sports with wearables, collaborative industry with co-working robots, dynamic rehabilitation exercise therapies, and the gaming industry [19].

Technical Approaches for Motion Artifact Mitigation

The research community has developed a multifaceted approach to addressing motion artifacts in BCIs, encompassing hardware innovations, signal processing techniques, and advanced algorithmic solutions. These approaches can be broadly categorized into three paradigms: sensor-based solutions, signal processing methods, and deep learning techniques.

Hardware and Sensor-Based Innovations

Hardware-level innovations focus on improving the fundamental quality of the recorded neural signals by addressing the physical interface between the body and recording equipment. A groundbreaking development in this domain is the creation of motion artifact–controlled micro–brain sensors designed to be inserted into the minuscule spaces between hair follicles [21]. These sensors achieve ultralow impedance density (0.03 kΩ·cm⁻²) on skin contact and enable high-fidelity neural signal capture for up to 12 hours, even during intense motion [21]. The compact, lightweight design minimizes inertia and reduces susceptibility to hair movements, thereby addressing a primary source of motion artifacts. This hardware advancement facilitates continuous telecommunication using augmented reality and demonstrates 96.4% accuracy in signal classification with a train-free algorithm during excessive motions including standing, walking, and running [21]. Alternative sensor configurations include ear-EEG, which places electrodes inside or around the ear, offering advantages in stability, portability, and unobtrusiveness compared to conventional scalp-EEG, though with potentially reduced performance for certain paradigms like SSVEP due to greater distance from the occipital cortex [22].

Signal Processing and Conventional Algorithms

Traditional signal processing approaches form the foundation of motion artifact handling in BCI systems. Basic filtering techniques using low-pass and high-pass filters represent the first line of defense, designed to remove unwanted frequency components [5]. However, their efficacy is limited when movement artifacts overlap with brain signal frequencies, as these artifacts can contaminate a broad frequency range [5]. Beyond basic filtering, more sophisticated methods include:

- Artifact Subspace Reconstruction (ASR): An adaptive method that identifies and removes components of the signal that exceed statistical norms, particularly effective for large-amplitude artifacts caused by motion [23].

- Independent Component Analysis (ICA): A blind source separation technique that decomposes multi-channel EEG signals into statistically independent components, allowing for the identification and removal of artifact-related components [23].

- Canonical Correlation Analysis (CCA): Used in conjunction with inertial measurement unit (IMU) data to enhance EEG quality across diverse artifact conditions with reduced calibration needs [23].

- Adaptive Filtering: Employs algorithms like the normalized least-mean-square to continuously adapt filter parameters based on reference signals from IMUs, particularly effective for gait-related artifacts during walking tasks [23].

These signal processing methods often form benchmark pipelines against which newer approaches are evaluated, with combinations like ASR+ICA representing established standards in the field [23].

Deep Learning and Advanced Computational Approaches

Recent advances in deep learning have introduced powerful new paradigms for motion artifact removal. The Motion-Net architecture represents a significant innovation as a subject-specific CNN-based framework for removing motion artifacts from EEG signals [5]. This model incorporates visibility graph (VG) features that provide structural information improving performance with smaller datasets, achieving an average motion artifact reduction percentage (η) of 86% ±4.13 and an SNR improvement of 20 ±4.47 dB [5]. Another groundbreaking approach involves IMU-Enhanced EEG artifact removal using fine-tuned large brain models (LaBraM) [23]. This method leverages spatial channel relationships in simultaneously recorded IMU data to identify motion-related artifacts in EEG signals, with the model successfully learning to focus on IMU channels that are truly correlated with EEG motion artifacts [23]. For motor imagery classification specifically, hierarchical attention-enhanced deep learning architectures have demonstrated remarkable performance, achieving up to 97.25% accuracy on four-class motor imagery tasks by synergistically integrating spatial feature extraction through convolutional layers, temporal dynamics modeling via long short-term memory networks, and selective attention mechanisms for adaptive feature weighting [24].

Table 2: Performance Comparison of Motion Artifact Handling Techniques

| Method Category | Specific Technique | Reported Performance | Key Advantages | Limitations |

|---|---|---|---|---|

| Hardware Solution | Micro–brain sensors between hair strands [21] | 96.4% SSVEP classification during running; 12-hour stable use | High fidelity during intense motion; Long-term stability | Requires specialized hardware |

| Signal Processing | ASR+ICA Pipeline [23] | Established benchmark for comparison | Well-understood; Extensive validation | Limited adaptability to new motion patterns |

| Deep Learning | Motion-Net [5] | 86% artifact reduction; 20 dB SNR improvement | Subject-specific; Effective with smaller datasets | Requires per-subject training |

| Multi-modal Fusion | IMU-Enhanced LaBraM [23] | Outperforms ASR+ICA across varying motions | Leverages direct motion measurement; Attention mechanisms | Requires synchronized IMU data |

| Classification | Attention-Enhanced CNN-RNN [24] | 97.25% MI classification accuracy | High precision; Interpretable through attention | Computationally intensive |

Experimental Protocols and Methodological Considerations

Robust experimental design is crucial for advancing the understanding and mitigation of motion artifacts in BCI systems. Several innovative protocols have emerged that enable rigorous evaluation of BCI performance under dynamic conditions.

Mobile BCI Data Acquisition Paradigms

Comprehensive datasets capturing EEG signals during various motion states are fundamental for developing and validating artifact handling techniques. The mobile BCI dataset by Lee et al. represents a significant contribution, containing scalp- and ear-EEGs with ERP and SSVEP paradigms recorded while participants engaged in activities at four different speed conditions: standing (0 m/s), slow walking (0.8 m/s), fast walking (1.6 m/s), and slight running (2.0 m/s) [22]. This dataset includes synchronized data from 32-channel scalp-EEG, 14-channel ear-EEG, 4-channel electrooculography (EOG), and 9-channel inertial measurement units (IMUs) placed at the forehead, left ankle, and right ankle [22]. The incorporation of IMU data is particularly valuable as it provides direct measurement of motion dynamics that can be correlated with EEG artifacts. For the ERP tasks, participants identified target ('OOO') and non-target ('XXX') stimuli, each shown for 0.5 seconds across 300 trials, while for SSVEP tasks, participants focused on one of three flickering stimuli displayed at different frequencies (5.45, 8.57, and 12 Hz) [22]. This comprehensive approach enables multifaceted analysis of motion artifacts across different BCI paradigms and movement intensities.

Fast-Training Asynchronous BCI Protocols

The development of efficient training paradigms represents another important methodological advancement. Traditional cue-based paradigms for generating training data often lead to extended training periods due to long intervals between cue symbols (typically >8 seconds) to allow for visual evoked potentials to subside [25]. A novel experimental paradigm incorporating a rotational cue with continuous rotation at varying rotational speeds addresses this limitation by minimizing visual cue effects while maintaining short inter-trial intervals [25]. This approach enables the collection of 300 cued movement trials in just 18 minutes, with the continuously rotating cross ensuring no abrupt visual cues are introduced during the waiting phase for the next motion [25]. The variable inter-trial intervals (2.50-4.75 seconds) prevent habituation and improve the ecological validity of the training data. Critically, research demonstrates that classifiers trained on data produced by this paradigm exhibit characteristics similar to those observed during self-paced movement and can accurately detect executed movements with an average true positive rate of 31.8% at a maximum rate of 1.0 false positives per minute [25].

Individual Finger Movement Decoding Protocols

Advancements in decoding precision have enabled increasingly sophisticated BCI applications. Recent work has demonstrated real-time noninvasive robotic hand control at the individual finger level using movement execution (ME) and motor imagery (MI) paradigms [26]. The experimental protocol involves participants performing ME and MI tasks with individual fingers (thumb, index, and pinky) of their dominant hand while EEG is recorded. Participants receive both visual feedback (on-screen color changes indicating decoding correctness) and physical feedback (corresponding robotic finger movements in real time) [26]. The implementation of fine-tuning mechanisms is particularly noteworthy, where a base model trained on initial data is further refined using same-day data collected in the first half of the session, significantly enhancing task performance by integrating session-specific learning with user adaptation to real-time feedback [26]. This approach has achieved real-time decoding accuracies of 80.56% for two-finger MI tasks and 60.61% for three-finger tasks, demonstrating the feasibility of naturalistic noninvasive robotic hand control at the individuated finger level despite the substantial overlap in neural responses associated with individual fingers [26].

Table 3: Research Reagent Solutions for Motion-Artifact Resilient BCI Research

| Resource Category | Specific Tool/Technology | Function/Purpose | Example Implementation |

|---|---|---|---|

| Sensor Technologies | Micro–brain sensors [21] | Enable high-fidelity neural signal capture during motion by minimizing skin-electrode impedance | Arrays of low-profile microstructured electrodes with highly conductive polymer inserted between hair follicles |

| Multi-modal Data Acquisition | Inertial Measurement Units (IMUs) [23] | Provide direct measurement of motion dynamics for artifact correlation and removal | 9-axis IMUs (3-axis accelerometer, gyroscope, magnetometer) placed at forehead and ankles |

| Reference Datasets | Mobile BCI Dataset [22] | Benchmarking and algorithm development across various motion conditions | Publicly available dataset with scalp-EEG, ear-EEG, and IMU data during standing, walking, running |

| Signal Processing Libraries | Artifact Subspace Reconstruction (ASR) [23] | Adaptive identification and removal of artifact components in multi-channel EEG | Real-time processing pipeline integrated with ICA for motion artifact handling |

| Deep Learning Frameworks | EEGNet [26] | Compact convolutional neural network architecture optimized for EEG-based BCIs | Base architecture for individual finger movement decoding with fine-tuning capability |

| Experimental Paradigms | Rotational Cue Protocol [25] | Fast generation of training data for asynchronous movement-based BCIs | Continuous rotation of visual cue with variable speed to minimize visual evoked potentials |

| Validation Metrics | Artifact Reduction Percentage (η) [5] | Quantitative assessment of motion artifact removal effectiveness | Performance benchmark for comparing different artifact handling methods |

The systematic investigation of motion artifacts in real-world BCI applications reveals a technology at a critical juncture, where significant progress has been made but fundamental challenges remain. The integration of multi-modal approaches combining hardware innovations, advanced signal processing, and deep learning represents the most promising path forward. Sensor-level innovations like micro–brain sensors that achieve ultralow impedance density [21] address the problem at its physical source, while algorithmic advances such as IMU-enhanced large brain models [23] and attention-enhanced deep learning architectures [24] provide sophisticated software-based solutions. The emergence of comprehensive public datasets [22] and standardized evaluation protocols enables rigorous comparison of different approaches and accelerates progress in the field. Future research directions should focus on enhancing the generalizability of artifact removal methods across diverse populations and movement patterns, reducing computational requirements for real-time processing on wearable platforms, and developing closed-loop systems that dynamically adapt to changing motion conditions. As these technical challenges are addressed, the translation of BCI technology from laboratory environments to real-world applications in neurorehabilitation, assistive communication, and human augmentation will accelerate, ultimately fulfilling the promise of seamless integration between human intention and external device control.

Methodologies for Artifact Management: From Traditional ICA to Deep Learning

Independent Component Analysis (ICA) has established itself as a gold-standard blind source separation (BSS) technique in brain-computer interface (BCI) research, primarily addressing the critical challenge of artifact contamination that severely impacts BCI performance and reliability. ICA is defined as a statistical approach that transforms multidimensional random data into features that are as statistically independent from one another as possible, primarily used to separate mixed signals into their source components [27]. In the context of BCI systems, which rely on the single-trial classification of ongoing EEG signals for real-time operation, artifacts originating from ocular movements, muscle activity, and cardiac rhythms can masquerade as neural signals of interest, leading to misleading conclusions and substantially diminished classification accuracy [28] [29]. The fundamental strength of ICA lies in its ability to separate these artifactual source components from neural signals without requiring prior knowledge of the mixing process—a capability known as blind source separation [27] [30]. This technical guide explores the core principles, variants, and methodological applications of ICA, with specific emphasis on its role in enhancing BCI performance through effective artifact removal and neural feature preservation.

Mathematical Foundations and Core Algorithms

Theoretical Framework and Assumptions

ICA operates on the principle of separating a multivariate signal into statistically independent, non-Gaussian components. The linear mixing model, which forms the basis of most ICA applications in BCI research, is expressed as:

X = AS

where X is the observed data matrix (e.g., multichannel EEG recordings), A is the unknown mixing matrix, and S contains the independent source components [27]. The goal of ICA is to estimate an unmixing matrix W such that:

S = WX

thereby recovering the statistically independent source signals from the observed mixtures [27]. The solution to this problem relies on three fundamental assumptions: (1) the source signals are statistically independent; (2) the source signals have non-Gaussian distributions; and (3) the mixing matrix is square and invertible [30]. The assumption of non-Gaussianity is particularly important as it enables the separation process through measures like negentropy or kurtosis that quantify the statistical independence of the components [27] [30].

Key ICA Algorithms and Implementation

Multiple algorithms have been developed to solve the ICA problem, each with distinct optimization strategies and practical considerations:

Table 1: Major ICA Algorithms and Their Characteristics

| Algorithm | Optimization Strategy | Key Features | BCI Application Considerations |

|---|---|---|---|

| FastICA | Maximizes non-Gaussianity using negentropy approximation | Fast convergence, deterministic output, memory efficient | Suitable for online BCI systems due to computational efficiency [27] [31] |

| Infomax | Minimizes mutual information between components | Neural network-based optimization, maximizes information transfer | Implemented in EEGLAB toolbox, effective for EEG decomposition [27] |

| JADE | Uses joint diagonalization of cumulant matrices | Based on fourth-order cumulants, robust performance | Computationally intensive for high-density EEG [27] |

| Picard | Likelihood optimization with extended ICA model | Faster convergence, robust to dependent sources | Suitable for real EEG where complete independence may not hold [32] |

ICA Variants and Methodological Advances

Temporal and Spatial ICA Approaches

Depending on the application domain, ICA can be implemented with either temporal or spatial focus. For fMRI data analysis, spatial ICA (sICA) is typically applied, producing as many components as there are data points in the processed time course data [31]. In contrast, EEG-based BCI applications often leverage temporal ICA to separate sources based on their statistical independence in the time domain. The cortex-based ICA (cbICA) approach represents an advanced variant that restricts the ICA decomposition to cortical voxels, substantially reducing calculation time and typically improving the resulting decomposition by focusing the ICA to relevant neural activity [31].

Nonlinear Extensions and Hybrid Methodologies

While standard ICA assumes linear mixing of sources, real-world BCI scenarios often involve nonlinear interactions. Recent advances have explored nonlinear ICA extensions to address these challenges [27] [33]. Post-nonlinear mixtures represent an important special case where a nonlinearity is applied to linear mixtures, with ambiguities essentially the same as for linear ICA problems [33]. Additionally, hybrid frameworks have emerged that combine ICA with other signal processing techniques to enhance artifact removal. The Hybrid ICA-Regression method, for instance, integrates ICA, regression, and high-order statistics to identify and eliminate ocular activities from EEG data while preserving neuronal signals [29]. Similarly, the recently developed Artifact Removal Transformer (ART) employs transformer architecture to capture transient millisecond-scale dynamics characteristic of EEG signals, demonstrating superior performance in denoising multichannel EEG data for BCI applications [13].

Experimental Protocols and Methodologies

Standardized ICA Workflow for EEG Artifact Removal

A systematic methodology for implementing ICA in BCI research involves several critical stages, each requiring careful execution to ensure optimal artifact removal while preserving neural signals of interest:

Data Acquisition and Preprocessing: Acquire multichannel EEG data according to international 10-20 system or other appropriate montages. Apply band-pass filtering (typically 1-40 Hz) to remove slow drifts and high-frequency noise that can negatively affect ICA performance [32]. Filtering is essential as slow drifts reduce the independence of assumed-to-be-independent sources, making it harder for the algorithm to find an accurate solution [32].

Data Decomposition: Perform ICA decomposition using preferred algorithm (FastICA, Infomax, or Picard). Determine the number of components based on explained variance or using dimensionality reduction techniques like Principal Component Analysis (PCA). For EEG data, the temporal dimension is typically reduced before applying spatial ICA [32].

Component Identification and Classification: Identify artifactual components using automated classification methods or expert inspection. Automated classifiers may utilize features from frequency, spatial, and temporal domains, with Linear Programming Machines (LPM) achieving performance on level with inter-expert disagreement (<10% Mean Squared Error) [28].

Signal Reconstruction: Reconstruct clean EEG signals by projecting the components back to sensor space while excluding identified artifactual components. The reconstruction can be controlled by the npcacomponents parameter, which may reduce the rank of the data if additional dimensionality reduction is desired [32].

Validation Methodologies and Performance Metrics

Rigorous validation is essential to establish the efficacy of ICA-based artifact removal in BCI applications. Standard evaluation approaches include:

Table 2: Performance Metrics for ICA-Based Artifact Removal in BCI

| Metric Category | Specific Metrics | Interpretation in BCI Context |

|---|---|---|

| Time-Domain Accuracy | Mean Squared Error (MSE), Mean Absolute Error (MAE) | Quantifies signal preservation after artifact removal; lower values indicate better performance [28] [29] |

| Information Preservation | Mutual Information between original and reconstructed signals | Measures retention of neural information; higher values indicate less neural signal loss [29] |

| BCI Performance | Classification accuracy of intentional control commands | Direct measure of impact on BCI efficacy; improvements demonstrate practical utility [28] |

| Component Classification | Sensitivity, Specificity for artifactual component identification | Evaluates accuracy of automated IC classification systems [28] |

Experimental protocols typically employ simulated, experimental, and standard EEG datasets to evaluate and analyze the effectiveness of ICA methods [29]. For instance, studies have demonstrated that optimized linear classifiers based on six features can achieve performance on par with inter-expert disagreement (<10% MSE) on reaction time data, with generalization to auditory ERP paradigms (15% MSE) and motor imagery BCI setups [28]. Critically, research has shown that discriminant information used for BCI is preserved when removing up to 60% of the most artifactual source components, highlighting the robustness of ICA for BCI applications [28].

Essential Software Tools and Implementation Platforms

Successful implementation of ICA in BCI research requires access to specialized software tools and programming environments:

Table 3: Essential Computational Tools for ICA Implementation in BCI Research

| Tool/Platform | Primary Function | Key Features for BCI Research |

|---|---|---|

| EEGLAB | MATLAB toolbox for EEG analysis | Implements ICA algorithms including Infomax, extensive visualization capabilities, plugin architecture [27] |

| MNE-Python | Python package for M/EEG analysis | Implements FastICA, Picard, and Infomax algorithms; comprehensive preprocessing and postprocessing tools [32] |

| BrainVoyager QX | fMRI analysis software | Implements spatial ICA (sICA) using FastICA; supports cortex-based ICA (cbICA) for focused decomposition [31] |

| ART (Artifact Removal Transformer) | Deep learning for EEG denoising | Transformer-based end-to-end model; removes multiple artifact sources simultaneously [13] |

Experimental Design Considerations for Optimal ICA Performance

To maximize the effectiveness of ICA in BCI applications, researchers should incorporate specific design elements:

Channel Configuration: Utilize sufficient electrode density (typically ≥19 channels) to enable effective source separation, as ICA requires multi-channel signals for meaningful decomposition [27].

Recording Parameters: Maintain consistent sampling rates (≥200 Hz recommended) and proper referencing schemes to preserve signal characteristics necessary for component identification [29].

Artifact Recording: When possible, include dedicated channels for EOG and EMG to facilitate validation of artifact component identification, though ICA does not strictly require these reference channels for successful artifact removal [28] [27].

Data Length: Ensure adequate recording duration to provide sufficient data points for stable ICA decomposition; longer recordings typically yield more robust components [32].

Impact on BCI Performance and Future Directions

The application of ICA and its variants has demonstrated significant impact on BCI performance metrics across multiple paradigms. In motor imagery BCI systems, preserving discriminant information while removing artifactual components enables maintenance of classification accuracy even when excluding substantial portions of components identified as artifactual [28]. For visual and auditory ERP-based BCIs, effective ocular and cardiac artifact removal through ICA decomposition has shown to improve signal-to-noise ratio and enhance detection of evoked responses [28] [32]. The advent of fully automated ICA classification systems has further advanced the field by providing consistent, objective component selection that performs on level with human experts while eliminating inter-rater variability [28].

Future directions in ICA development for BCI applications include nonlinear ICA extensions to address more complex mixing environments, real-time implementation for closed-loop BCI systems, and integration with deep learning approaches such as the Artifact Removal Transformer (ART) which has demonstrated superior performance in restoring multichannel EEG signals [13] [27]. Additionally, adaptive ICA methodologies that can track non-stationarities in EEG signals represent an important frontier for enhancing the robustness of BCI systems in real-world environments outside controlled laboratory settings.

Through continued refinement of algorithms, validation methodologies, and implementation frameworks, ICA remains a cornerstone technique for enhancing the reliability and performance of brain-computer interfaces, enabling more accurate neural decoding and more robust applications in both clinical and consumer domains.

Independent Low-Rank Matrix Analysis (ILRMA) for Automatic Reduction

Independent Low-Rank Matrix Analysis (ILRMA) represents a significant advancement in blind source separation (BSS) technology, particularly for artifact reduction in brain-computer interface (BCI) systems. By unifying the statistical independence principles of independent vector analysis (IVA) with the source structure modeling capabilities of nonnegative matrix factorization (NMF), ILRMA effectively addresses the critical challenge of artifact contamination in electroencephalogram (EEG)-based BCIs. This technical guide comprehensively examines ILRMA's theoretical foundations, algorithmic implementation, and experimental validation across multiple BCI paradigms. Evidence demonstrates that ILRMA-based artifact reduction improves averaged BCI performance by over 70% compared to conventional methods, establishing its potential for enhancing reliability in real-world BCI applications where artifacts frequently compromise signal integrity [34] [35].

Electroencephalogram (EEG)-based brain-computer interfaces (BCIs) enable direct communication between the brain and external devices through the detection of specific neural activity patterns. However, the practical implementation of these systems faces a substantial challenge: biological artifacts generated from body activities (e.g., eyeblinks, eye movements, and teeth clenches) frequently contaminate EEG recordings [34]. These artifacts exhibit electrical potentials with amplitudes often significantly higher than neuronal signals and overlapping frequency characteristics, severely complicating EEG-based classification and identification tasks essential for BCI operation [34].

Traditional approaches for artifact reduction have predominantly relied on independent component analysis (ICA) and its extension, independent vector analysis (IVA). These methods employ blind source separation (BSS) to estimate neural and artifactual components based primarily on statistical independence assumptions [34] [35]. However, ICA-based techniques often yield estimated independent components that remain mixed with both artifactual and neuronal information due to the reliance solely on the independence criterion [34]. This fundamental limitation necessitates the development of more sophisticated artifact reduction techniques capable of leveraging additional properties of neural signals.

Independent Low-Rank Matrix Analysis (ILRMA) emerges as a powerful solution to this challenge by incorporating both independence assumptions and the inherent low-rank structure of source signals in the frequency domain [34]. By modeling biological artifacts as reproducible patterns sharing few basic functions—thereby forming low-rank matrices across multiple time segments—ILRMA achieves superior separation performance compared to conventional ICA and IVA approaches [34]. When applied to BCIs, this advanced matrix factorization technique demonstrates remarkable potential for improving signal fidelity and subsequent classification accuracy across various experimental paradigms.

Theoretical Foundations of ILRMA

Problem Formulation: Blind Source Separation in BCIs

The fundamental challenge in EEG artifact reduction can be formulated as a blind source separation problem. In this framework, P-channel EEG observations are modeled as linear combinations of Q unknown cerebral sources comprising both artifactual and neuronal components, plus additive noise [34]. Mathematically, this relationship is expressed as:

[ \mathbf{x}(n) = \mathbf{A}\mathbf{s}(n) + \mathbf{d}(n) ]

where (\mathbf{x}(n) = [x1(n), x2(n), \ldots, xP(n)]^T) represents the EEG observation at the nth sampling point, (\mathbf{s}(n) = [s1(n), s2(n), \ldots, sQ(n)]^T) contains the unknown source signals, (\mathbf{A}) is a (P \times Q) mixing matrix, and (\mathbf{d}(n) = [d1(n), d2(n), \ldots, d_P(n)]^T) represents additive zero-mean noise [34]. The core BSS objective involves estimating both the source matrix (\hat{\mathbf{S}} = [\hat{\mathbf{s}}(1), \ldots, \hat{\mathbf{s}}(N)] \in \mathbb{R}^{Q \times N}) and the demixing matrix (\mathbf{W} (= \mathbf{A}^{-1}) \in \mathbb{R}^{Q \times P}) to blindly separate observations into artifactual and neuronal components:

[ \hat{\mathbf{s}}(n) = \mathbf{W}\mathbf{x}(n) ]

This process enables the reconstruction of artifact-reduced signals by selectively remixing only the neuronal components [34].

From ICA and IVA to ILRMA

ILRMA represents the unification of two complementary BSS approaches: independent vector analysis (IVA) and nonnegative matrix factorization (NMF). While frequency-domain ICA (FDICA) applies ICA independently to each frequency bin of a Short-Time Fourier Transform (STFT), it suffers from the permutation problem—the need to align components across frequencies [36] [37]. IVA addresses this limitation by employing a spherical generative model of source frequency vectors, assuming higher-order dependencies (co-occurrence) across frequency bins [36].

ILRMA further enhances this framework by integrating NMF-based source modeling, which exploits the low-rank time-frequency structure of source signals [36] [37]. Specifically, ILRMA models the power spectrograms of source signals using NMF, effectively capturing the co-occurrence patterns among time-frequency slots through a parts-based representation [36]. This integration enables ILRMA to simultaneously leverage statistical independence between sources while exploiting the inherent low-rank structure within each source, resulting in significantly improved separation performance, particularly for audio and EEG signals [36].

Table 1: Evolution of Blind Source Separation Methods

| Method | Key Principle | Advantages | Limitations |

|---|---|---|---|

| ICA/FDICA | Statistical independence between sources | Effective for instantaneous mixtures; well-established | Permutation problem in frequency domain; limited source model [36] [37] |

| IVA | Spherical multivariate model across frequencies | Solves permutation problem; models frequency dependencies | Limited modeling of time-frequency structure [36] |

| ILRMA | NMF-based low-rank source modeling + independence | Models time-frequency structure; superior separation performance | Higher computational complexity; parameter sensitivity [34] [36] [37] |

Core Algorithmic Framework

The ILRMA algorithm operates on the time-frequency representation of multichannel signals obtained through STFT. Let (\mathbf{X}{ij} = [x{ij,1}, \ldots, x{ij,M}]^T \in \mathbb{C}^{M \times 1}) represent the complex-valued time-frequency components of the observed signal, where (i = 1,\ldots,I) and (j = 1,\ldots,J) are frequency and time frame indices, respectively [36]. The estimated source components are given by (\mathbf{Y}{ij} = [y{ij,1}, \ldots, y{ij,N}]^T \in \mathbb{C}^{N \times 1}), obtained through the demixing operation (\mathbf{y}{ij} = \mathbf{W}i\mathbf{x}{ij}), where (\mathbf{W}i) is the frequency-dependent demixing matrix [36].

ILRMA assumes that each source's power spectrogram (|\mathbf{Y}_n|^2) (where (n) indexes sources) follows a low-rank structure decomposable via NMF:

[ |\mathbf{Y}n|^2 \approx \mathbf{T}n\mathbf{V}_n ]