Advancing Portable EEG: A Comprehensive Guide to Artifact Removal for Few-Channel Systems

The expansion of portable, few-channel electroencephalography (EEG) into clinical diagnostics, neuropharmacology, and real-world brain-computer interfaces is critically dependent on robust artifact removal.

Advancing Portable EEG: A Comprehensive Guide to Artifact Removal for Few-Channel Systems

Abstract

The expansion of portable, few-channel electroencephalography (EEG) into clinical diagnostics, neuropharmacology, and real-world brain-computer interfaces is critically dependent on robust artifact removal. This article provides a systematic analysis for researchers and drug development professionals, addressing the unique challenges of limited data in few-channel systems. We explore the foundational characteristics of motion, ocular, and myogenic artifacts in uncontrolled environments, detail cutting-edge methodological pipelines from adaptive filtering to deep learning, and offer optimization strategies for signal integrity. A critical validation framework compares algorithmic performance, empowering scientists to select and implement effective artifact management protocols that ensure data reliability for biomedical applications.

Understanding the Artifact Landscape in Few-Channel Mobile EEG

Few-channel, portable electroencephalography (EEG) systems represent a significant advancement for brain monitoring in real-world and clinical settings. However, their reduced electrode count presents unique and formidable challenges in managing signal artifacts. Unlike high-density laboratory systems, few-channel configurations possess an inherently limited capacity to separate true brain signals from non-neural noise, making them uniquely vulnerable to contamination. This technical support center guide details the reasons for this vulnerability and provides researchers with targeted troubleshooting and methodological guidance to enhance the reliability of their data.

The Core Vulnerability: A Comparative Analysis

The table below summarizes the key technical differences that make few-channel systems more susceptible to artifacts compared to conventional high-density systems.

Table 1: Key Characteristics of Few-Channel vs. Conventional High-Density EEG Systems

| Characteristic | Conventional High-Density EEG | Few-Channel Portable EEG | Impact on Artifact Vulnerability |

|---|---|---|---|

| Number of Channels | Often 64+ channels [1] | Typically 16 or fewer channels [1] | Greatly reduced spatial information for identifying and isolating artifact sources [1]. |

| Electrode Type | Wet/gel-based electrodes [1] | Often dry or semi-wet electrodes [1] | Higher and more unstable electrode-skin impedance, increasing sensitivity to motion and cable artifacts [1] [2]. |

| Recording Environment | Shielded lab, controlled settings [1] | Uncontrolled real-world environments [1] | Increased exposure to environmental noise and movement artifacts [1] [2]. |

| Spatial Resolution | High | Low | Limits effectiveness of source separation techniques like ICA [1]. |

| Primary Artifact Concerns | Ocular, muscle, cardiac [3] | Motion, cable noise, electrode pop, environmental interference [1] [2] | Artifacts are more frequent and harder to distinguish from neural signals. |

FAQ: Addressing Researcher Questions on Few-Channel EEG

Q1: Why are traditional artifact removal methods like ICA less effective on my portable EEG data?

Independent Component Analysis (ICA) is a powerful blind source separation technique that relies on having a sufficient number of sensor channels to isolate independent sources of signal, both neural and artifactual [1]. In a high-density system with 64 channels, ICA can reliably identify and remove components representing eye blinks or muscle noise. However, in a few-channel system (e.g., 8 or 16 channels), the number of available signals is insufficient to properly decompose the data. This forces the algorithm to mix artifacts with neural signals in the same components, making it impossible to remove the artifact without also discarding valuable brain data [1].

Q2: What are the most common and problematic artifacts for few-channel systems?

While all artifacts are concerning, some pose a greater threat to few-channel data:

- Motion and Cable Movement Artifacts: These are highly prevalent in mobile recordings. They can cause large, low-frequency drifts or sudden, high-amplitude spikes that obscure the underlying EEG signal [2]. Their non-stationary and unpredictable nature makes them difficult to filter out.

- Electrode Pop: A sudden change in impedance at a single electrode causes a large transient spike. In a high-density array, this only affects one of many channels. In a few-channel system, losing a single channel represents a significant loss of data (e.g., 12.5% for an 8-channel system) [2].

- Muscle Artifacts (EMG): EMG has a broad frequency spectrum that overlaps with key EEG rhythms like Beta and Gamma. Without the spatial information from many channels, it is extremely challenging to differentiate muscle noise from high-frequency neural activity [3] [1].

Q3: How can I improve my experimental protocol to minimize these vulnerabilities?

Proactive protocol design is critical:

- Secure Electrode Fit: Ensure the cap or headset is snug to minimize movement. For dry electrode systems, ensure proper skin contact.

- Cable Management: Secure cables to the participant's clothing to prevent tugging and movement.

- Participant Instruction: Provide clear, simple instructions to minimize movements like jaw clenching, swallowing, or excessive blinking during critical trial periods.

- Environment Scouting: Prior to recording, scan the environment for potential sources of electrical interference (e.g., monitors, unshielded power cables) and position the participant accordingly [4] [2].

Troubleshooting Guide: From Problem to Solution

Table 2: Common Few-Channel EEG Issues and Troubleshooting Steps

| Problem | Possible Cause | Immediate Action | Long-Term Solution |

|---|---|---|---|

| High-frequency noise across all channels | AC power line interference (50/60 Hz) [2]. | Check for and distance the system from unshielded electrical devices. Ensure proper grounding of the amplifier. | Use a power line notch filter in software (with caution, as it can distort neural signals). Record in a electrically shielded environment if possible. |

| Large, slow drifts in signal | Poor electrode contact; Sweat or perspiration [2]. | Check impedance on all channels and re-apply any electrodes with high impedance. | Use high-quality conductive gel and proper skin preparation (abrasion, cleaning) to ensure stable, low-impedance connections from the start [4]. |

| Sudden, large spikes on a single channel | Electrode "pop" from sudden impedance change [2]. | Note the timestamp and channel. If possible during a break, check and re-moisten/re-apply the specific electrode. | Ensure consistent electrode gel application and secure cap fit to prevent drying or movement. |

| Unusual, persistent noise on the reference channel | Faulty, disconnected, or poorly connected reference electrode [4]. | Verify the reference electrode is properly connected and has good contact with the scalp. Try an alternative reference placement if possible. | Systematically check the entire signal chain: electrode -> cap -> headbox -> amplifier -> software [4]. |

| Signal is lost on all channels | Loose headbox connection; Amplifier or software issue [4]. | Check all physical connections from the cap to the amplifier. Restart the acquisition software and amplifier unit [4]. | Implement a pre-recording checklist to verify all system components are functional and connected before participant setup. |

Advanced Methodologies for Artifact Management

For researchers requiring robust, post-processing solutions, advanced deep-learning techniques are showing promise. For example, the CLEnet algorithm integrates a dual-scale Convolutional Neural Network (CNN) with Long Short-Term Memory (LSTM) networks and an improved attention mechanism [5]. This architecture is designed to extract both the morphological and temporal features of EEG, enabling it to separate clean EEG from artifacts even in multi-channel data containing "unknown" artifact types. One study reported that CLEnet improved the Signal-to-Noise Ratio (SNR) by 2.45% and decreased the relative root mean square error in the temporal domain (RRMSEt) by 6.94% [5].

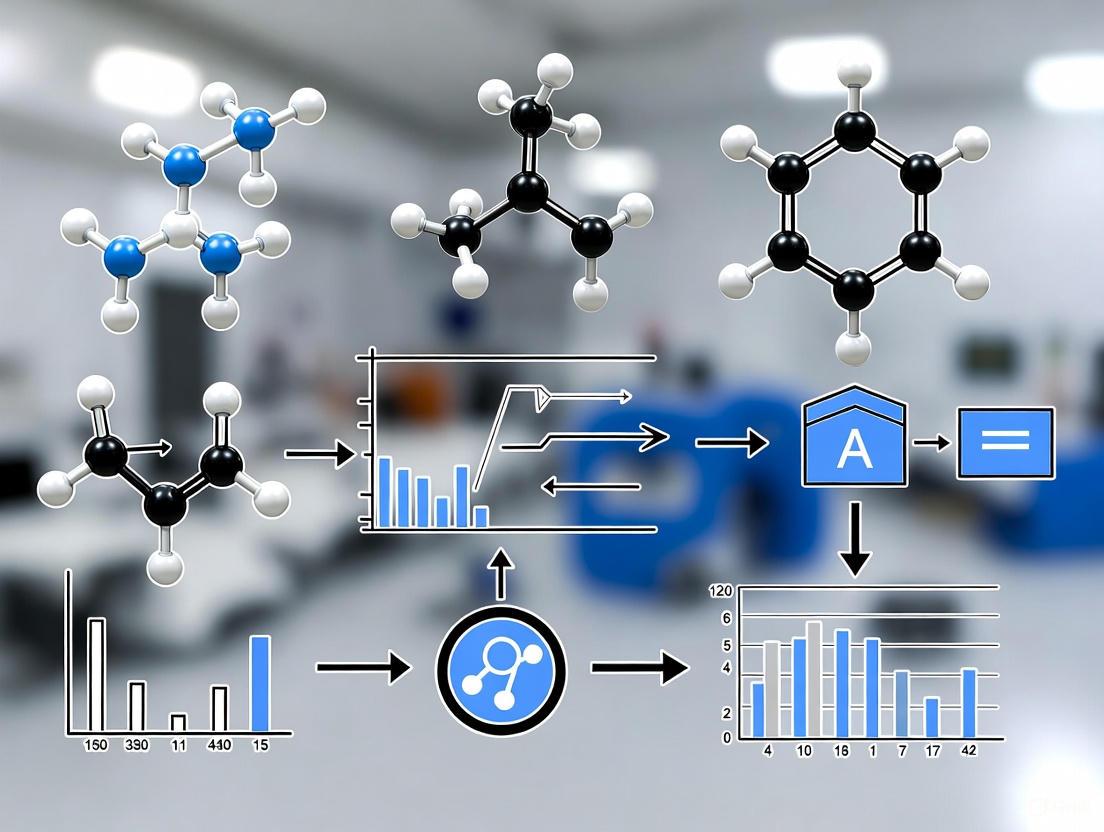

The workflow for implementing such a modern artifact removal pipeline is outlined below.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Materials for Few-Channel EEG Research

| Item | Function & Importance |

|---|---|

| Portable EEG Amplifier | The core hardware for signal acquisition. Key specifications for few-channel work include high input impedance (for dry electrodes), good common-mode rejection ratio (to reject environmental noise), and low intrinsic noise [6] [1]. |

| Dry or Semi-Wet Electrodes | Enable rapid setup and improve participant comfort for long-term, ambulatory recordings. Their use is a primary factor defining wearable EEG but requires careful management of impedance [1]. |

| Conductive Gel & Abrasion Kits | For wet electrode systems, proper skin preparation and low-impedance gel are critical for obtaining a stable signal and preventing electrode pops [4]. |

| Electrode Cap/Headset | The physical interface. A secure, well-fitting cap is essential to minimize motion artifacts. Material and design should be chosen for the target population and recording environment. |

| Auxiliary Sensors (IMU, EOG, EMG) | Inertial Measurement Units (IMUs) can track head movement, providing a reference signal for motion artifact correction. Dedicated EOG and EMG channels provide pristine reference signals for removing ocular and muscle artifacts, overcoming the limitations of few-channel source separation [1]. |

| Advanced Analysis Software | Software supporting modern techniques like deep learning (CLEnet [5]), wavelet transforms, or ICA [3] [1] is necessary for effective artifact management beyond simple filtering. |

For researchers working with few-channel portable EEG systems, achieving a high signal-to-noise ratio is a fundamental challenge. The data is invariably contaminated by artifacts—electrical signals generated from non-cerebral sources. These artifacts can obscure genuine neural activity and lead to misinterpretation of data. This technical support center provides a structured taxonomy of the primary artifact types—Motion, Ocular, Myogenic, and Technical—and offers evidence-based, practical troubleshooting guides framed within the context of contemporary artifact removal research for portable EEG systems.

Artifact Taxonomy & Identification Guide

The first step in effective artifact removal is accurate identification. The table below summarizes the core characteristics of the four main artifact categories.

Table 1: Taxonomy and Key Identifiers of Common EEG Artifacts

| Artifact Category | Primary Sources | Key Characteristics in EEG | Most Affected Frequency Bands |

|---|---|---|---|

| Motion Artifacts | Head movement, electrode displacement, cable sway [7] [8] [9] | Slow drifts, sharp amplitude bursts time-locked to gait cycle (e.g., heel strike), periodic oscillations [8] [9] | Delta, Theta [8] |

| Ocular Artifacts | Eyeblinks, eye movements (saccades) [10] [11] | High-amplitude, low-frequency signals; characteristic frontally-dominant topography [12] [10] | Delta (0.5-2 Hz) [12] |

| Myogenic Artifacts | Muscle activity in face, jaw, neck, and head [7] [10] | High-frequency, non-stationary, erratic waveform patterns [7] [10] | Beta, Gamma ( > 30 Hz) [7] |

| Technical Artifacts | Power line interference, faulty electrode contact, equipment limitations [8] | 50/60 Hz steady oscillation; "electrode pops" appear as sudden, large deflections [7] [8] | Specific to noise source (e.g., 60 Hz) |

Frequently Asked Questions (FAQs) for Researchers

Q1: Our gait study shows strong rhythmic noise during running. What preprocessing steps can we takebeforeICA to improve source separation?

A: Motion artifacts during running can overwhelm ICA. Two effective preprocessing methods are:

- iCanClean: This method uses canonical correlation analysis (CCA) to identify and subtract noise subspaces from the EEG signal. It can use dedicated noise sensors or create "pseudo-reference" signals from the EEG itself (e.g., by notch-filtering below 3 Hz to isolate motion noise). Studies show it significantly reduces power at the gait frequency and its harmonics, leading to better ICA decomposition with more dipolar brain components [13] [9].

- Artifact Subspace Reconstruction (ASR): ASR uses a sliding-window PCA to identify and remove high-variance signal components that deviate from a clean calibration period. An aggressive but effective

kparameter of 10 is recommended for locomotion studies to avoid over-cleaning while still mitigating motion artifacts [9].

Q2: For our portable single-channel EEG system, how can we effectively remove eyeblink artifacts without multiple channels for ICA?

A: Multi-channel methods like ICA are not suitable for single-channel data. Instead, consider data-driven decomposition approaches:

- Fixed Frequency Empirical Wavelet Transform (FF-EWT): This recent method adaptively decomposes the single-channel signal into components (IMFs). Components contaminated by EOG artifacts can be automatically identified using metrics like kurtosis, dispersion entropy, and power spectral density, and then removed with a specialized filter (e.g., GMETV). This method is designed to preserve essential low-frequency brain activity while targeting the 0.5-12 Hz range of eyeblink artifacts [12].

- Singular Spectrum Analysis (SSA): SSA is a subspace-based technique that can separate low-frequency oscillating noise, like EOG, from monovariate signals. Its performance can be improved with automated thresholding based on diffusion entropy [12].

Q3: We detect high-frequency noise in our forehead channels. Is this muscle or motion artifact?

A: This is most likely myogenic (muscle) artifact. Muscle contractions from the forehead, jaw, or scalp produce high-frequency, non-stationary, and erratic signals that are most prominent in the Beta and Gamma bands [7] [10]. In contrast, motion artifacts from head movement typically manifest as lower-frequency drifts or bursts time-locked to movement [8]. The Optimized Fingerprint Method uses a machine-learning model trained on features like spectral properties to automatically classify and remove such myogenic components from ICA decompositions [10].

Experimental Protocols for Artifact Removal

Protocol 1: ICA-based Removal of Ocular Artifacts in Free-Viewing Paradigms

This protocol is optimized for experiments where participants freely view stimuli, generating many eye movements [11].

Workflow Overview:

Detailed Methodology:

- Data Acquisition: Simultaneously record EEG and eye-tracking data.

- Filtering: Apply optimal high-pass (e.g., 2 Hz) and low-pass (e.g., 40-60 Hz) filters to the training data for ICA. This filtering step is critical for improving the quality of the ICA decomposition [11].

- Training Data Selection: Massively overweight the proportion of training data that contains myogenic saccadic spike potentials (SPs). This ensures the ICA algorithm is finely tuned to isolate these artifacts [11].

- Component Rejection: Use the synchronized eye-tracking data to objectively set thresholds for rejecting artifact-related independent components, minimizing both under-correction and over-correction [11].

Protocol 2: Deep Learning for Subject-Specific Motion Artifact Removal

This protocol uses a convolutional neural network (CNN) for end-to-end artifact removal, ideal for mobile EEG (mo-EEG) where artifact patterns are highly variable [8].

Workflow Overview:

Detailed Methodology:

- Model Architecture: Implement Motion-Net, a 1D CNN based on a U-Net architecture, which is effective for signal reconstruction tasks [8].

- Feature Engineering: Extract Visibility Graph (VG) features from the EEG signals. These features convert the time-series into a graph network, capturing non-linear structural properties that enhance the model's learning, especially on smaller datasets [8].

- Subject-Specific Training: Train and test the model on a per-subject basis. This approach accounts for individual variability in both brain signals and motion artifact patterns, leading to more robust performance than generalized models. The model is trained using real EEG recordings with ground-truth references (e.g., from static conditions) [8].

- Output: The model outputs a cleaned EEG signal. This approach has demonstrated an average motion artifact reduction of 86% and a significant improvement in Signal-to-Noise Ratio (SNR) [8].

The Scientist's Toolkit: Key Algorithms & Methods

Table 2: Essential "Research Reagent" Solutions for Artifact Removal

| Tool/Method | Primary Function | Key Advantage for Few-Channel EEG |

|---|---|---|

| iCanClean [13] [9] | Preprocessing of motion artifacts | Effective with pseudo-reference noise signals derived from EEG itself; improves subsequent ICA. |

| Artifact Subspace Reconstruction (ASR) [9] | Preprocessing of high-amplitude artifacts | Cleans data in real-time or offline before ICA; works on continuous data. |

| Fixed Frequency EWT (FF-EWT) [12] | Single-channel ocular artifact removal | Data-driven decomposition without needing reference channels. |

| Optimized Fingerprint Method [10] | Automatic classification of artifact components in ICA | Uses a tailored set of spatial, temporal, spectral, and statistical features for each artifact type. |

| Motion-Net (CNN) [8] | Subject-specific motion artifact removal | Does not rely on ICA; powerful for modeling complex, non-linear artifact patterns. |

The Impact of Dry Electrodes and Reduced Scalp Coverage on Signal Quality

The advancement of electroencephalography (EEG) towards portable, user-friendly, and long-term monitoring systems has driven the adoption of dry electrodes and reduced-channel arrays. These technologies are pivotal for applications in brain-computer interfaces, neuro-monitoring in drug development, and real-world cognitive studies. However, their impact on signal quality presents a significant challenge for researchers. Dry electrodes, while eliminating the preparation time and discomfort of conductive gels, are often more susceptible to motion artifacts and higher impedance. Similarly, reducing the number of electrodes compromises spatial resolution and can lower sensitivity to certain neural events. This technical support center provides evidence-based troubleshooting and FAQs to help researchers mitigate these challenges within the context of artifact removal for few-channel portable EEG systems.

Performance Data & Experimental Protocols

Quantitative Impact on Signal Acquisition

The following tables summarize key quantitative findings on how dry electrodes and reduced scalp coverage impact signal quality and operational performance.

Table 1: Impact of Reduced Electrode Arrays on Seizure Detection Sensitivity

| Study Reference | Number of EEG Channels | Seizure Type | Sensitivity | Specificity |

|---|---|---|---|---|

| [14] | 7 (Reduced Array) | Any Seizure | 70% | 96% |

| [14] | 7 (Reduced Array) | Focal Seizures | 80% | Not Specified |

| [14] | 7 (Reduced Array) | Generalized Seizures | 55% | Not Specified |

| [14] | 7 (Reduced Array) | Encephalopathic Patterns | 62% | 86% |

| [15] | 12 (Reduced Dry Array) | Neonatal Seizures | High (Correlation >0.8 with wet systems) | Not Specified |

Table 2: Dry vs. Wet Electrodes and Signal Quality Metrics

| Electrode Type | Key Advantages | Key Challenges & Signal Quality Impact | Best for Experimental Scenarios |

|---|---|---|---|

| Wet (Passive) | Excellent signal quality, low noise, stable impedance [16] | Long setup time, patient discomfort, gel can dry out [15] | Clinical diagnostics, high-fidelity lab studies |

| Dry (Passive) | Rapid setup, no gel, high patient comfort [16] | Higher impedance, more susceptible to motion artifacts [17] [15] | Short-term BCI, rapid screening, field studies |

| Active Dry | On-board amplification, superior noise immunity, good signal strength [15] | Higher cost, more complex design, requires power [15] | Long-term monitoring, movement-heavy paradigms |

Key Experimental Protocols from Literature

Protocol 1: Validating a Reduced Electrode Array for Inpatient Seizure Detection [14]

- Objective: To evaluate the sensitivity and specificity of a 7-electrode array for detecting seizures in hospitalized adults.

- Methodology:

- Data Collection: Retrospectively selected 100 EEG records (50 ictal, 50 non-ictal) from inpatients.

- Lead Reduction: Full 10-20 system recordings were digitally processed to simulate a 7-lead array (F3, F4, T7, T8, Cz, O1, O2).

- Blinded Review: Two epileptologists independently reviewed the reduced-array traces, documenting seizures and background disturbances.

- Analysis: Compared reviewers' findings to the original formal EEG report (the gold standard) to calculate sensitivity and specificity.

Protocol 2: Assessing a Novel Dry-Electrode Headset for Neonatal Seizure Monitoring [15]

- Objective: To develop and test a low-cost, adjustable headset with active dry-contact electrodes for continuous neonatal EEG.

- Methodology:

- Hardware Design: Created a 12-channel headset with 3D-printed, adjustable components and Ag/AgCl multi-spike electrodes.

- Signal Acquisition: Incorporated active electrodes with on-board buffering to strengthen signals and mitigate noise.

- Clinical Validation: Conducted simultaneous recordings on a pediatric patient using the custom dry-electrode device and a commercial wet-electrode system.

- Analysis: Computed cross-correlation and Signal-to-Noise Ratio (SNR) to compare signal quality between the two systems.

Protocol 3: Combining Spatial and Temporal Denoising for Dry EEG [17]

- Objective: To investigate if combining Independent Component Analysis (ICA)-based methods (Fingerprint+ARCI) with spatial filtering (SPHARA) improves dry EEG signal quality.

- Methodology:

- Data Recording: Recorded 64-channel dry EEG from 11 healthy volunteers during a motor execution paradigm.

- Processing Pipeline: Applied multiple denoising approaches: ICA-based methods alone, spatial filtering alone, and a combination of both.

- Quality Metrics: Quantified signal quality using Standard Deviation (SD), Signal-to-Noise Ratio (SNR), and Root Mean Square Deviation (RMSD).

- Statistical Analysis: Used a generalized linear mixed effects (GLME) model to identify significant changes in signal quality parameters.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My dry-electrode EEG data has a high noise floor. What are the first steps I should take?

- A: First, verify electrode-scalp contact. Ensure the headset is snug and all electrodes are making firm contact, particularly through hair. Second, check for environmental noise sources and increase the distance from power cables or monitors. Third, if using passive dry electrodes, consider switching to active dry electrodes, which incorporate a high-input-impedance amplifier directly at the electrode site to buffer the signal and drastically reduce noise [15].

Q2: I am using a reduced channel setup (e.g., 3 channels). How can I compensate for the lost spatial information?

- A: Leverage advanced feature extraction and deep learning methods that enrich the temporal and spectral information from the few available channels. One effective method is to create a Channel-Dependent Multilayer EEG Time-Frequency Representation (CDML-EEG-TFR). This involves converting each channel's signal into a 2D time-frequency image using Continuous Wavelet Transform and then stacking these images to create a rich, multi-dimensional input that allows deep learning models to learn integrated spatio-spectro-temporal features [18].

Q3: My analysis is confounded by physiological artifacts (e.g., blinks, muscle noise). What is a robust denoising pipeline for dry EEG?

- A: A combination of temporal and spatial techniques has been shown to be highly effective. A recommended pipeline is:

- ICA-based Correction: Use methods like Fingerprint and ARCI to identify and remove components corresponding to ocular and muscle artifacts [17].

- Spatial Filtering: Apply a spatial method like Spatial Harmonic Analysis (SPHARA) as a subsequent step. This combination has been proven to yield superior noise reduction and lower signal deviation in dry EEG data compared to either method alone [17].

Q4: Is a reduced electrode array sufficient for detecting pathological patterns like seizures?

- A: Caution is advised. While specificity can remain high (>95%), sensitivity can drop significantly. One study found a 7-electrode array had only 70% sensitivity for detecting any seizure, with performance varying by seizure type (80% for focal, 55% for generalized) [14]. The clinical or research objective must guide this decision: reduced arrays may be useful for screening, but a full array is recommended for definitive diagnosis or comprehensive analysis.

Troubleshooting Common Recording Failures

Problem: Unstable or Grayscale Impedance Readings on Multiple Channels.

- Possible Cause & Solution: This often indicates a ground or reference electrode issue.

- Action 1: Re-apply the ground (GND) and reference (REF) electrodes. Ensure thorough skin preparation (cleaning and mild abrasion).

- Action 2: Try an alternative GND placement, such as the mastoid, forearm, or sternum.

- Action 3: Systematically test components (headbox, amplifier) to isolate the fault. A problem that persists across different system components likely originates with the participant or electrode connections [4].

Problem: Excessive High-Frequency Noise in the Signal.

- Possible Cause & Solution: This is frequently caused by high electrode-skin impedance, often with dry electrodes.

- Action 1: Readjust the headset and check for poor electrode-scalp contact.

- Action 2: Ensure the subject is relaxed, as muscle tension (EMG) is a common source of high-frequency noise. Its frequency range (0 to >200 Hz) can broadly contaminate EEG signals [3].

- Action 3: For dry systems, confirm that the design includes active shielding and driven-right-leg (DRL) circuits to suppress common-mode noise [15].

The Scientist's Toolkit

Table 3: Essential Research Reagents & Materials for Few-Channel Dry EEG Research

| Item Name | Function & Explanation |

|---|---|

| Active Dry-Contact Electrodes | Electrodes with integrated high-input-impedance amplifiers. They buffer the weak EEG signal at the source, combating the high impedance and motion artifacts typical of dry systems [15]. |

| Adjustable 3D-Printed Headset | A customizable headset platform that ensures stable and consistent electrode placement across different head sizes and shapes, which is critical for reproducible results with reduced arrays [15]. |

| Blind Source Separation (BSS) Software | Software packages (e.g., implementing ICA) are crucial for decomposing multi-channel EEG data to isolate and remove artifact-laden components from brain signals [3]. |

| Continuous Wavelet Transform (CWT) Toolbox | A computational tool for creating time-frequency representations of single-channel EEG data. This is a key step in creating enriched feature sets (like CDML-EEG-TFR) for few-channel analysis [18]. |

| Spatial Filtering Algorithms (e.g., SPHARA) | Algorithms that leverage the spatial geometry of the electrode array to suppress noise and enhance the signal-to-noise ratio, complementing temporal filtering methods [17]. |

Workflow & Signaling Diagrams

Dry EEG Signal Acquisition and Processing

Reduced-Channel EEG Analysis Pathway

FAQs & Troubleshooting Guides

This section addresses frequently encountered challenges and questions in mobile EEG research, providing targeted solutions for artifact management in real-world studies.

Frequently Asked Questions

Q: What are the most effective preprocessing methods for removing motion artifacts during high-movement activities like running?

- A: Research comparing motion artifact removal approaches during overground running indicates that iCanClean and Artifact Subspace Reconstruction (ASR) are highly effective. iCanClean, which uses canonical correlation analysis (CCA) with pseudo-reference noise signals derived from the EEG data itself, has been shown to be somewhat more effective than ASR in recovering brain components and restoring expected event-related potential (ERP) patterns, such as the P300 effect, during running [9].

Q: How can I identify and remove eye-blink (EOG) artifacts from a single-channel EEG recording?

- A: For single-channel systems, an automated method using Fixed Frequency Empirical Wavelet Transform (FF-EWT) combined with a GMETV filter has been demonstrated as effective [12]. The FF-EWT decomposes the signal, and components contaminated with EOG artifacts are identified using metrics like kurtosis, dispersion entropy, and power spectral density. These artifact-related components are then removed using the GMETV filter, which helps preserve essential low-frequency EEG information [12].

Q: Our research involves participants walking in real-world environments. How can we maintain data quality without being on-site to fix issues?

- A: Establishing robust remote troubleshooting protocols is key.

- Familiarize Staff: Ensure all researchers are deeply familiar with the equipment to give clear, specific instructions to on-site assistants or participants via phone [19].

- Leverage Software Tools: Use software features to hide severely affected channels or adjust sensitivity and filters on-the-fly to mitigate the impact of poor electrode contacts without permanently altering the raw data [19].

- Define Clear Protocols: Create and follow pre-established criteria for different scenarios (e.g., when to contact on-call staff for electrode re-application) to ensure consistent and timely responses to data quality issues [19].

- A: Establishing robust remote troubleshooting protocols is key.

Q: What steps should we take if we experience significant technical interference or connectivity issues during a remote monitoring session?

- A: Technical issues require a systematic approach [19]:

- Document the Issue: Note the time and nature of the problem.

- Follow Known Solutions: If it is a known issue with a documented workaround, implement it.

- Escalate to IT: If the issue is unresolved, contact the relevant IT support (e.g., hospital IT for facility-side issues).

- Utilize Leadership Chain: Exhaust all resources and escalate within your team's leadership to liaise with facility leadership and IT for a permanent fix.

- A: Technical issues require a systematic approach [19]:

Q: How should I prepare a participant for a long-term, in-home EEG recording to minimize artifacts?

- A: Proper participant preparation is crucial for data quality [20]:

- Hygiene: Instruct the participant to shampoo their hair within 24 hours of the appointment without using any products (spray, oil, gel) afterward.

- Clothing: Advise them to wear a button-down or zippered shirt to avoid pulling off electrodes when changing.

- Activity Restrictions: Clearly state that they must avoid extreme physical activity (running, jumping, swimming), gum chewing, and consuming hard candy for the duration of the recording.

- Environment: Prepare a clear, flat surface in a well-ventilated area for the EEG equipment setup.

- A: Proper participant preparation is crucial for data quality [20]:

Methodologies & Performance Data

This section provides detailed protocols and quantitative performance metrics for key artifact removal techniques relevant to mobile EEG research.

Table 1: Motion Artifact Removal Performance During Running

The following table summarizes the effectiveness of two prominent approaches for cleaning motion artifacts from EEG data recorded during overground running, based on a comparative study [9].

| Approach | Key Mechanism | Key Parameters | Performance on Running Data |

|---|---|---|---|

| Artifact Subspace Reconstruction (ASR) | Uses a sliding-window PCA to identify and remove high-variance components based on a clean calibration period [9]. | k threshold: Standard deviation threshold for artifact identification. A lower k is more aggressive. k=20-30 is often recommended, but k=10 may be needed for running to avoid overcleaning [9]. | Reduced power at gait frequency; produced ERP components similar to standing tasks [9]. |

| iCanClean | Employs Canonical Correlation Analysis (CCA) to identify and subtract noise subspaces highly correlated with pseudo-reference noise signals [9]. | R² threshold: Correlation criterion for noise subtraction. R²=0.65 with a 4s sliding window is effective for running data [9]. | Most effective at reducing gait-frequency power; recovered expected P300 congruency effect; produced the most dipolar brain ICs [9]. |

Table 2: Single-Channel Artifact Removal Techniques

This table compares methods designed for artifact removal when only a single EEG channel is available.

| Artifact Type | Technique | Protocol Summary | Reported Outcome |

|---|---|---|---|

| Eye-blink (EOG) | Fixed Frequency EWT + GMETV Filter [12] | 1. Decompose signal via FF-EWT into 6 IMFs.2. Identify artifact components using kurtosis, dispersion entropy, and PSD thresholds.3. Apply GMETV filter to remove artifact components. | Lower RRMSE, higher CC on synthetic data; improved SAR and MAE on real EEG [12]. |

| General (Ocular, Muscular, Movement) | Adaptive Wavelet-Based Renormalization [21] | A data-driven renormalization of wavelet components to adaptively attenuate artifacts of different natures. | Showed superior performance across various artifacts and signal-to-noise levels compared to alternative techniques [21]. |

Experimental Protocol: Validating Mobile EEG with Augmented Reality

This protocol outlines a methodology for studying cognition in real-world mobile settings while maintaining experimental control, using a combination of mobile EEG and Augmented Reality (AR) [22].

- Objective: To validate the use of mobile EEG in free-moving conditions combined with AR by replicating the well-established face inversion effect (greater low-frequency EEG activity for inverted vs. upright faces) [22].

- Equipment:

- EEG: 64-channel mobile EEG system (e.g., BrainVision LiveAmp) with active electrodes.

- AR: Head-mounted AR display (e.g., Microsoft Hololens 2).

- Video: Head-mounted camera for scene recording and event tagging.

- Procedure:

- Task 1 (Lab Control): Participants view upright and inverted faces on a computer screen while seated to establish a baseline neural response.

- Task 2 (Mobile with Photos): Participants walk through a corridor and view physical photographs of upright and inverted faces attached to the walls, pressing a button to tag viewing events.

- Task 3 (Mobile with AR): Participants walk through the same corridor wearing the AR headset, viewing virtual 3D faces anchored to the environment, and tag events with a button press.

- Analysis:

- Epoch-based: EEG data is filtered (e.g., 1-20 Hz for mobile tasks), and epochs are extracted around face-viewing events. Power in the theta/low-alpha band (e.g., 4-10 Hz) is compared between upright and inverted conditions.

- Continuous GLM-based: A General Linear Model (GLM) is used to relate the continuous, dynamic EEG signal to the continuous stream of face perception states (upright vs. inverted) as the participant moves naturally.

- Validation: The study successfully identified the face inversion effect across all three tasks, demonstrating that cognitively relevant neural signals can be reliably measured using mobile EEG and AR paradigms [22].

The Scientist's Toolkit

This table lists essential computational tools and materials used in modern mobile EEG research for artifact removal and signal processing.

| Tool/Reagent | Function in Research |

|---|---|

| iCanClean | A signal processing toolbox designed to remove motion artifacts from mobile EEG by leveraging reference noise signals (from dedicated sensors or created pseudo-referentially) and Canonical Correlation Analysis (CCA) [9]. |

| Artifact Subspace Reconstruction (ASR) | An algorithm that uses a sliding-window principal components analysis (PCA) to identify and remove high-amplitude, non-stereotypical artifacts from continuous EEG data in real-time or during preprocessing [9]. |

| Fixed Frequency EWT (FF-EWT) | A signal decomposition technique that adaptively creates wavelet filters tuned to specific fixed frequencies, ideal for separating artifact-dominated components (like EOG) from neural signals in single-channel EEG [12]. |

| Independent Component Analysis (ICA) | A blind source separation method that linearly decomposes multi-channel EEG into maximally independent components, which can then be manually or automatically classified and removed if they represent artifacts [9]. |

| Mobile EEG System | A wearable, amplifier-based EEG system that allows for high-fidelity neural recordings while participants are freely moving. Often uses active electrodes and wireless data recording [22]. |

| Augmented Reality (AR) Headset | A head-mounted display that overlays virtual objects onto the real-world environment, enabling experimental control over visual stimuli in ecologically valid, real-world settings [22]. |

Workflow & System Diagrams

Signal Processing for Motion Artifact Removal

Mobile EEG-AR Experimental Workflow

Cutting-Edge Pipelines for Detection and Removal

Frequently Asked Questions (FAQs)

FAQ 1: Why should I choose VMD over the more traditional EMD for processing my single-channel EEG data?

VMD (Variational Mode Decomposition) possesses a robust mathematical foundation based on the variational principle, which transforms the decomposition problem into an optimization problem [23]. In contrast, EMD (Empirical Mode Decomposition) is often criticized for lacking a strong theoretical foundation and being more of a mathematical trick [23]. From a practical standpoint, VMD effectively overcomes the problem of modal mixing (aliasing) that frequently plagues EMD and can lead to data superposition in the decomposed components [24] [25]. Furthermore, VMD exhibits excellent noise robustness in practical applications, making it particularly suitable for the often noisy signals from portable EEG systems [25].

FAQ 2: How do I handle the critical parameter selection for VMD, specifically the number of modes (K)?

Selecting the correct number of intrinsic mode functions (IMFs), denoted as K, is indeed a crucial and challenging step for VMD [23]. An incorrect 'K' can lead to serious decomposition errors. While this often requires analysis of the specific signal, one practical approach is to start with a parameter optimization method. Research has successfully combined VMD with fuzzy entropy to identify artifact components after decomposition [25]. For a more automated solution, you can consider newer algorithms like QVMD (Queued Variational Mode Decomposition), which can determine the modal number adaptively during the separation process, eliminating the need for this prior information [23].

FAQ 3: My decomposed signal components show significant distortion at the endpoints. What is causing this and how can it be fixed?

This is a well-known challenge known as the "end effect," and it is not unique to one method—it can occur in EMD, EWT, and VMD [23]. The distortion arises because the decomposition algorithms have limited information at the signal boundaries. A common technique to mitigate this is to perform an end elongation of the composite signal before decomposition. For instance, the QVMD method uses a Principal Component Restoring (PCR) approach, which extracts trend lines and principal components from the end regions to effectively reduce the end effect to a much lower level [23].

FAQ 4: For my single-channel EEG artifact removal, which Blind Source Separation (BSS) method works best after signal decomposition?

Research indicates that the SOBI (Second Order Blind Identification) algorithm, an ICA implementation based on second-order statistics, is particularly effective for processing certain artifacts like EMG (electromyography) [24] [25]. While ICA methods based on high-order statistics are widely used, they are not as effective as SOBI for EMG artifacts [24] [25]. Therefore, for a method targeting multiple artifacts including EOG and EMG, a combination of VMD with SOBI has been shown to have a better removal effect compared to other combinations like EEMD-SOBI [24] [25].

FAQ 5: Are there readily available Python libraries to get started with these decomposition methods for my research?

Yes, several Python packages can help you implement these methods quickly. The PySDKit library provides a Scikit-learn-like interface for various signal decomposition algorithms, including EMD and VMD [26]. Specifically for EMD, the PyEMD package is available, which includes EEMD and CEEMDAN implementations [27]. For VMD and EWT, you can use the vmdpy and ewtpy packages, which have been used in comparative studies for EEG seizure detection [28].

Troubleshooting Guides

Issue 1: Poor Artifact Removal Performance in Single-Channel EEG

This guide addresses the problem of suboptimal artifact removal when using decomposition methods on a single EEG channel, which is common in portable systems.

- Symptoms: Useful brain signals are accidentally removed along with artifacts; artifacts persist in the reconstructed signal; reconstructed EEG signal appears overly smoothed or contains residual noise.

- Possible Causes & Solutions:

| Cause | Solution |

|---|---|

| Incorrect number of modes (K) in VMD | Optimize the K parameter. Start by over-specifying K and use a metric like fuzzy entropy [25] or correlation to identify and discard artifact-only components. |

| Ineffective BSS algorithm for target artifact | Switch the BSS method. For EMG artifacts, use SOBI instead of high-order statistics-based ICA [24] [25]. |

| Modal Mixing in EMD | Use an ensemble method like EEMD or CEEMDAN. These add controlled noise to create multiple derived signals, reducing mode mixing [23] [27]. |

| General performance plateau | Try a hybrid approach. Decompose with VMD, then apply SOBI to the set of IMFs to separate sources, before identifying and removing artifact components [24] [25]. |

Recommended Experimental Workflow: The diagram below illustrates a robust experimental protocol for single-channel EEG artifact removal, synthesizing recommendations from multiple studies.

Issue 2: Excessive Computational Time During Decomposition

This guide helps resolve impractically long processing times, which hinders experimental iteration and potential real-time application.

- Symptoms: Decomposition of a short signal segment takes several minutes or hours; computer becomes unresponsive during processing; unable to process large datasets in a feasible time.

- Possible Causes & Solutions:

| Cause | Solution |

|---|---|

| Using EEMD/CEEMDAN with many trials | Reduce the trials (ensemble number) parameter. Balance between performance and speed; even a lower number of trials can provide benefits [27]. |

| Sequential processing of multiple signals | Enable parallel processing. For EEMD, set the parallel flag to True and define the number of processes to utilize multiple CPU cores [27]. |

| High maximum mode number in EMD | Limit the max_imf parameter to stop decomposition after a set number of IMFs are extracted, preventing unnecessary iterations [27]. |

| VMD with high iteration count | Adjust VMD's convergence parameters (AbsoluteTolerance, RelativeTolerance) to allow for earlier stopping [29]. |

Performance Comparison of Decomposition Methods: The table below summarizes key characteristics, including relative speed, to help you choose the right method.

| Method | Key Principle | Strengths | Weaknesses | Relative Speed |

|---|---|---|---|---|

| EMD | Iterative sifting to extract IMFs [23] | Fully data-driven; intuitive | Modal mixing; end effect; no theoretical basis [23] | Medium [28] |

| EEMD | EMD on signal + multiple noise realizations [27] | Reduces mode mixing | High computational cost; residual noise [23] | Slow [28] |

| VMD | Variational optimization for mode extraction [24] | Robust theoretical basis; noise robustness [24] | Requires pre-setting mode number K [23] | Fast [28] |

| EWT | Adaptive wavelet filter bank [23] | Solid theoretical foundation | Empirical spectrum segmentation [23] | Very Fast [28] |

Issue 3: Effectively Isolating Specific Artifact Types (EOG vs. EMG)

This guide addresses the challenge of selectively removing different physiological artifacts, which have distinct characteristics.

- Symptoms: Muscle artifacts (EMG) remain after removing eye-blink artifacts (EOG); removing EMG artifacts also degrades high-frequency brain signals (e.g., gamma waves).

- Possible Causes & Solutions:

| Cause | Solution |

|---|---|

| Using the same BSS method for all artifacts | Employ a specialized BSS. SOBI (SOS-based) is particularly effective for the characteristic profiles of EMG artifacts [24] [25]. |

| Incorrect identification of artifact components | Use a quantitative identification metric. Calculate the fuzzy entropy of each component; artifact components often have significantly different entropy values compared to neural signals [25]. |

| Overlapping frequency content | Leverage joint decomposition-separation. Rely on the source separation step (SOBI/ICA) after decomposition to statistically disentangle sources even with overlapping frequencies [24]. |

Logical Decision Tree for Artifact Isolation: The following diagram provides a step-by-step strategy for tackling mixed artifacts.

The Scientist's Toolkit: Research Reagent Solutions

Essential Computational Tools and Datasets

| Tool Name | Type / Function | Role in the Experimental Pipeline |

|---|---|---|

| PySDKit [26] | Python Library | Provides a unified Scikit-learn-like API for EMD, VMD, and other decomposition methods, streamlining the analysis workflow. |

| vmdpy & ewtpy [28] | Python Packages | Dedicated, validated implementations of VMD and EWT, ensuring reliable and reproducible decomposition results. |

| PyEMD [27] | Python Library | A comprehensive suite for Empirical Mode Decomposition and its variants (EEMD, CEEMDAN). |

MATLAB vmd [29] |

MATLAB Function | The official implementation of VMD in MATLAB, offering extensive parameters for fine-tuning the decomposition. |

| Public EEG Datasets (e.g., Bonn, NSC-ND) [28] | Benchmark Data | Essential for validating new artifact removal algorithms against established benchmarks and comparing performance. |

Key Performance Metrics for Method Validation When comparing the efficacy of different decomposition pipelines for artifact removal, quantify performance using these standard metrics, derived from semi-simulation experiments [25]:

| Metric | Definition | Ideal Outcome |

|---|---|---|

| Signal-to-Artifact Ratio (SAR) | Ratio of power in neural signal to power in artifact component. | Maximize |

| Root Mean Square Error (RMSE) | Difference between cleaned signal and ground-truth clean signal. | Minimize |

| Correlation Coefficient | Linear correlation between cleaned signal and ground-truth clean signal. | Maximize (Close to 1) |

| Spectral Distortion | Measure of unwanted changes in the frequency spectrum of the clean signal. | Minimize |

Frequently Asked Questions (FAQs)

Q1: What is the main advantage of using a subject-specific model like Motion-Net over a generalized model for motion artifact removal? Subject-specific models are trained and tested on data from individual users separately. This approach accounts for the high variability in both EEG signals and motion artifact patterns across different individuals, leading to significantly better performance. The Motion-Net framework has demonstrated an average motion artifact reduction of 86% ±4.13 and a signal-to-noise ratio (SNR) improvement of 20 ±4.47 dB, outperforming generalized models which struggle with inter-subject variability [30].

Q2: My dataset is relatively small. Can I still effectively train a deep learning model for artifact removal? Yes, incorporating specific features can enhance model performance on smaller datasets. Motion-Net successfully uses Visibility Graph (VG) features, which convert time-series EEG data into graph structures, providing additional structural information that helps the Convolutional Neural Network (CNN) learn more effectively even with limited data [30]. Other studies also use data augmentation techniques, such as adding noise or sliding window sampling, to artificially increase the size of the training set [31].

Q3: For a portable EEG system with only a few channels, which deep learning architecture is most suitable? Architectures designed for 1D signal processing, such as 1D CNNs, are particularly well-suited for few-channel systems. Motion-Net employs a 1D U-Net architecture, which is effective for signal reconstruction tasks [30]. Similarly, other research uses a 1D-ResCNN (Residual CNN) or combines dual-scale CNNs with LSTM networks (e.g., CLEnet) to capture both morphological and temporal features from multi-channel data, even with a limited number of electrodes [32] [33].

Q4: How do I handle different types of artifacts (e.g., eye blinks, muscle activity) with a single model? While some models are tailored for specific artifacts, newer architectures aim for generalization. For instance, the CLEnet model, which integrates CNN and LSTM layers, has shown proficiency in removing various artifacts, including EMG, EOG, and even "unknown" artifacts in multi-channel EEG data, by leveraging an improved attention mechanism to extract robust features [33]. However, achieving high performance across all artifact types with one model remains an active research challenge.

Troubleshooting Guides

Issue 1: Poor Artifact Removal Performance on New Subjects

Problem: Your model, which performed well during training, fails to generalize to data from new subjects.

Solutions:

- Adopt a Subject-Specific Approach: Retrain the model separately for each subject using their own data. This is the core methodology of Motion-Net and avoids the problem of inter-subject variability [30].

- Incorplement Advanced Features: Augment your raw EEG input with engineered features like the Visibility Graph (VG), which can improve the model's learning stability and accuracy, particularly with smaller, subject-specific datasets [30].

- Use Domain Adaptation: Explore self-supervised learning techniques that can help the model adapt to new subjects or sessions with minimal labeled data. Methods like contrastive learning and masked prediction tasks can learn robust features that are less dependent on the individual [34].

Issue 2: Low Signal-to-Noise Ratio in Portable EEG Recordings

Problem: The input EEG data from your portable device has a very low SNR, making it difficult for the model to distinguish artifacts from neural signals.

Solutions:

- Pre-process with Filtering: Apply band-pass filters to remove frequency components outside the range of interest (e.g., 1-100 Hz for EEG). However, be cautious as motion artifacts often overlap with neural signal frequencies [30].

- Leverage Multi-Modal Data: If available, use data from integrated accelerometers or gyroscopes to independently detect motion events. This information can be synchronized with EEG data to improve artifact identification [30].

- Choose a Robust Model Architecture: Implement models specifically designed for noisy signals. For example, the AnEEG model uses a Generative Adversarial Network (GAN) with LSTM layers to capture temporal dependencies and generate artifact-free signals, even from highly contaminated data [32].

Issue 3: Model Training is Unstable or Fails to Converge

Problem: During the training of your CNN model, the loss function fluctuates wildly or does not decrease.

Solutions:

- Inspect and Pre-process Data: Ensure your data is properly synchronized and that a baseline correction (e.g., polynomial deduction) has been applied. Check for extreme outliers or non-physiological noise that could destabilize training [30].

- Adjust Hyperparameters: Tune key parameters such as learning rate and batch size. Using an adaptive learning rate scheduler can help. For optimal performance, consider using hyperparameter optimization frameworks like Optuna [35].

- Review Loss Functions: For reconstruction tasks, standard losses like Mean Squared Error (MSE) are common. Some studies, particularly those using GANs, employ advanced loss functions that incorporate temporal-spatial-frequency constraints to better guide the training process [32].

The table below summarizes the performance metrics of several deep learning models for EEG artifact removal, as reported in the search results.

| Model Name | Architecture Type | Primary Application | Key Performance Metrics |

|---|---|---|---|

| Motion-Net [30] | 1D U-Net CNN | Motion Artifact Removal | Artifact reduction (η): 86% ±4.13SNR improvement: 20 ±4.47 dBMAE: 0.20 ±0.16 |

| CLEnet [33] | Dual-scale CNN + LSTM | Multi-artifact Removal (EMG, EOG) | SNR: 11.498 dBCC: 0.925RRMSEt: 0.300 |

| AnEEG [32] | LSTM-based GAN | General Artifact Removal | Improved SNR and SAR values; lower NMSE and RMSE compared to wavelet techniques. |

| 1D-ResCNN [31] | 1D Residual CNN | Eye Blink Artifact Removal | Outperformed ICA and regression methods, particularly for central head electrodes. |

Experimental Protocols & Methodologies

Protocol 1: Implementing a Subject-Specific Motion-Net Framework

This protocol is based on the methodology used to develop and validate the Motion-Net model [30].

Data Collection & Preprocessing:

- Collect EEG data using a portable system alongside a synchronized motion sensor (e.g., accelerometer).

- Record data during both resting states (to obtain clean "ground-truth" segments) and motion tasks (to create artifact-contaminated data).

- Synchronize EEG and accelerometer data by resampling and aligning them based on trigger points or event markers.

- Apply a baseline correction, such as deducting a fitted polynomial, to remove low-frequency drifts.

Feature Engineering & Input Formation:

- Extract Visibility Graph (VG) features from the EEG time series. This converts the signal into a graph representation that captures structural properties.

- Formulate the model input by combining raw EEG signals and the extracted VG features to provide complementary information.

Model Training & Validation:

- Design a 1D U-Net CNN architecture. The encoder should downsample to capture features, and the decoder should upsample to reconstruct the clean signal.

- Train the model on a per-subject basis. Use the artifact-contaminated signals as input and the corresponding clean segments or ground-truth references as the training target.

- Use a loss function like Mean Absolute Error (MAE) or Mean Squared Error (MSE) to minimize the difference between the output and the target clean signal.

- Validate the model using a separate, held-out dataset from the same subject.

Protocol 2: Training a Multi-Artifact Removal Model (CLEnet)

This protocol outlines the procedure for training an end-to-end model capable of handling various artifacts [33].

Dataset Preparation:

- Utilize a semi-synthetic dataset (e.g., from EEGdenoiseNet) where clean EEG is artificially contaminated with recorded EOG and EMG artifacts at known SNR levels.

- For real-world validation, use a dedicated multi-channel EEG dataset containing labeled or identifiable artifacts.

Model Architecture Setup:

- Implement a dual-branch network (CLEnet) that includes:

- A CNN branch with dual-scale convolutional kernels to extract morphological features at different scales.

- An LSTM branch to capture the temporal dependencies in the signal.

- An Improved EMA-1D (Efficient Multi-Scale Attention) module embedded in the CNN to enhance feature extraction and preserve temporal context.

- Implement a dual-branch network (CLEnet) that includes:

End-to-End Training:

- Train the model in a supervised manner using the contaminated EEG as input and the pristine, clean EEG as the target.

- Use MSE as the loss function to guide the reconstruction of the artifact-free signal.

- Evaluate the model on a test set using metrics such as SNR, Correlation Coefficient (CC), and Relative Root Mean Square Error (RRMSE).

The Scientist's Toolkit: Research Reagent Solutions

| Item / Technique | Function in Experiment |

|---|---|

| Visibility Graph (VG) Features [30] | Converts EEG time-series into graph structures, providing supplementary structural information that enhances deep learning model accuracy, especially with smaller datasets. |

| Synchronized Accelerometer Data [30] | Provides an independent measure of subject motion, used to validate and synchronize with motion artifacts in the EEG signal for improved identification and removal. |

| Semi-Synthetic Datasets (e.g., EEGdenoiseNet) [33] | Allows for controlled model training and benchmarking by providing clean EEG signals mixed with well-defined artifacts (EOG, EMG) at known signal-to-noise ratios. |

| Optuna Hyperparameter Optimization Framework [35] | An open-source library used to automatically search for and identify the optimal set of hyperparameters (e.g., learning rate, network depth) for a deep learning model. |

| Dual-Attention Mechanism [35] | A module integrated into neural networks (like MobileNetV2) that helps the model focus on the most relevant spatial and channel-wise features for the task, improving classification accuracy. |

| 1D U-Net Architecture [30] | A convolutional network architecture with a symmetric encoder-decoder structure, particularly effective for tasks involving signal reconstruction and segmentation, such as mapping noisy EEG to clean EEG. |

Experimental Workflow and Signaling Pathways

Motion-Net Experimental Workflow

Deep Learning Model Selection Logic

Core Concepts: How Auxiliary Sensors Capture Noise

Auxiliary sensors, such as IMUs and dual-layer EEG noise electrodes, provide independent measurements of motion and environmental interference that corrupt EEG signals. They act as reference channels, enabling sophisticated signal processing techniques to isolate and remove artifacts.

Dual-Layer EEG uses mechanically coupled but electrically isolated electrodes. The scalp layer records brain signals mixed with artifacts, while the noise layer records only non-biological artifacts (e.g., from cable movement or electromagnetic interference), providing a direct reference for cleaning the scalp data [36].

Inertial Measurement Units (IMUs) are motion sensors (accelerometers, gyroscopes) that directly quantify the kinematics of the head or body. This data serves as a reference for motion artifacts introduced into the EEG signal from physical movement [37] [38].

Table: Comparison of Auxiliary Sensor Types for Noise Reference

| Sensor Type | Primary Measured Noise | Spatial Resolution | Key Advantage | Common Use Case |

|---|---|---|---|---|

| Dual-Layer Noise Electrodes | Cable movement, electromagnetic interference, electrode-skin interface noise [36] | High (channel-level) | Directly captures electrical artifacts on the scalp; no additional head-worn sensors required [36]. | Whole-body movement studies (e.g., table tennis, walking) [36]. |

| Head-Mounted IMU | Gross head motion (acceleration, rotation) [37] | Low (system-level) | Directly measures the kinematics of the head; simple to implement [38]. | Mobile BCIs during walking, running [37]. |

| Per-Electrode IMU | Local electrode motion and displacement [38] | Very High (electrode-level) | Captures localized motion at each electrode; allows for targeted artifact removal [38]. | High-motion scenarios where different electrodes experience different artifacts. |

Frequently Asked Questions & Troubleshooting

Q1: The correlation between my IMU data and EEG channels is low. What could be wrong? Low correlation often stems from misalignment between the noise measured by the IMU and the artifact seen by the EEG electrode. Consider these points:

- Spatial Location: A single head-mounted IMU may not capture localized electrode movements effectively. Using a per-electrode IMU setup can provide a more accurate reference for each channel [38].

- Signal Type: The raw acceleration from an IMU might not be the best correlate of the electrical artifact. Try integrating the acceleration signal to derive velocity, which has been shown to correlate better with motion artifacts in some scenarios [38].

- Temporal Synchronization: Even minor misalignment between the EEG and IMU data streams can drastically reduce observed correlation. Ensure precise hardware synchronization or use post-hoc alignment based on a shared trigger or event [37].

Q2: When should I choose a dual-layer EEG system over a standard EEG with an IMU? The choice depends on the primary noise source in your experiment.

- Choose a Dual-Layer EEG system when the main artifacts are from cable sway, electrode movement, and electromagnetic interference. This system is specifically designed to reference these electrical artifacts directly [36].

- Use a Standard EEG with an IMU when the main artifacts are from gross head and body movements, and you have the processing pipeline to leverage the kinematic data for artifact removal [37].

Q3: I am working with few-channel, portable EEG. Which artifact removal method is most suitable? For few-channel systems, methods that can effectively leverage limited spatial information are key.

- Channel-Dependent Multilayer Time-Frequency Representations (CDML-EEG-TFR) can be a powerful approach. This method converts each channel's signal into a time-frequency image and stacks them, creating a rich input for deep learning models that can learn to disentangle brain activity from artifacts, even with few channels [18].

- Fine-tuned Large Brain Models (LaBraM) that incorporate IMU data have also shown robustness. These models can be adapted (fine-tuned) for few-channel scenarios, using the IMU's motion data to guide artifact removal with high efficiency [37].

Q4: After applying an artifact removal algorithm, I suspect it is also removing neural signals. How can I validate this? Validation is crucial. Beyond checking for improved signal-to-noise ratio, consider these strategies:

- Use a Task with Known Neurophysiology: Employ a paradigm with a well-established brain response (e.g., a visual evoked potential). If the cleaning algorithm preserves or enhances this known response, it indicates neural signals are retained [37].

- Check Component Topography: If using methods like ICA, inspect the topography of removed components. Components with a dipolar pattern that originates from brain-like regions are more likely to be neural and should be treated with caution [36] [3].

- Source Localization: Perform source localization on the data before and after cleaning. An effective algorithm should allow for more stable and physiologically plausible source estimates [39].

Experimental Protocols for Validation

Protocol 1: Validating Dual-Layer EEG Performance

Objective: To verify that the use of dual-layer noise electrodes provides cleaner brain components compared to single-layer processing [36].

Materials:

- Dual-layer EEG system (e.g., 120 scalp + 120 noise electrodes) [36].

- A paradigm inducing both neural activity and motion (e.g., table tennis drills or walking tasks).

Methodology:

- Data Collection: Record EEG data during the chosen paradigm using the dual-layer system. Ensure noise electrodes are electrically isolated but mechanically coupled to their corresponding scalp electrodes [36].

- Data Processing with Noise Reference: Process the data using the iCanClean algorithm or a similar approach (e.g., CCA) that explicitly uses the noise electrode data to identify and remove artifact components [36].

- Data Processing without Noise Reference: Process the same data using only the scalp channels and a standard artifact removal pipeline (e.g., ASR followed by ICA).

- Quantitative Comparison: For both processing streams, count the number of "high-quality" brain components after ICA. This is typically done by assessing the fit of a dipole model to each component and using an automated labeling algorithm. A higher number of brain components with a good dipole fit indicates superior preservation of neural signals [36].

Protocol 2: Benchmarking IMU-Enhanced Deep Learning

Objective: To evaluate the performance of a fine-tuned large brain model (LaBraM) using IMU data against a established benchmark (ASR-ICA) for motion artifact removal [37].

Materials:

- 32-channel EEG system.

- Head-mounted 9-axis IMU (3-axis accelerometer, gyroscope, magnetometer).

- A mobile BCI dataset with recordings from various movement conditions (standing, slow walking, fast walking, slight running) [37].

Methodology:

- Data Preprocessing: Preprocess the EEG signals (bandpass filter 0.1-75 Hz, notch filter at 60 Hz, resample to 200 Hz). Synchronize the IMU data with the EEG [37].

- Model Fine-Tuning: Use a pre-trained LaBraM model. Project both the EEG signals and the 9-axis IMU signals into a shared 64-dimensional latent space. Train a correlation attention mapping mechanism to allow the EEG queries to attend to relevant IMU keys for identifying motion artifacts. Fine-tune the model on approximately 5.9 hours of mobile EEG-IMU data [37].

- Benchmarking: Compare the fine-tuned LaBraM model's performance against the standard ASR-ICA pipeline. Evaluation should use metrics like Mean Squared Error (MSE) and Signal-to-Noise Ratio (SNR) on a held-out test set, and critically, the classification accuracy of the underlying BCI task (e.g., ERP classification) after artifact removal [37].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Materials for Experimental Setup

| Item Name | Function / Application | Technical Notes |

|---|---|---|

| Dual-Layer EEG Cap | Records scalp EEG and mechanically-coupled noise references simultaneously. | Ensure noise electrodes are electrically isolated. 3D-printed couplers can be used to join scalp and noise electrodes [36]. |

| Active Electrodes with IMUs | Measures local electrode motion for data-driven artifact removal. | An IMU (accelerometer/gyroscope) is mounted directly on the PCB of each active electrode [38]. |

| 9-Axis Head-Mounted IMU | Provides reference signal for gross head motion (acceleration, rotation, orientation). | Often used as a single reference for the entire EEG system. Data can be integrated to derive velocity [37] [38]. |

| iCanClean Algorithm | A dual-layer processing method using CCA to reject components correlated with noise electrodes [36]. | An alternative to ICA-based approaches that explicitly uses the noise layer. |

| Artifact Removal Transformer (ART) | An end-to-end deep learning model (transformer-based) for denoising multichannel EEG [39]. | Trained on pseudo clean-noisy data pairs; can remove multiple artifact types simultaneously. |

| Mobile BCI Dataset | A public dataset containing synchronized EEG and IMU data from various motion states. | Used for training and benchmarking algorithms. Example: Mobile BCI dataset by Lee et al. with standing, walking, and running data [37]. |

Core Concepts and Challenges

What are the primary challenges in artifact removal for few-channel portable EEG systems that hybrid frameworks aim to solve?

Few-channel portable EEG systems, crucial for real-world applications like stroke rehabilitation and emotion recognition, face significant data quality challenges. Unlike high-density lab systems, they have limited spatial information and suffer from increased data sparsity, making traditional artifact removal methods less effective [18]. Furthermore, artifacts in these systems are diverse and often unknown or mixed (e.g., EMG, EOG, ECG), occurring simultaneously without reference channels, which challenges algorithms designed for single artifact types [40]. The signals are also inherently non-linear and non-stationary, contaminated by both physiological and non-physiological noise, requiring models that can capture complex temporal and morphological features [40] [41].

How do hybrid frameworks fundamentally differ from traditional signal processing for EEG artifact removal?

Traditional methods like Independent Component Analysis (ICA) or regression require manual intervention, struggle without reference signals, and often need a large number of channels [40]. Hybrid frameworks integrate the strengths of different deep learning architectures to create an end-to-end, automated solution. They combine models excelling in spatial feature extraction (like CNNs) with those capturing long-term temporal dependencies (like LSTMs), often enhanced with attention mechanisms to adaptively focus on the most salient features for robust, automated artifact removal even with few channels and unknown noise sources [40] [41].

Troubleshooting Guides & FAQs

FAQ 1: My hybrid model performs well on synthetic data but fails on real-world portable EEG data. What could be wrong?

This is a common issue known as the synthetic-to-real domain gap. The simulated artifacts in your synthetic dataset may not perfectly capture the complexity and variability of artifacts in authentic recordings.

- Troubleshooting Steps:

- Verify Data Synthesis: Critically review the methodology used to create your semi-synthetic data. Ensure the artifact-to-EEG mixing ratios reflect realistic scenarios. If possible, incorporate real artifacts recorded separately.

- Incorporate Real Data: Fine-tune your pre-trained model on a smaller dataset of real, artifact-contaminated EEG from your specific portable system. This adapts the model to the actual signal characteristics and noise profiles of your hardware.

- Use Data Augmentation: Apply robust data augmentation techniques during training to improve model generalization. For EEG, this can include channel reorganization, random noise injection, and signal transformation [34].

- Check Model Complexity: A model that is too complex might overfit to the patterns of your synthetic data. Consider simplifying the architecture or increasing regularization (e.g., dropout layers) to improve generalization to real data.

FAQ 2: How can I improve the performance of my hybrid model with very limited labeled training data?

This challenge is central to few-channel EEG research. Leveraging self-supervised and transfer learning is key to overcoming data sparsity.

- Troubleshooting Steps:

- Implement Self-Supervised Learning (SSL): Use methods like contrastive learning or masked prediction tasks on your large volume of unlabeled EEG data. For example, the EmoAdapt framework integrates both contrastive learning and masking to learn robust features from limited channels without needing extensive labels [34].

- Employ Transfer Learning: Utilize a pre-trained model from a related domain. One effective method is to convert few-channel EEG signals into a Channel-Dependent Multilayer Time-Frequency Representation (CDML-EEG-TFR). You can then use a pre-trained CNN (like EfficientNet) as a feature extractor, freezing its weights and training only a new classifier head. This transfers knowledge from large image datasets to your EEG analysis task [18].

- Data Augmentation: As above, aggressively augment your limited labeled data to create more varied training examples and prevent overfitting.

FAQ 3: The artifact removal process is distorting the genuine neural signals I want to analyze. How can I preserve signal fidelity?

The goal is to maximize artifact removal while minimizing distortion of the underlying brain signal. This requires a model that can effectively disentangle the two.

- Troubleshooting Steps:

- Analyze the Loss Function: Ensure your training objective includes a fidelity term. Using Mean Squared Error (MSE) as the loss function directly penalizes large deviations between the cleaned output and the ground-truth clean EEG, helping to preserve the original signal structure [40].

- Inspect Outputs Visually: Plot the cleaned signal alongside the raw and ground-truth (if available) signals in both time and frequency domains. Look for the preservation of key oscillatory patterns like alpha or beta rhythms after cleaning.

- Evaluate with Multiple Metrics: Don't rely on a single metric. Use a suite of evaluation criteria to assess different aspects of performance:

- Signal-to-Noise Ratio (SNR): Measures noise reduction.

- Correlation Coefficient (CC): Quantifies waveform shape preservation.

- Relative Root Mean Square Error (RRMSE): Assesses amplitude accuracy in temporal and frequency domains [40].

- Consider a Hybrid Architecture with Feature Fusion: Models like CLEnet use dual-branch structures to separately process morphological (via CNN) and temporal (via LSTM) features before fusing them. This dedicated processing can lead to cleaner separation of artifact from neural signal [40].

Performance Data & Methodology

The following tables summarize the performance of state-of-the-art hybrid frameworks and the datasets used for their validation.

Table 1: Quantitative Performance of Hybrid Models on Key Tasks

| Model Name | Primary Architecture | Task | Key Performance Metrics |

|---|---|---|---|

| CLEnet [40] | Dual-scale CNN + LSTM + EMA-1D | Mixed Artifact (EMG+EOG) Removal | SNR: 11.50 dB, CC: 0.925, RRMSEt: 0.300, RRMSEf: 0.319 |

| CLEnet [40] | Dual-scale CNN + LSTM + EMA-1D | Multi-channel EEG, Unknown Artifacts | SNR & CC: >2.45% improvement; RRMSEt & RRMSEf: >3.30% reduction vs. other models |

| CNN-Bi-LSTM-Attention [41] | CNN + Bi-LSTM + Attention + PSO | Lower-Limb Motor Imagery Classification | Average Accuracy: 72.14% (SD: 3.60%); 4.1% improvement over baseline models |

| CDML-EEG-TFR + EfficientNet [18] | Time-Frequency Imaging + Transfer Learning | Few-Channel Motor Imagery Classification | Accuracy on BCI Comp. IV 2b: 80.21% (3 channels: C3, Cz, C4) |

Table 2: Common Datasets for Training and Benchmarking

| Dataset Name | Type | Key Characteristics | Use Case Example |

|---|---|---|---|

| BCI Competition IV 2b [18] | Real EEG | 3 channels (C3, Cz, C4), Left/Right Hand MI, 250 Hz | Benchmarking few-channel MI classification algorithms |

| EEGdenoiseNet [40] | Semi-synthetic | Provides clean EEG & artifact (EMG, EOG) for mixing | Training & evaluating artifact removal models on controlled data |

| HBN-EEG [42] | Real EEG | Large-scale (3000+ subjects), 128-channel, multiple cognitive tasks | Cross-task transfer learning and foundation model training |

Experimental Protocols

Protocol 1: Implementing a Hybrid CNN-LSTM Model with Attention for Artifact Removal

This protocol is based on the architecture of the CLEnet model [40].

- Data Preparation: Use a semi-synthetic dataset (e.g., from EEGdenoiseNet) where clean EEG is artificially contaminated with EOG and EMG artifacts at known signal-to-noise ratios. Split data into training, validation, and test sets.

- Model Architecture (CLEnet):

- Branch 1 - Morphological Feature Extraction: Input the contaminated EEG. Process it through multiple 1D convolutional layers with kernels of different scales (e.g., 3 and 5) to extract features at various resolutions.

- Feature Enhancement: Embed an improved 1D Efficient Multi-Scale Attention (EMA-1D) module after convolutional layers. This module performs cross-dimensional interaction to highlight important features and suppress noise, enhancing the temporal features of the genuine EEG.

- Branch 2 - Temporal Feature Extraction: Flatten the output from the CNN-EMA blocks and reduce dimensionality with a fully connected layer. Then, feed the features into a Long Short-Term Memory (LSTM) network to capture long-range temporal dependencies in the signal.

- Reconstruction: The output from the LSTM is passed through a final series of fully connected layers to reconstruct the artifact-free EEG signal.

- Training: Use Mean Squared Error (MSE) between the model's output and the ground-truth clean EEG as the loss function. Train using an adaptive optimizer (e.g., Adam) for a fixed number of epochs, monitoring the loss on the validation set to avoid overfitting.

- Validation: Evaluate the model on the held-out test set using metrics like SNR, CC, and RRMSE.

The workflow for this protocol is summarized in the following diagram:

Protocol 2: Leveraging Transfer Learning for Few-Channel EEG Classification

This protocol details the method for using pre-trained models when labeled EEG data is scarce, as described in [18].