Advanced CNN-LSTM Architectures for EEG Artifact Removal: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive exploration of deep learning approaches, specifically Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks, for removing artifacts from electroencephalography (EEG) signals.

Advanced CNN-LSTM Architectures for EEG Artifact Removal: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive exploration of deep learning approaches, specifically Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks, for removing artifacts from electroencephalography (EEG) signals. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of EEG contamination and the limitations of traditional methods. The review details innovative hybrid and dual-scale CNN-LSTM architectures, discusses strategies for overcoming challenges like unknown artifact removal and multi-channel processing, and presents a rigorous comparative analysis of state-of-the-art models based on metrics such as SNR and correlation coefficient. The synthesis aims to equip professionals with the knowledge to select and implement advanced denoising techniques, thereby enhancing the reliability of EEG data in clinical diagnostics and neuroscience research.

EEG Artifacts and the Deep Learning Revolution: From Classical Methods to CNN-LSTM

The Critical Challenge of Physiological Artifacts in EEG Analysis

Electroencephalography (EEG) is a crucial tool in neuroscience research and clinical diagnostics, providing non-invasive, high-temporal-resolution measurement of brain activity. However, a significant challenge in EEG analysis is the presence of physiological artifacts—signal contaminants originating from non-cerebral sources in the body. These artifacts can profoundly distort EEG recordings, potentially leading to misinterpretation of brain activity and incorrect conclusions in both research and clinical settings. Physiological artifacts differ from environmental artifacts in that they arise from the subject's own biological processes, including ocular movements, muscle activity, cardiac rhythms, and glossokinetic effects [1] [2].

The critical challenge posed by these artifacts is their overlapping frequency characteristics with genuine neural signals. For instance, eye blinks typically manifest as low-frequency components below 4 Hz, while muscle artifacts appear as high-frequency activity above 13 Hz, both overlapping with important EEG rhythms [3]. This spectral overlap makes traditional filtering approaches insufficient for artifact removal, as they inevitably remove valuable neural information along with the artifacts. Furthermore, some artifacts can exhibit rhythmic properties that closely resemble seizure activity or other pathological patterns, creating significant diagnostic challenges [1]. With the expanding applications of EEG in drug development, brain-computer interfaces, and real-world monitoring, addressing the problem of physiological artifacts has become increasingly urgent for researchers and clinicians alike.

Characterization of Major Physiological Artifacts

Ocular Artifacts

Ocular artifacts represent one of the most common categories of physiological interference in EEG recordings. These artifacts primarily include eye blinks and lateral eye movements, both originating from the electrical potential difference between the cornea (positively charged) and retina (negatively charged) [1].

Eye blinks produce characteristic high-amplitude, low-frequency deflections maximal in the bifrontal regions (electrodes Fp1 and Fp2). The underlying mechanism, known as Bell's Phenomenon, involves an upward rotation of the eyes during blinking, bringing the corneal positive potential closer to the frontal electrodes [1]. A key identifying feature of ocular artifacts is their limited spatial distribution—they should appear predominantly in frontal leads without significant spread to posterior regions. This contrasts with cerebral activity such as frontal spike and waves, which typically demonstrate a broader field extending to occipital areas [1].

Lateral eye movements generate a distinctive pattern of opposing polarities in the F7 and F8 electrodes. When looking to the right, the right cornea moves closer to F8 (creating a positive deflection), while the left retina moves closer to F7 (creating a negative deflection). The reverse pattern occurs when looking to the left. In bipolar montages, this creates characteristic phase reversals that can be identified by experienced EEG readers [1].

Muscle and Movement Artifacts

Muscle artifacts represent another major category of physiological interference, typically originating from temporalis and frontalis muscle activity. These artifacts manifest as high-frequency, low-amplitude activity often described as "myogenic" or "muscle" artifact [1]. Unlike cerebral signals, myogenic activity tends to be much faster than normal brain rhythms and is typically most prominent in awake subjects.

Chewing artifact represents a specific form of muscle interference characterized by sudden-onset, intermittent bursts of generalized very fast activity resulting from temporalis muscle contraction [1]. This artifact is often accompanied by hypoglossal (tongue movement) artifact, which appears as slower, diffuse delta-frequency activity affecting multiple channels simultaneously. The highly organized, reproducible nature of hypoglossal artifact helps distinguish it from pathological cerebral rhythms [1].

Table 1: Characteristics of Major Physiological Artifacts in EEG

| Artifact Type | Primary Sources | Frequency Characteristics | Spatial Distribution | Identifying Features |

|---|---|---|---|---|

| Eye Blinks | Cornea-retinal potential, Bell's Phenomenon | Very low frequency (<4 Hz) | Bifrontal (Fp1, Fp2) | High-amplitude positive deflections, no posterior field |

| Lateral Eye Movements | Cornea-retinal potential during lateral gaze | Low frequency (1-2 Hz) | Frontal-temporal (F7, F8) | Opposing polarities at F7/F8, phase reversals in bipolar montages |

| Muscle Artifact | Frontalis, temporalis muscle contraction | High frequency (>13 Hz, beta/gamma) | Frontal, temporal regions | Fast, spiky morphology, often bilateral but asymmetric |

| Chewing Artifact | Temporalis muscle contraction | Very high frequency (beta/gamma) | Generalized, maximum temporal | Sudden onset bursts, correlates with visible chewing |

| Hypoglossal Artifact | Tongue movement | Delta frequency (1-4 Hz) | Generalized | Slow, rhythmic, reproducible with speech/lingual movement |

| ECG Artifact | Cardiac electrical activity | ~1 Hz (heart rate) | Left hemisphere predominant | Time-locked to QRS complex, periodic occurrence |

Cardiac and Other Artifacts

Cardiac artifacts appear in EEG recordings as waveforms time-locked to the cardiac cycle. The most common form is ECG artifact, characterized by periodic deflections synchronized with the QRS complex [1]. These artifacts typically show left-sided predominance due to the heart's position in the left hemithorax and generally appear as relatively low-amplitude disturbances. A less common variant is cardioballistic artifact, which occurs when an EEG electrode is positioned directly over an artery and detects pulsation-induced movement [1].

Additional physiological artifacts include respiratory artifacts (often manifesting as slow, rhythmic baseline wander), sweat artifacts (characterized by very slow, <0.5 Hz fluctuations due to sodium chloride in sweat carrying electrical charge), and pulse artifacts [1] [2]. Each exhibits distinctive temporal, spatial, and morphological features that enable identification by trained electroencephalographers.

Quantitative Impact Assessment

The impact of physiological artifacts on EEG signal quality can be quantified using Signal-to-Noise Ratio Deterioration (SNRD), which measures the difference in SNR between artifact-free conditions and periods contaminated by artifacts [4]. Research has demonstrated that different artifact types affect specific frequency bands and electrode locations with varying intensity.

Table 2: Quantitative Impact of Physiological Artifacts on EEG Signal Quality

| Artifact Type | SNRD in Scalp EEG | Most Affected Frequency Bands | Regional Maximum Impact | SNRD in Ear-EEG |

|---|---|---|---|---|

| Jaw Clenching | High deterioration | Gamma band (>30 Hz) | Generalized, maximum temporal | Higher than scalp EEG |

| Eye Blinking | Moderate deterioration | Delta, theta bands (1-7 Hz) | Frontal regions | Minimal deterioration |

| Lateral Eye Movements | Moderate deterioration | Delta, theta bands (1-7 Hz) | Frontal, temporal regions | Significant deterioration |

| Head Movements | Variable deterioration | Broadband | Dependent on movement type | Variable deterioration |

Studies comparing artifact vulnerability between conventional scalp EEG and emerging ear-EEG platforms have revealed important differences. For instance, ear-EEG demonstrates significantly higher susceptibility to jaw-related artifacts but relative resilience to eye-blink artifacts compared to scalp systems [4]. This has important implications for the design of wearable EEG systems intended for long-term monitoring in real-world environments.

Deep Learning Approaches for Artifact Removal

CNN-LSTM Architectures

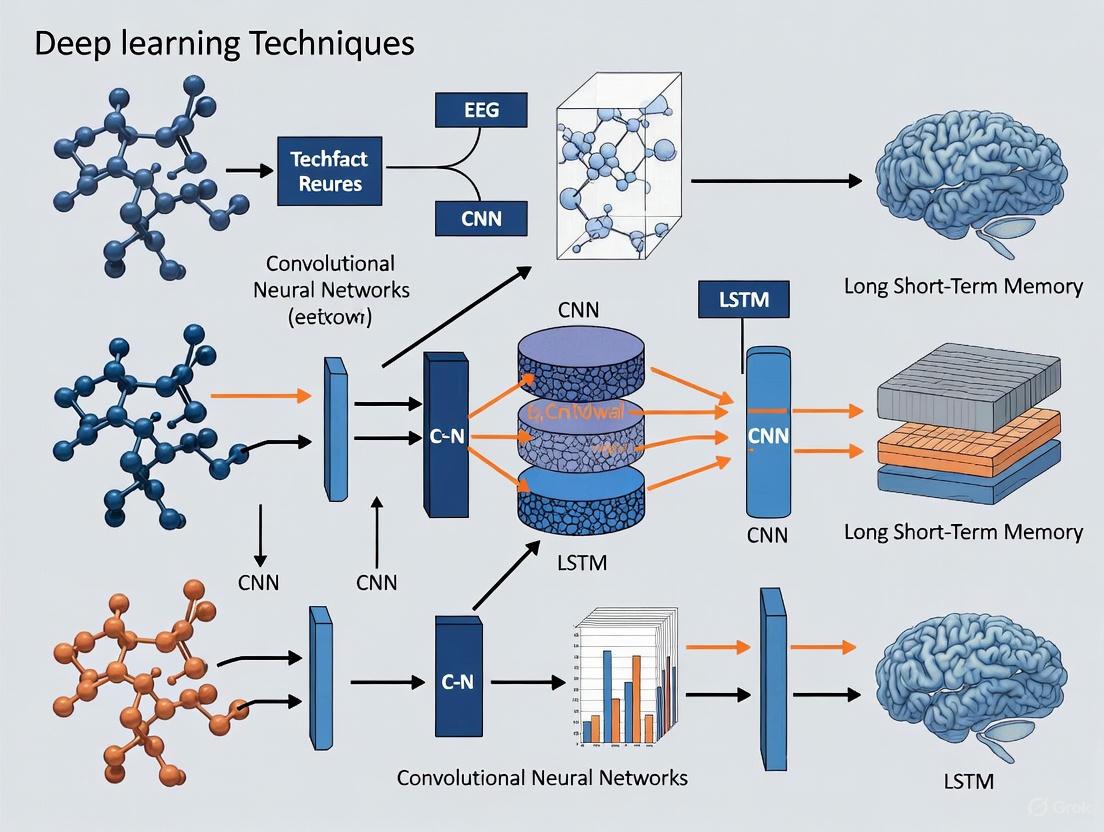

Recent advances in deep learning have produced sophisticated approaches for physiological artifact removal, with hybrid CNN-LSTM architectures demonstrating particularly promising results. These architectures leverage the complementary strengths of Convolutional Neural Networks (CNNs) for spatial feature extraction and Long Short-Term Memory (LSTM) networks for modeling temporal dependencies in EEG signals [3] [5].

The hybrid CNN-LSTM model employs a specific workflow for artifact removal. First, multi-channel EEG data are preprocessed and segmented into appropriate epochs for analysis. The CNN component then extracts spatially relevant features from the electrode array, identifying characteristic patterns associated with different artifact types. These spatial features are subsequently passed to the LSTM component, which models the temporal dynamics and context of the signal, effectively distinguishing between persistent cerebral rhythms and transient artifacts [5]. Studies incorporating simultaneous facial and neck EMG recordings have demonstrated that this approach can effectively remove muscle artifacts while preserving neurologically relevant signals such as Steady-State Visual Evoked Potentials (SSVEPs) [5].

Generative Adversarial Networks (GANs)

Generative Adversarial Networks (GANs) represent another powerful deep learning approach for EEG artifact removal. The GAN framework consists of two neural networks: a generator that produces cleaned EEG signals from artifact-contaminated inputs, and a discriminator that distinguishes between the generator's output and genuine clean EEG [3]. Through this adversarial training process, the generator learns to produce increasingly realistic artifact-free signals.

Recent implementations such as AnEEG have enhanced standard GAN architectures by incorporating LSTM layers to better capture temporal dependencies in EEG data [3]. These approaches have demonstrated superior performance compared to traditional methods like wavelet decomposition, achieving lower Normalized Mean Square Error (NMSE) and Root Mean Square Error (RMSE) values while maintaining higher Correlation Coefficient (CC) with ground truth signals [3].

Experimental Protocols for Method Evaluation

Protocol for Assessing Artifact Removal in SSVEP Paradigms

Objective: To evaluate the efficacy of deep learning artifact removal methods in preserving neurologically relevant signals while eliminating muscle artifacts.

Subjects: 24 participants with normal or corrected-to-normal vision [5].

Stimuli: Steady-State Visual Evoked Potentials (SSVEPs) elicited by visual stimulation using light-emitting diodes (LED) flickering at specific frequencies [5].

Artifact Induction: Participants perform strong jaw clenching during recording periods to induce significant muscle artifacts known to obscure EEG signals [5].

Data Acquisition:

- EEG recorded using standard scalp electrodes according to the 10-20 system

- Simultaneous recording of facial and neck EMG signals to provide reference data on muscle activity

- Sampling rate: ≥250 Hz to adequately capture high-frequency components

- Electrode impedance maintained below 10 kΩ throughout recording

Signal Processing:

- Raw data preprocessing with bandpass filtering (0.5-70 Hz)

- Data segmentation into epochs time-locked to visual stimulation

- Application of CNN-LSTM model using EMG references for targeted artifact removal

- Performance comparison against baseline methods (ICA, linear regression)

Outcome Measures:

- Signal-to-Noise Ratio (SNR) of SSVEP responses before and after artifact removal

- Time-domain analysis of signal morphology preservation

- Frequency-domain analysis of SSVEP peak integrity

- Quantitative metrics including NMSE, RMSE, and CC with ground truth

Protocol for Quantitative Artifact Impact Assessment

Objective: To quantify the signal-to-noise ratio deterioration caused by specific physiological artifacts in both scalp and ear-EEG configurations.

Subjects: 9 participants with no history of neurological disorders [4].

Stimuli: 40 Hz amplitude-modulated white noise presented binaurally to elicit Auditory Steady-State Response (ASSR) [4].

Experimental Conditions:

- Relaxed condition: Minimal movement, eyes open or closed

- Artifact conditions:

- Jaw clenching (muscle artifact)

- Eye blinking (ocular artifact)

- Lateral eye movements (ocular artifact)

- Head movements (motion artifact)

Data Acquisition:

- Scalp EEG from 32 electrodes according to the 10-20 system

- Ear-EEG from electrodes embedded in custom earpieces

- Reference configuration: Scalp electrodes referenced to Cz; ear electrodes referenced to concha electrodes

- Simultaneous physiological monitoring (ECG, EOG, EMG) for artifact verification

Signal Processing:

- Data preprocessing with FIR bandpass filtering (0.2-120 Hz)

- Notch filtering at 50 Hz and 100 Hz to remove power line interference

- Fourier analysis to calculate SNR at 40 Hz ASSR

- SNRD calculation as the difference between relaxed and artifact conditions

Outcome Measures:

- Frequency-band specific SNRD values for each artifact type

- Topographic distribution of artifact impact

- Comparative analysis of scalp vs. ear-EEG vulnerability

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for EEG Artifact Removal Studies

| Tool/Category | Specific Examples | Function in Research | Application Notes |

|---|---|---|---|

| EEG Recording Systems | g.USBamp amplifiers, active electrodes (g.LADYbird) | High-quality signal acquisition with minimal hardware artifact | Active electrodes reduce susceptibility to environmental interference; 32+ channels recommended for spatial analysis |

| Alternative EEG Platforms | Ear-EEG systems with custom earpieces | Discreet, long-term monitoring in real-world environments | Particularly susceptible to jaw artifacts but resistant to eye blink artifacts |

| Reference Signal Recordings | Facial and neck EMG, EOG, ECG | Provide objective reference for artifact identification and removal | Enables supervised learning approaches; critical for validating removal efficacy |

| Deep Learning Frameworks | TensorFlow, PyTorch with custom CNN-LSTM implementations | Nonlinear artifact separation from neural signals | Hybrid architectures optimal for spatiotemporal feature extraction |

| Data Augmentation Tools | Synthetic artifact generation algorithms | Expand training datasets for improved model generalization | Enables robust model training with limited clinical data |

| Evaluation Metrics | SNR, NMSE, RMSE, Correlation Coefficient | Quantitative assessment of artifact removal performance | SNR particularly valuable for evoked potential studies |

| Computational Modeling | Finite Element Method (FEM) head models | Understand artifact generation and propagation mechanisms | Explains physiological artifacts based on specific impedance changes |

Applications in Drug Development and CNS Research

The integration of advanced artifact removal techniques has significant implications for central nervous system (CNS) drug development. EEG biomarkers provide functional readouts that can predict human outcomes with higher confidence than traditional endpoints [6]. Preclinical EEG biomarkers enable real-time assessment of compound efficacy in disease-relevant models, potentially reducing late-stage attrition in drug development pipelines [6].

In practice, EEG-based pharmacodynamic measures are particularly valuable for drugs that act on the CNS, such as general anesthetics, benzodiazepines, and opioids, which generate reproducible EEG effects that correlate with drug concentration [7]. By applying sophisticated artifact removal techniques, researchers can obtain cleaner signals for pharmacokinetic/pharmacodynamic modeling, enabling more accurate dose optimization and titration [7].

Machine learning-enhanced EEG analysis has demonstrated particular utility in several therapeutic areas:

Epilepsy Drug Development: Quantitative EEG analysis can identify hidden seizure patterns not apparent in clinical seizure diaries. Where a patient might report 10 seizures per day, EEG analysis might reveal 150 subclinical events, providing a more sensitive measure of treatment efficacy [8].

Alzheimer's Disease Research: EEG can identify patients with subclinical epileptiform activity who experience faster cognitive decline. This allows for better patient stratification in clinical trials and targeted application of anti-epileptic mechanisms [8].

Psychiatric Drug Development: Sleep architecture metrics derived from EEG provide objective biomarkers for conditions like major depressive disorder, where sleep disturbances represent core symptoms. Artifact-free sleep EEG can distinguish between patients with insomnia versus hypersomnia, potentially predicting differential treatment response [8].

Neurodegenerative Disease Monitoring: REM sleep behavior disorders detected via EEG may serve as early indicators of Parkinson's disease, enabling earlier intervention when treatments may be most effective [8].

Physiological artifacts remain a critical challenge in EEG analysis, with the potential to significantly distort interpretation of brain activity in both research and clinical settings. The overlapping spectral characteristics of artifacts and genuine neural signals render traditional filtering approaches insufficient for high-precision applications. Advanced deep learning approaches, particularly hybrid CNN-LSTM architectures and GAN frameworks, offer promising solutions by leveraging both spatial and temporal features to separate artifacts from brain signals.

The development of standardized experimental protocols for evaluating artifact removal efficacy, particularly those incorporating SSVEP paradigms and quantitative SNRD metrics, provides rigorous methodology for comparing different approaches. As EEG continues to grow in importance for CNS drug development and real-world brain monitoring, the implementation of robust artifact removal techniques will be essential for extracting meaningful insights from neural signals. The integration of these advanced computational approaches with high-quality EEG acquisition represents a promising path forward for both neuroscience research and clinical applications.

Electroencephalography (EEG) is a crucial, non-invasive tool for studying brain activity, with applications spanning from clinical diagnostics to brain-computer interfaces (BCIs) [9]. However, the analysis of EEG signals is profoundly complicated by the presence of artifacts—unwanted signals originating from non-neural sources, such as ocular movements (EOG), muscle activity (EMG), and cardiac rhythms (ECG) [3] [5]. The effective removal of these artifacts is a prerequisite for accurate data interpretation. For decades, traditional techniques like regression, Blind Source Separation (BSS)—including Independent Component Analysis (ICA)—and their hybrids have formed the cornerstone of EEG artifact removal protocols. While these methods have provided valuable service, they possess inherent limitations that restrict their efficacy and applicability in modern research and clinical settings. The advent of deep learning, particularly models combining Convolutional and Recurrent Neural Networks (CNNs and LSTMs), highlights these constraints and points toward a new generation of automated, data-driven solutions [10] [5]. This application note details the fundamental limitations of traditional artifact removal methods, providing a structured comparison and experimental context for researchers, particularly those engaged in drug development and neurophysiological research.

Critical Analysis of Traditional Methodologies

The following sections delineate the core principles and, more importantly, the significant limitations of the most prevalent traditional artifact removal methods.

Regression-Based Methods

Regression techniques operate on the principle of using a reference signal (e.g., from an EOG channel) to model and subtract the artifact from the contaminated EEG signal [5].

- Core Limitation: Dependency on Reference Channels. The performance of regression methods is critically dependent on the availability and quality of a separate, clean reference signal for each type of artifact [10]. In practice, obtaining such references requires additional hardware (electrodes) and increases the complexity and cost of data acquisition. The absence of a reference signal leads to a significant degradation in artifact removal performance [10].

- Additional Constraints: These methods often assume a linear and stationary relationship between the reference artifact and its manifestation in the EEG, an assumption frequently violated in real-world physiological data. This can result in either incomplete artifact removal or the inadvertent subtraction of neural signals of interest, leading to a loss of information [5].

Blind Source Separation (BSS) and Independent Component Analysis (ICA)

BSS methods, such as ICA, are algorithmic techniques designed to separate a set of source signals from a mixture without prior knowledge of the sources or the mixing process [11]. ICA, a prominent BSS method, decomposes the multi-channel EEG signal into statistically independent components (ICs), which can then be manually or automatically classified as neural or artifactual before reconstruction of the cleaned signal [11] [12].

- Core Limitation: Requirement for Manual Intervention and Expert Knowledge. A fundamental drawback of ICA is the need for expert visual inspection to identify and label components corresponding to artifacts. This process is subjective, time-consuming, and not scalable to large datasets [12]. While tools like ICLabel [12] have been developed to automate component classification using CNNs, the underlying ICA process still requires careful pre-processing and significant computational resources, hindering fully automated pipeline implementation.

- Inherent Model Assumptions and Ambiguities: ICA relies on the statistical assumption that the source signals are independent. This assumption may not hold perfectly for complex, mixed neural signals. Furthermore, BSS methods inherently suffer from scaling and permutation ambiguities, meaning the recovered sources' amplitude and order are uncertain [11].

- Inability to Model Non-Linearities: ICA is typically a linear separation technique. However, the propagation of electrical signals through the head and tissues is a complex process that may involve non-linearities. ICA's simple linear mapping function may be insufficient to capture these complex relationships, potentially leading to suboptimal separation [12].

Hybrid and Other Methods

To overcome the limitations of individual techniques, hybrid methods have been developed. These combine the advantages of different approaches, such as BSS with wavelet transforms (BSS-WT) or empirical mode decomposition (BSS-EMD) [11]. For instance, the SSA-CCA method uses Singular Spectrum Analysis followed by Canonical Correlation Analysis to isolate and remove muscle artifacts based on their low autocorrelation [5].

- Core Limitation: Algorithmic Complexity and Customization: While often more effective, hybrid methods can be computationally intensive and require careful parameter tuning. Their development is often targeted at specific artifact types (e.g., SSA-CCA for EMG), meaning there is no universal hybrid solution for all artifacts. Their performance may not generalize well across different EEG acquisition setups or artifact profiles [5].

Table 1: Quantitative Comparison of Traditional vs. Deep Learning Artifact Removal Performance

| Method | Key Limitation | Reported Performance (Example) | Stimulation Type |

|---|---|---|---|

| Regression | Requires separate reference channel [10] | Performance drops significantly without reference | N/A |

| ICA (BSS) | Requires manual component inspection [12] | Effective but not automated; computationally heavy for long recordings [12] | General |

| Complex CNN (DL) | Data-hungry; high computational demand for training [13] | RRMSE: Best for tDCS artifacts [13] | tDCS |

| M4-SSM (DL) | Complex model architecture [13] | RRMSE: Best for tACS & tRNS artifacts [13] | tACS, tRNS |

| DuoCL (CNN-LSTM) | Potential disruption of original temporal features [10] | SNR & CC: Highest; RRMSEt & RRMSEf: Lowest in benchmark [14] | Hybrid/Unknown |

| CLEnet (CNN-LSTM) | Incorporates improved attention mechanism [10] | CC: 0.925; RRMSEt: 0.300 (Best for mixed EMG/EOG) [10] | Mixed (EMG+EOG) |

Experimental Protocols for Benchmarking Artifact Removal

To empirically validate the limitations of traditional methods and compare them against modern deep learning approaches, a standardized benchmarking protocol is essential. The following outlines a core experimental methodology based on current research practices.

Protocol: Semi-Synthetic Dataset Creation and Model Evaluation

Objective: To create a controlled, ground-truth dataset for the rigorous evaluation and comparison of artifact removal algorithms [13] [10].

Materials:

- Clean EEG Data: Source from public repositories like EEGdenoiseNet [10] or the LEMON dataset [12]. These should be verified to be free of major artifacts.

- Artifact Signals: Recordings of pure EOG, EMG, and ECG signals, or use available artifactual data from repositories.

- Computing Environment: MATLAB or Python with relevant signal processing and machine learning toolboxes (e.g., EEGLab, MNE-Python, TensorFlow/PyTorch).

Procedure:

- Data Preparation: Select epochs of clean EEG signals. Similarly, prepare epochs of artifact signals (e.g., EMG from jaw clenching [5]).

- Linear Mixing: Generate semi-synthetic contaminated EEG by linearly mixing clean EEG with artifact signals at varying Signal-to-Noise Ratios (SNRs). The clean EEG serves as the ground truth [13] [10]. Formula:

EEG_contaminated = EEG_clean + α * Artifact, whereαcontrols the contamination level. - Algorithm Application: Apply the target artifact removal methods (e.g., Regression, ICA, and deep learning models like CNN-LSTM) to the contaminated

EEG_contaminated. - Performance Quantification: Compare the output of each algorithm (

EEG_cleaned) against the knownEEG_cleanusing standardized metrics:- Relative Root Mean Square Error (RRMSE): Measures the error in both temporal (

RRMSEt) and spectral (RRMSEf) domains. Lower values indicate better performance [13] [10]. - Correlation Coefficient (CC): Measures the linear relationship between the cleaned and pure EEG. Values closer to 1.0 are superior [13] [10].

- Signal-to-Noise Ratio (SNR) & Signal-to-Artifact Ratio (SAR): Higher values indicate better denoising and artifact suppression [3].

- Relative Root Mean Square Error (RRMSE): Measures the error in both temporal (

Protocol: Validation with Real EEG and SSVEP

Objective: To assess artifact removal performance in a real experimental paradigm where the ground truth is a known neural response [5].

Materials:

- EEG Acquisition System: A multi-channel EEG system.

- EMG Acquisition System: Surface electrodes to record muscle activity from the face/neck.

- Visual Stimulus Unit: An LED screen or goggles capable of presenting a flickering stimulus to elicit Steady-State Visually Evoked Potentials (SSVEPs).

Procedure:

- Data Collection: Record EEG from participants simultaneously with EMG from relevant muscles (e.g., jaw, neck). Data is collected under two conditions:

- Condition A (Baseline): Participant views a flickering visual stimulus without movement.

- Condition B (Artifact): Participant views the same stimulus while performing a strong jaw clench to induce muscle artifacts [5].

- Artifact Removal: Apply the algorithms under test (e.g., ICA, regression, CNN-LSTM) to the data from Condition B.

- Performance Analysis: Calculate the SNR of the SSVEP response in the frequency domain for Condition A (clean baseline), Condition B (contaminated), and after processing Condition B with each algorithm. A successful method will restore the SSVEP SNR to a level close to that of Condition A, demonstrating effective artifact removal while preserving the neural signal of interest [5].

Table 2: Essential Materials and Tools for EEG Artifact Removal Research

| Item Name | Function / Application | Specific Examples / Notes |

|---|---|---|

| EEGdenoiseNet | A benchmark dataset of semi-synthetic EEG contaminated with EOG and EMG artifacts; used for training and testing DL models [10]. | Provides clean EEG, pure artifacts, and pre-mixed data for standardized evaluation [10]. |

| ICLabel | A CNN-based classifier that automates the labeling of Independent Components derived from ICA [12]. | Reduces, but does not eliminate, the manual effort required for ICA; a hybrid traditional-DL approach [12]. |

| Emotiv EPOC | A portable, non-invasive EEG acquisition system with 14 channels [9]. | Useful for out-of-lab studies but with lower performance compared to research-grade systems [9]. |

| Convolutional Neural Network (CNN) | Deep learning architecture ideal for extracting spatial and morphological features from multi-channel EEG data [10] [5]. | Used in models like 1D-ResCNN, NovelCNN, and as part of hybrid CNN-LSTM architectures [10]. |

| Long Short-Term Memory (LSTM) | A type of Recurrent Neural Network (RNN) designed to capture temporal dependencies and contextual information in time-series data [3] [12]. | Critical for modeling the dynamic, sequential nature of EEG signals in models like DuoCL and LSTEEG [14] [12]. |

| Blind Source Separation (BSS) | A class of algorithms to separate source signals from a mixture without prior knowledge; includes ICA, PCA, and CCA [11]. | A foundational traditional technique; used as a benchmark against which new DL methods are compared [13] [5]. |

Visualizing the Experimental and Methodological Workflow

The following diagram illustrates the typical workflow for benchmarking artifact removal methods, integrating both semi-synthetic and real-data validation protocols.

Traditional artifact removal methods, including regression, ICA, and BSS, are hampered by significant limitations such as dependency on reference channels, the need for labor-intensive manual intervention, and restrictive linear assumptions. Quantitative benchmarks reveal that these constraints lead to suboptimal performance compared to emerging deep learning approaches, particularly hybrid CNN-LSTM models which excel at capturing both the spatial and temporal features of EEG while enabling full automation. For researchers in drug development and neuroscience, transitioning to these data-driven, deep learning protocols is critical for enhancing the accuracy, efficiency, and scalability of EEG analysis in both clinical and experimental settings.

Why Deep Learning? The Paradigm Shift in Automated Artifact Removal

Electroencephalography (EEG) is indispensable in clinical diagnostics and neuroscience research, yet the analysis of neural signals is profoundly hindered by contamination from various artifacts, including those of muscular, ocular, and cardiac origin [15]. For decades, the field relied heavily on traditional signal processing techniques for artifact removal. Methods such as Independent Component Analysis (ICA), regression, and adaptive filtering are built upon linear assumptions and often require extensive expert intervention for component selection [12] [15]. A fundamental limitation of these approaches is their struggle to separate artifacts from neural signals when both occupy overlapping frequency bands, a common scenario in real-world EEG data [3]. Furthermore, techniques like ICA often necessitate careful pre-processing and significant computational resources for large datasets, hindering the development of fully automated, real-time analysis pipelines [12]. The reliance on these traditional methods created a bottleneck, limiting the scalability and applicability of EEG in both clinical and research settings, particularly with the advent of more portable recording devices used in naturalistic scenarios [12].

The Deep Learning Revolution: A New Paradigm

The emergence of deep learning (DL) has catalyzed a paradigm shift in EEG artifact removal, moving away from linear, expert-dependent models toward end-to-end, data-driven learning systems. The core advantage of DL models lies in their capacity to learn complex, non-linear mappings directly from raw, contaminated EEG inputs to clean, artifact-free outputs [15]. This capability allows them to model the highly dynamic and non-stationary nature of both neural activity and artifacts without relying on rigid statistical assumptions or reference signals.

This revolution is powered by specialized neural network architectures, with Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks playing pivotal, complementary roles. CNNs excel at extracting spatially meaningful, morphological features from multi-channel EEG data, effectively identifying the spatial distribution of artifacts across the scalp [14] [16]. Conversely, LSTM networks are inherently designed to model temporal sequences, capturing long-range dependencies and the temporal evolution of EEG signals, which is crucial for distinguishing brain activity from temporally structured artifacts like muscle contractions [12] [14]. The integration of these architectures into hybrid CNN-LSTM models represents a significant advance, enabling the simultaneous exploitation of both spatial and temporal features for superior artifact suppression [5] [14] [16].

Quantitative Performance: Deep Learning Outperforms Traditional Methods

Extensive benchmarking studies demonstrate that deep learning models consistently outperform traditional techniques across a variety of metrics and artifact types. The following table summarizes the quantitative performance of several state-of-the-art DL models, highlighting their efficacy in artifact removal.

Table 1: Performance Metrics of Deep Learning Models for EEG Artifact Removal

| Model Name | Architecture Type | Key Application / Artifact Target | Reported Performance Metrics | Reference |

|---|---|---|---|---|

| DuoCL | Dual-Scale CNN-LSTM | Hybrid & Unknown Artifacts | Highest SNR & CC; Lowest RRMSEₜ & RRMSE𝒇 | [14] |

| CLEnet | Dual-Scale CNN-LSTM with Attention | Multi-channel EEG, Unknown Artifacts | SNR ↑ 2.45%, CC ↑ 2.65%; RRMSEₜ ↓ 6.94%, RRMSE𝒇 ↓ 3.30% | [16] |

| M4 Network | Multi-modular State Space Model (SSM) | tACS & tRNS Artifacts | Best RRMSE & CC performance for tACS/tRNS | [13] |

| Complex CNN | Convolutional Neural Network | tDCS Artifacts | Best RRMSE & CC performance for tDCS | [13] |

| LSTEEG | LSTM-based Autoencoder | Multi-channel Artifact Detection & Correction | Superior artifact detection and correction vs. convolutional autoencoders | [12] |

| AnEEG | LSTM-based GAN | General Artifacts | Lower NMSE & RMSE; Higher CC, SNR & SAR vs. wavelet techniques | [3] |

The performance superiority of DL is further evidenced by its adaptability to specific artifact types. For instance, a comprehensive benchmark of transcranial Electrical Stimulation (tES) artifact removal revealed that while a Complex CNN performed best for transcranial Direct Current Stimulation (tDCS) artifacts, a multi-modular State Space Model (SSM) excelled at removing the more complex artifacts from transcranial Alternating Current Stimulation (tACS) and transcranial Random Noise Stimulation (tRNS) [13]. This specificity underscores DL's ability to adapt to the unique characteristics of different noise sources. When applied to a visual perception task in patients with Deep Brain Stimulation (DBS) implants, machine learning classifiers confirmed that DL-based preprocessing could successfully salvage neural data, making the spatiotemporal patterns of DBS-on and DBS-off conditions highly comparable [17].

Experimental Protocols: From Benchmarking to Novel Models

Protocol 1: Benchmarking tES Artifact Removal

This protocol outlines the methodology for a comparative benchmark of machine learning methods for removing artifacts induced by Transcranial Electrical Stimulation (tES), a major challenge in simultaneous EEG monitoring [13].

- Objective: To analyze and compare the performance of 11 different artifact removal techniques across three tES modalities: tDCS, tACS, and tRNS.

- Dataset Generation: A semi-synthetic dataset was created to enable controlled evaluation. Clean EEG data was combined with synthetic tES artifacts, providing a known ground truth for rigorous model assessment [13].

- Models Evaluated: The benchmark included a range of models, with the Complex CNN and a multi-modular network based on State Space Models (M4) being highlighted [13].

- Evaluation Metrics: Models were evaluated using three primary metrics calculated in both temporal and spectral domains:

- Root Relative Mean Squared Error (RRMSE)

- Correlation Coefficient (CC)

- Key Workflow Steps:

- Acquire clean, ground-truth EEG data.

- Generate synthetic tES artifacts (tDCS, tACS, tRNS).

- Create contaminated EEG by mixing clean EEG and synthetic artifacts.

- Apply each of the 11 artifact removal models.

- Compare the model's output to the known ground truth using RRMSE and CC.

- Outcome: The study provided clear guidelines for model selection, establishing that optimal performance is dependent on the stimulation type, thereby creating a benchmark for future research [13].

Protocol 2: A Hybrid CNN-LSTM Model with EMG Reference

This protocol details a novel approach that uses a hybrid CNN-LSTM architecture and additional EMG recordings to specifically target muscle artifacts while preserving neurologically valid signals like Steady-State Visually Evoked Potentials (SSVEPs) [5].

- Objective: To remove muscle artifacts from EEG signals using a hybrid CNN-LSTM model trained with simultaneous facial and neck EMG signals as an artifact reference, and to preserve the integrity of SSVEP responses.

- Data Collection: EEG and EMG data were recorded from 24 participants. Subjects were presented with an LED stimulus to elicit SSVEPs while performing a strong jaw clenching action to induce significant muscle artifacts [5].

- Data Augmentation: An innovative strategy was developed to generate augmented EEG and EMG recordings, creating a larger and more diverse training dataset [5].

- Model Architecture: A hybrid CNN-LSTM network was implemented. The CNN layers likely extract spatial features from the multi-channel EEG/EMG inputs, while the LSTM layers model the temporal dynamics of the signal and artifacts.

- Evaluation Method: The algorithm's efficacy was assessed based on its ability to preserve SSVEP responses. A key metric was the change in the Signal-to-Noise Ratio (SNR) of the SSVEP after cleaning, which quantifies how well the method removes noise while retaining the neural signal of interest [5].

- Comparison: The proposed method's performance was compared against commonly used algorithms, including Independent Component Analysis (ICA) and linear regression [5].

The logical workflow for this hybrid approach is illustrated below.

The Scientist's Toolkit: Key Research Reagents & Materials

Implementing deep learning models for EEG artifact removal requires a combination of computational resources, software frameworks, and datasets. The following table details essential components for building an experimental pipeline.

Table 2: Essential Research Toolkit for DL-Based EEG Artifact Removal

| Tool / Resource | Category | Specific Examples / Functions | Application in Research |

|---|---|---|---|

| Deep Learning Frameworks | Software | TensorFlow, PyTorch | Provides the foundation for building, training, and validating CNN, LSTM, and Autoencoder models. |

| Public EEG Datasets | Data | LEMON Dataset [12], EEGDenoiseNet [12], LoDoPaB-CT [18] | Serves as a source of clean EEG for training or standardized benchmarks for model evaluation. |

| Synthetic Data Generation | Methodology | Mixing clean EEG with synthetic artifacts (e.g., tES [13], EMG) | Enables controlled creation of large, labeled datasets with known ground truth for supervised learning. |

| Reference Signal Recordings | Experimental Data | Simultaneous EMG [5], EOG, or ECG | Provides a dedicated noise reference to enhance model training for specific artifact types (e.g., muscle, ocular). |

| Quantitative Evaluation Metrics | Analytical Tools | RRMSE, CC, SNR, SAR [13] [3] [16] | Offers objective, standardized measures to compare the performance of different artifact removal algorithms. |

| Computational Hardware | Hardware | GPUs (Graphics Processing Units) | Accelerates the training process of complex deep learning models, which are computationally intensive. |

Architectural Deep Dive: Dual-Scale Feature Learning

The most advanced DL models for EEG artifact removal employ sophisticated architectures that process information at multiple scales. The DuoCL model, for instance, uses a dual-scale approach to comprehensively capture both fine-grained and broad morphological features of artifacts [14]. As shown in the diagram below, this architecture processes raw EEG through two parallel convolutional branches with different kernel sizes. One branch uses larger kernels to capture broader, coarse-grained features, while the other uses smaller kernels to identify fine-grained, local details. The outputs from these dual pathways are then reinforced with temporal dependencies captured by an LSTM network before a final reconstruction layer produces the cleaned EEG signal [14]. This multi-scale, spatio-temporal approach allows the model to adaptively remove a wide range of artifacts, including previously "unknown" types, without requiring prior knowledge of their specific characteristics [14] [16].

The paradigm shift from traditional, assumption-laden methods to deep learning-based approaches has fundamentally transformed the field of automated EEG artifact removal. The empirical evidence is clear: models leveraging CNNs, LSTMs, and their hybrids consistently achieve superior performance by learning the complex, non-linear relationships that characterize artifact contamination directly from data. This capability translates into more accurate waveform reconstruction, higher signal fidelity, and robust performance across diverse artifact types, including challenging scenarios like tES and motion artifacts.

Future research is poised to build upon this foundation by integrating self-supervised learning to reduce dependency on large, labeled datasets, and hybrid architectures that combine the strengths of different DL models [15]. Furthermore, the exploration of attention mechanisms and transformers promises to enhance the model's ability to focus on the most salient, artifact-ridden segments of the signal [16] [15]. As these technologies mature, the focus will increasingly shift towards developing efficient, interpretable models suitable for real-time clinical diagnostics and robust brain-computer interfaces, solidifying deep learning as the cornerstone of next-generation EEG analysis.

Electroencephalography (EEG) is a cornerstone tool in neuroscience research and clinical diagnostics, prized for its non-invasive nature and high temporal resolution [5] [10]. However, the recorded EEG signals are persistently contaminated by various artifacts—from physiological sources like eye movements (EOG), muscle activity (EMG), and cardiac rhythms (ECG) to environmental interference [10] [3] [15]. These artifacts significantly obscure genuine brain activity, complicating analysis and potentially leading to misdiagnosis in clinical settings or errors in brain-computer interface (BCI) applications [3] [15].

Traditional artifact removal methods, including regression, independent component analysis (ICA), and wavelet transforms, often rely on linear assumptions, manual parameter tuning, or require reference signals, limiting their effectiveness and adaptability [5] [10] [15]. Deep learning has emerged as a powerful alternative, capable of learning complex, non-linear mappings directly from noisy data. Within this domain, hybrid architectures that combine Convolutional Neural Networks (CNNs) for superior spatial feature extraction with Long Short-Term Memory (LSTM) networks for sequential temporal modeling have demonstrated remarkable efficacy [5] [10]. This document details the application notes and experimental protocols for utilizing these synergistic strengths in EEG artifact removal, providing a practical framework for researchers and scientists.

State-of-the-Art Performance Quantification

The performance of CNN-LSTM hybrid models has been rigorously evaluated against other methodologies across multiple datasets and artifact types. The following table summarizes key quantitative results, demonstrating the superior performance of hybrid architectures.

Table 1: Performance Comparison of EEG Artifact Removal Methods Across Different Studies

| Model/Approach | Artifact Type | Dataset | Key Metrics | Reported Performance |

|---|---|---|---|---|

| CLEnet (CNN-LSTM with EMA-1D) [10] | Mixed (EMG + EOG) | EEGdenoiseNet | SNR (dB)CCRRMSEtRRMSEf | 11.498 dB0.9250.3000.319 |

| CLEnet [10] | ECG | EEGdenoiseNet + MIT-BIH | SNR (dB)CCRRMSEtRRMSEf | +5.13% vs. DuoCL+0.75% vs. DuoCL-8.08% vs. DuoCL-5.76% vs. DuoCL |

| Hybrid CNN-LSTM with EMG [5] | Muscle (from Jaw Clenching) | Custom SSVEP (24 subjects) | SSVEP Preservation | Excellent performance, retained SSVEP responses better than ICA and regression |

| CNN-Bi-LSTM with Feature Fusion [19] | Seizure Detection | CHB-MIT | AccuracySensitivitySpecificity | 98.43%97.84%99.21% |

| 1D-CNN-LSTM [20] | Lower-limb Motor Imagery | Custom Dataset | Classification Accuracy | 63.75% (Binary) |

| Denoising Autoencoder (DAR) [21] | fMRI (Gradient & BCG) | CWL EEG-fMRI | RMSESSIMSNR Gain | 0.0218 ± 0.01520.8885 ± 0.091314.63 dB |

| Artifact Removal Transformer (ART) [22] | Multiple | Multiple BCI Datasets | Signal Reconstruction | Surpassed other DL models in multi-channel denoising |

These results underscore a clear trend: hybrid CNN-LSTM models consistently achieve high performance across diverse tasks, from direct artifact removal to subsequent classification of cleaned signals. The integration of CNNs and LSTMs provides a balanced architecture that effectively handles both the spatial morphology and temporal dynamics of EEG signals.

Detailed Experimental Protocols

This section provides a step-by-step protocol for replicating a state-of-the-art CNN-LSTM approach for EEG artifact removal, based on validated methodologies from recent literature [5] [10].

Protocol A: Implementing a Hybrid CNN-LSTM Model for Muscle Artifact Removal

Objective: To remove muscle artifacts (EMG) from EEG signals while preserving neurologically relevant components, such as Steady-State Visual Evoked Potentials (SSVEPs), using a hybrid CNN-LSTM model with additional EMG reference signals.

Materials & Dataset:

- EEG Recording System: A high-density amplifier (e.g., 64-channel) with appropriate sampling rate (≥250 Hz).

- EMG Recording System: Surface electrodes for simultaneous recording of facial and neck muscle activity.

- Stimulus Presentation Setup: A device for presenting visual stimuli (e.g., LED for SSVEP elicitation).

- Dataset: Data from 24 participants performing SSVEP tasks with and without strong jaw clenching to induce artifacts [5]. For augmentation, clean EEG and artifact signals can be artificially mixed to expand the training set.

Procedure:

- Data Acquisition & Preprocessing:

- Record raw EEG and simultaneous EMG from reference muscles (e.g., masseter, temporalis).

- Apply band-pass filtering (e.g., 0.5-70 Hz for EEG) and a notch filter (50/60 Hz) to remove line noise.

- Segment data into epochs time-locked to the visual stimulus.

- Normalize the data (e.g., z-score) for stable training.

Model Architecture Design (Hybrid CNN-LSTM):

- Input: Raw or preprocessed segments of multi-channel EEG and corresponding EMG reference data.

- Feature Extraction Branch (CNN):

- Employ 1D convolutional layers with multiple kernel sizes (e.g., 3, 5, 7) to extract local morphological features at different scales [10].

- Use activation functions (ReLU) and pooling layers to introduce non-linearity and reduce dimensionality.

- Temporal Modeling Branch (LSTM):

- Fusion and Output:

- The output from the LSTM sequence is passed through a series of fully connected (Dense) layers.

- The final layer reconstructs the artifact-free EEG signal with the same dimensions as the input.

Model Training:

- Loss Function: Use Mean Squared Error (MSE) to minimize the difference between the model's output and the target clean EEG [10] [15].

- Optimizer: Use Adam or RMSProp for adaptive learning rate adjustment.

- Validation: Use a held-out validation set to monitor for overfitting and to select the best model.

Performance Evaluation:

- Quantitative Metrics: Calculate Signal-to-Noise Ratio (SNR), Correlation Coefficient (CC), and Relative Root Mean Squared Error in time and frequency domains (RRMSEt, RRMSEf) [10].

- SSVEP Preservation: Analyze the frequency spectrum of the cleaned signal to confirm the preservation of the SSVEP peak [5].

Protocol B: CLEnet for Multi-Channel EEG with Unknown Artifacts

Objective: To remove a wide range of known and unknown artifacts from multi-channel EEG data using an advanced dual-branch CNN-LSTM architecture (CLEnet) incorporating an attention mechanism [10].

Materials & Dataset:

- Multi-channel EEG System: 32-channel or 64-channel EEG cap.

- Dataset: A combination of public semi-synthetic datasets (e.g., EEGdenoiseNet for EMG/EOG [10]) and real, task-based EEG data (e.g., from an n-back task) containing unknown physiological artifacts.

Procedure:

- Data Preparation:

- For semi-synthetic data, mix clean EEG segments with recorded EOG and EMG artifacts at varying Signal-to-Noise Ratios (SNRs).

- For real data, use expert labeling or automated tools (e.g., ICA with ICLabel [20]) to identify clean and contaminated segments.

CLEnet Architecture:

- Dual-Scale CNN: Implement two parallel CNN streams with different kernel sizes to capture both fine and coarse morphological features from the input EEG.

- Improved EMA-1D Attention: Embed a 1D Efficient Multi-Scale Attention module after the CNNs. This module enhances critical temporal features and suppresses irrelevant ones by performing cross-dimensional interaction [10].

- LSTM for Temporal Dependencies: The attention-weighted features are then fed into LSTM layers to model the long-term temporal dependencies of the genuine EEG signal.

- Reconstruction: Finally, fully connected layers decode the processed features to reconstruct the clean, multi-channel EEG output.

Training and Ablation:

- Train the model end-to-end using MSE loss.

- Conduct ablation studies by removing the EMA-1D module to quantitatively demonstrate its contribution to overall performance (e.g., observed performance drops of ~6.94% in RRMSEt [10]).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for CNN-LSTM EEG Research

| Category | Item / Tool | Function / Purpose | Example / Note |

|---|---|---|---|

| Data Acquisition | High-Density EEG System | Records scalp electrical activity. | 64-channel systems recommended for comprehensive spatial coverage [20]. |

| EMG/EOG Amplifier & Electrodes | Records reference signals for artifacts. | Crucial for methods using auxiliary signals [5]. | |

| Software & Libraries | Python | Core programming language. | Versions 3.8+. |

| TensorFlow / PyTorch | Deep learning framework for model building. | ||

| MNE-Python | EEG-specific data handling, preprocessing, and analysis. | Includes ICA implementation [20]. | |

| NumPy, SciPy | Numerical computing and signal processing. | ||

| Datasets | EEGdenoiseNet [10] | Semi-synthetic benchmark with clean EEG and EOG/EMG. | For training and evaluating denoising models. |

| CHB-MIT Scalp EEG Database [19] | Long-term recordings from pediatric patients with epilepsy. | For seizure detection tasks. | |

| Custom SSVEP Dataset [5] | EEG with induced muscle artifacts and known evoked potentials. | For evaluating artifact removal & signal preservation. | |

| Computational Resources | GPU (NVIDIA) | Accelerates deep learning model training. | RTX 3090, A100, or similar. |

| High RAM & CPU | For data preprocessing and handling large datasets. | 32GB+ RAM recommended. |

The integration of CNNs for spatial feature extraction and LSTMs for temporal modeling represents a powerful, synergistic architecture for tackling the complex challenge of EEG artifact removal. The protocols and application notes detailed herein provide a robust foundation for researchers to implement, validate, and advance these methods. The demonstrated success of these hybrids in preserving neurologically critical information like SSVEPs, while effectively suppressing a wide array of artifacts, makes them particularly suitable for high-stakes applications in clinical diagnostics, drug development, and next-generation Brain-Computer Interfaces. Future work will likely focus on enhancing model interpretability, achieving greater computational efficiency for real-time use, and improving generalization across diverse patient populations and recording conditions.

Implementing Hybrid CNN-LSTM Models: Architectures for Effective EEG Reconstruction

Hybrid CNN-LSTM with Auxiliary EMG Input for Muscle Artifact Removal

Electroencephalography (EEG) is a crucial tool for studying human brain activity in research, clinical diagnostics, and brain-computer interface (BCI) technology due to its non-invasive nature and high temporal resolution [5]. However, accurate EEG analysis is significantly hindered by artifacts—interfering signals that do not originate from neuronal activity. Among these, muscle artifacts (electromyographic or EMG interference) present a particularly challenging problem as they generate high-amplitude interference that can overshadow genuine brain signals [5].

Muscle artifacts are especially problematic in paradigms requiring participant movement or in studies of steady-state visually evoked potentials (SSVEPs) [5]. Traditional artifact removal methods often rely solely on EEG data and face limitations due to the spectral overlap between muscle activity and neural signals of interest. This application note details a novel deep learning approach that integrates a hybrid convolutional neural network-long short-term memory (CNN-LSTM) architecture with auxiliary EMG recordings to achieve precise muscle artifact removal while preserving neurologically relevant signal components.

Technical Background and Literature Review

Traditional Muscle Artifact Removal Methods

Most conventional approaches to muscle artifact removal rely on solving a linear blind source separation (BSS) problem. Common techniques include:

- Independent Component Analysis (ICA): Separates sources by maximizing statistical independence, effective for stereotyped artifacts like ocular movements [23] [24].

- Canonical Correlation Analysis (CCA): Identifies sources with maximal autocorrelation, particularly effective for muscle artifacts which typically have lower autocorrelation than brain signals [23] [24].

- Regression-Based Methods: Utilize reference channels to estimate and subtract artifact components from contaminated EEG [5].

- Hybrid Methods: Combine multiple approaches (e.g., EEMD-CCA) to enhance artifact separation [24].

While these methods have demonstrated utility, they often require manual intervention, assume specific signal characteristics, or struggle to preserve neurologically relevant information when removing artifacts [23] [24].

Deep Learning Approaches in EEG Processing

Recent advances in deep learning have transformed EEG artifact removal:

- Generative Adversarial Networks (GANs): Can generate artifact-free EEG signals through adversarial training [3].

- Convolutional Neural Networks (CNNs): Effectively extract spatial and morphological features from EEG data [10].

- Long Short-Term Memory (LSTM) Networks: Capture temporal dependencies in EEG signals, crucial for maintaining brain dynamics [3] [10].

- Hybrid Architectures: Combine strengths of multiple network types for improved artifact removal [5] [10].

The Hybrid CNN-LSTM Framework with EMG Assistance

The proposed framework leverages a dual-pathway architecture that processes both EEG and simultaneously recorded EMG signals [5]. The CNN components extract spatial features from both signal types, while the LSTM layers model their temporal dynamics. The integrated network learns the complex nonlinear relationships between muscle activity and its manifestation in EEG signals, enabling precise artifact suppression.

Key Advantages

This approach offers several advantages over traditional methods:

- Utilization of Complementary Information: By incorporating EMG recordings as direct indicators of muscle activity, the model gains explicit information about artifact sources [5] [24].

- Preservation of Neural Information: The method specifically aims to retain clinically relevant components such as SSVEP responses [5].

- Adaptability: The data-driven approach can learn varied artifact patterns across different subjects and recording conditions [5] [10].

- End-to-End Processing: Eliminates need for manual component selection or reference channel configuration [5].

Quantitative Performance Analysis

Table 1: Performance comparison of artifact removal methods across different contamination types

| Method | Artifact Type | SNR (dB) | CC | RRMSEt | RRMSEf |

|---|---|---|---|---|---|

| CNN-LSTM with EMG [5] | Muscle (SSVEP) | Significant improvement reported | - | - | - |

| CLEnet [10] | Mixed (EMG+EOG) | 11.498 | 0.925 | 0.300 | 0.319 |

| CLEnet [10] | ECG | Outperformed DuoCL by 5.13% | Increased by 0.75% | Decreased by 8.08% | Decreased by 5.76% |

| CLEnet [10] | Multi-channel (Unknown artifacts) | Increased by 2.45% | Increased by 2.65% | Decreased by 6.94% | Decreased by 3.30% |

| AnEEG [3] | Various | Improvement reported | Improvement reported | - | - |

| ICA variants [23] | Muscle | Moderate improvement | - | - | - |

Table 2: Comparison of methodology characteristics across different artifact removal approaches

| Method | Architecture | External Signals | Automation Level | Key Strength |

|---|---|---|---|---|

| CNN-LSTM with EMG [5] | Hybrid CNN-LSTM | EMG | Full | Preservation of SSVEP responses |

| CLEnet [10] | Dual-scale CNN + LSTM + EMA-1D | None | Full | Handles unknown artifacts in multi-channel EEG |

| AnEEG [3] | LSTM-based GAN | None | Full | Temporal dependency capture |

| EEMD-CCA with EMG array [24] | Signal decomposition + adaptive filtering | EMG array | Partial | Performance improves with more EMG channels (2-16) |

| ICA methods [23] | Blind source separation | None | Partial | Established method for various artifacts |

Experimental Protocols

Data Acquisition and Experimental Setup

Subject Preparation

- Recruit 24 participants through appropriate ethical approval processes [5].

- Prepare scalp sites with light abrasion and cleaning to maintain electrode impedance below 5 kΩ.

- Apply EEG electrodes according to the 10-20 international system, focusing on occipital regions for SSVEP recording.

- Place EMG electrodes on facial and neck muscles (masseter, temporalis, sternocleidomastoid) using bipolar configurations [5].

Stimulus Presentation and Task Protocol

- Present visual stimuli using LED arrays flashing at specific frequencies (e.g., 15 Hz) to elicit SSVEPs [5].

- Instruct participants to perform strong jaw clenching during specific blocks to induce muscle artifacts.

- Include resting state blocks without artifact induction for baseline measurements.

- Implement randomized block designs counterbalancing artifact and non-artifact conditions.

Data Collection Parameters

- Sample EEG signals at ≥200 Hz with appropriate anti-aliasing filters [5].

- Record EMG signals synchronously with EEG data to ensure temporal alignment.

- Maintain consistent amplifier gains and filtering settings across all channels.

- Include trigger channels to mark stimulus onset and condition changes.

Data Preprocessing Pipeline

Signal Conditioning

- Apply bandpass filter (0.5-45 Hz) to EEG signals using fifth-order Butterworth zero-phase filters [23].

- Filter EMG signals with appropriate bandpass (10-200 Hz) to capture muscle activity.

- Remove powerline interference with notch filters at 50/60 Hz and harmonics.

Data Segmentation

- Segment data into epochs time-locked to visual stimulus onset.

- Include baseline periods for normalization when appropriate.

- Mark artifact-contaminated segments based on experimental conditions.

Implementation of Hybrid CNN-LSTM Model

Network Architecture Specification

- Design CNN component with multiple convolutional layers (kernel sizes: 3, 5, 7) to extract spatial features.

- Implement LSTM layers with 50-100 units to capture temporal dependencies [3] [10].

- Include attention mechanisms (e.g., EMA-1D) to enhance relevant features [10].

- Create separate input branches for EEG and EMG data with late fusion.

Training Configuration

- Use Adam optimizer with learning rate of 0.001 and batch size of 64.

- Implement mean squared error (MSE) between output and clean reference as loss function.

- Apply early stopping based on validation loss with patience of 20 epochs.

- Utilize data augmentation through synthetic artifact addition to increase training diversity [5].

Validation Methodology

- Employ k-fold cross-validation to assess model generalizability.

- Calculate performance metrics (SNR, CC, RRMSE) on held-out test sets.

- Compare with traditional methods (ICA, regression) using the same data splits [5].

Performance Evaluation Metrics

Quantitative Assessment

- Calculate Signal-to-Noise Ratio (SNR) improvements in dB scale [5] [3].

- Compute Correlation Coefficient (CC) between cleaned and artifact-free signals [3] [10].

- Determine Relative Root Mean Square Error in temporal (RRMSEt) and frequency (RRMSEf) domains [10].

Qualitative Analysis

- Visual inspection of time-domain signals before and after processing.

- Compare power spectral densities to assess artifact suppression and neural preservation.

- Generate time-frequency representations to evaluate oscillatory dynamics.

Experimental Workflow and Signaling Pathways

Diagram 1: Experimental workflow for hybrid CNN-LSTM muscle artifact removal

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential research reagents and materials for implementing hybrid CNN-LSTM artifact removal

| Category | Item | Specification/Function |

|---|---|---|

| Recording Equipment | EEG Acquisition System | High-input impedance amplifiers (>100 MΩ), 24-bit resolution, sampling rate ≥200 Hz [5] |

| EMG Electrodes | Disposable Ag/AgCl electrodes with conductive gel for facial muscle placement [5] [24] | |

| Visual Stimulation Device | Programmable LED arrays capable of precise frequency control for SSVEP elicitation [5] | |

| Computational Resources | Deep Learning Framework | TensorFlow/PyTorch with GPU acceleration for model training and inference [5] [10] |

| Signal Processing Toolbox | EEGLab, BBCI Toolbox, or custom Python implementations for preprocessing [23] | |

| High-Performance Computing | GPU with ≥8GB VRAM for efficient training of hybrid CNN-LSTM models [10] | |

| Validation Tools | Benchmark Datasets | EEGdenoiseNet, BCI Competition IV2b, or custom datasets with clean and contaminated signals [3] [10] |

| Performance Metrics | Custom scripts for SNR, CC, RRMSE calculations in temporal and frequency domains [5] [10] | |

| Experimental Materials | Electrode Application Supplies | Abrasive gels, conductive pastes, electrode caps for secure placement [5] |

| Data Collection Software | LabVIEW, PsychoPy, or custom MATLAB/Python scripts for experimental control [5] |

The integration of hybrid CNN-LSTM architectures with auxiliary EMG signals represents a significant advancement in muscle artifact removal from EEG data. This approach demonstrates superior performance compared to traditional methods by explicitly modeling the relationship between muscle activity and its manifestation in EEG signals. The framework's ability to preserve neurologically relevant components such as SSVEP responses while effectively suppressing artifacts makes it particularly valuable for both research and clinical applications.

Future development directions include adapting the architecture for real-time processing in BCI applications, extending the approach to handle other artifact types (EOG, ECG), and improving generalizability across diverse subject populations and recording conditions. As deep learning methodologies continue to evolve and EEG datasets expand, data-driven approaches with multimodal inputs are poised to become the standard for robust artifact removal in electrophysiological signal processing.

Dual-Scale CNN-LSTM (DuoCL) for Morphological and Temporal Feature Learning

Electroencephalogram (EEG) artifact removal represents a critical preprocessing challenge in neuroscientific research and clinical applications. The Dual-Scale CNN-LSTM (DuoCL) model addresses fundamental limitations in conventional artifact removal methods by integrating complementary deep learning architectures to simultaneously capture morphological features and temporal dependencies inherent in EEG signals [14]. This integrated approach enables superior artifact removal performance across diverse contamination scenarios, including electromyographic (EMG), electrooculographic (EOG), and hybrid artifacts that have traditionally challenged single-method solutions [10].

Traditional EEG denoising techniques, including regression methods, blind source separation (BSS), and wavelet transformations, often require manual intervention, reference channels, or make restrictive assumptions about signal characteristics [10] [25]. While deep learning approaches mark a significant advancement, many early neural network architectures demonstrated insufficient capability to capture potential temporal dependencies embedded in EEG or adapt to scenarios without a priori knowledge of artifacts [14]. The DuoCL architecture specifically addresses these limitations through its unique dual-branch design that extracts features at multiple scales while preserving temporal relationships across the signal [14] [10].

Architectural Framework and Mechanism of Action

Core Architectural Components

The DuoCL model operates through three sequential phases that transform contaminated EEG input into reconstructed, artifact-reduced output:

- Phase 1: Morphological Feature Extraction – A dual-branch convolutional neural network (CNN) utilizes convolution kernels of two different scales to learn morphological features from individual samples. This multi-scale approach enables the model to capture both local and global waveform characteristics essential for distinguishing neural activity from artifacts [14].

- Phase 2: Feature Reinforcement – The dual-scale features are reinforced with temporal dependencies (inter-sample) captured by Long Short-Term Memory (LSTM) networks. This component models the sequential nature of EEG signals, preserving contextual information that is crucial for accurate artifact removal [14].

- Phase 3: EEG Reconstruction – The resulting reinforced feature vectors are aggregated to reconstruct artifact-free EEG via a terminal fully connected layer, which maps the processed features back to the clean signal domain [14].

Comparative Architecture Analysis

Table 1: Comparative Analysis of Deep Learning Architectures for EEG Artifact Removal

| Architecture | Core Components | Temporal Processing | Multi-scale Features | Primary Artifact Targets |

|---|---|---|---|---|

| DuoCL [14] [10] | Dual-scale CNN + LSTM | LSTM networks | Dual-branch CNN | EMG, EOG, hybrid, unknown artifacts |

| CLEnet [10] | Dual-scale CNN + LSTM + EMA-1D | LSTM networks | Dual-branch CNN with attention | Multi-channel EEG, unknown artifacts |

| MSCGRU [25] | Multi-scale CNN + BiGRU + GAN | Bidirectional GRU | Multi-scale CNN module | EMG, EOG, ECG |

| 1D-ResCNN [26] | Residual CNN | None | Inception-ResNet blocks | EMG, ECG, EOG |

| NovelCNN [14] [10] | Feedforward CNN | None | Single-scale | EMG artifacts |

| State Space Models (M4) [13] [27] | State Space Models | Sequential modeling | Multi-modular | tACS, tRNS artifacts |

Quantitative Performance Evaluation

Performance Metrics and Benchmarking

The efficacy of DuoCL has been rigorously evaluated against state-of-the-art alternatives using standardized metrics in both temporal and spectral domains [14] [10]. Key performance indicators include:

- Signal-to-Noise Ratio (SNR): Measures the ratio of clean EEG power to residual artifact power

- Correlation Coefficient (CC): Quantifies waveform similarity between reconstructed and ground-truth EEG

- Relative Root Mean Square Error (RRMSE) : Evaluates reconstruction accuracy in both temporal (RRMSEt) and frequency (RRMSEf) domains [14] [10]

Comparative Performance Data

Table 2: Performance Comparison of DuoCL Against Benchmark Models for Mixed Artifact Removal

| Model | SNR (dB) | Correlation Coefficient | RRMSEt | RRMSEf |

|---|---|---|---|---|

| DuoCL [14] | 11.498* | 0.925* | 0.300* | 0.319* |

| CLEnet [10] | ~11.50 | ~0.925 | ~0.300 | ~0.319 |

| 1D-ResCNN [10] | Lower than DuoCL | Lower than DuoCL | Higher than DuoCL | Higher than DuoCL |

| NovelCNN [14] [10] | Lower than DuoCL | Lower than DuoCL | Higher than DuoCL | Higher than DuoCL |

| MSCGRU [25] | 12.857±0.294 (EMG only) | 0.943±0.004 (EMG only) | 0.277±0.009 (EMG only) | - |

Note: Values for DuoCL are representative performance for mixed (EMG+EOG) artifact removal as reported in comparative studies [10].

Experimental Protocols and Implementation

Dataset Preparation and Preprocessing

Successful implementation of DuoCL requires appropriate dataset construction with paired contaminated and clean EEG signals:

- Semi-Synthetic Dataset Generation: Combine artifact-free EEG recordings with separately recorded artifact signals (EMG, EOG) at controlled signal-to-noise ratios [10] [28]. The benchmark EEGdenoiseNet dataset provides standardized data for this purpose [10].

- Real-World Dataset Validation: Augment semi-synthetic validation with experimentally collected data containing unknown artifact profiles. For example, 32-channel EEG recordings during n-back tasks capture naturalistic artifacts without synthetic manipulation [10].

- Signal Preprocessing: Apply bandpass filtering (e.g., 0.5-50 Hz), normalization, and segmentation into fixed-length epochs compatible with network input dimensions [10] [28].

Model Training Protocol

- Network Configuration: Implement dual CNN branches with distinct kernel sizes (e.g., 3×1 and 15×1) to capture short- and long-range morphological features [14]. The LSTM component should be configured with sufficient hidden units to capture relevant temporal dependencies.

- Loss Function: Utilize Mean Squared Error (MSE) between reconstructed and ground-truth clean EEG as the primary optimization objective [10].

- Training Parameters: Employ Adam optimizer with learning rate scheduling, mini-batch processing, and early stopping based on validation loss to prevent overfitting [10].

- Validation Framework: Implement k-fold cross-validation with distinct test sets containing completely unseen data to ensure generalizability [10].

Performance Assessment Methodology

- Quantitative Metrics: Compute SNR, CC, RRMSEt, and RRMSEf between reconstructed signals and ground-truth clean EEG across all test samples [14] [10].

- Qualitative Assessment: Visual inspection of reconstructed waveforms to ensure physiological plausibility and absence of artifact-induced distortions [14].

- Downstream Task Validation: Evaluate the impact of denoising on subsequent EEG analysis tasks (e.g., classification accuracy in brain-computer interface applications) [26].

Visualization of DuoCL Architecture

Diagram 1: DuoCL three-phase architecture for EEG artifact removal

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Resources for DuoCL Implementation

| Resource | Type | Function/Purpose | Example Sources |

|---|---|---|---|

| EEGdenoiseNet [10] | Benchmark Dataset | Provides standardized semi-synthetic EEG with EMG/EOG artifacts for training and evaluation | Public repository |

| Synthetic EEG Dataset [28] | Training Dataset | Contains 80,000 examples of clean and artifact-contaminated EEG signals for CNN-LSTM training | IEEE DataPort |

| MIT-BIH Arrhythmia Database [10] | Component Data | Source of ECG artifacts for creating specialized contamination datasets | PhysioNet |

| Custom 32-channel EEG Dataset [10] | Validation Data | Real-world EEG with unknown artifacts for testing generalizability | Research institution collections |

| Dual-scale CNN-LSTM Framework [14] | Algorithm Core | Provides morphological feature extraction at different scales | Research implementations |

| LSTM Module [14] | Temporal Processor | Captures long-range dependencies in EEG time series | Deep learning libraries |

| EMA-1D Attention Mechanism [10] | Enhancement Module | Improves feature selection in advanced variants (CLEnet) | Research implementations |

Applications and Limitations

Application Scenarios

DuoCL demonstrates particular efficacy in several challenging EEG processing scenarios:

- Unknown Artifact Removal: The model maintains robust performance even when artifact characteristics are not fully known in advance, a significant advantage over specialized architectures [14].

- Hybrid Artifact Scenarios: Simultaneous contamination by multiple artifact types (e.g., EMG + EOG) is effectively addressed through the comprehensive feature learning approach [10].

- Multi-channel EEG Processing: Advanced variants like CLEnet extend the core DuoCL concept to complex multi-channel recordings while preserving inter-channel relationships [10].

Limitations and Considerations

Despite its advantages, practitioners should consider certain limitations:

- Computational Complexity: The dual-branch architecture with sequential temporal modeling requires greater computational resources than simpler CNN alternatives [10].

- Training Data Requirements: Like most deep learning approaches, performance depends on availability of representative training data with appropriate artifact diversity [10] [28].

- Architectural Refinements: Recent research indicates potential improvements through attention mechanisms (EMA-1D) and bidirectional temporal modeling (BiGRU) that address feature disruption limitations in the original DuoCL [10] [25].

The DuoCL architecture establishes a robust foundation for combining morphological and temporal feature learning in EEG artifact removal. Recent advancements build upon this core concept through several key innovations:

- Integration of Attention Mechanisms: CLEnet demonstrates that incorporating improved EMA-1D modules enhances feature selection and preservation [10].

- Bidirectional Temporal Modeling: MSCGRU replaces LSTM with bidirectional GRU networks to capture broader temporal context [25].

- Multi-scale Enhancements: Advanced architectures employ more sophisticated multi-scale feature extraction beyond dual branches [25] [26].