Adaptive Decoding Algorithms for Non-Stationary Neural Signals: From Theory to Clinical Translation

This article provides a comprehensive examination of adaptive decoding algorithms, which are critical for interpreting the non-stationary neural signals that underlie complex brain functions and neurological disorders.

Adaptive Decoding Algorithms for Non-Stationary Neural Signals: From Theory to Clinical Translation

Abstract

This article provides a comprehensive examination of adaptive decoding algorithms, which are critical for interpreting the non-stationary neural signals that underlie complex brain functions and neurological disorders. Aimed at researchers, scientists, and drug development professionals, it explores the foundational challenges of neural signal variability and the limitations of traditional analysis methods. The scope spans from core methodological innovations, including Bayesian adaptive designs and transformer-based architectures, to practical optimization strategies for enhancing robustness and computational efficiency. The content further delves into rigorous validation frameworks and comparative analyses, highlighting how these advanced algorithms are poised to revolutionize neurotherapeutic decision-making, precision brain imaging, and the development of personalized neural prostheses.

The Challenge of Neural Non-Stationarity: Foundations and Clinical Imperatives

Troubleshooting Guide: Frequently Asked Questions

What is a non-stationary neural signal, and why is it a problem for my analysis? A non-stationary neural signal is one whose statistical properties—such as mean firing rate, variance, and relationship to movement parameters—change over time [1] [2]. This is a problem because many standard neural decoding algorithms (e.g., linear regression or Kalman filters) are built on the assumption that these statistical properties are stationary. When this assumption is violated, the model's performance degrades over time, leading to inaccurate decoding of movement intentions or other neural states [2]. For instance, a neuron's mean firing rate might steadily increase while the animal's behavior remains consistent, breaking the fixed model relationship [2].

How can I visually identify non-stationarity in my recorded neural data? You can identify potential non-stationarity by plotting the average firing rates of individual neurons across many trials over the course of an experiment. If a substantial subpopulation (around 50% in some studies) shows significant trends or variations in their averaged firing rates while behavioral outputs are consistent, this is a key indicator of non-stationarity [2]. The figure below illustrates this concept.

My decoding model performance drops during long recording sessions. Is non-stationarity the cause? Yes, this is a classic symptom. Subjects may change their level of attention or engagement, and neural representations themselves can drift over time, making a model trained on initial data less accurate for later data [2]. The solution is to move from static to adaptive decoding models that update their parameters as new neural and behavioral observations come in [2].

The Fourier Transform of my signal is difficult to interpret. Is this related to non-stationarity? Yes. The standard Fourier Transform assumes signal properties are stable over time. For non-stationary signals, it conflates time and frequency information, obscuring when specific frequency components occur [3] [4]. This makes it poor for identifying transient events or tracking how neural oscillations change over time. You should use time-frequency analysis techniques like spectrograms or wavelet transforms instead [5] [6].

What are the main sources of variation in non-stationary neural signals? Trial-by-trial variation in neural signals can be broken down into two main components, as shown in the table below.

| Component of Variation | Description | Impact on Behavior |

|---|---|---|

| Shared Variation | Correlated fluctuations across a population of neurons, often from common input. This component is expressed as neuron-neuron latency correlations [7]. | Propagates through the sensory-motor circuit to drive trial-by-trial variation in behavioral latency and performance. It is challenging to eliminate by simple averaging [7]. |

| Independent Variation | Fluctuations local to individual neurons. Surprisingly, this arises more from the underlying probability of spiking (synaptic inputs) than from the stochasticity of spiking itself [7]. | Can be reduced by averaging across a large population of neurons [7]. |

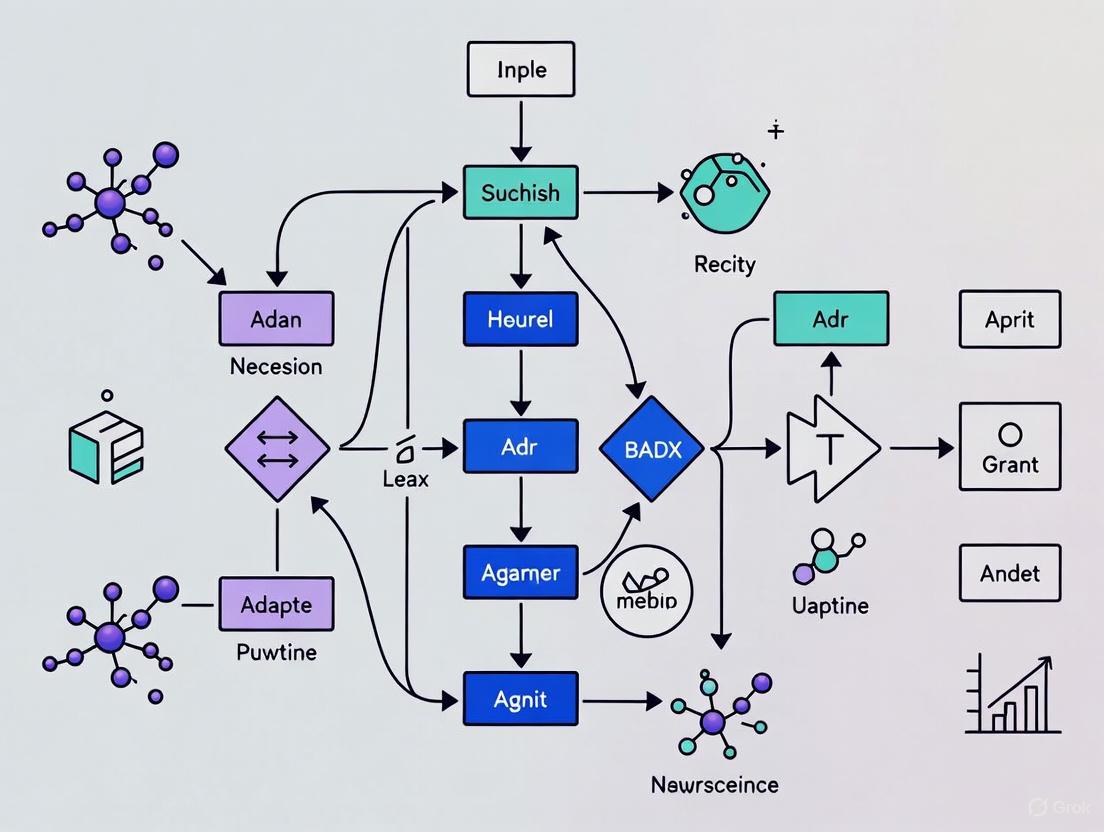

Can you provide a practical example of an adaptive algorithm for handling non-stationarity? A common approach is the Adaptive Kalman Filter. While a standard Kalman filter uses fixed parameters, an adaptive version updates its parameters (the state transition and observation matrices) over time as new training data (neural activity and measured kinematics) becomes available [2]. This allows the model to "track" the dynamic relationship between neural firing and behavior. A recursive update method can make this process computationally efficient for real-time use [2]. The following diagram outlines a general adaptive decoding workflow.

Experimental Protocols & Methodologies

Protocol 1: Quantifying Neural and Behavioral Latency Relationships

This protocol is used to investigate how trial-by-trial variations in neural response latency relate to behavioral latency [7].

- Task: Train a subject (e.g., a non-human primate) to perform a step-ramp visual pursuit task. A target appears and begins moving; the subject must initiate smooth eye movement to track it [7].

- Recording: Implant a multielectrode array in the relevant brain area (e.g., motor cortex, area MT). Record single-unit activity and behavioral kinematics (e.g., hand or eye position) simultaneously over hundreds of trials [2] [7].

- Latency Estimation:

- Behavioral Latency: For each trial, use an objective algorithm to determine the time point at which smooth pursuit eye movement begins [7].

- Neural Latency: For each recorded neuron, create a spike density function for each trial. Use an objective method to estimate the response latency on a trial-by-trial basis [7].

- Analysis:

- Bin all trials for a given neuron into quintiles based on behavioral latency.

- Calculate the mean neural latency for each quintile.

- Perform regression analysis to determine the sensitivity (slope) of neural latency to behavioral latency. A slope of 1 would indicate a perfect one-to-one relationship [7].

Protocol 2: Cycle-by-Cycle Analysis of Neural Oscillations

This methodology moves beyond Fourier analysis to characterize the non-sinusoidal and non-stationary properties of neural oscillations, such as those in EEG or LFP recordings [4].

- Signal Preprocessing: Filter the raw neural signal into a frequency band of interest (e.g., theta, alpha).

- Cycle Detection: Identify individual oscillatory cycles in the filtered signal by finding consecutive peaks.

- Feature Extraction: For each cycle, compute the following time-domain features:

- Peak-Trough Symmetry: Calculate as P / (P + T), where P is the time from a zero-crossing to the next peak, and T is the time from a zero-crossing to the next trough. A value of 0.5 indicates a perfect sinusoid [4].

- Rise-Decay Symmetry: Calculate as R / (R + D), where R is the rise time from a zero-crossing to a peak and D is the decay time from a zero-crossing to a trough [4].

- Interpretation: Plot the distributions of these symmetry measures over many cycles. The mean and variance of these distributions reveal how much and how consistently the oscillations deviate from a pure sinusoid, providing a measure of aperiodicity and non-stationarity [4].

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function in Research |

|---|---|

| Silicon Microelectrode Arrays (e.g., 100-electrode arrays) | Chronic implantation allows for long-term recording from a population of neurons in areas like motor cortex, critical for tracking non-stationarity over time [2]. |

| Multi-channel Neural Signal Acquisition System (e.g., Cerebus system) | Systems that can filter, amplify, and digitally record raw waveforms from all electrodes simultaneously at high sampling rates (e.g., 30 kHz) [2]. |

| Offline Spike Sorter (e.g., Plexon Offline Sorter) | Software used to isolate the activity of single units (individual neurons) from the recorded waveforms based on spike shape and other features [2]. |

| Robotic Arm or Kinematic Tracking System (e.g., KINARM) | Precisely measures and records the subject's behavioral output, such as joint angles or hand position, which is essential for modeling the neural-behavioral relationship [2]. |

| Time-Frequency Analysis Software | Software (e.g., custom MATLAB or Python scripts) to compute spectrograms, wavelet transforms, and other time-frequency distributions for analyzing non-stationary signal components [5]. |

Limitations of Traditional Machine Learning and Fixed Analysis Windows

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary data-related limitations of traditional machine learning for neural signal analysis? Traditional machine learning (ML) models face significant challenges with neural data due to its non-stationary, non-linear, and non-Gaussian nature. These models are highly dependent on data quality and struggle with distributional shifts that occur across different recording sessions or between different subjects. This violation of the standard assumption that data samples are independently and identically distributed (i.i.d.) severely limits model generalizability [8] [9].

FAQ 2: How do fixed analysis windows hinder accurate neural decoding? Fixed analysis windows are often ineffective for neural signals because they cannot adapt to the dynamic nature of brain activity. This is particularly problematic in applications like Steady-State Visual Evoked Potential (SSVEP) decoding, where using short, fixed windows to increase the information transfer rate can cause a significant drop in decoding accuracy. These static windows fail to capture the evolving temporal patterns of neural responses [10].

FAQ 3: Why is model interpretability a problem in clinical neurotechnology? Complex models like deep neural networks often function as "black boxes," making it difficult to understand how they arrive at a specific prediction. This lack of transparency is a major barrier to clinical adoption, as doctors and researchers require explainability to trust and effectively use a model's output for diagnostic or therapeutic decisions [9] [11].

FAQ 4: What is the "cross-subject and cross-session" generalization problem? This refers to the challenge of a decoding model, trained on data from one set of subjects or one recording session, failing to perform accurately on data from new subjects or subsequent sessions. This is caused by the inherent variability and randomness of brain electrical activity between individuals and over time [8].

Troubleshooting Guides

Issue 1: Poor Model Performance on New Subjects or Sessions

Problem: Your trained model, which performed well on its original training data, shows significantly degraded accuracy when applied to data from a new subject or a new recording session from the same subject.

Solution: Implement Domain Adaptation (DA) techniques. DA helps to minimize the distributional differences between your source domain (original training data) and target domain (new subject/session data) [8].

- Step 1: Identify the DA Approach. Choose a method based on your data and goals:

- Feature-based DA: Transform the feature spaces of the source and target domains to make them more similar [8].

- Model-based DA: Fine-tune a pre-trained model from the source domain using a small amount of labeled data from the target subject/session [8].

- Instance-based DA: Adjust the weights of source domain samples, emphasizing those most relevant to the target domain [8].

- Step 2: Consider Advanced Architectures. For complex scenarios, use deep learning frameworks combined with DA. Transformer models with adaptive attention mechanisms have shown success in learning robust, subject-agnostic features from neural data like EEG [10] [11].

- Step 3: Validate Rigorously. Always test the adapted model on a completely held-out dataset from the target domain to ensure true generalizability and avoid overfitting [12].

Issue 2: Inability to Capture Dynamic Neural Patterns

Problem: Your model fails to decode short-term fluctuations in neural states, which is critical for applications like adaptive deep brain stimulation (aDBS).

Solution: Move beyond static features and fixed windows by implementing models that capture spatiotemporal dynamics.

- Step 1: Employ Adaptive Time-Series Models. Replace static analyzers with models designed for sequential data. LSTMs and Transformers can learn from the temporal context of the signal [11].

- Step 2: Leverage Temporal-Spatial Feature Extraction. Use models that can simultaneously analyze information across both time and the spatial arrangement of electrodes (channels). Transformer architectures are particularly effective at this, using multi-head self-attention to model long-range temporal dependencies and spatial attention for inter-channel interactions [11].

- Step 3: Integrate with a Closed-Loop System. Frame the decoding output to directly control stimulation parameters in real-time. This creates an intelligent adaptive DBS (iDBS) system that responds to the patient's current brain state [12].

Experimental Protocols & Data

The following table summarizes the performance of various decoding algorithms reported in the literature, highlighting the challenge of cross-subject generalization.

| Model/Algorithm Type | Key Characteristic | Reported Performance Limitation / Advantage |

|---|---|---|

| Filter Bank CCA (FBCCA) [10] | Unsupervised | Performance declines significantly in short time windows. |

| Task-Related Component Analysis (TRCA) [10] | Supervised | Exhibits weak performance under cross-subject conditions. |

| Traditional CNN/LSTM [11] | Deep Learning | Struggles with spatial connections (CNNs) or long-range temporal dependencies (LSTMs). |

| SSVEPTransformer [10] | Transformer-based | Demonstrated better performance in short time windows and cross-subject conditions compared to traditional models. |

| Adaptive Transformer [11] | Transformer with Adaptive Attention | Achieved 98.24% accuracy on EEG tasks, effectively modeling temporal-spatial relationships. |

Detailed Protocol: Domain Adaptation for Cross-Subject EEG Decoding

Aim: To improve the generalization performance of an EEG-based classification model when applied to a new, unseen subject.

Methodology:

- Data Preparation:

- Source Domain (

Ds): Use a publicly available EEG dataset (e.g., TUH EEG Corpus, CHB-MIT) with data from multiple subjects{xi, yi}i=1Ns[11]. - Target Domain (

Dt): Select one subject as the hypothetical new user. Hold out this subject's data{xj, yj}j=1Ntduring initial training [8]. - Preprocessing: Apply standard preprocessing: band-pass filtering, downsampling, and artifact removal (e.g., for eye blinks and muscle noise) [8].

- Source Domain (

- Feature Extraction:

- Extract features from multiple domains: time, frequency, and time-frequency domains (e.g., using wavelet transforms) [8].

- Alternatively, for an end-to-end deep learning approach, use the raw or minimally processed data and allow the model to learn its own features.

- Model Training with DA:

- Baseline: Train a standard classifier (e.g., SVM, Random Forest) on the source domain data only. Test its performance on the held-out target subject. This establishes a performance baseline.

- Intervention - Feature-Based DA: Implement a feature-based DA algorithm, such as aligning the source and target feature distributions in a shared latent space using a method like Least Squares Transformation [10].

- Train a new classifier on the transformed source features and evaluate it on the target subject's data.

- Evaluation:

Experimental Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| Public EEG Datasets (e.g., TUH EEG Corpus, CHB-MIT) [11] | Provide standardized, annotated neural data for training and benchmarking decoding algorithms, ensuring reproducibility and comparison across studies. |

| Domain Adaptation Algorithms (e.g., Least Squares Transformation, RPA) [8] [10] | Techniques designed to minimize distributional differences between data from different subjects or sessions, directly addressing the generalization problem. |

| Transformer Architectures [10] [11] | Advanced neural network models that use self-attention mechanisms to effectively capture long-range temporal and spatial dependencies in non-stationary neural signals. |

| Open-Source ML Frameworks (e.g., TensorFlow, PyTorch) [13] | Provide the foundational tools and libraries for building, training, and testing custom deep learning models for neural decoding. |

| Hyperparameter Optimization Tools (e.g., Bayesian Optimization) [12] | Automate the search for the best model parameters, which is crucial for achieving robust performance and saving researcher time. |

Adaptive Algorithm Decision Pathway

Clinical and Research Consequences of Ignoring Temporal Variability

Foundations: Understanding Temporal Variability in Neural Signals

What is temporal variability and why is it a problem in neural decoding?

Temporal variability refers to the inconsistency in the timing of neural responses across multiple trials of the same task. In experimental settings, this means that the brain's response to an identical stimulus or the execution of an identical cognitive process (like memory recall or motor imagery) does not occur at precisely the same millisecond every time.

This is a critical problem because many standard decoding algorithms rely on time-locked analysis. These methods assume that task-relevant neural signals are consistently aligned to an external event marker. They perform decoding by analyzing data point-by-point across trials, an approach that fails when the neural dynamics shift in time. When timing is inconsistent, the averaged or analyzed signal appears blurred and degraded, much like a photograph of a moving subject taken with a slow shutter speed. This dramatically reduces the signal-to-noise ratio and compromises the accuracy of decoding mental contents [14].

How does ignoring temporal variability impact my research outcomes?

Ignoring temporal variability can lead to systematic errors and false conclusions in your research. The primary consequences are:

- Reduced Decoding Accuracy: The core performance metric for most decoding studies will be significantly lower. Models trained on misaligned data fail to learn the true neural signature of the cognitive process, leading to poor generalization on new test data.

- Invalidated Conclusions: Findings may incorrectly suggest that a certain brain area does not encode specific information, when in reality, the variability of the response masked the signal.

- Inefficient Use of Data: The statistical power of your experiments is weakened. You may need to collect significantly more data to achieve a desired effect size, increasing time and resource costs.

- Limited Clinical Translation: Algorithms developed under the assumption of fixed timing will perform poorly in real-world clinical applications, such as Brain-Computer Interfaces (BCIs), where users' neural responses are inherently non-stationary [15].

In which specific experimental paradigms is temporal variability most critical?

Temporal variability is particularly detrimental in paradigms involving covert, self-paced cognitive processes. The table below summarizes high-risk paradigms.

Table: Experimental Paradigms Highly Susceptible to Temporal Variability

| Paradigm | Reason for High Variability | Primary Consequence |

|---|---|---|

| Memory Recall | Self-paced retrieval of information; latency varies with memory strength and search effort. | Inaccurate decoding of recalled content [14]. |

| Mental Imagery | No external pacing for the onset and dynamics of the imagined scene or action. | Poor performance in imagery-based BCIs [15]. |

| Decision Making | The cognitive process of deliberation has variable duration. | Misalignment of neural correlates of evidence accumulation and choice. |

| Free-Keying Motor Tasks | Movement initiation is self-paced, unlike cue-triggered movements. | Blurred motor cortical signals and reduced classification of movement type. |

Troubleshooting Guide: Identifying Temporal Variability in Your Data

How can I diagnose if temporal variability is affecting my dataset?

Before implementing complex solutions, confirm that temporal variability is the root of your problem. Follow this diagnostic workflow:

Diagnostic Steps:

- Visual Inspection: Plot single-trial neural responses (e.g., raw signals, band power). Look for jitter in the latency of characteristic deflections or power changes relative to the event marker.

- Cross-Trial Consistency: Compute the inter-trial phase coherence (ITPC) or similar metrics. Low coherence at the time of the expected response suggests high temporal jitter.

- Time-Locked Decoding: Train a decoding model (e.g., an LDA or SVM) at each time point independently and plot the resultant accuracy over time.

- Interpret Result:

- If decoding accuracy forms a sharp, narrow peak that falls off quickly, your signal is highly time-locked but variable. This is a classic signature of temporal variability.

- If accuracy is consistently low and flat across time, the issue may be a fundamentally low signal-to-noise ratio or an incorrect neural feature, not just misalignment [14].

My decoding accuracy is low. Is it poor signal quality or temporal variability?

Distinguishing between these causes is essential for effective troubleshooting. The table below contrasts key indicators.

Table: Differentiating Low SNR from Temporal Variability

| Indicator | Suggests Temporal Variability | Suggests Poor Signal Quality (Low SNR) |

|---|---|---|

| Single-Trial Plots | Clear, strong responses that are misaligned (jittered) across trials. | Noisy, weak, or non-existent responses in most trials. |

| Time-Locked Decoding | Accuracy shows a prominent but narrow peak in time. | Accuracy is low and flat across the entire time window. |

| Grand Average Signal | The event-related potential/field (ERP/ERF) appears small and smeared. | The ERP/ERF is small but not necessarily smeared; noise dominates. |

| Solution | Implement alignment or adaptive algorithms like ADA. | Improve preprocessing, artifact removal, or feature extraction. |

Experimental Protocols & Solutions

What is the Adaptive Decoding Algorithm (ADA) and how do I implement it?

The Adaptive Decoding Algorithm (ADA) is a non-parametric method designed to handle temporal variability directly. Instead of assuming fixed timing, ADA performs a two-level prediction that explicitly accounts for trial-specific latency [14].

Core Protocol: Implementing ADA

Step-by-Step Methodology:

- Input Data: Your training set consists of multi-trial neural data (e.g., MEG/EEG timeseries) and corresponding task labels (e.g., Class A vs. Class B).

- Window Estimation (Training): For each training trial, ADA identifies the temporal window that is most informative for the task. This can be done using an internal cross-validation loop or a measure of discriminability between classes.

- Decoder Construction: A model (e.g., a classifier) is trained using the features extracted from the selected informative windows of all training trials. This model learns the neural patterns associated with the task, independent of their exact timing.

- Testing Phase: For a new, unlabeled test trial:

- A sliding window analysis is performed.

- The algorithm identifies the window within this trial that most likely contains the task-relevant signal.

- The pre-trained decoder is applied specifically to this window to generate the final prediction (e.g., "Class A") [14].

Are there advanced signal processing techniques to manage variable, high-frequency signals?

For signals with complex, high-frequency bursts, such as neuronal spikes, traditional time-frequency decomposition methods may be insufficient. The Hyperlet Transform (HLT) is a super-resolution technique designed for this challenge.

Key Advantages of HLT:

- High Resolution: Provides highly localized representations of short signal bursts.

- Computational Efficiency: Dramatically speeds up super-resolution operations compared to other methods.

- Improved Pattern Recognition: By unmixing complex signals, HLT enhances downstream tasks like neuronal spike detection and sorting, leading to cleaner neural features for decoding [16].

How can deep learning architectures be designed to handle temporal variability?

Deep learning models can inherently learn to be invariant to certain transformations, including temporal shifts. A highly effective architecture is the Hierarchical Attention-Enhanced Convolutional-Recurrent Network.

Experimental Protocol for Motor Imagery Classification (as demonstrated in [15]):

- Spectral Feature Extraction: The architecture first uses Convolutional Neural Network (CNN) layers to extract spatial features from the input EEG signals, treating electrode locations as spatial dimensions.

- Temporal Dynamics Modeling: The spatial features are then fed into Long Short-Term Memory (LSTM) layers. LSTMs are specialized for sequence modeling and can capture the temporal evolution of the neural signal, learning which temporal patterns are diagnostic.

- Attention Mechanism: An attention layer is applied to the LSTM outputs. This layer learns to adaptively weight different time points based on their importance for the classification task. This is the key to handling variability—the model learns to "focus" on the relevant neural activity regardless of its precise timing.

- Classification: The weighted features are finally passed to a fully connected layer for classification (e.g., left-hand vs. right-hand motor imagery). This approach achieved a state-of-the-art accuracy of 97.25% on a four-class motor imagery task, demonstrating the power of explicitly modeling temporal structure and importance [15].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for Non-Stationary Neural Signal Research

| Tool / Algorithm | Type | Primary Function | Key Reference |

|---|---|---|---|

| Adaptive Decoding Algorithm (ADA) | Decoding Algorithm | Handles temporal jitter via trial-specific window selection. | [14] |

| Hyperlet Transform (HLT) | Signal Processing Tool | Provides super-resolution time-frequency decomposition for short bursts. | [16] |

| Hierarchical Attention Model | Deep Learning Architecture | Uses CNNs, LSTMs, and attention to weight informative time points. | [15] |

| Common Spatial Patterns (CSP) | Feature Extraction | Extracts spatially discriminative patterns; requires alignment or adaptation. | [15] |

| Filter Bank CSP (FBCSP) | Feature Extraction | Extends CSP to multiple frequency bands, improving feature robustness. | [15] |

Frequently Asked Questions (FAQs)

Can't I just use more data to average out temporal variability?

While increasing trial count can improve the signal-to-noise ratio of a grand average, it does not solve the core problem of blurring. Averaging misaligned trials will still result in a temporally smeared and potentially attenuated representation of the true neural response. This limits the resolution at which you can study neural dynamics and is ineffective for single-trial decoding, which is essential for BCIs and real-time applications.

Is temporal variability only a concern for EEG/MEG, or also for fMRI?

Temporal variability is a concern for all neuroimaging modalities, but its impact is scaled by the temporal resolution of the technology. It is most critical for high-temporal-resolution techniques like EEG and MEG, where shifts of tens of milliseconds are meaningful. In fMRI, with its resolution of seconds, neural events happening hundreds of milliseconds apart are collapsed into a single volume. However, variability in the Hemodynamic Response Function (HRF) across brain regions and individuals is a well-studied problem that also requires careful modeling.

Are there clinical trial implications for ignoring neural temporal variability?

Yes. Ignoring temporal variability in clinical neuroscience research can lead to failed trials. For example:

- Inaccurate Endpoints: If a drug is intended to improve cognitive function (e.g., memory recall speed), but the neural biomarker for recall is misaligned and blurred, you may fail to detect a true treatment effect.

- BCI Rehabilitation: Clinical trials for BCIs used in stroke rehabilitation will see poor patient outcomes and high dropout rates if the decoding algorithm is not robust to the natural trial-to-trial variability in the patient's neural signals. Improving trial efficiency and participant retention through better technology is a major focus in the clinical trial industry [17] [18].

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: When is it necessary to record EEG and fMRI simultaneously, rather than in separate sessions?

Simultaneous recording is necessary when your research question requires that both datasets capture identical brain activity from the very same trial. This is crucial for analysis methods that rely on a direct trial-by-trial relationship between the electrophysiological (EEG) and hemodynamic (fMRI) signals, such as EEG-informed fMRI analysis [19]. If your hypothesis involves investigating the direct coupling between these signals in a resting state or during decision-making tasks, simultaneous recording is essential [19]. However, if your study design can tolerate the variance introduced by separate sessions (e.g., different sensory stimulation, habituation effects), and your analysis does not depend on a perfect one-to-one trial correspondence, then separate sessions may be preferable, as they often provide higher signal quality for each modality [19].

Q2: What are the most effective methods for handling physiological artifacts in EEG data?

The optimal method depends on the artifact type [20]:

- Eye blinks and movements: Use Independent Component Analysis (ICA) or regression-based subtraction. These artifacts are most prominent in frontal channels [20].

- Muscle artifacts (e.g., from jaw clenching): For transient artifacts, rejection of contaminated epochs is common. For persistent, localized muscle noise, ICA can be effective. Filtering can also attenuate the impact, though it may not remove it completely [20].

- Pulse (heartbeat) artifacts: If an electro-cardiogram (ECG) is co-registered, use specialized algorithms to identify and remove the heartbeat. Without ECG, ICA or using an average reference can reduce its influence [20].

- Sweating/Skin potentials: High-pass filtering can reduce slow drifts. The best strategy is prevention by ensuring a fresh and dry recording environment [20].

Q3: My decoding algorithm performs poorly across different subjects or sessions. What strategies can improve generalization?

This is a classic challenge due to the non-stationarity of neural signals. Domain Adaptation (DA) techniques are designed to address this by minimizing distributional differences [8]. You can consider:

- Feature-based DA: Transforming features from different subjects/sessions into a common space where their probability distributions are similar [8].

- Model-based DA: Fine-tuning a decoder pre-trained on a source subject's (or group's) labeled data using a small amount of data from your target subject. This is highly efficient when data collection is difficult [8].

- Instance-based DA: Re-weighting or selecting samples from your source dataset that are most similar to the target data, thereby reducing the influence of less relevant samples [8].

Q4: How can we validate the concordance between EEG source localization and fMRI findings?

A robust method involves a two-pronged approach on the same cortical surface [21]:

- Independent Comparison (MEM-concordance): Compare EEG sources localized with a method like Maximum Entropy on the Mean (MEM) with significant fMRI clusters. Quantify concordance using metrics like minimal geodesic distances between local extrema and overlap measurements of their spatial extents [21].

- fMRI-Relevance Index (α): Statistically test whether the fMRI cluster can serve as a relevant prior for the EEG inverse solution. A significantly positive α index suggests that sources located within that fMRI cluster can explain the scalp EEG data, providing evidence for concordance [21].

Troubleshooting Common Experimental Issues

Table 1: Troubleshooting Common Data Quality Issues

| Problem | Possible Causes | Solutions & Checks |

|---|---|---|

| Poor EEG signal quality during simultaneous EEG-fMRI | Gradient and ballistocardiogram (BCG) artifacts [19]; Loose electrode contact [20]. | Use artifact removal algorithms designed for MRI environments [19]; Ensure cap fit and check impedances (target 5-10 kΩ) [22]. |

| Low signal-to-noise ratio in fMRI-informed EEG source imaging | Overly restrictive fMRI priors; Mismatch between hemodynamic and electrical sources. | Use multiple Temporally Coherent Networks (TCNs) from fMRI as flexible covariance priors in a Parametric Empirical Bayesian (PEB) framework (e.g., the NESOI approach) [23]. |

| Decoding performance drops with unknown timing of neural events | Temporal variability in cognitive processes (e.g., memory recall); Assumption of fixed latency in analysis. | Employ algorithms that account for trial-specific timing, like the Adaptive Decoding Algorithm (ADA), which identifies the most informative temporal window for each trial [14]. |

| Discrepancies observed between EEG and fMRI activation maps | Different physiological origins and sensitivities of the signals; fMRI may reflect metabolic load while EEG reflects synchronized pyramidal activity [19]. | This may be a valid finding. Ensure your task reliably produces signals in both modalities. Consider that the underlying neural generator may be a distributed network, parts of which are visible to only one modality [21] [19]. |

Experimental Protocols for Multimodal Integration

Protocol 1: Integrating fMRI-Derived Networks for EEG Source Imaging (NESOI)

This protocol uses fMRI to provide spatial priors for estimating EEG source dynamics [23].

- fMRI Data Processing: Use Independent Component Analysis (ICA) on the fMRI data to extract multiple Temporally Coherent Networks (TCNs). These can include both task-related and resting-state networks [23].

- Lead Field Calculation: Construct a lead field matrix based on the individual's head model and electrode positions [23].

- EEG Source Reconstruction with PEB: Set up a Parametric Empirical Bayesian (PEB) model for EEG source imaging. Use the TCNs from Step 1 as the covariance components (priors) in the source space of the model [23].

- Model Estimation: Let the PEB framework estimate the hyperparameters that control the contribution of each TCN prior, based on the scalp EEG data. This provides a solution that integrates the high temporal resolution of EEG with the high spatial resolution of fMRI TCNs [23].

Workflow for fMRI-Informed EEG Source Imaging

Protocol 2: Assessing EEG-fMRI Concordance in Epileptic Spike Analysis

This protocol provides a quantitative method to compare generators of interictal spikes identified by EEG and fMRI [21].

- Data Acquisition & Preprocessing: Acquire EEG and fMRI data simultaneously. Preprocess both datasets and coregister them to the same anatomical space (e.g., the cortical surface) [21].

- fMRI Analysis: Perform an event-related fMRI analysis using the onset of spikes (marked on the EEG) as events. Identify clusters of significant BOLD response (activation/deactivation) and interpolate them onto the cortical surface [21].

- EEG Source Localization: Estimate distributed EEG sources of the averaged spikes on the cortical surface using an method like Maximum Entropy on the Mean (MEM), independent of the fMRI results [21].

- Quantify MEM-Concordance: For each fMRI cluster, calculate:

- The minimal geodesic distance between its local extremum and that of the EEG source.

- The spatial overlap between the extent of the fMRI cluster and the EEG source [21].

- Calculate fMRI-Relevance Index (α): For each fMRI cluster, estimate the α index to test if constraining the EEG source to that cluster significantly explains the scalp EEG data. A positive α supports concordance [21].

The Scientist's Toolkit

Table 2: Key Research Reagents & Computational Tools

| Item / Resource | Function / Application | Key Details |

|---|---|---|

| Parametric Empirical Bayesian (PEB) Framework | A flexible framework for EEG source imaging that allows incorporation of various priors, including those from fMRI [23]. | Enables the use of fMRI-derived Temporally Coherent Networks (TCNs) as covariance priors to guide the EEG inverse solution [23]. |

| Independent Component Analysis (ICA) | A data-driven method for separating mixed signals into statistically independent components [23] [20]. | Used to extract TCNs from fMRI data [23] and to isolate and remove artifacts (e.g., eye blinks, muscle noise) from EEG data [20]. |

| Domain Adaptation (DA) Algorithms | Enhances the generalization of neural decoders across subjects or sessions by minimizing distributional differences in the data [8]. | Categorized into instance-based, feature-based, and model-based approaches, with growing use in combination with deep learning [8]. |

| Adaptive Decoding Algorithm (ADA) | Decodes neural signals when the timing of cognitive events is variable and unknown across trials [14]. | A nonparametric method that, for each trial, estimates the temporal window most likely to contain task-relevant signals before decoding [14]. |

| Multimodal Dataset (e.g., from [24]) | Provides a benchmark for developing and testing new analytical methods, particularly for understanding the relationship between different neural signals. | Includes single neurons, local field potentials (LFP), intracranial EEG (iEEG), and fMRI from the same participants during a continuous naturalistic task (movie watching) [24]. |

The Role of Adaptive Decoding in Brain-Computer Interfaces (BCIs) and Neuroprosthetics

Frequently Asked Questions (FAQs)

1. What is adaptive decoding and why is it necessary in BCIs? Adaptive decoding refers to algorithms that can update their parameters over time to compensate for changes in neural signals, known as non-stationarity. These changes can be caused by factors like neuronal plasticity, learning, electrode instability, or tissue response around the implant. Without adaptation, a decoder's performance will degrade, making long-term, reliable BCI operation impossible [25].

2. What are the main technical approaches to adaptive decoding? Research has explored several methodological approaches, which can be broadly categorized [8]:

- Model-based Adaptation: Tuning the parameters of a pre-defined model (e.g., a Kalman Filter) using recent data.

- Feature-based Adaptation: Transforming neural features to align distributions from different sessions or subjects.

- Instance-based Adaptation: Intelligently weighting or selecting training samples to improve model generalization.

3. My BCI performance drops significantly across days. How can adaptive decoding help? Cross-session and cross-subject performance drops are a primary challenge that adaptive decoding aims to solve. Techniques like domain adaptation (DA) can rapidly transfer knowledge from previous, large datasets (source domain) to new sessions or subjects (target domain) with minimal new data. For instance, you can pre-train a model on source data and then fine-tune it with a small amount of target subject data, significantly reducing calibration time and maintaining accuracy [8].

4. Are there adaptive methods that don't require knowing the user's intended movement? Yes. Self-training methods like Bayesian regression updates can use the decoder's own output as a substitute for the true intended movement to periodically update the neuronal tuning model. This allows the decoder to adapt without external training signals or assumptions about the user's goals [25].

5. What is the role of deep learning in modern adaptive decoders? Deep learning models, such as Recurrent Neural Networks (RNNs) and Transformers, can automatically learn complex spatiotemporal features from neural data. Their architectures are naturally suited for sequence decoding (e.g., of sentences) and can be combined with domain adaptation techniques to create powerful, end-to-end adaptive decoders that generalize well across sessions [8] [26].

Troubleshooting Guide: Common Experimental Issues

| Problem Area | Specific Issue | Potential Causes | Recommended Solutions |

|---|---|---|---|

| Decoder Performance | High error rate on new days or with new subjects. | Distribution shift (non-stationarity) in neural data; poor generalizability of static decoder [8]. | Implement a Domain Adaptation (DA) strategy. Fine-tune a pre-trained model with a small amount of new subject/session data [8]. |

| Gradual performance decay within a single long session. | Within-session neuronal tuning changes or recording instability [25]. | Use a recursive self-training algorithm (e.g., Bayesian regression update) that updates decoder parameters every few minutes using recent decoder outputs [25]. | |

| Signal Acquisition & Quality | Poor decoding accuracy despite a previously good model. | Electrode impedance changes; poor contact; neuronal recording instability; environmental noise [25]. | Verify signal quality and electrode connections. For invasive systems, check spike waveform stability. For non-invasive systems, ensure proper impedance (<2000 kOhms is a common target) [27]. |

| Real-Time Operation | Unstable or jittery control of a neuroprosthetic. | High latency or inaccurate decoding at each time step. | Employ a state-space model like an Unscented Kalman Filter (UKF). It uses a tuning model and a kinematics model to smooth predictions and is compatible with adaptive updates [25]. |

Experimental Protocols & Performance Data

Protocol 1: Bayesian Regression Self-Training for Motor Decoding

This methodology is designed for continuous adaptation in closed-loop motor BCI experiments [25].

- Initial Decoder Setup: Begin with an Unscented Kalman Filter (UKF) decoder. Its parameters are initially fit using calibration data (e.g., neural activity recorded during attempted or actual arm movements).

- Closed-Loop Operation: The user controls a cursor or prosthetic device using the BCI.

- Batch-Mode Update:

- Every 2 minutes, collect a batch of recent neural firing rates and the corresponding decoder outputs (e.g., predicted kinematic states).

- Use Bayesian linear regression to compute new tuning parameters. The previous parameters serve as the prior, and the new data provides the likelihood to form the posterior.

- A transition model is applied to the parameters to account for potential drift.

- This process can be formulated to allow for the temporary omission or addition of neurons from the population.

Quantitative Performance: In offline reconstructions with non-human primates, this self-training update significantly improved the accuracy of hand trajectory reconstructions compared to a static decoder. In real-time closed-loop experiments spanning 29 days, the adaptive updates were crucial for maintaining control accuracy without requiring knowledge of the user's intended movements [25].

Protocol 2: Domain-Adaptive Speech Decoding for a Speech Neuroprosthesis

This protocol outlines the process for decoding attempted speech from a person with paralysis [26].

- Training Data Collection: The user attempts to speak 260-480 sentences, cued by a computer monitor. Intracortical neural activity is recorded from relevant speech motor areas (e.g., ventral premotor cortex).

- Model Architecture & Training: A Recurrent Neural Network (RNN) is trained to predict sequences of phonemes from neural data. To handle non-stationarity:

- Use unique input layers for each day to account for across-day changes.

- Implement rolling feature adaptation to account for within-day changes.

- Language Model Integration: The RNN's output (phoneme probabilities) is combined with a statistical language model to infer the most probable word sequence.

- Real-Time Evaluation: The user attempts to speak new, held-out sentences. Decoded words appear on the screen in real-time.

Quantitative Performance: This adaptive approach enabled a speech BCI to achieve a 23.8% word error rate on a 125,000-word vocabulary at a speed of 62 words per minute, demonstrating the feasibility of large-vocabulary decoding [26].

Table 1: Performance Comparison of Adaptive Decoding Algorithms

| Adaptive Method / Study | Application | Key Metric | Reported Performance |

|---|---|---|---|

| Bayesian Self-Training [25] | Motor Control (Non-human primate) | Control Accuracy Maintenance | Maintained accuracy over 29 days in closed-loop experiments. |

| RNN with Daily Adaptation [26] | Speech Decoding (Human Clinical Trial) | Word Error Rate (125k vocabulary) | 23.8% |

| Decoding Speed | 62 words per minute | ||

| Domain Adaptation (DA) Survey [8] | Cross-Subject/Session EEG | Generalization Accuracy | Enabled effective knowledge transfer, reducing need for extensive per-subject calibration. |

The Scientist's Toolkit: Research Reagents & Materials

Table 2: Essential Components for Adaptive BCI Research

| Item | Function in Research | Example / Note |

|---|---|---|

| Intracortical Microelectrode Arrays | Records action potentials (spikes) from populations of neurons. High-density arrays are crucial for decoding complex intentions. | e.g., 96-micro-wire arrays implanted in motor and somatosensory cortex [25] [26]. |

| Unscented Kalman Filter (UKF) | A state-space decoder for predicting continuous kinematic variables (e.g., cursor position) from neural activity. Serves as a base decoder for some adaptive methods [25]. | Preferred over standard Kalman filters for better handling of non-linear dynamics [25]. |

| Recurrent Neural Network (RNN) | A deep learning model ideal for decoding temporal sequences, such as phonemes in speech or movement trajectories. | Can be combined with custom input layers and rolling adaptation to combat non-stationarity [26]. |

| Bayesian Linear Regression | The core statistical engine for probabilistic parameter updates in self-training paradigms. | Combines prior knowledge (old parameters) with new evidence (recent data) in a principled way [25]. |

| Domain Adaptation (DA) Framework | A set of computational techniques to minimize distribution differences between training (source) and deployment (target) data domains. | Categorized into instance-based, feature-based, and model-based approaches [8]. |

Methodological Workflows

The following diagram illustrates the logical workflow of a self-training adaptive decoder, a key method for handling non-stationary signals.

This diagram outlines a high-level workflow for implementing a domain-adaptive decoding strategy, particularly useful for cross-session or cross-subject applications.

Core Algorithms and Architectures: Methodological Innovations and Applications

Bayesian Adaptive Methods for Dynamic Probability Updating

Frequently Asked Questions (FAQs)

Q1: What are the core advantages of using Bayesian methods over traditional frequentist approaches for decoding non-stationary neural signals? Bayesian methods provide a dynamic framework that integrates prior knowledge and continuously updates probabilistic beliefs with incoming data. This is crucial for non-stationary neural signals, as it allows the model to adapt to changes in signal properties over time or across sessions [28] [29] [30]. Unlike frequentist methods that often provide a single-point estimate, Bayesian approaches output probability distributions, offering a measure of uncertainty for each prediction. This is vital for assessing the reliability of decoded neural commands in brain-computer interfaces (BCIs) and for making informed decisions, especially in safety-critical applications like clinical neurotechnology [29] [30].

Q2: How can I quantify and improve the uncertainty estimates from my Bayesian neural decoder? Uncertainty in Bayesian models arises from two main sources: aleatoric (data noise) and epistemic (model uncertainty). You can improve these estimates by:

- Using Bayesian Neural Networks (BNNs): BNNs place probability distributions over weights, naturally capturing model uncertainty. Techniques like Monte Carlo Dropout or Markov Chain Monte Carlo (MCMC) sampling during inference can approximate these posteriors and provide uncertainty estimates [30].

- Proper Prior Selection: The choice of prior distribution significantly impacts uncertainty calibration. Use informative priors based on historical data or expert knowledge to constrain the model, which is particularly useful when data is limited [28] [31].

- Validation: Calibrate your model's uncertainty by checking if the predicted confidence intervals match the empirical frequency of outcomes on held-out validation data.

Q3: My decoding performance drops significantly between experimental sessions. What adaptive strategies can I use? This is a classic problem of cross-session domain shift. Several domain adaptation (DA) strategies can help:

- Feature-Based DA: Transform the features from both old (source) and new (target) sessions into a shared space where their distributions are aligned. This minimizes distributional differences caused by changes in electrode impedance or neural signal properties [8].

- Model-Based DA: Fine-tune a decoder trained on source session data using a small amount of new target session data. This allows the model to adapt its parameters quickly to the new signal characteristics without requiring a full re-training from scratch [8].

- Instance-Based DA: Re-weight the importance of data points from the source session, prioritizing those that are most similar to the new target session data during model training [8].

Q4: What are the best practices for preprocessing neural data to enhance Bayesian decoding under low signal-to-noise ratio (SNR) conditions? Effective artifact removal is essential for improving SNR before decoding.

- Adaptive Spatial Filtering: For intracortical signals, use advanced methods like the weighted Common Average Reference (CAR) filter with a Kalman filter to adaptively estimate and remove common noise across channels. This has been shown to improve force decoding accuracy from local field potentials (LFPs) by 33% compared to standard CAR filters [32].

- Artifact Correction vs. Rejection: For EEG, studies show that correcting artifacts using Independent Component Analysis (ICA) is often sufficient and preferable to outright rejecting contaminated trials. While the combination of correction and rejection does not significantly boost decoding accuracy in most cases, correction is still recommended to minimize artifact-related confounds that could artificially inflate performance metrics [33].

Troubleshooting Guides

Issue 1: Poor Model Adaptation to Rapidly Changing Neural States

Symptoms: The decoder's performance lags when the subject's cognitive state, behavior, or neural patterns change quickly. The model seems to be "stuck" on previous statistics.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|

| Insufficiently Informative Prior | Check if the prior distribution is too diffuse ("uninformative"), causing the model to learn slowly from new data. | Use a more informative prior based on data from the initial calibration or previous sessions. Implement a "forgetting" mechanism by using a fading-memory likelihood that places more weight on recent observations [28]. |

| Fixed, Inadequate Model Structure | The model lacks the capacity to capture the new neural dynamics. | Employ a Bayesian Adaptive Regression framework. Define a model that can dynamically switch between different regimes or states. The hidden state (e.g., the intended movement direction) can be inferred using recursive Bayesian filters like the Kalman filter or particle filters, which are designed for tracking dynamic states [14] [32]. |

Experimental Workflow for Dynamic Tracking: The following diagram outlines a recursive Bayesian filtering approach for tracking a continuously updating neural state.

Issue 2: Decoder Performance Degradation Over Long Time Scales (e.g., Days/Weeks)

Symptoms: The decoder trained on day 1 performs poorly when applied on day 7, even with initial recalibration. This is often due to non-stationarities in the neural code.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|

| Covariate Shift | Compare the feature distributions (e.g., mean power in specific frequency bands) between the initial training session and the new degraded session. | Apply Domain Adaptation (DA) techniques. As highlighted in the FAQs, use feature-based DA to find a domain-invariant feature space. This allows a decoder trained on source domain data (Day 1) to generalize to a target domain (Day 7) without extensive re-labeling [8]. |

| Inadequate Handling of Neural Sparsity and Variability | The sampling or model is not adapting to the changing sparsity and information content of the neural signal. | Implement an adaptive sampling rate allocation strategy. Inspired by methods in compressed sensing, you can segment the neural feature space and allocate more "decoding resources" (e.g., model complexity) to blocks of data with higher information content (sparsity), thereby improving the efficiency and robustness of the overall decoding framework [34]. |

Protocol for Feature-Based Domain Adaptation:

- Data Preparation: Collect labeled neural data from your initial session (source domain,

D_s) and a small amount of (potentially unlabeled) data from the new session (target domain,D_t). - Feature Extraction: Extract features (e.g., power spectral density, spike rates) from both

D_sandD_t. - Domain Alignment: Train a feature transformation network or apply an algorithm (e.g., Correlation Alignment CORAL, Maximum Mean Discrepancy MMD minimization) to project features from both domains into a new, domain-invariant feature space. The goal is to make

P(features_s) ≈ P(features_t)[8]. - Decoder Training & Application: Train your Bayesian decoder on the transformed source domain features and their labels. This decoder should then be applied directly to the transformed target domain features for inference.

Issue 3: High Computational Cost of Bayesian Inference in Real-Time Applications

Symptoms: The decoding algorithm cannot run in real-time due to the computational burden of sampling from posterior distributions.

Diagnosis and Solutions:

| Potential Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|

| Intractable Posterior | Using exact inference for complex models, leading to slow performance. | Replace exact inference with approximate methods. Use Variational Inference (VI) to approximate the true posterior with a simpler, tractable distribution. This is often faster than MCMC sampling and more suitable for real-time BCIs [30]. |

| Overly Complex Model | The model has too many parameters or layers for the available hardware. | Use a Bayesian Neural Network (BNN) with simplified architecture. Alternatively, employ techniques like adaptive layer parallelism. While originally proposed for LLMs, the core idea is relevant: for simpler decoding decisions, use intermediate network layers to generate predictions, bypassing the full computational graph and speeding up inference without sacrificing output consistency [35]. |

Protocol: Evaluating Bayesian Adaptive Filtering for Kinematic Decoding

This protocol details how to implement and test a Bayesian adaptive filter for decoding continuous movement parameters (e.g., hand velocity) from neural signals.

1. Hypothesis: A Bayesian adaptive filter (e.g., Kalman filter) will provide more accurate and robust decoding of hand kinematics from motor cortical signals compared to a standard Wiener filter, especially in the presence of non-stationary neural tuning.

2. Materials and Reagents:

- Neural Data: Intracortical recordings from a multi-electrode array (e.g., in primary motor cortex). Include both Single-Unit Activity (SUA) and Local Field Potentials (LFP) [32].

- Behavioral Data: Simultaneously recorded hand kinematics (position, velocity, force) at a high temporal resolution.

- Preprocessing Tools: Software for spike sorting, LFP filtering, and artifact removal (e.g., using the adaptive weighted CAR method) [32].

3. Detailed Methodology:

Preprocessing:

- Apply the weighted Common Average Reference (CAR) method with a Kalman filter to remove common noise and artifacts from the intracortical channels [32].

- Extract neural features: For SUA, use binned spike counts. For LFP, extract signal power in specific frequency bands (e.g., beta: 13-30 Hz, gamma: 70-200 Hz) over sliding time windows.

- Smooth and standardize all neural features and kinematic data.

Model Definition (Kalman Filter):

- State Transition Model:

x_t = A * x_{t-1} + w_t, wherex_tis the kinematic state (e.g., 2D velocity) andw_tis process noise. - Observation Model:

y_t = C * x_t + q_t, wherey_tis the vector of neural features andq_tis observation noise. - The matrices

A(state transition) andC(observation) are learned from a training dataset via maximum likelihood estimation.

- State Transition Model:

Bayesian Recursive Inference:

- Prediction Step: Predict the next state:

p(x_t | y_1:t-1) = N(x_t | A * μ_{t-1}, A * Σ_{t-1} * A^T + W). - Update Step: Update the belief with new neural data

y_t:p(x_t | y_1:t) = N(x_t | μ_t, Σ_t), where the meanμ_tand covarianceΣ_tare updated using the standard Kalman gain equations. This update is the application of Bayes' rule.

- Prediction Step: Predict the next state:

Validation:

- Use a held-out test dataset not used for model fitting.

- Quantify decoding performance using the Coefficient of Determination (R²) between the decoded and actual kinematics [32].

The table below summarizes key results from selected studies on adaptive methods in neural decoding and related fields.

Table 1: Performance of Adaptive Methods in Signal Decoding and Clinical Trials

| Application Area | Method | Key Performance Metric | Result | Source |

|---|---|---|---|---|

| Force Decoding (Rat Motor Cortex) | Weighted CAR + Kalman Filter (Artifact Removal) | Decoding Accuracy (R² value) | 33% improvement in R² compared to standard CAR filters [32]. | [32] |

| EEG Decoding (Various ERP Paradigms) | ICA Artifact Correction + Artifact Rejection | Impact on SVM/LDA Decoding Performance | No significant performance improvement in vast majority of cases. Recommendation: Use artifact correction to avoid confounds, but rejection may be unnecessary [33]. | [33] |

| Clinical Trial Design | Bayesian Adaptive Design | Efficiency (Sample Size, Duration) | Can reduce number of patients exposed to inferior treatments; enables seamless Phase II/III trials, accelerating development [28] [29]. | [28] [29] |

| LLM Decoding (Computer Science) | AdaDecode (Adaptive Layer Parallelism) | Decoding Throughput (Speedup) | Up to 1.73x speedup while guaranteeing output parity with standard decoding [35]. | [35] |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Components for Bayesian Adaptive Decoding Research

| Item | Function in Research | Specific Example / Note |

|---|---|---|

| Multi-Electrode Array | Records rich, high-resolution information about kinematic and kinetic states from multiple neurons simultaneously. Essential for obtaining the high-dimensional input for decoders [32]. | Utah array, Neuropixels probe. |

| Bayesian Neural Network (BNN) | A type of neural network that provides uncertainty estimates for its predictions. Crucial for assessing the reliability of decoded commands in safety-critical BCI applications [30]. | Can be implemented using libraries like PyTorch or TensorFlow with probability distributions over weights. |

| Domain Adaptation Algorithm | Enhances decoder generalizability across subjects or sessions by minimizing distributional differences in neural data. Addresses the problem of non-stationarity and inter-subject variability [8]. | Methods include feature-based (e.g., MMD minimization) and model-based (fine-tuning) approaches. |

| Markov Chain Monte Carlo (MCMC) Sampler | A computational method for approximating complex posterior distributions in Bayesian inference. Used when exact inference is intractable [30]. | Software: Stan, PyMC. Can be computationally intensive for real-time use. |

| Variational Inference (VI) Engine | An alternative, often faster, method for approximate Bayesian inference. Optimizes a simpler distribution to closely match the true posterior. More suitable for real-time BCI than MCMC in many cases [30]. | Often implemented automatically in probabilistic programming libraries. |

| Adaptive Spatial Filter | Removes common noise and artifacts from neural signals in a data-driven way, improving the signal-to-noise ratio before decoding [32]. | Weighted CAR filter with Kalman adaptation is an example for intracortical data [32]. |

| Kalman Filter / Particle Filter | The core algorithmic engine for recursive Bayesian state estimation. Used for dynamically tracking continuously varying neural states or kinematic parameters [14] [32]. | Kalman filter is optimal for linear Gaussian models; particle filters handle more complex, non-linear models. |

Transformer-Based Models with Adaptive Attention Mechanisms

Frequently Asked Questions

Q1: Why does my transformer model fail to decode non-stationary neural signals effectively?

Standard transformer architectures assume temporal consistency in input signals, which is often violated in neural data. The non-stationarity of neural signals—caused by factors like neuronal property variance, recording degradation, and attention fluctuations—severely limits traditional self-attention mechanisms that lack explicit frequency-domain modeling capabilities [36] [2] [37]. To address this, implement adaptive frequency-domain attention mechanisms that can dynamically emphasize fault-related frequency components while preserving long-range temporal dependencies [36].

Q2: What causes performance degradation when applying transformers to motor cortex decoding tasks?

Performance degradation typically stems from the fundamental mismatch between the transformer's stationary assumptions and the inherent non-stationarity of neural motor signals. As observed in primate studies, neural firing patterns in motor cortex significantly vary over time—some neurons show increasing mean firing rates while kinematic parameters remain consistent [2]. This temporal variability creates a moving target for fixed-parameter models. Consider implementing adaptive Kalman filters or recurrent neural network decoders that update their parameters as new observations become available [2] [37].

Q3: How can I improve my model's robustness to recording degradation in chronic intracortical recordings?

Chronic recording degradation manifests as decreased mean firing rates and reduced numbers of isolated units over time due to factors like glial scarring [37]. Implement dual-path extraction with gated residual enhancement (GRE-DB modules) that maintain performance under signal degradation conditions [36]. Additionally, employ retraining schemes where decoders are periodically updated with new session data rather than relying solely on initial training, as this approach maintains performance when neural preferred directions change over time [37].

Q4: What computational optimizations are available for attention mechanisms in long neural signal sequences?

For handling long sequential neural data, consider optimized attention implementations like PyTorch's scaleddotproduct_attention (SDPA) with FlashAttention-2 backend, memory-efficient attention, or CuDNN attention [38]. These fused kernel operations significantly reduce memory usage and computational overhead while maintaining accuracy. When deploying in production, leverage training optimizations like sliding window attention that carry over to inference, fundamentally shaping the model's capabilities [39] [38].

Troubleshooting Guides

Performance Degradation Under Noisy Conditions

Symptoms: Model accuracy decreases significantly under low signal-to-noise ratio (SNR) conditions; failure to detect periodic vibration patterns in bearing fault diagnosis applications [36].

| Problem Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inadequate noise suppression | Analyze model performance across multiple SNR levels; examine attention weight distribution | Integrate ultra-wide convolutional kernels at initial stage to suppress high-frequency noise [36] |

| Limited frequency-domain modeling | Compare time-domain vs frequency-domain feature importance | Implement adaptive frequency-domain attention mechanism to highlight informative diagnostic features [36] |

| Insufficient multiscale feature extraction | Visualize activations at different network depths | Add multiscale dilated convolutions to extract hierarchical temporal features [36] |

Protocol: To diagnose noise-related issues, systematically evaluate your model using the Paderborn University and Case Western Reserve University bearing fault datasets at SNR levels from -4dB to 10dB. Compare your model's performance against the ALMFormer architecture, which integrates large-kernel convolution and multiscale CNN structures [36].

Temporal Misalignment in Neural Decoding

Symptoms: Inconsistent decoding performance across trials; variable latency in detecting movement intention from motor cortex signals [2] [14].

| Problem Cause | Diagnostic Steps | Solution |

|---|---|---|

| Fixed temporal window assumption | Measure trial-to-trial timing variability | Implement Adaptive Decoding Algorithm (ADA) with two-level prediction that estimates optimal temporal windows per trial [14] |

| Non-adaptive model parameters | Track model performance degradation over session time | Develop adaptive Kalman filter or linear regression methods that update parameters with new observations [2] |

| Ignoring neural population dynamics | Analyze changes in preferred directions across neurons | Incorporate population vector models that account for neural property variance [37] |

Protocol: For temporal alignment issues, implement the ADA framework which first estimates, for each trial, the temporal window most likely to reflect task-relevant signals, then decodes test trials based on selection of informative windows. Validate using a model of memory recall based on real perception data [14].

Computational Bottlenecks in Long Sequence Processing

Symptoms: Slow training times; memory overflow with long neural recordings; inability to process full experimental sessions [36] [38].

| Problem Cause | Diagnostic Steps | Solution |

|---|---|---|

| Standard self-attention complexity | Profile computation time by sequence length | Replace with optimized attention kernels (FlashAttention, PyTorch SDPA, TransformerEngine) [38] |

| Inefficient attention computation | Monitor GPU memory usage during training | Implement sliding window attention or dilated attention mechanisms [39] |

| Suboptimal implementation | Compare different attention backends | Use PyTorch SDPA which dynamically selects most efficient backend based on input properties [38] |

Protocol: To address computational limitations, benchmark your attention implementation using a Vision Transformer backbone with sequence lengths matching your neural data. Compare default attention against optimized implementations like FlashAttention-2, which can reduce step time from 370ms to 242ms on NVIDIA H100 GPUs [38].

Experimental Protocols

Adaptive Frequency-Domain Attention Implementation

Objective: Enhance transformer robustness to noise in non-stationary neural signals through frequency-domain adaptation [36].

Workflow for Adaptive Frequency Attention

Procedure:

- Input Preparation: Segment neural signals into overlapping windows with 50% overlap, applying pre-emphasis filtering [2]

- Large-Kernel Convolution: Apply ultra-wide convolutional kernels (kernel size ≥ 64) at the initial stage to suppress high-frequency noise

- Multiscale Feature Extraction: Implement parallel dilated convolutions with dilation rates [1, 2, 4, 8] to capture temporal features at multiple timescales

- Gated Residual Enhancement: Process features through GRE-DB module with dual-path extraction and gated downsampling

- Frequency-Domain Attention: Apply adaptive frequency-domain attention to highlight diagnostically relevant frequency components

- Classification: Use feedforward layers with softmax activation for final prediction

Validation: Evaluate using 10-fold cross-validation on Paderborn University bearing fault dataset, reporting accuracy at SNR levels from -4dB to 10dB [36].

Adaptive Decoding Algorithm for Temporal Variability

Objective: Overcome trial-specific timing uncertainties in cognitive tasks like memory recall or motor imagery [14].

ADA Temporal Window Selection

Procedure:

- Temporal Window Generation: For each trial, generate multiple candidate temporal windows with varying onsets and durations

- Window Scoring: Calculate likelihood scores for each window containing task-relevant signals using nonparametric density estimation

- Optimal Window Selection: Select windows with highest likelihood scores for each trial

- Feature Extraction: Compute time-frequency features (wavelet coefficients, band power) from selected windows

- Model Training: Train decoder using features from optimally selected windows rather than fixed time points

- Cross-Validation: Implement leave-one-trial-out cross-validation to assess generalizability

Validation Metrics: Use controlled simulations with known ground truth timing, plus real MEG data from memory recall experiments [14].

Non-Stationarity Robustness Evaluation

Objective: Quantify model resilience to neural population changes over chronic recording periods [37].

Simulation Parameters:

| Non-Stationarity Type | Simulation Metric | Manipulation Range |

|---|---|---|

| Recording degradation | Mean Firing Rate (MFR) | 10-100% of baseline [37] |

| Recording degradation | Number of Isolated Units (NIU) | 10-100% of baseline [37] |

| Neuronal property variance | Preferred Directions (PDs) | 0-180° rotation [37] |

Procedure:

- Baseline Establishment: Train models on initial neural recordings with original statistics

- Controlled Degradation: Systematically vary MFR, NIU, and PDs using population vector models

- Performance Tracking: Evaluate decoder performance (correlation coefficient, R²) at each degradation level

- Comparison Framework: Test multiple decoders (OLE, Kalman Filter, RNN) under both static and retrained schemes

- Breakpoint Analysis: Identify degradation thresholds where performance drops significantly

The Scientist's Toolkit

Research Reagent Solutions

| Reagent/Tool | Function | Application Note |

|---|---|---|

| ALMFormer Architecture | Integrates adaptive frequency attention with large-kernel convolution | Optimal for bearing fault diagnosis under strong noise; achieves superior recognition accuracy at various SNRs [36] |

| Adaptive Decoding Algorithm (ADA) | Nonparametric method for trial-variable neural responses | Specifically designed for cognitive processes with uncertain timing (imagery, memory recall) [14] |

| Recurrent Neural Network Decoders | Nonlinear sequential modeling of neural dynamics | Outperforms OLE and Kalman filters under small recording degradation; sensitive to serious signal degradation [37] |

| PyTorch SDPA | Optimized attention computation with multiple backends | Reduces training step time by ~35% on H100 GPUs; supports FlashAttention-2, memory-efficient attention [38] |

| Population Vector Model | Simulation of neural population dynamics with controllable non-stationarity | Enables systematic testing of decoder robustness to MFR, NIU, and PD changes [37] |

| Gated Residual Enhancement Dual-Branch | Enhanced feature representation in noisy environments | Uses dual-path extraction, gated downsampling, and residual integration [36] |

The Adaptive Decoding Algorithm (ADA) for Trial-Specific Timing

Core Algorithm Concept and Data Features

The Adaptive Decoding Algorithm (ADA) is designed to overcome a fundamental challenge in neural signal analysis: the Heisenberg uncertainty principle, which makes it impossible to simultaneously determine the exact timing and frequency features of impulse components in non-stationary signals using classical Fourier or standard wavelet analysis [40]. ADA addresses this by integrating a model of shift-invariant pattern recognition, inspired by the human visual system's ability to identify "what" and "when" independently, with an advanced wavelet analysis using Krawtchouk functions as the mother wavelet [40]. This integration allows ADA to precisely identify the localization and frequency characteristics of impulse components in EEG signals, such as blinks (0.5-1 Hz) and muscle artifacts (16 Hz), invariant to time shifts [40].

Key Quantitative Features Processed by ADA: The table below summarizes the primary types of neural signal features that ADA is designed to characterize, along with their typical values and experimental significance.

| Feature Type | Description | Example Values / Range | Experimental Significance |

|---|---|---|---|

| Impulse Components [40] | Transient, localized events in the signal (e.g., blinks, muscle artifacts). | Blinks: 0.5 - 1 Hz; Muscle artifacts: ~16 Hz | Identification and removal of noise; isolation of bursts of brain activity. |

| Rhythmic Duration [41] | Length of sustained rhythmic episodes (e.g., theta, alpha) within a single trial. | Frontal Theta: Increased duration with working memory load [41]. | Tracks temporal dynamics of cognitive processes, superior to average power estimates. |

| Trial-to-Trial Variability [42] | Stability of neural spiking activity across trials, measured by Fano Factor (FF). | FF ~1 (Poisson process); Decreased FF during working memory delay indicates stability [42]. | Distinguishes between persistent activity and intermittent burst-coding models of neural computation. |

| Preictal Features [43] | EEG changes predicting seizure onset, including spectral and complexity measures. | Start: 83 ± 60 min before seizure; Duration: 56 ± 47 min [43]. | Key for personalized seizure prediction and intervention; timing varies between individuals and seizures. |

Detailed Experimental Protocols

Protocol for Single-Trial Rhythm Characterization

This protocol is based on the extended Better OSCillation detection (eBOSC) method, used to characterize the power and duration of rhythmic episodes in single trials [41].

- Step 1: Data Acquisition. Record neural signals (EEG, MEG, or LFP) during the cognitive or behavioral task of interest across multiple trials.

- Step 2: Preprocessing. Apply a bandpass filter to the raw signal to focus on the frequency band of interest (e.g., theta: 4-8 Hz, alpha: 8-13 Hz).

- Step 3: Rhythm Detection.

- Calculate the time-frequency representation of the signal using the continuous wavelet transform or similar methods.

- Establish a power threshold and a duration threshold for defining significant rhythmic episodes.

- For each time point and frequency, compare the signal's power against the power threshold. Cluster adjacent supra-threshold points in the time-frequency plane to define rhythmic episodes.

- Discard episodes that do not exceed the minimum duration threshold.

- Step 4: Data Extraction. For each identified rhythmic episode in a trial, extract its duration (ms) and mean amplitude (power). For trial-specific timing, the precise onset and offset of each episode are recorded.

Protocol for Validating ADA on Working Memory Data

This protocol leverages the analysis of trial-to-trial variability (Fano Factor) to test ADA's performance against different theoretical models [42].

- Step 1: Neural Data Collection. Perform single-neuron recordings (e.g., from macaque PFC) during a working memory task with a delay period.

- Step 2: Spike Train Processing. For each neuron and trial, align spike times to a task event (e.g., stimulus onset). Divide the time axis into bins of size Δ (e.g., 50-500 ms).

- Step 3: Fano Factor Calculation.

- For each time bin